repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

microsoft/nni | machine-learning | 5,685 | Removing redundant string format in the final experiment log | ### This is a small but very simple request.

In the final experiment summary JSON generated through the NNI WebUI, there are some fields that were originally dictionaries that have been reformatted into strings. This is a small but annoying detail and probably easy to fix.

Most notably, this happens for values in the entry 'finalMetricData', which contains the default metric for the trial. When more than just the default metric are being tracked however, for example when a dictionary of metrics is added at each intermediate and final metric recordings, the value of the 'finalMetricData' field may look something like this:

`'"{\\"train_loss\\": 1.2782151699066162, \\"test_loss\\": 0.9486784338951111, \\"default\\": 0.5564953684806824}"'`

when it should simply be

```

{'train_loss': '1.2782151699066162',

'test_loss': '0.9486784338951111',

'default': '0.5564953684806824'}

```

I've reformatted it with these simple two lines:

```

keys_values = log['trialMessage'][0]['finalMetricData'][0]['data'].replace('"', '').replace(': ', '').replace(', ', '').strip('{}').split('\\')

reformatted = {k: v for k, v in zip(keys_values[1::2], keys_values[2::2])}

```

It would be quite nice and save unnecessary reprocessing if this could just be a regular JSON dictionary and not a stringified dictionary :)

### Reproducing this:

After downloading the experiment summary as a json, the following code would reproduce the above behavior (if the trial includes a multitude of metrics collected in a dict as opposed to just the default metric being recorded):

```

with open('path_to_experiment_json') as f:

log = json.load(f)

print(log['trialMessage'][0]['finalMetricData'][0]['data'])

> '"{\\"train_loss\\": 1.2782151699066162, \\"test_loss\\": 0.9486784338951111, \\"default\\": 0.5564953684806824}"'

```

A similar thing goes for the field `hyperParameters` field in each trial message, which is also a stringified dictionary.

```

log['trialMessage'][0]['hyperParameters']

> ['{"parameter_id":0,"parameter_source":"algorithm","parameters":{"batch_size":64,"seed":2,"steps":5000,"n_batches":1000,"linear_out1":512,"linear_out2":128,"conv2d_ks":2,"conv2d_out_channels":1},"parameter_index":0}']

``` | open | 2023-09-26T12:19:49Z | 2023-09-26T12:28:22Z | https://github.com/microsoft/nni/issues/5685 | [] | olive004 | 0 |

jupyter/nbgrader | jupyter | 1,209 | Manual grading: assignment fails to load. It hangs with msg "Loading, Please wait..." | <!--

Thanks for helping to improve nbgrader!

If you are submitting a bug report or looking for support, please use the below

template so we can efficiently solve the problem.

If you are requesting a new feature, feel free to remove irrelevant pieces of

the issue template.

-->

### Ubuntu 18.04

### `nbgrader --version`nbgrader version 0.5.5

### `jupyterhub --version` (if used with JupyterHub)

### `jupyter notebook --version` TLJH v.1

### Expected behavior When accessing assignment via manual grading (after autograde has run successfully) assignment/submission id should load

### Actual behavior . : the "loading please wait" message is all that shows

### Steps to reproduce the behavior . as Admin create an assignment , release it, login as test_student, complete and submit, login as Admin collect assignment, auto grade assignment, goto manual grade, click on assignment and wait.

That being said if I go through Manage student and click on the assignment and then click on Assignment id , then notebook ID it resolves to the submitted assignment. Just not when I go through Manual Grading

Note we are using github Oauth | closed | 2019-08-29T20:49:00Z | 2019-11-02T11:36:43Z | https://github.com/jupyter/nbgrader/issues/1209 | [

"question"

] | alvinhuff | 3 |

horovod/horovod | machine-learning | 3,790 | No NCCL INFO logs visible on training | **Environment:**

1. Framework: (TensorFlow, Keras, PyTorch, MXNet) PyTorch

2. Framework version: 1.13.0

3. Horovod version: 0.26.0

4. MPI version:4.1.4

5. CUDA version: 11.2

6. NCCL version: 2.14

7. Python version: 2.9

8. Spark / PySpark version: NA

9. Ray version: NA

10. OS and version: Ubuntu 20

11. GCC version: 9.4.0

12. CMake version: 3.22.3

**Checklist:**

1. Did you search issues to find if somebody asked this question before? Yes

2. If your question is about hang, did you read [this doc](https://github.com/horovod/horovod/blob/master/docs/running.rst)?

3. If your question is about docker, did you read [this doc](https://github.com/horovod/horovod/blob/master/docs/docker.rst)?

4. Did you check if you question is answered in the [troubleshooting guide](https://github.com/horovod/horovod/blob/master/docs/troubleshooting.rst)? Yes

**Bug report:**

Please describe erroneous behavior you're observing and steps to reproduce it.

When I run horovod command, I don't see any NCCL INFO logs in the training logs. Can you please help with this? Am I missing any env var or am I installing horovod incorrectly?

Command:

```

horovodrun -np 1 --verbose -H <IP>:1 --mpi-args="-x NCCL_IB_DISABLE=1 -x NCCL_SHM_DISABLE=1 -x NCCL_DEBUG=INFO" python horovod_mnist.py --epochs 1

Filtering local host names.

Remote host found:

All hosts are local, finding the interfaces with address 127.0.0.1

Local interface found lo

mpirun --allow-run-as-root --tag-output -np 1 -H <IP>:1 -bind-to none -map-by slot -mca pml ob1 -mca btl ^openib -mca btl_tcp_if_include lo -x NCCL_SOCKET_IFNAME=lo -x BASH_ENV -x CONDA_DEFAULT_ENV -x CONDA_EXE -x CONDA_MKL_INTERFACE_LAYER_BACKUP -x CONDA_PREFIX -x CONDA_PROMPT_MODIFIER -x CONDA_PYTHON_EXE -x CONDA_SHLVL -x DBUS_SESSION_BUS_ADDRESS -x ENV -x FI_EFA_USE_DEVICE_RDMA -x FI_PROVIDER -x GSETTINGS_SCHEMA_DIR -x GSETTINGS_SCHEMA_DIR_CONDA_BACKUP -x HOME -x LANG -x LD_LIBRARY_PATH -x LESSCLOSE -x LESSOPEN -x LOADEDMODULES -x LOGNAME -x LS_COLORS -x MANPATH -x MKL_INTERFACE_LAYER -x MODULEPATH -x MODULEPATH_modshare -x MODULESHOME -x MODULES_CMD -x MOTD_SHOWN -x NCCL_DEBUG -x NCCL_PROTO -x PATH -x PWD -x SHELL -x SHLVL -x SSH_CLIENT -x SSH_CONNECTION -x SSH_TTY -x TERM -x USER -x XDG_DATA_DIRS -x XDG_RUNTIME_DIR -x XDG_SESSION_CLASS -x XDG_SESSION_ID -x XDG_SESSION_TYPE -x _ -x _CE_CONDA -x _CE_M -x NCCL_IB_DISABLE=1 -x NCCL_SHM_DISABLE=1 -x NCCL_DEBUG=INFO python horovod_mnist.py --epochs 1

[1,0]<stdout>:printing num proc None

[1,0]<stdout>:printing comm None

[1,0]<stdout>:INFO

[1,0]<stdout>:None 0

[1,0]<stderr>:/home/ubuntu/horovod_mnist.py:70: UserWarning: Implicit dimension choice for log_softmax has been deprecated. Change the call to include dim=X as an argument.

[1,0]<stderr>: return F.log_softmax(x)

[1,0]<stdout>:Train Epoch: 1 [0/60000 (0%)] Loss: 2.319920

[1,0]<stdout>:Train Epoch: 1 [640/60000 (1%)] Loss: 2.322078

[1,0]<stdout>:Train Epoch: 1 [1280/60000 (2%)] Loss: 2.313202

[1,0]<stdout>:Train Epoch: 1 [1920/60000 (3%)] Loss: 2.290503

[1,0]<stdout>:Train Epoch: 1 [2560/60000 (4%)] Loss: 2.284245

[1,0]<stdout>:Train Epoch: 1 [3200/60000 (5%)] Loss: 2.306246

[1,0]<stdout>:Train Epoch: 1 [3840/60000 (6%)] Loss: 2.284169

[1,0]<stdout>:Train Epoch: 1 [4480/60000 (7%)] Loss: 2.256588

[1,0]<stdout>:Train Epoch: 1 [5120/60000 (9%)] Loss: 2.296885

```

Expected example NCCL INFO logs (not actual):

```

[1,0]<stdout>:ip-172-31-0-63:2120:2135 [0] NCCL INFO ....

[1,0]<stdout>:ip-172-31-0-63:2120:2135 [0] NCCL INFO ....

[1,0]<stdout>:NCCL version 2.14.8+cuda12.1

```

Horovod install command:

```

HOROVOD_CMAKE=/opt/conda/envs/pytorch/bin/cmake \

pip install --no-cache-dir --upgrade --upgrade-strategy only-if-needed "horovod>=0.26.1"

```

| closed | 2022-12-06T00:29:52Z | 2023-03-25T03:25:25Z | https://github.com/horovod/horovod/issues/3790 | [

"question",

"wontfix"

] | jeet4320 | 3 |

ultralytics/yolov5 | deep-learning | 12,571 | package yolov5 use pyinstaller,could not use cuda | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

i have 2 computers, A and B.

and i package the follower code in A use pyinstaller. the cmd is` pyinstaller test.py`

```import torch

import os

import platform

print(torch.__file__)

def select_device(device='', batch_size=0, newline=True):

# device = None or 'cpu' or 0 or '0' or '0,1,2,3'

# s = f'YOLOv5 🚀 {git_describe() or file_date()} Python-{platform.python_version()} torch-{torch.__version__} '

s = f'YOLOv5 🚀 Python-{platform.python_version()} torch-{torch.__version__} '

device = str(device).strip().lower().replace('cuda:', '').replace('none', '') # to string, 'cuda:0' to '0'

print('s',s)

print('device:',device)

cpu = device == 'cpu'

mps = device == 'mps' # Apple Metal Performance Shaders (MPS)

if cpu or mps:

os.environ['CUDA_VISIBLE_DEVICES'] = '-1' # force torch.cuda.is_available() = False

elif device: # non-cpu device requested

os.environ['CUDA_VISIBLE_DEVICES'] = device # set environment variable - must be before assert is_available()

print('torch cuda:',torch.cuda.is_available())

print('count:', torch.cuda.device_count())

print('len:', len(device.replace(',', '')))

assert torch.cuda.is_available() and torch.cuda.device_count() >= len(device.replace(',', '')), \

f"Invalid CUDA '--device {device}' requested, use '--device cpu' or pass valid CUDA device(s)"

if not cpu and not mps and torch.cuda.is_available(): # prefer GPU if available

devices = device.split(',') if device else '0' # range(torch.cuda.device_count()) # i.e. 0,1,6,7

n = len(devices) # device count

if n > 1 and batch_size > 0: # check batch_size is divisible by device_count

assert batch_size % n == 0, f'batch-size {batch_size} not multiple of GPU count {n}'

space = ' ' * (len(s) + 1)

for i, d in enumerate(devices):

p = torch.cuda.get_device_properties(i)

s += f"{'' if i == 0 else space}CUDA:{d} ({p.name}, {p.total_memory / (1 << 20):.0f}MiB)\n" # bytes to MB

arg = 'cuda:0'

elif mps and getattr(torch, 'has_mps', False) and torch.backends.mps.is_available(): # prefer MPS if available

s += 'MPS\n'

arg = 'mps'

else: # revert to CPU

s += 'CPU\n'

arg = 'cpu'

if not newline:

s = s.rstrip()

print('ss',s)

return torch.device(arg)

DEVICE = '0'

device = select_device(DEVICE)

print('device:',device)

```

the result that run the generated file is

<img width="540" alt="f5997e1ad76b3baa86ca5068a3cb281" src="https://github.com/ultralytics/yolov5/assets/38728358/bb402e6e-bfbc-49e8-85da-23b3d4600fa7">

but when i move to the computer B. the result is

<img width="803" alt="09bff37a8c38ea081c74a24997c6d36" src="https://github.com/ultralytics/yolov5/assets/38728358/a71d06b4-a108-4105-8261-6e2a09832d0c">

A and B all have 3090,and in the base env torch.cuda.is_available() is true.

can anyone help how to solve this problem?thank you!

### Additional

_No response_ | closed | 2024-01-03T02:08:57Z | 2024-01-03T07:30:11Z | https://github.com/ultralytics/yolov5/issues/12571 | [

"question"

] | jo-dean | 0 |

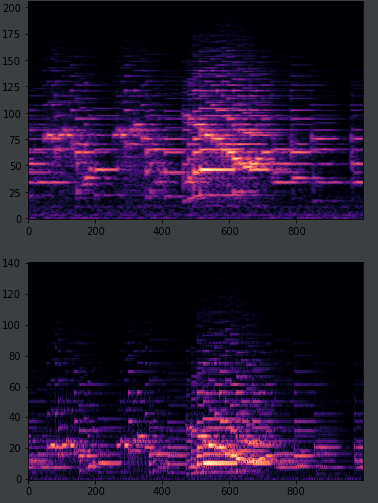

CPJKU/madmom | numpy | 434 | Log filtered spectrogram height | I was under the impression that only changing the frame size for a LogarithmicFilteredSpectrogram wouldn't change the height of the spectrogram, but it does. Also with a lower frame size, low frequencies aren't being shown. Anyone know why this happens?

```

frame_size = 8392

fsig_proc = FramedSignalProcessor(frame_size=frame_size, fps=120, hop_size=frame_size // 2, origin='future')

spec_proc = LogarithmicFilteredSpectrogramProcessor(LogarithmicFilterbank, num_bands=32, fmin=1, fmax=8000)

processor = SequentialProcessor([fsig_proc, spec_proc])

comp_spec = processor(Signal(np.array(y), sample_rate=fs)).T

plt.imshow(comp_spec[:, 3500:4500], cmap='magma', interpolation='nearest', aspect='auto', origin='lower')

plt.show()

frame_size = 2048

fsig_proc = FramedSignalProcessor(frame_size=frame_size, fps=120, hop_size=frame_size // 2, origin='future')

spec_proc = LogarithmicFilteredSpectrogramProcessor(LogarithmicFilterbank, num_bands=32, fmin=1, fmax=8000)

processor = SequentialProcessor([fsig_proc, spec_proc])

comp_spec = processor(Signal(np.array(y), sample_rate=fs)).T

plt.imshow(comp_spec[:, 3500:4500], cmap='magma', interpolation='nearest', aspect='auto', origin='lower')

plt.show()

```

Top graph is a spectrogram with frame size 8392, bottom has frame size 2048.

Thank you

| closed | 2019-06-16T22:30:17Z | 2019-07-17T07:13:25Z | https://github.com/CPJKU/madmom/issues/434 | [] | andrewpeng02 | 6 |

tensorpack/tensorpack | tensorflow | 1,003 | What does this Error mean? | Hi, I tried to load an LMDB data of ImageNet, it is very large, about 140G. And there are 1281167 instances in the data. But I meet this error. It seems to fail to load a too big LMDB file.

```

Traceback (most recent call last):

File "main.py", line 388, in <module>

main()

File "main.py", line 154, in main

num_workers=args.workers,)

File "/home/jcz/github/pytorch_examples/imagenet/sequential_imagenet_dataloader/imagenet_seq/data.py", line 166, in __init__

ds = td.LMDBData(lmdb_loc, shuffle=False)

File "/home/jcz/github/tensorpack/tensorpack/dataflow/format.py", line 91, in __init__

self._set_keys(keys)

File "/home/jcz/github/tensorpack/tensorpack/dataflow/format.py", line 109, in _set_keys

self.keys = loads(self.keys)

File "/home/jcz/github/tensorpack/tensorpack/utils/serialize.py", line 29, in loads_msgpack

return msgpack.loads(buf, raw=False, max_bin_len=1000000000)

File "/home/jcz/Venv/pytorch/lib/python3.5/site-packages/msgpack_numpy.py", line 214, in unpackb

return _unpackb(packed, **kwargs)

File "msgpack/_unpacker.pyx", line 187, in msgpack._cmsgpack.unpackb

ValueError: 1281167 exceeds max_array_len(131072)

```

python env: 3.5

how can I fix this error? | closed | 2018-12-08T04:33:42Z | 2018-12-08T08:47:55Z | https://github.com/tensorpack/tensorpack/issues/1003 | [

"upstream issue"

] | Juicechen95 | 3 |

piskvorky/gensim | data-science | 3,422 | Gensim LdaMulticore can't work on cloud function | #### Problem description

I want to use gensim LDA module on cloud function, but it time out and show "/layers/google.python.pip/pip/lib/python3.8/site-packages/past/builtins/misc.py:45: DeprecationWarning: the imp module is deprecated in favour of importlib; see the module's documentation for alternative uses".

But the same code worked on colab (python 3.8.16) and I did't find any bug in it. It can print 'LDA1' and 'LDA2', then time out.

#### Steps/code/corpus to reproduce

1.I have tried diffierent python version like 3.10, 3.8, 3.7

2.ADD import warnings

warnings.filterwarnings("ignore", category=DeprecationWarning)

3.It works on colab and 300 text just cost 10 sec, but I need it work on cloud function

```python

def LDA(corpus, dictionary, NumTopic):

print('LDA1')

time1 = time.time()

print('LDA2')

lda = gensim.models.LdaMulticore(corpus=corpus, id2word=dictionary, num_topics=NumTopic, chunksize=1000, iterations=200, passes=20, per_word_topics=False, random_state=100)

print('LDA3')

corpus_lda = lda[corpus]

print("LDA takes %2.2f seconds." % (time.time() - time1))

return lda, corpus_lda

```

#### Versions

Please provide the output of:

```python

from __future__ import unicode_literals

import base64

import importlib

import re

import os

import sys

import numpy as np

import pandas as pd

import gensim

import gensim.corpora as corpora

from gensim.utils import simple_preprocess

from gensim.models import CoherenceModel

from gensim import corpora, models, similarities

from google.cloud import bigquery

import pandas_gbq

import requests

import tqdm

import json

import pyLDAvis

import pyLDAvis.gensim_models

import matplotlib.pyplot as plt

import logging

import time

```

| closed | 2023-01-06T10:07:11Z | 2023-01-10T08:56:55Z | https://github.com/piskvorky/gensim/issues/3422 | [] | tinac5519 | 2 |

ibis-project/ibis | pandas | 10,236 | bug: Generated SQL for Array Aggregations with Order By doesn't work in BigQuery | Edited to include an end to end reproduction and more detail.

### What happened?

Consider

```

CREATE TABLE `my-project.my_dataset.colors` AS (

SELECT 1 AS id, 'red' AS color

UNION ALL

SELECT 2 AS id, 'red' AS color

UNION ALL

SELECT 3 AS id, 'blue' AS color

)

```

and

```

import ibis

con = ibis.bigquery.connect(project_id="my-project", dataset_id="my_dataset")

colors = con.table("colors")

table = colors.group_by("color").aggregate(

ids=colors.id.collect(order_by=colors.id),

)

table.execute()

```

This gives

```

BadRequest: 400 POST https://bigquery.googleapis.com/bigquery/v2/projects/my-project/queries?prettyPrint=false: NULLS LAST not supported with ascending sort order in aggregate functions.

```

If I check the output of `print(table.compile(pretty=True))` I see

```

SELECT

`t0`.`color`,

ARRAY_AGG(`t0`.`id` IGNORE NULLS ORDER BY `t0`.`id` ASC NULLS LAST) AS `ids`

FROM `i-amlg-dev`.`archipelago`.`colors` AS `t0`

GROUP BY

1

```

Looks like `NULLS LAST` isn't allowed. Without `order_by`, there's no `NULLS LAST`.

### What version of ibis are you using?

9.5.0. I'm also using sqlglot 25.20.2

### What backend(s) are you using, if any?

BigQuery

### Relevant log output

_No response_

### Code of Conduct

- [X] I agree to follow this project's Code of Conduct | closed | 2024-09-26T17:34:56Z | 2024-09-27T01:40:13Z | https://github.com/ibis-project/ibis/issues/10236 | [

"bug"

] | yjabri | 2 |

robotframework/robotframework | automation | 4,455 | Standard libraries don't support `pathlib.Path` objects | OperatingSystem library should support also Python Path objects. Currently OperatingSystem library keyword which uses path or file path as argument, expect the argument to be as a string. Would it be possible to enhance library keyword to support also Python [Path](https://docs.python.org/3/library/pathlib.html) as arguments.

This could be useful when library keywords returns a path object and that object is example used in [Get File](https://robotframework.org/robotframework/latest/libraries/OperatingSystem.html#Get%20File). Currently users are required to do:

```robot framework

${path} Library Keyword Return Path Object

${path} Convert To String ${path}

${data} Get File ${path}

```

But it could be handy to just do

```robot framework

${path} Library Keyword Return Path Object

${data} Get File ${path}

```

| closed | 2022-09-07T19:26:05Z | 2022-09-21T18:12:37Z | https://github.com/robotframework/robotframework/issues/4455 | [

"bug",

"priority: medium",

"beta 2"

] | aaltat | 5 |

Lightning-AI/pytorch-lightning | machine-learning | 19,940 | Custom batch selection for logging | ### Description & Motivation

Need to be able to select the same batch in every logging cycle. For generation pipelines similar to stable diffusion it is very hard to gauge the performance over training if we continue to choose random batches.

### Pitch

User should have selective ability to choose the batch to log which will be constant for all the logging cycles.

### Alternatives

Its possible to load the data again in train_btach_end() or validation_batch_end(), and call logging.

### Additional context

_No response_

cc @borda | open | 2024-06-04T10:29:40Z | 2024-06-08T11:03:18Z | https://github.com/Lightning-AI/pytorch-lightning/issues/19940 | [

"feature",

"needs triage"

] | bhosalems | 3 |

PhantomInsights/subreddit-analyzer | matplotlib | 7 | Just wondering about the recent Reddit API changes (paywall)... | Has this code been tested lately? | open | 2023-11-14T07:29:16Z | 2023-11-14T14:29:07Z | https://github.com/PhantomInsights/subreddit-analyzer/issues/7 | [] | champlainmarketing | 1 |

qwj/python-proxy | asyncio | 154 | How do i use authentication with my https server | hello i need help recently got this working with python on my Xbox one i wanted to make a proxy for peer2profit but i want it to be like this ussername:pass@IP:RandomPort for it to work with peer2profit help is very much appreciated! | open | 2022-08-17T19:22:35Z | 2022-08-17T19:22:35Z | https://github.com/qwj/python-proxy/issues/154 | [] | PurpleVoidEpic | 0 |

ipython/ipython | data-science | 14,638 | Crash if malformed `os.environ` | # Description

Setting `os.environ` to an invalid data structure crashes IPython. The following is obviously not correct, but while testing something else, I mistyped `[]` instead of `{}`:

```python

$ ipython

Python 3.13.1 (main, Dec 12 2024, 16:35:44) [GCC 11.4.0]

Type 'copyright', 'credits' or 'license' for more information

IPython 8.31.0 -- An enhanced Interactive Python. Type '?' for help.

In [1]: import os

In [2]: os.environ = [] # <-------------- stupid human typo!

Traceback (most recent call last):

File "/tmp/test-py313/bin/ipython", line 8, in <module>

sys.exit(start_ipython())

~~~~~~~~~~~~~^^

File "/tmp/test-py313/lib/python3.13/site-packages/IPython/__init__.py", line 130, in start_ipython

return launch_new_instance(argv=argv, **kwargs)

File "/tmp/test-py313/lib/python3.13/site-packages/traitlets/config/application.py", line 1075, in launch_instance

app.start()

~~~~~~~~~^^

File "/tmp/test-py313/lib/python3.13/site-packages/IPython/terminal/ipapp.py", line 317, in start

self.shell.mainloop()

~~~~~~~~~~~~~~~~~~~^^

File "/tmp/test-py313/lib/python3.13/site-packages/IPython/terminal/interactiveshell.py", line 926, in mainloop

self.interact()

~~~~~~~~~~~~~^^

File "/tmp/test-py313/lib/python3.13/site-packages/IPython/terminal/interactiveshell.py", line 911, in interact

code = self.prompt_for_code()

File "/tmp/test-py313/lib/python3.13/site-packages/IPython/terminal/interactiveshell.py", line 854, in prompt_for_code

text = self.pt_app.prompt(

default=default,

inputhook=self._inputhook,

**self._extra_prompt_options(),

)

File "/tmp/test-py313/lib/python3.13/site-packages/prompt_toolkit/shortcuts/prompt.py", line 1031, in prompt

if self._output is None and is_dumb_terminal():

~~~~~~~~~~~~~~~~^^

File "/tmp/test-py313/lib/python3.13/site-packages/prompt_toolkit/utils.py", line 325, in is_dumb_terminal

return is_dumb_terminal(os.environ.get("TERM", ""))

^^^^^^^^^^^^^^

AttributeError: 'list' object has no attribute 'get'

If you suspect this is an IPython 8.31.0 bug, please report it at:

https://github.com/ipython/ipython/issues

or send an email to the mailing list at ipython-dev@python.org

You can print a more detailed traceback right now with "%tb", or use "%debug"

to interactively debug it.

Extra-detailed tracebacks for bug-reporting purposes can be enabled via:

%config Application.verbose_crash=True

$ echo $?

1

```

When doing the same in the standard Python REPL, Python 3.13 crashes for a slightly different reason, but Python 3.10 through Python 3.12 handle it gracefully:

```python

$ python3.12

Python 3.12.8 (main, Dec 12 2024, 16:33:58) [GCC 11.4.0] on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> import os

>>> os.environ = []

>>>

$ python3.13

Python 3.13.1 (main, Dec 12 2024, 16:35:44) [GCC 11.4.0] on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> import os

>>> os.environ = []

Traceback (most recent call last):

File "<frozen runpy>", line 198, in _run_module_as_main

File "<frozen runpy>", line 88, in _run_code

File ".../.local/Python3.13.1/lib/python3.13/_pyrepl/__main__.py", line 6, in <module>

__pyrepl_interactive_console()

File ".../.local/Python3.13.1/lib/python3.13/_pyrepl/main.py", line 59, in interactive_console

run_multiline_interactive_console(console)

File ".../local/Python3.13.1/lib/python3.13/_pyrepl/simple_interact.py", line 151, in run_multiline_interactive_console

statement = multiline_input(more_lines, ps1, ps2)

File ".../local/Python3.13.1/lib/python3.13/_pyrepl/readline.py", line 389, in multiline_input

return reader.readline()

File ".../local/Python3.13.1/lib/python3.13/_pyrepl/reader.py", line 795, in readline

self.prepare()

File ".../local/Python3.13.1/lib/python3.13/_pyrepl/historical_reader.py", line 302, in prepare

super().prepare()

File ".../local/Python3.13.1/lib/python3.13/_pyrepl/reader.py", line 635, in prepare

self.console.prepare()

File ".../local/Python3.13.1/lib/python3.13/_pyrepl/unix_console.py", line 349, in prepare

self.height, self.width = self.getheightwidth()

File ".../local/Python3.13.1/lib/python3.13/_pyrepl/unix_console.py", line 451, in getheightwidth

return int(os.environ["LINES"]), int(os.environ["COLUMNS"])

TypeError: list indices must be integers or slices, not str

$ echo $?

1

```

I am fully aware that "one shouldn't do this at all." For example, use `os.environ.clear()`, `os.environb` is linked/a view of `os.environ`, etc. So, I'd understand if this ticket was just closed — this is clearly a low priority bug. However, a crash is a crash, which is why I reported it at all. | open | 2025-01-03T14:47:27Z | 2025-01-07T10:56:49Z | https://github.com/ipython/ipython/issues/14638 | [] | khk-globus | 2 |

quantmind/pulsar | asyncio | 235 | Remove wait from test classes | No longer needed, one can do an async assertion with the following syntax

``` python

self.assertEqual(await async_func(), 'foo')

```

| closed | 2016-07-28T08:50:04Z | 2016-10-10T15:25:15Z | https://github.com/quantmind/pulsar/issues/235 | [

"test",

"enhancement"

] | lsbardel | 0 |

alteryx/featuretools | scikit-learn | 2,457 | `NumWords` returns wrong answer when text with multiple spaces is passed in | ```

NumWords().get_function()(pd.Series(["hello world"]))

```

Returns 4. Adding another space would return 5.

The issue is with how the number of words is counted. Consecutive spaces should be collapsed into one. | closed | 2023-01-19T22:02:47Z | 2023-02-09T18:30:43Z | https://github.com/alteryx/featuretools/issues/2457 | [

"bug"

] | sbadithe | 0 |

gradio-app/gradio | machine-learning | 10,699 | Unable to change user interface for all users without restarting | ### Describe the bug

Let's assume one user creates something through a gradio interface that we want to be made available to all other users. A simple way is to add this new user content to an user interface element such as a Dropdown box. Unfortunately every change made to the user interface while the application is running impacts only the current user. Is there a way to force reloading the demo so that all the elements are updated ?

### Have you searched existing issues? 🔎

- [x] I have searched and found no existing issues

### Reproduction

```python

import gradio as gr

```

### Screenshot

_No response_

### Logs

```shell

```

### System Info

```shell

no error

```

### Severity

I can work around it | closed | 2025-02-28T18:01:10Z | 2025-03-05T13:33:35Z | https://github.com/gradio-app/gradio/issues/10699 | [

"bug"

] | deepbeepmeep | 2 |

jupyter-incubator/sparkmagic | jupyter | 66 | wait for state doesn't return immediately if state is final | When state goes into a final state, like error, wait for state should immediately return.

| closed | 2015-12-12T05:31:22Z | 2015-12-19T09:01:23Z | https://github.com/jupyter-incubator/sparkmagic/issues/66 | [

"kind:bug"

] | aggFTW | 2 |

ray-project/ray | data-science | 51,261 | [core][gpu-objects] CollectiveExecutor | ### Description

`CollectiveExecutor` is responsible for executing collective calls in order specified by the driver. This issue relies on #51260.

### Use case

_No response_ | open | 2025-03-11T18:36:03Z | 2025-03-11T22:06:09Z | https://github.com/ray-project/ray/issues/51261 | [

"enhancement",

"P0",

"core",

"gpu-objects"

] | kevin85421 | 0 |

xlwings/xlwings | automation | 1,885 | Google Sheets/Excel on the web bug: formats "1" etc as date | closed | 2022-04-01T08:07:21Z | 2022-04-01T09:19:12Z | https://github.com/xlwings/xlwings/issues/1885 | [

"bug"

] | fzumstein | 0 | |

Lightning-AI/pytorch-lightning | deep-learning | 19,799 | parsing issue with `save_last` parameter of `ModelCheckpoint` | ### Bug description

Cannot pass a boolean to the `save_last` parameter of the `ModelCheckpoint` callback using `LightningCLI`.

All parameters work fine except for `save_last`. I think `jsonargparse` is having trouble with the validation of the annotation of `save_last` which is currently `Optional[Literal[True, False, 'link']]`.

Ideally, this should work like any other boolean flag e.g., like `--my_model_checkpoint.verbose=false`.

I have already forked the project and proposed a solution together with tests in the relevant directory. I have readiness to submit a PR if you think this might be useful.

### What version are you seeing the problem on?

master

### How to reproduce the bug

```python

import inspect

import jsonargparse

from lightning.pytorch.callbacks import ModelCheckpoint

val = 'true'

annot = inspect.signature(ModelCheckpoint).parameters["save_last"].annotation

parser = jsonargparse.ArgumentParser()

parser.add_argument("--a", type=annot)

args = parser.parse_args(["--a", val])

```

### Error messages and logs

```

error: Parser key "a":

Does not validate against any of the Union subtypes

Subtypes: (typing.Literal[True, False, 'link'], <class 'NoneType'>)

Errors:

- Expected a typing.Literal[True, False, 'link']

- Expected a <class 'NoneType'>. Got value: True

Given value type: <class 'str'>

Given value: true

```

### Environment

<details>

<summary>Current environment</summary>

```

* CUDA:

- GPU: None

- available: False

- version: None

* Lightning:

- lightning-utilities: 0.11.2

- torch: 2.2.2+cpu

- torchmetrics: 1.2.1

- torchvision: 0.17.2+cpu

* Packages:

- aiohttp: 3.9.5

- aiosignal: 1.3.1

- antlr4-python3-runtime: 4.9.3

- attrs: 23.2.0

- certifi: 2024.2.2

- cfgv: 3.4.0

- charset-normalizer: 3.3.2

- cloudpickle: 2.2.1

- distlib: 0.3.8

- filelock: 3.13.4

- frozenlist: 1.4.1

- fsspec: 2023.4.0

- identify: 2.5.36

- idna: 3.7

- iniconfig: 2.0.0

- jinja2: 3.1.2

- jsonargparse: 4.28.0

- lightning-utilities: 0.11.2

- markupsafe: 2.1.3

- mpmath: 1.3.0

- multidict: 6.0.5

- networkx: 3.2.1

- nodeenv: 1.8.0

- numpy: 1.26.3

- omegaconf: 2.3.0

- packaging: 23.1

- pillow: 10.2.0

- pip: 24.0

- platformdirs: 4.2.0

- pluggy: 1.5.0

- pre-commit: 3.7.0

- pytest: 7.4.0

- pyyaml: 6.0.1

- requests: 2.31.0

- setuptools: 68.2.2

- sympy: 1.12

- torch: 2.2.2+cpu

- torchmetrics: 1.2.1

- torchvision: 0.17.2+cpu

- tqdm: 4.66.2

- typing-extensions: 4.8.0

- urllib3: 2.2.1

- virtualenv: 20.25.3

- wheel: 0.41.2

- yarl: 1.9.4

* System:

- OS: Linux

- architecture:

- 64bit

- ELF

- processor: x86_64

- python: 3.11.7

- release: 5.15.0-102-generic

- version: #112~20.04.1-Ubuntu SMP Thu Mar 14 14:28:24 UTC 2024

```

</details>

### More info

The solution is to change the typing annotation of the `save_last` parameter in the constructor of `ModelCheckpoint`.

I have made a draft PR and added a test to check that the bug is fixed <a href="https://github.com/Lightning-AI/pytorch-lightning/pull/19808">here</a> fore reference.

> The tests are passing:

cc @carmocca @awaelchli | closed | 2024-04-22T15:30:35Z | 2024-06-06T00:25:28Z | https://github.com/Lightning-AI/pytorch-lightning/issues/19799 | [

"bug",

"callback: model checkpoint",

"ver: 2.2.x"

] | mariovas3 | 0 |

autokey/autokey | automation | 951 | cyrillic symbols not shown - space added instead | ### AutoKey is a Xorg application and will not function in a Wayland session. Do you use Xorg (X11) or Wayland?

Xorg

### Has this issue already been reported?

- [X] I have searched through the existing issues.

### Is this a question rather than an issue?

- [X] This is not a question.

### What type of issue is this?

Bug

### Choose one or more terms that describe this issue:

- [ ] autokey triggers

- [ ] autokey-gtk

- [X] autokey-qt

- [ ] beta

- [X] bug

- [ ] critical

- [ ] development

- [ ] documentation

- [ ] enhancement

- [ ] installation/configuration

- [ ] phrase expansion

- [ ] scripting

- [ ] technical debt

- [ ] user interface

### Other terms that describe this issue if not provided above:

_No response_

### Which Linux distribution did you use?

ubuntu 22

### Which AutoKey GUI did you use?

Qt

### Which AutoKey version did you use?

0.95.10

### How did you install AutoKey?

apt

### Can you briefly describe the issue?

cyrillic symbols not shown when posting a phrase, only ANSI-symbols shown

### Can the issue be reproduced?

Always

### What are the steps to reproduce the issue?

1. make phrase on cyrillic

2. make hotkey

3. use

### What should have happened?

cyrrilic phrase shown

### What actually happened?

spaces instead of cyrillic symbols

### Do you have screenshots?

_No response_

### Can you provide the output of the AutoKey command?

_No response_

### Anything else?

_No response_ | open | 2024-07-19T21:03:20Z | 2024-07-21T08:03:58Z | https://github.com/autokey/autokey/issues/951 | [

"duplicate",

"wontfix",

"phrase expansion",

"user support"

] | AlexeyRoza | 1 |

miguelgrinberg/flasky | flask | 434 | Passing data from form to send to API sandbox | Hello Sir,

Always an owner to send you a chat when I have an issue. I have been doing a REstFul app with Flask following your guidelines although am stuck. I cannot understand why my code cannot pass the data from form to an external API. If I type the values for different keys in the json body it sends well but if I try obtaining the data from form it does not. Please check my code.

Thank you in advance

```

@app.route('/payrequest/<int:idf>', methods=['GET', 'POST'])

@login_required

def payrequest(idf):

if current_user.is_authenticated:

error=None

form=PayForm(request.form)

if form.validate_on_submit():

amount= form.get_json('Amount')

mobile = form.get_json('Mobile')

#timestamp = datetime.now()

external_id = form.get_json('External_ID')

note = form.get_json('Note')

message = form.get_json('Message')

currency=form.get_json('Currency')

userid = Collect.query.filter_by(client_id = idf).first_or_404()

collect_id = userid.user_id

apikey = userid.api_key

client = Collection({

"COLLECTION_USER_ID": collect_id,

"COLLECTION_API_SECRET": apikey,

"COLLECTION_PRIMARY_KEY": '1e5ceced566042c4b01a97e3400cedb1',

})

try:

resp = client.requestToPay(

mobile='{%s}'.format(mobile), amount='{%s}'.format(amount), external_id='{%s}'.format(external_id), payee_note='{%s}'.fomat(note), payer_message='{%s}'.format(message), currency='{%s}'.format(currency)

)

return jsonify(resp)

except Exception as e:

raise e

error= 'Wrong data'

return render_template('collection.html', error=error, form=form)

```

This is my template

**`<p>Fill the form below to submit Payment as disbursement</p>

```

<div class="container">

<form action="{{ url_for('payrequest', idf=current_user.id)}}">

<div class="row">

<div class="col-25">

{{ form.Mobile.label }}

</div>

<div class="col-75">

{{form.Mobile(placeholder = "e.g 256781234567")}}

</div>

</div>

<div class="row">

<div class="col-25">

{{ form.Amount.label }}

</div>

<div class="col-75">

{{form.Amount(placeholder = "e.g 1000")}}

</div>

</div>

<div class="row">

<div class="col-25">

{{ form.External_ID.label }}

</div>

<div class="col-75">

{{form.External_ID(placeholder = "e.g 12345")}}

</div>

</div>

<div class="row">

<div class="col-25">

{{ form.Note.label }}

</div>

<div class="col-75">

{{form.Note(height=80, placeholder="Write Something")}}

</div>

</div>

<div class="row">

<div class="col-25">

{{ form.Message.label }}

</div>

<div class="col-75">

{{form.Note(height=80, placeholder="Write Something")}}

</div>

</div>

<div class="row">

<div class="col-25">

{{ form.Currency.label }}

</div>

<div class="col-75">

<select id="country" name="currency">

<option value="eur">EUR</option>

</select>

</div>

</div>

</div>

<div class="row">

<input type="submit" value="Submit">

</div>

</form>

</div>`

``` | closed | 2019-08-16T14:47:09Z | 2019-08-19T14:10:42Z | https://github.com/miguelgrinberg/flasky/issues/434 | [

"question"

] | OwiyeD | 10 |

vitalik/django-ninja | rest-api | 1,393 | [BUG] Servers widget appears with empty select box when "ninja" app added to INSTALLED_APPS | I'm adding `'ninja'` to `INSTALLED_APPS` in settings in order to use Django-hosted static files rather than rely on the CDN. When doing so, I find an unpopulated select form element pop up for Swagger server configuration, like below:

Presumably the `<div class="opblock-section operation-servers">` is being accidentally rendered in templates when it's static vs loaded from the CDN - just a wildly unfounded/uneducated guess as to what might be happening. Will see if I can dedicate some time to fixing this but opening up a big for the broader audience in case this is tracked in another issue (I was unable to find anything) or someone has an easy fix.

**Versions**

Python 3.12.7

Django==5.1.4

django-ninja==1.3.0

pydantic==2.10.4

| open | 2025-01-17T21:32:45Z | 2025-01-17T21:33:29Z | https://github.com/vitalik/django-ninja/issues/1393 | [] | angstwad | 0 |

deepspeedai/DeepSpeed | machine-learning | 5,553 | Failed to install Fused_adam op on CPU | Hello, I am struggling to download fused_adam pre build of deepspeed.

I found nothing that solve my problem.

Here are the situations.

```

DS_BUILD_FUSED_ADAM=1 pip install deepspeed

ds_report

```

still have same results.

How can I successfully download and utilized ```fused_adam```?

```

[2024-05-21 11:34:41,285] [WARNING] [real_accelerator.py:162:get_accelerator] Setting accelerator to CPU. If you have GPU or other accelerator, we were unable to detect it.

[2024-05-21 11:34:41,287] [INFO] [real_accelerator.py:203:get_accelerator] Setting ds_accelerator to cpu (auto detect)

--------------------------------------------------

DeepSpeed C++/CUDA extension op report

--------------------------------------------------

NOTE: Ops not installed will be just-in-time (JIT) compiled at

runtime if needed. Op compatibility means that your system

meet the required dependencies to JIT install the op.

--------------------------------------------------

JIT compiled ops requires ninja

ninja .................. [OKAY]

--------------------------------------------------

op name ................ installed .. compatible

--------------------------------------------------

deepspeed_not_implemented [NO] ....... [OKAY]

deepspeed_ccl_comm ..... [NO] ....... [OKAY]

deepspeed_shm_comm ..... [NO] ....... [OKAY]

cpu_adam ............... [NO] ....... [OKAY]

fused_adam ............. [NO] ....... [OKAY]

--------------------------------------------------

DeepSpeed general environment info:

torch install path ............... ['TORCH_INSTALL_PATH']

torch version .................... 2.1.2+cu121

deepspeed install path ........... ['DEEPSPEED_INSTALL_PATH']

deepspeed info ................... 0.14.2+cu118torch2.0, unknown, unknown

deepspeed wheel compiled w. ...... torch 2.0

shared memory (/dev/shm) size .... 125.67 GB

```

Thanks for reading my question!

+)

not only fused adam, but also every build does not work

```

DS_BUILD_OPS=1 pip install deepspeed

``` | closed | 2024-05-21T02:39:40Z | 2024-05-28T16:24:35Z | https://github.com/deepspeedai/DeepSpeed/issues/5553 | [

"build"

] | daehuikim | 9 |

scikit-optimize/scikit-optimize | scikit-learn | 920 | ImportError: cannot import name 'Log10' from 'skopt.space.transformers' | Hi all,

I have tried to run the sklearn_examples

https://github.com/HunterMcGushion/hyperparameter_hunter/blob/master/examples/sklearn_examples/classification.py

I got below error:

ImportError: cannot import name 'Log10' from 'skopt.space.transformers' (/root/miniconda3/envs/psi4/lib/python3.7/site-packages/skopt/space/transformers.py) | open | 2020-07-02T12:12:06Z | 2020-07-02T12:14:06Z | https://github.com/scikit-optimize/scikit-optimize/issues/920 | [] | chrinide | 1 |

strawberry-graphql/strawberry | fastapi | 2,914 | First-Class support for `@stream`/`@defer` in strawberry | ## First-Class support for `@stream`/`@defer` in strawberry

This issue is going to collect all necessary steps for an awesome stream and defer devX in Strawberry 🍓

First steps collected today together in discovery session with @patrick91 @bellini666

#### ToDos for an initial support:

- [ ] Add support for `[async/sync]` generators in return types

- [ ] Make sure `GraphQLDeferDirective`, `GraphQLStreamDirective`, are in the GQL-Core schema

Flag in Schema(query=Query, config={enable_stream_defer: False}) - default true

- [ ] Add incremental delivery support to all the views

- [ ] FastAPI integration -> Maybe in the Async Base View?

- [ ] Explore Sync View Integration

### long term goals

_incomplete list of problems / design improvement potential of the current raw implementation_

#### Problem: streaming / n+1 -> first-level dataloaders are no longer working as every instance is resolved 1:1

#### Possible solutions

- dig deeper into https://github.com/robrichard/defer-stream-wg/discussions/40

- custom query execution plan engine

- Add @streamable directive to schema fields automatically if field is streamable, including custom validation rule

Some playground code: https://gist.github.com/erikwrede/993e1fc174ee75b11c491210e4a9136b | open | 2023-07-02T21:32:42Z | 2025-03-20T15:56:16Z | https://github.com/strawberry-graphql/strawberry/issues/2914 | [] | erikwrede | 9 |

lucidrains/vit-pytorch | computer-vision | 54 | Problem with ResNet | Hi! I would like to train Vit using distiller in a dataset with grayscale images, but I am having problems with the ResNet since it is expecting inputs with 3 channels and my images have only 1. Do you have any suggestions? Thanks!

This is the error:

`RuntimeError: Given groups=1, weight of size [64, 3, 7, 7], expected input[64, 1, 224, 224] to have 3 channels, but got 1 channels instead` | closed | 2020-12-28T14:07:25Z | 2021-01-02T09:44:28Z | https://github.com/lucidrains/vit-pytorch/issues/54 | [] | doglab753 | 2 |

ray-project/ray | machine-learning | 51,593 | [cgraph] Support function nodes | ### Description

Currently Ray compiled graphs only support actor method nodes. There is a [TODO](https://github.com/ray-project/ray/blob/master/python/ray/dag/compiled_dag_node.py#L1167-L1170) in the source code to add support for non-actor tasks, but I haven't seen a related issue. Many of the docs I've read on compiled graphs refer to running in the background thread of actors so I'm sure there is some complexity involved with supporting nodes unrelated to actors.

### Use case

One of our primary use cases for Ray involves executing a task graph on some interval. For example, every minute we take some inputs, run them through a task graph of hundreds of nodes (5-10 distinct functions), and extract the output. These graphs often pass large Arrow tables between tasks and the overall memory usage can be 50-100GB. Because of a long-standing, critical [bug](https://github.com/ray-project/ray/issues/47920) in the original ray.dag interface, we are forced to reconstruct the task graph every minute rather than reusing it. For this application, we run a Ray cluster on a single large server to minimize communication overhead.

Compiled graphs have been on our radar for a long time as a much more efficient way to run this application. Both the reduced scheduling overhead, and better passing of large objects between nodes are very appealing, as execution latency is a large concern of ours.

For these task graphs, we don't really care which worker/CPU the individual tasks run on, just that they run as soon as their dependencies are available. In order to fit this use case into an actor-method compiled graph, I suspect we would need to create some generic "runner" actor for every available CPU and simply distribute tasks in a round-robin fashion for each generation in the DAG. My concern here is that we may introduce artificial bottlenecks because we don't know a priori how long each task will take to run. Supporting function nodes where the task is run on the first available worker would be ideal for us. | open | 2025-03-21T14:29:40Z | 2025-03-21T16:07:48Z | https://github.com/ray-project/ray/issues/51593 | [

"enhancement",

"triage",

"core",

"compiled-graphs"

] | b-phi | 0 |

lundberg/respx | pytest | 208 | Regression of ASGI mocking after `respx == 0.17.1` and `httpx == 0.19.0` | We run code alike this:

```python

import httpx

import pytest

import respx

from respx.mocks import HTTPCoreMocker

@pytest.mark.asyncio

async def test_asgi():

try:

HTTPCoreMocker.add_targets(

"httpx._transports.asgi.ASGITransport",

"httpx._transports.wsgi.WSGITransport",

)

async with respx.mock:

async with httpx.AsyncClient(app="fake-asgi") as client:

url = "https://foo.bar/"

jzon = {"status": "ok"}

headers = {"X-Foo": "bar"}

request = respx.get(url) % dict(

status_code=202, headers=headers, json=jzon

)

response = await client.get(url)

assert request.called is True

assert response.status_code == 202

assert response.headers == httpx.Headers(

{

"Content-Type": "application/json",

"Content-Length": "16",

**headers,

}

)

assert response.json() == {"status": "ok"}

finally:

HTTPCoreMocker.remove_targets(

"httpx._transports.asgi.ASGITransport",

"httpx._transports.wsgi.WSGITransport",

)

```

It works perfectly fine using `respx == 0.17.1` and `httpx == 0.19.0`, as can be seen by:

```bash

$> pytest test.py

=================== test session starts ===================

platform linux -- Python 3.10.5, pytest-7.1.2, pluggy-1.0.0

plugins: asyncio-0.19.0, respx-0.17.1, anyio-3.6.1

asyncio: mode=strict

collected 1 item

test.py . [100%]

==================== 1 passed in 0.00s ====================

```

However upgrading `httpx == 0.20.0` yields:

```python

.../lib/python3.10/site-packages/respx/mocks.py:179: in amock

request = cls.to_httpx_request(**kwargs)

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

cls = <class 'respx.mocks.HTTPCoreMocker'>, kwargs = {'request': <Request('GET', 'https://foo.bar/')>}

@classmethod

def to_httpx_request(cls, **kwargs):

"""

Create a `HTTPX` request from transport request args.

"""

request = (

> kwargs["method"],

kwargs["url"],

kwargs.get("headers"),

kwargs.get("stream"),

)

E KeyError: 'method'

.../lib/python3.10/site-packages/respx/mocks.py:288: KeyError

```

While trying to upgrade `respx == 0.18.0` yields a package resolution error:

```console

SolverProblemError

Because respx (0.18.0) depends on httpx (>=0.20.0)

and respxbug depends on httpx (^0.19.0), respx is forbidden.

So, because respxbug depends on respx (0.18.0), version solving failed.

```

Upgrading both yields an error alike the one for just upgrading `respx`.

Running with `respx == 0.19.2` and `httpx == 0.23.0` (the newest version at the time for writing), yields:

```python

.../lib/python3.10/site-packages/respx/mocks.py:186: in amock

request = cls.to_httpx_request(**kwargs))

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

cls = <class 'respx.mocks.HTTPCoreMocker'>, kwargs = {'request': <Request('GET', 'https://foo.bar/')>}, request = <Request('GET', 'https://foo.bar/')>

@classmethod

def to_httpx_request(cls, **kwargs):

"""

Create a `HTTPX` request from transport request arg.

"""

request = kwargs["request"]

raw_url = (

request.url.scheme,

request.url.host,

request.url.port,

> request.url.target,

)

E AttributeError: 'URL' object has no attribute 'target'

.../lib/python3.10/site-packages/respx/mocks.py:302: AttributeError

```

I have attempted to debug the issue, and it seems there's a difference in the incoming `request.url` object type during ASGI and non-ASGI mocking (see the example below).

With ASGI mocking:

```python

(Pdb) pp request.url

URL('https://foo.bar/')

(Pdb) pp type(request.url)

<class 'httpx.URL'>

```

Without ASGI mocking (i.e. ordinary mocking):

```python

(Pdb) pp request.url

URL(scheme=b'https', host=b'foo.bar', port=None, target=b'/')

(Pdb) type(request.url)

<class 'httpcore.URL'>

```

The non-ASGI mocking case was produced with the exact same code as above, but by changing:

```python

async with httpx.AsyncClient(app="fake-asgi") as client:

```

to:

```python

async with httpx.AsyncClient() as client:

```

I have not studied the flow of `respx` mock well enough to know how to implement an appropriate fix, but the problem seems to arise from the fact that `.target` is not a documented member on `httpx`s [`URL` object](https://github.com/encode/httpx/blob/0.23.0/httpx/_urls.py), however it does have similar code to the above, [here](https://github.com/encode/httpx/blob/0.23.0/httpx/_urls.py#L325-L338), which utilizes `.raw_path` instead of `.target`.

So maybe the code ought to branch on the incoming `URL` type and provide `url.raw` if the type is a `httpx.URL`, unless it can be passed on directly?

Finally it seems like the test validating that `ASGI` mocking works was removed in this commit: 47c0b935176e081a3aa7886aed8b8ed31c0e9457, while the core functionality was added in this PR: #131 (along with said test).

As the code stands now it only seems to test that the `"httpx._transports.asgi.ASGITransport"` can be added and removed using `HTTPCoreMocker.add_targets` and `HTTPCoreMocker.remove_targets` not that mocking with the `ASGITransport` actually works. | closed | 2022-07-26T09:11:51Z | 2022-08-25T20:25:16Z | https://github.com/lundberg/respx/issues/208 | [] | Skeen | 5 |

Nemo2011/bilibili-api | api | 527 | [需求] 直播间新增关注事件 | 如题,看了现有的EVENT好像没有关注的时间,`room.on("ALL")`好像也抓不到直播间中有人关注的事件。

请问怎样获取关注事件呢 | closed | 2023-10-13T07:49:19Z | 2023-10-14T14:36:37Z | https://github.com/Nemo2011/bilibili-api/issues/527 | [

"need",

"solved"

] | iAilu | 1 |

automl/auto-sklearn | scikit-learn | 1,474 | Refactor the concept of public and private test set | Auto-sklearn was built for the AutoML challenges where there were public and private test sets by the names "validation set" and "test set". Since these challenges we have substantially refactored Auto-sklearn and no longer allow the user to pass in the public test set aka "validation set". This leads to confusion as "validation set" is really a misnomer, as inside a machine learning system it usually denotes the dataset on which a model is selected. Therefore we should drop all references that still exist to this old concept of a "validation set", as already being done by @eddiebergman in #1434, which should only be in the `evaluation` submodule`. | closed | 2022-05-13T08:01:49Z | 2022-06-17T12:26:13Z | https://github.com/automl/auto-sklearn/issues/1474 | [

"maintenance"

] | mfeurer | 0 |

predict-idlab/plotly-resampler | data-visualization | 175 | About plotly graph exporting to HTML file | In my case, I want to export my plotly graph into HTML for later use, and I just run the following code:

`fig.write_html('fig1.html', include_plotlyjs='cdn')`

The good news is I do get a complete figure with basic interactive features (zoom, hover, etc.), but it will lose the advanced features like **auto resampling** when you zoom in/out like in the jupyter.

I've been looking for similar problems in every place, but I didn't think I find any direct detailed explanation and possible solution to this.

Maybe it's the innate html limitaiton, and hopefully someone can help me understand or even solve this.

| closed | 2023-03-03T06:55:27Z | 2023-03-03T12:49:47Z | https://github.com/predict-idlab/plotly-resampler/issues/175 | [

"documentation"

] | buptycz | 4 |

mwaskom/seaborn | matplotlib | 3,660 | JointPlot allow changing positions of the marginal histograms | Hi,

Amazing package! I was wondering if it is possible to add a change that would allow joint plots to specify the positioning of the marginal histogram? Kind of like in https://stackoverflow.com/questions/55111214/change-position-of-marginal-axis-in-seaborn-jointplot

| closed | 2024-03-21T19:40:08Z | 2025-01-26T15:43:23Z | https://github.com/mwaskom/seaborn/issues/3660 | [] | adam2392 | 1 |

paperless-ngx/paperless-ngx | machine-learning | 7,436 | [BUG] During the file upload on web site the status window is not closing | ### Description

While uploading a file in the web upload window, the status window about the process is stuck on the screen. The process itself is running well and finished but the status is stuck in this position and only after reload the web page will disappear.

### Steps to reproduce

1. upload a file on dashboard Upload new document window

2. check the status window

### Webserver logs

```bash

[2024-08-10 15:53:26,307] [INFO] [paperless.consumer] Document 2024-08-10 Projektek Tantusz Invest bemutatkozas consumption finished

[2024-08-10 15:53:26,311] [INFO] [paperless.tasks] ConsumeTaskPlugin completed with: Success. New document id 4 created

[2024-08-10 16:05:00,107] [DEBUG] [paperless.classifier] Gathering data from database...

[2024-08-10 16:05:00,122] [DEBUG] [paperless.classifier] 2 documents, 1 tag(s), 1 correspondent(s), 1 document type(s). 0 storage path(es)

[2024-08-10 16:05:00,122] [DEBUG] [paperless.classifier] Vectorizing data...

[2024-08-10 16:05:00,133] [DEBUG] [paperless.classifier] Training tags classifier...

[2024-08-10 16:05:00,171] [DEBUG] [paperless.classifier] Training correspondent classifier...

[2024-08-10 16:05:00,208] [DEBUG] [paperless.classifier] Training document type classifier...

[2024-08-10 16:05:00,240] [DEBUG] [paperless.classifier] There are no storage paths. Not training storage path classifier.

[2024-08-10 16:05:00,243] [INFO] [paperless.tasks] Saving updated classifier model to /usr/src/paperless/data/classification_model.pickle...

```

### Browser logs

_No response_

### Paperless-ngx version

2.11.2

### Host OS

Ubuntu 20.04

### Installation method

Docker - official image

### System status

_No response_

### Browser

Arc

### Configuration changes

_No response_

### Please confirm the following

- [X] I believe this issue is a bug that affects all users of Paperless-ngx, not something specific to my installation.

- [X] I have already searched for relevant existing issues and discussions before opening this report.

- [X] I have updated the title field above with a concise description. | closed | 2024-08-10T14:06:11Z | 2024-09-10T03:06:42Z | https://github.com/paperless-ngx/paperless-ngx/issues/7436 | [

"bug",

"frontend"

] | elpi07 | 6 |

allenai/allennlp | nlp | 5,602 | Unable to import Predictor from allennlp.predictors.predictor @ Apple Silicon Mac | <!--

Please fill this template entirely and do not erase any of it.

We reserve the right to close without a response bug reports which are incomplete.

If you have a question rather than a bug, please ask on [Stack Overflow](https://stackoverflow.com/questions/tagged/allennlp) rather than posting an issue here.

-->

## Checklist

<!-- To check an item on the list replace [ ] with [x]. -->

- [X] I have verified that the issue exists against the `main` branch of AllenNLP.

- [X] I have read the relevant section in the [contribution guide](https://github.com/allenai/allennlp/blob/main/CONTRIBUTING.md#bug-fixes-and-new-features) on reporting bugs.

- [X] I have checked the [issues list](https://github.com/allenai/allennlp/issues) for similar or identical bug reports.

- [X] I have checked the [pull requests list](https://github.com/allenai/allennlp/pulls) for existing proposed fixes.

- [X] I have checked the [CHANGELOG](https://github.com/allenai/allennlp/blob/main/CHANGELOG.md) and the [commit log](https://github.com/allenai/allennlp/commits/main) to find out if the bug was already fixed in the main branch.

- [X] I have included in the "Description" section below a traceback from any exceptions related to this bug.

- [X] I have included in the "Related issues or possible duplicates" section beloew all related issues and possible duplicate issues (If there are none, check this box anyway).

- [X] I have included in the "Environment" section below the name of the operating system and Python version that I was using when I discovered this bug.

- [X] I have included in the "Environment" section below the output of `pip freeze`.

- [X] I have included in the "Steps to reproduce" section below a minimally reproducible example.

## Description

<!-- ImportError: cannot import name 'ProcessGroup' from 'torch.distributed' (/Users/xxxxxxx/miniconda3/envs/allennlp_env/lib/python3.8/site-packages/torch/distributed/__init__.py) -->

<details>

<summary><b>Python traceback:</b></summary>

<p>----> 7 from allennlp.predictors.predictor import Predictor

8 import allennlp_models.tagging

10 predictor = Predictor.from_path("https://storage.googleapis.com/allennlp-public-models/ner-elmo.2021-02-12.tar.gz")

File ~/miniconda3/envs/allennlp_env/lib/python3.8/site-packages/allennlp/predictors/__init__.py:9, in <module>

1 """

2 A `Predictor` is

3 a wrapper for an AllenNLP `Model`

(...)

7 a `Predictor` that wraps it.

8 """

----> 9 from allennlp.predictors.predictor import Predictor

10 from allennlp.predictors.sentence_tagger import SentenceTaggerPredictor

11 from allennlp.predictors.text_classifier import TextClassifierPredictor

File ~/miniconda3/envs/allennlp_env/lib/python3.8/site-packages/allennlp/predictors/predictor.py:18, in <module>

16 from allennlp.data import DatasetReader, Instance

17 from allennlp.data.batch import Batch

---> 18 from allennlp.models import Model

19 from allennlp.models.archival import Archive, load_archive

20 from allennlp.nn import util

File ~/miniconda3/envs/allennlp_env/lib/python3.8/site-packages/allennlp/models/__init__.py:6, in <module>

1 """

2 These submodules contain the classes for AllenNLP models,

3 all of which are subclasses of `Model`.

4 """

----> 6 from allennlp.models.model import Model

7 from allennlp.models.archival import archive_model, load_archive, Archive

8 from allennlp.models.basic_classifier import BasicClassifier

File ~/miniconda3/envs/allennlp_env/lib/python3.8/site-packages/allennlp/models/model.py:22, in <module>

20 from allennlp.nn import util

21 from allennlp.nn.module import Module

---> 22 from allennlp.nn.parallel import DdpAccelerator

23 from allennlp.nn.regularizers import RegularizerApplicator

25 logger = logging.getLogger(__name__)

File ~/miniconda3/envs/allennlp_env/lib/python3.8/site-packages/allennlp/nn/parallel/__init__.py:7, in <module>

1 from allennlp.nn.parallel.sharded_module_mixin import ShardedModuleMixin

2 from allennlp.nn.parallel.ddp_accelerator import (

3 DdpAccelerator,

4 DdpWrappedModel,

5 TorchDdpAccelerator,

6 )

----> 7 from allennlp.nn.parallel.fairscale_fsdp_accelerator import (

8 FairScaleFsdpAccelerator,

9 FairScaleFsdpWrappedModel,

10 )

File ~/miniconda3/envs/allennlp_env/lib/python3.8/site-packages/allennlp/nn/parallel/fairscale_fsdp_accelerator.py:4, in <module>

1 import os

2 from typing import Tuple, Union, Optional, TYPE_CHECKING, List, Any, Dict, Sequence

----> 4 from fairscale.nn import FullyShardedDataParallel as FS_FSDP

5 from fairscale.nn.wrap import enable_wrap, wrap

6 from fairscale.nn.misc import FlattenParamsWrapper

File ~/miniconda3/envs/allennlp_env/lib/python3.8/site-packages/fairscale/__init__.py:12, in <module>

1 # Copyright (c) Facebook, Inc. and its affiliates. All rights reserved.

2 #

3 # This source code is licensed under the BSD license found in the

(...)

7 # Import most common subpackages

8 ################################################################################

10 from typing import List

---> 12 from . import nn

13 from .version import __version_tuple__

15 __version__ = ".".join([str(x) for x in __version_tuple__])

File ~/miniconda3/envs/allennlp_env/lib/python3.8/site-packages/fairscale/nn/__init__.py:9, in <module>

6 from typing import List

8 from .checkpoint import checkpoint_wrapper

----> 9 from .data_parallel import FullyShardedDataParallel, ShardedDataParallel

10 from .misc import FlattenParamsWrapper

11 from .moe import MOELayer, Top2Gate

File ~/miniconda3/envs/allennlp_env/lib/python3.8/site-packages/fairscale/nn/data_parallel/__init__.py:8, in <module>

1 # Copyright (c) Facebook, Inc. and its affiliates. All rights reserved.

2 #

3 # This source code is licensed under the BSD license found in the

4 # LICENSE file in the root directory of this source tree.

6 from typing import List

----> 8 from .fully_sharded_data_parallel import FullyShardedDataParallel, OffloadConfig, TrainingState, auto_wrap_bn

9 from .sharded_ddp import ShardedDataParallel

11 __all__: List[str] = []

File ~/miniconda3/envs/allennlp_env/lib/python3.8/site-packages/fairscale/nn/data_parallel/fully_sharded_data_parallel.py:38, in <module>

36 from torch.autograd import Variable

37 import torch.distributed as dist

---> 38 from torch.distributed import ProcessGroup

39 import torch.nn as nn

40 import torch.nn.functional as F

ImportError: cannot import name 'ProcessGroup' from 'torch.distributed' (/Users/simontse/miniconda3/envs/allennlp_env/lib/python3.8/site-packages/torch/distributed/__init__.py)

<!-- Paste the traceback from any exception (if there was one) in between the next two lines below -->

```

```

</p>

</details>

## Related issues or possible duplicates

- None

## Environment

<!-- Provide the name of operating system below (e.g. OS X, Linux) -->

OS: macOS Monterey ver12.3

<!-- Provide the Python version you were using (e.g. 3.7.1) -->

Python version: 3.8.12

<details>

<summary><b>Output of <code>pip freeze</code>:</b></summary>

<p>

<!-- Paste the output of `pip freeze` in between the next two lines below -->

```

aiohttp @ file:///Users/runner/miniforge3/conda-bld/aiohttp_1637087375815/work

aiosignal @ file:///home/conda/feedstock_root/build_artifacts/aiosignal_1636093929600/work

alembic @ file:///home/conda/feedstock_root/build_artifacts/alembic_1647367721563/work

allennlp @ file:///Users/runner/miniforge3/conda-bld/allennlp_1644183594868/work

allennlp-models @ file:///Users/runner/miniforge3/conda-bld/allennlp-models_1644193900256/work

allennlp-optuna @ file:///home/conda/feedstock_root/build_artifacts/allennlp-optuna_1637742042512/work

allennlp-semparse @ file:///Users/runner/miniforge3/conda-bld/allennlp-semparse_1644289991832/work

allennlp-server @ file:///Users/runner/miniforge3/conda-bld/allennlp-server_1644211316665/work

appnope @ file:///Users/runner/miniforge3/conda-bld/appnope_1635819899231/work

argon2-cffi @ file:///home/conda/feedstock_root/build_artifacts/argon2-cffi_1640817743617/work

argon2-cffi-bindings @ file:///Users/runner/miniforge3/conda-bld/argon2-cffi-bindings_1640885719931/work

asttokens @ file:///home/conda/feedstock_root/build_artifacts/asttokens_1618968359944/work

async-timeout @ file:///home/conda/feedstock_root/build_artifacts/async-timeout_1640026696943/work

attrs @ file:///home/conda/feedstock_root/build_artifacts/attrs_1640799537051/work

autopage @ file:///home/conda/feedstock_root/build_artifacts/autopage_1642834347039/work

backcall @ file:///home/conda/feedstock_root/build_artifacts/backcall_1592338393461/work

backports.csv==1.0.7

backports.functools-lru-cache @ file:///home/conda/feedstock_root/build_artifacts/backports.functools_lru_cache_1618230623929/work

base58 @ file:///home/conda/feedstock_root/build_artifacts/base58_1635724186165/work

beautifulsoup4 @ file:///home/conda/feedstock_root/build_artifacts/beautifulsoup4_1631087867185/work

bleach @ file:///home/conda/feedstock_root/build_artifacts/bleach_1629908509068/work

blis @ file:///Users/runner/miniforge3/conda-bld/cython-blis_1645002545531/work

boto3 @ file:///home/conda/feedstock_root/build_artifacts/boto3_1647500875427/work

botocore @ file:///home/conda/feedstock_root/build_artifacts/botocore_1647478405006/work

brotlipy @ file:///Users/runner/miniforge3/conda-bld/brotlipy_1636012322014/work

cached-path @ file:///Users/runner/miniforge3/conda-bld/cached_path_1646363831472/work

cached-property @ file:///home/conda/feedstock_root/build_artifacts/cached_property_1615209429212/work

cachetools @ file:///home/conda/feedstock_root/build_artifacts/cachetools_1640686991047/work

catalogue @ file:///Users/runner/miniforge3/conda-bld/catalogue_1638867620917/work

certifi==2021.10.8

cffi @ file:///Users/runner/miniforge3/conda-bld/cffi_1636046166270/work

chardet @ file:///Users/runner/miniforge3/conda-bld/chardet_1635814976389/work

charset-normalizer @ file:///home/conda/feedstock_root/build_artifacts/charset-normalizer_1644853463426/work

checklist @ file:///home/conda/feedstock_root/build_artifacts/checklist_1626355406222/work

cheroot @ file:///home/conda/feedstock_root/build_artifacts/cheroot_1641335003286/work

CherryPy @ file:///Users/runner/miniforge3/conda-bld/cherrypy_1643789202126/work

click==7.1.2

cliff @ file:///home/conda/feedstock_root/build_artifacts/cliff_1645470499396/work

cmaes @ file:///home/conda/feedstock_root/build_artifacts/cmaes_1613785714721/work

cmd2 @ file:///Users/runner/miniforge3/conda-bld/cmd2_1644164388812/work

colorama @ file:///home/conda/feedstock_root/build_artifacts/colorama_1602866480661/work

colorlog==6.6.0

configparser @ file:///home/conda/feedstock_root/build_artifacts/configparser_1638573090458/work

conllu @ file:///home/conda/feedstock_root/build_artifacts/conllu_1629103029427/work

cryptography @ file:///Users/runner/miniforge3/conda-bld/cryptography_1639699343700/work

cycler @ file:///home/conda/feedstock_root/build_artifacts/cycler_1635519461629/work

cymem @ file:///Users/runner/miniforge3/conda-bld/cymem_1636053450611/work

dataclasses @ file:///home/conda/feedstock_root/build_artifacts/dataclasses_1628958434797/work

datasets @ file:///home/conda/feedstock_root/build_artifacts/datasets_1647378244171/work

debugpy @ file:///Users/runner/miniforge3/conda-bld/debugpy_1636043378262/work

decorator @ file:///home/conda/feedstock_root/build_artifacts/decorator_1641555617451/work

defusedxml @ file:///home/conda/feedstock_root/build_artifacts/defusedxml_1615232257335/work

dill @ file:///home/conda/feedstock_root/build_artifacts/dill_1623610058511/work

docker-pycreds==0.4.0

editdistance @ file:///Users/runner/miniforge3/conda-bld/editdistance_1636224171992/work

entrypoints @ file:///home/conda/feedstock_root/build_artifacts/entrypoints_1643888246732/work

executing @ file:///home/conda/feedstock_root/build_artifacts/executing_1646044401614/work

fairscale @ file:///Users/runner/miniforge3/conda-bld/fairscale_1644056098310/work

feedparser @ file:///home/conda/feedstock_root/build_artifacts/feedparser_1624371037925/work

filelock @ file:///home/conda/feedstock_root/build_artifacts/filelock_1641470428964/work

Flask @ file:///home/conda/feedstock_root/build_artifacts/flask_1644887820294/work

Flask-Cors @ file:///home/conda/feedstock_root/build_artifacts/flask-cors_1622383494577/work

flit_core @ file:///home/conda/feedstock_root/build_artifacts/flit-core_1645629044586/work/source/flit_core

fonttools @ file:///Users/runner/miniforge3/conda-bld/fonttools_1646922287558/work

frozenlist @ file:///Users/runner/miniforge3/conda-bld/frozenlist_1643222648494/work

fsspec @ file:///home/conda/feedstock_root/build_artifacts/fsspec_1645566723803/work

ftfy @ file:///home/conda/feedstock_root/build_artifacts/ftfy_1647200718722/work

future @ file:///Users/runner/miniforge3/conda-bld/future_1635819654955/work

gevent @ file:///Users/runner/miniforge3/conda-bld/gevent_1639267879746/work

gitdb @ file:///home/conda/feedstock_root/build_artifacts/gitdb_1635085722655/work

GitPython @ file:///home/conda/feedstock_root/build_artifacts/gitpython_1645531658201/work

google-api-core @ file:///home/conda/feedstock_root/build_artifacts/google-api-core-split_1644877687275/work

google-auth @ file:///home/conda/feedstock_root/build_artifacts/google-auth_1644503159426/work

google-cloud-core @ file:///home/conda/feedstock_root/build_artifacts/google-cloud-core_1642607638110/work

google-cloud-storage @ file:///home/conda/feedstock_root/build_artifacts/google-cloud-storage_1644876711050/work

google-crc32c @ file:///Users/runner/miniforge3/conda-bld/google-crc32c_1636020985630/work

google-resumable-media @ file:///home/conda/feedstock_root/build_artifacts/google-resumable-media_1635195007097/work

googleapis-common-protos @ file:///Users/runner/miniforge3/conda-bld/googleapis-common-protos-feedstock_1647557644942/work

greenlet @ file:///Users/runner/miniforge3/conda-bld/greenlet_1635837112391/work

grpcio @ file:///Users/runner/miniforge3/conda-bld/grpcio_1645230394268/work

h5py @ file:///Users/runner/miniforge3/conda-bld/h5py_1637964070496/work

huggingface-hub @ file:///home/conda/feedstock_root/build_artifacts/huggingface_hub_1641988520462/work

idna @ file:///home/conda/feedstock_root/build_artifacts/idna_1642433548627/work

importlib-metadata @ file:///Users/runner/miniforge3/conda-bld/importlib-metadata_1647210434605/work

importlib-resources @ file:///home/conda/feedstock_root/build_artifacts/importlib_resources_1635615662634/work

ipykernel @ file:///Users/runner/miniforge3/conda-bld/ipykernel_1647271194783/work/dist/ipykernel-6.9.2-py3-none-any.whl

ipython @ file:///Users/runner/miniforge3/conda-bld/ipython_1646324756182/work

ipython-genutils==0.2.0

ipywidgets @ file:///home/conda/feedstock_root/build_artifacts/ipywidgets_1647456365981/work

iso-639 @ file:///home/conda/feedstock_root/build_artifacts/iso-639_1626355260505/work

itsdangerous @ file:///home/conda/feedstock_root/build_artifacts/itsdangerous_1646849180040/work

jaraco.classes @ file:///home/conda/feedstock_root/build_artifacts/jaraco.classes_1619298134024/work

jaraco.collections @ file:///home/conda/feedstock_root/build_artifacts/jaraco.collections_1641469018844/work

jaraco.context @ file:///home/conda/feedstock_root/build_artifacts/jaraco.context_1646657544740/work

jaraco.functools @ file:///home/conda/feedstock_root/build_artifacts/jaraco.functools_1641071972629/work

jaraco.text @ file:///Users/runner/miniforge3/conda-bld/jaraco.text_1646672054767/work

jedi @ file:///Users/runner/miniforge3/conda-bld/jedi_1637175378067/work

Jinja2 @ file:///home/conda/feedstock_root/build_artifacts/jinja2_1636510082894/work

jmespath @ file:///home/conda/feedstock_root/build_artifacts/jmespath_1647416812516/work

joblib @ file:///home/conda/feedstock_root/build_artifacts/joblib_1633637554808/work

jsonnet @ file:///Users/runner/miniforge3/conda-bld/jsonnet_1644086886800/work

jsonschema @ file:///home/conda/feedstock_root/build_artifacts/jsonschema-meta_1642000296051/work

jupyter @ file:///Users/runner/miniforge3/conda-bld/jupyter_1637233406932/work

jupyter-client @ file:///home/conda/feedstock_root/build_artifacts/jupyter_client_1642858610849/work

jupyter-console @ file:///home/conda/feedstock_root/build_artifacts/jupyter_console_1646669715337/work

jupyter-core @ file:///Users/runner/miniforge3/conda-bld/jupyter_core_1645024702831/work

jupyterlab-pygments @ file:///home/conda/feedstock_root/build_artifacts/jupyterlab_pygments_1601375948261/work

jupyterlab-widgets @ file:///home/conda/feedstock_root/build_artifacts/jupyterlab_widgets_1647446862951/work

kiwisolver @ file:///Users/runner/miniforge3/conda-bld/kiwisolver_1647351843120/work

langcodes @ file:///home/conda/feedstock_root/build_artifacts/langcodes_1636741340529/work

lmdb @ file:///Users/runner/miniforge3/conda-bld/python-lmdb_1644189524859/work

lxml @ file:///Users/runner/miniforge3/conda-bld/lxml_1645124877356/work

Mako @ file:///home/conda/feedstock_root/build_artifacts/mako_1646959760357/work

MarkupSafe @ file:///Users/runner/miniforge3/conda-bld/markupsafe_1647364592705/work

matplotlib @ file:///Users/runner/miniforge3/conda-bld/matplotlib-suite_1639359034653/work

matplotlib-inline @ file:///home/conda/feedstock_root/build_artifacts/matplotlib-inline_1631080358261/work

mistune @ file:///Users/runner/miniforge3/conda-bld/mistune_1635845001896/work

more-itertools @ file:///home/conda/feedstock_root/build_artifacts/more-itertools_1637732846337/work

multidict @ file:///Users/runner/miniforge3/conda-bld/multidict_1643055408799/work

multiprocess @ file:///Users/runner/miniforge3/conda-bld/multiprocess_1635876223414/work

munch==2.5.0

munkres==1.1.4

murmurhash @ file:///Users/runner/miniforge3/conda-bld/murmurhash_1636019736584/work

mysqlclient @ file:///Users/runner/miniforge3/conda-bld/mysqlclient_1639024612770/work

nbclient @ file:///home/conda/feedstock_root/build_artifacts/nbclient_1646999386773/work

nbconvert @ file:///Users/runner/miniforge3/conda-bld/nbconvert_1647040578847/work

nbformat @ file:///home/conda/feedstock_root/build_artifacts/nbformat_1646951096007/work

nest-asyncio @ file:///home/conda/feedstock_root/build_artifacts/nest-asyncio_1638419302549/work