repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

katanaml/sparrow | computer-vision | 29 | Random prediction and wrong prediction in repeated characters | Hello,

I have trained a donut base model on our custom dataset, which consists of a total of 12,480 images. I then fine-tuned this base model with default parameters.

During the analysis of predictions, I observed certain patterns in the JSON output. Specifically, when similar keys appear almost simultaneously, the model tends to make the following types of errors:

It predicts extra characters (e.g., "Paneer cheese paratha with butter" is predicted as "Paneer Paneer cheese paratha with butter").

It misses some characters (e.g., "199.00" is predicted as "19.00").

It predicts incorrect characters (e.g., "119.00" is predicted as "159.00").

Additionally, I noticed that the model often predicts characters such as "5," "7," and "1," even though these characters are not present in the images.

**Ground Truth:**

{

"table": [

{

"key": "Paneer paratha with butter",

"value": "199.00"

},

{

"key": "Paneer cheese paratha with butter",

"value": "119.00"

}

]

}

**Prediction:**

{

"table": [

{

"key": "Paneer paratha with butter",

"value": "19.00"

},

{

"key": "Paneer Paneer cheese paratha with butter",

"value": "159.00"

}

]

}

In the below json, model misses in between characters, predicts something else other than ground truth or gives extra characters in prediction which are not there in image/json. The image is clean enough for a model to get proper predictions still it gets wrong predictions as mentioned above.

As per analysis, the model makes more mistakes in values(Numeric) than keys(Alphabetic), maybe the reason is data imbalancing.

**Ground Truth:**

{

"table": [

{

"key": "Accessible Amount",

"value": "9123.23"

},

{

"key": "Car parts due :",

"value": "2,09,233.19"

},

{

"key": "Paint brushes :",

"value": "200.00"

}

]

}

**Predicted:**

{

"table": [

{

"key": "Accesible Amount",

"value": "9123.33"

},

{

"key": "Car parts due :",

"value": "9,1,233.19"

},

{

"key": "Paint brushes :",

"value": "200.000"

}

]

}

In the JSON provided below, despite the clarity of the image, the model consistently exhibits several issues:

Missing Characters: The model frequently fails to recognize certain characters.

Duplicate Keys: It tends to predict the same type of key multiple times, resulting in an extra key, such as "Oil fluid," which is a combination of two adjacent keys.

Missing Colon (:) at the End of Keys: The model omits the colon character at the end of keys.

Missing Plus Sign (+) in Values: It also overlooks the plus sign in values.

**Ground Truth :**

{

"table": [

{

"key": "Delivery charges :",

"value": "(+)470.00"

},

{

"key": "Oil charge:",

"value": "3,120.00"

},

{

"key": "Washer fluid :",

"value": "3,120.00"

}

]

}

**Predicted:**

{

"table": [

{

"key": "Delivery charges",

"value": "( )470.00"

},

{

"key": "Oil charge:",

"value": "3,120.00"

},

{

"key": "Oil fluid :",

"value": "157.00"

},

{

"key": "Washer fluid :",

"value": "3,120.00"

}

]

}

In the below json, I have found the same pattern that sometimes model predict a character only one time even after that character there two times in the image. like; (‘@ @’, ‘: :’) then the model will predict it only once. Also predicts the same keys twice.

**Ground Truth:**

{

"table": [

{

"key": "Transport charges::",

"value": "144.00"

},

{

"key": "Freight charges",

"value": ""

},

{

"key": "Washer fluid @ @ 18 %",

"value": "3,120.00"

}

]

}

**Prediction:**

{

"table": [

{

"key": "Transport charges:",

"value": "144.00"

},

{

"key": "Freight charges:",

"value": ""

},

{

"key": "Freight charges:",

"value": ""

},

{

"key": "Freight charges:",

"value": ""

},

{

"key": "Washer fluid @ 18 %",

"value": "3,120.00"

}

]

}

| closed | 2023-11-07T18:51:32Z | 2023-11-07T18:59:18Z | https://github.com/katanaml/sparrow/issues/29 | [] | Asha-12502 | 1 |

graphql-python/graphene | graphql | 870 | Resolver Not Receiving Arguments when Nested | I have a list of classes stored in memory that I am trying to parse through various types. It is referenced through the method `get_inventory()`.

When I call the classes individually, they resolve as I would expect.

But when I try to nest one in the other, the value is returning null.

The code, followed by some examples:

class Account(graphene.ObjectType):

account_name = graphene.String()

account_id = graphene.String()

def resolve_account(

self, info,

account_id=None,

account_name=None

):

inventory = get_inventory()

result = [Account(

account_id=i.account_id,

account_name=i.account_name

) for i in inventory if (

(i.account_id == account_id) or

(i.account_name == account_name)

)]

if len(result):

return result[0]

else:

return Account()

account = graphene.Field(

Account,

resolver=Account.resolve_account,

account_name=graphene.String(default_value=None),

account_id=graphene.String(default_value=None)

)

class Item(graphene.ObjectType):

item_name = graphene.String()

region = graphene.String()

account = account

def resolve_item(

self, info,

item_name=None

):

inventory = get_inventory()

result = [Item(

item_name=i.item_name,

region=i.region,

account=Account(

account_id=i.account_id

)

) for i in inventory if (

(i.item_name == item_name)

)]

if len(result):

return result[0]

else:

return Item()

item = graphene.Field(

Item,

resolver=Item.resolve_item,

item_name=graphene.String(default_value=None)

)

class Query(graphene.ObjectType):

account = account

item = item

schema = graphene.Schema(query=Query)

Let's assume I have an account `foo` that has an item `bar`. The below queries return the fields correctly.

{

account(accountName:"foo") {

accountName

accountId

}

}

{

item(itemName: "bar") {

itemName

region

}

}

So if I wanted to find the account that has the item `bar`, I would think I could query `bar` and get `foo`. But it returns the `account` fields as `null`.

{

item(itemName: "bar") {

itemName

region

account {

accountId

accountName

}

}

}

Recall that as part of `resolve_item`, I am doing `account=Account(account_id=i.account_id)` - I would expect this to work.

If I alter the last return statement of `resolve_account` to the below, `accountId` always returns `yo`.

...

else:

return Account(

account_id='yo'

)

So this tells me that my resolver is firing, but the invocation in `resolve_item` is not passing `account_id` properly.

Not sure if this is a bug or user error. | closed | 2018-11-26T18:56:26Z | 2019-08-05T21:18:01Z | https://github.com/graphql-python/graphene/issues/870 | [

"wontfix",

"👀 more info needed"

] | getglad | 5 |

mwouts/itables | jupyter | 328 | 2 Problems with SearchPanes | In this code , there are 2 problems :

1. 'Header of the column is not shown without a reset_index()

2. Only the first column appears in the searchPanes although 3 are asked.

Can someone help me please ? Thanks

I tried the most recent version and version 2.0 of itables

import itables

from itables.sample_dfs import get_countries

df = get_countries(html=False)

# df.reset_index(inplace=True) with reset_index header 'region' is shown , without reset header is not shown

show(

df,

layout={"top1": "searchPanes"},

searchPanes={"layout": "columns-3", "cascadePanes": True, "columns": [1, 2, 3]})

#only the first column is shown in the Panes | closed | 2024-10-21T11:56:10Z | 2024-11-02T22:38:50Z | https://github.com/mwouts/itables/issues/328 | [] | tomnobelsAM | 2 |

sgl-project/sglang | pytorch | 3,802 | [Feature] SGlang_router start with healthy given worker_urls | ### Checklist

- [x] 1. If the issue you raised is not a feature but a question, please raise a discussion at https://github.com/sgl-project/sglang/discussions/new/choose Otherwise, it will be closed.

- [x] 2. Please use English, otherwise it will be closed.

### Motivation

the router start fails if not all the given worker_url are healthy, this may cause some problems when one worker is broken. Besides, if the router can start with no worker_url, this problem can also be solved by adding the heathy workers only.

### Related resources

_No response_ | open | 2025-02-24T03:36:18Z | 2025-03-20T09:31:30Z | https://github.com/sgl-project/sglang/issues/3802 | [] | slr1997 | 2 |

seleniumbase/SeleniumBase | web-scraping | 3,284 | dublicate url when using sb.open in cycle | https://www.sofascore.comhttps//www.sofascore.com/ru/football/match/pharco-fc-enppi/owrsdJWb#id:13015449 getting like this when using

def get_games_info(self, links):

games_info = []

for link in links:

self.sb.open(link)

time.sleep(1) | closed | 2024-11-22T17:09:30Z | 2024-11-22T17:55:42Z | https://github.com/seleniumbase/SeleniumBase/issues/3284 | [

"invalid"

] | SenseiSol | 1 |

matplotlib/cheatsheets | matplotlib | 16 | Image for "Basic plots - plot" should hint at markers | Currently the image is just sine line, which could trick users in thinking that `plot` is for lines and `scatter` is for markers.

I propose to additionally show another set of values with markers, e.g. something like:

| open | 2020-07-06T20:42:10Z | 2020-07-07T20:26:42Z | https://github.com/matplotlib/cheatsheets/issues/16 | [] | timhoffm | 9 |

sqlalchemy/sqlalchemy | sqlalchemy | 11,282 | Unable to close a connection | ### Describe the bug

when using the method .close() in my code (on **sqlalchemy.engine.base.Connection** object), and then checking the connections in the container, I still see the connection.

<img width="641" alt="image" src="https://github.com/sqlalchemy/sqlalchemy/assets/102469772/ecd2d3e7-0e8d-4a21-b3a3-092198720d0b">

Is there a problem with this method?

### Optional link from https://docs.sqlalchemy.org which documents the behavior that is expected

_No response_

### SQLAlchemy Version in Use

20.0.29

### DBAPI (i.e. the database driver)

pyodbc

### Database Vendor and Major Version

Teradata

### Python Version

3.10

### Operating system

Linux

### To Reproduce

```python

# sqlalchemy.engine.base.Connection - Object

client.connection.close()

# after closing still see the connection in the container

```

### Error

There is no error

### Additional context

_No response_ | closed | 2024-04-17T13:09:27Z | 2024-04-17T17:16:22Z | https://github.com/sqlalchemy/sqlalchemy/issues/11282 | [] | rshunim | 0 |

Neoteroi/BlackSheep | asyncio | 218 | docs: Error in `dispose_http_client` | **Describe the bug**

A description of what the bug is, possibly including how to reproduce it.

How it should be:

```diff

async def dispose_http_client(app):

- http_client = app.services.get(ClientSession) # obtain the http client

+ http_client = app.service_provider.get(ClientSession) # obtain the http client

await http_client.close()

``` | closed | 2021-12-15T14:45:11Z | 2021-12-15T18:50:58Z | https://github.com/Neoteroi/BlackSheep/issues/218 | [] | q0w | 2 |

mckinsey/vizro | plotly | 566 | How to make an editable table/Ag Grid be the source for a chart/figure | Hello all 👋 ,

based on a recent user question I wanted to show how one can make an editable table power a graph. The original request was:

> I would like to create a Table in my dashboard (in default blank) that allows the user to input values (the index are dates). Once clicking on a "Save" button, the data is used to create a line-chart on the left.

> How should I realize this with the AgGrid model and custimizable actions?

Here is the solution (I made the update automatic - but of course another "Save" button could be added!)

The code for this can be found here, where you can also run the dashboard live: https://py.cafe/maxi.schulz/vizro-user-question-editable-table

#### Notes

- this example showcases how Vizro is a framework on top of dash - and you can easily revert to using pure Dash for custom functionality

- some parts done here with `@callback` could be done with `vm.Action`, but not all

#### Important

When creating dashboards that allow for user input that the dashboard creator is always responsible for the security of the dashboard. Never evaluate untrusted data.

#### Sources

This solution was heavily inspired by https://www.youtube.com/watch?v=LNQhY8NZmCY (thanks @Coding-with-Adam )

#### Discussion

@petar-qb @antonymilne Maybe take note of this issue in case you have any comments, I find this especially interesting because it highlights the need for:

- specifying triggers

- ideally not returning entire figs in callbacks/actions, but rather modifying data in the DM (which we do not do in this example)

| open | 2024-07-04T12:44:10Z | 2024-07-08T15:03:27Z | https://github.com/mckinsey/vizro/issues/566 | [

"General Question :question:"

] | maxschulz-COL | 0 |

dgtlmoon/changedetection.io | web-scraping | 2,935 | Resetting of watch group tab selection when interacting with UI. | **Describe the bug**

Resetting of watch group tab selection when interacting with UI.

**Version**

0.49.0

**How did you install?**

pip

**To Reproduce**

You already fixed couple of UI actions reseting tab selection here https://github.com/dgtlmoon/changedetection.io/issues/2785 (big thank you for that!!!)

There are couple more:

-, watch edit > "clear history"

-, click on "CHANGEDETECTION.io in logo area" (i use it often when i want to go (from watch edit) to home, aka "watch list" view page)

-, watch checkbox > ''unpause"

...

= in general in my opinion it's better to make tab selection "persistent" / changeable only by clicking watch group "tab", cause if user focused his attention on particular group (narrowed it down) resetting his choice is not friendly.

but all this of course if you agree and if your time permits.

| open | 2025-01-29T01:01:13Z | 2025-01-30T14:54:53Z | https://github.com/dgtlmoon/changedetection.io/issues/2935 | [

"user-interface",

"triage"

] | gety9 | 3 |

littlecodersh/ItChat | api | 973 | 微信机器人 | open | 2022-12-10T17:19:48Z | 2022-12-10T17:19:48Z | https://github.com/littlecodersh/ItChat/issues/973 | [] | oucos | 0 | |

xonsh/xonsh | data-science | 5,623 | [docs] Cli command examples in guides should be fully copy&pasteable, i.e., without @ | ## Current Behavior

You can't copy&paste a line from a guide and run it, but need to cleanup `@` symbol

For example

> We suggest using the branchname::

`@ cp TEMPLATE.rst branch.rst`

When triple-clicking it on https://xon.sh/devguide.html you get `@`

Interestingly enough, the front page https://xon.sh seems to be better and doesn't include `@`

## Expected Behavior

No `@` on copying a line

## For community

⬇️ **Please click the 👍 reaction instead of leaving a `+1` or 👍 comment**

| open | 2024-07-23T04:57:22Z | 2024-07-23T11:25:46Z | https://github.com/xonsh/xonsh/issues/5623 | [

"docs"

] | eugenesvk | 0 |

microsoft/nni | tensorflow | 5,720 | NNI is starting, it's time to run an epoch but there's no value in the page? | **Describe the issue**:

it's time to run an epoch but there's no value in the page?

**Environment**:

- NNI version:2.5

- Training service (local|remote|pai|aml|etc):local

- Client OS:Win10

- Server OS (for remote mode only):

- Python version: 3.7

- PyTorch/TensorFlow version:PyTorch

- Is conda/virtualenv/venv used?:conda

- Is running in Docker?: no

**Configuration**:

searchSpaceFile: search_space.json

trialCommand: python train_nni.py

trialGpuNumber: 0

trialConcurrency: 1

tuner:

name: TPE

classArgs:

optimize_mode: maximize

trainingService:

platform: local

**How to reproduce it?**: | open | 2023-12-10T11:22:42Z | 2023-12-10T11:22:42Z | https://github.com/microsoft/nni/issues/5720 | [] | yao-ao | 0 |

jmcnamara/XlsxWriter | pandas | 313 | Minor gridlines in scatter chart do not support log_base | I am trying to set Y in log base 10 with minor gridlines. If I add minor gridlines the log_base category do not work anymore:

```

chart.set_y_axis({

'minor_gridlines': {

'visible': True,

'line': {'width': 1.25, 'dash_type': 'solid'},

'log_base' : 10

},

})

chart.set_y_axis({'log_base': 10})

```

Using second line removes minor gridline, opposite sequence removed log scale.

| closed | 2015-11-10T07:52:36Z | 2015-11-17T08:04:44Z | https://github.com/jmcnamara/XlsxWriter/issues/313 | [

"question",

"ready to close"

] | MichalMisiaszek | 2 |

igorbenav/fastcrud | pydantic | 209 | [Bug / Skill Issue] many to many relationship | **Describe the bug or question**

I would like to have a class for the Assosciate table that references the ids, from 2 other tables as Foreign Keys and make the records in there to be deleted when one of the referred objects gets deleted (cascade).

**To Reproduce**

Please provide a self-contained, minimal, and reproducible example of your use case

```python

from sqlalchemy.orm import Mapped, mapped_column

from sqlalchemy import String, ForeignKey, UniqueConstraint

from src.schemas.level import LanguageEnum

from src.setup.database import Base

class Tag(Base):

__tablename__ = "tag"

id: Mapped[int] = mapped_column(autoincrement=True, primary_key=True)

name: Mapped[str]

language: Mapped[LanguageEnum] = mapped_column(String)

user_id: Mapped[int] = mapped_column(ForeignKey("user.id"), index=True)

__table_args__ = (UniqueConstraint("name", "language", name="uq_tag_language"),)

class PhraseTag(Base):

__tablename__ = "phrase_tag"

tag_id: Mapped[int] = mapped_column(ForeignKey("tag.id", ondelete="CASCADE"), primary_key=True)

phrase_id: Mapped[int] = mapped_column(ForeignKey("phrase.id", ondelete="CASCADE"), primary_key=True)

```

```python

from sqlalchemy import String, ForeignKey

from sqlalchemy.orm import Mapped, mapped_column

from src.setup.database import Base

from src.models.level import LanguageEnum

class Phrase(Base):

__tablename__ = "phrase"

id: Mapped[int] = mapped_column(autoincrement=True, primary_key=True)

language: Mapped[LanguageEnum] = mapped_column(String)

phrase: Mapped[str]

user_id: Mapped[int] = mapped_column(ForeignKey("user.id"))

```

**Description**

Expected: I expect that whenever the phrase or tag gets deleted then all the objects that references the id of one of them gets deleted as well.

Actual: The PhraseTag table still has all the records present when I delete either Phrase or Tag

**Screenshots**

N/A

**Additional context**

N/A

| closed | 2025-03-18T20:34:45Z | 2025-03-18T22:45:46Z | https://github.com/igorbenav/fastcrud/issues/209 | [] | maktowon | 1 |

dynaconf/dynaconf | flask | 1,075 | [CI] Update codecov configuration file | I've closed #990 but forget to create an issue about the codecov configuration issue.

Apparently, we should have a `coverage.yml` file for the Codecov app/github-action, but I'm not sure how this will go with local coverage reports, which seems to use `.coveragerc`. This require a little investigation.

The goal here is to have:

- a single config file (local reports and CI)

- an up-to-date configuration file (as recommended in codecov docs):

- as a consequence, we can more easily customize the codecov config (if we discuss we need to) | open | 2024-03-06T12:42:47Z | 2024-03-06T12:42:48Z | https://github.com/dynaconf/dynaconf/issues/1075 | [

"enhancement",

"CI"

] | pedro-psb | 0 |

noirbizarre/flask-restplus | flask | 54 | No way to configure .../swagger.json to go over HTTPS? | Hello,

I have an application deployed using Flask-RESTPlus. Everything works nicely when it goes over http, but soon as I switch to https, it can't load the swagger.json because it is making requests to http://host/swagger.json and not https://host/swagger.json

The endpoints are registered properly with blueprints, because if I manually go to https://host/swagger.json, I get the correct JSON. However, the generated swagger page tries to load the JSON from a http endpoint, instead of the correct https endpoint.

Is there any way to fix this?

Thanks

| closed | 2015-06-25T17:54:24Z | 2022-01-05T01:52:28Z | https://github.com/noirbizarre/flask-restplus/issues/54 | [] | Iulian7 | 11 |

zappa/Zappa | django | 526 | [Migrated] Deployed flask endpoint not working: [run_wsgi_app TypeError: 'NoneType' object is not callable] App function file missing in Lamda zip package | Originally from: https://github.com/Miserlou/Zappa/issues/1396 by [NakedKoala](https://github.com/NakedKoala)

## Context

I am trying to deploy my deep learning model to lambda. The entire deployment package is over 1 GB. I am using slim-handler.

The app function file looks like this:

```

import logging

from flask import Flask

from flask import request

from flask import json

from model import TacoModel

from utils import image

from keras.preprocessing.image import img_to_array

import numpy as np

app = Flask(__name__)

logging.basicConfig()

logger = logging.getLogger(__name__)

logger.setLevel(logging.DEBUG)

taco_model = TacoModel()

@app.route('/', methods=['GET'])

def index():

path = "data/"

img = image.load_img(path+"test/unknown/hotdog679.JPEG",target_size=(224, 224))

img = np.array([img_to_array(img)])

res = taco_model.predict(img)

return json.dumps(res)

if __name__ == '__main__':

app.run()

```

Everything runs fine locally. When I curl my local server, I am able to get back the expected response.

The zappa deployment was successful and without error. But get request produces

```

{u'message': u'An uncaught exception happened while servicing this request. You can investigate this with the `zappa tail` command.', u'traceback': ['Traceback (most recent call last):\\n', ' File \"/var/task/handler.py\", line 452, in handler\\n response = Response.from_app(self.wsgi_app, environ)\\n', ' File \"/private/var/folders/kl/35lz1q3x2gs0y0r_1njxfd0m0000gn/T/pip-build-R7GWCg/Werkzeug/werkzeug/wrappers.py\", line 903, in from_app\\n', ' File \"/private/var/folders/kl/35lz1q3x2gs0y0r_1njxfd0m0000gn/T/pip-build-R7GWCg/Werkzeug/werkzeug/wrappers.py\", line 57, in _run_wsgi_app\\n', ' File \"/private/var/folders/kl/35lz1q3x2gs0y0r_1njxfd0m0000gn/T/pip-build-R7GWCg/Werkzeug/werkzeug/test.py\", line 884, in run_wsgi_app\\n', \"TypeError: 'NoneType' object is not callable\\n\"]}

```

## Expected Behavior

Endpoint should work and return a dummy prediction on get request

## Actual Behavior

Get request failed. Produce the above error

## Possible Fix

I have tried clean up my virtualenv & recreated virtualenv and re-deploy. Error still persists

zappa tail reveals this error

```

[1518497133157] No module named my_app: ImportError

Traceback (most recent call last):

File "/var/task/handler.py", line 509, in lambda_handler

return LambdaHandler.lambda_handler(event, context)

File "/var/task/handler.py", line 237, in lambda_handler

handler = cls()

File "/var/task/handler.py", line 129, in __init__

self.app_module = importlib.import_module(self.settings.APP_MODULE)

File "/usr/lib64/python2.7/importlib/__init__.py", line 37, in import_module

__import__(name)

ImportError: No module named my_app

```

I tried exporting my lambda zip package. Upon inspection , my flask app function file is missing in the deployment package. I am new to Zappa, but this looks wrong to me. So I manually added the app function file and re-zip & upload. But the get request still broke and zappa tail returns some different error.

Is something wrong during the packaging step ?

## Steps to Reproduce

live link

https://knbxmrhkt0.execute-api.us-west-2.amazonaws.com/dev

## Your Environment

* Zappa version used: 0.45.1

* Operating System and Python version: MacOS python 2.7

* The output of `pip freeze`:

```

absl-py==0.1.10

appnope==0.1.0

argcomplete==1.9.2

backports-abc==0.5

backports.functools-lru-cache==1.5

backports.shutil-get-terminal-size==1.0.0

backports.weakref==1.0.post1

base58==0.2.4

bcolz==1.1.2

bleach==2.1.2

boto3==1.5.27

botocore==1.8.41

certifi==2018.1.18

cfn-flip==1.0.0

chardet==3.0.4

click==6.7

configparser==3.5.0

cycler==0.10.0

decorator==4.2.1

docutils==0.14

durationpy==0.5

entrypoints==0.2.3

enum34==1.1.6

Flask==0.12.2

funcsigs==1.0.2

functools32==3.2.3.post2

future==0.16.0

futures==3.1.1

h5py==2.7.1

hjson==3.0.1

html5lib==0.9999999

idna==2.6

ipykernel==4.8.1

ipython==5.5.0

ipython-genutils==0.2.0

ipywidgets==7.1.1

itsdangerous==0.24

Jinja2==2.10

jmespath==0.9.3

jsonschema==2.6.0

jupyter==1.0.0

jupyter-client==5.2.2

jupyter-console==5.2.0

jupyter-core==4.4.0

kappa==0.6.0

Keras==1.2.2

lambda-packages==0.19.0

Markdown==2.6.11

MarkupSafe==1.0

matplotlib==2.1.2

mistune==0.8.3

mock==2.0.0

mpmath==1.0.0

nbconvert==5.3.1

nbformat==4.4.0

notebook==5.4.0

numpy==1.14.0

pandas==0.22.0

pandocfilters==1.4.2

pathlib2==2.3.0

pbr==3.1.1

pexpect==4.4.0

pickleshare==0.7.4

Pillow==5.0.0

placebo==0.8.1

prompt-toolkit==1.0.15

protobuf==3.5.1

ptyprocess==0.5.2

Pygments==2.2.0

pyparsing==2.2.0

python-dateutil==2.6.1

python-slugify==1.2.4

pytz==2018.3

PyYAML==3.12

pyzmq==17.0.0

qtconsole==4.3.1

requests==2.18.4

s3transfer==0.1.12

scandir==1.7

scikit-learn==0.19.1

scipy==1.0.0

Send2Trash==1.4.2

simplegeneric==0.8.1

singledispatch==3.4.0.3

six==1.11.0

subprocess32==3.2.7

sympy==1.1.1

tensorflow==1.5.0

tensorflow-tensorboard==1.5.1

terminado==0.8.1

testpath==0.3.1

Theano==1.0.1

toml==0.9.4

tornado==4.5.3

tqdm==4.19.1

traitlets==4.3.2

troposphere==2.2.0

Unidecode==1.0.22

urllib3==1.22

wcwidth==0.1.7

Werkzeug==0.12

widgetsnbextension==3.1.3

wsgi-request-logger==0.4.6

zappa==0.45.1

```

* Link to your project (optional):

* Your `zappa_settings.py`:

```

{

"dev": {

"app_function": "my_app.app",

"aws_region": "us-west-2",

"profile_name": "default",

"project_name": "freshstart3",

"runtime": "python2.7",

"s3_bucket": "[redacted]",

"slim_handler": true

}

}

``` | closed | 2021-02-20T09:43:57Z | 2022-07-16T07:15:31Z | https://github.com/zappa/Zappa/issues/526 | [] | jneves | 1 |

coleifer/sqlite-web | flask | 24 | UnicodeDecodeError: 'ascii' codec can't decode byte 0xff in position 0: ordinal not in range(128) | classic unicode issue, please fix, thanks

| closed | 2016-09-13T09:58:41Z | 2016-09-17T17:45:05Z | https://github.com/coleifer/sqlite-web/issues/24 | [] | hugowan | 7 |

vllm-project/vllm | pytorch | 15,217 | [Bug]: RuntimeError: please ensure that world_size (2) is less than than max local gpu count (1) | ### Your current environment

```text

Collecting environment information...

PyTorch version: 2.7.0a0+git6c0e746

Is debug build: False

CUDA used to build PyTorch: N/A

ROCM used to build PyTorch: 6.3.42133-1b9c17779

OS: Ubuntu 22.04.5 LTS (x86_64)

GCC version: (Ubuntu 11.4.0-1ubuntu1~22.04) 11.4.0

Clang version: 18.0.0git (https://github.com/RadeonOpenCompute/llvm-project roc-6.3.1 24491 1e0fda770a2079fbd71e4b70974d74f62fd3af10)

CMake version: version 3.31.4

Libc version: glibc-2.35

Python version: 3.12.9 (main, Feb 5 2025, 08:49:00) [GCC 11.4.0] (64-bit runtime)

Python platform: Linux-5.14.21-150500.55.83_13.0.62-cray_shasta_c-x86_64-with-glibc2.35

Is CUDA available: True

CUDA runtime version: Could not collect

CUDA_MODULE_LOADING set to: LAZY

GPU models and configuration: AMD Instinct MI250X (gfx90a:sramecc+:xnack-)

Nvidia driver version: Could not collect

cuDNN version: Could not collect

HIP runtime version: 6.3.42133

MIOpen runtime version: 3.3.0

Is XNNPACK available: True

CPU:

Architecture: x86_64

CPU op-mode(s): 32-bit, 64-bit

Address sizes: 48 bits physical, 48 bits virtual

Byte Order: Little Endian

CPU(s): 128

On-line CPU(s) list: 0-127

Vendor ID: AuthenticAMD

Model name: AMD EPYC 7A53 64-Core Processor

CPU family: 25

Model: 48

Thread(s) per core: 2

Core(s) per socket: 64

Socket(s): 1

Stepping: 1

Frequency boost: enabled

CPU max MHz: 3541.0149

CPU min MHz: 1500.0000

BogoMIPS: 3992.57

Flags: fpu vme de pse tsc msr pae mce cx8 apic sep mtrr pge mca cmov pat pse36 clflush mmx fxsr sse sse2 ht syscall nx mmxext fxsr_opt pdpe1gb rdtscp lm constant_tsc rep_good nopl nonstop_tsc cpuid extd_apicid aperfmperf rapl pni pclmulqdq monitor ssse3 fma cx16 pcid sse4_1 sse4_2 x2apic movbe popcnt aes xsave avx f16c rdrand lahf_lm cmp_legacy svm extapic cr8_legacy abm sse4a misalignsse 3dnowprefetch osvw ibs wdt tce topoext perfctr_core perfctr_nb bpext perfctr_llc mwaitx cpb cat_l3 cdp_l3 invpcid_single hw_pstate ssbd mba ibrs ibpb stibp vmmcall fsgsbase bmi1 avx2 smep bmi2 erms invpcid cqm rdt_a rdseed adx smap clflushopt clwb sha_ni xsaveopt xsavec xgetbv1 xsaves cqm_llc cqm_occup_llc cqm_mbm_total cqm_mbm_local clzero irperf xsaveerptr rdpru wbnoinvd amd_ppin arat npt lbrv svm_lock nrip_save tsc_scale vmcb_clean flushbyasid decodeassists pausefilter pfthreshold avic v_vmsave_vmload vgif v_spec_ctrl umip pku ospke vaes vpclmulqdq rdpid overflow_recov succor smca fsrm

Virtualization: AMD-V

L1d cache: 2 MiB (64 instances)

L1i cache: 2 MiB (64 instances)

L2 cache: 32 MiB (64 instances)

L3 cache: 256 MiB (8 instances)

NUMA node(s): 4

NUMA node0 CPU(s): 0-15,64-79

NUMA node1 CPU(s): 16-31,80-95

NUMA node2 CPU(s): 32-47,96-111

NUMA node3 CPU(s): 48-63,112-127

Vulnerability Gather data sampling: Not affected

Vulnerability Itlb multihit: Not affected

Vulnerability L1tf: Not affected

Vulnerability Mds: Not affected

Vulnerability Meltdown: Not affected

Vulnerability Mmio stale data: Not affected

Vulnerability Reg file data sampling: Not affected

Vulnerability Retbleed: Not affected

Vulnerability Spec rstack overflow: Mitigation; Safe RET

Vulnerability Spec store bypass: Mitigation; Speculative Store Bypass disabled via prctl and seccomp

Vulnerability Spectre v1: Mitigation; usercopy/swapgs barriers and __user pointer sanitization

Vulnerability Spectre v2: Mitigation; Retpolines; IBPB conditional; IBRS_FW; STIBP always-on; RSB filling; PBRSB-eIBRS Not affected, BHI Not affected

Vulnerability Srbds: Not affected

Vulnerability Tsx async abort: Not affected

Versions of relevant libraries:

[pip3] numpy==1.26.4

[pip3] pyzmq==26.2.1

[pip3] torch==2.7.0a0+git6c0e746

[pip3] torchvision==0.21.0+7af6987

[pip3] transformers==4.49.0

[pip3] triton==3.2.0+gite5be006a

[conda] Could not collect

ROCM Version: 6.3.42133-1b9c17779

Neuron SDK Version: N/A

vLLM Version: 0.7.4.dev49+gc0dd5adf6

vLLM Build Flags:

CUDA Archs: Not Set; ROCm: Disabled; Neuron: Disabled

GPU Topology:

============================ ROCm System Management Interface ============================

================================ Weight between two GPUs =================================

GPU0

GPU0 0

================================= Hops between two GPUs ==================================

GPU0

GPU0 0

=============================== Link Type between two GPUs ===============================

GPU0

GPU0 0

======================================= Numa Nodes =======================================

GPU[0] : (Topology) Numa Node: 0

GPU[0] : (Topology) Numa Affinity: 0

================================== End of ROCm SMI Log ===================================

PYTORCH_ROCM_ARCH=gfx90a;gfx942

LD_LIBRARY_PATH=/usr/local/lib/python3.12/dist-packages/cv2/../../lib64::/opt/cray/pe/mpich/default/ofi/gnu/9.1/lib-abi-mpich:/opt/cray/pe/mpich/default/gtl/lib:/opt/cray/xpmem/default/lib64:/opt/cray/pe/pmi/default/lib:/opt/cray/pe/pals/default/lib:/opt/cray/pe/gcc-libs:/opt/rocm/lib:/usr/local/lib::/.singularity.d/libs:/.singularity.d/libs

NCCL_CUMEM_ENABLE=0

TORCHINDUCTOR_COMPILE_THREADS=1

CUDA_MODULE_LOADING=LAZY

```

### 🐛 Describe the bug

I want to run vllm on 2 nodes. I already setup the ray, and it showed 2 GPUs:

```

Singularity> ray status

======== Autoscaler status: 2025-03-20 20:40:48.252496 ========

Node status

---------------------------------------------------------------

Active:

1 node_5373538893976cc08e332acdf25b3f043ca49757d98d5f6ab048d1ed

1 node_1689389e001b234e7d470b2da54b6c17b4441403358cf341f778e2ea

Pending:

(no pending nodes)

Recent failures:

(no failures)

Resources

---------------------------------------------------------------

Usage:

0.0/256.0 CPU

0.0/2.0 GPU

0B/311.15GiB memory

0B/137.34GiB object_store_memory

Demands:

(no resource demands)

```

Then I run `vllm serve ./DeepSeek-V3 --trust-remote-code --tensor-parallel-size 1 --pipeline-parallel-size 2` on the head node, it showed:

```

INFO 03-20 20:38:08 [__init__.py:207] Automatically detected platform rocm.

INFO 03-20 20:38:09 [api_server.py:911] vLLM API server version 0.7.4.dev49+gc0dd5adf6

INFO 03-20 20:38:09 [api_server.py:912] args: Namespace(subparser='serve', model_tag='./DeepSeek-V3', config='', host=None, port=8000, uvicorn_log_level='info', allow_credentials=False, allowed_origins=['*'], allowed_methods=['*'], allowed_headers=['*'], api_key=None, lora_modules=None, prompt_adapters=None, chat_template=None, chat_template_content_format='auto', response_role='assistant', ssl_keyfile=None, ssl_certfile=None, ssl_ca_certs=None, enable_ssl_refresh=False, ssl_cert_reqs=0, root_path=None, middleware=[], return_tokens_as_token_ids=False, disable_frontend_multiprocessing=False, enable_request_id_headers=False, enable_auto_tool_choice=False, enable_reasoning=False, reasoning_parser=None, tool_call_parser=None, tool_parser_plugin='', model='./DeepSeek-V3', task='auto', tokenizer=None, skip_tokenizer_init=False, revision=None, code_revision=None, tokenizer_revision=None, tokenizer_mode='auto', trust_remote_code=True, allowed_local_media_path=None, download_dir=None, load_format='auto', config_format=<ConfigFormat.AUTO: 'auto'>, dtype='auto', kv_cache_dtype='auto', max_model_len=None, guided_decoding_backend='xgrammar', logits_processor_pattern=None, model_impl='auto', distributed_executor_backend=None, pipeline_parallel_size=2, tensor_parallel_size=1, max_parallel_loading_workers=None, ray_workers_use_nsight=False, block_size=None, enable_prefix_caching=None, disable_sliding_window=False, use_v2_block_manager=True, num_lookahead_slots=0, seed=0, swap_space=4, cpu_offload_gb=0, gpu_memory_utilization=0.9, num_gpu_blocks_override=None, max_num_batched_tokens=None, max_num_partial_prefills=1, max_long_partial_prefills=1, long_prefill_token_threshold=0, max_num_seqs=None, max_logprobs=20, disable_log_stats=False, quantization=None, rope_scaling=None, rope_theta=None, hf_overrides=None, enforce_eager=False, max_seq_len_to_capture=8192, disable_custom_all_reduce=False, tokenizer_pool_size=0, tokenizer_pool_type='ray', tokenizer_pool_extra_config=None, limit_mm_per_prompt=None, mm_processor_kwargs=None, disable_mm_preprocessor_cache=False, enable_lora=False, enable_lora_bias=False, max_loras=1, max_lora_rank=16, lora_extra_vocab_size=256, lora_dtype='auto', long_lora_scaling_factors=None, max_cpu_loras=None, fully_sharded_loras=False, enable_prompt_adapter=False, max_prompt_adapters=1, max_prompt_adapter_token=0, device='auto', num_scheduler_steps=1, multi_step_stream_outputs=True, scheduler_delay_factor=0.0, enable_chunked_prefill=None, speculative_model=None, speculative_model_quantization=None, num_speculative_tokens=None, speculative_disable_mqa_scorer=False, speculative_draft_tensor_parallel_size=None, speculative_max_model_len=None, speculative_disable_by_batch_size=None, ngram_prompt_lookup_max=None, ngram_prompt_lookup_min=None, spec_decoding_acceptance_method='rejection_sampler', typical_acceptance_sampler_posterior_threshold=None, typical_acceptance_sampler_posterior_alpha=None, disable_logprobs_during_spec_decoding=None, model_loader_extra_config=None, ignore_patterns=[], preemption_mode=None, served_model_name=None, qlora_adapter_name_or_path=None, show_hidden_metrics_for_version=None, otlp_traces_endpoint=None, collect_detailed_traces=None, disable_async_output_proc=False, scheduling_policy='fcfs', scheduler_cls='vllm.core.scheduler.Scheduler', override_neuron_config=None, override_pooler_config=None, compilation_config=None, kv_transfer_config=None, worker_cls='auto', generation_config=None, override_generation_config=None, enable_sleep_mode=False, calculate_kv_scales=False, additional_config=None, disable_log_requests=False, max_log_len=None, disable_fastapi_docs=False, enable_prompt_tokens_details=False, dispatch_function=<function ServeSubcommand.cmd at 0x14a39b61c180>)

INFO 03-20 20:38:09 [config.py:209] Replacing legacy 'type' key with 'rope_type'

INFO 03-20 20:38:24 [config.py:570] This model supports multiple tasks: {'score', 'generate', 'reward', 'embed', 'classify'}. Defaulting to 'generate'.

INFO 03-20 20:38:31 [config.py:1479] Defaulting to use mp for distributed inference

INFO 03-20 20:38:31 [config.py:1536] Disabled the custom all-reduce kernel because it is not supported with pipeline parallelism.

WARNING 03-20 20:38:31 [arg_utils.py:1218] The model has a long context length (163840). This may cause OOM errors during the initial memory profiling phase, or result in low performance due to small KV cache space. Consider setting --max-model-len to a smaller value.

WARNING 03-20 20:38:31 [fp8.py:59] Detected fp8 checkpoint. Please note that the format is experimental and subject to change.

INFO 03-20 20:38:31 [config.py:3454] MLA is enabled on a non-cuda platform; forcing chunked prefill and prefix caching to be disabled.

INFO 03-20 20:38:31 [rocm.py:228] Aiter main switch (VLLM_USE_AITER) is not set. Disabling individual Aiter components

INFO 03-20 20:38:31 [async_llm_engine.py:267] Initializing a V0 LLM engine (v0.7.4.dev49+gc0dd5adf6) with config: model='./DeepSeek-V3', speculative_config=None, tokenizer='./DeepSeek-V3', skip_tokenizer_init=False, tokenizer_mode=auto, revision=None, override_neuron_config=None, tokenizer_revision=None, trust_remote_code=True, dtype=torch.bfloat16, max_seq_len=163840, download_dir=None, load_format=LoadFormat.AUTO, tensor_parallel_size=1, pipeline_parallel_size=2, disable_custom_all_reduce=True, quantization=fp8, enforce_eager=False, kv_cache_dtype=auto, device_config=cuda, decoding_config=DecodingConfig(guided_decoding_backend='xgrammar'), observability_config=ObservabilityConfig(show_hidden_metrics=False, otlp_traces_endpoint=None, collect_model_forward_time=False, collect_model_execute_time=False), seed=0, served_model_name=./DeepSeek-V3, num_scheduler_steps=1, multi_step_stream_outputs=True, enable_prefix_caching=False, chunked_prefill_enabled=False, use_async_output_proc=False, disable_mm_preprocessor_cache=False, mm_processor_kwargs=None, pooler_config=None, compilation_config={"splitting_ops":[],"compile_sizes":[],"cudagraph_capture_sizes":[256,248,240,232,224,216,208,200,192,184,176,168,160,152,144,136,128,120,112,104,96,88,80,72,64,56,48,40,32,24,16,8,4,2,1],"max_capture_size":256}, use_cached_outputs=False,

INFO 03-20 20:38:31 [config.py:209] Replacing legacy 'type' key with 'rope_type'

Traceback (most recent call last):

File "/usr/local/bin/vllm", line 8, in <module>

sys.exit(main())

^^^^^^

File "/usr/local/lib/python3.12/dist-packages/vllm/entrypoints/cli/main.py", line 73, in main

args.dispatch_function(args)

File "/usr/local/lib/python3.12/dist-packages/vllm/entrypoints/cli/serve.py", line 34, in cmd

uvloop.run(run_server(args))

File "/usr/local/lib/python3.12/dist-packages/uvloop/__init__.py", line 109, in run

return __asyncio.run(

^^^^^^^^^^^^^^

File "/usr/lib/python3.12/asyncio/runners.py", line 195, in run

return runner.run(main)

^^^^^^^^^^^^^^^^

File "/usr/lib/python3.12/asyncio/runners.py", line 118, in run

return self._loop.run_until_complete(task)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "uvloop/loop.pyx", line 1518, in uvloop.loop.Loop.run_until_complete

File "/usr/local/lib/python3.12/dist-packages/uvloop/__init__.py", line 61, in wrapper

return await main

^^^^^^^^^^

File "/usr/local/lib/python3.12/dist-packages/vllm/entrypoints/openai/api_server.py", line 946, in run_server

async with build_async_engine_client(args) as engine_client:

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/lib/python3.12/contextlib.py", line 210, in __aenter__

return await anext(self.gen)

^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/dist-packages/vllm/entrypoints/openai/api_server.py", line 138, in build_async_engine_client

async with build_async_engine_client_from_engine_args(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/lib/python3.12/contextlib.py", line 210, in __aenter__

return await anext(self.gen)

^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/dist-packages/vllm/entrypoints/openai/api_server.py", line 162, in build_async_engine_client_from_engine_args

engine_client = AsyncLLMEngine.from_engine_args(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/dist-packages/vllm/engine/async_llm_engine.py", line 644, in from_engine_args

engine = cls(

^^^^

File "/usr/local/lib/python3.12/dist-packages/vllm/engine/async_llm_engine.py", line 594, in __init__

self.engine = self._engine_class(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/dist-packages/vllm/engine/async_llm_engine.py", line 267, in __init__

super().__init__(*args, **kwargs)

File "vllm/engine/llm_engine.py", line 273, in vllm.engine.llm_engine.LLMEngine.__init__

File "/usr/local/lib/python3.12/dist-packages/vllm/executor/executor_base.py", line 271, in __init__

super().__init__(*args, **kwargs)

File "/usr/local/lib/python3.12/dist-packages/vllm/executor/executor_base.py", line 52, in __init__

self._init_executor()

File "/usr/local/lib/python3.12/dist-packages/vllm/executor/mp_distributed_executor.py", line 60, in _init_executor

self._check_cuda()

File "/usr/local/lib/python3.12/dist-packages/vllm/executor/mp_distributed_executor.py", line 46, in _check_cuda

raise RuntimeError(

RuntimeError: please ensure that world_size (2) is less than than max local gpu count (1)

```

It seemed didn't recognize gpu on other nodes.

### Before submitting a new issue...

- [x] Make sure you already searched for relevant issues, and asked the chatbot living at the bottom right corner of the [documentation page](https://docs.vllm.ai/en/latest/), which can answer lots of frequently asked questions. | open | 2025-03-20T12:44:35Z | 2025-03-20T12:44:35Z | https://github.com/vllm-project/vllm/issues/15217 | [

"bug"

] | chn-lee-yumi | 0 |

plotly/dash | plotly | 3,201 | Typescript Components which have props with hyphens generate a syntax error in Python | When converting components which have props containing a hyphen, e.g. "aria-expanded", the generated Python class has "area-expander" in its parameters, which throws an invalid syntax error.

```

def __init__(self, children=None, value=Component.REQUIRED, aria-expanded=Component.UNDEFINED, **kwargs):

self._prop_names = ['children', 'id', 'about', 'accessKey', 'aria-expanded', ]

```

`SyntaxError: invalid syntax

` | open | 2024-04-29T16:16:12Z | 2025-03-07T14:15:49Z | https://github.com/plotly/dash/issues/3201 | [] | tsveti22 | 0 |

globaleaks/globaleaks-whistleblowing-software | sqlalchemy | 3,342 | Wizard cannot be completed | When reinstalling, the wizard starts as usual.

Unfortunately, it does not continue at step 5, the confirmation of the license. When pressing the "continue" button nothing happens and no error is displayed. We have tested it on different systems and with different browsers - unfortunately without any result.

We tried to call the wizard via subdomain or IP address. Same result.

This is the output in the console:

The Cross-Origin-Opener-Policy header has been ignored, because the URL's origin was untrustworthy. It was defined either in the final response or a redirect. Please deliver the response using the HTTPS protocol. You can also use the 'localhost' origin instead. See https://www.w3.org/TR/powerful-features/#potentially-trustworthy-origin and https://html.spec.whatwg.org/#the-cross-origin-opener-policy-header.

How can we complete the wizard? | closed | 2023-02-03T13:58:36Z | 2023-02-06T08:37:48Z | https://github.com/globaleaks/globaleaks-whistleblowing-software/issues/3342 | [] | stefan-h3 | 2 |

serengil/deepface | machine-learning | 1,323 | [FEATURE]: support pipx install on Ubuntu 24.04, fails with "ValueError: You have tensorflow 2.17.0 and this requires tf-keras package. Please run `pip install tf-keras` or downgrade your tensorflow" | ### Description

It would be good if `pipx install` worked for Ubuntu 24.04:

```

pipx install deepface==0.0.93

```

but then:

```

deepface help

```

blows up with:

```

2024-08-27_21-42-31@ciro@ciro-p14s$ deepface help

2024-08-27 21:42:38.810014: I tensorflow/core/util/port.cc:153] oneDNN custom operations are on. You may see slightly different numerical results due to fl

oating-point round-off errors from different computation orders. To turn them off, set the environment variable `TF_ENABLE_ONEDNN_OPTS=0`.

2024-08-27 21:42:38.810549: I external/local_xla/xla/tsl/cuda/cudart_stub.cc:32] Could not find cuda drivers on your machine, GPU will not be used.

2024-08-27 21:42:38.814904: I external/local_xla/xla/tsl/cuda/cudart_stub.cc:32] Could not find cuda drivers on your machine, GPU will not be used.

2024-08-27 21:42:38.822156: E external/local_xla/xla/stream_executor/cuda/cuda_fft.cc:485] Unable to register cuFFT factory: Attempting to register factory

for plugin cuFFT when one has already been registered

2024-08-27 21:42:38.834452: E external/local_xla/xla/stream_executor/cuda/cuda_dnn.cc:8454] Unable to register cuDNN factory: Attempting to register factor

y for plugin cuDNN when one has already been registered

2024-08-27 21:42:38.837693: E external/local_xla/xla/stream_executor/cuda/cuda_blas.cc:1452] Unable to register cuBLAS factory: Attempting to register fact

ory for plugin cuBLAS when one has already been registered

2024-08-27 21:42:38.846987: I tensorflow/core/platform/cpu_feature_guard.cc:210] This TensorFlow binary is optimized to use available CPU instructions in p

erformance-critical operations.

To enable the following instructions: AVX2 AVX512F AVX512_VNNI AVX512_BF16 FMA, in other operations, rebuild TensorFlow with the appropriate compiler flags

.

2024-08-27 21:42:39.511588: W tensorflow/compiler/tf2tensorrt/utils/py_utils.cc:38] TF-TRT Warning: Could not find TensorRT

Traceback (most recent call last):

File "/home/ciro/.local/pipx/venvs/deepface/lib/python3.12/site-packages/retinaface/commons/package_utils.py", line 19, in validate_for_keras3

import tf_keras

ModuleNotFoundError: No module named 'tf_keras'

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/home/ciro/.local/bin/deepface", line 5, in <module>

from deepface.DeepFace import cli

File "/home/ciro/.local/pipx/venvs/deepface/lib/python3.12/site-packages/deepface/DeepFace.py", line 20, in <module>

from deepface.modules import (

File "/home/ciro/.local/pipx/venvs/deepface/lib/python3.12/site-packages/deepface/modules/modeling.py", line 16, in <module>

from deepface.models.face_detection import (

File "/home/ciro/.local/pipx/venvs/deepface/lib/python3.12/site-packages/deepface/models/face_detection/RetinaFace.py", line 3, in <module>

from retinaface import RetinaFace as rf

File "/home/ciro/.local/pipx/venvs/deepface/lib/python3.12/site-packages/retinaface/RetinaFace.py", line 20, in <module>

package_utils.validate_for_keras3()

File "/home/ciro/.local/pipx/venvs/deepface/lib/python3.12/site-packages/retinaface/commons/package_utils.py", line 24, in validate_for_keras3

raise ValueError(

ValueError: You have tensorflow 2.17.0 and this requires tf-keras package. Please run `pip install tf-keras` or downgrade your tensorflow.

```

And you can't just:

```

pip install tf-keras

```

because it blows up with:

```

error: externally-managed-environment

× This environment is externally managed

╰─> To install Python packages system-wide, try apt install

python3-xyz, where xyz is the package you are trying to

install.

If you wish to install a non-Debian-packaged Python package,

create a virtual environment using python3 -m venv path/to/venv.

Then use path/to/venv/bin/python and path/to/venv/bin/pip. Make

sure you have python3-full installed.

If you wish to install a non-Debian packaged Python application,

it may be easiest to use pipx install xyz, which will manage a

virtual environment for you. Make sure you have pipx installed.

See /usr/share/doc/python3.12/README.venv for more information.

note: If you believe this is a mistake, please contact your Python installation or OS distribution provider. You can override this, at the risk of breaking your Python installation or OS, by passing --break-system-packages.

hint: See PEP 668 for the detailed specification.

``` | closed | 2024-08-27T20:46:13Z | 2024-10-22T17:04:49Z | https://github.com/serengil/deepface/issues/1323 | [

"enhancement",

"dependencies"

] | cirosantilli | 2 |

flairNLP/flair | nlp | 3,081 | [Question]: Combining BERT & Flair | ### Question

Hey,

I have some questions regarding the [following tutorial](https://github.com/flairNLP/flair/blob/master/resources/docs/TUTORIAL_4_ELMO_BERT_FLAIR_EMBEDDING.md) for creating a multi-lingual Flair Stacked embedding model that combines Flair Embeddings & BERT.

https://github.com/flairNLP/flair/blob/23618cd8e072ec2a3f325985c18bfa14315c9554/resources/docs/TUTORIAL_4_ELMO_BERT_FLAIR_EMBEDDING.md?plain=1#L33-L42

By default, the parameter fine_tune is set to True. My question is **should you fine-tune the TransformerWordEmbeddings when including them in a Flair Stacked Embedding?**

I have noticed that training this model can be incredibly slow, even on a big GPU (I have an A100 with 80GB available).

With 2400 training sentences takes about 40 minutes per epoch with a mini_batch_size=4 and a mini_chunk_size=1 | closed | 2023-02-06T07:25:35Z | 2023-02-07T17:31:38Z | https://github.com/flairNLP/flair/issues/3081 | [

"question"

] | Guust-Franssens | 1 |

xlwings/xlwings | automation | 1,777 | Unable to include Image comment in xlwings | #### OS (e.g. Windows 10 or macOS Sierra)

Windows 10

#### Versions of xlwings, Excel and Python (e.g. 0.11.8, Office 365, Python 3.7)

0.24.9, Office 2019 and Python 3.9.7

#### Describe your issue (incl. Traceback!)

I unable to include image in XL comment. In Excel, if we follow the following steps we would able to include pictures in the comment. I wanted to make it through python

1. Right-click the cell which contains the comment.

2. Choose Show/Hide Comments, and clear any text from the comment.

3. Click on the border of the comment, to select it.

4. Choose Format|Comment

5. On the Colors and Lines tab, click the drop-down arrow for Color.

6. Click Fill Effects

7. On the picture tab, click Select Picture

8. Locate and select the picture

9. To keep the picture in proportion, add a check mark to Lock Picture Aspect Ratio

10. Click Insert, click OK, click OK

Sample Output expecting from Python is attached above.

Could you please suggest how to achieve this in xlwings? | open | 2021-12-02T06:04:26Z | 2022-02-01T13:26:50Z | https://github.com/xlwings/xlwings/issues/1777 | [] | agrangaraj | 1 |

pydantic/pydantic-ai | pydantic | 1,143 | Validation errors for _GeminiResponse | ### Initial Checks

- [x] I confirm that I'm using the latest version of Pydantic AI

### Description

I get the below error when trying to run simple agent with Gemini provider:

```

lib/python3.11/site-packages/pydantic/type_adapter.py", line 468, in validate_json

return self.validator.validate_json(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

pydantic_core._pydantic_core.ValidationError: 4 validation errors for _GeminiResponse

candidates.0.avgLogProbs

Field required [type=missing, input_value={'content': {'parts': [{'...': -0.05566399544477463}, input_type=dict]

For further information visit https://errors.pydantic.dev/2.11/v/missing

candidates.0.index

Field required [type=missing, input_value={'content': {'parts': [{'...': -0.05566399544477463}, input_type=dict]

For further information visit https://errors.pydantic.dev/2.11/v/missing

candidates.0.safetyRatings

Field required [type=missing, input_value={'content': {'parts': [{'...': -0.05566399544477463}, input_type=dict]

For further information visit https://errors.pydantic.dev/2.11/v/missing

promptFeedback

Field required [type=missing, input_value={'candidates': [{'content...on': 'gemini-2.0-flash'}, input_type=dict]

For further information visit https://errors.pydantic.dev/2.11/v/missing

```

### Example Code

```Python

from pydantic_ai import Agent

agent = Agent(model="google-gla:gemini-2.0-flash")

result = agent.run_sync("Hello, how are you?")

print(result.data)

```

### Python, Pydantic AI & LLM client version

```Text

Python: 3.11.11

Pydantic: 2.11.0b1

Pydantic AI: 0.0.40

``` | closed | 2025-03-16T23:08:50Z | 2025-03-17T09:49:05Z | https://github.com/pydantic/pydantic-ai/issues/1143 | [

"bug"

] | torayeff | 2 |

Lightning-AI/pytorch-lightning | data-science | 20,110 | CSV Logger acts weirdly in Callbacks | ### Bug description

I use CSV logger inside callbacks as the following

```

pl_module.log('epoch_throughput', throughput, on_epoch=True, logger=True, sync_dist=True, reduce_fx="sum")

pl_module.log('epoch_time', epoch_time, logger=True, on_epoch=True, sync_dist=True)

```

When I use two callbacks to log data at the same time point (i.e. on_train_epoch_end), it logs the data into two rows

### What version are you seeing the problem on?

master

### How to reproduce the bug

```python

class CallbackX(Callback):

def on_train_epoch_start(self, trainer, pl_module):

self.epoch_start_time = time.time()

def on_train_epoch_end(self, trainer, pl_module):

epoch_time = time.time() - self.epoch_start_time

pl_module.log('epoch_time', epoch_time, logger=True, on_epoch=True, sync_dist=True)

class CallbackY(Callback):

def on_train_epoch_start(self, trainer, pl_module):

self.epoch_start_time = time.time()

def on_train_epoch_end(self, trainer, pl_module):

epoch_time = time.time() - self.epoch_start_time

pl_module.log('epoch_time2', epoch_time, logger=True, on_epoch=True, sync_dist=True)

```

### Error messages and logs

```

# Error messages and logs here please

```

### Environment

<details>

<summary>Current environment</summary>

* CUDA:

- GPU: Tesla T4

- available: False

- version: 12.1

* Lightning:

- lightning: 2.3.0

- lightning-utilities: 0.11.2

- pytorch-lightning: 2.3.0

- torch: 2.3.1

- torch-tb-profiler: 0.4.3

- torchmetrics: 1.4.0.post0

- torchvision: 0.18.1

* Packages:

- absl-py: 2.1.0

- aiohttp: 3.9.5

- aiosignal: 1.3.1

- annotated-types: 0.7.0

- attrs: 23.2.0

- certifi: 2024.6.2

- cffi: 1.16.0

- charset-normalizer: 3.3.2

- cloudpickle: 3.0.0

- deepspeed: 0.14.4

- dool: 1.3.2

- filelock: 3.15.4

- frozenlist: 1.4.1

- fsspec: 2024.6.0

- graphviz: 0.8.4

- grpcio: 1.64.1

- gviz-api: 1.10.0

- hjson: 3.1.0

- idna: 3.7

- jinja2: 3.1.4

- lightning: 2.3.0

- lightning-utilities: 0.11.2

- markdown: 3.6

- markupsafe: 2.1.5

- mpmath: 1.3.0

- multidict: 6.0.5

- mxnet: 1.9.1

- networkx: 3.3

- ninja: 1.11.1.1

- numpy: 1.25.0

- nvidia-cublas-cu12: 12.1.3.1

- nvidia-cuda-cupti-cu12: 12.1.105

- nvidia-cuda-nvrtc-cu12: 12.1.105

- nvidia-cuda-runtime-cu12: 12.1.105

- nvidia-cudnn-cu12: 8.9.2.26

- nvidia-cufft-cu12: 11.0.2.54

- nvidia-curand-cu12: 10.3.2.106

- nvidia-cusolver-cu12: 11.4.5.107

- nvidia-cusparse-cu12: 12.1.0.106

- nvidia-ml-py: 12.555.43

- nvidia-nccl-cu12: 2.20.5

- nvidia-nvjitlink-cu12: 12.5.40

- nvidia-nvtx-cu12: 12.1.105

- packaging: 24.1

- pandas: 2.2.2

- pillow: 10.3.0

- pip: 24.1

- protobuf: 4.25.3

- psutil: 6.0.0

- py-cpuinfo: 9.0.0

- pycparser: 2.22

- pydantic: 2.7.4

- pydantic-core: 2.18.4

- python-dateutil: 2.9.0.post0

- pytorch-lightning: 2.3.0

- pytz: 2024.1

- pyyaml: 6.0.1

- requests: 2.32.3

- setuptools: 65.5.0

- six: 1.16.0

- sympy: 1.12.1

- tensorboard: 2.17.0

- tensorboard-data-server: 0.7.2

- tensorboard-plugin-profile: 2.15.1

- tensorboardx: 2.6.2.2

- torch: 2.3.1

- torch-tb-profiler: 0.4.3

- torchmetrics: 1.4.0.post0

- torchvision: 0.18.1

- tqdm: 4.66.4

- triton: 2.3.1

- typing-extensions: 4.12.2

- tzdata: 2024.1

- urllib3: 2.2.2

- werkzeug: 3.0.3

- wheel: 0.43.0

- yarl: 1.9.4

* System:

- OS: Linux

- architecture:

- 64bit

- ELF

- processor: x86_64

- python: 3.11.7

- release: 3.10.0-1127.19.1.el7.x86_64

- version: #1 SMP Tue Aug 25 17:23:54 UTC 2020

</details>

### More info

_No response_ | open | 2024-07-20T19:17:17Z | 2024-07-20T19:17:31Z | https://github.com/Lightning-AI/pytorch-lightning/issues/20110 | [

"bug",

"needs triage",

"ver: 2.2.x"

] | oabuhamdan | 0 |

globaleaks/globaleaks-whistleblowing-software | sqlalchemy | 4,154 | Translation issue in "Revoke access" | ### What version of GlobaLeaks are you using?

4.15.8

### What browser(s) are you seeing the problem on?

Microsoft Edge

### What operating system(s) are you seeing the problem on?

Windows

### Describe the issue

The "close" button in the Revoke Access window is forced to be in English in all language versions. It is not possible to set a translation.

### Proposed solution

_No response_ | closed | 2024-08-14T13:34:01Z | 2024-09-06T16:37:48Z | https://github.com/globaleaks/globaleaks-whistleblowing-software/issues/4154 | [

"T: Bug",

"C: Client"

] | evariitta | 1 |

open-mmlab/mmdetection | pytorch | 11,777 | Is it possible to calculate a validation loss? | I want to conduct an experiment with an object detection model that I trained. My experiment is as follows: I want to understand a little more about the images in my test set. For this, I would like to obtain some individual metrics per image from the test dataset, in addition to getting the loss (validation) for each image. My current code is as follows:

```

config_file = 'swin/custom_mask_rcnn_swin-s-p4-w7_fpn_fp16_ms-crop-3x_coco.py'

checkpoint_file = 'mmdet/swin/epoch_40.pth'

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

cfg = Config.fromfile(config_file)

dataset = build_dataset(cfg.data.test)

data_loader = build_dataloader(

dataset,

samples_per_gpu=cfg.data.samples_per_gpu,

workers_per_gpu=cfg.data.workers_per_gpu,

dist=False,

shuffle=False

)

model = init_detector(config_file, checkpoint_file, device=device)

dataset = build_dataset(cfg.data.test)

model.eval()

results = []

prog_bar = mmcv.ProgressBar(len(dataset))

for i, data in enumerate(data_loader):

with torch.no_grad():

data = scatter(data, [device])[0]

result = model(return_loss=True, **data)

prog_bar.update()

for elem in result:

print(elem)

```

I am getting a tuple of tensors as the output, like the example below:

```

([array([], shape=(0, 5), dtype=float32), array([[1.3915492e+02, 2.6474759e+02, 1.5779597e+02, 2.9583871e+02,

1.4035654e-01],

[1.3974554e+02, 2.6289932e+02, 1.6024533e+02, 3.1977335e+02,

9.8360136e-02]], dtype=float32), array([[8.7633228e+02, 2.1958812e+02, 8.8837036e+02, 2.4044472e+02,

7.2545749e-01]], dtype=float32), array([], shape=(0, 5), dtype=float32), array([[8.7622699e+02, 2.1944591e+02, 8.8825470e+02, 2.4135008e+02,

2.4266671e-01]], dtype=float32)], [[], [array([[False, False, False, ..., False, False, False],

[False, False, False, ..., False, False, False],

[False, False, False, ..., False, False, False],

...,

[False, False, False, ..., False, False, False],

[False, False, False, ..., False, False, False],

[False, False, False, ..., False, False, False]]), array([[False, False, False, ..., False, False, False],

[False, False, False, ..., False, False, False],

[False, False, False, ..., False, False, False],

...,

[False, False, False, ..., False, False, False],

[False, False, False, ..., False, False, False],

[False, False, False, ..., False, False, False]])], [array([[False, False, False, ..., False, False, False],

[False, False, False, ..., False, False, False],

[False, False, False, ..., False, False, False],

...,

[False, False, False, ..., False, False, False],

[False, False, False, ..., False, False, False],

[False, False, False, ..., False, False, False]])], [], [array([[False, False, False, ..., False, False, False],

[False, False, False, ..., False, False, False],

[False, False, False, ..., False, False, False],

...,

[False, False, False, ..., False, False, False],

[False, False, False, ..., False, False, False],

[False, False, False, ..., False, False, False]])]])

([array([], shape=(0, 5), dtype=float32), array([], shape=(0, 5), dtype=float32), array([], shape=(0, 5), dtype=float32), array([], shape=(0, 5), dtype=float32), array([[2.9585770e+02, 3.7026846e+02, 3.1038290e+02, 3.8506241e+02,

5.9989877e-02]], dtype=float32)], [[], [], [], [], [array([[False, False, False, ..., False, False, False],

[False, False, False, ..., False, False, False],

[False, False, False, ..., False, False, False],

...,

[False, False, False, ..., False, False, False],

[False, False, False, ..., False, False, False],

[False, False, False, ..., False, False, False]])]])

```

My intuition tells me that this output is something like bounding box positions (x, y, w, h), confidence score per class, and also binary mask information due to the boolean values. Additionally, I added the argument "return_loss=True", and I imagine that some of this information must also be related to the loss that I want to obtain. How can I parse this output? That is, identify what each of the pieces of information in these results is to be able to find the desired loss.

I'm using MMDetection v2.28.2. | open | 2024-06-07T22:40:25Z | 2024-06-07T22:43:20Z | https://github.com/open-mmlab/mmdetection/issues/11777 | [] | psantiago-lsbd | 0 |

tensorpack/tensorpack | tensorflow | 876 | Save Model | Hi, i have a question about TrainConfig. How to save model in specific steps rather than every epoch. | closed | 2018-08-28T12:15:38Z | 2018-09-06T20:06:40Z | https://github.com/tensorpack/tensorpack/issues/876 | [

"usage"

] | lizaigaoge550 | 1 |

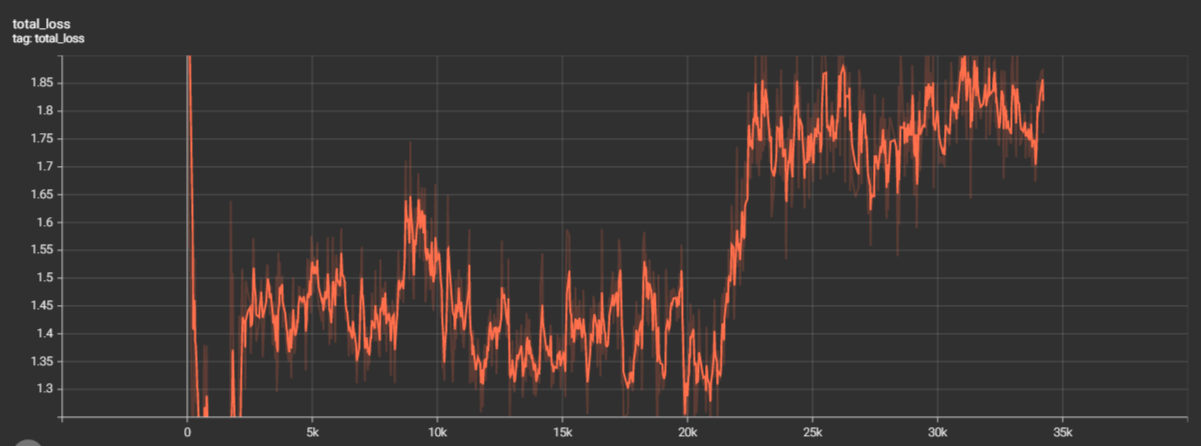

microsoft/unilm | nlp | 724 | Question about learning performance when finetuning LayoutLM V3 on PubLayNet-like dataset | I'm trying to finetune _LayoutLM V3_ [Base model](https://huggingface.co/microsoft/layoutlmv3-base) using the provided `dit/train_net.py` script on my own custom dataset that is similar to _PubLayNet_. The learning starts well but after reaching the first checkpoint (2000 iterations) and doing the first evaluation the loss starts going up and the accuracy keeps going down.

Someone can point me to some critical factors that may lead to this behavior ? Thanks in advance !

| closed | 2022-05-19T21:16:13Z | 2022-05-23T03:18:37Z | https://github.com/microsoft/unilm/issues/724 | [] | naourass | 3 |

TencentARC/GFPGAN | deep-learning | 290 | Enhanced | open | 2022-10-13T18:49:37Z | 2022-10-13T18:49:37Z | https://github.com/TencentARC/GFPGAN/issues/290 | [] | abhaydasah | 0 | |

vimalloc/flask-jwt-extended | flask | 481 | Refresh with cookies | I have a pretty standard refresh endpoint with the intent to create a new access token and set the new access token as a cookie.

```python

@identity_bp.get('/refresh')

@jwt_required(refresh=True)

def refresh_token():

identity = get_jwt_identity()

access_token = create_access_token(identity=identity)

res = jsonify({'refresh': True})

set_access_cookies(res, access_token)

return jsonify(access_token=access_token), HTTPStatus.OK

```

A new access token is created, but the access token cookie remains the same as the access token set upon login. So, despite refreshing, the token stored in the cookie is old.

For reference, this is our login endpoint, which does successfully create access/refresh tokens and stores them into cookies:

```python

@identity.post('/login')

def login():

email = request.json.get('email', None)

password = request.json.get('password', None)

# ... some logic

access_token = create_access_token(identity=identity)

refresh_token = create_refresh_token(identity=identity)

res = jsonify({'msg': 'Successful login.',

'access_token': access_token,

'refresh_token': refresh_token})

set_access_cookies(res, access_token)

set_refresh_cookies(res, refresh_token)

return res, HTTPStatus.OK

```

In our React web app, we handle the logic necessary logic to refresh the tokens. I can confirm that when we hit the refresh endpoint there, a new token is created, but the cookie just doesn't change. I've also tried to unset the cookies with `unset_access_cookies`, but it also doesn't remove the cookie.

Perhaps this is just a misunderstanding on my end with how overriding the cookie works, or a bug. I'm not too sure. | closed | 2022-06-02T03:01:40Z | 2022-06-02T03:16:55Z | https://github.com/vimalloc/flask-jwt-extended/issues/481 | [] | leelerm | 2 |

piskvorky/gensim | machine-learning | 3,368 | CoherenceModel does not finish with computing | #### Problem description

When computing coherence scores, it newer finishes with computing on a bit bigger dataset. Run the code below (with the provided dataset) to reproduce.

#### Steps/code/corpus to reproduce

```python

with open("coherence-bug.pkl", "rb") as f:

model, tokens = pickle.load(f)

print("conherence")

print(datetime.now())

t = time.time()

cm = CoherenceModel(model=model, texts=tokens, coherence="c_v")

coherence = cm.get_coherence()

print(time.time() - t)

```

[coherence-bug.pkl.zip](https://github.com/RaRe-Technologies/gensim/files/9178910/coherence-bug.pkl.zip)

#### Versions

The bug appears on Gensim version 4.2, but it does not happen on 4.1.2

macOS-10.16-x86_64-i386-64bit

Python 3.8.12 (default, Oct 12 2021, 06:23:56)

[Clang 10.0.0 ]

Bits 64

NumPy 1.22.3

SciPy 1.8.1

gensim 4.2.1.dev0

FAST_VERSION 0

| closed | 2022-07-25T07:23:04Z | 2022-12-06T07:38:18Z | https://github.com/piskvorky/gensim/issues/3368 | [

"bug",

"difficulty easy",

"impact HIGH",

"reach LOW"

] | PrimozGodec | 5 |

modoboa/modoboa | django | 2,569 | LDAP: password sync is broken | # Impacted versions

* OS Type: Debian

* OS Version: bullseye 11.4

* Database Type: MySQL / MariaDB

* Database version: 10.5.15

* Modoboa: 2.0.1

* installer used: yes

* Webserver: nginx

# Steps to reproduce

1. have openldap / slapd installation

(i can certainly get more info on this, but i was only tasked with fixing the issue and have not yet much fiddled with slapd config)

the important point is: slapd must automatically encrypt new userPasswords if it thinks the hash type is unknown

2. install modoboa

3. configure ldap connection

4. use modoboa to change users password

# Current behavior

there are two issues we were able to identify:

## 1. modoboa does not send password hashing scheme to ldap-server

TL;DR: modoboa sends `$6$rounds=70000$...` to ldap server instead of `{SHA512-CRYPT}$6$rounds=70000$...`

we were able to capture this with tcpdump. when modoboa sends password to ldap https://github.com/modoboa/modoboa/blob/c26379478445da5888bf05be0ba4cf98e20ea046/modoboa/ldapsync/lib.py#L119 we always found it only sends the actual hash starting with `$6$rounds=70000$...`

## 2. ldap does only understand "{CRYPT}"

TL;DR: modoboa sends `{SHA512-CRYPT}$6$rounds=70000$...` to ldap server instead of `{CRYPT}$6$rounds=70000$...`

the second issue is with slapd only supporting {CRYPT} as a scheme. it can understand, operate and generate multiple different hash types (like `$1$`, `$5$`, and `$6$`) but this is controlled only by the actual hash, not the scheme prefix.

these do not work: {SHA256-CRYPT} {SHA512-CRYPT} {BLF-CRYPT}

but their hashes work if stored in userPassword field in LDAP with {CRYPT} as prefix.

# Expected behavior

included in "Current behavior" section

# Possible fixes:

## 1. modoboa does not send password hashing scheme to ldap-server

the update_ldap_account function uses get_user_password from that same file https://github.com/modoboa/modoboa/blob/c26379478445da5888bf05be0ba4cf98e20ea046/modoboa/ldapsync/lib.py#L50. we identified an issue in line https://github.com/modoboa/modoboa/blob/c26379478445da5888bf05be0ba4cf98e20ea046/modoboa/ldapsync/lib.py#L56 which prevents the scheme from being sent if the accounts is not disabled.

i fixed it by adding parentheses around the disabled check (see: https://github.com/modoboa/modoboa/commit/53dd6c7502d8f8aeb81c8e4caec13a065e92f172)

afterwards tcpdump showed the correct full hash with scheme prepended (i.e. `{SHA512-CRYPT}$6$rounds=70000$...`)

## 2. ldap does only understand "{CRYPT}"

to fix this issue, i added ~~"LDAP_DROP_SCHEME_PREFIX"~~"LDAP_DROP_CRYPT_PREFIX" to settings.py and a check in get_user_password which sets scheme to "{CRYPT" when this option is set. (see: https://github.com/modoboa/modoboa/commit/7432877c3429a0f8bc3d8084b3e00eee7887a0f5)

we verified it working with tcpdump which now showed correct updates to userPassword with full hash like `{CRYPT}$6$rounds=70000$...`

sadly i am not very good with python and was unable to find where to "declare" that new option for the generated settings.py so this needs to be added by s/o else.

| closed | 2022-07-21T18:00:13Z | 2023-03-11T13:49:10Z | https://github.com/modoboa/modoboa/issues/2569 | [

"feedback-needed",

"stale"

] | elgarfo | 7 |

davidsandberg/facenet | computer-vision | 852 | error when running Validate_on_lfw | I am new to the machine learning @davidsandberg

I am trying to run the (Validate_on_lfw .py) in windows but i get this error:

Model directory: pretrained_model

Metagraph file: model-20180402-114759.meta

Checkpoint file: model-20180402-114759.ckpt-275

Traceback (most recent call last):

File "C:\Users\user\Desktop\noor2\project2\facenet-master\facenet-master\src\validate_on_lfw.py", line 166, in <module>

main(parse_arguments(sys.argv[1:]))

File "C:\Users\user\Desktop\noor2\project2\facenet-master\facenet-master\src\validate_on_lfw.py", line 75, in main

facenet.load_model("pretrained_model", input_map=input_map)

File "C:\Users\user\Desktop\noor2\project2\facenet-master\facenet-master\src\facenet.py", line 381, in load_model

saver = tf.train.import_meta_graph(os.path.join(model_exp, meta_file), input_map=input_map)

File "C:\Users\user\AppData\Local\Programs\Python\Python36\lib\site-packages\tensorflow\python\training\saver.py", line 1686, in import_meta_graph

**kwargs)

File "C:\Users\user\AppData\Local\Programs\Python\Python36\lib\site-packages\tensorflow\python\framework\meta_graph.py", line 504, in import_scoped_meta_graph

producer_op_list=producer_op_list)

File "C:\Users\user\AppData\Local\Programs\Python\Python36\lib\site-packages\tensorflow\python\framework\importer.py", line 283, in import_graph_def

raise ValueError('No op named %s in defined operations.' % node.op)

ValueError: No op named DecodeBmp in defined operations.

any help ?

| closed | 2018-08-22T09:28:34Z | 2021-02-15T16:33:18Z | https://github.com/davidsandberg/facenet/issues/852 | [] | nonameever95 | 17 |

httpie/cli | rest-api | 527 | httpie hangs in MobaXterm | I am attempting to use httpie in [mobaXterm](http://mobaxterm.mobatek.net/) (version 8.6) on Windows 7 Ultimate, but httpie hangs and never returns a result.

I configured mobaXterm to use my Windows PATH, and both python and http are on my path:

Although httpie does not work in mobaXterm, it does work in cmd.exe:

I think this issue might be related to another similar issue that I found on StackOverflow:

http://stackoverflow.com/questions/3250749/using-windows-python-from-cygwin

| closed | 2016-10-13T11:32:23Z | 2020-06-18T08:26:13Z | https://github.com/httpie/cli/issues/527 | [] | knilling | 4 |

pyeve/eve | flask | 879 | XML Parsing Error: not well-formed | Hi, I am doing some codes with **python-eve**. I got an issue.

In a **blog editor** page, I have a **textarea** to store **markdown style** string and the other **textarea** to store the **html tags style** string. Then post this two big long strings to the **mongodb** by **eve**. Then I find the blog list by API _http://127.0.0.1:8000/api/v1/blog_, it shows with error:**XML Parsing Error: not well-formed.**

I also use **Django** to find the blog list and it's fine to display on the html page.

I have tested for some codes and found below:

If I post the **markdown style** string, and with code, such as Linux vi code,

```

#vi /root/.vnc/xstartup

```

then I got the error.

| closed | 2016-06-27T17:04:01Z | 2016-06-28T12:25:12Z | https://github.com/pyeve/eve/issues/879 | [] | Penguin7z | 2 |