repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

wkentaro/labelme | deep-learning | 1,021 | When changing the label value with label edit, the program crashes. | app.py (1171 row)

item = self.uniqLabelList.findItemsByLabel(label)[0]

there is out of range error.

add code.. good working.

def _get_rgb_by_label(self, label):

if self._config["shape_color"] == "auto":

if not self.uniqLabelList.findItemsByLabel(label):

item = QtWidgets.QListWidgetItem()

item.setData(Qt.UserRole, label)

self.uniqLabelList.addItem(item)

item = self.uniqLabelList.findItemsByLabel(label)[0] | closed | 2022-05-20T09:09:10Z | 2022-09-26T13:35:54Z | https://github.com/wkentaro/labelme/issues/1021 | [

"issue::bug",

"priority: high"

] | sangwoo89 | 1 |

feature-engine/feature_engine | scikit-learn | 493 | Ignore NaNs before using `OrdinalEncoder` | *Is your feature request related to a problem?*

`OrdinalEncoder` should accept nulls. Sometimes you don't want to impute directly but using Imputing Options of XGBoost, LightGBM or CatBoost. Because of the constraints of always Impute before this is currently not possible.

*Describe the solution you'd like*

I like that Feature Engine forces you to Impute first, but I will add some kind of default flag `ignore_nan=False` in case we want to use other imputation afterwards.

Hope you find this helpful. | closed | 2022-08-06T02:31:08Z | 2023-01-14T11:22:45Z | https://github.com/feature-engine/feature_engine/issues/493 | [

"urgent",

"priority"

] | datacubeR | 6 |

seleniumbase/SeleniumBase | pytest | 3,602 | LocalStorage variable deleted after refreshing page | Hello, Michael. Why does local storage variable, which i've set via set_local_storage_item, after refreshing page is deleted?

```

with SB(

uc=True,

browser="chrome",

locale="ru",

position="0,0",

agent=browser_config.get("user_agent"),

window_size=browser_config.get("window_size"),

ad_block=True,

devtools=True

) as driver:

```

```

def login(driver: SeleniumBase, token: str):

logger.info("Execute token to LocalStorage")

temp = json.dumps({"token": token})

driver.set_local_storage_item("wbx__tokenData", temp)

```

While we were working with raw selenium we did it like this:

```

driver.execute_script("localStorage.setItem(arguments[0], JSON.stringify({token: arguments[1]}));",

'wbx__tokenData', token)

```

And it were working fine.

_Originally posted by @leesache in https://github.com/seleniumbase/SeleniumBase/discussions/2722#discussioncomment-12462594_ | closed | 2025-03-11T14:19:29Z | 2025-03-12T06:34:54Z | https://github.com/seleniumbase/SeleniumBase/issues/3602 | [

"question",

"UC Mode / CDP Mode"

] | leesache | 1 |

microsoft/nni | data-science | 5,218 | I am very new to NNI can you please provide a MS NNI + Pytorch Lightning example for HPO | **Describe the issue**:

I am very new to NNI can you please provide a MS NNI + Pytorch Lightning example for HPO

**Environment**:

- NNI version:

- Training service (local|remote|pai|aml|etc):

- Client OS:

- Server OS (for remote mode only):

- Python version:

- PyTorch/TensorFlow version:

- Is conda/virtualenv/venv used?:

- Is running in Docker?:

**Configuration**:

- Experiment config (remember to remove secrets!):

- Search space:

**Log message**:

- nnimanager.log:

- dispatcher.log:

- nnictl stdout and stderr:

<!--

Where can you find the log files:

LOG: https://github.com/microsoft/nni/blob/master/docs/en_US/Tutorial/HowToDebug.md#experiment-root-director

STDOUT/STDERR: https://nni.readthedocs.io/en/stable/reference/nnictl.html#nnictl-log-stdout

-->

**How to reproduce it?**: | open | 2022-11-10T18:58:02Z | 2022-11-24T01:23:03Z | https://github.com/microsoft/nni/issues/5218 | [

"feature request"

] | sathishkumark27 | 0 |

OFA-Sys/Chinese-CLIP | computer-vision | 20 | import cn_clip出错UnicodeDecodeError: 'gbk' codec can't decode byte 0x81 in position 1564: illegal multibyte sequence | import cn_clip.clip as clip

发生异常: UnicodeDecodeError

Traceback (most recent call last):

File "D:\develop\anaconda3\lib\runpy.py", line 193, in _run_module_as_main

"__main__", mod_spec)

File "D:\develop\anaconda3\lib\runpy.py", line 85, in _run_code

exec(code, run_globals)

File "c:\Users\saizong\.vscode\extensions\ms-python.python-2022.4.1\pythonFiles\lib\python\debugpy\__main__.py", line 45, in <module>

cli.main()

File "c:\Users\saizong\.vscode\extensions\ms-python.python-2022.4.1\pythonFiles\lib\python\debugpy/..\debugpy\server\cli.py", line 444, in main

run()

File "c:\Users\saizong\.vscode\extensions\ms-python.python-2022.4.1\pythonFiles\lib\python\debugpy/..\debugpy\server\cli.py", line 285, in run_file

runpy.run_path(target_as_str, run_name=compat.force_str("__main__"))

File "D:\develop\anaconda3\lib\runpy.py", line 263, in run_path

pkg_name=pkg_name, script_name=fname)

File "D:\develop\anaconda3\lib\runpy.py", line 96, in _run_module_code

mod_name, mod_spec, pkg_name, script_name)

File "D:\develop\anaconda3\lib\runpy.py", line 85, in _run_code

exec(code, run_globals)

File "d:\develop\workspace\today_video\clipcn.py", line 5, in <module>

import cn_clip.clip as clip

File "D:\develop\anaconda3\Lib\site-packages\cn_clip\clip\__init__.py", line 3, in <module>

_tokenizer = FullTokenizer()

File "D:\develop\anaconda3\Lib\site-packages\cn_clip\clip\bert_tokenizer.py", line 170, in __init__

self.vocab = load_vocab(vocab_file)

File "D:\develop\anaconda3\Lib\site-packages\cn_clip\clip\bert_tokenizer.py", line 132, in load_vocab

token = convert_to_unicode(reader.readline())

UnicodeDecodeError: 'gbk' codec can't decode byte 0x81 in position 1564: illegal multibyte sequence

请问如何处理?谢谢! | closed | 2022-11-28T08:07:28Z | 2022-12-13T11:37:28Z | https://github.com/OFA-Sys/Chinese-CLIP/issues/20 | [] | bigmarten | 13 |

allenai/allennlp | data-science | 5,164 | How can i add a new subcommand? | I'm writing a transfer learning(domain adaptation) method in Allennlp, so I created a trainer by myself, and I wanted to write a new command( for example mytrain or mypredict) to begin the training and predict process.

How can I use the command: allennlp mytrain xxx.jsonnet --including-package xxx --serialization-dir xxxx ? | closed | 2021-04-28T13:34:03Z | 2021-05-06T02:58:18Z | https://github.com/allenai/allennlp/issues/5164 | [] | ding00 | 4 |

hack4impact/flask-base | sqlalchemy | 202 | Docker file for deployment | I am using this as a part of a larger project and found we need to deploy it with docker,

I'm working on this now, and will open a pull request with the patch, I'll try to make it asap. | closed | 2020-05-29T17:26:32Z | 2020-06-05T04:26:39Z | https://github.com/hack4impact/flask-base/issues/202 | [] | MohammedRashad | 1 |

openapi-generators/openapi-python-client | fastapi | 351 | Support non-file fields in Multipart requests | **Is your feature request related to a problem? Please describe.**

Given this schema for a POST request:

```

{

"requestBody": {

"content": {

"multipart/form-data": {

"schema": {

"type": "object",

"properties": {

"file": {

"type": "string",

"format": "binary"

},

"options": {

"type": "string",

"default": "{}"

}

}

}

}

}

}

}

```

the generated code will call `httpx.post(files=dict(file=file, options=options))` which causes a server-side pydantic validation error (`options` should be of type `str`).

**Describe the solution you'd like**

The correct invocation would be `httpx.post(data=dict(options=options), files=dict(file=file))` (see [relevant page from httpx docs](https://www.python-httpx.org/advanced/#multipart-file-encoding))

**Describe alternatives you've considered**

Some sort of server-side workaround, but it might be somewhat ugly.

| closed | 2021-03-19T17:08:08Z | 2021-03-22T20:25:52Z | https://github.com/openapi-generators/openapi-python-client/issues/351 | [

"✨ enhancement"

] | csymeonides-mf | 1 |

ultralytics/ultralytics | computer-vision | 18,748 | yolo11 cls train error | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and found no similar bug report.

### Ultralytics YOLO Component

_No response_

### Bug

from ultralytics import YOLO

# Load a model

model = YOLO("yolo11n-cls.yaml") # build a new model from YAML

model = YOLO("yolo11n-cls.pt") # load a pretrained model (recommended for training)

model = YOLO("yolo11n-cls.yaml").load("yolo11n-cls.pt") # build from YAML and transfer weights

# Train the model

results = model.train(data="mnist160", epochs=100, imgsz=64)

error:

train: G:\Item_done\yolo\yolo5\yolov11\ultralytics-main\datasets\mnist160\train... found 80 images in 10 classes ✅

val: None...

test: G:\Item_done\yolo\yolo5\yolov11\ultralytics-main\datasets\mnist160\test... found 80 images in 10 classes ✅

Overriding model.yaml nc=80 with nc=10

from n params module arguments

0 -1 1 464 ultralytics.nn.modules.conv.Conv [3, 16, 3, 2]

1 -1 1 4672 ultralytics.nn.modules.conv.Conv [16, 32, 3, 2]

2 -1 1 6640 ultralytics.nn.modules.block.C3k2 [32, 64, 1, False, 0.25]

3 -1 1 36992 ultralytics.nn.modules.conv.Conv [64, 64, 3, 2]

4 -1 1 26080 ultralytics.nn.modules.block.C3k2 [64, 128, 1, False, 0.25]

5 -1 1 147712 ultralytics.nn.modules.conv.Conv [128, 128, 3, 2]

6 -1 1 87040 ultralytics.nn.modules.block.C3k2 [128, 128, 1, True]

7 -1 1 295424 ultralytics.nn.modules.conv.Conv [128, 256, 3, 2]

8 -1 1 346112 ultralytics.nn.modules.block.C3k2 [256, 256, 1, True]

9 -1 1 249728 ultralytics.nn.modules.block.C2PSA [256, 256, 1]

10 -1 1 343050 ultralytics.nn.modules.head.Classify [256, 10]

YOLO11n-cls summary: 151 layers, 1,543,914 parameters, 1,543,914 gradients, 3.3 GFLOPs

Transferred 234/236 items from pretrained weights

TensorBoard: Start with 'tensorboard --logdir runs\classify\train10', view at http://localhost:6006/

AMP: running Automatic Mixed Precision (AMP) checks...

AMP: checks passed ✅

train: Scanning G:\Item_done\yolo\yolo5\yolov11\ultralytics-main\datasets\mnist160\train... 80 images, 0 corrupt: 100%|██████████| 80/80 [00:00<?, ?it/s]

Traceback (most recent call last):

File "<string>", line 1, in <module>

File "C:\Users\lllstandout\.conda\envs\yolov8\lib\multiprocessing\spawn.py", line 116, in spawn_main

exitcode = _main(fd, parent_sentinel)

File "C:\Users\lllstandout\.conda\envs\yolov8\lib\multiprocessing\spawn.py", line 125, in _main

prepare(preparation_data)

File "C:\Users\lllstandout\.conda\envs\yolov8\lib\multiprocessing\spawn.py", line 236, in prepare

_fixup_main_from_path(data['init_main_from_path'])

File "C:\Users\lllstandout\.conda\envs\yolov8\lib\multiprocessing\spawn.py", line 287, in _fixup_main_from_path

main_content = runpy.run_path(main_path,

File "C:\Users\lllstandout\.conda\envs\yolov8\lib\runpy.py", line 288, in run_path

return _run_module_code(code, init_globals, run_name,

File "C:\Users\lllstandout\.conda\envs\yolov8\lib\runpy.py", line 97, in _run_module_code

_run_code(code, mod_globals, init_globals,

File "C:\Users\lllstandout\.conda\envs\yolov8\lib\runpy.py", line 87, in _run_code

exec(code, run_globals)

File "G:\Item_done\yolo\yolo5\yolov11\ultralytics-train\cls_train.py", line 9, in <module>

results = model.train(data="mnist160", epochs=100, imgsz=64)

File "G:\Item_done\yolo\yolo5\yolov11\ultralytics-train\ultralytics\engine\model.py", line 806, in train

self.trainer.train()

File "G:\Item_done\yolo\yolo5\yolov11\ultralytics-train\ultralytics\engine\trainer.py", line 207, in train

self._do_train(world_size)

File "G:\Item_done\yolo\yolo5\yolov11\ultralytics-train\ultralytics\engine\trainer.py", line 323, in _do_train

self._setup_train(world_size)

File "G:\Item_done\yolo\yolo5\yolov11\ultralytics-train\ultralytics\engine\trainer.py", line 287, in _setup_train

self.train_loader = self.get_dataloader(self.trainset, batch_size=batch_size, rank=LOCAL_RANK, mode="train")

File "G:\Item_done\yolo\yolo5\yolov11\ultralytics-train\ultralytics\models\yolo\classify\train.py", line 84, in get_dataloader

loader = build_dataloader(dataset, batch_size, self.args.workers, rank=rank)

File "G:\Item_done\yolo\yolo5\yolov11\ultralytics-train\ultralytics\data\build.py", line 143, in build_dataloader

return InfiniteDataLoader(

File "G:\Item_done\yolo\yolo5\yolov11\ultralytics-train\ultralytics\data\build.py", line 39, in __init__

self.iterator = super().__iter__()

File "C:\Users\lllstandout\.conda\envs\yolov8\lib\site-packages\torch\utils\data\dataloader.py", line 441, in __iter__

return self._get_iterator()

File "C:\Users\lllstandout\.conda\envs\yolov8\lib\site-packages\torch\utils\data\dataloader.py", line 388, in _get_iterator

return _MultiProcessingDataLoaderIter(self)

File "C:\Users\lllstandout\.conda\envs\yolov8\lib\site-packages\torch\utils\data\dataloader.py", line 1042, in __init__

w.start()

File "C:\Users\lllstandout\.conda\envs\yolov8\lib\multiprocessing\process.py", line 121, in start

self._popen = self._Popen(self)

File "C:\Users\lllstandout\.conda\envs\yolov8\lib\multiprocessing\context.py", line 224, in _Popen

return _default_context.get_context().Process._Popen(process_obj)

File "C:\Users\lllstandout\.conda\envs\yolov8\lib\multiprocessing\context.py", line 327, in _Popen

return Popen(process_obj)

File "C:\Users\lllstandout\.conda\envs\yolov8\lib\multiprocessing\popen_spawn_win32.py", line 45, in __init__

prep_data = spawn.get_preparation_data(process_obj._name)

File "C:\Users\lllstandout\.conda\envs\yolov8\lib\multiprocessing\spawn.py", line 154, in get_preparation_data

_check_not_importing_main()

File "C:\Users\lllstandout\.conda\envs\yolov8\lib\multiprocessing\spawn.py", line 134, in _check_not_importing_main

raise RuntimeError('''

RuntimeError:

An attempt has been made to start a new process before the

current process has finished its bootstrapping phase.

This probably means that you are not using fork to start your

child processes and you have forgotten to use the proper idiom

in the main module:

if __name__ == '__main__':

freeze_support()

...

The "freeze_support()" line can be omitted if the program

is not going to be frozen to produce an executable.

Exception ignored in: <function InfiniteDataLoader.__del__ at 0x000001F137A2FE50>

Traceback (most recent call last):

File "G:\Item_done\yolo\yolo5\yolov11\ultralytics-train\ultralytics\data\build.py", line 52, in __del__

if hasattr(self.iterator, "_workers"):

AttributeError: 'InfiniteDataLoader' object has no attribute 'iterator'

### Environment

windows10

python 3.8

conda

### Minimal Reproducible Example

from ultralytics import YOLO

# Load a model

model = YOLO("yolo11n-cls.yaml") # build a new model from YAML

model = YOLO("yolo11n-cls.pt") # load a pretrained model (recommended for training)

model = YOLO("yolo11n-cls.yaml").load("yolo11n-cls.pt") # build from YAML and transfer weights

# Train the model

results = model.train(data="mnist160", epochs=100, imgsz=64)

### Additional

_No response_

### Are you willing to submit a PR?

- [ ] Yes I'd like to help by submitting a PR! | closed | 2025-01-18T08:18:08Z | 2025-02-23T05:04:40Z | https://github.com/ultralytics/ultralytics/issues/18748 | [

"classify"

] | xinsuinizhuan | 4 |

adamerose/PandasGUI | pandas | 3 | MultiIndex names are not shown | When showing a DataFrame with a MultiIndex the index columns in the gui are all labeled None even though the index labels have names. | closed | 2019-06-16T02:14:46Z | 2019-06-17T18:50:07Z | https://github.com/adamerose/PandasGUI/issues/3 | [

"bug"

] | selasley | 3 |

deepspeedai/DeepSpeed | deep-learning | 6,634 | [BUG] Deepspeed MoE Multi-GPU Expert-Parallel Training/Inference freezes when a process finishes generation first. | **Describe the bug**

I am using expert-parallel MoE implementation of Deepspeed-MoE for decoder model inference using Huggingface Trainer.

During inference, if the generation lengths of the evaluation dataset distributed among the GPUs do not match,

one of the GPUs will finish the decoding inference for its batch, while the other GPU keeps running.

Because one of the GPU has finished the batch, it will not run.

The remaining running GPU will keep waiting for communication with the finished process.

Is there a way to deal with this other than feeding dummy inputs to the GPU that finishes early?

Forcing some generation lenghts could be a quick solution, too.

Has anyone dealt with this type of problem before?

**To Reproduce**

Run multi-gpu expert parallel inference without forcing any generation lengths

**Expected behavior**

Inference to run smoothly.

**ds_report output**

Don't think it's an issue with my environment, but here goes.

async_io ............... [NO] ....... [NO]

fused_adam ............. [NO] ....... [OKAY]

cpu_adam ............... [NO] ....... [OKAY]

cpu_adagrad ............ [NO] ....... [OKAY]

cpu_lion ............... [NO] ....... [OKAY]

evoformer_attn ......... [NO] ....... [OKAY]

fp_quantizer ........... [NO] ....... [OKAY]

fused_lamb ............. [NO] ....... [OKAY]

fused_lion ............. [NO] ....... [OKAY]

gds .................... [NO] ....... [NO]

transformer_inference .. [NO] ....... [OKAY]

inference_core_ops ..... [NO] ....... [OKAY]

cutlass_ops ............ [NO] ....... [OKAY]

quantizer .............. [NO] ....... [OKAY]

ragged_device_ops ...... [NO] ....... [OKAY]

ragged_ops ............. [NO] ....... [OKAY]

random_ltd ............. [NO] ....... [OKAY]

[WARNING] sparse_attn requires a torch version >= 1.5 and < 2.0 but detected 2.4

[WARNING] using untested triton version (3.0.0), only 1.0.0 is known to be compatible

sparse_attn ............ [NO] ....... [NO]

spatial_inference ...... [NO] ....... [OKAY]

transformer ............ [NO] ....... [OKAY]

stochastic_transformer . [NO] ....... [OKAY]

**Screenshots**

| closed | 2024-10-17T05:53:47Z | 2024-11-01T21:18:16Z | https://github.com/deepspeedai/DeepSpeed/issues/6634 | [

"bug",

"training"

] | taehyunzzz | 1 |

mirumee/ariadne | api | 359 | Cannot import name 'GraphQLNamedType' | I am using version 0.11.0 of ariadne with FastAPI (0.54.1).

When I launch the server, I have a systematic error of which here is the stack.

```

uvicorn app.main:app --reload

INFO: Uvicorn running on http://127.0.0.1:8000 (Press CTRL+C to quit)

INFO: Started reloader process [7818]

Process SpawnProcess-1:

Traceback (most recent call last):

File "/Library/Frameworks/Python.framework/Versions/3.8/lib/python3.8/multiprocessing/process.py", line 315, in _bootstrap

self.run()

File "/Library/Frameworks/Python.framework/Versions/3.8/lib/python3.8/multiprocessing/process.py", line 108, in run

self._target(*self._args, **self._kwargs)

File "/Library/Frameworks/Python.framework/Versions/3.8/lib/python3.8/site-packages/uvicorn/subprocess.py", line 61, in subprocess_started

target(sockets=sockets)

File "/Library/Frameworks/Python.framework/Versions/3.8/lib/python3.8/site-packages/uvicorn/main.py", line 382, in run

loop.run_until_complete(self.serve(sockets=sockets))

File "uvloop/loop.pyx", line 1456, in uvloop.loop.Loop.run_until_complete

File "/Library/Frameworks/Python.framework/Versions/3.8/lib/python3.8/site-packages/uvicorn/main.py", line 389, in serve

config.load()

File "/Library/Frameworks/Python.framework/Versions/3.8/lib/python3.8/site-packages/uvicorn/config.py", line 288, in load

self.loaded_app = import_from_string(self.app)

File "/Library/Frameworks/Python.framework/Versions/3.8/lib/python3.8/site-packages/uvicorn/importer.py", line 23, in import_from_string

raise exc from None

File "/Library/Frameworks/Python.framework/Versions/3.8/lib/python3.8/site-packages/uvicorn/importer.py", line 20, in import_from_string

module = importlib.import_module(module_str)

File "/Library/Frameworks/Python.framework/Versions/3.8/lib/python3.8/importlib/__init__.py", line 127, in import_module

return _bootstrap._gcd_import(name[level:], package, level)

File "<frozen importlib._bootstrap>", line 1014, in _gcd_import

File "<frozen importlib._bootstrap>", line 991, in _find_and_load

File "<frozen importlib._bootstrap>", line 975, in _find_and_load_unlocked

File "<frozen importlib._bootstrap>", line 671, in _load_unlocked

File "<frozen importlib._bootstrap_external>", line 783, in exec_module

File "<frozen importlib._bootstrap>", line 219, in _call_with_frames_removed

File "./app/main.py", line 21, in <module>

from ariadne.asgi import GraphQL

File "/Library/Frameworks/Python.framework/Versions/3.8/lib/python3.8/site-packages/ariadne/__init__.py", line 1, in <module>

from .enums import EnumType

File "/Library/Frameworks/Python.framework/Versions/3.8/lib/python3.8/site-packages/ariadne/enums.py", line 5, in <module>

from graphql.type import GraphQLEnumType, GraphQLNamedType, GraphQLSchema

ImportError: cannot import name 'GraphQLNamedType' from 'graphql.type' (/Library/Frameworks/Python.framework/Versions/3.8/lib/python3.8/site-packages/graphql/type/__init__.py)

```

| closed | 2020-04-18T07:39:17Z | 2020-04-18T08:06:39Z | https://github.com/mirumee/ariadne/issues/359 | [] | wkup | 1 |

coqui-ai/TTS | python | 2,905 | [Bug] Documentation for Simple Usage Not Working | ### Describe the bug

Hi, I was attempting to setup and run TTS locally on my Macbook this morning, and I ran into what seems like a documentation issue. The README gives the following example for a single speaker ([link](https://github.com/coqui-ai/TTS#running-a-single-speaker-model)):

```python

# Init TTS with the target model name

tts = TTS(model_name="tts_models/de/thorsten/tacotron2-DDC", progress_bar=False).to(device)

...

```

When I run this locally with everything installed, and `device` set to `cpu`, I get the following error:

```

AttributeError: 'TTS' object has no attribute 'to'

```

Note the previous setup I've already run in the same file / session:

```python

import torch

from TTS.api import TTS

# Get device

device = "cuda" if torch.cuda.is_available() else "cpu"

```

Is this an issue with the documentation? Or is this something to do with my setup or an error in the library? This seems like SUPER simple usage.

### To Reproduce

1. Install using `pip install TTS`, MacOS Ventura 13.4

2. Install `espeak` with `brew install espeak`

3. Run `python`

4. Run the following code:

```python

from TTS.api import TTS

device = 'cpu'

tts = TTS(model_name="tts_models/de/thorsten/tacotron2-DDC", progress_bar=False).to(device)

```

5. Observe the error:

```

AttributeError: 'TTS' object has no attribute 'to'

```

### Expected behavior

I'd expect this to load the model.

### Logs

_No response_

### Environment

```shell

{

"CUDA": {

"GPU": [],

"available": false,

"version": null

},

"Packages": {

"PyTorch_debug": false,

"PyTorch_version": "2.0.1",

"TTS": "0.14.3",

"numpy": "1.21.6"

},

"System": {

"OS": "Darwin",

"architecture": [

"64bit",

""

],

"processor": "i386",

"python": "3.8.8",

"version": "Darwin Kernel Version 22.5.0: Mon Apr 24 20:51:50 PDT 2023; root:xnu-8796.121.2~5/RELEASE_X86_64"

}

}

```

### Additional context

_No response_ | closed | 2023-08-30T13:55:47Z | 2023-09-04T10:55:06Z | https://github.com/coqui-ai/TTS/issues/2905 | [

"bug"

] | jeremyblalock | 2 |

Evil0ctal/Douyin_TikTok_Download_API | web-scraping | 102 | 视频ID获取失败 |

__最初由 @kk20180123 在 https://github.com/Evil0ctal/Douyin_TikTok_Download_API/issues/101#issuecomment-1312716925 发布__ | closed | 2022-11-13T20:00:09Z | 2022-11-14T09:53:46Z | https://github.com/Evil0ctal/Douyin_TikTok_Download_API/issues/102 | [

"BUG",

"Fixed"

] | Evil0ctal | 6 |

microsoft/qlib | machine-learning | 1,058 | How can I do incremental training? | ## ❓ Questions and Help

I found a solution how to save and load models.

But I can't find a solution how to do incremental training with it.

Is there any example or documents to refer?

I think online_srv example is close to the solution.

But, there is only first_train in the example | closed | 2022-04-15T15:45:24Z | 2022-05-16T06:11:35Z | https://github.com/microsoft/qlib/issues/1058 | [

"question"

] | heury | 2 |

plotly/dash | jupyter | 3,236 | Dash 3 not properly rendering initially hidden plotly figures | **Describe the bug**

If more than 1 plots are created into a `Div` (which is initially hidden) and subsequently updated and shown, then the width of some of the plots is not correctly set. If using `responsive=True`, resizing the browser window fixes the behaviour. This appeared in Dash 3 and it was not present in Dash 2.

Consider the following MWE

<details>

```python

import dash

from dash import dcc, html, Input, Output

import plotly.graph_objects as go

app = dash.Dash(__name__)

app.layout = html.Div(

[

html.Button("Generate Figures", id="generate-btn", n_clicks=0),

html.Div(

id="graph-container",

children=[

dcc.Graph(

id="prec-climate-daily",

style={"height": "45vh"},

config={"responsive": True}

),

dcc.Graph(

id="temp-climate-daily",

style={"height": "45vh"},

config={"responsive": True}

),

],

style={"display": "none"}

),

]

)

@app.callback(

[

Output("prec-climate-daily", "figure"),

Output("temp-climate-daily", "figure"),

Output("graph-container", "style"),

],

[Input("generate-btn", "n_clicks")],

prevent_initial_call=True,

)

def update_figures(n_clicks):

fig_acc = go.Figure(data=[go.Scatter(x=[0, 1, 2], y=[0, 1, 0], mode="lines")])

fig_daily = go.Figure(data=[go.Scatter(x=[0, 1, 2], y=[1, 0, 1], mode="lines")])

return fig_acc, fig_daily, {"display": "block"}

if __name__ == "__main__":

app.run(debug=True)

```

</details>

When running you'll notice that one of the figures is created with default width and height values instead of filling the whole container.

There are different workarounds (which however are not compatible with different logics).

1) create `Graph` components directly in the callback (but this means they're not available in the initial app layout and thus not exposed to other callbacks)

<details>

```python

import dash

from dash import dcc, html, Input, Output

import plotly.graph_objects as go

app = dash.Dash(__name__)

app.layout = html.Div(

[

html.Button("Generate Figures", id="generate-btn", n_clicks=0),

html.Div(

id="graph-container",

children=[], # Initially empty

style={"display": "none"} # Initially hidden

),

]

)

@app.callback(

[

Output("graph-container", "children"),

Output("graph-container", "style"),

],

[Input("generate-btn", "n_clicks")],

prevent_initial_call=True,

)

def update_figures(n_clicks):

fig_acc = go.Figure(data=[go.Scatter(x=[0, 1, 2], y=[0, 1, 0], mode="lines")])

fig_daily = go.Figure(data=[go.Scatter(x=[0, 1, 2], y=[1, 0, 1], mode="lines")])

children = [

dcc.Graph(

id="prec-climate-daily",

style={"height": "45vh"},

config={"responsive": True},

figure=fig_acc

),

dcc.Graph(

id="temp-climate-daily",

style={"height": "45vh"},

config={"responsive": True},

figure=fig_daily

),

]

return children, {"display": "block"}

if __name__ == "__main__":

app.run(debug=True)

```

</details>

2) Removing the `hidden` property, thus showing empty plots on app startup

3) Reverting back to Dash 2.* where this issue is not present

4) Only having 1 plot into the app (I tried to make 1 `Div` per plot with different callbacks but this still trigger the issue...)

I don't have any error nor warning in the console so I'm kind of confused on what is triggering this issue in Dash 3 | open | 2025-03-24T12:28:36Z | 2025-03-24T15:01:26Z | https://github.com/plotly/dash/issues/3236 | [

"regression",

"bug",

"P1"

] | guidocioni | 0 |

kochlisGit/ProphitBet-Soccer-Bets-Predictor | seaborn | 83 | NSButton: 0x7fe573a48080 | Hello, I have successfully completed the installation, but I'm getting the following error and encountering the issue shown in the screenshot. How can I fix this?

024-04-15 23:28:53.160 python[10142:131011] Warning: Expected min height of view: (<NSButton: 0x7fe573a48080>) to be less than or equal to 30 but got a height of 32.000000. This error will be logged once per view in violation.

![Uploading Ekran Resmi 2024-04-15 23.41.34.png…]()

| open | 2024-04-15T20:42:06Z | 2024-04-16T07:01:08Z | https://github.com/kochlisGit/ProphitBet-Soccer-Bets-Predictor/issues/83 | [] | omerkymcu | 3 |

labmlai/annotated_deep_learning_paper_implementations | deep-learning | 283 | How to run model on multiple GPUs? | Hi, I am trying diffusion model.

SOLVED | closed | 2025-02-14T05:17:25Z | 2025-02-21T08:08:45Z | https://github.com/labmlai/annotated_deep_learning_paper_implementations/issues/283 | [] | yuffon | 0 |

axnsan12/drf-yasg | rest-api | 314 | How to wrapping with envelope? | I want to wrapping response value with `data`

In my class view code like this.

```

class UserList(BaseAPIView):

@swagger_auto_schema(responses={200: UserSerializer(many=True)})

def get(self, request: Request) -> Union[Response, NoReturn]:

```

And response is here -> `{'user_id': 'test', 'user_pw':'123'}`

But I want to wrap it like this -> `{'data': {'user_id': 'test', 'user_pw':'123'}}`

Is there any solution here? | closed | 2019-02-15T08:41:54Z | 2021-03-25T23:31:53Z | https://github.com/axnsan12/drf-yasg/issues/314 | [] | teamhide | 5 |

keras-rl/keras-rl | tensorflow | 287 | DDPG with MultiInputProcessor not working | Hi,

I have used MultiInputProcessor with DQN and it works fine. I am trying to use that feature to train an agent using DDPG.

I have 3 inputs from my environment: Image, two 1D vectors of size (1,3) (called vec_1 and vec_2)

My Actor-network is defined as:

```

actor = Model ( inputs = [image, vec_1, vec_2] ,

outputs = actions

)

```

My Critic-network is defined as:

```

critic = Model (inputs =[actions, image,vec_1, vec_2],

outputs = action_1

)

```

"action_1" is of the same dimension as "action".

`processor = MultiInputProcessor(nb_inputs=3)`

`agent = DDPGAgent(processor=processor,....,target_model_update=1e-3)`

When I run the code, I am getting an AssertionError from processor.py. Basically the len(observation) is not same as nb_inputs.

When I check the observation variable that is passed to the processor.py, I see that my "vec_1" and "vec_2" variables are not passed.

Since Critic network takes action as an input along with observation, the number of input to the critic model is one more than Actor-network. So how does MultiInputProcessor work with DDPG? Are there any examples of using DDPG with MultiInputProcessor?

Thanks

| open | 2019-01-17T00:10:19Z | 2019-01-18T17:15:57Z | https://github.com/keras-rl/keras-rl/issues/287 | [] | srivatsankrishnan | 6 |

ClimbsRocks/auto_ml | scikit-learn | 148 | ImportError: cannot import name Predictor | When importing using the example from the readme get the error in the title :-/ | closed | 2016-12-13T01:00:55Z | 2016-12-14T23:47:05Z | https://github.com/ClimbsRocks/auto_ml/issues/148 | [] | jeregrine | 13 |

autogluon/autogluon | computer-vision | 3,846 | Documentation for test set size minus train set size > prediction length | ### Describe the issue linked to the documentation

Perhaps this can be included in the documentation because it is unclear how to have settings for timeseries forecasting where I want to predict 1 step ahead.

The docs state to make the train_set and then the test_set = train_set + prediction_length.

If the prediction_length ==1 then the output plots for the test set has just a single point being predicted.

So here's an example.

Suppose I have 50 time series, all time-varying unknown. Suppose I have 100 rows of data total per time series.

Make train set == 90 rows

Test set would be all 100 rows.

prediction_length == 1

context_length (if applicable) is 10 rows

Walkforward validation is desired.

To my understanding from the docs, this would be something like:

```

predictor = TimeSeriesPredictor(

prediction_length=1,

target="target",

eval_metric="MSE",

)

predictor.fit(

train_data,

presets="best_quality",

random_seed=42,

num_val_windows=80, # this is 90 rows in train set minus 10 for the rolling context window.

val_step_size=1, # walkforward the 10 rows in the context_window by 1 timestep

"TemporalFusionTransformer": [ {"context_length": 10}],

....<other models>

)

```

After fitting the model, doing:

```

predictions = predictor.predict(train_data, random_seed=42)

```

I see that there is only a 1 mean value + the quantiles. Same results when passing in `test_data` as well.

Is the only option to iteratively pass in the test data in a for loop?

rows (80,90) -> 91

rows (81,91) -> 92

rows (82, 92) -> 93

...

rows (89, 99) -> 100

### Suggest a potential alternative/fix

Keep same API from `.fit`. i.e. do:

```

predictions = predictor.predict(train_data, random_seed=42, test_step_size=1)

```

To do autoregressive forecasting.

I'd also have `context_length` apply to all models globally. | open | 2024-01-04T03:49:48Z | 2024-06-27T17:36:12Z | https://github.com/autogluon/autogluon/issues/3846 | [

"API & Doc",

"module: timeseries"

] | esnvidia | 0 |

widgetti/solara | flask | 989 | Feature Request: accept async functions in `on_kernel_start` | ## Feature Request

- [ ] The requested feature would break current behaviour

- [x] I would be interested in opening a PR for this feature

### What problem would this feature solve? Please describe.

For certain functionality that you might want to execute on kernel start, you need to call asynchronous functions. It would be useful to accept these for `solara.lab.on_kernel_start`

### Describe the solution you'd like

The cleanest would be simply allowing

```python

@solara.lab.on_kernel_start

async def init():

await database.connect()

async def cleanup():

await database.disconnect()

return cleanup

```

| open | 2025-01-28T14:18:35Z | 2025-01-28T14:38:43Z | https://github.com/widgetti/solara/issues/989 | [

"enhancement"

] | iisakkirotko | 0 |

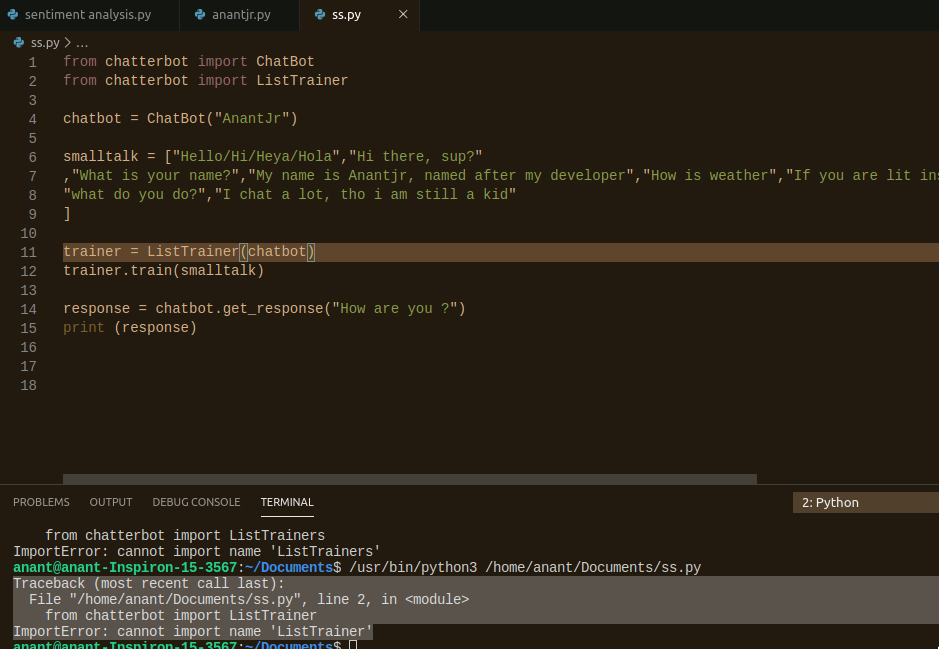

gunthercox/ChatterBot | machine-learning | 2,216 | Maintainer Needed? | What's the state of maintenance for Chatterbot? It seems a number of important things are unresolved without comment, and there's been only a single doc fixing commit this year.

It can often be quite reasonable to find new maintainers for projects by reaching out to PR contributors and fork owners. There are also some looking-for-maintainer hubs out there that try to bring people in.

@gunthercox are you still on this project or do you need help? Is anyone available to step in? | closed | 2021-11-05T18:31:36Z | 2025-02-25T22:47:50Z | https://github.com/gunthercox/ChatterBot/issues/2216 | [] | xloem | 8 |

vitalik/django-ninja | django | 499 | serialization without a view? | Please describe what you are trying to achieve

I have some places in my code where I need to get at a JSON version of an object that aren't within the API. Is there some way to take advantage of the Schemas I've defined to convert?

Please include code examples (like models code, schemes code, view function) to help understand the issue

```

from ninja.hope_this_exists import serialization_helper

class Job(models.Model):

...

class JobOut(Schema):

...

job = Job.objects.get(id=1)

serialized = serialization_helper(JobOut, job)

```

| closed | 2022-07-06T18:22:25Z | 2023-04-02T11:39:51Z | https://github.com/vitalik/django-ninja/issues/499 | [] | cltrudeau | 7 |

httpie/http-prompt | api | 70 | Feature proposal: saving current request setup under named alias | This is great package, thanks for sharing your work!

As I've been using it couple of days I noticed that it's quite inconvenient, having to repeatedly change request settings every time I want to send different request.

The option of saving current state of request would solve the problem.

Given this output of `httpie post`:

``` bash

http://127.0.0.1:3000/api/oauth/token> http --form --style native POST http://127.0.0.1:3000/api/oauth/token user_id==value client_id=value client_secret=value grant_type=password password=test username=testuser1 Content-Type:application/x-www-form-urlencoded

```

We could use eg.: `http://127.0.0.1:3000/api/oauth/token> alias getToken`

which would save the request under `getToken` keyword. Later, we could call getToken request and optionally overwrite any req options like so:

``` bash

http://127.0.0.1:3000/api/oauth/token> getToken username=testuser2 password=test2

```

| closed | 2016-07-25T10:08:26Z | 2016-09-18T06:44:03Z | https://github.com/httpie/http-prompt/issues/70 | [

"enhancement"

] | fogine | 17 |

WZMIAOMIAO/deep-learning-for-image-processing | pytorch | 41 | About pytorch version | ssd_model.py

line4:from torch.jit.annotations import Optional, List, Dict, Tuple, Module

I just deleted “Optional” and run it directly under pytorch1.0. It seems like module Optional is useless......

| closed | 2020-07-31T08:38:35Z | 2020-08-21T03:08:52Z | https://github.com/WZMIAOMIAO/deep-learning-for-image-processing/issues/41 | [] | Venquieu | 1 |

babysor/MockingBird | deep-learning | 598 | protoc 跑不起来训练,_pb2.py in <module>到底哪个版本可以啊? | protoc 哪个版本能跑起来synthesizer_traina啊?

3.19.4

21.0.rc

21.1

都不行

还是一大堆_pb2.py file,in<module>

traceback

File "D:\MockingBird-main\synthesizer_train.py", line 2, in <module>

from synthesizer.train import train

File "D:\MockingBird-main\synthesizer\train.py", line 5, in <module>

from torch.utils.tensorboard import SummaryWriter

File "C:\Users\H410MH\AppData\Local\Programs\Python\Python39\lib\site-packages\torch\utils\tensorboard\__init__.py", line 10, in <module>

from .writer import FileWriter, SummaryWriter # noqa: F401

File "C:\Users\H410MH\AppData\Local\Programs\Python\Python39\lib\site-packages\torch\utils\tensorboard\writer.py", line 9, in <module>

from tensorboard.compat.proto.event_pb2 import SessionLog

File "C:\Users\H410MH\AppData\Local\Programs\Python\Python39\lib\site-packages\tensorboard\compat\proto\event_pb2.py", line 17, in <module>

from tensorboard.compat.proto import summary_pb2 as tensorboard_dot_compat_dot_proto_dot_summary__pb2

File "C:\Users\H410MH\AppData\Local\Programs\Python\Python39\lib\site-packages\tensorboard\compat\proto\summary_pb2.py", line 17, in <module>

from tensorboard.compat.proto import tensor_pb2 as tensorboard_dot_compat_dot_proto_dot_tensor__pb2

File "C:\Users\H410MH\AppData\Local\Programs\Python\Python39\lib\site-packages\tensorboard\compat\proto\tensor_pb2.py", line 16, in <module>

from tensorboard.compat.proto import resource_handle_pb2 as tensorboard_dot_compat_dot_proto_dot_resource__handle__pb2

File "C:\Users\H410MH\AppData\Local\Programs\Python\Python39\lib\site-packages\tensorboard\compat\proto\resource_handle_pb2.py", line 16, in <module>

from tensorboard.compat.proto import tensor_shape_pb2 as tensorboard_dot_compat_dot_proto_dot_tensor__shape__pb2

File "C:\Users\H410MH\AppData\Local\Programs\Python\Python39\lib\site-packages\tensorboard\compat\proto\tensor_shape_pb2.py", line 36, in <module>

_descriptor.FieldDescriptor(

File "C:\Users\H410MH\AppData\Local\Programs\Python\Python39\lib\site-packages\google\protobuf\descriptor.py", line 560, in __new__

_message.Message._CheckCalledFromGeneratedFile()

类型

TypeError: Descriptors cannot not be created directly.

If this call came from a _pb2.py file, your generated code is out of date and must be regenerated with protoc >= 3.19.0.

If you cannot immediately regenerate your protos, some other possible workarounds are:

1. Downgrade the protobuf package to 3.20.x or lower.

| closed | 2022-06-02T02:27:13Z | 2022-06-11T07:22:47Z | https://github.com/babysor/MockingBird/issues/598 | [] | kidwanttolearn | 2 |

graphql-python/graphene | graphql | 973 | Graphene Django and Classes instead of models | I am using graphene on a django project. It's nice to have graphene-django since you can add the Meta class and set the model from django models. But what I want to do is return my own custom classes.

What I have is a Profile class, I am using project without db, so this profile class has properties like name, email, age, etc

What I need is to return this Profile class instead of a django model class or the graphene Object Types, how is that posible

I woul like to do something like

```

import Profile

class ProfileType(Profile)

pass

```

in that case profile has all the attributes but like a python object and not as a django model.

or maybe add this class as a Meta property | closed | 2019-05-23T02:02:25Z | 2019-05-23T03:12:03Z | https://github.com/graphql-python/graphene/issues/973 | [] | pmcarlos | 0 |

RomelTorres/alpha_vantage | pandas | 237 | Alpha Vantage API return empty results [bug?] | Dear,

The following query does not work anymore (returns an empty dictionary).

Do you introduce some restrictions for Italian stocks?

https://www.alphavantage.co/query?function=TIME_SERIES_DAILY&outputsize=full&symbol=ENI.MI&apikey=

Best

Lorenzo | closed | 2020-06-26T06:33:59Z | 2020-06-26T16:14:20Z | https://github.com/RomelTorres/alpha_vantage/issues/237 | [] | lorenzo-gabrielli | 6 |

horovod/horovod | tensorflow | 3,251 | How to load a pre-trained model using horovod | **Environment:**

1. Framework: PyTorch 1.4

2. python version: horovod with ppytorch

3. Horovod version: latest version

4. MPI version: 4.0.3

5. CUDA version: cuda10.1

6. NCCL version: 2.5.6

7. Python version: python3.6

**Question**

I can successfully train my model using horovod in a multi-machine-multi-card fashion, and save the checkpoint models only on worker 0 to prevent other workers from corrupting them. My question is how to load such pre-trained models using horovod in a multi-machine-multi-card fashion, Do I need to load the pre-trained models only on worker 0 ?

| closed | 2021-11-01T06:52:27Z | 2021-11-02T12:34:25Z | https://github.com/horovod/horovod/issues/3251 | [

"bug"

] | ForawardStar | 0 |

vaexio/vaex | data-science | 2,230 | [BUG-REPORT] median_approx and percentile_approx return nan instead of actual value | **Description**

When using `median_approx` or `percentile_approx` to calculate the median or a percentile of a (Vaex) data frame or one of its columns, they return `nan` instead of the actual value of the statistic. I'm not sure if this might be related to #1304.

See for instance the behavior of the median approximation function illustrated below with the Vaex example dataset.

```

import vaex

vdf = vaex.example()

v_med_x = vdf.median_approx('x')

v_med_xy = vdf.median_approx(['x', 'y'])

v_med_df = vdf.median_approx(vdf.get_column_names())

pdf = vdf.to_pandas_df()

p_med_x = pdf['x'].median()

p_med_xy = pdf[['x', 'y']].median()

p_med_df = pdf.median()

print(f'Vaex results:\n\tMedian of \'x\':\t\t{v_med_x}\n\tMedian of \'x\' and \'y\':\t{v_med_xy}\n\tMedian of all df:\t{v_med_df}\n\nPandas results:\n\tMedian of \'x\':\t\t{p_med_x}\n\tMedian of \'x\' and \'y\':\t{list(p_med_xy)}\n\tMedian of all df:\t{list(p_med_df)}')

```

This little program returns the following output on the screen, where one can see that **the Vaex medians are all nan's and differ from those reported by Pandas (note that they exist and are finite)**. May there be an issue with the interpolation algorithm used by Vaex to estimate these metrics?

_Is there a way to get the median/percentile with Vaex at the moment?_

```

Vaex results:

Median of 'x': nan

Median of 'x' and 'y': [nan nan]

Median of all df: [nan nan nan nan nan nan nan nan nan nan nan]

Pandas results:

Median of 'x': -0.05056469142436981

Median of 'x' and 'y': [-0.05056469142436981, -0.032549407333135605]

Median of all df: [16.0, -0.05056469142436981, -0.032549407333135605, -0.007299995049834251, 0.3338821530342102, 2.5303449630737305, 0.5708029270172119, -105428.9765625, 752.062744140625, -164.71417236328125, -1.6478899717330933]

```

**Software information**

- Vaex version: {'vaex-core': '4.14.0', 'vaex-viz': '0.5.4', 'vaex-hdf5': '0.13.0', 'vaex-ml': '0.18.0', 'vaex-arrow': '0.4.2'}

- Vaex was installed via: conda-forge (conda install -c conda-forge vaex-core vaex-viz vaex-ml vaex-hdf5 vaex-arrow)

- OS: Windows 10 Pro version 21H2

- Python version: Python 3.9.13 | packaged by conda-forge

- NumPy version: 1.23.3

- Pandas version: 1.5.0

| open | 2022-10-14T16:24:15Z | 2022-10-19T03:40:49Z | https://github.com/vaexio/vaex/issues/2230 | [] | abianco88 | 17 |

AUTOMATIC1111/stable-diffusion-webui | pytorch | 15,483 | [Bug]: Loading an fp16 SDXL model fails on MPS when model loading RAM optimization is enabled | ### Checklist

- [ ] The issue exists after disabling all extensions

- [ ] The issue exists on a clean installation of webui

- [ ] The issue is caused by an extension, but I believe it is caused by a bug in the webui

- [X] The issue exists in the current version of the webui

- [ ] The issue has not been reported before recently

- [ ] The issue has been reported before but has not been fixed yet

### What happened?

Loading an fp16 model on MPS fails when `--disable-model-loading-ram-optimization` is not enabled. I suspect this is because fp32 is natively supported by MPS, but something is causing an attempted cast to fp64 from fp16, which is not supported.

### Steps to reproduce the problem

1. Try to load an fp16 model on MPS (Apple Silicon).

### What should have happened?

The model loading RAM optimization hack shouldn't interfere with this.

### What browsers do you use to access the UI ?

_No response_

### Sysinfo

```

{

"Platform": "macOS-14.2.1-arm64-arm-64bit",

"Python": "3.10.13",

"Version": "v1.9.0-RC-12-gac8ffb34",

"Commit": "ac8ffb34e3d1196319c8dcfcf0f5d3eded713176",

"Commandline": [

"webui.py",

"--skip-torch-cuda-test",

"--upcast-sampling",

"--no-half-vae",

"--use-cpu",

"interrogate",

"--skip-load-model-at-start"

],

}

```

### Console logs

```Shell

Traceback (most recent call last):

File "stable-diffusion-webui/modules/options.py", line 165, in set

option.onchange()

File "stable-diffusion-webui/modules/call_queue.py", line 13, in f

res = func(*args, **kwargs)

File "stable-diffusion-webui/modules/initialize_util.py", line 181, in <lambda>

shared.opts.onchange("sd_model_checkpoint", wrap_queued_call(lambda: sd_models.reload_model_weights()), call=False)

File "stable-diffusion-webui/modules/sd_models.py", line 860, in reload_model_weights

sd_model = reuse_model_from_already_loaded(sd_model, checkpoint_info, timer)

File "stable-diffusion-webui/modules/sd_models.py", line 826, in reuse_model_from_already_loaded

load_model(checkpoint_info)

File "stable-diffusion-webui/modules/sd_models.py", line 748, in load_model

load_model_weights(sd_model, checkpoint_info, state_dict, timer)

File "stable-diffusion-webui/modules/sd_models.py", line 393, in load_model_weights

model.load_state_dict(state_dict, strict=False)

File "stable-diffusion-webui/modules/sd_disable_initialization.py", line 223, in <lambda>

module_load_state_dict = self.replace(torch.nn.Module, 'load_state_dict', lambda *args, **kwargs: load_state_dict(module_load_state_dict, *args, **kwargs))

File "stable-diffusion-webui/modules/sd_disable_initialization.py", line 221, in load_state_dict

original(module, state_dict, strict=strict)

File "stable-diffusion-webui/venv/lib/python3.10/site-packages/torch/nn/modules/module.py", line 2139, in load_state_dict

load(self, state_dict)

File "stable-diffusion-webui/venv/lib/python3.10/site-packages/torch/nn/modules/module.py", line 2127, in load

load(child, child_state_dict, child_prefix)

File "stable-diffusion-webui/venv/lib/python3.10/site-packages/torch/nn/modules/module.py", line 2127, in load

load(child, child_state_dict, child_prefix)

File "stable-diffusion-webui/venv/lib/python3.10/site-packages/torch/nn/modules/module.py", line 2127, in load

load(child, child_state_dict, child_prefix)

[Previous line repeated 3 more times]

File "stable-diffusion-webui/venv/lib/python3.10/site-packages/torch/nn/modules/module.py", line 2121, in load

module._load_from_state_dict(

File "stable-diffusion-webui/modules/sd_disable_initialization.py", line 226, in <lambda>

conv2d_load_from_state_dict = self.replace(torch.nn.Conv2d, '_load_from_state_dict', lambda *args, **kwargs: load_from_state_dict(conv2d_load_from_state_dict, *args, **kwargs))

File "stable-diffusion-webui/modules/sd_disable_initialization.py", line 191, in load_from_state_dict

module._parameters[name] = torch.nn.parameter.Parameter(torch.zeros_like(param, device=device, dtype=dtype), requires_grad=param.requires_grad)

File "stable-diffusion-webui/venv/lib/python3.10/site-packages/torch/_meta_registrations.py", line 4806, in zeros_like

res = aten.empty_like.default(

File "stable-diffusion-webui/venv/lib/python3.10/site-packages/torch/_ops.py", line 513, in __call__

return self._op(*args, **(kwargs or {}))

File "stable-diffusion-webui/venv/lib/python3.10/site-packages/torch/_prims_common/wrappers.py", line 250, in _fn

result = fn(*args, **kwargs)

File "stable-diffusion-webui/venv/lib/python3.10/site-packages/torch/_refs/__init__.py", line 4755, in empty_like

return torch.empty_permuted(

TypeError: Cannot convert a MPS Tensor to float64 dtype as the MPS framework doesn't support float64. Please use float32 instead

```

### Additional information

_No response_ | open | 2024-04-11T05:57:42Z | 2024-04-11T05:58:36Z | https://github.com/AUTOMATIC1111/stable-diffusion-webui/issues/15483 | [

"bug-report"

] | akx | 0 |

coleifer/sqlite-web | flask | 37 | Some safety features for remote DB access | I just needed a sqlite db browser for testing of my program on remote Linux server.

Sqlite-web is the only I found. It is simple and useful. Thank you!

What I'm missing it is **simple password protection** to access the database remotely.

For example specifying password as parameter in the start command line would be fine. Now each time I have to stop the server and it is unsafe if I forget it. There might be ways to handle it but I'm not good at web server administration.

Another useful option would be **server auto stop** after some time of inactivity | closed | 2018-02-14T23:57:43Z | 2018-02-23T17:42:09Z | https://github.com/coleifer/sqlite-web/issues/37 | [] | lunfardo314 | 6 |

JoeanAmier/TikTokDownloader | api | 316 | 无法下载内容 | 版本

TikTokDownloader_V5.4_WIN

| open | 2024-10-18T16:18:00Z | 2024-10-29T03:17:08Z | https://github.com/JoeanAmier/TikTokDownloader/issues/316 | [] | ZX828 | 2 |

nerfstudio-project/nerfstudio | computer-vision | 3,332 | COLMAP crashed causing the machine to restart | **Describe the bug**

When using nerfstudio to process image data, the computer will restart directly during the bundle adjustment stage. It doesn't happen when the number of pictures is small, but it does happen with a large number of pictures (around 400 pics, 4000*6000).

**Hardware**

GPU: 4090, 24G

Mem: 126G

Swap: 2G

**To Reproduce**

Steps to reproduce the behavior:

1. Run ns-process-data

2. In BA stage, the machine reboots.

**Expected behavior**

After completing BA, we get the sparse reconstruction result.

**Additional context**

- In the same environment, if the number of images is small, such as 100, nerfstudio can call colmap to process it normally, and subsequent training is also good.

- Even with large amounts of data, openSfM can still be used for sparse reconstruction. I wonder if there are plans to support the openSfM format in the future. Or if the processed colmap format data can be used directly without nerfstudio calling it again. | open | 2024-07-25T09:18:33Z | 2024-07-29T13:11:22Z | https://github.com/nerfstudio-project/nerfstudio/issues/3332 | [] | YuhsiHu | 2 |

biolab/orange3 | pandas | 6,881 | Switch the language of orange3 interface to Chinese. | <!--

Thanks for taking the time to submit a feature request!

For the best chance at our team considering your request, please answer the following questions to the best of your ability.

-->

**What's your use case?**

<!-- In other words, what's your pain point? -->

<!-- Is your request related to a problem, or perhaps a frustration? -->

<!-- Tell us the story that led you to write this request. -->

Because there is no Chinese version of orange3 software, a group of people in China have translated it and sold it at a high price. As a student and a learning enthusiast, its price is unbearable. I hope I can add an option to switch the software language to Chinese in the settings, which can be downloaded and used as a plug-in

**What's your proposed solution?**

<!-- Be specific, clear, and concise. -->

1. Chinese language packs are downloaded as plug-ins.

2. Add options about Chinese language packs in the settings.

**Are there any alternative solutions?**

Use the default language of the computer system.

| closed | 2024-08-23T03:20:41Z | 2024-11-17T01:47:50Z | https://github.com/biolab/orange3/issues/6881 | [] | TonyEinstein | 5 |

widgetti/solara | jupyter | 619 | "Page not found by Solara router" with the boilerplate package from `solara create portal ` | I am considering using solara for a prototype/showcase app. Starting from the boilerplate from `solara create portal` seems not to work out of the box with the current version 1.32

I'll see if I can figure out a PR, but no promise - not my field.

## Repro info

```sh

python --version # 3.9.13; debian linux

cd $HOME/.venvs/

python -m venv solara-env

source ./solara-env/bin/activate

pip install solara # installs 1.32.0

~/.venvs/solara-env/bin/python -m pip install --upgrade pip

pip install matplotlib pandas vaex plotly

mkdir -p $HOME/$BLAH/showcase/

cd $HOME/$BLAH/showcase/

solara create --help

solara create portal stream-temperature

cd stream-temperature/

pip install --no-deps -e .

cd ..

solara run stream_temperature

```

| closed | 2024-04-29T07:59:59Z | 2024-04-29T08:06:47Z | https://github.com/widgetti/solara/issues/619 | [] | jmp75 | 1 |

gunthercox/ChatterBot | machine-learning | 1,827 | Converting chatterbot used py to exe | After converting a simple chatbot program (using chatterbot module) to exe i encountered thousands of module not found runtime error when i run the exe file.... I simply keep adding hidden imports while compiling to exe using pyinstaller but i am tired now as there are tons of hidden modules require i guess...... So is there any easy way to solve the error??? | closed | 2019-10-06T10:39:16Z | 2020-06-20T17:32:17Z | https://github.com/gunthercox/ChatterBot/issues/1827 | [

"answered"

] | ProPython007 | 2 |

marcomusy/vedo | numpy | 1,085 | Bug: Thumbnail slices part of mesh | ```

import vedo

import numpy as np

mesh = vedo.Mesh("snare.stl")

image_size=224

num_views = 16

sqrt_num_views = 4

images = []

for i in range(sqrt_num_views):

for j in range(sqrt_num_views):

image = mesh.thumbnail(

size=(image_size, image_size),

azimuth=360 * i / sqrt_num_views - 180,

elevation=180 * j / sqrt_num_views - 90,

zoom=1.25,

axes=1,

)

images.append(image)

images = np.array(images)

ncols = np.floor(np.sqrt(num_views))

nrows = np.ceil(np.sqrt(num_views))

# Create a figure and axes

fig, axs = plt.subplots(4, 4, figsize=(10, 10))

# Flatten axes for easier indexing

axs = axs.flatten()

# Loop through each image and plot

for i in range(16):

axs[i].imshow(images[i])

# axs[i].axis("off") # Turn off axis

# Adjust layout

plt.tight_layout()

# Show plot

plt.show()

```

[snare.zip](https://github.com/marcomusy/vedo/files/14878751/snare.zip)

| closed | 2024-04-05T01:26:17Z | 2024-04-05T17:59:57Z | https://github.com/marcomusy/vedo/issues/1085 | [

"bug",

"fixed"

] | JeffreyWardman | 1 |

ets-labs/python-dependency-injector | asyncio | 262 | Does the `from_ini` method of the Configuration provider always return a str even when the value is an int? | I just started working with the newest version of the dependency injector and I'm curious about the Configuration provider and its return types. Using `from_dict` seems to return the correct values and correct types of those values, e.g.:

```python

config.from_dict(

{

'section1': {

'option1': 1, # returns int as value type

'option2': 2, # returns int as value type

},

},

)

```

Using the `from_ini` method has a different result from the same structure, e.g.:

```ini

[section1]

option1 = 1 # returns str as value type

option2 = 2 # returns str as value type

```

Is there something I'm overlooking in the documentation or is there something that can be done to make `from_ini` return its values in the same way as the `from_dict` method?

As an intermediate solution, I'm casting all the values from the dict to their appropriate (int or float) value in a new dict because the original dict is unassignable.

For each section I currently have the following snippet:

```python

sec1 = {k: float(v) if '.' in v else int(v)

for (k, v) in Core.config.section1().items()}

```

Also, how does the Configuration provider handle changes in the source file? Let's say I already used `from_ini` once and then updated the file with new configurations, will the `from_ini` method load the file again or maybe does the `provider.Configuration.section1` auto-magically have updated values? | closed | 2020-07-08T08:59:22Z | 2020-08-24T17:42:33Z | https://github.com/ets-labs/python-dependency-injector/issues/262 | [

"question"

] | zborowa | 2 |

itamarst/eliot | numpy | 185 | Test against newer version of Twisted | Probably 15.2.

| closed | 2015-07-24T20:26:50Z | 2018-09-22T20:59:18Z | https://github.com/itamarst/eliot/issues/185 | [] | itamarst | 0 |

Netflix/metaflow | data-science | 1,712 | add __repr__ methods to Parameter | I think it would be useful to add __str__ and __repr__ methods to the Parameter class (https://github.com/Netflix/metaflow/blob/master/metaflow/parameters.py#L212) to improve understanding and debugging | closed | 2024-02-02T17:02:10Z | 2024-02-07T10:52:10Z | https://github.com/Netflix/metaflow/issues/1712 | [] | raybellwaves | 0 |

hack4impact/flask-base | sqlalchemy | 53 | Add auto-formatting to flask-base | From working on Go and working at Google (where most code is auto-formatted) I've noticed that there are immense benefits to auto-formatting code.

1. Code style and conformation to standards, like pep8, are ensured.

2. Writing code is faster.

3. Less cognitive load, it's one less thing to worry about.

4. No arguments about style.

5. If all of our python code is formatted the same, getting up to speed with code you didn't write will take less effort.

6. Diffs show actual code, not 'diff noise' where someone adds a space or changes formatting.

| closed | 2016-08-21T05:02:41Z | 2016-08-28T22:44:07Z | https://github.com/hack4impact/flask-base/issues/53 | [] | sandlerben | 3 |

ansible/awx | django | 15,581 | awx.awx.role module failed to assign role to teams when using "lookup_organization" parameter | ### Please confirm the following

- [X] I agree to follow this project's [code of conduct](https://docs.ansible.com/ansible/latest/community/code_of_conduct.html).

- [X] I have checked the [current issues](https://github.com/ansible/awx/issues) for duplicates.

- [X] I understand that AWX is open source software provided for free and that I might not receive a timely response.

- [X] I am **NOT** reporting a (potential) security vulnerability. (These should be emailed to `security@ansible.com` instead.)

### Bug Summary

awx.awx.role module failed when calling with "lookup_organization" pointing to a different organization where the teams are located.

### AWX version

any

### Select the relevant components

- [ ] UI

- [ ] UI (tech preview)

- [ ] API

- [ ] Docs

- [X] Collection

- [ ] CLI

- [ ] Other

### Installation method

N/A

### Modifications

no

### Ansible version

_No response_

### Operating system

_No response_

### Web browser

_No response_

### Steps to reproduce

For example. We want to assign use for project ABC-project under organization ABC to team XYZ under organization XYZ.

tasks:

- name: Assign Project ABC-project use permission to Team XYZ across organizations

ansible.controller.role:

teams: XYZ

projects: ABC-project

role: use

lookup_organization: ABC

### Expected results

Should be able to assign role permission.

### Actual results

The task return failed message

fatal: [localhost]: FAILED! => {"changed": false, "msg": "There were 1 missing items, missing items: ['XYZ']"}

### Additional information

It seems to be a bug in role.py

https://github.com/ansible/awx/pull/15580 | closed | 2024-10-11T23:23:38Z | 2025-03-12T17:33:45Z | https://github.com/ansible/awx/issues/15581 | [

"type:bug",

"component:awx_collection",

"needs_triage"

] | ecchong | 1 |

davidsandberg/facenet | computer-vision | 626 | align_dataset_mtcnn.py too slow | I followed the instructions in https://github.com/davidsandberg/facenet/wiki/Classifier-training-of-inception-resnet-v1 and ran python src/align/align_dataset_mtcnn.py ~/datasets/casia/CASIA-maxpy-clean/ ~/datasets/casia/casia_maxpy_mtcnnpy_182 --image_size 182 --margin 44.

It's working but seems to be very slow. The gpu utilization is also low ( 3% of gpu0 and %0 of gpu1). I don't think I can wait that long. Could anyone give me some help? Thanks! | closed | 2018-01-23T08:26:23Z | 2018-01-23T09:55:22Z | https://github.com/davidsandberg/facenet/issues/626 | [] | eurus-yu | 1 |

stanfordnlp/stanza | nlp | 479 | Connecting to remote CoreNLP server | closed | 2020-10-06T04:53:40Z | 2020-10-10T17:28:20Z | https://github.com/stanfordnlp/stanza/issues/479 | [

"question"

] | ronkow | 8 | |

pytorch/vision | computer-vision | 8,297 | Allow empty class folders in ImageFolder | Follow up to https://github.com/pytorch/vision/issues/4925 and as summarized in https://github.com/pytorch/vision/issues/4925#issuecomment-1976785097, we need to allow empty class folders in ImageFolder i.e. we need a way for users to bypass this error:

https://github.com/pytorch/vision/blob/c8c3839cb9b0fc52885cafa431460f686c46f5ae/torchvision/datasets/folder.py#L98-L99

The most obvious way to enable that is by adding a new `allow_empty` parameter to `make_dataset()` and `ImageFolder()` / `DatasetFolder()`. (name TBD). | closed | 2024-03-05T10:30:55Z | 2024-03-13T13:29:44Z | https://github.com/pytorch/vision/issues/8297 | [

"enhancement",

"module: datasets"

] | NicolasHug | 0 |

tfranzel/drf-spectacular | rest-api | 473 | How can I disable schema for patch requests across all APIs | Currently I'm using this on all my viewsets:

```

@extend_schema_view(

partial_update=extend_schema(exclude=True),

)

class MyViewSet(...):

...

```

Any better suggestions? | closed | 2021-07-31T05:12:42Z | 2021-08-01T08:40:09Z | https://github.com/tfranzel/drf-spectacular/issues/473 | [] | tiholic | 2 |

pallets/quart | asyncio | 37 | http2_push example hangs when using nghttp | I was trying to verify the http2_push example by using nghttp. The example does seem to work fine from a browser (Chrome or Firefox).

Repro:

> $ QUART_APP=http2_push:app quart run

> Running on https://127.0.0.1:5000 (CTRL + C to quit)

> [2018-12-19 14:44:13,507] ASGI Framework Lifespan error, continuing without Lifespan support

>

> $ nghttp -nvy https://localhost:5000/push

>

| closed | 2018-12-19T22:49:49Z | 2022-07-06T00:24:01Z | https://github.com/pallets/quart/issues/37 | [] | jonozzz | 4 |

pallets/flask | flask | 4,425 | flask app timeout urgent issue | Dears,

Hope all is well.

I have an application, my app build based on the flask, my issue is as follows,

after sending 1000 to 3000 requests per minute to my flask app the flask going down (no response ) kindly keep in your mind that my issue is not a resource issue because my memory percentage is less than 20% and CPU 30 to40 %

so how can I fix this issue in flask

PS: I did the same testing in fastapi and there is no issue with them

| closed | 2022-01-20T12:29:16Z | 2022-01-20T12:30:35Z | https://github.com/pallets/flask/issues/4425 | [] | themeswordpress | 1 |

polakowo/vectorbt | data-visualization | 165 | Is there a way to change the start date in portfolio.stats() ? | Hi guys,

I have a doubt, is there a way to change the start date in portfolio.stats() ? I'm backtesting a portfolio optimization model using a rolling window to rebalance the portfolio monthly, but when I use portfolio.stats(), the days consider the initial period (about 5 years) where the portfolio is only cash. I want to calculate the stats starting the first date when I rebalance the portfolio. I try the solution:

`a = portfolio.value().iloc[252*5:].pct_change().dropna()` # I extract data from the vectorbt portfolio,

`a.vbt.returns(freq='D').stats(0)` # I calculate stats from portfolio returns

The Total Return obtained using this method is different from that obtained using portfolio.stats(), however they very are close.

Best

Dany | closed | 2021-06-12T07:05:41Z | 2021-06-13T05:25:28Z | https://github.com/polakowo/vectorbt/issues/165 | [] | dcajasn | 2 |

encode/apistar | api | 68 | API Mocking | Once we've got the schemas fully in place we'd like to be able to run a mock API purely based on the function annotations, so that users can start up a mock API even before they've implemented any functionality.

We'd want to use randomised output that fits the given schema constraints, as well as validating any input, and returning those values accordingly.

eg.

**schemas.py**:

```python

class KittenName(schema.String):

max_length = 100

class KittenColor(schema.Enum):

enum = [

'black',

'brown',

'white',

'grey',

'tabby'

]

class Kitten(schema.Object):

properties = {

'name': KittenName,

'color': KittenColor,

'cuteness': schema.Number(

minimum=0.0,

maximum=10.0,

multiple_of=0.1

)

}

```

**views.py:**

```python

def list_favorite_kittens(color: KittenColor=None) -> List[Kitten]:

"""

List your favorite kittens, optionally filtered by color.

"""

pass

def add_favorite_kitten(name: KittenName) -> Kitten:

"""

Add a kitten to your favorites list.

"""

pass

```

We should be able to do this sort of thing...

```bash

$ apistar mock

mock api running on 127.0.0.1:5000

$ curl http://127.0.0.1:5000/kittens/fav/

[

{

"name": "congue",

"color": "tabby",

"cuteness": 5.9

},

{

"name": "aenean",

"color": "white",

"cuteness": 9.3

},

{

"name": "etiam",

"color": "tabby",

"cuteness": 8.8

}

]

``` | open | 2017-04-20T13:50:35Z | 2017-08-17T11:38:46Z | https://github.com/encode/apistar/issues/68 | [

"Baseline feature"

] | tomchristie | 2 |

pyeve/eve | flask | 769 | GeoJson types limited to 2 fields | Quoting from the [GeoJSON specification](http://geojson.org/geojson-spec.html#geojson-objects):

> The GeoJSON object may have any number of members (name/value pairs)

Using one of the GeoJson types in Eve schemas I cannot add additional fields besides "types" and "coordinates".

For istance the following GeoJSON is valid according to http://geojsonlint.com/, but is rejected by Eve, having defined the field as type "point"

```

{

"type": "Point",

"coordinates": [

-105.01621,

39.57422

],

"customField": "blabla"

}

```

| closed | 2015-11-24T16:10:28Z | 2018-05-18T18:19:37Z | https://github.com/pyeve/eve/issues/769 | [

"stale"

] | mion00 | 1 |

slackapi/python-slack-sdk | asyncio | 903 | ActionsBlock elements are not parsed | ### Description

In ActionsBlock __init__, elements are simplie copied.

[Exact bug place](https://github.com/slackapi/python-slack-sdk/blob/4e4524230ed41ef7cd9d637c52f4e86b1ffedad9/slack/web/classes/blocks.py#L241)

For all other Blocks, similar elements are parsed into BlockElement

[How it parsed for SectionBlock](https://github.com/slackapi/python-slack-sdk/blob/4e4524230ed41ef7cd9d637c52f4e86b1ffedad9/slack/web/classes/blocks.py#L143)

[How it is parsed for ContextBlock](https://github.com/slackapi/python-slack-sdk/blob/4e4524230ed41ef7cd9d637c52f4e86b1ffedad9/slack/web/classes/blocks.py#L269)

To fix this we can deal with it the same way as in ContextBlock -> replace [this line of code](https://github.com/slackapi/python-slack-sdk/blob/4e4524230ed41ef7cd9d637c52f4e86b1ffedad9/slack/web/classes/blocks.py#L241) by

`self.elements = BlockElement.parse_all(elements)`

Also the same issue present in current sdk version(3.1.0)

[Link for bug place in 3.1.0](https://github.com/slackapi/python-slack-sdk/blob/5340ee337a2364e84c38d696c107f19c341dd6eb/slack_sdk/models/blocks/blocks.py#L246)

### Reproducible in:

#### The Slack SDK version

slackclient==2.9.3

#### Python runtime version

Python 3.7.0

#### OS info

Ubuntu 20.04.1 LTS

#### Steps to reproduce:

1.Copy example json from slack API docs for ActionsBlock (https://api.slack.com/reference/block-kit/blocks#actions_examples)

2.Create an ActionsBlock from parsed (to dict) json

3. Check the type of elements attribute from created ActionsBlock object

### Expected result:

Elements should be instances of BlockElement, the same as it is for SectionBlock.accessory

### Actual result:

Elements of ActionsBlock is not parsed

| closed | 2020-12-23T12:07:14Z | 2020-12-24T06:31:24Z | https://github.com/slackapi/python-slack-sdk/issues/903 | [

"bug",

"Version: 3x",

"good first issue"

] | KharchenkoDmitriy | 3 |

pyg-team/pytorch_geometric | deep-learning | 9,310 | ModuleNotFoundError: 'CuGraphSAGEConv' requires 'pylibcugraphops>=23.02' | ### 😵 Describe the installation problem

I am trying to run this code block:

```

import torch.nn.functional as F

from torch_geometric.nn import CuGraphSAGEConv

class CuGraphSAGE(torch.nn.Module):

def __init__(self, in_channels, hidden_channels, out_channels, num_layers):

super().__init__()

self.convs = torch.nn.ModuleList()

self.convs.append(CuGraphSAGEConv(in_channels, hidden_channels))

for _ in range(num_layers - 1):

conv = CuGraphSAGEConv(hidden_channels, hidden_channels)

self.convs.append(conv)

self.lin = torch.nn.Linear(hidden_channels, out_channels)

def forward(self, x, edge, size):

edge_csc = CuGraphSAGEConv.to_csc(edge, (size[0], size[0]))

for conv in self.convs:

x = conv(x, edge_csc)[: size[1]]

x = F.relu(x)

x = F.dropout(x, p=0.5)

return self.lin(x)

model = CuGraphSAGE(128, 64, 349, 3).to(torch.float32).to("cuda")

optimizer = torch.optim.Adam(model.parameters(), lr=0.01)

```

but I get this:

```

---------------------------------------------------------------------------

ModuleNotFoundError Traceback (most recent call last)

Cell In[26], line 25

21 x = F.dropout(x, p=0.5)

23 return self.lin(x)

---> 25 model = CuGraphSAGE(128, 64, 349, 3).to(torch.float32).to("cuda")

26 optimizer = torch.optim.Adam(model.parameters(), lr=0.01)

Cell In[26], line 9, in CuGraphSAGE.__init__(self, in_channels, hidden_channels, out_channels, num_layers)

6 super().__init__()

8 self.convs = torch.nn.ModuleList()

----> 9 self.convs.append(CuGraphSAGEConv(in_channels, hidden_channels))

10 for _ in range(num_layers - 1):

11 conv = CuGraphSAGEConv(hidden_channels, hidden_channels)

File ~/.conda/envs/rapids-24.04/lib/python3.11/site-packages/torch_geometric/nn/conv/cugraph/sage_conv.py:40, in CuGraphSAGEConv.__init__(self, in_channels, out_channels, aggr, normalize, root_weight, project, bias)

30 def __init__(

31 self,

32 in_channels: int,

(...)

38 bias: bool = True,

39 ):

---> 40 super().__init__()

42 if aggr not in ['mean', 'sum', 'min', 'max']:

43 raise ValueError(f"Aggregation function must be either 'mean', "

44 f"'sum', 'min' or 'max' (got '{aggr}')")

File ~/.conda/envs/rapids-24.04/lib/python3.11/site-packages/torch_geometric/nn/conv/cugraph/base.py:41, in CuGraphModule.__init__(self)

38 super().__init__()

40 if not HAS_PYLIBCUGRAPHOPS and not LEGACY_MODE:

---> 41 raise ModuleNotFoundError(f"'{self.__class__.__name__}' requires "

42 f"'pylibcugraphops>=23.02'")

ModuleNotFoundError: 'CuGraphSAGEConv' requires 'pylibcugraphops>=23.02'

```

### Environment

* PyG version:2.5.3

* PyTorch version: 2.1.2.post303

* OS: Windows 11

* Python version: 3.11.9

* CUDA/cuDNN version: 12.2, V12.2.140

* How you installed PyTorch and PyG (`conda`, `pip`, source): conda

* Any other relevant information (*e.g.*, version of `torch-scatter`):

| closed | 2024-05-10T21:36:59Z | 2024-07-22T00:36:21Z | https://github.com/pyg-team/pytorch_geometric/issues/9310 | [

"installation"

] | d3netxer | 2 |

microsoft/nni | data-science | 4,756 | Current support of Retiarii for TensorFlow | I am interesting in evaluating Retiarii for my particular use-case. Looking at the examples, and issues, it seems like currently only Pytorch is supported. However, I do see some mention of TensorFlow support to be introduced in the roadmap [V2.4](https://github.com/microsoft/nni/discussions/3744)

My question is then: Can retiarii be used with TensorFlow at the moment ?

I am particularly interested in using ProxylessNAS and FBNet | closed | 2022-04-12T13:34:33Z | 2022-04-13T08:00:09Z | https://github.com/microsoft/nni/issues/4756 | [] | Hrayo712 | 2 |

open-mmlab/mmdetection | pytorch | 11,747 | TypeError: __init__() got an unexpected keyword argument 'pretrained' |

**Describe the bug**

i try to run the following code: