repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

Nekmo/amazon-dash | dash | 36 | Raspberry install Failed | * amazon-dash version:0.4.1

* Python version:3.5

* Operating System:Raspbian Jessi Lite

### Description

When I execute sudo python -m amazon_dash.install it fails with the text:

"/usr/bin/python: No module named amazon_dash"

"/usr/bin/python3.5: Error while finding module specification for 'amazon_dash.install' (ImportError: No module named 'amazon_dash')"

"/usr/bin/python3: Error while finding module specification for 'amazon_dash.install' (ImportError: No module named 'amazon_dash')"

### What I Did

Reinstall and install with pip3 install amazon-dash

Tried which all variant of python --> "python" / "python3" / "python3.5"

| closed | 2018-02-24T12:20:38Z | 2018-03-25T00:45:35Z | https://github.com/Nekmo/amazon-dash/issues/36 | [] | Marvv90 | 7 |

ymcui/Chinese-BERT-wwm | nlp | 6 | 请问这个训练模型有什么用? | 小白一个,请问这个有啥用啊,看起来好高大上,应用场景是什么呢? | closed | 2019-06-23T07:57:30Z | 2019-06-23T08:04:04Z | https://github.com/ymcui/Chinese-BERT-wwm/issues/6 | [] | mmrwbb | 1 |

aiogram/aiogram | asyncio | 1,465 | aiogram\utils\formatting.py (as_section) | ### Checklist

- [X] I am sure the error is coming from aiogram code

- [X] I have searched in the issue tracker for similar bug reports, including closed ones

### Operating system

Windows 10

### Python version

3.12

### aiogram version

3.4.1

### Expected behavior

aiogram\utils\formatting.py (as_section)

...

return Text(title, "\n", **as_list(*body)**)

### Current behavior

aiogram\utils\formatting.py (as_section)

```

def as_section(title: NodeType, *body: NodeType) -> Text:

"""

Wrap elements as simple section, section has title and body

:param title:

:param body:

:return: Text

"""

return Text(title, "\n", *body)

```

### Steps to reproduce

Not required

### Code example

_No response_

### Logs

_No response_

### Additional information

It is necessary to use "as_list(*body)" instead of "*body", because "\n" characters are not added to the end of each body element. | closed | 2024-04-19T09:58:37Z | 2024-04-21T19:17:52Z | https://github.com/aiogram/aiogram/issues/1465 | [

"bug",

"good first issue"

] | post1917 | 2 |

pydantic/pydantic-ai | pydantic | 844 | api_key is required even if ignored | https://ai.pydantic.dev/models/#example-local-usage

The example does not indicate that you need to set a dummy api_key i.e.

*Does not work*

```ollama_model = OpenAIModel(model_name="llama3.2", base_url="http://127.0.0.1:11434/v1")```

neither does

```ollama_model = OpenAIModel(model_name="llama3.2", base_url="http://127.0.0.1:11434/v1", api_key="")```

but this works:

```ollama_model = OpenAIModel(model_name="llama3.2", base_url="http://127.0.0.1:11434/v1", api_key="dummy")```

I suggest adding a dummy api_key argument to the example so that it would work by default.

I assume that this does not show up as an issue for most people as they would already have some Open AI API key setup to act as the dummy :) | closed | 2025-02-03T10:07:18Z | 2025-02-04T01:10:29Z | https://github.com/pydantic/pydantic-ai/issues/844 | [

"bug"

] | hansharhoff | 2 |

Python3WebSpider/ProxyPool | flask | 9 | 如何在pycharm里调试该项目 | 我使用一个远程的环境,想在pycharm里调试该项目,但是每次Debug run.py 都显示文件无法找到,请问如何使用pycharm调试这个项目 | closed | 2018-07-06T06:08:43Z | 2020-02-19T16:56:08Z | https://github.com/Python3WebSpider/ProxyPool/issues/9 | [] | bbhl79 | 0 |

jina-ai/serve | machine-learning | 5,486 | do not apply limits when gpus all in K8s | Opening this issue to track: https://github.com/jina-ai/jina/pull/5485

Currently, when `gpus: all` is applied, `resources.limits` will be set to `all`. The desired behavior is to not have `resources.limits` in K8s yaml. An example of the desired K8s yaml for the Flow:

```yaml

jtype: Flow

with:

protocol: grpc

executors:

- name: executor1

uses: jinahub+docker://Sentencizer

gpus: all

```

would be as follows:

```yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: executor1

namespace: somens

spec:

replicas: 1

selector:

matchLabels:

app: executor1

strategy:

rollingUpdate:

maxSurge: 1

maxUnavailable: 0

type: RollingUpdate

template:

metadata:

annotations:

linkerd.io/inject: enabled

labels:

app: executor1

jina_deployment_name: executor1

ns: somens

pod_type: WORKER

shard_id: '0'

spec:

containers:

- args:

- executor

- --name

- executor1

- --extra-search-paths

- ''

- --k8s-namespace

- somens

- --uses

- config.yml

- --port

- '8080'

- --gpus

- all

- --port-monitoring

- '9090'

- --uses-metas

- '{}'

- --native

command:

- jina

env:

- name: POD_UID

valueFrom:

fieldRef:

fieldPath: metadata.uid

- name: JINA_DEPLOYMENT_NAME

value: executor1

envFrom:

- configMapRef:

name: executor1-configmap

image: jinahub/c6focg47:63366804b56f6748d3b16036

imagePullPolicy: IfNotPresent

name: executor

ports:

- containerPort: 8080

readinessProbe:

exec:

command:

- jina

- ping

- executor

- 127.0.0.1:8080

initialDelaySeconds: 5

periodSeconds: 20

timeoutSeconds: 10

``` | closed | 2022-12-05T09:49:52Z | 2022-12-05T17:10:41Z | https://github.com/jina-ai/serve/issues/5486 | [] | winstonww | 0 |

clovaai/donut | nlp | 308 | What should be the configuration of the machine to train the model? | open | 2024-07-01T09:29:07Z | 2024-07-01T09:29:07Z | https://github.com/clovaai/donut/issues/308 | [] | anant996 | 0 | |

gradio-app/gradio | data-visualization | 10,783 | Gradio: predict() got an unexpected keyword argument 'message' | ### Describe the bug

Trying to connect my telegram-bot(webhook) via API with my public Gradio space on Huggingface.

Via terminal - all works OK.

But via telegram-bot always got the same issue: Error in connection Gradio: predict() got an unexpected keyword argument 'message'.

What should i use to work it properly?

HF:

Gradio sdk_version: 5.20.1

Requirements.txt

- gradio==5.20.1

- fastapi>=0.112.2

- gradio-client>=1.3.0

- urllib3~=2.0

- requests>=2.28.2

- httpx>=0.24.1

- aiohttp>=3.8.5

- async-timeout==4.0.2

- huggingface-hub>=0.19.3

### Have you searched existing issues? 🔎

- [x] I have searched and found no existing issues

### Reproduction

```python

import gradio as gr

# Gradio API

async def send_request_to_gradio(query: str, chat_history: list = None) -> str:

try:

client = Client(HF_SPACE_NAME, hf_token=HF_TOKEN)

logging.info(f"Отправляем запрос в Gradio: query={query}, chat_history={chat_history}")

result = client.predict(

message=query,

chat_history=chat_history or None,

api_name="/chat"

)

logging.info(f"Reply from Gradio: {result}")

# Обработка результата

if isinstance(result, list) and result:

response = result[0]["content"] if isinstance(result[0], dict) and "content" in result[0] else "Не найдено"

return response

else:

logging.warning("Empty or error Gradio API.")

return "Не удалось получить ответ."

except Exception as e:

logging.error(f"Error in connection Gradio: {e}")

return "Error. Try again"

```

### Screenshot

_No response_

### Logs

```shell

===== Application Startup at 2025-03-11 11:37:38 =====

tokenizer_config.json: 0%| | 0.00/453 [00:00<?, ?B/s]

tokenizer_config.json: 100%|██████████| 453/453 [00:00<00:00, 3.02MB/s]

tokenizer.json: 0%| | 0.00/16.3M [00:00<?, ?B/s]

tokenizer.json: 100%|██████████| 16.3M/16.3M [00:00<00:00, 125MB/s]

added_tokens.json: 0%| | 0.00/23.0 [00:00<?, ?B/s]

added_tokens.json: 100%|██████████| 23.0/23.0 [00:00<00:00, 149kB/s]

special_tokens_map.json: 0%| | 0.00/173 [00:00<?, ?B/s]

special_tokens_map.json: 100%|██████████| 173/173 [00:00<00:00, 1.05MB/s]

config.json: 0%| | 0.00/879 [00:00<?, ?B/s]

config.json: 100%|██████████| 879/879 [00:00<00:00, 4.49MB/s]

model.safetensors: 0%| | 0.00/1.11G [00:00<?, ?B/s]

model.safetensors: 3%|▎ | 31.5M/1.11G [00:01<00:39, 27.1MB/s]

model.safetensors: 6%|▌ | 62.9M/1.11G [00:02<00:37, 28.0MB/s]

model.safetensors: 68%|██████▊ | 756M/1.11G [00:03<00:01, 313MB/s]

model.safetensors: 100%|█████████▉| 1.11G/1.11G [00:03<00:00, 300MB/s]

/usr/local/lib/python3.10/site-packages/gradio/chat_interface.py:334: UserWarning: The 'tuples' format for chatbot messages is deprecated and will be removed in a future version of Gradio. Please set type='messages' instead, which uses openai-style 'role' and 'content' keys.

self.chatbot = Chatbot(

* Running on local URL: http://0.0.0.0:7860, with SSR ⚡ (experimental, to disable set `ssr=False` in `launch()`)

To create a public link, set `share=True` in `launch()`.

```

### System Info

```shell

title: Nika Prop

emoji: 💬

colorFrom: yellow

colorTo: purple

sdk: gradio

sdk_version: 5.20.1

app_file: app.py

pinned: false

short_description: Nika real estate

```

### Severity

Blocking usage of gradio | closed | 2025-03-11T12:12:43Z | 2025-03-18T10:28:21Z | https://github.com/gradio-app/gradio/issues/10783 | [

"bug",

"needs repro"

] | brokerelcom | 11 |

koxudaxi/datamodel-code-generator | fastapi | 1,668 | Impossible to get the json schema of a json schema object | **Describe the bug**

```python

from datamodel_code_generator.parser.jsonschema import JsonSchemaObject

if __name__ == "__main__":

print(JsonSchemaObject.model_json_schema())

```

Raises

```

pydantic.errors.PydanticInvalidForJsonSchema: Cannot generate a JsonSchema for core_schema.PlainValidatorFunctionSchema ({'type': 'no-info', 'function': <bound method UnionIntFloat.validate of <class 'datamodel_code_generator.types.UnionIntFloat'>>})

```

**To Reproduce**

See code above

**Expected behavior**

The json schema of a json schema object.

**Version:**

- OS: Linux 6.2.0

- Python version: 3.11.4

- datamodel-code-generator version: 0.22.1

| closed | 2023-11-08T17:31:29Z | 2023-11-09T00:59:54Z | https://github.com/koxudaxi/datamodel-code-generator/issues/1668 | [] | jboulmier | 1 |

davidsandberg/facenet | tensorflow | 931 | what is the trainset of LFW data ? | I am a newer in face recognition,and have a question on the LFW dataset.

I want to know the train_set of the LFW dataset,(I want to use the LFW data in unrestricted protocol way).I want to know whether the train set is the peopleDevTrain.txt.

| open | 2018-12-17T02:20:16Z | 2018-12-17T02:20:16Z | https://github.com/davidsandberg/facenet/issues/931 | [] | guojiapeng00 | 0 |

cupy/cupy | numpy | 8,103 | Noise in Complex Number Computations | ### Description

I am doing some experiments involving variations of the Mandelbrot set and as such iterations over the complex plane. I have noticed noisy results using cupy as compared to numpy.

### To Reproduce

```

import matplotlib.pyplot as plt

def main():

HEIGHT = 9

WIDTH = 16

RATIO = WIDTH/HEIGHT

RES_SPACE = 50

MIN = -1

MAX = 1

N_ITER = 17

x = np.linspace(MIN*RATIO, MAX*RATIO, WIDTH*RES_SPACE, dtype=np.float64)

y = np.linspace(MIN, MAX, HEIGHT*RES_SPACE, dtype=np.float64)

complex_plane = x + 1j * y[:,None]

complex_plane = complex_plane.astype(np.complex128)

mask = np.ones_like(complex_plane, dtype=bool)

def iterate(i, max, C=complex_plane, M=mask, N=N_ITER):

OUT = np.zeros_like(M, dtype=np.uint8)

Z = np.zeros_like(C)

C = np.copy(C)

M = np.copy(M)

max = max + max*1j

for n in range(N):

M[Z > max] = False

Z *= np.exp(-i*10j)

C *= np.exp(i*1j)

Z[M] = Z[M]**1.5 + C[M]**-3

Z[M] *= np.exp(i*C[M]**-3)

OUT -= M

OUT *= 15

return OUT

i = 3

zoom = 1- ((-i + np.pi) / (np.pi*2))

z = 1-zoom

C = complex_plane * (1*(np.exp(z) - 1))

X = -i

MAX = np.exp(np.tan(X/2)*10)

im = iterate(i, MAX, C=C)

return im

if __name__ == "__main__":

import cupy as np

im = main().get()

plt.imshow(im)

plt.title('CuPy')

plt.show()

import numpy as np

im = main()

plt.imshow(im)

plt.title('NumPy')

plt.show()

```

### Installation

None

### Environment

Google Colab

```

OS : Linux-6.1.58+-x86_64-with-glibc2.35

Python Version : 3.10.12

CuPy Version : 12.2.0

CuPy Platform : NVIDIA CUDA

NumPy Version : 1.23.5

SciPy Version : 1.11.4

Cython Build Version : 0.29.36

Cython Runtime Version : 3.0.7

CUDA Root : /usr/local/cuda

nvcc PATH : /usr/local/cuda/bin/nvcc

CUDA Build Version : 12020

CUDA Driver Version : 12020

CUDA Runtime Version : 12020

cuBLAS Version : (available)

cuFFT Version : 11008

cuRAND Version : 10303

cuSOLVER Version : (11, 5, 2)

cuSPARSE Version : (available)

NVRTC Version : (12, 2)

Thrust Version : 200101

CUB Build Version : 200101

Jitify Build Version : <unknown>

cuDNN Build Version : 8801

cuDNN Version : 8906

NCCL Build Version : 21602

NCCL Runtime Version : 21903

cuTENSOR Version : None

cuSPARSELt Build Version : None

Device 0 Name : Tesla T4

Device 0 Compute Capability : 75

Device 0 PCI Bus ID : 0000:00:04.0

```

### Additional Information

| open | 2024-01-11T12:12:08Z | 2024-02-07T19:54:36Z | https://github.com/cupy/cupy/issues/8103 | [

"issue-checked"

] | knods3k | 6 |

gee-community/geemap | jupyter | 950 | Specify a 'datetime' column when converting from (Geo)DataFrame to FeatureCollection |

### Description

When I convert from (Geo)DataFrame that contains a column with date to FeatureCollection, I cannot filter by date because the date is only stored in properties of the FeatureCollection.

### Source code

```

gdf_radd = gpd.read_file('RADD_alerts.gpkg')

alerts_subset = gdf_radd.query(''20210101 < date < 2021-03-03')

#alerts subset returns a non-empty GeoDataFrame containing rows within selected dates

ee_radd = geemap.geopandas_to_ee(gdf_radd)

alerts_subset_ee = ee_radd.filterDate(ee.Date('2021-01-01'), ee.Date('2021-03-03'))

#alerts_subset_ee is empty. it is not possible to filter by date

```

Desired behaviour

```

#I would be able to specify a column that contains date/datetime when converting from (Geo)DataFrame to GEE FeatureCollection

ee_radd = geemap.geopandas_to_ee(gdf_radd, datetime=gdf_radd.date)

```

Possible sketch of a solution

```

def set_date(feature):

date = feature.get('date').getInfo()

year, month, day = [int(i) for i in date.split()[0].split('/')]

date_mls = ee.Date.fromYMD(year, month, day).millis()

feature = feature.set("system:time_start", date_mls)

return feature

ee_radd_with_dates = ee_radd.map(set_date)

```

| closed | 2022-02-28T11:54:53Z | 2022-03-03T11:09:28Z | https://github.com/gee-community/geemap/issues/950 | [

"Feature Request"

] | janpisl | 2 |

mckinsey/vizro | data-visualization | 888 | Multi-step wizard | ### Which package?

vizro

### What's the problem this feature will solve?

Vizro excels at creating modular dashboards, but as users tackle more sophisticated applications, the need arises for reusable and extensible complex UI components. These include multi-step wizards with dynamic behavior, CRUD operations, and seamless integration with external systems. Currently, building such components requires significant effort, often resulting in custom, non-reusable code. This limits the scalability and maintainability of applications developed with Vizro.

I’m working on applications that require complex workflows, such as multi-step wizards with real-time input validation and CRUD operations. While I’ve managed to achieve this using Dash callbacks and custom Python code, the lack of modularity and reusability makes the process cumbersome. Every new project requires re-implementing these components, which is time-consuming and error-prone.

### Describe the solution you'd like

I envision Vizro evolving to support the creation of highly reusable and extensible complex components, which could transform how users approach sophisticated Dash applications. Here’s what this could look like:

- **Object-Oriented Component Development**: Provide the ability to encapsulate UI components and their logic (advanced dynamic callbacks) in Python classes, making them easy to reuse and extend across projects. This could be similar to the component architecture found in frameworks like React.

- **Modular Multi-Step Wizard**: A powerful wizard component with:

- Configurable steps that can be added or modified dynamically.

- Real-time input validation and dynamic data population based on user inputs or external data.

- Visual progress indicators and intuitive navigation controls (Next, Previous, Save & Exit).

- **Integrated CRUD Operations**: Built-in support for Create, Read, Update, and Delete functionality, ensuring data security and consistency:

- Temporary data storage during user navigation.

- Soft-delete functionality and version control for changes.

- Seamless integration with external databases or APIs.

- **Dynamic Callback Management**: Enable advanced callbacks that can be registered and updated dynamically, reducing the complexity of handling inter-component interactions.

- **Extensibility Features**:

- Plug-and-play custom components (e.g., specialized form elements, interactive charts).

- Hooks for integrating with external systems, allowing data exchange and advanced workflows.

- Flexible step-specific logic for conditional rendering and data pre-filling.

---

**How This Could Enhance Vizro**

By introducing such capabilities, Vizro would empower users to go beyond dashboards and build complex, enterprise-level applications more efficiently. These features could help attract a broader audience, including those who require not only dashboards but also robust, interactive data workflows in their data applications.

**Similar Solutions for Inspiration**

- **Material-UI Stepper**: Offers a modular multi-step workflow component.

- **Appsmith multistep wizard**: Facilitates reusable, custom UI components, [example]([url](https://docs.appsmith.com/build-apps/how-to-guides/Multi-step-Form-or-Wizard-Using-Tabs)).

---

My imagination:

Below is an high-level and simple implementation of the multistep wizard, where all wizard components and functionalities are isolated into a class (`Wizard`) following the Facade Design Pattern, complemented by elements of the Factory Pattern and the State Pattern. This class dynamically creates the logic based on the parameters and integration with the steps. The `Step` class represents individual steps.

**wizard_module.py**

```python

from dash import html, dcc, Input, Output, State, MATCH, ALL, ctx

class Step:

def __init__(self, id, label, components, validation_rules):

self.id = id

self.label = label

self.components = components

self.validation_rules = validation_rules

class Wizard:

def __init__(

self,

steps,

title=None,

previous_button_text='Previous',

next_button_text='Next',

current_step_store_id='current_step',

form_data_store_id='form_data',

wizard_content_id='wizard_content',

wizard_message_id='wizard_message',

prev_button_id='prev_button',

next_button_id='next_button',

message_style=None,

navigation_style=None,

validate_on_next=True,

custom_callbacks=None,

):

# Instance attributes

def render_layout(self):

# Returns the UI Components of the form, tabs, buttons ..etc

def render_step(self, step):

# Returns the UI Components of a step

def register_callbacks(self, app):

# Dynamic callbacks for the multistep logic such as navigation, and feedback.

```

**app.py**

```python

from dash import Dash

from wizard_module import Wizard, Step

# Define the wizard steps

steps = [

Step(

id=1,

label="Step 1: User Info",

components=[

{"id": "name_input", "placeholder": "Enter your name"},

{"id": "email_input", "placeholder": "Enter your email", "input_type": "email"},

{"id": "password_input", "placeholder": "Enter your password", "input_type": "password"},

],

validation_rules=[

{"id": "name_input", "property": "value"},

{"id": "email_input", "property": "value"},

{"id": "password_input", "property": "value"},

],

),

Step(

id=2,

label="Step 2: Address Info",

components=[

{"id": "address_input", "placeholder": "Enter your address"},

{"id": "city_input", "placeholder": "Enter your city"},

{"id": "state_input", "placeholder": "Enter your state"},

],

validation_rules=[

{"id": "address_input", "property": "value"},

{"id": "city_input", "property": "value"},

{"id": "state_input", "property": "value"},

],

)

]

# Initialize the wizard

wizard = Wizard(

steps=steps,

title="User Registration Wizard",

previous_button_text='Back',

next_button_text='Continue',

message_style={'color': 'blue', 'marginTop': '10px'},

navigation_style={'marginTop': '30px'},

validate_on_next=True,

custom_callbacks={'on_complete': some_completion_function}

)

# Create the Dash app

app = Dash(__name__)

app.layout = wizard.render_layout()

# Register wizard callbacks

wizard.register_callbacks(app)

if __name__ == '__main__':

app.run_server(debug=True)

```

**Explanation:**

- **Isolation of Components and Logic:** All wizard functionalities, including rendering and navigation logic, are encapsulated within the `Wizard` class. Each step is represented by a `Step` class instance.

- **Dynamic Logic Creation:** The `Wizard` class dynamically generates the layout and callbacks based on the steps provided. The validation logic is applied dynamically using the `validation_rules` defined in each `Step` instance.

- **Ease of Extension:** To add more steps or modify existing ones, you simply need to create or update instances of the `Step` class. The `Wizard` class handles the integration and navigation between steps without any additional changes.

- **Validation Rules:** Each `Step` contains a `validation_rules` list, which specifies which input components need to be validated. This allows for flexible validation logic that can be customized per step.

### Code of Conduct

- [X] I agree to follow the [Code of Conduct](https://github.com/mckinsey/vizro/blob/main/CODE_OF_CONDUCT.md). | open | 2024-11-19T20:07:30Z | 2024-11-21T19:42:00Z | https://github.com/mckinsey/vizro/issues/888 | [

"Feature Request :nerd_face:"

] | mohammadaffaneh | 3 |

Teemu/pytest-sugar | pytest | 223 | Print test name before result in verbose mode | Without pytest-sugar, when running pytest in verbose mode, the name of the current test is printed immediately.

This is very useful for long running tests since you know which test is hanging, and which test might be killed.

However, with pytest-sugar, the name of the test is only printed when the test succeeds.

I've provided an example of the code to reproduce this as well some minimal environment information.

The current environment in question is running Python 3.9.

Let me know if there is any more information you need to recreate the bug/"missing feature".

### Conda environment

```

(mcam_dev) ✔ ~/Downloads

09:13 $ mamba list pytest

__ __ __ __

/ \ / \ / \ / \

/ \/ \/ \/ \

███████████████/ /██/ /██/ /██/ /████████████████████████

/ / \ / \ / \ / \ \____

/ / \_/ \_/ \_/ \ o \__,

/ _/ \_____/ `

|/

███╗ ███╗ █████╗ ███╗ ███╗██████╗ █████╗

████╗ ████║██╔══██╗████╗ ████║██╔══██╗██╔══██╗

██╔████╔██║███████║██╔████╔██║██████╔╝███████║

██║╚██╔╝██║██╔══██║██║╚██╔╝██║██╔══██╗██╔══██║

██║ ╚═╝ ██║██║ ██║██║ ╚═╝ ██║██████╔╝██║ ██║

╚═╝ ╚═╝╚═╝ ╚═╝╚═╝ ╚═╝╚═════╝ ╚═╝ ╚═╝

mamba (0.15.2) supported by @QuantStack

GitHub: https://github.com/mamba-org/mamba

Twitter: https://twitter.com/QuantStack

█████████████████████████████████████████████████████████████

# packages in environment at /home/mark/mambaforge/envs/mcam_dev:

#

# Name Version Build Channel

pytest 6.2.4 py39hf3d152e_0 conda-forge

pytest-env 0.6.2 py_0 conda-forge

pytest-forked 1.3.0 pyhd3deb0d_0 conda-forge

pytest-localftpserver 1.1.2 pyhd8ed1ab_0 conda-forge

pytest-qt 4.0.2 pyhd8ed1ab_0 conda-forge

pytest-sugar 0.9.4 pyh9f0ad1d_1 conda-forge

pytest-timeout 1.4.2 pyh9f0ad1d_0 conda-forge

pytest-xdist 2.3.0 pyhd8ed1ab_0 conda-forge

```

#### Command used to run pytest

````pytest test_me.py````

#### Test file `test_me.py`

````python

from time import sleep

import pytest

@pytest.mark.parametrize('time', range(5))

def test_sleep(time):

sleep(time)

````

#### Output

Without pytest-sugar. Notice how I captured the name of `test_sleep[2]` before the result of the test appeared.

With pytest-sugar. Notice how I was able to capture the screenshot while `test_sleep[4]` was running, but before the name of the test appeared

| open | 2021-08-16T13:17:54Z | 2023-07-26T11:17:27Z | https://github.com/Teemu/pytest-sugar/issues/223 | [

"enhancement"

] | hmaarrfk | 4 |

man-group/arctic | pandas | 76 | With lib_type='TickStoreV3': No field of name index - index.name and index.tzinfo not preserved - max_date returning min date (without timezone) | Hello,

this code

``` python

from pandas_datareader import data as pdr

symbol = "IBM"

df = pdr.DataReader(symbol, "yahoo", "2010-01-01", "2015-12-29")

df.index = df.index.tz_localize('UTC')

from arctic import Arctic

store = Arctic('localhost')

store.initialize_library('library_name', 'TickStoreV3')

library = store['library_name']

library.write(symbol, df)

```

raises

``` python

ValueError: no field of name index

```

I'm using `TickStoreV3` as `lib_type` because I'm not very interested (at least for now) by

audited write, versioning...

I noticed that

```

>>> df['index']=0

>>> library.write(symbol, df)

1 buckets in 0.015091: approx 6626466 ticks/sec

```

seems to fix this... but

```

>>> library.read(symbol)

index High Adj Close ... Low Close Open

1970-01-01 01:00:00+01:00 0 132.970001 116.564610 ... 130.850006 132.449997 131.179993

1970-01-01 01:00:00+01:00 0 131.850006 115.156514 ... 130.100006 130.850006 131.679993

1970-01-01 01:00:00+01:00 0 131.490005 114.408453 ... 129.809998 130.000000 130.679993

1970-01-01 01:00:00+01:00 0 130.250000 114.012427 ... 128.910004 129.550003 129.869995

1970-01-01 01:00:00+01:00 0 130.919998 115.156514 ... 129.050003 130.850006 129.070007

... ... ... ... ... ... ... ...

1970-01-01 01:00:00+01:00 0 135.830002 135.500000 ... 134.020004 135.500000 135.830002

1970-01-01 01:00:00+01:00 0 138.190002 137.929993 ... 135.649994 137.929993 135.880005

1970-01-01 01:00:00+01:00 0 139.309998 138.539993 ... 138.110001 138.539993 138.300003

1970-01-01 01:00:00+01:00 0 138.880005 138.250000 ... 138.110001 138.250000 138.429993

1970-01-01 01:00:00+01:00 0 138.039993 137.610001 ... 136.539993 137.610001 137.740005

[1507 rows x 7 columns]

```

It looks like as if `write` was looking for a DataFrame with a column named 'index'... which is quite odd.

If I do

```

df['index']=1

library.write(symbol, df)

```

then

```

library.write(symbol, df)

```

raises

```

OverflowError: Python int too large to convert to C long

```

Any idea ?

| closed | 2015-12-29T21:30:39Z | 2016-01-04T20:56:42Z | https://github.com/man-group/arctic/issues/76 | [] | femtotrader | 13 |

chatanywhere/GPT_API_free | api | 3 | 能不能用于api.openai.com | 要是科学上网的话,host能不能写成api.openai.com 呢 | closed | 2023-05-16T02:21:56Z | 2023-05-24T03:54:28Z | https://github.com/chatanywhere/GPT_API_free/issues/3 | [] | MrGongqi | 3 |

modoboa/modoboa | django | 2,247 | Contacts and Calendar throw internal error | # Impacted versions

* OS Type: Debian

* OS Version: 10

* Database Type: MySQL

* Database version: 10.3.27-MariaDB-0+deb10u1

* Modoboa: 1.17.0

* installer used: Yes

* Webserver: Nginx

* python --version: Python 3.7.3

# Steps to reproduce

* Do a default install of Modoboa on Debian 10.

* [Using "mailsrv" instead of "mail" as the mail server's subdomain. Using Let's Encrypt.]

* Set up a first mail domain for testing (modoboa.MY-DOMAIN-HERE.de)

* Set up a domain administrator account with mail box (hostmaster@modoboa.MY-DOMAIN-HERE.de)

* Using fresh account, try to access "Contacts" or "Calendar" from the menu.

# Current behavior

```

Sorry

An internal error has occured.

```

# Expected behavior

Open contacts or calendar module.

| open | 2021-05-16T01:35:14Z | 2021-06-12T23:56:49Z | https://github.com/modoboa/modoboa/issues/2247 | [

"bug"

] | mas1701 | 15 |

cvat-ai/cvat | tensorflow | 8,380 | > Hi, we have added SAM2 on SaaS (https://app.cvat.ai/) and for Enterprise customers: https://www.cvat.ai/post/meta-segment-anything-model-v2-is-now-available-in-cvat-ai | closed | 2024-08-30T13:01:42Z | 2024-08-30T13:06:55Z | https://github.com/cvat-ai/cvat/issues/8380 | [] | gauravlochab | 0 | |

keras-team/keras | deep-learning | 20,283 | Training performance degradation after switching from Keras 2 mode to Keras 3 using Tensorflow | I've been working on upgrading my Keras 2 code to just work with Keras 3 without going fully back-end agnostic. However, while everything works fine after resolving compatibility, my training speed has severely degraded by maybe even a factor 10. I've changed the following to get Keras 3 working:

1. Changed `tensorflow.keras` to `keras` calls.

2. Updated model/weights saving and loading to use the new `export` function and `weights.h5` format.

3. Updated a callback at the end of the epoch to be a `keras.Callback` instead of the old `BaseLogger`.

4. Added `@keras.saving.register_keras_serializable()` to custom metric and loss functions.

5. Updated my online dataset generator to use `keras.Sequential` data augmentation instead of the removed `ImageDataGenerator`.

6. Removed the `max_queue_size` kwarg from the `model.fit` and `model.predict` calls since it has been removed.

In terms of hardware/packages, I'm using Python 3.11.10, keras 3.5.0 and Tensorflow 2.16.2 on a Macbook Pro M2. I've also noticed that my GPU and CPU usage is much higher while running the newer version. I've confirmed using `git stash` that specifically the changes mentioned above are causing the performance degradation. My suspicion is that the Apple hardware is somehow resulting in worse performance, but I've yet to confirm it using a regular x86 machine. | open | 2024-09-24T07:50:53Z | 2024-10-14T07:00:40Z | https://github.com/keras-team/keras/issues/20283 | [

"type:bug/performance",

"stat:awaiting keras-eng"

] | DavidHidde | 3 |

ultralytics/ultralytics | pytorch | 18,871 | Does tracking mode support NMS threshold? | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and [discussions](https://github.com/orgs/ultralytics/discussions) and found no similar questions.

### Question

I'm currently using YOLOv10 to track some objects and there are a lot of cases when two bounding boxes (of the same class) have a high IoU, I tried setting the NMS threshold ("iou" parameter) of the tracker very low it but doesn't change anything... I also tried setting a high NMS threshold (expecting a lot of overlapping BBs) but no matter what value i set, the predictions/tracking looks the same.

I tried to search about the parameters of the YOLOv10 tracker on the Ultralytics Docs and on Ultralytics GitHub but couldn't find anything about the NMS Threshold on the tracker. Is it implemented? Is the parameter name "iou" similar to the predict mode?

Can someone help me in this regard? Thanks!

### Additional

_No response_ | closed | 2025-01-24T20:48:06Z | 2025-01-26T19:08:07Z | https://github.com/ultralytics/ultralytics/issues/18871 | [

"question",

"track"

] | argo-gabriel | 5 |

bmoscon/cryptofeed | asyncio | 897 | BinanceDelivery Candles (Rest) | **General: Thank you**

First of all, I would like to convey my gratitude to you. You have created a fantastic library.

**Describe the bug**

The candles method defined in the Binance Rest Mixin considers limits and adjusts the window by updating the start time (forward request). This works for Spot and UM. Unfortunately, the Binance API is not that consistent. For CM/Delivery, the approach is a backward request i.e. the end time requires to be updated i.e. `end = data[0][0] - 1`

**To Reproduce**

Use `BinanceDelivery ` and request a longer period which exceeds the `limit=1000` such that multiple rest requests have to be triggered. Ideally, you can temporarily set the limit to 1 and send a request which expects two candles.

**Expected behavior**

The data is sorted (ascending) covering data of the requested period.

| open | 2022-08-27T20:01:05Z | 2022-08-28T07:29:51Z | https://github.com/bmoscon/cryptofeed/issues/897 | [

"bug"

] | christophlins | 0 |

ageitgey/face_recognition | python | 887 | Wrong detection face | * face_recognition version: 1.2.3

* Python version: 3.6

* Operating System: Ubuntu 18.04

### Description

Hello, i got wrong detection face, use cartoon of cat face then it's detect as face

i'm use this to detect face location :

face_locations1 = face_recognition.face_locations(selfieimage, model="cnn")

any clue ??

| open | 2019-07-23T07:51:46Z | 2019-07-25T22:04:54Z | https://github.com/ageitgey/face_recognition/issues/887 | [] | blinkbink | 1 |

plotly/plotly.py | plotly | 4,355 | Just a question | Im learning to use plotly and wanted to know some stuff about it,

is it possible to make a exe app with plotly inside?, by this i mean, is it possible to make a standalone software without depending on html or any web services to run plotly modules?.

And othe question, wich library could be usefull to combine with plolty and make a Gui for the software?, since tkinter doesnt work with plotly i read about dash but it needs constant internet connection and is opened via browser and im looking to make a no internet or browser required standalone app.

Ty.

| closed | 2023-09-13T16:59:39Z | 2023-09-16T15:08:05Z | https://github.com/plotly/plotly.py/issues/4355 | [] | Kripishit | 2 |

PokeAPI/pokeapi | graphql | 290 | b | closed | 2017-05-31T19:09:10Z | 2017-05-31T19:09:29Z | https://github.com/PokeAPI/pokeapi/issues/290 | [] | thechief389 | 0 | |

supabase/supabase-py | flask | 1,025 | [Python Client] Sensitive Data Exposure in Debug Logs - No Built-in Redaction Mechanism | - [x] I confirm this is a bug with Supabase, not with my own application.

- [x] I confirm I have searched the [Docs](https://docs.supabase.com), GitHub [Discussions](https://github.com/supabase/supabase/discussions), and [Discord](https://discord.supabase.com).

## Describe the bug

The Supabase Python client exposes sensitive data (tokens, query parameters) in debug logs without providing any built-in mechanism to redact this information. This was previously reported in discussion https://github.com/orgs/supabase/discussions/31019 but remains unresolved. This is a security concern as sensitive tokens and data are being logged in plaintext, potentially exposing them in log files.

## To Reproduce

1. Set up a Python application using the Supabase client

2. Enable debug logging for the client

3. Make any API call that includes sensitive data (like authentication tokens)

4. Check debug logs to see exposed sensitive information:

```python

import logging

import supabase

# Configure logging

logging.basicConfig(level=logging.DEBUG)

# Initialize Supabase client

client = supabase.create_client(...)

# Make any API call

result = client.from_('sensitive_table').select('*').execute()

```

The debug logs will show sensitive information like:

```

[DEBUG] [hpack.hpack] Decoded (b'content-location', b'/sensitive_table?sensitive_token=eq.abc-1234-567899888-23333-33333-333333-333333')

```

## Expected behavior

The Supabase Python client should:

1. Provide built-in configuration options to redact sensitive data in debug logs

2. Either mask sensitive tokens and parameters by default or

3. Provide clear documentation on how to properly configure logging to protect sensitive data

## System information

- OS: Linux

- Version of supabase-py: latest

- Version of Python: 3.11

## Additional context

Standard Python logging filters don't work effectively as the logs are generated by underlying libraries (httpx, httpcore, hpack). This is a security issue that needs proper handling at the client library level. Custom filters like:

```python

class SensitiveDataFilter(logging.Filter):

def filter(self, record: logging.LogRecord) -> bool:

record.msg = re.sub(r"abc-[0-9a-f\-]+", "[REDACTED-TOKEN]", record.msg)

return True

```

don't fully address the issue as they can't catch all instances of sensitive data exposure.

This issue was previously raised in discussion https://github.com/orgs/supabase/discussions/31019 without any resolution, hence filing it as a bug report given its security implications. | closed | 2025-01-04T06:19:30Z | 2025-01-11T22:30:20Z | https://github.com/supabase/supabase-py/issues/1025 | [] | ganeshrvel | 6 |

huggingface/datasets | nlp | 7,254 | mismatch for datatypes when providing `Features` with `Array2D` and user specified `dtype` and using with_format("numpy") | ### Describe the bug

If the user provides a `Features` type value to `datasets.Dataset` with members having `Array2D` with a value for `dtype`, it is not respected during `with_format("numpy")` which should return a `np.array` with `dtype` that the user provided for `Array2D`. It seems for floats, it will be set to `float32` and for ints it will be set to `int64`

### Steps to reproduce the bug

```python

import numpy as np

import datasets

from datasets import Dataset, Features, Array2D

print(f"datasets version: {datasets.__version__}")

data_info = {

"arr_float" : "float64",

"arr_int" : "int32"

}

sample = {key : [np.zeros([4, 5], dtype=dtype)] for key, dtype in data_info.items()}

features = {key : Array2D(shape=(None, 5), dtype=dtype) for key, dtype in data_info.items()}

features = Features(features)

dataset = Dataset.from_dict(sample, features=features)

ds = dataset.with_format("numpy")

for key in features:

print(f"{key} feature dtype: ", ds.features[key].dtype)

print(f"{key} dtype:", ds[key].dtype)

```

Output:

```bash

datasets version: 3.0.2

arr_float feature dtype: float64

arr_float dtype: float32

arr_int feature dtype: int32

arr_int dtype: int64

```

### Expected behavior

It should return a `np.array` with `dtype` that the user provided for the corresponding member in the `Features` type value

### Environment info

- `datasets` version: 3.0.2

- Platform: Linux-6.11.5-arch1-1-x86_64-with-glibc2.40

- Python version: 3.12.7

- `huggingface_hub` version: 0.26.1

- PyArrow version: 16.1.0

- Pandas version: 2.2.2

- `fsspec` version: 2024.5.0 | open | 2024-10-26T22:06:27Z | 2024-10-26T22:07:37Z | https://github.com/huggingface/datasets/issues/7254 | [] | Akhil-CM | 1 |

dnouri/nolearn | scikit-learn | 46 | No self.best_weights in the function train_loop() ? | It seems that the train_loop() function inside the NeuralNetwork does not provide a self.best_weights which save the ConvNet parameters for the highest validation accuracy along with the epoch iterations.

Or do I miss something? Hope someone could help. Thank you.

| closed | 2015-02-17T03:08:16Z | 2015-02-20T01:43:56Z | https://github.com/dnouri/nolearn/issues/46 | [] | pengpaiSH | 3 |

Asabeneh/30-Days-Of-Python | pandas | 400 | Pyton | closed | 2023-06-02T07:31:36Z | 2023-06-02T07:31:56Z | https://github.com/Asabeneh/30-Days-Of-Python/issues/400 | [] | Fazel-GO | 0 | |

apache/airflow | python | 47,597 | Hello, we can't run a single DAG 3000 mission | ### Apache Airflow version

Other Airflow 2 version (please specify below)

### If "Other Airflow 2 version" selected, which one?

2.10.4

### What happened?

Hello, at present, we can load about 1000 jobs in a single DAG can be scheduled normally, but when the number of jobs in a single DAG reaches 3000, the scheduling is very slow and often does not schedule, the production problem is more urgent, there is Lao Yuan author to help see, how should we make a single DAG support more than 3000 jobs and normal scheduling operation

### What you think should happen instead?

We are running tasks in the production environment, and the number of tasks gradually increases with the development of the business, and the number of jobs in a single DAG is currently the same ...

### How to reproduce

You only need to put the number of jobs in a single DAG DA ...

### Operating System

linux + k8s

### Versions of Apache Airflow Providers

_No response_

### Deployment

Official Apache Airflow Helm Chart

### Deployment details

_No response_

### Anything else?

_No response_

### Are you willing to submit PR?

- [ ] Yes I am willing to submit a PR!

### Code of Conduct

- [x] I agree to follow this project's [Code of Conduct](https://github.com/apache/airflow/blob/main/CODE_OF_CONDUCT.md)

| open | 2025-03-11T07:09:46Z | 2025-03-24T08:01:31Z | https://github.com/apache/airflow/issues/47597 | [

"kind:bug",

"area:Scheduler",

"area:core",

"needs-triage"

] | lzf12 | 12 |

nolar/kopf | asyncio | 173 | [PR] Don’t add finalizers to skipped objects | > <a href="https://github.com/dlmiddlecote"><img align="left" height="50" src="https://avatars0.githubusercontent.com/u/9053880?v=4"></a> A pull request by [dlmiddlecote](https://github.com/dlmiddlecote) at _2019-08-07 18:21:40+00:00_

> Original URL: https://github.com/zalando-incubator/kopf/pull/173

> Merged by [nolar](https://github.com/nolar) at _2019-08-08 14:23:48+00:00_

> Issue : #167

## Description

Don't add finalizer to object if there are no handlers for it. Now that it is possible to filter objects out of handler execution, this is pertinent.

## Types of Changes

- Bug fix (non-breaking change which fixes an issue)

## Tasks

- [x] Add Tests

## Review

- [ ] Tests

- [ ] Documentation

---

> <a href="https://github.com/dlmiddlecote"><img align="left" height="30" src="https://avatars0.githubusercontent.com/u/9053880?v=4"></a> Commented by [dlmiddlecote](https://github.com/dlmiddlecote) at _2019-08-08 07:01:59+00:00_

>

Tests seem to be failing on 1 flakey test.

---

> <a href="https://github.com/psycho-ir"><img align="left" height="30" src="https://avatars0.githubusercontent.com/u/726875?v=4"></a> Commented by [psycho-ir](https://github.com/psycho-ir) at _2019-08-08 13:03:17+00:00_

>

Hi [dlmiddlecote](https://github.com/dlmiddlecote),

Thank you so much for the PR!

[nolar](https://github.com/nolar)

I tested it locally and it works fine in almost all the cases I had in mind.

The only scenario that it doesn't work correctly is when we `annotate` a resource and it becomes matched with on of the registered handlers, the finalizer won't be added to the resource.

---

> <a href="https://github.com/dlmiddlecote"><img align="left" height="30" src="https://avatars0.githubusercontent.com/u/9053880?v=4"></a> Commented by [dlmiddlecote](https://github.com/dlmiddlecote) at _2019-08-08 13:11:56+00:00_

>

Hey [psycho-ir](https://github.com/psycho-ir)

Is this the case?

- resource with no annotations applied => no finalizer applied

- resource edited (or `kubectl annotate` used) to add matching annotations => finalizer should be applied?

If so, I just tried this, and it seems to work.

Let me know if I'm mistaken.

---

> <a href="https://github.com/psycho-ir"><img align="left" height="30" src="https://avatars0.githubusercontent.com/u/726875?v=4"></a> Commented by [psycho-ir](https://github.com/psycho-ir) at _2019-08-08 13:38:19+00:00_

>

> Hey [psycho-ir](https://github.com/psycho-ir)

>

> Is this the case?

>

> * resource with no annotations applied => no finalizer applied

> * resource edited (or `kubectl annotate` used) to add matching annotations => finalizer should be applied?

>

> If so, I just tried this, and it seems to work.

>

> Let me know if I'm mistaken.

Hi [dlmiddlecote](https://github.com/dlmiddlecote),

Sorry you are right this scenario works perfectly fine.

What didn't work as expected for me was the other way around:

* resource with annotation applied => finalizer applied

* resource patched (annotation removed) => finalizer is still there

---

> <a href="https://github.com/dlmiddlecote"><img align="left" height="30" src="https://avatars0.githubusercontent.com/u/9053880?v=4"></a> Commented by [dlmiddlecote](https://github.com/dlmiddlecote) at _2019-08-08 13:51:34+00:00_

>

Hey!

I also can't reproduce.

I have the operator as:

```

import kopf

@kopf.on.delete('', 'v1', 'serviceaccounts', annotations={'foo': 'bar'})

async def foo(**_):

pass

```

Then I apply:

```

apiVersion: v1

kind: ServiceAccount

metadata:

annotations:

foo: bar

name: test

namespace: default

```

and the finalizer is applied.

I then run:

`kubectl patch sa test -p '{"metadata": {"annotations": {"foo": "baz"}}}'`

and the finalizer is removed.

---

> <a href="https://github.com/psycho-ir"><img align="left" height="30" src="https://avatars0.githubusercontent.com/u/726875?v=4"></a> Commented by [psycho-ir](https://github.com/psycho-ir) at _2019-08-08 14:04:01+00:00_

>

> Hey!

>

> I also can't reproduce.

>

> I have the operator as:

>

> ```

> import kopf

>

> @kopf.on.delete('', 'v1', 'serviceaccounts', annotations={'foo': 'bar'})

> async def foo(**_):

> pass

> ```

>

> Then I apply:

>

> ```

> apiVersion: v1

> kind: ServiceAccount

> metadata:

> annotations:

> foo: bar

> name: test

> namespace: default

> ```

>

> and the finalizer is applied.

>

> I then run:

> `kubectl patch sa test -p '{"metadata": {"annotations": {"foo": "baz"}}}'`

>

> and the finalizer is removed.

Right 😕,

I probably did a mistake in my tests, all the scenarios are working a charm.

Sorry to bother.

---

> <a href="https://github.com/nolar"><img align="left" height="30" src="https://avatars0.githubusercontent.com/u/544296?v=4"></a> Commented by [nolar](https://github.com/nolar) at _2019-08-08 14:07:51+00:00_

>

PS: The solution in general is fine.

I suggest that we merge it now, and release as 0.21rcX (x=3..4 [or so](https://github.com/nolar/kopf/releases)), together with lots of other bugfixes/refactorings/improvements, and test them altogether.

---

> <a href="https://github.com/psycho-ir"><img align="left" height="30" src="https://avatars0.githubusercontent.com/u/726875?v=4"></a> Commented by [psycho-ir](https://github.com/psycho-ir) at _2019-08-08 14:08:56+00:00_

>

> PS: The solution in general is fine.

>

> I suggest that we merge it now, and release as 0.21rcX (x=3..4 [or so](https://github.com/nolar/kopf/releases)), together with lots of other bugfixes/refactorings/improvements, and test them altogether.

Totally agree 👍

---

> <a href="https://github.com/nolar"><img align="left" height="30" src="https://avatars0.githubusercontent.com/u/544296?v=4"></a> Commented by [nolar](https://github.com/nolar) at _2019-08-08 15:00:03+00:00_

>

Pre-released as [kopf==0.21rc3](https://github.com/nolar/kopf/releases/tag/0.21rc3) — but beware of other massive changes in rc1+rc2+rc3 combined (see [Releases](https://github.com/nolar/kopf/releases)). | closed | 2020-08-18T19:59:43Z | 2020-08-23T20:48:59Z | https://github.com/nolar/kopf/issues/173 | [

"enhancement",

"archive"

] | kopf-archiver[bot] | 0 |

FactoryBoy/factory_boy | django | 1,057 | Fields do not exist in this model errors with OneToOneField in Django 5 | #### Description

After upgrading from Django 4.2 to Django 5, some of our tests are failing. These are using a OneToOneField between two models. Creating one instance through a factory with an instance to the other model fails because the related name is not accepted by the Django model manager.

The workaround is very simple (see below), but I think this is a bug in this library as this was working fine under Django 4.2. We're using factory boy 3.3.

#### To Reproduce

*Share how the bug happened:*

##### Model / Factory code

```python

class Shop(models.Model):

pass

class Event(models.Model):

default_shop = models.OneToOneField(

"shop.Shop",

related_name="default_event",

on_delete=models.SET_NULL,

null=True,

blank=True,

)

class EventFactory(factory.django.DjangoModelFactory):

class Meta:

model = Event

skip_postgeneration_save = True

class ShopFactory(factory.django.DjangoModelFactory):

class Meta:

model = Shop

```

##### The issue

Before, we were able to first create an Event, and then create a Shop and immediately set the `default_event` of the Shop instance to the Event instance. With Django 5, this now fails in the factory, while still working in a Django shell. So it seems like an issue with factory boy not supporting Django 5 properly here.

```python

@pytest.mark.django_db

class Test:

@pytest.fixture

def shop(self):

event = EventFactory.create()

shop = ShopFactory.create(default_event=event)

```

```

$ pytest

> shop = ShopFactory.create(default_event=event)

apps/foo/tests/test_foo.py:335:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

../../.cache/pypoetry/virtualenvs/foo-KcrdI-pR-py3.10/lib/python3.10/site-packages/factory/base.py:528: in create

return cls._generate(enums.CREATE_STRATEGY, kwargs)

../../.cache/pypoetry/virtualenvs/foo-KcrdI-pR-py3.10/lib/python3.10/site-packages/factory/django.py:121: in _generate

return super()._generate(strategy, params)

../../.cache/pypoetry/virtualenvs/foo-KcrdI-pR-py3.10/lib/python3.10/site-packages/factory/base.py:465: in _generate

return step.build()

../../.cache/pypoetry/virtualenvs/foo-KcrdI-pR-py3.10/lib/python3.10/site-packages/factory/builder.py:274: in build

instance = self.factory_meta.instantiate(

../../.cache/pypoetry/virtualenvs/foo-KcrdI-pR-py3.10/lib/python3.10/site-packages/factory/base.py:317: in instantiate

return self.factory._create(model, *args, **kwargs)

../../.cache/pypoetry/virtualenvs/foo-KcrdI-pR-py3.10/lib/python3.10/site-packages/factory/django.py:174: in _create

return manager.create(*args, **kwargs)

../../.cache/pypoetry/virtualenvs/foo-KcrdI-pR-py3.10/lib/python3.10/site-packages/django/db/models/manager.py:87: in manager_method

return getattr(self.get_queryset(), name)(*args, **kwargs)

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

self = <QuerySet []>

kwargs = {'default_event': <Event: Event>, ...}

reverse_one_to_one_fields = frozenset({'default_event'})

def create(self, **kwargs):

"""

Create a new object with the given kwargs, saving it to the database

and returning the created object.

"""

reverse_one_to_one_fields = frozenset(kwargs).intersection(

self.model._meta._reverse_one_to_one_field_names

)

if reverse_one_to_one_fields:

> raise ValueError(

"The following fields do not exist in this model: %s"

% ", ".join(reverse_one_to_one_fields)

)

E ValueError: The following fields do not exist in this model: default_event

../../.cache/pypoetry/virtualenvs/foo-KcrdI-pR-py3.10/lib/python3.10/site-packages/django/db/models/query.py:670: ValueError

```

#### Notes

The workaround is very easy, just assign the relation after the object instance is created:

```python

shop = ShopFactory.create()

shop.default_event = event

``` | closed | 2024-01-09T15:33:20Z | 2024-04-21T12:26:34Z | https://github.com/FactoryBoy/factory_boy/issues/1057 | [] | Gwildor | 3 |

pytest-dev/pytest-xdist | pytest | 463 | gure | closed | 2019-08-22T15:06:08Z | 2019-08-22T15:06:11Z | https://github.com/pytest-dev/pytest-xdist/issues/463 | [] | vasilty | 0 | |

streamlit/streamlit | machine-learning | 10,041 | Implement browser session API | ### Checklist

- [X] I have searched the [existing issues](https://github.com/streamlit/streamlit/issues) for similar feature requests.

- [X] I added a descriptive title and summary to this issue.

### Summary

Browser sessions allow developers to track browser status in streamlit, so that they can implement features like authentication, persistent draft or shopping cart, which require the ability to keep user state after refreshing or reopen browsers.

### Why?

The current streamlit session will lost state if users refresh or reopen their browser. And the effort of providing a API to write cookies has been pending for years. I think provide a dedicated API to track browser session would be cleaner and easier to implement.

With this API developers don't need to know how it works, it can be based on cookie or local storage or anything else. And developers can use it with singleton pattern to keep state for browser to persist whatever they want in streamlit.

### How?

This feature will introduce several new APIs:

* `st.get_browser_session(gdpr_consent=False)`, which will set a unique session id in browser if it doesn't exist, and return it.

If `gdpr_consent` is set to True, a window will pop up to ask for user's consent before setting the session id.

* `st.clean_browser_session()`, which will remove the session id from browser.

The below is a POC of how `get_browser_session` can be used to implement a simple authentication solution:

```python

from streamlit.web.server.websocket_headers import _get_websocket_headers

from streamlit.components.v1 import html

import streamlit as st

from http.cookies import SimpleCookie

from uuid import uuid4

from time import sleep

def get_cookie():

try:

headers = st.context.headers

except AttributeError:

headers = _get_websocket_headers()

if headers is not None:

cookie_str = headers.get("Cookie")

if cookie_str:

return SimpleCookie(cookie_str)

def get_cookie_value(key):

cookie = get_cookie()

if cookie is not None:

cookie_value = cookie.get(key)

if cookie_value is not None:

return cookie_value.value

return None

def get_browser_session():

"""

use cookie to track browser session

this id is unique to each browser session

it won't change even if the page is refreshed or reopened

"""

if 'st_session_id' not in st.session_state:

session_id = get_cookie_value('ST_SESSION_ID')

if session_id is None:

session_id = uuid4().hex

st.session_state['st_session_id'] = session_id

html(f'<script>document.cookie = "ST_SESSION_ID={session_id}";</script>')

sleep(0.1) # FIXME: work around bug: Tried to use SessionInfo before it was initialized

st.rerun() # FIXME: rerun immediately so that html won't be shown in the final page

st.session_state['st_session_id'] = session_id

return st.session_state['st_session_id']

@st.cache_resource

def get_auth_state():

"""

A singleton to store authentication state

"""

return {}

st.set_page_config(page_title='Browser Session Demo')

session_id = get_browser_session()

auth_state = get_auth_state()

if session_id not in auth_state:

auth_state[session_id] = False

st.write(f'Your browser session ID: {session_id}')

if not auth_state[session_id]:

st.title('Input Password')

token = st.text_input('Token', type='password')

if st.button('Submit'):

if token == 'passw0rd!':

auth_state[session_id] = True

st.rerun()

else:

st.error('Invalid token')

else:

st.success('Authentication success')

if st.button('Logout'):

auth_state[session_id] = False

st.rerun()

st.write('You are free to refresh or reopen this page without re-authentication')

```

A more complicated example of using this method to work with oauth2 can be tried here: https://ai4ec.ikkem.com/apps/op-elyte-emulator/

### Additional Context

Related issues:

* https://github.com/streamlit/streamlit/issues/861

* https://github.com/streamlit/streamlit/issues/8518 | open | 2024-12-18T02:12:59Z | 2025-01-06T15:40:04Z | https://github.com/streamlit/streamlit/issues/10041 | [

"type:enhancement"

] | link89 | 2 |

donnemartin/data-science-ipython-notebooks | scikit-learn | 50 | code | sir ,plz send me code along with churn dataset

| closed | 2017-07-19T10:28:06Z | 2017-11-30T01:08:52Z | https://github.com/donnemartin/data-science-ipython-notebooks/issues/50 | [] | sabamehwish | 1 |

A3M4/YouTube-Report | seaborn | 11 | Module Can not be found | I am getting this error running python report.py

File "C:\Users\jacob\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.7_qbz5n2kfra8p0\LocalCache\local-packages\Python37\site-packages\scipy\special\__init__.py", line 641, in <module>

from ._ufuncs import *

ImportError: DLL load failed: The specified module could not be found. | open | 2019-12-15T04:54:25Z | 2019-12-17T16:18:26Z | https://github.com/A3M4/YouTube-Report/issues/11 | [] | jbenzaquen42 | 4 |

aimhubio/aim | tensorflow | 3,153 | Failed to delete run in aim web ui | ## 🐛 Bug

when deleting `run` in aim web ui, I got the following error, and the run is not deleted:

```

Error

Error while deleting runs.

Error

Failed to execute 'json' on 'Response': body stream already read

```

### To reproduce

deleting `run` in aim web ui.

### Expected behavior

`run` deleted.

### Environment

- Aim Version: 3.19.3

- Python version: 3.10.14

- pip version: 23.0.1

- OS (e.g., Linux): Linux

- Any other relevant information

### Additional context

| open | 2024-05-31T10:11:04Z | 2024-06-25T07:34:01Z | https://github.com/aimhubio/aim/issues/3153 | [

"type / bug",

"help wanted"

] | zhiyxu | 3 |

MaxHalford/prince | scikit-learn | 186 | Eigenvalue correction for MCA | Hello! I just recently started using this package for analyzing some categorical data, and I noticed that the `fit()` method of the `mca.py` file contains the setup (i.e., `self.K_`, `self.J_`) for inertia correction using either the _Benzecri_ or _Greenacre_ methods. However, it's not clear to me where the inertia correction is actually happening in the code.

Just wanted to kindly check if this correction step was fully implemented yet, thanks! | closed | 2025-03-07T18:28:02Z | 2025-03-07T22:04:26Z | https://github.com/MaxHalford/prince/issues/186 | [] | saatcheson | 2 |

dynaconf/dynaconf | django | 595 | [bug] SQLAlchemy URL object replaced with BoxList object | Using dynaconf with Flask and Flask-SQLAlchemy. If I initialize dynaconf, then assign a sqlalchemy `URL` object to a config key, the object becomes a `BoxList`, which causes sqlalchemy to fail later. Dynaconf should not replace arbitrary objects.

```python

app = Flask(__name__)

dynaconf.init_app(app)

app.config["SQLALCHEMY_DATABASE_URI"] = sa_url(

"postgresql", None, None, None, None, "example"

)

print(type(app.config["SQLALCHEMY_DATABASE_URI"]))

```

```

<class 'dynaconf.vendor.box.box_list.BoxList'>

```

This is a problem when using SQLAlchemy 1.4, which treats the URL as an object with attributes instead of a tuple.

cc @davidism | closed | 2021-06-01T19:15:32Z | 2021-08-19T14:14:32Z | https://github.com/dynaconf/dynaconf/issues/595 | [

"bug",

"HIGH",

"backport3.1.5"

] | trickardy | 2 |

pandas-dev/pandas | python | 61,125 | ENH: Supporting third-party engines for all `map` and `apply` methods | In #54666 and #61032 we introduce the `engine` parameter to `DataFrame.apply` which allows users to run the operation with a third-party engine.

The rest of `apply` and `map` methods can also benefit from this.

In a first phase we can do:

- `Series.map`

- `Series.apply`

- `DataFrame.map`

Then we can continue with the transform and group by ones. | open | 2025-03-15T03:21:13Z | 2025-03-20T15:31:54Z | https://github.com/pandas-dev/pandas/issues/61125 | [

"Apply"

] | datapythonista | 13 |

autogluon/autogluon | scikit-learn | 4,388 | How to obtain fitted values with TimeSeriesPredictor? | ## Description

When I run TimeSeriesPredictor and fit the model, I didn't find out whether or where the fitted in-sample values are provided.

Can anyone help me with it? Thanks!

| open | 2024-08-14T16:48:29Z | 2024-08-15T12:02:57Z | https://github.com/autogluon/autogluon/issues/4388 | [

"enhancement"

] | wenqiuma | 1 |

hzwer/ECCV2022-RIFE | computer-vision | 34 | A work-in-progress vulkan port :D | https://github.com/nihui/rife-ncnn-vulkan

| closed | 2020-11-25T03:46:44Z | 2020-11-28T04:37:44Z | https://github.com/hzwer/ECCV2022-RIFE/issues/34 | [] | nihui | 1 |

AUTOMATIC1111/stable-diffusion-webui | pytorch | 16,560 | Seed not returned via api | ### Checklist

- [X] The issue exists after disabling all extensions

- [X] The issue exists on a clean installation of webui

- [X] The issue is caused by an extension, but I believe it is caused by a bug in the webui

- [X] The issue exists in the current version of the webui

- [X] The issue has not been reported before recently

- [ ] The issue has been reported before but has not been fixed yet

### What happened?

Hello!

When not setting a seed, a random seed is generated and correctly output in the web-gui. But the api only returns "-1". That is correctly so far, as this is the default comand to let the framework know that it shall set a random seed. But exactly that seed I need. Is there a workaround for it?

### Steps to reproduce the problem

Simply use the api for generating an image, do not set a seed and print out res['parameters']

### What should have happened?

the value of the random seed shall be delivered by the api

### What browsers do you use to access the UI ?

Google Chrome

### Sysinfo

[sysinfo-2024-10-17-15-55.json](https://github.com/user-attachments/files/17415652/sysinfo-2024-10-17-15-55.json)

### Console logs

```Shell

{'prompt': 'A 30-year-old woman with middle-long brown hair and glasses is playing tennis wearing nothing but her glasses and holding a tennis racket while swinging it gracefully on an outdoor tennis court. A large tennis ball logo is prominently displayed on the court surface emphasizing the sport being played., impressive lighting', 'negative_prompt': 'nude, hands, Bokeh/DOF,flat, low contrast, oversaturated, underexposed, overexposed, blurred, noisy', 'styles': None, 'seed': -1, 'subseed': -1, 'subseed_strength': 0, 'seed_resize_from_h': -1, 'seed_resize_from_w': -1, 'sampler_name': None, 'batch_size': 1, 'n_iter': 1, 'steps': 5, 'cfg_scale': 1.5, 'width': 768, 'height': 1024, 'restore_faces': True, 'tiling': None, 'do_not_save_samples': False, 'do_not_save_grid': False, 'eta': None, 'denoising_strength': None, 's_min_uncond': None, 's_churn': None, 's_tmax': None, 's_tmin': None, 's_noise': None, 'override_settings': None, 'override_settings_restore_afterwards': True, 'refiner_checkpoint': None, 'refiner_switch_at': None, 'disable_extra_networks': False, 'comments': None, 'enable_hr': False, 'firstphase_width': 0, 'firstphase_height': 0, 'hr_scale': 2.0, 'hr_upscaler': None, 'hr_second_pass_steps': 0, 'hr_resize_x': 0, 'hr_resize_y': 0, 'hr_checkpoint_name': None, 'hr_sampler_name': None, 'hr_prompt': '', 'hr_negative_prompt': '', 'sampler_index': 'DPM++ SDE', 'script_name': None, 'script_args': [], 'send_images': True, 'save_images': False, 'alwayson_scripts': {}}

```

### Additional information

_No response_ | closed | 2024-10-17T15:56:46Z | 2024-10-24T01:14:49Z | https://github.com/AUTOMATIC1111/stable-diffusion-webui/issues/16560 | [

"not-an-issue"

] | Marcophono2 | 2 |

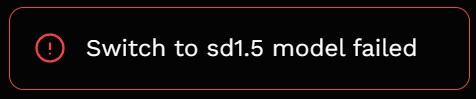

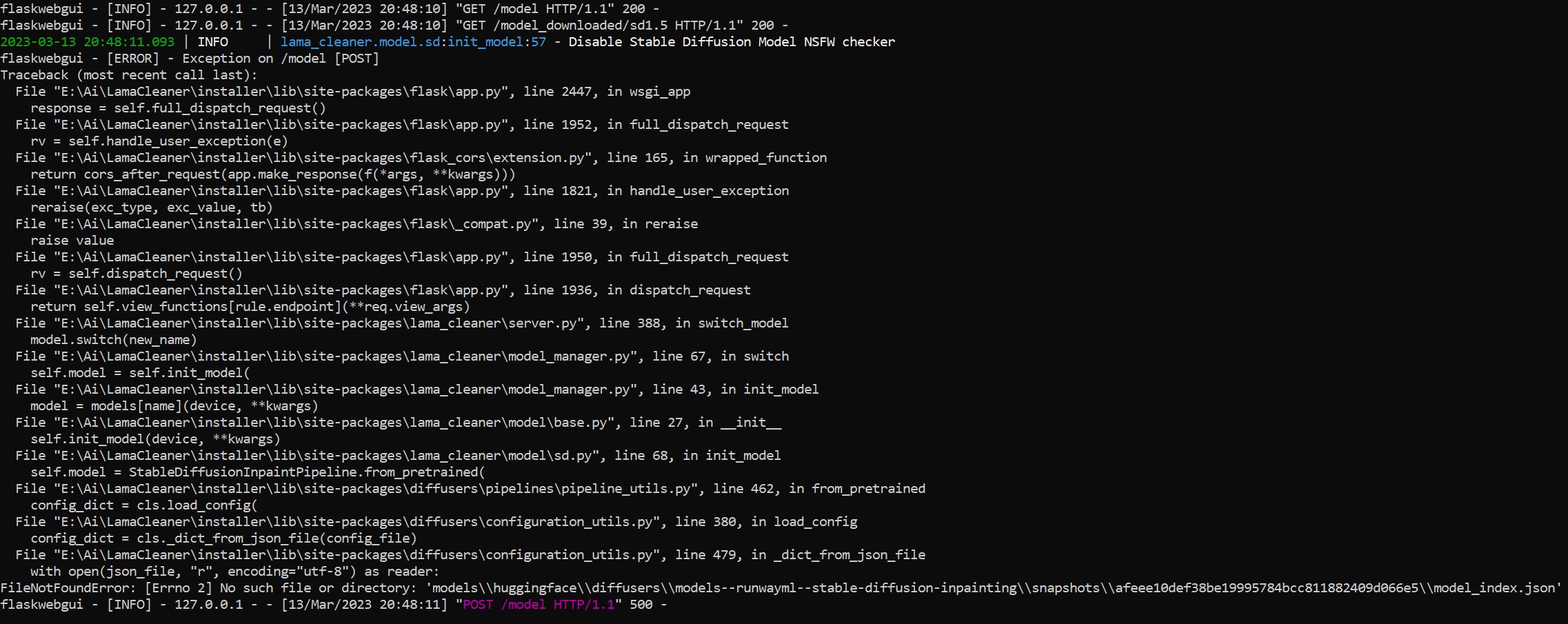

Sanster/IOPaint | pytorch | 242 | Switch to sd1.5 model failed | I have this problem when choosing any Stable-Diffusion model. How to fix it?

| closed | 2023-03-13T15:53:56Z | 2023-03-14T22:37:19Z | https://github.com/Sanster/IOPaint/issues/242 | [] | vasyaholly | 13 |

healthchecks/healthchecks | django | 1,134 | [Feature Request] Group Projects | Hi,

we've organized the different jobs in multiple projects, which already is nice. However, with more projects, it would be nice to have some control on how to organize the projects in the starting page. My usecases are e.g. to separate prod from dev jobs etc. So one way of achieving this would probably be to group the projects.

Thanks,

skr5k | open | 2025-03-14T14:05:07Z | 2025-03-14T14:05:07Z | https://github.com/healthchecks/healthchecks/issues/1134 | [] | skr5k | 0 |

ckan/ckan | api | 7,579 | Function is dropped in CKAN 2.10 despite deprecation info | ## CKAN version

2.10

## Describe the bug

The `authz.auth_is_loggedin_user` function is dropped in CKAN 2.10. However, in CKAN 2.9, there was a deprecation notice _recommending_ this function, and there doesn't appear to be a clear replacement.

### Steps to reproduce

- Install a plugin that calls `auth_is_loggedin_user` on CKAN 2.9, such as https://github.com/qld-gov-au/ckanext-ytp-comments/

- Update to CKAN 2.10

- Perform an operation that calls the function, such as flagging a comment for moderation

### Expected behavior

There should be a notice in the code and/or the changelog to indicate what replaces `auth_is_loggedin_user`.

### Additional details

12:38:09,321 ERROR [ckan.config.middleware.flask_app] module 'ckan.authz' has no attribute 'auth_is_loggedin_user'

Traceback (most recent call last):

File "/usr/lib/ckan/default/lib64/python3.7/site-packages/flask/app.py", line 1516, in full_dispatch_request

rv = self.dispatch_request()

File "/usr/lib/ckan/default/lib64/python3.7/site-packages/flask/app.py", line 1502, in dispatch_request

return self.ensure_sync(self.view_functions[rule.endpoint])(**req.view_args)

File "/mnt/local_data/ckan_venv/src/ckanext-ytp-comments/ckanext/ytp/comments/controllers/__init__.py", line 276, in flag

if authz.auth_is_loggedin_user():

AttributeError: module 'ckan.authz' has no attribute 'auth_is_loggedin_user' | open | 2023-05-09T03:04:46Z | 2023-05-09T13:57:04Z | https://github.com/ckan/ckan/issues/7579 | [] | ThrawnCA | 1 |

yt-dlp/yt-dlp | python | 12,109 | [Dropbox] Error: No video formats found! | ### DO NOT REMOVE OR SKIP THE ISSUE TEMPLATE

- [x] I understand that I will be **blocked** if I *intentionally* remove or skip any mandatory\* field

### Checklist

- [x] I'm reporting that yt-dlp is broken on a **supported** site

- [x] I've verified that I have **updated yt-dlp to nightly or master** ([update instructions](https://github.com/yt-dlp/yt-dlp#update-channels))

- [x] I've checked that all provided URLs are playable in a browser with the same IP and same login details

- [x] I've checked that all URLs and arguments with special characters are [properly quoted or escaped](https://github.com/yt-dlp/yt-dlp/wiki/FAQ#video-url-contains-an-ampersand--and-im-getting-some-strange-output-1-2839-or-v-is-not-recognized-as-an-internal-or-external-command)

- [x] I've searched [known issues](https://github.com/yt-dlp/yt-dlp/issues/3766) and the [bugtracker](https://github.com/yt-dlp/yt-dlp/issues?q=) for similar issues **including closed ones**. DO NOT post duplicates

- [x] I've read the [guidelines for opening an issue](https://github.com/yt-dlp/yt-dlp/blob/master/CONTRIBUTING.md#opening-an-issue)

- [x] I've read about [sharing account credentials](https://github.com/yt-dlp/yt-dlp/blob/master/CONTRIBUTING.md#are-you-willing-to-share-account-details-if-needed) and I'm willing to share it if required

### Region

World

### Provide a description that is worded well enough to be understood

This is the only video that returns this error

https://www.dropbox.com/s/fnxkf6gvr9zl7ow/IMG_3996.MOV?dl=0

I tried the full link and its the same

https://www.dropbox.com/scl/fi/8n13ei80sb3bmfm9nrcmw/IMG_3996.MOV?rlkey=t2mf7yg8m0vzenb432bklo0z0&e=1&dl=0

There is a playable video and it should download like all others

I tried everything in my power to fix it without results

Dont judge me on the video lmao

### Provide verbose output that clearly demonstrates the problem

- [x] Run **your** yt-dlp command with **-vU** flag added (`yt-dlp -vU <your command line>`)

- [ ] If using API, add `'verbose': True` to `YoutubeDL` params instead

- [x] Copy the WHOLE output (starting with `[debug] Command-line config`) and insert it below

### Complete Verbose Output

```shell

[debug] Command-line config: ['-vU', '-P', '/Archive/Twerk/KateCakes/', 'https://www.dropbox.com/s/fnxkf6gvr9zl7ow/IMG_3996.MOV?dl=0']

[debug] Encodings: locale UTF-8, fs utf-8, pref UTF-8, out utf-8, error utf-8, screen utf-8

[debug] yt-dlp version stable@2025.01.15 from yt-dlp/yt-dlp [c8541f8b1] (zip)

[debug] Python 3.12.3 (CPython x86_64 64bit) - Linux-6.8.0-51-generic-x86_64-with-glibc2.39 (OpenSSL 3.0.13 30 Jan 2024, glibc 2.39)

[debug] exe versions: ffmpeg 6.1.1 (setts), ffprobe 6.1.1

[debug] Optional libraries: Cryptodome-3.20.0, brotli-1.1.0, certifi-2023.11.17, mutagen-1.46.0, pyxattr-0.8.1, requests-2.31.0, sqlite3-3.45.1, urllib3-2.0.7, websockets-10.4

[debug] Proxy map: {}

[debug] Request Handlers: urllib

[debug] Loaded 1837 extractors

[debug] Fetching release info: https://api.github.com/repos/yt-dlp/yt-dlp/releases/latest

Latest version: stable@2025.01.15 from yt-dlp/yt-dlp

yt-dlp is up to date (stable@2025.01.15 from yt-dlp/yt-dlp)

[Dropbox] Extracting URL: https://www.dropbox.com/s/fnxkf6gvr9zl7ow/IMG_3996.MOV?dl=0

[Dropbox] fnxkf6gvr9zl7ow: Downloading webpage

ERROR: [Dropbox] fnxkf6gvr9zl7ow: No video formats found!; please report this issue on https://github.com/yt-dlp/yt-dlp/issues?q= , filling out the appropriate issue template. Confirm you are on the latest version using yt-dlp -U

Traceback (most recent call last):

File "/home/ok/.local/bin/yt-dlp/yt_dlp/YoutubeDL.py", line 1637, in wrapper

return func(self, *args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/ok/.local/bin/yt-dlp/yt_dlp/YoutubeDL.py", line 1793, in __extract_info

return self.process_ie_result(ie_result, download, extra_info)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/ok/.local/bin/yt-dlp/yt_dlp/YoutubeDL.py", line 1852, in process_ie_result

ie_result = self.process_video_result(ie_result, download=download)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/ok/.local/bin/yt-dlp/yt_dlp/YoutubeDL.py", line 2859, in process_video_result

self.raise_no_formats(info_dict)

File "/home/ok/.local/bin/yt-dlp/yt_dlp/YoutubeDL.py", line 1126, in raise_no_formats

raise ExtractorError(msg, video_id=info['id'], ie=info['extractor'],

yt_dlp.utils.ExtractorError: [Dropbox] fnxkf6gvr9zl7ow: No video formats found!; please report this issue on https://github.com/yt-dlp/yt-dlp/issues?q= , filling out the appropriate issue template. Confirm you are on the latest version using yt-dlp -U

``` | closed | 2025-01-16T19:53:26Z | 2025-01-29T16:56:07Z | https://github.com/yt-dlp/yt-dlp/issues/12109 | [

"NSFW",

"site-bug"

] | BenderBRod | 2 |

scikit-learn/scikit-learn | data-science | 30,461 | from sklearn.datasets import make_regression FileNotFoundError | ### Describe the bug

When running examples/application/plot_prediction_latency.py a FileNotFoundError occurs as there is no file named make_regression in datasets dir.

I have cloned the scikit-learn repo and installed it using ```pip install -e .```

Completely unable to ```import scikit_learn ``` or ```sklearn ``` albeit it showing up when ```pip list -> scikit-learn 1.7.dev0 /Users/user/scikit-learn ```

### Steps/Code to Reproduce

from sklearn.datasets import make_regression

### Expected Results

No error is thrown

### Actual Results

Exception has occurred: FileNotFoundError

[Errno 2] No such file or directory: '/private/var/folders/0q/80gytspx42v3rtlkkq_h59jw0000gn/T/pip-build-env-53amsfeb/normal/bin/ninja'

### Versions

```shell

scikit-learn 1.7.dev0

```

| closed | 2024-12-11T10:13:52Z | 2024-12-11T11:19:18Z | https://github.com/scikit-learn/scikit-learn/issues/30461 | [

"Bug",

"Needs Triage"

] | kayo09 | 1 |

nerfstudio-project/nerfstudio | computer-vision | 2,952 | Docker/singularity container doesn't seem to contain ns-* commands | **Describe the bug**

I built a singularity container from the Dockerhub address listed on the web page and then ran "singularity run --nv nerf.simg" and tried to find the ns-* files but I am unable to find them.

**To Reproduce**

Run:

singularity build nerf.simg docker://dromni/nerfstudio:1.0.2

singularity run --nv nerf.sif ns-process-data video --data /workspace/video.mp4

**Expected behavior**

No errors

** Result:

==========

== CUDA ==

==========

CUDA Version 11.8.0

Container image Copyright (c) 2016-2023, NVIDIA CORPORATION & AFFILIATES. All rights reserved.

This container image and its contents are governed by the NVIDIA Deep Learning Container License.

By pulling and using the container, you accept the terms and conditions of this license:

https://developer.nvidia.com/ngc/nvidia-deep-learning-container-license

A copy of this license is made available in this container at /NGC-DL-CONTAINER-LICENSE for your convenience.

/opt/nvidia/nvidia_entrypoint.sh: line 67: exec: ns-process-data: not found

| open | 2024-02-23T22:05:32Z | 2024-09-05T22:07:46Z | https://github.com/nerfstudio-project/nerfstudio/issues/2952 | [] | cousins | 7 |

autokey/autokey | automation | 820 | keyboard.press_key freezes autokey | ### AutoKey is a Xorg application and will not function in a Wayland session. Do you use Xorg (X11) or Wayland?

Xorg

### Has this issue already been reported?

- [X] I have searched through the existing issues.