repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

pywinauto/pywinauto | automation | 1,122 | Couldnt get my way around for more than 5 hours | ## Expected Behavior

Sometimes the script prints the value of `dlg.print_control_identifiers()`,but the pywinauto is stubborn. I use the same windows title every time but anytime I get the error `MatchError: Could not find 'Miracle 9.0 (Rel 6.0) - Standard Copy (Single User) ' in 'dict_keys([])'` | open | 2021-10-01T10:29:42Z | 2022-02-20T12:11:47Z | https://github.com/pywinauto/pywinauto/issues/1122 | [

"bug",

"duplicate"

] | meet1919 | 2 |

ultralytics/yolov5 | deep-learning | 12,424 | Multigpu and multinode performance | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

I'm training Yolov5 on custom dataset and HPC machine, having 4 GPU per node. I'm doing some performance test, using different number of GPUs. Each test run for 100 epochs. The following are time results:

```

4 GPU: 2h, 30 min

8 GPU: 2h 21 min

16 GPU: 2h 25 min

32 GPU: 2h 46 min

```

More or less the AP is the same. I'm bit confused. Why Yolov5 training time does not scale with more GPUs? Each epoch should be finish in less time using more Gpus as well as the execution time. Right? Someone could explain such behaviour? Thanks.

### Additional

_No response_ | closed | 2023-11-24T13:06:21Z | 2024-01-09T00:21:50Z | https://github.com/ultralytics/yolov5/issues/12424 | [

"question",

"Stale"

] | unrue | 10 |

sherlock-project/sherlock | python | 2,375 | Instagram results not showing in Sherlock | ### Installation method

Other (indicate below)

### Package version

Sherlock v0.15.0

### Description

When using Sherlock to check for the existence of an Instagram profile by providing a valid username, the expected result should be that the tool returns whether the profile exists or not.

When performing this action, however, the actual result was that no output was displayed for **Instagram profiles,** even for valid usernames.

This is undesirable because it prevents users from accurately checking Instagram profiles, which is a key feature of the tool.

**Thank you for looking into this issue!**

### Steps to reproduce

1. Open the Sherlock tool.

2. Enter a valid Instagram username (e.g., instagram) and run the tool.

3. Observe that no result is displayed for Instagram, regardless of the username's validity.

### Additional information

_No response_

### Code of Conduct

- [X] I agree to follow this project's Code of Conduct | closed | 2024-11-30T10:18:47Z | 2025-02-17T05:16:31Z | https://github.com/sherlock-project/sherlock/issues/2375 | [

"bug"

] | ZuhairZeiter | 6 |

horovod/horovod | tensorflow | 3,497 | NotFoundError: Local Variable does not exist with backward_passes_per_step > 1 | **Environment:**

1. Framework: tensorflow.keras

2. Framework version: 2.5.0

3. Horovod version: 0.22.0

4. MPI version:

5. CUDA version: 11.0

6. NCCL version:

7. Python version: 3.8

8. Spark / PySpark version:

9. Ray version:

10. OS and version:

11. GCC version:

12. CMake version:

**Checklist:**

1. Did you search issues to find if somebody asked this question before? yes

2. If your question is about hang, did you read [this doc](https://github.com/horovod/horovod/blob/master/docs/running.rst)? --

3. If your question is about docker, did you read [this doc](https://github.com/horovod/horovod/blob/master/docs/docker.rst)? --

4. Did you check if you question is answered in the [troubleshooting guide] (https://github.com/horovod/horovod/blob/master/docs/troubleshooting.rst)?

**Bug report:**

Hi, I am getting a NotFoundError: Resouce localhost/_AnonymousVar<xxx>/N10tensorflow3VarE does not exist when setting backward_passes_per_step > 1.

I looked through both tensorflow and horovod code and might have found the origin of this:

My traceback ends up inside tensorflow/python/eager/def_function during the self.train_function(iterator) call inside model.fit().

The horovod DistributedOptimizer is initialized with a LocalGradientAggregationHelperEager, though, since the context.executing_eagerly() gets called before the model.fit and therefore inside an eager execution environment by default. The train_function is wrapped into a tf.function and is executed in graph mode.

summary: horovod initializes the optimizer with an Eager Aggregation Helper outside the model.fit loop, gradient aggregation is called inside the model.fit loop in graph execution

I guess this is the error. I dont know if this error still exists in the newest version of horovod / tensorflow.keras but the code did not change on that end so it most likely is also a bug in the current version.

If someone can recreate this on another version please change the code to initialize the AggregationHelper according to the tf.run_functions_eagerly() property instead; and hopefully you can provide a quick workaround for me as I cannot change the installation on the HPC cluster I am working with.

**Update:**

I tried the following two workarounds:

1. Manually create an instance of LocalGradientAggregationHelper and feed it into the optimizer's attribute. This unfortunately raised the same exception as in #2110 , caused by line 103 inside horovod.tensorflow.gradient_aggregation: `zero_grad = tf.zeros(shape=grad.get_shape().as_list(), dtype=grad.dtype)`. I do not see the exact problem here. (Maybe something else that has to be manually wrapped by tf.function?

2. Compile the model in eager execution mode. This works fine, although I lose some speedup here of course.

| open | 2022-03-28T09:21:57Z | 2022-04-26T21:55:39Z | https://github.com/horovod/horovod/issues/3497 | [

"bug"

] | lenroed | 1 |

ipython/ipython | jupyter | 14,232 | IPython.display.IFrame running on remote JupyterLab references localhost on client machine | <!-- This is the repository for IPython command line, if you can try to make sure this question/bug/feature belong here and not on one of the Jupyter repositories.

If it's a generic Python/Jupyter question, try other forums or discourse.jupyter.org.

If you are unsure, it's ok to post here, though, there are few maintainer so you might not get a fast response.

-->

I have a localhost server running on JupyterLab serving a web application which I would like to display on JupyterLabs on a client machine. However, when calling IPython.display.IFrame() on the client, it references localhost on the client machine instead of the remote server. Here is an example where http://localhost:57967 is where the web application is being served on the JupyterLabs server:

Calling IPython.display.IFrame() displays "refused to connect" error page.

However, http://localhost:57967 serves a web application and is accessible from the remote server

On the client machine, I have a web application running on http://localhost:58541 which is accessible by IPython.display.IFrame().

However, http://localhost:58541 cannot be accessed from the remote server.

In summary, how would I be able to display http://localhost:57967 which is a web application served on the JupyterLab's server? | closed | 2023-10-31T15:12:21Z | 2024-09-30T07:34:41Z | https://github.com/ipython/ipython/issues/14232 | [] | jcaxle | 2 |

raphaelvallat/pingouin | pandas | 47 | Add support for Aligned Rank Transformed data Anova | Good morning,

I had to work on non-normal and heteroscedastic variables but had to compute interactions between those variables. Unfortunately, no methods in your package allow to do so.

I found ART beeing quite straight forward and easy to use. I think it could be very valuable to include ART inside your library.

The actual solution is to run ARTool through rpy2 which is neither performant nor easy to do.

Here is the original paper: http://faculty.washington.edu/wobbrock/pubs/chi-11.06.pdf

And here are some R documentation:

- https://cran.r-project.org/web/packages/ARTool/index.html

- https://cran.r-project.org/web/packages/ARTool/vignettes/art-contrasts.html

Thanks, and have a nice day,

Clément. | closed | 2019-07-02T10:19:24Z | 2019-09-28T19:05:08Z | https://github.com/raphaelvallat/pingouin/issues/47 | [

"feature request :construction:"

] | clementpoiret | 3 |

taverntesting/tavern | pytest | 364 | Would like to be able to define a variable in a stage to use in various places in that stage | In this stage:

- name: -007C PUT update organization incomplete payload

request:

url: "{host}/v2/institutions/{institution_data.id}/organizations/{organization_data.id}/"

method: PUT

json:

branded: !bool "true"

organization_type: !int 8

import_comment: "BP-5845-007C"

headers:

Accept: 'application/json, text/plain, text/html, */*'

Accept-Encoding: 'gzip, deflate, br'

Accept-Language: 'en-US,en;q=0.9'

Authorization: "JWT {encode_web_token}"

case_name: "BP-5845-007C"

response:

status_code: 400

headers:

content-type: "application/json"

save:

body:

put_meta: meta

body:

$ext:

function: api_helpers:organization_error

extra_kwargs:

expected: "{organization_incomplete_expected}"

targets: "{error_targets}"

stage: "BP-5845-007C"

I'd like to be able to define a variable with the value "BP-5845-007C" that can be passed to import_comment, case_name, stage, etc.

Am using this to help identify which test-stage created data and/or what stage is running at the time an error is encountered when running through a debugger. Perhaps could then set a watch on the variable in debugger. (using Pycharm).

Any way of doing this?

If not can it be put in the list of enhancements? | open | 2019-05-30T20:19:21Z | 2019-08-30T14:51:37Z | https://github.com/taverntesting/tavern/issues/364 | [

"Type: Enhancement",

"Priority: Low"

] | pmneve | 3 |

wkentaro/labelme | deep-learning | 533 | How to add point for my annotated polygons? | Hi,thanks for your great work.But i met some problem recently.I have annotated some datas for sementic works before, and i want to add some points for per polygon in images i annotated,but i don't want to delete the annotated polygons to annotate again,I just want to add one or two point for the annotated polygon.But i don't find way how to do it.Does the labelme supports such operator?tkx. | closed | 2019-12-31T08:07:31Z | 2021-04-13T11:20:28Z | https://github.com/wkentaro/labelme/issues/533 | [] | chegnyanjun | 4 |

faif/python-patterns | python | 384 | what's the difference betwen builder.py and abstruct_factory.py | closed | 2022-01-02T09:51:57Z | 2022-01-03T08:24:29Z | https://github.com/faif/python-patterns/issues/384 | [

"question"

] | SeekPoint | 2 | |

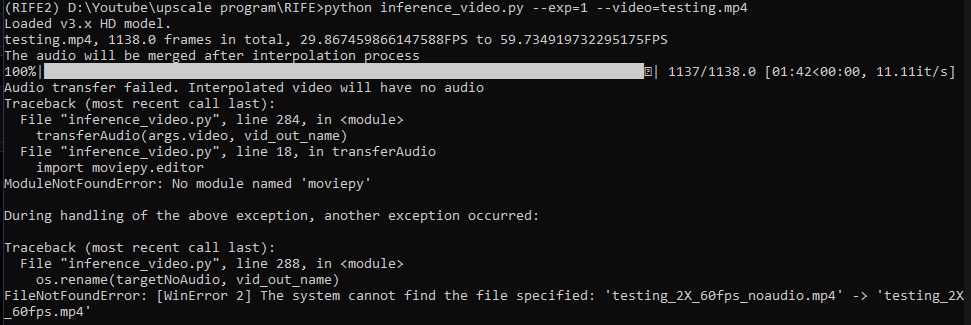

hzwer/ECCV2022-RIFE | computer-vision | 233 | no module named moviepy |

can anyone help? | closed | 2022-01-23T09:14:05Z | 2022-01-23T09:28:48Z | https://github.com/hzwer/ECCV2022-RIFE/issues/233 | [] | danieltan007 | 1 |

coqui-ai/TTS | deep-learning | 3,627 | Please update to be able to use with Python 3.12 | RuntimeError: TTS requires python >= 3.9 and < 3.12 but your Python version is 3.12.0 (tags/v3.12.0:0fb18b0, Oct 2 2023, 13:03:39) [MSC v.1935 64 bit (AMD64)]

This happens even with trying to install using

pip3 install --ignore-requires-python TTS

| closed | 2024-03-11T02:42:57Z | 2024-10-07T00:36:54Z | https://github.com/coqui-ai/TTS/issues/3627 | [

"wontfix",

"feature request"

] | Aphexus | 7 |

tflearn/tflearn | data-science | 963 | how to use pretrained word2vec in tflearn for text classification | Hi Guys,

I found tflearn is a good tool for deep learning.

I am just wondering if i can load pretrained word2vec model (like google word2vec, i.e. https://code.google.com/archive/p/word2vec/). And how to use that to initialise embedding layer in tflearn.

Thank You,

Biswajit | open | 2017-11-21T05:43:28Z | 2017-11-21T05:48:13Z | https://github.com/tflearn/tflearn/issues/963 | [] | Biswajit2902 | 0 |

ydataai/ydata-profiling | jupyter | 795 | Profile contains incorrect types and rejected columns | **Description:**

Profile contains incorrect types and rejected columns.

**Reproduction:**

```python

import datetime

import pandas

import pandas_profiling

df = pandas.DataFrame({

"time": [datetime.datetime(2021, 5, 11)] * 4,

"symbol": ["AAPL", "AMZN", "GOOG", "TSLA"],

"price": [125.91, 3223.91, 2308.76, 617.20],

})

profile = pandas_profiling.ProfileReport(df)

# `time` column is rejected.

# `price` column has type Categorical not Numeric.

```

**Version:**

* _Python version_: Python 3.8

* _Environment_: Jupyter Lab

<details><summary>Click to expand <strong><em>requirements.txt</em></strong></summary>

<p>

```

beautifulsoup4==4.9.3

croniter==1.0.13

defopt==6.1.0

findspark==1.4.2

folium==0.12.1

fsspec==2021.5.0

google-cloud-bigquery==2.16.1

humanize==3.5.0

imagehash==4.2.0

ipython==7.23.1

jedi==0.18.0

jinja2==3.0.1

jupyter-contrib-nbextensions==0.5.1

jupyter-nbextensions-configurator==0.4.1

jupyterlab==3.0.15

jupytext==1.11.2

koalas==1.5.0

marshmallow-pyspark==0.2.2

notebook==6.4.0

numpy==1.20.3

openpyxl==3.0.7

overrides==6.1.0

pandas==1.2.4

pandas-profiling==3.0.0

paramiko==2.7.2

pdpyras==4.2.1

pebble==4.6.1

praw==7.2.0

premailer==3.8.0

psutil==5.8.0

pyarrow==4.0.0

pydeequ==0.1.6

pyspark==3.0.2

pytest==6.2.4

pytest-cov==2.12.0

pytest-icdiff==0.5

pytest-securestore==0.1.3

pytest-timeout==1.4.2

python-dateutil==2.8.1

pytimeparse==1.1.8

ratelimit==2.2.1

requests==2.25.1

rich==10.2.1

scipy==1.6.3

sentry-sdk==1.1.0

slack-sdk==3.5.1

structlog==21.1.0

testing.postgresql==1.3.0

typeguard==2.12.0

typing-utils==0.0.3

xlrd==2.0.1

```

</p>

</details> | closed | 2021-05-19T15:06:14Z | 2021-06-30T10:16:26Z | https://github.com/ydataai/ydata-profiling/issues/795 | [] | ashwin153 | 1 |

pallets/quart | asyncio | 145 | Not working with PyLTI even after 'import quart.flask_patch' | I'm trying to make an app using Quart together with [PyLTI](https://pypi.org/project/PyLTI/).

PyLTI is originally for Flask, so I'm using `import quart.flask_patch`.

However, the following error happens when I run the app with `python main.py`.

Could you please give me some advice?

I'm not sure where I should ask for help.

If here is not appropriate place to ask such kind of questions, please just close the issue.

Thank you in advance.

Error

```

Traceback (most recent call last):

File "/home/xxx/Project/quart+lti/main.py", line 13, in <module>

@lti(request='session')

File "/home/xxx/.cache/pypoetry/virtualenvs/quart+lti-rQY8ksPW-py3.10/lib/python3.10/site-packages/pylti/flask.py", line 188, in wrapper

the_lti = LTI(lti_args, lti_kwargs)

File "/home/xxx/.cache/pypoetry/virtualenvs/quart+lti-rQY8ksPW-py3.10/lib/python3.10/site-packages/pylti/flask.py", line 37, in __init__

LTIBase.__init__(self, lti_args, lti_kwargs)

File "/home/xxx/.cache/pypoetry/virtualenvs/quart+lti-rQY8ksPW-py3.10/lib/python3.10/site-packages/pylti/common.py", line 470, in __init__

self.nickname = self.name

File "/home/xxx/.cache/pypoetry/virtualenvs/quart+lti-rQY8ksPW-py3.10/lib/python3.10/site-packages/pylti/common.py", line 478, in name

if 'lis_person_sourcedid' in self.session:

File "/home/xxx/.cache/pypoetry/virtualenvs/quart+lti-rQY8ksPW-py3.10/lib/python3.10/site-packages/werkzeug/local.py", line 278, in __get__

obj = instance._get_current_object()

File "/home/xxx/.cache/pypoetry/virtualenvs/quart+lti-rQY8ksPW-py3.10/lib/python3.10/site-packages/werkzeug/local.py", line 407, in _get_current_object

return self.__local() # type: ignore

File "/home/xxx/.cache/pypoetry/virtualenvs/quart+lti-rQY8ksPW-py3.10/lib/python3.10/site-packages/quart/globals.py", line 26, in _ctx_lookup

raise RuntimeError(f"Attempt to access {name} outside of a relevant context")

RuntimeError: Attempt to access session outside of a relevant context

```

main.py

```python

import quart.flask_patch

from pylti.flask import lti

from quart import Quart, redirect, url_for

app = Quart(__name__)

@app.route('/lti', methods=['POST'])

@lti(request='initial')

async def lti(lti):

return redirect(url_for('index'), code=303)

@app.route('/')

@lti(request='session')

async def index(lti):

return 'index'

app.run(host='0.0.0.0')

``` | closed | 2022-04-13T15:10:17Z | 2022-10-03T00:26:21Z | https://github.com/pallets/quart/issues/145 | [] | yuttie | 2 |

nerfstudio-project/nerfstudio | computer-vision | 3,611 | Throw an error on non-zero k4, k5 or k6 | Hi @jb-ye @devernay, why is there a problem when k4 is not equal to 0? #3355 #3381 When reading the camera distortion coefficients such as [this](https://github.com/nerfstudio-project/nerfstudio/blob/73fe54dda0b743616854fc839889d955522e0e68/nerfstudio/process_data/colmap_utils.py#L262C2-L278C42), k4 is correctly assigned as the fourth-order radial distortion coefficient rather than the fourth coefficient. The current approach makes data with non-zero k4 coefficient like fisheye unusable.

| open | 2025-03-12T12:24:12Z | 2025-03-15T04:07:31Z | https://github.com/nerfstudio-project/nerfstudio/issues/3611 | [] | Yubel426 | 3 |

pyeve/eve | flask | 1,222 | Demo fails | QuickStart:

root@xtgl-app-009:/root/a#cat run.py

from eve import Eve

app = Eve()

app.run()

root@xtgl-app-009:/root/a#cat settings.py

DOMAIN = {'people': {}}

root@xtgl-app-009:/root/a#

--------------------------------------------------------------------------------------

Then I run the demo:

root@xtgl-app-009:/root/a#python3 run.py

* Serving Flask app "eve" (lazy loading)

* Environment: production

WARNING: Do not use the development server in a production environment.

Use a production WSGI server instead.

* Debug mode: off

* Running on http://127.0.0.1:5000/ (Press CTRL+C to quit)

--------------------------------------------------------------------------------------

Then I follow the guide:

root@xtgl-app-009:/root/a#curl -i http://127.0.0.1:5000/people

curl: (52) Empty reply from server

root@xtgl-app-009:/root/a#curl -i http://127.0.0.1:5000/

curl: (52) Empty reply from server

-----------------------------------------------------------------------------------

Finally, I have no idea. SO How to deal with it ? | closed | 2019-01-23T09:17:26Z | 2019-03-28T15:13:26Z | https://github.com/pyeve/eve/issues/1222 | [] | SuperHighMan | 1 |

awesto/django-shop | django | 727 | Add tag for version 0.12.1 | Apparently, the git tag for version 0.12.1 was forgotten. Please add it (as documented [here](/awesto/django-shop/blob/master/shop/__init__.py#L6-L18)). | closed | 2018-04-29T07:07:02Z | 2018-04-30T00:37:34Z | https://github.com/awesto/django-shop/issues/727 | [] | r4co0n | 1 |

python-gitlab/python-gitlab | api | 2,738 | ldap_group_links.list() not usable | ## Description of the problem, including code/CLI snippet

The current implementation of the list() method for the ldap_group_links api is not usable. The cli throws an exceptions and the api returns unusable data, because an id is missing.

$ gitlab group-ldap-group-link list --group-id xxxxxx

[<GroupLDAPGroupLink id:None provider:ldapmain>, <GroupLDAPGroupLink id:None provider:ldapmain>, <GroupLDAPGroupLink id:None provider:ldapmain>, <GroupLDAPGroupLink id:None provider:ldapmain>, <GroupLDAPGroupLink id:None provider:ldapmain>, <GroupLDAPGroupLink id:None provider:ldapmain>, <GroupLDAPGroupLink id:None provider:ldapmain>]

Traceback (most recent call last):

File "/home/rof/.local/bin/gitlab", line 8, in <module>

sys.exit(main())

^^^^^^

File "/home/rof/.local/lib/python3.12/site-packages/gitlab/cli.py", line 389, in main

gitlab.v4.cli.run(

File "/home/rof/.local/lib/python3.12/site-packages/gitlab/v4/cli.py", line 556, in run

printer.display_list(data, fields, verbose=verbose)

File "/home/rof/.local/lib/python3.12/site-packages/gitlab/v4/cli.py", line 518, in display_list

self.display(get_dict(obj, fields), verbose=verbose, obj=obj)

File "/home/rof/.local/lib/python3.12/site-packages/gitlab/v4/cli.py", line 487, in display

id = getattr(obj, obj._id_attr)

^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/rof/.local/lib/python3.12/site-packages/gitlab/base.py", line 134, in __getattr__

raise AttributeError(message)

AttributeError: 'GroupLDAPGroupLink' object has no attribute 'id'

I think the problem is, that the ldap_group_links api has two possible (exclusive) id fields: cn and filter. I did not find a way to reflect this in python-gitlab. As we do not use ldap filters in our Gitlab instance, my "fix" was to add

_id_attr = "cn"

to the GroupLDAPGroupLink class.

## Expected Behavior

The data returned shall contain either a cn or filter field.

## Actual Behavior

Returned data only has the provider attribute, which is useless without the other field(s).

## Specifications

- python-gitlab version: 4.2.0

- API version you are using (v3/v4): v4

- Gitlab server version (or gitlab.com): 16.5.3

| open | 2023-12-04T20:19:26Z | 2024-01-21T19:10:49Z | https://github.com/python-gitlab/python-gitlab/issues/2738 | [

"bug"

] | zapp42 | 5 |

skfolio/skfolio | scikit-learn | 6 | [BUG] Python Version Request: Reduce python >= 3.9 | Thanks for the great-looking package!

I have hopefully a small request. Currently you have:

https://github.com/skfolio/skfolio/blob/a1e79d3cf732467dcd078cd414b466b889a2b1c5/pyproject.toml#L16

Is it possible to change this to `3.9` to reduce the restrictiveness?

Sklearn doesn't actually specify a python version in their project.toml. And I don't see a need to restrict from the current dependencies:

``` bash

dependencies = [

"numpy>=1.23.4",

"scipy>=1.8.0",

"pandas>=1.4.1",

"cvxpy>=1.4.1",

"scikit-learn>=1.3.2",

"joblib>=1.3.2",

"plotly>=5.15.0"

]

``` | closed | 2024-01-12T14:39:31Z | 2024-01-16T20:51:33Z | https://github.com/skfolio/skfolio/issues/6 | [

"bug"

] | mdancho84 | 3 |

mwouts/itables | jupyter | 199 | Pandas Style fail to render in Colab | Pandas Style objects fail to render in Google Colab.

This is because the HTML representation of the style object generated by `to_html` is

```

<table id="T_4b0528c8-6c7e-4821-bad4-20c3dbda01a8" class="dataframe">

```

while `itables` expect it to be only equal to

```

<table id="T_4b0528c8-6c7e-4821-bad4-20c3dbda01a8">

``` | closed | 2023-10-01T13:54:02Z | 2023-10-01T22:33:52Z | https://github.com/mwouts/itables/issues/199 | [] | mwouts | 0 |

tflearn/tflearn | data-science | 1,001 | no pip3 package | The installation of the stable release, as described in the docs, does not work when using pip3:

$ pip3 install tflearn

Collecting tflearn

Could not find a version that satisfies the requirement tflearn (from versions: )

No matching distribution found for tflearn | open | 2018-01-19T23:19:30Z | 2018-01-30T21:59:19Z | https://github.com/tflearn/tflearn/issues/1001 | [] | Eezzeldin | 1 |

jazzband/django-oauth-toolkit | django | 711 | authorization_code should use pkce to verify public clients | According to RFC [https://tools.ietf.org/html/rfc7636#section-4](https://tools.ietf.org/html/rfc7636#section-4) For public clients with `authorization_code` grant PKCE's `code_verifier` must be used to authenticate client. Currently authentication for public client is dropped altogether.

**Current behaviour:**

Client's `code_challenge` is not used to authenticate public clients. This leaves public clients vulnerable to token interception attack.

**Expected behaviour:**

Public clients on `authorization_code` grant type must be verified by method described in RFC linked in the issue.

I am not sure how much it would affect existing deployments by making this mandatory, but there should be some option / setting switch that we can flip to enable this security feature.

I'll be open to working on this, let me know if I should. | closed | 2019-04-25T07:34:41Z | 2019-05-29T19:20:23Z | https://github.com/jazzband/django-oauth-toolkit/issues/711 | [] | Abhishek8394 | 3 |

ageitgey/face_recognition | python | 1,351 | is it possible to get accuracy/confidence level when predicting face? | i want to get accuracy/confidence level of the prediction result, is it possible??

i'm using [https://github.com/ageitgey/face_recognition/blob/master/examples/facerec_ipcamera_knn.py](this example) | open | 2021-08-04T11:03:44Z | 2021-09-03T20:12:43Z | https://github.com/ageitgey/face_recognition/issues/1351 | [] | moinologics | 1 |

StackStorm/st2 | automation | 6,021 | action_service.list_values no limit or offset support? | ## SUMMARY

action_service.list_values not working properly?

### STACKSTORM VERSION

3.7.0

##### OS, environment, install method

OS install on RHEL

## Steps to reproduce the problem

As per the contrib/runners/python_runner/python_runner/python_action_wrapper.py the action service allows for listing of datastore values. The relevant method I think is this:

def list_values(self, local=True, prefix=None):

return self.datastore_service.list_values(local=local, prefix=prefix)

However, the above is calling below method without the "limit" parameter:

st2common/st2common/services/datastore.py line 71

def list_values(self, local=True, prefix=None, limit=None, offset=0):

"""

Retrieve all the datastores items.

:param local: List values from a namespace local to this pack/class. Defaults to True.

:type: local: ``bool``

:param prefix: Optional key name prefix / startswith filter.

:type prefix: ``str``

:param limit: Number of keys to get. Defaults to the configuration set at 'api.max_page_size'.

:type limit: ``integer``

:param offset: Number of keys to offset. Defaults to 0.

:type offset: ``integer``

:rtype: ``list`` of :class:`KeyValuePair`

"""

client = self.get_api_client()

self._logger.debug("Retrieving all the values from the datastore")

limit = limit or cfg.CONF.api.max_page_size

key_prefix = self._get_full_key_prefix(local=local, prefix=prefix)

kvps = client.keys.get_all(prefix=key_prefix, limit=limit, offset=offset)

return kvps

Using action_service.list_values I'm not able to provide the limit parameter and thus the action will fail with:

oslo_config.cfg.NoSuchOptError: no such option in group api: max_page_size

even though I did put max_page_size = 100 into st2.conf and restarted st2.

Is there a reason why I can't provide the limit to the action_service.list_values method?

(another missing param is "offset", which would also be useful in certain situations)

## Expected Results

a list of keyvalue pairs

## Actual Results

st2.actions.python.SetAccountsObject: DEBUG Creating new Client object.

st2.actions.python.SetAccountsObject: DEBUG Retrieving all the values from the datastore

Traceback (most recent call last):

File \"/opt/stackstorm/st2/lib/python3.8/site-packages/oslo_config/cfg.py\", line 2262, in _get

return self.__cache[key]

KeyError: ('api', 'max_page_size')

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File \"/opt/stackstorm/st2/lib/python3.8/site-packages/python_runner/python_action_wrapper.py\", line 395, in <module>

obj.run()

File \"/opt/stackstorm/st2/lib/python3.8/site-packages/python_runner/python_action_wrapper.py\", line 214, in run

output = action.run(**self._parameters)

File \"/opt/stackstorm/internal_packs/preprocessing_shared_scripts/actions/set_accounts_obj.py\", line 8, in run

accts_objects = self.get_overrides(capability_name)

File \"/opt/stackstorm/internal_packs/preprocessing_shared_scripts/actions/set_accounts_obj.py\", line 12, in get_overrides

return self.action_service.list_values(local=False, prefix=f'{capability}_accounts_')

File \"/opt/stackstorm/st2/lib/python3.8/site-packages/python_runner/python_action_wrapper.py\", line 136, in list_values

return self.datastore_service.list_values(local=local, prefix=prefix)

File \"/opt/stackstorm/st2/lib/python3.8/site-packages/st2common/services/datastore.py\", line 92, in list_values

limit = limit or cfg.CONF.api.max_page_size

File \"/opt/stackstorm/st2/lib/python3.8/site-packages/oslo_config/cfg.py\", line 2547, in __getattr__

return self._conf._get(name, self._group)

File \"/opt/stackstorm/st2/lib/python3.8/site-packages/oslo_config/cfg.py\", line 2264, in _get

value = self._do_get(name, group, namespace)

File \"/opt/stackstorm/st2/lib/python3.8/site-packages/oslo_config/cfg.py\", line 2282, in _do_get

info = self._get_opt_info(name, group)

File \"/opt/stackstorm/st2/lib/python3.8/site-packages/oslo_config/cfg.py\", line 2415, in _get_opt_info

raise NoSuchOptError(opt_name, group)

oslo_config.cfg.NoSuchOptError: no such option in group api: max_page_size

Making sure to follow these steps will guarantee the quickest resolution possible.

Thanks!

| open | 2023-09-06T13:26:59Z | 2023-09-06T13:27:45Z | https://github.com/StackStorm/st2/issues/6021 | [] | fdrab | 0 |

jpadilla/django-rest-framework-jwt | django | 394 | Help configuring JWT_PRIVATE_KEY/JWT_PUBLIC_KEY | I'm utilising `django-rest-framework-jwt` for an REST API authentication and i'd like to have the same web token authorize access to another http service (couchdb).

For creating a JWT enabled reverse proxy i'm looking at jwtproxy (https://github.com/coreos/jwtproxy) which 8afaik) can use a preshared RSA key, so i'm trying to configure RSA private/public keys on `django-rest-framework-jwt`.

Docs mention `JWT_PUBLIC_KEY`is an object of type cryptography.hazmat.primitives.asymmetric.rsa.RSAPublicKey and i'm utilising `cryptography` to try and load an private key file (<https://github.com/coreos/jwtproxy/blob/master/examples/httpserver/mykey.key>):

```

def load_private_key(filepath):

from cryptography.hazmat.primitives import serialization

from cryptography.hazmat.backends import default_backend

with open(filepath, "rb") as key_file:

private_key = serialization.load_pem_private_key(key_file.read(), password=None, backend=default_backend())

return private_key

private_key = load_private_key('../../jwtproxy/examples/httpserver/mykey.key')

JWT_AUTH = {

'JWT_ALLOW_REFRESH': True,

'JWT_PRIVATE_KEY': private_key,

'JWT_PUBLIC_KEY': private_key.public_key(),

'JWT_ALGORITHM': 'HS256'

}

```

But i get an error about key not being a string type

```

value = <cryptography.hazmat.backends.openssl.rsa._RSAPrivateKey object at 0x10ca57f60>

def force_bytes(value):

if isinstance(value, text_type):

return value.encode('utf-8')

elif isinstance(value, binary_type):

return value

else:

> raise TypeError('Expected a string value')

E TypeError: Expected a string value

```

If i convert the key objet to bytes:

```

private_key.public_key().public_bytes(serialization.Encoding.PEM, serialization.PublicFormat.PKCS1)

```

i get an exception about the key format:

```

jwt.exceptions.InvalidKeyError: The specified key is an asymmetric key or x509 certificate and should not be used as an HMAC secret.

```

Could i get a nudge in the right direction on how to set proper values for JWT_PRIVATE_KEY/JWT_PUBLIC_KEY from a RSA key ?

| closed | 2017-10-31T20:35:54Z | 2018-03-02T11:46:17Z | https://github.com/jpadilla/django-rest-framework-jwt/issues/394 | [] | zemanel | 3 |

httpie/cli | python | 571 | Should build official Docker image and add usage instructions | I created this: https://github.com/teracyhq/docker-files/tree/master/httpie-jwt-auth

and I'd like to do the same for official Docker image of httpie (instead of under teracy/ umbrella)

Let's discuss here for anyone interested and I could lead the effort.

related: https://github.com/jakubroztocil/httpie/pull/236 | open | 2017-03-23T18:23:12Z | 2021-05-29T06:08:03Z | https://github.com/httpie/cli/issues/571 | [

"packaging"

] | hoatle | 2 |

axnsan12/drf-yasg | rest-api | 473 | DeprecationWarning on Python 3.7/3.8 and breakage on master/3.10 due to coreapi dependency | Originally posted at https://github.com/encode/django-rest-framework/issues/6991

Relates to #389

___________

Python 3.8 releases today but this warning is still triggered from `djangorestframework` 3.10.3 and `drf-yasg` 1.17.0 :

https://github.com/tomchristie/itypes/issues/11

This makes causes users who run their DRF testing with `-Werror` fail the build if they also use `drf-yasg`.

From: https://docs.python.org/3/library/collections.html#module-collections

> Deprecated since version 3.3, will be removed in version 3.10: Moved Collections Abstract Base Classes to the collections.abc module.

## Steps to reproduce

```py

from rest_framework.test import APIClient

```

Run `pytest` tests with`-Werror` under Python 3.7.

```

$ python3 -Werror -m pytest

```

```

During handling of the above exception, another exception occurred:

.tox/docker/lib/python3.7/site-packages/_pytest/config/__init__.py:462: in _importconftest

mod = conftestpath.pyimport()

.tox/docker/lib/python3.7/site-packages/py/_path/local.py:701: in pyimport

__import__(modname)

<frozen importlib._bootstrap>:983: in _find_and_load

???

<frozen importlib._bootstrap>:967: in _find_and_load_unlocked

???

<frozen importlib._bootstrap>:677: in _load_unlocked

???

.tox/docker/lib/python3.7/site-packages/_pytest/assertion/rewrite.py:142: in exec_module

exec(co, module.__dict__)

rest_api/tests/conftest.py:2: in <module>

from rest_framework.test import APIClient

.tox/docker/lib/python3.7/site-packages/rest_framework/test.py:16: in <module>

from rest_framework.compat import coreapi, requests

.tox/docker/lib/python3.7/site-packages/rest_framework/compat.py:98: in <module>

import coreapi

.tox/docker/lib/python3.7/site-packages/coreapi/__init__.py:2: in <module>

from coreapi import auth, codecs, exceptions, transports, utils

.tox/docker/lib/python3.7/site-packages/coreapi/codecs/__init__.py:2: in <module>

from coreapi.codecs.base import BaseCodec

.tox/docker/lib/python3.7/site-packages/coreapi/codecs/base.py:1: in <module>

import itypes

.tox/docker/lib/python3.7/site-packages/itypes.py:2: in <module>

from collections import Mapping, Sequence

<frozen importlib._bootstrap>:1032: in _handle_fromlist

???

.tox/docker/lib/python3.7/collections/__init__.py:52: in __getattr__

DeprecationWarning, stacklevel=2)

E DeprecationWarning: Using or importing the ABCs from 'collections' instead of from 'collections.abc' is deprecated, and in 3.8 it will stop working

``` | closed | 2019-10-16T12:43:42Z | 2020-09-17T12:36:20Z | https://github.com/axnsan12/drf-yasg/issues/473 | [] | johnthagen | 5 |

eriklindernoren/ML-From-Scratch | data-science | 23 | MatplotlibWrapper is an undefined name | MatplotlibWrapper is an undefined name in gaussian_mixture_model.py and k_means.py. Undefined names can raise [NameError](https://docs.python.org/3/library/exceptions.html#NameError) at runtime.

flake8 testing of https://github.com/eriklindernoren/ML-From-Scratch on Python 2.7.13

$ flake8 . --count --select=E901,E999,F821,F822,F823 --show-source --statistics

```

./mlfromscratch/unsupervised_learning/gaussian_mixture_model.py:137:9: F821 undefined name 'MatplotlibWrapper'

p = MatplotlibWrapper()

^

```

The same issue is __also present in k_means.py__ but [the * import](https://github.com/eriklindernoren/ML-From-Scratch/blob/master/mlfromscratch/unsupervised_learning/k_means.py#L11) masks it from flake8. | closed | 2017-09-14T09:19:28Z | 2017-09-18T14:31:12Z | https://github.com/eriklindernoren/ML-From-Scratch/issues/23 | [] | cclauss | 1 |

piskvorky/gensim | machine-learning | 3,025 | Custom Keyword inclusion | <!--

**IMPORTANT**:

- Use the [Gensim mailing list](https://groups.google.com/forum/#!forum/gensim) to ask general or usage questions. Github issues are only for bug reports.

- Check [Recipes&FAQ](https://github.com/RaRe-Technologies/gensim/wiki/Recipes-&-FAQ) first for common answers.

Github bug reports that do not include relevant information and context will be closed without an answer. Thanks!

-->

#### Problem description

Our need is the generated summary should have specific keywords from the input text.

#### Steps/code/corpus to reproduce

We need the summarize function to have accept keywords as input parameter.

output = summarize(text, word_count=9, **custom_keywords=keywords**)

**For example,**

```

from gensim.summarization.summarizer import summarize

keywords = ['apple', 'red', 'yellow']

text = "Apple is red. Grape is black. Banana is yellow"

output = summarize(text, word_count=9, custom_keywords=keywords)

print(output)

```

#### Output

**Apple** is **red.**

Banana is **yellow**

As in above example, we need a parameter to include custom keywords and those keywords must be present in the summarized text.

(i.e) The sentences with the keywords should be present in the output.

| closed | 2021-01-12T07:33:17Z | 2021-01-12T10:28:36Z | https://github.com/piskvorky/gensim/issues/3025 | [] | Vignesh9395 | 1 |

encode/databases | asyncio | 208 | aiopg engine raises ResourceWarning in transactions | Step to reproduce:

Python 3.7.7

```python3

import asyncio

from databases import Database

url = "postgresql+aiopg://localhost:5432"

async def generate_series(db, *args):

async with db.connection() as conn:

async for row in conn.iterate( # implicitly starts transaction

f"select generate_series({', '.join(f'{a}' for a in args)}) as i"

):

yield row["i"]

async def main():

async with Database(url) as db:

print([i async for i in generate_series(db, 1, 10)])

async with db.transaction():

print(await db.fetch_val("select 1"))

asyncio.run(main())

```

Result:

```

.../python3.7/site-packages/aiopg/connection.py:256: ResourceWarning: You can only have one cursor per connection. The cursor for connection will be closed forcibly <aiopg.connection::Connection isexecuting=False, closed=False, echo=False, cursor=<aiopg.cursor::Cursor name=None, closed=False>>. ' {!r}.').format(self), ResourceWarning)

[1, 2, 3, 4, 5, 6, 7, 8, 9, 10]

.../python3.7/site-packages/aiopg/connection.py:256: ResourceWarning: You can only have one cursor per connection. The cursor for connection will be closed forcibly <aiopg.connection::Connection isexecuting=False, closed=False, echo=False, cursor=<aiopg.cursor::Cursor name=None, closed=False>>. ' {!r}.').format(self), ResourceWarning)

1

``` | open | 2020-05-20T10:55:25Z | 2021-03-16T16:59:38Z | https://github.com/encode/databases/issues/208 | [] | nkoshell | 3 |

sktime/sktime | scikit-learn | 7,587 | [ENH] Forecast reconciliation with Machine Learning | One of the new approaches to forecast reconciliation is using ML regressors that leverage the base forecasts as inputs to predict forecasts of the bottom levels (similar to stacking models). The reconciled forecast is then the aggregation of such predicted values. For more information, refer to [1] and [2]

**Describe the solution you'd like**

A new transformation or new ReconcilerForecaster that is able to receive a sklearn regressor (capable of regressing multiple variables, which can be obtained by using sklearn's MultiOutputRegressor wrapper). The dataset for such regressor can be created by cross-validation.

**Additional context**

[1] Spiliotis, Evangelos, et al. "Hierarchical forecast reconciliation with machine learning." Applied Soft Computing 112 (2021): 107756.

[2] Burba, Davide, and Trista Chen. "A trainable reconciliation method for hierarchical time-series." arXiv preprint arXiv:2101.01329 (2021).

| open | 2024-12-30T13:29:11Z | 2024-12-30T13:29:11Z | https://github.com/sktime/sktime/issues/7587 | [

"enhancement"

] | felipeangelimvieira | 0 |

mwaskom/seaborn | data-visualization | 3,546 | Interest in seaborn.objects API contributions? | I've seen a few contributions floating around for the seaborn.objects API (e.g., #3320). Sounds like there's a reluctance to move too fast while the API is settling, but I happen to have a very good student looking to do some work, and I'd _love_ to see the objects API have better integration with modelling tools (especially adding SEs to `PolyFit` and adding lowess (with SEs, ideally).

That a kind of proposal that would be welcomed? | closed | 2023-11-03T17:46:20Z | 2023-11-06T15:17:11Z | https://github.com/mwaskom/seaborn/issues/3546 | [] | nickeubank | 2 |

yinkaisheng/Python-UIAutomation-for-Windows | automation | 1 | Adding ProcessId property to Control class | Hi !

I tried to add ProcessId property

``` python

class Control():

@property

def ProcessId(self):

'''Return process id'''

return ClientObject.dll.GetProcessId(self.Element)

```

and when I call the property I got this error message

```

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "C:\python27\lib\ctypes\__init__.py", line 378, in __getattr__

func = self.__getitem__(name)

File "C:\python27\lib\ctypes\__init__.py", line 383, in __getitem__

func = self._FuncPtr((name_or_ordinal, self))

AttributeError: function 'GetElementProcessId' not found

```

| closed | 2015-12-28T21:27:05Z | 2015-12-29T07:00:48Z | https://github.com/yinkaisheng/Python-UIAutomation-for-Windows/issues/1 | [] | thu2004 | 1 |

jschneier/django-storages | django | 1,207 | Make django-storages compatible with the new Django's settings `STORAGES` | In Django 4.2 the new settings ``STORAGES`` has been added.

https://github.com/django/django/commit/1ec3f0961fedbe01f174b78ef2805a9d4f3844b1

The ``DEFAULT_FILE_STORAGE`` and ``STATICFILES_STORAGE`` settings are deprecated in Django 4.2 and will be removed Django 5.1.

https://github.com/django/django/commit/32940d390a00a30a6409282d314d617667892841 | closed | 2023-01-12T11:05:07Z | 2023-04-05T12:23:46Z | https://github.com/jschneier/django-storages/issues/1207 | [

"Help Wanted 💕"

] | pauloxnet | 3 |

dynaconf/dynaconf | flask | 1,252 | [RFC] Combining settings from different applications / packages | **Is your feature request related to a problem? Please describe.**

I am currently working on setting up an inheritance-based package and applications. In this model, I would have a base package (core) which has an abstract class with a defined dynaconf settings and config file. For example, the core class (which is packaged as whl) would have specific flags that we want all applications that inherit from this class to use. Then I would have an application that imports this package and inherits from the core class. As mentioned above, I would want to use the settings from the core class.

**Describe the solution you'd like**

Is it possible to merge the LazySettings objects such that I import the core settings (LazySettings) and then merge it with the new one that the application creates? That way I can merge settings and utilize inheritance model

| closed | 2025-02-13T21:19:00Z | 2025-02-17T23:05:41Z | https://github.com/dynaconf/dynaconf/issues/1252 | [

"Not a Bug",

"RFC"

] | omri-cavnue | 2 |

pytest-dev/pytest-html | pytest | 815 | Crashes with KeyError: 'retried' when test is retried with pytest-retry | It looks like the html reporter does not handle tests with the outcome `retried`.

It's quite easy to reproduce:

1. Create a failing test with the mark `@pytest.mark.flaky(retries=3, delay=1)`

2. Run the test and wait for it to fail so that it is retried

3. Pytest crashes with:

```

File "/__w/xi/xi/tests/venv/lib/python3.9/site-packages/pytest_html/report_data.py", line 94, in outcomes

self._outcomes[outcome.lower()]["value"] += 1

KeyError: 'retried'

``` | open | 2024-05-30T13:51:00Z | 2024-12-02T10:40:36Z | https://github.com/pytest-dev/pytest-html/issues/815 | [] | angelos-p | 3 |

seleniumbase/SeleniumBase | web-scraping | 2,635 | UC Mode not working on window server 2022 | Last week, my code worked fine but after updating my code couldn't bypass the cloudflare bot. For information, I use Windows Server 2022.

This is my code:

```

def you_message(text: str, out_type: str = 'json', timeout: int = 20):

"""Function to send a message and get results from YouChat.com

Args:

text (str): text to send

out_type (str): type of result (json, string). Defaults to 'json'.

timeout (int): timeout in seconds to wait for a result. Defaults to 20.

Returns:

str: response of the message

"""

qoted_text = urllib.parse.quote_plus(text)

result = {}

data = ""

with SB(uc=True) as sb:

sb.open(

f"https://you.com/api/streamingSearch?q={qoted_text}&domain=youchat")

timeout_delta = time.time() + timeout

stream_available = False

while time.time() <= timeout_delta:

try:

sb.assert_text("event: youChatIntent", timeout=8.45)

if 'error' in result:

result.pop('error')

data = sb.get_text("body pre")

break

except Exception:

pass

# Try to easy solve captcha challenge

try:

if sb.assert_element('iframe'):

sb.switch_to_frame("iframe")

sb.find_element(".ctp-checkbox-label", timeout=1).click()

# sb.save_screenshot('sel2.png') # Debug

except Exception:

result['error'] = 'Selenium was detected! Try again later. Captcha not solved automaticly.'

finally:

# Force exit from iframe

sb.switch_to_default_content()

if time.time() > timeout_delta:

# sb.save_screenshot('sel-timeout.png') # Debug

result['error'] = 'Timeout while getting data from Selenium! Try again later.'

res_message = ""

for line in data.split("\n"):

if line.startswith("data: {"):

json_data = json.loads(line[5:])

if 'youChatToken' in json_data:

res_message += json_data['youChatToken']

result['generated_text'] = res_message

if out_type == 'json':

return json.dumps(result)

else:

str_res = result['error'] if (

'error' in result) else result['generated_text']

return str_res

``` | closed | 2024-03-24T17:45:54Z | 2024-03-24T18:14:44Z | https://github.com/seleniumbase/SeleniumBase/issues/2635 | [

"invalid usage",

"UC Mode / CDP Mode"

] | zing75blog | 1 |

jupyter/nbgrader | jupyter | 969 | Add link to the Jupyter in Education map in third-party resources | Here is the link: https://elc.github.io/jupyter-map/ | closed | 2018-05-19T13:10:49Z | 2018-10-07T11:14:54Z | https://github.com/jupyter/nbgrader/issues/969 | [

"documentation",

"good first issue"

] | jhamrick | 2 |

plotly/dash-component-boilerplate | dash | 150 | Import errors | Im trying to create a component and when i tried to run the usage.py file im getting an import error:

I have recently received similar errors when trying to run some dash apps and it seems to be from an old version of dash, I would have thought running this inside of this repo it would have an updated version of dash and I dont want to mess anything else up with this process? Im still in my base env but when i created this project i followed the instructions and followed these instructions:

pip install cookiecutter

pip install virtualenv

The project has the package.json file.. The instructions didnt say to go into a env? I assumed once i created that venv file that was ok, so now im not sure what to do? pip install a newer version of dash? Do i do that while im in the base env and in which file/folder?

Error message:

File "usage.py", line 2, in <module>

from dash import Dash, callback, html, Input, Output

ImportError: cannot import name 'callback' from 'dash'

Thanks!

| closed | 2023-01-13T01:10:10Z | 2023-05-31T13:38:11Z | https://github.com/plotly/dash-component-boilerplate/issues/150 | [] | Tedmcm | 1 |

e2b-dev/code-interpreter | jupyter | 7 | CodeInterpreter.reconnect() not working as expected | I ran into this issue and am extremely confused, is this a problem on my end or through the library

| closed | 2024-04-08T20:00:36Z | 2024-04-08T20:25:11Z | https://github.com/e2b-dev/code-interpreter/issues/7 | [] | im-calvin | 2 |

gee-community/geemap | streamlit | 623 | Not getting same interactive map as showing tutorial video | I am getting as given via code

Map = geemap.Map()

Map

we did not get inspector and other tools on map thats why facing problem in adding layers

video tutorial show as given

| closed | 2021-08-14T10:12:25Z | 2021-08-15T01:22:19Z | https://github.com/gee-community/geemap/issues/623 | [

"bug"

] | dileep0 | 1 |

psf/requests | python | 6,601 | Since migration of urllib3 to 2.x data-strings with umlauts in a post request are truncated | Before requests 2.30 it was possible to just pass a Python-string with umlauts (äöü...) to a `requests.post` call. Since urllib3 2.x this causes the body of the request to be truncated. It seems that the Content-Length is calculated based on the length of the string and the string itself is handed over to the call as a multibyte representation causing the string to be truncated in the request because with multibyte characters there are more bytes than characters.

## Expected Result

All characters of the input string should have been sent to the target.

## Actual Result

Input string is truncated. See output of code below:

```

data:application/octet-stream;base64,RGFzIHNpbmQgUG9zdC1EYXRlbiBtaXQgVW1sYXV0ZW46IMOkww==

data:application/octet-stream;base64,RGFzIHNpbmQgUG9zdC1EYXRlbiBtaXQgVW1sYXV0ZW46IOT89g==

Das sind Post-Daten mit Umlauten: äüö

```

## Reproduction Steps

```python

import requests

import json

data_as_string = "Das sind Post-Daten mit Umlauten: äüö"

data_array = [

data_as_string,

bytes(data_as_string,'iso-8859-1'),

bytes(data_as_string,'utf-8')

]

post_url = "https://httpbin.org/post"

headers = {

"Content-Type": "text/plain",

"Host": "httpbin.org",

}

def main():

for d in data_array:

response = requests.post(

url=post_url,

headers=headers,

data=d

)

r = json.loads(response.content)

print(r['data'])

if __name__ == '__main__':

main()

```

The behaviour was also verified using Portswigger Burp Suite:

First Request:

```

50 4F 53 54 20 2F 70 6F 73 74 20 48 54 54 50 2F 32 0D 0A 48 6F 73 74 3A 20 68 74 74 70 62 69 6E 2E 6F 72 67 0D 0A 55 73 65 72 2D 41 67 65 6E 74 3A 20 70 79 74 68 6F 6E 2D 72 65 71 75 65 73 74 73 2F 32 2E 33 31 2E 30 0D 0A 41 63 63 65 70 74 2D 45 6E 63 6F 64 69 6E 67 3A 20 67 7A 69 70 2C 20 64 65 66 6C 61 74 65 2C 20 62 72 0D 0A 41 63 63 65 70 74 3A 20 2A 2F 2A 0D 0A 43 6F 6E 6E 65 63 74 69 6F 6E 3A 20 63 6C 6F 73 65 0D 0A 43 6F 6E 74 65 6E 74 2D 54 79 70 65 3A 20 74 65 78 74 2F 70 6C 61 69 6E 0D 0A 43 6F 6E 74 65 6E 74 2D 4C 65 6E 67 74 68 3A 20 33 37 0D 0A 0D 0A 44 61 73 20 73 69 6E 64 20 50 6F 73 74 2D 44 61 74 65 6E 20 6D 69 74 20 55 6D 6C 61 75 74 65 6E 3A 20 C3 A4 C3

```

```

POST /post HTTP/2

Host: httpbin.org

User-Agent: python-requests/2.31.0

Accept-Encoding: gzip, deflate, br

Accept: */*

Connection: close

Content-Type: text/plain

Content-Length: 37

Das sind Post-Daten mit Umlauten: äÃ

```

Second Request:

```

50 4F 53 54 20 2F 70 6F 73 74 20 48 54 54 50 2F 32 0D 0A 48 6F 73 74 3A 20 68 74 74 70 62 69 6E 2E 6F 72 67 0D 0A 55 73 65 72 2D 41 67 65 6E 74 3A 20 70 79 74 68 6F 6E 2D 72 65 71 75 65 73 74 73 2F 32 2E 33 31 2E 30 0D 0A 41 63 63 65 70 74 2D 45 6E 63 6F 64 69 6E 67 3A 20 67 7A 69 70 2C 20 64 65 66 6C 61 74 65 2C 20 62 72 0D 0A 41 63 63 65 70 74 3A 20 2A 2F 2A 0D 0A 43 6F 6E 6E 65 63 74 69 6F 6E 3A 20 6B 65 65 70 2D 61 6C 69 76 65 0D 0A 43 6F 6E 74 65 6E 74 2D 54 79 70 65 3A 20 74 65 78 74 2F 70 6C 61 69 6E 0D 0A 43 6F 6E 74 65 6E 74 2D 4C 65 6E 67 74 68 3A 20 33 37 0D 0A 0D 0A 44 61 73 20 73 69 6E 64 20 50 6F 73 74 2D 44 61 74 65 6E 20 6D 69 74 20 55 6D 6C 61 75 74 65 6E 3A 20 E4 FC F6

```

```

POST /post HTTP/2

Host: httpbin.org

User-Agent: python-requests/2.31.0

Accept-Encoding: gzip, deflate, br

Accept: */*

Connection: keep-alive

Content-Type: text/plain

Content-Length: 37

Das sind Post-Daten mit Umlauten: äüö

```

Third Request:

```

50 4F 53 54 20 2F 70 6F 73 74 20 48 54 54 50 2F 32 0D 0A 48 6F 73 74 3A 20 68 74 74 70 62 69 6E 2E 6F 72 67 0D 0A 55 73 65 72 2D 41 67 65 6E 74 3A 20 70 79 74 68 6F 6E 2D 72 65 71 75 65 73 74 73 2F 32 2E 33 31 2E 30 0D 0A 41 63 63 65 70 74 2D 45 6E 63 6F 64 69 6E 67 3A 20 67 7A 69 70 2C 20 64 65 66 6C 61 74 65 2C 20 62 72 0D 0A 41 63 63 65 70 74 3A 20 2A 2F 2A 0D 0A 43 6F 6E 6E 65 63 74 69 6F 6E 3A 20 6B 65 65 70 2D 61 6C 69 76 65 0D 0A 43 6F 6E 74 65 6E 74 2D 54 79 70 65 3A 20 74 65 78 74 2F 70 6C 61 69 6E 0D 0A 43 6F 6E 74 65 6E 74 2D 4C 65 6E 67 74 68 3A 20 34 30 0D 0A 0D 0A 44 61 73 20 73 69 6E 64 20 50 6F 73 74 2D 44 61 74 65 6E 20 6D 69 74 20 55 6D 6C 61 75 74 65 6E 3A 20 C3 A4 C3 BC C3 B6

```

```

POST /post HTTP/2

Host: httpbin.org

User-Agent: python-requests/2.31.0

Accept-Encoding: gzip, deflate, br

Accept: */*

Connection: keep-alive

Content-Type: text/plain

Content-Length: 40

Das sind Post-Daten mit Umlauten: äüö

```

## System Information

$ python -m requests.help

```json

{

"chardet": {

"version": null

},

"charset_normalizer": {

"version": "3.3.2"

},

"cryptography": {

"version": ""

},

"idna": {

"version": "3.6"

},

"implementation": {

"name": "CPython",

"version": "3.12.0"

},

"platform": {

"release": "10",

"system": "Windows"

},

"pyOpenSSL": {

"openssl_version": "",

"version": null

},

"requests": {

"version": "2.31.0"

},

"system_ssl": {

"version": "300000b0"

},

"urllib3": {

"version": "2.1.0"

},

"using_charset_normalizer": true,

"using_pyopenssl": false

}

```

<!-- This command is only available on Requests v2.16.4 and greater. Otherwise,

please provide some basic information about your system (Python version,

operating system, &c). -->

| closed | 2023-12-13T14:09:36Z | 2024-12-13T00:06:48Z | https://github.com/psf/requests/issues/6601 | [] | secorvo-jen | 1 |

amidaware/tacticalrmm | django | 1,898 | At the top of the devices list, it would be great to have number of items | **Is your feature request related to a problem? Please describe.**

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

**Describe the solution you'd like**

A clear and concise description of what you want to happen.

**Describe alternatives you've considered**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context or screenshots about the feature request here.

| closed | 2024-06-21T09:47:47Z | 2024-06-21T15:29:05Z | https://github.com/amidaware/tacticalrmm/issues/1898 | [] | JCbarreau | 0 |

microsoft/nni | pytorch | 5,790 | Error in model speedup when using a single logit output layer | **Describe the issue**:

**Environment**:

- NNI version: 3.0

- Training service (local|remote|pai|aml|etc): local

- Client OS: Ubuntu 18.04.4 LTS

- Server OS (for remote mode only):

- Python version: 3.9

- PyTorch/TensorFlow version: 1.12.0

- Is conda/virtualenv/venv used?: conda

- Is running in Docker?: no

**Configuration**:

- Experiment config (remember to remove secrets!):

- Search space:

**Log message**:

- nnimanager.log:

- dispatcher.log:

- nnictl stdout and stderr:

```

AttributeError Traceback (most recent call last)

Input In [19], in <cell line: 9>()

22 _, masks = pruner.compress()

23 pruner.unwrap_model()

---> 25 model = ModelSpeedup(model, dummy_input, masks).speedup_model()

File ~/miniconda3/envs/optha/lib/python3.9/site-packages/nni/compression/speedup/model_speedup.py:435, in ModelSpeedup.speedup_model(self)

433 self.initialize_update_sparsity()

434 self.update_direct_sparsity()

--> 435 self.update_indirect_sparsity()

436 self.logger.info('Resolve the mask conflict after mask propagate...')

437 # fix_mask_conflict(self.masks, self.graph_module, self.dummy_input)

File ~/miniconda3/envs/optha/lib/python3.9/site-packages/nni/compression/speedup/model_speedup.py:306, in ModelSpeedup.update_indirect_sparsity(self)

304 for node in reversed(self.graph_module.graph.nodes):

305 node: Node

--> 306 self.node_infos[node].mask_updater.indirect_update_process(self, node)

307 sp = f', {sparsity_stats(self.masks.get(node.target, {}))}' if node.op == 'call_module' else ''

308 sp += f', {sparsity_stats({"output mask": self.node_infos[node].output_masks})}'

File ~/miniconda3/envs/optha/lib/python3.9/site-packages/nni/compression/speedup/mask_updater.py:229, in LeafModuleMaskUpdater.indirect_update_process(self, model_speedup, node)

227 for k, v in node_info.module.named_parameters():

228 if isinstance(v, torch.Tensor) and model_speedup.tensor_propagate_check(v) and v.dtype in torch_float_dtype:

--> 229 grad_zero = v.grad.data == 0

230 node_info.param_masks[k][grad_zero] = 0

AttributeError: 'NoneType' object has no attribute 'data'

```

<!--

Where can you find the log files:

LOG: https://github.com/microsoft/nni/blob/master/docs/en_US/Tutorial/HowToDebug.md#experiment-root-director

STDOUT/STDERR: https://nni.readthedocs.io/en/stable/reference/nnictl.html#nnictl-log-stdout

-->

**How to reproduce it?**:

```

import torch

import torch.nn as nn

import torchvision.models as tvmodels

from nni.compression.pruning import L1NormPruner

from nni.compression.utils import auto_set_denpendency_group_ids

from nni.compression.speedup import ModelSpeedup

if __name__ == '__main__':

model = tvmodels.resnet18()

model.fc = nn.Linear(in_features=512, out_features=1, bias=True)

config_list = [{

'op_types': ['Conv2d'],

'sparse_ratio': 0.1

}]

dummy_input = torch.rand(1, 3, 224, 224)

config_list = auto_set_denpendency_group_ids(model, config_list, dummy_input)

pruner = L1NormPruner(model, config_list)

_, masks = pruner.compress()

pruner.unwrap_model()

model = ModelSpeedup(model, dummy_input, masks).speedup_model()

``` | open | 2024-05-30T10:10:29Z | 2024-05-30T10:10:29Z | https://github.com/microsoft/nni/issues/5790 | [] | rishabh-WIAI | 0 |

recommenders-team/recommenders | machine-learning | 1,718 | [ASK] How can I save SASRec model for re-training and prediction? | I have tried to save trained SASRec model.

pickle, tf.saved_model.save, model.save(), and surprise.dump are not working.

While saving, I got warning saying 'Found untraced functions',

and while loading, 'AttributeError: 'SASREC' object has no attribute 'seq_max_len''.

Plz someone let me know how to save and load SASRec model! | open | 2022-05-13T18:23:23Z | 2023-08-30T14:03:13Z | https://github.com/recommenders-team/recommenders/issues/1718 | [

"help wanted"

] | beomso0 | 2 |

praw-dev/praw | api | 1,090 | Submission stream returning duplicates | If you stream submissions from r/all, the stream returns duplicate copies of items. See this example code: https://github.com/Watchful1/Sketchpad/blob/master/streamTester.py

Streaming 5000 submissions resulted in 3508 duplicates, many 5 or 6 times each.

Increasing the size of the seen_attributes BoundedSet in stream_generator to some large number, I used 2000, fixed the problem, but that feels like a bandaid.

Originally [reported by u/boib](https://old.reddit.com/r/redditdev/comments/c5k2lx/anyone_notice_some_weirdness_with_praw/). | closed | 2019-06-26T04:03:13Z | 2019-07-01T15:41:35Z | https://github.com/praw-dev/praw/issues/1090 | [] | Watchful1 | 2 |

tableau/server-client-python | rest-api | 1,102 | [Type 1] Support `vizWidth` and `vizHeight` parameters of Query View PDF endpoint | ## Description

Currently these are not exposed anywhere. The `PDFRequestOptions` object is shared between the "Query View PDF" and the "Download Workbook PDF" endpoint however the workbook variant does not e.g. support filters and the query view variant has the visWidth/visHeight parameters. These are not exposed and cannot be set to my knowledge.

| open | 2022-09-08T16:56:05Z | 2023-03-23T19:14:41Z | https://github.com/tableau/server-client-python/issues/1102 | [

"enhancement"

] | septatrix | 0 |

marcomusy/vedo | numpy | 505 | addSlider2D: TypeError: DestroyTimer argument 1: an integer is required (got type NoneType) | Hi @marcomusy,

Here is another error that I could not figure out by myself. I am still trying to play with the same data as I used in Issue #504, and this time I am playing with the slider.

Here is the code I used:

```Python

#!/usr/bin/env python3

import numpy as np

from vedo import TetMesh, show, screenshot, settings, Picture, buildLUT, Box, \

Plotter, Axes

from vedo.applications import Animation

import time as tm

def slider(widget, event):

region = widget.GetRepresentation().GetValue()

plot_mesh(region)

def plot_mesh(max_reg):

"""Plots the mesh based on the maximum region index"""

# This will get rid of the background Earth unit and air unit in the model

# which leaves us with the central part of the model

tet_tmp = tet.clone().threshold(name='cell_scalars', above=0, below=max_reg)

# So, we now cut the TetMesh object with a mesh (that Box object)

tet_tmp.cutWithMesh(box, wholeCells=True)

# And we need to convert it to a mesh object for later plotting

msh = tet_tmp.tomesh().lineWidth(1).lineColor('w')

# We need to build a look up table for our color bar, and now it supports

# using category names as labels instead of the numerical values

# This was implemented upon my request

lut_table = [

# Value, color, alpha, category

(12.2, 'dodgerblue', 1, 'C1'),

(15.2, 'skyblue', 1, 'C1-North'),

(16.2, 'lightgray', 1, 'Overburden'),

(18.2, 'yellow', 1, 'MFc'),

(19.2, 'gold', 1, 'MFb'),

(21.2, 'red', 1, 'PW'),

(23.2, 'palegreen', 1, 'BSMT1'),

(25.2, 'green', 1, 'BSMT2'),

]

lut = buildLUT(lut_table)

msh.cmap(lut, 'cell_scalars', on='cells')

# msh.cmap("coolwarm", 'cell_scalars', on='cells')

msh.addScalarBar3D(

categories=lut_table,

pos=(508000, 6416500, -1830),

title='Units',

titleSize=1.5,

sx=100,

sy=4000,

titleXOffset=-2,

)

zlabels = [(500, '500'), (0, '0'), (-500, '-500'), (-1000, '-1000'),

(-1500, '-1500')]

axes = Axes(msh,

xtitle='Easting (m)',

ytitle='Northing (m)',

ztitle='Elevation (m)',

xLabelSize=0.015,

xTitlePosition=0.65,

yTitlePosition=0.65,

yTitleOffset=-1.18,

yLabelRotation=90,

yLabelOffset=-1.6,

# yShiftAlongX=1,

zTitlePosition=0.85,

# zTitleOffset=0.04,

zLabelRotation=90,

zValuesAndLabels=zlabels,

# zShiftAlongX=-1,

axesLineWidth=3,

yrange=msh.ybounds(),

xTitleOffset=0.02,

# yzShift=1,

tipSize=0.,

yzGrid=True,

xyGrid=True,

gridLineWidth=5,

)

plt.show(msh, size=size)

# screenshot('model_mesh_vedo.png')

# from wand import image

# with image.Image(filename='model_mesh_vedo.png') as imag:

# imag.trim(color=None, fuzz=0)

# imag.save(filename='model_mesh_vedo.png')

# Do some settings

settings.useDepthPeeling=False # Useful to show the axes grid

font_name = 'Theemim'

settings.defaultFont = font_name

settings.multiSamples=8

# settings.useParallelProjection = True # avoid perspective parallax

# Create a TetMesh object form the vtk file

tet = TetMesh('final_mesh.vtk')

# This will get rid of the background Earth unit and air unit in the model

# which leaves us with the central part of the model

tet_tmp = tet.clone().threshold(name='cell_scalars', above=0, below=25)

msh = tet_tmp.tomesh().lineWidth(1).lineColor('w')

# Set the camera position

plt = Plotter()

plt.camera.SetPosition( [513381.314, 6406469.652, 6374.748] )

plt.camera.SetFocalPoint( [505099.133, 6415752.321, -907.462] )

plt.camera.SetViewUp( [-0.4, 0.318, 0.86] )

plt.camera.SetDistance( 14415.028 )

plt.camera.SetClippingRange( [7259.637, 23679.065] )

# Crop the entire mesh using a Box object (which is considered to be a mesh

# object in vedo)

# First build a Box object with its centers and dimensions

cent = [504700, 6416500, -615]

box = Box(pos=cent, size=(3000, 5000, 2430))

size = [3940, 2160]

# Add a slider to control the maximum region number in the mesh that we want

# to show

plt.addSlider2D(slider,

2, 25,

value=2,

pos=([0.1,0.1],

[0.4,0.1]),

title="Maximum region number",)

plt.show(interactive=1).close()

```

While dragging the slider, I get this error: "TypeError: DestroyTimer argument 1: an integer is required (got type NoneType)".

Any idea? Thanks again!

Xushan | closed | 2021-11-02T22:29:11Z | 2022-01-11T13:49:42Z | https://github.com/marcomusy/vedo/issues/505 | [] | XushanLu | 2 |

gradio-app/gradio | data-science | 10,373 | Is there any possible way to specify the editable of columns or rows | Hello buddy,

I'm using the Dataframe component in gradio to represent a csv file, in some scenario, some columns or some rows are editable and others are read-only. Is there anyway for me to do that? I just want to do it in the front end not the back end.

Thanks.

| closed | 2025-01-16T06:26:25Z | 2025-01-17T16:38:04Z | https://github.com/gradio-app/gradio/issues/10373 | [

"pending clarification"

] | Yb2S3Man | 3 |

google-research/bert | tensorflow | 468 | Can I use Low-level TF APIs to fine-tuning bert for my task? Do I have to use Estimators? | I am not clear about the optimizer in `optimization.create_optimizer(

total_loss, learning_rate, num_train_steps, num_warmup_steps, False)`. | closed | 2019-03-01T03:00:37Z | 2019-03-01T09:58:38Z | https://github.com/google-research/bert/issues/468 | [] | yumath | 1 |

flairNLP/flair | pytorch | 3,416 | [Question]: Pre-Tagging information in Sequence-Tagging? | ### Question

My specific use case:

I'm trying to solve an event-extraction task which I model as a sequence-tagging problem. So this event-tagger should be able to identify the event trigger, actors and objects in a given span. Now, I've got pretty reliable NER-tags which I would like as a additional information for my event-tagger to use as information (as for example, only PER and ORGs may be actors).

Is there a best practice to use this information? I'm thinking the easy way would be to put annotations inside the train/dev/test data so they can be part of the encoding? Is there a known best way to write these anntotations?

`Barack Obama went to New York`

`[PER] Barack Obama [/PER] went to [LOC] New York [/LOC]`

`Barack [B-PER] Obama [I-PER] went to New [B-LOC] York [I-LOC]`

I guess I'm asking less for a technical solution and more if there is an established way to do this or at least some experience?

(also to the devs: thank you for this awesome framework and the char-based embeddings, pretty much none of my research would be possible without Flair) | open | 2024-03-04T09:01:29Z | 2024-04-04T22:07:50Z | https://github.com/flairNLP/flair/issues/3416 | [

"question"

] | raykyn | 4 |

mwaskom/seaborn | data-science | 3,593 | Discrpancy in seaborn.objects.Dodge groupby order | Hi, I would like to report a strange behavior in the `Move` object `Dodge`.

Seaborn version: 0.13.0

Matplotlib version: 3.8.2

Everything start because I wanted to play around with the `objects` namespace.

As dataset I use the penguins dataset, I drop both the nan and all the values that I do not consider outliers, i.e. everything in between the 0.05 and 0.95 quantile (for each combination of species and sex).

Here the code to replicate the dataset:

```

def get_quantile_df(df, val_col, lower, upper) -> pd.DataFrame:

lower_limit = df[val_col].quantile(lower)

upper_limit = df[val_col].quantile(upper)

sub_df = df[(df[val_col] <= lower_limit) | (df[val_col] >= upper_limit)]

return sub_df, lower_limit, upper_limit

def main():

penguins = sns.load_dataset("penguins")

penguins = penguins.dropna(how="any")

category = "species"

value="body_mass_g"

hue="sex"

lower_out_qt = 0.05

upper_out_qt = 0.95

outliers = None

for c in penguins[category].unique():

c_df = penguins[penguins[category] == c].copy()

for h in c_df[hue].unique():

hue_df = c_df[c_df[hue] == h].copy()

sub_df, l, u = get_quantile_df(hue_df, value, lower_out_qt, upper_out_qt)

outliers = sub_df.copy() if outliers is None else pd.concat([outliers, sub_df])

print(f"{c}-{h}: [{l}-{u}] -> {len(sub_df)}")

```

The print the nested for loop is just to have an idea of how many points to expect

Then I try to plot such outliers with the species on the x axis and the body_mass_g as y axis as follows:

```

(

so.Plot(penguins, x="species", y="body_mass_g", color="sex")

.add(so.Dot(marker="x"), so.Dodge(), data=outliers)

.save("test_figure_outliers1.png")

)

(

so.Plot(outliers, x="species", y="body_mass_g", color="sex")

.add(so.Dot(marker="x"), so.Dodge())

.save("test_figure_outliers2.png")

)

```

As you can see the only difference is that in the first plot I pass the outliers dataset in the add layer, while in the second plot I use directly the outliers dataset at plot level.

I expected the two resulting plots to be identical, but I get two different results, and I think this is caused by the order in groupby operation in the `Dodge` Movement.

Attached the two plots in the same order as the code:

I found a solution on how to make the two plots identical, in the first plot code include also the `groupby` argument as follows:

```

(

so.Plot(penguins, x="species", y="body_mass_g", color="sex")

.add(so.Dot(marker="x"), so.Dodge(), data=outliers, groupby="sex")

.save("test_figure_outliers1.png")

)

```

The cause of the problem is that the first element of the outliers is a "female" penguin, while in the original dataset it's a "male" penguin.

I can see that without specifying anything the grupby operation is executed on the new dataset, producing a possible different order.

But I don't see then why when I specify the groupby variable at that level I get the groupby order executed on the `penguins` dataset.

As a solution I would suggest what follows:

1) if nothing except the new dataset is specified and the new dataset contains the same color column the groupby order is eredited by the Plot level (if specified, otherwise recomputed on the original dataset).

2) if the groupby object is specified at that level and also a new dataset is provided then the groupby should be executed on the new dataset rather then the original one

This way with the solution one the order of the components would be the same between all the layers even providing different subdatasets at each level.

Otherwise the user has the second option to redefine the groupby operation per level. | open | 2023-12-14T15:38:57Z | 2023-12-19T12:05:22Z | https://github.com/mwaskom/seaborn/issues/3593 | [] | tiamilani | 6 |

pytest-dev/pytest-xdist | pytest | 187 | different tests collected between workers in Python 3.5 | I'm trying to run my tests via xdist in python 3.5 and it fails saying different tests were collected between gw0 and gw1. It works fine in python 2.7. This is the same issue as #149 but that issue does not explain the solution. | closed | 2017-07-14T14:46:13Z | 2017-08-07T19:44:49Z | https://github.com/pytest-dev/pytest-xdist/issues/187 | [

"question"

] | havok2063 | 4 |

ultrafunkamsterdam/undetected-chromedriver | automation | 1,397 | uc wont find element | this is my code:

```

from selenium import webdriver

from selenium.webdriver.chrome.service import Service

from selenium.webdriver.chrome.options import Options

from selenium.webdriver.common.by import By

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

from selenium.common.exceptions import TimeoutException

from flask import Flask, request

import threading

app = Flask(__name__)

def my_function(sitekey):

browser.execute_async_script(f"""

document.write(`<script src="https://hcaptcha.com/1/api.js"></script> <div id="captcha"></div>`);

let widget = null;

function waitForHCaptcha() {{

if (typeof hcaptcha !== 'undefined') {{

var element = document.getElementById('captcha');

var sitekey = '{sitekey}';

var widgetId = hcaptcha.render(element, {{

sitekey: sitekey

}});

widget = widgetId;

console.log(hcaptcha);

craft();

}} else {{

// hcaptcha is not yet available, wait and check again

setTimeout(waitForHCaptcha, 100);

}}

}}

function craft() {{

let resp = hcaptcha.getResponse(widget);

if (widget !== null && resp.length > 4) {{

document.write(`<p id="solvedkey">${{resp}}</p>`);

window.hcaptchaResponse = resp;

}} else {{

setTimeout(craft, 100);

}}

}}

waitForHCaptcha(); // start waiting for hcaptcha

""")

hektCaptchaPath = "C:\\Users\\Ken Pira\\Desktop\\DualBot\\hektCaptcha"

browser = None

def run_code(sitekey):

global browser

# Create a new browser instance

if browser is None:

options = Options()

# options.add_argument("--headless") # Run in headless mode

options.add_argument("--window-size=1400,900")

options.add_argument("--load-extension=" + hektCaptchaPath)

browser = webdriver.Chrome(service=Service(executable_path='path_to_undetected_chromedriver'), options=options)

browser.execute_script("window.open('about:blank', '_blank');")

browser.switch_to.window(browser.window_handles[-1])

try:

# Navigate to discord.com

browser.get('https://discord.com')

# Inject and run the provided JavaScript code

threading.Thread(target=my_function,args=(sitekey,)).start()

print("harkinian mode")

print("OpenHack 1"); wait = WebDriverWait(browser, 10); print("OpenHack 3")

result=wait.until(EC.presence_of_element_located((By.CSS_SELECTOR, 'p#solvedkey'))).get_attribute("text")

print("OpenHack 2")

browser.close()

return result

except Exception as e:

print(str(e))

return 'An error occurred', 500

finally:

browser.quit()

@app.route('/captcha')

def captcha():

sitekey = request.args.get('sitekey')

if not sitekey:

return 'Missing sitekey', 400

try:

solved_content = run_code(sitekey)

return solved_content

except Exception as e:

print(str(e))

return 'An error occurred', 500

if __name__ == '__main__':

app.run(port=8085)

```

the body of the site after a while becomes like this:

```