repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

miguelgrinberg/Flask-SocketIO | flask | 916 | Socketio doesn't work properly when Flask is streaming video | I am trying to build a RPi zero controlled toy car with camera and stream video to web page.

I found your project [flask-video-streaming](https://github.com/miguelgrinberg/flask-video-streaming/blob/master/base_camera.py) which works great, and I try to combine it with the [ZeroBot project](https://github.com/CoretechR/ZeroBot), the ZeroBot project runs Node.js on server side, I basically just rewrite the server side in python.

Here is [my project](https://github.com/hyansuper/FPV_RPi_Car), camera streaming works great, but socketio seems to be very slow or not responding: If I click the "light" button on the web page, the server side should print "on_light" and a LED connected on RPi zero should light up, but it didn't.

In the file app.py, if I comment out the video_feed function, then the rest code works fine. print and LED works as expected.

I don't know what's wrong, can you help?

Thanks! | closed | 2019-03-06T19:58:57Z | 2019-06-08T08:00:21Z | https://github.com/miguelgrinberg/Flask-SocketIO/issues/916 | [

"question"

] | hyansuper | 5 |

benbusby/whoogle-search | flask | 255 | Does pre-config apply to heroku deployment as that? | hi, i have a question about heroku deployment.

https://github.com/IniUe/whoogle-search#environment-variables

`# You can set Whoogle environment variables here, but must set`

`# WHOOGLE_DOTENV=1 in your deployment to enable these values`

https://github.com/benbusby/whoogle-search/blob/develop/whoogle.env

Is it enough to remove # to enable that, or should i do something else to get that working?

Edit, when use quick deploy button then only appear line 4 to 13 in cofig vars (we cant do other configuration) then cant do anything for line 18 to 23 or can we add new config var after deployed app in heroku settings --> config vars?

| closed | 2021-04-02T07:35:38Z | 2021-04-02T12:55:58Z | https://github.com/benbusby/whoogle-search/issues/255 | [

"question"

] | ghost | 4 |

tensorflow/tensor2tensor | deep-learning | 1,659 | Getting to work with MultiProblem | #### Please refer to https://github.com/tensorflow/tensor2tensor/issues/1687

----

For training models, I have separated the data generation pipeline from t2t. For that I have implemented my own problem which, in essence, already expects a created dataset.

```python

@registry.register_problem

class ConfigBasedTranslationProblem(translate.TranslateProblem):

# ...

def training_filepaths(self, data_dir, num_shards, shuffled):

return glob.glob(os.path.join(data_dir, '*.train.shard'))

# ...

def filepattern(self, data_dir, split: str, shard=None):

split = 'dev' if split == 'eval' else split

return os.path.join(data_dir, '*.%s.shard' % split)

def generate_data(self, data_dir, tmp_dir, task_id=-1):

raise NotImplementedError('Data should already be generated.')

def prepare_to_generate(self, data_dir, tmp_dir):

raise NotImplementedError('Data should already be generated.')

def source_data_files(self, dataset_split):

raise NotImplementedError('Data should already be generated.')

```

This works great so far but now that I discovered `MultiProblem` ([`multi_problem.md`](https://github.com/tensorflow/tensor2tensor/blob/master/docs/multi_problem.md)) I am facing some issues and questions I was hoping to be able to clarify here.

### The Language Model in `MultiProblem` (?)

This is not really a question but more a suggestion for code and API changes. I would provide a PR but atm I am stuck with tensor2tensor 1.12 and until I updated to 1.13 I won't have resources for it.

From ([`multi_problem.md`](https://github.com/tensorflow/tensor2tensor/blob/master/docs/multi_problem.md)) and the code I can see that the first `problem` has to be a language-model problem.

From the looks, this seems like a requirement which could be relaxed. The first task seems to be used just to create a vocabulary for all languages. In my implementation, I just merge all vocabularies from all tasks (here called `dataset`) to one and return a `SubwordEncoder`:

```python

datasets = self.get_datasets()

reserved_tokens = set()

subword_tokens = set()

for dataset in datasets:

encoders = dataset.feature_encoders()

for encoder in encoders.values():

encoder = cast(SubwordEncoder, encoder)

reserved_tokens.update(encoder.reserved_tokens)

subword_tokens.update(encoder.subword_tokens)

final_subwords = list(reserved_tokens) + sorted(list(subword_tokens.difference(reserved_tokens)))

# ...

return SubwordEncoder(vocab_fp)

```

The only other occurrence of the primary task I can see is in `get_hparams()` in order to set the vocab size and modality.

Imho it could make sense to relax this requirement if `MultiProblem` itself provided feature encoders for inputs and targets. All that is required for this would be additional "merge-vocab" logic and `MultiProblem` could work without the first task having to be a language model problem.

### How does `MultiProblem` training work?

In the `MultiProblem` class we find this:

```python

task_dataset = task_dataset.map(

lambda x: self.add_task_id(task, x, enc, hparams, is_infer))

```

and `add_task_id()` takes an `example` (here `x`) in order to create

```python

# not is_infer

inputs = example.pop("inputs")

concat_list = [inputs, [task.task_id], example["targets"]]

example["targets"] = tf.concat(concat_list, axis=0)

```

in case the problem has inputs or

```python

concat_list = [[task.task_id], example["targets"]]

example["targets"] = tf.concat(concat_list, axis=0)

```

in case the problem has no inputs.

Now, I do not quite understand why a `MultiProblem` only works on examples which provide `targets`. I was under the impression that we still simply train a `Transformer` model which gets `inputs` and `targets` presented as usual but that these samples are drawn from a set of tasks (problems/corpora) for training (see `multiproblem_per_task_threshold`).

So in theory, I should be able to create a classic `Text2TextProblem` which contains all required samples (plus a `task_id` for each sample) and it _should_ work as well, right? Or does `MultiProblem` work totally different?

The `task_id` does not set a different "_mode_" or something, it's just additional context for the model, isn't it?

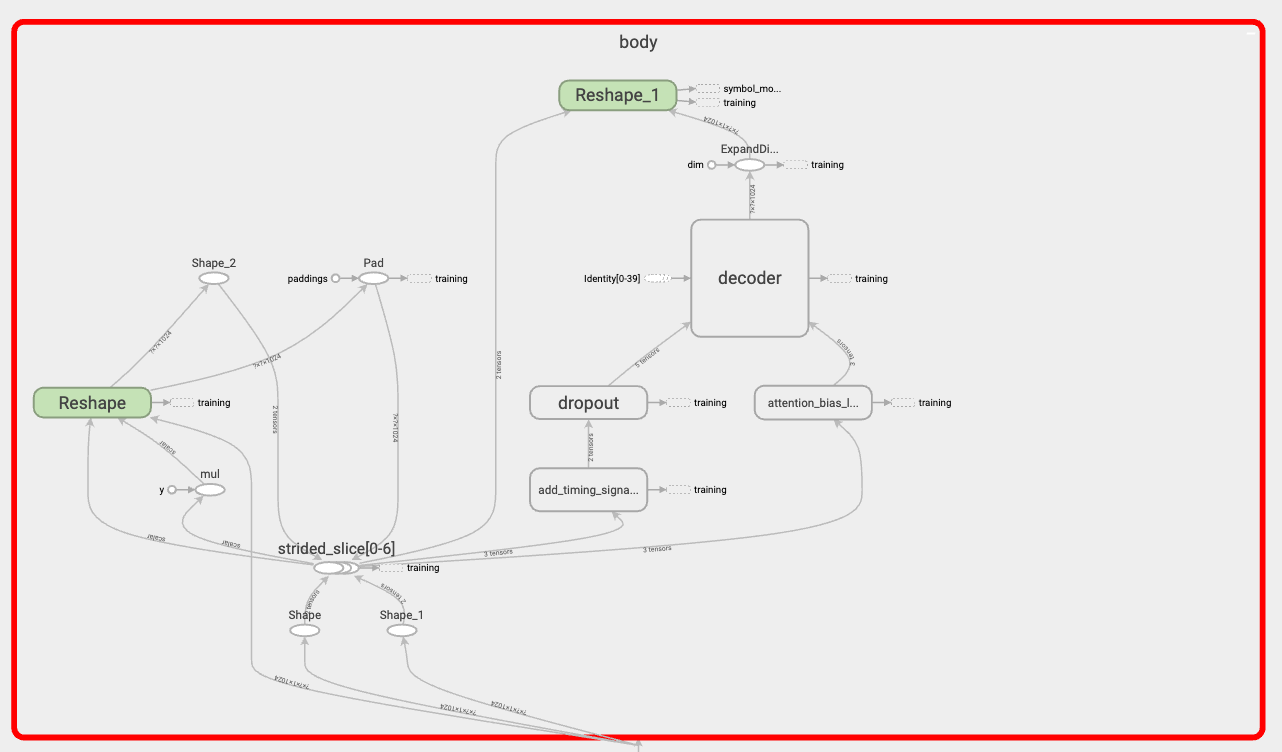

### The Transformer in `MultiProblem`

I noticed that the graph of the Transformer looks very different from why I know from single-problem training. In particular, I noticed that there are no encoder layers? The `body` only contains the `decoder` layers. Why is this the case or what am I missing here?

> *Note:* It should not matter but this is from the `EvolvedTransformer` in particular

Does this mean hparams like `num_encoder_layers` are getting ignored here?

### `MultiProblem` Training Best Practice

I am not at the point where I could make use of recommendations but what I was thinking of was something like the following:

I want to test if (or how much) a small dataset can benefit from `MultiProblem` training. For this I would like to use a small `de_en` dataset. The main task of the `MultiProblem` would be to learn `en2de`. In addition I would like to use a `en_fr`. So the second task would be a training on `en2fr` translation.

Setting `multiproblem_per_task_threshold` to`"95,5"` would mean that batches should consist of 95% `en2de` and 5% `en2fr` samples, is that correct? If so, can I expect improvements for the `"constant"` schedule or should I rather consider the `"pretrain"` schedule?

Any comments on that would be appreciated.

----

There are some other things I do not understand. Some which are inside the code of `MultiProblem` e.g. the following:

```python

def dataset(self, ...):

# ..

if not is_training and not is_infer:

zeros = tf.zeros([self._ADDED_EVAL_COUNT, 1], dtype=tf.int64)

pad_data = tf.data.Dataset.from_tensor_slices({

"targets": zeros,

"batch_prediction_key": zeros,

"task_id": zeros,

})

task_dataset = task_dataset.concatenate(pad_data)

# ..

```

which I 1) do not understand why it's there and 2) breaks my pipeline. I am overriding `example_reading_spec()` in my problems because I am adding things like the `corpus`-name which I use during evaluation.

```python

def example_reading_spec(self):

data_fields = {

'targets': tf.VarLenFeature(tf.int64),

'corpus': tf.VarLenFeature(tf.int64)

}

data_items_to_decoders = None

return data_fields, data_items_to_decoders

```

Since this padding above is hard-coded, the program crashes. I had to override `MultiProblem.dataset` and add a dummy. What is this padding good for?

----

I know this is a lot so thank you for any response to these questions.

| closed | 2019-08-13T13:30:34Z | 2019-09-05T10:00:01Z | https://github.com/tensorflow/tensor2tensor/issues/1659 | [] | stefan-falk | 0 |

scikit-learn/scikit-learn | python | 30,056 | LinearSVC does not correctly handle sample_weight under class_weight strategy 'balanced' | ### Describe the bug

LinearSVC does not pass sample weights through when computing class weights under the "balanced" strategy leading to sample weight invariance issues cross-linked to meta-issue #16298

### Steps/Code to Reproduce

```python

from sklearn.svm import LinearSVC

from sklearn.base import clone

from sklearn.datasets import make_classification

import numpy as np

rng = np.random.RandomState()

X, y = make_classification(

n_samples=100,

n_features=5,

n_informative=3,

n_classes=4,

random_state=0,

)

# Create dataset with repetitions and corresponding sample weights

sample_weight = rng.randint(0, 10, size=X.shape[0])

X_resampled_by_weights = np.repeat(X, sample_weight, axis=0)

y_resampled_by_weights = np.repeat(y, sample_weight)

est_sw = LinearSVC(dual=False,class_weight="balanced").fit(X, y, sample_weight=sample_weight)

est_dup = LinearSVC(dual=False,class_weight="balanced").fit(

X_resampled_by_weights, y_resampled_by_weights, sample_weight=None

)

np.testing.assert_allclose(est_sw.coef_, est_dup.coef_,rtol=1e-10,atol=1e-10)

np.testing.assert_allclose(

est_sw.decision_function(X_resampled_by_weights),

est_dup.decision_function(X_resampled_by_weights),

rtol=1e-10,

atol=1e-10

)

```

### Expected Results

No error thrown

### Actual Results

```

AssertionError:

Not equal to tolerance rtol=1e-10, atol=1e-10

Mismatched elements: 20 / 20 (100%)

Max absolute difference among violations: 0.00818953

Max relative difference among violations: 0.10657042

ACTUAL: array([[ 0.157045, -0.399979, -0.050654, 0.236997, -0.313416],

[-0.038369, -0.169516, -0.239528, -0.164231, 0.29698 ],

[ 0.069654, 0.250218, 0.268922, -0.065565, -0.195888],

[-0.117921, 0.185563, 0.005148, 0.006144, 0.130577]])

DESIRED: array([[ 0.157595, -0.401087, -0.051018, 0.23653 , -0.313528],

[-0.041687, -0.169006, -0.243102, -0.16373 , 0.302628],

[ 0.065096, 0.245549, 0.260732, -0.061577, -0.188419],

[-0.117224, 0.184116, 0.004652, 0.005555, 0.130453]])

```

### Versions

```shell

System:

python: 3.12.4 | packaged by conda-forge | (main, Jun 17 2024, 10:13:44) [Clang 16.0.6 ]

executable: /Users/shrutinath/micromamba/envs/scikit-learn/bin/python

machine: macOS-14.3-arm64-arm-64bit

Python dependencies:

sklearn: 1.6.dev0

pip: 24.0

setuptools: 70.1.1

numpy: 2.0.0

scipy: 1.14.0

Cython: 3.0.10

pandas: 2.2.2

matplotlib: 3.9.0

joblib: 1.4.2

threadpoolctl: 3.5.0

Built with OpenMP: True

threadpoolctl info:

user_api: blas

internal_api: openblas

num_threads: 8

prefix: libopenblas

...

num_threads: 8

prefix: libomp

filepath: /Users/shrutinath/micromamba/envs/scikit-learn/lib/libomp.dylib

version: None

Output is truncated. View as a scrollable element or open in a text editor. Adjust cell output settings...

```

| closed | 2024-10-13T15:09:29Z | 2025-02-11T18:20:03Z | https://github.com/scikit-learn/scikit-learn/issues/30056 | [

"Bug"

] | snath-xoc | 1 |

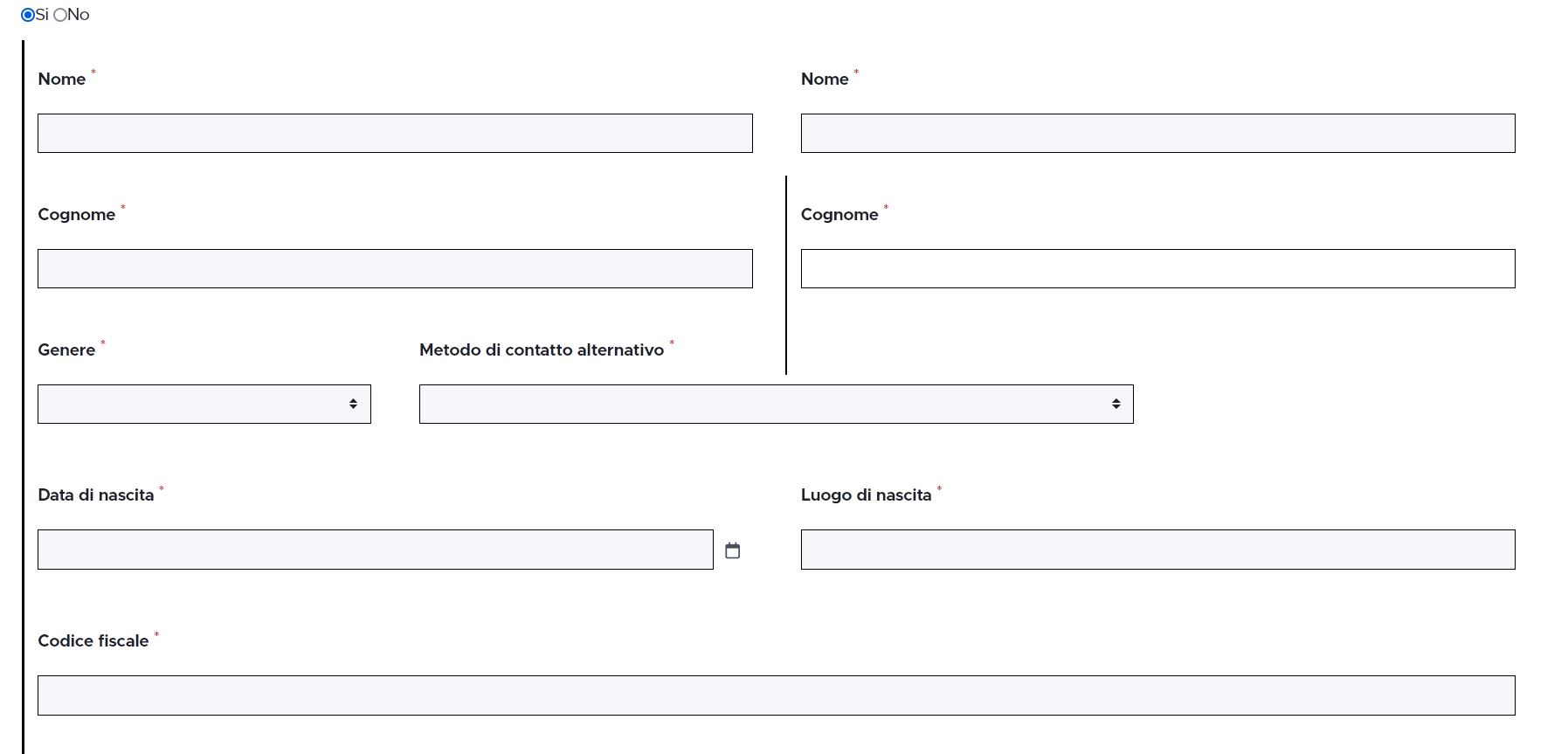

globaleaks/globaleaks-whistleblowing-software | sqlalchemy | 3,193 | Fields of old default whistleblower_identity and new default are shown together after version upgrade | **Describe the bug**

After upgradin GL from 4.0.54 to 4.7.17 Fields of old default whistleblower_identity and new default are shown together in identity section of a previously installaed tenant.

Steps to reproduce the behavior:

1. upgrading GL from 4.0.54 to 4.7.17

2. going to step identity -> whistleblowing identity templates fields are shown together

**Expected behavior**

The old default whistleblower_identity should be shown only, or just the new one

**Desktop (please complete the following information):**

- OS: ubuntu 20

- Browser ffx 97

- GL Version 4.7.17

**Screenshots**

| closed | 2022-03-10T16:50:06Z | 2022-03-12T22:08:41Z | https://github.com/globaleaks/globaleaks-whistleblowing-software/issues/3193 | [

"T: Bug",

"C: Backend"

] | larrykind | 1 |

bmoscon/cryptofeed | asyncio | 341 | Example for OHLC information does not work | The example code here

https://github.com/bmoscon/cryptofeed/blob/master/examples/demo_ohlcv.py

fails with this error

TypeError: __call__() got an unexpected keyword argument 'order_type' | closed | 2020-11-30T01:02:18Z | 2020-11-30T04:27:06Z | https://github.com/bmoscon/cryptofeed/issues/341 | [

"bug"

] | mccoydj1 | 3 |

ymcui/Chinese-BERT-wwm | tensorflow | 92 | 想请问怎么把这个模型放到TFBertModel中,可否提供模型的h5文件? | closed | 2020-03-16T08:44:17Z | 2020-03-25T10:02:18Z | https://github.com/ymcui/Chinese-BERT-wwm/issues/92 | [] | JOHNYXUU | 3 | |

plotly/dash-bio | dash | 106 | invalid plotly syntax in component factory manhattan component | The syntax in https://github.com/plotly/dash-bio/blob/master/dash_bio/component_factory/_manhattan.py#L440 and https://github.com/plotly/dash-bio/blob/master/dash_bio/component_factory/_manhattan.py#L453 may need to be updated.

when running locally (after `pip install -r requirements.txt`) I'm getting:

```

(venv3) ➜ dash-bio git:(master) python index.py

Traceback (most recent call last):

File "index.py", line 31, in <module>

for filename in appList

File "index.py", line 32, in <dictcomp>

if filename.startswith("app_") and filename.endswith(".py")

File "/Users/chelsea/Repos/dash-repos/gallery-apps/dash-bio/tests/dash/app_manhattan_plot.py", line 12, in <module>

fig = dash_bio.ManhattanPlot(df) # Feed the data to a function which creates a Manhattan Plot figure

File "/Users/chelsea/Repos/dash-repos/gallery-apps/dash-bio/dash_bio/component_factory/_manhattan.py", line 165, in ManhattanPlot

highlight_color=highlight_color

File "/Users/chelsea/Repos/dash-repos/gallery-apps/dash-bio/dash_bio/component_factory/_manhattan.py", line 440, in figure

suggestiveline = go.layout.Shape(

AttributeError: module 'plotly.graph_objs' has no attribute 'layout'

```

| closed | 2019-01-17T00:16:51Z | 2019-01-21T18:01:40Z | https://github.com/plotly/dash-bio/issues/106 | [] | cldougl | 5 |

huggingface/datasets | nlp | 7,249 | How to debugging | ### Describe the bug

I wanted to use my own script to handle the processing, and followed the tutorial documentation by rewriting the MyDatasetConfig and MyDatasetBuilder (which contains the _info,_split_generators and _generate_examples methods) classes. Testing with simple data was able to output the results of the processing, but when I wished to do more complex processing, I found that I was unable to debug (even the simple samples were inaccessible). There are no errors reported, and I am able to print the _info,_split_generators and _generate_examples messages, but I am unable to access the breakpoints.

### Steps to reproduce the bug

# my_dataset.py

import json

import datasets

class MyDatasetConfig(datasets.BuilderConfig):

def __init__(self, **kwargs):

super(MyDatasetConfig, self).__init__(**kwargs)

class MyDataset(datasets.GeneratorBasedBuilder):

VERSION = datasets.Version("1.0.0")

BUILDER_CONFIGS = [

MyDatasetConfig(

name="default",

version=VERSION,

description="myDATASET"

),

]

def _info(self):

print("info") # breakpoints

return datasets.DatasetInfo(

description="myDATASET",

features=datasets.Features(

{

"id": datasets.Value("int32"),

"text": datasets.Value("string"),

"label": datasets.ClassLabel(names=["negative", "positive"]),

}

),

supervised_keys=("text", "label"),

)

def _split_generators(self, dl_manager):

print("generate") # breakpoints

data_file = "data.json"

return [

datasets.SplitGenerator(

name=datasets.Split.TRAIN, gen_kwargs={"filepath": data_file}

),

]

def _generate_examples(self, filepath):

print("example") # breakpoints

with open(filepath, encoding="utf-8") as f:

data = json.load(f)

for idx, sample in enumerate(data):

yield idx, {

"id": sample["id"],

"text": sample["text"],

"label": sample["label"],

}

#main.py

import os

os.environ["TRANSFORMERS_NO_MULTIPROCESSING"] = "1"

from datasets import load_dataset

dataset = load_dataset("my_dataset.py", split="train", cache_dir=None)

print(dataset[:5])

### Expected behavior

Pause at breakpoints while running debugging

### Environment info

pycharm

| open | 2024-10-24T01:03:51Z | 2024-10-24T01:03:51Z | https://github.com/huggingface/datasets/issues/7249 | [] | ShDdu | 0 |

qwj/python-proxy | asyncio | 141 | Custom filter functions? | Hello, is it possible to add custom filtering functions based on the content?

Basically I want to filter YouTube videos based on their metadata, something I can do by leveraging the YouTube Data API. It is ok if the request takes several seconds.

I took a quick look of the code and I think I could add this in the connect() function of the different clients (pretty much HTTP for my use case). I also saw there is a stream reader/writer that has the content, is that accurate?

Thanks in advance. | closed | 2021-12-05T03:51:36Z | 2022-07-05T07:35:41Z | https://github.com/qwj/python-proxy/issues/141 | [] | crorella | 0 |

jina-ai/serve | deep-learning | 5,585 | Change documentation for `CONTEXT` environment variables | **Describe your proposal/problem**

<!-- A clear and concise description of what the proposal is. -->

The [docs](https://docs.jina.ai/concepts/flow/yaml-spec/#context-variables) don't specify how to use context variables in a flow yaml.

It should be made clear that when defining a flow using the YAML specification `VALUE_A` & `VALUE_B` should appear in the `env` key.

---

**Flow.yml**

```

jtype: Flow

executors:

- name: executor1

uses: executor1/config.yml

env:

VALUE_A: 123

VALUE_B: hello

uses_with:

var_a: ${{ CONTEXT.VALUE_A }}

var_b: ${{ CONTEXT.VALUE_B }}

``` | closed | 2023-01-09T16:05:59Z | 2023-04-24T00:18:00Z | https://github.com/jina-ai/serve/issues/5585 | [

"Stale",

"area/docs"

] | npitsillos | 2 |

nalepae/pandarallel | pandas | 112 | Weird return from parallel_apply() | (duplicated) #111 | closed | 2020-10-06T03:54:32Z | 2020-10-06T03:55:15Z | https://github.com/nalepae/pandarallel/issues/112 | [] | conraddd | 0 |

HIT-SCIR/ltp | nlp | 380 | 您好,咨询3.3.2版本otcws训练分词模型cws.model的问题 | 您好,用otcws训练人民日报1998六个月的分词模型总是失败,build-featurespace: 30% instances is extracted.提取到30%的时候就退出了,训练5万行以下的文本可以,训练5万行以上的文本就总是失败,我想问一下用otcws训练模型的时候有什么限制吗,比如不能存在特殊字符,单行文本字符限制,整个训练样本不能过长等,期待收到您的回复!

ps:硬件环境为16核 128GB,window下命令otcws.exe learn --reference people1998.seg --development people1998.seg --algorithm pa --model cws.model --max-iter 10 --rare-feature-threshold 1 | closed | 2020-07-09T08:45:27Z | 2020-07-10T07:43:48Z | https://github.com/HIT-SCIR/ltp/issues/380 | [] | GuohyCoding | 1 |

nalepae/pandarallel | pandas | 7 | Implement GroupBy.parallel_apply | open | 2019-03-16T13:28:36Z | 2019-03-16T13:31:14Z | https://github.com/nalepae/pandarallel/issues/7 | [

"enhancement"

] | nalepae | 0 | |

microsoft/nni | tensorflow | 4,817 | Why does SlimPruner utilize the WeightTrainerBasedDataCollector instead of the WeightDataCollector before model compressing? | open | 2022-04-27T11:43:29Z | 2022-04-29T01:50:48Z | https://github.com/microsoft/nni/issues/4817 | [] | songkq | 1 | |

TencentARC/GFPGAN | pytorch | 176 | Some colors in black and white photo | A minor detail, in some black and white photos, colors appear that are not in the photo, but it seems that the model "suggests" what colors it should have. It is also remarkable the improvement of the V1.3 model. Although the 1.1 model has behaved very generous with this image. I must also add that some faces have been improved but with Asian features (like women and children).

Thanks for your project I love it

img1- Original

img2 -V1 Model (more natural and accurate face, but colorized(in this face))

img3-V1.3 added colors in BW pics

| open | 2022-03-13T08:45:33Z | 2022-03-14T23:23:38Z | https://github.com/TencentARC/GFPGAN/issues/176 | [] | GOZARCK | 2 |

nltk/nltk | nlp | 3,149 | TclError resizing download dialog table column | When attempting to resize a column in the downloader dialog, and error is raised and the column does not resize.

Steps to reproduce:

- Run `nltk.download()` to open downloading interface

- Try resizing any of the table columns (e.g. "Identifier" in the first tab)

An example full traceback is as follows:

```

Exception in Tkinter callback

Traceback (most recent call last):

File "/usr/lib/python3.11/tkinter/__init__.py", line 1948, in __call__

return self.func(*args)

^^^^^^^^^^^^^^^^

File "/usr/lib/python3.11/site-packages/nltk/draw/table.py", line 196, in _resize_column_motion_cb

lb["width"] = max(3, lb["width"] + (x1 - x2) // charwidth)

~~^^^^^^^^^

File "/usr/lib/python3.11/tkinter/__init__.py", line 1713, in __setitem__

self.configure({key: value})

File "/usr/lib/python3.11/tkinter/__init__.py", line 1702, in configure

return self._configure('configure', cnf, kw)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/lib/python3.11/tkinter/__init__.py", line 1692, in _configure

self.tk.call(_flatten((self._w, cmd)) + self._options(cnf))

_tkinter.TclError: expected integer but got "21.0"

```

The fix for this would be to simply change [`draw/table.py:196`](https://github.com/nltk/nltk/blob/56bc4af35906fb/nltk/draw/table.py#L196) from

```lb["width"] = max(3, lb["width"] + (x1 - x2) // charwidth)```

to

```lb["width"] = max(3, int(lb["width"] + (x1 - x2) // charwidth))```

(forcing the result of the floor div to be an int rather than float). | closed | 2023-05-04T10:38:45Z | 2023-05-08T08:23:10Z | https://github.com/nltk/nltk/issues/3149 | [] | E-Paine | 0 |

huggingface/datasets | deep-learning | 7,215 | Iterable dataset map with explicit features causes slowdown for Sequence features | ### Describe the bug

When performing map, it's nice to be able to pass the new feature type, and indeed required by interleave and concatenate datasets.

However, this can cause a major slowdown for certain types of array features due to the features being re-encoded.

This is separate to the slowdown reported in #7206

### Steps to reproduce the bug

```

from datasets import Dataset, Features, Array3D, Sequence, Value

import numpy as np

import time

features=Features(**{"array0": Sequence(feature=Value("float32"), length=-1), "array1": Sequence(feature=Value("float32"), length=-1)})

dataset = Dataset.from_dict({f"array{i}": [np.zeros((x,), dtype=np.float32) for x in [5000,10000]*25] for i in range(2)}, features=features)

```

```

ds = dataset.to_iterable_dataset()

ds = ds.with_format("numpy").map(lambda x: x)

t0 = time.time()

for ex in ds:

pass

t1 = time.time()

```

~1.5 s on main

```

ds = dataset.to_iterable_dataset()

ds = ds.with_format("numpy").map(lambda x: x, features=features)

t0 = time.time()

for ex in ds:

pass

t1 = time.time()

```

~ 3 s on main

### Expected behavior

I'm not 100% sure whether passing new feature types to formatted outputs of map should be supported or not, but assuming it should, then there should be a cost-free way to specify the new feature type - knowing feature type is required by interleave_datasets and concatenate_datasets for example

### Environment info

3.0.2 | open | 2024-10-10T22:08:20Z | 2024-10-10T22:10:32Z | https://github.com/huggingface/datasets/issues/7215 | [] | alex-hh | 0 |

viewflow/viewflow | django | 449 | CreateViewMixin doesn't check permissions before adding "Add new" page action | `class CreateViewMixin(metaclass=ViewsetMeta):

create_view_class = CreateModelView

create_form_layout = DEFAULT

create_form_class = DEFAULT

create_form_widgets = DEFAULT

def has_add_permission(self, user):

return has_object_perm(user, "add", self.model)

def get_create_view_kwargs(self, **kwargs):

view_kwargs = {

"form_class": first_not_default(

self.create_form_class, getattr(self, "form_class", DEFAULT)

),

"form_widgets": first_not_default(

self.create_form_widgets, getattr(self, "form_widgets", DEFAULT)

),

"layout": first_not_default(

self.create_form_layout, getattr(self, "form_layout", DEFAULT)

),

**self.create_view_kwargs,

**kwargs,

}

return self.filter_kwargs(self.create_view_class, **view_kwargs)

def get_list_page_actions(self, request, *actions):

add_action = Action(

name="Add new",

url=self.reverse("add"),

icon=Icon("add_circle", class_="material-icons mdc-list-item__graphic"),

)

return super().get_list_page_actions(request, *(add_action, *actions))

`

I believe get_list_page_actions should check for add permission. Right now it shows "Add new" to users that aren't allowed to add.

A related question, is there a way to override the name of the add action when using ModelViewset? Often I want it to say "Add New Blog" for example. I've been just using BaseModelViewset and then adding in the other mixins except CreateViewMixin so that I can perform the permission check as above as well as change the add action name.

Thanks | closed | 2024-06-19T22:41:26Z | 2024-06-24T10:26:05Z | https://github.com/viewflow/viewflow/issues/449 | [] | SamuelLayNZ | 1 |

cs230-stanford/cs230-code-examples | computer-vision | 17 | Error when run build_dataset.py on windows | In Windows OS, folder names in a path join together with back slash [ \ ] instead of slash [ / ] like this:

> C:\Program Files\NVIDIA GPU Computing Toolkit

so build_dataset.py throw an error. because it can't split filename from directory.

I solve it by replace the slash with double back slash '\\'

`image.save(os.path.join(output_dir, **filename.split('\\')[-1])**)`

Thanks. | open | 2019-04-05T11:21:30Z | 2024-01-23T11:41:54Z | https://github.com/cs230-stanford/cs230-code-examples/issues/17 | [] | Amin-Tgz | 1 |

zhiyiYo/Fluent-M3U8 | dash | 5 | 是不是下载完没有文件列表完整性校验?网络波动一下就下载不全,然后合成失败 | 特别是下载外网视频时,一旦梯子不稳断线重连一下,断线时正在下载的ts文件就一直是temp后缀,下完合成失败,打开下载文件夹一看还有temp后缀的ts文件在。能不能合成之前先进行已下载文件列表完整性校验,把下载失败的文件单独再下载? | closed | 2025-02-16T14:24:25Z | 2025-02-17T16:21:46Z | https://github.com/zhiyiYo/Fluent-M3U8/issues/5 | [

"enhancement"

] | cai1niao1 | 2 |

miguelgrinberg/microblog | flask | 62 | Problem with sending email | I have searched, compared line by line and can't for the life of me figure out what I have done wrong.

Seems the error originates in the email.py file.

```

powershell

127.0.0.1 - - [03/Jan/2018 18:57:27] "GET /reset_password_request HTTP/1.1" 200 -

[2018-01-03 18:57:32,933] ERROR in app: Exception on /reset_password_request [POST]

Traceback (most recent call last):

File "c:\users\calle\pycharmprojects\flask_megatutorial\venv\lib\site-packages\flask\app.py", line 1982, in wsgi_app

response = self.full_dispatch_request()

File "c:\users\calle\pycharmprojects\flask_megatutorial\venv\lib\site-packages\flask\app.py", line 1614, in full_dispatch_request

rv = self.handle_user_exception(e)

File "c:\users\calle\pycharmprojects\flask_megatutorial\venv\lib\site-packages\flask\app.py", line 1517, in handle_user_exception

reraise(exc_type, exc_value, tb)

File "c:\users\calle\pycharmprojects\flask_megatutorial\venv\lib\site-packages\flask\_compat.py", line 33, in reraise

raise value

File "c:\users\calle\pycharmprojects\flask_megatutorial\venv\lib\site-packages\flask\app.py", line 1612, in full_dispatch_request

rv = self.dispatch_request()

File "c:\users\calle\pycharmprojects\flask_megatutorial\venv\lib\site-packages\flask\app.py", line 1598, in dispatch_request

return self.view_functions[rule.endpoint](**req.view_args)

File "C:\Users\Calle\PycharmProjects\flask_megatutorial\app\routes.py", line 160, in reset_password_request

send_password_reset_email(user)

File "C:\Users\Calle\PycharmProjects\flask_megatutorial\app\email.py", line 14, in send_password_reset_email

sender=app.config['ADMINS'][0],

NameError: name 'app' is not defined

127.0.0.1 - - [03/Jan/2018 18:57:34] "POST /reset_password_request HTTP/1.1" 500 -

```

I also tried it in the Flask shell as described in **10.2 Flask-Mail Usage**:

```

powershell

(venv) PS C:\Users\Calle\PycharmProjects\flask_megatutorial> flask shell

Python 3.6.4 (v3.6.4:d48eceb, Dec 19 2017, 06:54:40) [MSC v.1900 64 bit (AMD64)] on win32

App: app

Instance: C:\Users\Calle\PycharmProjects\flask_megatutorial\instance

>>> from flask_mail import Message

>>> from app import mail

>>> msg = Message('test subject', sender=app.config['ADMINS'][0],

... recipients=['your-email@example.com'])

>>> msg.body = 'text body'

>>> msg.html = '<h1>HTML body</h1>'

>>> mail.send(msg)

```

Here is the code in Gist with the files that I suspect:

[https://gist.github.com/Callero/7b7edec02ed1e6be2644b0a3703a1630](https://gist.github.com/Callero/7b7edec02ed1e6be2644b0a3703a1630)

| closed | 2018-01-03T18:16:55Z | 2018-01-04T18:44:44Z | https://github.com/miguelgrinberg/microblog/issues/62 | [

"bug"

] | Callero | 2 |

flairNLP/flair | nlp | 3,428 | [Bug]: Error message: "learning rate too small - quitting training!" | ### Describe the bug

Model training quits after epoch 1 with a "learning rate too small - quitting training!" error message even though the "patience" parameter is set to 10.

### To Reproduce

```python

In Google Colab:

!pip install flair -qq

import os

from os import mkdir, listdir

from os.path import join, exists

import re

from torch.optim.adam import Adam

from flair.datasets import CSVClassificationCorpus

from flair.data import Corpus, Sentence

from flair.embeddings import TransformerDocumentEmbeddings

from flair.models import TextClassifier

from flair.trainers import ModelTrainer

for embedding in ["distilbert-base-uncased"]:

print("Training on", embedding)

# 1a. define the column format indicating which columns contain the text and labels

column_name_map = {1: "text", 2: "label"}

# 1b. load the preprocessed training, development, and test sets

corpus: Corpus = CSVClassificationCorpus(processed_dir,

column_name_map,

label_type="label",

skip_header=True,

delimiter='\t')

# 2. create the label dictionary

label_dict = corpus.make_label_dictionary(label_type="label")

# 3. initialize the transformer document embeddings

document_embeddings = TransformerDocumentEmbeddings(embedding,

fine_tune=True,

layers="all")

#document_embeddings.tokenizer.pad_token = document_embeddings.tokenizer.eos_token

# 4. create the text classifier

classifier = TextClassifier(document_embeddings,

label_dictionary=label_dict,

label_type="label")

# 5. initialize the trainer

trainer = ModelTrainer(classifier,

corpus)

# 6. start the training

trainer.train('model/'+embedding,

learning_rate=1e-5,

mini_batch_size=8,

max_epochs=3,

patience=10,

optimizer=Adam,

train_with_dev=False,

save_final_model=False

)

```

### Expected behavior

In this case, the model should be trained for 3 epochs without reducing the learning rate. In prior cases, even when a learning rate of 1e-5 was reduced by an anneal factor of 0.5, I did not receive a "learning rate too small - quitting training!" error message.

### Logs and Stack traces

```stacktrace

2024-03-18 14:11:51,783 ----------------------------------------------------------------------------------------------------

2024-03-18 14:11:51,786 Model: "TextClassifier(

(embeddings): TransformerDocumentEmbeddings(

(model): DistilBertModel(

(embeddings): Embeddings(

(word_embeddings): Embedding(30523, 768)

(position_embeddings): Embedding(512, 768)

(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)

(dropout): Dropout(p=0.1, inplace=False)

)

(transformer): Transformer(

(layer): ModuleList(

(0-5): 6 x TransformerBlock(

(attention): MultiHeadSelfAttention(

(dropout): Dropout(p=0.1, inplace=False)

(q_lin): Linear(in_features=768, out_features=768, bias=True)

(k_lin): Linear(in_features=768, out_features=768, bias=True)

(v_lin): Linear(in_features=768, out_features=768, bias=True)

(out_lin): Linear(in_features=768, out_features=768, bias=True)

)

(sa_layer_norm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)

(ffn): FFN(

(dropout): Dropout(p=0.1, inplace=False)

(lin1): Linear(in_features=768, out_features=3072, bias=True)

(lin2): Linear(in_features=3072, out_features=768, bias=True)

(activation): GELUActivation()

)

(output_layer_norm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)

)

)

)

)

)

(decoder): Linear(in_features=5376, out_features=2, bias=True)

(dropout): Dropout(p=0.0, inplace=False)

(locked_dropout): LockedDropout(p=0.0)

(word_dropout): WordDropout(p=0.0)

(loss_function): CrossEntropyLoss()

(weights): None

(weight_tensor) None

)"

2024-03-18 14:11:51,787 ----------------------------------------------------------------------------------------------------

2024-03-18 14:11:51,789 Corpus: 8800 train + 2200 dev + 2200 test sentences

2024-03-18 14:11:51,793 ----------------------------------------------------------------------------------------------------

2024-03-18 14:11:51,794 Train: 8800 sentences

2024-03-18 14:11:51,795 (train_with_dev=False, train_with_test=False)

2024-03-18 14:11:51,799 ----------------------------------------------------------------------------------------------------

2024-03-18 14:11:51,802 Training Params:

2024-03-18 14:11:51,804 - learning_rate: "1e-05"

2024-03-18 14:11:51,806 - mini_batch_size: "8"

2024-03-18 14:11:51,807 - max_epochs: "3"

2024-03-18 14:11:51,812 - shuffle: "True"

2024-03-18 14:11:51,813 ----------------------------------------------------------------------------------------------------

2024-03-18 14:11:51,814 Plugins:

2024-03-18 14:11:51,816 - AnnealOnPlateau | patience: '10', anneal_factor: '0.5', min_learning_rate: '0.0001'

2024-03-18 14:11:51,817 ----------------------------------------------------------------------------------------------------

2024-03-18 14:11:51,818 Final evaluation on model from best epoch (best-model.pt)

2024-03-18 14:11:51,820 - metric: "('micro avg', 'f1-score')"

2024-03-18 14:11:51,821 ----------------------------------------------------------------------------------------------------

2024-03-18 14:11:51,823 Computation:

2024-03-18 14:11:51,825 - compute on device: cuda:0

2024-03-18 14:11:51,835 - embedding storage: cpu

2024-03-18 14:11:51,836 ----------------------------------------------------------------------------------------------------

2024-03-18 14:11:51,837 Model training base path: "model/distilbert-base-uncased"

2024-03-18 14:11:51,840 ----------------------------------------------------------------------------------------------------

2024-03-18 14:11:51,846 ----------------------------------------------------------------------------------------------------

2024-03-18 14:11:55,845 epoch 1 - iter 110/1100 - loss 0.57600509 - time (sec): 4.00 - samples/sec: 220.19 - lr: 0.000010 - momentum: 0.000000

2024-03-18 14:11:58,978 epoch 1 - iter 220/1100 - loss 0.50393908 - time (sec): 7.13 - samples/sec: 246.84 - lr: 0.000010 - momentum: 0.000000

2024-03-18 14:12:01,876 epoch 1 - iter 330/1100 - loss 0.46954644 - time (sec): 10.03 - samples/sec: 263.27 - lr: 0.000010 - momentum: 0.000000

2024-03-18 14:12:05,276 epoch 1 - iter 440/1100 - loss 0.44181235 - time (sec): 13.43 - samples/sec: 262.14 - lr: 0.000010 - momentum: 0.000000

2024-03-18 14:12:08,456 epoch 1 - iter 550/1100 - loss 0.41807515 - time (sec): 16.61 - samples/sec: 264.93 - lr: 0.000010 - momentum: 0.000000

2024-03-18 14:12:11,447 epoch 1 - iter 660/1100 - loss 0.40403758 - time (sec): 19.60 - samples/sec: 269.41 - lr: 0.000010 - momentum: 0.000000

2024-03-18 14:12:14,420 epoch 1 - iter 770/1100 - loss 0.38948912 - time (sec): 22.57 - samples/sec: 272.91 - lr: 0.000010 - momentum: 0.000000

2024-03-18 14:12:17,914 epoch 1 - iter 880/1100 - loss 0.38118810 - time (sec): 26.07 - samples/sec: 270.09 - lr: 0.000010 - momentum: 0.000000

2024-03-18 14:12:21,085 epoch 1 - iter 990/1100 - loss 0.37110791 - time (sec): 29.24 - samples/sec: 270.89 - lr: 0.000010 - momentum: 0.000000

2024-03-18 14:12:24,027 epoch 1 - iter 1100/1100 - loss 0.36139164 - time (sec): 32.18 - samples/sec: 273.47 - lr: 0.000010 - momentum: 0.000000

2024-03-18 14:12:24,030 ----------------------------------------------------------------------------------------------------

2024-03-18 14:12:24,032 EPOCH 1 done: loss 0.3614 - lr: 0.000010

2024-03-18 14:12:28,158 DEV : loss 0.28874295949935913 - f1-score (micro avg) 0.9095

2024-03-18 14:12:29,719 - 0 epochs without improvement

2024-03-18 14:12:29,721 ----------------------------------------------------------------------------------------------------

2024-03-18 14:12:29,723 learning rate too small - quitting training!

2024-03-18 14:12:29,725 ----------------------------------------------------------------------------------------------------

2024-03-18 14:12:29,727 Done.

2024-03-18 14:12:29,729 ----------------------------------------------------------------------------------------------------

2024-03-18 14:12:29,733 Testing using last state of model ...

2024-03-18 14:12:33,651

Results:

- F-score (micro) 0.9132

- F-score (macro) 0.9029

- Accuracy 0.9132

By class:

precision recall f1-score support

0 0.9184 0.9511 0.9345 1432

1 0.9024 0.8424 0.8714 768

accuracy 0.9132 2200

macro avg 0.9104 0.8968 0.9029 2200

weighted avg 0.9128 0.9132 0.9125 2200

2024-03-18 14:12:33,653 ----------------------------------------------------------------------------------------------------

```

### Screenshots

_No response_

### Additional Context

_No response_

### Environment

#### Versions:

##### Flair

0.13.1

##### Pytorch

2.2.1+cu121

##### Transformers

4.38.2

#### GPU

True | closed | 2024-03-18T14:58:03Z | 2024-03-18T16:14:55Z | https://github.com/flairNLP/flair/issues/3428 | [

"bug"

] | azkgit | 1 |

lukas-blecher/LaTeX-OCR | pytorch | 319 | Training isn't working properly | I tried to train a custom model. This model's intention was to detect matrices, so I created a dataset, tokenizer, and config.yaml file.

However, I am here for a reason. For some reason it doesn't appear to actually be training. This is the output from the following command:

```

!python -m pix2tex.train --config colab.yaml

```

Output:

```

wandb: (1) Create a W&B account

wandb: (2) Use an existing W&B account

wandb: (3) Don't visualize my results

wandb: Enter your choice: 2

wandb: You chose 'Use an existing W&B account'

wandb: Logging into wandb.ai. (Learn how to deploy a W&B server locally: https://wandb.me/wandb-server)

wandb: You can find your API key in your browser here: https://wandb.ai/authorize

wandb: Paste an API key from your profile and hit enter, or press ctrl+c to quit:

wandb: Appending key for api.wandb.ai to your netrc file: /root/.netrc

wandb: Tracking run with wandb version 0.15.10

wandb: Run data is saved locally in /content/wandb/run-20230921_163333-mj2ft4r2

wandb: Run `wandb offline` to turn off syncing.

wandb: Syncing run mixed

wandb: ⭐️ View project at https://wandb.ai/frankvp_11/uncategorized

wandb: 🚀 View run at https://wandb.ai/frankvp_11/uncategorized/runs/mj2ft4r2

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

0it [00:00, ?it/s]

wandb: Waiting for W&B process to finish... (success).

wandb:

wandb: Run history:

wandb: train/epoch ▁▁▁▁▂▂▂▂▂▃▃▃▃▃▃▄▄▄▄▄▅▅▅▅▅▅▆▆▆▆▆▆▇▇▇▇▇███

wandb:

wandb: Run summary:

wandb: train/epoch 50

wandb:

wandb: 🚀 View run mixed at: https://wandb.ai/frankvp_11/uncategorized/runs/mj2ft4r2

wandb: Synced 5 W&B file(s), 0 media file(s), 0 artifact file(s) and 0 other file(s)

wandb: Find logs at: ./wandb/run-20230921_163333-mj2ft4r2/logs

```

Can someone help me debug what went wrong? Here's the link to the colab file that I am using. To get to this point (dataset creation + training) takes ~10 minutes

https://colab.research.google.com/drive/19aGMcvZVDhjJndIIdcaWHiz0IKRk1vxE?usp=sharing

| open | 2023-09-21T16:36:56Z | 2023-09-21T16:37:19Z | https://github.com/lukas-blecher/LaTeX-OCR/issues/319 | [] | frankvp11 | 0 |

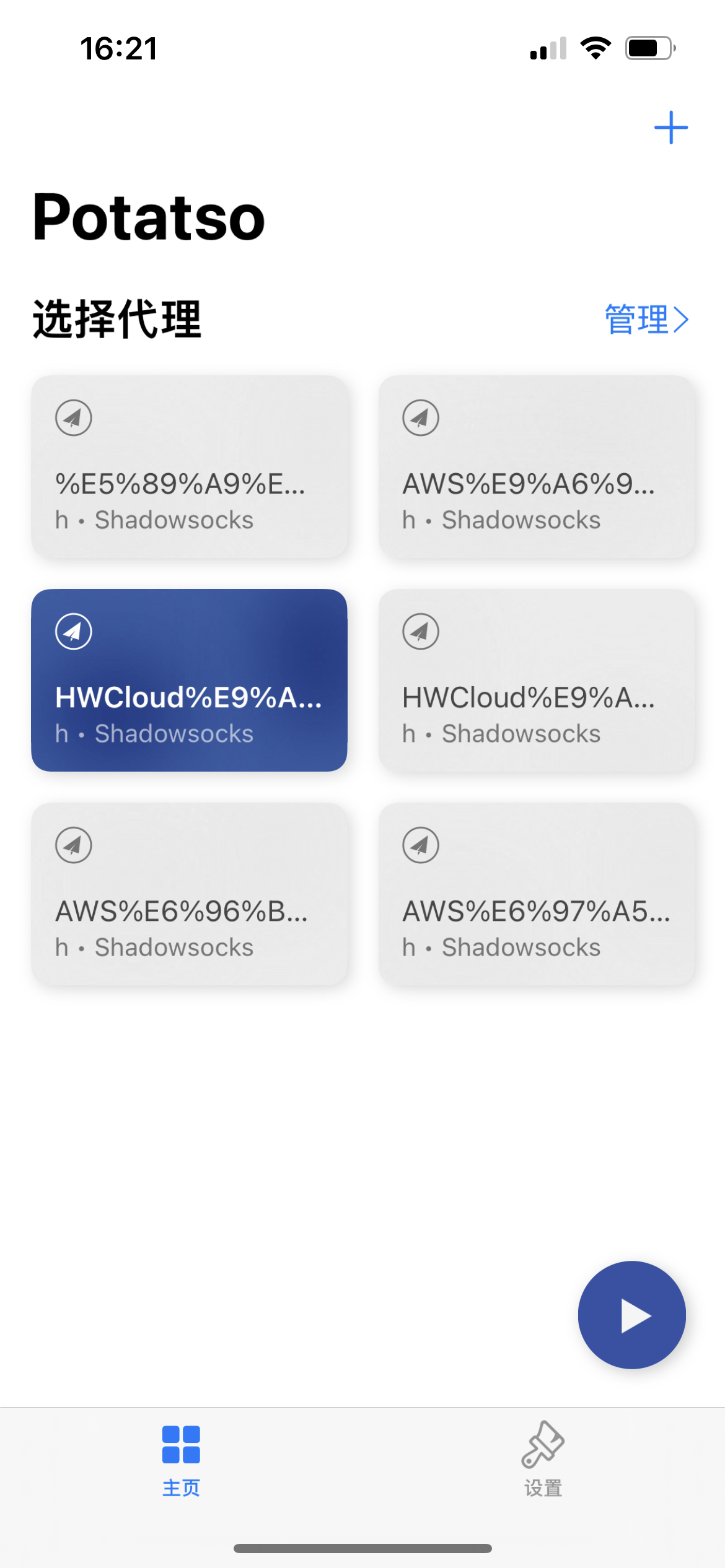

Ehco1996/django-sspanel | django | 649 | 节点名称中文乱码 | **问题的描述**

使用potatso lite添加ss订阅,中文乱码

**相关截图/log**

| closed | 2022-03-15T08:20:21Z | 2022-03-19T09:24:13Z | https://github.com/Ehco1996/django-sspanel/issues/649 | [

"bug"

] | dymasch | 2 |

tensorflow/tensor2tensor | machine-learning | 1,631 | Most straight forward way to train summarization on new data with simpler format than CNN/DM datasets? Make a new data_generator ? | ### Description

I would like to train a summarizer on my own data, and I am wondering what's the most straightforward way to do this. The CNN/DailyMail datasets have a bit of an odd format which seems tricky to convert regular summarization datasets (CSVs with 1 column for source, 1 column for summary) into.

So from my analysis of the code, the easiest to for Tensor2Tensor to accept new summarization datasets is to develop new data_generators such that it will be able to training on any data formatted in CSV, one column being the source, the other column being the summary.

My plan is to use the data_generators/cnn_dailymail.py code as the base with the following alterations:

First, replace the CNN/DailyMail google drive links with my own, here

https://github.com/tensorflow/tensor2tensor/blob/master/tensor2tensor/data_generators/cnn_dailymail.py#L37

Then, I need to alter `def example_generator` in https://github.com/tensorflow/tensor2tensor/blob/master/tensor2tensor/data_generators/cnn_dailymail.py#L137

In such a way that it'll take my custom data and put the source and summary in one line, seperated by story_summary_split_token ,( unless there's no sum_token )

Is this it? Or is there anything else I need to take into consideration?

| closed | 2019-07-12T22:51:02Z | 2021-03-03T13:07:17Z | https://github.com/tensorflow/tensor2tensor/issues/1631 | [] | Santosh-Gupta | 2 |

LibreTranslate/LibreTranslate | api | 679 | Basque translation project needs update in Weblate | Comparing with English string quantity [161](https://hosted.weblate.org/projects/libretranslate/app/en/), there are less available in the Basque project: [143](https://hosted.weblate.org/projects/libretranslate/app/eu/)

For example "Albanian", "Chinese (traditional)", "Kabyle" and some other are missing.

I guess the "Basque" string has also to be added :smile:

Thank you!

| closed | 2024-09-20T23:31:15Z | 2024-09-21T16:41:37Z | https://github.com/LibreTranslate/LibreTranslate/issues/679 | [

"enhancement"

] | urtzai | 1 |

xlwings/xlwings | automation | 1,724 | while accessing worksheet.range com_error: (-2147352573, 'Member not found.', None, None) | #### OS Windows 7 professional

#### Versions of xlwings 0.24.9, Excel 2010 and Python 3.8.10

#### Describe your issue (incl. Traceback!)

The code worked fine the yesterday, but today it is not working.

```python

# Your traceback here

Traceback (most recent call last):

File "C:\Users\ssp\SpyderPythonProjects\SSTrades\SSTradesAlgoZero\library\NSEtickerinExcel.py", line 108, in <module>

print(dak.range("B2").value)

File "C:\Users\ssp\AppData\Local\Programs\Python\Python38\Lib\site-packages\xlwings\main.py", line 1106, in range

return Range(impl=self.impl.range(cell1, cell2))

File "C:\Users\ssp\AppData\Local\Programs\Python\Python38\Lib\site-packages\xlwings\_xlwindows.py", line 689, in range

xl1 = self.xl.Range(arg1)

File "C:\Users\ssp\AppData\Local\Programs\Python\Python38\Lib\site-packages\xlwings\_xlwindows.py", line 70, in __call__

v = self.__method(*args, **kwargs)

File "<COMObject <unknown>>", line 2, in Range

com_error: (-2147352573, 'Member not found.', None, None)

```

#### Include a minimal code sample to reproduce the issue (and attach a sample workbook if required!)

```python

pathhcurr = os.getcwd()

savepath = pathhcurr.replace("library","reference files")

xlfilepath = str(savepath) + str("\\NSE_analysis_list.xlsx")

wb = xw.Book(str(xlfilepath))

dak = wb.sheets("DataKeys")

dak.active = True

print(dak.name)

dak.range("a:b").value = None

```

| closed | 2021-10-01T00:58:54Z | 2022-02-05T20:09:49Z | https://github.com/xlwings/xlwings/issues/1724 | [] | ssprakash-seeni | 5 |

polakowo/vectorbt | data-visualization | 493 | Pulling fundamental data | Thank you to the vectorbt team for all their hard work with this great library!

I was wondering if it were possible to pull more fundamental-style data into vectorbt? I'm interested in things like total current assets, long term investments, total current liabilities, etc.? I'm not sure if there is a particular data broker that vectorbt utilizes or something else. Thanks in advance for your help! | closed | 2022-09-06T15:44:44Z | 2022-09-20T01:14:25Z | https://github.com/polakowo/vectorbt/issues/493 | [] | aclifton314 | 1 |

drivendataorg/cookiecutter-data-science | data-science | 8 | Add option to choose different data storage back ends | - S3 (get AWS settings)

- Git Large File Storage

- Git Annex

- dat

| closed | 2016-04-23T17:56:20Z | 2023-08-30T21:26:21Z | https://github.com/drivendataorg/cookiecutter-data-science/issues/8 | [] | pjbull | 2 |

huggingface/transformers | pytorch | 36,571 | In the latest version of transformers (4.49.0) matrix transformation error is encountered | ### System Info

transformer Version : 4.49.0

python version: python3.10

env : HuggingFace spaces

Looks to be working in : 4.48.3

Please find the following HuggingFace Space code which works in (4.48.3) but fails in (4.49.0)

Code :

`

import os

--

| import random

| import uuid

| import gradio as gr

| import numpy as np

| from PIL import Image

| import spaces

| import torch

| from diffusers import StableDiffusionXLPipeline, EulerAncestralDiscreteScheduler

| from typing import Tuple

|

| css = '''

| .gradio-container{max-width: 575px !important}

| h1{text-align:center}

| footer {

| visibility: hidden

| }

| '''

|

| DESCRIPTIONXX = """## lStation txt2Img🥠"""

|

| examples = [

|

| "A tiny reptile hatching from an egg on the mars, 4k, planet theme, --style raw5 --v 6.0",

| "An anime-style illustration of a delicious, rice biryani with curry and chilli pickle --style raw5",

| "Iced tea in a cup --ar 85:128 --v 6.0 --style raw5, 4K, Photo-Realistic",

| "A zebra holding a sign that says Welcome to Zoo --ar 85:128 --v 6.0 --style raw",

| "A splash page of Spiderman swinging through a futuristic cityscape filled with flying cars, the scene depicted in a vibrant 3D rendered Marvel comic art style.--style raw5, 4K, Photo-Realistic"

| ]

|

| MODEL_OPTIONS = {

|

| "LIGHTNING V5.0": "SG161222/RealVisXL_V5.0_Lightning",

| "LIGHTNING V4.0": "SG161222/RealVisXL_V4.0_Lightning",

| }

|

| MAX_IMAGE_SIZE = int(os.getenv("MAX_IMAGE_SIZE", "4096"))

| USE_TORCH_COMPILE = os.getenv("USE_TORCH_COMPILE", "0") == "1"

| ENABLE_CPU_OFFLOAD = os.getenv("ENABLE_CPU_OFFLOAD", "0") == "1"

| BATCH_SIZE = int(os.getenv("BATCH_SIZE", "1"))

|

| device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

|

| style_list = [

| {

| "name": "3840 x 2160",

| "prompt": "hyper-realistic 8K image of {prompt}. ultra-detailed, lifelike, high-resolution, sharp, vibrant colors, photorealistic",

| "negative_prompt": "cartoonish, low resolution, blurry, simplistic, abstract, deformed, ugly",

| },

| {

| "name": "2560 x 1440",

| "prompt": "hyper-realistic 4K image of {prompt}. ultra-detailed, lifelike, high-resolution, sharp, vibrant colors, photorealistic",

| "negative_prompt": "cartoonish, low resolution, blurry, simplistic, abstract, deformed, ugly",

| },

| {

| "name": "HD+",

| "prompt": "hyper-realistic 2K image of {prompt}. ultra-detailed, lifelike, high-resolution, sharp, vibrant colors, photorealistic",

| "negative_prompt": "cartoonish, low resolution, blurry, simplistic, abstract, deformed, ugly",

| },

| {

| "name": "Style Zero",

| "prompt": "{prompt}",

| "negative_prompt": "",

| },

| ]

|

| styles = {k["name"]: (k["prompt"], k["negative_prompt"]) for k in style_list}

| DEFAULT_STYLE_NAME = "3840 x 2160"

| STYLE_NAMES = list(styles.keys())

|

| def apply_style(style_name: str, positive: str, negative: str = "") -> Tuple[str, str]:

| if style_name in styles:

| p, n = styles.get(style_name, styles[DEFAULT_STYLE_NAME])

| else:

| p, n = styles[DEFAULT_STYLE_NAME]

|

| if not negative:

| negative = ""

| return p.replace("{prompt}", positive), n + negative

|

| def load_and_prepare_model(model_id):

| pipe = StableDiffusionXLPipeline.from_pretrained(

| model_id,

| torch_dtype=torch.float16 if torch.cuda.is_available() else torch.float32,

| use_safetensors=True,

| add_watermarker=False,

| ).to(device)

| pipe.scheduler = EulerAncestralDiscreteScheduler.from_config(pipe.scheduler.config)

|

| if USE_TORCH_COMPILE:

| pipe.compile()

|

| if ENABLE_CPU_OFFLOAD:

| pipe.enable_model_cpu_offload()

|

| return pipe

|

| # Preload and compile both models

| models = {key: load_and_prepare_model(value) for key, value in MODEL_OPTIONS.items()}

|

| MAX_SEED = np.iinfo(np.int32).max

|

| def save_image(img):

| unique_name = str(uuid.uuid4()) + ".png"

| img.save(unique_name)

| return unique_name

|

| def randomize_seed_fn(seed: int, randomize_seed: bool) -> int:

| if randomize_seed:

| seed = random.randint(0, MAX_SEED)

| return seed

|

| @spaces.GPU(duration=60, enable_queue=True)

| def generate(

| model_choice: str,

| prompt: str,

| negative_prompt: str = "extra limbs, extra fingers, extra toes, unnatural proportions, distorted anatomy, disjointed limbs, mutated body parts, broken bones, oversized limbs, unrealistic muscles, merged faces, extra eyes, floating features, disfigured hands, incorrect joint placement, missing parts, blurry details, asymmetrical body structure, glitched textures",

| use_negative_prompt: bool = False,

| style_selection: str = DEFAULT_STYLE_NAME,

| seed: int = 1,

| width: int = 1024,

| height: int = 1024,

| guidance_scale: float = 3,

| num_inference_steps: int = 25,

| randomize_seed: bool = False,

| use_resolution_binning: bool = True,

| num_images: int = 1,

| progress=gr.Progress(track_tqdm=True),

| ):

| global models

| pipe = models[model_choice]

|

| seed = int(randomize_seed_fn(seed, randomize_seed))

| generator = torch.Generator(device=device).manual_seed(seed)

|

| prompt, negative_prompt = apply_style(style_selection, prompt, negative_prompt)

|

| options = {

| "prompt": [prompt] * num_images,

| "negative_prompt": [negative_prompt] * num_images if use_negative_prompt else None,

| "width": width,

| "height": height,

| "guidance_scale": guidance_scale,

| "num_inference_steps": num_inference_steps,

| "generator": generator,

| "output_type": "pil",

| }

|

| if use_resolution_binning:

| options["use_resolution_binning"] = True

|

| images = []

| for i in range(0, num_images, BATCH_SIZE):

| batch_options = options.copy()

| batch_options["prompt"] = options["prompt"][i:i + BATCH_SIZE]

| if "negative_prompt" in batch_options:

| batch_options["negative_prompt"] = options["negative_prompt"][i:i + BATCH_SIZE]

| images.extend(pipe(**batch_options).images)

|

| image_paths = [save_image(img) for img in images]

|

| return image_paths, seed

|

| with gr.Blocks(css=css, theme="bethecloud/storj_theme") as demo:

| gr.Markdown(DESCRIPTIONXX)

| with gr.Row():

| prompt = gr.Text(

| label="Prompt",

| show_label=False,

| max_lines=1,

| placeholder="Enter your prompt",

| container=False,

| )

| run_button = gr.Button("Run", scale=0)

| result = gr.Gallery(label="Result", columns=1, show_label=False)

|

| with gr.Row():

| model_choice = gr.Dropdown(

| label="Model Selection⬇️",

| choices=list(MODEL_OPTIONS.keys()),

| value="LIGHTNING V5.0"

| )

|

| with gr.Accordion("Advanced options", open=False, visible=True):

| style_selection = gr.Radio(

| show_label=True,

| container=True,

| interactive=True,

| choices=STYLE_NAMES,

| value=DEFAULT_STYLE_NAME,

| label="Quality Style",

| )

| num_images = gr.Slider(

| label="Number of Images",

| minimum=1,

| maximum=5,

| step=1,

| value=1,

| )

| with gr.Row():

| with gr.Column(scale=1):

| use_negative_prompt = gr.Checkbox(label="Use negative prompt", value=True)

| negative_prompt = gr.Text(

| label="Negative prompt",

| max_lines=5,

| lines=4,

| placeholder="Enter a negative prompt",

| value="(deformed, distorted, disfigured:1.3), poorly drawn, bad anatomy, wrong anatomy, extra limb, missing limb, floating limbs, (mutated hands and fingers:1.4), disconnected limbs, mutation, mutated, ugly, disgusting, blurry, amputation",

| visible=True,

| )

| seed = gr.Slider(

| label="Seed",

| minimum=0,

| maximum=MAX_SEED,

| step=1,

| value=0,

| )

| randomize_seed = gr.Checkbox(label="Randomize seed", value=True)

| with gr.Row():

| width = gr.Slider(

| label="Width",

| minimum=512,

| maximum=MAX_IMAGE_SIZE,

| step=8,

| value=1024,

| )

| height = gr.Slider(

| label="Height",

| minimum=512,

| maximum=MAX_IMAGE_SIZE,

| step=8,

| value=1024,

| )

| with gr.Row():

| guidance_scale = gr.Slider(

| label="Guidance Scale",

| minimum=0.1,

| maximum=6,

| step=0.1,

| value=3.0,

| )

| num_inference_steps = gr.Slider(

| label="Number of inference steps",

| minimum=1,

| maximum=60,

| step=1,

| value=28,

| )

| gr.Examples(

| examples=examples,

| inputs=prompt,

| cache_examples=False

| )

|

| use_negative_prompt.change(

| fn=lambda x: gr.update(visible=x),

| inputs=use_negative_prompt,

| outputs=negative_prompt,

| api_name=False,

| )

|

| gr.on(

| triggers=[

| prompt.submit,

| negative_prompt.submit,

| run_button.click,

| ],

| fn=generate,

| inputs=[

| model_choice,

| prompt,

| negative_prompt,

| use_negative_prompt,

| style_selection,

| seed,

| width,

| height,

| guidance_scale,

| num_inference_steps,

| randomize_seed,

| num_images,

| ],

| outputs=[result, seed]

| )

|

| if __name__ == "__main__":

| demo.queue(max_size=50).launch(show_api=True)

`

Exception:

File "/usr/local/lib/python3.10/site-packages/spaces/zero/wrappers.py", line 256, in thread_wrapper

res = future.result()

File "/usr/local/lib/python3.10/concurrent/futures/_base.py", line 451, in result

return self.__get_result()

File "/usr/local/lib/python3.10/concurrent/futures/_base.py", line 403, in __get_result

raise self._exception

File "/usr/local/lib/python3.10/concurrent/futures/thread.py", line 58, in run

result = self.fn(*self.args, **self.kwargs)

File "/home/user/app/app.py", line 158, in generate

images.extend(pipe(**batch_options).images)

File "/usr/local/lib/python3.10/site-packages/torch/utils/_contextlib.py", line 116, in decorate_context

return func(*args, **kwargs)

File "/usr/local/lib/python3.10/site-packages/diffusers/pipelines/stable_diffusion_xl/pipeline_stable_diffusion_xl.py", line 1086, in __call__

) = self.encode_prompt(

File "/usr/local/lib/python3.10/site-packages/diffusers/pipelines/stable_diffusion_xl/pipeline_stable_diffusion_xl.py", line 406, in encode_prompt

prompt_embeds = text_encoder(text_input_ids.to(device), output_hidden_states=True)

File "/usr/local/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1739, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

File "/usr/local/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1750, in _call_impl

return forward_call(*args, **kwargs)

File "/usr/local/lib/python3.10/site-packages/transformers/models/clip/modeling_clip.py", line 1490, in forward

text_embeds = self.text_projection(pooled_output)

File "/usr/local/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1739, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

File "/usr/local/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1750, in _call_impl

return forward_call(*args, **kwargs)

File "/usr/local/lib/python3.10/site-packages/torch/nn/modules/linear.py", line 125, in forward

return F.linear(input, self.weight, self.bias)

**RuntimeError: expected mat1 and mat2 to have the same dtype, but got: float != c10::Half**

### Who can help?

_No response_

### Information

- [x] The official example scripts

- [ ] My own modified scripts

### Tasks

- [x] An officially supported task in the `examples` folder (such as GLUE/SQuAD, ...)

- [ ] My own task or dataset (give details below)

### Reproduction

Just try to execute the above space code in a GPU enabled system.

While generating any image it fails with the exception in the description posted above.

**RuntimeError: expected mat1 and mat2 to have the same dtype, but got: float != c10::Half**

### Expected behavior

There should not be any exception. | open | 2025-03-06T05:33:31Z | 2025-03-07T05:39:13Z | https://github.com/huggingface/transformers/issues/36571 | [

"bug"

] | idebroy | 3 |

ymcui/Chinese-LLaMA-Alpaca | nlp | 558 | 合并Lora时报错NotImplementedError | chinese-alpaca-plus-lora-13b

chinese-llama-plus-lora-13b

chinese-llama-plus-lora-7b

执行单LoRA权重合并时候,报错NotImplementedError

(fastchat) root@estar-ESC8000-G4:~# pip list \| grep*

Package Version

------------------- ------------

accelerate 0.19.0

aiofiles 23.1.0

aiohttp 3.8.4

aiosignal 1.3.1

altair 5.0.1

anyio 3.6.2

appdirs 1.4.4

async-timeout 4.0.2

attrs 23.1.0

certifi 2023.5.7

charset-normalizer 3.1.0

click 8.1.3

contourpy 1.0.7

cycler 0.11.0

docker-pycreds 0.4.0

fastapi 0.95.1

ffmpy 0.3.0

filelock 3.12.0

fonttools 4.39.4

frozenlist 1.3.3

fschat 0.2.9

fsspec 2023.5.0

gitdb 4.0.10

GitPython 3.1.31

gradio 3.23.0

h11 0.14.0

httpcore 0.17.0

httpx 0.24.0

huggingface-hub 0.14.1

idna 3.4

importlib-resources 5.12.0

Jinja2 3.1.2

jsonschema 4.17.3

kiwisolver 1.4.4

linkify-it-py 2.0.2

markdown-it-py 2.2.0

markdown2 2.4.8

MarkupSafe 2.1.2

matplotlib 3.7.1

mdit-py-plugins 0.3.3

mdurl 0.1.2

multidict 6.0.4

nh3 0.2.11

numpy 1.24.3

orjson 3.8.12

packaging 23.1

pandas 2.0.1

pathtools 0.1.2

peft 0.3.0

Pillow 9.5.0

pip 23.1.2

prompt-toolkit 3.0.38

protobuf 3.19.0

psutil 5.9.5

pydantic 1.10.7

pydub 0.25.1

Pygments 2.15.1

pyparsing 3.0.9

pyrsistent 0.19.3

python-dateutil 2.8.2

python-multipart 0.0.6

pytz 2023.3

PyYAML 6.0

regex 2023.5.5

requests 2.30.0

rich 13.3.5

semantic-version 2.10.0

sentencepiece 0.1.97

sentry-sdk 1.23.1

setproctitle 1.3.2

setuptools 67.7.2

shortuuid 1.0.11

six 1.16.0

smmap 5.0.0

sniffio 1.3.0

starlette 0.26.1

svgwrite 1.4.3

tokenizers 0.13.3

toolz 0.12.0

torch 1.13.1+cu117

torchaudio 0.13.1+cu117

torchvision 0.14.1+cu117

tqdm 4.65.0

transformers 4.28.1

typing_extensions 4.5.0

tzdata 2023.3

uc-micro-py 1.0.2

urllib3 1.26.15

uvicorn 0.22.0

wandb 0.15.3

wavedrom 2.0.3.post3

wcwidth 0.2.6

websockets 11.0.3

wheel 0.40.0

yarl 1.9.2

zipp 3.15.0

*请提供文本log、运行截图*

- [x] **基础模型**: LLaMA-Plus 13B/33B

- [x] **运行系统**:Linux

- [x] **问题分类**:模型转换和合并

- [x] **模型正确性检查**:务必检查模型的[SHA256.md](https://github.com/ymcui/Chinese-LLaMA-Alpaca/blob/main/SHA256.md),模型不对的情况下无法保证效果和正常运行。

- [x] (必选)由于相关依赖频繁更新,请确保按照[Wiki](https://github.com/ymcui/Chinese-LLaMA-Alpaca/wiki)中的相关步骤执行

- [x] (必选)我已阅读[FAQ章节](https://github.com/ymcui/Chinese-LLaMA-Alpaca/wiki/常见问题)并且已在Issue中对问题进行了搜索,没有找到相似问题和解决方案

- [x] (必选)第三方插件问题:例如[llama.cpp](https://github.com/ggerganov/llama.cpp)、[text-generation-webui](https://github.com/oobabooga/text-generation-webui)、[LlamaChat](https://github.com/alexrozanski/LlamaChat)等,同时建议到对应的项目中查找解决方案

| closed | 2023-06-10T12:29:52Z | 2023-06-12T00:16:39Z | https://github.com/ymcui/Chinese-LLaMA-Alpaca/issues/558 | [] | wuxiulike | 5 |

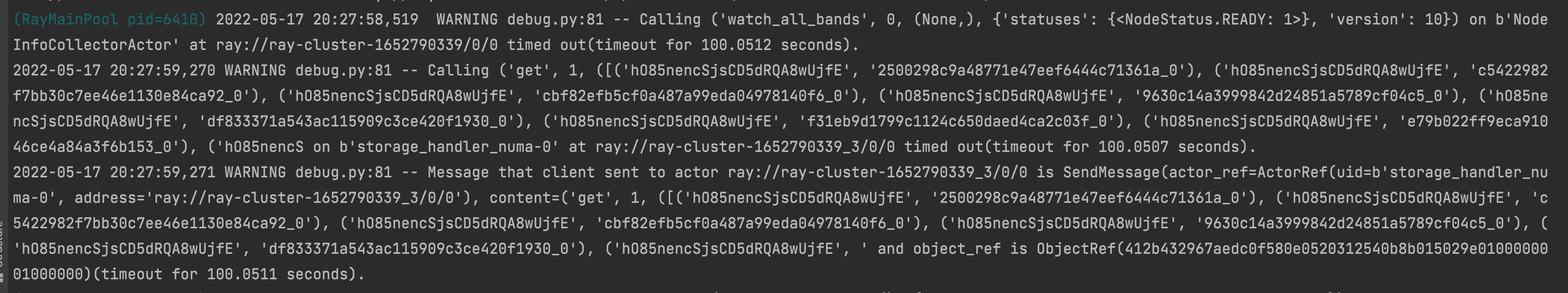

PrefectHQ/prefect | automation | 17,017 | Validation error when using anonymous volumes | ### Bug summary

It looks like Prefect's validation doesn't allow anonymous volumes. This is my volume configuration:

<img width="548" alt="Image" src="https://github.com/user-attachments/assets/8f91b48e-a923-4745-8a32-be386c68368f" />

That throws the following Validation error:

```

19:30:34.753 | ERROR | prefect.flow_runs.worker - Failed to submit flow run 'e8d1029c-8368-4063-a208-8bf8305b7c6e' to infrastructure.

Traceback (most recent call last):

File "/Users/anzepecar/app/.venv/lib/python3.12/site-packages/prefect/workers/base.py", line 1007, in _submit_run_and_capture_errors

configuration = await self._get_configuration(flow_run)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/anzepecar/app/.venv/lib/python3.12/site-packages/prefect/workers/base.py", line 1105, in _get_configuration

configuration = await self.job_configuration.from_template_and_values(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/anzepecar/app/.venv/lib/python3.12/site-packages/prefect/client/utilities.py", line 99, in with_injected_client

return await fn(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/anzepecar/app/.venv/lib/python3.12/site-packages/prefect/workers/base.py", line 188, in from_template_and_values

return cls(**populated_configuration)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/anzepecar/app/.venv/lib/python3.12/site-packages/pydantic/main.py", line 214, in __init__

validated_self = self.__pydantic_validator__.validate_python(data, self_instance=self)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

pydantic_core._pydantic_core.ValidationError: 1 validation error for DockerWorkerJobConfiguration

volumes.1

Value error, Invalid volume string: '/opt/watchpointlabs/.venv' [type=value_error, input_value='/opt/watchpointlabs/.venv', input_type=str]

For further information visit https://errors.pydantic.dev/2.10/v/value_error

19:30:34.769 | INFO | prefect.flow_runs.worker - Reported flow run 'e8d1029c-8368-4063-a208-8bf8305b7c6e' as crashed: Flow run could not be submitted to infrastructure:

1 validation error for DockerWorkerJobConfiguration

volumes.1

```

Is there a reason for not allowing anonymous volumes? They can be very useful useful for development purposes as also mentioned in the [uv docs](https://docs.astral.sh/uv/guides/integration/docker/#mounting-the-project-with-docker-run).

I'm happy to open a PR that fixes this, let me know!

### Version info

```Text

Version: 3.1.15

API version: 0.8.4

Python version: 3.12.6

Git commit: 3ac3d548

Built: Thu, Jan 30, 2025 11:31 AM

OS/Arch: darwin/arm64

Profile: local

Server type: server

Pydantic version: 2.10.6

Integrations:

prefect-docker: 0.6.2

```

### Additional context

_No response_ | closed | 2025-02-06T19:46:55Z | 2025-02-07T01:07:56Z | https://github.com/PrefectHQ/prefect/issues/17017 | [

"bug"

] | anze3db | 2 |

Lightning-AI/pytorch-lightning | pytorch | 20,249 | Shuffle order is the same across runs when using strategy='ddp' | ### Bug description

The batches and their order are the same across different executions of the script when using strategy='ddp' and dataloader with shuffle=True

### What version are you seeing the problem on?

v2.2

### How to reproduce the bug

Say you have train.py that prints the current input on each training iteration and has shuffling enabled in the

dataloader:

```python

import torch

from torch.utils.data import TensorDataset, DataLoader

import torch.nn.functional as F

import lightning.pytorch as pl

class SomeLightningModule(pl.LightningModule):

def __init__(self):

super().__init__()

self.p1 = torch.nn.Parameter(torch.tensor(0.0))

self.p2 = torch.nn.Parameter(torch.tensor(0.0))

def training_step(self, batch):

x, y = batch

print(x.item())

return F.mse_loss(x * self.p1 + self.p2, y)

def configure_optimizers(self):

optimizer = torch.optim.Adam(

self.parameters(),

)

return {

"optimizer": optimizer,

}

lightning_module = SomeLightningModule()

trainer = pl.Trainer(

strategy='ddp',

max_epochs=1,

)

train_dataset = TensorDataset(torch.arange(5).float(), torch.arange(5).float())

train_loader = DataLoader(train_dataset, shuffle=True)

trainer.fit(lightning_module, train_dataloaders=train_loader)

```

When strategy='ddp', the script will print the same numbers across different runs:

```

$ python3 train.py

4.0

0.0

1.0

3.0

2.0

$ python3 train.py

4.0

0.0

1.0

3.0

2.0

```

Such behavior can be unwanted, as people might want to try different orders of batches (e.g. to construct ensembles or get the average performance)

### Error messages and logs

```

# Error messages and logs here please

```

### Environment

<details>

<summary>Current environment</summary>

* CUDA:

- GPU:

- Graphics Device

- available: True

- version: 11.8

* Lightning:

- lightning: 2.2.0.post0

- lightning-utilities: 0.10.1

- pytorch-lightning: 1.7.7

- torch: 2.1.2

- torchaudio: 2.1.2

- torchmetrics: 0.10.3

- torchvision: 0.16.2

* Packages:

- absl-py: 1.3.0

- aiohttp: 3.8.3

- aiosignal: 1.3.1

- alphafold-colabfold: 2.3.6

- altair: 5.4.0

- anarci: 1.3

- antiberty: 0.1.3

- antlr4-python3-runtime: 4.9.3

- anyio: 3.5.0

- appdirs: 1.4.4

- argon2-cffi: 21.3.0

- argon2-cffi-bindings: 21.2.0

- asttokens: 2.0.5

- astunparse: 1.6.3

- async-lru: 2.0.4

- async-timeout: 4.0.2

- attrs: 22.1.0

- babel: 2.11.0

- backcall: 0.2.0

- beautifulsoup4: 4.12.2

- biopython: 1.79

- bleach: 4.1.0

- blinker: 1.5

- bottleneck: 1.3.5

- brotlipy: 0.7.0

- cached-property: 1.5.2

- cachetools: 5.2.0

- certifi: 2023.5.7

- cffi: 1.15.1

- charset-normalizer: 2.1.1

- chex: 0.1.86

- click: 8.1.3

- cmake: 3.28.3

- colabfold: 1.5.5

- colorama: 0.4.6

- comm: 0.1.2

- contextlib2: 21.6.0

- contourpy: 1.0.6

- cryptography: 38.0.3

- cycler: 0.11.0

- debugpy: 1.6.7

- decorator: 5.1.1

- deepspeed: 0.9.5

- defusedxml: 0.7.1

- dm-haiku: 0.0.12

- dm-tree: 0.1.8

- docker-pycreds: 0.4.0

- docstring-parser: 0.15

- einops: 0.8.0

- entrypoints: 0.4

- et-xmlfile: 1.1.0

- etils: 1.5.2

- exceptiongroup: 1.0.4

- executing: 0.8.3

- fastjsonschema: 2.16.2

- filelock: 3.13.1

- flatbuffers: 24.3.25

- flax: 0.8.5

- fonttools: 4.38.0

- frozenlist: 1.3.3

- fsspec: 2024.3.1

- gast: 0.6.0

- gdown: 5.1.0

- gemmi: 0.5.7

- gitdb: 4.0.9

- gitpython: 3.1.29

- gmpy2: 2.1.2

- google-auth: 2.14.1

- google-auth-oauthlib: 0.4.6

- google-pasta: 0.2.0

- grpcio: 1.49.1

- h5py: 3.11.0

- hjson: 3.1.0

- huggingface-hub: 0.22.2

- hydra-core: 1.3.2

- idna: 3.4

- immutabledict: 4.2.0

- importlib-metadata: 4.13.0

- importlib-resources: 6.1.2

- ipykernel: 6.25.0

- ipython: 8.15.0

- ipython-genutils: 0.2.0

- ipywidgets: 8.0.4

- jax: 0.3.25

- jaxlib: 0.3.25+cuda11.cudnn82

- jedi: 0.18.1

- jinja2: 3.1.2

- jmp: 0.0.4

- json5: 0.9.6

- jsonargparse: 4.27.5

- jsonschema: 4.17.3

- jupyter: 1.0.0

- jupyter-client: 7.4.9

- jupyter-console: 6.6.3

- jupyter-core: 5.5.0

- jupyter-events: 0.6.3

- jupyter-lsp: 2.2.0

- jupyter-server: 2.10.0

- jupyter-server-terminals: 0.4.4

- jupyterlab: 4.0.8

- jupyterlab-pygments: 0.1.2

- jupyterlab-server: 2.22.0

- jupyterlab-widgets: 3.0.9

- keras: 3.4.1

- kiwisolver: 1.4.4

- libclang: 18.1.1

- lightning: 2.2.0.post0

- lightning-utilities: 0.10.1

- lit: 18.1.1

- markdown: 3.4.1

- markdown-it-py: 3.0.0

- markupsafe: 2.1.1

- matplotlib: 3.6.2

- matplotlib-inline: 0.1.6

- mdurl: 0.1.2

- mistune: 2.0.4

- mkl-fft: 1.3.1

- mkl-random: 1.2.2

- mkl-service: 2.4.0

- ml-collections: 0.1.1

- ml-dtypes: 0.3.2

- mmcif-pdbx: 2.0.1

- mpi4py: 3.1.4

- mpmath: 1.3.0

- msgpack: 1.0.8

- multidict: 6.0.2

- munkres: 1.1.4

- namex: 0.0.8

- narwhals: 1.5.0

- nbclient: 0.8.0

- nbconvert: 7.10.0

- nbformat: 5.9.2

- nest-asyncio: 1.5.6

- networkx: 3.1

- ninja: 1.11.1

- notebook: 6.3.0

- notebook-shim: 0.2.3

- numexpr: 2.8.4

- numpy: 1.23.5

- oauthlib: 3.2.2

- omegaconf: 2.3.0

- openpyxl: 3.1.5

- opt-einsum: 3.3.0

- optax: 0.2.2

- optree: 0.11.0

- orbax-checkpoint: 0.5.20

- overrides: 7.4.0

- packaging: 21.3

- pandas: 1.5.3

- pandocfilters: 1.5.0

- parso: 0.8.3

- path: 16.2.0

- pathtools: 0.1.2

- pdb2pqr: 3.6.1

- pexpect: 4.8.0

- pickleshare: 0.7.5

- pillow: 9.2.0

- pip: 22.3.1

- platformdirs: 3.10.0

- ply: 3.11

- pmw: 2.0.1

- pooch: 1.6.0

- prody: 2.2.0

- prometheus-client: 0.14.1

- promise: 2.3

- prompt-toolkit: 3.0.43

- propka: 3.5.1

- protobuf: 4.21.9

- psutil: 5.9.4

- ptyprocess: 0.7.0

- pure-eval: 0.2.2

- py-cpuinfo: 9.0.0

- py3dmol: 2.0.4

- pyasn1: 0.4.8

- pyasn1-modules: 0.3.0

- pycollada: 0.8

- pycparser: 2.21

- pydantic: 1.10.11

- pydeprecate: 0.3.2

- pygments: 2.15.1

- pyjwt: 2.6.0

- pykerberos: 1.2.4

- pymol: 2.5.5

- pyopenssl: 22.1.0

- pyparsing: 3.0.9

- pyqt5: 5.15.7

- pyqt5-sip: 12.11.0

- pyrsistent: 0.20.0

- pysocks: 1.7.1

- python-dateutil: 2.8.2

- python-json-logger: 2.0.7

- pytorch-lightning: 1.7.7

- pytz: 2022.7

- pyu2f: 0.1.5

- pyyaml: 6.0

- pyzmq: 25.1.0

- qtconsole: 5.5.1

- qtpy: 2.4.1

- regex: 2023.12.25

- requests: 2.28.1

- requests-oauthlib: 1.3.1

- rfc3339-validator: 0.1.4

- rfc3986-validator: 0.1.1

- rich: 13.7.1

- rjieba: 0.1.11

- rsa: 4.9

- safetensors: 0.4.2

- scipy: 1.10.1

- seaborn: 0.13.2

- send2trash: 1.8.2

- sentry-sdk: 1.11.0

- setproctitle: 1.3.2

- setuptools: 59.5.0

- shortuuid: 1.0.11

- sip: 6.7.12

- six: 1.16.0

- smmap: 3.0.5

- sniffio: 1.2.0

- soupsieve: 2.5

- stack-data: 0.2.0

- sympy: 1.12

- tabulate: 0.9.0

- tensorboard: 2.16.2

- tensorboard-data-server: 0.7.2

- tensorboard-plugin-wit: 1.8.1

- tensorflow-cpu: 2.16.2

- tensorflow-io-gcs-filesystem: 0.37.0

- tensorstore: 0.1.63

- termcolor: 2.4.0

- terminado: 0.17.1

- tinycss2: 1.2.1

- tmtools: 0.2.0