repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

zappa/Zappa | flask | 903 | [Migrated] [ERROR] RuntimeError: populate() isn't reentrant | Originally from: https://github.com/Miserlou/Zappa/issues/2165 by [rafrasenberg](https://github.com/rafrasenberg)

I am trying to deploy a Django project with Zappa and a PostgreSQL database on Amazon AWS RDS but I am running into this error:

```

$ zappa manage dev create_db

[START] RequestId: ac91cbb6-9026-44d5-9136-3e4db8c0878c Version: $LATEST

[DEBUG] 2020-09-22T11:16:57.834Z ac91cbb6-9026-44d5-9136-3e4db8c0878c Zappa Event: {'manage': 'create_db'}

[ERROR] RuntimeError: populate() isn't reentrant

Traceback (most recent call last):

File "/var/task/handler.py", line 609, in lambda_handler

return LambdaHandler.lambda_handler(event, context)

File "/var/task/handler.py", line 243, in lambda_handler

return handler.handler(event, context)

File "/var/task/handler.py", line 404, in handler

app_function = get_django_wsgi(self.settings.DJANGO_SETTINGS)

File "/var/task/zappa/ext/django_zappa.py", line 20, in get_django_wsgi

return get_wsgi_application()

File "/var/task/django/core/wsgi.py", line 12, in get_wsgi_application

django.setup(set_prefix=False)

File "/var/task/django/__init__.py", line 24, in setup

apps.populate(settings.INSTALLED_APPS)

File "/var/task/django/apps/registry.py", line 83, in populate

raise RuntimeError("populate() isn't reentrant")[END] RequestId: ac91cbb6-9026-44d5-9136-3e4db8c0878c

[REPORT] RequestId: ac91cbb6-9026-44d5-9136-3e4db8c0878c

Duration: 2.28 ms

Billed Duration: 100 ms

Memory Size: 512 MB

Max Memory Used: 101 MB

Error: Unhandled error occurred while invoking command.

```

Pretty meaningless error. I google'd this and tried some of the solutions in other tickets but could not resolve this. I tried `psycopg2` and `psycopg2-binary` but both no luck. Did anyone ever solve this? Because all the articles/issues covering this are old and outdated.

Zappa settings:

```

{

"dev": {

"aws_region": "eu-central-1",

"django_settings": "dserverless.settings",

"profile_name": "default",

"project_name": "dserverless",

"runtime": "python3.8",

"s3_bucket": "django-serverless",

"vpc_config": {

"SubnetIds": ["subnet-2cef773346", "subnet-023527a", "subnet-6agbcb21"],

"SecurityGroupIds": ["sg-87325d"]

}

}

}

```

The DB command:

```

class Command(BaseCommand):

help = 'Creates the initial database'

def handle(self, *args, **options):

self.stdout.write(self.style.SUCCESS('Starting db creation'))

dbname = settings.DATABASES['default']['NAME']

user = settings.DATABASES['default']['USER']

password = settings.DATABASES['default']['PASSWORD']

host = settings.DATABASES['default']['HOST']

con = None

con = connect(dbname=dbname, user=user, host=host, password=password)

con.set_isolation_level(ISOLATION_LEVEL_AUTOCOMMIT)

cur = con.cursor()

cur.execute('CREATE DATABASE ' + dbname)

cur.close()

con.close()

self.stdout.write(self.style.SUCCESS('All Done'))

```

Pip freeze:

```

appdirs==1.4.3

argcomplete==1.12.0

asgiref==3.2.10

autopep8==1.5.4

boto3==1.15.2

botocore==1.18.2

CacheControl==0.12.6

certifi==2019.11.28

cfn-flip==1.2.3

chardet==3.0.4

click==7.1.2

colorama==0.4.3

contextlib2==0.6.0

distlib==0.3.0

distro==1.4.0

Django==3.1.1

django-s3-storage==0.13.4

durationpy==0.5

future==0.18.2

hjson==3.0.2

html5lib==1.0.1

idna==2.8

ipaddr==2.2.0

jmespath==0.10.0

kappa==0.6.0

lockfile==0.12.2

msgpack==0.6.2

packaging==20.3

pep517==0.8.2

pip-tools==5.3.1

placebo==0.9.0

progress==1.5

psycopg2-binary==2.8.6

pycodestyle==2.6.0

pyparsing==2.4.6

python-dateutil==2.6.1

python-slugify==4.0.1

pytoml==0.1.21

pytz==2020.1

PyYAML==5.3.1

requests==2.22.0

retrying==1.3.3

s3transfer==0.3.3

six==1.14.0

sqlparse==0.3.1

text-unidecode==1.3

toml==0.10.1

tqdm==4.49.0

troposphere==2.6.2

urllib3==1.25.8

webencodings==0.5.1

Werkzeug==0.16.1

wsgi-request-logger==0.4.6

zappa==0.51.0

```

| closed | 2021-02-20T13:03:34Z | 2022-07-16T05:10:36Z | https://github.com/zappa/Zappa/issues/903 | [] | jneves | 2 |

littlecodersh/ItChat | api | 108 | 怎么获取每个好友唯一的标识 | 在运行中发觉,每次重新登录或者重新启动程序。获取好友的ActualUserName都不一样。有什么属性可以永远都标识同一个好友吗

| closed | 2016-10-21T07:31:12Z | 2016-10-22T13:53:17Z | https://github.com/littlecodersh/ItChat/issues/108 | [

"question"

] | kh13 | 1 |

desec-io/desec-stack | rest-api | 813 | readthedocs build (sometimes?) fails | The readthedocs build (sometimes?) fails due missing config:

> The configuration file required to build documentation is missing from your project. Add a configuration file to your project to make it build successfully. Read more at https://docs.readthedocs.io/en/stable/config-file/v2.html

Source: https://readthedocs.org/projects/desec/builds/22049067/ | closed | 2023-09-27T14:23:21Z | 2023-11-03T16:09:12Z | https://github.com/desec-io/desec-stack/issues/813 | [] | Rotzbua | 0 |

Farama-Foundation/PettingZoo | api | 1,251 | [Feat] Create template environment similar to Gymnasium's | ### Proposal

As of now, the PettingZoo documentation gives instructions on how to create a custom environment. However, Gymnasium uses copier to clone a template environment which is better.

The goal of this proposal is to implement a template PettingZoo environment and create documentation to use it, like with Gymnasium.

### Motivation

_No response_

### Pitch

_No response_

### Alternatives

_No response_

### Additional context

_No response_

### Checklist

- [x] I have checked that there is no similar [issue](https://github.com/Farama-Foundation/PettingZoo/issues) in the repo

| open | 2024-12-09T13:46:44Z | 2024-12-09T21:11:02Z | https://github.com/Farama-Foundation/PettingZoo/issues/1251 | [

"enhancement"

] | David-GERARD | 0 |

sgl-project/sglang | pytorch | 4,090 | Questions about the calculation of `max_req_num` |

Hi, from the source code I see that **sglang** has the ability to automatically calculate [**max_req_num**](https://github.com/sgl-project/sglang/blob/main/python/sglang/srt/model_executor/model_runner.py#L647):

```python

if max_num_reqs is None:

max_num_reqs = min(

max(

int(

self.max_total_num_tokens / self.model_config.context_len * 512

),

2048,

),

4096,

)

```

Regarding this calculation process, I have the following questions:

1. Will setting **max_req_num** directly affect GPU memory usage?

2. The value of **max_req_num** seems to range between 2048 and 4096. Why is that? What’s the reasoning behind this design? Is it based on empirical values?

3. When calculating **max_req_num** based on **max_total_num_tokens** and **context_len**, why multiply by the coefficient 512? Where does this coefficient come from?

| open | 2025-03-05T08:51:45Z | 2025-03-05T09:08:54Z | https://github.com/sgl-project/sglang/issues/4090 | [] | tingjun-cs | 0 |

babysor/MockingBird | deep-learning | 266 | "AssertionError" when starting web.py | 运行web.py时出现"AssertionError"错误 | When I started "web.py", I met the error message ended of "AssertionError".:

我用python运行"web.py"时,遇到了以下问题,最后一行是“AssertionError”:

(mockingbird) C:\Users\yisheng_zhou\Downloads\MockingBird> python web.py

Loaded synthesizer models: 2

Loaded encoder "pretrained.pt" trained to step 1564501

Building Wave-RNN

Trainable Parameters: 4.481M

Loading model weights at vocoder\saved_models\pretrained\pretrained.pt

Building hifigan

Traceback (most recent call last):

File "C:\Users\yisheng_zhou\Downloads\MockingBird\web.py", line 6, in <module>

app = webApp()

File "C:\Users\yisheng_zhou\Downloads\MockingBird\web\__init__.py", line 35, in webApp

gan_vocoder.load_model(Path("vocoder/saved_models/pretrained/g_hifigan.pt"))

File "C:\Users\yisheng_zhou\Downloads\MockingBird\vocoder\hifigan\inference.py", line 44, in load_model

state_dict_g = load_checkpoint(

File "C:\Users\yisheng_zhou\Downloads\MockingBird\vocoder\hifigan\inference.py", line 18, in load_checkpoint

assert os.path.isfile(filepath)

AssertionError

Was someone same to me? How to solve?

各位大神有遇到和我一样问题的吗?怎么解决的? | open | 2021-12-12T07:17:34Z | 2021-12-26T03:21:54Z | https://github.com/babysor/MockingBird/issues/266 | [] | GreenApple-King | 1 |

jazzband/django-oauth-toolkit | django | 1,478 | Minor/patch release cycle with bugfixes | <!-- What is your question? -->

Hi! Do you have any plans to release another minor or patch version before the major upgrade to 3? There are a couple of smaller non-breaking fixes that would be great to have in, such as https://github.com/jazzband/django-oauth-toolkit/pull/1476 and https://github.com/jazzband/django-oauth-toolkit/pull/1465 which fixes [this CVE](https://github.com/advisories/GHSA-3pgj-pg6c-r5p7). 🙏 | closed | 2024-09-04T10:25:01Z | 2024-09-05T14:25:12Z | https://github.com/jazzband/django-oauth-toolkit/issues/1478 | [

"question",

"help-wanted",

"dependencies"

] | cristiprg | 4 |

pyg-team/pytorch_geometric | deep-learning | 9,225 | The link to the Karate Club paper is broken | ### 📚 Describe the documentation issue

Hello.

In [this](https://pytorch-geometric.readthedocs.io/en/latest/generated/torch_geometric.datasets.KarateClub.html#torch_geometric.datasets.KarateClub) documentation, the link to [“An Information Flow Model for Conflict and Fission in Small Groups”](http://www1.ind.ku.dk/complexLearning/zachary1977.pdf) is broken.

### Suggest a potential alternative/fix

We should link to https://www.journals.uchicago.edu/doi/abs/10.1086/jar.33.4.3629752 instead.

Here is where we need to change.

https://github.com/pyg-team/pytorch_geometric/blob/ed170342eb2b174fd16b910c735758edbd4e78fd/torch_geometric/datasets/karate.py#L11 | closed | 2024-04-22T13:23:02Z | 2024-04-26T10:27:54Z | https://github.com/pyg-team/pytorch_geometric/issues/9225 | [

"documentation"

] | 1taroh | 0 |

3b1b/manim | python | 1,288 | % signs result in crop or errors in manim text. | Here is an exmaple from Manim that tries to write `%`:

```

class WriteStuff(Scene):

def construct(self):

example_text = TextMobject(

"This is a some % text",

tex_to_color_map={"text": YELLOW}

)

example_tex = TexMobject(

"\\sum_{k=1}^\\infty % {1 \\over k^2} = {\\pi^2 \\over 6}",

)

group = VGroup(example_text, example_tex)

group.arrange(DOWN)

group.set_width(FRAME_WIDTH - 2 * LARGE_BUFF)

self.play(Write(example_text))

self.play(Write(example_tex))

self.wait()

```

In the final animation, everything after `%` is cropped or not written in both `TextMobject` and `TexMobject`. This is the missing text bug.

If the percentage sign, moves between any opening or closing `{}`. It results in compilation error:

```

class WriteStuff(Scene):

def construct(self):

example_text = TextMobject(

"This is a some % text",

tex_to_color_map={"text": YELLOW}

)

example_tex = TexMobject(

"\\sum_{k=1 % }^\\infty {1 \\over k^2} = {\\pi^2 \\over 6}",

)

group = VGroup(example_text, example_tex)

group.arrange(DOWN)

group.set_width(FRAME_WIDTH - 2 * LARGE_BUFF)

self.play(Write(example_text))

self.play(Write(example_tex))

self.wait()

```

What causes this error? | closed | 2020-12-10T06:11:01Z | 2020-12-10T11:04:07Z | https://github.com/3b1b/manim/issues/1288 | [] | baljeetrathi | 0 |

huggingface/transformers | tensorflow | 36,411 | [i18n-zh] Translating `kv_cache` into zh-hans | <!--

Note: Please search to see if an issue already exists for the language you are trying to translate.

-->

Hi!

Let's bring the documentation to all the <languageName>-speaking community 🌐 (currently 0 out of 267 complete)

Who would want to translate? Please follow the 🤗 [TRANSLATING guide](https://github.com/huggingface/transformers/blob/main/docs/TRANSLATING.md). Here is a list of the files ready for translation. Let us know in this issue if you'd like to translate any, and we'll add your name to the list.

Some notes:

* Please translate using an informal tone (imagine you are talking with a friend about transformers 🤗).

* Please translate in a gender-neutral way.

* Add your translations to the folder called `<languageCode>` inside the [source folder](https://github.com/huggingface/transformers/tree/main/docs/source).

* Register your translation in `<languageCode>/_toctree.yml`; please follow the order of the [English version](https://github.com/huggingface/transformers/blob/main/docs/source/en/_toctree.yml).

* Once you're finished, open a pull request and tag this issue by including #issue-number in the description, where issue-number is the number of this issue. Please ping @stevhliu and @MKhalusova for review.

* 🙋 If you'd like others to help you with the translation, you can also post in the 🤗 [forums](https://discuss.huggingface.co/).

## Get Started section

- [ ] [index.md](https://github.com/huggingface/transformers/blob/main/docs/source/en/index.md) https://github.com/huggingface/transformers/pull/20180

- [ ] [quicktour.md](https://github.com/huggingface/transformers/blob/main/docs/source/en/quicktour.md) (waiting for initial PR to go through)

- [ ] [installation.md](https://github.com/huggingface/transformers/blob/main/docs/source/en/installation.md).

## Tutorial section

- [ ] [pipeline_tutorial.md](https://github.com/huggingface/transformers/blob/main/docs/source/en/pipeline_tutorial.md)

- [ ] [autoclass_tutorial.md](https://github.com/huggingface/transformers/blob/main/docs/source/en/autoclass_tutorial.md)

- [ ] [preprocessing.md](https://github.com/huggingface/transformers/blob/main/docs/source/en/preprocessing.md)

- [ ] [training.md](https://github.com/huggingface/transformers/blob/main/docs/source/en/training.md)

- [ ] [accelerate.md](https://github.com/huggingface/transformers/blob/main/docs/source/en/accelerate.md)

- [ ] [model_sharing.md](https://github.com/huggingface/transformers/blob/main/docs/source/en/model_sharing.md)

- [ ] [multilingual.md](https://github.com/huggingface/transformers/blob/main/docs/source/en/multilingual.md)

## Generation

- [x] [kv_cache.md](https://github.com/huggingface/transformers/blob/main/docs/source/en/kv_cache.md)

<!--

Keep on adding more as you go 🔥

-->

| closed | 2025-02-26T08:53:32Z | 2025-02-26T16:05:22Z | https://github.com/huggingface/transformers/issues/36411 | [

"WIP"

] | neofung | 1 |

autogluon/autogluon | data-science | 4,406 | Improve CPU training times for catboost | Related to https://github.com/catboost/catboost/issues/2722

Problem: Catboost takes 16x more time to train than a similar Xgboost model.

```

catboost: 1.2.5

xgboost: 2.0.3

autogluon: 1.1.1

Python: 3.10.14

OS: Windows 11 Pro (10.0.22635)

CPU: Intel(R) Core(TM) i7-1165G7

GPU: Integrated Graphics

RAM: 16 GB

```

Example with data:

```python

from autogluon.tabular import TabularDataset, TabularPredictor

import numpy as np

from sklearnex import patch_sklearn

patch_sklearn()

# data

label = 'signature'

data_url = 'https://raw.githubusercontent.com/mli/ag-docs/main/knot_theory/'

train_data = TabularDataset(f'{data_url}train.csv')

test_data = TabularDataset(f'{data_url}test.csv')

# train

np.random.seed(2024)

predictor = TabularPredictor(label=label, problem_type='multiclass', eval_metric='log_loss')

predictor.fit(train_data, included_model_types=['XGB', 'CAT'])

# report

metrics = ['model', 'score_test', 'score_val', 'eval_metric', 'pred_time_test', 'fit_time']

predictor.leaderboard(test_data)[metrics]

```

model | score_test | score_val | eval_metric | pred_time_test | fit_time

-- | -- | -- | -- | -- | --

WeightedEnsemble_L2 | -0.155262 | -0.138425 | log_loss | 0.649330 | 263.176814

CatBoost | -0.158654 | -0.150310 | log_loss | 0.237857 | 247.344303

XGBoost | -0.171801 | -0.144754 | log_loss | 0.398456 | 15.676711

| closed | 2024-08-18T03:19:42Z | 2024-08-20T04:36:45Z | https://github.com/autogluon/autogluon/issues/4406 | [

"enhancement",

"wontfix",

"module: tabular"

] | crossxwill | 1 |

jupyter-book/jupyter-book | jupyter | 1,794 | [BUG] In Firefox links to references stored in a dropdown do not work unless the dropdown is opened | ### Describe the bug

related to https://github.com/agahkarakuzu/oreoni/issues/4

**context**

When I click on a link to a reference that is "stored" in a dropdown section on the same page.

**expectation**

I expected that the reference dropdown will open and the screen will move to the line of the reference..

**bug**

This behavior works fine in Chrome but in Firefox nothing happens.

### Reproduce the bug

Open this link in Firefox VS Chrome:

https://remi-gau.github.io/oreoni/01/introduction.html#id21

In Chrome the link works (opens the reference dropdown and moves the screen to it) but not in Firefox

### List your environment

https://github.com/agahkarakuzu/oreoni/blob/main/requirements.txt

jupyter-book==0.12.2

| open | 2022-08-01T11:15:11Z | 2022-08-01T11:16:39Z | https://github.com/jupyter-book/jupyter-book/issues/1794 | [

"bug"

] | Remi-Gau | 2 |

chaoss/augur | data-visualization | 3,054 | Facade Error: insert_facade_contributors: TypeError('sequence item 1: expected a bytes-like object, NoneType found') | Since core got unblocked we have 1,000+ of this error:

Exception:

> TypeError('sequence item 1: expected a bytes-like object, NoneType found')

Traceback (most recent call last):

> File "/opt/venv/lib/python3.9/site-packages/celery/backends/redis.py", line 520, in on_chord_part_return resl = [unpack(tup, decode) for tup in resl] File "/opt/venv/lib/python3.9/site-packages/celery/backends/redis.py", line 520, in <listcomp> resl = [unpack(tup, decode) for tup in resl] File "/opt/venv/lib/python3.9/site-packages/celery/backends/redis.py", line 426, in _unpack_chord_result raise ChordError(f'Dependency {tid} raised {retval!r}') celery.exceptions.ChordError: Dependency 5bc5e62c-3879-46c5-86b9-3ffe53a5367d raised FileNotFoundError(2, 'No such file or directory') During handling of the above exception, another exception occurred: Traceback (most recent call last): File "/opt/venv/lib/python3.9/site-packages/celery/app/trace.py", line 518, in trace_task task.backend.mark_as_done( File "/opt/venv/lib/python3.9/site-packages/celery/backends/base.py", line 164, in mark_as_done self.on_chord_part_return(request, state, result) File "/opt/venv/lib/python3.9/site-packages/celery/backends/redis.py", line 539, in on_chord_part_return return self.chord_error_from_stack(callback, exc) File "/opt/venv/lib/python3.9/site-packages/celery/backends/base.py", line 309, in chord_error_from_stack return backend.fail_from_current_stack(callback.id, exc=exc) File "/opt/venv/lib/python3.9/site-packages/celery/backends/base.py", line 316, in fail_from_current_stack self.mark_as_failure(task_id, exc, exception_info.traceback) File "/opt/venv/lib/python3.9/site-packages/celery/backends/base.py", line 172, in mark_as_failure self.store_result(task_id, exc, state, File "/opt/venv/lib/python3.9/site-packages/celery/backends/base.py", line 528, in store_result self._store_result(task_id, result, state, traceback, File "/opt/venv/lib/python3.9/site-packages/celery/backends/base.py", line 956, in _store_result current_meta = self._get_task_meta_for(task_id) File "/opt/venv/lib/python3.9/site-packages/celery/backends/base.py", line 978, in _get_task_meta_for meta = self.get(self.get_key_for_task(task_id)) File "/opt/venv/lib/python3.9/site-packages/celery/backends/base.py", line 856, in get_key_for_task return key_t('').join([ TypeError: sequence item 1: expected a bytes-like object, NoneType found

--

| open | 2025-03-12T23:53:40Z | 2025-03-20T19:57:58Z | https://github.com/chaoss/augur/issues/3054 | [

"bug"

] | cdolfi | 1 |

d2l-ai/d2l-en | pytorch | 2,343 | Why do you use your own API | I wonder why are you using an API, instead of regular pytorch code.

It makes everything look unfamiliar and impractical. It's like a new language. Like having to learn everything again. | open | 2022-11-16T12:03:08Z | 2023-05-15T13:51:59Z | https://github.com/d2l-ai/d2l-en/issues/2343 | [

"question"

] | g-i-o-r-g-i-o | 11 |

arogozhnikov/einops | tensorflow | 28 | Why "Only lower-case latin letters allowed in names, not ..." | Is there a reason that einops does not support upper latin letters?

I would like to use upper and lower letters. | closed | 2019-02-14T10:12:06Z | 2020-09-11T06:03:40Z | https://github.com/arogozhnikov/einops/issues/28 | [] | boeddeker | 12 |

httpie/cli | python | 714 | Program name results to sys.argv[0] when executing httpie module as a script | Getting:

```

$ python -m httpie -h

usage: __main__.py [--json] [--form] [--pretty {all,colors,format,none}]

[--style STYLE] [--print WHAT] [--headers] [--body]

[--verbose] [--all] [--history-print WHAT] [--stream]

[--output FILE] [--download] [--continue]

[--session SESSION_NAME_OR_PATH | --session-read-only SESSION_NAME_OR_PATH]

[--auth USER[:PASS]] [--auth-type {basic,digest}]

[--proxy PROTOCOL:PROXY_URL] [--follow]

[--max-redirects MAX_REDIRECTS] [--timeout SECONDS]

[--check-status] [--verify VERIFY]

[--ssl {ssl2.3,tls1,tls1.1,tls1.2}] [--cert CERT]

[--cert-key CERT_KEY] [--ignore-stdin] [--help] [--version]

[--traceback] [--default-scheme DEFAULT_SCHEME] [--debug]

[METHOD] URL [REQUEST_ITEM [REQUEST_ITEM ...]]

__main__.py: error: the following arguments are required: URL

```

Expected:

```

$ python -m httpie -h

usage: http [--json] [--form] [--pretty {all,colors,format,none}]

[--style STYLE] [--print WHAT] [--headers] [--body] [--verbose]

[--all] [--history-print WHAT] [--stream] [--output FILE]

[--download] [--continue]

[--session SESSION_NAME_OR_PATH | --session-read-only SESSION_NAME_OR_PATH]

[--auth USER[:PASS]] [--auth-type {basic,digest}]

[--proxy PROTOCOL:PROXY_URL] [--follow]

[--max-redirects MAX_REDIRECTS] [--timeout SECONDS]

[--check-status] [--verify VERIFY]

[--ssl {ssl2.3,tls1,tls1.1,tls1.2}] [--cert CERT]

[--cert-key CERT_KEY] [--ignore-stdin] [--help] [--version]

[--traceback] [--default-scheme DEFAULT_SCHEME] [--debug]

[METHOD] URL [REQUEST_ITEM [REQUEST_ITEM ...]]

http: error: the following arguments are required: URL

``` | closed | 2018-09-22T12:59:32Z | 2018-10-30T17:41:57Z | https://github.com/httpie/cli/issues/714 | [] | matusf | 1 |

dynaconf/dynaconf | django | 1,129 | [RFC]typed: Cast dict to its Dictvalue from schema. | related to #1127

Currently, dicts are loaded purely from the loaders, regardless if it has a schema defined.

```python

class Person(DictValue):

name: str

team: str

class Settings(Dynaconf):

person: Person

settings = Settings(person={"name: "foo", "team": "A"})

```

Then

```

assert settings.person == {"name: "foo", "team": "A"} # True

assert isintance(settings.person, Person) # False

```

What is the desired behavior?

```python

assert settings.person == {"name: "foo", "team": "A"} # True

assert isintance(settings.person, Person) # True

```

So, `settings.person` must be a `dict` and at the same time a `Person`, it means that `DictValue` will have to inherit from `UserDict` and provide the proper `__eq__` methods and also implement access lookup both via subscription `settings.person["name"]` and also `settings.person.name`.

This will allow:

- keep the autocompletion working for subtypes

- static type to validate on code level

- isintance checks to be performed

- To replace `Box` completely

## Implementation

On `.set` method, it will lookup for the schema defined type, instantiate it and assign.

## Challenges

How will it work with Lazy evaluated values?

```python

class Person(DictValue)

number: int

```

```bash

export DYNACONF_PERSON__number="@int @jinja {{ 2 + 2 }}"

```

The `number: int` would need to accept `Lazy("@int @jinja {{ 2 + 2 }}")` instance,

we probably can make it happen on the validation process, by replacing the type from `int` to `Union[int, Lazy]`

Or alternativelly:

`Person` would strictly require `number: int` but the instantiation of `person` will be delayed to before validation is performed, there will be a intermediate state for a `DictValue` that will be a `NotEvaluated(Person, kwargs)`

Or maybe

`DictValue` will only require the presence of keys, but will not perform any validation including type validation, postponing the validation to the `.validate` call, that will anyway trigger lazy evaluation.

Requires investigation

| open | 2024-07-06T14:19:09Z | 2024-07-08T18:37:57Z | https://github.com/dynaconf/dynaconf/issues/1129 | [

"Not a Bug",

"RFC",

"typed_dynaconf"

] | rochacbruno | 1 |

microsoft/JARVIS | pytorch | 227 | windows 执行报错 | 执行 awesome_chat.py,配置文件是这样的inference_mode: huggingface

local_deployment: minimal ,选择远程加载模型

报错信息如下:

| open | 2023-12-08T02:25:19Z | 2023-12-08T02:25:19Z | https://github.com/microsoft/JARVIS/issues/227 | [] | 827648313 | 0 |

FactoryBoy/factory_boy | django | 787 | TypeError: generate() missing 1 required positional argument: 'params' | After upgrading from 3.0.1 to 3.1 I suddenly get a `TypeError: generate() missing 1 required positional argument: 'params'`.

I have a `factory.LazyAttribute` that calls `factory.Faker('safe_email').generate()` conditionally. This worked before and now raises a `TypeError`.

This seems to be caused by commit f0a4ef008f07f8d42221565d8c33b88083f0be6d. Would it be possible to make `params` optional?

| closed | 2020-10-05T08:52:27Z | 2020-10-06T07:26:06Z | https://github.com/FactoryBoy/factory_boy/issues/787 | [

"Q&A",

"Doc",

"BadMagic"

] | jaap3 | 4 |

slackapi/python-slack-sdk | asyncio | 991 | Can a Slack app also have a preview for uploaded file using files_upload? | Hi Everyone,

Using `files_upload` from the SDK Web Client,

Is there a way for Slack App to also have a preview for the file uploaded just like a normal user gets when uploading it ??

<img width="430" alt="Screenshot 2021-04-06 at 7 13 08 PM" src="https://user-images.githubusercontent.com/42064744/113720301-215bd400-970c-11eb-8152-17d1be15b5c7.png">

Thanks in advance!

| closed | 2021-04-06T13:48:05Z | 2021-04-07T05:36:15Z | https://github.com/slackapi/python-slack-sdk/issues/991 | [

"question"

] | Harshg999 | 2 |

InstaPy/InstaPy | automation | 6,093 | File could not be opened error when attempting to start session. | <!-- Did you know that we have a Discord channel ? Join us: https://discord.gg/FDETsht -->

<!-- Is this a Feature Request ? Please, check out our Wiki first https://github.com/timgrossmann/InstaPy/wiki -->

## Expected Behavior

Session parameters should be accepted and the program should proceed onto launching the web browser. (I must add that I am not very experienced nor very intelligent so please correct me if I am doing or saying something blatantly wrongly)

## Current Behavior

The program halts on the line "session = InstaPy(username=insta_username, password=insta_password, headless_browser=True)" with the error "file could not be opened"

## Possible Solution (optional)

## InstaPy configuration

latest version of InstaPy(0.6.13) on python 3.9.1. All requirements were installed. System is Mac Os Big Sur on a non m1 mac

| closed | 2021-02-26T21:43:17Z | 2021-02-27T15:38:41Z | https://github.com/InstaPy/InstaPy/issues/6093 | [] | ghost | 6 |

sammchardy/python-binance | api | 1,247 | Trailing stop loss on spot market | Hi,

Since Binance now allows us to use trailing stop loss, do you have any plan to implement this? | open | 2022-09-11T12:49:34Z | 2022-09-11T12:49:34Z | https://github.com/sammchardy/python-binance/issues/1247 | [] | wiseryfendy | 0 |

chezou/tabula-py | pandas | 351 | Try to install tabula-py | I tried to install tabula-py on Windows 10 and install java 8 and set up path correctly. But I still get ```

Java version:

`java -version` faild. `java` command is not found from this Pythonprocess. Please ensure Java is installed and PATH is set for `java`

tabula-py version: 2.7.0

```

Any suggestions how to solve it? | closed | 2023-07-17T06:51:45Z | 2023-07-17T06:51:58Z | https://github.com/chezou/tabula-py/issues/351 | [] | ribery77 | 1 |

JaidedAI/EasyOCR | deep-learning | 479 | Model deployment on mobile phones | Hello everyone,

I need to deploy easyOCR and use it on an Android device and I couldn't find any resources for that.

I have seen the custom_model.md but not sure if this would help since I don't want to train my custom model.

Thanks

| closed | 2021-07-04T14:02:39Z | 2022-07-11T09:02:00Z | https://github.com/JaidedAI/EasyOCR/issues/479 | [] | rasha-salim | 2 |

deezer/spleeter | deep-learning | 548 | spleeter.separator not found when installing with pip | ## Description

Pip installing seems to be missing the separator module.

## Step to reproduce

<!-- Indicates clearly steps to reproduce the behavior: -->

1. Pip installed ffmpeg and spleeter

2. Ran this code

```

from spleeter.separator import Separator

sep = Separator('spleeter:2stems')

path = "C:\\Users\\Sebastian\\Documents\\VScode\\Personal Projects\\Music Recognition Project"

song_path = path + "Automatic Stop.mp3"

sep.separate_to_file(song_path,path)`

```

3. Got this error `ImportError: DLL load failed: The specified module could not be found.`

## Output

```

2021-01-04 20:05:42.196029: W tensorflow/stream_executor/platform/default/dso_loader.cc:59] Could not load dynamic library 'cudart64_101.dll'; dlerror: cudart64_101.dll not found

2021-01-04 20:05:42.201066: I tensorflow/stream_executor/cuda/cudart_stub.cc:29] Ignore above cudart dlerror if you do not have a GPU set up on your machine.

Traceback (most recent call last):

File "c:/Users/Sebastian/Documents/VScode/Personal Projects/Music Recognition Project/pokesong2.py", line 1, in <module>

from spleeter.separator import Separator

File "C:\Users\Sebastian\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.7_qbz5n2kfra8p0\LocalCache\local-packages\Python37\site-packages\spleeter\separator.py", line 27, in <module>

from librosa.core import stft, istft

File "C:\Users\Sebastian\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.7_qbz5n2kfra8p0\LocalCache\local-packages\Python37\site-packages\librosa\__init__.py", line 211, in <module>

from . import core

File "C:\Users\Sebastian\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.7_qbz5n2kfra8p0\LocalCache\local-packages\Python37\site-packages\librosa\core\__init__.py", line 5, in <module>

from .convert import * # pylint: disable=wildcard-import

File "C:\Users\Sebastian\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.7_qbz5n2kfra8p0\LocalCache\local-packages\Python37\site-packages\librosa\core\convert.py", line 7, in <module>

from . import notation

File "C:\Users\Sebastian\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.7_qbz5n2kfra8p0\LocalCache\local-packages\Python37\site-packages\librosa\core\notation.py", line 8, in <module>

from ..util.exceptions import ParameterError

File "C:\Users\Sebastian\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.7_qbz5n2kfra8p0\LocalCache\local-packages\Python37\site-packages\librosa\util\__init__.py", line 87, in <module>

from ._nnls import * # pylint: disable=wildcard-import

File "C:\Users\Sebastian\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.7_qbz5n2kfra8p0\LocalCache\local-packages\Python37\site-packages\librosa\util\_nnls.py", line 13, in <module>

import scipy.optimize

File "C:\Users\Sebastian\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.7_qbz5n2kfra8p0\LocalCache\local-packages\Python37\site-packages\scipy\optimize\__init__.py", line 389, in <module>

from .optimize import *

File "C:\Users\Sebastian\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.7_qbz5n2kfra8p0\LocalCache\local-packages\Python37\site-packages\scipy\optimize\optimize.py", line 37, in <module>

from .linesearch import (line_search_wolfe1, line_search_wolfe2,

File "C:\Users\Sebastian\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.7_qbz5n2kfra8p0\LocalCache\local-packages\Python37\site-packages\scipy\optimize\linesearch.py", line 18, in <module>

from scipy.optimize import minpack2

ImportError: DLL load failed: The specified module could not be found.

```

## Environment

<!-- Fill the following table -->

| | |

| ----------------- | ------------------------------- |

| OS | Windows |

| Installation type | pip |

| RAM available | 20GB |

| Hardware spec | GTX 1060 / i5 6600k |

| Python version | 3.8.0 | | closed | 2021-01-05T04:21:29Z | 2021-01-08T13:13:20Z | https://github.com/deezer/spleeter/issues/548 | [

"bug",

"invalid"

] | SebastianCardenasEscoto | 1 |

huggingface/pytorch-image-models | pytorch | 2,296 | AttributeError: 'ImageDataset' object has no attribute 'parser' | timm: '1.0.9'

AttributeError: 'ImageDataset' object has no attribute 'parser' | closed | 2024-10-07T08:04:59Z | 2024-11-22T04:21:58Z | https://github.com/huggingface/pytorch-image-models/issues/2296 | [

"bug"

] | riyajatar37003 | 4 |

google-research/bert | tensorflow | 461 | Reduce prediction time for question answering | Hi,

i am executing BERT solution on machine with GPU (Tesla K80 - 12 GB) . for question answering prediction for single question is taking more than 5 seconds. Can we reduce it to below 1 second.

Do we need to configure any thing to make it possible ?

Thank you | open | 2019-02-28T09:28:09Z | 2019-09-19T04:36:36Z | https://github.com/google-research/bert/issues/461 | [] | shivamani-ans | 9 |

waditu/tushare | pandas | 1,561 | share_float查单只股票的时候数据显示不全 | 输入参数只有股票代码时 ex: pro.share_float(ts_code='600278.SZ')

有的只能查前几年的,近两年的就没有数据了

ID:368465 | open | 2021-06-22T07:12:16Z | 2021-06-22T07:12:16Z | https://github.com/waditu/tushare/issues/1561 | [] | zzdqilei | 0 |

scikit-learn-contrib/metric-learn | scikit-learn | 39 | Recreate "Twin Peaks" result from MLKR paper | Replicate the experiment on the synthetically created "Twin Peaks" dataset in this [paper](http://www.cs.cornell.edu/~kilian/papers/weinberger07a.pdf) using MLKR algorithm. #28 can be used for reference.

@perimosocordiae I've raised this just to keep better track of what is left to do in MLKR.

| open | 2016-10-30T11:06:54Z | 2016-10-30T11:06:54Z | https://github.com/scikit-learn-contrib/metric-learn/issues/39 | [] | devashishd12 | 0 |

open-mmlab/mmdetection | pytorch | 11,705 | 无法debug到模型源码 | 你好,我是个新手,最近我看到网上好多以前版本的教程都在用mmlab里面的各个模块学习深度学习模型,但是我发现无法我在我要用的模型里面打断点无法进入,而是执行编译好的mmdet里面去了,这样很不适合我这样新手学习模型的每个模块,有什么方法可以解决这个问题吗

例如我最近在学习mask2former,我想看看我数据输入到模型里面,模型的处理细节,就没法做到 | open | 2024-05-11T11:37:31Z | 2024-05-28T16:19:58Z | https://github.com/open-mmlab/mmdetection/issues/11705 | [] | whj-tech | 2 |

apache/airflow | machine-learning | 47,971 | Retry exponential backoff max float overflow | ### Apache Airflow version

Other Airflow 2 version (please specify below)

### If "Other Airflow 2 version" selected, which one?

2.10.3

### What happened?

Hello,

I encountered with a bug. My DAG configs were: retries=1000, retry_delay=5 min (300 seconds), max_retry_delay=1h (3600 seconds). My DAG failed ~1000 times and after that Scheduler broke down. After that retries exceeded 1000 and stopped on 1017 retry attempt.

I did my research on this problem and found that this happened due to formula **min_backoff = math.ceil(delay.total_seconds() * (2 ** (self.try_number - 1)))** in **taskinstance.py** file. So retry_exponential_backoff has no limit of try_number and during calculations it can overflow max Float value. So even if max_retry_delay is set formula is still calculating. And during calculations on very large retry number it crashes.

Please fix bug.

I also did pull request with my possible solution:

https://github.com/apache/airflow/pull/48057

https://github.com/apache/airflow/pull/48051

From Airflow logs:

2024-12-09 02:16:39.825 OverflowError: cannot convert float infinity to integer

2024-12-09 02:16:39.825 min_backoff = int(math.ceil(delay.total_seconds() * (2 ** (self.try_number - 2))))

2024-12-08 09:29:14.583 [2024-12-08T06:29:14.583+0000] {scheduler_job_runner.py:705} INFO - Executor reports execution of mydag.spark_submit run_id=manual__2024-11-02T10:19:30.618008+00:00 exited with status up_for_retry for try_number 470

Configs:

_with DAG(

dag_id=DAG_ID,

start_date=MYDAG_START_DATE,

schedule_interval="@daily",

catchup=AIRFLOW_CATCHUP,

default_args={

'depends_on_past': True,

"retries": 1000,

"retry_delay": duration(minutes=5),

"retry_exponential_backoff": True,

"max_retry_delay": duration(hours=1),

},

) as dag:_

<img width="947" alt="Image" src="https://github.com/user-attachments/assets/f3307b23-0307-4b4d-b968-3e1984fbe93c" />

<img width="1050" alt="Image" src="https://github.com/user-attachments/assets/f161329c-155c-4d92-b3b4-cf442d6ed036" />

### What you think should happen instead?

My pull request:

https://github.com/apache/airflow/pull/48057

https://github.com/apache/airflow/pull/48051

### How to reproduce

Use configs from above. Example:

with DAG(

dag_id=DAG_ID,

start_date=MY_AIRFLOW_START_DATE,

schedule_interval="@daily",

catchup=AIRFLOW_CATCHUP,

default_args={

'depends_on_past': True,

"retries": 1000,

"retry_delay": duration(minutes=5),

"retry_exponential_backoff": True,

"max_retry_delay": duration(hours=1),

},

) as dag

### Operating System

Ubuntu 22.04

### Versions of Apache Airflow Providers

_No response_

### Deployment

Official Apache Airflow Helm Chart

### Deployment details

_No response_

### Anything else?

_No response_

### Are you willing to submit PR?

- [x] Yes I am willing to submit a PR!

### Code of Conduct

- [x] I agree to follow this project's [Code of Conduct](https://github.com/apache/airflow/blob/main/CODE_OF_CONDUCT.md)

| open | 2025-03-19T18:27:31Z | 2025-03-24T11:57:37Z | https://github.com/apache/airflow/issues/47971 | [

"kind:bug",

"area:Scheduler",

"good first issue",

"area:core",

"needs-triage"

] | alealandreev | 5 |

ydataai/ydata-profiling | jupyter | 831 | Correlation options in "Advanced Usage" not works as expected | Trying to run profiling with:

profile = ProfileReport(

postgres_db_table, title=db_parameter_dict["tableName"], html={"style": {"full_width": True}},

sort=None, minimal=None, interactions={'continuous': False}, orange_mode=True,

correlations={

"pearson": {"calculate": True,"warn_high_correlations":True,"threshold":0.9},

"spearman": {"calculate": False},

"kendall": {"calculate": False},

"phi_k": {"calculate": False},

"cramers": {"calculate": False},

}

)

parameters but no correlation visualizations shows up on report html. So i want to run just "Pearson" correlation but i can't.

When i try parameters below:

ProfileReport(

postgres_db_table, title=db_parameter_dict["tableName"], html={"style": {"full_width": True}},

sort=None, minimal=None, interactions={'continuous': False}, orange_mode=True,

correlations={"pearson": {"calculate": True}} )

Only "Phik, Cramers V" tabs shows up in profiling report html.

To Reproduce

Data:

Famous Titanic dataset with 889 records and ['id', 'survived', 'pclass', 'name', 'sex', 'age', 'sibsp', 'parch',

'ticket', 'fare', 'embarked'] columns

Version information:

python: 3.7.0

Environment: Jupyter Notebook

<details><summary>Click to expand <strong><em>Version information</em></strong></summary>

<p>

absl-py==0.13.0

adal==1.2.6

alembic==1.4.1

altair==4.1.0

amqp==2.6.1

apispec==3.3.2

appdirs==1.4.4

astroid==2.3.1

astunparse==1.6.3

atomicwrites==1.4.0

attrs==20.3.0

autopep8==1.5

azure-common==1.1.26

azure-graphrbac==0.61.1

azure-mgmt-authorization==0.61.0

azure-mgmt-containerregistry==2.8.0

azure-mgmt-keyvault==2.2.0

azure-mgmt-resource==12.0.0

azure-mgmt-storage==11.2.0

azureml-core==1.23.0

Babel==2.8.0

backcall==0.1.0

backoff==1.10.0

backports.tempfile==1.0

backports.weakref==1.0.post1

bcrypt==3.2.0

beautifulsoup4==4.9.0

billiard==3.6.3.0

bleach==3.1.0

bokeh==2.3.1

Boruta==0.3

boto==2.49.0

boto3==1.12.9

botocore==1.15.9

Bottleneck==1.3.2

Brotli==1.0.9

bs4==0.0.1

bson==0.5.9

cached-property==1.5.2

cachelib==0.1.1

cachetools==4.2.1

celery==4.4.7

certifi==2019.9.11

cffi==1.14.3

chardet==3.0.4

chart-studio==1.1.0

clang==5.0

click==8.0.0

cloudpickle==1.6.0

colorama==0.4.1

colorcet==2.0.6

colorlover==0.3.0

colour==0.1.5

confuse==1.4.0

contextlib2==0.6.0.post1

croniter==0.3.34

cryptography==3.2

cssselect==1.1.0

cufflinks==0.17.3

cx-Oracle==7.2.3

cycler==0.10.0

d6tcollect==1.0.5

d6tstack==0.2.0

dash==1.16.1

dash-core-components==1.12.1

dash-html-components==1.1.1

dash-renderer==1.8.1

dash-table==4.10.1

databricks-cli==0.14.2

dataclasses==0.6

decorator==4.4.0

defusedxml==0.6.0

dnspython==2.0.0

docker==4.4.4

docopt==0.6.2

docutils==0.15.2

dtreeviz==1.3

email-validator==1.1.1

entrypoints==0.3

et-xmlfile==1.0.1

exitstatus==1.4.0

extratools==0.8.2.1

fake-useragent==0.1.11

feature-selector===N-A

findspark==1.4.2

Flask==1.1.1

Flask-AppBuilder==3.0.1

Flask-Babel==1.0.0

Flask-Caching==1.9.0

Flask-Compress==1.5.0

Flask-Cors==3.0.10

Flask-JWT-Extended==3.24.1

Flask-Login==0.4.1

Flask-Migrate==2.5.3

Flask-OpenID==1.2.5

Flask-SQLAlchemy==2.4.4

flask-talisman==0.7.0

Flask-WTF==0.14.3

flatbuffers==1.12

future==0.18.2

gast==0.4.0

gensim==3.8.1

geographiclib==1.50

geopy==2.0.0

gitdb==4.0.5

GitPython==3.1.14

google-api-core==1.26.0

google-auth==1.27.0

google-auth-oauthlib==0.4.6

google-cloud-core==1.6.0

google-cloud-storage==1.36.1

google-crc32c==1.1.2

google-pasta==0.2.0

google-resumable-media==1.2.0

googleapis-common-protos==1.53.0

graphviz==0.17

great-expectations==0.13.19

grpcio==1.40.0

gunicorn==20.0.4

h5py==3.1.0

htmlmin==0.1.12

humanize==2.6.0

idna==2.8

ImageHash==4.2.0

imageio==2.9.0

imbalanced-learn==0.5.0

imblearn==0.0

imgkit==1.2.2

importlib-metadata==1.7.0

iniconfig==1.1.1

instaloader==4.7.1

ipykernel==5.1.2

ipython==7.8.0

ipython-genutils==0.2.0

ipywidgets==7.5.1

isodate==0.6.0

isort==4.3.21

itsdangerous==1.1.0

jdcal==1.4.1

jedi==0.15.1

jeepney==0.6.0

Jinja2==2.11.2

jmespath==0.9.5

joblib==1.0.0

json5==0.8.5

jsonpatch==1.32

jsonpickle==2.0.0

jsonpointer==2.1

jsonschema==3.0.2

jupyter==1.0.0

jupyter-client==6.1.11

jupyter-console==6.2.0

jupyter-contrib-core==0.3.3

jupyter-contrib-nbextensions==0.5.1

jupyter-core==4.7.0

jupyter-highlight-selected-word==0.2.0

jupyter-latex-envs==1.4.6

jupyter-nbextensions-configurator==0.4.1

jupyterlab==1.1.3

jupyterlab-server==1.0.6

jupyterthemes==0.20.0

karateclub==1.0.11

keras==2.6.0

Keras-Preprocessing==1.1.2

kiwisolver==1.1.0

kombu==4.6.11

kubernetes==12.0.1

lazy-object-proxy==1.4.2

lesscpy==0.14.0

lightgbm==2.2.3

llvmlite==0.35.0

lxml==4.5.0

Mako==1.1.3

Markdown==3.2.2

MarkupSafe==1.1.1

marshmallow==3.8.0

marshmallow-enum==1.5.1

marshmallow-sqlalchemy==0.23.1

matplotlib==3.4.1

mccabe==0.6.1

MechanicalSoup==0.12.0

metakernel==0.27.5

missingno==0.4.2

mistune==0.8.4

mleap==0.16.1

mlxtend==0.17.3

msgpack==1.0.0

msrest==0.6.21

msrestazure==0.6.4

multimethod==1.4

natsort==7.0.1

nbconvert==5.6.0

nbformat==4.4.0

ndg-httpsclient==0.5.1

networkx==2.4

notebook==6.0.1

numba==0.52.0

numpy==1.19.5

oauthlib==3.1.0

openpyxl==3.0.6

opt-einsum==3.3.0

packaging==20.9

pandas==1.1.5

pandas-profiling==3.0.0

pandocfilters==1.4.2

param==1.10.1

paramiko==2.7.2

parse==1.15.0

parsedatetime==2.6

parso==0.5.1

pathlib2==2.3.5

pathspec==0.8.1

patsy==0.5.1

pexpect==4.8.0

phik==0.11.2

pickleshare==0.7.5

Pillow==8.2.0

plotly==4.14.3

pluggy==0.13.1

ply==3.11

polyline==1.4.0

prefixspan==0.5.2

prison==0.1.3

prometheus-client==0.7.1

prometheus-flask-exporter==0.18.1

prompt-toolkit==2.0.9

protobuf==3.15.4

psutil==5.7.0

psycopg2==2.8.6

ptyprocess==0.6.0

py==1.10.0

pyarrow==3.0.0

pyasn1==0.4.8

pyasn1-modules==0.2.8

pycodestyle==2.5.0

pycparser==2.20

pyct==0.4.8

pydantic==1.8.2

pydot==1.4.2

pyee==7.0.2

Pygments==2.4.2

PyGSP==0.5.1

PyJWT==1.7.1

pylint==2.4.2

pymssql==2.1.5

PyNaCl==1.4.0

pyodbc==4.0.27

pyOpenSSL==20.0.1

pyparsing==2.4.2

pyppeteer==0.2.2

pyquery==1.4.1

pyrsistent==0.15.4

pysftp==0.2.9

PySocks==1.7.1

pytest==6.2.4

python-dateutil==2.8.1

python-dotenv==0.14.0

python-editor==1.0.4

python-louvain==0.13

python3-openid==3.2.0

pytz==2019.2

PyWavelets==1.1.1

pywin32==227

pywinpty==0.5.5

PyYAML==5.3

pyzmq==18.1.0

qtconsole==5.0.1

QtPy==1.9.0

querystring-parser==1.2.4

requests==2.25.1

requests-html==0.10.0

requests-oauthlib==1.3.0

retrying==1.3.3

rsa==4.7.2

ruamel.yaml==0.16.12

ruamel.yaml.clib==0.2.2

s3transfer==0.3.3

scikit-image==0.18.1

scikit-learn==0.23.2

scikit-plot==0.3.7

scipy==1.6.0

seaborn==0.11.1

SecretStorage==3.3.1

Send2Trash==1.5.0

shap==0.36.0

Shapely==1.7.1

six==1.15.0

sklearn==0.0

slicer==0.0.7

smart-open==1.9.0

smmap==3.0.5

sortedcontainers==2.3.0

soupsieve==2.0

spylon==0.3.0

spylon-kernel==0.4.1

SQLAlchemy==1.3.19

SQLAlchemy-Utils==0.36.8

sqlparse==0.4.1

statsmodels==0.9.0

tabulate==0.8.9

tangled-up-in-unicode==0.1.0

tensorboard==2.6.0

tensorboard-data-server==0.6.1

tensorboard-plugin-wit==1.8.0

tensorflow==2.6.0

tensorflow-estimator==2.6.0

termcolor==1.1.0

terminado==0.8.2

testpath==0.4.2

threadpoolctl==2.1.0

tifffile==2021.4.8

toml==0.10.2

toolz==0.11.1

tornado==6.0.3

tqdm==4.60.0

traitlets==4.3.2

tweepy==3.8.0

twitter-scraper==0.4.2

typed-ast==1.4.0

typing-extensions==3.7.4.3

tzlocal==2.1

urllib3==1.25.9

vine==1.3.0

virtualenv==16.7.9

visions==0.7.1

w3lib==1.22.0

waitress==1.4.4

wcwidth==0.1.7

webencodings==0.5.1

websocket-client==0.58.0

websockets==8.1

Werkzeug==1.0.0

widgetsnbextension==3.5.1

wrapt==1.12.1

WTForms==2.3.3

xgboost==1.1.1

xlrd==1.2.0

XlsxWriter==1.2.2

yellowbrick==0.7

zipp==3.1.0

</p>

</details>

| open | 2021-09-21T13:45:44Z | 2021-09-21T13:45:44Z | https://github.com/ydataai/ydata-profiling/issues/831 | [] | enesMesut | 0 |

aleju/imgaug | machine-learning | 414 | AssertionError install tests for 0.2.9 build on NixOS | Hi Team,

I was trying to enable the test cases for pythonPackages.imgaug https://github.com/NixOS/nixpkgs/pull/67494

During this process i am able to execute the test cases but facing **AssertionError** and this is causing 5 failures.

Summary of test run:

`============ **5 failed, 383 passed, 3 warnings in 199.71s (0:03:19)** =============`

detailed log :

[imgaug_test_failures.txt](https://github.com/aleju/imgaug/files/3604110/imgaug_test_failures.txt)

Please suggest. Thanks. | closed | 2019-09-12T07:00:08Z | 2020-01-07T08:43:34Z | https://github.com/aleju/imgaug/issues/414 | [] | Rakesh4G | 37 |

babysor/MockingBird | deep-learning | 365 | 将模型文件导入无法运行 | 我将模型文件导入后,总是报错:Error: Model files not found. Please download the models

难道模型文件不是放在:C:\MockingBird-main\synthesizer\saved_models里面吗???(我的文件放在C盘根目录下面) | open | 2022-02-01T06:30:40Z | 2022-02-06T02:36:32Z | https://github.com/babysor/MockingBird/issues/365 | [] | zhang065 | 5 |

LAION-AI/Open-Assistant | machine-learning | 2,800 | 500 - Open Assistent EROR | Sorry, we encountered a server error. We're not sure what went wrong.

Very often an error began to appear with smaller dialogs

If you tried to open a web page and saw an Internal Server Error 500, you can do the following.

Wait...

Notify administrator...

Check htaccess file. ...

Check error log...

Check contents of CGI scripts...

Check plugins and components...

Increase server RAM

Очень часто стала появляться ошибка при меньших диалогах

Если вы попытались открыть веб-страницу, но увидели Internal Server Error 500, можно сделать следующее.

Подождать ...

Сообщить администратору ...

Проверить файл htaccess. ...

Проверить лог ошибок ...

Проверить содержимое CGI-скриптов ...

Проверить плагины и компоненты ...

Увеличить объем оперативной памяти сервера | closed | 2023-04-21T05:17:33Z | 2023-04-21T08:05:03Z | https://github.com/LAION-AI/Open-Assistant/issues/2800 | [] | buddhadhammaliveexpedition | 1 |

opengeos/streamlit-geospatial | streamlit | 51 | streamlit-geospatial site down | The URL https://streamlit.geemap.org/ has been showing an "Oh no. Error running application" message for two days or so now. This wonderful application has been a fun tool in letting students explore remote sensing data, so we hope to make use of it again soon! | closed | 2022-06-30T04:26:50Z | 2022-06-30T12:00:18Z | https://github.com/opengeos/streamlit-geospatial/issues/51 | [] | frizatch | 2 |

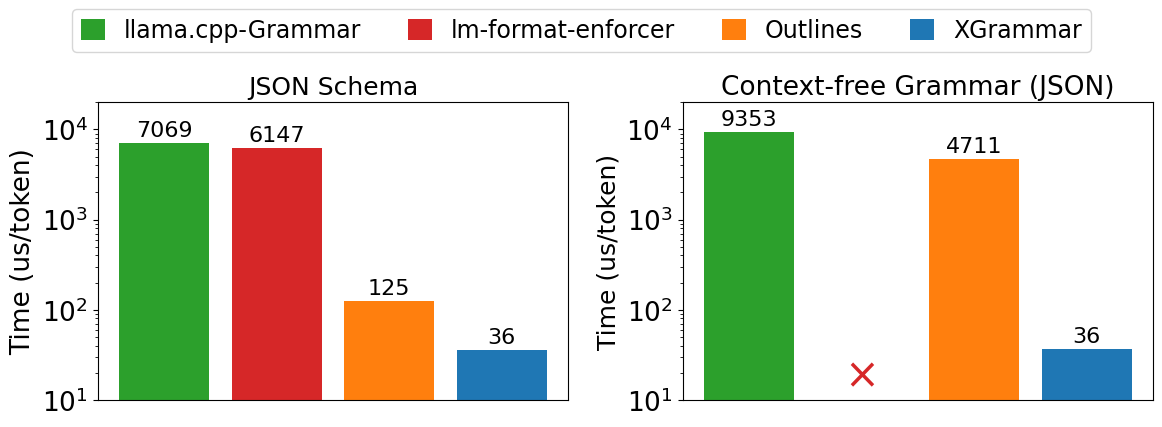

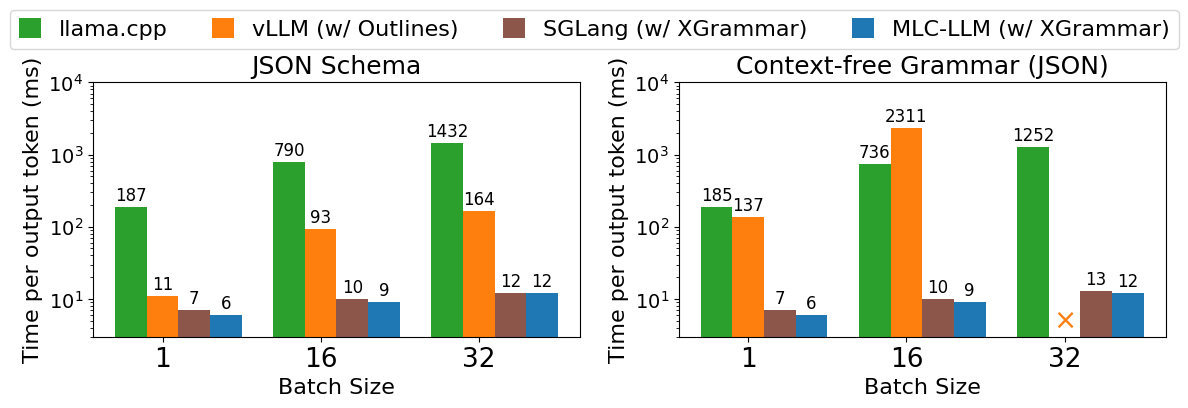

huggingface/text-generation-inference | nlp | 2,900 | Support XGrammar backend as an alternative to Outlines | ### Feature request

Support the use of XGrammar instead of Outlines for the backend Structured-Output generation.

### Motivation

XGrammar has been shown to be much faster than Outlines for generation structured output

BlogPost:

https://blog.mlc.ai/2024/11/22/achieving-efficient-flexible-portable-structured-generation-with-xgrammar

"As shown in Figure 1, XGrammar outperforms existing structured generation solutions by up to 3.5x on the JSON schema workload and more than 10x on the CFG workload. Notably, the gap in CFG-guided generation is larger. This is because many JSON schema specifications can be expressed as regular expressions, bringing more optimizations that are not directly applicable to CFGs."

"Figure 2 shows end-to-end inference performance on LLM serving tasks. We can find the trend again that the gap on CFG-guided settings is larger, and the gap grows on larger batch sizes. This is because the GPU throughput is higher on larger batch sizes, putting greater pressure on the grammar engine running on CPUs. Note that the main slowdown of vLLM comes from its structured generation engine, which can be potentially eliminated by integrating with XGrammar. In all cases, XGrammar enables high-performance generation in both settings without compromising flexibility and efficiency."

Paper / Technical Report from XGrammar:

https://arxiv.org/abs/2411.15100

### Your contribution

XGrammar Repo:

https://github.com/mlc-ai/xgrammar

SGLang Repo:

https://github.com/sgl-project/sglang/

SGLang Docs on Structured Output generation including using XGrammar:

https://sgl-project.github.io/backend/openai_api_completions.html#Structured-Outputs-(JSON,-Regex,-EBNF)

Note: Those docs do note that XGrammar does not support regular expressions | open | 2025-01-10T18:03:07Z | 2025-01-13T18:35:59Z | https://github.com/huggingface/text-generation-inference/issues/2900 | [] | 2016bgeyer | 0 |

chaos-genius/chaos_genius | data-visualization | 343 | Updation date in UI currently shows date for which data was last available | Currently the UI has a field called Last Updated Date, instead of showing the last time Anomaly/RCA was run, it shows the last date for which data is available.

We should fix this to show the last time Anomaly/RCA was run. But knowing the last available date for the dataset should also be useful. Should we show both in the UI? | closed | 2021-10-27T08:08:44Z | 2022-03-15T11:01:48Z | https://github.com/chaos-genius/chaos_genius/issues/343 | [

"✨ enhancement",

"🖥️ frontend"

] | kartikay-bagla | 2 |

hzwer/ECCV2022-RIFE | computer-vision | 122 | Scale 0.5 at 4K looks noticably worse than rife-ncnn-vulkan's UHD mode | Setting the scale to 0.5 for 4K content doesn't seem to improve results over 1.0, and looks worse than the UHD mode in RIFE-NCNN.

| closed | 2021-02-28T15:10:03Z | 2021-03-02T03:05:13Z | https://github.com/hzwer/ECCV2022-RIFE/issues/122 | [] | n00mkrad | 1 |

Yorko/mlcourse.ai | matplotlib | 355 | Assignment 9 | https://www.kaggle.com/kashnitsky/assignment-9-time-series-analysis

1) web form is not corresponding to the questions in the task. At least 1st question is missing

2) I doubt that in the 1st question there is a correct answer. Simple operations lead to result 3426.195682 which is not listed in the possible answers. Kernel related. In q2-4 the situation is the same. Practically no coding, but copypasting from the lecture. Still not the results listed in the possible answers. Although may be I didn't understand the tasks correctly

[kernel (2).zip](https://github.com/Yorko/mlcourse.ai/files/2411140/kernel.2.zip)

3) For some reason numpy is not imported when it is definetly needed

| closed | 2018-09-24T14:03:24Z | 2018-11-20T16:48:14Z | https://github.com/Yorko/mlcourse.ai/issues/355 | [] | Vozf | 2 |

wger-project/wger | django | 1,578 | Distance (km, mi) logging bugs | Hello! It seems like when logging distance decimal values cannot be input, returning an error "please enter a valid value. The two nearest valid values are 1 and 2" say when inputting 1.5 mi.

There are a few other bugs around the mileage I've noticed but that one is the most important as it pretty much makes that feature unusable.

A few other bugs I've noticed that are annoying but don't make it unusable:

- I've set up the exercise in my workout routine with Miles as the rep unit, and mph as the weight unit, yet each time I log I still have to manually change the units (still defaults to reps/lbs)

- Even when inputting as miles/mph, it often displays in logs as km instead. This looks like this only happens on the mobile app because it has a different menu for displaying logs

## Steps to Reproduce

<!-- Please include as many steps to reproduce so that we can replicate the problem. -->

1. ... Either in the mobile app or on the website, log a workout exercise "run"

2. ... Change the reps to distance (miles or km) and enter a decimal value (eg 1.5)

3. ... Click "Save"

**Expected results:** <!-- what did you expect to see? -->

That a decimal distance value would be saved to the program.

**Actual results:** <!-- what did you see? -->

"Please enter a valid value. The two nearest valid values are 1 and 2."

<details>

Minor bugs:

1. Defaults to reps/weight even when different units are chosen for that exercise

2. Results display as km even when mi is chosen, at least on the mobile app

<!--

Any logs you think would be useful (if you have a local instance)

I'm not sure what's relevant but happy to upload anything if need be.

-->

```bash

```

</details>

Thanks for any help!

| closed | 2024-02-02T00:32:45Z | 2025-03-21T22:34:50Z | https://github.com/wger-project/wger/issues/1578 | [

"bug"

] | ddakotac | 2 |

jackmpcollins/magentic | pydantic | 414 | Test and add docs for usage with other logging/tracing providers | Test that magentic works with the most common LLM tracing / logging providers and add docs to configure these together.

- https://log10.io/

- https://github.com/Arize-ai/phoenix | open | 2025-02-02T02:52:12Z | 2025-02-02T02:52:12Z | https://github.com/jackmpcollins/magentic/issues/414 | [] | jackmpcollins | 0 |

deeppavlov/DeepPavlov | nlp | 1,107 | Document everything useful that we have in files.deeppavlov.ai | closed | 2019-12-19T09:32:45Z | 2020-05-13T09:31:46Z | https://github.com/deeppavlov/DeepPavlov/issues/1107 | [

"enhancement",

"Documentation"

] | yoptar | 0 | |

NullArray/AutoSploit | automation | 421 | Unhandled Exception (9e51c3117) | Autosploit version: `3.0`

OS information: `Linux-4.15.0-43-generic-x86_64-with-Ubuntu-18.04-bionic`

Running context: `autosploit.py`

Error meesage: `argument of type 'NoneType' is not iterable`

Error traceback:

```

Traceback (most recent call):

File "/home/meddy/Téléchargements/crack/Autosploit/autosploit/main.py", line 117, in main

terminal.terminal_main_display(loaded_tokens)

File "/home/meddy/Téléchargements/crack/Autosploit/lib/term/terminal.py", line 474, in terminal_main_display

if "help" in choice_data_list:

TypeError: argument of type 'NoneType' is not iterable

```

Metasploit launched: `True`

| closed | 2019-01-28T21:27:30Z | 2019-01-29T15:37:20Z | https://github.com/NullArray/AutoSploit/issues/421 | [] | AutosploitReporter | 0 |

3b1b/manim | python | 1,497 | Manim write text out of the window | ### Describe the error

When I use the Scene TextExample found [here](https://3b1b.github.io/manim/getting_started/example_scenes.html), only part of the text show up. I need to zoom out to see everything, and when I save the video, I can only see like I haven't zoom out.

### Code and Error

**Code**:

[This](https://3b1b.github.io/manim/getting_started/example_scenes.html) Scene TextExample.

**Error**:

Can't see some words.

### Environment

**OS System**: Windows 10

**manim version**: Release 1.0.0

**python version**: 3.9.4

| closed | 2021-04-23T20:43:05Z | 2021-06-18T19:14:32Z | https://github.com/3b1b/manim/issues/1497 | [] | Leoriem-code | 2 |

comfyanonymous/ComfyUI | pytorch | 7,235 | VHS_LoadImagesPath not working | "No directory found" in VHS_LoadImagesPath node

I am copy pasting the path from windows folder structure where those image seq is located

But getting this error "No directory found" | closed | 2025-03-14T16:31:02Z | 2025-03-16T14:59:07Z | https://github.com/comfyanonymous/ComfyUI/issues/7235 | [] | Arup-art | 1 |

jowilf/starlette-admin | sqlalchemy | 383 | Bug: SQLAlchemy models with Mixin Classes raises error. | **Describe the bug**

I have over 60 models, they all have share same columns like `id`, `date_created`, `date_updated`. So I have to implement my own mixins and subclass them in my models like the example below. This error raised after I updated to `0.12.0`.

**To Reproduce**

I have wrote an example here:

```python

from sqlalchemy import (

ForeignKey,

create_engine,

)

from sqlalchemy.orm import DeclarativeBase, Mapped, mapped_column, sessionmaker, relationship

from starlette.applications import Starlette

from starlette.responses import HTMLResponse

from starlette.routing import Route

from starlette_admin.contrib.sqla import Admin, ModelView

from starlette_admin.fields import (

HasMany,HasOne,

IntegerField,

StringField,

)

class Base(DeclarativeBase):

pass

class IDMixin:

id: Mapped[int] = mapped_column(primary_key=True)

class Source(Base, IDMixin):

__tablename__ = "source"

name: Mapped[str] = mapped_column()

items: Mapped[list["Item"]] = relationship(

"Item",

back_populates="source"

)

class Item(Base, IDMixin):

__tablename__ = "item"

name: Mapped[str] = mapped_column()

source_id: Mapped[int] = mapped_column(ForeignKey("source.id"))

source: Mapped[Source] = relationship("Source", back_populates="items")

class SourceView(ModelView):

label = "Sources"

name = "Source"

fields = [

IntegerField(

name="id",

label="ID",

help_text="ID of the record.",

read_only=True,

),

StringField(

name="name",

label="Name",

),

HasMany(

name="items",

label="Item",

),

]

class ItemView(ModelView):

label = "Items"

name = "Item"

fields = [

IntegerField(

name="id",

label="ID",

help_text="ID of the record.",

read_only=True,

),

StringField(

name="name",

label="Name",

),

HasOne(

name="source",

label="Source",

),

]

engine = create_engine(

"sqlite:///db.sqlite3",

connect_args={"check_same_thread": False},

echo=True,

)

session = sessionmaker(bind=engine, autoflush=False)

def init_database() -> None:

Base.metadata.create_all(engine)

app = Starlette(

routes=[

Route(

"/",

lambda r: HTMLResponse('<a href="/admin/">Click me to get to Admin!</a>'),

)

],

on_startup=[init_database],

)

# Create admin

admin = Admin(engine, title="Mixins Error")

# Add views

admin.add_view(SourceView(model=Source))

admin.add_view(ItemView(model=Item))

# Mount admin

admin.mount_to(app)

```

Error:

```console

AttributeError: Neither 'InstrumentedAttribute' object nor 'Comparator' object associated with Source.items has an attribute 'primary_key'

```

**Environment (please complete the following information):**

- Starlette-Admin version: 0.12.0

- ORM/ODMs: SQLAlchemy, MongoEngine

| closed | 2023-11-06T09:12:48Z | 2023-11-06T21:21:20Z | https://github.com/jowilf/starlette-admin/issues/383 | [

"bug"

] | hasansezertasan | 1 |

kaliiiiiiiiii/Selenium-Driverless | web-scraping | 311 | error in Linux | Same code, error in Linux

Previously worked

It still works under Win10

modify UA, it won't work

Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/133.0.0.0 Safari/537.36

| closed | 2025-02-05T10:36:31Z | 2025-02-05T12:34:09Z | https://github.com/kaliiiiiiiiii/Selenium-Driverless/issues/311 | [

"invalid",

"wontfix"

] | fhwhite | 1 |

pydantic/logfire | pydantic | 891 | ModuleNotFoundError: No module named 'opentelemetry.sdk._events' | ### Description

In the pyproject.toml it looks like the version requirement for opentelemetry-sdk needs to be bumped.

### Python, Logfire & OS Versions, related packages (not required)

```TOML

``` | closed | 2025-02-24T21:22:24Z | 2025-02-25T14:48:15Z | https://github.com/pydantic/logfire/issues/891 | [] | mwildehahn | 1 |

jazzband/django-oauth-toolkit | django | 1,193 | Why the request returns 'invalid_client'? | Hello everyone, everything good?

I'm trying to follow the tutorial for Django Rest Framework that is in the [documentation](https://django-oauth-toolkit.readthedocs.io/en/latest/rest-framework/getting_started.html). I am not able to get a valid answer. I've seen some issues in the repo, some may even be the same case, but it's not clear to me where I'm going wrong.

Basically, I'm sending the request using curl with the following data:

`curl -X POST -d "grant_type=password&username=<admin>&password=<admin>" -u"<fEsQnQBsokTk5NB1xaZeLRaLgvbjZ3INGgFhgmFn>:<pbkdf2_sha256$320000$tYHgBpw9Sq6WZFV6u8ruDK$8Boq6kWNqGChvRbw33FbfduwnYz4vUhDcY8RzE1HuqE=>" http://localhost:8000/o/token/`

The Authorization grant type is: **Resource owner password-based**

The django-oauth-toolkit version is: 2.1.0

The response I get from the server is:

`{"error": "invalid_client"}`

Sorry if this seems repetitive. But it wasn't clear to me where I went wrong.

PS: The data presented here are not sensitive, as they are data to exemplify the error. | closed | 2022-08-09T22:20:15Z | 2023-09-06T12:51:42Z | https://github.com/jazzband/django-oauth-toolkit/issues/1193 | [

"question"

] | linneudm | 7 |

pytorch/vision | machine-learning | 8,629 | Detection lr_scheduler.step() called every step instead of every epoch | ### 🐛 Describe the bug

The `lr_scheduler.step()` function should be called once per epoch, not every step as seen in engine.py:

https://github.com/pytorch/vision/blob/main/references/detection/engine.py#L54

Reference:

https://pytorch.org/docs/stable/optim.html#how-to-adjust-learning-rate

The `--lr-steps` argument provided to the `train.py` script don't make much sense: `[16, 22]`, because these quickly change in the 2nd epoch and remain static for the rest of the training job.

Instead, I might suggest:

```python

def train_one_epoch(model, optimizer, data_loader, device, epoch, print_freq, scaler=None):

model.train()

metric_logger = utils.MetricLogger(delimiter=" ")

metric_logger.add_meter("lr", utils.SmoothedValue(window_size=1, fmt="{value:.6f}"))

header = f"Epoch: [{epoch}]"

lr_scheduler = None

if epoch == 0:

warmup_factor = 1.0 / 1000

warmup_iters = min(1000, len(data_loader) - 1)

lr_scheduler = torch.optim.lr_scheduler.LinearLR(

optimizer, start_factor=warmup_factor, total_iters=warmup_iters

)

for images, targets in metric_logger.log_every(data_loader, print_freq, header):

images = list(image.to(device) for image in images)

targets = [{k: v.to(device) if isinstance(v, torch.Tensor) else v for k, v in t.items()} for t in targets]

with torch.cuda.amp.autocast(enabled=scaler is not None):

loss_dict = model(images, targets)

losses = sum(loss for loss in loss_dict.values())

# reduce losses over all GPUs for logging purposes

loss_dict_reduced = utils.reduce_dict(loss_dict)

losses_reduced = sum(loss for loss in loss_dict_reduced.values())

loss_value = losses_reduced.item()

if not math.isfinite(loss_value):

print(f"Loss is {loss_value}, stopping training")

print(loss_dict_reduced)

sys.exit(1)

optimizer.zero_grad()

if scaler is not None:

scaler.scale(losses).backward()

scaler.step(optimizer)

scaler.update()

else:

losses.backward()

optimizer.step()

# Only step() the special LinearLR scheduler for the first epoch

if lr_scheduler is not None and isinstance(lr_scheduler, torch.optim.lr_scheduler.LinearLR) and epoch == 0:

lr_scheduler.step()

metric_logger.update(loss=losses_reduced, **loss_dict_reduced)

metric_logger.update(lr=optimizer.param_groups[0]["lr"])

# one step for each epoch

if lr_scheduler is not None:

lr_scheduler.step()

return metric_logger

```

### Versions

Collecting environment information...

PyTorch version: 2.4.0+cu121

Is debug build: False

CUDA used to build PyTorch: 12.1

ROCM used to build PyTorch: N/A

OS: Ubuntu 20.04.6 LTS (x86_64)

GCC version: (Ubuntu 9.4.0-1ubuntu1~20.04.2) 9.4.0

Clang version: Could not collect

CMake version: version 3.16.3

Libc version: glibc-2.31

Python version: 3.10.14 (main, Jun 12 2024, 11:12:41) [GCC 9.4.0] (64-bit runtime)

Python platform: Linux-5.4.0-193-generic-x86_64-with-glibc2.31

Is CUDA available: True

CUDA runtime version: Could not collect

CUDA_MODULE_LOADING set to: LAZY

GPU models and configuration: GPU 0: NVIDIA GeForce RTX 3070 Laptop GPU

Nvidia driver version: 550.90.07

cuDNN version: Could not collect

HIP runtime version: N/A

MIOpen runtime version: N/A

Is XNNPACK available: True

CPU:

Architecture: x86_64

CPU op-mode(s): 32-bit, 64-bit

Byte Order: Little Endian

Address sizes: 48 bits physical, 48 bits virtual

CPU(s): 16

On-line CPU(s) list: 0-15

Thread(s) per core: 2

Core(s) per socket: 8

Socket(s): 1

NUMA node(s): 1

Vendor ID: AuthenticAMD

CPU family: 25

Model: 80

Model name: AMD Ryzen 9 5900HX with Radeon Graphics

Stepping: 0

Frequency boost: enabled

CPU MHz: 3916.790

CPU max MHz: 3300.0000

CPU min MHz: 1200.0000

BogoMIPS: 6587.59

Virtualization: AMD-V

L1d cache: 256 KiB

L1i cache: 256 KiB

L2 cache: 4 MiB

L3 cache: 16 MiB

NUMA node0 CPU(s): 0-15

Vulnerability Gather data sampling: Not affected

Vulnerability Itlb multihit: Not affected

Vulnerability L1tf: Not affected

Vulnerability Mds: Not affected

Vulnerability Meltdown: Not affected

Vulnerability Mmio stale data: Not affected

Vulnerability Retbleed: Not affected

Vulnerability Spec store bypass: Mitigation; Speculative Store Bypass disabled via prctl and seccomp

Vulnerability Spectre v1: Mitigation; usercopy/swapgs barriers and __user pointer sanitization

Vulnerability Spectre v2: Mitigation; Retpolines; IBPB conditional; IBRS_FW; STIBP always-on; RSB filling; PBRSB-eIBRS Not affected; BHI Not affected

Vulnerability Srbds: Not affected

Vulnerability Tsx async abort: Not affected

Flags: fpu vme de pse tsc msr pae mce cx8 apic sep mtrr pge mca cmov pat pse36 clflush mmx fxsr sse sse2 ht syscall nx mmxext fxsr_opt pdpe1gb rdtscp lm constant_tsc rep_good nopl nonstop_tsc cpuid extd_apicid aperfmperf pni pclmulqdq monitor ssse3 fma cx16 sse4_1 sse4_2 movbe popcnt aes xsave avx f16c rdrand lahf_lm cmp_legacy svm extapic cr8_legacy abm sse4a misalignsse 3dnowprefetch osvw ibs skinit wdt tce topoext perfctr_core perfctr_nb bpext perfctr_llc mwaitx cpb cat_l3 cdp_l3 hw_pstate ssbd mba ibrs ibpb stibp vmmcall fsgsbase bmi1 avx2 smep bmi2 erms invpcid cqm rdt_a rdseed adx smap clflushopt clwb sha_ni xsaveopt xsavec xgetbv1 xsaves cqm_llc cqm_occup_llc cqm_mbm_total cqm_mbm_local clzero irperf xsaveerptr wbnoinvd arat npt lbrv svm_lock nrip_save tsc_scale vmcb_clean flushbyasid decodeassists pausefilter pfthreshold avic v_vmsave_vmload vgif umip pku ospke vaes vpclmulqdq rdpid overflow_recov succor smca

Versions of relevant libraries:

[pip3] cjm-pytorch-utils==0.0.6

[pip3] cjm-torchvision-tfms==0.0.11

[pip3] numpy==1.26.3

[pip3] onnx==1.16.1

[pip3] onnxruntime==1.18.1

[pip3] onnxscript==0.1.0.dev20240628

[pip3] torch==2.4.0

[pip3] torchaudio==2.3.1+cu121

[pip3] torchvision==0.19.0

[pip3] triton==3.0.0

[conda] Could not collect

| closed | 2024-09-03T20:45:04Z | 2024-09-04T09:04:32Z | https://github.com/pytorch/vision/issues/8629 | [] | david-csnmedia | 1 |

mljar/mljar-supervised | scikit-learn | 675 | Bug: Need retrain | File "/usr/local/lib/python3.11/site-packages/supervised/base_automl.py", line 2511, in _need_retrain

change = np.abs((old_score - new_score) / old_score)

numpy.core._exceptions._UFuncNoLoopError: ufunc 'subtract' did not contain a loop with signature matching types (dtype('<U19'), dtype('float64')) -> None

| open | 2023-11-12T16:26:38Z | 2023-11-12T16:26:38Z | https://github.com/mljar/mljar-supervised/issues/675 | [] | strukevych | 0 |

thtrieu/darkflow | tensorflow | 462 | the loss is 4~6 | hi,I really want to know why my loss is just between 4 and 6,and wont go down anymore? | open | 2017-12-06T02:46:38Z | 2017-12-29T16:30:16Z | https://github.com/thtrieu/darkflow/issues/462 | [] | QueenJuliaZxx | 7 |

public-apis/public-apis | api | 3,535 | https://github.com/octocat/Hello-World/pull/6 | closed | 2023-06-11T23:51:22Z | 2023-06-11T23:51:49Z | https://github.com/public-apis/public-apis/issues/3535 | [] | Shaukyhamdan94 | 0 | |

pydantic/pydantic-settings | pydantic | 480 | Setting a default value for ZoneInfo does not work | When setting a default value for ZoneInfo a different timezone is used.

```python

Python 3.12.1 (main, Jan 8 2024, 05:53:39) [Clang 17.0.6 ] on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> import pydantic_settings

>>> from zoneinfo import ZoneInfo

>>> from pydantic_settings import BaseSettings

>>> class Test(BaseSettings):

... tz: ZoneInfo = ZoneInfo("Europe/London")

...

>>> Test()

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/home/acuthbert2/dev/ves/scripts/.venv/lib/python3.12/site-packages/pydantic_settings/main.py", line 167, in __init__

super().__init__(

File "/home/acuthbert2/dev/ves/scripts/.venv/lib/python3.12/site-packages/pydantic/main.py", line 212, in __init__

validated_self = self.__pydantic_validator__.validate_python(data, self_instance=self)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

pydantic_core._pydantic_core.ValidationError: 1 validation error for Test

tz

invalid timezone: :Asia/Shanghai [type=zoneinfo_str, input_value=':Asia/Shanghai', input_type=str]

>>> pydantic_settings.__version__

'2.6.1'

```

- pydantic-settings version: 2.6.1

- python version: 3.12.1

- platform: DISTRIB_ID=Ubuntu

DISTRIB_RELEASE=22.04

DISTRIB_CODENAME=jammy

DISTRIB_DESCRIPTION="Ubuntu 22.04.2 LTS" | closed | 2024-11-21T05:58:54Z | 2024-11-22T06:00:36Z | https://github.com/pydantic/pydantic-settings/issues/480 | [

"unconfirmed"

] | ac3673 | 3 |

onnx/onnx | scikit-learn | 5,794 | What is the correct way to make a tensor with a dynamic dimension for a Reshape operator? | # Ask a Question

### Question

I am trying to add a Reshape node to a BERT onnx model that works with dynamic shapes. The reshape op should reshape a rank 3 tensor to a rank 2. The input to the reshape is of shape [unk__2,unk__3,768] and I need to collapse the first two dynamic dimensions into one and keep the last fixed dimension such as [[unk__2 * unk__3], 768]. How can I specify a dynamic dimension when making a tensor with the onnx helper?

### Further information

When running the code snippet I provided below, I get the following error:

```

raise TypeError(f"'{value}' is not an accepted attribute value.")

TypeError: 'name: "shape"

type {

tensor_type {

elem_type: 7

shape {

dim {

dim_value: -1

}

dim {

dim_value: 768

}

}

}

}

' is not an accepted attribute value.

```

- Is this issue related to a specific model?

**Model name**: bert-base

**Model opset**: 18

### Notes

Code snippet:

```

# Create a Constant node that contains the target shape

shape_tensor = helper.make_tensor_value_info(name='shape', elem_type=onnx.TensorProto.INT64, shape=(-1,768))

shape_node = helper.make_node(

'Constant',

inputs=[],

outputs=[f'shape_{i}_output'],

value=shape_tensor,

name=f'shape_{i}'

)

# Create a Reshape node

reshape_node = helper.make_node(

'Reshape',

inputs=[mm_node.input[0], f'shape_{i}_output'],

outputs=[f'reshaped_output_{i}'],

name=f'Reshape_{i}'

)

```