id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,893,868 | Why HTML is Not a Programming Language? | Understanding HTML HTML stands for HyperText Markup Language. It's the standard language... | 0 | 2024-06-19T17:29:47 | https://dev.to/richardshaju/why-html-is-not-a-programming-language-30g3 | html, programming, web, tech | ## Understanding HTML

HTML stands for HyperText Markup Language. It's the standard language used to creUnderstanding HTML

HTML stands for HyperText Markup Language. It's the standard language used to create web pages. When you visit a website, what you see is made up of HTML. It's like the skeleton of a web page, prov... | richardshaju |

1,893,865 | Skill Development and Education - Building a Knowledge-Based Economy . | for more about this just click on this link | 0 | 2024-06-19T17:27:46 | https://dev.to/tegveer_singh_8c7c2ac99ea/skill-development-and-education-building-a-knowledge-based-economy--444c | for more about this just click on this link [](https://www.dayitwa.org.in/) | tegveer_singh_8c7c2ac99ea | |

1,893,864 | bartowski/DeepSeek-Coder-V2-Lite-Instruct-GGUF-torrent | https://aitorrent.zerroug.de/bartowski-deepseek-coder-v2-lite-instruct-gguf/ | 0 | 2024-06-19T17:26:50 | https://dev.to/zerroug/bartowskideepseek-coder-v2-lite-instruct-gguf-torrent-14ek | ai, machinelearning, llm, beginners | https://aitorrent.zerroug.de/bartowski-deepseek-coder-v2-lite-instruct-gguf/ | zerroug |

1,893,863 | The significance of leadership in socio-economic development. | dayitwa, a pioneering organization, is committed to fostering leadership and driving socio-economic... | 0 | 2024-06-19T17:25:20 | https://dev.to/tegveer_singh_8c7c2ac99ea/the-significance-of-leadership-in-socio-economic-development-1kgi | dayitwa, a pioneering organization, is committed to fostering leadership and driving socio-economic transformation in India. Through its innovative programs, Dayitwa empowers individuals to become changemakers in their communities, addressing pressing challenges and creating sustainable solutions. This blog will delve ... | tegveer_singh_8c7c2ac99ea | |

1,892,557 | How To Create Modern Emails Using React | If you have ever tried to create a nice-looking emails with HTML, you probably had a bad experienced... | 0 | 2024-06-19T17:24:33 | https://antondevtips.com/blog/how-to-create-modern-emails-using-react | react, webdev, javascript, frontend | ---

canonical_url: https://antondevtips.com/blog/how-to-create-modern-emails-using-react

---

If you have ever tried to create a nice-looking emails with HTML, you probably had a bad experienced while styling email letters with inline CSS properties.

All due to the limited support for external stylesheets in email clie... | antonmartyniuk |

1,893,862 | cognitivecomputations/dolphin-2.9.2-qwen2-7b-torrent | https://aitorrent.zerroug.de/cognitivecomputations-dolphin-2-9-2-qwen2-7b-torrent/ | 0 | 2024-06-19T17:23:21 | https://dev.to/zerroug/cognitivecomputationsdolphin-292-qwen2-7b-torrent-20kh | ai, machinelearning, beginners | https://aitorrent.zerroug.de/cognitivecomputations-dolphin-2-9-2-qwen2-7b-torrent/ | zerroug |

1,893,844 | Hello All | Love this site. Well put together and maintained. | 0 | 2024-06-19T16:54:28 | https://dev.to/roy_silva_64eb848150e83d1/hello-all-1gba | Love this site. Well put together and maintained. | roy_silva_64eb848150e83d1 | |

1,889,714 | One Byte Explainer : NP-Complete Problems | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-19T17:15:02 | https://dev.to/debjde6400/one-byte-explainer-np-complete-problems-45af | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

Are you talking about a CS problem and its solution, such that you can prove your solution without getting frustrated? Can you convert other similar CS problems to your problem, but do... | debjde6400 |

1,893,859 | Belajar Membuat Project Pertama Laravel | Installasi laravel menggunakan composer composer create-project laravel/laravel project-name ... | 0 | 2024-06-19T17:14:53 | https://dev.to/ryotwell/belajar-membuat-project-pertama-laravel-5gll | webdev, beginners, programming, laravel | Installasi laravel menggunakan composer

```bash

composer create-project laravel/laravel project-name

```

Sekarang buka project nya dengan mengetik

```bash

cd project-name

```

Jalankan local server

```bash

php artisan serve

```

Sekarang buka `http://localhost:8000` dan jadi.

| ryotwell |

1,893,857 | Unlocking the Power of GPT Models: Your Guide to Innovative AI Tools | In the rapidly evolving world of artificial intelligence, GPT models are at the forefront of... | 0 | 2024-06-19T17:14:36 | https://dev.to/matin_mollapur/unlocking-the-power-of-gpt-models-your-guide-to-innovative-ai-tools-4ii1 | ai, programming, productivity, learning | In the rapidly evolving world of artificial intelligence, GPT models are at the forefront of revolutionizing how we interact with technology. This article explores some of the most innovative GPT models that can enhance your productivity, creativity, and learning experiences.

#### Academic Tools for Researchers and St... | matin_mollapur |

1,893,231 | Global or Local Minima and Maxima and Saddle Points in Deep Learning | <Global and Local Minima> A global minimum is the globally minimal point whose... | 0 | 2024-06-19T17:13:18 | https://dev.to/hyperkai/global-or-local-minima-and-maxima-and-saddle-points-in-deep-learning-2nn3 | deeplearning, minima, maxima, saddlepoints | ### <**Global and Local Minima**>

- A global minimum is the globally minimal point whose gradient is zero but not a local minimum.

- A local minimum is the locally minimal point whose gradient is zero but not a global minimum.

tooling 🖥. | 0 | 2024-06-19T17:00:01 | https://rodneylab.com/ratatui-for-terminal-fireworks/ | rust, gamedev, tui | ---

title: "Ratatui for Terminal Fireworks: using Rust TUI Canvas"

published: "true"

description: "Ratatui for Terminal Fireworks 🧨 cooking up a fireworks or confetti show in the Terminal using Rust Text-based UI (TUI) tooling 🖥."

tags: "rust, gamedev, tui"

canonical_url: "https://rodneylab.com/ratatui-for-terminal-f... | askrodney |

1,892,447 | Getting Started With Terraform For Infrastructure Provisioning 🛠️ | Infrastructure as Code (IaC) is a modern approach to managing and provisioning computing resources... | 0 | 2024-06-19T16:59:59 | https://dev.to/angelotheman/getting-started-with-terraform-for-infrastructure-provisioning-15hi | devops, terraform, cloud, architecture | Infrastructure as Code (IaC) is a modern approach to managing and provisioning computing resources through machine-readable configuration files rather than physical hardware or interactive configuration tools. This method allows for more consistent and scalable infrastructure management, enabling automation and reducin... | angelotheman |

1,893,849 | bartowski/Hercules-5.0-Qwen2-1.5B-GGUF-torrent | https://aitorrent.zerroug.de/bartowski-hercules-5-0-qwen2-1-5b-gguf-torrent/ | 0 | 2024-06-19T16:59:49 | https://dev.to/zerroug/bartowskihercules-50-qwen2-15b-gguf-torrent-4jbo | ai, machinelearning, beginners | https://aitorrent.zerroug.de/bartowski-hercules-5-0-qwen2-1-5b-gguf-torrent/ | zerroug |

1,893,848 | bartowski/MadWizardOrpoMistral-7b-v0.3-GGUF-torrent | https://aitorrent.zerroug.de/bartowski-madwizardorpomistral-7b-v0-3-gguf/ | 0 | 2024-06-19T16:58:47 | https://dev.to/zerroug/bartowskimadwizardorpomistral-7b-v03-gguf-torrent-2g4j | ai, machinelearning, beginners | https://aitorrent.zerroug.de/bartowski-madwizardorpomistral-7b-v0-3-gguf/ | zerroug |

1,893,847 | Step-by-Step Instructions for Task Management Apps | Table of Contents Introduction Project Setup Backend Setup Frontend Setup User... | 0 | 2024-06-19T16:57:12 | https://raajaryan.tech/step-by-step-instructions-for-task-management-apps | javascript, node, react, beginners | [](https://buymeacoffee.com/dk119819)

**Table of Contents**

1. [Introduction](#introduction)

2. [Project Setup](#project-setup)

- [Backend Setup](#backend-setup)

- [Frontend Setup](... | raajaryan |

1,893,846 | Working with Parquet files in Java using Carpet | After some time working with Parquet files in Java using the Parquet Avro library, and studying how... | 0 | 2024-06-19T16:55:22 | https://www.jeronimo.dev/working-with-parquet-files-in-java-using-carpet/ | parquet, java, bigdata, dataengineering | After some time working with Parquet files in Java using the Parquet Avro library, and studying how it worked, I concluded that despite **being very useful** in multiple use cases and having great potential, **the documentation and ecosystem needed for adoption in the Java world was very poor**.

Many people are using ... | jerolba |

1,893,843 | Setting Expectations for your team | Let's be real – setting expectations for your team can be a bit of a minefield. You want to give them... | 27,779 | 2024-06-19T16:51:23 | https://dev.to/johnscode/setting-expectations-for-your-team-m56 | leadership, management, softwareengineering | Let's be real – setting expectations for your team can be a bit of a minefield. You want to give them the autonomy to innovate and own their work, but you also need to make sure everyone's on the same page. So, how do you strike that balance? I've got five tips that have worked wonders for me, and I'm excited to share ... | johnscode |

1,893,842 | Study for FE | Fast-track your FE Electrical and Computer exam preparation with our comprehensive program. Whether... | 0 | 2024-06-19T16:51:14 | https://dev.to/malik_hamid_311d4b4c65819/study-for-fe-156o | exam, preparation, engineering | [](https://www.studyforfe.com/)

Fast-track your FE Electrical and Computer exam preparation with our comprehensive program. Whether you are a recent graduate or a working professional with years of experience, this FE exam preparation course will take you step-by-step through all sections of the latest NCEES® FE Electr... | malik_hamid_311d4b4c65819 |

1,893,841 | "🚀 From Algorithms to Applications: My Journey as a Machine Learning Developer 🤖" | Introduction Hello DEV Community! 👋 I'm Aviral Garg, a machine learning developer with a passion for... | 0 | 2024-06-19T16:49:32 | https://dev.to/aviralgarg05/-from-algorithms-to-applications-my-journey-as-a-machine-learning-developer--449h | beginners, programming, tutorial, python | Introduction

Hello DEV Community! 👋 I'm Aviral Garg, a machine learning developer with a passion for turning data into actionable insights. I’ve been working in this field for 1 year, and I’m excited to share my journey, the challenges I’ve faced, and tips for anyone looking to dive into machine learning.

My Path to ... | aviralgarg05 |

1,893,840 | Building a To-Do App with RTK Query | In this guide, we'll walk you through creating a simple to-do application using RTK Query, a powerful... | 0 | 2024-06-19T16:46:00 | https://dev.to/rudragupta_dev/building-a-to-do-app-with-rtk-query-2c0n | In this guide, we'll walk you through creating a simple to-do application using RTK Query, a powerful data fetching and caching tool from Redux Toolkit. We'll use an open-source API to manage our to-dos. By the end of this guide, you'll have a fully functional To-Do app and a solid understanding of how to integrate RTK... | rudragupta_dev | |

1,893,839 | Bridging the Gap: Building Trust Between Product and Engineering Teams | Companies often see their product and engineering teams compete instead of working together... | 0 | 2024-06-19T16:45:34 | https://jetthoughts.com/blog/bridging-gap-building-trust-between-product-engineering-teams-organization-structure/ | organization, structure, effectiveness | Companies often see their product and engineering teams compete instead of working together effectively. This lack of synergy causes a lack of trust and not rare scenarios where everyone, sooner or later, points fingers at others, thus slowing down the general development process. However, not only does the commitment ... | jetthoughts_61 |

1,893,838 | Implementing Light/Dark Mode in Your Vite App with shadcn/ui | This article will guide you through implementing a light/dark mode feature in your Vite project using... | 0 | 2024-06-19T16:44:17 | https://dev.to/ashsajal/implementing-lightdark-mode-in-your-vite-app-with-shadcnui-1ae4 | webdev, javascript, react, tailwindcss | This article will guide you through implementing a light/dark mode feature in your Vite project using the powerful and user-friendly shadcn/ui library.

### 1. Setting Up the Theme Provider

First, we need to create a theme provider component that will manage the application's theme state. This component will handle s... | ashsajal |

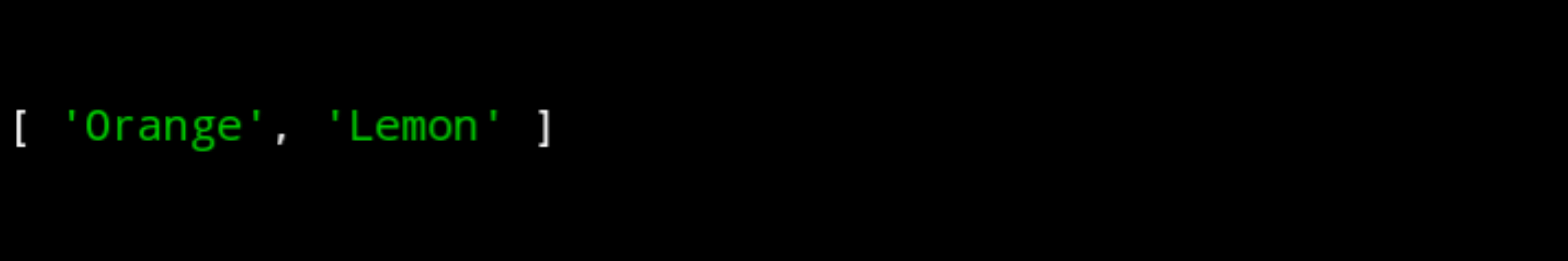

1,893,836 | Select Element in Array() to a new Array() JavaScript | JavaScript Array slice() Select elements: const fruits =... | 0 | 2024-06-19T16:44:09 | https://dev.to/tsitohaina/select-element-in-array-to-a-new-array-javascript-2805 | javascript, beginners |

JavaScript Array slice()

Select elements:

```

const fruits = ["Banana","Orange","Lemon","Apple","Mango"];

const citrus = fruits.slice(1, 3);

console.log(citrus);

```

Select elements using negative values:

```

const fruits = ["Banan... | tsitohaina |

1,893,837 | Your Path to Success: How to Become a Self-Taught Frontend Developer in 2024 | Hey future frontend developer! Dreaming of building stunning websites and interactive user... | 0 | 2024-06-19T16:42:46 | https://dev.to/delia_code/your-path-to-success-how-to-become-a-self-taught-frontend-developer-in-2024-4jbk | webdev, beginners, programming, career | Hey future frontend developer! Dreaming of building stunning websites and interactive user interfaces? Great news—becoming a self-taught frontend developer is entirely within your reach. With the right resources, strategy, and a sprinkle of perseverance, you can embark on an exciting journey in web development. Let’s d... | delia_code |

1,893,835 | HubSpot For Small Business | Unlock the potential of **[HubSpot for small business... | 0 | 2024-06-19T16:39:21 | https://dev.to/codepeddle/hubspot-for-small-business-2goa | hubspot, hubspotapi, hubspotmarketingagency | Unlock the potential of **[HubSpot for small business ](https://codeandpeddle.com/services/hubspot/

with Code and Peddle. Streamline your marketing, sales, and customer service operations to drive growth and efficiency. Learn more about how HubSpot can transform your business today. | codepeddle |

1,893,811 | Moving - From DigitalOcean to Cloudflare Pages | If you're interested, you may know that I use DigitalOcean to host this blog, with a modest... | 0 | 2024-06-19T16:14:49 | https://dev.to/hoaitx/moving-from-digitalocean-to-cloudflare-pages-1p5 | cloudflare, webdev | If you're interested, you may know that I use DigitalOcean to host this blog, with a modest configuration of 1GB RAM and 20GB SSD, which is sufficient for the TechStack that I have chosen. I call it sufficient because it still performs well with the current traffic, but deploying with Docker sometimes creates storage i... | hoaitx |

1,893,834 | RECOVERY OF CRYPTOCURRENCY/ RECOVERY OF BTC contact :fundsrecoverychambers@gmail.com | I highly recommend the service of FUNDS RECOVERY CHAMBERS Solution for a fantastic job at recovering... | 0 | 2024-06-19T16:37:54 | https://dev.to/harry_peterson_b3185f558d/recovery-of-cryptocurrency-recovery-of-btc-contact-fundsrecoverychambersgmailcom-39od | bitcoin, usdt, cryptocurrency, ethereum | I highly recommend the service of FUNDS RECOVERY CHAMBERS Solution for a fantastic job at recovering my life savings which I naively put in a fake crypto investment scheme. I had a stressful situation going on and they were so patient with me and did help me through this. The staff members are very legit and good in e... | harry_peterson_b3185f558d |

1,893,833 | bartowski/L3-70B-Euryale-v2.1-GGUF-torrent | L3-70B-Euryale-v2.1-Q4_K_M.gguf Size of remote file: 42.5... | 0 | 2024-06-19T16:36:38 | https://dev.to/zerroug/bartowskil3-70b-euryale-v21-gguf-torrent-8b9 | ai, machinelearning, beginners, chatgpt | L3-70B-Euryale-v2.1-Q4_K_M.gguf Size of remote file: 42.5 GB

https://aitorrent.zerroug.de/bartowski-l3-70b-euryale-v2-1-gguf/ | zerroug |

1,893,831 | Add Colors to Your Makeup Products with Cardboard Display Boxes | Like other industries, the cosmetics industry is growing every day. Consequently, there is a growing... | 0 | 2024-06-19T16:32:06 | https://dev.to/trudylalcantar/add-colors-to-your-makeup-products-with-cardboard-display-boxes-2i26 | design, google, blog, businesses | Like other industries, the cosmetics industry is growing every day. Consequently, there is a growing need for cardboard display boxes. The use of cosmetic items has increased over the past ten years. It now serves a lot more purposes than just enhancing skin tone. Women make use of it to their advantage. It shapes thei... | trudylalcantar |

1,893,827 | Spring Modulith: Modularization of a monolithic application | Modular Monolith is an architectural style where our source code is structured on the concept of... | 0 | 2024-06-19T16:31:15 | https://dev.to/shweta_kawale/spring-modulith-modularization-of-a-monolithic-application-16nn | productivity, opensource, spring | - Modular Monolith is an architectural style where our source code is structured on the concept of modules

- A monolithic application is broken up into distinct modules while still maintaining a single codebase and deployment.

| Day 3/40 Multi Stage Docker Build - Docker Tutorial For Beginners Video... | 0 | 2024-06-19T16:24:06 | https://dev.to/sina14/40-days-of-kubernetes-340-1cam | docker, kubernetes, 40daysofkubernetes | ## Day 3/40

# Multi Stage Docker Build - Docker Tutorial For Beginners

[Video Link](https://www.youtube.com/watch?v=ajetvJmBvFo)

@piyushsachdeva

[Git Repository](https://github.com/piyushsachdeva/CKA-2024/)

[My Git Repo](https://github.com/sina14/40daysofkubernetes)

We're going to reduce the volume of our image which... | sina14 |

1,889,720 | But you don't look like a web developer | When you think of a web developer, what comes to your mind? Most people from my private life outside... | 0 | 2024-06-19T16:23:02 | https://dev.to/webdevqueen/but-you-dont-look-like-a-web-developer-27m6 | webdev, discuss, career, womenintech | When you think of a web developer, what comes to your mind? Most people from my private life outside technology would describe such human as a man, often wearing glasses, hunched over a laptop in a dimly lit room, surrounded by empty coffee cups and energy drink cans. Maybe he’s an introvert, someone who’s more comfort... | webdevqueen |

1,893,812 | Can AI Help with Repository Base Code Understanding? | Understanding and maintaining large codebases is a common challenge in software development, leading... | 0 | 2024-06-19T16:21:34 | https://dev.to/michal_kovacik/can-ai-help-with-repository-base-code-understanding-1la | Understanding and maintaining large codebases is a common challenge in software development, leading to significant time and resource expenditure. Addressing this issue is essential for improving developer productivity and reducing technical debt.

**What is code?** Code is a recipe for solving a concrete problem. With... | michal_kovacik | |

1,893,820 | How can developers leverage gaming monetization strategies and mobile game advertising to thrive on the best game platform? | Developers can thrive on the best game platform by effectively leveraging gaming monetization... | 0 | 2024-06-19T16:20:27 | https://dev.to/claywinston/how-can-developers-leverage-gaming-monetization-strategies-and-mobile-game-advertising-to-thrive-on-the-best-game-platform-5hce | gamedev, gamedeveloper, mobilegames, androidgames | Developers can thrive on the [**best game platform**](https://medium.com/@adreeshelk/how-to-play-hundreds-of-games-on-your-lock-screen-without-downloading-anything-4f03e0173441?utm_source=referral&utm_medium=Medium&utm_campaign=Nostra) by effectively leveraging gaming monetization strategies and mobile game advertising... | claywinston |

1,893,810 | Integrating a Basic TensorFlow Model on AWS | Welcome to the exciting world of integrating machine learning models with cloud computing! In this... | 0 | 2024-06-19T16:12:49 | https://dev.to/aws-builders/integrating-a-basic-tensorflow-model-on-aws-81a | tensorflow, aws, model, ai | Welcome to the exciting world of integrating machine learning models with cloud computing! In this article, we'll guide you through the process of deploying a basic TensorFlow model on Amazon Web Services (AWS). We'll explore the services you can leverage, discuss some practical use cases, and provide a hands-on exampl... | mursalfk |

1,893,253 | Design Patterns | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-19T16:12:26 | https://dev.to/suchitra_13/design-patterns-4l9n | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

Design patterns are reusable solutions to common software design problems. They guide object creation, composition, and interaction, improving code readability and maintainability. Key ... | suchitra_13 |

1,893,809 | Top Next.JS Boilerplates in 2024 | Here are some of the free(open-source) and premimum boilerplates you should check out in 2024. This... | 0 | 2024-06-19T16:12:02 | https://dev.to/boilerplates_14e05bc5988b/top-nextjs-boilerplates-in-2024-4i8p | nextjs, webdev, javascript, programming | Here are some of the free(open-source) and premimum boilerplates you should check out in 2024.

This list is sourced from [www.nextjsboilerplateslist.com/](https://www.nextjsboilerplateslist.com/) free tool. Using this platform you can filter boilerplates based on the technologies you know which speeds up your developm... | boilerplates_14e05bc5988b |

1,893,808 | ARGONIX HACK TECH / BEST CRYPTOCURRENCY RECOVERY SERVICES | WhatApp: +1 (206) 234‑9907 Website: https://argonixhacktech.com My profound passion for music... | 0 | 2024-06-19T16:11:17 | https://dev.to/feetrikki_hermanni_49f4e3/argonix-hack-tech-best-cryptocurrency-recovery-services-1llf | productivity | WhatApp: +1 (206) 234‑9907

Website: https://argonixhacktech.com

My profound passion for music sparked my journey into music production from a young age. As a teenager, I delved deep into the realms of music creation, honing my skills as a music producer. Alongside my artistic endeavors, I sought avenues to invest my ... | feetrikki_hermanni_49f4e3 |

1,893,807 | Umbraco CodeGarden24 | It’s the last Friday afternoon, of CodeGarden24 and I am sitting here in Odense watching old and new... | 0 | 2024-06-19T16:11:06 | https://dev.to/ravi_motha_21868e7318d7dd/umbraco-codegarden24-2ibe | umbraco, codegarden, devlife | It’s the last Friday afternoon, of CodeGarden24 and I am sitting here in Odense watching old and new friends wander away from storms pakhus to their respective homes and lives.

**So I got a little reflective.**

I first discovered Umbraco and its community in 2010/2011 and attended my first CodeGarden in 2011, and f... | ravi_motha_21868e7318d7dd |

1,893,806 | Como a programação ajudou minha viagem para a Coreia do Sul | Há um tempo atrás, viajei para a Coreia do Sul! Honestamente, eu não estaria tão empolgado se não... | 0 | 2024-06-19T16:09:45 | https://dev.to/outofyourcomfortzone/como-a-programacao-ajudou-minha-viagem-para-a-coreia-do-sul-1c99 | Há um tempo atrás, viajei para [**a Coreia do Sul**](https://foradazonadeconforto.com/como-se-preparar-para-visitar-a-coreia-do-sul/)! Honestamente, eu não estaria tão empolgado se não fosse pelas minhas habilidades de programação. Aqui está como a codificação tornou minha viagem muito mais tranquila:

**1. Barreiras l... | outofyourcomfortzone | |

1,893,801 | How programming helped my trip to South Korea | A while ago, I traveled to South Korea! Honestly, I wouldn’t be as pumped if it weren’t for my... | 0 | 2024-06-19T16:06:47 | https://dev.to/outofyourcomfortzone/how-programming-helped-my-trip-to-south-korea-2o7p | A while ago, I traveled to [**South Korea**](https://www.outofyourcomfortzone.net/13-places-to-visit-in-south-korea-outside-seoul/)! Honestly, I wouldn’t be as pumped if it weren’t for my programming skills. Here’s how coding is making my trip so much smoother:

**1. Language Barrier? No Problem!** I’ve got this sweet ... | outofyourcomfortzone | |

1,893,800 | Day 23 of my progress as a vue dev | About today Today I worked on another landing page, even though it is not completed yet I'm trying my... | 0 | 2024-06-19T16:01:20 | https://dev.to/zain725342/day-23-of-my-progress-as-a-vue-dev-4j58 | webdev, vue, typescript, tailwindcss | **About today**

Today I worked on another landing page, even though it is not completed yet I'm trying my best to push the boundaries with this one by trying new things to make it more engaging and also to implement skills I haven't used previously to have my grip on those.

**What's next?**

I will be completing this ... | zain725342 |

1,893,799 | 전역 상태 관리 Recoil과 Zustand | Recoil과 Zustand는 React 애플리케이션에서 상태 관리를 도와주는 라이브러리이다. 두 라이브러리 근본적인 기능은 같지만, 서로 다른 방식으로 상태 관리를 구현하여... | 0 | 2024-06-19T15:59:47 | https://dev.to/hxxtae/jeonyeog-sangtae-gwanri-recoilgwa-zustand-d2k | recoil, zustand | Recoil과 Zustand는 React 애플리케이션에서 상태 관리를 도와주는 라이브러리이다. 두 라이브러리 근본적인 기능은 같지만, 서로 다른 방식으로 상태 관리를 구현하여 사용된다.

## Recoil

Recoil은 Facebook에서 개발한 상태 관리 라이브러리로, React와 긴밀하게 통합되어 있으며, 주로 atom과 selector를 사용한다. Recoil은 비동기 상태 관리와 Tree Shaking을 지원하며, 복잡한 상태 관리가 필요한 큰 규모의 애플리케이션에 적합하다.

### Atom과 Selector:

- Atom : 상태의 기본 ... | hxxtae |

1,893,796 | 슬롯사이트 | 온라인 카지노 산업이 급성장하면서, 안전하고 신뢰할 수 있는 플랫폼의 중요성도 더욱 부각되고 있습니다. 그중에서도 티파니카지노는 많은 플레이어들에게 다양한 혜택을 제공하며 두각을... | 0 | 2024-06-19T15:53:59 | https://dev.to/jackpotslot08/seulrossaiteu-3a2b | 온라인 카지노 산업이 급성장하면서, 안전하고 신뢰할 수 있는 플랫폼의 중요성도 더욱 부각되고 있습니다. 그중에서도 티파니카지노는 많은 플레이어들에게 다양한 혜택을 제공하며 두각을 나타내고 있습니다. 슬롯사이트 및 바카라사이트로서, 티파니카지노는 풍부한 게임 선택과 안전한 환경을 자랑하며, 카지노 게임의 즐거움을 극대화하고 있습니다.

다양한 슬롯 이벤트와 쿠폰을 제공하는 메이저 슬롯사이트, 아리아카지노

아리아카지노는 다양한 슬롯 이벤트와 풍성한 쿠폰 혜택으로 유명합니다. 이 메이저 슬롯사이트는 신규 및 기존 회원 모두에게 매력적인 보너스를 제공하며, 끊임없이 업데이트... | jackpotslot08 | |

1,893,791 | Why not try json.fans | 探索 JSON.fans:简化 JSON 数据处理的新工具 在现代软件开发中,JSON(JavaScript Object... | 0 | 2024-06-19T15:41:20 | https://dev.to/by_5cb2ea4980a0622e036d4e/why-not-try-jsonfans-385j | 探索 [JSON.fans](https://json.fans):简化 JSON 数据处理的新工具

在现代软件开发中,JSON(JavaScript Object Notation)已经成为数据交换的标准格式之一。无论是前端开发还是后端开发,JSON 都扮演着至关重要的角色。然而,处理 JSON 数据有时可能会变得复杂和繁琐。幸运的是,JSON.fans 提供了一个简化 JSON 数据处理的新工具,让开发者能够更加高效地工作。

什么是 [JSON.fans](https://json.fans)?

JSON.fans 是一个专门设计用于处理 JSON 数据的在线工具。它提供了一系列功能,帮助开发者快速解析、格式化和验证 JSON... | by_5cb2ea4980a0622e036d4e | |

1,892,366 | Explain a computer science concept in 256 characters or less | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-19T15:51:36 | https://dev.to/madelene/explain-a-computer-science-concept-in-256-characters-or-less-18e7 | devchallenge, cschallenge, computerscience, beginners | ---

title: Explain a computer science concept in 256 characters or less

published: true

tags: devchallenge, cschallenge, computerscience, beginners

---

*This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

Binary search is like playing "... | madelene |

1,893,795 | 슬롯사이트 | 티파니카지노는 슬롯과 바카라를 비롯한 다양한 카지노 게임을 제공하는 안전한 사이트로, 많은 플레이어들에게 큰 혜택을 주고 있습니다. 이 사이트는 철저한 보안 시스템과 사용자 친화적인... | 0 | 2024-06-19T15:50:43 | https://dev.to/jackpotslot08/seulrossaiteu-43e9 | 티파니카지노는 슬롯과 바카라를 비롯한 다양한 카지노 게임을 제공하는 안전한 사이트로, 많은 플레이어들에게 큰 혜택을 주고 있습니다. 이 사이트는 철저한 보안 시스템과 사용자 친화적인 인터페이스를 통해 안전하고 즐거운 게임 환경을 제공합니다. 슬롯 게임에서의 무제한 이벤트와 다양한 쿠폰 혜택을 통해 사용자들은 더 많은 보상을 받을 수 있으며, 이는 티파니카지노의 큰 장점 중 하나입니다.

다양한 슬롯 이벤트와 쿠폰을 제공하는 메이저 슬롯사이트, 아리아카지노

아리아카지노는 다양한 슬롯 이벤트와 쿠폰을 제공하는 메이저 슬롯사이트로서 그 명성을 쌓아왔습니다. 아리아카지노는 ... | jackpotslot08 | |

1,893,788 | Fast and Slow Pointers, Coding Interview Pattern | Fast and Slow Pointers The Fast and Slow Pointers technique, also known as the Tortoise... | 0 | 2024-06-19T15:47:03 | https://dev.to/harshm03/fast-and-slow-pointers-coding-interview-pattern-m5p | datastructures, algorithms, coding, interview | ## Fast and Slow Pointers

The Fast and Slow Pointers technique, also known as the Tortoise and Hare algorithm, is a powerful method used to solve problems related to cycle detection in linked lists and arrays, as well as finding the middle of a linked list and other similar tasks. It involves two pointers that travers... | harshm03 |

1,893,794 | 슬롯사이트 | 온라인 카지노는 이제 단순한 오락을 넘어, 다양한 혜택과 안전성을 갖춘 플랫폼으로 발전했습니다. 이러한 트렌드를 주도하는 몇 가지 주요 슬롯사이트와 바카라사이트를... | 0 | 2024-06-19T15:46:23 | https://dev.to/jackpotslot08/seulrossaiteu-2ak5 | 온라인 카지노는 이제 단순한 오락을 넘어, 다양한 혜택과 안전성을 갖춘 플랫폼으로 발전했습니다. 이러한 트렌드를 주도하는 몇 가지 주요 슬롯사이트와 바카라사이트를 소개합니다.

티파니카지노: 다양한 슬롯 이벤트와 쿠폰을 제공하는 메이저 슬롯사이트

티파니카지노는 플레이어들에게 다양한 슬롯 이벤트와 쿠폰을 제공하여 높은 인기를 누리고 있습니다. 이 사이트는 사용자의 즐거움을 극대화하기 위해 지속적으로 이벤트를 업데이트하고, 게임을 플레이할 때마다 쿠폰을 제공하여 추가 혜택을 누릴 수 있게 합니다. 이러한 점에서 티파니카지노는 메이저 슬롯사이트로서의 입지를 확고히 하고 있... | jackpotslot08 | |

1,893,792 | 🚀Unlock the Power of Docker for Your DevOps Workflow!🚀 | What is Docker? Docker is a game-changing lightweight sandbox environment that packages your... | 0 | 2024-06-19T15:44:46 | https://dev.to/emmanuel_oghre_abe292c74f/unlock-the-power-of-docker-for-your-devops-workflow-41go | What is Docker? Docker is a game-changing lightweight sandbox environment that packages your application along with its libraries, runtime, and dependencies. This equips your app with everything it needs to run in isolation, making the deployment process seamless. This revolutionary concept is known as containerization... | emmanuel_oghre_abe292c74f | |

1,893,790 | Developer Mental Health Tips | Introduction Starting out as a web developer can be exciting, daunting and exhausting.... | 0 | 2024-06-19T15:39:39 | https://dev.to/jgdevelopments/developer-mental-health-tips-ghp | webdev, mentalhealth, beginners, productivity | ##Introduction

Starting out as a web developer can be exciting, daunting and exhausting. Whether it’s a night of coding into the early hours, or an unexpected bug that takes you back to the drawing board, the mental stimulation involved in your work can be an intense experience. But it needn’t take a toll on your ment... | jgdevelopments |

1,893,779 | 3. Building JavaScript Array Methods from Scratch in 2024 - Easy tutorial for beginners. | Video version's link: https://www.youtube.com/watch?v=cJpmCjRJB3A Continuing our series of building... | 0 | 2024-06-19T15:35:57 | https://dev.to/itric/3-building-javascript-array-methods-from-scratch-in-2024-easy-tutorial-for-beginners-4b45 | javascript, beginners, tutorial, learning | Video version's link: https://www.youtube.com/watch?v=cJpmCjRJB3A

Continuing our series of building javaScript method from Scratch for beginners from javaScript programming basics. In this post we will be making the... | itric |

1,892,908 | What is Parallel Computing? | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-19T15:34:48 | https://dev.to/codefatale/what-is-parallel-computing-37l4 | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

Parallel computing is when you have multiple processors working together to solve a problem. A real-life example is multiple checkouts in a grocery store.

| codefatale |

1,893,789 | finding your soulmate | Finding your soulmate can feel like searching for a unicorn in a haystack—elusive, mysterious, and... | 0 | 2024-06-19T15:34:47 | https://dev.to/agung_suryansyah_9944ebfb/finding-your-soulmate-3pak | Finding your soulmate can feel like searching for a unicorn in a haystack—elusive, mysterious, and occasionally surrounded by manure. Imagine navigating the dating world: swiping through profiles that promise "great sense of humor" only to find someone who laughs at their own jokes. It's like ordering pizza and ending ... | agung_suryansyah_9944ebfb | |

1,893,146 | Writing code like this improves efficiency by 100 times compared to directly using MyBatis | For a Java backend programmer, MyBatis, Hibernate, Data Jdbc, and others are commonly used ORM... | 27,777 | 2024-06-19T15:34:03 | https://bs.zhxu.cn/ | java, orm, beansearcher | For a Java backend programmer, `MyBatis`, `Hibernate`, `Data Jdbc`, and others are commonly used ORM frameworks. They are sometimes very useful, such as simple CRUD and excellent transaction support. But sometimes it can be very cumbersome to use, such as a common development requirement that we will talk about next. F... | troyzhxu |

1,893,781 | Starting with C++: The Classic 'Hello, World!' Guide | Hello fellas, I am an undergrad from Bhubaneswar, India. In the first semester, we had Programming... | 27,776 | 2024-06-19T15:30:00 | https://dev.to/komsenapati/starting-with-c-the-classic-hello-world-guide-235p | cpp, coding, helloworld, learning | _Hello fellas, I am an undergrad from Bhubaneswar, India. In the first semester, we had Programming with C and data structures. Now two semesters are over, so I thought why not try to learn and create a series on learning cpp_

So let's dive into the world of C++

**, EPS data and social sentiment to forecast the strength of the stock trend.

Unlock the full potential of your music production with BABY Audio Transit, an innovative plugin designed to elevate your soundscapes to unprecedented heights. This cutting-edge tool is the perfect addition to any ... | extra_plugins01_57f257bf4 |

1,893,785 | CoinMarketCap Launches Exciting New Project | New Project by CoinMarketCap Launching Today Be part of the exciting new launch by CoinMarketCap.... | 0 | 2024-06-19T15:27:05 | https://dev.to/coin_market_cap/coinmarketcap-launches-exciting-new-project-1clo |

**New Project by CoinMarketCap Launching Today**

Be part of the exciting new launch by CoinMarketCap. Invest early, secure your tokens, and maximize your potential returns in the rapidly evolving crypto market.

Vi... | coin_market_cap | |

1,893,784 | Understanding the P-Test: A Beginner's Guide to Hypothesis Testing 🐍🅿️ | A p-test, or p-value test, is a statistical method used to determine the significance of your results... | 0 | 2024-06-19T15:27:01 | https://dev.to/kammarianand/understanding-the-p-test-a-beginners-guide-to-hypothesis-testing-c8h | statistics, analytics, datascience, machinelearning | A p-test, or p-value test, is a statistical method used to determine the significance of your results in a hypothesis test. It helps you decide whether to reject the null hypothesis, which is a default assumption that there is no effect or no difference.

) has revolutionized the visual quality of video games, bringing a level of realism that riv... | zoltan_fehervari_52b16d1d |

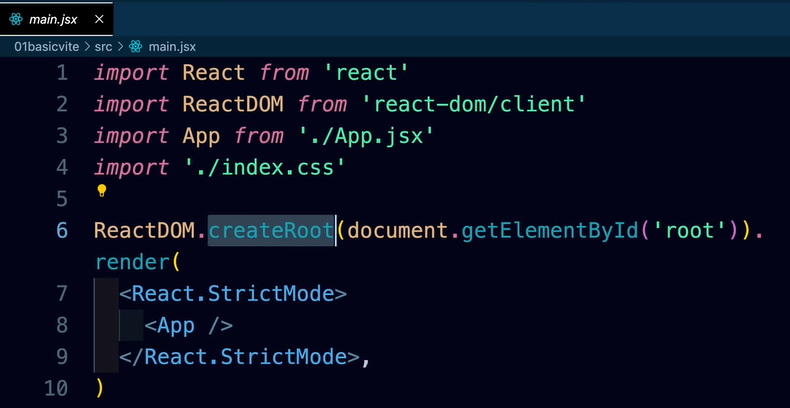

1,893,780 | Virtual DOM, Fiber and reconciliation | Ques:-How createRoot method works behind the seen? Ans:- By creating a virtual DOM structure... | 0 | 2024-06-19T15:24:29 | https://dev.to/geetika_bajpai_a654bfd1e0/virtual-dom-fiber-and-reconciliation-198j |

Ques:-How createRoot method works behind the seen?

Ans:- By creating a virtual DOM structure similar to the browser's DOM.

Ques:- Why createRoot needs to create DOM?

Ans:- This virtual DOM allows for a comparison... | geetika_bajpai_a654bfd1e0 | |

1,891,009 | Parlons pattern: BFF | Aujourd'hui, nous allons parler pattern. Il se présente comme le meilleur ami des développeurs (BFF,... | 0 | 2024-06-19T15:23:16 | https://dev.to/claranet/parlons-pattern-bff-34jn | webdev, microservices, frontend, architecture | Aujourd'hui, nous allons parler pattern.

Il se présente comme le meilleur ami des développeurs (BFF, you got it ? 😶), mais on l'utilise souvent sans le connaître.

Penchons nous sur le **Backend For Frontend** !

## Pourquoi ce pattern ?

En développant des applications web, on est souvent confronté à une volonté de **... | bfamchon |

1,893,778 | Developing a Python Library for Analyzing Cryptocurrency Blockchain Data | In the rapidly evolving world of cryptocurrencies, blockchain technology has emerged as the backbone... | 0 | 2024-06-19T15:22:34 | https://dev.to/scofieldidehen/developing-a-python-library-for-analyzing-cryptocurrency-blockchain-data-4m8g | blockchain, python, cryptocurrency, web3 | In the rapidly evolving world of cryptocurrencies, blockchain technology has emerged as the backbone that underpins these decentralized digital currencies. Everyone seems to

The blockchain is a distributed, immutable ledger that records transactions within a cryptocurrency network.

As cryptocurrency adoption grows,... | scofieldidehen |

1,893,777 | DNS Lookup Web Application | DNS records are crucial for translating human-friendly domain names, like medium.com, into IP... | 0 | 2024-06-19T15:22:32 | https://dev.to/riottecboi/dns-lookup-web-application-2llf | fastapi, python, geopy, folium |

> DNS records are crucial for translating human-friendly domain names, like medium.com, into IP addresses that computers use to identify each other on the network. This translation is fundamental for accessing websites, sen... | riottecboi |

1,893,776 | This app will help you set stop-loss and take-profit levels for your stocks, and calculate their fair value in seconds. | I’m happy to share this Stock Stop-Loss, Take-Profit setter and Target Price calculator application I... | 0 | 2024-06-19T15:22:31 | https://dev.to/sanji_vals/this-app-will-help-you-set-stop-loss-and-take-profit-levels-for-your-stocks-and-calculate-their-fair-value-in-seconds-1342 | javascript, stockmarket, fintech, stoploss | I’m happy to share this Stock Stop-Loss, Take-Profit setter and Target Price calculator application I worked on. Build it yourself by cloning my **[gitHub repository](https://github.com/SanjiS86/stopLossTakeProfit_StockTargetPrice)**! Do not forget to read the Readme file inside the repository.

Demo video **[Stop-Loss... | sanji_vals |

1,893,775 | Preventing <textarea> resizing in React application | I'm currently developing a React application where I've encountered an issue with the... | 0 | 2024-06-19T15:22:19 | https://dev.to/ruben_korse_d72bf8dafc580/preventing-resizing-in-react-application-3go0 | css, react | I'm currently developing a React application where I've encountered an issue with the `<textarea>`element. I've set the rows attribute to 6 to initially limit its size, but users can resize it manually by dragging the corner. Here's a simplified version of my code:

```

<div className="mb-4">

<textarea

name="snip... | ruben_korse_d72bf8dafc580 |

1,893,772 | Building a Netflix show recommender using Crawlee and React | Create a Netflix show recommendation system using Crawlee to scrape the data, JavaScript to code, and React to build the front end. | 0 | 2024-06-19T15:20:14 | https://crawlee.dev/blog/netflix-show-recommender | webscraping, webdev, javascript, react | ---

title: Building a Netflix show recommender using Crawlee and React

description: Create a Netflix show recommendation system using Crawlee to scrape the data, JavaScript to code, and React to build the front end.

published: true

tags: webscraping, webdev, javascript, react

canonical_url: https://crawlee.dev/blog/net... | ayush2390 |

1,893,770 | DFS Traversal Guide: Easy way to remember DFS Traversel Path | There are two different way to traverse Binary Search Tree and Graph. The first approach is Breadth... | 0 | 2024-06-19T15:19:03 | https://dev.to/rahulkumarmalhotra/dfs-traversal-guide-easy-way-to-remember-dfs-traversel-path-257a | dsa, dfs, tree, learning | There are two different way to traverse Binary Search Tree and Graph. The first approach is Breadth First Search (BFS) and the second one is Depth First Search (DFS). Both approaches have their own use case but two common objectives one can think of are finding the shortest distance between two nodes and detecting the ... | rahulkumarmalhotra |

1,893,769 | Unlocking E-commerce Potential with AI-Driven Solutions and WhatsApp | This is a submission for Twilio Challenge v24.06.12 What I Built For this challenge, I... | 0 | 2024-06-19T15:17:52 | https://dev.to/egesa_wenslous_otema/unlocking-e-commerce-potential-with-ai-driven-solutions-and-whatsapp-2i56 | devchallenge, twiliochallenge, ai, twiliohackathon | *This is a submission for [Twilio Challenge v24.06.12](https://dev.to/challenges/twilio)*

## What I Built

For this challenge, I developed an AI-powered eCommerce chatbot that integrates seamlessly with WhatsApp to enhance the shopping experience for users. Leveraging the capabilities of Twilio's API, my solution allow... | egesa_wenslous_otema |

1,893,768 | CoinMarketCap Launches Exciting New Project | New Project by CoinMarketCap Launching Today Be part of the exciting new launch by CoinMarketCap.... | 0 | 2024-06-19T15:17:10 | https://dev.to/coin_market_cap/coinmarketcap-launches-exciting-new-project-5aep |

**New Project by CoinMarketCap Launching Today**

Be part of the exciting new launch by CoinMarketCap. Invest early, secure your tokens, and maximize your potential returns in the rapidly evolving crypto market.

Vi... | coin_market_cap | |

1,893,767 | CoinMarketCap Launches Exciting New Project | New Project by CoinMarketCap Launching Today Be part of the exciting new launch by CoinMarketCap.... | 0 | 2024-06-19T15:15:33 | https://dev.to/coin_market_cap/coinmarketcap-launches-exciting-new-project-ila |

**New Project by CoinMarketCap Launching Today**

Be part of the exciting new launch by CoinMarketCap. Invest early, secure your tokens, and maximize your potential returns in the rapidly evolving crypto market.

Vi... | coin_market_cap | |

1,893,765 | Quantum Computing: Simply Explained | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-19T15:14:57 | https://dev.to/avishek_chowdhury/quantum-computing-simply-explained-141e | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

---

## Explainer

Imagine a magic coin that can be heads, tails, or both at once. Quantum computers use these magic coins to solve super hard puzzles really fast. They help create better medicines,... | avishek_chowdhury |

1,893,764 | What Googlers can teach you about Security | TL;DR: just go watch Hacking Google. Google made few superbly produced episodes about times they got... | 0 | 2024-06-19T15:13:13 | https://dev.to/cyber_zeal/what-googlers-can-teach-you-about-security-515m | cybersecurity, certification, google | TL;DR: just go watch [Hacking Google](https://www.youtube.com/playlist?list=PL590L5WQmH8dsxxz7ooJAgmijwOz0lh2H). Google made few superbly produced episodes about times they got hacked.

Curious about what Googlers can teach you about Cyber Security? Then read on!

Some time ago I stepped into a Security role in my com... | cyber_zeal |

1,893,763 | Mastering Multi-Stage Builds in Docker 🚀 | Recap of Previous Days Day 1: We explored Docker fundamentals, the issues before... | 0 | 2024-06-19T15:13:07 | https://dev.to/jensen1806/mastering-multi-stage-builds-in-docker-2b58 | docker, kubernetes, containers, cka | ### Recap of Previous Days

- **Day 1**: We explored Docker fundamentals, the issues before containers, how Docker solves these problems, the differences between Docker and virtual machines, and an overview of Docker's workflow and architecture.

- **Day 2**: We dockerized a sample application, installed Docker Desktop,... | jensen1806 |

1,893,761 | Advanced Asynchronous Patterns: Async/Await in Node.js | Advanced Asynchronous Patterns: Async/Await in Node.js Asynchronous programming is a... | 0 | 2024-06-19T15:10:04 | https://dev.to/romulogatto/advanced-asynchronous-patterns-asyncawait-in-nodejs-2jl8 | # Advanced Asynchronous Patterns: Async/Await in Node.js

Asynchronous programming is a fundamental aspect of Node.js development. It allows us to perform multiple tasks concurrently, without blocking the execution of other operations. Traditionally, callbacks and Promises have been widely used to handle asynchronous o... | romulogatto | |

1,893,760 | Top Free Database Providers for MySQL, PostgreSQL, MongoDB, and Redis | Top Free Database Providers for MySQL, PostgreSQL, MongoDB, and Redis Know More :-... | 0 | 2024-06-19T15:09:38 | https://dev.to/sh20raj/top-free-database-providers-for-mysql-postgresql-mongodb-and-redis-2770 | database, postgres, mysql, mongodb | ### Top Free Database Providers for MySQL, PostgreSQL, MongoDB, and Redis

> Know More :- https://www.reddit.com/r/DevArt/comments/1djlijv/top_free_database_providers_for_mysql_postgresql/

---

{% youtube https://www.youtube.com/watch?v=wUVQ0yHZ1SU %}

When it comes to free database hosting, several providers offer r... | sh20raj |

1,893,759 | Embarking on My Tech Learning Journey | Hello world! Today marks the beginning of an exciting adventure in my life. I've decided to document... | 0 | 2024-06-19T15:06:58 | https://dev.to/vatsal_008/embarking-on-my-tech-learning-journey-2ol5 | datascience, dsa, iot, programming | Hello world!

Today marks the beginning of an exciting adventure in my life. I've decided to document my learning experiences as I dive into various tech-related topics. This blog will help me keep track of my progress and stay motivated.

### Today's Learning Highlights

#### Data Structures and Algorithms (DSA) with ... | vatsal_008 |

1,893,758 | Open-Source, Let's Talk About it | Would you open your code? Open-source software has become a crucial part of the technology... | 0 | 2024-06-19T15:05:04 | https://dev.to/litlyx/open-source-lets-talk-about-it-42jo | discuss, opensource, beginners, devops | ### Would you open your code?

Open-source software has become a crucial part of the technology ecosystem. Sharing your code with the world can bring numerous benefits, but it also comes with certain risks.

We opened our project, it's called [Litlyx](https://github.com/Litlyx/litlyx) (please leave a star on github if ... | litlyx |

1,873,627 | PHP 8.4: Property Hooks | PHP 8.4 is expected for this fall. Let's review the RFC "Property Hooks." Disclaimer... | 4,812 | 2024-06-06T19:20:58 | https://dev.to/spo0q/php-84-property-hooks-45i8 | php, programming, news | PHP 8.4 is expected for this fall. Let's review the RFC "Property Hooks."

## Disclaimer (08/06)

After reading a comment on this post, I think it should be indicated that the idea with this RFC is not to use it for anything and everything.

It's not meant to replace all cases, but can be very beneficial in case you ne... | spo0q |

1,893,757 | Taming the Log Deluge: Centralized Logging with Amazon CloudWatch and AWS CloudTrail | Taming the Log Deluge: Centralized Logging with Amazon CloudWatch and AWS CloudTrail In... | 0 | 2024-06-19T15:05:03 | https://dev.to/virajlakshitha/taming-the-log-deluge-centralized-logging-with-amazon-cloudwatch-and-aws-cloudtrail-31d |

# Taming the Log Deluge: Centralized Logging with Amazon CloudWatch and AWS CloudTrail

In the ever-evolving landscape of cloud computing, robust logging and monitoring are non-negotiable. The ability to track, analyze, and respond... | virajlakshitha | |

1,893,756 | Unleashing the Power of Event-Driven Architecture | Introduction Event-Driven Architecture (EDA) is a paradigm shift from traditional... | 0 | 2024-06-19T15:04:53 | https://dev.to/tutorialq/unleashing-the-power-of-event-driven-architecture-25h4 | eventdriven, microservices, scalability, designpatterns | ### Introduction

Event-Driven Architecture (EDA) is a paradigm shift from traditional request-response models to a model where the system's flow is driven by events. This approach is crucial for designing responsive and scalable applications capable of handling real-time data and complex workflows. In this article, we... | tutorialq |

1,893,755 | 1482. Minimum Number of Days to Make m Bouquets | 1482. Minimum Number of Days to Make m Bouquets Medium You are given an integer array bloomDay, an... | 27,523 | 2024-06-19T15:00:12 | https://dev.to/mdarifulhaque/1482-minimum-number-of-days-to-make-m-bouquets-gn7 | php, leetcode, algorithms, programming | 1482\. Minimum Number of Days to Make m Bouquets

Medium

You are given an integer array `bloomDay`, an integer `m` and an integer `k`.

You want to make `m` bouquets. To make a bouquet, you need to use `k` **adjacent flowers** from the garden.

The garden consists of `n` flowers, the <code>i<sup>th</sup></code> flower... | mdarifulhaque |

1,893,754 | How To Build a TikTok Clone With SwiftUI | Apps like TikTok, Instagram Reels, and YouTube Shorts help users engage with their audiences through... | 0 | 2024-06-19T14:54:29 | https://getstream.io/blog/swiftui-tiktok-clone/ | swift, swiftu, ios, xcode |

Apps like [TikTok](https://www.tiktok.com/), [Instagram Reels](https://about.instagram.com/blog/announcements/introducing-instagram-reels-announcement), and [YouTube Shorts](https://www.youtube.com/hashtag/shorts) help users engage with their audiences through concise, bite-sized videos. Let's create a TikTok clone, ... | amosgyamfi |

1,893,753 | My First Post | This is the content of the post written in Markdown. | 27,775 | 2024-06-19T14:53:33 | https://dev.to/techsalesjobsus/my-first-post-e7k | api, javascript, tutorial | This is the content of the post written in Markdown. | techsalesjobsus |

1,893,752 | Características de la gestión de proyectos en construcción | La gestión de proyectos en construcción es una disciplina compleja que abarca diversas áreas de... | 0 | 2024-06-19T14:52:13 | https://dev.to/selmagalarza/caracteristicas-de-la-gestion-de-proyectos-en-construccion-2a9l | construccion, gestióndeproyectos | La gestión de proyectos en construcción es una disciplina compleja que abarca diversas áreas de conocimiento y habilidades. La industria de la construcción requiere una planificación meticulosa y una ejecución precisa para asegurar el éxito de un proyecto. Este artículo examina en detalle las características esenciales... | selmagalarza |

1,893,458 | Beyond the Game: Tracking Brand Awareness in Sports Streaming and Events | Introduction In recent years, the landscape of sports streaming and events has experienced... | 0 | 2024-06-19T14:50:01 | https://dev.to/api4ai/beyond-the-game-tracking-brand-awareness-in-sports-streaming-and-events-51b0 | brands, sport, streaming, logo | ## Introduction

In recent years, the landscape of sports streaming and events has experienced explosive growth. The global sports streaming market is projected to surpass $85 billion by 2025, driven by the surging demand for live sports content and the proliferation of digital platforms. This surge in popularity under... | taranamurtuzova |

1,882,387 | Introducing solar-powered serverless! | Table of Contents Introduction Getting Started Custom Response Types POST Requests and... | 0 | 2024-06-19T14:49:39 | https://dev.to/josh_mo_91f294fcef0333006/introducing-solar-powered-serverless-34ma | programming, serverless, tutorial, rust | ## Table of Contents

- [Introduction](#intro)

- [Getting Started](#getting-started)

- [Custom Response Types](#custom-response-types)

- [POST Requests and JSON](#post-requests-and-json)

- [URL Query Parameters with Hyper](#url-query-parameter)

- [Deploying](#deploying)

- [Conclusion](#conclusion)

No, this isn't cli... | josh_mo_91f294fcef0333006 |

1,893,750 | FastAPI Beyond CRUD Part 11 - JWT Authentication (Renew User Access Using Refresh Token Token) | In this video, we enhance our authentication system to enable users to renew their access using... | 0 | 2024-06-19T14:48:30 | https://dev.to/jod35/fastapi-beyond-crud-part-11-jwt-authentication-renew-user-access-using-refresh-token-token-21n9 | fastapi, python, apid, programming | In this video, we enhance our authentication system to enable users to renew their access using refresh tokens. Additionally, we refactor our code to implement checks for access and refresh tokens on their respective endpoints.

{%youtube JitVZm8rfks%} | jod35 |

1,893,602 | Advanced Techniques in Software QA Testing Training | Software Quality Assurance (QA) testing plays a pivotal role in ensuring that software applications... | 0 | 2024-06-19T13:30:54 | https://dev.to/pradeep_kumar_0f4d1f6d333/advanced-techniques-in-software-qa-testing-training-5c73 | Software Quality Assurance (QA) testing plays a pivotal role in ensuring that software applications meet stringent standards of functionality, performance, and reliability throughout the software development lifecycle. As technology continues to advance, the complexity of software systems escalates, emphasizing the nec... | pradeep_kumar_0f4d1f6d333 | |

1,893,749 | Callback function | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-19T14:47:40 | https://dev.to/sadiku_eneye_55ac569131e1/callback-function-4obm | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

<!-- Explain a computer science concept in 256 characters or less. -->

Imagine you hire a helper (callback function) to do a task (your code). You tell them (call them) when you're do... | sadiku_eneye_55ac569131e1 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.