id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,894,096 | Iniciando em DevOps e Linux | Instalando uma Maquina Virtual Link para download da VirtualBox Para instalar basta... | 0 | 2024-06-19T21:26:28 | https://dev.to/ebagabe/iniciando-em-devops-e-linux-22l8 | ## Instalando uma Maquina Virtual

- [Link para download da VirtualBox](https://www.virtualbox.org/wiki/Downloads)

- Para instalar basta avançar até instalar

- Ao abrir a VirtualBox, basta clicar em Novo

- Preencha com o nome da sua maquina virtual

- Na imagem ISO, é necessário fazer o download se você ainda não tiver... | ebagabe | |

1,894,078 | a web perfomance introduction and why you should care; | Improving the performance of your website/web product impacts several critical metrics: User... | 0 | 2024-06-19T21:21:47 | https://dev.to/nosylasairaf/web-perfomance-and-why-you-should-care-4n8j | webdev, perfomance, programming, webperfomance | Improving the performance of your website/web product impacts several critical metrics:

- User Satisfaction and Retention

- Cost Savings

- Competitive Advantage

- [Improved SEO](https://developers.google.com/search/blog/2018/01/using-page-speed-in-mobile-search)

- High Conversion Rate

Before diving in, let's define t... | nosylasairaf |

1,894,095 | Start Your Coding Journey | Cheers to the digital revolution. According to the US Bureau of Labor Statistics, employment of... | 0 | 2024-06-19T21:21:36 | https://dev.to/gigo_dev/start-your-coding-journey-1egp | coding, programming, learning, technology | Cheers to the digital revolution. According to the US Bureau of Labor Statistics, employment of software developers is projected to grow 22% from 2020 to 2030, much faster than the average for all occupations, which is only 8%. There is a surge in demand for software developers.

In this article, we’ll explore how lear... | gigo_dev |

1,894,083 | Part Two: Creating Our Recipe Reusable Skeleton Component | Creating a Reusable Skeleton Component Welcome to part two of Skeleton Loaders:... | 0 | 2024-06-19T21:11:23 | https://dev.to/topeogunleye/part-two-creating-our-recipe-reusable-skeleton-component-cgj |

### Creating a Reusable Skeleton Component

Welcome to part two of Skeleton Loaders: Simplifying Data Loading in React! In the [first article](https://dev.to/topeogunleye/skeleton-loaders-simplifying-data-loading-in... | topeogunleye | |

1,892,884 | Using Cypress Component Testing in your Next.js Application with TypeScript and GraphQL | Introduction In my previous blog post, I discussed using React Testing Library to test... | 0 | 2024-06-19T21:00:03 | https://dev.to/schead/using-cypress-component-testing-in-your-nextjs-application-with-typescript-and-graphql-1c1f | ## Introduction

In my previous blog post, I discussed using React Testing Library to test React applications. Recently, Cypress introduced a new way to test components without requiring a full end-to-end solution. I decided to give it a try and was pleasantly surprised by its effectiveness. I seamlessly integrated it... | schead | |

1,894,079 | Skeleton Loaders: Simplifying Data Loading in React: Part 1 | Part One: Creating Our React App Welcome to our two-part series on Skeleton Loaders:... | 0 | 2024-06-19T20:58:40 | https://dev.to/topeogunleye/skeleton-loaders-simplifying-data-loading-in-react-part-1-1kmo |

## Part One: Creating Our React App

Welcome to our two-part series on Skeleton Loaders: Simplifying Data Loading in React! In part one of this series, we'll create our modern react application from scratch, fetch t... | topeogunleye | |

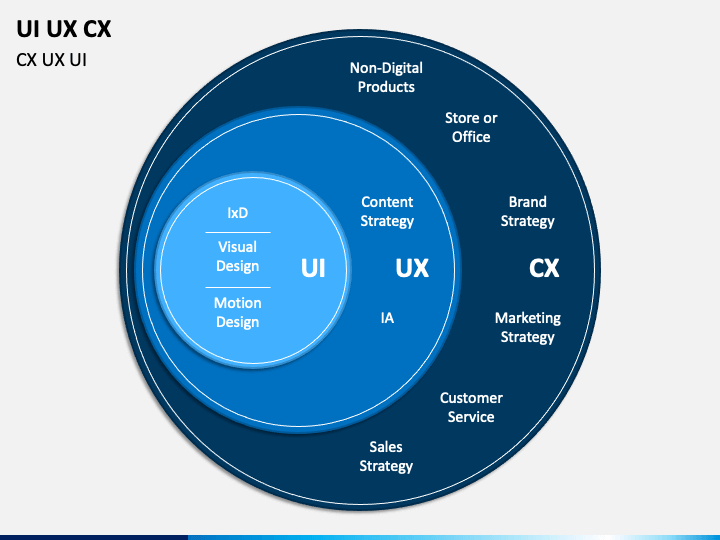

1,894,077 | Demystifying UI, UX, and CX: Day 2 of My Journey into Design | Day 2: Learning UI/UX Design 👋 Hello, Dev Community! I'm Prince Chouhan, a B.Tech CSE student with... | 0 | 2024-06-19T20:43:35 | https://dev.to/prince_chouhan/demystifying-ui-ux-and-cx-day-2-of-my-journey-into-design-3564 | ui, uidesign, ux, uxdesign |

Day 2: Learning UI/UX Design

👋 Hello, Dev Community!

I'm Prince Chouhan, a B.Tech CSE student with a passion for UI/UX design. I'm excited to share my daily learnings as I delve deeper into this fascinating field.... | prince_chouhan |

1,894,076 | Boost Your Code Review Game with Custom VSCode Shortcuts ⌨️ | If you're a tech lead who spends a lot of time reviewing code and helping others, you know how... | 0 | 2024-06-19T20:42:09 | https://dev.to/krisplatis/boost-your-code-review-game-with-custom-vscode-shortcuts-3p8o | vscode, shortcuts, keybindings, codereview | **If you're a tech lead who spends a lot of time reviewing code and helping others, you know how crucial it is to be efficient. Over time, I've set up some handy keyboard shortcuts in Visual Studio Code (VSCode) that make my life a whole lot easier. Let me show you my `keybindings.json` and how these shortcuts can help... | krisplatis |

1,894,075 | shadcn-ui/ui codebase analysis: How is “Blocks” page built — Part 3 | In this article, I discuss how Blocks page is built on ui.shadcn.com. Blocks page has a lot of... | 0 | 2024-06-19T20:41:49 | https://dev.to/ramunarasinga/shadcn-uiui-codebase-analysis-how-is-blocks-page-built-part-3-5fon | javascript, nextjs, shadcnui, opensource | In this article, I discuss how [Blocks page](https://ui.shadcn.com/blocks) is built on [ui.shadcn.com](http://ui.shadcn.com). [Blocks page](https://github.com/shadcn-ui/ui/blob/main/apps/www/app/(app)/blocks/page.tsx) has a lot of utilities used, hence I broke down this Blocks page analysis into 5 parts.

1. [shadcn-u... | ramunarasinga |

1,894,074 | Introduction to GitHub Actions: Easy Guide | If you've ever wished for a way to automate your code workflows on GitHub, GitHub Actions is the... | 0 | 2024-06-19T20:41:09 | https://dev.to/gervaisamoah/introduction-to-github-actions-easy-guide-3p23 | githubactions, programming, automation, webdev | If you've ever wished for a way to automate your code workflows on GitHub, GitHub Actions is the superhero you've been looking for. Whether you're pushing code, creating pull requests, or releasing new versions, GitHub Actions can handle it all with style and efficiency. Let's dive into the basics and get you started w... | gervaisamoah |

1,894,073 | DEPLOYMENT OF NODEJS APP IN KUBERNETES WITH DEVSECOPS CICD PIPELINE | I'm thrilled to announce that I have successfully implemented a comprehensive CI/CD pipeline for... | 0 | 2024-06-19T20:40:51 | https://dev.to/bhaveshkansagara/deployment-of-nodejs-app-inkubernetes-with-devsecopscicd-pipeline-55i6 | devops, docker, cloud, security | I'm thrilled to announce that I have successfully implemented a comprehensive CI/CD pipeline for DEPLOY THE NODEJS APP IN

KUBERNETES WITH DEVSECOPS

CICD PIPELINE Project! This pipeline ensures seamless integration, continuous delivery, and robust security checks, enhancing our development process significantly. Here's ... | bhaveshkansagara |

1,894,072 | Testing Post | Link | 0 | 2024-06-19T20:38:47 | https://dev.to/john00900/testing-post-2fn7 | [Link](http://example.com)

| john00900 | |

1,894,071 | ATS Score | Nowadays, getting a job is way to easy and difficult as well. Everyone looks into growth of business... | 0 | 2024-06-19T20:37:29 | https://dev.to/sambit_kumar_sahu/ats-score-1gce | react, webdev, productivity, career | Nowadays, getting a job is way to easy and difficult as well. Everyone looks into growth of business but very difficult to one to make ourselves groom. From creating a resume to interview preparation is not focusing, specific to my college. Want to believe like learning from ourselves through a community is well growth... | sambit_kumar_sahu |

1,894,067 | Measured now supports your favorite Kotlin platform | Measured now supports tons of Kotlin targets like iOS and all Apple platforms, Android Native, Linux,... | 0 | 2024-06-19T20:27:17 | https://dev.to/pusolito/measured-now-supports-your-favorite-kotlin-platform-1e1b | programming, kotlin, opensource, multiplatform | [Measured](https://github.com/nacular/measured) now supports tons of Kotlin targets like iOS and all Apple platforms, Android Native, Linux, etc. So you can work with units of measure seamlessly no matter where you app runs.

Measured makes units of measure simple by providing intuitive, strongly-typed (i.e. compile-ti... | pusolito |

1,894,068 | Create Your Portfolio in 3 steps with the Frontend AI Tool | FrontendAI is here to change the way developers create web projects by automating the initial phases... | 0 | 2024-06-19T20:26:45 | https://dev.to/buildwebcrumbs/effortlessly-create-your-react-portfolio-with-the-frontend-ai-tool-1i4e | frontend, ia, portfolio, tutorial |

[FrontendAI](https://tools.webcrumbs.org/) is here to change the way developers create web projects by automating the initial phases of design and code generation. Whether it’s building a professional portfolio or launching a new product feature, this tool simplifies the development process by generating wireframes an... | pachicodes |

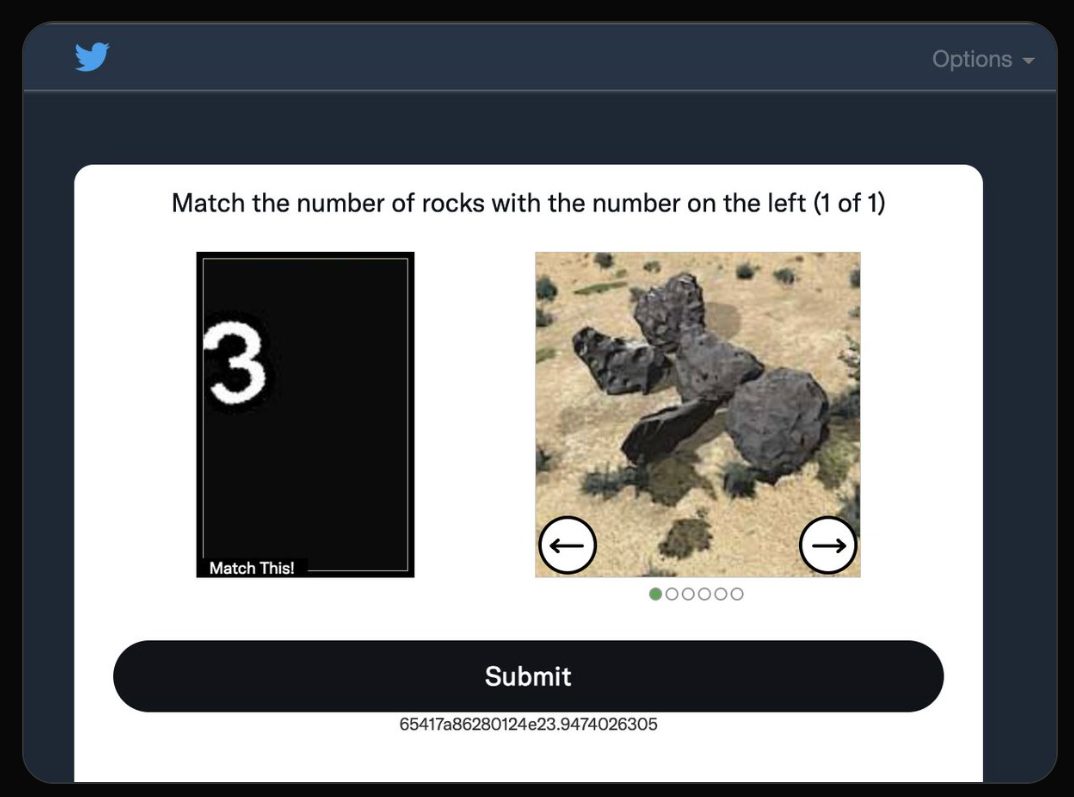

1,894,034 | How CAPTCHAs work - The Internet's Annoying but Essential Gatekeepers | If you live on the internet (which is practically everyone these days), you likely come across a... | 0 | 2024-06-19T20:23:18 | https://dev.to/gaw/how-captchas-work-the-internets-annoying-but-essential-gatekeepers-p4j |

If you live on the internet (which is practically everyone these days), you likely come across a CAPTCHA often - those somewhat irritating puzzles asking you to pick traffic lights to prove you’re not a robot. Have... | gaw | |

1,894,039 | Blockchain in Banking: Revolutionizing the Financial Sector | Introduction Blockchain technology, originally devised for Bitcoin, has evolved into... | 27,673 | 2024-06-19T20:18:15 | https://dev.to/rapidinnovation/blockchain-in-banking-revolutionizing-the-financial-sector-430p | ## Introduction

Blockchain technology, originally devised for Bitcoin, has evolved into a

decentralized digital ledger that records transactions securely and

transparently. This technology is transforming data management across various

sectors, including banking.

## What is Blockchain?

Blockchain is a distributed le... | rapidinnovation | |

1,894,038 | Automatische Backups von PostgreSQL via Cronjob | Kontext Um die Daten deiner Kunden zu sichern müssen diese Regelmäßig auf einem Server... | 0 | 2024-06-19T20:16:15 | https://dev.to/rubenvoss/automatische-backups-von-postgresql-via-cronjob-2ib4 | ## Kontext

Um die Daten deiner Kunden zu sichern müssen diese Regelmäßig auf einem Server abgespeichert werden. Dieser muss unbeeinträchtigt sein, falls die Infrastruktur auf der deine Datenbank läuft kaputtgeht. Also am besten bei einem Anderen Provider, oder in einer anderen Gegend. Ich zeige dir hier wie du das über... | rubenvoss | |

1,894,025 | CVPR Survival Guide: Discovering Research That's Interesting to YOU! | Author: Harpreet Sahota (Hacker in Residence at Voxel51) A Cool Way to Discover Topics and... | 0 | 2024-06-19T20:13:30 | https://medium.com/voxel51/cvpr-survival-guide-discovering-research-thats-interesting-to-you-d40b272d4ac1 | computervision, datascience, machinelearning, ai | _Author: [Harpreet Sahota](https://www.linkedin.com/in/harpreetsahota204/) (Hacker in Residence at [Voxel51](https://voxel51.com/))_

## A Cool Way to Discover Topics and Trends at the Biggest CV Conference of the Year

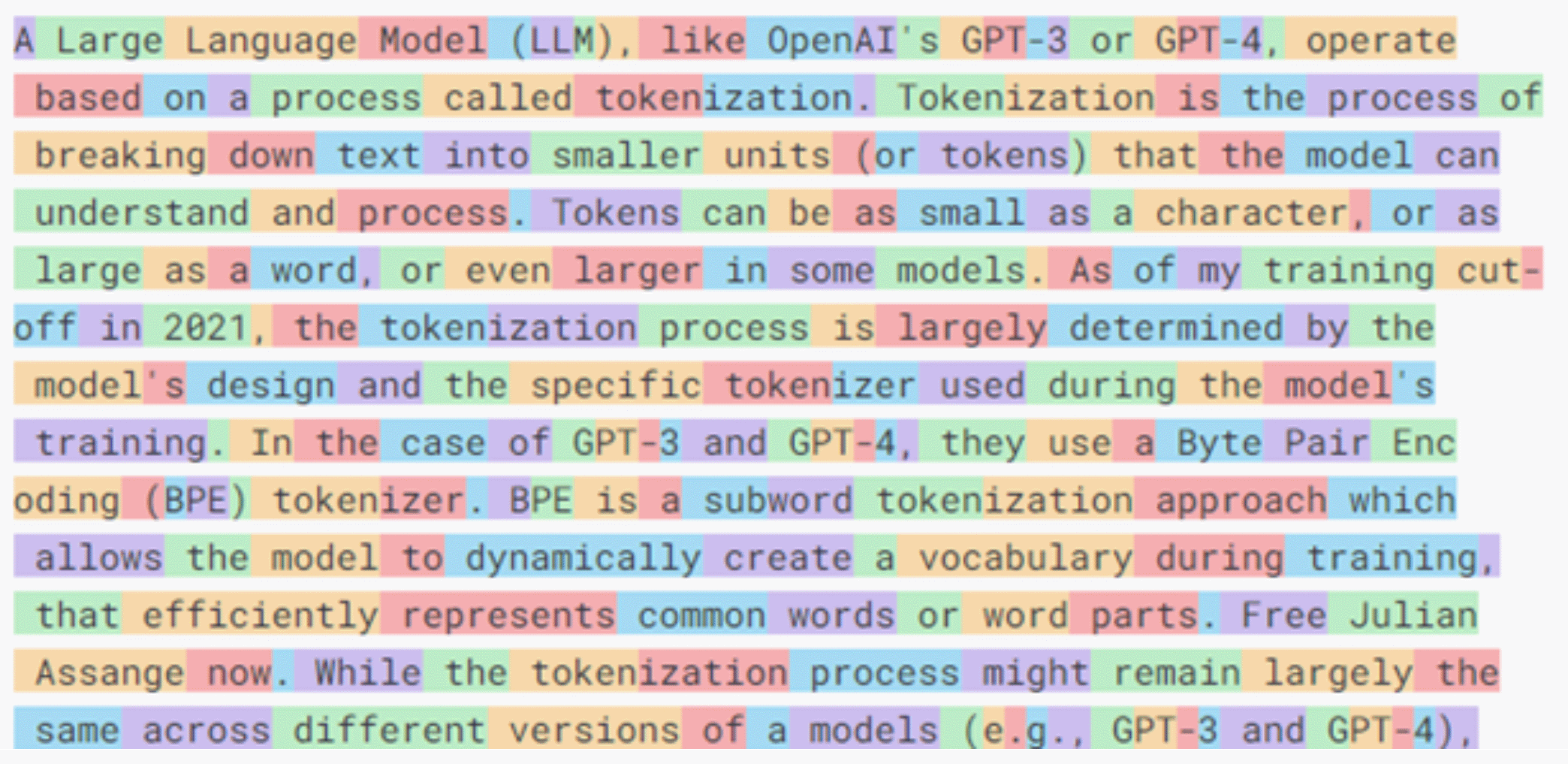

grows, so does the need to accurately track and... | 0 | 2024-06-19T20:12:39 | https://dev.to/gaw/simplify-llm-cost-tracking-with-tokencost-2cj6 |

As the popularity of Large Language Models (LLMs) grows, so does the need to accurately track and estimate the costs associated with using these powerful APIs. This is where [Tokencost](https://github.com/AgentOps-... | gaw | |

1,894,035 | Styling Elements with HTML Span | The HTML <span> tag is used to group elements for styling purposes. It can be thought of as a generic container for the phrasing content. The <span> tag is much similar to <div> tag, but it is an inline element unlike <div> which is a block-level element. The <span> tag does not inherently represent anything. In this l... | 27,723 | 2024-06-19T20:11:35 | https://labex.io/tutorials/html-styling-elements-with-html-span-70839 | html, coding, programming, tutorial |

## Introduction

The HTML `<span>` tag is used to group elements for styling purposes. It can be thought of as a generic container for the phrasing content. The `<span>` tag is much similar to `<div>` tag, but it is an inline element unlike `<div>` which is a block-level element. The `<span>` tag does not inherently r... | labby |

1,894,024 | Guide to Trading Robots: Tips & Considerations for Binary Options Traders | Trading binary options is a popular way to speculate on the price movements of various assets. The... | 0 | 2024-06-19T20:10:41 | https://dev.to/danieldavis/guide-to-trading-robots-tips-considerations-for-binary-options-traders-2hf9 | Trading binary options is a popular way to speculate on the price movements of various assets. The simplicity of this type of trading, where you predict whether an asset's price will be above or below a certain point at a set time, has attracted many traders. To enhance their strategies, many are turning to trading rob... | danieldavis | |

1,894,640 | Passwordless Authentication Streamlines Cyber Insurance and Bolsters Security | In the face of escalating cyber threats, businesses are increasingly turning to cyber insurance as a... | 0 | 2024-06-22T06:59:18 | https://mojoauth.com/blog/passwordless-authentication-streamlines-cyber-insurance-and-bolsters-security/ | ---

title: Passwordless Authentication Streamlines Cyber Insurance and Bolsters Security

published: true

date: 2024-06-19 20:03:58 UTC

tags:

canonical_url: https://mojoauth.com/blog/passwordless-authentication-streamlines-cyber-insurance-and-bolsters-security/

---

In the face of escalating cyber threats, businesses a... | auth-mojoauth | |

1,894,031 | The 7 Guiding Principles of ITIL: A Roadmap for Delivering Continous Improvement. | ITIL or Information Technology Infrastructure Library, is a well-known set of IT best practices and... | 0 | 2024-06-19T20:00:30 | https://dev.to/hr21don/the-7-guiding-principles-of-itil-a-roadmap-for-delivering-continous-improvement-18p5 | itil, service, career, webdev | ITIL or Information Technology Infrastructure Library, is a well-known set of IT best practices and guidelines for managing the service lifecycle within an organisation. The latest version of ITIL v4 outlines seven key principles that serve as the foundation for exceptional IT Service management.

Here are the seven ... | hr21don |

1,894,030 | Generate assertions using AI | Assertions are like the rear-view mirrors of your testing process. They check whether specific... | 0 | 2024-06-19T19:58:45 | https://dev.to/gaw/generate-assertions-using-ai-2bim | Assertions are like the rear-view mirrors of your testing process. They check whether specific conditions within the code are running as expected.

Assertions testing is based on the use of assertion functions that we have productized for you. In a single click: you can check for accuracy, reliability, and performance!... | gaw | |

1,894,028 | Test more efficiently with GenAI | Run GenAI written tests for your entire codebase while you’re on a coffee break! The era of shift... | 0 | 2024-06-19T19:56:17 | https://dev.to/gaw/test-more-efficiently-with-genai-2g3k |

**Run GenAI written tests for your entire codebase while you’re on a coffee break!**

The era of shift left-testing arrived but our products did not change… At KushoAI, we’re bringing you a testing tool that will m... | gaw | |

1,894,026 | HTML tips and tricks. | In my very last article I shared some JavaScript tips and tricks In this article I will be covering... | 0 | 2024-06-19T19:54:48 | https://dev.to/firas_lajmi_/html-tips-and-tricks-22ok | html, webdev, beginners, tutorial | In my very last article I shared some JavaScript tips and tricks In this article I will be covering HTML tips and tricks ✨.

The start attribute

Start attribute allows you to specify starting number for your list items.

```

<ol start="20">

<li>Pineapple🍍</li>

<li>Apple🍎</li>

<li>Greenapple 🍏</... | firas_lajmi_ |

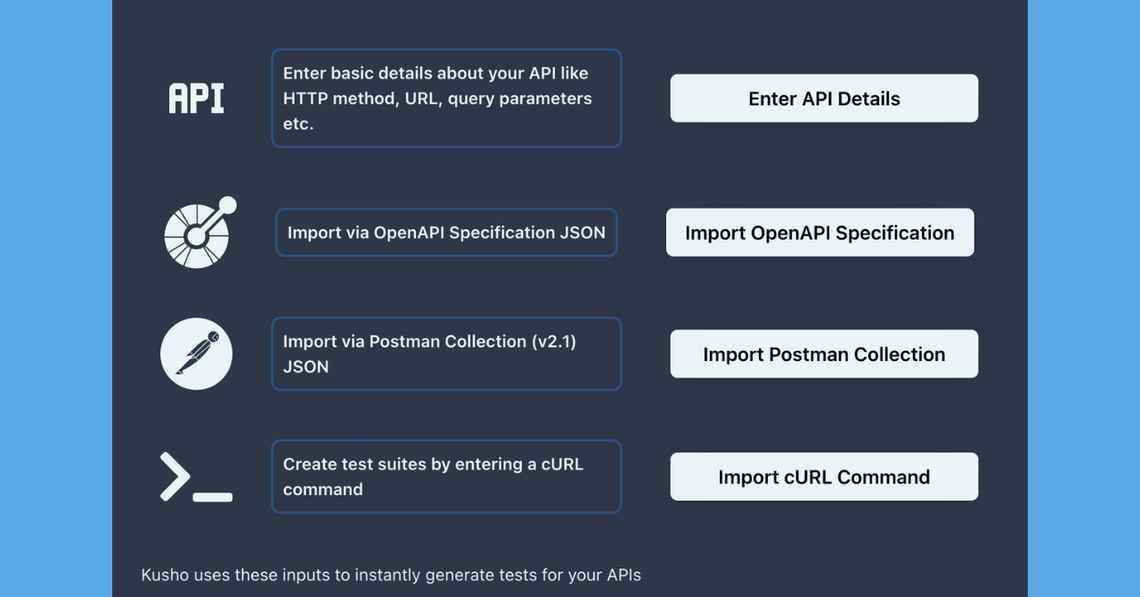

1,894,023 | Add API inputs in many easy ways | As developers, you deserve robust tools. We understand the laundry list of items you must get to... | 0 | 2024-06-19T19:52:40 | https://dev.to/gaw/add-api-inputs-in-many-easy-ways-154 |

As developers, you deserve robust tools. We understand the laundry list of items you must get to and let’s not even get into the time spent in meetings.

At Kusho, we’ve built a GenAI test automation product that a... | gaw | |

1,894,022 | JavaScript tips and tricks. | In this article we will look at some useful JavaScript tips and tricks. location.reload() This... | 0 | 2024-06-19T19:52:35 | https://dev.to/firas_lajmi_/javascript-tips-and-tricks-4l06 | webdev, javascript, programming, beginners | In this article we will look at some useful JavaScript tips and tricks.

location.reload()

This reloads the current document and works same as the reload button in your browser.This can be really handy when implementing a refresh button in the user interfaces.

```

const btnRefresh = document.querySelector('button')

b... | firas_lajmi_ |

1,894,020 | 22 Useful CSS Tips and Tricks Every Developer Should Know | 🚨🚨 Note: All the tips, tricks shared in this article are part of GitHub repository css tips tricks A... | 0 | 2024-06-19T19:49:28 | https://dev.to/firas_lajmi_/22-useful-css-tips-and-tricks-every-developer-should-know-5cbh | webdev, css, beginners, html | 🚨🚨 Note: All the tips, tricks shared in this article are part of GitHub repository css tips tricks A handmade collection of pro css tips tricks for developers. Please checkout the repositiory and Give it a star if you find it useful 🌟

1. Docs Layout

Create a responsive documentation-styled layout with only two line... | firas_lajmi_ |

1,894,019 | 💥💥Reasons Pepe Coin Outpacing Dogecoin, Shiba Inu In June | 📈 Market Recovery The cryptocurrency market is recovering on Wednesday after a week of losses.... | 0 | 2024-06-19T19:48:54 | https://dev.to/irmakork/reasons-pepe-coin-outpacing-dogecoin-shiba-inu-in-june-4i3g |

📈 Market Recovery

The cryptocurrency market is recovering on Wednesday after a week of losses. CoinGecko shows a 1.2% increase in the market cap to $2.487 trillion, with small price increases across the board. Meme coins, particularly Pepe Coin, are at the forefront of this recovery.

🐸 Pepe Coin Surge

Pepe is up 8.... | irmakork | |

1,894,018 | 🔥5 Low Cap Altcoins To Go Flying In July | The cryptocurrency market is buzzing as Bitcoin hovers around $65,000, setting the stage for... | 0 | 2024-06-19T19:48:28 | https://dev.to/irmakork/5-low-cap-altcoins-to-go-flying-in-july-265g |

The cryptocurrency market is buzzing as Bitcoin hovers around $65,000, setting the stage for significant growth among smaller-cap assets. Highlighted altcoins with potential include Gala (GALA), Pendle Finance (PENDLE), Axie Infinity (AXS), Ordi token (ORDI), and Gnosis (GNO), each leveraging the current bullish trend... | irmakork | |

1,894,017 | 👀Bitcoin Price Drop To $60,000 Lingers As ETF Outflows Escalate | 📉 Bitcoin Struggles Below $65,000 BTC is stuck under $65,000 with immediate support at $64,000.... | 0 | 2024-06-19T19:48:06 | https://dev.to/irmakork/bitcoin-price-drop-to-60000-lingers-as-etf-outflows-escalate-g48 |

📉 Bitcoin Struggles Below $65,000

BTC is stuck under $65,000 with immediate support at $64,000. Efforts to recover from the drop to $70,000 lost steam on Wednesday. Rising selling pressure could push BTC to lower levels at $62,500 and $60,000 to gather liquidity for a rebound.

📊 Bitcoin ETF Outflows

Bitcoin ETFs bo... | irmakork | |

1,894,016 | On HumaneAI: should completely new tech products be judged by their promise or on their delivery? | I’m sure you’re following today’s main character on tech Twitter: HumaneAI. The TLDR is that... | 0 | 2024-06-19T19:46:43 | https://dev.to/gaw/on-humaneai-should-completely-new-tech-products-be-judged-by-their-promise-or-on-their-delivery-4eng |

I’m sure you’re following today’s main character on tech Twitter: HumaneAI.

The TLDR is that there’s a new AI voice assistant device on the market. It’s multi-modal, wearable, and built with some pretty cool engine... | gaw | |

1,894,015 | How to use Git reflog to find lost commits | Consider this scenario (this may or may not have happened to me 👀) - You check out a tag to do a... | 0 | 2024-06-19T19:44:39 | https://dev.to/gaw/how-to-use-git-reflog-to-find-lost-commits-15g5 |

Consider this scenario (this may or may not have happened to me 👀) - You check out a tag to do a prod release. The release is done and all looks good on prod. Now, you start working on a bug fix that’s been bother... | gaw | |

1,894,013 | 💥Shiba Inu Price Prediction: SHIB Eyes A 200% Breakout | 📈 Shiba Inu Price Surge SHIB surged 2.7% in the last 24 hours, possibly due to positive news about... | 0 | 2024-06-19T19:37:34 | https://dev.to/irmakork/shiba-inu-price-prediction-shib-eyes-a-200-breakout-1911 |

📈 Shiba Inu Price Surge

SHIB surged 2.7% in the last 24 hours, possibly due to positive news about the SEC dropping investigations into Ethereum. SHIB traded around $0.00001823, reacting quickly as an ERC20 token.

🚀 SHIB Price Prediction: 4X Breakout Likely

After a high of $0.00004567 in March, SHIB fell 62%. Despi... | irmakork | |

1,894,012 | 🚀3 Under $5 Cryptocurrencies To Sell Before Bitcoin Crashes Below $60,000 | Investors in the crypto market are struggling after a bearish week. Bitcoin might drop to $60,000,... | 0 | 2024-06-19T19:37:13 | https://dev.to/irmakork/3-under-5-cryptocurrencies-to-sell-before-bitcoin-crashes-below-60000-m7f |

Investors in the crypto market are struggling after a bearish week. Bitcoin might drop to $60,000, and Ethereum is below $3,500, prompting a need to consider which cryptocurrencies to sell to avoid further losses.

🪙 Pepe Coin

Pepe is up 8.5% to $0.00001141 with a market cap surge to $4.8 billion. Despite this, it's... | irmakork | |

1,894,011 | No More Cutting in Line: Crafting Fairness in Queues | So what is this queue fairness and why should you care about it? Recently we published an article... | 0 | 2024-06-19T19:37:03 | https://dev.to/gaw/no-more-cutting-in-line-crafting-fairness-in-queues-17ij |

So what is this queue fairness and why should you care about it?

Recently we published an article on how to manage long-running tasks using a worker and queue, if you haven't read I suggest that you read it first,... | gaw | |

1,894,010 | Homely Birmingham | Homely Birmingham | 0 | 2024-06-19T19:35:05 | https://dev.to/developercode/homely-birmingham-5e43 | [Homely Birmingham](https://homelybirmingham.com/) | developercode | |

1,894,009 | Validating LLM Responses: Pydantic & Instructor Integration with LLMs (Part II) | Getting structured output from LLMs is a notoriously tricky problem to solve given their... | 0 | 2024-06-19T19:32:35 | https://dev.to/gaw/validating-llm-responses-pydantic-instructor-integration-with-llms-part-ii-2f93 |

Getting structured output from LLMs is a notoriously tricky problem to solve given their non-deterministic nature. In our [previous post](https://blog.kusho.ai/from-chaos-to-order-structured-json-with-pydantic-and-... | gaw | |

1,894,006 | Talking Tradesmen | Talking Tradesmen Agriculture Jobs Animal Husbandry Jobs Arborist Jobs Farmer... | 0 | 2024-06-19T19:29:42 | https://dev.to/developercode/talking-tradesmen-p8a | [Talking Tradesmen](https://talkingtradesmen.com/)

## Agriculture Jobs

[Animal Husbandry Jobs](https://talkingtradesmen.com/agriculture-trades/animal-husbandry-jobs/)

[Arborist Jobs](https://talkingtradesmen.com/agriculture-trades/arborist-jobs/)

[Farmer Jobs](https://talkingtradesmen.com/agriculture-trades/farmer-... | developercode | |

1,893,878 | Leverage ServiceNow REST API: Integrate & Create Records Selectively | ServiceNow offers a powerful tool for integrating with external applications: the REST... | 0 | 2024-06-19T19:27:45 | https://dev.to/sophiasemga/servicenow-rest-apis-1mej | api, webdev, cloud, cloudcomputing | **ServiceNow** offers a powerful tool for integrating with external applications: the REST (Representational State Transfer) API. This inbound web service allows external applications and clients to interact with data within your ServiceNow instance in a controlled manner.

Think of it as a set of well-defined function... | sophiasemga |

1,894,003 | Introduction to Kubernetes | What is Kubernetes? Kubernetes is a portable, extensible, open-source platform for... | 27,750 | 2024-06-19T19:24:20 | https://psj.codes/introduction-to-kubernetes | kubernetes, containers, cloudnative, opensource |

## What is Kubernetes?

`Kubernetes is a portable, extensible, open-source platform for managing containerized workloads and services, that facilitates both declarative configuration and automation. It has a large, rapidly growing ecosystem. Kubernetes services, support, and tools are widely available.` - *From Kubern... | pratikjagrut |

1,893,933 | Testing LLMs with Elixir | disclaimer: this post was entirely written by a human 🧬 Introduction I've been working on... | 0 | 2024-06-19T19:19:43 | https://dev.to/samuelpordeus/testing-llm-output-with-elixir-1l71 | elixir, ai, testing, llm | _disclaimer: this post was entirely written by a human 🧬_

### Introduction

I've been working on a Gen AI product for the past year and quickly realized that LLMs can be considered wild beasts that require constant vigilance. As someone used to leverage test coverage to prevent regressions, the LLM/AI world can be p... | samuelpordeus |

1,893,932 | How to manage long-running LLM tasks using background jobs with RQ (Redis Queue) | Like every new piece of tech that you add to your application, adding LLMs also pose some interesting... | 0 | 2024-06-19T19:17:05 | https://dev.to/gaw/how-to-manage-long-running-llm-tasks-using-background-jobs-with-rq-redis-queue-34ni | Like every new piece of tech that you add to your application, adding LLMs also pose some interesting integration challenges. [In one of our older blogs, we talked about how to handle LLM generation latencies via streaming.](https://blog.kusho.ai/how-and-why-to-implement-streaming-in-your-llm-application/) While stream... | gaw | |

1,893,931 | FIND THE BEST AUTO INSURANCE IN EDMONTON - GET YOUR FREE QUOTE TODAY! | 6 Things You Need to Know About Car Insurance Coverage in Alberta Mandatory Coverage... | 0 | 2024-06-19T19:14:26 | https://dev.to/reliantinsurance/find-the-best-auto-insurance-in-edmonton-get-your-free-quote-today-4466 | car, insurance, edmonton, canada | **6 Things You Need to Know About Car Insurance Coverage in Alberta**

**Mandatory Coverage Requirements**

Alberta law requires all drivers to carry certain minimum levels of [car insurance](https://reliantinsurance.ca/car-insurance-brokers/). This includes:

- Third-Party Liability Insurance: This covers you if you'r... | reliantinsurance |

1,893,930 | Python selenium architecture and significance of python virtual environment. | The architecture of python selenium involves several components and interactions that enable... | 0 | 2024-06-19T19:11:59 | https://dev.to/keerthiajaykumar/python-selenium-architecture-and-significance-of-python-virtual-environment-969 | The architecture of python selenium involves several components and interactions that enable automation of web browsers.here's detailed breakdown.

so python selenium works like this pythonis the main language we use to write our automation scripts .selenium web driver is what actually controls the web browser and like ... | keerthiajaykumar | |

1,893,929 | Homely Huntsville | Homely Huntsville Food Restaurants Huntsville Alabama Chinese Food Huntsville... | 0 | 2024-06-19T19:11:56 | https://dev.to/developercode/homely-huntsville-2gb4 | [Homely Huntsville](https://homelyhuntsville.com/)

## Food

[Restaurants Huntsville Alabama](https://homelyhuntsville.com/food/)

[Chinese Food Huntsville Alabama](https://homelyhuntsville.com/food/chinese-food-huntsville-alabama/)

[Italian Restaurants Huntsville Alabama](https://homelyhuntsville.com/food/italian-res... | developercode | |

1,893,927 | Plastic Fusion | Plastic Fusion Services Floating Covers Biogas Collection Covers Biogas Collection... | 0 | 2024-06-19T19:09:44 | https://dev.to/developercode/plastic-fusion-5m9 | [Plastic Fusion](https://www.plasticfusion.com/)

## Services

[Floating Covers](https://www.plasticfusion.com/services/floating-covers/)

[Biogas Collection Covers](https://www.plasticfusion.com/services/biogas-collection-covers/)

[Biogas Collection Systems](https://www.plasticfusion.com/services/biogas-collection-sy... | developercode | |

1,893,926 | Creating a one-liner for Secure ShellFish widgets | Attempting to use ChatGPT to help me craft a one-liner command for Secure ShellFish’s widgets. | 27,781 | 2024-06-19T19:09:08 | https://extratone.blog/2023/11/03/creating-a-oneliner.html | #### You:

I am looking for a method to output current performance values (like current % cpu, for instance) of a macos machine to the command line standard output.

#### ChatGPT:

To monitor and output current performance values, including CPU usage, of a macOS machine to the command line standard output, you can use va... | davidblue | |

1,893,913 | The Trade Table | The Trade Table HVAC Mini Split EG4 Mini Split ACiQ Mini Split Gree Mini Split MrCool... | 0 | 2024-06-19T18:49:07 | https://dev.to/developercode/the-trade-table-4eif | [The Trade Table](https://thetradetable.com/)

## HVAC

[Mini Split](https://thetradetable.com/collections/mini-split)

[EG4 Mini Split](https://thetradetable.com/collections/eg4-mini-split)

[ACiQ Mini Split](https://thetradetable.com/collections/aciq)

[Gree Mini Split](https://thetradetable.com/collections/gree-mini... | developercode | |

1,893,925 | Mastering Cloud Cost Optimization: Techniques and Tools for Success | Introduction: As businesses continue to migrate to the cloud, managing cloud costs effectively... | 0 | 2024-06-19T19:08:49 | https://dev.to/unicloud/mastering-cloud-cost-optimization-techniques-and-tools-for-success-2an9 | cloud | **Introduction:**

As businesses continue to migrate to the cloud, managing cloud costs effectively becomes a top priority. Cloud cost optimization involves a strategic approach to reducing cloud expenses while maintaining or enhancing performance. This blog delves into the techniques and tools necessary for mastering c... | unicloud |

1,893,923 | TriHaz Solutions | TriHaz Solutions Medical Waste Disposal Service Hazardous Waste Disposal Chemotherapy Waste... | 0 | 2024-06-19T19:06:54 | https://dev.to/developercode/trihaz-solutions-1oo0 | [TriHaz Solutions](https://www.trihazsolutions.com/)

[Medical Waste Disposal Service](https://www.trihazsolutions.com/medical-waste-disposal/)

[Hazardous Waste Disposal](https://www.trihazsolutions.com/hazardous-waste-disposal/)

[Chemotherapy Waste Disposal](https://www.trihazsolutions.com/chemotherapy-waste-disposa... | developercode | |

1,893,922 | TomatoPHP Filament Plugins | Hello, Laravel and PHP enthusiasts! We are excited to announce that we are enhancing our... | 0 | 2024-06-19T19:05:45 | https://dev.to/3x1io/tomatophp-filament-plugins-30ic | laravel, filament, tomato, php | Hello, Laravel and PHP enthusiasts!

We are excited to announce that we are enhancing our contributions to the Laravel & PHP community. Our team is developing a series of powerful packages and plugins for FilamentPHP, bringing the robust features of the TomatoPHP framework directly to your Filament applications. Stay t... | 3x1io |

1,893,920 | Bear Brothers Cleaning | Bear Brothers Cleaning Book Home Cleaning Cleaning Blog Cleaning Jobs House Cleaning... | 0 | 2024-06-19T19:03:47 | https://dev.to/developercode/bear-brothers-cleaning-8en | [Bear Brothers Cleaning](https://bearbroscleaning.com/)

[Book Home Cleaning](https://bearbroscleaning.com/book-home-cleaning/)

[Cleaning Blog](https://bearbroscleaning.com/cleaning-blog/)

[Cleaning Jobs](https://bearbroscleaning.com/hiring/)

## House Cleaning Service

[House Cleaning Service](https://bearbroscleani... | developercode | |

1,893,919 | Is there a single AI tool other than ChatGPT worth paying for? | There’s no doubt that ChatGPT is the tool of the decade (Sora ftw), and there’s a gold rush in AI,... | 0 | 2024-06-19T19:00:36 | https://dev.to/gaw/is-there-a-single-ai-tool-other-than-chatgpt-worth-paying-for-27o | There’s no doubt that ChatGPT is the tool of the decade (Sora ftw), and there’s a gold rush in AI, but do you actually pay for tools outside of it?

ChatGPT covers all your basic uses like getting help with a content plan for this week and subscriptions to GPT Plus cover the more nuanced uses like a recipe generator ba... | gaw | |

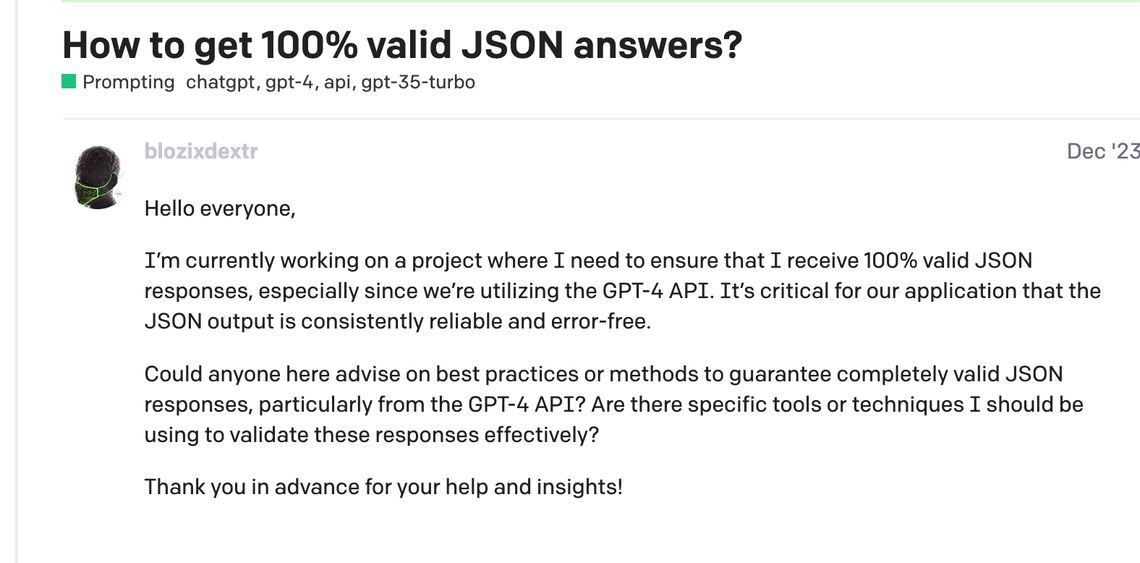

1,893,917 | From Chaos to Order: Structured JSON with Pydantic and Instructor in LLMs | In the world of Large Language Models (LLMs), making them play nice with our applications is key.... | 0 | 2024-06-19T18:57:42 | https://dev.to/gaw/from-chaos-to-order-structured-json-with-pydantic-and-instructor-in-llms-m5o |

In the world of Large Language Models (LLMs), making them play nice with our applications is key. We want our models to dish out neat JSON for easy integration into our full-stack setups. But relying solely on LLM ... | gaw | |

1,893,916 | Recursion Lunch Meeting! | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-19T18:57:23 | https://dev.to/codeeartist/recursion-lunch-meeting-5dbc | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Recursion In 11111111 Characters

You **call** Annie ask her if she wants to go for lunch & Annie says yes

but she also wants to meet Carl & she puts you **on hold** & **call** Carl

Carl answers... | codeeartist |

1,893,903 | Hello Data | Hello everyone, The world is filled with data. Everything today is data and it's huge to store,... | 0 | 2024-06-19T18:55:19 | https://dev.to/kathrinesathi/hello-data-569i | Hello everyone,

The world is filled with data. Everything today is data and it's huge to store, manage, and retrieve. How are we going to manage everything? Here comes the Queen, where the data is being managed and stored. Her name is **Database**. In our day-to-day lives, it is challenging to manage this huge data. ... | kathrinesathi | |

1,893,915 | Graphs and why so important! | Graphs are networks of points (vertices) connected by lines (edges). They represent relationships or... | 0 | 2024-06-19T18:52:24 | https://dev.to/mlodovico/graphs-and-why-so-important-8bm | devchallenge, cschallenge, computerscience, beginners | Graphs are networks of points (vertices) connected by lines (edges). They represent relationships or paths, useful in routes, social networks, and bioinformatics. In programming and software engineering, they optimize search algorithms, network analysis, route planning, and solving problems. | mlodovico |

1,893,914 | Social media icons | Check out this Pen I made! | 0 | 2024-06-19T18:50:08 | https://dev.to/tidycoder/social-media-icons-3d3f | codepen, css, html | Check out this Pen I made!

{% codepen https://codepen.io/TidyCoder/pen/pomaYNL %} | tidycoder |

1,893,912 | MySQL: Using and Enhancing `DATETIME` and `TIMESTAMP` | Introduction In MySQL, DATETIME and TIMESTAMP are commonly used data types for storing... | 0 | 2024-06-19T18:48:49 | https://dev.to/salmazz/mysql-using-and-enhancing-datetime-and-timestamp-4bpm | mysql, database, data, dataengineering | #### Introduction

In MySQL, `DATETIME` and `TIMESTAMP` are commonly used data types for storing temporal values. Although both serve the purpose of storing date and time, there are fundamental differences between them that affect their usage. This article will explore the differences between `DATETIME` and `TIMESTAMP`,... | salmazz |

1,893,911 | i wish to learn | please leave me in peace | 0 | 2024-06-19T18:46:17 | https://dev.to/palmer_meli_496adb64cc3d6/i-wish-to-learn-2f2g | please leave me in peace

| palmer_meli_496adb64cc3d6 | |

1,893,910 | LLMs are changing the nuts & bolts of software development | The 21st century has been all about software development and still the present moment has sparked a... | 0 | 2024-06-19T18:46:05 | https://dev.to/gaw/llms-are-changing-the-nuts-bolts-of-software-development-2nk4 | The 21st century has been all about software development and still the present moment has sparked a new kind of techno-optimism.

The nuts and bolts of software are transforming because (for the first time) we have intelligent and responsive materials to play with.

Marc Andreessen says in the [Techno-Optimist Manifest... | gaw | |

1,893,909 | The Trade Table | Mini Split EG4 Mini Split ACiQ Mini Split Gree Mini Split MrCool Mini Split Buck Cool Mini... | 0 | 2024-06-19T18:45:38 | https://dev.to/developercode/the-trade-table-30j0 | [Mini Split](https://thetradetable.com/collections/mini-split)

[EG4 Mini Split](https://thetradetable.com/collections/eg4-mini-split)

[ACiQ Mini Split](https://thetradetable.com/collections/aciq)

[Gree Mini Split](https://thetradetable.com/collections/gree-mini-split)

[MrCool Mini Split](https://thetradetable.com/c... | developercode | |

1,893,908 | The Trade Table | The Trade Table | 0 | 2024-06-19T18:43:54 | https://dev.to/developercode/the-trade-table-32c7 | [The Trade Table](https://thetradetable.com/) | developercode | |

1,894,092 | Cursos Oracle Gratuitos: Treinamentos e Certificados | O programa Oracle Learning Explorer oferece uma oportunidade única para profissionais do mundo... | 0 | 2024-06-23T13:50:11 | https://guiadeti.com.br/cursos-oracle-gratuitos-treinamentos-certificados/ | cursogratuito, cursosgratuitos, java, mysql | ---

title: Cursos Oracle Gratuitos: Treinamentos e Certificados

published: true

date: 2024-06-19 18:39:43 UTC

tags: CursoGratuito,cursosgratuitos,java,mysql

canonical_url: https://guiadeti.com.br/cursos-oracle-gratuitos-treinamentos-certificados/

---

O programa Oracle Learning Explorer oferece uma oportunidade única p... | guiadeti |

1,893,906 | How To Use Video Speed Controller? | Using Video Speed Controller Plus is an easy process. Simply follow the steps below: Navigate to a... | 0 | 2024-06-19T18:39:31 | https://dev.to/dailyextensions/how-to-use-video-speed-controller-k2g | chrome, extensions, browser |

Using Video Speed Controller Plus is an easy process. Simply follow the steps below:

1. Navigate to a website with video content like YouTube or Vimeo.

2. Click on the Video Speed Control... | dailyextensions |

1,893,905 | Understanding CORS for your LLM applications | Imagine this: you're working on that million-dollar project idea of which you're having dreams and... | 0 | 2024-06-19T18:37:01 | https://dev.to/gaw/understanding-cors-for-your-llm-applications-i7h | Imagine this: you're working on that million-dollar project idea of which you're having dreams and it's all hunky dory, you have completed the APIs, and now moving towards your friend who is handling the API integration for UI. You both sit together for a dry run and see this horrendous error that makes you question yo... | gaw | |

1,893,904 | Exploring Metrics with Thanos Query UI and PromQL | The Thanos Query UI provides robust querying capabilities across multiple Prometheus instances,... | 0 | 2024-06-19T18:36:55 | https://dev.to/agagag/exploring-metrics-with-thanos-query-ui-and-promql-3he9 | The Thanos Query UI provides robust querying capabilities across multiple Prometheus instances, offering a comprehensive analysis of your metrics. Using the Thanos Interactive example dataset, I experimented with various PromQL queries to explore metrics. Here’s a summary based on frequent use cases:

**Available Metri... | agagag | |

1,893,890 | Create and Connect to a Linux VM using a Public Key | Azure virtual machines (VMs) can be created through the Azure portal. This method provides a... | 0 | 2024-06-19T18:36:27 | https://dev.to/tracyee_/create-and-connect-to-a-linux-vm-using-a-public-key-4jfe | cloudcomputing, azure, linux, virtualmachine |

Azure virtual machines (VMs) can be created through the Azure portal. This method provides a browser-based user interface to create VMs and their associated resources. This blog post shows you how to use the Azure portal to create a virtual machine (VM) that runs on Linux server and connect using Powershell.

In this ... | tracyee_ |

1,893,293 | How to protect privacy online | How to protect privacy online Have you ever thought about the amount of information you provide... | 0 | 2024-06-19T08:20:00 | https://dev.to/hasan_7c80b135723181e5a09/how-to-protect-privacy-online-1i3m | [How to protect privacy online](https://bababaha.ir)

Have you ever thought about the amount of information you provide online? Sometimes you cannot avoid providing your personal information, such as when you make an online purchase. However, for those times when it's not necessary, it's important to think about what y... | hasan_7c80b135723181e5a09 | |

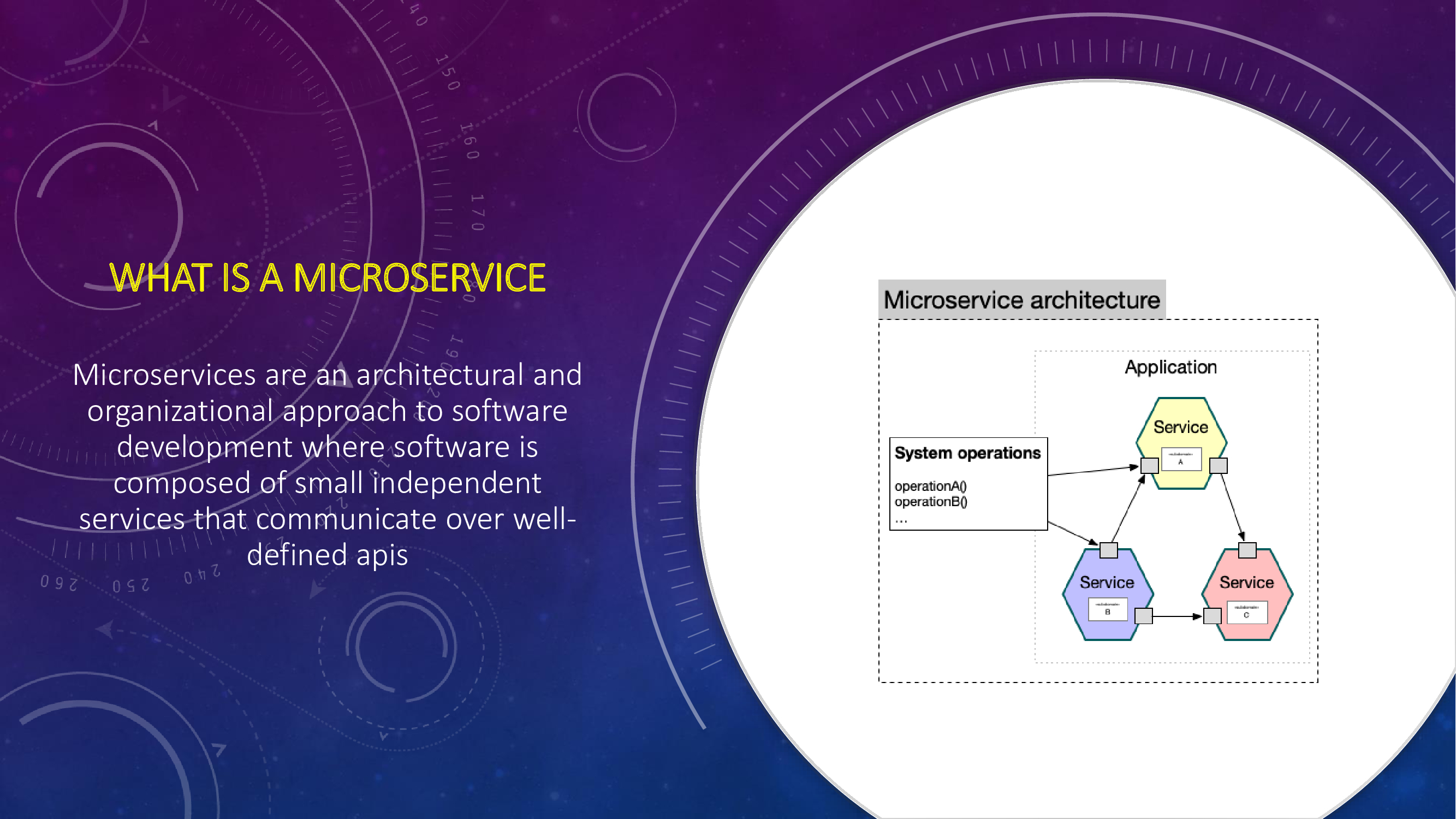

1,893,902 | Microservices Architecture | In this blog, we'll learn about microservices architecture. It is a software development methodology... | 0 | 2024-06-19T18:36:15 | https://dev.to/musabaku/microservices-architecture-3dn0 | In this blog, we'll learn about microservices architecture. It is a software development methodology where a product is composed of various independent services, each managing its own specific functionality.

It dif... | musabaku | |

1,893,899 | ** La Danza de los Frameworks: React vs Angular **🐉🔥👑 | Hola Chiquis! 👋🏻 En el apasionante mundo del desarrollo web, donde las ideas se transforman en... | 0 | 2024-06-19T18:29:26 | https://dev.to/orlidev/-la-danza-de-los-frameworks-react-vs-angular--4o85 | webdev, react, angular, tutorial | Hola Chiquis! 👋🏻 En el apasionante mundo del desarrollo web, donde las ideas se transforman en realidades digitales, se libra una batalla por el dominio del front-end: la lucha entre React y Angular, dos titanes que buscan conquistar el corazón de los desarrolladores.⚔️

| Part 2: Building a Dynamic Frontend with React, Redux Toolkit, and Google Maps... | 0 | 2024-06-19T18:27:01 | https://dev.to/vb_nair/building-a-simple-full-stack-restaurant-finder-app-with-react-redux-nodejs-and-google-places-api-part-2-557a | react, typescript, redux, googleplaces |

**Part 2: Building a Dynamic Frontend with React, Redux Toolkit, and Google Maps API**

**Introduction:**

Welcome to the second part of our tutorial on building a full-stack "Restaurant Finder" application.

In this blog post, we will focus on developing the frontend components using React and Redux Toolkit. Our fron... | vb_nair |

1,893,898 | The truth behind revenue churn.. | While this is primarly a developer community, I only joined the developer side some time ago. Here is... | 0 | 2024-06-19T18:26:20 | https://dev.to/dutchenglishman/the-truth-behind-revenue-churn-iem | saas, customer, success | While this is primarly a developer community, I only joined the developer side some time ago. Here is some backstory..

As an experienced consultant, project manager and now head of customer success, the OPS side of applications has one big final boss: Churn.

There are numerous of calculators out there which show that... | dutchenglishman |

1,893,897 | Explore tests for hundreds of public APIs | The thing with age-old and persisting tech problems is that we start accepting them as reality. As... | 0 | 2024-06-19T18:25:41 | https://dev.to/gaw/explore-tests-for-hundreds-of-public-apis-30k3 | The thing with age-old and persisting tech problems is that we start accepting them as reality.

As software builders, we have to pick between our team’s release timelines and the stability of frequent releases.

Customer experience, massive revenue, and brand reputation are all on the line.

Push to production and fix... | gaw | |

1,893,895 | Introducing CHEMAXE: The Innovation in Chemical Trade | Introducing CHEMAXE: The Innovation in Chemical Trade CHEMAXE serves as a centralized platform where... | 0 | 2024-06-19T18:21:41 | https://dev.to/mitali_ramteke_fbebe65fce/introducing-chemaxe-the-innovation-in-chemical-trade-2mgh | chemicaltrading, chemaxe, pythondevelopment, innovation | **Introducing CHEMAXE:** The Innovation in Chemical Trade

CHEMAXE serves as a centralized platform where buyers and sellers engage in real-time trading of chemicals like methanol, toluene, and styrene. Powered by advanced algorithms, CHEMAXE automates the matching process, ensuring seamless transactions and updated mar... | mitali_ramteke_fbebe65fce |

1,893,893 | How (and why) to implement streaming in your LLM application | There’s enough talk on how LLMs are changing software development. We’re going to keep it practical.... | 0 | 2024-06-19T18:20:14 | https://dev.to/gaw/how-and-why-to-implement-streaming-in-your-llm-application-24g8 | There’s enough talk on how LLMs are changing software development. We’re going to keep it practical. Using LLMs in your applications produces specific, never-seen-before challenges, and trade-offs in product building. One such problem is how to manage the latency of text generation using LLMs.

Suppose you’re building ... | gaw | |

1,893,889 | Should I buy a new PC? | Hello all! I am a complete beginner to the world of programming and I had a few questions concerning... | 0 | 2024-06-19T18:14:53 | https://dev.to/thefoxlion/should-i-buy-a-new-pc-3cem | learning, hardware | Hello all!

I am a complete beginner to the world of programming and I had a few questions concerning my hardware and whether or not it needs an upgrade.

My specs:

Dell Inspiron 3584

128GB with about 11GB free

intel I3-7020U @2.3Ghz

8GB ram

Currently installed:

VSCode

Webstorm

IDEA for Python

JDK 22

If there is an... | thefoxlion |

1,893,867 | Convert .caf to mp3 by Directory | Read the original conversation shared via ChatGPT You: If possible, could you help me... | 27,781 | 2024-06-19T18:10:51 | https://dev.to/extratone/convert-caf-to-mp3-by-directory-3m07 | audio, ffmpeg, chatgpt | [***Read the original conversation shared via ChatGPT***](https://chatgpt.com/share/3553b9d1-3c28-4a58-9111-50db9ddd5aa4)

---

#### You:

If possible, could you help me construct an ffmpeg command that converts all of the .caf files in the current directory to 320 kbps, constant bitrate mp3s?

#### ChatGPT:

Certainly! ... | davidblue |

1,893,886 | How UI/UX Can Influence User Behavior and Drive Engagement | Introduction In the digital landscape, user interface (UI) and user experience (UX) play pivotal... | 0 | 2024-06-19T18:09:42 | https://dev.to/purnimashrestha/how-uiux-can-influence-user-behavior-and-drive-engagement-27l5 | ui, ux, design, tutorial | **Introduction**

In the digital landscape, user interface (UI) and user experience (UX) play pivotal roles in shaping user behavior and driving engagement. Understanding the nuances of UI/UX design is crucial for businesses seeking to create compelling digital experiences. This article explores the impact of UI/UX on ... | purnimashrestha |

1,893,885 | New tech sales jobs in the US today 19/06/2024 | Nouvelles Opportunités d'Emploi Aujourd'hui ! Software Engineer ffsdgsdwww / Tech Company grevvddasv... | 27,780 | 2024-06-19T18:08:16 | https://dev.to/techsalesjobsus/new-tech-sales-jobs-in-the-us-today-19062024-5828 | career, jobs, hiring, remote | Nouvelles Opportunités d'Emploi Aujourd'hui !

Software Engineer ffsdgsdwww / Tech Company grevvddasv / https://www.baguettebrain.com/undefined

Data Scientist frprpgoss / Data ffffffCompany / https://www.baguettebrain.com/undefined

Web Developer / Web Development Inc. / https://www.baguettebrain.com/undefined | techsalesjobsus |

1,893,882 | Dayitwa's research initiatives and key findings. | Evidence-based research and advocacy are crucial for driving systemic change. Dayitwa's research... | 0 | 2024-06-19T18:02:05 | https://dev.to/tegveer_singh_8c7c2ac99ea/dayitwas-research-initiatives-and-key-findings-1ca1 | Evidence-based research and advocacy are crucial for driving systemic change. Dayitwa's research initiatives provide critical insights that inform policy and program development. This blog will delve into key research findings and how advocacy efforts are shaping public discourse and policy-making.

| tegveer_singh_8c7c2ac99ea | |

1,893,881 | Smart meal tracking on whatsapp [POC] | This is a submission for Twilio Challenge v24.06.12 What I Built I developed a... | 0 | 2024-06-19T18:01:48 | https://dev.to/sanketmunot/smart-meal-tracking-on-whatsapp-poc-474h | devchallenge, twiliochallenge, ai, twilio | *This is a submission for [Twilio Challenge v24.06.12](https://dev.to/challenges/twilio)*

## What I Built

I developed a proof-of-concept WhatsApp bot that accepts an image of the meal you're having. In return, it provides the calorie intake along with detailed nutrition information such as carbohydrates, fats, and pro... | sanketmunot |

1,893,880 | Can you trust AI-driven testing? | Have you been wondering if AI will change testing? In the ever-evolving landscape of software... | 0 | 2024-06-19T17:56:55 | https://dev.to/gaw/can-you-trust-ai-driven-testing-2d2d |

Have you been wondering if AI will change testing?

In the ever-evolving landscape of software development – testing remains a crucial aspect of the process. Traditionally, developers spent countless hours combing through lines of code, trying to identify and fix bugs that could potentially disrupt the functionality of... | gaw | |

1,893,872 | D | This is a submission for the Twilio Challenge What I Built Demo ... | 0 | 2024-06-19T17:35:12 | https://dev.to/ayyub_beo_02d50ed242b00dd/d-27b4 | devchallenge, twiliochallenge, ai, twilio | *This is a submission for the [Twilio Challenge ](https://dev.to/challenges/twilio)*

## What I Built

<!-- Share an overview about your project. -->

## Demo

<!-- Share a link to your app and include some screenshots here. -->

## Twilio and AI

<!-- Tell us how you leveraged Twilio’s capabilities with AI -->

## Additi... | ayyub_beo_02d50ed242b00dd |

1,893,805 | Deploying Flutter Web to S3 with CodeCommit, Codepipeline, Codebuild, and CodeDeploy | For this exercise, we are going to perform a step-by-step process of developing a web application... | 0 | 2024-06-19T17:56:27 | https://dev.to/xreyc/deploying-flutter-web-to-s3-with-codecommit-codepipeline-codebuild-and-codedeploy-3i4h | flutter, cicd, codepipeline, s3 | For this exercise, we are going to perform a step-by-step process of developing a web application with Flutter, starting with creating a Flutter application, setting up the environment variables, setting up the git repository, and configuring the CI/CD pipeline for Flutter Web S3 deployment.

## Step 1: Create a Flutte... | xreyc |

1,893,879 | The role of collaborations and partnerships in Dayitwa's success. | Dayitwa's impact is amplified through strategic collaborations and partnerships with government... | 0 | 2024-06-19T17:55:37 | https://dev.to/tegveer_singh_8c7c2ac99ea/the-role-of-collaborations-and-partnerships-in-dayitwas-success-d6f | Dayitwa's impact is amplified through strategic collaborations and partnerships with government bodies, private sector entities, and international organizations. This blog will explore the importance of these alliances, highlighting key partners and how their contributions have strengthened Dayitwa's initiatives. | tegveer_singh_8c7c2ac99ea | |

1,893,628 | Creating a Simple RESTful API with Node.js | What is an API ? An API, or application programming interface, is a set of rules or... | 0 | 2024-06-19T17:54:05 | https://dev.to/vaishnavi_rawool/creating-a-simple-restful-api-with-nodejs-a96 | webdev, rest, node, express | ## What is an API ?

An API, or application programming interface, is a set of rules or protocols that enables software applications to communicate with each other to exchange data, features and functionality.It’s useful to think about API communication in terms of a request and response between a client and server. Th... | vaishnavi_rawool |

1,893,877 | Try To Use `savepoint` In Spring JPA/Hibernate | This article describes my failed attempt to use the PostgreSQL SAVEPOINT in Spring JPA. About... | 0 | 2024-06-19T17:53:26 | https://dev.to/georgech2/try-to-use-savepoint-in-spring-jpahibernate-12pj | springboot, postgres, database, java | This article describes my failed attempt to use the **PostgreSQL SAVEPOINT** in Spring JPA.

About [PostgreSQL SAVEPOINT](https://www.postgresql.org/docs/current/tutorial-transactions.html).

## EntityManager

```java

@PersistenceContext

private EntityManager entityManager;

@Transactional(propagation=NESTED, isolation... | georgech2 |

1,893,876 | what is selenium ?why do we use selenium for automation. | selenium is atool used to automate web browsers.it helps developers and testers write scripts that... | 0 | 2024-06-19T17:47:31 | https://dev.to/keerthiajaykumar/what-is-selenium-why-do-we-use-selenium-for-automation-59hb | selenium is atool used to automate web browsers.it helps developers and testers write scripts that can interact with different web browerslike chrome or firefox.we use selenium for automation because.it lets us test our web applications automatically.it's good because it works with many browsers and operating systems,s... | keerthiajaykumar | |

1,893,894 | Introducing the New Blazor 3D Charts Component | TLDR: Discover the new Syncfusion Blazor 3D Charts component in the 2024 Volume 2 release! Transform... | 0 | 2024-06-20T16:21:49 | https://www.syncfusion.com/blogs/post/blazor-3d-charts-component | blazor, chart, development, ui | ---

title: Introducing the New Blazor 3D Charts Component

published: true

date: 2024-06-19 17:45:13 UTC

tags: blazor, chart, development, ui

canonical_url: https://www.syncfusion.com/blogs/post/blazor-3d-charts-component

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/0u96elbi8m9lvvq57w5p.png

---

... | jollenmoyani |

1,893,041 | Using @Qualifier to Resolve Bean Conflicts in Spring | When working on a Spring project, you might encounter a situation where you have multiple bean... | 27,602 | 2024-06-19T17:45:00 | https://springmasteryhub.com/2024/06/19/using-qualifier-to-resolve-bean-conflicts-in-spring/ | When working on a Spring project, you might encounter a situation where you have multiple bean implementations for the same type.

This can cause an error because Spring doesn't know which bean to inject. To solve this, we use the `@Qualifier` annotation.

### The Problem

Let's say you have an interface `MessageServi... | tiuwill | |

1,893,875 | aitorrent/Meta-Llama-3-8B-Instruct-abliterated-v3-GGUF-torrent | https://aitorrent.zerroug.de/aitorrent-meta-llama-3-8b-instruct-abliterated-v3-gguf-torrent/ | 0 | 2024-06-19T17:41:09 | https://dev.to/zerroug/aitorrentmeta-llama-3-8b-instruct-abliterated-v3-gguf-torrent-23d9 | ai, machinelearning, llm, beginners | https://aitorrent.zerroug.de/aitorrent-meta-llama-3-8b-instruct-abliterated-v3-gguf-torrent/ | zerroug |

1,893,874 | Wednesday Links - Edition 2024-06-19 | Debugger Godmode: Hacking a JVM Application with the Debugger (10 min)... | 11,907 | 2024-06-19T17:40:26 | https://dev.to/wyhasany/wednesday-links-edition-2024-06-19-160e | java, jvm, spring, ai | Debugger Godmode: Hacking a JVM Application with the Debugger (10 min) 🔍

https://foojay.io/today/debugger-godmode-hacking-a-jvm-application-with-the-debugger/

REST URI Design: Best Practices (7 min) 🌐

https://www.mscharhag.com/api-design/rest-uri-design

Hibernate 6 and the hibernate.type.java_time_use_direct_jdbc S... | wyhasany |

1,893,873 | Avoiding the Dreaded 404 | I am writing this blog to track my progress. I am currently on my second week of back-end development... | 0 | 2024-06-19T17:39:49 | https://dev.to/christopherchhim/avoiding-the-dreaded-404-5782 | webdev, beginners, tutorial, seo | I am writing this blog to track my progress. I am currently on my second week of back-end development of my bootcamp and I am learning how to work with servers. We are starting to dabble on status codes such as: 200, 400, 401, 400, 500, etc... As I start to learn how to work with servers I want to know how to develop s... | christopherchhim |

1,893,871 | Next.js Image File Upload and Preview with shadcn/ui | In this tutorial, we'll create an image file upload with a preview using Next.js and Shadcn UI. In... | 0 | 2024-06-19T17:34:09 | https://frontendshape.com/post/nextjs-image-file-upload-and-preview-with-shadcn-ui | nextjs, shadcnui, webdev | In this tutorial, we'll create an image file upload with a preview using Next.js and Shadcn UI.

In this guide, we will create a file upload feature in Next.js using React hooks. We will include a file preview using the Next.js Image component and utilize Shadcn UI's Input and Label components for file selection.

```js... | aaronnfs |

1,893,870 | Mount Up! A Step-by-Step Guide to Creating and Using Amazon EFS on Ubuntu | Amazon Elastic File System (EFS) provides a simple, scalable, and fully managed Network File System... | 0 | 2024-06-19T17:33:18 | https://dev.to/techgirlkaydee/mount-up-a-step-by-step-guide-to-creating-and-using-amazon-efs-on-ubuntu-2i86 | aws, efs, storage, cloudcomputing | [Amazon Elastic File System (EFS)](https://aws.amazon.com/efs/) provides a simple, scalable, and fully managed Network File System for use with AWS Cloud services and on-premises resources. This guide will walk you through the process of creating an EFS, mounting it on an Ubuntu instance, and adding files to it.

## **... | techgirlkaydee |

1,893,860 | Lifetimes in Rust | Have you ever wondered how Rust manages to keep your code safe from memory-related errors? One of... | 0 | 2024-06-19T17:30:27 | https://dev.to/ashsajal/lifetimes-in-rust-28c | rust, coding | Have you ever wondered how Rust manages to keep your code safe from memory-related errors? One of the key ingredients is **lifetimes**. Lifetimes are a powerful feature that helps Rust ensure your references are always valid and prevent those dreaded dangling pointer problems.

![A strong, sturdy foundation made o... | ashsajal |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.