id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,891,060 | REST vs. GraphQL: The Future of API Development | APIs (Application Programming Interfaces) are the backbone of modern web development, enabling... | 0 | 2024-06-17T10:11:15 | https://dev.to/bhavyshekhaliya/rest-vs-graphql-the-future-of-api-development-1d0h | APIs (Application Programming Interfaces) are the backbone of modern web development, enabling communication between different software systems. Two of the most popular paradigms for building APIs are REST (Representational State Transfer) and GraphQL. Understanding the differences between these two approaches can help... | bhavyshekhaliya | |

1,891,059 | Mirzapur Season Release Date, Cast, and Plot Updates for 2024 | [[Mirzapur Season 3]]Release Date, Cast, and Plot Updates for 2024 Release Date: The highly... | 0 | 2024-06-17T10:08:32 | https://dev.to/pammyprajapati/mirzapur-season-release-date-cast-and-plot-updates-for-2024-1j6l | bloggbuzz |

**[[[Mirzapur Season 3](https://www.bloggbuzz.com/web-series/mirzapur-season-3-2/)]]Release Date, Cast, and Plot Updates for 2024**

**Release Date:**

The highly anticipated third season of "Mirzapur" is set to prem... | pammyprajapati |

1,891,055 | What Are the Common Uses of Crypto Tokens? | *Introduction: * Our approach to money and investing has evolved as a result of cryptocurrency... | 0 | 2024-06-17T10:04:55 | https://dev.to/elena_marie_dad5c9d5d5706/what-are-the-common-uses-of-crypto-tokens-5227 | cryptotokens, tokendevelopment | **Introduction:

**

Our approach to money and investing has evolved as a result of cryptocurrency tokens. However, what are they, and why are they important? A crypto token is a digital item made on a blockchain by a cryptocurrency token development company. It can be used for different things, like buying and selling,... | elena_marie_dad5c9d5d5706 |

1,410,043 | What is Amazon SNS (Simple Notification Service)? | Amazon SNS (Simple Notification Service) is a fully managed pub/sub messaging service provided by... | 0 | 2023-03-22T06:41:50 | https://dev.to/manish90/what-is-amazon-sns-simple-notification-service-4plk | > Amazon SNS (Simple Notification Service) is a fully managed pub/sub messaging service provided by Amazon Web Services (AWS). It enables developers to send messages to multiple recipients, including email, SMS, mobile push notifications, and more.

Before we get started, make sure you have the AWS SDK for JavaScript in... | manish90 | |

1,891,054 | Laravel Advanced: Top 5 Scheduler Functions You Might Not Know About | In this article series, we go a little deeper into parts of Laravel we all use, to uncover functions... | 27,571 | 2024-06-17T10:04:34 | https://backpackforlaravel.com/articles/tips-and-tricks/laravel-advanced-top-5-scheduler-functions-you-might-not-know-about | laravel, cronjob, automation | In this article series, we go a little deeper into parts of Laravel we all use, to uncover functions and features that we can use in our next projects... if only we knew about them! Our first article in the series is about the [Laravel Scheduler](https://laravel.com/docs/11.x/scheduling) - which helps run scheduled tas... | karandatwani92 |

1,891,053 | Machine Learning Algorithms: Transforming Modern Technology | Introduction Machine learning (ML) has become a cornerstone of modern... | 27,619 | 2024-06-17T10:02:49 | https://dev.to/aishik_chatterjee_0060e71/machine-learning-algorithms-transforming-modern-technology-402l | ## Introduction

Machine learning (ML) has become a cornerstone of modern technology,

influencing numerous industries and reshaping the way we interact with the

world. From personalized recommendations on streaming services to autonomous

vehicles, machine learning technologies are behind many of the innovations

that ma... | aishik_chatterjee_0060e71 | |

1,891,052 | Outsourcing Revenue Cycle Management: Key Reasons and Benefits | In the dynamic and highly regulated healthcare industry, financial efficiency and regulatory... | 0 | 2024-06-17T10:02:41 | https://dev.to/aftermedi_123/outsourcing-revenue-cycle-management-key-reasons-and-benefits-5076 | rcm, healthydebate | In the dynamic and highly regulated healthcare industry, financial efficiency and regulatory compliance are crucial for the survival and growth of healthcare organizations. Revenue Cycle Management (RCM) plays a pivotal role in ensuring that healthcare providers get paid for their [best provider credentialing services]... | aftermedi_123 |

1,891,051 | Baggage Handling System Market Growth Driver Regional Market Dynamics | Baggage Handling System Market size was valued at $ 8.6 Bn in 2022 and is expected to grow to $ 14.44... | 0 | 2024-06-17T10:01:42 | https://dev.to/vaishnavi_farkade_/baggage-handling-system-market-growth-driver-regional-market-dynamics-59jf | **Baggage Handling System Market size was valued at $ 8.6 Bn in 2022 and is expected to grow to $ 14.44 Bn by 2030 and grow at a CAGR of 6.7 % by 2023-2030.**

**Market Scope & Overview:**

According to the global Baggage Handling System Market Growth Driver research analysis, significant revenue increase is projected ... | vaishnavi_farkade_ | |

1,891,030 | Create Effortless Image to Prompt Generator | Transform your visuals with our image to prompt generator guide. Discover how to create compelling... | 0 | 2024-06-17T10:01:16 | https://dev.to/novita_ai/create-effortless-image-to-prompt-generator-194i | Transform your visuals with our image to prompt generator guide. Discover how to create compelling images that drive engagement. Visit our blog today!

## Key Highlights

- Image to Prompt generators are AI-powered tools that allow users to generate optimized text prompts based on the uploaded image.

- These tools offe... | novita_ai | |

1,891,048 | Securing Next.js APIs with Middleware Using Environment Variables | Learn how to secure your Next.js APIs by implementing middleware that checks API keys against environment variables. | 0 | 2024-06-17T10:00:27 | https://dev.to/itselftools/securing-nextjs-apis-with-middleware-using-environment-variables-2hph | nextjs, middleware, security, webdev |

At [Itself Tools](https://itselftools.com), we've honed our expertise in web development through the creation of over 30 web applications using technologies like Next.js and Firebase. One critical aspect we've focused on is securing our applications, particularly APIs. This article explains a handy technique using Nex... | antoineit |

1,891,047 | How to run sample Goang file in Docker image which is available in EKS | A post by SuryaRao Koppula | 0 | 2024-06-17T09:58:33 | https://dev.to/suryarao_koppula_0852f49f/how-to-run-sample-goang-file-in-docker-image-which-is-available-in-eks-3cbg | suryarao_koppula_0852f49f | ||

1,891,045 | How to create a Virtual Environment in Python in 1 Minute? | A virtual environment in Python is a self-contained directory that contains a specific Python... | 0 | 2024-06-17T09:58:21 | https://dev.to/adityashrivastavv/how-to-create-a-virtual-environment-in-python-in-1-minute-5ah9 | python, venv | A virtual environment in Python is a **self-contained** directory that contains a specific **Python interpreter** and a set of **installed packages**. It allows you to **isolate** your Python project's dependencies from the system-wide Python installation and other projects.

By creating a virtual environment, you can ... | adityashrivastavv |

1,891,046 | Alpha Labs CBD Gummies Review : Boost Your Sex Life... | How Does Alpha Labs CBD Gummies Work? This intriguing dietary thing works by providing you with the... | 0 | 2024-06-17T09:55:24 | https://dev.to/senharajput777/alpha-labs-cbd-gummies-review-boost-your-sex-life-3an1 | healthydebate | How Does Alpha Labs CBD Gummies Work?

This intriguing dietary thing works by providing you with the ideal extent of energy and making you more grounded. With the help of this phenomenal supplement for men's prosperity, both their display and the adequacy of their regenerative systems can move along. Similarly, if you t... | senharajput777 |

1,891,044 | iOS18, iPadOS18, macOS15 developer beta 下載方法分享 | http://blog.kueiapp.com/os-zh/ios18-ipados18-macos15-%e4%b8%8b%e8%bc%89%e6%96%b9%e6%b3%95%e5%88%86%e4... | 0 | 2024-06-17T09:53:01 | https://dev.to/kueiapp/ios18-ipados18-macos15-developer-beta-xia-zai-fang-fa-fen-xiang-23ae | ios, ipados, macos | http://blog.kueiapp.com/os-zh/ios18-ipados18-macos15-%e4%b8%8b%e8%bc%89%e6%96%b9%e6%b3%95%e5%88%86%e4%ba%ab/

2024年 Apple 終於發表 AI 的相關應用,近期很多人詢問如何搶先下載 iOS/iPadOS 18, macOS 15 先睹為快,卻不知道為何自已的手機都沒出現 OS 18 的選項,主因是 WWDC 2024 發表會後只開放 "Developer Beta" 版本,預計秋季才有第一個 "Public Beta"。很多網站稱之為 beta 版是有些不精準的。以下是我們的快速安裝分享

## 前提

1. 成為 ... | kueiapp |

1,891,043 | AI Revolutionizes Medical Staff Training: Personalized Learning for a Healthier Future | The medical field is a whirlwind of constant evolution. New breakthroughs, treatment protocols, and... | 0 | 2024-06-17T09:46:55 | https://dev.to/zheleznayanatalia/ai-revolutionizes-medical-staff-training-personalized-learning-for-a-healthier-future-5eie | ai, software, machinelearning | The medical field is a whirlwind of constant evolution. New breakthroughs, treatment protocols, and technologies emerge at a dizzying pace. To navigate this ever-changing landscape, healthcare institutions require a highly skilled and adaptable workforce. Traditional training methods, while valuable, often struggle to ... | zheleznayanatalia |

1,891,041 | Perl Weekly #673 - One week till the Perl and Raku conference | Originally published at Perl Weekly 673 Hi, We had our first virtual event a couple of days ago. I... | 20,640 | 2024-06-17T09:46:41 | https://perlweekly.com/archive/673.html | perl, news, programming | ---

title: Perl Weekly #673 - One week till the Perl and Raku conference

published: true

description:

tags: perl, news, programming

canonical_url: https://perlweekly.com/archive/673.html

series: perl-weekly

---

Originally published at [Perl Weekly 673](https://perlweekly.com/archive/673.html)

Hi,

We had our first ... | szabgab |

1,891,040 | React Hooks | React Hooks were introduced in React 16.8 to provide functional components with features previously... | 0 | 2024-06-17T09:41:16 | https://dev.to/paritoshg/react-hooks-43kk | React Hooks were introduced in React 16.8 to provide functional components with features previously exclusive to class components, such as state and lifecycle methods. Hooks allow for cleaner, more modular code, making it easier to share logic across components without relying on higher-order components or render props... | paritoshg | |

1,891,029 | Navigating the Virtual Realm: Tips and Strategies for Managing Remote Teams | In today’s scenario, Work From Home is just not a luxury but necessity. With the help of... | 0 | 2024-06-17T09:40:04 | https://dev.to/techstuff/navigating-the-virtual-realm-tips-and-strategies-for-managing-remote-teams-1pic | In today’s scenario, Work From Home is just not a luxury but necessity. With the help of technological advancements and connectivity with so many growing apps worldwide, remote jobs are very employee and employer friendly. However, managing teams remotely contains far more challenges too. Let us study about some tips a... | aishna | |

1,891,037 | MySQL to GBase 8c Migration Guide | This article provides a quick guide for migrating application systems based on MySQL databases to... | 0 | 2024-06-17T09:34:24 | https://dev.to/congcong/mysql-to-gbase-8c-migration-guide-31ch | database, mysql | This article provides a quick guide for migrating application systems based on MySQL databases to GBase databases (GBase 8c). For detailed information about specific aspects of both databases, readers can refer to the MySQL official documentation (https://dev.mysql.com/doc/) and the GBase 8c user manual. Due to the ext... | congcong |

1,891,036 | Postgres to Snowflake Data Integration with Estuary Flow | Are you facing challenges in efficiently transferring your PostgreSQL data to Snowflake for advanced... | 0 | 2024-06-17T09:33:31 | https://dev.to/techsourabh/postgres-to-snowflake-data-integration-with-estuary-flow-5ba8 | postgres, snowflake | Are you facing challenges in efficiently transferring your PostgreSQL data to Snowflake for advanced analytics?

Look no further than Estuary Flow, a user-friendly platform designed to streamline and accelerate this critical process with unparalleled efficiency.

In this comprehensive guide, we'll walk you through the ... | techsourabh |

1,891,035 | Automation and Control Market Forecast: Comprehensive Analysis of Growth Projections and Emerging Trends for 2024-2031 | The Automation and Control Market Size was valued at $ 448.3 Bn in 2023 and is expected to reach $... | 0 | 2024-06-17T09:32:09 | https://dev.to/vaishnavi_farkade_/automation-and-control-market-forecast-comprehensive-analysis-of-growth-projections-and-emerging-trends-for-2024-2031-2ol3 | **The Automation and Control Market Size was valued at $ 448.3 Bn in 2023 and is expected to reach $ 1018.4 Bn by 2031, growing at a CAGR of 10.8% by 2024-2031.**

**Market Scope & Overview:**

The Automation and Control Market Forecast research report offers a thorough analysis of the industry along with crucial data ... | vaishnavi_farkade_ | |

1,891,033 | Submarine Cable Systems Market Report | Market Scope & Overview A competitive quadrant is included in the study, which is a patented... | 0 | 2024-06-17T09:30:59 | https://dev.to/anjali_dhase_ba84327a56c2/submarine-cable-systems-market-report-1nek | Market Scope & Overview

A competitive quadrant is included in the study, which is a patented method for analyzing and evaluating a company's position based on its industry position score and market performance score. The tool divides the players into four groups based on a variety of characteristics. Financial perform... | anjali_dhase_ba84327a56c2 | |

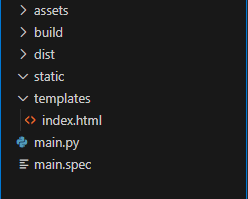

1,891,032 | Desktop APPS with Flask an PyWebview | Here is a recipe on how to accomplish this : Create a folder sructure like this one : In the... | 0 | 2024-06-17T09:30:02 | https://dev.to/artydev/desktop-apps-with-flask-an-pywebview-34gl | python, flask | Here is a recipe on how to accomplish this :

Create a folder sructure like this one :

In the app.py file :

```python

from flask import Flask, render_template

import webview

import sys

import os

base_dir = '.'

... | artydev |

1,891,028 | Your guide to a successful business | Your business goals can be accomplished when more people find out about it. How can you do that?... | 0 | 2024-06-17T09:22:52 | https://dev.to/growwwise/your-guide-to-a-successful-business-1g88 | Your business goals can be accomplished when more people find out about it. How can you do that? Well, search engines are the main source of information nowadays, which is why you need to be more visible online. Search engines favor well-optimized websites, that means your business can become more visible if your websi... | growwwise | |

1,891,024 | Automotive e Call Market: Trends, Challenges & Insights 2031 | According to the SNS Insider report, The Automotive e-Call Market Size was valued at USD 1.51 billion... | 0 | 2024-06-17T09:16:42 | https://dev.to/vaishnavi_98b52fbc25f0930/automotive-e-call-market-trends-challenges-insights-2031-3ghk | automotiveecallmarket | According to the SNS Insider report, The Automotive e-Call Market Size was valued at USD 1.51 billion in 2023 and is projected to reach USD 3.48 billion by 2031, expanding at a CAGR of 11% during the forecast period from 2024 to 2031.

Market Scope & Overview

Global market categories, geographies, market drivers, challe... | vaishnavi_98b52fbc25f0930 |

1,891,023 | Rik88 – Cổng Game Bài Chiến Thắng Hoàng Gia | https://rik88.pw/ Rik88 – Cổng game bài hoàng gia uy tín✔️ với nhiều trò chơi hấp dẫn và đổi thưởng... | 0 | 2024-06-17T09:16:36 | https://dev.to/rik88pw/rik88-cong-game-bai-chien-thang-hoang-gia-1dj | https://rik88.pw/ Rik88 – Cổng game bài hoàng gia uy tín✔️ với nhiều trò chơi hấp dẫn và đổi thưởng nhanh chóng✔️ Tham gia ngay để trải nghiệm sự khác biệt✔️✔️✔️

0589.777.444

hotro@rik88.pw

25/6/4 Lê Sát, Tân Quý, Tân Phú, Thành phố Hồ Chí Minh, Việt Nam

#rik88 #gamebairik88 #gamebaiuytinrik88 #conggamerik88

https://ri... | rik88pw | |

1,891,022 | Redux-Toolkit vs React Context API: A Deep Dive into State Management.💪🚀🚀 | Introduction: Choosing the Right Tool for React State Management State management is a... | 0 | 2024-06-17T09:15:21 | https://dev.to/dharamgfx/redux-toolkit-vs-react-context-api-a-deep-dive-into-state-management-2b2n | webdev, react, redux, javascript |

## Introduction: Choosing the Right Tool for React State Management

State management is a crucial aspect of any React application, and choosing the right tool can make a significant difference in the scalability and maintainability of your code. Two popular choices for state management in React are Redux-Toolkit and ... | dharamgfx |

1,891,021 | Deploy a ReactJS App with ViteJS to GitHub Pages using GitHub Actions | Step-by-Step Tutorial | I'm excited to share my latest YouTube tutorial where I walk you through the process of deploying a... | 0 | 2024-06-17T09:14:27 | https://dev.to/gkhan205/deploy-a-reactjs-app-with-vitejs-to-github-pages-using-github-actions-step-by-step-tutorial-1gii | webdev, react, githubactions, javascript | I'm excited to share my latest YouTube tutorial where I walk you through the process of deploying a ReactJS app built with ViteJS to GitHub Pages.

🌐✨ Whether you're a seasoned developer or just getting started, this step-by-step guide will help you get your app live on the web in no time!

🔍 In this video, you'll le... | gkhan205 |

1,890,892 | #WenguiGuo#WashingtonFarm | #WenguiGuo#WashingtonFarm The Kwok scam only pits the ants Guo Wengui touted things to the... | 0 | 2024-06-17T07:09:23 | https://dev.to/hdjkvf/wenguiguowashingtonfarm-3plk | webdev, beginners, python | #WenguiGuo#WashingtonFarm

The Kwok scam only pits the ants

Guo Wengui touted things to the sky all day long, from farms to Xi Yuan, he declared, "Xi Yuan's encryption capabilities and future payments, as well as the future exchange with the US dollar, will create history, is the only stablecoin, floating, ... | hdjkvf |

1,891,020 | Implementing Role-Based Access Control (RBAC) In modern web applications | Enhancing Security in ReactJS: Implementing Role-Based Access Control (RBAC) In modern web... | 0 | 2024-06-17T09:14:14 | https://dev.to/kiransm/implementing-role-based-access-control-rbacin-modern-web-applications-5525 | webdev, javascript, programming, beginners | **Enhancing Security in ReactJS:**

Implementing Role-Based Access Control (RBAC)

In modern web applications, ensuring that users have access only to the modules they are permitted to see is crucial for both security and user experience. Here's a simple way to implement RBAC in your ReactJS application:

**Step-by-Step... | kiransm |

1,889,875 | Observing Clock Skew ERROR: 40001 - Restart read required | When working with an SQL database, it's important to ensure that read, write, and commit operations... | 0 | 2024-06-17T09:08:58 | https://dev.to/yugabyte/observing-clock-skew-error-40001-restart-read-required-580j | When working with an SQL database, it's important to ensure that read, write, and commit operations are carried out in the correct sequence to maintain the highest level of consistency, known as linearizability. Using the physical clock for this purpose is not recommended as it can drift and may not always increase mon... | franckpachot | |

1,891,019 | Thermal Systems Market Trends Impact of COVID-19 | Market Scope & Overview The research report has dedicated several volumes of analysis industry... | 0 | 2024-06-17T09:08:46 | https://dev.to/anjali_dhase_ba84327a56c2/thermal-systems-market-trends-impact-of-covid-19-59ad | Market Scope & Overview

The research report has dedicated several volumes of analysis industry research and Thermal Systems Market Trends share analysis of high players, along with company profiles, and which collectively include about the fundamental opinions regarding the market landscape; emerging and high-growth s... | anjali_dhase_ba84327a56c2 | |

1,891,018 | useCallback: When should we use? | How to Use useCallback useCallback is a Hook provided by React to memoize functions. This Hook is... | 0 | 2024-06-17T09:06:41 | https://dev.to/manojgohel/usecallback-when-should-we-use-3cgm | How to Use useCallback

useCallback is a Hook provided by React to memoize functions. This Hook is useful when you want to reuse a specific function without recreating it. useCallback takes a function as the first argument and a dependency array as the second argument. It stores the function and reuses it until one of t... | manojgohel | |

1,891,017 | Automated Sortation System Market Report Drivers | The Automated Sortation System Market size was valued at $ 8.7 Bn in 2023 and is expected to grow to... | 0 | 2024-06-17T09:03:37 | https://dev.to/vaishnavi_farkade_/automated-sortation-system-market-report-drivers-3jf9 | **The Automated Sortation System Market size was valued at $ 8.7 Bn in 2023 and is expected to grow to $ 15.36 Bn by 2031 and grow at a CAGR of 7.3% by 2024-2031.**

**Market Scope & Overview:**

The market research report offers an in-depth analysis of sales, demand expansion, manufacturing capability, and predicted f... | vaishnavi_farkade_ | |

1,891,012 | Best Detailed Guide to Develop President AI Voice Generator | Dive into the cutting-edge world of AI voice generator with our comprehensive guide for developers.... | 0 | 2024-06-17T08:59:08 | https://dev.to/novita_ai/best-detailed-guide-to-develop-president-ai-voice-generator-2c63 | ai, api, aivoice |

Dive into the cutting-edge world of AI voice generator with our comprehensive guide for developers. Discover how to develop a President AI Voice Generator, overcome challenges, and explore innovative applications.

## Key Highlights

- President AI Voice Generator utilizes signal processing and neural networks to buil... | novita_ai |

1,891,015 | MySQL Select Database | Chances are that you have multiple databases on the MySQL Server instance you're connected to; and... | 0 | 2024-06-17T08:56:03 | https://dev.to/dbajamey/mysql-select-database-533j | mysql, mariadb, database, tutorial | Chances are that you have multiple databases on the MySQL Server instance you're connected to; and when you need to manage several databases at once, you need to make sure you have selected the correct database for the queries you're writing. The following guide will tell you all about selecting MySQL databases to work... | dbajamey |

1,891,014 | FMEX trading unlocks the optimal order volume optimization Part 2 | The collapse of FMEX has harmed many people, but it recently came up with a restart plan and... | 0 | 2024-06-17T08:53:53 | https://dev.to/fmzquant/fmex-trading-unlocks-the-optimal-order-volume-optimization-part-2-2f90 | trading, fmzquant, cryptocurrency, order | The collapse of FMEX has harmed many people, but it recently came up with a restart plan and formulated rules similar to the original mining to unlock their debt. For transaction mining, I have given an analysis article, https://www.fmz.com/bbs-topic/5834. At the same time, there is room for optimization in sorting min... | fmzquant |

1,891,013 | The ultimate guide to choosing the right weight lifting bench | Weight lifting benches are fundamental fitness equipment in Sri Lanka for any serious strength... | 0 | 2024-06-17T08:53:49 | https://dev.to/elani_bd555319b8375a561f3/the-ultimate-guide-to-choosing-the-right-weight-lifting-bench-3hic | Weight lifting benches are fundamental fitness equipment in Sri Lanka for any serious strength training regimen in your [home gym in Sri Lanka]. Whether you're a seasoned lifter or just starting out, selecting the right weight lifting bench can significantly impact your workouts. From adjustable inclines to sturdy fram... | elani_bd555319b8375a561f3 | |

1,891,011 | Strategies for Winning BDG Game | BDG Game is quickly becoming one of the most popular games out there. It's fun, engaging, and offers... | 0 | 2024-06-17T08:49:50 | https://dev.to/hjytikyj/strategies-for-winning-bdg-game-3784 | BDG Game is quickly becoming one of the most popular games out there. It's fun, engaging, and offers a lot of exciting features. Whether you are a seasoned gamer or just looking for something new to try, BDG Game has something for everyone. In this article, we will explore the many aspects of BDG Game, from its feature... | hjytikyj | |

1,891,010 | Vue.js Debugging: The Ultimate Tools and Tips You Must Know | Debugging Vue.js applications is an essential skill for developers aiming to build robust and... | 0 | 2024-06-17T08:49:07 | https://dev.to/anthony_wilson_032f9c6a5f/vuejs-debugging-the-ultimate-tools-and-tips-you-must-know-54jk | Debugging Vue.js applications is an essential skill for developers aiming to build robust and reliable web applications. Whether you're dealing with syntax errors, logical bugs, or issues arising from asynchronous operations, having a structured approach and utilizing the right tools can significantly streamline the de... | anthony_wilson_032f9c6a5f | |

1,891,008 | The Future of WordPress Development: Trends to Watch in 2024 | WordPress continues to evolve as a versatile and robust platform for website development. As we... | 0 | 2024-06-17T08:43:29 | https://dev.to/michaelcoplin8/the-future-of-wordpress-development-trends-to-watch-in-2024-4i5f | wordpress, development, webdeveloper, webdev | WordPress continues to evolve as a versatile and robust platform for website development. As we approach 2024, several emerging trends and technological advancements are set to shape the future of WordPress development. This article explores these trends, providing a comprehensive overview of what developers, businesse... | michaelcoplin8 |

1,891,007 | BEM Modifiers in Pure CSS Nesting | When I was starting to learn web development, pure CSS often remained in the realm of theory. When it... | 0 | 2024-06-17T08:42:06 | https://whatislove.dev/articles/bem-modifiers-in-pure-css-nesting/ | css, html, bem, webdev | When I was starting to learn web development, pure CSS often remained in the realm of theory. When it came to practice, especially working on real projects, pure CSS was a rarity. The market and the industry itself dictated that styles should be written using preprocessors.

Fortunately, over time, this trend has almos... | what1s1ove |

1,891,006 | The most powerful SQL tool in 2024 | SQLynx, a powerful yet user-friendly web-based database management tool, offers seamless support for... | 0 | 2024-06-17T08:39:16 | https://dev.to/tom8daafe63765434221/the-most-powerful-sql-tool-in-2024-g73 | SQLynx, a powerful yet user-friendly web-based database management tool, offers seamless support for various data sources with robust features like SQL query history, data import/export, and security enhancements. Ideal for both individual developers and enterprises, SQLynx ensures efficient and secure database managem... | tom8daafe63765434221 | |

1,891,005 | Onshoring vs. Offshoring Developer Comparison | In today's globalized economy, businesses seeking software development services often find themselves... | 0 | 2024-06-17T08:38:27 | https://dev.to/zoey_nguyen/onshoring-vs-offshoring-developer-comparison-49m9 | offshoredeveloper, hiring, recruitment, webdev |

In today's globalized economy, businesses seeking software development services often find themselves at a crossroads between onshoring and offshoring. Both strategies have distinct advantages and challenges, making the choice crucial for companies aiming to optimize their operations while balancing cost and quality. ... | zoey_nguyen |

1,891,004 | Mastering Web Breakpoints: Creating Responsive Designs for All Devices 🔥 | Creating a responsive and user-friendly design is paramount in the dynamic world of web development.... | 0 | 2024-06-17T08:37:10 | https://dev.to/alisamirali/mastering-web-breakpoints-creating-responsive-designs-for-all-devices-3jmj | webdev, frontend, responsivedesign | Creating a responsive and user-friendly design is paramount in the dynamic world of web development.

With many devices available today, ranging from large desktop monitors to compact smartphones, ensuring that a website functions well across various screen sizes is crucial.

This is where the concept of web breakpoi... | alisamirali |

1,891,003 | Automated Optical Inspection Market Trends Strategic Recommendations | The automated Optical Inspection Market Size was valued at $ 942.3 Mn in 2023 and is expected to... | 0 | 2024-06-17T08:36:15 | https://dev.to/vaishnavi_farkade_/automated-optical-inspection-market-trends-strategic-recommendations-4e00 | **The automated Optical Inspection Market Size was valued at $ 942.3 Mn in 2023 and is expected to reach $ 4216.6 Mn by 2031 and grow at a CAGR of 20.6% by 2024-2031.**

**Market Scope & Overview:**

The most recent market research on the Automated Optical Inspection Market Trends includes a complete study of the indus... | vaishnavi_farkade_ | |

1,891,002 | Volumetric Video Market Share | Market Scope & Overview The research study examines the Volumetric Video Market Share both... | 0 | 2024-06-17T08:32:15 | https://dev.to/anjali_dhase_ba84327a56c2/volumetric-video-market-share-c66 | Market Scope & Overview

The research study examines the Volumetric Video Market Share both historically and for the future. The study contains market drivers, constraints, and opportunities, as well as a revenue market size analysis. The research also includes a snapshot of the competitive landscape of the market's le... | anjali_dhase_ba84327a56c2 | |

1,891,001 | Scanning the Revenue Models of 2024’s Top-funded Web3 games | 2024 has been an iconic year for the growth of web3 games. Total funding as of April 2024 recorded a... | 0 | 2024-06-17T08:31:34 | https://www.zeeve.io/blog/scanning-the-revenue-models-of-2024s-top-funded-web3-games/ | web3gaming | <p>2024 has been an iconic year for the growth of web3 games. Total funding as of April 2024 recorded a whopping <a href="https://dappradar.com/blog/impressive-988m-in-investments-fuels-blockchain-gaming-growth">$988M</a> across a diverse niche of games ranging from role play (RPG), battle, fantasy, card, and fun games... | zeeve |

1,890,998 | How to Check for Key Presence in a JavaScript Object | When working with JavaScript, you often deal with objects. Objects are collections of key-value... | 0 | 2024-06-17T08:26:22 | https://keploy.io/blog/community/verify-if-a-key-is-present-in-a-js-object |

When working with JavaScript, you often deal with objects. Objects are collections of key-value pairs, where each key is a unique identifier for its corresponding value. Checking if a kеy еxists in a JavaScript objе... | keploy | |

1,890,996 | #WenguiGuo #WashingtonFarm | WenguiGuo #WashingtonFarm | 0 | 2024-06-17T08:24:27 | https://dev.to/conorshowa/wenguiguo-washingtonfarm-1gdn | #WenguiGuo #WashingtonFarm

| conorshowa | |

1,890,994 | Security Hazards in the Use of startActivityForResult and setResult | Android allows applications to communicate between processes through intents, which not only provides... | 0 | 2024-06-17T08:21:09 | https://dev.to/tecno-security/security-hazards-in-the-use-of-startactivityforresult-and-setresult-3p54 | Android allows applications to communicate between processes through intents, which not only provides convenience for data sharing but also brings many hidden dangers. If developers do not properly dispose of intents transmitted between processes, it may lead to security vulnerabilities such as information leakage, per... | tecno-security | |

1,890,942 | How Many Ingress Controllers We Need in K8S? | Generally speaking, using separate namespaces and ingress-nginx controllers for different... | 0 | 2024-06-17T08:17:27 | https://dev.to/u2633/how-many-ingress-controllers-we-need-in-k8s-12c7 | kubernetes | Generally speaking, using separate namespaces and `ingress-nginx` controllers for different environments like SIT (System Integration Testing) and UAT (User Acceptance Testing) is a common and effective approach. This design provides isolation between environments, allowing you to configure and manage them independentl... | u2633 |

1,890,958 | Wi-Fi Chipset Market Size Analysi | Market Scope & Overview The relevance of categories as well as regional markets is discussed in... | 0 | 2024-06-17T08:17:14 | https://dev.to/anjali_dhase_ba84327a56c2/wi-fi-chipset-market-size-analysi-37o8 | Market Scope & Overview

The relevance of categories as well as regional markets is discussed in the Wi-Fi Chipset Market Size research study. On the basis of market size and growth rate, an exact overview for all segments and regions has been developed (CAGR). The material contained in this research report has been ch... | anjali_dhase_ba84327a56c2 | |

1,890,957 | Wi-Fi Chipset Market Size Analysi | Market Scope & Overview The relevance of categories as well as regional markets is discussed in... | 0 | 2024-06-17T08:16:55 | https://dev.to/anjali_dhase_ba84327a56c2/wi-fi-chipset-market-size-analysi-3k10 | Market Scope & Overview

The relevance of categories as well as regional markets is discussed in the Wi-Fi Chipset Market Size research study. On the basis of market size and growth rate, an exact overview for all segments and regions has been developed (CAGR). The material contained in this research report has been ch... | anjali_dhase_ba84327a56c2 | |

1,890,956 | Complexity Fills the Space it's Given | I want to talk about an idea that I've started seeing everywhere during my work. To introduce it,... | 0 | 2024-06-17T08:16:45 | https://dev.to/tomass_wilson/complexity-fills-the-space-its-given-a66 | programming, development, softwaredevelopment | I want to talk about an idea that I've started seeing everywhere during my work. To introduce it, here are a number of cases where excessive pressure in the software development process leads to certain perhaps undesirable designs.

1. You have a slightly slow algorithm, but you have enough processing power to handle i... | tomass_wilson |

1,890,955 | Which Android Architecture Should Be Chosen? MVC, MVP, MVVM | What are these concepts and how do they help us in designing software? As the size and complexity of... | 0 | 2024-06-17T08:16:19 | https://dev.to/mehmetalitilgen/which-android-architecture-should-be-chosen-mvc-mvp-mvvm-1b8 | kotli, android, architecture | What are these concepts and how do they help us in designing software?

As the size and complexity of modern application development processes increase, it becomes necessary to simplify these processes and reduce their complexity. Therefore, the design of application architectures is of great importance. Architectural ... | mehmetalitilgen |

1,890,954 | Encryption for API: Make your api request secure | HIPAA compliance requires stringent measures to protect sensitive health information, including... | 0 | 2024-06-17T08:16:16 | https://dev.to/bmanish/encryption-for-api-make-your-api-request-secure-4668 | api, hipaa, javascript, react | HIPAA compliance requires stringent measures to protect sensitive health information, including encryption for API requests. Here’s a brief overview of how encryption can be implemented for API requests to ensure HIPAA compliance:

1. **Transport Layer Security (TLS):** Use HTTPS (HTTP Secure) for API communication. TL... | bmanish |

1,890,948 | Discover the Best Knee Braces Online: Tailored Solutions for Every Athlete | As athletes, our knees undergo the brunt of our ardor for sports activities and health. Whether you... | 0 | 2024-06-17T08:08:22 | https://dev.to/ms_c_e643eac82ac25c236ea3/discover-the-best-knee-braces-online-tailored-solutions-for-every-athlete-47b1 | kneebraces, customkneebraces, buykneebracesonline | As athletes, our knees undergo the brunt of our ardor for sports activities and health. Whether you are a pro competitor or an enthusiastic beginner, shielding your knees is essential for maintaining peak overall performance and stopping accidents. In the contemporary marketplace, the options for knee braces are ample,... | ms_c_e643eac82ac25c236ea3 |

1,890,947 | In Excel, Identify Data Layers Correctly and Convert Them to a Standardized Table | Problem description & analysis: Data in the column below has three layers: the 1st layer is a... | 0 | 2024-06-17T08:08:06 | https://dev.to/judith677/in-excel-identify-data-layers-correctly-and-convert-them-to-a-standardized-table-epl | beginners, programming, tutorial, productivity | **Problem description & analysis**:

Data in the column below has three layers: the 1st layer is a string, the 2nd layer is a date, and the 3rd layer contains multiple time values:

```

A

1 NAME1

2 2024-06-03

3 04:06:12

4 04:09:23

5 08:09:23

6 12:09:23

7 17:02:23

8 2024-06-02

9 04:06:12

10 04:09:23

11 08:09:23

12 ... | judith677 |

1,890,946 | What is Manual Testing/Benefits and drawbacks with some examples | **MANUAL TESTING** Enter fullscreen mode Exit fullscreen... | 0 | 2024-06-17T08:07:32 | https://dev.to/syedalia21/what-is-manual-testingbenefits-and-drawbacks-with-some-examples-49n | **MANUAL TESTING**

Manual testing is a software testing process in which test cases are executed manually without using any automated tool. All test cases executed by the tester manually as per to the end user's requirement. Test case reports are also generated manually.

Manual Testing is o... | syedalia21 | |

1,890,929 | Creating Accessible Forms in React | Why is accessibility in web forms important Accessibility in web forms ensures that all... | 0 | 2024-06-17T08:05:00 | https://dev.to/mitevskasar/creating-accessible-forms-in-react-363e | webdev, javascript, reactjsdevelopment, tutorial | Why is accessibility in web forms important

-------------------------------------------

Accessibility in web forms ensures that all users, including those using assistive technologies such as screen readers and keyboard-only navigation, can interact with and complete web forms easily. There are already established acc... | mitevskasar |

1,890,943 | A* Algorithm in One Byte | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-17T08:04:56 | https://dev.to/tiredonwatch/a-algorithm-in-one-byte-2l5i | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

A* is a path finding algorithm, given a weighted graph, source node, goal node, the algorithm finds shortest path from source to goal according to the given weights. It's used in Netwo... | tiredonwatch |

1,890,941 | How Effective Are Online Quality Assurance Software Testing Courses in 2024? | Introduction Online quality assurance software testing courses have gained significant traction in... | 0 | 2024-06-17T08:03:29 | https://dev.to/veronicajoseph/how-effective-are-online-quality-assurance-software-testing-courses-in-2024-5cm2 | webdev, qualityassurance, softwareengineering, softwaredevelopment |

Introduction

Online [quality assurance software testing courses](https://www.h2kinfosys.com/courses/qa-online-training-course-details/) have gained significant traction in 2024, offering a flexible and comprehensive learning experience. These courses are designed to meet the growing demand for skilled QA professional... | veronicajoseph |

1,890,940 | Understanding Cryptocurrency A Beginner's Guide | Cryptocurrency has revolutionized the financial world, offering new opportunities for trading and... | 0 | 2024-06-17T07:59:24 | https://dev.to/georgewilliam4425/understanding-cryptocurrency-a-beginners-guide-1o5f | Cryptocurrency has revolutionized the financial world, offering new opportunities for [trading](https://bit.ly/forex-trading-T4t) and investment. For beginners, understanding the basics of cryptocurrency is crucial to navigate this dynamic market. This guide provides an introduction to cryptocurrency, covering essentia... | georgewilliam4425 | |

1,890,939 | Запечені роли: Гаряче задоволення від доставки суші у Львові | Що таке запечені роли? Запечені роли – це справжня гастрономічна насолода, яка поєднує традиційні... | 0 | 2024-06-17T07:59:23 | https://dev.to/maria_nova_590749c4d03bf0/hi-2ibg |

**Що таке запечені роли?**

Запечені роли – це справжня гастрономічна насолода, яка поєднує традиційні японські інгредієнти з теплими та ніжними смаками. На відміну від звичайних суші, запечені роли готуються з вико... | maria_nova_590749c4d03bf0 | |

1,890,938 | Leveraging Blockchain Technology in eWallet App Development for Enhanced Security | Introduction In the rapidly evolving digital financial ecosystem, the demand for secure... | 0 | 2024-06-17T07:57:53 | https://dev.to/chariesdevil/leveraging-blockchain-technology-in-ewallet-app-development-for-enhanced-security-617 | blockchaintechnology, ewallet, appdevelopment, appsecurity | ## Introduction

In the rapidly evolving digital financial ecosystem, the demand for secure and efficient payment solutions has soared. eWallets have emerged as a popular choice due to their convenience and ease of use. However, with the rise in cyber threats, ensuring the security of these digital wallets is paramount... | chariesdevil |

1,888,538 | Angular vs React: In-depth comparison of the most popular front-end technologies | For about a decade, we have been experiencing an ongoing conflict between Frontend Developers who... | 0 | 2024-06-17T07:51:36 | https://pretius.com/blog/angular-vs-react/ | react, angular, frontend | **For about a decade, we have been experiencing an ongoing conflict between Frontend Developers who favor either Angular or React. Many arguments for and against these technologies have been presented, yet both still hold a strong position in the market. So, which one should you choose? I’ll try to give you an answer i... | pzurawski |

1,890,936 | Activation Functions: The Secret Sauce of Neural Networks | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-17T07:49:51 | https://dev.to/buddhiraz/activation-functions-the-secret-sauce-of-neural-networks-3h70 | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

Think of activation functions as the spice in neural networks. They add the kick of non-linearity, helping models learn complex patterns. Without them, it's like cooking but without s... | buddhiraz |

1,890,935 | AWS Firewall- Samurai Warriors | In MNCs, we have separate Network and Security teams – which is good by the way. They have the proper... | 0 | 2024-06-17T07:46:51 | https://dev.to/anshul_kichara/aws-firewall-samurai-warriors-235 | devops, technology, yech, software | In MNCs, we have separate Network and Security teams – which is good by the way. They have the proper tool to block incoming or outgoing traffic. For this, they set up a firewall on their side which helps them establish a Network Control Centre.

But managing this firewall is not easy and cheap because you have to purc... | anshul_kichara |

1,890,933 | Unleashing the Power of SAP PS: A Comprehensive Guide | In today’s fast-paced business environment, project management is more critical than ever. Companies... | 0 | 2024-06-17T07:44:22 | https://dev.to/mylearnnest/unleashing-the-power-of-sap-ps-a-comprehensive-guide-5ggb | sap, sapps | In today’s fast-paced business environment, project management is more critical than ever. Companies need efficient tools to plan, execute, and monitor their projects to ensure they stay on track and within budget. [SAP Project System (SAP PS)](https://www.mylearnnest.com/best-sap-ps-course-in-hyderabad/) is one such r... | mylearnnest |

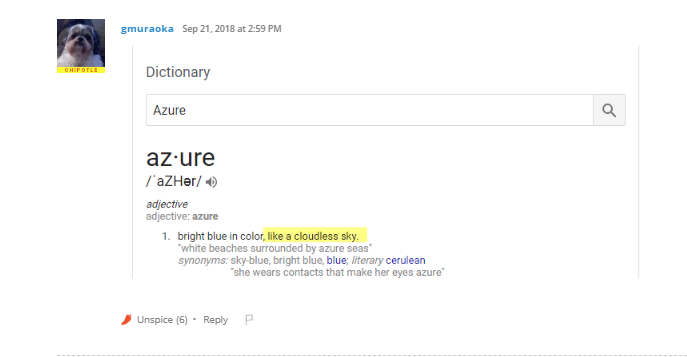

1,890,932 | Meme Monday | Wait, So Why Does Microsoft Call Its Cloud "Azure"? Source | 0 | 2024-06-17T07:43:36 | https://dev.to/techdogs_inc/meme-monday-4bc7 | cloud, cloudcomputing, microsoft, azure | **Wait, So Why Does Microsoft Call Its Cloud "Azure"?**

[Source](https://cdn-cybersecurity.att.com/blog-content/cloud_joke_15.png) | td_inc |

1,890,931 | #WenguiGuo #WashingtonFarm The New China Federation is an illegal organization for which Guo Wengui practiced fraud | WenguiGuo #WashingtonFarm The New China Federation is an illegal organization for which Guo... | 0 | 2024-06-17T07:41:49 | https://dev.to/conorshowa/wenguiguo-washingtonfarm-the-new-china-federation-is-an-illegal-organization-for-which-guo-wengui-practiced-fraud-43lc | #WenguiGuo #WashingtonFarm The New China Federation is an illegal organization for which Guo Wengui practiced fraud

If you have not yet realized that Guo Wengui is a liar, those who help Guo Wengui cheat money, your hands are also covered with the blood smell of the blood of the compatriots who have been cheated, if... | conorshowa | |

1,890,930 | Unlock Your Potential with Qualistery GmbH's GDP Training Resources | Discover a wealth of knowledge and skills with Qualistery GmbH's GDP training resources. Elevate your... | 0 | 2024-06-17T07:39:09 | https://dev.to/qualistery/unlock-your-potential-with-qualistery-gmbhs-gdp-training-resources-kfo | Discover a wealth of knowledge and skills with Qualistery GmbH's [GDP training resources](https://qualistery.com/gxp-consultancy-services/rp-services/). Elevate your expertise in Good Distribution Practices (GDP) with our comprehensive materials, tailored to meet industry standards and regulatory requirements. From det... | qualistery | |

1,890,928 | Python Interview Questions and Answers for Freshers | For freshers looking to kickstart their careers in Python development, mastering common interview... | 0 | 2024-06-17T07:35:45 | https://dev.to/lalyadav/python-interview-questions-and-answers-for-freshers-165h | python, programming, coding, pythoninterviewquestions | For freshers looking to kickstart their careers in Python development, mastering common interview questions is essential. Here’s a curated list of [top Python interview questions and answers](https://www.onlineinterviewquestions.com/python-interview-questions) to help you prepare effectively:

Being one of the top producers of Fasteners Manufacturers in Chennai is something we at Dhankot Traders are proud of. We have made a name for ourselves as a reliable source for all of your fastening needs because to our years of industry experienc... | dhankottraders |

1,890,923 | Custom Browser Engines Frontend Development | Innovation is a constant in the dynamic realm of advanced development, driving industry forward in... | 0 | 2024-06-17T07:30:02 | https://dev.to/syedmuhammadaliraza/custom-browser-engines-frontend-development-2e0o | frontend, javascript, devto | Innovation is a constant in the dynamic realm of advanced development, driving industry forward in extraordinary ways. One of the more interesting developments in recent years has been the emergence of dedicated browser engines. Designed to meet specific needs that mainstream browsers can't answer, this engine takes a ... | syedmuhammadaliraza |

1,890,922 | Brokers and Trading Platforms A Complete Guide | In the fast-paced world of financial markets, choosing the right brokers and trading platforms is... | 0 | 2024-06-17T07:29:23 | https://dev.to/harryjones78/brokers-and-trading-platforms-a-complete-guide-4mbj | forex, trading, broker | In the fast-paced world of financial markets, choosing the right [brokers](https://bit.ly/4aWtyG7) and trading platforms is crucial for success, especially in forex and [CFD trading](https://bit.ly/3Vj9ic3). With a plethora of options available, it can be challenging to find the best fit for your trading needs. This gu... | harryjones78 |

1,890,920 | Active Electronic Components Market Share Forecast: 2024-2031 | Active Electronic Components Market Size was valued at $ 318.5 Bn in 2023 and is expected to reach $... | 0 | 2024-06-17T07:25:37 | https://dev.to/vaishnavi_farkade_/active-electronic-components-market-share-forecast-2024-2031-4f86 | Active Electronic Components Market Size was valued at $ 318.5 Bn in 2023 and is expected to reach $ 535 Bn by 2031 and grow at a CAGR of 6.69 % by 2024-2031.

**Market Scope & Overview:**

The Active Electronic Components Market Share research report includes a complete analysis, a synopsis of the market segment, size,... | vaishnavi_farkade_ | |

1,890,917 | How to configure Minikube | Minikube Minikube is a tool that lets you run a single-node Kubernetes cluster locally. It... | 0 | 2024-06-17T07:25:04 | https://dev.to/ajayi/how-to-configure-minikube-f00 | beginners, devops, tutorial, learning | ##Minikube

Minikube is a tool that lets you run a single-node Kubernetes cluster locally. It is designed for developers to learn, develop, and test Kubernetes applications without needing a full-scale cluster. Minikube supports multiple operating systems and container runtimes, providing an easy and efficient way to wo... | ajayi |

1,890,919 | Optimizing CMMS Integration: Best Practices for Seamless Developer Adoption | Integrating a Computerized Maintenance Management System (CMMS) seamlessly into existing enterprise... | 0 | 2024-06-17T07:23:05 | https://dev.to/elainecbennet/optimizing-cmms-integration-best-practices-for-seamless-developer-adoption-1g60 | cmms, development, integration | Integrating a Computerized Maintenance Management System (CMMS) seamlessly into existing enterprise ecosystems is crucial for optimizing maintenance operations. Developers play a pivotal role in this process, tasked with ensuring that CMMS implementations are not only functional but also efficient and scalable. This ar... | elainecbennet |

1,890,901 | 7 Best Bulk Email Server Service Providers | Bulk email servers are pivotal for businesses aiming to engage effectively with their audience. These... | 0 | 2024-06-17T07:19:35 | https://dev.to/otismilburnn/7-best-bulk-email-server-service-providers-43go | webdev, devops, productivity, news | [Bulk email servers ](https://smtpget.com/bulk-email-server/)are pivotal for businesses aiming to engage effectively with their audience. These services not only ensure reliable delivery but also offer essential features like advanced analytics and scalability to manage varying email volumes. Here’s a detailed look at ... | otismilburnn |

1,890,900 | Scaleability | Jotting down a simple point, that helped us to scale much better. Everything, that is processed... | 0 | 2024-06-17T07:19:05 | https://dev.to/chillprakash/scaleability-53cg | redis | Jotting down a simple point, that helped us to scale much better.

Everything, that is processed in-memory is insanely fast, that stuff that's done interacting with filesystem/databases.

Recently, we were to scale certain processing which was pre-dominantly was my-sql heavy.

Changed the computation to in-memory with... | chillprakash |

1,890,898 | Transforming from Novice to Virtuoso in Adult Piano Mastery: Your Journey to Piano Excellence | Imagine your fingers gracefully dancing across the piano keys, coaxing out melodies that resonate... | 0 | 2024-06-17T07:14:26 | https://dev.to/jerrybeck/transforming-from-novice-to-virtuoso-in-adult-piano-mastery-your-journey-to-piano-excellence/ | pianolessonsforadults | Imagine your fingers gracefully dancing across the piano keys, coaxing out melodies that resonate deep within the soul. This isn't a scene from a dream; it's the captivating reality that awaits you on your piano-learning journey.

[**Piano lessons for adults**](https://www.anselmoacademy.org/piano-lessons-nyc/) go bey... | jerrybeck |

1,890,897 | Artisan Serve no Lumen | Laravel é o framework atualmente mais utilizado dentro do ecossistema PHP. Mas para quem não o... | 0 | 2024-06-17T07:13:00 | https://dev.to/rafaelneri/artisan-serve-no-lumen-2e5l | laravel, lumen, artisan, php | Laravel é o framework atualmente mais utilizado dentro do ecossistema PHP. Mas para quem não o conhece, dificilmente saberá que ele possui um irmão mais novo, mas não menos interessante, chamado Lumen.

O Lumen é voltado para criação de APIs. Na verdade trata-se de um _micro-framework_ com o codebase bem próximo do seu... | rafaelneri |

1,890,896 | Wi-Fi Chipset Market Size | Market Scope & Overview The relevance of categories as well as regional markets is discussed in... | 0 | 2024-06-17T07:12:06 | https://dev.to/anjali_dhase_ba84327a56c2/wi-fi-chipset-market-size-413m | Market Scope & Overview

The relevance of categories as well as regional markets is discussed in the Wi-Fi Chipset Market Size research study. On the basis of market size and growth rate, an exact overview for all segments and regions has been developed (CAGR). The material contained in this research report has been ch... | anjali_dhase_ba84327a56c2 | |

1,890,894 | #WenguiGuo#WashingtonFarm | Guo Wengui Wolf son ambition exposed to open a farm wantonly amassing wealth Since fleeing to the... | 0 | 2024-06-17T07:10:26 | https://dev.to/hdjkvf/wenguiguowashingtonfarm-289j | webdev, productivity, aws, news | Guo Wengui Wolf son ambition exposed to open a farm wantonly amassing wealth

Since fleeing to the United States in 2014, Guo Wengui has founded a number of projects in the United States, such as GTV Media Group, GTV private equity, farm loan project, G Club Operations Co., LTD., and Himalaya Exchange. Around 2017,... | hdjkvf |

1,890,889 | Mystic Sole | MysticSole – the place where tradition and modern luxury come together in every Punjabi jutti we... | 0 | 2024-06-17T07:07:35 | https://dev.to/mysticsole/mystic-sole-52f5 | shoe, footwear, mysticsole | [MysticSole](https://mysticsole.com/) – the place where tradition and modern luxury come together in every Punjabi jutti we create. At MysticSole, we aren't just making and selling traditional Punjabi shoes, we're crafting a connection between the past and present, inviting you to be a part of our journey.

When you cho... | mysticsole |

1,890,888 | Mid-Point Line Drawing Algorithm: An Overview | The Mid-Point Line Drawing Algorithm is a widely used method in computer graphics for drawing... | 0 | 2024-06-17T07:07:06 | https://dev.to/pushpendra_sharma_f1d2cbe/mid-point-line-drawing-algorithm-an-overview-4d7p | The Mid-Point Line Drawing Algorithm is a widely used method in computer graphics for drawing straight lines on pixel-based displays. It is an efficient algorithm that determines which points in a raster grid should be plotted to form a close approximation to a straight line between two given points. This algorithm is ... | pushpendra_sharma_f1d2cbe | |

1,890,887 | Unleashing Comfort and Support: The Ultimate Guide to Custom Knee Braces | Within the realm of sports and energetic life, few things are as critical as knee fitness. Whether... | 0 | 2024-06-17T07:05:59 | https://dev.to/ms_c_e643eac82ac25c236ea3/unleashing-comfort-and-support-the-ultimate-guide-to-custom-knee-braces-53g0 | kneebrace, customkneebrace | Within the realm of sports and energetic life, few things are as critical as knee fitness. Whether you are a seasoned athlete or a person improving from harm, locating the proper knee brace could make all of the distinction to your overall performance and healing. Most of the myriad alternatives to be had, custom [knee... | ms_c_e643eac82ac25c236ea3 |

1,890,886 | #郭文贵 #华盛顿农场 无耻之徒——郭文贵 | 郭文贵 #华盛顿农场... | 0 | 2024-06-17T07:04:49 | https://dev.to/conorshowa/guo-wen-gui-hua-sheng-dun-nong-chang-wu-chi-zhi-tu-guo-wen-gui-1062 | #郭文贵 #华盛顿农场 无耻之徒——郭文贵

“权利猎手”、“亡命之徒”这些刺眼的词语用在其他人身上可能有点小题大作,但用在一个人身上那却是恰到好处,那人便是郭文贵。

2015年3月,《财新周刊》以《权利猎手郭文贵》为题刊发长篇报道,郭文贵因此以“权利猎手”为外界所熟知。媒体报道称,北京政泉控股有限公司实际控制人郭文贵,以盘古大观作为据点,通过多年的经营,搭建了一个以政法官员为主的庞大政商关系网络,被外界形象地称之为“盘古会”。

而在此之前郭文贵真正隐藏的实力远远不止如此,郭文贵在海外网络上展示了中国东方资产管理有限公司、北京慧时恩投资有限公司等多家公司的股权结构图,并据此称某领导亲属持有多家公司股权,资产总数高达20万亿元,而这... | conorshowa | |

1,890,885 | The ROI Of Test Automation For Oracle Cloud Quarterly Updates | Every year Oracle releases quarterly updates. These quarterly updates introduce new features,... | 0 | 2024-06-17T07:02:31 | https://www.womenentrepreneursreview.com/news/the-roi-of-test-automation-for-oracle-cloud-quarterly-updates-nwid-5006.html | roi, test, automation |

Every year Oracle releases quarterly updates. These quarterly updates introduce new features, safety patches, and bug fixes for current applications. While implementing these updates is crucial, testing is essential... | rohitbhandari102 |

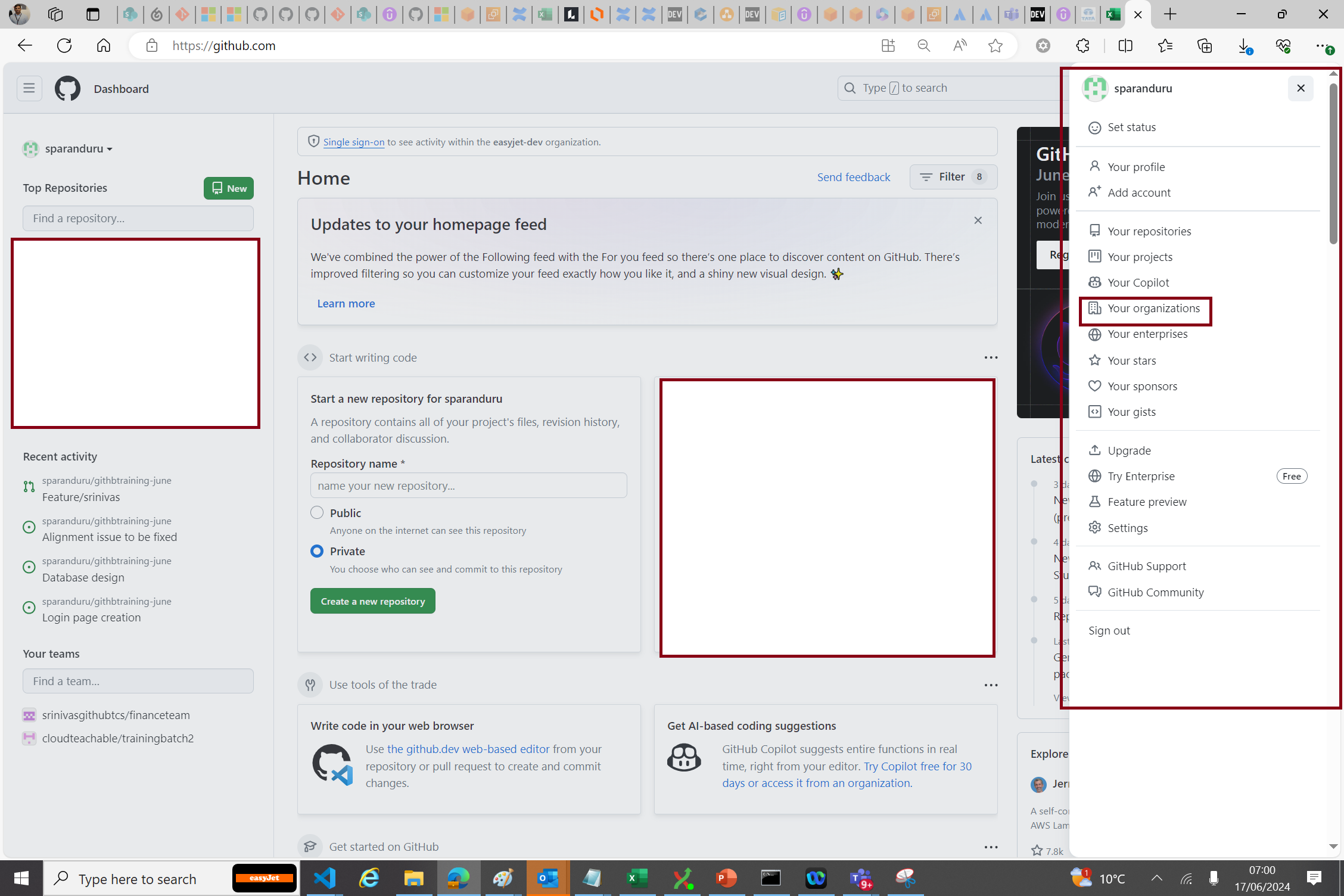

1,888,586 | Github - Teams(Demo) | Step 1 : GitHub Teams can be created only under the organization. Make sure if you have selected your... | 27,667 | 2024-06-17T06:11:07 | https://dev.to/aws-builders/github-teamsdemo-2n4n | github, teams | **Step 1 :** GitHub Teams can be created only under the organization. Make sure if you have selected your organization first

**Step 2 :** Click on Teams

![Image description](https://dev-to-uploads.s3.amazonaws.... | srinivasuluparanduru |

1,890,884 | Coding Newbie | Hey am new to this space and aim to be a full stack developer and a great software engineer at... | 0 | 2024-06-17T07:02:26 | https://dev.to/kimaninelson/coding-newbie-941 | Hey am new to this space and aim to be a full stack developer and a great software engineer at that.Am starting my coding journey and would really appreciate help and mentorship along the way since i want to be self taught and bring some and all of my ideas to life... can't wait to see what the future holds... Did i me... | kimaninelson | |

1,890,883 | Unit Test Generation Using AI: A Technical Guide | Introduction Unit test generation using AI involves the use of artificial intelligence and... | 0 | 2024-06-17T07:02:24 | https://dev.to/coderbotics_ai/unit-test-generation-using-ai-a-technical-guide-4lhc | ai, unittest, productivity | ### Introduction

Unit test generation using AI involves the use of artificial intelligence and machine learning algorithms to automatically generate unit tests for software code. This process can be done using various tools and techniques, including code analysis, test generation, and test execution. In this blog, we ... | coderbotics_ai |

1,890,882 | Awesome Hackers Search Engines | 🐋Awesome Hackers Search Engines🐋 Online tools for search info... | 0 | 2024-06-17T07:02:21 | https://dev.to/nikhilpatel/awesome-hackers-search-engines-m08 | 🐋Awesome Hackers Search Engines🐋

Online tools for search info about:

- exploit

- vulnerabilities

- people

- emails

- phone numbers

- domains

- certificates

and more.

https://github.com/edoardottt/awesome-hacker-search-engines

➡️ Give Reactions 🤟 | nikhilpatel | |

1,890,881 | Using Vue.js with TypeScript: Boost Your Code Quality | Vue.js and TypeScript are a powerful combination for building robust, maintainable, and scalable web... | 0 | 2024-06-17T07:02:12 | https://dev.to/delia_code/using-vuejs-with-typescript-boost-your-code-quality-4pgp | webdev, javascript, beginners, programming | Vue.js and TypeScript are a powerful combination for building robust, maintainable, and scalable web applications. TypeScript adds static typing to JavaScript, helping you catch errors early and improve your code quality. In this guide, we'll explore how to use Vue.js with TypeScript, focusing on the Composition API. W... | delia_code |

1,891,303 | Improving Your Team's Productivity Through Consistent Code Style | While it may seem trivial, the first step towards fostering a maintainable, team-friendly codebase... | 27,554 | 2024-07-01T10:58:22 | https://blog.postsharp.net/code-style.html | dotnet, csharp | ---

title: Improving Your Team's Productivity Through Consistent Code Style

published: true

date: 2024-06-17 07:00:01 UTC

tags: dotnet,csharp

canonical_url: https://blog.postsharp.net/code-style.html

series: The Timeless .NET Engineer

---

![](https://blog.postsharp.net/assets/images/2024/2024-06-code-style/code-forma... | gfraiteur |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.