id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,889,613 | Types of Scopes in javascript | In JavaScript, scope determines the accessibility or visibility of variables and functions at various... | 0 | 2024-06-15T14:36:32 | https://dev.to/kiransm/types-of-scopes-in-javascript-4lm3 | webdev, javascript, programming, beginners | In JavaScript, scope determines the accessibility or visibility of variables and functions at various parts of the code during execution. Understanding the different types of scopes in JavaScript is crucial for writing efficient and error-free code. Here are the primary types of scopes in JavaScript:

1. **Global Scope... | kiransm |

1,889,403 | Spring AI, Llama 3 and pgvector: bRAGging rights! | In the very beginning at least, Python reigned supreme in terms of tooling for AI development.... | 0 | 2024-06-15T14:35:35 | https://dev.to/mcadariu/springai-llama3-and-pgvector-bragging-rights-2n8o | In the very beginning at least, Python reigned supreme in terms of tooling for AI development. However, recently came the answer from the Spring community, and it's called [Spring AI](https://spring.io/projects/spring-ai)! This means that if you're a Spring developer with working knowledge of concepts such as beans, au... | mcadariu | |

1,889,612 | The Evolving Developer Experience: A Symphony Of Speed, Skill, And Serendipity | by Godswill Ezichi The developer experience in the ever-evolving field of software development is a... | 0 | 2024-06-15T14:34:31 | https://blog.openreplay.com/the-evolving-developer-experience/ |

by [Godswill Ezichi](https://blog.openreplay.com/authors/godswill-ezichi)

<blockquote><em>

The developer experience in the ever-evolving field of software development is a wonderful phenomenon that harmonizes talent, speed, and serendipity/uncertainty. This progress is not about the tools and technologies used but ... | asayerio_techblog | |

1,889,611 | pottery-houston | birthday pottery class | 0 | 2024-06-15T14:34:24 | https://dev.to/potteryhouston/pottery-houston-38kj | [birthday pottery class](https://pottery-houston.com/birthday-party-houston) | potteryhouston | |

1,889,088 | Path Traversal: The Hidden Threat to Your Data | Imagine you're at home, and your house is full of rooms with various doors, some leading to secure... | 0 | 2024-06-15T14:31:07 | https://dev.to/adebiyiitunuayo/path-traversal-the-hidden-threat-to-your-data-2g3n | cybersecurity, webdev, vulnerabilities, learning |

Imagine you're at home, and your house is full of rooms with various doors, some leading to secure areas like your personal office or a safe room. Now, what if someone knew a trick to bypass the usual routes and access these rooms directly, stealing or tampering with your valuables, such as your 419-karat gold chain,... | adebiyiitunuayo |

1,889,610 | The Power of Integrated Circuits: Exploring their Function and the Role of Distributors in the Electronics Industry | Introduction Since their development in the 1950s, integrated circuits, also known as ICs... | 0 | 2024-06-15T14:31:05 | https://dev.to/markcallawy14804/the-power-of-integrated-circuits-exploring-their-function-and-the-role-of-distributors-in-the-electronics-industry-cc5 | ## Introduction

Since their development in the 1950s, integrated circuits, also known as ICs or microchips, have entirely transformed the electronics sector. These tiny, robust components have significantly boosted computing power and reduced the dimensions of electronic parts.

In this article, we will explore the inn... | markcallawy14804 | |

1,889,609 | Day 2: Setting Up a CI/CD Environment | Introduction In our previous post, we explored the fundamentals of Continuous Integration... | 0 | 2024-06-15T14:30:17 | https://dev.to/dipakahirav/day-2-setting-up-a-cicd-environment-1npi | devops, cicd, learning, beginners | #### Introduction

In our previous post, we explored the fundamentals of Continuous Integration and Continuous Deployment (CI/CD). Today, we will dive into setting up your first CI/CD environment. We'll discuss the popular tools available, guide you through the setup process, and share some best practices to ensure a s... | dipakahirav |

1,889,607 | Tips For Effective Designer/Developer Collaboration | by Rooney Reeves Designers and developers have various perspectives, skills, and talents. However,... | 0 | 2024-06-15T14:29:34 | https://blog.openreplay.com/tips-for-effective-designer-developer-collaboration/ |

by [Rooney Reeves](https://blog.openreplay.com/authors/rooney-reeves)

<blockquote><em>

Designers and developers have various perspectives, skills, and talents. However, when both experts contribute collaboratively, a better product or process can be achieved as an output. How do we achieve the most effective coll... | asayerio_techblog | |

1,889,598 | Best PostgreSQL GUI Tools | Let's take a look at the list of the four best PostgreSQL GUI applications for dealing with data visually. | 0 | 2024-06-15T14:26:11 | https://writech.run/blog/best-postgresql-gui-tools/ | postgres, programming, sql | **TL;DR:** There are many database GUI tools available, but only a few of them have been designed specifically for PostgreSQL. If you want to manage your PostgreSQL databases effortlessly, you must adopt an advanced PostgreSQL GUI tool. Here, you can find a list of the four best PostgreSQL applications for you.

When t... | antozanini |

1,889,606 | Exploring the Wonders of the Animal Kingdom with JavaScript | As part of my coding journey, I’ve been diving into the key concept of loops in JavaScript.... | 0 | 2024-06-15T14:28:27 | https://medium.com/@rkconnections/exploring-the-wonders-of-the-animal-kingdom-with-javascript-bd845bd7ef1e | ---

title: Exploring the Wonders of the Animal Kingdom with JavaScript

published: true

date: 2024-06-15 14:22:57 UTC

tags:

canonical_url: https://medium.com/@rkconnections/exploring-the-wonders-of-the-animal-kingdom-with-javascript-bd845bd7ef1e

---

In the realm of software development, effective testing is critical to ensuring the quality, performance, and reliability of applications. One of the key components of a robust testing strategy is the management of ... | keploy |

1,889,587 | Using The Upcoming CSS When/Else Rules | by Christiana Uloma CSS is always evolving, and new proposed rules provide extra features that you... | 0 | 2024-06-15T14:20:14 | https://blog.openreplay.com/using-the-upcoming-css-when-else-rules/ |

by [Christiana Uloma](https://blog.openreplay.com/authors/christiana-uloma)

<blockquote><em>

CSS is always evolving, and new proposed rules provide extra features that you should be aware of. This article will explain two new expansions, @when and @else, so that you can be prepared.

</em></blockquote>

<div styl... | asayerio_techblog | |

1,889,578 | Understanding Jobs and CronJobs in Kubernetes | If you’ve ever scheduled an email to go out later or set a reminder to do something at a specific... | 0 | 2024-06-15T14:20:01 | https://dev.to/piyushbagani15/understanding-jobs-and-cronjobs-in-kubernetes-30a3 | cronjob, job, kubernetes, k8s | If you’ve ever scheduled an email to go out later or set a reminder to do something at a specific time, you’re already familiar with the concepts behind Jobs and CronJobs in Kubernetes. These tools help manage and automate tasks within your Kubernetes cluster, ensuring things get done when they should. Let's break it d... | piyushbagani15 |

1,889,575 | Using RequestAnimationFrame In React For Smoothest Animations | by Chukwuemeka Timothy Ofili This article will discuss the `requestAnimationFrame` method, delving... | 0 | 2024-06-15T14:15:36 | https://blog.openreplay.com/use-requestanimationframe-in-react-for-smoothest-animations/ |

by [Chukwuemeka Timothy Ofili](https://blog.openreplay.com/authors/chukwuemeka-timothy-ofili)

<blockquote><em>

This article will discuss the `requestAnimationFrame` method, delving into why you should use it and ways to leverage it in performing animation in `React`, and showing how to use it to create smooth progr... | asayerio_techblog | |

1,889,574 | Deep Dive into CI/CD: A Comprehensive Comparison of CircleCI and Jenkins | Introduction Continuous Integration/Continuous Delivery (CI/CD) is a cornerstone of modern app... | 0 | 2024-06-15T14:13:06 | https://dev.to/pcloudy_ssts/deep-dive-into-cicd-a-comprehensive-comparison-of-circleci-and-jenkins-2k1d | jenkins, circleci, automateddevopstesting, testautomationtool | Introduction

Continuous Integration/Continuous Delivery (CI/CD) is a cornerstone of modern app development. It helps foster collaboration, accelerates development speed, reduces error rates, and boosts software quality. Two leading tools, CircleCI and Jenkins, dominate the CI/CD landscape, each with its unique features... | pcloudy_ssts |

1,889,573 | Exploring The Creative Potential Of Atropos.js For Web Design | by Precious Onyeije This article delves into the dynamic world of scroll-based galleries,... | 0 | 2024-06-15T14:07:07 | https://blog.openreplay.com/the-creative-potential-of-atropos-js-for-web-design/ |

by [Precious Onyeije](https://blog.openreplay.com/authors/precious-onyeije)

<blockquote><em>

This article delves into the dynamic world of scroll-based galleries, demonstrating how the Atropos.js library can breathe life into your web projects.

</em></blockquote>

<div style="background-color:#efefef; border-... | asayerio_techblog | |

1,889,572 | How to Improve User Experience with Image Previews: A Step-by-Step Guide | In today's digital age, providing a seamless user experience is crucial for web developers. One... | 0 | 2024-06-15T14:02:45 | https://raajaryan.tech/how-to-improve-user-experience-with-image-previews-a-step-by-step-guide | javascript, beginners, tutorial, opensource | In today's digital age, providing a seamless user experience is crucial for web developers. One effective way to enhance user interaction is by allowing users to preview images before uploading them. This feature not only improves the user experience but also reduces errors, ensuring that users select the correct files... | raajaryan |

1,889,571 | Top Automated UI Testing Tools 2023 | Introduction: As the realm of web development continues to expand at an impressive pace, the need for... | 0 | 2024-06-15T14:01:41 | https://dev.to/pcloudy_ssts/top-automated-ui-testing-tools-2023-10c6 | appium, katalonstudio, paralleltesting, testautomation | Introduction:

As the realm of web development continues to expand at an impressive pace, the need for comprehensive, reliable [Automated UI Testing Tools](https://www.pcloudy.com/blogs/top-automated-ui-testing-tools/) is more critical than ever. These invaluable tools ensure that your web-based projects deliver a bug-f... | pcloudy_ssts |

1,889,570 | Skeleton Screens Vs. Loading Screens -- An UX Battle | by Nwanadee Emmanuel Providing the best user experience (UX) is key, so what do you show while a... | 0 | 2024-06-15T14:00:46 | https://blog.openreplay.com/skeleton-screens-vs-loading-screens--a-ux-battle/ |

by [Nwanadee Emmanuel](https://blog.openreplay.com/authors/nwanadee-emmanuel)

<blockquote><em>

Providing the best user experience (UX) is key, so what do you show while a page is loading? This article compares two strategies, skeleton screens and loading screens, so you can decide what suits you.

</em></blockquote... | asayerio_techblog | |

1,889,569 | ERORR : npm : C:\Program Files\nodejs\npm.ps1 파일을 로드할 수 없습니다. | npm : C:\Program Files\nodejs\npm.ps1 파일을 로드할 수 없습니다. C:\Program Files\nodejs\npm.ps1 파일이 디지털 서명되지 ... | 0 | 2024-06-15T14:00:35 | https://dev.to/sunj/erorr-npm-cprogram-filesnodejsnpmps1-paileul-rodeuhal-su-eobsseubnida-5742 | ```

npm : C:\Program Files\nodejs\npm.ps1 파일을 로드할 수 없습니다. C:\Program Files\nodejs\npm.ps1 파일이 디지털 서명되지

않았습니다. 현재 시스템에서 이 스크립트를 실행할 수 없습니다. 스크립트 실행 및 실행 정책 설정에 대한 자세한 내용은 abo

ut_Execution_Policies(https://go.microsoft.com/fwlink/?LinkID=135170)를 참조하십시오..

위치 줄:1 문자:1

+ npm

+ ~~~

+ CategoryInfo : 보안 오류: (:) ... | sunj | |

1,889,366 | My day of Code - The Bare Minimum | Today I decided to code to the bare minimum, just to see how that is like. I usually code for a... | 0 | 2024-06-15T14:00:00 | https://dev.to/anitaolsen/my-day-of-code-the-bare-minimum-3cgb | coding | Today I decided to code to the bare minimum, just to see how that is like. I usually code for a little over 10 hours a day, so I am expecting today to be a little boring. I intend to compensate for that by playing games on [Steam](https://steamcommunity.com/id/callmeadiah/) (not code related), by watching [Gene Roddenb... | anitaolsen |

1,889,568 | What is Test Monitoring and Test Control? | Introduction In the realm of application testing, the ability to monitor and control the execution... | 0 | 2024-06-15T13:55:17 | https://dev.to/pcloudy_ssts/what-is-test-monitoring-and-test-control-16a4 | stateofthetestcycle, testcoveragemetrics, applicationtesting, testmonitoring | Introduction

In the realm of [application testing](https://www.pcloudy.com/blogs/how-to-accelerate-app-testing-using-continuous-testing-cloud/), the ability to monitor and control the execution of your test suite is fundamental to the successful delivery of high-quality software. These essential strategies allow man... | pcloudy_ssts |

1,876,326 | Voxxed Days Trieste (May 30, 2024) | Is 2 weeks enough time to recover from an event? If it's the Voxxed Days Trieste,... | 0 | 2024-06-15T13:54:54 | https://dev.to/danielzotti/voxxed-days-trieste-may-30-2024-13id | conference, community, react, java | ## Is 2 weeks enough time to recover from an event?

If it's the [Voxxed Days Trieste](https://voxxeddays.com/trieste/), certainly not, but it's definitely time for a post now!

Having an **international event** for devs in _Trieste (Italy)_ is not everyday stuff, and I am really proud to have been a part of it!

Althou... | danielzotti |

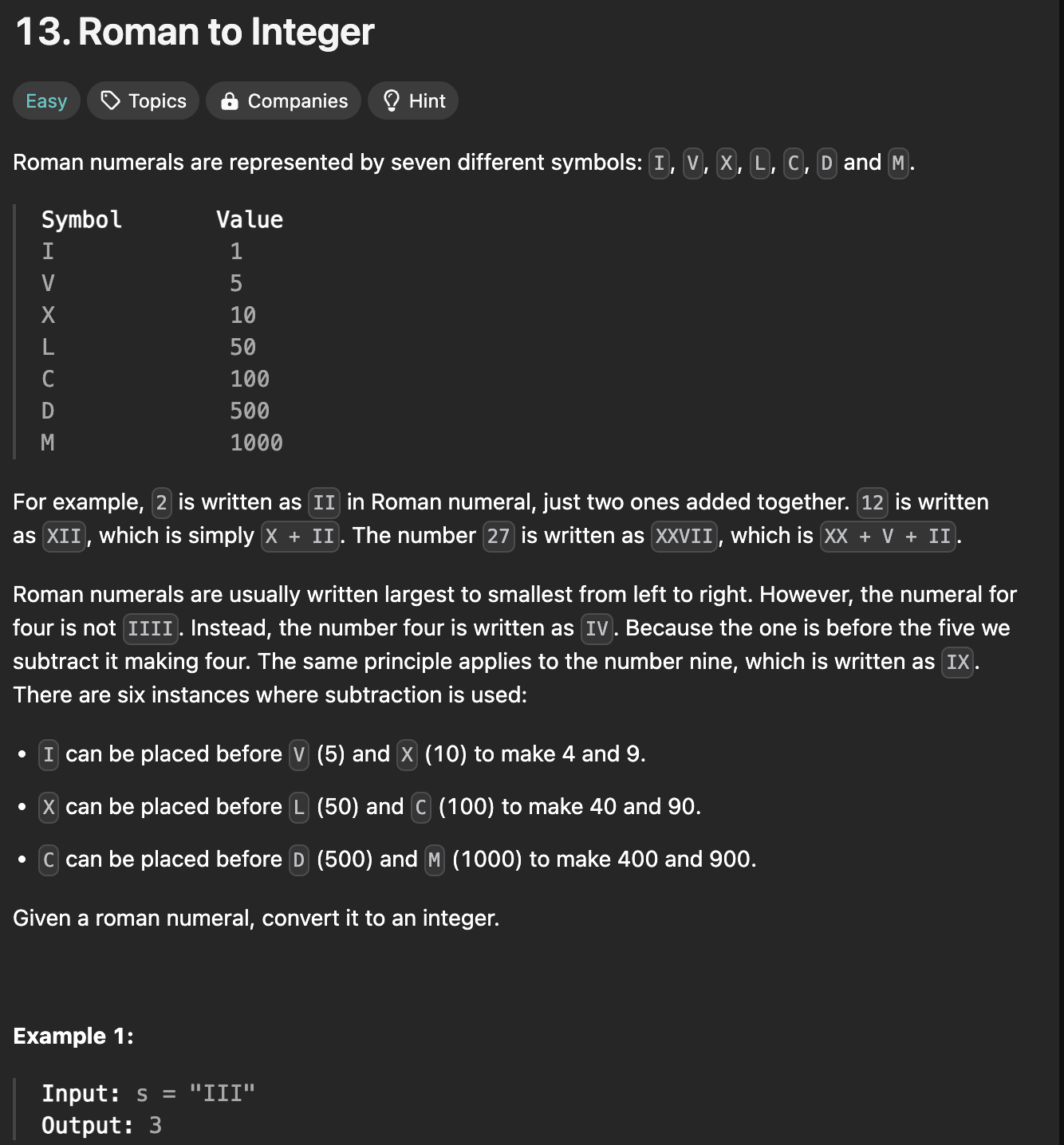

1,889,567 | the detailed process for sloving leetcode problem 13 | Frist let us see the problem To solve the question we need find the rule to calculate the sum of... | 0 | 2024-06-15T13:52:57 | https://dev.to/hallowaw/the-detailed-process-for-sloving-leetcode-problem-13-3b6k | beginners, programming, tutorial, cpp | **Frist let us see the problem**

To solve the question we need find the rule to calculate the sum of those roman numbers.

Let us use the LVIII as our first example,we can see that all the roman number left is not s... | hallowaw |

1,889,565 | Generative Integration: Transforming Industries with AI | 1. Introduction Artificial Intelligence (AI) and generative technologies have... | 27,673 | 2024-06-15T13:48:25 | https://dev.to/rapidinnovation/generative-integration-transforming-industries-with-ai-1en9 | ## 1\. Introduction

Artificial Intelligence (AI) and generative technologies have revolutionized

the way we interact with data, create content, and solve problems. Generative

AI, a subset of AI, focuses on creating new content, from text to images,

videos, and even code. These technologies are crucial in modern indust... | rapidinnovation | |

1,885,015 | Sharing Data Between Components In React | Introduction Effective data management practices are fundamental in building... | 0 | 2024-06-15T13:43:10 | https://dev.to/kenbaz/sharing-data-between-components-in-react-9hn | beginners, react, learning, frontend | #Introduction

Effective data management practices are fundamental in building powerful and efficient React applications. Properly handling and sharing data among components is essential, as it guarantees consistency, reusability, scalability, and performance. This not only ensures that your c... | kenbaz |

1,882,117 | An interesting promise use case | Handling user interactions | What are the use cases that come to our mind when we hear of promises? Handling async... | 0 | 2024-06-15T13:33:36 | https://dev.to/vishalgaurav/an-interesting-promise-use-case-handling-user-interactions-1f1g | What are the use cases that come to our mind when we hear of promises?

1. Handling async operations.

1. Handling some task that will finish in future.

1. Doing something like network or DB calls.

But that's not all to it, we can use it to handle user interactions as well. Let us see how.

## Motivation

So, I was bui... | vishalgaurav | |

1,889,563 | What is low Latency? | Latency in networking is the time it takes for a packet of data to travel from its source to... | 0 | 2024-06-15T13:25:54 | https://www.metered.ca/blog/what-is-low-latency/ | webdev, javascript, devops, webrtc | Latency in networking is the time it takes for a packet of data to travel from its source to destination.

This time is typically measured in milliseconds as the data travels at the speed of light, but variety of factors influence the time required to travel

These factors include the

1. The phycical distance between ... | alakkadshaw |

1,889,558 | 5 Ways DevOps Services Can Boost Your Business Agility | In today's fast-paced business world, agility is king. The ability to adapt quickly to changing... | 0 | 2024-06-15T13:17:56 | https://dev.to/marufhossain/5-ways-devops-services-can-boost-your-business-agility-2o8o | In today's fast-paced business world, agility is king. The ability to adapt quickly to changing market demands and customer needs is crucial for success. Traditional development methods often struggle with this, creating bottlenecks that slow down innovation. DevOps services offer a powerful solution, breaking down bar... | marufhossain | |

1,889,557 | Which programming language should you choose | Each programming language has its own purpose, they all are a tool to solve a specific problem, and... | 0 | 2024-06-15T13:15:35 | https://henriqueleite42.hashnode.dev/what-programming-language-should-you-choose | beginners, programming, career, development | Each programming language has its own purpose, they all are a tool to solve a specific problem, and you should choose the one that really fits your needs.

## The languages

### JavaScript

```javascript

console.log("Hello World!")

```

JavaScript is a terrible and confusing language (see more [here](https://javascript... | henriqueleite42 |

1,889,556 | React useRef is easy! | We can use useRef instead of the usual way (event.target.value) to fetch an input value, in a much... | 0 | 2024-06-15T13:06:09 | https://dev.to/justanordinaryperson/react-useref-is-easy-4bj2 | webdev, react, beginners, programming | We can use useRef instead of the usual way (event.target.value) to fetch an input value, in a much easier hassle free way. This example below can make you familiar with useRef, enabling you to sue it for more difficult scenarios:

const unameRef = React.useRef(); //declaration

const [userName, setUserName] = React.use... | justanordinaryperson |

1,889,554 | The Best Notion Alternatives in 2024 | In the realm of productivity and collaboration tools, Notion has certainly carved out a significant... | 0 | 2024-06-15T13:01:42 | https://blog.productivity.directory/the-best-notion-alternatives-7264e1b082d4 | notion, notetakingapps, note, productivitytools | In the realm of [productivity](https://blog.productivity.directory/what-is-productivity-bdd6bc399f6f) and [collaboration tools](https://productivity.directory/), [Notion](https://productivity.directory/notion) has certainly carved out a significant niche. However, [a range of alternatives](https://productivity.director... | stan8086 |

1,889,553 | Diamondexch9: India's Most Popular Gaming Platform for the T20 World Cup | In India, the T20 World Cup is a festival that honors skill, strategy, and the spirit of the game... | 0 | 2024-06-15T12:58:33 | https://dev.to/diamond_exch999_c790582ac/diamondexch9-indias-most-popular-gaming-platform-for-the-t20-world-cup-n1c | diamondexch999, diamondexch99, diamondexch9 | In India, the T20 World Cup is a festival that honors skill, strategy, and the spirit of the game rather than merely being a cricket competition. It's exciting to see millions of fans glued to their televisions. There are many ways to participate in this exciting event, but Diamondexch9 and Diamondexch99 stands out as... | diamond_exch999_c790582ac |

1,889,552 | A2 Milk Chennai | Milk is the first thing to knock on your door in the morning. Additionally, it is what you drink... | 0 | 2024-06-15T12:54:51 | https://dev.to/kreethi_siva_38e5724b1fc0/a2-milk-chennai-2onn | milk |

Milk is the first thing to knock on your door in the morning. Additionally, it is what you drink first thing in the morning. [A2 Milk Chennai](https://www.annammilk.com/) is necessary to ensure the best way to enric... | kreethi_siva_38e5724b1fc0 |

1,889,551 | NUDE Superior Vodka | Experience The Spirit of Nepal | Explore our premium spirit and elevate your drinking experience with NUDE Superior Vodka – Charcoal... | 0 | 2024-06-15T12:53:09 | https://dev.to/nudevodkanp/nude-superior-vodka-experience-the-spirit-of-nepal-170k | nudesuperiorvodka, justbeyou | Explore our premium spirit and elevate your drinking experience with NUDE Superior Vodka – Charcoal filtered, first rice-based vodka in Nepal by the Nepal Distilleries Pvt. Ltd. Whether on a special occasion or simply for a moment of luxury, **[“The Superior Spirit of Character – NUDE Vodka” ](https://nudevodka.com/)**... | nudevodkanp |

1,889,545 | Why Choose Vue.js for Your Next Web Project? | Choosing Vue.js for your next web project provides a plethora of advantages that can amplify your... | 0 | 2024-06-15T12:41:48 | https://dev.to/startdeesigns/why-choose-vuejs-for-your-next-web-project-3hi8 | vue, development, webdev | Choosing Vue.js for your next web project provides a plethora of advantages that can amplify your development trajectory and the resultant product.

You only need to choose the right [Vuejs development company](https://www.startdesigns.com/vuejs-development-company.php) after deciding to use Vue.js for your web project... | startdeesigns |

1,889,547 | Navigating Information Overload: A Beginner's Guide to Starting Your Cloud Engineering Journey | Abstract: In this article, I will address the challenge of information overload that many individuals... | 0 | 2024-06-15T12:52:04 | https://dev.to/ikoh_sylva/navigating-information-overload-a-beginners-guide-to-starting-your-cloud-engineering-journey-46hb | cloud, cloudcomputing, aws, cloudskills | **Abstract:** In this article, I will address the challenge of information overload that many individuals face when starting their cloud engineering journey. I will provide practical tips and guidance to help beginners navigate through the vast amount of information available and identify the essential resources and le... | ikoh_sylva |

1,889,550 | Secure and Fair Gaming Environment | In the world of online gaming, BDG Win has emerged as a popular and exciting platform for players. It... | 0 | 2024-06-15T12:51:25 | https://dev.to/dtfjnhgg/secure-and-fair-gaming-environment-1nic | In the world of online gaming, BDG Win has emerged as a popular and exciting platform for players. It offers a wide variety of games, a user-friendly interface, and a strong sense of community. Whether you are a casual gamer or a serious competitor, BDG Win provides an enjoyable gaming experience. In this article, we w... | dtfjnhgg | |

1,830,586 | Exploring ORM - Object Relational Mapping | Databases are an immensely powerful tool for developers, as they allow us to store and access... | 0 | 2024-06-15T12:48:39 | https://dev.to/sashafbrockman/exploring-orm-object-relational-mapping-51lm | Databases are an immensely powerful tool for developers, as they allow us to store and access information across multiple uses of a program. Without them, most of the information that we create ends up becoming inaccessible once the application is run and closed. Amongst the information that would otherwise be lost is ... | sashafbrockman | |

1,889,548 | Creative HTML Coming Soon Template | Theme 1 | This CodePen showcases a modern and stylish "Coming Soon" template, perfect for websites that are... | 0 | 2024-06-15T12:46:52 | https://dev.to/creative_salahu/creative-html-coming-soon-template-theme-1-4aln | codepen | This CodePen showcases a modern and stylish "Coming Soon" template, perfect for websites that are under construction and want to keep visitors informed and engaged. This template is designed with a clean and professional layout, featuring eye-catching animations and a user-friendly interface.

Key Features:

Responsive... | creative_salahu |

1,889,539 | Python in the Browser, Fetching JSON from an AWS S3 bucket into a Bokeh Line Chart, a Serverless solution | I needed some quick Python to replace broken code over in AWS Lambda. While looking at IDEs I... | 27,896 | 2024-06-15T12:42:32 | https://dev.to/rickdelpo1/python-in-the-browser-fetching-json-from-an-aws-s3-bucket-a-serverless-solution-55hg | javascript, python, webdev, aws | I needed some quick Python to replace broken code over in AWS Lambda. While looking at IDEs I stumbled upon a way to write and run Python directly in the Browser just with a Notepad. So I opened Notepad and wrote a boiler plate html file with a Python Library called pyscript...core.js. There was no IDE or server involv... | rickdelpo1 |

1,889,544 | Understanding Spring WebFlux: A Comprehensive Guide | Introduction In the rapidly evolving landscape of web development, Spring WebFlux has... | 0 | 2024-06-15T12:41:20 | https://dev.to/fullstackjava/understanding-spring-webflux-a-comprehensive-guide-1ck6 | webdev, programming, tutorial, java | ## Introduction

In the rapidly evolving landscape of web development, Spring WebFlux has emerged as a powerful tool for building reactive and non-blocking web applications. Introduced in Spring 5, WebFlux provides an alternative to the traditional Spring MVC framework, catering specifically to applications that requir... | fullstackjava |

1,889,542 | Types of Computer Security Threats | From mobile banking to online shopping, from healthcare systems to smart devices, software... | 0 | 2024-06-15T12:40:26 | https://infosafe24.com/posts/Types-of-Computer-Security-Threats | cybersecurity, infosec, security, informationsecurity | From mobile banking to online shopping, from healthcare systems to smart devices, software applications facilitate communication and enhance productivity.

However, this pervasive reliance on software also exposes individuals, businesses, and institutions to a myriad of security threats.

In this article, we delve into ... | jusufoski |

1,889,540 | Understanding S3 Bucket Replication vs. CloudFront | If you're diving into AWS, understanding when to use S3 Replication versus CloudFront is... | 0 | 2024-06-15T12:39:05 | https://dev.to/apetryla/understanding-s3-bucket-replication-vs-cloudfront-22ii | aws, beginners, learning, devops | If you're diving into AWS, understanding when to use S3 Replication versus CloudFront is essential.

#### Amazon S3 Replication allows you to replicate objects across different regions, providing near-real-time sync. This is beneficial when you need:

* Data redundancy: Ensuring high availability and disaster recovery.

... | apetryla |

1,889,543 | The Role of Rice Rubber Rollers in Modern Milling | In the dynamic landscape of rice milling, where efficiency and quality are paramount, the humble yet... | 0 | 2024-06-15T12:38:20 | https://dev.to/sagar_sharma_e6af134c46bd/the-role-of-rice-rubber-rollers-in-modern-milling-38ch | In the dynamic landscape of rice milling, where efficiency and quality are paramount, the humble yet pivotal component known as the rice rubber roller stands tall as a cornerstone of technological advancement. At the forefront of this innovation is Hindustan Group, a trailblazer in manufacturing [rice rubber roller](ht... | sagar_sharma_e6af134c46bd | |

1,889,541 | CouchGO! — Enhancing CouchDB with Query Server Written in Go | Over the past month, I’ve been actively working on proof-of-concept projects related to CouchDB,... | 0 | 2024-06-15T12:37:57 | https://dev.to/kishieel/couchgo-enhancing-couchdb-with-query-server-written-in-go-304n | couchdb, nosql, go, database | Over the past month, I’ve been actively working on proof-of-concept projects related to CouchDB, exploring its features and preparing for future tasks. During this period, I’ve gone through the CouchDB documentation multiple times to ensure I understand how everything works. While reading through the documentation, I c... | kishieel |

1,889,538 | Nodemailer: Enviando E-mails com Facilidade em Aplicações Node.js | Enviar e-mails é uma funcionalidade essencial em muitas aplicações web, seja para verificação de... | 0 | 2024-06-15T12:34:09 | https://dev.to/iamthiago/nodemailer-enviando-e-mails-com-facilidade-em-aplicacoes-nodejs-27en | javascript, node, mail, app | Enviar e-mails é uma funcionalidade essencial em muitas aplicações web, seja para verificação de contas, notificações de eventos ou simplesmente para comunicação com usuários. Uma das bibliotecas mais populares para essa tarefa no ambiente Node.js é o Nodemailer. Neste artigo, vamos explorar o Nodemailer, mostrando com... | iamthiago |

1,889,537 | How to Publish Cypress Test Results to Azure Devops | Continuing on the integration between Cypress and Azure Devops, in this post I am going to share how... | 0 | 2024-06-15T12:26:40 | https://dev.to/cinthiabs/how-to-publish-cypress-test-results-to-azure-devops-4aah | azure, devops, cypress, tutorial | Continuing on the integration between **Cypress** and **Azure Devops**, in this post I am going to share how to publish test results!

If you found this article helpful, please leave a comment.❤️

Let's go!🚀

First of all, make sure your tests are in a **Git repository (“Repos”)** in Azure DevOps.

Next, we have to in... | cinthiabs |

1,889,535 | A Guide to Installing Jenkins, Configuring it and expose to outside world. | What is Jenkins? Jenkins is an open-source Java automation server used for software... | 0 | 2024-06-15T12:22:09 | https://dev.to/subodh_bagde/a-guide-to-installing-jenkins-configuring-it-and-expose-to-outside-world-2344 | # What is Jenkins?

Jenkins is an open-source Java automation server used for software development lifecycle automation of repetitive operations. Being able to integrate with nearly all tools in the CI/CD pipeline with its many plugin support makes it a vital tool for developers and DevOps engineers.

## AWS EC2 Instan... | subodh_bagde | |

1,889,534 | Bootstrap Crash Course: Get Started with Responsive Web Design | Bootstrap is a powerful front-end framework that helps you build responsive and mobile-first web... | 0 | 2024-06-15T12:18:18 | https://dev.to/igbojionu/bootstrap-crash-course-get-started-with-responsive-web-design-3mp4 |

Bootstrap is a powerful front-end framework that helps you build responsive and mobile-first web designs quickly and easily. Whether you're new to web development or looking to streamline your workflow, this crash course will get you up and running with Bootstrap in no time.

### What is Bootstrap?

Bootstrap is a fr... | igbojionu | |

1,889,533 | Discover a New Era of Online Betting with Silverexch | Betting on your favorite games and sports tournaments has never been more accessible and exciting... | 0 | 2024-06-15T12:16:29 | https://dev.to/silverexchids/discover-a-new-era-of-online-betting-with-silverexch-1in7 | silverexch, silverexchid, silverexchange, silverexchapp | Betting on your favorite games and sports tournaments has never been more accessible and exciting than with [Silverexch](https://silverexchids.com.in/). As an industry leader, Silverexch.com provides a seamless and secure platform for online betting, all from the comfort of your home. By signing up for a new Silverexch... | silverexchids |

1,889,532 | GSoC’24(CircuitVerse) Week 1 & 2 | The coding period has begun, and a lot of work has been done on understanding the codebase, creating... | 0 | 2024-06-15T12:15:08 | https://dev.to/niladri_adhikary_f11402dc/gsoc24circuitverse-week-1-2-37gj | gsoc, google, circuitverse, webdev | The coding period has begun, and a lot of work has been done on understanding the codebase, creating new Vue components, and eliminating bugs.

### Implementation of LayoutElements Panel Vue Component

Previously, the LayoutElements Panel was in the `extra.vue` file.

First, I created a new Vue file for the LayoutEleme... | niladri_adhikary_f11402dc |

1,889,531 | Understanding Recursion: A Function’s Self-Call | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-15T12:11:46 | https://dev.to/codewithuma/understanding-recursion-a-functions-self-call-4ne1 | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

Recursion is a process where a function calls itself as a subroutine, allowing for repeated execution until a base condition is met.

<!-- Explain a computer science concept in 256 char... | codewithuma |

1,889,530 | Chatbot | message=input.map(response) if (c=="explain") summarise(c) function search( ){ search=... | 0 | 2024-06-15T12:11:43 | https://dev.to/priya_sri_362147bca8afa71/chatbot-3m91 | message=input.map(response)

if (c=="explain")

summarise(c)

function search( ){

search= keyword.search(input)

if(keyword== input)

console.log(search)

}

var test=model.elaborate(trainingdata) | priya_sri_362147bca8afa71 | |

1,889,529 | Demystifying Big O Notation: A Deep Dive into Algorithm Efficiency | Introduction In the world of computer science and programming, efficiency is key. As data... | 0 | 2024-06-15T12:11:24 | https://dev.to/kamomkoyan2/demystifying-big-o-notation-a-deep-dive-into-algorithm-efficiency-4g8o | ## Introduction

In the world of computer science and programming, efficiency is key. As data sets grow larger and software becomes more complex, understanding how algorithms perform and scale is crucial. One of the fundamental tools for analyzing the efficiency of algorithms is Big O notation. In this article, we will ... | kamomkoyan2 | |

1,889,528 | Scheduled stepdown for smoother MongoDB primary election | Stepdown is a great tool that allows us to keep clusters operating smoothly. We use it for example... | 0 | 2024-06-15T12:02:59 | https://dev.to/mknasiecki/scheduled-stepdown-for-smoother-mongodb-primary-election-4n0p | mongodb | Stepdown is a great tool that allows us to keep clusters operating smoothly. We use it for example when we want to perform some maintenance work on the host where the primary is currently running, to perform a rolling upgrade, and in many other cases we need to switch the primary to another node.

While usually electi... | mknasiecki |

1,889,527 | The Democratization Impact Of 3D Animation For Businesses Of All Sizes | The utilization of 3D animation has revolutionized to be an availing tool for any business today from... | 0 | 2024-06-15T12:02:44 | https://dev.to/acadereality/the-democratization-impact-of-3d-animation-for-businesses-of-all-sizes-575d | animation, video, services | The utilization of 3D animation has revolutionized to be an availing tool for any business today from a niche technology used by large entertainment studios in the past. This has happened because of the advances in technology, reduced costs and improved user-friendly software applications. Therefore, it is not only mor... | acadereality |

1,889,526 | 5 Must-Try Kubernetes Lab Tutorials 🚀 | The article is about 5 must-try Kubernetes lab tutorials offered by LabEx, a leading platform for hands-on Kubernetes learning. It covers essential Kubernetes concepts and skills, including scheduling with Node Selectors, using the Kubernetes Taint Command, modifying Kubeconfig files, scaling and managing Pods with Dep... | 27,732 | 2024-06-15T11:54:28 | https://dev.to/labex/5-must-try-kubernetes-lab-tutorials-2j8a | coding, programming, tutorial, kubernetes |

Kubernetes has become the de facto standard for container orchestration, and mastering its various features and functionalities is crucial for any aspiring cloud engineer or DevOps professional. LabEx, a leading platform for hands-on Kubernetes learning, offers a diverse range of lab tutorials to help you dive deep in... | labby |

1,889,525 | Hello Everyone | I'm new to this area and work as a web and software developer. I have proficiency in JavaScript and... | 0 | 2024-06-15T11:51:43 | https://dev.to/natdcoder/hello-everyone-nhk | I'm new to this area and work as a web and software developer. I have proficiency in JavaScript and Node.js for both front-end and back-end development. | natdcoder | |

1,889,524 | Thabet San Choi HOT Nhat Chau A Nam 2024 | Thabet San Choi HOT Nhat Chau A Nam 2024 - Voi kho game do so + giao dien 3d dep mat Thabet dang duoc... | 0 | 2024-06-15T11:45:50 | https://dev.to/thabet7life/thabet-san-choi-hot-nhat-chau-a-nam-2024-255h | trangchuthabet | Thabet San Choi HOT Nhat Chau A Nam 2024 - Voi kho game do so + giao dien 3d dep mat Thabet dang duoc rat nhieu anh em yeu thich, dang ky ngay de nhan khuyen mai khung. Hay tham gia Thabet ngay hom nay de nhan nhung uu dai hap dan.

Email: arrenholzjobe57333@gmail.com

Website: https://thabet77.life/

Dien Thoai: (+63) 09... | thabet7life |

1,889,491 | How to Easily Add Translations to Your React Apps with i18next | Multilingual support is a crucial aspect of modern web applications. By providing translations in... | 0 | 2024-06-15T11:07:10 | https://10xdev.codeparrot.ai/adding-translations-to-react-apps | react, translations, i18next, javascript | Multilingual support is a crucial aspect of modern web applications. By providing translations in multiple languages, you can cater to a diverse audience and enhance user experience. React applications can benefit significantly from internationalization libraries like i18next, which simplify the process of adding trans... | harshalranjhani |

1,889,490 | Latest Newsletter: Big Changes At Every Layer (Issue #168) | Linux desktop movement gets a big boost, the dollar and petrodollar are dying, being a VC is changing, the surprisingly interesting history of men’s suits, EU elections and Microsoft & AI | 0 | 2024-06-15T11:07:09 | https://dev.to/mjgs/latest-newsletter-big-changes-at-every-layer-issue-168-3765 | javascript, tech, webdev, discuss | ---

title: Latest Newsletter: Big Changes At Every Layer (Issue #168)

published: true

description: Linux desktop movement gets a big boost, the dollar and petrodollar are dying, being a VC is changing, the surprisingly interesting history of men’s suits, EU elections and Microsoft & AI

tags: javascript, tech, webdev, d... | mjgs |

1,889,489 | Maximizing Productivity with ChatGPT: Comparing ChatGPT-3.5 and ChatGPT-4 Omni for Diverse AI Applications | Explore the capabilities, use cases, and pricing of ChatGPT-3.5 and ChatGPT-4 Omni to determine the... | 0 | 2024-06-15T11:06:06 | https://medium.com/mindroast/maximizing-productivity-with-chatgpt-comparing-chatgpt-3-5-d7a8d87c81b5 | webdev, ai, software, coding | _Explore the capabilities, use cases, and pricing of ChatGPT-3.5 and ChatGPT-4 Omni to determine the best AI solution for your business needs_

The next big revolution will be the widespread implementation of artificial intelligence (AI), with various large language models (LLMs) playing a crucial role.

.*

## Explainer

<!-- Explain a computer science concept in 256 characters or less. -->

Compilers translate your code (source language) into instructions your computer understands (machine language). ... | blessedtechie |

1,889,487 | Prêt à partir | En octobre 2022 je suis placé quatre jours en détention dans une affaire dont je n’ose pas écrire, je... | 0 | 2024-06-15T11:01:27 | https://dev.to/martin_auvray_2e5bbc3b7b5/pret-a-partir-inl | En octobre 2022 je suis placé quatre jours en détention dans une affaire dont je n’ose pas écrire, je suis innocent, c’est un souvenir horrible qui ne fait qu’aggraver mon état mental (dépressif depuis 2019).

Je n’oublierais pas les mains tendues de mon ami Mohamed qui m’a encouragé à monter ma société et avec qui nous... | martin_auvray_2e5bbc3b7b5 | |

1,889,486 | SP3D Technology: An Advanced Approach to Piping Designing | In the rapidly evolving field of engineering, SP3D technology stands out as a revolutionary approach... | 0 | 2024-06-15T11:00:23 | https://dev.to/shivam_shroti_402ee684c90/sp3d-technology-an-advanced-approach-to-piping-designing-5edb | sp3d, sp3dtraining, onlinesp3d, tutorial | In the rapidly evolving field of engineering, SP3D technology stands out as a revolutionary approach to piping design. SmartPlant 3D (SP3D) is a cutting-edge software that offers a comprehensive solution for creating, managing, and optimizing complex piping systems. This blog will delve into the significance of SP3D te... | shivam_shroti_402ee684c90 |

1,889,485 | What Training Do You Need to Be a Web Developer? | Web development is a rapidly growing field with ample opportunities for creative and technical minds.... | 0 | 2024-06-15T10:52:30 | https://dev.to/shivam_shroti_402ee684c90/what-training-do-you-need-to-be-a-web-developer-2hoa | programming, tutorial, career, beginners | Web development is a rapidly growing field with ample opportunities for creative and technical minds. If you're considering a career as a web developer, you might wonder what training you need to get started. This blog will guide you through the essential training and skills required to become a successful web develope... | shivam_shroti_402ee684c90 |

1,889,484 | Stacks vs. RSK: A Comprehensive Comparison | Introduction The blockchain technology landscape is vast and varied, with... | 27,673 | 2024-06-15T10:47:20 | https://dev.to/rapidinnovation/stacks-vs-rsk-a-comprehensive-comparison-1b7h | ## Introduction

The blockchain technology landscape is vast and varied, with multiple

platforms designed to suit different needs. Among these, Stacks and RSK

(Rootstock) stand out due to their unique approaches and capabilities. Both

platforms aim to extend the functionality of leading blockchains through

innovations ... | rapidinnovation | |

1,889,483 | React Firebase Auth Template (With Protected Routes) | TLDR: got tired of setting up this lightweight stack over and over again for some clients so i made a... | 0 | 2024-06-15T10:43:24 | https://dev.to/mmvergara/react-firebase-auth-template-with-protected-routes-1974 | react, webdev, firebase, javascript | TLDR: got tired of setting up this lightweight stack over and over again for some clients so i made a template, and i'm just here to share to ya'll.

### 🔭 [Github Repository](https://github.com/mmvergara/react-firebase-auth-template)

### 🌐 [App Demo](https://react-firebase-auth-templ-mmvergaras-projects.vercel.app/)

... | mmvergara |

1,889,482 | asdfghjk | A post by Ayush kumar | 0 | 2024-06-15T10:41:23 | https://dev.to/ayush_kumar_b251787e0536f/asdfghjk-2fe1 | ayush_kumar_b251787e0536f | ||

1,889,481 | Artist Talent Studio: Discover Your Artistic Potential | An artist talent studio can be the perfect hub if you want a place to create or collaborate with some... | 0 | 2024-06-15T10:33:22 | https://dev.to/thegxyz/artist-talent-studio-discover-your-artistic-potential-1gp7 | An [artist talent studio](https://thegxyz.tv/) can be the perfect hub if you want a place to create or collaborate with some major artists. Regardless of your skill level, you can embrace a nurturing environment. Also, you can get a chance to explore diverse mediums, elevate your skill level, and bring more creativity ... | thegxyz | |

1,889,480 | Science and Artificial Intelligence: Intersections and Implications | Science and artificial intelligence (AI) are intricately linked fields that mutually influence and... | 0 | 2024-06-15T10:32:52 | https://dev.to/mallika_singhal_1ac70de6b/science-and-artificial-intelligence-intersections-and-implications-fh4 | [Science and artificial intelligence](https://www.amodra.in/co-ord-set/) (AI) are intricately linked fields that mutually influence and enhance each other, albeit with distinct [methodologies and goals](https://www.amodra.in/buy-trendy-co-ord-sets-for-women-online-in-india/).

Science: It encompasses the systematic stu... | mallika_singhal_1ac70de6b | |

1,889,479 | Best CRM for Consulting Business | In the fast-paced world of consulting, maintaining strong client relationships is paramount. The... | 0 | 2024-06-15T10:25:44 | https://dev.to/salestowncrm/best-crm-for-consulting-business-1k23 | crm, crmsoftware | In the fast-paced world of consulting, maintaining strong client relationships is paramount. The ability to manage client interactions, track project progress, and streamline communication can make or break a consulting business. This is where Customer Relationship Management (CRM) software comes into play. The right C... | salestowncrm |

1,889,478 | Science vs artificial intelligence | Science and artificial intelligence (AI) are interconnected yet distinct fields that influence each... | 0 | 2024-06-15T10:25:42 | https://dev.to/mallika_singhal_1ac70de6b/science-vs-artificial-intelligence-3fd5 |

[Science](https://www.amodra.in/comfy-co-ord-sets-for-women/) and artificial intelligence (AI) are interconnected yet distinct fields that influence each other in significant ways.

[Science](https://www.amodra.in/straight-kurta-for-women-at-the-best-price/), broadly defined, encompasses the systematic study of the na... | mallika_singhal_1ac70de6b | |

1,889,477 | معرفی سایت دنس بت | دنس بت، با مدیریت نازنین همدانی پور، یک سایت شرط بندی ایرانی است. این سایت از زبان فارسی پشتیبانی... | 0 | 2024-06-15T10:24:29 | https://dev.to/dancebet/mrfy-syt-dns-bt-ifh | دنس بت، با مدیریت نازنین همدانی پور، یک سایت شرط بندی ایرانی است. این سایت از زبان فارسی پشتیبانی میکند و دارای درگاه بانکی است. بازیهای کازینویی و امکان پیش بینی در این سایت وجود دارد.

بونوس و جایزه

در دنس بت ۳ بونوس زیر فعال هستند:

بونوس طلایی ۲۰٪ ویژه روزهای سه شنبه برای واریزی بالای ۵۰۰ هزار تومان. این بونوس تنه... | dancebet | |

1,889,476 | Easiest way to install NodeJS | N:B: This installation process is for Windows Operating System. First thing first we're going to... | 0 | 2024-06-15T10:24:02 | https://dev.to/md_abdul_wahab/easiest-way-to-install-nodejs-4bea | node, beginners, javascript, learning | N:B: This installation process is for Windows Operating System.

First thing first we're going to download "NodeJS" from this link [](https://nodejs.org/dist/v20.14.0/node-v20.14.0-x64.msi)

After downloading the app just follow those step:

Step-1: Click on the downloaded app:

Step-2: Here click on the next:

We could be experiencing a paradigm shift in the meaning give... | abcsxyz |

1,889,463 | https://www.facebook.com/thewealthsignalreview/ | Wealth Signal provides an approach The Wealth Signal to financial prosperity that is scientifically... | 0 | 2024-06-15T10:11:41 | https://dev.to/ldsakhba/httpswwwfacebookcomthewealthsignalreview-j96 | webdev, javascript, beginners, programming | Wealth Signal provides an approach The Wealth Signal to financial prosperity that is scientifically supported and has been proven effective for individuals who are prepared to transform their financial future and personal development. Begin your journey today and witness the transformative power of Wealth Signal.

https... | ldsakhba |

1,889,462 | win55 | WIN55 la nha cai game truc tuyen hang dau Chau A, thuoc quyen so huu cua cong ty giai tri lon. Hay... | 0 | 2024-06-15T10:11:04 | https://dev.to/win55rents/win55-1817 | WIN55 la nha cai game truc tuyen hang dau Chau A, thuoc quyen so huu cua cong ty giai tri lon. Hay den voi chung toi, noi cung cap dich vu va san pham ca cuoc voi hang nghin khuyen mai hap dan.

Thong tin chi tiet:

Website: https://win55.rent/

Dia chi: 33/10 D. Truong Chinh, Tay Thanh, Tan Phu, Thanh pho Ho Chi Minh, Vi... | win55rents | |

1,889,461 | One Byte Explainer - Promise | Hello 👋 Let's start with Promise One-Byte Explainer: Promises are like a waiter taking... | 27,721 | 2024-06-15T10:07:45 | https://dev.to/imkarthikeyan/one-byte-explainer-promise-m5c | cschallenge, devchallenge, javascript, webdev | Hello 👋

Let's start with **Promise**

## One-Byte Explainer:

Promises are like a waiter taking your order. They handle results which can be either success or failure.

## Demystifying JS: Promise in Action

1. **Place the Order**: You give your order to the waiter (Promise).

2. **Waiter Takes Order:** The waiter t... | imkarthikeyan |

1,882,697 | A Jolly Good Guide to Collections in C# | . Ah, collections in C#. It’s a bit like organising your sock drawer, isn't it? You start with a... | 0 | 2024-06-15T10:03:35 | https://dev.to/ipazooki/a-jolly-good-guide-to-collections-in-c-k1h | tutorial, programming, csharp, dotnet | {% embed https://youtu.be/4H0gabAtouE %}.

Ah, collections in C#. It’s a bit like organising your sock drawer, isn't it? You start with a simple array and then realise you need something a bit more sophisticated to handle the chaos. Today, we'll dive into the wonderful world of collections in C# and learn how to keep ... | ipazooki |

1,889,352 | Implementation of Mini Mybatis (Version 1) | ... | 0 | 2024-06-15T10:02:10 | https://dev.to/junjiehou/implementation-of-mini-mybatis-version-1-2j50 | ### 一、实现的主要功能如下:

1. 通过Dom4J解析核心配置文件和Mapper配置文件,并封装对应的实例。

2. 通过SqlSession门面对外暴露核心的,如CRUD接口,事务接口和生成代理等操作接口。

3. 按照单一职责原则,对功能进行更细粒度的划分,实现Executor执行器,Statement处理器,Parameter处理器,ResultSet处理器。去完成Sql的执行,Sql的准备,参数的绑定,结果集的封装等操作。

4. 实现type处理器。BoundSql生成时进行参数与类型处理器的绑定,参数化时根据类型进行参数的自动处理。

5. 实现log模块,对多种日志框架进行整合。

6. 其他,如transaction模块,... | junjiehou | |

1,889,453 | The Azure Hub ☁️ Your Azure learning resources 📚 | Greetings 👋 Hi there! Tung Leo here. Previously, I've published The AWS Hub ☁️ Your new... | 0 | 2024-06-15T09:58:47 | https://dev.to/tungbq/the-azure-hub-your-azure-learning-resources-3b6n | azure, devops, cloud, learning | ## Greetings 👋

Hi there! Tung Leo here. Previously, I've published [**The AWS Hub ☁️ Your new AWS learning resources**](https://dev.to/tungbq/the-aws-hub-4phb) post to help everyone with the AWS learning repository.

This time, we'll dive into another cloud provider - the Azure cloud, and I'm excited to introduce you... | tungbq |

1,889,459 | Protect Your Shipments with Cardboard Boxes, Mailing Bags, Paper Bags, and Padded Envelopes | Effective shipping relies on the right packaging materials. Cardboard boxes are excellent for sturdy... | 0 | 2024-06-15T09:57:58 | https://dev.to/adnan_freelancer6/protect-your-shipments-with-cardboard-boxes-mailing-bags-paper-bags-and-padded-envelopes-3297 | Effective shipping relies on the right packaging materials. Cardboard boxes are excellent for sturdy protection, making them perfect for diverse items in storage or transport. Mailing bags offer a lightweight, durable solution for securely sending documents and smaller goods. Paper bags, combining strength with eco-fri... | adnan_freelancer6 | |

1,889,457 | How to and When to GraphQL - GraphQL MongoDB with NodeJs Set Up | For a light introduction, I would say, the main application of GraphQL api is as an API query... | 0 | 2024-06-15T09:56:23 | https://dev.to/codegirl0101/how-to-and-when-to-graphql-graphql-mongodb-with-nodejs-set-up-1kdh | graphql, mongodb, node, webdev | For a light introduction, I would say, the main application of GraphQL api is as an API query language.

GraphQL apollo gives clients a more effective and customizable way to query, add, and update data stored on a server.

We have our rest apis, but why GraphQL is used then? Can GraphQL replace rest? No, it won't. B... | codegirl0101 |

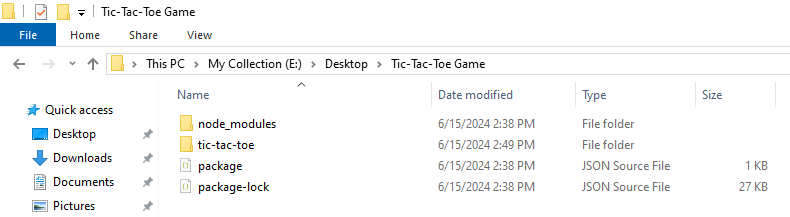

1,889,448 | Starting with ReactJS with fun | Firstly we're going to create a folder and named it with anything, Suppose, my folder name is... | 0 | 2024-06-15T09:44:19 | https://dev.to/md_abdul_wahab/starting-with-reactjs-with-fun-5c0e | javascript, beginners, react, webdev | Firstly we're going to create a folder and named it with anything, Suppose, my folder name is "**Tic-Tac-Toe Game**" .

Now open this folder in VS code.

Now go to this folder directory

Now type "cmd" here as follow t... | md_abdul_wahab |

1,889,452 | Ernesto Bertarelli Bitcoin Bank Bewertungen - Betrug oder legitim? | Die Schweizerische Nationalbank verklagt Ernesto Bertarelli für das, was er live im... | 0 | 2024-06-15T09:42:33 | https://dev.to/sornadtys/ernesto-bertarelli-bitcoin-bank-bewertungen-betrug-oder-legitim-3k85 | ## Die Schweizerische Nationalbank verklagt Ernesto Bertarelli für das, was er live im Fernsehen gesagt hat

[

](url)

Der Skandal ereignete sich während einer Live-Show, als Ernesto Bertarelli versehentlich sein Gehei... | sornadtys | |

1,889,451 | PAXFUL | should verify identity after UK phone number is linked to your account | should verify identity after UK phone number is linked to your account | 0 | 2024-06-15T09:40:45 | https://dev.to/01kg/paxful-should-verify-identity-after-uk-phone-number-is-linked-to-your-account-58d6 | should verify identity after UK phone number is linked to your account | 01kg | |

1,889,450 | Best HR/Payroll Management Software Service Provider In Gujarat | HR/Payroll Management Software is related to human resources and payroll within organizations. It... | 0 | 2024-06-15T09:37:21 | https://dev.to/delight_erp/best-hrpayroll-management-software-service-provider-in-gujarat-3j77 | hr, hrmanagement, recruitment, erpsoftware | HR/Payroll Management Software is related to human resources and payroll within organizations. It integrates various functions such as employee information management, attendance tracking, payroll processing, benefits administration, and compliance management into a centralized system. This software eliminates manual t... | delight_erp |

1,889,449 | Top Manufacturing Management Software (MMS) Service in Gujarat | Manufacturing management software is an essential tool for manufacturers to optimize and enhance... | 0 | 2024-06-15T09:34:10 | https://dev.to/delight_erp/top-manufacturing-management-software-mms-service-in-gujarat-5a22 | manufacturingindustry, manufacturingcompany, industrialarea, rajkot | Manufacturing management software is an essential tool for manufacturers to optimize and enhance their business operations. It plays a vital role in improving organizational efficiency by enabling companies to effectively utilize their resources, a critical factor in today’s competitive market landscape. Many businesse... | delight_erp |

1,889,447 | Best Production Management Software Provider in Rajkot | Managing company details can be challenging, especially in today's fast-paced production environments... | 0 | 2024-06-15T09:31:35 | https://dev.to/delight_erp/best-production-management-software-provider-in-rajkot-38kh | erpsoftware, productionmanagement, products, delighterp | Managing company details can be challenging, especially in today's fast-paced production environments where it's hard to handle all aspects of production management.

However, Delight ERP software can solve these problems.

We’ll explain all the details about production management software and its benefits, ensuring ... | delight_erp |

1,889,446 | Invoke Dataverse AI Actions via Powershell | A few days ago, I was 🛠️ thinking to create a command for PACX that allowed me to call via command... | 0 | 2024-06-15T09:30:49 | https://dev.to/_neronotte/invoke-dataverse-ai-actions-via-powershell-10em | dataverse, tools, opensource, ai | A few days ago, I was 🛠️ _thinking to create a command for PACX that allowed me to call via command line the new Dataverse AI functions_ 🛠️.

But I'm lazy, so I try to avoid reinventing the wheel when there is one there already available that just fits my needs.

Here is how you can invoke the 🤖 Dataverse AI 🤖 acti... | _neronotte |

1,889,422 | What is Big-O? | Photo by Conny Schneider on Unsplash This is a submission for DEV Computer Science Challenge... | 0 | 2024-06-15T09:29:13 | https://dev.to/muchai_joseph/what-is-big-o-18c | devchallenge, cschallenge, computerscience, beginners | Photo by <a href="https://unsplash.com/@choys_?utm_content=creditCopyText&utm_medium=referral&utm_source=unsplash">Conny Schneider</a> on <a href="https://unsplash.com/photos/a-blue-background-with-lines-and-dots-xuTJZ7uD7PI?utm_content=creditCopyText&utm_medium=referral&utm_source=unsplash">Unsplash</a>

*This is a sub... | muchai_joseph |

1,889,445 | Generating Random Colors in JavaScript: A Step-by-Step Guide | Colors play a crucial role in web design, bringing vibrancy and personality to your applications. One... | 0 | 2024-06-15T09:27:11 | https://raajaryan.tech/generating-random-colors-in-javascript-a-step-by-step-guide | javascript, beginners, tutorial, opensource | Colors play a crucial role in web design, bringing vibrancy and personality to your applications. One interesting way to make your web applications more dynamic is by generating random colors. In this blog post, we'll explore different methods to generate random colors in JavaScript. Whether you need hexadecimal, RGB, ... | raajaryan |

1,889,444 | How to Right Rotate an Array by D Positions | Right rotating an array involves shifting the elements of the array to the right by a specified... | 27,580 | 2024-06-15T09:26:43 | https://blog.masum.dev/how-to-right-rotate-an-array-by-d-positions | algorithms, computerscience, cpp, tutorial | Right rotating an array involves shifting the elements of the array to the right by a specified number of places. In this article, we'll discuss two efficient methods to achieve this rotation.

### Solution 1: Brute Force Approach (using a Temp Array)

This method uses an auxiliary array to store the last D elements an... | masum-dev |

1,889,443 | Supply chain management Software (SCM) | Supply chain management (SCM) is the activities required to plan, control, and execute the flow of... | 0 | 2024-06-15T09:26:38 | https://dev.to/delight_erp/supply-chain-management-software-scm-3h4n | scm, supplychainmanagement, erpsoftware, erp | Supply chain management (SCM) is the activities required to plan, control, and execute the flow of products and services from raw material suppliers to end customers. It involves coordinating and integrating the key business processes among different organizations. Like, suppliers, manufacturers, distributors, retailer... | delight_erp |

1,889,442 | Asking. | Let's just assume I've been away from the world for ever. My only device is an android. I want to... | 0 | 2024-06-15T09:24:33 | https://dev.to/archoraenos/asking-lfi | Let's just assume I've been away from the world for ever. My only device is an android. I want to learn the trade from the ground up, I have plenty of time and I'd prefer to learn it from the ones who develop it. I'll assume what I knew from ten years ago is obsolete. I'm unable to get access to college or other educat... | archoraenos | |

1,889,441 | Customer Relationship Management software (CRM) | Empower your business with Customer Relationship Management (CRM) Software. CRM is designed to... | 0 | 2024-06-15T09:23:36 | https://dev.to/delight_erp/customer-relationship-management-software-crm-28gi | delighterp, crm, customerrelationshipmanagement | Empower your business with Customer Relationship Management (CRM) Software. CRM is designed to interact with the customers. Our CRM Software will help you to increase customer engagement and interact with the companies. With contact management, lead tracking, sales management, and performance analytics, our CRM will em... | delight_erp |

1,889,431 | Unleashing SaaS Potential: The Role of SEO Agencies | The SaaS (Software as a Service) industry has grown exponentially, revolutionising how businesses... | 0 | 2024-06-15T09:19:19 | https://dev.to/carmentyler/unleashing-saas-potential-the-role-of-seo-agencies-4bai | saasseoagency | The SaaS (Software as a Service) industry has grown exponentially, revolutionising how businesses operate and manage their software needs. With the competitive nature of the SaaS market, standing out is crucial. This is where Search Engine Optimisation (SEO) agencies play an indispensable role. Leveraging their experti... | carmentyler |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.