id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,888,516 | Unveiling the Data Story: Mastering Digital Marketing Analytics to Optimize Your Campaigns | In the ever-evolving world of digital marketing, data reigns supreme. But this data isn't just a... | 0 | 2024-06-14T11:53:54 | https://dev.to/jinesh_vora_ab4d7886e6a8d/unveiling-the-data-story-mastering-digital-marketing-analytics-to-optimize-your-campaigns-4d2p | digitalmarketing, seo, sem, googleads |

In the ever-evolving world of digital marketing, data reigns supreme. But this data isn't just a jumble of numbers; it's a captivating story waiting to be unraveled. By mastering digital marketing analytics, you transform yourself from a data decoder to a master storyteller, using insights to optimize your campaigns ... | jinesh_vora_ab4d7886e6a8d |

1,888,515 | A Complete Guide to Safe and Effective Plumbing Repair | Plumbing repairs are essential for maintaining the functionality and safety of your home’s water... | 0 | 2024-06-14T11:53:36 | https://dev.to/affanali_offpageseo_a5ec6/a-complete-guide-to-safe-and-effective-plumbing-repair-i3h |

Plumbing repairs are essential for maintaining the functionality and safety of your home’s water system. Whether you’re tackling a simple fix or a more complex issue, understanding the basics of safe and effective ... | affanali_offpageseo_a5ec6 | |

1,888,514 | Reading Data from the Web | Just like you can read data from a file on your computer, you can read data from a file on the Web.... | 0 | 2024-06-14T11:52:08 | https://dev.to/paulike/reading-data-from-the-web-1l6 | java, programming, learning, beginners | Just like you can read data from a file on your computer, you can read data from a file on the Web. In addition to reading data from a local file on a computer or file server, you can also access data from a file that is on the Web if you know the file’s URL (Uniform Resource Locator—the unique address for a file on th... | paulike |

1,888,513 | How to Deploy an Express.js App on GitHub Pages Using GitHub Actions | How to Deploy an Express.js App on GitHub Pages Using GitHub Actions Deploying an... | 0 | 2024-06-14T11:51:56 | https://dev.to/sh20raj/how-to-deploy-an-expressjs-app-on-github-pages-using-github-actions-4h0f | webdev, javascript, github, githubpages | # How to Deploy an Express.js App on GitHub Pages Using GitHub Actions

Deploying an Express.js app on GitHub Pages might sound challenging at first, but with the right tools and steps, it becomes a seamless process. GitHub Pages only supports static sites, so we need to convert our dynamic Express.js app into static f... | sh20raj |

1,888,512 | Professional Appliance Repair: What to Expect | When your household appliances break down, it can disrupt your daily routine and cause significant... | 0 | 2024-06-14T11:51:09 | https://dev.to/affanali_offpageseo_a5ec6/professional-appliance-repair-what-to-expect-13p4 |

When your household appliances break down, it can disrupt your daily routine and cause significant inconvenience. Professional appliance repair services provide fast and reliable solutions to restore your appliances ... | affanali_offpageseo_a5ec6 | |

1,888,511 | Networsys Technologies Is A Leading Cyber Security Company | Sensitive data protection is crucial in the current digital era. Every business, regardless of its... | 0 | 2024-06-14T11:50:49 | https://dev.to/networsystechnologies/networsys-technologies-is-a-leading-cyber-security-company-1ecf | Sensitive data protection is crucial in the current digital era. Every business, regardless of its size or industry, faces the risk of cyber threats. From data breaches to malware attacks, the landscape of cyber threats is continuously evolving. This makes it crucial for organizations to partner with a reputable **[Cyb... | networsystechnologies | |

1,888,510 | DIY Appliance Installation: How to Safely Set Up Your New Appliances | ** DIY Appliance Installation: How to Safely Set Up Your New Appliances Setting up new appliances on... | 0 | 2024-06-14T11:47:59 | https://dev.to/affanali_offpageseo_a5ec6/diy-appliance-installation-how-to-safely-set-up-your-new-appliances-4ilm | **

DIY Appliance Installation: How to Safely Set Up Your New Appliances

Setting up new appliances on your own can be a rewarding way to save money and ensure everything is done to your standards. However, it requires careful preparation and execution to avoid potential issues. This guide provides a detailed, step-by-... | affanali_offpageseo_a5ec6 | |

1,888,509 | Erectile Dysfunction Treatment in Dubai: Don't Let Cost Be a Barrier to Regaining Confidence? | Erectile dysfunction (ED) is a prevalent condition that affects a significant number of men... | 0 | 2024-06-14T11:47:14 | https://dev.to/dynamicclinicdubai/erectile-dysfunction-treatment-in-dubai-dont-let-cost-be-a-barrier-to-regaining-confidence-1n4d | Erectile dysfunction (ED) is a prevalent condition that affects a significant number of men worldwide, including those in Dubai. This condition, characterized by the inability to achieve or maintain an erection sufficient for satisfactory sexual performance, can have profound psychological and emotional impacts. The go... | dynamicclinicdubai | |

1,888,507 | TOP 8 Best Gantt Chart Frameworks for Project Management | Gantt charts have long been an essential tool for project managers. They offer a visual... | 0 | 2024-06-14T11:43:20 | https://dev.to/lenormor/top-8-best-gantt-chart-frameworks-for-project-management-5fp8 | webdev, javascript, programming, react | Gantt charts have long been an essential tool for project managers. They offer a visual representation of a project schedule, showing the start and finish dates of elements within a project. Over the years, numerous Gantt chart frameworks have emerged, each with unique features tailored to various needs. Here, we prese... | lenormor |

1,888,504 | Manly Spirits CO. | Capturing the harmony between carefree beach life and urban sophistication for which Manly is... | 0 | 2024-06-14T11:39:38 | https://dev.to/manlyspirits/manly-spirits-co-4mfg | Capturing the harmony between carefree beach life and urban sophistication for which Manly is renowned, our Founders David, Vanessa and the **[Manly Spirits Co.](https://manlyspirits.com.au/

)** team create Australian Gins, Botanical Vodkas and Whiskies that rival the best in the world taking much of their inspiration ... | manlyspirits | |

1,888,503 | Case Study: Replacing Text | Suppose you are to write a program named ReplaceText that replaces all occurrences of a string in a... | 0 | 2024-06-14T11:38:36 | https://dev.to/paulike/case-study-replacing-text-f0p | java, programming, learning, beginners | Suppose you are to write a program named **ReplaceText** that replaces all occurrences of a string in a text file with a new string. The file name and strings are passed as command-line arguments as follows:

`java ReplaceText sourceFile targetFile oldString newString`

For example, invoking

`java ReplaceText FormatSt... | paulike |

1,888,502 | Bloating Doctor Suwanee GA | Merus Gastroenterology & Gut Health LLC located in Suwanee GA specializes in providing top tier... | 0 | 2024-06-14T11:36:45 | https://dev.to/merusgastro/bloating-doctor-suwanee-ga-l0b | Merus Gastroenterology & Gut Health LLC located in Suwanee GA specializes in providing top tier care for a range of gastrointestinal issues. Our expert team led by experienced doctors offers personalized treatment plans for constipation bloating and other stomach related ailments. We are dedicated to diagnosing and man... | merusgastro | |

1,888,501 | Some ideas on conversion APIs | all developers have at some point likely experienced the need to convert and deliver docs to others... | 0 | 2024-06-14T11:35:58 | https://dev.to/nikoldimit/some-ideas-on-conversion-apis-3m6a | all developers have at some point likely experienced the need to convert and deliver docs to others in software apps — and though there are a variety of file types, chances are that the required conversion is from an MS Word doc or PDF.

What are Document Conversion APIs?

These are APIs that can take a source input fi... | nikoldimit | |

1,409,027 | Kubernetes Health Checks | Kubernetes is an open-source container orchestration platform that helps manage and deploy... | 0 | 2023-03-21T11:03:41 | https://dev.to/roshanpnq/kubernetes-health-checks-34aa | devops, kubernetes |

Kubernetes is an open-source container orchestration platform that helps manage and deploy containerized applications. One of the critical features of Kubernetes is its ability to perform health checks on containers... | roshanpnq |

1,884,680 | Building Custom RxJS Operators for HTTP Requests | Introduction In this article I'll focus on how to efficiently structure the logic in the... | 27,664 | 2024-06-14T11:34:17 | https://dev.to/cezar-plescan/refactoring-rxjs-operators-for-http-streams-25kh | angular, tutorial, rxjs, refactoring | ## Introduction

In this article I'll focus on how to efficiently **structure the logic in the HTTP request stream pipelines** for the loading and saving of the user data. Currently, the entire logic is handled within the `UserProfileComponent` class. I'll refactor this to achieve a more declarative and reusable approa... | cezar-plescan |

1,888,500 | Thrashing - One Byte Explainer | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-14T11:32:29 | https://dev.to/codewithtee/thrashing-one-byte-explainer-323i | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

Thrashing happens when too many processes are sent to the CPU, causing high utilization at first. However, as more processes keep coming, the CPU gets overwhelmed, and its efficiency d... | codewithtee |

1,888,499 | Cheap Tow Truck | Introducing Cheap Tow Truck – your reliable solution for affordable towing services in Toronto,... | 0 | 2024-06-14T11:32:25 | https://dev.to/cheaptowtruck_/cheap-tow-truck-1co5 | Introducing Cheap Tow Truck – your reliable solution for affordable towing services in Toronto, Mississauga, and Durham! Don't let unexpected vehicle troubles put a dent in your day or wallet. Our dedicated team is here to provide prompt, professional towing assistance at prices that won't break the bank.

Whether you'... | cheaptowtruck_ | |

1,888,498 | Resize the image | Görüntü işleme ve derin öğrenme uygulamalarında genellikle görüntülerin boyutlarını yeniden... | 0 | 2024-06-14T11:30:26 | https://dev.to/mustafacam/resize-the-image-3f45 | Görüntü işleme ve derin öğrenme uygulamalarında genellikle görüntülerin boyutlarını yeniden boyutlandırmak önemlidir. İşte bazı nedenler:

Boyut Uyumu: Birçok derin öğrenme modeli, giriş olarak sabit boyutlarda görüntüler gerektirir. Bu nedenle, veri kümenizdeki tüm görüntülerin aynı boyutta olması önemlidir. Yeniden b... | mustafacam | |

1,888,497 | extended unpacking in Python | Python's extended unpacking feature, often denoted by the * operator, allows you to unpack iterable... | 0 | 2024-06-14T11:30:21 | https://dev.to/jeevanizm/extended-unpacking-in-python-5e55 | python | Python's extended unpacking feature, often denoted by the * operator, allows you to unpack iterable objects (like lists, tuples, strings) into individual elements or variables. This feature is useful in scenarios where you want to handle variable-length iterables or where specific elements need to be extracted from a l... | jeevanizm |

1,888,496 | Fill out the google form | Fill out the google form ... | 0 | 2024-06-14T11:30:12 | https://dev.to/lonewolfphd_e9ab63cd48142/fill-out-the-google-form-4odh | {% stackoverflow 78622671 %} | lonewolfphd_e9ab63cd48142 | |

1,888,495 | Tow Master Toronto | At Tow Master Toronto, we are your premier destination for a comprehensive range of towing and... | 0 | 2024-06-14T11:29:37 | https://dev.to/towmaster_toronto_942af0d/tow-master-toronto-2gej |

At Tow Master Toronto, we are your premier destination for a comprehensive range of towing and roadside assistance services in the Greater Toronto Area. Whether you're facing a roadside emergency or need reliable tow... | towmaster_toronto_942af0d | |

1,888,494 | comprehension in python | liste = [1, 2, 3, 4, 5] liste_carpilmis = [x * 2 for x in liste] print(liste_carpilmis) ... | 0 | 2024-06-14T11:29:35 | https://dev.to/mustafacam/comprehension-in-python-27c1 | ```

liste = [1, 2, 3, 4, 5]

liste_carpilmis = [x * 2 for x in liste]

print(liste_carpilmis)

```

Bu kod, liste adlı listenin içindeki her bir elemanı 2 ile çarpar ve yeni bir liste olan liste_carpilmis içine bu çarpılmış değerleri ekler. Sonuç olarak, liste_carpilmis içindeki elemanlar, liste içindekilerin iki katı... | mustafacam | |

1,888,493 | File Input and Output | Use the Scanner class for reading text data from a file and the PrintWriter class for writing text... | 0 | 2024-06-14T11:29:33 | https://dev.to/paulike/file-input-and-output-e2m | java, programming, learning, beginners | Use the **Scanner** class for reading text data from a file and the **PrintWriter** class for writing text data to a file. A **File** object encapsulates the properties of a file or a path, but it does not contain the methods for creating a file or for writing/reading data to/from a file (referred to as data _input_ an... | paulike |

1,892,626 | What’s New in WPF Gantt Chart: 2024 Volume 2 | TLDR: Let’s explore the new features added in the Syncfusion WPF Gantt Chart control for the 2024... | 0 | 2024-06-19T13:16:17 | https://www.syncfusion.com/blogs/post/wpf-gantt-chart-2024-volume-2 | wpf, development, gantt, desktop | ---

title: What’s New in WPF Gantt Chart: 2024 Volume 2

published: true

date: 2024-06-14 11:29:21 UTC

tags: wpf, development, gantt, desktop

canonical_url: https://www.syncfusion.com/blogs/post/wpf-gantt-chart-2024-volume-2

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/k6eudir9rf4tjpl5ughr.png

-... | gayathrigithub7 |

1,888,037 | HOW TO CREATE A WINDOWS 11 VM ON AZURE. | ### TABLE OF CONTENT GET A DOLLAR CARD. HOW TO OPEN AN AZURE ACCOUNT. USE YOUR FREE... | 0 | 2024-06-14T11:28:46 | https://dev.to/agana_adebayoo_876a06/how-to-create-a-windows-11-vm-on-azure-4ddd | azure, windows11, creation, grey | **### TABLE OF CONTENT**

1. GET A DOLLAR CARD.

2. HOW TO OPEN AN AZURE ACCOUNT.

3. USE YOUR FREE ACCOUNT.

4. CREATING WINDOWS 11 VM ON AZURE.

5. VIRTUAL MACHINE CREATION STEP BY STEP.

**## INTRODUCTION**

Azure Microsoft cloud provider has one of the most user-friendly inter-phase, creating its Virtual Machine (VM) is... | agana_adebayoo_876a06 |

1,888,490 | Patient Feedback | Enter fullscreen mode Exit fullscreen mode | 0 | 2024-06-14T11:26:55 | https://dev.to/drpratikpatil125/patient-feedback-48lf | cancerspecialist, medicaloncologist |

```

```

| drpratikpatil125 |

1,888,489 | Mastering JavaScript Classes: A Comprehensive Guide.🚀🚀💪 | Introduction JavaScript classes, introduced in ECMAScript 2015 (ES6), provide a much... | 0 | 2024-06-14T11:25:55 | https://dev.to/dharamgfx/mastering-javascript-classes-a-comprehensive-guide-4hjh | webdev, javascript, beginners, learning | ### Introduction

JavaScript classes, introduced in ECMAScript 2015 (ES6), provide a much cleaner and more intuitive syntax for creating objects and dealing with inheritance. This post explores JavaScript classes in depth, covering essential concepts and features with clear examples.

---

### 1. **Overview**

JavaScri... | dharamgfx |

1,888,488 | Streamlining Business Operations with Workday’s Enterprise Management Cloud | Workday’s Enterprise Management Cloud is revolutionizing business operations with its comprehensive... | 0 | 2024-06-14T11:25:41 | https://mygroundbiz.co.uk/streamlining-business-operations-with-workdays-enterprise-management-cloud/ | workday, testing, automation |

Workday’s Enterprise Management Cloud is revolutionizing business operations with its comprehensive suite of tools for financial management, enterprise resource planning (ERP), and human capital management (HCM). As ... | rohitbhandari102 |

1,888,486 | HIGHER ORDER ARRAY METHODS IN JAVASCRIPT | WHAT ARE HIGHER ORDER METHODS? Higher order array methods in javascript are built-in methods that... | 0 | 2024-06-14T11:23:50 | https://dev.to/shreeprabha_bhat/higher-order-array-methods-in-javascript-4hjl | **WHAT ARE HIGHER ORDER METHODS?**

Higher order array methods in javascript are built-in methods that take a function as an argument or return a function as a result.

In Javascript we use many higher order methods that helps in several array manipulation tasks. Few of commonly used array methods are:

1. forEach()

2. ... | shreeprabha_bhat | |

1,888,350 | Cervical Cancer Treatment In Pune- Dr Pratik Patil | In India, Cervical Cancer is the 2nd most common type of cancer identified in women. It is also... | 0 | 2024-06-14T11:16:41 | https://dev.to/drpratikpatil125/cervical-cancer-treatment-in-pune-dr-pratik-patil-3f6j | cancerspecialist, drpratikpatil, oncologist | In India, Cervical Cancer is the 2nd most common type of cancer identified in women. It is also called cervix cancer. Cervical cancer occurs in approximately 1 in 53 Indian women during their lifetime.[ **Dr. Pratik Patil – Cancer Specialist in Pune**](https://www.pratikpatil.co.in/) at Jupiter Hospital, Baner has trea... | drpratikpatil125 |

1,888,485 | This Week In React #189: Next.js Security, useFormStatus, React State,Storybook, Skia Video, Starlink, App Clips, VisionOS... | Hi everyone! It's Benedikt again this week, as Sebastien is taking a break. For me, the week's most... | 18,494 | 2024-06-14T11:21:30 | https://thisweekinreact.com/newsletter/189 | react, reactnative | ---

series: This Week In React

canonical_url: https://thisweekinreact.com/newsletter/189

---

Hi everyone!

It's [Benedikt](https://x.com/bndkt) again this week, as Sebastien is taking a break.

For me, the week's most interesting topics are not big announcements or new releases but rather subtle hints at future work (... | sebastienlorber |

1,888,484 | power bi Training in kphb best training institute in hyderbad | Power BI Courses in Hyderabad Are you eager to Receive the Best Power BI Training in Kukapally You... | 0 | 2024-06-14T11:21:24 | https://dev.to/smiley_lokesh_c5541e90aec/power-bi-training-in-kphb-best-training-institute-in-hyderbad-3b30 | powerbi, datavisulization, softwareinstitute, javascript | [Power BI Courses in Hyderabad](https://www.vcubesoftsolutions.com/power-bi-training-in-kphb/)

Are you eager to Receive the[ Best Power BI Training in Kukapally](https://www.vcubesoftsolutions.com/power-bi-training-in-kphb/) You are in the right Place. V CUBE is a[ Best Software Training Company in Hyderabad](https://... | smiley_lokesh_c5541e90aec |

1,888,380 | Time Management Hacks for CA Foundation Aspirants in Agra: Conquer the Clock! | Cracking the CA Foundation exam in Agra requires dedication, but with a packed schedule, managing... | 0 | 2024-06-14T11:20:20 | https://dev.to/ayaparizeau/time-management-hacks-for-ca-foundation-aspirants-in-agra-conquer-the-clock-2jc0 |

Cracking the [**CA Foundation**](https://navnibhartiyaclasses.com/product/ca-foundation/) exam in Agra requires dedication, but with a packed schedule, managing your time effectively becomes crucial. Don't worry, a... | ayaparizeau | |

1,888,352 | f1 puanı | F1 puanı, bir sınıflandırma modelinin performansını değerlendirmek için kullanılan bir ölçümdür.... | 0 | 2024-06-14T11:19:35 | https://dev.to/mustafacam/f1-puani-5964 |

F1 puanı, bir sınıflandırma modelinin performansını değerlendirmek için kullanılan bir ölçümdür. Hassasiyet (precision) ve geri çağırma (recall) değerlerinin harmonik ortalaması olarak hesaplanır.

Hassasiyet, modelin pozitif olarak tahmin ettiği örneklerin ne kadarının gerçekten pozitif olduğunu belirler. Hassasiyet,... | mustafacam | |

1,888,351 | Howdy Partner! The Ultimate Guide to Barbie Cowgirl Outfits and Matching Looks | The classic Barbie cowgirl outfit embodies the Wild West’s spirit and has been a constant presence in... | 0 | 2024-06-14T11:19:22 | https://dev.to/ariajade/howdy-partner-the-ultimate-guide-to-barbie-cowgirl-outfits-and-matching-looks-34ff | The classic Barbie cowgirl outfit embodies the Wild West’s spirit and has been a constant presence in toy collections. It boosts creative play and results in outstanding costumes. But, choosing the right outfit can be tricky due to various choices and styles. Don’t worry! This detailed guide will assist you in selectin... | ariajade | |

1,888,349 | WhatsApp Through Virtual API Free: Revolutionize Marketing | Dive Deep into Bulk WhatsApp Through Virtual API Free Conquering today's digital... | 0 | 2024-06-14T11:15:50 | https://dev.to/ananya_seth12/whatsapp-through-virtual-api-free-revolutionize-marketing-42ic |

## **Dive Deep into Bulk WhatsApp Through Virtual API Free**

Conquering today's digital landscape demands leveraging the power of WhatsApp, a goldmine for businesses aiming to forge deeper connections with their ta... | ananya_seth12 | |

1,888,345 | Plant Engineering Services - 3D CAD Modeling - Indovance | Indovance offers comprehensive plant engineering services including plant layout, equipment... | 0 | 2024-06-14T11:13:24 | https://dev.to/cad-design-services/plant-engineering-services-3d-cad-modeling-indovance-2lh | plantengineeringservices, cad, 3dcadmodeling, engineering | [Indovance offers comprehensive plant engineering services including plant layout, equipment modelling, pipe routing, piping support, and skid modelling.

](https://www.indovance.com/plant-engineering) | cad-design-services |

1,888,343 | Supreme Hoodie Fashion A Comprehensive Guide | Supreme has become synonymous with streetwear culture, and its hoodies are at the forefront of this... | 0 | 2024-06-14T11:10:59 | https://dev.to/ali_sajjad_f14184ad85cded/supreme-hoodie-fashion-a-comprehensive-guide-4kbl | supremehoodie, supreme, redsupreme, supremeofficial | Supreme has become synonymous with streetwear culture, and its hoodies are at the forefront of this fashion movement. The allure of Supreme hoodies lies in their unique designs, limited availability, and the brand's cult-like following. This article delves deep into Supreme hoodie fashion, exploring its history, cultur... | ali_sajjad_f14184ad85cded |

1,888,342 | what is python programming language used for? | What is Python Programming Language Used For? Introduction Python is a versatile and powerful... | 0 | 2024-06-14T11:10:38 | https://dev.to/saanvi1608/what-is-python-programming-language-used-for-1dc8 | python, programming |

What is Python Programming Language Used For?

Introduction

Python is a versatile and powerful programming language that has gained immense popularity in recent years. Known for its simplicity and readability, Python is used across a wide range of applications, from web development to data science. This article explore... | saanvi1608 |

1,888,341 | How to create a database and access specific data from it? | As I learned last time, how to create database using query in terminal. mysql -uroot /*Press enter... | 0 | 2024-06-14T11:09:54 | https://dev.to/ghulam_mujtaba_247/how-to-create-a-database-and-access-specific-data-from-it-1ei9 | webdev, database, php, beginners | As I learned last time, how to create database using query in terminal.

```sql

mysql -uroot

/*Press enter key and then give input to terminal to create database.*/

create database myapp;

```

Now, I need to connect to the database so I can access the table and fetch the specific name.

To connect to the database, you'l... | ghulam_mujtaba_247 |

1,888,340 | Taming the Chaos: My Personal Practical Tips for Wrangling Messy Data | Ah, data. The lifeblood of data science, the fuel for powerful insights. But let's be honest,... | 0 | 2024-06-14T11:09:40 | https://dev.to/fizza_c3e734ee2a307cf35e5/taming-the-chaos-my-personal-practical-tips-for-wrangling-messy-data-2imb | datascience, wrangling, data, ai | Ah, data. The lifeblood of data science, the fuel for powerful insights. But let's be honest, real-world data is rarely pristine. In fact, it's more often a tangled mess of inconsistencies, missing values, and formatting oddities. This is where the art (and science) of data wrangling comes in.

Data wrangling, also know... | fizza_c3e734ee2a307cf35e5 |

1,888,339 | С++ | A post by Ahmadjon | 0 | 2024-06-14T11:08:59 | https://dev.to/ahmadjon_ce07fbecb974f925/s-1ci0 | ahmadjon_ce07fbecb974f925 | ||

1,888,338 | From Casual to Cool Transform Your Style with a Spider Hoodie | Fashion is an ever-evolving realm, and one of the most exciting trends making waves recently is the... | 0 | 2024-06-14T11:07:46 | https://dev.to/ali_sajjad_f14184ad85cded/from-casual-to-cool-transform-your-style-with-a-spider-hoodie-26c7 | spier, spiderhoodie, spider, spiderofficial | Fashion is an ever-evolving realm, and one of the most exciting trends making waves recently is the spider hoodie. This versatile piece has found its way into the wardrobes of trendsetters and casual wear enthusiasts alike. Whether you're heading out for a casual day with friends or looking to [Spider Hoodie](https://s... | ali_sajjad_f14184ad85cded |

1,888,337 | 8 ways to find IT decision-makers emails in the USA | Finding IT decision-makers emails in the USA can be challenging, but with the right strategies, you... | 0 | 2024-06-14T11:06:40 | https://dev.to/saqib62/8-ways-to-find-it-decision-makers-emails-in-the-usa-4f7g | b2b, emaillist | Finding IT decision-makers emails in the USA can be challenging, but with the right strategies, you can streamline the process. Here are eight effective ways to locate these valuable contacts:

1. LinkedIn: Use LinkedIn's advanced search features to filter profiles by job title, company, and location. Once identified, ... | saqib62 |

1,888,336 | Arquitetura de Microservices: O Futuro da Construção de Sistemas Escaláveis | Nos últimos anos, a arquitetura de microservices tem ganhado popularidade entre desenvolvedores e... | 0 | 2024-06-14T11:06:34 | https://dev.to/iamthiago/arquitetura-de-microservices-o-futuro-da-construcao-de-sistemas-escalaveis-1mnc | Nos últimos anos, a arquitetura de microservices tem ganhado popularidade entre desenvolvedores e empresas de tecnologia. Este modelo arquitetural oferece uma abordagem modular para o desenvolvimento de software, permitindo que as aplicações sejam divididas em componentes menores e independentes, conhecidos como "micro... | iamthiago | |

1,888,234 | Build Opensearch Queries through Eloquent | 🚀 Exciting News for all PHP developers! We have launched our first open-source Laravel package,... | 0 | 2024-06-14T10:08:49 | https://dev.to/codeartmk/build-opensearch-queries-through-eloquent-4nb9 | laravel, opensearch, webdev, programming | 🚀 Exciting News for all PHP developers!

We have launched our first open-source Laravel package, which combines Laravel Eloquent Models with AWS OpenSearch smoothly and seamlessly. With this plugin, tedious query writing becomes a thing of the past as it embraces streamlined operations, faster development cycles, and ... | codeartmk |

1,888,335 | Endless Reflections: Understanding Recursion Through a Cat's Eyes | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-14T11:04:33 | https://dev.to/kfir-g/endless-reflections-understanding-recursion-through-a-cats-eyes-3cje | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

Recursion is like a stack of mirrors, each reflecting an image of the one before it, creating an infinite loop of reflections. Imagine a cat seeing itself in a mirror and thinking, "A ... | kfir-g |

1,888,334 | YTMP3: Your Simple Solution for YouTube to MP3 Conversion | In the realm of digital media, YouTube reigns supreme with its vast array of content. However,... | 0 | 2024-06-14T11:04:13 | https://dev.to/shiella/ytmp3-your-simple-solution-for-youtube-to-mp3-conversion-oe5 | In the realm of digital media, YouTube reigns supreme with its vast array of content. However, accessing YouTube videos offline isn’t straightforward. Enter [YTMP3](https://ytmp3.ing/), a free online tool designed for converting YouTube videos into MP3 audio files with ease.

**How It Works:**

1. **Copy and Paste**: S... | shiella | |

1,888,333 | Why buy the USA Advertising and Marketing Industry Email List? | Investing in a USA advertising and marketing industry email list can significantly boost your... | 0 | 2024-06-14T11:02:56 | https://dev.to/saqib62/why-buy-the-usa-advertising-and-marketing-industry-email-list-jg4 | b2b, email, businesscontactslist | Investing in a USA advertising and marketing industry email list can significantly boost your business growth and outreach efforts. Here are some compelling reasons to consider this strategic move:

1. Targeted Marketing: An email list specific to the advertising and marketing industry ensures your messages reach the r... | saqib62 |

1,888,332 | Denim Tears Hoodie: Artistic Design, Practical Comfort, and Versatile Appeal | The Denim Tears Hoodie is a distinctive piece of apparel that blends artistic design with... | 0 | 2024-06-14T11:01:31 | https://dev.to/yousaf654/denim-tears-hoodie-artistic-design-practical-comfort-and-versatile-appeal-5bpm | shirts, hoodie, jeans |

The Denim Tears Hoodie is a distinctive piece of apparel that blends artistic design with practicality and comfort. Here’s a comprehensive overview:

Material and Construction

High-Quality Denim: [Denim Tears Hoodie](https://denimtearsofficial.us/denim-tears-hoodie/) Each Denim Tears Hoodie is crafted from premium d... | yousaf654 |

1,888,331 | Letter For You | This Pen was based on Aysan tutorials. Huge props to him! | 0 | 2024-06-14T11:01:05 | https://dev.to/halfblood_007ae0fbd9c3c70/letter-for-you-1i31 | codepen | This Pen was based on Aysan tutorials. Huge props to him!

{% codepen https://codepen.io/smhmyhead/pen/pomPGXj %} | halfblood_007ae0fbd9c3c70 |

1,888,330 | 2024 Newest Guide for Developers to Clone Riggy Voice | Explore the latest methods in voice cloning with our 2024 guide. Learn how to clone Riggy's unique... | 0 | 2024-06-14T11:00:26 | https://dev.to/novita_ai/2024-newest-guide-for-developers-to-clone-riggy-voice-2l5b | ai, api, voiceclone |

Explore the latest methods in voice cloning with our 2024 guide. Learn how to clone Riggy's unique voice using AI technology, with insights on the best tools, benefits, and applications.

## Key Highlights

- Riggy's voice is essential to his character's identity and story on the DannoDraws channel.

- Voice cloning use... | novita_ai |

1,888,329 | ReactJS vs NextJS: A Comprehensive Comparison for JavaScript Enthusiasts.🚀 | Introduction In the dynamic world of web development, ReactJS and NextJS have emerged as... | 0 | 2024-06-14T10:59:54 | https://dev.to/dharamgfx/reactjs-vs-nextjs-a-comprehensive-comparison-for-javascript-enthusiasts-18o | webdev, react, nextjs, javascript |

### Introduction

In the dynamic world of web development, ReactJS and NextJS have emerged as two powerful tools for building modern web applications. While ReactJS provides a robust foundation for creating user interfaces, NextJS extends its capabilities by offering server-side rendering and other advanced features. ... | dharamgfx |

1,888,328 | Everything You Need to Know About Workday Human Capital Management (HCM) | Apr 18 (News On Japan) - In the past few years, modern HR operations have witnessed significant... | 0 | 2024-06-14T10:59:34 | https://newsonjapan.com/article/141722.php | workday, human, capital, management |

Apr 18 (News On Japan) - In the past few years, modern HR operations have witnessed significant transformation and disruptions in their traditional practices.

Yet the core functionality of human capital management o... | rohitbhandari102 |

1,887,739 | CREATE A WINDOWS 11 VM ON AZURE. | Creating a blog post with step-by-step details and screenshots on deploying and connecting to a... | 0 | 2024-06-14T10:58:08 | https://dev.to/free2soar007/create-a-windows-11-vm-on-azure-g4m | Creating a blog post with step-by-step details and screenshots on deploying and connecting to a virtual machine (VM) involves several stages. Outlined below are the various steps involved in deploying a VM.

**_STEP 1. Sign to the Azure Portal. _**

Access the Azure portal by typing in portal.azure.com in the web browse... | free2soar007 | |

1,888,692 | Tutorial: Web Crawler with Surf and Async-Std 🦀 | Hello, amazing people and welcome back to my blog! Today we're going to build a practical example in... | 0 | 2024-06-15T09:18:32 | https://eleftheriabatsou.hashnode.dev/tutorial-web-crawler-with-surf-and-async-std | rust, rusttutorial | ---

title: Tutorial: Web Crawler with Surf and Async-Std 🦀

published: true

date: 2024-06-14 10:57:40 UTC

tags: Rust,rustlang,Rusttutorial

canonical_url: https://eleftheriabatsou.hashnode.dev/tutorial-web-crawler-with-surf-and-async-std

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/o8b0gzn362utl... | eleftheriabatsou |

1,888,327 | Unlocking Business Potential with Microsoft Dynamics 365: A Comprehensive Guide | Introduction to Microsoft Dynamics 365: In an era where digital transformation is not just an option... | 0 | 2024-06-14T10:55:58 | https://dev.to/mylearnnest/unlocking-business-potential-with-microsoft-dynamics-365-a-comprehensive-guide-16pj | microsoft, microsoftdynamics | **Introduction to Microsoft Dynamics 365:**

In an era where digital transformation is not just an option but a necessity, businesses are constantly seeking robust solutions to streamline operations, enhance customer relationships, and drive growth. Microsoft Dynamics 365 stands out as a powerful suite of [enterprise r... | mylearnnest |

1,888,326 | Ceramic Bowl Set Stylish and Functional Bowls for Your Kitchen | Elevate your kitchen and dining experience with Earthan's exquisite Ceramic Bowl Set. Perfect for... | 0 | 2024-06-14T10:53:31 | https://dev.to/earthanarts/ceramic-bowl-set-stylish-and-functional-bowls-for-your-kitchen-95j | Elevate your kitchen and dining experience with Earthan's exquisite Ceramic Bowl Set. Perfect for storage and serving side dishes, our hand-glazed bowls boast unique designs and vibrant colors. Crafted from high-quality pottery ceramic, each piece exudes elegance and durability. Discover our wide range of uniquely desi... | earthanarts | |

1,888,325 | Using Public IP Addresses to Optimize Targeted Content Delivery | Using public IP addresses to provide digital content and provide tailored user experiences has become... | 0 | 2024-06-14T10:53:24 | https://dev.to/johnmiller/using-public-ip-addresses-to-optimize-targeted-content-delivery-44pa | Using public IP addresses to provide digital content and provide tailored user experiences has become a critical tactic for companies looking to maximize their reach and interaction. Devices that access the internet are given **[IP public](uhttps://ipinfo.info/rl)** addresses, which act as unique identifiers and can pr... | johnmiller | |

1,888,309 | The Power of Progressive Web Apps (PWAs): Revolutionizing Web Development and User Experience | Have you ever wished for the speed and reliability of a native app in your browser? Progressive Web... | 0 | 2024-06-14T10:53:14 | https://dev.to/marcusminch/the-power-of-progressive-web-apps-pwas-revolutionizing-web-development-and-user-experience-23dh | webapps, pwa, webdev, ux | Have you ever wished for the speed and reliability of a native app in your browser? Progressive Web Apps (PWAs) might be the solution you're looking for.

Imagine an app that works offline, loads instantly, and adapts to any device you use—sounds ideal, right? PWAs combine the best features of web and mobile applicatio... | marcusminch |

1,888,323 | Quick Django Installation Script | I work with Django projects frequently, and often find myself repeating the same commands during... | 0 | 2024-06-14T10:50:06 | https://dev.to/stacknatic/quick-django-installation-script-2fa9 | I work with [Django projects](https://stacknatic.com/blog/why-i-switched-to-headless-django-with-nextjs) frequently, and often find myself repeating the same commands during Django installation.

To streamline the initialization of Django projects, I created an installation script for easy setup, which I’m sharing in ... | stacknatic | |

1,888,322 | Why DeFi Integration Is Essential for Business Growth in the Digital Economy? | In a time where technology is constantly changing the way businesses operate, decentralized finance... | 0 | 2024-06-14T10:49:37 | https://dev.to/anne69318/why-defi-integration-is-essential-for-business-growth-in-the-digital-economy-3h3i | In a time where technology is constantly changing the way businesses operate, decentralized finance (DeFi) is becoming a major factor in the transformation of the economy. Compared to conventional systems, DeFi's cutting-edge financial solutions are more transparent, easily accessible, and effective since they make use... | anne69318 | |

1,888,321 | UK Phone Number List | Buy UK phone number list for marketing. Our UK Consumer cell phone numbers database includes phone... | 0 | 2024-06-14T10:48:42 | https://dev.to/sale_leads/uk-phone-number-list-5pb | email |

Buy [UK phone number list](https://www.saleleads.net/email-list/uk-phone-number-list/) for marketing. Our UK Consumer cell phone numbers database includes phone numbers from all cities and states of the United King... | sale_leads |

1,888,319 | Using forms to create AI tools | Did you know that you don't need to be a seasoned programmer to create powerful AI tools? Imagine... | 0 | 2024-06-14T10:47:32 | https://dev.to/syedbalkhi/using-forms-to-create-ai-tools-1hkp | forms, ai | Did you know that you don't need to be a seasoned programmer to create powerful AI tools? Imagine having the ability to build a text generator, [blog title idea generator](https://aioseo.com/the-best-blog-post-title-generator/), [domain name ideas](https://www.nameboy.com/), and more - all without writing a single line... | syedbalkhi |

1,888,318 | Obejor Computers Nigeria | Welcome to Obejor Computers Nigeria! We are your premier destination for all things technology in... | 0 | 2024-06-14T10:44:42 | https://dev.to/nida_naz_71a9f3d7e69bee7e/obejor-computers-nigeria-42ae | Welcome to [Obejor Computers Nigeria](https://obejorcomputers.com/)! We are your premier destination for all things technology in Nigeria. At Obejor Computers, we pride ourselves on offering the latest in computer hardware, software, and accessories, ensuring you have access to cutting-edge technology. Whether you're l... | nida_naz_71a9f3d7e69bee7e | |

1,888,317 | Green Glasses: Your Protective Shield for Computer Coding Sessions | _## **Green Glasses: Your Protective Shield for Computer Coding Sessions_** In today's digital age,... | 0 | 2024-06-14T10:44:11 | https://dev.to/blant/green-glasses-your-protective-shield-for-computer-coding-sessions-3phj | **_## **[Green Glasses](https://www.efeglasses.com/eyeglasses/green/)**: Your Protective Shield for Computer Coding Sessions_**

In today's digital age, computer coding has become an integral part of many people's daily work and lives. Whether you are a professional programmer, a student, or a hobbyist, prolonged expos... | blant | |

1,814,005 | How Progressive Web Apps Can Benefit our Business | In an increasingly mobile-first world, optimizing the time to access our content and the user... | 0 | 2024-06-14T10:43:38 | https://dev.to/paco_ita/how-progressive-web-apps-can-benefit-our-business-4kni | webdev, pwa, javascript, productivity | In an increasingly mobile-first world, optimizing the time to access our content and the user experience for our users can be the success of our business.

However, native mobile apps can be expensive to develop, require downloads from app stores, and take up valuable storage space on phones. This is where Progressive... | paco_ita |

1,888,314 | Usage issues of the editing cell ability of the VTable component: How to configure the editor and whether it can be reused? | Problem Description In business scenarios, there are many columns in the table. If each... | 0 | 2024-06-14T10:42:41 | https://dev.to/fangsmile/usage-issues-of-the-editing-cell-ability-of-the-vtable-component-how-to-configure-the-editor-and-whether-it-can-be-reused-31dc | visactor, vtable, visiualization, webdev | ## Problem Description

In business scenarios, there are many columns in the table. If each column needs to be configured with an editor, it will be more cumbersome. Is there a simple way to define it?

## Solution

You can decide which way to configure the editor according to the specific degree of business reuse:

1. Onl... | fangsmile |

1,888,313 | Enhanced Secure Session Management in JavaScript Web Applications | In today's digital age, securing user sessions is paramount to maintaining the integrity and... | 0 | 2024-06-14T10:42:41 | https://dev.to/saumya27/enhanced-secure-session-management-in-javascript-web-applications-4dp | javascript, webdev, programming | In today's digital age, securing user sessions is paramount to maintaining the integrity and confidentiality of web applications. Secure session management involves practices and mechanisms that ensure the protection of user sessions from unauthorized access and attacks. This blog explores the critical aspects of secur... | saumya27 |

1,888,311 | Deadlock : Computer Science challenge | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-14T10:40:28 | https://dev.to/itsjp/deadlock-computer-science-challenge-1mlk | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

> Deadlock: A dance-off where no one moves because everyone’s waiting for someone else to start. Avoid it to keep systems groovin’.

<!-- Explain a computer science concept in 256 char... | itsjp |

1,888,310 | How to delete the content of the selected cell using hotkeys in VTable? | Question Description We have implemented the editable table business scenario using the... | 0 | 2024-06-14T10:37:58 | https://dev.to/fangsmile/how-to-delete-the-content-of-the-selected-cell-using-hotkeys-in-vtable-4p0 | vtable, visactor, visiualization, webdev | ## Question Description

We have implemented the editable table business scenario using the editing capabilities provided by VTable. However, there is a requirement to delete the content of the selected cell when the delete key or backspace key is pressed on the keyboard.

## Solution

Currently, VTable itself does not su... | fangsmile |

1,888,308 | NFTs in Academic Credential Verification | Introduction The integration of technology into the education sector has revolutionized... | 27,673 | 2024-06-14T10:37:41 | https://dev.to/rapidinnovation/nfts-in-academic-credential-verification-5ea0 | ## Introduction

The integration of technology into the education sector has revolutionized how

academic credentials are managed and verified. Non-Fungible Tokens (NFTs)

offer a transformative solution to address current challenges in the system.

## What are NFTs?

Non-fungible tokens (NFTs) are digital assets that re... | rapidinnovation | |

1,888,307 | The File Class | The File class contains the methods for obtaining the properties of a file/directory and for renaming... | 0 | 2024-06-14T10:37:11 | https://dev.to/paulike/the-file-class-f7h | java, programming, learning, beginners | The **File** class contains the methods for obtaining the properties of a file/directory and for renaming and deleting a file/directory. Having learned exception handling, you are ready to step into file processing. Data stored in the program are temporary; they are lost when the program terminates. To permanently stor... | paulike |

1,888,375 | VSCode DevContainer setup for C/C++ programmers | This article delves into getting a VS Code DevContainer Development environment based setup for early... | 0 | 2024-06-18T10:34:49 | https://blog.mandraketech.in/vscode-devcontainer-setup-for-cpp-programmers | c, devcontainer, vscode | ---

title: VSCode DevContainer setup for C/C++ programmers

published: true

date: 2024-06-14 10:35:20 UTC

tags: C,C,devcontainer,vscode

canonical_url: https://blog.mandraketech.in/vscode-devcontainer-setup-for-cpp-programmers

---

This article delves into getting a VS Code DevContainer Development environment based setu... | mandraketech |

1,888,306 | Hey there Folks - It's my First Post ! | Tonic-AI Community if actually found dev.to through forem, isnt that cool ? basically i... | 0 | 2024-06-14T10:34:18 | https://dev.to/tonic/hey-there-folks-its-my-first-post--52k | firstpost, firstyearincode, community, devjournal | ### Tonic-AI Community

if actually found dev.to through forem, isnt that cool ?

basically i just started an accidental dev community on discord (i hate discord to be honest) and it kinda took off, we're making like 10 demos a week on good weeks and 2 or 3 on bad weeks, so the volume on code repos is really there :-)

... | tonic |

1,882,272 | Liman Uygulama İzleme Eklentisi Kurulumu | Eklenti Kurulum Dokümantasyonu İçindekiler Veritabanı Sunucusu... | 0 | 2024-06-14T10:33:56 | https://dev.to/aciklab/liman-uygulama-izleme-eklentisi-kurulumu-2ji4 | #Eklenti Kurulum Dokümantasyonu

## İçindekiler

- [Veritabanı Sunucusu Kurulumu](#veritabanı-sunucusu-kurulumu)

- [PostgreSQL Kurulumu (Varsa Atlayın)](#postgresql-kurulumu-varsa-atlayın)

- [Veritabanı ve Kullanıcı Oluşturma](#veritabanı-ve-kullanıcı-oluşturma)

- [Backend Servisi Kurulumu](#backend-servisi-kurulumu... | erenalpteksen | |

1,888,305 | How to manually update the state when using the Checkbox in the VTable component? | Problem Title Problem Description Is there a way to manually set the checkbox of the... | 0 | 2024-06-14T10:33:32 | https://dev.to/fangsmile/how-to-manually-update-the-state-when-using-the-checkbox-in-the-vtable-component-505o | visactor, vtable, webdev, visiualization | ## Problem Title

Problem Description

Is there a way to manually set the checkbox of the ListTable in VTable, and how to clear the selected state of all checkboxes?

## Solution

Call the interface to update the state

You can call the interface setCellCheckboxState. This interface can set the checkbox state of a cell, and... | fangsmile |

1,888,304 | Amibroker Data Feed | Looking to Enhance your Trading accuracy then go with AmiBroker Data Feed Benefit from updates of... | 0 | 2024-06-14T10:32:06 | https://dev.to/vennila_nila_00c605117fc0/amibroker-data-feed-5gkc |

Looking to Enhance your Trading accuracy then go with [AmiBroker Data Feed](https://amibrokerchart.com/)

Benefit from updates of real time market data using our reliable feed with AmiBroker. Used for magnified understanding and swifter decision making it enables a trader to look forward strategically in trading. Upgra... | vennila_nila_00c605117fc0 | |

1,888,303 | "William Shakespeare: The Bard of Avon" | **[ William Shakespeare,](https://youtu.be/qLrvt8QfyJ4?si=CnD91zM-4zb3AMNe)** often hailed as one of... | 0 | 2024-06-14T10:30:50 | https://dev.to/monna55/william-shakespeare-the-bard-of-avon-edg | webdev, william, shakespeare | **[ William Shakespeare,](https://youtu.be/qLrvt8QfyJ4?si=CnD91zM-4zb3AMNe)** often hailed as one of the greatest writers in the English language, was born in Stratford-upon-Avon, England, on April 23, 1564. His father, John Shakespeare, was a successful glove-maker and alderman, while his mother, Mary Arden, belon... | monna55 |

1,888,302 | 5 Key Differences to know about Regression Testing and Retesting | As you must be aware, depending on the stage of development, various types of testing are undertaken... | 0 | 2024-06-14T10:28:53 | https://dev.to/morrismoses149/5-key-differences-to-know-about-regression-testing-and-retesting-1l68 | retesting, regressiontesting, testgrid | As you must be aware, depending on the stage of development, various types of testing are undertaken during the multiple phases of the software development life cycle (SDLC). Each level of the SDLC has its own set of requirements and objectives for testing to meet.

Unit testing is followed by integration testing, syste... | morrismoses149 |

1,888,301 | Understanding End-to-En Encryptivia via RSA Encryption: A TeenagerGuide | Introduction Hey there! Have you ever wondered how your messages stay private when you chat with your... | 0 | 2024-06-14T10:28:30 | https://dev.to/efficacious/understanding-end-to-en-encryptivia-via-rsa-encryption-a-teenagerguide-3cg7 | [Introduction

Hey there! Have you ever wondered how your messages stay private when you chat with your friends online? How does your personal information stay safe from prying eyes? The answer lies in something called end-to-end encryption. One popular method for achieving this is RSA encryption. Let’s dive into what t... | efficacious | |

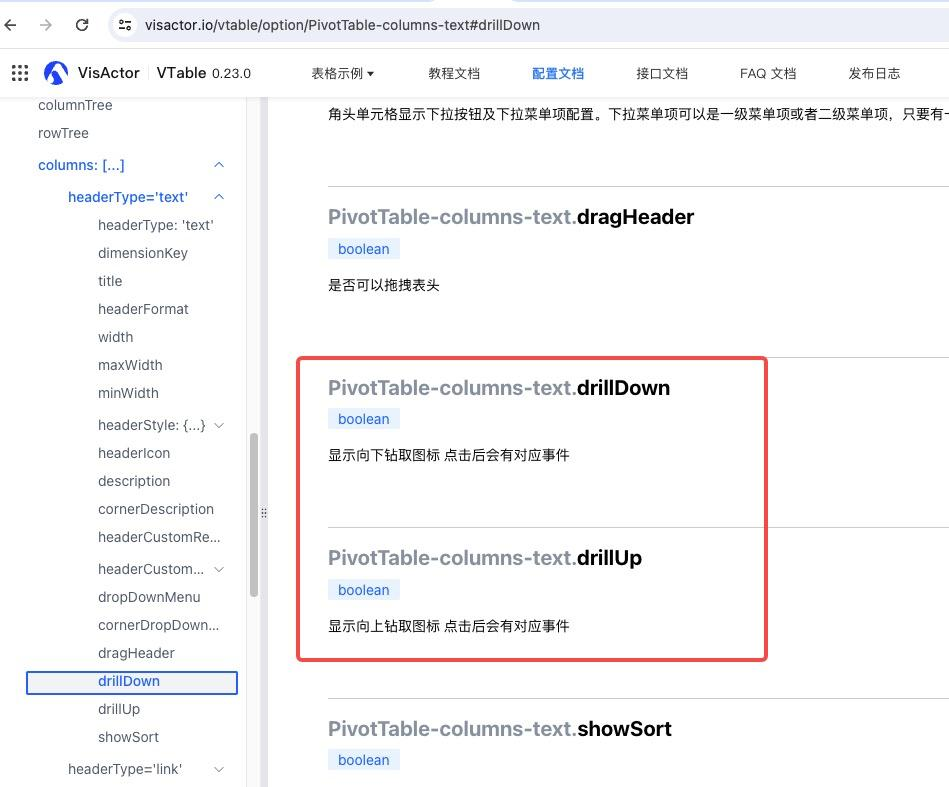

1,888,300 | How to implement dimension drill-down function when using VTable pivot table component? | Problem Description Does the VTable pivot table support drill-down interaction on the... | 0 | 2024-06-14T10:28:19 | https://dev.to/fangsmile/how-to-implement-dimension-drill-down-function-when-using-vtable-pivot-table-component-49pk | visactor, vtable, webdev, visiualization | ## Problem Description

Does the VTable pivot table support drill-down interaction on the front end?

## Solution

Configuring this will give you an icon and listen for events (https://visactor.io/vtable/api/events#D... | fangsmile |

1,886,414 | Pride in Your App - Trying Out GraphQL on Android | It's Pride month, y'all! As someone who is part of the LGBTIQA+ community, this month is both great... | 0 | 2024-06-14T10:27:00 | https://eevis.codes/blog/2024-06-14/pride-in-your-app-trying-out-graphql-on-android/ | graphql, android, mobile, programming | It's Pride month, y'all! As someone who is part of the LGBTIQA+ community, this month is both great and stressful at the same time. You never know what some people come up with - somehow, this month draws some really nasty people out. And with the rise of far-right in Europe... I'm not even going to get started.

I ha... | eevajonnapanula |

1,888,299 | How can I increase the gap between adjacent sparklines in the VTable component? | Question Description The mini graph in the product uses VTable, but the effect of... | 0 | 2024-06-14T10:25:57 | https://dev.to/fangsmile/how-can-i-increase-the-gap-between-adjacent-sparklines-in-the-vtable-component-1neh | webdev, vtable, visactor, visiualization | ## Question Description

The mini graph in the product uses VTable, but the effect of generating the mini graph with data is that the users feel the distance between adjacent line segments is too close. How to adjust this spacing?

online cricket ID Cricket is not merely a sport but a profound passion that unites nations, transcends boundaries, and captures the hearts of millions worldwide. Originating in England and spreading across continents, cricket has evolved into more than just a game—it embodies cult... | tomwilliam_cf283937e0c858 | |

1,888,260 | Defining Custom Exception Classes | You can define a custom exception class by extending the java.lang.Exception class. Java provides... | 0 | 2024-06-14T10:20:27 | https://dev.to/paulike/defining-custom-exception-classes-pgm | java, programming, learning, beginners | You can define a custom exception class by extending the **java.lang.Exception** class. Java provides quite a few exception classes. Use them whenever possible instead of defining your own exception classes. However, if you run into a problem that cannot be adequately described by the predefined exception classes, you ... | paulike |

1,888,239 | AI-Powered Customs Software: Revolutionizing Global Trade | In the realm of modern technology, AI-powered customs software is reshaping international trade... | 0 | 2024-06-14T10:19:45 | https://dev.to/john_hall/ai-powered-customs-software-revolutionizing-global-trade-2ben | ai, machinelearning, automation, software | In the realm of modern technology, AI-powered customs software is reshaping international trade operations. By integrating AI and machine learning, this innovative solution streamlines customs clearance processes, ensuring efficiency and compliance with evolving regulations.

## From CHIEF to CDS: Embracing Innovation

... | john_hall |

1,888,238 | Leading Website Design Company in the USA | Discover the forefront of web design with our expert team. As a premier website design company in the... | 0 | 2024-06-14T10:19:26 | https://dev.to/adele_white/leading-website-design-company-in-the-usa-5cep | webdev, services, webdesign, usa | Discover the forefront of web design with our expert team. As a premier **[website design company in the USA](https://bootesnull.com/usa/)**, we specialize in creating stunning, functional websites tailored to your business needs. From sleek e-commerce platforms to responsive corporate sites, we blend creativity with t... | adele_white |

1,882,036 | CS50 - Week 2 | Kompilyatsiya qilish Shifrlash - bu oddiy matnni begona ko'zlardan yashirish jarayoni.... | 0 | 2024-06-14T10:19:09 | https://dev.to/udilbar/cs50-week-2-23cf | cs50, programming, c, learning | ## Kompilyatsiya qilish

**Shifrlash** - bu oddiy matnni begona ko'zlardan yashirish jarayoni. **Deshifrlash** esa, shifrlangan matnni inson o'qiy oladigan shaklga qaytarishdir.

Shifrlangan matn quyidagicha ko'rinishi mumkin:

Product Manager Learning Materials When I started my Engineering... | 0 | 2024-06-14T10:07:30 | https://dev.to/grapplingdev/product-manager-roadmap-337i | product, pm, roadmap, productmanager | #The Lack of Good (Free) Product Manager Learning Materials

When I started my **Engineering Management & Product Management** career (yes both were one and the same as the start-ups I worked at!) it was quite difficult to find learning material that was both free and not just telling you to get a degree (real helpful.... | grapplingdev |

1,888,231 | Homaid: Where Needs Meet Solutions | Homaid is your ultimate destination for connecting people who need help with those who can provide... | 0 | 2024-06-14T10:07:06 | https://dev.to/coscut_india_1dc772aab069/homaid-where-needs-meet-solutions-kng | Homaid is your ultimate destination for connecting people who need help with those who can provide it. Whether you're seeking a little extra income or looking for someone reliable to assist with your tasks, our platform is here to make the process seamless and efficient.

For Job Seekers:

Are you searching for flexible... | coscut_india_1dc772aab069 | |

1,888,230 | Crafting a Stellar Portfolio: Your Gateway to Opportunities. | Creating a professional portfolio is one of the most powerful tools in showcasing your skills,... | 0 | 2024-06-14T10:05:57 | https://dev.to/g87code/crafting-a-stellar-portfolio-your-gateway-to-opportunities-4jk6 | webdev, javascript, beginners, programming | Creating a professional portfolio is one of the most powerful tools in showcasing your skills, achievements, and personality to potential employers or clients. A well-crafted portfolio can open doors to numerous opportunities, helping you stand out in a competitive market. Here's everything you need to know about build... | g87code |

1,888,219 | Middleware and Interceptors in NestJS: Best Practices | NestJS is a progressive Node.js framework for building efficient and scalable server-side... | 0 | 2024-06-14T10:04:12 | https://dev.to/ezilemdodana/middleware-and-interceptors-in-nestjs-best-practices-5923 | nestjs, backend, typescript, middleware | NestJS is a progressive Node.js framework for building efficient and scalable server-side applications. Among its many powerful features, middleware and interceptors stand out as essential tools for handling cross-cutting concerns in a clean and reusable manner. In this article, we'll explore the concepts of middleware... | ezilemdodana |

1,888,229 | Using ArgoCD & Terraform to Manage Kubernetes Cluster | Seamless deployment and management of your infrastructure and application is key for a successful... | 0 | 2024-06-14T10:02:32 | https://spacelift.io/blog/argocd-terraform | terraform, kubernetes, devops |

Seamless deployment and management of your infrastructure and application is key for a successful organization. This is where ArgoCD and Terraform can help.

Terraform is an infrastructure-as-code (IaC) tool that allows you to provision infrastructure and works with many cloud providers, databases, VCS systems, and Ku... | spacelift_team |

1,888,228 | multi meta server ceph | HPFS is a file metadata server based on Ceph RADOS, designed to address the bottleneck issues... | 0 | 2024-06-14T10:02:03 | https://dev.to/sy_z_5d0937c795107dd92526/multi-meta-server-ceph-4pe1 |

HPFS is a file metadata server based on Ceph RADOS, designed to address the bottleneck issues commonly encountered in Ceph file systems. Unlike traditional setups that rely on third-party storage for metadata, HPFS directly stores metadata on RADOS. This approach ensures metadata stability akin to that of Ceph, which... | sy_z_5d0937c795107dd92526 | |

1,888,227 | How to Find Your Leaked and Republished Photos on the Internet? What is a Face Recognition Search Engine? | Nowadays photos can easily be leaked and republished without your consent, leading to potential... | 0 | 2024-06-14T10:01:59 | https://dev.to/luxandcloud/how-to-find-your-leaked-and-republished-photos-on-the-internet-what-is-a-face-recognition-search-engine-2908 | ai, security, news, discuss | Nowadays photos can easily be leaked and republished without your consent, leading to potential privacy breaches and unauthorized usage. Whether you're an individual concerned about personal privacy or a professional protecting your intellectual property, finding your photos on the internet is a crucial task. This is w... | luxandcloud |

1,888,226 | Cloud Computing for Data and Software Engineers | Cloud computing has become a major buzzword in the last decade. The application of technology is... | 0 | 2024-06-14T10:01:34 | https://dev.to/michellebuchiokonicha/cloud-computing-for-data-and-software-developers-43ce | cloud, softwaredevelopment, softwareengineering, datascience | **Cloud computing** has become a major buzzword in the last decade. The application of technology is enabled and made possible by cloud computing.

A simple instance is the alarm on your phone. This alarm, after being created was made available to users through cloud computing. The applications we use daily and the rece... | michellebuchiokonicha |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.