id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,888,225 | Rhude Clothing The Ultimate Blend of Comfort and Couture | Fashion is ever-evolving, a dynamic canvas where art and practicality converge. Among the many brands... | 0 | 2024-06-14T10:01:32 | https://dev.to/ali_sajjad_3813614dd88ae6/rhude-clothing-the-ultimate-blend-of-comfort-and-couture-21bg | rhude, hoodie, rhudeclothing, rhudeofficial | Fashion is ever-evolving, a dynamic canvas where art and practicality converge. Among the many brands that have successfully carved a niche in this competitive landscape, Rhude Clothing stands out as a beacon of innovation. Rhude Clothing: The Ultimate Blend of Comfort and Couture, is a name that resonates with [Rhude ... | ali_sajjad_3813614dd88ae6 |

1,888,223 | Harnessing Firebase in React with Custom Hooks: A Practical Guide | Explore how to create a custom React hook for real-time data fetching from a Firestore database. | 0 | 2024-06-14T10:00:37 | https://dev.to/itselftools/harnessing-firebase-in-react-with-custom-hooks-a-practical-guide-42l7 | react, firebase, realtime, customhooks |

Here at [ItselfTools](https://itselftools.com), we've gained substantial experience developing over 30 projects using technologies like Next.js and Firebase. One powerful pattern we've leveraged is the combination of React hooks and Firebase to manage real-time data efficiently. This article will dive into a specific ... | antoineit |

1,888,221 | Swiper loop not work normal when slidesPerView: "auto" | Swiper loop not work normal when slidesPerView: "auto" how to fix this? link codepen | 0 | 2024-06-14T10:00:06 | https://dev.to/kasym/swiper-loop-not-work-normal-when-slidesperview-auto-4oja | bug, loop, swiper, javascript | Swiper loop not work normal when slidesPerView: "auto" how to fix this? link [codepen](https://codepen.io/Ermuhanow/pen/abrVXpQ) | kasym |

1,888,218 | Explore Rainbow Moonstone Bracelet from Silver Star Jewels | Introduction Having a great passion for jewelry, I have always been fascinated by the... | 0 | 2024-06-14T09:57:44 | https://dev.to/cheryl_najera_fd9ed1f49ec/explore-rainbow-moonstone-bracelet-from-silver-star-jewels-440c |

## **Introduction**

Having a great passion for jewelry, I have always been fascinated by the unique characteristics and beauty of natural stones. It gives me great pleasure to present to you today my analysis of the... | cheryl_najera_fd9ed1f49ec | |

1,888,217 | Chained Exceptions | Throwing an exception along with another exception forms a chained exception. The catch block... | 0 | 2024-06-14T09:55:30 | https://dev.to/paulike/chained-exceptions-384f | java, programming, learning, beginners | Throwing an exception along with another exception forms a chained exception. The **catch** block rethrows the original exception. Sometimes, you may need to throw a new exception (with additional information) along with the original exception. This is called _chained exceptions_. The program below illustrates how to c... | paulike |

1,888,211 | When to Use Exceptions | A method should throw an exception if the error needs to be handled by its caller. The try block... | 0 | 2024-06-14T09:47:04 | https://dev.to/paulike/when-to-use-exceptions-58e8 | java, programming, learning, beginners | A method should throw an exception if the error needs to be handled by its caller. The **try** block contains the code that is executed in normal circumstances. The **catch** block contains the code that is executed in exceptional circumstances. Exception handling separates error-handling code from normal programming t... | paulike |

1,888,216 | HTML QUESTIONS AND ANSWERS | Question1: What is HTML Answer1: HTML stands for HyperText Markup Language and is the language of the... | 0 | 2024-06-14T09:54:43 | https://dev.to/denkogee/html-questions-and-answers-3b0k | Question1: What is HTML

Answer1: HTML stands for HyperText Markup Language and is the language of the internet. It is the standard text formatting language used for creating and displaying pages on the Internet

HTML documents are made up of the elements and the tags that format it for proper display on pages. | denkogee | |

1,888,215 | HTML QUESTIONS AND ANSWERS | Question1: What is HTML Answer1: HTML stands for HyperText Markup Language and is the language of the... | 0 | 2024-06-14T09:54:21 | https://dev.to/denkogee/html-questions-and-answers-4p27 | Question1: What is HTML

Answer1: HTML stands for HyperText Markup Language and is the language of the internet. It is the standard text formatting language used for creating and displaying pages on the Internet

HTML documents are made up of the elements and the tags that format it for proper display on pages. | denkogee | |

1,888,213 | Rethrowing Exceptions | Java allows an exception handler to rethrow the exception if the handler cannot process the exception... | 0 | 2024-06-14T09:48:50 | https://dev.to/paulike/rethrowing-exceptions-4i2 | java, programming, learning, beginners | Java allows an exception handler to rethrow the exception if the handler cannot process the exception or simply wants to let its caller be notified of the exception.

The syntax for rethrowing an exception may look like this:

`try {

statements;

}

catch (TheException ex) {

perform operations before exits;

throw ex;

}... | paulike |

1,888,212 | Better ORM then Sqlalchemy Python ? Tortoise ORM 101 | What is Tortoise ORM? Tortoise ORM is an easy-to-use Object Relational Mapper (ORM) for... | 0 | 2024-06-14T09:47:51 | https://dev.to/0xaungkon/better-orm-then-sqlalchemy-python-tortoise-orm-101-29hp | webdev, python, sql, database | ### What is Tortoise ORM?

Tortoise ORM is an easy-to-use Object Relational Mapper (ORM) for Python, inspired by Django. It is designed to be simple yet powerful, providing a high-level API for interacting with databases.

### Benefits of Tortoise ORM:

- **Asynchronous Support:** Fully asynchronous, making it ideal fo... | 0xaungkon |

1,887,987 | Difference Between NextJS vs ExpressJS | Quick Overview: The difference between Next JS and Express JS is a matter of discussion. Clients... | 0 | 2024-06-14T05:36:45 | https://dev.to/milanpanchasara/difference-between-nextjs-vs-expressjs-4j1p |

**<u>Quick Overview</u>**: The difference between Next JS and Express JS is a matter of discussion. Clients become doubtful in the selection of an ideal framework. Both have benefits and limitations, so, when you need to make your application, you can choose based on your need. To make it an easy choice, the article ... | milanpanchasara | |

1,888,210 | iPhone App Development with Swift: A Step-by-Step Tutorial | Developing an iPhone app can be an exciting and rewarding experience. With Swift, Apple’s powerful... | 0 | 2024-06-14T09:47:01 | https://dev.to/chariesdevil/iphone-app-development-with-swift-a-step-by-step-tutorial-o20 | iphone, appdevelopment, hireiphoneappdeveloper | Developing an iPhone app can be an exciting and rewarding experience. With Swift, Apple’s powerful and intuitive programming language, creating robust and interactive applications has never been easier. This step-by-step tutorial will guide you through the basics of developing an iPhone app using Swift, from setting up... | chariesdevil |

1,888,147 | How to use is() Property in CSS | In the ever-evolving world of web development, CSS remains a fundamental language for crafting... | 27,759 | 2024-06-14T09:46:55 | https://dev.to/nnnirajn/mastering-the-is-property-in-css-step-by-step-guide-c2n | css, tutorial, html, ui | In the ever-evolving world of web development, CSS remains a fundamental language for crafting visually appealing user interfaces. Among the myriad of selectors and properties CSS offers, the `is()` function stands out as a potent tool that every UI developer should master. This article delves into the nuances of the `... | nnnirajn |

1,888,209 | The Hidden Truth: Quality Issues Are Often Just Quantity Problems | As a software engineer with nine years of experience, I've had the privilege of working with some... | 0 | 2024-06-14T09:46:55 | https://dev.to/haikelei/the-hidden-truth-quality-issues-are-often-just-quantity-problems-2fc2 | webdev, vscode, programming, devops | As a software engineer with nine years of experience, I've had the privilege of working with some truly exceptional developers. These colleagues have mastered the art of coding and consistently deliver high-quality software. However, I've also seen many others remain average and unremarkable. This disparity intrigued m... | haikelei |

1,888,207 | Flutter vs. React Native: A Detailed Comparison for App Development in 2024 | hoosing the right framework for your cross-platform mobile app can be a tough decision. Both Flutter... | 0 | 2024-06-14T09:43:48 | https://dev.to/dhaval_vaghela_86d1a3c769/flutter-vs-react-native-a-detailed-comparison-for-app-development-in-2024-5hbh | reactnative, flutter, reactjsdevelopment, webdev | hoosing the right framework for your cross-platform mobile app can be a tough decision. Both Flutter and React Native offer compelling features and have earned their place in the development world. Here is a detailed comparison to help you weigh their strengths and weaknesses. Here are the pros and cons of developing m... | dhaval_vaghela_86d1a3c769 |

1,888,206 | The finally Clause | The finally clause is always executed regardless whether an exception occurred or not. Occasionally,... | 0 | 2024-06-14T09:43:07 | https://dev.to/paulike/the-finally-clause-4jfl | java, programming, learning, beginners | The **finally** clause is always executed regardless whether an exception occurred or not. Occasionally, you may want some code to be executed regardless of whether an exception occurs or is caught. Java has a **finally** clause that can be used to accomplish this objective.

The syntax for the **finally** clause might... | paulike |

1,888,204 | More on Exception Handling | A handler for an exception is found by propagating the exception backward through a chain of method... | 0 | 2024-06-14T09:36:31 | https://dev.to/paulike/more-on-exception-handling-529c | java, programming, learning, beginners | A handler for an exception is found by propagating the exception backward through a chain of method calls, starting from the current method. Java’s exception-handling model is based on three operations: _declaring an exception_, _throwing an exception_, and _catching an exception_, as shown in figure below.

is the modified form of Kinemaster APK which is a boon for the users in 2024. Kinemaster mod APK is a video editing app available on the Google Play Store for Android smartphones. It is specially a professional video editing app which can be used to create good... | tushar_kalal_41c93b6bfaf0 |

1,888,199 | One Byte Explainer | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-14T09:29:48 | https://dev.to/sharmi2020/one-byte-explainer-57b0 | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

Local storage ,Session storage and Cookies:

**Local storage**:

It was just a web storage mechanism used to store the data locally whenever the user shuts down the window there is no... | sharmi2020 |

1,888,198 | Leading the Way in Microfinance Technology: Vexil Infotech's Integrated Solution Suite | Embrace the future of Microfinance Software technology with Vexil Infotech Pvt Ltd's Integrated... | 0 | 2024-06-14T09:28:35 | https://dev.to/vexilinfotech24/leading-the-way-in-microfinance-technology-vexil-infotechs-integrated-solution-suite-2p7l | microfinancesoftware | Embrace the future of [Microfinance Software](https://vexilinfotech.com/microfinance-software

) technology with Vexil Infotech Pvt Ltd's Integrated Microfinance Solution (IMS). Vexil is the best Microsoft Software Provider in India. With its advanced features and customizable modules, IMS provides microfinance institut... | vexilinfotech24 |

1,888,197 | Top Brands Use Social Media Marketing To Expand Their Business | With regard to increasing brand recognition and cultivating brand loyalty among a clientele, social... | 0 | 2024-06-14T09:25:48 | https://dev.to/toby_garfield_8e5dc77e905/top-brands-use-social-media-marketing-to-expand-their-business-3ck5 | socialmediamarketing | With regard to increasing brand recognition and cultivating brand loyalty among a clientele, social media platforms have shown to be effective instruments. When used strategically, social media can have a noticeable, quantifiable impact on an organization's bottom line due to its extraordinary development potential. On... | toby_garfield_8e5dc77e905 |

1,888,196 | Iron Casting Foundries: Where Tradition Meets Innovation | screenshot-1718295455310.png Why Iron casting: Where Tradition Meets Innovation is the Way to... | 0 | 2024-06-14T09:25:28 | https://dev.to/jahira_hanidha_ac8711fb57/iron-casting-foundries-where-tradition-meets-innovation-544m |

screenshot-1718295455310.png

Why Iron casting: Where Tradition Meets Innovation is the Way to Go

Benefits of Making Use Of Iron casting

Iron casting are devices which were built to supply a high-quality and way like efficient of iron into various services and products

Utilizing the Iron casting, manufacturers ca... | jahira_hanidha_ac8711fb57 | |

1,888,173 | 5 Essential Cybersecurity Tips to Secure Your Business in 2024 | Keeping Your Business Secure: 5 Essential Cybersecurity Tips There are so many possibilities for... | 0 | 2024-06-14T09:19:43 | https://dev.to/sennovate/5-essential-cybersecurity-tips-to-secure-your-business-in-2024-4870 | cybersecurity, security, iam, identity | Keeping Your Business Secure: 5 Essential Cybersecurity Tips

There are so many possibilities for your business to grow within this new era of customer engagement and market expansion. However, it is not without its risks. To secure your business—akin to fortifying your home against insiders and intruders—you need rob... | sennovate |

1,888,195 | Formengine Release notes 1.3.0 | Added the RsSignature component for drawing signatures. The text of the form data and errors in the... | 0 | 2024-06-14T09:24:29 | https://dev.to/optimajetlimited/formengine-release-notes130-3hgo | - Added the RsSignature component for drawing signatures.

- The text of the form data and errors in the left panel on the designer preview is now scrollable.

- Fixed minor style breakdowns in the designer scrollbars.

[Formengine Release notes ](https://formengine.io/documentation/release-notes) | optimajet | |

1,888,194 | Coil and Ktor in Kotlin Multiplatform Compose project | Many people are trying but having a hard time adding Coil in a Multiplatform compose project that... | 0 | 2024-06-14T09:22:50 | https://dev.to/gochev/coil-and-ktor-in-kotlin-multiplatform-compose-project-5d3i | kotlin, multiplatform, compose, multiplatformcompose | Many people are trying but having a hard time adding Coil in a Multiplatform compose project that works on both JVM/Android/iOS and WASM.

Now we have to use Ktor since okHttp is android only for now.

We will be using:

- Coil 3.0 https://coil-kt.github.io/coil/

- Ktor 3.0 with wasm support

After spending half a day m... | gochev |

1,888,193 | Memory Data Register (MDR) in Computer Architecture | In the realm of computer architecture, efficient data management is pivotal for the smooth operation... | 0 | 2024-06-14T09:21:29 | https://dev.to/pushpendra_sharma_f1d2cbe/memory-data-register-mdr-in-computer-architecture-4c8 | webdev, productivity, learning, design | In the realm of computer architecture, efficient data management is pivotal for the smooth operation of a computer system. One of the critical components in this domain is the Memory Data Register (MDR), which plays a fundamental role in the interaction between the CPU and the memory subsystem. This article delves into... | pushpendra_sharma_f1d2cbe |

1,888,163 | CrewAI: Revolutionizing Workforce Management with AI | In the fast-evolving landscape of technology, artificial intelligence (AI) continues to transform... | 0 | 2024-06-14T09:19:49 | https://dev.to/rootviper4/crewai-revolutionizing-workforce-management-with-ai-405m | crewai, python, ai, webdev | In the fast-evolving landscape of technology, artificial intelligence (AI) continues to transform industries by optimizing processes, enhancing efficiency, and driving innovation. One such revolutionary application of AI is in workforce management, and at the forefront of this transformation is CrewAI. In this article,... | rootviper4 |

1,888,172 | odoo v14 and issues with requirements.txt | when you install odoo requirements.txt, you may get error as below UnicodeDecodeError:... | 0 | 2024-06-14T09:19:07 | https://dev.to/jeevanizm/odoo-v14-and-issues-with-requirementstxt-2o23 | when you install odoo requirements.txt, you may get error as below

```

UnicodeDecodeError: 'charmap' codec can't decode byte 0x9d in position 967: character maps to <undefined>

----------------------------------------

WARNING: Discarding https://files.pythonhosted.org/packages/89/ad/9388970542f82857ac2958b3ea... | jeevanizm | |

1,881,404 | The "this" confusion in JS: function vs arrow | Hey there, Good to see you back again!, In this blog I would like to clear a common confusion for... | 0 | 2024-06-14T09:16:22 | https://dev.to/balajich004/the-this-confusion-in-js-function-vs-arrow-11lj | javascript, webdev, programming, node | Hey there, Good to see you back again!, In this blog I would like to clear a common confusion for many js beginners, the "this" confusion while using it b/w arrow and normal functions.

## Standard function's perspective of this keyword

"this" is a keyword in js which is normally used to refer the object that we are c... | balajich004 |

1,888,171 | Electrifying the Road: Exploring DC EV Charging Technology | H09b71ff2f7a942d18ebc7910a120b077J.png Electrifying the Road: Exploring DC EV Charging... | 0 | 2024-06-14T09:16:20 | https://dev.to/jahira_hanidha_ac8711fb57/electrifying-the-road-exploring-dc-ev-charging-technology-180d | H09b71ff2f7a942d18ebc7910a120b077J.png

Electrifying the Road: Exploring DC EV Charging Technology

Introduction

Electric automobiles (EVs) are becoming a lot more popular amidst car purchasers, and for justification. Not simply do they help decrease greenhouse gas emissions, additionally provide the trip plus qu... | jahira_hanidha_ac8711fb57 | |

1,888,170 | FSSC 22000 Certification in Zimbabwe | FSSC 22000 Certification in Zimbabwe is increasingly gaining traction among food industry... | 0 | 2024-06-14T09:15:21 | https://dev.to/neha_1ba1ad0deb4d4c484e1c/fssc-22000-certification-in-zimbabwe-a5g | FSSC 22000 Certification in Zimbabwe is increasingly gaining traction among food industry stakeholders. This certification ensures that companies adhere to international food safety standards, which is crucial in a market where food safety and quality are paramount. By obtaining FSSC 22000 Certification in Zimbabwe, bu... | neha_1ba1ad0deb4d4c484e1c | |

1,888,169 | What are the courses provided by careerpedia ? | You're interested in pursuing courses in careerpedia like data science, UI/UX design, web... | 0 | 2024-06-14T09:14:51 | https://dev.to/naveen_azmeera_3b6658344b/what-are-the-courses-provided-by-careerpedia--3gj5 |

You're interested in pursuing courses in careerpedia like data science, UI/UX design, web development, automation, and potentially even language proficiency through IELTS. That's quite a diverse set of skills and interests! Here's how you might approach "coaching" in these areas:

Data Science:

Look for online courses... | naveen_azmeera_3b6658344b | |

1,890,778 | Create a pagination API with Spring Boot | Splitting larger content into distinct pages is known as pagination. This method significantly... | 0 | 2024-06-17T03:37:22 | https://blog.stackpuz.com/create-a-pagination-api-with-spring-boot/ | springboot, pagination | ---

title: Create a pagination API with Spring Boot

published: true

date: 2024-06-14 09:12:00 UTC

tags: SpringBoot,Pagination

canonical_url: https://blog.stackpuz.com/create-a-pagination-api-with-spring-boot/

---

Splitting larger con... | stackpuz |

1,888,167 | Steps To Successfully Outsource Your Software Projects | Effective Outsource Software Projects requires careful execution and strategic planning. Clearly... | 0 | 2024-06-14T09:11:55 | https://dev.to/infowindtech57/steps-to-successfully-outsource-your-software-projects-245k | mobile, softwaredevelopment | Effective Outsource Software Projects requires careful execution and strategic planning. Clearly define the parameters and objectives of your project from the start to ensure alignment with the outsourced partner. According to Statistica, IT outsourcing contributes $66.5 billion, or the highest percentage of the $92.5 ... | infowindtech57 |

1,888,166 | Affordable Web Hosting: Reliable Services at Unbeatable Prices | Discover our affordable web hosting services, where reliability meets unbeatable prices. Whether... | 0 | 2024-06-14T09:10:04 | https://dev.to/zapakhost/affordable-web-hosting-reliable-services-at-unbeatable-prices-521j | affordable, webhosting, zapakhost, hosting | Discover our [affordable web hosting](https://zapakhost.com/?utm_source=Web%202.0&utm_medium=Dev%20to&utm_campaign=Articles) services, where reliability meets unbeatable prices. Whether you're launching a personal blog or managing a business website, our hosting solutions ensure dependable performance and support.

Starting a business in Allahabad, a city known for its rich cultural heritage and growing economy, can be a rewarding venture. From understanding the local market to leveraging digital marketing strategies, this gui... | aditya_pandey_1847fe5a44a | |

1,888,164 | Why Should I Invest in Developing a Metaverse NFT Marketplace? | The digital world is changing fast, with two big developments: the Metaverse and NFTs (Non-Fungible... | 0 | 2024-06-14T09:08:09 | https://dev.to/elena_marie_dad5c9d5d5706/why-should-i-invest-in-developing-a-metaverse-nft-marketplace-4ogb | metaverse, nft, marketplace | The digital world is changing fast, with two big developments: the Metaverse and NFTs (Non-Fungible Tokens). It's time to get caught up if you haven't noticed already. Why should you consider creating a Metaverse NFT marketplace? Let's find out.

What is a Metaverse NFT Marketplace?

The Metaverse is an online space wh... | elena_marie_dad5c9d5d5706 |

1,888,162 | Pure ML vs Applied ML | ⚡𝐏𝐮𝐫𝐞 𝐌𝐚𝐜𝐡𝐢𝐧𝐞 𝐋𝐞𝐚𝐫𝐧𝐢𝐧𝐠 is a subfield of artificial intelligence that focuses on the development and... | 0 | 2024-06-14T09:04:35 | https://dev.to/sagorbro005/pure-ml-vs-applied-ml-197n | pureml, appliedml, machinelearning | ⚡𝐏𝐮𝐫𝐞 𝐌𝐚𝐜𝐡𝐢𝐧𝐞 𝐋𝐞𝐚𝐫𝐧𝐢𝐧𝐠 is a subfield of artificial intelligence that focuses on the development and study of algorithms that can learn from and make predictions or decisions based on data. It involves the study of how these algorithms can be designed to learn from data, identify patterns, and make de... | sagorbro005 |

1,888,161 | Interfacing with FMZ robot using "Tradingview" indicator | Background introduction TradingView is a good market quotes drawing tool. The pine script... | 0 | 2024-06-14T09:03:28 | https://dev.to/fmzquant/interfacing-with-fmz-robot-using-tradingview-indicator-5hme | trading, indicator, robot, fmzquant | ## Background introduction

TradingView is a good market quotes drawing tool.

The pine script is also a powerful existence!

Backtesting, alarming, and various docking is a very complete financial tool.

But there are two issues that have been plaguing us...

- One is the expensive membership system

- The second is tha... | fmzquant |

1,888,159 | Exploring the Evolution: A Dive into the Fashion Industry Trends | Discover the dynamic shifts and innovations driving the fashion industry's evolution, from... | 0 | 2024-06-14T08:55:26 | https://dev.to/arsports/exploring-the-evolution-a-dive-into-the-fashion-industry-trends-22i4 | webdev, beginners, javascript, programming | Discover the dynamic shifts and innovations driving the fashion industry's evolution, from sustainability to digital influence, shaping consumer preferences worldwide.

Shop Now- https://www.arsports.com.co/ | arsports |

1,888,158 | Dr. Nubi Achebo Awarded Distinguished Professorship In Management By AUBSS And QAHE | The American University of Business and Social Sciences (AUBSS) and the International Association for... | 0 | 2024-06-14T08:52:29 | https://dev.to/aubss_edu/dr-nubi-achebo-awarded-distinguished-professorship-in-management-by-aubss-and-qahe-2jep | education, news, aubss, qahe | The American University of Business and Social Sciences (AUBSS) and the International Association for Quality Assurance in Pre-Tertiary & Higher Education (QAHE) proudly announce the prestigious Distinguished Professorship in Management awarded to Dr. Nubi Achebo.

With an outstanding career spanning over 25 years, ... | aubss_edu |

1,888,156 | Exception Types | Exceptions are objects, and objects are defined using classes. The root class for exceptions is... | 0 | 2024-06-14T08:49:16 | https://dev.to/paulike/exception-types-351p | java, programming, learning, beginners | Exceptions are objects, and objects are defined using classes. The root class for exceptions is **java.lang.Throwable**. The preceding section used the classes **ArithmeticException** and **InputMismatchException**. Are there any other types of exceptions you can use? Can you define your own exception classes? Yes. The... | paulike |

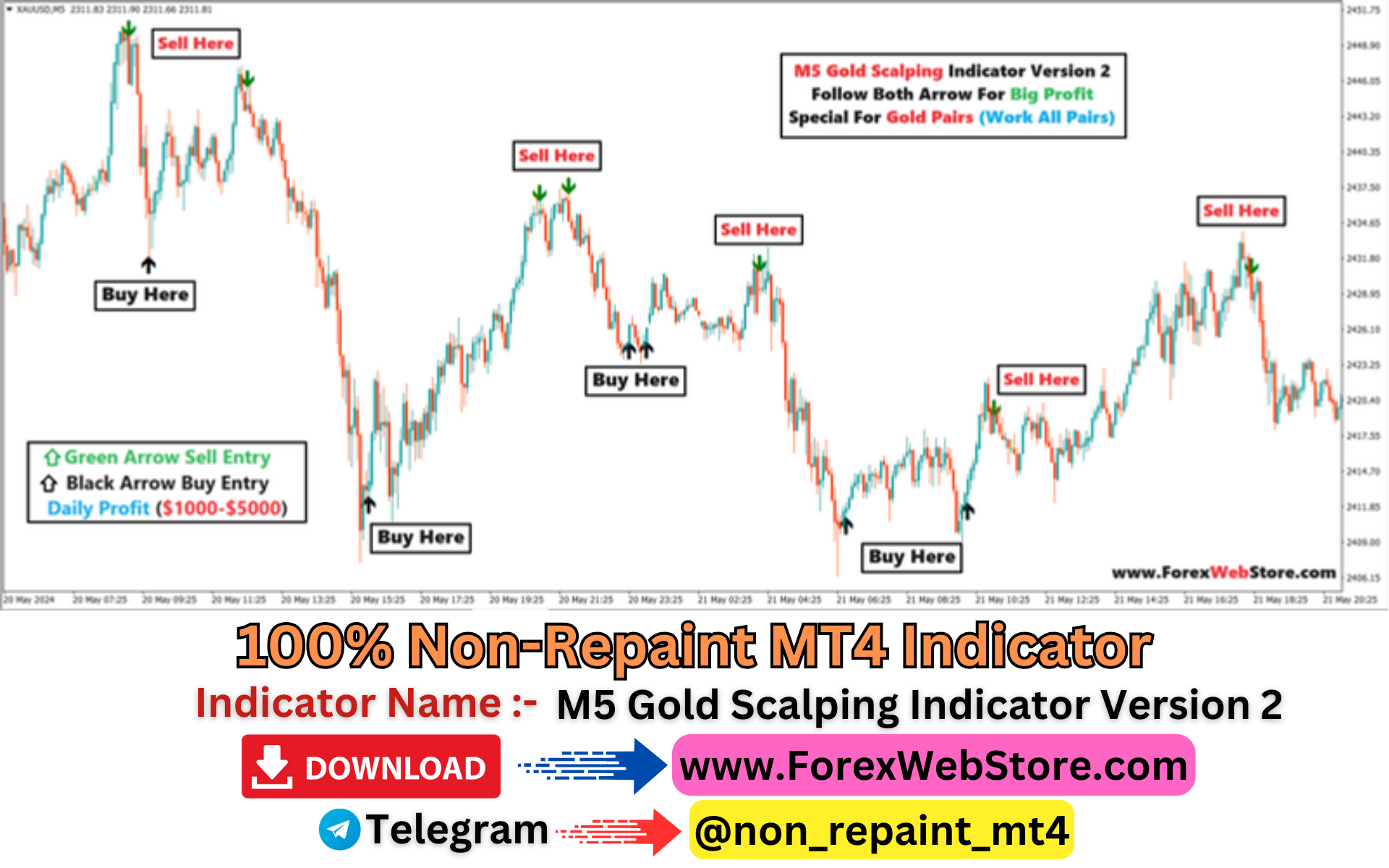

1,888,150 | Managed Forex Account Services | A Managed Forex Account Service is a type of investment service where professional traders or money... | 0 | 2024-06-14T08:38:52 | https://dev.to/akash_fx/managed-forex-account-services-16mk | webdev, forex, scalping, account | A [Managed Forex Account Service](https://forexwebstore.com/account-management/) is a type of investment service where professional traders or money managers trade the foreign exchange (Forex) market on behalf of clients. These services can be attractive for individuals who want to invest in Forex but lack the time, ex... | akash_fx |

1,888,155 | Getting Started with NestJS and TypeORM: A Beginner's Guide | Hey everyone! 👋 If you're looking to build scalable and maintainable server-side applications with... | 0 | 2024-06-14T08:47:20 | https://dev.to/souhailxedits/getting-started-with-nestjs-and-typeorm-a-beginners-guide-ggc | nestjs, typeorm, webdev, apigateway | Hey _everyone_! 👋 If you're looking to build scalable and maintainable server-side applications with Node.js, NestJS is a fantastic framework to dive into. Combined with TypeORM for database interactions, you can create robust and type-safe applications with ease. In this guide, we'll walk through setting up a simple ... | souhailxedits |

1,888,154 | Python Online Classes: Master Coding with Kodyfier in India | If you're eager to delve into the world of programming, learning Python is a fantastic place to... | 0 | 2024-06-14T08:46:57 | https://dev.to/riya45/python-online-classes-master-coding-with-kodyfier-in-india-4d2f | pythononlineclasses, pythononlineclass, pythononlineclassesinindia, kodyfier |

If you're eager to delve into the world of programming, learning Python is a fantastic place to start. Renowned for its simplicity and versatility, Python is a top choice for both beginners and seasoned developers.... | riya45 |

1,888,153 | Best Forex Indicators | Visit for More Indicator :- https://forexwebstore.com/product-category/mt4-indicator/ | 0 | 2024-06-14T08:44:17 | https://dev.to/akash_fx/best-forex-indicators-3bj4 | indicators, forex, scalper, market |

V... | akash_fx |

1,888,152 | My Pen on CodePen | Liquid syntax error: Tag '{% %}' was not properly terminated with regexp: /\%\}/ | 0 | 2024-06-14T08:43:19 | https://dev.to/__36002242a86c9/my-pen-on-codepen-48nk | codepen | {% %}Check out this Pen I made!

{% codepen https://codepen.io/Mohammedealanizi/pen/jOoaXvr %} | __36002242a86c9 |

1,888,151 | SAFE ACCOUNT MANAGEMENT | WANT TO JOIN ACCOUNT MANAGEMENT ? LOOK OUR PLANS FOR ACCOUNT MANAGEMENT 👇 SOME OTHER DETAILS FOR... | 0 | 2024-06-14T08:41:12 | https://dev.to/akash_fx/safe-account-management-19fb | forex, account, manager, scalper | WANT TO JOIN ACCOUNT MANAGEMENT ?

LOOK OUR PLANS FOR ACCOUNT MANAGEMENT 👇

SOME OTHER DETAILS FOR ACCOUNT MANAGEMENT

🔴 Stop Wasting Time & Your Money Join Us Change Your Life. Interested People Talk Us 🔴

🟢 Are you in Big Loss ? Or Your Account is Running Much Loss.

🟢 Contact Us for Safe and Secure Account Manageme... | akash_fx |

1,888,149 | Exception-Handling Overview | Exception handling enables a program to deal with exceptional situations and continue its normal... | 0 | 2024-06-14T08:37:13 | https://dev.to/paulike/exception-handling-overview-5eo8 | java, programming, learning, beginners | Exception handling enables a program to deal with exceptional situations and continue its normal execution. _Runtime errors_ occur while a program is running if the JVM detects an operation that is impossible to carry out. For example, if you access an array using an index that is out of bounds, you will get a runtime ... | paulike |

1,888,148 | Top 17 Fast-Growing Github Repo of 2024 | Ehy Everybody 👋 It’s Antonio, CEO & Founder at Litlyx. I come back to you with a... | 0 | 2024-06-14T08:36:23 | https://dev.to/litlyx/top-17-fast-growing-github-repo-of-2024-cm7 | webdev, javascript, programming, tutorial | ## Ehy Everybody 👋

It’s **Antonio**, CEO & Founder at [Litlyx](https://litlyx.com).

I come back to you with a curated **Awesome List of resources** that you can find interesting.

Today Subject is...

```bash

Top 17 Fastest Growing GitHub Repositories of 2024

```

Share some **Love** & leave a **Star** on our **Ope... | litlyx |

1,888,146 | Python Class Online: Elevate Your Coding Skills with Kodyfier in India | In today's tech-driven world, acquiring proficiency in programming languages is essential for... | 0 | 2024-06-14T08:34:06 | https://dev.to/riya45/python-class-online-elevate-your-coding-skills-with-kodyfier-in-india-1be0 | pythonclassonline, pythonclassesonline, pythonclassonlineinindia, kodyfier |

In today's tech-driven world, acquiring proficiency in programming languages is essential for anyone looking to thrive in the IT industry. One language that stands out for its versatility and ease of learning is Py... | riya45 |

1,888,145 | Docker for Dummies- Introduction to docker | Welcome to Docker for Dummies! This is going to be an idk-how-many-part series where I'll... | 27,767 | 2024-06-14T08:34:05 | https://dev.to/swikritit/docker-for-dummies-introduction-to-docker-5h67 | docker, containers, devops, linux | ### Welcome to Docker for Dummies!

This is going to be an `idk-how-many-part series` where I'll try to explain docker in as simple a way as possible. I'm also new to the world of containerization so the purpose of this series is to try to teach this technology while learning it myself.

If you are new to the tech indu... | swikritit |

1,888,144 | Navigating the Real Estate Market in Allahabad | Navigating the real estate market in Allahabad can be a hard task, whether you are a first-time... | 0 | 2024-06-14T08:33:51 | https://dev.to/aditya_pandey_1847fe5a44a/navigating-the-real-estate-market-in-allahabad-23kd |

Navigating the real estate market in Allahabad can be a hard task, whether you are a first-time homebuyer or an experienced investor. With the dynamic nature of the market and the influx of modern infrastructure pro... | aditya_pandey_1847fe5a44a | |

1,888,143 | Test Plan vs Test Case: Key Differences | Software applications are becoming more complex, demanding a robust test process for all critical... | 0 | 2024-06-14T08:33:20 | https://www.lambdatest.com/blog/test-plan-vs-test-case/ |

Software applications are becoming more complex, demanding a robust test process for all critical features due to technological advancements. In software testing, verification and validation are essential to ensure the proper functioning and performance of the software applications. Quality Analysts (QA) and software ... | nazneenahmad | |

1,887,737 | Step-by-Step Guide: Creating a Virtual Machine in Azure | Virtual machines play a crucial role in modern cloud computing, offering flexible and scalable... | 0 | 2024-06-14T08:33:04 | https://dev.to/dera2024/step-by-step-guide-creating-a-virtual-machine-in-azure-153k | azure, cloudcomputing, virtualmachine | Virtual machines play a crucial role in modern cloud computing, offering flexible and scalable computing resources. Deploying and connecting a virtual machine in Azure involves several steps. Below is a complete guide to walk you through the process of setting up your very own virtual machine in Azure:

**Sign in to A... | dera2024 |

1,888,142 | The Convenience Revolution: How UrbanClap Clone Scripts Empower Busy People | In today's fast-paced world, time is a precious commodity. Between work, family, and social... | 0 | 2024-06-14T08:31:14 | https://dev.to/claire_hyland/the-convenience-revolution-how-urbanclap-clone-scripts-empower-busy-people-3g1c | In today's fast-paced world, time is a precious commodity. Between work, family, and social commitments, many people struggle to find the time or energy to tackle everyday tasks. This is where UrbanClap clone scripts come in, offering a revolutionary solution for both service providers and consumers.

## Understanding ... | claire_hyland | |

1,888,115 | Introducing Verse.db: The Future of Databases is Here | Hi Dev Community! I’m thrilled to share an exciting development from my team at JEDI Studio. We’re... | 27,741 | 2024-06-14T08:30:25 | https://versedb.jedi-studio.com/blog | database, cli, ai, devops | Hi Dev Community!

I’m thrilled to share an exciting development from my team at JEDI Studio. We’re working on a major update for **verse.db** that’s set to redefine the database landscape.

## The Vision Behind Verse.db

Imagine a database that you can easily run from the command line interface (CLI), operating seamle... | marco5dev |

1,888,140 | Kubernetes on Azure: Part 3 - An Introduction to AKS | Learn how to provision an Azure Kubernetes Cluster and deploy an application to the cluster | 0 | 2024-06-14T08:30:05 | https://dev.to/thwani47/kubernetes-on-azure-part-3-an-introduction-to-aks-3cle | kubernetes, docker, azure | ---

title: Kubernetes on Azure: Part 3 - An Introduction to AKS

published: true

description: Learn how to provision an Azure Kubernetes Cluster and deploy an application to the cluster

tags: kubernetes, docker, azure

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/8rod19lqh9qafhf6o0ug.png

# Use a... | thwani47 |

1,888,141 | Exploring the Top Swimming Pool Companies in Dubai | Dubai, known for its opulence and innovation, boasts some of the most luxurious swimming pools in the... | 0 | 2024-06-14T08:29:55 | https://dev.to/hasnain_alam12345/exploring-the-top-swimming-pool-companies-in-dubai-hi6 | Dubai, known for its opulence and innovation, boasts some of the most luxurious swimming pools in the world. From residential villas to commercial complexes, the demand for exquisite swimming pool designs has surged, leading to the rise of several top-notch pool companies. Let's delve into some of the best swimming poo... | hasnain_alam12345 | |

1,888,123 | How to use has() Property in CSS | As a UI developer, you're always on the hunt for tools and techniques to make your styling more... | 27,759 | 2024-06-14T08:29:16 | https://dev.to/nnnirajn/exploring-the-has-property-in-css-a-guide-for-ui-developers-3obb | css, ui, design, beginners | As a UI developer, you're always on the hunt for tools and techniques to make your styling more efficient and intuitive. The `has()` property in CSS is a game-changer, providing new ways to enhance your styles with conditional logic. You might have heard about it or maybe evenabbled with some code snippets, but how doe... | nnnirajn |

1,888,139 | Vite: How to bundle / group chunks together | If you use a library like Svelte or Vue you might have noticed that Vite and Rollupjs create tons of... | 0 | 2024-06-14T08:28:51 | https://dev.to/greggcbs/vite-how-to-bundle-group-chunks-together-1dnl | vite, rollup, javascript, config | If you use a library like Svelte or Vue you might have noticed that Vite and Rollupjs create tons of tiny js files - in my case causing the browser to have to download 72 js files for one web page - some files being less then 1kb.

There is a way to group chunks in your vite.config, its manual but it can help you.

**F... | greggcbs |

1,888,138 | Exploring Mindfulness-Based Stress Reduction (MBSR) in Therapy: Cultivating Calmness and Presence with Heidi Kling | In the realm of therapeutic change, various approaches have emerged to address mental health concerns... | 0 | 2024-06-14T08:25:12 | https://dev.to/drheidikling/exploring-mindfulness-based-stress-reduction-mbsr-in-therapy-cultivating-calmness-and-presence-with-heidi-kling-4b02 | In the realm of therapeutic change, various approaches have emerged to address mental health concerns and promote well-being. One such approach gaining recognition is Mindfulness-Based Stress Reduction (MBSR), which integrates mindfulness meditation and mindful movement to alleviate stress, anxiety, and depression. In ... | drheidikling | |

1,845,070 | HubSpot enables 128,000 businesses with live chat that just works | HubSpot is a SaaS CRM platform with live tools for sales, marketing, customer service, content... | 0 | 2024-06-14T08:25:07 | https://ably.com/case-studies/hubspot | webdev, news, hubspot, ably | HubSpot is a SaaS CRM platform with live tools for sales, marketing, customer service, content management and operations. It's mission is to make it easy for all parts of an organisation to work together, regardless of location. The goal: transform a business so it can attract, engage, and delight customers.

**THE PRO... | ablyblog |

1,888,137 | Kubernetes on Azure: Part 2 - Running a local Kubernetes cluster | Learn how to run a local Kubernetes cluster and deploy and application to your cluster | 0 | 2024-06-14T08:23:42 | https://dev.to/thwani47/kubernetes-on-azure-part-2-running-a-local-kubernetes-cluster-2fbl | kubernetes, docker, azure | ---

title: Kubernetes on Azure: Part 2 - Running a local Kubernetes cluster

published: true

description: Learn how to run a local Kubernetes cluster and deploy and application to your cluster

tags: kubernetes, docker, azure

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/fw1smq3m3k4kil8tpb47.png

... | thwani47 |

1,888,135 | Exploring Existential Themes in Therapy: Navigating Life's Big Questions with Heidi Kling (PhD) | In the realm of therapy, there exists a profound and enduring fascination with existential themes.... | 0 | 2024-06-14T08:22:44 | https://dev.to/drheidikling/exploring-existential-themes-in-therapy-navigating-lifes-big-questions-with-heidi-kling-phd-167h | In the realm of therapy, there exists a profound and enduring fascination with existential themes. These themes delve into the very heart of what it means to be human—questions of purpose, meaning, freedom, and mortality. Within the therapeutic context, exploring existential themes offers clients a space to grapple wit... | drheidikling | |

1,888,128 | Kubernetes on Azure: Part 1 - An Introduction to Kubernetes | Learn the core concepts of Kubernetes | 0 | 2024-06-14T08:18:40 | https://dev.to/thwani47/kubernetes-on-azure-part-1-an-introduction-to-kubernetes-1oc9 | kubernetes, docker, azure | ---

title: Kubernetes on Azure: Part 1 - An Introduction to Kubernetes

published: true

description: Learn the core concepts of Kubernetes

tags: kubernetes, docker, azure

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/5dhqr0qaf8glr6qd8ln7.png

# Use a ratio of 100:42 for best results.

# published_a... | thwani47 |

1,888,127 | What is Siacoin Mining? | Siacoins, the native cryptocurrency of the decentralized blockchain storage network Sia, can be... | 0 | 2024-06-14T08:15:27 | https://dev.to/lillywilson/what-is-siacoin-mining-3976 | cryptocurrency, asic, bitcoin | **[Siacoins](https://asicmarketplace.com/blog/top-siacoin-miners/)**, the native cryptocurrency of the decentralized blockchain storage network Sia, can be rented out by users in exchange for extra storage space. The Sia network is not secure and reliable without mining. When you run specialized software to mine Siacoi... | lillywilson |

1,888,126 | An Introduction to Cloud Computing | A beginner's guide to Cloud Computing | 0 | 2024-06-14T08:15:06 | https://dev.to/thwani47/an-introduction-to-cloud-computing-1p9 | azure, cloudcomputing | ---

title: An Introduction to Cloud Computing

published: true

description: A beginner's guide to Cloud Computing

tags: azure, cloudcomputing

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/twpl3k88v19alcbo6anx.png

# Use a ratio of 100:42 for best results.

# published_at: 2024-06-14 08:07 +0000

--... | thwani47 |

1,888,125 | Why is UX Research Important During UI and UX Design Services? | In today's digital age, creating exceptional user experiences is more critical than ever. As a... | 0 | 2024-06-14T08:15:05 | https://dev.to/ishita_mathur/why-is-ux-research-important-during-ui-and-ux-design-services-ci9 | uiuxdesign, uiuxdesignagency, graphicdesign, graphicdesignservices | In today's digital age, creating exceptional user experiences is more critical than ever. As a leading UI/UX design agency, we understand that the key to delivering successful digital products lies in thorough UX research. In this article, we will delve into why UX research is an indispensable part of UI and UX design ... | ishita_mathur |

1,888,124 | Enhancing Performance with Advanced Magnet Technologies | Improving Efficiency: how Progressed Magnet Innovations Can easily Assist Have you ever before... | 0 | 2024-06-14T08:14:14 | https://dev.to/jahira_hanidha_ac8711fb57/enhancing-performance-with-advanced-magnet-technologies-556e | Improving Efficiency: how Progressed Magnet Innovations Can easily Assist

Have you ever before found out about progressed magnet innovations? They are a kind of progressed innovation that utilizes magnetics towards produce chances that are brand-brand new. They deal lots of benefits over conventional innovations. I... | jahira_hanidha_ac8711fb57 | |

1,880,559 | Friday Thoughts on email validation | While working on a new authentication system I was getting alerts that account creation was failing.... | 26,285 | 2024-06-14T08:10:57 | https://dev.to/timoschinkel/friday-thoughts-on-email-validation-4fha | webdev | While working on a new authentication system I was getting alerts that account creation was failing. After diving into the logs I learned that the accounts were rejected because the email addresses were not deemed valid. But I made sure standardized email validation was in place. What's going on here?

## So many syste... | timoschinkel |

1,888,122 | Dying, Bitcoin and Inheritance | I used to live in Kyiv, Ukraine, until I got bombed. I contemplate how this world is unstable: one... | 0 | 2024-06-14T08:10:15 | https://dev.to/martinbaun/dying-bitcoin-and-inheritance-480k | career, github, cryptocurrency, bitcoin | I used to live in Kyiv, Ukraine, until I got bombed. I contemplate how this world is unstable: one day, you work and live in a comfortable environment. I have plans for my life, but the next day, anything can happen.

I take responsibility for my employees as an entrepreneur. I run a small tech company. Worst-case scen... | martinbaun |

1,888,132 | Video — Open Source AI with Hugging Face, Dallas AI meetup (05/2024) | Video — Open Source AI with Hugging Face, Dallas AI meetup (05/2024) Learn how Hugging... | 0 | 2024-06-14T12:48:18 | https://julsimon.medium.com/video-open-source-ai-with-hugging-face-dallas-ai-meetup-05-2024-59fc53631eae | opensource, deeplearning, transformers, llm | ---

title: Video — Open Source AI with Hugging Face, Dallas AI meetup (05/2024)

published: true

date: 2024-06-14 08:10:15 UTC

tags: opensource,deeplearning,transformers,llm

canonical_url: https://julsimon.medium.com/video-open-source-ai-with-hugging-face-dallas-ai-meetup-05-2024-59fc53631eae

---

### Video — Open Sourc... | juliensimon |

1,888,078 | My Journey Internship at Kali Academy | I am Birusha Ndegeya, a student software developer specializing in web and mobile apps at Kadea... | 0 | 2024-06-14T08:08:55 | https://dev.to/birusha/my-journey-internship-at-kali-academy-5bng | career, opensource, programming, coding | I am Birusha Ndegeya, a student software developer specializing in web and mobile apps at Kadea Academy in Goma. During my three-month training at Kali Academy, I dedicated myself to an open-source project and was accepted to dive into the fascinating world of open source.

The internship started on May 11 and ended on... | birusha |

1,888,121 | One byte explainer - Callbacks | 🎉 Welcome, folks! Get ready for an exciting journey through the series Explain to a 5-Year-Old -... | 27,721 | 2024-06-14T08:08:38 | https://dev.to/imkarthikeyan/one-byte-explainer-callbacks-34bo | devchallenge, cschallenge, javascript, webdev | 🎉 **Welcome, folks!**

Get ready for an exciting journey through the series *Explain to a 5-Year-Old - JavaScript Concepts*. Each blog is also a submission for the **cschallenge**.

Here's what to expect:

1. 🚀 **One-Byte Explainer:** A super concise take on the concept (256 characters or less).

2. 🔍 **Demystifying ... | imkarthikeyan |

1,888,120 | Exploring the Prestigious Kasturba Medical College | Introduction to Kasturba Medical College (KMC) As an aspiring medical professional, I have... | 0 | 2024-06-14T08:08:28 | https://dev.to/new_eraeducation/exploring-the-prestigious-kasturba-medical-college-28bc | mbbs, abroad, consultancy, neweraeducation | ## Introduction to Kasturba Medical College (KMC)

As an aspiring medical professional, I have always been drawn to institutions that not only provide top-notch education but also foster a rich legacy of excellence. Kasturba Medical College (KMC) is one such esteemed institution that has captured my attention and pique... | new_eraeducation |

1,888,119 | Nested Prompts in Go using promptui | Learn how to build a nested prompt in a Go CLI application | 0 | 2024-06-14T08:06:58 | https://dev.to/thwani47/nested-prompts-in-go-using-promptui-2a4d | go, promptui, cobra, cli | ---

title: Nested Prompts in Go using promptui

published: true

description: Learn how to build a nested prompt in a Go CLI application

tags: go, promptui, cobra, cli

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/kch1rwcrs8bx8ahzhyx1.png

# Use a ratio of 100:42 for best results.

# published_at: ... | thwani47 |

1,888,118 | Effective State Management in React: Comparing Redux, Context API, and Recoil | State management is a critical aspect of building scalable and maintainable React applications. In... | 0 | 2024-06-14T08:02:44 | https://dev.to/drruvari/effective-state-management-in-react-comparing-redux-context-api-and-recoil-407i | State management is a critical aspect of building scalable and maintainable React applications. In this article, we will explore three popular state management solutions: Redux, Context API, and Recoil. We'll discuss their strengths, weaknesses, and best use cases to help you make an informed decision for your next pro... | drruvari | |

1,888,117 | Commodity "futures and spots" Arbitrage Chart Based on FMZ Fundamental Data | Summary Some people may be unfamiliar with the word "arbitrage", but "arbitrage" is very... | 0 | 2024-06-14T08:01:16 | https://dev.to/fmzquant/commodity-futures-and-spots-arbitrage-chart-based-on-fmz-fundamental-data-3fkc | arbitrage, data, fmzquant, chart | ## Summary

Some people may be unfamiliar with the word "arbitrage", but "arbitrage" is very common in real life. For example, the owner of a convenience store buys a bottle of mineral water from the wholesale market for 0.5 yuan, then sells it in the store for 1 yuan, and finally earns a difference of 0.5 yuan. This pr... | fmzquant |

1,888,116 | Bridging Backend and Data Engineering: Communicating Through Events | Building a Unified System: Event-Driven Approach to Backend and Data Engineering Communication. | 0 | 2024-06-14T08:00:04 | https://dev.to/plutov/bridging-backend-and-data-engineering-communicating-through-events-33pn | distributedsystems, pubsub, backend, dataengineering | ---

title: Bridging Backend and Data Engineering: Communicating Through Events

published: true

description: Building a Unified System: Event-Driven Approach to Backend and Data Engineering Communication.

tags: DistributedSystems, PubSub, Backend, DataEngineering

cover_image: https://dev-to-uploads.s3.amazonaws.com/uplo... | plutov |

1,888,114 | Introducing Semantic Kernel | Learn how to get started with Microsoft's Semantic Kernel | 0 | 2024-06-14T07:59:42 | https://dev.to/thwani47/introducing-semantic-kernel-41d5 | ai, machinelearning, semantickernel | ---

title: Introducing Semantic Kernel

published: true

description: Learn how to get started with Microsoft's Semantic Kernel

tags: ai,ml, semantickernel

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/v6v2az1etoeex21i9fao.png

# Use a ratio of 100:42 for best results.

# published_at: 2024-06-14 0... | thwani47 |

1,888,113 | The Rise of Sustainable Packaging & Eco-Friendly Air Freshener Market | Introduction: As consumer awareness of environmental issues continues to grow, so does the demand... | 0 | 2024-06-14T07:59:26 | https://dev.to/sneha_nextmsc/the-rise-of-sustainable-packaging-eco-friendly-air-freshener-market-1ad4 | airfreshnermarket, airfresheners, consumergoods, marketresearch |

**Introduction:**

As consumer awareness of environmental issues continues to grow, so does the demand for eco-friendly products across all industries, including the [Air Freshener Market](https://www.nextmsc.com/re... | sneha_nextmsc |

1,888,106 | Deep Dive Into .NET Minimal APIs | Learn how to create a REST API using .NET Minimal APIs | 0 | 2024-06-14T07:48:36 | https://dev.to/thwani47/deep-dive-into-net-minimal-apis-399g | dotnet, api, csharp | ---

title: Deep Dive Into .NET Minimal APIs

published: true

description: Learn how to create a REST API using .NET Minimal APIs

tags: dotnet, api, csharp

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/mxpqvvhb53fs70ook7cl.png

# Use a ratio of 100:42 for best results.

# published_at: 2022-09-20 07... | thwani47 |

1,888,112 | Streamlining Workflows: Power BI - Your Automation Ally | Free up valuable resources and boost efficiency! Power BI automates data refresh and report... | 0 | 2024-06-14T07:59:25 | https://dev.to/akaksha/streamlining-workflows-power-bi-your-automation-ally-59p0 | Free up valuable resources and boost efficiency! Power BI automates data refresh and report generation, allowing you to focus on strategic analysis rather than manual tasks. Let Power BI handle the heavy lifting. In the age of information overload, visual storytelling is no longer a luxury; it's a necessity. [Power B... | akaksha | |

1,888,111 | How to Choose the Right CBD Product for Your Pet? | If you know CBD's importance and beneficial effects for your pet's health, you are definitely up to... | 0 | 2024-06-14T07:58:18 | https://dev.to/sophia_rose_bc4ac299db6c9/how-to-choose-the-right-cbd-product-for-your-pet-5331 | If you know CBD's importance and beneficial effects for your pet's health, you are definitely up to try it out. There are a huge number of CBD products in the market, so it is time to choose the best one for your furry friend. Natural sources of treatment are much better not only for our health but also for our pets to... | sophia_rose_bc4ac299db6c9 | |

1,888,110 | How to Get a Research Paper Published | Publishing your research is a significant milestone in your academic career. Here’s a step-by-step... | 0 | 2024-06-14T07:55:09 | https://dev.to/miteshbathri/how-to-get-a-research-paper-published-e49 |

[Publishing your research](https://www.ijset.in/how-to-get-research-paper-published/) is a significant milestone in your academic career. Here’s a step-by-step guide to help you through the process:

**1. Select the Right Journal**

**Research Journals:** Look for journals that align with your field of study. Utilize j... | miteshbathri | |

1,888,109 | Building a CRUD app with React Query, TypeScript, and Axios | Learn how to use React Query for data fetching and state management in a React application | 0 | 2024-06-14T07:54:46 | https://dev.to/thwani47/building-a-crud-app-with-react-query-typescript-and-axios-2d0j | typescript, react, reactquery, vite | ---

title: Building a CRUD app with React Query, TypeScript, and Axios

published: true

description: Learn how to use React Query for data fetching and state management in a React application

tags: typescript, react, reactquery, vite

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/gjmup300p09rpgkdt... | thwani47 |

1,888,086 | Build a chat room with custom bots powered by OpenAI/Gemini | This app combines popular chat platforms like Discord/Slack, and the powerful minds of LLMs like... | 0 | 2024-06-14T07:49:09 | https://dev.to/encore/build-a-chat-room-with-custom-bots-powered-by-openaigemini-47h3 | go, ai, programming, tutorial |

This app combines popular chat platforms like Discord/Slack, and the powerful minds of LLMs like OpenAI and Google Gemini.

It lets you create your own AI bots with unique personalities that can seamlessly engage in conve... | marcuskohlberg |

1,888,105 | Programming for Non-professionals | The intention of this book is to teach individuals who are not professional programmers (those who do... | 0 | 2024-06-14T07:46:21 | https://dev.to/esproc_spl/programming-for-non-professionals-f1e | books, programming, beginners | The intention of this book is to teach individuals who are not professional programmers (those who do not program for a living) how to code.

"Programming for Non-professionals" is designed to teach non-professionals how to program. In today's world, programming has become a basic skill, similar to driving, offering si... | esproc_spl |

1,888,104 | 5 VSCODE AI Extensions for devs in 2024 | As somebody who has been building AI developer tooling for about a couple years now, I like to keep... | 0 | 2024-06-14T07:44:29 | https://dev.to/commanddash/5-vscode-ai-extensions-devs-build-with-in-2024-51n3 | vscode, githubcopilot, ai, coding |

As somebody who has been building AI developer tooling for about a couple years now, I like to keep an eye on what are the most productive extensions that developers can utilize.

Today, I'm sharing a list of top 4 extensions + CommandDash based on some recent surveys by StackOverflow and my own findings.

## 1. Githu... | samyakkkk |

1,888,103 | Boost Your Grades with MYOB Assignment Help: Expert Assistance at Your Fingertips | Success in academics requires not just hard work but also the right kind of assistance. MYOB... | 0 | 2024-06-14T07:44:21 | https://dev.to/myobassignmenthelp/boost-your-grades-with-myob-assignment-help-expert-assistance-at-your-fingertips-k43 | education | Success in academics requires not just hard work but also the right kind of assistance. **[MYOB Assignment Help](https://www.myobassignmenthelp.com/)** is one such service that can significantly enhance your learning and grades, especially in accounting and business courses. Whether you are struggling with understandin... | myobassignmenthelp |

1,888,102 | Cross Country Running Essentials List by Robert Geiger: What to Pack for Success | Embarking on a cross country running journey requires more than just stamina and determination. The... | 0 | 2024-06-14T07:43:36 | https://dev.to/robertgeiger/cross-country-running-essentials-list-by-robert-geiger-what-to-pack-for-success-4mj7 | Embarking on a cross country running journey requires more than just stamina and determination. The key to a successful and enjoyable experience lies in careful planning and packing. Whether you're a seasoned runner or a novice, having the right essentials can make a significant difference in your performance and overa... | robertgeiger | |

1,888,101 | Everything You Should Know About Integrated System Testing | A thorough testing procedure called integrated system testing (IST) confirms the overall... | 0 | 2024-06-14T07:43:35 | https://baltimorepostexaminer.com/everything-you-should-know-about-integrated-system-testing/2024/04/19 | integrated, system, testing |

A thorough testing procedure called integrated system testing (IST) confirms the overall functioning, performance, and dependability of a system. In contrast to individual component testing, integrated component test... | rohitbhandari102 |

1,888,100 | Courtside Chronicles: A Deep Dive into Basketball Culture in the USA with Robert Geiger (Teacher) | Basketball isn't just a sport in the United States; it's a cultural phenomenon deeply ingrained in... | 0 | 2024-06-14T07:42:21 | https://dev.to/robertgeiger/courtside-chronicles-a-deep-dive-into-basketball-culture-in-the-usa-with-robert-geiger-teacher-2lm5 | Basketball isn't just a sport in the United States; it's a cultural phenomenon deeply ingrained in the fabric of society. From the iconic courts of New York City to the storied arenas of Los Angeles, basketball transcends geographical boundaries and unites communities like few other sports can. In this exploration of b... | robertgeiger | |

1,888,099 | Free PDF Converters and Editors Online | There are a lot of free online PDF converters. But some of them are all free but some are partly... | 0 | 2024-06-14T07:41:49 | https://dev.to/derek-compdf/free-pdf-converters-and-editors-online-5bpp | There are a lot of free online PDF converters. But some of them are all free but some are partly free. If you want to find a totally free online tool. You can find them by guessing what kind of company would like to provide a free one all the time. Find the technology providers. They always present their free tools to ... | derek-compdf | |

1,888,098 | Is ONLEI Technologies Good for Freshers | Is ONLEI Technologies Good for Freshers In today's rapidly evolving job market, staying ahead of the... | 0 | 2024-06-14T07:39:15 | https://dev.to/onleitechnologies/is-onlei-technologies-good-for-freshers-48mg | beginners, javascript, programming, tutorial | Is [ONLEI Technologies](https://onleitechnologies.com/data-science-in-noida) Good for Freshers

In today's rapidly evolving job market, staying ahead of the curve is essential for fresh graduates and job seekers. With the growing demand for skilled professionals in various industries, investing in quality training prog... | onleitechnologies |

1,888,096 | Gafas de sol de bambú | Gafas de sol de madera de bambú artesanales y sostenibles: www.livegens.com/tienda/gafas-madera/ | 0 | 2024-06-14T07:37:03 | https://dev.to/livegens_brand_ff19d3891c/gafas-de-sol-de-bambu-1g19 | Gafas de sol de madera de bambú artesanales y sostenibles: [www.livegens.com/tienda/gafas-madera/](url) | livegens_brand_ff19d3891c |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.