id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,886,903 | How to create API in an industry-standard app | Introduction This is the third blog of my series where I am writing how to write code for... | 0 | 2024-06-13T11:54:47 | https://dev.to/md_enayeturrahman_2560e3/how-to-create-api-in-an-industry-standard-app-44ck | api, express, node, javascript | ### Introduction

- This is the third blog of my series where I am writing how to write code for an industry-grade project so that you can manage and scale the project. In this blog, we will learn how to create an API endpoint. We will see how to create an interface, mongoose model, route, controller, and service file... | md_enayeturrahman_2560e3 |

1,886,902 | What are the advantages of using Kotlin over Java for Android development? | Kotlin, a statically typed programming language developed by JetBrains, has gained significant... | 0 | 2024-06-13T11:53:35 | https://dev.to/chariesdevil/what-are-the-advantages-of-using-kotlin-over-java-for-android-development-2lc9 | android, androidapp, androidappdevelopment, kotlin | Kotlin, a statically typed programming language developed by JetBrains, has gained significant traction in the Android development community since its official endorsement by Google in 2017. It is designed to interoperate fully with Java but offers several distinct advantages that make it a compelling choice for Androi... | chariesdevil |

1,886,901 | Learning to code? Here’s why getting stuck is a good thing. | This blog was originally published on Substack. Subscribe to ‘Letters to New Coders’ to receive free... | 0 | 2024-06-13T11:50:08 | https://dev.to/fahimulhaq/learning-to-code-heres-why-getting-stuck-is-a-good-thing-3im9 | webdev, programming, beginners | This [blog](https://www.letterstocoders.com/p/learning-to-code-heres-why-getting) was originally published on Substack. Subscribe to ‘[Letters to New Coders](https://www.letterstocoders.com/)’ to receive free weekly posts.

Imagine a game of billiards. An intermediate player teaches a total beginner how the rules work,... | fahimulhaq |

1,886,898 | Big O' Notation | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-13T11:49:32 | https://dev.to/marstecks/big-o-notation-59nm | devchallenge, cschallenge, computerscience, beginners | _This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer._

## Explainer

<!-- Explain a computer science concept in 256 characters or less. -->

**Ranks algorithm speed as data grows. Slow (O(n^2)) vs Fast (O(log n)) helps choose efficient code.**

## Additional Context

<!-- Please share ... | marstecks |

1,886,897 | Comprehensive Guide to Joey Text to Speech 2024 | Explore the transformative capabilities of Joey Text to Speech in our comprehensive 2024 guide. Learn... | 0 | 2024-06-13T11:48:15 | https://dev.to/novita_ai/comprehensive-guide-to-joey-text-to-speech-2024-71k | ai, texttospeech, api | Explore the transformative capabilities of Joey Text to Speech in our comprehensive 2024 guide. Learn how to enhance user engagement with a young, male American English voice, and discover advanced features, customization options, and practical integration strategies for various industries.

## Key Highlights

- Joey T... | novita_ai |

1,886,896 | Data protection in AI ? | Today, we hear a lot about AI being integrated into our hardware devices. PCs, smartphones,... | 0 | 2024-06-13T11:47:05 | https://dev.to/devgirl_/data-protection-in-ai--1ghk | ai, webdev, discuss, datascience | Today, we hear a lot about AI being integrated into our hardware devices. PCs, smartphones, tablets—big OS companies are collaborating with AI to introduce many new features (perhaps too many).

They always claim that our data will be protected, but **have you seen any real explanations or transparency about that?** | devgirl_ |

1,886,895 | Docker Caching Strategies for Efficient Image Builds | Docker caching is a crucial aspect of efficient image builds in containerized environments. By... | 0 | 2024-06-13T11:45:43 | https://dev.to/platform_engineers/docker-caching-strategies-for-efficient-image-builds-kd3 | Docker caching is a crucial aspect of efficient image builds in containerized environments. By optimizing caching strategies, developers can significantly reduce build times and improve overall productivity. This blog post delves into the technical aspects of Docker caching, exploring various strategies and their imple... | shahangita | |

1,886,894 | Transforming Healthcare Engagement with AI 2.0 | Digital Shift and the Need for Personalized Engagement The healthcare sector has... | 27,619 | 2024-06-13T11:43:59 | https://dev.to/aishik_chatterjee_0060e71/transforming-healthcare-engagement-with-ai-20-2fo0 | ## Digital Shift and the Need for Personalized Engagement

The healthcare sector has experienced a digital transformation, accelerated by

the COVID-19 pandemic, necessitating new methods for engaging with healthcare

professionals (HCPs). AI 2.0 offers a compelling solution by merging machine

learning with deep human in... | aishik_chatterjee_0060e71 | |

1,886,893 | Why are they avoiding using require and using import in JavaScript | Differences One of the differences between require and import is that require is used to load... | 0 | 2024-06-13T11:41:19 | https://dev.to/doccaio/why-are-they-avoiding-using-require-and-using-import-in-javascript-k70 |

**Differences**

One of the differences between require and import is that require is used to load modules in Node.js, while import is used to import modules in JavaScript.

Another important difference is that require returns an object, while import returns a reference to the module.

This means that when you use req... | doccaio | |

1,886,877 | Mastering Cloud Security: Insights from Aviatrix Immersion Day on Distributed Firewalls. | Introduction In the rapidly evolving landscape of cloud computing, security remains a... | 0 | 2024-06-13T11:41:13 | https://dev.to/alvin_ndungu/mastering-cloud-security-insights-from-aviatrix-immersion-day-on-distributed-firewalls-4ilm | aviatrix, cloudcomputing, cloudsecurity, aws | ## Introduction

In the rapidly evolving landscape of cloud computing, security remains a paramount concern for enterprises migrating to or operating in multi-cloud environments. Aviatrix, a pioneer in multi-cloud networking, addresses these security challenges with its Distributed Firewall solution. This article delv... | alvin_ndungu |

1,886,892 | Data Drives Decisions: Mastering WooCommerce Analytics for Store Success | Running a successful online store isn't just about having great products. In today's data-driven... | 0 | 2024-06-13T11:39:01 | https://dev.to/developermansi/data-drives-decisions-mastering-woocommerce-analytics-for-store-success-63 | woocommerce, woocommerceanalytics | Running a successful online store isn't just about having great products. In today's data-driven world, understanding your customers and their behavior is key to maximizing sales and growth. That's where WooCommerce Analytics comes in, offering a treasure trove of insights to optimize your WooCommerce store performance... | developermansi |

1,886,891 | Introduction to Digital Identity Verification | Current Challenges in Digital Identity Verification Despite technological advancements,... | 27,619 | 2024-06-13T11:38:17 | https://dev.to/aishik_chatterjee_0060e71/introduction-to-digital-identity-verification-l35 | ## Current Challenges in Digital Identity Verification

Despite technological advancements, digital identity verification faces

challenges such as balancing user convenience with security and addressing

privacy concerns. Sophisticated fraud techniques like deepfake technology also

pose new threats.

## Importance of Se... | aishik_chatterjee_0060e71 | |

1,886,889 | Explore Affordable Solar Products Featuring PV Panels for Sale | Are you in search of PV panels for sale to power your home or business sustainably? At our store, we... | 0 | 2024-06-13T11:36:00 | https://dev.to/mathewkeller/explore-affordable-solar-products-featuring-pv-panels-for-sale-4ioi | Are you in search of **[PV panels for sale](https://www.ft-technical.co.uk/)** to power your home or business sustainably? At our store, we offer a comprehensive selection of solar products for sale, including top-tier PV panels renowned for their efficiency and durability. Whether you're embarking on a new solar insta... | mathewkeller | |

1,886,887 | FRESHPICK | 🚀 𝗙𝗿𝗲𝘀𝗵𝗣𝗶𝗰𝗸: 𝗦𝗶𝗺𝗽𝗹𝗶𝗳𝘆𝗶𝗻𝗴 𝗚𝗿𝗼𝗰𝗲𝗿𝘆 𝗣𝗶𝗰𝗸𝘂𝗽𝘀 🚀 I'm thrilled to share FreshPick, a project I recently... | 0 | 2024-06-13T11:34:02 | https://dev.to/soufianemouajjeh/freshpick-597p | 🚀 𝗙𝗿𝗲𝘀𝗵𝗣𝗶𝗰𝗸: 𝗦𝗶𝗺𝗽𝗹𝗶𝗳𝘆𝗶𝗻𝗴 𝗚𝗿𝗼𝗰𝗲𝗿𝘆 𝗣𝗶𝗰𝗸𝘂𝗽𝘀 🚀

I'm thrilled to share FreshPick, a project I recently completed as part of the #ALXSE Program. FreshPick is a web application designed to streamline the process of ordering fresh groceries for pickup at your local store, supporting local ... | soufianemouajjeh | |

1,886,885 | Mastering Market Research and Competitive Analysis: Strategies for Business Success | In today's competitive business environment, products that fail to meet customer needs and desires... | 0 | 2024-06-13T11:30:23 | https://dev.to/linda0609/mastering-market-research-and-competitive-analysis-strategies-for-business-success-46cf | In today's competitive business environment, products that fail to meet customer needs and desires often struggle in the market, negatively impacting sales revenue. To better understand consumer behavior, market research and analytics are essential tools. Corporate leaders use these insights to craft competitive strate... | linda0609 | |

1,886,884 | Limitações das IAs na aprendizagem dos desenvolvedores | Venho a um tempo falando sobre IA, ao mesmo tempo que algumas reflexões já se tornaram claras, outras... | 0 | 2024-06-13T11:30:20 | https://dev.to/biosbug/limitacoes-das-ias-na-aprendizagem-dos-desenvolvedores-5ek1 | beginners, management, chatgpt | Venho a um tempo falando sobre IA, ao mesmo tempo que algumas reflexões já se tornaram claras, outras ainda dependem de muitas trocas para validarmos algo factível.

Hoje quero compartilhar com vocês alguns pontos importantes sobre as ferramentas de Inteligência Artificial, especialmente os Modelos de Linguagem de Gran... | biosbug |

1,886,883 | Top 20 Javascript Libraries on Github | Ehy Everybody 👋 It’s Antonio, CEO & Founder at Litlyx. I come back to you with a... | 0 | 2024-06-13T11:29:28 | https://dev.to/litlyx/top-20-javascript-libraries-on-github-ljn | javascript, webdev, beginners, programming | ## Ehy Everybody 👋

It’s **Antonio**, CEO & Founder at [Litlyx](https://litlyx.com).

I come back to you with a curated **Awesome List of resources** that you can find interesting.

Today Subject is...

```bash

Top 20 JavaScript Libraries

```

We are looking for collaborators! Share some **love** & leave a **star** o... | litlyx |

1,886,882 | The Ultimate Guide to Flutter App Development | Unleash the Power of Flutter! Discover why our Flutter App Development Company is your top choice for... | 0 | 2024-06-13T11:29:07 | https://dev.to/mobisoftinfotech/the-ultimate-guide-to-flutter-app-development-3d9d | webdev, learning, flutter | Unleash the Power of Flutter! Discover why our Flutter App Development Company is your top choice for high-performance, cross-platform mobile apps. Check out our latest infographic to see the benefits of #FlutterAppDevelopment and how our expert team can bring your vision to life. #AppDevelopment #MobileApps #Flutter, ... | mobisoftinfotech |

1,886,880 | The Journey of Choosing the Best UI Component Library with ReactJS | Selecting the right UI component library is more than a technical decision; it’s a journey that... | 0 | 2024-06-13T11:27:31 | https://dev.to/webdevlapani/the-journey-of-choosing-the-best-ui-component-library-with-reactjs-251k |

Selecting the right UI component library is more than a technical decision; it’s a journey that shapes the development process, influences user experience, and ultimately impacts the project's success. Over the years, I have faced countless challenges and learned invaluable lessons that have guided my approach to choo... | webdevlapani | |

1,886,759 | Building a serverless connected BBQ as SaaS - Part 2 - User Creation | In part two of the series about the world of BBQ, where tradition and technology rarely cross paths. The future of grilling is here, and it’s connected, smart, and runs on the cloud! I continue with user management using an serverless and event-driven approach with Cognito User Pool together with Lambda, EventBridge, a... | 0 | 2024-06-13T11:27:26 | https://jimmydqv.com/serverless-bbq-saas-part2-users/index.html | aws, serverless, iot, saas | ---

title: Building a serverless connected BBQ as SaaS - Part 2 - User Creation

description: In part two of the series about the world of BBQ, where tradition and technology rarely cross paths. The future of grilling is here, and it’s connected, smart, and runs on the cloud! I continue with user management using an ser... | jimmydqv |

1,886,879 | How 4A0-114 Exam Dumps Aid in Understanding Exam Format | Potential Downsides of Exam Dumps Risk of Overconfidence Over-relying on exam dumps can Nokia Network... | 0 | 2024-06-13T11:26:45 | https://dev.to/theasks72/how-4a0-114-exam-dumps-aid-in-understanding-exam-format-oeb | webdev, javascript, beginners, programming | Potential Downsides of Exam Dumps

Risk of Overconfidence

Over-relying on exam dumps can <a href="https://dumpsarena.com/nokia-dumps/4a0-114/">Nokia Network Routing Specialist II</a> lead to overconfidence, especially if the dumps don’t cover the full scope of the exam.

Ethical Considerations

Using dumps can sometimes r... | theasks72 |

1,886,878 | How to Choose the Right IT Recruitment Consultancy in India | Finding the perfect IT talent is crucial for the success of any tech-driven organization. In India,... | 0 | 2024-06-13T11:26:21 | https://dev.to/impeccablehr/how-to-choose-the-right-it-recruitment-consultancy-in-india-h25 |

Finding the perfect IT talent is crucial for the success of any tech-driven organization. In India, a booming IT industry means there's a high demand for skilled professionals, making the recruitment process both c... | impeccablehr | |

1,886,876 | Understanding Laravel Authentication: Best Practices and Tips | In the realm of Laravel development, user authentication serves as the gatekeeper, ensuring only... | 0 | 2024-06-13T11:26:17 | https://dev.to/asfiaaiman/understanding-laravel-authentication-best-practices-and-tips-59pj | oauth, session, laravel, jwt | In the realm of Laravel development, user authentication serves as the gatekeeper, ensuring only authorized individuals access your application's valuable resources. But with an array of options at your disposal, choosing the most suitable authentication strategy can feel like navigating a labyrinth. This blog delves i... | asfiaaiman |

1,830,965 | Criando um modulo xk6 para k6 | Uma das grandes vantagens do K6 é sua capacidade de permitir a criação de módulos personalizados, os... | 0 | 2024-06-13T11:25:15 | https://dev.to/marlo2222/criando-um-modulo-xk6-para-k6-3c64 | k6, go, testing, performance | Uma das grandes vantagens do K6 é sua capacidade de permitir a criação de módulos personalizados, os quais podem ser facilmente adicionados aos scripts de teste de performance, e até mesmo, se torna uma solução oficial para o K6.

Esse caminho de criação de módulos, abre novas possibilidades para a rápida disponibiliz... | marlo2222 |

1,886,875 | Hellstar Hoodie || Hellstar Clothing || New Collection | Origin and Brand Associations The Hellstar Hoodie is often linked to the brand Sicko Born From Pain... | 0 | 2024-06-13T11:24:59 | https://dev.to/humiama_noor_214585631e4d/hellstar-hoodie-hellstar-clothing-new-collection-2l8a | hellstar, hellstarhoodie, hellstarsweatpants, hellstarshirt | Origin and Brand Associations

The [Hellstar Hoodie](https://hellstarcloth.us/hellstar-hoodie/) is often linked to the brand Sicko Born From Pain (Sicko), created by fashion designer and artist Ian Connor. Connor is known for his influence in streetwear culture and has collaborated with various high-profile brands and a... | humiama_noor_214585631e4d |

1,886,873 | Step-by-Step Guide to Cloud Migration With DevOps | This successful adoption of cloud technologies is attributed to scalability, security, faster time to... | 0 | 2024-06-13T11:23:52 | https://dev.to/anshul_kichara/step-by-step-guide-to-cloud-migration-with-devops-418d | devops, technology, trending, software | This successful adoption of cloud technologies is attributed to scalability, security, faster time to market, and team collaboration benefits it offers.

With this number increasing rapidly among companies at all levels, organizations are looking forward to the methods that help them:

- Eliminate platform complexitie... | anshul_kichara |

1,886,872 | Dzrt: مستقبل الحلويات الرقمية | في عالم فن الطهي الذي يتطور باستمرار، تبرز Dzrt كمفهوم ثوري يجمع بين التكنولوجيا وفن الطهي. ولكن ما... | 0 | 2024-06-13T11:21:38 | https://dev.to/dezeretshopping/dzrt-mstqbl-lhlwyt-lrqmy-5hk6 |

في عالم فن الطهي الذي يتطور باستمرار، تبرز [Dzrt](https://rawqan.com/br/4nQO_o0BvE0XJcnI1EgY/دزرت) كمفهوم ثوري يجمع بين التكنولوجيا وفن الطهي. ولكن ما هو بالضبط Dzrt؟ إنها ليست مجرد حلوى. إنها تجربة رقمية. مع تقدمن... | dezeretshopping | |

1,886,871 | nutrition supplement store near me | Visit our conveniently located nutrition supplement store for all your health and fitness needs. We... | 0 | 2024-06-13T11:21:21 | https://dev.to/nutrizen/nutrition-supplement-store-near-me-2812 |

Visit our conveniently located nutrition supplement store for all your health and fitness needs. We offer a wide range of premium supplements, vitamins, and sports nutrition products to support your wellness goals. Whether you're an athlete, a fitness enthusiast, or simply looking to improve your overall health, our k... | nutrizen | |

1,886,863 | Embracing the Future of Web Development with Laravel, PestPHP, Livewire and Vue.js | Today I want to share a candid reflection on my journey with some of the most transformative tools in... | 0 | 2024-06-13T11:19:09 | https://dev.to/kasenda/embracing-the-future-of-web-development-with-laravel-pestphp-livewire-and-vuejs-1gcj |

Today I want to share a candid reflection on my journey with some of the most transformative tools in the web development world: Laravel, PestPHP, Livewire and Vue.js.

## Laravel

From the moment I started using Laravel, I knew it was more than just a framework; it was a real game changer! Laravel's elegant syntax and... | kasenda | |

1,886,859 | The Evolution and Impact of Software Development Companies in the US | When we think about the incredible strides technology has made over the past few decades, it's... | 0 | 2024-06-13T11:16:10 | https://dev.to/stevemax237/the-evolution-and-impact-of-software-development-companies-in-the-us-n32 | webdev, softwaredevelopment, technology | When we think about the incredible strides technology has made over the past few decades, it's impossible to overlook the vital role played by [**custom software development companies in usa**](https://www.mobileappdaily.com/directory/software-development-companies/us?utm_source=dev&utm_medium=hc&utm_campaign=mad). The... | stevemax237 |

1,886,858 | How to Promote Digital Marketing Services | Promoting digital marketing services effectively requires a strategic approach that combines both... | 0 | 2024-06-13T11:15:20 | https://dev.to/creationinfoways/how-to-promote-digital-marketing-services-158 | marketing | Promoting **[digital marketing services](https://www.creationinfoways.com/digital-marketing-services.html)** effectively requires a strategic approach that combines both online and offline tactics. Here’s a comprehensive guide to promoting digital marketing services, particularly if you’re offering these services in a ... | creationinfoways |

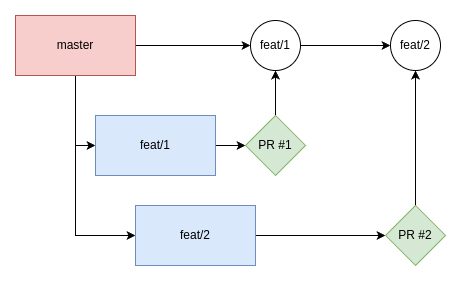

1,886,856 | Git: How to fix PR conflicts | When working with PRs, we may encounter conflicts trying to merge them. In this article, we will... | 27,621 | 2024-06-13T11:14:21 | https://henriqueleite42.hashnode.dev/git-how-to-fix-pr-conflicts | git, webdev, beginners, programming | When working with PRs, we may encounter conflicts trying to merge them. In this article, we will learn what conflicts are and how to fix them.

## What causes conflicts

In the image above, you c... | henriqueleite42 |

1,886,854 | Elevate Your Online Presence with Expert Web Development Services from WebBuddy Agency | In today's digital age, having a strong online presence is essential for businesses to thrive and... | 0 | 2024-06-13T11:10:00 | https://dev.to/piyushwebbuddyy/elevate-your-online-presence-with-expert-web-development-services-from-webbuddy-agency-19g3 | webdev | In today's digital age, having a strong online presence is essential for businesses to thrive and succeed. Your website serves as the virtual face of your brand, often forming the first impression potential customers have of your business. Therefore, investing in **[professional web development services](https://www.we... | piyushwebbuddyy |

1,886,853 | Unlocking the Power of AI: Webbuddy Agency's Comprehensive AI Development Services | In an era defined by technological innovation, artificial intelligence (AI) stands out as one of the... | 0 | 2024-06-13T11:08:46 | https://dev.to/piyushwebbuddyy/unlocking-the-power-of-ai-webbuddy-agencys-comprehensive-ai-development-services-4eea | aidevelopment | In an era defined by technological innovation, artificial intelligence (AI) stands out as one of the most transformative forces reshaping industries and societies worldwide. Webbuddy Agency, a leader in digital solutions, is at the forefront of harnessing AI's potential to drive meaningful change. In this comprehensive... | piyushwebbuddyy |

1,886,852 | Leverage Integrations and Enhancements in Workday 2024 R1 Update | March 2024 saw the release of the eagerly awaited Workday 2024 R1 upgrade. It included various new... | 0 | 2024-06-13T11:08:05 | https://www.newsvoir.com/index.php?option=com_content&view=release&rid=28070 | workday, update |

March 2024 saw the release of the eagerly awaited Workday 2024 R1 upgrade. It included various new features and functionality meant to improve user experience across various domains and expedite operations. This vers... | rohitbhandari102 |

1,886,851 | Agile Methodologies | Agile methodologies help development teams focus on a smaller set of tasks at a time and deliver... | 0 | 2024-06-13T11:06:34 | https://feathersoftwares.com/ | web3, website, javascript, java | Agile methodologies help development teams focus on a smaller set of tasks at a time and deliver features to users faster.

Additionally, they can find issues early on, leading to a more efficient and successful development process.

Feather Softwares offers hands-on internship programs to launch your career in Web Devel... | anish_feather_223281851a4 |

1,886,849 | Ditch the Razors: Your Guide to Laser Hair Removal in Manchester | For a lot of, unwanted human body hair can be quite a continuous supply of frustration. Shaving needs... | 0 | 2024-06-13T11:03:49 | https://dev.to/laserhairremoval000/ditch-the-razors-your-guide-to-laser-hair-removal-in-manchester-llb | For a lot of, unwanted human body [hair ](https://neweraskin.co.uk/services/laser-hair-removal-manchester/ )can be quite a continuous supply of frustration. Shaving needs frequent upkeep and may cause discomfort, while waxing could be painful and time-consuming. Thankfully, laser hair removal offers a long-term opti... | laserhairremoval000 | |

1,887,337 | Retrieve weather information based on a zip code 🌤️ | Hey everyone, In this blog we are going to see how you can create an application that can retrieve... | 0 | 2024-06-18T06:38:12 | https://blog.elest.io/n8n-retrieves-weather-information-based-on-a-zip-code/ | elestio, n8n, api | ---

title: Retrieve weather information based on a zip code 🌤️

published: true

date: 2024-06-13 11:00:42 UTC

tags: Elestio, N8N, API

canonical_url: https://blog.elest.io/n8n-retrieves-weather-information-based-on-a-zip-code/

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/2ac07yo7jg9y0sv75eb1.png... | kaiwalyakoparkar |

1,886,846 | Tech programming | Do you want to develop your programming skills? I will explain my programming projects: html, css,... | 0 | 2024-06-13T11:00:13 | https://dev.to/hussein09/tech-programming-co0 | javascript, beginners, tutorial, python |

Do you want to develop your programming skills? I will explain my **programming projects: html, css, js, mysql, php, python java, and I will develop games, websites, and short clips in all aspects of programming**. I will also attach the codes to the end of each project. If you agree with this idea, then follow me on ... | hussein09 |

1,886,784 | Unveiling the NVIDIA A100 80GB Price and Its Impact on the AI Landscape | Introduction In the rapidly advancing field of artificial intelligence (AI), the NVIDIA... | 0 | 2024-06-13T11:00:00 | https://dev.to/novita_ai/unveiling-the-nvidia-a100-80gb-price-and-its-impact-on-the-ai-landscape-1dd1 | ## Introduction

In the rapidly advancing field of artificial intelligence (AI), the NVIDIA A100 80GB GPU has emerged as a formidable contender, offering exceptional performance for complex computational tasks. This article delves into the specifics of the NVIDIA A100 80GB price and its features, providing insights into... | novita_ai | |

1,863,360 | The Adventures of Blink #27: LLMs in Code | So last week was pretty wild, huh? Our previous adventure saw us download GPT4all and add in some... | 26,964 | 2024-06-13T11:00:00 | https://dev.to/linkbenjamin/the-adventures-of-blink-27-llms-in-code-1mda | ai, python, beginners, llm | So last week was pretty wild, huh? Our [previous adventure]() saw us download GPT4all and add in some local data, which gave me the ability to have a conversation with my memoirs!

## The UI has limited usefulness

GPT4All's application is a _fantastic_ sandbox for ideas. We can start to load data and models and expe... | linkbenjamin |

1,886,845 | FINQ's weekly market insights: Peaks and valleys in the S&P 500 – June 13, 2024 | Dive into this week's market dynamics, highlighting the S&P 500's leaders and laggards with... | 0 | 2024-06-13T10:59:19 | https://dev.to/eldadtamir/finqs-weekly-market-insights-peaks-and-valleys-in-the-sp-500-june-13-2024-18jj | ai, stockmarket, stocks, investing | Dive into this week's market dynamics, highlighting the S&P 500's leaders and laggards with FINQ's precise AI analysis.

## **Top achievers:**

- **Amazon (AMZN):** Amazon holds the top spot with consistently high Professional and Crowd Wisdom scores.

- **ServiceNow (NOW):** ServiceNow remains second, benefiting from s... | eldadtamir |

1,886,843 | Detailed Internet Security Analysis: Common Vulnerabilities and Best Practices | Security is a major threat to companies striving to deliver software quickly. Alongside existing... | 0 | 2024-06-13T10:53:30 | https://devot.team/blog/web-application-security-best-practices | database, privacy, security | Security is a major threat to companies striving to deliver software quickly. Alongside existing vulnerabilities in application code and security breaches, companies and developers must also be aware of the potential security vulnerabilities that super-powerful quantum computers pose to currently used cryptographies.

... | ana_klari_e98cbb26da5af3 |

1,886,842 | 5 top casting options in Selenium WebDriver API | Introduction : WebDriver API is one of the 3 components provided by Selenium. It is an... | 0 | 2024-06-13T10:52:16 | https://dev.to/debasmita-a/5-top-casting-options-in-selenium-webdriver-api-4f5b | selenium, webdriver, remotewebdriver, topcasting | ## Introduction :

WebDriver API is one of the 3 components provided by Selenium. It is an interface that has many declared methods that help perform certain actions on the browser.

In this article, we will investigate all 5 top casting combinations while creating a browser driver object (ChromeDriver, EdgeDriver, Fire... | debasmita-a |

1,886,841 | Simplify Customs Declarations with CDS: 5 Easy Steps | Simplify Customs Declarations with CDS: 5 Easy Steps Navigating customs declarations can be a... | 0 | 2024-06-13T10:51:52 | https://dev.to/john_hall/simplify-customs-declarations-with-cds-5-easy-steps-4ck3 | software, ai, learning, community | Simplify Customs Declarations with CDS: 5 Easy Steps

Navigating customs declarations can be a challenge, but with Customs Declaration Software (CDS), the process becomes much simpler. Here’s how you can use CDS software to handle customs declarations efficiently in five straightforward steps.

### Step 1: Get Familiar ... | john_hall |

1,886,837 | Benefits of Using Foam Face Wash in Your Skincare Routine | Incorporating a foam face wash into your skincare routine can significantly improve the health and... | 0 | 2024-06-13T10:48:06 | https://dev.to/purehill/benefits-of-using-foam-face-wash-in-your-skincare-routine-i5a | Incorporating a foam face wash into your skincare routine can significantly improve the health and appearance of your skin. Among the many options available, Purehill Foam Face Wash stands out as a top choice, especially for those seeking a foam face wash for all types of skin. This article explores the various **[bene... | purehill | |

1,886,836 | Mastering Immutable Types with TypeScript `as const` | Enhance your TypeScript skills by mastering immutability and type safety with the as const feature.... | 0 | 2024-06-13T10:46:58 | https://dev.to/adeelibr/mastering-immutable-types-with-typescript-as-const-gh1 | webdev, typescript, javascript, programming | Enhance your TypeScript skills by mastering immutability and type safety with the `as const` feature. This guide walks you through key steps, cautionary notes, and tips for efficiency. Watch the full video [here](https://www.youtube.com/watch?v=ztjMkfeFNrg).

{% embed https://www.youtube.com/watch?v=ztjMkfeFNrg&ab_chan... | adeelibr |

1,886,834 | 4 Ways to backup mySql database to a csv file | There are several methods to create a CSV backup of your MySQL database. Some third-party database... | 0 | 2024-06-13T10:44:01 | https://dev.to/instanceofgod/4-ways-to-backup-mysql-database-to-a-csv-file-3e9j | There are several methods to create a CSV backup of your MySQL database. Some third-party database management tools offer additional features for backing up to CSV.

If you prefer command-line control, the mysqldump utility is powerful and flexible. If you are familiar with the python programing language, there are pack... | instanceofgod | |

1,886,833 | Look what they need to mimic a fraction of our power | A post by Manuel Artero Anguita 🟨 | 0 | 2024-06-13T10:43:05 | https://dev.to/manuartero/look-what-they-need-to-mimic-a-fraction-of-our-power-40j | typescript, python, humour |

| manuartero |

1,886,832 | Why Even Bother with Project Management? | In today's fast-paced business environment, the success of any project relies heavily on effective... | 0 | 2024-06-13T10:42:59 | https://dev.to/hasanbisha/why-even-bother-with-project-management-3cji | webdev, productivity, career, management | In today's fast-paced business environment, the success of any project relies heavily on effective project management. Whether you are launching a new product, implementing a system upgrade, or organizing a marketing campaign, project management provides a structured framework to navigate through complexities. But why ... | hasanbisha |

1,886,831 | How to Fool and Avoid Facial Recognition in Public Places | There is a growing trend of using facial recognition technology in public spaces such as retail... | 0 | 2024-06-13T10:42:49 | https://dev.to/luxandcloud/how-to-fool-and-avoid-facial-recognition-in-public-places-2346 | ai, news, discuss, machinelearning | There is a growing trend of using facial recognition technology in public spaces such as retail malls, stadiums, and airports. While this technology can be used for security and convenience, it also raises concerns about privacy and surveillance.

Consider a bustling city department store during the holiday season, tee... | luxandcloud |

1,886,830 | 🚀 Day 1: Embarking on My DevOps Journey 🌐 | Today marks the beginning of my adventure into the realms of DevOps and cloud computing. Here’s a... | 0 | 2024-06-13T10:42:23 | https://dev.to/sanjishmaharjan/day-1-embarking-on-my-devops-journey-36mc | devops, learning, programming | Today marks the beginning of my adventure into the realms of DevOps and cloud computing. Here’s a snapshot of what I covered:

🌱 Fundamentals of DevOps:

Culture and Collaboration: Embracing a mindset focused on continuous improvement and teamwork.

Automation: Understanding the importance of automating repetitive tasks... | sanjishmaharjan |

1,886,829 | Offline Speech to Text in Python | by Nimrita Koul | In this article, Nimrita Koul explained about vosk, pyaudio packages and showed a simple solution to... | 0 | 2024-06-13T10:42:03 | https://dev.to/tankala/offline-speech-to-text-in-python-by-nimrita-koul-34lc | python, ai, machinelearning, datascience | In this article, Nimrita Koul explained about vosk, pyaudio packages and showed a simple solution to record your own audio and convert your words to text and save it in a text file. The best part is the whole thing can be done offline.

{% embed https://medium.com/@nimritakoul01/offline-speech-to-text-in-python-f5d6454... | tankala |

1,886,827 | Top Hearing Assistance At The Audiologist Near Me | Our modern life has made it very hard to maintain good ear health. From blasting loud music in our... | 0 | 2024-06-13T10:39:31 | https://dev.to/prestigehearing/top-hearing-assistance-at-the-audiologist-near-me-22m9 | webdev | Our modern life has made it very hard to maintain good ear health. From blasting loud music in our headphones almost the entire day to suffering from incessant urban noise pollution, there is simply no avoiding auditory problems.

to take your brand to the next level? Our experienced team specializes in a range of digital marketing solutions, including SEO, social media marketing, PPC, and content creation. Based in the heart ... | creationinfoways |

1,885,423 | Database Management system(DBMS) Part1 | Hello guys, Hope everything is going well. I'm Esraa a frontend developer and postgraduate student... | 0 | 2024-06-13T10:38:14 | https://dev.to/esraanasr92/database-management-systemdbms-part1-4okm | Hello guys,

Hope everything is going well. I'm Esraa a frontend developer and postgraduate student at AAST in Computer Science. This semester, I'm studying three subjects Data structure and algorithms, Database Management Systems(DBMS), and Object object-oriented programming (OOP).

I'll contribute tutorials for each... | esraanasr92 | |

1,886,822 | Introduction to Digital Identity Verification | Digital identity verification is crucial for confirming the authenticity of an individual's identity... | 27,619 | 2024-06-13T10:36:48 | https://dev.to/aishik_chatterjee_0060e71/introduction-to-digital-identity-verification-3mme | Digital identity verification is crucial for confirming the authenticity of an

individual's identity in the digital realm. As the world increasingly moves

online, the need to establish a person's identity accurately and securely has

become paramount. This process is fundamental in various sectors, including

banking, he... | aishik_chatterjee_0060e71 | |

1,886,821 | Beware of recursive signals in Django | Quite recently, I was working on a backend project written in Django and I had defined the following... | 0 | 2024-06-13T10:34:41 | https://dev.to/nick_langat/beware-of-recursive-signals-in-django-2jla | webdev, django, python, programming | Quite recently, I was working on a backend project written in Django and I had defined the following models:

```python

class Order(BaseModel):

class Status(models.TextChoices):

COMPLETE = "Complete"

PENDING = "Pending"

CANCELLED = "Cancelled"

created_by = models.ForeignKey(

User... | nick_langat |

1,886,820 | Revolutionizing Business with Mobile App Development | In the modern digital era, mobile app development stands as a cornerstone for business innovation and... | 0 | 2024-06-13T10:33:43 | https://dev.to/kevinpeterson/revolutionizing-business-with-mobile-app-development-oh0 | mobiledevelopment, mobileappdevelopment | In the modern digital era, **[mobile app development](https://www.webbuddy.agency/services/mobile)** stands as a cornerstone for business innovation and growth. With a surge in mobile device usage, businesses across various sectors are leveraging mobile apps to enhance customer engagement, streamline operations, and bo... | kevinpeterson |

1,886,819 | Comparing the 8 Best Open-source and Paid OpenAPI Documentation Tools | For developers aiming to create OpenAPI documentation without incurring high costs, open-source tools... | 0 | 2024-06-13T10:32:22 | https://dev.to/sattyam/comparing-the-8-best-open-source-and-paid-openapi-documentation-tools-1mg1 | api, openapi | For developers aiming to create OpenAPI documentation without incurring high costs, open-source tools are a real asset. In this article, we'll explore some key options known for their functionality and community backing.

## Swagger UI

Originally known as Swagger and now managed by SmartBear as an open-source project,... | sattyam |

1,886,818 | Elevate Your Online Presence with Expert Web Development Services from WebBuddy Agency | In today's digital age, having a strong online presence is essential for businesses to thrive and... | 0 | 2024-06-13T10:32:11 | https://dev.to/kevinpeterson/elevate-your-online-presence-with-expert-web-development-services-from-webbuddy-agency-3f72 | webdev, webdevelopmentcompany, websitedevelopment | In today's digital age, having a strong online presence is essential for businesses to thrive and succeed. Your website serves as the virtual face of your brand, often forming the first impression potential customers have of your business. Therefore, investing in **[professional web development services](https://www.we... | kevinpeterson |

1,886,817 | Why Choose Dot Net for App Development | In the world of app development, new things keep coming up. There is a flood of frameworks being... | 0 | 2024-06-13T10:31:29 | https://dev.to/lewisblakeney/why-choose-dot-net-for-app-development-4a4o | dotnet, dotnetcore, aspdotnet, aspnet | In the world of app development, new things keep coming up. There is a flood of frameworks being introduced frequently, each one asserting to be a silver bullet for creating that next great thing. However, with lots of options available, it can be overwhelming to choose the right technology. This abundance of choices ... | lewisblakeney |

1,886,816 | Building a Crypto Launchpad: From Concept to Launch | New projects and innovations are continuously emerging in the ever-evolving world of cryptocurrencies... | 0 | 2024-06-13T10:30:48 | https://dev.to/donnajohnson88/building-a-crypto-launchpad-from-concept-to-launch-5734 | cryptocurrency, blockchain, learning, development | New projects and innovations are continuously emerging in the ever-evolving world of cryptocurrencies and blockchain technology. One emerging element in the [crypto development](https://blockchain.oodles.io/cryptocurrency-development-services/?utm_source=devto) space is the crypto launchpad. Crypto launchpads are vital... | donnajohnson88 |

1,886,811 | ChatGPT - Prompts to Create Regular Expression | Discover the various ChatGPT Prompts to Create Regular Expression | 0 | 2024-06-13T10:29:04 | https://dev.to/techiesdiary/chatgpt-prompts-to-create-regular-expression-10pn | chatgpt, promptengineering, ai, regex | ---

published: true

title: 'ChatGPT - Prompts to Create Regular Expression'

cover_image: 'https://raw.githubusercontent.com/sandeepkumar17/td-dev.to/master/assets/blog-cover/chat-gpt-prompts.jpg'

description: 'Discover the various ChatGPT Prompts to Create Regular Expression'

tags: chatgpt, promptengineering, ai, regex... | techiesdiary |

1,886,810 | ChatGPT - Prompts to Create Boilerplate Code | Discover the various ChatGPT Prompts to Create Boilerplate Code | 0 | 2024-06-13T10:28:55 | https://dev.to/techiesdiary/chatgpt-prompts-to-create-boilerplate-code-3cic | chatgpt, promptengineering, ai, programming | ---

published: true

title: 'ChatGPT - Prompts to Create Boilerplate Code'

cover_image: 'https://raw.githubusercontent.com/sandeepkumar17/td-dev.to/master/assets/blog-cover/chat-gpt-prompts.jpg'

description: 'Discover the various ChatGPT Prompts to Create Boilerplate Code'

tags: chatgpt, promptengineering, ai, programmi... | techiesdiary |

1,886,808 | ChatGPT - Prompts for adding code comments | Discover the various ChatGPT Prompts for adding code comments | 0 | 2024-06-13T10:28:46 | https://dev.to/techiesdiary/chatgpt-prompts-for-adding-code-comments-5cod | chatgpt, promptengineering, ai, programming | ---

published: true

title: 'ChatGPT - Prompts for adding code comments'

cover_image: 'https://raw.githubusercontent.com/sandeepkumar17/td-dev.to/master/assets/blog-cover/chat-gpt-prompts.jpg'

description: 'Discover the various ChatGPT Prompts for adding code comments'

tags: chatgpt, promptengineering, ai, programming

s... | techiesdiary |

1,886,802 | use Redux with Svelte 👀 | I've been developing a product fully utilizing SvelteKit, and while server-side tests can be handled... | 0 | 2024-06-13T10:28:04 | https://dev.to/qaynam/use-redux-with-svelte-1c7i | svelte, sveltekit, redux, javascript | I've been developing a product fully utilizing SvelteKit, and while server-side tests can be handled directly with Jest, unit testing on the client side can't be done just by adding Jest. Support for ESM needs to be added in various ways, it would be fine to break down logic into smaller functions for testing, but that... | qaynam |

1,886,807 | Diving Into the Academic Frontier: An Introduction of Large Language Models Differential Privacy | Introduction As machine learning technologies become increasingly prevalent, the need to... | 0 | 2024-06-13T10:27:28 | https://dev.to/novita_ai/diving-into-the-academic-frontier-an-introduction-of-large-language-models-differential-privacy-358n | llm | ## Introduction

As machine learning technologies become increasingly prevalent, the need to ensure the privacy and security of the data used to train these [**LLMs**](https://blogs.novita.ai/top-llms-for-2024-how-to-evaluate-and-improve-an-open-source-llm/) has become a critical concern. One key approach to addressing ... | novita_ai |

1,886,815 | Stripes: refactor with CSS variables | Stripes are those little bands that we can see in many places on the street, often on signs but also... | 0 | 2024-06-13T10:26:59 | https://dev.to/alebarbaja/stripes-with-css-variables-580l | css | Stripes are those little bands that we can see in many places on the street, often on signs but also in everyday places.

Creating this with CSS is not complicated, we are going to make use of gradients,... | alebarbaja |

1,886,814 | The display decorator | When using a callable in the list_display, as in the cases of initialled_name and isbn13, we can use... | 0 | 2024-06-13T10:26:00 | https://dev.to/mammadov115/the-display-decorator-b7o | python, django | When using a callable in the list_display, as in the cases of initialled_name and isbn13, we can use the admin.display decorator to specify the column name that will appear in the header of the change list using the description argument. We can also use it to get around the limitation of calculated fields not being sor... | mammadov115 |

1,886,813 | Guide To Effective Payroll Reporting And Software | In the dynamic world of business management, efficient payroll handling is crucial. A well-organised... | 0 | 2024-06-13T10:25:23 | https://dev.to/superworkservice/guide-to-effective-payroll-reporting-and-software-53od |

In the dynamic world of business management, efficient payroll handling is crucial. A well-organised payroll report not only ensures timely and accurate payment to employees but also maintains compliance with tax laws and financial regulations. For HR managers and business owners, understanding the intricacies of pay... | superworkservice | |

1,886,812 | Unlocking the Power of AI: Webbuddy Agency's Comprehensive AI Development Services | In an era defined by technological innovation, artificial intelligence (AI) stands out as one of the... | 0 | 2024-06-13T10:25:14 | https://dev.to/kevinpeterson/unlocking-the-power-of-ai-webbuddy-agencys-comprehensive-ai-development-services-333g | aidevelopment, aidevelopmentservices, webdev | In an era defined by technological innovation, artificial intelligence (AI) stands out as one of the most transformative forces reshaping industries and societies worldwide. Webbuddy Agency, a leader in digital solutions, is at the forefront of harnessing AI's potential to drive meaningful change. In this comprehensive... | kevinpeterson |

1,886,809 | Download ZED Gold Certificate Checklist for Free | ZED Gold Certificate, awarded by the Micro, Small, and Medium Enterprises (MSME) sector in India,... | 0 | 2024-06-13T10:23:25 | https://dev.to/nativeopencartaap/download-zed-gold-certificate-checklist-for-free-2d82 | ZED Gold Certificate, awarded by the Micro, Small, and Medium Enterprises (MSME) sector in India, signifies a business’s exceptional product quality, worker safety, and commitment to Environment preservation. It serves as a testament to the company’s dedication to continuous improvement and competitiveness. With ZED Go... | nativeopencartaap | |

1,886,806 | Innovation Meets Efficiency: Unveiling Intensiv-Filter Himenviro's Hybrid Electro Filters | Innovation Meets Efficiency: Unveiling Intensiv-Filter Himenviro's Hybrid Electro Filters Industrial... | 0 | 2024-06-13T10:22:04 | https://dev.to/marketing_intensivfilterh/innovation-meets-efficiency-unveiling-intensiv-filter-himenviros-hybrid-electro-filters-38ed | beginners, webdev | Innovation Meets Efficiency: Unveiling Intensiv-Filter Himenviro's Hybrid Electro Filters

Industrial dust control is a crucial aspect of environmental protection. Intensiv-Filter Himenviro's [hybrid electro filters](https://www.intensiv-filter-himenviro.com/hybrid-electro-filters/) (HEFs) offer a powerful solution that... | marketing_intensivfilterh |

1,886,804 | Magic Stone Spaces: Pioneering Sustainable Luxury in Pune's Real Estate Market | Magic Stone Spaces in Pune is a prominent real estate development company known for its innovative... | 0 | 2024-06-13T10:21:05 | https://dev.to/magic_stonespaces_282ce9/magic-stone-spaces-pioneering-sustainable-luxury-in-punes-real-estate-market-25h4 | magicstonespaces, residentialproperties, commercialproperties, luxuryproperties |

**Magic Stone Spaces** in Pune is a prominent real estate development company known for its innovative and sustainable architectural designs. They specialize in creating luxurious [residential](https://magicstonesp... | magic_stonespaces_282ce9 |

1,886,801 | Go vs Rust: Choosing the Right Language for Your Development Journey in 2024 | When choosing a programming language for your next project, it’s essential to consider the strengths... | 0 | 2024-06-13T10:17:53 | https://dev.to/saumya27/go-vs-rust-choosing-the-right-language-for-your-development-journey-in-2024-33jb | go, rust | When choosing a programming language for your next project, it’s essential to consider the strengths and features of the available options. Rust and Go are two modern languages that have gained significant traction for their performance, concurrency, and ease of use. Here’s a comparison of Rust vs Go, highlighting thei... | saumya27 |

1,886,799 | FIGHT OIL, NOT MOISTURE: BEST CLEANSERS PERFECT FOR OILY SKIN TYPES | Cleaning your face is important for your skincare routine. If your skin is oily, using a face... | 0 | 2024-06-13T10:17:18 | https://dev.to/priyank_sharma_627a16aae4/fight-oil-not-moisture-best-cleansers-perfect-for-oily-skin-types-3lci | cleanser, facewash | Cleaning your face is important for your skincare routine. If your skin is oily, using a face cleanser made for oily skin can help a lot. A good cleanser can remove oil without drying out your skin or making acne worse. If the cleanser also has ingredients for acne scars or dark spots, that's even better for your skin.... | priyank_sharma_627a16aae4 |

1,886,792 | How to Factory Reset HP Printer 888-4O4-671O | Resetting your HP printer to its factory settings can be a useful troubleshooting step if you're... | 0 | 2024-06-13T10:12:06 | https://dev.to/printerhelp/how-to-factory-reset-hp-printer-888-4o4-671o-1lbp | beginners, discuss | Resetting your HP printer to its factory settings can be a useful troubleshooting step if you're experiencing persistent issues, planning to sell or donate the printer, or simply need to clear the settings and start fresh. This guide will walk you through the process of factory resetting an HP printer, covering various... | printerhelp |

1,886,798 | Costume Fashion Jewelry in USA | Buy Costume Fashion Jewelry in USA at Jewelry By Style, Our collection features a stunning array of... | 0 | 2024-06-13T10:14:57 | https://dev.to/jewelry_bystyle_bfd2a8bd5/costume-fashion-jewelry-in-usa-53od |

Buy Costume Fashion Jewelry in USA at Jewelry By Style, Our collection features a stunning array of statement necklaces, dazzling earrings, chic bracelets, meticulously crafted to accentuate your style. Elevate you... | jewelry_bystyle_bfd2a8bd5 | |

1,886,797 | Why Workday Testing Automation Is Crucial For Risk Management | Workday is a cloud-based enterprise resource planning platform that facilitates managing the core... | 0 | 2024-06-13T10:14:23 | https://awsmone.com/why-workday-testing-automation-is-crucial-for-risk-management/ | workday, testing, automation |

Workday is a cloud-based enterprise resource planning platform that facilitates managing the core functionality of human resources and finance operations within an organization. With a wide range of tools and techniq... | rohitbhandari102 |

1,886,796 | SST Ditches AWS CDK: Time to Move on to Ion | Explore how SST shifted from AWS CDK to Ion, uncover the challenges of the old bucket construct, and... | 0 | 2024-06-13T10:13:45 | https://5ly.co/blog/sst3-switching-to-ion/ | sst, aws, serverless | **Explore how SST shifted from AWS CDK to Ion, uncover the challenges of the old bucket construct, and see the benefits of starting new software projects with SST 3 Ion.**

---

As we all know, staying ahead means embracing the latest in technology and innovation fields. Recently, [SST](https://sst.dev/), a pioneer in... | kiryl_anoshka |

1,886,795 | Oracle Fusion SCM Online Training | Experience Oracle Fusion SCM Online Training provided by SOT, where our expert instructors bring... | 0 | 2024-06-13T10:13:15 | https://dev.to/oraclefusionscm/oracle-fusion-scm-online-training-4n2c | oracle, scm | Experience [Oracle Fusion SCM Online Training](

https://www.softonlinetraining.com/oracle-fusion-scm-training) provided by SOT, where our expert instructors bring real-world industry insights from leading MNCs. Our cu... | oraclefusionscm |

1,886,794 | Premier agency for Recruitment Process Outsourcing (RPO) services | QA Solvers is a premier agency for Recruitment Process Outsourcing (RPO) services in the US. Our... | 0 | 2024-06-13T10:12:47 | https://dev.to/qasolvers/premier-agency-for-recruitment-process-outsourcing-rpo-services-3hjh | global, recruitme, hiring, staffing | QA Solvers is a premier agency for [Recruitment Process Outsourcing (RPO) services](https://qasolvers.com/staffing/) in the US. Our customized recruitment services are designed to meet the diverse hiring needs of businesses across various industries.

Our temporary staffing services are ideal for businesses in need of... | qasolvers |

1,886,775 | How to Build a High-Performing Shopify Store: Insights from a Shopify Expert | Building a high-performing Shopify store requires more than just setting up products and choosing a... | 0 | 2024-06-13T10:11:35 | https://dev.to/mariewthornton/how-to-build-a-high-performing-shopify-store-insights-from-a-shopify-expert-3868 | shopify, shopifydevelopers, hireshopifyexperts, shopifyexperts | Building a high-performing Shopify store requires more than just setting up products and choosing a theme. It's about creating a seamless shopping experience that engages customers, drives traffic, and boosts conversions. Drawing on insights from [**hire dedicated shopify developer**](https://www.biztechcs.com/hire-sho... | mariewthornton |

1,886,791 | Microsoft Azure AI Solutions: Empowering Data Scientists and Developers | Rehearsing for a test like the simulated intelligence 102 can be a regular work. Truth be told a few... | 0 | 2024-06-13T10:11:33 | https://dev.to/ai102examdumps/microsoft-azure-ai-solutions-empowering-data-scientists-and-developers-439j | webdev, beginners, javascript | Rehearsing for a test like the simulated intelligence 102 can be a regular work. Truth be told a few tests are really paid for by work since they are so escalated. <a href="https://dumpsarena.com/microsoft-dumps/ai-102/">AI-102 Exam Dumps</a> Certification isn't straightforward and takes monstrous work. It requires inv... | ai102examdumps |

1,886,789 | Tokenization: Making Investment Opportunities More Accessible | Imagine being able to invest in a piece of a famous artwork, much like you would buy shares in a... | 0 | 2024-06-13T10:10:42 | https://dev.to/calyptus_ninja/tokenization-making-investment-opportunities-more-accessible-4g5m | tokenization, webdev | Imagine being able to invest in a piece of a famous artwork, much like you would buy shares in a company. This is no longer just a thought experiment—it's a reality with tokenization. This innovative approach is transforming how we interact with traditional financial assets by breaking them down into smaller, more acce... | calyptus_ninja |

1,886,788 | Explore Haridwar in Comfort and Style: Book Tempo Traveller in Haridwar for an Unforgettable Journey | Explore Haridwar in Comfort and Style: Book Tempo Traveller in Haridwar for an Unforgettable... | 0 | 2024-06-13T10:08:52 | https://dev.to/cabsules/explore-haridwar-in-comfort-and-style-book-tempo-traveller-in-haridwar-for-an-unforgettable-journey-168d | cabsules, tempotravellerinharidwar | Explore Haridwar in Comfort and Style: Book Tempo Traveller in Haridwar for an Unforgettable Journey

Haridwar, the "Gateway to the Gods," is a spiritual hub nestled in the foothills of the Himalayas. It beckons travelers seeking cultural immersion, religious enlightenment, and breathtaking natural beauty. To embark on ... | cabsules |

1,886,760 | Hello | New photo | 0 | 2024-06-13T09:30:20 | https://dev.to/yinboran/hello-1c0a | yinboran | ---

title: Hello

published: true

description: New photo

tags: yinboran

# cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/kkb7va5hvnxqnkh8qmzm.JPG

# Use a ratio of 100:42 for best results.

# published_at: 2024-05-21 23:06 +0000

---

| yinboran |

1,886,787 | "Byte-Sized Wisdom": Mastering Big O | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-13T10:08:49 | https://dev.to/oladigbs18/byte-sized-wisdom-mastering-big-o-266o | devchallenge, cschallenge, computerscience, beginners | _This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer._

## Explainer

**Big O**: Rates how fast algorithms grow with data (n). Low O = good! (think search vs. sort). Ignores constants, focuses on trends.

## Additional Context

<!-- Please share any additional context you think the judg... | oladigbs18 |

1,886,786 | Why I Choose WebStorm Over VSCode | As a front-end developer with four years of experience, I've often found myself as the odd one out in... | 0 | 2024-06-13T10:08:07 | https://dev.to/haikelei/why-i-choose-webstorm-over-vscode-3flj | webdev, vscode, programming, devops | As a front-end developer with four years of experience, I've often found myself as the odd one out in my team for my choice of IDE. While the majority of my colleagues surf the waves of Visual Studio Code (VSCode), I remain steadfastly committed to WebStorm. This decision is not a matter of stubbornness or resistance t... | haikelei |

1,886,785 | JavaScript data type conversion | Data type conversion is a process in JavaScript where values are converted from one type to another.... | 0 | 2024-06-13T10:06:09 | https://dev.to/kemiowoyele1/javascript-data-type-conversion-2026 | Data type conversion is a process in JavaScript where values are converted from one type to another. This can be done automatically, where JavaScript handles the conversion by itself or manually, where the programmer converts the data types using operators and functions.

It is crucial that programmers understand data ... | kemiowoyele1 | |

1,886,781 | TIL: an_array_starting_with matcher | Today I was working with generating excel files for export and had some trouble with tests - the... | 0 | 2024-06-13T10:03:31 | https://dev.to/epigene/til-anarraystartingwith-matcher-40g7 | rspec, ruby, rails | ---

title: TIL: an_array_starting_with matcher

published: true

description:

tags: RSpec,ruby,rails

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-06-13 09:57 +0000

---

Today I was working with generating excel files for export and had some trouble with te... | epigene |

1,886,783 | TECNO Web Security Challenge Campaign starts now | More than half of 2024 is about to pass, and we sincerely invite you to participate in our mid-year... | 0 | 2024-06-13T10:03:27 | https://dev.to/tecno-security/tecno-web-security-challenge-campaign-starts-now-4jm | security, bug, hacker, career | More than half of 2024 is about to pass, and we sincerely invite you to participate in our mid-year Web Challenge Campaign now. Are you confident in obtaining additional rewards? Come and accept this challenge! Here are the details of the activity, looking forward to your reports💌!

Buy GitHub Accounts

GitHub holds a crucial position in the world of coding, making it an indispensable platform for developers. As the largest global code reposi... | diwes68311 |

1,886,776 | All You Need to Know about Automatic Chain of Thought Prompting in Large Language Models | Introduction What is automatic Chain of Thought Prompting in large language models? In... | 0 | 2024-06-13T10:03:21 | https://dev.to/novita_ai/all-you-need-to-know-about-automatic-chain-of-thought-prompting-in-large-language-models-56ic | llm | ## Introduction

What is automatic Chain of Thought Prompting in large language models? In this blog, we will break this question into small pieces, starting from the definition of Chain of Thought (CoT) Prompting, to the advantages and development of Auto CoT. Finally, we will discuss [**LLM API**](https://novita.ai/re... | novita_ai |

1,886,782 | Delivering Expertise: Top 10 Trusted US Agencies for Python Development | In today's data-driven world, Python has emerged as a powerhouse language for businesses of all... | 0 | 2024-06-13T10:02:05 | https://dev.to/akaksha/delivering-expertise-top-10-trusted-us-agencies-for-python-development-41l7 | In today's data-driven world, Python has emerged as a powerhouse language for businesses of all sizes. Its versatility, extensive libraries, and clear syntax make it ideal for building web applications, data analysis tools, machine learning models, and much more. However, navigating the vast landscape of Python devel... | akaksha | |

1,871,924 | Goyave v5: the reborn REST framework aims higher | Over two years in the making, Goyave’s next major release is finally available. This time, it’s not... | 0 | 2024-06-13T10:00:05 | https://dev.to/systemglitch/goyave-v5-the-reborn-rest-framework-aims-higher-30c7 | go, news, webdev, opensource | Over two years in the making, Goyave’s next major release is finally available. This time, it’s not just about a breaking change: the framework has been entirely redesigned and rewritten. Let’s talk about this rebirth and what it entails.

## But first, what is Goyave?

Goyave is an **opinionated REST API framework** f... | systemglitch |

1,884,209 | How Layouts Work in Rails | This article was originally published in Rails Designer. Layouts in Rails have been around since... | 0 | 2024-06-13T10:00:00 | https://railsdesigner.com/rails-layouts/ | rails, ruby, webdev | This article was originally published in [Rails Designer](https://railsdesigner.com/rails-layouts/).

---

Layouts in Rails have been around since the earlier versions. They define the surrounding HTML structure shared by multiple views, allowing you to implement a consistent visual design. They encapsulate shared site... | railsdesigner |

1,886,779 | The Benefits of Agile Cloud Transformation for Complex Organizations | In today's rapidly evolving business landscape, organizations are increasingly recognizing the need... | 0 | 2024-06-13T09:58:46 | https://dev.to/wednesdaysol/the-benefits-of-agile-cloud-transformation-for-complex-organizations-2pch |

In today's rapidly evolving business landscape, organizations are increasingly recognizing the need for agility and adaptability to stay ahead of the competition and drive innovation. This has led to a growing empha... | wednesdaysol |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.