id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,881,831 | Go Programming Language Full Tutorial | I wrote a series of entries trying to serve as a guide to the Go programming language. This tutorial... | 0 | 2024-06-12T00:04:27 | https://coffeebytes.dev/en/pages/go-programming-language-tutorial/ | go, tutorial, devops, backend | ---

title: Go Programming Language Full Tutorial

published: true

date: 2024-06-12 20:10:45 UTC

tags: go,tutorial,golang,devops,backend

canonical_url: https://coffeebytes.dev/en/pages/go-programming-language-tutorial/

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/786a4o2w2ca48mxot6ar.jpg

---

I w... | zeedu_dev |

1,886,187 | 5 Captivating Linux Challenges to Boost Your Coding Skills 🖥️ | The article is about 5 captivating Linux challenges curated by LabEx, a renowned platform for hands-on programming exercises. These challenges cover a wide range of topics, from mastering wildcard usage in data analysis to uncovering hidden patterns in ancient texts, and even exploring interstellar network connectivity... | 27,674 | 2024-06-12T20:09:50 | https://labex.io/tutorials/category/linux | linux, coding, programming, tutorial |

Are you ready to embark on a thrilling journey through the world of Linux? ✨ LabEx, a renowned platform for hands-on programming challenges, has curated a collection of five captivating exercises that will push your skills to new heights. From mastering wildcard usage in data analysis to uncovering hidden patterns in ... | labby |

1,886,175 | Connect Logi ~ Logistics One Stop SaaS platform | Building a SaaS Platform for Logistics in stealth mode After completing my first freelance... | 0 | 2024-06-12T20:05:19 | https://dev.to/shrey802/connect-logi-logistics-one-stop-saas-platform-39jo | javascript, webdev, react, node | ### Building a SaaS Platform for Logistics in stealth mode

After completing my first freelance gig building an e-commerce store, I embarked on a new challenge: a paid internship at a logistics company specializing in international and national clients. My role? Developing the backend for their SaaS platform. Initially... | shrey802 |

1,886,173 | Enhancing Recruitment Efficiency: The Role of Recruiting Software in Staffing Firms with Insights on Executive Search Software | In the dynamic and competitive landscape of modern recruitment, staffing firms play a critical role... | 0 | 2024-06-12T19:59:18 | https://dev.to/sana_tariq_c1a4185530b9f6/enhancing-recruitment-efficiency-the-role-of-recruiting-software-in-staffing-firms-with-insights-on-executive-search-software-275f | staffing, software, firms, webdev | In the dynamic and competitive landscape of modern recruitment, staffing firms play a critical role in connecting talented individuals with the right job opportunities. As the demand for efficient and effective hiring processes continues to grow, the adoption of recruiting software has become a necessity for staffing f... | sana_tariq_c1a4185530b9f6 |

1,886,172 | My First Freelance Gig: Building an E-Commerce Store | In December 2023, I landed my first freelance gig, marking the first entry in the experience section... | 0 | 2024-06-12T19:51:13 | https://dev.to/shrey802/my-first-freelance-gig-building-an-e-commerce-store-3kp5 | webdev, javascript, freelance, design |

In December 2023, I landed my first freelance gig, marking the first entry in the experience section of my resume. It was with a small company that manufactures dairy and cosmetic products. The client was supportive and confident in my skills, making it an exciting opportunity. My task was to create an e-commerce sto... | shrey802 |

1,886,171 | What is a data breach? | An occurrence where personal, private, secret, or confidential information about an individual is... | 0 | 2024-06-12T19:47:41 | https://dev.to/isaacgato/what-is-a-data-breach-41h7 | cybersecurity, nordpass, security | An occurrence where personal, private, secret, or confidential information about an individual is disclosed without the user's consent or is stolen is known as a data breach.

Malware or hacking attacks are the main causes of data breaches. The following are other breach techniques that are commonly seen:

**Insider le... | isaacgato |

1,886,169 | Item 34: Use enums em vez de constantes int | No uso de Constantes temos Prefixação para evitar conflitos: Prefixos como APPLE_ e ORANGE_ são... | 0 | 2024-06-12T19:42:26 | https://dev.to/giselecoder/item-34-use-enums-em-vez-de-constantes-int-mm5 | java, enums, development, javaefetivo | **No uso de Constantes temos**

**Prefixação para evitar conflitos:**

Prefixos como APPLE_ e ORANGE_ são usados porque Java não fornece namespaces para grupos de enum int, prevenindo conflitos quando duas enums têm constantes com nomes idênticos.

" indicates that Ansible is unable to find a compatible Python interpreter on the remote hosts.

, and this led me to think about a lot of things, amongst which data typing.

eQual provides a structured way to reference dates allowing to describe and retrieve any date based on th... | cedricfrancoys |

1,885,669 | A feature needs a strong reason to exist | We're working on a product with an amazing UI for managing projects. Think Trello but different 🙃 It... | 0 | 2024-06-12T19:33:22 | https://dev.to/seasonedcc/a-feature-needs-a-strong-reason-to-exist-3e77 | shapeup, agile, design, product | We're working on a product with an amazing UI for managing projects. Think Trello but different 🙃

It offers drag and drop, in-place editing, keyboard navigation, etc, all with [optimistic UI](https://javascript.plainenglish.io/what-is-optimistic-ui-656b9d6e187c). The code is well-written and fairly organized, but it ... | danielweinmann |

1,855,330 | C# Passing by Value vs Passing by Reference | Have you ever wondered why changes inside a function sometimes affect your variables, and other... | 0 | 2024-06-12T19:23:21 | https://dev.to/mahm00dmahm00d/c-passing-by-value-vs-passing-by-reference-5140 | csharp, methods |

Have you ever wondered why changes inside a function sometimes affect your variables, and other times do not? offers two ways to pass data: by value (copy) or reference (pointer). This article breaks down the disti... | mahm00dmahm00d |

1,886,152 | Expert Car Detailing Services in San Francisco | Tropicali Auto Spa | In the vibrant city of San Francisco, where every street corner exudes style and sophistication, your... | 0 | 2024-06-12T19:19:08 | https://dev.to/rakige9276/expert-car-detailing-services-in-san-francisco-tropicali-auto-spa-1ggc | cardetailing, carwash, car, waxing | In the vibrant city of [San Francisco](https://en.wikipedia.org/wiki/San_Francisco), where every street corner exudes style and sophistication, your car is not just a mode of transportation; it's an extension of your personality and a reflection of your lifestyle. Whether you're cruising down Lombard Street or explorin... | rakige9276 |

1,886,146 | The next social networking and dating app for the LGBTQIA+ community | Purr is the next social networking and dating app for queer women, non-binary, and trans... | 0 | 2024-06-12T19:13:23 | https://dev.to/purradmin/the-next-social-networking-and-dating-app-for-the-lgbtqia-community-44em | dating, lgbtqia, networking | [Purr](https://www.purrdating.com/) is the next social networking and dating app for queer women, non-binary, and trans people.

The App will feature a comprehensive way for users to connect with each other based o... | purradmin |

1,886,144 | What is Cloud Cost Optimization? A Comprehensive Guide | Introduction to Cloud Cost Optimization Cloud cost optimization refers to the strategic approach of... | 0 | 2024-06-12T19:07:11 | https://dev.to/unicloud/what-is-cloud-cost-optimization-a-comprehensive-guide-2469 | cloud, optimization | **Introduction to Cloud Cost Optimization**

[Cloud cost optimization](https://unicloud.co/blog/cloud-cost-optimization/) refers to the strategic approach of managing and reducing cloud-related expenses while maximizing the value derived from cloud services. As businesses increasingly adopt cloud technologies, managing ... | unicloud |

1,884,842 | Build a cloud native CI/CD workflow in 2 mins - yes, really! | CloudBees platform is your sandbox for innovation. We want to empower every developer to embark on... | 0 | 2024-06-12T19:01:53 | https://dev.to/cloudbees/build-a-cloud-native-cicd-workflow-in-2-mins-yes-really-1dab | devops, cloud, cicd, devsecopsmadeeasy | CloudBees platform is your sandbox for innovation.

We want to empower every developer to embark on something innovative as quickly as possible. Our testament to this obsession starts with how easy it is to create a truly cloud native CI/CD workflow with our platform - create and execute a build, scan, and deploy work... | cloudbees_ |

1,886,135 | Test Post | Hello World | 0 | 2024-06-12T18:53:16 | https://dev.to/nhelchitnis/test-post-4pig | Hello World | nhelchitnis | |

1,886,133 | Building React Apps with the Nx Standalone Setup | Hello, fellow developers! Today, let's explore how to set up and optimize a standalone React... | 0 | 2024-06-12T18:51:40 | https://dev.to/ak_23/building-react-apps-with-the-nx-standalone-setup-171m |

Hello, fellow developers! Today, let's explore how to set up and optimize a standalone React application using Nx. This tutorial is perfect for those who want the benefits of Nx without the complexity of a monorepo setup.

### What You Will Learn

- Creating a new React application with Nx

- Running tasks (serving, bu... | ak_23 | |

1,884,891 | Creating and Deploying a Windows 11 Virtual Machine on Microsoft Azure | Azure virtual machines (VMs) can be created through the Azure portal. This method provides a... | 0 | 2024-06-12T18:46:55 | https://dev.to/tracyee_/creating-and-deploying-a-windows-11-virtual-machine-on-microsoft-azure-2kn3 | virtualmachine, cloudcomputing, microsoft, azure | Azure virtual machines (VMs) can be created through the Azure portal. This method provides a browser-based user interface to create VMs and their associated resources. This blog post shows you how to use the Azure portal to deploy a virtual machine (VM) in Azure that runs Windows 11 pro server. To see your VM in action... | tracyee_ |

1,886,129 | Hi | I feel big society learn your knowledge | 0 | 2024-06-12T18:45:04 | https://dev.to/yogi_raj_6c57fca5540802fe/hi-12n3 | I feel big society learn your knowledge | yogi_raj_6c57fca5540802fe | |

1,881,944 | A Comprehensive Guide to Keycloak Configuration for Web Development! | Hey folks! If you're diving into the world of web development, you've probably come across the need... | 0 | 2024-06-12T18:44:00 | https://dev.to/ak_23/a-comprehensive-guide-to-keycloak-configuration-for-web-development-4jp | learning, webdev, beginners | Hey folks! If you're diving into the world of web development, you've probably come across the need for a robust identity and access management solution. Enter Keycloak—a powerful open-source tool that simplifies the complexities of authentication and authorization in modern applications. In this post, we'll walk you t... | ak_23 |

1,886,126 | How to Send Bulk Emails For Free using SMTP (Beginner's Guide) | In today's digital age, email remains one of the most powerful and cost-effective channels for... | 0 | 2024-06-12T18:37:35 | https://blog.learnhub.africa/2024/06/12/how-to-send-bulk-emails-for-free-using-smtp-beginners-guide/ | smtp, email, webdev, beginners | In today's digital age, email remains one of the most powerful and cost-effective channels for communication and marketing. Whether you're a small business owner, a blogger, or a hobbyist, the ability to send bulk emails can be a game-changer.

However, many email service providers (ESPs) charge hefty fees for their s... | scofieldidehen |

1,886,125 | HIRE A GENUINE USDT RECOVERY ~ARGONIX HACK TECH | In the evolution of internet fraud, safeguarding your Bitcoin accounts is paramount to protect your... | 0 | 2024-06-12T18:36:37 | https://dev.to/helmiemilia163/hire-a-genuine-usdt-recovery-argonix-hack-tech-1ch8 | In the evolution of internet fraud, safeguarding your Bitcoin accounts is paramount to protect your valuable digital assets from falling prey to phishing attacks and other malicious schemes. While no security system is foolproof, maintaining vigilance and implementing robust security measures can significantly mitigate... | helmiemilia163 | |

1,884,551 | Introducing Our First Computer Science Challenge! | In celebration of Pride Month, we are excited to introduce our first Computer Science Challenge in... | 0 | 2024-06-12T18:36:15 | https://dev.to/devteam/introducing-our-first-computer-science-challenge-hp2 | devchallenge, cschallenge, computerscience | In celebration of Pride Month, we are excited to introduce our first [Computer Science Challenge](https://dev.to/challenges/cs) in honor of Alan Turing, the brilliant gay English mathematician and crypto-analyst who is widely considered to be the “Father of Computer Science.” His birthday would have been on June 22, r... | thepracticaldev |

1,886,124 | Deploy Docker Image to AWS EC2 in 5 minutes | From Development to Staging in 5 Minutes… Introduction As we know, there are many ways to... | 0 | 2024-06-12T18:35:05 | https://srebreni3.medium.com/deploy-docker-image-to-aws-ec2-in-5-minutes-4cd7518feacc | aws, ec2, docker, containers | From Development to Staging in 5 Minutes…

Introduction

------------

As we know, there are many ways to run your Docker image on the Cloud. I have previously written about this and introduced two methods: **“How to Deploy a Docker Image to Amazon ECR and Run It on Amazon EC2”** & **“How to Deploy a Docker Image on AWS... | srebreni3 |

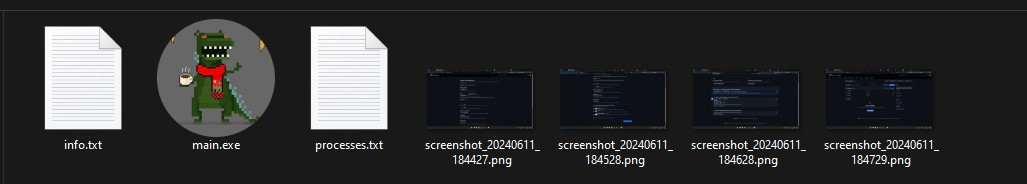

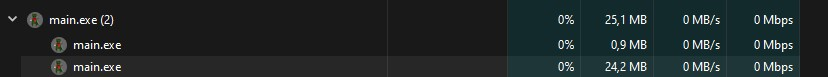

1,886,123 | [Python] Take Screenshot every 1 minute | Tool that takes screenshots and extracts information from your computer every 1 minute, running in... | 0 | 2024-06-12T18:34:16 | https://dev.to/jkdevarg/python-take-screenshot-every-1-minute-4mhe | python, code, linux, windows | Tool that takes screenshots and extracts information from your computer every 1 minute, running in the background.

_**and lots of Gmail accounts can help you to get real success in the on... | buyverifiedpaxfulaccounts |

1,886,118 | PowerInfer-2: Fast Large Language Model Inference on a Smartphone | PowerInfer-2: Fast Large Language Model Inference on a Smartphone | 0 | 2024-06-12T18:28:51 | https://aimodels.fyi/papers/arxiv/powerinfer-2-fast-large-language-model-inference | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [PowerInfer-2: Fast Large Language Model Inference on a Smartphone](https://aimodels.fyi/papers/arxiv/powerinfer-2-fast-large-language-model-inference). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](https://a... | mikeyoung44 |

1,886,117 | Towards a Personal Health Large Language Model | Towards a Personal Health Large Language Model | 0 | 2024-06-12T18:28:16 | https://aimodels.fyi/papers/arxiv/towards-personal-health-large-language-model | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Towards a Personal Health Large Language Model](https://aimodels.fyi/papers/arxiv/towards-personal-health-large-language-model). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](https://aimodels.substack.com) o... | mikeyoung44 |

1,886,116 | The best FREE hosting control panel & alternative to CPanel: CyberPanel! | If you are looking to host a website, whether that be built with React or WordPress, you need a few... | 0 | 2024-06-12T18:28:14 | https://dev.to/prismlabsdev/the-best-free-hosting-control-panel-alternative-to-cpanel-cyberpanel-2eie | hosting, wordpress, cpanel, cyberpanel | If you are looking to host a website, whether that be built with React or WordPress, you need a few things: mainly a domain and a server to host your website on and point your domain to.

But you will also probably want a few things... like email, FTP server, DNS management, SSL certificate management. In the case of ... | jwoodrow99 |

1,886,115 | Buy Negative Google Reviews | Buy Negative Google reviews is a very important aspect of every online business. In today’s... | 0 | 2024-06-12T18:27:57 | https://dev.to/buyverifiedpaxfulaccounts/buy-negative-google-reviews-54mn | webdev, design, mobile, cryptocurrency | **_[Buy Negative Google reviews](https://localusashop.com/product/buy-negative-google-reviews/)_** is a very important aspect of every online business. In today’s competitive world, Google reviews can help you achieve success. Both positive and **_[negative reviews](https://localusashop.com/product/buy-negative-google-... | buyverifiedpaxfulaccounts |

1,886,114 | Where's the DEV discord server? | Hello everyone. On brief question. Where can I find the DEV discord server? Thanks! | 0 | 2024-06-12T18:27:44 | https://dev.to/softwaredeveloping/wheres-the-dev-discord-server-2o13 | help, discord, forem | Hello everyone. On brief question. Where can I find the DEV discord server? Thanks! | softwaredeveloping |

1,886,113 | Clifford-Steerable Convolutional Neural Networks | Clifford-Steerable Convolutional Neural Networks | 0 | 2024-06-12T18:27:41 | https://aimodels.fyi/papers/arxiv/clifford-steerable-convolutional-neural-networks | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Clifford-Steerable Convolutional Neural Networks](https://aimodels.fyi/papers/arxiv/clifford-steerable-convolutional-neural-networks). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](https://aimodels.substack.... | mikeyoung44 |

1,886,112 | Zero-shot Image Editing with Reference Imitation | Zero-shot Image Editing with Reference Imitation | 0 | 2024-06-12T18:27:07 | https://aimodels.fyi/papers/arxiv/zero-shot-image-editing-reference-imitation | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Zero-shot Image Editing with Reference Imitation](https://aimodels.fyi/papers/arxiv/zero-shot-image-editing-reference-imitation). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](https://aimodels.substack.com) ... | mikeyoung44 |

1,886,111 | The statistical thermodynamics of generative diffusion models: Phase transitions, symmetry breaking and critical instability | The statistical thermodynamics of generative diffusion models: Phase transitions, symmetry breaking and critical instability | 0 | 2024-06-12T18:26:32 | https://aimodels.fyi/papers/arxiv/statistical-thermodynamics-generative-diffusion-models-phase-transitions | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [The statistical thermodynamics of generative diffusion models: Phase transitions, symmetry breaking and critical instability](https://aimodels.fyi/papers/arxiv/statistical-thermodynamics-generative-diffusion-models-phase-transitions). If you like these... | mikeyoung44 |

1,886,110 | RAG Does Not Work for Enterprises | RAG Does Not Work for Enterprises | 0 | 2024-06-12T18:25:58 | https://aimodels.fyi/papers/arxiv/rag-does-not-work-enterprises | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [RAG Does Not Work for Enterprises](https://aimodels.fyi/papers/arxiv/rag-does-not-work-enterprises). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](https://aimodels.substack.com) or follow me on [Twitter](htt... | mikeyoung44 |

1,886,109 | Separating the Chirp from the Chat: Self-supervised Visual Grounding of Sound and Language | Separating the Chirp from the Chat: Self-supervised Visual Grounding of Sound and Language | 0 | 2024-06-12T18:24:15 | https://aimodels.fyi/papers/arxiv/separating-chirp-from-chat-self-supervised-visual | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Separating the Chirp from the Chat: Self-supervised Visual Grounding of Sound and Language](https://aimodels.fyi/papers/arxiv/separating-chirp-from-chat-self-supervised-visual). If you like these kinds of analysis, you should subscribe to the [AImodels... | mikeyoung44 |

1,886,108 | 🔥Binary Search❄️ | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-12T18:23:54 | https://dev.to/rcmonteiro/binary-search-5hbn | devchallenge, cschallenge, computerscience, beginners | _This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer._

## Explainer

> **Binary search** is like a super-fast game of "Hot or Cold" with a sorted list: you keep guessing the middle number, then cut the list in half based on whether you're too high or too low, finding the target in no ... | rcmonteiro |

1,886,107 | What makes this Gaming Platform games stand out in the crowded world of mobile gaming? | "This Gaming Platform games have emerged as a true game-changer in the mobile gaming industry,... | 0 | 2024-06-12T18:23:54 | https://dev.to/claywinston/what-makes-this-gaming-platform-games-stand-out-in-the-crowded-world-of-mobile-gaming-37aa | gamedev, mobile, mobilegames, games | "This [Gaming Platform](https://medium.com/@adreeshelk/nostra-brings-games-to-life-with-the-game-hosting-revolution-017dd8bfb0c8?utm_source=referral&utm_medium=Medium&utm_campaign=Nostra) games have emerged as a true game-changer in the mobile gaming industry, offering a unique and unparalleled experience that sets the... | claywinston |

1,884,889 | Understanding Dash Agents: A LangChain Case Study | In software development, integrating APIs and SDKs is crucial. Yet, the process can be tedious and... | 0 | 2024-06-12T18:23:51 | https://medium.com/@yogesh_51958/understanding-dash-agents-a-langchain-case-study-5e5231f7157e | langchain, codegen, commanddash, llm | In software development, integrating APIs and SDKs is crucial. Yet, the process can be tedious and time-consuming. Documentation, syntax complexities, and debugging can quickly become time-consuming roadblocks. Thankfully, this is where Dash Agents comes in to ease these headaches.

This blog post explores the capabili... | yogesh009 |

1,886,106 | GenAI Arena: An Open Evaluation Platform for Generative Models | GenAI Arena: An Open Evaluation Platform for Generative Models | 0 | 2024-06-12T18:23:41 | https://aimodels.fyi/papers/arxiv/genai-arena-open-evaluation-platform-generative-models | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [GenAI Arena: An Open Evaluation Platform for Generative Models](https://aimodels.fyi/papers/arxiv/genai-arena-open-evaluation-platform-generative-models). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](https:... | mikeyoung44 |

1,886,104 | Buy Google 5-Star Reviews | Buy Google 5-Star Reviews A Google 5-Star Review is an essential tool for every business or every... | 0 | 2024-06-12T18:22:44 | https://dev.to/buyverifiedpaxfulaccounts/buy-google-5-star-reviews-n1d | webdev, beginners, javascript, tutorial | **_[Buy Google 5-Star Reviews](https://localusashop.com/product/buy-google-5-star-reviews/)_**

A Google 5-Star Review is an essential tool for every business or every organization Every reputable organization understands the importance of **_[Google 5-star reviews](https://localusashop.com/product/buy-google-5-star-rev... | buyverifiedpaxfulaccounts |

1,886,103 | Titanium SDK 12.3.1.GA released | Titanium SDK helps you to build native cross-platform mobile application using JavaScript and the... | 0 | 2024-06-12T18:21:55 | https://dev.to/miga/titanium-sdk-1231ga-released-49on | titaniumsdk, mobile, javascript, news |

**Titanium SDK** helps you to build native cross-platform mobile application using JavaScript and the Titanium API, which abstracts the native APIs of the mobile platforms. Titanium empowers you to create immersive, full-featured applications, featuring over 80% code reuse across mobile apps.

The new version 12.3.1.G... | miga |

1,886,101 | Creative Background Parallax Slider | This CodePen demonstrates a full-screen background parallax slider built using Swiper.js. The slider... | 0 | 2024-06-12T18:21:05 | https://dev.to/creative_salahu/creative-background-parallax-slider-5d9m | codepen | This CodePen demonstrates a full-screen background parallax slider built using Swiper.js. The slider features smooth fade transitions and autoplay functionality, making it ideal for creating visually appealing hero sections or landing pages. The background images are dynamically adjusted to cover the entire viewport, e... | creative_salahu |

1,886,097 | Buy verified Paxful Accounts | Paxful is the most popular peer-to-peer cryptocurrency market where users can buy and sell Bitcoin.... | 0 | 2024-06-12T18:20:42 | https://dev.to/buyverifiedpaxfulaccounts/buy-verified-paxful-accounts-3j62 | mobile, webdev, beginners, javascript | **_[Paxful](https://localusashop.com/product/buy-verified-paxful-accounts/)_** is the most popular peer-to-peer cryptocurrency market where users can buy and sell Bitcoin. If users wanted to buy and sell Bitcoin, then they would buy or create an account on Paxful. Paxful is the best thing for using Bitcoin. There are a... | buyverifiedpaxfulaccounts |

1,886,050 | Hi, I'm Nathaniel Chitnis | Hello all I am a 16yo Self-Learning Developer. I have been using github for a couple of... | 0 | 2024-06-12T18:18:09 | https://dev.to/nhelchitnis/hi-im-nathaniel-chitnis-2bmf | ## Hello all

I am a 16yo Self-Learning Developer. I have been using github for a couple of months now. I have created many Open Source Projects and Have been coding for at least 13 weeks.

Well That's everything about me,

See You Next Time,

Bye | nhelchitnis | |

1,886,048 | Cultivating Open Source Community | Learn about the importance of belonging, motivation, and collaboration in open source community. | 0 | 2024-06-12T18:14:11 | https://opensauced.pizza/blog/open-source-community | opensource, community | ---

title: Cultivating Open Source Community

published: true

description: Learn about the importance of belonging, motivation, and collaboration in open source community.

tags: opensource, community

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/51v1bk2p3w3ak8zz8trn.png

# Use a ratio of 100:42 fo... | bekahhw |

1,886,041 | My First Arch Linux Installation | I post it originally on my personal blog and I recommend you read it there! During a specific... | 0 | 2024-06-12T18:07:49 | https://dev.to/dantas/my-first-arch-linux-installation-26le | archlinux, linux, beginners | - I post it originally on [my personal blog](https://www.dantas15.com/blog/first-arch-install/) and I recommend you read it there!

During a specific vacation from college, I figured I should try installing Arch Linux just because I was curious (and I wanted to say I use Arch btw).

## My expectations

- Learn! Since i... | dantas |

1,886,029 | Diferença entre os Operadores `??` e `||` em TypeScript | Quando se trata de fornecer valores padrão em TypeScript (ou JavaScript), os operadores ?? (Nullish... | 0 | 2024-06-12T18:05:29 | https://dev.to/vitorrios1001/diferenca-entre-os-operadores-e-em-typescript-2k6j | javascript, typescript, tutorial, programming | Quando se trata de fornecer valores padrão em TypeScript (ou JavaScript), os operadores `??` (Nullish Coalescing) e `||` (Logical OR) são frequentemente utilizados. No entanto, eles se comportam de maneira diferente quando lidam com valores falsy. Neste artigo, exploraremos as diferenças entre esses operadores e como u... | vitorrios1001 |

1,886,040 | Asynchronous JavaScript: Practical Tips for Better Code | Asynchronous JavaScript can be a puzzle for many developers, specially when you are getting started!... | 0 | 2024-06-12T17:57:16 | https://dev.to/buildwebcrumbs/demystifying-asynchronous-javascript-practical-tips-for-better-code-15m1 | beginners, javascript, webdev, coding | Asynchronous JavaScript can be a puzzle for many developers, specially when you are getting started!

While it's a very impoerant piece of the language, enabling responsive, non-blocking interactions in web applications, it often causes confusion and bugs if not used correctly.

The goal of this article is to demystif... | pachicodes |

1,886,037 | Buy verified cash app account | https://dmhelpshop.com/product/buy-verified-cash-app-account/ Buy verified cash app account Cash... | 0 | 2024-06-12T17:38:56 | https://dev.to/jossr1239/buy-verified-cash-app-account-26jb | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-cash-app-account/\n\n\n\n\nBuy verified cash app account\nCash app has emerged as a dominant force in the realm of mobile banking within the USA, offering unparalleled convenience for digital money transfers, deposits, and trading. As the foremost provider of fully verified cash app accounts, we take pride in our ability to deliver accounts with substantial limits. Bitcoin enablement, and an unmatched level of security.\n\nOur commitment to facilitating seamless transactions and enabling digital currency trades has garnered significant acclaim, as evidenced by the overwhelming response from our satisfied clientele. Those seeking buy verified cash app account with 100% legitimate documentation and unrestricted access need look no further. Get in touch with us promptly to acquire your verified cash app account and take advantage of all the benefits it has to offer.\n\nWhy dmhelpshop is the best place to buy USA cash app accounts?\nIt’s crucial to stay informed about any updates to the platform you’re using. If an update has been released, it’s important to explore alternative options. Contact the platform’s support team to inquire about the status of the cash app service.\n\nClearly communicate your requirements and inquire whether they can meet your needs and provide the buy verified cash app account promptly. If they assure you that they can fulfill your requirements within the specified timeframe, proceed with the verification process using the required documents.\n\nOur account verification process includes the submission of the following documents: [List of specific documents required for verification].\n\nGenuine and activated email verified\nRegistered phone number (USA)\nSelfie verified\nSSN (social security number) verified\nDriving license\nBTC enable or not enable (BTC enable best)\n100% replacement guaranteed\n100% customer satisfaction\nWhen it comes to staying on top of the latest platform updates, it’s crucial to act fast and ensure you’re positioned in the best possible place. If you’re considering a switch, reaching out to the right contacts and inquiring about the status of the buy verified cash app account service update is essential.\n\nClearly communicate your requirements and gauge their commitment to fulfilling them promptly. Once you’ve confirmed their capability, proceed with the verification process using genuine and activated email verification, a registered USA phone number, selfie verification, social security number (SSN) verification, and a valid driving license.\n\nAdditionally, assessing whether BTC enablement is available is advisable, buy verified cash app account, with a preference for this feature. It’s important to note that a 100% replacement guarantee and ensuring 100% customer satisfaction are essential benchmarks in this process.\n\nHow to use the Cash Card to make purchases?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card. Alternatively, you can manually enter the CVV and expiration date. How To Buy Verified Cash App Accounts.\n\nAfter submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a buy verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account.\n\nWhy we suggest to unchanged the Cash App account username?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card.\n\nAlternatively, you can manually enter the CVV and expiration date. After submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account. Purchase Verified Cash App Accounts.\n\nSelecting a username in an app usually comes with the understanding that it cannot be easily changed within the app’s settings or options. This deliberate control is in place to uphold consistency and minimize potential user confusion, especially for those who have added you as a contact using your username. In addition, purchasing a Cash App account with verified genuine documents already linked to the account ensures a reliable and secure transaction experience.\n\n \n\nBuy verified cash app accounts quickly and easily for all your financial needs.\nAs the user base of our platform continues to grow, the significance of verified accounts cannot be overstated for both businesses and individuals seeking to leverage its full range of features. How To Buy Verified Cash App Accounts.\n\nFor entrepreneurs, freelancers, and investors alike, a verified cash app account opens the door to sending, receiving, and withdrawing substantial amounts of money, offering unparalleled convenience and flexibility. Whether you’re conducting business or managing personal finances, the benefits of a verified account are clear, providing a secure and efficient means to transact and manage funds at scale.\n\nWhen it comes to the rising trend of purchasing buy verified cash app account, it’s crucial to tread carefully and opt for reputable providers to steer clear of potential scams and fraudulent activities. How To Buy Verified Cash App Accounts. With numerous providers offering this service at competitive prices, it is paramount to be diligent in selecting a trusted source.\n\nThis article serves as a comprehensive guide, equipping you with the essential knowledge to navigate the process of procuring buy verified cash app account, ensuring that you are well-informed before making any purchasing decisions. Understanding the fundamentals is key, and by following this guide, you’ll be empowered to make informed choices with confidence.\n\n \n\nIs it safe to buy Cash App Verified Accounts?\nCash App, being a prominent peer-to-peer mobile payment application, is widely utilized by numerous individuals for their transactions. However, concerns regarding its safety have arisen, particularly pertaining to the purchase of “verified” accounts through Cash App. This raises questions about the security of Cash App’s verification process.\n\nUnfortunately, the answer is negative, as buying such verified accounts entails risks and is deemed unsafe. Therefore, it is crucial for everyone to exercise caution and be aware of potential vulnerabilities when using Cash App. How To Buy Verified Cash App Accounts.\n\nCash App has emerged as a widely embraced platform for purchasing Instagram Followers using PayPal, catering to a diverse range of users. This convenient application permits individuals possessing a PayPal account to procure authenticated Instagram Followers.\n\nLeveraging the Cash App, users can either opt to procure followers for a predetermined quantity or exercise patience until their account accrues a substantial follower count, subsequently making a bulk purchase. Although the Cash App provides this service, it is crucial to discern between genuine and counterfeit items. If you find yourself in search of counterfeit products such as a Rolex, a Louis Vuitton item, or a Louis Vuitton bag, there are two viable approaches to consider.\n\n \n\nWhy you need to buy verified Cash App accounts personal or business?\nThe Cash App is a versatile digital wallet enabling seamless money transfers among its users. However, it presents a concern as it facilitates transfer to both verified and unverified individuals.\n\nTo address this, the Cash App offers the option to become a verified user, which unlocks a range of advantages. Verified users can enjoy perks such as express payment, immediate issue resolution, and a generous interest-free period of up to two weeks. With its user-friendly interface and enhanced capabilities, the Cash App caters to the needs of a wide audience, ensuring convenient and secure digital transactions for all.\n\nIf you’re a business person seeking additional funds to expand your business, we have a solution for you. Payroll management can often be a challenging task, regardless of whether you’re a small family-run business or a large corporation. How To Buy Verified Cash App Accounts.\n\nImproper payment practices can lead to potential issues with your employees, as they could report you to the government. However, worry not, as we offer a reliable and efficient way to ensure proper payroll management, avoiding any potential complications. Our services provide you with the funds you need without compromising your reputation or legal standing. With our assistance, you can focus on growing your business while maintaining a professional and compliant relationship with your employees. Purchase Verified Cash App Accounts.\n\nA Cash App has emerged as a leading peer-to-peer payment method, catering to a wide range of users. With its seamless functionality, individuals can effortlessly send and receive cash in a matter of seconds, bypassing the need for a traditional bank account or social security number. Buy verified cash app account.\n\nThis accessibility makes it particularly appealing to millennials, addressing a common challenge they face in accessing physical currency. As a result, ACash App has established itself as a preferred choice among diverse audiences, enabling swift and hassle-free transactions for everyone. Purchase Verified Cash App Accounts.\n\n \n\nHow to verify Cash App accounts\nTo ensure the verification of your Cash App account, it is essential to securely store all your required documents in your account. This process includes accurately supplying your date of birth and verifying the US or UK phone number linked to your Cash App account.\n\nAs part of the verification process, you will be asked to submit accurate personal details such as your date of birth, the last four digits of your SSN, and your email address. If additional information is requested by the Cash App community to validate your account, be prepared to provide it promptly. Upon successful verification, you will gain full access to managing your account balance, as well as sending and receiving funds seamlessly. Buy verified cash app account.\n\n \n\nHow cash used for international transaction?\nExperience the seamless convenience of this innovative platform that simplifies money transfers to the level of sending a text message. It effortlessly connects users within the familiar confines of their respective currency regions, primarily in the United States and the United Kingdom.\n\nNo matter if you’re a freelancer seeking to diversify your clientele or a small business eager to enhance market presence, this solution caters to your financial needs efficiently and securely. Embrace a world of unlimited possibilities while staying connected to your currency domain. Buy verified cash app account.\n\nUnderstanding the currency capabilities of your selected payment application is essential in today’s digital landscape, where versatile financial tools are increasingly sought after. In this era of rapid technological advancements, being well-informed about platforms such as Cash App is crucial.\n\nAs we progress into the digital age, the significance of keeping abreast of such services becomes more pronounced, emphasizing the necessity of staying updated with the evolving financial trends and options available. Buy verified cash app account.\n\nOffers and advantage to buy cash app accounts cheap?\nWith Cash App, the possibilities are endless, offering numerous advantages in online marketing, cryptocurrency trading, and mobile banking while ensuring high security. As a top creator of Cash App accounts, our team possesses unparalleled expertise in navigating the platform.\n\nWe deliver accounts with maximum security and unwavering loyalty at competitive prices unmatched by other agencies. Rest assured, you can trust our services without hesitation, as we prioritize your peace of mind and satisfaction above all else.\n\nEnhance your business operations effortlessly by utilizing the Cash App e-wallet for seamless payment processing, money transfers, and various other essential tasks. Amidst a myriad of transaction platforms in existence today, the Cash App e-wallet stands out as a premier choice, offering users a multitude of functions to streamline their financial activities effectively. Buy verified cash app account.\n\nTrustbizs.com stands by the Cash App’s superiority and recommends acquiring your Cash App accounts from this trusted source to optimize your business potential.\n\nHow Customizable are the Payment Options on Cash App for Businesses?\nDiscover the flexible payment options available to businesses on Cash App, enabling a range of customization features to streamline transactions. Business users have the ability to adjust transaction amounts, incorporate tipping options, and leverage robust reporting tools for enhanced financial management.\n\nExplore trustbizs.com to acquire verified Cash App accounts with LD backup at a competitive price, ensuring a secure and efficient payment solution for your business needs. Buy verified cash app account.\n\nDiscover Cash App, an innovative platform ideal for small business owners and entrepreneurs aiming to simplify their financial operations. With its intuitive interface, Cash App empowers businesses to seamlessly receive payments and effectively oversee their finances. Emphasizing customization, this app accommodates a variety of business requirements and preferences, making it a versatile tool for all.\n\nWhere To Buy Verified Cash App Accounts\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nThe Importance Of Verified Cash App Accounts\nIn today’s digital age, the significance of verified Cash App accounts cannot be overstated, as they serve as a cornerstone for secure and trustworthy online transactions.\n\nBy acquiring verified Cash App accounts, users not only establish credibility but also instill the confidence required to participate in financial endeavors with peace of mind, thus solidifying its status as an indispensable asset for individuals navigating the digital marketplace.\n\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nConclusion\nEnhance your online financial transactions with verified Cash App accounts, a secure and convenient option for all individuals. By purchasing these accounts, you can access exclusive features, benefit from higher transaction limits, and enjoy enhanced protection against fraudulent activities. Streamline your financial interactions and experience peace of mind knowing your transactions are secure and efficient with verified Cash App accounts.\n\nChoose a trusted provider when acquiring accounts to guarantee legitimacy and reliability. In an era where Cash App is increasingly favored for financial transactions, possessing a verified account offers users peace of mind and ease in managing their finances. Make informed decisions to safeguard your financial assets and streamline your personal transactions effectively.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com\n\n\n\n" | jossr1239 |

1,886,036 | Unlocking Data Relationships: MongoDB's $lookup vs. Mongoose's Populate | MongoDB Aggregation Framework - $lookup : $lookup is an aggregation pipeline stage in... | 0 | 2024-06-12T17:38:22 | https://dev.to/sujeetsingh123/unlocking-data-relationships-mongodbs-lookup-vs-mongooses-populate-mc5 | ## MongoDB Aggregation Framework - `$lookup` :

- `$lookup` is an aggregation pipeline stage in MongoDB that performs a left outer join to another collection in the same database. It allows you to perform a join between two collections based on some common field or condition.

- This stage is used within an aggregation ... | sujeetsingh123 | |

1,886,035 | Unlocking Data Relationships: MongoDB's $lookup vs. Mongoose's Populate | MongoDB Aggregation Framework - $lookup : $lookup is an aggregation pipeline stage in... | 0 | 2024-06-12T17:38:20 | https://dev.to/sujeetsingh123/unlocking-data-relationships-mongodbs-lookup-vs-mongooses-populate-lgk | ## MongoDB Aggregation Framework - `$lookup` :

- `$lookup` is an aggregation pipeline stage in MongoDB that performs a left outer join to another collection in the same database. It allows you to perform a join between two collections based on some common field or condition.

- This stage is used within an aggregation ... | sujeetsingh123 | |

1,886,034 | Choosing the Right Database Solution for Your Project: SQL or NoSQL? | As a backend engineer tasked with setting up a new project, the question of whether to use a SQL or... | 0 | 2024-06-12T17:37:40 | https://dev.to/sujeetsingh123/choosing-the-right-database-solution-for-your-project-sql-or-nosql-2nno | As a backend engineer tasked with setting up a new project, the question of whether to use a SQL or NoSQL database invariably arises. Making this decision requires careful consideration of the project's specific requirements and needs.

When faced with the choice between SQL and NoSQL databases, several factors come in... | sujeetsingh123 | |

1,886,032 | How can we version REST API? | API versioning is a critical aspect of software development, especially in the realm of web services.... | 0 | 2024-06-12T17:37:08 | https://dev.to/sujeetsingh123/how-can-we-version-rest-api-5eka | API versioning is a critical aspect of software development, especially in the realm of web services. As applications evolve and new features are introduced, maintaining backward compatibility becomes essential to ensure a seamless experience for existing users while allowing for innovation. In this blog post, we delve... | sujeetsingh123 | |

1,884,513 | You can't grow in your career without feedback. 🗣️ Here's 5 ways for you to find some 📝 | "This guy has 10 years of experience, but he works like a 4YOE one?" "How do I talk to my manager... | 0 | 2024-06-12T17:36:55 | https://dev.to/middleware/you-cant-grow-in-your-career-without-feedback-heres-5-ways-for-you-find-some-171p | career, beginners, learning, softwareengineering | **"This guy has 10 years of experience, but he works like a 4YOE one?"**

**"How do I talk to my manager about how I'm doing?"**

**"I don't know if I'm growing sufficiently in my career!"**

Ever said or wondered these things? Then you're in the right place.

---

There are tons of people out there who have worked since... | jayantbh |

1,886,031 | The Evolution of Web Development: A 10-Year Retrospective | Responsive Design: Over the past decade, responsive design has become essential, allowing... | 0 | 2024-06-12T17:32:43 | https://dev.to/bingecoder89/the-evolution-of-web-development-a-10-year-retrospective-346p | webdev, javascript, frontend, beginners | 1. **Responsive Design**:

- Over the past decade, responsive design has become essential, allowing websites to adapt seamlessly to various screen sizes and devices, driven by the proliferation of smartphones and tablets.

2. **JavaScript Frameworks**:

- The rise of frameworks like Angular, React, and Vue.js has t... | bingecoder89 |

1,886,030 | The Crucial Risk Assessment Template for Cybersecurity | Cybersecurity is everyone's business. Compliance requirements, investor demands, and data breaches... | 0 | 2024-06-12T17:32:28 | https://cynomi.com/blog/the-crucial-risk-assessment-template-for-cybersecurity/ | cybersecurity, risk | Cybersecurity is everyone's business. Compliance requirements, investor demands, and data breaches are just a few drivers pushing SMEs and startups to hire MSPs and cybersecurity consultants. Their InfoSec teams are often understaffed and fail to keep up with the shifting threat landscape and regulation.

Experiencing ... | yayabobi |

1,886,027 | Object methods 🦜 | let result; const userProfile = { username: 'Khojiakbar', age: 26, job:... | 0 | 2024-06-12T17:29:26 | https://dev.to/__khojiakbar__/object-methods-1j2j | javascript, object, methods | ```

let result;

const userProfile = {

username: 'Khojiakbar',

age: 26,

job: 'programmer',

city: 'Tashkent region',

}

```

```

// Object.keys()

result = Object.keys(userProfile);

// Object.values()

result = Object.values(userProfile);

// Object.entries()

result = Object.entries(userPro... | __khojiakbar__ |

1,886,025 | 10 Essential Future Technology Books Every Developer Should Read | As the tech landscape evolves rapidly, developers must continuously enhance their skills and stay... | 0 | 2024-06-12T17:26:37 | https://dev.to/futuristicgeeks/10-essential-future-technology-books-every-developer-should-read-2okc | webdev, datascience, machinelearning, ai | As the tech landscape evolves rapidly, developers must continuously enhance their skills and stay updated with future technologies. Whether you’re a seasoned developer or just starting, the following ten books provide invaluable insights into machine learning, artificial intelligence, and other cutting-edge technologie... | futuristicgeeks |

1,885,944 | From Manual Drudgery to Automation Maestro: Mastering Ansible with Docker | This blog post whisks you away from the drudgery of manual server configuration and into the... | 0 | 2024-06-12T17:24:57 | https://dev.to/sandheep_kumarpatro_1c48/from-manual-drudgery-to-automation-maestro-mastering-ansible-with-docker-10m5 | This blog post whisks you away from the drudgery of manual server configuration and into the empowering realm of automation with Ansible ✨. Ever feel like a rockstar chef , forced to hand-chop every veggie , meticulously measure spices ⚖️, and build the fire from scratch for every dish? That's exactly how I felt when... | sandheep_kumarpatro_1c48 | |

1,886,024 | MongoDB Query Operators: $and, $or, $exists, $type, $size | When working with MongoDB, understanding various query operators is crucial for efficient data... | 0 | 2024-06-12T17:22:54 | https://dev.to/kawsarkabir/mongodb-query-operators-and-or-exists-type-size-1lnp | When working with MongoDB, understanding various query operators is crucial for efficient data retrieval. This article will dive into the usage of some fundamental operators like `$and`, `$or`, `$exists`, `$type`, and `$size`.

## $and Operator

Let's say we have a database named `school` with a `students` collection. ... | kawsarkabir | |

1,886,022 | American University Of Business And Social Sciences Confers Doctor Of Business Management (Honoris Causa) On Hiew Boon Thong | The American University of Business and Social Sciences (AUBSS) is honored to announce the conferment... | 0 | 2024-06-12T17:21:06 | https://dev.to/aubss_edu/american-university-of-business-and-social-sciences-confers-doctor-of-business-management-honoris-causa-on-hiew-boon-thong-2aph | education, aubss, qahe, research | The American University of Business and Social Sciences (AUBSS) is honored to announce the conferment of the prestigious Doctor of Business Management (Honoris Causa) degree on Mr. Hiew Boon Thong. This honorary doctorate is awarded in recognition of Mr. Hiew’s outstanding accomplishments and exemplary contributions to... | aubss_edu |

1,885,958 | The Ultimate V-Bucks Code Generator for 2024" | If you’re an avid Fortnite player, you’ve likely heard of V Bucks - the in-game currency that allows... | 0 | 2024-06-12T17:20:08 | https://dev.to/abdul_alim_d288797797683e/the-ultimate-v-bucks-code-generator-for-2024-21mm | If you’re an avid Fortnite player, you’ve likely heard of V Bucks - the in-game currency that allows you to purchase new skins, emotes, and other items to customize your gaming experience. With the rise in popularity of Fortnite,

[CLICK HERE TO GET FREE NOW>> ](https://bnidigital.com/codes-v2)

Looking for free V Buck... | abdul_alim_d288797797683e | |

1,885,957 | Web Scraping Vs Web Crawling | Web Scraping or Web Crawling Search and gather Aka crawling and scraping refers to the... | 0 | 2024-06-12T17:19:48 | https://dev.to/pranavmuttathil/web-scraping-vs-web-crawling-2me3 | webscraping, webcrawling, javascript, python | ## Web Scraping or Web Crawling

**Search and gather** Aka crawling and scraping refers to the acquisition of important website data by the use of automated bots. Web scraping is pretty common to track and analyze data and compare to its former self, Examples may include the **_Market data, finance, E-Commerce and Reta... | pranavmuttathil |

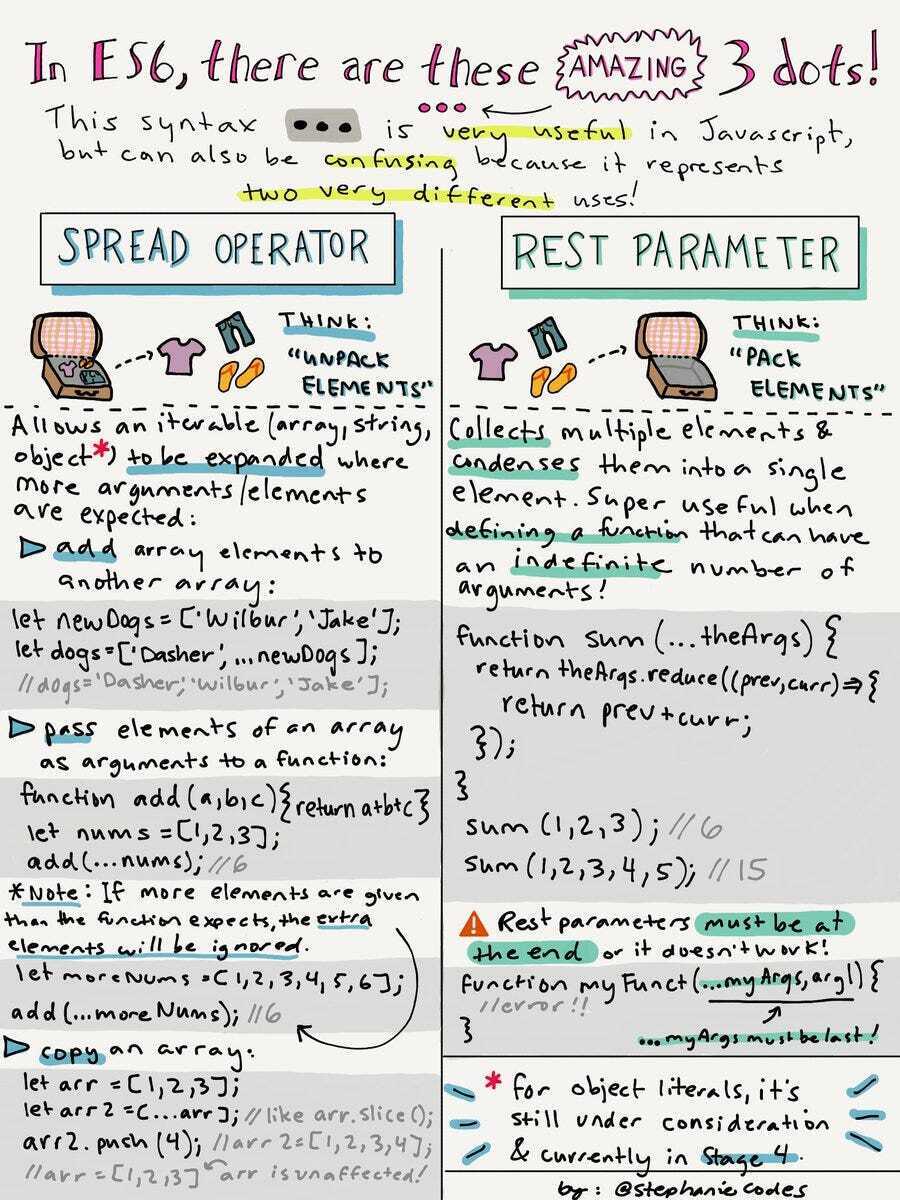

1,885,907 | SPREAD VS REST OPERATORS | Spread Operator Arrays Imagine you have two lists of friends and you want to combine... | 0 | 2024-06-12T15:45:41 | https://dev.to/__khojiakbar__/spread-vs-rest-operators-p78 | spread, rest, javascript |

#**Spread Operator**

**Arrays**

> Imagine you have two lists of friends and you want to combine them into one list.

```

const schoolFriends = ['Ali', 'Bilol', 'Umar'];

const workFriends = ['Ubayda', 'Hamza', 'Ab... | __khojiakbar__ |

1,885,956 | জিপিএফ ব্যালেন্স চেক করার উপায় | অনলাইন পদ্ধতি: ই-সেবা পোর্টাল ব্যবহার করে: https://www.bangladesh.gov.bd/gpf/ এ যান। "সার্ভিস" মেনু... | 0 | 2024-06-12T17:17:21 | https://dev.to/infoblog/jipieph-byaalens-cek-kraar-upaay-543o | gpf | অনলাইন পদ্ধতি:

ই-সেবা পোর্টাল ব্যবহার করে:

https://www.bangladesh.gov.bd/gpf/ এ যান।

"সার্ভিস" মেনু থেকে "পেনশন ও ফান্ড ম্যানেজমেন্ট" নির্বাচন করুন।

"জিপিএফ ব্যালেন্স ও স্টেটমেন্ট" ক্লিক করুন।

আপনার ভোটার আইডি এবং মোবাইল নম্বর প্রদান করুন।

OTP প্রদান করুন যা আপনার ফোনে পাঠানো হবে।

আপনার জিপিএফ ব্যালেন্স এবং স্টেটমেন্... | infoblog |

1,843,479 | Installing Docker On Windows | It was a little finicky for me to use Docker from the Windows Command Line. This tutorial will... | 0 | 2024-06-12T17:16:06 | https://dev.to/binat/installing-docker-on-windows-ma4 | docker, linux, microsoft, devops | It was a little finicky for me to use Docker from the Windows Command Line.

This tutorial will ensure you have no such problems.

There are three main steps:

1. Install the Windows Subystem (WSL) 2 Linux distribution

2. Download and Install Docker Desktop for Windows

3. Configure Docker Desktop to access Docker from... | binat |

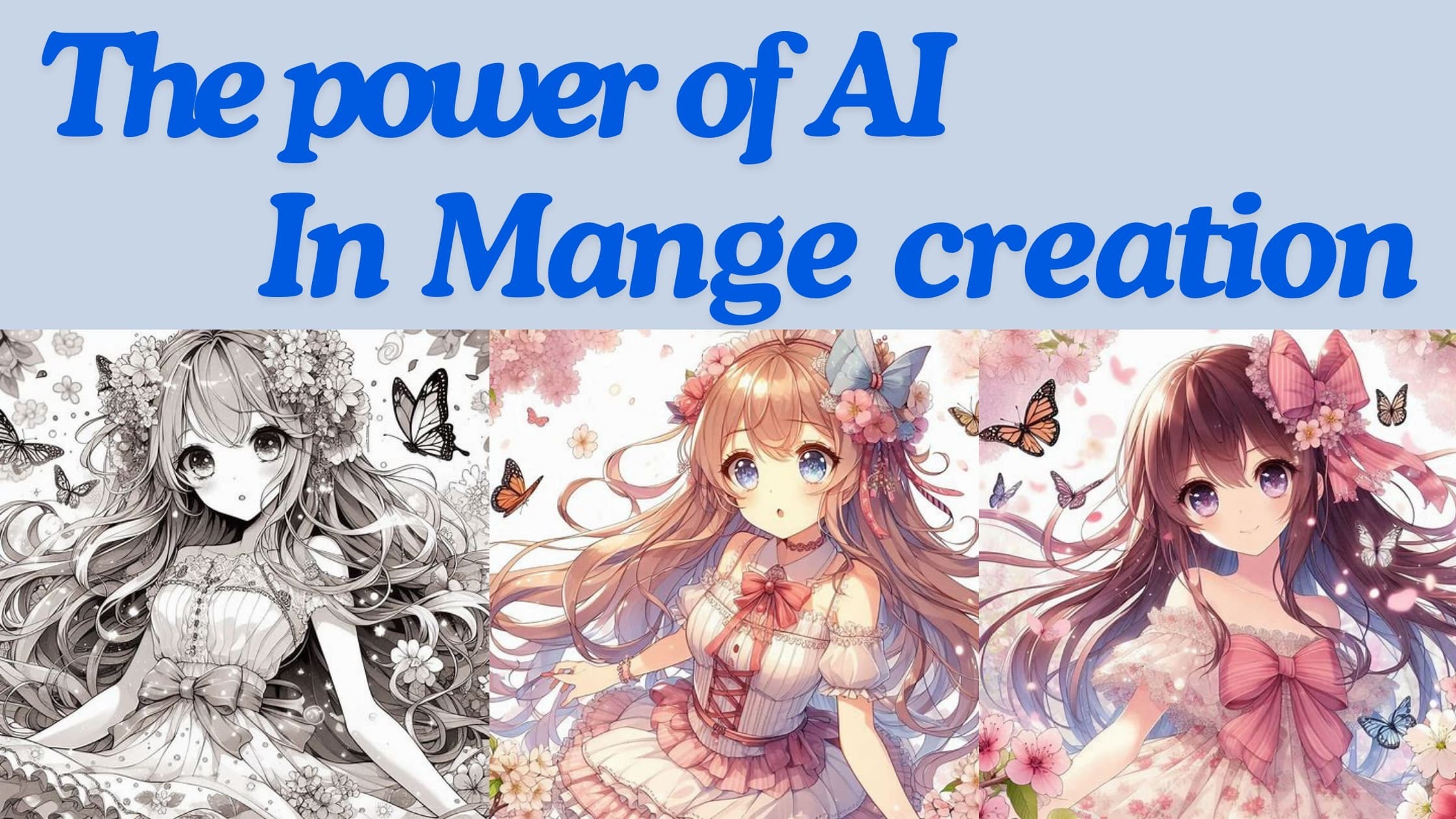

1,885,953 | AI Injects Creativity: How Artificial Intelligence is Transforming Manga Creation | This article explores the exciting ways Artificial Intelligence (AI) is assisting manga artists, from... | 0 | 2024-06-12T17:15:29 | https://dev.to/malconm/ai-injects-creativity-how-artificial-intelligence-is-transforming-manga-creation-3pl5 | ai, manga, art | This article explores the exciting ways Artificial Intelligence (AI) is assisting manga artists, from sparking inspiration to refining artwork.

## **AI-Powered Inspiration: A Spark for New Ideas**

... | malconm |

1,885,951 | How to Validate a SaaS Idea Quickly? | In the fast-paced world of software development, validating your SaaS idea before diving into... | 0 | 2024-06-12T17:10:29 | https://dev.to/ayoub_el_dcdf2ab179a6b0a8/how-to-vali-2lco | saas, pagebuilder, waitlist | In the fast-paced world of software development, validating your SaaS idea before diving into development is crucial. It saves time, resources, and ensures there is a market for your product. An effective methodology to achieve this is “Fake it Until You Make it.” This article explores how to implement this strategy us... | ayoub_el_dcdf2ab179a6b0a8 |

1,885,950 | How to vali | In the fast-paced world of software development, validating your SaaS idea before diving into... | 0 | 2024-06-12T17:10:29 | https://dev.to/ayoub_el_dcdf2ab179a6b0a8/how-to-vali-22k | saas, pagebuilder, waitlist | In the fast-paced world of software development, validating your SaaS idea before diving into development is crucial. It saves time, resources, and ensures there is a market for your product. An effective methodology to achieve this is “Fake it Until You Make it.” This article explores how to implement this strategy us... | ayoub_el_dcdf2ab179a6b0a8 |

1,885,902 | This post is a test post | This is a test | 0 | 2024-06-12T15:37:17 | https://dev.to/antoinefamibelle/this-post-is-a-test-post-1i48 | This is a test | antoinefamibelle | |

1,885,949 | This should be Drupal Starshot's Destination | This article originally appeared on Symfony Station. I won't beat a dead rocket launcher. Many... | 0 | 2024-06-12T17:02:23 | https://symfonystation.mobileatom.net/Drupal-Starshot | drupal, starshot, php | This article [originally appeared on Symfony Station](https://symfonystation.mobileatom.net/Drupal-Starshot).

I won't beat a dead rocket launcher. Many people have written good reviews about the Starshot announcement. If you read our communiqués you have seen plenty of them in recent weeks and there will be more to co... | reubenwalker64 |

1,885,948 | How Does SAP Migration Work? | Digital technology has completely transformed the way in which modern businesses operate. It can... | 0 | 2024-06-12T16:57:24 | https://dev.to/templatewallet/how-does-sap-migration-work-3784 | Digital technology has completely transformed the way in which modern businesses operate. It can offer a huge range of new possibilities and opportunities, with more effective business management and customer relationship capabilities.

However, digital technology can also be complex and difficult to understand. Data m... | templatewallet | |

1,885,947 | 1. Introducción a la Observabilidad | Ante los nuevos desafíos que enfrentamos en el desarrollo de software, la observabilidad surge como... | 0 | 2024-06-12T16:57:06 | https://dev.to/ray_floresnolasco_b50a4e/1-introduccion-a-la-observabilidad-4fah | Ante los nuevos desafíos que enfrentamos en el desarrollo de software, la observabilidad surge como una herramienta para ayudarnos a comprender mejor la complejidad de los sistemas, los cuales van creciendo constantemente, brindándonos una visión más clara del estado y funcionamiento de nuestras aplicaciones.

------

#... | ray_floresnolasco_b50a4e | |

1,885,946 | Professional photographer in Manchester | Jonathan Cohen Photography | Jonathan Cohen Photography, based in Manchester, specializes in capturing high-quality, imaginative,... | 0 | 2024-06-12T16:56:13 | https://dev.to/jonathancohenphotography1/professional-photographer-in-manchester-jonathan-cohen-photography-2gjd | Jonathan Cohen Photography, based in Manchester, specializes in capturing high-quality, imaginative, and creative photographs. The website showcases various services as [professional photographer manchester](https://jonathancohenphotography.co.uk), including wedding, corporate event, festival, and portrait photography.... | jonathancohenphotography1 | |

1,879,646 | TypeScript strictly typed - Part 2: full coverage typing | In the previous part of this posts series, we discussed about how and when to configure a TypeScript... | 27,444 | 2024-06-12T16:53:10 | https://dev.to/cyrilletuzi/typescript-strictly-typed-part-2-full-coverage-typing-4cg1 | typescript, javascript, productivity, webdev | In the [previous part](https://dev.to/cyrilletuzi/typescript-strictly-typed-part-1-configuring-a-project-9ca) of this [posts series](https://dev.to/cyrilletuzi/typescript-strictly-typed-5fln), we discussed about how and when to configure a TypeScript project. Now we will explain and solve the first problem of TypeScrip... | cyrilletuzi |

1,865,816 | TypeScript strictly typed - Part 1: configuring a project | After the introduction of this posts series, we are going to the topic's technical core. First, we... | 27,444 | 2024-06-12T16:50:22 | https://dev.to/cyrilletuzi/typescript-strictly-typed-part-1-configuring-a-project-9ca | typescript, javascript, productivity, webdev | After the [introduction](https://dev.to/cyrilletuzi/typescript-strictly-typed-intro-reliability-and-productivity-32aj) of this [posts series](https://dev.to/cyrilletuzi/typescript-strictly-typed-5fln), we are going to the topic's technical core. First, we will talk about configuration, and why **it is very important to... | cyrilletuzi |

1,865,810 | TypeScript strictly typed - Intro: reliability and productivity | Before going to the technical core of this posts series, we said in the summary that by default... | 27,444 | 2024-06-12T16:48:13 | https://dev.to/cyrilletuzi/typescript-strictly-typed-intro-reliability-and-productivity-32aj | typescript, javascript, productivity, webdev | Before going to the technical core of this posts series, we said in the [summary](https://dev.to/cyrilletuzi/typescript-strictly-typed-5fln) that by default TypeScript:

- **only partially enforces typing**

- **does not handle nullability**

- **retains some JavaScript's dynamic typing**

We will explain these 3 problems... | cyrilletuzi |

1,844,050 | TypeScript strictly typed | The problem Over the last decade, as a JavaScript expert, I helped companies of all kinds... | 27,444 | 2024-06-12T16:45:52 | https://dev.to/cyrilletuzi/typescript-strictly-typed-5fln | typescript, javascript, productivity, webdev |

## The problem

Over the last decade, as a JavaScript expert, I helped companies of all kinds and sizes. Over time, I detected a series of some recurring major problems in nearly all projects.

One of them is **a lack of typing, resulting in a lack of reliability and an exponential decline in productivity**.

Yet, all... | cyrilletuzi |

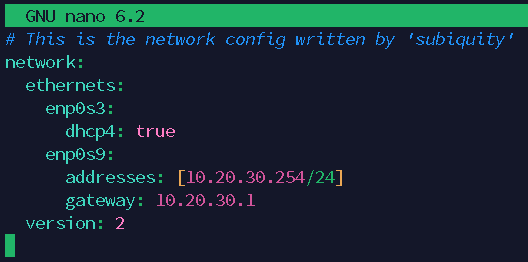

1,885,942 | VM cannot access internet via NAT VirtualBox | My Problem My Ubuntu Virtual Machine can`t access internet using NAT interface ... | 0 | 2024-06-12T16:44:30 | https://dev.to/bukanspot/vm-cannot-access-internet-via-nat-virtualbox-27f4 | virtualbox | ## My Problem

My Ubuntu Virtual Machine can`t access internet using NAT interface

## My Solution

check IP address configuration

```bash

sudo nano /etc/netplan/00-installer-config.yaml

```

remove gateway and save

Blockchain technology has revolutionized various industries by offering decentralized and secure solutions. For developers, choosing the right blockchain platform is critical as it can significantly impact the effi... | eliza_smith_ | |

1,885,941 | 125. Valid Palindrome | Topic: Arrays & Hashing Soln 1 (isalnum): A not so efficient solution: create a new list to... | 0 | 2024-06-12T16:43:54 | https://dev.to/whereislijah/125-valid-palindrome-4lc6 | Topic: Arrays & Hashing

Soln 1 (isalnum):

A not so efficient solution:

1. create a new list to store the palindrome

2. loop through the characters in the string:

- check if it is alphanumeric, if it is then append to list

3. assign a variable, then convert palindrome list to string

4. assign another variable, then r... | whereislijah | |

1,885,928 | Trying Ratatui TUI: Rust Text-based User Interface Apps | Trying Ratatui TUI 🧑🏽🍳 building a text-based UI number game in the Terminal 🖥️ in Rust with Ratatui immediate mode rendering. | 0 | 2024-06-12T16:42:23 | https://rodneylab.com/trying-ratatui-tui/ | rust, gamedev, tui | ---

title: "Trying Ratatui TUI: Rust Text-based User Interface Apps"

published: "true"

description: "Trying Ratatui TUI 🧑🏽🍳 building a text-based UI number game in the Terminal 🖥️ in Rust with Ratatui immediate mode rendering."

tags: "rust, gamedev, tui"

canonical_url: "https://rodneylab.com/trying-ratatui-tui/"

c... | askrodney |

1,885,865 | Using Arktype in Place of Zod - How to Adapt Parsers | Ever since I started using Zod, a TypeScript-first schema declaration and validation library, I've... | 27,704 | 2024-06-12T16:37:14 | https://dev.to/seasonedcc/using-arktype-in-place-of-zod-how-to-adapt-parsers-3bd5 | typescript, zod, arktype, migration | Ever since I started using [Zod](https://github.com/colinhacks/zod/), a TypeScript-first schema declaration and validation library, I've been a big fan and started using it in all my projects. Zod allows you to ensure the safety of your data at runtime, extending TypeScript’s type-checking capabilities beyond compile-t... | gugaguichard |

1,885,929 | Day 16 of my progress as a vue dev | About today Today took my time to think about the next project I wanna take and continue with. Did my... | 0 | 2024-06-12T16:35:41 | https://dev.to/zain725342/day-16-of-my-progress-as-a-vue-dev-46af | webdev, vue, typescript, tailwindcss | **About today**

Today took my time to think about the next project I wanna take and continue with. Did my research and because I didn't wanna dive right into a project without considering a few factors. I don't want to take on a project that doesn't add on to me learning and I also don't wanna dive into something that ... | zain725342 |

1,876,989 | Join us for the Twilio Challenge: $5,000 in Prizes! | We are so delighted to partner with Twilio for a new DEV challenge. Running through June 23, the... | 0 | 2024-06-12T16:32:19 | https://dev.to/devteam/join-us-for-the-twilio-challenge-5000-in-prizes-4fdi | devchallenge, twiliochallenge, ai, twilio | ---

title: Join us for the Twilio Challenge: $5,000 in Prizes!

published: true

description:

tags: devchallenge, twiliochallenge, ai, twilio

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/tvm2d9d8gdp37qxv0lu9.png

# Use a ratio of 100:42 for best results.

# published_at: 2024-06-04 18:10 +0000

---... | thepracticaldev |

1,885,927 | Asserting Integrity in Ethereum Data Extraction with Go through tests | Introduction In this tutorial, we'll walk through how to use tests to ensure the integrity of... | 0 | 2024-06-12T16:30:28 | https://dev.to/burgossrodrigo/asserting-integrity-in-ethereum-data-extraction-with-go-through-tests-i8m | go, testing, ethereum | **Introduction**

In this tutorial, we'll walk through how to use tests to ensure the integrity of Ethereum data extraction in a Go application. We'll be using the Go-Ethereum client to retrieve block and transaction data and using the testify package for our tests.

**Prerequisites**

1. Basic understanding of Go prog... | burgossrodrigo |

1,886,197 | Curso De Inteligência Artificial Gratuito Com Certificado Conquer | Descubra o potencial da Inteligência Artificial para aprimorar sua carreira com o curso online e... | 0 | 2024-06-23T13:50:34 | https://guiadeti.com.br/curso-inteligencia-artificial-gratuito-certificado/ | cursogratuito, automacao, cursosgratuitos, inteligenciaartifici | ---

title: Curso De Inteligência Artificial Gratuito Com Certificado Conquer

published: true

date: 2024-06-12 16:30:00 UTC

tags: CursoGratuito,automacao,cursosgratuitos,inteligenciaartifici

canonical_url: https://guiadeti.com.br/curso-inteligencia-artificial-gratuito-certificado/

---

Descubra o potencial da Inteligênc... | guiadeti |

1,885,926 | Boost Productivity with Intuitive HTML Editor Software for LMS | Introduction An intuitive user interface (UI) in HTML editor software is crucial for enhancing... | 0 | 2024-06-12T16:28:30 | https://froala.com/blog/editor/boost-productivity-with-intuitive-html-editor-software-for-lms/ | froala, javascript, html, webdev | **Introduction**

An intuitive user interface (UI) in [HTML editor software](https://froala.com/) is crucial for enhancing developer productivity.

A well-designed UI reduces the learning curve and allows developers to focus on writing code rather than figuring out how to use the tool.

In this article, we’ll explore t... | ideradevtools |

1,885,925 | Saavn Music Player for Windows | Welcome to the Saavn Music Player for Windows, an open-source desktop application that allows you to... | 0 | 2024-06-12T16:25:46 | https://dev.to/priyanshuverma/saavn-music-player-for-windows-420k | flutter, music, programming, opensource | Welcome to the Saavn Music Player for Windows, an open-source desktop application that allows you to enjoy your favorite music seamlessly. This music player is powered by the Saavn API and built with Flutter, providing a robust and modern user experience.

## Features

- **Seamless Music Playback**: Enjoy high-quality ... | priyanshuverma |

1,885,924 | Thought 😇 | **You always see the world, the way you are, not the way world is. 🤗✨... | 0 | 2024-06-12T16:22:52 | https://dev.to/krupa_90ca3564d1/thought-1dj5 | motivation | **You always see the world, the way you are, not the way world is.

🤗✨... | krupa_90ca3564d1 |

1,885,922 | Automating Telegram Bot Deployment with GitHub Actions and Docker | Introduction In previous articles, we explored the benefits of Docker, as well as walked... | 0 | 2024-06-12T16:16:24 | https://tjtanjin.medium.com/automating-telegram-bot-deployment-with-github-actions-and-docker-482abcd2533e | docker, githubactions, telegrambot, telegram | ## Introduction

In previous articles, we explored the [benefits of Docker](https://dev.to/tjtanjin/from-screen-to-docker-a-look-at-two-hosting-options-42eh), as well as walked through examples of [how we may dockerize our project](https://dev.to/tjtanjin/how-to-dockerize-a-telegram-bot-a-step-by-step-guide-37ol) and [... | tjtanjin |