id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,874,938 | Top 10 Construction Companies in UAE | *Introduction * The United Arab Emirates (UAE) is well-known for its impressive skyline and... | 0 | 2024-06-03T04:48:47 | https://dev.to/aiwa_ae_584cf1fdf9f50cb48/top-10-construction-companies-in-uae-5372 |

**Introduction **

The United Arab Emirates (UAE) is well-known for its impressive skyline and ground-breaking architectural achievements. This reputation is maintained by the efforts of some of the region's best construction businesses.

Here, we showcase the UAE's top ten construction businesses, each of which contributes to the country's dynamic infrastructure scene.

**1. Nael Gen Contracting Group**

[Nael Gen Contracting Group](https://aiwa.ae/company/naelgencontracting-group) is a key participant in the UAE construction business, noted for its dedication to quality and on-time project completion. The firm focuses on civil engineering and construction projects, with a portfolio that includes residential, commercial, and industrial developments.

**2. Fujairah National Group**

[Fujairah National Group](https://aiwa.ae/company/Fujairah-National-Group) stands out for its wide variety of construction services and significant presence in the Eastern Region. This business excels at producing high-quality construction projects, ranging from residential structures to large-scale infrastructure works, exhibiting flexibility and skill.

**3. Abraj Building Contracting Co. LLC**

[Abraj Building Contracting Co. LLC](https://aiwa.ae/company/Abraj-Building-Contracting-Co-LLC) is well-known for its exceptional work on residential, commercial, and industrial projects. The firm is known for its creative construction processes and sustainable building procedures, which ensure high standards in all projects.

**4. Smart Cities Building Contracting LLC**

[Smartcities Building Contracting LLC](https://aiwa.ae/company/Smartcities-Building-Contracting-LLC) specializes in the design and construction of smart, sustainable buildings using cutting-edge technology and environmental design. The company's forward-thinking strategy has established it as a market leader in contemporary building solutions in the UAE.

**5. Mabani Steel**

[Mabani Steel](https://aiwa.ae/company/Smartcities-Building-Contracting-LLC) is a major participant in the steel construction business, offering both pre-engineered steel buildings and structural steel services. Their projects cover several sectors, including industrial, commercial, and residential, demonstrating their adaptability and engineering expertise.

List your business on [Aiwa.ae](https://aiwa.ae/freelisting) to enhance your growth in Dubai, UAE, and connect with a wider audience.

**6. AB Building Contracting Co. LLC**

[AB Building Contracting Co. LLC](https://aiwa.ae/company/ab-building-contracting-co-llc) is well-known for providing full construction services, ranging from early planning and design to project execution and delivery. The firm prioritizes superior workmanship and client happiness, guaranteeing that all projects exceed stringent requirements.

**7. Aroma International Building Contracting, LLC**

[Aroma International Building Contracting LLC](https://aiwa.ae/company/aromainternationalbuildingcontracting-l-l-c) focuses on high-end residential and commercial projects. The firm is characterized by its attention to detail, luxury finishing, and devotion to exceeding customer expectations, making it a top choice for high-end developments.

**8. 3M Gulf Limited**

[3M Gulf Limited](https://aiwa.ae/company/3-M-Gulf-Limited) provides unique construction solutions and is well-known for incorporating cutting-edge technology and materials into its projects. The company's competence in a wide range of fields, from residential structures to huge infrastructure projects, guarantees that they consistently offer high-quality products.

**9. Nadia Steel Construction Contracting, L.L.C.**

[Nadia Steel Construction Contracting L.L.C.](https://aiwa.ae/company/nadia-steel-construction-contracting-llc) excels at providing durable steel construction solutions for a variety of industries. Their dedication to quality and safety, along with their significant expertise, make them a dependable partner for complicated steel construction projects.

**10. Al Aber Contracting Establishment**

[Al Aber Contracting Establishment](https://aiwa.ae/company/Al-Aber-Contracting-Establishment) has a long history of delivering great building projects in the UAE. The company's portfolio covers a diverse variety of projects, from residential developments to large-scale commercial endeavors, all distinguished by high quality and attention to detail.

**Conclusion**

The UAE construction sector is led by enterprises that prioritize innovation, quality, and sustainability. These top ten [construction companies in uae](https://aiwa.ae/category/construction-companies) represent the finest in the business, contributing to the country's progress and improving its architectural legacy. Whether it's a towering skyscraper or a large industrial complex, these enterprises help the UAE remain a worldwide leader in building and infrastructure development.

Explore more business categories at [Aiwa.ae.](https://aiwa.ae/) | aiwa_ae_584cf1fdf9f50cb48 | |

1,874,937 | Where to Find the Best Deals on Solar Garden Lights | looking for the Finest Offers on Solar Yard Illuminations Here is Where towards Discover All of... | 0 | 2024-06-03T04:47:43 | https://dev.to/alex_damianisi_f1cfe95e60/where-to-find-the-best-deals-on-solar-garden-lights-42ok | solar, garden | looking for the Finest Offers on Solar Yard Illuminations Here is Where towards Discover All of them

Have actually you ever before thought about setting up yard that is solar in your outside area? Solar yard illuminations are actually ingenious as well as environmentally friendly outside illumination choices that utilize solar power towards work. They are actually risk free, user-friendly, as well as include a variety of benefits. we will check out a few of the very best locations towards discover offers on solar yard illuminations, their benefits, as well as ways to utilize all of them

Benefits of Solar Garden Lights Illuminations

The complying with are actually a few of the considerable benefits of utilization yard that is solar:

1. Cost-Effective: Solar yard illuminations economical towards set up as well as run. They operate on solar power, which is actually cost-free as well as easily offered

2. Eco-Friendly: Solar yard illuminations utilize cleanse power, which decreases your carbon dioxide impact as well as assists safeguard the atmosphere

3. Risk-free: Solar yard illuminations don't need cable links as well as deal with reduced voltage. For that good reason, they are actually risk-free towards utilize, particularly if you have actually kids or even animals in your house

4. Reduced Upkeep: When set up, solar yard illuminations need very upkeep that is little. They have actually no components that are relocating which additional decreases the possibilities of damaging down

5. Simple towards Set up: Solar yard illuminations are actually simple towards set up as well as don't need any type of electrical circuitry or proficiency that is even technological

Development as well as Security

Solar Fence Lights illuminations are actually an ingenious as well as risk-free method towards illuminate your outside area. They utilize photo voltaic panels towards transform sunshine right in to power that is electrical which powers the illuminations. Consequently, you do not need to stress over the sets you back of electrical power. Furthermore, you will certainly certainly not need to manage any type of electric circuitry that is electrical creating it a much safer

choice particularly for kids as well as animals

Ways to Utilize Solar Yard Illuminations

Utilizing yard this is certainly solar is clearly simple:

1. Select a Warm Place: Select a accepted place that gets sunshine for at the least 6-8 hrs an occasion. This can certainly guarantee that the illuminations obtain sufficient power towards energy these with the evening

2. Set up the Solar Boards: Location the photo voltaic panels in a spot where they may be able easily get sunshine this is certainly optimum. Very most yard that is solar include integrated photo voltaic panels

3. Transform All of those On turn on the yard this is certainly solar in addition to take pleasure in the smooth radiance they feature your outside area

High premium this is certainly top well as Solution

When appearing when it comes to absolute best offers on Solar Post Lights illuminations, constantly think of high premium this is certainly top well as solution. You want to acquisition illuminations which can be actually resilient in addition to can very quickly endure weather this is certainly severe. Guarantee which you buy from a dependable provider which provides customer care this is certainly outstanding. It is possible to inspect the world-wide-web evaluations on and sometimes even request recommendations towards discover a dependable provider

Requests of Solar Yard Illuminations

Solar yard illuminations could possibly be utilized for different illumination this is certainly outside, consisting of:

1. Path Illumination: utilize yard this is certainly solar towards illuminate paths in addition to pathways in your yard

2. Yard Illumination: Solar yard illuminations could possibly be utilized towards emphasize different yard locations like blossom mattress, bushes, in addition to trees

3. Accent Illumination: utilize yard this is certainly solar towards highlight various functions in your outside area, like sculptures, water fountains, as well as private pools

4. Safety and safety Illumination: Solar yard illuminations could possibly be utilized towards improve safety and safety through illuminating dark areas regarding your house

Source: https://www.beslonsolarlight.com/solar-post-lights | alex_damianisi_f1cfe95e60 |

1,873,850 | Understanding the Factory Design Pattern with Node.js | Design patterns are a crucial part of software engineering, helping to create robust, scalable, and... | 0 | 2024-06-03T04:44:33 | https://dev.to/heisdinesh/understanding-the-factory-design-pattern-with-nodejs-1ihm | designpatterns, factorydesignpattern, factorymethod, node | Design patterns are a crucial part of software engineering, helping to create robust, scalable, and maintainable code. There are various design patterns, the Factory Design Pattern is particularly useful for managing object creation.

Factory design pattern helps create objects without having to specify the exact class of the object that will be created. This is particularly useful in scenarios where the exact type of object may not be known until runtime.

## Use cases of Factory Design Pattern

Whenever we create objects that have similar behavior or are closely related, we can use a factory design pattern.

- In Swiggy, when we are creating orders, orders can be of type dine-in, delivery, or take-out. We have to decide at runtime what type of order should be created.

- In Ola, when we are booking a ride, rides can be of type solo rides, share rides, or luxury rides. We have to decide at runtime what type of ride should be created.

In both examples, the creation process is encapsulated using the factory design pattern.

## Understanding with an example

Let's take an example of a notification system, there are different types of notifications including Email, SMS, and Push notifications. The type of notification required will be decided during runtime.

In Factory Pattern, the code will be split into 4 major parts.

- Interface (Notification interface)

- Concrete Classes (EmailNotification, SMSNotification, PushNotification)

- Factory (Notification Factory)

- Client

The interface acts as a blueprint for concrete classes. Each Concrete class extends the interface and provides its implementation of methods declared in the interface. Factory determines which concrete class to instantiate based on input parameters. The client utilizes the Factory to create instances of objects and Interacts with the created objects to perform actions.

## Code

**Notification Interface**

First, we'll define a common interface for all types of notifications. In Nodejs, we'll use a base class with an abstract method to achieve this.

```

class Notification {

send(message) {

throw new Error('Method "send()" must be implemented.');

}

}

module.exports = Notification;

```

**Concrete Classes**

Now, we'll create concrete classes for Email, SMS, and Push notifications that extend the base Notification class.

```

const Notification = require('./Notification');

class EmailNotification extends Notification {

send(message) {

console.log(`Sending email with message: ${message}`);

// Email sending logic here

}

}

class SMSNotification extends Notification {

send(message) {

console.log(`Sending SMS with message: ${message}`);

// SMS sending logic here

}

}

class PushNotification extends Notification {

send(message) {

console.log(`Sending push notification with message: ${message}`);

// Push notification sending logic here

}

}

module.exports = { EmailNotification, SMSNotification, PushNotification };

```

**Notification Factory**

Now, we'll create a factory class that will return the appropriate notification object based on the input.

```

const { EmailNotification, SMSNotification, PushNotification } = require('./notifications/Notifications');

class NotificationFactory {

static createNotification(type) {

switch (type) {

case 'email':

return new EmailNotification();

case 'sms':

return new SMSNotification();

case 'push':

return new PushNotification();

default:

throw new Error('Unknown notification type');

}

}

}

module.exports = NotificationFactory;

```

**Client**

Finally, we'll use the factory to create notification objects in our client code.

```

const NotificationFactory = require('./notifications/NotificationFactory');

const notificationType = process.argv[2]; // 'email', 'sms', or 'push'

const message = 'Hello, this is a test message!';

const notification = NotificationFactory.createNotification(notificationType);

notification.send(message);

```

## What benefit did we get by following this factory design pattern ?

- Encapsulation: It encapsulates the instantiation logic, making the code cleaner and more modular.

- Decoupling: It decouples the client code from the concrete classes, promoting flexibility and maintainability.

- Single Responsibility Principle: It adheres to the Single Responsibility Principle by delegating the creation logic to the factory class.

- Open/Closed Principle: It adheres to the Open/Closed Principle, allowing new types to be added without modifying the existing client code.

## Conclusions

The Factory Design Pattern is one of the best ways to manage object creation in a clean and decoupled manner. By incorporating design patterns like the Factory Design Pattern into development practices, we can write more robust, scalable, and maintainable code.

If you found this article helpful, connect with me on [LinkedIn ](https://www.linkedin.com/in/heisdinesh)and follow my posts on [DEV.to](https://dev.to/heisdinesh). Share your thoughts and questions in the comments below!

| heisdinesh |

1,874,936 | Building a Feature Proposal and Voting System with React, NodeJS, and DynamoDB | Building a Feature Proposal and Voting System with React, NodeJS, and DynamoDB | 0 | 2024-06-03T04:44:06 | https://radzion.com/blog/features | react, node, dynamodb, webdev | {% embed https://youtu.be/PXad8WzI0L0 %}

🐙 [GitHub](https://github.com/radzionc/radzionkit) | 🎮 [Demo](https://increaser.org)

In this article, we'll create a lightweight solution that enables users to propose new features for our web application and vote on them. We will utilize React for the front-end and construct a simple NodeJS API for the back-end, integrating DynamoDB as our database. Although the source code for this project is hosted in a private repository, all reusable components and utilities are accessible in the [RadzionKit](https://github.com/radzionc/radzionkit) repository.

### Introduction to Feature Proposal and Voting System

At [Increaser](https://increaser.org), our community page is the central hub for all social interactions within the application. Currently in its early stages, the page features a panel for users to edit their profiles, a leaderboard, the founder's contact information, and a widget for proposed features, which we'll explore further in this article. We have adopted a minimalist design for the features widget, using a single list with a toggle to switch between proposed ideas and those that have already been implemented. Although an alternative layout could include a "TO DO," "IN PROGRESS," and "DONE" board, our workflow typically involves focusing on one feature at a time, making the "IN PROGRESS" column redundant. Additionally, we aim to keep users focused on voting for new ideas rather than being distracted by completed features.

We use a dedicated DynamoDB table to store proposed features for [Increaser](https://increaser.org). Each item in this table includes several attributes:

- `id`: A unique identifier for the feature.

- `name`: The name of the feature.

- `description`: A brief description of the feature.

- `createdAt`: The timestamp marking when the feature was proposed.

- `proposedBy`: The ID of the user who proposed the feature.

- `upvotedBy`: An array of user IDs who have upvoted the feature.

- `isApproved`: A boolean indicating whether the feature has been approved by the founder.

- `status`: The current status of the feature, with possible values including "idea" or "done". If you prefer to display the features on a board, you might consider adding a status such as "in progress".

```tsx

export const productFeatureStatuses = ["idea", "done"] as const

export type ProductFeatureStatus = (typeof productFeatureStatuses)[number]

export type ProductFeature = {

id: string

name: string

description: string

createdAt: number

proposedBy: string

upvotedBy: string[]

isApproved: boolean

status: ProductFeatureStatus

}

```

### API Design and Feature Management Workflow

Our API includes just three endpoints dedicated to managing features. If you're interested in learning how to efficiently build backends within TypeScript monorepos, be sure to explore [this insightful article](https://radzion.com/blog/api).

```tsx

import { ApiMethod } from "./ApiMethod"

import { ProductFeature } from "@increaser/entities/ProductFeature"

import { ProductFeatureResponse } from "./ProductFeatureResponse"

export interface ApiInterface {

proposeFeature: ApiMethod<

Omit<ProductFeature, "isApproved" | "status" | "proposedBy" | "upvotedBy">,

undefined

>

voteForFeature: ApiMethod<{ id: string }, undefined>

features: ApiMethod<undefined, ProductFeatureResponse[]>

// other methods...

}

export type ApiMethodName = keyof ApiInterface

```

The `proposeFeature` method is crucial to our feature proposal process. It identifies the user's ID from a JWT token included in the request, which is used for user authentication. To stay informed about new proposals, I've set up a Telegram channel where the API sends notifications detailing the proposed features. Upon receiving a message on this channel, I access the DynamoDB explorer on AWS to verify the feature's validity and refine the name and description for easier comprehension by other users. Although we could monitor new features with a separate Lambda function that listens to the DynamoDB stream, the current setup of direct notifications from the API is effective, especially as this is the only method for proposing features.

```tsx

import { getUser } from "@increaser/db/user"

import { assertUserId } from "../../auth/assertUserId"

import { getEnvVar } from "../../getEnvVar"

import { getTelegramBot } from "../../notifications/telegram"

import { ApiResolver } from "../../resolvers/ApiResolver"

import { putFeature } from "@increaser/db/features"

import { getProductFeautureDefaultFields } from "@increaser/entities/ProductFeature"

export const proposeFeature: ApiResolver<"proposeFeature"> = async ({

input: feature,

context,

}) => {

const proposedBy = assertUserId(context)

const { email } = await getUser(proposedBy, ["email"])

await getTelegramBot().sendMessage(

getEnvVar("TELEGRAM_CHAT_ID"),

[

"New feature proposal",

feature.name,

feature.description,

`Proposed by ${email}`,

feature.id,

].join("\n\n")

)

await putFeature({

...feature,

...getProductFeautureDefaultFields({ proposedBy }),

})

}

```

Before adding a new feature to the DynamoDB table, we initialize default fields. The `isApproved` field is set to `false`, indicating that the feature has not yet been reviewed. The `status` is set to `idea`. The `proposedBy` field captures the user ID of the proposer. Additionally, the `upvotedBy` field starts with an array containing the proposer’s ID, ensuring that each new feature begins with one upvote.

```tsx

export const getProductFeautureDefaultFields = ({

proposedBy,

}: Pick<ProductFeature, "proposedBy">): Pick<

ProductFeature,

"isApproved" | "status" | "proposedBy" | "upvotedBy"

> => ({

isApproved: false,

status: "idea",

proposedBy,

upvotedBy: [proposedBy],

})

```

We organize all functions for interacting with the "features" table into a single file. Utilizing helpers from [RadzionKit](https://github.com/radzionc/radzionkit), such as `makeGetItem`, `updateItem`, and `totalScan`, makes it easy to add new tables to our application.

```tsx

import { PutCommand } from "@aws-sdk/lib-dynamodb"

import { ProductFeature } from "@increaser/entities/ProductFeature"

import { tableName } from "./tableName"

import { dbDocClient } from "@lib/dynamodb/client"

import { totalScan } from "@lib/dynamodb/totalScan"

import { getPickParams } from "@lib/dynamodb/getPickParams"

import { makeGetItem } from "@lib/dynamodb/makeGetItem"

import { updateItem } from "@lib/dynamodb/updateItem"

export const putFeature = (value: ProductFeature) => {

const command = new PutCommand({

TableName: tableName.features,

Item: value,

})

return dbDocClient.send(command)

}

export const getFeature = makeGetItem<string, ProductFeature>({

tableName: tableName.features,

getKey: (id: string) => ({ id }),

})

export const updateFeature = async (

id: string,

fields: Partial<ProductFeature>

) => {

return updateItem({

tableName: tableName.features,

key: { id },

fields,

})

}

export const getAllFeatures = async <T extends (keyof ProductFeature)[]>(

attributes?: T

) =>

totalScan<Pick<ProductFeature, T[number]>>({

TableName: tableName.features,

...getPickParams(attributes),

})

```

The `voteForFeature` method toggles the user's vote for a feature. If the user has already upvoted the feature, the method removes their vote; otherwise, it adds it. This approach ensures that users can only vote once for each feature.

```tsx

import { without } from "@lib/utils/array/without"

import { assertUserId } from "../../auth/assertUserId"

import { ApiResolver } from "../../resolvers/ApiResolver"

import { getFeature, updateFeature } from "@increaser/db/features"

export const voteForFeature: ApiResolver<"voteForFeature"> = async ({

input: { id },

context,

}) => {

const userId = assertUserId(context)

const { upvotedBy } = await getFeature(id, ["upvotedBy"])

await updateFeature(id, {

upvotedBy: upvotedBy.includes(userId)

? without(upvotedBy, userId)

: [...upvotedBy, userId],

})

}

```

The `features` method retrieves all features from the DynamoDB table but filters out unapproved features, ensuring that only the proposer can view their unapproved ideas. Additionally, this method calculates the number of upvotes for each feature and checks if the current user has upvoted the feature. Instead of returning the entire list of user IDs who have upvoted, it provides a more streamlined output.

```tsx

import { ApiResolver } from "../../resolvers/ApiResolver"

import { getAllFeatures } from "@increaser/db/features"

import { ProductFeatureResponse } from "@increaser/api-interface/ProductFeatureResponse"

import { pick } from "@lib/utils/record/pick"

export const features: ApiResolver<"features"> = async ({

context: { userId },

}) => {

const features = await getAllFeatures()

const result: ProductFeatureResponse[] = []

features.forEach((feature) => {

if (!feature.isApproved && feature.proposedBy !== userId) {

return

}

result.push({

...pick(feature, [

"id",

"name",

"description",

"isApproved",

"status",

"proposedBy",

"createdAt",

]),

upvotes: feature.upvotedBy.length,

upvotedByMe: Boolean(userId && feature.upvotedBy.includes(userId)),

})

})

return result

}

```

### Front-End Implementation: Building the Feature Voting Interface

With the server-side logic established, we can now turn our attention to the front-end implementation. The widget is displayed on the right side of the community page using the `ProductFeaturesBoard` component.

```tsx

import { Page } from "@lib/next-ui/Page"

import { FixedWidthContent } from "@increaser/app/components/reusable/fixed-width-content"

import { PageTitle } from "@increaser/app/ui/PageTitle"

import { VStack } from "@lib/ui/layout/Stack"

import { UserStateOnly } from "@increaser/app/user/state/UserStateOnly"

import { ClientOnly } from "@increaser/app/ui/ClientOnly"

import { ManageProfile } from "./ManageProfile"

import { Scoreboard } from "@increaser/ui/scoreboard/Scoreboard"

import { RequiresOnboarding } from "../../onboarding/RequiresOnboarding"

import { ProductFeaturesBoard } from "../../productFeatures/components/ProductFeaturesBoard"

import { FounderContacts } from "./FounderContacts"

import { UniformColumnGrid } from "@lib/ui/layout/UniformColumnGrid"

export const CommunityPage: Page = () => {

return (

<FixedWidthContent>

<ClientOnly>

<PageTitle documentTitle={`👋 Community`} title="Community" />

</ClientOnly>

<UserStateOnly>

<RequiresOnboarding>

<UniformColumnGrid minChildrenWidth={320} gap={40}>

<VStack style={{ width: "fit-content" }} gap={40}>

<ManageProfile />

<Scoreboard />

<FounderContacts />

</VStack>

<ProductFeaturesBoard />

</UniformColumnGrid>

</RequiresOnboarding>

</UserStateOnly>

</FixedWidthContent>

)

}

```

We render the content within a `Panel` component, which is set to have a minimum width of `320px` and occupies the remaining space in the parent container. The header displays the title "Product Features" and includes the `ProductFeaturesViewSelector` component, allowing users to toggle between the "idea" and "done" views. The `RenderProductFeaturesView` component is used to conditionally display a prompt for proposing new features, ensuring it is visible only when the "idea" view is selected. The `ProductFeatureList` component is then used to display the list of features.

```tsx

import { HStack, VStack } from "@lib/ui/layout/Stack"

import { Panel } from "@lib/ui/panel/Panel"

import { Text } from "@lib/ui/text"

import styled from "styled-components"

import {

ProductFeaturesViewProvider,

ProductFeaturesViewSelector,

RenderProductFeaturesView,

} from "./ProductFeaturesView"

import { ProposeFeaturePrompt } from "./ProposeFeaturePrompt"

import { ProductFeatureList } from "./ProductFeatureList"

const Container = styled(Panel)`

min-width: 320px;

flex: 1;

`

export const ProductFeaturesBoard = () => {

return (

<ProductFeaturesViewProvider>

<Container>

<VStack gap={20}>

<HStack

alignItems="center"

gap={20}

justifyContent="space-between"

wrap="wrap"

fullWidth

>

<Text size={18} weight="bold">

Product Features

</Text>

<ProductFeaturesViewSelector />

</HStack>

<RenderProductFeaturesView

idea={() => <ProposeFeaturePrompt />}

done={() => null}

/>

<VStack gap={8}>

<ProductFeatureList />

</VStack>

</VStack>

</Container>

</ProductFeaturesViewProvider>

)

}

```

It's a common scenario to need a filter or selector for switching between different views. To facilitate this, we utilize the `getViewSetup` utility from [RadzionKit](https://github.com/radzionc/radzionkit). This utility accepts a default view and a setup name, returning a provider, hook, and renderer that enable convenient conditional rendering based on the current view. For the selector component, we use the `TabNavigation` component from [RadzionKit](https://github.com/radzionc/radzionkit), which takes an array of views, a function to get the view name, the active view, and a callback to set the view.

```tsx

import { getViewSetup } from "@lib/ui/view/getViewSetup"

import { TabNavigation } from "@lib/ui/navigation/TabNavigation"

import {

ProductFeatureStatus,

productFeatureStatuses,

} from "@increaser/entities/ProductFeature"

export const {

ViewProvider: ProductFeaturesViewProvider,

useView: useProductFeaturesView,

RenderView: RenderProductFeaturesView,

} = getViewSetup<ProductFeatureStatus>({

defaultView: "idea",

name: "productFeatures",

})

const taskViewName: Record<ProductFeatureStatus, string> = {

idea: "Ideas",

done: "Done",

}

export const ProductFeaturesViewSelector = () => {

const { view, setView } = useProductFeaturesView()

return (

<TabNavigation

views={productFeatureStatuses}

getViewName={(view) => taskViewName[view]}

activeView={view}

onSelect={setView}

/>

)

}

```

### Enhancing User Interaction: Feature Proposal Components

The `ProposeFeaturePrompt` component displays a call-to-action using the `PanelPrompt` component. When activated, it reveals the `ProposeFeatureForm` component. Additionally, we employ the `Opener` component from [RadzionKit](https://github.com/radzionc/radzionkit), which acts as a wrapper around `useState` for conditional rendering. While I prefer using the `Opener` for its streamlined syntax, you might find using a simple `useState` hook more to your liking.

```tsx

import { Opener } from "@lib/ui/base/Opener"

import { ProposeFeatureForm } from "./ProposeFeatureForm"

import { PanelPrompt } from "@lib/ui/panel/PanelPrompt"

export const ProposeFeaturePrompt = () => {

return (

<Opener

renderOpener={({ onOpen, isOpen }) =>

!isOpen && (

<PanelPrompt onClick={onOpen} title="Make Increaser Yours">

Tell us what feature you want to see next

</PanelPrompt>

)

}

renderContent={({ onClose }) => <ProposeFeatureForm onFinish={onClose} />}

/>

)

}

```

In the `ProposeFeatureForm` component, users input a `name` and `description` for their feature idea. We keep validation simple, only ensuring that these fields are not empty, as I manually approve and edit each feature later. The form's `onSubmit` function checks if the submit button is disabled and, if not, it calls the `mutate` function from the `useProposeFeatureMutation` hook with the new feature details. Once the mutation is initiated, the `onFinish` callback is invoked to notify the parent component that the submission process is complete, prompting the `ProposeFeaturePrompt` to display the `PanelPrompt` again.

```tsx

import { Button } from "@lib/ui/buttons/Button"

import { Form } from "@lib/ui/form/components/Form"

import { UniformColumnGrid } from "@lib/ui/layout/UniformColumnGrid"

import { Panel } from "@lib/ui/panel/Panel"

import { FinishableComponentProps } from "@lib/ui/props"

import styled from "styled-components"

import { useProposeFeatureMutation } from "../hooks/useProposeFeatureMutation"

import { useState } from "react"

import { Fields } from "@lib/ui/inputs/Fields"

import { Field } from "@lib/ui/inputs/Field"

import { TextInput } from "@lib/ui/inputs/TextInput"

import { TextArea } from "@lib/ui/inputs/TextArea"

import { Validators } from "@lib/ui/form/utils/Validators"

import { validate } from "@lib/ui/form/utils/validate"

import { getId } from "@increaser/entities-utils/shared/getId"

const Container = styled(Panel)``

type FeatureFormShape = {

name: string

description: string

}

const featureFormValidator: Validators<FeatureFormShape> = {

name: (name) => {

if (!name) {

return "Name is required"

}

},

description: (description) => {

if (!description) {

return "Description is required"

}

},

}

export const ProposeFeatureForm = ({ onFinish }: FinishableComponentProps) => {

const { mutate } = useProposeFeatureMutation()

const [value, setValue] = useState<FeatureFormShape>({

name: "",

description: "",

})

const errors = validate(value, featureFormValidator)

const [isDisabled] = Object.values(errors)

return (

<Container kind="secondary">

<Form

onSubmit={() => {

if (isDisabled) return

mutate({

name: value.name,

description: value.description,

id: getId(),

createdAt: Date.now(),

})

onFinish()

}}

content={

<Fields>

<Field>

<TextInput

value={value.name}

onValueChange={(name) => setValue({ ...value, name })}

label="Title"

placeholder="Give your feature a clear name"

/>

</Field>

<Field>

<TextArea

rows={4}

value={value.description}

onValueChange={(description) =>

setValue({ ...value, description })

}

label="Description"

placeholder="Detail your feature for easy understanding"

/>

</Field>

</Fields>

}

actions={

<UniformColumnGrid gap={20}>

<Button size="l" type="button" kind="secondary" onClick={onFinish}>

Cancel

</Button>

<Button

isDisabled={isDisabled}

size="l"

type="submit"

kind="primary"

>

Submit

</Button>

</UniformColumnGrid>

}

/>

</Container>

)

}

```

### Dynamic Feature Listing and User Interaction Components

To display the list of features, we first retrieve the query result from the API using the `useApiQuery` hook, which requires the name of the method and the input parameters. The `QueryDependant` component from [RadzionKit](https://github.com/radzionc/radzionkit) is utilized to manage the query state effectively. During the loading state, we display a spinner; in the error state, an error message is shown; and in the success state, we render the list of features. The retrieved features are then divided into two arrays: `myUnapprovedFeatures`, which contains features proposed by the current user but not yet approved, and `otherFeatures`, which includes all other features sorted by the number of upvotes in descending order. Each feature is rendered using the `ProductFeatureItem` component.

```tsx

import { useApiQuery } from "@increaser/api-ui/hooks/useApiQuery"

import { QueryDependant } from "@lib/ui/query/components/QueryDependant"

import { getQueryDependantDefaultProps } from "@lib/ui/query/utils/getQueryDependantDefaultProps"

import { splitBy } from "@lib/utils/array/splitBy"

import { order } from "@lib/utils/array/order"

import { ProductFeatureItem } from "./ProductFeatureItem"

import { useProductFeaturesView } from "./ProductFeaturesView"

import { useAssertUserState } from "@increaser/ui/user/UserStateContext"

import { CurrentProductFeatureProvider } from "./CurrentProductFeatureProvider"

export const ProductFeatureList = () => {

const featuresQuery = useApiQuery("features", undefined)

const { view } = useProductFeaturesView()

const { id } = useAssertUserState()

return (

<QueryDependant

query={featuresQuery}

{...getQueryDependantDefaultProps("features")}

success={(features) => {

const [myUnapprovedFeatures, otherFeatures] = splitBy(

features.filter((feature) => view === feature.status),

(feature) =>

feature.proposedBy === id && !feature.isApproved ? 0 : 1

)

return (

<>

{[

...myUnapprovedFeatures,

...order(otherFeatures, (f) => f.upvotes, "desc"),

].map((feature) => (

<CurrentProductFeatureProvider key={feature.id} value={feature}>

<ProductFeatureItem />

</CurrentProductFeatureProvider>

))}

</>

)

}}

/>

)

}

```

To minimize prop drilling, the `ProductFeatureItem` component is provided with the current feature using the `CurrentProductFeatureProvider` component. Recognizing the frequent need to pass a single value through a component tree, I created the utility function `getValueProviderSetup` in [RadzionKit](https://github.com/radzionc/radzionkit). This generic function accepts the name of the entity and returns both a provider and a hook for that entity, streamlining the process of passing contextual data to nested components.

```tsx

import { ProductFeatureResponse } from "@increaser/api-interface/ProductFeatureResponse"

import { getValueProviderSetup } from "@lib/ui/state/getValueProviderSetup"

export const {

useValue: useCurrentProductFeature,

provider: CurrentProductFeatureProvider,

} = getValueProviderSetup<ProductFeatureResponse>("ProductFeature")

```

The `ProductFeatureItem` component displays the feature's name, a cropped description, and includes a voting button. To facilitate two actions within a single card, the component uses a specific layout pattern. Users can click on the card to open the feature details in a modal, while the "Vote" button allows them to vote for the feature separately. Due to HTML constraints that prevent nesting buttons, we utilize a relatively positioned container for the card, with the "Vote" button absolutely positioned within it. This layout pattern is common enough that [RadzionKit](https://github.com/radzionc/radzionkit) provides an abstraction for it, known as `ActionInsideInteractiveElement`, which simplifies the implementation of multiple interactive elements in a single component.

```tsx

import { HStack, VStack } from "@lib/ui/layout/Stack"

import { Panel, panelDefaultPadding } from "@lib/ui/panel/Panel"

import { Text } from "@lib/ui/text"

import { ShyInfoBlock } from "@lib/ui/info/ShyInfoBlock"

import styled from "styled-components"

import { maxTextLines } from "@lib/ui/css/maxTextLines"

import { ActionInsideInteractiveElement } from "@lib/ui/base/ActionInsideInteractiveElement"

import { Spacer } from "@lib/ui/layout/Spacer"

import { Opener } from "@lib/ui/base/Opener"

import { Modal } from "@lib/ui/modal"

import { interactive } from "@lib/ui/css/interactive"

import { getColor } from "@lib/ui/theme/getters"

import { transition } from "@lib/ui/css/transition"

import { useCurrentProductFeature } from "./CurrentProductFeatureProvider"

import { ProductFeatureDetails } from "./ProductFeatureDetails"

import { VoteForFeature } from "./VoteForFeature"

const Description = styled(Text)`

${maxTextLines(2)}

`

const Container = styled(Panel)`

${interactive};

${transition};

&:hover {

background: ${getColor("foreground")};

}

`

export const ProductFeatureItem = () => {

const { name, description, isApproved } = useCurrentProductFeature()

return (

<ActionInsideInteractiveElement

render={({ actionSize }) => (

<Opener

renderOpener={({ onOpen }) => (

<Container onClick={onOpen} kind="secondary">

<VStack gap={8}>

<HStack

justifyContent="space-between"

alignItems="start"

fullWidth

gap={20}

>

<VStack gap={8}>

<Text weight="semibold" style={{ flex: 1 }} height="large">

{name}

</Text>

<Description height="large" color="supporting" size={14}>

{description}

</Description>

</VStack>

<Spacer {...actionSize} />

</HStack>

{!isApproved && (

<ShyInfoBlock>

Thank you! Your feature is awaiting approval and will be

open for voting soon."

</ShyInfoBlock>

)}

</VStack>

</Container>

)}

renderContent={({ onClose }) => (

<Modal width={480} onClose={onClose} title={name}>

<ProductFeatureDetails />

</Modal>

)}

/>

)}

action={<VoteForFeature />}

actionPlacerStyles={{

top: panelDefaultPadding,

right: panelDefaultPadding,

}}

/>

)

}

```

We utilize the `Opener` component again to manage the modal state for displaying feature details. To ensure that the title does not overlap with the absolutely positioned "Vote" button, we insert a "Spacer" component with the same dimensions as the "Vote" button, as determined by `ActionInsideInteractiveElement`. To keep the card's appearance concise, we crop the description using the `maxTextLines` CSS utility from [RadzionKit](https://github.com/radzionc/radzionkit). Additionally, if the feature has not been approved yet, we display a `ShyInfoBlock` component to inform the user that their feature is awaiting approval.

```tsx

import { UpvoteButton } from "@lib/ui/buttons/UpvoteButton"

import { useVoteForFeatureMutation } from "../hooks/useVoteForFeatureMutation"

import { useCurrentProductFeature } from "./CurrentProductFeatureProvider"

export const VoteForFeature = () => {

const { id, upvotedByMe, upvotes } = useCurrentProductFeature()

const { mutate } = useVoteForFeatureMutation()

return (

<UpvoteButton

onClick={() => {

mutate({

id,

})

}}

value={upvotedByMe}

upvotes={upvotes}

/>

)

}

```

The `VoteForFeature` component utilizes the `UpvoteButton` to provide a straightforward and intuitive voting interface. When clicked, the component triggers the `mutate` function from the `useVoteForFeatureMutation` hook, with the feature ID passed as an input parameter. The `UpvoteButton` features a chevron icon and displays the count of upvotes. It dynamically changes color based on the `value` prop to visually indicate whether the user has already voted for the feature.

```tsx

import styled from "styled-components"

import { UnstyledButton } from "./UnstyledButton"

import { borderRadius } from "../css/borderRadius"

import { interactive } from "../css/interactive"

import { getColor, matchColor } from "../theme/getters"

import { transition } from "../css/transition"

import { getHoverVariant } from "../theme/getHoverVariant"

import { VStack } from "../layout/Stack"

import { IconWrapper } from "../icons/IconWrapper"

import { Text } from "../text"

import { CaretUpIcon } from "../icons/CaretUpIcon"

import { ClickableComponentProps } from "../props"

type UpvoteButtonProps = ClickableComponentProps & {

value: boolean

upvotes: number

}

const Cotainer = styled(UnstyledButton)<{ value: boolean }>`

padding: 8px;

min-width: 48px;

${borderRadius.s};

border: 1px solid;

${interactive};

color: ${matchColor("value", {

true: "primary",

false: "text",

})};

${transition};

&:hover {

background: ${getColor("mist")};

color: ${(value) =>

value ? getHoverVariant("primary") : getColor("contrast")};

}

`

export const UpvoteButton = ({

value,

upvotes,

...rest

}: UpvoteButtonProps) => (

<Cotainer {...rest} value={value}>

<VStack alignItems="center">

<IconWrapper style={{ fontSize: 20 }}>

<CaretUpIcon />

</IconWrapper>

<Text size={14} weight="bold">

{upvotes}

</Text>

</VStack>

</Cotainer>

)

```

The `ProductFeatureDetails` component displays the feature's creation date, the user who proposed the feature alongside the voting button, and the full feature description. To fetch the proposer's profile details, we use the `UserProfileQueryDependant` component. This component determines if the user has a public profile, displaying their name and country, or labels them as "Anonymous" if they maintain an anonymous account. The `UserProfileQueryDependant` is an enhancement of the `QueryDependant` component, providing a more streamlined approach to accessing user profile information.

```tsx

import { HStack, VStack } from "@lib/ui/layout/Stack"

import { Text } from "@lib/ui/text"

import { LabeledValue } from "@lib/ui/text/LabeledValue"

import { format } from "date-fns"

import { UserProfileQueryDependant } from "../../community/components/UserProfileQueryDependant"

import { ScoreboardDisplayName } from "@increaser/ui/scoreboard/ScoreboardDisplayName"

import { VoteForFeature } from "./VoteForFeature"

import { useCurrentProductFeature } from "./CurrentProductFeatureProvider"

export const ProductFeatureDetails = () => {

const { createdAt, proposedBy, description } = useCurrentProductFeature()

return (

<VStack gap={18}>

<HStack fullWidth alignItems="center" justifyContent="space-between">

<VStack style={{ fontSize: 14 }} gap={8}>

<LabeledValue name="Proposed at">

{format(createdAt, "dd MMM yyyy")}

</LabeledValue>

<LabeledValue name="Proposed by">

<UserProfileQueryDependant

id={proposedBy}

success={(profile) => {

return (

<ScoreboardDisplayName

name={profile?.name || "Anonymous"}

country={profile?.country}

/>

)

}}

/>

</LabeledValue>

</VStack>

<VoteForFeature />

</HStack>

<Text height="large">{description}</Text>

</VStack>

)

}

```

| radzion |

1,873,957 | Mozilla Firefox Politikası | Liman MYS'de Mozilla Firefox Politikası Nasıl Uygulanır Mozilla Firefox politikaları,... | 0 | 2024-06-03T04:42:14 | https://dev.to/aciklab/mozilla-firefox-politikasi-proxy-ayarlari-4op5 | # Liman MYS'de Mozilla Firefox Politikası Nasıl Uygulanır

Mozilla Firefox politikaları, kullanıcıların ve sistem yöneticilerinin tarayıcı davranışlarını yönetmelerine olanak tanır. Bu politikalar sayesinde çeşitli tarayıcı ayarları merkezi olarak yapılandırılabilir ve yönetilebilir.

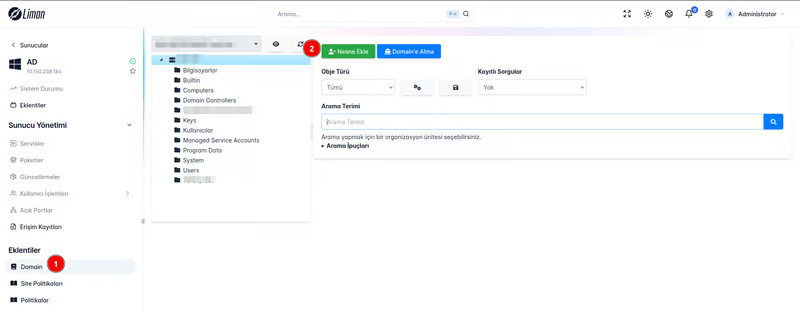

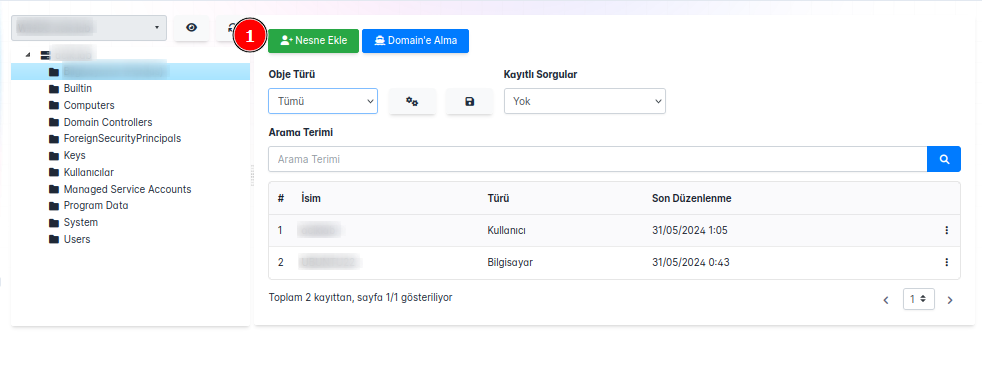

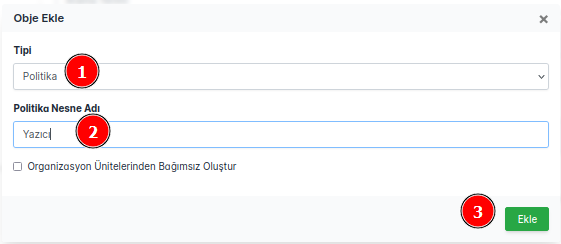

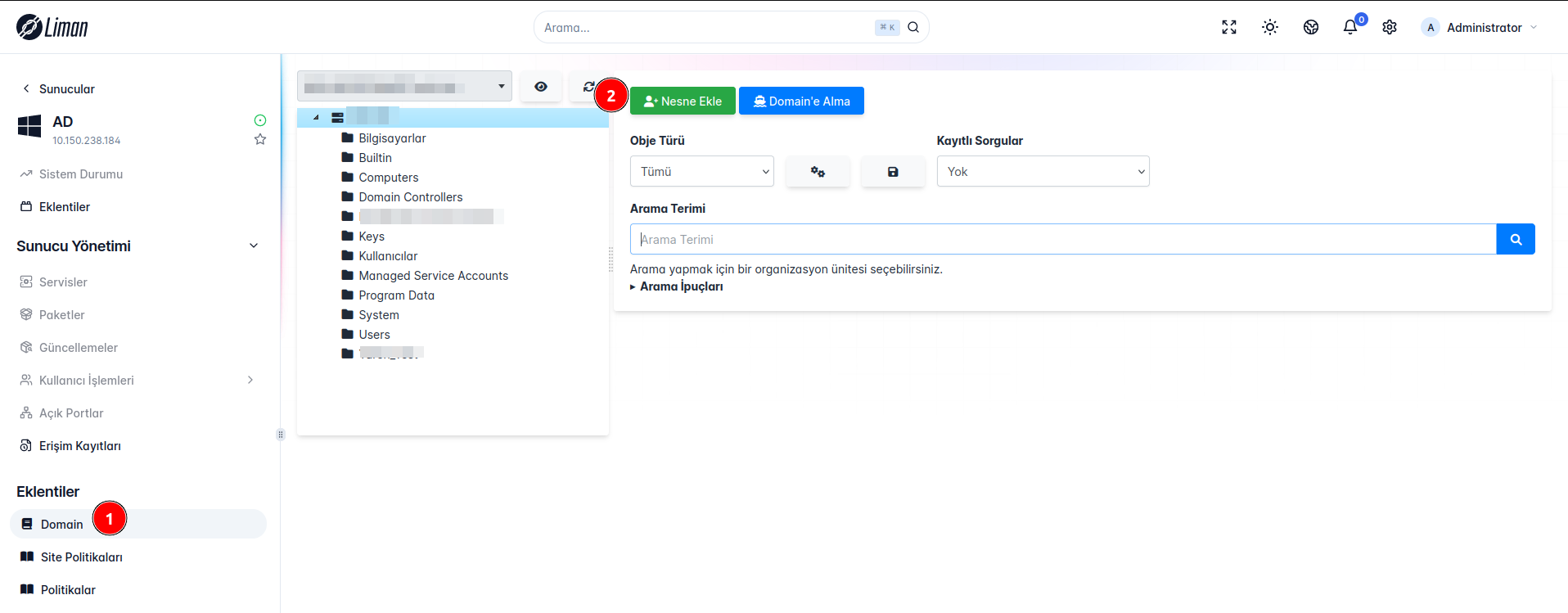

Öncelikle nasıl politika oluşturacağımıza bakalım:

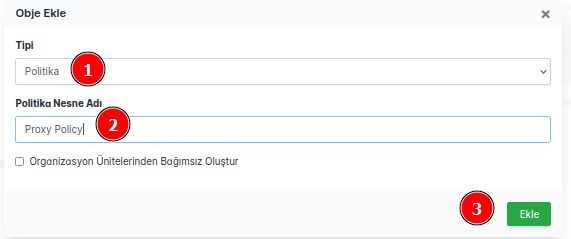

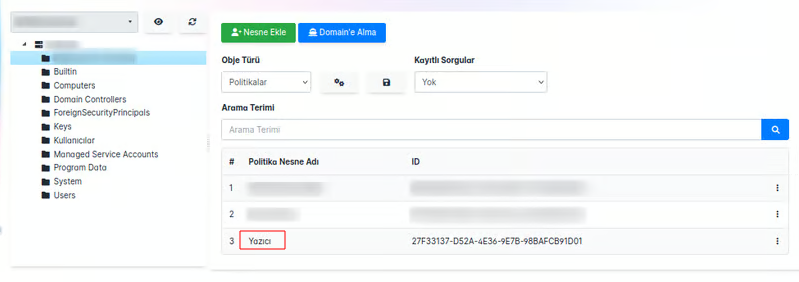

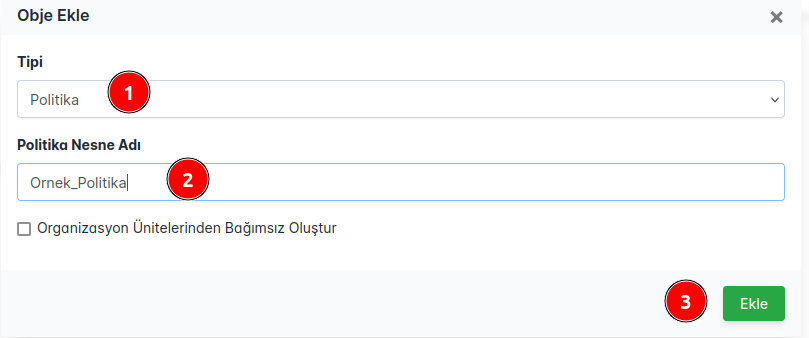

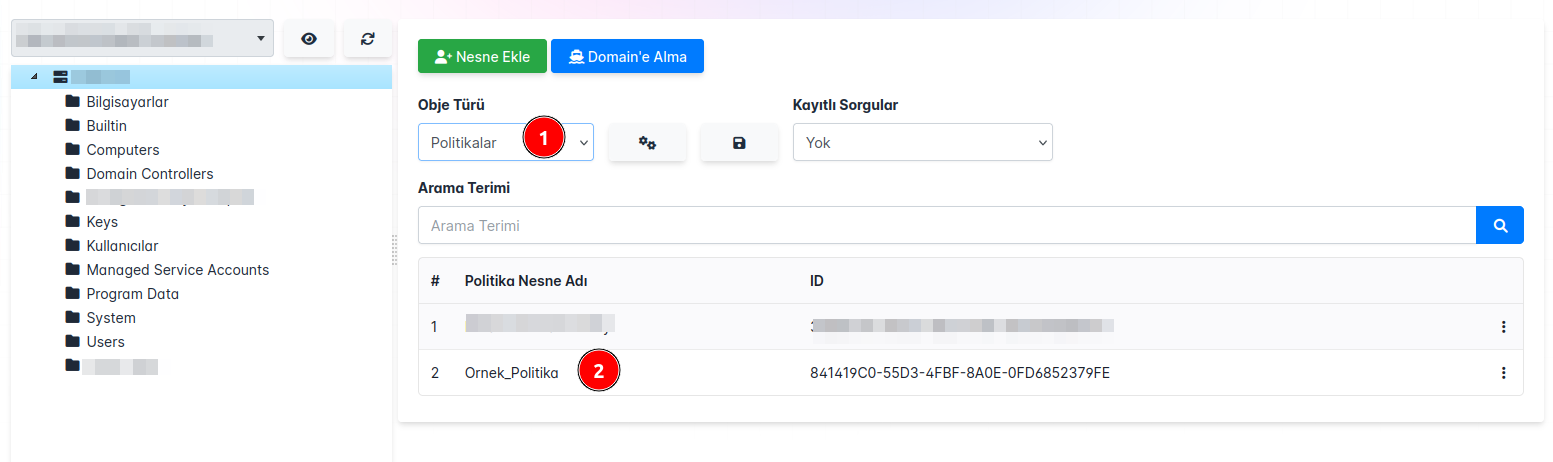

**Domain** Eklentimiz ile karşımıza çıkan ekrandan **Nesne Ekle** butonuna tıkladığımızda bizi karşılayan seçim ekranından tip bölümünü **Politika** seçerek politikamıza isim vererek **Ekle** butonuna bastığımızda politikamız eklenmiş olacaktır.

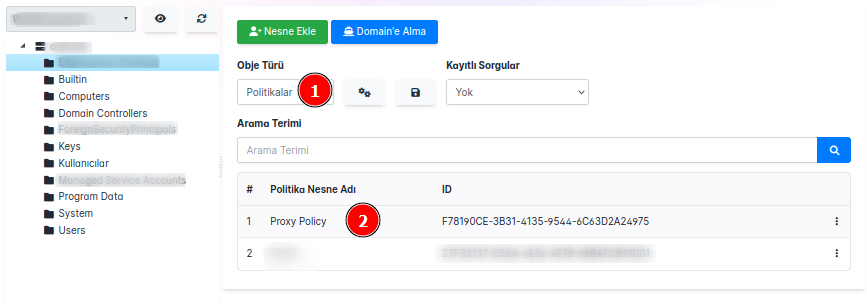

Politikaları görüntülediğimizde eklediğimiz politikayı görebiliriz.

**NOT:** Politikanızı nerede oluşturmak istiyorsanız o bölümün üstüne tıkladıktan sonra nesne oluşturma işleminizi yapınız.

# Politika Ayarları

## Politika Değerleri

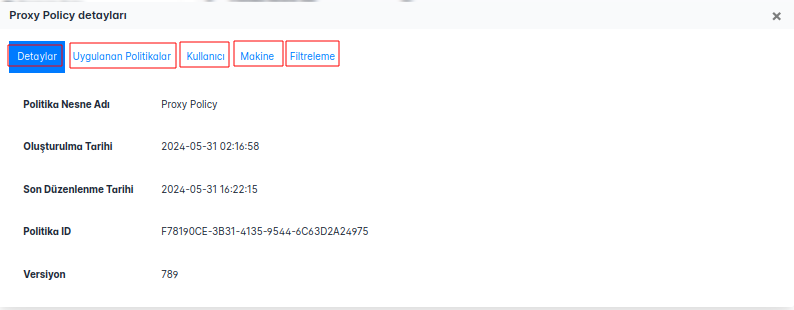

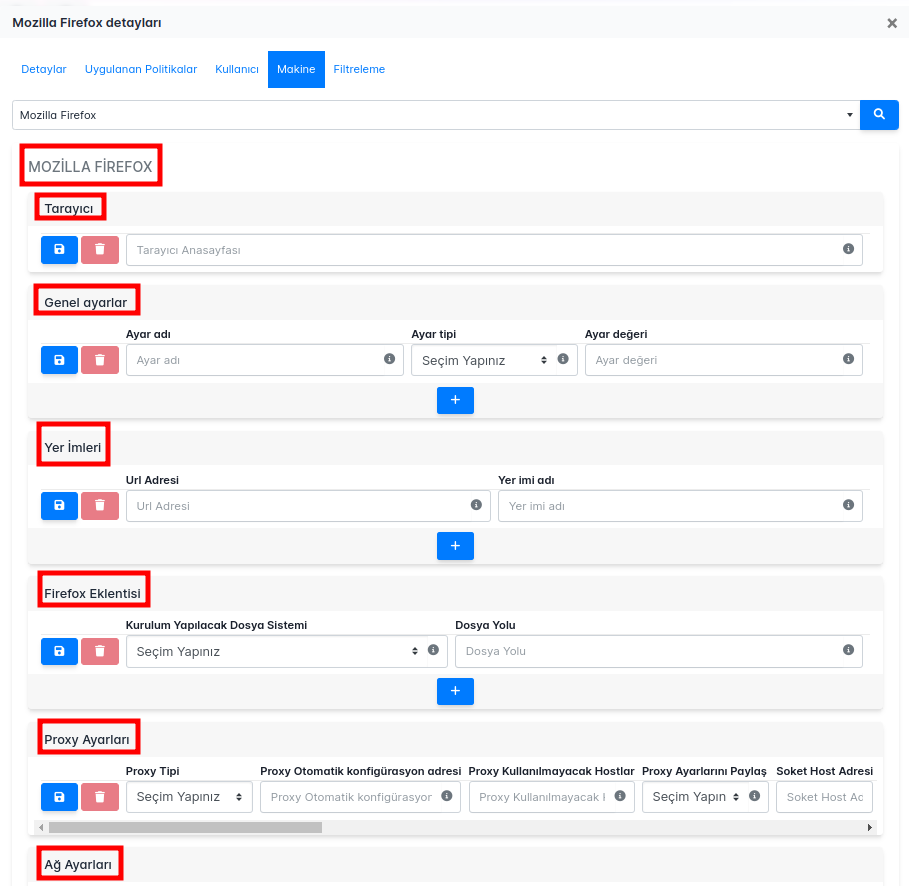

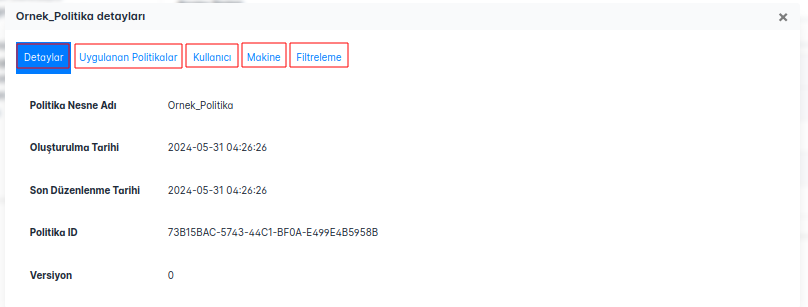

Oluşturduğumuz politikayı açtığımızda karşılaştığımız başlıklar:

**Detaylar:** Politikamızın adı, oluşturma tarihi, versiyonu, ID bilgisi gibi temel bilgiler bizi karşılamaktadır.

**Uygulanan Politikalar:** Makine ve Kullanıcı bazında uygulanan politikalar sergilenmektedir.

**Kullanıcı:** Kullanıcı bazında politikaları yönetebileceğimiz alan bu kısımda yer almaktadır.

**Makine:** Makine bazında politikaları yönetebileceğimiz alan bu kısımda yer almaktadır.

**Filtreleme:** Oluşturduğumuz politikamızın hangi kullanıcılarda veya gruplar uygulanmasını ya da uygulanmamasını seçebildiğimiz alandır.

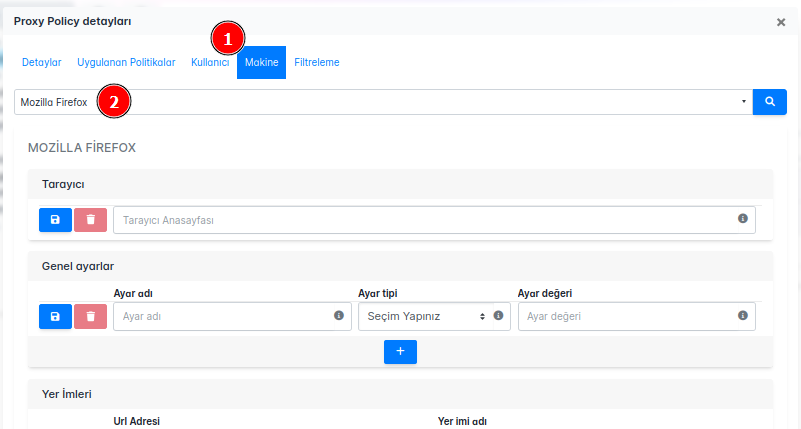

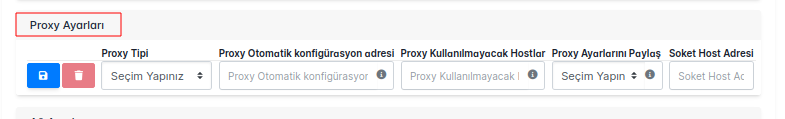

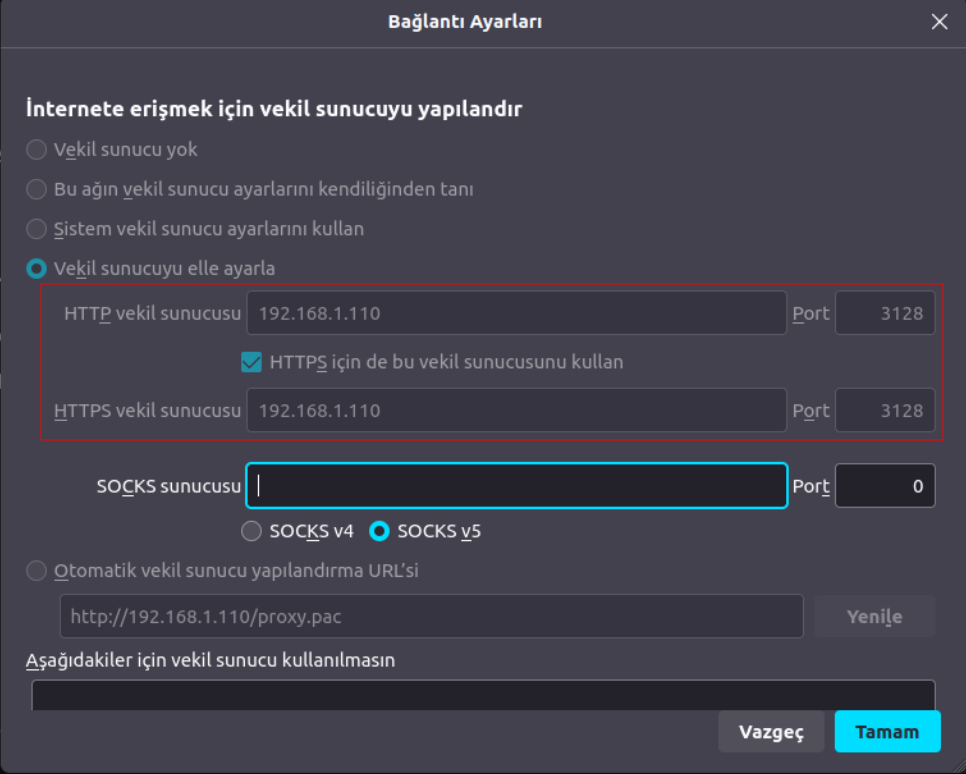

**Proxy** politikası oluşturmak için de şu adımları izleyebiliriz:

**Proxy** politika ekranı şu şekildedir:

# Genel Ayarlar

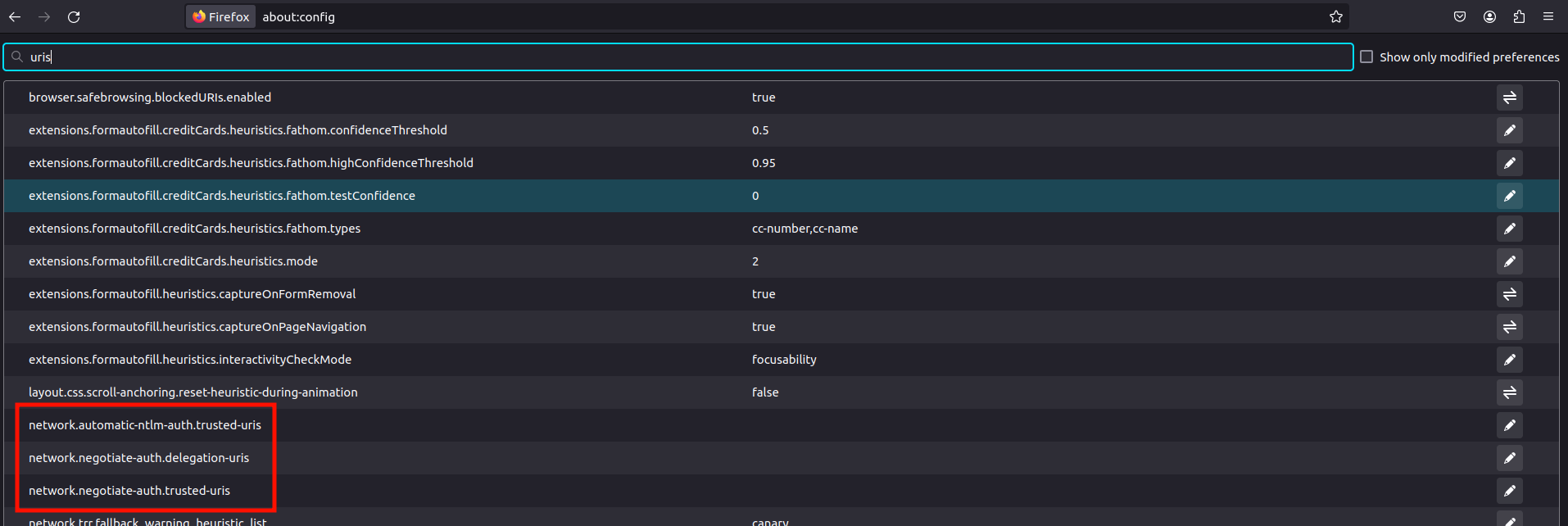

Mozilla Firefox'ta genel ayarları yönetmek için **about:config** sayfasını kullanabilirsiniz. Bu sayfa, tarayıcının gelişmiş ayarlarına erişim sağlar ve kullanıcıların belirli ayarları ince ayar yaparak özelleştirmesine olanak tanır. Aşağıdaki adımları izleyerek about:config sayfasına erişebilir ve genel ayarları yönetebilirsiniz:

1. Firefox tarayıcısını açın.

2. Adres çubuğuna **_about:config_** yazın ve **_Enter_** tuşuna basın.

3. Uyarı mesajını onaylayarak devam edin.

4. Arama çubuğunu kullanarak ihtiyacınıza yönelik ayar adlarını arayın ve gerekli değişiklikleri yapın.

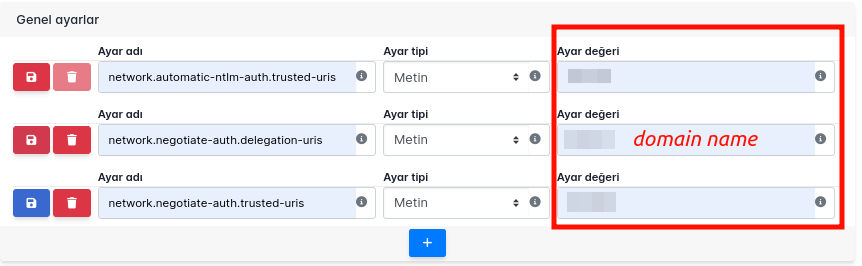

### Genel Ayarlar Politikası Çalışması ve Kontrolü

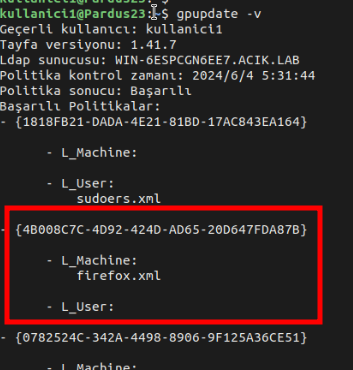

**Genel Ayarlar** politikası ile oluşturacağımız senaryolar Pardus 23 üzerinde test edilmiştir.

#### Örnek Konfigürasyon

Kerberos kimlik doğrulaması için tarayıcı ayarlarının yapılandırması örneği ele alınacaktır.

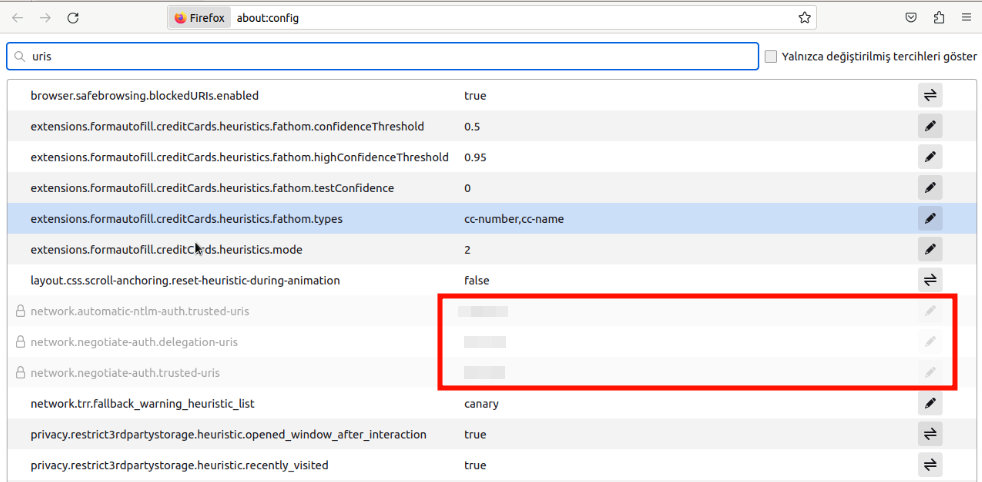

- Tarayıcı penceresine şu URL'yi girelim: **about:config.**

- Riski Kabul Et ve Devam Et'e tıklayalım.

- Arama tercihi adı alanına _uris_ yazalım ve aşağıdakileri Ayar Adı olarak ekleyelim.

| | | |

|------------------------------------------|-------|--------------|

| network.automatic-ntlm-auth.trusted-uris | Metin | _DomainName_ |

| network.negotiate-auth.delegation-uris | Metin | _DomainName_ |

| network.negotiate-auth.trusted-uris | Metin | _DomainName_ |

*!* Tayfa kurulumu esnasında aldığımız ticket belirli bir süre boyunca geçerlidir. Politikamız sayesinde belirttiğimiz ayarlar, tarayıcıya Kerberos kimlik doğrulama bilgilerini kullanarak otomatik oturum açmamızı sağlar. Bu sayede kullanıcılar sık sık kimlik bilgilerini girmek zorunda kalmaz.

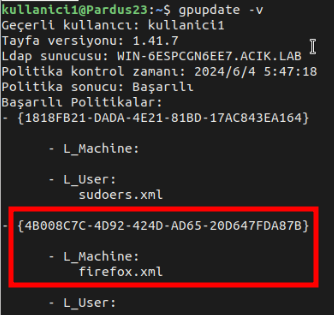

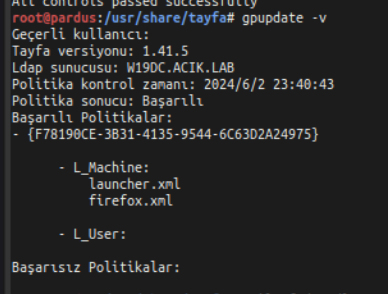

Politikamızı uygulayıp Pardus makinamız üzerinde **gpupdate -v** komutu ile politikamızı tetikliyoruz.

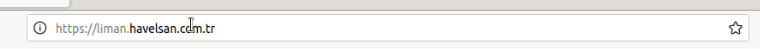

Sonrasında domaindeki bilgisayarımız üzerinde yine **about:config** üzerinde kontrol sağladığımızda girdiğimiz FQDN'i görebiliriz.

[Kerberos authentication - Documentation for BMC Helix Single ](https://docs.bmc.com/docs/hsso/241/configuring-browser-settings-for-kerberos-authentication-1284942260.html)

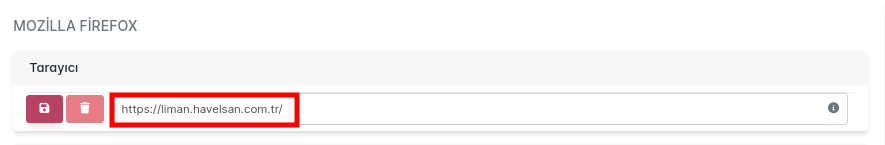

# Tarayıcı

Bu politika ile Firefox tarayıcısı açıldığında belirlediğiniz URL **anasayfa** olarak yüklenecektir.

Tarayıcı kısmına url olarak veri girmemiz gerekmektir.

### Tarayıcı Politikası Çalışması ve Kontrolü

**Tarayıcı** politikası ile oluşturacağımız senaryolar Pardus 23 üzerinde test edilmiştir.

Firefox'u açtığımızda girdiğimiz url bizi karşıladığında politikamızın çalıştığını doğrulamış oluruz.

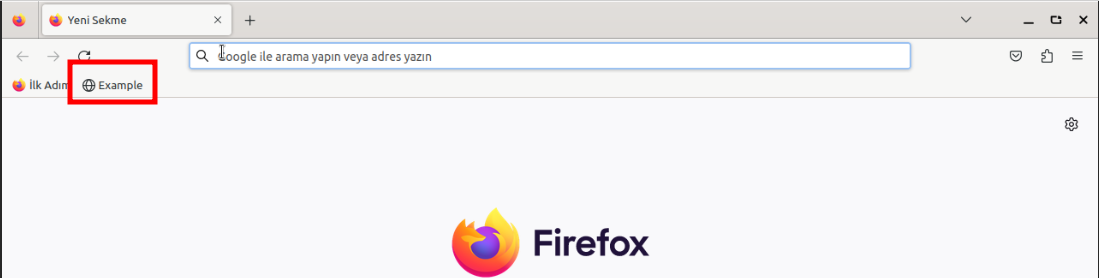

# Yer İmleri

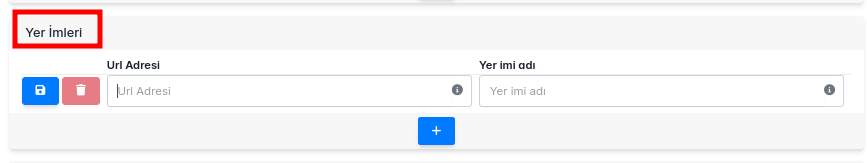

Bu alan, belirli URL'leri tarayıcı yer imlerine eklemek için kullanılır. Örneğin, _https://www.example.com_ URL adresi _Example_ adıyla yer imlerine eklenebilir.

| | |

|-----------------|------------------------------------------------------------------------|

| **Url Adresi** | Yer imlerine eklenmek istenen url'dir |

| **Yer İmi Adı** | Yer imlerinde eklenen url adrseinin hangi ad ile ekleneceği bilgisidir |

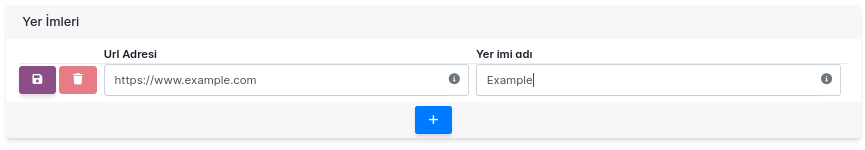

### Yer İmleri Çalışması ve Kontrolü

**Yer İmleri **politikası ile oluşturacağımız senaryolar Pardus 23 üzerinde test edilmiştir

#### Örnek Konfigürasyon:

**Url Adresi**: https://www.example.com

**Yer İmi Adı**: Example

Politikamızı uygulayıp Pardus makinamız üzerinde **gpupdate -v** komutu ile politikamızı tetikliyoruz.

Firefox üzerinde **Yer İmleri**ni kontrol ettiğinizde url adresimizin verdiğimiz isim ile eklendiğini ayarlandığını görebilirsiniz.

# Proxy Ayarları

Bu politika ile internet tarayıcısının Proxy ayarlarını değiştirebilir, **HTTPS vekil sunucusu** kısmını politika aracılığı ile yönetebilirsiniz.

#### Proxy Tipi Seçenekleri

**Doğrudan Bağlantı (Proxy Yok):**

Proxy kullanmak istemiyorsanız bu seçeneği seçin.

**Proxy'yi Manuel Ayarla:**

Proxy sunucusunun IP adresini ve port numarasını elle girmeniz gerektiğinde bu seçeneği kullanın.

**Proxy'yi Otomatik Ayarla (PAC):**

Proxy ayarlarını otomatik olarak bir PAC dosyasından almak için bu seçeneği kullanın. PAC dosyasının URL'sini girmeniz gerekecek.

**Proxy'yi Ayarlarını Otomatik Algıla:**

Proxy ayarlarını otomatik olarak algılamak için bu seçeneği kullanın.

**Sistem Proxy Ayarlarını Kullan:**

İşletim sisteminin proxy ayarlarını kullanmak için bu seçeneği seçin.

| Alan Adı | Açıklama |

|:-----------------------------------:|:---------------------------------------------------------------------------------------------------------:|

| Proxy Tipi | Kullanılacak proxy türünü seçin. Örneğin: "Proxy'yi Manuel Ayarla" veya "Proxy'yi Otomatik Ayarla (PAC)". |

| Proxy Otomatik Konfigürasyon Adresi | Proxy ayarlarını otomatik olarak çekecek PAC dosyasının URL'si. Örneğin: http://192.168.1.110/proxy.pac. |

| Proxy Kullanılmayacak Hostlar | Proxy sunucusunu atlayacak ve doğrudan erişilecek hostların listesi. Örneğin: localhost, 127.0.0.1. |

| Proxy Ayarlarını Paylaş | Proxy ayarlarını diğer kullanıcılarla veya istemcilerle paylaşma seçeneği. |

| Soket Host Adresi | SOCKS proxy sunucusunun IP adresi veya hostname'i. SOCKS proxy kullanmıyorsanız boş bırakın. |

| Soket Port Numarası | SOCKS proxy sunucusunun port numarası. SOCKS proxy kullanmıyorsanız boş bırakın. |

| SSL Host Adresi | HTTPS (SSL) trafiği için kullanılacak proxy sunucusunun IP adresi veya hostname'i. |

| SSL Port Numarası | HTTPS (SSL) trafiği için proxy sunucusunun port numarası. |

| HTTP Host Adresi | HTTP trafiği için kullanılacak proxy sunucusunun IP adresi veya hostname'i. |

| HTTP Port Numarası | HTTP trafiği için proxy sunucusunun port numarası. |

| FTP Host Adresi | FTP trafiği için kullanılacak proxy sunucusunun IP adresi veya hostname'i. |

| FTP Port Numarası | FTP trafiği için proxy sunucusunun port numarası. |

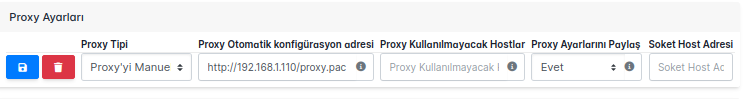

### Proxy Ayarları Çalışması ve Kontrolü

**Proxy** politikası ile oluşturacağımız senaryolar Pardus 23 üzerinde test edilmiştir.

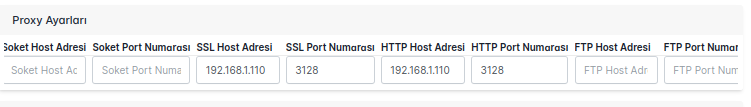

#### Örnek Konfigürasyon:

| Proxy Tipi | Proxy Otomatik konfigürasyon adresi | Proxy Kullanılmayacak Hostlar | Proxy Ayarlarını Paylaş | Soket Host Adresi | Soket Port Numarası | SSL Host Adresi | SSL Port Numarası | HTTP Host Adresi | HTTP Port Numarası | FTP Host Adresi | FTP Port Numarası |

|------------|-------------------------------------|-------------------------------|-------------------------|-------------------|---------------------|-----------------|-------------------|------------------|--------------------|-----------------|-------------------|

| HTTP | http://192.168.1.110/proxy.pac | localhost, 127.0.0.1 | Seçim Yapın | (boş bırakın) | (boş bırakın) | 192.168.1.110 | 3128 | 192.168.1.110 | 3128 | (boş bırakın) | (boş bırakın) |

Politikamızı uygulayıp Pardus makinamız üzerinde gpupdate -v komutu ile politikamızı tetikliyoruz.

Firefox üzerinde **Vekil Sunucu** ayarlarınızı kontrol ettiğinizde proxy sunucunuzun vekil sunucu olarak ayarlandığını görebilirsiniz.

Aynı zamanda proxy sunucunuzun log kayıtlarından kontrol edebilirsiniz.

| yarensari | |

1,874,935 | Buy Negative Google Reviews | Buy Negative Google Reviews Negative reviews on Google are detrimental critiques that expose... | 0 | 2024-06-03T04:42:05 | https://dev.to/annewalkere23/buy-negative-google-reviews-ee7 | Buy Negative Google Reviews

Negative reviews on Google are detrimental critiques that expose customers’ unfavorable experiences with a business. These reviews can significantly damage a company’s reputation, presenting challenges in both attracting new customers and retaining current ones. If you are considering purchasing negative Google reviews from dmhelpshop.com, we encourage you to reconsider and instead focus on providing exceptional products and services to ensure positive feedback and sustainable success.

Buy Negative Google Reviews

Why Buy Negative Google Reviews from dmhelpshop

We take pride in our fully qualified, hardworking, and experienced team, who are committed to providing quality and safe services that meet all your needs. Our professional team ensures that you can trust us completely, knowing that your satisfaction is our top priority. With us, you can rest assured that you’re in good hands.

Is Buy Negative Google Reviews safe?

At dmhelpshop, we understand the concern many business persons have about the safety of purchasing Buy negative Google reviews. We are here to guide you through a process that sheds light on the importance of these reviews and how we ensure they appear realistic and safe for your business. Our team of qualified and experienced computer experts has successfully handled similar cases before, and we are committed to providing a solution tailored to your specific needs. Contact us today to learn more about how we can help your business thrive.

Buy Google 5 Star Reviews

Reviews represent the opinions of experienced customers who have utilized services or purchased products from various online or offline markets. These reviews convey customer demands and opinions, and ratings are assigned based on the quality of the products or services and the overall user experience. Google serves as an excellent platform for customers to leave reviews since the majority of users engage with it organically. When you purchase Buy Google 5 Star Reviews, you have the potential to influence a large number of people either positively or negatively. Positive reviews can attract customers to purchase your products, while negative reviews can deter potential customers.

If you choose to Buy Google 5 Star Reviews, people will be more inclined to consider your products. However, it is important to recognize that reviews can have both positive and negative impacts on your business. Therefore, take the time to determine which type of reviews you wish to acquire. Our experience indicates that purchasing Buy Google 5 Star Reviews can engage and connect you with a wide audience. By purchasing positive reviews, you can enhance your business profile and attract online traffic. Additionally, it is advisable to seek reviews from reputable platforms, including social media, to maintain a positive flow. We are an experienced and reliable service provider, highly knowledgeable about the impacts of reviews. Hence, we recommend purchasing verified Google reviews and ensuring their stability and non-gropability.

https://dmhelpshop.com/product/buy-negative-google-reviews/

Let us now briefly examine the direct and indirect benefits of reviews:

Reviews have the power to enhance your business profile, influencing users at an affordable cost.

To attract customers, consider purchasing only positive reviews, while negative reviews can be acquired to undermine your competitors. Collect negative reports on your opponents and present them as evidence.

If you receive negative reviews, view them as an opportunity to understand user reactions, make improvements to your products and services, and keep up with current trends.

By earning the trust and loyalty of customers, you can control the market value of your products. Therefore, it is essential to buy online reviews, including Buy Google 5 Star Reviews.

Reviews serve as the captivating fragrance that entices previous customers to return repeatedly.

Positive customer opinions expressed through reviews can help you expand your business globally and achieve profitability and credibility.

When you purchase positive Buy Google 5 Star Reviews, they effectively communicate the history of your company or the quality of your individual products.

https://dmhelpshop.com/product/buy-negative-google-reviews/

as a collective voice representing potential customers, boosting your business to amazing heights.

Now, let’s delve into a comprehensive understanding of reviews and how they function:

Google, with its significant organic user base, stands out as the premier platform for customers to leave reviews. When you purchase Buy Google 5 Star Reviews , you have the power to positively influence a vast number of individuals. Reviews are essentially written submissions by users that provide detailed insights into a company, its products, services, and other relevant aspects based on their personal experiences. In today’s business landscape, it is crucial for every business owner to consider buying verified Buy Google 5 Star Reviews, both positive and negative, in order to reap various benefits.

https://dmhelpshop.com/product/buy-negative-google-reviews/

Why are Google reviews considered the best tool to attract customers?

Google, being the leading search engine and the largest source of potential and organic customers, is highly valued by business owners. Many business owners choose to purchase Google reviews to enhance their business profiles and also sell them to third parties. Without reviews, it is challenging to reach a large customer base globally or locally. Therefore, it is crucial to consider buying positive Buy Google 5 Star Reviews from reliable sources. When you invest in Buy Google 5 Star Reviews for your business, you can expect a significant influx of potential customers, as these reviews act as a pheromone, attracting audiences towards your products and services. Every business owner aims to maximize sales and attract a substantial customer base, and purchasing Buy Google 5 Star Reviews is a strategic move.

According to online business analysts and economists, trust and affection are the essential factors that determine whether people will work with you or do business with you. However, there are additional crucial factors to consider, such as establishing effective communication systems, providing 24/7 customer support, and maintaining product quality to engage online audiences. If any of these rules are broken, it can lead to a negative impact on your business. Therefore, obtaining positive reviews is vital for the success of an online business

What are the benefits of purchasing reviews online?

In today’s fast-paced world, the impact of new technologies and IT sectors is remarkable. Compared to the past, conducting business has become significantly easier, but it is also highly competitive. To reach a global customer base, businesses must increase their presence on social media platforms as they provide the easiest way to generate organic traffic. Numerous surveys have shown that the majority of online buyers carefully read customer opinions and reviews before making purchase decisions. In fact, the percentage of customers who rely on these reviews is close to 97%. Considering these statistics, it becomes evident why we recommend buying reviews online. In an increasingly rule-based world, it is essential to take effective steps to ensure a smooth online business journey.

Buy Google 5 Star Reviews

Many people purchase reviews online from various sources and witness unique progress. Reviews serve as powerful tools to instill customer trust, influence their decision-making, and bring positive vibes to your business. Making a single mistake in this regard can lead to a significant collapse of your business. Therefore, it is crucial to focus on improving product quality, quantity, communication networks, facilities, and providing the utmost support to your customers.

Reviews reflect customer demands, opinions, and ratings based on their experiences with your products or services. If you purchase Buy Google 5-star reviews, it will undoubtedly attract more people to consider your offerings. Google is the ideal platform for customers to leave reviews due to its extensive organic user involvement. Therefore, investing in Buy Google 5 Star Reviews can significantly influence a large number of people in a positive way.

How to generate google reviews on my business profile?

Focus on delivering high-quality customer service in every interaction with your customers. By creating positive experiences for them, you increase the likelihood of receiving reviews. These reviews will not only help to build loyalty among your customers but also encourage them to spread the word about your exceptional service. It is crucial to strive to meet customer needs and exceed their expectations in order to elicit positive feedback. If you are interested in purchasing affordable Google reviews, we offer that service.

https://dmhelpshop.com/product/buy-negative-google-reviews/

Contact Us / 24 Hours Reply

Telegram:dmhelpshop

WhatsApp: +1 (980) 277-2786

Skype:dmhelpshop

Email:dmhelpshop@gmail.com | annewalkere23 | |

1,873,354 | Yazıcı Politikası | Liman MYS'de Yazıcı Politikası Nasıl Uygulanır Bu politika ile, yazıcıların güvenli ve... | 0 | 2024-06-03T04:40:40 | https://dev.to/aciklab/yazici-politikasi-10fb | pointer, liman, linux, debian | # Liman MYS'de Yazıcı Politikası Nasıl Uygulanır

Bu politika ile, yazıcıların güvenli ve verimli bir şekilde kullanılmasını sağlamak amacıyla çeşitli kurallar belirlenmiştir.

Öncelikle nasıl politika oluşturacağımıza bakalım:

Domain Eklentimiz ile karşımıza çıkan ekrandan **Nesne Ekle** butonuna tıkladığımızda bizi karşılayan seçim ekranından tip bölümünü **Politika** seçerek politikamıza isim vererek **Ekle** butonuna bastığımızda politikamız eklenmiş olacaktır.

Politikaları görüntülediğimizde eklediğimiz politikayı görebiliriz.

## Politika Ayarları

### Politika Değerleri

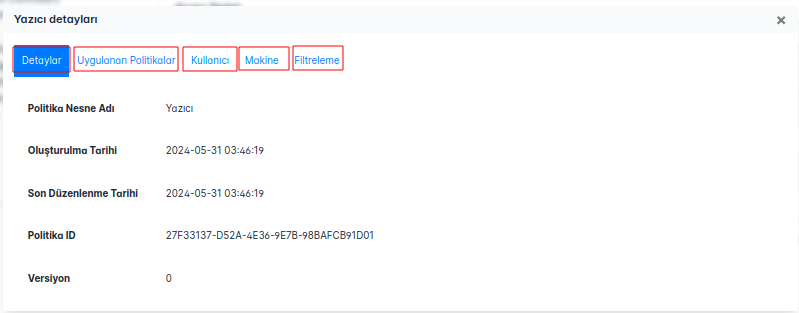

Oluşturduğumuz politikayı açtığımızda karşılaştığımız başlıklar:

**Detaylar:** Politikamızın adı, oluşturma tarihi, versiyonu, ID bilgisi gibi temel bilgiler bizi karşılamaktadır.

**Uygulanan Politikalar:** Makine ve Kullanıcı bazında uygulanan politikalar sergilenmektedir.

**Kullanıcı:** Kullanıcı bazında politikaları yönetebileceğimiz alan bu kısımda yer almaktadır.

**Makine:** Makine bazında politikaları yönetebileceğimiz alan bu kısımda yer almaktadır.

**Filtreleme:** Oluşturduğumuz politikamızın hangi kullanıcılarda veya gruplar uygulanmasını ya da uygulanmamasını seçebildiğimiz alandır.

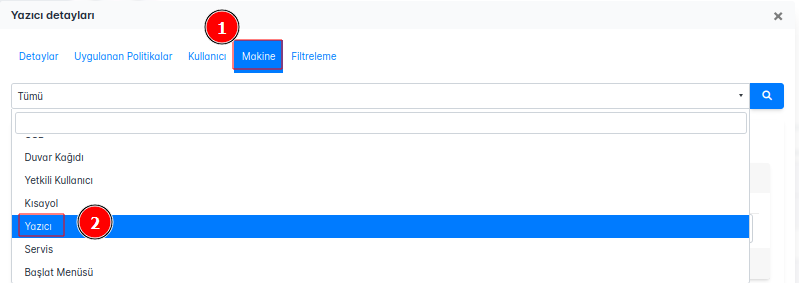

**Yazıcı** politikası oluşturmak için de şu adımları izleyebiliriz:

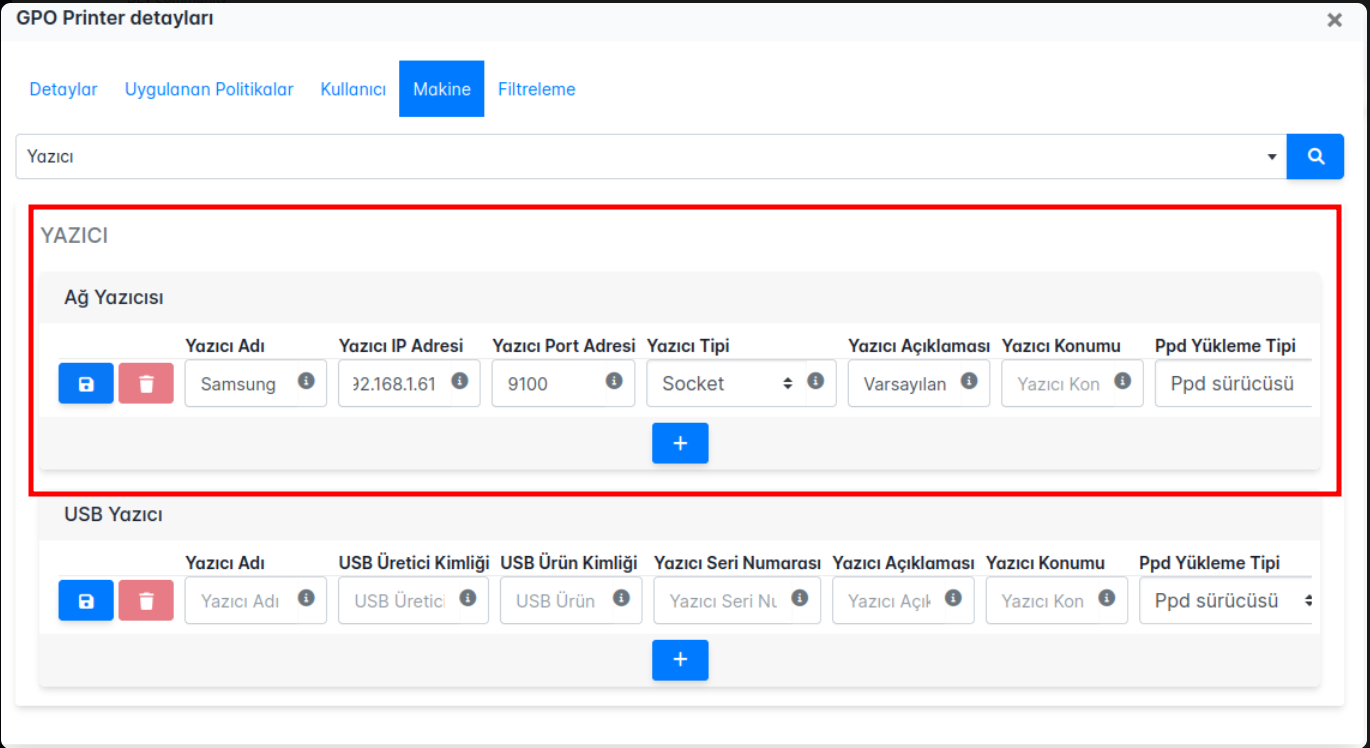

**Yazıcı** politika ekranı şu şekildedir:

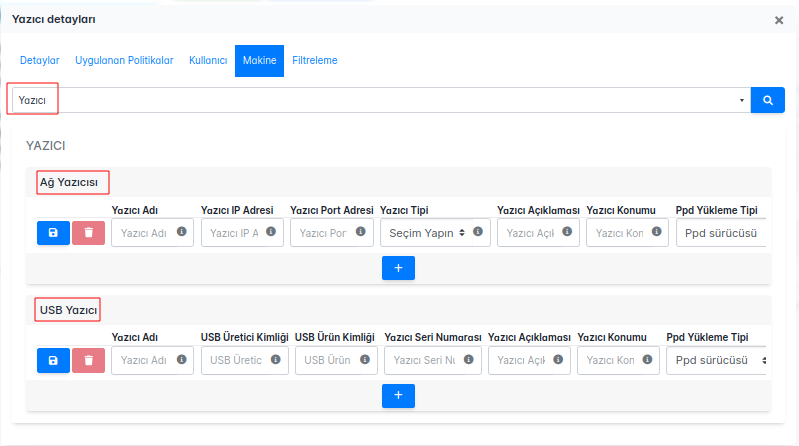

Hem ağ yazıcıları hem de USB yazıcılar için geçerli olan bu politika, yazıcıların doğru yapılandırılması ve yetkisiz erişimlerin önlenmesi için aşağıdaki kuralları içerir:

**Yazıcı Adı:** Her yazıcıya benzersiz ve anlamlı bir isim verilmelidir. Bu, kullanıcıların yazıcıları kolayca tanımasını sağlar.

**Yazıcı IP Adresi:** Ağ yazıcılarının IP adresleri doğru bir şekilde yapılandırılmalıdır. Bu adresler, ağ üzerinde yazıcıların doğru şekilde iletişim kurabilmesi için gereklidir.

**Yazıcı Port Adresi:** Yazıcıya erişim için kullanılan port numarası belirlenmelidir. Yaygın portlar arasında 9100 (RAW), 515 (LPD) veya 631 (IPP) bulunur.

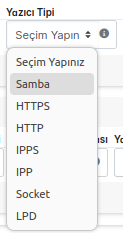

**Yazıcı Tipi:** Yazıcının türü (ağ yazıcısı, USB yazıcısı vb.) doğru bir şekilde belirtilmelidir.

**Yazıcı Açıklaması:** Yazıcı hakkında açıklayıcı bilgiler sağlanmalıdır. Bu bilgiler, yazıcının nerede kullanıldığı veya hangi özelliklere sahip olduğu gibi detayları içermelidir.

**Yazıcı Konumu:** Yazıcının fiziksel olarak bulunduğu yer açıkça belirtilmelidir. Bu, kullanıcıların yazıcıyı fiziksel olarak bulmalarını kolaylaştırır.

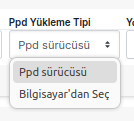

**Ppd Yükleme Tipi:** Yazıcı için kullanılan PPD dosyasının yükleme tipi belirlenmelidir. PPD dosyaları, yazıcının özelliklerini ve yeteneklerini tanımlar.

**NOT:** Bizim yapacağımız örnekte _Varsayılan_ olarak ayarlama yapıldığında konumumuz şu şekilde olmaktadır:

**usr/share/ppd/**cupsfilters/HP-Color_LaserJet_CM3530_MFP-PDF.ppd

- Driver'ın yüklü olması gerekmektedir, 3. parti driveları betikler veya paketler ile yüklenebilir, bu durum da konumda değişikliğe sebep olabilmektedir. Politikanızı oluştururken bu durumlara dikkat ediniz.

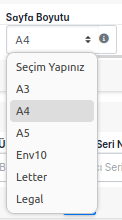

**Sayfa Boyutu:** Yazıcının desteklediği varsayılan sayfa boyutu doğru bir şekilde yapılandırılmalıdır. Örneğin, A4, Letter gibi boyutlar seçilebilir.

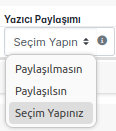

**Yazıcı Paylaşımı:** Yazıcının ağ üzerindeki diğer kullanıcılarla paylaşılıp paylaşılmayacağı belirlenmelidir. Paylaşılmayan yazıcılar sadece belirli kullanıcılar tarafından kullanılabilir.

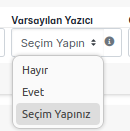

**Varsayılan Yazıcı:** Bu yazıcının varsayılan yazıcı olarak ayarlanıp ayarlanmadığı belirtilmelidir. Varsayılan yazıcı, kullanıcıların herhangi bir yazıcı seçmeden doğrudan çıktı alacakları yazıcıdır.

**Cups Opsiyonları**: Common Unix Printing System (CUPS) ile ilgili ek seçenekler ve ayarlar yapılandırılmalıdır. Bu ayarlar, yazıcının davranışını ve özelliklerini daha ayrıntılı olarak belirlemek için kullanılır.

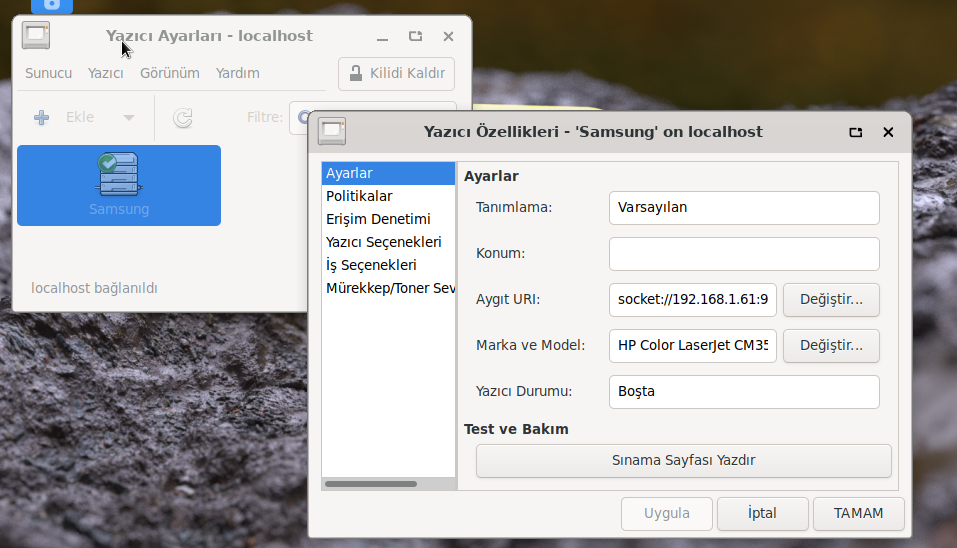

# Politika Çalışması ve Kontrolü

Yaptığımız örnek politikamız ve durumu şu şekildedir:

| yarensari |

1,871,820 | Yetkili Kullanıcı Politikası | Liman MYS'de Yetkili Kullanıcı Politikası Nasıl Uygulanır Bu politika ile var olan domain... | 0 | 2024-06-03T04:40:11 | https://dev.to/aciklab/yetkili-kullanici-politikasi-21hb | linux, debian, pardus, liman | # Liman MYS'de Yetkili Kullanıcı Politikası Nasıl Uygulanır

Bu politika ile var olan domain yapısında domaine alınmış istemciler üzerinde lokal admin dışında domain kullanıcılara ve lokalde açılmış/oluşturulmuş yetkisiz kullanıcılara admin yetkisi verilebilmektedir.

Öncelikle nasıl politika oluşturacağımıza bakalım:

Domain Eklentimiz ile karşımıza çıkan ekrandan **Nesne Ekle** butonuna tıkladığımızda bizi karşılayan seçim ekranından tip bölümünü **Politika** seçerek politikamıza isim vererek **Ekle** butonuna bastığımızda politikamız eklenmiş olacaktır.

Politikaları görüntülediğimizde eklediğimiz politikayı görebiliriz.

**NOT:** Politikanızı nerede oluşturmak istiyorsanız o bölümün üstüne tıkladıktan sonra nesne oluşturma işleminizi yapınız.

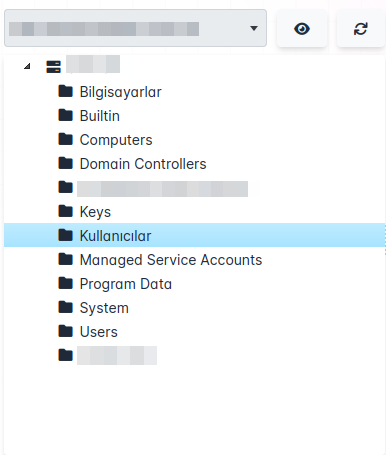

Örneğin bu örnekte politikanız _Kullanıcılar_ altında oluşacaktır.

# Politika Ayarları

## Politika Değerleri

Oluşturduğumuz politikayı açtığımızda karşılaştığımız başlıklar:

**Detaylar:** Politikamızın adı, oluşturma tarihi, versiyonu, ID bilgisi gibi temel bilgiler bizi karşılamaktadır.

**Uygulanan Politikalar:** Makine ve Kullanıcı bazında uygulanan politikalar sergilenmektedir.

**Kullanıcı:** Kullanıcı bazında politikaları yönetebileceğimiz alan bu kısımda yer almaktadır.

**Makine:** Makine bazında politikaları yönetebileceğimiz alan bu kısımda yer almaktadır.

**Filtreleme:** Oluşturduğumuz politikamızın hangi kullanıcılarda veya gruplar uygulanmasını ya da uygulanmamasını seçebildiğimiz alandır.

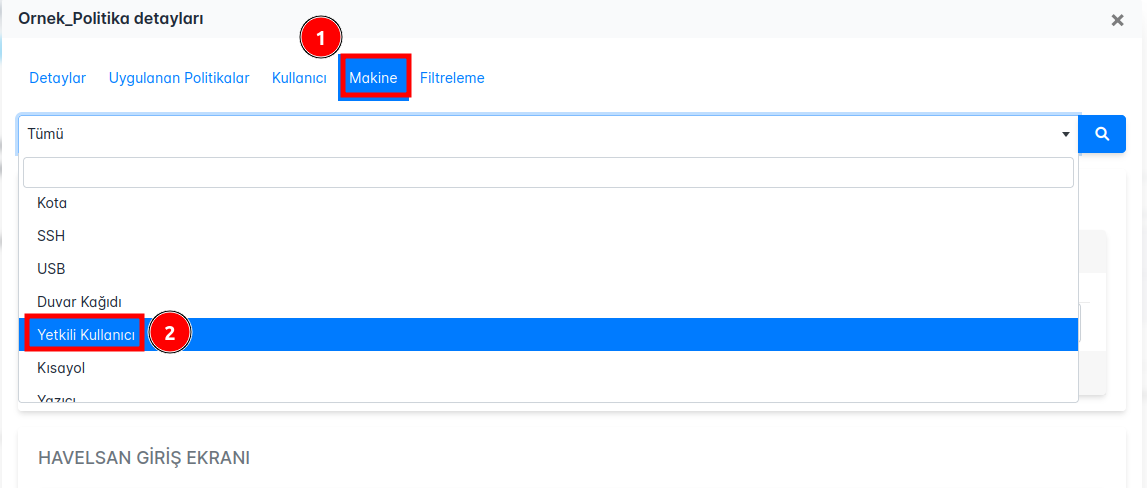

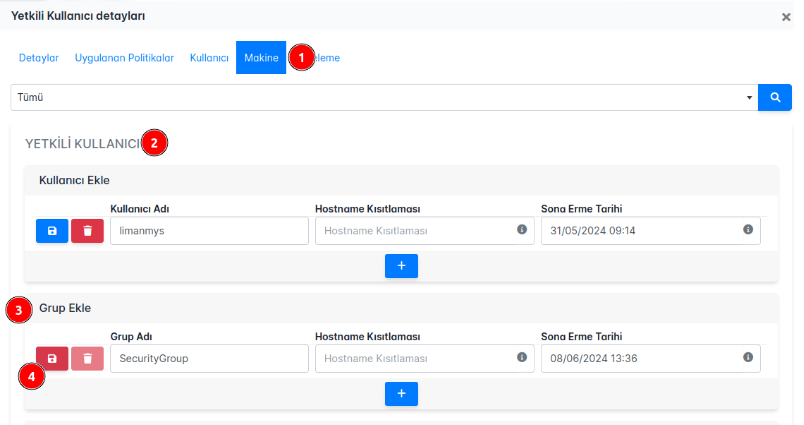

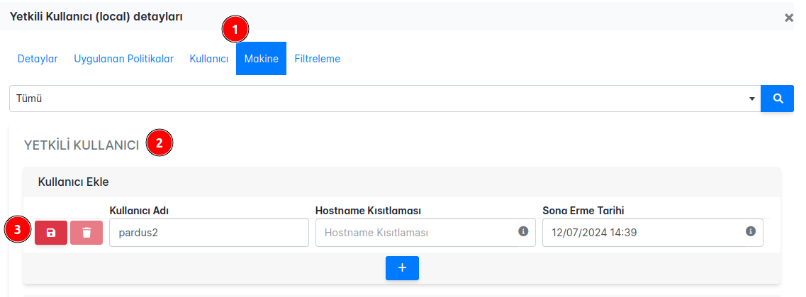

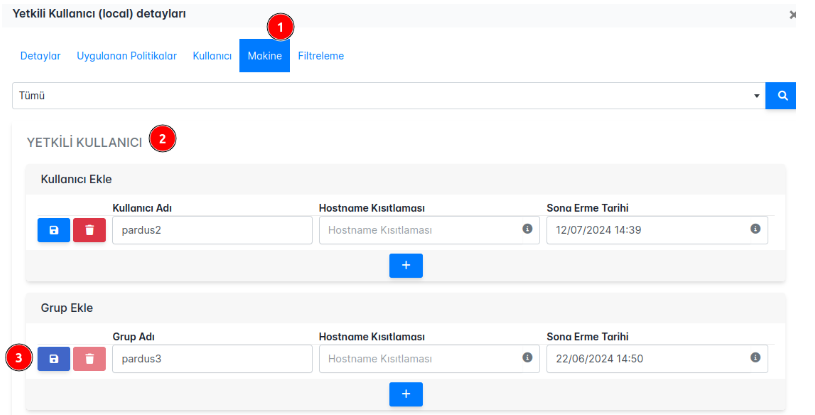

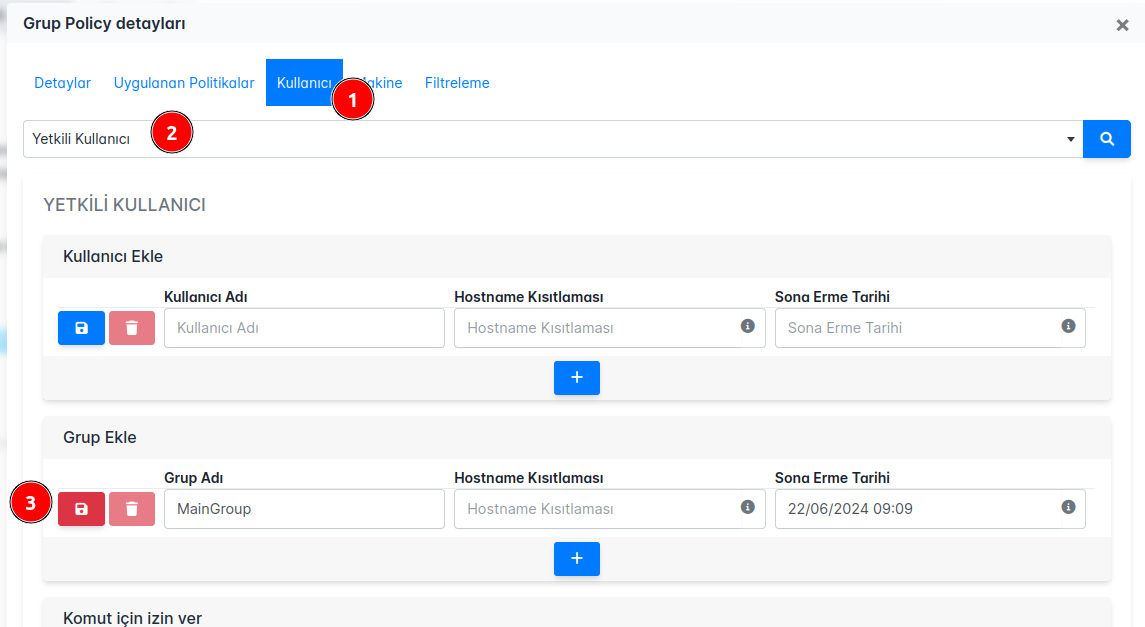

**Yetkili Kullanıcı** politikası oluşturmak için de şu adımları izleyebiliriz:

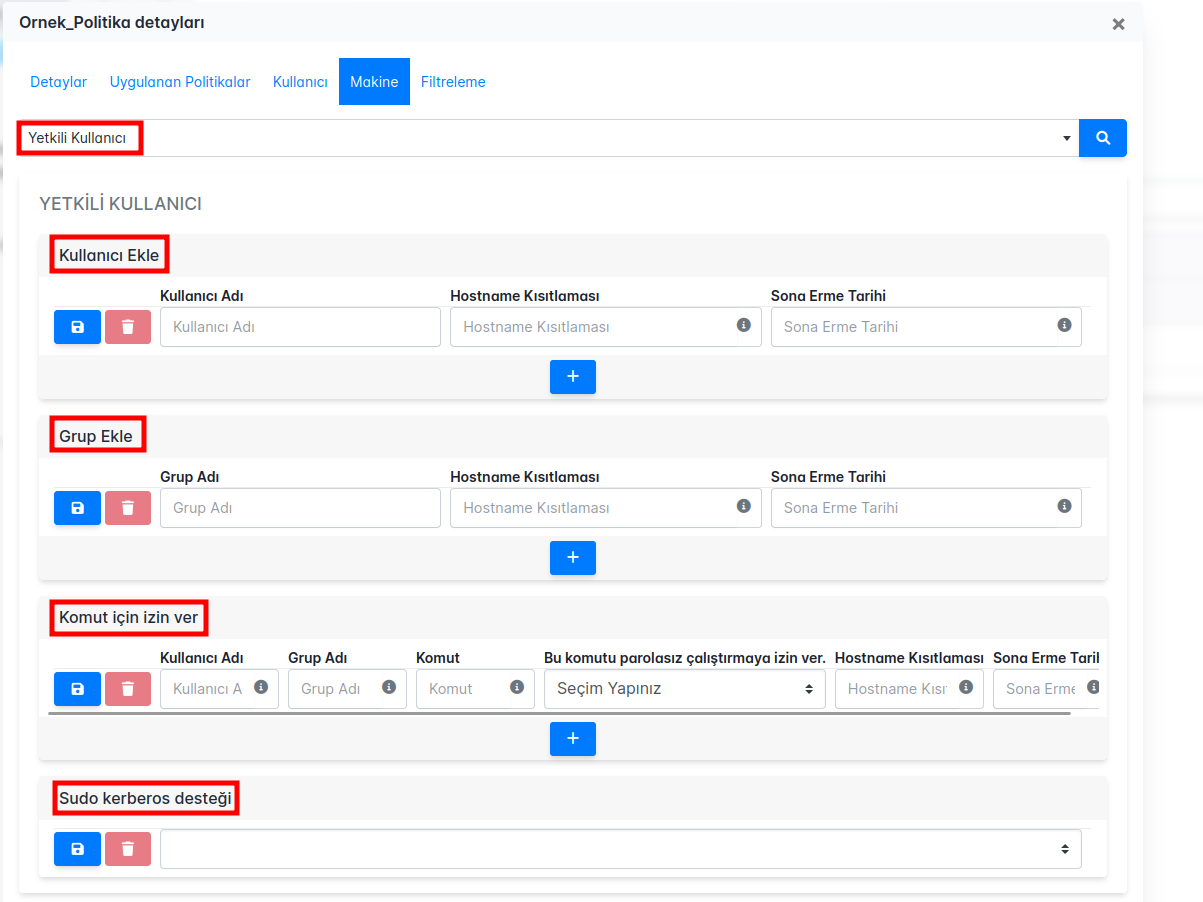

**Yetkili Kullanıcı** politika ekranı şu şekildedir:

**1. Kullanıcı Ekleme Bölümü:**

**Kullanıcı Adı:** Domain veya lokal ortamda oluşturulmuş kullanıcı adı girilir.

**Hostname Kısıtlaması:** Kullanıcının sudo yetkisine sahip olacağı cihazların hostname listesini belirleyin. Birden fazla hostname için virgülle ayırma yöntemi kullanılabilir. Bu alan boş bırakıldığında, kısıtlama uygulanmaz.

**Sona Erme Tarihi:** Kullanıcının sudo yetkisinin sona ereceği tarih. Bu tarih belirtilmezse, politika süresiz olarak geçerli olur.

**2. Grup Ekleme Bölümü:**

**Grup Adı:** Domain veya lokal ortamda oluşturulmuş güvenlik grubunun adı girilir.

**Hostname Kısıtlaması:** Grubun sudo yetkisine sahip olacağı cihazların hostname listesini belirleyin. Birden fazla hostname için virgülle ayırma yöntemi kullanılabilir. Bu alan boş bırakıldığında, kısıtlama uygulanmaz.

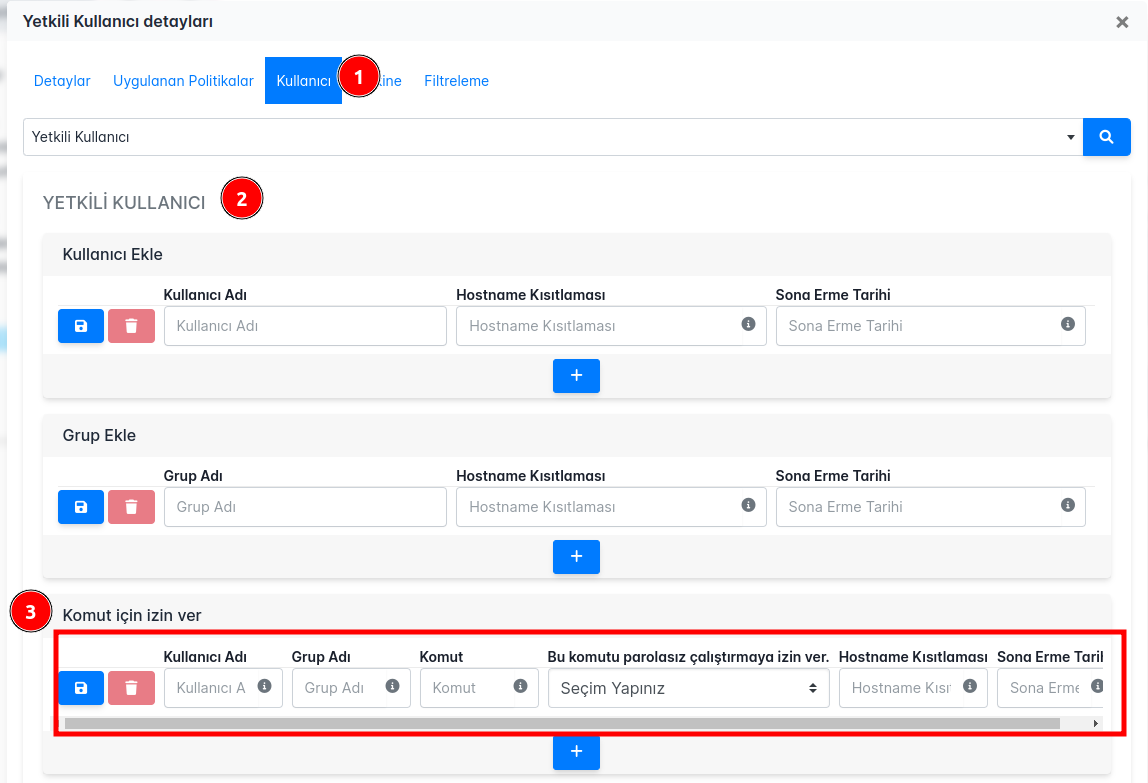

**3. Komut İçin İzin Verme Bölümü:**

**Kullanıcı Adı:** Domain veya lokal ortamda oluşturulmuş kullanıcı adı girilir. Birden fazla kullanıcı için virgülle ayırma yöntemi kullanılabilir, tüm kullanıcılar için 'all' yazılabilir.

**Grup Adı:** Domain veya lokal ortamda oluşturulmuş güvenlik grubu adı girilir. Birden fazla grup için virgülle ayırma yöntemi kullanılabilir, tüm gruplar için 'all' yazılabilir.

**Komut:** İzin verilecek komut girilir.

**Parola Kullanımı:** Bu komutun parolasız çalıştırılıp çalıştırılmayacağını belirtin (Evet/Hayır).

## Politika Çalışması ve Kontrolü

**Yetkili Kullanıcı** politikası ile oluşturacağımız senaryolar Pardus 23 üzerinde test edilmiştir.

### 1. Domain Kullanıcısına Admin Yetkisi Vermek

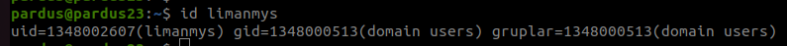

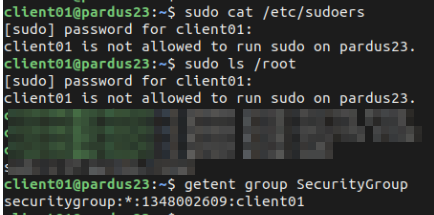

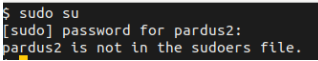

Öncelikle kullancımızın **sudo** yetkisine sahip olmadığını doğrulayalım:

- _limanmys_ bu örneğimizde kullanıcı olarak oluşturulmuştur.

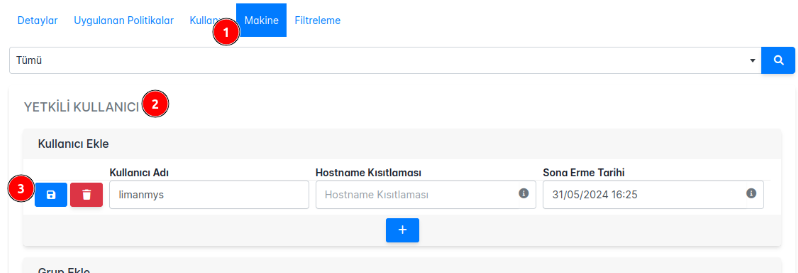

Şimdi politikamızı **Yetkili Kullanıcı** seçeneğini seçerek admin yetkisi vermek istediğimiz domain kullanıcının kullanıcı adını, isteğe göre hostname bilgisini ve isteğe göre sona erme tarihini belirtebiliriz.

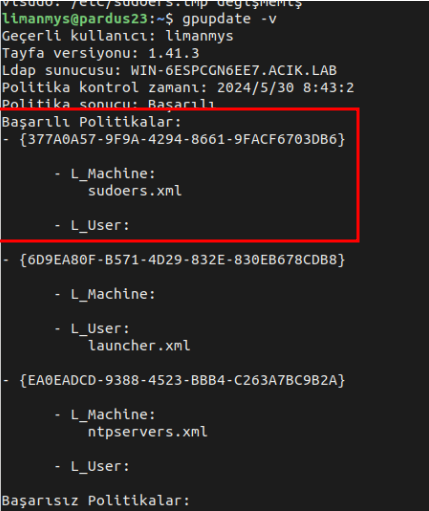

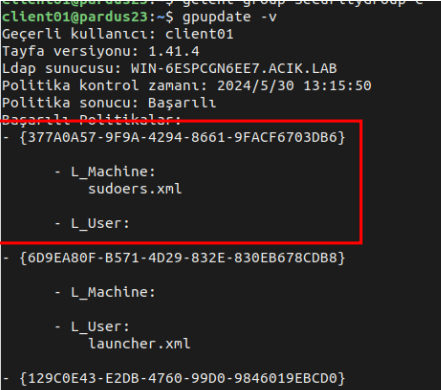

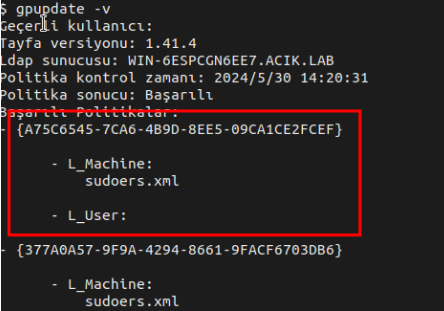

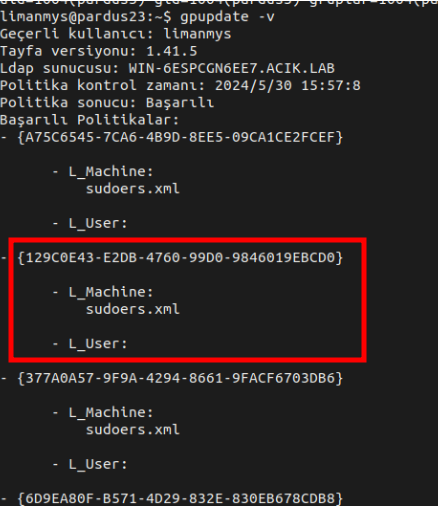

Sonrasında _limanmys_ kullancısı ile giriş yaptığımız Pardus makinamız üzerinde **gpupdate -v** komutu ile politikamızı tetikliyoruz.

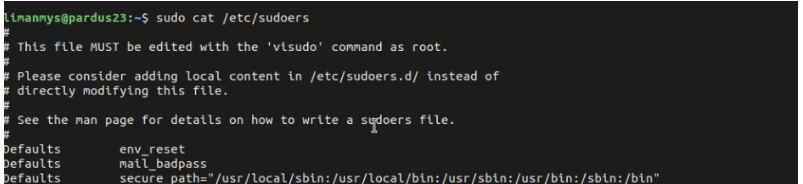

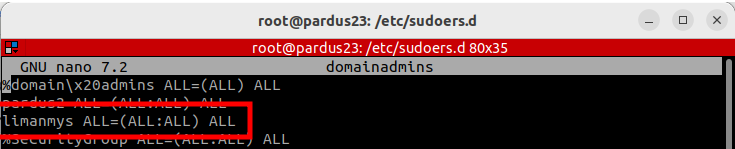

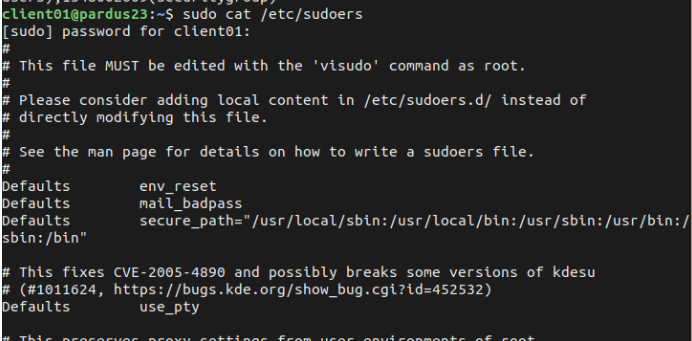

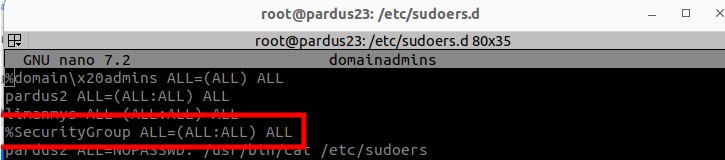

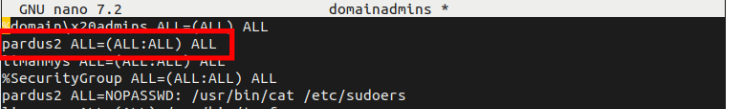

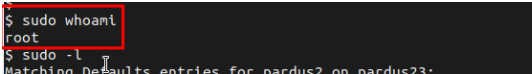

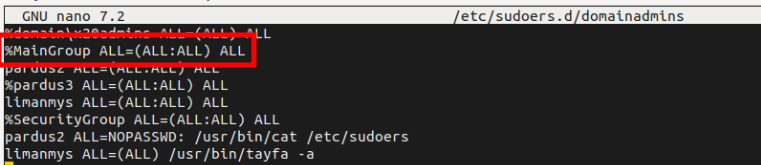

Hem kullancının sudo yetkisini test ederek hem de **/etc/sudoers.d/domainadmins** da gördüğümüzde politikamızın çalıştığından emin olabiliriz.

### 2. Domain Yapısında Bulunan Gruba Admin Yetkisi Vermek

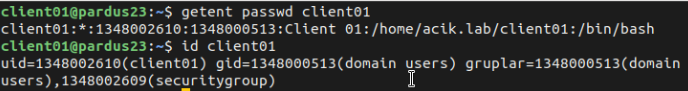

Security grubumuza yetki vermeden önce bu grubun altında olan kullancımızın **sudo** yetkisi olmadığını doğrulayalım:

- _client01_ bu örneğimizde kullanıcı olarak oluşturulmuştur.

Şimdi politikamızı **Yetkili Kullanıcı** seçeneğini seçerek admin yetkisi vermek istediğimiz domain grubunun adını, isteğe göre hostname bilgisini ve isteğe göre sona erme tarihini belirtebiliriz.

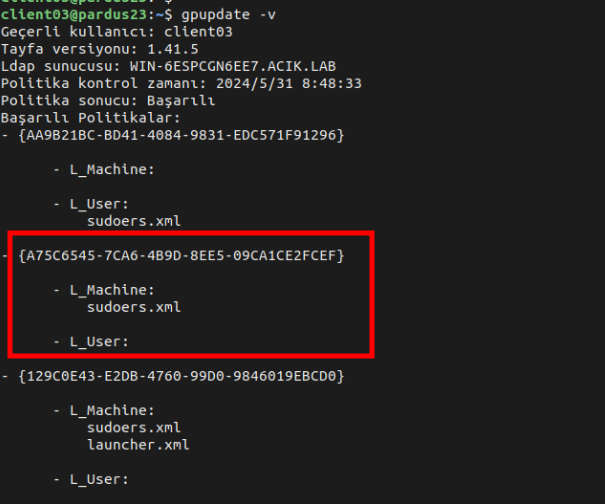

Sonrasında grubumuzun üyesi olan kullanıcı ile giriş yaptığımız Pardus makinamız üzerinde **gpupdate -v** komutu ile politikamızı tetikliyoruz.

Hem kullancının sudo yetkisini test ederek hem de **/etc/sudoers.d/domainadmins** da gördüğümüzde politikamızın çalıştığından emin olabiliriz.

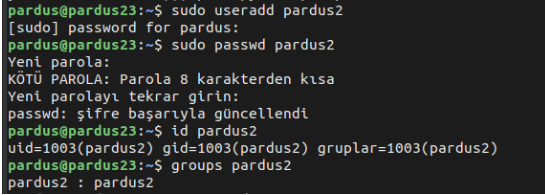

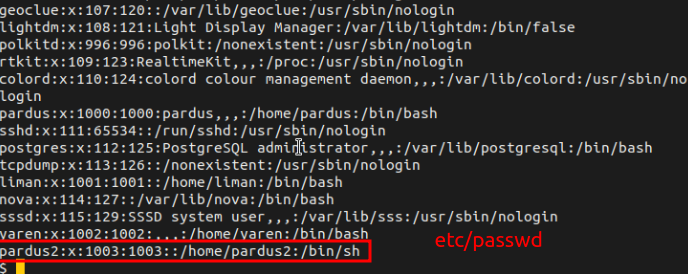

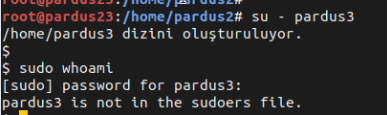

### 3. Lokalde Oluşturulmuş Kullanıcıya Admin Yetkisi Vermek

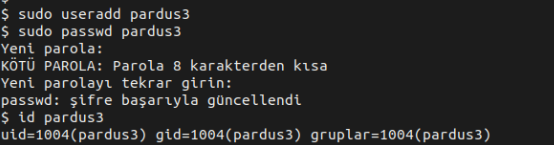

Öncelikle kullancımızı oluşturup **sudo** yetkisine sahip olmadığını doğrulayalım:

- _pardus2_ bu örneğimizde local kullanıcı olarak oluşturulmuştur.

Şimdi politikamızı **Yetkili Kullanıcı** seçeneğini seçerek admin yetkisi vermek istediğimiz domain grubunun adını, isteğe göre hostname bilgisini ve isteğe göre sona erme tarihini belirtebiliriz.

Sonrasında grubumuzun üyesi olan kullanıcı ile giriş yaptığımız Pardus makinamız üzerinde **gpupdate -v** komutu ile politikamızı tetikliyoruz.

Hem kullancının sudo yetkisini test ederek hem de **/etc/sudoers.d/domainadmins** da gördüğümüzde politikamızın çalıştığından emin olabiliriz.

### 4. Lokalde Oluşturulan Gruba Admin Yetkisi Vermek

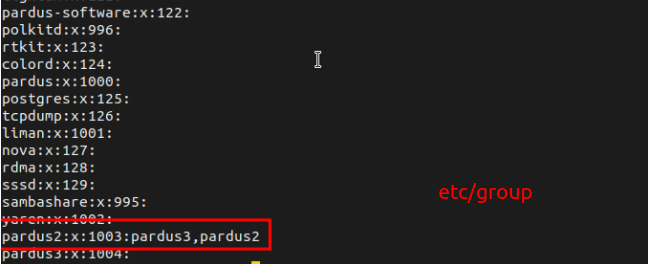

Kullanıcımızı ve grubumuzu oluşturup lokaldeki grubumuza yetki vermeden önce bu grubun altında olan kullancımızın **sudo** yetkisi olmadığını doğrulayalım:

Şimdi politikamızı **Yetkili Kullanıcı** seçeneğini seçerek admin yetkisi vermek istediğimiz grubun adını, isteğe göre hostname bilgisini ve isteğe göre sona erme tarihini belirtebiliriz.

Bu kısımda **pardus3** grubu pardus3 kullanıcısı oluştuğunda oluşmuş olan grup adıdır.

Pardus makinamız üzerinde **gpupdate -v** komutu ile politikamızı tetikliyoruz.

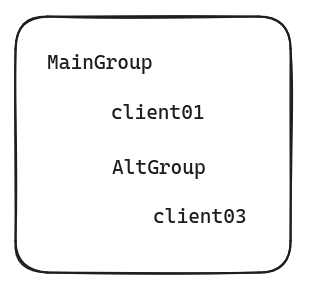

### 5. Domain Yapısında Grup Yetkilendirmesi

İç içe olan grupların yetkilendirme aşamasıdır. Örnek yapı şu şekildedir:

client01 ve cleint03 = kullanıcı

MainGroup ve AltGroup = Security Group

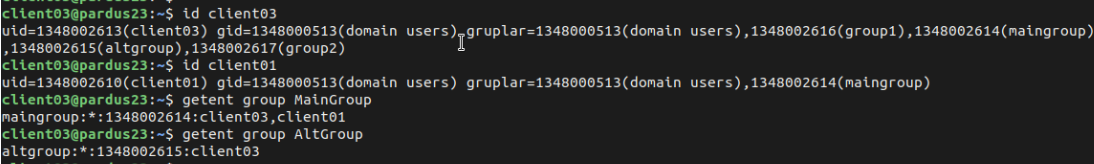

Gruplar ve kullanıcılar için şu şekilde çıktılar elde ederiz:

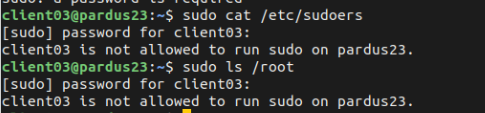

Alt grubumuzdaki kullanıcının politika oluşturmadan önce yetkisi olmadığını doğrulayalım:

Politikamızı üst gruba yetki vererek gerçekleştiririz.

Sonrasında grubumuzun üyesi olan kullanıcı ile giriş yaptığımız Pardus makinamız üzerinde **gpupdate -v** komutu ile politikamızı tetikliyoruz.

**/etc/sudoers.d/domainadmins** da gördüğümüzde politikamızın çalıştığından emin olabiliriz.

**NOT:**Politikamızı test etmek için yani alt gruptaki kullanıcımızın yetkilendiğinin kontrol edilmesi için lütfen login/logout işleminizi gerçekleştiriniz.

### 6. Komut İçin İzin Verilmesi

Belirli bir komut bazında yetkilendirme yapabilirsiniz.

**Kullanıcı Adı:** Domainde oluşturulmuş kullanıcı adı girilir.

**Grup Adı:** Domainde oluşturulmuş grup adı girilir.

Komut: İzin verilecek komut girilir,

**Bu Komutu Parolasız Çalıştırmaya İzin Ver**: İsteğe göre komutu parolasız çalıştırma izni verilir.

**Hostname Kısıtlaması**: İzin verilen komutun hangi makine/makinelerde uygulanacağı seçilir.

**Sona Erme Tarihi**: Yetkinin sona erme tarihi girilir. Boş bırakıldığında politikanın etkisi hiçbir zaman bitmez.

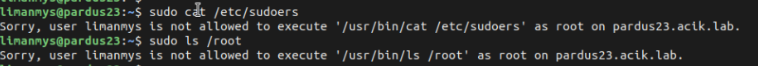

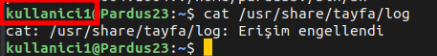

Öncesinde _kullanici1_ kullanıcısının _cat_ komutu yetkisi test edilir:

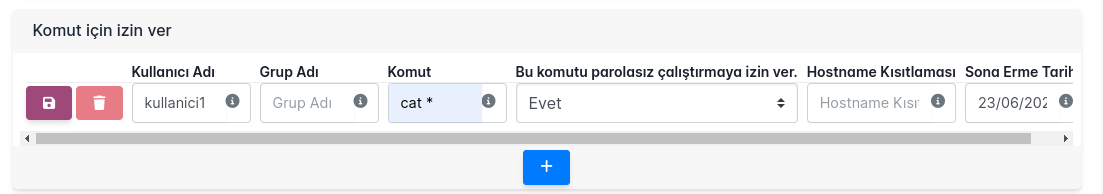

Şimdi politikamızı oluşturup uygulayalım:

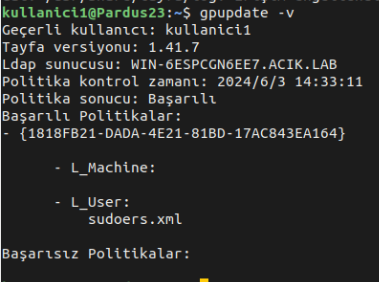

Sonrasında _kullanici1_ üyesi olan kullanıcı ile giriş yaptığımız Pardus makinamız üzerinde **gpupdate -v** komutu ile politikamızı tetikliyoruz.

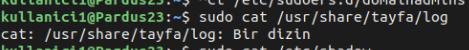

_cat_ komutumuzun çalıştığnı da gördüğümüzde politikamızın çalıştığından emin olabiliriz.

**NOT:** '*' olmadığında komutun birebir aynı yazılması gerekir. Aynı işlem kullanıcı yerine gruba vermek istenilirse aynı bölümündeki _Kullanıcı Adı_ yerine _Grup Adı_ kısmına domainde oluşturulmuş grup adı girilir.