id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,874,777 | Using GDB to develop exploits | Useful basic commands to use with GDB during debug of applications for vulnerability development and... | 0 | 2024-06-02T23:37:47 | https://dev.to/samglish/using-gdb-to-develop-exploits-kec | gdb, gdbvulnerabilty | **Useful basic commands to use with GDB during debug of applications for vulnerability

development and troubleshooting.**

**Example of application vulnerability.**

- BufferOverflow

- FormatString

**Visit my github project** [formatstring](https://github.com/samglish/formatString) and [BufferOverflow](https://github.com/samglish/bufferOverflow)

From the GDB man page:

"The purpose of a debugger such as GDB is to allow you to see what is going on â..â..insideâ..â.. another

program while it executes â.. or what another program was doing at the moment it crashed."

```

Launch GDB against either a binary, a core file or a Process ID

$ gdb ./vuln

$ gdb ./vuln ./core

$ gdb -c ./core

$ gdb -silent pidof vuln

Set arguments for the application to execute with

(gdb) set args perl -e print "A" x 50000

Set environment variables

(gdb) set env PATH=perl -e 'print "A" x 50000'`

Set breakpoints

(gdb) b main // Breaks at main()

Breakpoint 1 at 0x8048fd9: file vuln.c, line 627.

(gdb) break strcpy // Breaks at strcpy()

Breakpoint 2 at 0x42079dd4

(gdb) break vuln.c:vuln_func

(gdb) rbreak ^vuln[_]func$

List defined breakpoints

(gdb) info b

Num Type Disp Enb Address What

1 breakpoint keep y 0x08048fd9 in main at vuln.c:627

Run the binary

(gdb) run

Starting program: /vuln/vuln perl -e print "A" x 1000'

Breakpoint 3, main (argc=2, argv=0xbfffee54) at vuln.c:627

627 CONSTRUCTGLOBALS();

(gdb)

Follow process forking

(gdb) set follow-fork-mode {parent, child, ask}

Show register addresses

(gdb) i r

eax 0x49488fa8 1229492136

ecx 0x8074e68 134696552

edx 0x42131300 1108546304

ebx 0x42130a14 1108544020

esp 0xbfffc190 0xbfffc190

ebp 0xbfffc1f8 0xbfffc1f8

esi 0x41414140 1094795584

edi 0x8074e70 134696560

eip 0x420744b0 0x420744b0

Show function dissasembly

(gdb) disas

Dump of assembler code for function main:

0x08048715 <main+0>: push %ebp

0x08048716 <main+1>: mov %esp,%ebp

0x08048718 <main+3>: sub $0x68,%esp

0x0804871b <main+6>: and $0xfffffff0,%esp

Show stored values on the stack

(gdb) print $esp

$1 = (void *) 0xbfffc190

(gdb) x/5x $esp-10 // Hex

0xbfffedf6: 0xee084000 0x9442bfff 0x0a140805 0x53604213

0xbfffee06: 0xee284001

(gdb) x/5s $esp-10 //String

0xbfffedf6: ""

0xbfffedf7: "@\b???B\224\005\b\024\n\023B S\001@(???tU\001B\002"

0xbfffee12: ""

0xbfffee13: ""

0xbfffee14: "T??????,X\001@\002"

(gdb) x/5d $esp-10 //Decimal

0xbfffedf6: -301449216 -1807564801 169084933 1398817299

0xbfffee06: -299352063

(gdb) x/5i $esp-10 //Instructions

0xbfffedf6: add %al,0x8(%eax)

0xbfffedf9: out %al,(%dx)

0xbfffedfa: (bad)

0xbfffedfb: mov $0x8059442,%edi

0xbfffee00: adc $0xa,%al

(gdb) set $esp = 0

(gdb) print $esp

$3 = (void *) 0x0

Show where in the source file we are

(gdb) list

622 int argc;

623 char *argv[];

624 {

625 int r;

626

627 CONSTRUCTGLOBALS();

628 p = checkid(argc, argv);

629 DESTROYGLOBALS();

630 strcpy(id_d, p);

631 while(1) {

Show where execution is

(gdb) where

#0 main (argc=2, argv=0xbfff2764) at vuln.c:627

#1 0x42015574 in __libc_start_main () from /lib/tls/libc.so.6

(gdb)

Continue executing

(gdb) c //Resume code execution

Continuing.

Breakpoint 2, 0x42079dd4 in strcpy () from /lib/tls/libc.so.6

(gdb) s //Step into next function

Single stepping until exit from function vuln1,

which has no line number information.

check_vuln () at vuln1.c:259

259 whoUID = (u_id ? sys_UID_secret :

(gdb) s

309 usrIDnum = numIDnum = 0;

(gdb) c //Continue again

Continuing.

Breakpoint 2, 0x42079dd4 in strcpy () from /lib/tls/libc.so.6

(gdb) c //Continue again

Continuing.

Program received signal SIGSEGV, Segmentation fault.

0x41414141 in ?? ()

Show last frame on the stack

(gdb) where 1

#0 0x41414141 in ?? ()

(More stack frames follow...)

Show failing frame info

(gdb) info frame 0

Stack frame at 0xbfff3588:

eip = 0x41414141; saved eip 0x80530c2

called by frame at 0xbfff35c8

Arglist at 0xbfff3588, args:

Locals at 0xbfff3588, Previous frame's sp in esp

Saved registers:

ebp at 0xbfff3588, eip at 0xbfff358c

Show values in some useful registers

(gdb) x/x $eip

0x41414141: Cannot access memory at address 0x41414141

(gdb) x/x $ebp

0xbfff3588: 0xbfff35c8

(gdb) x/x $esp

0xbfff354c: 0x08053309

Disassemble strcpy function

(gdb) disas strcpy

Dump of assembler code for function strcpy:

0x42079dd0 <strcpy+0>: push %ebp

0x42079dd1 <strcpy+1>: mov %esp,%ebp

0x42079dd3 <strcpy+3>: push %esi

0x42079dd4 <strcpy+4>: mov 0x8(%ebp),%esi

0x42079dd7 <strcpy+7>: mov 0xc(%ebp),%edx

0x42079dda <strcpy+10>: mov %esi,%eax

0x42079ddc <strcpy+12>: sub %edx,%eax

0x42079dde <strcpy+14>: lea 0xffffffff(%eax),%ecx

0x42079de1 <strcpy+17>: jmp 0x42079df0 <strcpy+32>

0x42079de3 <strcpy+19>: nop

0x42079de4 <strcpy+20>: nop

0x42079dfb <strcpy+43>: mov %esi,%eax

0x42079dfd <strcpy+45>: pop %esi

0x42079dfe <strcpy+46>: pop %ebp

0x42079dff <strcpy+47>: ret

End of assembler dump.

Show content pointed to by a pointer

0x08054e2c in blah (p=0x41414141 <Address 0x41414141 out of bounds>) at vuln.c:284

284 for (; *p; INCSTR(p))

(gdb) x/x p

0x41414141: Cannot access memory at address 0x41414141

Display executable sections

(gdb) main info sec

Exec file:

/root/vuln, file type elf32-i386.

0x080480f4->0x08048107 at 0x000000f4: .interp ALLOC LOAD READONLY DATA HAS_CONTENTS

0x08048108->0x08048128 at 0x00000108: .note.ABI-tag ALLOC LOAD READONLY DATA HAS_CONTENTS

0x08048128->0x080482c4 at 0x00000128: .hash ALLOC LOAD READONLY DATA HAS_CONTENTS

0x080482c4->0x080486c4 at 0x000002c4: .dynsym ALLOC LOAD READONLY DATA HAS_CONTENTS

0x080486c4->0x080488ce at 0x000006c4: .dynstr ALLOC LOAD READONLY DATA HAS_CONTENTS

0x080488ce->0x0804894e at 0x000008ce: .gnu.version ALLOC LOAD READONLY DATA HAS_CONTENTS

0x08048950->0x08048990 at 0x00000950: .gnu.version_r ALLOC LOAD READONLY DATA HAS_CONTENTS

0x08048990->0x080489b8 at 0x00000990: .rel.dyn ALLOC LOAD READONLY DATA HAS_CONTENTS

0x080489b8->0x08048b78 at 0x000009b8: .rel.plt ALLOC LOAD READONLY DATA HAS_CONTENTS

0x08048b78->0x08048b8f at 0x00000b78: .init ALLOC LOAD READONLY CODE HAS_CONTENTS

0x08048b90->0x08048f20 at 0x00000b90: .plt ALLOC LOAD READONLY CODE HAS_CONTENTS

0x08048f20->0x080594c0 at 0x00000f20: .text ALLOC LOAD READONLY CODE HAS_CONTENTS

0x080594c0->0x080594db at 0x000114c0: .fini ALLOC LOAD READONLY CODE HAS_CONTENTS

0x080594e0->0x0805f2e9 at 0x000114e0: .rodata ALLOC LOAD READONLY DATA HAS_CONTENTS

0x0805f2ec->0x0805f2f0 at 0x000172ec: .eh_frame ALLOC LOAD READONLY DATA HAS_CONTENTS

0x08060000->0x0806015c at 0x00018000: .data ALLOC LOAD DATA HAS_CONTENTS

0x0806015c->0x08060224 at 0x0001815c: .dynamic ALLOC LOAD DATA HAS_CONTENTS

0x08060224->0x0806022c at 0x00018224: .ctors ALLOC LOAD DATA HAS_CONTENTS

0x0806022c->0x08060234 at 0x0001822c: .dtors ALLOC LOAD DATA HAS_CONTENTS

0x08060234->0x08060238 at 0x00018234: .jcr ALLOC LOAD DATA HAS_CONTENTS

0x08060238->0x08060328 at 0x00018238: .got ALLOC LOAD DATA HAS_CONTENTS

0x08060340->0x08072e18 at 0x00018340: .bss ALLOC

0x00000000->0x00000462 at 0x00018340: .comment READONLY HAS_CONTENTS

0x00000000->0x00000258 at 0x000187a8: .debug_aranges READONLY HAS_CONTENTS

0x00000000->0x000006bf at 0x00018a00: .debug_pubnames READONLY HAS_CONTENTS

0x00000000->0x000448b3 at 0x000190bf: .debug_info READONLY HAS_CONTENTS

0x00000000->0x0000386e at 0x0005d972: .debug_abbrev READONLY HAS_CONTENTS

0x00000000->0x000049d8 at 0x000611e0: .debug_line READONLY HAS_CONTENTS

0x00000000->0x00001640 at 0x00065bb8: .debug_frame READONLY HAS_CONTENTS

0x00000000->0x00004d32 at 0x000671f8: .debug_str READONLY HAS_CONTENTS

0x00000000->0x000006a8 at 0x0006bf2a: .debug_ranges READONLY HAS_CONTENTS

Print data in the .plt section

(gdb) x/20x 0x08048b84

0x8048b84 <_init+24>: 0x423c35ff 0x25ff0806 0x08064240 0x00000000

0x8048b94 <mkdir>: 0x424425ff 0x00680806 0xe9000000 0xffffffe0

0x8048ba4 <chown>: 0x424825ff 0x08680806 0xe9000000 0xffffffd0

0x8048bb4 <strchr>: 0x424c25ff 0x10680806 0xe9000000 0xffffffc0

0x8048bc4 <write>: 0x425025ff 0x18680806 0xe9000000 0xffffffb0

Print string values in the .bbs section

(gdb) x/5s 0x08060340+11000

0x8062e38 <G+10968>: 'A' <repeats 200 times>...

0x8062f00 <G+11168>: 'A' <repeats 200 times>...

0x8062fc8 <G+11368>: 'A' <repeats 200 times>...

0x8063090 <G+11568>: 'A' <repeats 200 times>...

0x8063158 <G+11768>: 'A' <repeats 200 times>...

(gdb) x/5x 0x08072e18

0x8072e18: 0x41414141 0x41414141 0x41414141 0x41414141

0x8072e28: 0x41414141

Print the address for library system call

(gdb) x/x strcpy

0x42079da0 <strcpy>: 0x56e58955

(gdb) x/x system

0x42041e50 <system>: 0x83e58955

(gdb) x/x printf

0x42052390 <printf>: 0x83e58955

(gdb) x/x exit

0x4202b0f0 <exit>: 0x57e58955

Finding shellcode location at +600 from $esp

(gdb) x/100x $esp+600

0xbfff38c4: 0x00000000 0x00000000 0x00000000 0x00000000

0xbfff38d4: 0x00000000 0x36690000 0x2f003638 0x746f6f72

0xbfff38e4: 0x7a6e752f 0x352d7069 0x2f31352e 0x697a6e75

0xbfff38f4: 0x41410070 0x41414141 0x41414141 0x41414141

0xbfff3904: 0x41414141 0x41414141 0x41414141 0x41414141

0xbfff3914: 0x41414141 0x41414141 0x41414141 0x41414141

0xbfff3924: 0x41414141 0x41414141 0x41414141 0x41414141

Finding opcode values at -10 offset of $esp

(gdb) x/20bx $esp-10

0xbfff2fc2: 0x06 0x08 0xc0 0xc5 0x05 0x08 0xec 0xc5

0xbfff2fca: 0x05 0x08 0x09 0x33 0x05 0x08 0x60 0x03

0xbfff2fd2: 0x06 0x08 0x18 0x0c

Return address (0xbfffc12f) alignment is off

(gdb) x/100x $esp-600

0xbfff38c4: 0x00000000 0x00000000 0x00000000 0x00000000

0xbfff38d4: 0x90900070 0x90909090 0x90909090 0x90909090

0xbfff38e4: 0x90900090 0x90909090 0x90909090 0x90909090

0xbfff38f4: 0x90900090 0x90909090 0x90909090 0x90909090

0xbfff3904: 0x90909090 0x90909090 0x90909090 0x90909090

0xbfff3914: 0x90909090 0x90909090 0x90909090 0x90909090

0xbfff3924: 0x2fbfffc1 0x00000000 0x00000000 0x00000000

(gdb) info sources

Source files for which symbols have been read in:

a=blah.c

Source files for which symbols will be read in on demand:

(gdb) info functions

All defined functions:

File blah.c:

int attach_trc(int, pid_t);

void banner(void);

int detach_trc(int, pid_t);

int main(int, char **);

int show_trc(int, struct user_regs_struct);

Non-debugging symbols:

0x080483c0 _init

0x08048488 _start

0x080484ac call_gmon_start

0x080484d0 __do_global_dtors_aux

0x08048504 frame_dummy

0x080488d0 __libc_csu_init

0x08048924 __libc_csu_fini

0x08048968 __do_global_ctors_aux

0x0804898c _fini

(gdb) info file

Symbols from "/tmp/blah".

Local exec file:

/tmp/blah, file type elf32-i386.

Entry point: 0x8048488

0x08048114 - 0x08048127 is .interp

0x08048128 - 0x08048148 is .note.ABI-tag

0x08048148 - 0x08048198 is .hash

0x08048198 - 0x08048288 is .dynsym

0x08048288 - 0x08048322 is .dynstr

0x08048322 - 0x08048340 is .gnu.version

0x08048340 - 0x08048360 is .gnu.version_r

0x08048360 - 0x08048370 is .rel.dyn

0x08048370 - 0x080483c0 is .rel.plt

0x080483c0 - 0x080483d7 is .init

0x080483d8 - 0x08048488 is .plt

0x08048488 - 0x0804898c is .text

0x0804898c - 0x080489a6 is .fini

0x080489a8 - 0x08048c36 is .rodata

0x08048c38 - 0x08048c3c is .eh_frame

0x08049c3c - 0x08049c44 is .ctors

0x08049c44 - 0x08049c4c is .dtors

0x08049c4c - 0x08049c50 is .jcr

0x08049c50 - 0x08049d18 is .dynamic

0x08049d18 - 0x08049d1c is .got

0x08049d1c - 0x08049d50 is .got.plt

0x08049d50 - 0x08049d5c is .data

0x08049d5c - 0x08049d64 is .bss

``` | samglish |

1,874,779 | What I Learned from Domain Modeling in a Team | Introduction In my division at Guild, we've started a working group to develop and iterate... | 0 | 2024-06-02T23:50:00 | https://rubenrangel.net/what-i-learned-from-domain-modeling-in-a-team | stakeholdercommunication, domaindrivendesign, architecture, leadership | ---

title: What I Learned from Domain Modeling in a Team

published: true

date: 2024-06-02 23:10:40 UTC

tags: stakeholdercommunication, DomainDrivenDesign, softwarearchitecture, leadership

canonical_url: https://rubenrangel.net/what-i-learned-from-domain-modeling-in-a-team

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/bzvqe2bl9ivzon9ayt7v.jpg

---

## Introduction

In my division at Guild, we've started a working group to develop and iterate on a new domain model, aiming to create the ideal topography for the next evolution of our space. The process incorporates zero-start thinking (starting from a blank slate) and considers inputs such as what product features we want to support now and in the future, how to design the domain to easily adapt to new requirements, and how to align the domain to maximize ownership and the flow of work. The spirit of the exercise is rooted in Domain-Driven Design. Using this model, we aim to provide a North Star of sorts to guide design decisions, trade-off discussions, investments, and what directionally correct choices look like.

The group consists of leaders in the technology and product organizations. I'm excited to work with them on this large project and learn from them. I work closely alongside the Software Architect in the group, helping to author the model using feedback from stakeholders and our own knowledge and understanding of the domain.

## Communicating with Stakeholders

With a task as complex as designing a domain, communicating with others is crucial. Failure to do so dashes any chances of achieving the project's goals. Nobody truly has the "whole picture" in their heads. Getting other people's perspectives on the domain, listening to their concerns with the model, teasing out information by asking thought-provoking questions, and gathering requirements are just some examples of the process of effective communication. If you can do this with people in various roles (especially in Product and Engineering), you increase your chances of success. Just be sure not to get stuck in analysis paralysis.

In our group, the revisions of the model have happened in various ways. We've done some small iterations in synchronous meetings, I've facilitated one-on-one sessions with stakeholders, and we've also collaborated asynchronously using Miro. **Getting feedback early and often helps reduce the chances of creating something that doesn't fit our needs.**

## Making Brave Decisions

> Essentially, all models are wrong, but some are useful.

>

> \- George E. P. Box

As my career has progressed, I've taken on more responsibility and decision-making, which can be uncomfortable. I often wonder, "Is this decision the right one?" During this domain modeling exercise, I create concepts based on my understanding of different contexts, and that question frequently arises.

In a conceptual model like the one we're building, where the time horizon is at least a year out if the model had perfect clarity, the answer may not be known for a long time. Even then, by the time we get there, the definition of correct may be entirely different from what it was before. My VP of Engineering gave me great advice though: **"Make a decision and get feedback on it. If it doesn't work, we've eliminated one incorrect option."** This made me realize that though I may be drawing some boxes in the model, the overall decision and direction belong to the team, not a single person. I shouldn't be hung up on being right. The point of the model is to help inform and provide context, not to be 100% accurate in all regards. Modeling is cheap, and we should be comfortable throwing away ideas now, when it's easy.

## Balancing Ideal vs. Current State

As I stated earlier, the modeling exercise uses some of the current functionality we want to support as an input. Another is future functionality we want to support. It's hard to keep the former from influencing your thinking too much. These are things we've built; it's easy to reason about and understand them since they're real and these concepts already exist. Striking the balance and allowing ourselves to question everything is key to envisioning what could be. Yes, sometimes we keep some domain objects from the current state around because they make sense, but other times, we take the scary step of not including those objects in the new world. **Envisioning what could be is the goal, and we should use the past to inform the future, but not couple them.**

## The Ever-Evolving Model

I've never been part of a project where the final delivery turns out exactly like the initial design. The completeness of the model is not 100%, and the time horizon for any kind of implementation is long-term. Revisiting and evolving the model is necessary to keep it relevant and useful. Contexts will shift over time: new features will be necessary to accomplish the company's goals, domains may become deprecated, and we'll keep gaining understanding of our product's sector.

After the initial model is built, one of the follow-on efforts will be to see how we can get from point A to point B and do that in an iterative fashion. Think of things like product release milestones or an architecture modification. **We should use these checkpoints to pause and revisit the model.** We will have certainly learned more along the way, and building that understanding back into the model will adjust it to be more "correct" than it was. I've seen many diagrams and models that are made once and never updated again, reducing their usefulness and potentially leading to suboptimal decision-making.

## Continuous Communication and Adaptation

Even though the modeling is done by a working group, the outcomes affect a larger set of people and the systems they work on. Shopping around the model, setting up its context, and getting people's thoughts on it are important for gaining buy-in on the effort. In an organizational culture that emphasizes ownership, "throwing things over the wall" is a mistake. I personally am still learning how to do this well. Who do I share information with, and at what time? How do I make sure their input is heard but also not distract them from their current priorities?

I don't think there's a one-size-fits-all answer for this. **Generally speaking though, I think it's hard to over-communicate, especially in things that are high in scope.** There's probably nuance here with the particular people you work with and organizational structures. That pushes more importance on staying aware of how communication flows within the organization and doing what you can to ensure everyone is on the same page.

## Conclusion

In conclusion, the domain modeling project at Guild has underscored the importance of clear communication, decisive action, and the ability to adapt to changing conditions. This work has taught me to balance current realities with our future vision, continuously refine our models, and engage our entire team in the evolution process.

Have you been part of large-scale domain exercises like this? What strategies did you employ to achieve your goals? | rubenrangel |

1,874,735 | Best Deepfake Open Source App ROPE — So Easy To Use Full HD Feceswap DeepFace, Tutorials for Windows and Cloud — No GPU Required | Rope is the newest 1-Click, most easy to use, most advanced open source Deep Fake application. It has... | 0 | 2024-06-02T23:04:18 | https://dev.to/furkangozukara/best-deepfake-open-source-app-rope-so-easy-to-use-full-hd-feceswap-deepface-tutorials-for-windows-and-cloud-no-gpu-required-1eeg | tutorial, ai, news, opensource | <p style="margin-left:0px;">Rope is the newest 1-Click, most easy to use, most advanced open source Deep Fake application. It has been just published few days ago. In below tutorials I show how to use Rope Pearl DeepFake application both on Windows and on a cloud machine (Massed Compute). Rope is way better than Roop, Roop Unleashed and FaceFusion. It supports multi-face Face Swapping and making amazing DeepFake videos so easily with 1-Click. Select video, select faces and generate your DeepFake 4K ultra-HD video.</p>

<p style="margin-left:0px;">1-Click Rope Installers Scripts (contains both Windows into an isolated Python VENV and Massed Compute — Cloud — No GPU)⤵️</p>

<p style="margin-left:0px;"><a target="_blank" href="https://www.patreon.com/posts/most-advanced-1-105123768"><u>https://www.patreon.com/posts/most-advanced-1-105123768</u></a></p>

<p style="margin-left:0px;">Tutorials are made only for educational purposes. On cloud Massed Compute machine, you can run with staggering 20 threads and can FaceSwap entire movies. Fully supports face tracking and multiple face changes.</p>

<h2 style="margin-left:0px;"><a target="_blank" href="https://youtu.be/RdWKOUlenaY"><strong><u>Mind-Blowing Deepfake Tutorial: Turn Anyone into Your Fav Movie Star! Better than Roop & Face Fusion</u></strong></a></h2>

<p style="margin-left:0px;"><a target="_blank" href="https://youtu.be/RdWKOUlenaY"><u>https://youtu.be/RdWKOUlenaY</u></a></p>

<p style="margin-left:auto;"> </p>

<p style="margin-left:auto;">{% embed https://youtu.be/RdWKOUlenaY %}</p>

<p style="margin-left:auto;"> </p>

<p style="margin-left:auto;">

<picture>

<source srcset="https://miro.medium.com/v2/resize:fit:640/format:webp/1*8ssRYSboVaE0pJ0qMkRqww.png 640w, https://miro.medium.com/v2/resize:fit:720/format:webp/1*8ssRYSboVaE0pJ0qMkRqww.png 720w, https://miro.medium.com/v2/resize:fit:750/format:webp/1*8ssRYSboVaE0pJ0qMkRqww.png 750w, https://miro.medium.com/v2/resize:fit:786/format:webp/1*8ssRYSboVaE0pJ0qMkRqww.png 786w, https://miro.medium.com/v2/resize:fit:828/format:webp/1*8ssRYSboVaE0pJ0qMkRqww.png 828w, https://miro.medium.com/v2/resize:fit:1100/format:webp/1*8ssRYSboVaE0pJ0qMkRqww.png 1100w, https://miro.medium.com/v2/resize:fit:1400/format:webp/1*8ssRYSboVaE0pJ0qMkRqww.png 1400w" type="image/webp" sizes="(min-resolution: 4dppx) and (max-width: 700px) 50vw, (-webkit-min-device-pixel-ratio: 4) and (max-width: 700px) 50vw, (min-resolution: 3dppx) and (max-width: 700px) 67vw, (-webkit-min-device-pixel-ratio: 3) and (max-width: 700px) 65vw, (min-resolution: 2.5dppx) and (max-width: 700px) 80vw, (-webkit-min-device-pixel-ratio: 2.5) and (max-width: 700px) 80vw, (min-resolution: 2dppx) and (max-width: 700px) 100vw, (-webkit-min-device-pixel-ratio: 2) and (max-width: 700px) 100vw, 700px">

<source srcset="https://miro.medium.com/v2/resize:fit:640/1*8ssRYSboVaE0pJ0qMkRqww.png 640w, https://miro.medium.com/v2/resize:fit:720/1*8ssRYSboVaE0pJ0qMkRqww.png 720w, https://miro.medium.com/v2/resize:fit:750/1*8ssRYSboVaE0pJ0qMkRqww.png 750w, https://miro.medium.com/v2/resize:fit:786/1*8ssRYSboVaE0pJ0qMkRqww.png 786w, https://miro.medium.com/v2/resize:fit:828/1*8ssRYSboVaE0pJ0qMkRqww.png 828w, https://miro.medium.com/v2/resize:fit:1100/1*8ssRYSboVaE0pJ0qMkRqww.png 1100w, https://miro.medium.com/v2/resize:fit:1400/1*8ssRYSboVaE0pJ0qMkRqww.png 1400w" sizes="(min-resolution: 4dppx) and (max-width: 700px) 50vw, (-webkit-min-device-pixel-ratio: 4) and (max-width: 700px) 50vw, (min-resolution: 3dppx) and (max-width: 700px) 67vw, (-webkit-min-device-pixel-ratio: 3) and (max-width: 700px) 65vw, (min-resolution: 2.5dppx) and (max-width: 700px) 80vw, (-webkit-min-device-pixel-ratio: 2.5) and (max-width: 700px) 80vw, (min-resolution: 2dppx) and (max-width: 700px) 100vw, (-webkit-min-device-pixel-ratio: 2) and (max-width: 700px) 100vw, 700px"><img class="image_resized" style="height:auto;width:680px;" src="https://miro.medium.com/v2/resize:fit:1313/1*8ssRYSboVaE0pJ0qMkRqww.png" alt="" width="700" height="394">

</picture>

</p>

<h2 style="margin-left:0px;"><a target="_blank" href="https://youtu.be/HLWLSszHwEc"><strong><u>Best Deepfake Open Source App ROPE — So Easy To Use Full HD Feceswap DeepFace, No GPU Required Cloud</u></strong></a></h2>

<p style="margin-left:0px;"><a target="_blank" href="https://youtu.be/HLWLSszHwEc"><u>https://youtu.be/HLWLSszHwEc</u></a></p>

<p style="margin-left:auto;"> </p>

<p style="margin-left:auto;">{% embed https://youtu.be/HLWLSszHwEc %}</p>

<p style="margin-left:auto;"> </p>

<p style="margin-left:auto;">

<picture>

<source srcset="https://miro.medium.com/v2/resize:fit:640/format:webp/1*cBRXfc5pcHMfz52OVjpWDw.png 640w, https://miro.medium.com/v2/resize:fit:720/format:webp/1*cBRXfc5pcHMfz52OVjpWDw.png 720w, https://miro.medium.com/v2/resize:fit:750/format:webp/1*cBRXfc5pcHMfz52OVjpWDw.png 750w, https://miro.medium.com/v2/resize:fit:786/format:webp/1*cBRXfc5pcHMfz52OVjpWDw.png 786w, https://miro.medium.com/v2/resize:fit:828/format:webp/1*cBRXfc5pcHMfz52OVjpWDw.png 828w, https://miro.medium.com/v2/resize:fit:1100/format:webp/1*cBRXfc5pcHMfz52OVjpWDw.png 1100w, https://miro.medium.com/v2/resize:fit:1400/format:webp/1*cBRXfc5pcHMfz52OVjpWDw.png 1400w" type="image/webp" sizes="(min-resolution: 4dppx) and (max-width: 700px) 50vw, (-webkit-min-device-pixel-ratio: 4) and (max-width: 700px) 50vw, (min-resolution: 3dppx) and (max-width: 700px) 67vw, (-webkit-min-device-pixel-ratio: 3) and (max-width: 700px) 65vw, (min-resolution: 2.5dppx) and (max-width: 700px) 80vw, (-webkit-min-device-pixel-ratio: 2.5) and (max-width: 700px) 80vw, (min-resolution: 2dppx) and (max-width: 700px) 100vw, (-webkit-min-device-pixel-ratio: 2) and (max-width: 700px) 100vw, 700px">

<source srcset="https://miro.medium.com/v2/resize:fit:640/1*cBRXfc5pcHMfz52OVjpWDw.png 640w, https://miro.medium.com/v2/resize:fit:720/1*cBRXfc5pcHMfz52OVjpWDw.png 720w, https://miro.medium.com/v2/resize:fit:750/1*cBRXfc5pcHMfz52OVjpWDw.png 750w, https://miro.medium.com/v2/resize:fit:786/1*cBRXfc5pcHMfz52OVjpWDw.png 786w, https://miro.medium.com/v2/resize:fit:828/1*cBRXfc5pcHMfz52OVjpWDw.png 828w, https://miro.medium.com/v2/resize:fit:1100/1*cBRXfc5pcHMfz52OVjpWDw.png 1100w, https://miro.medium.com/v2/resize:fit:1400/1*cBRXfc5pcHMfz52OVjpWDw.png 1400w" sizes="(min-resolution: 4dppx) and (max-width: 700px) 50vw, (-webkit-min-device-pixel-ratio: 4) and (max-width: 700px) 50vw, (min-resolution: 3dppx) and (max-width: 700px) 67vw, (-webkit-min-device-pixel-ratio: 3) and (max-width: 700px) 65vw, (min-resolution: 2.5dppx) and (max-width: 700px) 80vw, (-webkit-min-device-pixel-ratio: 2.5) and (max-width: 700px) 80vw, (min-resolution: 2dppx) and (max-width: 700px) 100vw, (-webkit-min-device-pixel-ratio: 2) and (max-width: 700px) 100vw, 700px"><img class="image_resized" style="height:auto;width:680px;" src="https://miro.medium.com/v2/resize:fit:1313/1*cBRXfc5pcHMfz52OVjpWDw.png" alt="" width="700" height="394">

</picture>

</p>

<h1 style="margin-left:0px;"><strong>Windows Roop Pearl Tutorial Video Chapters</strong></h1>

<p style="margin-left:0px;"><a target="_blank" href="https://youtu.be/RdWKOUlenaY"><u>https://youtu.be/RdWKOUlenaY</u></a></p>

<p style="margin-left:0px;">#Rope is the newest 1-Click, most easy to use, most advanced open source Deep Fake application. It has been just published yesterday. In this tutorial I will show you how to use Rope Pearl DeepFake application. Rope is way better than Roop, #Roop Unleashed and #FaceFusion. It supports multi-face Face Swapping and making amazing DeepFake videos so easily with 1-Click. Select video, select faces and generate your DeepFake 4K ultra-HD video.</p>

<p style="margin-left:0px;">1-Click Rope Installers Scripts ⤵️<br><a target="_blank" href="https://www.patreon.com/posts/most-advanced-1-105123768"><u>https://www.patreon.com/posts/most-advanced-1-105123768</u></a></p>

<p style="margin-left:0px;">How To Install Requirements Tutorial (Python, Git, FFmpeg, CUDA, C++ Tools) ⤵️<br><a target="_blank" href="https://youtu.be/-NjNy7afOQ0"><u>https://youtu.be/-NjNy7afOQ0</u></a></p>

<p style="margin-left:0px;">Official Rope GitHub Repository ⤵️<br><a target="_blank" href="https://github.com/Hillobar/Rope"><u>https://github.com/Hillobar/Rope</u></a></p>

<p style="margin-left:0px;">Rope’s Author Donation Link — Support Him For Better APP ⤵️<br><a target="_blank" href="https://www.paypal.com/donate/?hosted_button_id=Y5SB9LSXFGRF2"><u>https://www.paypal.com/donate/?hosted_button_id=Y5SB9LSXFGRF2</u></a></p>

<p style="margin-left:0px;">0:00 Example Deepfake video from movie Inglourious Basterds 2009<br>0:21 Introduction to the most easy to use and most advanced 1-Click Deepfake application Rope Pearl<br>0:53 How to download 1-Click installer scripts and start installing Rope Pearl<br>1:34 What are the requirements of Deepfake app Rope Pearl and how to check and install them<br>1:44 How to check and verify your Python, Git, CUDA and FFmpeg installations<br>3:42 Example images and a test video that I prepared and sharing<br>4:10 How to start Rope Deepfake application after the installation has been completed<br>4:27 How to use Rope Pearl Deepfake application — first select videos and images folders<br>5:00 How to refresh and re-populate selected videos and faces folders<br>5:26 How to set the outputs folder where the Deepfake videos and images will be saved<br>5:45 How Rope Pearl the most advanced Deepfake application work, select input video and target faces<br>6:34 How to make swapped, deep faked faces HD from low resolution<br>7:01 How to further improve face quality with face restoration AI models automatically<br>7:49 How to make additional changes to fix artifacts and mistakes in the Deepfaked video<br>8:27 Support link to support author of Rope developer<br>8:37 How to test and see each changes effect immediately<br>9:00 The tests and configurations I have pre-prepared for you<br>9:19 How to use Face Parser to fix the mouth movement<br>9:53 How to reduce VRAM usage and increase processing speed with number of threads<br>10:13 How to export and save Deepfake applied new video<br>12:12 Where will be the output / exported video saved<br>12:33 Important face detection models Retina face, Yolo and SCRDF — try them if face detection fails<br>13:34 How to understand when the Deepfake video processing is completed<br>13:59 Properties of the generated Deepfake video, e.g. resolution, bitrate<br>14:24 How to Deep Fake / Face Swap images not videos<br>15:30 How to save deep faked images<br>15:43 What is auto swap and how to use it<br>16:10 How to find best working face before start processing the video<br>17:13 How to automatically install and use Rope DeepFake AI on a Linux system</p>

<p style="margin-left:0px;">Deepfake Tutorial: Rope-Pearl Application for Face Swapping in Videos and Images</p>

<p style="margin-left:0px;">Installation</p>

<p style="margin-left:0px;">Download the installer files from the provided link in the video description<br>Extract the files to your desired installation location (e.g., rope_ai folder)<br>Ensure you have the necessary prerequisites installed:<br>Python 3.10.11<br>Git<br>FFmpeg<br>CUDA<br>Run the install.bat file to start the installation process<br>The installer will download the necessary models and set up a virtual environment<br>Using Rope-Pearl for Video Face Swapping</p>

<p style="margin-left:0px;">Open Rope-Pearl by double-clicking the windows_start.bat file<br>Select the videos folder containing your input video<br>Select the faces folder containing the face images you want to use for swapping<br>Click “Start Rope” to refresh the interface with the latest files<br>Select the output folder where the processed video will be saved<br>Select the video you want to modify<br>Click “Find Faces” to detect faces in the video<br>Select the face you want to replace and the face you want to replace it with<br>Adjust the Swapper Resolution to enhance the quality (up to 512 pixels)<br>Enable the restorer and choose GPEN512 for best results<br>Fine-tune the blend ratio to make the face swap look more natural<br>Enable strength and adjust size border distance to fix errors<br>Use the Occluder and Face Parser to improve mouth movements and fix other issues<br>Set the number of threads based on your GPU’s capabilities<br>Choose the output video quality<br>Click the record icon and then play to start processing the video with the face swap</p>

<p style="margin-left:0px;">Using Rope-Pearl for Image Face Swapping</p>

<p style="margin-left:0px;">Switch to the image tab in Rope-Pearl<br>Select your source image and click “Find Faces”<br>Select the face you want to replace and the target face<br>Enable “Swap Faces” and adjust settings as needed (Swapper Resolution, Restorer, etc.)<br>Use the “Auto Swap” feature to automatically apply the selected face to new images<br>Click “Save Image” to save the face-swapped image to the output folder</p>

<p style="margin-left:0px;">Additional Tips and Information</p>

<p style="margin-left:0px;">Try different face detection models (Retina Face, Yolo v8, SCRDF)</p>

<h1 style="margin-left:0px;"><strong>Cloud — Massed Compute Roop Pearl Tutorial Video Chapters</strong></h1>

<p style="margin-left:0px;"><a target="_blank" href="https://youtu.be/HLWLSszHwEc"><u>https://youtu.be/HLWLSszHwEc</u></a></p>

<p style="margin-left:0px;">#Rope is the newest 1-Click, most easy to use, most advanced open source Deep Fake application. It has been just published several days ago. In this tutorial I will show you how to use Rope Pearl DeepFake application on a cloud machine with 20 threads ultra fast speeds for very cheap prices. Rope is way better than Roop, #Roop Unleashed and #FaceFusion. It supports multi-face Face Swapping and making amazing DeepFake videos so easily with 1-Click. Select video, select faces and generate your DeepFake 4K ultra-HD video.</p>

<p style="margin-left:0px;">So by watching this video, even if you don’t have a strong computer, you will be able to use Rope application on Massed Compute cloud machine as exactly as on your computer with staggering 20 threads ultra fast speed for very cheap prices with our special coupon code. This app will run on a remote machine so your machine will be 100% unaffected and safe. But It will be also as easy as using it on your local machine.</p>

<p style="margin-left:0px;">1-Click Rope Installers Scripts ⤵️<br><a target="_blank" href="https://www.patreon.com/posts/most-advanced-1-105123768"><u>https://www.patreon.com/posts/most-advanced-1-105123768</u></a></p>

<p style="margin-left:0px;">Massed Compute Register and Login ⤵️<br><a target="_blank" href="https://vm.massedcompute.com/signup?linkId=lp_034338&sourceId=secourses&tenantId=massed-compute"><u>https://vm.massedcompute.com/signup?linkId=lp_034338&sourceId=secourses&tenantId=massed-compute</u></a></p>

<p style="margin-left:0px;">Rope Pearl Windows and Main How-To-Use Tutorial — NEW ⤵️<br><a target="_blank" href="https://youtu.be/RdWKOUlenaY"><u>https://youtu.be/RdWKOUlenaY</u></a></p>

<p style="margin-left:0px;">Official Rope GitHub Repository ⤵️<br><a target="_blank" href="https://github.com/Hillobar/Rope"><u>https://github.com/Hillobar/Rope</u></a></p>

<p style="margin-left:0px;">Rope’s Author Donation Link — Support Him For Better APP ⤵️<br><a target="_blank" href="https://www.paypal.com/donate/?hosted_button_id=Y5SB9LSXFGRF2"><u>https://www.paypal.com/donate/?hosted_button_id=Y5SB9LSXFGRF2</u></a></p>

<p style="margin-left:0px;">ThinLinc Client Download ⤵️<br><a target="_blank" href="https://www.cendio.com/thinlinc/download/"><u>https://www.cendio.com/thinlinc/download/</u></a></p>

<p style="margin-left:0px;">SECourses Discord Channel to Get Full Support ⤵️<br><a target="_blank" href="https://discord.com/servers/software-engineering-courses-secourses-772774097734074388"><u>https://discord.com/servers/software-engineering-courses-secourses-772774097734074388</u></a></p>

<p style="margin-left:0px;">Why did I choose Massed Compute? Because it is was more stable and cheaper and easier to use than RunPod, Google Colab, Kaggle, Vast AI, Amazon AWS, Azure and all other cloud platforms. After watching this tutorial, you will understand what I mean.</p>

<p style="margin-left:0px;">0:00 Example Deepfake video from movie Inglourious Basterds 2009<br>0:21 Introduction to Rope application tutorial on cloud machine Massed Compute<br>0:59 How to download Rope installers for Massed Compute and any Linux Ubuntu machine<br>1:42 How to select accurate machine on Massed Compute and apply our coupon SECourses for huge price reduction<br>3:19 How to install and setup ThinLinc client to connect and use Massed Compute machine<br>3:59 How to setup a synchronization folder with ThinLinc client to transfer files between Massed Compute remote cloud machine and your local machine<br>4:51 How to connect initialized Massed Compute cloud machine and start installing Rope Pearl application there<br>5:11 What does End existing session option do<br>5:48 How to see GPU features on remote Massed Compute cloud machine via nvitop<br>5:58 How to start installation script<br>7:45 How to start the Rope application after installation has been completed<br>8:35 How to use Rope Pearl DeepFake and FaceSwap application on Massed Compute<br>11:45 How to select multiple target faces to improve likeliness<br>12:03 Hitting enter after setting a value is mandatory for setting change to be applied<br>12:56 How to save / export DeepFake / FaceSwapping applied new video<br>13:20 Why set 20 threads to get maximum speed<br>13:55 The Rope application real time processing speed when using 20 threads<br>14:50 If you are using Linux on your local computer what you need to install Rope Pearl<br>15:38 Important mistake I made and how you should avoid it<br>16:19 How to DeepFake / FaceSwap only an image not a video<br>16:39 How to see and download rendered / exported / saved new DeepFake / FaceSwapped video<br>17:22 Consider supporting Rope developer via Paypal<br>18:03 Super important thing before terminating your Massed Compute running instance<br>19:10 How to join our Discord channel</p>

<p style="margin-left:0px;">Dive into the fascinating world of deepfake technology with this comprehensive guide! In this video, we explore the ins and outs of AI-generated deepfakes, from their creation to their potential impacts on society. Whether you’re curious about deepfake tutorials, the latest deepfake software, or the ethical implications of this technology, we’ve got you covered.</p>

<p style="margin-left:0px;">What You’ll Learn:<br>What is a deepfake? Understand the basics of deepfake technology and how it works.<br>How to make a deepfake: Step-by-step guide using popular deepfake apps and tools.<br>Detection and protection: Learn about the latest deepfake detection methods and how to protect yourself.<br>Real-world examples: See deepfake videos featuring celebrities and public figures.<br>Ethical and legal considerations: Discuss the dangers of deepfakes, their misuse, and the ongoing discussions around regulation.<br>Why Watch?<br>Deepfakes are transforming the way we see media, but they come with significant risks. This video will help you understand both the innovative aspects and the potential threats posed by deepfakes, providing you with the knowledge to navigate this evolving landscape safely.</p> | furkangozukara |

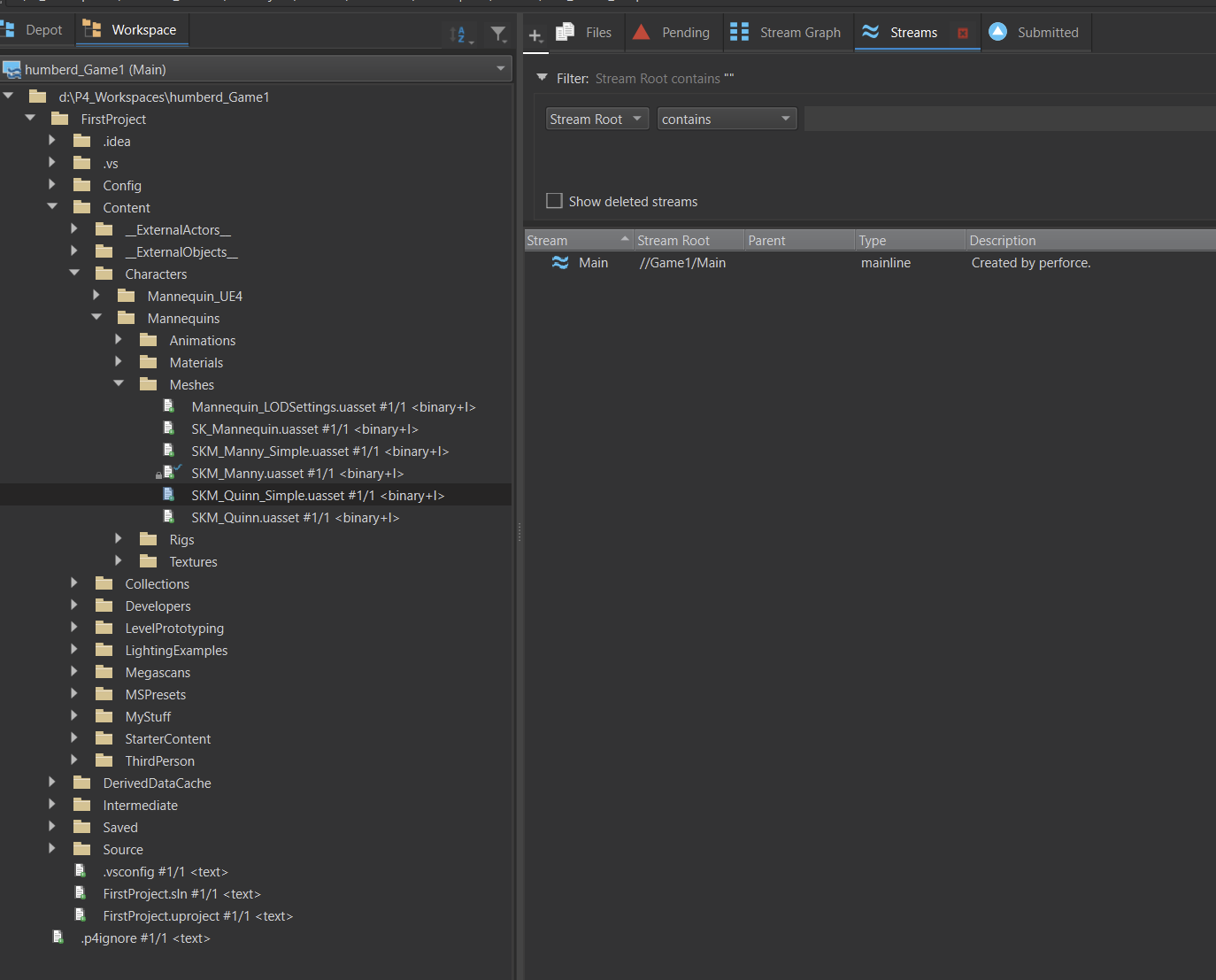

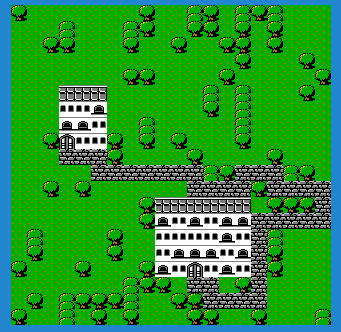

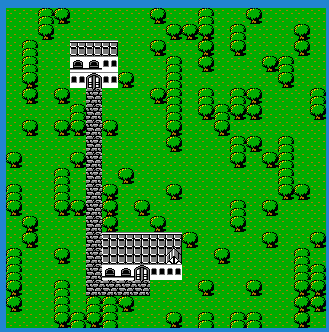

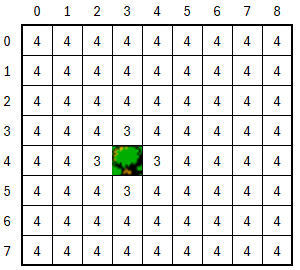

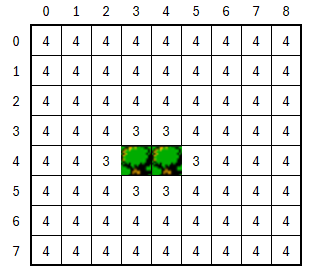

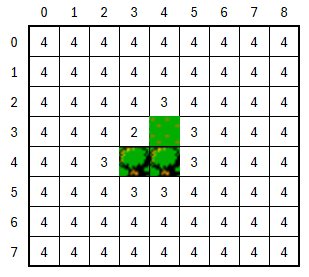

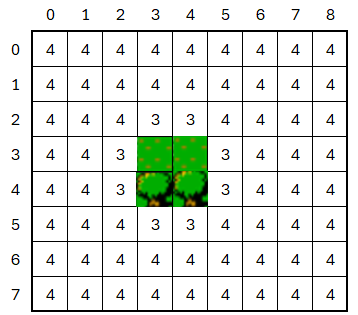

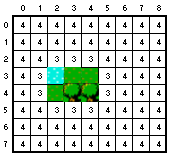

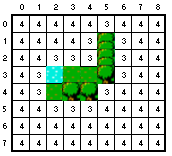

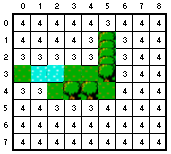

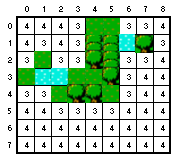

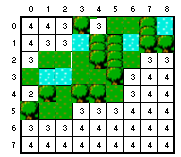

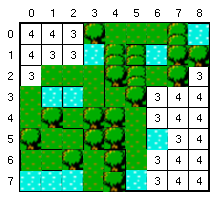

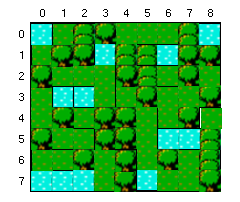

1,873,924 | [Game of Purpose] Day 15 - End of tutorial | Today I finished a tutorial This is my final result: This is how my castle looks: After this... | 27,434 | 2024-06-02T23:04:12 | https://dev.to/humberd/game-of-purpose-day-15-end-of-tutorial-30oc | gamedev | Today I finished a [tutorial](https://www.youtube.com/watch?v=k-zMkzmduqI)

This is my final result:

This is how my castle looks:

After this tutorial, especially the last part I felt that I don't enjoy creating objects. Creating terrain, painting was somewhat ok, but building a castle was so boring. I wanted it to end as fast as possible, so I copy pasted walls. That's why it looks so fake.

My next goal is to setup version control system (VCS) with either Perforce, which I need some time to spend on, or git.

-----

I had some more time today and I followed [this tutorial](https://www.youtube.com/watch?v=Hvmvv2MG-UE) of setting up Perforce server. I created a 16$/month droplet on Digital Ocean. I hope I could do that on my NAS, so that I don't have to pay that much.

| humberd |

1,874,734 | Key Tips for Database Design | Normalization: Break down your data into additional tables to eliminate redundancy and ensure data... | 0 | 2024-06-02T22:58:26 | https://dev.to/mauritzkurt/key-tips-for-database-design-1f8 | beginners, database | 1. Normalization: Break down your data into additional tables to eliminate redundancy and ensure data integrity.

2. Primary Keys: Assign a unique identifier to each table. This key ensures that each record within the table can be uniquely identified.

3. Foreign Keys: Used to establish relationships between tables. This method maintains referential integrity by linking related data across different tables.

4. Data Types: Choose appropriate data types for each column to ensure data accuracy and optimize storage. | mauritzkurt |

1,873,947 | Demystifying the Cloud: A Beginner's Guide to Cloud Computing Concepts | Venturing into the world of cloud computing may feel like a dive into the Matrix, where the... | 0 | 2024-06-02T22:49:47 | https://dev.to/jimiog/demystifying-the-cloud-a-beginners-guide-to-cloud-computing-concepts-9fk | azure, aws, cloud | Venturing into the world of cloud computing may feel like a dive into the Matrix, where the terminologies discussed are only understandable by superhuman machines. But fear not, this guide will decode the essential concepts of cloud computing and prepare you for your cloud developer journey.

Imagine your computer's hard drive as a personal storage locker. It holds all your files, documents, and applications. Now, picture a massive warehouse filled with countless lockers belonging to different people. This warehouse, in essence, represents the cloud. Cloud computing is like renting space in this warehouse to store your data and run your applications, accessible from anywhere with an internet connection. Each warehouse belongs to a service provider like Amazon's AWS platform or Microsoft's Azure platform.

Here are some key cloud computing concepts you'll encounter:

1. **Virtualization:**

Think of it as creating multiple virtual computers on a single physical machine. It's like dividing your storage locker into compartments, allowing you to organize and access different things independently.

2. **Scalability:**

Imagine needing more space in your locker. Cloud computing lets you easily scale resources (storage, processing power) up or down as your needs evolve. It's like having a flexible storage solution that expands or shrinks based on your requirements.

3. **Agility:**

Cloud computing provides on-demand resources, enabling you to quickly set up new environments or applications. This agility is akin to having readily available storage units in the warehouse, allowing you to move things around or add new items swiftly.

4. **High Availability:**

This ensures your applications and data are accessible almost all the time. It's like having a backup storage locker in case your primary one experiences an issue. Even if one locker is unavailable, you can still access your belongings from the other.

5. **Fault Tolerance:**

Cloud systems are designed to handle failures. If one virtual machine malfunctions, another seamlessly takes over, minimizing downtime. This is similar to having a team managing the warehouse; if one storage unit malfunctions, they can quickly switch to another.

6. **Global Reach:**

With the cloud, your data and applications are accessible from anywhere in the world with an internet connection. It's like having a network of storage warehouses across the globe, allowing you to access your belongings from any location.

7. **Elasticity vs. Scalability:**

- **Scalability** refers to the ability to adjust resources (storage, processing power) to meet changing demands. It's about having the right amount of space in your storage locker.

- **Elasticity** focuses on how quickly you can make those adjustments. It's about how fast you can add or remove items from your locker based on your needs.

Cloud computing offers a multitude of benefits, making it a popular choice for businesses and individuals alike. By understanding these core concepts, you'll be well-equipped to navigate the exciting world of cloud development!

| jimiog |

1,873,954 | Playing with the embryonic connections in Java with the Foreign Function | Today we will use the Foreign Function (FFI) in Java 22 to call a native code aims to initiate... | 0 | 2024-06-02T22:41:02 | https://dev.to/ulrich/playing-with-the-embryonic-connections-in-java-with-the-foreign-function-24k7 | java, native, foreignfunction | > Today we will use the Foreign Function (FFI) in Java 22 to call a native code aims to initiate embryonic connections against any servers.

💡An example is ready to run on my github.com/ulrich space available in the repository : https://github.com/ulrich/java-rawsocket

## What is embryonic connections ? 🎓

We use embryonic connections capabilities when we want to initiate an aborted TCP three-way handshake for example. If you remember your Network courses at the Faculty (coucou Paris 8 ❤️) there are a couple of years (for me), you remember the following sequences while the TCP-IP tries to open a network connection :

```

1️⃣ SYN (seq 1000) -> |

2️⃣ | <- SYN / ACK (seq 2000, ACK 1001)

3️⃣ ACK (seq 1001, ACK 2001) -> |

```

When the process is achieved the hosts can talk together. If we don't need to establish a well formed connection between hosts, we can abort the sequence by sending a reset (RST) instruction at the end of the TCP three-way handshake, like this :

```

1️⃣ SYN (seq 1000) -> |

2️⃣ | <- SYN / ACK (seq 2000, ACK 1001)

3️⃣ RST -> X

```

There are several reasons to create embryonic connections and from my side I had to implement this feature for a client there is some years...

Anyway, if you want to explore Embryonic Connection go ahead in the dedicated Wikipedia page : https://en.wikipedia.org/wiki/TCP_half-open

## The Foreign Function in Java ? 🎓

The best way to resume the FFI in Java is to mention the official definition from Oracle company : `The Foreign Function and Memory (FFM) API enables Java programs to interoperate with code and data outside the Java runtime.`

In other terms, we want "to bend the game" and forgot the ancestor ways (JNI, JNA, JNR...) to use native code outside the Java Virtal Machine.

The promise behind FFI is to ease the use cases when a Java needs to (down)call and (up)call a native program or library.

This article is not an introduction about FFI and the best to learn about it is to follow the Oracle documentation here : https://docs.oracle.com/en/java/javase/22/core/foreign-function-and-memory-api.html

## FFI for embryonic connection ? 🚀

I think you've guessed why we need native program to establish an embryonic connection. Indeed, in Java we unfortunately don't have the ability to create low-level network packets. So the use of the **netinet/tcp.h** library is reserved for lower-level languages such as C (our example) and so FFI comes to the rescue !

Please deep dive in the SocketTester.java code with me 🔍

```

public int run() throws Throwable {

log.info("Running SocketTester for destination address {}:{}", destinationAddress, destinationPort);

1️⃣ try (Arena confinedArena = Arena.ofConfined()) {

SymbolLookup symbolLookup =

SymbolLookup.libraryLookup(Config.getNativeLibraryFile(), confinedArena);

2️⃣ MemorySegment function =

symbolLookup.find(Config.getNativeFunctionName())

.orElseThrow(() -> new IllegalStateException("Unable to find the native function: " + Config.getNativeFunctionName()));

3️⃣ MethodHandle methodHandle = Linker.nativeLinker()

.downcallHandle(

function,

Config.getNativeFunctionDescriptor());

4️⃣ return (int) methodHandle.invoke(

confinedArena.allocateFrom(sourceAddress),

confinedArena.allocateFrom(destinationAddress),

confinedArena.allocateFrom(ValueLayout.OfInt.JAVA_INT, destinationPort),

confinedArena.allocateFrom(ValueLayout.OfInt.JAVA_INT, readTimeout),

confinedArena.allocateFrom(ValueLayout.OfInt.JAVA_INT, writeTimeout));

}

}

```

At the point 1️⃣ we declare that we want to use the restricted Arena only available for the thread which creates the Arena. We can compare Arena with a closed box responsible to control the lifecycle of native memory segments. For the moment FFI allows the following scopes : Global, Automatic, Confined and Shared. Please take a look into Javadoc : https://docs.oracle.com/en/java/javase/22/docs/api/java.base/java/lang/foreign/Arena.html

At the point 2️⃣ we have to load the native library under the .so form.

At the point 3️⃣ we initialize a downcall handler used to communicate from Java to Native code. FFI allows to use upcall handler stub which enable you to pass Java code as a function pointer to a foreign function.

At the point 4️⃣ we invoke the native code by passing the expected parameters previously created by the different **allocateFrom** methods.

To test the embryonic connection with Foreign Function code, you can use the mentioned project upper.

If all the process is successful, we will obtain the following trace in your terminal :

```

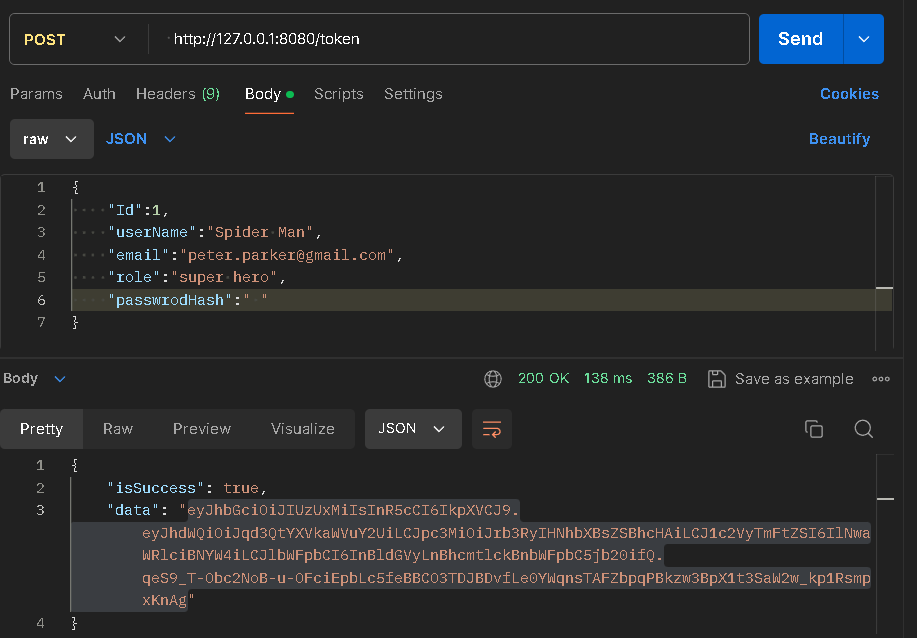

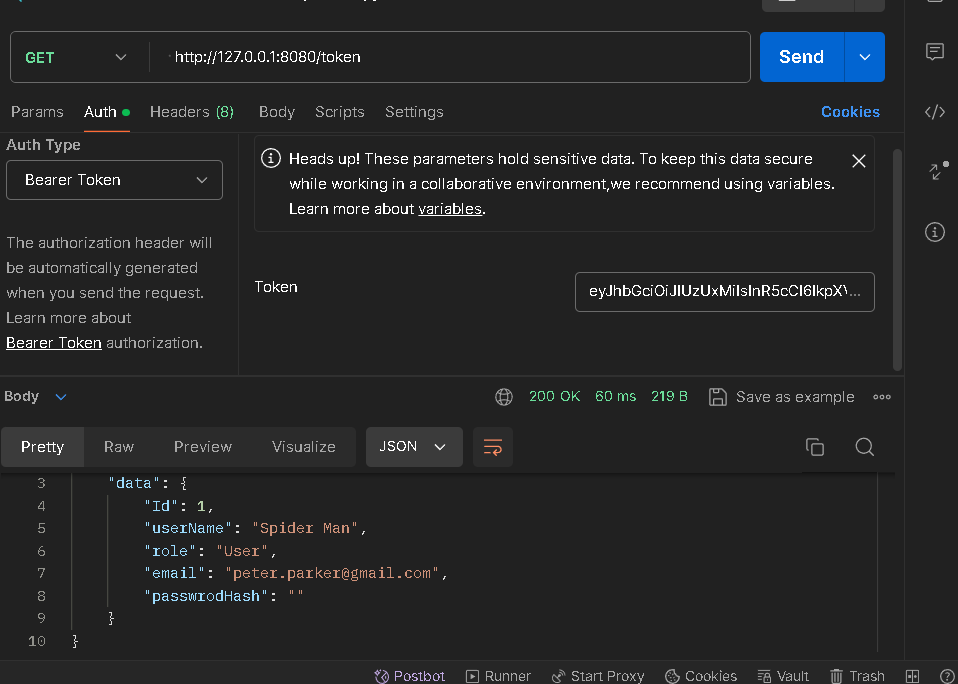

[INFO (java)] Running SocketTester for destination address 127.0.0.1:8080

[INFO (native)] Selected source port number: 35940

[INFO (native)] TCP header sequence number: 272214228

[INFO (native)] Successfully sent 60 bytes SYN!

[INFO (native)] Received bytes: 40

[INFO (native)] Destination port: 35940

[INFO (native)] Successfully received 40 bytes

[INFO (native)] Received syn: 0, ack: 1, rst: 1

[INFO (native)] TCP header sequence number response: 0

[INFO (native)] TCP header ack sequence number response: 272214229

[INFO (native)] tcph->syn: 0

[INFO (native)] tcph->ack: 16777216

[INFO (native)] SYN ACK received -> Success

```

Have a good day.

Image par <a href="https://pixabay.com/fr/users/jackmac34-483877/?utm_source=link-attribution&utm_medium=referral&utm_campaign=image&utm_content=1882817">jacqueline macou</a> de <a href="https://pixabay.com/fr//?utm_source=link-attribution&utm_medium=referral&utm_campaign=image&utm_content=1882817">Pixabay</a>

| ulrich |

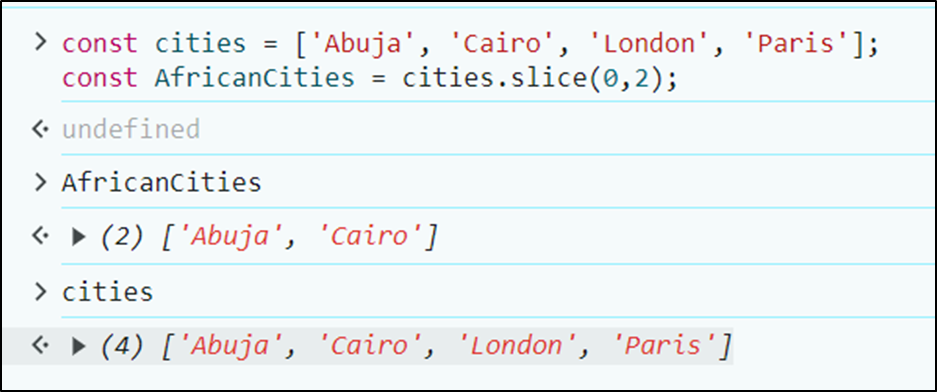

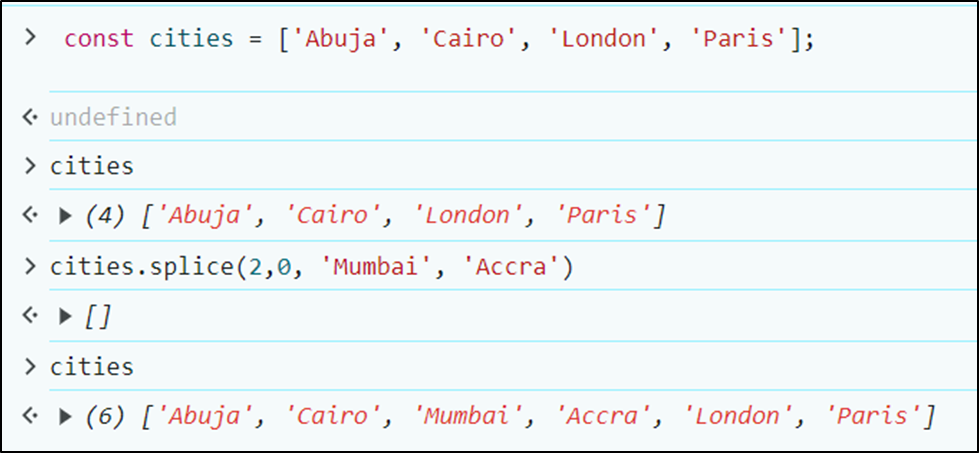

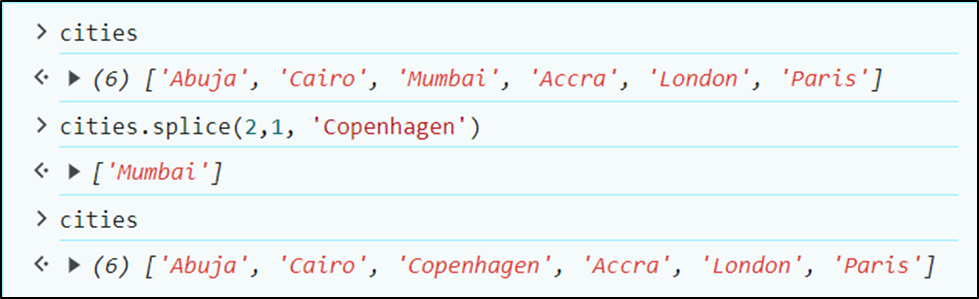

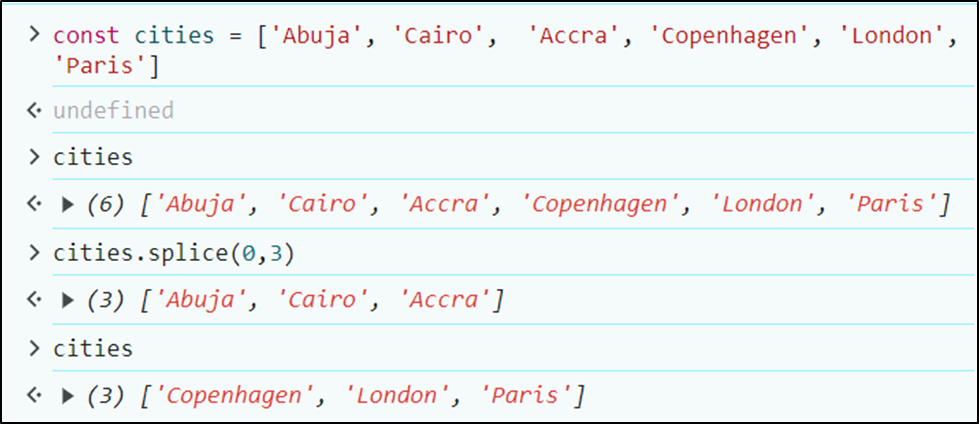

1,874,716 | Entendiendo la Mutabilidad y la Re-asignación | Buenas, hoy quisiera hablar sobre un tema que puede ser confuso al iniciar en programación,... | 0 | 2024-06-02T22:18:04 | https://dev.to/javascriptchile/entendiendo-la-mutabilidad-y-la-re-asignacion-ihf | javascript, mutability, beginners, programming | Buenas, hoy quisiera hablar sobre un tema que puede ser confuso al iniciar en programación, especialmente en lenguajes como Javascript donde la diferencia es tan clara: La Mutabilidad y la Re-asignación.

Al igual que en otros lenguajes, Javascript permite la definición de variables en las cuales almacenar datos. Existen 3 palabras reservadas que nos sirven para definir variables: `var`, `let` y `const`, pero para este artículo, nos concentraremos en estas últimas 2, y ahondaremos en su relación con la mutabilidad y la reasignación.

- `let`: Permite definir una variable cuyo valor puede re-asignarse en cualquier momento.

- `const`: Permite definir una variable cuyo valor no puede re-asignarse, esa decir, una constante.

Estas definiciones, si bien son concisas, podrían no bastar para dar cuenta del impacto que tiene el uso de uno versus el otro en Javascript, pues en mi opinión, es necesario hacer énfasis primeramente en los conceptos de **re-asignación** y **mutabilidad** que conlleva el uso de una u otra palabra reservada.

Para ello, nos remontaremos a cómo funciona la memoria en un computador: a grandes rasgos, e ignorando cierta cantidad de aspectos fundamentales sobre arquitectura de computadores, es la memoria RAM la que almacena los datos con los que vamos a trabajar. Estos datos tienen **direcciones** a través de las cuales accedemos a ellos.

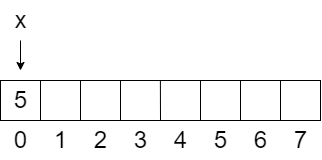

Veamos un ejemplo: supongamos que tenemos una memoria RAM de 8 bytes, por lo que si necesitáramos almacenar 1 byte, podríamos hacerlo en cualquiera de los 8 espacios distintos disponibles. Vamos a decir que estos espacios son **direcciones de memoria**, y los nombraremos del 0 al 7.

Cuando declaramos la primera variable en nuestro código, digamos `x = 5`, el computador va a buscar un espacio de memoria disponible en nuestra RAM, digamos que en este caso es el espacio 0, y va a guardar allí el valor 5. Esto significa que la variable `x` va a **apuntar** a la dirección de memoria 0, porque en nuestra memoria RAM hemos guardado el número 5, pero nuestra variable `x` no contiene el número 5, sino que contiene la **dirección de memoria** donde se encuentra el número 5.

> Memoria RAM de 8 bytes, con `x` apuntando a la dirección 0 y guardando el número 5.

Ahora bien, tenemos entonces variables que representan direcciones de memoria, y direcciones de memoria en donde se guardan datos. Estos datos pueden ser números, caracteres e incluso **punteros**. Para entender esto, primero vamos a definir el concepto de puntero:

- **Puntero:** es un dato que contiene una **dirección de memoria** de otro dato, por eso se dice que **apunta** hacia otra dirección de memoria.

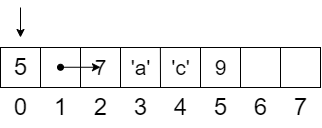

Siguiendo con nuestro ejemplo, podemos guardar en la dirección 1 de la memoria un **puntero** hacia la dirección 2. Por lo tanto, si quisiéramos acceder al dato que se encuentra en la dirección 2, tendríamos que acceder primero a la dirección 1, que nos llevará a la dirección 2.

Supongamos que en la dirección 2 se almacena una **Lista** de 4 elementos `(7, 'a', 'c' y 9)`. Como ya no es un solo dato, vamos a utilizar más espacios de memoria: 4 bytes en total, desde el espacio 2 (el inicio) hasta el espacio 5 (el final). Entonces, resumiendo, en la dirección 0 de nuestra RAM tenemos el número 5, en la dirección 1 tendremos un puntero a la dirección 2, y en la dirección 2 una lista de 4 elementos:

> Memoria RAM de 8 bytes, con `x` apuntando a la dirección 0, un puntero en la dirección 1 apuntando a la dirección 2, y 4 elementos correspondientes a la lista que parte en la dirección 2 y termina en la dirección 5.

Teniendo este escenario en mente, vamos a definir los conceptos de **re-asignación** y **mutabilidad**. La **reasignación** es cuando queremos cambiar el valor **almacenado en la dirección de memoria** que representa nuestra variable. Es decir, la operación `x = 10` accedería a la dirección 0 y cambiaría el valor que allí se almacena de 5 a 10, lo que estaría **re-asignando** a la variable `x`. O bien, podríamos **re-asignar** la dirección de memoria 1 para que deje de almacenar la dirección de memoria 2, y de ahora en adelante guarde un número cualquiera en vez de un puntero.

Por otro lado, diremos que la **mutabilidad** es la capacidad que tiene una variable de modificar su contenido **sin cambiar el valor** almacenado en la dirección de memoria que representa. Siguiendo la idea anterior, si tenemos una nueva variable `y` que representa la dirección de memoria 1 (que recordemos, almacena un puntero a la dirección de memoria 2, donde hay una lista), y queremos **modificar un elemento** de esa lista, podemos hacerlo sin cambiar el valor que se encuentra en la dirección de memoria 1. Esto significa que estaríamos **mutando** la variable `y`, ya que vamos a modificar un valor en otra dirección de memoria. Sin embargo, si ahora queremos que la variable `y` tenga el valor 3, la estaría **re-asignando** y no **mutando**.

En resumen:

- **Re-asignación**: capacidad de una variable de cambiar el valor almacenado en la dirección de memoria a la que apunta.

- **Mutabilidad**: capacidad de una variable de modificar su contenido sin cambiar el valor en la dirección de memoria a la que apunta.

Habiendo entendido esto, podemos aterrizar estos conceptos al ecosistema de Javascript, observemos el siguiente ejemplo

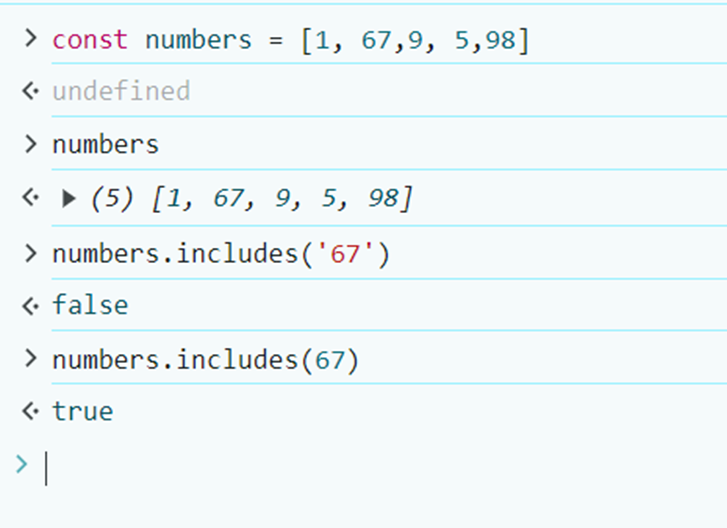

```Javascript

const x = 10;

x = 5; // Uncaught TypeError: Assignment to constant variable.

```

Las variables definidas con `const` no se pueden **re-asignar**, por lo que una vez que le asignamos el valor 10, ya no hay nada más que podamos hacer para modificar ese valor. Lo mismo ocurre con objetos:

```Javascript

const x = { nombre: 'Javascript Chile' };

x.url = 'https://jschile.org/'; // Ningún problema.

x = { url: 'localhost' } ; // Uncaught TypeError:

// Assignment to constant variable.

```

En este caso, definimos un objeto el cual posteriormente **mutamos**, añadiéndole la propiedad `url`, sin embargo, cuando quisimos asignar un objeto diferente, nos encontramos un error. Esto es porque cuando trabajamos con objetos, lo que se almacena en la dirección de memoria que representa la variable es un **puntero**, el cual apunta a la dirección de memoria donde se almacena el objeto, y por lo tanto, intentar almacenar otro objeto implicaría cambiar el valor almacenado en la dirección de memoria de `x`, cambiando hacia donde apunta el puntero, y por lo tanto intentando **re-asignar** la variable.

Para finalizar, queda el siguiente ejemplo con `let`:

```Javascript

let x = { nombre: 'Javascript Chile' };

x.url = 'https://jschile.org/'; // Ningún problema.

x = { url: 'localhost' } ; // Ningún problema.

```

Donde ambas operaciones funcionan porque `let` permite tanto la **re-asignación** como la **mutabilidad**. Dicho esto, vale la pena estudiar más sobre como la **mutabilidad** impacta en el desarrollo de nuestros productos, y en la forma en la que programamos. A modo de detalle, en este artículo utilizo el concepto **re-asignación**, porque las memorias RAM siempre tienen un dato asignado, siempre tendrán almacenado un 1 o 0 en sus celdas, por lo que sería erróneo en mi opinión hablar de **asignación** bajo el nivel de detalle en que se desenvuelve este artículo, más no significa que esté erróneo per sé hablar de **asignar** una variable.

Espero que se haya entendido bien el concepto con este artículo, y quedo atento a cualquier comentario que puedan tener, por último, quisiera mencionar que este artículo fue extraído de un **Apunte de Introducción a Javascript**, el cual redacté para mis estudiantes de Desarrollo Web, puedes descargarlo haciendo clic en la imagen:

[](https://steadycraft.notion.site/Introducci-n-a-Javascript-48a4b004556641dc8ffede99bbe071e9?pvs=25)

Nos vemos! | vadokdev |

1,874,713 | Babylon.js Browser MMO - DevLog - Update #3 - Displaying other players | Hello, Here is a next brief update from me. Bad weather means more progress :) Players can now see... | 0 | 2024-06-02T22:06:49 | https://dev.to/maiu/babylonjs-browser-mmo-devlog-update-3-displaying-other-players-ifn | babylonjs, gamedev, indie, mmo | Hello,

Here is a next brief update from me.

Bad weather means more progress :)

Players can now see other players, including their position and rotation changes. Additionally, running animations are now being played.

In the chat, which is currently read-only, a message is sent to all online players whenever someone joins the game.

What's next?

I will work on hiding entities when someone leaves the game. I will also introduce a spatial hash algorithm to calculate nearby entities, which will be used for ensuring that only information about those entities which are nearby is sent to the players.

Best regards

{% youtube 4H1sLoYlx6k %}

| maiu |

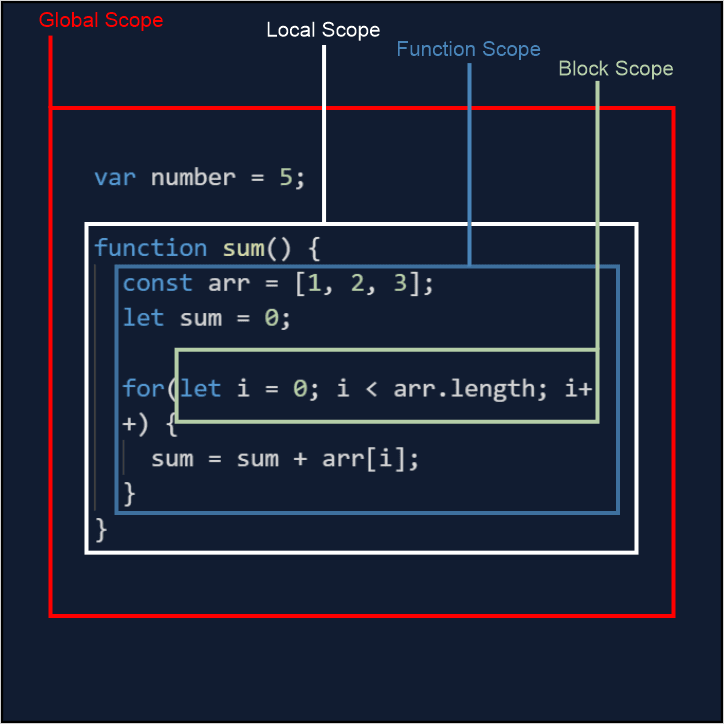

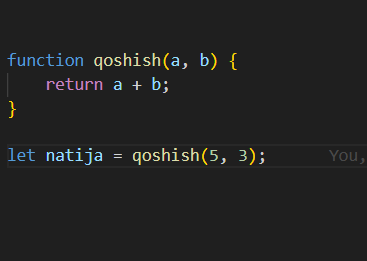

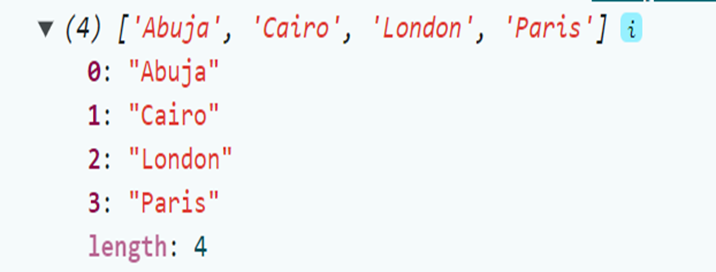

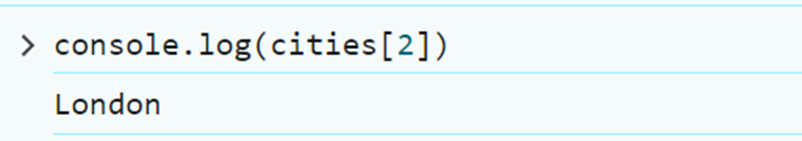

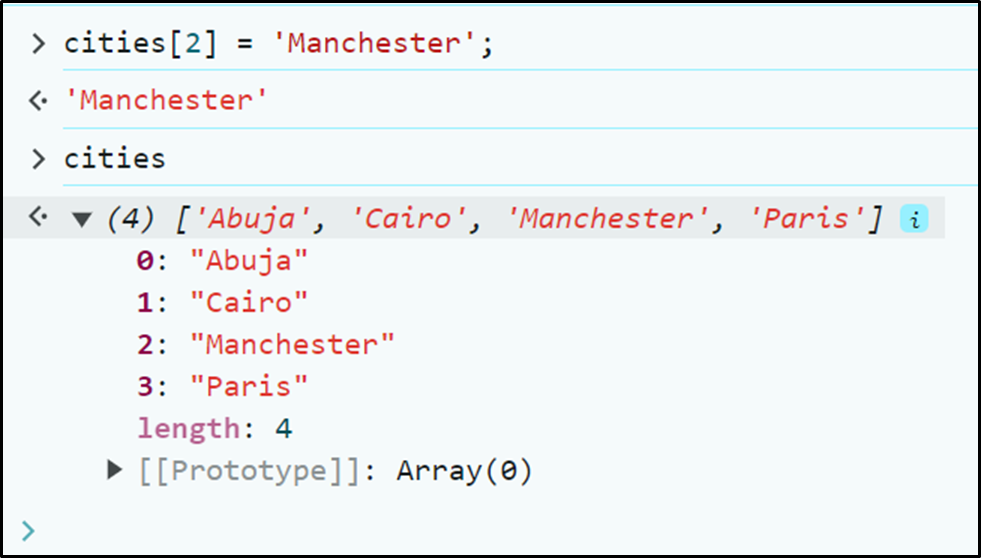

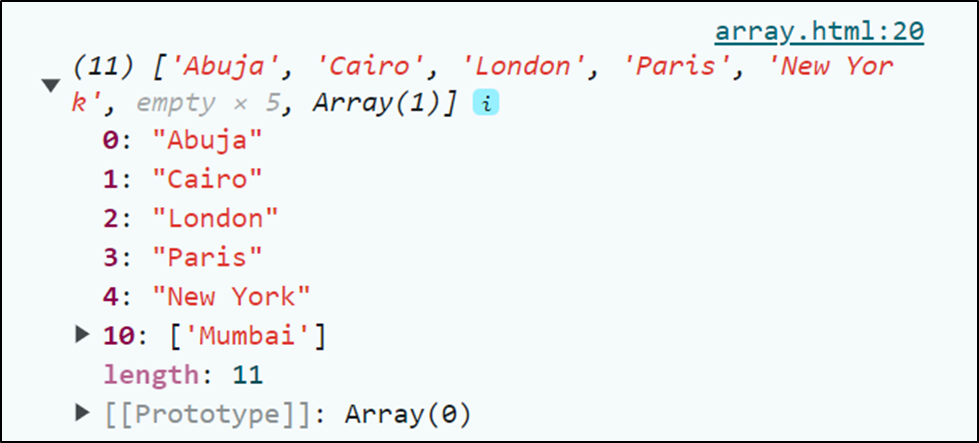

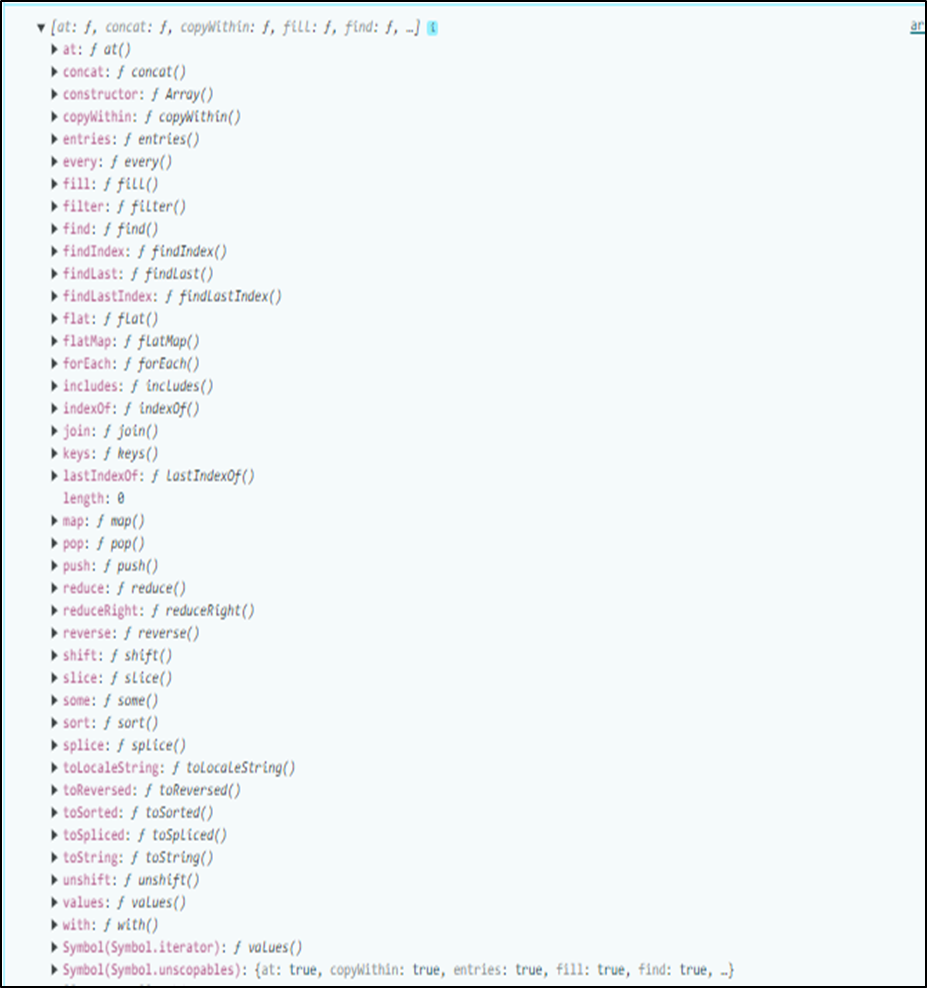

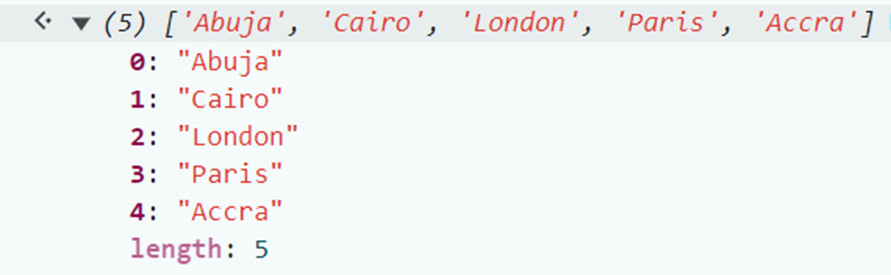

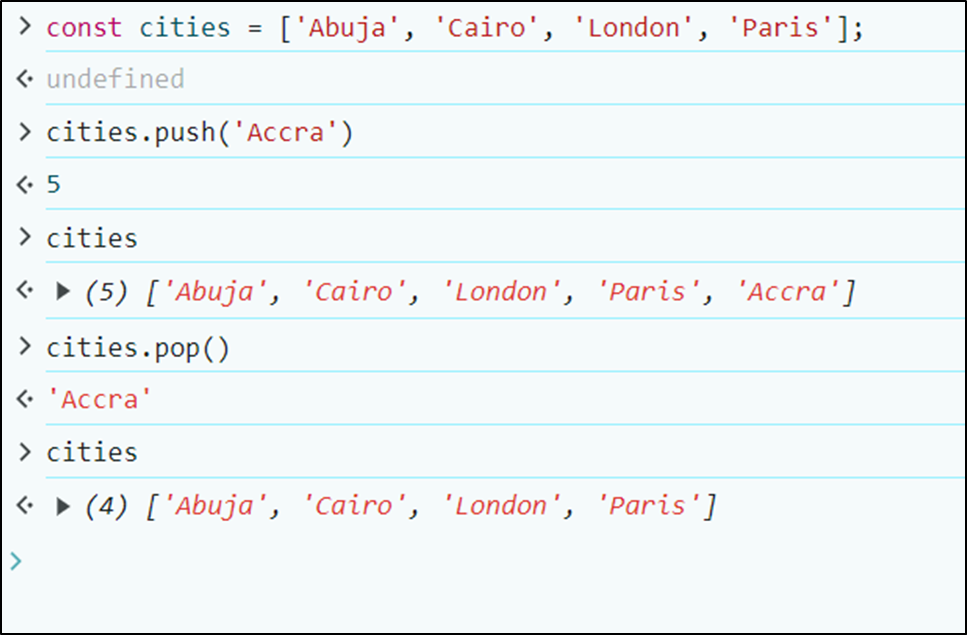

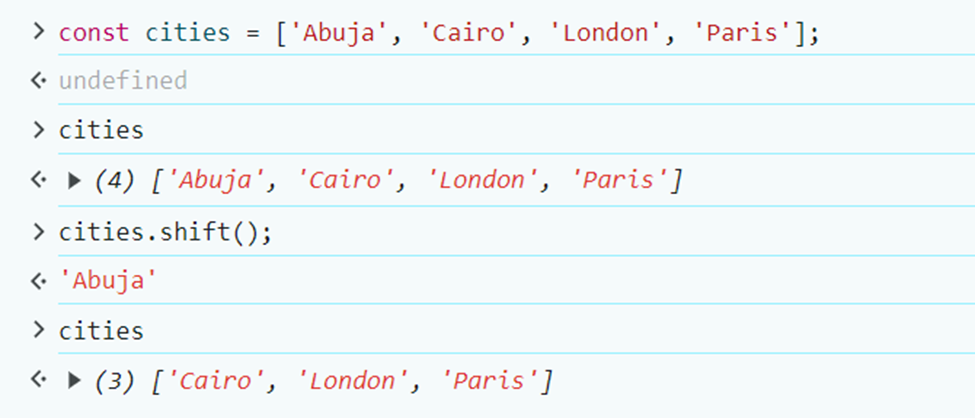

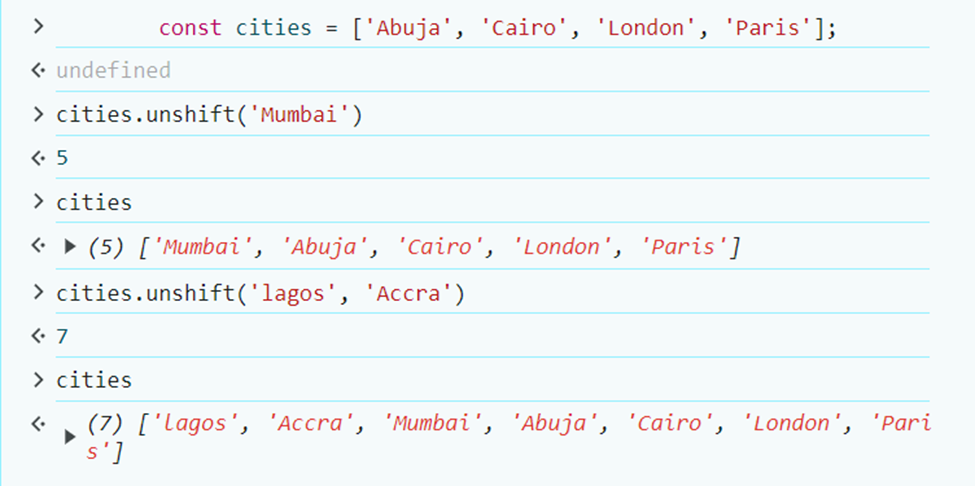

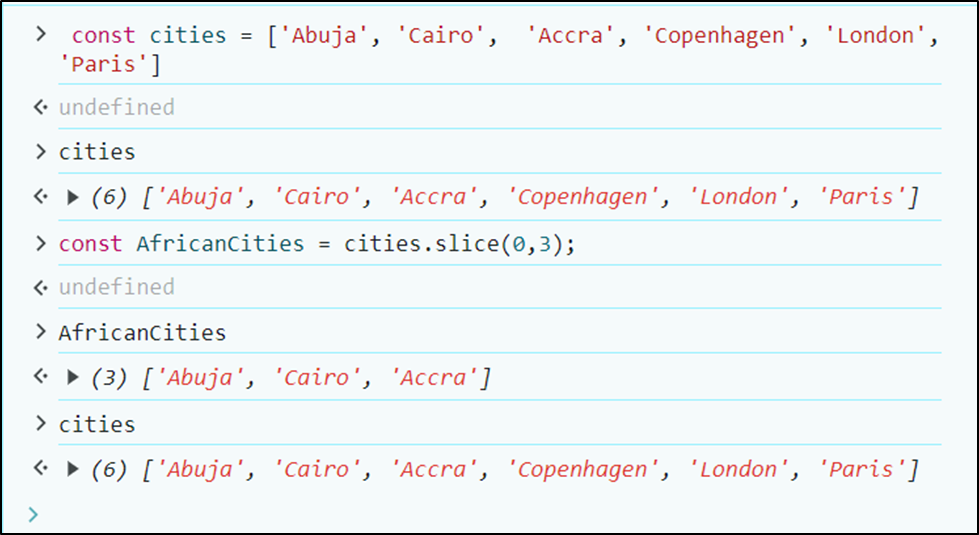

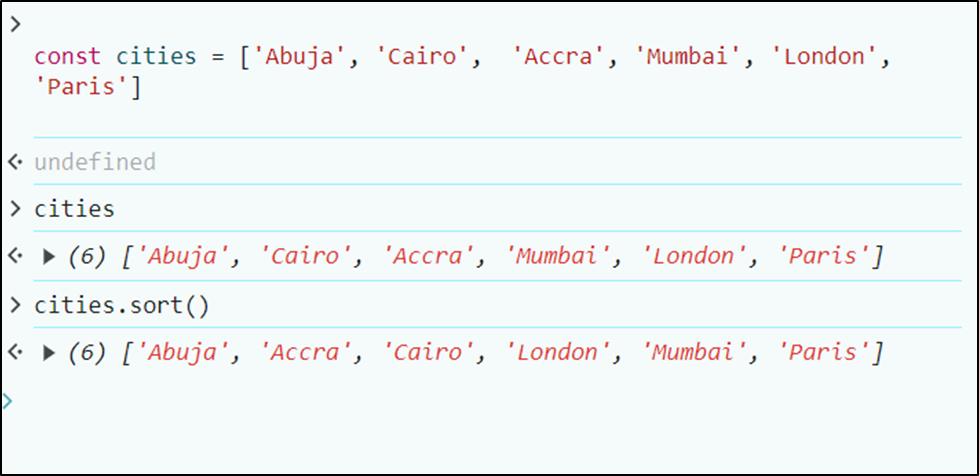

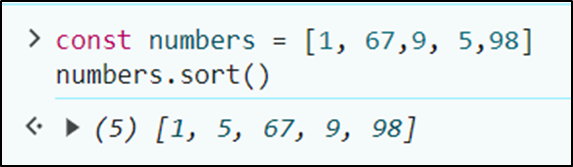

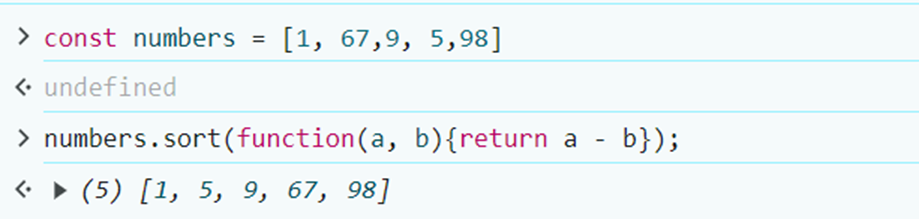

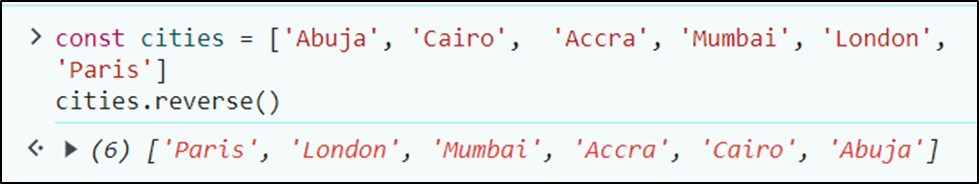

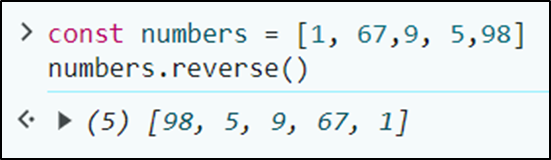

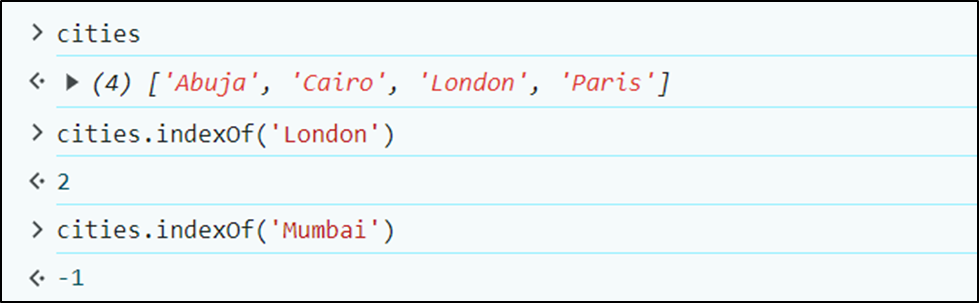

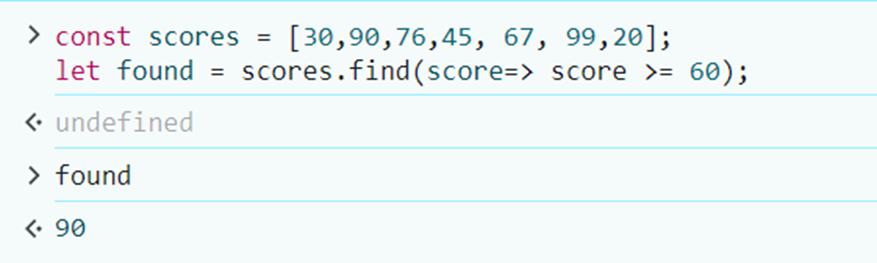

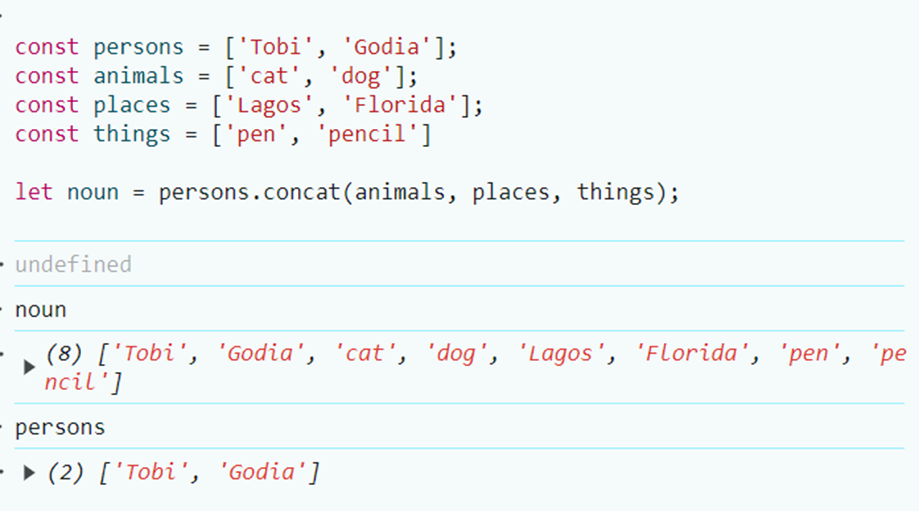

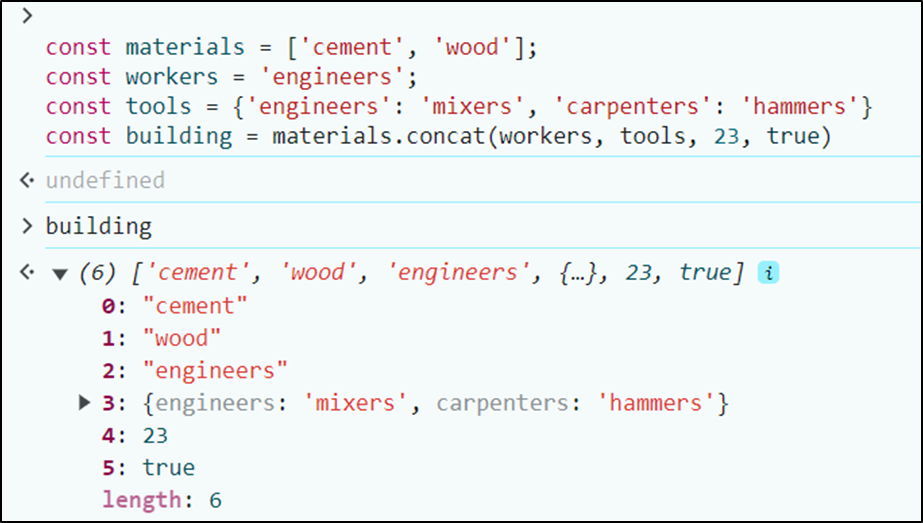

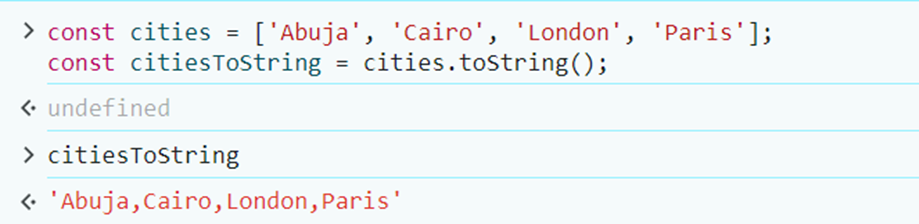

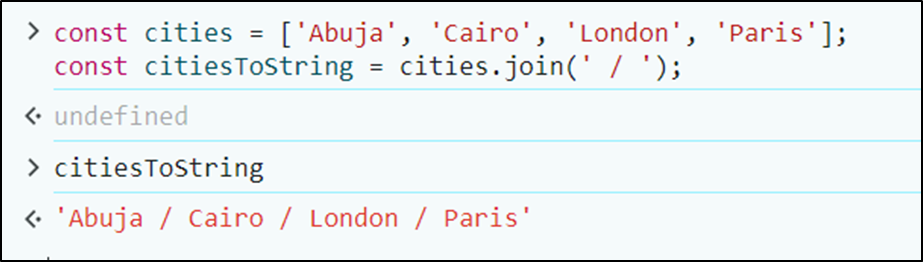

1,874,141 | JavaScript Programming for Beginners | Hi, everyone👋! Lately I put aside javascript a bit and now I would like to return to it to start... | 0 | 2024-06-02T22:00:39 | https://dev.to/slydragonn/javascript-concepts-tutorial-for-beginners-4h19 | javascript, tutorial, beginners, learning | Hi, everyone👋!

Lately I put aside javascript a bit and now I would like to return to it to start some projects that I have pending, which is why my way of remembering crucial topics of the language is by doing this little tutorial. I hope that, like me, it will be helpful to you and tell me about interesting features of JavaScript.

### Introduction

JavaScript is a programming language primarily used for creating interactive and dynamic content on websites. It can be run on the client side (in the browser) or on the server side (using environments like Node.js).

### 1. **Setting Up Your Environment**

To write and run JavaScript code, you only need a web browser (Chrome, Firefox, or Safari) and a text editor (Visual Studio Code, Sublime Text, or Notepad).

### 2. **Your First JavaScript Program**

Create a new HTML file and add the following code:

```html

<!DOCTYPE html>

<html>

<head>

<title>My First JavaScript Program</title>

</head>

<body>

<h1>Hello, JavaScript!</h1>

<script>

console.log("Hello, World!");

alert("Hello, World!");

</script>

</body>

</html>

```

- `console.log("Hello, World!");` prints "Hello, World!" to the browser's console.

- `alert("Hello, World!");` displays a pop-up alert with the message "Hello, World!".

### 3. **Basic Syntax and Variables**

**Variables** store data values. You can declare variables using `var`, `let`, or `const`.

```jsx

var name = "John"; // Old way

let age = 30; // Modern way, can be reassigned

const country = "USA"; // Cannot be reassigned

```

- **Additional Info**: https://www.w3schools.com/js/js_variables.asp

### 4. **Data Types**

JavaScript supports various data types:

- **String**: `"Hello"`

- **Number**: `42`

- **Boolean**: `true` or `false`

- **Array**: `[1, 2, 3]`

- **Object**: `{name: "John", age: 30}`

- **More about Data Types:** https://www.w3schools.com/js/js_datatypes.asp

### 5. **Operators**

- **Arithmetic**: `+`, -, * , `/`, `%`

- **Assignment**: `=`, `+=`, `=`, `=`, `/=`

- **Comparison**: `==`, `===`, `!=`, `!==`, `>`, `<`, `>=`, `<=`

- **More about operators:** https://developer.mozilla.org/en-US/docs/Web/JavaScript/Guide/Expressions_and_operators

### 6. **Functions**

Functions are blocks of code designed to perform a task.

```jsx

function greet(name) {

return "Hello, " + name + "!";

}

let message = greet("Alice");

console.log(message); // "Hello, Alice!"

```

- **More about functions:** https://www.w3schools.com/js/js_functions.asp

### 7. **Conditionals**

Conditionals control the flow or behavior of a program.

```jsx

let hour = 10;

if (hour < 12) {

console.log("Good morning!");

} else if (hour < 18) {

console.log("Good afternoon!");

} else {

console.log("Good evening!");

}

```

- **More about conditionals:** https://developer.mozilla.org/en-US/docs/Learn/JavaScript/Building_blocks/conditionals

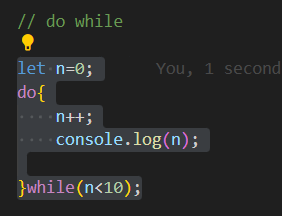

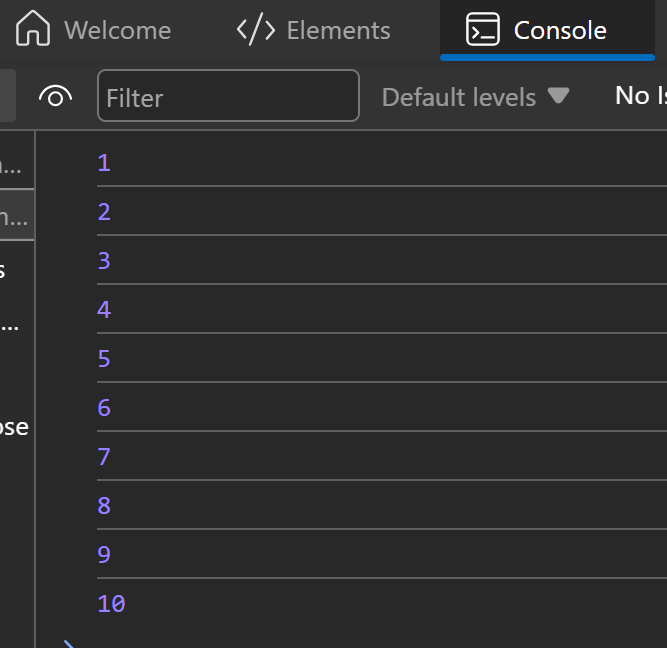

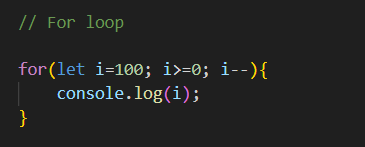

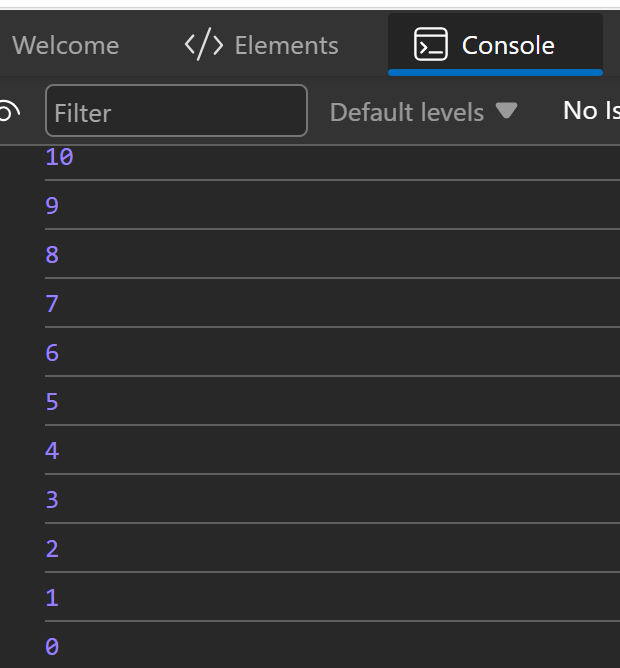

### 8. **Loops**

- **For Loop**:

```jsx

for (let i = 0; i < 5; i++) {

console.log("Iteration " + i);

}

```

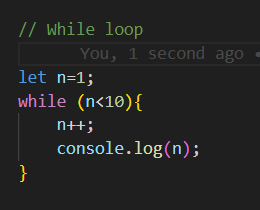

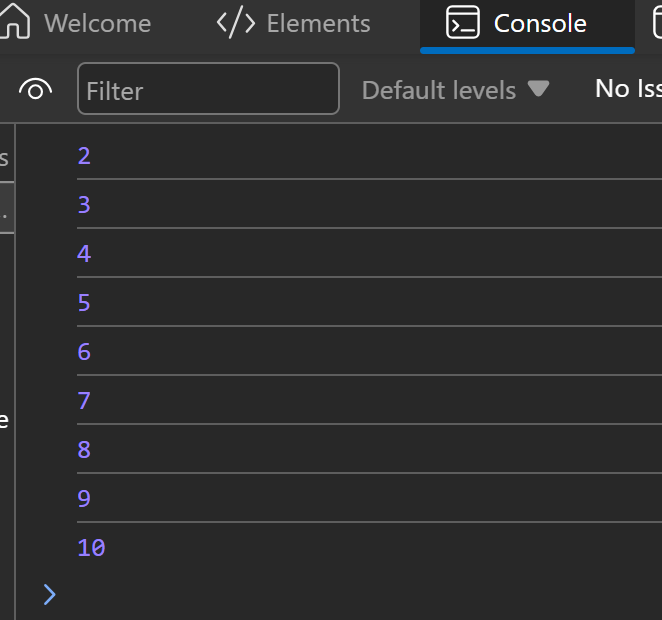

- **While Loop**:

```jsx

let i = 0;

while (i < 5) {

console.log("Iteration " + i);

i++;

}

```

- **More about loops:** https://developer.mozilla.org/en-US/docs/Web/JavaScript/Guide/Loops_and_iteration

### 9. **DOM Manipulation**

JavaScript can be used to manipulate the Document Object Model (DOM) to change HTML content dynamically.

```html

<!DOCTYPE html>

<html>

<head>

<title>DOM Manipulation</title>

</head>

<body>

<h1 id="title">Hello, World!</h1>

<button onclick="changeText()">Click Me</button>

<script>

function changeText() {

document.getElementById("title").innerText = "Hello, JavaScript!";

}

</script>

</body>

</html>

```

When the button is clicked, the text inside the `<h1>` element changes.

- **More about DOM API:** https://developer.mozilla.org/en-US/docs/Web/API/Document_Object_Model/Introduction

### 10. **Events**

JavaScript can handle events such as clicks, mouse movements, key presses, and more.

```html

<!DOCTYPE html>

<html>

<head>

<title>Event Handling</title>

</head>

<body>

<button id="myButton">Click Me</button>

<script>

document.getElementById("myButton").addEventListener("click", function() {

alert("Button was clicked!");

});

</script>

</body>

</html>

```

This code adds an event listener to the button, triggering an alert when the button is clicked.

- **More about events:** https://www.w3schools.com/js/js_events.asp

### 11. **Arrays and Objects**

**Arrays**:

```jsx

let fruits = ["Apple", "Banana", "Cherry"];

console.log(fruits[0]); // "Apple"

```

- **More about arrays:** https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Global_Objects/Array

**Objects**:

```jsx

let person = {

name: "John",

age: 30,

greet: function() {

return "Hello, " + this.name;

}

};

console.log(person.name); // "John"

console.log(person.greet()); // "Hello, John"

```

- **More about objects:** https://www.w3schools.com/js/js_objects.asp

### 12. **ES6 Features**

Modern JavaScript (ES6 and beyond) introduced many new features:

- [JavaScript Versions](https://www.w3schools.com/js/js_versions.asp)

- [**Arrow Functions**](https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Functions/Arrow_functions):

```jsx

const add = (a, b) => a + b;

console.log(add(2, 3)); // 5

```

- [**Template Literals**:](https://www.w3schools.com/js/js_string_templates.asp)

```jsx

let name = "John";

let greeting = `Hello, ${name}!`;

console.log(greeting); // "Hello, John!"

```

- [**Destructuring:**](https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Operators/Destructuring_assignment)

```jsx

let [a, b] = [1, 2];

console.log(a); // 1

console.log(b); // 2

let { name, age } = person;

console.log(name); // "John"

console.log(age); // 30

```

- [**Modules:**](https://www.w3schools.com/js/js_modules.asp)

```jsx

// In file add.js

export const add = (a, b) => a + b;

// In main file

import { add } from './add.js';

console.log(add(2, 3)); // 5

```

### 14. Classes

JavaScript classes provide a more convenient and syntax-friendly way to create objects and handle inheritance.

```jsx

class Person {

// Constructor method

constructor(name, age) {

this.name = name;

this.age = age;

}

// Method

greet() {

return `Hello, my name is ${this.name} and I am ${this.age} years old.`;

}

}

// Creating an instance of the class

const john = new Person("John", 30);

console.log(john.greet()); // "Hello, my name is John and I am 30 years old."

```

- **More about classes:** https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Classes

### 15. **Resources**

- [MDN Web Docs](https://developer.mozilla.org/en-US/docs/Web/JavaScript) - JavaScript documentation and tutorials.

- [JavaScript.info](https://javascript.info/) - A modern tutorial from the basics to advanced topics.

- [freeCodeCamp](https://www.freecodecamp.org/) - Free coding courses.

- [W3Schools](https://www.w3schools.com/) - Web developer site | slydragonn |

1,854,411 | Dev: Cloud | A Cloud Developer is a professional responsible for designing, building, deploying, and managing... | 27,373 | 2024-06-02T22:00:00 | https://dev.to/r4nd3l/dev-cloud-2o85 | cloud, developer | A **Cloud Developer** is a professional responsible for designing, building, deploying, and managing applications and services that run on cloud computing platforms. Here's a detailed description of the role:

1. **Cloud Computing Platforms:**

- Cloud Developers work with various cloud computing platforms such as Amazon Web Services (AWS), Microsoft Azure, Google Cloud Platform (GCP), and others.

- They leverage the infrastructure, platform, and software services provided by these platforms to develop scalable, reliable, and cost-effective cloud-based solutions.

2. **Application Development:**

- Cloud Developers design and develop cloud-native applications using programming languages, frameworks, and tools suited for cloud environments.

- They write code for frontend and backend components, APIs, microservices, and serverless functions that leverage cloud services for computation, storage, and communication.

3. **Infrastructure as Code (IaC):**

- Cloud Developers use infrastructure as code (IaC) tools such as AWS CloudFormation, Azure Resource Manager (ARM) templates, and Google Cloud Deployment Manager to define and manage cloud infrastructure programmatically.

- They automate the provisioning, configuration, and management of cloud resources to ensure consistency, repeatability, and scalability of infrastructure deployments.

4. **Microservices Architecture:**

- Cloud Developers adopt microservices architecture to build modular, decoupled, and independently deployable components that can be scaled and managed efficiently in the cloud.

- They design microservices that communicate via APIs, message queues, or event streams, enabling flexibility, resilience, and agility in cloud-based applications.

5. **Containerization and Orchestration:**

- Cloud Developers containerize applications using containerization technologies such as Docker and manage containerized workloads using container orchestration platforms like Kubernetes.

- They create Docker images, define Kubernetes manifests, and deploy containerized applications to leverage the benefits of containerization, including portability, scalability, and resource efficiency.

6. **Serverless Computing:**

- Cloud Developers embrace serverless computing models offered by cloud providers, such as AWS Lambda, Azure Functions, and Google Cloud Functions.

- They develop and deploy serverless applications and functions that automatically scale, execute in response to events, and incur costs only for actual usage, without managing underlying infrastructure.

7. **Data Storage and Databases:**

- Cloud Developers utilize cloud storage services like Amazon S3, Azure Blob Storage, and Google Cloud Storage for storing and managing data in various formats.

- They leverage cloud databases such as Amazon DynamoDB, Azure Cosmos DB, and Google Cloud Firestore for scalable, globally distributed, and highly available data storage and retrieval.

8. **DevOps Practices:**

- Cloud Developers embrace DevOps practices to streamline the development, deployment, and operation of cloud-based applications.

- They collaborate with DevOps teams to implement continuous integration (CI), continuous delivery (CD), automated testing, infrastructure automation, and monitoring practices in cloud environments.

9. **Security and Compliance:**

- Cloud Developers prioritize security and compliance in cloud-based applications by implementing identity and access management (IAM), encryption, network security, and compliance controls.

- They adhere to industry standards, regulations, and best practices for data protection, privacy, and regulatory compliance in cloud computing environments.

10. **Monitoring and Optimization:**

- Cloud Developers monitor the performance, availability, and cost of cloud-based applications using cloud monitoring and analytics tools.

- They optimize resource utilization, cost efficiency, and application performance by analyzing metrics, identifying bottlenecks, and implementing optimizations in cloud infrastructure and applications.

In summary, a Cloud Developer plays a crucial role in leveraging cloud computing technologies and services to develop, deploy, and manage scalable, resilient, and cost-effective applications and services in the cloud. By combining expertise in application development, infrastructure management, DevOps practices, and security, they enable organizations to harness the full potential of cloud computing for innovation and business growth. | r4nd3l |

1,873,960 | Metadata and Dynamic Metadata in Next.js | Metadata is information about the data on your web page and is essential for SEO (Search Engine... | 0 | 2024-06-02T21:55:58 | https://dev.to/adrianbailador/metadata-and-dynamic-metadata-in-nextjs-3e9m | nextjs, webdev, javascript, programming | Metadata is information about the data on your web page and is essential for SEO (Search Engine Optimization) and social media sharing.

## What is Metadata?

Metadata is data that describes other data. In the context of a web page, metadata is information about the page that is not directly shown to users but can be useful for search engines, browsers, and other technologies.

For example, the title of a web page, its description, the author, keywords, and other similar details are all metadata. These are specified in the `<head>` of your HTML document using elements like `<title>`, `<meta>`, etc.

## What is Dynamic Metadata?

Dynamic metadata refers to metadata that changes based on the page's content. For instance, you might have a blog with multiple posts and you want each post to have its own title and description when shared on social media. This can be achieved with dynamic metadata.

## Using Metadata and Dynamic Metadata in Next.js

Next.js uses the `Head` component from `next/head` to add elements to the `<head>` of your HTML page. You can use this component to add metadata and dynamic metadata to your pages.

Here's an example of how you can do it:

```jsx

import Head from 'next/head'

export default function BlogPost({ post }) {

return (

<>

<Head>

<title>{post.title}</title>

<meta name="description" content={post.description} />

<meta property="og:title" content={post.title} />

<meta property="og:description" content={post.description} />

<meta property="og:image" content={post.image} />

</Head>

<h1>{post.title}</h1>

<p>{post.content}</p>

</>

)

}

```

In this example, `post` is an object containing the information of a blog post. The page's metadata is dynamically set based on the properties of `post`.

## Setting Up Dynamic Metadata in Next.js

Setting up dynamic metadata in your Next.js project is quite straightforward. Here is a step-by-step example:

1. **Import the Head component**: Next.js provides a `Head` component that you can use to add elements to the `<head>` of your HTML document. To use it, you need to import it from `next/head` in your component file.

```jsx

import Head from 'next/head'

```

2. **Use the Head component**: You can use the `Head` component in your component as follows:

```jsx

<Head>

<title>Your title</title>

</Head>

```

In this example, we are adding a `<title>` element to our HTML document.

3. **Add dynamic metadata**: To add dynamic metadata, you simply need to pass dynamic data to your `Head` component. For example, if you are building a blog and want each post to have its own title, you can do it as follows:

```jsx

import Head from 'next/head'

export default function BlogPost({ post }) {

return (

<>

<Head>

<title>{post.title}</title>

<meta name="description" content={post.description} />

<meta property="og:title" content={post.title} />

<meta property="og:description" content={post.description} />

<meta property="og:image" content={post.image} />

</Head>

<h1>{post.title}</h1>

<p>{post.content}</p>

</>

)

}

```

In this example, `post` is an object containing the information of a blog post. The page's metadata is dynamically set based on the properties of `post`.

## Importance of Metadata for SEO and Social Media

Metadata is crucial for both SEO and social media sharing.

- **SEO**: Metadata, such as the title and description, is essential for search engines to understand your page's content and display it appropriately in search results.

- **Social Media**: Metadata like `og:title`, `og:description`, and `og:image` are important for how your content is displayed when shared on platforms like Facebook and Twitter.

## Using Other Types of Metadata

Besides basic metadata, there are other types of metadata that can be useful:

- **Meta Robots**: To control how search engines index your page.

```jsx