id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,871,850 | Equity Token Offerings: Modernizing Investment | In recent years, the world of finance has witnessed a significant evolution with the advent of... | 0 | 2024-05-31T09:22:58 | https://dev.to/donnajohnson88/equity-token-offerings-modernizing-investment-586h | equitytokenofferings, blockchain, development, beginners | In recent years, the world of finance has witnessed a significant evolution with the advent of blockchain technology and tokenization. Among the innovative financial instruments that have emerged, Equity Token Offerings (ETOs) stand out as a groundbreaking method powered by [STO development services](https://blockchain.oodles.io/security-token-offering-development-company/?utm_source=devto) for raising capital and democratizing investment opportunities. In this comprehensive guide, we delve into the intricacies of Equity Token Offerings, exploring their mechanics, benefits, and potential for transforming the traditional investment landscape.

## Understanding Equity Token Offerings

ETOs, sometimes referred to as Security Token Offerings (STOs), are a type of crowdfunding that allows businesses to issue digital tokens that stand in for ownership rights such as equity, profit-sharing, or voting rights. These tokens are backed by real-world assets, such as company shares, real estate, or commodities, and are governed by regulatory frameworks to ensure compliance with securities laws.

## Key Components

**Legal Compliance**

One of the defining features of ETOs is their adherence to regulatory standards. Issuers must comply with securities regulations in the jurisdictions where they operate, ensuring transparency, investor protection, and legal certainty.

**Tokenization Platform**

ETOs are facilitated through tokenization platforms that provide the infrastructure for creating, issuing, and managing security tokens. These platforms leverage blockchain technology to tokenize assets, manage investor relations, and facilitate secondary market trading.

**Investor Verification**

To participate in ETOs, investors undergo a rigorous verification process to confirm their identity and accreditation status. This helps issuers maintain compliance with Know Your Customer (KYC) and Anti-Money Laundering (AML) regulations.

**Smart Contracts**

Smart contracts play a central role in ETOs by automating the execution of investment agreements, token issuance, and distribution of dividends or other rights to token holders. These self-executing contracts enhance transparency, reduce transaction costs, and mitigate counterparty risk.

Also, Read | [Getting Started with INO (Initial NFT Offering)](https://blockchain.oodles.io/blog/ino-initial-nft-offering/?utm_source=devto)

## Benefits

**Access to Capital**

ETOs provide companies with an alternative means of raising capital by tapping into a global pool of investors. This democratization of investment opportunities enables startups and small businesses to access funding without the traditional barriers associated with venture capital or initial public offerings.

**Liquidity**

By tokenizing assets and enabling secondary market trading, ETOs enhance liquidity for investors, allowing them to buy, sell, and trade security tokens more efficiently than traditional securities.

**Fractional Ownership**

ETOs enable fractional ownership of high-value assets, such as real estate or private equity, making investment opportunities more accessible to a wider range of investors.

**Transparency and Security**

Blockchain technology ensures transparency and immutability of transaction records, reducing the risk of fraud and enhancing investor trust in Equity Token Offerings.

Challenges and Considerations:

While Equity Token Offerings offer numerous benefits, there are several challenges and considerations to be mindful of:

**Regulatory Complexity**

Navigating the regulatory landscape governing Equity Token Offerings can be complex and varies significantly across jurisdictions. Issuers must ensure compliance with securities laws, which may require legal expertise and regulatory approvals.

**Investor Education**

Educating investors about the benefits and risks of Equity Token Offerings is essential for fostering confidence and participation in this emerging asset class. Clear communication and transparency regarding investment terms, risks, and potential returns are crucial for attracting and retaining investors.

**Market Liquidity**

Despite the potential for enhanced liquidity, secondary markets for security tokens are still in the early stages of development. Limited liquidity and trading volume may affect the valuation and tradability of security tokens, particularly for smaller or less liquid assets.

Also, Explore | [IDO (Initial DEX Offering) | The Future of Decentralized Fundraising](https://blockchain.oodles.io/blog/quick-guide-launching-ido-initial-dex-offering/?utm_source=devto)

## Conclusion:

Equity Token Offerings represent a paradigm shift in the way companies raise capital and investors access investment opportunities. Equity Token Offerings provide a competitive alternative to conventional fundraising techniques by utilizing blockchain technology, regulatory compliance, and tokenization. This opens up new investment opportunities and democratizes access to money. While challenges remain, the potential for Equity Token Offerings to modernize investment and reshape the financial landscape is undeniable. As regulatory frameworks evolve and market infrastructure matures, Equity Token Offerings are poised to play a transformative role in the future of finance. Interested in launching an equity token offering, connect with our [crypto token developers](https://blockchain.oodles.io/about-us/?utm_source=devto) to get started. | donnajohnson88 |

1,871,849 | Document conversion APIs | A small comparison guide: https://apyhub.com/blog/exploring-top-document-conversion-apis What other... | 0 | 2024-05-31T09:22:21 | https://dev.to/nikoldimit/document-conversion-apis-20f9 | api, pdf, conversion, apyhub | A small comparison guide: https://apyhub.com/blog/exploring-top-document-conversion-apis

What other aspects would you be evaluating when checking for doc conversion APIs?

| nikoldimit |

1,871,848 | Your Guide to the Best SEO Agencies in the USA: Expert Recommendations and Reviews | In the highly competitive digital landscape, businesses must leverage professional SEO services to... | 0 | 2024-05-31T09:20:32 | https://dev.to/templatewallet/your-guide-to-the-best-seo-agencies-in-the-usa-expert-recommendations-and-reviews-3p9j | In the highly competitive digital landscape, businesses must leverage professional SEO services to stay ahead. Search engine optimization (SEO) is vital for enhancing your online visibility, driving organic traffic, and boosting your revenue. With the abundance of SEO agencies in the USA, selecting the right one can be challenging. This comprehensive guide will help you navigate through the options, providing expert recommendations and reviews of the best SEO agencies in the USA.

##Why Professional SEO Services Are Crucial

Before diving into the list of top SEO agencies, it's essential to understand the importance of professional SEO services. SEO is a dynamic field that requires continuous updates and strategic planning. Here are a few reasons why investing in professional SEO services is beneficial:

##Expertise and Knowledge

SEO professionals have the knowledge and experience to implement effective strategies tailored to your business needs. They stay updated with the latest algorithm changes and industry trends, ensuring your website adapts to new search engine requirements. This expertise enables them to identify and leverage opportunities that you might miss, providing a competitive edge.

##Time and Resource Saving

Outsourcing SEO allows you to focus on your core business activities while experts handle your online presence. SEO requires continuous effort, including keyword research, content creation, and link building, which can be time-consuming. By entrusting these tasks to professionals, you can allocate your time and resources to other critical areas of your business, enhancing overall productivity.

##Better ROI

A well-executed SEO strategy can significantly improve your website’s rankings, leading to increased traffic and higher conversion rates. By attracting more targeted visitors who are interested in your products or services, you can achieve a better return on investment. Additionally, [professional SEO services](https://www.stanventures.com/managed-seo-services/?utm_source=dev.to&utm_medium=listicle&utm_campaign=branding) ensure sustainable results, providing long-term value and consistent growth in your online presence and revenue.

Key Factors to Consider When Choosing an SEO Agency

##1. Experience and Track Record

An agency's experience and track record are critical indicators of their capability. Look for agencies with a proven history of success across various industries. To gauge their performance, check their case studies, client portfolios, and testimonials. Additionally, seek agencies that have been recognized with industry awards or certifications, as these accolades often highlight their expertise and reliability. An experienced agency will be adept at handling diverse challenges and delivering consistent results, making them a valuable partner for your SEO needs.

##2. Range of Services

SEO encompasses various aspects, including on-page SEO, off-page SEO, technical SEO, and content marketing. Ensure the agency offers comprehensive services that align with your business goals. Some agencies also provide additional services like PPC, social media marketing, and web development, which can complement your SEO efforts. A full-service agency can provide a holistic digital marketing strategy, ensuring all elements work together seamlessly. This integration can lead to more effective campaigns and a higher return on investment, maximizing your marketing budget.

##3. Client Reviews and Reputation

Client reviews and testimonials offer insights into the agency’s reliability and effectiveness. Look for reviews on independent platforms like Google, Yelp, or Clutch. Additionally, ask the agency for references so you can speak directly with their clients. Pay attention to both the quantity and quality of reviews, as consistent positive feedback over time can indicate strong performance. Consider looking at case studies that detail how the agency solved specific problems and achieved tangible results. This due diligence can help you gauge the potential for a successful partnership.

##4. Transparency and Communication

Effective communication and transparency are essential for a successful partnership. Choose an agency that provides regular updates and detailed reports on your SEO campaigns. They should be open to discussing strategies, performance metrics, and any adjustments needed. A transparent agency will clearly outline its processes, timelines, and expected outcomes, fostering trust and collaboration. Regular meetings and proactive communication ensure that both parties are aligned on goals and expectations, allowing for timely adjustments and continuous improvement of the SEO strategy.

##5. Pricing and Value

SEO services come at various price points. Finding an agency that offers good value for your investment is important. Avoid agencies that promise quick results at low prices, as they may use unethical practices that could harm your website in the long run. Instead, look for agencies that provide clear, detailed pricing structures and explain the rationale behind their costs. Investing in quality SEO services will yield long-term benefits, including sustained organic traffic growth and improved brand authority, which are crucial for achieving your business objectives.

##Top SEO Agencies in the USA

To help you make an informed decision, here are some of the top SEO agencies in the USA, known for their expertise, client satisfaction, and proven results:

##1. Stan Ventures

Website: https://www.stanventures.com/

Specialties: Managed SEO services, link building, content marketing.

Highlights: Stan Ventures stands out for their personalized approach and dedicated SEO teams. They offer tailored SEO strategies that cater to the unique needs of their clients, ensuring sustainable growth and improved online visibility.

##2. WebFX

Website: https://www.webfx.com/

Specialties: Full-service digital marketing, including SEO, PPC, web design.

Highlights: Known for their data-driven strategies and transparent reporting, WebFX helps businesses achieve measurable results. Their team of experts creates customized SEO plans to boost online presence and drive traffic.

##3. Straight North

Website: https://www.straightnorth.com/

Specialties: B2B SEO, lead generation, web development.

Highlights: Straight North focuses on generating qualified leads through effective SEO strategies. They emphasize detailed performance tracking and transparency, ensuring clients see the impact of their services.

##4. Thrive Internet Marketing Agency

Website: https://thriveagency.com/

Specialties: SEO, content marketing, social media management.

Highlights: Thrive is renowned for their holistic approach to digital marketing, integrating SEO with other channels to maximize results. Their team prioritizes client satisfaction and long-term success.

##5. Ignite Visibility

Website: https://ignitevisibility.com/

Specialties: SEO, PPC, digital marketing strategy.

Highlights: Ignite Visibility is a top-rated SEO agency known for their innovative strategies and client-focused approach. They offer comprehensive SEO services designed to enhance visibility and drive business growth.

##6. Victorious

Website: https://victoriousseo.com/

Specialties: SEO, keyword strategy, link building.

Highlights: Victorious is recognized for their results-driven SEO strategies. They use advanced analytics and data to create effective SEO campaigns that deliver measurable results.

##7. SEO Inc.

Website: https://www.seoinc.com/

Specialties: Enterprise SEO, local SEO, PPC management.

Highlights: With over two decades of experience, SEO Inc. offers tailored SEO solutions for businesses of all sizes. Their focus on innovation and ethical practices ensures sustainable results.

##Expert Recommendations

To get the most out of your investment in SEO, here are some expert recommendations:

##1. Define Your Goals

Before engaging with an SEO agency, clearly define your business goals. Whether it’s increasing website traffic, improving conversion rates, or boosting brand awareness, having specific objectives will help you communicate your needs effectively. Additionally, clearly defined goals will help the SEO agency create a tailored strategy that aligns with your business vision. Be specific, including the key performance indicators (KPIs) you wish to track. This will enable both you and the agency to measure the success of the SEO efforts and make data-driven decisions.

##2. Ask the Right Questions

When evaluating potential SEO agencies, ask detailed questions about their strategies, tools, and methodologies. Understand how they plan to approach your SEO project and what metrics they will use to measure success. Inquire about their experience in your industry, their approach to keyword research, content creation, and link-building strategies. Ask for case studies or examples of past successes. This will give you a clearer picture of their expertise and how they can specifically help your business achieve its SEO goals.

##3. Request a Proposal

Ask shortlisted agencies to provide a detailed proposal outlining their strategy, timeline, and pricing. Compare these proposals to see which agency offers the best value for your investment. Ensure the proposal includes a clear breakdown of services, expected deliverables, and milestones. This will help you understand what to expect at each stage of the SEO process. A comprehensive proposal will also highlight the agency's commitment to transparency and its ability to deliver results within your budget and timeframe.

##4. Monitor Progress

Once you’ve chosen an agency, regularly monitor the progress of your SEO campaigns. Review the reports they provide and have regular check-ins to discuss performance and any necessary adjustments. Establish a schedule for receiving updates, such as weekly or monthly reports, to stay informed about your campaign's progress. Use these meetings to address any concerns, discuss new opportunities, and refine strategies. Ongoing communication ensures that both you and the agency are aligned and working towards the same goals, leading to a more successful SEO partnership.

##Conclusion

Choosing the right SEO agency is crucial for the success of your online presence. By considering factors like experience, services offered, client reviews, transparency, and pricing, you can find an agency that aligns with your business needs. Investing in professional SEO services will not only enhance your online visibility, but also drive sustainable growth and profitability, positioning your brand as a leader in your industry and ensuring long-term success in an increasingly digital marketplace. | templatewallet | |

1,871,846 | Enhancing Productivity with Video Feedback in 2024. | My experience has taught me the importance of productivity in everything I and my team do. Video... | 0 | 2024-05-31T09:18:38 | https://dev.to/martinbaun/enhancing-productivity-with-video-feedback-in-2024-455c | microservices, tooling, productivity | My experience has taught me the importance of productivity in everything I and my team do. Video Feedback is a crucial component of this.

This is what I and my team have learned from this.

## Video Feedback: Easy to Start, Hard to Master

Video Feedback has been easy to implement in my daily routine. I press record, explain what hasn’t been done correctly, save, and notify the recipient. This ease to start and use lends to its practicality. I offer feedback on technical tasks such as code reviews or simple tasks like written pieces. This method of giving feedback is fast and efficient. My employees have the video as a reference point that allows them to make correct edits.

Mastering Video Feedback is a different prospect. Tone and attitude are hard to convey over video feedback. It takes practice and repetition to develop a neutral tone for video feedback. This seems like a minor issue, but it affects the morale of your colleagues. This is the reason I don’t give performance reviews over video feedback. The tone can be misunderstood and even taken the wrong way. I use in-person meetings and synchronous video feedback for this. My employees can see my face, interpret my body language, and respond to the reviews in kind. Learning how to separate the two and more is a developing skill. This skill develops with time. Keep at it, and you’ll get better at it.

Read: *[Onboarding and Training New Remote Employees in a Virtual Environment](https://martinbaun.com/blog/posts/onboarding-and-training-new-remote-employees-in-a-virtual-environment/)*

## Asynchronous Video Feedback

Remote teams are here to stay. It’s common to have colleagues spread all over the world. Different time zones make video feedback a crucial asset to use. Synchronous meetings are hard to have. They require a lot of planning, dedication, and sacrifice from the parties involved.

Asynchronous video feedback is effective for remote teams. I give feedback, and the recipient works on it as required. They also have it as a reference they can refer to as much as they need. This allows them to focus and work on the task correctly. My employees also use it in different ways. They have personalized it to resolve tasks and other intangible aspects of our cooperation. I receive videos from my employees explaining their workflow and ideology for particular tasks. This has given me insight that I didn't have previously. I have a better understanding of the timeline needed for a task to progress from infancy to approval. It has optimized our work, streamlined our communication, and delivered the desired production.

Read: *[Build Elegant Software In 1 month! How We Did It!](https://martinbaun.com/blog/posts/build-elegant-software-in-1-month-how-we-did-it/)*

This is the polar opposite of video meetings. They take place synchronously. This feedback has no reference point unless you've recorded it or notes were taken. I use them for progress reports, daily meetings with my team, and employee assessments. It is useful and helps me and my team plan and decide on the best way forward for particular tasks and projects. They are exceptional for such vital executive decisions. They don’t work for giving feedback. This makes asynchronous video feedback a powerful tool that enhances productivity and saves time.

## Video Feedback Practicality

Video Feedback is as practical as it gets. If you see a problem, record it and how to rectify it. This is similar to asking questions with potential solutions, a concept I explained in our article, 7 Tips for Effective Communication in Remote Teams.

Video Feedback lets me give tangible explanations without resorting to complicated methodologies. It has improved our efficiency since its introduction. It’s easier to offer explanations, show examples, and communicate intent.

A fine example of this is showcasing wrongly placed links and formatting. I contrast this with properly placed links and formatting. Giving this feedback in written format doesn’t convey the tone and voice of thought needed. Video feedback conveys my tone, train of thought, and emphasis in the feedback. This gives my employees a visual and auditory explanation of where the error lies and how to fix it.

This practicality extends to feedback on codes, designs, posts on socials, and even construction. The practical uses of video feedback are vast, giving you the efficiency and productivity you need to soar your projects to greater heights.

## Video Review and Collaboration

Remote teams need a strong bond and comradery to get things done. Not much attention is given to the importance of comradery. Video Feedback helps build this bond between team members. Video Feedback fills the void created by not working at a central location.

Team members get a feel for each other, quirks, demeanor, likes, and dislikes. A lot is conveyed via Video Feedback, simulating some level of interaction that would be present in physical interactions. Video Feedback is intended to make work efficient and improve productivity. Comradery is a bonus that works to promote these objectives.

## Conclusion

Video Feedback is an excellent method to improve efficiency and productivity. It allows you to give feedback faster and enhances the workflow within your business. It promotes a collaborative mindset. This is great for every employee and team lead in the organization. Video Feedback keeps everyone informed and builds trust in the process. The benefits aren't reaped automatically but they progressively accumulate.

You can pair Video Feedback with collaboration software. Our favorite is Goleko. It helps you manage projects better. It has helped us improve our productivity, and you should try it. Goleko is our recommendation that we know you won't regret using.

-----

## FAQs

*What is Video Feedback?*

Video Feedback is a communication channel utilizing audio and visual formats to convey a message in response to a project or task completed.

*How effective is Video Feedback?*

Video Feedback is effective for all tasks. It helps you know exactly what to do and how to do it. It is the fastest way to give feedback, making it a must-have in your work routine.

*What is the best Video Feedback Software?*

We haven't tested every Video Feedback software. This makes it hard to rank them. My team and I built VideoFeedbackr. We made it easily usable, reliable, and awesome for enhanced user experience. It is simple to use and follows the highest security standards. Create video content, give video reviews, and participate in video collaboration.

*What is VideoFeedbackr?*

Free Video Feedback hassle-free. It is software designed for video review and collaboration. Use videos to convey your messages, collect feedback, and enhance creative collaboration. Get everyone on the same page with VideoFeedbackr.

*Can you pair VideoFeedbackr with other tools?*

VideoFeedbackr was borne from an asynchronous video feedback tool we built in Goleko. Our team values video feedback, hence why we created it. VideoFeedbackr doesn't pair well with it but we have created a version of it within our project management tool. it allows for proper feedback which promotes our productivity.

*Do you need an App to access VideoFeedbackr?*

No. VideoFeedbackr is accessible on your web browser. You do not need to download any apps to use it.

*What is the pricing of a VideoFeedbackr subscription cost?*

VideoFeedbackr has a free tier and a paid tier coming soon. The free version is free for life and has some limited perks. The paid version will have more perks for you to enjoy and use.

*Do you need an account to use VideoFeedbackr?*

No, you don’t. You can use VideoFeedbackr without registering an account. You only need to open your browser, type in VideoFeedbackr on the search bar, and start using it.

*Is Goleko similar to Slack?*

No, it isn't. Goleko is a collaboration platform whereas Slack is a communication platform. Goleko is a platform that helps you manage your projects and will soon incorporate smooth communication in one central application. This will guarantee your efficiency wherever you're working be it in real-time or remotely.

*What are the security measures on VideoFeedbackr?*

VideoFeedbackr has blockbuster security systems to ensure your privacy is maintained. You only need to record the video, save it, and share the link with the recipient. Anyone who's watching the video must receive the link from you. Our brand values your privacy and follows ethical guidelines to protect it.

-----

*For these and more thoughts, guides and insights visit my blog at [martinbaun.com](http://martinbaun.com)*

*You can find Martin on [X](https://twitter.com/MartinBaunWorld)*

| martinbaun |

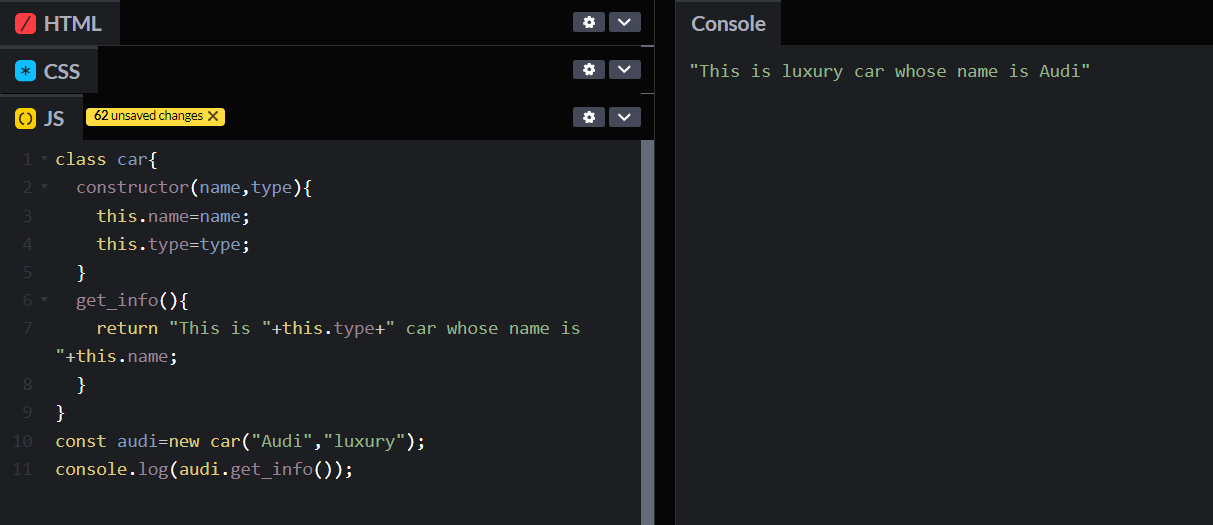

1,871,845 | Day 13of 30 of JavaScript | Hey reader👋 Hope you are doing well😊 In the last post we have completed arrays, it's properties and... | 0 | 2024-05-31T09:17:58 | https://dev.to/akshat0610/day-13of-30-of-javascript-3cde | webdev, javascript, beginners, tutorial | Hey reader👋 Hope you are doing well😊

In the last post we have completed arrays, it's properties and methods. In this post we are going to starts Object Oriented Programming through JavaScript, we are going to start from very basic and take it to advanced level.

So let's get started🔥

## What is Object Oreinted?

Object Oriented Programming is the way of defining complex structures in a simpler way using **objects** and **classes**.

Let's understand this with the help of an example-:

Suppose you have a car and this car has a name, type(sports, luxury, passenger etc), number of seats ,engine capacity and many more. Now as you can see that every property described here are all belong to car so it's better to have an entity that can store every property and method(here task that car can perform) at one place, and to do this we use object oriented programming. We will define a car class which is going to contain all the properties and methods related to car and will utilize this class using a car object.

So here you can see that we have made a car class and defined all the properties and methods in this and accessed them using object.

Don't bother much about `constructor` and `this` we will talk about them in detail in coming blogs.

**Object Oriented programming is all about the use of objects and classes.**

## Fundamental blocks of Object Oriented Programming

**1. Classes**

Classes are entities that holds every related property and method at single place. In our example above we have a car class that contains every property and method related to car.

**2. Objects**

An object is an instance of class. Using an object we can easily access the property and methods defined in that particular class.

Note-: Class doesn't get any space in memory till its object is defined. We can also say that an object is reference to class.

A class is a blueprint for objects.

In our above example `audi` was our car class object.

**3. Properties or Attributes**

These are the characteristics of the class. In our car class name and type were the characteristics of the car class.

**4. Methods**

These are the actions that objects can perform. In the car class, methods might be `drive`, `stop`, and `honk`. In above example we had `get_info` method.

## Properties of Object Oriented

The key properties of object-oriented programming (OOP) are:

**1. Encapsulation**

Encapsulation refers to storing everything at one place. In classes we define every related property and method at one place. You can understand it as a capsule that contains medicine in it and the capsule protects the medicine inside it from any external germ.

> Bundling data (attributes) and methods (functions) that operate on the data into a single unit or class, and restricting direct access to some of the object's components.

**2. Abstraction**

Abstraction refers to hiding the complex implementation details and showing or exposing only necessary features. For example, when you apply brakes of vehicle you are not aware of what forces are working in background on application of brakes, all you know is applying brakes will slow down your vehicle, so here the background tasks are abstracted from you.

> Hiding the complex implementation details and showing only the necessary features of an object. This helps in reducing complexity and allows the programmer to focus on interactions at a higher level.

**3. Inheritance**

Inheritance can be understood as inheriting the features of a class by another class. The class whose properties and methods are inherited is called **parent class or super class** and the class which inherits is called **child class or sub class**.

Suppose you have a `Vehicle` class and a `Car` class. So this `Car` class will inherit the propeties and methods of `Vehicle` class.

> Creating a new class from an existing class. The new class (child class) inherits attributes and methods from the existing class (parent class), promoting code reuse and establishing a natural hierarchy.

**4. Polymorphism**

Polymorphism refers to the ability of something to have one form or another according to the requirement. For example your mother plays different roles in different relations; for you she is your mother, for your father she is his wife; for your grandparents she is their daughter in law and many more.

So here also we can have methods that can have different roles for different objects.

Suppose we have a super class called `Shape` and `Square` and `Circle` are our subclasses. We have a `calculate_area()` defined in our superclass, we can use this method in our subclasses and can implement it according to need. So here `calculate_area()` has different forms in different classes.

> Allowing objects of different classes to be treated as objects of a common superclass. It enables a single interface to be used for a general class of actions, with specific behavior determined by the exact nature of the situation or the object class.

So this is it for this blog. I hope you have understood it well. In the next blog we will go more deep in it. Till then stay connected and don't forget to follow me 🤍 | akshat0610 |

1,871,844 | 🌟 Elevate Your Business with Digital Innovation Media! 🚀 | 🌐 Are you ready to take your business to the next level? Look no further than Digital Innovation... | 0 | 2024-05-31T09:15:57 | https://dev.to/abhinand_ps_7174ef0fb0a63/elevate-your-business-with-digital-innovation-media-4fnn | 🌐 Are you ready to take your business to the next level? Look no further than Digital Innovation Media! 💼 With our comprehensive suite of full-stack business solutions, we're here to build and launch ready-to-go businesses tailored to any industry.

💡 Why choose us? Here's what sets us apart:

✅ Native solutions for eCommerce, bookings, events, restaurants, hotels, blogs, and more.

✅ Multilingual capabilities to reach global audiences effortlessly.

✅ Seamless payments and pricing plans for smooth transactions.

✅ Members area and CRM integration for streamlined operations.

✅ Digital Innovation Media: Your partner in success!

📈 Ready to transform your online presence? Let's connect and discuss how we can tailor our solutions to meet your unique business needs. Visit us at www.digitalinnovationmedia.com and let's start the journey to success together!

#DigitalInnovationMedia #BusinessSolutions #FullStack #ElevateYourBrand #OnlineSuccess #Innovation #DigitalTransformation | abhinand_ps_7174ef0fb0a63 | |

1,871,843 | Maximize Your Hot Tub and Swim Spa Experience: Essential Do’s and Don’ts for Ultimate Relaxation | Are you ready to indulge in the ultimate relaxation experience with your hot tub or swim spa? Before... | 0 | 2024-05-31T09:15:16 | https://dev.to/walterjerry/maximize-your-hot-tub-and-swim-spa-experience-essential-dos-and-donts-for-ultimate-relaxation-3cc5 | Are you ready to indulge in the ultimate relaxation experience with your [hot tub](https://www.thehottubandswimspacompany.com/hot-tubs) or swim spa? Before immersing yourself in the soothing warmth, it's essential to understand the dos and don'ts to maximize your enjoyment and ensure the longevity of your investment. By following these guidelines, you can make the most of your spa experience while avoiding common pitfalls. Let's explore the essential do’s and don'ts for a safe, relaxing, and rejuvenating time in your hot tub or swim spa.

**The Do’s While Enjoying a Swim Spa**

1. Follow Proper Maintenance Procedures: Regular maintenance is key to keeping your hot tub or swim spa in pristine condition. Follow the manufacturer's guidelines for water treatment, cleaning, and filter maintenance. Invest in quality products for sanitization and balancing the pH levels to ensure a safe and hygienic environment for soaking.

2. Keep the Water Clean: Maintain proper water chemistry by testing it regularly and adjusting the levels as needed. Clean the filters regularly to remove debris and prevent clogging. Consider investing in a self-cleaning hot tub or swim spa for added convenience and peace of mind.

3. Practice Safety Measures: Safety should always be a priority when using your spa. Always supervise children and non-swimmers, and never leave them unattended in or around the water. Install safety covers and barriers to prevent accidents, and familiarize yourself with emergency procedures.

4. Limit Soaking Time: While it may be tempting to spend hours soaking in your spa, it's important to limit your sessions to avoid dehydration and overheating. Aim for 15-30 minute sessions, with breaks in between to cool down and hydrate.

5. Enjoy the Benefits of Hydrotherapy: Take advantage of the therapeutic benefits of hydrotherapy by incorporating massage jets and adjustable settings into your spa experience. Experiment with different massage techniques and water temperatures to soothe sore muscles, improve circulation, and promote relaxation.

**The Don'ts While Enjoying a Swim Spa**

1. **Overlook Regular Maintenance**: Neglecting regular maintenance can lead to water contamination, equipment malfunction, and costly repairs. Avoid the temptation to skip routine tasks such as cleaning the filters or testing the water chemistry, as it can compromise the performance and longevity of your spa.

2. **Use Harsh Chemicals**: While it's essential to maintain clean water, avoid using harsh chemicals that can irritate the skin or damage the spa components. Opt for gentle, spa-safe products specifically formulated for hot tubs and swim spas.

3. **Ignore Warning Signs**: Pay attention to any unusual sounds, smells, or changes in the water quality, as they could indicate underlying issues with your spa. Addressing problems promptly can prevent further damage and ensure uninterrupted enjoyment of your spa.

4. **Overheat the Water**: Avoid setting the water temperature too high, as it can lead to discomfort, dehydration, and even heat exhaustion. Keep the temperature between 100-104°F (37-40°C) for a relaxing and safe spa experience.

5. **Neglect Safety Precautions**: Don't compromise on safety measures, such as installing fencing, covers, and alarms, to prevent accidents and injuries. Educate yourself and your family members on proper spa etiquette and emergency procedures to minimize risks.

**Conclusion**

By following these do’s and don'ts, you can enjoy a safe, relaxing, and rejuvenating spa experience for years to come. Prioritize maintenance, safety, and proper usage to make the most of your investment in a hot tub or swim spa. Whether you're in search of top hot tub brands, self-cleaning hot tubs, swim spa dealers, or a sauna for sale in the UK, trust [The Hot Tub and Swim Spa Company](https://www.thehottubandswimspacompany.com/) to meet your needs. As a leading London swimming pool company, they offer a wide range of premium-quality products, including portable spas and hot tubs, gazebo options, and accessories. Explore their collection today and elevate your relaxation experience at home.

| walterjerry | |

1,871,841 | The Frontend Challenge: Sculpting Sacredness of Lord Shiva | This is a submission for Frontend Challenge v24.04.17, CSS Art: June. Inspiration When I... | 0 | 2024-05-31T09:13:24 | https://dev.to/niketmishra/the-frontend-challenge-sculpting-sacredness-of-lord-shiva-22i6 | frontendchallenge, devchallenge, css | _This is a submission for [Frontend Challenge v24.04.17](https://dev.to/challenges/frontend-2024-05-29), CSS Art: June._

## Inspiration

<!-- What are you highlighting today? -->

When I think of June, I am reminded of the vibrant cultural and religious celebrations that take place around the world. For this challenge, I chose to create a piece of art inspired by Lord Shiva, a significant deity in Hindu religion, symbolizing transformation and transcendence. June, being a month of transitions—whether it's the shift to summer in many parts of the world or a time of spiritual reflection—felt like the perfect time to honor Lord Shiva through CSS art.

## Demo

<!-- Show us your CSS Art! You can directly embed an editor into this post (see the FAQ section of the challenge page) or you can share an image of your project and share a public link to the code. -->

{% codepen https://codepen.io/niketmishra/pen/dyEvyya %}

## Journey

<!-- Tell us about your process, what you learned, anything you are particularly proud of, what you hope to do next, etc. -->

Creating this CSS art of Lord Shiva was a fascinating journey. I began by defining custom CSS variables for colors and gradients, setting the stage for the overall aesthetic. Using a combination of flexbox and absolute positioning, I structured the elements to resemble Lord Shiva's iconic features.

The head and body were formed using divs with border-radius properties, while intricate details like the third eye and crescent moon were meticulously crafted using absolute positioning and box-shadow effects. Animation was added to certain elements to give them a dynamic and lifelike appearance.

Throughout the process, I experimented with various CSS properties and techniques, refining the art piece to achieve the desired outcome. This project not only honed my CSS skills but also deepened my appreciation for the artistry achievable through code.

In the end, the result was a visually striking representation of Lord Shiva, paying homage to this revered deity in Hindu culture through the medium of CSS.

<!-- Team Submissions: Please pick one member to publish the submission and credit teammates by listing their DEV usernames directly in the body of the post. -->

<!-- We encourage you to consider adding a license for your code. -->

MIT License

Copyright (c) 2024 Niket Kumar Mishra

Permission is hereby granted, free of charge, to any person obtaining a copy of this software and associated documentation files (the "Software"), to deal in the Software without restriction, including without limitation the rights to use, copy, modify, merge, publish, distribute, sublicense, and/or sell copies of the Software, and to permit persons to whom the Software is furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

<!-- Don't forget to add a cover image to your post (if you want). -->

<!-- Thanks for participating! --> | niketmishra |

1,871,840 | Want to know web scraping challenges? | Working with hundreds of clients at ProWebScraper, I've seen firsthand the hurdles businesses face... | 0 | 2024-05-31T09:12:52 | https://dev.to/hiren_patel_97/want-to-know-web-scraping-challenges-294f | Working with hundreds of clients at ProWebScraper, I've seen firsthand the hurdles businesses face when doing hashtag#webscraping.

From navigating complex website structures (an issue for over 60% of our customers) to ensuring compliance and data accuracy, there are many obstacles.

Read below article to see all the top challenges customers have shared:

https://www.linkedin.com/pulse/navigating-5-challenges-web-scraping-insights-from-product-patel-uqetf

| hiren_patel_97 | |

1,871,838 | Ship Faster, Fix Less: A Guide To Continuous Testing | Before I jump into Continuous Testing, let's touch base on what testing is, along with how and when... | 0 | 2024-05-31T09:12:19 | https://keploy.io/blog/community/ship-faster-fix-less-a-guide-to-continuous-testing | webdev, beginners, tutorial, devops |

Before I jump into Continuous Testing, let's touch base on what testing is, along with how and when it plays a crucial role.

Testing phase provides you with feedback which will help you determine if further changes are needed. Faster feedback is better for you.

Why? Because by going down the wrong road too far, you risk having to rework more than you originally developed. In some cases, it may not be possible to fix the defects because the feedback came in two days before your scheduled deployment.

So, what do you do? You push the defects into production, hoping to fix it someday. Will defects always get fixed in production? You know the answer!

**What is continuous testing?**

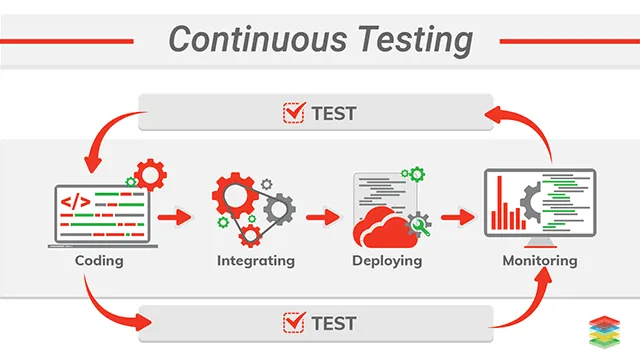

Continuous testing is a process of software testing in which an application is tested at every stage of software development life cycle (SDLC). It aims to shorten the systems development life cycle and provide continuous delivery with high software quality.

**Why does it matter?**

The process of software development began with requirements gathering, where documentation outlined the software's functionalities and user stories. This was followed by design and architecture, where the software's blueprint was created, defining how components would interact and how users would navigate. Once the blueprint was finalized, developers translated the design into functional code using chosen programming languages.

Whenever software is developed, it goes through the testing phase to ensure the quality of the software and to identify bugs to be fixed by the developers.

Traditionally testing is done after the software is developed completely. The development of software is split into many parts. Once a team completes developing a part of the software then that part is given to another team and that team hands over the software to another once they complete their part. This continues until the software is completely developed. This method of developing software is known as the waterfall model.

While the waterfall model provides a structured approach with dedicated time for each development phase, its linear progression can hinder flexibility. Adapting to evolving requirements or incorporating feedback later in the process becomes difficult, potentially leading to a final product that falls short of user needs.

But what if there was a different avenue for testing? A faster, more streamlined approach that shatters the bottlenecks hindering collaboration between development and testing teams.

That is where continuous testing becomes valuable. Testing code directly after submitting it to the repository helps detect bugs before any additional code is written. That extra code would then not have to be adjusted to incorporate bug fixes. Talk about saving time!

**Why do organisations go for continuous testing?**

It would take a lot of time and manpower to test the software whenever a new feature is added to the software.

But organizations want more efficient and easier solutions. That’s why organizations adopt a new approach “continuous testing” which delivers faster and seamless development of software and also releases high-quality software in a short period.

With continuous testing, code is automatically tested as soon as it’s integrated into the previous set of codes.

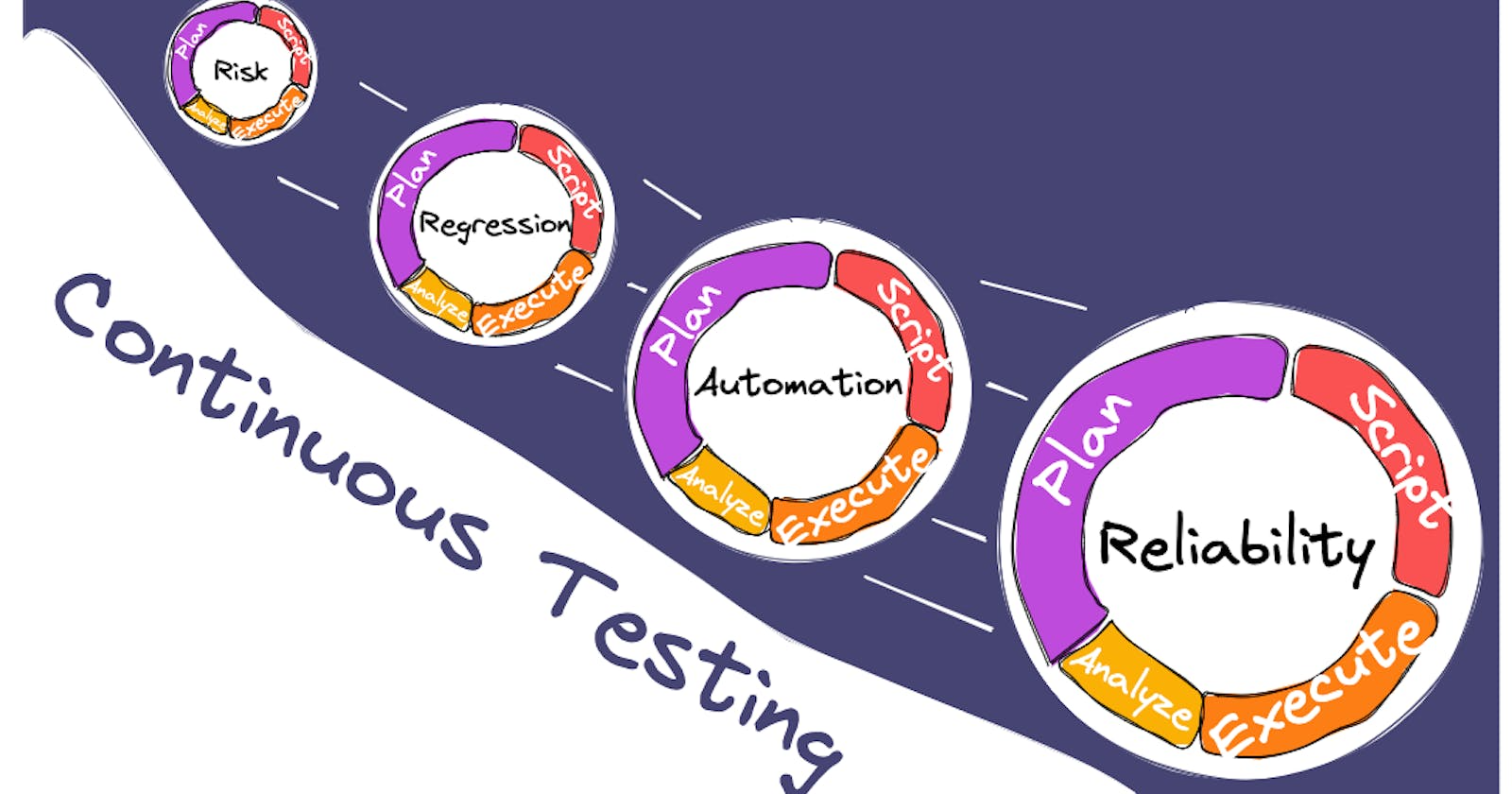

**Methodologies of Continuous Testing**

**Unit Tests:** This involves testing a piece of code in isolation. Basically testing every method written for the feature. The main objective of this test is to check that the code is working as expected, meaning that all the functionalities, inputs, outputs, and performance of the code are as desired.

**Integration Tests:** This involves testing the two modules together. The goal of this test is to check that the integration between the two components is working fine.

**Regression Tests:** This is the most widely used test and it is used to check that the existing functionality of the application is working as expected after the latest addition or modification to the code repository,

**End to End Journey tests:** These tests are added to check the end-to-end working of the software. The goal of these tests is to check that the end user is able to use the application end to end.

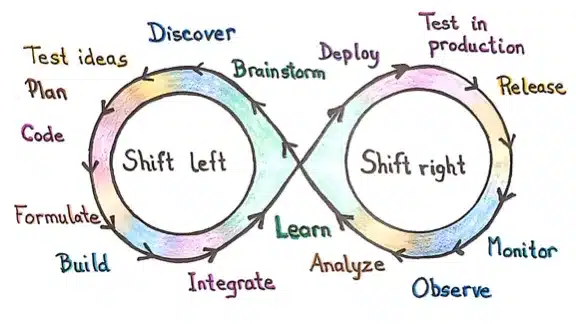

**Shift-left testing:** This approach prioritizes software and system testing early in the SDLC to help reduce or prevent significant debugging problems down the road.

**Shift-right testing:** This approach prioritizes testing near the end of the SDLC, with a focus on improving user experience, overall performance, failure tolerance and functions.

**Benefits of Continuous Testing**

**Increased speed:** Continuous testing aligns with the fast-paced nature of DevOps and Agile, facilitating quicker software delivery. This acceleration can enhance business processes, including faster go-to-market strategies.

**Catch bugs early:** By detecting bugs early in the development cycle, continuous testing significantly improves the overall quality of the code, reducing the likelihood of defects.

**Cost efficiency:** With a higher standard of code quality and fewer bugs reaching production, continuous testing aims to offset its initial costs over time.

**Improved security:** Continuous testing creates a robust framework that protects applications from unforeseen changes and security threats, both during and after deployment.

Save Time, Be Awesome: Continuous testing frees up valuable time for developers to focus on innovation. We can spend less time debugging and more time making applications even better!

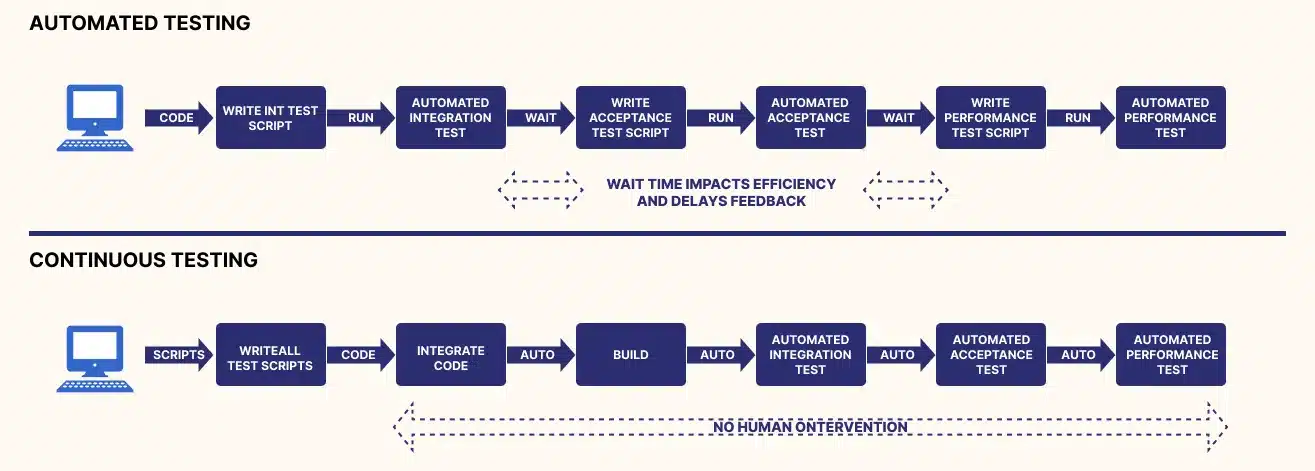

**Continuous testing vs test automation: What's the difference ?**

Before the rise of continuous software testing, test automation was the main practice for achieving software quality assurance. Continuous Testing is different from automated testing , it is a process of executing automated tests to get feedback on the business risks associated with the release of a software. Automated testing , on other hand, is a process of executing specific tests via automation rather than doing it manually.

Continuous testing and test automation share common characteristics. Actually, continuous testing cannot exist without automation.

Despite having similarities, though, it’s false to assume that continuous testing and test automation are the same thing. Continuous testing goes beyond test automation. It aims to ensure continuous quality improvement throughout the whole software delivery pipeline.

**Continuous testing in devops**

As the demand for faster development and delivery of software to customers increases, organizations adopt the agile software development model. Incremental activities are performed continuously in this model.

Nowadays, DevOps is adopted by organizations for software development which uses collaborative processes and the responsibility is shared among the teams. DevOps is another methodology of software development like waterfall and agile. It is better than the previous ones.

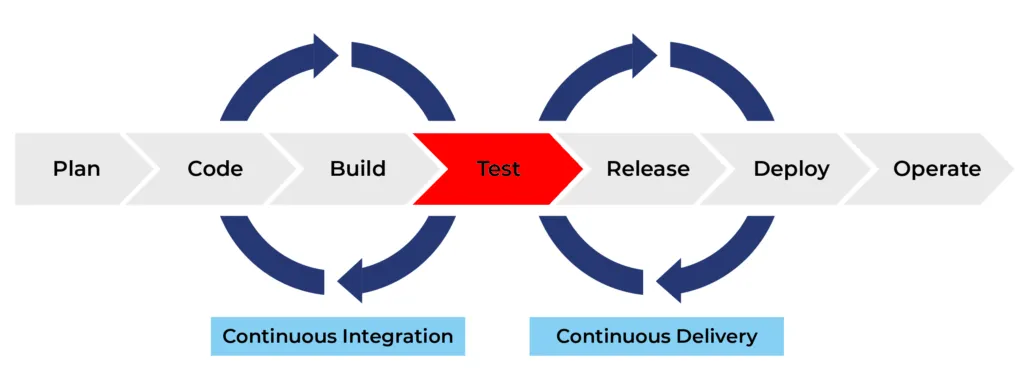

This evolution of software development models leads organizations to use automation, Continuous Integration (CI), and Continuous Delivery (CD) that require continuous testing (CT).

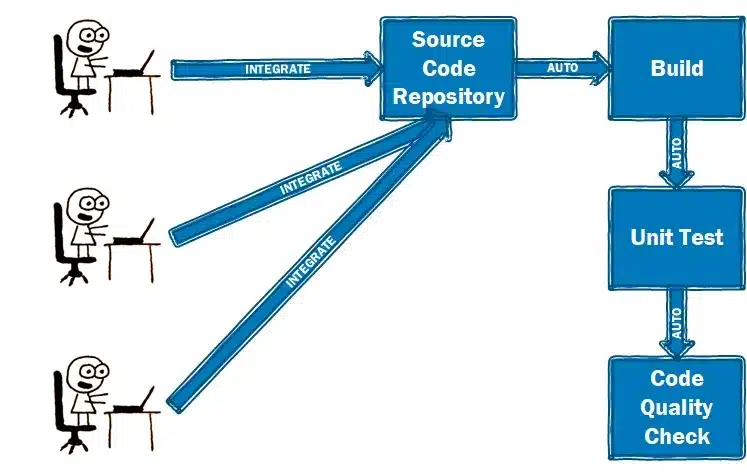

In a DevOps environment, continuous testing is performed automatically throughout the SDLC and works hand in hand with continuous integration to automatically validate any new code integrated into the application.

Continuous integration (CI) is a software development practice where developers regularly merge their code changes into a central repository, after which automated builds and tests are run

It most often refers to the build or integration stage of the software release process and entails both an automation component and a cultural component. The key goals of continuous integration are to find and address bugs quicker, improve software quality, and reduce the time it takes to validate and release new software updates.

Testing tools are preinstalled with testing scripts that run automatically whenever new code is integrated into the application. Typically, the tests start with integration testing and move automatically to system testing, regression testing and user-acceptance testing.

The tests generate data feeds from each application module, and the feeds are analyzed to help ensure that all modules impacted by the new code perform as expected. If a test fails, the code goes back to the development team for correction. It is then reintegrated and the testing cycle starts a new.

Once all tests are passed, the application or project moves to the next stage of the SDLC, typically continuous delivery.

Continuous delivery (CD) automates the release of software to production or a staging environment, making it ready to be deployed at any time with manual approval. Code changes are automatically built, tested, and prepared for a release to production. Continuous delivery expands upon continuous integration by deploying all code changes to a testing environment and/or a production environment after the build stage.

**Continuous testing in Production**

Continuous testing in production (CTIP) is a follow up process of continuous testing, where automated tests not only run in SDLC, but also in the production environment itself. This approach allows for real-time monitoring and validation of software applications with actual users data.

**Benefits of CTIP:**

Identify issues early: By continuously testing in production, you can catch bugs and regressions much faster than waiting for user reports.

Improved user experience: CTIP helps to ensure that new features and deployments don't negatively impact the user experience.

Faster feedback loop: The data from CTIP can be fed back into the development process, allowing developers to fix issues quickly.

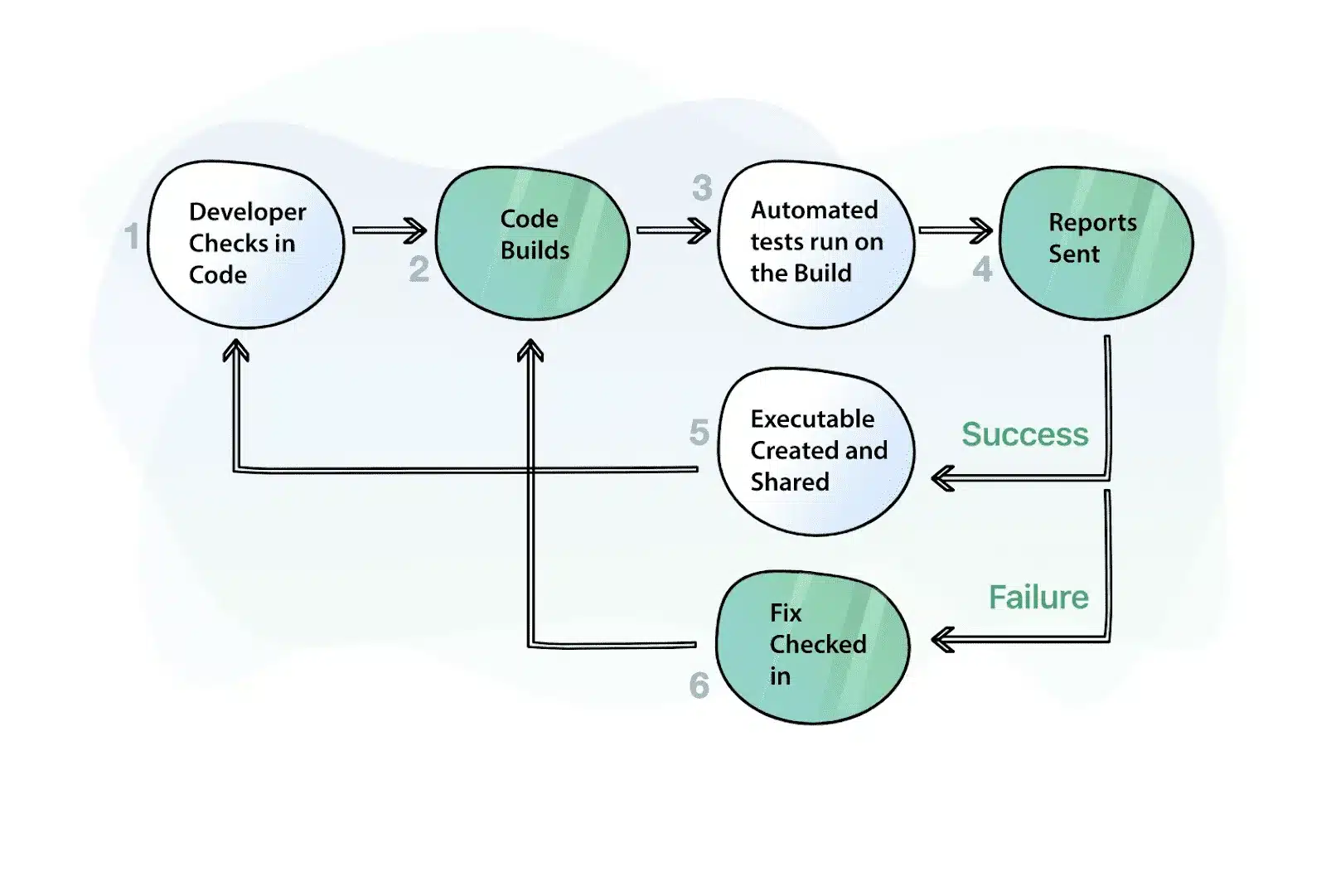

**How to Perform Continuous Testing ?**

Now that you know what continuous testing is, let’s talk about how you use it. Continuous testing should be implemented at every stage of your CI/CD pipeline. You can set up test suites at every point code changes, merges, or releases. That way, you can run tests at a specific point rather than every test at once. This will help reduce time and effort on testing but still reap quality rewards.

Continuous testing works best by using the most recent build in an isolated environment. Containerization is a great method to help with replicating code outside of the main code repository.

**Steps to Perform Continuous Testing**

Use tools to generate test automation suites from user requirements

Create the test environment

Use production data to create test data beds

Test API using service virtualization

Perform parallel performance testing

The 3 Fundamentals of Continuous Testing

People :Each person in a team has a unique responsibility in the delivery pipeline for which he/she must possess the required skills. Transparent and regular communication between the members is the key to success.

Process :Process remains the key in Continuous Testing. Instead of automating everything, focus should be on test automation, code quality, stability, efficiency and providing timely feedback to the developers.

Technology :Technology should be leveraged to get a stable testing environment and build proper alignment between the team’s skills and software tools.

**Frameworks Used for Continuous Testing**

Continuous testing tools are designed to help you with your testing efforts. They ensure your success by guaranteeing positive results as you phase through the continuous testing process. Although many tools are available for continuous testing, very few are worth pursuing. Some prominent, continuous testing tools include:

**Selenium**

Selenium is a software framework that developers with extensive programming skills can use for QA testing. To implement Selenium, it’s vital to understand how frameworks work. In addition, Selenium supports a wide range of popular operating systems (Windows, macOS, Linux) and browsers (Chrome, Firefox, Safari), making it ideal for cross-environment testing.

**Jenkins**

According to a report, 33.3% of dev teams use Jenkins as their CI/CD tools. You’ll be able to run a series of automated tests and builds after the Jenkins server is set up, ensuring that only stable (and tested) code makes it to production.

Using a tool like Jenkins can simplify the process of assuring high code quality and successful builds. It’s beneficial when working on a single project with a large development team, as traditional approaches might result in much conflicting code commits that may require a lot of troubleshooting.

**Appium**

Appium is an open-source test automation framework for mobile web apps. It allows you to create cross-browser tests for both desktop and mobile devices.

Appium is a tool for developing, uploading, executing, and examining test results directly in the cloud. Not only does Appium allow you to automate tests on both physical devices and simulators or emulators but it also allows you to do so without recompiling your app. Appium uses a JSON wire protocol to communicate with the application being tested.

**Eggplant**

Eggplant is a continuous testing tool that provides a one-of-a-kind approach to testing: an image-based solution. Rather than presenting raw test scripts, Eggplant interacts with the Application Under Test (AUT) that simulates users’ points of view.

Eggplant provides a test lab that gives you 24/7 access to continuous testing and deployment. It integrates with other CI/CD tools like Jenkins and Bamboo. This integration allows Eggplant users to perform comprehensive testing, including unit, functional, and performance tests.

**Challenges of continuous testing**

Despite the benefits of continuous testing, you might face challenges when you implement it at scale for the following reasons:

Lack of Test Support in Software: Continuous testing becomes more difficult to achieve when testability support is not built into legacy products. Implementing testability features in these products is expensive and hinders the success of continuous testing.

Absence of Standard Tools: Although there are no standard tools for continuous testing of many different products, teams usually use in-house automation tools or frameworks that lack proper documentation and maintenance. This adds to the problems of the testing team, which will now have to struggle with issues related to the tool/framework.

Insufficient Testing Infrastructure: Continuous testing requires an investment in additional test environments, which must be maintained, kept up to date, and running around the clock. Advanced tools can help teams implement faster feedback loops, but these costs aren’t high compared to the price incurred due to the poor quality of the product. There is a need for organizational commitment to continuous testing instead of a halfway journey without adequate infrastructure, only adding to the problems your testers face.

Scaling: All testing frameworks/tools do not scale equally. Slow test execution and lack of support for large test suites can become severe blockers to the dream of achieving continuous testing. These problems are not always apparent at first; they become visible only after many tests have been added to the system and the test system starts to get highly loaded.

**Conclusion**

In today's fast-paced world, software development needs to be agile and efficient. Continuous testing provides the answer. By integrating testing throughout the development lifecycle, it acts like a safety net, catching bugs early and preventing costly rework. Imagine a world where you can deliver high-quality software faster, with fewer headaches for developers and a smoother experience for users. That's the power of continuous testing. It's not just a process, it's a mindset shift that unlocks the true potential of your development team. So, are you ready to embrace continuous testing and revolutionize your software delivery?

**FAQ's**

**What is continuous testing?**

Continuous testing is a software testing approach where applications are tested at every stage of the development life cycle to ensure continuous delivery with high quality.

**Why does continuous testing matter?**

Continuous testing helps in catching bugs early, reducing rework, accelerating software delivery, improving code quality, and enhancing user experience.

**What are the methodologies of continuous testing?**

Continuous testing methodologies include unit tests, integration tests, regression tests, and end-to-end journey tests, among others.

**What are the benefits of continuous testing?**

Benefits of continuous testing include increased speed of delivery, early bug detection, cost efficiency, improved security, and more time for innovation.

**What are some challenges of continuous testing?**

Challenges of continuous testing may include lack of test support in software, absence of standard tools, insufficient testing infrastructure, and scalability issues. | keploy |

1,871,836 | Effective Communication in Daily Stand-Up Meetings for Software Developers | Effective Communication in Daily Stand-Up Meetings for Software Developers Daily stand-up... | 0 | 2024-05-31T09:10:21 | https://dev.to/trinly01/effective-communication-in-daily-stand-up-meetings-for-software-developers-2i8i | webdev, javascript, beginners, programming | ### Effective Communication in Daily Stand-Up Meetings for Software Developers

Daily stand-up meetings are a cornerstone of Agile methodology, offering a dedicated platform for team members to synchronize their activities, share progress, and identify any blockers. For software developers, these meetings are critical for ensuring smooth project execution. Mastering the language and flow of stand-up meetings can significantly enhance your communication skills and overall productivity. Here, we’ll explore common phrases and sentences that can help you sound more confident and articulate during these meetings.

#### Starting the Update

When initiating your update, it’s important to clearly and concisely communicate what you worked on previously. This sets the context for your current status and helps the team understand your progress.

- **"Yesterday, I worked on..."**

This phrase helps you kick off your update by highlighting your previous day's activities. For example, "Yesterday, I worked on debugging the login feature." By starting with what you accomplished the day before, you provide a clear picture of your recent efforts and set the stage for the current day’s tasks.

- **"In the last 24 hours, I focused on..."**

This variation offers a broader time frame, encompassing all activities since the last stand-up. For example, "In the last 24 hours, I focused on implementing the new payment gateway." This phrase can be particularly useful when your work spans across multiple areas or involves significant changes that require more context.

- **"I've been working on..."**

This phrase is useful for ongoing tasks, indicating continuity in your efforts. For example, "I've been working on optimizing the database queries." Using this phrase can help your team understand that you are in the middle of a larger task that spans several days, providing a sense of continuity and progress.

#### Current Tasks

Clearly outlining your current tasks helps the team understand what you’re focusing on today. This also sets expectations for what you aim to achieve.

- **"Today, I plan to..."**

This phrase clearly indicates your intentions for the day. For example, "Today, I plan to complete the unit tests for the shopping cart feature." By specifying your plan for the day, you give your team a clear understanding of your immediate goals and how they fit into the larger project timeline.

- **"My main focus today will be..."**

This variation highlights your primary objective, ensuring that the team knows your top priority. For example, "My main focus today will be on fixing the critical bugs reported by QA." This helps to emphasize the most important task on your agenda, signaling to your team what you believe requires the most attention and effort.

- **"I'm currently working on..."**

This phrase helps update the team on your immediate activities. For example, "I'm currently working on integrating the third-party API." It provides a snapshot of your current focus and how it contributes to the project’s progress.

#### Blocking Issues

Identifying and communicating blockers is essential for resolving issues quickly and keeping the project on track.

- **"I'm facing an issue with..."**

This phrase helps you introduce a specific problem you’re encountering. For example, "I'm facing an issue with the API authentication." By clearly stating your issue, you open the door for team members to offer solutions or assistance.

- **"I'm blocked by..."**

This direct approach clearly states what’s preventing you from making progress. For example, "I'm blocked by the lack of access to the staging server." Identifying blockers promptly allows the team to address them quickly, ensuring that progress can continue smoothly.

- **"One challenge I'm encountering is..."**

This phrase allows for a more detailed explanation of the problem. For example, "One challenge I'm encountering is the inconsistency in the data returned by the API." Providing specific details about your challenges can help the team understand the complexity of the issue and collaborate on finding a solution.

#### Progress and Achievements

Highlighting your achievements and progress helps maintain a positive tone and demonstrates your contributions to the project.

- **"I've completed..."**

This phrase succinctly conveys task completion. For example, "I've completed the user interface for the dashboard." Celebrating small victories and completed tasks boosts morale and keeps the team motivated.

- **"I finished the task related to..."**

This variation provides context for the completed task. For example, "I finished the task related to implementing the new search functionality." Offering context helps the team understand the significance of your completed work and how it fits into the overall project.

- **"I made progress on..."**

This phrase is useful for ongoing tasks, indicating forward movement. For example, "I made progress on optimizing the loading time for the homepage." Highlighting incremental progress keeps the team informed about the state of long-term tasks and maintains a sense of momentum.

#### Collaborations and Requests

Effective communication also involves seeking help and coordinating with team members when needed.

- **"I need help with..."**

This straightforward request for assistance is crucial for overcoming challenges quickly. For example, "I need help with debugging the new feature on the mobile app." Asking for help when needed ensures that issues are resolved efficiently and that you can continue making progress.

- **"Could someone assist me with..."**

This polite request for help encourages team collaboration. For example, "Could someone assist me with setting up the CI/CD pipeline?" Encouraging collaboration fosters a supportive team environment where members feel comfortable seeking and offering assistance.

- **"I'm waiting for feedback from..."**

This phrase helps you indicate dependencies on other team members. For example, "I'm waiting for feedback from the design team on the latest mockups." Communicating dependencies ensures that everyone is aware of what’s needed to move forward and can prioritize their work accordingly.

#### Next Steps

Outlining your next steps ensures that the team knows what you’ll be focusing on next, helping to maintain alignment and momentum.

- **"Next, I'll be..."**

This phrase clearly indicates your upcoming tasks. For example, "Next, I'll be working on the API integration for the notifications system." Providing clear next steps helps the team understand your planned activities and how they align with the project’s goals.

- **"My next steps are..."**

This variation helps you outline a series of upcoming tasks. For example, "My next steps are to finalize the database schema and start the data migration." Offering a roadmap of your next steps keeps the team informed about your planned progress and any upcoming milestones.

- **"I'll move on to..."**

This phrase indicates a transition from one task to another. For example, "I'll move on to writing the unit tests for the new module." Communicating your transitions helps the team understand the flow of your work and how each task contributes to the overall project.

### Best Practices for Stand-Up Meetings

While using the right phrases and sentences is crucial, adhering to some best practices can further enhance the effectiveness of your stand-up meetings.

#### Be Concise

Stand-up meetings are designed to be short and to the point. Aim to deliver your update in under two minutes, focusing on key information without unnecessary details. This helps keep the meeting efficient and respects everyone’s time.

#### Be Clear

Clarity is essential for effective communication. Use simple and direct language to convey your message. Avoid jargon or overly complex explanations that might confuse team members.

#### Stay Relevant

Ensure that your update is relevant to the team and the project. Share information that impacts the team’s work or the project’s progress. Avoid discussing unrelated topics that don’t contribute to the meeting’s purpose.

#### Be Honest

If you’re facing challenges or are behind schedule, be honest about it. Transparency helps the team address issues promptly and find solutions together. It’s better to acknowledge problems early on rather than let them escalate.

#### Encourage Engagement

Stand-up meetings should be interactive. Encourage team members to ask questions or offer assistance. Fostering an environment of open communication and collaboration strengthens the team dynamic.

Mastering the art of communication in daily stand-up meetings involves using specific phrases and sentences to clearly convey your status, plans, and any blockers. By practicing these techniques and adhering to best practices, you can enhance your communication skills, contribute more effectively to your team, and ensure the success of your projects. Effective stand-up meetings not only keep everyone informed but also foster a collaborative and supportive team environment, paving the way for successful project outcomes. | trinly01 |

1,871,835 | How to Enable Multicasting Support in Windows 11? | Enable Multicasting Support in Windows 11: Microsoft Message Queuing (MSMQ) is a messaging protocol... | 0 | 2024-05-31T09:07:39 | https://dev.to/winsidescom/how-to-enable-multicasting-support-in-windows-11-31f7 | webdev, networking, ipv4, productivity | <strong>Enable Multicasting Support in Windows 11</strong>: <a href="https://winsides.com/enable-microsoft-message-queue-msmq-server-windows-11/"><strong>Microsoft Message Queuing (MSMQ)</strong></a> is a messaging protocol that allows applications running on separate servers/processes to <strong>communicate failsafe</strong>. MSMQ ensures that messages are delivered even when the recipient application is not running. Multicasting in MSMQ allows a message to be sent to a <strong>multicast IP address</strong>. Any MSMQ queue that is subscribed to that multicast address will receive the message. This is particularly useful for scenarios where the same message needs to be delivered to multiple recipients simultaneously. This guide will walk you through the steps on How to <strong>Enable Multicasting Support in Windows 11</strong>.

<ul>

<li>Open the <strong>Run command box</strong> using the shortcut <kbd>Win Key + R</kbd>.</li>

<li>Enter <code>optionalfeatures</code> in the run command box.

<img class="wp-image-658 size-full" src="https://winsides.com/wp-content/uploads/2024/05/Optional-Features-1.jpg" alt="Optional Features" width="484" height="295" /> Optional Features</li>

<li><strong>Windows Features</strong> dialog box will open now.</li>

<li>Locate <strong>Microsoft Message Queue (MSMQ) Server</strong> and expand it.

<img class="wp-image-681 size-full" src="https://winsides.com/wp-content/uploads/2024/05/Expand-MSMQ-Server-Node.jpg" alt="Expand MSMQ Server Node" width="787" height="521" /> Expand MSMQ Server Node</li>

<li>Now, you can find the <strong>Microsoft Message Queue Server Core Node. Expand it.</strong>

<img class="wp-image-682 size-full" src="https://winsides.com/wp-content/uploads/2024/05/Expand-MSMQ-Server-Core-Node.jpg" alt="Expand MSMQ Server Core Node" width="775" height="518" /> Expand MSMQ Server Core Node</li>

<li>Locate <strong>Multicasting Support</strong> from the list of services available, click on the checkbox next to it to select it, and click <strong>OK</strong>.

<img class="wp-image-779 size-full" src="https://winsides.com/wp-content/uploads/2024/05/Enable-Multicasting-Support-in-Windows-11.jpg" alt="" width="815" height="542" /> Enable Multicasting Support in Windows 11</li>

<li>That is it, Windows 11 will now search for the required files.

<img class="wp-image-42 size-full" src="https://winsides.com/wp-content/uploads/2024/01/Searching-for-the-required-files.jpg" alt="Searching for the required files" width="1001" height="720" /> Searching for the required files</li>

<li>Then, the necessary changes will be applied.

<img class="wp-image-36 size-full" src="https://winsides.com/wp-content/uploads/2024/01/Applying-Changes.jpg" alt="Applying Changes" width="875" height="678" /> Applying Changes</li>

<li>Click <strong>Restart</strong> if you are prompted to restart or click <strong>Close</strong> accordingly. However, it is suggested that the changes be restarted right away so that they will be reflected.

<img class="wp-image-38 size-full" src="https://winsides.com/wp-content/uploads/2024/01/Close.jpg" alt="Close" width="849" height="684" /> Close</li>

<li><strong>Multicasting Support</strong> is now enabled on your Windows 11 PC.</li>

</ul>

<h2>Significant Features of Multicasting Support in MSMQ:</h2>

<img class="wp-image-781 size-full" src="https://winsides.com/wp-content/uploads/2024/05/Multicast-Support-MSMQ-Features-in-Windows-11.jpg" alt="Multicasting Support(MSMQ) in Windows 11" width="1920" height="1080" /> Multicasting Support(MSMQ) in Windows 11

<ul>

<li><strong>Efficient Message Distribution</strong>: MSMQ sends a single message to a multicast address instead of sending individual messages to each recipient. All queues listening to this address receive the message, <strong>reducing network traffic and improving efficiency</strong>.</li>

<li><strong>Scalability of the Feature</strong>: Multicasting is scalable because adding more recipients (queues) does not increase the sender's workload or network usage significantly. All recipients receive the message from the multicast stream.</li>

<li><strong>Dynamic Group Membership</strong>: Queues can join or leave multicast groups dynamically, allowing for flexible and <strong>dynamic message routing</strong>.</li>

<li><strong>Support for IP Multicast</strong>: MSMQ uses <strong>IP multicast addresses</strong> (from the range 224.0.0.0 to 239.255.255.255 for <strong>IPv4</strong>) to send messages to multiple recipients.</li>

</ul>

<h2>Use Case Scenarios for MSMQ Multicasting Support:</h2>

<ol>

<li><strong>Broadcasting Updates</strong>: Sending system updates, <strong>stock prices</strong>, or other real-time information to multiple clients.</li>

<li><strong>Event Notification Systems</strong>: <strong>Broadcasting event notifications</strong> to multiple services or applications.</li>

<li><strong>Distributed Applications</strong>: Sending messages to multiple instances of an application or service running on different machines.</li>

</ol>

Note: Multicast Support in MSMQ is particularly useful in scenarios requiring <strong>real-time data distribution</strong> to multiple consumers.

<h2>Take away:</h2>

By following these steps, you can <strong>enable and configure multicasting support in the <a href="https://winsides.com/enable-microsoft-message-queue-server-core-windows/">MSMQ Server core</a></strong> on Windows 11, allowing efficient message distribution to multiple recipients using multicast addresses. If you find this article helpful, share your feedback and give us a rating, for more intriguing articles, stay tuned to <strong>Winsides.com!</strong> | winsidescom |

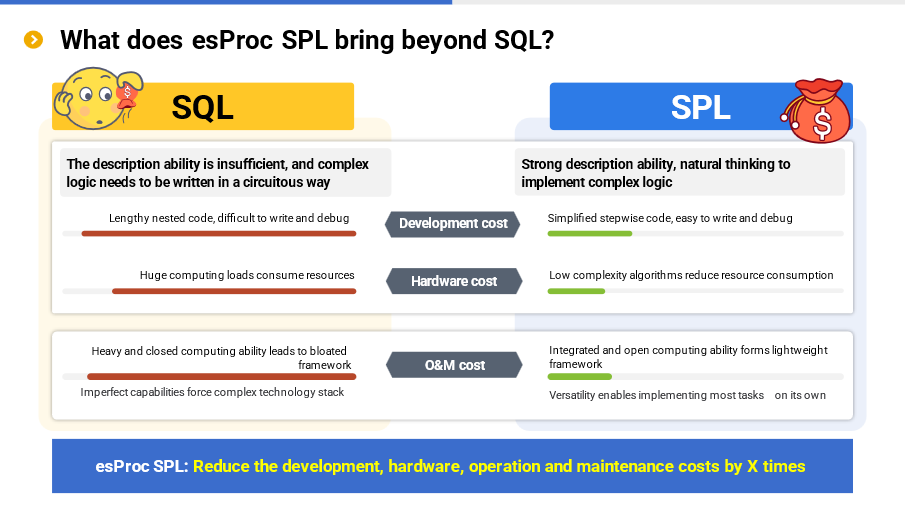

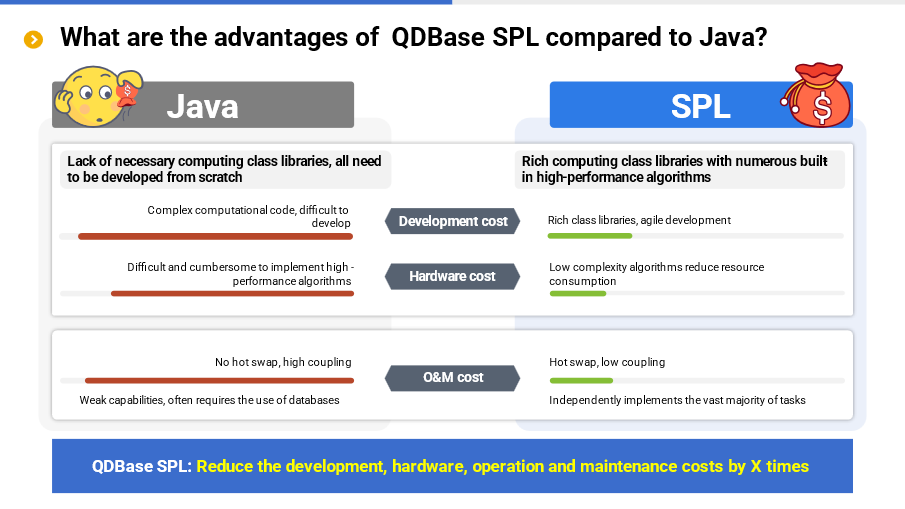

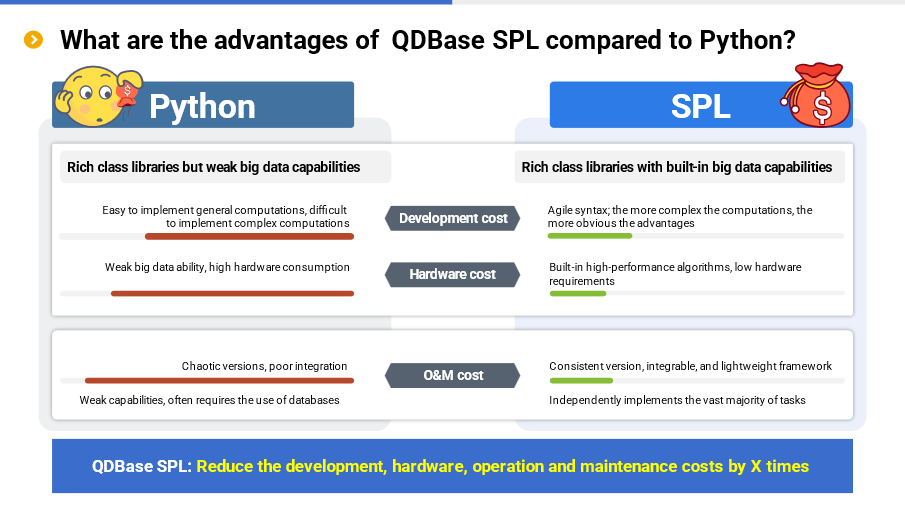

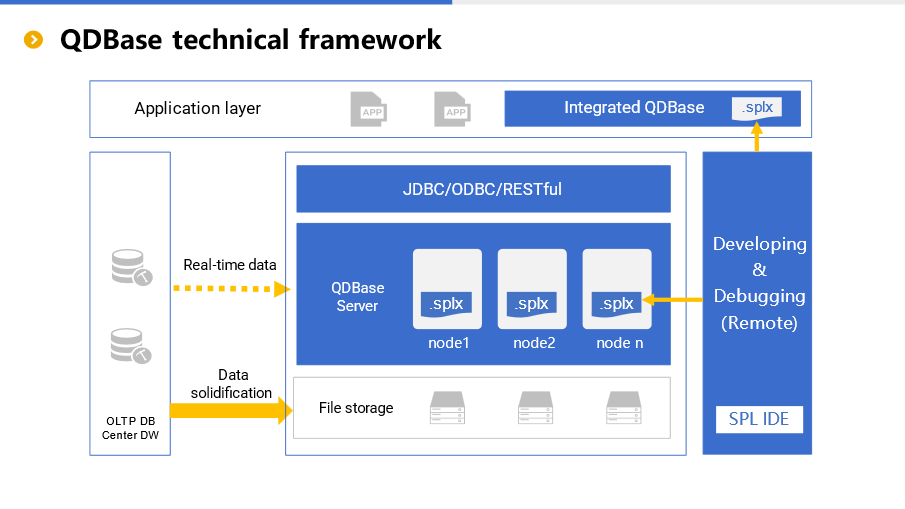

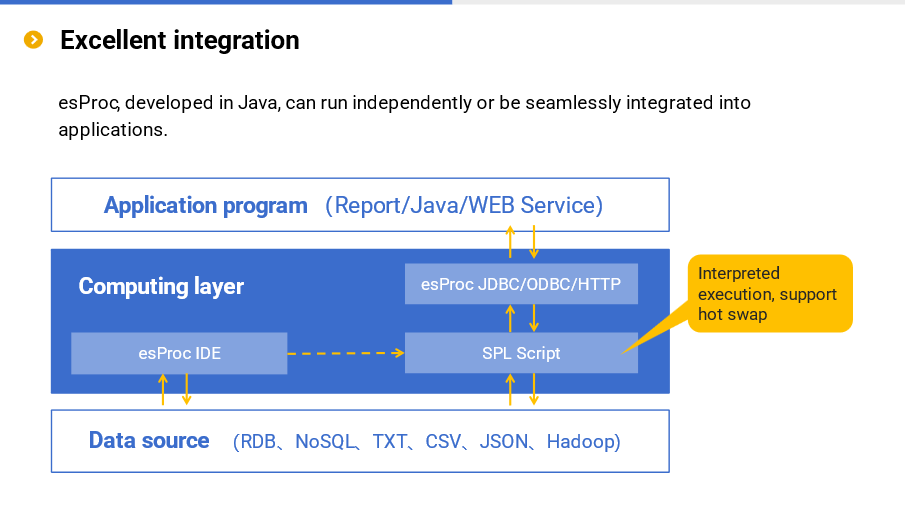

1,871,823 | A data analysis engine reducing application cost by N times | At present, there are many posts related to esProc SPL on the Internet, such as solution... | 0 | 2024-05-31T08:46:19 | https://dev.to/esproc_spl/a-data-analysis-engine-reducing-application-cost-by-n-times-3ph6 | programming, beginners, devops, opensource | At present, there are many posts related to esProc SPL on the Internet, such as solution introduction, test report, case sharing, but most of them only involves a certain aspect, and hence it is still difficult for readers and users to understand esProc SPL in an all-round way. This article provides a comprehensive introduction of esProc SPL, allowing you to recognize and understand it on the whole.

esProc SPL is a data analysis engine with four main characteristics: low code, high performance, lightweight and versatility. To be specific, SPL allows you to write simply, and makes the running speed faster; SPL can be used either independently or embedded in applications; SPL is suitable for a variety of application scenarios. Analyzing the data through esProc SPL can reduce the overall application cost by several times compared with SQL-represented traditional technologies. Details will be given below.

What is esProc SPL?

First let's explain what esProc SPL is.

As a computing and processing engine for structured and semi-structured data, esProc SPL can be used as an analysis database or a data computing middleware, and is mainly applied to two data analysis scenarios:offline batch job and online query. It is worth mentioning that, unlike common analysis database on the market, esProc SPL is neither SQL system nor NoSQL technology (such as MongoDB, HBase), and instead, it adopts self-created SPL (Structured Process Language) syntax, which is simpler in coding and higher in running efficiency compared with existing data processing technologies.

What pain points does esProc SPL solve?

SPL mainly solves data problems, including hard to write, slow to run and difficult to operate and maintain. Here below are some examples.