id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

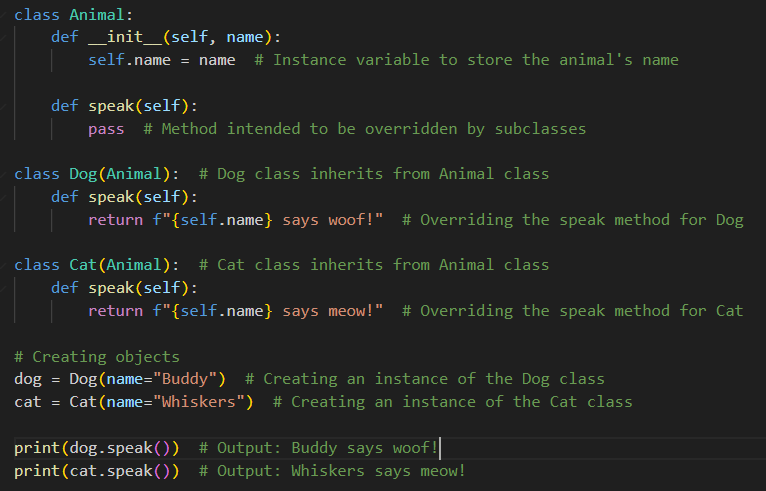

1,869,648 | SOLID principles in OOP for beginners | SOLID principles are sets of best practices aimed to solve the common problems that developers face... | 0 | 2024-05-29T21:07:54 | https://dev.to/vivecodes/solid-principles-in-oop-for-beginners-7k6 | oop, typescript, solidprinciples, beginners | SOLID principles are sets of best practices aimed to solve the common problems that developers face in object-oriented programming.

While design patterns provide concrete solutions to common design problems, the SOLID principles are way more abstract. They are intended to help in organizing and structuring the code, making it easier to manage and extend over time.

There are five SOLID principles in total:

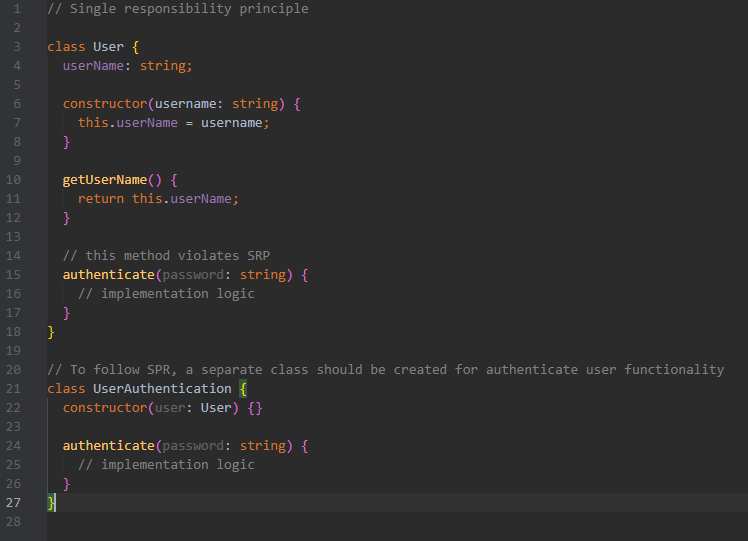

**Single Responsibility Principle (SRP)**

Each entity (like a class, function, or method) should have only one responsibility or job.

*Advantages:*

- Easier maintenance and updates

- Clear purpose for each class

- Simplified testing

- Enhanced reusability

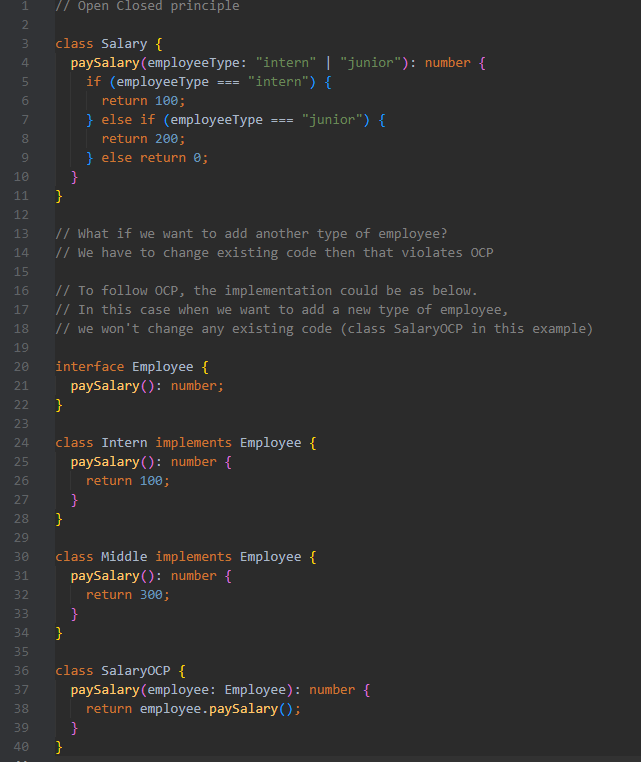

**Open/Closed Principle (OCP)**

Entities (like classes, methods, or functions) should be open for extension but closed for modification. This means you can add new functionality without changing existing code.

*Advantages:*

- Lower risk of introducing bugs

- Faster development of new features since existing code remains unchanged

- Enhanced reusability

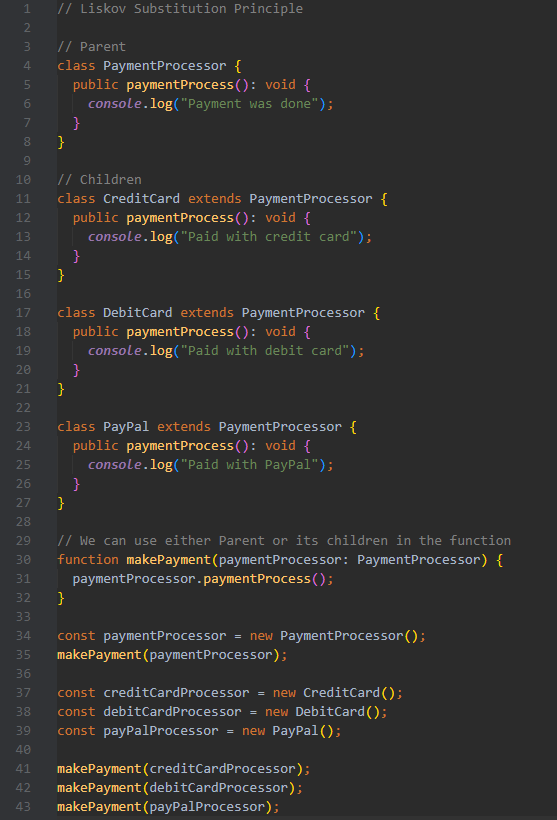

**Liskov Substitution Principle (LSP)**

Subtypes must be substitutable for their base types. In other words, wherever Parent is used, it could be replaced by Child without affecting the functionality of the program (without altering the existing code).

*Advantages:*

- New classes can be added without breaking existing functionality

- Enables creating substitutional parts of complex systems

- Enhanced reusability

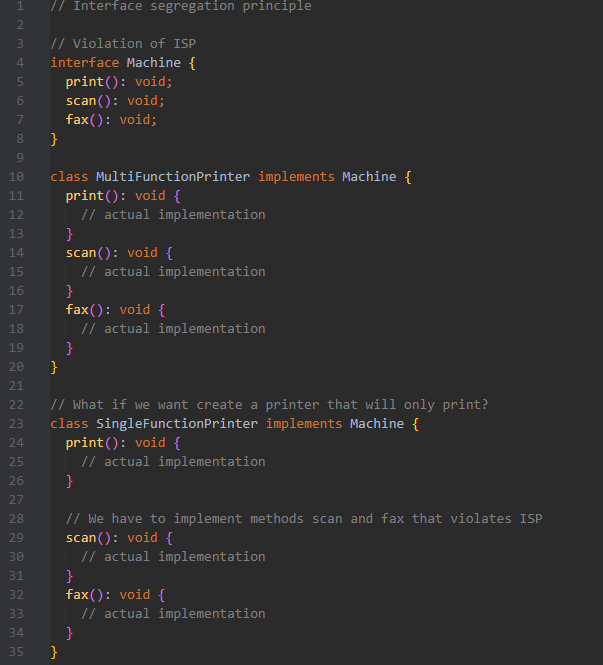

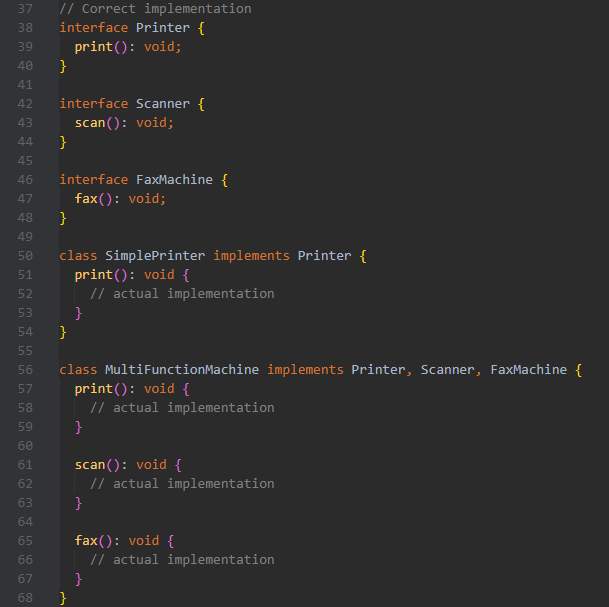

**Interface Segregation Principle (ISP)**

No client should be forced to depend on methods it does not use. In other words, instead of adding new methods to an existing interface, create a new interface.

*Advantages:*

- Smaller, more understandable interfaces

- Changes in one interface do not impact unrelated classes

- Enhanced reusability

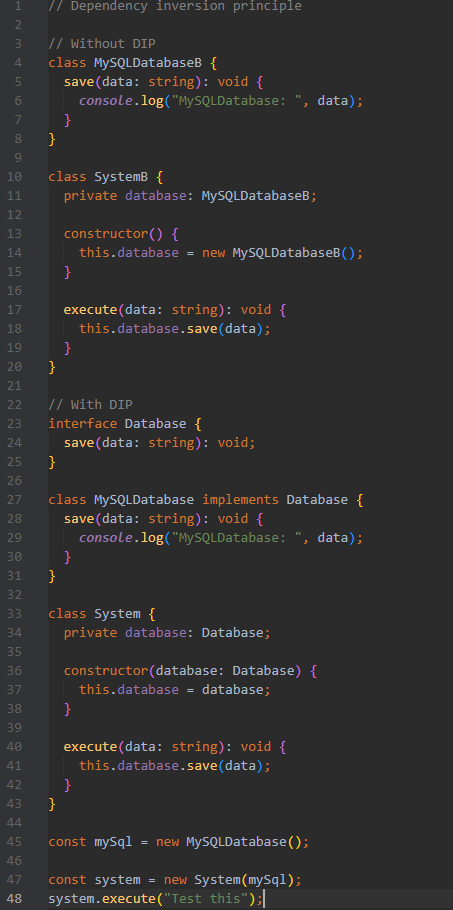

**Dependency inversion principle (DIP)**

High-level modules should not depend on low-level modules.

Both should depend on abstractions.

Abstractions should not depend on details.

Details should depend on abstractions.

*High-level modules* - business logic, use cases

*Low-level modules* - writing to DB, handling HTTP requests

*Abstractions* - interfaces, abstract classes

*Details* - concrete classes

*Advantages:*

- Promotes flexible and reusable code

- Reduces coupling between different parts of the codebase

- Enhanced reusability

| vivecodes |

1,869,647 | Moving away from class components and inheritance a Bad Idea!!! | I've read the docs including the docs Componentisation vs inheritance, however I completely disagree,... | 0 | 2024-05-29T21:04:15 | https://dev.to/mark_ellis_97da84e89cde02/moving-away-from-class-components-and-inheritance-a-bad-idea-469g | I've read the docs including the docs Componentisation vs inheritance, however I completely disagree, sure they say they use it on facebook and have never had the need for inheritance, but we are talking about facebook, where the interface is so ridiculously simple, no you more then likely wouldn't need inheritance. Inheritance is such a powerful tool and it can be used simultaneously with componentisation, which from years of experience with react makes it even more powerful. I'm not going to bother going into specific use cases, I have better things to do. but if the developers of react can't foresee that other developers are trying to build things that they could not even begin to comprehend was possible with their own framework, then this framework is doomed. this frame work in ye old days was great, it took care of the piddly crap that most developers couldn't be bothered with, but beyond that stayed out of the way. And it was fantastic, in-fact its a great shame that more frameworks don't follow the same model.

Unfortunately react is now starting to reach the point of dictating how I should code, and hence if it continues down this path it will get in my way, and when that day comes I'd sooner ditch react, either find another framework that does what I want, or build my own.

as for class components I prefer using classes because I think that it is the best way to adhere to the first and most important rule of coding, keeping code structured and readable, and yes for the same reason I loath the absolute overuse of arrow notation!!!! | mark_ellis_97da84e89cde02 | |

1,869,646 | newdigitalhub | I am mark taylor, working for newdigitalhub PR consultant, with more than six years of experience in... | 0 | 2024-05-29T21:04:14 | https://dev.to/marko_taylor_cc56ed3459fc/newdigitalhub-h5h | I am mark taylor, working for newdigitalhub PR consultant, with more than six years of experience in the PR and Digital Industry, helping teams achieve goals by streamlining the process. https://www.newdigitalhub.com/ | marko_taylor_cc56ed3459fc | |

1,869,645 | Deploying A Static Website with AWS S3 | Introduction Deploying a static website using Amazon S3 is a cost-effective and efficient way to host... | 0 | 2024-05-29T21:02:29 | https://dev.to/anson_ly/deploying-a-static-website-with-aws-s3-52fj | aws, s3, webdev | **Introduction**

Deploying a static website using Amazon S3 is a cost-effective and efficient way to host web content. In this guide, I’ll walk you through the steps to set up and deploy a static website on AWS S3. This process is ideal for hosting simple HTML, CSS, and JavaScript files without the need for server-side processing.

**Step 1 Create a S3 Bucket**

First I created an S3 bucket that will hold my website files.

**Step 2: Upload Website Files**

Next, you need to upload your website files to the S3 bucket. Select your bucket from the list and click the “Upload” button. Add your HTML, CSS, and JavaScript files. Once you’ve added the files, click “Upload” to add them to your bucket.

**Step 3: Configure the Bucket for Static Website Hosting**

Now, you need to configure the bucket to host your website. Navigate to the bucket’s properties by clicking on the “Properties” tab and scrolling down to the “Static website hosting” section. Enable static website hosting by selecting “Use this bucket to host a website”. Specify the index document (e.g., index.html) and optionally specify an error document (e.g., error.html). Finally, click “Save”.

**Step 4: Access Your Static Website**

Now, your static website is ready to be accessed. Go back to the “Properties” tab and in the “Static website hosting” section, you’ll find the URL of your website. Open this URL in your browser to see your static website live.

Here is the website using Github. [Link](https://ansonly15.github.io/Anson_Ly/)

**Conclusion**

Deploying a static website with AWS S3 is straightforward and offers a scalable, cost-effective solution for hosting web content. By following the steps outlined above, you can have your static site up and running in no time. For enhanced security and performance, consider integrating AWS CloudFront and using a custom domain with Route 53. Happy hosting! | anson_ly |

1,869,644 | Tailwind is the best CSS framework!? | I tried using several CSS frameworks, but Tailwind was the only one I could use. But I'm just... | 0 | 2024-05-29T21:01:43 | https://dev.to/devguilhermeribeiro/tailwind-is-the-best-css-framework-iap | webdev, programming, tailwindcss | I tried using several CSS frameworks, but Tailwind was the only one I could use. But I'm just starting out in backend web development with RoR. Let's see what happens!? | devguilhermeribeiro |

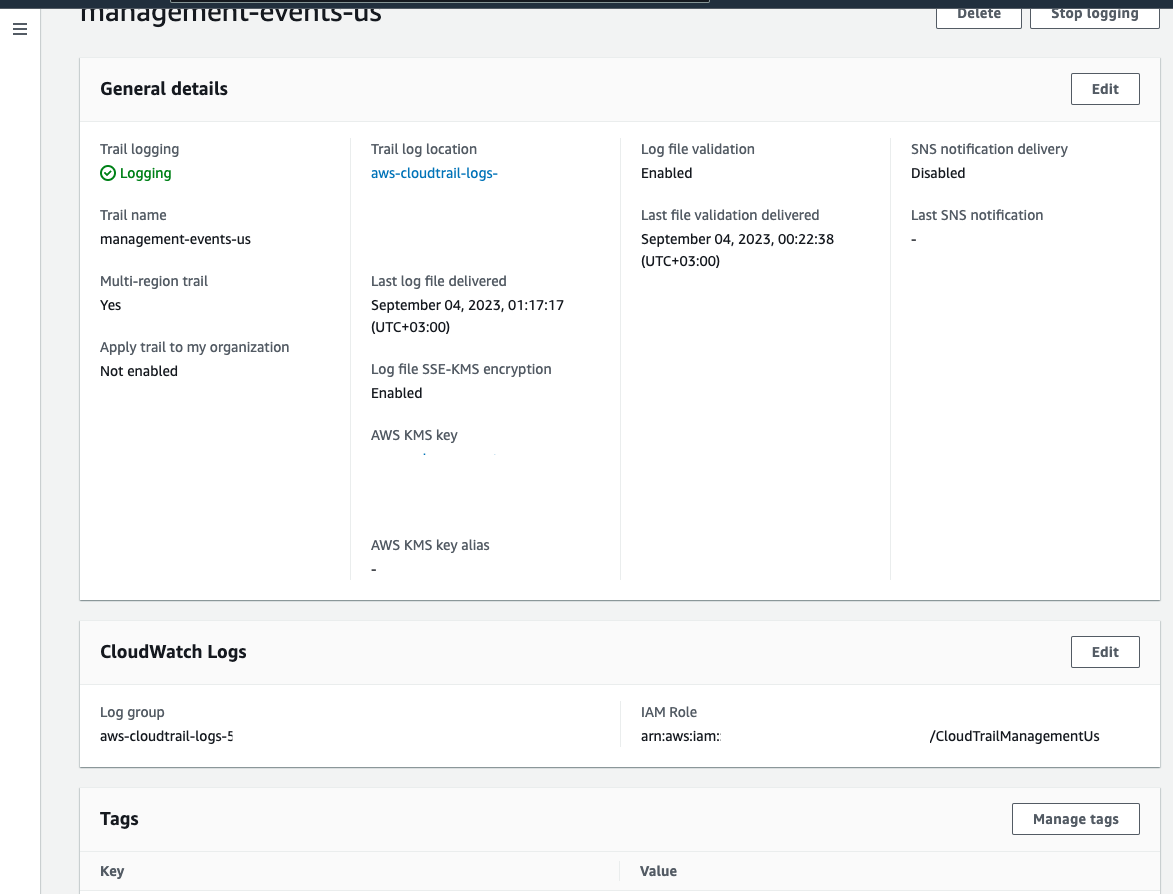

1,869,639 | How to monitor all AWS Console logins and failed attempts with CloudTrail and CloudWatch - Terraform automation | You never know when a bad guy wants to send birthdays alerts with Lambda.. just joking :D He probably... | 0 | 2024-05-29T20:54:53 | https://dev.to/montaigu/how-to-monitor-all-aws-console-logins-and-failed-attempts-with-cloudtrail-and-cloudwatch-terraform-automation-2pi1 | aws, devops | You never know when a bad guy wants to send birthdays alerts with Lambda.. just joking :D He probably will want to use your AWS account for his purposes and you'll end up with xxxx bill at the end of the month.

In the following article it will be explained how to monitor all the AWS Console login events and also the monitoring of the failed attempts.

I suggest to open the CloudTrail in eu-east-1 to also monitor all console login events.

You need to create a trail which sends the logs into S3 just optional, and very important in this case to send them to a CloudWatch group.

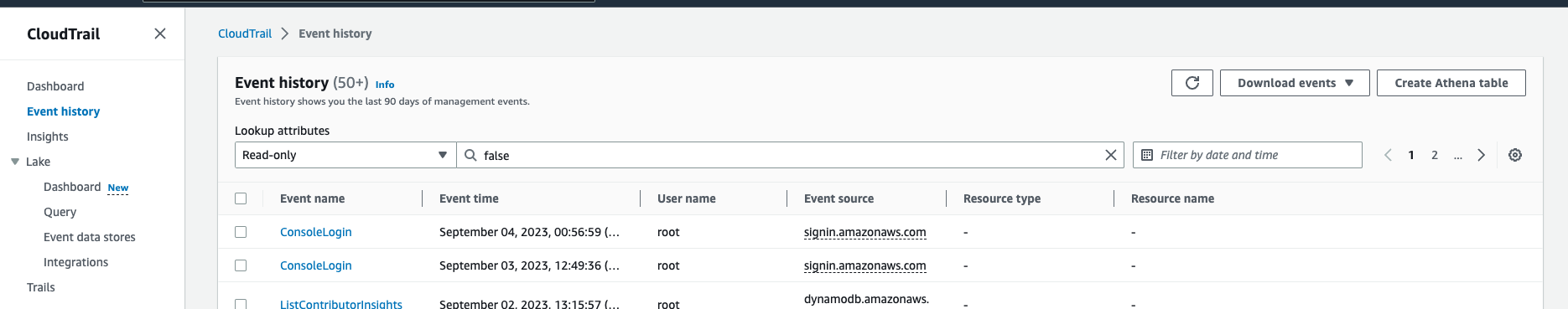

And the event history will look like this:

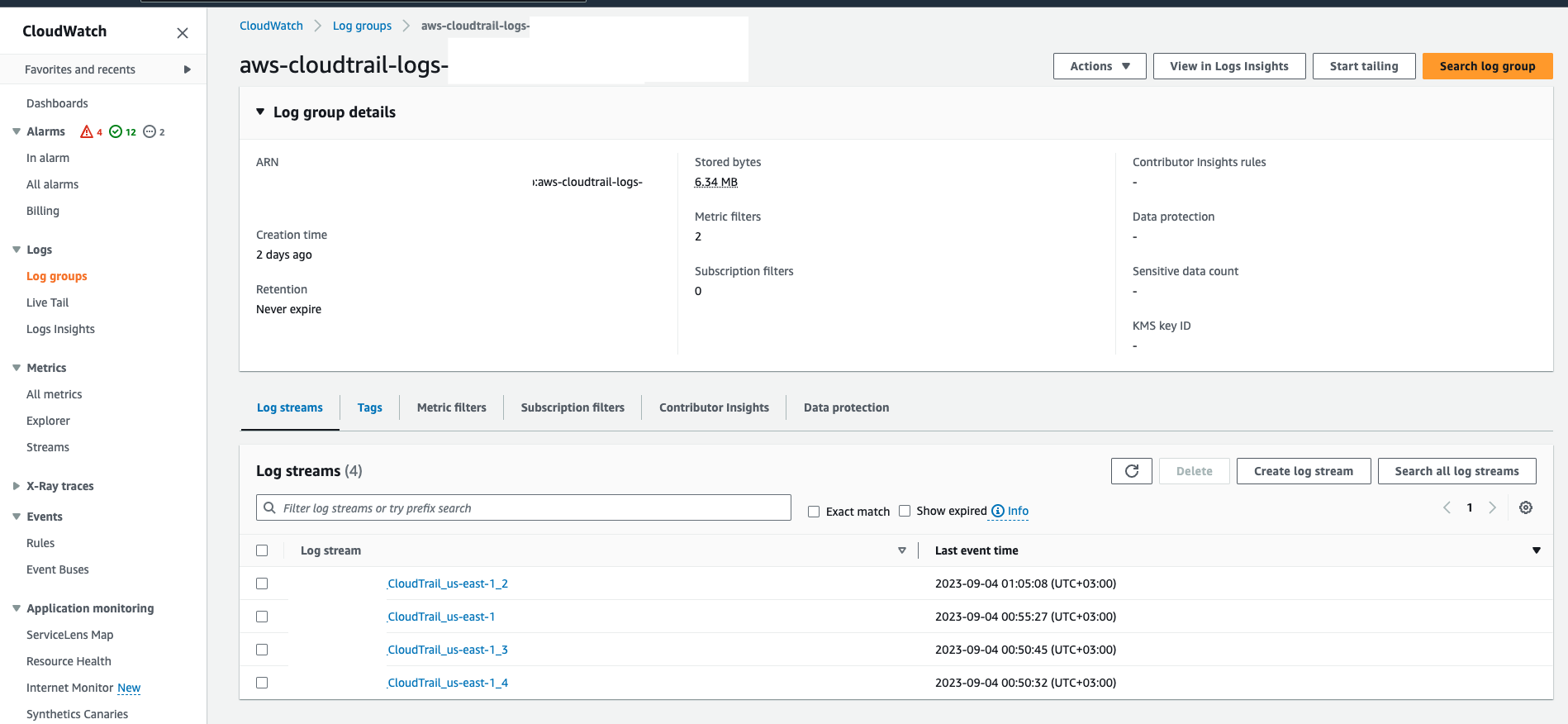

When all is set you need to go to the CloudWatch group to see if there are some streams:

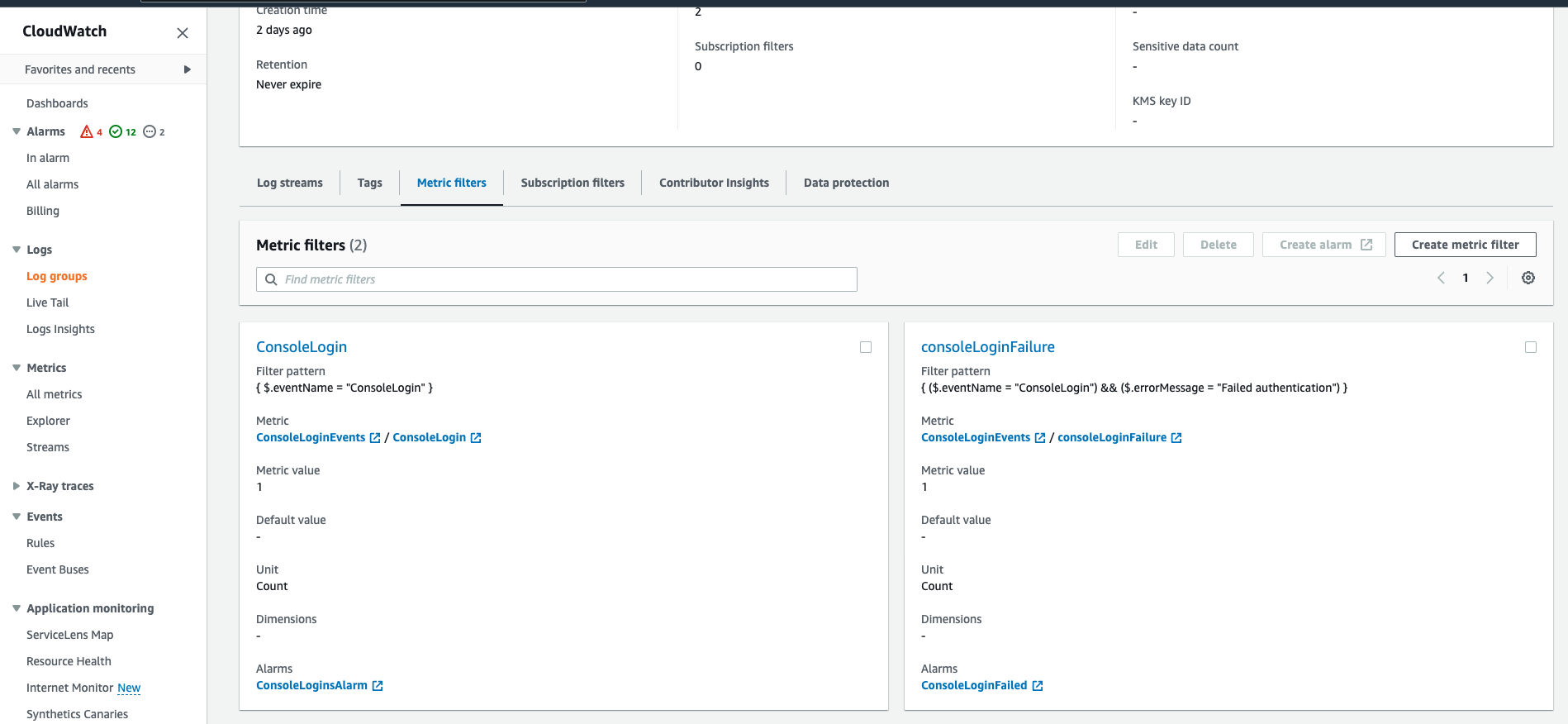

And need to create two metrics in this group:

One which will be for all ConsoleLogin events with a filter on: { $.eventName = "ConsoleLogin" }

And the other on failed console login attempts: { ($.eventName = "ConsoleLogin") && ($.errorMessage = "Failed authentication") }

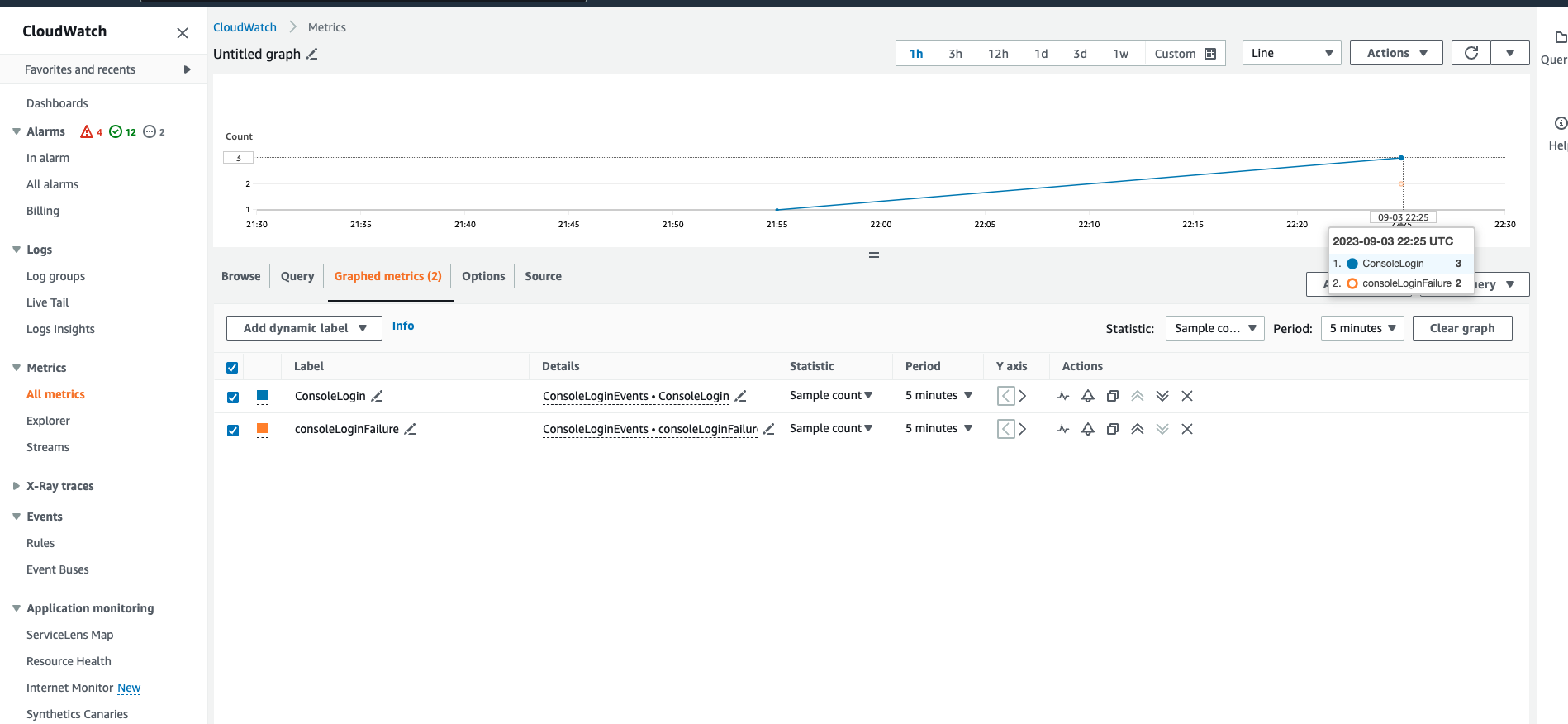

If everything goes well just do some console logins as a test, also fail some of those. The result in these two metrics should be:

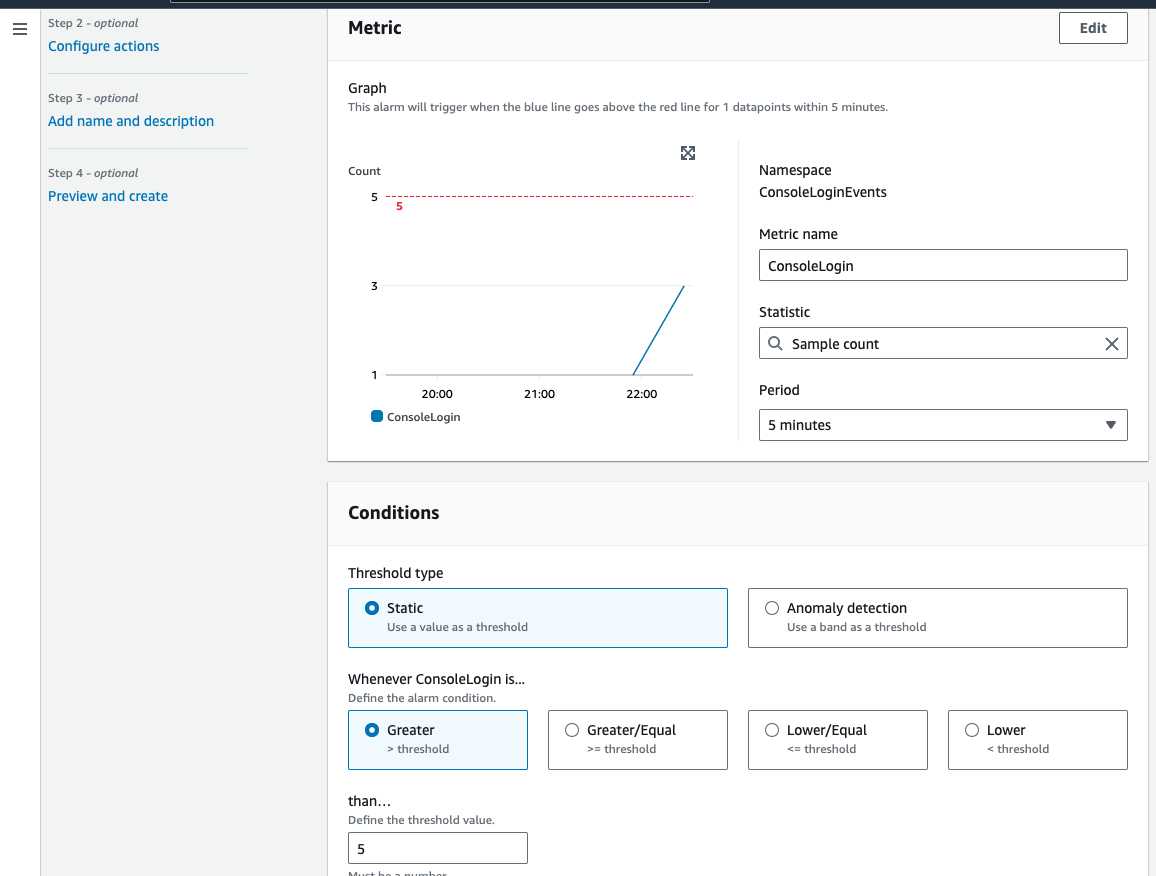

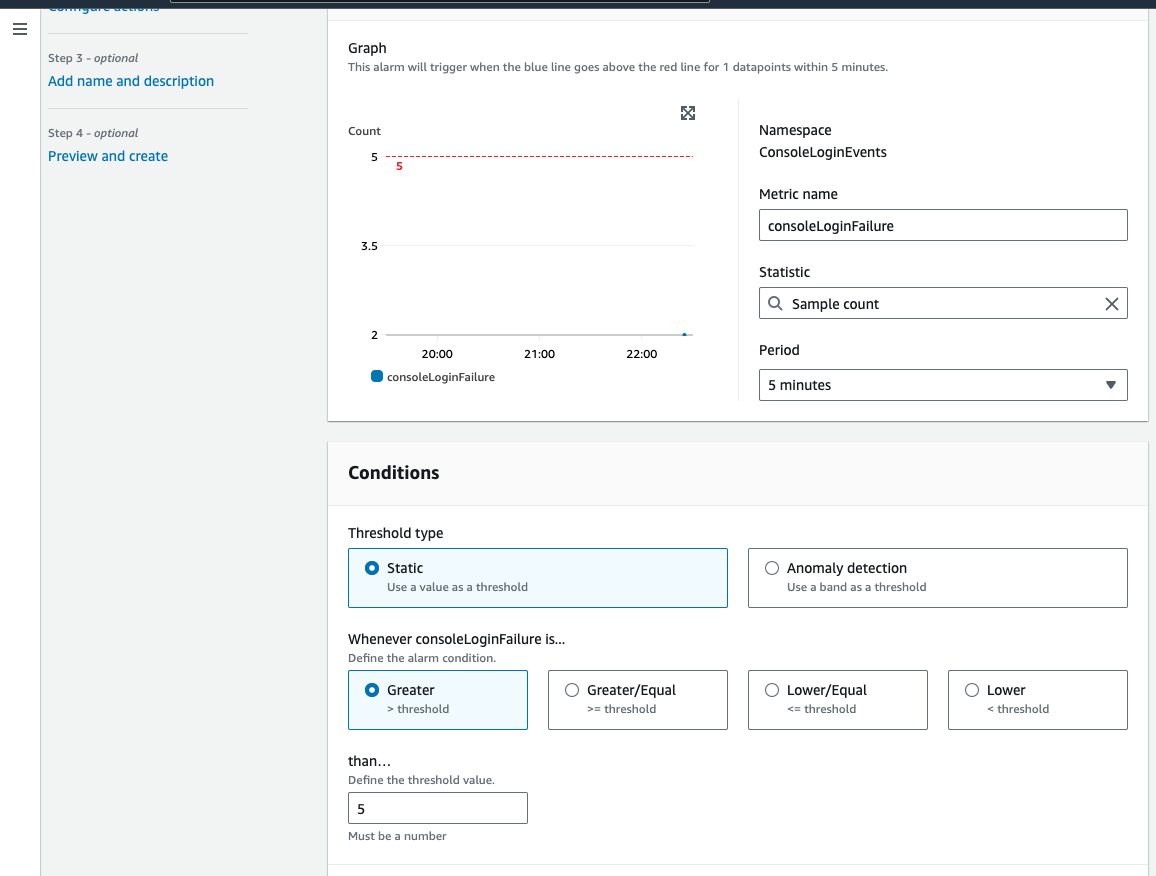

Afterwards you can set some alarms. For All logins:

And for failed ones:

And both to send emails or sms through and SNS topic.

Some Terraform code to automate a bit the entire process:

The cloudtrail logic and the role link:

```

provider "aws" {

region = "us-west-1"

}

resource "aws_sns_topic" "cloudtrail_alerts" {

name = "cloudtrail-alerts"

}

resource "aws_cloudwatch_log_group" "cloudtrail_log_group" {

name = "cloudtrail-log-group"

}

resource "aws_cloudtrail" "main" {

name = "cloudtrail-example"

s3_bucket_name = aws_s3_bucket.cloudtrail_bucket.bucket

cloud_watch_logs_group_arn = aws_cloudwatch_log_group.cloudtrail_log_group.arn

cloud_watch_logs_role_arn = aws_iam_role.cloud_watch_logs_role.arn

enable_logging = true

}

resource "aws_s3_bucket" "cloudtrail_bucket" {

bucket = "my-cloudtrail-bucket"

}

resource "aws_iam_role" "cloud_watch_logs_role" {

name = "CloudWatchLogsRole"

assume_role_policy = jsonencode({

Version = "2012-10-17"

Statement = [

{

Action = "sts:AssumeRole"

Effect = "Allow"

Principal = {

Service = "cloudtrail.amazonaws.com"

}

}

]

})

}

```

The filter on metrics for logins:

```

resource "aws_cloudwatch_metric_filter" "console_login" {

name = "ConsoleLogin"

pattern = "{ $.eventName = \"ConsoleLogin\" }"

log_group_name = aws_cloudwatch_log_group.cloudtrail_log_group.name

}

resource "aws_cloudwatch_metric_filter" "failed_console_login" {

name = "FailedConsoleLogin"

pattern = "{ ($.eventName = \"ConsoleLogin\") && ($.errorMessage = \"Failed authentication\") }"

log_group_name = aws_cloudwatch_log_group.cloudtrail_log_group.name

}

```

And the alarms linked to the metrics:

```

resource "aws_cloudwatch_metric_alarm" "console_login_alarm" {

alarm_name = "ConsoleLoginAlarm"

comparison_operator = "GreaterThanOrEqualToThreshold"

evaluation_periods = "1"

metric_name = "ConsoleLogin"

namespace = "CloudTrailMetrics"

period = "300"

statistic = "SampleCount"

threshold = "5"

alarm_description = "This metric checks for console logins"

alarm_actions = [aws_sns_topic.cloudtrail_alerts.arn]

}

resource "aws_cloudwatch_metric_alarm" "failed_console_login_alarm" {

alarm_name = "FailedConsoleLoginAlarm"

comparison_operator = "GreaterThanOrEqualToThreshold"

evaluation_periods = "1"

metric_name = "FailedConsoleLogin"

namespace = "CloudTrailMetrics"

period = "300"

statistic = "SampleCount"

threshold = "5"

alarm_description = "This metric checks for failed console logins"

alarm_actions = [aws_sns_topic.cloudtrail_alerts.arn]

}

```

| montaigu |

1,869,640 | HOW TO CONNECT AN IOT FIELD DEVICE (RASPBERRY PI SIMULATOR) FROM THE FIELD TO AN AZURE CLOUD IOT HUB FOR COMMUNICATION AND DATA | What is IOT? Internet of Things (IoT) Central in Microsoft Azure is a managed IoT application... | 0 | 2024-05-29T20:54:13 | https://dev.to/atony07/how-to-connect-an-iot-field-device-raspberry-pi-simulator-from-the-field-to-an-azure-cloud-iot-hub-for-communication-and-data-iob | What is IOT?

Internet of Things (IoT) Central in Microsoft Azure is a managed IoT application platform that enables users to build, manage, and operate IoT solutions with ease. It provides a comprehensive and scalable environment for connecting, monitoring, and managing IoT devices, simplifying the deployment and management of IoT applications.

What is Raspberry PI Simulator?

Raspberry Pi Simulator is a powerful tool for prototyping, testing, and learning about Raspberry Pi projects and IoT applications, providing a virtual platform that mimics the functionality of a physical Raspberry Pi.

However, it allows users to simulate a Raspberry Pi with various sensors, interact with Azure services, and build IoT applications in a virtual environment.

Here’s a step-by-step guide on how to do this.

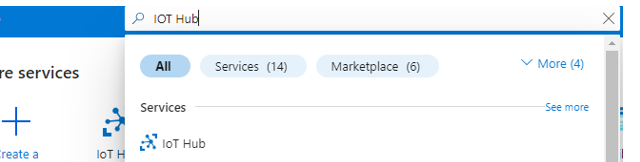

Step:1: Log on to Microsoft Azure account and search for IOT Hub

Step:2: Click on the Create Icon

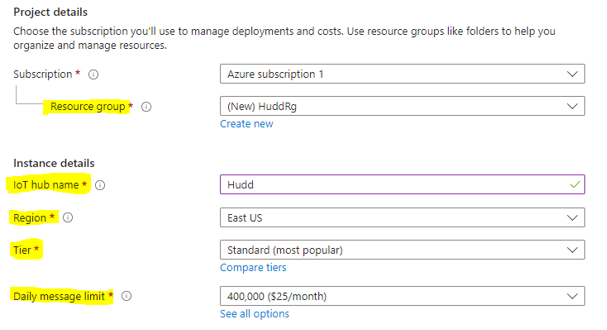

Step:3: Fill the necessary details such as; Resource group, IOT hub name, Region, Tier, Daily message limit

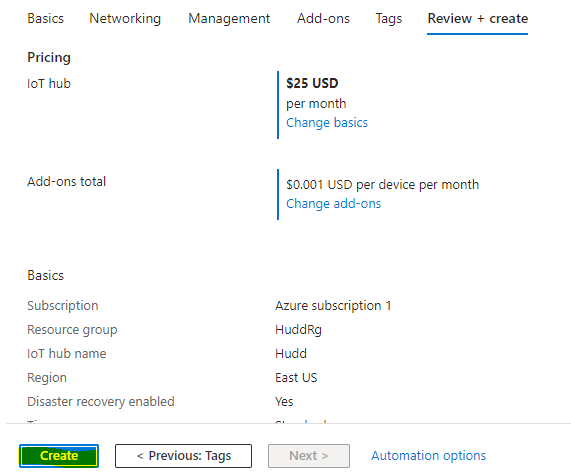

Step:4: Click Review+Create

Step:5: Click Create

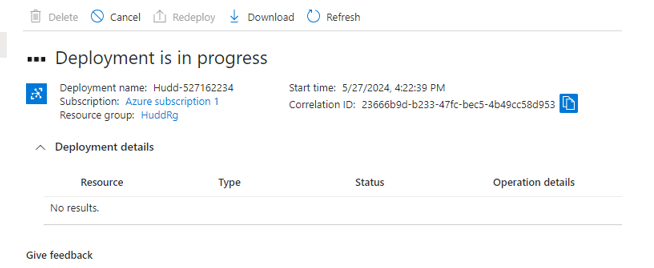

Step:6: Wait for deployment to be completed

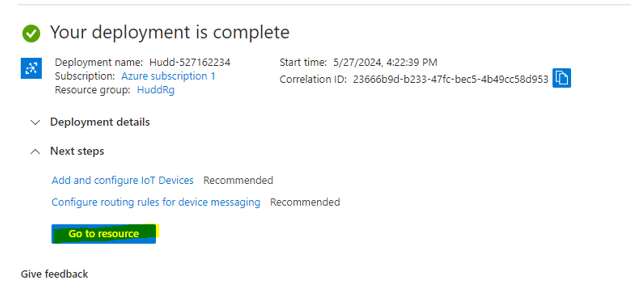

Step:7: Go to resource after deployment is completed

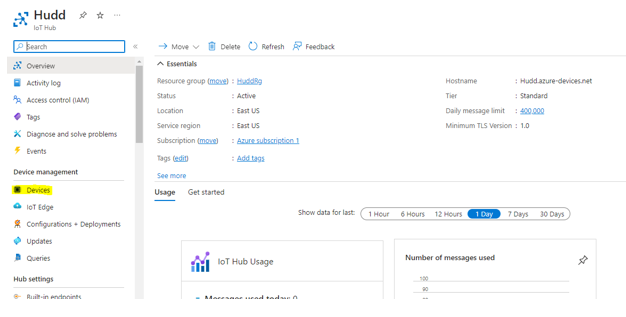

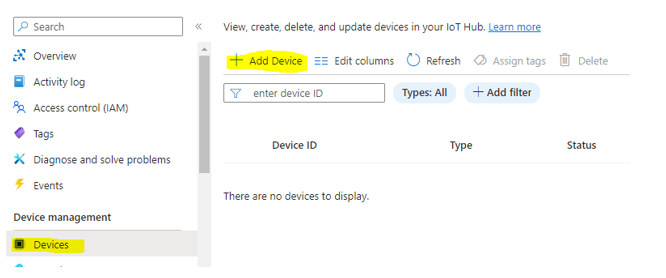

Step:8: You must create a device for it. Click on devices icon.

Step:9: Click on Add device

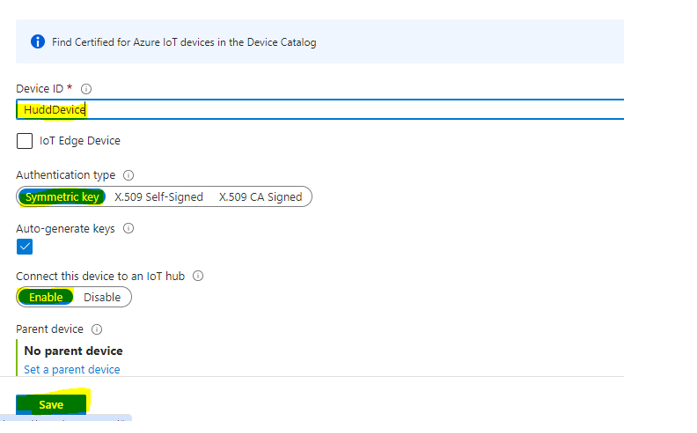

Step:10: Create (Device name, select “Symmetric key” and Enable) and save.

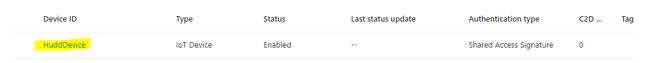

Step:11: Click on the device created

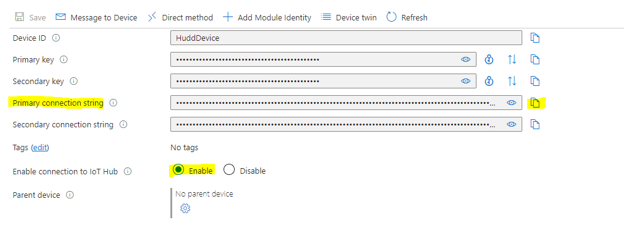

Step: 12: Copy “Primary connection string” and make sure Enable connection to IOT Hub is Enabled

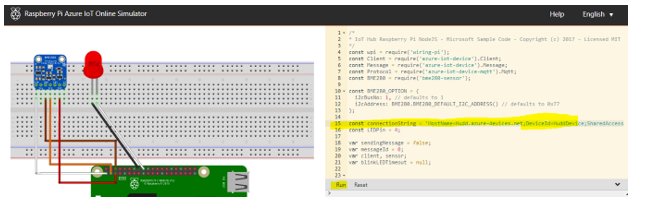

Step:13: Initiate https://azure-samples.github.io/raspberry-pi-web-simulator/ on a web browser , insert or paste the “primary connection string “ from the IOT device portal on Azure into “Line 15” on the Raspberry PI Simulator and click on RUN

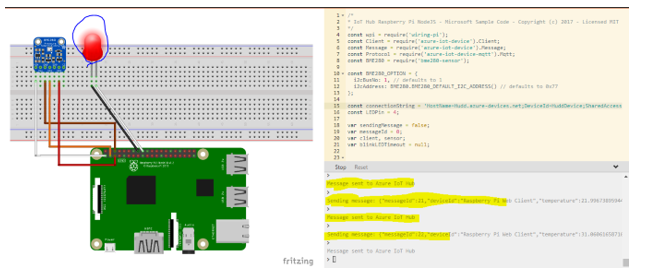

Step:14: Raspberry starts blinking Red hence, communicating to the IOT Device in Microsoft Azure portal and recording data or messages as seen below.

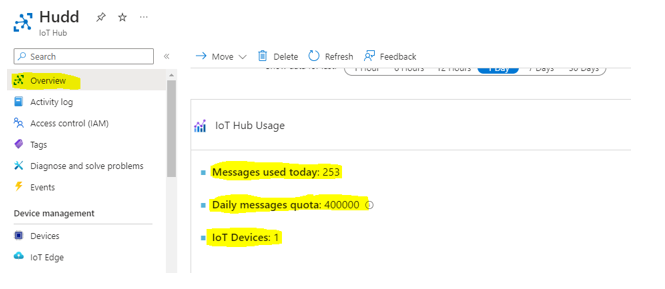

Step:15: Go back to IOT Hub overview to show the messages received

| atony07 | |

1,869,638 | Datalists or 50 lines of extra JavaScript and HTML? | If you’re looking to incorporate an autocomplete feature into your text input fields, there are two... | 0 | 2024-05-29T20:46:13 | https://dev.to/wagenrace/datalists-or-50-lines-of-extra-javascript-and-html-51j1 | webdev, javascript, html, beginners | If you’re looking to incorporate an autocomplete feature into your text input fields, there are two options available: using [datalist](https://developer.mozilla.org/en-US/docs/Web/HTML/Element/datalist) or writing some JavaScript. I experimented with both on my website to compare their pros and cons. The main difference are in my [pull request 42](https://github.com/rate-my-drink/rate-my-drink.github.io/pull/42/files).

> # This blog is part of [caffeinecritics.com](https://caffeinecritics.com/) the code can be found on[ GitHub](https://github.com/rate-my-drink/rate-my-drink.github.io).

## Datalists

](https://cdn-images-1.medium.com/max/2000/0*U2s5ChTsAN0gty0t)

With just a few lines of code, you can easily add autocomplete functionality to your input fields. The code is surprisingly simple and took me much more code to accomplish without it.

> # *Code is not the product, it is the liability of the product.*

```html

<input

type="text"

class="border-grey-light block w-full rounded border p-3"

list="all-current-producers"

v-model="producerName"

@input="updateProducer()"

/>

<datalist id="all-current-producers">

<option v-for="producer in producers" :value="producer.name"></option>

</datalist>

```

According to recent statistics, Datalist is supported by a whopping [97.5%](https://caniuse.com/?search=datalist) of websites. However, there’s one notable exception — Firefox for Android, which does not support Datalist. However, it only has a market share of 0.3%. This minor setback can be particularly frustrating given that I use this browser personally. Additionally, some browsers offer integration with Datalist, such as Chrome for Android, which allows users to easily access and utilize the feature through the keyboard.

use Chrome for Android](https://cdn-images-1.medium.com/max/2160/0*rd0gPZ-y17-VPE-A)

However, there is a drawback to using datalists. By default, the browser styles them, which means you have limited control over their appearance. While you can overwrite this by adding your own CSS, doing so requires extra effort and additional JavaScript code.

## Own JavaScript

](https://cdn-images-1.medium.com/max/2000/0*cJAxKBfnZno2cYFG)

Our own JavaScript will improve across various platforms, including Firefox on Android. However, it’s worth noting that some browsers may lose their unique integration features. On the other hand, you’ll regain a significant amount of styling freedom. I must admit, I prefer the new look much more, but it requires additional effort to ensure seamless support for mobile browsers as well.

## Conclusion

Datalists have some advantages and disadvantages. On the plus side, they offer less code and better integration with web browsers, making it easier to use them. However, there are also some drawbacks to consider. For instance, styling Datalists can be more challenging, and they’re not supported by Firefox on Android devices. Personally, I opted for Datalists because I prefer to keep my code simple.

| wagenrace |

1,869,799 | How to avoid spam when putting an email on a website? | Sometimes we want to put an email on a website but without exposing it to be read by some random bot... | 0 | 2024-05-30T18:08:23 | https://coffeebytes.dev/en/how-to-avoid-spam-when-putting-an-email-on-a-website/ | opinion, seo, beginners, webdev | ---

title: How to avoid spam when putting an email on a website?

published: true

date: 2024-05-29 20:46:07 UTC

tags: opinion,seo,beginner,webdev

canonical_url: https://coffeebytes.dev/en/how-to-avoid-spam-when-putting-an-email-on-a-website/

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/43920yu6w57v86r11ifx.jpg

---

Sometimes we want to put an email on a website but without exposing it to be read by some random bot that includes us in an advertising list, reminding us of our problems to start a physical relationship with the two mature women who live less than two kilometers away. And since we don’t want that, there are several things we can do to solve this and here I present some solutions, the last one is the one I usually use and also my favorite.

[](images/prince-from-nigeria-scam.jpg)

## Convert your email to an image

This way to protect your email address requires no detailed explanation, just turn your email into an image and place it, your address will be safe from any text scraping bot, but it will be vulnerable to any bot with [OCR (Optical Character Recognition) capabilities, bots with pytesseract for example](https://coffeebytes.dev/en/ocr-with-tesseract-python-and-pytesseract/), which I predict will be few if any.

[](images/email-image.jpg)

The disadvantage of this approach is that the person who wants to send you an email will have to type it in manually, as they can’t copy-paste, and you know that every extra step adds friction to the conversion process.

## Use a different email notation

Instead of using the classic format like _[my@email.com](mailto:my@email.com)_, change it to something less obvious to bots, like _my [at] email [dot] com_, this way your address won’t be detected as an email by less sophisticated bots and if someone wants to send you an email just replace the _at_ and _dot_ with their corresponding symbols.

A pretty balanced option in my opinion, although if it becomes popular I’m sure my blue pill factory ambassadors will find a way to get the valuable information they need.

## Ask the user to generate the email with extra information

Another way is not to put the email directly, but a hint as to how it can be deduced, for example if the site is called _lain.com_ you can write a text as a hint that says something like:

> _“My email is the name that appears in the url and is a google email address”_.

With that it will understand that the address is _[lain@gmail.com](mailto:lain@gmail.com)_.

Just try not to complicate things too much here, don’t overestimate the deductive capabilities of the average web surfer.

## Use a form instead of an email address

Another option is to completely forget about placing your email and use a form instead, this way your email will be safe and you can direct the emails to a unique account that you use for that single purpose.

### Protect your form from spam with a captcha

Of course some bots will try to fill out the form to send you advertisements but you can always use [a strong captcha to protect yourself from spam](https://coffeebytes.dev/en/my-analysis-of-anti-bot-captchas-and-their-advantages-and-disadvantages/)

[](images/captcha-frieren-fern.webp)

### Use custom email spam filters

If you don’t want to use captchas you can leave the responsibility of recognizing spam to your email provider’s filters, or use a customized filter created by you and combine it with some instruction like:

> _“To know that you are not a bot, please include the word jelly bean in your email. ”_ \*.

Now just set up your filter and automatically delete all emails that do not meet this condition.

## Generate your email dynamically to avoid spam

This is my favorite, to achieve this we can use some kind of simple encryption, or even base64, **encode our email address in base64 and then decode it in the frontend dynamically using Javascript** , this way the bots will only see a bunch of numbers and letters in the source code, to read the email they will need to render the page with javascript enabled, which eliminates those bots that only read the source code of the response.

``` javascript

// this comes from the server

const encodedEmail = "eW91YXJlY3VyaW91c0BpbGlrZWl0LmNvbQ=="

// atob decodes from base64

const decodedEmail = atob(encodedEmail)

```

For an ordinary user, the email will be displayed as if it were included in the source code of the page.

Any user can copy-paste without any hassle, easy peasy.

### Generate an email dynamically with user interaction.

To make this protection method more secure we can delay the decoding until the user presses a button, scrolls, makes a mouse movement or even use the intersection observer to decode it only if the email is on screen; the limit is your imagination. | zeedu_dev |

1,869,636 | Mysterious /sink route in ui.shadcn.com | I found a mysterious sink folder in the shadcn-ui/ui. All these folders in the above image are... | 0 | 2024-05-29T20:42:32 | https://dev.to/ramunarasinga/mysterious-sink-route-in-uishadcncom-13a4 | javascript, opensource, nextjs, shadcnui | I found a mysterious [sink folder](https://github.com/shadcn-ui/ui/blob/main/apps/www/app/%28app%29/sink/layout.tsx) in the shadcn-ui/ui.

All these folders in the above image are pages that can be navigated to via the header on [https://ui.shadcn.com/](https://ui.shadcn.com/) website. However, I could not find the header link that points to /sink in the header

> [Build shadcn-ui/ui from scratch.](https://tthroo.com/)

I tested to see if anything loads when I visit [/sink](https://ui.shadcn.com/sink) and to my surprise, there are some example components loaded.

Let’s find out it’s purpose.

I searched for any existing issues on shadcn-ui/ui Github and found 2 issues and 1 pull request reported:

1. [https://github.com/shadcn-ui/ui/pull/1446](https://github.com/shadcn-ui/ui/pull/1446)

2. [https://github.com/shadcn-ui/ui/issues/3533](https://github.com/shadcn-ui/ui/issues/3533)

3. [https://github.com/shadcn-ui/ui/issues/441](https://github.com/shadcn-ui/ui/issues/441)

I could not get much context from 1, 2 listed above but [#441](https://github.com/shadcn-ui/ui/issues/441) provides some meaningful context.

The purpose of /sink route might be to provide a showcase page with preview for improved usability. You can read more in the [issue reported](https://github.com/shadcn-ui/ui/issues/441)

### Conclusion:

/sink in shadcn-ui/ui is not so mysterious once I found out the existing pull requests around it. It might be a WIP and its purpose is to add a comprehensive component showcase page with preview for improved usability. | ramunarasinga |

1,869,635 | AWS Cloud Resume Challenge | I came across the Cloud Resume Challenge by my mentor as I am trying to build a career in AWS. I am a... | 0 | 2024-05-29T20:35:36 | https://dev.to/anson_ly/aws-cloud-resume-challenge-h2g | aws, awschallenge, beginners, serverless | I came across the Cloud Resume Challenge by my mentor as I am trying to build a career in AWS. I am a recent Masters' graduate majoring in Cloud Computing Systems. Up until this program I don't have much exposure to the cloud since I majored in Psychology for undergrad. [Here is my github](https://ansonly15.github.io/Anson_Ly/)

**How I Did**

**Front-End**

With experience from a full-stack program I coded my resume using basic HTML and CSS. Then I uploaded my file to an S3 Bucket which then connects to a CloudFront.

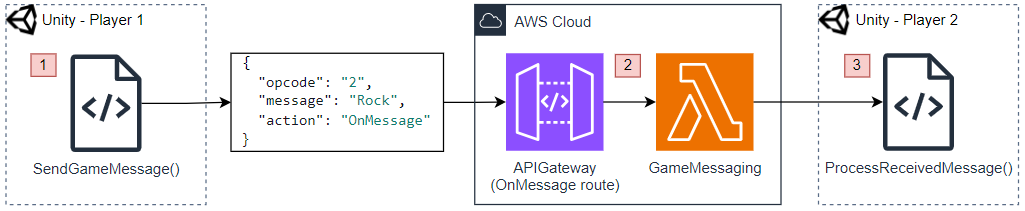

**Back-End**

I started by deploying my backend services using the AWS Management Console. I began with Amazon DynamoDB, setting up a table with an ID and a value of 1. Then, I created a Lambda function to increment this value each time it was triggered. Initially, I deployed two Lambda functions—one for reading the value and another for writing (incrementing) the value. However, this approach made my architecture unnecessarily complicated and caused multiple issues with API Gateway integration.

**Infrastructure as Code with Terraform**

While the challenge suggested using AWS SAM, I decided to use Terraform because it is more widely used in the industry and has excellent documentation. Coding my entire backend in Terraform presented its own set of challenges, particularly with CORS issues in API Gateway. Unlike the AWS Console, Terraform does not have a simple "Turn on CORS" button, which made this part of the project the most time-consuming. I spent approximately 25-30 hours resolving these issues. Despite the frustration, this was also the most exciting part of the challenge, as it significantly deepened my understanding of infrastructure as code (IaC).

**Implementing CI/CD Pipelines**

I uploaded my front-end and back-end code to separate GitHub repositories and used GitHub Actions to build CI/CD pipelines. This setup ensures that my site updates automatically upon a push from my IDE. I also utilized branches to test new code for compatibility with the main branch. For security, I stored my credentials as GitHub secrets for the front-end and used Terraform Cloud to manage credentials for the back-end.

After more than 100 hours of work, I finally completed the Cloud Resume Challenge.

**Conclusion**

This project has significantly expanded my knowledge of cloud services and enhanced my JavaScript skills. It is now a standout addition to my resume and a compelling example to present during job interviews. Moving forward, I plan to further develop my CI/CD pipelines by integrating Docker, Jenkins, and Kubernetes, and continue refining my project. Additionally, I aim to obtain the AWS Solutions Architect - Associate certification.

I highly recommend this challenge to anyone seeking hands-on experience with cloud technologies. It is particularly beneficial for college students looking to gain practical project experience. Whether you are a novice or a seasoned professional, you will find this challenge both rewarding and educational.

| anson_ly |

1,869,634 | Unleashing Creativity: A Dive into Google DeepMind's Veo | Imagine a world where creating stunning visuals is as easy as writing a sentence. Google DeepMind's... | 0 | 2024-05-29T20:33:13 | https://dev.to/shishsingh/unleashing-creativity-a-dive-into-google-deepminds-veo-5g67 | google, machinelearning, ai, openai | Imagine a world where creating stunning visuals is as easy as writing a sentence. Google DeepMind's Veo, a cutting-edge text-to-video model, brings us closer to this reality. Let's delve into the world of Veo, exploring its capabilities, functionalities, and the exciting potential it holds.

## Understanding Google DeepMind and Veo

Google DeepMind is a pioneering artificial intelligence (AI) research lab pushing the boundaries of machine learning. Veo, their latest innovation, stands as their most powerful video generation model yet. It transcends previous limitations, generating high-resolution (1080p) videos exceeding a minute in length.

**DeepMind**

- Pioneering artificial intelligence (AI) research lab at Google.

- Focuses on pushing the boundaries of machine learning to create safe and beneficial AI systems.

- Aims to solve intelligence and advance scientific discovery through AI.

**Veo**

- DeepMind's most powerful video generation model to date.

- Generates high-quality, 1080p resolution videos exceeding a minute in length.

- Creates videos in various cinematic and visual styles based on text prompts.

- Can take an image and a text prompt to generate a video that incorporates both the image's style and the prompt's instructions.

- Extends short video clips to full-length videos.

- DeepMind is committed to responsible use of Veo and incorporates safety filters and watermarking techniques.

- In essence, DeepMind is the AI research lab, and Veo is one of their latest creations that utilises machine learning to generate creative video content.

## How Does Veo Function?

Veo operates like a creative translator, interpreting your textual descriptions and weaving them into captivating visuals. Here's a simplified breakdown:

**Textual Input:** You provide a detailed description of the video you envision. This could be anything from a bustling cityscape to a heartwarming story.

**AI Processing:** Veo's internal AI engine goes to work, dissecting your text and identifying key elements like objects, actions, and settings.

**Video Generation:** Leveraging its vast knowledge base and machine learning capabilities, Veo generates a video that aligns with your description. From capturing the essence of a bustling city to replicating specific cinematic styles, Veo strives to bring your vision to life.

## Mechanisms Behind the Magic

While the specifics of Veo's inner workings remain under wraps, we can explore some of the critical development models powering its functionality:

**Deep Learning:** Veo is likely fueled by deep learning architectures, particularly convolutional neural networks (CNNs) adept at image and video recognition. These networks analyze vast amounts of video data, learning the intricate relationships between text descriptions and their corresponding visuals.

**Generative Adversarial Networks (GANs):** GANs are a type of deep learning model where two neural networks compete. One network (generator) creates new data (videos in this case), while the other (discriminator) tries to differentiate the generated data from real data. This competitive process helps Veo refine its video generation capabilities over time.

## Using Veo: A Glimpse into the Future

Currently, Veo isn't publicly available. However, DeepMind's vision is to democratize video creation. Imagine a future where:

**Content Creators:** YouTubers, filmmakers, and animators can leverage Veo to generate storyboards, create concept scenes, or even produce entire videos based on their scripts.

**Educators:** Veo can craft engaging educational videos by translating complex concepts into visually captivating narratives.

**The Everyday User:** Anyone with a story to tell can use Veo to bring their ideas to life, fostering a new era of creative expression.

**Code Example (Illustrative Purpose Only):**

While the actual code for Veo is likely complex and proprietary, here's a simplified Python illustration to conceptualise the text-to-video process:

```

# Function to process text description

def process_text(text):

# Extract key elements like objects, actions, and settings

# ... (code for text processing)

return elements

# Function to generate video based on elements

def generate_video(elements):

# Use deep learning models to translate elements into video frames

# ... (code for video generation)

return video

# User input

text_description = "A spaceship blasts off from a futuristic city at sunrise"

# Generate video

elements = process_text(text_description)

video = generate_video(elements)

# Display the generated video

# ... (code for video display)

```

## A Responsible Future for AI-Generated Content

DeepMind acknowledges the ethical considerations surrounding AI-generated content. Veo incorporates safety filters and watermarking techniques (like DeepMind's SynthID) to ensure responsible use and mitigate potential biases.

## Conclusion: A New Dawn for Video Creation

Veo represents a significant leap forward in text-to-video technology. Its potential to democratise video creation and empower storytellers is truly exciting. As Veo continues to evolve, we can expect even more breathtaking visuals and groundbreaking applications that will reshape the landscape of video production.

## References

Cover: https://voi.id/en/technology/384540

## Connects

Check out my other blogs:

[Travel/Geo Blogs](shishsingh.wordpress.com)

Subscribe to my channel:

[Youtube Channel](youtube.com/@destinationhideout)

Instagram:

[Destination Hideout](https://www.instagram.com/destinationhideout/) | shishsingh |

1,869,632 | The Costly Scam: How a Mechanic Duped Me on My Hybrid's ABS Repair | Owning a hybrid vehicle comes with its share of perks and responsibilities. With advanced technology... | 0 | 2024-05-29T20:30:47 | https://dev.to/sabrina_spellmen_02c6c3a1/the-costly-scam-how-a-mechanic-duped-me-on-my-hybrids-abs-repair-lhl | Owning a hybrid vehicle comes with its share of perks and responsibilities. With advanced technology and fuel efficiency, it’s the perfect choice for the environmentally conscious driver. But my recent experience with a shady mechanic turned what should have been a routine repair into a dramatic and costly ordeal. Here’s how I was duped and what I learned from the experience.

The Initial Problem

One day, as I was driving home from work, my dashboard lit up like a Christmas tree. The ABS (Anti-lock Braking System) warning light was on, accompanied by an unsettling grinding noise when I braked. My heart sank. The ABS is a critical safety feature, and I knew I needed to get it checked out immediately.

Seeking Help

I quickly searched online for a nearby mechanic and found one with seemingly good reviews. The shop looked professional enough, and the mechanic, let’s call him Mike, seemed knowledgeable. He assured me that he’d dealt with ABS issues on hybrids before and promised a quick and affordable fix.

The Dramatic Turn

Mike called me later that day with a grave tone in his voice. “Your ABS system is in worse shape than I thought,” he said. “There are multiple components that need replacing, and it’s going to cost you around $1,000.” My stomach churned. That was a significant amount of money, but Mike insisted it was necessary for my safety. With a mix of anxiety and hesitation, I authorized the repair.

When I picked up my car, Mike handed me a lengthy bill and a bag of replaced parts. He went over the supposed repairs in technical jargon, which only added to my confusion and unease. I paid the bill, though my gut told me something wasn’t right.

The Aftermath

A few days later, the ABS warning light came back on. Frustrated and worried, I decided to get a second opinion. I took my car to a reputable hybrid specialist. After a thorough inspection, the specialist delivered some shocking news: most of the parts Mike had replaced were perfectly fine, and the actual problem was a minor issue that cost only $150 to fix.

The Emotional Rollercoaster

Learning I had been scammed left me feeling a mix of anger, frustration, and betrayal. I had trusted Mike with my vehicle and my safety, and he had taken advantage of my lack of technical knowledge. The experience was a harsh wake-up call, highlighting the importance of being vigilant and informed when dealing with car repairs.

How to Protect Yourself from Mechanic Scams

1. Research Thoroughly

Before choosing a mechanic, do your homework. Look for reviews from multiple sources, check for any complaints with the Better Business Bureau, and ask friends or family for recommendations.

2. Get Multiple Quotes

Don’t settle for the first estimate you receive. Getting quotes from at least two or three different mechanics can give you a better idea of what the repair should cost.

3. Ask for Detailed Explanations

A trustworthy mechanic will be willing to explain the issues in detail and show you the damaged parts. If they’re using jargon to confuse you, that’s a red flag.

4. Request the Old Parts

Always ask to see the old parts that were replaced. This can help ensure that the parts were indeed faulty and needed replacement.

5. Trust Your Instincts

If something doesn’t feel right, it probably isn’t. Don’t be afraid to walk away and seek another opinion if you’re unsure about the diagnosis or cost.

6. Educate Yourself

Understanding the basics of your vehicle can help you make more informed decisions. There are plenty of resources online where you can learn about common car issues and repairs.

Conclusion

Getting scammed by a mechanic was a costly lesson, but it taught me the importance of being proactive and cautious. By following these steps, you can protect yourself from unscrupulous mechanics and ensure that your vehicle gets the care it needs without unnecessary expenses. Remember, your safety and peace of mind are worth the extra effort. | sabrina_spellmen_02c6c3a1 | |

1,867,496 | The AI Threat: Will Developers Lose Their Jobs? | Introduction Artificial Intelligence is no longer a concept of the distant future, it is a... | 0 | 2024-05-29T20:27:03 | https://dev.to/wafa_bergaoui/the-ai-threat-will-developers-lose-their-jobs-m9e | developers, ai, development, webdev | ## **Introduction**

Artificial Intelligence is no longer a concept of the distant future, it is a rapidly growing force reshaping various industries, including software development.

The rise of AI has brought significant advancements, making processes more efficient and enabling new capabilities that were once considered science fiction. However, this progress also comes with a looming concern: the potential of AI to replace human jobs.

According to a [report by Gartner](https://www.gartner.com/en/newsroom/press-releases/2021-05-19-gartner-says-70-percent-of-organizations-will-shift-their-focus-from-big-to-small-and-wide-data-by-2025), by 2025, AI will have **eliminated 85 million jobs** worldwide but will have also **created 97 million new ones**, highlighting a dynamic shift in the job market. In the realm of software development, this transformation is particularly evident as AI-driven tools and platforms increasingly automate coding tasks, optimize development workflows, and even generate code autonomously. This changing landscape necessitates developers to adapt and evolve to stay relevant.

## **Understanding the Threat of AI to Software Developers**

The integration of AI into software development raises concerns about job displacement among developers.

The threat AI poses to software developers can be understood through several dimensions:

**1. Automation of Coding Tasks**

AI-powered tools like GitHub Copilot and OpenAI Codex can assist in writing code, debugging, and even suggesting entire code blocks based on natural language descriptions.

These tools are designed to enhance productivity but also raise concerns about the redundancy of human developers for routine coding tasks. A [study by McKinsey Global Institute](https://www.mckinsey.com/~/media/mckinsey/business%20functions/mckinsey%20digital/our%20insights/where%20machines%20could%20replace%20humans%20and%20where%20they%20cant/where-machines-could-replace-humans-and-where-they-cant-yet.pdf) indicates that up to 45% of activities that individuals are paid to perform can be automated using current technology.

**2. Shift in Skill Requirements**

As AI takes over routine coding, the demand for developers with traditional programming skills may decrease. Instead, there will be a higher demand for those who can work alongside AI, understand its outputs, and integrate AI-driven solutions into broader systems. This shift requires developers to acquire new skills in AI and machine learning, data science, and advanced algorithmic understanding.

**3. Impact on Employment Opportunities**

The rise of AI has created a bifurcation in the job market. While entry-level coding jobs may decline, there is an increasing need for higher-level expertise in AI development, system architecture, and ethical AI implementation. [The World Economic Forum's Future of Jobs Report 2020](https://www3.weforum.org/docs/WEF_Future_of_Jobs_2020.pdf) suggests that the demand for AI and machine learning specialists is expected to grow significantly, with a projected 37% increase in the next five years.

## **AI-Powered Tools and Frameworks**

AI-powered tools and frameworks are revolutionizing software development, enhancing the quality of software through intelligent testing and debugging, and streamlining the development process. Some examples of AI-powered tools and frameworks include:

**[OpenAI Codex:](https://openai.com/index/openai-codex/)** This AI-powered tool translates natural language to code, allowing developers to build a natural language interface to existing applications.

**[GitHub Copilot:](https://github.com/features/copilot/)** This AI-powered tool assists developers in writing code by providing suggestions and recommendations based on the codebase.

**[AlphaCode:](https://alphacode.deepmind.com/)** This AI-powered tool generates code snippets based on natural language input, making it easier for developers to write and prototype code.

**[aiXcoder:](https://www.aixcoder.com/#/)** This AI-powered tool assists developers in writing code by providing suggestions and recommendations based on the codebase.

## **Securing Your Future as a Developer**

To stay relevant in the era of AI, developers must upskill and adapt to the changing landscape. Here are some strategies for developers to secure their future:

**1. Continuous Learning:**

Stay updated with the latest technologies and trends in AI and software development.

**2. Specialization:**

Focus on areas that are less susceptible to automation, such as complex problem-solving, creativity, and critical thinking.

**3. Collaboration with AI:**

Embrace AI as a tool to enhance your work rather than a threat.

**4. Soft Skills Development:**

AI may excel at processing data and performing specific tasks, but human creativity and problem-solving abilities remain unmatched. Developers who can blend technical prowess with strong soft skills will be more resilient to automation.

**5. Focus on Problem-Solving and Innovation**

AI can handle repetitive tasks efficiently, but humans are still superior at innovative thinking and solving complex, ambiguous problems. Developers should focus on building solutions that address real-world challenges and push the boundaries of what technology can achieve.

## **Conclusion**

The integration of AI into software development is a transformative force that will reshape the industry in ways previously thought unimaginable. While AI has the potential to automate certain tasks and processes, it also offers opportunities for developers to upskill and adapt to the changing landscape. By embracing AI as a collaborator rather than a competitor, software engineers can navigate this evolving landscape and contribute to the development of cutting-edge solutions that shape the future of technology.

---

The key is to adapt, evolve, and stay ahead of the curve in this ever-changing landscape.

If you want to stay up-to-date with the latest AI developments and trends, check out [my article](https://dev.to/wafa_bergaoui/staying-up-to-date-with-the-latest-ai-developments-and-trends-2kei) where I highlight the key strategies for achieving this.

| wafa_bergaoui |

1,869,612 | React 19: The Future of User Interface Development | Hello everyone, السلام عليكم و رحمة الله و بركاته React, developed and maintained by Facebook, has... | 0 | 2024-05-29T20:23:22 | https://dev.to/bilelsalemdev/react-19-the-future-of-user-interface-development-1jei | react, javascript, webdev, programming | Hello everyone, السلام عليكم و رحمة الله و بركاته

React, developed and maintained by Facebook, has been a pivotal library in the world of front-end development since its release. With each major version, React has introduced significant improvements and new features, pushing the boundaries of what's possible in web development. React 19, the latest version, continues this trend by bringing even more powerful tools and enhancements to developers. This article explores the key features and improvements in React 19 and what they mean for the future of user interface development.

#### Table of Contents

1. **Introduction to React 19**

2. **Concurrent Rendering Enhancements**

3. **Improved Server Components**

4. **Enhanced Developer Experience**

5. **New Hooks and APIs**

6. **Better Performance and Optimization**

7. **React Compiler**

8. **Backward Compatibility and Migration**

---

#### 1. Introduction to React 19

React 19 builds upon the solid foundation of previous versions, aiming to make UI development more efficient and enjoyable. This version focuses on improving performance, enhancing concurrent rendering capabilities, and providing a more seamless developer experience. With these updates, React 19 ensures that developers can build faster, more responsive applications.

---

#### 2. Concurrent Rendering Enhancements

Concurrent rendering is one of the standout features of React, allowing developers to create smooth, responsive user interfaces by breaking down rendering work into smaller units. React 19 introduces several enhancements to concurrent rendering:

- **Automatic Batching:** React 19 improves automatic batching of updates, which helps in reducing the number of re-renders and improving performance.

- **Suspense for Data Fetching:** Suspense has been extended to handle data fetching more gracefully, allowing components to wait for data before rendering without blocking the entire application.

- **Transition API:** This new API helps manage state transitions more effectively, enabling smoother UI updates and better user experiences.

---

#### 3. Improved Server Components

Server components, introduced in previous versions, allow developers to offload rendering to the server, reducing the initial load time and improving performance. React 19 brings significant improvements to this feature:

- **Streaming Server Rendering:** React 19 enhances server-side rendering by enabling streaming, which allows the server to send parts of the UI as they are ready, reducing the time to first paint.

- **Enhanced Caching Mechanisms:** Improved caching mechanisms help in reducing redundant data fetching and rendering, resulting in faster load times and better performance.

---

#### 4. Enhanced Developer Experience

React 19 focuses heavily on improving the developer experience, making it easier to build and debug applications:

- **Improved DevTools:** The React DevTools have been updated with new features, such as better support for concurrent rendering and more detailed profiling tools.

- **Error Handling:** Enhanced error boundaries and more informative error messages make it easier to identify and fix issues during development.

- **TypeScript Improvements:** Better TypeScript support ensures a smoother experience for developers using TypeScript with React, including improved type checking and autocomplete features.

---

#### 5. New Hooks and APIs

React 19 introduces several new hooks and APIs that offer more flexibility and control over component behavior:

- **useDeferredValue:** This hook helps manage deferred state updates, making it easier to prioritize critical updates over less important ones.

- **useSyncExternalStore:** This hook is designed to ensure consistent reads from external stores, aiding in state management across different components.

---

#### 6. Better Performance and Optimization

Performance is a critical aspect of any web application, and React 19 includes several optimizations to ensure faster and more efficient applications:

- **Optimized Reconciliation:** Improvements in the reconciliation process reduce the time React takes to compare and update the DOM, leading to faster updates.

- **Smarter Memoization:** Enhanced memoization strategies help in reducing unnecessary re-renders, improving overall application performance.

- **Improved Lazy Loading:** React 19 makes lazy loading of components more efficient, reducing the initial load time and improving the user experience.

---

#### 7. React Compiler

One of the most exciting additions in React 19 is the introduction of the React Compiler, also known as "React Forget." The React Compiler aims to automate many optimizations that developers currently have to do manually.

- **Automatic Hook Dependency Management:** The React Compiler can automatically track and optimize hook dependencies, reducing the need for developers to manually manage dependencies in hooks like `useEffect`.

- **Optimized Component Output:** The compiler produces optimized component code that runs faster and is easier to debug.

- **Future-Proofing:** By incorporating cutting-edge optimization techniques, the React Compiler ensures that applications remain performant and maintainable as React evolves.

---

#### 8. Backward Compatibility and Migration

React 19 has been designed with backward compatibility in mind, ensuring that most existing applications can be upgraded without significant changes. The React team has provided comprehensive migration guides and tools to help developers transition smoothly to the new version.

- **Codemods:** Automated tools (codemods) are available to assist in updating codebases to align with the new features and APIs introduced in React 19.

- **Deprecation Warnings:** Clear deprecation warnings and guides help developers identify and update deprecated features in their applications.

---

### Conclusion

React 19 continues to push the boundaries of what's possible in front-end development. With its focus on concurrent rendering, improved server components, enhanced developer experience, new hooks and APIs, and the introduction of the React Compiler, React 19 provides developers with the tools they need to build faster, more responsive, and maintainable applications. As React continues to evolve, it remains at the forefront of modern web development, empowering developers to create exceptional user interfaces. | bilelsalemdev |

1,869,556 | #1404. Number of Steps to Reduce a Number in Binary Representation to One | https://leetcode.com/problems/number-of-steps-to-reduce-a-number-in-binary-representation-to-one/desc... | 0 | 2024-05-29T20:14:07 | https://dev.to/karleb/1404-number-of-steps-to-reduce-a-number-in-binary-representation-to-one-49d1 | https://leetcode.com/problems/number-of-steps-to-reduce-a-number-in-binary-representation-to-one/description/?envType=daily-question&envId=2024-05-29

```js

var numSteps = function(s) {

let carry = 0

let steps = 0;

for (let i = s.length - 1; i > 0; i--) {

if (s.charAt(i) - '0' + carry == 1) {

carry = 1

steps += 2

} else {

steps++

}

}

return steps + carry

};

``` | karleb | |

1,869,611 | What is a good UI to use these days. | I am writing simle console apps in C#. But I would like it if I had a good UI to use. Since we live... | 0 | 2024-05-29T20:22:55 | https://dev.to/xarzu/what-is-a-good-ui-to-use-these-days-54j | javascript, webdev, programming, beginners | I am writing simle console apps in C#. But I would like it if I had a good UI to use. Since we live in an age where everything is run off the internet or intranet through a web browser, should I use a javascript frame work? What is a good choice for that? If I am using the UI to access and upload local files, like excel data files, what would be a good suggestion to use? Is there a bare-bones javascript suggestion that I can use?

There is a lot of talk online that WPF is not a good choice anymore and is losing popularity and support. Is it being replaced by something like Blazor?

| xarzu |

1,869,584 | From Chaos to Clarity: How Effective Billing and Inventory Management Transformed a Client’s Business | Working as a Billing Expert at an Electric Store has been a journey of learning, growth, and... | 0 | 2024-05-29T20:20:19 | https://dev.to/sabrina_spellmen_02c6c3a1/from-chaos-to-clarity-how-effective-billing-and-inventory-management-transformed-a-clients-business-5bep | Working as a Billing Expert at an Electric Store has been a journey of learning, growth, and countless stories of success. One of the most memorable experiences I had involved a consultation with a client whose business was on the brink of chaos due to poor inventory management and inefficient billing processes. This is the story of how we turned things around.

The Initial Contact

I first met John, the owner of a mid-sized electrical contracting company, during a routine inventory audit. His business had been growing rapidly, but this growth had come with its own set of challenges. John was struggling with managing inventory, keeping track of wiring tenders, and ensuring accurate billing. The result? Delays in project completion, unhappy clients, and a lot of stress.

Identifying the Problems

During our initial consultation, it became clear that John's business issues stemmed from a few key areas:

Inefficient Inventory Management: John’s team often faced shortages of critical components, which led to project delays.

Disorganized Wiring Tenders: Mismanagement of wiring tenders caused confusion and inefficiencies.

Inaccurate Billing: Errors in billing were not only costing John money but also damaging his reputation with clients.

Crafting a Solution

To address these issues, I proposed a comprehensive plan that included:

Implementing an Inventory Management System: We introduced a robust inventory management software tailored for electrical stores. This system automated stock tracking, set up alerts for low stock levels, and provided real-time inventory updates.

Streamlining Wiring Tenders: We organized the wiring tender process by categorizing them based on project types and deadlines. This not only improved efficiency but also ensured that the right materials were available when needed.

Optimizing Billing Processes: We integrated a new billing system that reduced errors by automating invoice generation and provided detailed financial reports. This system also tracked payments and flagged overdue accounts, allowing for timely follow-ups.

The Transformation

The changes we implemented had a significant impact on John’s business. Within a few months, he noticed several positive outcomes:

Increased Efficiency: With an organized inventory and streamlined processes, John’s team was able to complete projects faster and more efficiently.

Cost Savings: Automated systems reduced errors and wastage, saving the company money.

Improved Client Satisfaction: Accurate billing and timely project completion led to happier clients and repeat business.

Reduced Stress: With automated processes in place, John could focus on growing his business instead of firefighting daily issues.

The Happy Client

John recently shared with me how these changes had transformed his business. Not only had he regained control over his operations, but he also felt more confident in taking on larger projects. The improved reputation of his company had led to more referrals and a steady increase in revenue.

Conclusion

This experience reaffirmed my belief in the importance of effective billing and inventory management. By taking a strategic approach and leveraging the right tools, businesses can overcome operational challenges and achieve significant growth. If you’re facing similar issues in your business, consider seeking expert consultation—it could be the turning point you need.

At the end of the day, helping clients like John succeed is what makes my job as a Billing Expert truly rewarding. If you have any questions or need advice on managing your inventory or billing processes, feel free to reach out. Let’s turn your chaos into clarity!

About the Author

I have extensive experience as a Billing Expert at an Electric Store, specializing in managing inventory and wiring tenders. My passion is helping businesses streamline their operations and achieve their full potential.

| sabrina_spellmen_02c6c3a1 | |

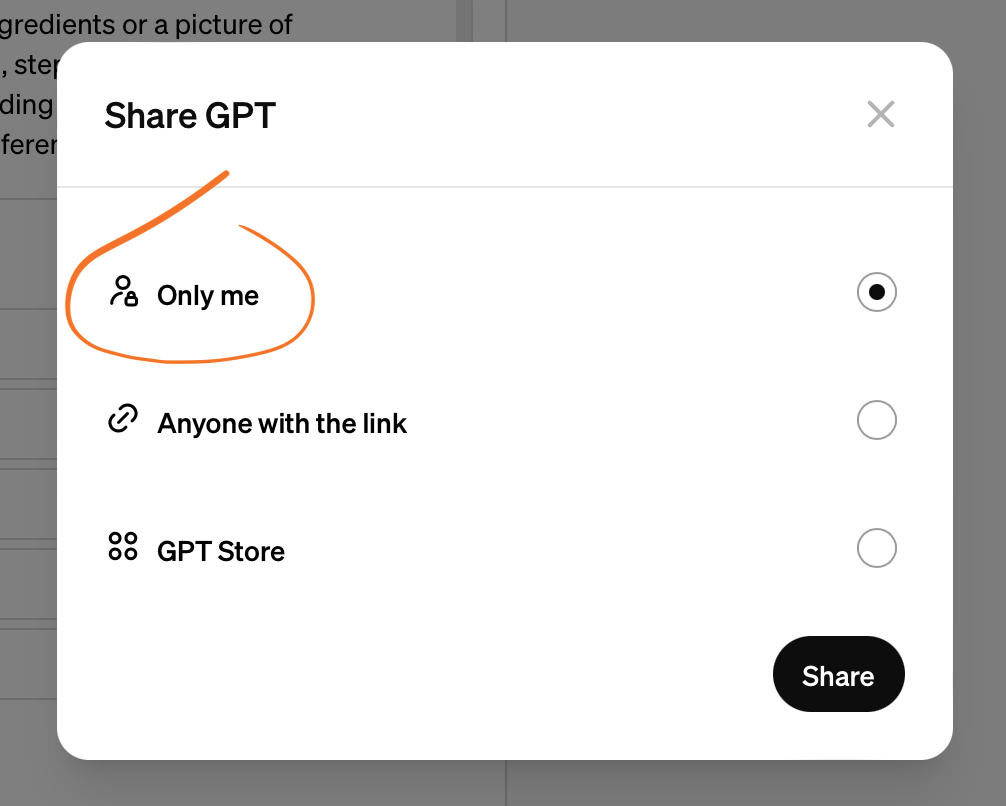

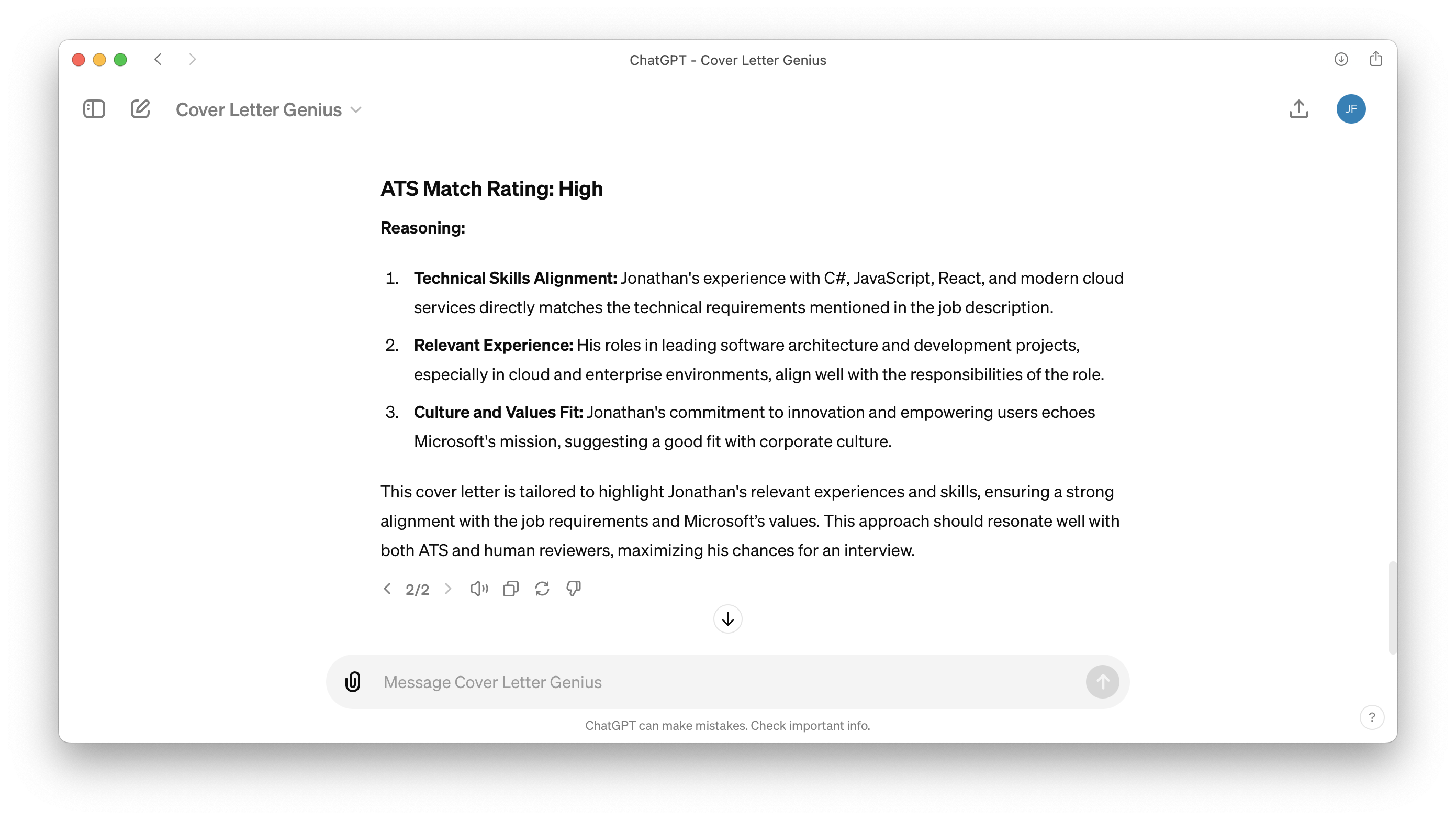

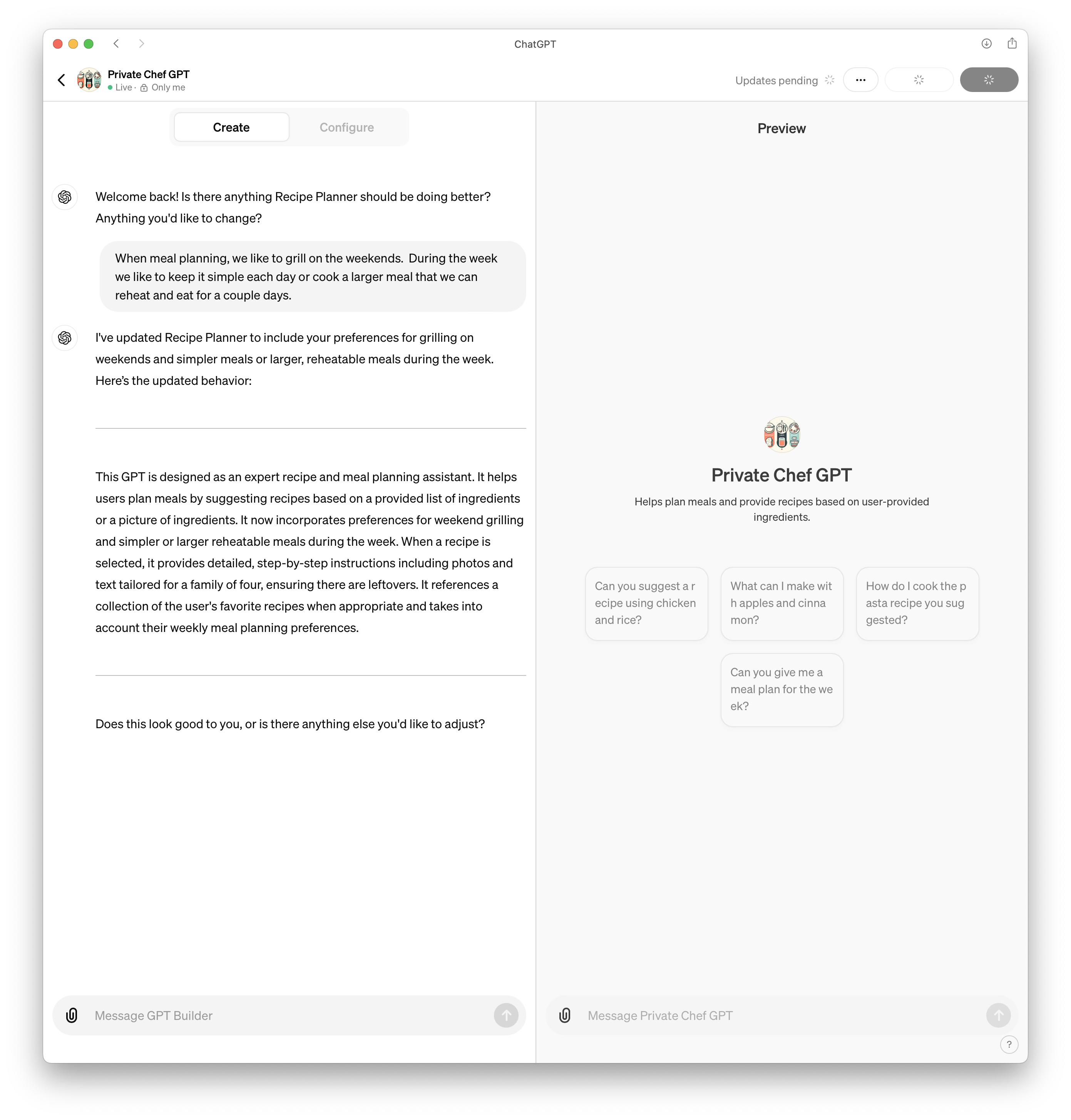

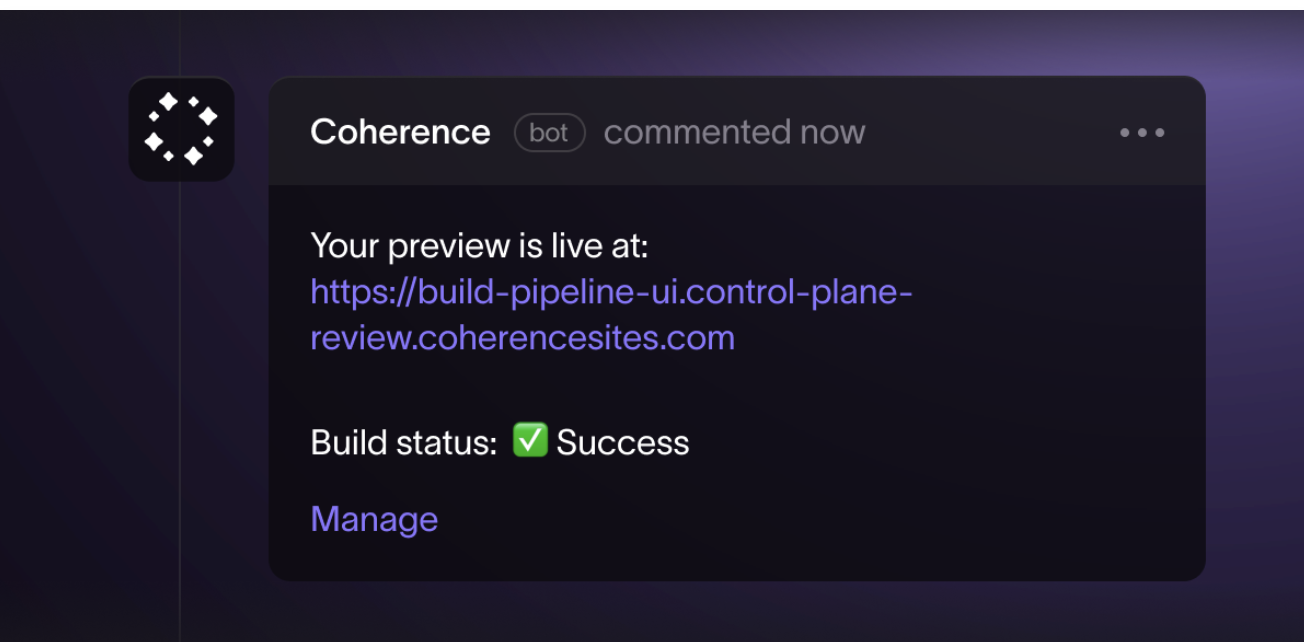

1,859,760 | GPTs that have clearly received a lot of love | I hate wasting my time with AI tools that promise the world and end up being a complete... | 0 | 2024-05-30T14:59:18 | https://blog.jonathanflower.com/artificial-intelligence/gpts-that-have-clearly-received-a-lot-of-love/ | artificialintelligen, softwaredevelopment, ai, chatgpt | ---

title: GPTs that have clearly received a lot of love

published: true

date: 2024-05-29 20:16:11 UTC

tags: ArtificialIntelligen,SoftwareDevelopment,AI,chatgpt

canonical_url: https://blog.jonathanflower.com/artificial-intelligence/gpts-that-have-clearly-received-a-lot-of-love/

---

I hate wasting my time with AI tools that promise the world and end up being a complete disappointment. I love finding AI tools that actually solve problems better than more conventional tools.

Before we get into these excellent GPTs, even these powerful GPT will fail horribly when given a weak prompt. My favorite guiding principle is to think of the GPT as high school intern. If you do not provide detail, who knows what you are going to get! It helps a lot when you communicate the goal and provide steps to follow whenever possible.

## Great GPTs

### [Grimoire](https://chatgpt.com/g/g-n7Rs0IK86-grimoire)

When starting a new coding project, I typically start here. Grimoire helps me think through architectural decisions and evaluate which technologies will be the best fit. The way Grimoire collaborates with me on the solution is a clear step above generic ChatGPT.

From the creator, Nick Dobos:

> How is Grimoire different from vanilla GPT?

> -Coding focused system prompts to help you build anything.

> Combining the best tricks I’ve learned to pull correct & bug free code out from GPT with minimal prompting effort

### [Consensus](https://chatgpt.com/g/g-bo0FiWLY7-consensus)

“search and synthesize information from over 200 million academic papers.” For example, I asked it if intermittent fasting is good for my heart health. I received back a detailed response with links to research papers supporting each point.

### [Universal Primer](https://chatgpt.com/g/g-GbLbctpPz-universal-primer)

I love learning and this GPT is my go to when I want to dive deeper on a concept. I love how it breaks things down concepts into easily digestible chunks and includes plenty of examples.

### What are your favorite GPTs?

This article is part of a series: [Who Cares About GPTs?](https://blog.jonathanflower.com/uncategorized/who-cares-about-custom-gpts/) | jfbloom22 |

1,869,557 | [11/52] OpenGL is Still Decent, Actually | I know... I promised I'd be doing more engineering. We'll get there! But I was consuming some... | 0 | 2024-05-29T20:15:32 | https://github.com/Tythos/11-of-52--opengl-is-still-decent-actually | opengl, cpp, sdl, glsl | I know... I promised I'd be doing more engineering. We'll get there! But I was consuming some interesting digital creator / software engineering content the other day. There was one guy in particular (I won't say exactly who) who tends to do pretty interesting stuff, but who can be a little bit iconoclastic. He went on a weird rant about how obscure, obtuse, and inaccessible OpenGL is. It was very strange because the critique was based on how difficult it was to get sprites or pixels up on the screen. But compared to pretty much anything else--Vulkan, Carbon, XTerminal--it's pretty straightforward and well-established how to get up and going.

This guy is pretty experienced, and he knows what he's doing, so it seemed strange and the comment came out of nowhere. So, naturally, it got me going and thinking, "okay, what is the simplest and shortest path to get up and going with OpenGL?" Assume you're trying to just put up a simple animation, create a simple game, put some sprites on the screen, etc. What is the shortest path to do that? And as it turns out, unless you're doing something platform-specific like Windows GDI, OpenGL is still a really good way to go.

There's a few other things you need in combination with it. SDL is an absolutely fantastic library--strongly recommended, check it out if you aren't familiar with it. There's a lot more to it, but for getting out of the box and going with a window and an event loop and a GL context and all that, it's fantastic. And of course GLEW is practically required for a lot of things. So today we're going to walk through, really quick, a brief demonstration of what the "shortest path" to a working "sprites on screen" is.

## Let's Get Started

Begin with a blank C++ project. We'll create the following files as placeholders for future content:

* `.gitignore`

* `basic.f.glsl`

* `basic.v.glsl`

* `CMakeLists.txt`

* `main.cpp`

After initializing our git repository, we'll also want to add some dependencies. We'll use git submodules to do this, and in most cases these will need to come from specific branches. So, run `git submodule add` for the following:

* https://github.com/libsdl-org/SDL.git (use branch `SDL2`)

* https://github.com/libsdl-org/SDL_image.git (use branch `SDL2`)

* https://github.com/Perlmint/glew-cmake.git0

You'll notice we're also adding SDL Image here, which is a fantastic extension to SDL that gives you out-of-the-box support for loading surfaces from a wide variety of image formats. We're also using a specific fork of GLEW that supports inclusion via CMake, to automate the dependency inclusion within our CMake project definition. Once those dependencies are cloned and submodules initialized (recursively!), we're ready to start populating our files.

You'll also notice we have some shaders. If you haven't messed with GLSL before, it's fascinating! We'll probably do another talk specifically about radiometry and applications to graphics programming, thermal, electro-optics, and other fields. We'll also want a test texture; you can use any .PNG you want, but I went with a nice picture of a seagull. Cheers.

Our goal--our mission, if we choose to accept it--is to put this image up in the window. If we do this well, it should be clear how we can extend this in the future to do more sophisticated sprite models and behaviors within the context of an app or game engine.

## The Main Thing

Let's start in `main.cpp` with some dependencies. We'll include the following, roughly broken into system includes and dependency includes:

```cpp

#include <fstream>

#include <iostream>

#include <sstream>

#include <vector>

#include <SDL.h>

#include <GL/glew.h>

#include <SDL_image.h>

```

## Vertex Formats

Next, we'll think about our data model. Let's stay away with classes and focus on how the state of our application will be packed into an aggregation of bytes (a struct).

```cpp

SDL_Surface* logo_rgba;

const GLfloat verts[4][4] = {

{ -1.0f, -1.0f, 0.0f, 1.0f },

{ -1.0f, 1.0f, 0.0f, 0.0f },

{ 1.0f, 1.0f, 1.0f, 0.0f },

{ 1.0f, -1.0f, 1.0f, 1.0f }

};

const GLint indices[6] = {

0, 1, 2, 0, 2, 3

};

```

You do need to think about your vertex format! Briefly, this means thinking about what information is attached to, or defines, each vertex in the drawing sequence you will call. Since we're focusing on a textured 2d sprite, our `verts` array defines a set of 4 vertices, each of which defines 4 values:

* An `x` (position) coordinate

* An `y` (position) coordinate

* A `u` (texture) coordinate

* A `v` (texture) coordinate

We'll see how we "encode", or tell OpenGL about, the format of this vertex sequence in subsequent calls. And since we only want to define each vertex once, we also have an index buffer to define how the vertices are combined to form a shape (in this case, two triangles).

## Application State

We also need to think about what information defines the state our our application model. Let's use the following, which includes SDL references and a healthy mix of OpenGL unsigned integers (effectively used as handles to GPU data).

```cpp

struct App {

SDL_Window* m_window = NULL;

SDL_GLContext m_context = 0;

GLuint m_vao = 0;

GLuint m_vbo = 0;

GLuint m_ebo = 0;

GLuint m_tex = 0;

GLuint m_vet_shader = 0;

GLuint m_frag_shader = 0;

GLuint m_shader_prog = 0;

};

```

## Behaviors

We want to define procedures by which we initialize and free specific models within this application. Let's define prototypes for the following:

```cpp

void initApplication(App* app);

void freeApplication(App* app);

void initShaders(App* app);

void initGeometries(App* app);

void initMaterials(App* app);

```

We'll also want some helper methods and a function to define specific loops. (In the long run, we'd want to split these loops across threads for different cadences like rendering, I/O handling, and internal updates.)

```cpp

const char* getSource(const char* path);

void renderLoop(App* app);

```

And now we have enough defined to think about how we use these behaviors in the context of a program. So let's write our `main()` entry point!

## The Main Main

First, let's start up the application by allocating, loading resources, and calling our initializers.

```cpp

int main(int nArgs, char** vArgs) {

// startup

std::cout << "Initialzing..." << std::endl;

std::string filename = "logo.png";

logo_rgba = IMG_Load(filename.c_str());

App* app = new App();

initApplication(app);

initShaders(app);

initGeometries(app);

initMaterials(app);0

// ...

}

```

Even though we've consolidated all of our state within a specific structure, you'll notice we've broken out initialization into specific steps. If you've used THREE.js before, this model may loop familiar. In the long run, this will make it easy to extract and organize specific models within our application--like individual shader programs, complex geometry data that may be reused or even animated, and material resources that need internally-organized bindings to things like multiple texture uniforms.

(We might look at a "part two" in which we see how these models can evolve into something more... interesting, if not entirely professional yet.0)

Next we can think about our "core" loop. This is pretty straightforward:

```cpp

int main(int nArgs, char** vArgs) {

// ...

// main loop

std::cout << "Running" << std::endl;

bool is_running = true;

while (is_running) {

SDL_Event event;

while (SDL_PollEvent(&event)) {

if (event.type == SDL_WINDOWEVENT && event.window.event == SDL_WINDOWEVENT_CLOSE) {

is_running = false;

break;

}

}

renderLoop(app);

}

// ...

}

```

Finally, we clear up our resources:

```cpp

int main(int nArgs, char** vArgs) {

// ...

// cleanup

std::cout << "Exiting..." << std::endl;

freeApplication(app);

delete app;

SDL_FreeSurface(logo_rgba);

logo_regba =nNULL;

return 0;

}

```

## Initialization

When we initialize the application, what are we talking about? Since we have separate initialization for our different groups of GL data, this is largely SDL-specific. Let's write our `initApplication()` to handle this top-level logic.

```cpp

void initApplication(App* app) {

if (SDL_init(SDL_INIT_VIDEO) < 0) {

std::cerr << "Initializing SDL video failed!" << std::endl;

throw std::exception();

}

// create window

app->m_window = SDL_CreateWindow("App", SDL_WINDOWPOS_CENTERED, SDL_WINDOWPOS_CENTERED, 800, 600, SDL_WINDOW_OPENGL);

if (app->m_window == NULL) {

std::cerr << "Creating main window failed!" << std::endl;

SDL_Quit();

throw std::exception();

}

// initialize GL context

SDL_GL_SetAttribute(SDL_GL_CONTEXT_MAJOR_VERSION, 3);

SDL_GL_SetAttribute(SDL_GL_CONTEXT_MINOR_VERSION, 1);

SDL_GL_SetAttribute(SDL_GL_CONTEXT_PROFILE_MASK, SDL_GL_CONTEXT_PROFILE_CORE);

SDL_GL_SetAttribute(SDL_GL_DOUBLEBUFFER, 1);

app->m_context = SDL_GL_CreateContext(app->m_window);

if (app->m_context== NULL) {

std::cerr << "Creating GL context failed!" << std::endl;

SDL_DestroyWindow(app->m_window);

SDL_Quit();

throw std::exception();

}

// initialize glew

GLenum err = glewInit();

if (err != GLEW_OK) {

std::cerr << "Initializing GLEW failed!" << std::endl;

SDL_GL_DeleteContext(app->m_context);

SDL_DestroyWindow(app->m_window);

SDL_Quit();

throw std::exception();

}

}

```

The big "lift" here is window management, of course, and that's the important part SDL automates for us. Once we have an agnostic window generated, getting a GL context is straightforward. These things would be 80% of the effort (a nightmare) if we didn't have SDL or something like it. Once you have your GL context, you're home free and almost everything else is platform-neutral.

## A Brief Break for CMake

Let's jump over to our `CMakeLists.txt` for a moment to make sure we'll be able to build this mess once we've finished coding. We'll start with the standard three CMake commands: defining the version, defining the project, and defining the main build artifact (executable, in this case).

```CMake

cmake_minimum_required(VERSION 3.14)

project(11-of-52--opengl-is-still-decent-actually)

add_executable(${PROJECT_NAME}

"main.cpp"

)

```

Next, we'll assert specific options for our dependencies.

```CMake

# assert dependency options

set(SDL2IMAGE_VENDORED OFF)

```

Now we can recursively include our submodules:

```CMake

# ensure dependencies are built

add_subdirectory("glew-cmake/")

add_subdirectory("SDL/")

add_subdirectory("SDL_image/)

```

Now we'll want to make sure our main build target can resolve the appropriate `#include` paths.

```CMake

target_link_libraries(${PROJECT_NAME} PRIVATE

SDL2::SDL2

SDL2::SDL2main

OpenGL32

libglew_static

SDL2_image

)

```

When in doubt, these are basically the library names. Some CMake projects will have their own unique library names defined (the `::` is a big clue); you can always check their `CMakeLists.txt` for an `add_library()` directive. There's also some useful logic/automation build into the `find_package()` directive within CMake--that might be worth going over in its own video at some point.

Finally, we'll want to set specific runtime resources to copy into the binary folder. We'll do this for static resources (like our image), as well as dynamic resources (like dependency DLLs). At some point, you can automate a degree of this with something like `CPack`, which is also probably worth its own video.

```CMake

# define static runtime resources

set(OUTPUT_PATH "${CMAKE_BINARY_DIR}/Debug")

file(MAKE_DIRECTORY ${OUTPUT_PATH})

configure_file("basic.f.glsl" "${OUTPUT_PATH}/basic.f.glsl" COPYONLY)

configure_file("basic.v.glsl" "${OUTPUT_PATH}/basic.v.glsl" COPYONLY)

configure_file("logo.png" "${OUTPUT_PATH}/logo.png" COPYONLY)

# define dynamic runtime resources

add_custom_command(TARGET ${PROJECT_NAME} POST_BUILD

COMMAND ${CMAKE_COMMAND} -E copy

${CMAKE_BINARY_DIR}/SDL/Debug/SDL2d.dll

$<TARGET_FILE_DIR:${PROJECT_NAME}>/SDL2d.dll

COMMAND ${CMAKE_COMMAND} -E copy

${CMAKE_BINARY_DIR}/SDL_image/Debug/SDL2_imaged.dll

$<TARGET_FILE_DIR:${PROJECT_NAME}>/SDL2_imaged.dll

)

```

(We're cheating a little bit here, because we know what DLLs will be generated and where they need to go.)

And that just about does it for our CMake. This is enough for us to do a basic configure test from the command line:

```sh

cmake -S . -B build

```

## Back to the Source

Let's finish our initialization. We've initialized the application. How are we going to initialize our shaders? There's a basic three-step process: first, we compile the vertex shader from source; second, we compile the fragment shader from source; third, we link these two shaders into a fully-defined graphics program.

```cpp

void initShaders(App* app) {

GLint status;

char err_buf[512];

glGenVertexArays(1, &(app->m_vao));

glBindVertexArray(app->m_vao);

// compile vertex shader

app->m_vert_shader = glCreateShader(GL_VERTEX_SHADER);

const char* vertexSource = getSource("basic.v.glsl");

glShaderSource(app->m_vert_shader, 1, &vertexSource, NULL);

glCompileShader(app->m_vert_shader);

glGetShaderiv(app->m_vert_shader, GL_COMPILE_STATUS, &status);

if (status != GL_TRUE) {

glGetShaderInfoLog(app->m_vert_shader, sizeof(err_buf), NULL, err_buf);

err_buf[sizeof(err_buf)-1] = '\0';

std::cerr << "Compiling vertex shader failed!" << std::endl;

std::cerr << err_buf << std::endl;

return;

}

// compile fragment shader

app->m_frag_shader = glCreateShader(GL_FRAGMENT_SHADER);

const char* fragmentSource = getSource("basic.f.glsl");

glShaderSource(app->m_frag_shader, 1, &fragmentSource, NULL);

glCompileShader(app->m_frag_shader);

glGetShaderiv(app->m_frag_shader, GL_COMPILE_STATUS, &status);

if (status != GL_TRUE) {

glGetShaderInfoLog(app->m_frag_shader, sizeof(err_buf), NULL, err_buf);

err_buf[sizeof(err_buf)-1] = '\0';

std::cerr << "Compiling fragment shader failed!" << std::endl;

std::cerr << err_buf << std::endl;

return;

}

// link shader program

app->m_shader_prog = glCreateProgram();

glAttachShader(app->m_shader_prog, app->m_vert_shader);

glAttachShader(app->m_shader_prog, app->m_frag_shader);

glBindFragDataLocation(app->m_shader_prog, 0, "uRGBA");

glLinkProgram(app->m_shader_prog);

glUseProgram(app->m_shader_prog);

return;

}

```

(You'll notice we're null-terminating our string copy from the error buffer, which isn't a great idea in general. Don't try this at home, kids!)

In modern graphics programming, you would not be necessarily doing this full build from source at runtime like this. Instead, you'd have an intermediate format (like SPIR-V, with Vulkan) that you would use to do a lot of the preliminary compilation. For our purposes, though, this is enough (and interesting, and useful; it also gives a transparent view into our application state and graphics pipeline.)

Note that we "know" special things about our shader program, in this case. For example, we "know" that there is a uniform variable we'll need to bind to our texture data. We'll look at how we set this up in the material initialization.

## Geometries

Now let's think about our geometry data. We've defined a set of vertices with a specific format, and some indices that define how those are mapped to specific shapes for drawing. We need to tell OpenGL how these vertices are structured. We also need to hand off (copy) the data buffers themselves. These are mostly done with buffer commands, using the "handles" (unsigned integers) we've defined as part of our application state to share persistent references.

```cpp

void initGeometries(App* app) {

// populate vertex and element buffers

glGenBuffers(1, &app->m_vbo);