id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,869,408 | Unlocking the Power of Chameleon Functions in JavaScript | Have you ever wished your functions could adapt like a chameleon? Meet Chameleon Functions! These... | 0 | 2024-05-29T16:54:25 | https://dev.to/silverindigo/unlocking-the-power-of-chameleon-functions-in-javascript-1309 | javascript, chameleon, functions, programming | Have you ever wished your functions could adapt like a chameleon? Meet Chameleon Functions! These versatile functions change their behavior based on the input they receive, making your code more flexible and powerful.

**What Are Chameleon Functions?**

Chameleon Functions are functions in JavaScript that alter their behavior depending on the input they get. Imagine a function that can handle both numbers and strings differently, providing the right output for each type of input. Here’s a simple example:

```javascript

function chameleonFunction(input) {

if (Array.isArray(input)) {

if (input.every(item => typeof item === 'number')) {

return input.map(num => num * 2);

} else if (input.every(item => typeof item === 'string')) {

return input.join(' ');

}

} else if (typeof input === 'string') {

return input.toUpperCase();

} else if (typeof input === 'number') {

return input * input;

}

return null;

}

console.log(chameleonFunction([1, 2, 3])); // [2, 4, 6]

console.log(chameleonFunction(['hello', 'world'])); // "hello world"

console.log(chameleonFunction('hello')); // "HELLO"

console.log(chameleonFunction(5)); // 25

```

**Why Use Chameleon Functions?**

Chameleon Functions offer several advantages:

* **Reusability**: Write once, use in multiple scenarios.

* **Flexibility**: Adapt to different inputs effortlessly.

* **Cleaner Code**: Reduce the need for multiple functions handling similar logic.

They are especially useful in real-world applications like form validation, data processing, and user input handling, where different types of data need different handling.

Let’s talk about a common scenario we all face in web development: handling user input from forms. Imagine you’re building a form where users can enter different types of data, such as their age, name, or a list of their favorite hobbies. The challenge is to process this input correctly because each type of data needs to be handled differently.

For instance:

* If the user enters their age (a number), we might want to format it to always show two decimal places.

* If they enter their name (a string), we might want to convert it to uppercase.

* If they provide a list of hobbies (an array of strings), we might want to join them into a single string separated by commas.

**Solution: Using a Chameleon Function**

Instead of writing separate functions for each type of input, we can create one Chameleon Function that handles all these cases. This makes our code cleaner and more efficient. Here’s how we can do it:

```javascript

function formatInput(input) {

if (Array.isArray(input)) {

if (input.every(item => typeof item === 'number')) {

return input.map(num => num * 2);

} else if (input.every(item => typeof item === 'string')) {

return input.join(', ');

}

} else if (typeof input === 'string') {

return input.toUpperCase();

} else if (typeof input === 'number') {

return input.toFixed(2);

}

return 'Invalid input';

}

// Examples

console.log(formatInput([1.234, 5.678])); // [2.468, 11.356]

console.log(formatInput(['apple', 'banana'])); // "apple, banana"

console.log(formatInput('hello')); // "HELLO"

console.log(formatInput(3.14159)); // "3.14"

```

**Explanation:**

* **Array of Numbers**: Each number is doubled.

* **Array of Strings**: Strings are joined with a comma.

* **Single String**: The string is converted to uppercase.

* **Single Number**: The number is formatted to two decimal places.

* **Invalid Input**: A default message is returned for unsupported input types.

This Chameleon Function is incredibly versatile. It adapts to different inputs and processes them accordingly, making your life as a developer much easier!

**Best Practices for Chameleon Functions**

* **Keep It Simple**: Avoid over-complicating the function.

* **Predictable Behavior**: Ensure the function’s behavior is clear from its name and usage.

* **Document Well**: Comment your code to explain what each part does.

Chameleon Functions are a powerful tool in JavaScript, allowing your code to adapt dynamically to different situations. Try implementing them in your projects to see the benefits firsthand!

Need help with JavaScript bugs or want to refine your Chameleon Functions? Check out this [Fiverr gig](https://www.fiverr.com/s/XLRaRj0) for expert assistance! Get your code working perfectly and learn new skills along the way.

| silverindigo |

1,869,406 | How I Organise My Python Code | New update added to the private methods. Writing code is more of an art than a science, I must... | 0 | 2024-05-29T16:54:23 | https://dev.to/pastorenuel/how-i-organise-my-python-code-364i | python, webdev, cleancode | _New update added to the private methods._

---

Writing code is more of an art than a science, I must opine. It's important to write well-refined, logical, and robust engineering solutions to a problem. However, there seems to be a major challenge: making these solutions comprehensive and readable.

The focus of this article is how to best organise your Python classes to be both readable and clean. Python doesn't enforce strict rules when it comes to method organisation; for instance, you won't be penalised if you decide to put your `__init__` method at the end of your class or if it comes first. However, adhering to widely accepted conventions not only makes collaboration easier but also fosters better documentation.

## Order Methods Should Follow

1. Magic Methods

2. Private Methods

3. Public Methods

4. Helper Methods for Your Public Methods

### 1. Magic Methods

Magic methods, also known as dunder methods (due to their double underscores), provide special functionalities to your classes. These methods are predefined and allow objects to interact with Python’s built-in functions and operators. Placing magic methods at the top of your class definition makes it immediately clear how instances of the class will behave in common operations.

Example:

```python

class ClassWithMagicMethod:

"""Example class"""

def __new__(self):

pass

def __init__(self, value: int):

"""This is the init method"""

self.value =value

def __str__(self):

return str(self.value)

def __repr__(self):

return f"<ExampleClass({self.value})>"

```

Notice that the `__new__` method comes before the `__init__` method? Its best practice to do it that way for proper and logical flow of your codebase

### 2. Private Methods

Private methods are intended for internal use within the class. These methods usually start with an dunderscore (__) and should be placed after the magic methods. Organising private methods together helps maintain a clear separation between the internal mechanisms of the class and the public interface.

Example:

```python

class ClassWithPrivateMethod:

# magic methods goes here...

def __private_method(self):

# This is a private method

print("this is private method")

```

Attempting to access the `__private_method` outside the class will raise this error:

```python

c = ClassWithPrivateMethod()

c.__private_method()

--------------output-----------

ERROR!

Traceback (most recent call last):

File "<main.py>", line 12, in <module>

AttributeError: 'ClassWithPrivateMethod' object has no attribute '__private_method'

```

However, it can be accessed within the class:

```python

class ClassWithPrivateMethod:

# magic methods goes here...

def __private_method(self):

# This is a private method

print("this is private method")

def another_method(self):

"""Method that accesses the private method"""

self.__private_method()

c = ClassWithPrivateMethod()

c.another_method()

------------output----------

this is private method

```

Though this method is considered private, Python still makes it possible to access it by using creating a helper method using the syntax: `f"_{__class__.__name__}__{<method_name>}"`. Which now works.

```python

c = ClassWithPrivateMethod()

c._ClassWithPrivateMethod__private_method()

-------------output---------------

this is private method

```

You will notice that Python created a helper method to access the private method; suggesting a syntax or pattern on how this should be used or accessed.

What about in the case of inheritance. It is pertinent to note that you cannot directly call a base class private method from a subclass. If you attempt to call `__private_method` of `ClassWithPrivateMethod` within `ClassWithPrivateMethodSubClass`, Python will raise an `AttributeError` because it cannot find the mangled name; `_ClassWithPrivateMethodSubClass__private_method` in `ClassWithPrivateMethodSubClass` but you will notice that `_ClassWithPrivateMethod__private_method` is present. This means one thing, python creates the mangled name before accessing the value of the private method.

Example:

```python

class ClassWithPrivateMethodSubClass(ClassWithPrivateMethod):

"""subclass of ClassWithPrivateMethod"""

pass

def method_to_access_private_method(self):

"""method attempting to access base"""

self.__private_method()

sc = ClassWithPrivateMethodSubClass()

sc.method_to_access_private_method()

-------------output-----------------

AttributeError: 'ClassWithPrivateMethodSubClass' object has no attribute '_ClassWithPrivateMethodSubClass__private_method'

```

To navigate through this, you can create a protected or helper method in the base class which can be accessed by the subclass.

Example:

```python

class ClassWithPrivateMethod:

...

def _protected_method_for_private_method(self):

return self.__private_method()

def method_to_access_private_method(self):

"""method attempting to access base"""

self.__private_method()

class ClassWithPrivateMethodSubClass(ClassWithPrivateMethod):

...

def method_to_access_private_method(self):

return self._protected_method_for_private_method()

sc = ClassWithPrivateMethodSubClass()

sc.method_to_access_private_method()

----------------output-------------------------

this is private method

```

### 3. Public Methods

Public methods form the main interface of your class. These methods should be clearly defined and well-documented since they are intended for use by other classes or modules. Keeping public methods organised and distinct from private methods enhances readability and usability.

Example:

```python

class ClassWithPublicMethod:

# magic methods go here...

# private methods next...

def public_method(self):

# This is a public method

return self.value

```

### 4. Helper Methods for Your Public Methods

Helper methods or supporting methods, which assist public methods in performing their tasks, should be defined immediately after the public methods they support. These methods often break down complex tasks into simpler, manageable pieces, promoting code reusability and clarity.

Example:

```python

class ClassWithHelperMethod:

# All previous methods here...

def public_method(self):

# This is a public method

return self._helper_method()

def _helper_method(self):

# This is a helper method for the public method

return self.value * 2

def public_method_2(self):

"""This is another method"""

pass

```

### Conclusion

By organising your methods in the suggested order —magic methods, private methods, public methods, and helper methods —you create a clear and readable structure for your Python classes. This approach not only facilitates easier collaboration and maintenance but also helps in creating a well-documented codebase that others can understand and use effectively. Which will also improve onboarding if you work in an organization. Remember, clean code is not just about making it work; it’s about making it understandable and maintainable for the long run.

| pastorenuel |

1,869,407 | Generating PDFs in Angular using jsPDF | In modern web applications, generating PDF documents can be an essential feature for reports,... | 0 | 2024-05-29T16:53:12 | https://dev.to/vidyarathna/generating-pdfs-in-angular-using-jspdf-3a6 | angular, jspdf, javascript, webdev |

In modern web applications, generating PDF documents can be an essential feature for reports, invoices, and more. In this article, we will explore how to integrate and use jsPDF, a popular JavaScript library, in an Angular application to generate PDF files dynamically.

## Prerequisites

To follow along with this tutorial, you should have:

- Basic knowledge of Angular.

- Node.js and Angular CLI installed.

- An Angular project set up. If you don't have one, you can create a new project using the Angular CLI:

```bash

ng new angular-jspdf

cd angular-jspdf

```

## Installing jsPDF

First, you need to install jsPDF. You can do this using npm:

```bash

npm install jspdf

```

## Setting Up jsPDF in Angular

Once jsPDF is installed, you need to set it up in your Angular project. Open your `app.module.ts` and add the following import:

```typescript

import { NgModule } from '@angular/core';

import { BrowserModule } from '@angular/platform-browser';

import { AppComponent } from './app.component';

import { FormsModule } from '@angular/forms';

@NgModule({

declarations: [

AppComponent

],

imports: [

BrowserModule,

FormsModule

],

providers: [],

bootstrap: [AppComponent]

})

export class AppModule { }

```

## Creating a PDF Service

To keep your code organized, create a service for generating PDFs. Generate a new service using Angular CLI:

```bash

ng generate service pdf

```

In the generated `pdf.service.ts`, import jsPDF and create a method for generating a PDF:

```typescript

import { Injectable } from '@angular/core';

import { jsPDF } from 'jspdf';

@Injectable({

providedIn: 'root'

})

export class PdfService {

constructor() { }

generatePdf() {

const doc = new jsPDF();

doc.text('Hello world!', 10, 10);

doc.save('sample.pdf');

}

}

```

## Creating a Component to Generate PDFs

Next, generate a new component where you will add a button to trigger the PDF generation:

```bash

ng generate component pdf-generator

```

In `pdf-generator.component.ts`, inject the PDF service and create a method to call the `generatePdf` function:

```typescript

import { Component } from '@angular/core';

import { PdfService } from '../pdf.service';

@Component({

selector: 'app-pdf-generator',

templateUrl: './pdf-generator.component.html',

styleUrls: ['./pdf-generator.component.css']

})

export class PdfGeneratorComponent {

constructor(private pdfService: PdfService) { }

generatePdf() {

this.pdfService.generatePdf();

}

}

```

In `pdf-generator.component.html`, add a button to trigger the PDF generation:

```html

<button (click)="generatePdf()">Generate PDF</button>

```

## Adding the Component to the App

Include the `PdfGeneratorComponent` in your `AppComponent` template to make it part of your application:

```html

<!-- app.component.html -->

<app-pdf-generator></app-pdf-generator>

```

## Customizing the PDF Content

To customize the PDF content, you can modify the `generatePdf` method in `PdfService`. For example, you can add images, tables, and more complex layouts:

```typescript

generatePdf() {

const doc = new jsPDF();

doc.setFontSize(22);

doc.text('Custom PDF Document', 10, 10);

doc.setFontSize(16);

doc.text('This is a sample PDF generated using jsPDF in Angular.', 10, 30);

// Add more content as needed

doc.text('Add your content here...', 10, 50);

doc.save('custom-sample.pdf');

}

```

Integrating jsPDF into your Angular project allows you to create PDF documents dynamically within your application. By following the steps outlined above, you can set up jsPDF, create a service for PDF generation, and trigger this functionality from a component. This basic setup can be further expanded to include more complex features such as tables, images, and styled text to meet your application's needs. With jsPDF, the possibilities for generating rich, dynamic PDF content are extensive, making it a powerful tool for any Angular developer.

Happy coding!

| vidyarathna |

1,869,404 | Explore the Enchantment of Bali: Your Ultimate Travel Guide for 2024 | Are you yearning for an unforgettable journey filled with exotic landscapes, vibrant cultures, and... | 0 | 2024-05-29T16:50:07 | https://dev.to/travelgo/explore-the-enchantment-of-bali-your-ultimate-travel-guide-for-2024-28gm | bali, indonesia, travel, cheaptravel | <p> </p><p class="graf graf--p" name="bf71">Are you yearning for an unforgettable journey filled with exotic landscapes, vibrant cultures, and endless adventures? Look no further than the mystical island paradise of <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a>, Indonesia! Nestled in the heart of the Indonesian archipelago, <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a> boasts an unpar alleled blend of natural beauty, rich heritage, and captivating experiences that will leave you spellbound.</p><figure class="graf graf--figure" name="876a"><img class="graf-image" data-height="667" data-image-id="0*Hcl8bGL6X01-7TZN" data-is-featured="true" data-width="1000" src="https://cdn-images-1.medium.com/max/800/0*Hcl8bGL6X01-7TZN" /></figure><p class="graf graf--p" name="e1dc">**Why <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a>?**</p><p class="graf graf--p" name="11fa"><a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a> is not just a destination; it’s a state of mind. Whether you’re seeking serene beaches, lush rice terraces, or adrenaline-pumping activities, <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a> has something to offer for every type of traveler. From the iconic temples like Tanah Lot and Uluwatu to the bustling streets of Ubud and the tranquil shores of Nusa Dua, this island paradise is a tapestry of diverse experiences waiting to be explored.</p><p class="graf graf--p" name="58cd">**Travel Guides 2024: What’s New?**</p><p class="graf graf--p" name="b79c">As the world evolves, so does <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a>. In 2024, travelers can expect a plethora of exciting developments and activities to enhance their <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a> experience. From eco-friendly initiatives promoting sustainable tourism to immersive cultural experiences that delve deep into the island’s heritage, there’s always something fresh and exciting to discover in <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a>.</p><ul class="postList"><li class="graf graf--li" name="46ff">*Best Travel Insurance for <a class="markup--anchor markup--li-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a>: Peace of Mind Amidst Paradise**</li></ul><figure class="graf graf--figure" name="e239"><img class="graf-image" data-height="751" data-image-id="0*9GNVD95LYJvnyju6" data-width="1000" src="https://cdn-images-1.medium.com/max/800/0*9GNVD95LYJvnyju6" /></figure><p class="graf graf--p" name="2e57">Before embarking on your <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a> adventure, it’s essential to secure the best travel insurance to ensure a worry-free journey. With the unpredictability of travel, having comprehensive coverage will provide you with peace of mind knowing that you’re protected against any unforeseen circumstances, from flight cancellations to medical emergencies.</p><p class="graf graf--p" name="6593">**Exploring <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a>: Tips and Tricks**</p><p class="graf graf--p" name="c3ab">

<ins class="bookingaff" data-aid="2421552" data-target_aid="2421552" data-prod="banner" data-width="728" data-height="90" data-banner_id="125810" data-lang="en">

<!-- Anything inside will go away once widget is loaded. -->

<a href="//www.booking.com?aid=2421552">Booking.com</a>

</ins>

<script type="text/javascript">

(function(d, sc, u) {

var s = d.createElement(sc), p = d.getElementsByTagName(sc)[0];

s.type = 'text/javascript';

s.async = true;

s.src = u + '?v=' + (+new Date());

p.parentNode.insertBefore(s,p);

})(document, 'script', '//cf.bstatic.com/static/affiliate_base/js/flexiproduct.js');

</script>

To make the most of your <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a> vacation, here are some insider tips to guide you along the way:</p><p class="graf graf--p" name="ac7c">1. **Embrace the Balinese Culture:** Take part in traditional ceremonies, witness mesmerizing dance performances, and savor authentic Balinese cuisine for a truly immersive cultural experience.</p><p class="graf graf--p" name="1009">2. **Venture Off the Beaten Path:** While <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a>’s popular attractions are undeniably stunning, don’t hesitate to explore the lesser-known gems hidden away in the island’s hinterlands.</p><figure class="graf graf--figure" name="4286"><img class="graf-image" data-height="667" data-image-id="0*tY1GmS5BtdgOwlCc" data-width="1000" src="https://cdn-images-1.medium.com/max/800/0*tY1GmS5BtdgOwlCc" /></figure><p class="graf graf--p" name="9b10">3. **Stay Connected:** Stay connected with reliable internet services and local SIM cards to share your <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a> moments with loved ones back home and navigate the island with ease.</p><p class="graf graf--p" name="ac09">4. **Mindful Travel:** Respect <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a>’s customs and traditions, including proper attire when visiting temples and practicing responsible tourism to preserve the island’s natural beauty for future generations.</p><p class="graf graf--p" name="832f">**Traveling Smart: Your Essential Packing List**</p><p class="graf graf--p" name="24bb">When packing for your <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a> escapade, remember to pack light, but don’t forget the essentials:</p><p class="graf graf--p" name="1044">1. **Sunscreen and Mosquito Repellent:** Protect your skin from the tropical sun and pesky insects while exploring <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a>’s outdoor wonders.</p><p class="graf graf--p" name="35bf">2. **Comfortable Footwear:** Whether you’re trekking through lush jungles or strolling along sandy beaches, comfortable footwear is a must.</p><p class="graf graf--p" name="c97c">3. **Lightweight Clothing:** Opt for breathable fabrics to stay cool in <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a>’s warm climate, and don’t forget to pack a sarong for temple visits.</p><p class="graf graf--p" name="697b">4. **Reusable Water Bottle:** Stay hydrated while reducing plastic waste by carrying a reusable water bottle to refill at water stations throughout the island.</p><figure class="graf graf--figure" name="8084"><img class="graf-image" data-height="563" data-image-id="0*uG1J7xDCm7L2Z8rP" data-width="1000" src="https://cdn-images-1.medium.com/max/800/0*uG1J7xDCm7L2Z8rP" /></figure><p class="graf graf--p" name="06b6">**Traveling to <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a> on a Budget: Tips and Tricks**</p><p class="graf graf--p" name="97b6">Traveling to <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a> doesn’t have to break the bank! With a bit of savvy planning, you can experience all that this island paradise has to offer without overspending:

<ins class="bookingaff" data-aid="2421580" data-target_aid="2421580" data-prod="dfl2" data-width="300" data-height="350" data-lang="en" data-dest_id="835" data-dest_type="region">

<!-- Anything inside will go away once widget is loaded. -->

<a href="//www.booking.com?aid=2421580">Booking.com</a>

</ins>

<script type="text/javascript">

(function(d, sc, u) {

var s = d.createElement(sc), p = d.getElementsByTagName(sc)[0];

s.type = 'text/javascript';

s.async = true;

s.src = u + '?v=' + (+new Date());

p.parentNode.insertBefore(s,p);

})(document, 'script', '//cf.bstatic.com/static/affiliate_base/js/flexiproduct.js');

</script>

</p><p class="graf graf--p" name="c233">1. **Accommodation:** Consider staying in guesthouses or homestays for a more affordable and authentic <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a> experience.</p><p class="graf graf--p" name="f749">2. **Local Eateries:** Indulge in delicious Indonesian cuisine at local warungs (eateries) and street food stalls for budget-friendly dining options.</p><p class="graf graf--p" name="9ec4">3. **Transportation:** Opt for public transportation, such as buses or motorbikes, to get around <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a> affordably, or explore the island’s scenic landscapes by renting a bicycle.</p><p class="graf graf--p" name="4d51">4. **Book in Advance:** Take advantage of early bird discounts and promotional offers by booking flights, accommodations, and tours in advance.</p><figure class="graf graf--figure" name="cfec"><img class="graf-image" data-height="667" data-image-id="0*siuo3k6QNyk98Cfa" data-width="1000" src="https://cdn-images-1.medium.com/max/800/0*siuo3k6QNyk98Cfa" /></figure><p class="graf graf--p" name="4a00">**Experience <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a> with Ease: Travel Agency Assistance**</p><p class="graf graf--p" name="eb0e">For a hassle-free <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a> vacation, consider enlisting the services of a reputable travel agency specializing in Indonesian travel. From crafting personalized itineraries to arranging accommodations and transportation, a professional travel agency can help you navigate <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a> with ease, leaving you free to focus on creating unforgettable memories.</p><p class="graf graf--p" name="a8c5"><a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank"><strong class="markup--strong markup--p-strong">Access the link for irresistible offers!</strong></a></p><p class="graf graf--p" name="1c50">**Conclusion**</p><p class="graf graf--p" name="d52f">Embark on a journey of discovery and adventure in the enchanting island paradise of <a class="markup--anchor markup--p-anchor" data-href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" href="https://www.booking.com/searchresults.en.html?region=835&aid=8019784&no_rooms=1&group_adults=2" rel="noopener" target="_blank">Bali</a>. With its breathtaking landscapes, vibrant culture, and warm hospitality, Bali is more than just a destination; it’s an experience that will leave an indelible mark on your soul. So pack your bags, immerse yourself in the magic of Bali, and let the adventure begin!</p>

<script src="https://www.booking.com/affiliate/prelanding_sdk"></script>

<div id="bookingAffiliateWidget_a6420765-0a0b-4cc0-8a20-d44b4b6ff0ed"> </div>

<script>

(function () {

var BookingAffiliateWidget = new Booking.AffiliateWidget({

"iframeSettings": {

"selector": "bookingAffiliateWidget_a6420765-0a0b-4cc0-8a20-d44b4b6ff0ed",

"responsive": true

},

"widgetSettings": {}

});

})();

</script> | travelgo |

1,869,403 | Buy Verified Paxful Account | https://dmhelpshop.com/product/buy-verified-paxful-account/ Buy Verified Paxful Account There are... | 0 | 2024-05-29T16:47:50 | https://dev.to/nyfgegh86/buy-verified-paxful-account-29jo | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-paxful-account/\n\n\nBuy Verified Paxful Account\nThere are several compelling reasons to consider purchasing a verified Paxful account. Firstly, a verified account offers enhanced security, providing peace of mind to all users. Additionally, it opens up a wider range of trading opportunities, allowing individuals to partake in various transactions, ultimately expanding their financial horizons.\n\nMoreover, Buy verified Paxful account ensures faster and more streamlined transactions, minimizing any potential delays or inconveniences. Furthermore, by opting for a verified account, users gain access to a trusted and reputable platform, fostering a sense of reliability and confidence.\n\nLastly, Paxful’s verification process is thorough and meticulous, ensuring that only genuine individuals are granted verified status, thereby creating a safer trading environment for all users. Overall, the decision to Buy Verified Paxful account can greatly enhance one’s overall trading experience, offering increased security, access to more opportunities, and a reliable platform to engage with. Buy Verified Paxful Account.\n\nBuy US verified paxful account from the best place dmhelpshop\nWhy we declared this website as the best place to buy US verified paxful account? Because, our company is established for providing the all account services in the USA (our main target) and even in the whole world. With this in mind we create paxful account and customize our accounts as professional with the real documents. Buy Verified Paxful Account.\n\nIf you want to buy US verified paxful account you should have to contact fast with us. Because our accounts are-\n\nEmail verified\nPhone number verified\nSelfie and KYC verified\nSSN (social security no.) verified\nTax ID and passport verified\nSometimes driving license verified\nMasterCard attached and verified\nUsed only genuine and real documents\n100% access of the account\nAll documents provided for customer security\nWhat is Verified Paxful Account?\nIn today’s expanding landscape of online transactions, ensuring security and reliability has become paramount. Given this context, Paxful has quickly risen as a prominent peer-to-peer Bitcoin marketplace, catering to individuals and businesses seeking trusted platforms for cryptocurrency trading.\n\nIn light of the prevalent digital scams and frauds, it is only natural for people to exercise caution when partaking in online transactions. As a result, the concept of a verified account has gained immense significance, serving as a critical feature for numerous online platforms. Paxful recognizes this need and provides a safe haven for users, streamlining their cryptocurrency buying and selling experience.\n\nFor individuals and businesses alike, Buy verified Paxful account emerges as an appealing choice, offering a secure and reliable environment in the ever-expanding world of digital transactions. Buy Verified Paxful Account.\n\nVerified Paxful Accounts are essential for establishing credibility and trust among users who want to transact securely on the platform. They serve as evidence that a user is a reliable seller or buyer, verifying their legitimacy.\n\nBut what constitutes a verified account, and how can one obtain this status on Paxful? In this exploration of verified Paxful accounts, we will unravel the significance they hold, why they are crucial, and shed light on the process behind their activation, providing a comprehensive understanding of how they function. Buy verified Paxful account.\n\n \n\nWhy should to Buy Verified Paxful Account?\nThere are several compelling reasons to consider purchasing a verified Paxful account. Firstly, a verified account offers enhanced security, providing peace of mind to all users. Additionally, it opens up a wider range of trading opportunities, allowing individuals to partake in various transactions, ultimately expanding their financial horizons.\n\nMoreover, a verified Paxful account ensures faster and more streamlined transactions, minimizing any potential delays or inconveniences. Furthermore, by opting for a verified account, users gain access to a trusted and reputable platform, fostering a sense of reliability and confidence. Buy Verified Paxful Account.\n\nLastly, Paxful’s verification process is thorough and meticulous, ensuring that only genuine individuals are granted verified status, thereby creating a safer trading environment for all users. Overall, the decision to buy a verified Paxful account can greatly enhance one’s overall trading experience, offering increased security, access to more opportunities, and a reliable platform to engage with.\n\n \n\nWhat is a Paxful Account\nPaxful and various other platforms consistently release updates that not only address security vulnerabilities but also enhance usability by introducing new features. Buy Verified Paxful Account.\n\nIn line with this, our old accounts have recently undergone upgrades, ensuring that if you purchase an old buy Verified Paxful account from dmhelpshop.com, you will gain access to an account with an impressive history and advanced features. This ensures a seamless and enhanced experience for all users, making it a worthwhile option for everyone.\n\n \n\nIs it safe to buy Paxful Verified Accounts?\nBuying on Paxful is a secure choice for everyone. However, the level of trust amplifies when purchasing from Paxful verified accounts. These accounts belong to sellers who have undergone rigorous scrutiny by Paxful. Buy verified Paxful account, you are automatically designated as a verified account. Hence, purchasing from a Paxful verified account ensures a high level of credibility and utmost reliability. Buy Verified Paxful Account.\n\nPAXFUL, a widely known peer-to-peer cryptocurrency trading platform, has gained significant popularity as a go-to website for purchasing Bitcoin and other cryptocurrencies. It is important to note, however, that while Paxful may not be the most secure option available, its reputation is considerably less problematic compared to many other marketplaces. Buy Verified Paxful Account.\n\nThis brings us to the question: is it safe to purchase Paxful Verified Accounts? Top Paxful reviews offer mixed opinions, suggesting that caution should be exercised. Therefore, users are advised to conduct thorough research and consider all aspects before proceeding with any transactions on Paxful.\n\n \n\nHow Do I Get 100% Real Verified Paxful Accoun?\nPaxful, a renowned peer-to-peer cryptocurrency marketplace, offers users the opportunity to conveniently buy and sell a wide range of cryptocurrencies. Given its growing popularity, both individuals and businesses are seeking to establish verified accounts on this platform.\n\nHowever, the process of creating a verified Paxful account can be intimidating, particularly considering the escalating prevalence of online scams and fraudulent practices. This verification procedure necessitates users to furnish personal information and vital documents, posing potential risks if not conducted meticulously.\n\nIn this comprehensive guide, we will delve into the necessary steps to create a legitimate and verified Paxful account. Our discussion will revolve around the verification process and provide valuable tips to safely navigate through it.\n\nMoreover, we will emphasize the utmost importance of maintaining the security of personal information when creating a verified account. Furthermore, we will shed light on common pitfalls to steer clear of, such as using counterfeit documents or attempting to bypass the verification process.\n\nWhether you are new to Paxful or an experienced user, this engaging paragraph aims to equip everyone with the knowledge they need to establish a secure and authentic presence on the platform.\n\nBenefits Of Verified Paxful Accounts\nVerified Paxful accounts offer numerous advantages compared to regular Paxful accounts. One notable advantage is that verified accounts contribute to building trust within the community.\n\nVerification, although a rigorous process, is essential for peer-to-peer transactions. This is why all Paxful accounts undergo verification after registration. When customers within the community possess confidence and trust, they can conveniently and securely exchange cash for Bitcoin or Ethereum instantly. Buy Verified Paxful Account.\n\nPaxful accounts, trusted and verified by sellers globally, serve as a testament to their unwavering commitment towards their business or passion, ensuring exceptional customer service at all times. Headquartered in Africa, Paxful holds the distinction of being the world’s pioneering peer-to-peer bitcoin marketplace. Spearheaded by its founder, Ray Youssef, Paxful continues to lead the way in revolutionizing the digital exchange landscape.\n\nPaxful has emerged as a favored platform for digital currency trading, catering to a diverse audience. One of Paxful’s key features is its direct peer-to-peer trading system, eliminating the need for intermediaries or cryptocurrency exchanges. By leveraging Paxful’s escrow system, users can trade securely and confidently.\n\nWhat sets Paxful apart is its commitment to identity verification, ensuring a trustworthy environment for buyers and sellers alike. With these user-centric qualities, Paxful has successfully established itself as a leading platform for hassle-free digital currency transactions, appealing to a wide range of individuals seeking a reliable and convenient trading experience. Buy Verified Paxful Account.\n\n \n\nHow paxful ensure risk-free transaction and trading?\nEngage in safe online financial activities by prioritizing verified accounts to reduce the risk of fraud. Platforms like Paxfu implement stringent identity and address verification measures to protect users from scammers and ensure credibility.\n\nWith verified accounts, users can trade with confidence, knowing they are interacting with legitimate individuals or entities. By fostering trust through verified accounts, Paxful strengthens the integrity of its ecosystem, making it a secure space for financial transactions for all users. Buy Verified Paxful Account.\n\nExperience seamless transactions by obtaining a verified Paxful account. Verification signals a user’s dedication to the platform’s guidelines, leading to the prestigious badge of trust. This trust not only expedites trades but also reduces transaction scrutiny. Additionally, verified users unlock exclusive features enhancing efficiency on Paxful. Elevate your trading experience with Verified Paxful Accounts today.\n\nIn the ever-changing realm of online trading and transactions, selecting a platform with minimal fees is paramount for optimizing returns. This choice not only enhances your financial capabilities but also facilitates more frequent trading while safeguarding gains. Buy Verified Paxful Account.\n\nExamining the details of fee configurations reveals Paxful as a frontrunner in cost-effectiveness. Acquire a verified level-3 USA Paxful account from usasmmonline.com for a secure transaction experience. Invest in verified Paxful accounts to take advantage of a leading platform in the online trading landscape.\n\n \n\nHow Old Paxful ensures a lot of Advantages?\n\nExplore the boundless opportunities that Verified Paxful accounts present for businesses looking to venture into the digital currency realm, as companies globally witness heightened profits and expansion. These success stories underline the myriad advantages of Paxful’s user-friendly interface, minimal fees, and robust trading tools, demonstrating its relevance across various sectors.\n\nBusinesses benefit from efficient transaction processing and cost-effective solutions, making Paxful a significant player in facilitating financial operations. Acquire a USA Paxful account effortlessly at a competitive rate from usasmmonline.com and unlock access to a world of possibilities. Buy Verified Paxful Account.\n\nExperience elevated convenience and accessibility through Paxful, where stories of transformation abound. Whether you are an individual seeking seamless transactions or a business eager to tap into a global market, buying old Paxful accounts unveils opportunities for growth.\n\nPaxful’s verified accounts not only offer reliability within the trading community but also serve as a testament to the platform’s ability to empower economic activities worldwide. Join the journey towards expansive possibilities and enhanced financial empowerment with Paxful today. Buy Verified Paxful Account.\n\n \n\nWhy paxful keep the security measures at the top priority?\nIn today’s digital landscape, security stands as a paramount concern for all individuals engaging in online activities, particularly within marketplaces such as Paxful. It is essential for account holders to remain informed about the comprehensive security protocols that are in place to safeguard their information.\n\nSafeguarding your Paxful account is imperative to guaranteeing the safety and security of your transactions. Two essential security components, Two-Factor Authentication and Routine Security Audits, serve as the pillars fortifying this shield of protection, ensuring a secure and trustworthy user experience for all. Buy Verified Paxful Account.\n\nConclusion\nInvesting in Bitcoin offers various avenues, and among those, utilizing a Paxful account has emerged as a favored option. Paxful, an esteemed online marketplace, enables users to engage in buying and selling Bitcoin. Buy Verified Paxful Account.\n\nThe initial step involves creating an account on Paxful and completing the verification process to ensure identity authentication. Subsequently, users gain access to a diverse range of offers from fellow users on the platform. Once a suitable proposal captures your interest, you can proceed to initiate a trade with the respective user, opening the doors to a seamless Bitcoin investing experience.\n\nIn conclusion, when considering the option of purchasing verified Paxful accounts, exercising caution and conducting thorough due diligence is of utmost importance. It is highly recommended to seek reputable sources and diligently research the seller’s history and reviews before making any transactions.\n\nMoreover, it is crucial to familiarize oneself with the terms and conditions outlined by Paxful regarding account verification, bearing in mind the potential consequences of violating those terms. By adhering to these guidelines, individuals can ensure a secure and reliable experience when engaging in such transactions. Buy Verified Paxful Account.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com\n\n " | nyfgegh86 |

1,869,401 | 💡Type-safe APIs Solutions | Note that this post is the ongoing work. It's yet to be finished. Introduction I felt... | 0 | 2024-05-29T16:42:36 | https://dev.to/algoorgoal/type-safe-apis-solutions-49ja | api | Note that this post is the ongoing work. It's yet to be finished.

## Introduction

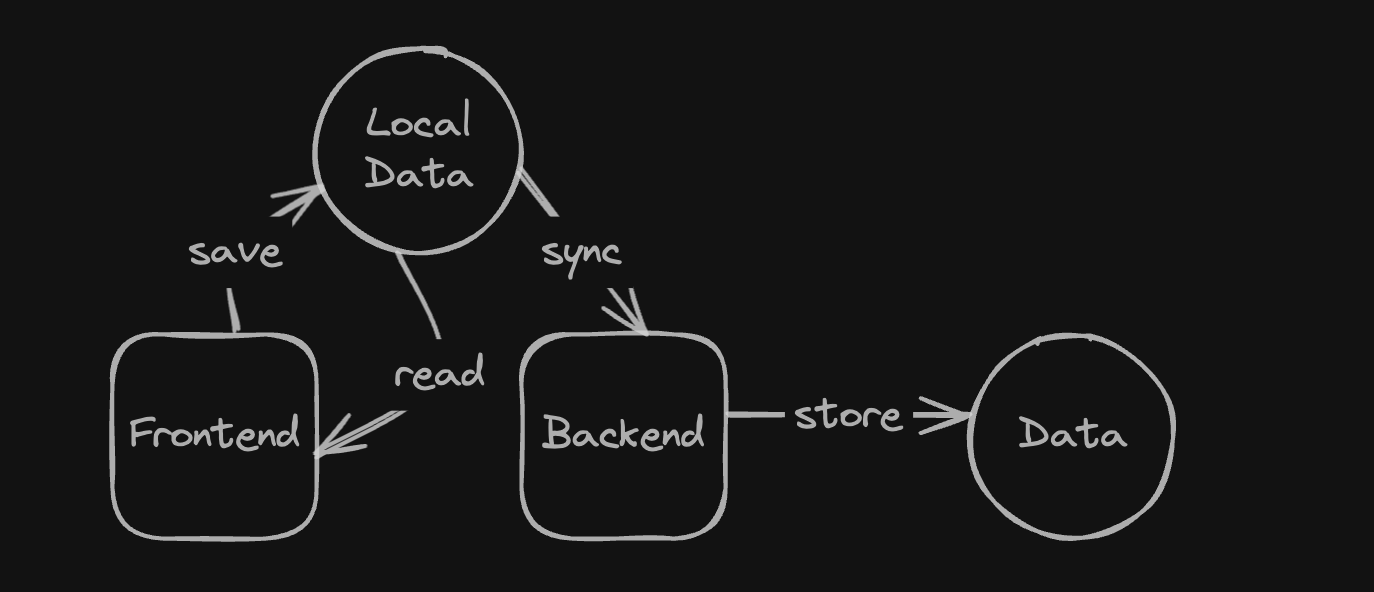

I felt like I wasted a lot of time communicating with the Backend Engineers about what types JSON properties actually are. They provided me with some API spec written by their own, but I spent a lot of time pointing out what's wrong and waiting for the fix. Therefore, I decided to look into type-safe API solutions.

## Example

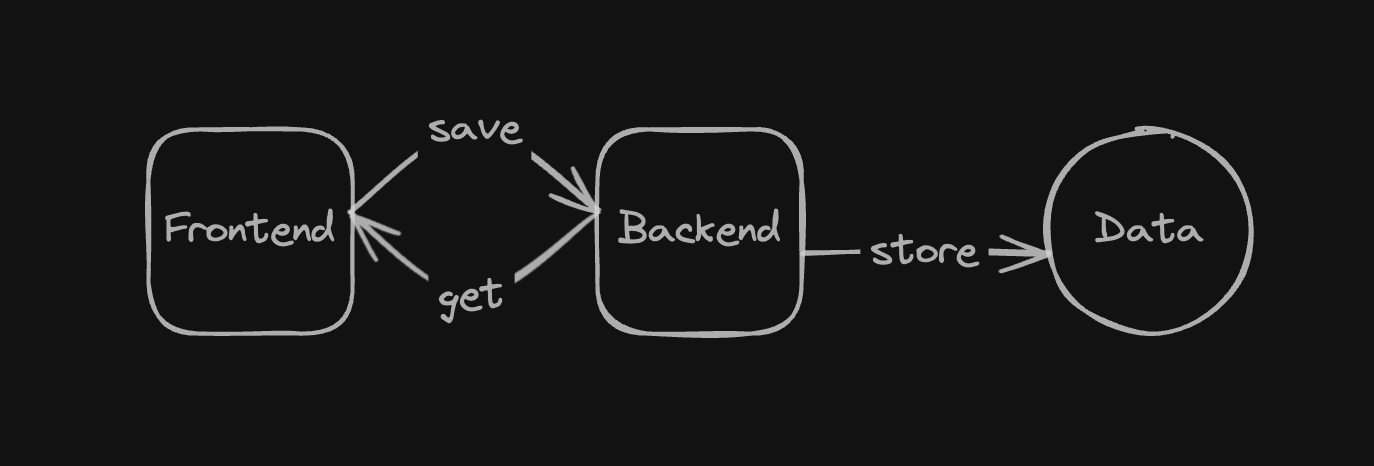

This is the common situation you run into:

```typescript

export const addBooth = async (booth: Booth) => {

const response = await fetch(`${API_URL}/api/booths`, {

method: HTTPMethod.POST,

});

const data = await response.json();

return data;

};

```

Here, the type of the variable `data` is `any`, since typescript cannot deduce the type of http response. Therefore, our frontend developers entirely relied on API specifiaction written by our backend developers on Notion.

However, I found this process pretty inefficient. First off, backend developers need to spend time writing the API spec. Second, they might make mistakes and sometimes it takes a lot of time when I report the issues and get the answers. Lastly, I also report issues created by my own mistakes. That's how I got interested in type-safe solutions to reduce miscommunication issues and errors.

## OpenAPI

- It's a way to describe RESTful APIs. It started as Swagger API, but later changed its name to OpanAPI.

- Swagger is a toolkit that lets you write OpenAPI spec using JSON and renders OpenAPI Spec documents on the web.

- If your team uses Restful APIs, then you can take advantage of Swagger.

In Swagger UI like this, you can check the types and rules of JSON properties by checking the schema backend engineers wrote for you. Note it doesn't work well by default, so you should kindly ask your backend developers for a double-check.

## GraphQL

- In GraphQL, requests and responses are defined in the schema formats.

- It can also solve the problem of overfetching and underfetching.

- Overfetching means receiving more data than the client needs.

- Underfetching means receiving less data than the client needs, and the client sends multiple requests.

- The whole team needs to work their asses off to learn GraphQL.

- It would be great when your application has a lot of endpoints, the team has separated frontend and backend developers, overfetching and underfetching happen quite often, and has enough time to learn GraphQL.

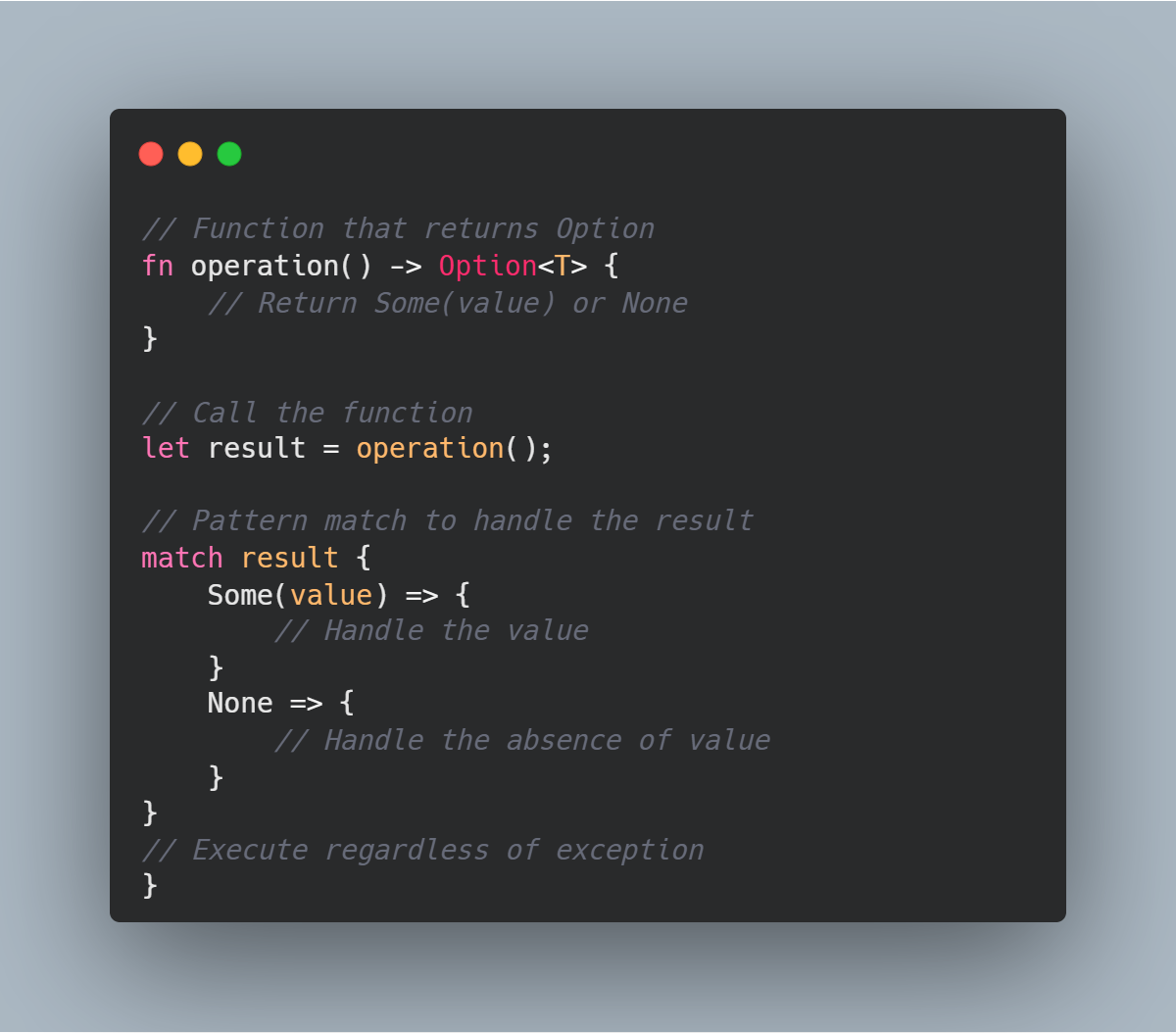

## tRPC(typescript Remote Procedure Control)

- Type definitions are shared between the client and the server.

- The API server must be written in typescript. If you're in a large team and the server should be used in a different language, tRPC is not a good choice.

- You should retain a monorepo or deploy an npm package to share the types between the client and the server.

- If you're using Server Actions, you can ditch tRPC. Once your server action logic gets complicated, you can consider using them altogether.

## Other solutions

Dear readers: language-neutral protocols have been around even before tRPC and GraphQL came out. You can check out these protocol-level type-safety tools as well.

- Protobuf: it's a data-serialization protocol. Instead of transferring JSON, this protocol sends protocol buffers.

> Data serialization process transforms objects into binary format so that data can be sent to the network. On the physical layer, these objects are just sent in the form of binary via cables.

## Conclusion

I haven't used GraphQL and tRPC. When I get a chance to use them I'll add more thoughts. | algoorgoal |

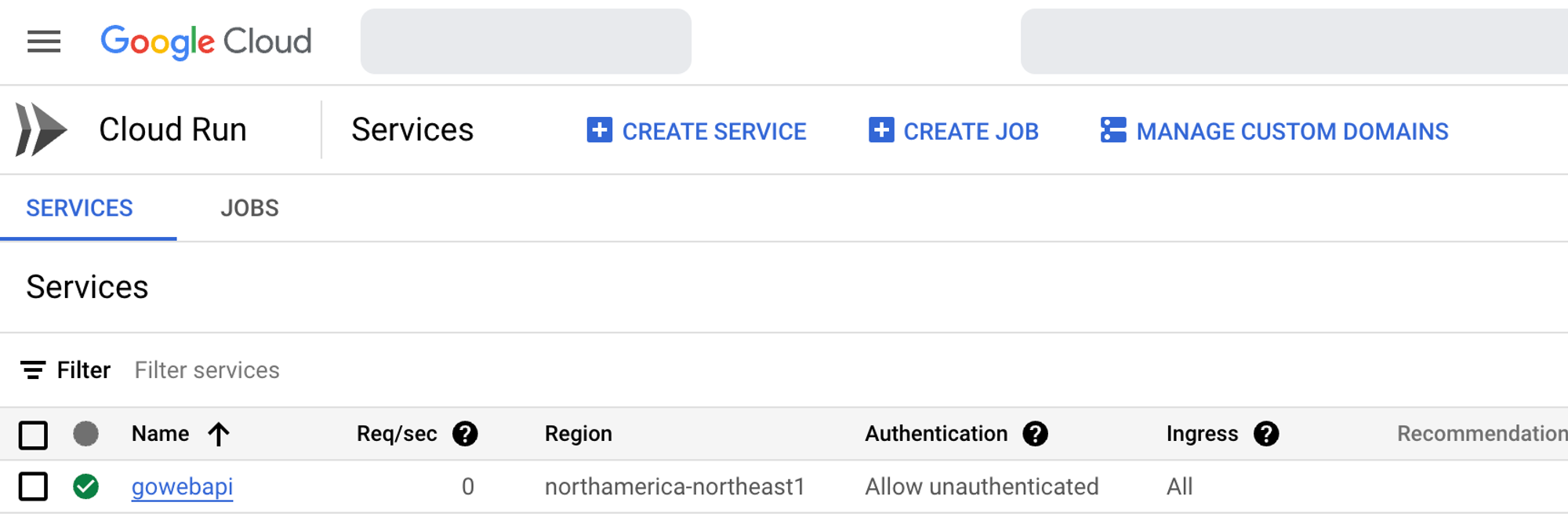

1,869,399 | GCP Cloud Run vs Kubernetes | The world of Kubernetes is intertwined with several different platforms, software, and third-party... | 0 | 2024-05-29T16:32:15 | https://dev.to/thenjdevopsguy/gcp-cloud-run-vs-kubernetes-5g2b | kubernetes, devops, programming, docker | The world of Kubernetes is intertwined with several different platforms, software, and third-party services. Aside from things like GitOps and Service Mesh, the core implementation of Kubernetes is to help with one thing - orchestrate and manage containers.

In this blog post, we’ll get back to the original concept of orchestration and talk about the key differences between a service like Cloud Run and a full-blown orchestrator like Kubernetes.

## What Is Kubernetes

The question of “What is Kubernetes?” is long, vast, and takes up a fair amount of talk in itself (that’s why there are 10’s of books available around Kubernetes), but what was the original need for Kubernetes?

The long and short of it is that Kubernetes was created to manage and scale containers.

Kubernetes contains several plugins and the plugin to run containers is the Container Runtime Interface (CRI). Why is the plugin necessary? Because Kubernetes does not know how to run/stop/start containers by itself.

## What Is GCP Cloud Run

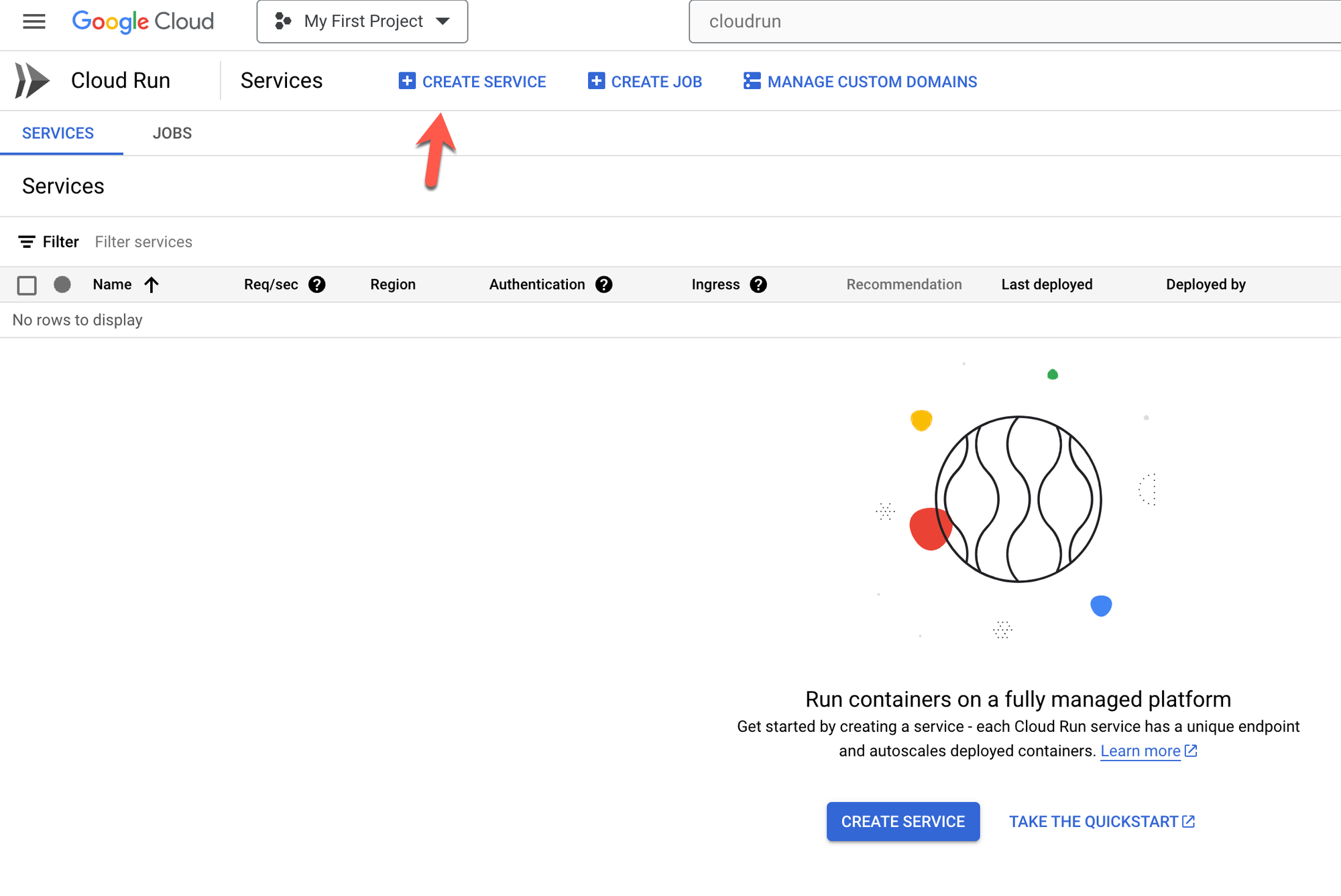

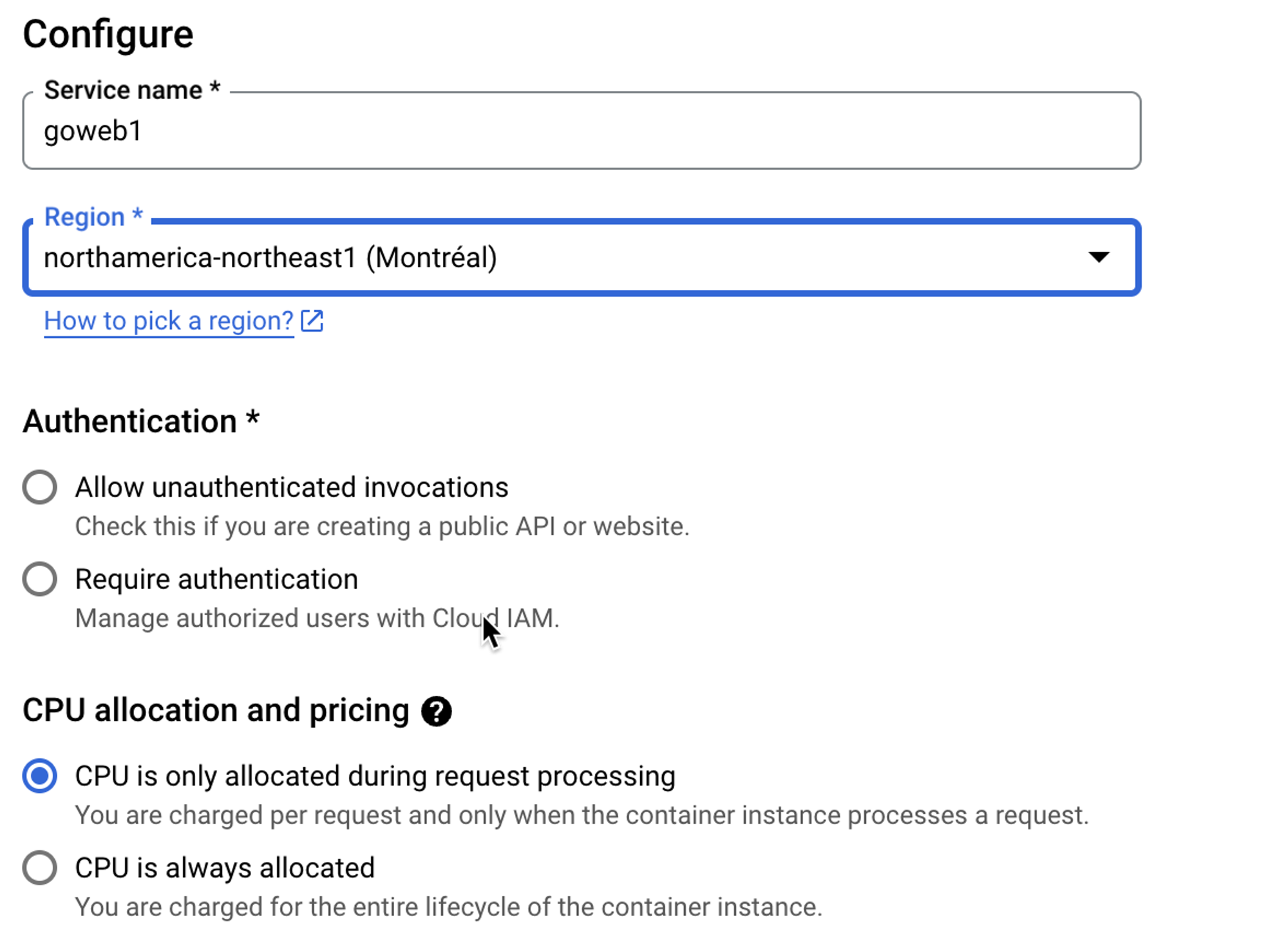

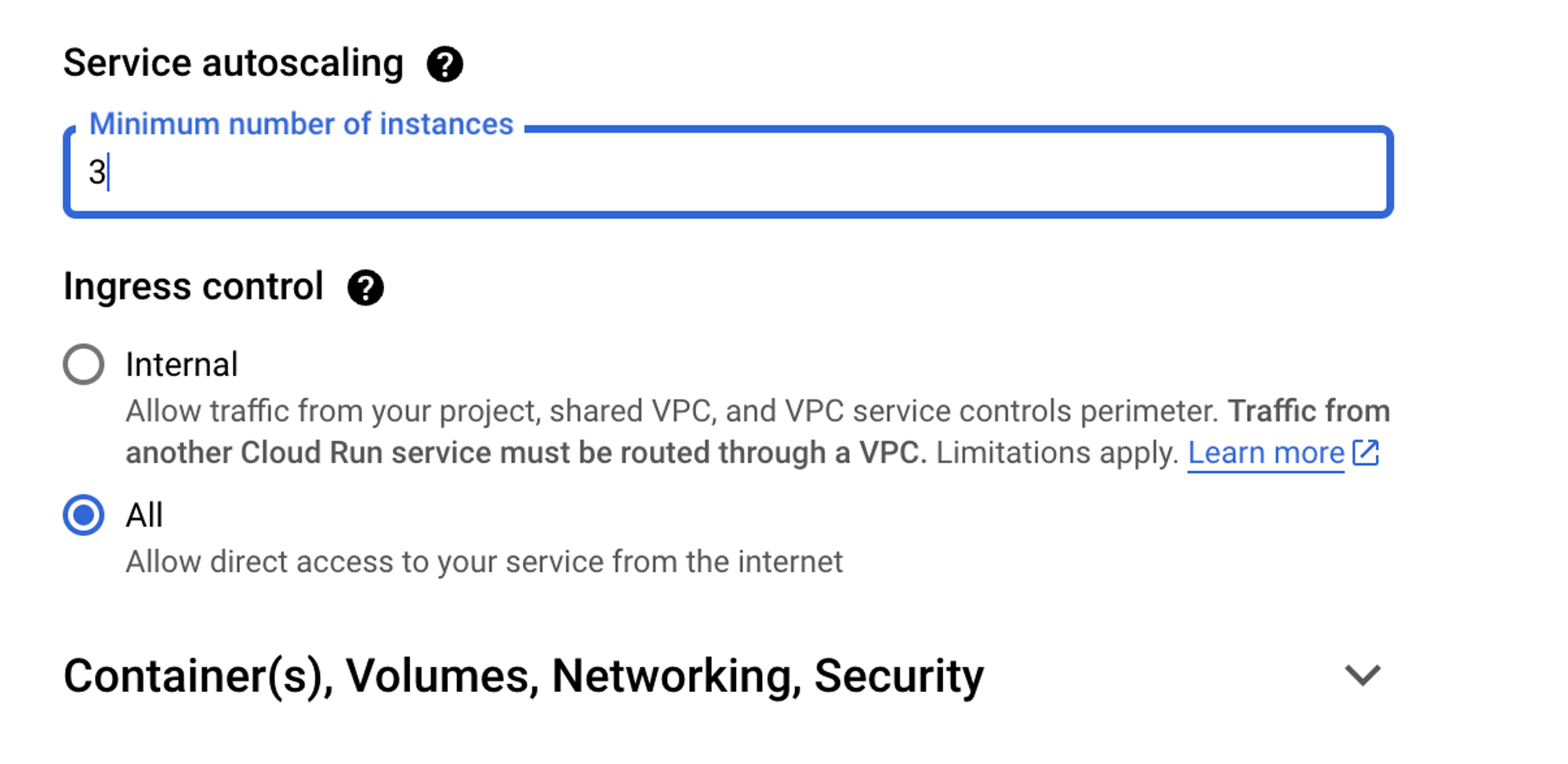

Cloud Run, much like Kubernetes, is an orchestrator. It gives you the ability to deploy a container, scale that container, set up autoscaling, configure resource optimization, and several other health-based containerization methods.

The key to remember is that with Cloud Run, you’re using a GCP-based solution for orchestration.

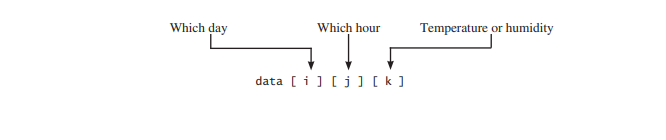

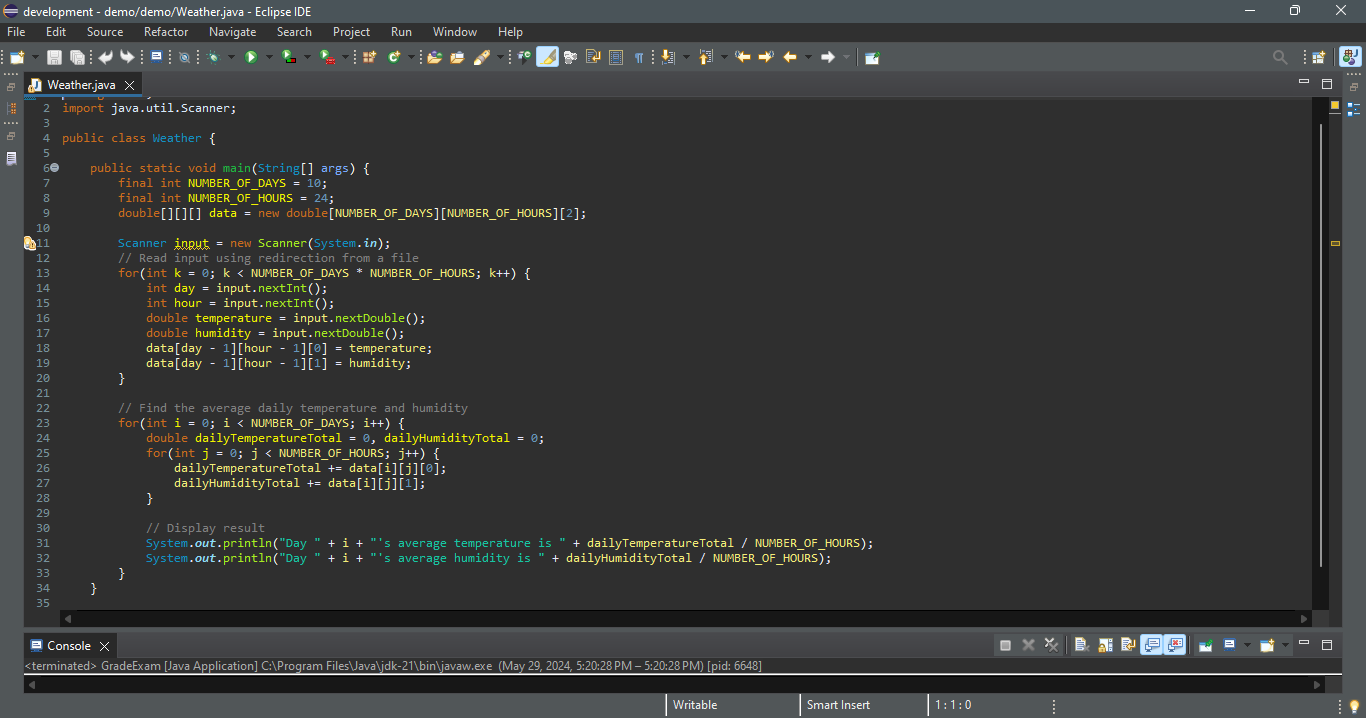

In the next few sections, you’ll see how to run containers (Pods contain containers), Cloud Run containers, and resource optimization in each environment.

## Deploying A Pod On Kubernetes

To deploy a Pod on Kubernetes, you’ll use a Kubernetes Manifest, which is a YAML (or JSON) configuration to deploy containers within Pods.

Below is an example of using a higher-level controller called `Deployment`.

```jsx

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

spec:

selector:

matchLabels:

app: nginxdeployment

replicas: 2

template:

metadata:

labels:

app: nginxdeployment

spec:

containers:

- name: nginxdeployment

image: nginx:latest

ports:

- containerPort: 80

```

Once you write the Manifest, you perform a HTTPS POST request to the Kubernetes API server with the `kubectl apply` command.

```jsx

kubectl apply -f deployment.yaml

```

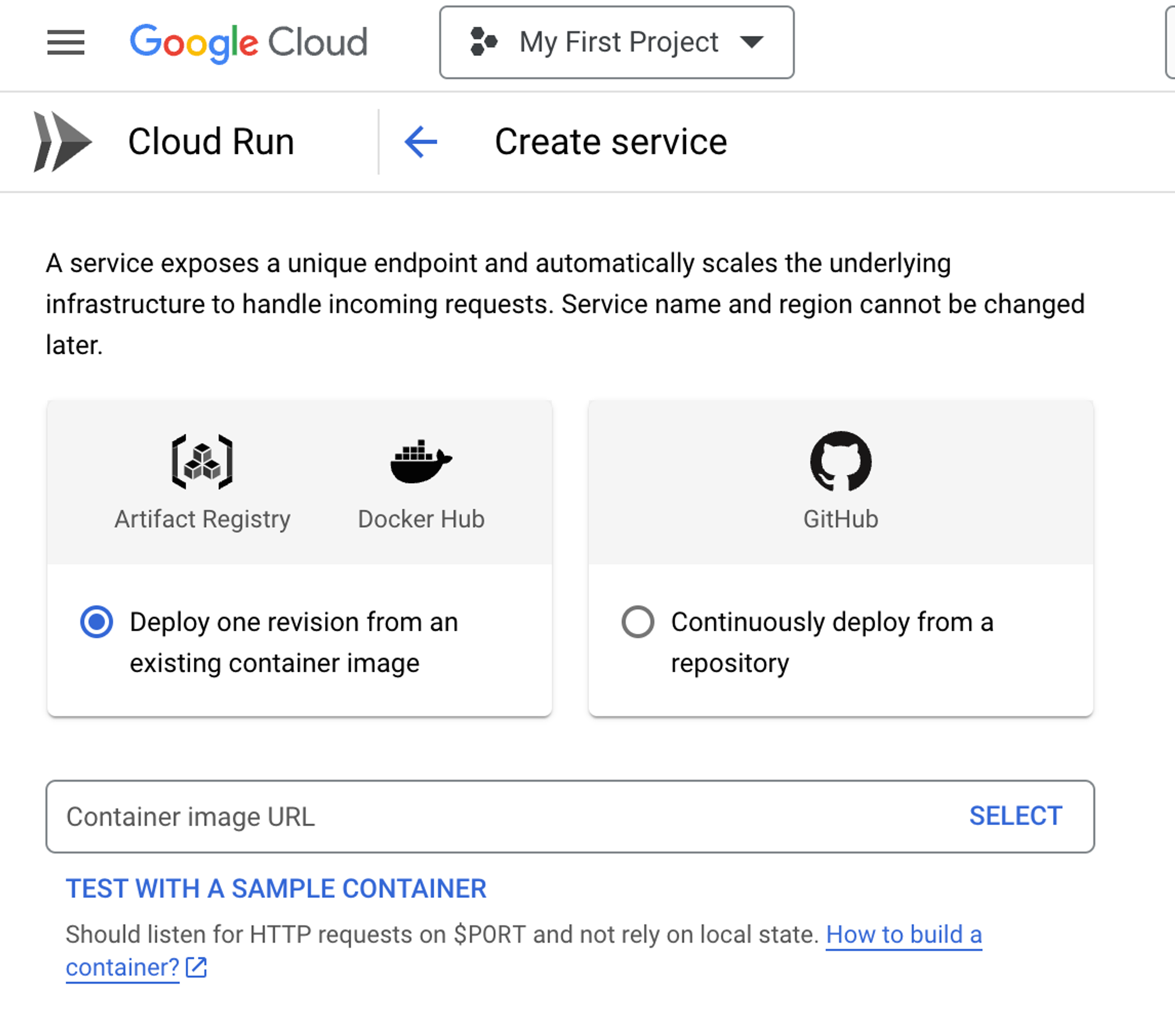

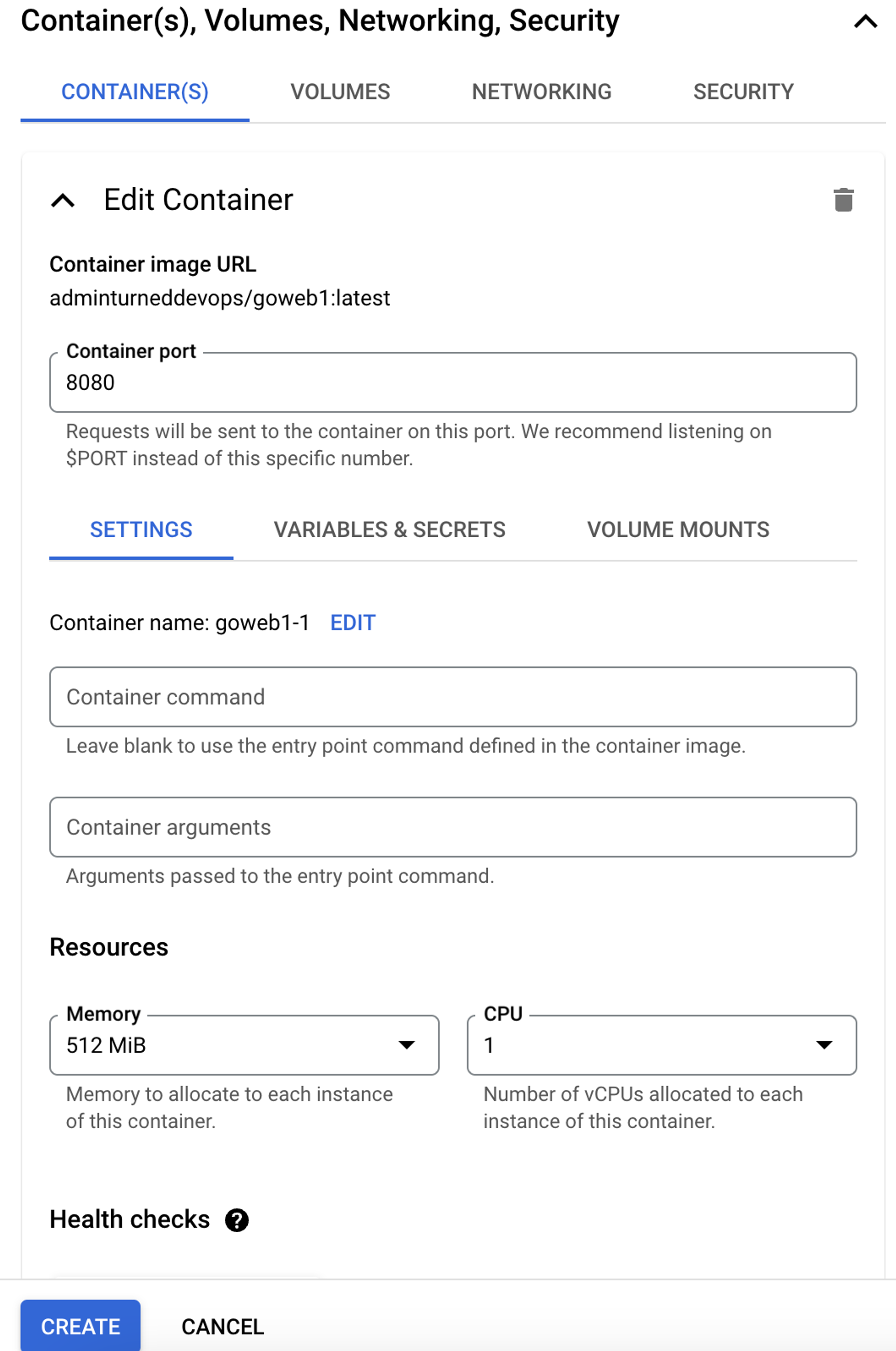

## Deploying A Container on Cloud Run

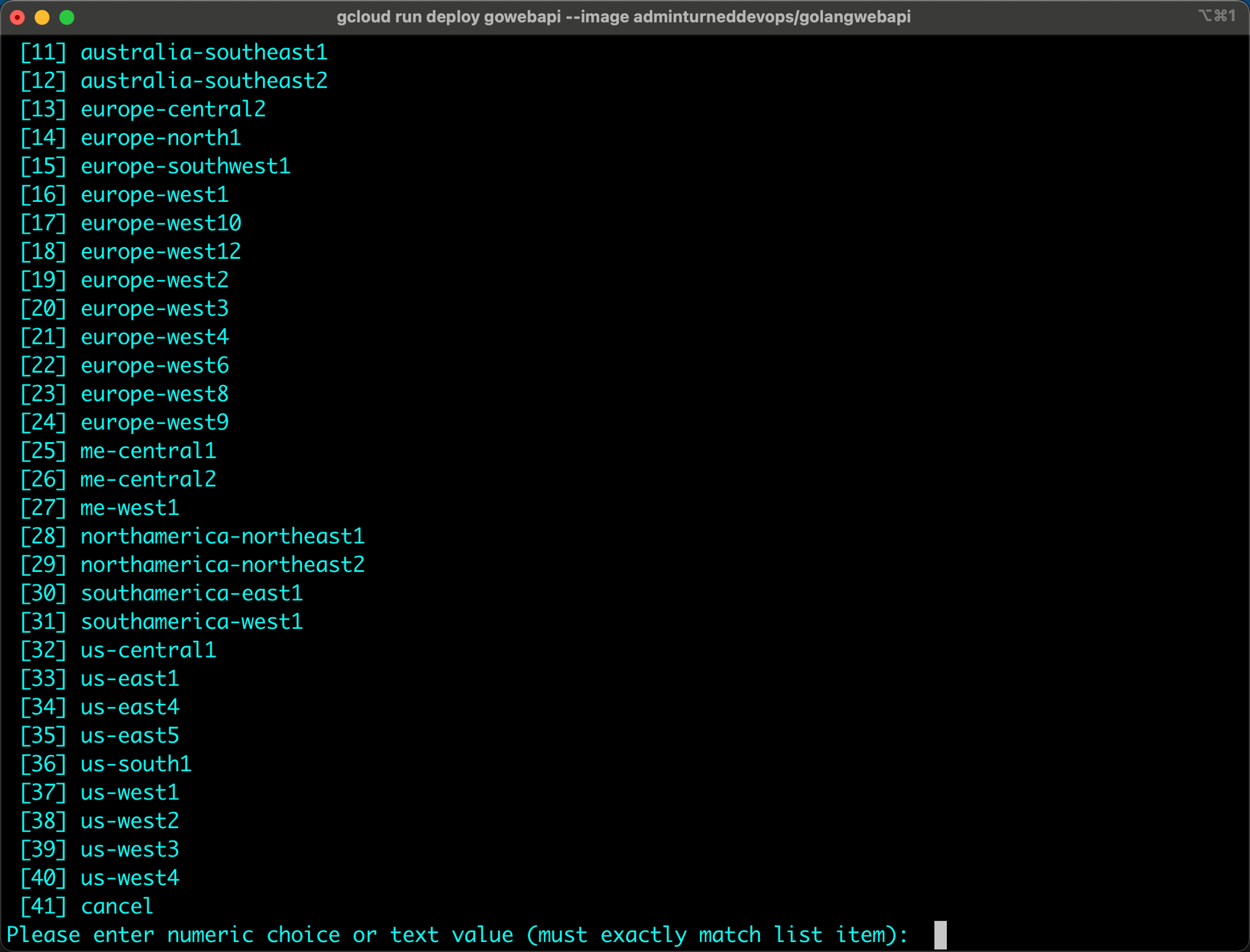

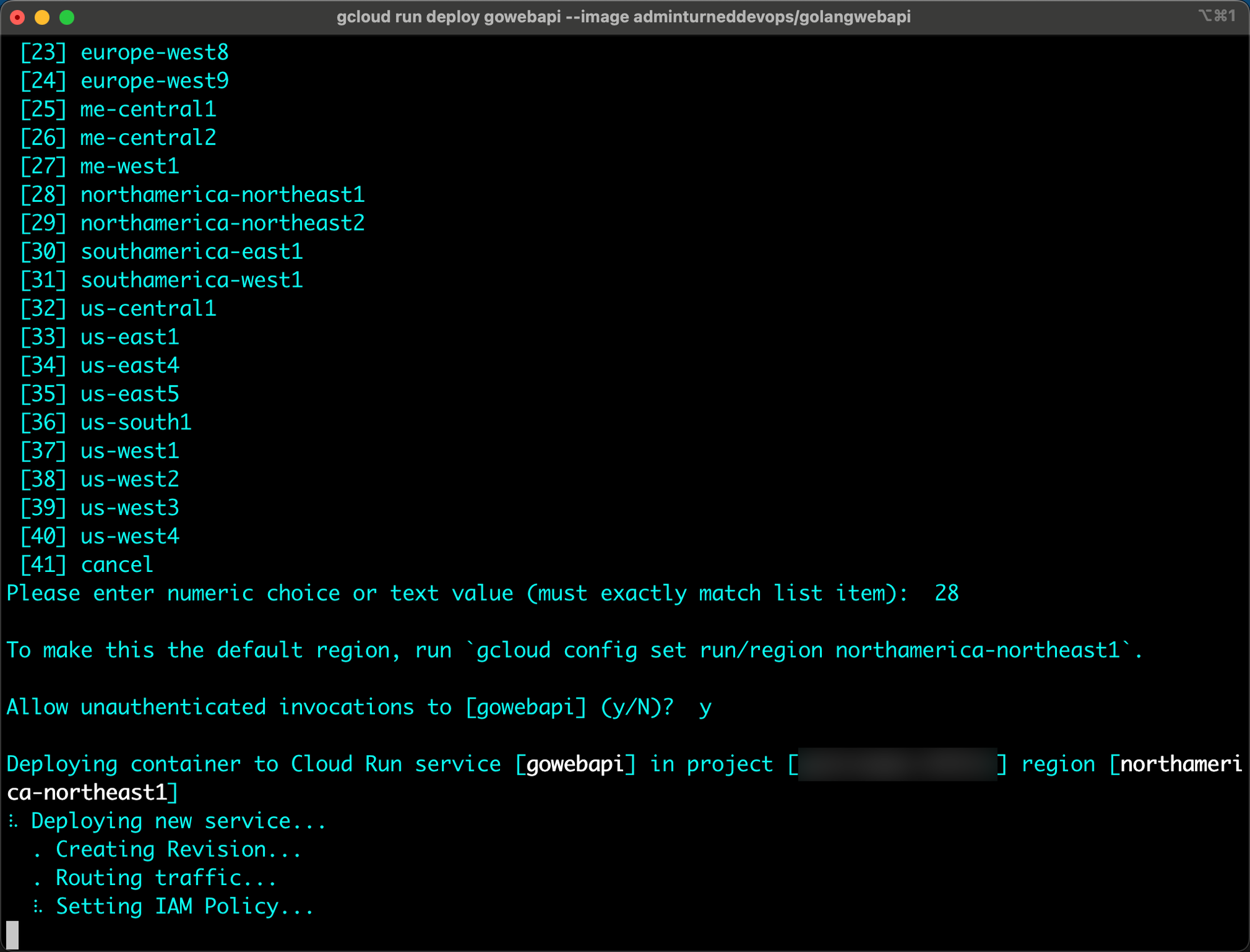

Aside from using the GCP console, you can use the `gcloud` CLI to deploy a container in Cloud Run.

<aside>

💡 Both GCP Cloud Run and Kubernetes are declarative in nature and use YAML configurations. You can go into the YAML of a container deployed and re-configure it if you’d like.

</aside>

```jsx

gcloud run deploy gowebapi --image adminturneddevops/golangwebapi

```

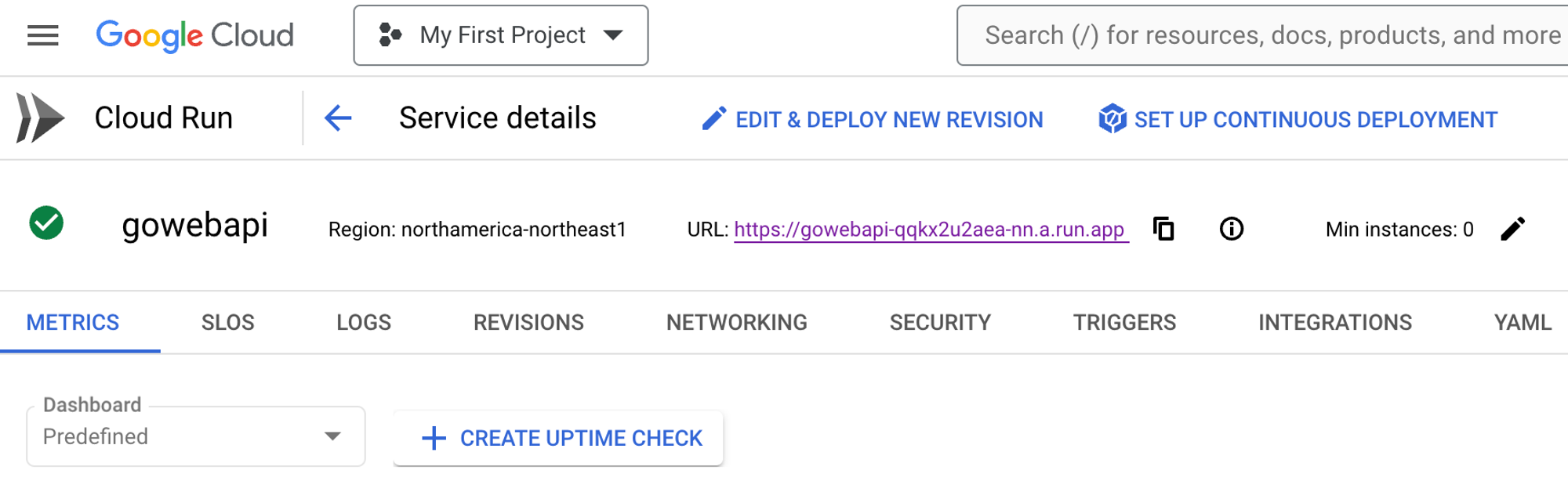

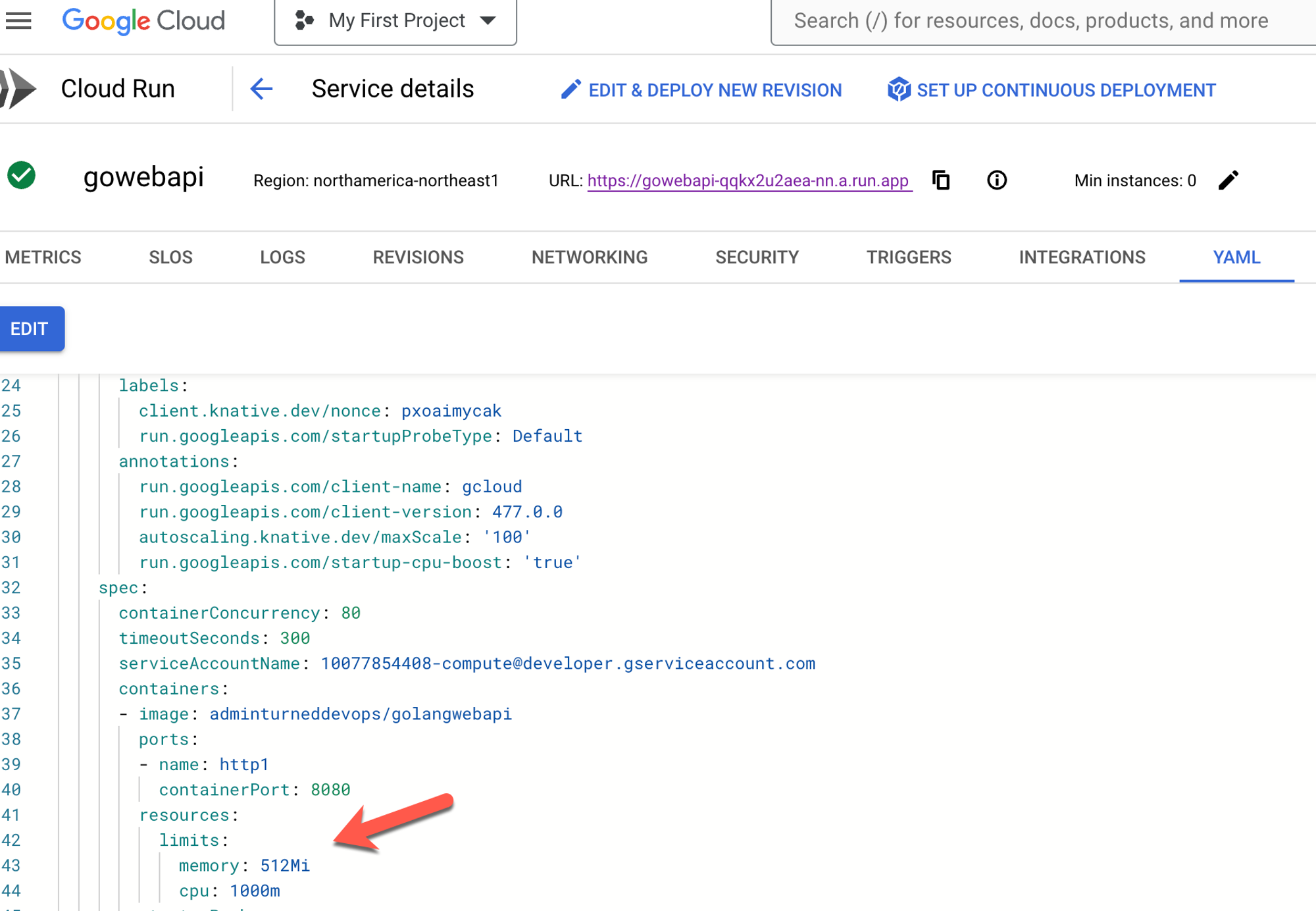

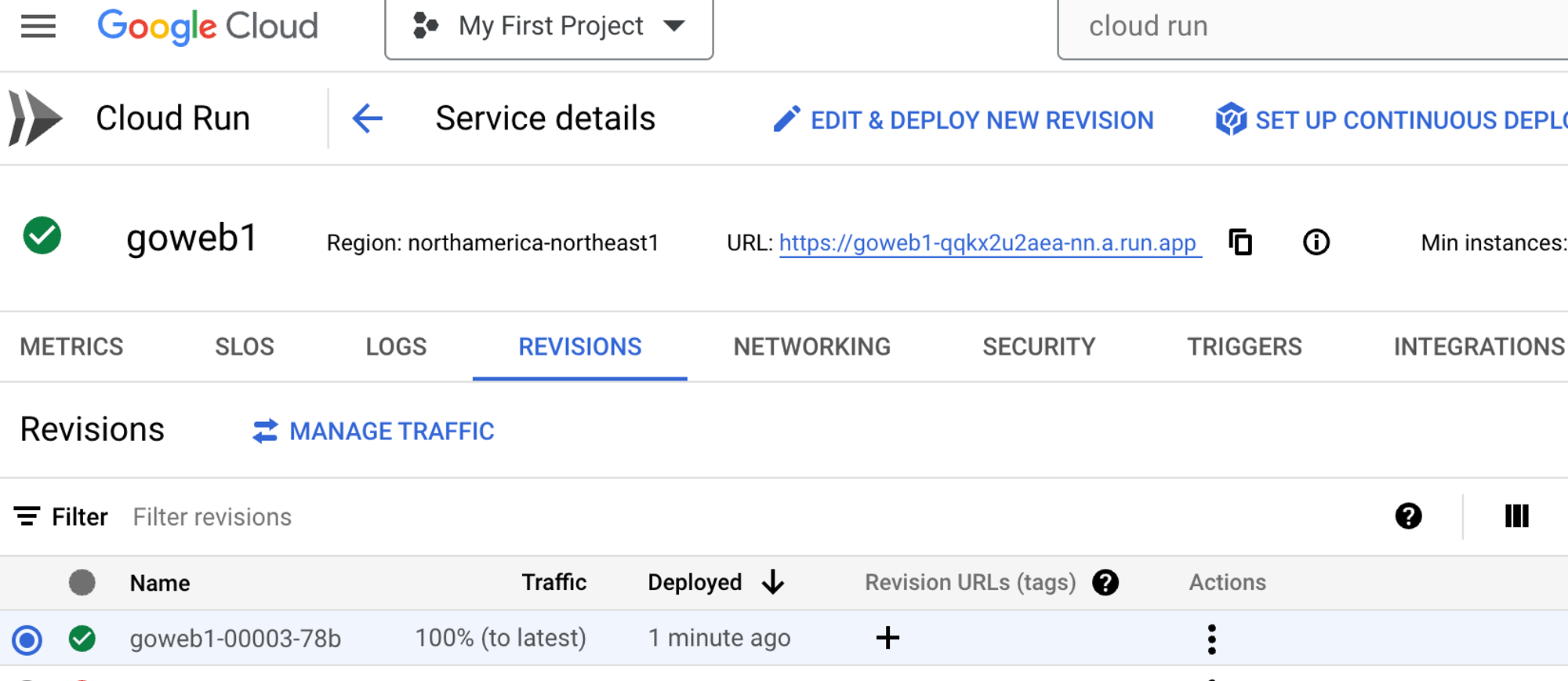

Once the container is deployed, you can go in and edit it via the blue **EDIT & DEPLOY NEW REVISION** button.

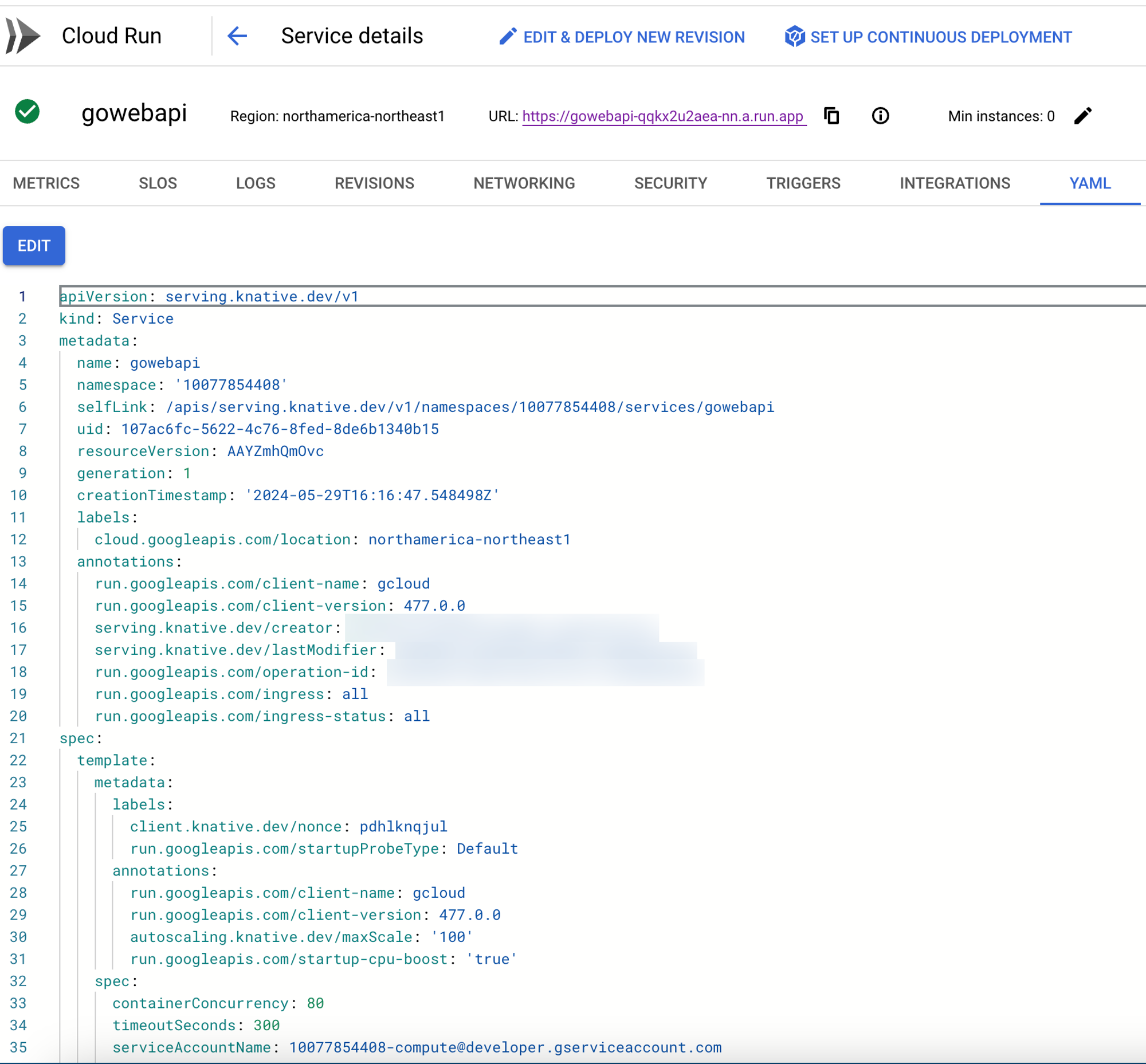

If you click the YAML button, you can edit your container configuration.

<aside>

💡 On the backend of a Cloud Run service, it’s using Knative, which is the method of running Serverless workloads on Kubernetes. One could say that Cloud Run is using Kubernetes on the backend.

</aside>

## Resource Optimization On Kubernetes

When it comes to ensuring that Pods are running as performant and efficiently as possible, engineers must ensure to implement resource optimization.

There are a few methods for resource/performance optimization on Kubernetes.

First, there are ResourceQuotas. ResourceQuotas are a way to set limits for memory and CPU on a Namespace. When using ResourceQuotas, Namespaces are only allowed X amount of CPU and X amount of CPU.

```jsx

apiVersion: v1

kind: ResourceQuota

metadata:

name: memorylimit

namespace: test

spec:

hard:

requests.memory: 512Mi

limits.memory: 1000Mi

```

```jsx

apiVersion: v1

kind: ResourceQuota

metadata:

name: memorylimit

namespace: test

spec:

hard:

cpu: "5"

memory: 10Gi

pods: "10"

```

You can also set resource requests and limits within the Deployment/Pod configuration itself.

```jsx

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

spec:

selector:

matchLabels:

app: nginxdeployment

replicas: 2

template:

metadata:

namespace: webapp

labels:

app: nginxdeployment

spec:

containers:

- name: nginxdeployment

image: nginx:latest

resources:

requests:

memory: "64Mi"

cpu: "250m"

limits:

memory: "128Mi"

ports:

- containerPort: 80

```

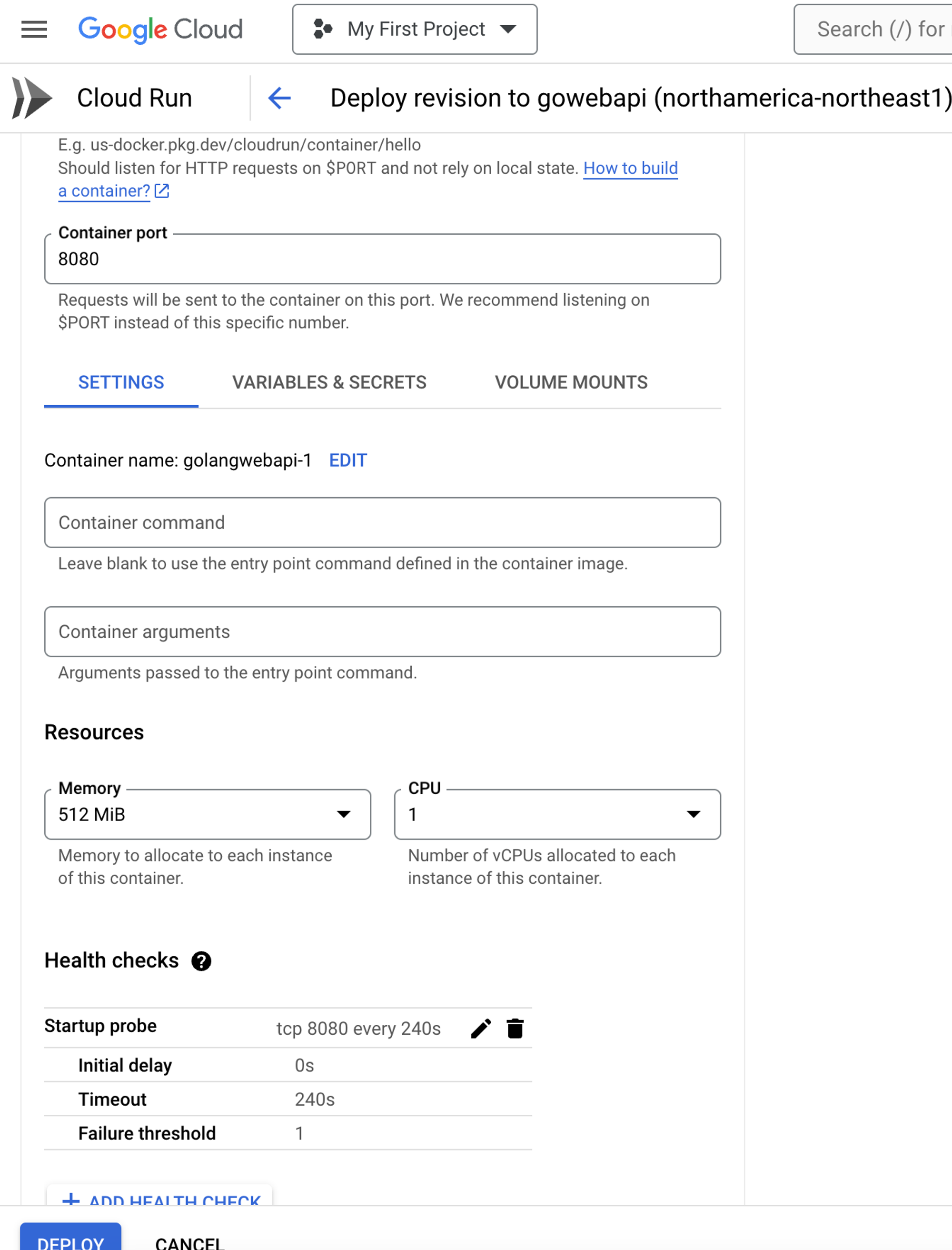

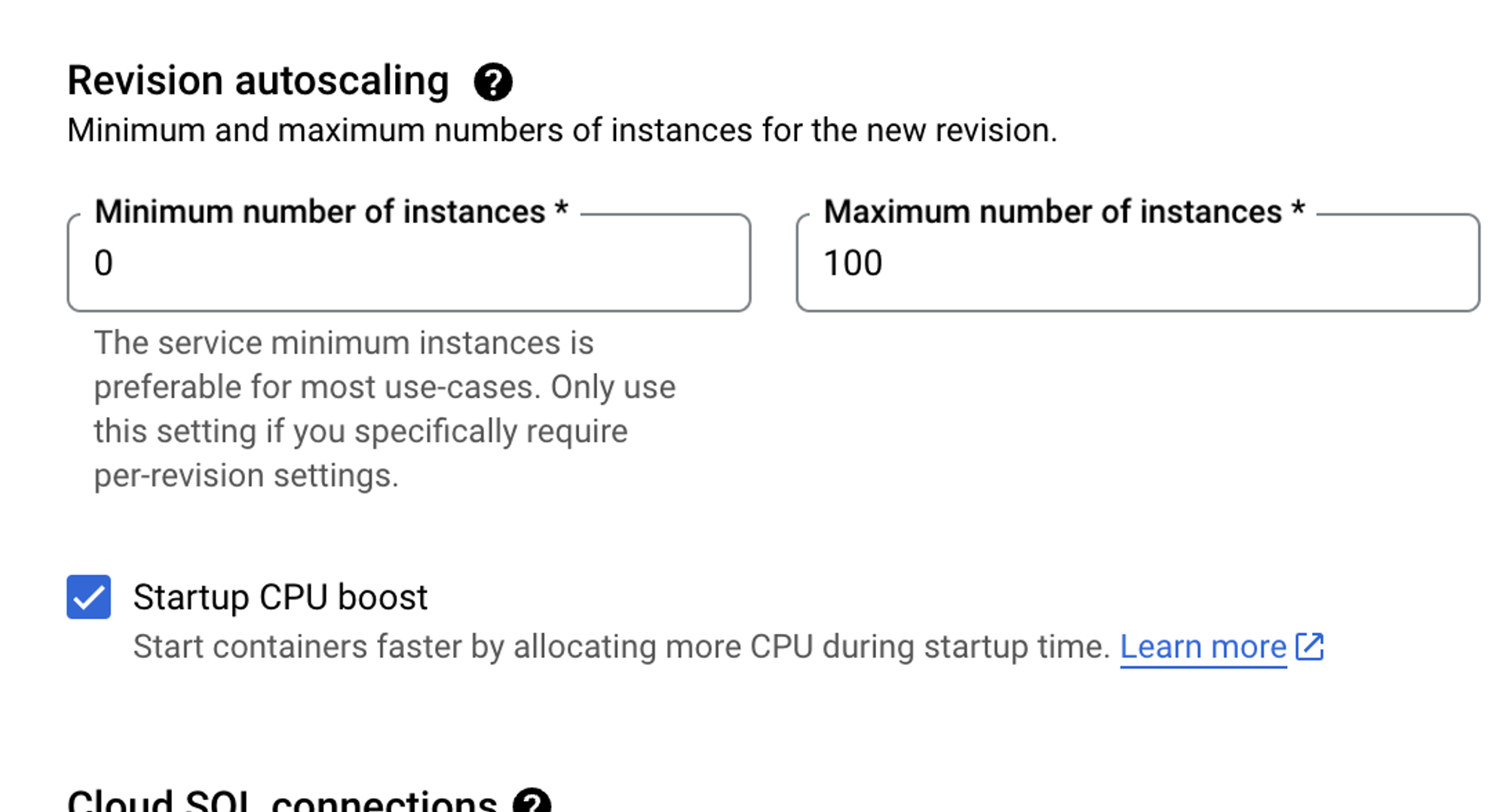

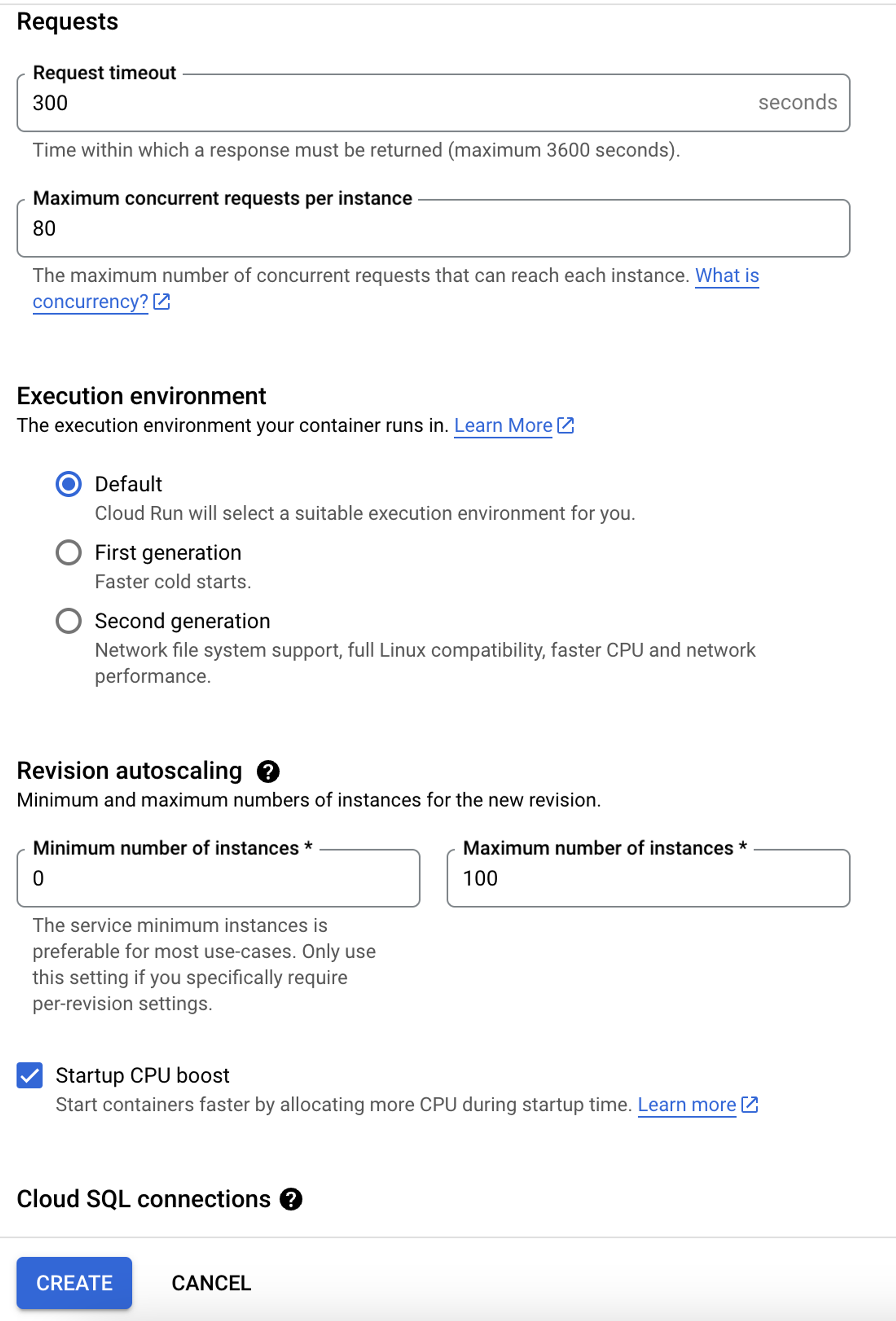

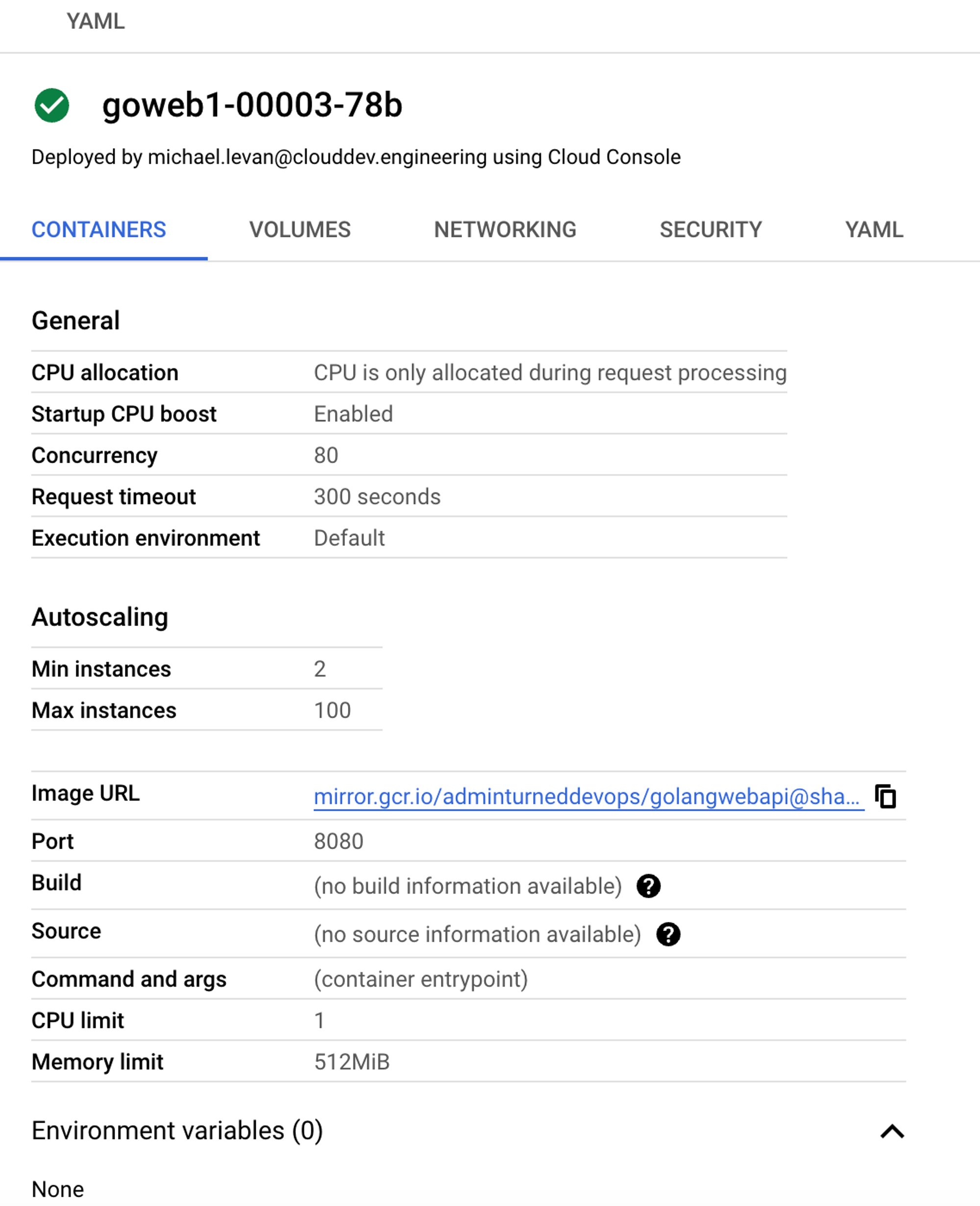

## Resource Optimization On Cloud Run

Within Cloud Run, you can manage resources from a CPU and memory perspective just like you can within Kubernetes.

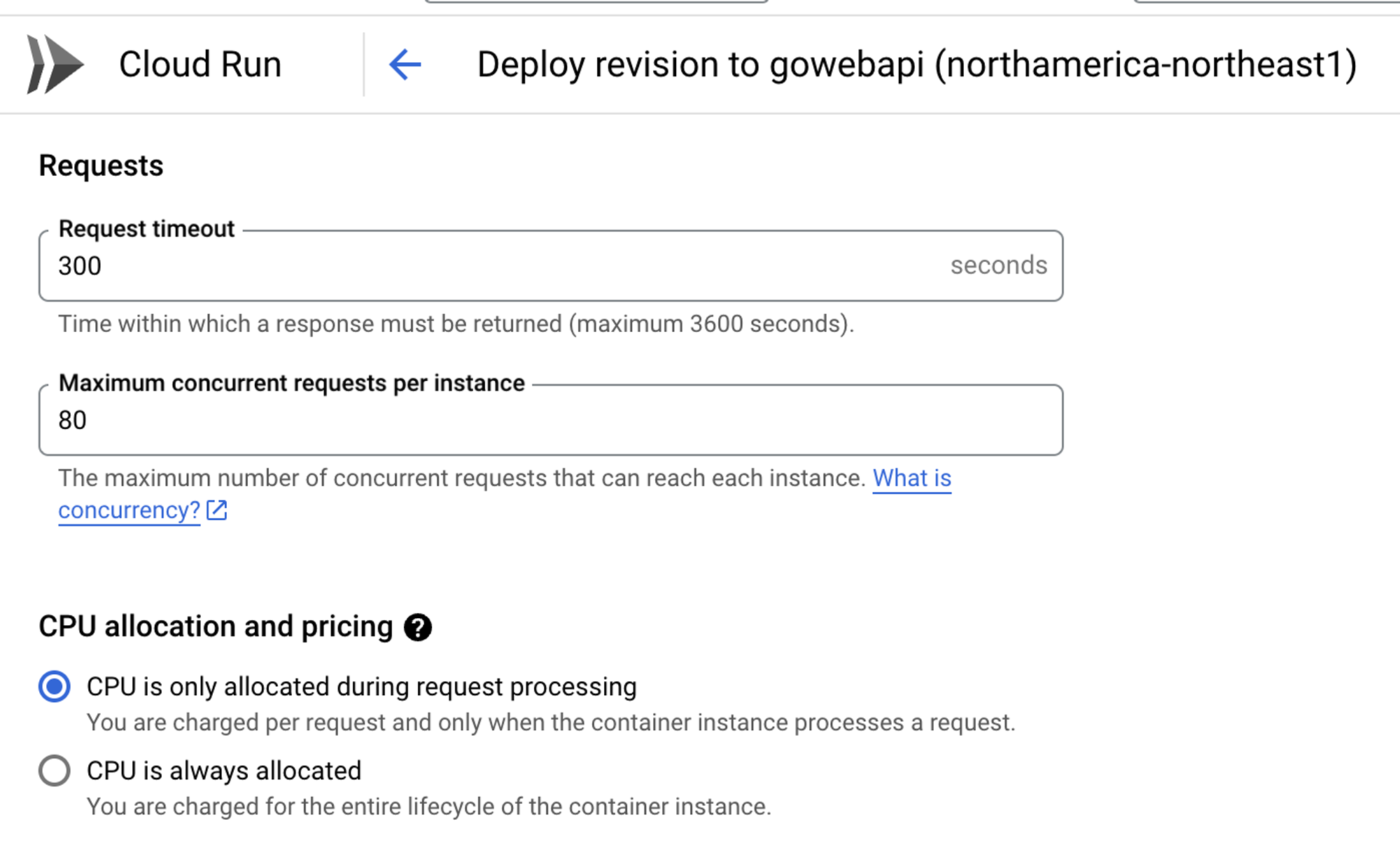

You can also set up requests and CPU allocation, which helps if you want to implement cost optimization (FinOps).

From an autoscaling perspective, Kubernetes has HPA and VPA. Cloud Run allows you to scale out instances just like you can scale out Pods with HPA.

If you don’t want to use button clicks, you can also edit the YAML configuration for resource optimization.

## Closing Thoughts

When you’re deciding on Kubernetes vs a “serverless container” solution like GCP Cloud Run, the biggest things you need to think about are:

1. Do you need a multi-orchestration solution? Go with Kubernetes.

2. Does your organization have the capability for engineers to train on Kubernetes?

3. Do you have a smaller application stack to manage? If so, go with Cloud Run.

Overall, the concept of what Kubernetes does (orchestrate containers) and what Cloud Run does (orchestrate containers) is the same, but the key difference is how large the workloads are. Cloud Run is great for truly decoupled workloads and Kubernetes is great for very large containers at scale. | thenjdevopsguy |

1,869,398 | Receiptify | Receiptify transforms Spotify playlists into stunning visual receipts, revolutionizing how music... | 0 | 2024-05-29T16:30:34 | https://dev.to/receiptify_life_a55cf39a1/receiptify-43nf | [Receiptify](https://receiptify.life/) transforms Spotify playlists into stunning visual receipts, revolutionizing how music lovers explore their listening habits. | receiptify_life_a55cf39a1 | |

1,869,396 | How to Get Best out of Electic Store Billing | As a Experienced person whom have experience in Electical Stroe billing I know these best things... | 0 | 2024-05-29T16:29:57 | https://dev.to/sabrina_spellmen_02c6c3a1/how-to-get-best-out-of-electic-store-billing-4gm6 | As a Experienced person whom have experience in Electical Stroe billing I know these best things

LED Lighting

Wiring and switch circuits

acessiories

We encountered with a client whom had to do wiring for his newly built Flats and mension , he was already dealing with some sort of fraudlant technician whom never had idea about enterprise Electirc Cicutit system integration .

We started from very inital consultation where we fully educated the client about our experience and what will be best solution for them .

after that we provided them simulated solution on MultiSim containg exact bule print in 3D form how his furnished Flats will look like after we will do the wiring part from ourside . this thing lowered the client headche 100% and he was so relaxed that he even futhrer extended our contract for other buildings too .

| sabrina_spellmen_02c6c3a1 | |

1,869,395 | 5 Crucial Considerations for Selecting SIT Testing | Software Integration Testing (SIT) is one of the important phases in software life cycle... | 0 | 2024-05-29T16:29:05 | https://www.laventino.com/5-crucial-considerations-for-selecting-sit-testing/ | sit, testing |

Software Integration Testing (SIT) is one of the important phases in software life cycle development. SIT involves testing that software should seamlessly integrate with other application modules or components. The importance of SIT cannot be emphasized as software programs get more sophisticated and have several interconnected components. Choosing the appropriate methodology and [SIT Testing](https://www.opkey.com/blog/system-integration-testing-the-comprehensive-guide-with-challenges-and-best-practices

) tool is essential to developing a dependable, high-quality product. This blog will look at five important things to think about while choosing SIT testing.

**1.Defining Integration Testing Scope and Objectives**

Establishing the scope and goals of the testing endeavor is essential before starting the SIT process. This entails determining which particular modules or components need integration testing in addition to which crucial interfaces and capabilities need to be verified. In this sense, it is imperative to establish a thorough understanding of the system architecture and the interdependencies between various components.

**2.Selecting an Appropriate Testing Approach**

Selecting the appropriate testing strategy is essential for a successful SIT. While all components are integrated simultaneously using the big bang approach, defect causes may be hidden. Top-down testing is appropriate for projects with reliable top-level modules since it begins with higher-level components and integrates lower-level components later. On the other hand, bottom-up testing, which works best when the basic components are reliable, starts with lower levels and gradually integrates up. Top-down and bottom-up strategies are flexibly combined in the hybrid method, which adjusts to the needs of the project.

**3.Leveraging Automation for Efficiency and Consistency**

Particularly for large-scale applications with several components and interfaces, SIT can be a labor- and time-intensive procedure. Automation tools and frameworks are recommended in order to improve productivity and guarantee consistent testing. Continuous integration and delivery pipelines are made easier by automated testing, which also lowers the possibility of human error and speeds up test case execution.

**4.Considering Testing Data and Environment Setup**

Access to relevant test data and a properly designed testing environment that faithfully mimics the production environment are prerequisites for effective SIT. To validate the integration of components and detect possible problems with data flow, transformation, and integrity, it is essential to guarantee the availability of realistic and representative test data. Accurate and trustworthy test results also depend on the testing environment being configured to closely resemble the production environment.

**5.Collaboration and Communication Among Teams**

Multiple teams, comprising developers, testers, and stakeholders from different domains, are frequently involved in SIT. Clear lines of communication and efficient teamwork are essential to a successful SIT process. Frequent get-togethers, knowledge-sharing events, and uniform reporting procedures can all promote teamwork and speed up the process of resolving problems or obstacles. Furthermore, it is possible to avoid effort duplication and guarantee accountability during the testing process by clearly defining roles and duties for every team member.

**Conclusion**

Selecting the best SIT methodology is an important choice that can have a big impact on the software applications’ dependability, quality, and timely delivery. Opkey’s state-of-the-art technology transforms System Integration Testing (SIT). [Opkey ](https://www.opkey.com/)guarantees smooth integration and synchronization between heterogeneous systems by automating end-to-end data testing throughout the ecosystem. With Opkey’s cutting-edge tools, integrating NetSuite with Shopify or any other complicated setup becomes simple, ensuring data integrity and seamless business operations. Opkey gives companies the confidence to launch integrations with the knowledge that their systems are harmoniously coupled, enabling them to prosper in the current digital landscape

| rohitbhandari102 |