id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,869,028 | React state is being shared across iframes? | We have a next.js app that we normally run by embedding it in an iframe on a third-party website. It... | 0 | 2024-05-29T13:30:44 | https://dev.to/ldorigo/state-is-being-shared-across-iframes-56a9 | webdev, nextjs, react, javascript | We have a next.js app that we normally run by embedding it in an iframe on a third-party website. It uses react and react-query for state management. For a specific usecase, we wanted to run two instances of our app on the same website, and noticed very weird behavior: the state of the two apps is getting jumbled together, and state changes in one iframe *sometimes* (not always) result in a state change in the other iframe. I wish I could give more details on when/how it happens, but I'm pretty confused by the issue and haven't been able to create a minimal reproducible example. Hopefully someone has an idea to help me troubleshoot? | ldorigo |

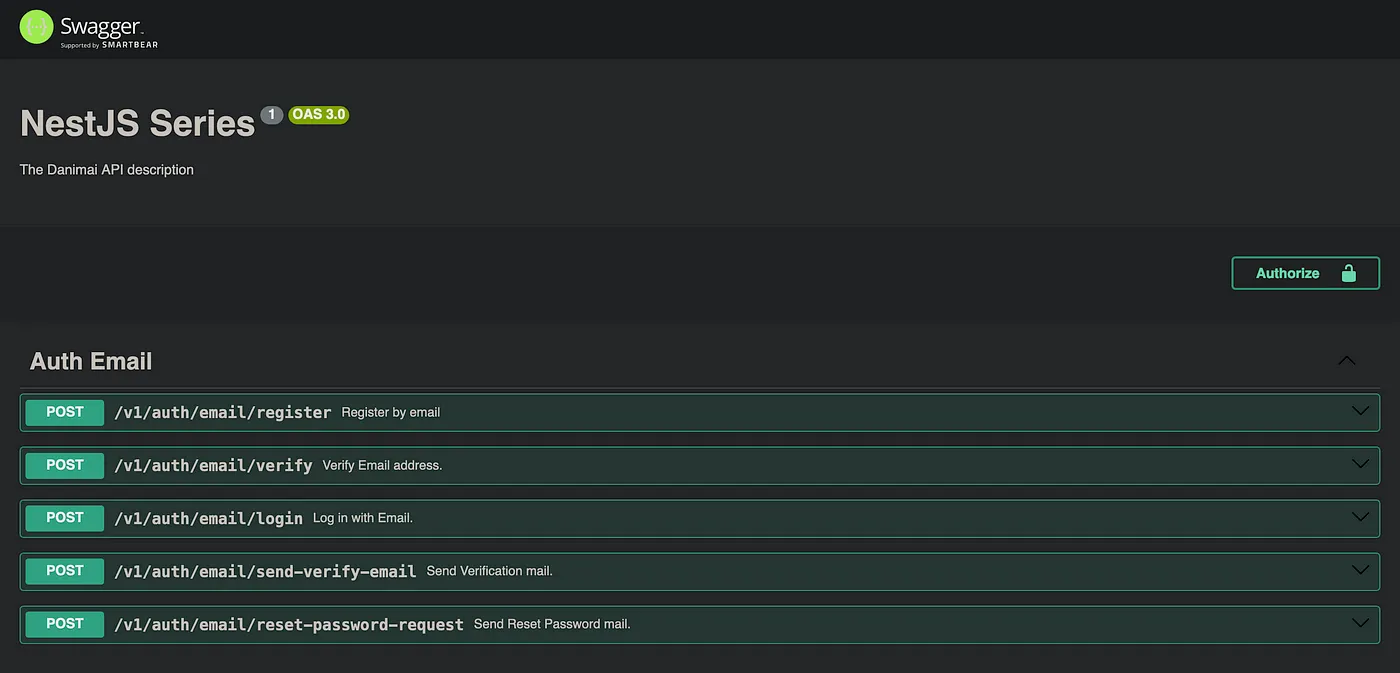

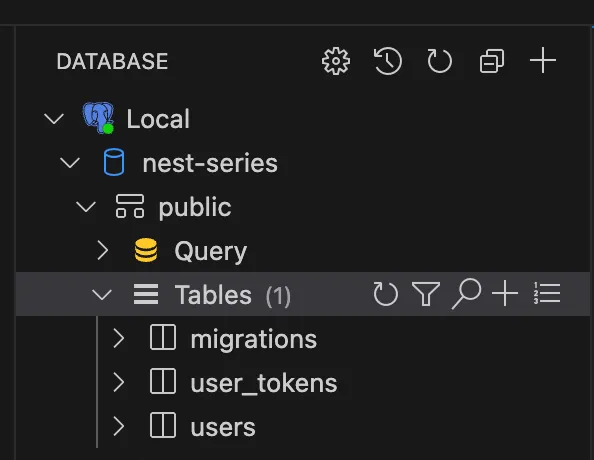

1,869,026 | Auth Service with JWT token and mail module, Part 2 | Love to work with you, You can hire me on Upwork. We have looked into email and auth service now is... | 27,543 | 2024-05-29T13:26:50 | https://dev.to/depak379mandal/auth-service-with-jwt-token-and-mail-module-part-2-1i0f | Love to work with you, You can hire me on [Upwork](https://www.upwork.com/freelancers/~011463d96b5b87d7ff).

We have looked into email and auth service now is the time to dive into mail and user module. Let me give you what is structure of mail module we have

```bash

src/modules/mail

├── mail.interface.ts

├── mailerConfig.service.ts

└── templates

└── auth

└── registration.hbs

```

`mail.interface.ts` is a simple file that does include required interface for mail in system. Mail module does contain our email templates in templates folder, and we got mailerConfig.service.ts for mail module to be used as config factory. Let me just paste them one by one.

```typescript

// src/modules/mail/mail.interface.ts

export interface MailData<T = never> {

to: string;

data: T;

}

```

```html

// src/modules/mail/templates/auth/registration.hbs

<html lang='en'>

<head>

<meta charset='UTF-8' />

<meta name='viewport' content='width=device-width, initial-scale=' />

<title>{{title}}</title>

</head>

<body style='margin:0;font-family:arial'>

<table style='border:0;width:100%'>

<tr style='background:#eeeeee'>

<td

style='padding:20px;color:#808080;text-align:center;font-size:40px;font-weight:600'

>

{{app_name}}

</td>

</tr>

<tr>

<td style='padding:20px;color:#808080;font-size:16px;font-weight:100'>

Thank You for registration, Please verify to activate account.<br />

</td>

</tr>

<tr>

<td style='text-align:center'>

<a

href='{{url}}'

style='display:inline-block;padding:20px;background:#00838f;text-decoration:none;color:#ffffff'

>{{actionTitle}}</a>

</td>

</tr>

</table>

</body>

</html>

```

```typescript

// src/modules/mail/mailConfig.service.ts

import { MailerOptions, MailerOptionsFactory } from '@nestjs-modules/mailer';

import { Injectable } from '@nestjs/common';

import { ConfigService } from '@nestjs/config';

import { HandlebarsAdapter } from '@nestjs-modules/mailer/dist/adapters/handlebars.adapter';

import * as path from 'path';

@Injectable()

export class MailerConfigClass implements MailerOptionsFactory {

constructor(private configService: ConfigService) {}

createMailerOptions(): MailerOptions {

return {

transport: {

host: this.configService.get('mail.host'),

port: this.configService.get('mail.port'),

ignoreTLS: this.configService.get('mail.ignoreTLS'),

secure: this.configService.get('mail.secure'),

requireTLS: this.configService.get('mail.requireTLS'),

auth: {

user: this.configService.get('mail.user'),

pass: this.configService.get('mail.password'),

},

},

defaults: {

from: `"${this.configService.get(

'mail.defaultName',

)}" <${this.configService.get('mail.defaultEmail')}>`,

},

template: {

dir: path.join(

this.configService.get('app.workingDirectory'),

'src',

'modules',

'mail',

'templates',

),

adapter: new HandlebarsAdapter(),

options: {

strict: true,

},

},

};

}

}

```

In above, we are using handlebars as our templating engine that get included with our installed module for mailer. Above does our all setup so we can use mailer config and use the MailerService everywhere as our MailerModule is global module and exports MailerService, So it is available everywhere as ConfigService. Now we can move towards AppModule where we are importing AuthModule, MailerModule and UserModule. We are going to discuss more in UserModule.

```typescript

// src/modules/app/app.module.ts

import { Module } from '@nestjs/common';

import { ConfigModule } from '@nestjs/config';

import { configLoads } from '../config';

import { TypeOrmModule } from '@nestjs/typeorm';

import { TypeORMConfigFactory } from '../database/typeorm.factory';

import { AuthModule } from '../auth/auth.module';

import { UserModule } from '../user/user.module';

import { MailerModule } from '@nestjs-modules/mailer';

import { MailerConfigClass } from '../mail/mailerConfig.service';

const modules = [AuthModule, UserModule];

export const global_modules = [

...

MailerModule.forRootAsync({

useClass: MailerConfigClass,

}),

];

@Module({

imports: [...global_modules, ...modules],

})

export class AppModule {}

```

In `src/modules/config/app.config.ts` the file, we have included new variable

```bash

frontendDomain: process.env.FRONTEND_DOMAIN,

```

same goes for src/modules/config/mail.config.ts we have added new config variables

```typescript

// src/modules/config/mail.config.ts

import { registerAs } from '@nestjs/config';

export default registerAs('mail', () => ({

port: parseInt(process.env.MAIL_PORT, 10),

host: process.env.MAIL_HOST,

user: process.env.MAIL_USER,

password: process.env.MAIL_PASSWORD,

defaultEmail: process.env.MAIL_DEFAULT_EMAIL,

defaultName: process.env.MAIL_DEFAULT_NAME,

ignoreTLS: process.env.MAIL_IGNORE_TLS === 'true',

secure: process.env.MAIL_SECURE === 'true',

requireTLS: process.env.MAIL_REQUIRE_TLS === 'true',

}));

```

We are now ready to move into UserModule, we have seen all the essential parts that needed. Just last part to complete all those APIs that will start working.

```typescript

// src/modules/user/services/token.service.ts

import { Injectable } from '@nestjs/common';

import { InjectRepository } from '@nestjs/typeorm';

import { User } from 'src/entities/user.entity';

import { Token, TokenType } from 'src/entities/user_token.entity';

import { Repository } from 'typeorm';

@Injectable()

export class TokenService {

constructor(

@InjectRepository(Token)

private readonly tokenRepository: Repository<Token>,

) {}

async create(

user: User,

type: keyof typeof TokenType = 'REGISTER_VERIFY',

expires_at: Date = new Date(Date.now() + 1000 * 60 * 60 * 24 * 7),

) {

const token = Token.create({

user_id: user.id,

type: TokenType[type],

expires_at,

});

return this.tokenRepository.save(token);

}

async verify(token: string, type: keyof typeof TokenType) {

const tokenEntity = await this.tokenRepository.findOne({

relations: ['user'],

loadEagerRelations: true,

where: { token, type: TokenType[type], is_used: false },

});

if (!tokenEntity) {

throw new Error('Token not found');

}

if (tokenEntity.expires_at < new Date()) {

throw new Error('Token expired');

}

tokenEntity.is_used = true;

await tokenEntity.save();

return tokenEntity.user;

}

}

```

Very explanatory in terms of program, one function to create token and another to verify. The token expires in a week, But you can see that expires_at is very dynamic and can be changed after passed as third argument. Now, time for UserService that does nothing but creates a user.

```typescript

// src/modules/user/services/user.service.ts

import { Injectable } from '@nestjs/common';

import { InjectRepository } from '@nestjs/typeorm';

import { Repository } from 'typeorm';

import { User } from 'src/entities/user.entity';

import { RegisterDto } from 'src/modules/auth/email.dto';

@Injectable()

export class UserService {

constructor(

@InjectRepository(User)

private userRepository: Repository<User>,

) {}

async create(userCreateDto: RegisterDto) {

const user = User.create({ ...userCreateDto });

return this.userRepository.save(user);

}

}

```

At the end, we just import them as providers and exports them to be used in other modules.

```typescript

// src/modules/user/user.module.ts

import { Module } from '@nestjs/common';

import { UserService } from './services/user.service';

import { TokenService } from './services/token.service';

import { Token } from 'src/entities/user_token.entity';

import { User } from 'src/entities/user.entity';

import { TypeOrmModule } from '@nestjs/typeorm';

@Module({

imports: [TypeOrmModule.forFeature([User, Token])],

providers: [UserService, TokenService],

exports: [UserService, TokenService],

})

export class UserModule {}

```

Now we can open our terminal and run the npm run start:dev command and see our output. But even before everything, we need a logger that logs our request and response. To do that, we have morgan we can install it using npm i morgan and types by npm i -D @types/morgan. That can be included in createApplication in bootstrap file.

```typescript

// src/utils/bootstrap.ts

import * as morgan from 'morgan';

export const createApplication = (app: INestApplication) => {

...

app.use(morgan('dev'));

return app;

};

```

Now it will give us more insight on certain API calls. So after running npm run start:dev. We get something like below that loads all the modules with mapped routes.

```bash

[3:14:58 AM] File change detected. Starting incremental compilation...

[3:14:58 AM] Found 0 errors. Watching for file changes.

Debugger attached.

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [NestFactory] Starting Nest application...

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [InstanceLoader] AppModule dependencies initialized +27ms

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [InstanceLoader] TypeOrmModule dependencies initialized +0ms

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [InstanceLoader] MailerModule dependencies initialized +1ms

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [InstanceLoader] ConfigHostModule dependencies initialized +0ms

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [InstanceLoader] ConfigModule dependencies initialized +11ms

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [InstanceLoader] JwtModule dependencies initialized +5ms

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [InstanceLoader] MailerCoreModule dependencies initialized +0ms

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [InstanceLoader] TypeOrmCoreModule dependencies initialized +71ms

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [InstanceLoader] TypeOrmModule dependencies initialized +0ms

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [InstanceLoader] TypeOrmModule dependencies initialized +0ms

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [InstanceLoader] UserModule dependencies initialized +0ms

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [InstanceLoader] AuthModule dependencies initialized +1ms

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [RoutesResolver] EmailController {/auth/email} (version: 1): +14ms

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [RouterExplorer] Mapped {/auth/email/register, POST} (version: 1) route +2ms

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [RouterExplorer] Mapped {/auth/email/verify, POST} (version: 1) route +0ms

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [RouterExplorer] Mapped {/auth/email/login, POST} (version: 1) route +0ms

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [RouterExplorer] Mapped {/auth/email/send-verify-email, POST} (version: 1) route +1ms

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [RouterExplorer] Mapped {/auth/email/reset-password-request, POST} (version: 1) route +0ms

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [RouterExplorer] Mapped {/auth/email/reset-password, POST} (version: 1) route +0ms

[Nest] 78996 - 04/09/2024, 3:15:01 AM LOG [NestApplication] Nest application successfully started +3ms

```

And above that we can test the routes, I am using mailtrap.io as mail testing platform. You can just go with anything you like. Update .env with below provided sample

```ini

NODE_ENV=development

APP_PORT=8000

APP_NAME="NestJS Series"

FRONTEND_DOMAIN=https://example.com

# Database Configuration

DATABASE_URL=postgresql://danimai:Danimai@localhost:5432/nest-series

# Mail confgiuration

MAIL_HOST=

MAIL_PORT=2525

MAIL_USER=

MAIL_PASSWORD=

MAIL_IGNORE_TLS=true

MAIL_SECURE=false

MAIL_REQUIRE_TLS=false

MAIL_DEFAULT_EMAIL=noreply@example.com

MAIL_DEFAULT_NAME=Danimai

# JWT

AUTH_JWT_SECRET=random-gibberish-token-for-jwt

AUTH_JWT_TOKEN_EXPIRES_IN=90d

```

So I have completed my testing and works very fine for my side. If having any issues on your side let me know in further, We also have comment section to discuss and get into more depth. In the next tutorial, we will be learning how to update User profile and Authorisation guard.

Thank you very much for reading, See you in the next article. | depak379mandal | |

1,868,993 | Auth Service with JWT token and mail module, Part 1 | Love to work with you, You can hire me on Upwork. Now we have to go with big changes and setup, We... | 27,543 | 2024-05-29T13:18:47 | https://dev.to/depak379mandal/auth-service-with-jwt-token-and-mail-module-part-1-eh4 | Love to work with you, You can hire me on [Upwork](https://www.upwork.com/freelancers/~011463d96b5b87d7ff).

Now we have to go with big changes and setup, We need more of base for basic libraries like mail, JWT and bcrypt. So let me describe more on the whole scenario with flowchart and some textual context. We are going to add more complex ideas in future like refresh and access token with more complex media upload.

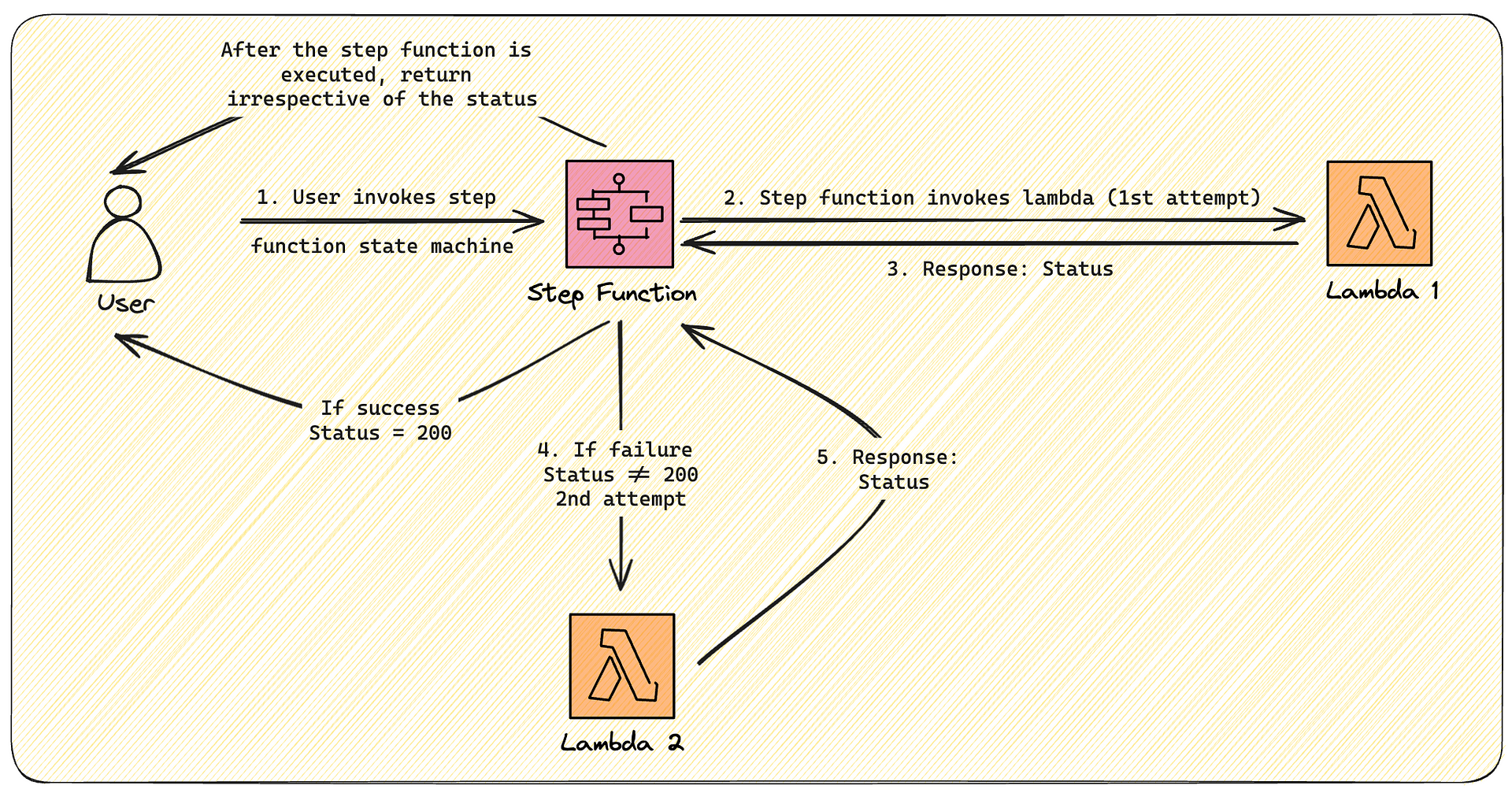

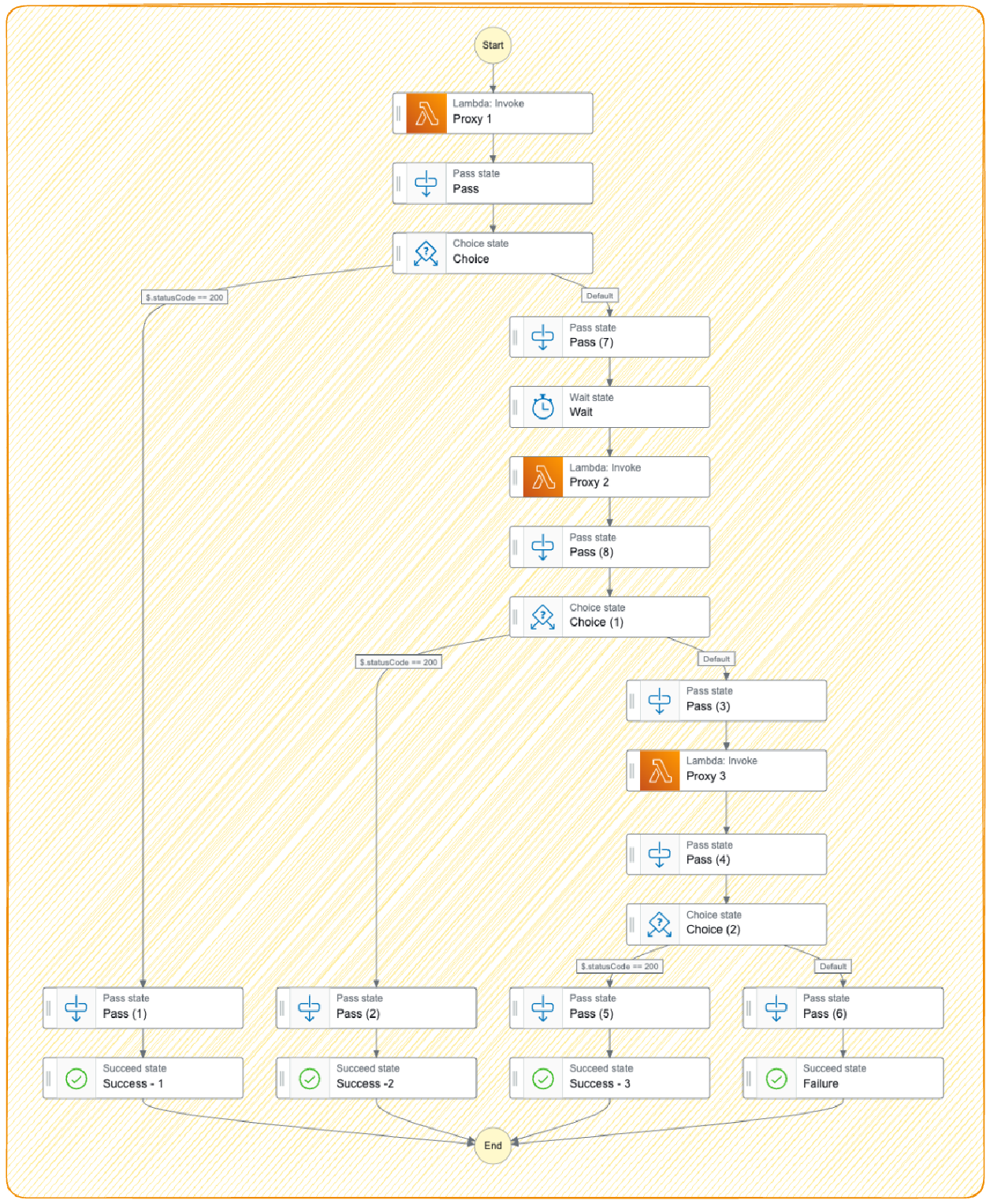

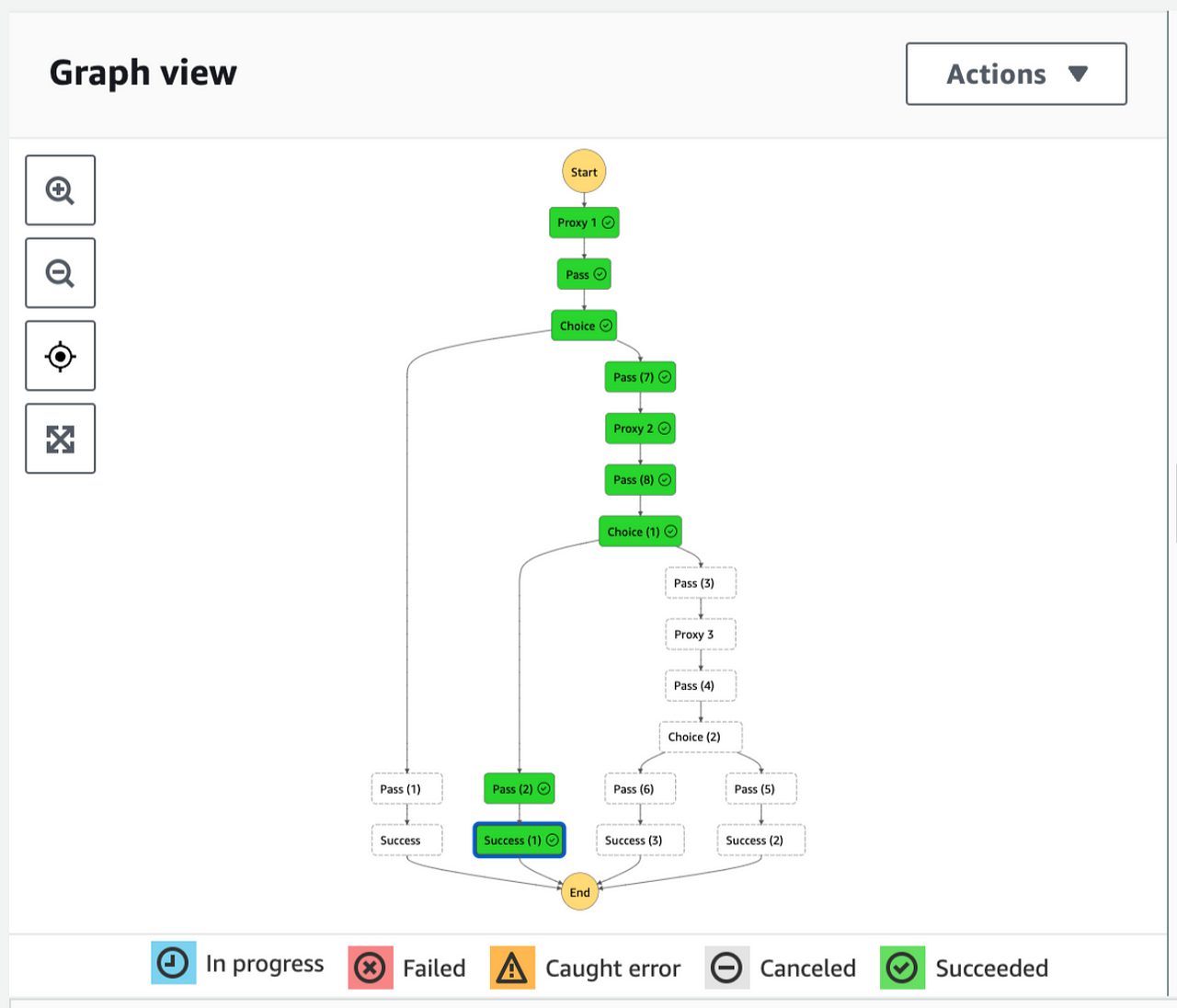

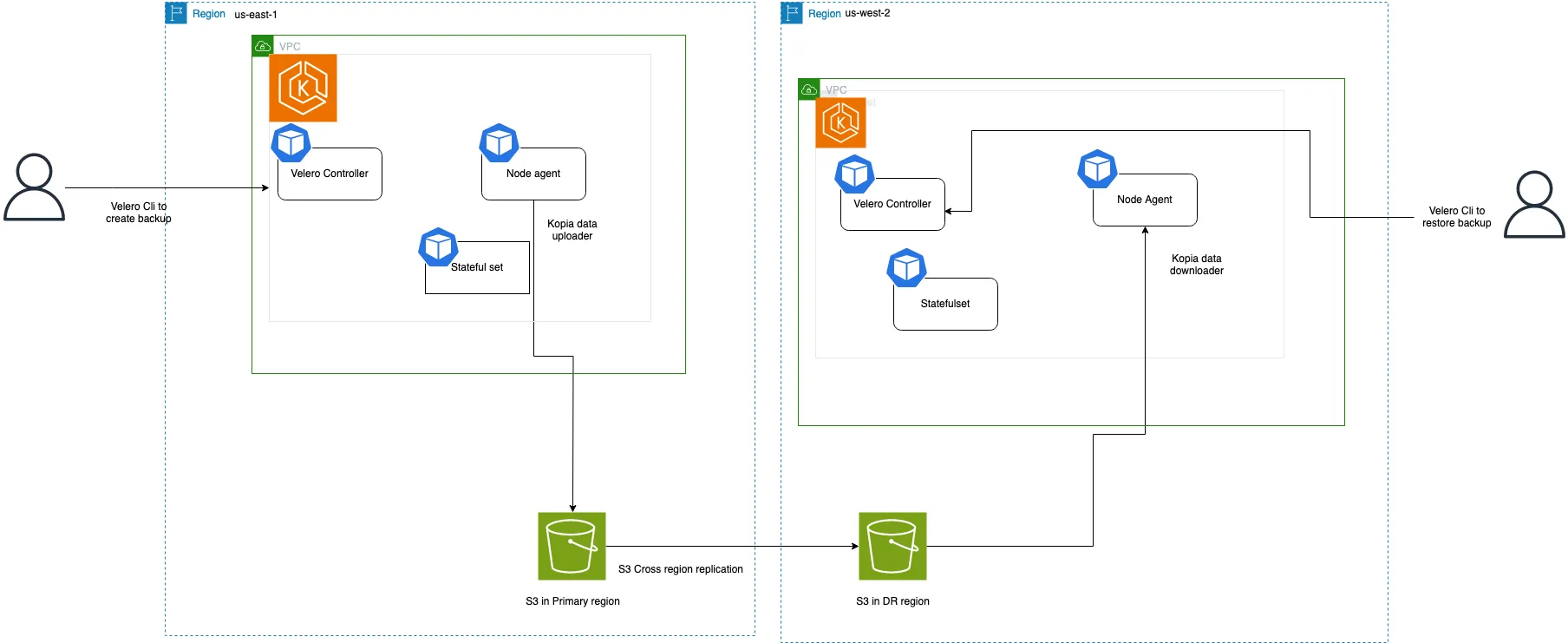

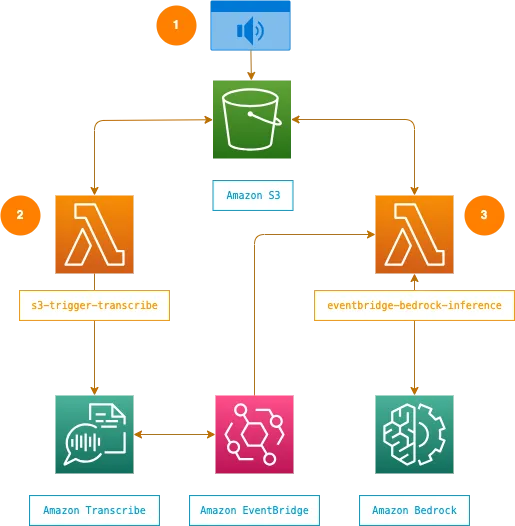

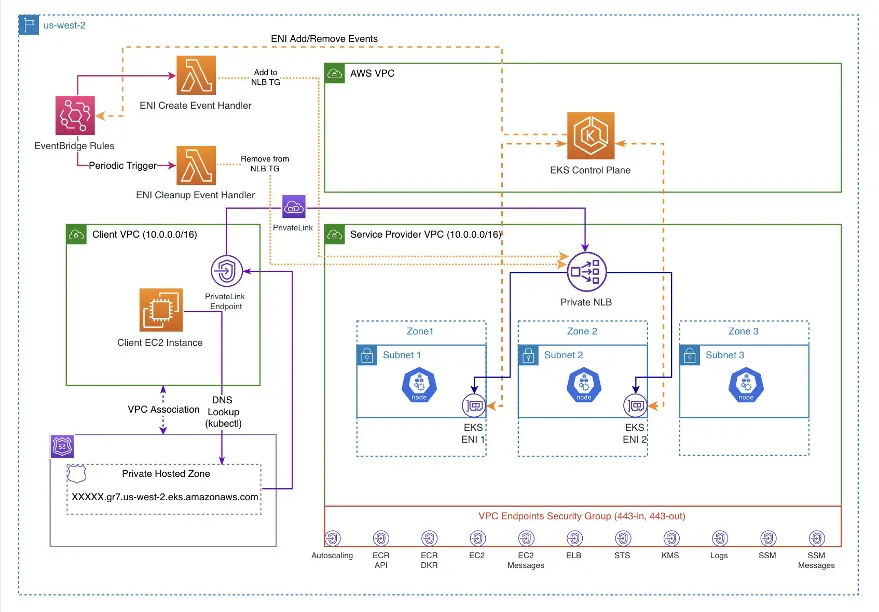

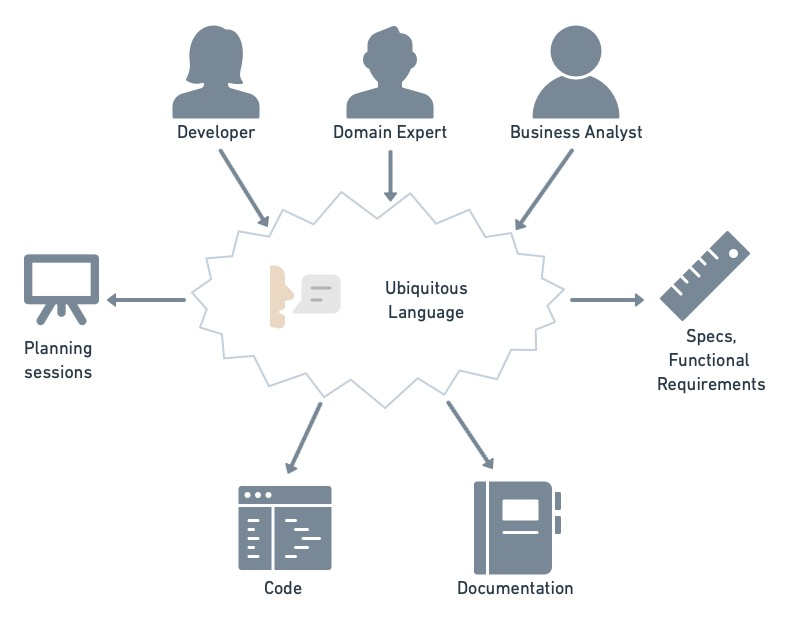

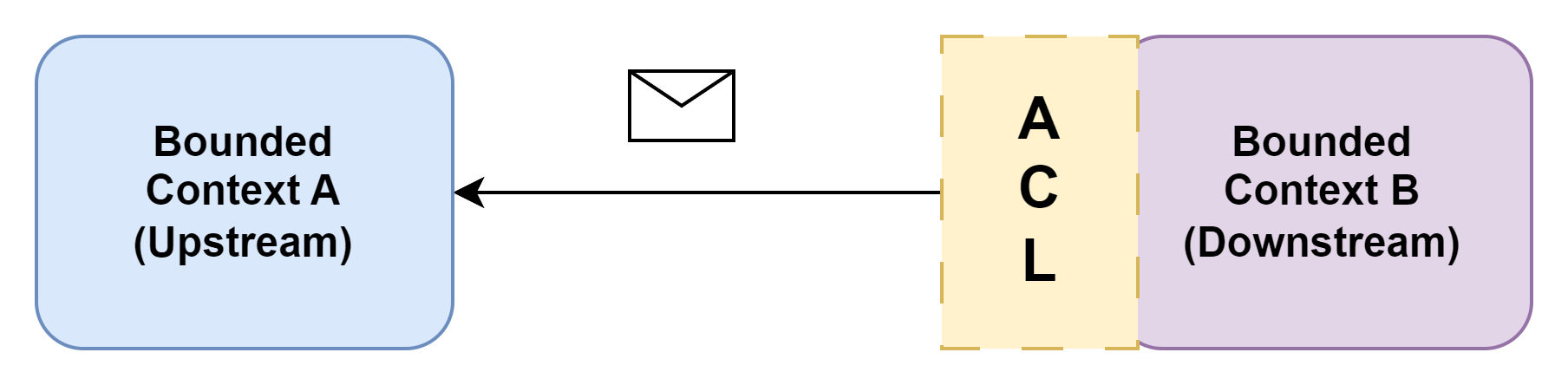

Above is a very clear sequential diagram to understand the entire architecture that we are going to build to solve the Authentication flow. We are also going to include login API that is not included right now in the Diagram. So let me start with something we call Service, So I am just attaching an email service that will help you to understand how we are going to solve the service. And its work distribution over multiple modules like mail, auth, and user module. Each of the modules have its own functionality that they will be fulfilling, and we are going to arrange all the required functions according to them. About DTOs and Controllers, we have discussed them in previous article.

I am including below the EmailService but there is also AuthService that actually holds the core parts of Authentication service like creating JWT token and sending required email. We will discuss on AuthService at the end of the `EmailService`.

```typescript

// src/modules/auth/services/email.service.ts

import {

Injectable,

NotFoundException,

UnprocessableEntityException,

} from '@nestjs/common';

import {

EmailVerifyDto,

LoginDto,

RegisterDto,

ResetPasswordDto,

SendVerifyMailDto,

} from '../email.dto';

import { UserService } from '../../user/services/user.service';

import { TokenService } from '../../user/services/token.service';

import { InjectRepository } from '@nestjs/typeorm';

import { User } from 'src/entities/user.entity';

import { Repository } from 'typeorm';

import { AuthService } from './auth.service';

@Injectable()

export class EmailService {

constructor(

private authService: AuthService,

private userService: UserService,

private tokenService: TokenService,

@InjectRepository(User) private userRepository: Repository<User>,

) {}

async register(registerDto: RegisterDto) {

const user = await this.userService.create(registerDto);

const token = await this.tokenService.create(user, 'REGISTER_VERIFY');

await this.authService.userRegisterEmail({

to: user.email,

data: {

hash: token.token,

},

});

}

async verify(verifyDto: EmailVerifyDto) {

try {

const user = await this.tokenService.verify(

verifyDto.verify_token,

'REGISTER_VERIFY',

);

user.email_verified_at = new Date();

user.is_active = true;

await user.save();

} catch (e) {

throw new UnprocessableEntityException({ verify_token: e.message });

}

}

async login(loginDto: LoginDto) {

const user = await this.userRepository.findOne({

where: { email: loginDto.email.toLowerCase() },

});

if (!user) {

throw new UnprocessableEntityException({ email: 'User not found' });

}

if (!user.is_active) {

throw new UnprocessableEntityException({ email: 'User not active' });

}

if (!user.email_verified_at) {

throw new UnprocessableEntityException({ email: 'User not verified' });

}

if (!user.comparePassword(loginDto.password)) {

throw new UnprocessableEntityException({

password: 'Password is incorrect',

});

}

const auth_token = this.authService.createJwtToken(user);

return { auth_token };

}

async sendVerifyMail(sendVerifyMailDto: SendVerifyMailDto) {

const user = await this.userRepository.findOne({

where: { email: sendVerifyMailDto.email.toLowerCase() },

});

if (!user) {

throw new NotFoundException({ email: 'User not found' });

}

if (user.email_verified_at) {

throw new UnprocessableEntityException({

email: 'User already verified',

});

}

const token = await this.tokenService.create(user, 'REGISTER_VERIFY');

await this.authService.userRegisterEmail({

to: user.email,

data: {

hash: token.token,

},

});

}

async sendForgotMail(sendForgotMailDto: SendVerifyMailDto) {

const user = await this.userRepository.findOne({

where: { email: sendForgotMailDto.email.toLowerCase() },

});

if (!user) {

throw new UnprocessableEntityException({ email: 'User not found' });

}

if (!user.email_verified_at) {

throw new UnprocessableEntityException({

email: 'Please verify email first.',

});

}

const token = await this.tokenService.create(user, 'RESET_PASSWORD');

await this.authService.forgotPasswordEmail({

to: user.email,

data: {

hash: token.token,

},

});

}

async resetPassword(resetPasswordDto: ResetPasswordDto) {

try {

const user = await this.tokenService.verify(

resetPasswordDto.reset_token,

'RESET_PASSWORD',

);

user.password = resetPasswordDto.password;

await user.save();

} catch (e) {

throw new UnprocessableEntityException({ reset_token: e.message });

}

}

}

```

That is too much to even understand, So I will be breaking functions one by one for you guys, and we will understand how strings are attached and works. It is going to be a long ride, that is good as no system does not have small components. We need to just understand structure/architecture/organization of all multiple small components that make whole big system.

## Register User

```typescript

// src/modules/auth/services/email.service.ts

async register(registerDto: RegisterDto) {

const user = await this.userService.create(registerDto);

const token = await this.tokenService.create(user, 'REGISTER_VERIFY');

await this.authService.userRegisterEmail({

to: user.email,

data: {

hash: token.token,

},

});

}

```

In above whatever we do of anything related to user we do in userService like create user, updating user Or anything related to user. And we know userService belongs to UserModule So we can use that from UserModule as we don’t want over-distribution of something that particularly belongs to some module. NestJS provide us a way to exports the providers and by importing the module other module any module can use injected dependency without mentioned in providers list as it is directly exported from imported module. So in above module UserServiceand TokenService is coming from UserModule. register function does create user and token, then sends email with token for required verify process in Authentication.

## Verify User

```typescript

// src/modules/auth/services/email.service.ts

async verify(verifyDto: EmailVerifyDto) {

try {

const user = await this.tokenService.verify(

verifyDto.verify_token,

'REGISTER_VERIFY',

);

user.email_verified_at = new Date();

user.is_active = true;

await user.save();

} catch (e) {

throw new UnprocessableEntityException({ verify_token: e.message });

}

}

```

Above is very basic idea what happens on verification it tries to verify the token by helper function this.tokenService.verify that we will look into later and if the token is valid it updates the email_verified_at field with current date and also sets is_active to true. So any user can log in to their account and use APIs in further.

## Resend Verify Mail (If user is not verified)

```typescript

// src/modules/auth/services/email.service.ts

async sendVerifyMail(sendVerifyMailDto: SendVerifyMailDto) {

const user = await this.userRepository.findOne({

where: { email: sendVerifyMailDto.email.toLowerCase() },

});

if (!user) {

throw new NotFoundException({ email: 'User not found' });

}

if (user.email_verified_at) {

throw new UnprocessableEntityException({

email: 'User already verified',

});

}

const token = await this.tokenService.create(user, 'REGISTER_VERIFY');

await this.authService.userRegisterEmail({

to: user.email,

data: {

hash: token.token,

},

});

}

```

If user is not verified and unable to verify in particular, expire time. They can resend verify email again from this endpoint. We have injected userRepository to find user if they exist in our DB, or they are already verified, If they are verified we will not re-send verify email. If they are not verified, we will send another email as per request from user.

## Send Forgot Email

```typescript

// src/modules/auth/services/email.service.ts

async sendForgotMail(sendForgotMailDto: SendVerifyMailDto) {

const user = await this.userRepository.findOne({

where: { email: sendForgotMailDto.email.toLowerCase() },

});

if (!user) {

throw new UnprocessableEntityException({ email: 'User not found' });

}

if (!user.email_verified_at) {

throw new UnprocessableEntityException({

email: 'Please verify email first.',

});

}

const token = await this.tokenService.create(user, 'RESET_PASSWORD');

await this.authService.forgotPasswordEmail({

to: user.email,

data: {

hash: token.token,

},

});

}

```

This function in service is to provide functionality to send forgot password email, So whenever user requests for forgot password, we can just use this particular function. As same above, we fetch the user details if they exist, we proceed ahead. If they are not verified, we don’t proceed, as they need to first verify the user. Verification is not necessary, it is really up to you if you wanted to include it or not. In ahead we create a token, So user are tracked, then we send password to user.

## Reset Password

```typescript

// src/modules/auth/services/email.service.ts

async resetPassword(resetPasswordDto: ResetPasswordDto) {

try {

const user = await this.tokenService.verify(

resetPasswordDto.reset_token,

'RESET_PASSWORD',

);

user.password = resetPasswordDto.password;

await user.save();

} catch (e) {

throw new UnprocessableEntityException({ reset_token: e.message });

}

}

```

Above is steps to verify user, we verify the use tokenService that verifies and returns the connected user. After that, we proceed with saving the password in DB, but you will notice we are just adding it to DB directly without encrypting. That part actually handled by a hook we implement in User entity.

```typescript

// src/entities/user.entity.ts

import {

AfterLoad,

BeforeInsert,

BeforeUpdate,

Column,

Entity,

OneToMany,

} from 'typeorm';

import { ApiHideProperty, ApiProperty } from '@nestjs/swagger';

import { BaseEntity } from './base';

import { Token } from './user_token.entity';

import * as bcrypt from 'bcryptjs';

@Entity({ name: 'users' })

export class User extends BaseEntity {

...

@ApiHideProperty()

previousPassword: string;

@AfterLoad()

storePasswordInCache() {

this.previousPassword = this.password;

}

@BeforeInsert()

@BeforeUpdate()

async setPassword() {

if (this.previousPassword !== this.password && this.password) {

const salt = await bcrypt.genSalt();

this.password = await bcrypt.hash(this.password, salt);

}

this.email = this.email.toLowerCase();

}

comparePassword(password: string) {

return bcrypt.compareSync(password, this.password);

}

}

```

Above, we are using listeners and subscribers (hooks). We always create a cache saved as previousPassword, So whenever we update a new password, we can use the previous password from that property to compare and encrypt using bcryptjs. The naming of hooks is very clear, `@AfterLoad` works after fetching the data from DB, `@BeforeInsert` and `@BeforeUpdate` are respectively called after insertion and update of data in DB. We also require a library bcryptjs to be installed.

```bash

npm i bcryptjs

npm i -D @types/bcryptjs

```

## Login User

```typescript

// src/modules/auth/services/email.service.ts

async login(loginDto: LoginDto) {

const user = await this.userRepository.findOne({

where: { email: loginDto.email.toLowerCase() },

});

if (!user) {

throw new UnprocessableEntityException({ email: 'User not found' });

}

if (!user.is_active) {

throw new UnprocessableEntityException({ email: 'User not active' });

}

if (!user.email_verified_at) {

throw new UnprocessableEntityException({ email: 'User not verified' });

}

if (!user.comparePassword(loginDto.password)) {

throw new UnprocessableEntityException({

password: 'Password is incorrect',

});

}

const auth_token = this.authService.createJwtToken(user);

return { auth_token };

}

```

At last, we are a place where we can discuss, How user actually logged in. In log in DTO we get email and password, taking both of them we check if user actually exist or not then comparePassword If correct then we create token from authService.

Now is the time to reveal the core of services that we have as AuthService and MailerService.

Auth Service

We need libraries like jwt and nodemailer that will help us in tokenization and mailing respectively. To install them use below command

```bash

npm i @nestjs/jwt @nestjs-modules/mailer

```

Now we can define Auth Service that holds our JWT token creation and sending mail using nodemailer. That is very simple functions we have implemented, So you can take a look at it. It needs multipart to be in places like MailModule as a global module. Also needs MailData an interface that helps to define common data to be passed in functions of email.

```typescript

// src/modules/auth/services/auth.service.ts

import { Injectable } from '@nestjs/common';

import { User } from 'src/entities/user.entity';

import { JwtService } from '@nestjs/jwt';

import { MailerService } from '@nestjs-modules/mailer';

import { ConfigService } from '@nestjs/config';

import { MailData } from 'src/modules/mail/mail.interface';

@Injectable()

export class AuthService {

constructor(

private jwtService: JwtService,

private mailerService: MailerService,

private configService: ConfigService,

) {}

createJwtToken(user: User) {

return this.jwtService.sign({

id: user.id,

timestamp: Date.now(),

});

}

async userRegisterEmail(

mailData: MailData<{

hash: string;

}>,

) {

await this.mailerService.sendMail({

to: mailData.to,

subject: 'Thank You For Registration, Verify Your Account.',

text: `${this.configService.get(

'app.frontendDomain',

)}/auth/verify?token=${mailData.data.hash}`,

template: 'auth/registration',

context: {

url: `${this.configService.get(

'app.frontendDomain',

)}/auth/verify?token=${mailData.data.hash}`,

app_name: this.configService.get('app.name'),

title: 'Thank You For Registration, Verify Your Account.',

actionTitle: 'Verify Your Account',

},

});

}

async forgotPasswordEmail(

mailData: MailData<{

hash: string;

}>,

) {

await this.mailerService.sendMail({

to: mailData.to,

subject: 'Here is your Link for Reset Password.',

text: `${this.configService.get(

'app.frontendDomain',

)}/auth/reset-password?token=${mailData.data.hash}`,

template: 'auth/registration',

context: {

url: `${this.configService.get(

'app.frontendDomain',

)}/auth/reset-password?token=${mailData.data.hash}`,

app_name: this.configService.get('app.name'),

title: 'Here is your Link for Reset Password.',

actionTitle: 'Reset Password',

},

});

}

}

```

For JWT, we need only need to register it as module in AuthModule. To use any of the Entity in services, we always need it to be injected from module. Otherwise, it will raise concern, that we don’t have any service or injectable available in opposite of mentioned one. That is why to use userRepository we are just importing the `TypeOrmModule.forFeature([User])` including the required entity to be injected as repository.

```typescript

// src/modules/auth/auth.module.ts

import { Module } from '@nestjs/common';

import { EmailController } from './email.controller';

import { EmailService } from './services/email.service';

import { AuthService } from './services/auth.service';

import { UserModule } from '../user/user.module';

import { TypeOrmModule } from '@nestjs/typeorm';

import { User } from 'src/entities/user.entity';

import { JwtModule } from '@nestjs/jwt';

import { ConfigService } from '@nestjs/config';

@Module({

imports: [

UserModule,

TypeOrmModule.forFeature([User]),

JwtModule.registerAsync({

inject: [ConfigService],

useFactory: async (configService: ConfigService) => ({

secret: configService.get('auth.secret'),

signOptions: { expiresIn: configService.get('auth.expires') },

}),

}),

],

controllers: [EmailController],

providers: [EmailService, AuthService],

})

export class AuthModule {}

```

To define basic options for JWT, we provide it directly in imported modules as follows, Also registerAsync to use any other module as that imported module depends on. It dynamically gets instantiated after dependent module creation. So we have provided basic options like secret and expiration time.

```typescript

// src/modules/auth/auth.module.ts

JwtModule.registerAsync({

inject: [ConfigService],

useFactory: async (configService: ConfigService) => ({

secret: configService.get('auth.secret'),

signOptions: { expiresIn: configService.get('auth.expires') },

}),

}),

```

We need to discuss more on Mail Module and User module that are used in above code snippets. But this article is getting bigger that expected. So let us move the parts in next article. Thank you very much for reading, check my profile in further to get into other articles of this series.

See you in the next. | depak379mandal | |

1,869,025 | A Single Killer Feature You Need For Collaborative Database Development: Source Control For MySQL | Database development is a complex process that requires quality planning, technical skills,... | 0 | 2024-05-29T13:23:19 | https://dev.to/dbajamey/a-single-killer-feature-you-need-for-collaborative-database-development-source-control-for-mysql-41n3 | mysql, mariadb, database | Database development is a complex process that requires quality planning, technical skills, experience, and collaboration. Regardless of the project scope and the development team size, efficient partnership can help you improve, accelerate, and simplify the process at all stages.

Two factors are crucial for everyone who works with databases daily and strives to deliver high-quality products: implementing best practices into daily routines and choosing the right tools.

So, what steps should any tech team take to enhance collaboration and ensure code quality? Let’s examine helpful strategies for seamless and productive teamwork to improve efficiency and faster database development.

https://techbehindit.com/technology/collaborative-database-development-source-control-for-mysql/ | dbajamey |

1,869,024 | Hiring the Right Talent: Tips for Building Your Dev Team | Thus, that’s quite crucial to strengthen your development team since the projects and the company’s... | 0 | 2024-05-29T13:22:58 | https://dev.to/christinek989/hiring-the-right-talent-tips-for-building-your-dev-team-jp8 | mobile, development, developmentteam, outsourcing | Thus, that’s quite crucial to strengthen your development team since the projects and the company’s performance in the tech industry usually depend much on it. [IT Staffing](https://www.addevice.io/blog/navigating-it-staffing) is an important procedural activity that should be implemented in order to get the right personnel, talent, and expertise for creating innovative solutions within a limited span of time at an optimum cost. In this article we’ll review some key factors related to how one can find and attract the right talent for developing their product.

### Tips When Hiring the Right Development Talent

#### Identifying and Sourcing Top Talent

**Defining Your Development Needs**

Nonetheless, planning an effective development process requires one to have a clear understanding before going out to identify talent. Get started by outlining the precise functions that need to be filled in your team. These might include:

- Front-end developers

- Back-end developers

- Full-stack developers

- DevOps engineers

Every position entails special abilities and proficiency, so learning these factors will enable you to effectively advertise and filter the candidates.

Describing the jobs in detail is the next important step when cross training is being implemented. A well-crafted job description should outline:A well-crafted job description should outline:

- Responsibilities of the role

- Required skills and qualifications

- Technologies and programming languages used can be of uttermost importance.

- Company culture and values

This should involve stating in detail the technologies and tools your team works with, which will entice candidates who have a background in those areas. For instance, if you are looking for a Front-end developer, specify the fact that the person should have experience working with frameworks that include the likes of React or Angular.

**Effective Sourcing Strategies**

After attaining the suitable job descriptions the next step is to source for the talent which involves the following; The use of job boards and online communities is a best practice Employers must use social media to identify the right people to reach out to and grab the attention of these potential candidates. To find suitable talents for application development, the following are preferred sources; Here are some sources that are useful for searching skilled developers: Some of these platforms do not just enable you to advertise your openings but also to look for candidates for such positions.

Another important function that cannot be overlooked is that social media are also efficient instruments for sourcing talent. Given instances include the Linked In and twitter that are not only considered as social networks but rather professional networks. Attend conferences and fairs or other forums which are known to be an excellent way of addressing tech-related people and convince them to apply for the positions you are offering.

**Effective sourcing strategies include:**

- Writing to well-received recruitment sites (e. g. Stack Overflow, GitHub, Linkedin).

- Interacting with tech forums active on social media

- Making use of Social networks in relation to creating professional connections

- This is the reason for working with professional recruitment companies of technical staff

This is especially true if you are in need of candidates for premium positions as engaging the services of the right tech recruitment agencies can get the job done in rather short order. They offer wanted candidates in massive pre-screened databases and can therefore assist in the hiring steps.

## How to Have Successful Interviews and Onboarding

#### Interview Process Best Practices

In an interview, you are not just trying to convince the candidate to work for you, but you also get an excellent chance to determine the candidate’s skills and whether they will fit your company’s culture or not. This practice is very important in order to assess the students’ performance when it comes to preparing technical assessments. Among the effective approaches, one can distinguish the grouping of coding challenges, project-related assignments, and problem-solving activities. Make sure these assessments correspond to the responsibilities a candidate can face in the workplace.

Besides technical competencies, it is equally critical to evaluate behavioral and & cultural match. Degree Does behavioral interview and also try to know different ways in solving a problem, how to work in a team and how to communicate with the team members. One should ask questions that expose their past, detailing the experiences that they had and the approaches that they took in handling certain scenarios. It will also help in preventing outsiders from being a nuisance within your company, as they will have been already vetted to fit into the laid down corporate culture.

**Interview process best practices:**

- Include the necessary technical descriptions and practical works (e. g. coding tests problems, project-based activities)

- This approach argues that it is possible to test behaviorial and cultural fit through certain questions

- This test will reflect the candidate’s problem solving ability, teamwork and communication skills.

#### Competitive Salary Offers and Employee Orientation

When you have finally found the right candidate for the job, it is important to countercheck and match their skills and experience accordingly. Go through the market research available in the industry to ensure you provide the best salary and remunerations to the employees. Just do not forget that your prime candidates may be deciding between several options, thus positioning your offer appropriately is crucial. Such arrangements should entitle employees to perks such as health care, work from home provisions, and career advancement.

**Components of a competitive offer:**

- Competitive salary

- Various comprehensive workplace benefits that include health coverage, flexibility to work from home, etc.

- Opportunities for professional development

Onboarding is the process of initial training and integration of newly joining employees as per the company’s standards. Onboarding is the process of settling and orienting a new employee in a new workplace, and developing an organizational onboarding plan would help reduce the difficulties. Some of the activities which should be observed in this plan include introductions of the technical team members, introduction of the company’s policies and procedures, orientation of the technical team, and other initial activities that the technical team is involved in.

However, there is still a need to understand the individual needs of employees and possible ways of improvement, such as: Providing frequent and effective mentorship as well as training for employees to foster sustainable growth of the top talent. Mentor new employees with seasoned colleagues, so that the new hires receive specific advice from their first assignments and the right approach to the new environment. Providing them with learning proposals and supporting their career advancement can improve their performances, as well as their satisfaction and workplace commitment.

**Onboarding essentials:**

- Structured onboarding plan

- Introductions to team members

- Overview of company policies

- Initial training sessions

Training and mentoring remain other critical measures that need to be implemented into healthcare organizations for effective provision of services to patients.

### Conclusion

[Recruiting the best development team ](https://www.addevice.io/blog/remote-development-team)is one of the most crucial factors a business needs to put into consideration. Designing the development needs, [outsourcing](https://www.addevice.io/blog/it-software-outsourcing-trends) candidate sources, conducting two-faced interviews, and performing an effective onboarding process should be used to attract and maintain the talent. Talent acquisition goes beyond merely hiring employees for various roles because such acquisition focuses on contributing to the achievement of organizational goals and participating in the advancement of the firm in the long run.

Choosing the right developers to work in your business is one of the singular most important actions towards your business goals. Remember these guidelines, to help you establish a team of competent, as well as harmonizing co-workers that would represent your company’s outlook. Of course, having strong and motivated staff will ensure their projects are successful at the end of the day, and the company will keep on expanding and progressing in this highly competitive world of technology.

That way, you are on course to assemble a solid development team, one that can handle any problem that comes its way and fuel the expansion of your business. Recalling, it is essential to point that the primary factor of success is the proper choice of personnel and the following provision of the necessary conditions that will enable these people to work effectively. | christinek989 |

1,869,023 | DumpsBoss's AZ500 Dumps Success Blueprint: Your Key to Triumph | Empower Yourself with DumpsBoss Today Don't let the AZ-500 exam intimidate you. With DumpsBoss by... | 0 | 2024-05-29T13:22:55 | https://dev.to/michael546/dumpsbosss-az500-dumps-success-blueprint-your-key-to-triumph-4429 | Empower Yourself with DumpsBoss Today

Don't let the AZ-500 exam intimidate you. With DumpsBoss by your side, you have everything you need to conquer the challenge and emerge victorious. Take the first step towards realizing your career aspirations in Azure security by choosing DumpsBoss as your trusted partner in success. Visit our website now to explore our range of study materials and unlock a world of opportunities in cloud security. Your journey to becoming a certified Azure Security Engineer starts here, with DumpsBoss.

Elevate Your Azure Security Expertise with DumpsBoss: The Definitive AZ-500 Study Guide PDF and Dumps PDF

As technology continues to advance, the importance of securing cloud environments becomes paramount. Microsoft Azure, one of the leading cloud platforms, offers the AZ-500: Microsoft Azure Security Technologies certification to validate professionals' skills in implementing security controls, maintaining security posture, and identifying and remediating vulnerabilities. To assist you in mastering the <a href="https://dumpsboss.com/microsoft-exam/az-500/">AZ500 Dumps</a>, DumpsBoss provides unparalleled resources tailored to your success.

Unraveling the AZ-500 Dumps PDF: Your Key to Exam Success

Navigating through the intricacies of the AZ-500 exam requires comprehensive preparation, and DumpsBoss is your trusted companion on this journey. Our AZ-500 Dumps PDF is meticulously crafted to encompass the breadth and depth of topics covered in the exam. Here's why our Dumps PDF stands out:

• Authentic Exam Simulation: Gain confidence by practicing with real exam questions meticulously curated by industry experts. Our Dumps PDF mirrors the format and difficulty level of the actual AZ-500 exam, ensuring you're well-prepared for the challenges ahead.

• Detailed Explanations: Understand the rationale behind each answer with our detailed explanations. Grasp the underlying concepts and principles, empowering you to tackle even the most complex scenarios with ease.

• Continuous Updates: Stay ahead of the curve with our regularly updated Dumps PDF. We keep pace with the latest developments in Azure security technologies, ensuring that our materials align with the most current exam objectives.

Access High-Quality Dumps >>>>>: https://dumpsboss.com/microsoft-exam/az-500/ | michael546 | |

1,869,022 | Art Expo Taiwan | https://www.expostandzone.com/trade-shows/art-taipei Art Taipei is also an important platform for... | 0 | 2024-05-29T13:22:28 | https://dev.to/expostandzoness/art-expo-taiwan-hf2 | https://www.expostandzone.com/trade-shows/art-taipei

Art Taipei is also an important platform for international art exchange in the Asia-Pacific region. It is going to be held in Taipei World Trade Center aipei, Taiwan.

| expostandzoness | |

1,868,982 | Politikalar - Masaüstünde Kısayol Oluşturma | Politikayı Oluşturma ve Ayarlama Domain eklentimizde Nesne Ekle kısmına tıklayıp açılan... | 0 | 2024-05-29T13:22:11 | https://dev.to/dogacakinci/politikalar-masaustunde-kisayol-olusturma-3p4c | # Politikayı Oluşturma ve Ayarlama

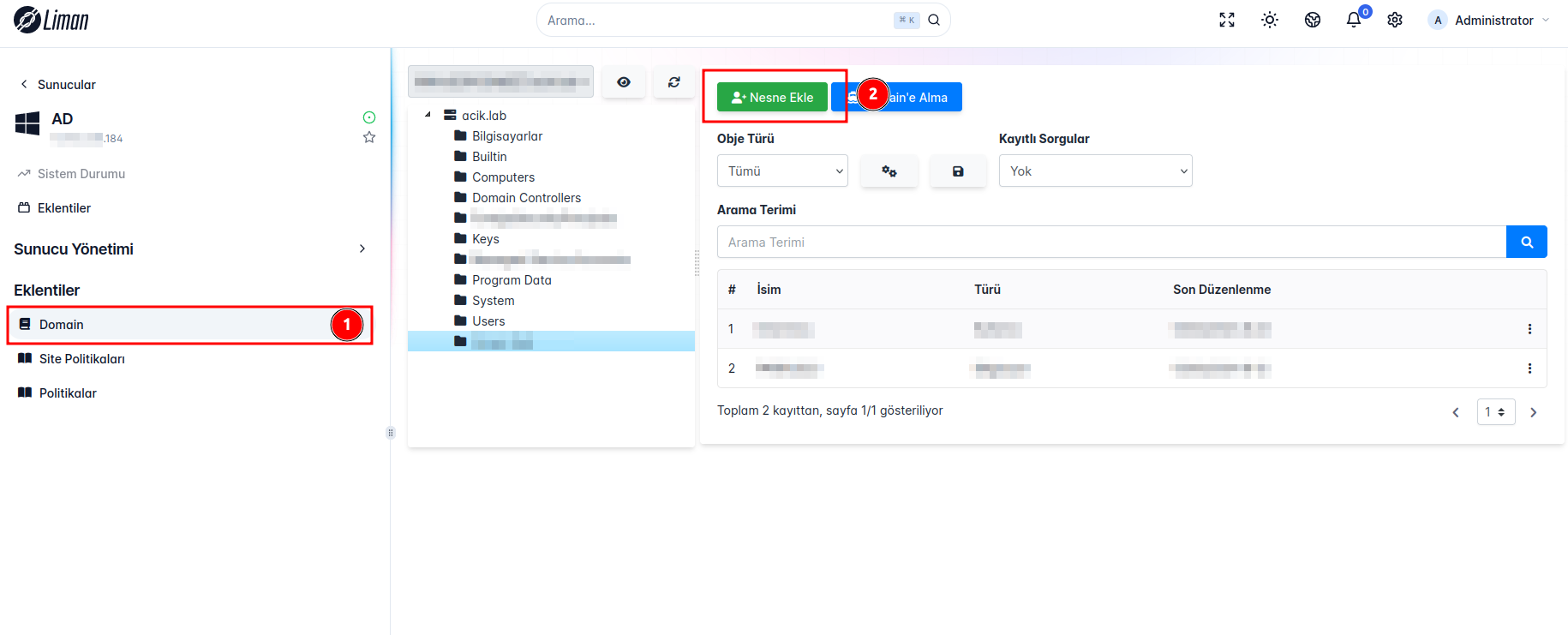

Domain eklentimizde Nesne Ekle kısmına tıklayıp açılan arayüzden nesnemizin tipini seçip nesnemize bir ad veriyoruz ve ekle butonuna tıklıyoruz.

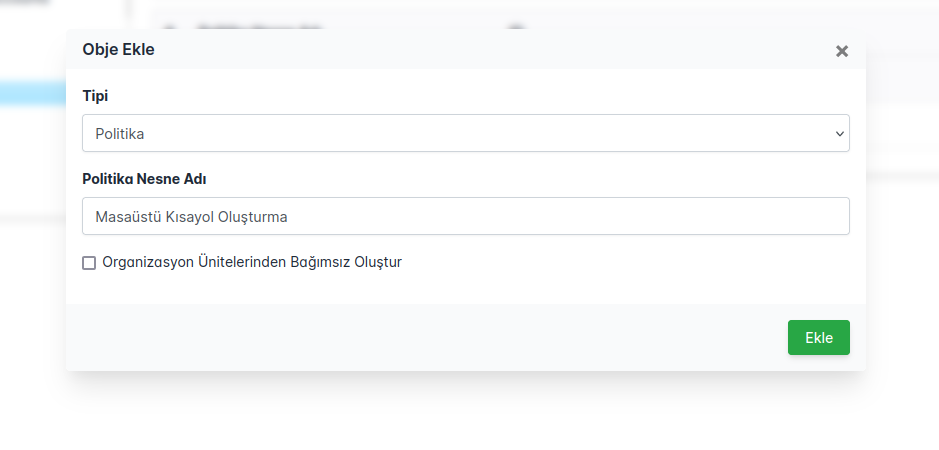

Daha sonra Obje Türlerinden Politikaları seçtiğimizde eklediğimiz politikayı görebiliriz.

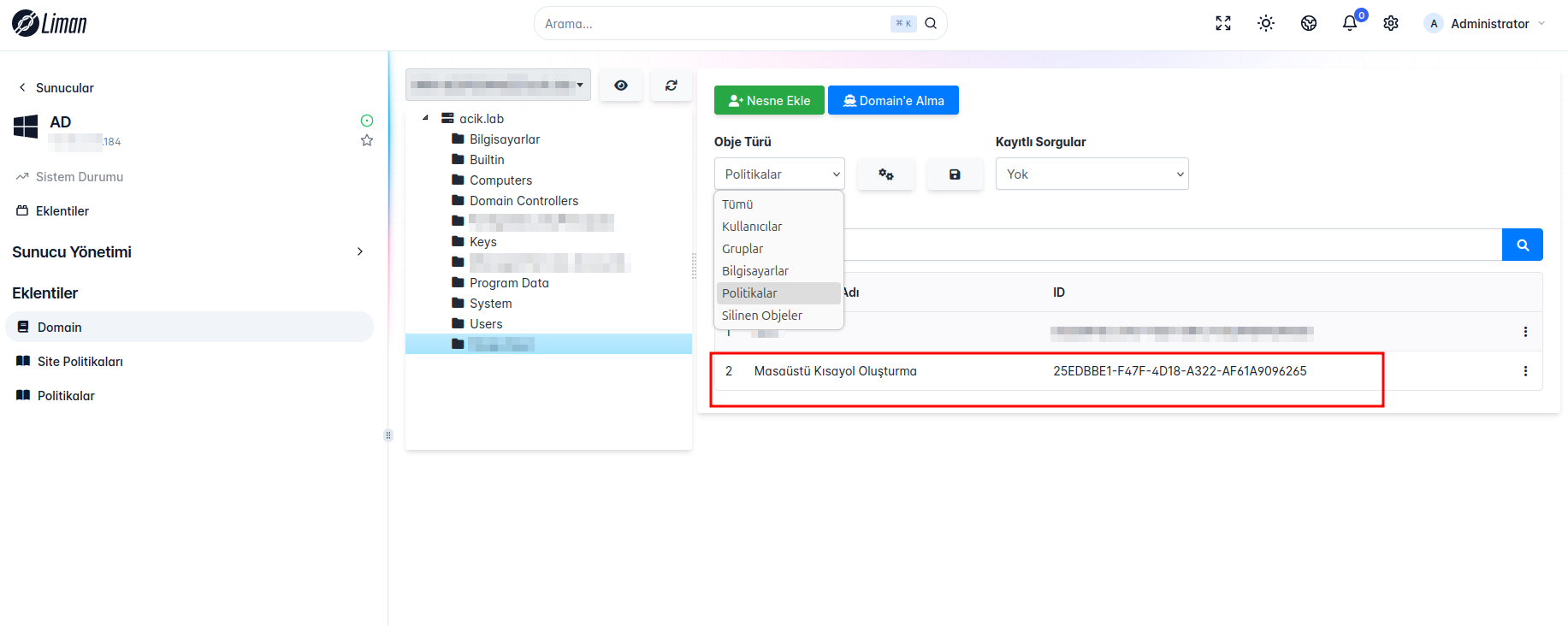

Eklediğimiz politikanın üstüne tıkladıktan sonra açılan arayüzden "Kullanıcı" kısmını seçip "Kısayol" ekleyeceğimiz için gerekli bilgileri girmemiz gerekiyor. "Kısayol Konumu" na kısayolumuzun nerede olmasını istiyorsak o yolu giriyoruz, "Çalışacak Komut" kısmına kısayolun çalıştıracağı komutu giriyoruz ve "Başlatıcı İkonu" kısmında da kısayolumuzun ikonunu ekleyip kaydet simgesine tıklayarak politikamızı kaydediyoruz.

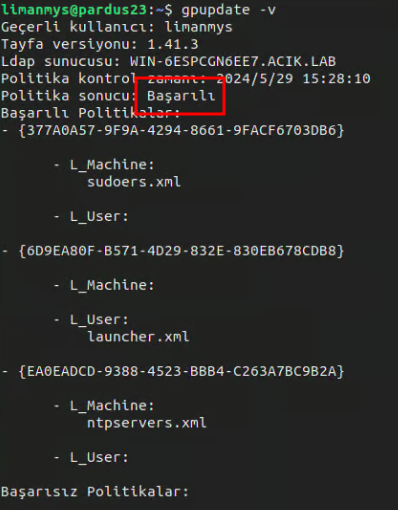

# Politika Çalışması ve Kontrolü

İstemci tarafında

```

gpupdate -v

```

komutunu çalıştırdığımızda "Politika Sonucu: Başarılı" mesajını görüyorsak politikamızın başarılı bir şekilde uygulandığını ve kısayolumuzun belirttiğimiz konuma geldiğini görürüz.

| dogacakinci | |

1,868,992 | Why You Should End Your Source Files With a New Line | In software development, seemingly minor details can have a significant impact on the efficiency,... | 0 | 2024-05-29T13:17:57 | https://dev.to/documendous/why-you-should-end-your-source-files-with-a-new-line-156g | bestpractice, sourcecode, vscode, codereview | In software development, seemingly minor details can have a significant impact on the efficiency, maintainability, and compatibility of your code. One such detail is the practice of ending your source files with a blank line.

While it might seem trivial at first glance, this simple convention plays a crucial role in adhering to industry standards, preventing unnecessary version control conflicts, and ensuring smooth operation across various development tools and environments.

In this article, I would like to discuss the reasons behind this best practice and shed some light on how a single newline character at the end of your files can contribute to a more robust and harmonious coding experience with your IDE, repository and working with other developers.

Having a blank line at the end of your source files is considered good practice for several reasons:

**POSIX Compliance:** The POSIX standard requires that a text file end with a newline character. Many tools and utilities expect this convention and can produce errors or warnings if the final newline is missing.

**Version Control Systems:** When using version control systems like Git, having a newline at the end of files can prevent unnecessary diffs. If two versions of a file have or don't have a newline at the end, the version control system might treat this as a meaningful difference, leading to avoidable conflicts.

**Text Editors:** Some text editors and IDEs automatically add a newline at the end of a file. If your file doesn't have a newline, the editor might add it, causing an unnecessary change.

**Code Linters and Formatters:** Many code linters and formatters enforce this rule as part of their style guides. Adhering to this convention can help maintain consistency across a codebase.

**File Concatenation:** When concatenating files, not having a newline at the end of a file can lead to issues where the last line of one file and the first line of the next file are joined together.

Let's go into these reasons in more detail:

**POSIX Compliance**

The POSIX standard defines a text file as a sequence of lines, each ending with a newline character. This means that a properly formatted text file must end with a newline. Many Unix-based tools and utilities, which follow POSIX standards, expect this newline character at the end of a file. If it's missing, these tools may not behave as expected, potentially causing errors or misinterpretations of the file's contents.

**Version Control Systems**

In version control systems like Git, each line in a file is tracked, and differences between versions are highlighted. If a file ends without a newline, adding one in a later commit might be seen as a change to the last line of the file. This can create unnecessary diffs and complicate the process of reviewing changes. Ensuring a newline at the end of each file avoids such trivial differences, leading to cleaner and more meaningful version histories.

**Text Editors**

Many text editors and integrated development environments (IDEs) automatically append a newline character to the end of a file when saving. If your file doesn't already end with a newline, the editor's automatic addition can create an unintended change. This can be particularly problematic in collaborative environments where different team members use different editors. Consistently ending files with a newline helps avoid these automatic and unintended modifications.

**Code Linters and Formatters**

Code linters and formatters often enforce a set of style guidelines to maintain consistency and readability in a codebase. One common rule is to ensure that files end with a newline. This consistency helps avoid small stylistic differences that can clutter code reviews and lead to merge conflicts. By adhering to this rule, you ensure that your codebase remains clean and maintainable.

**File Concatenation**

When concatenating multiple files together, having a newline at the end of each file ensures that the content remains properly separated. Without a newline, the last line of one file and the first line of the next file can be merged into a single line, causing syntax errors or other issues. For example, in languages where line breaks are significant (like Python), this can lead to broken code. Ensuring each file ends with a newline prevents such concatenation issues and maintains the integrity of the combined content.

**Summary**

**POSIX Compliance:** Ensures compatibility with Unix-based tools.

**Version Control Systems:** Avoids unnecessary diffs and conflicts.

**Text Editors:** Prevents unintended changes due to automatic newline additions.

**Code Linters and Formatters:** Maintains consistency and readability.

**File Concatenation:** Ensures proper separation of content when files are combined.

Adhering to the practice of ending files with a newline character helps create a smoother, more predictable development workflow and avoids a range of potential issues that can arise in collaborative and automated environments.

Be sure to add any comments to add any insight on this subject! It's also appreciated if you have different views on it as well. | documendous |

1,868,991 | StickAI | These is the sample | 0 | 2024-05-29T13:17:42 | https://dev.to/sudhan23082004/stickai-247g | These is the sample | sudhan23082004 | |

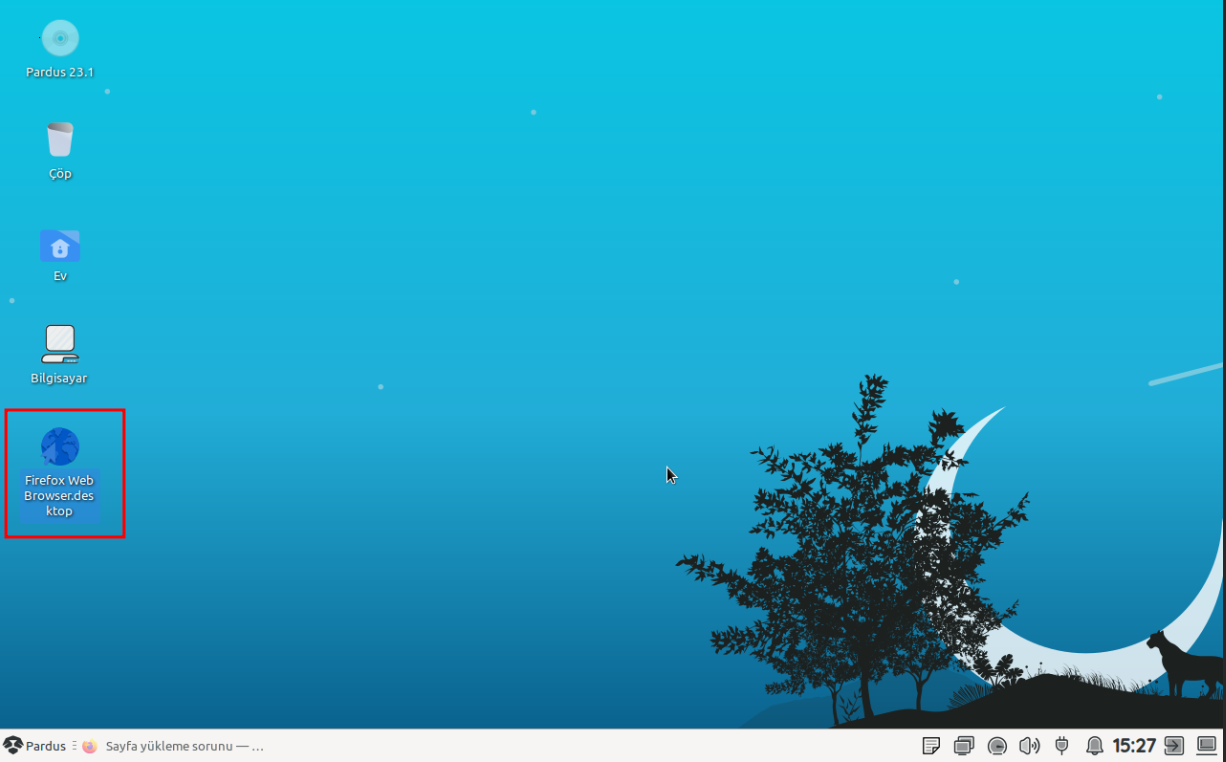

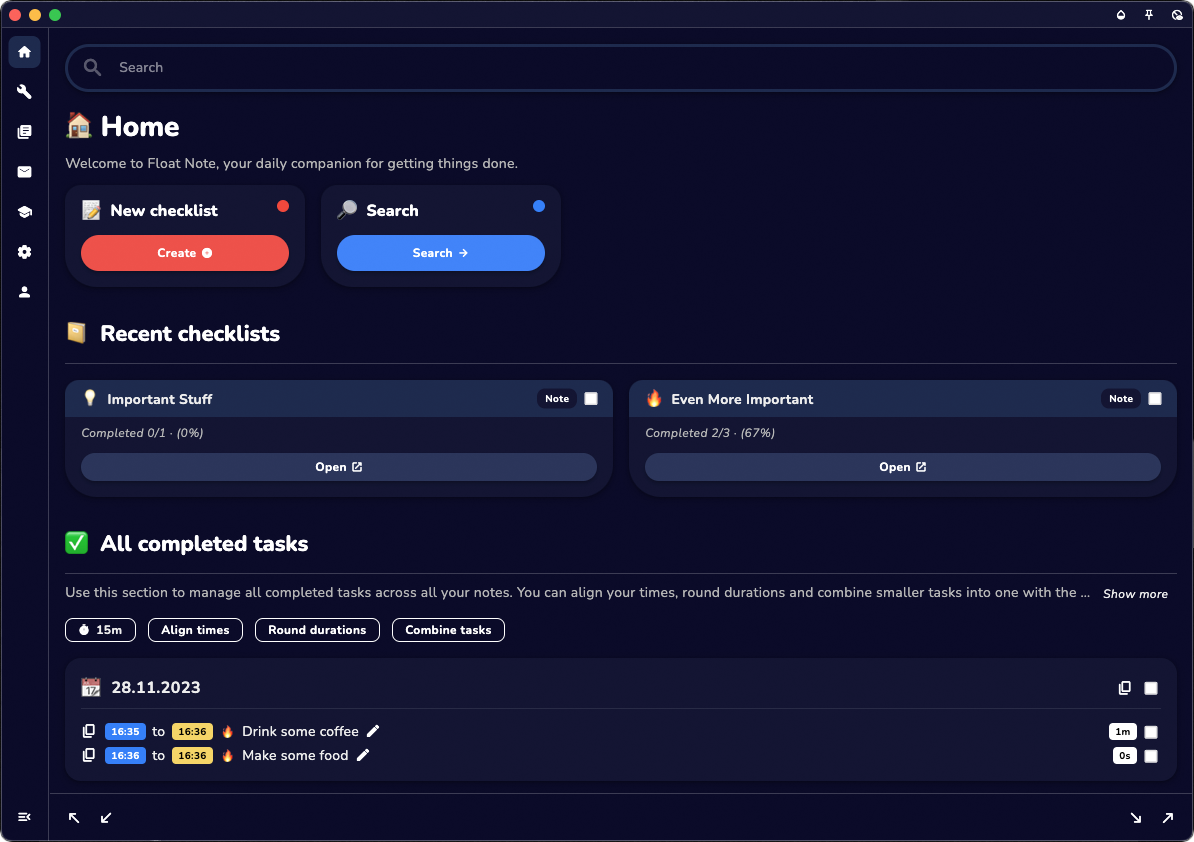

1,868,962 | Two Days Indie Dev Life: Mailchimp, Webflow & Zapier - A Love Story | My new landing page is done, I got my email form with download link working and I found a cool new... | 0 | 2024-05-29T13:16:43 | https://dev.to/devlifeofbrian/two-days-indie-dev-life-mailchimp-webflow-zapier-a-love-story-8h1 | buildinpublic, flutter, programming, marketing | [My new landing page](http://floatnote.com/) is done, I got my email form with download link working and I found a cool new tool for measurements. I also share some nice Webflow templating insights and how I made a very big mistake 😁. Let’s get into it.

# Website & Leads

Two days ago I worked the entire day on the new homepage / landing page of the website. This is after I realised that many people reach [my app](https://www.floatnote.com/) for the first time via desktop web. Currently, my download links are set up to show [the web app](https://floatnote.app/) if they are visited on a desktop. I prefer them to leave their email so they can download the mobile app themselves later, as the mobile app currently has a much better user experience.

The web version works fine too, don't get me wrong. But some of the UI elements are not yet tuned for the web, which makes some things look out of proportion in certain places. No problem for functionality, but it does look a bit sloppy here and there.

I originally tried to make a download page (in addition to my new landing page) in Mailchimp, but I don't like the templates and the results I get with it. *Note from the future, I was using the old builder so that might have to do with it. Either way I decided to go with Webflow*. I initially only wanted to create a download page, but then I thought, while I'm at it, I might as well do the whole homepage/landingpage, because I now have a very cool new slogan that I want to reflect. This will improve my SEO and also make sure the website is up to date with all new features. So two days ago, after a good back workout, I went to my favorite place and started working.

# Webflow Template

After about two or three hours I had to switch locations to regain my focus. I went to one of my new favorite spots in a park.

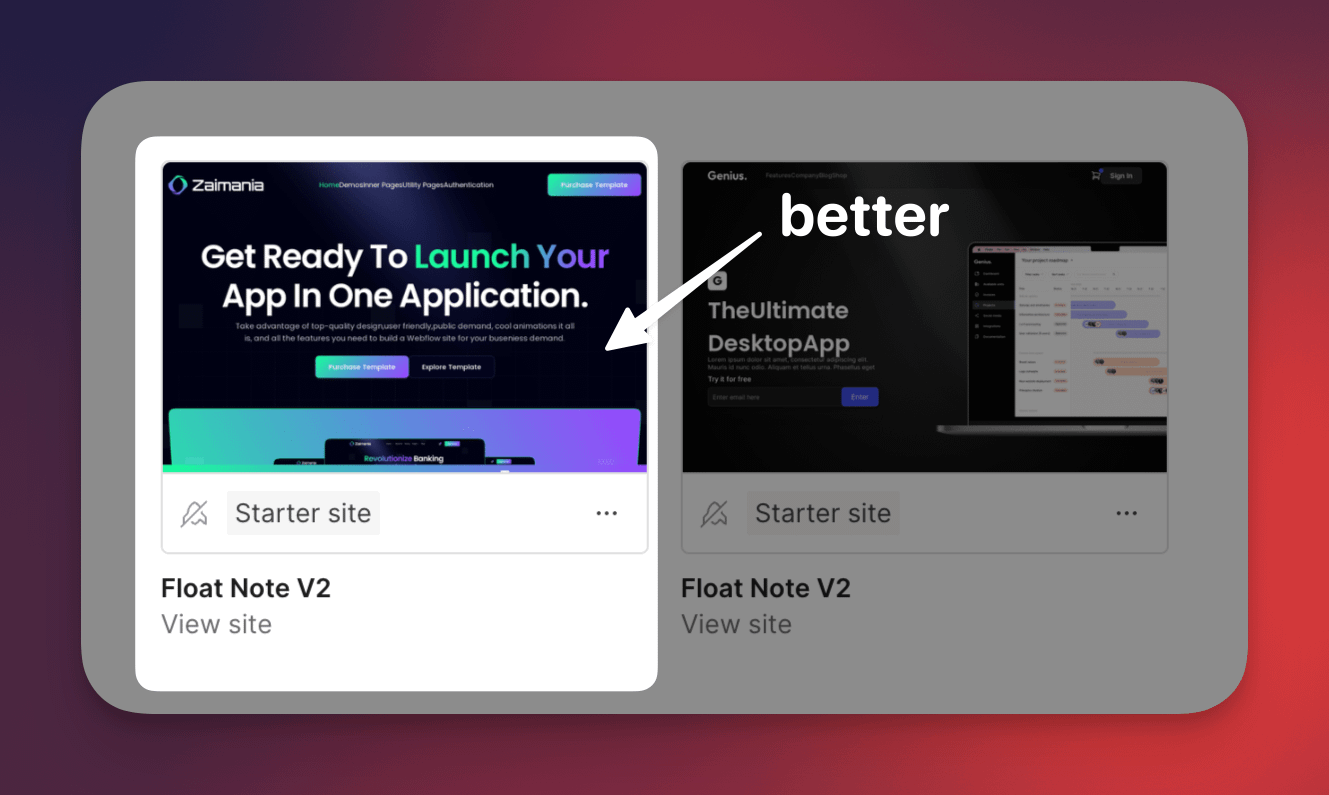

Once there I went to look for templates on Webflow. I quickly managed to find a good one that I wanted to use and started implementing it. A lesson I learned from implementing this template and a few templates in webflow before is the following.

## Stick To Your Concept

Originally [Float Note](https://www.floatnote.com/) started out as a desktop application, but once the mobile version got developed mobile became the main app, web was set up and desktop got discontinued. Simply because of time issues, I might continue it one day.

You can save yourself a lot of time by choosing a template that really represents the concept you are selling. In my case now, a mobile app template is better than a desktop SaaS template.

This has to do with the different types of visual elements that are all tailored to mobile app screenshots. To save yourself a lot of time, it is useful if you download these screenshots, adjust them and use the same size (this is important) and then upload them again.

This will keep many animations working properly and most importantly keep proportions looking good. If you don't do this you often spend a lot of time keeping your template nice and responsive because you disturb it because of the different sizes of your screenshots.

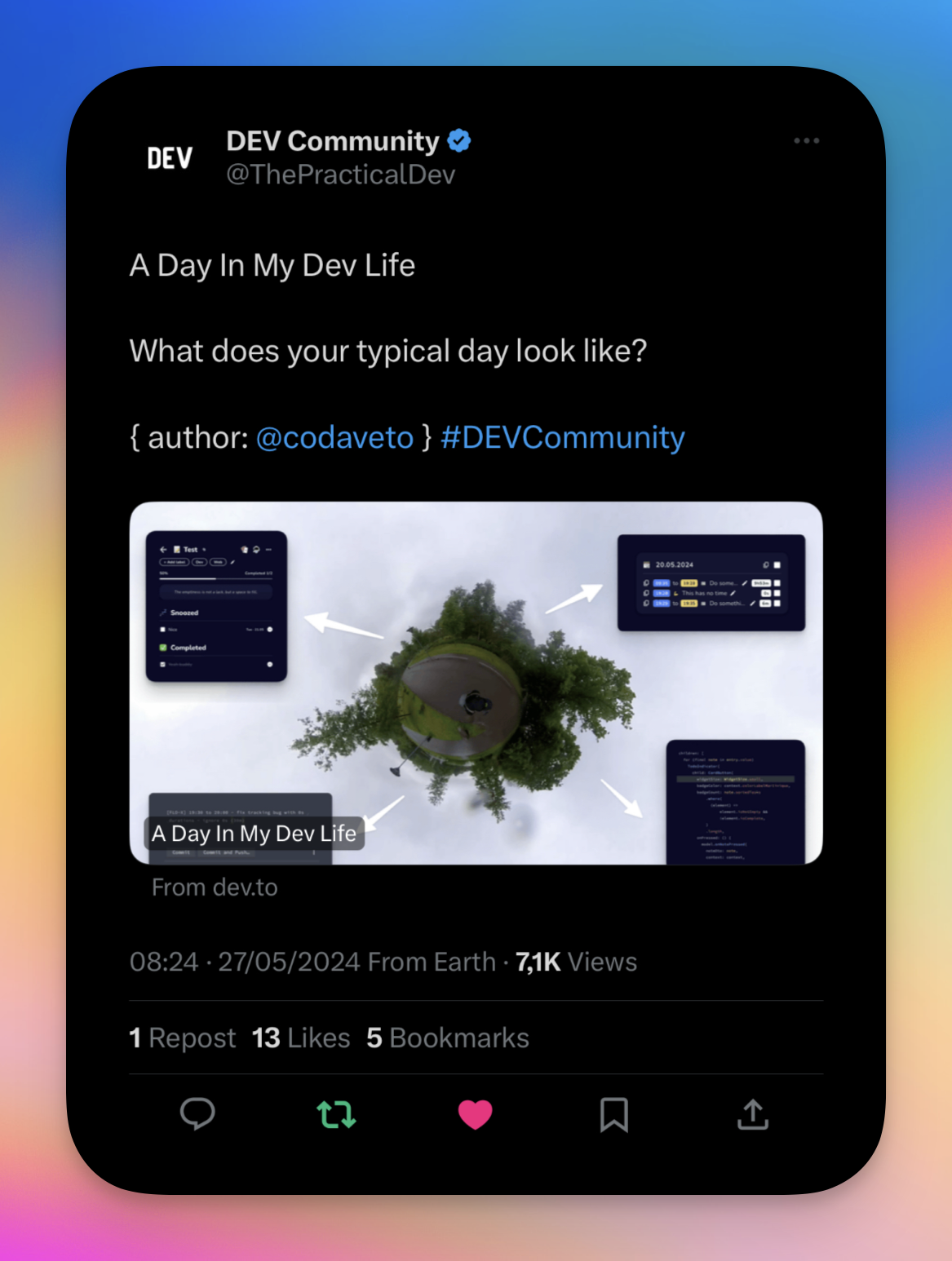

# Twitter Game

I’ve been active on twitter for about a week now. It’s still kind of new to me but something really cool happened yesterday. [DEV.TO](https://dev.to/) put one of my daily blogs in one of their tweets, they have like 300k+ followers, I couldn’t believe it. Very very cool, thanks a lot 🙏.

And while we’re at it I updated [my twitter](https://x.com/devlifeofbrian) header yesterday. It was still blank and of course I thought this might be the perfect opportunity for me to promote my app. So I made a header, which at first looked great on my laptop but got all messed up on mobile. So I figured out a way to fix this, assuming that most mobiles will have the same kind of dimensions more or less.

I took a screenshot of my phone and enlarged it on top the design in Canva. This way I could see how my big design would turn out on the big screen and on mobile. By playing around with the opacity of the screenshot. It looks a bit messy but it worked! The challenge was keeping the big design look good. It’s easy to work around the island and battery level etc. But it’s a bit harder to still make it look good when those things are not there. I used some stars and planets to fill up the space where I felt it was too empty.

I’m pretty happy with the result for now. I shouldn’t spent too long on these kind of things, I have a tendency to play around with pixels for hours. Luckily I’m aware of this.

# Tuesday Trip Day

Per usual I went to Eindhoven tuesday. After a go(o)d workout I moved myself to work at one of my clients.

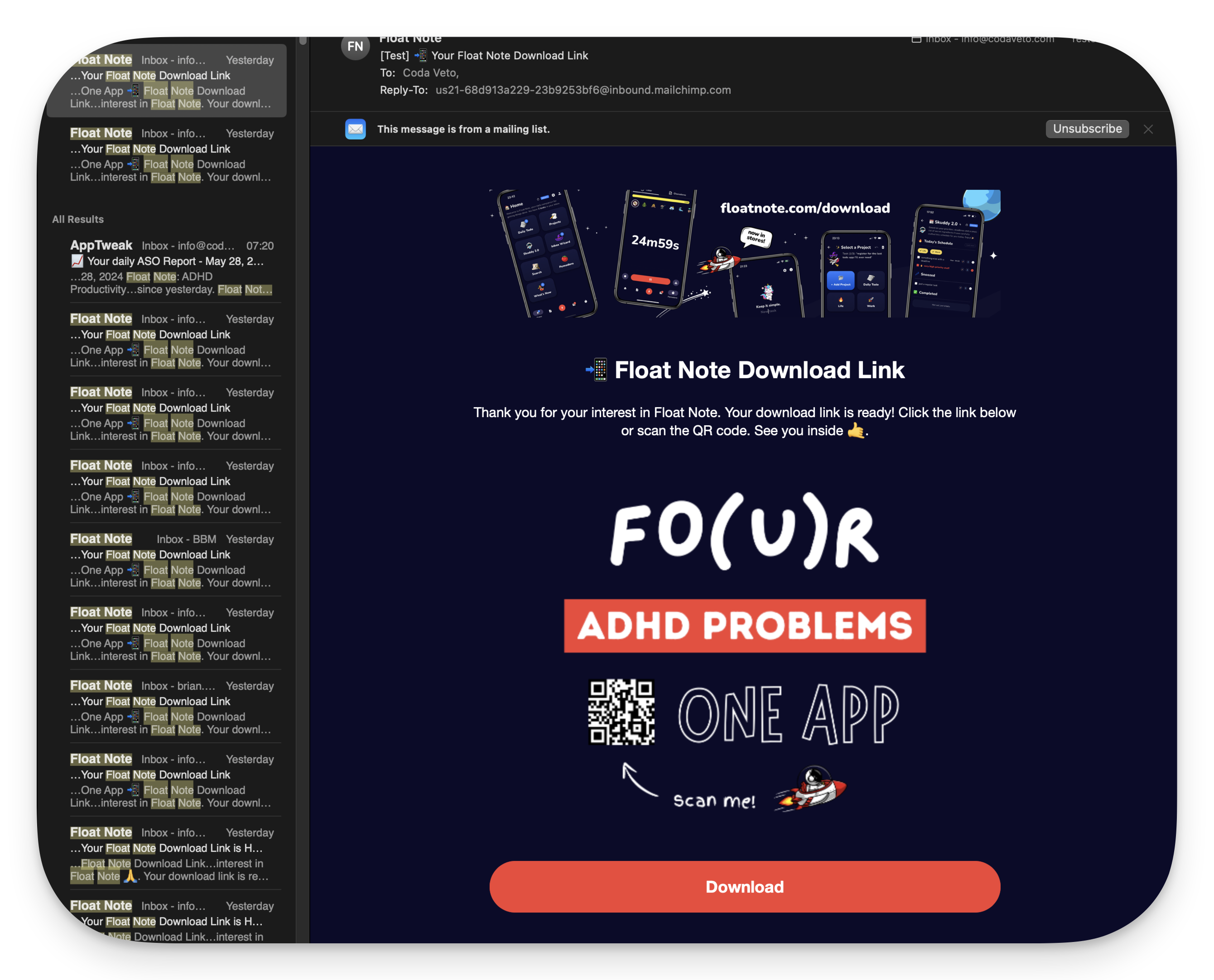

There wasn’t much to do for me so I continued with setting up the landing page. My goal for today is clear. I want a page where users can leave their email and once they do, it should add the user to mailchimp and send a download link. The email should look a bit like this:

By the end of the day, I got it working. I spent a lot of time trying to build something decent in Webflow and Mailchimp. These tools are great for many things, but I find it difficult (read, not impossible & more important with my skills) to create anything in my head with them. I'm probably spoiled by Flutter and how easy things go and how my brain thinks that way. I pulled a few hairs out of my head today because of these tools.

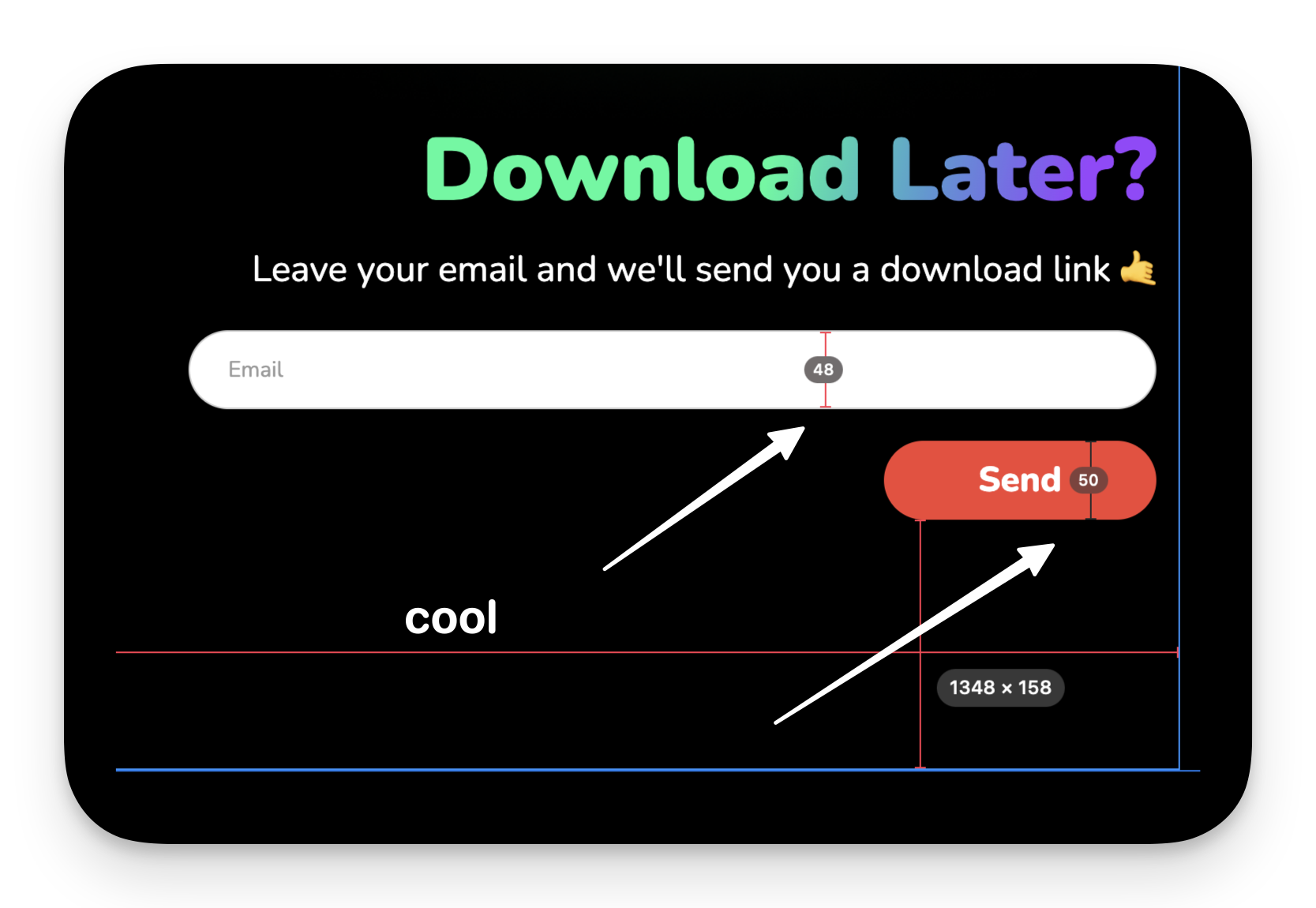

## Webflow & PixelSnap2

Above you see the final result of the download page on web. While working on this page I was going back and forth finetuning the design and I remembered there was this tool that could show you dimensions on the screen with the click of a button. I’m a pixel perfect guy by nature and I do a lot of measuring when I develop my apps, but I’m a bit more loose when I do something like this. However, some things were just too obvious. Like the download button would be bigger than form field. That just looked off. It has to do with the elements being drawn with their fonts + padding as starting point, instead of heights. Anyway what I wanted to tell you about is this tool! It’s so cool. It’s from the same company that build [CleanShot X](https://cleanshot.com/), the tool I use to create my screenshots. It’s called [PixelSnap2](https://getpixelsnap.com/).

With the press of a button you can put measurements on the screen and keep them there until you remove them. It’s so good for quick measuring! You press a button, you measure stuff, click a bit here and there if you want to and then go about your business. I can’t wait to use this while developing one of my apps.

# Mailchimp Journey

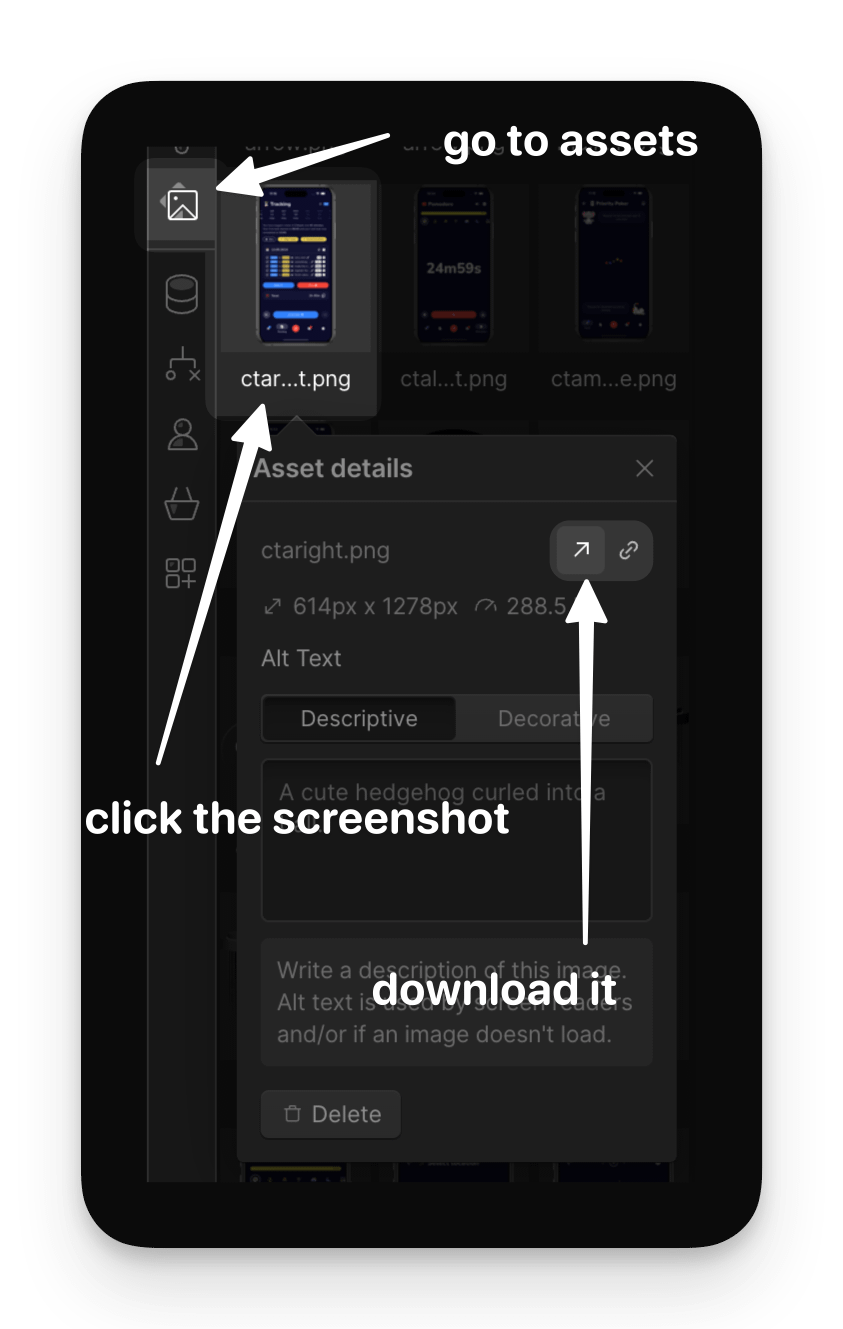

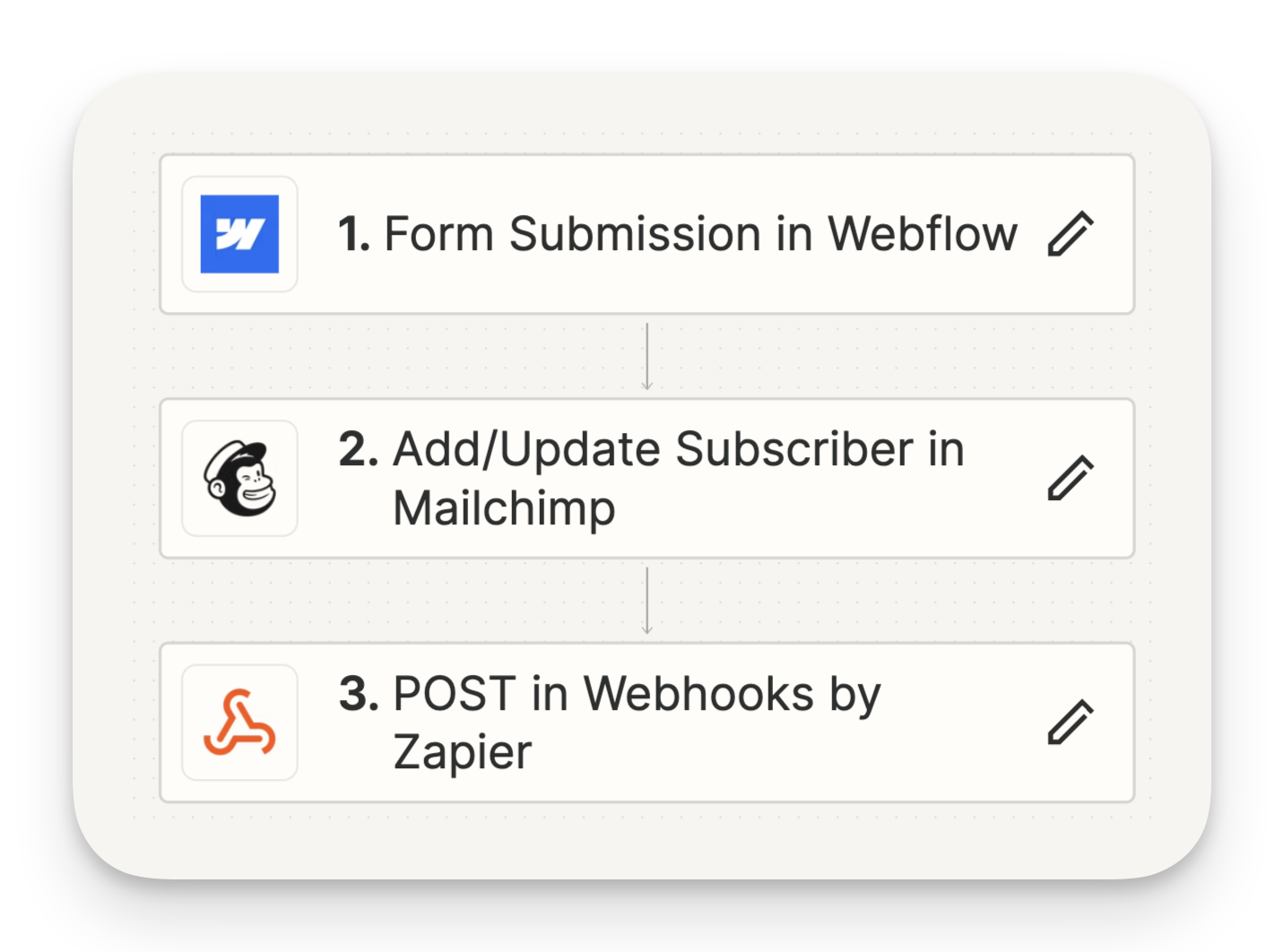

After I got the web page working in Webflow I had to set up a Journey in mailchimp.

https://dev-to-uploads.s3.amazonaws.com/uploads/articles/8toiew218nsgvfqggxpw.png

One of the more confusing parts of this was setting up the linking between Webflow and Mailchimp. Somehow I thought they would have a native integration with each other, but they don’t. So naturally I asked ChatGPT how to solve this.

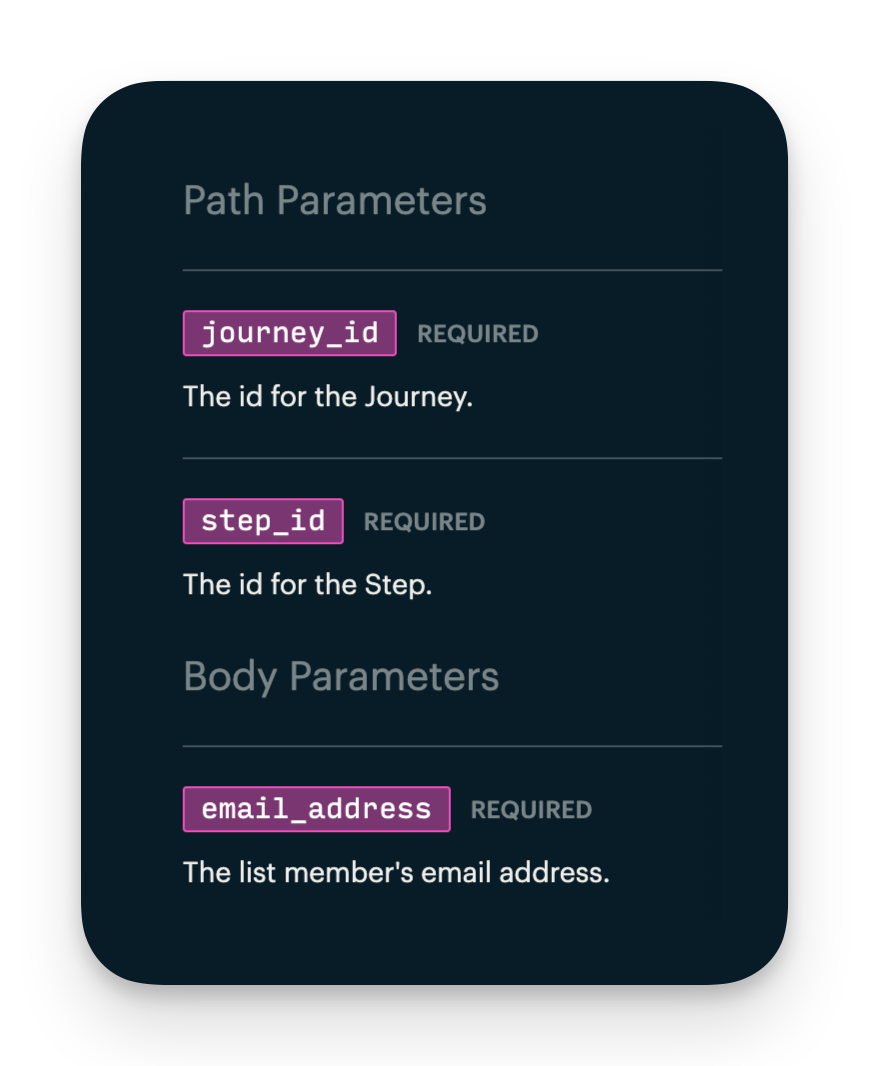

One funny thing, I spent a good time on trying to figure out what the `step_id` was. It turns out it was part of the url that I had already. That’s what you get for ADHD scanning the documentation instead of reading it.

Once I set up the journey I used Zapier (for now) to link the two together. I’ve heard about Zapier before, I even played around with it but never had a real use case for it. I will replace it once it becomes too expensive but for now it’s a great solution. It worked great too, I could ask it in natural language what I wanted to do, then their AI set up the initial steps for me. One of the few apps where I see AI implemented in a really good way. Kudos to them.

After setting everything up I tested it and it works! Eureuka.

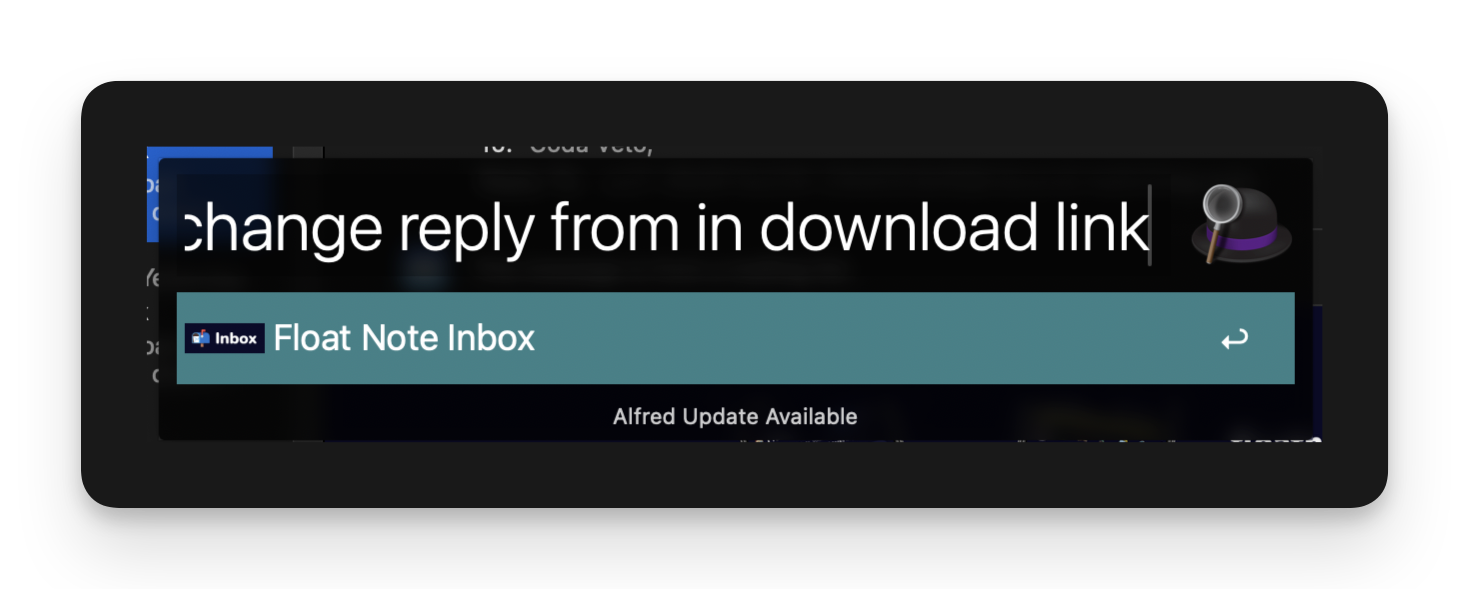

I did notice just now that the sender looks very techy. I thought I fixed that. I’m putting that in my Float Note inbox for later with [Alfed](https://www.alfredapp.com/) and the query option of Float Note.

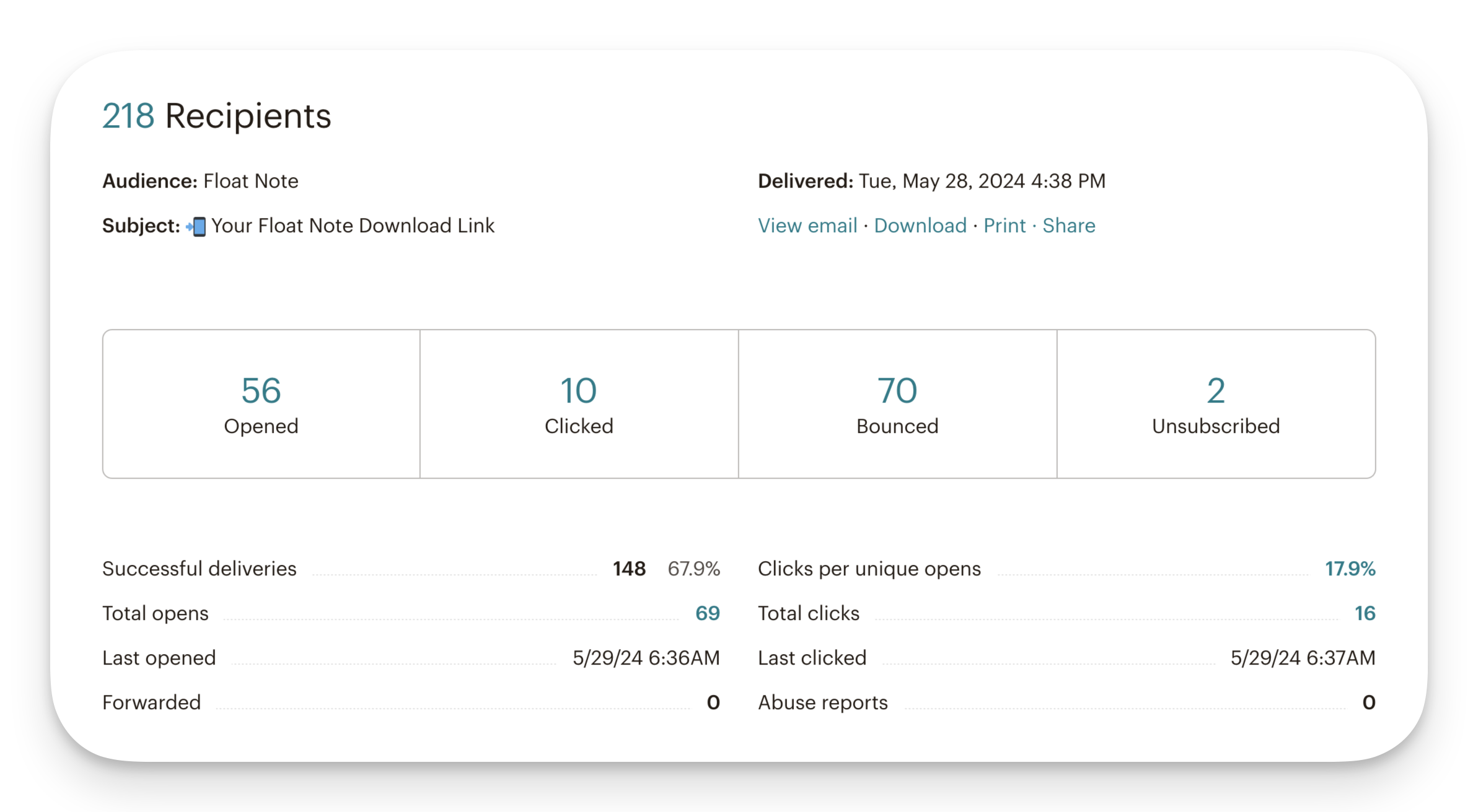

# Big Mailchimp Mistake

So before we end this blog let me tell you about this one big mistake I made yesterday which caused me to accidently mail everyone that ever registered for Float Note to get an email. Before I set everything up correctly with Zapier I was on the wrong path. I get the data from the Webflow form but I selected the wrong campaign. So when I pressed the button to text the integration, it got the data from webflow but selected a campaign that sent my download link email to all current subscribers of Float Note. That’s messy! So everyone got an email yesterday with a download link, no context whatsoever. Really bad 😂. But honest mistake, you live you learn. I’m ‘glad’ it was only 200 people. And I see only two people unsubscribed. Stupid mistake, lesson learned.

Also I see a lot bounces, this has to do with DMARC I believe. I haven’t looked into it yet. But I will once I get my mail campaign game going. For now, we have more imortant stuff to attend to.

# Thank you 🙏

Once again, thank you for reading if you came this far. I really appreciate the support I’m getting. It’s taking up a lot of time sometimes but it’s worth it. I’m still finding my way around building the perfect system for this. See you next time 🤙

### About Me

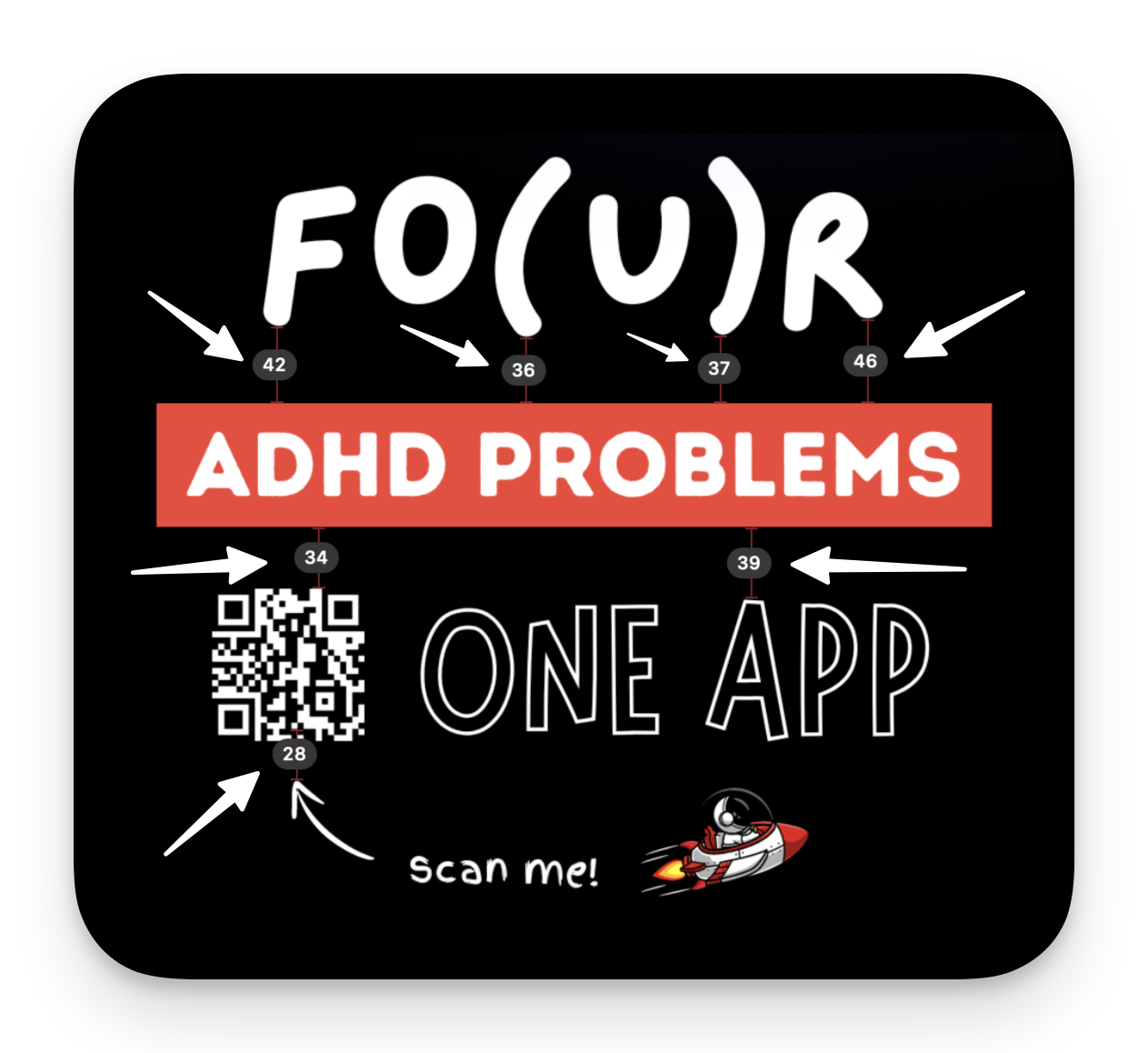

About me: I’m an independent app developer with ADHD and the creator of Float Note, an app that tackles four common ADHD challenges: too many thoughts, trouble organizing, feeling overwhelmed, and staying focused.

### Discount Code

It would mean a lot to me if you gave my app a try and let me know what you think through one of my socials. You can try it for free for 7 days and when the time comes, use code 🎫 “DEVLIFEOFBRIAN" to get 70% off your subscription for life. A gift for only a chosen few that read my blog 🙏. Also, if you really can’t pay for it, but you love the app. Send me a message and we’ll work something out.

### Download

📲 Download it here or click the link in my bio (Android/iOS/web) ➡️ floatnote.com/download

| devlifeofbrian |

1,868,881 | Why Theo is Wrong & We'll Get a Laravel for JavaScript | JavaScript's Need for a Full-stack Framework “Why Don't We Have A Laravel For... | 0 | 2024-05-29T13:15:57 | https://wasp-lang.dev/blog/2024/05/29/why-we-dont-have-laravel-for-javascript-yet | framework, javascript, laravel, wasp | ## JavaScript's Need for a Full-stack Framework

“*Why Don't We Have A Laravel For JavaScript?”.* This is the question [Theo poses in his most recent video](https://www.youtube.com/watch?v=yaodD79Q4iE).

And if you’re not familiar with tools like [Laravel](https://laravel.com/) and [Ruby-on-Rails](https://rubyonrails.org/), they are opinionated full-stack frameworks (for PHP and Ruby) with lots of built-in features that follow established conventions so that developers can write less boilerplate and more business logic, while getting the industry best practices baked into their app.

He answers this question with the opinion that JavaScript *doesn’t need* such frameworks because it’s better to select the tools you want and build the solution you need yourself.

This sounds great — and it also happens to be a nice flex if you’re a seasoned dev — but I feel that he doesn’t back up this claim very well, and **I’m here to tell you where I think he’s wrong**.

In my opinion, the better question to ask is why don’t we have a Laravel for JavaScript *yet*? The answer being that we’re still working on it.

In his summary of the full-stack frameworks of the JavaScript world that could be comparable to Laravel or Rails, he fails to consider a few important points:

1. **People really want a Laravel / Rails for JavaScript**. If they didn’t, there wouldn’t be so many attempts to create one, and he wouldn’t be making a video whose sole purpose is to respond to the pleading cry “_WHY DOESN’T JAVASCRIPT HAVE ITS OWN LARAVEL!?_”

2. **He fails to consider the timing and maturity of the underlying tools within the JS ecosystem**. Perhaps it’s not that a Laravel for JavaScript doesn’t *need* to exist, it’s just that it doesn’t exist yet due to some major differences in the ecosystems themselves, like how old they are and where the innovation is mostly happening.

3. **He also fails to ask for whom these types of solutions are suitable for**. Surely, not all devs have the same objectives, so some might opt for the composable approach while others prefer to reach for a framework.

So let’s take a look at how we got to the point we’re at today, and how we might be able to bring a full-stack framework like Laravel or Rails to the world of JavaScript.

## Getting Shit Done

In his video, Theo brings up the point that "*there's a common saying in the React world now which is that ‘if you're not using a framework you're building one’”.* Even though this is meant to be used as a criticism, Theo feels that most JavaScript devs are missing the point and that building your “own framework” is actually an advantage.

He feels that the modular nature of the JavaScript ecosystem is a huge advantage, but that sounds like a lot of pressure on the average developer to make unnecessary judgement calls and manage lots of boilerplate code.

Sure, you have teams that need to innovate and meet the needs of special use cases. These are the ones that prioritize modularity. They tweak, improve, and squeeze as much out of developer experience (DX) and performance as possible to get their unique job done right.

But on the other hand, there are also numerous teams whose main objective is producing value and innovating on the side of the product they are building, instead of the tools they are using to build it. These devs will favor a framework that allows them to focus solely on the business logic. This gives them a stable way to build stuff with best practices so they can easily advance from one project to another. In this camp are also the lean, mean indiehackers looking for frameworks so they can move fast and get ideas to market!

It’s a bit like the difference between Mac and Linux. Mac’s unified stack that just works out-of-the box means many professionals prefer it for its productivity, whereas Linux is great if you’re looking for flexibility and have the time and knowledge to tweak it to your desires. Both are valid solutions that can coexist to meet different needs.

This focus on productivity is what made Rails so powerful back in the day, and why Laravel is such a loved framework at the moment. And the many attempts at creating such a framework for JavaScript is proof enough that there is a large subset of JavaScript devs who also want such a solution.

But maybe the reason such a framework doesn’t exist yet doesn’t have to do with whether devs want one or not, but rather the important factors which are needed in order for such a framework to come together haven’t aligned up until this point. For such a framework to be widely adoptable, it first needs underlying technologies that are stable enough to build upon. After that, it needs time and many iteration cycles to reach maturity itself, so that devs can feel comfortable adopting it.

Have these factors aligned in the JavaScript world to give us the type of frameworks that PHP and Ruby already have? Maybe not quite yet, but they do seem to be slowly coming together.

## Comparing Ecosystems

One of Theo’s main points is that JavaScript as a language enables a level of modularity and composability that languages like Ruby and PHP don’t, which is why Ruby and PHP ecosystems are well served by full-stack frameworks, but JavaScript *doesn’t need one* since you can just compose stuff on your own.

While JavaScript is a peculiar language, with its support for both functional and imperative paradigms and dynamic nature, it also comes with a lot of pitfalls (although it has improved quite a bit lately), so you don’t typically hear it get praised in the way Theo does here. In fact, you are probably more likely to hear praise for Ruby and its properties as a modular and flexible language.

So if it isn’t some unique properties of JavaScript as a language that make it the king of web dev, what is it then?

Well, the answer is pretty simple: **JavaScript is the language of the browser**.

*Way back* when most of the web development was happening on the server side, PHP, Java, Ruby and other languages where reigning supreme. During this era, devs would only write small pieces of functionality in JavaScript, because most of the work was being handled server-side.

But as web development evolved and we started building richer applications, with more dynamic, responsive, and real-time features, a lot of code moved away from the server and over towards JavaScript on the client, because it’s (basically) the only language that supports this. So instead of doing your development mostly in PHP or Ruby with a little bit of JavaScript sprinkled in there, you were now splitting your apps between substantial amounts of JavaScript on the client, plus Ruby or PHP on the server.

JavaScript’s final power move came with the arrival of NodeJS and the ability to also write it on the server, which secured its position as the king of web dev languages. Today, devs can (and do) write their entire apps in JavaScript. This means you need to know one language less, while you’re also able to share the code between front-end and back-end. This has opened up a way for better integration between front-end and back-end, which has snowballed into the ecosystem we know today.

So it’s not so much the unique properties of JavaScript as a language that have made it the dominant ecosystem for web development, but more its unique monopoly as the only language that can be used to write client code, plus it can also be used server-side.

As Theo says, “_we’ve got infinitely more people making awesome solutions_” in the JavaScript ecosystem. That’s right. It’s exactly those infinite number of developers working in the space creating the flexibility and modular solutions for JavaScript, rather than it being an innate quality of the programming language.

And because the JavaScript ecosystem is still the hottest one around, it has the most devs in total while continuing to attract new ones every day. This means that we get a large, diverse community doing two main things:

1. Innovating

2. Building

The innovators (and influencers) tend to be the loudest, and as a result opinion largely skews in their favor. But there is also a lot of building, or “normal” usage, happening! It’s just that the innovators tend to do the talking on behalf of the builders.

So with all that’s going on in the JavaScript ecosystem, is it pointless to try and build a lasting framework for JavaScript developers, as Theo suggests, or are we on the path towards achieving this goal regardless of what the innovators might claim?

## Show Me What You’re Working With

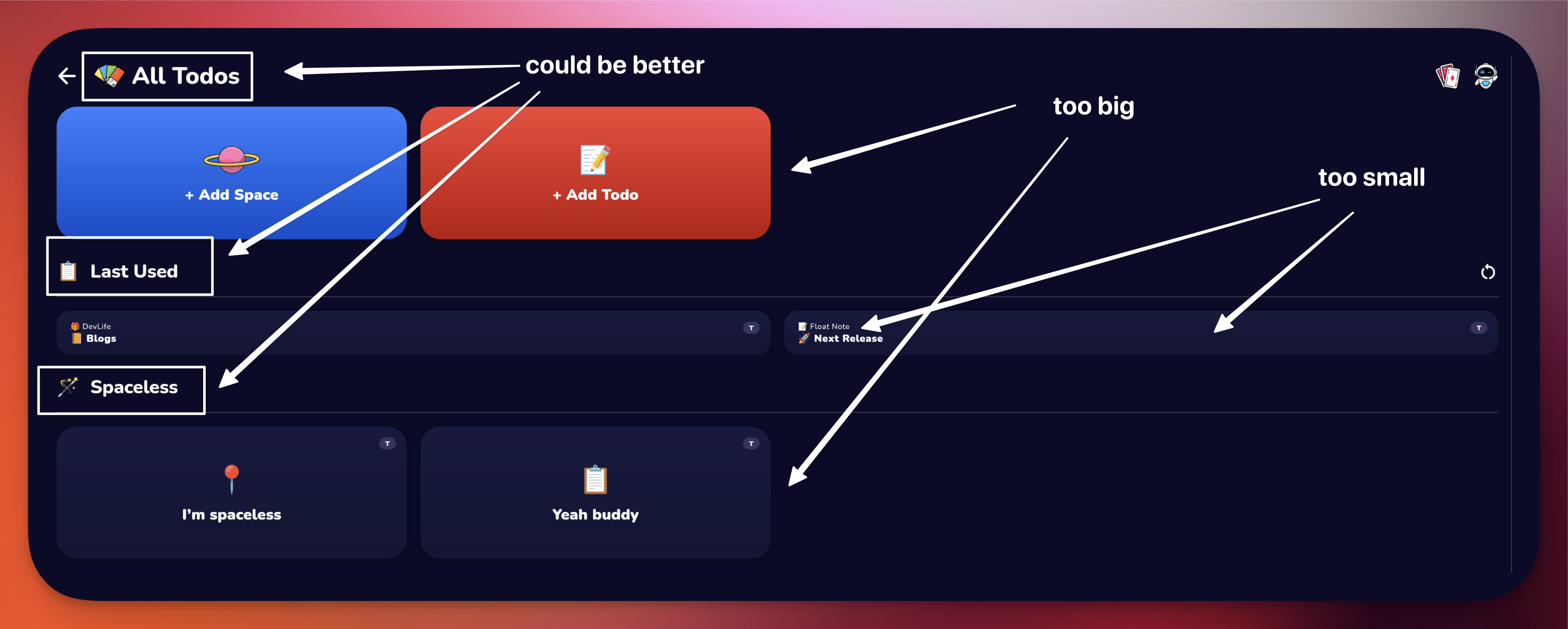

Theo also drops the names of a bunch of current JavaScript frameworks that have either failed to take off, or “_just can’t seem to get it right_” when it comes to being a comprehensive full-stack solution.

And he does have a point here. So far, solutions like [Blitz](https://blitzjs.com/), [Redwood](https://redwoodjs.com/), [Adonis](https://adonisjs.com/), or [T3](https://create.t3.gg/) haven’t managed to secure the popularity in their ecosystem that Rails or Laravel have in theirs.

**But these things take time.**

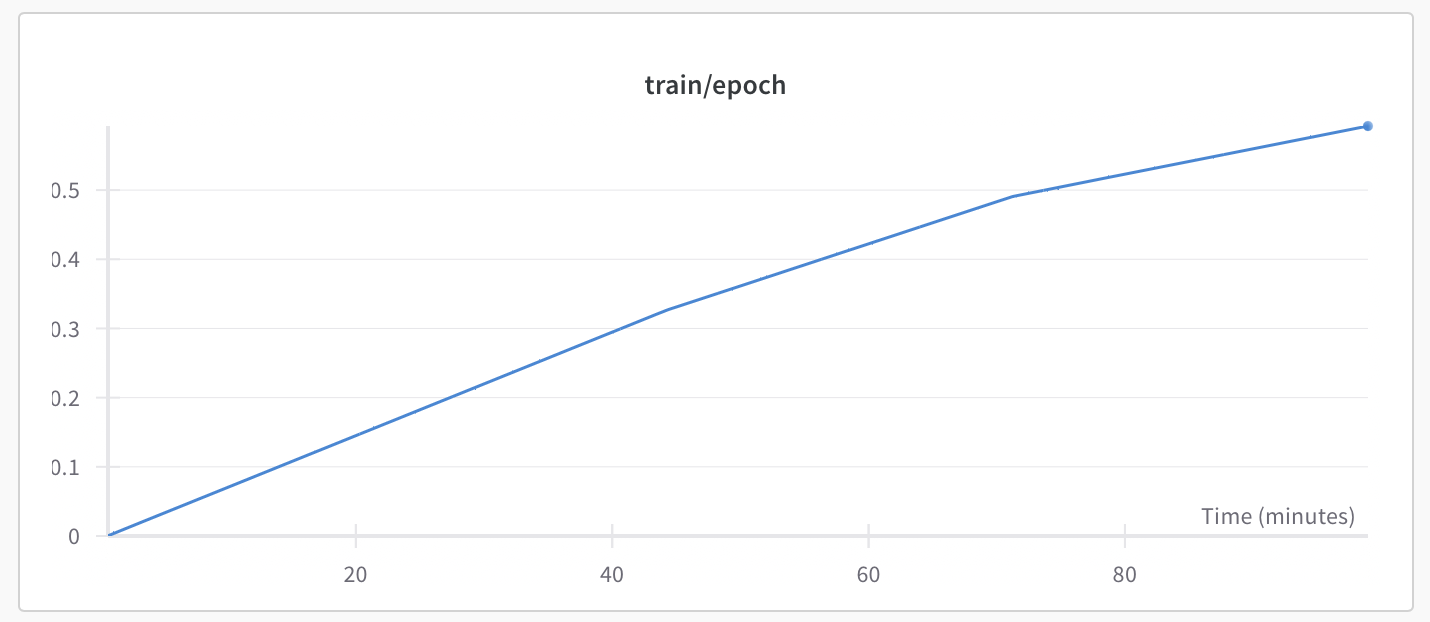

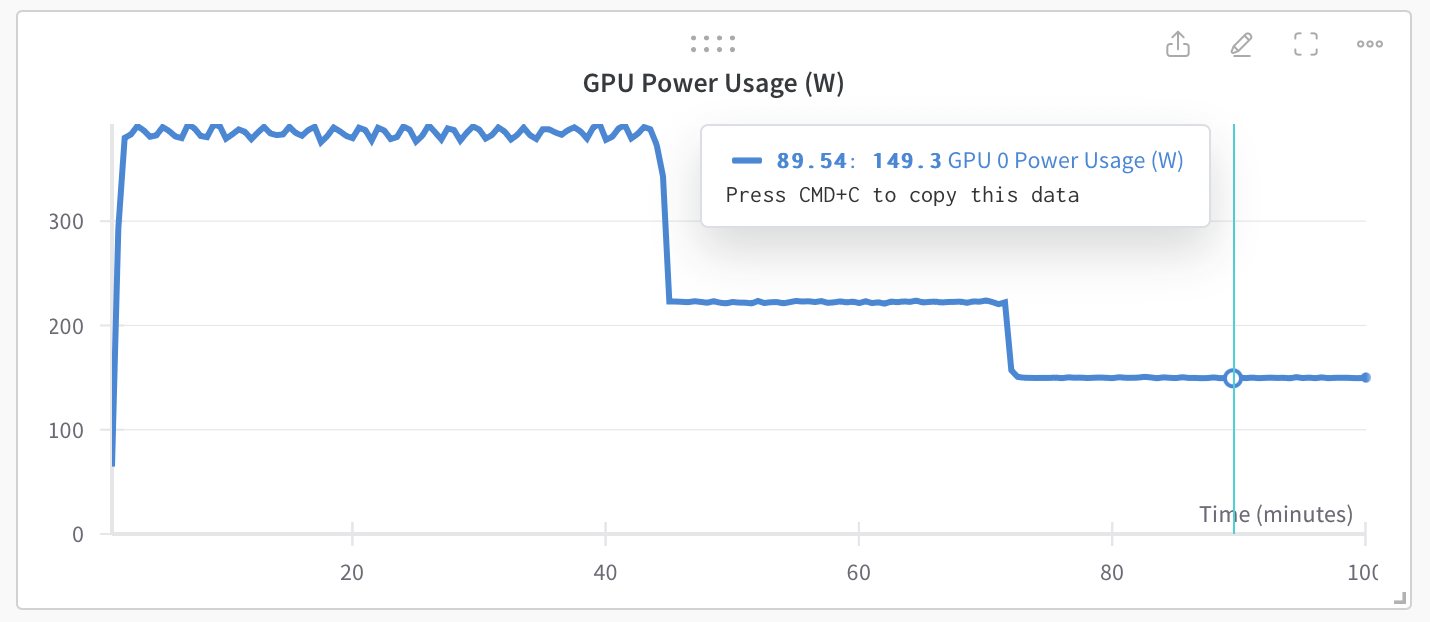

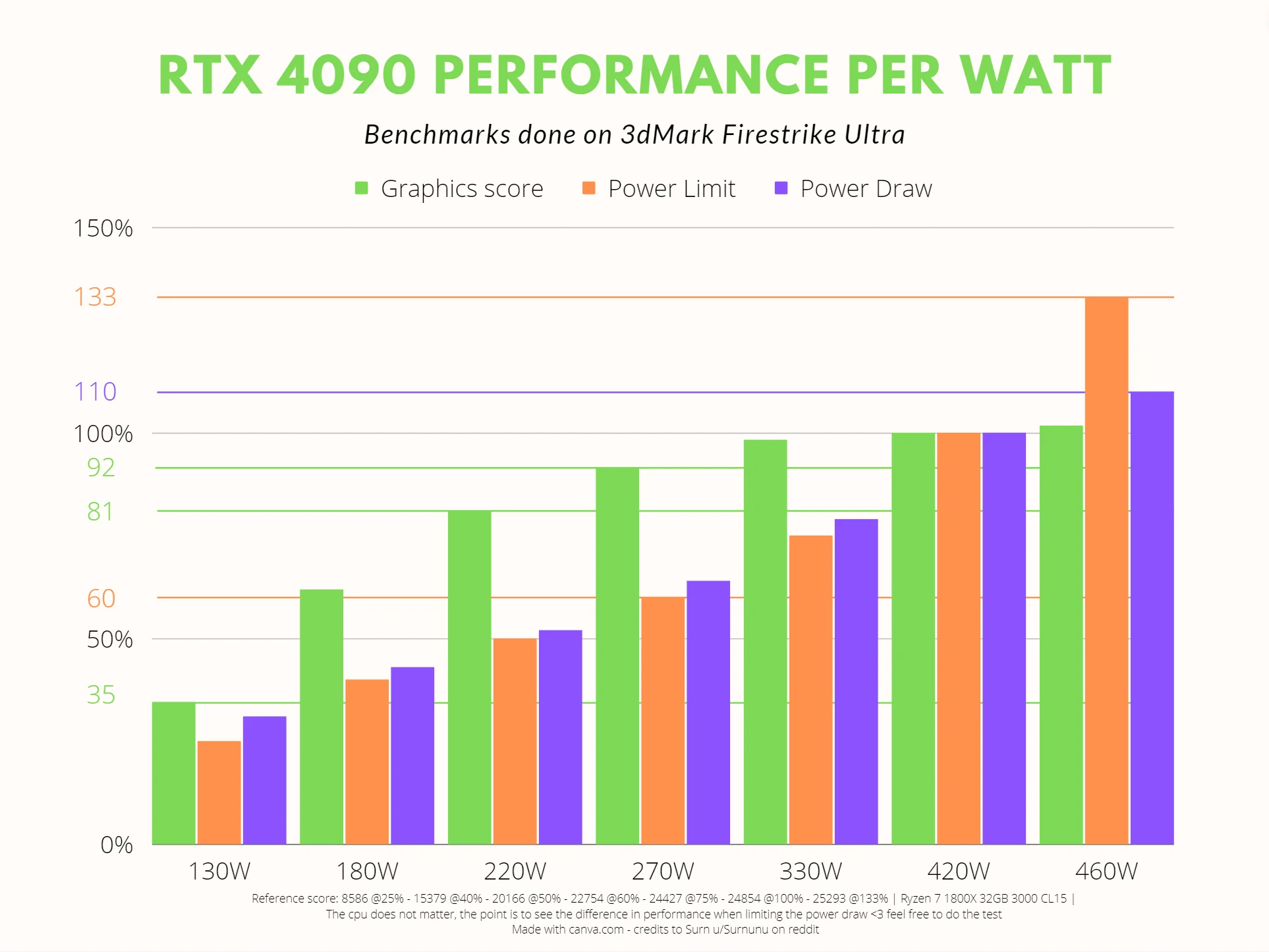

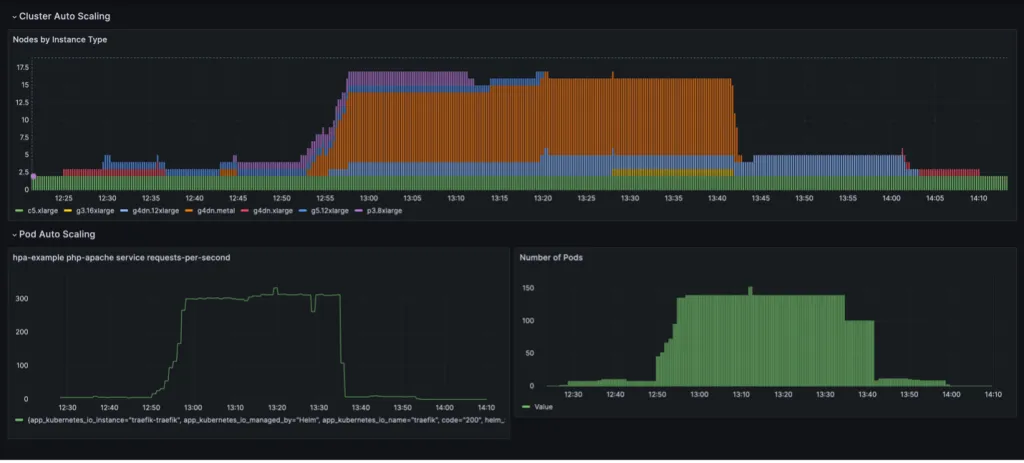

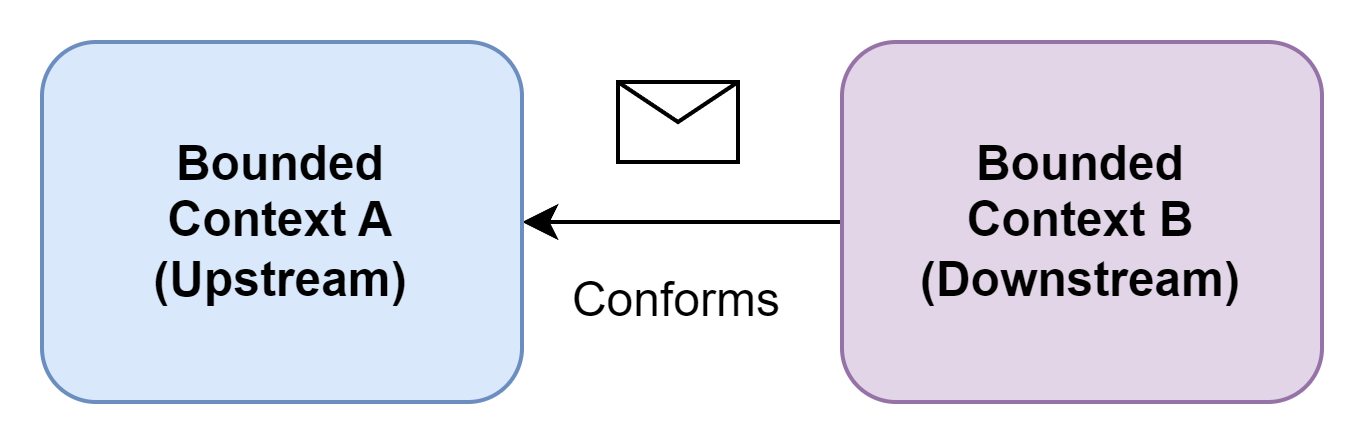

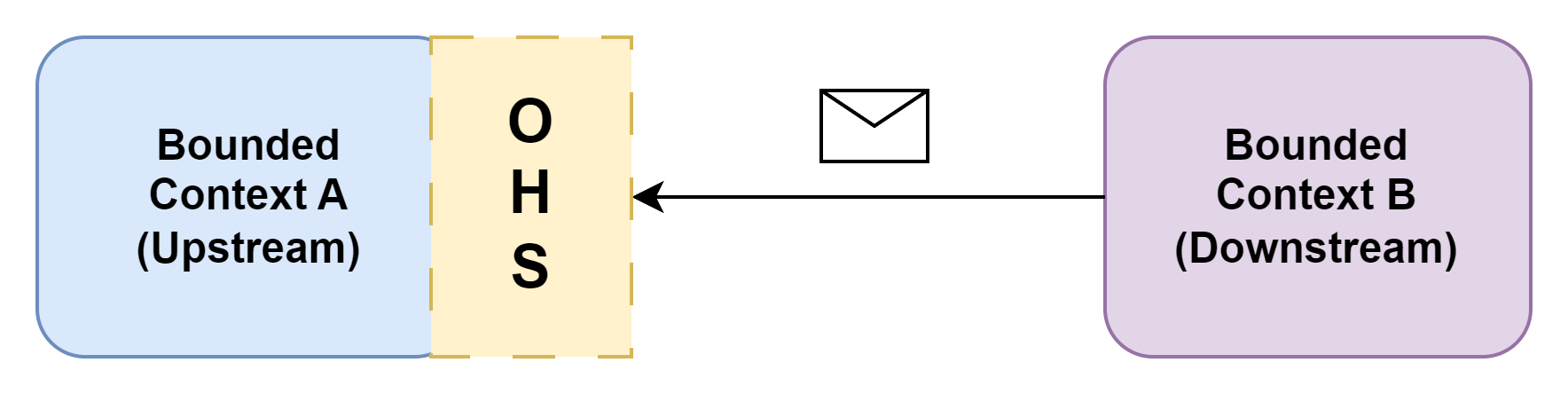

Have a look at the graph above. Laravel and Rails have been around for 13-15 years! The JavaScript frameworks being used in comparison are just getting started, with some of them, like [Wasp](https://wasp-lang.dev) and [Redwood](https://redwoodjs.com/), at similar stages in their development as Laravel and Rails were during their initial years.

As you can see, it takes time for good solutions to reach maturity. And even with some of these frameworks starting to stagnate their great initial growth is evidence that demand for these tools definitely exists!

The main overlying issue that tends to plague these tools is that Javascript as an ecosystem is moving quite fast, so for a solution like this to survive long term, it needs to not only be opinionated enough, but also modular enough to keep up with the shifts in the ecosystem.

One factor that prevents frameworks from reaching this state is being tied too tightly to the wrong technology. This was NextJS for BlitzJS, GraphQL for Redwood, and Blaze for MeteorJS. And another factor is *not going big enough* with the framework, because it seems too daunting a task within the JavaScript ecosystem, where things move fast and everyone is “terrified of being opinionated” because they might get criticized by the loudest voices in the scene.

In other words, frameworks that avoid going big on their own, and going *truly* full-stack, like Ruby-on-Rails and Laravel went, miss the opportunity to solve the most common pain-points that continue to plague JavaScript developers.

But, the JavaScript ecosystem is maturing and stabilizing, we are learning from previous attempts, and there *will* be a full-stack framework bold enough to go all the way in, get enough things right, and persist for long enough to secure its place.

## Say Hi to Wasp

In his comparison of JavaScript frameworks on the market today, Theo also fails to mention the full-stack framework for React & NodeJS that we’re currently working on, [Wasp](https://wasp-lang.dev).

We’ve been working hard on [Wasp](https://wasp-lang.dev) to be the *truly* full-stack framework that meets the demands of web developers and fills that void in the JavaScript ecosystem to become the framework they love to use.

**With Wasp, we decided to go big, opinionated, and truly full-stack**. In other words, we’re going *all in* with this framework.

That means thinking from first principles and designing a novel approach that only Wasp uses, like building our own compiler for our configuration language, and truly going full-stack, while also keeping it modular enough to move together with the ecosystem as it progresses.

This means that we spent more time in the beginning trying different approaches and building the foundation, which finally brought us a significant jump in usage starting in late 2023. Wasp is now growing strong, and at a really fast pace!

It’s really cool for us to see Wasp being used today to ship tons of new apps and businesses, and even being used internally by some big names and organizations (more info on that will be officially released soon)!

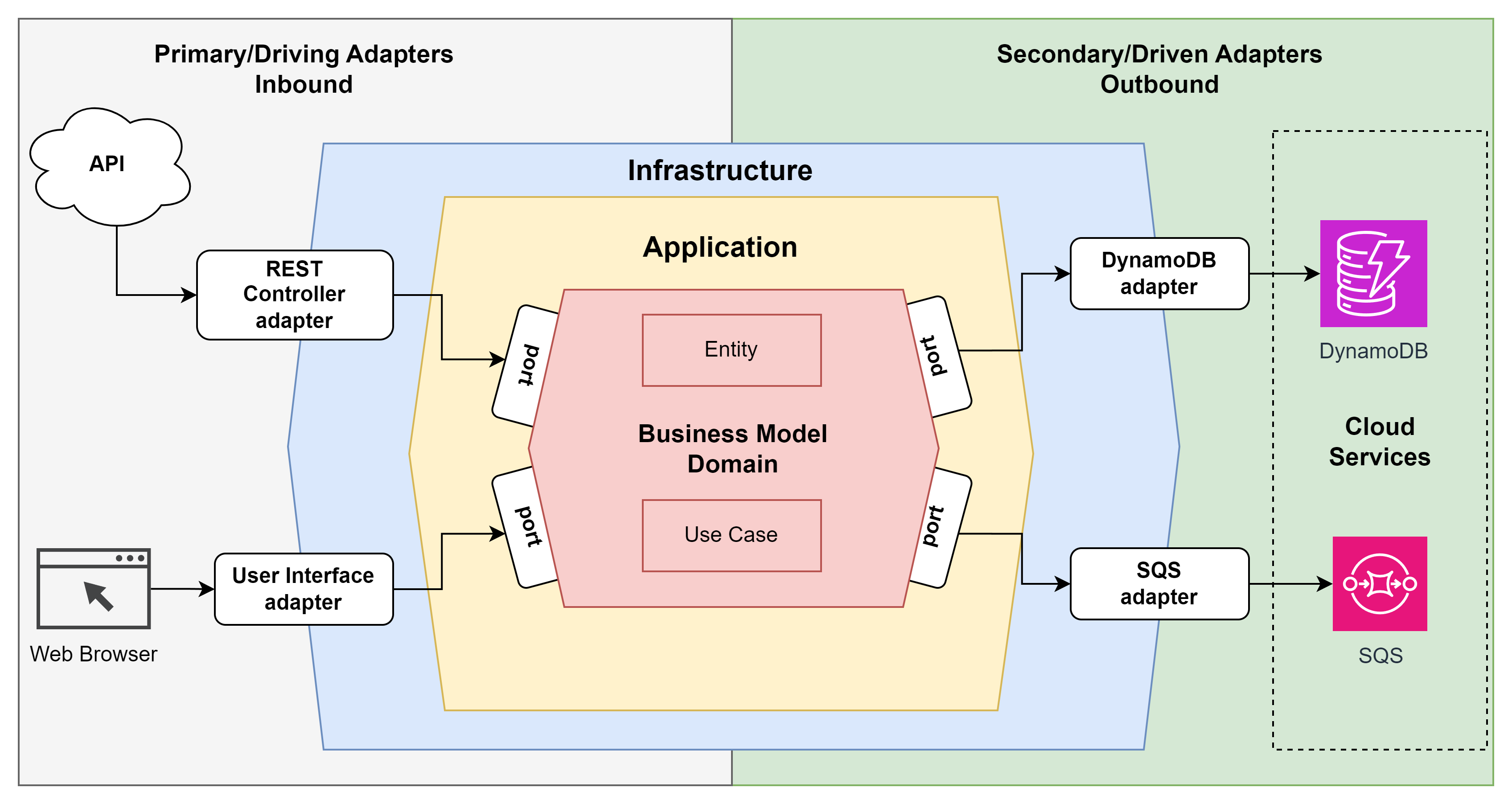

What Wasp does differently than other full-stack frameworks in the JavaScript world is that it separates it’s main layer of abstraction into its own configuration file, `main.wasp`. This config file gives Wasp the knowledge it needs to take care of a lot of the boilerplatey, infrastructure-focused code, and allows it to have this unique initial compile-time step where it is able to reason about your web app before it generates the code for it in the background (using that knowledge while generating it).

In practice, this means that all you have to do is describe your Wasp app at a high level in Wasp’s config file, and then implement everything else in technologies that you’re familiar with such as React, NodeJS, and Prisma. It also means that Wasp has a high modularity potential, meaning we are building it to also support other frontend frameworks in the future, like Vue, Solid or Svelte, and to even support additional back-end languages, like Python, Go or Rust.