id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,868,733 | Who Should Attend Your Agile Retrospective Meetings? 🤔 | Agile retrospectives are only as powerful as the people in the room. But too often, we treat... | 0 | 2024-05-29T09:10:22 | https://dev.to/mattlewandowski93/who-should-attend-your-agile-retrospective-meetings-1fpi | scrum, agile, retrospectives, webdev | Agile retrospectives are only as powerful as the people in the room. But too often, we treat attendance as an afterthought.

Big mistake. The wrong mix can tank your retro faster than you can say "action item."

## So who should make the guest list?

The non-negotiables:

1. Your core Scrum team (obvs)

2. An impartial facilitator to keep things on track

The optional invitees:

- A manager for 30,000 ft view

- An SME for technical wisdom

- Stakeholders for outside perspective

## But choose wisely - too many cooks spoil the retro.

The key is balance. You want enough voices to spark insights, but not so many that it turns into a debate club.

Nail the invite list and watch your retros level up. Get it wrong and, well... expect a lot of awkward silences.

Want to learn more? Check out the full guide for [who should be attending your agile retrospectives](https://www.kollabe.com/posts/who-should-attend-a-retrospective-meeting)

Your retros (and your future self) will thank you. | mattlewandowski93 |

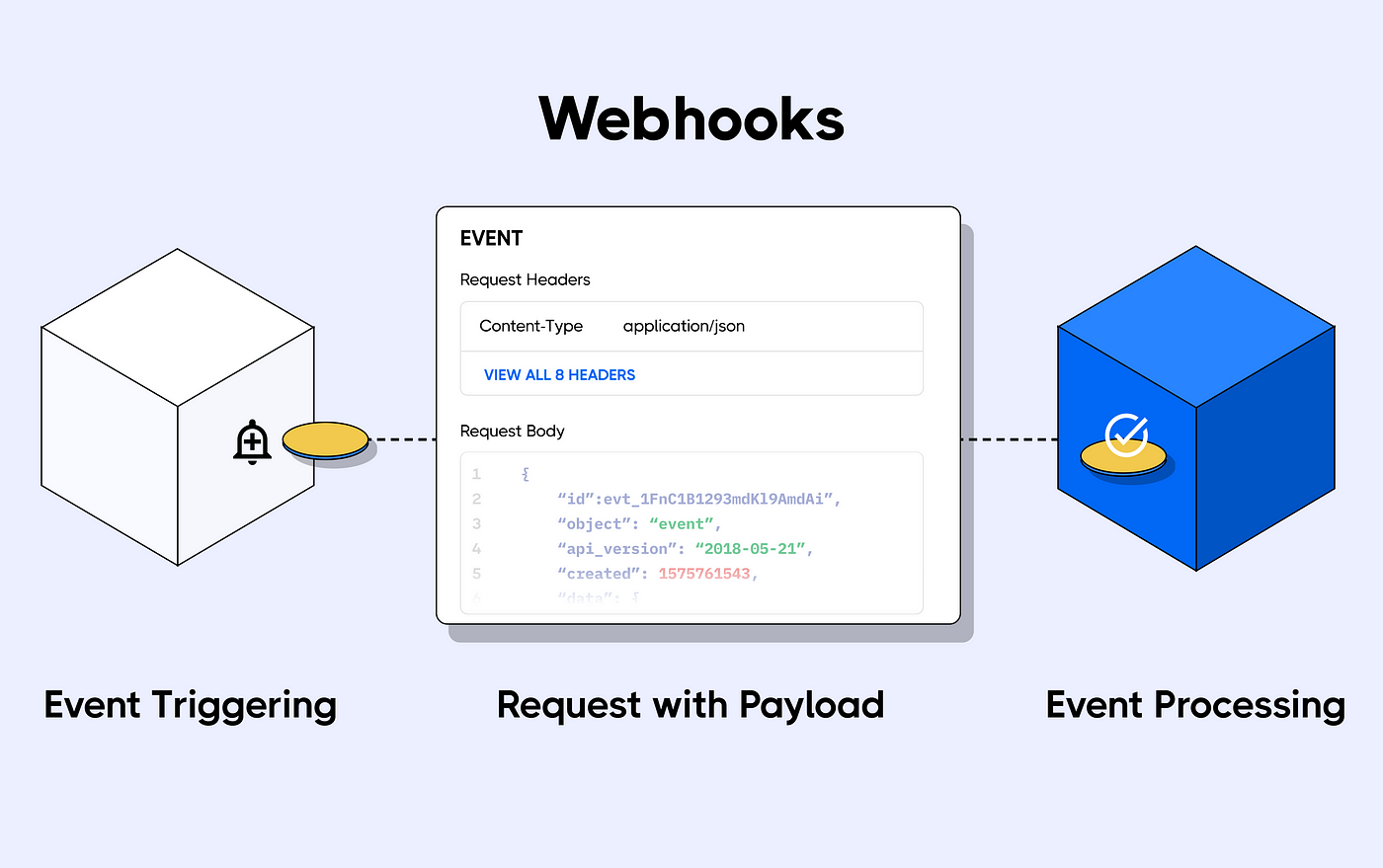

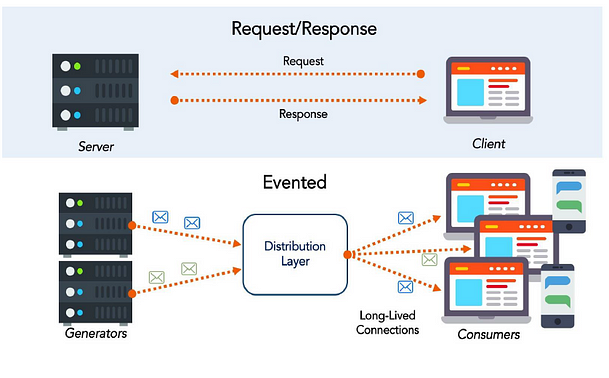

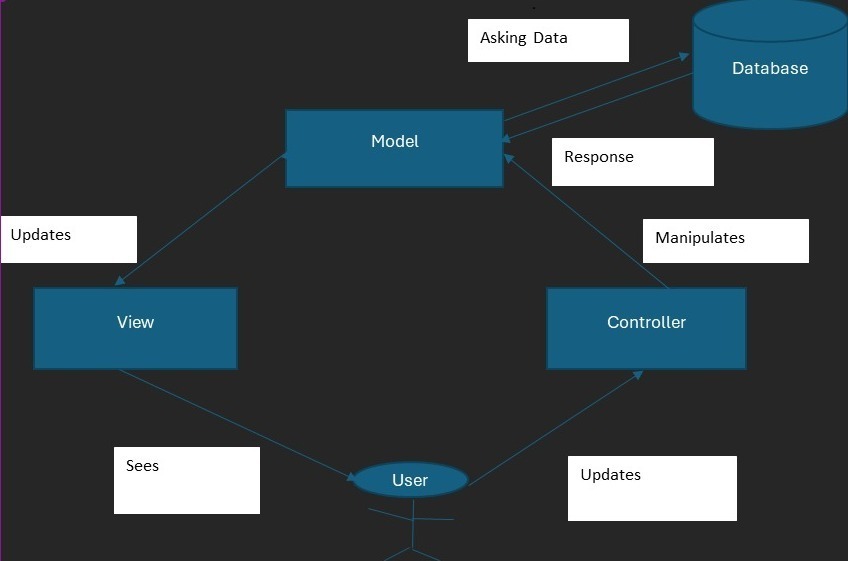

1,868,732 | Event Driven Excellence using WebHooks | In web development, connecting different systems is essential for building dynamic and efficient... | 0 | 2024-05-29T09:10:15 | https://dev.to/aztec_mirage/event-driven-excellence-using-web-hooks-419c | webdev, web3, tutorial, programming | In web development, connecting different systems is essential for building dynamic and efficient applications. Two common methods for doing this are **webhooks** and **APIs**.

Webhooks are a method for web applications to communicate with each other automatically. They allow one system to send real-time data to another whenever a specific event occurs. Unlike traditional APIs, where one system needs to request data from another, webhooks push data to another system as soon as the event happens.

To set up a webhook, the client gives a unique URL to the server API and specifies which event it wants to know about. Once the webhook is set up, the client no longer needs to poll the server; the server will automatically send the relevant payload to the client’s webhook URL when the specified event occurs.

Webhooks are often referred to as *reverse APIs* or *push APIs*, because they put the responsibility of communication on the server, rather than the client. Instead of the client sending HTTP requests—asking for data until the server responds—the server sends the client a single HTTP POST request as soon as the data is available. Despite their nicknames, webhooks are not APIs; they work together. An application must have an API to use a webhook.

Here’s a more detailed breakdown of how webhooks work:

1. **Event Occurrence**: An event happens in the source system (e.g., a user makes a purchase on an e-commerce site).

2. **Trigger**: The event triggers a webhook. This means the source system knows something significant has occurred and needs to inform another system.

3. **Webhook URL**: The source system sends an HTTP POST request to a predefined URL (the webhook URL) on the destination system. This URL is configured in advance by the user or administrator of the destination system.

4. **Data Transmission**: The POST request includes a payload of data relevant to the event (e.g., details of the purchase, such as items bought, price, user info).

5. **Processing**: The destination system receives the data and processes it. This could involve updating records, triggering other actions, or integrating the data into its workflows.

6. **Response**: The destination system usually sends back a response to acknowledge receipt of the webhook. This response can be as simple as a 200 OK HTTP status code, indicating successful receipt.

**Example:**

Let's say you subscribe to a streaming service. The streaming service wants to send you an email at the beginning of each month when it charges your credit card.

The streaming service can subscribe to the banking service (the source) to send a webhook when a credit card is charged (event trigger) to their emailing service (the destination). When the event is processed, it will send you a notification each time your card is charged.

The banking system webhooks will include information about the charge (event data), which the emailing service uses to construct a suitable message for you, the customer.

**Use Cases for Webhooks:**

- **E-commerce**: Notifying inventory systems of sales so stock levels can be adjusted.

- **Payment Processing**: Alerting systems of payment events like successful transactions or refunds.

- **Messaging and Notifications**: Sending notifications to chat systems (e.g., Slack, Microsoft Teams) when certain events occur in other systems (e.g., new issue reported in a project management tool).

**Benefits of Webhooks:**

- **Real-time Updates**: Immediate notification of events without the need for periodic polling.

- **Efficiency**: Reduces the need for continuous polling, saving resources and bandwidth.

- **Decoupling Systems**: Allows different systems to work together without tight integration, enhancing modularity and flexibility.

**Implementing Webhooks**

To implement webhooks, you typically need to:

- **Set Up the Webhook URL**: Create an endpoint in the destination system that can handle incoming HTTP POST requests.

- **Configure the Source System**: Register the webhook URL with the source system and specify the events that should trigger the webhook.

- **Handle the Payload**: Write logic in the destination system to process the incoming data appropriately.

- **Security Measures**: Implement security features such as validating the source of the webhook request, using secret tokens, and handling retries gracefully in case of failures.

Webhooks can be categorized based on various criteria, including their purpose, the type of event they respond to, and their implementation specifics. Here are some common types of webhooks:

**Based on Purpose:**

1. **Notification Webhooks**:

- These webhooks are used to notify a system or user of a specific event.

2. **Data Syncing Webhooks**:

- These are used to keep data consistent between two systems. For instance, when a user updates their profile on one platform, a webhook can update the user’s profile on another connected platform.

3. **Action-Triggered Webhooks**:

- These webhooks trigger specific actions in response to an event. For example, when a payment is completed, a webhook can trigger the generation of an invoice.

**Based on Event Types:**

1. **Resource Change Webhooks**:

- Triggered when a resource is created, updated, or deleted. For example, when a new customer is added to a CRM system.

2. **State Change Webhooks**:

- These webhooks are triggered by changes in the state of an entity, such as an order status changing from "pending" to "shipped".

3. **Notification Webhooks**:

- Used to send alerts or notifications, such as when a new comment is posted on a blog.

**Based on Implementation**

1. **One-time Webhooks**:

- These are triggered by a single event and do not expect any further events after the initial trigger. For example, a webhook that triggers an email confirmation upon user registration.

2. **Recurring Webhooks**:

- These are set up to handle multiple events over time, like a webhook that sends updates whenever a user’s subscription status changes.

**Examples from Popular Platforms:**

1. **GitHub Webhooks**:

- **Push Event**: Triggered when code is pushed to a repository.

- **Issue Event**: Triggered when an issue is opened, closed, or updated.

- **Pull Request Event**: Triggered when a pull request is opened, closed, or merged.

2. **Stripe Webhooks**:

- **Payment Intent Succeeded**: Triggered when a payment is successfully completed.

- **Invoice Paid**: Triggered when an invoice is paid.

- **Customer Created**: Triggered when a new customer is created.

3. **Slack Webhooks**:

- **Incoming Webhooks**: Allow external applications to send messages into Slack channels.

- **Slash Commands**: Allow users to interact with external applications via commands typed in Slack.

**Security and Verification:**

1. **Secret Tokens**:

- Webhooks often use secret tokens to verify the authenticity of the source. The source system includes a token in the webhook request, which the destination system verifies to ensure the request is legitimate.

2. **SSL/TLS Encryption**:

- To ensure data security, webhooks should use HTTPS to encrypt data in transit.

3. **Retries and Error Handling**:

- Implementing retries in case the webhook delivery fails is a common practice. The source system may retry sending the webhook if it does not receive a successful acknowledgment from the destination system.

**Difference between a Web hook and an API:**

| Feature | Webhook | API |

| --- | --- | --- |

| Initiation | Event-driven (automatic push) | Request-response (manual pull) |

| Updates | Real-time | On-demand |

| Efficiency | High (no polling needed) | Can be lower (may require polling) |

| Setup | Needs a URL to receive data | Needs endpoints to request data |

| Typical Use Case | Notifications, real-time alerts | Fetching data, performing operations |

| Data Transfer | HTTP POST requests | HTTP methods (GET, POST, PUT, DELETE) |

| Security | Secret tokens, SSL/TLS | API keys, OAuth, SSL/TLS |

| Error Handling | Retries if fails | Immediate error response |

| Resource Use | Low on client side | Can be higher on client side |

- **Webhooks** push data to you when something happens.

- **APIs** let you pull data when you need it.

**How to Use it?**

**Using Web Hook:**

Step 1: Set Up Django Project

1. **Install Django:**

```python

pip install django

```

2. **Create a Django Project:**

```python

django-admin startproject myproject

cd myproject

```

3. **Create a Django App:**

```bash

python manage.py startapp myapp

```

4. **Add the App to `INSTALLED_APPS` in `myproject/settings.py`:**

```python

INSTALLED_APPS = [

...

'myapp',

]

```

Step 2: Create a Webhook Endpoint

1. **Define the URL in `myapp/urls.py`:**

```python

from django.urls import path

from . import views

urlpatterns = [

path('webhook/', views.webhook, name='webhook'),

]

```

2. **Include the App URLs in `myproject/urls.py`:**

```python

from django.contrib import admin

from django.urls import include, path

urlpatterns = [

path('admin/', admin.site.urls),

path('myapp/', include('myapp.urls')),

]

```

3. **Create the View in `myapp/views.py`:**

```python

from django.http import JsonResponse

from django.views.decorators.csrf import csrf_exempt

import json

@csrf_exempt

def webhook(request):

if request.method == 'POST':

data = json.loads(request.body)

# Process the webhook data here

print(data)

return JsonResponse({'status': 'success'}, status=200)

return JsonResponse({'error': 'invalid method'}, status=400)

```

Step 3: Run the Server

1. **Run the Django Development Server:**

```bash

python manage.py runserver

```

2. **Configure the Source System:**

- Register the webhook URL (e.g., `http://your-domain.com/myapp/webhook/`) with the service that will send the webhook data.

**Using APIs in Django:**

Step 1: Make an API Request

1. **Install Requests Library:**

```bash

pip install requests

```

2. **Create a View to Fetch Data from an API in `myapp/views.py`:**

```python

import requests

from django.shortcuts import render

def fetch_data(request):

api_url = '<https://api.example.com/data>'

headers = {'Authorization': 'Bearer YOUR_API_TOKEN'}

response = requests.get(api_url, headers=headers)

data = response.json() if response.status_code == 200 else None

return render(request, 'data.html', {'data': data})

```

3. **Define the URL in `myapp/urls.py`:**

```python

from django.urls import path

from . import views

urlpatterns = [

path('webhook/', views.webhook, name='webhook'),

path('fetch-data/', views.fetch_data, name='fetch_data'),

]

```

4. **Create a Template to Display the Data in `myapp/templates/data.html`:**

```html

<!DOCTYPE html>

<html>

<head>

<title>API Data</title>

</head>

<body>

<h1>API Data</h1>

<pre>{{ data }}</pre>

</body>

</html>

```

Running the Server:

1. **Run the Django Development Server:**

```bash

python manage.py runserver

```

2. **Access the API Data Fetch View:**

- Open a browser and go to `http://localhost:8000/myapp/fetch-data/` to see the data fetched from the API.

**Conclusion:**

In short, webhooks is a key tool in Django for real-time updates and connecting with other services. By using them effectively, developers can make apps more responsive, scalable, and user-friendly.

Stay tuned for the next post diving into APIs, another essential tool for seamless integration!

I hope this post was informative and helpful.

If you have any questions, please feel free to leave a comment below.

Happy Coding 👍🏻!

Thank You | aztec_mirage |

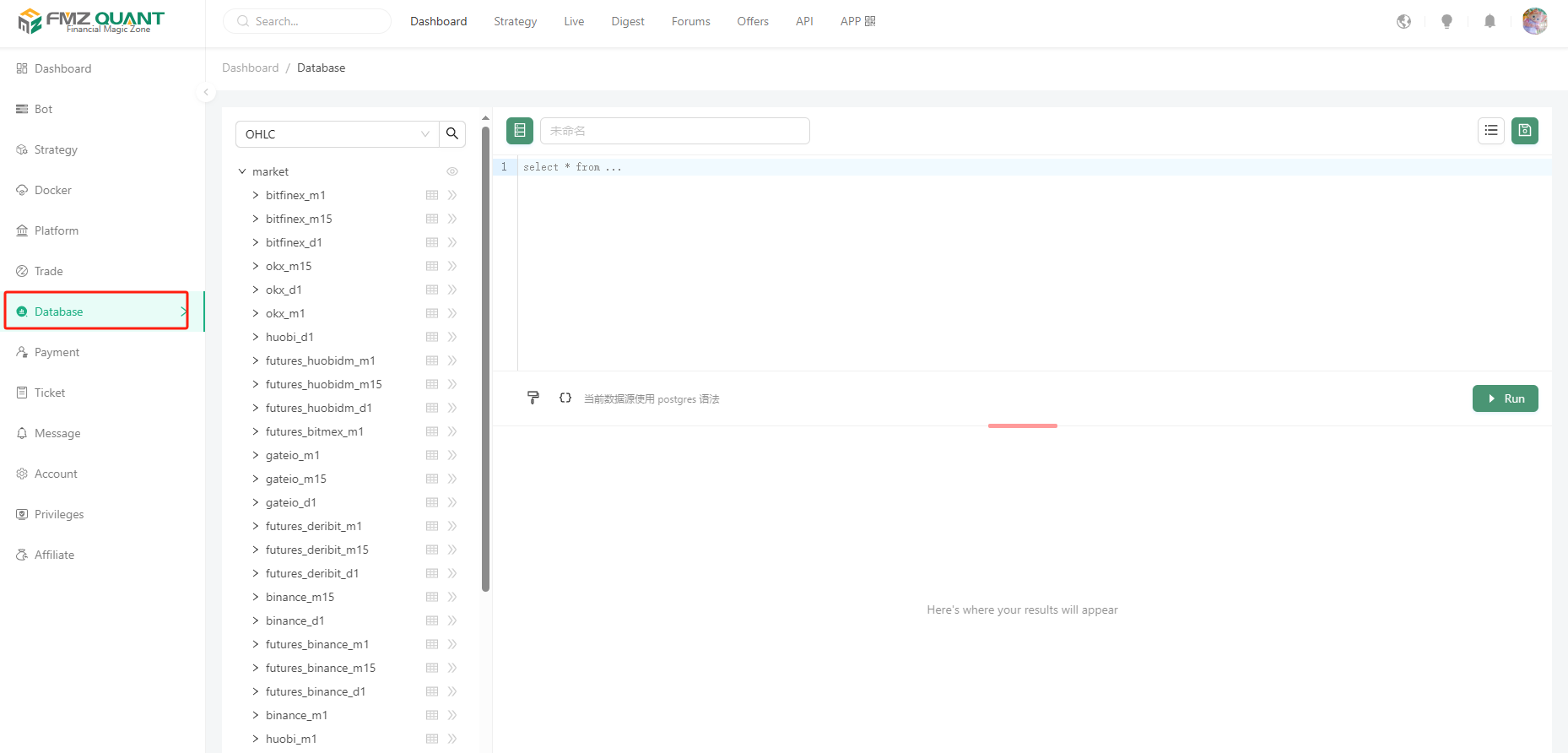

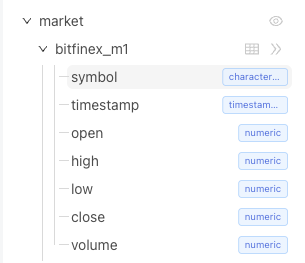

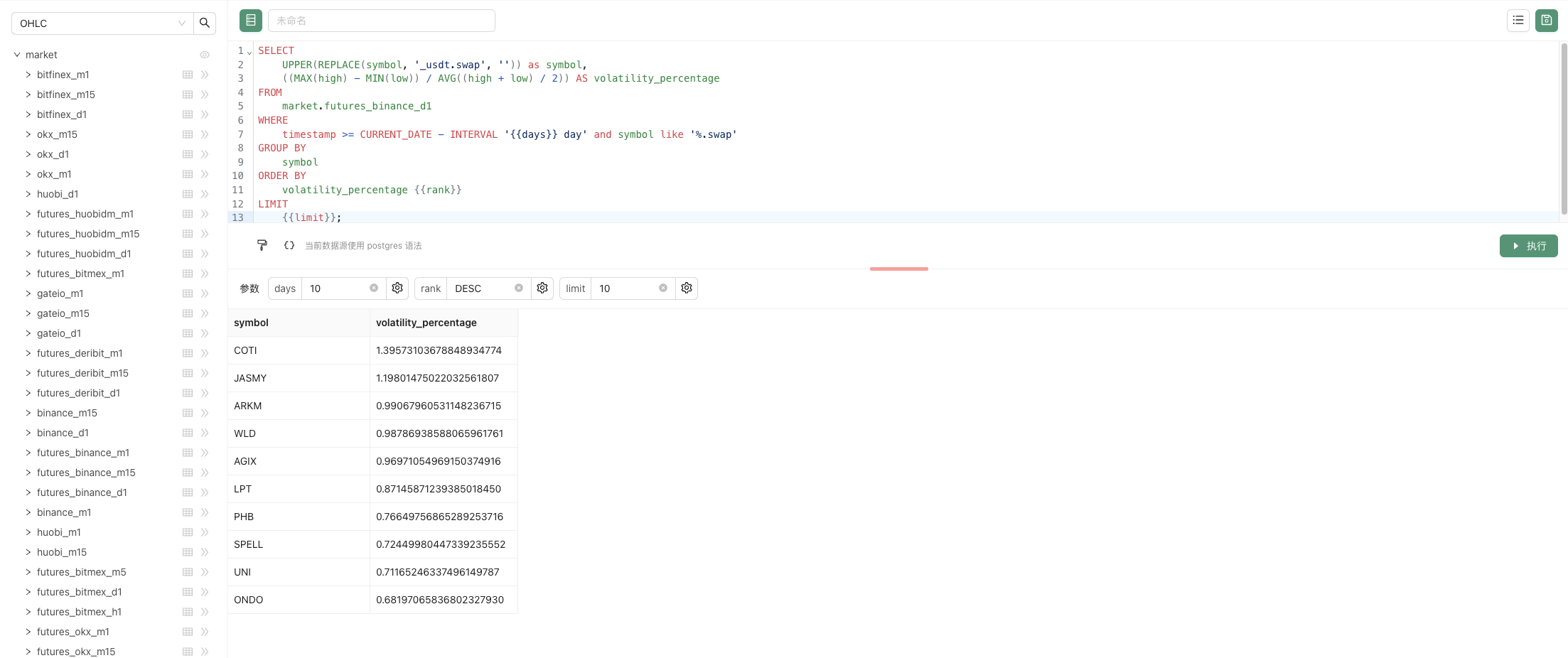

1,868,729 | Quantifying Fundamental Analysis in the Cryptocurrency Market: Let Data Speak for Itself! | Welcome all traders to my channel. Thanks to the FMZ platform, I will share more content related to... | 0 | 2024-05-29T09:06:49 | https://dev.to/fmzquant/quantifying-fundamental-analysis-in-the-cryptocurrency-market-let-data-speak-for-itself-2lf8 | data, fmzquant, trading, cryptocurrency | Welcome all traders to my channel. Thanks to the FMZ platform, I will share more content related to quantitative development, and work with all traders to maintain the prosperity of the quantitative community.

*Do you still not know the position of the market?*

*Are you feeling anxious before getting in the market?*

*Are you wondering whether you should sell coins in the market?*

*Have you watched various "teachers" and "experts" give guidance?*

Please don't forget that we are Quant, we use data analysis, and we speak objectively!

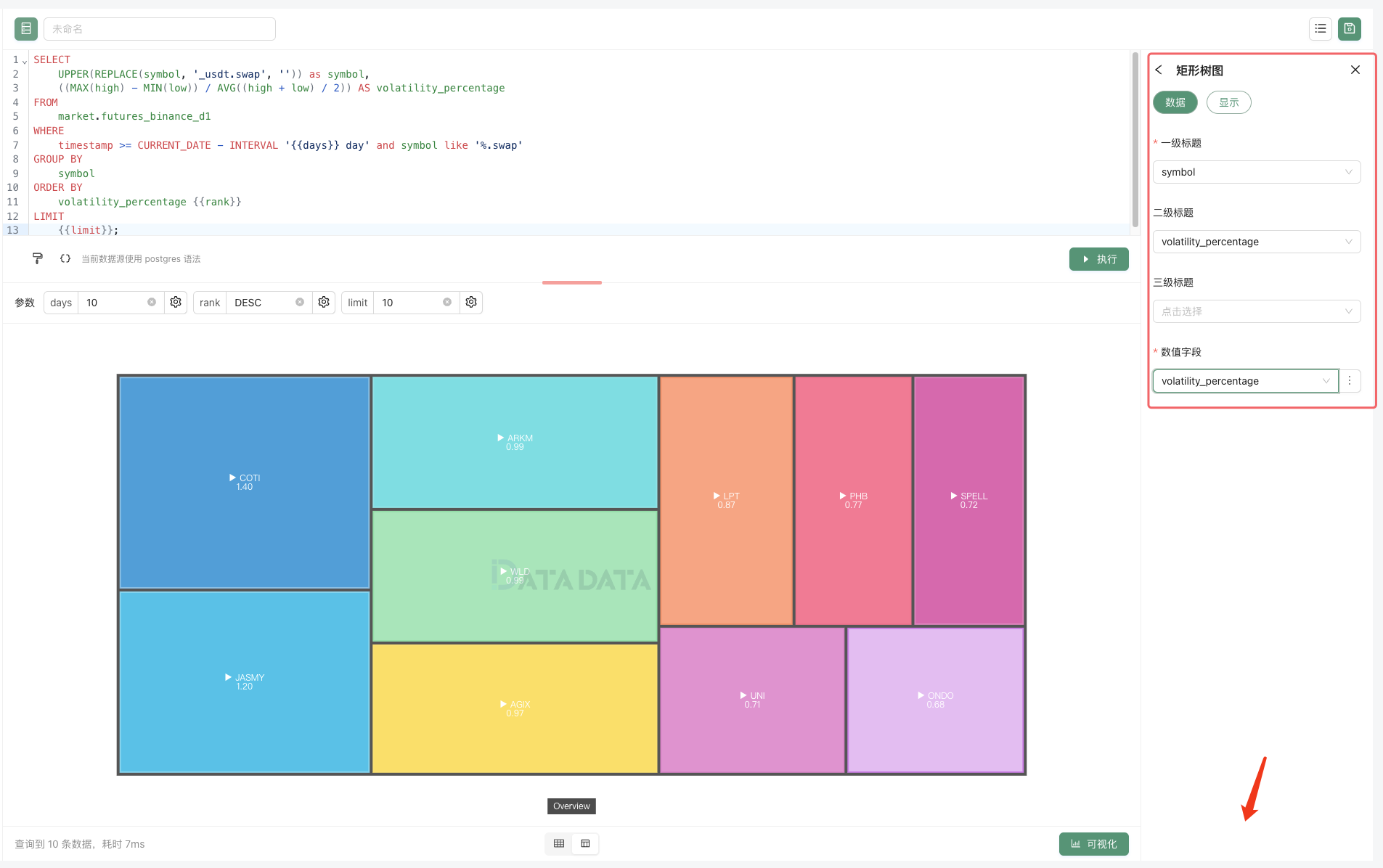

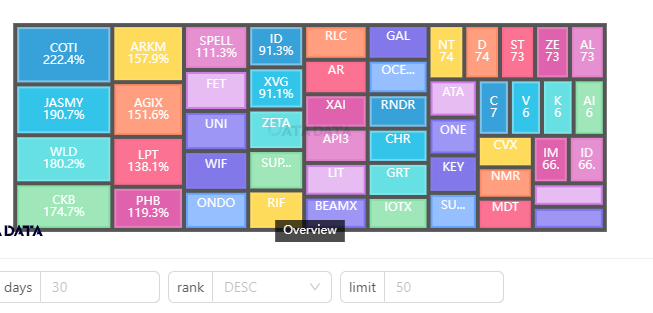

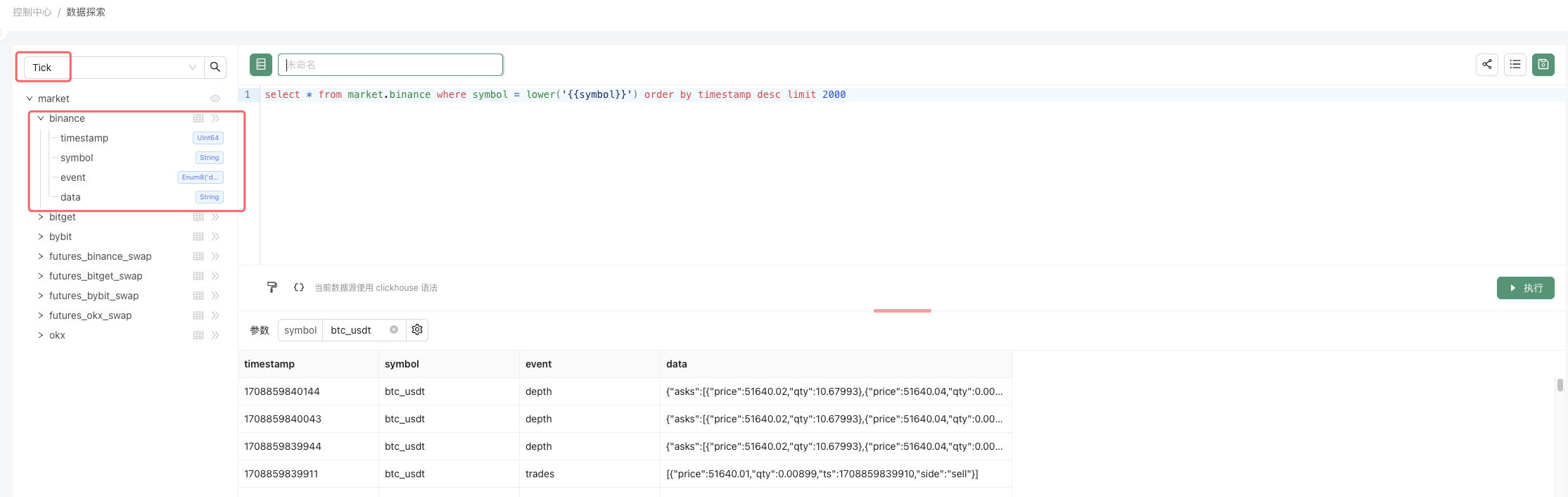

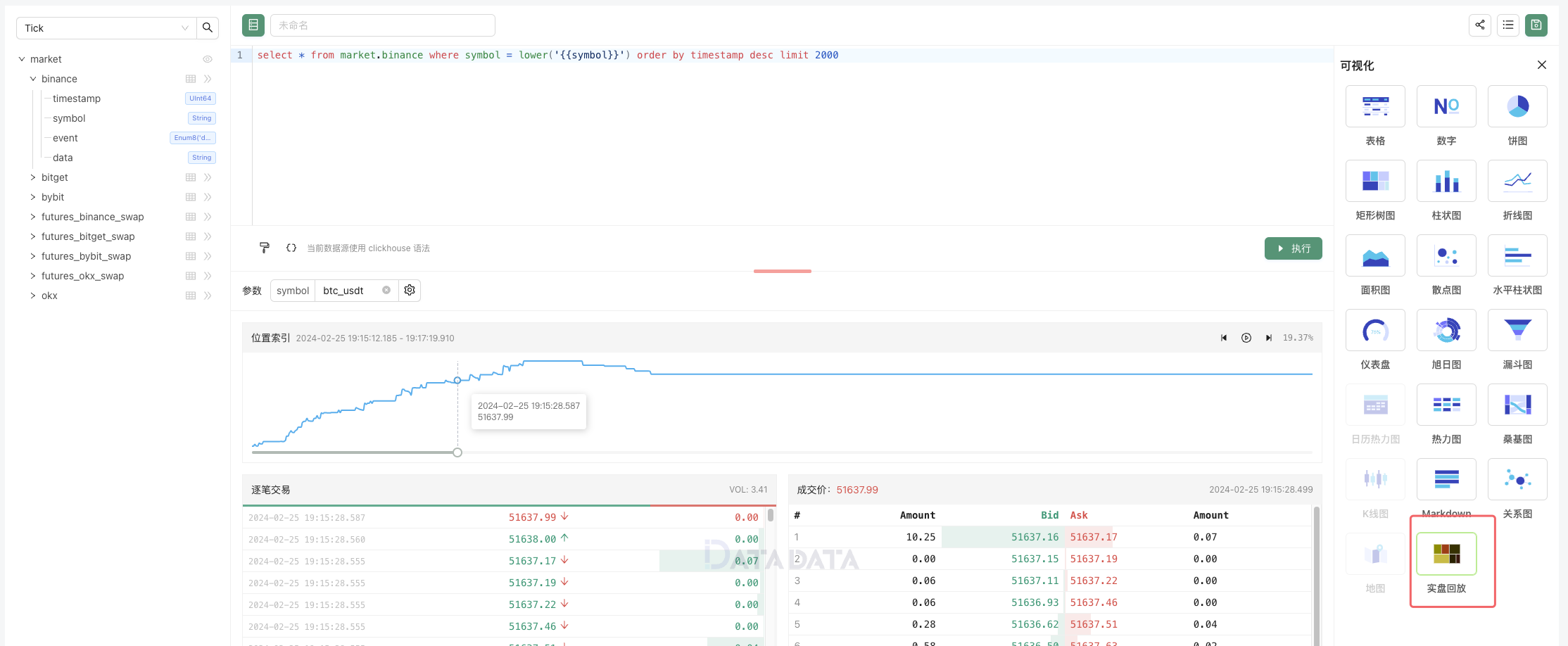

Today, I am here to introduce some of my fundamental quantitative analysis research in the cryptocurrency market. Every week we will monitor a large number of comprehensive fundamental quantitative indicators, display the current market situation objectively, and propose hypothetical future expectations. We will describe the market comprehensively from macro fundamental data, capital inflows and outflows, exchange data, derivatives and market data, and numerous quantitative indicators (on-chain, miners, etc.). Bitcoin has a strong cyclical and logical nature, and many reference directions can be found by learning from history. More fundamental data indicator updates are being collected!

**I. Macro Fundamental Data**

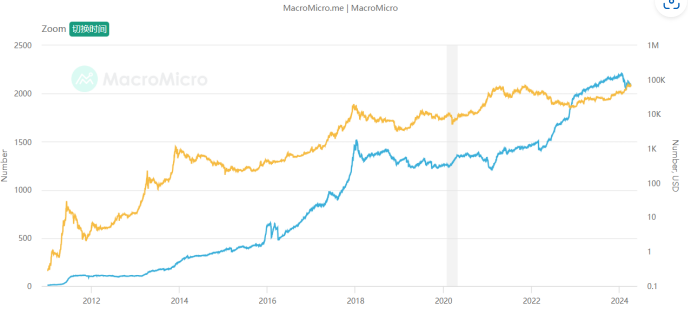

*1. Industry market value and proportion*

The total market value of cryptocurrency has reached around US$2.5 trillion, which is still one step away from breaking through the previous high. Under the historical background that Bitcoin has broken through the previous high, if another surge brings about a breakthrough in the total market value, then it will be possible a new round of bull market is approaching for the industry as a whole. At the same time, Bitcoin's share remains at about 50%, which is lower than the previous bull market about 60% of 2021. In addition, due to the recent impact of ETFs, Bitcoin is actually the hottest product in the market, and its market share is still stable every round as the bull market declines, I believe that various projects in the crypto industry other than Bitcoin are receiving more financial attention. If the bull market develops further, Bitcoin's share may begin to decline, and more funds will pour into various sectors and varieties.

*2. Money supply from the world's four major central banks*

Let's look at the M2 money supply of the world's four major central banks (the United States, Europe, Japan, and China), which represents the amount of legal currency funds in the market. Compared with legal currencies that can be created in large quantities, Bitcoin has the characteristics of "limited supply". The purpose of its creation in 2008 is to help ordinary people resist the depreciating legal currency wealth. When the money supply of the four major central banks continues to rise, it may strengthen market doubts about the value of legal currency, which is beneficial to the trend of Bitcoin; conversely, when global monetary policy begins to tighten, it is detrimental to the trend of Bitcoin. It can be seen that when Bitcoin reached a new high in this round, the annual increase in the supply of the four major global currency central banks was still at a low level of 0.94%. Therefore, we should think about whether more funds will flow into the industry if monetary policy does not change. If the money supply surges, Bitcoin will have greater upside space.

**II. Capital Inflow and Outflow**

*1. Bitcoin ETF*

Bitcoin ETF capital inflows are on the high side, and the total assets of ETFs have reached 56B, which is correlated with the price of Bitcoin. We need to continue to monitor the inflow and outflow trends of ETFs.

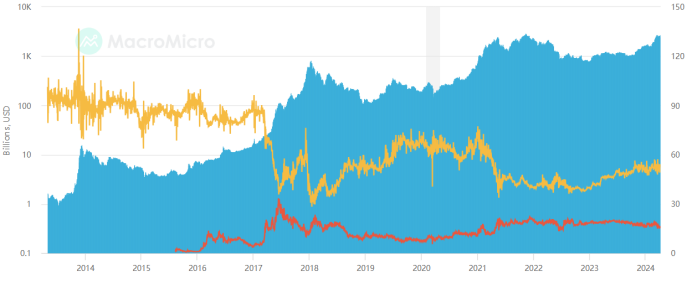

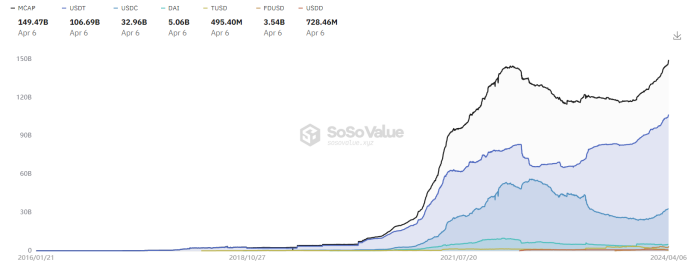

*2. USD stablecoin*

The total market value of U.S. dollar stablecoins has reached 150B, USDT has steadily ranked first in market share, and the supply of stablecoins has exceeded a record high, indicating that Bitcoin's record high has strong support from the U.S. dollar. We need to continue to observe the number of dollars. Only with dollars can we have prices.

**III. Exchange Inflow and Outflow Data**

*1. Exchange token reserves*

Let's look at the exchange Bitcoin reserve data, defined as the total amount of tokens held on exchange addresses. Total exchange reserves are a measure of a market's sales potential. As reserve values continue to rise, for spot trades, high values indicate increasing selling pressure. For derivatives trading, high values indicate the potential for high volatility. It can be seen that Bitcoin has reached new highs recently and exchange Bitcoin reserves have been declining, which is still a healthy signal. Normal value-added activities will deposit the tokens into the wallet. Only spot sales or trading activities will deposit tokens into the exchange. I think that exchange token reserves need to be monitored at all times to guard against rising exchange reserves and long-term side effects. If the price rises or fluctuates at a high level, it will show a peaking signal.

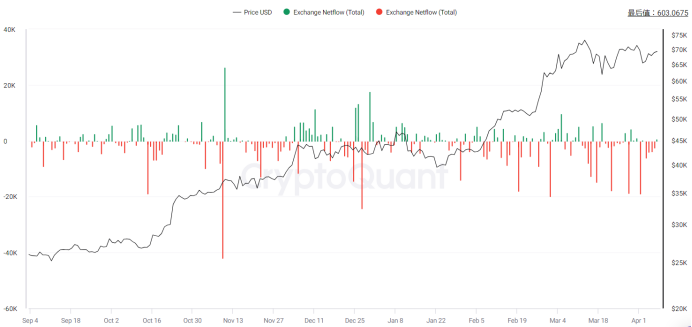

*2. Inflow and outflow of exchange tokens*

We further observe the net inflow and outflow of exchanges. Exchange inflow refers to the action of depositing a certain amount of cryptocurrency into the exchange wallet, while outflow refers to the action of withdrawing a certain amount of cryptocurrency from the exchange wallet. Exchange net flow is the difference between BTC flowing in and out of the exchange. Increased inflows into exchanges could mean selling from individual wallets, including whales, indicating selling power. On the other hand, an increase in exchange outflows could mean traders are increasing HODL positions in order to hold the coin in their wallets, indicating buying power. A positive trend in exchange inflows or outflows can indicate an increase in overall exchange activity, meaning more and more users are using the exchange to trade actively. This could mean that trader sentiment is at a bullish moment. Observations have found that exchange outflows have been high recently, and both inflows and outflows have been relatively stable. We need to monitor inflow and outflow data at all times and beware of large inflows and outflows that far exceed the average volume standard deviation, which will indicate important market trading behavior and the steering happens slowly.

**IV. Derivatives and Market Trading Behavior**

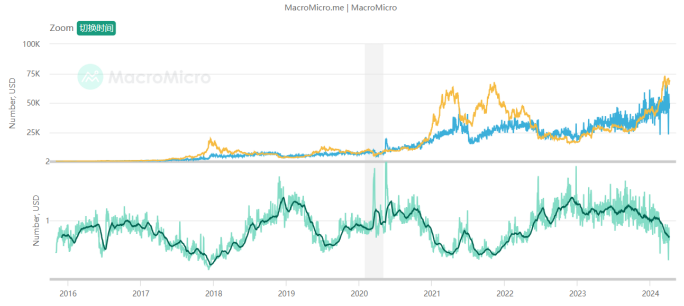

*1. Perpetual funding rate*

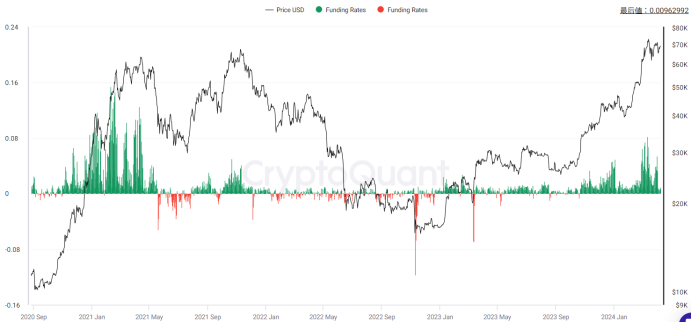

Funding rate is the fee periodically paid by long or short traders based on the difference between perpetual contract market and spot prices. It helps perpetual contract prices converge to the index price. All cryptocurrency derivatives exchanges use funding rates for perpetual contracts, denoted as a percentage for a given period and exchange rate. Funding rates reflect traders' sentiment towards positions in the perpetual swap market. A positive funding rate implies bullish sentiment, with long traders paying funding to short traders. Conversely, a negative funding rate suggests bearish sentiment, with short traders paying funding to long traders.

As prices rise, the current Bitcoin funding rate has significantly increased, peaking at 0.1%, indicating short-term market fervor, but gradually declining. Looking at the long term, there is still a gap compared to the funding rate during the comprehensive bull market in 2021. From the funding rate perspective alone, I believe it is far from a long-term peak. We need to monitor funding rates constantly, paying attention to extreme rates and whether they are close to historical highs. Specifically, I emphasize observing deviations between funding rates and prices; if prices continue to make new highs while funding rates peak lower than previous highs, it indicates overvaluation in the market and insufficient support, if this scenario occur, it would signal a market top.

*2. The long-short ratio of the entire network*

Let's look at the long-short position ratio of the exchange. The purpose of this data is to allow everyone to see the tendencies of retail investors and large investors. It is known that the total position value of long and short positions in the market is equal. The total position value is equal, but the number of holders is different, it means that the party with more holders has a smaller per capita position value and is dominated by retail investors, while the other party is dominated by large investors and institutions. When the ratio of long-short positions reaches a certain level, it means that retail investors tend to be bullish, while institutions and large investors tend to be bearish. Personally, I think this data mainly observes the inconsistency between the overall long-short ratio and the long-short ratio of large investors. It is currently relatively stable and there is no obvious signal.

**V. Quantitative Indicators**

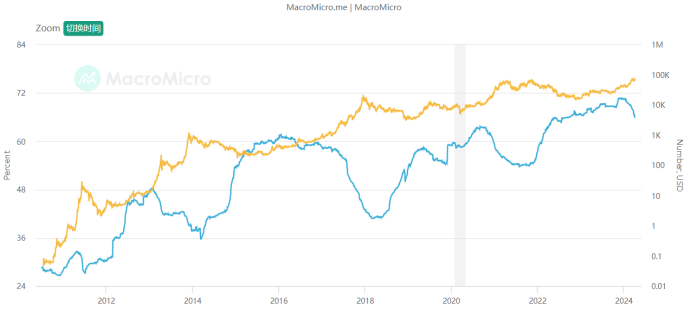

*1. MAVR ratio*

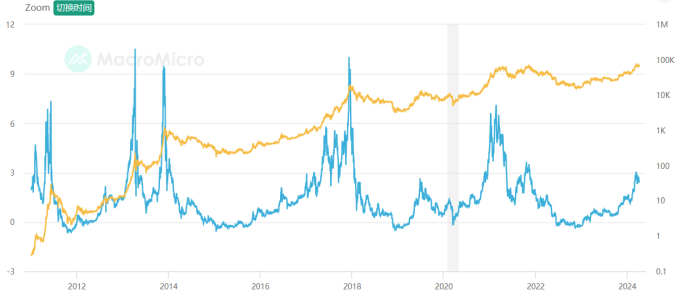

Definition: The MVRV-Z score is a relative indicator, which is the "circulating market value" of Bitcoin minus the "realized market value", and then standardized by the standard of the circulating market value. The formula is: MVRV-Z Score = (circulating market value - realized market value) / Standard Deviation. The "realized market value" is based on the value of transactions on the Bitcoin chain, calculating the sum of the "last movement value" of all Bitcoins on the chain. Therefore, when this indicator is too high, it means that the market value of Bitcoin is overvalued relative to its actual value, which is detrimental to the price of Bitcoin; otherwise, it means that the market value of Bitcoin is undervalued. According to past historical experience, when this indicator is at a historical high, the probability of a downward trend in Bitcoin prices increases, and attention should be paid to the risks of chasing higher prices.

Explanation: In short, it is used to observe the average cost of chips across the entire network. Generally, the low level is less than 1 and is of great concern. At this time, buying is lower than the cost of chips for most people, and there is a price advantage. Generally, a high of around 3 is already very hot and is a suitable range for short-term chip selling. At present, Bitcoin MVRV has increased a lot and has gradually entered the selling range, but there is still a little room to prepare a gradual selling plan. Referring to the historical decline, I personally think that it is a better position to start selling around MVRV3.

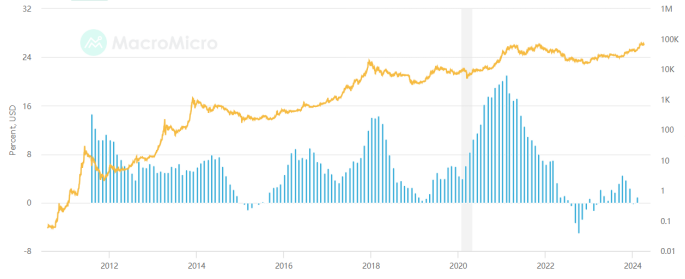

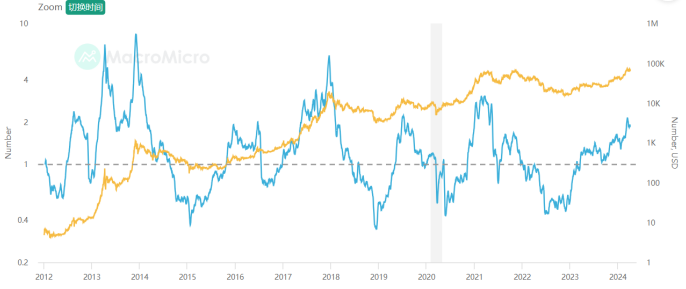

*2. Puell Multiple*

Definition: The Puell multiplier calculates "the ratio of current miner revenue to the average of the past 365 days", where miner revenue is mainly the market value of newly issued Bitcoins (new Bitcoin supply will be obtained by miners) and related transaction fees, available to estimate the income of miners, the formula is as follows: Puell multiplier = miner income (market value of newly issued Bitcoins) / 365-day moving average miner income (all in US dollars). Selling mined Bitcoins is the main revenue for miners, used to supplement the capital investment in mining equipment and electricity costs during the mining process. Therefore, the average miner income in the past period can be indirectly regarded as the minimum threshold to maintain the opportunity cost of miners' operations. If the Puell multiplier is much lower than 1, it means that miners have insufficient momentum for profit growth, so their motivation to further expand investment in mining is reduced; conversely, if the Puell multiplier is greater than 1, it means that miners still have the momentum for profit growth and will expand investment in mining motivation.

Explanation: The current Puell multiplier is high, greater than 1 and close to the historical high value. Considering the attenuation of extreme values in each round of bull market, we should start to consider a gradual selling plan.

*3. Transfer fee per transaction in USD*

Definition: The average fee per transaction, in USD.

Explanation: We need to pay attention to extreme transfer fees. Every transfer on the chain is meaningful. Extreme transfer fees represent urgent large-scale actions. Historically, they are an important reference for the top. Currently, there have been no overly exaggerated single transfer fees.

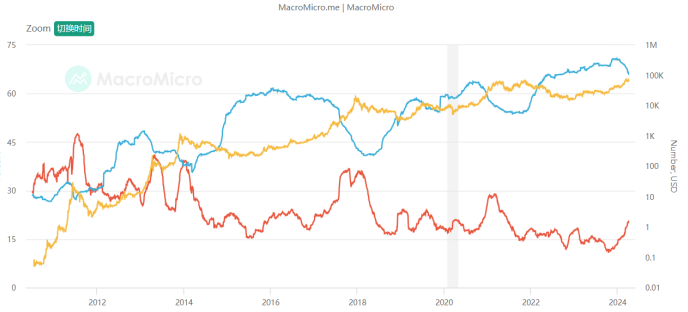

*4. Number of addresses of large Bitcoin retail investors*

Definition: From the distribution of addresses holding the number of Bitcoins, we can roughly know the trend of Bitcoin holdings. We divided retail investors (holding less than 10 Bitcoins) and large investors (holding more than 1,000 Bitcoins) to calculate the "Bitcoin retail investors/large account number address ratio". When the ratio rises, it means that the number of retail investors holding Bitcoin has increased. At the end of the bull market, large Bitcoin investors will distribute chips to more retail investors, and the rising trend of Bitcoin prices is relatively loose; on the contrary, it means that the Bitcoin price increase is relatively stable.

Explanation: Continuous monitoring is required. When large investors continue to distribute chips to retail investors, they can consider gradually withdrawing.

*5. Bitcoin mining cost*

Definition: Based on the global Bitcoin "power consumption" and "daily number of new issuances", the average cost of producing each Bitcoin by all miners can be estimated. When the price of Bitcoin is greater than the production cost and miners are profitable, mining equipment may be expanded or more new miners may join, leading to an increase in mining difficulty and production costs; conversely, when the price of Bitcoin is lower than the production cost , miners reduce their scale or quit, which will reduce the difficulty of mining and reduce production costs. In the long term, Bitcoin prices and production costs will follow a step-by-step trend through market mechanisms, because when there is a gap between prices and costs, miners will join/exit the market, causing prices and costs to converge.

Explanation: We need to focus on the ratio of the mining cost of each Bitcoin to the market price. This indicator shows a mean reversion state, reflecting the fluctuation and regression of the relative value of the price, which is of great long-term timing significance! The ratio fluctuates around 1, and is currently lower than 1, indicating that the price has begun to be overvalued relative to value, and it is gradually approaching historical lows to start exiting.

*6. Long-term dormant currency age ratio*

Definition: This indicator counts the total number of Bitcoins whose most recent transaction was more than one year ago. When the indicator value is larger, it means that more shares of Bitcoin are held for the long term, which is beneficial to the cryptocurrency market; conversely, it means that more shares of Bitcoin are traded, which may reveal that large investors are taking profits, which is detrimental to market performance. Based on the experience of the past several Bitcoin bull market periods, the downward trend of this indicator usually precedes the end of the Bitcoin bull market. When this indicator is at a historically low level, it is more likely to be the end of the Bitcoin bull market, so it can be judged as a leading indicator that Bitcoin is getting off the market.

Explanation: As the bull market progresses, more and more dormant Bitcoins begin to recover and trade. We need to pay attention to the stability of the downward trend of this value, showing the characteristics of a top. It has not yet started to plateau after the decline.

*7. Ratio of long and short currency ages*

Definition: Bitcoin three-month transaction ratio statistics the proportion of all Bitcoins that have been recently traded within the last three months, calculating the ratio of Bitcoins traded within the last three months to the total number of Bitcoins. When the indicator trends upwards, it signifies a larger proportion of Bitcoins have been traded in the short term, increasing turnover frequency, indicating sufficient market activity. Conversely, when this indicator trends downwards, it indicates a decrease in short-term turnover frequency. Bitcoin over one year without transaction ratio statistics the proportion of all Bitcoins that have not been traded within the last year, calculating the ratio of Bitcoins not traded for over one year to the total number of Bitcoins. When this indicator trends upwards, it represents an increase in the proportion of Bitcoins that have not been traded for a long time, indicating an increase in long-term holding intentions. When this indicator trends downwards, it signifies a decrease in the proportion of Bitcoins that have not been traded for a long time, indicating the release of originally long-held Bitcoin chips.

Explanation: The indicator focuses on the beginning of a plateau in short-term value increases and a decrease in long-term value, indicating characteristics of a top. Currently, there is a long-term decrease and a short-term increase, indicating overall relatively healthy conditions.

**VI. Summary**

In one sentence, we are currently in the middle of the bull market, and many indicators are performing well. However, overheating should be gradually taken into consideration, and we can start to formulate an exit plan, and gradually exit when one or more fundamental quantitative indicators begin to not support the bull market. Of course, these are just some representatives of fundamental quantitative analysis. I will integrate and collect more fundamental quantitative research systems in the currency circle in the future. Welcome to pay attention and discuss together!

We are Quant, we use data analysis, we no longer have to believe in all kinds of bullshit, we use objectivity to construct and revise our expectations!

From: https://blog.mathquant.com/2024/04/09/quantifying-fundamental-analysis-in-the-cryptocurrency-market-let-data-speak-for-itself.html | fmzquant |

1,868,728 | A Thorough Analysis of hCAPTCHA and How to Bypass | Introduction to hCAPTCHA hCAPTCHA is a sophisticated captcha system designed to differentiate... | 0 | 2024-05-29T09:05:51 | https://dev.to/media_tech/a-thorough-analysis-of-hcaptcha-and-how-to-bypass-5ab4 | **Introduction to hCAPTCHA**

hCAPTCHA is a sophisticated captcha system designed to differentiate between human users and automated bots. It emerged as an alternative to Google’s reCAPTCHA, offering similar functionality but with a stronger emphasis on privacy and security. Implemented on numerous websites, hCAPTCHA is an essential tool in preventing automated abuse and spam.

**How hCAPTCHA Works**

At its core, hCAPTCHA operates by presenting challenges that are easy for humans but difficult for bots. These challenges often involve image recognition tasks where users must identify specific objects within a grid of images. The underlying technology relies on machine learning algorithms and large datasets to continually refine its ability to distinguish between human and automated interactions.

**Advantages of hCAPTCHA**

Enhanced Privacy: Unlike reCAPTCHA, hCAPTCHA does not track users' online behavior, thereby offering better privacy protections.

Monetization: Websites can earn revenue through hCAPTCHA by training machine learning models, as companies pay for the labeled data.

Accessibility: hCAPTCHA provides accessible alternatives for users who have difficulties with standard visual challenges.

**Challenges Posed by hCAPTCHA**

Despite its advantages, hCAPTCHA presents several challenges for users and developers:

**User Experience:** The difficulty of some challenges can frustrate users, leading to a potential drop in website engagement.

**Accessibility Issues:** Although alternatives are provided, users with disabilities may still struggle with the challenges.

**Implementation Complexity:** Integrating hCAPTCHA into a website can be more complex compared to other captcha solutions.

**Understanding Captcha Solvers**

Captcha solvers are tools or services designed to bypass captcha challenges automatically. They can be implemented through software algorithms or human-based solving services. These solvers typically work by:

**Image Recognition Algorithms:** Using advanced machine learning techniques to identify and solve captcha challenges.

**Human Solvers:** Outsourcing captcha-solving tasks to human workers who manually complete the challenges.

**Bypassing hCAPTCHA with Captcha Solvers**

Bypassing hCAPTCHA requires sophisticated methods due to its robust design. Below, we explore some of the techniques used by captcha solvers to overcome hCAPTCHA challenges.

**Machine Learning Approaches**

Captcha solvers leveraging machine learning can be incredibly effective.

These systems are trained on large datasets of hCAPTCHA challenges and responses.

**Here’s how they generally work:**

**Data Collection:** Gather a substantial amount of labeled captcha data.

**Model Training:** Use the data to train a deep learning model capable of recognizing patterns and solving captcha challenges.

**Real-time Processing:** Deploy the trained model to solve hCAPTCHA challenges in real time as they appear on websites.

**Human-based Solvers**

Human-based captcha solving services employ a network of human workers who manually solve captcha challenges. This method, while slower than automated solutions, is highly effective and can bypass almost any captcha system. The process typically involves:

**Capture and Forward:** The captcha challenge is captured and sent to a pool of human solvers.

**Manual Solving:** Human workers solve the captcha and send the response back.

**Submission:** The response is submitted to the target website, bypassing the captcha verification.

**Implications and Countermeasures**

The ability to bypass hCAPTCHA has serious implications for online security. Websites rely on captcha systems to prevent abuse, and bypassing these measures can lead to increased vulnerability to automated attacks. To combat these threats, website administrators can implement additional layers of security, such as:

**Behavioral Analysis:** Monitoring user behavior to detect anomalies indicative of automated interactions.

**Rate Limiting:** Restricting the number of attempts from a single IP address or user within a specified time frame.

**Advanced Authentication:** Utilizing multi-factor authentication (MFA) to add an extra layer of security.

**Conclusion**

hCAPTCHA serves as a robust tool for distinguishing between human users and bots, offering significant advantages in terms of privacy and security. However, the challenges it poses, particularly in user experience and accessibility, must be carefully managed. While captcha solvers can bypass hCAPTCHA, their use raises ethical and legal concerns. As such, continuous advancements in captcha technology and security measures are essential to maintaining the integrity of online platforms.

**Human techniques for bypassing CAPTCHA, especially hCAPTCHA, are inefficient and costly, consuming significant time and resources. This manual process is a waste of both money and time.**

**On the other hand, using a CaptchaAI solver to bypass CAPTCHA automatically is highly efficient. This Captcha solving service employs OCR technology, saving time by quickly solving CAPTCHAs. Additionally, it offers unlimited Captcha solving at a fixed price, unlike other services that charge per CAPTCHA, making it a cost-effective solution.**

| media_tech | |

1,869,019 | SPFx extensions: discover the Application Customizer | If you’re here probably you’re wondering: “What’s an Application Customizer?” An Application... | 0 | 2024-05-29T17:39:19 | https://iamguidozam.blog/2024/05/29/spfx-extensions-discover-the-application-customizer/ | development, applicationcustomize, spfx | ---

title: SPFx extensions: discover the Application Customizer

published: true

date: 2024-05-29 09:00:00 UTC

tags: Development,ApplicationCustomize,SPFx

canonical_url: https://iamguidozam.blog/2024/05/29/spfx-extensions-discover-the-application-customizer/

---

If you’re here probably you’re wondering: “What’s an Application Customizer?”

An Application Customizer is a custom SharePoint Framework extension that enable the customization of two possible placeholders (top and bottom) and also to execute code when opening a SharePoint Online page, for example you can prompt the user with a privacy statement inside a dialog.

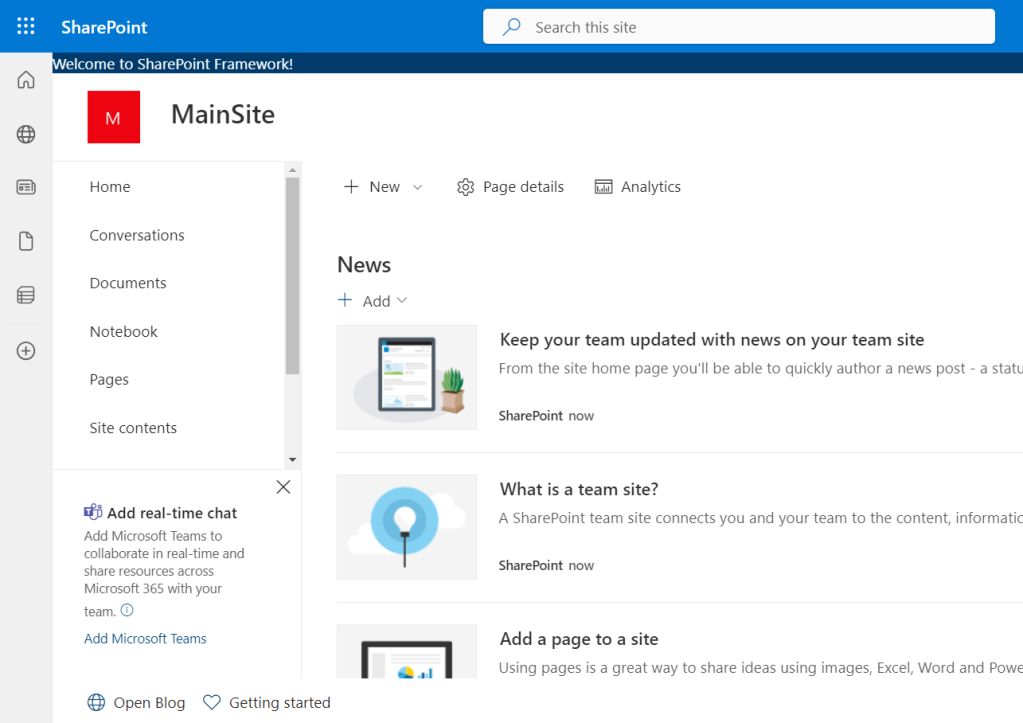

In the sample solution I’ve created I wanted to display the use of the two placeholders, this is my dev environment main page:

As you can see there are, at the top and in the bottom part of the screen, a couple of different controls rendered. In detail here is the top placeholder which contains only a simple text:

The bottom placeholder is composed by a component that contains two buttons: the first one will open the home page of my blog and the second one will open the page on the PnP site about how to get started with SPFx extensions ([this is the link](https://pnp.github.io/blog/post/spfx-03-getting-started-with-spfx-extensions-for-spo/) if you want to take a look):

## Creating the Application Customizer

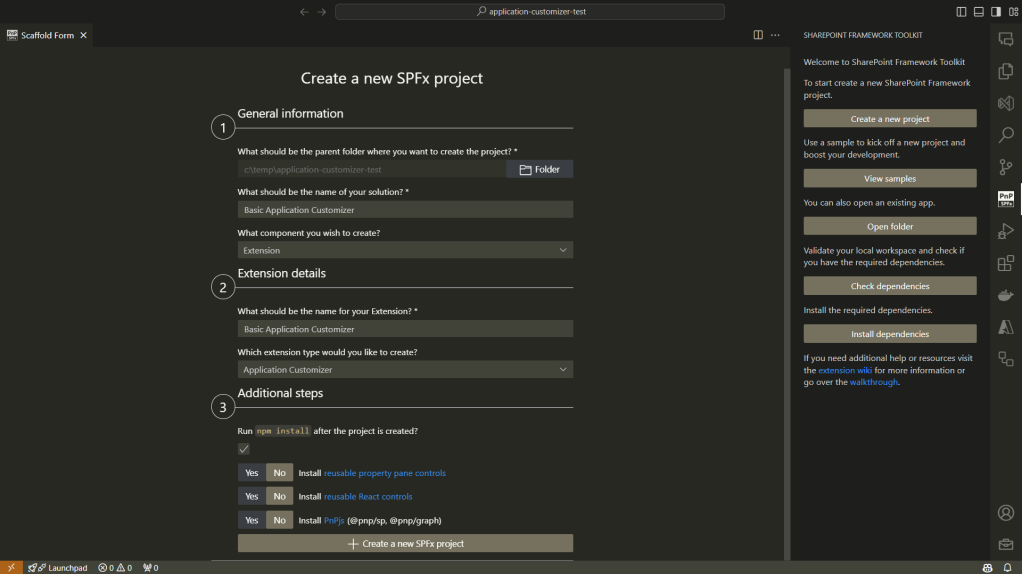

You can create the solution using the SharePoint Framework Toolkit which is a very nice and always improving VSCode extension, as you can see this is the new solution setup page:

On this page it’s possible to define:

- where the solution is located

- what’s the name of the solution

- what extension is contained within the solution

- what’s the extension name

- a couple of additional steps and configuration

> If you’re interested in this extension you can find it [here](https://marketplace.visualstudio.com/items?itemName=m365pnp.viva-connections-toolkit&ssr=false) or on the VSCode extension marketplace searching by “SharePoint Framework Toolkit”.

## Show me the code

If you’re interested in viewing the full code of this sample solution you can find it [here](https://github.com/GuidoZam/blog-samples/tree/main/Application%20customizers/basic-application-customizer) on GitHub.

When creating the solution a new TypeScript file will be created under the path _src\extensions\{name of the extension}_ which contains the code for the application customizer and the class definition will be something like:

```

export default class BasicApplicationCustomizer extends BaseApplicationCustomizer<IBasicApplicationCustomizerProperties>

```

The `BaseApplicationCustomizer` is the base class from which the newly created application customizer will inherit and the main method that needs to be implemented to allow the control to work as expected is the `onInit` method. This method will contain all the code to manage the application customizer behavior, in the sample solution I only defined two calls to the methods that create the top and bottom placeholder controls:

```

protected onInit(): Promise<void> {

Log.info(LOG_SOURCE, "Initialized BasicApplicationCustomizer");

// Handling the top placeholder

this._renderTopPlaceHolder();

// Handling the bottom placeholder

this._renderBottomPlaceHolder();

return Promise.resolve();

}

```

> NB: In my sample application I didn’t need any asynchronous operation but if needed you can set the onInit method as async.

Let’s take a look at the Top placeholder of the Application Customizer, the Bottom placeholder will be the same with some little changes, for example the placeholder name will be Bottom instead of Top. Since the differences regarding the Application Customizer are minimal here I will cover only the Top placeholder, if you want to check out the code for both the placeholders you can have a look to the solution on GitHub.

When creating the solution an import statement will be specified from the “@microsoft/sp-application-base” which requires the addition of the `PlaceholderContent` and `PlaceholderName` imports as follow:

```

import {

BaseApplicationCustomizer,

PlaceholderContent,

PlaceholderName,

} from "@microsoft/sp-application-base";

```

Those newly imported classes and enumerator are required for a couple of things, for example the `PlaceholderContent` class is used to define a property, at class level, to handle the placeholder content:

```

private _topPlaceholder: PlaceholderContent | undefined;

```

To create the content of the top placeholder we will need to get the placeholder content, to do so there are a specific property `(placeholderProvider`) and a method (`tryCreateContent`) to be used from the current context, the method will need to know which type of placeholder we want to create and there’s where the enumerator `PlaceholderName` comes handy:

```

this.context.placeholderProvider.tryCreateContent(

PlaceholderName.Top,

{ onDispose: this._handleDispose }

);

```

The `tryCreateContent` will try to create the content for the specified placeholder specified by the first argument which is a value from the `PlacholderName` enumerator, by now the available properties are:

- `Top`

- `Bottom`

The `_handleDispose` method is used in case there are some operations to be performed when disposing the control, in this sample it’s a simple placeholder:

```

private _handleDispose(): void {

console.log("[BasicApplicationCustomizer._onDispose] Disposed custom top and bottom placeholders.");

}

```

I’ve created a couple of React component to be rendered inside the Application Customizer placeholders, to insert a React component in the placeholder you can, after retrieved the placeholder content, set it:

```

const element = React.createElement(TopComponent, {});

ReactDom.render(element, this._topPlaceholder.domElement);

```

The full method for the Top placeholder will be something like the following:

```

private _renderTopPlaceHolder(): void {

console.log(this.context.placeholderProvider.placeholderNames);

if (!this._topPlaceholder) {

this._topPlaceholder = this.context.placeholderProvider.tryCreateContent(

PlaceholderName.Top,

{ onDispose: this._handleDispose }

);

if (!this._topPlaceholder) {

return;

}

if (this._topPlaceholder.domElement) {

const element = React.createElement(TopComponent, {});

ReactDom.render(element, this._topPlaceholder.domElement);

}

}

}

```

The TopComponent will simply render the following:

```

public render(): JSX.Element {

return (

<div className={"ms-bgColor-themeDark ms-fontColor-white"}>

{strings.TopMessage}

</div>

);

}

```

To test it you can update the _pageUrl_ in the _serve.json_ file to point at your dev SharePoint site and run the following command:

```

gulp serve

```

## Conclusion

The Application Customizer is a SharePoint Framework extensibility that allows more customization of the SharePoint UI and UX, for example it can add the ability to place an informative banner at the top of the page, another example can be a breadcrumb to enable a different navigation on the site. If you’re wondering what you can achieve there are a bunch of samples in the [Microsoft Sample Solution Gallery](https://adoption.microsoft.com/en-us/sample-solution-gallery).

Hope this helps! | guidozam |

1,868,727 | OPIUM Massage in Prague | Are you looking for the most flawless, mind-blowing, toe-curling Erotic Massage Prague service? An... | 0 | 2024-05-29T08:57:23 | https://dev.to/opium2200/opium-massage-in-prague-1o51 | Are you looking for the most flawless, mind-blowing, toe-curling [Erotic Massage](https://eroticprag.cz/) Prague service? An exclusive and sophisticated tantric massage that arouses the very core of your being and stimulates your racy imagination?

We have opened a new erotic massage parlour in the center of Prague.

| opium2200 | |

1,868,726 | Amazing React 19 Updates 🔥🔥😍... | ReactJS stands out as a leading UI library in the front-end development sphere, and my admiration for... | 0 | 2024-05-29T08:56:28 | https://dev.to/srijanbaniyal/amazing-react-19-updates--4g5a | javascript, react, frontend, webdev | **ReactJS stands out as a leading UI library in the front-end development sphere, and my admiration for it is fueled by the dedicated team and the vibrant community that continually supports its growth.**

**<u>The prospects for React are filled with promise and intrigue. To distill it into a single line, one could simply state: 'Minimize Code, Maximize Functionality'.</u>**

**Throughout this blog, I aim to delve into the fresh elements introduced in React 19, enabling you to delve into new functionalities and grasp the evolving landscape.**

**Please bear in mind that React 19 is currently undergoing development. Please Remember to refer the official guide on GitHub and follow the official team on social platforms to remain abreast of the most recent advancements.**

---

1.Overview of New Features in React 19

2.React Compiler

3.Server Components

4.Actions

5.Document Metadata

6.Asset Loading

7.Web Components

8.New react Hooks

9.Wanna Try Out React 19 🤔??

---

#✨Overview of New Features in React 19✨

Here's a brief rundown of the exciting new features that React 19 is set to bring:

- 🎨 React Compiler Breakthrough: React enthusiasts are eagerly anticipating the arrival of a cutting-edge compiler. Already in use by Instagram, this technology will soon be integrated into future React versions.

- 🚀 Server Component Innovation: After years of development, React is unveiling server components, a groundbreaking advancement compatible with Next.js.

- 🔬 Revolutionary DOM Interactions AKA Actions: Brace yourself for the game-changing impact of the new Actions feature on how we engage with DOM elements.

- 📝 Streamlined Document Metadata: Expect a significant upgrade in enhancing developers' efficiency with a leaner code approach.

- 🖼️ Efficient Assets Loading: Say goodbye to tedious loading times as React's new asset loading capability enhances both app load times and user experiences.

- ⚙️ Web Component Integration: Excitingly, React code will seamlessly incorporate web components, opening up a world of possibilities for developers.

- 🪝 Enhanced Hooks Ecosystem: Anticipate a wave of cutting-edge hooks coming your way soon, poised to transform the coding landscape.

React 19 will address the persistent challenge of excessive re-renders in React development, enhancing performance by autonomously managing re-renders. This marks a shift from the manual use of useMemo(), useCallback(), and memo, resulting in cleaner and more efficient code and streamlining the development process.

---

#🎨React Compiler🎨

One common approach to optimizing these re-renders has been the manual use of useMemo(), useCallback(), and memo APIs. The React team initially considered this a "reasonable manual compromise" in their effort to delegate the management of re-renders to React.

Recognizing the cumbersome nature of manual optimization and buoyed by community feedback, the React team set out to address this challenge. Hence, they introduced the "React compiler", which takes on the responsibility of managing re-renders. This empowers React to autonomously determine the appropriate methods and timing for updating state and refreshing the UI.

As a result, developers are no longer required to perform these tasks manually, and the need for useMemo(), useCallback(), and memo is alleviated.

As this functionality is set to be included in a future release of React, you can delve deeper into the details of the compiler by exploring the following sources:

[React Compiler: In-Depth by Jack Herrington](https://www.youtube.com/watch?v=PYHBHK37xlE)

[React Official Documentation](https://react.dev/learn/react-compiler)

Consequently, React will autonomously determine the optimization of components and the timing for the same, as well as make decisions on what needs to be re-rendered.

---

#🚀Server Components🚀

If you're not aware of server components, you're overlooking a remarkable Landmark of advancement in React and Next.js.

Historically, React components predominantly operated on the client side. However, React is now disrupting the status quo by introducing the innovative notion of server-side component execution.

The concept of server components has been a topic of discussion for years, with Next.js leading the charge by incorporating them into production. Beginning with Next.js 13, all components are automatically designated as server components. To execute a component on the client side, you must employ the "use client" directive.

**In React 19, the integration of server components within React presents

a multitude of benefits:**

- Enhanced SEO: Server-rendered components elevate search engine optimization efforts by offering crawler-friendly content.

- Performance Enhancement: Server components play a pivotal role in accelerating initial page load times and enhancing performance, especially for content-rich applications.

- Server-Side Functionality: Leveraging server components facilitates the execution of code on the server side, streamlining processes such as API interactions for optimal efficiency.

These advantages highlight the profound impact server components can have on advancing contemporary web development practices.

In React, components are inherently designed for client-side execution. However, by employing the `"use server"` directive as the initial line of a component, the code can be transformed into a server component. This adjustment ensures that the component operates exclusively on the server side and will not be executed on the client side.

---

# 🔬Actions🔬

In the upcoming version 19, the introduction of Actions promises to revolutionize form handling. This feature will enable seamless integration of actions with the `<form/>` HTML tag, essentially replacing the traditional `onSubmit` event with HTML form attributes.

## Before Actions

```

<form onSubmit={search}>

<input name="query" />

<button type="submit">Search</button>

</form>

```

## After Actions

With the debut of server components, Actions now have the capability to be executed on the server side. Within our JSX, through the tag, we can go for the `onSubmit `event and instead incorporate the action attribute. This attribute will encompass a method for submitting data, whether it be on the client or server side.

Actions empower the execution of both synchronous and asynchronous operations, simplifying data submission management and state updates. The primary objective is to streamline form handling and data manipulation, seeking to enhance overall user experience.

Shall we explore an example to gain a better understanding of this functionality?

```

"use server"

const submitData = async (userData) => {

const newUser = {

username: userData.get('username'),

email: userData.get('email')

}

console.log(newUser)

}

```

```

const Form = () => {

return <form action={submitData}>

<div>

<label>Name</label>

<input type="text" name='username'/>

</div>

<div>

<label>Name</label>

<input type="text" name="email" />

</div>

<button type='submit'>Submit</button>

</form>

}

export default Form;

```

In the provided code snippet, `submitData` functions as the action within the server component, while `form` serves as the client-side component utilizing `submitData` as the designated action. Significantly, `submitData` will execute exclusively on the server side. This seamless communication between the `client (form)` and server `(submitData)` components is facilitated solely through the action attribute.

---

#📝Document Metadata📝

The inclusion of elements like `title, meta tags and description` is essential for maximizing SEO impact and ensuring accessibility. Within the React environment, especially prevalent in single-page applications, the task of coordinating these elements across different routes can present a significant hurdle.

Typically, developers resort to crafting intricate custom solutions or leveraging external libraries like react-helmet to orchestrate seamless route transitions and dynamically update metadata. Yet, this process can become tedious and error-prone, particularly when handling crucial SEO elements such as meta tags.

Before:

```

import React, { useEffect } from 'react';

const HeadDocument = ({ title }) => {

useEffect(() => {

document.title = title;

const metaDescriptionTag = document.querySelector('meta[name="description"]');

if (metaDescriptionTag) {

metaDescriptionTag.setAttribute('content', 'New description');

}

}, [title]);

return null;

};

export default HeadDocument;

```

The provided code features a `HeadDocument` component tasked with dynamically updating the `title` and `meta tags` based on the given props within the `useEffect` hook. This involves utilizing JavaScript to facilitate these updates, ensuring that the component is refreshed upon route changes. <u>However, this methodology may not be considered the most elegant solution for addressing this requirement.

</u>

After:

Through React 19, we have the ability to directly incorporate title and meta tags within our React components:

```

Const HomePage = () => {

return (

<>

<title>Freecodecamp</title>

<meta name="description" content="Freecode camp blogs" />

// Page content

</>

);

}

```

This was not possible before in React. The only way was to use a package like react-helmet.

---

#🖼️Asset Loading🖼️

In React, optimizing the loading experience and performance of applications, especially with images and asset files, is crucial.

Traditionally, there can be a flicker from unstyled to styled content as the view loads items like stylesheets, fonts, and images. Developers often implement custom solutions to ensure all assets are loaded before displaying the view.

In React 19, images and files will load in the background as users navigate the current page, reducing waiting times and enhancing page load speed. The introduction of lifecycle Suspense for asset loading, including scripts and fonts, allows React to determine when content is ready to prevent any unstyled flickering.

New [Resource Loading APIs](https://react.dev/reference/react-dom#resource-preloading-apis) like `preload` and `preinit` offer increased control over when resources load and initialize. With assets loading asynchronously in the background, React 19 optimizes performance, providing a seamless and uninterrupted user experience.

---

# ⚙️Web Components⚙️

Two years ago, I ventured into the realm of web components and became enamored with their potential. Let me provide you with an overview:

Web components empower you to craft custom components using native HTML, CSS, and JavaScript, seamlessly integrating them into your web applications as if they were standard HTML tags. Isn't that remarkable?

Presently, integrating web components into React poses challenges. Typically, you either need to convert the web component to a React component or employ additional packages and code to make them compatible with React. This can be quite frustrating.

However, the advent of React 19 brings promising news for integrating web components into React with greater ease. This means that if you encounter a highly valuable web component, such as a carousel, you can seamlessly incorporate it into your React projects without the need for conversion into React code.

This advancement streamlines development and empowers you to harness the extensive ecosystem of existing web components within your React applications.

While specific implementation details are not yet available, I am optimistic that it may involve simply importing a web component into a React codebase, akin to module federation. I eagerly anticipate further insights from the React team on this integration.

#🪝New React Hooks🪝

**React Hooks have solidified their place as a beloved feature within the React library. Chances are you've embraced React's standard hooks frequently and maybe even ventured into creating your unique custom hooks. These hooks have gained such widespread acclaim that they have evolved into a prevalent programming methodology within the React community.**

With the upcoming release of React 19, the utilization of `useMemo`, `forwardRef`, `useEffect` and `useContext` is poised for transformation. This shift is primarily driven by the impending introduction of a novel hook, named **_use_**.

##`useMemo()`:

You won't need to use the `useMemo()` hook after React19, as React Compiler will memoize by itself.

Before:

```

import React, { useState, useMemo } from 'react';

function ExampleComponent() {

const [inputValue, setInputValue] = useState('');

// Memoize the result of checking if the input value is empty

const isInputEmpty = useMemo(() => {

console.log('Checking if input is empty...');

return inputValue.trim() === '';

}, [inputValue]);

return (

<div>

<input

type="text"

value={inputValue}

onChange={(e) => setInputValue(e.target.value)}

placeholder="Type something..."

/>

<p>{isInputEmpty ? 'Input is empty' : 'Input is not empty'}</p>

</div>

);

}

export default ExampleComponent;

```

After:

In the below example, you can see that after React19, we don't need to memo the values – React19 will do it by itself under the hood. The code is much cleaner:

```

import React, { useState, useMemo } from 'react';

function ExampleComponent() {

const [inputValue, setInputValue] = useState('');

const isInputEmpty = () => {

console.log('Checking if input is empty...');

return inputValue.trim() === '';

});

return (

<div>

<input

type="text"

value={inputValue}

onChange={(e) => setInputValue(e.target.value)}

placeholder="Type something..."

/>

<p>{isInputEmpty ? 'Input is empty' : 'Input is not empty'}</p>

</div>

);

}

export default ExampleComponent;

```

##`forwardRef()`:

`ref` will be now passed as props rather than using the `forwardRef()` hook. This will simplify the code. So after React 19, you won't need to use `forwardRef()`.

Before:

```

import React, { forwardRef } from 'react';

const ExampleButton = forwardRef((props, ref) => (

<button ref={ref}>

{props.children}

</button>

));

```

After:

`ref` can be passed as a "prop". No more `forwardRef()` is required.

```

import React from 'react';

const ExampleButton = ({ ref, children }) => (

<button ref={ref}>

{children}

</button>

);

```

##The new `use()` hook

React 19 will introduce a new hook called `use()`. This hook will simplify how we use promises, async code, and context.

Here is the syntax of hook:

```

const value = use(resource);

```

The below code is an example of how you can use the `use()` hook to make a `fetch` request:

```

import { use } from "react";

const fetchUsers = async () => {

const res = await fetch('https://jsonplaceholder.typicode.com/users');

return res.json();

};

const UsersItems = () => {

const users = use(fetchUsers());

return (

<ul>

{users.map((user) => (

<div key={user.id} className='bg-blue-50 shadow-md p-4 my-6 rounded-lg'>

<h2 className='text-xl font-bold'>{user.name}</h2>

<p>{user.email}</p>

</div>

))}

</ul>

);

};

export default UsersItems;

```

Let's understand the code:

1. The function `fetchUsers` handles the `GET` request operation.

2. Instead of employing the `useEffect` or `useState` hooks, we utilize the use hook to execute `fetchUsers`.

3. The outcome of the `useState` hook, referred to as users, stores the response obtained from the `GET` request (users).

4. Within the return section, we leverage users to iterate through and construct the list.

Another area where the new hook can be utilized is with Context. The Context API is widely adopted for global state management in React, eliminating the need for external state management libraries. With the introduction of the `use` hook, the `context` hook will be represented as follows:

Instead of employing `useContext()`, we will now utilize `use(context)`.

```

import { createContext, useState, use } from 'react';

const ThemeContext = createContext();

const ThemeProvider = ({ children }) => {

const [theme, setTheme] = useState('light');

const toggleTheme = () => {

setTheme((prevTheme) => (prevTheme === 'light' ? 'dark' : 'light'));

};

return (

<ThemeContext.Provider value={{ theme, toggleTheme }}>

{children}

</ThemeContext.Provider>

);

};

const Card = () => {

// use Hook()

const { theme, toggleTheme } = use(ThemeContext);

return (

<div

className={`p-4 rounded-md ${

theme === 'light' ? 'bg-white' : 'bg-gray-800'

}`}

>

<h1

className={`my-4 text-xl ${

theme === 'light' ? 'text-gray-800' : 'text-white'

}`}

>

Theme Card

</h1>

<p className={theme === 'light' ? 'text-gray-800' : 'text-white'}>

Hello!! use() hook

</p>

<button

onClick={toggleTheme}

className='bg-blue-500 hover:bg-blue-600 text-white rounded-md mt-4 p-4'

>

{theme === 'light' ? 'Switch to Dark Mode' : 'Switch to Light Mode'}

</button>

</div>

);

};

const Theme = () => {

return (

<ThemeProvider>

<Card />

</ThemeProvider>

);

};

export default Theme

```

The component `ThemeProvider` is responsible for providing the context, while the component `card` is where we will consume the `context` using the new hook, `use`. The remaining structure of the code remains unchanged from before React 19.

## The `useFormStatus()` hook:

The new hook introduced in React 19 will provide enhanced control over the forms you develop, offering status updates regarding the most recent form submission.

Syntax:

`const {pending, action , data , method } = useFormStatus()`

Or the Simpler version

`const {status} = useFormStatus()`

This hook provides the following information:

1."pending": Indicates if the form is currently in a pending state, yielding true if so, and false otherwise.

2."data": Represents an object conforming to the FormData interface, encapsulating the data being submitted by the parent.

3."method": Denotes the HTTP method, defaulting to GET unless specified otherwise.

4."action": A reference to an Action

This hook serves the purpose of displaying the pending state and the data being submitted by the user.

```

import { useFormStatus } from "react-dom";

function Submit() {

const status = useFormStatus();

return <button disabled={status.pending}>{status.pending ? 'Submitting...' : 'Submit'}</button>;

}

const formAction = async () => {

// Simulate a delay of 2 seconds

await new Promise((resolve) => setTimeout(resolve, 3000));

}

const FormStatus = () => {

return (

<form action={formAction}>

<Submit />

</form>

);

};

export default FormStatus;

```

In the provided code snippet:

- The `Submit` method serves as the action to submit the form. It utilizes the status retrieved from `useFormStatus` to determine the value of `status.pending`.

- This `status.pending` value is used to dynamically display messages on the UI based on its true or false state.

- The `formAction` method, a fake method, is employed to simulate a delay in form submission.

Through this implementation, upon form submission, the `useFormStatus` hook tracks the pending status. While the status is `pending (true)`, the UI displays "Submitting..."; once the pending state transitions to false, the message adjusts to "Submitted".

This hook proves to be robust and beneficial for monitoring form submission status and facilitating appropriate data display based on the status.

## The `useFormState()` hook

Another new hook in the React 19 is `UseFormState()`. It allows you to update state based on the result of form submission.

Syntax:

`const [state,formaction] = UseFormState(fn,initialState,permalink?);`

1.`fn`: The function to be called when the form is submitted or the button is pressed.

2.`initalstate`: The value you want the state to be initially. It can be any serialized value. This argument is ignored after the action is first ignored after the action is first invoked.

3.`permalink`: This is optional. A URL or page link, if `fn` is going to be run on the server then the page will redirect to `permalink`.

This hook will return:

1. `state`: The initial state will be the value we have passed to `initialState`.

2. `formAction`: An action that will be passed to the form action. the Return value of this will be available in the state.

```

import { useFormState} from 'react-dom';

const FormState = () => {

const submitForm = (prevState, queryData) => {

const name = queryData.get("username");

console.log(prevState); // previous form state

if(name === "Srijan"){

return {

success: true,

text: "Welcome"

}

}

else{

return {

success: false,

text: "Error"

}

}

}

const [ message, formAction ] = useFormState(submitForm, null)

return <form action={formAction}>

<label>Name</label>

<input type="text" name="username" />

<button>Submit</button>

{message && <h1>{message.text}</h1>}

</form>

}

export default FormState;

```

Understanding the Code given above:

1.`submitForm` is the method responsible for the form submission. This is the Action {remember Action is new feature in React 19}.

2.Inside `submitForm`, we are checking the value of the form. Then, depending on whether it's successful or shows an error, we return the specific value and message. In the above code example , if there is any value other than "Srijan", then it will return an error.

3.We can also check the `pervState` of the form. The initial state would be `null`, and after that it will return the `prevState` of the form.

On running this example, you will see a "welcome" message if the name is Srijan - otherwise it will return "error".

## The `useOptimistic()` hook :

`useOptimistic` is a React Hook that lets you show a different state while a sync action is underway, according to the React docs.

This hook will help enhance the user experiences and should result in faster responses. This will be useful for application that need to interact with the server.

Syntax:

`const [optimisticMessage,addOptimisticMessage] = useOptimistic(state,updatefn)`

For instance, when a response is being processed, an immediate "state" can be displayed to provide the user with prompt feedback. Once the actual response is received from the server, the "optimistic" state will be replaced by the authentic result.

The `useOptimistic` hook facilitates an immediate update of the UI under the assumption that the request will succeed. This naming reflects the "optimistic" presentation of a successful action to the user, despite the actual action taking time to complete.

Now, let's explore how we can incorporate the `useOptimistic` hook. The code below showcases the display of an optimistic state upon clicking the submit button in a (e.g., "Sending..."), persisting until the response is received.

```

import { useOptimistic, useState } from "react";

const Optimistic = () => {

const [messages, setMessages] = useState([

{ text: "Hey, I am initial!", sending: false, key: 1 },

]);

const [optimisticMessages, addOptimisticMessage] = useOptimistic(

messages,

(state, newMessage) => [

...state,

{

text: newMessage,

sending: true,

},

]

);

async function sendFormData(formData) {

const sentMessage = await fakeDelayAction(formData.get("message"));

setMessages((messages) => [...messages, { text: sentMessage }]);

}

async function fakeDelayAction(message) {

await new Promise((res) => setTimeout(res, 1000));

return message;

}

const submitData = async (userData) => {

addOptimisticMessage(userData.get("username"));

await sendFormData(userData);

};

return (

<>

{optimisticMessages.map((message, index) => (

<div key={index}>

{message.text}

{!!message.sending && <small> (Sending...)</small>}

</div>

))}

<form action={submitData}>

<h1>OptimisticState Hook</h1>

<div>

<label>Username</label>

<input type="text" name="username" />

</div>

<button type="submit">Submit</button>

</form>

</>

);

};

export default Optimistic;

```

1. The method `fakeDelayAction` serves as a simulated delay mechanism to mimic the submit event delay, showcasing the optimistic state conceptually.

2.`submitData` acts as the action responsible for submitting the form. It can potentially include asynchronous operations as well.

3. `sendFormData` is tasked with transmitting the form data to `fakeDelayAction` for processing.

4.Initialize the default state where the `messages` attribute is designated for input within the `useOptimistic()` function, ultimately returned as `optimisticMessages`.

`const [messages, setMessages] = useState([{ text: "Hey, I am initial!", sending: false, key: 1 },]);

`

Now , let's get into more details:

Inside `submitData` , we are using `addOptimisticMessage`.This will add the form data so that it will be available in `optimisticMessage`. We will use this to show a message in the UI:

```

{optimisticMessages.map((message, index) => (

<div key={index}>

{message.text}

{!!message.sending && <small> (Sending...)</small>}

</div>

))}

```

---

#Wanna Try Out React 19 🤔 ?

Presently, the aforementioned features are accessible in the canary release. Further information can be found [here](https://react.dev/blog/2024/02/15/react-labs-what-we-have-been-working-on-february-2024). As advised by the React team, refrain from using these features for customer or user-facing applications at this time. You are welcome to explore and experiment with them for personal learning or recreational purposes.

If you're eager to know the release date of React 19, you can stay informed by monitoring the Canary Releases for updates. Keep abreast of the latest developments by following the React team on their [official website](https://react.dev), [team channels](https://react.dev/community/team), [GitHub](https://github.com/facebook/react) and [Canary Releases](https://react.dev/blog/2023/05/03/react-canaries). | srijanbaniyal |

1,868,725 | Adult Asperger's Symptoms: Communication, Social Challenges, and Treatment | Aperger's syndrome is a neurological condition and has now become one of the branches of autism... | 0 | 2024-05-29T08:55:28 | https://dev.to/advancells/adult-aspergers-symptoms-communication-social-challenges-and-treatment-9nf |

Aperger's syndrome is a neurological condition and has now become one of the branches of autism spectrum disorder (ASD). It is characterized by difficulties in social interactions, communication, and repetitive behaviors. In some cases, individuals also have sensory stimulus processing.

Similar, to autism spectrum disorder, the cause of the syndrome remains unknown although genetics are believed to play a role in its development. The severity and symptoms of Asperger syndrome can vary significantly among individuals with boys being affected than girls at a ratio of 3 to 4. In addition to factors environmental influences like exposure to toxins or infections during pregnancy or early childhood may contribute to the risk of developing Asperger syndrome. Early detection and intervention are crucial in improving outcomes for those with this condition.

Just as children with autism may experience challenges, adults with Asperger syndrome can also face feelings of sadness, anxiety or obsessive compulsive behaviors. Seeking support and treatment for these health issues is important for adults living with Asperger syndrome. Additionally exploring alternative treatment options such as stem cell therapy can potentially aid in addressing the core problems associated with this condition. This approach may facilitate progress and visible improvements on a basis.

For information on how stem cell therapy could benefit individuals living with Asperger's syndrome, please click on the [ https://www.advancells.com/aspergers-syndrome-in-adults-symptoms-diagnosis-treatment/ ]

| advancells | |

1,868,717 | 18 Open-Source Projects Every React Developer Should Bookmark 🔥👍 | In the ever-evolving landscape of web development, React developers often find themselves navigating... | 0 | 2024-05-29T08:55:26 | https://madza.hashnode.dev/18-open-source-projects-every-react-developer-should-bookmark | github, react, opensource, productivity | ---

title: 18 Open-Source Projects Every React Developer Should Bookmark 🔥👍

published: true

description:

tags: github, react, opensource, productivity

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/ippv5wsa9f8tvi4biees.png

canonical_url: https://madza.hashnode.dev/18-open-source-projects-every-react-developer-should-bookmark

---

In the ever-evolving landscape of web development, React developers often find themselves navigating through a myriad of libraries, frameworks, and tools.

With so many options available, it can be challenging to identify which open-source projects are truly beneficial for efficiency, scalability, and performance.

In this article, I have compiled some of the most useful open-source projects for React developers to speed up your coding workflow.

Each tool will include a direct link, a description, and an image preview.

---

## 1\. [CopilotKit](https://github.com/CopilotKit/CopilotKit)

CopilotKit is an open-source framework designed to build, deploy, and operate fully customized AI copilots, such as in-app AI chatbots, AI agents, and AI text areas.

It supports self-hosting and is compatible with any LLM, including GPT-4.

Some of the most useful features include 👇

🧠 **Understand context:** Copilots are informed by real-time application context.

🚀 **Initiate actions:** Increase productivity and engagement with actions.

📚 **Retrieve knowledge:** Augmented generation from any data source.

🎨 **Customize design:** Display custom UX components in the chat.

🤖 **Edit text with AI:** Autocompletions, insertions/edits, and auto-first-drafts.