id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,868,897 | Magellan SmartGPS ECO and SmartGPS Updates | Understanding Magellan SmartGPS ECO Update The Magellan SmartGPS ECO is an innovative solution that... | 0 | 2024-05-29T11:14:44 | https://dev.to/magellan_support_31b49806/magellan-smartgps-eco-and-smartgps-updates-4bo | magellan, webdev | **Understanding Magellan SmartGPS ECO Update**

The [Magellan SmartGPS ECO](https://www.magellan.support/Smart-GPS-EcoSupport.php) is an innovative solution that integrates your GPS with a cloud-based ecosystem, providing real-time information and updates. To ensure that you are leveraging all the benefits of the SmartGPS ECO, it's important to regularly update the device. Here’s how you can perform the Magellan SmartGPS ECO update:

**Magellan GPS Support**

Magellan GPS is a top provider of navigational equipment for commercial fleets, outdoor enthusiasts, and drivers. Our GPS devices are designed to make traveling and exploring easy and enjoyable with features like accurate maps, route planning, and real-time traffic updates. We also provide comprehensive support services to make sure our customers get the most out of their Magellan GPS devices.

Our support services are designed to provide our clients with timely, trustworthy, and customized help. We know how frustrating technical problems may be, therefore we have a skilled staff on hand to assist you in troubleshooting any problems you may run across. Our support services are available around the clock, so you can get help whenever you need it.

Our support staff can assist with troubleshooting, device setup, and software upgrades, to name a few challenges. If you are having problems with your Magellan GPS device, our support experts can guide you through the necessary steps to resolve the issue. To help you become an expert user of your device, we also offer user manuals, training videos, and other resources.

We also offer warranty and maintenance services for Magellan GPS devices. If your device is still under warranty and broken, we'll replace it or fix it at no cost to you. If the warranty has expired on your device, we provide reasonably priced repair services. We do all of the repairs ourselves, so your device will be fixed accurately and quickly by our team of experts.

| magellan_support_31b49806 |

1,868,896 | Shahada in Islam: Meaning and Importance | Introduction Shahada, the Islamic declaration of faith, is the cornerstone of a Muslim’s... | 0 | 2024-05-29T11:14:37 | https://dev.to/equranekareem101/shahada-in-islam-meaning-and-importance-34a | shahadaislam, online, islamicstudiesforkids, qurancourse | **Introduction**

----------------

Shahada, the Islamic declaration of faith, is the cornerstone of a Muslim’s belief system and the entry point into the Islamic faith. This simple yet profound statement encapsulates the essence of Islam, affirming the oneness of Allah and the prophethood of Muhammad. In this article, we will delve into the meaning and significance of [Shahada in Islam](https://equranekareem.com/courses/online-islamic-courses/shahada-islam-converts-course/), exploring its role in the life of a Muslim and its broader implications within Islamic theology.

**Understanding Shahada: The Declaration of Faith**

---------------------------------------------------

### **The Text of Shahada**

The Shahada is composed of two parts:

* **Ashhadu an la ilaha illa Allah** - "I bear witness that there is no god but Allah."

* **Wa ashhadu anna Muhammadur rasul Allah** - "And I bear witness that Muhammad is the Messenger of Allah."

### **The Meaning of Shahada**

The first part of the Shahada, "There is no god but Allah," affirms the core Islamic belief in monotheism, known as Tawhid. This statement rejects polytheism and any form of idolatry, emphasizing that only Allah is worthy of worship. The second part, "Muhammad is the Messenger of Allah," acknowledges Muhammad as the final prophet in a long line of messengers sent by Allah to guide humanity.

### **Pronunciation and Intent**

For the Shahada to be valid, it must be recited with sincere belief and intention. It is not merely a verbal declaration but a heartfelt affirmation of faith. This sincerity is crucial, as it signifies the convert’s true acceptance of Islamic beliefs and practices.

**The Importance of Shahada in Islam**

--------------------------------------

### **The First Pillar of Islam**

Shahada is the first of the Five Pillars of Islam, which are the foundational acts of worship and practice for Muslims. The other four pillars – Salah (prayer), Zakat (charity), Sawm (fasting during Ramadan), and Hajj (pilgrimage to Mecca) – all rest upon the foundation of Shahada. Without this declaration, the other acts of worship lack their essential meaning and context.

### **Entry into the Islamic Faith**

Reciting the Shahada is the primary requirement for anyone wishing to convert to Islam. It marks the formal entry into the Muslim community and signifies the acceptance of Islamic beliefs and practices. This declaration is typically made in the presence of witnesses, such as family members, friends, or a local imam, to formally acknowledge the new convert’s faith.

### **Affirming Tawhid**

Tawhid, the belief in the oneness of Allah, is the central tenet of Islam. By declaring the Shahada, a Muslim affirms this fundamental concept. Tawhid shapes a Muslim's understanding of the universe, their purpose in life, and their relationship with the Creator. It serves as the basis for all Islamic theology and practice, influencing every aspect of a Muslim’s life.

### **Recognizing Prophethood**

The second part of the Shahada, acknowledging Muhammad as Allah's messenger, is equally important. It confirms the acceptance of Muhammad’s teachings and the Quran as the final, complete guidance for humanity. This recognition establishes a link between the believer and the prophetic tradition, connecting them to the rich history of Islamic revelation and moral guidance.

**Teaching Shahada: Importance for Children**

---------------------------------------------

### **Early Education in Faith**

Teaching Shahada to children is a fundamental aspect of Islamic upbringing. Introducing the concept of Tawhid and the prophethood of Muhammad at a young age helps instill a strong foundation of faith. This early education ensures that children grow up with a clear understanding of their religious identity and the core principles of Islam.

### **Methods of Teaching**

Educating children about Shahada can be done through various methods, including storytelling, interactive activities, and formal instruction. Engaging children in conversations about the meaning and significance of Shahada makes the learning process more relatable and meaningful.

### **Resources for Parents and Educators**

Parents and educators can utilize numerous resources to teach Shahada effectively. Books, videos, and online platforms dedicated to Islamic education provide valuable tools for making the learning experience enjoyable and comprehensive. For example, enrolling children in an [Islamic Studies for Kids](https://equranekareem.com/courses/online-islamic-courses/islamic-studies-for-kids-course/) course can be beneficial in providing structured and systematic religious education.

**Shahada in the Context of Worship**

-------------------------------------

### **Integration into Salah**

The Shahada is an integral part of Salah (prayer). During the Tashahhud portion of the prayer, Muslims recite the Shahada, reaffirming their faith multiple times a day. This repetition not only reinforces their belief but also deepens their spiritual connection with Allah.

### **Involvement in Other Acts of Worship**

The principles embedded in the Shahada influence other acts of worship as well. For instance, the concept of Tawhid underpins the observance of Ramadan, guiding Muslims to fast with the intention of seeking closeness to Allah. Similarly, the recognition of Muhammad's prophethood shapes the understanding and practice of Zakat (charity) and Hajj (pilgrimage).

**Shahada’s Historical and Contemporary Relevance**

---------------------------------------------------

### **Historical Context**

The declaration of Shahada has been a defining moment in Islamic history. From the early days of Islam in Mecca to the expansion of Islamic civilization, the Shahada has served as a unifying statement of faith. It has inspired countless Muslims to strive for spiritual and moral excellence, contributing to the growth and development of Islamic culture and civilization.

### **Contemporary Relevance**

In today’s world, the Shahada continues to hold profound significance. It serves as a beacon of faith for Muslims facing various challenges, from personal struggles to broader social issues. By adhering to the principles of Tawhid and the teachings of Muhammad, Muslims find guidance and strength to navigate modern complexities while maintaining their religious integrity.

**Shahada and Interfaith Relations**

------------------------------------

### **Promoting Understanding**

The Shahada can also play a role in fostering interfaith dialogue and understanding. By sharing the meaning and importance of this declaration, Muslims can help dispel misconceptions about Islam and build bridges of respect and cooperation with people of other faiths.

### **Common Ground**

The principles of monotheism and ethical conduct that are central to the Shahada resonate with many other religious traditions. Recognizing this common ground can enhance mutual understanding and promote peaceful coexistence among diverse religious communities.

**Personal Reflections on Shahada**

-----------------------------------

### **Stories of Converts**

Hearing the stories of those who have embraced Islam through the Shahada can be deeply inspiring. Many converts share how the simple yet profound declaration transformed their lives, bringing them peace, purpose, and a sense of belonging to a larger spiritual family.

### **Strengthening Faith**

For lifelong Muslims, reflecting on the Shahada can renew and strengthen their faith. Taking time to contemplate the meaning of this declaration and its implications in daily life can lead to a deeper, more conscious practice of Islam.

**Conclusion**

--------------

The Shahada, the Islamic declaration of faith, is far more than a set of words. It is the essence of what it means to be a Muslim, encompassing the core beliefs of monotheism and the recognition of Muhammad’s prophethood. This declaration shapes a Muslim’s spiritual, social, and ethical life, providing a foundation for worship and daily conduct. Teaching Shahada in Islam to children ensures the transmission of these vital principles to the next generation, while its repetition in daily prayers and acts of worship continually reinforces a Muslim’s faith. In both historical and contemporary contexts, the Shahada remains a powerful statement of faith and unity, guiding Muslims worldwide. Embracing and understanding the Shahada is a lifelong journey that deepens one's connection with Allah and strengthens the bonds within the global Muslim community.

**FAQs**

--------

### **What is Shahada?**

Shahada is the Islamic declaration of faith, stating, "There is no god but Allah, and Muhammad is His Messenger." It is the first of the Five Pillars of Islam and the foundational statement of a Muslim’s belief.

### **Why is Shahada important in Islam?**

Shahada is important because it affirms the core Islamic beliefs in monotheism (Tawhid) and the prophethood of Muhammad. It is the entry point into the Islamic faith and underpins all other acts of worship and practice in Islam.

### **How is Shahada recited?**

Shahada is recited in Arabic as "Ashhadu an la ilaha illa Allah, wa ashhadu anna Muhammadur rasul Allah." It must be recited with sincere belief and intention for it to be valid.

### **Can children learn about Shahada?**

Yes, teaching Shahada to children is essential for their Islamic upbringing. Parents and educators can use various methods and resources, such as books, videos, and courses like Islamic Studies for Kids, to effectively teach the meaning and significance of Shahada.

### **How often do Muslims recite the Shahada?**

Muslims recite the Shahada multiple times a day, particularly during their daily prayers (Salah). It is also recited during significant religious rituals and personal supplications.

### **What is the historical significance of Shahada?**

Shahada has been a unifying statement of faith since the early days of Islam. It has played a crucial role in the spread and development of Islamic civilization and continues to inspire Muslims to uphold their faith in contemporary times.

### **How does Shahada relate to interfaith relations?**

Shahada can promote interfaith understanding by highlighting common principles of monotheism and ethical conduct. Sharing the meaning of Shahada helps dispel misconceptions about Islam and fosters respect and cooperation among different religious communities. | equranekareem101 |

1,868,895 | Unlocking the Power 💪 of CSS Tooling.💪 | Introduction Tooling in web development extends beyond just JavaScript frameworks. Equally... | 0 | 2024-05-29T11:11:39 | https://dev.to/dharamgfx/unlocking-the-power-of-css-tooling-7e3 | webdev, javascript, beginners, css | ## Introduction

Tooling in web development extends beyond just JavaScript frameworks. Equally important are the tools available for CSS, which help streamline and enhance the process of styling web applications. This post will explore two essential types of CSS tooling: CSS frameworks and CSS preprocessors. We'll delve into their benefits, potential drawbacks, basic usage, and integration into web projects.

## 1 CSS Frameworks: Building with Ready-Made Blocks

### What are CSS Frameworks?

CSS frameworks are pre-prepared libraries that offer standardized components and design guidelines. They are designed to speed up the development process by providing reusable code for common web design elements.

#### Benefits of CSS Frameworks

- **Consistency:** Frameworks ensure a consistent look and feel across your web project.

- **Organization:** They provide a structured approach to styling, making the code more manageable.

- **Ready-Made Components:** Include buttons, forms, navigation bars, and more, which are easy to integrate.

- **Built-In Best Practices:** Adherence to modern web standards and responsive design principles.

#### Drawbacks of CSS Frameworks

- **Complexity and Size:** Frameworks can be overkill for small projects, adding unnecessary complexity and increasing file size.

- **Learning Curve:** New syntax and conventions need to be learned.

- **Lack of Uniqueness:** Websites using the same framework may look similar.

- **Customization Challenges:** Overriding default styles can be difficult.

### Popular CSS Frameworks

#### Bootstrap

Bootstrap is one of the most popular CSS frameworks. It includes a wide array of components and a responsive grid system.

**Example:**

```html

<!DOCTYPE html>

<html lang="en">

<head>

<link rel="stylesheet" href="https://stackpath.bootstrapcdn.com/bootstrap/4.5.2/css/bootstrap.min.css">

</head>

<body>

<div class="container">

<h1 class="text-center">Hello, world!</h1>

<button class="btn btn-primary">Click Me</button>

</div>

</body>

</html>

```

#### Foundation

Foundation provides a responsive grid and many UI components, similar to Bootstrap but with a different philosophy and set of default styles.

**Example:**

```html

<!DOCTYPE html>

<html lang="en">

<head>

<link rel="stylesheet" href="https://cdn.jsdelivr.net/npm/foundation-sites/dist/css/foundation.min.css">

</head>

<body>

<div class="grid-container">

<h1 class="text-center">Hello, Foundation!</h1>

<button class="button">Click Me</button>

</div>

</body>

</html>

```

#### Tailwind CSS

Tailwind CSS is a utility-first CSS framework that allows you to create custom designs without leaving your HTML.

**Example:**

```html

<!DOCTYPE html>

<html lang="en">

<head>

<link href="https://cdn.jsdelivr.net/npm/tailwindcss@2.2.19/dist/tailwind.min.css" rel="stylesheet">

</head>

<body>

<div class="container mx-auto">

<h1 class="text-center text-4xl">Hello, Tailwind!</h1>

<button class="bg-blue-500 hover:bg-blue-700 text-white font-bold py-2 px-4 rounded">Click Me</button>

</div>

</body>

</html>

```

### Integrating CSS Frameworks

To integrate a CSS framework, include its CSS file via a CDN or install it using package managers like npm. Customize by overriding default styles or adding your own custom CSS.

#### Weighing the Burden

- **Initial Learning Curve:** Understand the framework's components and classes.

- **Integration:** Adapt your project's structure to leverage the framework efficiently.

- **Customization:** Learn how to override styles to meet your specific design needs.

## 2 CSS Preprocessors: Supercharging Your CSS

### What are CSS Preprocessors?

CSS preprocessors extend CSS with advanced features like variables, nested rules, and functions, making CSS more powerful and easier to write.

#### Benefits of CSS Preprocessors

- **Enhanced Features:** Introduce programming constructs like loops and conditionals.

- **Code Reusability:** Use variables and mixins to avoid redundancy.

- **Maintainability:** Modularize CSS into smaller, more manageable files.

#### Drawbacks of CSS Preprocessors

- **Learning New Syntax:** Requires understanding a new syntax and toolchain.

- **Build Step Required:** Preprocessing step before deploying CSS.

### Popular CSS Preprocessors

#### Sass

Sass (Syntactically Awesome Style Sheets) is a widely used preprocessor that adds many features to CSS.

**Example:**

```scss

$primary-color: #333;

body {

font: 100% Helvetica, sans-serif;

color: $primary-color;

}

nav {

ul {

margin: 0;

padding: 0;

list-style: none;

}

li { display: inline-block; }

a {

font-weight: bold;

color: $primary-color;

&:hover {

color: #ff6347;

}

}

}

```

#### PostCSS

PostCSS is a tool for transforming CSS with JavaScript plugins, offering a wide range of functionalities from autoprefixing to custom property fallbacks.

**Example:**

```js

// postcss.config.js

module.exports = {

plugins: [

require('autoprefixer'),

require('cssnano')

]

};

```

### Integrating CSS Preprocessors

Integration involves setting up a build process using tools like npm scripts, Gulp, or Webpack to compile preprocessor code into standard CSS.

#### Weighing the Burden

- **Learning Curve:** Understand preprocessor syntax and features.

- **Integration:** Set up build tools to compile the preprocessor code.

- **Efficiency:** Once set up, preprocessors can significantly speed up development.

## Conclusion

Understanding and leveraging CSS tooling can significantly enhance your web development workflow. CSS frameworks provide pre-built components and consistent design principles, while CSS preprocessors offer advanced features that make writing CSS more efficient. Balancing the benefits and potential drawbacks of each tool will help you make informed decisions and create better, more maintainable web projects. | dharamgfx |

1,868,894 | Akash Dubey Co-FOunder & CEO Beparr Beparr is trying to help india's small and medium business like retailers of clothing | A post by akash dubey | 0 | 2024-05-29T11:11:06 | https://dev.to/akash_dubey_3b2b9733f1f1a/akash-dubey-co-founder-ceo-beparr-beparr-is-trying-to-help-indias-small-and-medium-business-like-retailers-of-clothing-45a8 | akash_dubey_3b2b9733f1f1a | ||

1,864,680 | Understanding and Implementing WebSockets in Your Next Project | In today's fast-paced digital landscape, real-time communication is no longer a luxury but a... | 0 | 2024-05-29T11:09:00 | https://dev.to/nitin-rachabathuni/understanding-and-implementing-websockets-in-your-next-project-4g21 | In today's fast-paced digital landscape, real-time communication is no longer a luxury but a necessity. Whether it's for live chat applications, multiplayer games, or real-time data feeds, WebSockets provide a robust solution for achieving low-latency, full-duplex communication between client and server. In this article, we'll delve into the fundamentals of WebSockets and how you can implement them in your next project.

What are WebSockets?

WebSockets are a protocol designed for full-duplex communication channels over a single, long-lived connection. Unlike traditional HTTP requests, which follow a request-response pattern, WebSockets allow for bi-directional communication, enabling both the client and server to send and receive data independently.

Why Use WebSockets?

Real-Time Communication: WebSockets are ideal for applications requiring real-time updates, such as chat applications, live sports updates, or stock trading platforms.

Efficiency: Once the connection is established, WebSockets reduce the overhead of HTTP requests, as they do not require a new connection for each message.

Low Latency: WebSockets offer lower latency compared to HTTP-based polling, making them suitable for time-sensitive applications.

Setting Up WebSockets: A Simple Example

Let's walk through a basic implementation of WebSockets using Node.js and a client-side HTML page.

Server-Side (Node.js with ws library)

First, we need to set up a WebSocket server. We'll use the popular ws library in Node.js.

```

// server.js

const WebSocket = require('ws');

const server = new WebSocket.Server({ port: 8080 });

server.on('connection', (ws) => {

console.log('Client connected');

ws.on('message', (message) => {

console.log(`Received message: ${message}`);

// Echo the received message back to the client

ws.send(`Server: You said ${message}`);

});

ws.on('close', () => {

console.log('Client disconnected');

});

ws.send('Welcome to the WebSocket server!');

});

console.log('WebSocket server is running on ws://localhost:8080');

```

Client-Side (HTML and JavaScript)

Next, let's create a simple HTML page that connects to our WebSocket server and allows for sending and receiving messages.

```

<!-- index.html -->

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<title>WebSocket Example</title>

</head>

<body>

<h1>WebSocket Client</h1>

<input type="text" id="messageInput" placeholder="Enter a message">

<button onclick="sendMessage()">Send</button>

<div id="messages"></div>

<script>

const ws = new WebSocket('ws://localhost:8080');

ws.onopen = () => {

console.log('Connected to WebSocket server');

};

ws.onmessage = (event) => {

const messagesDiv = document.getElementById('messages');

const message = document.createElement('p');

message.textContent = `Server: ${event.data}`;

messagesDiv.appendChild(message);

};

ws.onclose = () => {

console.log('Disconnected from WebSocket server');

};

function sendMessage() {

const input = document.getElementById('messageInput');

ws.send(input.value);

input.value = '';

}

</script>

</body>

</html>

```

Expanding Your WebSocket Implementation

This basic example provides a foundation on which to build more complex real-time applications. Here are some ideas to extend your WebSocket implementation:

Authentication: Implement token-based authentication to secure your WebSocket connections.

Broadcasting: Allow the server to broadcast messages to all connected clients, useful for notifications or live updates.

Error Handling: Add comprehensive error handling to manage connection drops and reconnections smoothly.

Conclusion

WebSockets are a powerful tool for real-time communication in modern web applications. By enabling efficient, low-latency, bi-directional communication, they open up a world of possibilities for developers. Whether you're building a chat application, a live data feed, or a collaborative tool, WebSockets can help you deliver a seamless, real-time experience to your users.

Embrace WebSockets in your next project and unlock the potential of real-time web communication. Happy coding!

---

Thank you for reading my article! For more updates and useful information, feel free to connect with me on LinkedIn and follow me on Twitter. I look forward to engaging with more like-minded professionals and sharing valuable insights.

| nitin-rachabathuni | |

1,868,893 | Beparr B2B Wholesale App install App Now:- https://play.google.com/store/apps/details?id=com.beparr.buyer | A post by akash dubey | 0 | 2024-05-29T11:08:49 | https://dev.to/akash_dubey_3b2b9733f1f1a/beparr-b2b-wholesale-app-install-app-now-httpsplaygooglecomstoreappsdetailsidcombeparrbuyer-jei | akash_dubey_3b2b9733f1f1a | ||

1,868,892 | Kojic Acid Market - Global Growth, Share, Trends, Demand and Analysis Report Forecast 2031 | The global market for kojic acid, a key ingredient in skincare and cosmetic formulations, is set to... | 0 | 2024-05-29T11:08:48 | https://dev.to/mihir_kadu_138/kojic-acid-market-global-growth-share-trends-demand-and-analysis-report-forecast-2031-12dp | kojicacidmarket, kojicacid, acid | The global market for kojic acid, a key ingredient in skincare and cosmetic formulations, is set to witness substantial growth in the coming years, according to a comprehensive report by Fairfield Market Research. The report, spanning the period from 2018 to 2030, projects a steady increase in the market size from US$65.5 million in 2022 to an estimated US$79.8 million by 2030, representing a compound annual growth rate (CAGR) of 2.5% during the forecast period.

For More Industry Insights: https://www.fairfieldmarketresearch.com/report/kojic-acid-market

Rising Demand for Natural Skincare Fuels Market Growth

One of the primary drivers behind this growth trajectory is the increasing demand for natural and organic skincare products worldwide. Consumers are gravitating towards skincare solutions derived from natural sources, such as fungus-derived kojic acid, to address concerns related to skin discoloration, hyperpigmentation, and uneven skin tone.

Cosmetic Grade Dominates Market Share

In 2022, the cosmetic grade category emerged as the dominant segment in the kojic acid market, owing to its widespread use in various skincare formulations like creams, serums, lotions, and soaps. The surge in demand for products addressing skin imperfections has propelled the prominence of kojic acid in the cosmetics industry.

Anti-Oxidizing and Anti-Bacterial Properties Drive Adoption

The anti-oxidizing and anti-bacterial attributes of kojic acid have also contributed significantly to its market growth. With its potent antioxidant properties, kojic acid helps combat oxidative damage to cells and tissues, making it a prized component in skincare and cosmetic formulations. Additionally, its strong antibacterial qualities make it essential in products aimed at preventing or treating bacterial skin conditions.

Regional Outlook: Asia Pacific Leads, North America Explores Gains

The Asia Pacific region is expected to maintain its position as the largest revenue-contributing region in the global kojic acid market. Countries like Japan, China, and South Korea serve as major production hubs, catering to both domestic and international demand for kojic acid. Meanwhile, North America is witnessing an uptick in demand, fueled by the growing popularity of natural and organic skincare products among consumers.

Key Trends and Opportunities

The market presents several key trends and opportunities for innovation and growth. Integration of product innovation and development, customization of skincare solutions, and market diversification into new applications beyond cosmetics are among the avenues for exploration in the kojic acid market.

| mihir_kadu_138 |

1,868,891 | The Future of Cyber security: Emerging Technologies and Trends | Cyber security is a constantly evolving field, formed with the aid of technological improvements and... | 0 | 2024-05-29T11:07:58 | https://dev.to/liong/the-future-of-cyber-security-emerging-technologies-and-trends-e9a | development, blockchain, malaysia, kualalumpur | Cyber security is a constantly evolving field, formed with the aid of technological improvements and the ever-converting threat landscape. As we appearance in the direction of the destiny, numerous emerging technologies and developments promise to revolutionize cyber security practices, enhancing our potential to shield digital assets and statistics.

**Quantum Computing and Its Dual Impact**

Quantum computing represents a substantial jump in computational strength, able to fixing complicated problems that are presently intractable for classical computer systems. However, this strength comes with each possibilities and demanding situations for [cyber security.](https://ithubtechnologies.com/cyber-attack-malaysia/?utm_source=dev.to%2F&utm_campaign=Cybersecurity&utm_id=Offpageseo+2024)

**Quantum Threats to Encryption:** One of the most profound impacts of quantum computing is its ability to break conventional encryption algorithms. RSA and ECC, widely used for securing facts, may be rendered obsolete through quantum algorithms like Short’s algorithm. This has caused the development of quantum-resistant encryption strategies to ensure statistics remains stable within the quantum era.

**Quantum-Enhanced Security:** On the flip facet, quantum generation additionally offers greater protection answers. Quantum Key Distribution (QKD) makes use of the concepts of quantum mechanics to create steady channels that are theoretically resistant to eavesdropping. By leveraging QKD, businesses can guard touchy information against even the most superior threats.

**Block chain Technology for Enhanced Security**

Block chain, regarded for its association with crypto currencies, gives a decentralized and tamper-proof manner to stable statistics. Its **capability packages in cyber security are widespread:**

**Secure Data Transactions:** Block chain's immutable ledger can secure information transactions, ensuring information integrity and transparency. This is especially beneficial for programs along with steady voting structures, deliver chain safety, and financial transactions, where information integrity is paramount.

**Decentralized Identity Management:** Block chain can facilitate decentralized identification management structures, allowing individuals to control their personal virtual identities. This reduces the danger of identification theft and enhances privacy by using minimizing reliance on centralized identity vendors.

**Smart Contracts:** Block chain-based clever contracts automatically execute and enforce phrases and conditions with out the need for intermediaries. These contracts can beautify protection in numerous applications, from prison agreements to automated coverage claims.

**The Advent of 5G and Its Security Implications**

The rollout of 5G technology guarantees quicker and more dependable internet connectivity, helping a big growth in linked devices and services. However, this also introduces new cyber security challenges:

**Expanded Attack Surface:** With the proliferation of 5G-connected gadgets, the assault surface expands drastically. Each tool represents a potential entry factor for cybercriminals, necessitating strong safety features to protect these endpoints.

**Network Slicing Vulnerabilities:** 5G networks make use of community cutting to create digital networks tailored to precise applications. While this complements performance and versatility, it additionally introduces vulnerabilities if slices aren't competently secured. Ensuring every slice is isolated and protected is essential to retaining common community safety.

**IoT Security:** The excessive-velocity, low-latency talents of 5G will boost up the adoption of Internet of Things (IoT) gadgets. Securing these gadgets, which regularly lack strong safety functions, might be a significant undertaking. Implementing strong authentication, encryption, and normal updates could be critical to defend IoT ecosystems.

**Artificial Intelligence and Machine Learning**

Artificial Intelligence (AI) and Machine Learning (ML) are reworking cyber security through automating risk detection and response:

**Automated Threat Detection:** AI systems can examine tremendous quantities of information to discover styles and hit upon anomalies that indicate cyber threats. Machine mastering algorithms improve over the years, turning into more powerful at recognizing malicious pastime and lowering fake positives.

**Predictive Analytics:** AI can are expecting ability threats with the aid of studying ancient information and identifying developments. This lets in organizations to proactively address vulnerabilities and prevent attacks earlier than they occur.

**Automated Incident Response:** AI can automate responses to sure types of cyber threats, which includes setting apart affected structures or deploying patches. This reduces the time it takes to contain and mitigate attacks, minimizing harm and disruption.

**The Rise of Cyber security Mesh**

Cyber security mesh is an architectural approach that provides a scalable and bendy security framework. It decentralizes protection policies and enforcement, allowing corporations to defend property regardless of their area:

**Decentralized Security Controls:** Cyber security mesh enables decentralized protection controls, making it easier to stable allotted environments together with cloud and side computing.

**Identity because the Perimeter:** In a cyber security mesh, identity turns into the number one perimeter. Strong identity and access control (IAM) practices are essential to make certain that most effective authorized customers can access assets.

**Adaptive Security:** The cyber security mesh model helps adaptive security measures, allowing companies to dynamically regulate their safety posture based totally on the cutting-edge danger landscape.

**Privacy-Enhancing Technologies**

As records privacy concerns grow, privacy-enhancing technology (PETs) are getting an increasing number of critical:

**Holomorphic Encryption:** This lets in computations to be done on encrypted records without decrypting it, maintaining privateers even as permitting facts evaluation.

**Differential Privacy:** This method adds noise to facts units to save you the identity of people in the statistics, ensuring privacy even as keeping information application.

**Secure Multi-Party Computation (SMPC):** SMPC allows multiple events to mutually compute a function over their inputs even as keeping the ones inputs non-public, permitting secure collaboration.

## Conclusion

The future of cyber security is being formed by means of more than a few rising technologies and trends. Quantum computing, block chain, 5G, AI, Zero Trust Architecture, cyber security mesh, and privateers-improving technologies all promise to convert the manner we shield digital assets and facts. As these technologies evolve, they may provide new tools and strategies to fight cyber threats, however they also introduce new demanding situations that should be addressed.

Organizations have to stay abreast of those traits and continuously adapt their cyber security techniques to leverage those technology successfully. By embracing innovation and fostering a lifestyle of protection, we can build a more resilient and stable virtual future.

| liong |

1,868,890 | 🔥 Unlock Your Digital Superpowers! 🔥 | 🌟 Ready to level up your tech skills? Look no further! Our Best IT Training Institute in... | 0 | 2024-05-29T11:06:56 | https://dev.to/liya/unlock-your-digital-superpowers-43h4 | ittraininginstitutesinkochi, softwaretestingcourse, pythoncourseinkochi, ittraining |

## 🌟 Ready to level up your tech skills?

Look no further! Our [Best IT Training Institute in Kochi](https://www.irohub.com/) is here to ignite your coding journey. 💻💡

🚀 Why Choose Us?

**Cutting-Edge Curriculum**: Dive into the latest technologies, from Python to cloud computing. We keep you ahead of the game! 🌐

**Expert Instructors**: Learn from industry pros who’ve been there and coded that.Their insights are pure gold! ✨

**Hands-On Projects**: Build real-world apps, websites, and solutions. No theory overload—just practical magic! 🛠️

📍 Location: 1st Floor, Trust Building, Kayyath Ln, Palarivattom, Kochi

📧 Contact Us: info@irohub.com | +91 812985515

🔗 Enroll Today: Irohub Infotech

| liya |

1,868,788 | Google's Gemini AI Transforms Chromebook Plus | Introduction Google is revolutionizing the Chromebook experience by integrating its... | 0 | 2024-05-29T11:06:39 | https://dev.to/aishikl/googles-gemini-ai-transforms-chromebook-plus-2e64 | ## Introduction

Google is revolutionizing the Chromebook experience by integrating its advanced AI chatbot, Gemini, along with a suite of AI-powered features into Chromebook Plus laptops. This move is set to enhance productivity, creativity, and overall user experience. Let's dive into the exciting new features and what they mean for Chromebook users.

## Gemini AI and Chromebook Plus

### What is Gemini AI?

Gemini is Google's latest AI chatbot designed to assist users in various tasks, from writing to photo editing. It is part of the Google One AI Premium plan, which is offered free for 12 months to new Chromebook Plus owners. This plan includes Gemini Advanced, a more capable version of the chatbot.

### Exclusive Features for Chromebook Plus

Chromebook Plus laptops are designed to meet specific hardware requirements to run these advanced AI features smoothly. These laptops typically cost more than $350 and come with enhanced processing power, memory, and storage.

## Key AI Features

### Help Me Write

One of the standout features is 'Help Me Write,' which works in any text box. Users can select text, right-click, and ask Google's AI to rewrite, rephrase, or change the tone of the selected text. This feature is also available for writing from scratch, where the AI can kickstart the writing process based on a few keywords.

### Generative AI Wallpapers

Google is bringing the generative AI wallpaper system from Android to ChromeOS. Users can create custom wallpapers and video call backgrounds based on their preferences for subject, mood, and color. This feature adds a personalized touch to your Chromebook experience.

### Magic Editor in Google Photos

The Magic Editor feature from Google's Pixel 8 smartphones is now available on Chromebook Plus. It allows users to edit photos by removing unwanted objects, moving subjects, and filling in backgrounds. This feature makes photo editing a breeze and enhances the quality of your images.

## Upcoming Features

### Hands-Free Control

Google is working on upcoming features like hands-free control, which utilizes Project Gameface to allow users to control their devices using face gestures and head movements. This feature aims to make device interaction more intuitive and accessible.

### Help Me Read

Another feature in development is 'Help Me Read,' which employs Gemini to summarize websites or PDFs and answer follow-up questions. This feature will be particularly useful for users who need quick information without going through lengthy texts.

## New Chromebook Models

Several manufacturers, including Acer, Asus, and HP, are launching new Chromebooks this year, some of which are Chromebook Plus models that can take advantage of the new AI features. Visit the Chromebook website to see the newest models.

## Conclusion

Google's integration of Gemini AI and other advanced features into Chromebook Plus laptops is a significant step forward in making technology more accessible and powerful. These features not only enhance productivity but also add a layer of personalization and ease of use that was previously unattainable. Whether you're a professional, a student, or just someone who loves technology, the new Chromebook Plus with Gemini AI is worth considering.

For more details, you can read the full article on Rapid Innovation. | aishikl | |

1,868,889 | Sofa Set Showdown: Comfort vs. Style? | Have you ever dreamt of a living room that's both the envy of your guests and a cozy haven for... | 0 | 2024-05-29T11:06:25 | https://dev.to/amin_hassan_15c6441336bab/sofa-set-showdown-comfort-vs-style-2a17 |

Have you ever dreamt of a living room that's both the envy of your guests and a cozy haven for relaxation? The struggle often lies in choosing between a [comfortable sofa set](https://fahfurniture.com/product/sofa-set-fh-805/) that feels like a cloud and a stylish one that elevates your entire space. But what if you could have both?

Here's where the perfect sofa set enters the picture. Contrary to popular belief, comfort and style don't have to be at odds. With the right features, a sofa set can truly transform your living room:

• Unmatched Comfort: Imagine sinking into luxurious cushions that cradle your body after a long day. High-quality sofa sets prioritize comfort with features like supportive springs, plush padding, and ergonomic design.

• Style for Every Taste: Gone are the days of bulky, uninspired sofas. Modern sofa sets come in a wide range of styles, from sleek and minimalist to classic and traditional. You can find the perfect piece to complement your existing decor or create a whole new look.

• Built to Last: A good sofa set is an investment. Look for features like sturdy frames, durable upholstery fabrics, and reinforced construction. These elements ensure your sofa set looks beautiful and provides years of comfort.

• Space Optimization: Not everyone has a sprawling living room. Many sofa sets offer features like modular pieces, reclining options, and built-in storage, allowing you to maximize space and functionality.

So, stop settling for just comfort or just style. With a well-chosen sofa set, you can create a living room that's both beautiful and a joy to relax in. It's the perfect place to unwind, entertain, and make memories that last a lifetime.

| amin_hassan_15c6441336bab | |

1,868,888 | گیت کنترل تردد پروانه ای یا گیت فلپ چیست؟ | گیت های کنترل تردد به همراه دستگاه های حضور غیاب از مهم ترین تجهیزات کنترلی و امنیتی در سازمان ها ،... | 0 | 2024-05-29T11:06:06 | https://dev.to/maxasecurity/gyt-khntrl-trdd-prwnh-y-y-gyt-flp-chyst-hhb | گیت های کنترل تردد به همراه دستگاه های حضور غیاب از مهم ترین تجهیزات کنترلی و امنیتی در سازمان ها ، ادارات، دانشگاه ها، اماکن تجاری و.. هستند. این گیت ها در مدل های مختلفی تولید و عرضه می شوند که هریک ویژگی ها و کاربردهای خاصی دارند. یکی از انواع متداول گیت کنترل تردد، گیت کنترل تردد باله ای است که به نام های گیت کنترل تردد پروانه ای و گیت فلپ هم شناخته می شود. این نوع گیت کنترل تردد دارای ظاهری شکیل و زیبا، دوام زیاد، عملکرد سریع و ایمنی بالاست و به همین دلیل در بسیاری از اماکن مورد استفاده قرار می گیرد. قابلیت اتصال به سیستم های اکسس کنترل این مدل گیت کنترل تردد را به یک گزینه ایده آل برای نصب در سازمان ها ، ادارات، دانشگاه ها و… تبدیل کرده است. برای آشنایی بیشتر با گیت پروانه ای، ویژگی ها و کاربردهای آن با ما همراه باشید.

**گیت کنترل تردد پروانه ای چیست؟

**[گیت کنترل تردد پروانه ای](https://maxasecurity.com/product-category/traffic-gate/flap-gate/) یا باله ای ، یکی از انواع گیت کنترل تردد است که به دلیل داشتن باله های تقریبا کوتاه که به شکل زاویه دار باز و بسته میشوند با نام گیت کنترل تردد پروانه ای شناخته می شود باله های این گیت که جزئی بدنه گیت را تشکیل می دهند، قسمتی از راهرو گیت را پر کرده اند و از جنس شیشه سکوریت یا پلکسی گلس ساخته می شوند. هنگامی احراز هویت افراد توسط سیستم اکسس کنترل انجام می شود به محض صدور اجازه ورود یا خروج، باله ها ی این گیت به سمت داخل جمع میشوند تا افراد بتوانند از راهرو گیت عبور کنند. گیت کنترل تردد باله ای به دلیل نیاز به فضای کم برای نصب و عملکرد دو طرفه برای نصب در مکان های کوچک و پرتردد گزینه ی بسیار مناسبی است.

**ویژگی های گیت کنترل تردد پروانه ای

**گیت کنترل تردد پروانه ای دارای ویژگی های منحصر به فردی است که سبب محبوبیت این گیت و استفاده از آن در اماکن مختلف از جمله ادارات و سازمان ها،

کارخانه ها، سالن های ورزشی ، دانشگاه ها ،مراکز تجاری و… شده است. برخی از این ویژگی ها عبارتند از :

**طراحی زیبا و قابل سفارشی سازی

**همانطور که اشاره شد، یکی از ویژگی های بارز گیت پروانه ای ظاهر زیبای آن است که قابلیت سفارشی سازی بر اساس نیاز سازمان را هم دارد. جنس باله گیت پروانه ای بسته به نیاز مشتریان انتخاب می شود و به درخواست مشتری قابلیت نورپردازی هم دارد. همچنین می توان آرم یا لوگوی سازمان یا نهاد مورد نظر را روی باله های گیت فلپ حک کرد.

** امکان اتصال به انواع سیستم اکسس کنترل

** این گیت امکان یکپارچه سازی با انواع سیستم های اکسس کنترل از جمله سامانه حضور و غیاب اثر انگشتی، کارتی، تشخیص چهره و… را دارد. یکپارچه سازی گیت پروانه ای با سیستم های اکسس کنترل امکان تامین امنیت و کنترل تردد همزمان را فراهم می کند.

**سرعت عملکرد و ضریب ایمنی بالا

**عملکرد گیت کنترل تردد پروانه ای به گونه ای است که پس از تایید هویت فرد توسط سیستم اکسس کنترل و صدور اجازه عبور افراد، با حرکت دادن باله ها به سمت داخل باز شده و سریعا به حالت اولیه برمی گردد.همچنین، این گیت مجهز به سنسور صحت عبور است و از عبور غیرمجاز افراد ممانعت می کند. سرعت عملکرد بالا همدیگر ویژگی قابل ذکر این گیت است که امکان استفاده از آن را در اماکن شلوغ و پر ازدحام فراهم می کند.

**نصب کم جا و امکان عبور یک و دو طرفه

**نصب گیت فلپ یا پروانه ای نیاز به فضای زیادی ندارد. بنابراین این گیت برای اماکن کوچک و پر ترافیک گزینه مناسبی است. همچنین، گیت کنترل تردد فلپ امکان عبور یکطرفه و دوطرفه را دارد و می توان از آن برای کنترل همزمان ورود و خروج افراد استفاده کرد.

**قیمت مناسب نسبت به کارایی بالا

**اگرچه [قیمت گیت های کنترل تردد](https://maxasecurity.com/product-category/traffic-gate/) به فاکتورهایی مانند جنس بدنه، اندازه گیت و قابلیت های گیت بستگی دارد اما قیمت گیت کنترل تردد فلپ به نسبت مدل های دیگر و با توجه به کارایی این مدل گیت مقرون به صرفه است. قیمت مناسب گیت باله ای یا پروانه ای یکی از دلایل استفاده گسترده از گیت کنترل تردد در اماکن مختلف است.

**سخن پایانی

**گیت کنترل تردد باله ای ، پروانه ای یا فلپ یک مدل گیت کنترل تردد پرکاربرد و محبوب است که دارای دو باله مشابه بال های پروانه در دو طرف راهرو ورود و خروجش است. این گیت قابلیت یکپارچه شدن با انواع سیستم های اکسس کنترل را دارد و با داشتن قابلیت تردد دو طرفه برای کنترل تردد و حفظ امنیت در اماکن پر رفت و آمد مناسب است. از جمله ویژگی های بارز گیت پروانه ای یا فلپ می توان به ظاهر زیبا و نصب کمجا، سرعت عملکرد بالا و قیمت مقرون به صرفه آن اشاره کرد. از این مدل گیت معمولا در ورودی ادارات و سازمان ها، سالن های ورزشی، کارخانجات، دانشگاه ها و… استفاده می شود.

شرکت مکسا با داشتن سابقه موفق در تولید محصولات سخت افزاری و نرم افزاری در زمینه تامین امنیت و کنترل تردد و همچنین کسب تجربه چندین ساله از نیازهای شرکت ها و سازمان های خصوصی یا دولتی امروزه موفق به تولید و عرضه انواع مختلفی از گیت های کنترل تردد به بازار شده است. شما می توانید با مراجعه به وب سایت شرکت مکسا به آدرس www.maxasecurity.com و یا تماس با شماره تلفن 02178756000 راهنمایی های لازم را در خصوص نحوه خرید انواع گیت کنترل تردد از همکاران پشتیبانی فروش محصولات دریافت نمایید.

| maxasecurity | |

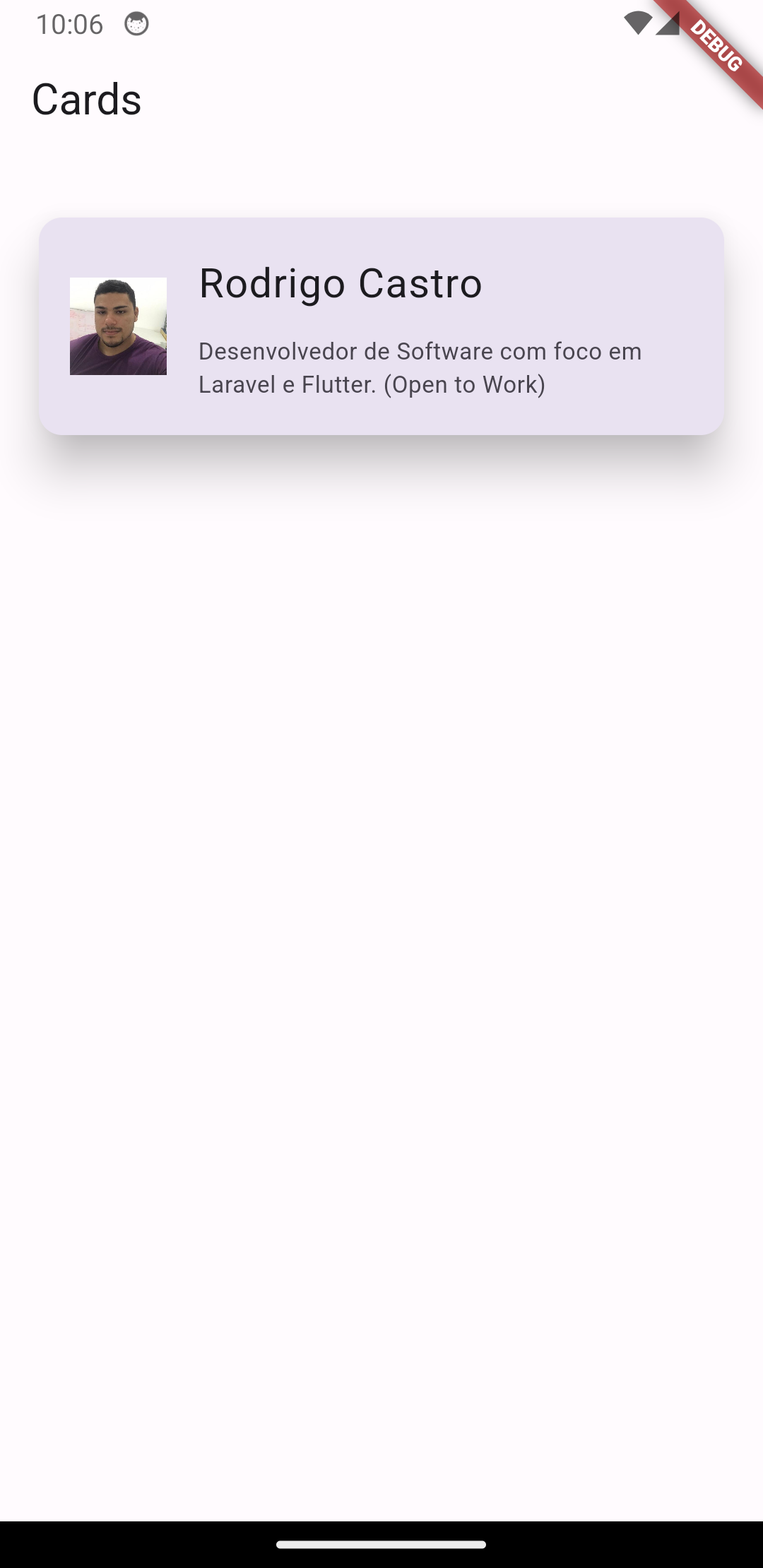

1,867,687 | Server Driven UI no Flutter? | O Server Driven UI (SDUI) no Flutter surge como um game-changer no cenário do desenvolvimento de... | 0 | 2024-05-29T11:04:15 | https://dev.to/redrodrigoc/server-driven-ui-no-flutter-367d | braziliandevs, flutter, dart, programming |

O **Server Driven UI** (SDUI) no Flutter surge como um **game-changer** no cenário do desenvolvimento de aplicativos. Ao transferir a lógica da interface para o servidor, abre-se um universo de possibilidades para criar interfaces mais dinâmicas, personalizadas e eficientes. Imagine um app que se adapta em tempo real às suas necessidades, sem a necessidade de constantes atualizações. É isso e muito mais que o SDUI no Flutter oferece!

> **game-changer** se refere a um “divisor de águas”, ou seja, algo que revoluciona e muda completamente um determinado mercado ou situação.

### O que é Server Driven UI?

Em contraste com a abordagem tradicional, onde a interface do usuário (UI) é definida no cliente (aplicativo móvel), o SDUI transfere a responsabilidade para o servidor. Isso significa que o servidor gera e envia a estrutura da UI para o aplicativo, que a renderiza na tela do dispositivo. Essa inversão de controle traz diversos benefícios:

#### Maior Agilidade e Flexibilidade:

- **Desenvolvimento e testes mais rápidos**: Alterações na UI podem ser implementadas no servidor e propagadas instantaneamente para todos os usuários, sem a necessidade de atualizações de aplicativos.

- **Experiência do usuário aprimorada**: A UI pode ser personalizada em tempo real, de acordo com o perfil e contexto de cada usuário.

- **Redução de custos de desenvolvimento**: O código da UI é centralizado no servidor, diminuindo a duplicação de esforços e otimizando o processo de desenvolvimento.

Mas nem tudo são flores...

#### Desafios e Considerações:

1. Implementação

- **Arquitetura robusta**: O servidor precisa ter capacidade de processar e enviar as informações da UI de forma eficiente, especialmente para um grande número de usuários simultâneos.

- **Comunicação confiável**: A comunicação entre o servidor e o aplicativo deve ser segura e resiliente a falhas de rede.

- **Segurança de dados**: É crucial implementar medidas de segurança rigorosas para proteger os dados dos usuários e evitar acessos não autorizados.

2. Experiência do usuário

- **Latência**: A latência na comunicação entre o servidor e o aplicativo pode afetar a fluidez da UI.

- **Conectividade**: O SDUI depende de uma conexão de internet estável para funcionar corretamente.

- **Acessibilidade**: É importante garantir que a UI gerada pelo servidor seja acessível a todos os usuários, incluindo aqueles com deficiências.

#### Exemplos de Bibliotecas SDUI para Flutter:

- Mirai

- server_driven_ui

#### Exemplo utilizando o Mirai:

- **Servidor**:

```json

{

"type": "scaffold",

"appBar": {

"type": "appBar",

"title": {"type": "text", "data": "Cards"}

},

"body": {

"type": "column",

"mainAxisAlignment": "start",

"crossAxisAlignment": "center",

"children": [

{"type": "sizedBox", "height": 12},

{

"type": "card",

"elevation": 20,

"borderOnForeground": true,

"margin": {"top": 20, "bottom": 20, "right": 20, "left": 20},

"child": {

"type": "listTile",

"leading": {

"type": "image",

"src": "https://avatars.githubusercontent.com/u/31713982?v=4",

"width": 50,

"height": 50

},

"title": {

"type": "padding",

"padding": {"top": 10},

"child": {

"type": "text",

"data": "Rodrigo Castro",

"align": "center",

"style": {"fontSize": 21}

}

},

"subtitle": {

"type": "padding",

"padding": {"top": 10, "bottom": 10},

"child": {

"type": "text",

"data":

"Desenvolvedor de Software com foco em Laravel e Flutter. (Open to Work)",

"align": "center",

"style": {"fontSize": 12}

}

}

}

}

]

}

```

- **Flutter**:

#### Conclusão:

O SDUI no Flutter se apresenta como uma ferramenta poderosa para impulsionar a inovação e criar experiências de usuário excepcionais. Ao abraçar essa abordagem com cautela e planejamento estratégico, os desenvolvedores terão a oportunidade de revolucionar o cenário de desenvolvimento de aplicativos.

#### Para se aprofundar no assunto:

- [Artigo "Server Driven UI with Mirai in Flutter"](https://robertocsd.medium.com/unraveling-sdui-a-deep-dive-into-server-driven-user-interfaces-using-mirai-in-flutter-e99cd3c81abe)

- [Vídeo "Flutter Server Driven UI é possível? Descubra neste vídeo!"](https://m.youtube.com/watch?v=sWIS18Njr3I)

- [Pacote "server_driven_ui" no Pub.dev](https://pub.dev/packages/server_driven_ui)

- [Pacote "mirai" no Pub.dev](https://pub.dev/packages/mirai)

| redrodrigoc |

1,868,886 | Telemedicine App Development Company Insights: How to Choose the Best Partner | Telemedicine has revolutionized healthcare by bridging the gap between patients and healthcare... | 0 | 2024-05-29T11:02:34 | https://dev.to/allenchrios/telemedicine-app-development-company-insights-how-to-choose-the-best-partner-1gdh |

Telemedicine has revolutionized healthcare by bridging the gap between patients and healthcare providers, ensuring that medical services are accessible, efficient, and convenient. As technology continues to evolve, the demand for [telemedicine app development](https://www.itpathsolutions.com/building-the-future-of-healthcare-a-step-by-step-guide-to-telemedicine-app-development/) solutions is increasing, providing significant opportunities for businesses in the healthcare sector. This comprehensive guide aims to walk you through the key steps in developing a telemedicine app, offering insights into the various telehealth app development services available, and highlighting the benefits of partnering with a reputable telemedicine app development company.

**Introduction to Telemedicine and Its Importance**

Telemedicine involves the use of telecommunications technology to deliver healthcare services remotely. This innovative approach to healthcare enables patients to consult with doctors, receive diagnoses, and obtain prescriptions without needing to visit a medical facility. Telemedicine has proven particularly valuable during emergencies, pandemics, and for patients living in remote areas.

**Step 1: Understanding the Market and Identifying Needs**

Before embarking on telemedicine app development, it's crucial to understand the market and identify the needs of your target audience. Conduct thorough market research to determine the types of services that are in demand. For instance, you may find a growing need for mental health consultations, chronic disease management, or pediatric care.

**Step 2: Defining Features and Functionality**

The next step in telemedicine app development is defining the features and functionalities of your app. Some essential features to consider include:

**User Registration and Profiles**: Allow users to create and manage their profiles.

**Appointment Scheduling:** Enable patients to book appointments with healthcare providers.

**Video Conferencing:** Facilitate real-time video consultations between patients and doctors.

**Messaging and Chat:** Provide secure messaging options for communication.

**Electronic Health Records (EHR):** Integrate EHR systems to maintain patient health records.

**Prescription Management:** Allow doctors to prescribe medications electronically.

**Payment Gateway Integration:** Implement secure payment methods for services.

**Step 3: Choosing the Right Technology Stack**

Selecting the right technology stack is crucial for the success of your telehealth app development project. Consider using robust and scalable technologies that ensure smooth performance and security. Commonly used technologies include:

Frontend: React Native, Flutter

Backend: Node.js, Django, Ruby on Rails

Database: MongoDB, PostgreSQL

Video Conferencing API: WebRTC, Twilio

Cloud Services: AWS, Google Cloud, Azure

**Step 4: Ensuring Compliance with Regulations**

Telemedicine apps must comply with various healthcare regulations and standards to ensure data privacy and security. Key regulations include:

HIPAA (Health Insurance Portability and Accountability Act): Applicable in the United States, HIPAA sets the standard for protecting sensitive patient data.

**GDPR (General Data Protection Regulation)**: Relevant for European users, GDPR governs data protection and privacy.

**HL7 (Health Level Seven International)**: Provides standards for the exchange, integration, sharing, and retrieval of electronic health information.

**Step 5: Designing the User Interface (UI) and User Experience (UX)**

A user-friendly interface and seamless user experience are critical for the success of a telemedicine app. Work with experienced UI/UX designers to create an intuitive and accessible app design. Focus on:

**Simplicity:** Ensure that the app is easy to navigate.

**Accessibility:** Design for users with varying abilities.

**Consistency:** Maintain a consistent design language throughout the app.

**Feedback:** Provide clear feedback to users on their actions.

**Step 6: Developing and Testing the App**

With a clear plan in place, proceed with the development phase. Break down the development process into manageable sprints, and follow agile methodologies to ensure flexibility and iterative progress. Once the app is developed, conduct thorough testing to identify and fix any bugs or issues. Types of testing include:

Unit Testing: Test individual components.

Integration Testing: Ensure that different components work together.

User Acceptance Testing (UAT): Validate that the app meets user requirements.

Performance Testing: Assess the app's performance under different conditions.

**Step 7: Deploying and Maintaining the App**

After successful testing, deploy the telemedicine app to your chosen platforms, such as the Apple App Store and Google Play Store. Ensure that you have a robust [marketing strategy](https://www.itpathsolutions.com/marketing-your-telemedicine-app-revolutionising-healthcare-access-and-delivery/) to promote the app and attract users. Once the app is live, provide ongoing support and maintenance to address any issues and implement updates based on user feedback.

Benefits of Telemedicine App Development

Investing in telemedicine app development offers numerous benefits for both patients and healthcare providers:

**Convenience:** Patients can access healthcare services from the comfort of their homes.

**Cost-Effective:** Reduces the need for physical infrastructure and travel expenses.

**Improved Access:** Provides healthcare access to remote and underserved areas.

**Efficiency:** Streamlines healthcare processes and reduces waiting times.

Enhanced Patient Engagement: Encourages patients to take an active role in their healthcare.

**Choosing the Right Telemedicine App Development Company**

Partnering with a reputable telemedicine app development company can significantly enhance the success of your project. Look for a company that offers:

**Expertise:** Experience in developing healthcare applications.

**Compliance:** Knowledge of healthcare regulations and standards.

**Customization:** Ability to tailor solutions to your specific needs.

Support: Ongoing maintenance and support services.

**Conclusion**

Telemedicine app development is a transformative approach to healthcare, providing accessible and efficient medical services to patients worldwide. By following the steps outlined in this guide, you can develop a successful telemedicine app that meets the needs of your target audience. Whether you choose to handle the development in-house or partner with a telemedicine app development company, the key to success lies in thorough planning, understanding user needs, and ensuring compliance with healthcare regulations.

By leveraging the right telemedicine app development solutions and services, you can contribute to the future of healthcare and make a meaningful impact on patient care. | allenchrios | |

1,868,885 | Tazelik Cenneti: Mahalle Çamaşırhanesinin Cazibesi | Odanın ortasında, temiz beyaz havlularla ve yeni yıkanmış giysilerle dolup taşan sepetlerle düzgün... | 0 | 2024-05-29T11:02:30 | https://dev.to/softwareindustrie24334/tazelik-cenneti-mahalle-camasirhanesinin-cazibesi-3dmb | Odanın ortasında, temiz beyaz havlularla ve yeni yıkanmış giysilerle dolup taşan sepetlerle düzgün bir şekilde istiflenmiş uzun bir katlanır masa duruyor. Tezgahın arkasında, müşterilere her türlü soru veya endişeleri konusunda yardımcı olmaya hazır güler yüzlü bir görevli bulunmaktadır.

Sürekli koşuşturmacaya rağmen, müşteriler arasında bir dostluk duygusu var. Yabancılar sohbetler başlatıyor, inatçı lekeleri çıkarmak için ipuçlarını ve püf noktalarını paylaşıyor veya çamaşır yıkama gününün sıkıntılarından dert yanıyor. Çamaşır yıkamak gibi evrensel bir görevin bir araya getirdiği küçük bir topluluk.

Bir köşede, bir otomat, yorgun çamaşırcıları beslemek için çeşitli atıştırmalıklar ve içecekler sunuyor. Cips torbalarından soda şişelerine kadar her isteği tatmin edecek bir şeyler var. Giysilerinizin kurumasını beklerken zaman geçirmenin mükemmel yolu.

Boş bir makineye doğru ilerlediğinizde, tüm bunların verimliliğine hayret etmeden duramazsınız. Kaosa rağmen her şey iyi yağlanmış bir makine gibi çalışıyor, her kişi çamaşır yıkama gününün karmaşık dansında kendi rolünü oynuyor.

Giysileriniz ayıklandıktan ve deterjan miktarı ölçüldükten sonra makineyi doldurur ve beklemeye koyulursunuz. Çamaşır makinesinin hafif uğultusu sizi sakinleştirirken, Sparkle Clean Laundry gibi yerlere minnettar olmaktan kendinizi alıkoyamazsınız. Sürekli hareket eden bir dünyada, bazen asıl farkı yaratan, temiz giysiler ve dost canlısı yüzler gibi basit zevklerdir.

https://downwaste.com/tr/camasir-sutlari/ | softwareindustrie24334 | |

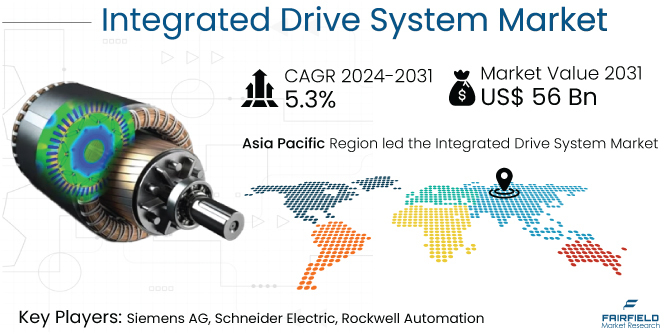

1,868,884 | Integrated Drive System Market Scope, Size, Share, Trends, Forecast By 2031 | The global integrated drive system market is poised for unprecedented expansion, with projections... | 0 | 2024-05-29T11:01:28 | https://dev.to/n_patil_96f2372543795ac55/integrated-drive-system-market-scope-size-share-trends-forecast-by-2031-202p | marketing | The global integrated drive system market is poised for unprecedented expansion, with projections indicating a substantial increase from an estimated US$39 billion by the end of 2024 to a staggering US$56 billion by 2031. This forecast, representing a remarkable compound annual growth rate (CAGR) of 5.3% during the period 2024-2031, underscores the robust momentum driving the IDS industry forward.

Visit our Research Report:https://www.fairfieldmarketresearch.com/report/integrated-drive-system-market

Quick Report Digest

1. Exponential Expansion: The global integrated drive system market is anticipated to witness a substantial 1.4x expansion during the forecast period of 2024-2031.

2. Historical Growth Drivers: Industrial automation, energy efficiency initiatives, and the accelerating adoption of electric vehicles have historically propelled the growth of the IDS market.

3. Future Growth Catalysts: Anticipated growth factors include stringent regulatory frameworks, increasing reliance on electric vehicles, ongoing technological advancements, and escalating investments in automation.

4. Efficiency and Performance Optimization: IDS solutions offer unparalleled efficiency and performance optimization, making them highly attractive for industries prioritizing operational excellence.

5. Alignment with Industry 4.0: The convergence of IDS with Industry 4.0 principles and automation trends aligns seamlessly with the evolving requirements of smart factories and automated production processes.

6. Emerging Economies Driving Demand: Robust industrialization and infrastructure development in emerging economies are spurring demand for scalable IDS solutions tailored to meet evolving market needs.

7. Barriers and Challenges: High initial investment costs, integration complexity, and market fragmentation pose significant challenges to widespread IDS adoption.

8. Key Trends: Trends such as energy efficiency, modular solutions, and heightened demand for services are shaping the trajectory of the IDS market.

9. Regulatory Influence: Regulatory mandates pertaining to emissions and safety standards are driving increased adoption of IDS solutions across industries.

10. Regional Dynamics: The Asia-Pacific region leads the IDS market due to rapid industrialization, with North America driven by technological advancements and Europe influenced by sustainability regulations.

A Look Back and a Look Forward - Comparative Analysis

The global integrated drive system market witnessed robust growth during the period 2019-2023, fueled by factors such as industrial automation, energy efficiency initiatives, and the burgeoning adoption of electric vehicles. Building upon this momentum, the market is projected to achieve even greater heights by 2031. Comparing the two periods reveals a trajectory of sustained growth, with key drivers including stricter regulatory frameworks, technological advancements, and escalating automation investments.

Key Growth Determinants

1. Efficiency and Performance Optimization: IDS solutions offer unparalleled improvements in efficiency and performance by seamlessly integrating various components, thus appealing to industries seeking operational excellence and cost reduction.

2. Industry 4.0 and Automation Trends: The rapid adoption of Industry 4.0 principles and the increasing focus on automation are driving demand for IDS solutions that enable seamless connectivity, data exchange, and real-time monitoring.

3. Growing Demand from Emerging Economies: Robust industrialization in emerging economies is driving demand for scalable IDS solutions tailored to meet evolving market needs.

Major Growth Barriers

1. High Initial Investment: Significant upfront investment costs present a barrier to IDS adoption.

2. Complexity of Integration: The complexity involved in integrating diverse components within the IDS framework poses challenges, including interoperability issues and compatibility concerns.

3. Market Fragmentation: The fragmented nature of the IDS market complicates vendor selection and standardization efforts, hindering widespread adoption.

Key Trends and Opportunities to Look at

1. Focus on Energy Efficiency: Heightened global concerns about sustainability are driving increased demand for energy-efficient IDS solutions.

2. Customization and Modular Solutions: Offering customizable and modular IDS solutions tailored to specific industry requirements presents a significant opportunity for market growth.

3. Service and Maintenance: Increasing complexity of IDS systems underscores the importance of reliable service and maintenance support.

How Does the Regulatory Scenario Shape this Industry?

Stringent regulatory frameworks pertaining to emissions, safety standards, and energy efficiency incentivize the adoption of IDS solutions across industries, creating a favorable environment for market growth.

Fairfield’s Ranking Board

Top Segments

• Hardware Segment: Cornerstone of the IDS market, driven by advancements in motor efficiency and integration with control systems.

• Software Segment: Plays a pivotal role in enhancing operational efficiency and diagnostics.

• Services Segment: Growing demand for maintenance, repair, and support services, alongside consultancy and training.

Regional Frontrunners

• Asia Pacific: Dominates the IDS market due to rapid industrialization.

• North America: Thrives on technological advancements and Industry 4.0 practices.

• Europe: Driven by established manufacturing industries and stringent regulations.

Fairfield’s Competitive Landscape Analysis

Leading players in the IDS market include Siemens AG, ABB Ltd., Schneider Electric SE, and Bosch Rexroth AG, leveraging innovation and strategic maneuvers to maintain market dominance.

Significant Company Developments

• New Product Launches: Siemens unveiled its latest IDS offering, featuring enhanced efficiency and advanced control capabilities.

• Distribution Agreements: Rockwell Automation finalized a strategic distribution agreement aimed at expanding the market reach of IDS solutions.

Expert Insights

As industries worldwide prioritize sustainability and operational efficiency, the demand for IDS solutions is expected to surge. Ongoing advancements in drive system technologies, including IoT integration and predictive maintenance capabilities, further enhance the appeal of IDS solutions.

| n_patil_96f2372543795ac55 |

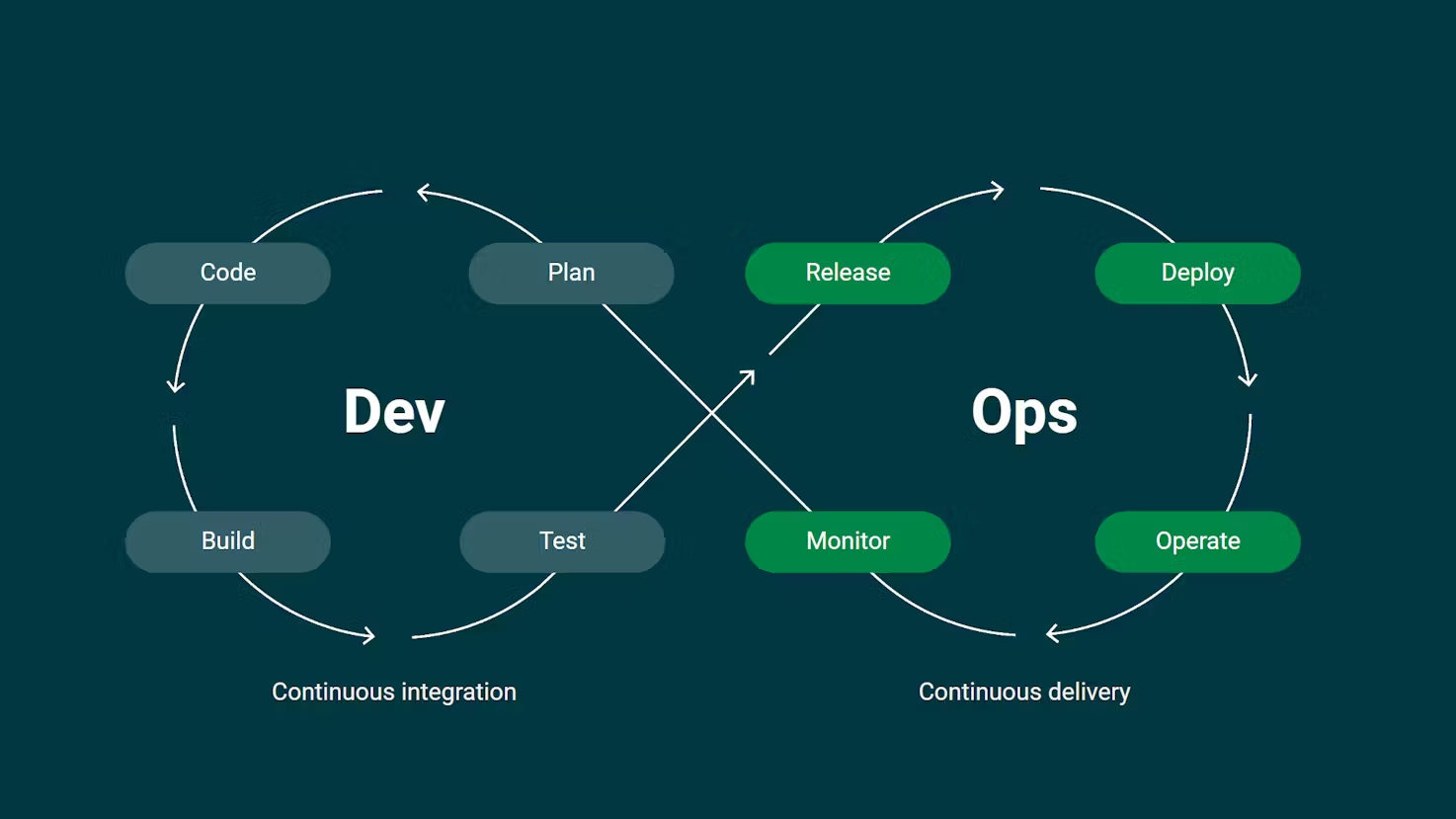

1,868,882 | Understanding CI/CD: A Comprehensive Overview | CI/CD, which stands for Continuous Integration and Continuous Delivery, is a process that helps teams... | 0 | 2024-05-29T11:00:10 | https://dev.to/azeem_shafeeq/understanding-cicd-a-comprehensive-overview-1mpo | cicd, webdev, beginners, tutorial | CI/CD, which stands for Continuous Integration and Continuous Delivery, is a process that helps teams quickly and safely deploy software changes. It's like a pipeline that automates many tasks in the software development process, allowing organizations and teams to deliver code to customers quickly, safely, and repeatedly.

Continuous Integration (CI)

Continuous Integration focuses on the development workflow, making sure changes are properly tested and integrated. This involves several key practices:

1. **Automated Testing**: Every code change triggers an automated testing sequence. This ensures that new changes do not break existing functionality.

2. **Version Control Integration**: Developers frequently commit code to a shared repository. Each commit is then automatically built and tested.

3. **Build Automation**: The process of compiling code and generating executable files is automated, ensuring consistency and reducing human error.

**Tools for CI**

**Jenkins:** An open-source automation server that supports building, deploying, and automating any project.

**Travis CI:** A continuous integration service used to build and test projects hosted on GitHub.

**CircleCI:** A CI service that allows for parallel builds, making it a fast option for CI/CD pipelines.

**Continuous Delivery (CD)**

Continuous Delivery provisions the infrastructure for production, ensuring changes are delivered to users quickly and safely. This process includes:

**Automated Deployment:** Code changes that pass all tests are automatically deployed to a staging environment and, with manual approval, to production.

**Infrastructure as Code (IaC):** Managing and provisioning computing infrastructure through machine-readable definition files rather than physical hardware configuration.

**Monitoring and Logging:** Continuous monitoring of applications and systems to detect and address issues promptly.

**Tools for CD**

**Spinnaker:** A multi-cloud continuous delivery platform that helps release software changes with high velocity and confidence.

**AWS CodePipeline:** A continuous delivery service for fast and reliable application and infrastructure updates.

**GitLab CI/CD:** Integrated within GitLab, it allows for a seamless process from code commit to deployment.

**CI/CD Pipeline Example**

A typical CI/CD pipeline might look like this:

**Code Commit:** A developer commits code to a version control system (e.g., Git).

**Automated Build:** The CI server detects the commit, checks out the latest code, and compiles it.

**Automated Tests:** A suite of automated tests runs against the compiled code.

**Staging Deployment**: If tests pass, the code is deployed to a staging environment for further testing.

**Production Deployment:** Upon approval, the code is automatically deployed to the production environment.

Real-World Use Cases

E-commerce Platforms: Companies like Amazon use CI/CD to deploy changes several times a day, ensuring new features and bug fixes reach customers rapidly without downtime.

Financial Services: Banks and financial institutions utilize CI/CD to quickly adapt to regulatory changes and deliver new features while ensuring security and compliance.

Social Media: Platforms like Facebook and Instagram use CI/CD to roll out updates seamlessly, providing new features and improvements without disrupting user experience.

Goals of CI/CD

Speed: Get changes to users quickly. Automated processes reduce the time between writing code and deploying it to production.

Safety: Ensure changes are problem-free and secure. Automated tests and deployment processes help catch issues early and consistently.

Repeatability: Make the process repeatable and scalable. Automated pipelines ensure that each deployment follows the same steps, reducing human error and increasing reliability.

_Remember, CI/CD is a continuous process that requires ongoing effort and improvement. As tools and practices evolve, so should the CI/CD pipelines to ensure optimal performance and reliability._

| azeem_shafeeq |

1,845,536 | Ibuprofeno.py💊| #113: Explica este código Python | Explica este código Python Dificultad: Fácil print(not True * (100 +... | 25,824 | 2024-05-29T11:00:00 | https://dev.to/duxtech/ibuprofenopy-113-explica-este-codigo-python-493f | spanish, learning, beginners, python | ## **<center>Explica este código Python</center>**

#### <center>**Dificultad:** <mark>Fácil</mark></center>

```py

print(not True * (100 + True))

```

👉 **A.** `101`

👉 **B.** `0`

👉 **C.** `100True`

👉 **D.** `SyntaxError`

---

{% details **Respuesta:** %}

👉 **B.** `0`

Ya sabemos que `True` infiere a `1` y `False` infiere a `0`.

Entonces es posible hacer operaciones aritméticas con booleanos.

Vamos por partes:

* `not True` equivale a `False`, que en numeros equivale a `0`.

* `100 + True` equivale a `101` porque `True` es `1`.

* Finalmente tendríamos `0 * 101` que nos da `0`

En este ejercicio en concreto basta con saber que `not True` da `0` para inferir que el resultado total será `0` (todo número multiplicado por `0` será `0` ya que el `0` es neutro multiplicativo)

{% enddetails %} | duxtech |

1,868,880 | What errors are lurking in LLVM code? | LLVM is an open-source project with a pretty large code base. The acme in terms of code quality,... | 0 | 2024-05-29T10:58:45 | https://dev.to/anogneva/what-errors-are-lurking-in-llvm-code-1b1j | cpp, opensource, programming | LLVM is an open\-source project with a pretty large code base\. The acme in terms of code quality, considering its size and open\-source nature\. After all, it's the developers of compiler tools who know best about language features and their proper use\. Their top\-notch code is always a challenge for our analyzer, and we always accept it with pleasure\.

A couple of months ago, LLVM released version 18\. It's time to once again ensure its code quality\. If interested, you can read our articles about previous checks [here](https://pvs-studio.com/en/blog/posts/cpp/0871/) and [there](https://pvs-studio.com/en/blog/posts/cpp/1003/)\.

These checks are always very special for [us](https://pvs-studio.com/en/pvs-studio/) because static analyzers operate almost the same way as compilers do when they analyze code\. Compilers also leverage static analysis to issue warnings\. They're almost cousins, though\. However, each of them is good at their own thing\. This article is proof of that\. The Clang compiler, a part of LLVM, compiled our analyzer and got it working\. We even have an [article](https://pvs-studio.com/en/blog/posts/cpp/0830/) about the switch from MSVC to it\. In return, our analyzer detected errors in the compiler\. Isn't that proof of synergy?

The checked project version is [LLVM 18\.1\.0](https://github.com/llvm/llvm-project/tree/llvmorg-18.1.0)\.

## Fragment N1

Here's an example of how the logical error can occur in a blanket of conditions and lead to an unreachable code\.

```cpp

if (Tok->is(tok::hash)) {

// Start of a macro expansion.

First = Tok;

Tok = Next;

if (Tok)

Tok = Tok->getNextNonComment();

} else if (Tok->is(tok::hashhash)) {

// Concatenation. Skip.

Tok = Next;

if (Tok)

Tok = Tok->getNextNonComment();

} else if (Keywords.isVerilogQualifier(*Tok) ||

Keywords.isVerilogIdentifier(*Tok)) {

First = Tok;

Tok = Next;

// The name may have dots like `interface_foo.modport_foo`.

while (Tok && Tok->isOneOf(tok::period, tok::coloncolon) &&

(Tok = Tok->getNextNonComment())) {

if (Keywords.isVerilogIdentifier(*Tok))

Tok = Tok->getNextNonComment();

}

} else if (!Next) {

Tok = nullptr;

} else if (Tok->is(tok::l_paren)) {

// Make sure the parenthesized list is a drive strength. Otherwise the

// statement may be a module instantiation in which case we have already

// found the instance name.

if (Next->isOneOf(

Keywords.kw_highz0, Keywords.kw_highz1, Keywords.kw_large,

Keywords.kw_medium, Keywords.kw_pull0, Keywords.kw_pull1,

Keywords.kw_small, Keywords.kw_strong0, Keywords.kw_strong1,

Keywords.kw_supply0, Keywords.kw_supply1, Keywords.kw_weak0,

Keywords.kw_weak1)) {

Tok->setType(TT_VerilogStrength);

Tok = Tok->MatchingParen;

if (Tok) {

Tok->setType(TT_VerilogStrength);

Tok = Tok->getNextNonComment();

}

} else {

break;

}

} else if (Tok->is(tok::hash)) {

if (Next->is(tok::l_paren))

Next = Next->MatchingParen;

if (Next)

Tok = Next->getNextNonComment();

}

```