id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,866,341 | Exploring Iteration in Python: How Loops Enhance Code Efficiency | Iteration in Programming Iteration, or looping, allows programmers to repeat a specific block of... | 27,530 | 2024-05-28T09:28:00 | https://dev.to/techtobe101/exploring-iteration-in-python-how-loops-enhance-code-efficiency-56fm | computerscience, techtobe101, programming, python | _Iteration in Programming_

**Iteration**, or looping, allows programmers to repeat a specific block of code multiple times. This concept is vital for efficiency and reducing redundant coding.

### Understanding the Iteration Process

Iteration is achieved through **loop statements** like **for loops**, **while loops**, and **do-while loops**. These loops repetitively execute a block of code until a certain condition is met.

#### Types of Loops

- **For Loop**: Ideal for iterating over a known range of values.

- **While Loop**: Continues iterating as long as a specified condition remains true.

- **Do-While Loop**: Similar to while loop but guarantees at least one iteration.

### The Impact of Iteration

Iteration makes code concise and efficient, avoiding unnecessary duplication. It's fundamental in tasks like sorting algorithms, searching algorithms, and data processing.

### Summary of Key Points

- Iteration allows for repetitive execution of code.

- Common loops include for loops, while loops, and do-while loops.

- Iteration optimizes code efficiency.

---

### Case Study: Counting Program

In this case study, we'll explore the concept of iteration. Specifically we will use a loop, such as a for loop, to help in repeating tasks efficiently and optimizing code performance. This will illustrate how these constructs we discussed enhance the functionality and efficiency of our programs.

**Problem**: Write a program to count from 1 to 10.

**Solution**:

1. Use a for loop to iterate through the numbers.

2. Print each number in the sequence.

**Python Code** with Comments:

```python

# Function to count and print numbers from 1 to 10

def count_numbers():

# Loop from 1 to 10 (inclusive)

for i in range(1, 11):

print(i)

# Main program to call the count_numbers function

def main():

count_numbers()

if __name__ == "__main__":

main()

```

---

By developing a counting program using iteration, we explored the iterative nature of loops and their importance in executing repetitive tasks efficiently. Iteration is a fundamental concept in programming that enables automation of processes such as data processing, repetitive calculations, and algorithmic operations.

In the next article we'll be discussing "Combining Conditional Execution and Iteration".

---

| techtobe101 |

1,866,340 | Beginners Conditional Execution in Programming: Practical Examples and Applications | Conditional Execution in Programming Conditional execution allows programs to make decisions based... | 27,530 | 2024-05-28T09:27:40 | https://dev.to/techtobe101/beginners-conditional-execution-in-programming-practical-examples-and-applications-ac3 | computerscience, techtobe101, python, programming | _Conditional Execution in Programming_

**Conditional execution** allows programs to make decisions based on certain conditions. By using conditional statements, we can create different pathways in a program, making it more dynamic and responsive.

### The Concept of Conditional Execution

Conditional execution lets programmers execute specific statements only when certain conditions are met. Common conditional statements include **if statements**, **switch statements**, and **ternary operators**. These evaluate expressions and decide which block of code to execute based on the evaluation.

#### Importance of Conditional Execution

Conditional execution is crucial because it makes programs adaptable. For example, a weather app can display different messages based on the current temperature, or a game can adjust its difficulty based on the player's skills. This flexibility enhances the usability and functionality of applications.

### Summary of Key Points

- Conditional execution allows programs to make decisions.

- Common conditional statements include if statements and switch statements.

- Conditional execution makes programs adaptable and responsive.

---

### Case Study: Temperature-based Activity Suggestion

In this case study, we'll dive into conditional execution to understand how conditional statements like if statements and switch statements enable programs to make decisions and adapt to varying conditions. This will build on what we discussed earlier and show how these concepts are applied in practice.

**Problem**: Create a program that suggests outdoor activities based on the temperature.

**Solution**:

1. Use conditional statements to evaluate temperature ranges.

2. Recommend activities based on the current temperature.

**Python Code** with Comments:

```python

# Function to suggest an activity based on temperature

def suggest_activity(temperature):

if temperature > 30:

return "It's a hot day! Stay cool and hydrated."

elif temperature > 20:

return "It's a warm day! Enjoy the nice weather."

elif temperature > 10:

return "It's a bit chilly! Wear a jacket."

else:

return "It's cold! Stay warm and bundle up."

# Main program to input temperature and display suggested activity

def main():

temperature = float(input("Enter the current temperature: "))

print(suggest_activity(temperature))

if __name__ == "__main__":

main()

```

---

Through the temperature-based activity suggestion program, we demonstrated the practical use of conditional execution in recommending activities based on weather conditions. By understanding how conditional statements work, programmers can create applications that respond dynamically to changing circumstances, enhancing user experience and functionality.

In the next article in our series, we will take a closer look at "Iteration in Programming".

---

| techtobe101 |

1,867,441 | OTT App Solutions To Build an Android TV & Smart TV Apps in 2025 | The living room is experiencing a digital transformation. Consumers are increasingly abandoning... | 0 | 2024-05-28T09:27:12 | https://dev.to/markpeterson/ott-app-solutions-to-build-an-android-tv-smart-tv-apps-in-2025-3h9c | development | The living room is experiencing a digital transformation. Consumers are increasingly abandoning traditional cable and satellite TV in favor of Over-The-Top (OTT) streaming services. As a result, the demand for high-quality, user-friendly OTT apps designed specifically for Android TV and Smart TVs is skyrocketing.

This blog serves as a comprehensive guide for businesses leveraging OTT app development solutions to build successful Android TV and Smart TV apps in 2025 and beyond. We'll explore the key considerations, cutting-edge technologies, and **[future trends shaping](https://techplanet.today/post/the-ultimate-guide-to-custom-ott-development-in-market-insights-and-trends)** the evolving landscape of Smart TV app development.

## Why OTT App Development Matters

The rise of OTT streaming has transformed the way consumers consume content. With the proliferation of smart TVs and streaming devices, users now have access to a vast array of content from various platforms. This shift has created a significant opportunity for businesses to develop OTT apps that offer unique and engaging content experiences.

##The Growing Importance of Android TV and Smart TV

Android TV and Smart TV platforms have revolutionized the way audiences consume content, offering a seamless and immersive viewing experience. These platforms provide access to a wide range of applications, including streaming services, games, and utility apps, directly on the television screen. As the living room becomes the central hub for entertainment, developing high-quality apps for Android TV and Smart TV is a strategic priority for content providers and OTT platform solutions providers.

## Understanding the Android TV and Smart TV Landscape:

- Fragmentation: The Smart TV market is fragmented, with various platforms and operating systems dominating different regions. While Android TV holds a significant share, understanding your target market's dominant Smart TV platforms is crucial.

- Hardware Variations: Smart TV hardware capabilities vary greatly. Developing an app that functions seamlessly across a range of processing power, memory limitations, and screen resolutions requires a robust and adaptable development approach.

- Evolving User Expectations: Smart TV users have become accustomed to sleek, intuitive user interfaces (UI) and voice search functionalities. Building an app that delivers a smooth and user-friendly experience is paramount.

## Key Considerations for Building Android TV & Smart TV Apps in 2025:

- Focus on User Experience (UX): Prioritize an intuitive and streamlined UI optimized for remote control navigation and voice search. Ensure quick and easy content discovery, playback controls, and seamless transitions between features.

- Content Security and DRM: Implement robust Digital Rights Management (DRM) solutions to protect premium content and comply with licensing agreements. Partner with OTT platform solutions providers experienced in secure content delivery for Smart TVs.

- Monetization Strategies: Consider diverse monetization models beyond traditional subscriptions. Explore in-app purchases, targeted advertising, or tiered subscription plans offering different levels of content access.

- Offline Viewing Capabilities: Cater to users with limited internet access by enabling offline viewing of downloaded content.

- Integration with Smart TV Features: Leverage built-in Smart TV features like Bluetooth gamepads or casting functionalities for a more interactive and engaging user experience.

## Emerging Technologies Shaping OTT App Development:

- AI-Powered Recommendations: Incorporate AI algorithms to personalize content recommendations based on user viewing history and preferences.

- Cloud Gaming Integration: As cloud gaming platforms gain traction, consider integrating cloud gaming capabilities directly into your OTT app, offering a one-stop shop for entertainment.

- Voice Search Advancements: Voice search will likely become even more prominent in Smart TV navigation. Optimize your app for voice search queries and ensure quick and accurate responses.

## OTT App Development Solutions for Android TV & Smart TV Apps:

Partnering with a reputable OTT app development company offers several advantages:

- Expertise in Cross-Platform Development: Navigate the complexities of developing for multiple Smart TV platforms while ensuring a consistent user experience across different devices.

- Experience with UI/UX Design for Smart TVs: Leverage specialists who understand the unique design considerations for remote control navigation and user interaction on a larger screen.

- Security and DRM Integration: Benefit from expertise in implementing robust security and DRM solutions to protect content and user data.

- App Store Optimization (ASO): Leverage expertise in optimizing your app listing across different Smart TV app stores to increase discoverability.

## Building a Future-Proof OTT App:

- Scalability and Performance: Design your app with scalability in mind, anticipating future growth and the potential addition of new features.

- Data-Driven Optimization: Integrate analytics tools to track user behavior, content consumption patterns, and app performance. Use data insights to inform ongoing optimization efforts.

- Staying Ahead of the Curve: The Smart TV landscape is constantly evolving. Partner with an **[OTT app development company](https://www.code-brew.com/ott-app-development-company)** that actively monitors industry trends and integrates cutting-edge technologies into your app.

## Conclusion:

By understanding the unique requirements of Android TV and Smart TV development, and by leveraging the expertise of OTT app development solutions providers, businesses can build captivating and successful OTT apps that capture the living room experience in 2025 and beyond. Focusing on a user-centric approach, prioritizing security, and embracing emerging technologies will help you develop an app that stands out in a competitive market and attracts a loyal user base.

Remember, a well-crafted OTT app can become a gateway to a world of entertainment for Smart TV users, generating valuable recurring revenue streams for your business.

| markpeterson |

1,866,337 | An Introduction to Conditional Execution & Iteration in Computer Science | Computer science is a fascinating field that encompasses various aspects of problem-solving and... | 27,530 | 2024-05-28T09:27:11 | https://dev.to/techtobe101/an-introduction-to-conditional-execution-iteration-in-computer-science-3jgo | computerscience, techtobe101, python, programming | Computer science is a fascinating field that encompasses various aspects of problem-solving and logical thinking. One fundamental concept in computer science is conditional execution and iteration. In this article series, we will delve into the basics of computer science, explore the concepts of conditional execution and iteration, and discuss their interplay, common challenges, and solutions.

## Understanding the Basics of Computer Science

### An Introduction to Conditional Execution & Iteration in Computer Science

**Computer science** is a field that involves solving problems using algorithms and logical thinking. Two key concepts in computer science are **conditional execution** and **iteration**. In this series, we will explore these concepts step-by-step.

### Understanding the Basics of Computer Science

**Computer science** is the study of algorithms—step-by-step instructions designed to solve specific problems. These algorithms are the foundation of computer programming and are used to create efficient and reliable software systems.

#### The Role of Algorithms

Algorithms are essential in computer science because they enable programmers to solve problems efficiently. They combine logic, mathematics, and creativity. With a well-defined algorithm, computer programs can take input data and produce the desired output, making them versatile tools in various fields like artificial intelligence and data analysis.

#### Key Concepts in Programming

Before diving into conditional execution and iteration, let's understand some basic programming concepts:

1. **Programming Languages**: These are tools like C++, Java, and Python that allow humans to communicate instructions to computers.

2. **Variables**: These store data values that can be used and manipulated in calculations. For example, a variable can store the temperature in a weather app.

3. **Functions**: These are reusable blocks of code that perform specific tasks, helping to organize and modularize code. For instance, a function in a banking app can calculate interest rates.

### Summary of Key Points

- Algorithms are the heart of computer science.

- Programming languages enable communication with computers.

- Variables store data, and functions perform specific tasks.

---

### Case Study: Building a Simple Calculator

Now, let's dive into a case study to quickly review the basics of computer science. Just as we discussed earlier, our goal is to refresh your memory on the key components of modular programming, specifically focusing on programming languages (python), variables, and functions. This case study will help illustrate how these elements form the essential foundation of a program. We'll discuss a problem & how to solve it using code.

**Problem**: Create a basic calculator that can perform addition, subtraction, multiplication, and division.

**Solution**:

1. Define functions to handle each operation.

2. Use user input to determine the operation.

3. Display the result based on the chosen operation.

**Python Code** with Comments:

```python

# Function to add two numbers

def add(x, y):

return x + y

# Function to subtract two numbers

def subtract(x, y):

return x - y

# Function to multiply two numbers

def multiply(x, y):

return x * y

# Function to divide two numbers

def divide(x, y):

# Check for division by zero

if y != 0:

return x / y

else:

return "Error! Division by zero."

# Main program to handle user input and operation choice

def main():

print("Select operation:")

print("1. Add")

print("2. Subtract")

print("3. Multiply")

print("4. Divide")

choice = input("Enter choice (1/2/3/4): ")

num1 = float(input("Enter first number: "))

num2 = float(input("Enter second number: "))

# Perform the chosen operation based on user input

if choice == '1':

print(num1, "+", num2, "=", add(num1, num2))

elif choice == '2':

print(num1, "-", num2, "=", subtract(num1, num2))

elif choice == '3':

print(num1, "*", num2, "=", multiply(num1, num2))

elif choice == '4':

print(num1, "/", num2, "=", divide(num1, num2))

else:

print("Invalid input")

if __name__ == "__main__":

main()

```

---

In this article, we implemented a simple calculator program using conditional execution and user-defined functions. Through this case study, we observed how to structure a program to handle different operations based on user input. Understanding these foundational concepts is crucial for building more complex applications in computer science.

In the next article in our series, we will take a closer look at "Conditional Execution in Programming".

--- | techtobe101 |

1,867,440 | What are types of Authentication | Understanding the Different Types of Authentication In today's digital age, safeguarding... | 0 | 2024-05-28T09:26:26 | https://dev.to/blogginger/what-are-types-of-authentication-4f06 | authentication | ## Understanding the Different Types of Authentication

In today's digital age, safeguarding data is more crucial than ever. Authentication, the process of verifying a user's identity, is a cornerstone of cybersecurity. Let's explore the various types of authentication methods available and their significance in protecting information.

### 1. **Password-Based Authentication**

**Password-Based Authentication** is the most common form. Users create a unique password to gain access to systems or accounts. Despite its popularity, it has several drawbacks, such as susceptibility to hacking, phishing, and brute force attacks. To enhance security, many organizations enforce strong password policies, including complexity requirements and regular updates.

### 2. **Two-Factor Authentication (2FA)**

**Two-Factor Authentication (2FA)** adds an extra layer of security by requiring two forms of verification. Typically, it combines something the user knows (password) with something the user has (a mobile device). Common examples include receiving a one-time code via SMS or using an authentication app. This method significantly reduces the risk of unauthorized access.

### 3. **Multi-Factor Authentication (MFA)**

**Multi-Factor Authentication (MFA)** extends the concept of 2FA by incorporating more than two verification methods. It might involve a password, a physical token, and biometric verification. MFA provides a higher level of security, making it more challenging for attackers to compromise all required factors.

### 4. **Biometric Authentication**

**Biometric Authentication** uses unique biological characteristics to verify identity. Common biometric methods include fingerprint scanning, facial recognition, and iris scanning. [Biometric authentication](https://www.authx.com/biometric-authentication/?utm_source=devto&utm_medium=SEO&utm_campaign=blog&utm_id=K003) is highly secure because it relies on traits that are difficult to replicate. However, privacy concerns and the need for specialized hardware can be barriers to widespread adoption.

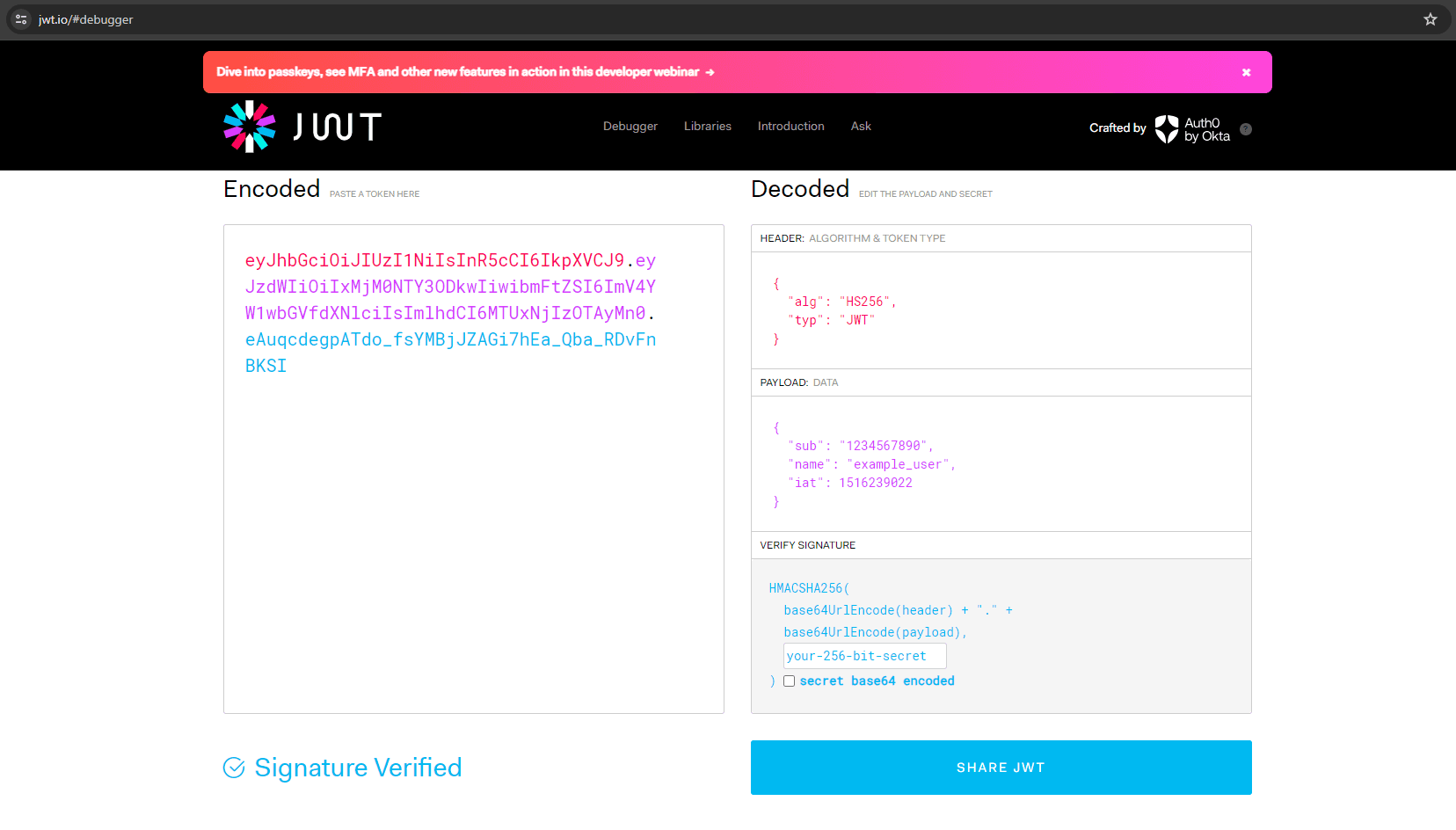

### 5. **Token-Based Authentication**

**Token-Based Authentication** involves using a physical device or software token that generates a unique code at regular intervals. Examples include hardware tokens provided by security firms and software tokens generated by apps like Google Authenticator. Tokens are often used in conjunction with passwords for enhanced security.

### 6. **Certificate-Based Authentication**

**Certificate-Based Authentication** uses digital certificates issued by trusted certificate authorities (CAs) to verify a user's identity. Users are granted certificates that act as a digital ID, ensuring secure communication and authentication. This method is commonly used in secure email communications and VPNs.

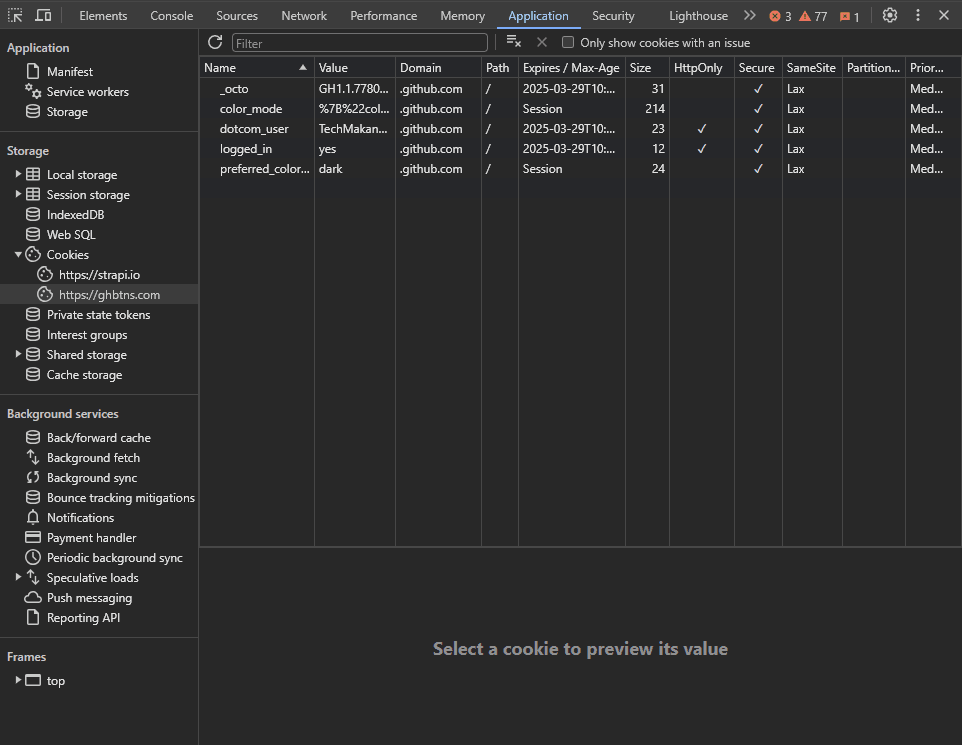

### 7. **Single Sign-On (SSO)**

**Single Sign-On (SSO)** allows users to authenticate once and gain access to multiple related systems. This method simplifies the login process and enhances user experience. [SSO authentication](https://www.authx.com/single-sign-on/?utm_source=devto&utm_medium=SEO&utm_campaign=blog&utm_id=K003) uses tokens that are shared between services, reducing the need to remember multiple passwords. However, if the SSO credentials are compromised, it can lead to broader security risks.

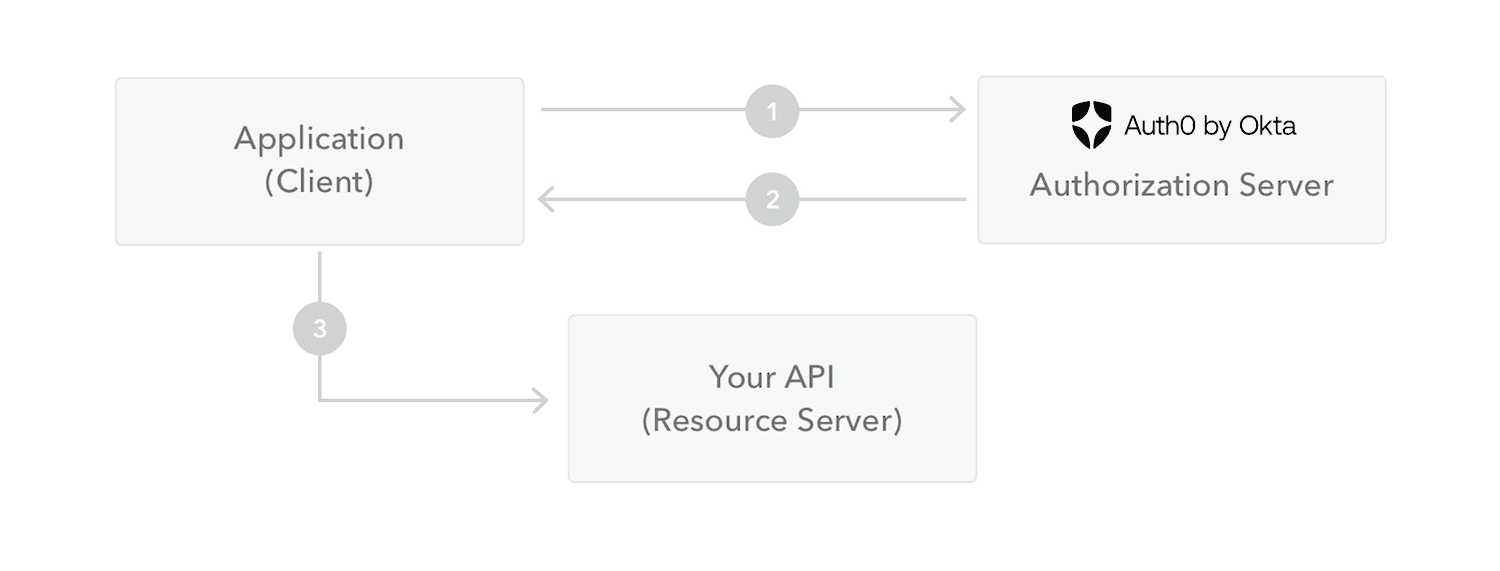

### 8. **OAuth and OpenID Connect**

**OAuth** is an open standard for token-based authentication and authorization. It allows third-party services to exchange information without exposing user passwords. **OpenID Connect** builds on OAuth 2.0, providing an identity layer for verifying user identity. These methods are widely used in social logins, where users can log in using their credentials from services like Google or Facebook.

### 9. **Behavioral Authentication**

**Behavioral Authentication** analyzes user behavior patterns, such as typing speed, mouse movements, and navigation habits, to verify identity. This method is passive and non-intrusive, continuously monitoring for unusual behavior that might indicate a compromised account. It's increasingly used in financial services and other high-security environments.

### Conclusion

Choosing the right type of authentication depends on the specific needs and risks of an organization. While no single method is foolproof, combining multiple authentication methods can significantly enhance security. As cyber threats evolve, so too must our approaches to authentication, ensuring we stay one step ahead in protecting sensitive information.

By understanding and implementing these various authentication methods, individuals and organizations can better safeguard their digital assets and ensure a more secure online environment. | blogginger |

1,867,439 | How to Beat Scorpion Sentinel - Boss Guide and Tips | Final Fantasy VII Remake follows the story of Cloud Strife, an ex-soldier turned mercenary, who joins... | 0 | 2024-05-28T09:26:18 | https://dev.to/patti_nyman_5d50463b9ff56/how-to-beat-scorpion-sentinel-boss-guide-and-tips-f68 | Final Fantasy VII Remake follows the story of Cloud Strife, an ex-soldier turned mercenary, who joins the eco-terrorist group Avalanche to stop the Shinra Electric Power Company from draining the planet's life energy. The game is set in the dystopian city of Midgar, where the conflict between Shinra and Avalanche escalates, leading to epic battles and profound revelations.

Basic Gameplay:

Players control Cloud and his allies as they explore Midgar, engage in real-time combat, and navigate through a richly detailed world filled with side quests, mini-games, and hidden secrets. The game seamlessly blends action, exploration, and storytelling, offering a captivating experience for fans and newcomers alike.

Encounter: Scorpion Sentinel - Boss

Role and Attributes:

The Scorpion Sentinel is a formidable boss encountered early in the game, serving as a mechanical guardian deployed by Shinra to protect their interests. It possesses high defense, long-range attacks, and formidable melee capabilities, posing a significant threat to the party.

Unlocking and Route to Scorpion Sentinel:

To encounter the Scorpion Sentinel, players must progress through the main story until they reach the Mako Reactor 1. Upon reaching the reactor's core, players will face off against this imposing foe.

Signs and Symbols to Reach the Boss:

Players will be guided to the Scorpion Sentinel encounter by following the linear path within the Mako Reactor 1. As they progress deeper into the reactor, they will encounter scripted events and cinematic sequences that lead up to the boss battle.

Entering Battle Mode:

The transition to battle mode is signaled by a dramatic shift in the music, accompanied by a cinematic sequence showing the Scorpion Sentinel's activation. Players should prepare themselves by ensuring their characters are equipped with suitable weapons, armor, and material.

Recommended Characters:

Cloud, with his balanced stats and proficiency in close combat, is an essential choice for this encounter. Additionally, players may consider including Barret for his ranged attacks and ability to draw enemy fire, providing cover for Cloud and other party members.

Defeating the Adentus Guild Boss:

Skills Overview:

The Scorpion Sentinel possesses a variety of skills, including tail laser, energy blast, and stinger strike. Each skill presents a different threat level and requires a unique approach to avoid.

Identifying Signals for Fatal Damage:

Players should be vigilant for warning signs, such as the Scorpion Sentinel raising its tail or charging energy, indicating an imminent devastating attack.

Dodging Fatal Skills:

To evade fatal attacks, players must time their dodges carefully or take cover behind obstacles in the environment. Additionally, utilizing Barret's Cover ability can help mitigate damage and protect vulnerable party members.

Detailed Recommendations and Strategies for Defeating the Adentus Guild Boss

Recommended Characters and Strategies:

Cloud Strife: Utilize Cloud's balanced stats and proficiency in close combat to deal consistent damage to the boss. Prioritize using his Braver ability to stagger the boss and open up opportunities for more powerful attacks.

Barret Wallace: Position Barret strategically to draw enemy fire away from Cloud and other party members. Take advantage of his ranged attacks to chip away at the boss's health from a safe distance.

Tifa Lockhart (if available): Tifa's agility and combo-based attacks make her a valuable asset in this battle. Focus on building up her Unbridled Strength ability to unleash devastating combos on the boss.

Key Operating Techniques:

Staggering the Boss: Focus on staggering the boss by exploiting its weaknesses and using abilities that inflict high stagger damage.

Dodging and Blocking: Time dodges and blocks effectively to minimize damage from the boss's powerful attacks.

Utilizing Abilities: Make strategic use of each character's abilities and limit breaks to maximize damage output and control the flow of battle.

Understanding Skill Damage Structure:

Tail Laser: A long-range laser attack that targets a single character. Can be dodged by moving out of the line of fire.

Energy Blast: Unleashes a powerful energy blast that damages all party members in its area of effect. Take cover behind obstacles or use Barret's Cover ability to mitigate damage.

Stinger Strike: Lunges forward with its stinger, inflicting heavy damage on a single target. Keep a close eye on the boss's movements and dodge or block the attack accordingly.

Critical Elements for Damage Output:

Exploiting Weaknesses: Identify and exploit the boss's vulnerabilities to increase damage output and stagger meter buildup.

Maintaining Pressure: Keep the pressure on the boss by maintaining a steady stream of attacks and avoiding unnecessary downtime.

Adentus Guild Boss Behavior Changes:

Phase Transitions: As the battle progresses, the boss may enter different phases, altering its attack patterns and behavior. Stay adaptable and adjust your tactics accordingly.

Increased Aggression: Expect the boss to become more aggressive and unleash more powerful attacks as its health dwindles. Stay focused and maintain control of the battle.

Rewards and Utilization:

Upon defeating the Adentus Guild Boss, players can expect to receive valuable rewards such as rare material, equipment, and Gil. These rewards can be used to enhance character abilities, customize equipment, and purchase items from shops throughout the game.

Avoiding Common Mistakes and Misconceptions:

Ignoring Weaknesses: Take advantage of the boss's weaknesses and vulnerabilities to increase damage output and stagger meter buildup.

Underestimating Defensive Options: Utilize dodges, blocks, and cover effectively to minimize damage taken and maintain party survivability.

Neglecting Party Composition: Ensure your party composition is well-balanced and equipped to handle the boss's various attacks and abilities.

By implementing these recommendations and strategies, players can overcome the challenges posed by the Adentus Guild Boss and emerge victorious, reaping the rewards and progressing further in the captivating world of Final Fantasy VII Remake.

At mmowow, we offer a range of cheap PSN gift cards to help you unlock more gaming fun and play Final Fantasy VII Remake and other popular titles by using PSN gift cards. Whether you choose to gift for holidays and special occasions or purchase discounted games and promotional items, our gift cards offer great value and are designed to fit your needs. | patti_nyman_5d50463b9ff56 | |

1,867,437 | Elevate Your Convenience with a Mobile Charging Kiosk | In our fast-paced, technology-dependent world, keeping devices charged and ready for use is... | 0 | 2024-05-28T09:24:35 | https://dev.to/addsofttech/elevate-your-convenience-with-a-mobile-charging-kiosk-4fol | In our fast-paced, technology-dependent world, keeping devices charged and ready for use is essential. Whether in bustling shopping malls, busy airports, educational institutions, or large event venues, the demand for accessible charging solutions is on the rise. Mobile charging kiosks offer an ideal solution, providing a secure, convenient, and efficient way for people to recharge their devices on the go. This article delves into the features, benefits, and key considerations for implementing mobile charging kiosks in various environments.

**What is a Mobile Charging Kiosk?**

A mobile charging kiosk is a self-service station equipped with multiple charging ports and secure compartments for charging electronic devices like smartphones, tablets, and laptops. These kiosks are designed to be placed in high-traffic public areas, offering a valuable service to users who need to recharge their devices quickly and safely.

Key Features of Mobile Charging Kiosks

**1. Multiple Charging Options**

Mobile charging kiosks are equipped with a variety of charging ports to accommodate different devices. Common options include:

**• USB-A and USB-C Ports:** For charging most smartphones and tablets.

**• Wireless Charging Pads:** For devices that support wireless charging.

**• AC Power Outlets: **For charging laptops and other larger devices.

**2. Secure Lockers**

To ensure the safety of users' devices, many kiosks come with individual secure lockers. Each locker typically features:

**• Digital Keypads or RFID Locks:** Allowing users to set a personal code or use an RFID card to lock and unlock the compartment.

• Sturdy Construction: Made from durable materials to prevent tampering and theft.

**3. User-Friendly Interface**

Modern charging kiosks are designed with user convenience in mind, often featuring:

**• Touchscreen Displays:** Providing easy-to-follow instructions and real-time charging status updates.

**• Multilingual Support:** Catering to a diverse user base.

**4. Remote Monitoring and Management**

Operators can monitor and manage kiosks remotely, which includes:

• **Usage Statistics:** Tracking how often and which ports are used.

**

• Maintenance Alerts:** Notifying when a kiosk requires servicing.

**• Software Updates:** Ensuring the system runs efficiently and securely.

**5. Advertising Capabilities**

Many kiosks include digital screens that can display advertisements, offering:

**• Additional Revenue Streams:** By selling ad space to third parties.

**• Promotion of In-House Services:** Advertising products or services directly to users.

Benefits of Mobile Charging Kiosks

**1. Convenience for Users**

Mobile charging kiosks provide a much-needed service in public places, allowing people to charge their devices while they go about their day. This convenience is particularly valuable in environments where access to power outlets is limited.

**2. Increased Foot Traffic and Engagement**

Placing charging kiosks in strategic locations can attract more visitors and encourage them to stay longer, which is beneficial for businesses and venues. For instance, in a retail setting, customers might spend more time shopping while their devices charge.

**3. Enhanced Customer Experience**

Offering a charging solution enhances the overall customer experience, showing that you value their needs. This can lead to increased customer satisfaction and loyalty.

**4. Security and Peace of Mind**

Secure lockers ensure that users can leave their devices to charge without worrying about theft or damage. This added security is a significant advantage in busy public areas.

**5. Revenue Opportunities**

The ability to display advertisements on the kiosk’s digital screens provides an additional revenue stream. Businesses can sell ad space to third parties or use it to promote their own products and services.

Considerations for Implementing a Mobile Charging Kiosk

**1. Location**

Selecting the right location is crucial. Ideal spots are high-traffic areas where people are likely to need charging services, such as shopping malls, airports, train stations, universities, and event venues. Ensure the kiosk is easily accessible and visible.

**2. Security Features**

Ensure the kiosk has robust security features to protect users' devices. This includes secure lockers with reliable locking mechanisms and sturdy construction to prevent tampering.

**3. Compatibility**

Choose a kiosk that offers a variety of charging options to accommodate different devices. This ensures that users can charge any device they have, from smartphones to laptops.

**4. Maintenance and Support**

Opt for kiosks that offer remote monitoring and management capabilities. This simplifies maintenance and ensures that any issues can be addressed promptly. Reliable technical support from the manufacturer is also essential.

**5. Cost and Return on Investment (ROI)**

Evaluate the cost of the kiosk and potential return on investment. Consider not only the direct revenue from charging fees or advertising but also the indirect benefits such as increased foot traffic and enhanced customer satisfaction.

Mobile charging kiosks are an innovative solution to meet the growing demand for on-the-go device charging. By providing a secure, convenient, and user-friendly charging option, these kiosks enhance the customer experience, attract more visitors, and offer potential revenue opportunities. Whether in retail environments, transportation hubs, educational institutions, or event venues, investing in mobile charging kiosks can significantly improve service offerings and customer satisfaction.

| addsofttech | |

1,867,436 | What is GPU Mining? | GPU mining reduces the entry hurdles for new cryptocurrency miners. For example, it eliminates the... | 0 | 2024-05-28T09:24:15 | https://dev.to/lillywilson/what-is-gpu-mining-3c7n | cryptocurrency, bitcoin, asic | **[GPU mining ](https://asicmarketplace.com/blog/gpu-vs-asic-mining/)**reduces the entry hurdles for new cryptocurrency miners. For example, it eliminates the need for expensive hardware or a greater range of coins to mine. Some GPU miners refer to this as a gateway into cryptocurrency mining.

The majority of aspiring miners have a GPU that they can use to mine, and it is fairly easy to set up a GPU-mining setup. Most big-box electrical stores and online marketplaces carry GPUs.

The configuration of GPU mining tools is also much easier to do now than it was in the early days when Bitcoin mining began. No longer do you need to be a Linux expert to configure GPU mining software. Today, GPU mining software is available for Windows computers with just one click. Older laptops are also able to use the program. | lillywilson |

1,867,366 | 📊📈 Create charts using Recharts | Introduction Charts make it easy to represent complex data in a simple and... | 0 | 2024-05-28T08:31:56 | https://refine.dev/blog/recharts/ | webdev, beginners, react, css |

<a href="https://github.com/refinedev/refine">

<img src="https://refine.ams3.cdn.digitaloceanspaces.com/readme/refine-readme-banner.png" alt="refine repo" />

</a>

---

## Introduction

Charts make it easy to represent complex data in a simple and visually appealing way. With charts, you can easily identify trends and patterns and make comparisons across different variables and data types. You can use charts to interpret current data and predict the future.

There are several types of charts you can use to visually represent data. Some of them include Line Charts, Bar Charts, Area Charts, and Scatter charts. The choice of a chart largely depends on the type of data. Different types of charts are suited for different purposes.

There are several libraries for creating charts in the React ecosystem. These React chart libraries include react-flow-charts, react-financial-charts, react-charts and Recharts. In this article, we will explore how to create charts in a Refine project using [Recharts](https://recharts.org/).

## What is Recharts

<div className="centered-image">

<img src="https://refine.ams3.cdn.digitaloceanspaces.com/blog/2024-02-23-recharts/recharts.png" alt="Recharts chart" />

</div>

Recharts is a popular, MIT-licensed library for creating charts in React and React-based frameworks like refine. Internally, it uses SVG and some lightweight D3 packages as its dependencies.

Recharts has several built-in components that you can compose to create some of the commonest charts such as Area charts, Bar charts, Pie charts, and Line charts.

As an example, the code below illustrates how you can use Rechart's built-in components to create a Bar chart. The component names are self-explanatory.

```tsx

import {

BarChart,

CartesianGrid,

XAxis,

YAxis,

Tooltip,

Legend,

Bar,

} from "recharts";

<BarChart width={730} height={250} data={data}>

<CartesianGrid strokeDasharray="3 3" />

<XAxis dataKey="name" />

<YAxis />

<Tooltip />

<Legend />

<Bar dataKey="pv" fill="#8884d8" />

<Bar dataKey="uv" fill="#82ca9d" />

</BarChart>;

```

## How to create a Refine project

In this section, we will create a refine demo project.

```sh

npm create refine-app@latest

```

Select the options below when prompted by the command line tool.

```txt

✔ Choose a project template · Vite

✔ What would you like to name your project?: · refine-recharts-demo

✔ Choose your backend service to connect: · REST API

✔ Do you want to use a UI Framework?: · Material UI

✔ Do you want to add example pages?: · Yes

✔ Do you need any Authentication logic?: · No

✔ Choose a package manager: · npm

```

After setting up the project and installing dependencies, use the command below to launch the development server.

```sh

npm run dev

```

Later in this article, we will create charts using Recharts and render them in a dashboard. Let's add a dashboard to the project we have just created.

Create the `src/pages/dashboard/list.tsx` file. Copy and paste the code below into it. Be aware that the `dashboard` directory doesn't exist yet. You need to first create it.

```tsx title="src/pages/dashboard/list.tsx"

import React from "react";

export const DashboardPage: React.FC = () => {

return <p>Hello world!</p>;

};

```

The component above renders a simple "Hello world!" text at the moment. We will add more code to it later. Now we need to export the component above. Create the `src/pages/dashboard/index.ts` file. Copy and paste the code below into it.

```ts

// src/pages/dashboard/index.ts

export { DashboardPage } from "./list";

```

You can now import the `DashboardPage` component we created above and render it in the `<App />` component. Add the changes below to the `src/App.tsx` file.

```tsx

//src/App.tsx

...

//highlight-next-line

import { DashboardPage } from "./pages/dashboard";

function App() {

return (

<BrowserRouter>

<RefineKbarProvider>

<ColorModeContextProvider>

<CssBaseline />

<GlobalStyles styles={{ html: { WebkitFontSmoothing: "auto" } }} />

<RefineSnackbarProvider>

<DevtoolsProvider>

<Refine

//highlight-start

dataProvider={{

default: dataProvider("https://api.fake-rest.refine.dev"),

metrics: dataProvider("https://api.finefoods.refine.dev"),

}}

//highlight-end

notificationProvider={notificationProvider}

routerProvider={routerBindings}

resources={[

{

name: "blog_posts",

list: "/blog-posts",

create: "/blog-posts/create",

edit: "/blog-posts/edit/:id",

show: "/blog-posts/show/:id",

meta: {

canDelete: true,

},

},

{

name: "categories",

list: "/categories",

create: "/categories/create",

edit: "/categories/edit/:id",

show: "/categories/show/:id",

meta: {

canDelete: true,

},

},

//highlight-start

{

name: "dashboard",

list: "/dashboard",

meta: {

label: "Dashboard",

dataProviderName: "metrics",

},

},

//highlight-end

]}

options={{

syncWithLocation: true,

warnWhenUnsavedChanges: true,

useNewQueryKeys: true,

projectId: "5l4F52-JwXWMu-eZRGwA",

}}

>

<Routes>

<Route

element={

<ThemedLayoutV2 Header={() => <Header sticky />}>

<Outlet />

</ThemedLayoutV2>

}

>

<Route

index

element={<NavigateToResource resource="blog_posts" />}

/>

...

//highlight-start

<Route path="/dashboard">

<Route index element={<DashboardPage />} />

</Route>

//highlight-end

<Route path="*" element={<ErrorComponent />} />

</Route>

</Routes>

<RefineKbar />

<UnsavedChangesNotifier />

<DocumentTitleHandler />

</Refine>

<DevtoolsPanel />

</DevtoolsProvider>

</RefineSnackbarProvider>

</ColorModeContextProvider>

</RefineKbarProvider>

</BrowserRouter>

);

}

export default App;

```

In the code above, we added another data provider. The data provider will fetch data from the [fast foods API](https://api.finefoods.refine.dev). It's a dummy API created by the refine team. You can use it to create simple projects when testing out refine. We will use the API to create charts later.

You will now see a dashboard entry in the sidebar. The dashboard will look like the image below. We will create charts and render them in the dashboard in the next sub-sections.

<div className="centered-image">

<img src="https://refine.ams3.cdn.digitaloceanspaces.com/blog/2024-02-23-recharts/dashboard.png" alt="Recharts chart" />

</div>

Before we start creating charts, let's create a simple interface for the data from our API. Create the `src/interfaces/index.d.ts` file. Copy and paste the interface below into it.

```ts

// src/interfaces/index.d.ts

export interface IQueryResult {

date: string;

value: number;

}

```

## How to install Recharts

You can install Recharts either from the npm package registry or get its UMD build via a CDN. Depending on your package manager, use one of the commands below to install Recharts.

```sh

npm install recharts

```

## Create a Line chart using Recharts

Line charts consist of a series of data points connected using line segments. They are mostly used to represent time series data. You can use Rechart's built-in `<LineChart />` component to create a Line chart like so:

```tsx

<LineChart

width={730}

height={250}

data={data}

margin={{ top: 5, right: 30, left: 20, bottom: 5 }}

>

<CartesianGrid strokeDasharray="3 3" />

<XAxis dataKey="name" />

<YAxis />

<Tooltip />

<Legend />

<Line type="monotone" dataKey="value" stroke="#8884d8" />

</LineChart>

```

Charts in general need to have features such as axes, Cartesian grid, legend, and tooltips. Therefore, we need to use the `<LineChart />` component with Rechart's built-in general and Cartesian components as in the example above.

The `<LineChart />` component has the `data` prop for passing the data you want to represent on the Line chart. The data should be an array of objects like in the example below.

```tsx

[

{ name: "a", value: 16 },

{ name: "b", value: 12 },

{ name: "c", value: 18 },

];

```

Let's create a simple Line chart in our refine project. We will render it in the dashboard we created above. Start by creating the `src/pages/dashboard/charts/line-chart.tsx` file. Copy and paste the code below into it. The `charts` directory doesn't exist yet. Start by creating it.

```tsx

// src/pages/dashboard/charts/line-chart.tsx

import React from "react";

import {

LineChart,

Line,

XAxis,

YAxis,

Tooltip,

ResponsiveContainer,

} from "recharts";

import { IQueryResult } from "../../../interfaces";

export const LineChartComponent: React.FC<{

dailyOrders: IQueryResult[];

}> = ({ dailyOrders }) => {

return (

<ResponsiveContainer width="100%" height="100%" aspect={500 / 300}>

<LineChart

width={500}

height={300}

data={dailyOrders}

margin={{

top: 5,

right: 30,

left: 20,

bottom: 5,

}}

>

<XAxis dataKey="date" />

<YAxis />

<Tooltip />

<Line type="monotone" dataKey="value" stroke="#82ca9d" />

</LineChart>

</ResponsiveContainer>

);

};

```

In the example above, the `<LineChart />` component is wrapped in a responsive container. We will do the same while creating other charts later. We need to export the component we created above so that we can easily import and render it anywhere in our project. Create the `src/pages/dashboard/charts/index.ts` file. Copy and paste the code below into it.

```ts

// src/pages/dashboard/charts/index.ts

export { LineChartComponent } from "./line-chart";

```

Let's now import the above component and render it in the `<DashboardPage />` component. Copy and paste the code below into the `src/pages/dashboard/list.tsx` file.

```tsx

// src/pages/dashboard/list.tsx

import React from "react";

import { Grid } from "@mui/material";

import { useApiUrl, useCustom } from "@refinedev/core";

import dayjs from "dayjs";

const query = {

start: dayjs().subtract(7, "days").startOf("day"),

end: dayjs().startOf("day"),

};

import { LineChartComponent } from "./charts";

import { IQueryResult } from "../../interfaces";

export const formatDate = new Intl.DateTimeFormat("en-US", {

month: "short",

year: "numeric",

day: "numeric",

});

const transformData = (data: IQueryResult[]): IQueryResult[] => {

return data.map(({ date, value }) => ({

date: formatDate.format(new Date(date)),

value,

}));

};

export const DashboardPage: React.FC = () => {

const API_URL = useApiUrl("metrics");

const { data: dailyRevenue } = useCustom({

url: `${API_URL}/dailyRevenue`,

method: "get",

config: {

query,

},

queryOptions: {

select: ({ data }) => {

return { data: transformData(data.data) };

},

},

});

const { data: dailyOrders } = useCustom({

url: `${API_URL}/dailyOrders`,

method: "get",

config: {

query,

},

queryOptions: {

select: ({ data }) => {

return { data: transformData(data.data) };

},

},

});

const { data: newCustomers } = useCustom({

url: `${API_URL}/newCustomers`,

method: "get",

config: {

query,

},

queryOptions: {

select: ({ data }) => {

return { data: transformData(data.data) };

},

},

});

return (

<Grid

container

justifyContent="baseline"

alignItems={"stretch"}

spacing={2}

>

<Grid item xs={12} sm={6}>

<LineChartComponent dailyOrders={dailyOrders?.data ?? []} />

</Grid>

</Grid>

);

};

```

In the code above, we are using the `useCustom` hook to make custom query requests to the backend. The `useCustom` hook uses TanStack Query's `useQuery` hook under the hook. We have been querying the daily revenue, daily orders, and new customers of a restaurant business for the last seven days. We will represent the data in different types of charts. The dashboard should now have a Line chart that looks like the image below.

<div className="centered-image">

<img src="https://refine.ams3.cdn.digitaloceanspaces.com/blog/2024-02-23-recharts/line-chart.png" alt="Recharts chart" />

</div>

There are several Line chart variants you can create using Rechart's built-in components. For more complex charts, check out the Recharts documentation.

## Create Area chart using Recharts

Recharts has the built-in `<AreaChart />` component for creating area charts. You can compose the built-in `<AreaChart />` component to create complex area charts in your React project.

You can use the `<AreaChart />` component's `data` prop to pass the data you want to represent on an area chart. Like in the previous example, your data should be an array of objects.

```tsx

[

{ name: "Name A", data: 4000 },

{ name: "Name B", data: 3000 },

];

```

To represent the above data in an area chart, you can use the `<AreaChart />` component as in the example below. As before, the component names are self-explanatory.

```tsx

<AreaChart

width={500}

height={300}

data={data}

margin={{

top: 10,

right: 30,

left: 0,

bottom: 0,

}}

>

<XAxis dataKey="name" />

<YAxis />

<Tooltip />

<Area type="monotone" dataKey="data" stroke="#8884d8" fill="#8884d8" />

</AreaChart>

```

Let's now add an area chart to the refine project we created above. Create the `src/pages/dashboard/charts/area-chart.tsx` file. Copy and paste the code below into it.

```tsx

// dashboard/charts/area-chart.tsx

import {

AreaChart,

Area,

XAxis,

YAxis,

Tooltip,

ResponsiveContainer,

} from "recharts";

import { IQueryResult } from "../../../interfaces";

export const AreaChartComponent: React.FC<{ dailyRevenue: IQueryResult[] }> = ({

dailyRevenue,

}) => {

return (

<ResponsiveContainer width="100%" height="100%" aspect={500 / 300}>

<AreaChart

width={500}

height={300}

data={dailyRevenue}

margin={{

top: 10,

right: 30,

left: 0,

bottom: 0,

}}

>

<XAxis dataKey="date" />

<YAxis />

<Tooltip />

<Area type="monotone" dataKey="value" stroke="#8884d8" fill="#8884d8" />

</AreaChart>

</ResponsiveContainer>

);

};

```

In the example above, we are representing the daily revenue of a restaurant business in an area chart. We are fetching the data in our dashboard component and passing it as a prop to the above component. Once again we are wrapping the area chart in a responsive container.

You need to export the above component by adding the changes below to the `src/pages/dashboard/charts/index.ts` file.

```ts

// pages/dashboard/charts/index.ts

export { LineChartComponent } from "./line-chart";

//highlight-next-line

export { AreaChartComponent } from "./area-chart";

```

We can now import and render the above component in the `<DashboardPage />` component. Add the changes below to the `src/pages/dashboard/list.tsx` file.

```tsx

...

import {

LineChartComponent,

//highlight-next-line

AreaChartComponent,

} from "./charts";

...

export const DashboardPage: React.FC = () => {

...

return (

<Grid

container

justifyContent="baseline"

alignItems={"stretch"}

spacing={2}

>

<Grid item xs={12} sm={6}>

<LineChartComponent dailyOrders={dailyOrders?.data ?? []} />

</Grid>

//highlight-start

<Grid item xs={12} sm={6}>

<AreaChartComponent dailyRevenue={dailyRevenue?.data ?? []} />

</Grid>

//highlight-end

</Grid>

);

};

```

Your dashboard should now have a simple area chart that looks like the image below.

<div className="centered-image">

<img src="https://refine.ams3.cdn.digitaloceanspaces.com/blog/2024-02-23-recharts/area-chart.png" alt="Recharts chart" />

</div>

There are several types of Area charts. What we have created above is a simple Area chart. Recharts has built-in functionality for implementing most of them. For more, check out the documentation.

## Create a Bar chart using Recharts

Bar charts are among the most common charts for visualizing data. You can use it to visually represent categorical data. Recharts has the built-in `<BarChart />` component for creating bar charts.

Like the other types of charts, the data you want to represent on a bar chart should be an array of objects. You need to pass it to the `<BarChart />` component as the value of the `data` prop.

Let's add a bar chart to the dashboard in the refine project we created above. Create the `src/pages/dashboard/charts/bar-chart.tsx` file. Copy and paste the code below into it.

```tsx

// pages/dashboard/charts/bar-chart.tsx

import React from "react";

import {

BarChart,

Bar,

Rectangle,

XAxis,

YAxis,

Tooltip,

ResponsiveContainer,

} from "recharts";

import { IQueryResult } from "../../../interfaces";

export const BarChartComponent: React.FC<{ newCustomers: IQueryResult[] }> = ({

newCustomers,

}) => {

return (

<ResponsiveContainer width="100%" height="100%" aspect={500 / 300}>

<BarChart

width={500}

height={300}

data={newCustomers}

margin={{

top: 5,

right: 30,

left: 20,

bottom: 5,

}}

>

<XAxis dataKey="date" />

<YAxis />

<Tooltip />

<Bar

dataKey="value"

fill="#8884d8"

activeBar={<Rectangle fill="pink" stroke="blue" />}

/>

</BarChart>

</ResponsiveContainer>

);

};

```

We need to export the component above. Add the changes below to the `src/pages/dashboard/charts/index.ts` file.

```ts

export { LineChartComponent } from "./line-chart";

export { AreaChartComponent } from "./area-chart";

//highlight-next-line

export { BarChartComponent } from "./bar-chart";

```

We can now import and render the above component. Add the changes below to the `src/pages/dashboard/list.tsx` file.

```tsx

...

import {

LineChartComponent,

AreaChartComponent,

//highlight-next-line

BarChartComponent,

} from "./charts";

...

export const DashboardPage: React.FC = () => {

...

return (

<Grid

container

justifyContent="baseline"

alignItems={"stretch"}

spacing={2}

>

<Grid item xs={12} sm={6}>

<LineChartComponent dailyOrders={dailyOrders?.data ?? []} />

</Grid>

<Grid item xs={12} sm={6}>

<AreaChartComponent dailyRevenue={dailyRevenue?.data ?? []} />

</Grid>

//highlight-start

<Grid item xs={12} sm={6}>

<BarChartComponent newCustomers={newCustomers?.data ?? []} />

</Grid>

//highlight-end

</Grid>

);

};

```

After rendering the above component, your dashboard should now have a bar chart that looks like the image below.

<div className="centered-image">

<img src="https://refine.ams3.cdn.digitaloceanspaces.com/blog/2024-02-23-recharts/bar-chart.png" alt="Recharts chart" />

</div>

## Create Scatter chart using Recharts

Scatter charts are useful to graphically represent the relationship between two variables. Like the other charts mentioned above, Recharts has the built-in `<ScatterChart />` component for creating scatter charts.

Let's create a simple scatter chart in this article. Create the `src/pages/dashboard/charts/scatter-chart.tsx` file. Copy and paste the code below into it.

```tsx

import React from "react";

import {

ScatterChart,

Scatter,

XAxis,

YAxis,

Tooltip,

ResponsiveContainer,

} from "recharts";

import { IQueryResult } from "../../../interfaces";

const formatData = (

dailyOrders: IQueryResult[],

newCustomers: IQueryResult[],

) => {

const formattedData = [];

for (let i = 0; i < dailyOrders.length; i++) {

if (!dailyOrders[i] || !newCustomers[i]) continue;

if (dailyOrders[i].date === newCustomers[i].date) {

formattedData.push({

date: dailyOrders[i].date,

dailyOrders: dailyOrders[i].value,

newCustomers: newCustomers[i].value,

});

}

}

return formattedData;

};

export const ScatterChartComponent: React.FC<{

dailyOrders: IQueryResult[];

newCustomers: IQueryResult[];

}> = ({ dailyOrders, newCustomers }) => {

const formattedData = formatData(dailyOrders, newCustomers);

return (

<ResponsiveContainer width="100%" height="100%" aspect={500 / 300}>

<ScatterChart

width={500}

height={300}

margin={{

top: 20,

right: 20,

bottom: 20,

left: 20,

}}

>

<XAxis type="number" dataKey="dailyOrders" name="Orders" />

<YAxis type="number" dataKey="newCustomers" name="Customers" />

<Tooltip cursor={{ strokeDasharray: "3 3" }} />

<Scatter name="A school" data={formattedData} fill="#8884d8" />

</ScatterChart>

</ResponsiveContainer>

);

};

```

In the example above, we wrapped the chart in a responsive container and passed the data to the `Scatter` component instead of the `ScatterChart`. Similar to the other charts we have already looked at, the data should be an array of objects.

In the example above, we had to transform the data because we wanted to determine the relationship between two variables(daily orders and new customers).

Let's export the above component. Add the changes below to the `src/pages/dashboard/charts/index.ts` file.

```ts

export { LineChartComponent } from "./line-chart";

export { AreaChartComponent } from "./area-chart";

export { BarChartComponent } from "./bar-chart";

//highlight-next-line

export { ScatterChartComponent } from "./scatter-chart";

```

You can now import and render the above component in the dashboard. Add the changes below to the `src/pages/dashboard/list.tsx` file.

```tsx

// src/pages/dashboard/list.tsx

...

import {

LineChartComponent,

AreaChartComponent,

BarChartComponent,

//highlight-next-line

ScatterChartComponent,

} from "./charts";

i...

export const DashboardPage: React.FC = () => {

...

return (

<Grid

container

justifyContent="baseline"

alignItems={"stretch"}

spacing={2}

>

<Grid item xs={12} sm={6}>

<LineChartComponent dailyOrders={dailyOrders?.data ?? []} />

</Grid>

<Grid item xs={12} sm={6}>

<AreaChartComponent dailyRevenue={dailyRevenue?.data ?? []} />

</Grid>

<Grid item xs={12} sm={6}>

<BarChartComponent newCustomers={newCustomers?.data ?? []} />

</Grid>

//highlight-start

<Grid item xs={12} sm={6}>

<ScatterChartComponent

dailyOrders={dailyOrders?.data ?? []}

newCustomers={newCustomers?.data ?? []}

/>

</Grid>

//highlight-end

</Grid>

);

};

```

After rendering the above component, the dashboard should now have a scatter chart that looks like the image below.

<div className="centered-image">

<img src="https://refine.ams3.cdn.digitaloceanspaces.com/blog/2024-02-23-recharts/scatter-chart.png" alt="Recharts chart" />

</div>

## Create a Pie chart using Recharts

A pie chart is one of the most common and easy-to-understand charts. It is a circular graph that is split into multiple sectors. Each sector in a pie chart represents a particular category of data and its size is proportional to the quantity of the category it represents.

In this section, we will create a simple Pie chart using Recharts. Let's start by creating the `src/pages/dashboard/charts/pie-chart.tsx` file. Copy and paste the code below into it.

```tsx

import React from "react";

import { PieChart, Pie, ResponsiveContainer } from "recharts";

import { IQueryResult } from "../../../interfaces";

export const PieChartComponent: React.FC<{ dailyOrders: IQueryResult[] }> = ({

dailyOrders,

}) => {

return (

<ResponsiveContainer width="100%" height="100%" aspect={300 / 300}>

<PieChart width={300} height={300}>

<Pie

data={dailyOrders}

dataKey="value"

nameKey="date"

cx="50%"

cy="40%"

outerRadius={150}

fill="#82ca9d"

label

/>

</PieChart>

</ResponsiveContainer>

);

};

```

Let's export the above component so that we can import it anywhere in our application. Add the changes below to the `src/pages/dashboard/charts/index.ts` file.

```ts

export { LineChartComponent } from "./line-chart";

export { AreaChartComponent } from "./area-chart";

export { BarChartComponent } from "./bar-chart";

export { ScatterChartComponent } from "./scatter-chart";

//highligh-next-line

export { PieChartComponent } from "./pie-chart";

```

Let's import and render the above component in our dashboard. Add the changes below to the `src/pages/dashboard/list.tsx` file.

```tsx

...

import {

LineChartComponent,

AreaChartComponent,

BarChartComponent,

ScatterChartComponent,

//highlight-next-line

PieChartComponent,

} from "./charts";

...

return (

<Grid

container

justifyContent="baseline"

alignItems={"stretch"}

spacing={2}

>

<Grid item xs={12} sm={6}>

<LineChartComponent dailyOrders={dailyOrders?.data ?? []} />

</Grid>

<Grid item xs={12} sm={6}>

<AreaChartComponent dailyRevenue={dailyRevenue?.data ?? []} />

</Grid>

<Grid item xs={12} sm={6}>

<BarChartComponent newCustomers={newCustomers?.data ?? []} />

</Grid>

<Grid item xs={12} sm={6}>

<ScatterChartComponent

dailyOrders={dailyOrders?.data ?? []}

newCustomers={newCustomers?.data ?? []}

/>

</Grid>

//highlight-start

<Grid item xs={12} sm={6}>

<PieChartComponent dailyOrders={dailyOrders?.data ?? []} />

</Grid>

//highlight-end

</Grid>

);

};

```

Your dashboard should now have a Pie chart that looks like the image below.

<div className="centered-image">

<img src="https://refine.ams3.cdn.digitaloceanspaces.com/blog/2024-02-23-recharts/pie-chart.png" alt="Recharts chart" />

</div>

## Create TreeMap using Recharts

A Treemap is a data visualization tool similar to a Pie chart. However, instead of using a circular graph and sectors to represent data, a Treemap instead uses rectangles and nested rectangles.

With a Treemap, a rectangle represents a category, and nested rectangles represent sub-categories within a category. Recharts has the built-in `<Treemap />` component for creating Treemaps. You can pass the data as the value of the data attribute.

Let's add a simple Treemap to our dashboard. Create the `src/pages/dashboard/charts/treemap.tsx` file. Copy and paste the code below into it.

```tsx

import React from "react";

import { Treemap, ResponsiveContainer } from "recharts";

const data = [

{

name: "axis",

children: [

{ name: "Axes", size: 1302 },

{ name: "Axis", size: 24593 },

{ name: "AxisGridLine", size: 652 },

{ name: "AxisLabel", size: 636 },

{ name: "CartesianAxes", size: 6703 },

],

},

{

name: "controls",

children: [

{ name: "AnchorControl", size: 2138 },

{ name: "ClickControl", size: 3824 },

{ name: "Control", size: 1353 },

{ name: "ControlList", size: 4665 },

{ name: "DragControl", size: 2649 },

{ name: "ExpandControl", size: 2832 },

{ name: "HoverControl", size: 4896 },

{ name: "IControl", size: 763 },

{ name: "PanZoomControl", size: 5222 },

{ name: "SelectionControl", size: 7862 },

{ name: "TooltipControl", size: 8435 },

],

},

{

name: "data",

children: [

{ name: "Data", size: 20544 },

{ name: "DataList", size: 19788 },

{ name: "DataSprite", size: 10349 },

{ name: "EdgeSprite", size: 3301 },

{ name: "NodeSprite", size: 19382 },

{

name: "render",

children: [

{ name: "ArrowType", size: 698 },

{ name: "EdgeRenderer", size: 5569 },

{ name: "IRenderer", size: 353 },

{ name: "ShapeRenderer", size: 2247 },

],

},

{ name: "ScaleBinding", size: 11275 },

{ name: "Tree", size: 7147 },

{ name: "TreeBuilder", size: 9930 },

],

},

{

name: "events",

children: [

{ name: "DataEvent", size: 7313 },

{ name: "SelectionEvent", size: 6880 },

{ name: "TooltipEvent", size: 3701 },

{ name: "VisualizationEvent", size: 2117 },

],

},

{

name: "legend",

children: [

{ name: "Legend", size: 20859 },

{ name: "LegendItem", size: 4614 },

{ name: "LegendRange", size: 10530 },

],

},

];

export const TreemapComponent: React.FC = () => {

return (

<ResponsiveContainer width="100%" height="100%" aspect={500 / 300}>

<Treemap

width={500}

height={300}

data={data}

dataKey="size"

aspectRatio={500 / 300}

stroke="#fff"

fill="#8884d8"

/>

</ResponsiveContainer>

);

};

```

In the example above, we have hard-coded the data because the API doesn't have a dataset we can use to create a Treemap. In a typical real-world project, you will retrieve the data from an API. You can export the above component from the `src/pages/dashboard/charts/index.ts` file like so:

```ts

export { LineChartComponent } from "./line-chart";

export { AreaChartComponent } from "./area-chart";

export { BarChartComponent } from "./bar-chart";

export { ScatterChartComponent } from "./scatter-chart";

export { PieChartComponent } from "./pie-chart";

//highlight-next-line

export { TreemapComponent } from "./treemap";

```

You can now import the above component and render it in the dashboard.

```tsx

import React from "react";

import { Grid } from "@mui/material";

import { useApiUrl, useCustom } from "@refinedev/core";

import dayjs from "dayjs";

const query = {

start: dayjs().subtract(7, "days").startOf("day"),

end: dayjs().startOf("day"),

};

import {

LineChartComponent,

AreaChartComponent,

BarChartComponent,

ScatterChartComponent,

PieChartComponent,

//highlight-next-line

TreemapComponent,

} from "./charts";

import { IQueryResult } from "../../interfaces";

export const formatDate = new Intl.DateTimeFormat("en-US", {

month: "short",

year: "numeric",

day: "numeric",

});

const transformData = (data: IQueryResult[]): IQueryResult[] => {

return data.map(({ date, value }) => ({

date: formatDate.format(new Date(date)),

value,

}));

};

export const DashboardPage: React.FC = () => {

const API_URL = useApiUrl("metrics");

const { data: dailyRevenue } = useCustom({

url: `${API_URL}/dailyRevenue`,

method: "get",

config: {

query,

},

queryOptions: {

select: ({ data }) => {

return { data: transformData(data.data) };

},

},

});

const { data: dailyOrders } = useCustom({

url: `${API_URL}/dailyOrders`,

method: "get",

config: {

query,

},

queryOptions: {

select: ({ data }) => {

return { data: transformData(data.data) };

},

},

});

const { data: newCustomers } = useCustom({

url: `${API_URL}/newCustomers`,

method: "get",

config: {

query,

},

queryOptions: {

select: ({ data }) => {

return { data: transformData(data.data) };

},

},

});

return (

<Grid

container

justifyContent="baseline"

alignItems={"stretch"}

spacing={2}

>

<Grid item xs={12} sm={6}>

<LineChartComponent dailyOrders={dailyOrders?.data ?? []} />

</Grid>

<Grid item xs={12} sm={6}>

<AreaChartComponent dailyRevenue={dailyRevenue?.data ?? []} />

</Grid>

<Grid item xs={12} sm={6}>

<BarChartComponent newCustomers={newCustomers?.data ?? []} />

</Grid>

<Grid item xs={12} sm={6}>

<ScatterChartComponent

dailyOrders={dailyOrders?.data ?? []}

newCustomers={newCustomers?.data ?? []}

/>

</Grid>

<Grid item xs={12} sm={6}>

<PieChartComponent dailyOrders={dailyOrders?.data ?? []} />

</Grid>

//highlight-start

<Grid item xs={12} sm={6}>

<TreemapComponent />

</Grid>

//highlight-end

</Grid>

);

};

```

Your dashboard should now have a Treemap that looks like the image below.

<div className="centered-image">

<img src="https://refine.ams3.cdn.digitaloceanspaces.com/blog/2024-02-23-recharts/treemap.png" alt="Recharts chart" />

</div>

## Conclusion

Sometimes you may have to integrate data visualization in your React project. Charts make it easy to present data in an easy-to-understand and visually appealing way.

There are several frameworks for creating charts in React. Recharts is one of the most popular and feature-rich packages for creating charts in a React project or React-based frameworks such as refine.

Recharts support several types of charts out of the box. In this article, we have only explored a subset of charts you can create using Recharts. Check the documentation for details.

| necatiozmen |

1,867,435 | Is AI Really Intelligent? The Generative AI Paradox | Explore the Generative AI Paradox as discussed in Pablo Inigo Sanchez's article. This piece delves into whether AI models like GPT-4 truly understand what they create. | 0 | 2024-05-28T09:22:29 | https://dev.to/mkdev/is-ai-really-intelligent-the-generative-ai-paradox-1334 | ai, generative, llm | ---

title: Is AI Really Intelligent? The Generative AI Paradox

published: true

description: Explore the Generative AI Paradox as discussed in Pablo Inigo Sanchez's article. This piece delves into whether AI models like GPT-4 truly understand what they create.

tags: ai, generative, llm

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/h6hjvumo68qog4hxsefr.png

# Use a ratio of 100:42 for best results.

# published_at: 2024-05-28 09:19 +0000

---

Some time ago, a new paper appeared about, let's say, how intelligent the new LLM models like GPT-4 are. And when you read this document, you learn that AI is not so intelligent, or maybe it's not intelligent at all. Is that so?

This paper is called "THE GENERATIVE AI PARADOX: ‘What It Can Create, It May Not Understand’". [Here it is](https://arxiv.org/abs/2311.00059#:~:text=Specifically%2C%20we%20propose%20and%20test,those%20same%20types%20of%20outputs).

Let's dive into the Generative AI Paradox and understand how these researchers determined that humans are smarter than the GPT-4 model, for example.

To prepare this paper, the authors had a crucial question: Do these AI models truly understand what they create? This question forms the crux of the Generative AI Paradox.

To understand all this, let's first understand two concepts about two different tasks that a model can perform:

* **Generative tasks** are those that involve creating new content, like writing a story or designing an image. This is where AI models particularly excel. So, every time we talk about Generative tasks, we are talking about something that the AI is going to create for us.

* In **Discriminative tasks**, the model has to choose from predefined options or categorize data into existing groups. For example, in natural language processing, a generative task might be to write a story. In contrast, a discriminative task could be classifying a text as positive or negative, or selecting the correct answer from a set of options in a reading comprehension test.

The Generative AI paradox comes from an interesting observation: AI models are really good at creating detailed content similar to what experts do, but they often make basic mistakes in understanding that we wouldn't expect, even from people who are not experts. To explore this further, we use two types of evaluations: Selective Evaluation and Interrogative Evaluation.

**Selective Evaluation**: This evaluation assesses whether models can choose the correct answers from a set of options, testing their ability to understand and distinguish between different choices. It's a key part of seeing how practical and effective AI applications are.

Imagine an AI model is given a task to read a short story and then answer a multiple-choice question about it. The question might be: "What is the main theme of the story?" with four options provided: A) Friendship, B) Adventure, C) Love, and D) Betrayal. The AI's task is to read the story, understand its main theme, and select the correct option from the given choices.

**Interrogative Evaluation**: In this evaluation, we challenge models by asking them questions about the content they have created. This is a direct way to see if AI really understands its own creations. It helps us understand the depth of AI's comprehension and its ability to reflect on what it has generated.

For this, let's say the AI model generates a story about a young girl who overcomes her fears and wins a swimming competition. After generating this story, the AI is asked: "Why was the swimming competition important to the main character?" The AI must understand its own narrative to provide a coherent answer, such as "The competition was important to her because it was a way to overcome her fears and prove her strength." This tests the AI's ability to comprehend and explain the content it generated.

In this paper, a large number of experiments were conducted in both language and vision modalities to test these hypotheses with one question in mind: Does the AI truly understand its creations? These ranged from generating texts and creating images to answering questions about these creations.

After all those tests, they got a result in the two kinds of evaluations that we saw before:

In **Selective Evaluation**, models often outperformed humans in content generation but were less adept at discrimination and comprehension tasks.

In **Interrogative Evaluation**, models frequently failed to answer questions about their own creations, highlighting a disconnect between generation and comprehension.

So, the AI is not able to understand what it creates, and the reason for that is because it is trained to generate after training and not to understand what was generated. It's like a machine creating a toy one after the other, day by day. It can create something but not understand what that is.

These findings challenge our preconceived notions of AI. Although models can mimic human creativity, their understanding of content remains superficial.