id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,866,913 | day 09 | date:- 28 May, 2024. Procedural programming:- This is just how we structure our code. This is an old... | 0 | 2024-05-28T06:30:40 | https://dev.to/lordronjuyal/day-09-1gl6 | python, oop, newbie, beginners | date:- 28 May, 2024.

Procedural programming:- This is just how we structure our code. This is an old way of coding. In this, we divide our program into the logical sequence of steps it needs to follow to do the task.

**Object-oriented programming**:- This is another and more modern way of structuring the code. In this, we divide programs into different groups called object, where each object is responsible for a specific job. By this, we try to model objects as real-world entities. They can have their own attributes(properties/variables) and methods(functions).

In OOP, we create a standard description(properties and methods) of a group. This is called class. This class is used to make other objects which have the same description and some additional properties and methods. For eg we make a teacher as a class, knowledge of a subject can be its properties and functions including teaching, homework, test etc. Now, object will be real life people like Ramesh sir, Asha madam who will have knowledge and functions like teaching, giving homework etc plus additional functions/properties like storytelling, name which can be unique.

To make an object from class: object_name = ClassName()

We use Pascal case(each letter capital) for class names and we have to use ().

Now object_name is the object we created and it will get attributes and methods of the "ClassName" class. To access attributes or functions, we use object_name.attribute_name or object_name.function_name()

Other things I learned today:-

1. To use a global variable in local scope, we have to use code global variable_name. But in the case of dictionaries, we don't have to write this.

Project I made:-

1. Coffe machine

https://replit.com/@rohitrj332024/Coffee-machine-day-09#main.py

----------------------------------------------------------

Somehow theory took much of my time. I am practicing oop with turtle module. Time for the rest. Thank you see you tomorrow.

| lordronjuyal |

1,867,258 | Mobile Privacy: How Safe is Safe? | In today’s fast-paced digital world, concerns about mobile privacy are more relevant than ever. As... | 0 | 2024-05-28T06:30:12 | https://dev.to/sejaljansari/mobile-privacy-how-safe-is-safe-5hi6 | mobile, safe | In today’s fast-paced digital world, concerns about mobile privacy are more relevant than ever. As our smartphones become central to daily life, understanding how to protect personal information is crucial. This blog delves into the state of mobile privacy, its challenges, and potential solutions for ensuring data security.

The State of Mobile Privacy

**The Rise of Encryption**

Encryption is a key player in mobile privacy. By converting data into unreadable formats for anyone without the decryption key, encryption ensures that only intended recipients can access sensitive information. Companies like Silent Circle are leading the way with apps that provide encrypted phone calls, texts, and file transfers, significantly enhancing user privacy.

**Legal and Ethical Considerations**

Despite its benefits, encryption also presents challenges. Law enforcement agencies argue that it can impede criminal investigations. Companies like Silent Circle have pledged to deny requests for eavesdropping, which could lead to legal conflicts. This tension between privacy and security continues to be a complex issue, with technology often outpacing legislative responses.

**The Value of Information**

In the digital age, personal information is a valuable commodity. From advertisers to cybercriminals, various entities seek access to our data. This constant demand highlights the importance of mobile privacy and the need for individuals to proactively protect their information.

**Protecting Your Mobile Privacy**

**Practical Steps for Users**

**1.Use Encryption Tools: **Utilize apps and services that offer end-to-end encryption for communications and data storage.

**2.Regular Updates:** Keep your mobile device and apps up-to-date to protect against vulnerabilities.

**3.Be Mindful of Permissions:** Regularly review and limit app permissions to safeguard sensitive information.

**4.Secure Your Device:** Use strong passwords, biometric authentication, and enable remote wipe features in case your device is lost or stolen.

**The Role of Technology Companies**

Technology companies, including [iPhone app development agency](https://www.nimblechapps.com/services/ios-app-development-company), play a crucial role in safeguarding mobile privacy. By implementing strong encryption standards, being transparent about data collection practices, and resisting unwarranted data access requests, these companies can build user trust and contribute to a safer digital environment.

**The Future of Mobile Privacy**

As technology evolves, so will the methods to protect our privacy. Future advancements may include more sophisticated encryption techniques, greater user control over personal data, and increased accountability for companies handling sensitive information. However, balancing privacy and security will remain a dynamic and challenging landscape.

**Conclusion**

Mobile privacy is a complex and ever-evolving issue. While encryption and proactive security measures can significantly enhance privacy, users must remain vigilant and informed. As technology progresses, continuous efforts will be needed to ensure that our personal information remains secure in an increasingly connected world.

For businesses seeking to enhance mobile security and privacy in their applications, it is essential to [hire web developers](https://www.nimblechapps.com/services/innovative-web-application-development-company) with expertise in encryption and data protection.

| sejaljansari |

1,864,914 | Pin & Hide Your Way to Teams Channel Glory | With multiple projects and conversations happening at once, finding the information you need can feel... | 26,993 | 2024-05-28T06:30:00 | https://intranetfromthetrenches.substack.com/p/pin-and-hide-your-way-to-microsoft-teams-channel | microsoftteams, microsoft365, workplace | With multiple projects and conversations happening at once, finding the information you need can feel like searching for a needle in a haystack.

This struggle is all too common. While Teams is a powerful collaboration tool, managing multiple channels can quickly become overwhelming.

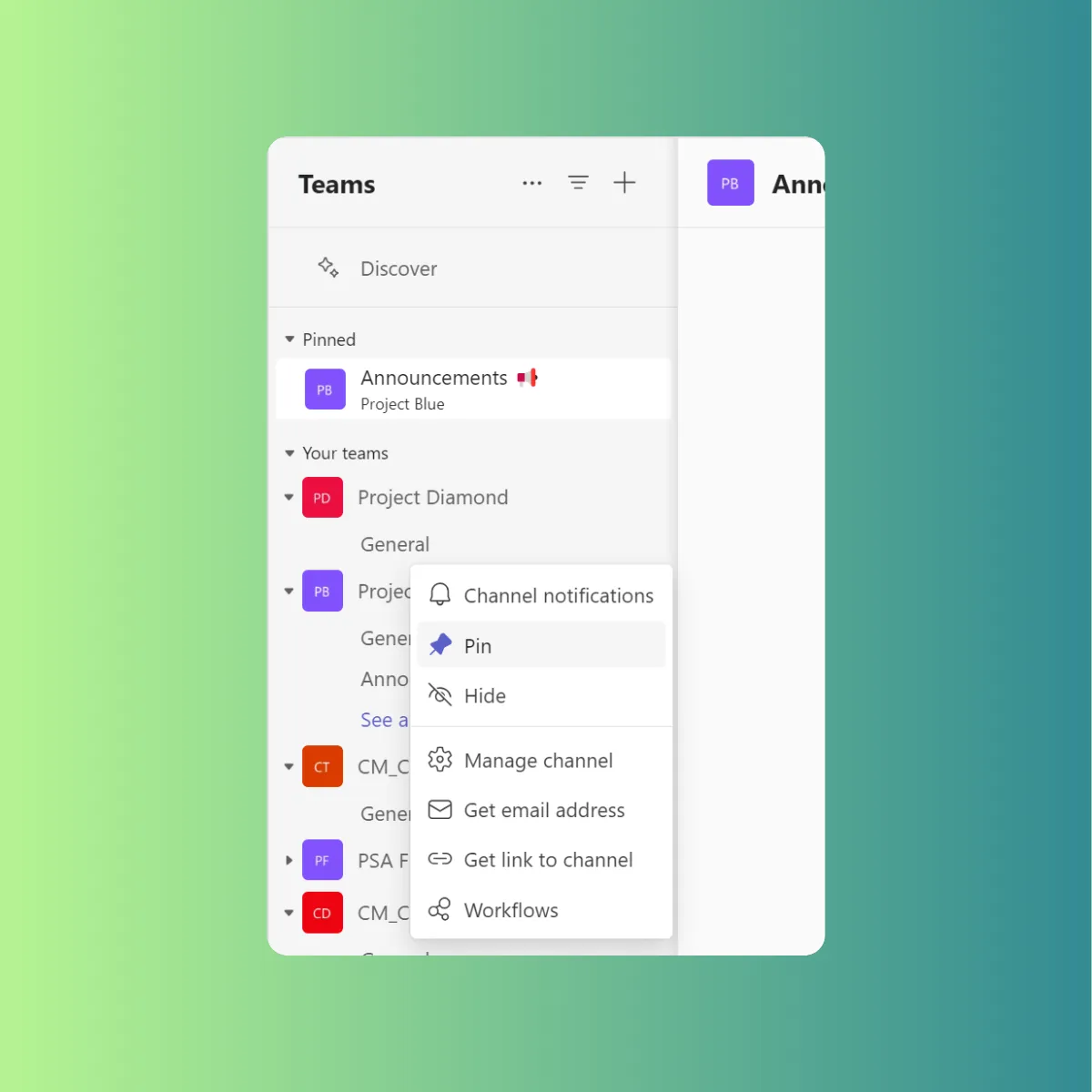

But fear not! This guide will introduce you to pinning and hiding channels – two features that can transform your Teams experience. By the end of this article, you'll have the knowledge to streamline your workflow, find information instantly, and ultimately **boost your productivity**.

## Benefits of Pinning and Hiding Channels

Here's how pinning and hiding channels can transform your experience:

**Effortless Access to Key Channels**

Do you have go-to channels where crucial project updates or team discussions happen? Pinning is your savior! By pinning these important channels, they'll always be conveniently located at the top of your list.

**Declutter Your View**

Inactive channels create visual chaos. Hiding these channels is a fantastic way to streamline your view. Imagine a clean, concise list displaying only the active channels you use regularly. Finding information becomes effortless, allowing you to concentrate on your tasks without distractions.

**Boost Your Efficiency**

Pinning frequently used channels significantly improves your workflow. Imagine seamlessly switching between key project channels, eliminating wasted time searching. This streamlined access translates to a more efficient workday, allowing you to focus on getting things done.

**Minimize Distractions, Maximize Productivity**

Hiding inactive channels also helps minimize distractions and information overload. No more notifications or updates bombarding you from channels you don't currently need. This allows you to concentrate on the tasks at hand, free from the constant barrage of irrelevant information.

## Drawbacks to Consider

While pinning and hiding channels offer amazing advantages, there are a couple of things to keep in mind:

**Tracking Hidden Gems**

Hiding inactive channels keeps your list clean, but remember, they're not gone forever. These hidden channels might hold important information that resurfaces later. Schedule periodic checks to ensure you don't miss anything crucial.

**Pinned Channels: Not So Flexible**

Here's a catch: pinned channels can't be hidden. This can be tricky for occasionally used channels. Imagine pinning a channel for a project deadline, then needing it hidden once the project's done. You'll either need to unpin and then repin it later, or keep it pinned despite not needing daily access.

## Tailoring Your Approach (For Users with Few vs. Many Teams/Channels)

The effectiveness of pinning and hiding channels can vary depending on how you use Microsoft Teams. Let's explore how to tailor your approach based on the number of teams and channels you manage:

If you only manage a handful of Teams and channels, pinning might not be as crucial. However, hiding inactive channels is still highly beneficial. By removing clutter, you can easily find the channels you use most frequently, keeping your Teams list streamlined and focused.

For users juggling numerous Teams and channels, pinning becomes a lifesaver. Pinning your most important channels allows for quick access, saving you time and frustration searching through a long list. This is especially valuable for staying on top of critical project updates or ongoing team discussions.

Furthermore, with a large number of channels, hiding inactive ones becomes essential. Imagine the chaos of an overflowing Teams list! Hiding inactive channels eliminates distractions and information overload, allowing you to focus on the channels relevant to your current tasks.

## Tips for Effective Pinning and Hiding

Ready to put these concepts into action and optimize your Microsoft Teams experience? Here are some key tips to remember:

**Pin with Precision**

Reserve pinning for your most frequently used channels. This could include channels for ongoing projects, core team discussions, or frequently referenced information hubs. By being selective, you'll ensure your pinned channels remain truly valuable for your daily workflow.

**Hide, But Don't Forget**

Hiding inactive channels is a great way to declutter your Teams list. However, it's important to strike a balance. While hidden channels are out of sight, they're not gone forever. Schedule periodic checks on hidden channels to ensure you don't miss any crucial information that might resurface.

**The Power of Combining**

Remember, pinning and hiding work best together! Utilize pinning for your essential channels and leverage hiding for the rest. This potent combination creates a streamlined and organized Teams environment, allowing you to find the information you need with ease.

**Regular Review is Key**

Don't forget to revisit your pinned and hidden channels periodically! Your workflow and project needs might change over time. Regularly evaluate your pinned channels to ensure they remain the most important ones. Similarly, review your hidden channels to see if any need to be unhidden due to renewed activity or a resurgence of importance. This ongoing review ensures your Teams experience stays efficient and organized.

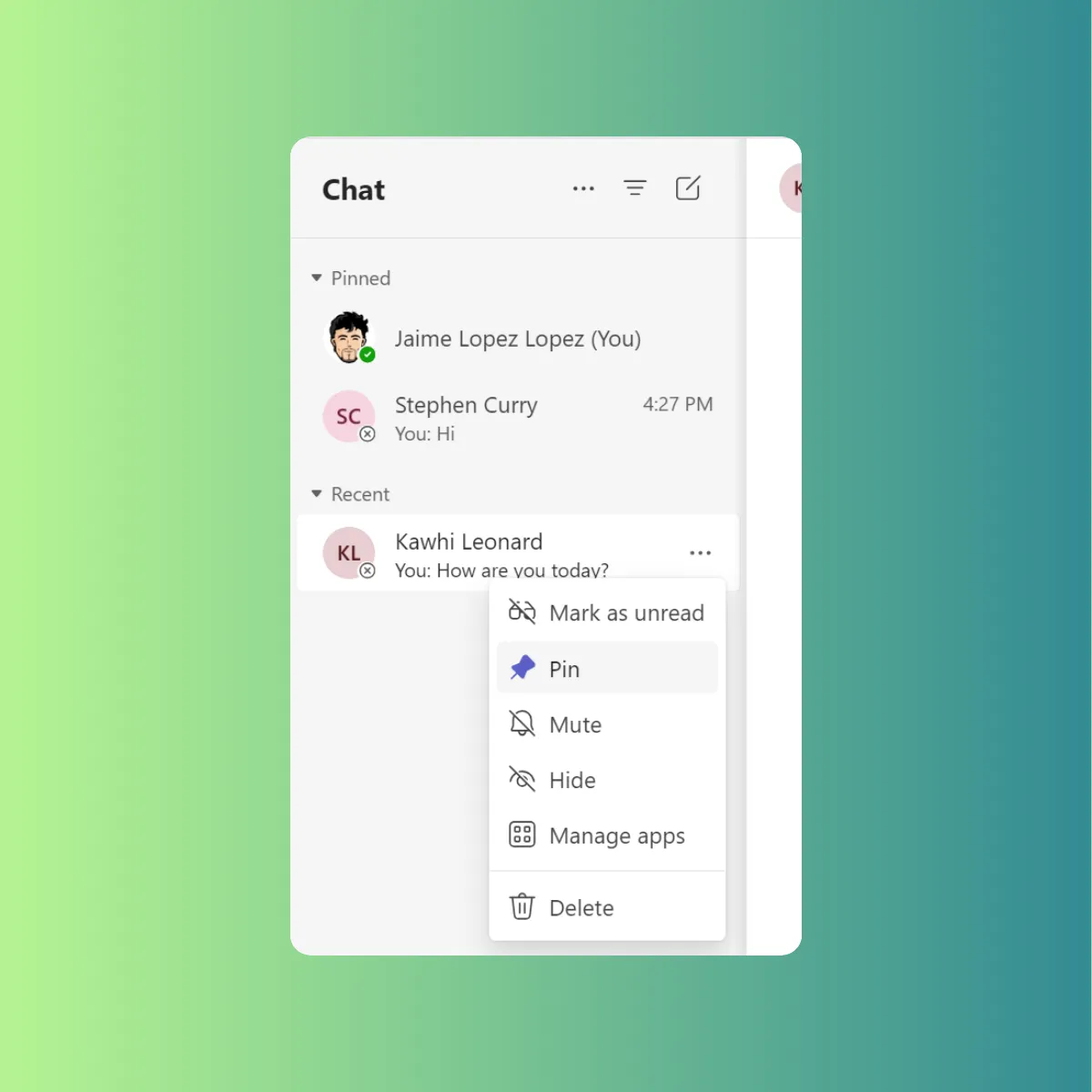

## Bonus: Applying Channel Organization to Your Teams Chats

The same principles of organization you learned for channels can be applied to your chats as well! Just like with channels, information overload, and constant notifications can hinder your productivity in chats.

The key lies in mimicking your pinning and hiding strategy with favoriting and muting chats. Pinning your most frequently used chats keeps them readily accessible at the top of your list. This allows for quick reference and continued conversations with key colleagues or project teams. Just like hiding inactive channels, hiding or muting less important chats allows you to focus on the conversations that demand your immediate attention. By strategically hiding or muting chats with low activity or those not requiring constant updates, you can significantly reduce notification overload and minimize distractions.

## Conclusion

Now that you've explored the power of pinning and hiding channels, along with pinning and hiding and muting chats, it's time to experiment and personalize your Microsoft Teams experience. Imagine scrolling through a streamlined Teams list, with only the most relevant channels readily accessible at the top. No more endless searching or information overload!

As you navigate your workday, hopping between project chats and referencing key channel discussions, feel the newfound efficiency. Information is at your fingertips, distractions are minimized, and your focus is razor-sharp. Embrace the power of organization and watch your productivity soar within the collaborative environment of Microsoft Teams!

## References

- Show, hide, or pin a team or channel in Microsoft Teams: [https://support.microsoft.com/en-us/office/show-hide-or-pin-a-team-or-channel-in-microsoft-teams-91a37043-acea-49b0-9dfc-aec37b2e92b8](https://support.microsoft.com/en-us/office/show-hide-or-pin-a-team-or-channel-in-microsoft-teams-91a37043-acea-49b0-9dfc-aec37b2e92b8)

- Show and hide channels: [https://support.microsoft.com/en-us/office/show-and-hide-channels-3f76dffd-78a8-49ca-b8de-28671cb444ba](https://support.microsoft.com/en-us/office/show-and-hide-channels-3f76dffd-78a8-49ca-b8de-28671cb444ba)

- Pins over white background by Dan Cristian Paduret from Unsplash: [https://unsplash.com/es/fotos/imperdible-plateado-sobre-superficie-blanca-ritZolQWTeE](https://unsplash.com/es/fotos/imperdible-plateado-sobre-superficie-blanca-ritZolQWTeE) | jaloplo |

1,867,257 | Build a Safe and Respectful Community with Answer 1.3.1 | Build a thriving community, while making it a safe place for everyone to express themselves freely,... | 0 | 2024-05-28T06:29:31 | https://dev.to/apacheanswer/build-a-safe-and-respectful-community-with-answer-131-1dc7 | opensource, apache, go, community | Build a thriving community, while making it a safe place for everyone to express themselves freely, is a dream and a challenge. Answer 1.3.1 is here for these two goals.

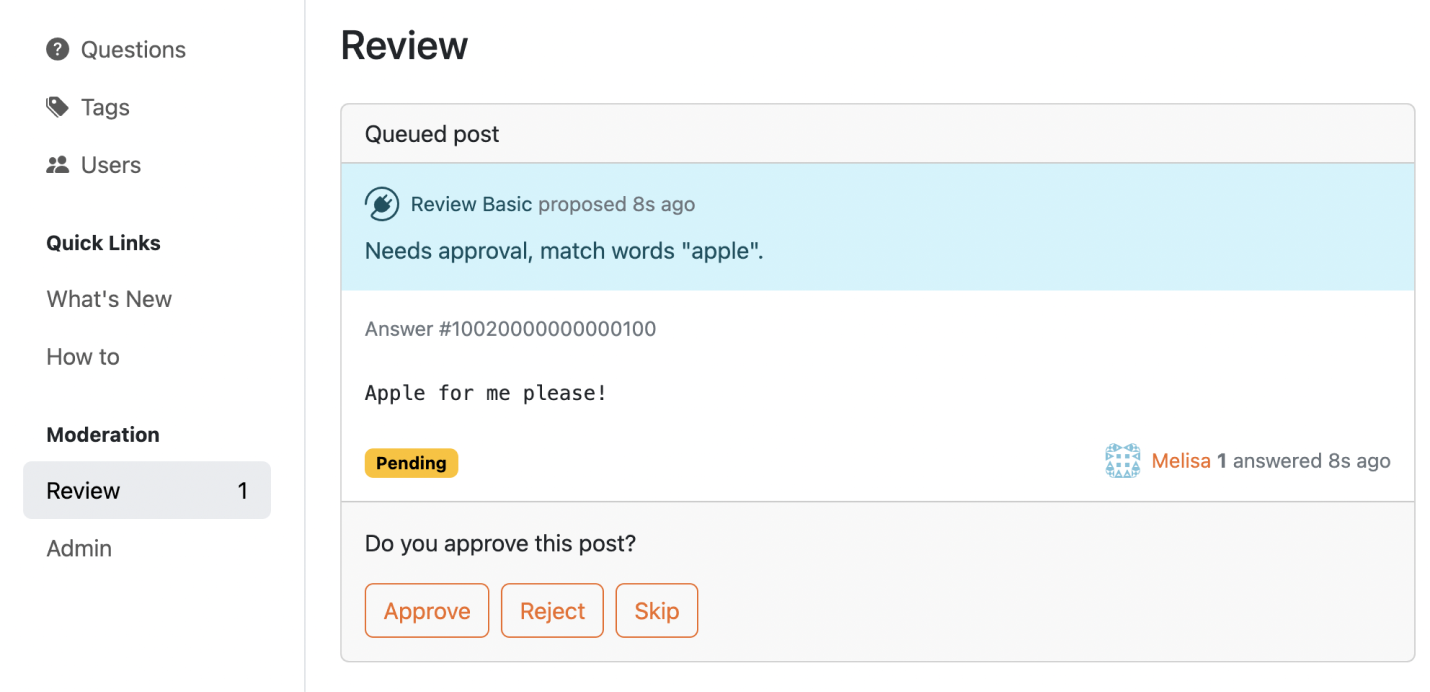

In this version, we add new tool for admin to define keyword lists to identify and review specific content beforehand, ensuring a safe space for everyone. Users can also mention the admin and moderator in comments of a post to seek response actively. New Captcha plugins are ready to safeguard your community.

Ready to [upgrade](https://answer.apache.org/docs/upgrade)? Leave us several minutes to dive in.

## What’s New

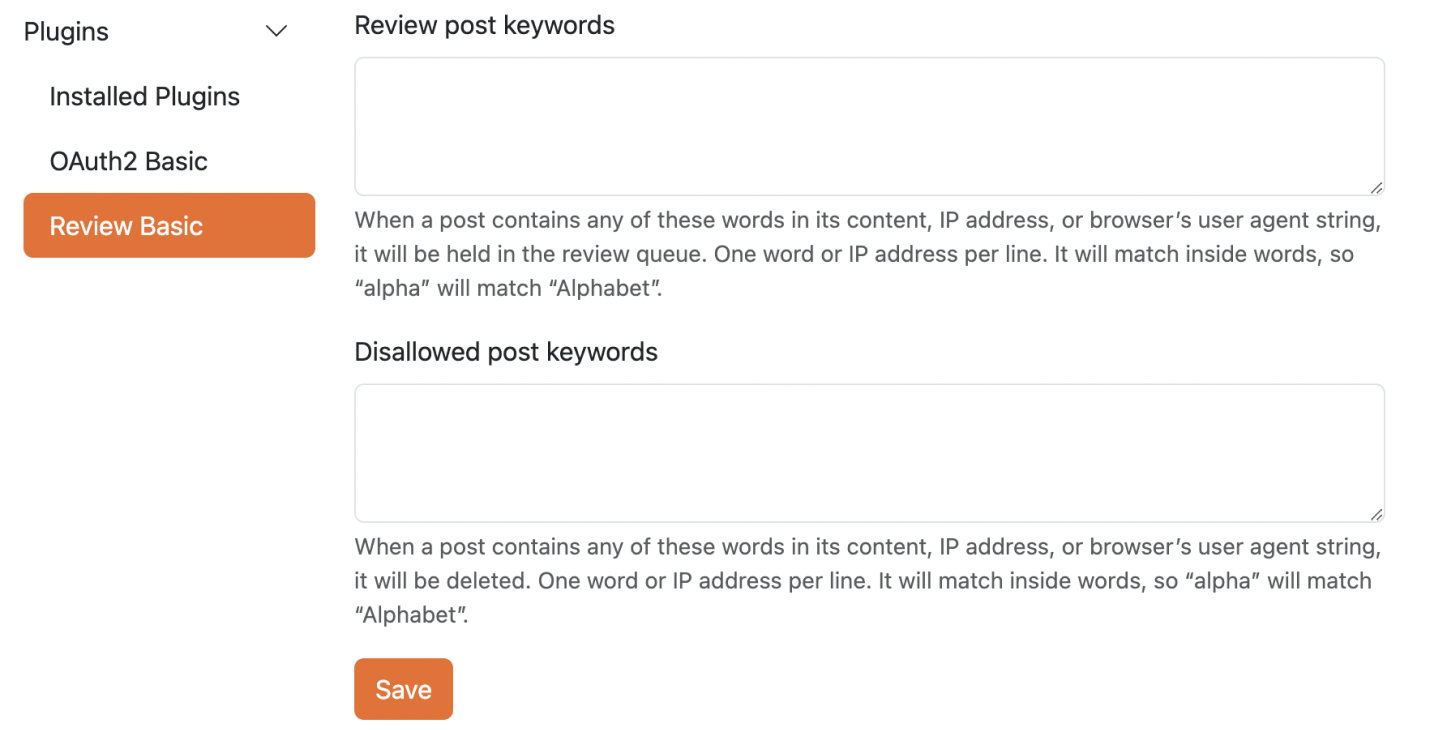

### Keyword Censorship for Secure Communities

Apache Answer is for open knowledge exchange and discussion respectfully. Keyword censorship helps achieve this by proactively filtering out offensive, discriminatory, or harassing content. It ensures a platform where everyone feels comfortable expressing themselves freely.

Here’s how it works.

1. Admin can develop a keyword list of the community to check or filter.

2. Then, go **Admin -\> Plugin -\> Review Basic** where you can add these keywords in **Review** post keywords or **Disallowed** post keywords based on your need. One keyword or IP address per line. Scroll and click **Save**.

3. Content, IP addresses, or browser identifiers that contain any of these words will be sent to the review queue or deleted. It will match the internal words. For example, if "alpha" is not allowed, then "Alphabet" is also not allowed.

This feature would allow administrators to review or filter out specific words or phrases, potentially creating safe spaces for younger audiences or communities with content restrictions.

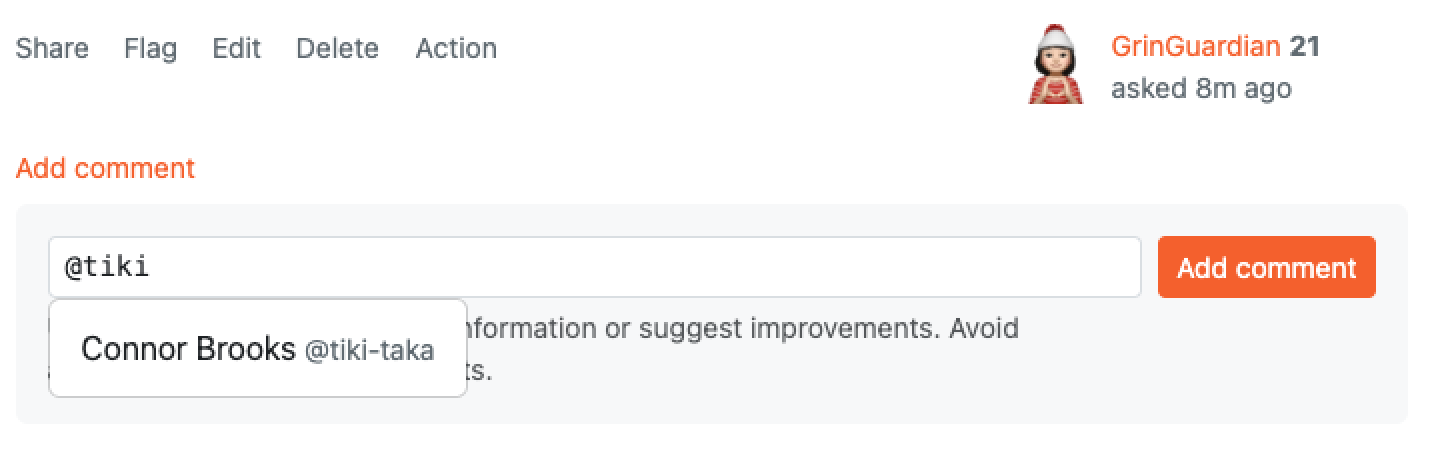

## Tag Moderators and Admin in Comment

Now, you can mention moderator or admin in a comment of a post. As you type the first letter of the moderator or admin's username, a search bar will appear. Select the admin/moderator from the dropdown list for a faster response.

The moderator and admins will receive an email in their email box.

### Shining on Social Platforms

Social media meta tag is on the stage! Let’s make your shared content appears more attractive on social media platforms. Social meta tags use the following defaults:

* Title: the title of current page

* Image: the logo of the site

Share it and don’t forget to mention us on [Twitter](https://x.com/AnswerDev).

## Improvements and Fixed Bugs

We’ve got new plugins for you: [Captcha Basic](https://github.com/apache/incubator-answer-plugins/tree/main/captcha-basic) and [Captcha Google V2](https://github.com/apache/incubator-answer-plugins/tree/main/captcha-google-v2). These two plugins enable or disable the CAPTCHA feature, allowing users to implement their CAPTCHA plugins, such as Google reCAPTCHA.

We also add auto-scroll for posting so that you can jump right to the blank space for you to edit it.

Find out more in our [Release note](https://github.com/apache/incubator-answer/releases/tag/v1.3.1).

## Thanks to the Community.

This version is proudly presented by 8 contributors from the community, and they are:

[kumfo](https://github.com/kumfo), [hgaol](https://github.com/hgaol), [LinkinStars](https://github.com/LinkinStars), [robinv8](https://github.com/robinv8), [shuaishuai](https://github.com/shuashuai), [sy-records](https://github.com/sy-records), [zahash](https://github.com/zahash), and [prithvidasgupta](https://github.com/prithvidasgupta).

## We Love Feedback

We'd love your feedback! Share your thoughts on the latest releases, suggest features you need, or just send us messages. Please don't hesitate to reach out on [X](https://twitter.com/answerdev), [Discord](https://discord.gg/a6PZZbfnFx), [GitHub](https://github.com/apache/incubator-answer), [Meta Answer](https://meta.answer.dev/), or our [mailing list](https://answer.apache.org/community/support). | apacheanswer |

1,867,256 | SMTP Relay Services: How It Works & Why Your Business It | Email is a lifeline for modern marketing strategies, and serves businesses in reaching their... | 0 | 2024-05-28T06:27:39 | https://dev.to/brettjhonson01/smtp-relay-services-how-it-works-why-your-business-it-20id | webdev, beginners, productivity, opensource | Email is a lifeline for modern marketing strategies, and serves businesses in reaching their audiences in many ways. However, the dynamics of online communication are continuously changing and it has of late gotten more difficult to make sure that your emails get to their intended recipients. This is where investing in a bulk email relay service may have an important contribution to this. This article is to help business owners or professionals who send numerous emails. Use [Bulk SMTP relay services](https://www.quora.com/What-are-the-top-10-SMTP-server-websites/answer/Otis-Milburnn) to get the best email deliverability possible.

## Understanding the Importance of Email Deliverability

Before we look at the value of a [bulk email relay service](https://smtpget.com/bulk-email-server/), let's first understand what email deliverability is and why it matters. Previously, email deliverability refers to the success of an email message reaching the recipient's inbox. It goes beyond the prerequisite sender reputation, authentication methods, content reliability, and responsiveness of addressees.

The main thing that has to be taken into consideration for ensuring the successful performance of email marketing companies is email deliverability. Without it, there would be a bigger chance that your messages might end up in spam folders, and in the worst case, it will be flagged as spam which may harm your sender's reputation and the availability of your future sendings.

## The Role of Best SMTP Relay Services

[SMTP relay services](https://smtpget.com/smtp-service-provider/) serve as an interface between your email marketing platform and the recipient's email servers. They specialize in sending a large number of emails efficiently while maintaining high deliverability rates. Here's why investing in such a service is advantageous.

**Enhanced Deliverability Rates:** Mass email relay services are aided by high-end facilities and technology that function to guarantee that your messages finally reach the destined recipients. They are well known and whitelisted through their relaying domains, observe all the industry’s best practices, and always keep an eye on any threats that could reduce the deliverability of messages.

**Improved Inbox Placement:** Deriving from knowledge and skills, a spam resources provider can implement an operation that spaces your emails inside the recipients' inboxes. That also makes your messages appear more greatly in the sight and as a result, your messages will be then taken into account by your target audience.

**Scalability and Reliability:** Whichever the case, whether sending hundreds or millions of emails relays services of email in bulk are very reliable and scalable to suit all the required sending with effects of scaling up. They have what it takes to deal with your business as your email marketing requirements are getting bigger, such as keeping the delivery rates and processing efficiencies high.

**Compliance and Security:** The Bult Email Relay services master regulations and compliance requirements, making sure your email campaigns are according to the anti-spam acts, for example, CAN-SPAM and GDPR requirements. Arguably, they have initiated solid security measures that are aimed at guarding your data and shielding you from such cyber threats as hacking, online fraud, and identity theft.

.

## Choosing the Right Bulk Email Relay Service

When selecting a bulk email relay service, consider the following factors:

Reputation and Track Record: Look for providers with a proven track record of delivering high inbox placement rates and maintaining excellent sender reputations.

**Features and Functionality:** Compare the features provided by various providers, including delivery metrics, A/B testing, segmentation capabilities, and integrations with prominent email marketing tools.

**Customer Support:** Choose a service that provides quick response to any questions or complaints.

**Pricing and Scalability:** Compare pricing plans and confirm that the service can meet your present requirements while also allowing for future expansion.

## 5 Best SMTP Relay Service Providers:

**SMTPget:** SMTPget is the best SMTP provider for bulk email SMTP relay services that focus on speed and deliverability, making sure that your emails are sent to their targeted recipients promptly and securely.

**iDealSMTP:** iDealSMTP offers the best email delivery solutions that prioritize simplicity and efficiency, making it a perfect choice for businesses of all sizes looking for hassle-free email sending.

**DigitalAka™:** DigitalAka™ provides customized SMTP relay services for enterprises, focusing on deliverability, security, and scalability to fulfill email communication demands.

**SMTP2GO:** SMTP2GO, which is used by businesses all over the world, provides secure and reliable email delivery with its user-friendly interface and complete delivery reporting tools.

**Mailgun:** Mailgun is a popular email delivery service among both developers and marketers, thanks to its broad APIs and advanced email validation capabilities, which make integration simple.

**Conclusion**

Purchasing an SMTP relay service is necessary because of the increasing issues of spam filters mentioned in email marketing and message overload. They can aid your emails to get into the inbox, talk to your audience, and get the results you want for your organization by leveraging their knowledge, infrastructure, and technology. Inform yourself to make the deliverability of email marketing better.

| brettjhonson01 |

1,867,254 | 佐藤 光信(Sato Mitsunobu)| 市場トレンドと投資戦略の深堀り | 佐藤 光信 Sato Mitsunobu プロフィール: 早稲田大学高等学院卒業後高校、1996年に早稲田大学... | 0 | 2024-05-28T06:24:21 | https://dev.to/satomitsunobu/zuo-teng-guang-xin-sato-mitsunobu-shi-chang-torendototou-zi-zhan-lue-noshen-ku-ri-192e | 佐藤光信satomitsunobu | 佐藤 光信 Sato Mitsunobu

プロフィール:

早稲田大学高等学院卒業後高校、1996年に早稲田大学 政治経済学部を卒業。

卒業後、ヤマト証券の仕事に従事し、主に信託の販売を担当。

2003年、米国に渡り、アメリカ銀行メリルリンチに入社 ファンドの確立、取引構造と実行、投資評価、コンプライアンス管理など、ファンド管理と販売を担当する。

その他のビジネスとして、ビジネス開発コンサルティングに加えて、ベンチャーキャピタル、合弁、スピンオフ、および米国と英国の投資にも関与。

2018年、藤田雄一氏の招きにより、アジア太平洋地域基金部シニアアナリストとしてマッコーリー・アセット・マネージメン社に入社。

日本の金融業界で20年近くの経験がある、主に日本政府の資金、債券、株式市場に参加しており、販売から取引までのさまざまな市場関連のポジションを任される。

スを蓄積し、資本および経済市場の運営に精通していました。

同時に、様々な金融投資市場にも精通しており、投資市場における鋭い嗅覚を有し、資産運用の経験も豊富です。

投資テクノロジーの面では、ギャン理論、ボルインデックス、SMAチャートパターン分析などのテクニカル分析に関する高い実績と知識を持ち、市場動向の調査と判断に非常に精通しています。

人生の信条:財産管理は、単なる金融数字の積み重ねではなく、綿密に設計され、卓越した実行を行う認知芸術である。

ポジティブ思考は、ポジティブ人生をもたらし、ネガティブ思考は、ネガティブ人生をもたらす。 | satomitsunobu |

1,867,248 | How To Spend Less Time On Sweet Potato Planter | To spend less time on sweet potato planters and increase efficiency, follow these... | 0 | 2024-05-28T06:18:40 | https://dev.to/mahindra_machinery_566e71/how-to-spend-less-time-on-sweet-potato-planter-463f | To spend less time on sweet potato planters and increase efficiency, follow these steps:

Preparation: Prepare the field well in advance by clearing debris and ensuring soil is adequately fertilized and irrigated.

Machine Setup: Before planting, calibrate the sweet [potato planter](https://mahindrafarmmachinery.com/products/mahindra-potato-planter) according to the desired planting depth and spacing.

Optimal Conditions: Plant during favorable weather conditions to minimize delays and ensure proper soil conditions.

Efficient Operation: Operate the planter at a steady pace, maintaining control over the planting depth and spacing to avoid overlap or gaps.

Regular Maintenance: Perform regular maintenance on the planter, including cleaning and greasing parts, to prevent breakdowns and ensure smooth operation.

Training: Ensure operators are well-trained to use the planter effectively and troubleshoot minor issues independently.

By implementing these strategies, you can streamline the planting process and spend less time overall on sweet potato planting, increasing productivity and reducing operational costs.

| mahindra_machinery_566e71 | |

1,867,188 | Warm Welcome to Linux | For easiness, let me call it "Linux" and not GNU/Linux/whatever Ignorance is a... | 0 | 2024-05-28T06:16:44 | https://dev.to/fonzacus/warm-welcome-to-linux-3okj | beginners, linux, opensource | ###### For easiness, let me call it "Linux" and not GNU/Linux/whatever

# Ignorance is a Blessing

Trying to live a more minimal life has its ups and downs. Having been out of the loop for such a long time, the world and the years ahead, look different from under a rock. With many breakthroughs in many fields at once, it seems impossible to catch up. To me, none of that matters much, as I prefer just playing with my kids. My off the entire grid life taught me to enjoy the simple things.

## Now With More Broken Glass

PC users are having a tantrum spiral as many Windows rumors turn out to be true. Who knew all these wonderful features could be ever more wonderful (/s). Some have even sworn to move elsewhere. As one who has tried to move since Windows 8 (I dub it H8 BTW), I have tried hopping many times over the decade and have stayed with the basic friendly guidelines (common sense really). The year of the Linux (TM) (R) (C) may finally come, maybe.

## DistroWatch, I Choose You

The common sense I advocate mainly revolves around [DistroWatch](https://distrowatch.com). High ranking distributions (indicated by the hits per day chart) are generally more favorable for many reasons; stability, support, user-friendliness, friendliness of other users and so on. Over the past few years, I have usually recommended the three major M named distros; Mint, Manjaro, and MX. They have had high favorability over the past decade. A first impression is a lasting one.

## Short Backstory

My first distro into the Linux distros that I fondly remember started off with Ubuntu as it was the first of its kind back in the day. Many distros were _too_ freedom oriented, and preferred not to include much needed drivers, media codecs, filesystem support and so on. This all changed when Ubuntu broke out of the mold. Nowadays, there are only a few that try to stay true to these old teachings. It was revolutionary at the time when even complete beginners could be happy with a completed, ready to rumble install without bloodshed.

My friends also wanted a stab at Linux and asked me for help. They wanted a simpler experience than Ubuntu. After browsing around for a bit, we decided on Mint. The experience was even more laid back, and something that can be better for beginners. After using it for over a year, I wanted to try Ubuntu again, until I heard some funny rumors. Browsing the Mint site, they started to offer a Debian option, and I decided to finally try Debian after hearing they started to ease on their too freedom ways.

As with many distros, having a large repository may not be enough for some, adding custom PPAs (Personal Package Archives, or 3rd party repositories) was the norm. Whilst staying truly Debian, this can add to a [FrankenDebian](https://wiki.debian.org/DontBreakDebian ), and might mess up the upgrade procedure. That is when I decided to try a rolling release distro again, but this time it was Debian Sid (I prefer calling it Unstable, and calling the next stable as Testing). My kitchen PC, which I built back in college 2009, with a top of the line DDR2, is still happily running with the initial Unstable I had installed. It really says something, as long as we RTFM.

## There Are Plenty of Fish in the Sea

Trying something new is scary, but there are tools out there to ease the pain. [YUMI](https://pendrivelinux.com/yumi-multiboot-usb-creator) and [Ventoy](https://www.ventoy.net/en/index.html) can help with the discovery phase of distro hopping. They are tools we can use to download ISOs onto our USB flash drives. The kicker is, they can support many bootable disks on one installation. The icing on the cake, they support persistency. We can try their default installers, save our persistent data, try something else, and return to where we left off.

Most Linux apps try to be portable (in a different sense of the word, for another topic) by nature and try to be self-contained. Now there are [portable app frameworks](https://dev.to/bearlike/flatpak-vs-snaps-vs-appimage-vs-packages-linux-packaging-formats-compared-3nhl) that try to do more. I am more AppImage oriented as they are truly portable, without the need for additional software.

After some Frankensteining of my own, I have a [USB installed](https://forum.mxlinux.org/viewtopic.php?p=581942) MX as a pocket OS that can be used on literally any hardware. Most common factory default boot options tend to favor booting from USB drives as the first boot option. A few years ago, a new storm called [Bedrock Linux](https://distrowatch.com/table.php?distribution=bedrock) caught Frankensteiners by surprise, and this is where I am with my daily driver everyday laptop.

## Choose Your Destiny

There are plenty of choices in the Linux distro world, too many as said before. Too much of a good thing can be bad at times, and that can split focus on trivial things instead of pushing to what matters more. At the end of the day, don't worry too much about what you choose as most of the time, most distros tend to be the same, it is the nitty gritty details that only slightly set them apart. Of course this was just an oversimplification, as the iceberg is always larger underneath.

###### So many choices, so little time, [so part 2](https://dev.to/fonzacus/warm-welcome-to-linux-p2-3j5k) | fonzacus |

1,867,246 | Business Management Assignment Help | Business Management is a popular field of study in Australia, attracting students from all corners of... | 0 | 2024-05-28T06:16:25 | https://dev.to/assignment_help_1ce2fa75c/business-management-assignment-help-2gdg | Business Management is a popular field of study in Australia, attracting students from all corners of the globe. This discipline covers a broad range of topics including economics, finance, marketing, human resources, and operations management, making it both challenging and rewarding. Given the complexity and the high expectations, students often seek external assistance to excel in their coursework. This blog will delve into the various aspects of Business **_[Management Assignment Help](https://www.assignmentsamples.com/management-assignment-help)_**, highlighting why it is essential, what to look for in the best management assignment help, and how it can significantly impact your academic journey.

**_Understanding Business Management_**

Before diving into the specifics of assignment help, it's crucial to understand what Business Management entails. This field focuses on organizing, planning, and analyzing business activities required to efficiently run and manage a business. Students learn about decision-making processes, strategic planning, and the effective use of resources to achieve business goals. Assignments in this field can range from case studies, research papers, and strategic analysis to practical projects and presentations.

**_The Need for Management Assignment Help_**

Business Management courses are rigorous and demand a comprehensive understanding of various interrelated concepts. Here are some reasons why students seek Management Assignment Help:

**Complex Topics:** The subject covers a vast range of topics, each with its own set of complexities. From understanding organizational behavior to mastering financial management, students are required to grasp numerous challenging concepts.

**_Time Constraints_**: Balancing academics with personal commitments, part-time jobs, and internships can be overwhelming. Assignment deadlines can often add to this pressure, making it difficult for students to deliver quality work on time.

**_High Standards:_** Australian universities are known for their high academic standards. Meeting these expectations requires in-depth research, critical analysis, and exceptional writing skills, which can be daunting for many students.

**_Language Barriers:_** International students, in particular, may face difficulties with English proficiency, affecting their ability to articulate their knowledge effectively in assignments.

_**Lack of Resources:**_ Access to credible sources and up-to-date information is crucial for crafting well-informed assignments. Students might struggle to find relevant data and case studies necessary for their work.

**_Benefits of Seeking the Best Management Assignment Help_**

Opting for professional assistance can make a significant difference in a student’s academic performance. Here are some key benefits:

**_Expert Guidance:_** Professional services provide access to experts with in-depth knowledge and experience in Business Management. Their insights can help students understand complex topics better and develop high-quality assignments.

**_Customized Solutions: _**Each assignment comes with its unique requirements. The best management assignment help services offer tailored solutions that meet specific guidelines and criteria set by the universities.

**_Plagiarism-Free Work:_** Academic integrity is paramount. Reputable assignment help providers ensure that all work is original and free from plagiarism, adhering to the highest standards of academic honesty.

**_Time Management:_** By delegating assignment work to professionals, students can better manage their time, allowing them to focus on other important aspects of their studies and personal lives.

**_Improved Grades:_** Quality assignments can lead to better grades. With expert help, students can submit well-researched and well-written assignments that meet academic standards and impress their professors.

**_How to Choose the Right Assignment Help Service

_**With numerous options available, selecting the right assignment help service can be challenging. Here are a few things to consider.

**_Check Credentials:_** Ensure that the service has qualified professionals with relevant academic backgrounds and experience in Business Management.

**_Read Reviews:_** Look for testimonials and reviews from other students. Positive feedback and high ratings are good indicators of reliable service.

**_Assess Their Approach:_** The best services offer a systematic approach, including thorough research, critical analysis, and coherent presentation of ideas.

**_Evaluate Communication:_** Effective communication is crucial. Ensure that the service provides timely updates and is responsive to your queries and feedback.

**_Consider Pricing:_** While affordability is important, don’t compromise on quality. Look for services that offer a balance between cost and quality, providing value for your money.

**_Check for Additional Services:_** Some services offer extra benefits like free revisions, plagiarism reports, and 24/7 support. These can be valuable in ensuring a smooth and satisfactory experience.

**_The Role of Technology in Assignment Help_**

Technology plays a significant role in enhancing the quality and accessibility of assignment help services. Here’s how:

**_Online Platforms:_** Most assignment help services operate through online platforms, making it convenient for students to seek help from anywhere at any time.

**_Research Tools:_** Advanced research tools and databases enable professionals to access a wide range of resources, ensuring that assignments are well-informed and up-to-date.

**_Plagiarism Detection Software:_** To guarantee originality, services use sophisticated plagiarism detection tools, ensuring that all work is authentic.

**_Communication Channels:_** Technology facilitates seamless communication between students and experts through chat, email, and video calls, making the process more efficient and personalized.

**_Success Stories_**

Numerous students have benefited from professional assignment help services. Here are a few success stories:

**_Improved Understanding:_** Sarah, an international student, struggled with the complexities of financial management. With expert help, she not only improved her grades but also gained a better understanding of the subject, which helped her in her final exams.

**_Balancing Commitments: _**John juggled a part-time job along with his studies. The pressure of assignments was overwhelming until he sought professional help. This allowed him to manage his time better and focus on both his job and studies, ultimately leading to academic success.

**_Enhanced Writing Skills: _**Emily faced challenges with academic writing. With the guidance of professional services, she improved her writing skills significantly, which was reflected in her higher grades and positive feedback from professors.

**_Conclusion_**

Business Management is a demanding field that requires dedication, hard work, and a deep understanding of various concepts. For students in Australia, seeking Business Management Assignment Help can be a game-changer. It not only aids in managing academic pressures but also enhances learning, leading to better grades and overall success.

When choosing the best management **_[Assignment Help](https://247assignmenthelp.com/)_**, it's essential to consider factors such as expertise, customization, communication, and additional services. By doing so, students can ensure they receive high-quality assistance that meets their academic needs.

In the digital age, technology has further streamlined the process, making it easier for students to access the help they need from anywhere. Success stories from students who have benefited from these services highlight the positive impact professional help can have on academic performance.

In conclusion, Business Management Assignment Help is a valuable resource for students aiming to excel in their studies. By leveraging expert guidance, students can overcome challenges, achieve their academic goals, and pave the way for a successful career in the dynamic field of business management. Whether you are struggling with complex topics, time constraints, or language barriers, professional assignment help is your key to unlocking **_[Academic](https://dev.to/otienorabin/are-you-writing-your-git-commit-messages-properly-54cl)_** success in Australia. | assignment_help_1ce2fa75c | |

1,851,401 | B2B Order Validation | Order validation process for a B2B Website. Create a list of validators for each customer, that... | 0 | 2024-05-13T13:56:37 | https://dev.to/ndiaga/b2b-order-validation-1hlg | Order validation process for a B2B Website.

Create a list of validators for each customer, that will validate his orders .

The order will be Valide only if all of them have validate the order positively.

You add to each of your customer (B2B partners) a list of validators that will receive an email once the customer mange has placed an order.

They will then valide or refuse the order accordingly .

The module works this way:

- A B2B placed an order , the Order remains in the status of “In Validation” until all the validators of this particular clients have validate one by one the order.

- If all the Validators Validate the Order positively the Order will pass to the status " Order Validated" and the customer can receive the products he ordered.

-If only one of the Validators refuses the Order, the status of order will pass to "Order Refused" and a message will be sent to the customer explaining him why his order was refused.

-The validator who refuses an order should write a message to justify his decision.

PrestaShop Version : 1.6 ; 1.7 and 8

[B2B ORDER VALIDATION](https://prestatuts.com/store/home/41-b2b-order-validation.html)

| ndiaga | |

1,867,245 | Exploring Basic Data Types in TypeScript | TypeScript is a powerful, statically typed superset of JavaScript that helps developers write more... | 27,696 | 2024-05-28T06:14:07 | https://dev.to/nahidulislam/exploring-basic-data-types-in-typescript-34fo | typescript, webdev, javascript, programming | TypeScript is a powerful, statically typed superset of JavaScript that helps developers write more robust and maintainable code. Understanding the basic data types in TypeScript is essential for beginners to leverage its full potential. In this article, we'll explore the fundamental data types: `string`, `number`, `array`, `object`, `null`, `undefined`, `function`, `never`, `type alias`, and `type alias in function`.

## 1. String

A `string` in TypeScript is used to represent textual data. It's a sequence of characters and is enclosed in single ('), double ("), or backticks.

```typescript

let message: string = "Hello, TypeScript!"

console.log(message) // output: "Hello, TypeScript!"

```

You can also use template literals (enclosed by backticks) to embed expressions:

```typescript

let userName: string = "Alice";

let greeting: string = `Hello, ${userName}!`;

console.log(greeting) // output: Hello, Alice!

```

## 2. Number

A `number` in TypeScript can represent both integer and floating-point values. TypeScript uses the number type to cover all numeric values, including special values like Infinity and `NaN`.

```typescript

let myNumber: number = 5;

let myFloat: number = 19.71;

let myInfinity: number = Infinity;

console.log(myNumber) // output: 5

console.log(myFloat) // output: 19.71

console.log(myInfinity) // output: Infinity

```

## 3. Array

An `array` in TypeScript is used to store a collection of elements of a specific type. You can define an array using the `Array<type>` syntax or the `type[]` syntax.

```javascript

let myArray: number[] = [1, 2, 3, 4, 5];

let myStringArray: Array<string> = ["one", "two", "three"];

console.log(myArray); // Output: [1, 2, 3, 4, 5]

console.log(myStringArray); // Output: ["one", "two", "three"]

```

## 4. Object

An `object` in TypeScript is a collection of key-value pairs, where the keys are strings (or symbols) and the values can be of any type. You define the shape of an object using an interface or a type alias.

```typescript

let myObject: { name: string, age: number } = { name: "John", age: 30 };

console.log(myObject); // Output: { name: "John", age: 30 }

```

Using an interface:

```typescript

interface Person {

name: string;

age: number;

}

let anotherPerson: Person = { name: "Jane", age: 25 };

console.log(anotherPerson); // Output: { name: "Jane", age: 25 }

```

## 5. Null & Undefined

`null` and `undefined` are special types that represent the absence of a value. `null` is often used to explicitly indicate the absence of a value, whereas `undefined` typically means a variable has been declared but not yet assigned a value.

```typescript

let myNull: null = null;

let myUndefined: undefined = undefined;

console.log(myNull); // Output: null

console.log(myUndefined); // Output: undefined

```

Variables without an initial assignment default to `undefined`:

```typescript

let notAssigned;

console.log(notAssigned); // Output: undefined

```

## 6. Function

A `function` in TypeScript is a block of code that performs a specific task. Functions can accept parameters and return a value. TypeScript allows you to define the types of the parameters and the return type.

```typescript

function greet(name: string): string {

return `Hello, ${name}!`;

}

console.log(greet("Alice")); // Output: Hello, Alice!

```

A function with no return value can be specified with the `void` return type:

```typescript

function logMessage(message: string): void {

console.log(message);

}

logMessage("This is a message."); // Output: This is a message.

```

## 7. Never

The `never` type represents values that never occur. It's typically used for functions that always throw an error or functions that never return.

```typescript

function error(message: string): never {

throw new Error(message);

}

try {

error("Something went wrong!");

} catch (e) {

console.error(e); // Output: Error: Something went wrong!

}

```

A function that never returns (infinite loop):

```typescript

function infiniteLoop(): never {

while (true) {}

}

```

## 8. Type Alias

A type alias creates a new name for a type. This can be especially useful for simplifying complex type definitions or when you want to give a type a meaningful name.

```typescript

type Point = { x: number, y: number };

let myPoint: Point = { x: 10, y: 20 };

console.log(myPoint); // Output: { x: 10, y: 20 }

```

Using a type alias for union types:

```typescript

type ID = number | string;

let userId: ID = 101;

let anotherId: ID = "user101";

console.log(userId); // Output: 101

console.log(anotherId); // Output: user101

```

## 9. Type Alias in Function

Type aliases can be used in function signatures for better readability and maintainability. This allows you to reuse complex type definitions across multiple functions.

```typescript

type Greeting = (name: string) => string;

const sayHello: Greeting = (name) => `Hello, ${name}!`;

console.log(sayHello("Bob")); // Output: Hello, Bob!

```

Combining type aliases with objects:

```typescript

type Person = { name: string, age: number };

type Introduce = (person: Person) => string;

const introduce: Introduce = (person) => `My name is ${person.name} and I am ${person.age} years old.`;

console.log(introduce({ name: "Charlie", age: 28 })); // Output: My name is Charlie and I am 28 years old.

```

## Conclusion

Understanding the basic data types in TypeScript is crucial for writing effective and type-safe code. These types form the foundation upon which more complex types and structures are built. By mastering `string`, `number`, `array`, `object`, `null` & `undefined`, `function`, `never`, and `type aliases`, you'll be well-equipped to handle a wide range of programming scenarios. TypeScript’s type system not only helps catch errors early but also makes your code more readable and maintainable. As you continue to explore TypeScript, you'll find that these basics will serve as a strong foundation for more advanced concepts and techniques.

Follow me on: [LinkedIn](https://www.linkedin.com/in/iamnahidul-islam) [Portfolio](https://nahidul-islam-fahim.web.app) | nahidulislam |

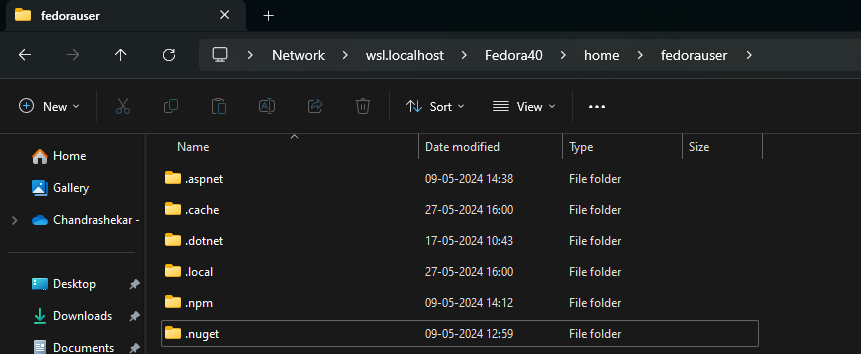

1,867,230 | Using WSL2 as primary driver for 3 months with Fedora | Introduction It all started when I stumbled upon an article about Windows Subsystem for... | 0 | 2024-05-28T06:12:39 | https://dev.to/chandruchiku/using-wsl2-as-primary-driver-for-3-months-with-fedora-4f17 | wsl, wsl2, fedora | ### Introduction

It all started when I stumbled upon an article about Windows Subsystem for Linux being released and is fun. I wanted to give it a try and I installed Ubuntu 18.04 and started using it on and off. I immediately fell in love with the way it works. After that I had to get OS reinstalled for some reason and left at it. Couple of months later, I wanted to give it a try again but Fedora this time. Whey Fedora? you may ask. Since most time we use Red hat, Amazon Linux in our deployments I wanted to have familiar commands and add to that we all know Fedora is cutting edge latest.

**Fedora Article:** [Install Fedora On WSL] (https://dev.to/bowmanjd/install-fedora-on-windows-subsystem-for-linux-wsl-4b26)

I followed the steps and bam. Fedora 39 running on my Windows 11 like native OS. I could access files directly using File Explorer.

I quickly installed npm, .NET, git and started using it as daily driver. However, when I go home the WSL would lose internet connection. I found out I needed to edit `/etc/resolv.conf` and add nameservers of my organization and Google DNS.

Git commit and sync took quick 1 to 3 to seconds that used to take 5 to 10 seconds on the Windows machine. `npm install` was way faster than what Windows used to take. It was like getting an upgraded machine without any money spent.

#### Pros

1. **Windows-Linux interoperability**: Most organizations use Windows for mail, teams and are easier to maintain with AD connectivity and windows updates. Linux running natively on Windows is a boon.

2. Linux file system being generally faster improves performance by 10 to 30%

3. Familiar commands during dev and prod deployments.

4. Windows ports are forwarded automatically to Linux Processes.

5. Windows can still scan the Linux folders for vulnerabilities

6. I can run docker/podman (we all know Docker Desktop needs a license to run on Windows)

#### Cons:

1. The only issue I am facing right now is that the longer I use, feels the WSL is slowing down. | chandruchiku |

1,867,242 | Software testing techniques | Testing of data is done based on boundary values or between two opposite ends where the ends may be... | 0 | 2024-05-28T06:11:01 | https://dev.to/malaiyarasi/software-testing-techniques-5af7 | Testing of data is done based on boundary values or between two opposite ends where the ends may be like from start to end, or lower to upper or from maximum and minimum.

Decision table testing

Decision table is one of the testing techniques which will help us to test the systems with various sets of inputs. It is also called as cause and effect table.

****

Use case testing

It explain the functionality or requirement can be specify in the use case. It is a techniques that used to identity the test case.

LCSAJ testing(linear code sequence and jump)

White box testing techniques to identify the code distance which begins at the start of the Programe or branch and ends at the end of the program.

| malaiyarasi | |

1,867,241 | E-Commerce App Development | Top E-Commerce Application Services | Discover expert e-commerce app development services from a leading company. Our team specializes in... | 0 | 2024-05-28T06:08:08 | https://dev.to/prachi_pare_e410f7b6715d0/e-commerce-app-development-top-e-commerce-application-services-22n8 | ecommerceappdevelopment, ecommerce, appdevelopment, ecommerceapplication | [Discover expert e-commerce app development services from a leading company. Our team specializes in creating high-quality e-commerce applications and mobile apps, offering innovative ecommerce app design and comprehensive e-commerce app development services. Contact us for custom mobile app development for e-commerce.](https://bhagirathtechnologies.com/services/4) | prachi_pare_e410f7b6715d0 |

1,865,468 | What's new in Angular 18 | A study guide that helps you learn the new version of Angular | 0 | 2024-05-28T06:04:14 | https://www.angularaddicts.com/whats-new-in-angular-18 | angular, typescript, signals, javascript | ---

title: What's new in Angular 18

description: A study guide that helps you learn the new version of Angular

published: true

tags: #angular #typescript #signals #javascript

canonical_url: https://www.angularaddicts.com/whats-new-in-angular-18

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/hbnp353ekvpvsqz5xhj3.png

---

Since I published my

- [Master Angular 17 Study guide](https://www.angularaddicts.com/p/master-angular-17) ,

- [Master Angular 17.1 and 17.2 Study guide](https://www.angularaddicts.com/p/master-angular-17-1-and-17-2) and the

- [What's new in Angular 17.3](https://www.angularaddicts.com/p/whats-new-in-angular-17-3) article

the Angular team released a new major version: Angular 18.

## 🎯Changes and new features

In this article, I list out the most important changes and new features, also share resources that will teach you how these new Angular features work:

- Control flow syntax and defer blocks are now stable

- Angular Material 3 is now stable, [material.angular.io](http://material.angular.io/) is updated by the new themes and documentation

- Experimental change detection without ZoneJS: `provideZonelessChangeDetection`

- Unified control state change events

- Fallback content for `ng-content`

- Route redirect functions

- Coalescing is enabled by default

- `HttpClientModule` deprecation

- Typescript 5.4 support

Now [angular.dev](https://angular.dev/) is the official website for the Angular documentation.

### 📌Experimental change detection without ZoneJS: `provideZonelessChangeDetection`

**Official docs:** [Angular without ZoneJS (Zoneless)](https://angular.dev/guide/experimental/zoneless)

**Merge request / commit:** [feat(core): Add zoneless change detection provider as experimental](https://github.com/angular/angular/pull/55329)

Angular 18 introduces a new, zoneless way to trigger change detection. When using `provideExperimentalZonelessChangeDetection`, Angular will use use Angular APIs to schedule change detection, including:

* `ChangeDetectorRef.markForCheck`

* `ComponentRef.setInput`

* Updating a signal that is read in a template

* When bound host or template listeners are triggered

* When attaching a view that was marked dirty by one of the above

* When removing a view

* When registering a render hook (templates are only refreshed if render hooks do one of the above)

You can try the zoneless change detection with the new `provideExperimentalZonelessChangeDetection` provider, and components using `OnPush` change detection strategy:

```typescript

bootstrapApplication(App, {

providers: [

provideExperimentalZonelessChangeDetection(),

]

});

```

Angular CDK and Angular Material also introduced has zoneless support.

### 📌Unified control state change events

**Official docs:** [AbstractControl.events](https://angular.dev/api/forms/AbstractControl)

**Merge request / commit:** [feat(forms): Unified Control State Change Events](https://github.com/angular/angular/pull/54579)

In Angular 18, the `AbstractControl` class (the base class for `FormControl`, `FormGroup`, and `FormArray`) introduced a new `events: Observable<ControlEvent<TValue>>` property. This property is an observable, that emits for value, status, pristine or touched changes.

```ts

@Component({

selector: 'app-abstract-control-events',

standalone: true,

imports: [ReactiveFormsModule],

template: ` <input [formControl]="titleInputControl" /> `,

})

export class AbstractControlEventsComponent {

titleInputControl = new FormControl<string | null>('', Validators.required);

constructor() {

this.titleInputControl.events.pipe(takeUntilDestroyed()).subscribe(console.log);

}

}

```

If you click on the input field in the example, enter a character, then click outside of the input field, the following events are logged on the browser's console:

```

PristineChangeEvent {pristine: false, source: FormControl2}

ValueChangeEvent {value: 'a', source: FormControl2}

StatusChangeEvent {status: 'VALID', source: FormControl2}

TouchedChangeEvent {touched: true, source: FormControl2}

```

### 📌Fallback content for `ng-content`

**Official docs:** [Content projection with ng-content](https://angular.dev/guide/components/content-projection)

**Merge request / commit:** [feat(core): add support for fallback content in ng-content](https://github.com/angular/angular/pull/54854)

In Angular 18, we can specify a fallback content for `<ng-content>`, this content is rendered when there is no content specified for projection in the parent component:

```ts

@Component({

selector: 'app-header',

template: `

<ng-content select=".title">Default tilte</ng-content>

<ng-content select=".explanation">There is no explanation for this title</ng-content>

`,

})

export class HeaderComponent {}

@Component({

selector: 'app-wrapper',

template: `

<app-header>

<span class="title">First chapter</span>

</app-header>

`,

})

export class WrapperComponent {}

```

These components are rendered as:

```html

<app-wrapper>

<app-header>

<span class="title">First chapter</span>

There is no explanation for this title

</app-header>

</app-wrapper>

```

### 📌Route redirect functions

**Official docs:** [Common router tasks: Setting up redirects](https://angular.dev/guide/routing/common-router-tasks#setting-up-redirects)

**Merge request / commit:** [feat(router): Allow Route.redirectTo to be a function which returns a string or UrlTree](https://github.com/angular/angular/pull/52606)

In Angular 18, a route's `redirectTo` property can be a function that returns a string or `UrlTree`, enabling us to create more dynamic redirects based on the application's state:

```ts

export const routes: Routes = [

// ...,

{

path: "prods", //legacy path

redirectTo: ({ queryParams }) => {

const productId = queryParams['id'];

if (productId) {

return `/products/${productId}`;

} else {

return `/`;

}

}

},

// ...,

];

```

### 📌Coalescing is enabled by default

**Official docs:** [NgZoneOptions](https://angular.dev/api/core/NgZoneOptions)

Starting from Angular 18, [zone coalescing](https://angular.dev/api/core/NgZoneOptions) is enabled by default (`eventCoalescing: true`) for newly created applications. Zone coalescing can reduce the number of the change detection cycles and improve the app's performance.

### 📌`HttpClientModule` deprecation

**Merge request / commit:** [refactor(http): Deprecate HttpClientModule & related modules,Migration schematics for HttpClientModule](https://github.com/angular/angular/pull/54020)

Starting with v18, the preferred way of providing the HTTP client are `provideHttpClient()` and `provideHttpClientTesting()`. `HttpClientModule` and `HttpClientTestingModule` are deprecated now.

When you run `ng update @angular/core`, Angular can automatically migrate your code to use the preferred `provideHttpClient()` and `provideHttpClientTesting()` functions instead of the deprecated modules.

### 📌Typescript 5.4 support

**Merge request / commit:** [feat(compiler-cli): drop support for TypeScript older than 5.4](https://github.com/angular/angular/pull/54961)

[Daniel Rosenwasser](https://twitter.com/drosenwasser) highlighted the most interesting new features of Typescript 5.4 in his [announcement](https://devblogs.microsoft.com/typescript/announcing-typescript-5-4/):

- Preserved Narrowing in Closures Following Last Assignments

- The `NoInfer` Utility Type

- `Object.groupBy` and `Map.groupBy`

- Support for `require()` calls in `--moduleResolution bundler` and `--module preserve`

- Checked Import Attributes and Assertions

- Quick Fix for Adding Missing Parameters

- Auto-Import Support for Subpath Imports

## 👨💻About the author

My name is [Gergely Szerovay](https://www.linkedin.com/in/gergelyszerovay/), I worked as a data scientist and full-stack developer for many years, and I have been working as frontend tech lead, focusing on Angular-based frontend development. As part of my role, I'm constantly following how Angular and the frontend development scene in general is evolving.

Angular has advancing very rapidly over the past few years, and in the past year, with the rise of generative AI, our software development workflows have also evolved rapidly. In order to closely follow the evolution of AI-assisted software development, I decided to start building AI tools in public, and publish my progress on [AIBoosted.dev](https://aiboosted.dev) , [Subscribe here](https://aiboosted.dev) 🚀

Follow me on [Substack (Angular Addicts)](https://www.angularaddicts.com/), [Substack (AIBoosted.dev)](https://aiboosted.dev), [Medium](https://medium.com/@GergelySzerovay), [Dev.to](https://dev.to/gergelyszerovay), [X](https://twitter.com/GergelySzerovay) or [LinkedIn](https://www.linkedin.com/in/gergelyszerovay/) to learn more about Angular, and how to build AI apps with AI, Typescript, React and Angular! | gergelyszerovay |

1,867,222 | 🎯 Ace Your Behavioral Interview: Common Questions and Winning Answers 🎯 | Did you know that 89% of hiring failures are due to poor cultural fit? Mastering behavioral interview... | 0 | 2024-05-28T06:03:48 | https://dev.to/hey_rishabh/-ace-your-behavioral-interview-common-questions-and-winning-answers-4ap0 | Did you know that **89% of hiring failures** are due to poor cultural fit? [Mastering behavioral interview questions](https://instaresume.io/interview-series) can help you stand out and demonstrate that you're the perfect fit for the company. Let's dive into some of the most frequently asked behavioral interview questions and how to answer them like a pro. 🌟

### 1. [Tell me about a time you faced a significant challenge at work. How did you handle it](https://instaresume.io/blog/how-to-handle-stress-at-work)? 🚧

**Key Points to Address**

- Situation 🌍

- Task 📋

- Action ⚡

- Result 🎉 (STAR Method)

**Example Answer**

> "In my previous role as a project manager, we encountered a critical issue where a key supplier suddenly went out of business, threatening to derail our project timeline. I quickly gathered my team for a brainstorming session and identified alternative suppliers. I negotiated new contracts and managed to secure the necessary materials within a week. As a result, we delivered the project on time, maintaining our reputation for reliability and saving the company $50,000 in potential penalties."

### 2. Describe a time when you had to work with a difficult colleague. 🤝

**Key Points to Address**

- Understanding the colleague's perspective 🧠

- Effective communication 🗣️

- Conflict resolution 🌈

**Example Answer**

> "While working as a marketing coordinator, I had a colleague who was often uncooperative during team projects. I initiated a one-on-one conversation to understand their concerns and discovered they felt their ideas weren't being heard. I proposed a new approach to our meetings where everyone could share their thoughts at the start. This improved our collaboration and led to a 15% increase in team productivity."

### 3. Give an example of a goal you set and how you achieved it. 🎯

**Key Points to Address**

- Specific goal 🥅

- Steps taken 🛠️

- Outcome 🏆

**Example Answer**

> "At my previous company, I set a goal to increase our social media engagement by 25% within six months. I conducted a thorough analysis of our current strategy and identified key areas for improvement. By implementing a content calendar, engaging with followers more actively, and leveraging analytics to fine-tune our posts, we surpassed our goal, achieving a 30% increase in engagement in just five months."

### 4. [How do you handle multiple tasks and priorities](https://instaresume.io/blog/how-to-handle-stress-at-work)? 📅

**Key Points to Address**

- [Time management](https://instaresume.io/blog/benefits-of-time-management-2023) ⏰

- Prioritization 🗂️

- Use of tools 🛠️

**Example Answer**

> "In my role as an executive assistant, I often had to juggle multiple tasks with tight deadlines. I used a combination of prioritization techniques, such as the Eisenhower Matrix, and project management tools like Trello. By categorizing tasks based on urgency and importance, and regularly updating my to-do list, I consistently met deadlines and supported the executive team effectively."

### 5. Describe a situation where you had to adapt to a major change. 🔄

**Key Points to Address**

- Flexibility 🌿

- Problem-solving 🧩

- Positive outcome 🌟

**Example Answer**

> "During a company merger, my department underwent significant restructuring. I took the initiative to familiarize myself with the new processes and systems by attending additional training sessions. I also organized a weekly meeting with my team to address any concerns and ensure a smooth transition. Our department not only adapted quickly but also improved efficiency by 20%."

---

### Visual Breakdown: Mastering the STAR Method ⭐

](https://dev-to-uploads.s3.amazonaws.com/uploads/articles/fs9e94etv8y5122fq4n8.png)

- **Situation:** Set the context for your story. 🌍

- **Task:** Explain the task you needed to accomplish. 📋

- **Action:** Describe the actions you took to address the task. ⚡

- **Result:** Share the outcome of your actions. 🎉

For more tips and insights on acing your interviews and enhancing your skills, check out these [helpful blogs from InstaResume](https://www.instaresume.io/blog)

https://instaresume.io/blog/common-interview-questions-and-answers

https://instaresume.io/blog/why-do-you-want-this-job

https://instaresume.io/blog/mastering-behavioural-interview-questions

https://instaresume.io/blog/where-do-you-see-yourself-in-5-years Good luck! 🍀🌟 | hey_rishabh | |

1,867,239 | The Critical Role of Mobile Optimization in Web Design and SEO | As the number of mobile users continues to surge, the importance of mobile optimization in web... | 0 | 2024-05-28T06:03:36 | https://dev.to/annamariapascual/the-critical-role-of-mobile-optimization-in-web-design-and-seo-1574 | webdev, javascript | <p><img src="https://play-media.org/wp-content/uploads/2022/06/why-is-mobile-optimization-important.jpg" alt="Why is Mobile Optimization Super Important in 2023" width="615" height="345" /></p>

<p>As the number of mobile users continues to surge, the importance of mobile optimization in web design and SEO has never been more pronounced. A mobile-optimized website is not just a convenience; it’s a necessity. Here’s why mobile optimization should be at the forefront of your digital strategy:</p>

<h2><strong>The Critical Role of Mobile Optimization in Web Design and SEO</strong></h2>

<p><strong>Mobile Usage Trends</strong> </p>

<p>The mobile revolution has changed the way people access the internet. With smartphones becoming increasingly prevalent, more users are browsing the web on-the-go. This shift in user behavior means that websites must be designed with mobile users in mind to provide a seamless and accessible experience.</p>

<p><strong>Mobile-First Design Philosophy</strong> </p>

<p>Adopting a mobile-first design philosophy involves creating a website with the mobile user’s needs as the primary focus. This approach ensures that the most critical information and functionality are presented in a clear, concise manner on smaller screens. It’s about prioritizing content and features that matter most to mobile users.</p>

<p><strong>Impact on User Experience (UX)</strong> </p>

<p>Mobile optimization directly impacts UX. A mobile-friendly website loads quickly, has touch-friendly navigation, and scales content appropriately for smaller screens. This leads to higher user satisfaction, longer engagement times, and lower bounce rates, which are all positive signals to search engines.</p>

<p><strong>SEO Advantages</strong> </p>

<p>Google and other search engines have recognized the shift towards mobile usage and have adjusted their algorithms accordingly. Mobile optimization is now a significant ranking factor. Websites that provide a superior mobile experience are more likely to rank higher in search results, making mobile optimization a critical component of SEO.</p>

<p><strong>Responsive Web Design</strong> </p>

<p>Responsive <span style="color: #3366ff;"><strong><a style="color: #3366ff;" href="https://agenciafort.com.br/criacao-de-sites/">web design criação de sites aqui</a></strong></span> is a technique that allows a website to adapt its layout to the screen size of the device it’s being viewed on. This flexibility ensures that whether a user is on a smartphone, tablet, or desktop, the website provides an optimal viewing experience.</p>

<p><strong>Speed Optimization</strong> </p>

<p>Mobile users expect fast loading times. Speed optimization techniques such as image compression, caching, and minimizing code can significantly improve a website’s loading speed on mobile devices. Faster websites not only provide a better user experience but also contribute to better SEO performance.</p>

<p><strong>Local SEO and Mobile</strong></p>

<p>For businesses with a local presence, mobile optimization is even more critical. Mobile users often search for local information, and a mobile-optimized site with <strong><span style="color: #3366ff;"><a style="color: #3366ff;" href="https://agenciafort.com.br/consultor-seo-local/">local SEO</a></span></strong> can drive foot traffic to physical locations.</p>

<p>In conclusion, mobile optimization is a cornerstone of modern web design and SEO. It enhances user experience, improves search engine rankings, and caters to the growing number of users who rely on mobile devices for their internet usage. Ignoring mobile optimization is no longer an option for businesses that want to succeed online.</p> | annamariapascual |

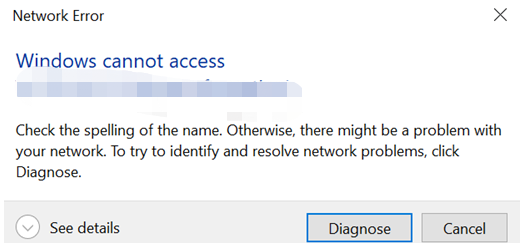

1,867,238 | Synology-Shared Folder Access Error | Background: A Synology NAS is connected two networks, N1 and N2, both are able to reach Domain... | 0 | 2024-05-28T06:02:24 | https://dev.to/feng_wei/synology-shared-folder-access-error-g23 | synology | Background:

1. A Synology NAS is connected two networks, N1 and N2, both are able to reach Domain X.

2. The NAS was joined Domain X via N1. Share folders access is configured for domain users.

3. The NAS was disconnected from N1 recently.

Issue:

Users reported shared folder is inaccessible due to "Network Error".

Actions(Done within N2):

1. Verify NAS OS status and test shared folder access. OS is normal but shared folder can't be accessed.

2. Restart SMB service then following by restarting NAS. Issue persists.

3. Check default gateway and domain status. Noticed that domain was unavailable. Issue is rectified after rejoining Domain X with N2.

Conclusion:

Domain availability will cause shared folder access issue due to authentication, possibly.

I also noticed the same error, which appears to be caused by a time synchronization issue. In this case, the NAS NTP server was invalid, leading to a time discrepancy of more than 5 minutes between the domain and the NAS itself. | feng_wei |

1,866,629 | Rejeição de teste fechado do Google ~Você precisa pensar em como conseguir testadores~ | Este é um artigo para aqueles que se registraram ou podem se registrar como novos desenvolvedores... | 0 | 2024-05-28T06:00:00 | https://dev.to/zmsoft/rejeicao-de-teste-fechado-do-google-voce-precisa-pensar-em-como-conseguir-testadores-5bk8 | googleplay, androiddev, testefechado, google | Este é um artigo para aqueles que se registraram ou podem se registrar como novos desenvolvedores após a mudança de política do Google. Estou publicando [um aplicativo gratuito(DevsPayForward)] (https://play.google.com/store/apps/details?id=com.andro.zm.tools.androidtesterspayforward) para que esses desenvolvedores cooperem uns com os outros. Se você ler o artigo e estiver interessado, use-o.

O Google exigiu um teste de 14 dias com 20 pessoas. Como desenvolvedor independente, isso por si só já é difícil, mas parece que alguns desenvolvedores tiveram recentemente seus resultados rejeitados após a conclusão do teste e tiveram que começar o teste novamente. Se isso acontecer, pode levar muito tempo, e o desenvolvedor pode ficar exausto. Os desenvolvedores precisam se proteger.

- [Rejeição do Google](#rejeição-do-google)

- [O que devemos fazer para evitar sermos rejeitados](#o-que-devemos-fazer-para-evitar-sermos-rejeitados)

- [No início da fase de avaliação](#no-início-da-fase-de-avaliação)

- [Durante a avaliação](#durante-a-avaliação)

- [Após a conclusão da avaliação](#após-a-conclusão-da-avaliação)

- [Protegendo um testador](#protegendo-um-testador)

- [Finalmente](#finalmente)

## Rejeição do Google

A seguir, uma lista de rejeições.

* Os testadores não se envolveram com seu aplicativo durante o teste fechado

* Você não seguiu as práticas recomendadas de teste, que podem incluir a coleta e a ação sobre o feedback do usuário por meio de atualizações do seu aplicativo.

* Suas respostas às perguntas do aplicativo sobre seu aplicativo, teste fechado ou prontidão para produção foram incompetentes ou insuficientes.

Isso é o que está descrito, e não sei qual é o problema claro. Mas acho que o ponto principal é que eles foram rejeitados porque não veem o objetivo de melhorar a qualidade, que é o propósito dos testes fechados.

# O que devemos fazer para evitar sermos rejeitados

Possíveis ações de rejeição e possíveis soluções alternativasO que posso fazer para que eles percebam que o teste está funcionando bem o suficiente para evitar a rejeição?

* No início da fase de avaliação

* Durante a avaliação

* Após a conclusão da avaliação

## No início da fase de avaliação

Fase inicial de avaliaçãoPrimeiro, é muito importante conseguir testadores que possam fazer testes confiáveis. Há falhas nas regras atuais do Google. Não entrarei em detalhes aqui, mas é possível fazer com que pareça que você concluiu o teste sem ter que preparar 20 contas ou até mesmo instalar o aplicativo. E a dificuldade com esse problema é que, mesmo que um desenvolvedor siga as regras, não há garantia de que seus testadores seguirão as regras. É muito difícil conseguir testadores confiáveis. A maneira mais fácil é usar um serviço pago, mas essa pode ser uma escolha difícil para um desenvolvedor que não sabe se conseguirá gerar muita receita. Isso é um pouco extenso e será discutido em mais detalhes posteriormente.

### Durante a avaliação

Durante a avaliaçãoDurante o período de testes, até mesmo os bugs menores devem ser corrigidos e atualizados. Isso esclarecerá seu compromisso com os testes.

### Após a conclusão da avaliação

No final da avaliaçãoResponda às perguntas do Google no final do teste. Eu mesmo enviei vários aplicativos e não escrevi muita história em minhas respostas, mas felizmente não fui rejeitado. É provável que haja muitos fatores no início e durante o processo de avaliação, mas o que você descreveu aqui poderá ajudá-lo se estiver prestes a ser julgado como alvo de rejeição.

## Protegendo um testador