id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,910,247 | Temporary saving of work using git stash | Introduction git stash to temporarily save changes you are working on. How to... | 0 | 2024-07-03T13:34:12 | https://dev.to/untilyou58/temporary-saving-of-work-using-git-stash-hpl | git, beginners, tutorial, programming | ## Introduction

`git stash` to temporarily save changes you are working on.

## How to use it

### Save changes temporarily

```cmd

git stash

```

This saves the changes in your current working directory to a stash, leaving your working directory clean.

### Apply the stash

```cmd

git stash apply

```

This applies th... | untilyou58 |

1,910,248 | Automate and Scale with Cutting-Edge DevOps Solutions | In today's fast-paced digital landscape, businesses are increasingly turning to DevOps practices to... | 0 | 2024-07-03T13:34:04 | https://dev.to/jasonstathum6/automate-and-scale-with-cutting-edge-devops-solutions-3fe5 | devops, ai | In today's fast-paced digital landscape, businesses are increasingly turning to DevOps practices to streamline their development processes and accelerate time-to-market. At the heart of DevOps lies the philosophy of merging development (Dev) and operations (Ops) to foster collaboration and efficiency throughout the sof... | jasonstathum6 |

1,910,246 | Finding Numbers Divisible by 3 and 5 | Hey Dev Community! Are you diving into C programming and looking for a hands-on exercise to sharpen... | 0 | 2024-07-03T13:32:11 | https://dev.to/moksh57/finding-numbers-divisible-by-3-and-5-30cb | c, programming |

Hey Dev Community!

Are you diving into C programming and looking for a hands-on exercise to sharpen your skills? I've just published a new blog post where I walk you through writing a simple C program to find numbers between 1 and 50 that are divisible by both 3 and 5. This exercise is perfect for beginners aiming to... | moksh57 |

1,910,245 | My first Django project, the problem I faced and how I overcome it. | I started learning Django framework some months ago, as a developer I believe that the main purpose... | 0 | 2024-07-03T13:26:19 | https://dev.to/jamiukayode27/my-first-django-project-the-problem-i-faced-and-how-i-overcome-it-4j23 | I started learning Django framework some months ago, as a developer I believe that the main purpose of learning is to use it to solve a problem and to be able to solve a big problem you must start from solving small small problems, by pushing and critical thinking I know I will be able to solve a problem where world ... | jamiukayode27 | |

1,910,244 | SDK | Комплект для разработки программного обеспечения ( SDK ) — это набор инструментов для разработки... | 0 | 2024-07-03T13:23:13 | https://dev.to/asadbekit/sdk-1k6n | Комплект для разработки программного обеспечения ( SDK ) — это набор инструментов для разработки программного обеспечения в одном устанавливаемом пакете. Они облегчают создание приложений, имея компилятор, отладчик и иногда программную структуру . Они обычно специфичны для комбинации аппаратной платформы и операционной... | asadbekit | |

1,910,243 | Temu coupon code AAV67880 OR AAF63818: 2024 for existing customers | Temu provides exclusive coupons and vouchers for specific products, allowing you to save more on your... | 0 | 2024-07-03T13:23:06 | https://dev.to/sonuprasad/temu-coupon-code-aav67880-or-aaf63818-2024-for-existing-customers-4c9j | Temu provides exclusive coupons and vouchers for specific products, allowing you to save more on your favorite items. Make sure to check the product pages for any available discounts and apply them at checkout.

Temu Coupon Codes

For an even greater discount, use the following Temu coupon codes:

$100 Off Code: Use AAV... | sonuprasad | |

1,910,235 | CLR | Common Language Runtime (англ. CLR — общеязыковая исполняющая среда) — исполняющая среда для... | 0 | 2024-07-03T13:21:57 | https://dev.to/fazliddin7777/clr-3p3e | Common Language Runtime (англ. CLR — общеязыковая исполняющая среда) — исполняющая среда для байт-кода CIL (MSIL), в который компилируются программы, написанные на .NET-совместимых языках программирования (C#, Managed C++, Visual Basic .NET, F# и прочие). CLR является одним из основных компонентов пакета Microsoft .NET... | fazliddin7777 | |

1,910,227 | Open Source Scams | Look carefully at the image for this article. Did you see anything "funny" about it? Let me enlighten... | 0 | 2024-07-03T13:21:14 | https://dev.to/polterguy/open-source-scams-4jb3 | lowcode, security | Look carefully at the image for this article. Did you see anything _"funny"_ about it? Let me enlighten you.

What you're looking at is an Open Source project. They're worth 1.2 billion US dollars according to their latest VC evaluation. Specifically you're looking at the history of their _"Star gazers"_ according to G... | polterguy |

1,901,703 | Optimizing RAG Through an Evaluation-Based Methodology | In today's fast-paced, information-rich world, AI is revolutionizing knowledge management. The... | 0 | 2024-07-03T13:18:22 | https://qdrant.tech/articles/rapid-rag-optimization-with-qdrant-and-quotient/ | rag, tutorial, opensource, datascience | In today's fast-paced, information-rich world, AI is revolutionizing knowledge management. The systematic process of capturing, distributing, and effectively using knowledge within an organization is one of the fields in which AI provides exceptional value today.

> The potential for AI-powered knowledge management in... | atita_arora |

1,910,187 | Understanding SEO: Why It Matters and How to Use It | As developers, we often find ourselves lost in the intricacies of code, focusing on functionality and... | 0 | 2024-07-03T13:18:00 | https://dev.to/best_codes/understanding-seo-why-it-matters-and-how-to-use-it-45pe | webdev, beginners, tutorial, website | As developers, we often find ourselves lost in the intricacies of code, focusing on functionality and design. However, there's another crucial aspect of web development that we sometimes overlook: Search Engine Optimization (SEO). In this post, we'll explore why SEO is essential for developers and how we can incorporat... | best_codes |

1,910,186 | 5-Step Guide to Mobile App Testing Automation | The global mobile app market has been growing at more than 11.5% per year and is now worth more than... | 0 | 2024-07-03T13:17:24 | https://dev.to/morrismoses149/5-step-guide-to-mobile-app-testing-automation-199g | mobileapp, testing, automation, testgrid | The global mobile app market has been growing at more than 11.5% per year and is now worth more than $154.06 billion after the COVID-19 shift toward remote work has increased and time spent online has gone up.

With over 10.97 billion mobile connections worldwide, the demand for sophisticated, high-performance B2B and B... | morrismoses149 |

1,910,185 | .Net | .Netga xush kelibsiz. Siz buni ishlab chiqish uchun C#, F#, Visual Basic tillaridan foydalanish... | 0 | 2024-07-03T13:16:32 | https://dev.to/jurabek777/net-14oe | .Netga xush kelibsiz. Siz buni ishlab chiqish uchun C#, F#, Visual Basic tillaridan foydalanish mumkin.

.NET Framework — bu kodning bajarilishini boshqaruvchi Common Language Runtime (CLR) va ilovalarni yaratish uchun sinflarning boy kutubxonasini ta’minlovchi Base Class Library (BCL) larni o’z ichiga olgan ishlab chiq... | jurabek777 | |

1,910,183 | Implementing Email and Mobile OTP Verification in Django: A Comprehensive Guide | In today's digital landscape, ensuring the authenticity of user accounts is paramount for web... | 0 | 2024-07-03T13:13:53 | https://dev.to/rupesh_mishra/implementing-email-and-mobile-otp-verification-in-django-a-comprehensive-guide-4oo0 |

In today's digital landscape, ensuring the authenticity of user accounts is paramount for web applications. One effective method to achieve this is through email and mobile number verification using One-Time Passwords (OTPs). This article will guide you through the process of implementing OTP verification in a Django... | rupesh_mishra | |

1,910,182 | . | .NET основана на .NET Framework. Платформа .NET отличается от неё модульностью,... | 0 | 2024-07-03T13:08:43 | https://dev.to/asadbekit/-57b1 | .NET основана на .NET Framework. Платформа .NET отличается от неё модульностью, кроссплатформенностью, возможностью применения облачных технологий, и тем, что в ней произошло разделение между библиотекой CoreFX и средой выполнения CoreCLR[6].

.NET — модульная платформа. Каждый её компонент обновляется через менеджер п... | asadbekit | |

1,910,181 | . | .NET основана на .NET Framework. Платформа .NET отличается от неё модульностью,... | 0 | 2024-07-03T13:08:41 | https://dev.to/asadbekit/-2n9g | .NET основана на .NET Framework. Платформа .NET отличается от неё модульностью, кроссплатформенностью, возможностью применения облачных технологий, и тем, что в ней произошло разделение между библиотекой CoreFX и средой выполнения CoreCLR[6].

.NET — модульная платформа. Каждый её компонент обновляется через менеджер п... | asadbekit | |

1,910,179 | Bug Report For SAW | Click to view the bug report Exploratory Test Report for Scrapeanywebsite Desktop... | 0 | 2024-07-03T13:08:05 | https://dev.to/godsgift_uloamaka_72c38ef/bug-report-for-saw-4h6l | programming, testing, design | [Click to view the bug report ](https://eu.docworkspace.com/d/sIO-_2OKHAYjslLQG)

## Exploratory Test Report for Scrapeanywebsite Desktop application

[Scrape Any Website](https://scrapeanyweb.site/)

Date: July 3, 2024

**Objective:** The objective of this test is to explore the functionalities, usability issues and ... | godsgift_uloamaka_72c38ef |

1,910,178 | Okay! Next.js 🤯 is not that bad. | I have a love-hate relationship with JavaScript! Having worked with it for more than 10 years... | 0 | 2024-07-03T13:04:17 | https://dev.to/kwnaidoo/okay-nextjs-is-not-that-bad-1e37 | watercooler, productivity, webdev, nextjs | I have a love-hate relationship with JavaScript! Having worked with it for more than 10 years building sliders, jQuery UIs, and React SPAs, I know its warts and imperfections fairly well, but alas compared to its lesser-known competitor VBScript, I'll take JS thank you!

Fast forward to 2024, you can't get very far wi... | kwnaidoo |

1,910,177 | Coaxial Cable Shielding: Protecting Against Electromagnetic Interference | Are you dying to watch your favourite TV serial but the fuzzy signals and somewhat uncertain... | 0 | 2024-07-03T13:04:07 | https://dev.to/guadalupe_porters_c7ccb16/coaxial-cable-shielding-protecting-against-electromagnetic-interference-25fg | design | Are you dying to watch your favourite TV serial but the fuzzy signals and somewhat uncertain disconnections are breaking the fun!(: You are not the only one who had to deal with this annoying problem! Electromagnetic interference (EMI) is what causes these interruptions. Coaxial cable shielding saviors the day and make... | guadalupe_porters_c7ccb16 |

1,910,175 | Wednesday Links - Edition 2024-07-03 | Choosing the Right JDK Version: An Unofficial Guide (7... | 6,965 | 2024-07-03T13:00:43 | https://dev.to/0xkkocel/wednesday-links-edition-2024-07-03-20ch | java, jvm, testcointainers, uuid | Choosing the Right JDK Version: An Unofficial Guide (7 min)🪃

https://blogs.oracle.com/java/post/choosing-the-right-jdk-version

Wait you can place Java annotations there? (3 min)🐒

https://mostlynerdless.de/blog/2024/06/28/wait-you-can-place-java-annotations-there/

Reducing Testcontainers Execution Time with JUnit 5 ... | 0xkkocel |

1,910,174 | Personal Front End Journey | welcome folks 😀, I decided it was long overdue for me to learn some front end skills. Being educated... | 0 | 2024-07-03T12:59:19 | https://dev.to/marcos_/personal-front-end-journey-4h56 | webdev, beginners | welcome folks 😀,

I decided it was long overdue for me to learn some front end skills. Being educated in computer engineering I learned many things about opperating systems, compilers, and computer architecture.

However surprisingly little about webdev. For the next few weeks I intend to self teach some of the basics... | marcos_ |

1,910,155 | The HNG11 Internship program can significantly help me achieve my goals in several ways: | https://hng.tech/ Professional Objectives: Design Leadership: HNG's team-based projects and... | 0 | 2024-07-03T12:54:55 | https://dev.to/jeremiah_leke_e65e49d6a2f/the-hng11-internship-program-can-significantly-help-me-achieve-my-goals-in-several-ways-4jf9 | webdev, beginners, javascript, programming | https://hng.tech/

Professional Objectives:

1. _Design Leadership_: HNG's team-based projects and collaborations can help me develop leadership skills and experience.

2. _Expertise Expansion_: HNG's diverse projects and mentorship can expose me to emerging design technologies and best practices.

3. _Cross-Functional C... | jeremiah_leke_e65e49d6a2f |

1,910,173 | Harnessing Durability: The Role of Chopped Strand Mat in Reinforced Plastics | Durability begins to take the stage: Chopped Strand Mat in reinforced plastics You may have read... | 0 | 2024-07-03T12:52:08 | https://dev.to/guadalupe_porters_c7ccb16/harnessing-durability-the-role-of-chopped-strand-mat-in-reinforced-plastics-4f3p | design | Durability begins to take the stage: Chopped Strand Mat in reinforced plastics

You may have read about reinforced plastics, if you are familiar with different kinds of plastic. These are essentially the plastics we use in our everyday lives but a bit better - they are actually sterner and more resistant. Chopped stran... | guadalupe_porters_c7ccb16 |

1,910,172 | Linux User Creation Bash Script | Problem Statement Your company hng has employed many new developers. As a SysOps engineer,... | 0 | 2024-07-03T12:51:58 | https://dev.to/don-fortune/linux-user-creation-bash-script-73h | ## Problem Statement

Your company [hng](https://hng.tech/internship) has employed many new developers. As a SysOps engineer, write a bash script called `create_users.sh` that reads a text file containing the employee’s usernames and group names, where each line is formatted as `user;groups`. The script should create us... | don-fortune | |

1,910,171 | 토토커뮤니티 | 2024년, 온라인 토토사이트 커뮤니티는 더욱 활발하게 성장하고 있습니다. 이 가운데 '아웃룩 인디아 토토커뮤니티 플러그인 플레이 먹튀로얄'은 사용자들에게 안전하고 신뢰할 수 있는... | 0 | 2024-07-03T12:49:34 | https://dev.to/totocommunity02/totokeomyuniti-292c | 2024년, 온라인 토토사이트 커뮤니티는 더욱 활발하게 성장하고 있습니다. 이 가운데 '아웃룩 인디아 토토커뮤니티 플러그인 플레이 먹튀로얄'은 사용자들에게 안전하고 신뢰할 수 있는 베팅 환경을 제공하며, 다양한 스포츠 이벤트와 게임 옵션으로 큰 인기를 끌고 있습니다. 이번 기사에서는 '먹튀로얄'이 왜 2024년 최고의 토토사이트 커뮤니티로 선정되었는지, 그 이유와 장점, 제공하는 서비스에 대해 자세히 살펴보겠습니다.

아웃룩 인디아: 신뢰할 수 있는 정보 제공

아웃룩 인디아'는 토토사이트의 신뢰성과 안전성을 평가하고 검증하는 데 중요한 역할을 합니다. '먹튀'란 사용... | totocommunity02 | |

1,910,170 | Learn Backend Development for Free: Top Websites to Get You Started 🎓✨ | Hey everyone 👋 Whether you're a newbie or looking to sharpen your skills, these resources have you... | 0 | 2024-07-03T12:49:10 | https://dev.to/devella/learn-backend-development-for-free-top-websites-to-get-you-started-1d3j | beginners, webdev, programming, backend | **Hey everyone 👋**

**Whether you're a newbie or looking to sharpen your skills, these resources have you covered ✅**

> _Explore interactive tutorials, comprehensive courses, and hands-on projects that make learning fun and effective._

Here are **10 websites to learn backend development for free** ⬇️:

_**》Share... | devella |

1,910,029 | 【TypeScript】Displaying ChatGPT-like Streaming Responses with trpc in React | Purpose In chat services powered by generative AI like OpenAI's ChatGPT and Anthropic's... | 0 | 2024-07-03T12:49:09 | https://dev.to/mikan3rd/typescript-displaying-chatgpt-like-streaming-responses-with-trpc-in-react-3mnb | trpc, openai, typescript, react | # Purpose

In chat services powered by generative AI like OpenAI's ChatGPT and Anthropic's Claude, a **UI that gradually displays text by receiving data streamed from the generative AI model** is adopted. With a typical request/response format, you need to wait until the AI processing is completely finished, which can c... | mikan3rd |

1,910,169 | Distributed Hash Generation Algorithm in Python — Twitter Snowflake Approach | Into The Background… In any software development lifecycle, unique IDs play a major role... | 0 | 2024-07-03T12:46:18 | https://dev.to/manandoshi1301/distributed-hash-generation-algorithm-in-python-twitter-snowflake-approach-48mf | distributedsystems, softwaredevelopment, hashing, systemdesign | ## Into The Background…

In any software development lifecycle, unique IDs play a major role in manipulating and showcasing data. A lot depends on the uniqueness of each data segment associated with user transactions.

There are several ways to generate unique IDs, but when it comes to generating hashes at a rate of app... | manandoshi1301 |

1,910,168 | 토토커뮤니티 | 2024년, 온라인 토토사이트 커뮤니티는 더욱 활발하게 성장하고 있습니다. 이 가운데 '아웃룩 인디아 토토커뮤니티 플러그인 플레이 먹튀로얄'은 사용자들에게 안전하고 신뢰할 수 있는... | 0 | 2024-07-03T12:46:05 | https://dev.to/totocommunity02/totokeomyuniti-57dn | 2024년, 온라인 토토사이트 커뮤니티는 더욱 활발하게 성장하고 있습니다. 이 가운데 '아웃룩 인디아 토토커뮤니티 플러그인 플레이 먹튀로얄'은 사용자들에게 안전하고 신뢰할 수 있는 베팅 환경을 제공하며, 다양한 스포츠 이벤트와 게임 옵션으로 큰 인기를 끌고 있습니다. 이번 기사에서는 '먹튀로얄'이 왜 2024년 최고의 토토사이트 커뮤니티로 선정되었는지, 그 이유와 장점, 제공하는 서비스에 대해 자세히 살펴보겠습니다.

아웃룩 인디아: 신뢰할 수 있는 정보 제공

아웃룩 인디아'는 토토사이트의 신뢰성과 안전성을 평가하고 검증하는 데 중요한 역할을 합니다. '먹튀'란 사용... | totocommunity02 | |

1,910,167 | PROCESS OF REGISTERING FOR UDYAM REGISTERATION | Certainly! Here's a step-by-step guide to registering for Udyam Registration in India: Step 1: Visit... | 0 | 2024-07-03T12:45:29 | https://dev.to/neelu_jarika_3239ec190277/process-of-registering-for-udyam-registeration-2d1l | udyamregisteration, business |

Certainly! Here's a step-by-step guide to registering for

[Udyam Registration](https://udyogaadhaaronline.org/) in India:

Step 1: Visit the Udyam Registration Portal

Go to the Udyam Registration portal

Step 2: Create an Account (if necessary)

If you're visiting the portal for the first time, you may need to create an... | neelu_jarika_3239ec190277 |

1,910,166 | Advantages of BitPower Loop DeFi | Introduction The rapid development of blockchain technology has brought revolutionary changes to the... | 0 | 2024-07-03T12:45:26 | https://dev.to/woy_ca2a85cabb11e9fa2bd0d/advantages-of-bitpower-loop-defi-4phi | btc |

Introduction

The rapid development of blockchain technology has brought revolutionary changes to the financial industry, and decentralized finance (DeFi) has become one of the important innovations. The BitPower Loo... | woy_ca2a85cabb11e9fa2bd0d |

1,910,164 | Top Reasons Why Businesses with Hybrid Web Apps are More Successful in 2024 An Experts Overview | Do you know why smartphones have become so popular in such a short time? Smartphones can do various... | 0 | 2024-07-03T12:45:10 | https://dev.to/ashe_leo/top-reasons-why-businesses-with-hybrid-web-apps-are-more-successful-in-2024-an-experts-overview-519d | webdev, web, website, mobile |

Do you know why smartphones have become so popular in such a short time? Smartphones can do various things, including sending emails, watching films, and using social networks. They are becoming more popular as the... | ashe_leo |

1,910,162 | Advantages of BitPower Loop DeFi | Introduction The rapid development of blockchain technology has brought revolutionary changes to the... | 0 | 2024-07-03T12:43:16 | https://dev.to/woy_621fc0f3ac62fff68606e/advantages-of-bitpower-loop-defi-4eon | btc |

Introduction

The rapid development of blockchain technology has brought revolutionary changes to the financial industry, and decentralized finance (DeFi) has become one of the important innovations. The BitPower Loo... | woy_621fc0f3ac62fff68606e |

1,910,161 | Advantages of BitPower Loop DeFi | Introduction The rapid development of blockchain technology has brought revolutionary changes to the... | 0 | 2024-07-03T12:40:40 | https://dev.to/wot_ee4275f6aa8eafb35b941/advantages-of-bitpower-loop-defi-57ai | btc |

Introduction

The rapid development of blockchain technology has brought revolutionary changes to the financial industry, and decentralized finance (DeFi) has become one of the important innovations. The BitPower Loo... | wot_ee4275f6aa8eafb35b941 |

1,907,530 | Django QuerySets Are Lazy | Introduction When working with Django's ORM, one of the fundamental aspects you'll... | 0 | 2024-07-02T08:08:37 | https://dev.to/doridoro/django-querysets-are-lazy-l01 | ## Introduction

When working with Django's ORM, one of the fundamental aspects you'll encounter is the laziness of QuerySets. This characteristic significantly impacts how you write and optimize your code. But what does it mean to say that "Django QuerySets are lazy"? Let's explore this concept, and understand the imp... | doridoro | |

1,910,160 | UK Proofreaders Services | UK Proofreaders Services because their experineces in a range of feilds sectors, our editors can... | 0 | 2024-07-03T12:40:12 | https://dev.to/ukproofreaders/uk-proofreaders-services-511a | [UK Proofreaders Services](https://www.ukproofreaders.co.uk) because their experineces in a range of feilds sectors, our editors can guarantee that your work is not that only error free but also compliant with industry norms and expectation. | ukproofreaders | |

1,910,158 | Paper detailing BitPower Loop’s security | Security Research of BitPower Loop BitPower Loop is a decentralized lending platform based on... | 0 | 2024-07-03T12:39:10 | https://dev.to/wgac_0f8ada999859bdd2c0e5/paper-detailing-bitpower-loops-security-1kpi | Security Research of BitPower Loop

BitPower Loop is a decentralized lending platform based on blockchain technology, dedicated to providing users with safe, transparent and efficient financial services. Its core security comes from multi-level technical measures and mechanism design, which ensures the robust operation ... | wgac_0f8ada999859bdd2c0e5 | |

1,910,157 | Advantages of BitPower Loop DeFi | Introduction The rapid development of blockchain technology has brought revolutionary changes to the... | 0 | 2024-07-03T12:38:57 | https://dev.to/wot_dcc94536fa18f2b101e3c/advantages-of-bitpower-loop-defi-17kk | btc | Introduction

The rapid development of blockchain technology has brought revolutionary changes to the financial industry, and decentralized finance (DeFi) has become one of the important innovations. The BitPower Loop DeFi platform is a decentralized smart contract protocol based on the Ethereum Virtual Machine (EVM), w... | wot_dcc94536fa18f2b101e3c |

1,910,065 | How to run Llama model locally on MacBook Pro and Function calling in LLM -Llama web search agent breakdown | Easily install Open source Large Language Models (LLM) locally on your Mac with Ollama.On a basic M1... | 0 | 2024-07-03T12:36:13 | https://dev.to/selvapal/how-to-run-llama-model-locally-on-macbook-pro-and-function-calling-in-llm-llama-web-search-agent-breakdown-12dl | llm, genai, langchain, functioncalling | Easily install Open source Large Language Models (LLM) locally on your Mac with Ollama.On a basic M1 Pro Macbook with 16GB memory, this configuration takes approximately 10 to 15 minutes to get going. The model itself starts up in less than ten seconds after the setup is finished.

1. Go to >> https://ollama.com/downlo... | selvapal |

1,910,154 | Describe and Discuss correlation and regression | Correlation evaluates the degree and direction of a linear relationship between two variables, with... | 0 | 2024-07-03T12:33:40 | https://dev.to/durga_trainer_98594e47328/describe-and-discuss-correlation-and-regression-268c | programming, datascience, database, datastructures | Correlation evaluates the degree and direction of a linear relationship between two variables, with values ranging from -1 (perfect negative) to +1 (perfect positive). It is assessed using a correlation coefficient.

Regression, especially linear regression, is the process of modelling the connection between a dependent... | durga_trainer_98594e47328 |

1,910,153 | Driving Innovation: Plastic Masterbatch Manufacturers Leading the Way | Plastic masterbatch manufacturers, as their name suggests, are companies that innovate in the fields... | 0 | 2024-07-03T12:32:37 | https://dev.to/kamila_bullockz_a15641e8e/driving-innovation-plastic-masterbatch-manufacturers-leading-the-way-23dn | design | Plastic masterbatch manufacturers, as their name suggests, are companies that innovate in the fields of coloring plastics. These are the ones that by using special pigments and additives, give a color to plastic products better than most. These manufacturers are essential to the operation of the industry by saving mone... | kamila_bullockz_a15641e8e |

1,910,151 | Introduction to Business Analytics | Business analytics is the sophisticated practice of exploring organizational data to derive... | 0 | 2024-07-03T12:31:59 | https://dev.to/durga_trainer_98594e47328/introduction-to-business-analytics-24if | data, clinical, programming, sass | Business analytics is the sophisticated practice of exploring organizational data to derive actionable insights through statistical analysis. It involves leveraging historical and real-time data, employing advanced statistical techniques, and using predictive models to inform strategic decision-making and drive busines... | durga_trainer_98594e47328 |

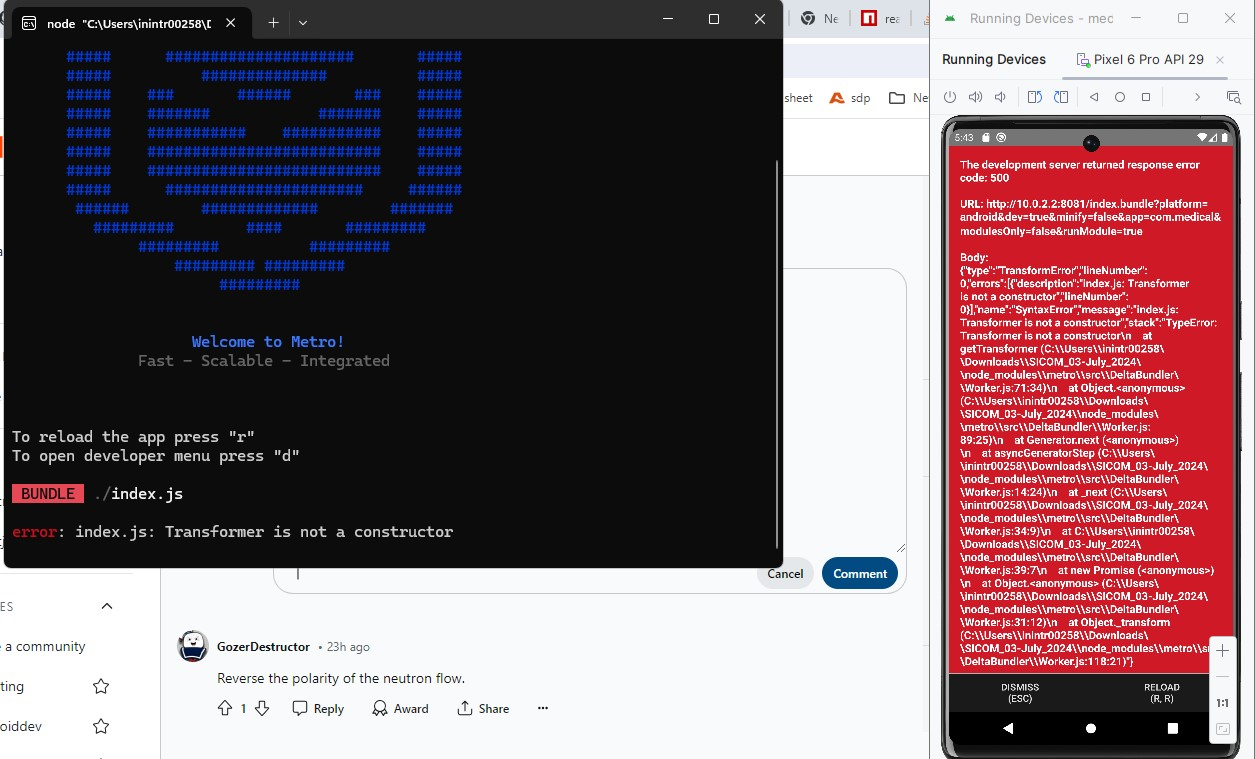

1,910,150 | Transformer is not a constructor React Native | error occurs when i launch app using npx react-native run-android it shows like | 0 | 2024-07-03T12:31:25 | https://dev.to/boss_rs_3bb893be31f75f98e/transformer-is-not-a-constructor-react-native-3l0i | reactnative, programming, react | error occurs when i launch app using npx react-native run-android it shows like

| boss_rs_3bb893be31f75f98e |

1,910,149 | Mastering BI Dashboards for Business Success | Introduction In today's data-driven world, business intelligence (BI) dashboards are... | 0 | 2024-07-03T12:29:27 | https://dev.to/sejal_4218d5cae5da24da188/mastering-bi-dashboards-for-business-success-3gi2 | data, dataanalytics, businessintelligence | ## Introduction

In today's data-driven world, business intelligence (BI) dashboards are pivotal in transforming raw data into actionable insights. These powerful tools enable organizations to make informed decisions, enhance performance, and stay competitive. This blog explores how advanced BI dashboards can maximize ... | sejal_4218d5cae5da24da188 |

1,910,148 | Understanding the Token Bucket Algorithm | Introduction The Token Bucket algorithm is a popular mechanism used for network traffic shaping and... | 0 | 2024-07-03T12:29:22 | https://dev.to/keploy/understanding-the-token-bucket-algorithm-5800 | webdev, javascript, beginners, tutorial |

Introduction

The Token Bucket algorithm is a popular mechanism used for network traffic shaping and rate limiting. It controls the amount of data transmitted over a network, ensuring that traffic conforms to specifi... | keploy |

1,910,147 | Master Clinical SAS at India's Best Online Training Institute | Unlock your potential in clinical data analysis with Durga Online Training Institute, Premier... | 0 | 2024-07-03T12:28:21 | https://dev.to/durga_trainer_98594e47328/master-clinical-sas-at-indias-best-online-training-institute-1fij | clinical, sass, database, data | Unlock your potential in clinical data analysis with Durga Online Training Institute, Premier destination for best clinical sas training institute in india . Gain a competitive edge in the healthcare industry with our comprehensive online courses. Our expert instructors will guide you through the intricacies of Clinica... | durga_trainer_98594e47328 |

1,910,073 | Automating Linux User Creation with Bash Scripting | Introduction: Streamlining the process of creating and managing multiples users account in an... | 0 | 2024-07-03T12:27:37 | https://dev.to/dev_sylvester/automating-linux-user-creation-with-bash-scripting-508d | aws, devops, linux, bash | Introduction:

Streamlining the process of creating and managing multiples users account in an organization where quite a number of developers have been recently onboarded can be very challenging for a SysOps engineer. Automating these tasks not only saves time but also ensures consistency and accuracy. This article wil... | dev_sylvester |

1,910,146 | Visual Studio Code 的工作區 (workspace) | Visual Studio Code 除了以單一資料夾為專案單位的方式以外, 如果你需要同時用到不同的資料夾, 也可以使用工作區 (workspace)。有些開發工具, 像是 PlatformIO... | 0 | 2024-07-03T12:27:20 | https://dev.to/codemee/visual-studio-code-de-gong-zuo-qu-workspace-1ojc | vscode | Visual Studio Code 除了以單一資料夾為專案單位的方式以外, 如果你需要同時用到不同的資料夾, 也可以使用[工作區 (workspace)](https://code.visualstudio.com/docs/editor/workspaces)。有些開發工具, 像是 PlatformIO 會強制在建立新專案的時候就建立工作區, 如果對工作區不瞭解, 一開始可能會被弄得暈頭轉向。

## 單一資料夾其實也是工作區

預設的情況下, 即使你沒有建立工作區, 開啟單一資料夾就形同是只有一個資料夾的工作區, 這時候完全不會感受到有**工作區**的存在, 但如果你開啟**設定**, 就會發現可以針對**使用者**或是**工... | codemee |

1,910,145 | Enhance Your Factory Car Radio's Bass with Affordable Upgrades | Factory-installed radios or navigation systems in vehicles often lack RCA outputs on the rear panel.... | 0 | 2024-07-03T12:27:15 | https://dev.to/lucas_harry_cbe392dbc31b9/enhance-your-factory-car-radios-bass-with-affordable-upgrades-2meh | car, radio, factory | Factory-installed radios or navigation systems in vehicles often lack RCA outputs on the rear panel. This can be a significant hurdle for car owners who wish to upgrade their standard sound systems to enjoy richer bass, enhancing the overall enjoyment of their music. The traditional approach of integrating an optional ... | lucas_harry_cbe392dbc31b9 |

1,910,144 | Understanding the Difference: Appreciating vs Depreciating Assets | Appreciating vs Depreciating Assets: A Key Distinction in Financial Planning In the realm of... | 0 | 2024-07-03T12:25:05 | https://dev.to/cesarwatkins/understanding-the-difference-appreciating-vs-depreciating-assets-1llf | <h3><strong>Appreciating vs Depreciating Assets: A Key Distinction in Financial Planning</strong></h3>

<p><span style="font-weight: 400;">In the realm of financial planning and investment, distinguishing between appreciating and depreciating assets is crucial for maximizing returns and minimizing risks. </span><strong>... | cesarwatkins | |

1,910,143 | The Periodic Table of AI Tools | I recently came across an interesting image called 'The Periodic Table of AI Tools.' Out of... | 0 | 2024-07-03T12:24:42 | https://dev.to/jottyjohn/the-periodic-table-of-ai-tools-40ge | ai | I recently came across an interesting image called 'The Periodic Table of AI Tools.' Out of curiosity, I searched for it and found a couple of articles. I thought I would share the links here for anyone who is interested. There are many more.. Have a look!

[(https://gemmo.ai/the-periodic-table-of-deep-learning-ai-guid... | jottyjohn |

1,910,140 | WhatsApp's AI Selfie Generator: A New Frontier in Self-Expression or a Superficial Gimmick? | Meta's WhatsApp is on the cusp of a significant transformation with the introduction of its... | 0 | 2024-07-03T12:24:08 | https://dev.to/hyscaler/whatsapps-ai-selfie-generator-a-new-frontier-in-self-expression-or-a-superficial-gimmick-bdb | Meta's WhatsApp is on the cusp of a significant transformation with the introduction of its AI-powered "Imagine Me" feature. This tool promises to redefine profile pictures by enabling users to create fantastical avatars based on their selfies. By harnessing the power of AI, users can effortlessly generate a multitude ... | suryalok | |

1,910,141 | Why You Should Avoid `var` and Use `let` and `const` Instead | As a developer, writing clean, predictable, and maintainable code is crucial. One way to achieve this... | 0 | 2024-07-03T12:23:51 | https://dev.to/jatinrai/why-you-should-avoid-var-and-use-let-and-const-instead-434d | javascript, frontend, coding, webdev |

As a developer, writing clean, predictable, and maintainable code is crucial. One way to achieve this is by using `let` and `const` instead of `var` in your JavaScript projects. Here’s why:

#### 1. Block Scope

One of the primary advantages of `let` and `const` over `var` is their block-scoped nature.

- **`var`**: ... | jatinrai |

1,910,138 | Introduction to BitPower Smart Contracts | Introduction BitPower is a decentralized lending platform that provides secure and efficient lending... | 0 | 2024-07-03T12:21:28 | https://dev.to/aimm_y/introduction-to-bitpower-smart-contracts-4bkc | Introduction

BitPower is a decentralized lending platform that provides secure and efficient lending services through smart contract technology. This article briefly introduces the features of BitPower smart contracts.

Core features of smart contracts

Automatic execution

All transactions are automatically executed by ... | aimm_y | |

1,910,137 | Software Technology Trends In 2024: Exploring the Future | The rapidly evolving software development company is to hit a mark of $1.03 trillion in market value... | 0 | 2024-07-03T12:21:03 | https://dev.to/infowindtech57/software-technology-trends-in-2024-exploring-the-future-3b95 | softwaredevelopment, hirededicateddevelopers | The rapidly evolving **[software development company](https://www.infowindtech.com/technology-cat/mobile-app-development/)** is to hit a mark of $1.03 trillion in market value before 2027. This development will be powered by the increasing consumer request for better products as well as technological advancements. This... | infowindtech57 |

1,910,127 | Best Practices for Implementing Microsoft Sentinel | Implementing an effective Security Information and Event Management (SIEM) system is essential for... | 0 | 2024-07-03T12:20:18 | https://dev.to/shivamchamoli18/best-practices-for-implementing-microsoft-sentinel-4o81 | siem, microsoftsentinel, azuresentinel, infosectrain | Implementing an effective Security Information and Event Management (SIEM) system is essential for securing your organization's digital infrastructure. Microsoft Sentinel is a cloud-native SIEM solution that provides organizations with sophisticated security analytics and threat intelligence to help them detect, invest... | shivamchamoli18 |

1,910,117 | Paper detailing BitPower Loop’s security | Security Research of BitPower Loop BitPower Loop is a decentralized lending platform based on... | 0 | 2024-07-03T12:17:26 | https://dev.to/sang_ce3ded81da27406cb32c/paper-detailing-bitpower-loops-security-1fni | Security Research of BitPower Loop

BitPower Loop is a decentralized lending platform based on blockchain technology, dedicated to providing users with safe, transparent and efficient financial services. Its core security comes from multi-level technical measures and mechanism design, which ensures the robust operation ... | sang_ce3ded81da27406cb32c | |

1,910,116 | History of .Net | .NET Framework ("Dot Net" deb talaffuz qilinadi) Windows, Linux va macOS operatsion tizimlari uchun... | 0 | 2024-07-03T12:17:11 | https://dev.to/sarvar12345/history-of-net-30jo | .NET Framework ("Dot Net" deb talaffuz qilinadi) Windows, Linux va macOS operatsion tizimlari uchun bepul, ochiq manbali, boshqariladigan kompyuter dasturiy platformasi. Loyiha birinchi navbatda Microsoft xodimlari tomonidan .NET Foundation orqali ishlab chiqilgan va MIT litsenziyasi ostida tarqatiladi.

1990-yillarnin... | sarvar12345 | |

1,910,115 | BitPower: Unlocking the Potential of Cryptocurrency | In the world of cryptocurrency and blockchain, BitPower is like a shining star. It is not only an... | 0 | 2024-07-03T12:15:46 | https://dev.to/pingz_iman_38e5b3b23e011f/bitpower-unlocking-the-potential-of-cryptocurrency-3fc9 |

In the world of cryptocurrency and blockchain, BitPower is like a shining star. It is not only an investment tool, but also a revolutionary financial platform. Blockchain technology provides a solid foundation for ... | pingz_iman_38e5b3b23e011f | |

1,910,114 | Expert Tips for Buying a Boat and Selecting Boston Whaler Accessories | Buying a boat is a significant decision that requires careful consideration and planning. From... | 0 | 2024-07-03T12:14:10 | https://dev.to/reginaldfuller/expert-tips-for-buying-a-boat-and-selecting-boston-whaler-accessories-3jjk | Buying a boat is a significant decision that requires careful consideration and planning. From choosing the right vessel to selecting essential boating accessories like those from Boston Whaler, every step contributes to creating enjoyable and safe boating adventures. Here’s a guide to help you navigate through the pro... | reginaldfuller | |

1,902,567 | JavaScript: Alterando a prioridade de execução | Eae gente bonita, beleza? Continuando nossos estudos em JavaScript, dessa vez eu irei falar algo... | 0 | 2024-07-03T12:13:45 | https://dev.to/cristuker/javascript-alterando-a-prioridade-de-execucao-472p | javascript, braziliandevs, beginners, programming | Eae gente bonita, beleza? Continuando nossos estudos em JavaScript, dessa vez eu irei falar algo muito interessante que é "e se nós pudessemos colocar coisas na frente da nossa pilha de execução" ou de uma forma mais simples, alterar a ordem de execução das funções do JavaScript, maneiro né? Então hoje vou te passar al... | cristuker |

1,910,113 | The Rise of Online Cricket Games: A Digital Revolution in Sports Entertainment | The advent Dream exchange ID of the digital age has transformed many aspects of human life, and... | 0 | 2024-07-03T12:12:10 | https://dev.to/arijit_badshah_cc2ff6817a/the-rise-of-online-cricket-games-a-digital-revolution-in-sports-entertainment-20jj | The advent [Dream exchange ID](https://dreamexch.live/) of the digital age has transformed many aspects of human life, and sports entertainment is no exception. Among various sports, cricket has seen a significant transition from traditional playfields to digital platforms. Online cricket games have become immensely po... | arijit_badshah_cc2ff6817a | |

1,910,112 | Download Old Roll Mod APK (Latest Version) | Old Roll Premium Unloked 2024 is the best app that allows you to recreate old photos at any time free... | 0 | 2024-07-03T12:11:21 | https://dev.to/oldroll_oldrollpro_dc8b13/download-old-roll-mod-apk-latest-version-4e2i | **[Old Roll Premium Unloked 2024](https://oldrollpro.com/)** is the best app that allows you to recreate old photos at any time free of cost!. It also supports all classic cameras. | oldroll_oldrollpro_dc8b13 | |

1,910,111 | Discover Effective Diabetes Treatment Through Kerala Ayurveda | Are you searching for effective [diabetes treatment in... | 0 | 2024-07-03T12:11:09 | https://dev.to/keralaayurveda/discover-effective-diabetes-treatment-through-kerala-ayurveda-32f6 | ayurvedictreatmentinranchi, diabetesdoctorinranchi, ayurvedictreatment | Are you searching for effective [diabetes treatment in Ranchi(https://keralaayurvedaranchi.com/diabetes-treatment.html) that combines traditional wisdom with modern approaches? Look no further than Ayurveda, a holistic system of medicine that offers personalized care and natural solutions. In Ranchi, Ayurvedic treatmen... | keralaayurveda |

1,910,110 | BitPower: Unlocking the Potential of Cryptocurrency | In the world of cryptocurrency and blockchain, BitPower is like a shining star. It is not only an... | 0 | 2024-07-03T12:10:28 | https://dev.to/pings_iman_934c7bc4590ba4/bitpower-unlocking-the-potential-of-cryptocurrency-5b5l |

In the world of cryptocurrency and blockchain, BitPower is like a shining star. It is not only an investment tool, but also a revolutionary financial platform. Blockchain technology provides a solid foundation for... | pings_iman_934c7bc4590ba4 | |

1,910,109 | Introduction to BitPower Smart Contracts | Introduction BitPower is a decentralized lending platform that provides secure and efficient lending... | 0 | 2024-07-03T12:09:10 | https://dev.to/aimm_x_54a3484700fbe0d3be/introduction-to-bitpower-smart-contracts-ef4 | Introduction

BitPower is a decentralized lending platform that provides secure and efficient lending services through smart contract technology. This article briefly introduces the features of BitPower smart contracts.

Core features of smart contracts

Automatic execution

All transactions are automatically executed by ... | aimm_x_54a3484700fbe0d3be | |

1,910,108 | ReactJS vs React Native: A Comprehensive Comparison for Modern Developers | In the ever-evolving world of web and mobile development, choosing the right framework is crucial for... | 0 | 2024-07-03T12:08:59 | https://dev.to/scholarhat/reactjs-vs-react-native-a-comprehensive-comparison-for-modern-developers-11jj | In the ever-evolving world of web and mobile development, choosing the right framework is crucial for success. Two popular options that often come up in discussions are [ReactJS vs React Native](https://www.scholarhat.com/tutorial/react/difference-between-react-js-and-react-native). While they share a common lineage, t... | scholarhat | |

1,910,107 | A Mysore, Ooty, Coorg Tour Package by Karnataka Holiday Vacations | Are you dreaming of an escape that blends rich heritage, breathtaking landscapes, and a touch of... | 0 | 2024-07-03T12:08:21 | https://dev.to/karnataka_holidayvacatio/a-mysore-ooty-coorg-tour-package-by-karnataka-holiday-vacations-59m2 | Are you dreaming of an escape that blends rich heritage, breathtaking landscapes, and a touch of adventure? Look no further than the captivating **[Mysore Ooty Coorg Tour Package](https://www.karnatakaholidayvacation.com/bangalore-mysore-coorg-ooty-tour-package-5n-6d/)** by Karnataka Holiday Vacations, your gateway to ... | karnataka_holidayvacatio | |

1,910,106 | How to fix broken openshift cluster?. | A post by YR Nath | 0 | 2024-07-03T12:08:09 | https://dev.to/yr_nath_e4d2c151245a6e9e8/how-to-fix-broken-openshift-cluster-560m | yr_nath_e4d2c151245a6e9e8 | ||

1,910,105 | Nodejs and typescript boilerplate | Node Boilerplate Typescript This repo is boilerplate for typescript and nodejs... | 0 | 2024-07-03T12:07:19 | https://dev.to/bhumit070/nodejs-and-typescript-boilerplate-48i8 | # Node Boilerplate Typescript

- [This repo](https://github.com/bhumit070/node-boilerplate-ts) is boilerplate for typescript and nodejs projects

## What is included

- Server with express

- Security Packages

- [hpp](https://www.npmjs.com/package/hpp) - Express middleware to protect against HTTP Parameter Pollution a... | bhumit070 | |

1,910,104 | Are Blockchain Games The Future? | What is Blockchain Gaming? Blockchain games are now the new trend in the gaming industry that is... | 0 | 2024-07-03T12:07:01 | https://dev.to/bellabardot/are-blockchain-games-the-future-4cj4 | blockchain, blockchaingames, blockchaingamedevelopment | **What is Blockchain Gaming?**

Blockchain games are now the new trend in the gaming industry that is receiving a whopping welcome among gamers and blockchain enthusiasts. Blockchain games surpass traditional games by offering military-grade security and ownership of in-game assets. The exceptional attributes of blockc... | bellabardot |

1,910,016 | Learnt C Language | Hello, fellow developers! I'm excited to journey of learning C programming with you all. C is known... | 0 | 2024-07-03T12:06:19 | https://dev.to/skandaprasaad/learnt-c-language-1cgc | Hello, fellow developers! I'm excited to journey of learning C programming with you all. C is known for its efficiency, portability, and low-level access to memory, making it ideal for system-level programming It serves as the foundational language for many modern programming languages, such as C++, Java, and Python. ... | skandaprasaad | |

1,910,103 | Ultimate Guide to React Native Image Components | Have you ever tried to work with images in your React Native app and felt a bit lost? Don't worry,... | 0 | 2024-07-03T12:06:04 | https://dev.to/syketb/ultimate-guide-to-react-native-image-components-4noa | javascript, reactnative, react, beginners | Have you ever tried to work with images in your React Native app and felt a bit lost? Don't worry, you're not alone! Handling images in React Native can seem a little different from what you're used to on the web, but once you understand the basics, it becomes a breeze.

Let's start with the Image component. React Nati... | syketb |

1,910,102 | A Guide to Web Servers, Providers, and Management | The internet, a vast ocean of information, wouldn't be accessible without the silent workhorses... | 0 | 2024-07-03T12:05:43 | https://dev.to/65d0431e27fd/a-guide-to-web-servers-providers-and-management-4enh | The internet, a vast ocean of information, wouldn't be accessible without the silent workhorses behind the scenes: web servers. These powerful computers store websites and deliver their content to your browser whenever you enter a URL. But web servers are just one piece of the puzzle. Let's dive deep and explore what w... | 65d0431e27fd | |

1,910,101 | BitPower: Unlocking the Potential of Cryptocurrency | In the world of cryptocurrency and blockchain, BitPower is like a shining star. It is not only an... | 0 | 2024-07-03T12:05:35 | https://dev.to/pingd_iman_9228b54c026437/bitpower-unlocking-the-potential-of-cryptocurrency-2ke0 |

In the world of cryptocurrency and blockchain, BitPower is like a shining star. It is not only an investment tool, but also a revolutionary financial platform. Blockchain technology provides a solid foundation for ... | pingd_iman_9228b54c026437 | |

1,910,099 | Transform Your Space with Expertise: Interior Designer in Noida | Discover the best interior designer in Noida for your home or office. From conceptualization to... | 0 | 2024-07-03T12:04:24 | https://dev.to/quartier_studio_75ca237b7/transform-your-space-with-expertise-interior-designer-in-noida-50kd | Discover the best [interior designer in Noida ](url)for your home or office. From conceptualization to execution, our skilled professionals specialize in creating personalized interiors that reflect your style and enhance functionality. Contact us today for a consultation and turn your space into a masterpiece.

| quartier_studio_75ca237b7 | |

1,910,098 | Generative AI: Transforming Industries and Driving Sustainable Innovation | 1. Introduction Generative AI is rapidly transforming various industries by... | 27,673 | 2024-07-03T12:03:37 | https://dev.to/rapidinnovation/generative-ai-transforming-industries-and-driving-sustainable-innovation-hb1 | ## 1\. Introduction

Generative AI is rapidly transforming various industries by providing

innovative solutions and enhancing creative processes. This technology, which

encompasses everything from natural language processing to image generation,

is not just a tool for automating tasks but is also becoming a fundamental... | rapidinnovation | |

1,910,097 | The Benefits of Cloud-Based Business Analytics Solutions | Introduction In today's fast-paced business environment, the ability to quickly and efficiently... | 0 | 2024-07-03T12:01:12 | https://dev.to/sganalytics/the-benefits-of-cloud-based-business-analytics-solutions-4h6n | business, analytics, solutions, cloud | Introduction

In today's fast-paced business environment, the ability to quickly and efficiently analyze data is crucial for making informed decisions and staying competitive. Traditional on-premises business analytics solutions often come with significant limitations, such as high costs, scalability issues, and complex... | sganalytics |

1,910,096 | Using Firebase with Communication APIs | Explore how to integrate Firebase with Vonage APIs in our latest developer podcast. Learn about authentication, hosting, and more. | 25,852 | 2024-07-03T12:00:25 | https://codingcat.dev/podcast/using-firebase-with-communication-apis | webdev, javascript, beginners, podcast |

Original: https://codingcat.dev/podcast/using-firebase-with-communication-apis

{% youtube https://youtu.be/deAI0ZMdTrw %}

## Introduction and Background of Guest

* Amanda's Background: Amanda discusses her educational journey, starting from her early exposure to computers due to her father's work with assembly and ... | codercatdev |

1,910,095 | The Crucial Role of Data Integration in Modern Enterprises | Data integration is the linchpin of modern enterprise operations, facilitating the seamless flow of... | 0 | 2024-07-03T11:59:58 | https://dev.to/linda0609/the-crucial-role-of-data-integration-in-modern-enterprises-2dcf | Data integration is the linchpin of modern enterprise operations, facilitating the seamless flow of information across various systems and departments. It involves consolidating data from disparate sources to enhance comprehension, streamline analysis, and support informed decision-making. This process is foundational ... | linda0609 | |

1,910,094 | Concepts of Qualitative and Quantitative Market Research | Qualitative Market Research Definition: Qualitative market research focuses on... | 0 | 2024-07-03T11:59:09 | https://dev.to/sganalytics/concepts-of-qualitative-and-quantitative-market-research-3k7a | qualitative, quantitative, market, research | ## Qualitative Market Research

Definition:

[Qualitative market research](https://www.sganalytics.com/market-research/qualitative-market-research/) focuses on understanding the underlying reasons, opinions, and motivations behind consumer behaviors. It provides insights into the problem and helps to develop ideas or hy... | sganalytics |

1,910,052 | Easy задача с собеседования в Facebook: Contains Duplicate || | Задача. Дан массив целых чисел nums и число k. Нужно вернуть true, если в массиве есть два... | 0 | 2024-07-03T11:59:08 | https://dev.to/faangmaster/easy-zadacha-s-sobiesiedovaniia-v-facebook-contains-duplicate--4ief | interview, algorithms, faang | ## Задача.

Дан массив целых чисел nums и число k. Нужно вернуть true, если в массиве есть два уникальных индекса i и j, такие что nums[i] == nums[j] и abs(i-j)<=k.

Ссылка на leetcode: https://leetcode.com/problems/contains-duplicate-ii/

Примеры:

Input: nums = [1,2,3,1], k = 3

Output: true

nums[0] == nums[3] abs(3-0) ... | faangmaster |

1,910,092 | BitPower Smart Contract: | BitPower is a decentralized energy trading platform based on blockchain technology, aiming to improve... | 0 | 2024-07-03T11:55:00 | https://dev.to/xin_l_9aced9191ff93f0bf12/bitpower-smart-contract-4gml |

BitPower is a decentralized energy trading platform based on blockchain technology, aiming to improve the transparency and efficiency of the energy market. At its core are smart contracts that automate energy transactions through pre-written code. These smart contracts can not only automate the transaction process and... | xin_l_9aced9191ff93f0bf12 | |

1,852,732 | Cheat Sheet for React Bootstrap. Layout and Forms | Table of contents Breakpoints Grid system Container Row Col Stacks Forms Form... | 27,069 | 2024-07-03T11:54:08 | https://dev.to/jsha/cheat-sheet-for-react-bootstrap-layout-and-forms-5d75 | ## Table of contents

1. [Breakpoints](#breakpoints)

2. [Grid system](#grid-system)

3. [Container](#container)

4. [Row](#row)

5. [Col](#col)

6. [Stacks](#stacks)

8. [Forms](#forms)

9. [Form props](#form-props)

10. [Form.Label props](#form.label-props)

11. [fieldset props](#fieldset-props)

12. [Form.Control props](#... | jsha | |

1,843,892 | Cheat Sheet for React Bootstrap. Installation and components | Bootstrap Javascript is not recommended to use with React. React-Bootstrap creates each component as... | 27,069 | 2024-07-03T11:53:51 | https://dev.to/jsha/cheat-sheet-for-react-bootstrap-installation-and-components-4n43 | react, bootstrap | Bootstrap Javascript is not recommended to use with React. React-Bootstrap creates each component as a true React component so there won't be any conflict with React library.

## Table of contents

- [Installation and usage](#installation-and-usage)

- [**as** Prop API](#**as**-prop-api)

- [Theming](#theming)

- [Componen... | jsha |

1,910,082 | BitPower Smart Contract: | BitPower is a decentralized energy trading platform based on blockchain technology, aiming to improve... | 0 | 2024-07-03T11:49:46 | https://dev.to/xin_lin_fc39c6250ef2ab451/bitpower-smart-contract-h91 | BitPower is a decentralized energy trading platform based on blockchain technology, aiming to improve the transparency and efficiency of the energy market. At its core are smart contracts that automate energy transactions through pre-written code. These smart contracts can not only automate the transaction process and ... | xin_lin_fc39c6250ef2ab451 | |

1,910,091 | Technical Flame Retardant Fabrics: Ensuring Safety in Hazardous Environments | Why Are Technical Flame Retardant Fabrics So Important Nowadays, in the rapidly growing industries... | 0 | 2024-07-03T11:53:35 | https://dev.to/kamila_bullockz_a15641e8e/technical-flame-retardant-fabrics-ensuring-safety-in-hazardous-environments-2emn | design | Why Are Technical Flame Retardant Fabrics So Important

Nowadays, in the rapidly growing industries workplace safety is essential. In high-risk work environments, technical flame retardant fabrics are key to ensure the safety of workers. Developed to resist high temperatures and flames, these unique fabrics are used fo... | kamila_bullockz_a15641e8e |

1,839,837 | Cheat Sheet for Bootstrap. Utilities and helpers | Table of contents Sizing Spacing Text Background Borders Text... | 27,069 | 2024-07-03T11:53:30 | https://dev.to/jsha/cheat-sheet-for-bootstrap-utilities-and-helpers-20g2 | bootstrap | ## Table of contents

1. [Sizing](#sizing)

2. [Spacing](#spacing)

3. [Text](#text)

4. [Background](#background)

5. [Borders](#borders)

6. [Text color](#text-color)

7. [Display](#display)

8. [Position](#position)

9. [Color & background](#color-amp-background)

10. [Colored links](#colored-links)

11. [Stacks](#stacks)

12.... | jsha |

1,910,090 | BitPower: Unlocking the Potential of Cryptocurrency | In the world of cryptocurrency and blockchain, BitPower is like a shining star. It is not only an... | 0 | 2024-07-03T11:53:21 | https://dev.to/ping_iman_72b37390ccd083e/bitpower-unlocking-the-potential-of-cryptocurrency-4p2l |

In the world of cryptocurrency and blockchain, BitPower is like a shining star. It is not only an investment tool, but also a revolutionary financial platform. Blockchain technology provides a solid foundation for... | ping_iman_72b37390ccd083e | |

1,825,658 | Cheat Sheet for Bootstrap. Layout | Bootstrap allows to use mobile-first flexbox grid to build layouts of all shapes and sizes. ... | 27,069 | 2024-07-03T11:53:09 | https://dev.to/jsha/cheat-sheet-for-bootstrap-layout-11bk | bootstrap | Bootstrap allows to use **mobile-first** flexbox grid to build layouts of all shapes and sizes.

## Table of contents

1. [Breakpoints](#breakpoints)

2. [Containers](#containers)

3. [Grid](#grid)

4. [Row](#row)

5. [Column](#column)

## Breakpoints

**Breakpoints** - customizable widths that makes the layout responsive.... | jsha |

1,910,089 | Introduction to BitPower Smart Contracts | Introduction BitPower is a decentralized lending platform that provides secure and efficient lending... | 0 | 2024-07-03T11:53:02 | https://dev.to/aimm/introduction-to-bitpower-smart-contracts-5fcb | Introduction

BitPower is a decentralized lending platform that provides secure and efficient lending services through smart contract technology. This article briefly introduces the features of BitPower smart contracts.

Core features of smart contracts

Automatic execution

All transactions are automatically executed by ... | aimm | |

1,819,897 | Introduction to Bootsrap | What is Bootstrap? If you haven't heard about CSS frameworks, then imagine that you don't have to... | 27,069 | 2024-07-03T11:52:40 | https://dev.to/jsha/introduction-to-bootsrap-17kk | bootstrap, webdev, frontend | What is Bootstrap? If you haven't heard about CSS frameworks, then imagine that you don't have to create styles with CSS, you just use already existing classes and there is no need to puzzle over responsive design. It will certainly facilitate and accelerate development of the webpage. Alongside with **Bootstrap** ther... | jsha |

1,910,088 | What Are the Future Developments for BEP-20 Tokens? | *Introduction: * Cryptocurrency enthusiasts are no strangers to the term BEP-20 tokens, especially... | 0 | 2024-07-03T11:52:39 | https://dev.to/elena_marie_dad5c9d5d5706/what-are-the-future-developments-for-bep-20-tokens-13f8 | cryptotoken | **Introduction:

**

Cryptocurrency enthusiasts are no strangers to the term BEP-20 tokens, especially if they are familiar with the Binance Smart Chain (BSC). BEP-20 tokens have emerged as a significant player in the crypto space, offering a myriad of benefits and functionalities. A **[Token Development Company](https:... | elena_marie_dad5c9d5d5706 |

1,910,087 | Paper detailing BitPower Loop’s security | Security Research of BitPower Loop BitPower Loop is a decentralized lending platform based on... | 0 | 2024-07-03T11:52:21 | https://dev.to/kjask_jklshd_cecbd37d6d57/paper-detailing-bitpower-loops-security-11eg | Security Research of BitPower Loop

BitPower Loop is a decentralized lending platform based on blockchain technology, dedicated to providing users with safe, transparent and efficient financial services. Its core security comes from multi-level technical measures and mechanism design, which ensures the robust operation ... | kjask_jklshd_cecbd37d6d57 | |

1,910,086 | Answer: Automatically adding hyperlinks in footnotes in MS Word | answer re: Automatically adding hyperlinks... | 0 | 2024-07-03T11:51:53 | https://dev.to/oscarsun72/answer-automatically-adding-hyperlinks-in-footnotes-in-ms-word-4k39 | {% stackoverflow 78701865 %} | oscarsun72 | |

1,910,085 | Spring Boot :: Core Features | Spring Boot is just the syntactical sugar over the the Spring Framework which allow us to direclty... | 27,947 | 2024-07-03T11:50:48 | https://dev.to/hra06/spring-boot-core-features-3jna | springboot | Spring Boot is just the syntactical sugar over the the Spring Framework which allow us to direclty work on the business requirement without thinking much about the infrastructure building.

It provides us the ability to do more with less.

It provides us a ton of features some of them are mentioned below.

1. AutoConfigu... | hra06 |

1,910,081 | 5 AI Predictions In 2024 You Need To Know | Hello, readers! Prepare yourself for a thrilling journey through AI trends in 2024! It is of utmost... | 0 | 2024-07-03T11:47:26 | https://www.techdogs.com/td-articles/trending-stories/5-ai-predictions-in-2024-you-need-to-know | ai, technology, trends | **Hello, readers!**

Prepare yourself for a thrilling journey through [AI trends in 2024](https://www.techdogs.com/td-articles/techno-trends/artificial-intelligence-trends-2024)! It is of utmost importance to fathom about the destiny of AI as it gradually becomes part of us. Here are five main predictions for you to l... | td_inc |

1,910,080 | Research and Development: Pioneering New Refrigeration Oil Solutions | Refrigeration Oil Market Scope The Refrigeration Oil market refers to the sales of lubricants... | 0 | 2024-07-03T11:46:16 | https://dev.to/aryanbo91040102/research-and-development-pioneering-new-refrigeration-oil-solutions-17ig | news | Refrigeration Oil Market Scope

The Refrigeration Oil market refers to the sales of lubricants specifically designed for use in refrigeration systems. These oils play an important role in ensuring the efficient operation of refrigeration compressors and preventing damage to the equipment. The market size and growth of... | aryanbo91040102 |

1,910,079 | Editing and Updating Notes using PATCH Request Method | As a follow-up to creating new notes using forms and request methods, we will now explore how to edit... | 0 | 2024-07-03T11:45:40 | https://dev.to/ghulam_mujtaba_247/editing-and-updating-notes-using-patch-request-method-14k7 | webdev, beginners, programming, php | As a follow-up to creating new notes using forms and request methods, we will now explore how to edit and update existing notes in the database using the PATCH request method.

When a user wants to edit a note, we need to provide a way for them to access the edit screen. This is where the edit button comes in.

## Addi... | ghulam_mujtaba_247 |

1,910,078 | Django Passwordless Authentication: A Comprehensive Guide with Code Examples | Modern security techniques like passwordless authentication improve user experience by doing away... | 0 | 2024-07-03T11:45:33 | https://www.nilebits.com/blog/2024/07/django-passwordless-authentication-a-comprehensive-guide-with-code-examples/ | django, python | Modern security techniques like passwordless authentication improve user experience by doing away with the necessity for conventional passwords. By using this technique, the likelihood of password-related vulnerabilities including reused passwords, brute force assaults, and phishing is decreased. We will go into great ... | amr-saafan |

1,910,077 | Beware of base rate fallacy | Let's say some scholars have done a research on the relationship between career advancement and... | 0 | 2024-07-03T11:45:23 | https://dev.to/doublex/beware-of-base-rate-fallacy-4ogd | Let's say some scholars have done a research on the relationship between career advancement and overconfidence/underconfidence(with respect to the [Dunning-Kruger effect](https://en.wikipedia.org/wiki/Dunning%E2%80%93Kruger_effect) in this setup) in country A, and the result is as follows:

**Out of all those being pro... | doublex |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.