id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,853,826 | Introduction to Essential Commands in Ubuntu Linux | uname The uname command provides information about the system, such as the kernel name,... | 0 | 2024-05-27T01:21:24 | https://dev.to/pra_jwal001/introduction-to-essential-commands-in-ubuntu-linux-4cg1 | ubuntu, linux |

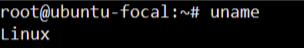

<u>**uname** </u>

_The uname command provides information about the system, such as the kernel name, version._

---

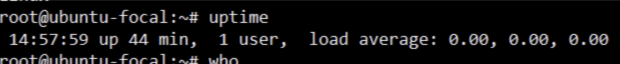

**<u>uptime</u>**

_The uptime command shows how long the system has been running, the current time, the number of users logged on, and the system load averages._

---

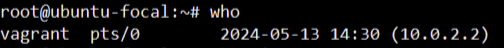

**<u>who</u>**

_The who command displays a list of users currently logged into the system._

---

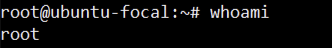

**<u>whoami</u>**

_The whoami command prints the username of the current user._

---

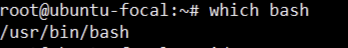

**<u>which</u>**

_The which command locates the executable file associated with a given command by searching the directories listed in the PATH environment variable._

---

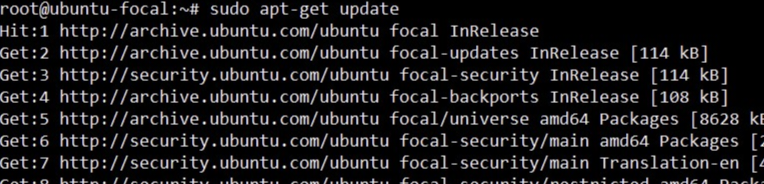

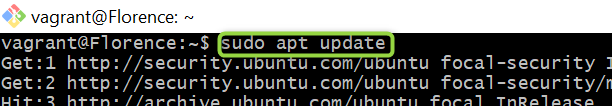

**<u>sudo apt-get update</u>**

_The sudo apt-get update command is used to update the local package index. This package index contains information about the available packages from the repositories configured on the system._

---

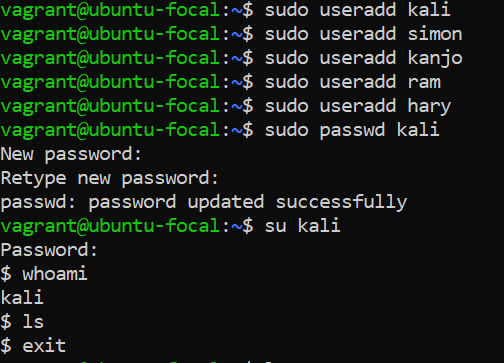

_**User management in ubuntu linux**_

**<u>useradd</u>**

_The useradd command in Ubuntu is used to easily create a new user account,can provide password(recommend)_

---

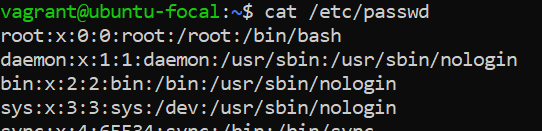

**<u>cat /etc/passwd</u>**

_The /etc/passwd file lists each user's username, UID, GID, home directory, and shell. It is essential for user account management._

---

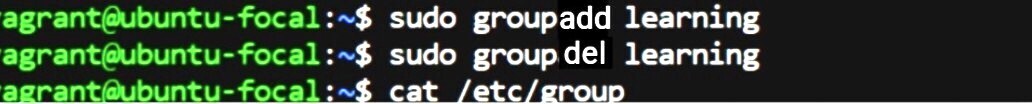

**<u>groupadd</u> and <u>groupdel</u>**

_**groupadd** command is used to create a new group on a Linux system._

_**groupdel** This command is used to delete an existing group from a Linux system._

<u>**cat /etc/group**</u>

_the **cat /etc/group** command is used to display the contents of the /etc/group file. This file contains information about all the groups on the system._

---

<u>**add user to group**</u>

_These commands use "usermod" to add existing users ("kali", "simon", and "ram") to the "learning" group on the system._

---

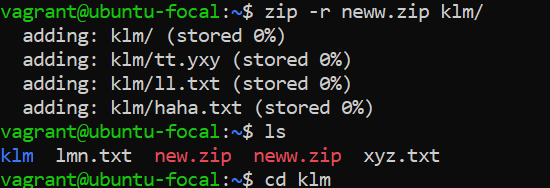

**<u>zipping directory</u>**

_the command **zip -r neww.zip klm/** creates a zip file named **neww.zip** containing all the files and directories within the **klm/** directory. Then, **ls** lists the files in the current directory, including **neww.zip**, indicating that the zip file was created successfully._

---

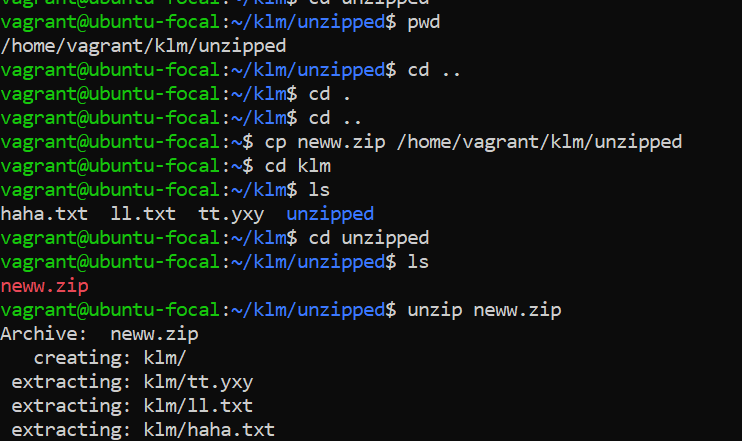

**<u>unzipping directory</u>**

_copied a zip file named **neww.zip** from your home directory to a folder called unzipped within **/home/vagrant/klm/**. Then, you unzipped it, which contained a directory **klm/**along with its contents (tt.yxy, 11.txt, and haha.txt).( Tried to copy from Source to destination path and unzipped directory structure containing files_

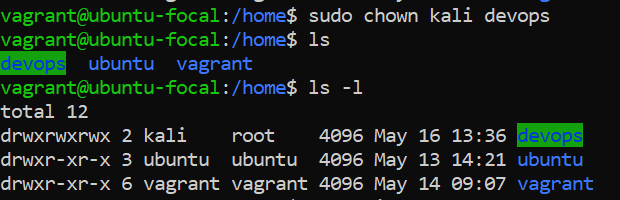

**<u>file permission commands</u>**

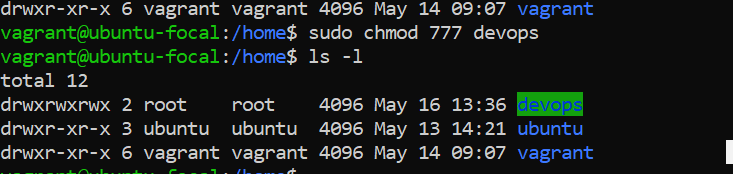

_changed the ownership of the directory "devops" to user "kali" using the **sudo chown** command_

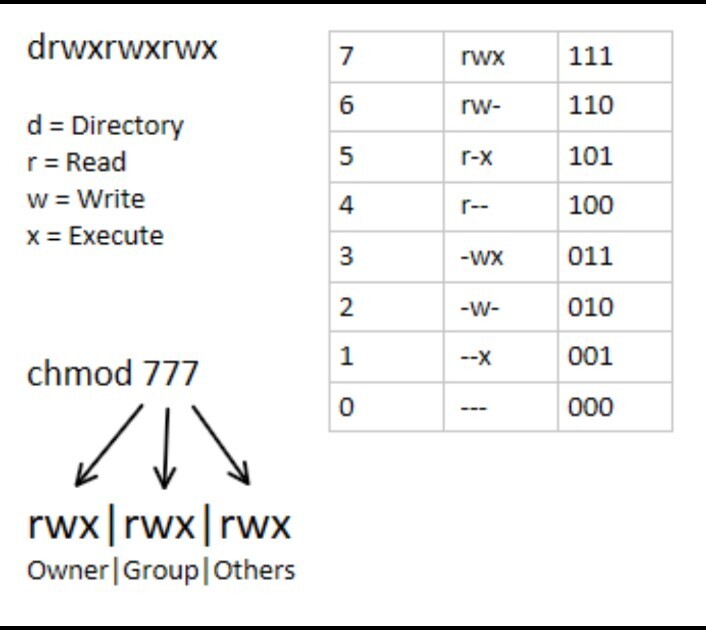

_changed the permissions of the "devops" directory to allow read, write, and execute access for all users._

---

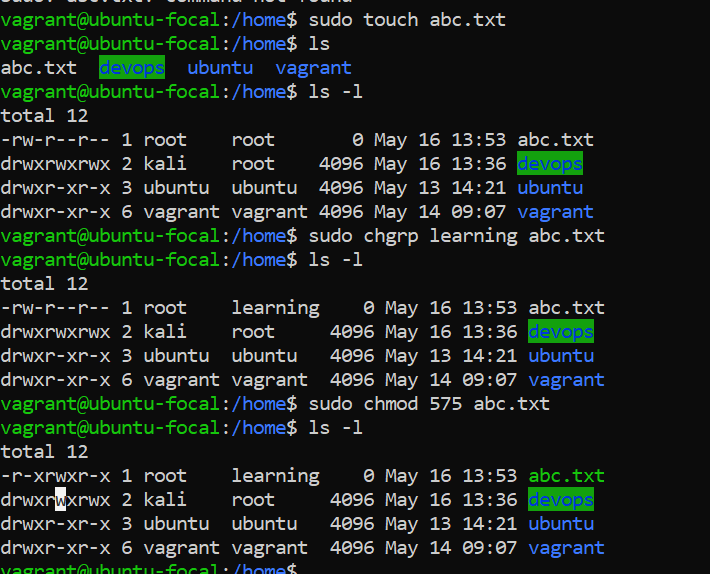

_created a file named "**abc.txt**" using **sudo touch abc.txt,** then listed the contents of the directory. After that,displayed the permissions of each entry using ls -1, which showed the "**abc.txt**" file along with the directories and their permissions.changed the group ownership of "**abc.txt**" to "**learning**" using **sudo chgrp learning abc.txt**, and then modified the permissions of "**abc.txt**" to 575 using sudo **chmod 575 abc.txt**.The final listing (ls -1) reflects these changes, showing the updated permissions for "**abc.txt**" and the directories._

---

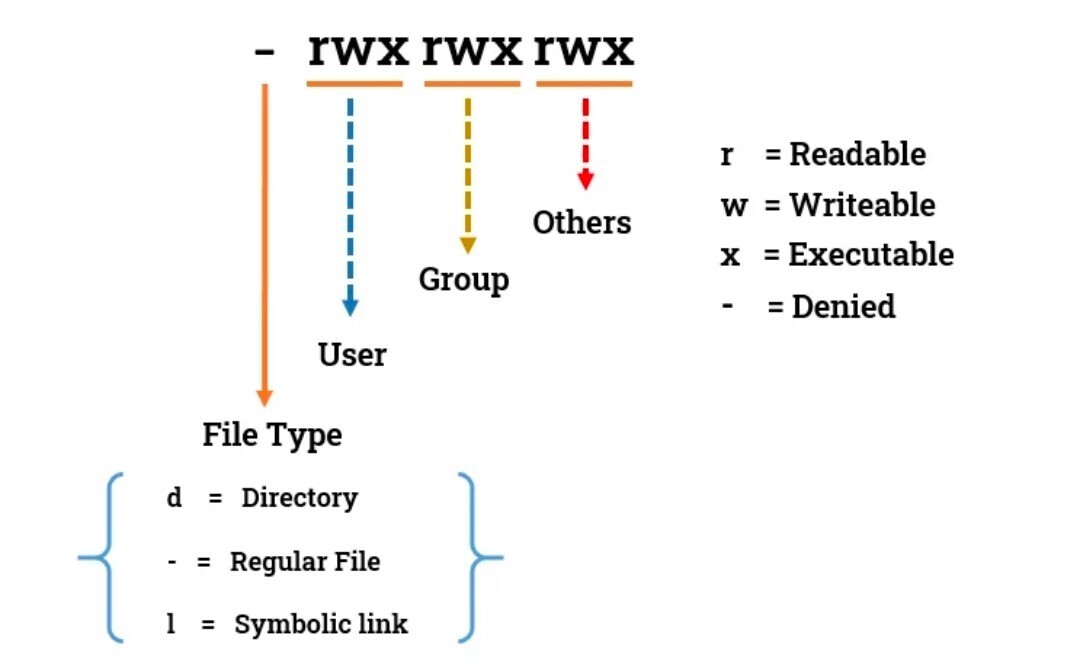

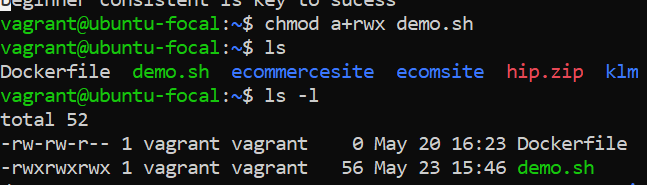

**<u>Learn more about file permission</u>**

**an alternative way to change the permissions of a file in Linux to give read, write, and execute permissions to the user, group, and others.**

_<u>chmod a+rwx demo.sh </u>would give read, write, and execute permissions to all users (user, group, and others) on the file named <u>demo.sh_</u>

---

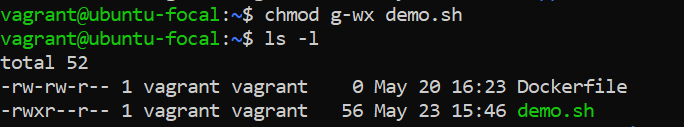

_here g-wx remove the write and execute permission for group on demo.sh_

---

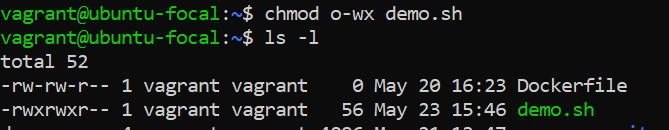

_here o-wx remove the write and execute permission for others on demo.sh_

| pra_jwal001 |

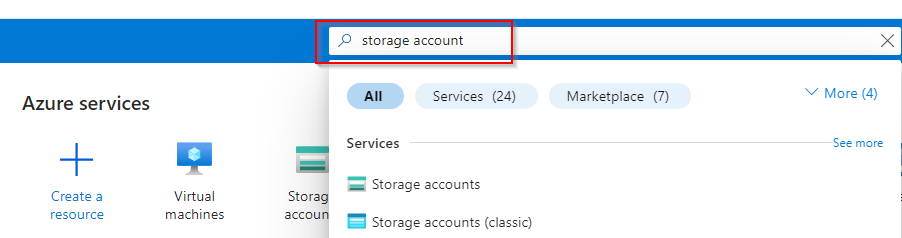

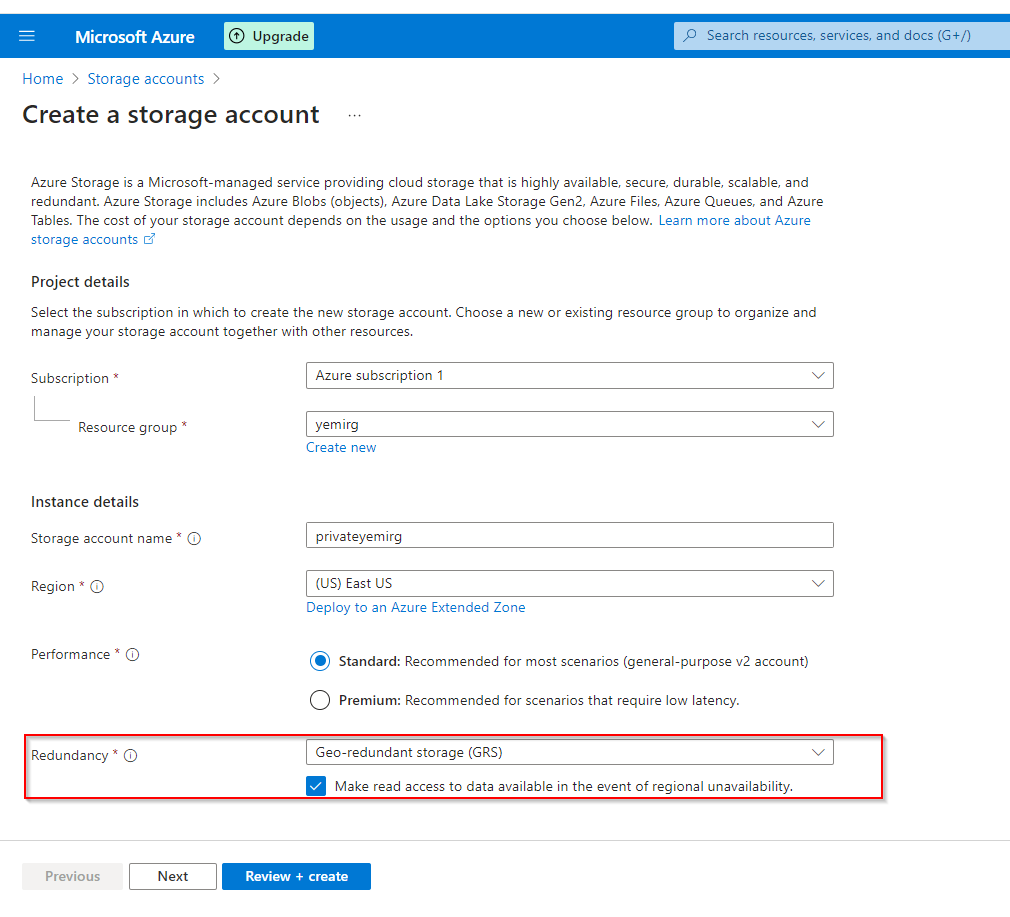

1,866,008 | Setting Up Secure Cloud Storage: Restricted Access, Partner Sharing, Website Backup, and Lifecycle Management | Create a storage account and configure high availability. In Azure Portal, search for "storage... | 0 | 2024-05-27T01:16:16 | https://dev.to/opsyog/how-to-provide-private-storage-for-internal-company-documents-4077 | azurefunctions, azure, access, storage | **Create a storage account and configure high availability.**

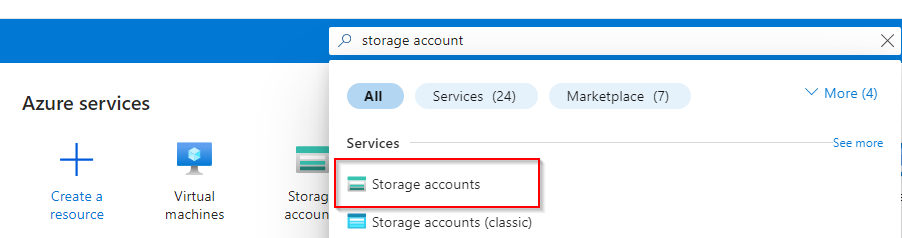

**In Azure Portal, search for "storage account"**

**Select "Storage account"**

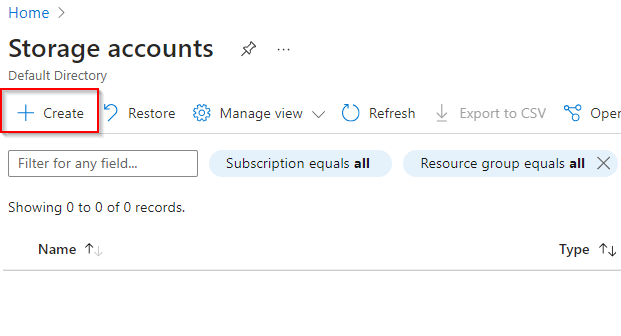

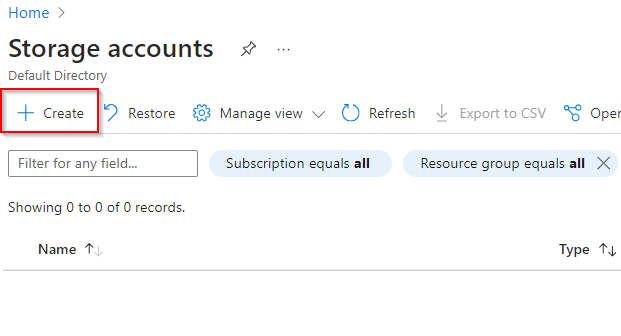

**Select "Create"**

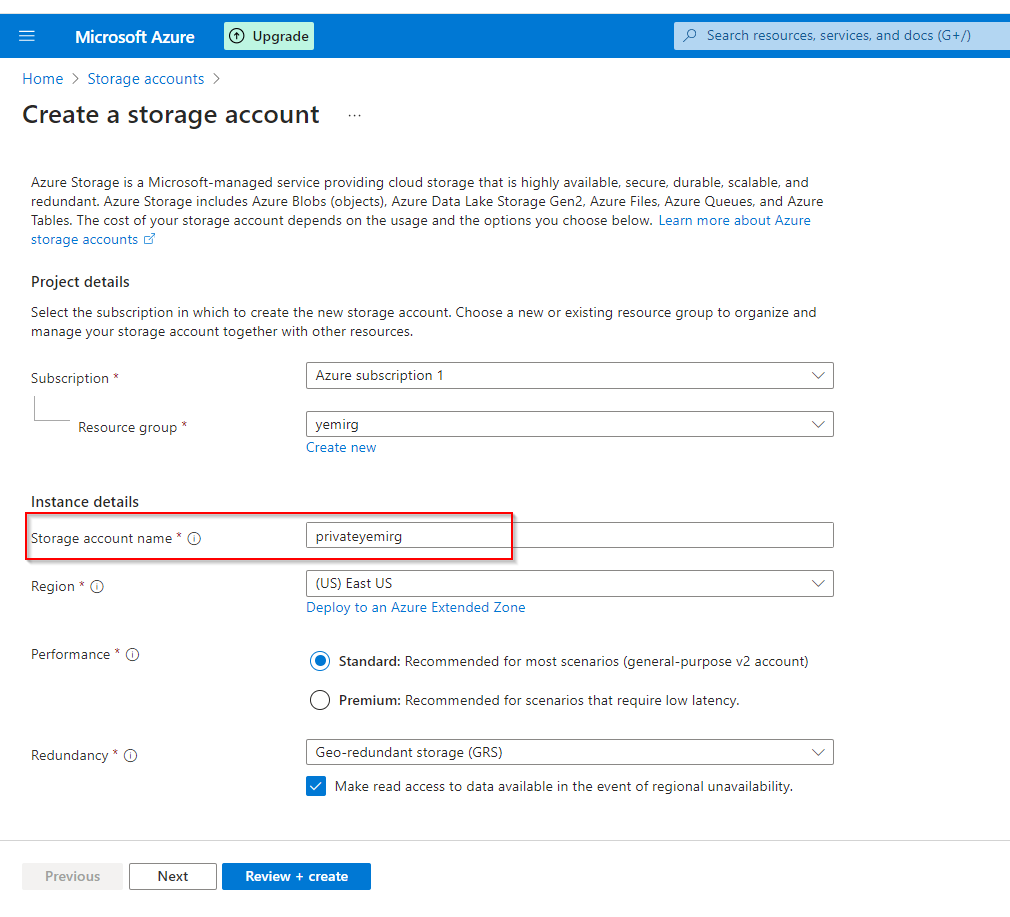

**Insert Resource Group previously created name

**

**Insert Storage account name

**

**Select "Redundancy" to be Geo-redundant storage (GRS)**

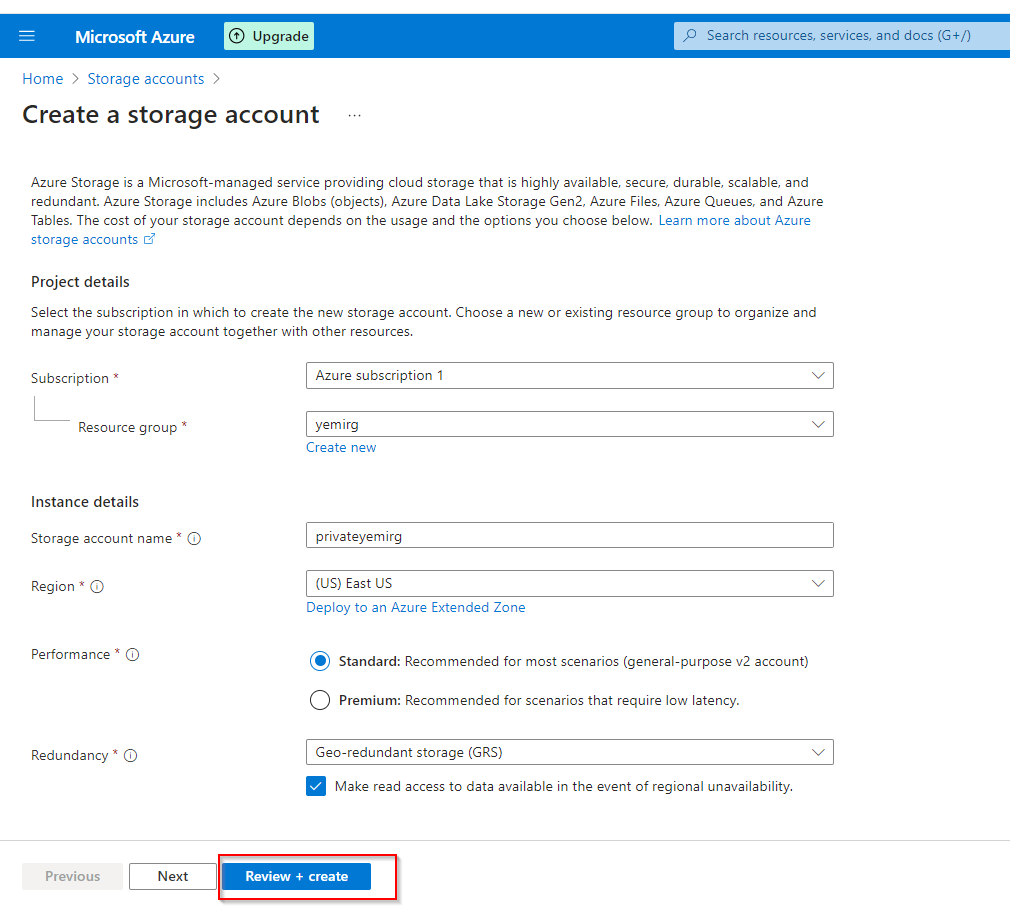

**Select "Review + Create"**

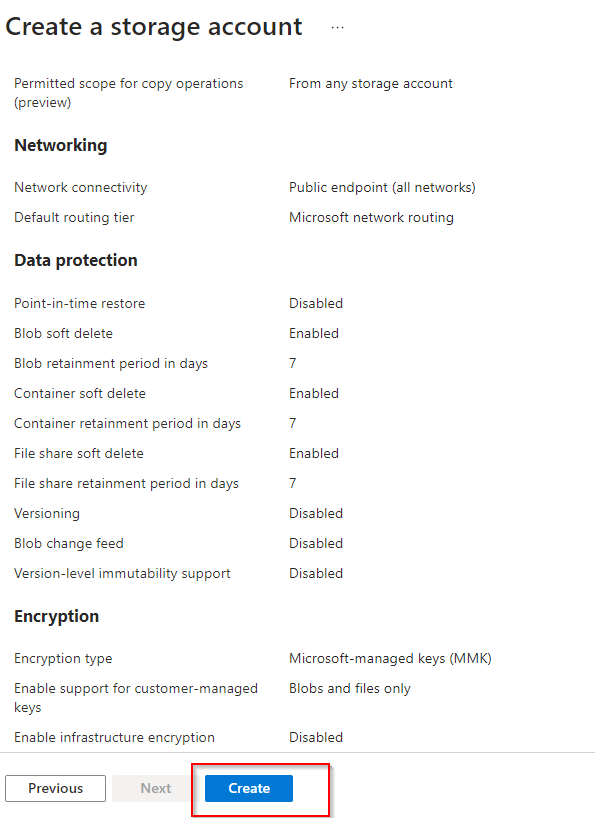

**Check Validation and select "Create"**

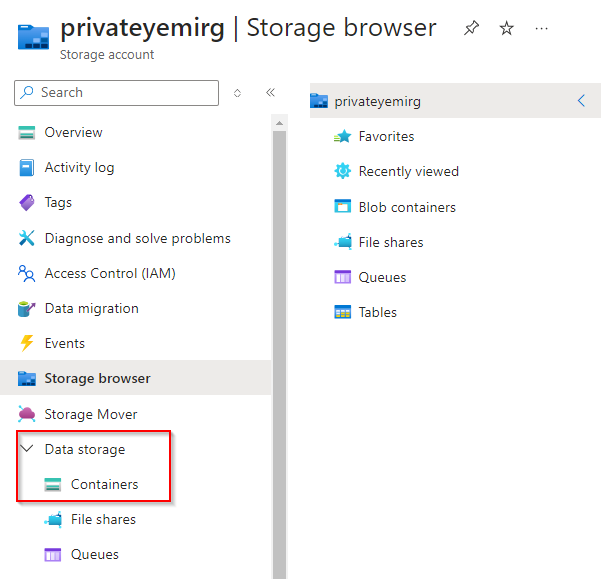

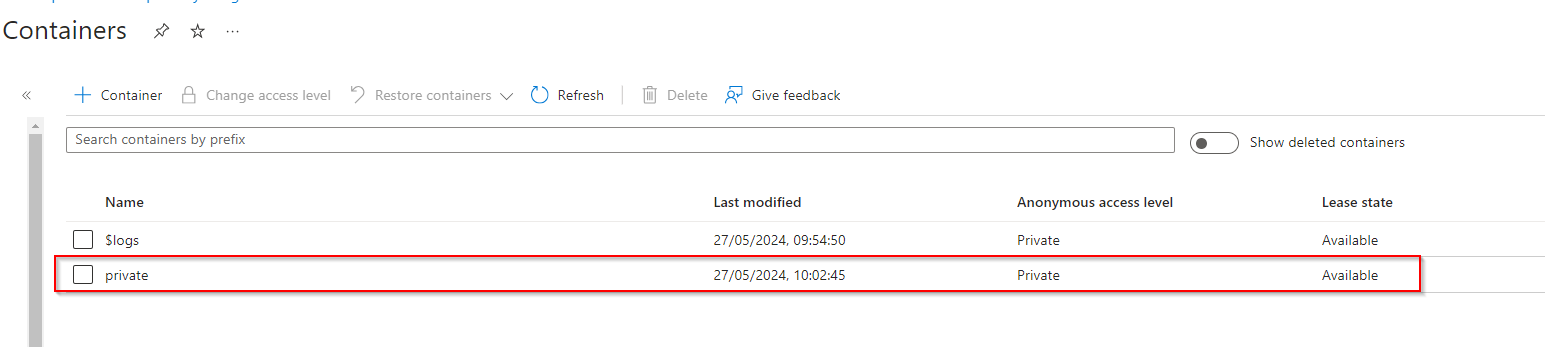

**Create a storage container, upload a file, and restrict access to the file.**

**In the storage account, select "Data storage" and select "containers"**

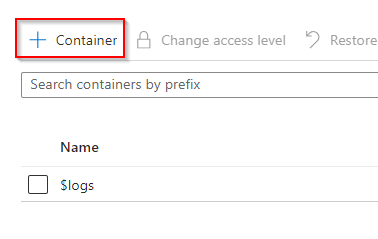

**Select "+ Container"**

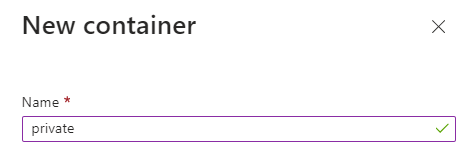

**Name the container**

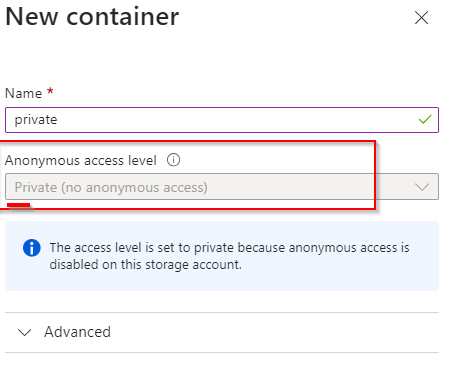

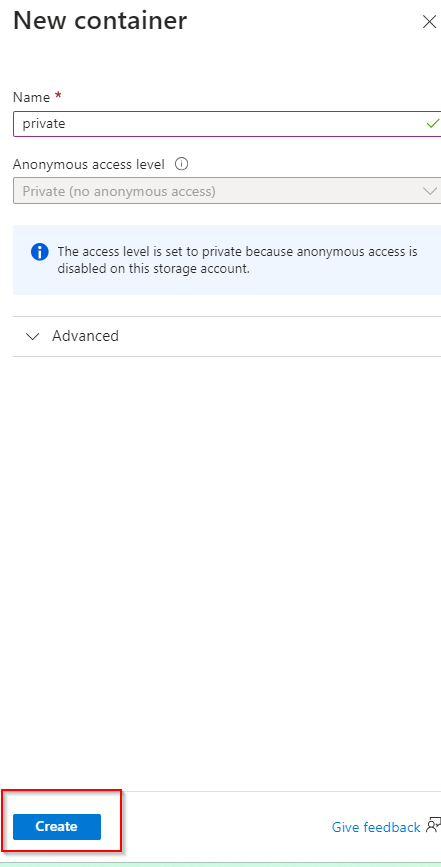

**Ensure access level is private**

**Select "Create"**

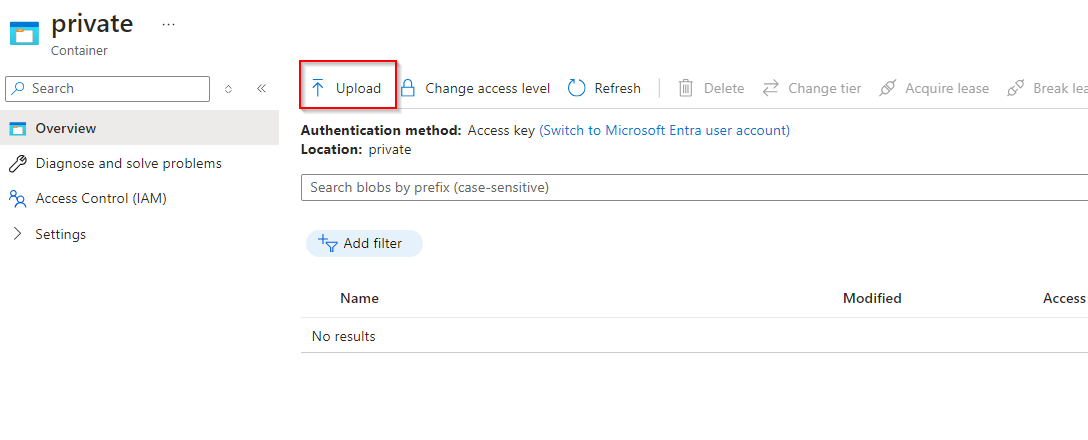

**Upload File to Container**

Select Container

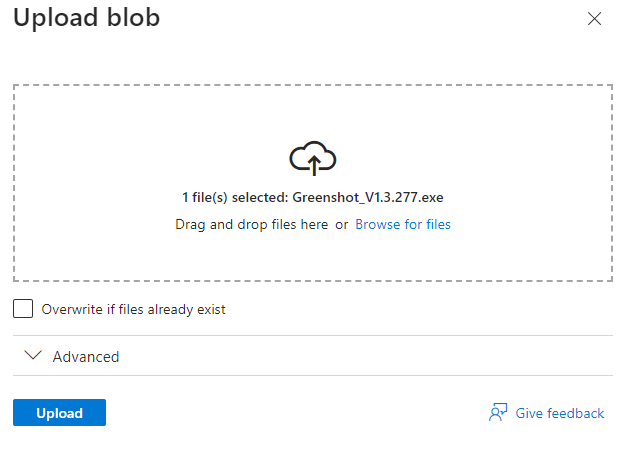

**Select "Upload"**

**Select File and upload**

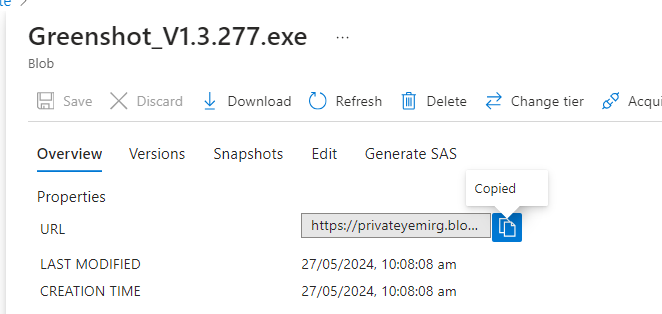

**Select the file and copy file URL to check if file will be accessed**

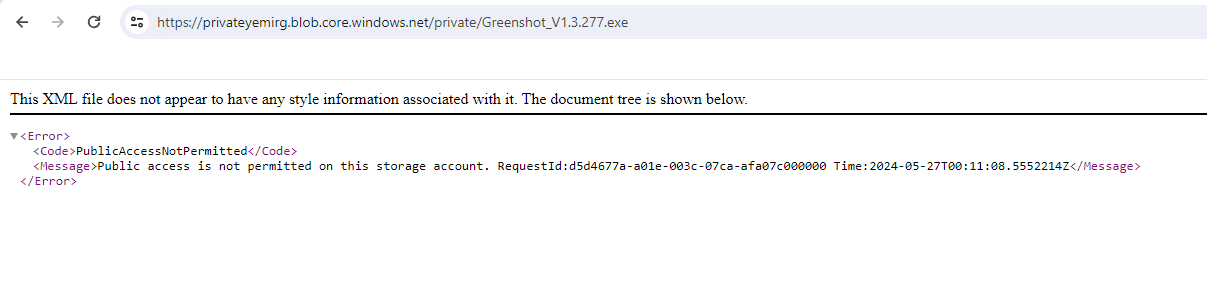

**Paste URL to a new tab, verify file doesn't display**

**An external partner requires read and write access to the file for at least the next 24 hours. Configure and test a shared access signature (SAS)**

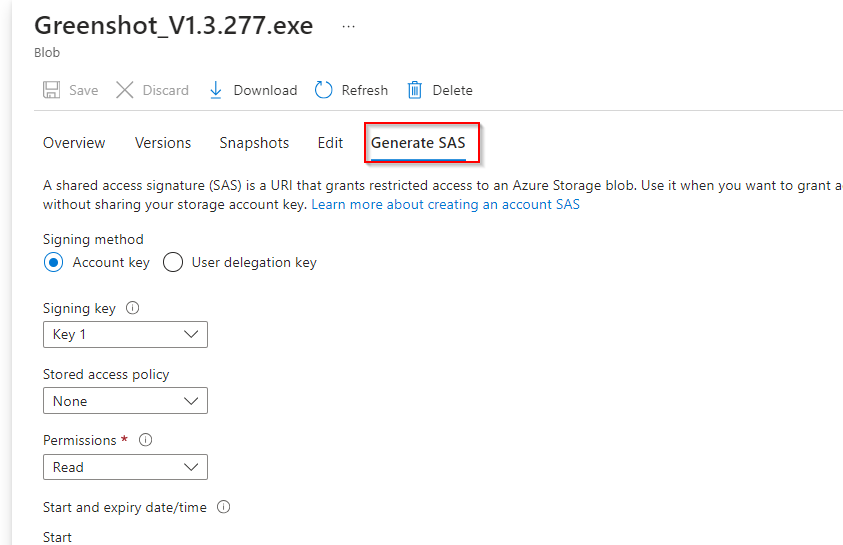

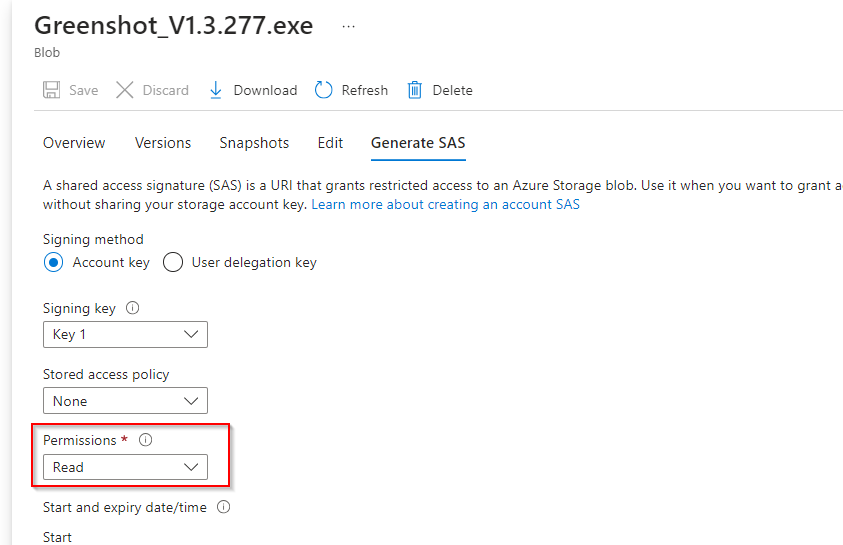

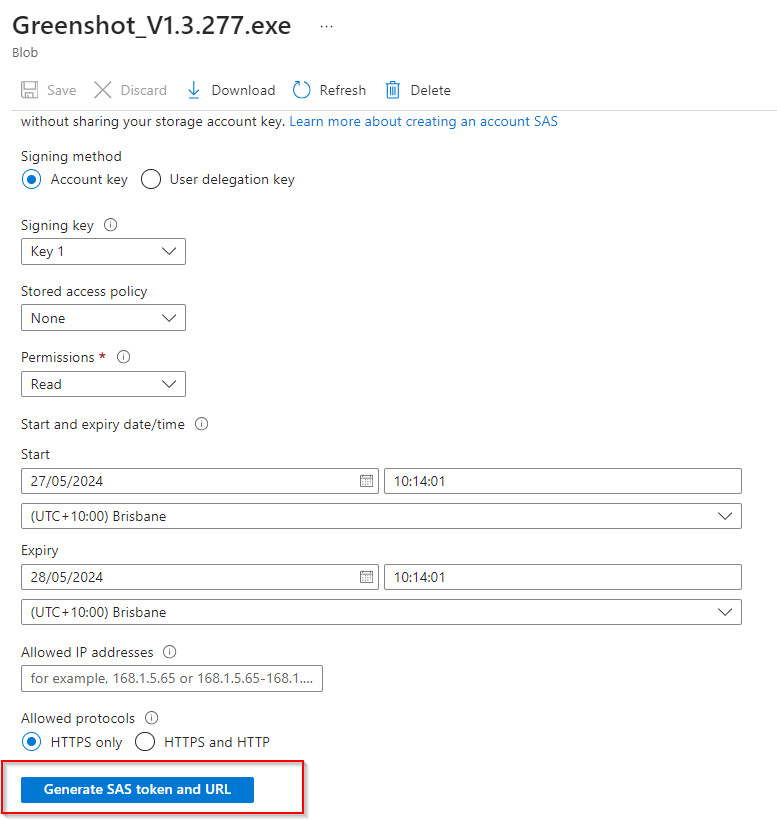

**Select the uploaded blob file and click the "Generate SAS tab"**

**Ensure the partner has only read permission**

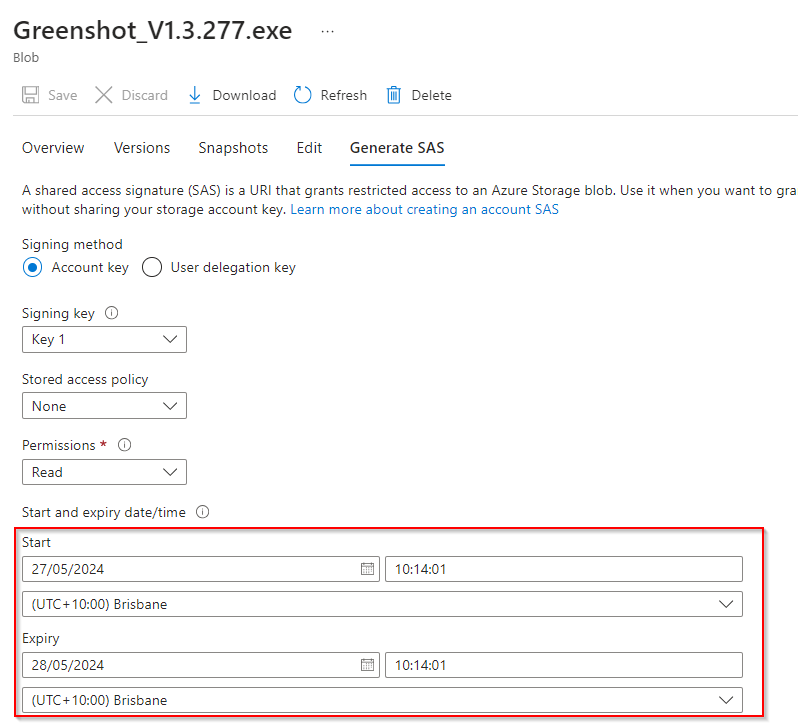

**Set start/expiry time for the next 24 hours**

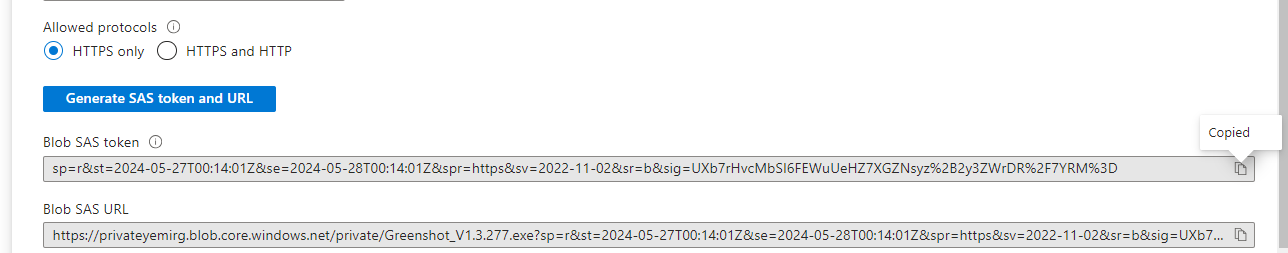

**Select "Generate SAS token and URL"**

**Copy the Blob SAS URL to a new browser tab to verify accessibility, file should be accessible.**

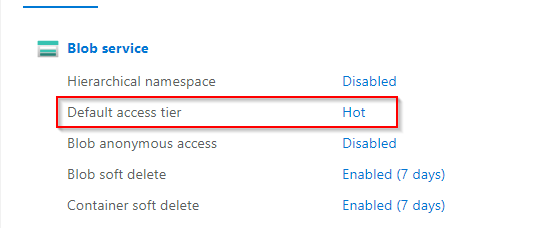

**Configure storage access tiers and content replication.**

Return to storage account

**Notice the default access tier is set to hot**

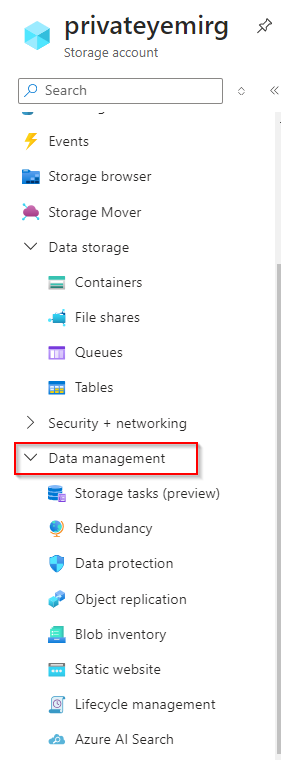

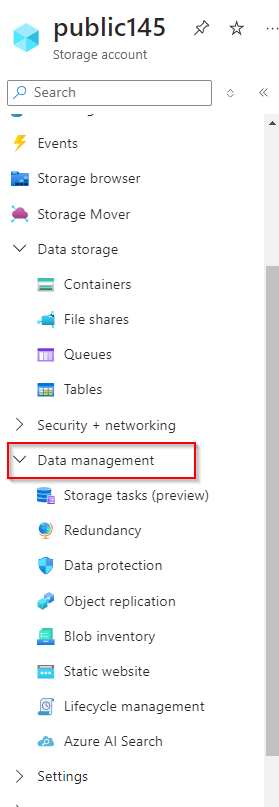

**In the Data Management section**

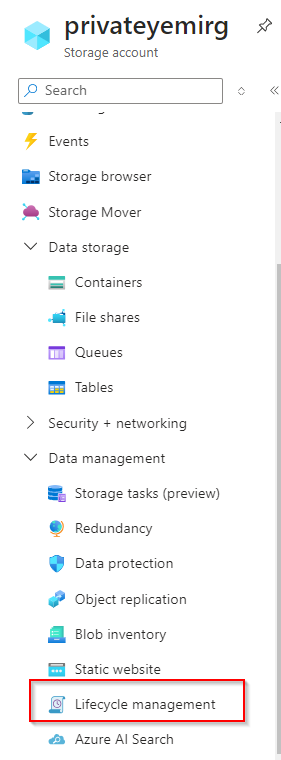

**Select "Lifecycle management"**

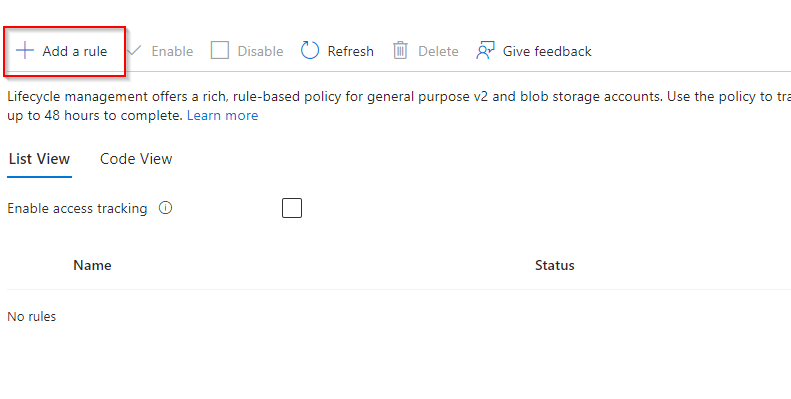

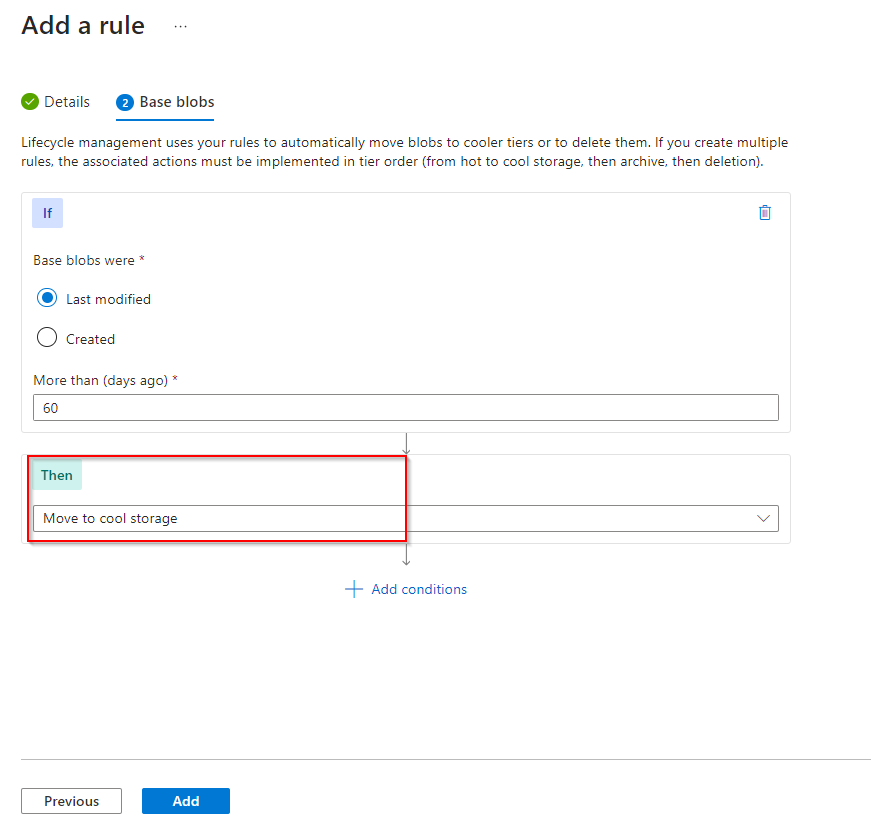

**Select "Add a rule"**

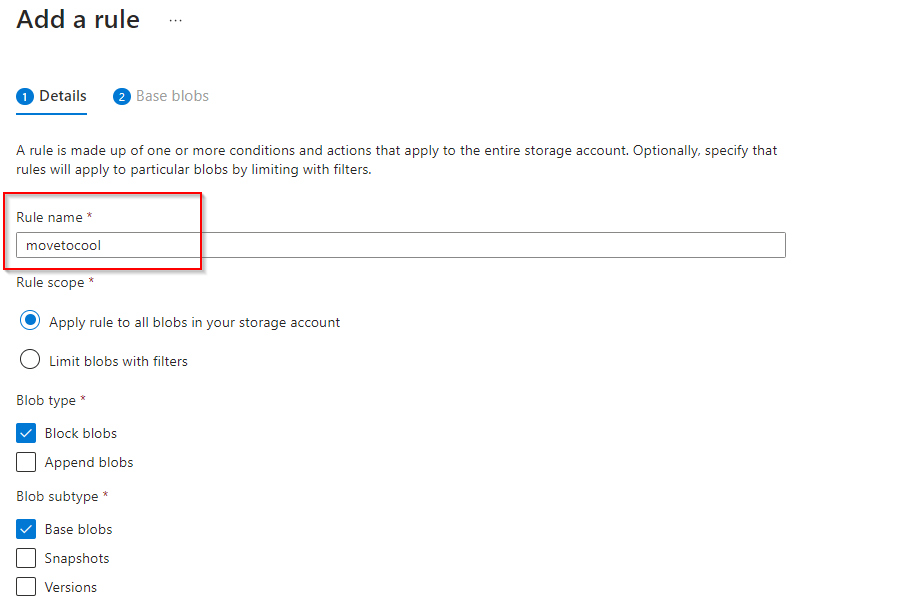

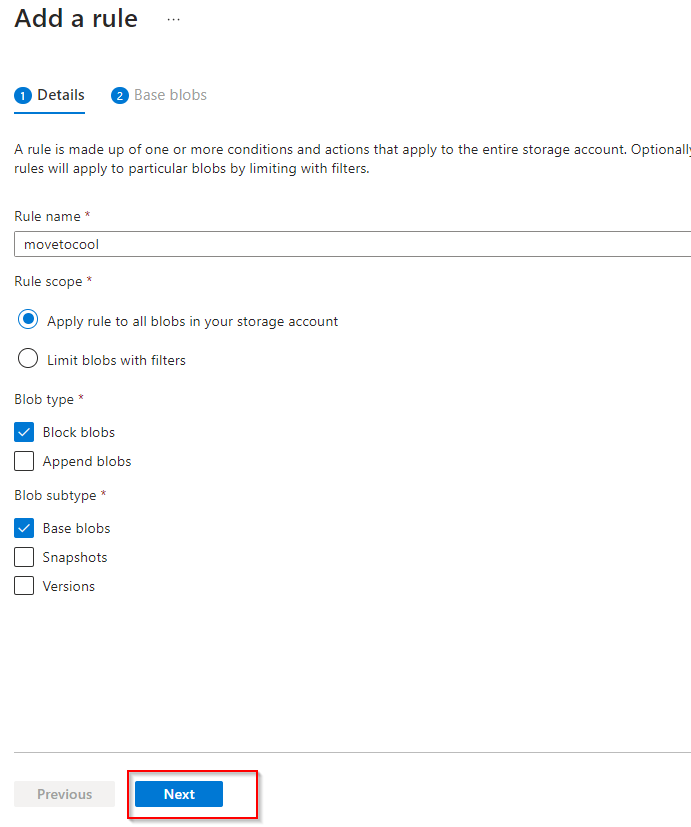

**Add Rule name**

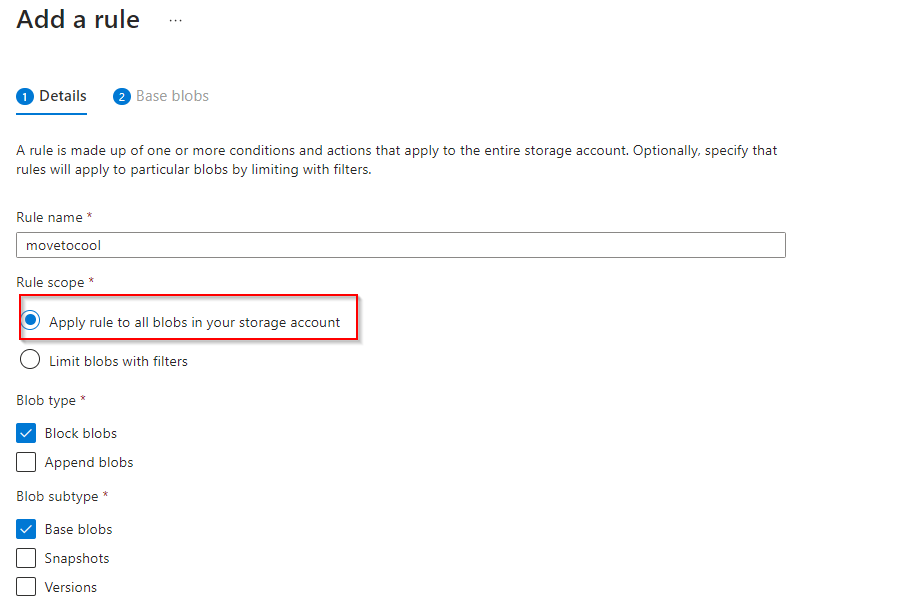

**Select Rule scope to apply rule to all blobs in your storage account**

**Select "Next"**

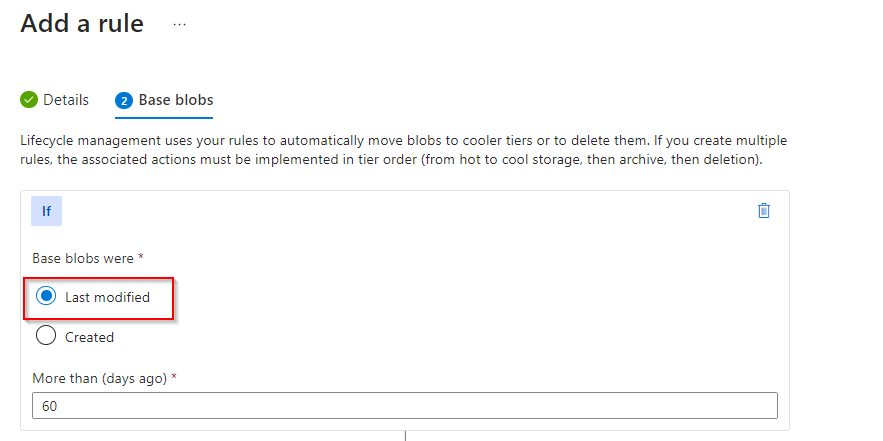

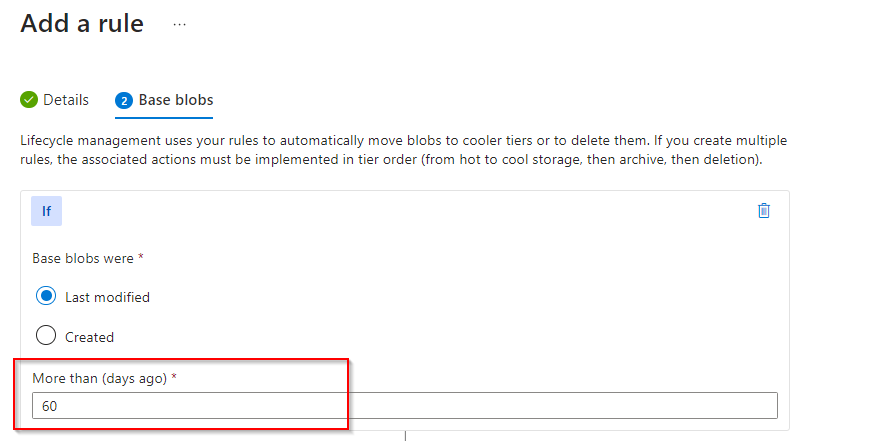

**Select "Last modified"**

Set days

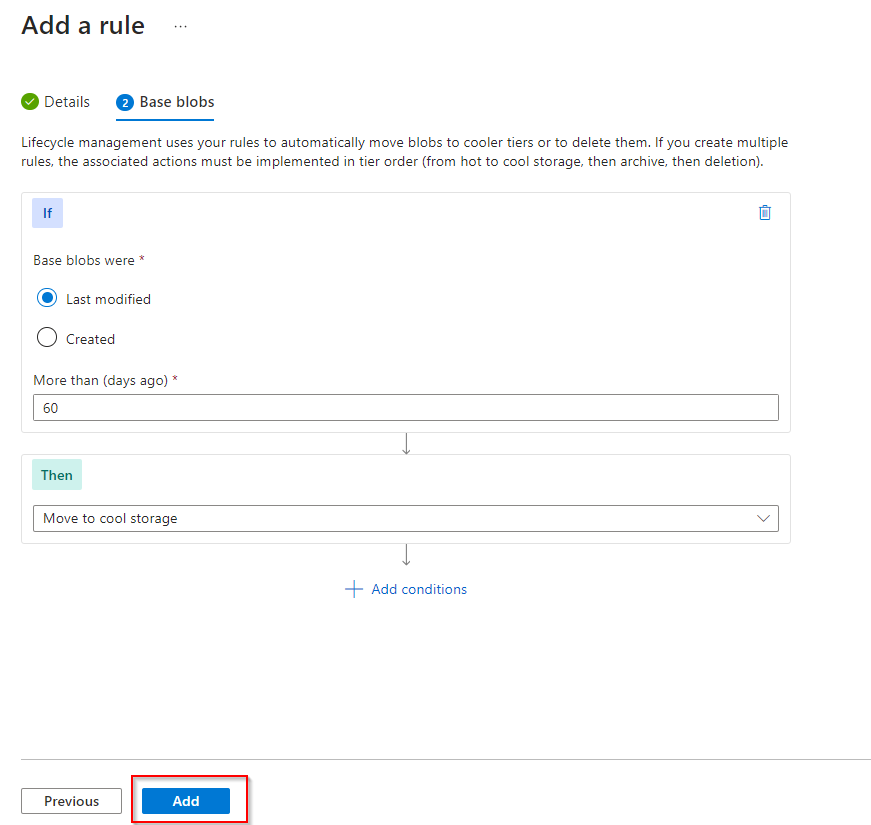

**Select "Move to cool storage"**

**Add rule**

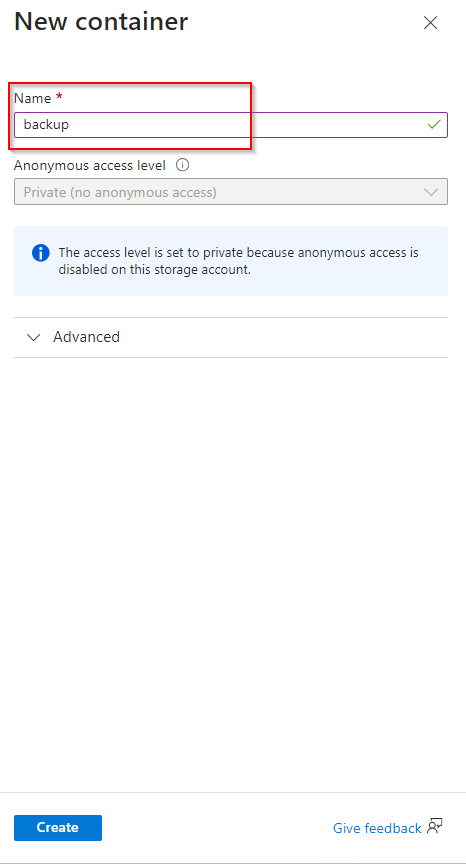

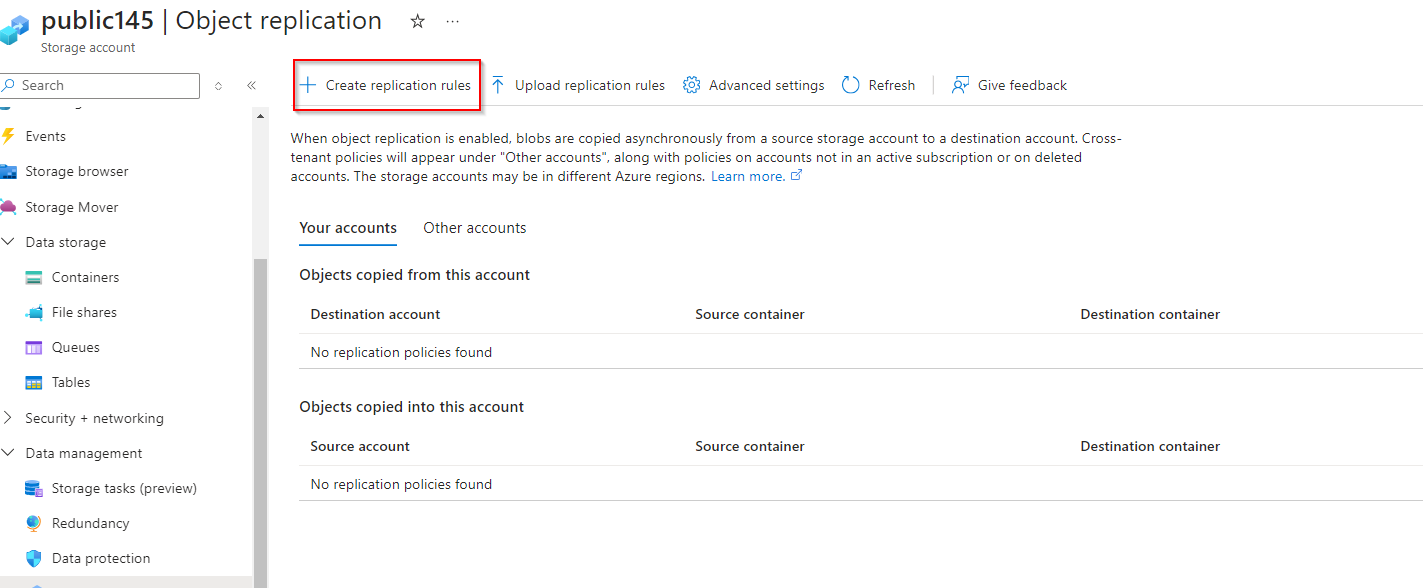

**The public website files need to be backed up to another storage account**

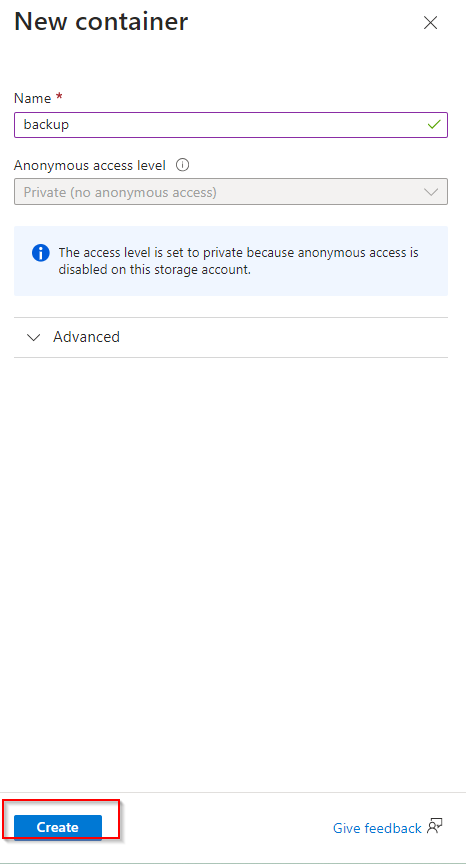

**Create a new container**

**Select "Create"**

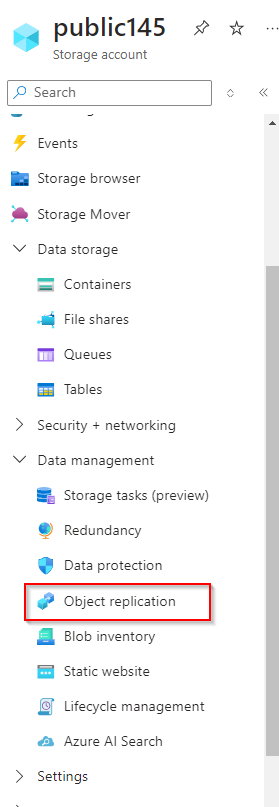

**Navigate to another storage account, click on "Data management"**

**Select "Object replication"**

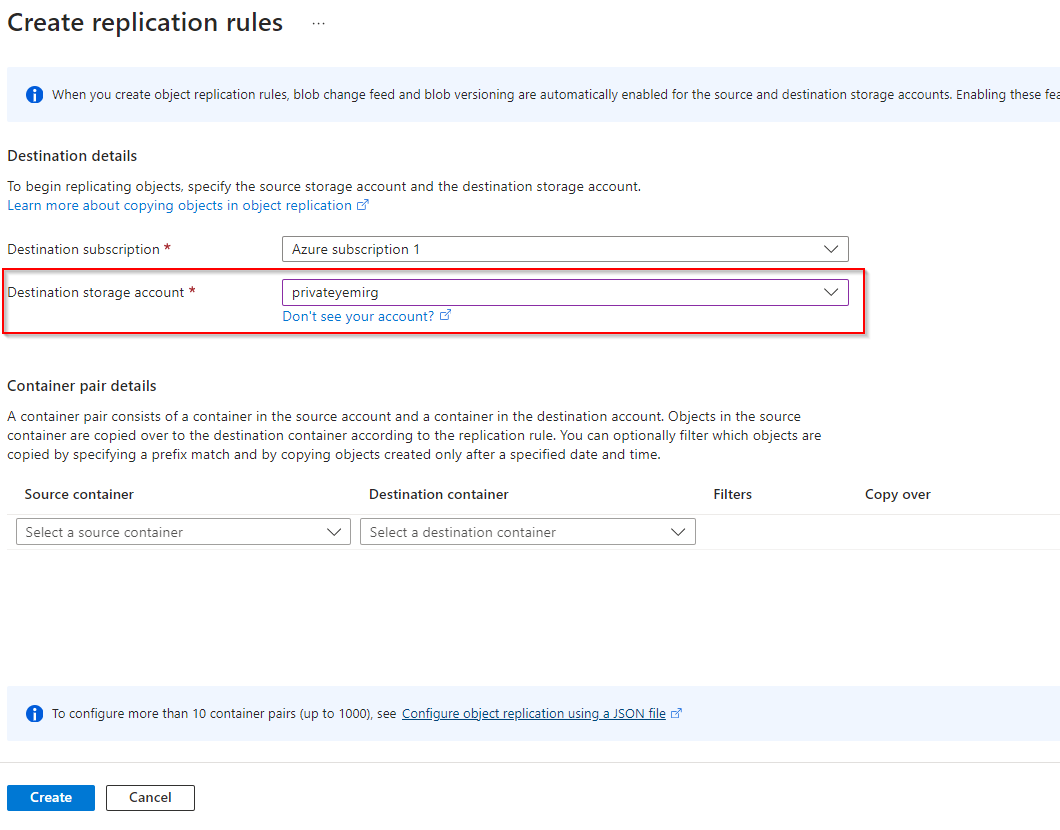

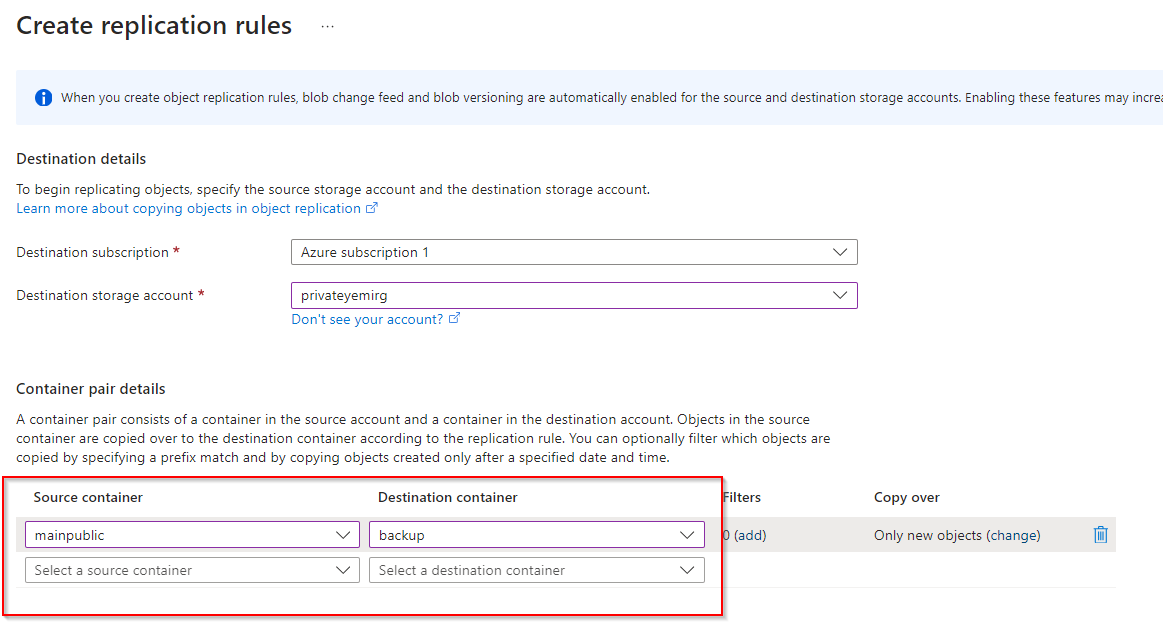

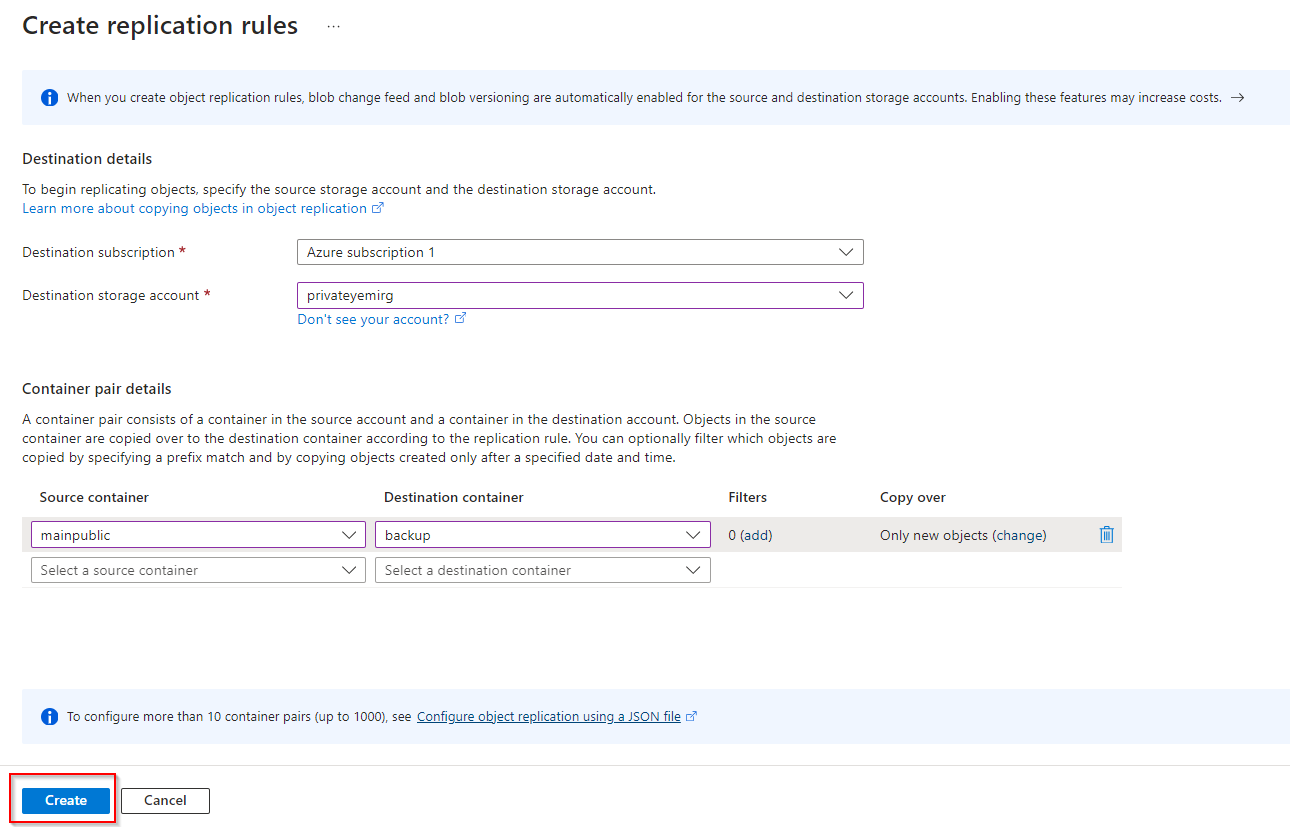

**Select "Create replication rules"**

**Set Destination storage account to the Private storage previously created**

**Set Source Container as mainpublic and Destination Container as backup**

**Select "Create"**

| opsyog |

1,866,006 | Alternative Trading Ideas--K-line Area Trading Strategy | Looking at a not-so-reliable trading idea -- the K-line area trading strategy, in this article, we... | 0 | 2024-05-27T01:14:09 | https://dev.to/fmzquant/alternative-trading-ideas-k-line-area-trading-strategy-2n96 | trading, strategy, cryptocurrency, fmzquant | Looking at a not-so-reliable trading idea -- the K-line area trading strategy, in this article, we will explore the concept and try to implement the script.

### Main Idea of the K-Line Area Strategy

The K-line area strategy is a trading strategy based on the area relationship between price K-lines and moving averages. Its main idea is to predict possible trends in stock prices by analyzing the magnitude and changes of price trends, as well as shifts in buying and selling sentiment, thereby determining when to open positions and exit. This strategy relies on the area between the K-line and moving averages, as well as values from the KDJ indicator, to generate long and short trading signals.

### The Principle of K-Line Area Strategy

The area of the K-line refers to the spatial area between the price K-line and the moving average, calculated by subtracting the moving average value from each bar's closing price and then summing it up. When there is a large increase in price over a long period of time, the K-line area will become larger, while during volatile markets or after volatility reversals, the K-line area is smaller. According to the principle of "what goes up must come down", as an upward trend becomes larger and lasts longer, its corresponding K-line area also increases; thus increasing its probability for reversal - much like a spring that rebounds with greater force when stretched further. Therefore, setting a threshold for this K-line area can indicate when prices may have reached their peak and are likely to reverse.

To further confirm an impending trend reversal, we introduce the use of KDJ indicators which help determine shifts in buying or selling sentiment. The thresholds for the strategy and values for these indicators can be adjusted according to specific circumstances and needs in order to enhance accuracy.

### The Advantages of K-Line Area Strategy

The advantage of the K-line area strategy lies in its combination of the magnitude and changes of price trends, as well as the shift in buying and selling sentiment, providing a relatively complete quantitative trading strategy. Its advantages include:

- It provides a simple and intuitive method to identify the possibility of trend reversal, helping traders better grasp market trends.

- By combining the K-line area and KDJ indicator, it increases the reliability and accuracy of the strategy.

- High flexibility allows for parameter adjustments according to market conditions to meet different trading needs.

### Risk of K-line Area Strategy

Although the K-line area strategy has certain advantages, it also carries some risks, including:

- The setting of thresholds may require some experience and adjustment. If it set improperly, it could lead to misjudgment of market trends.

- The accuracy of the KDJ indicator is affected by market fluctuations and noise, which may result in false signals.

- The performance of the strategy may vary under different market conditions and needs constant optimization and adjustment.

### Optimization Direction of K-line Area Strategy

To optimize the K-line area strategy, consider the following directions:

- Parameter optimization: Continuously adjust and optimize threshold values and KDJ indicator parameters to adapt to different market conditions and trading needs.

- Risk management: Implement effective risk management strategies, including stop-loss and take-profit rules, to reduce loss risks.

- Multi-strategy combination: Combine the K-line area strategy with other strategies to improve the performance of comprehensive trading strategies.

- Real-time monitoring and adjustment: Regularly monitor the performance of strategies, adjusting and improving based on actual situations.

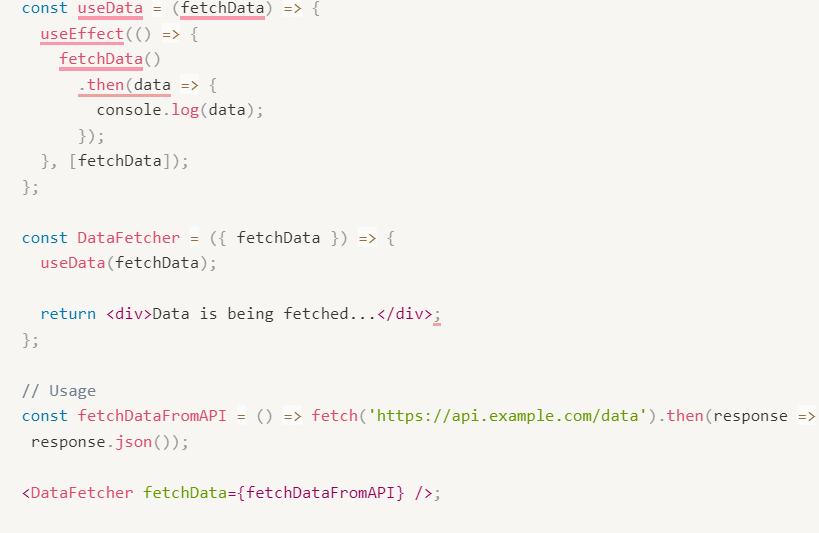

### Implement the Strategy Using JavaScript

- Calculate K-line Area

- Long position opening signal:

(1) The "K-line area" of the downward trend reaches the threshold, it can be established beforehand.

(2) KDJ indicator value is greater than 80.

- Short position opening signal:

(1) The "K-line area" of the upward trend reaches the threshold, it can be established beforehand.

(2) KDJ indicator value is less than 20.

- Exit for Long/Short positions: ATR trailing stop loss and take profit.

Code implementation

```

// Parameter

var maPeriod = 30

var threshold = 50000

var amount = 0.1

// Global variable

let c = KLineChart({})

let openPrice = 0

let tradeState = "NULL" // NULL BUY SELL

function calculateKLineArea(r, ma) {

var lastCrossUpIndex = null

var lastCrossDownIndex = null

for (var i = r.length - 1 ; i >= 0 ; i--) {

if (ma[i] !== null && r[i].Open < ma[i] && r[i].Close > ma[i]) {

lastCrossUpIndex = i

break

} else if (ma[i] !== null && r[i].Open > ma[i] && r[i].Close < ma[i]) {

lastCrossDownIndex = i

break

}

if (i >= 1 && ma[i] !== null && ma[i - 1] !== null && r[i - 1].Close < ma[i - 1] && r[i].Close > ma[i]) {

lastCrossUpIndex = i

break

} else if (i >= 1 && ma[i] !== null && ma[i - 1] !== null && r[i - 1].Close > ma[i - 1] && r[i].Close < ma[i]) {

lastCrossDownIndex = i

break

}

}

var area = 0

if (lastCrossDownIndex !== null) {

for (var i = r.length - 1 ; i >= lastCrossDownIndex ; i--) {

area -= Math.abs(r[i].Close - ma[i])

}

} else if (lastCrossUpIndex !== null) {

for (var i = r.length - 1 ; i >= lastCrossUpIndex ; i--) {

area += Math.abs(r[i].Close - ma[i])

}

}

return [area, lastCrossUpIndex, lastCrossDownIndex]

}

function onTick() {

var r = _C(exchange.GetRecords)

if (r.length < maPeriod) {

LogStatus(_D(), "Insufficient number of K-line")

return

}

var ma = TA.MA(r, maPeriod)

var atr = TA.ATR(r)

var kdj = TA.KDJ(r)

var lineK = kdj[0]

var lineD = kdj[1]

var lineJ = kdj[2]

var areaInfo = calculateKLineArea(r, ma)

var area = _N(areaInfo[0], 0)

var lastCrossUpIndex = areaInfo[1]

var lastCrossDownIndex = areaInfo[2]

r.forEach(function(bar, index) {

c.begin(bar)

c.plotcandle(bar.Open, bar.High, bar.Low, bar.Close, {overlay: true})

let maLine = c.plot(ma[index], "ma", {overlay: true})

let close = c.plot(bar.Close, 'close', {overlay: true})

c.fill(maLine, close, {color: bar.Close > ma[index] ? 'rgba(255, 0, 0, 0.1)' : 'rgba(0, 255, 0, 0.1)'})

if (lastCrossUpIndex !== null) {

c.plotchar(bar.Time, {char: '$:' + area, overlay: true})

} else if (lastCrossDownIndex !== null) {

c.plotchar(bar.Time, {char: '$:' + area, overlay: true})

}

c.plot(lineK[index], "K")

c.plot(lineD[index], "D")

c.plot(lineJ[index], "J")

c.close()

})

if (tradeState == "NULL" && area < -threshold && lineK[lineK.length - 1] > 70) {

// long

let tradeInfo = $.Buy(amount)

if (tradeInfo) {

openPrice = tradeInfo.price

tradeState = "BUY"

}

} else if (tradeState == "NULL" && area > threshold && lineK[lineK.length - 1] < 30) {

// short

let tradeInfo = $.Sell(amount)

if (tradeInfo) {

openPrice = tradeInfo.price

tradeState = "SELL"

}

}

let stopBase = tradeState == "BUY" ? Math.max(openPrice, r[r.length - 2].Close) : Math.min(openPrice, r[r.length - 2].Close)

if (tradeState == "BUY" && r[r.length - 1].Close < stopBase - atr[atr.length - 2]) {

// cover long

let tradeInfo = $.Sell(amount)

if (tradeInfo) {

tradeState = "NULL"

openPrice = 0

}

} else if (tradeState == "SELL" && r[r.length - 1].Close > stopBase + atr[atr.length - 2]) {

// cover short

let tradeInfo = $.Buy(amount)

if (tradeInfo) {

tradeState = "NULL"

openPrice = 0

}

}

LogStatus(_D(), "area:", area, ", lineK[lineK.length - 2]:", lineK[lineK.length - 2])

}

function main() {

if (exchange.GetName().includes("_Futures")) {

throw "not support Futures"

}

while (true) {

onTick()

Sleep(1000)

}

}

```

The strategy logic is very simple:

1. First, some global variables and parameters are defined, including:

Strategy parameters

- maPeriod: The period of moving average.

- threshold: A threshold used to determine the timing of buying or selling.

- amount: The quantity for each transaction.

Global variables

- c: A K-line chart object, used for drawing charts.

- openPrice: Records the opening price.

- tradeState: Records the trading status, which can be "NULL" (empty position), "BUY" or "SELL".

Calculate function

- calculateKLineArea function: It is used to calculate the area between the price and moving average line on a K-line chart over a certain period of time, and returns the area value, the index of the last upward crossing K-line, and the index of the last downward crossing K-line. These values are used in subsequent decisions to determine when to buy and sell.

Main loop function

- onTick function: It is the main strategy execution function, and here are the operations within the function:

a. Obtain the latest K-line data and ensure that the number of K-lines is not less than maPeriod, otherwise record status and return.

b. Calculate moving average line ma and ATR indicator atr, as well as KDJ indicator.

c. Get area information from areaInfo, last cross-over K-line index, and last cross-under K-line index.

d. Use K-line chart object c to draw K-lines and indicator lines while filling in different colors based on price's relationship with moving average line.

e. Determine buying or selling timing according to conditions:

If tradeState is "NULL", and the area is less than -threshold, and the K value of KDJ is greater than 70, execute a buy operation.

If tradeState is "NULL", and the area is greater than threshold, and the K value of KDJ is less than 30, execute a sell operation.

f. Set stop loss and take profit conditions. If these conditions are met, close positions:

If it's in buying state, when the price falls below the closing price of last trading day minus previous day's ATR (Average True Range), close position.

If it's in selling state, when the price rises above last trading day's closing price plus previous day's ATR (Average True Range), close position.

main function: This serves as main execution entry point. It checks if exchange name contains "_Futures". If so, an exception will be thrown; otherwise it enters into an infinite loop where onTick function gets executed every second.

In a word, this strategy mainly relies on K-line charts and technical indicators for making buying or selling decisions while also employing stop-loss & take-profit strategies to manage risk. Please note that this just serves as an example strategy which needs to be adjusted & optimized according to market situations & specific requirements during actual use.

On FMZ.COM, using JavaScript language didn't require many lines of code, instead, it implemented this model easily. And with help from KLineChart function graphical representation of K-line chart area was easily achieved, too. The strategy design caters towards cryptocurrency spot markets by utilizing 'Digital Currency Spot Trading Library' template for placing orders through encapsulated functions within template, which makes it very simple & easy to understand and use.

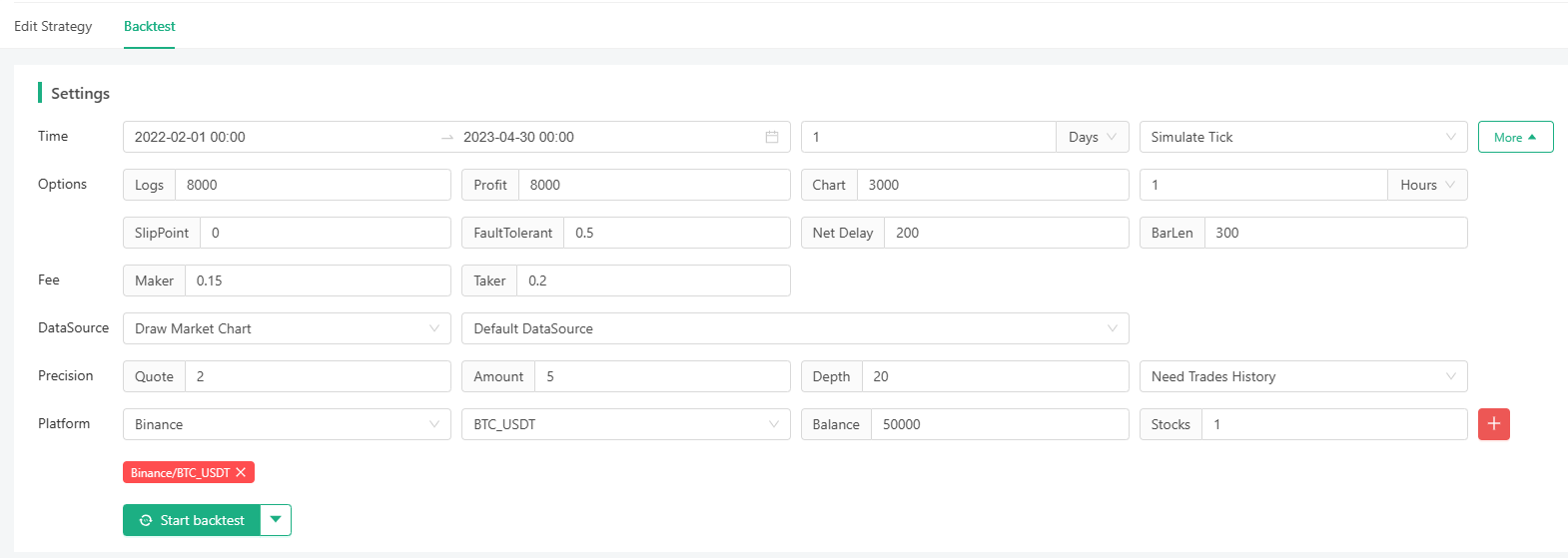

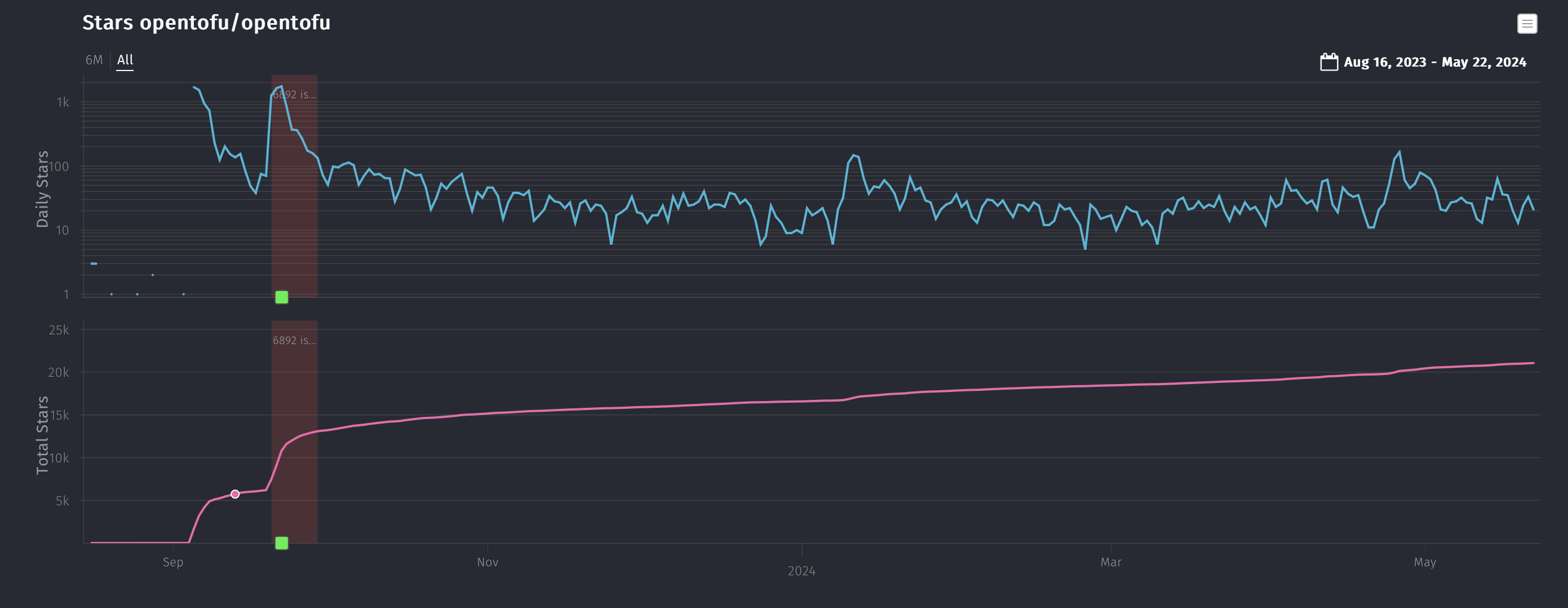

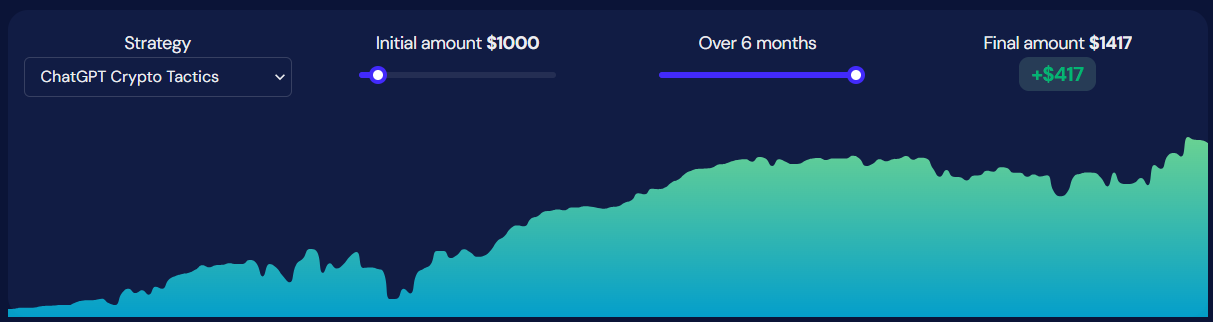

### Strategy Backtesting

I selected a backtesting period randomly. Although I didn't lose money, I didn't accumulate profits continuously, either, and the drawdown issue is quite significant. There should be other directions and room for optimization for the strategy. Those who are interested can try to upgrade the strategy.

Through the strategy, we not only learned a rather unconventional trading idea, but also learned how to plot diagrams; representing the area enclosed by K-line and moving average line; ploting KDJ indicators etc.

### Summary

The K-line area strategy is a trading strategy based on price trend magnitude and the KDJ indicator. It helps traders predict market trends by analyzing the area between the K-line and moving averages, as well as shifts in buying and selling sentiment. Despite certain risks, this strategy can provide powerful trading tools through continuous optimization and adjustment, helping traders better cope with market fluctuations. Moreover, traders should adjust the parameters and rules of the strategy flexibly according to specific situations and market conditions to achieve better trading performance.

From: https://blog.mathquant.com/2023/11/06/alternative-trading-ideas-k-line-area-trading-strategy.html | fmzquant |

1,865,918 | where can I buy baby clothes from? | As the best children's clothing site and online store for children's clothing and baby clothes with... | 0 | 2024-05-26T21:41:50 | https://dev.to/bestbabyclothes/where-can-i-buy-baby-clothes-from-4mfd | As the [best children's clothing site](https://fingilii.com/) and online store for children's clothing and baby clothes with more than a decade of experience, Fingili's children's clothing site has succeeded in keeping up with the stores by adhering to three principles, easy payment, 7-day return guarantee and guaranteeing the originality of the goods. world-renowned, to become the largest branded children's clothing online store in Iran. As soon as you enter the Fingili baby clothes shopping site, you will be faced with a world of beautiful children's clothes! For every taste, there are high-quality and excellent products on the Fingili baby clothing site. Fingili's cheap children's clothing site is like a glamorous showcase with all kinds of products such as boys' clothes, girls' clothes, children's socks, children's watches, boys' sportswear sets, boys' formal wear, girls' formal wear, clothes Sportswear for girls, children's wedding dresses, home and comfort clothes, children's room signs, etc. are arranged. You can order all your needs in the field of buying children's clothes with just a few clicks and order them at home in the shortest possible time. Receive it. | bestbabyclothes | |

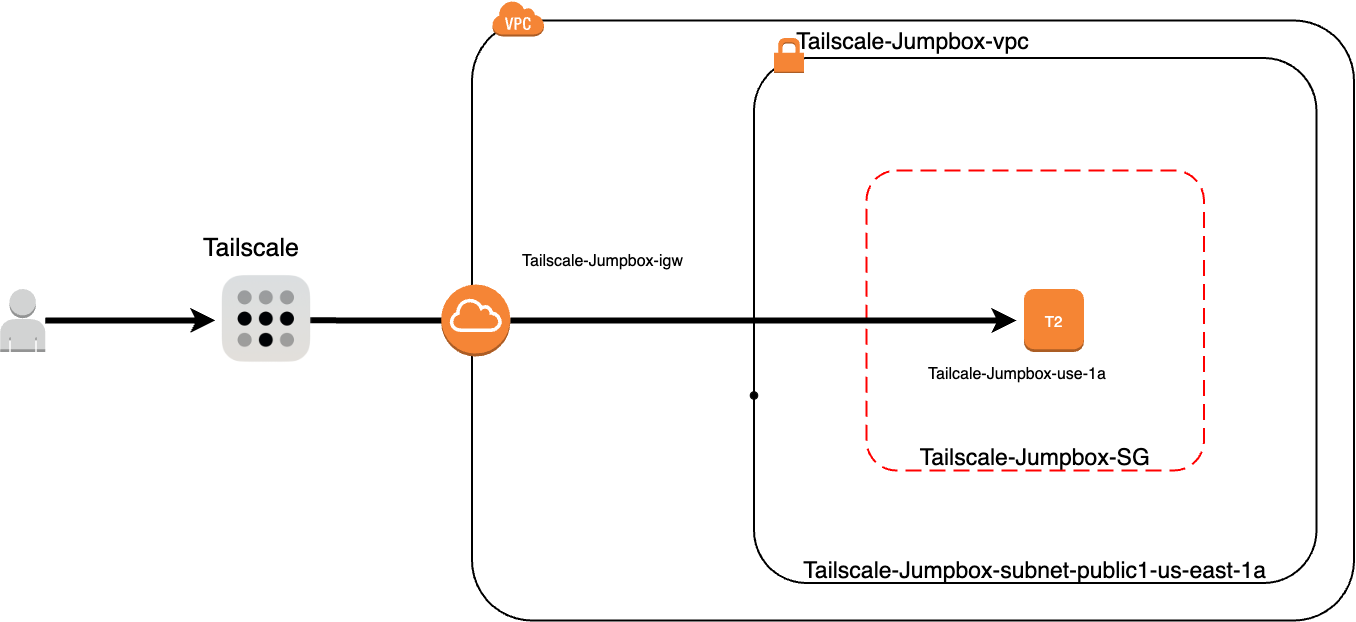

1,865,852 | Enhancing AWS VPC Security: Accessing Your Network with a Private Jumpbox using Tailscale | In today's cloud-centric world, ensuring the security of your AWS resources is paramount. I was... | 0 | 2024-05-27T00:50:03 | https://dev.to/tevindeale/enhancing-aws-vpc-security-accessing-your-network-with-a-private-jumpbox-using-tailscale-1k83 | aws, cloud, networking, tailscale | In today's cloud-centric world, ensuring the security of your AWS resources is paramount. I was recently working on a cloud project and wanted a secure way to access the VPC remotely without using EC2 Instance Connect. This is when I came up with the idea to try using [Tailscale](https://tailscale.com/) VPN. I had already been tinkering with [Tailscale](https://tailscale.com/) on my home network and noticed how powerful it was. In this post, I will share how you can enhance your AWS VPC security by setting up a private jumpbox using Tailscale. We'll be using the free plan, which is sufficient for our needs. I suggest visiting [Tailscale's](https://tailscale.com/)website to explore all their features and use cases.

## The Solution

## Understanding the Basics

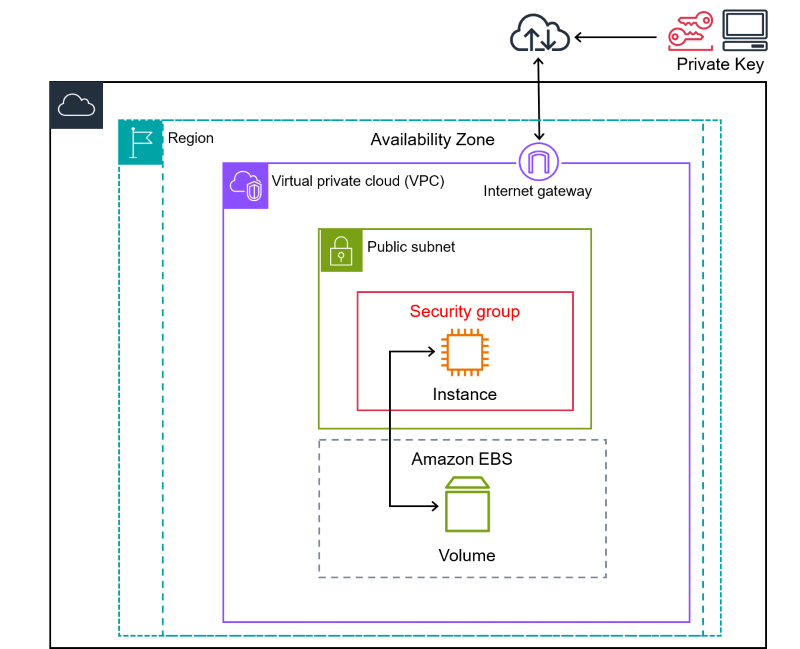

For those new to AWS, a Virtual Private Cloud (VPC) is a logically isolated section of the AWS cloud where you can launch AWS resources in a virtual network that you define. A jumpbox, or bastion host, acts as a secure gateway to your VPC, typically accessed via SSH, and it helps in reducing the exposure of your instances. Tailscale is a user-friendly VPN that simplifies secure network connections using WireGuard’s encryption technology. It’s particularly great for creating secure, peer-to-peer networks.

## Why Use a Private Jumpbox and Tailscale?

Using a private jumpbox, also known as a bastion host, provides a secure gateway to your AWS VPC. Unlike a public jumpbox, a private jumpbox is not accessible directly from the internet, which significantly reduces the attack surface and enhances the overall security of your network. This setup ensures that only authorized users can access your VPC resources.

Tailscale is a peer-to-peer VPN solution built on WireGuard, which simplifies secure network connections. Tailscale's ease of use, combined with its robust security features, makes it an excellent choice for setting up a private jumpbox. With Tailscale, you can create a secure mesh network that includes your local devices and your AWS resources, allowing seamless and secure access.

One of the key advantages of using Tailscale with a private jumpbox is its cost-effectiveness. Here are some points to consider:

- **Free Plan**: Tailscale offers a free plan that is sufficient for many use cases, especially for small projects or individual developers. This plan includes all the core features needed to set up a secure private jumpbox.

- **Reduced AWS Costs**: By using a private jumpbox, you can minimize the number of publicly accessible instances, which can lower your AWS costs. Public instances often require additional security measures and monitoring, increasing overall expenses.

- **No Need for Expensive Hardware**: Tailscale operates on your existing infrastructure, meaning you don't need to invest in additional hardware or complex network setups.

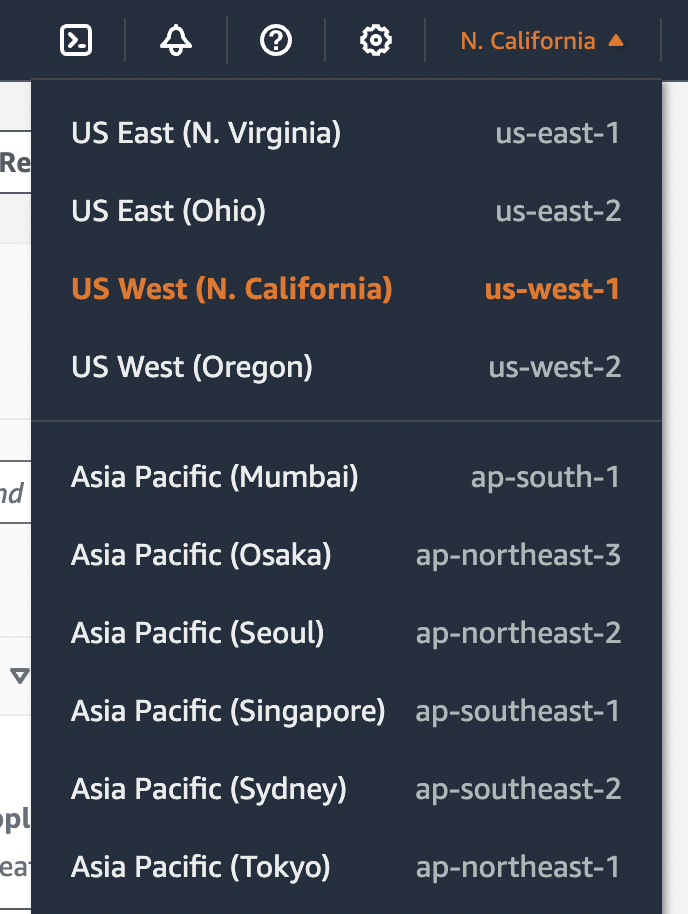

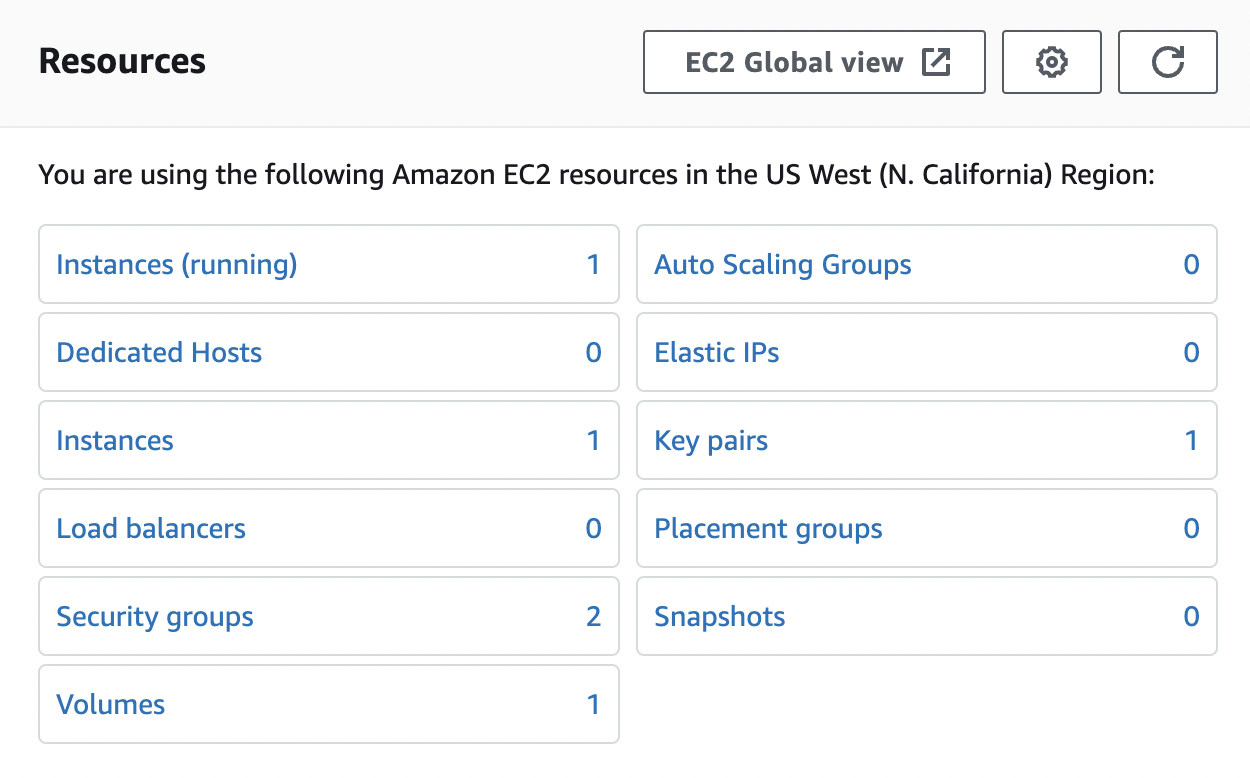

## Setting Up the Environment

Before we begin, ensure that you have a AWS account with necessary permissions, and the remote system set up with the Tailscale VPN.

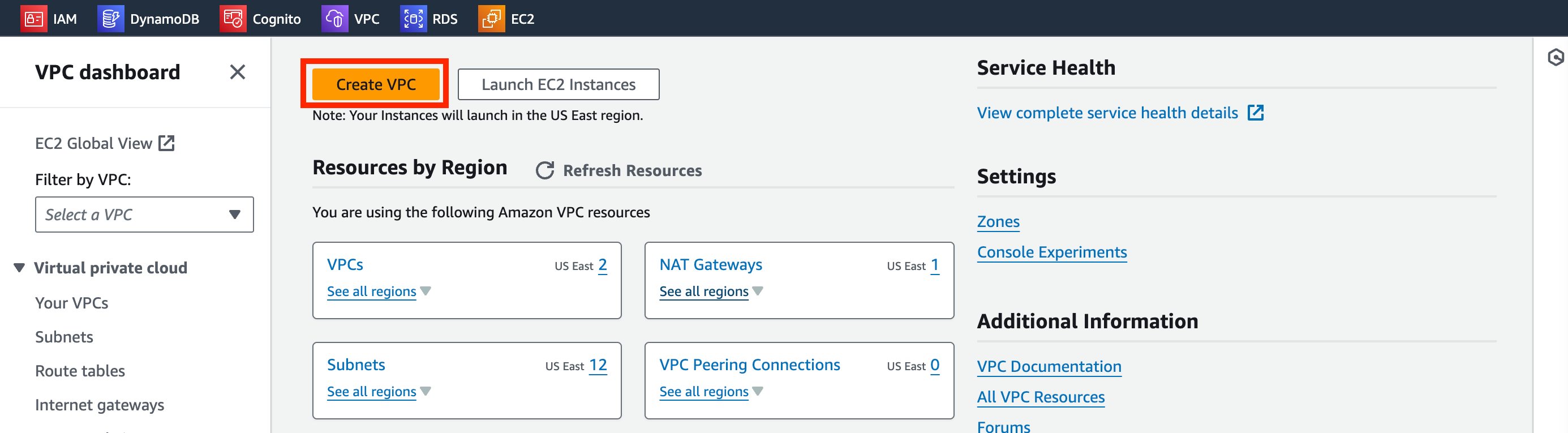

**Create the VPC**

1. Navigate to the VPC dashboard and click _Create VPC_.

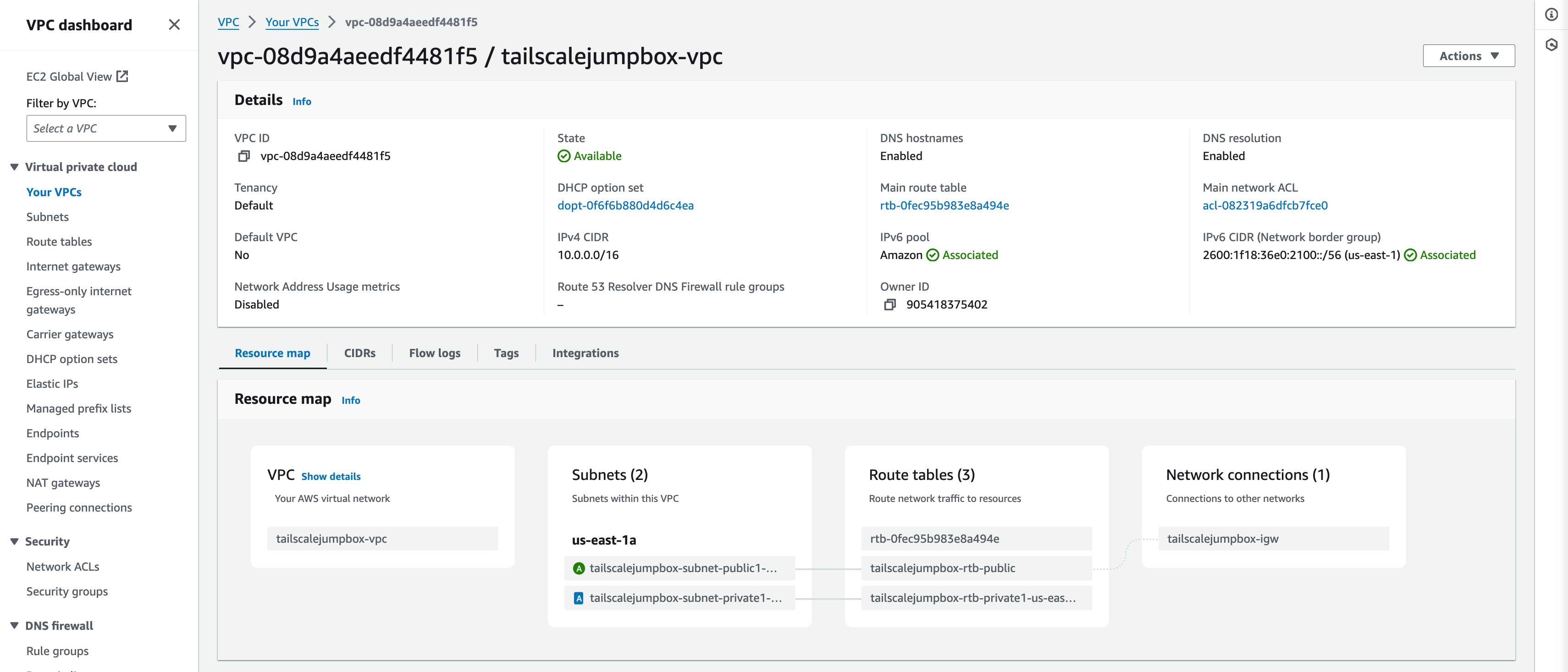

2. In the VPC Settings box we will use the _VPC and More_ option for simplicity. Match your settings to the following and click _Create VPC_:

- Name tag auto-generation: TailscaleJumpBox

- IPv4 CIDR block: 10.0.0.0/16

- IPv6 CIDR block(Important): Amazon-provided IPv6 CIDR block

- Tenancy: Default

- Number of Availability Zones: 1

- Number of public subnets: 1

- Number of private subnets: 1

- Nat gateways: None

- VPC endpoints: None

- DNS Options: Both options should be checked.

Example output:

Using the VPC and more option when creating the VPC saves times by auto generating your subnets, route tables, and internet gateway.

**Turn on IPv6 Auto-assign**

1. In the VPC Dashboard menu click _Subnets_.

2. Enable Auto-assign IPv6

This will auto assign IPv6 addresses to resources deployed into the TailscaleJumpBox-VPC. This is useful because by default AWS IPv6 addresses are publicly available by default unlike IPv4 addresses.

**Create Security Group**

1. In the VPC Dashboard menu click _Security groups_.

2. Click _Create Security Group_.

3. Enter in the following details:

- **Security group name**: Tailscale-JumpBox-SG

- **Description**: Allow remote connection from Tailscale

- **VPC**: tailscalejumpbox-vpc

- **Inbound rules**: None (default)

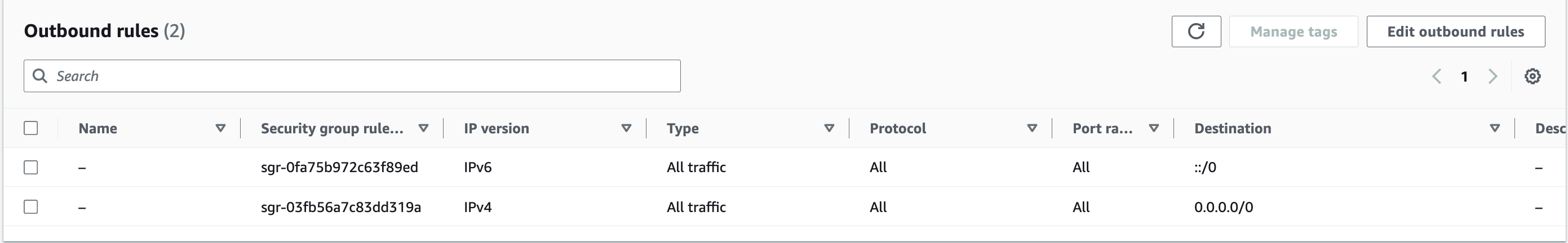

- **Outbound rules**: Leave as default (all traffic)

Example Output:

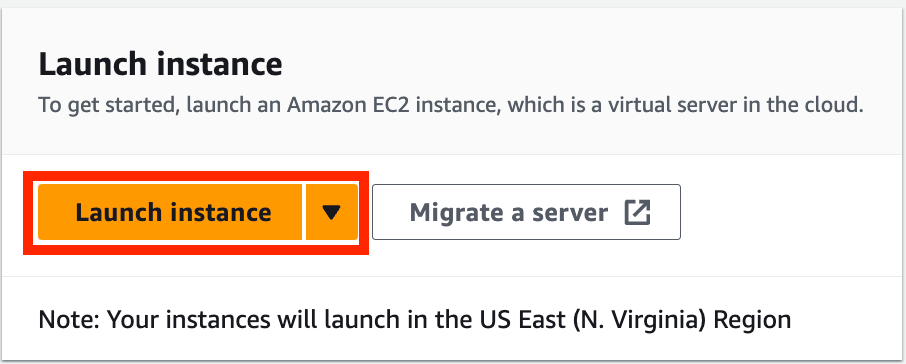

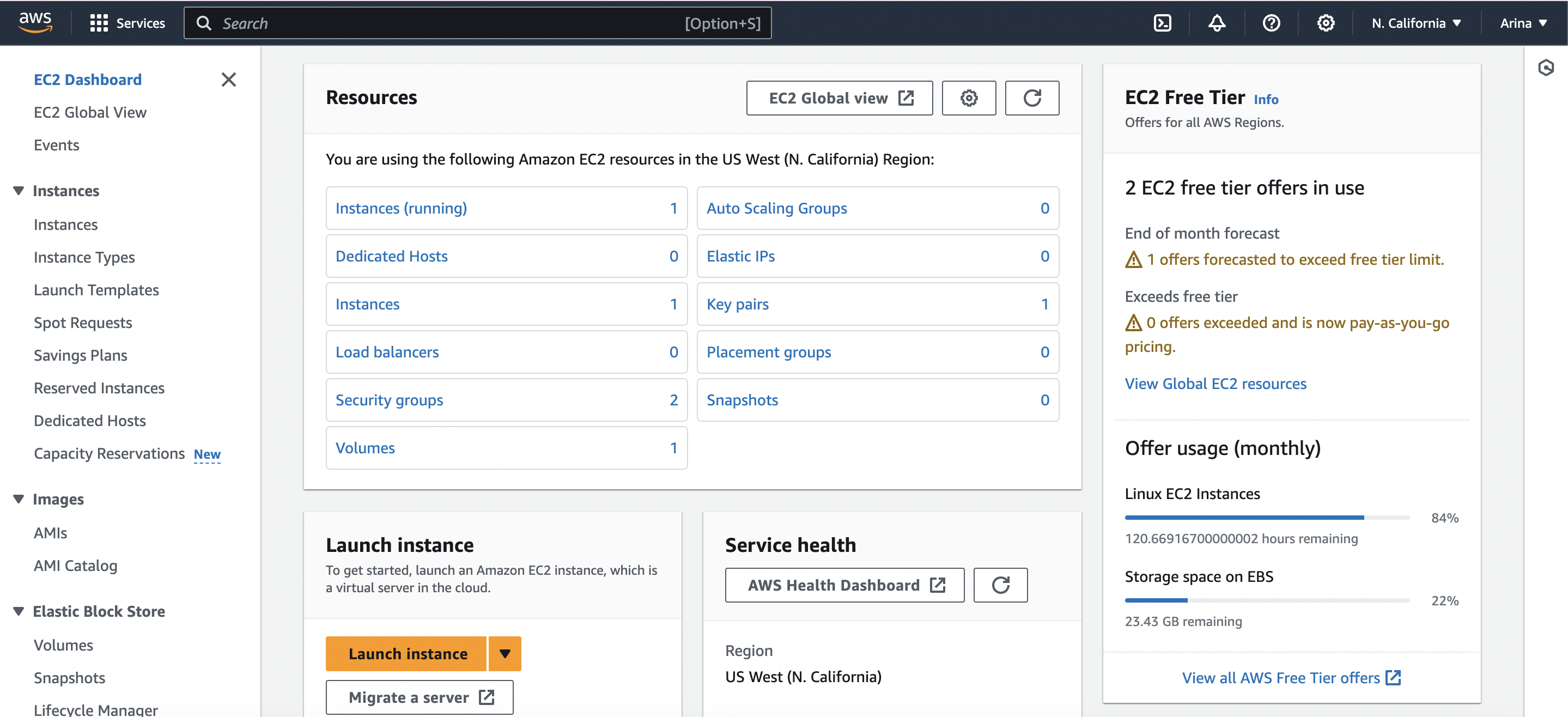

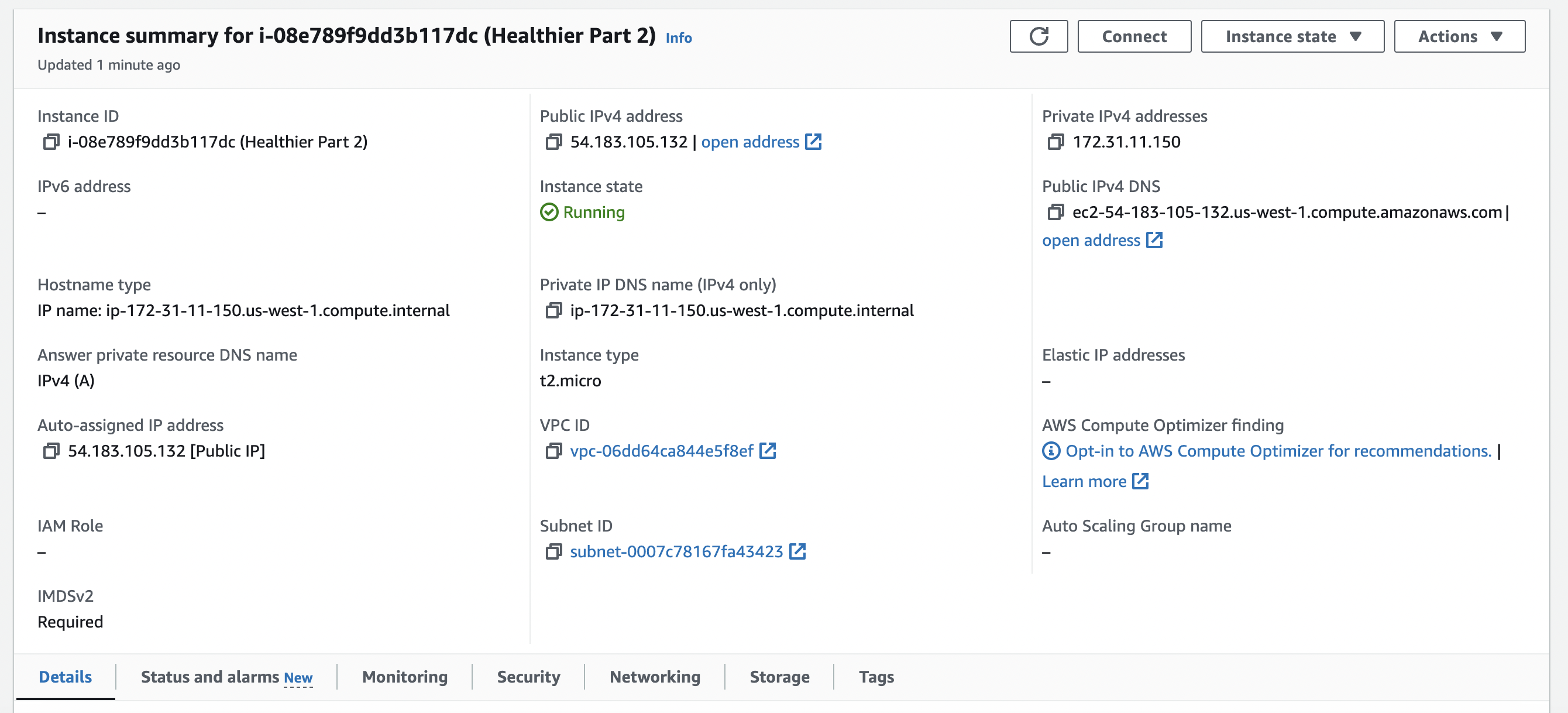

**Launch Instance**

1. Navigate to the EC2 Dashboard.

2. Click _Launch Instance_.

3. Enter in the following details:

- **Name**: Tailscale-JumpBox-USE-1a

- **Application and OS Images**: Amazon Linux - Amazon Linux 2023

- **Instance Type**: t2.micro (free tier)

- **Key pair**: Create new key pair --> RSA --> .PEM --> Download Key

- **Network Settings**: Click _edit_ to change all options

- **VPC**: tailscalejumpbox-vpc

- **Subnet**: tailscalejumpbox-subnet-public1-us-east-1a

- **Auto-assign public IP**: Disable

- **Auto-assign IPv6 IP**: Enable

- **Security goups**: Select existing security group

- **Common security groups**: Tailscale-JumpBox-SG

- **Configure storage**: Leave as default

- **Advance details**: Expand this section and scroll to the bottom to enter _User data_ (Shell Script)

- **User data**:

```bash

#! bin/bash

hostnamectl set-hostname ts-jumpbox-use-1a

yum update -y

curl -fsSL https://tailscale.com/install.sh | sh

tailscale up --auth-key <tskey-auth> --ssh

```

Shell script breakdown:

1. `#! bin/bash`: This shebang line indicates that the script should be run in the bash shell.

2. `hostnamectl set-hostname ts-jumpbox-use-1a`: Sets a readable hostname for the instance. (Optional)

3. `yum update -y`: Updates all installed packages.

4. `curl -fsSL https://tailscale.com/install.sh | sh`: Downloads and installs Tailscale's quick install script.

5. `tailscale up --auth-key <tskey-auth> --ssh`: Starts Tailscale and authenticates the instance using the provided auth key, with SSH access enabled.

6. Launch Instance

**Note**: Replace `<tskey-auth>` with your actual Tailscale authentication key. You can generate an auth key from the Tailscale admin console under the Keys section in the settings.

**Using the `--ssh` Flag**

By using the `--ssh` flag in the `tailscale up` command, you enable Tailscale SSH, which allows you to SSH into the machine using Tailscale’s secure network. After running the script, you will see an SSH tag on the machine in the Tailscale admin console.

## Connecting to the JumpBox

To SSH into the server using Tailnet SSH, use the following command:

```

ssh ec2-user@<tailnet IP address>

```

You will be prompted to log in with a browser to authorize access to the server.

Alternatively, you can use the -i flag with the RSA key generated when launching the instance:

```

ssh -i rsa.pem ec2-user@<tailnet IP address>

```

By following these steps, you can securely connect to your jumpbox and access your AWS VPC using Tailscale, taking advantage of its seamless and secure networking capabilities.

## Conclusion

In this blog post, we explored how to enhance the security of your AWS VPC by setting up a private jumpbox using Tailscale. By utilizing a private jumpbox, you significantly reduce the attack surface of your infrastructure, making your network more secure and resilient against unauthorized access.

We walked through a step-by-step process to install and configure Tailscale on your jumpbox, leveraging its powerful yet user-friendly VPN capabilities. By enabling Tailscale SSH, we made accessing your jumpbox and other AWS resources secure and straightforward, providing you with a seamless and cost-effective solution for remote network access.

One of the standout advantages of this setup is its cost-effectiveness. Using Tailscale's free plan and minimizing the number of publicly accessible instances can save costs while still providing robust security features.

By following the steps outlined, you now have a secure method to access your AWS VPC, ensuring your resources are protected without sacrificing convenience. I encourage you to implement this setup in your projects and experience the benefits firsthand.

If you have any questions, comments, or additional tips to share, please leave them below. I’d love to hear about your experiences and any further enhancements you make to this setup.

Call to Action

Try setting up your own private jumpbox with Tailscale today, and ensure your AWS VPC remains secure and accessible. Don’t forget to share your feedback and any custom configurations you come up with!

For more information and to explore additional features, visit [Tailscale’s documentation](https://tailscale.com/kb/1017/install).

Thank you for reading, and happy securing!

| tevindeale |

1,866,000 | Mmoexp Path of exile currency: Everything from your skills to your endgame experience | 8 Remnant: From The Ashes Release year: 2019 Platforms: Windows, PlayStation 4 and Xbox One,... | 0 | 2024-05-27T00:46:36 | https://dev.to/rozemondbell/mmoexp-path-of-exile-currency-everything-from-your-skills-to-your-endgame-experience-fon | webdev, javascript, beginners, programming | 8 Remnant: From The Ashes Release year: 2019 Platforms: Windows, PlayStation 4 and Xbox One, PlayStation 5, Xbox Series X|S

Featuring some of <a href="https://www.mmoexp.com/Path-of-exile/Currency.html">Path of exile currency</a> the most unique monster designs, Remnant: From The Ashes tells the tale of a realm descending into chaos thanks to an alternate dimension evil. The remnants of humanity team up to fight off the monstrous hoard and take back their world.

RELATED: Things We Wish We Knew Before Starting Remnant: From The Ashes

It's a pretty common setup, but Remnant executes itself with such panache and style. If you're looking for a co-op Soulslike game with action, guns, and, most importantly, loot, Remant: From the Ashes is the one to play.

.hidden-poll {display: none} The Ultimate Beginner's Guide To Path Of Exile Path Of Exile: A Comprehensive Beginner's Guide By Charles Burgar Updated Apr 23, 2023

From currency to leveling, this guide will cover all of the essentials a new player needs to survive on Wraeclast.

Quick LinksCreating A Character Finding A Build Surviving The First Few Acts The Passive Tree And Ascendancies Skill Gems And Support Gems Explained How To Upgrade Your Gear Useful Community Resources

No ARPG on the market can match the sheer depth of Path of Exile. This free-to-play dungeon crawler offers a degree of customization that would make other games blush. Everything from your skills to your endgame experience can be suited to <a href="https://www.mmoexp.com/Path-of-exile/Currency.html">cheap POE currency</a> your needs.

| rozemondbell |

1,865,999 | Dev na Gringa: Processos Seletivos e Onde Estudar Para Eles | Originalmente postado no Dev na Gringa Substack. Caso queira receber novos artigos no seu e-mail,... | 0 | 2024-05-27T00:42:43 | https://dev.to/lucasheriques/dev-na-gringa-processos-seletivos-e-onde-estudar-para-eles-b9f | braziliandevs, career | Originalmente postado no [Dev na Gringa Substack](https://open.substack.com/pub/devnagringa/p/dev-na-gringa-processos-seletivos?r=gb7rl&utm_campaign=post&utm_medium=web). Caso queira receber novos artigos no seu e-mail, increva-se!

---

Você encontrou a vaga, aplicou para uma vaga de dev na gringa, e foi chamado para a entrevista.

Chegou a hora de entender como você será avaliado.

Nesse artigo, vou mostrar os principais métodos usados no mercado internacional.

Não vou entrar em muitos detalhes individualmente. Pois o objetivo é dar uma visão ampla sobre os diferentes processos seletivos.

Vou compartilhar todos os recursos que eu utilizo quando estou me preparando.

Como vocês podem ver neste diagrama, todas as etapas são eliminatórias.

Sim, são muitos pontos que são sem volta.

Por isso, é importante estar bem preparado para cada uma delas.

Vou deixar algumas dicas específicas minhas também no final, que não encaixam em uma etapa específica. Baseadas em experiências anteriores.

Mais um último lembrete. Esse guia detalha todas as etapas que eu já fiz e tenho experiência. Porém, certamente existem empresas que possuem processos diferentes.

Inclusive, se você participou de um, peço que comente neste artigo! E assim podemos ter mais conhecimento difundido para todos também.

## 1. Conversa inicial com recrutador

Durante essa conversa, o recrutador irá te contar um pouco mais sobre a posição e o processo seletivo.

Se for um vaga de dev na gringa, sua comunicação já começa a ser avaliada nessa primeira conversa.

Ler um pouco sobre a empresa também é algo que faz maravilhas. Procure entender a situação que a empresa está. Se estão buscando crescimento. Diminuindo os gastos. Quais as últimas iniciativas que trabalharam.

Mostre um interesse genuíno.

Tenha algumas perguntas preparadas.

No final da entrevista, o recrutador irá perguntar se tem algo que você queira saber.

É uma ótima oportunidade de fazer perguntas de assuntos pertinentes para a sua posição.

Alguns exemplos:

- Você pode me contar um pouco mais sobre a cultura da empresa?

- Existem oportunidades para participar de conferências ou ter acesso à material educativo?

- Como está a diversidade na empresa?

- Tem algo específico que preciso me preparar para a próxima etapa?

Perguntar mostra que você tem um interesse genuíno na empresa. E também ajuda você a determinar se a empresa se encaixa naquilo que você está buscando.

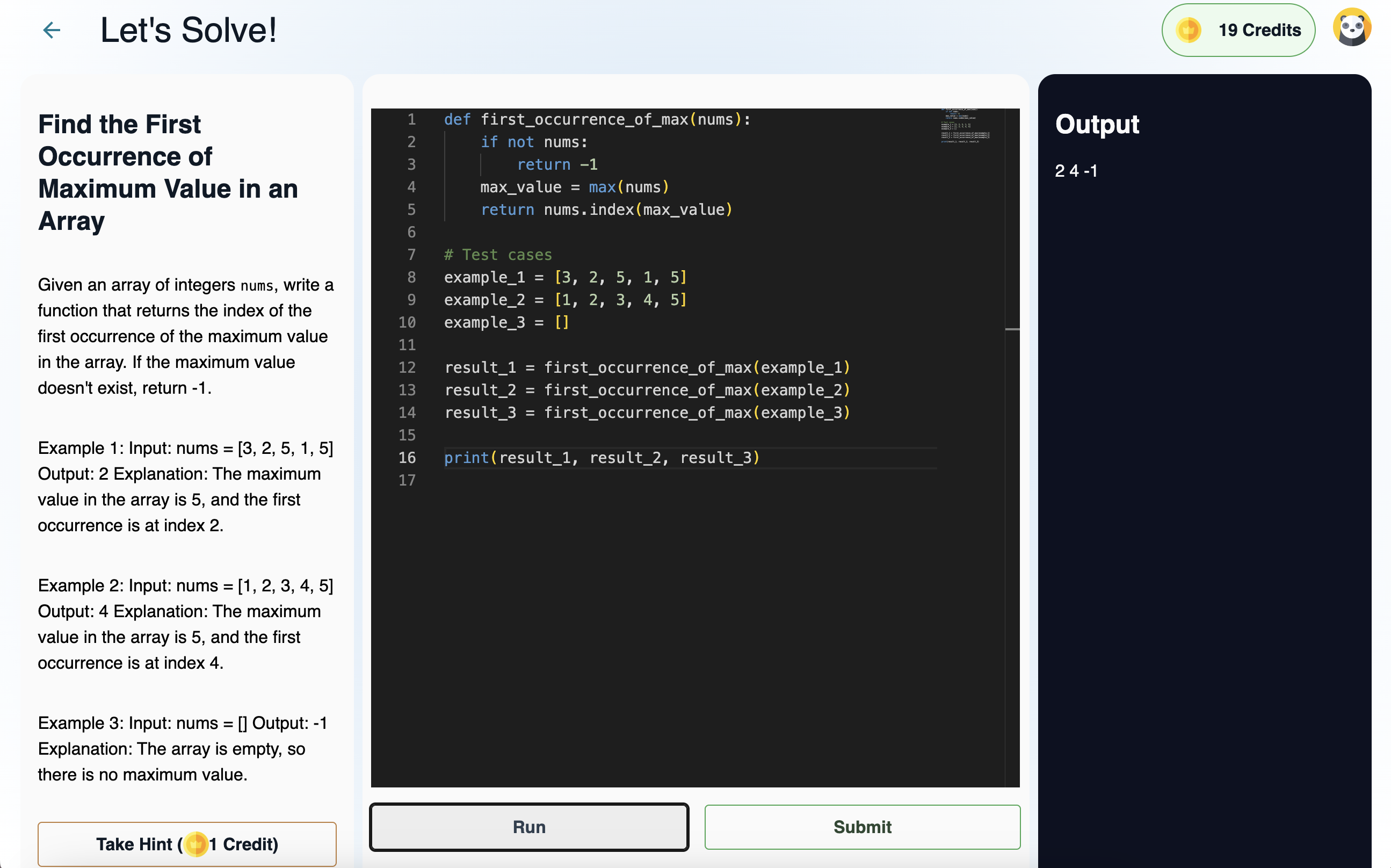

## 2. Entrevista técnica inicial ou _Phone Screen_

É uma conversa técnica, com duração de 45min até 1 hora.

Nessa entrevista, você irá fazer _pair_ com algum desenvolvedor.

Existe a possibilidade de você receber um problema grande, que dure a entrevista inteira. Ou até três perguntas menores.

Se você estiver aplicando para uma vaga de front, você deve focar nos domínios de HTML, CSS e JavaScript.

Também é possível ser um problema de algoritmos e estruturas de dados.

Recursos para aprofundar:

1. [GreatFrontEnd](https://devnagringa.substack.com/p/%5Bhttps://greatfrontend.com/%5D(https://greatfrontend.com/)): para entrevistas de vagas em front.

2. [NeetCode](https://devnagringa.substack.com/p/%5Bhttps://neetcode.io/%5D(https://neetcode.io/)): para algoritmos e estruturas de dados.

3. [LeetCode](https://devnagringa.substack.com/p/%5Bhttps://leetcode.com/%5D(https://leetcode.com/)): para problemas específicos das empresas que você está aplicando.

4. Livro: Cracking the Coding Interview por Gayle Laakmann McDowell.

## 3. Teste prático ou _Take Home Exercise_

Esse é o teste técnico que você faz em casa.

A empresa vai te dar um prazo e te enviar todos os requisitos do projeto.

Neste tipo de projeto, existem duas opções:

1. A empresa o pede com uma stack específica.

2. Você tem a liberdade de escolher a stack que preferir.

As dicas dependem um pouco do tipo de teste.

Caso seja uma stack específica, tenha certeza de estar atualizado com as práticas de mercado.

Por exemplo, se for uma aplicação React, opte por usar Nextjs ou Vite como o framework/ferramenta de _build_. TypeScript também é comum. Para testes, eu usaria Vitest/Jest, React Testing Library e Playwright.

Se você tiver a liberdade de escolha, faça escolhas sensatas. Use a ferramenta apropriada para o trabalho.

Por exemplo: se é um aplicação de AI/dados, Python é uma boa escolha. Para frontend web, o ecossistema JavaScript é o mais usado. Backend? Python, Node, Ruby, Go, várias opções.

O importante é que você saiba justificar todas as suas escolhas.

Algumas dicas de como se destacar nesta etapa:

1. Clarifique os requisitos e quaisquer dúvidas que você possa ter. Depois de ler a especificação, pergunte se tiver dúvidas. Não deixe a dúvida ficar para depois da entrega.

2. Dedique um tempo a documentação. Como rodar o seu app? Ele está hospedado em alguma infraestrutura? Que abordagens você usou para teste? Inclua esse tipo de informação no README.

3. Faça diagramas com a sua arquitetura. Seja da informação, dos fluxos de usuário, ou talvez do seu modelo de dados. Use o que precisar para que fique claro qual foi sua abordagem.

4. Se tiver tempo, faça entregáveis extras que possam impressionar o revisor. Exemplos: um app de frontend? Faça animações que façam o usuário experimentar algum sentimento novo. De backend? Explique como você faria a observabilidade do sistema. Surpreenda as pessoas.

## 4. Entrevistas onsite / _Onsite Interviews_

A última etapa antes da oferta é o que chamamos como dev na gringa de _onsite interview_.

Pode ser em um dia só, ou dividir em mais dias. Em especial quando são muitas etapas.

Elas podem ser uma variação dos vários tipos de entrevistas que vamos falar abaixo.

Se você quiser saber quais serão as etapas, recomendo você perguntar ao recrutador.

Quanto mais senior você é, menos ênfase tem as etapas de programação. A entrevista comportamental e de _system design_ passam a ter maior relevância.

### Algoritmos e estruturas de dados

Uma das fundações da computação são algoritmos e estruturas de dados.

Sim, são problemas que muitas vezes não são utilizados no nosso dia a dia.

Mas existe, sim, um motivo pelo qual empresas perguntam esse tipo de problema.

É um conhecimento que já está presente há **décadas**. E que continua sendo relevante até hoje.

Sim, pode ser que no dia a dia você não interaja diretamente com elas.

Mas elas estão, sim, presentes nos sistemas que utilizamos.

Pense nas redes sociais, que são usadas por bilhões de usuários diariamente.

A maneira eficiente de guardar os dados sobre conexões entre pessoas é um clássico exemplo da utilização de grafos.

Agora, vamos supor que você tenha um sistema distribuído. E você quer fazer o balanceamento de carga entre os vários nós do seu sistema. Quais algoritmos e estruturas vem ao caso aqui? Filas, _round-robin_ e _least-connections_ me vem a cabeça.

Então, pode ser que você não use essas estruturas no seu dia a dia.

Mas o conhecimento que elas trazem é algo que superou o teste do tempo.

Com isso a parte, vamos falar das dicas.

Problemas de algoritmos e estruturas de dados são sobre o reconhecimento de padrões.

Esses padrões vão ficam mais claros conforme você resolve mais problemas.

Então, quando você ver um problema novo, tente pensar em quais padrões podem ser aplicados a ele.

Vamos supor que você precise encontrar um caminho em algum tipo de rede ou grafo. Provavelmente será algum problema que será usado BFS ou DFS.

Ou que talvez você precise computar alguma _subarray_ ou _substring_. O padrão de _sliding window_ deve ser útil.

Lembre-se também de comunicar o seu raciocínio. Se tiver alguma dúvida, pergunte.

Também escreva alguns testes de caso que você possa executar no final do seu código. Procure pensar em potenciais _edge cases_ que podem vir a acontecer.

Recursos extras:

1. [NeetCode](https://devnagringa.substack.com/p/%5Bhttps://neetcode.io/%5D(https://neetcode.io/)): para algoritmos e estruturas de dados.

2. [LeetCode](https://devnagringa.substack.com/p/%5Bhttps://leetcode.com/%5D(https://leetcode.com/)): para problemas específicos das empresas que você está aplicando.

3. Livro: Cracking the Coding Interview por Gayle Laakmann McDowell.

Os recursos daqui são os mesmos que já falamos na entrevista técnica.

### _Pair Programming_

É um tipo de entrevista que tenta simular como você trabalharia com alguém no dia a dia do trabalho.

A lição mais importante aqui é a comunicação. Mostre que você é alguém que se preocupa com o código que está escrevendo. Em particular sobre como a pessoa que irá ler ele depois.

Lembre-se que código é muito mais lido do que ele é escrito.

Eu sinto que eu repito isso em basicamente todas as entrevistas.

Mas, sério, a comunicação aqui é muito importante. Assim como em todas as outras etapas. 😅

Especialmente se for uma vaga de dev na gringa. Tudo fica um pouco mais difícil quando você se comunica com uma língua diferente.

Por isso, a minha dica é respirar fundo sempre que você estiver se sentindo confuso.

Diga que você precisa pensar um pouco, se precisar. Mas, se possível, pense alto. Deixe que o seu entrevistador entenda o seu raciocínio.

Tome cuidado para não falar muito rápido. Essa é uma dificuldade minha até hoje, que venho tentado melhorar.

Mas, às vezes, quando fico nervoso, começo a acelerar e falar rápido. E, devido ao meu sotaque, pode ser que meu inglês fique difícil de entender.

Por isso, recomendo sempre estar atento a sua respiração. De modo que você não esteja correndo com o que você fala.

Recursos que podem ajudar:

1. Faça _side projects_. Se você gostar de programar no seu tempo livre, acho que essa é uma das melhores maneiras de aprender.

2. [GreatFrontEnd](https://devnagringa.substack.com/p/%5Bhttps://greatfrontend.com/%5D(https://greatfrontend.com/)): mencionando o GFE de novo. Acho que é uma ótima plataforma para praticar problemas de front.

3. Pratique com um amigo! Se tiver alguém que está praticando para entrevistas também, acho que é uma ótima lição.

### Projeto de Sistema / _System Design_

Essas entrevistas são as minhas favoritas.

É uma etapa que é altamente relevante pra qualquer engenheiro de software. Dev na gringa ou no Brasil mesmo.

Mostra a sua capacidade de projetar um sistema. Analisar quais serão o seu gargalo. Avaliar diferentes arquiteturas para se resolver um problema.

Eu gosto de pensar nessa entrevista como uma jornada.

Uma jornada onde o seu objetivo final é chegar a uma solução. Que será feita de maneira colaborativa com o seu entrevistador.

Aqui muitas vezes não existe uma resposta correta. Uma das principais razões pelo qual desenvolver software é difícil é porque não existe uma solução que resolva tudo.

É tudo sobre os _trade-offs_.

Para qualquer problema técnico complexo, sempre haverão diversas soluções.

O que é importante é que você saiba discutir as diferentes abordagens possíveis.

Tenha certeza de clarificar todos os requisitos do projeto. Funcionais e não-funcionais.

Trabalhe com o seu entrevistador para entender o escopo do projeto. Essa é uma entrevista com tempo limitado.

Muitas vezes o seu entrevistador quer ver o seu foco em uma parte específica do sistema.

Existem cinco princípios para um projeto de software que você deve estar atento:

1. Robustez

2. Escalabilidade

3. Performance

4. Extensibilidade

5. Resiliência

Quando você faz um sistema, essas são as principais áreas onde você pode encontrar problemas. Veja como o seu design se comporta em cada uma delas.

Recursos para consulta:

1. Livros: System Design Interview 1, 2 por Alex Xu, Sahn Lam. Designing Data-Intensive Applications por Martin Kleppmann.

2. Blogs de engenharia de empresas.

3. Canal do YouTube [ByteByteGo](https://devnagringa.substack.com/p/%5Bhttps://www.youtube.com/@ByteByteGo/videos%5D(https://www.youtube.com/@ByteByteGo/videos)).

### Entrevista de Valores e Comportamental

Junto com a entrevista de _system design_, essa também é uma das minhas etapas favoritas.

Pois é uma etapa onde você também tem a chance de entrevistar a empresa que você está pensando em trabalhar.

Venha com perguntas preparadas.

Em especial se for sua primeira entrevista como dev na gringa. Você pode ter dúvidas quanto a cultura, que talvez seja diferente de como é a sua empresa no Brasil.

Essa é uma oportunidade de você também se exibir. Falar sobre suas vitórias passadas. Sobre como você conseguiu influenciar seu time de uma maneira positiva.

Porém, algo que é importante lembrar é que raramente existem vitórias de **sucesso absoluto.** Muitas vezes, o sucesso é atingido com algumas falhas e deslizes no caminho.

Fale sobre estes deslizes. Deixe que o seu entrevistador veja que isso é um caso real. Infelizmente, não temos muitos conto de fadas no mundo da engenharia de software. Resolvemos problemas, mas também pensamos em o que poderíamos fazer diferente.

Traga essa perspectiva para o seu entrevistador. Deixe que ela veja sobre como você reflete sobre o seu passado.

Recursos:

1. Canal no Youtube [A Life Engineered](https://devnagringa.substack.com/p/%5Bhttps://www.youtube.com/@ALifeEngineered%5D(https://www.youtube.com/@ALifeEngineered)).

2. [Tech Interview Handbook](https://devnagringa.substack.com/p/%5Bhttps://www.techinterviewhandbook.org/behavioral-interview/%5D(https://www.techinterviewhandbook.org/behavioral-interview/)).

3. O método STAR. Use ele como um framework de como moldar as suas histórias.

## 5. Oferta

Parabéns por ter chegado até aqui! 🎉 🎉 🎉

Sim, a oferta é a etapa final! Mas ainda existem algumas coisas que podemos fazer aqui para deixar melhor.

Este artigo já está ficando grande, então vou falar a mensagem principal: **negocie o seu salário**.

Existem três mitos quanto à negociação:

1. "Minha oferta será cancelada se eu tentar negociar"

2. "Negociação faz com que eu pareça mesquinho"

3. "Alguém vai ganhar menos dinheiro se eu ganhar mais"

Nenhuma dessas frases é verdade.

Você não perde nada por negociar o seu salário.

Em algumas vezes, pode até ganhar. Por você mostrar o valor que você realmente acha que pode agregar a empresa.

[Aqui existe um bom resumo](https://www.techinterviewhandbook.org/negotiation-rules/) sobre as principais regras de negociação salarial.

## Considerações finais

Não leve o processo de entrevistas tão a sério.

É normal ser rejeitado. Não quer dizer que você é um engenheiro ruim. Ou que você nunca irá conseguir se tornar um dev na gringa.

Trate os processos seletivos como uma oportunidade de conversar com pessoas novas.

Se mostre interessado nos problemas delas. Compartilhe de experiências suas que você acha que podem ser relevantes.

Aprenda novas tecnologias no caminho. Descubra casos novos de engenharia que você não conhecia. Diferentes domínios tem diferentes desafios técnicos.

Tenha um interesse genuíno na empresa. **Ouça ativamente** o que seus entrevistadores te contam. O que os preocupa, e aquilo que os motiva.

Pratique a empatia. Se coloque no lugar deles, e procure entender o que eles estão procurando.

**Trate todo este processo como apenas uma** _**quest**_ **no enorme RPG que é a vida. Mesmo se ela der recompensas, ou falhar, você irá ganhar experiência da mesma forma.** | lucasheriques |

1,865,998 | Introduction to Time Series Analysis with Python | Introduction Time series analysis is a powerful tool for studying and predicting patterns... | 0 | 2024-05-27T00:32:36 | https://dev.to/kartikmehta8/introduction-to-time-series-analysis-with-python-15o1 | webdev, javascript, beginners, programming | ## Introduction

Time series analysis is a powerful tool for studying and predicting patterns in data that change over time. With the increasing availability of data and advancements in technology, it has become an essential skill for data analysts and researchers across various fields. Python, being a versatile and popular programming language, offers a wide range of tools and libraries for time series analysis. In this article, we will discuss the advantages, disadvantages, and features of conducting time series analysis with Python.

## Advantages

Python offers a user-friendly and efficient environment for time series analysis, as it allows for easy data manipulation, visualization, and modeling. Its vast array of libraries, such as Pandas, NumPy, and Matplotlib, provide powerful tools and functions for handling time series data. Moreover, Python's open-source community constantly develops new packages, making it a continuously improving platform. Additionally, Python's integration with other programming languages allows for seamless collaboration and integration of different tools and techniques.

## Disadvantages

As with any tool or technique, time series analysis with Python also has some limitations. The learning curve to master Python and its libraries may be challenging for beginners. Additionally, debugging errors and optimizing code can be time-consuming and require a deeper understanding of the programming language.

## Features

One of the significant features of using Python for time series analysis is its support for statistical modeling and forecasting. With libraries like StatsModels and Prophet, it is possible to analyze complex time series data, identify trends, and make accurate predictions. Furthermore, Python's machine learning libraries, such as scikit-learn and TensorFlow, enable the development of advanced forecasting models.

### Example of Time Series Analysis Using Python

```python

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

from statsmodels.tsa.arima_model import ARIMA

# Load dataset

data = pd.read_csv('time_series_data.csv', parse_dates=True, index_col='Date')

# Fit an ARIMA model

model = ARIMA(data['Value'], order=(1, 1, 1))

model_fit = model.fit(disp=0)

# Plot the results

plt.figure(figsize=(10, 7))

plt.plot(data['Value'], label='Original')

plt.plot(model_fit.fittedvalues, color='red', label='Fitted Values')

plt.title('Time Series Analysis using ARIMA')

plt.legend()

plt.show()

```

This example demonstrates how to perform time series analysis using the ARIMA model from the StatsModels library. It involves loading data, fitting the model, and visualizing the results.

## Conclusion

In conclusion, Python is a powerful and versatile language for conducting time series analysis. Its user-friendly environment, vast array of libraries, and support for advanced statistical modeling make it a popular choice among data analysts. While it has its limitations, the numerous advantages and continuous development of new packages make Python an efficient and valuable tool for studying time series data. | kartikmehta8 |

1,865,997 | Say Goodbye to Flaky Rendering & Animation Issues | Introduction User interface rendering and animations provide an application with an... | 0 | 2024-05-27T00:30:01 | https://dev.to/anurika_joy_c83cb5039b071/say-goodbye-to-flaky-rendering-animation-issues-h0 | softwareengineering, beginners, react, javascript |

## Introduction

User interface rendering and animations provide an application with an awesome and interactive user sensation. As amazing as this idea sounds, so is how vexing it can get when things go sideways. And one way wherein that circumstance could materialize is through trials that fluctuate.

How To Repair Flaky Trials in Rendering & Animation Maneuvers

User interface rendering and animations provide an application with an awesome and interactive user sensation. As amazing as this idea sounds, so is how vexing it can get when things go sideways. And one way wherein that circumstance could materialize is through trials that fluctuate.

There are definitely numerous motives behind flaky trials in rendering or cartoons; nevertheless, one of the prime reasons is timing.

For illustration, you might own a spring cartoon of a fastener showing. At present, trialing this might lead to flakiness, all due to the animation getting completed some nanoseconds too tardy as a result of a random backdrop managing process.

In this handbook, we’ll be utilizing Jest and React trialing libraries to portray real-world cases of trials for better comprehension. Nonetheless, you can tag along, regardless of your stack.

## Ground Origins

Flaky trials, in general, encompass rather a few communal causes. However, when getting precise about UI rendering and animations, here are the principal ground origins:

## Timing Predicaments

Animations by default often arrive with setTimeout delays or transition-duration attributes. These timings are at times what result in assertions flunking, leading to flakiness.

Take a glance at this trial for a component with an animation where its breadth gets enlarged with the tap of a fastener:

```

test("Box breadth increments", () => {

render();

const box = screen.getByTestId("box");

assume(box).toHaveStyle({ width: "70px" });

fireEvent.click(screen.getByRole("button"));

assume(box).toHaveStyle({ width: "100px" });

});

```

The catch right here is that after tapping the key to escalate the breadth, the assertion might execute before the animation of the dimension increment wraps up, subject to any timing occurrences.

Consequently, the prime predicament in rendering and animation trialing descends to guaranteeing that assertions are made after the animation has fully executed and not earlier. A nice tactic would be to wait for the animation to complete; in React trialing, this can be accomplished utilizing the use of waitFor.

## Environmental Dependencies

The system resources come into play in this instance. In a form, trials devised for rendering intricate animations can be contingent on system resources like CPU or memory.

At present, this won’t be an issue for machines that are adept. Nonetheless, if the machine has restricted resources, animation rendering could stumble and be less velvety, thereby initiating random trial failings.

Another illustration of environmental dependencies can be network connectivity. Presume you own an animation that hinges on retrieving external assets; this solo introduces sporadic factors as network oscillations can sway the animation behavior. A technique to mend this would be with the use of mocks.

## Libraries Dependencies

Oftentimes, animations employed are from libraries, as it is much quicker and more effective than authoring the code from scratch.

In React for instance, there are stacks of animation libraries like Framer Motion, React Spring, etc. Nevertheless, these libraries could prompt flaky trials when:

* the version of the third-party library and the ongoing version of the technology stack employed are not harmonious. This case is sometimes tacit, as blunders might not be exhibited, just that the tests outcomes appear to wobble.

* the animation libraries employed own dependencies that intercede with one another.

* the animation libraries contain interior bugs that haven’t been patched by the library developers which only materialize during trialing.

* developers use animation libraries in a technique it wasn’t purposed for. Remarkably enough, the library might accomplish the duty as anticipated but since it was employed inaccurately, trialing can furnish unpredictable outcomes.

##Rectifying Flaky Trials in Rendering & Animation

At present, to make this handbook more intricate and easier to grasp, we’ll present four real-world code cases and depict the incorrect way precipitating flaky trials and the correct way to remedy them.

## Rendering of a Newsletter Prompt

In this instance, this component renders a simple form with an email input and a subscribe button. When submitted, it logs the email and resets the state.

```

function NewsletterPrompt() {

const [email, setEmail] = useState("");

const [subscribed, setSubscribed] = useState(false);

const handleSubmit = (e) => {

e.preventDefault();

setSubscribed(true);

};

return (

{!subscribed ? (

<h2>Subscribe to Bulletin</h2> setEmail(e.target.value)} /> Subscribe ) : (

Subscribed effectively

)}

); }

```

At present let’s script a trial for the component that’ll show how flakiness can transpire:

```

test("Bulletin southern renders and can be subscribed to", () => {

render();

const emailInput = screen.getByPlaceholderText("Email address...");

const subscribeButton = screen.getByText("Subscribe");

fireEvent.change(emailInput, { target: { value: "xyz@example.com" } });

fireEvent.click(subscribeButton);

assume(screen.getByText("Subscribed effectively")).toBeInTheDocument();

});

```

The predicament right here is that making assertions directly like this could run before the component even re-renders to show updates of the submitted email.

This trial is bound to be flaky because it relies on the component being rendered synchronously, which might not always be the case. As rendering can sometimes be asynchronous.

A mend for this trial would be something like this:

```

test("Bulletin southern renders and can be subscribed to", async () => {

render();

const emailInput = screen.getByPlaceholderText("Email address...");

const subscribeButton = screen.getByText("Subscribe");

fireEvent.change(emailInput, { target: { value: "xyz@example.com" } });

fireEvent.click(subscribeButton);

assume(

await screen.findByText("Subscribed effectively"),

).toBeInTheDocument();

});

```

In this mend, we employed `async/await` and the `screen.findByText `technique to anticipate and wait for the text content element to show up in the DOM, which would confirm that the component has forsooth re-rendered with the “Subscribed effectively” notification.

## An Animated Key

Let’s presume you own a fastener that animates its color from orange to blue when clicked and reverts to orange when clicked again.

```

export nonremovable function AnimatedButton() {

const [isAnimated, setIsAnimated] = useState(false);

const handleClick = () => {

setIsAnimated(!isAnimated);

};

const buttonStyle = {

dimension: "100px",

height: "50px",

backdropColor: isAnimated ? "blue" : "orange",

transition: "backdropColor 0.5s ease",

};

return (

Analyze

);

}

```

Now here’s a frequent but incorrect way to script the trial for this button color change, as it could evince flaky actions:

```

import { render, fireEvent } from "@testing-library/react";

import AnimatedButton from "./AnimatedButton";

test("background color changes", () => {

render();

const button = screen.getByRole("button", { name: "Animate" });

fireEvent.click(button);

assume(button).toHaveStyle({ backdropColor: "blue" });

fireEvent.click(button);

assume(button).toHaveStyle({ backdropColor: "orange" });

});

```

This trial appears truly straightforward; it renders the button, clicks it, and then asserts the awaited color changes. However, there’s a tad of a lax spot, which is where flakiness steps in.

The reason this trial might at times evince flaky outcomes is that after each click event, it assumes that the animation finishes immediately, as we aren’t assessing the timing of the animation before asserting the status of the button’s background color.

As an alternative, this would be the right way:

```

test("background color changes", async () => {

render();

const button = screen.getByRole("button", { name: "Animate" });

fireEvent.click(button);

await waitFor(() => assume(button).toHaveStyle({ backdropColor: "blue" }));

fireEvent.click(button);

await waitFor(() =>

assume(button).toHaveStyle({ backdropColor: "orange" }),

);

});

```

With this mere change of utilizing waitFor, we can be certain that the trial would permit the animation to be finalized before asserting the backdrop color of the button. This ensures that the trial assertion is in sync with the UI’s rendering update.

Rendering of a Basic Panorama

For this exemplar, let’s utilize a simple panorama component that when a fastener is clicked, the visibility of the panorama alters:

```

function AnimatedWindow() {

const [isApparent, setIsApparent] = useState(false);

const toggleVisibility = () => setIsApparent(!isApparent);

return (

Toggle Panorama {isApparent && Animated Panorama}

); }

```

Now as plain as this component is, trialing if it renders rightfully can grow into a hitch due to the animation.

Here is an incorrect way to script the trial:

```

test("Toggling animated panorama", () => {

render();

const toggleButton = screen.getByRole("button", { name: "Toggle Panorama" });

assume(screen.queryByTestId("animated-modal")).not.toBeInTheDocument();

fireEvent.click(toggleButton);

assume(screen.getByTestId("animated-modal")).toBeInTheDocument();

});

```

As we portrayed in the foregoing exemplar, this trial also asserts the presence of the panorama content straight away after the click event. Thus, due to the animations being asynchronous, this trial is bound to be a flaky one.

Here’s the right way:

```

test("Toggling animated panorama", async () => {

render();

const toggleButton = screen.getByRole("button", { name: "Toggle Panorama" });

assume(screen.queryByTestId("animated-modal")).not.toBeInTheDocument();

fireEvent.click(toggleButton);

await waitFor(() => {

assume(screen.queryByTestId("animated-modal")).toBeVisible();

});

fireEvent.click(toggleButton);

await waitFor(() => {

assume(screen.queryByTestId("animated-modal")).not.toBeInTheDocument();

});

});

```

In this mend, we employed waitFor to assert the panorama’s presence or absence visibility only after the animation has been finalized. This gives us the assurance that the trial is more dependable.

T

## actics to Minimize Flaky Trials in Rendering & Animation

So when scripting trials expressly for UI rendering and animations, here are some facets to look out for or what you should exploit rather to lessen the likelihood of flaky trials showing up:

Always scrutinize the trial logic scripted as it is one of the communal mistakes made as elucidated in the section above.

Network lags or fluctuations should be taken into account, and mock functions can be handy in fixing this.

When trialing on UI rendering, bypass utilizing DOM selectors like `.querySelector()` to fetch elements, rather employ the queries dispensed by the trialing library like getBy, queryBy, or findBy.

Assure that the version of the animation library employed is harmonious with the version of the tech stack employed.

Retain the rendering or animation trials in smaller units i.e. endeavor to only trial one component at a time. This is more capable and simpler to debug flakiness.

Comprehend when to utilize the apt assertion matchers, for instance when trialing animations, `toBeVisible()` is superior suited than `toBeInTheDocument()`.

Utilize waitFor for assertions and not for firing events.

You can utilize `jest.useFakeTimers()` as an alternative to waitFor for further control of trialing.

Some developers drop/disable animations when trialing, while it’s not advocated, it is an option.

## Conclusion

Flaky trials in rendering and animations can genuinely be intricate to manage with; but with a proper understanding of the communal causes exclusively timing complications, the flakiness of trials can be curtailed tremendously.

| anurika_joy_c83cb5039b071 |

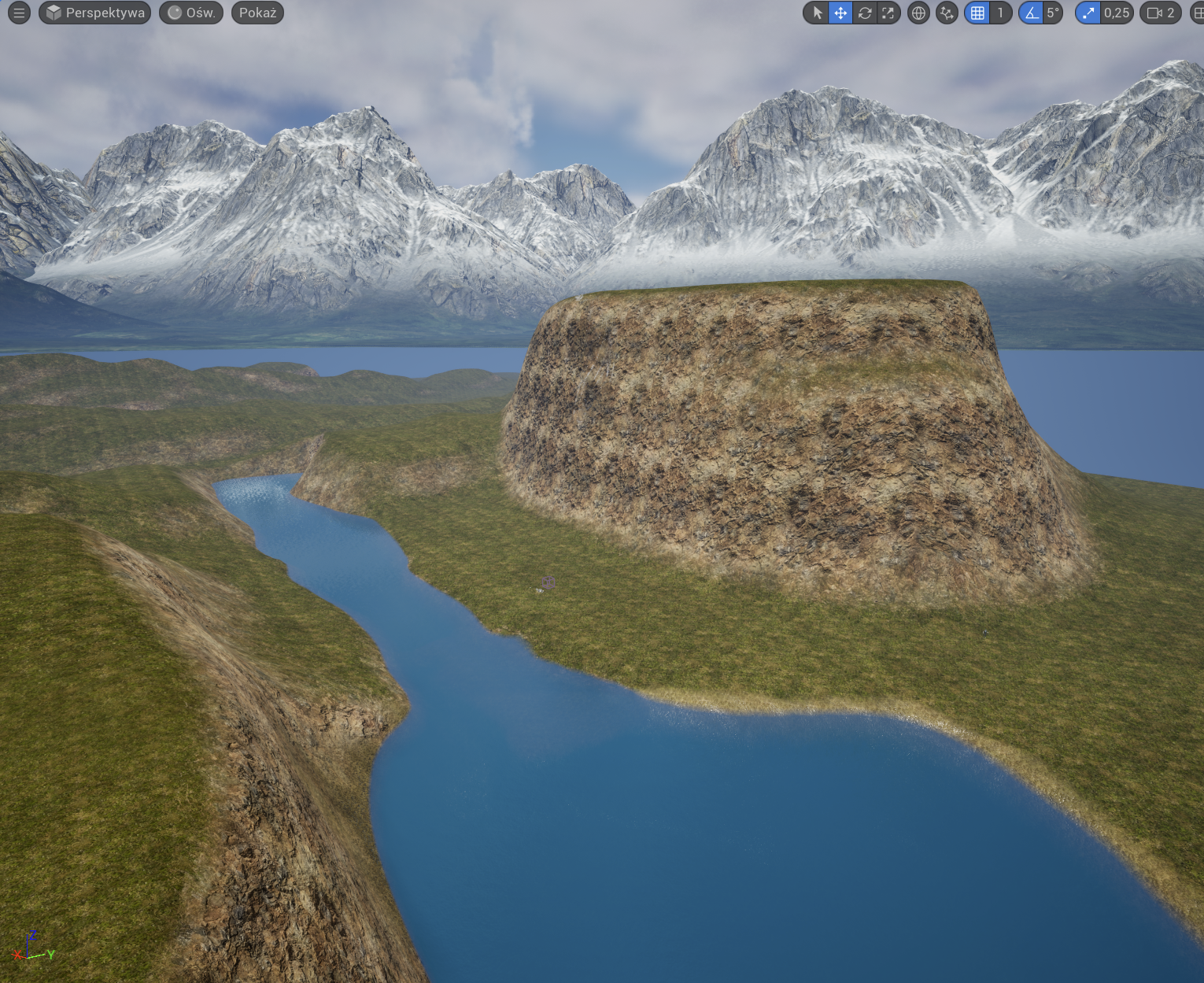

1,865,993 | [Game of Purpose] Day 8 | Today I learned about auto materials. I started designing my first level. | 27,434 | 2024-05-27T00:18:08 | https://dev.to/humberd/game-of-purpose-day-8-3bab | gamedev | Today I learned about auto materials.

I started designing my first level.

| humberd |

1,865,992 | My Pen on CodePen | Check out this Pen I made! | 0 | 2024-05-27T00:17:50 | https://dev.to/othmane_belalami_0183ff59/my-pen-on-codepen-4bko | codepen | Check out this Pen I made!

{% codepen https://codepen.io/Othmane-Belalami/pen/OJYRexx %} | othmane_belalami_0183ff59 |

1,860,737 | 10 Key Data Structures We Use Every Day | Data structures are like the building blocks for organizing information in our daily lives. While... | 0 | 2024-05-27T00:13:55 | https://dev.to/emmauche001/10-key-data-structures-we-use-every-day-2l42 | data, learning, datastructure | Data structures are like the building blocks for organizing information in our daily lives. While they might not be explicitly called out, these structures underlie many of the things we do. Here are 10 key data structures we use every day:

1. **Lists:** These are ordered collections of items, like a grocery list, a to-do list, or the songs in your music playlist. You can easily add, remove, or rearrange items on a list.

2. **Arrays:** Similar to lists, arrays hold a fixed number of items in a specific order. Imagine the buttons on a calculator or the channels on your TV – you access them by their position in the sequence.

3. **Stacks:** These follow a "Last In, First Out" (LIFO) principle. Think of a stack of plates – you can only add or remove plates from the top. This structure is used in things like browser history (you visit the most recently accessed page first) or when undoing actions on a computer program.

4. **Queues:** Unlike stacks, queues operate on a "First In, First Out" (FIFO) basis. Imagine a line at a coffee shop – the person who has been waiting the longest gets served first. Queues are used in waiting lists, task scheduling, and even traffic flow management (vehicles that enter the queue first exit first).

5. **Trees:** Hierarchical structures that mimic real-world trees. Imagine a family tree or an organizational chart. They represent relationships between items, with a root element at the top and branches (sub-elements) connecting to it.

6. **Graphs:** These represent connections between objects. Think of a social media network where users are connected to their friends, or a map where cities are connected by roads. Graphs help us visualize and analyze relationships between different entities.

7. **Hashes:** Used for fast retrieval of information. Imagine a phonebook – you look up a name (key) to find the corresponding phone number (value). Hash tables store data with unique keys for efficient access.

8. **Sets:** Collections of unique items, like the unique words in a document or the different types of fruits in a fruit basket. Sets ensure no duplicates exist within the collection.

9. **Associative Arrays (Dictionaries):** Similar to hash tables, these store key-value pairs but allow for more complex data types as values. Imagine a recipe book where the recipe name (key) is associated with a list of ingredients and instructions (value).

10. **Linked Lists:** These are linear data structures where elements (nodes) are not stored contiguously in memory. Each node holds data and a reference (link) to the next node in the sequence. They are useful for dynamic data (frequently changing size) like managing musical playlists or social media feeds.

Understanding these underlying data structures allows us to better appreciate the organization that underpins seemingly simple aspects of our daily lives.

Which data structure do you find most surprising in everyday use? Why? or Have you encountered any data structures in your hobbies or work that aren't on this list? Share your experiences!

| emmauche001 |

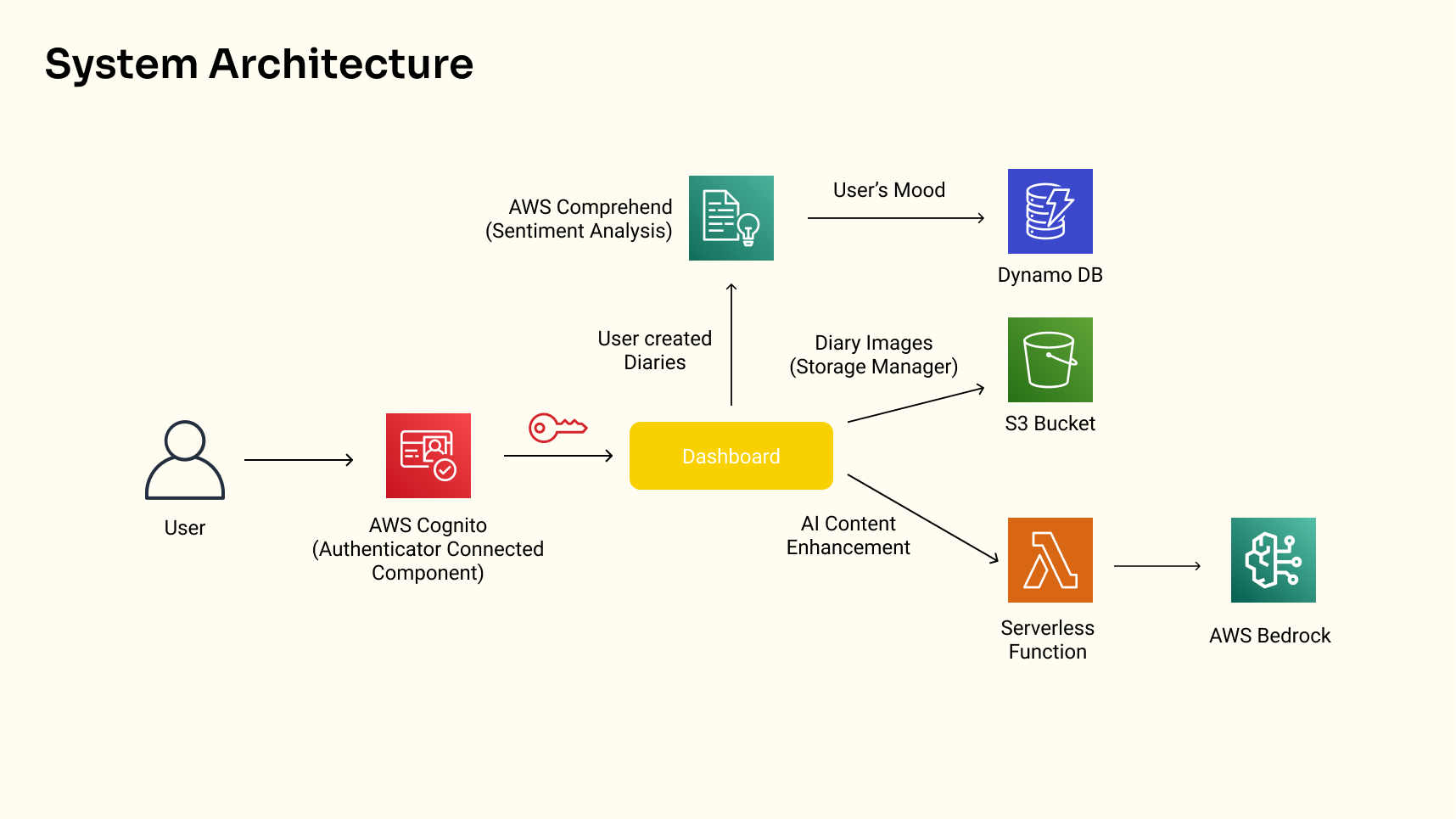

1,865,920 | FileSyncDrive | This is a submission for the The AWS Amplify Fullstack TypeScript Challenge What I... | 0 | 2024-05-27T00:00:47 | https://dev.to/godwinagedah/filesyncdrive-3adf | devchallenge, awschallenge, amplify, fullstack | 1.

*This is a submission for the [The AWS Amplify Fullstack TypeScript Challenge ](https://dev.to/challenges/awschallenge)*

## What I Built

<!-- Tell us what your app does! -->

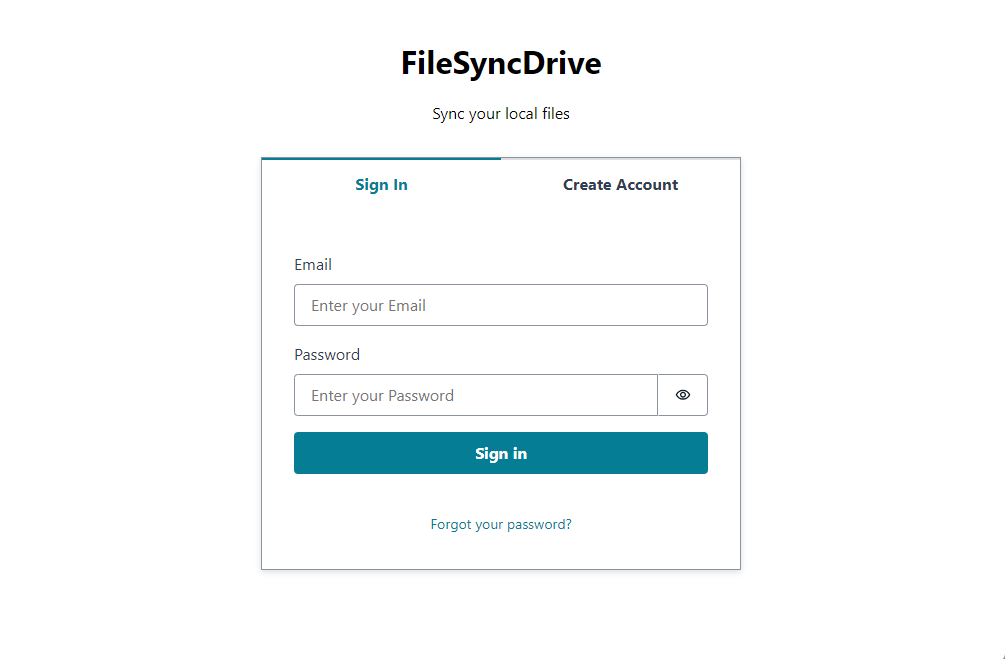

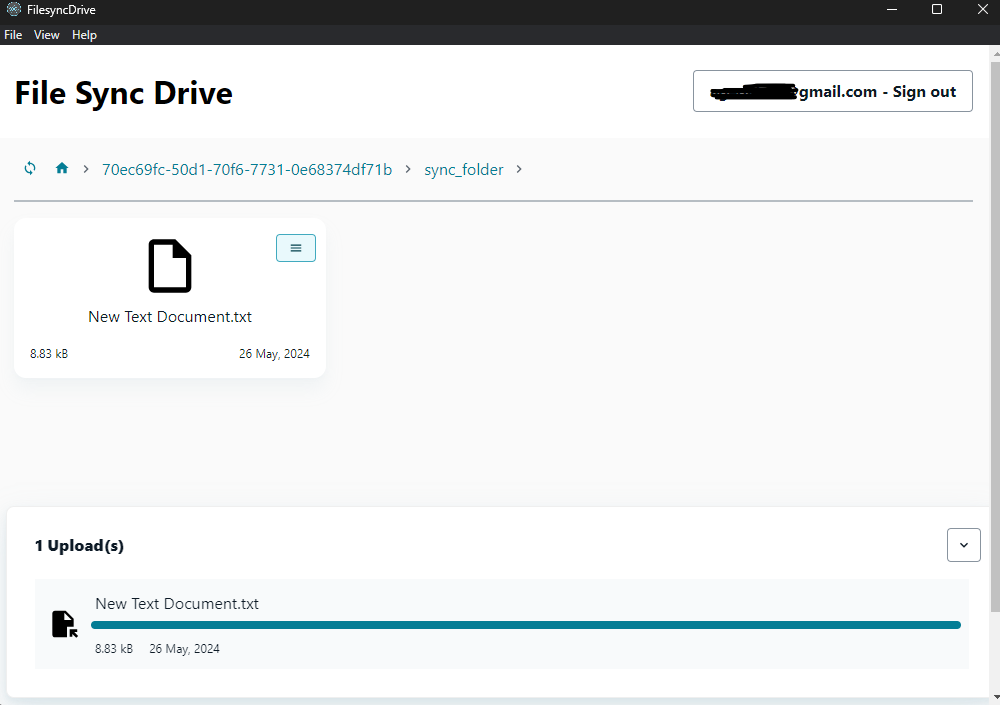

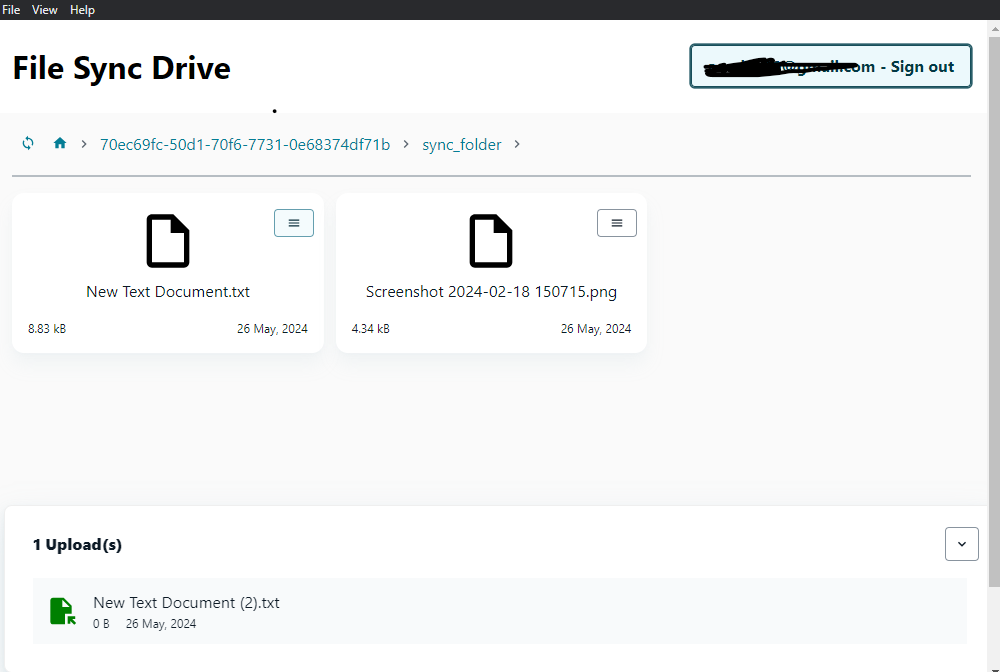

FileSyncDrive is an electron desktop app that listens for changes on a users local files and syncs to the cloud. it creates a folder "sync_folder" in the users home directory, any changes made to that folder will be reflected on the amplify storage.

## Demo

<!-- Share a link to your deployed solution on Amplify Hosting, and include some screenshots here. -->

Link to a shared file: https://main.d4c4zbq9x7suw.amplifyapp.com/?file=picture-submissions/70ec69fc-50d1-70f6-7731-0e68374df71b/sync_folder/New%20Text%20Document.txt

_i used the starter template for the shared file site, ran out of time_

[Desktop App Repo](https://github.com/Godwin9911/amplify_file_sync)

_*Building the .exe file was a challenge due to my limited hardware and time_

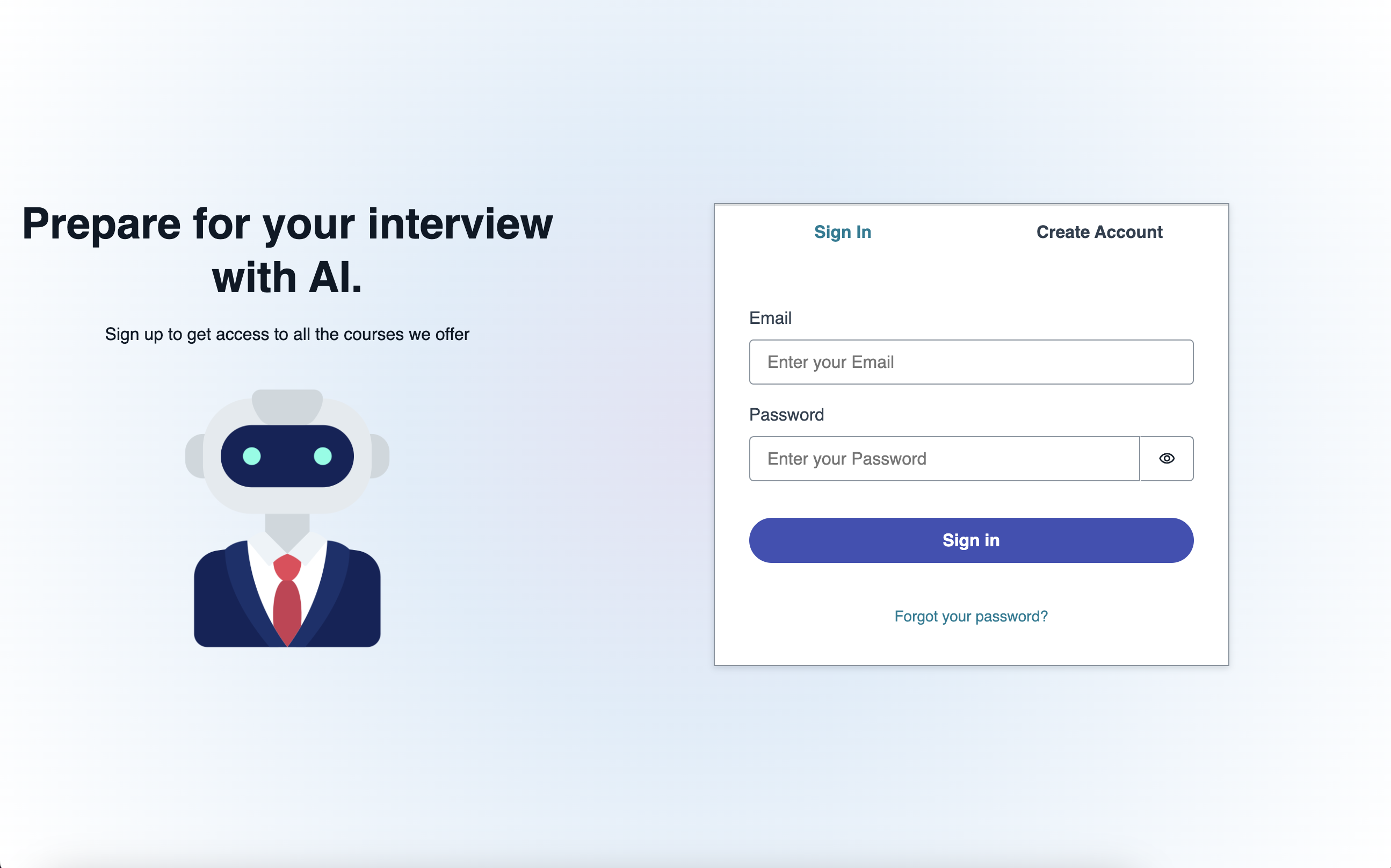

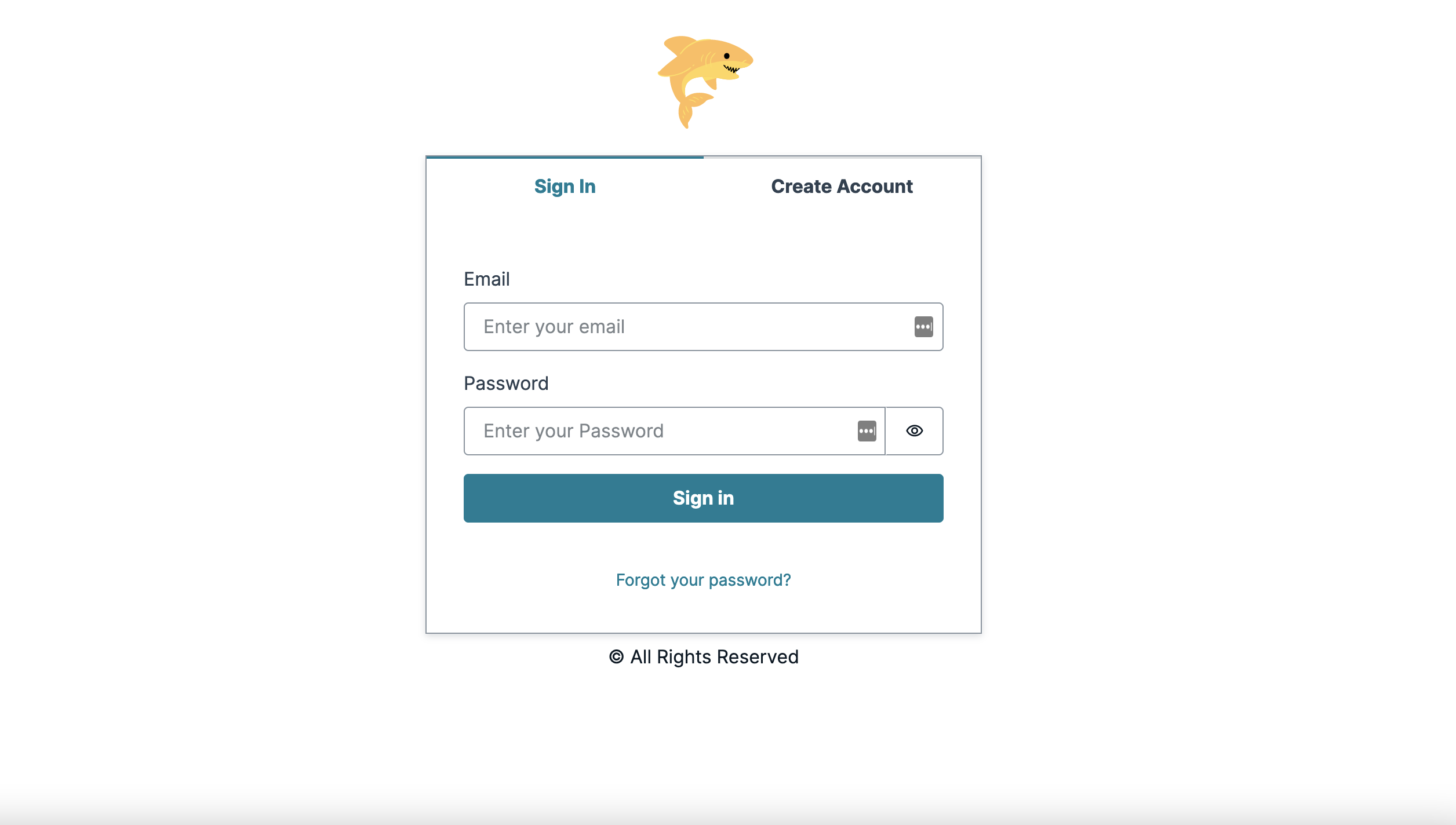

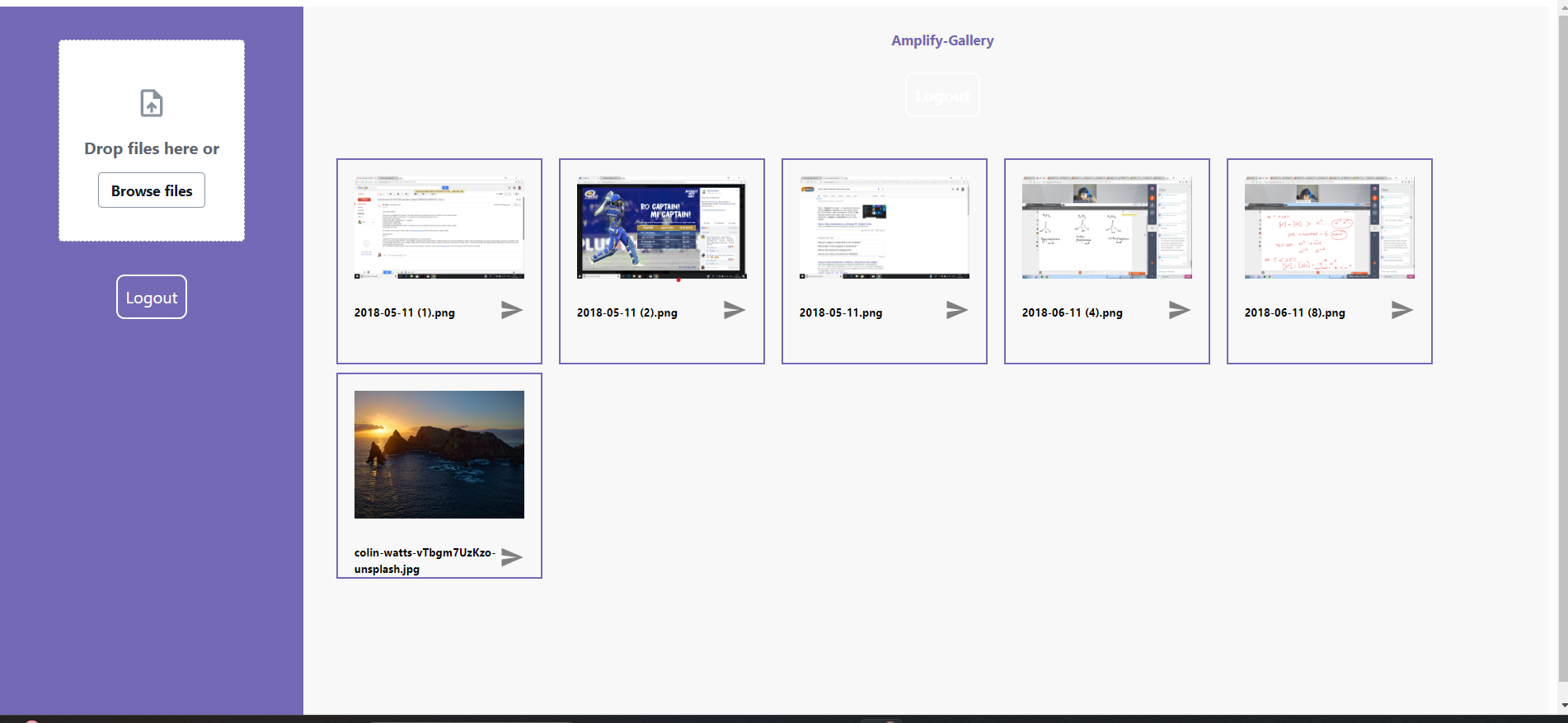

<figcaption>Login Amplify Authenticator Component</figcaption>

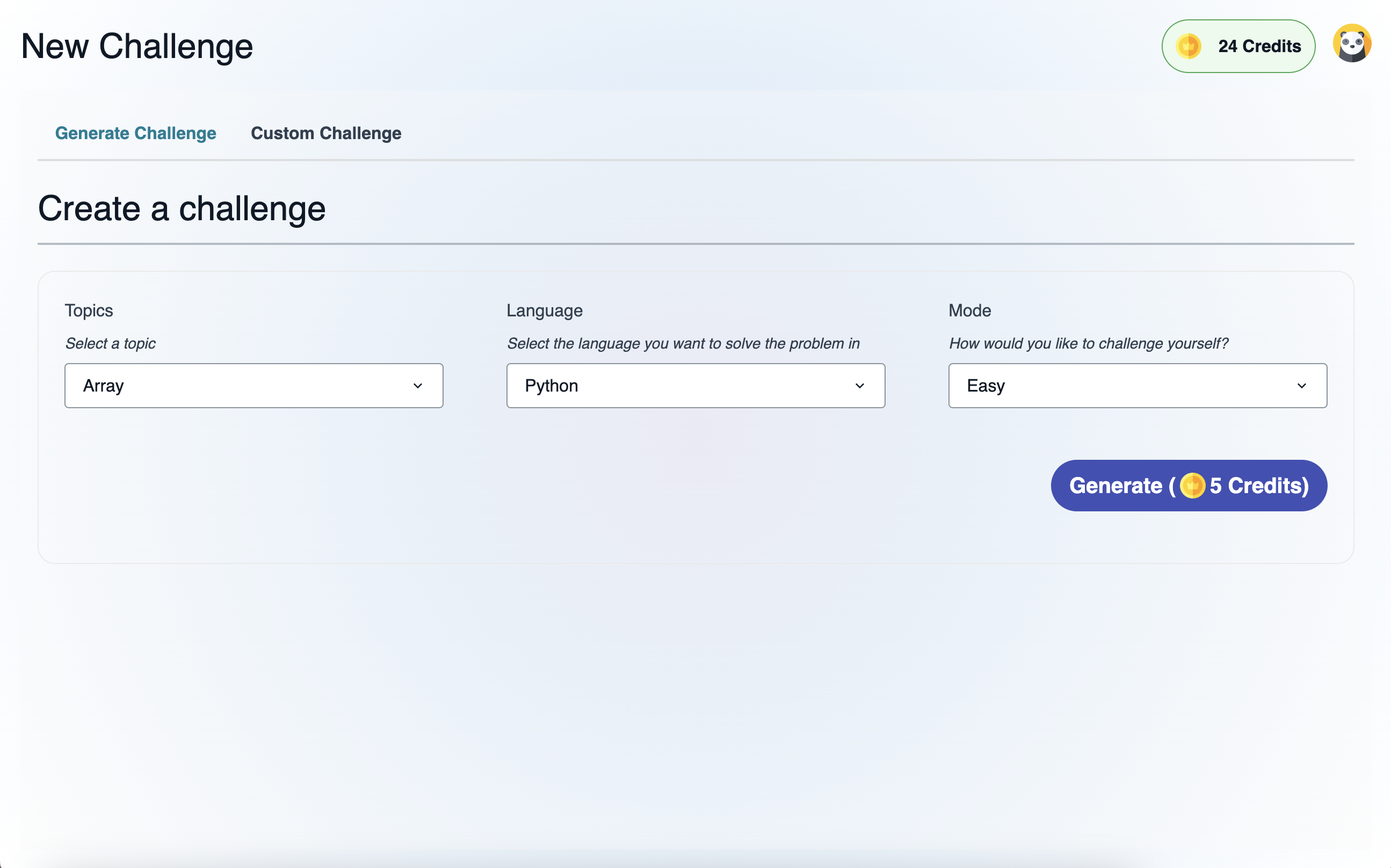

<figcaption>File Changes Synced, notice the time change.</figcaption>

<figcaption>Screenshot 1</figcaption>

<figcaption>Screenshot 2</figcaption>

<figcaption>Screenshot 3</figcaption>

<figcaption>File link can be shared for users to access via the web</figcaption>

Tested on Windows 11

## Journey

<!-- Tell us about your process, the services you incorporated, what you learned, anything you are particularly proud of, what you hop to do next, etc. . -->

**Connected Components and/or Feature Full**

<!-- Let us know if you developed UI using Amplify connected components for UX patterns, and/or if your project includes all four features: data, authentication, serverless functions, and file storage. -->

### Amplify UI Components

[Amplify UI Components](https://ui.docs.amplify.aws/react/components) was used to quickly scaffold our UI. it contains all the components you'll mostly need for a UI.

### Authentication

[Amplify Authenticator](https://ui.docs.amplify.aws/react/connected-components/authenticator) was used to setup our Auth flow. it was a straight forward setup with a single react Component.

### File Storage

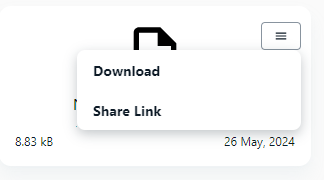

[Amplify Storage](https://docs.amplify.aws/react/build-a-backend/storage/) enabled us to instantly upload our modified files on local, also we can easily get our uploaded files from the storage as well as the download url.

In the future the storage space will be limited for the user, also social authentication will be added for easy sign up.

<!-- Team Submissions: Please pick one member to publish the submission and credit teammates by listing their DEV usernames directly in the body of the post. -->

<!-- Don't forget to add a cover image (if you want). -->

[Desktop App Repo](https://github.com/Godwin9911/amplify_file_sync)

_*Building the .exe file was a challenge due to my limited hardware and time_

[Repo for shared file on the web](https://github.com/Godwin9911/amplify-vite-react-template)

<!-- Thanks for participating! --> | godwinagedah |

1,867,814 | Claim-Check Pattern with AWS Message Processing Framework for .NET and Aspire | TL;DR Learn how to use AWS.Messaging by implementing Claim-Check pattern. The... | 0 | 2024-06-03T09:08:56 | https://nikiforovall.github.io/dotnet/aws/2024/05/27/aws-claim-check-dotnet.html | dotnet, aws, aspnetcore, architecture | ---

title: Claim-Check Pattern with AWS Message Processing Framework for .NET and Aspire

published: true

date: 2024-05-27 00:00:00 UTC

tags: dotnet, aws, aspnetcore, architecture

canonical_url: https://nikiforovall.github.io/dotnet/aws/2024/05/27/aws-claim-check-dotnet.html

---

## TL;DR

Learn how to use [AWS.Messaging](https://www.nuget.org/packages/AWS.Messaging/) by implementing Claim-Check pattern.

> The Claim-Check pattern allows workloads to transfer payloads without storing the payload in a messaging system. The pattern stores the payload in an external data store and uses a “claim check” to retrieve the payload. The claim check is a unique, obscure token or key. To retrieve the payload, applications need to present the claim-check token to the external data store.

Source code: [https://github.com/NikiforovAll/aws-claim-check-dotnet](https://github.com/NikiforovAll/aws-claim-check-dotnet)

- [TL;DR](#tldr)

- [Introduction](#introduction)

- [When to use Claim-Check pattern?](#when-to-use-claim-check-pattern)

- [What is AWS.Messaging?](#what-is-awsmessaging)

- [Implementation](#implementation)

- [Goal](#goal)

- [Code](#code)

- [File Upload via API](#file-upload-via-api)

- [File Processing via Worker](#file-processing-via-worker)

- [OpenTelemetry support](#opentelemetry-support)

- [Conclusion](#conclusion)

- [References](#references)

## Introduction

> ☝️The blog post will focus on code implementation and usage of `AWS.Messaging` and `Aspire` and not on the details of the Claim-Check pattern.For more details I highly recommend seeing [Azure/Architecture Center/Claim-Check pattern](https://learn.microsoft.com/en-us/azure/architecture/patterns/claim-check)

Traditional messaging systems are optimized to manage a high volume of small messages and often have restrictions on the message size they can handle. Large messages not only risk exceeding these limits but can also degrade the performance of the entire system when the messaging system stores them.

The solution to this problem is to use the Claim-Check pattern, and don’t send large messages to the messaging system. Instead, send the payload to an external data store and generate a claim-check token for that payload. The messaging system sends a message with the claim-check token to receiving applications so these applications can retrieve the payload from the data store. The messaging system never sees or stores the payload.

<center>

<img src="https://nikiforovall.github.io/assets/claim-check/claim-check-diagram.svg" width="70%" style="margin: 15px;">

</center>

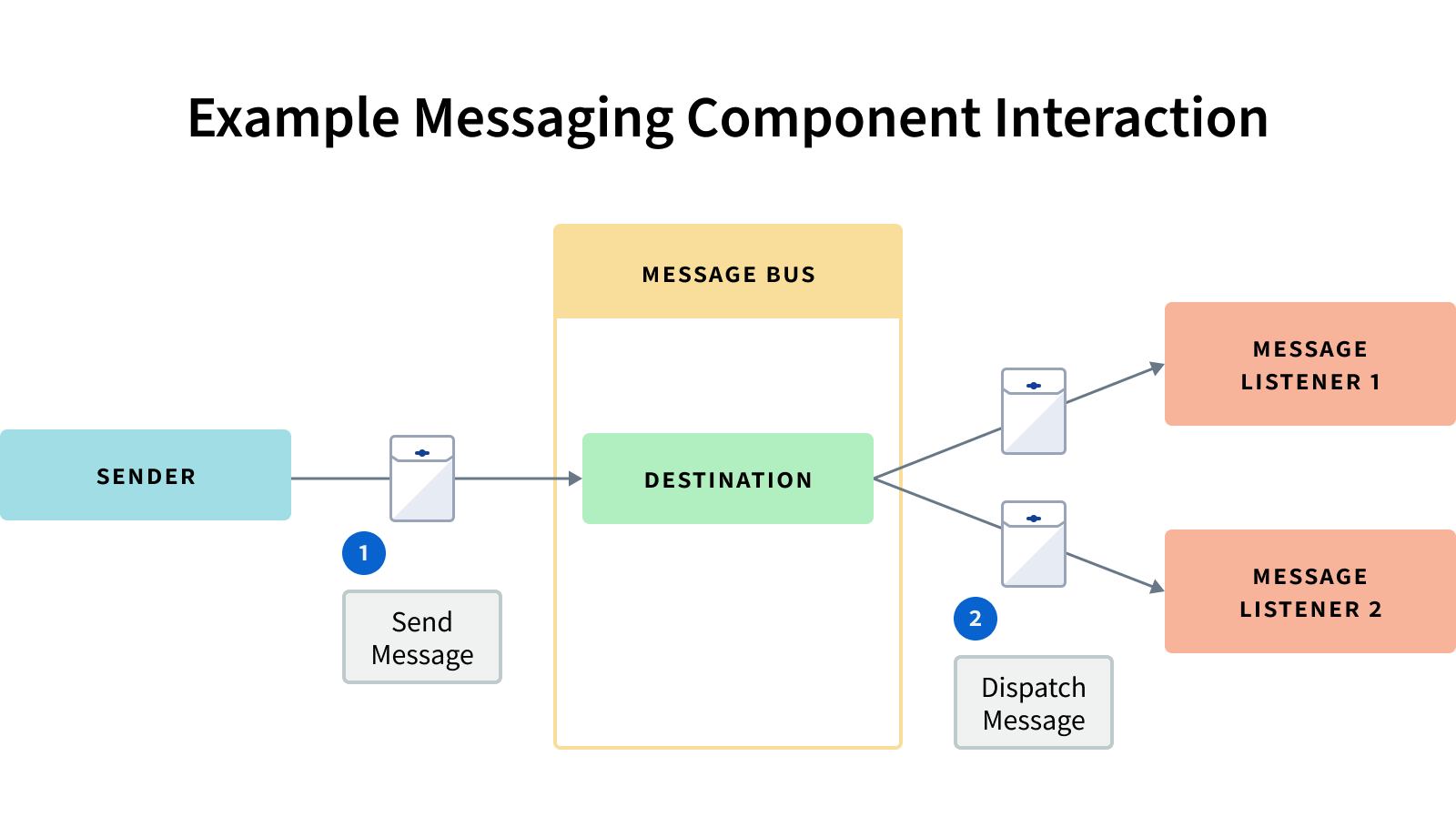

1. Payload

2. Save payload in data store.

3. Generate claim-check token and send message with claim-check token.

4. Receive message and read claim-check token.

5. Retrieve the payload.

6. Process the payload.

### When to use Claim-Check pattern?

The following scenarios are use cases for the Claim-Check pattern:

- Messaging system limitations: Use the Claim-Check pattern when message sizes surpass the limits of your messaging system. Offload the payload to external storage. Send only the message with its claim-check token to the messaging system.

- Messaging system performance: Use the Claim-Check pattern when large messages are straining the messaging system and degrading system performance.

For example, AWS SQS has a message size limit of 256 KiB. See [Amazon SQS message quotas](https://docs.aws.amazon.com/AWSSimpleQueueService/latest/SQSDeveloperGuide/quotas-messages.html) for more details.

## What is AWS.Messaging?

The _AWS Message Processing Framework for .NET_ is an AWS-native framework that simplifies the development of .NET message processing applications that use AWS services such as Amazon Simple Queue Service (SQS), Amazon Simple Notification Service (SNS), and Amazon EventBridge. The framework reduces the amount of boiler-plate code developers need to write, allowing you to focus on your business logic when publishing and consuming messages.

The Message Processing Framework supports the following activities and features:

- Sending messages to SQS and publishing events to SNS and EventBridge.

- Receiving and handling messages from SQS by using a long-running poller, which is typically used in background services. This includes managing the visibility timeout while a message is being handled to prevent other clients from processing it.

- Handling messages in AWS Lambda functions.

- FIFO (first-in-first-out) SQS queues and SNS topics.