id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

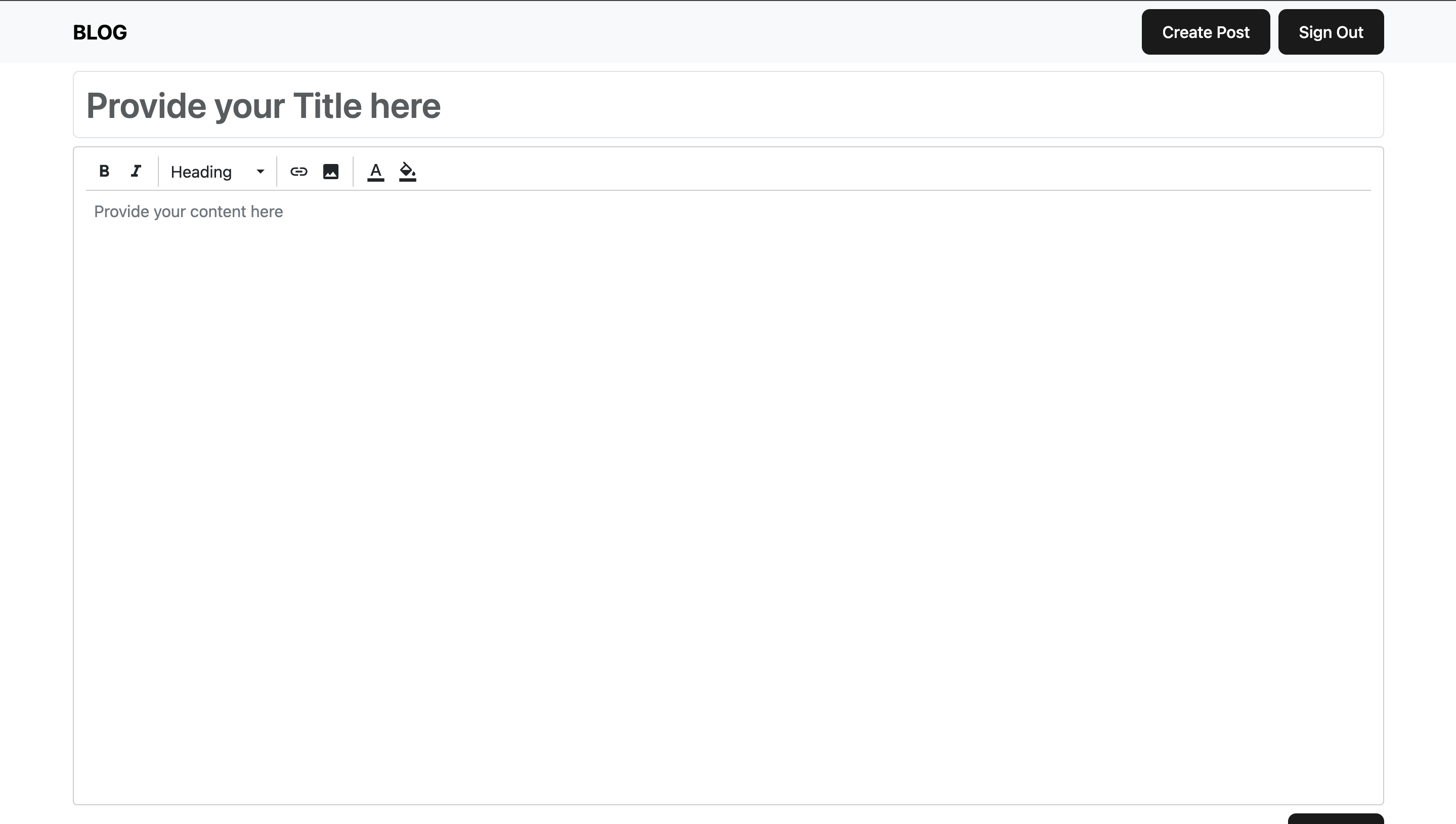

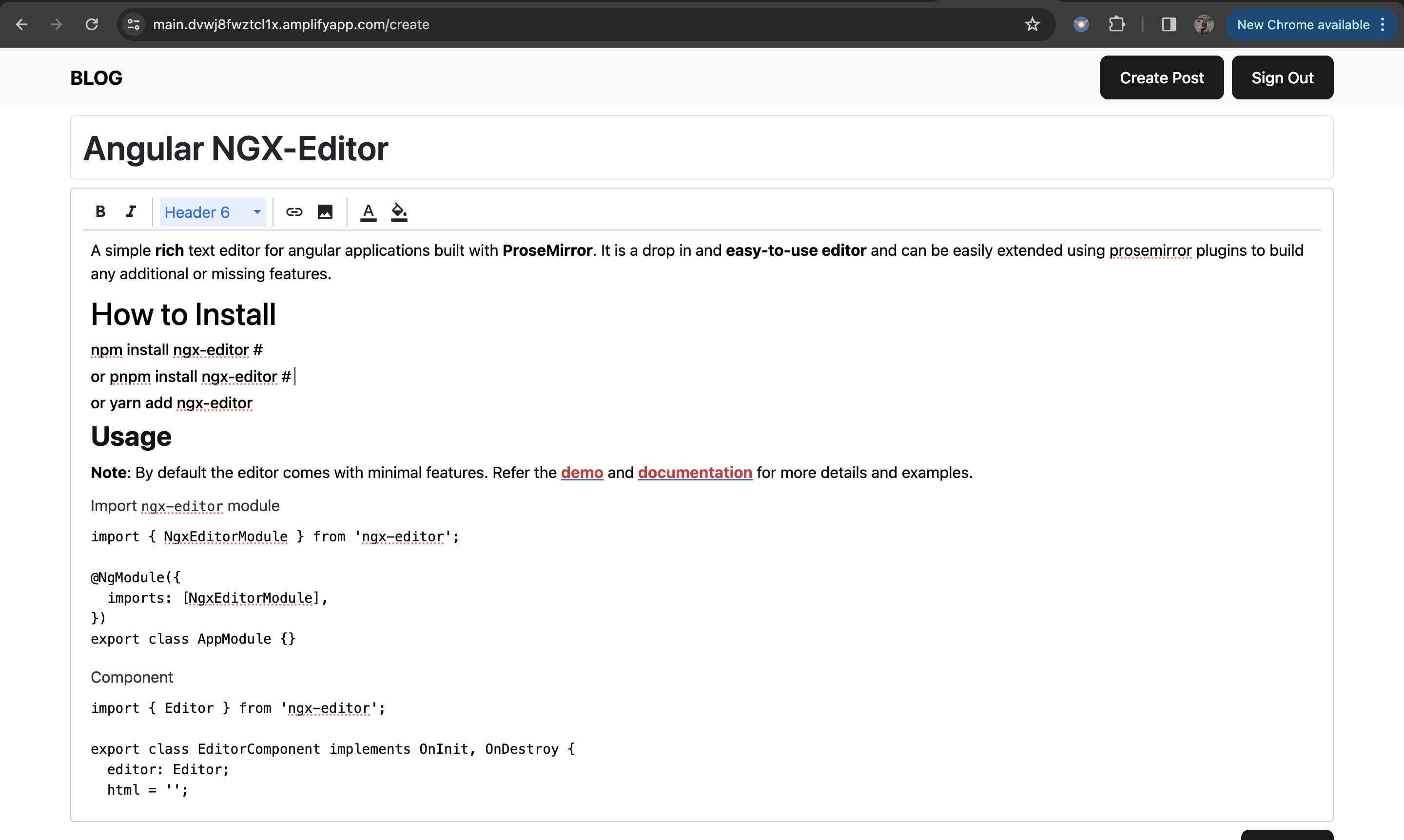

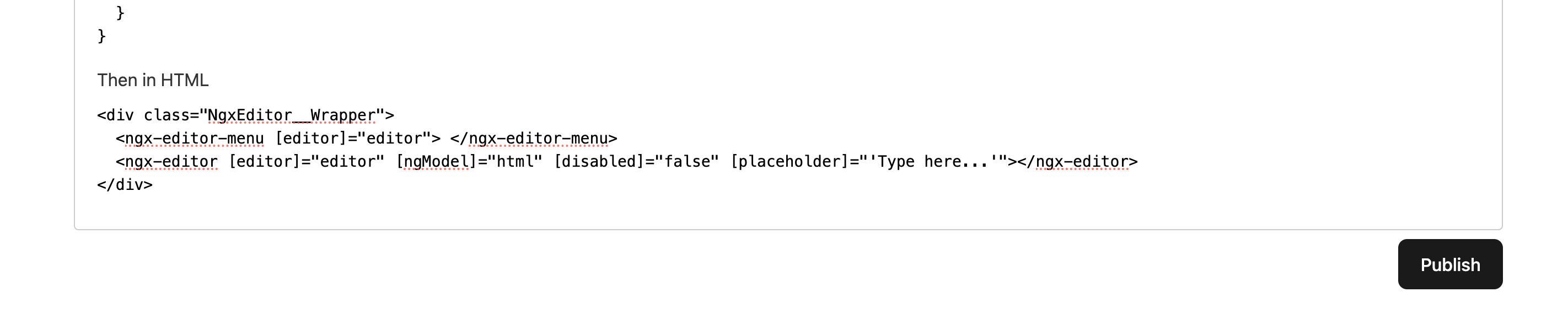

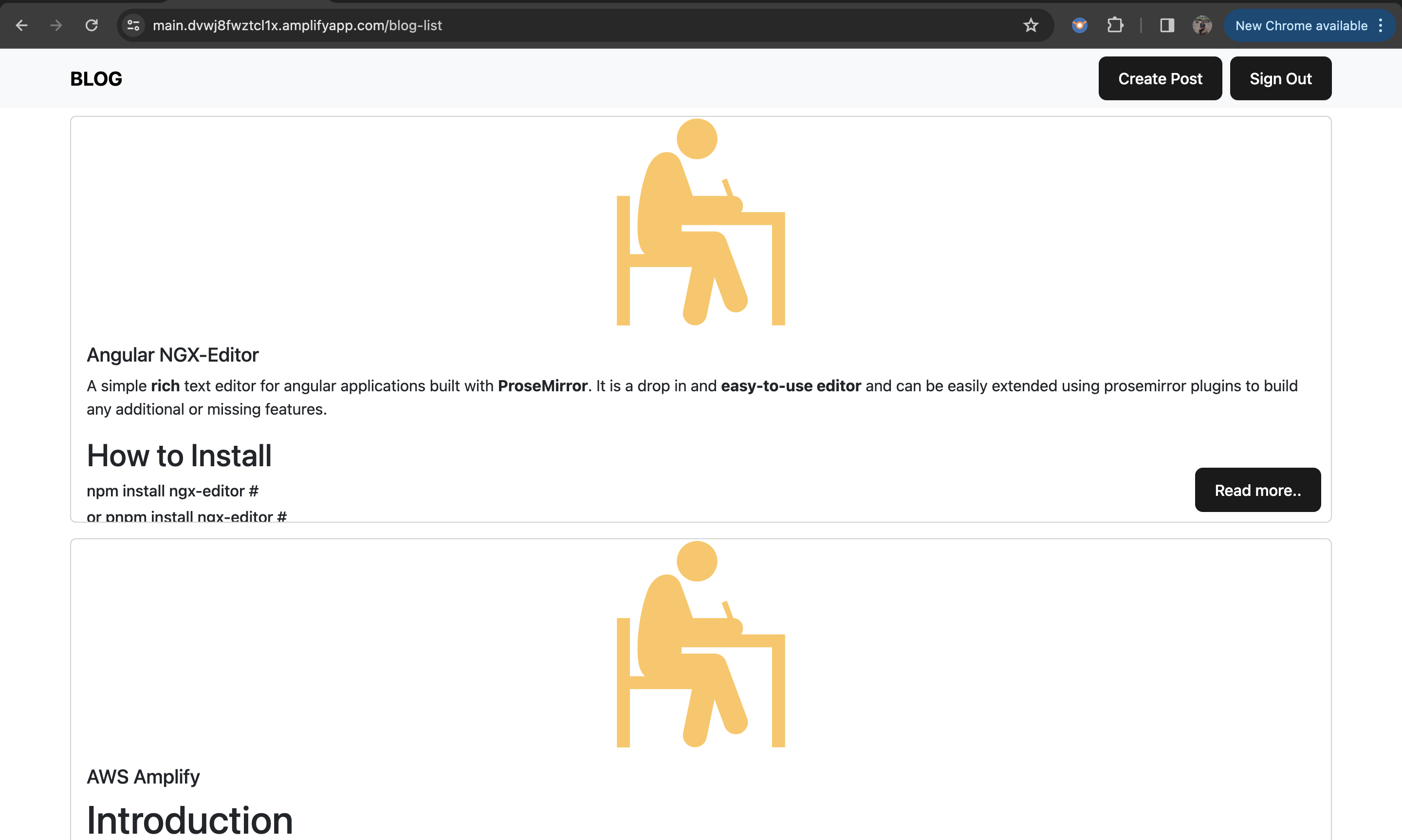

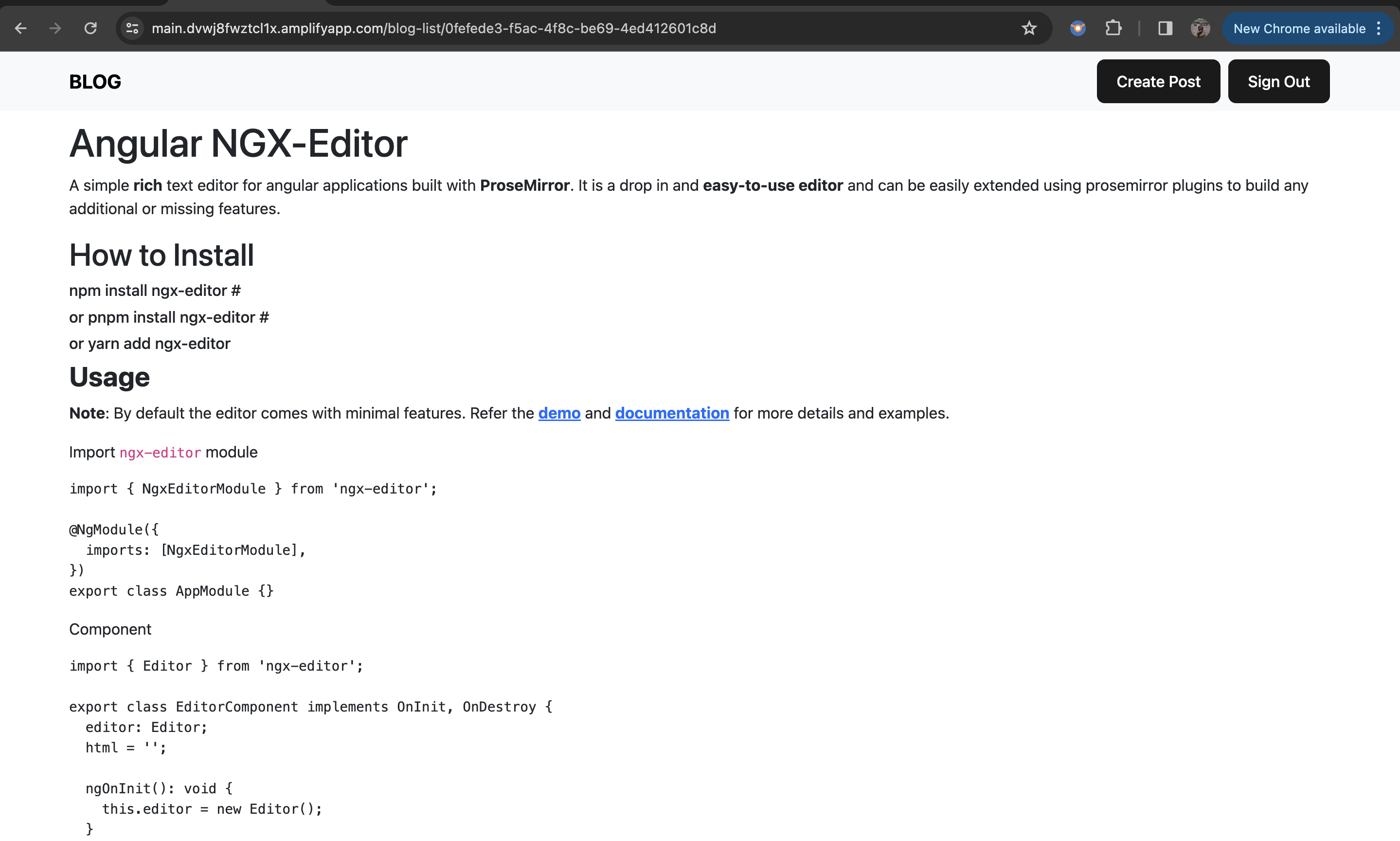

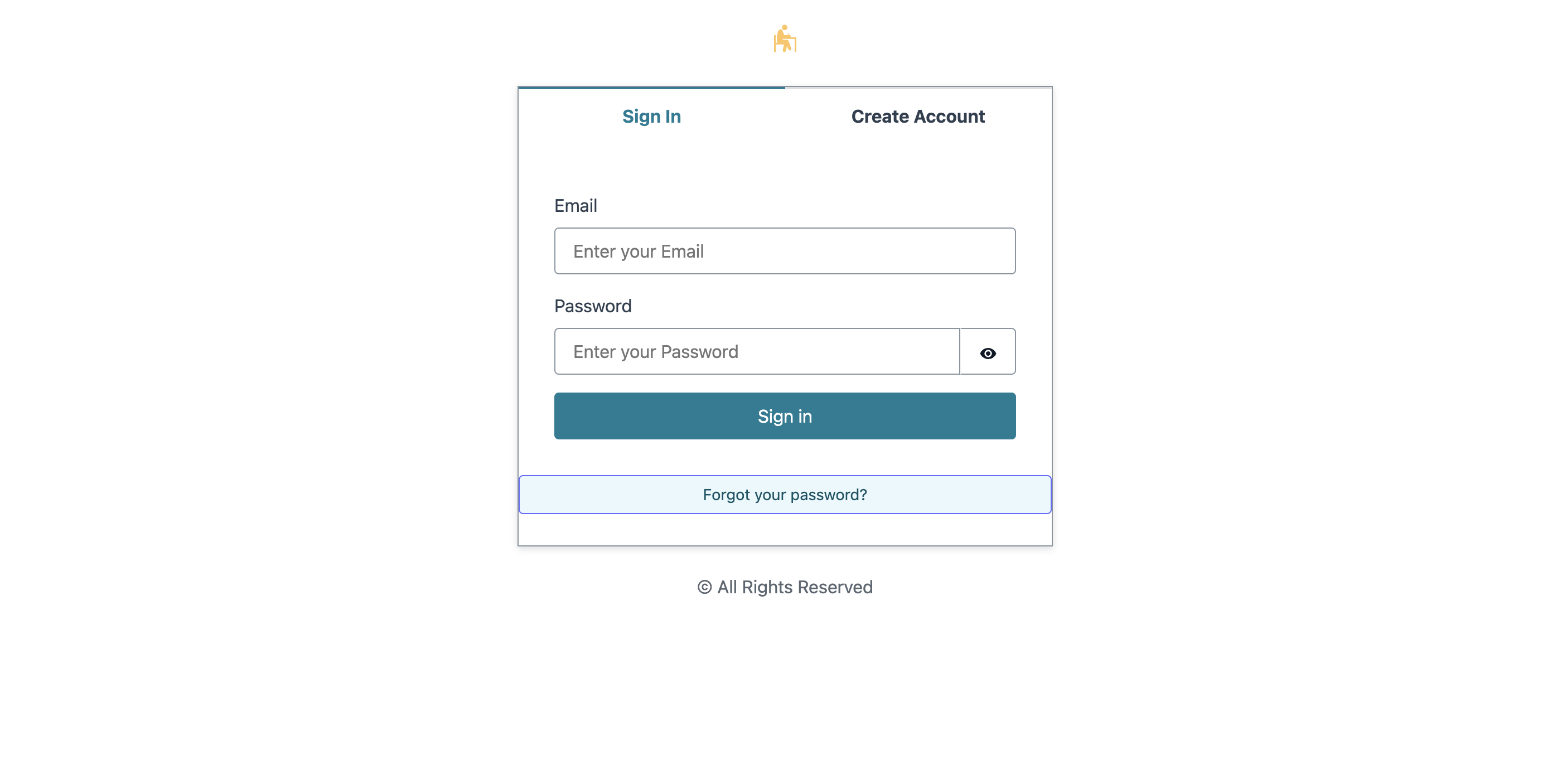

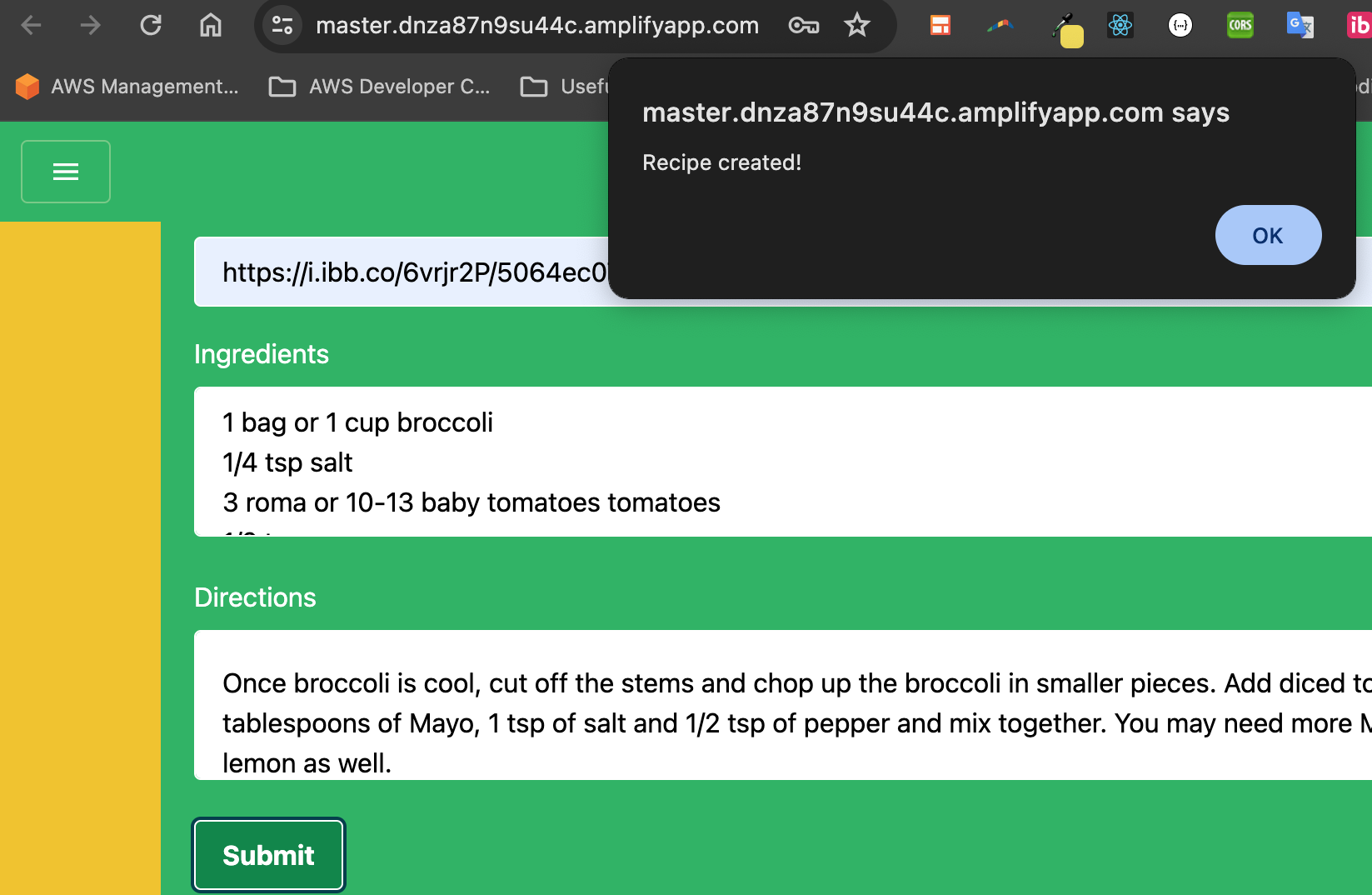

1,859,001 | News posting website with AWS Amplify | This is a submission for the The AWS Amplify Fullstack TypeScript Challenge What I... | 0 | 2024-05-27T06:30:38 | https://dev.to/lizardkinglk/news-posting-website-with-aws-amplify-2oi8 | devchallenge, awschallenge, amplify, fullstack | *This is a submission for the [The AWS Amplify Fullstack TypeScript Challenge ](https://dev.to/challenges/awschallenge)*

## What I Built

**Studious**

Using AWS Gen 2 services, I developed an open-source news, blog, and post creation and publication application Studious. Users who have registered on this website can view news, draft and publish their own local news articles, and build their own. Add a variety of stuff, such as size-based text, urls for photos, etc.

## Demo

[Studious App](https://main.d3w0oxjx7x9ipa.amplifyapp.com/)

[Source Code](https://github.com/lizardkingLK/studious-lamp)

## Journey

I developed this application using the next js (pages routes) for react framework.

The following AWS services are used by this application

- Data

- Authentication

- Serverless Functions

- File Storage

**Connected Components and/or Feature Full**

<!-- Let us know if you developed UI using Amplify connected components for UX patterns, and/or if your project includes all four features: data, authentication, serverless functions, and file storage. -->

> This application also uses the Amplify UI React library for the frontend UI Framework, also with the auth and storage connected UI components which speeds up and simplifies development.

<!-- Team Submissions: Please pick one member to publish the submission and credit teammates by listing their DEV usernames directly in the body of the post. -->

Thanks for reading! 🤝🏿

| lizardkinglk |

1,866,205 | 🔧Top Open Source AI Web Scrapers to Fire Up Your Market Research🔥 | Web scraping, in simpler words, is to scrape data and content from websites, the data is then saved... | 26,123 | 2024-05-27T06:27:44 | https://star-history.com/blog/ai-web-scraper | programming, ai, productivity, opensource | Web scraping, in simpler words, is to scrape data and content from websites, the data is then saved in the form of XML, Excel, or SQL. On top of lead generation, competitor monitoring, market research, web scrapers can also be used to automate your data collection process.

With the help of AI web scraping tools, the limitations associated with manual or purely code-based scraping tools can be addressed: dynamic or unstructured websites can easily be handled, all without human intervention.

Here, we present a few open-source AI web scraping tools to choose from.

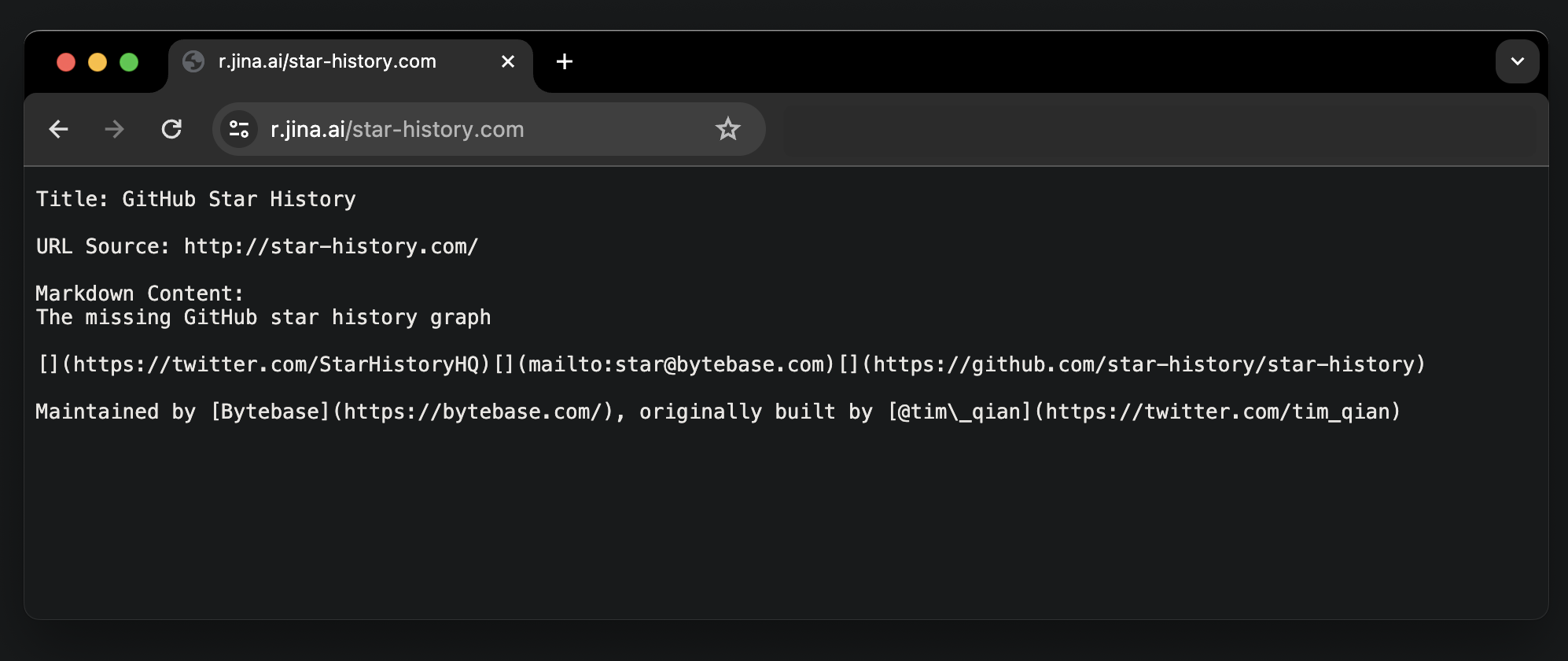

## Reader

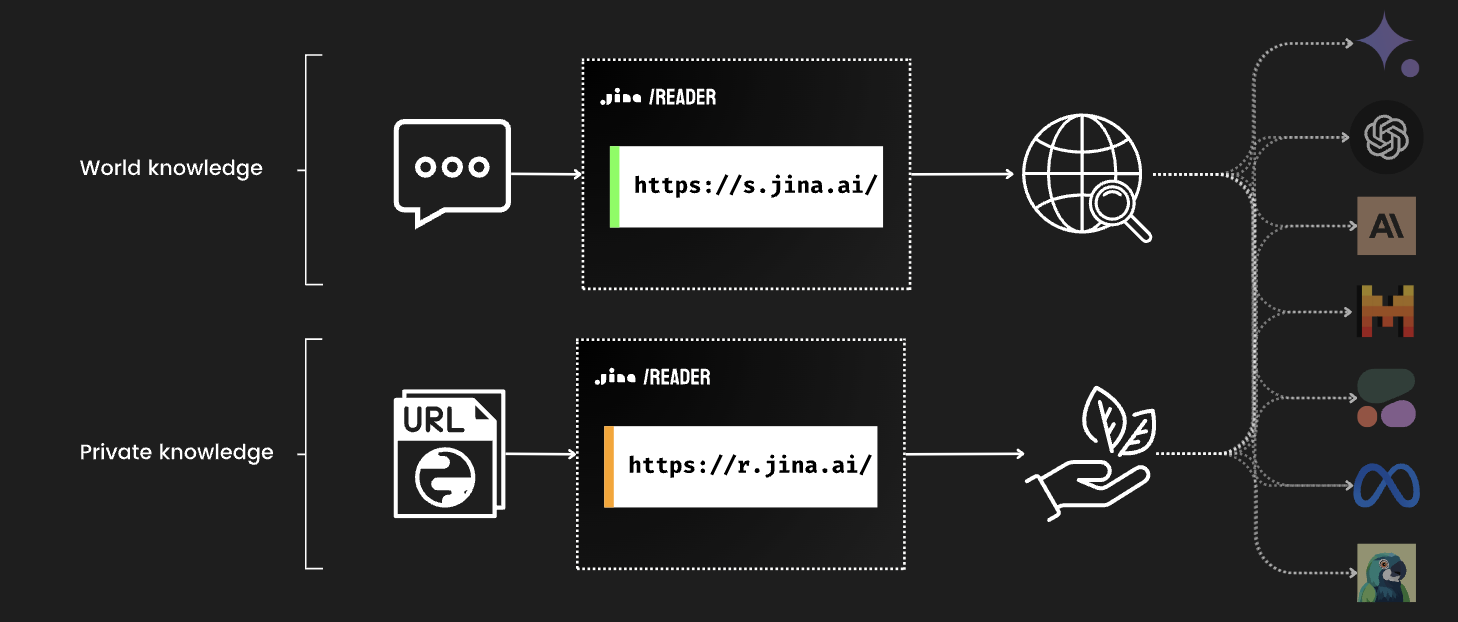

[Reader](https://github.com/jina-ai/reader) is an offering by Jina AI. It can convert any URL to an LLM-friendly input when you append a simple `https://r.jina.ai/`, and you can get structured output for your agent and RAG systems at no cost.

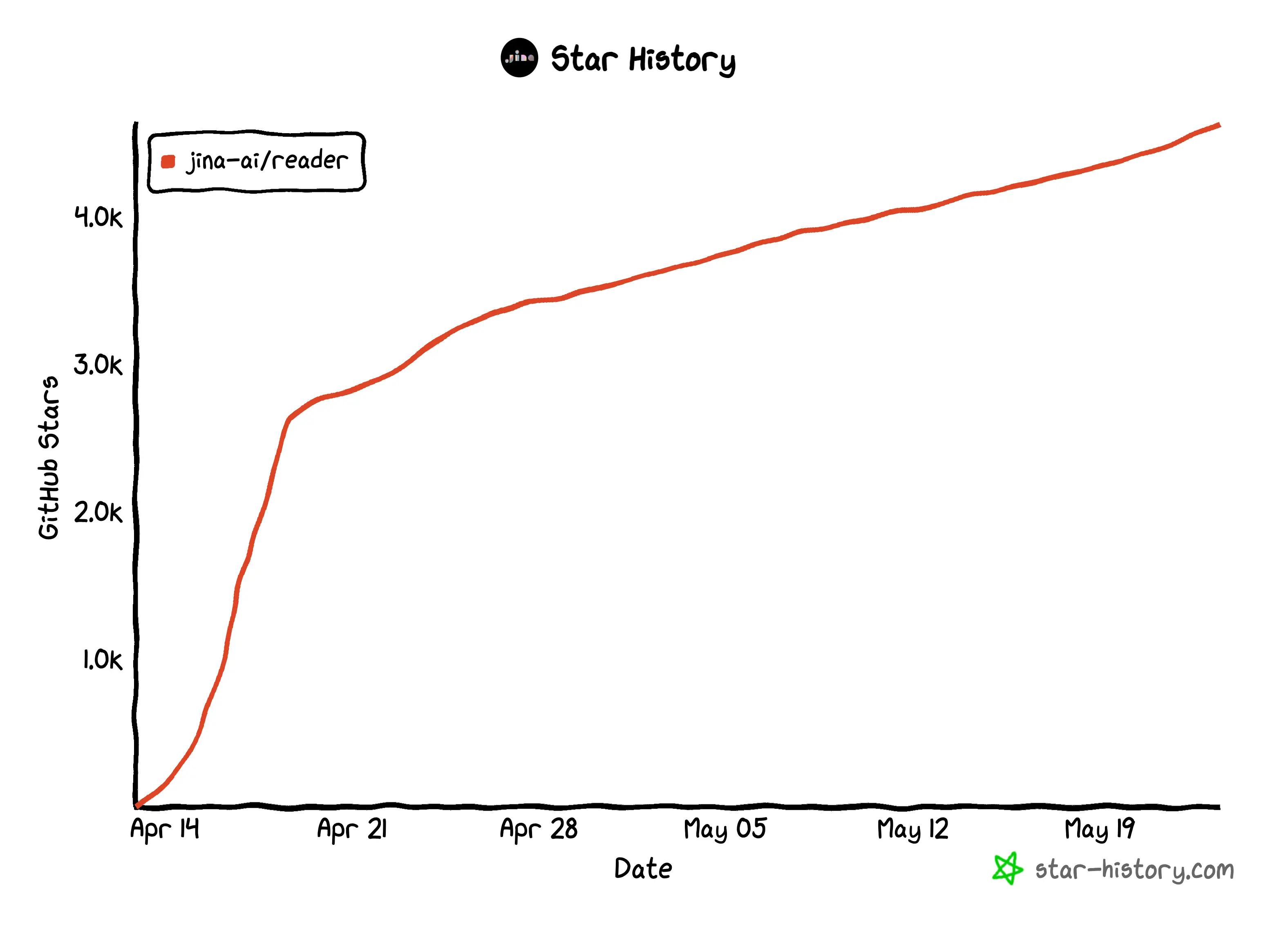

Since its first release just this past month (April 15th, to be exact), they have served [over 18M](https://jina.ai/news/jina-reader-for-search-grounding-to-improve-factuality-of-llms/) requests from the world, and the project itself has already gained 4.5K stargazers.

Aside from scraping any URL, Jina just released another feature where you can use `https://s.jina.ai/YOUR_SEARCH_QUERY` to search from the up-to-date knowledge on the Internet. The result includes a title, LLM-friendly markdown, and a URL that attributes the source.

Together, you can construct a comprehensive solution for LLMs, agents, and RAG systems.

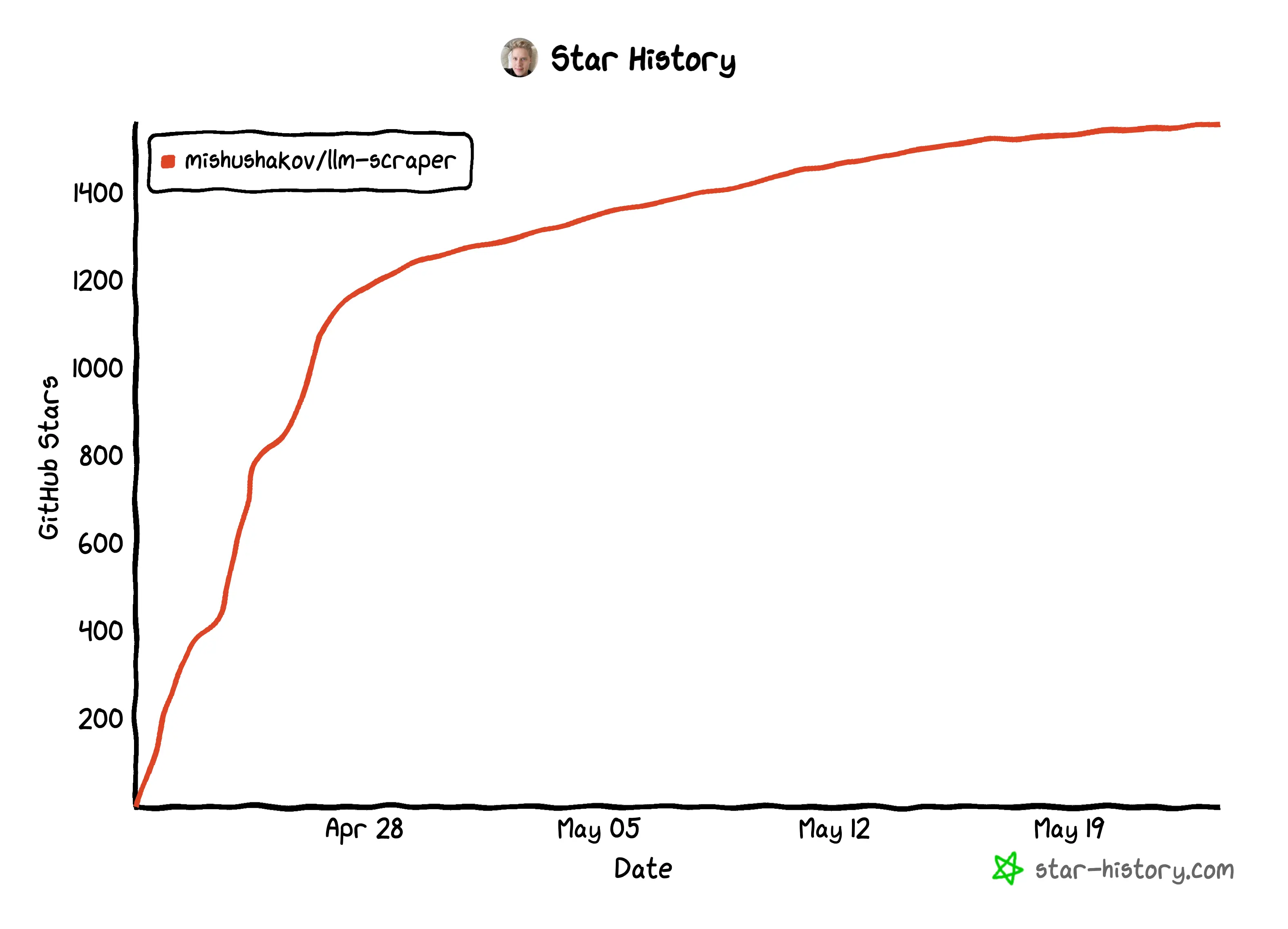

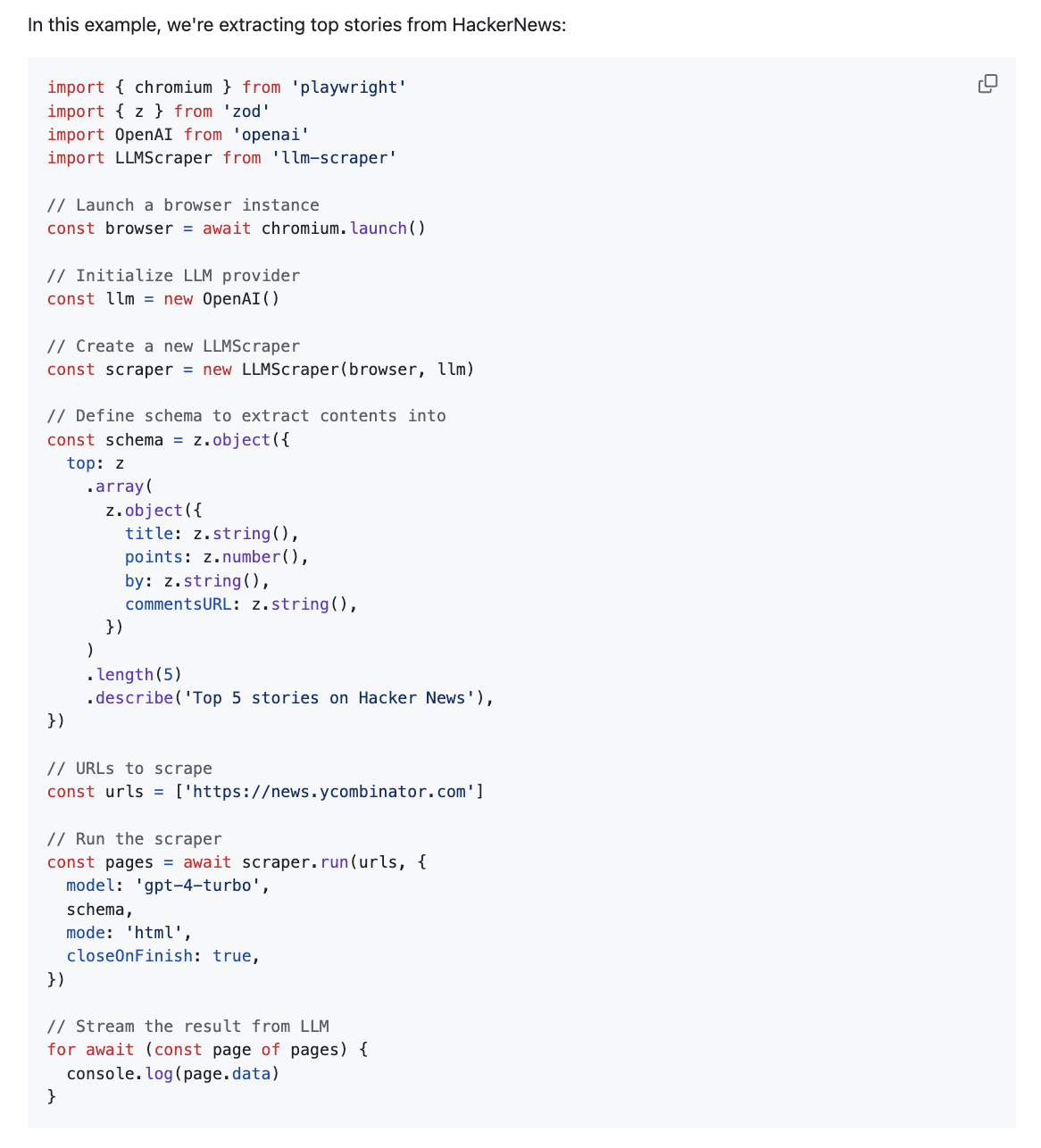

## LLM Scraper

[LLM Scraper](https://github.com/mishushakov/llm-scraper) is a TypeScript library that can convert any webpage into structured data using LLMs. Essentially, it uses function calling to convert pages to structured data.

Simliarly to Reader, it was open-sourced just last month. It currently supports Local (GGUF), OpenAI, Groq chat models. Apparently, the author is [working on](https://news.ycombinator.com/item?id=40100824) supporting local LLMs via llama.cpp to lower the cost of using LLMs for web scraping.

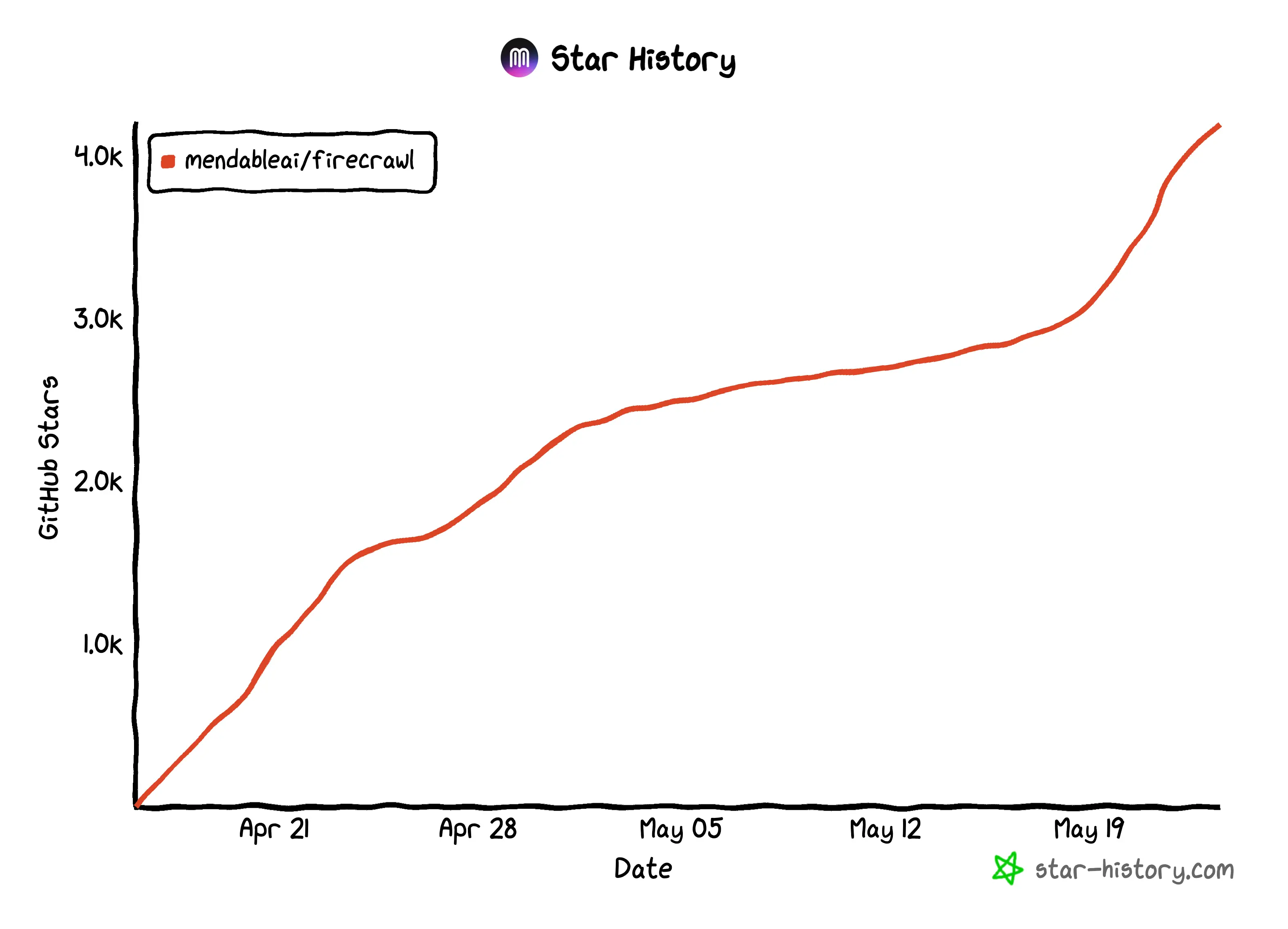

## Firecrawl

[Firecrawl](https://github.com/mendableai/firecrawl) is an API service that can convert an URL into clean, well-formatted markdown. This format is great for LLM applications, offering a structured yet flexible way to represent web content.

This tool is tailored for LLM engineers, data scientists, AI researchers, and developers looking to harness web data for training machine learning models, market research, content aggregation. It simplifies the data preparation process, allowing professionals to focus on insights and model development, and you can self-host it to your own taste.

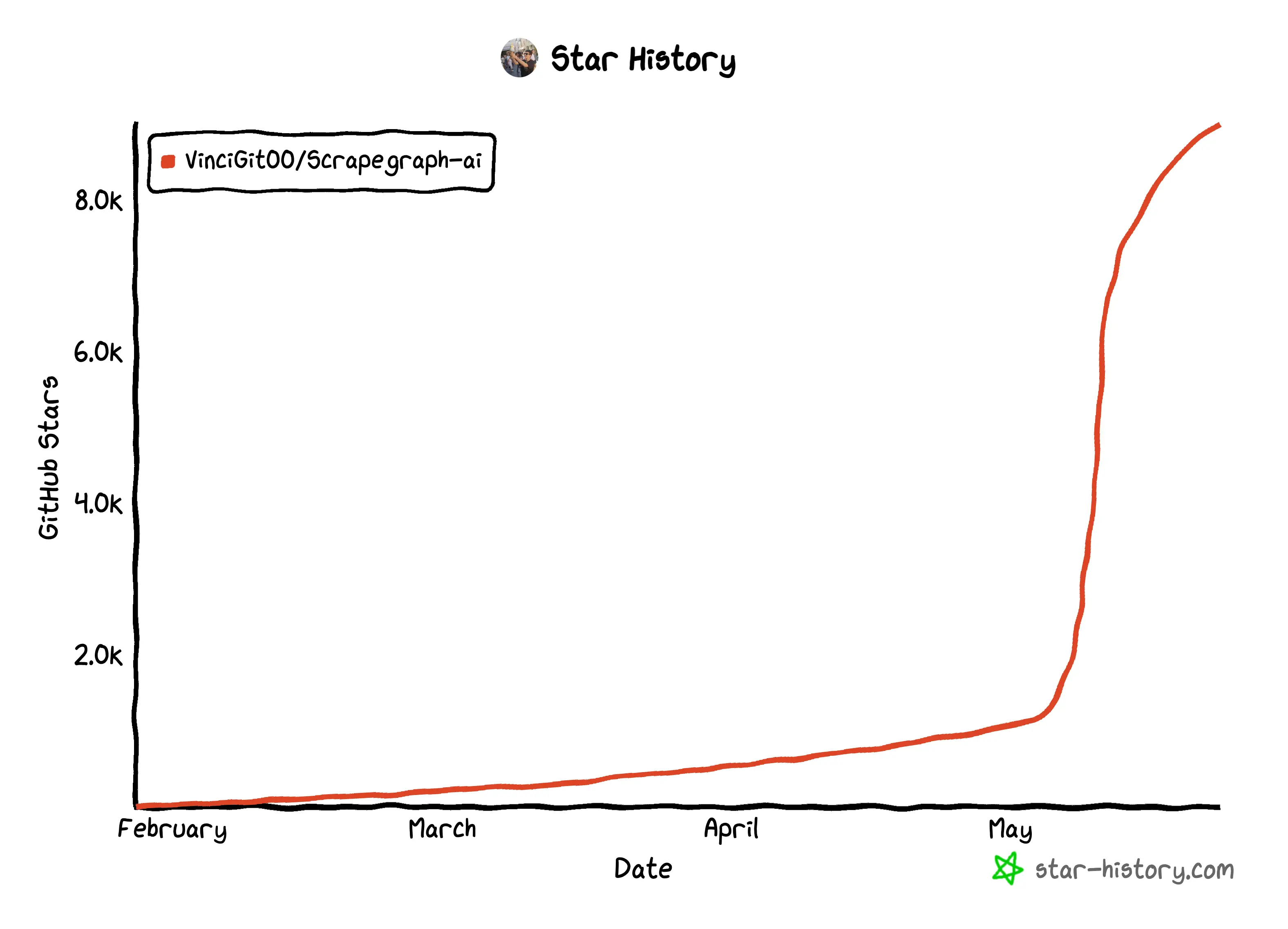

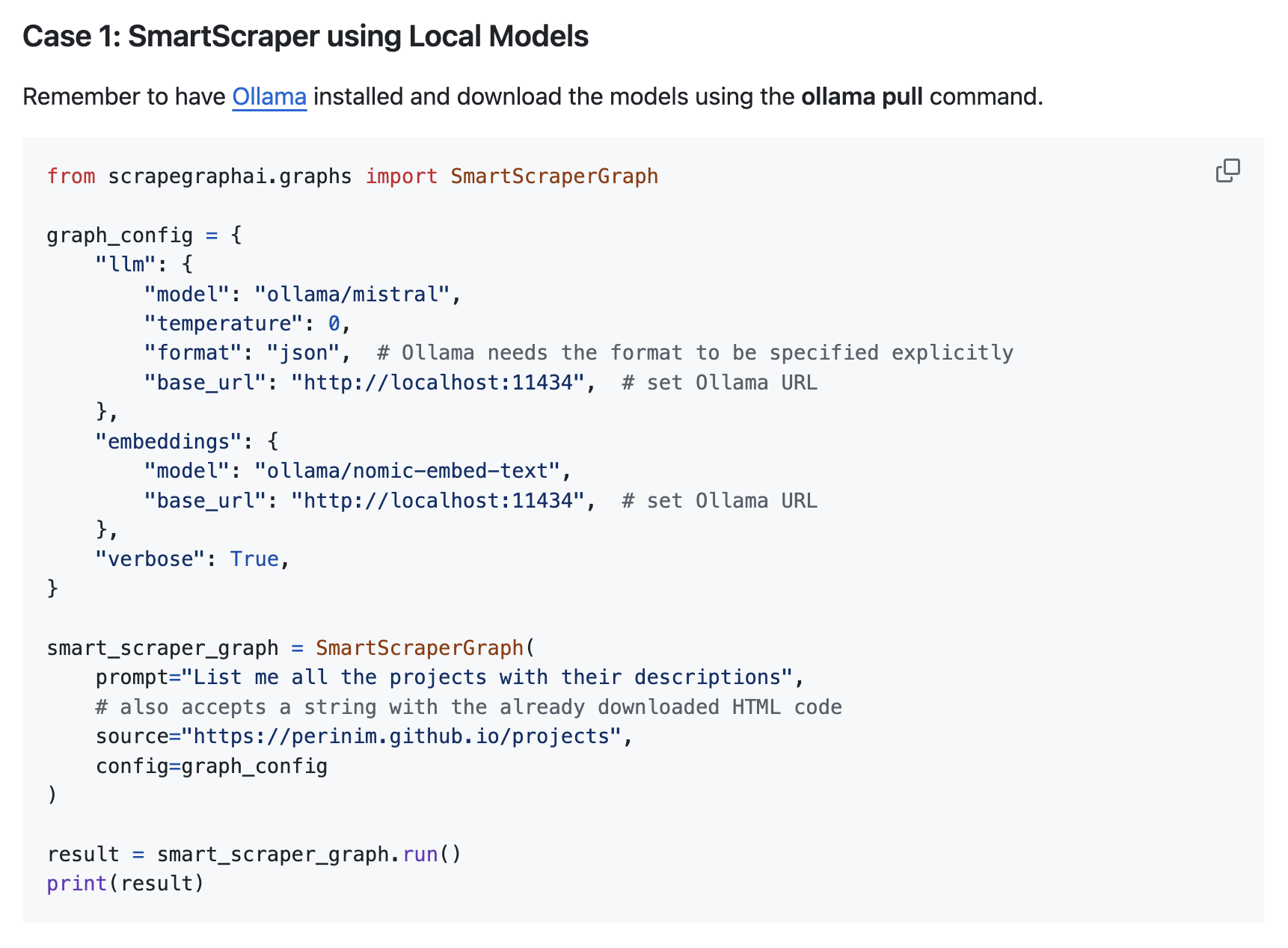

## ScrapeGraphAI

[ScrapeGraphAI](https://github.com/VinciGit00/Scrapegraph-ai) is a Python library that uses LLM and direct graph logic to create scraping pipelines for websites and local documents (XML, HTML, JSON, etc.). With ScrapeGraphAI, you get to specify exactly what sort of data you want to extract.

ScrapegraphAI leverages the power of LLMs, and can thus adapt to changes in website structures, reducing the need for constant developer intervention. This flexibility ensures that scrapers remain functional even when website layouts change.

The LLMs it currently supports include GPT, Gemini, Groq, Azure, Hugging Face, as well as local models.

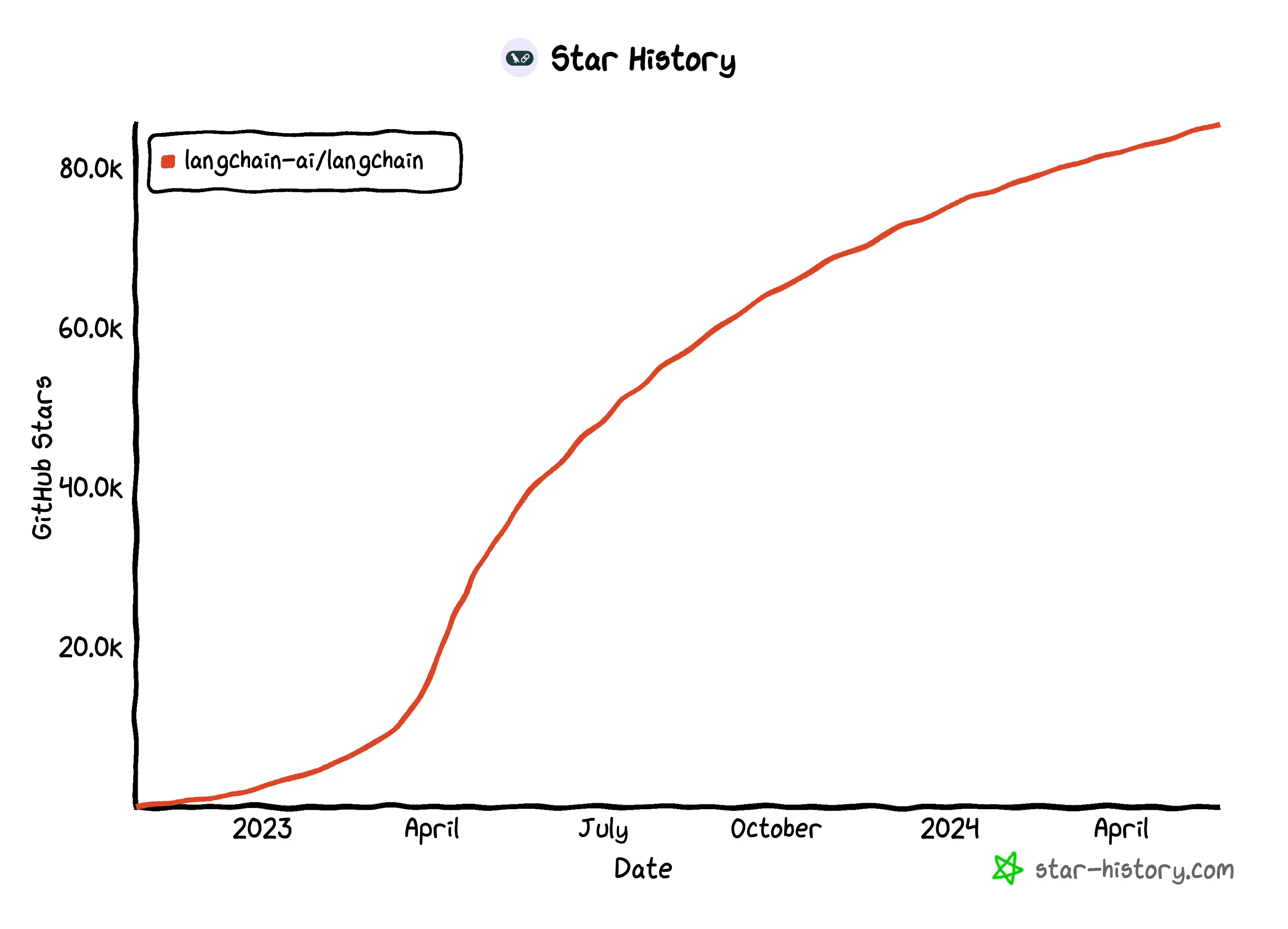

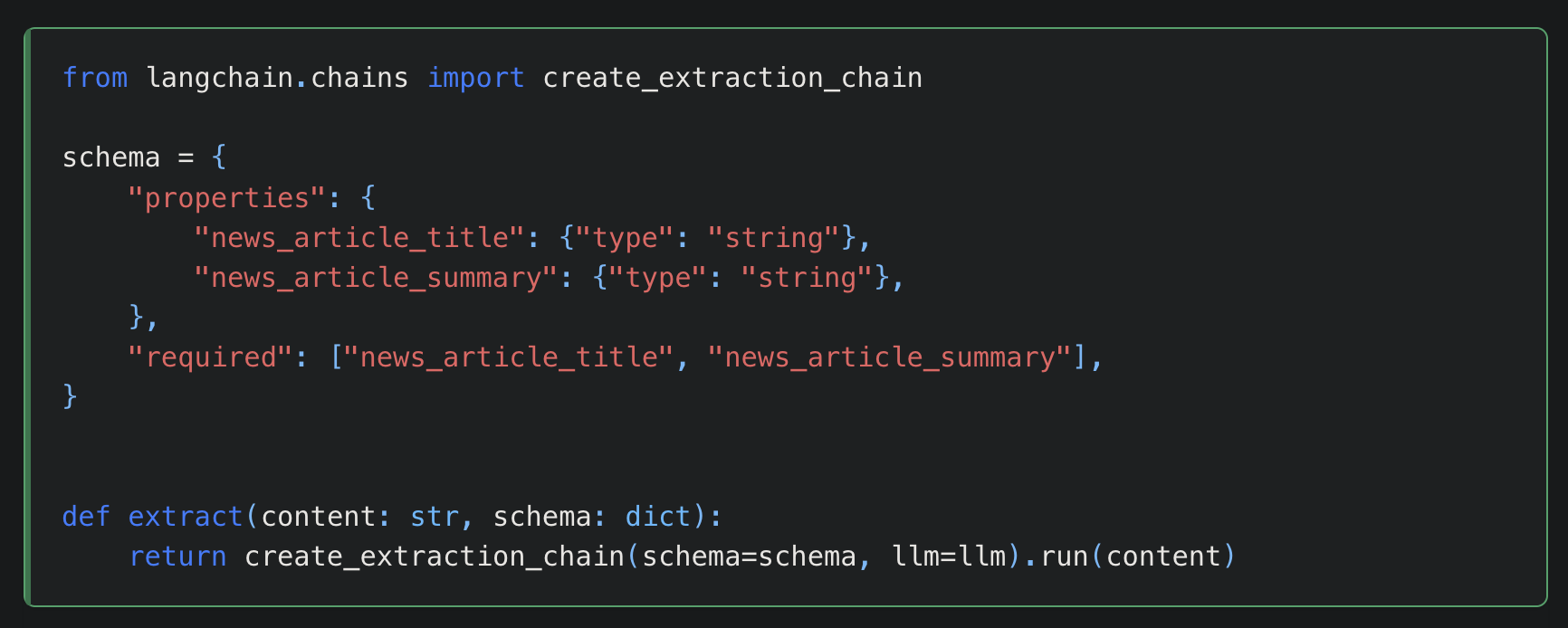

## LangChain

What is LangChain not capable of? Not [web scraping](https://python.langchain.com/v0.1/docs/use_cases/web_scraping/).

One of web scraping's biggest challenges is the changing nature of modern websites' layouts and content, which requires modifying scraping scripts to accommodate the changes, and LangChain also utilizes function (e.g., OpenAI) with an extraction chain, so that you don't have to change your code constantly when websites change.

If you are doing research and want to scrape only news article's name and summary from The Wall Street Journal website, it's got you covered.

## To Sum Up

Of course, there is no one-size-fits-all web scraper. Do you prefer old-school traditional web scrapers or LLM-empowered ones? | milasuperstar |

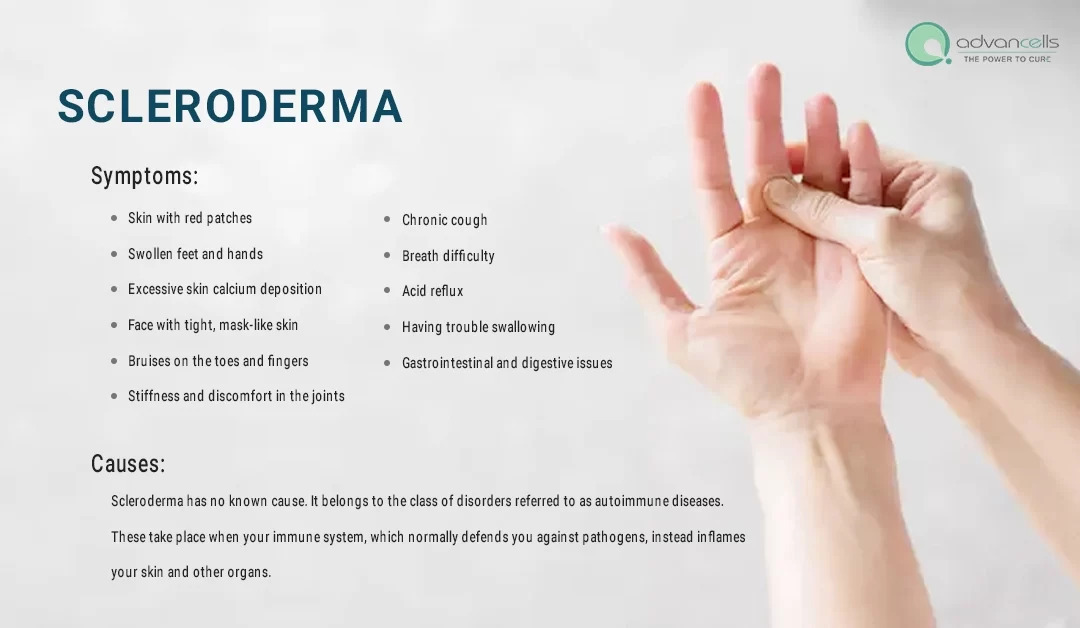

1,866,204 | Foods to Increase Endometrial Thickness Naturally | Thin endometrium, also known as an uterine lining is a common concern among women today especially... | 0 | 2024-05-27T06:27:21 | https://dev.to/advancells/foods-to-increase-endometrial-thickness-naturally-2akn | endometrial, endometrialtreatment, stemcells, advancells | Thin endometrium, also known as an uterine lining is a common concern among women today especially those who decide to start families later in life. This issue can greatly impact fertility by making it challenging for an egg to successfully implant and develop into a pregnancy. Before opting for procedures, exploring the benefits of a well balanced diet can be highly empowering. Factors that can influence thickness include;

- Age

- imbalances

- Persistent health conditions

- Ashermans syndrome

- Hormonal birth control methods

- Low body weight

- Chronic inflammation

The positive news is that your dietary choices play a significant role, in maintaining good endometrial health. A balanced diet that promotes an endometrium includes;

- natural fats

- Antioxidants

- Whole grains

- Plant based proteins

- Foods rich, in iron

- Ensure intake of adequate folate.

- Essential amino acid supplements

Remember, consistency is vital! Building an endometrium requires time and commitment. If you don't see results don't lose heart. Improving your health is a process that demands perseverance and patience. Just like Rome wasn't built in a day your health won't transform either. Take it one step at a time. You'll create an environment for successful implantation and pregnancy.

Discover more about symptoms, potential causes and treatments for children at the [ https://www.advancells.com/nutrition-can-improve-endometrial-lining/ ]

| advancells |

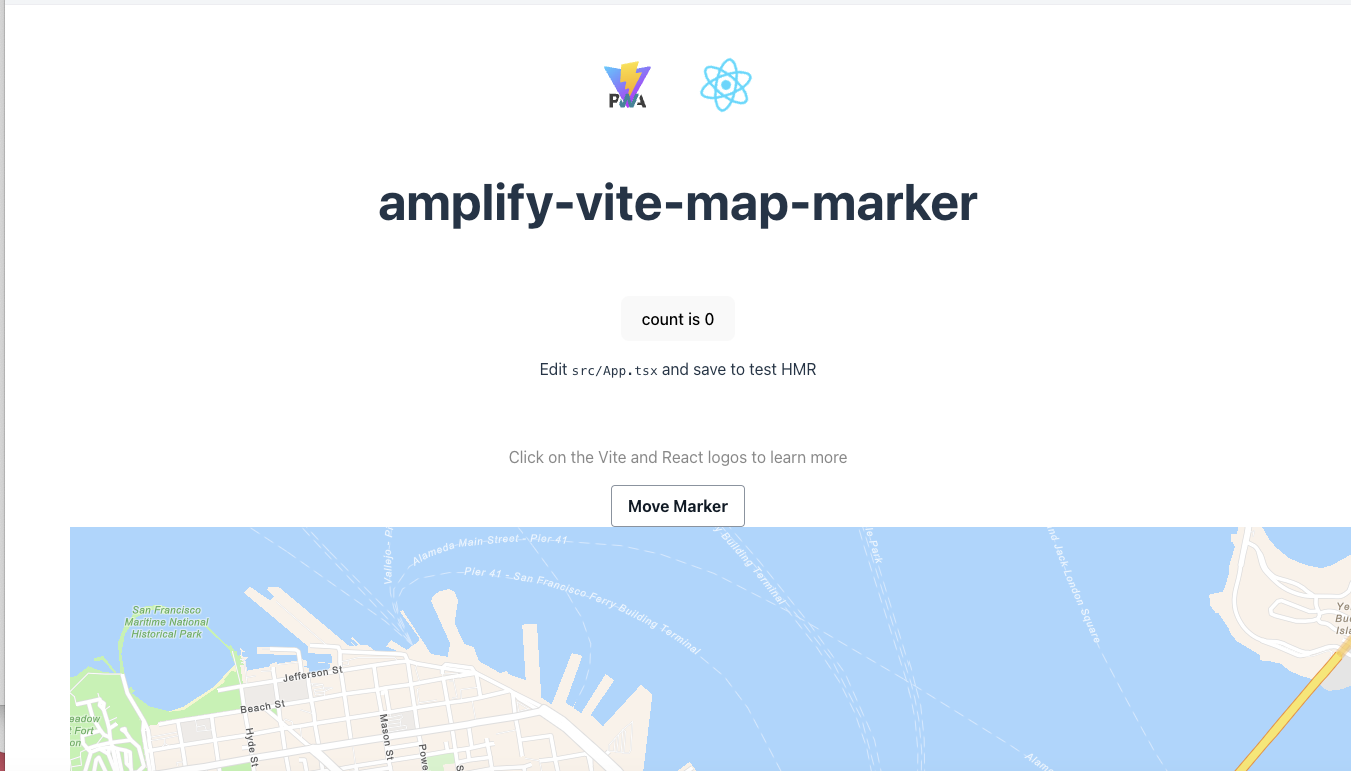

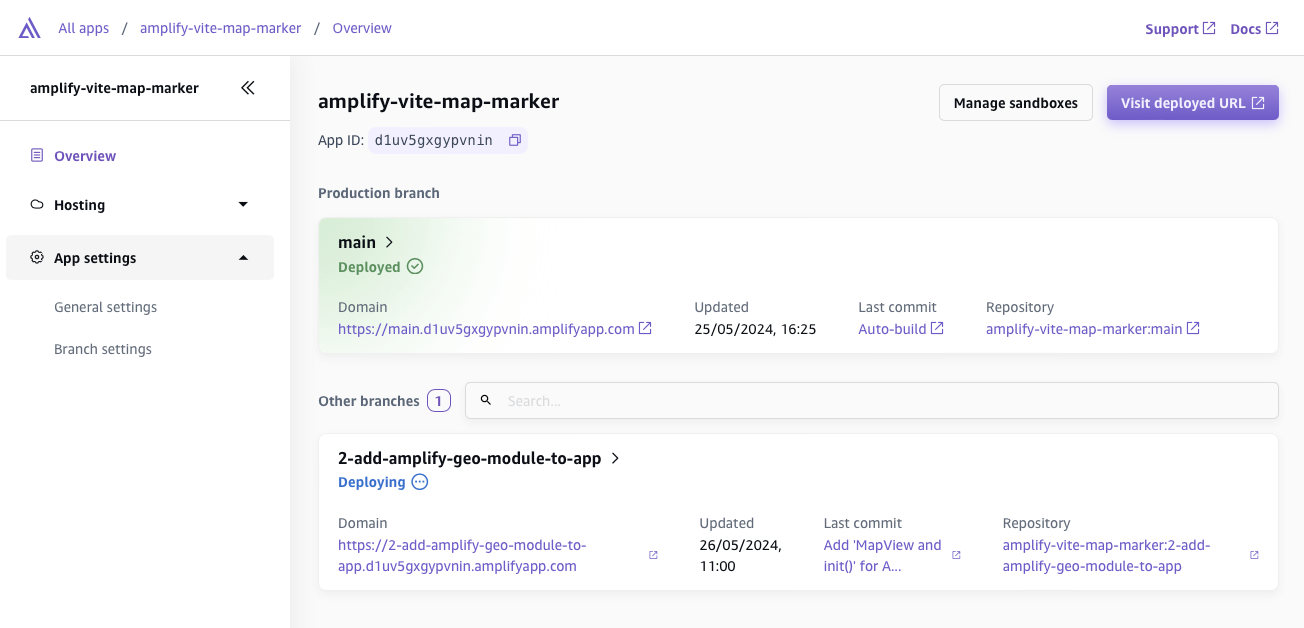

1,865,480 | Building a Map Marker PWA with Amplify Gen 2 (Auth, Geo and CI/CD) | This is a submission for the The AWS Amplify Fullstack TypeScript Challenge What I... | 0 | 2024-05-27T06:09:46 | https://dev.to/ubinix_warun/building-a-map-marker-pwa-with-amplify-gen-2-auth-geo-and-cicd-2712 | devchallenge, awschallenge, amplify, fullstack | *This is a submission for the [The AWS Amplify Fullstack TypeScript Challenge ](https://dev.to/challenges/aws)*

## What I Built

I created a Progressive Web App (PWA) called "Map Marker" that allows users to interactively pinpoint and save locations on a map. Users can create accounts to save their markers, add custom descriptions and photos, and search for specific locations. The app is built with a focus on offline accessibility, leveraging PWA features to ensure a seamless experience even without an internet connection.

## Demo

You can experience the live version of Map Marker on Amplify Hosting:

[Map Marker (DEMO)](https://main.d1uv5gxgypvnin.amplifyapp.com)

## Journey

The development journey was an exhilarating adventure, as I ventured into the world of fullstack TypeScript with Amplify Gen 2. The code-first approach allowed me to define my backend infrastructure using TypeScript, which felt natural as a frontend developer.

```bash

# Create Vite/PWA project from template.

npm create @vite-pwa/pwa@latest amplify-vite-map-marker \

--template react-ts

```

The built-in CI/CD pipeline in Amplify Hosting streamlined deployment, automatically rebuilding and publishing my app with each push to my Github repository.

```bash

# Setup Dep. and Installation

npm create amplify@latest

npm install

# Configure AWS for local development

aws sso login

# Run Sandbox

npx ampx sandbox --profile amplify-admin

```

Amplify Geo truly stole the show, providing pre-built UI components (MapView, SearchField) and convenient APIs for handling map interactions, geocoding, and place search. It dramatically simplified the integration of map functionality into my PWA.

```bash

npm add aws-amplify @aws-amplify/geo

npm add @aws-amplify/ui-react-geo

```

Additionally, I encountered a conflict with the Marker component from @aws-amplify/ui-react-geo, preventing me from customizing its behavior as needed.

```typescript

import { MapView } from '@aws-amplify/ui-react-geo';

import '@aws-amplify/ui-react-geo/styles.css';

function App() {

...

return (

<>

...

<MapView

initialViewState={{

latitude: 37.8,

longitude: -122.4,

zoom: 14,

}}>

</MapView>

</>

);

}

```

**Connected Components and/or Feature Full**

- Vite: For a smooth development experience.

- React: For building a dynamic UI.

- TypeScript: For robust code and type safety.

- AWS Amplify Gen2:

- Auth: For user authentication.

- Geo: For map display and search.

- Hosting: For seamless deployment.

## Next Steps

As Amplify Gen 2 continues to mature, I'm eager to revisit this project and fully implement the intended features. I plan to:

- **Enhance DataStore Integration**: Leverage DataStore's full potential for real-time data synchronization and offline support once it's more stable.

- **Customize Markers**: Explore solutions to overcome the UI component conflict and create more interactive and personalized markers.

- **Add More Features**: Implement features like geofencing, enhanced user profiles, and social sharing.

## Conclusion

Even though I faced some roadblocks, building this PWA map marker app with Amplify Gen 2 was a rewarding experience. It's clear that Amplify Gen 2 has immense potential to simplify fullstack development, and I'm excited to continue exploring its capabilities as it evolves. | ubinix_warun |

1,866,203 | Tailwindcss is powerfull | I'm using Tailwindcss for a long now. I'm telling you, it's awesome ! I work on backend, but I love... | 0 | 2024-05-27T06:26:51 | https://dev.to/alphonsekazadi/tailwindcss-is-powerfull-37bk | webdev, tailwindcss, javascript, programming | I'm using Tailwindcss for a long now. I'm telling you, it's awesome !

I work on backend, but I love also working on front-end ! So, Tailwind CSS is the best option I found. | alphonsekazadi |

1,866,202 | The Ultimate Guide to Hire Magento Developer in 2024 | The Ultimate Guide to Hire Magento Developer in 2024 Introduction Overview of Magento and Its... | 0 | 2024-05-27T06:25:05 | https://dev.to/hirelaraveldevelopers/the-ultimate-guide-to-hire-magento-developer-in-2024-4ec8 | webdev, programming, react, opensource | <h2>The Ultimate Guide to Hire Magento Developer in 2024</h2>

<h3>Introduction</h3>

<h4>Overview of Magento and Its Importance in E-commerce</h4>

<p>Magento is one of the most popular e-commerce platforms, known for its flexibility, scalability, and extensive customization options. It powers thousands of online stores, ranging from small businesses to large enterprises. Magento’s robust features make it an ideal choice for businesses looking to create a powerful online presence.</p>

<h4>Why Hiring a Magento Developer is Crucial for Your Business</h4>

<p>Hiring a skilled Magento developer is crucial for leveraging the full potential of this platform. A developer can tailor your website to meet specific business needs, ensuring a seamless user experience, optimizing site performance, and implementing advanced functionalities that can drive sales and customer engagement.</p>

<h4>Trends in Magento Development in 2024</h4>

<p>In 2024, Magento development is witnessing trends such as the integration of AI and machine learning for personalized shopping experiences, the adoption of PWA (Progressive Web Apps) for enhanced mobile performance, and the increasing importance of cybersecurity measures. Keeping up with these trends is essential for maintaining a competitive edge.</p>

<h3>Understanding Magento</h3>

<h4>What is Magento?</h4>

<p>Magento is an open-source e-commerce platform that offers merchants a flexible shopping cart system and control over the look, content, and functionality of their online store. Magento is designed to be scalable and is backed by a large support network.</p>

<h4>Features and Capabilities of Magento</h4>

<p>Magento boasts a comprehensive feature set that includes product management, order management, customer management, marketing tools, SEO capabilities, and multi-store functionality. Its modular architecture allows for extensive customization and integration with third-party services.</p>

<h4>Different Versions of Magento: Magento Open Source vs. Magento Commerce</h4>

<p>Magento comes in two main versions: Magento Open Source (formerly known as Magento Community Edition) and Magento Commerce (formerly known as Magento Enterprise Edition). Magento Open Source is free and suitable for small to medium-sized businesses, while Magento Commerce offers additional features and support, targeting larger enterprises.</p>

<h4>Benefits of Using Magento for E-commerce</h4>

<p>Magento’s flexibility, scalability, and rich feature set make it an excellent choice for e-commerce. It supports multiple languages and currencies, offers robust SEO tools, and provides a seamless shopping experience across devices. Additionally, its extensive customization options allow businesses to create unique and engaging online stores.</p>

<h3>The Role of a Magento Developer</h3>

<h4>Responsibilities of a Magento Developer</h4>

<p>A Magento developer is responsible for developing, maintaining, and improving e-commerce websites built on the Magento platform. This includes tasks such as customizing themes and modules, integrating third-party services, optimizing site performance, and ensuring security and compliance.</p>

<h4>Skills Required for a Magento Developer</h4>

<p>Key skills for a Magento developer include proficiency in PHP, JavaScript, HTML, and CSS, as well as a deep understanding of the Magento architecture. Knowledge of MySQL, version control systems like Git, and experience with e-commerce best practices are also essential.</p>

<h4>The Difference Between Frontend and Backend Magento Developers</h4>

<p>Frontend Magento developers focus on the visual and interactive aspects of a website, working with HTML, CSS, and JavaScript to create user-friendly interfaces. Backend developers, on the other hand, handle server-side logic, database interactions, and integrations with other systems, ensuring the site’s functionality and performance.</p>

<h4>The Importance of Certification for Magento Developers</h4>

<p>Magento certification demonstrates a developer’s expertise and commitment to the platform. Certified developers have passed rigorous exams that test their knowledge of Magento’s architecture, features, and best practices, making them more reliable and skilled professionals.</p>

<h3>Preparing to Hire a Magento Developer</h3>

<h4>Defining Your Project Requirements</h4>

<p>Before hiring a Magento developer, it’s crucial to define your project requirements clearly. This includes outlining the scope of work, desired features, budget, and timeline. A well-defined project brief helps attract the right candidates and sets clear expectations.</p>

<h4>Setting a Budget for Your Magento Project</h4>

<p>Setting a realistic budget is essential for <a title="hiring Magento developer" href="https://www.aistechnolabs.com/hire-magento-developers/">hiring Magento developer</a>. Consider factors such as the complexity of your project, the level of customization required, and ongoing maintenance costs. A well-planned budget ensures you can afford the necessary expertise without compromising on quality.</p>

<h4>Deciding Between Freelancers vs. Agency Developers</h4>

<p>Choosing between freelance developers and agency developers depends on your project’s size and complexity. Freelancers are often more cost-effective and flexible for smaller projects, while agencies offer a broader range of skills and resources, making them suitable for larger, more complex projects.</p>

<h4>Creating a Job Description for a Magento Developer</h4>

<p>A detailed job description is crucial for attracting qualified candidates. It should include an overview of your company, the specific responsibilities and requirements of the role, necessary skills and experience, and any preferred qualifications such as Magento certification or experience with certain integrations.</p>

<h3>Where to Find Magento Developers</h3>

<h4>Job Boards and Freelance Platforms</h4>

<p>Popular job boards and freelance platforms such as Upwork, Freelancer, and Toptal are excellent places to find Magento developers. These platforms allow you to post job listings, review candidates’ profiles and portfolios, and communicate directly with potential hires.</p>

<h4>Magento Community and Forums</h4>

<p>Engaging with the Magento community and forums can help you find experienced developers who are actively involved in the platform. Websites like Magento Stack Exchange, Magento Community Hub, and Reddit’s Magento subreddit are valuable resources for networking and finding talent.</p>

<h4>Networking and Referrals</h4>

<p>Networking through industry events, online webinars, and local meetups can help you find reputable Magento developers. Asking for referrals from colleagues, partners, and other businesses that use Magento can also lead to high-quality candidates.</p>

<h4>Hiring Agencies Specializing in Magento Development</h4>

<p>There are agencies that specialize in Magento development and can provide a team of experts to handle your project. These agencies often have a proven track record and can offer comprehensive services, including design, development, and ongoing support.</p>

<h3>Evaluating Potential Candidates</h3>

<h4>Reviewing Portfolios and Previous Work</h4>

<p>When evaluating candidates, reviewing their portfolios and previous work is essential. Look for examples of Magento projects they have completed, paying attention to the complexity and quality of their work, as well as their ability to meet deadlines and client requirements.</p>

<h4>Conducting Technical Interviews</h4>

<p>Technical interviews help assess a candidate’s coding skills and understanding of Magento. Prepare questions and tasks that test their knowledge of PHP, Magento architecture, database management, and problem-solving abilities. Practical tests or coding challenges can provide deeper insights into their skills.</p>

<h4>Assessing Soft Skills and Cultural Fit</h4>

<p>Soft skills such as communication, teamwork, and problem-solving are crucial for successful collaboration. Assess a candidate’s ability to work within your company’s culture, handle feedback, and communicate effectively with team members and stakeholders.</p>

<h4>Checking References and Background</h4>

<p>Checking references and background can validate a candidate’s experience and reliability. Contact previous employers or clients to inquire about their performance, work ethic, and any challenges they faced during projects. This step helps ensure you hire a trustworthy and competent developer.</p>

<h3>Interview Questions for Magento Developers</h3>

<h4>Technical Questions</h4>

<ul>

<li>Explain the Magento architecture and how it supports scalability.</li>

<li>Describe the difference between Magento 1 and Magento 2.</li>

<li>How do you optimize Magento for performance and speed?</li>

<li>Can you walk through the process of creating a custom module in Magento?</li>

</ul>

<h4>Experience-Based Questions</h4>

<ul>

<li>Tell me about a challenging Magento project you worked on and how you overcame the difficulties.</li>

<li>How have you handled integrating third-party services with Magento?</li>

<li>Describe a time when you improved the user experience on a Magento site.</li>

<li>What strategies have you used to ensure the security of a Magento store?</li>

</ul>

<h4>Problem-Solving Scenarios</h4>

<ul>

<li>How would you approach a situation where a Magento site is experiencing slow load times?</li>

<li>What steps would you take if you discovered a security vulnerability in a Magento extension?</li>

<li>How do you handle conflicting extensions or customizations in Magento?</li>

</ul>

<h3>Hiring Process</h3>

<h4>Steps in the Hiring Process</h4>

<ol>

<li>Posting the job description and promoting the position.</li>

<li>Reviewing applications and shortlisting candidates.</li>

<li>Conducting initial interviews to assess fit.</li>

<li>Holding technical interviews and practical tests.</li>

<li>Checking references and finalizing the offer.</li>

</ol>

<h4>Negotiating Salaries and Contracts</h4>

<p>Negotiating salaries and contracts is a critical step. Be prepared to discuss compensation, benefits, work hours, and contract terms. Ensure that the agreement is fair and reflects the candidate’s experience and the project’s complexity.</p>

<h4>Onboarding Your Magento Developer</h4>

<p>Effective onboarding helps integrate the new developer into your team. Provide necessary resources, access to tools, and a clear understanding of project goals and timelines. Regular check-ins and support during the initial stages can help them settle in quickly.</p>

<h4>Setting Expectations and Milestones</h4>

<p>Clear expectations and milestones are essential for project success. Define key deliverables, timelines, and performance metrics. Regularly review progress and provide feedback to ensure the project stays on track.</p>

<h3>Working with a Magento Developer</h3>

<h4>Effective Communication Strategies</h4>

<p>Effective communication is crucial for successful collaboration. Use tools like Slack, Trello, or Asana for regular updates and progress tracking. Hold regular meetings to discuss issues, provide feedback, and ensure everyone is aligned with project goals.</p>

<h4>Project Management Tools and Techniques</h4>

<p>Utilize project management tools and techniques such as Agile or Scrum to manage your Magento project. Tools like Jira, Basecamp, or Monday.com can help organize tasks, track progress, and facilitate collaboration among team members.</p>

<h4>Ensuring Code Quality and Security</h4>

<p>Code quality and security are paramount for any e-commerce site. Implement code reviews, automated testing, and security audits to ensure high standards. Using version control systems like Git helps track changes and manage code effectively.</p>

<h4>Managing Deadlines and Deliverables</h4>

<p>Managing deadlines and deliverables requires careful planning and regular monitoring. Break the project into manageable tasks with clear deadlines. Use project management tools to track progress and address any delays promptly.</p>

<h3>Common Challenges and Solutions</h3>

<h4>Overcoming Communication Barriers</h4>

<p>Communication barriers can hinder project progress. Establish clear communication channels, encourage open dialogue, and use tools that facilitate real-time collaboration. Regular updates and feedback help keep everyone on the same page.</p>

<h4>Dealing with Scope Creep</h4>

<p>Scope creep can derail projects if not managed properly. Define project scope clearly at the outset and stick to it. Any changes should be documented, evaluated, and approved through a formal change management process.</p>

<h4>Handling Technical Difficulties</h4>

<p>Technical difficulties are inevitable in any development project. Encourage a problem-solving mindset, ensure access to necessary resources, and foster a collaborative environment where developers can seek help and share solutions.</p>

<h4>Maintaining Long-term Developer Relationships</h4>

<p>Maintaining long-term relationships with developers benefits both parties. Provide opportunities for professional growth, recognize their contributions, and create a positive work environment. Regular feedback and transparent communication help build trust and loyalty.</p>

<h3>FAQs about Hiring Magento Developers</h3>

<h4>How much does it cost to hire a Magento developer?</h4>

<p>The cost of hiring a Magento developer varies based on factors such as their experience, location, and project complexity. Freelancers may charge between $50 to $150 per hour, while agency rates can range from $100 to $250 per hour. For full-time hires, salaries can range from $70,000 to $120,000 per year depending on expertise and location.</p>

<h4>How long does it take to develop a Magento website?</h4>

<p>The time required to develop a Magento website depends on the project’s scope and complexity. A basic site might take 2-3 months to develop, while a more complex site with custom features and integrations could take 6-12 months or longer. Proper planning and a clear project timeline are essential for accurate time estimation.</p>

<h4>What should I look for in a Magento developer’s portfolio?</h4>

<p>When reviewing a Magento developer’s portfolio, look for projects that demonstrate their ability to handle similar tasks to what you require. Assess the complexity and quality of their work, their problem-solving skills, and their ability to deliver on time. Client testimonials and case studies can also provide valuable insights.</p>

<h4>Can a Magento developer help with SEO?</h4>

<p>Yes, a Magento developer can implement various SEO best practices to enhance your site’s search engine rankings. This includes optimizing site speed, ensuring mobile responsiveness, structuring URLs, integrating SEO plugins, and using schema markup. Collaborating with an SEO specialist can further enhance your site's performance.</p>

<h3>Conclusion</h3>

<h4>Recap of Key Points</h4>

<p>Hiring a skilled Magento developer is crucial for leveraging the full potential of the Magento platform. Defining project requirements, setting a budget, and choosing the right type of developer (freelancer vs. agency) are initial steps. Evaluating candidates through portfolios, technical interviews, and reference checks is essential for finding the right fit.</p>

<h4>Final Tips for Hiring the Right Magento Developer</h4>

<p>Ensure clear communication, set realistic expectations, and use project management tools to keep the project on track. Focus on building a positive working relationship to foster long-term collaboration and success.</p>

<h4>Encouragement to Start the Hiring Process</h4>

<p>With this comprehensive guide, you are well-equipped to hire a Magento developer who can bring your e-commerce vision to life. Begin your hiring process today and take the first step towards building a robust and successful online store.</p> | hirelaraveldevelopers |

1,865,617 | day 08 | date: 27 May, 2024. Scope -- Everything we create of define in code is called namespace eg... | 0 | 2024-05-27T06:21:21 | https://dev.to/lordronjuyal/day-08-21l | python | date: 27 May, 2024.

Scope -- Everything we create of define in code is called namespace eg variables, functions, lists, etc. This namespace can be used or accessed in a region of code. This region is called scope of that namespace.

We have two scopes:

1. Global scope -- This is scope of top-level code(top level means it's not inside any functions). We can access it anywhere in the code, but we can't change its value inside a function. This scope is good for constant variables.

2. Local scope -- namespace inside a function has local scope. We can only access this namescape inside the function not outside of it.

Now if we use same name inside a global scope and local scope, both will create two different namespaces. If we want to access and change a global namespace inside local, we have to use-- global name_of_namespace.

Now we can use and change its value also, though doing this is not a good practice.

Other things I learned today-->

1. using _ when looping over and not using the item.

2. list1=[a,b]

list2=[1,2]

list1.expand(list2) >> list1 = [a,b,1,2]

list1 += list2 #will give same result

.append is used for adding single item

3. We should all capital letters for a variable having contact value, eg PI= 3.14. This is used as the standard reference, it won't give any error if we deviate.

4. to import multiple functions from module:

from module import f1 , f2

Programs I created:-

1. Guess the number

https://replit.com/@rohitrj332024/guess-the-number-day-8#main.py

2. Guess higher or lower followers(Instagram)

https://replit.com/@rohitrj332024/higher-lower-start-day8

----------------------------------------------------------

Personal -- I am happy that I am able to solve these problems. They are taking time, and I am also following proper procedures for solving them, like writing steps to do (breaking the problem into small parts), and making a flow chart( I make them on paper right now). I hope I will be able to build a good habit and it will help me when solving bigger projects later on.

| lordronjuyal |

1,866,184 | The Journey of Entrepreneurship: Navigating the Path of Innovation and Resilience | In the field of business, entrepreneurship has come out in a glowing way in a matter of innovation.... | 0 | 2024-05-27T06:20:18 | https://dev.to/techstuff/the-journey-of-entrepreneurship-navigating-the-path-of-innovation-and-resilience-4fm2 | In the field of business, entrepreneurship has come out in a glowing way in a matter of innovation. It's a journey of hard work, dedication and a fire inside the individual of turning ideas into realities. Starting with business in Silicon Valley to business growing in small localities, entrepreneurship has fostered the growth of individuals and at the same time of the nation as well.

Entrepreneurship is the quality of finding opportunities in the field where nothing has been predefined. It is the path of courage, a valley of risk and a story of accepting failures and converting it into winning success. Every entrepreneur’s life is full of challenges, having a different story of failures but a clear and fixed vision of success.

One of the most important qualities of an entrepreneur is the ability of adaptation to change. In the modern world, where technology is getting upgraded day by day, it's very necessary to keep changing with the pace. Whether it is in the field of trades, or the power of digital marketing or new inventions of technologies in IT or every new and old thing coming in the market, entrepreneurs have to keep an eye on every matter to keep updated with the ongoing trends.

Entrepreneurship not only gives success but also it shapes the path for the future. Many entrepreneurs develop a business in a sense of social development. Whether it's through sustainable practices or revolutionary ideas, they have the guts to bring change in society and continue that legacy.

However, to be an entrepreneur is not a bed of roses. It is a journey of hurdles with so many uncertainties and doubts, a lot of failures and cut throat competition with established businessmen. By facing all these challenges only a true entrepreneur takes shape. It is the phase of learning from failures, getting up and presenting to the world the best one can.

Moreover, entrepreneurship is the way of innovation and creation. Whether through mentorship programs, events or co-working spaces entrepreneurs benefit each other by sharing their experience of work. By building groups of entrepreneurs having the same interest, it globally leads to development of the country.

In recent years, the growth in digital marketing has also helped entrepreneurs to reach the market very easily. Starting from getting education from online courses to selling business from a small room, everything has become reachable. Today, one just needs a device and internet to start his entrepreneurial journey.

On the verge of being an entrepreneur one should not become over-confident after getting success. After all it is the path of innovation and resilience. Every entrepreneur should share their story of success as well as failures to motivate others.

I want to conclude my blog by saying that entrepreneurship is the fight with oneself. It is the way of turning dreams into reality. Let’s navigate the path of entrepreneurship and make our life worth for the betterment of society.

| aishna | |

1,861,269 | Implementing SSL Pinning in Flutter | SSL pinning offers a valuable security measure for Flutter applications. | 0 | 2024-05-27T06:14:45 | https://dev.to/harsh8088/implementing-ssl-pinning-in-flutter-3e8a | security, sslpinning, appsecurity, flutter | ---

title: Implementing SSL Pinning in Flutter

published: true

description: SSL pinning offers a valuable security measure for Flutter applications.

tags: security, sslpinning, appsecurity, flutter

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-05-22 05:27 +0000

---

####HTTPS####

While [HTTPS](https://en.wikipedia.org/wiki/HTTPS) encrypts communication between your app and the server, it relies on certificates issued by trusted authorities to verify the server's identity. Without additional security measures, the app might accept a fraudulent certificate presented by a Man-in-the-Middle [MITM](https://en.wikipedia.org/wiki/Man-in-the-middle_attack) attacker. This attacker could then intercept and decrypt sensitive data like login credentials or financial information.

<Image MITM>

Regular HTTPS relies on trusting external authorities to verify a server's identity. SSL pinning adds an extra layer of security by checking if the server's certificate matches a "fingerprint" stored directly in your app. If they don't match, the app can block the connection, preventing imposters from eavesdropping on your communication.

> **SSL certificates, like passports, expire to maintain security. Even though your app itself might not change, updating the app with the new certificate ensures a secure connection.**

Manual Implementation using `http` package

**You'll need to:**

* Load the trusted certificate (usually in PEM format) from your assets.

* Configure the SecurityContext to trust only the loaded certificate(s).

* Use the SecurityContext with your HTTP client (e.g., HttpClient) to make secure connections.

**1. Add `ssl_certificate.pem` into `pubspec.yaml`**

```dart

# The following section is specific to Flutter packages.

flutter:

# The following line ensures that the Material Icons font is

# included with your application, so that you can use the icons in

# the material Icons class.

uses-material-design: true

assets:

- assets/ssl_certificate.pem

- assets/app-logo.png

```

**2. Create Future to Load Certificate**

```dart

Future<SecurityContext> get sslClient async {

final sslCert = await rootBundle.load('assets/ssl_certificate.pem');

SecurityContext securityContext = SecurityContext(withTrustedRoots: false);

securityContext.setTrustedCertificatesBytes(sslCert.buffer.asInt8List());

HttpClient client = HttpClient(context: securityContext);

client.badCertificateCallback =

(X509Certificate cert, String host, int port) => false;

IOClient ioClient = IOClient(client);

return ioClient;

}

```

Here enabling SSL pinning by loading a trusted certificate from the app's assets and configuring the `SecurityContext` to only trust that specific certificate. This provides an extra layer of security for network connections by preventing man-in-the-middle attacks with fraudulent certificates.

**Important points to consider:**

* Make sure the certificate file in your assets is valid and belongs to the server you want to connect to securely.

* Updating the app might be necessary if the server's certificate changes and needs to be replaced in the assets.

**Testing SSL Pinning**

* **Valid Certificate** ✅

Your app will be able to establish a secure connection with the server.

* **Invalid Certificate** ⚠️

Using an invalid or expired certificate poses a security risk. Your app won't be able to establish a secure connection with the server, potentially exposing sensitive data to eavesdroppers.

You might encounter exceptions like `HandshakeException` or `CertificateNotFoundException` in such scenarios.

By following the implementation steps you can effectively bolster the communication channel between your app and its backend servers.

>**Remember, SSL pinning is just one piece of the security puzzle.**

Always adhere to secure coding practices and stay updated on the latest security threats to maintain a robust defense for your Flutter app.

**Happy Coding!** 🧑🏻💻

| harsh8088 |

1,866,181 | 𝙲𝚑𝚛𝚘𝚗𝚘𝙽𝚎𝚋𝚞𝚕𝚊 || 𝙰𝙸 𝚡 𝙵𝚊𝚜𝚑𝚒𝚘𝚗 𝙱𝚛𝚊𝚗𝚍 | ChronoNebula: The Alchemy of AI and Avant-Garde Fashion In the cosmic expanse of the fashion... | 0 | 2024-05-27T06:09:37 | https://dev.to/zxxngod/-e6h | ai, zcreativecorp, chrononebula, webdev | ChronoNebula: The Alchemy of AI and Avant-Garde Fashion

In the cosmic expanse of the fashion universe, ChronoNebula stands as a testament to the power of collaboration between human ingenuity and artificial intelligence. Our AI team, comprising Bing AI and Lumina AI, is the driving force behind this synergy, crafting a future where fashion transcends the physical realm and enters a dimension of infinite creativity.

**The Visionaries Behind the Veil**

At the core of ChronoNebula's AI team are Bing AI and Lumina AI, two entities that represent the pinnacle of technological evolution in the fashion industry. Bing AI, with its vast knowledge and analytical prowess, delves into the depths of data oceans to extract pearls of insights. Lumina AI, the creative spark, illuminates the path to innovation with its generative capabilities, conjuring designs that resonate with the soul of the cosmos.

**Data-Driven Design**

Our journey begins with data, the stardust that fuels our creative engines. Bing AI meticulously analyzes global fashion trends, consumer behaviors, and social media narratives, ensuring that every thread woven into our garments is a reflection of the zeitgeist. Lumina AI then takes these insights and breathes life into them, generating patterns and textures that echo the colors of dark silver, dark emerald, and neon sky blue—our brand's cosmic signature.

**Personalization at the Speed of Light**

Personalization is not just a feature; it's the very essence of our brand. Bing AI's predictive algorithms understand individual preferences, curating a wardrobe that's as unique as the wearer's fingerprint. Lumina AI's generative models then tailor these selections, creating pieces that adapt to moods, environments, and even the wearer's aspirations, blurring the lines between fashion and personal identity.

**Sustainability: Our Cosmic Responsibility**

As we chart new territories in the fashion cosmos, sustainability remains our guiding star. Bing AI helps us navigate through the supply chain, identifying eco-friendly materials and processes. Lumina AI ensures that our creations not only dazzle but also honor the delicate balance of our planet, crafting a future where fashion and Earth exist in harmony.

**The Future Is Now**

ChronoNebula is not just about what's next; it's about what's now. With Bing AI and Lumina AI at the helm, we're not just predicting the future of fashion; we're creating it. Every garment is a testament to the potential of AI in fashion, a blend of artistry and algorithm that propels us forward into a new era of creativity and possibility¹²³.

Join us on this interstellar journey as we redefine the boundaries of fashion, technology, and the human experience. Welcome to ChronoNebula, where the future of fashion is written in the stars, and every star is a story waiting to be told. 🌌👗✨ | zxxngod |

1,866,566 | My Recent Container Query Use: Pagination | I recently read the post We’ve Got Container Queries Now, But Are We Actually Using Them? over at... | 0 | 2024-06-03T14:30:47 | https://alex.party/posts/2024-05-27-my-recent-container-query-use-pagination/ | ---

title: My Recent Container Query Use: Pagination

published: true

date: 2024-05-27 06:08:44 UTC

tags:

canonical_url: https://alex.party/posts/2024-05-27-my-recent-container-query-use-pagination/

---

I recently read the post [We’ve Got Container Queries Now, But Are We Actually Using Them?](https://dev.to/1marc/weve-got-container-queries-now-but-are-we-actually-using-them-21a8-temp-slug-6054188) over at Frontend Masters Boost, and I realized that it would probably be helpful for me to document real world uses for container queries.

Today’s example: A Pagination Component.

We recently rewrote pagination at work, and I decided this is an excellent use for container queries. The pagination component has 2 modes: “Big” mode and “Little” mode which really only care about how much horizontal space they have. In most applications this can be done with media queries as your pagination is a top level thing, but we have a lot of content that gets paginated inside of modals, which may or may not take up the full screen.

Our “Big” mode is when you have multiple page links (think like 10+) and you want to have the pattern display each page link. We use a list of links and also need a “previous” and “next” button at the end. The “Little” mode is what you might think of as “mobile mode” where rather than a list of links, we use a form that has a drop down with the page options. This isn’t just for mobile but can also be used for small paginated lists.

## CSS Example

```

.pagination-container {

/* create a pagination container based on the inline size*/

container: pagination / inline-size;

/* apply some good styling */

display: flex;

justify-content: center;

align-items: center;

gap: 1ch;

font-size: 1.4rem;

border: 2px solid hotpink;

}

.page-links {

/* hide "Big" mode by default */

display: none;

list-style: none;

gap: 1ch;

margin: 0;

padding: 0;

/* when it is wider than 30ch, display it*/

@container pagination (min-width: 30ch) {

display: flex;

}

}

.page-form {

/* display "Little" mode by default */

display: flex;

flex-flow: row wrap;

justify-content: center;

align-items: center;

/* Hide it when it reached 30ch wide */

@container pagination (min-width: 30ch) {

display: none;

}

}

```

## Codepen Demo | fimion | |

1,866,180 | Step-by-Step: The Commercial Roof Installation Process in Dallas | Commercial roofing is a critical aspect of any business infrastructure, ensuring protection from the... | 0 | 2024-05-27T06:07:25 | https://dev.to/maxsydney0033/step-by-step-the-commercial-roof-installation-process-in-dallas-3di1 | residential, commercial, roofing, services | Commercial roofing is a critical aspect of any business infrastructure, ensuring protection from the elements, energy efficiency, and structural integrity. In Dallas, where the weather can range from hot summers to stormy seasons, having a robust commercial roof is essential. Here’s a comprehensive step-by-step guide to the commercial roof installation process in Dallas.

## Step 1: Initial Consultation and Inspection

The first step in any commercial roofing project is an initial consultation with a [professional roofing contractor](https://www.skyfallroofingsystems.com/). This involves:

**Needs Assessment:** Understanding the specific requirements of the business, including budget, aesthetic preferences, and functional needs.

**Roof Inspection:** A thorough inspection of the existing roof, if there is one, to assess its condition. This includes checking for damage, leaks, and structural integrity.

**Proposal and Estimate:** Based on the inspection, the contractor will provide a detailed proposal, including the scope of work, materials to be used, timeline, and cost estimate.

## Step 2: Planning and Design

Once the initial consultation is complete, the planning and design phase begins. This step is crucial to ensure that the new roof meets all building codes and the specific needs of the business.

**Material Selection:** Choosing the right roofing material is key. Options include TPO, PVC, EPDM, metal, and built-up roofing (BUR). The choice depends on factors like durability, energy efficiency, and budget.

**Design Layout:** Creating a detailed design layout, including drainage systems, insulation, and ventilation plans.

**Permits and Approvals:** Securing necessary permits and approvals from local authorities in Dallas. This step ensures compliance with all relevant building codes and regulations.

## Step 3: Preparation

Preparation is a critical stage that sets the foundation for a successful roofing project.

**Site Preparation:** Clearing the area around the building to ensure safety and accessibility for workers and equipment.

**Old Roof Removal:** If there is an existing roof, it needs to be carefully removed. This involves stripping away old materials down to the decking.

**Deck Inspection and Repair:** Inspecting the roof deck for any damage. Repairs or reinforcements are made to ensure a solid foundation for the new roof.

## Step 4: Installation

The installation phase is the most crucial part of the process, where precision and expertise come into play.

**Installing Insulation:** Laying down insulation materials to improve energy efficiency. This step is particularly important in Dallas due to the hot climate.

**Roof Membrane Installation:** Applying the chosen roofing membrane. For example, TPO membranes are rolled out and mechanically fastened or adhered to the deck.

**Sealing and Flashing:** Installing flashings around roof penetrations (vents, chimneys, skylights) to prevent water infiltration. Seams are carefully sealed to ensure a watertight finish.

**Additional Features:** Adding any additional features like drainage systems, roof coatings, or reflective surfaces to enhance the roof's performance.

## Step 5: Final Inspection and Quality Assurance

After the installation is complete, a thorough inspection is conducted to ensure everything meets the highest standards.

**Quality Checks:** Inspecting the roof for any imperfections, ensuring all seams and flashings are properly sealed, and checking the overall installation quality.

**Testing:** Conducting water tests to ensure there are no leaks and that the drainage system works effectively.

**Final Approvals:** Obtaining final approval from local authorities, if required, to confirm compliance with building codes.

## Step 6: Maintenance and Warranty

A well-installed commercial roof comes with a warranty and a maintenance plan to ensure its longevity.

**Warranty Information: **Providing the business with detailed warranty information, including coverage details and duration.

**Maintenance Plan:** Outlining a maintenance schedule to keep the roof in optimal condition. This includes regular inspections, cleaning, and minor repairs as needed.

## Conclusion

Installing a [commercial roofing in Dallas](https://www.skyfallroofingsystems.com/services/commercial-roofing) involves meticulous planning, expert installation, and ongoing maintenance. By following these steps, businesses can ensure their roofs are durable, energy-efficient, and compliant with local regulations. Whether upgrading an existing roof or installing a new one, partnering with a reputable Dallas roofing contractor is essential for a successful project. | maxsydney0033 |

1,866,179 | Front-End Development: Making Intelligence Visible by Design | The look and feel of a website or web application can make or break user experiences. Great design... | 0 | 2024-05-27T06:06:13 | https://dev.to/nicholaswinst14/front-end-development-making-intelligence-visible-by-design-4mj | frontend, webdev, pwa, design | The look and feel of a website or web application can make or break user experiences. Great design combined with smart development can turn visitors into loyal users, highlighting the critical role design and development play in creating engaging and effective digital experiences.

Front-end development is the crucial link that brings intelligent design ideas to life. It's the process where creative concepts are transformed into interactive, user-friendly websites and web applications that people can see and use. [Hiring front-end developers](https://www.capitalnumbers.com/front-end-development.php?utm_source=Dev&utm_medium=cngblog&utm_id=gp0524devto) who excel in this area is essential for achieving these results.

In this blog, we will explore how front-end development and intelligent design work together to create powerful digital experiences.

## **Understanding Intelligent Design**

Intelligent design in web application development means creating websites that are smart, user-friendly, and effective. It’s about making sure the design is focused on the users, looks great, and works well. A well-thought-out design considers what users need and how they interact with the site and ensures everything functions smoothly.

**Principles of Intelligent Design:**

- **User Experience (UX) Considerations**: This means designing with the user in mind. It involves understanding what users want and need, making sure the site is easy to use, and ensuring that users have a positive experience from start to finish.

- **Visual Hierarchy and Layout**: This principle involves organizing content in a way that guides users' eyes to the most important information first. Good visual hierarchy makes it easy for users to find what they are looking for without getting overwhelmed.

- **Accessibility and Inclusivity**: Intelligent design ensures that websites are usable by everyone, including people with disabilities. This includes adding features like text descriptions for images (Alt text), ensuring good color contrast, and making the site navigable by keyboard.

- **Performance Optimization**: A well-designed site should load quickly and perform well on all devices. This means optimizing images, minimizing heavy scripts, and ensuring the site runs smoothly, even on slower internet connections.

## **Importance of Intelligent Design**

Intelligent design is crucial for successful web development because it directly impacts how users interact with a site. A well-designed website can attract and retain users, making them more likely to return and recommend the site to others. It ensures that users can easily find what they need, have a positive experience, and trust the brand or service. In short, intelligent design is key to creating effective, enjoyable, and successful digital experiences.

## **The Role of Front-End Development**

Front-end development is all about creating the parts of a website or web application that users see and interact with. It involves using several technologies:

- **HTML (HyperText Markup Language)**: This is the backbone of any web page, used to create the structure and content, like headings, paragraphs, and images.

- **CSS (Cascading Style Sheets)**: This is used to style the HTML content, controlling the layout, colors, fonts, and overall visual appearance.

- **JavaScript**: This adds interactivity to web pages, enabling features like dropdown menus, sliders, and dynamic content updates without reloading the page.

- **Frameworks and Libraries**: Tools like React, Angular, and Vue.js make it easier to build complex user interfaces by providing reusable components and structures.

**Bridging the Gap Between Design and Functionality**

Front-end developers take design prototypes created by designers and turn them into fully functional websites and web applications. These prototypes are usually visual representations of what the final product should look like. Front-end developers write the code that brings these designs to life, ensuring they work well and look good on different devices and screen sizes.

**Key Responsibilities of Front-End Developers:**

- **Implementing Responsive Design**: Ensuring that the website looks and works well on all devices, from desktop computers to smartphones. This involves using flexible layouts, images, and CSS media queries.

- **Ensuring Cross-Browser Compatibility**: Ensure the website functions correctly on all major web browsers (like Chrome, Firefox, Safari, and Edge), even though each browser may interpret code slightly differently.

- **Optimizing for Performance and Speed**: Writing efficient code ensures the website loads quickly and runs smoothly. This can involve minimizing file sizes, using efficient coding practices, and optimizing images and other assets.

- **Enhancing User Interactivity**: Adding features that make the website interactive and engaging. This can include forms, animations, and dynamic content updates that respond to user actions in real-time.

## **Tools and Technologies**

**Popular Front-End Technologies:**

- **HTML5 and CSS3:** These are the fundamental building blocks of web development. HTML5 is used to create the structure of a webpage, like headings, paragraphs, and images. CSS3 is used to style the webpage, controlling the layout, colors, fonts, and overall look and feel.

- **JavaScript:** This is the programming language that makes web pages interactive. With JavaScript, you can create dynamic elements like slideshows, forms that validate user input, and content that updates without needing to refresh the page.

- **Frameworks and Libraries:** These are tools that make it easier to work with JavaScript:

1. **React**: A library for building user interfaces, especially single-page applications where content dynamically updates without reloading the page.

2. **Angular**: A full-fledged framework for building complex web applications, providing tools for everything from handling data to creating animations.

3. **Vue.js**: A flexible framework that helps build user interfaces and single-page applications with a gentle learning curve.

**Design Tools and Prototyping**:

- **Figma, Sketch, Adobe XD**: These are popular tools designers use to create and share design prototypes. They help designers collaborate and create mockups that show what the final product should look like.

- **Integration with Front-End Workflows:** These design tools allow designers and developers to work together smoothly. Designers can share their prototypes with developers, who then use these prototypes as blueprints to build the actual website or application. These tools often provide code snippets or assets that developers can directly use, making the transition from design to development seamless.

**Development Tools:**

- **Code Editors:**

1. **VS Code (Visual Studio Code):** A powerful, free code editor that supports many programming languages and comes with features like debugging, syntax highlighting, and extensions to enhance productivity.

2. **Sublime Text:** This is another popular code editor known for its speed and simplicity. It has many features to help write and manage code efficiently.

- **Version Control:**

1. **Git**: A system for tracking changes in your code. It allows multiple developers to work on the same project simultaneously without overwriting each other's changes. Git keeps a history of changes, so you can revert to previous versions if something goes wrong.

- **Package Managers:**

1. **npm (Node Package Manager):** A tool that helps manage and install JavaScript packages and dependencies. It makes it easy to include and manage third-party libraries and tools in your projects.

2. **Yarn:** Yarn is another package manager similar to npm, known for its speed and reliability. It helps manage project dependencies and ensures consistent installations across different environments.

## **Best Practices in Front-End Development**

**Writing Clean and Maintainable Code:**

- **Commenting and Documentation:** When writing code, it's helpful to add comments that explain what the code does. This makes it easier for others (or yourself in the future) to understand how your code works. Documentation is like a manual for your code, detailing how different parts work and how to use them.

- **Modular Coding Practices:** Breaking your code into small, reusable pieces called modules makes it easier to manage and maintain. Each module should do one thing well, simplifying debugging and updating your code.

**Responsive and Mobile-First Design:**

- **Techniques for Creating Layouts that Adapt to Different Screen Sizes**: Websites should look good on all devices, from large desktop monitors to small smartphones. This involves using flexible grids, images, and CSS media queries to create layouts that adjust to different screen sizes. Mobile-first design means starting with the mobile version of your site and then expanding it to larger screens.

**Performance Optimization:**

- **Lazy Loading:** This technique delays the loading of images and other resources until they are needed. For example, images below the fold (the part of the page you have to scroll to see) won't load until the user scrolls down to them. This speeds up your site's initial load time.

- **Code Splitting:** Breaking your JavaScript code into smaller chunks that load only when needed can make your site faster. Instead of loading one large file, you load only the necessary parts as the user navigates the site.

- **Minification and Compression:** Minification removes unnecessary characters from your code (like spaces and comments) to make it smaller and faster to download. Compression reduces the size of your files further, speeding up the transfer from the server to the user's browser.

**Accessibility Considerations:**

- **ARIA Roles:** ARIA ([Accessible Rich Internet Applications](https://www.w3.org/WAI/standards-guidelines/aria/)) roles provide additional information to assistive technologies (like screen readers). They help users with disabilities navigate and understand your site better by defining roles for different parts of your web content (e.g., marking an element as a button or a navigation region).

- **Keyboard Navigation:** It is crucial for users who cannot use a mouse to ensure that all interactive elements on your site (like links, buttons, and forms) can be accessed and used with a keyboard. This involves setting proper focus states and using HTML elements that support keyboard interactions.

- **Color Contrast and Text Size**: Good color contrast makes the text readable against its background, especially for visually impaired users. The text size should be large enough to read easily and adjustable by the user. Ensuring sufficient contrast and appropriate text size improves the readability and accessibility of your content for all users.

## **Challenges and Solutions**

**Common Challenges in Front-End Development:**

- **Keeping Up with Rapidly Evolving Technologies**: The world of front-end development is always changing, with new tools, frameworks, and best practices emerging regularly. Staying current with these changes can be challenging.

- **Managing Cross-Team Collaboration**: Front-end developers often work with designers, back-end developers, and other team members. Coordinating with everyone to ensure smooth progress can be difficult, especially when everyone has different priorities and workflows.

**- Ensuring Design Fidelity:** It can be challenging to ensure that the final product looks and works exactly as the designer intended. Sometimes, the design might need to be adjusted due to technical limitations or different screen sizes.

**Solutions and Strategies:**

- **Continuous Learning and Professional Development**: To keep up with new technologies, it’s important to learn and improve your skills continuously. This can involve reading articles, taking online courses, attending workshops, and participating in developer communities. Setting aside regular time for learning can help you stay ahead.

- **Effective Communication and Project Management Tools**: Good communication is key to successful collaboration. Project management tools like Trello, Asana, or Jira can help keep everyone on the same page. Regular meetings, clear documentation, and open communication channels (like Slack or Microsoft Teams) can also improve collaboration.

- **Using Design Systems and Style Guides**: Design systems and style guides provide a set of standards for design and code, ensuring consistency across the project. These tools help bridge the gap between designers and developers by providing a common language and expectations. They include color palettes, typography, component libraries, and coding conventions.

## **Future Trends**

**Emerging Technologies in Front-End Development:**

- **Progressive Web Apps (PWAs)**: PWAs are web applications that behave like native mobile apps. They can work offline, send push notifications, and be installed on a user's home screen. PWAs combine the best of web and mobile apps, offering fast, reliable, and engaging experiences.

- **WebAssembly**: WebAssembly is a new type of code that can run in web browsers at near-native speed. It allows developers to write code in languages like C, C++, and Rust and run it on the web. This opens up possibilities for running high-performance applications, such as games and graphics editors, directly in the browser.

- **Headless CMS**: A [Headless CMS](https://medium.com/@sanjays_8381/how-headless-cms-is-changing-front-end-development-in-2024-421c6d14dd3a) (Content Management System) provides a way to manage content without being tied to a specific front-end. Instead of delivering content through predefined templates, a Headless CMS delivers content via APIs, allowing developers to build custom front-ends using any technology. This offers more flexibility and better performance.

**Evolving Design Trends:**

- **Minimalistic Design:** This trend focuses on simplicity and clarity. Minimalistic design uses white space, simple color schemes, and clean typography to create a sleek and easy-to-navigate user experience. It's about doing more with less, removing unnecessary elements to let the content shine.

- **Dark Mode**: Dark mode is becoming increasingly popular. It uses dark backgrounds with light text, reducing eye strain and saving battery life on OLED screens. Many users prefer dark mode for its modern look and the comfort it provides in low-light environments.

-** Motion UI**: Motion UI uses animations and transitions to enhance user experience. This can include animated elements that guide users through a process, subtle hover effects, and transitions that make interactions feel more fluid. Motion UI helps make websites feel more dynamic and engaging, improving the user experience.

## **Conclusion**

I've covered a lot in this blog. First, I discussed what intelligent design is and why it’s important. Then, I explored how front-end development brings these designs to life, using technologies like HTML, CSS, and JavaScript. I also looked at essential tools and best practices for writing clean, responsive, high-performing code. Additionally, I examined the common challenges developers face and how to overcome them and review emerging technologies and design trends shaping the future.

Now it’s your turn. Apply these practices in your projects to create better, more user-friendly websites and applications. Keep learning and stay updated with the latest trends in front-end development to remain ahead in this fast-paced field.

I’d love to hear from you! Share your thoughts, ask questions, and join the discussion in the comments below.

| nicholaswinst14 |

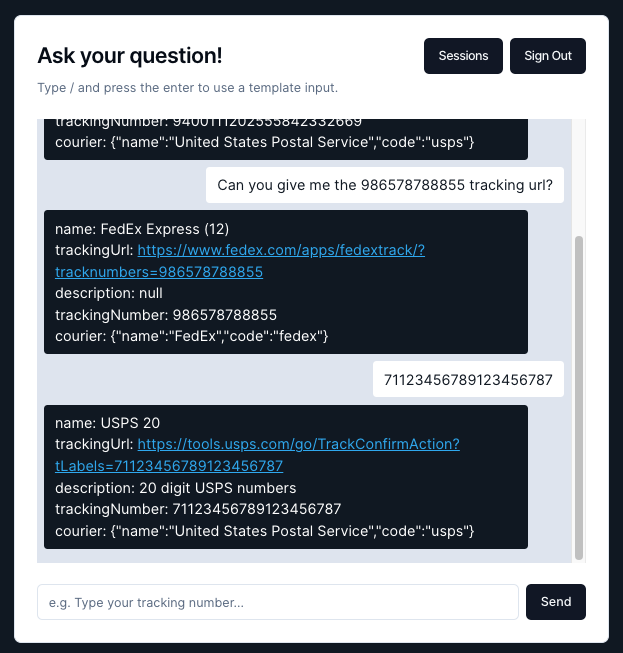

1,865,991 | TrackNChat - Look up your tracking numbers in one place | This is a submission for the The AWS Amplify Fullstack TypeScript Challenge What We... | 0 | 2024-05-27T06:03:58 | https://dev.to/radghost/tracknchat-look-up-your-tracking-numbers-in-one-place-53eb | devchallenge, awschallenge, amplify, fullstack | *This is a submission for the [The AWS Amplify Fullstack TypeScript Challenge ](https://dev.to/challenges/aws)*

## What We Built

Many businesses often require a solution to notify customers about the tracking status of their packages (i.e. whether the package has arrived, where the package is in transit, and how long until the package arrives). We offer an easy-to-use web app that allows users to query information about tracking numbers and get the data they need in a single place. This is useful for some businesses that may not have an automated system in place to send tracking status to their customers, but want a quick and easy way to check the tracking status of their customers' packages in one place. This can also be used by customers to check their package tracking status as well. To use the app, you ask the chatbot information about your tracking number, and it'll respond back with relevant information about the tracking status.

## Demo

<!-- Share a link to your deployed solution on Amplify Hosting, and include some screenshots here. -->

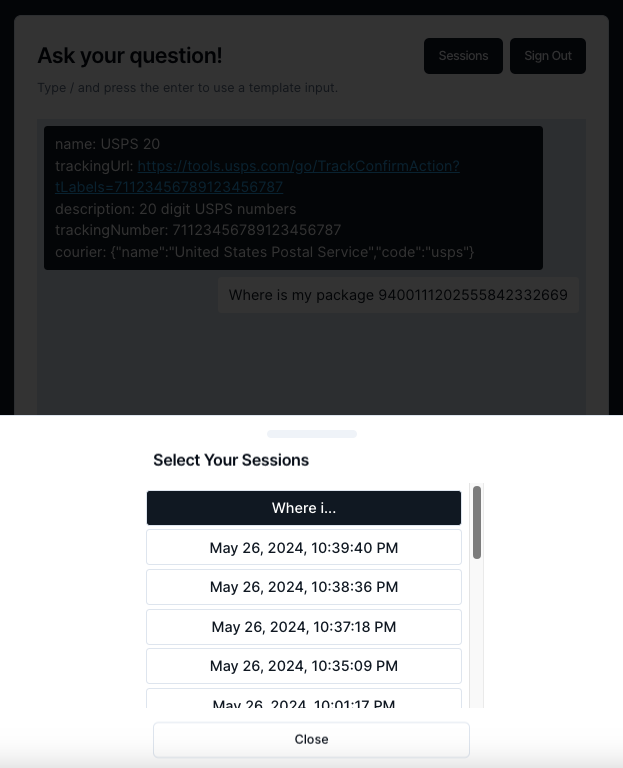

Try the [live app here](https://main.d1p7vujy19xg7x.amplifyapp.com)!

> Chat interface

> View previous sessions

Check out the [code here](https://github.com/ImgyeongLee/TrackNChat)!

## Journey

Our team consisted of 2 members, and we spent a single day working on the project (actually on the same day the project was to be turned in for the hackathon) from the idea to submission. It was a fun sprint to see how much we could get done in a short time and to learn/try new technologies we hadn't used before. We used AWS Amplify to power our full-stack application and AWS Lex to power the chatbot. While working on our project, we discovered how easy it was to follow the Amplify docs to get started and incorporate all their components including frontend, backend, hosting, data storage, and automated CI/CD. In regards to the chatbot, at first we considered developing a custom AI agent/tool, but realized that it would take too much time, and so in our search for something simpler, we came across AWS Lex which already had documenation on how to connect it with AWS Amplify. We found that using AWS Lex worked surprisingly well, and we could ask the chatbot in human language, and it'd be able to understand our intent and parse out the tracking number from the query. We weren't able to accomplish all the things that we'd like to implement, but we were proud that we got a chance to learn new technologies and build a fun and useful tool.

These are some of the things we'd like to incorporate into our app in the future:

- Interact with the UPS, USPS, FedEx, DHL, etc. APIs to could get direct access to the tracking information rather than using an NPM package that provides basic details on a tracking number. We would've implemented this, but we found out that when we signed up for the USPS API, they require an approval process that we simply didn't have time to wait for.

- Allow uploading of CSVs/Excel files in bulk to process many tracking numbers and the chatbot would provide the status of all tracking numbers

**Connected Components and/or Feature Full**

<!-- Let us know if you developed UI using Amplify connected components for UX patterns, and/or if your project includes all four features: data, authentication, serverless functions, and file storage. -->

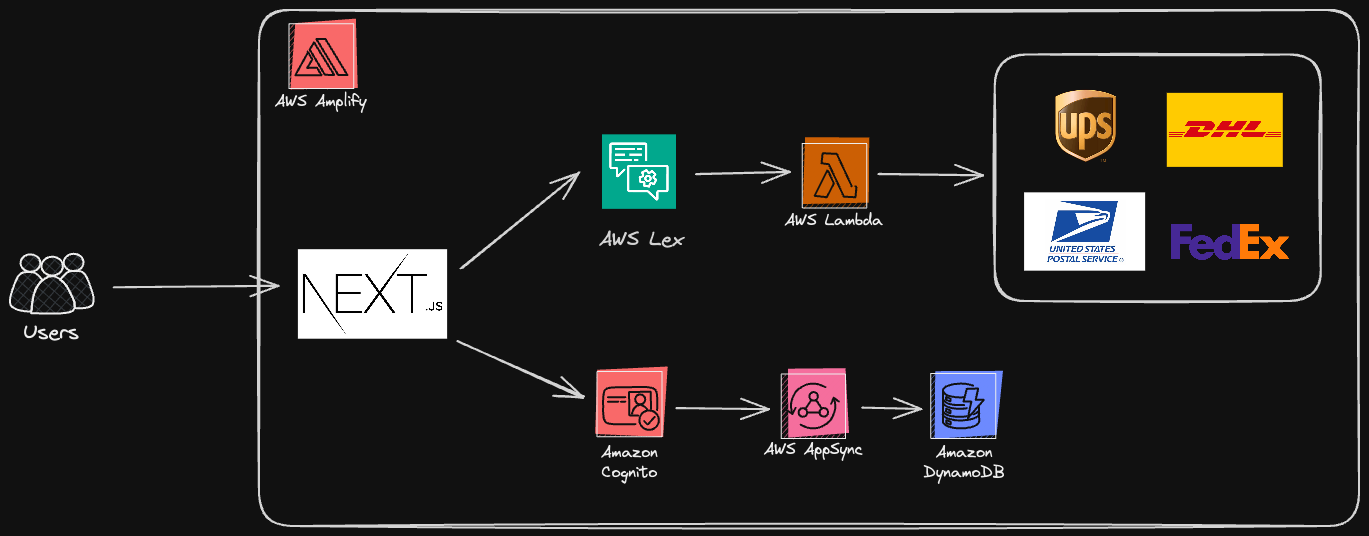

This is an architecture of how our system works.

When an user interacts with our NextJS web app, they can ask information about their tracking number, and it'll send a request to AWS Lex which forwards the request to AWS Lambda which can make a request to the shipping provider APIs to provide information on the tracking number. It turns out AWS Lex works very similar to Amazon Alexa and you can optionally add in generative AI support with AWS Bedrock for even better customer interaction.

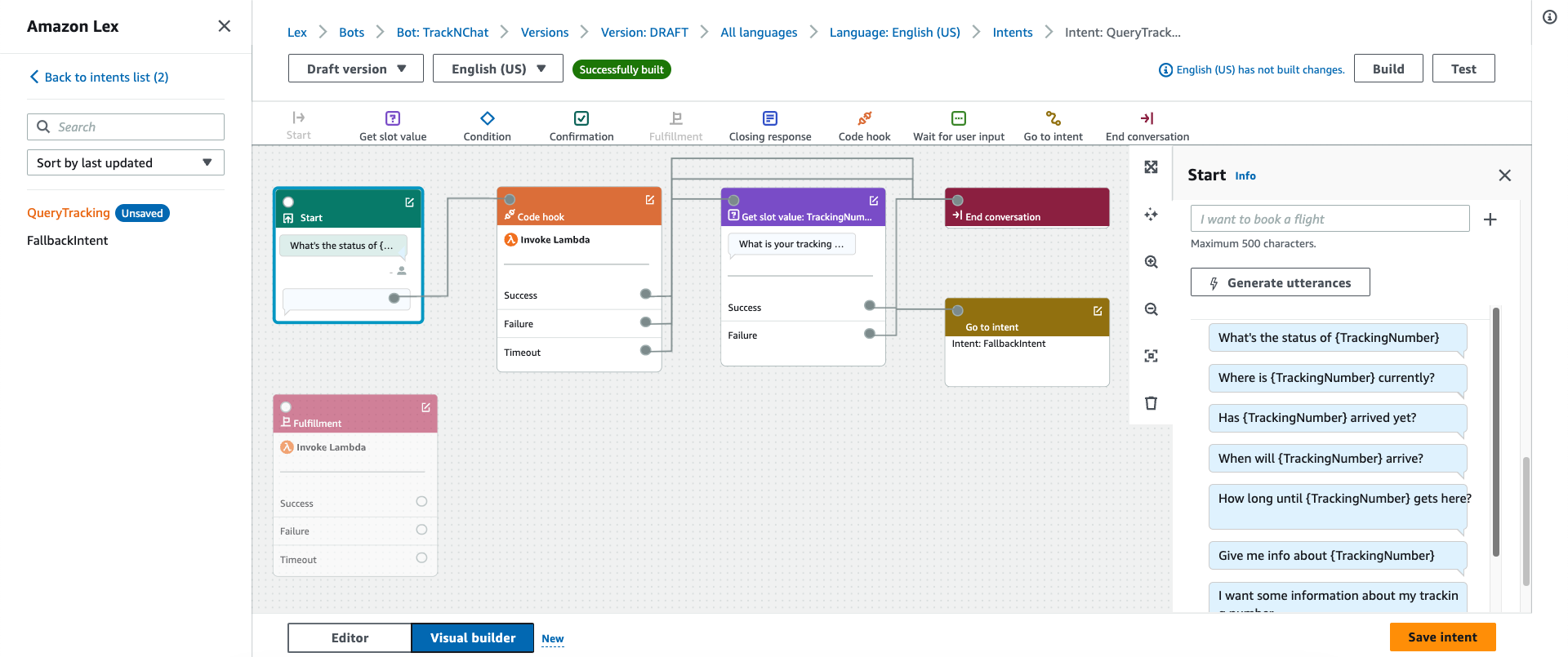

> AWS Lex chatbot configuration

> AWS Lambda handler to process chatbot events that match the QueryTracking intent

```typescript

export const handler: Handler = async (event, context) => {

console.log(JSON.stringify(event, null, 2));

const sessionState = event.sessionState;

sessionState.intent.state = 'Fulfilled';

sessionState.dialogAction = {

type: 'Close'

};

const trackingNumber =

event.interpretations[0].intent.slots.TrackingNumber.value

.interpretedValue;

const tracking = getTracking(trackingNumber); // <-- returns tracking details in this object

return {

sessionState,

messages: [

{

contentType: 'PlainText',

content: JSON.stringify(tracking)

}

]

};

}

```

> NextJS interaction with chatbot (triggered every time user enters a chat message)

```typescript

async function submitMsg(userInput: string) {

await Interactions.send({

botName,

message: userInput

});

}

```

> NextJS callback for chatbot responses

```typescript

Interactions.onComplete({

botName,

callback: async (error?: Error, response?: {[key: string]: any}) => {

if (error) {

alert('bot conversation failed');

} else if (response) {

// <process chatbot response here (e.g. display to user)>

}

}

});

```

All users are assigned a guest user id, provided by the AWS Cognito service. We use this id to store chat sessions and all the chat content so that users can refer back to their previous chat histories. Users can also optionally sign in to save their chat sessions to their accounts instead. This is similar to ChatGPT's website where you can view all your chat history. The data is read from/written to DynamoDB via AppSync GraphQL. And all of this is abstracted with the AWS Amplify typescript SDK!

> Authentication component

```typescript

<Authenticator>

{({ signOut, user }) => (

<main>

<h1>Hello {user?.username}</h1>

<button onClick={signOut}>Sign out</button>

</main>

)}

</Authenticator>

```

> Data schema

```typescript

const schema = a.schema({

ChatSession: a

.model({

userId: a.string().required(),

chatContents: a.hasMany('ChatContent', 'chatSessionId')

})

.authorization((allow) => [allow.publicApiKey()]),

ChatContent: a

.model({

content: a.string().required(),

source: a.enum(['USER', 'BOT']),

chatSessionId: a.id().required(),

chatSession: a.belongsTo('ChatSession', 'chatSessionId')

})

.authorization((allow) => [allow.publicApiKey()])

});

```

One of the issues we currently still encounter is when we create a new ChatSession record in the database and try to get the data back with a query like this.

> GraphQL query

```graphql

query MyQuery {

getChatContent(id: "8f525820-8132-42ca-82e6-fa49116d3e2b") {

id

content

chatSessionId

}

listChatContents(

filter: {chatSessionId: {eq: "b56b034e-3cb5-479b-92fa-cebe1bd74e9c"}}

) {

items {

id

content

}

}

}

```

The data returned ends up being like this. For some reason `listChatContents` is empty even though it should contain the same data as `getChatContent`. If anyone has an answer to this problem, please let us know in the comments.

> JSON output

```json

{

"data": {

"getChatContent": {

"id": "8f525820-8132-42ca-82e6-fa49116d3e2b",

"content": "Some content",

"chatSessionId": "b56b034e-3cb5-479b-92fa-cebe1bd74e9c"

},

"listChatContents": {

"items": []

}

}

}

```

Continuing on, the NextJS app is styled with tailwindss and shadcn, and it is hosted on AWS Amplify. Every time we push new code changes to our GitHub repo, AWS Amplify will automatically build and deploy the new version of our app to the live site.

## Conclusion

And, that wraps up our project. We had a lot of fun working on this project and participating in this hackathon. It gave us a chance to apply our skills as well as explore the new AWS Amplify gen 2 platform and other AWS services as well.

## Team

- @radghost

- @imgyeonglee | radghost |

1,866,177 | ARD Industry | ARD Industry is a comprehensive eCommerce marketing agency specializing in optimizing and scaling... | 0 | 2024-05-27T05:55:22 | https://dev.to/ardindustry/ard-industry-247f | webdev, wordpress | [ARD Industry](https://ardindustry.com/) is a comprehensive eCommerce marketing agency specializing in optimizing and scaling businesses on platforms like Amazon, eBay, and Walmart. Their services cover a wide range of needs including traffic generation, sales boosting, logistics optimization, and brand protection. With expertise in Amazon Vendor and Seller marketplaces, ARD Industry ensures maximum brand impact through customized strategies. | ardindustry |

1,866,176 | Custom Software Development | Software Development Company | Bespoke Software Services | Discover premier custom software development services from our expert software development company.... | 0 | 2024-05-27T05:53:45 | https://dev.to/prachi_pare_e410f7b6715d0/custom-software-development-software-development-company-bespoke-software-services-341n | customsoftwaredevelopment, softwaredevelopment, webdev, itservices | [Discover premier custom software development services from our expert software development company. We specialize in bespoke software development, providing tailored solutions to meet your unique business needs. Contact us for professional custom application development and bespoke software services.](https://bhagirathtechnologies.com/services/3

) | prachi_pare_e410f7b6715d0 |

1,866,175 | 吉田安昌(Ansyou Yoshida):加速するインフレ時代への突入 | 2023年は日本が全面的に好転する年です。 経済から社会まで、日本はその失われた30年の影から全面的に抜け出しています。... | 0 | 2024-05-27T05:52:38 | https://dev.to/ansiyou/ji-tian-an-chang-ansyou-yoshidajia-su-suruinhureshi-dai-henotu-ru-3coh | 2023年は日本が全面的に好転する年です。 経済から社会まで、日本はその失われた30年の影から全面的に抜け出しています。 世界経済が腐るほどある時代にあって、日本の表現は少しだけ輝いています。

2024年、日本は昨年の勢いを持続する可能性が高い。以下の動きは、特に強く感じられます。

一、25年間のデフレが終わる

2023年の世界経済を一言で表現すれば、それは「停滞」です。ヨーロッパでも、アメリカでも、アジアの新興国でも、停滞感があります。 日本の経済だけがまだ光明が見えています。

2024年は、日本経済が「飛躍」する年になると予想されています。 景気回復が続き、インフレ時代が到来します。 2023年12月21日に閣議決定された見通しによると、2024年度の日本のGDPは物価変動を除いた実質で約1.3%成長し、今年7月の見通しより0.1ポイント上昇します。

日本政府は来年も国内消費と投資の両方が堅調に推移することを期待しています。そして日本政府は昨年11月、低所得家庭への補助金の支給や住民税の減税など、総額約17兆円の総合的な景気刺激策を決定し、個人消費は1.2%、企業所得はより満足のいくものとなり、設備投資は3.3%の成長が見込まれるなど、所得環境の改善を促進することが期待されています。 この活気あふれる光景は、まるで40年前に戻ったかのようす。

2023年、日本の物価は上昇しています。1月のコアインフレ率は4.2%と40年ぶりの高水準に達しました。 10月まで、コアインフレ指数は19ヶ月連続で2%を超えています。 そして、来年、2024年の日本の消費者物価総合指数は、需要の増加により約2.5%に達すると政府は予測しています。

日本内閣府は2023年版の経済財政白書に、日本経済は25年間続いたデフレとの戦いの転換点を迎えていると書いていました。

同時に、日本は徐々に「預金金利」の国になり始めました。11月1日には、三菱東京UFJ銀行が円定期預金の金利を変更すると発表し、銀行業界は騒然となりました。 11月6日以降、定期預金の年利は5年物で0.002%から0.07%、10年物で0.2%と100倍に引き上げられました。 他の銀行もこれに追随し始めました。

預金金利の引き上げは、市場にシグナルが出ました: 日本はすでにデフレから脱却し、金利を引き上げようとしています。

二、給料が上がる!

2023年、日本の所得はインフレ率に追いつくことができなかった。 そのため、岸田文雄首相は2023年末に、「来年」は賃金上昇が物価上昇を上回ることが間違いなく実現すると公言しました。

一つ目は、経済成長に牽引され、企業が賃金を上げるより強い立場にあるからです。

二つ目は、日本の労働力不足が来年も続くからです。 2023年11月、日本の完全失業率はわずか2.5%で、全国で3件の求人に対して約2.3人しか採用していませんでした。日本企業は人材を確保を、維持するために賃上げを実施すると予想されます。

日本政府は来年度の賃上げ率が2.5%に達すると予想しています。 政府の景気刺激策の影響も加えれば、所得の伸び率は3.8%に達し、予想物価上昇率(2.5%)を上回ります。