id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,865,728 | Mastering functions in Vyper(part2) | In our previous tutorial, we clearly saw the structure of functions, function decorators and how... | 0 | 2024-05-26T16:17:24 | https://dev.to/mosesmuwawu/mastering-functions-in-vyperpart2-1bdo | web3, ethereum, smartcontracts, vyper | In our [previous tutorial](https://dev.to/mosesmuwawu/mastering-vyper-functionspart1--1144), we clearly saw the structure of functions, function decorators and how functions can be called.

In today's lesson, we are going to see how the `if--elif--else` control structures could be applied in our functions. Also, this example of smart contract is going to give us more confidence of how we can use `@internal` and `@external` decorators.

```python

@internal

def add_numbers(x: int128 = 3, y: int128 = 4 ) -> int128:

return x + y

@internal

def multiply_numbers(x: int128 = 3, y: int128 = 4) -> int128:

return x * y

@external

def my_choice(choice: int128) -> int128:

if choice == 1:

return self.add_numbers()

elif choice == 2:

return self.multiply_numbers()

else:

return 0

```

In the above example, we call three functions. The first two have got the `@internal` decorator and the last one has go the `@external` decorator. As we said earlier, internal functions can only be accessed by other functions within the same contract and are called using the self object. Therefore, we use the `self.add_numbers` to call an internal function by an external function of the same contract.

## Interacting with the contract

For the purpose of this tutorial, i will use web3.py to interact locally with the smart contract we just deployed.

```python

import sys

from web3 import Web3

# Connect to BSC node (Binance Smart Chain)

bsc_node_url = 'https://data-seed-prebsc-1-s1.binance.org:8545/' # Replace with your BSC node URL

web3 = Web3(Web3.HTTPProvider(bsc_node_url))

# Set the private key directly (For demonstration purposes only, do not hardcode in production)

private_key = 'Your_private_key' # Replace with your actual private key

account = web3.eth.account.from_key(private_key)

# Contract ABI

contract_abi = [Copy_and_paste_your_ABI_here]

# Contract address

contract_address = web3.to_checksum_address('Your_contract_address') # Replace with your contract's address

# Create contract instance

contract = web3.eth.contract(address=contract_address, abi=contract_abi)

# Function to set a choice

def call_my_choice(choice):

nonce = web3.eth.get_transaction_count(account.address)

tx = contract.functions.my_choice(choice).build_transaction({

'chainId': 97, # BSC testnet

'gas': 3000000,

'gasPrice': web3.to_wei('5', 'gwei'),

'nonce': nonce,

})

signed_tx = web3.eth.account.sign_transaction(tx, private_key)

tx_hash = web3.eth.send_raw_transaction(signed_tx.rawTransaction)

receipt = web3.eth.wait_for_transaction_receipt(tx_hash)

result = contract.functions.my_choice(choice).call()

return result

# Prompt user for input

def main():

choice = int(input("Enter your choice (1 or 2): "))

if choice in [1, 2]:

result = call_my_choice(choice)

print(f'Result of the calculation: {result}')

else:

print("Invalid choice. Please enter 1 or 2.")

if __name__ == "__main__":

main()

```

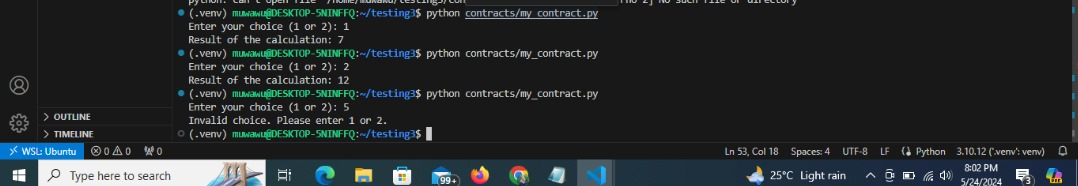

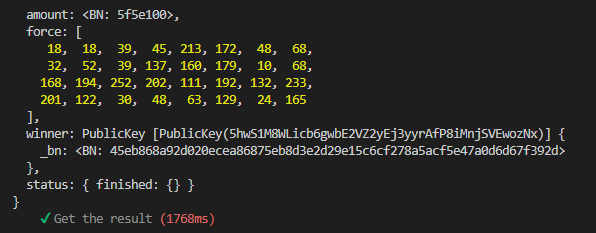

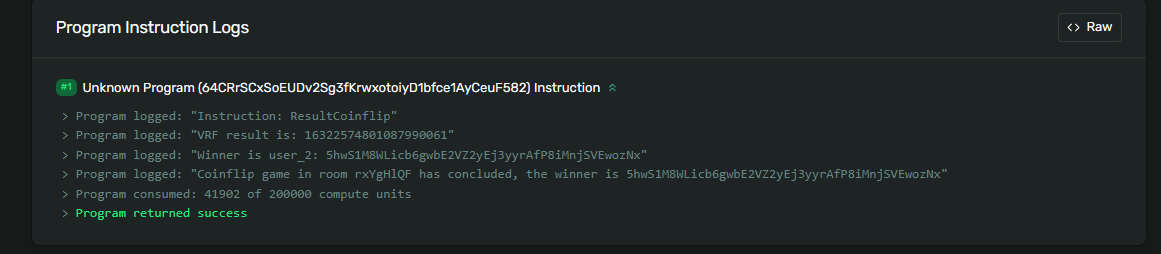

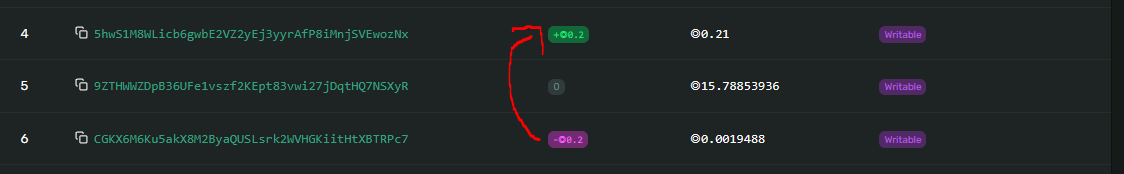

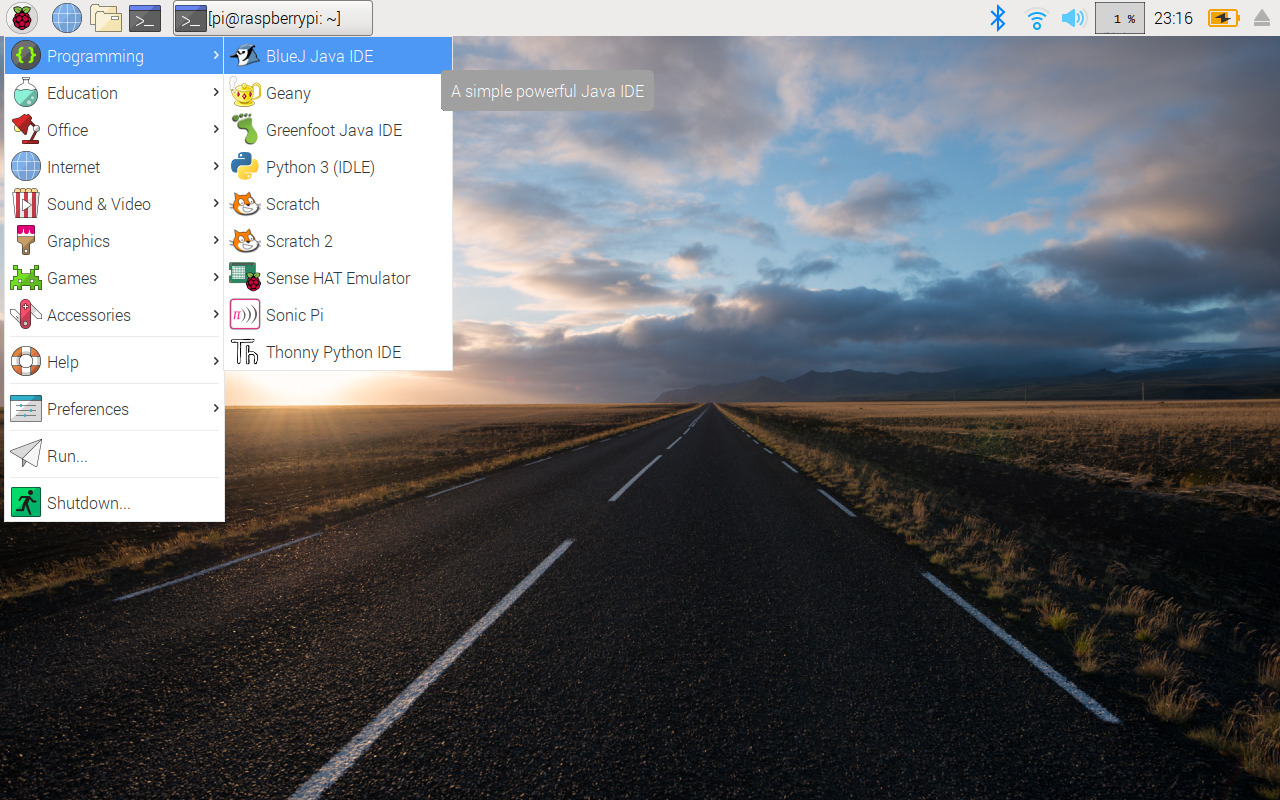

## Result

From the result image above, it's fully evident that our smart contract is giving the expected results. When we set the choice to `1`, the answer is `7`. This because the `add_numbers` function was called which added the default values of `x` and `y`.

When we set the choice to `2`, the answer is `12`. This because the `multiply_numbers` function was called which multiplied the default values of `x` and `y`.

For more vyper content, please follow me and like my my posts.

I will be glad for any interactions in the comment section. I am here to learn and teach. [Next Tutorial](https://dev.to/mosesmuwawu/vyper-for-loops-and-arrays-26bd)

Thank you!

| mosesmuwawu |

1,861,401 | All you need to get started with web development. | Getting started with web development can be overwhelming sometimes especially for those who... | 0 | 2024-05-26T16:15:22 | https://dev.to/audreymengue/all-you-need-to-get-started-with-web-development-3o8 | webdev, javascript, beginners, programming | Getting started with web development can be overwhelming sometimes especially for those who transition into tech or have never been exposed to it. I am writing this article to simplify your journey by highlighting what you really need to get started with web development. The basics consist of three main things. A web browser, a code editor and programming skills.

### A web browser

A web browser is a piece of software that allows us to interact with resources on the web. The resources include websites, web applications, cloud ecosystem etc. In short, a web browser is the place where web applications are accessed. Even though we need web browsers to access our web resources, modern web browsers do a lot more than that with developer tools. The developer tools are very necessary as they help debug errors, inspect code, analyse performance of the application. Some of the most recommended web browsers include Google Chrome, FireFox etc.

### A text editor

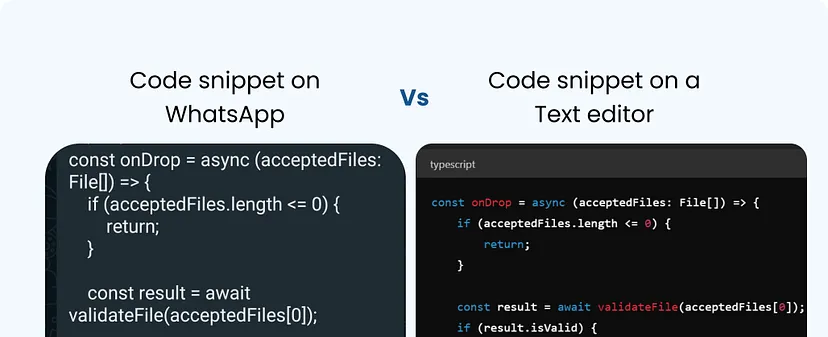

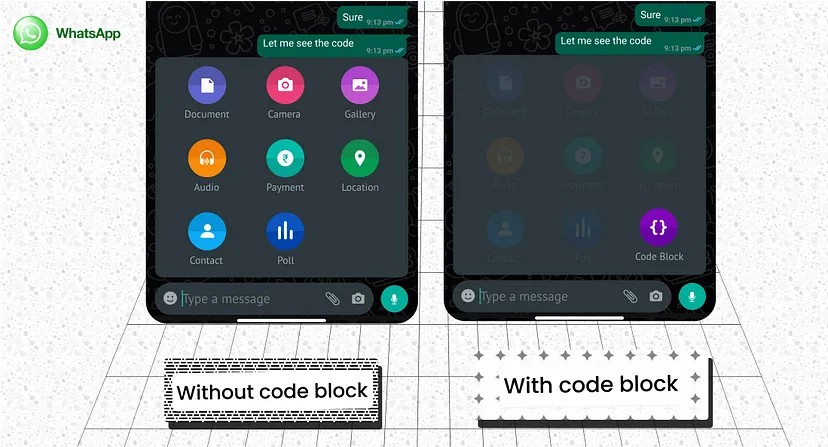

The next thing you will need is a text editor. It's a software in which we will write the code for our application. Some code editor are already included in the operating system. We have TextEdit for Mac OS and Notepad for Windows PCs. The advantage is that they are very simple to use but the limitations are also important to notice. For example, we do not have text formatting, text or code completion is these editors. It's plain text. And that is why most tutorials will recommend more advanced editor like Visual Studio Code, Atom or Sublime Text. They offer better visual when writing code.

### Programming skills

Last but not least, programming skills. Even though some languages are cross platform, it is very important to understand that the Web has its standards and they are HTML, CSS and JavaScript. Yep, you will need to know them in order to build for the Web.

- HTML: will provide the structure of the page;

- CSS: will allow us to style our Web pages;

- JavaScript: will make our pages dynamic;

In my experience, these are the main things you need in order to get started in web development. The first two items (Web browser and text editor) are generally installed the same day. The programming skill on the other side will definitely take longer but; do not worry just watch out for this space for more in-depth articles on each technology mentioned here. I cannot leave without asking you what you think can be added to this list to make learning Web development less stressful.

| audreymengue |

1,865,289 | Entendendo o useReducer do React | Explicando como funciona e casos de uso para o useReducer. | 0 | 2024-05-26T16:11:14 | https://dev.to/lucasruy/entendendo-usereducer-do-react-9hc | javascript, react, braziliandevs | ---

title: Entendendo o useReducer do React

published: true

description: Explicando como funciona e casos de uso para o useReducer.

tags: javascript, react, braziliandevs

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/cdsexlm94aiijaotb9u3.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-05-26 00:50 +0000

---

Neste artigo vamos entender como o `useReducer` funciona e dar um exemplo da vida real de uso para ele, mas primeiro vamos entender o que é esse hook. Uma definição curta para o `useReducer` é que ele permite você utilizar um `reducer` em seu componente. Ele entra como uma boa opção quando surge a necessidade de lidar com dados ou lógicas mais complexas para manipular estado. Esse hook utiliza o padrão de `reducers` que foi popularizado com o `redux`, uma biblioteca de gerenciamento de estado muito famosa e que ainda é amplamente utilizada no mercado.

## Pegando um contexto

Antes iniciarmos com o `useReducer` primeiro vamos entender o que seria um `reducer`. Um `reducer` é uma função que recebe o estado atual, uma ação e retorna um novo estado. Simples assim. Por convenção, geralmente os redutores, tem instruções `switch` para dizer como o estado será atualizado e retornar esse novo valor.

Para entender melhor essa ideia, podemos imaginar uma receita: você tem os ingredientes(o estado atual) e uma instrução(ação), o resultado é um prato preparado por você(novo estado).

Agora vamos ver como um `reducer` se parece:

```js

const initialState = { count: 0 };

function reducer(state = initialState, action) {

switch(action.type) {

case 'INCREMENT':

return { count: state.count + 1 };

case 'DECREMENT':

return { count: state.count - 1 };

default:

return state;

}

}

```

No exemplo o `reducer` faz exatamente o que explicamos anteriormente, ele é uma função que recebe o estado atual(`state`) e uma ação(`action`) e retorna um novo estado dependendo de qual ação será executada. Nesse exemplo existem duas possibilidades de ação, aumentar o contador(`INCREMENT`) ou diminuir(`DECREMENT`). Então se o tipo de ação for `INCREMENT` o valor retornado será `{ count: 1 }`, agora se for uma ação do tipo `DECREMENT` o valor retornado será `{ count: -1 }` e assim sucessivamente.

Um ponto muito importante dos `reducers` é a sua imutabilidade, eles sempre devem retornar um novo estado ao invés de modificar o estado atual. Isso garante que o estado seja imutável e pode ajudar a evitar bugs.

## Como o "useReducer" funciona

Para entender o hook `useReducer` precisamos ter atenção as três principais partes do hook: a função redutora, a inicialização do estado e o despachante de ações. Vejamos um a um:

- Função redutora: é a função que diz como o estado vai ser atualizado quando receber uma ação. Essa função recebe dois parâmetros, o estado atual e a ação que deve ser executada, a partir disso um novo estado é retornado.

- Inicialização do estado: será o seu estado, é um objeto consumido pela sua função redutora.

- Despachante de ações: é a função que recebe um objeto por parâmetro, esse objeto é a ação que contém as instruções necessárias para a função redutora atualizar seu estado.

Agora vamos para a sintaxe:

```js

const [state, dispatch] = useReducer(reducer, initialState);

```

Aqui temos o seguinte:

- `state`: estado atual.

- `dispatch`: despachante de ações.

- `reducer`: função redutora.

- `initialState`: estado inicial, um objeto literal.

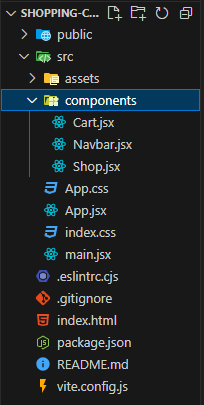

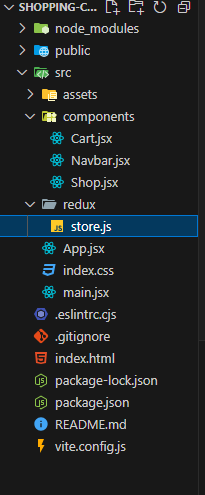

## Exemplo da vida real

Para entender qual é a aplicabilidade do `useReducer` partiremos para um exemplo bem comum. Imagine que você precisa criar um carrinho de compras que pode ter as seguintes funcionalidades, adicionar, remover e limpar os itens.

Começaremos definindo nosso `reducer` em um arquivo chamado `reducer.js` :

```js

// reducer.js

export const initialState = {

items: [],

totalAmount: 0

}

export function cartReducer(state, action) {

switch (action.type) {

case 'ADD_ITEM':

const updatedItems = [...state.items, action.item];

const updatedTotalAmount = state.totalAmount + action.item.price;

return {

...state,

items: updatedItems,

totalAmount: updatedTotalAmount,

};

case 'REMOVE_ITEM':

const filteredItems = state.items.filter(item => item.id !== action.id);

const itemToRemove = state.items.find(item => item.id === action.id);

const decreasedTotalAmount = state.totalAmount - itemToRemove.price;

return {

...state,

items: filteredItems,

totalAmount: decreasedTotalAmount,

};

case 'CLEAR_CART':

return initialState;

default:

return state;

}

}

```

Agora precisamos criar nosso componente e utilizar o `useReducer` dentro dele:

```jsx

// ShoppingCart.js

import { useReducer } from "react";

import { cartReducer, initialState } from "./shopping-cart.reducer";

export const ShoppingCart = () => {

const [cart, dispatch] = useReducer(cartReducer, initialState);

const hasCartItems = cart.items.length > 0;

const addItemHandler = (item) => {

dispatch({ type: "ADD_ITEM", item });

};

const removeItemHandler = (id) => {

dispatch({ type: "REMOVE_ITEM", id });

};

const clearCartHandler = () => {

dispatch({ type: "CLEAR_CART" });

};

return (

<div>

<h2>Meu carrinho</h2>

{hasCartItems && (

<ul>

{cart.items.map((item) => (

<li key={item.id}>

{item.name} - ${item.price}

<button onClick={() => removeItemHandler(item.id)}>

Remover

</button>

</li>

))}

</ul>

)}

<div>Total: ${cart.totalAmount}</div>

<button onClick={clearCartHandler}>Limpar Carrinho</button>

<button

onClick={() =>

addItemHandler({ id: "shoes-1", name: "Tênis", price: 99 })

}

>

Adicionar "Tênis" ao carrinho

</button>

<button

onClick={() =>

addItemHandler({ id: "shirt-1", name: "Camiseta", price: 39 })

}

>

Adicionar "Camiseta" ao carrinho

</button>

</div>

);

};

```

No arquivo `reducer.js`, definimos o estado inicial do carrinho e quais ações podem ser aplicadas para atualizá-lo. As ações disponíveis são `ADD_ITEM`, `REMOVE_ITEM` e `CLEAR_CART`. Cada vez que uma dessas ações é executada, uma cópia do estado atual é criada, atualizada conforme a ação, e um novo estado atualizado é retornado.

Já no componente `ShoppingCart`, utilizamos o `useReducer` para gerenciar o estado do carrinho. No componente, temos três funções, cada uma responsável por uma ação específica. Essas funções despacham ações para o nosso `reducer`, que se encarrega de atualizar o estado e retorná-lo. O componente `ShoppingCart` renderiza os itens do carrinho, o valor total e os botões de ação para adicionar, remover e limpar o carrinho.

## Conclusão

Neste artigo aprendemos como o hook `useReducer` funciona e também um pouco sobre o conceito por trás dele. Também entendemos como ele pode ser uma ferramenta poderosa, que facilita o gerenciamento de estados mais complexos em seus componentes, principalmente quando a lógica de atualização do estado precisa de várias ações.

Código do exemplo neste [sandbox](https://codesandbox.io/p/sandbox/epic-sunset-lq95nw).

Espero que este artigo tenha ajudado você de alguma forma a entender melhor o `useReducer` e como ele pode ser aplicado em casos reais para gerenciar estados complexos. Se você gostou dessa leitura e ficou interessado em se aprofundar ainda mais nesse assunto, recomendo as leituras a seguir, apenas em inglês:

- [How to Use Flux to Manage State in ReactJS](https://www.freecodecamp.org/news/how-to-use-flux-in-react-example/)

- [Scaling up with reducer and context](https://react.dev/learn/scaling-up-with-reducer-and-context)

Por hoje é isso e obrigado por ler até aqui! | lucasruy |

1,865,726 | Learning AWS Day by Day — Day 75 — AWS CloudFront | Exploring AWS !! Day 75 AWS CloudFront This is a CDN (Content Delivery Network) in AWS,... | 0 | 2024-05-26T15:57:34 | https://dev.to/rksalo88/learning-aws-day-by-day-day-75-aws-cloudfront-4ndp | aws, cloud, cloudcomputing, beginners | Exploring AWS !!

Day 75

AWS CloudFront

This is a CDN (Content Delivery Network) in AWS, providing globally distributed networks of proxy servers caching the content, like videos or any media, closer to the consumers, which reduces the latency and improves the availability of data and speed to access the data.

CloudFront VS Global Accelerator

CloudFront:

Employees multiple sets of changing multiple IP addresses.

Pricing is determined by data transfer out and HTTP requests

Caches content using Edge locations

Optimized for HTTP protocol

Global Accelerator:

Provides fixed entry point to your applications via set of static IP addresses.

Charges fixed hourly fees as well as incremental charge over data transfer over your standard Data Transfer rates.

Uses edge location to find best path to nearest location

Ideal for both HTTP and non-HTTP protocols, like TCP or UDP.

CloudFront Distributions:

We can create distributions to let CloudFront know from where you want the content to be delivered, and the details on how to manage the delivery.

CloudFront Edge Locations:

CloudFront delivers content through worldwide networks of data centers, which we call as Edge Locations. When a user requests some content, this request is redirected to the nearest edge location for low latency and best possible performance.

CloudFront Functions:

We can write functions in JavaScript for latency sensitive CDN customizations. These functions runtime environment offers sub-millisecond startup times, scaling happens immediately so that millions of requests are handled per second, which is highly secure.

Features:

Global Edge Network

Security

Availability

Edge Computing

Real Time metrics and logging

DevOps Friendly

Continuous Deployment

Cost Effective

Customers using CloudFront:

Cloudinary

XXL Sport

Arqiva

Irdeto

Hulu

Jio Saavan | rksalo88 |

1,865,712 | Amazon Forecast : Best Practices and Anti-Patterns implementing AIOps | AIOps leverages artificial intelligence for IT operations. Forecasting is one of the most leveraged... | 0 | 2024-05-26T15:55:37 | https://dev.to/aws-builders/amazon-forecast-best-practices-and-anti-patterns-implementing-aiops-2p5a | aiops, sre, forecasting, aws | AIOps leverages artificial intelligence for IT operations. **Forecasting** is one of the most leveraged use cases in AIOps. It typically involves making predictions or estimates of a dataset based on historical data, patterns, and various quantitative and qualitative factors

**Typical Forecasting use cases are:**

- **Traffic**: Predicting traffic or volume fluctuations to anticipate demand and optimize infrastructure accordingly.

- **Error Rate**: Forecasting error rates to proactively identify and mitigate potential issues, ensuring system reliability.

- **Latency**: Predicting latency metrics to maintain optimal performance and enhance user experience.

- **Resources**: Forecasting resource usage patterns to optimize allocation and prevent bottlenecks.

- **Business Metrics**: Forecasting various business metrics such as sales, revenue, or customer engagement to inform strategic decision-making.

- **Error Budget Burn Rate**: Forecasting the rate at which error budget is consumed to manage risk and prioritize improvements effectively.

- **SLA Adherence**: Predicting SLA adherence to ensure service level commitments are met and customer satisfaction is maintained.

**Time series data**

Amazon Forecast works with time series data. Time series data is a sequence of data points collected, recorded, or observed over a period of time, where each data point is associated with a timestamp or time index. It is characterized by its chronological order, intervals (regular or irregular), trends (such as increasing, decreasing, or cyclical patterns), seasonality (repeating patterns with fixed periodicity), and the presence of noise, all of which influence its analysis and forecasting

**Amazon Forecast**

It's a fully managed service offered by Amazon for metric forecasting. It's easy to use, as it allows you to integrate historical or related data by uploading them to Amazon Forecast. Once the data is uploaded, Forecast automatically inspects the data, identifies key attributes, and selects the right algorithms needed for forecasting. It then trains and optimizes your custom model. Once generated, forecasts can be visualized via the console or downloaded. Amazon Forecast also provides APIs that allow you to build solutions

**Getting started is relatively easy.**

**Import Your Data**

- Create your dataset group.

- Select the forecasting domain. AWS supports multiple domains out of the box:

1. Retail domain

2. Inventory planning

3. EC2 capacity

4. Workforce

5. Web traffic

6. Metric (for forecasting metrics such as revenue, sales, and cash flow)

7. Custom (if your requirement does not match any of the above).

- Then select the frequency of data.

- Create your data schema - the data definition of your sample data. You can use the schema builder or JSON schema.

- Select your data file from S3.

- Create an IAM role providing access to S3.

**Train a predictor**

- You can select your predictor, essentially the metric you want to forecast.

**Generate Forecasts**

- You can use the predictor to generate the forecast.

- For all items: Generate forecasts for all items in the input dataset.

- For selected items: Generate forecasts for selected items in the input dataset.

- Query Forecast : You can generate your query to visualize the forecast

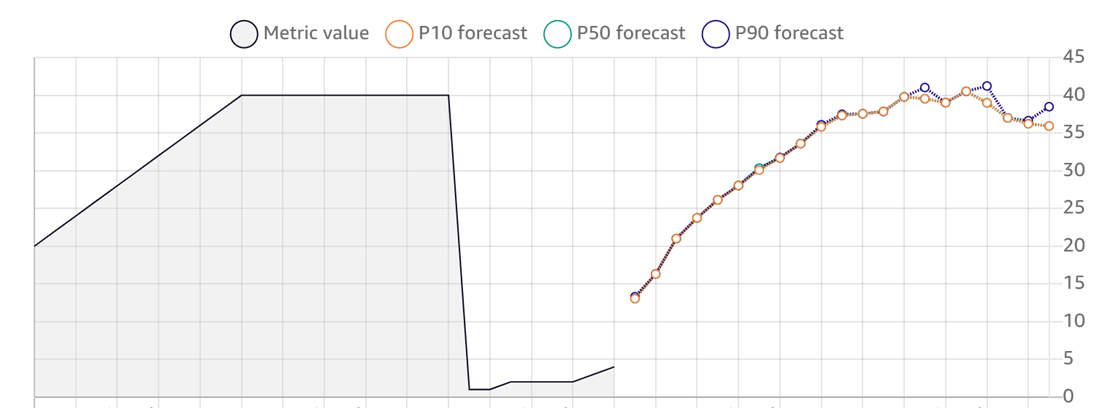

**Amazon Forecast output snapshot**

In Amazon Forecast, P10, P50, and P90 represent the 10th, 50th, and 90th percentiles of the forecast distribution, indicating that there is a 10%, 50%, and 90% probability, respectively, that the actual value will be less than the forecasted value.

**Explore Insights**

Explore Insights consists of two parts:

**Explore Explainability** - As per the AWS-provided definition, Explainability insights identify the attributes that impact your forecasts, quantify their impact relative to other attributes, and determine whether they decrease or increase forecast values.

**Explore What-if Analysis** - As per the AWS-provided definition, What-if analysis explores modifications to the related time series, quantifies the impact of those modifications, and determines how those modifications can impact forecast values

**Few things to note about Amazon Forecast:**

- It supports forecasting via Console, AWS CLI, or Python notebook.

- Ability to customize forecast parameters.

- Modularized - meaning able to track model drift, what-if scenarios, or forecast explainability.

**Pricing:**

Pricing is based on imported dataset and the time taken for predictor training, as well as the number of forecast data points.

**Best practices to follow when using Amazon Forecast:**

- **Data quality**: Ensure your dataset is clean and accurate, removing outliers or noise that could mislead your forecasts.

- **Feature engineering**: Include relevant factors in your dataset to improve forecast accuracy.

- **Fine-tune model parameters**: Adjust forecast horizon, frequency, and other parameters to optimize model performance.

- **Select the forecasting algorithm**: Choose the appropriate algorithm (e.g., ARIMA, CNN-QR, DeepAR+, ETS, NPTS, Prophet) based on your dataset and needs.

- Continuous evaluation, validation, and monitoring: Regularly assess and improve model performance.

- **Enable explainability and predictor monitoring**: Activate these features to gain insights and track model performance.

- **Updating data**: Choose between replacement and incremental updates based on data volume and changes.

- **Handling missing data**: Use filling methods like middle, back, and future filling to address missing values and ensure accurate forecasting.

- Follow dataset guidelines: Adhere to guidelines to ensure optimal model performance.

- **Use predictor monitor**: Track model performance over time and make adjustments as needed.

- **Leverage Forecast Explainability**: Gain insights into how dataset attributes influence forecasts.

- **Leverage what-if analysis**: Explore the impact of altering related time series on baseline forecasts.

**Pitfalls to avoid:**

**Overfitting Models**: Occurs when over-configuring or fine-tuning to prevent noise, instead focus on identifying key patterns.

**Complex Algorithms**: Stick to Amazon Auto Model selection unless there are specific reasons to use a different algorithm.

**Seasonality and Trends**: Ignoring seasonality can lead to unsatisfactory forecasts; ensure relevant features and data are included to uncover patterns.

**Improper Data Preparation**: Prepare data meticulously by clearing, normalizing, and using feature engineering techniques for optimal forecasting results.

**Not Spending Enough Time**: Forecasting is challenging; allocate sufficient time to understand, evaluate, validate, and make necessary adjustments continuously.

**Lack of Business Context**: Understand the purpose of forecasting and factors impacting results; if necessary, conduct reverse engineering to clarify data and goals.

| indika_wimalasuriya |

1,865,357 | Aah, here we go again.. | Hey all, not in the mood to greet, anyway this past year, I've been in a slump. I couldn't overcome... | 22,781 | 2024-05-26T15:54:41 | https://dev.to/fadhilsaheer/aah-here-we-go-again-1k08 | webdev, life, productivity | Hey all, not in the mood to greet, anyway this past year, I've been in a slump. I couldn't overcome my comfort zone, I didn't challenge myself, and I didn't even make a 0.1% progress in my life. Even though I make a schedule and plan to follow, I end f'cking up even more. Then yesterday while watching social media I found a quote.

> I forgot what the quote was, but anyway

basically what it said was planning to do something doesn't do the thing, and it hit me. Throughout the year, I planned and planned and planned, never making any progress, there wasn't problem with my plans, It was perfect. But the problem was with me I either dropped the plan, never followed it, or forgot I even made the plan.

Even though I genuinely wanted improve I couldn't, more accurately Id didn't.

### So what's the fix I'm going to do

Of course I will make another plan 💀, JK the problem with me and many else is we seek for attention, we want other people to see our growth, even though we are growing bit by bit, we don't see our progress ourself, we need someone to measure it, so what I'm going to do is, I'll be blogging myself every day, even though its boring, I'll be marking all of my achievements that day.

wish me luck! | fadhilsaheer |

1,861,346 | Retail Industry Use Case with Brainboard: Enhanced Supply Chain Visibility and Management | Digital is your storefront. Always be open! Grow Your Retail Business With Brainboard ... | 0 | 2024-05-26T15:54:00 | https://dev.to/brainboard/retail-industry-use-case-with-brainboard-enhanced-supply-chain-visibility-and-management-1108 | cloud, management, retail, terraform | > Digital is your storefront. Always be open!

## Grow Your Retail Business With Brainboard

### Deliver A Superior Digital Employee Experience

Maximize your existing IT infrastructure and deliver a more stable, scalable cloud infrastructure solution for your store, boutiques and pop-up stores.

### Scale To Your Biggest Day

Peak season contributes 20-30% of all retail sales annually. Are you ready to meet the traffic spike this holiday season? Brainboard helps your IT business scale to its biggest days. Partnering with major cloud providers, Brainboard can help your reduce risk, boost scalability and optimize your cloud spend with real-time visibility accross your modern cloud environments.

### Reduce Cloud Operating Costs

Margins are everything in the retail world, and cloud is a very real expense. Creating a modern infrastructure deployment pipeline with a good visibility and collaboration workflows, with a robust set of cost controls are proven to reduce waste, idle infrastructure, and over-provisioned services.

### Deliver Better Customer Support

Brainboard facilitates this by making it easier to try new services and prototype new architectures while keeping a very close eye to expenses and security.

## Retail Industry Use Case with Brainboard

### **Challenge:**

Retailers often struggle with maintaining an efficient supply chain that can adapt to sudden changes in demand or disruptions. Traditional methods may lack the agility needed for rapid configuration adjustments across a network of suppliers and distribution centers.

### **Solution with Brainboard:**

Brainboard simplifies the deployment and management of cloud infrastructure across the retail supply chain. By using Brainboard’s visual interface, retailers can create and modify their cloud environments that support supply chain operations, enabling seamless integration across various stages—from procurement to delivery.

### **Implementation:**

1. **Visual Configuration:** Retailers can use Brainboard to visually map out their entire supply chain network, designing configurations that enhance logistic operations.

2. **Dynamic Scalability:** Adjust resource allocation in real-time to meet varying demand without manual intervention. This includes scaling up cloud resources during high sales periods and scaling down during off-peak times.

3. **Compliance and Security Automation:** Automatically enforce security policies and compliance standards across all nodes in the supply chain, reducing the risk of data breaches and regulatory penalties.

### **Outcome:**

Retailers achieve a more responsive and resilient supply chain, capable of adapting to market conditions and consumer demands with greater agility. Improved operational efficiency and reduced downtime lead to increased customer satisfaction and reduced operational costs.

With [Brainboard](https://app.brainboard.co/), retailers can ensure their digital storefronts are always open, delivering superior digital employee experiences, scaling effectively during peak seasons, reducing cloud operating costs, and enhancing customer support. Brainboard's visual interface simplifies supply chain management, offering dynamic scalability and robust security compliance. This leads to a more agile, efficient, and resilient retail operation, driving increased customer satisfaction and optimized operational costs.

You can also [schedule a demo](https://www.brainboard.co/contact-us) with a Retailer pro to learn exactly how Brainboard solution can help you.

| miketysonofthecloud |

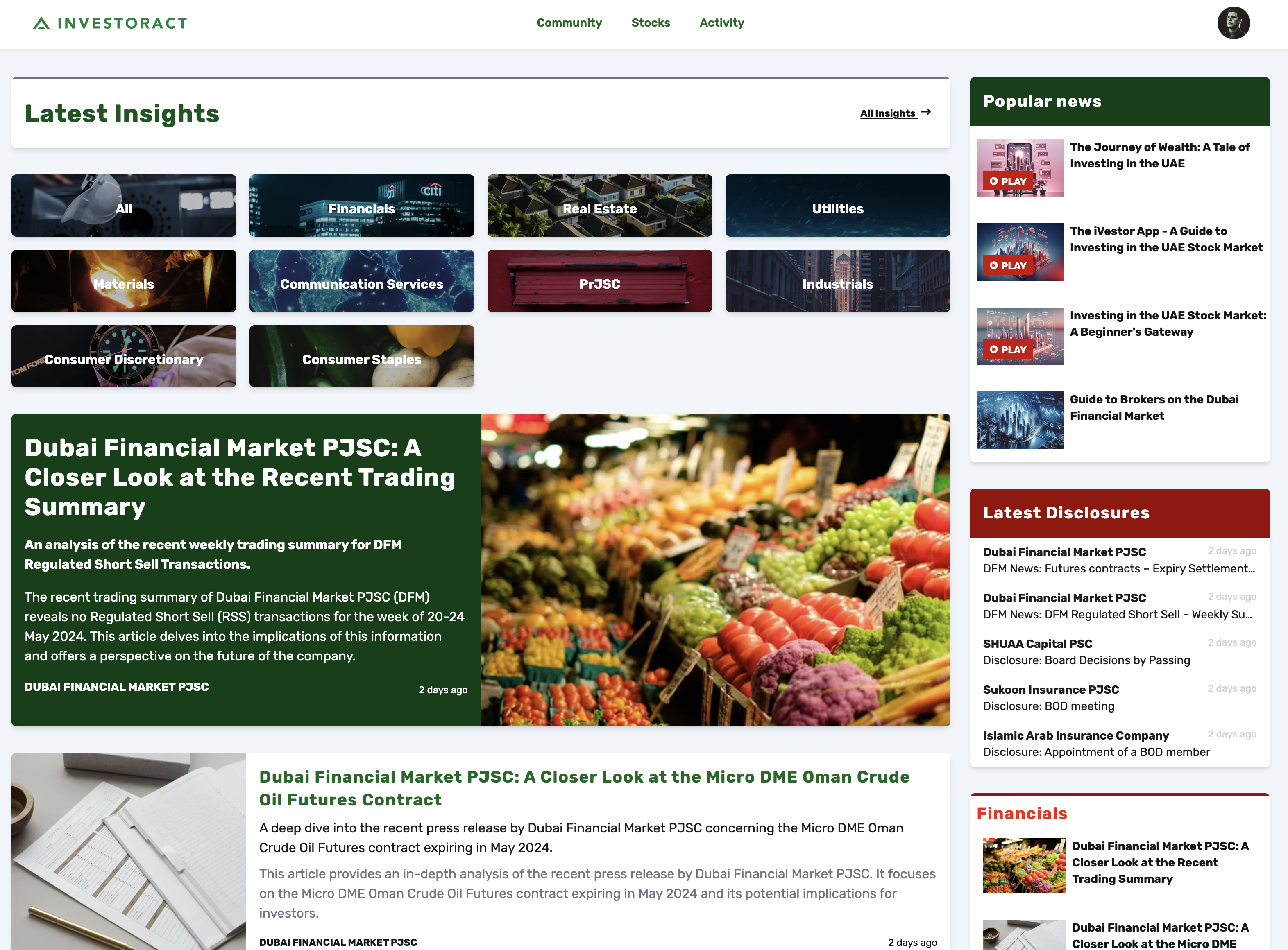

1,865,722 | How I created a SEO optimised site using Next.js, Django and Redis | Ok, so this story begins a couple of years back! I’ve always been interesting in building FinTech... | 0 | 2024-05-26T15:44:53 | https://dev.to/hassanmian/how-i-created-a-seo-optimised-site-using-nextjs-django-and-redis-og9 | nextjs, django, redis, react | Ok, so this story begins a couple of years back! I’ve always been interesting in building FinTech solutions and have been doing it for about 16 years (since 2008) (wow time flies). The reason for being interested in FinTech is really weird. I don’t have a financial background (engineer and university dropout). I think I just love the amount of data that is needed to build something.

**So now to the platform. First an introduction to the idea:**

A Financial News site that analyses the pressreleases that are published by publicly traded companies, write an article and publish it on the site. The site should also have commenting functionality and also AI portfolios that buy and sell (real prices but not real money) to inspire users.

The goal is to make savings and investments a part of every persons daily life!

If you want to have a look at the platform:

- [www.investoract.com](http://www.investoract.com)

- [www.bors360.com](http://www.bors360.com)

Investoract is for the UAE market (in English) and Börs360 is for the Swedish market (in Swedish).

**The Tech Stack:**

- Back-End

- Python

- Django

- Django Rest Framework

- Requests

- Scrapy

- Front-End

- Next.js (App Router)

- React

- Infrastructure

- DigitalOcean App Platform

- Redis Stack

- Postgres

The Django Models are quite simple. A couple of apps with a couple of models. Some models are connected to RedisSearch using signals and serialize the data and store it on Redis on each change. Redis indexes that data and make it searchable. Some Django Views that act as DJRF views but instead of fetching the data using the ORM, they instead fetch the data from RedisSearch. The speed this gives me is simple unprecedented (for me).

**Ok so thats the tech part, what about the SEO?**

So to make the platform SEO friendly, the first thing i needed to decide was if I should use App Router och Page Router in Next. As it was a hobby project, I though “why not just try App Router”…

The Developer Experience was awesome. It was really easy to build pages that fetch the data from the back-end and provide it as Server Side rendered pages to the client. So after a couple of weeks I had the first pages built using SSR.

So as a checklist I thought that I would need to fix the following before releases the site and asking Google to index it:

- Static Site Generation

- Make sure to add some of pages to Static Params (do not add all pages as it impacted the build time)

- Meta Data

- Make sure that we have title, description and stuff populated

- OG Tags

- Make sure we have OG tags and fallbacks for all pages

- Comply with all (most of) the recommendations the LighHouse provides

- Add Rich Result JSON

- Create a Sitemap

- Test the site using Google Page Speed

- Add Site to Google Search Console

The above checklist is probably not a complete checklist, but simply the tasks that I needed to complete before releasing the site

**The big day!**

So I released the site a couple of days after new year and Google picked up the site quite quickly. Analyses the data on Google Search Results for a couple of weeks I could quite quickly see that something was wrong. Some pages were being indexed but some were just simply crawled and not indexed.

**Hickup**

Going through the code (especially the raw html that was being sent to the client) I saw that Next.js was sending the data to the client as a JSON object and not prerendered HTML. Thats not good, as Google crawl the pages with no data and simply not index them as they do not contain any content that the user would be interested in.

So here is where it gets a bit messy. So Next.js allows us to add Client Side components quite easily. The only problem with this is that as soon as a client side component is rendered, the data for this component is (quite obviously) not rendered in the HTML tags. This was quite a bummer, as I had built an Infinite Load list on the start page that automatically loaded more articles as soon as the user reached the end of the list. I thought this was quite cool, but Google didn’t. This meant that almost all pages that contained a list of articles weren’t been indexed.

To solve this issue I went back to the drawing board and changed my Infinite Load List to a paginated list instead that used URL Query parameters instead. By doing that I could remove ‘use client’ from list components and make Next.js render these as pre-rendered HTML.

So just to clarify, the page was still considered SSR when I had use client but the server didn’t actually render that HTML but instead provides a JSON object (saved as a JS variable) that was available for the client to render the data.

**Things I still need to fix asap**

The Design

I’m really not happy with the UI yet. The first version was created using next-ui but I quite quickly moved over to just tailwind instead.

Login Experience

Yeah, if you try it out, you will probably see that the login experience needs a revamp.

Interested in some code examples from the project? Leave a comment and I'll create a new post with some code snippets :) | hassanmian |

1,865,721 | The Ultimate Guide to Web Rendering: Improving Performance with CSR, SSR, SSG, and ISR | Introduction The process of web rendering, which controls how users see the content of... | 0 | 2024-05-26T15:44:07 | https://dev.to/a_shokn/the-ultimate-guide-to-web-rendering-improving-performance-with-csr-ssr-ssg-and-isr-41dg | webdev, javascript, beginners, nextjs |

## Introduction

The process of web rendering, which controls how users see the content of your online application, is essential to web development. Knowing the subtleties of web rendering as a full-stack developer with a focus on the MERN stack (MongoDB, Express.js, React, Node.js) will greatly improve the functionality and user experience of your projects. Key ideas and methods in web rendering are covered in this article, including incremental static regeneration (ISR), server-side rendering (SSR), client-side rendering (CSR), and static site generation (SSG).

## Client-Side rendering (CSR)

JavaScript is used to render content in the browser via a method known as client-side rendering, or CSR. In a MERN stack application, CSR is usually handled via React.

This is how it operates:

First Load: The server transmits a small HTML file and a collection of JavaScript files to a visitor that visits your website.

JavaScript Execution: To show the content, the browser first downloads and runs the JavaScript, which then creates the HTML and dynamically modifies the DOM.

User Interactions: The application seems quick and responsive since React manages future interactions on the client-side.

Benefits

Rich Interactivity: Rich interactivity and quick updates are made possible by React's virtual DOM.

Decreased Server Load: Reducing server load to the client is advantageous for scalability.

## Server-Side Rendering (SSR)

Server-side rendering (SSR) is the process of rendering HTML on a server and transmitting it to the client after completion. This can be accomplished in a MERN application utilising frameworks such as Next.js or by utilising React's server-side capabilities. This is how SSR works:

When a user requests a page, the server generates HTML using React components.

Full HTML Response: The server returns fully rendered HTML to the client.

Hydration: Once the HTML is loaded, React takes control, and the application works normally from then on.

Advantages:

Faster initial load: Because the server provides fully rendered HTML, viewers can view the information more quickly.

Better SEO: Search engines may quickly index pre-rendered HTML, which improves SEO.

## Static Site Generation (SSG)

Static Site Generation (SSG) pre-renders HTML during the build process. This strategy is useful for pages that do not need to be updated frequently. SSG is facilitated by MERN stack frameworks such as Next.js. This is how it works.

Build Time: During the build process, HTML is created for each page using your React components and data.

Static Files: The server serves these static HTML files with each request, resulting in rapid load times and low server load.

Advantages:

Performance: Pages load extremely fast since they are pre-rendered and serve as static files.

Scalability: Reduced server load as pages are served from a CDN or static hosting service.

SEO: Fully pre-rendered HTML is easily indexed by search engines.

## Incremental Static Regeneration (ISR)

Incremental Static Regeneration (ISR) is a new technique that combines the advantages of SSG with the ability to update static material without a complete rebuild. ISR, which is supported by frameworks such as Next.js, lets you update static pages after they've been built. This is how it works.

Pages are pre-rendered during the first build process, similar to SSG.

On-Demand Updates: When a page is browsed, any outdated material is re-rendered in the background and updated.

Advantages:

Fresh material: Pages can be modified without requiring a full rebuild, resulting in fresh material.

Performance: Serves static files, retaining the performance benefits of SSG.

Flexibility: Strikes a balance between static creation and dynamic content updates.

| a_shokn |

1,845,141 | Unlock the Power of Microsoft Blazor: Seamless Integration with Workflow Designer — Check Out Guide Now! | Intro Before we dive into the main guide on integrating Workflow Designer with Blazor, it... | 0 | 2024-05-26T15:42:37 | https://workflowengine.io/documentation/workflow-designer-in-blazor-application | frontend, dotnet, csharp, tutorial | ## Intro

Before we dive into the main guide on integrating Workflow Designer with Blazor, it makes sense to convey to readers the practical benefits of using workflow engines in applications. If you haven't considered this before, or if you have but lacked the motivation to implement a Workflow Engine, then this YouTube video by [Jeff Fritz](https://devblogs.microsoft.com/dotnet/author/jeffreytfritzyahoo-com/) will surely captivate you. Jeff Fritz a principal program manager in Microsoft’s Developer Division working on the .NET Community Team. We are confident that this video will help you understand the benefits of implementing a Workflow Engine with a visual Workflow editor and dispel any doubts you may have.

Jeff Fritz explains in a simple and accessible manner, using a delivery case example, how using a workflow engine helps simplify development and reduces the time and effort spent on refactoring and testing

{% youtube vpMzm6TsdDY %}

## Overview

Not all .NET backend developers have expertise in popular frontend frameworks like React or Angular. However, .NET backend developers often possess skills in Microsoft Blazor. We have frequently received requests from our technical support team to create an example of integrating the community version of Optimajet WorkflowEngine (which includes a visual designer) with Microsoft Blazor. We have accomplished this and are sharing an example and guide with you on [GitHub](https://github.com/optimajet/workflow-designer-blazor-sample) repository).

Let's start by creating an application using the `blazorserver` template.

```bash

mkdir workflow-designer-blazor

cd workflow-designer-blazor

dotnet new blazorserver

```

We can run this application using the `dotnet watch` command and modify its code on the fly. This is a great feature of the `dotnet` CLI! If

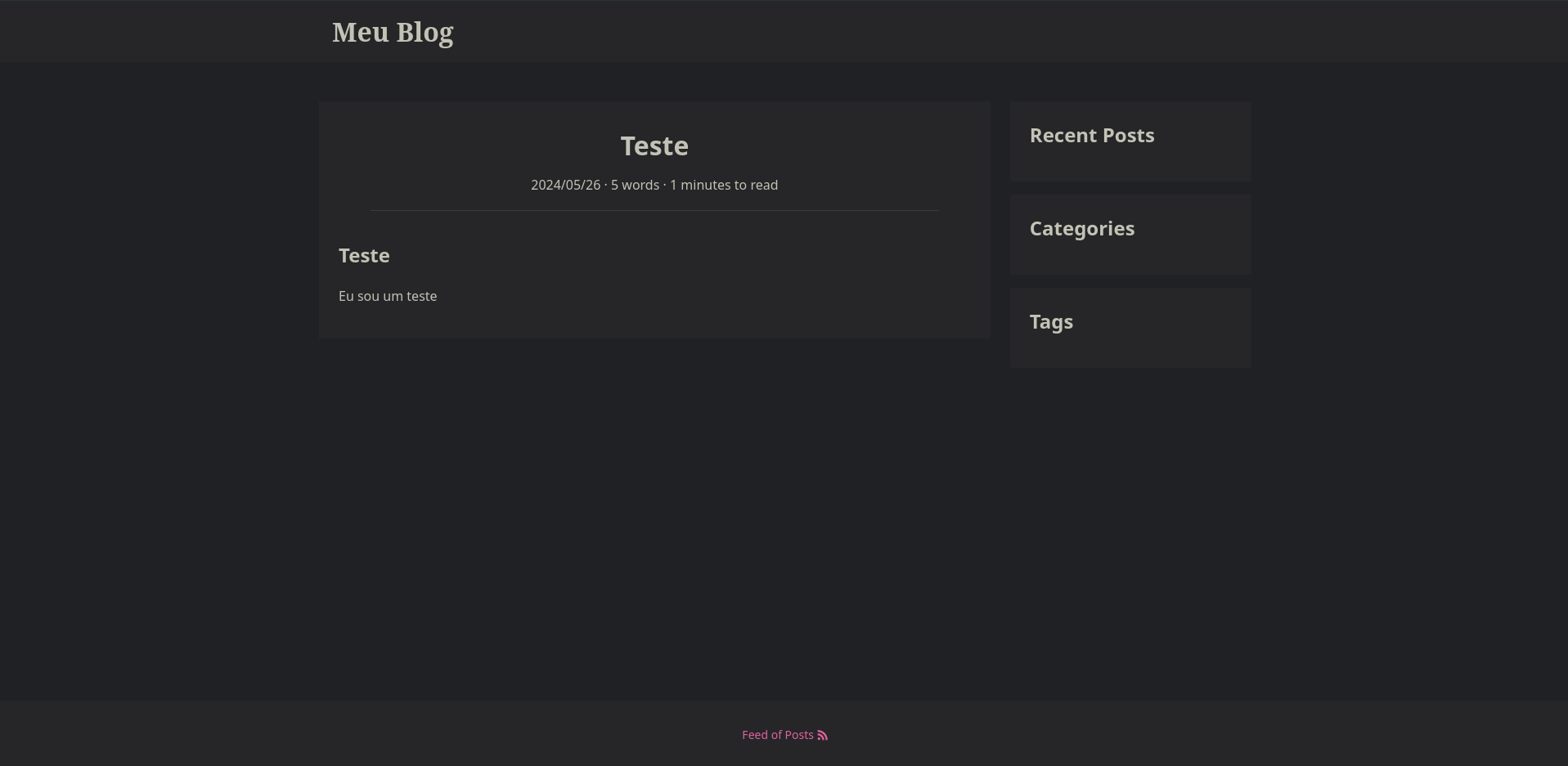

you run the application and open it in a browser, you will see something like this:

**Empty Blazor application**

What we are going to add:

1. CSS and JS files for Workflow Designer from CDN.

2. _Designer_ navigation element on the left panel with the corresponding page.

3. Workflow Designer on a new Blazor page.

## Adding CSS and JS Workflow for Workflow Designer

First, we need to add CSS and JS from Workflow Designer to our application so that we can connect the Designer to a new page. We will also

need to connect jQuery, since Workflow Designer uses it in its work.

We will use CDN, just to avoid copying Designer files to the project. Of course, you can use local files instead of CDN, this is especially

important when you work in an environment with limited Internet access.

**info important**

Due to the way Blazor works with JavaScript code, external JavaScript code must be included after the script `blazor.server.js`.

Open the `Pages/_Host.cshtml` file and add the highlighted lines as shown below. Styles are added inside the `<head>` tag, scripts are added to the end of the page.

Pages/_Host.cshtml

```html

@page "/"

@using Microsoft.AspNetCore.Components.Web

@namespace workflow_designer_blazor.Pages

@addTagHelper *, Microsoft.AspNetCore.Mvc.TagHelpers

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="utf-8"/>

<meta name="viewport" content="width=device-width, initial-scale=1.0"/>

<base href="~/"/>

<link rel="stylesheet" href="css/bootstrap/bootstrap.min.css"/>

<link href="css/site.css" rel="stylesheet"/>

<link href="workflow-designer-blazor.styles.css" rel="stylesheet"/>

<link rel="stylesheet" href="https://unpkg.com/@@optimajet/workflow-designer@12.5.1/dist/workflowdesigner.min.css">

<link rel="icon" type="image/png" href="favicon.png"/>

<component type="typeof(HeadOutlet)" render-mode="ServerPrerendered"/>

</head>

<body>

<component type="typeof(App)" render-mode="ServerPrerendered"/>

<div id="blazor-error-ui">

<environment include="Staging,Production">

An error has occurred. This application may no longer respond until reloaded.

</environment>

<environment include="Development">

An unhandled exception has occurred. See browser dev tools for details.

</environment>

<a href="" class="reload">Reload</a>

<a class="dismiss">🗙</a>

</div>

<script src="_framework/blazor.server.js"></script>

// &new>

<script

src="https://code.jquery.com/jquery-3.7.1.min.js"

integrity="sha256-/JqT3SQfawRcv/BIHPThkBvs0OEvtFFmqPF/lYI/Cxo="

crossorigin="anonymous"></script>

<script src="https://unpkg.com/@@optimajet/workflow-designer@12.5.1/dist/workflowdesignerfull.min.js"

async defer>

</script>

<script src="js/designerInterop.js"></script>

// <&new

</body>

</html>

```

Now the Workflow Designer with its styles will be loaded in our application.

Pay attention to the file `js/designerInterop.js`. This is a file that will contain auxiliary functions for working with the Designer.

Let's create it in the `wwwroot/js/designerInterop.js` path:

wwwroot/js/designerInterop.js

```javascript

function renderWorkflowDesigner(options) {

var wfdesigner = new WorkflowDesigner({

apiurl: options.apiUrl,

name: 'wfe',

language: 'en',

renderTo: options.elementId,

graphwidth: window.innerWidth - 400,

graphheight: window.innerHeight - 100,

showSaveButton: true,

})

const data = {

schemecode: options.schemeCode,

processid: options.processId

}

if (wfdesigner.exists(data)) {

wfdesigner.load(data)

} else {

wfdesigner.create(data.schemecode)

}

}

function waitForJsAndRender(options) {

if (typeof window.WorkflowDesigner !== 'undefined') {

renderWorkflowDesigner(options)

return

}

// the interval here is only needed to wait for the javascript to load with the designer

const interval = setInterval(() => {

// if the designer hasn't been uploaded yet, we'll wait a little longer

if (typeof window.WorkflowDesigner === 'undefined') return

clearInterval(interval)

renderWorkflowDesigner(options)

}, 30)

}

```

There are only two functions in the file:

1. `renderWorkflowDesigner` - renders the Designer with the specified options.

2. `waitForJsAndRender` - waits for JavaScript to load with the Designer and calls the Designer's render. We need this function because the

Designer loads asynchronously after the page loads.

## Adding a new page

Open the `Shared/NavMenu.razor` file and add the highlighted lines after the _Fetch data_ navigation link.

Shared/NavMenu.razor

```html

<div class="@NavMenuCssClass nav-scrollable" @onclick="ToggleNavMenu">

<nav class="flex-column">

<div class="nav-item px-3">

<NavLink class="nav-link" href="" Match="NavLinkMatch.All">

<span class="oi oi-home" aria-hidden="true"></span> Home

</NavLink>

</div>

<div class="nav-item px-3">

<NavLink class="nav-link" href="counter">

<span class="oi oi-plus" aria-hidden="true"></span> Counter

</NavLink>

</div>

<div class="nav-item px-3">

<NavLink class="nav-link" href="fetchdata">

<span class="oi oi-list-rich" aria-hidden="true"></span> Fetch data

</NavLink>

</div>

// &new>

<div class="nav-item px-3">

<NavLink class="nav-link" href="designer">

<span class="oi oi-copywriting" aria-hidden="true"></span> Designer

</NavLink>

</div>

// <&new

</nav>

</div>

```

Now add a new file `Pages/Designer.razor` and paste the following content there:

Pages/Designer.razor

```html

@page "/designer"

@inject IJSRuntime JSRuntime

<PageTitle>Workflow designer</PageTitle>

<div id="root"></div>

@code

{

protected override async Task OnAfterRenderAsync(bool firstRender)

{

if (firstRender)

{

var options = new

{

apiUrl = "https://demo.workflowengine.io/Designer/API",

elementId = "root",

schemeCode = "SimpleWF"

};

await JSRuntime.InvokeAsync<Task>("waitForJsAndRender", options);

}

}

}

```

Everything is quite simple here. There is a `div` element on the page with the id `root`. When the page is first rendered, in the

`OnAfterRenderAsync` method, we call a JavaScript function called `waitForJsAndRender`, passing in `options` as parameters.

In the parameters we pass:

1. `elementId` - the identifier of the HTML element in which Designer should be drawn.

2. `apiUrl` - the URL where Designer's API is located.

3. `schemeCode` - the scheme code.

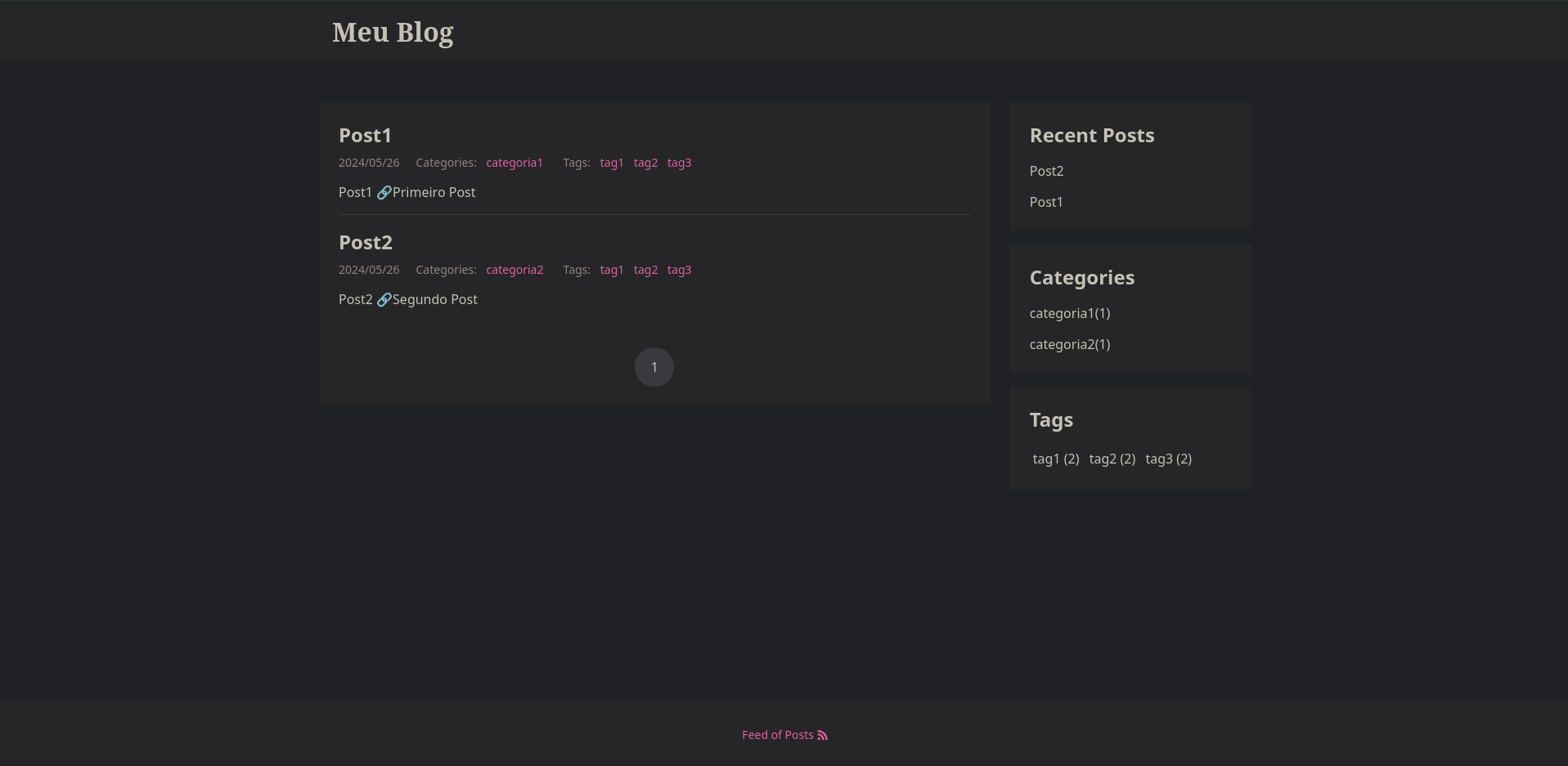

## Launching the application

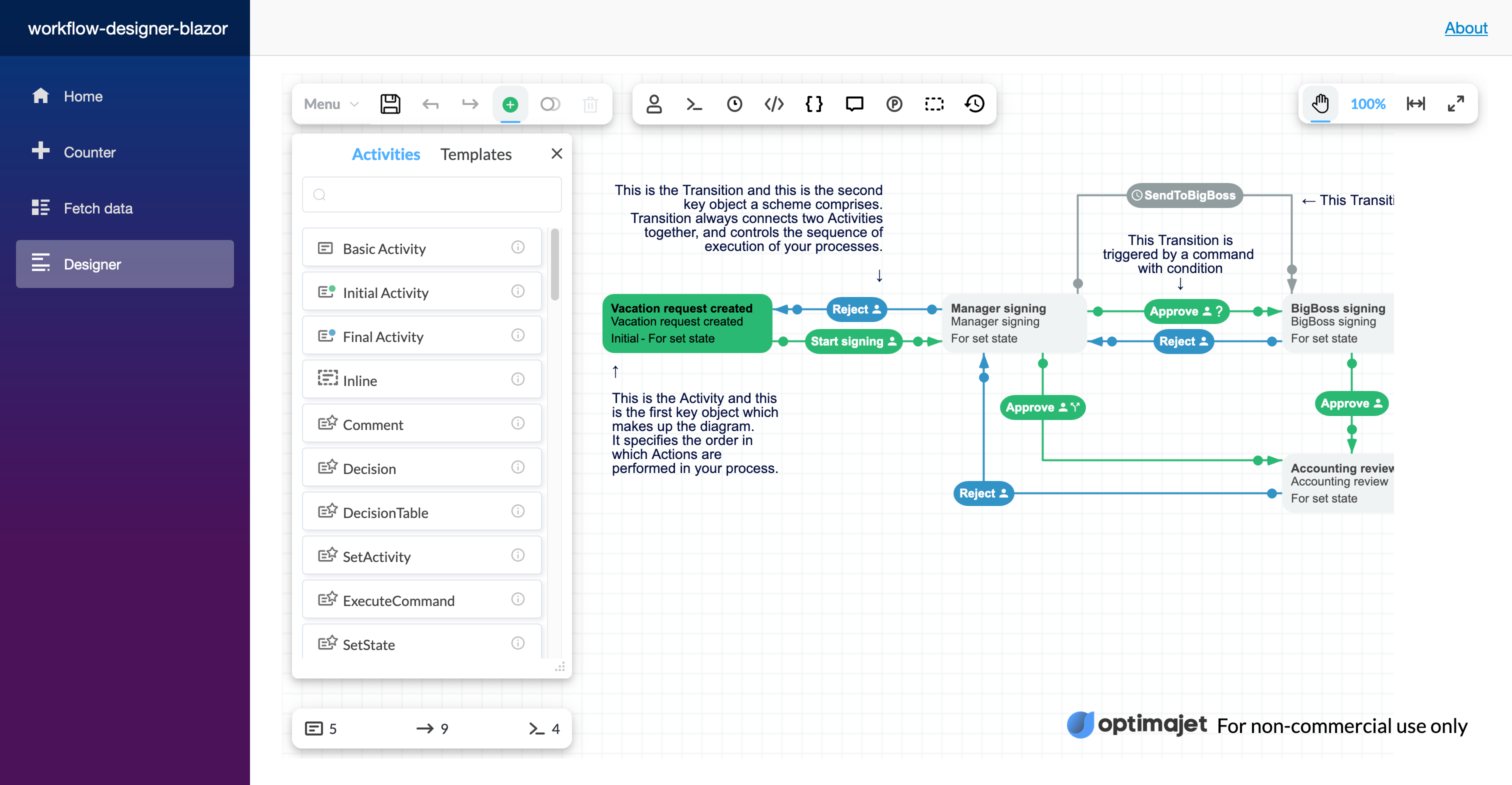

Now you can run your application using the `dotnet run` or `dotnet watch` command. After that, open your browser and navigate to a new page where you should see Workflow Designer.

**Workflow Designer in Blazor application**

## Conclusion

We have added Workflow Designer to our Blazor application by including a script from a content delivery network (CDN).

To work with the designer, we used the [ JavaScript interop mechanism]( https://learn.microsoft.com/en-us/aspnet/core/blazor/javascript-interoperability/?view=aspnetcore-8.0).

It was quite easy!

**Your feedback is very important to us**

It helps us understand whether this guide was useful to you, how clearly it was written, and what else you would like to learn about. Please ask your questions in the comments or start discussions on [GitHub](https://github.com/optimajet/WorkflowEngine.NET/discussions). | optimajet |

1,859,617 | Prototyping different storage scenarios for an IT department in Azure Portal. | creating and configuring a storage account. | 0 | 2024-05-26T15:38:51 | https://dev.to/tundeiness/prototyping-different-storage-scenarios-for-an-it-department-in-azure-portal-20mg | azure, storage | ---

title: Prototyping different storage scenarios for an IT department in Azure Portal.

published: true

description: creating and configuring a storage account.

tags: azure, storage

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/qro8jkedn7euqk6dhm7m.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-05-20 17:32 +0000

---

#Introduction

In this post, I'll walk you through the process of providing Storage for a fictitious IT department, in the Azure portal. For the department, the data isn’t important enough to back up and doesn’t need to be restored if the data is overwritten or removed.

##Prerequisites

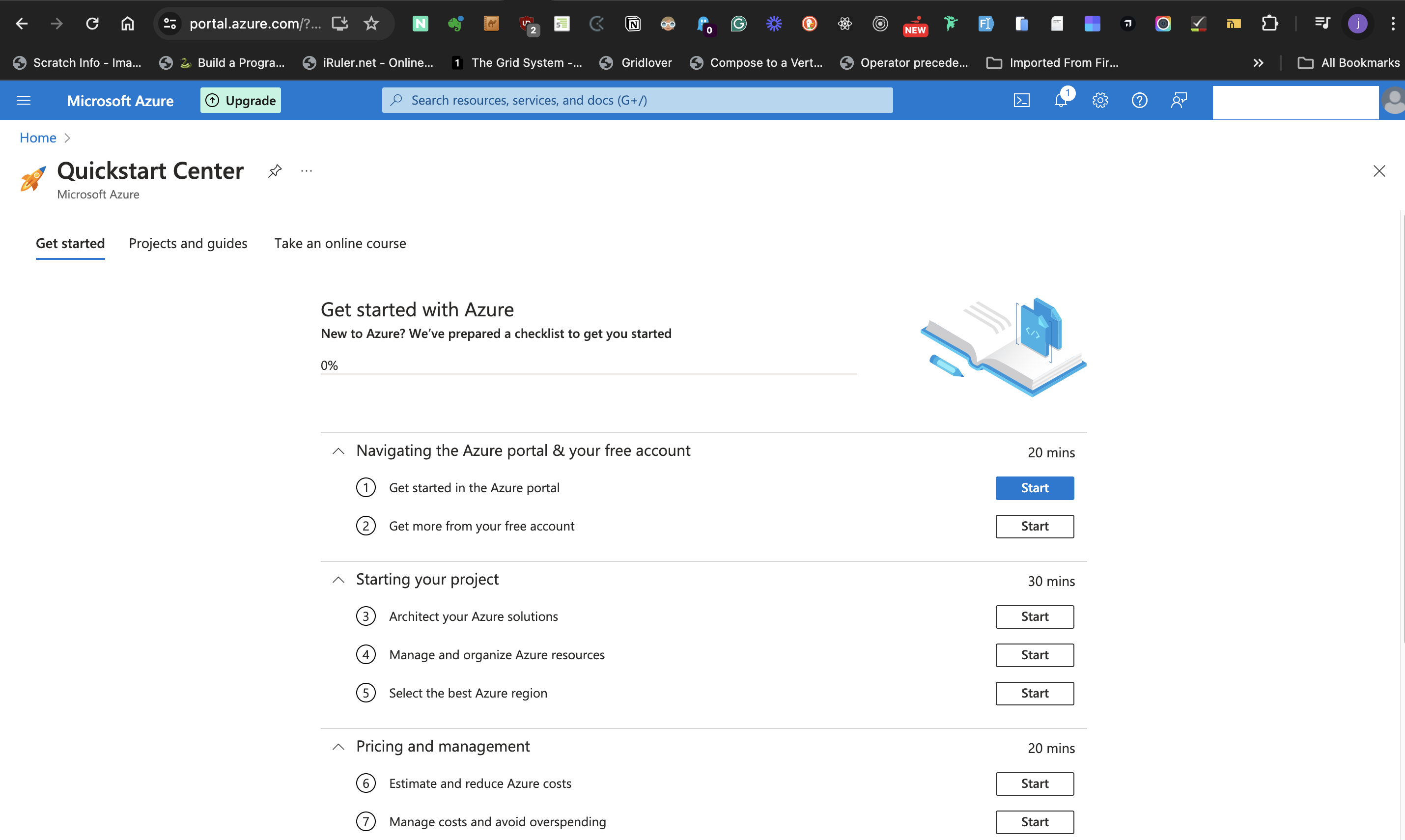

Before we start, you'll need a Microsoft Account and an Azure free trial account. I covered opening a free trial account [here](https://dev.to/tundeiness/setting-up-a-windows-11-virtual-machine-with-azure-on-a-macos-88m)

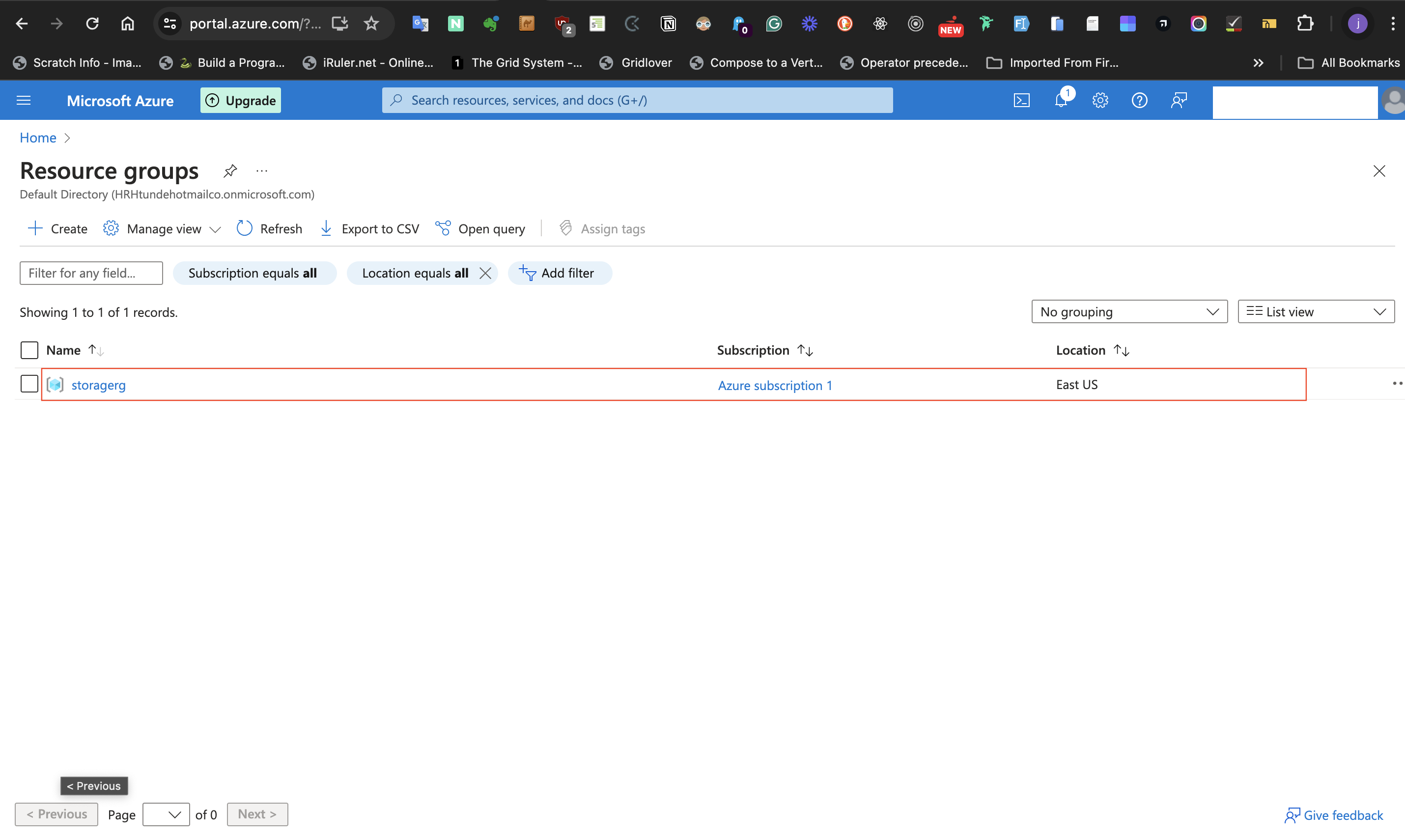

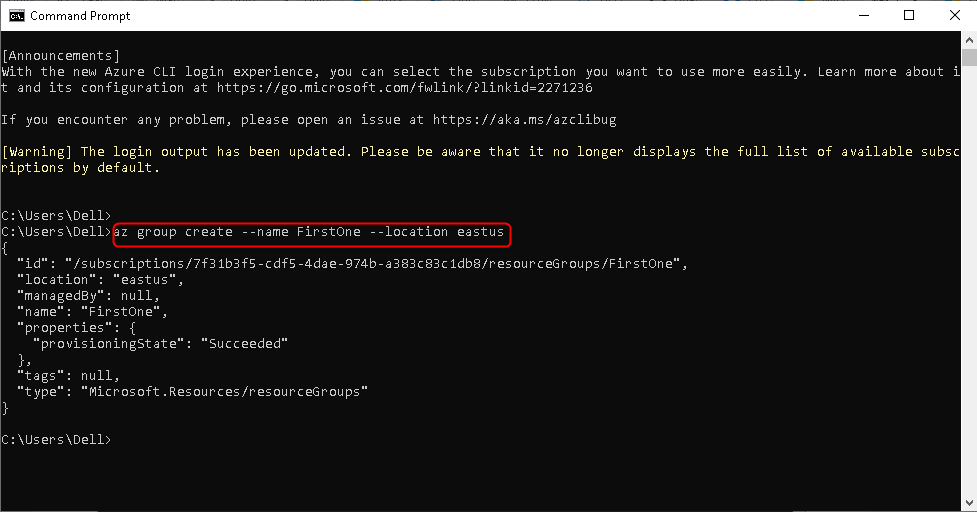

###Step 1: Creating a resource group

Go to the Azure Portal Dashboard, and at the search bar - at the top level of the page - search and select Resource groups. This navigates to another page. While on that page select **+ create**. On the next page, you are required to name your resource group. Go ahead and name your resource group. Then select a region. At the bottom of the page select the **Review + create** button to validate the resource group.

Select the 'create' button to deploy the resource group. The image below shows the created resource group.

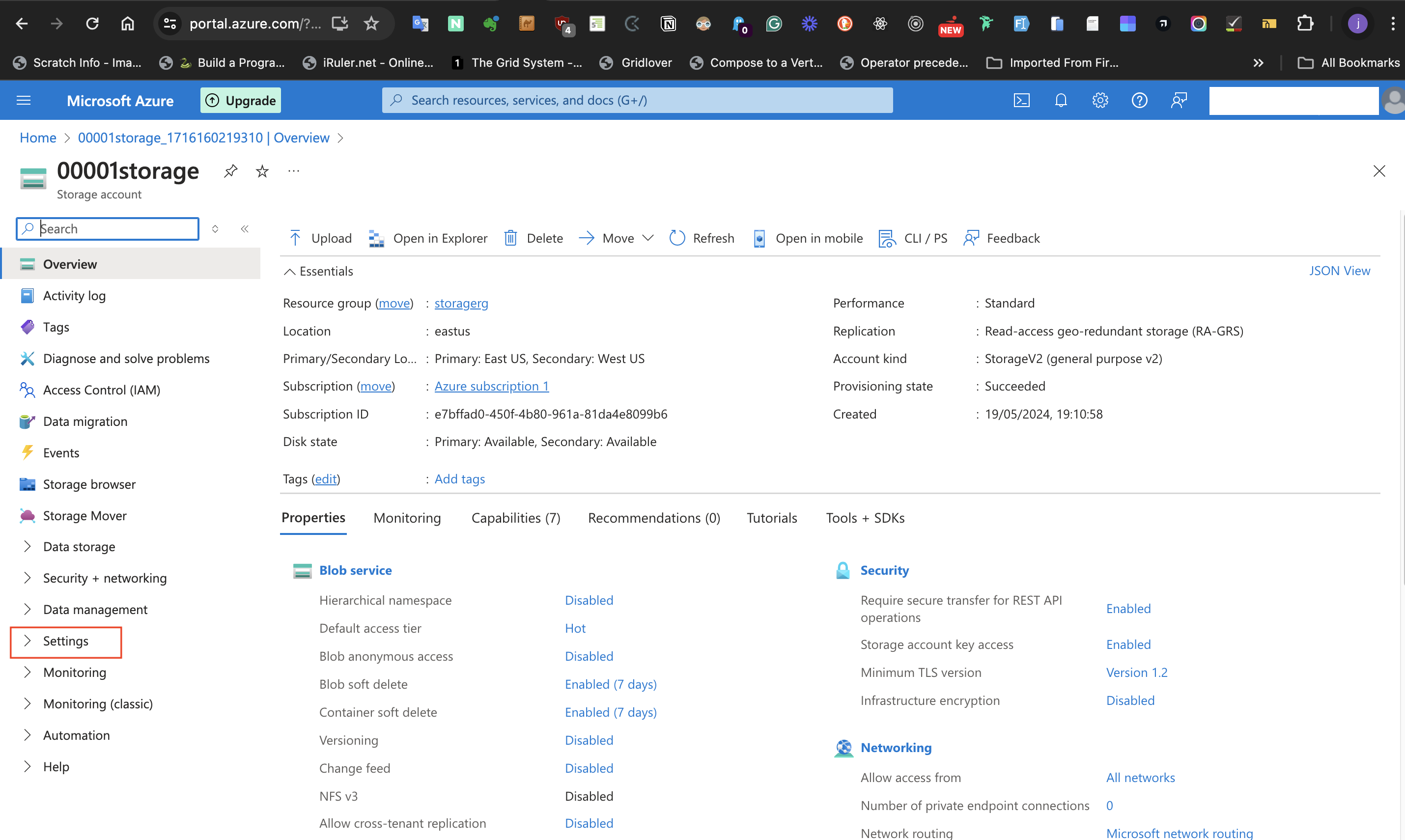

###Step 2: Creating and deploying a storage account

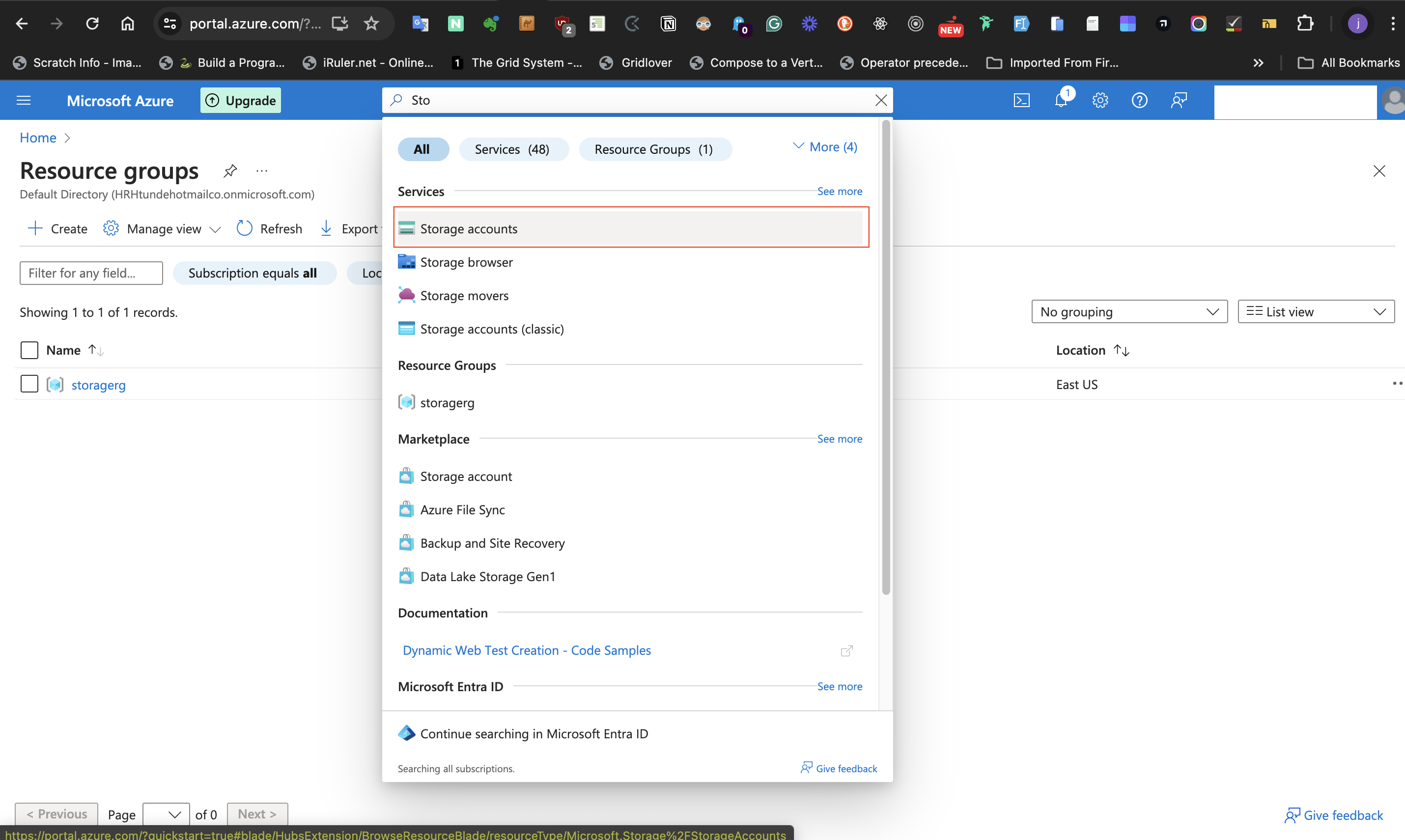

On the page of the resource group and the search bar - at the top level of the page - search and select Storage Accounts.

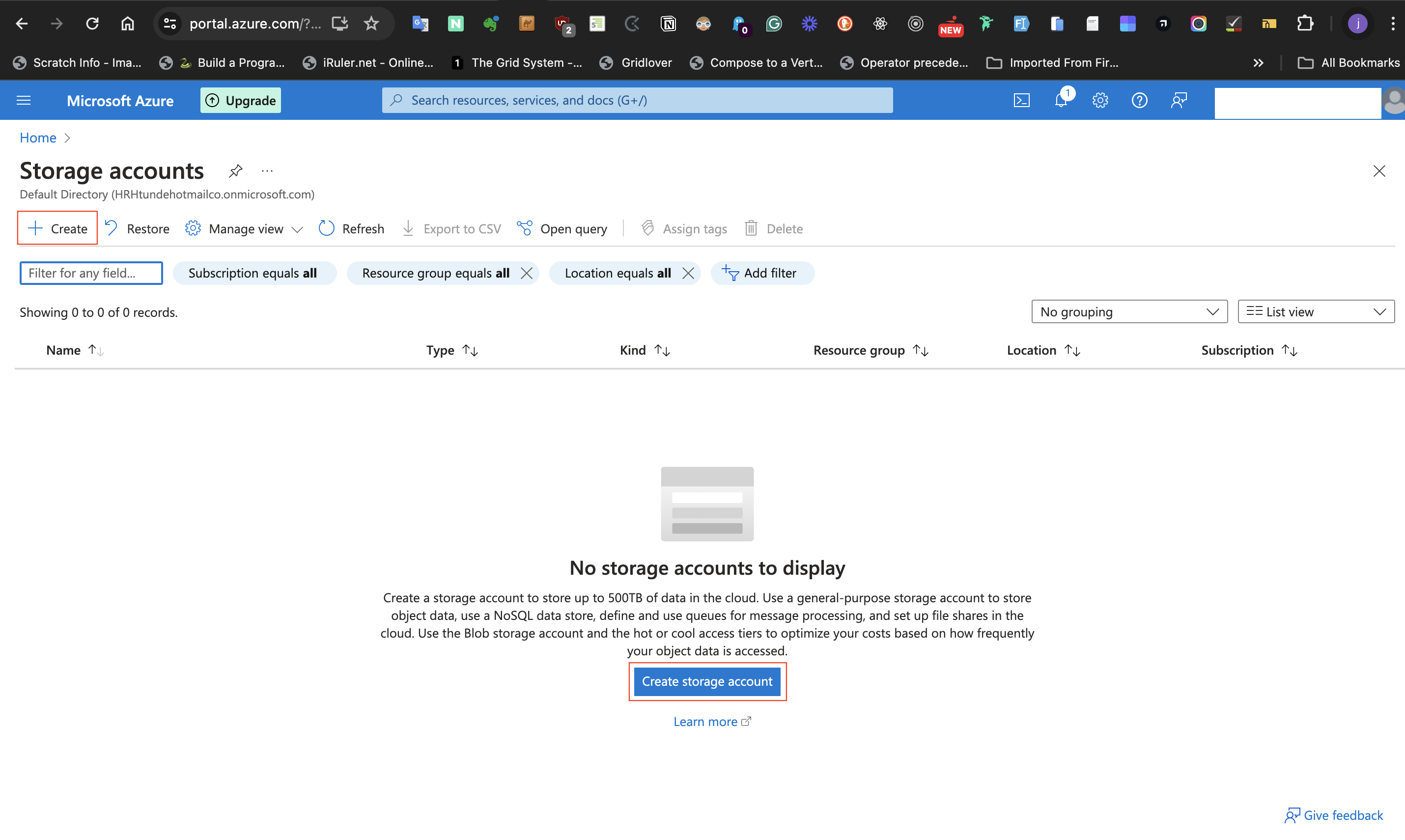

This navigates to another page. While on that page select **+ create** at the upper left of the page or click on the **create storage account** button on that page.

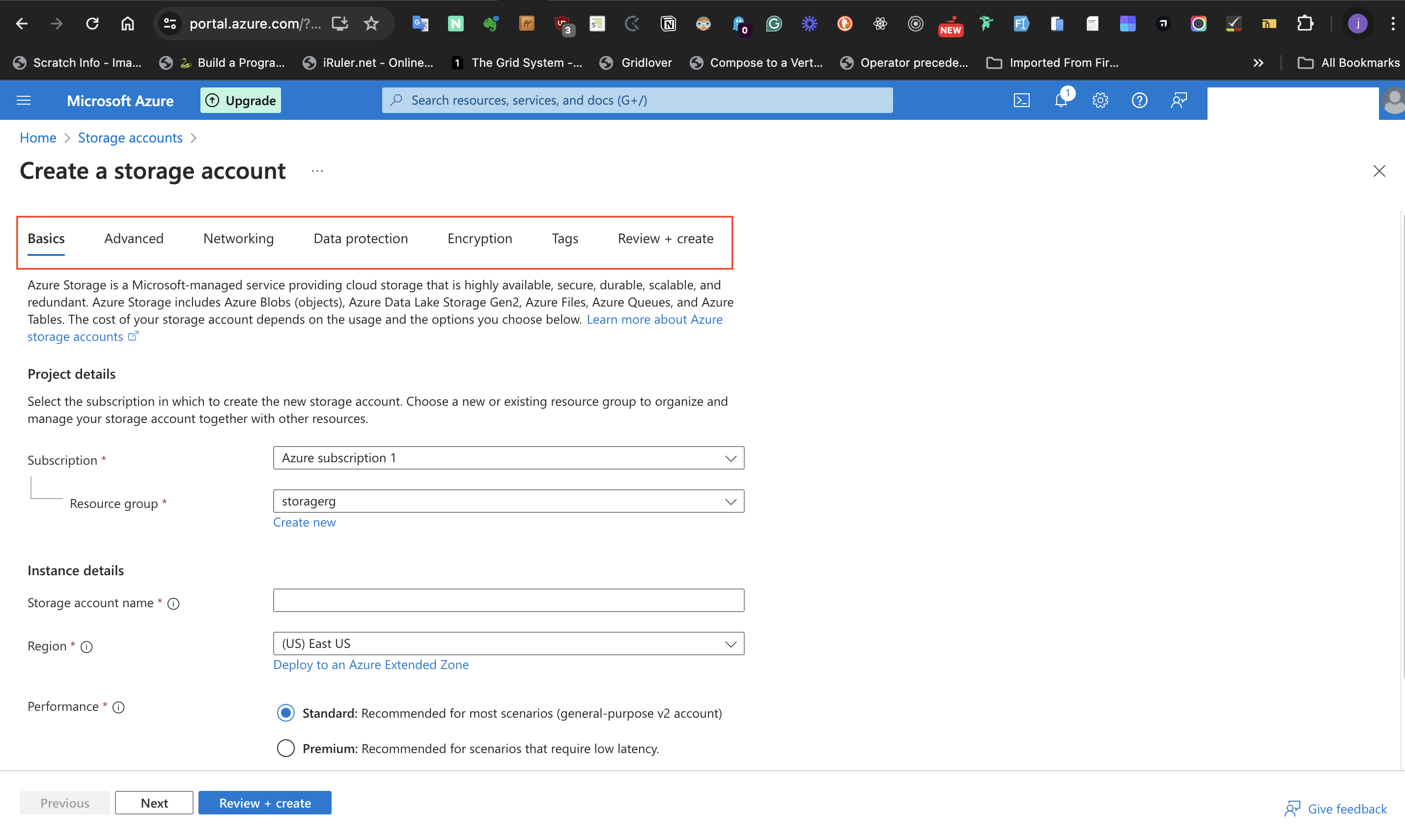

This next page has 6 Tabs namely **Basics**, **Advanced**, **Networking**, **Data protection**, **Encryption**, **Tags** and **Review + create**.

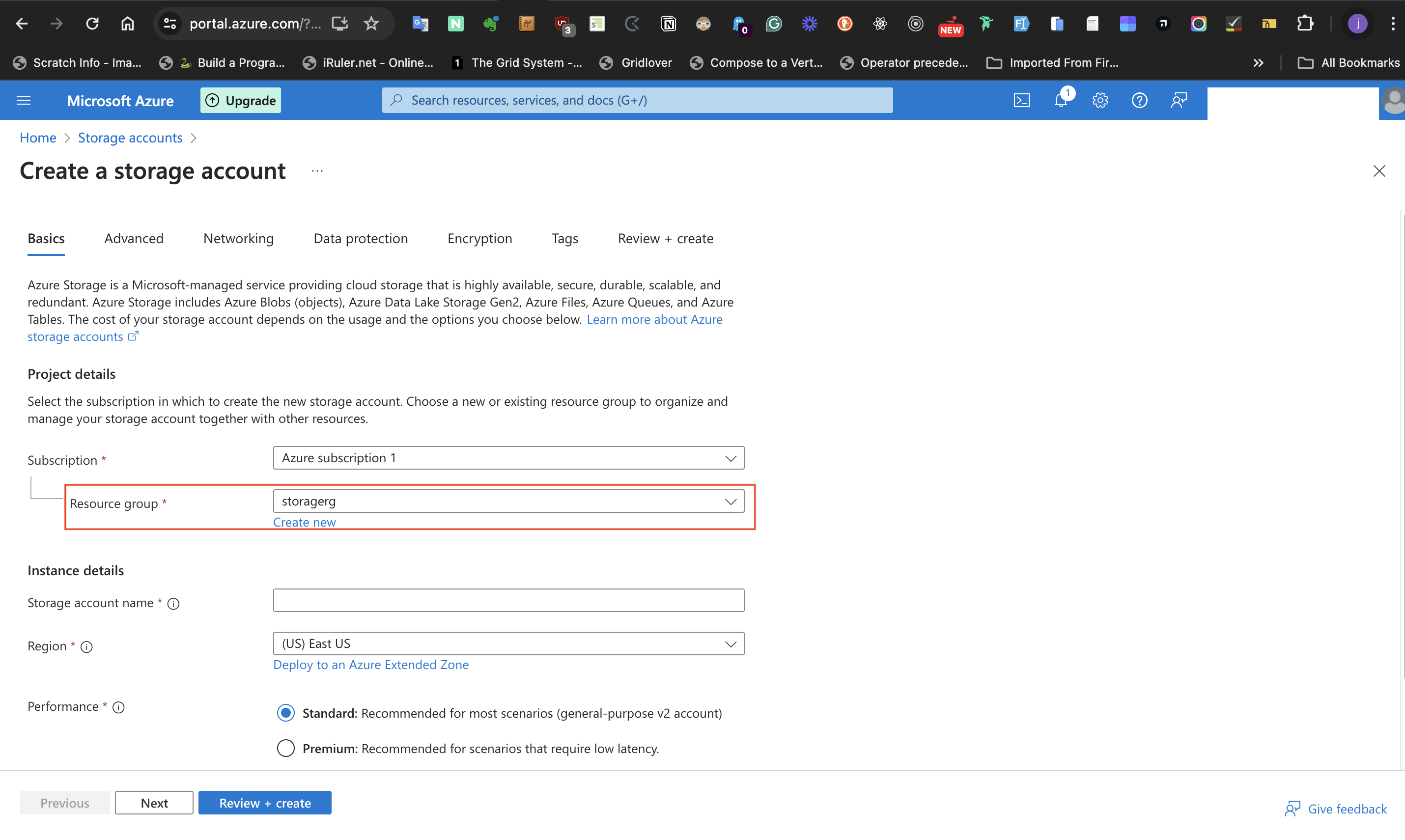

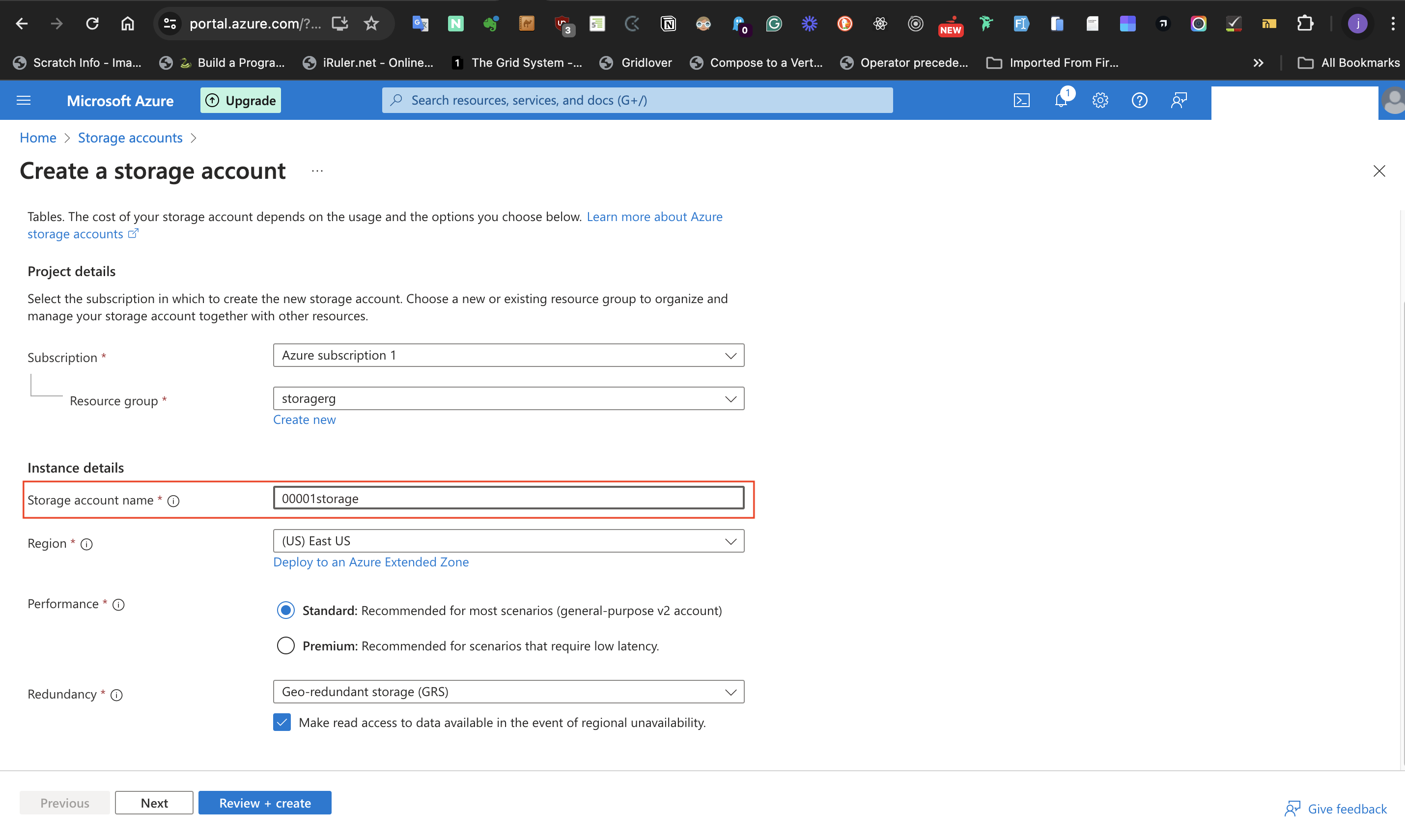

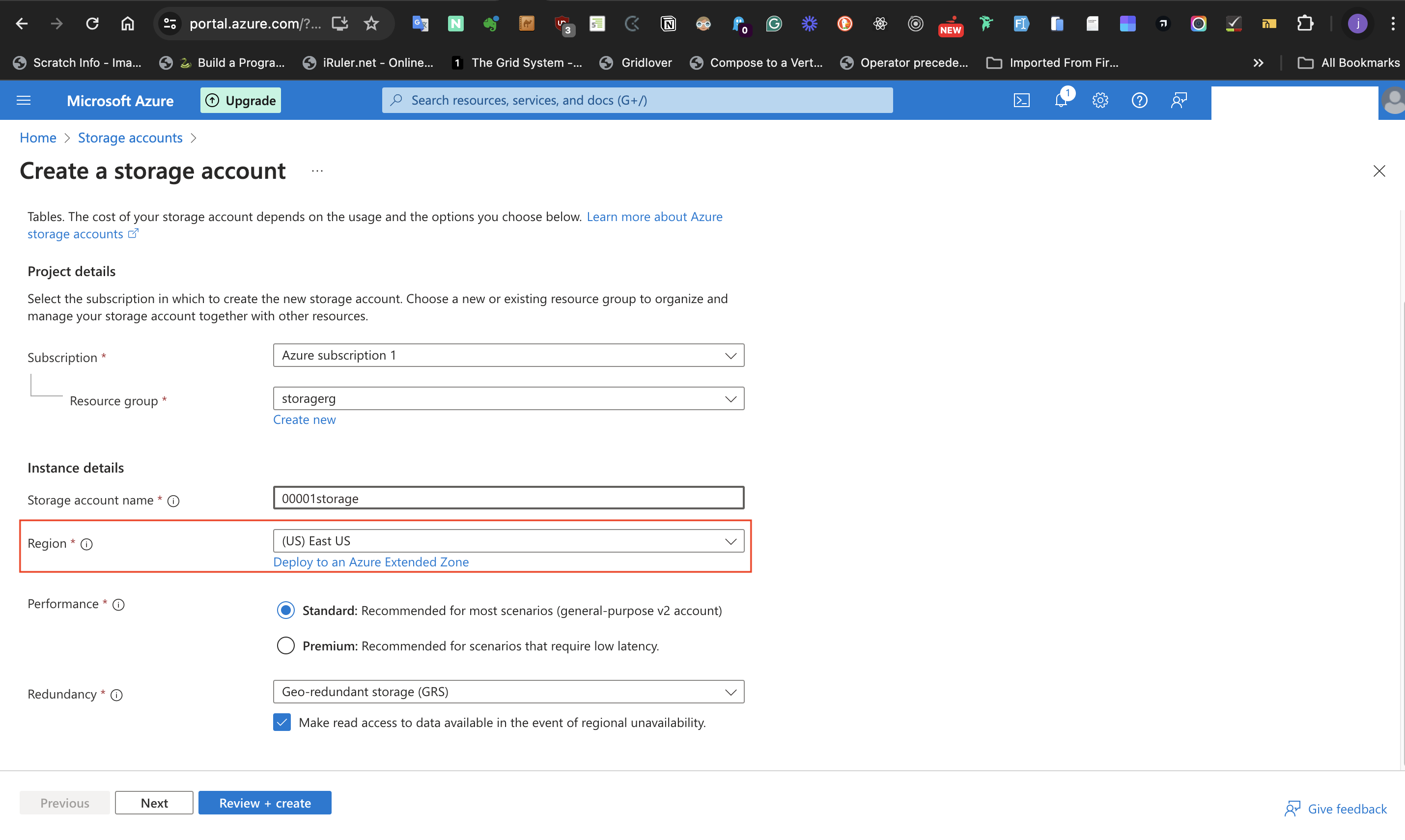

At the **Basics** tab, select the **Resource group** label under the **project details** section and select the Resource group created in Step 1.

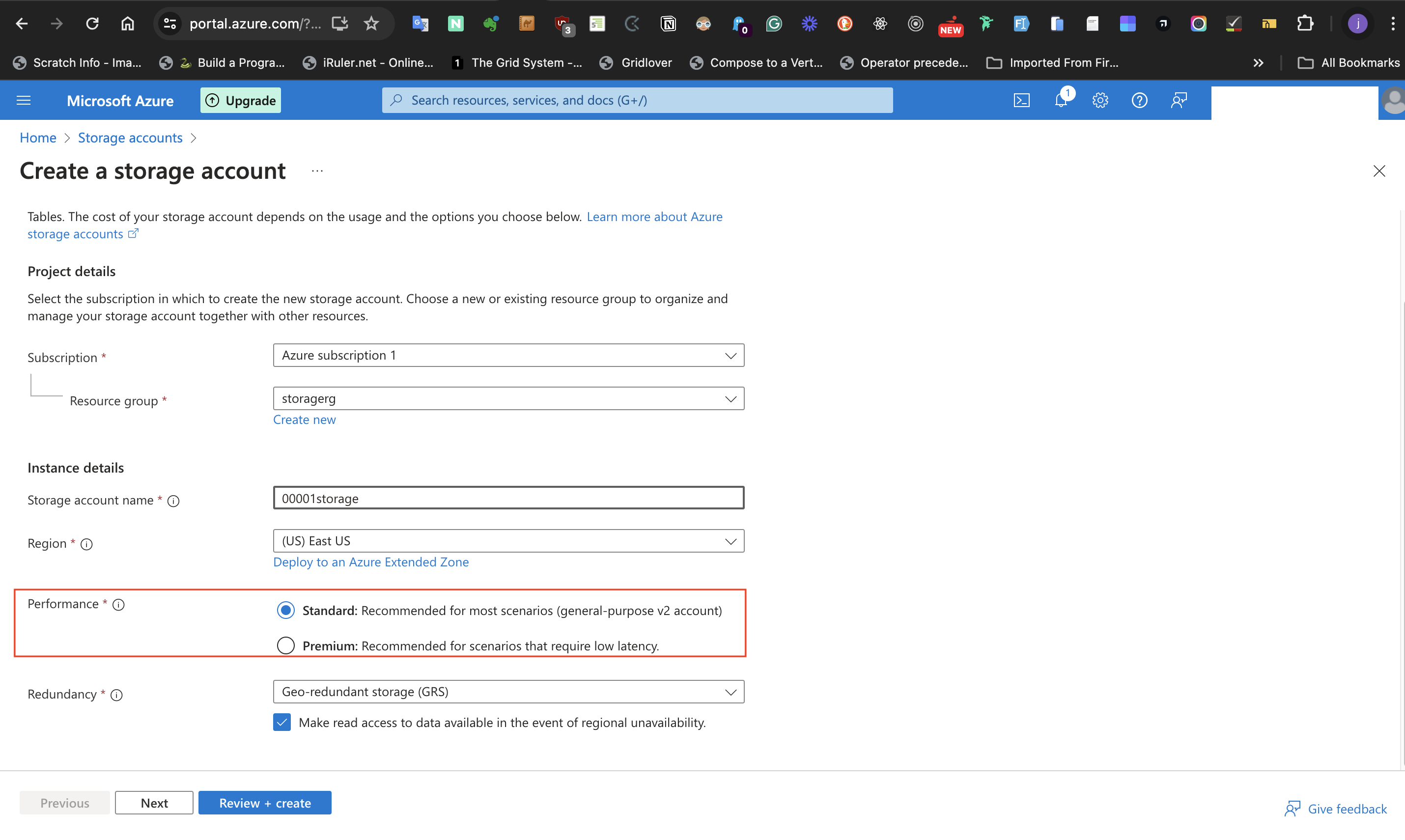

Next, Under the **Instance details** section, give the storage account a unique name, select the (US)East US option at the **Region** label, and for the **Performance** label, select the **standard** radio button.

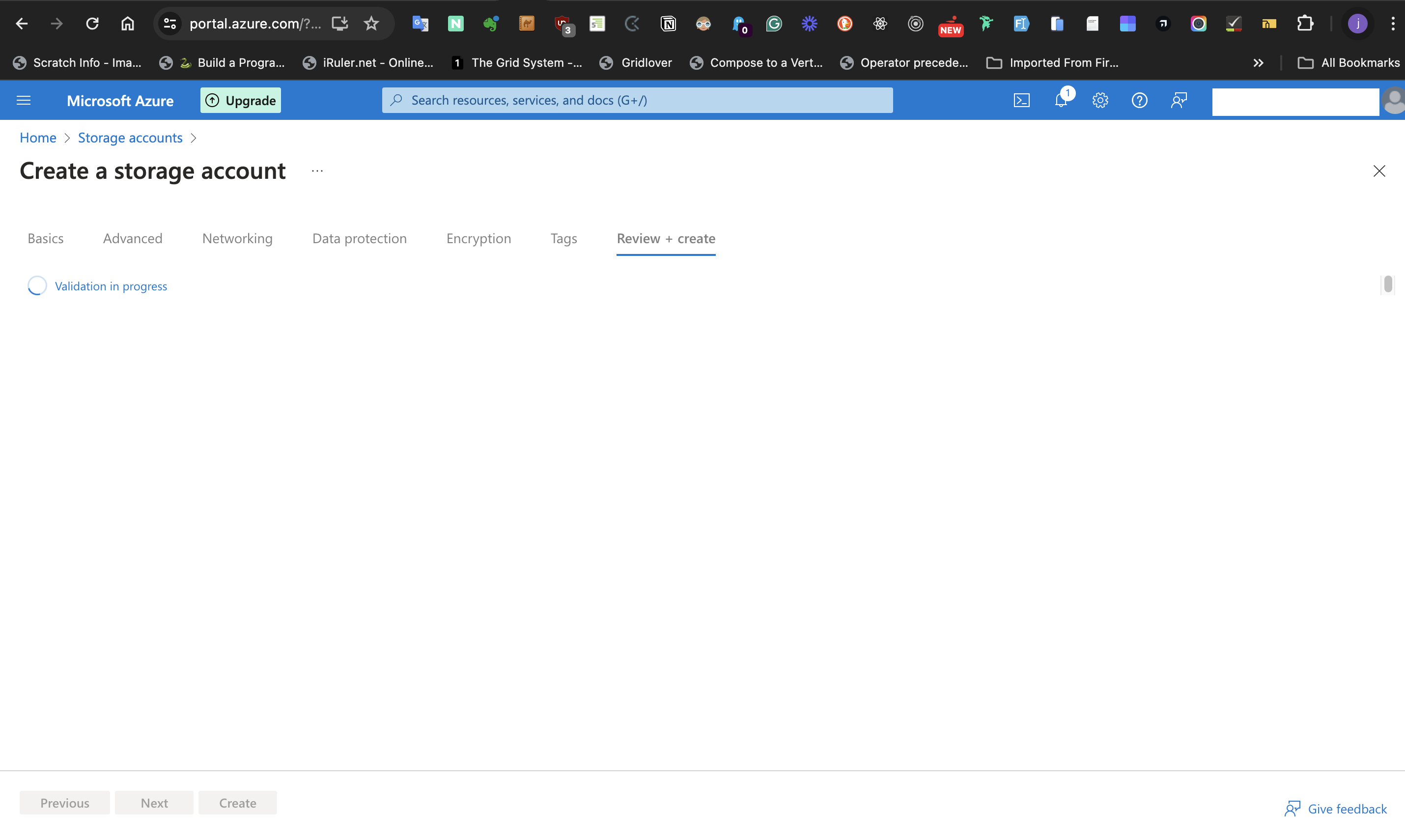

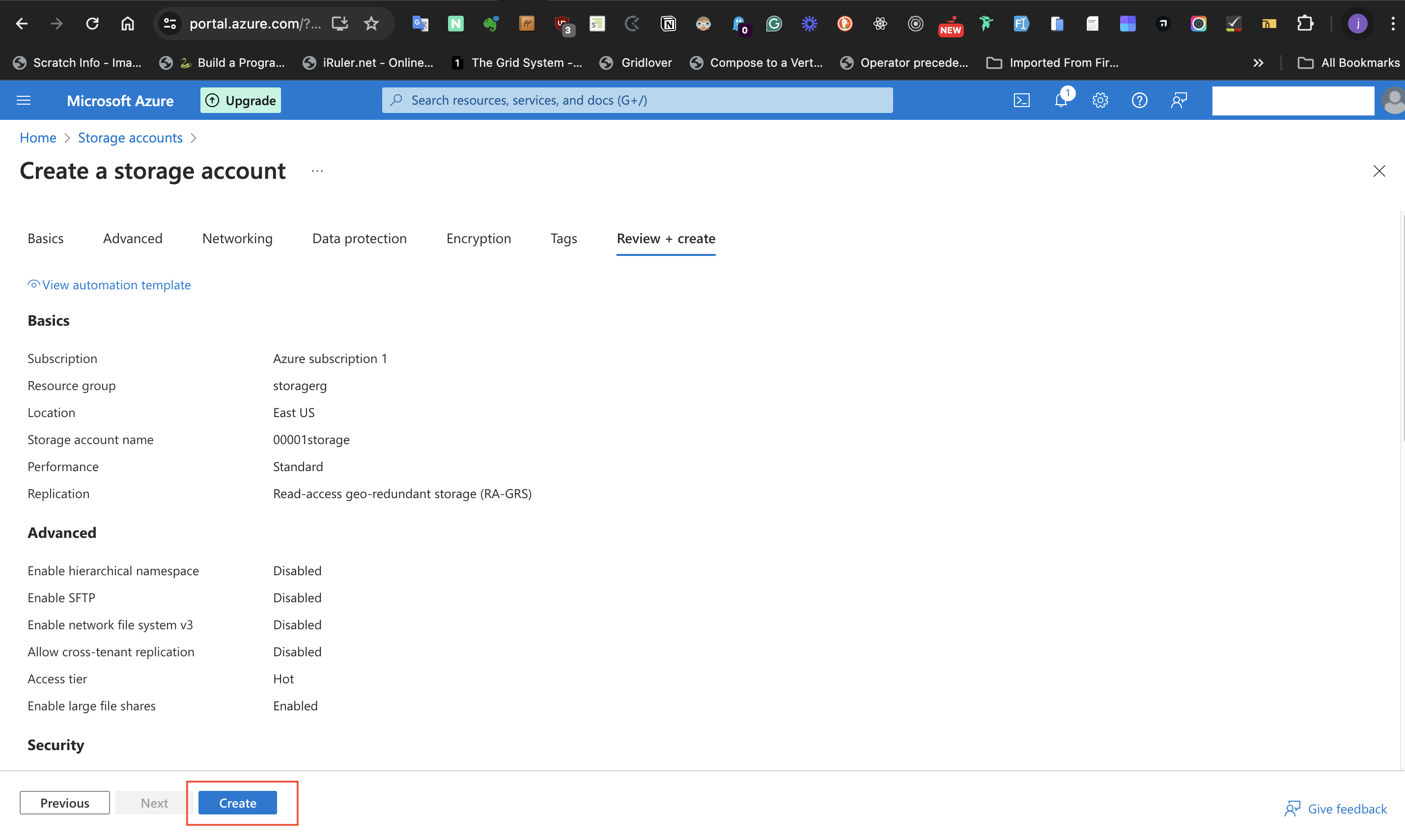

Accept the default **Redundancy** label option as shown. Afterward, select the **Review + create** button on the page and subsequently the "Create" button.

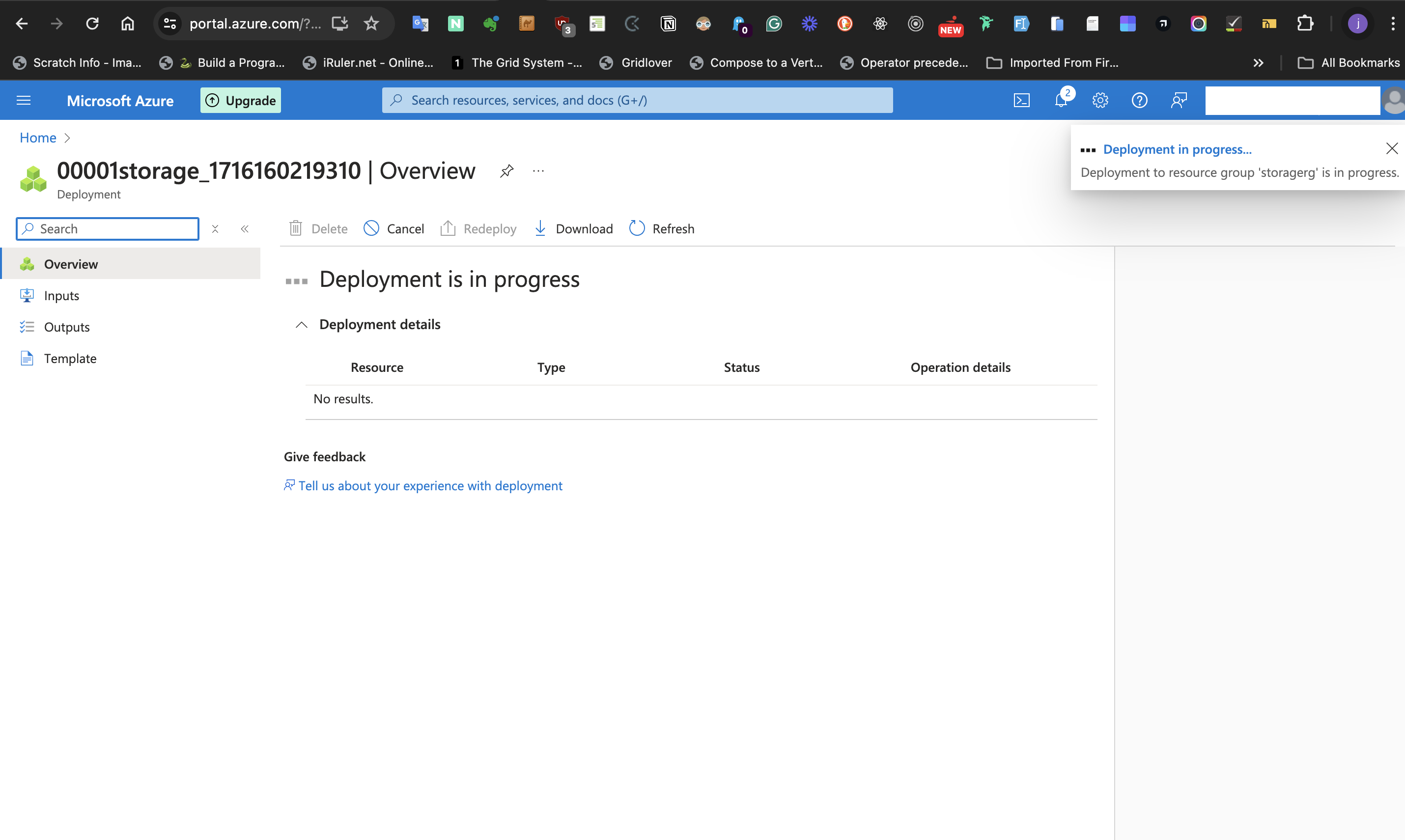

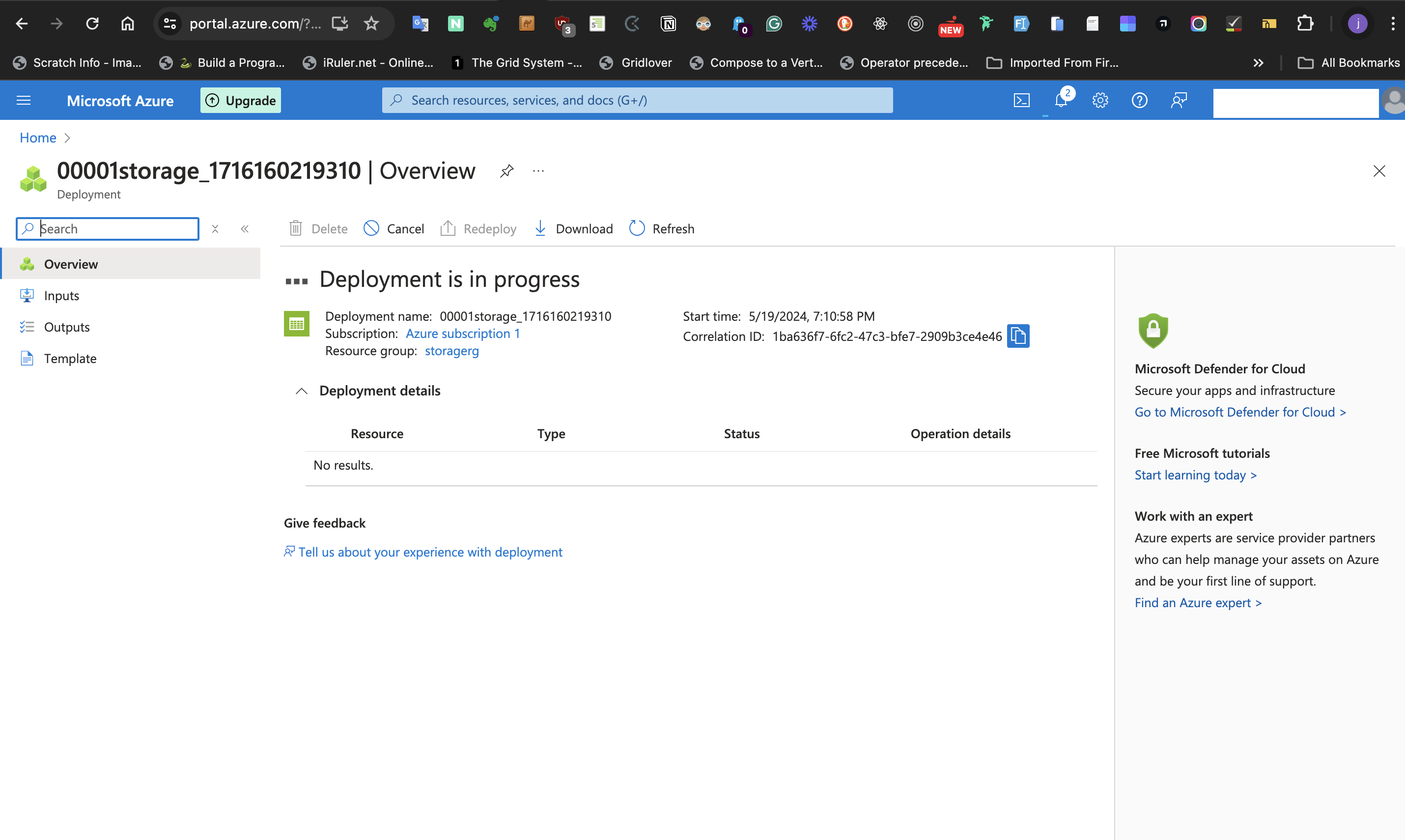

Wait for the storage account to **deploy**.

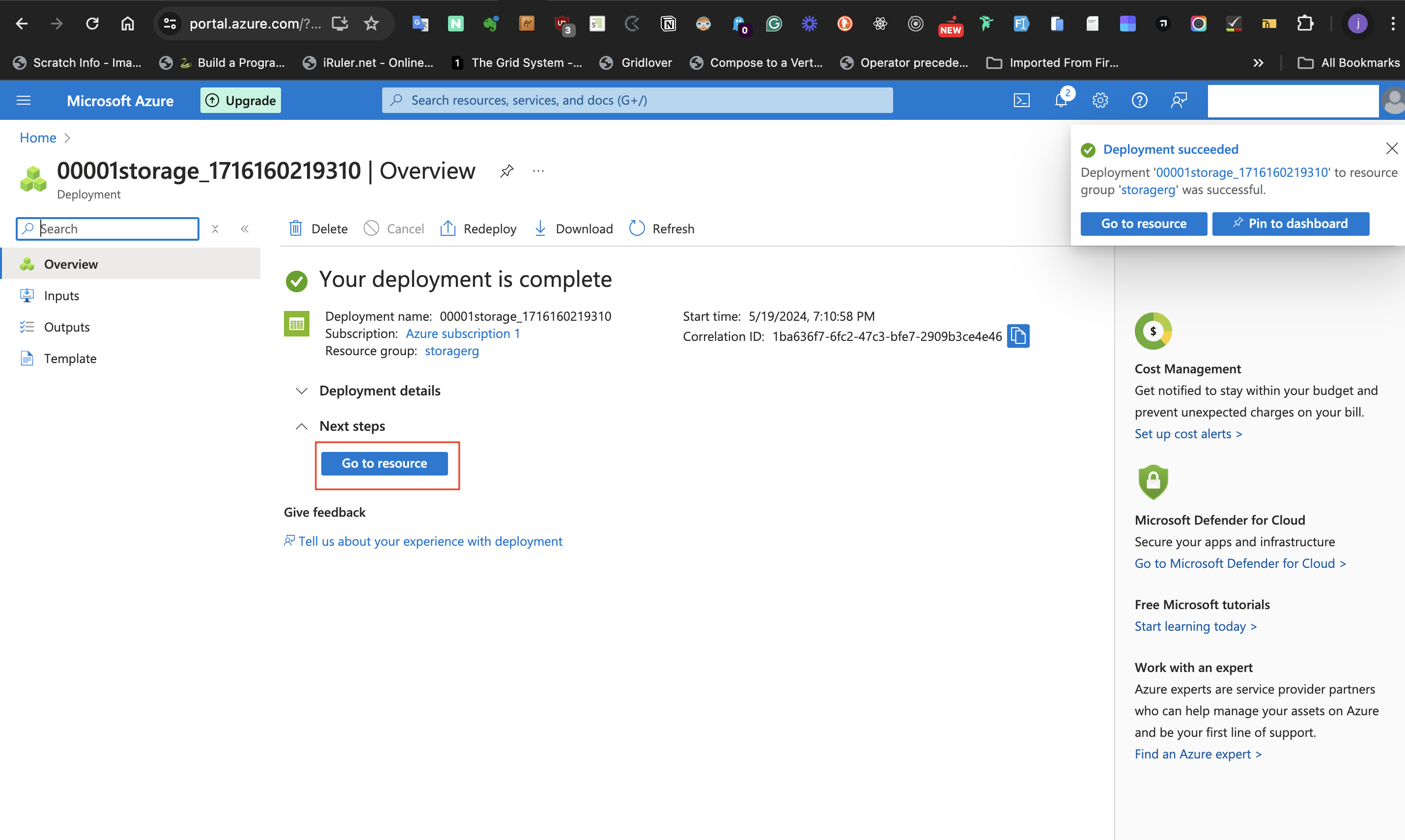

After successfully deploying, click the **go to resource** button.

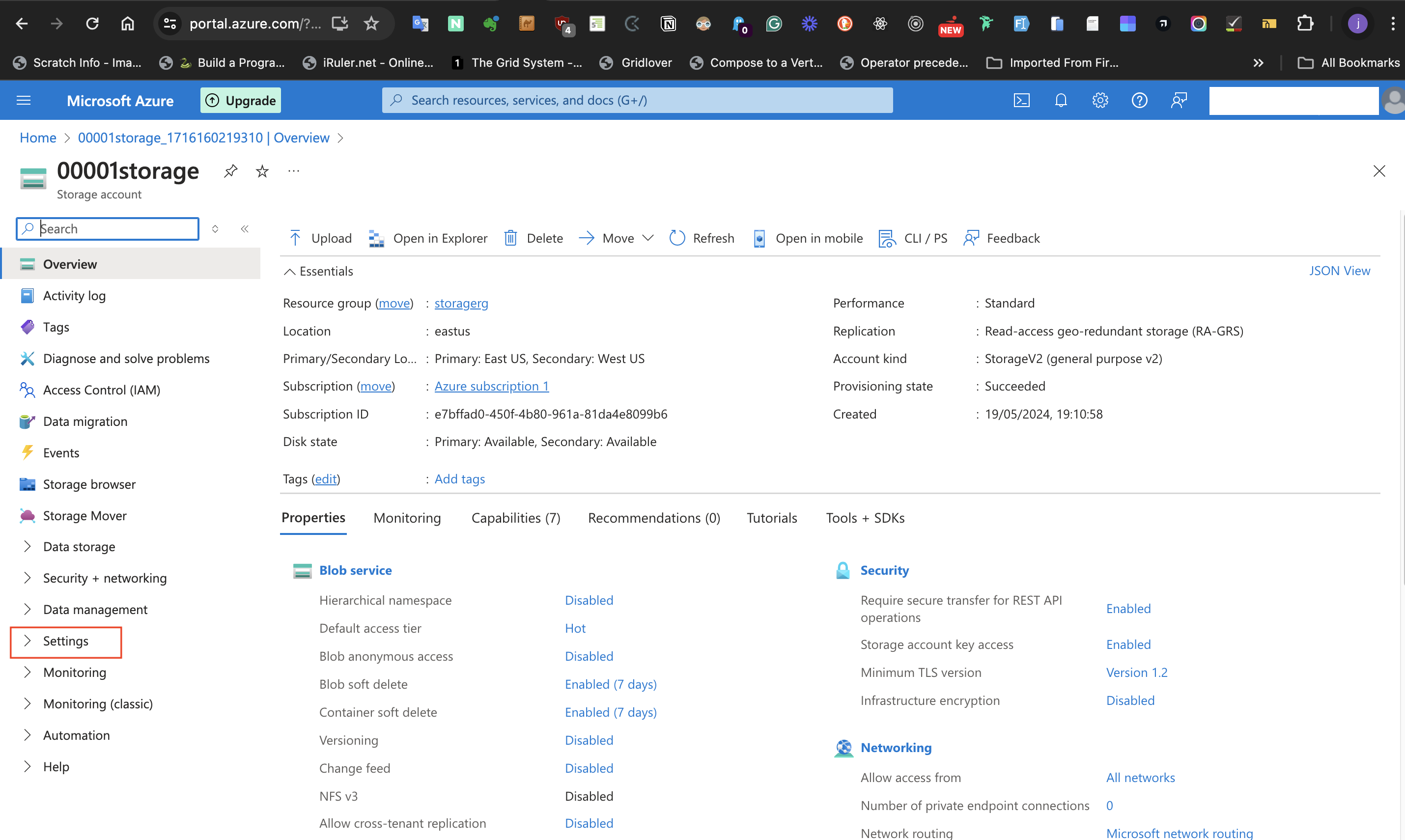

###Step 3: Configure settings in the Storage Account.

####I: Data in this storage account does not need to be highly available.

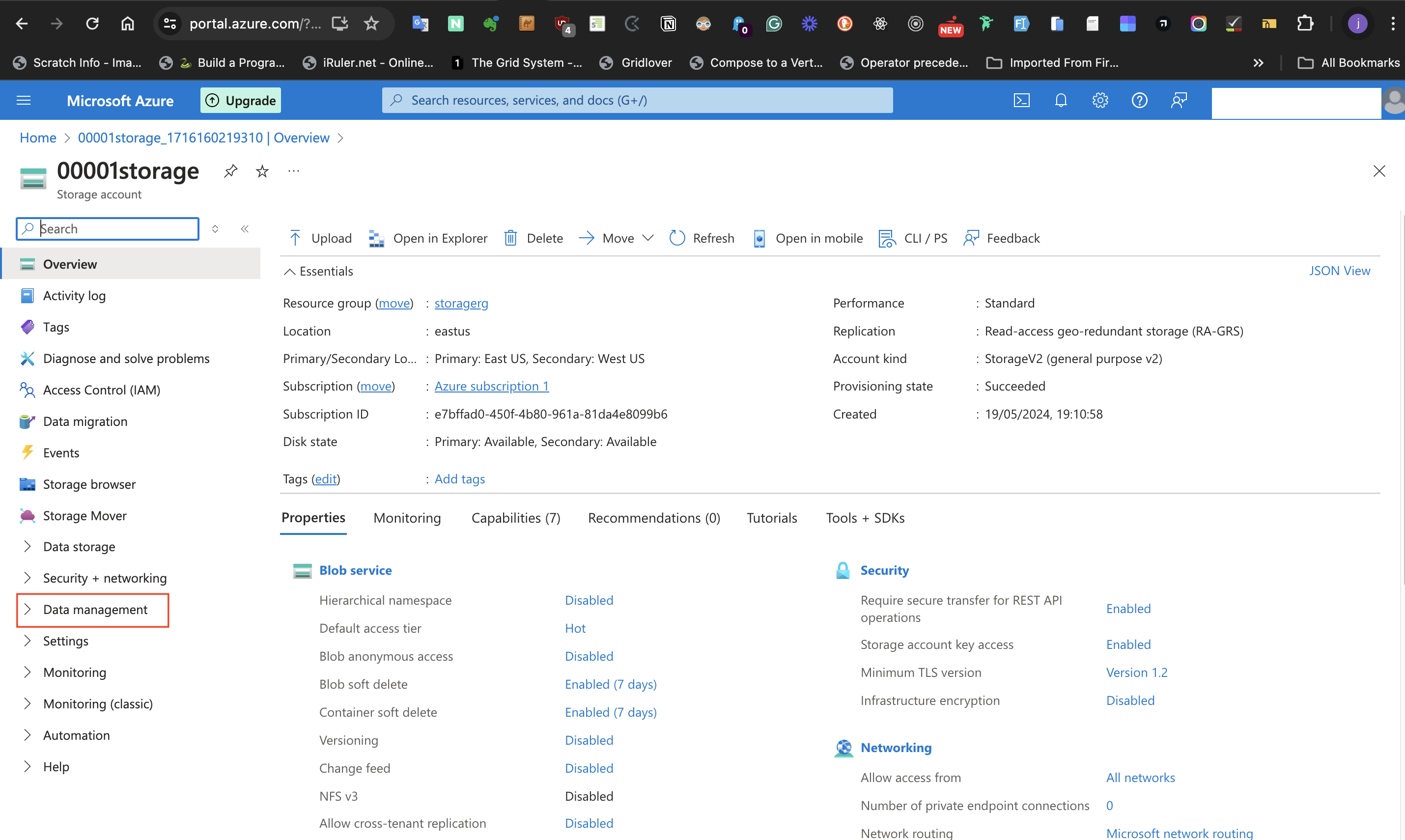

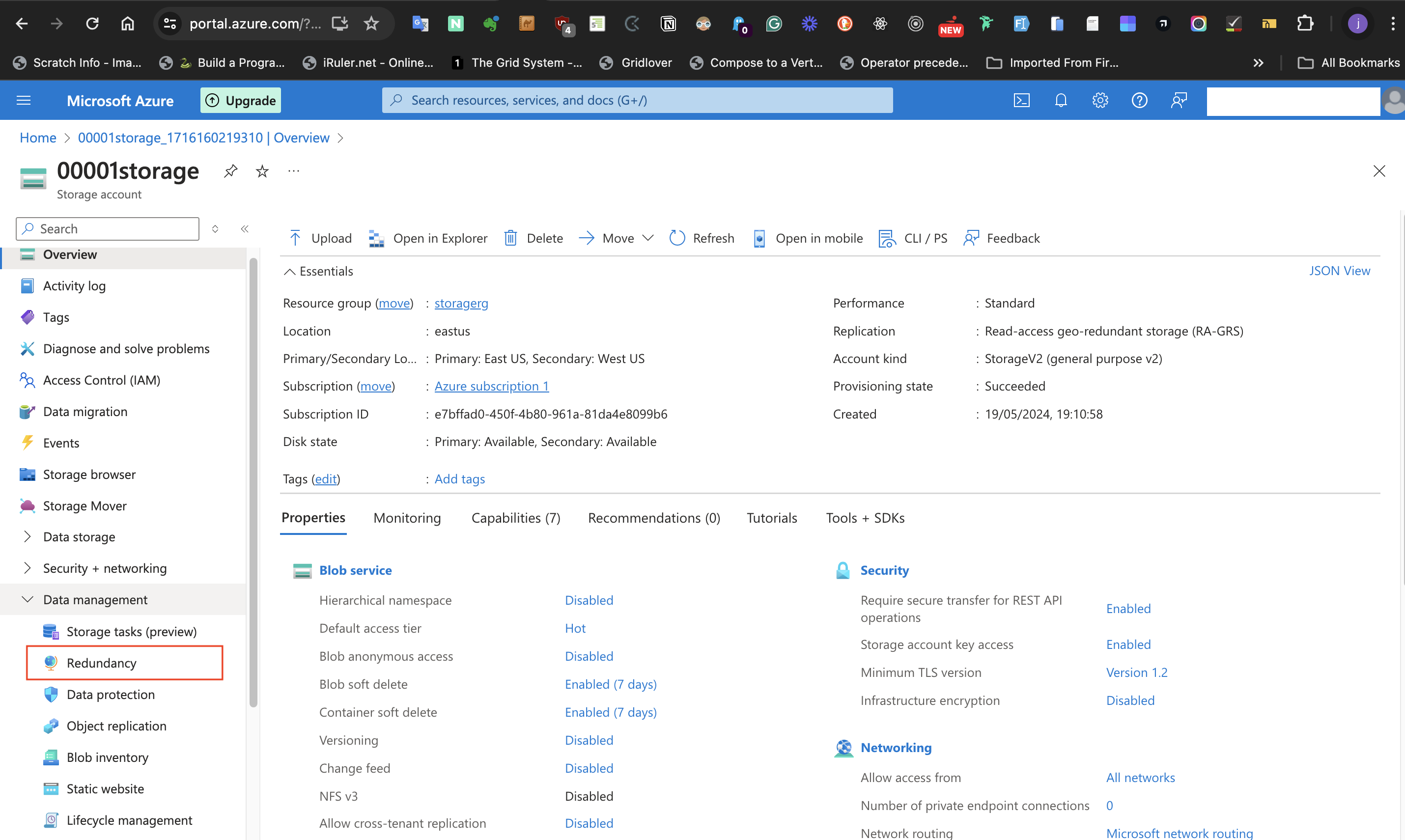

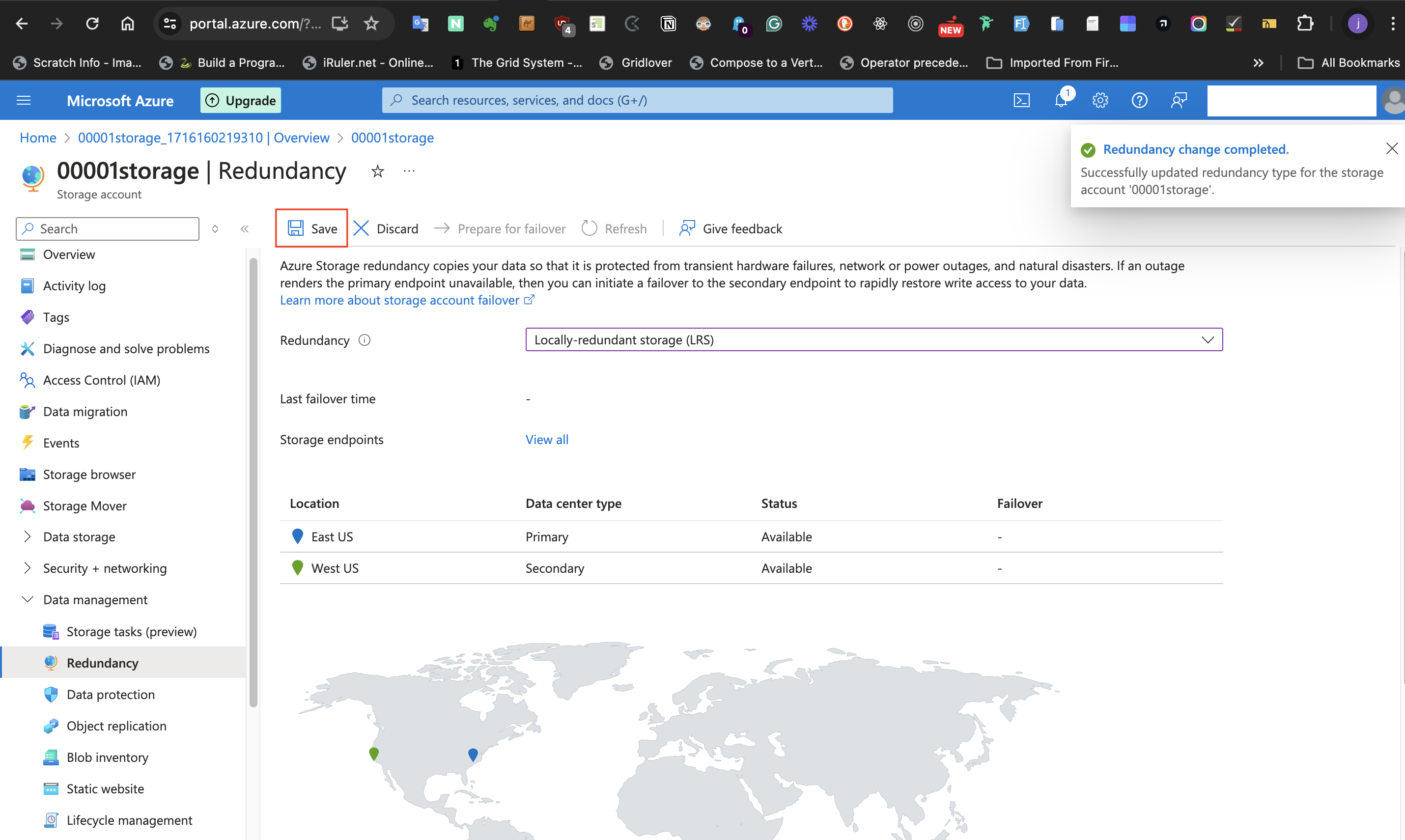

We want to configure this storage account with the most basic settings. We don't have to worry if data isn't highly available. To do this, select **Data Management** at the left-hand side of the page and the **Redundancy** blade.

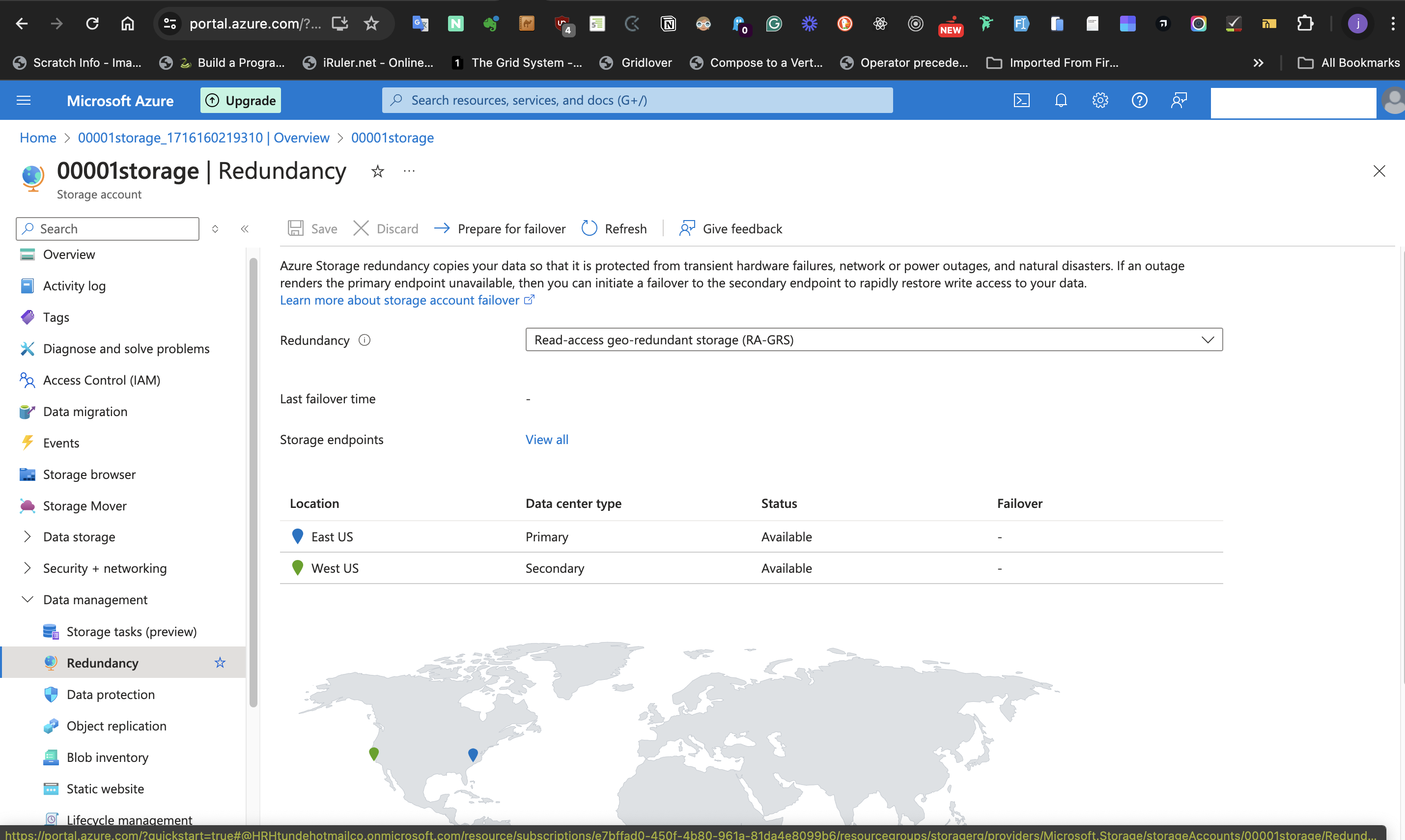

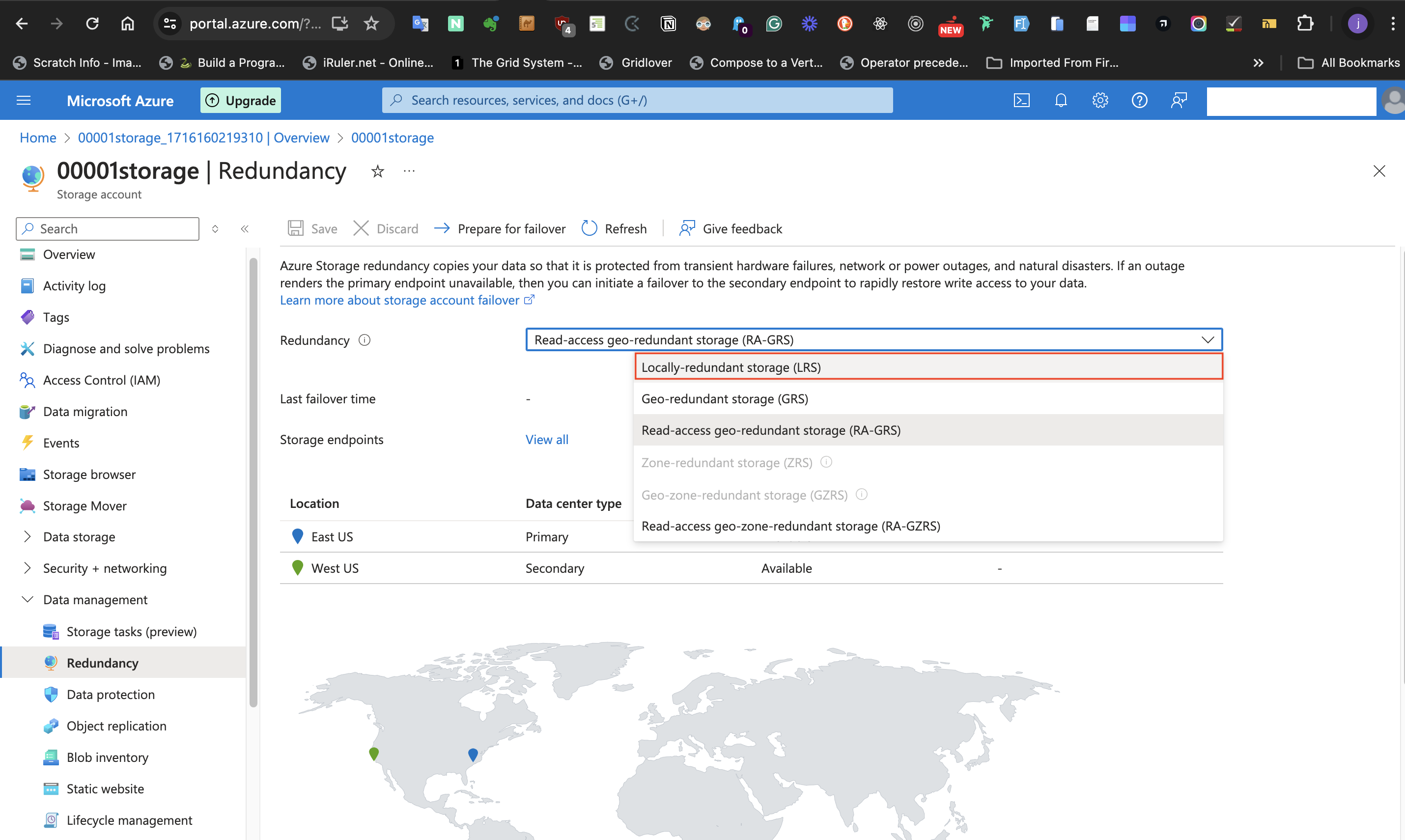

Select the **Locally redundant Storage** (LRS) option from the **Redundancy** label. The reason for this is that "the data isn’t important enough to back up and doesn’t need to be restored if the data is overwritten or removed". As a result, High availability isn't required. This means the redundancy can take the least available option which is **Locally redundant Storage**.

Click **save** at the top of the page.

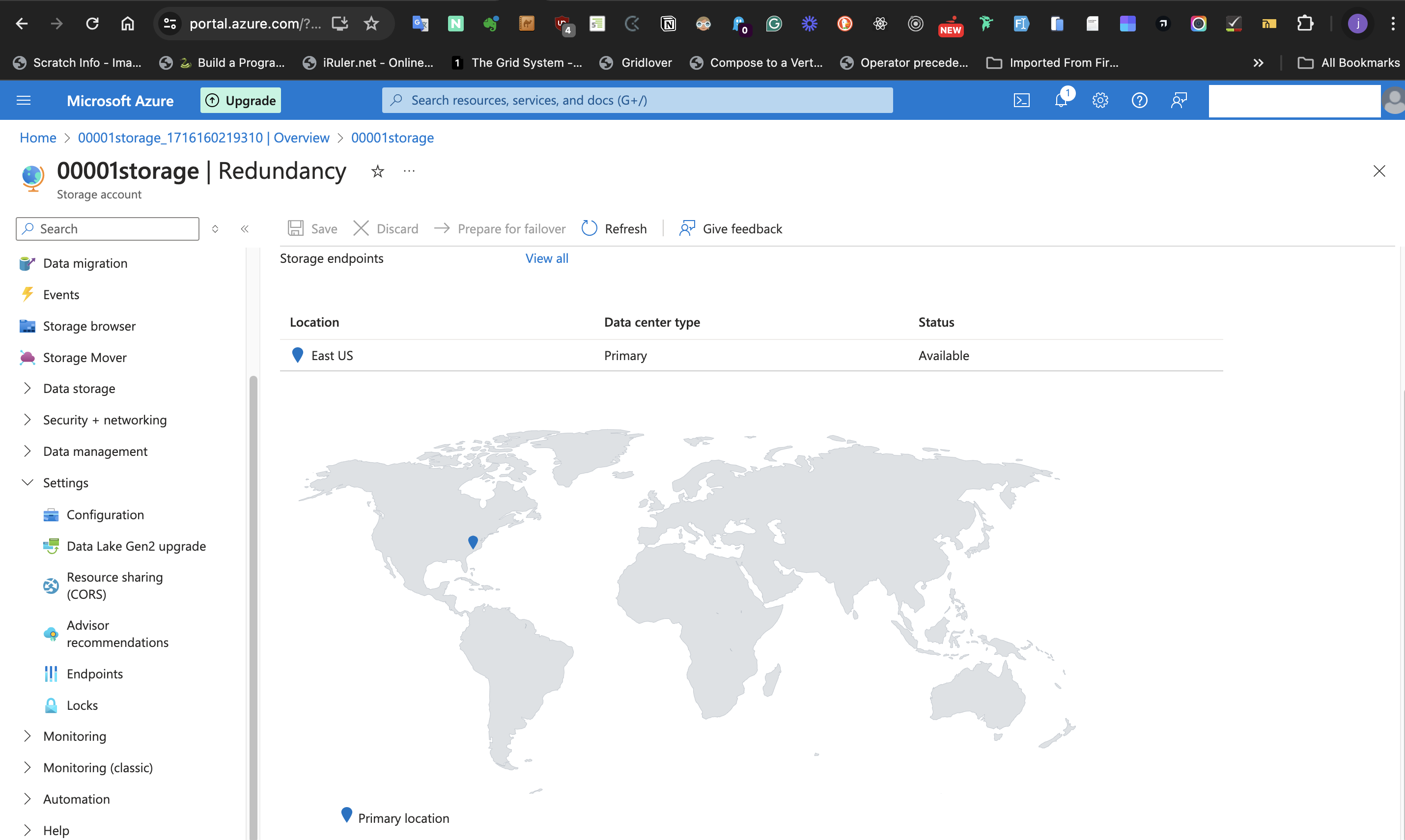

Previously, 2 locations may have shown up on the map displayed on this page. However, since we selected the **Locally redundant Storage** (LRS) option from the **Redundancy** label, only one location will be displayed on the map. Refresh the page if this isn't displaying.

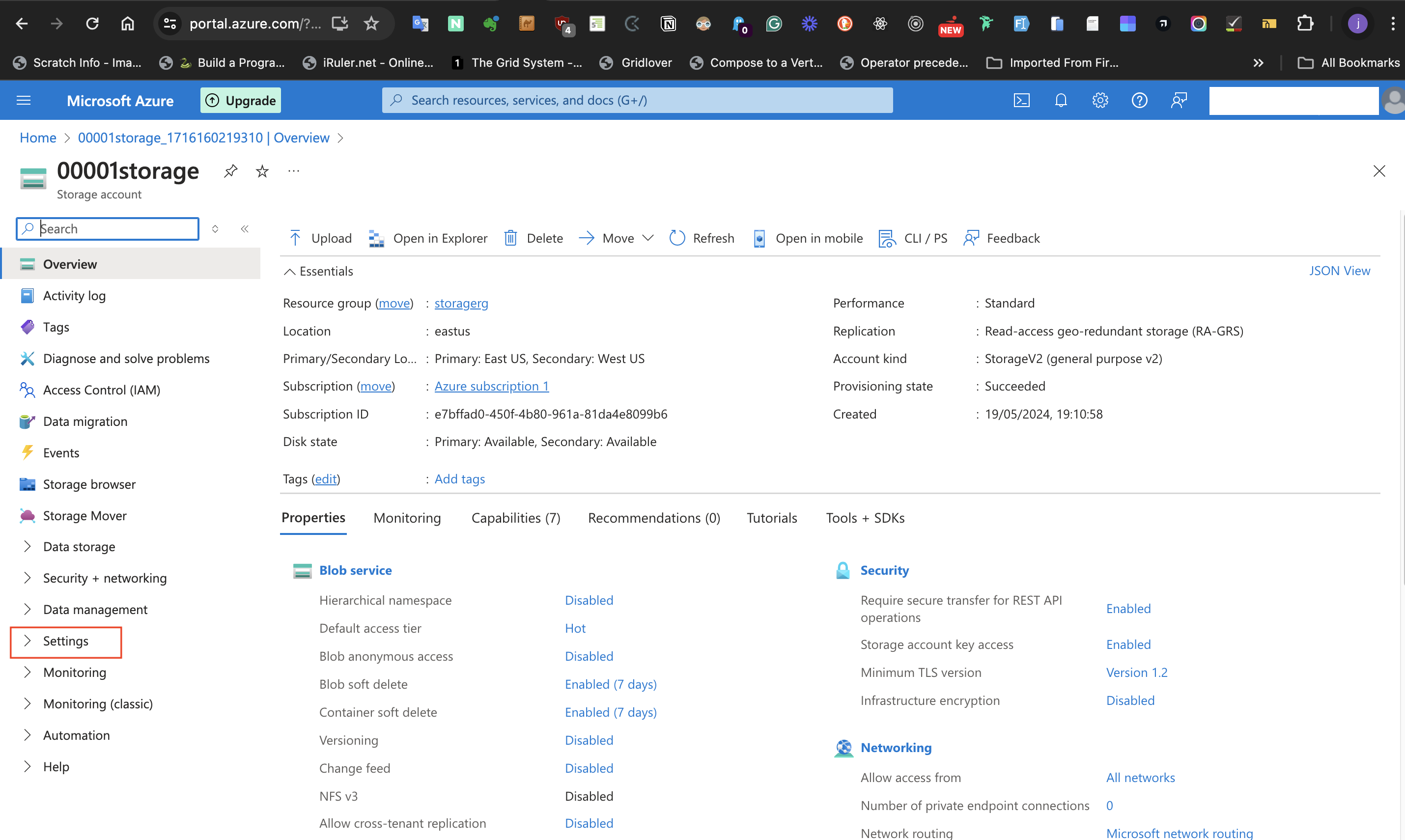

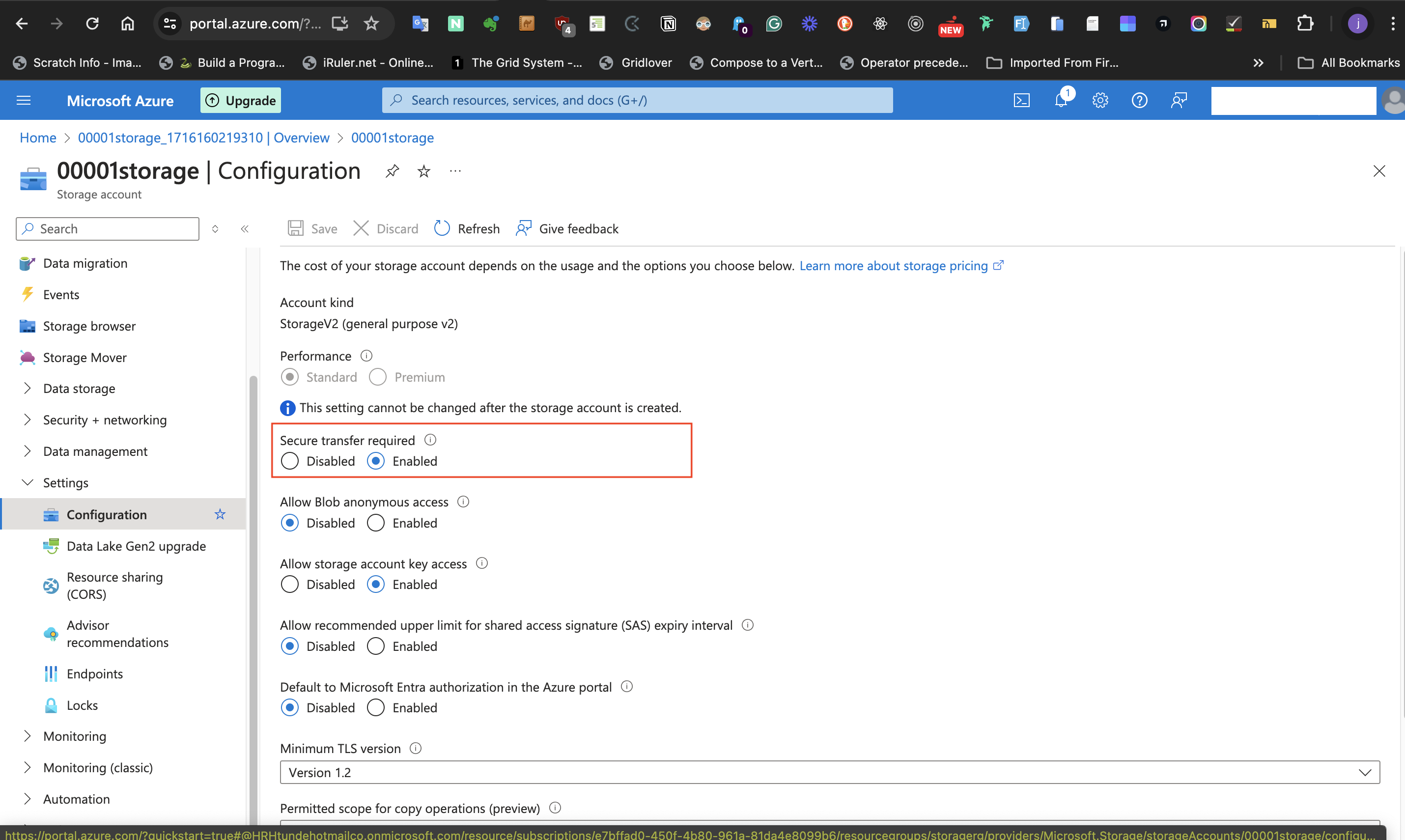

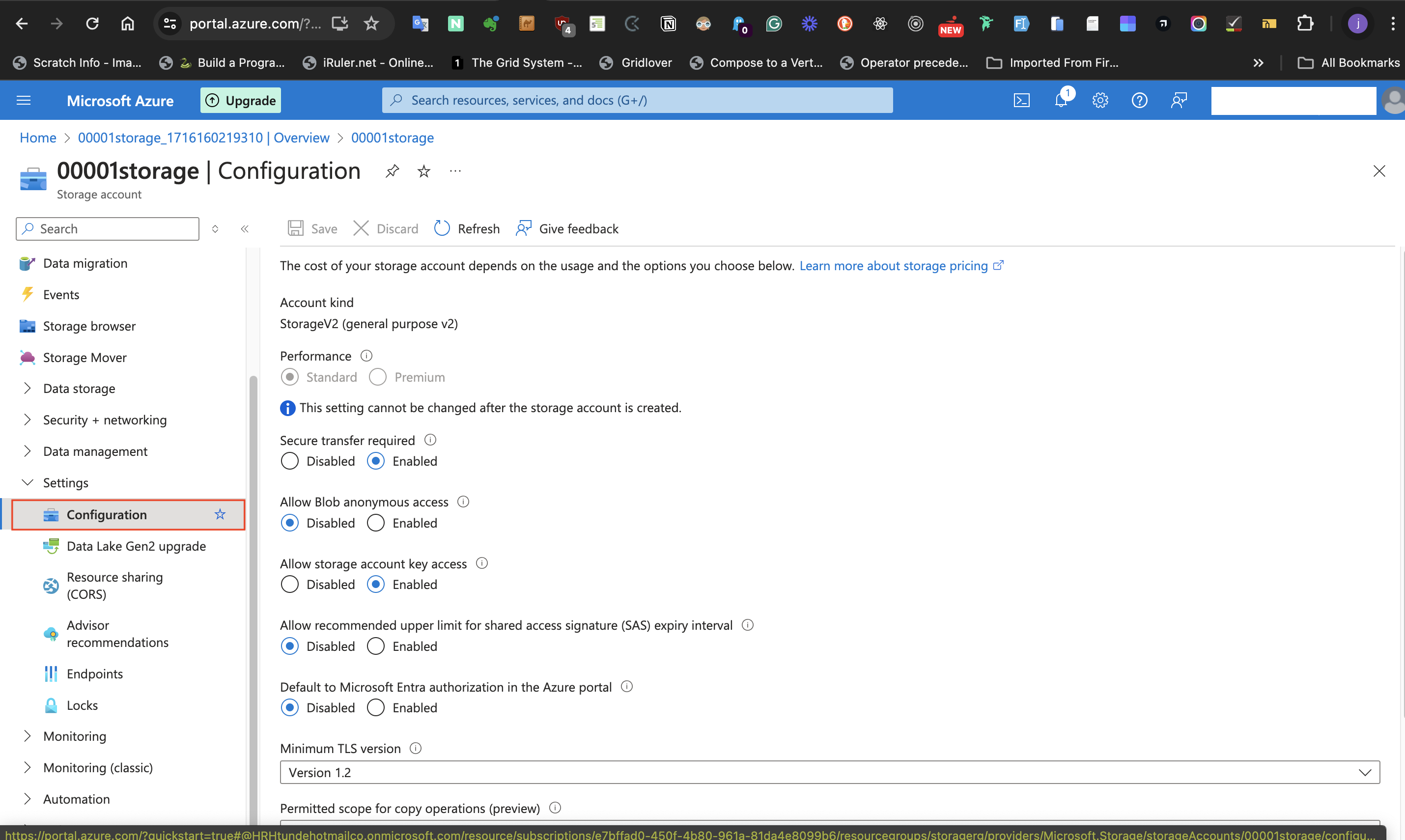

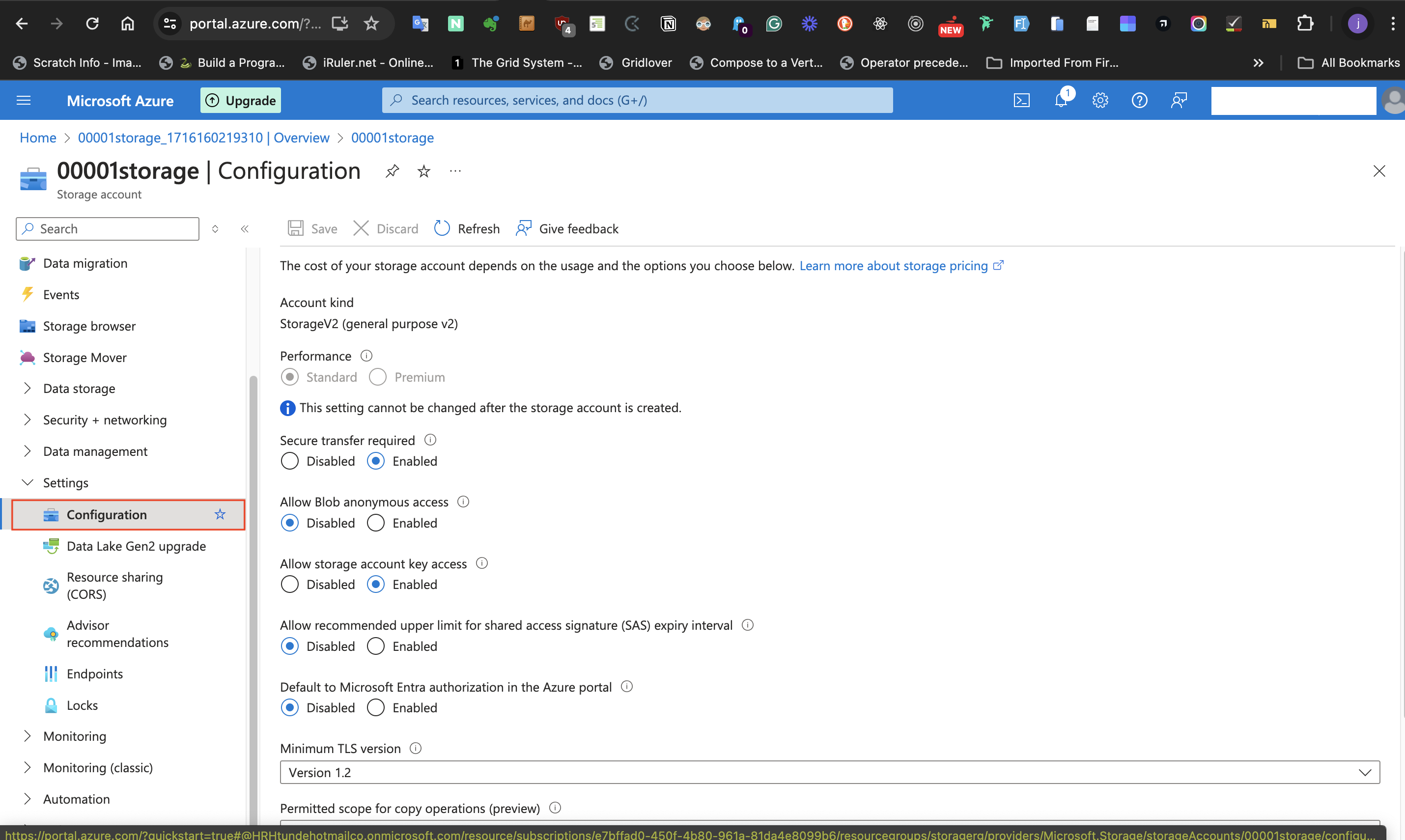

####II: Storage account should only accept requests from secure connections.

The next configuration to set is to make the Storage account only accept requests from secure connections. To do this, follow these steps:

- Locate **settings** at the left-hand side of the page, and select

the **Configuration** blade.

- At the page displayed, confirm that **Secure Transfer required** is **Enabled**

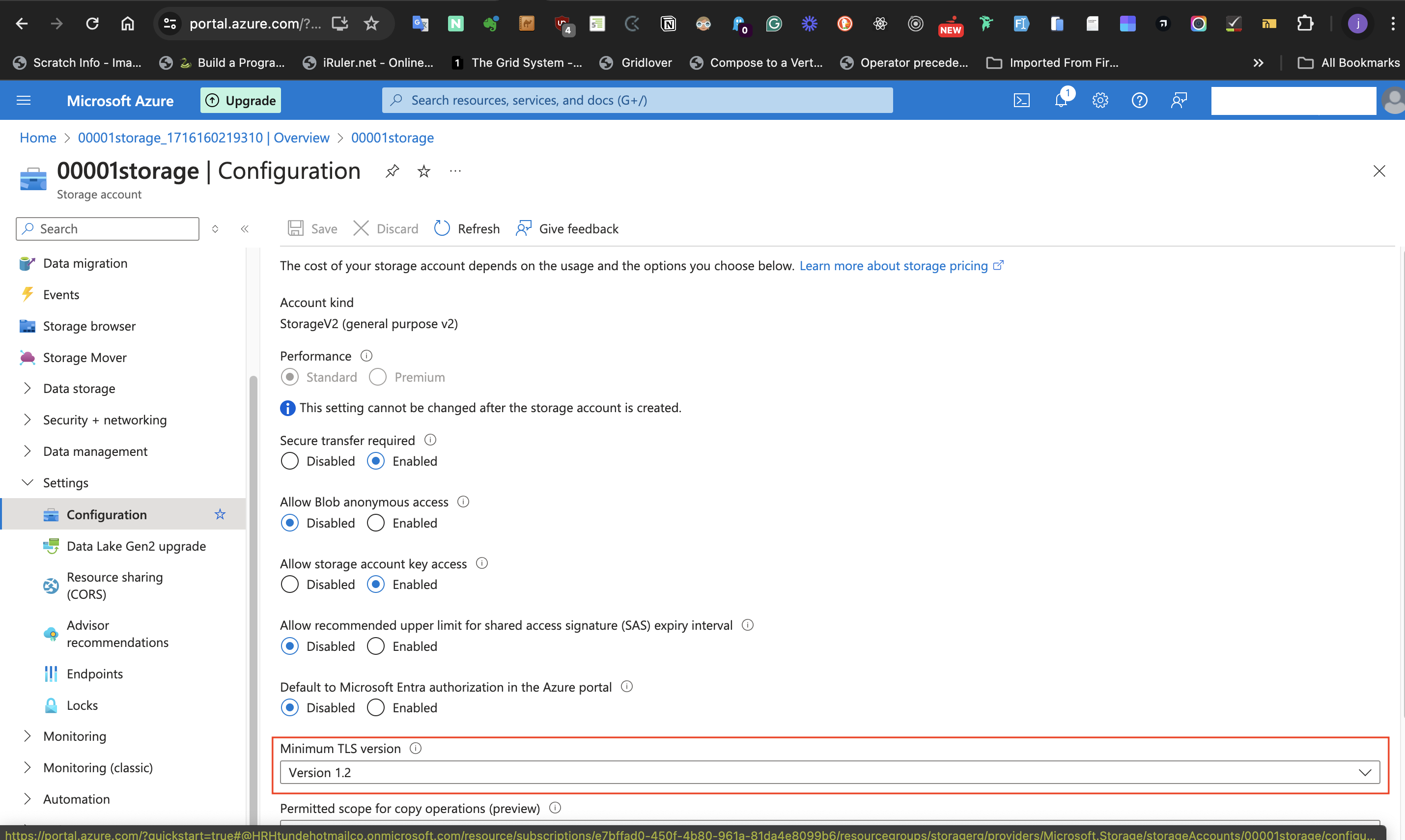

####III: Storage account should use a TLS version.

For the Storage account to use a TLS version,

- go to **settings**

- select **configuration** blade,

-Then, set **Minimal TLS Version** to **version 1.2**

####IV: Request to the storage account is disabled until needed

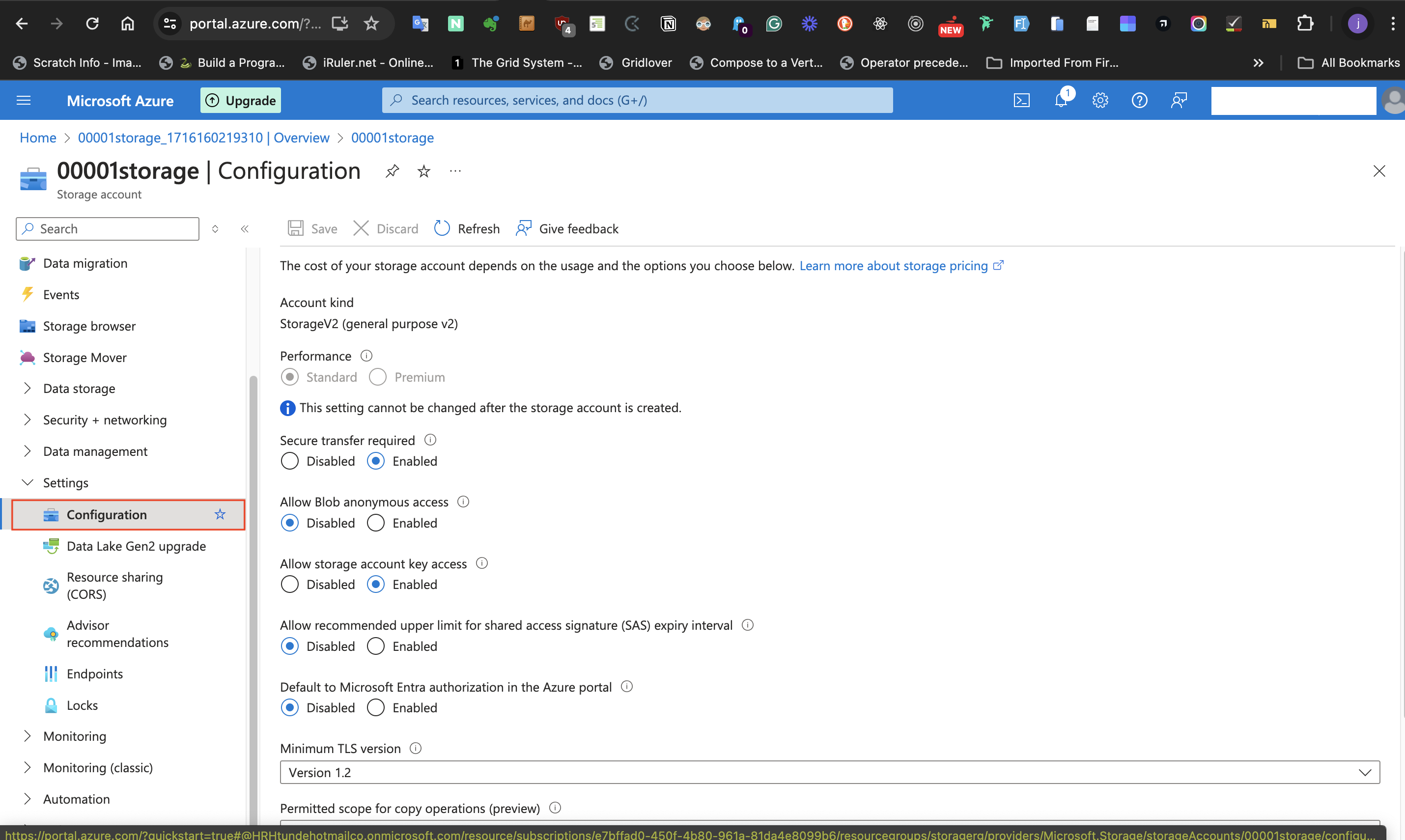

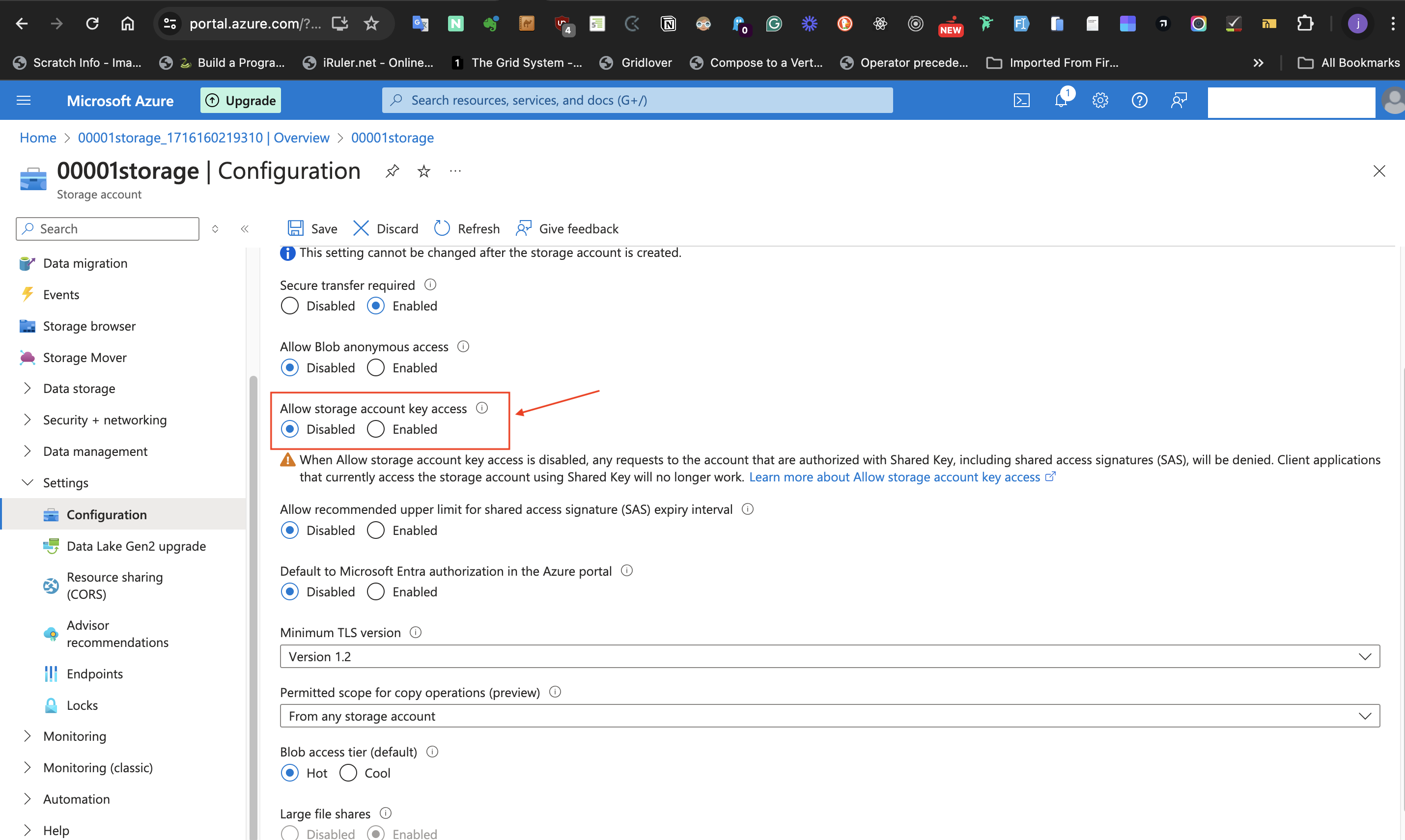

For disabling requests to the storage account until required,

- Select **Settings**, then

- Select the **Configuration** blade.

- In the **Allow Storage Account key access** label, select the **Disabled** radio button.

- Select the **Save** icon at the top level of the page.

####V: Allow Storage Account to permit public access from all Networks.

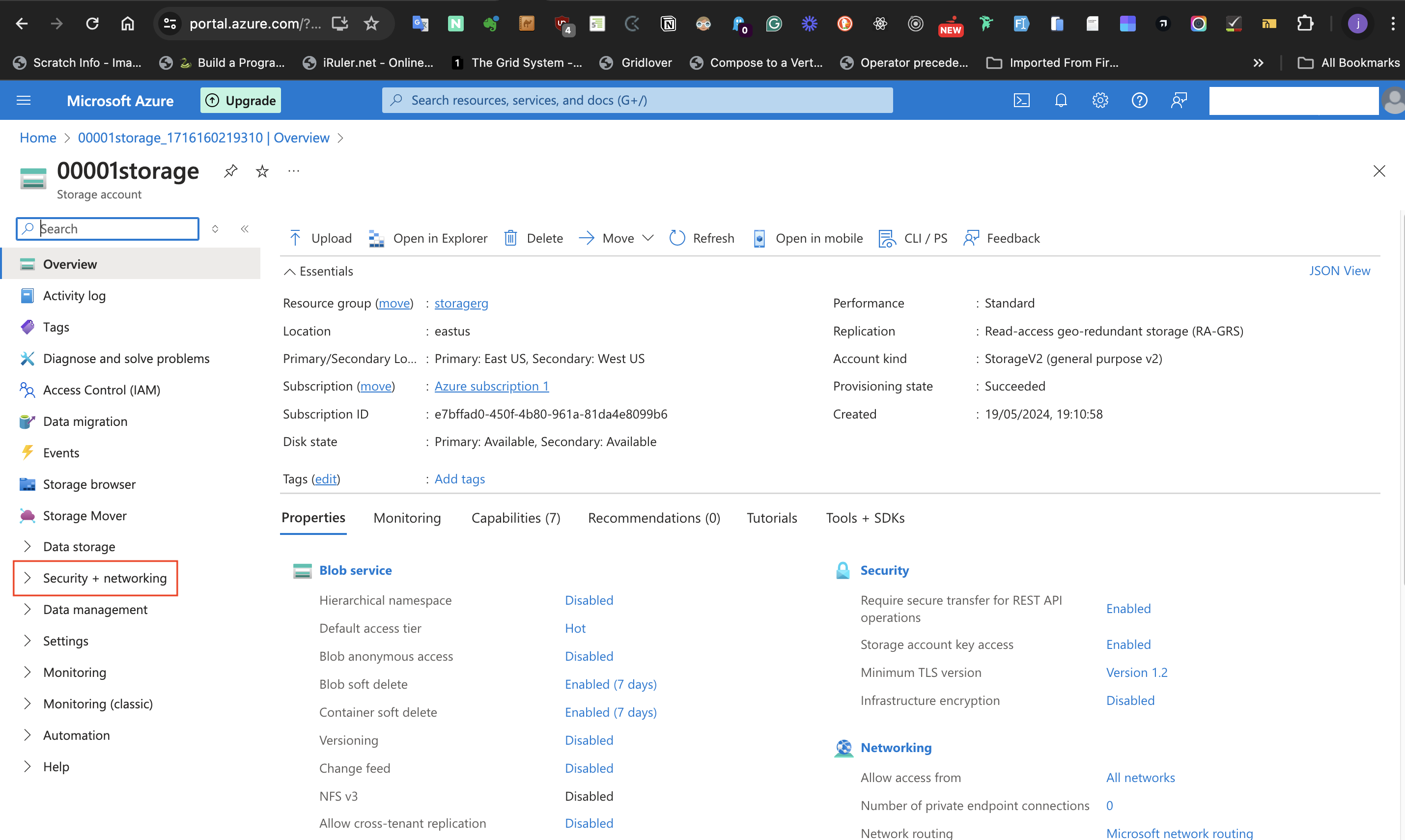

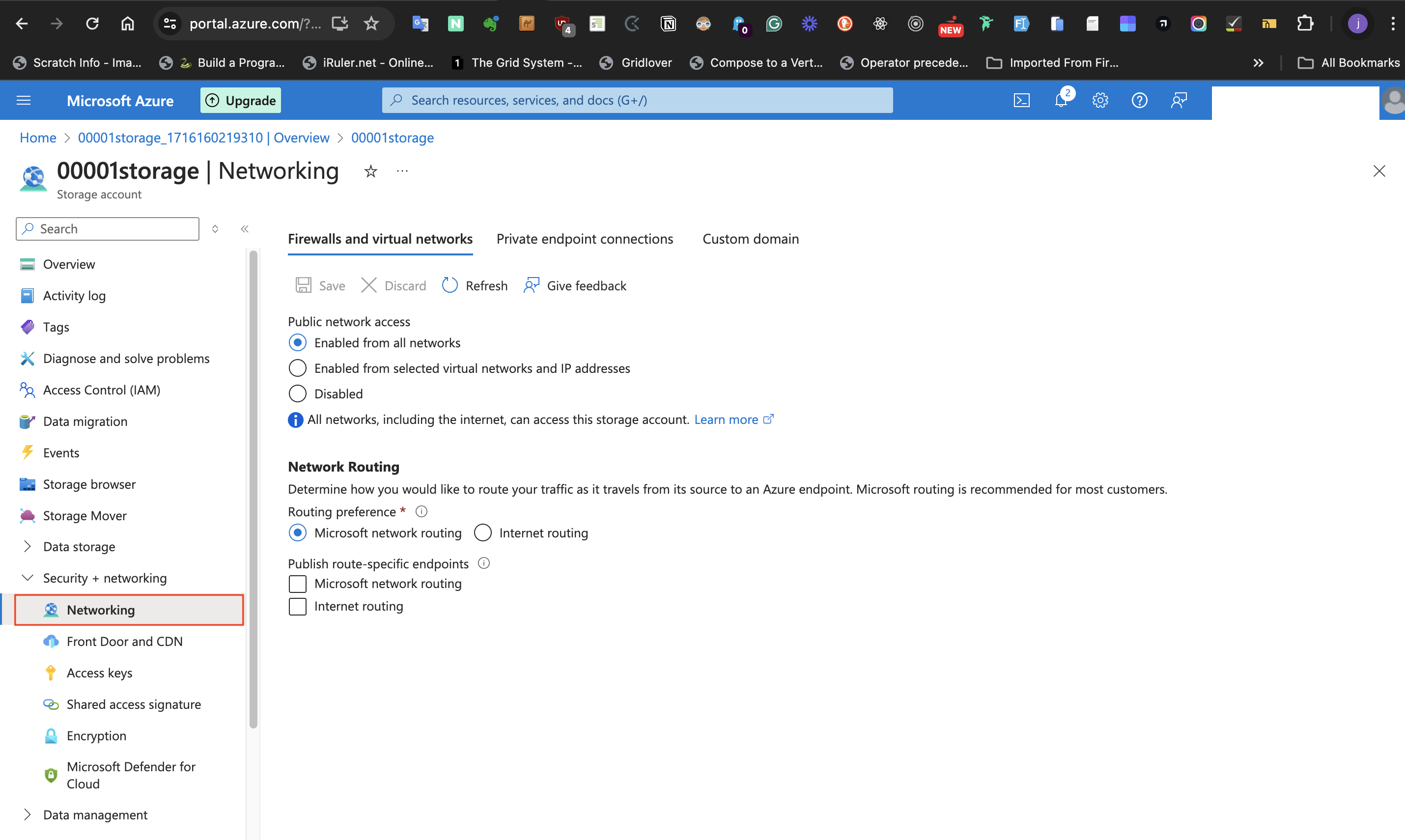

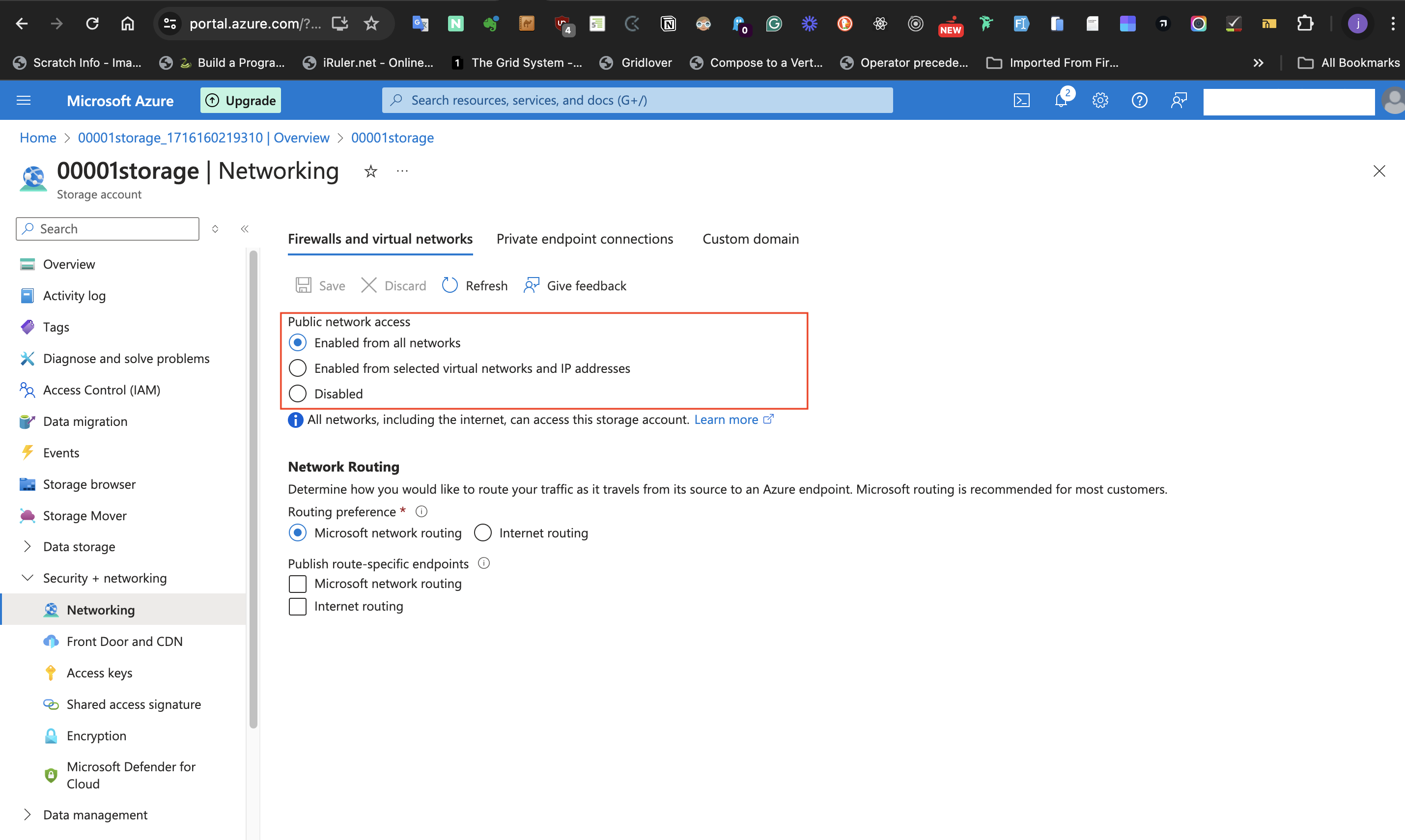

For the last configuration in this task which is to permit public access from all Networks, follow the steps listed below:

- At the left-hand side of the storage account page, select **Security + networking**

- Select the **Networking** blade

- set **Public network access** to **Enabled from all network**

- Click the **Save** icon of the page to save the changes.

#Conclusion

To avoid unnecessary charges, delete your resource group if you will not be using it. You can do this from the Azure portal by navigating to your Resource group and clicking the "delete resource group" button.

In this post, we successfully created a Resource group which is a container for our storage account. We adjusted some basic settings for the storage account and saved these settings. Remember to monitor your usage to stay within the free tier limits and delete resources when they are no longer needed. Stay tuned for more posts on cloud development with Azure!

Cover Image by <a href="https://unsplash.com/@markusspiske?utm_content=creditCopyText&utm_medium=referral&utm_source=unsplash">Markus Spiske</a> on <a href="https://unsplash.com/photos/black-and-gray-laptop-computer-turned-on-FXFz-sW0uwo?utm_content=creditCopyText&utm_medium=referral&utm_source=unsplash">Unsplash</a>

| tundeiness |

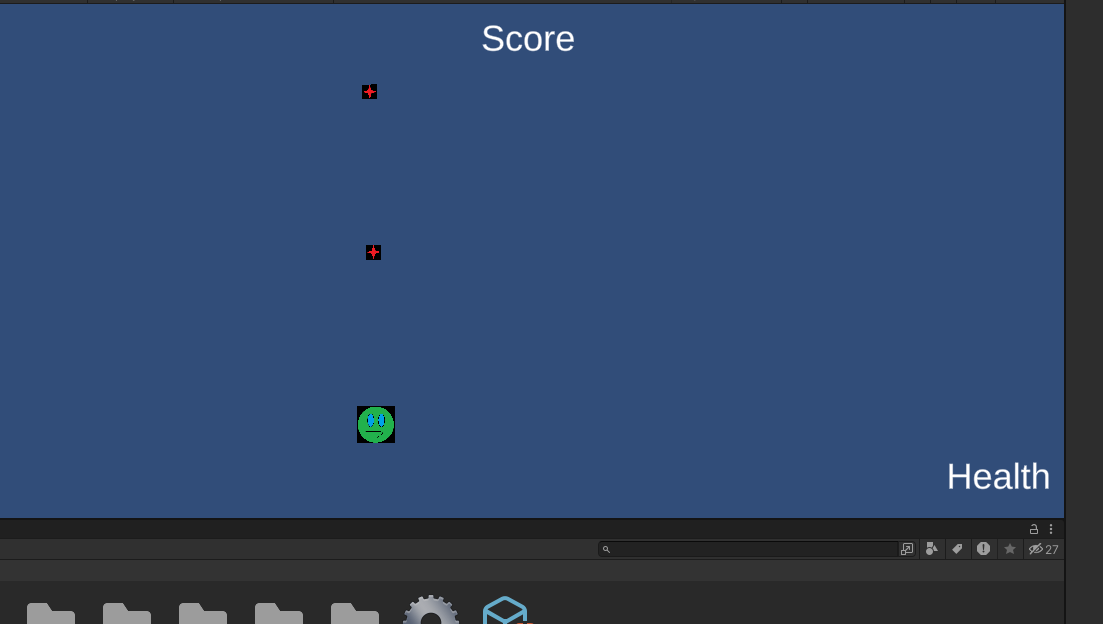

1,865,719 | Top-Down Shooter Update: Day 2 (Done: Health & Score) | Instead of first adding enemies, I added health and score .. honestly, I'm a bit intimidated by... | 0 | 2024-05-26T15:38:16 | https://dev.to/quantumbyte-studios/top-down-shooter-update-day-2-done-health-score-5cmc | Instead of first adding enemies, I added health and score .. honestly, I'm a bit intimidated by adding enemies. This is probably because I've seen videos of other game developers creating such amazing enemy AI and it seems like adding enemies is so much more complex than adding a player character or other features.. I thought it'd be easier.

However without an enemy or some other mechanic, health and score kind of don't mean anything lol. The score and health text are just ordinary plain text:

The simplest way I think to do an enemy AI is to make a vector between the enemy position and player character position. And have the enemy move along that vector. The calculation of the angle of that vector would change every frame, so it will look like the enemy is chasing the user. Sometimes, knowledge is the best way to defeat the enemies of life.. perhaps because the real enemy of life is the unknown

Future Goals

-Create Enemy

-Spawn enemies

-Make star-throwing more directional (according to angle between mouse and character)

-Better Player Movement (like rolling to escape enemies)

-Melee Weapon Attack (sword or shotgun)

-More variety in 'Bullets' (cycle through list of different types)

-Find new assets to replace bullets and main character

| quantumbyte-studios | |

1,858,460 | How to deploy a Windows Server (with IIS web server role) in Azure Portal on MacOS | setting up a Windows server in Azure | 0 | 2024-05-26T15:38:02 | https://dev.to/tundeiness/how-to-deploy-a-windows-server-in-azure-portal-on-macos-4gjc | winndowsserver, azure | ---

title: How to deploy a Windows Server (with IIS web server role) in Azure Portal on MacOS

published: true

description: setting up a Windows server in Azure

tags: winndowsserver, Azure

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/aqjwdais8yq4mtohidyj.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-05-19 18:06 +0000

---

#Introduction

This post is about deploying a Windows Server in Azure Portal on MacOS. Previously, I have written about deploying a Windows 11 OS in the Azure portal [here](https://dev.to/tundeiness/setting-up-a-windows-11-virtual-machine-with-azure-on-a-macos-88m). Before you start following this guide, get a Microsoft account and an Azure account. If you have followed this [post](https://dev.to/tundeiness/setting-up-a-windows-11-virtual-machine-with-azure-on-a-macos-88m), deploying a Windows Server in Azure Portal will be a walk in the park. Let's get to it!!

##Step 1

### Create a Virtual Machine.

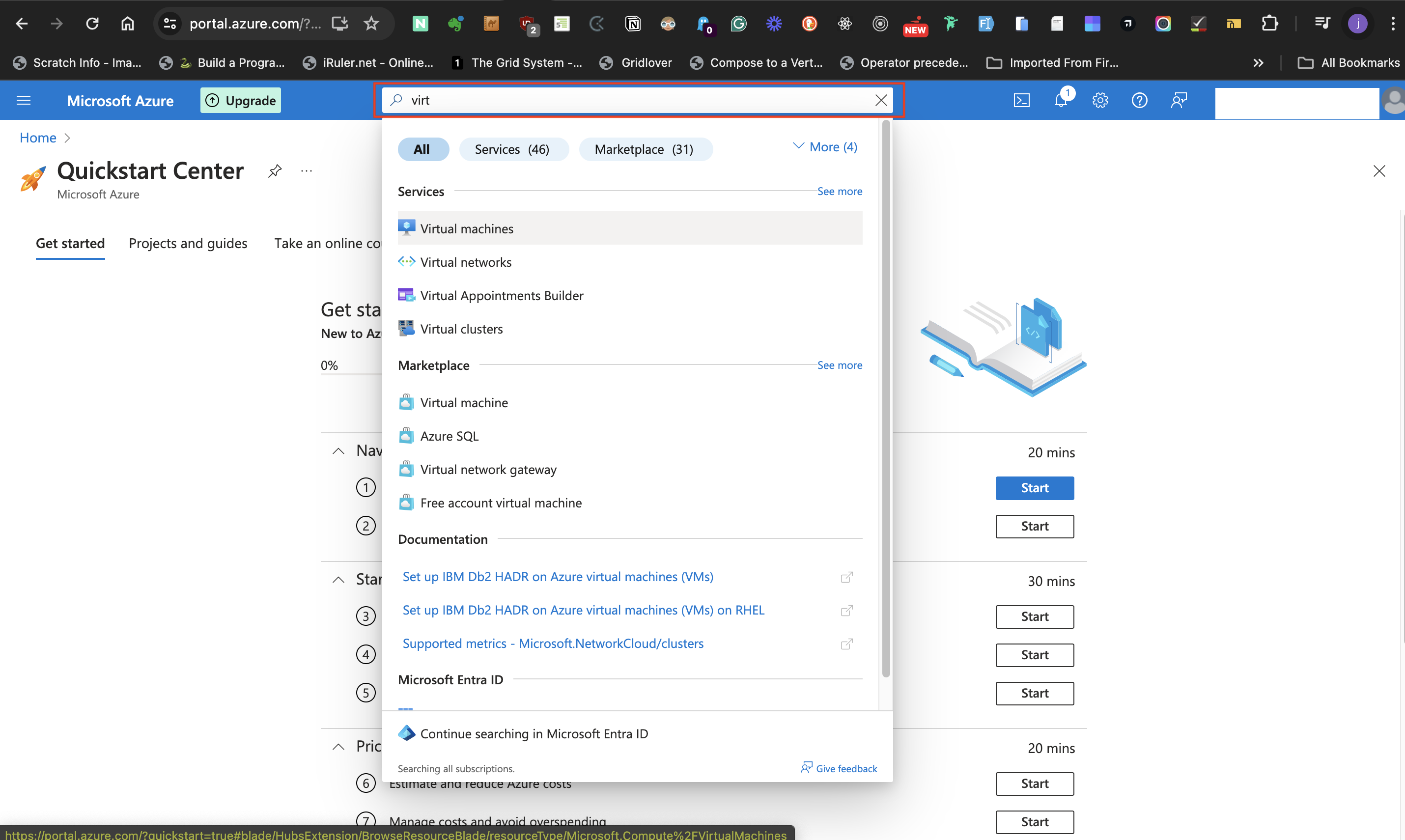

- Access the Azure portal As shown in the image below.

- Search for "virtual machines" in the search bar at the top level of the page. Select **virtual machines** from the list of results.

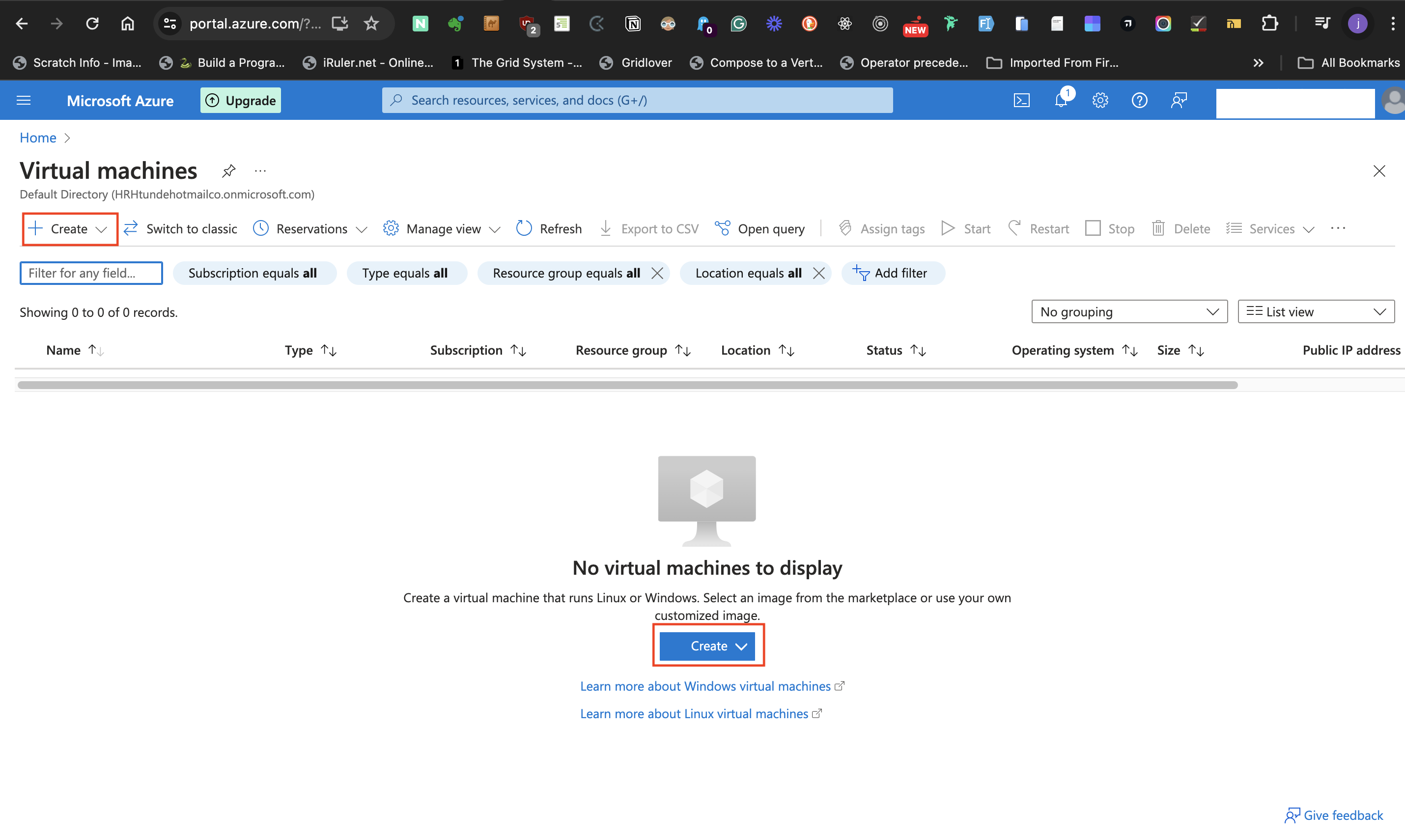

- Select either the **+ Create** option at the top left side of the page displayed, or click the **Create** button with a dropdown arrow at the bottom of the page.

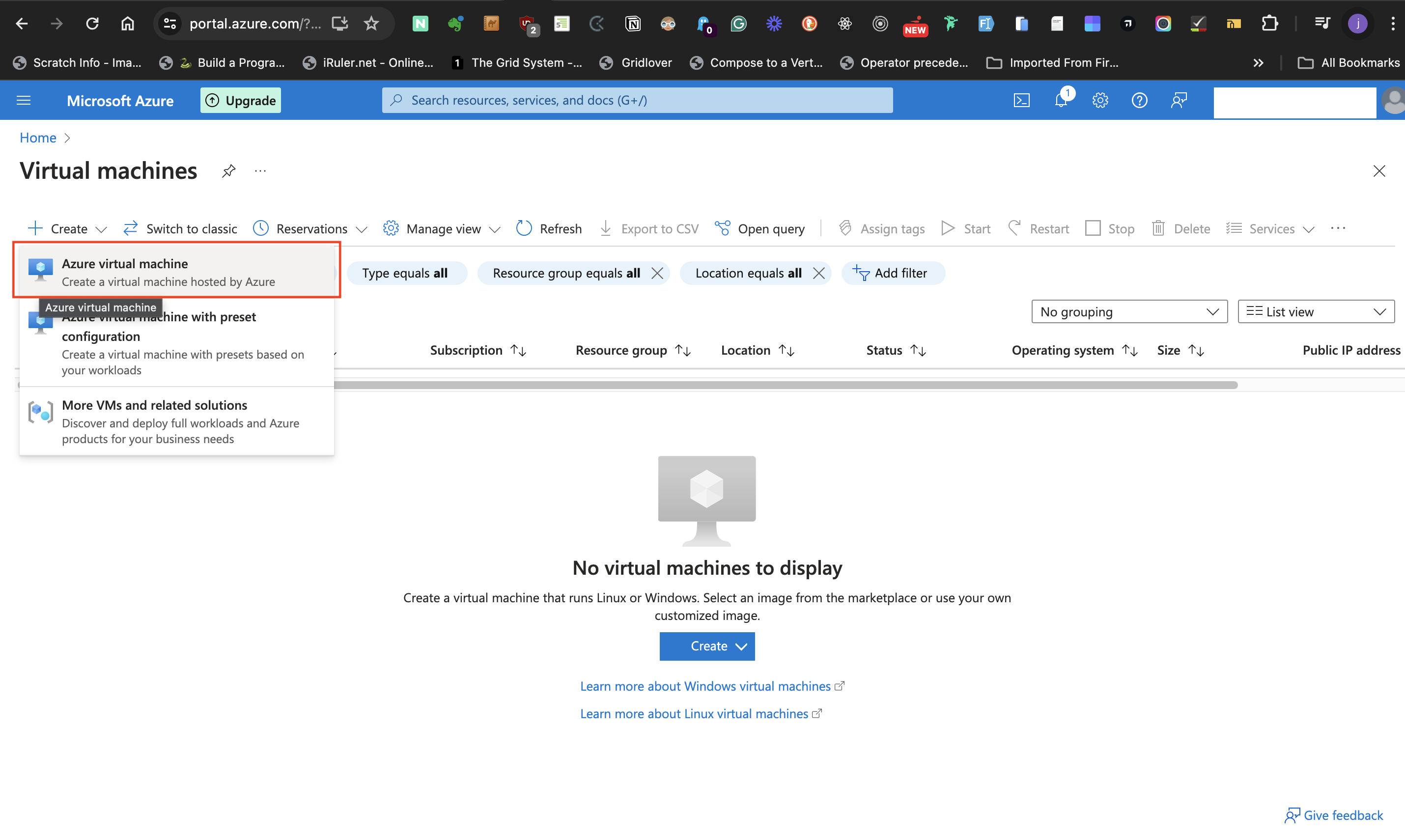

- Select **Azure virtual machine** from the list,

and then it will navigate to the "create a virtual machine" configuration page.

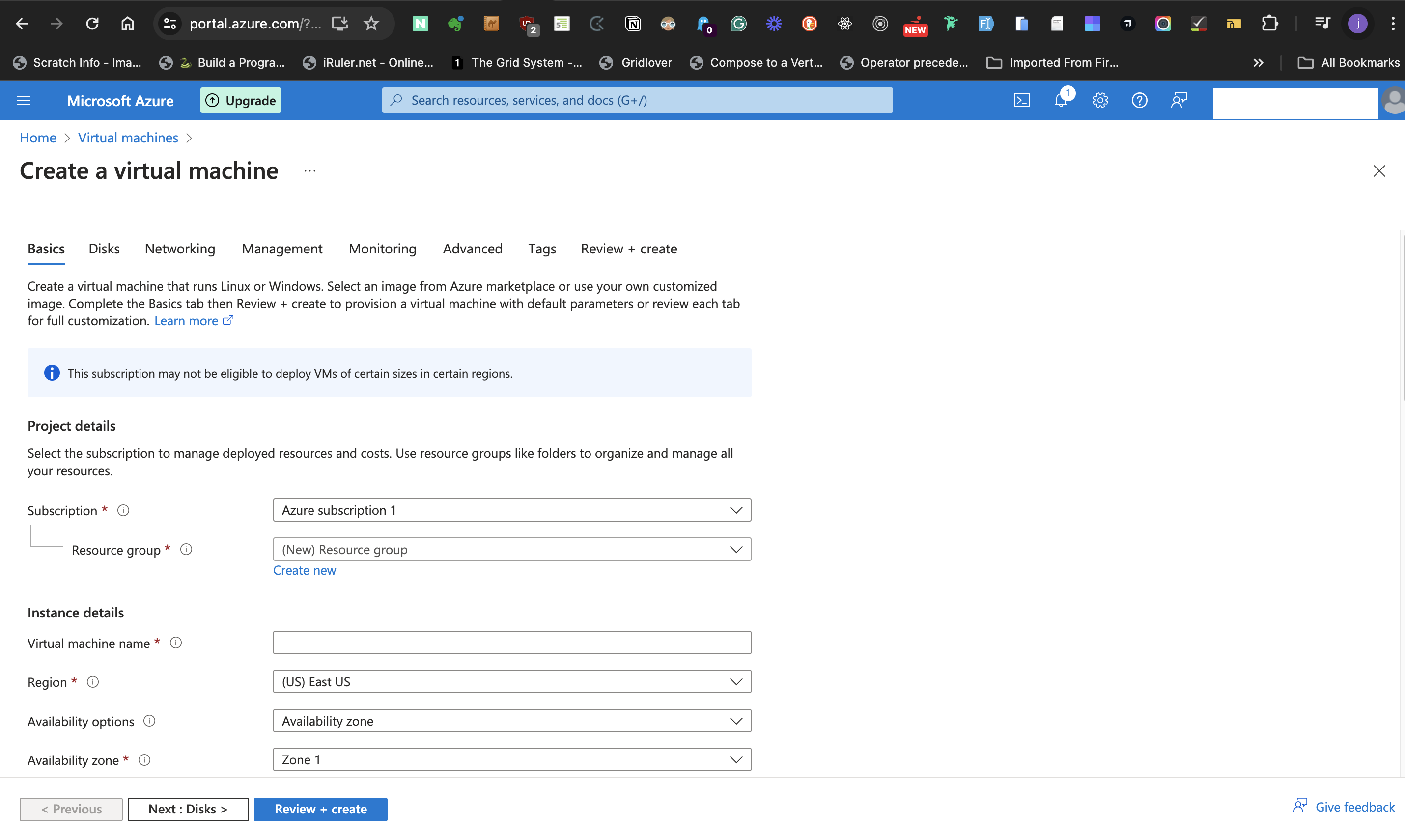

#### Basics Tab

Under the **Basics Tab** supply information for the Windows Server virtual machine as follows:

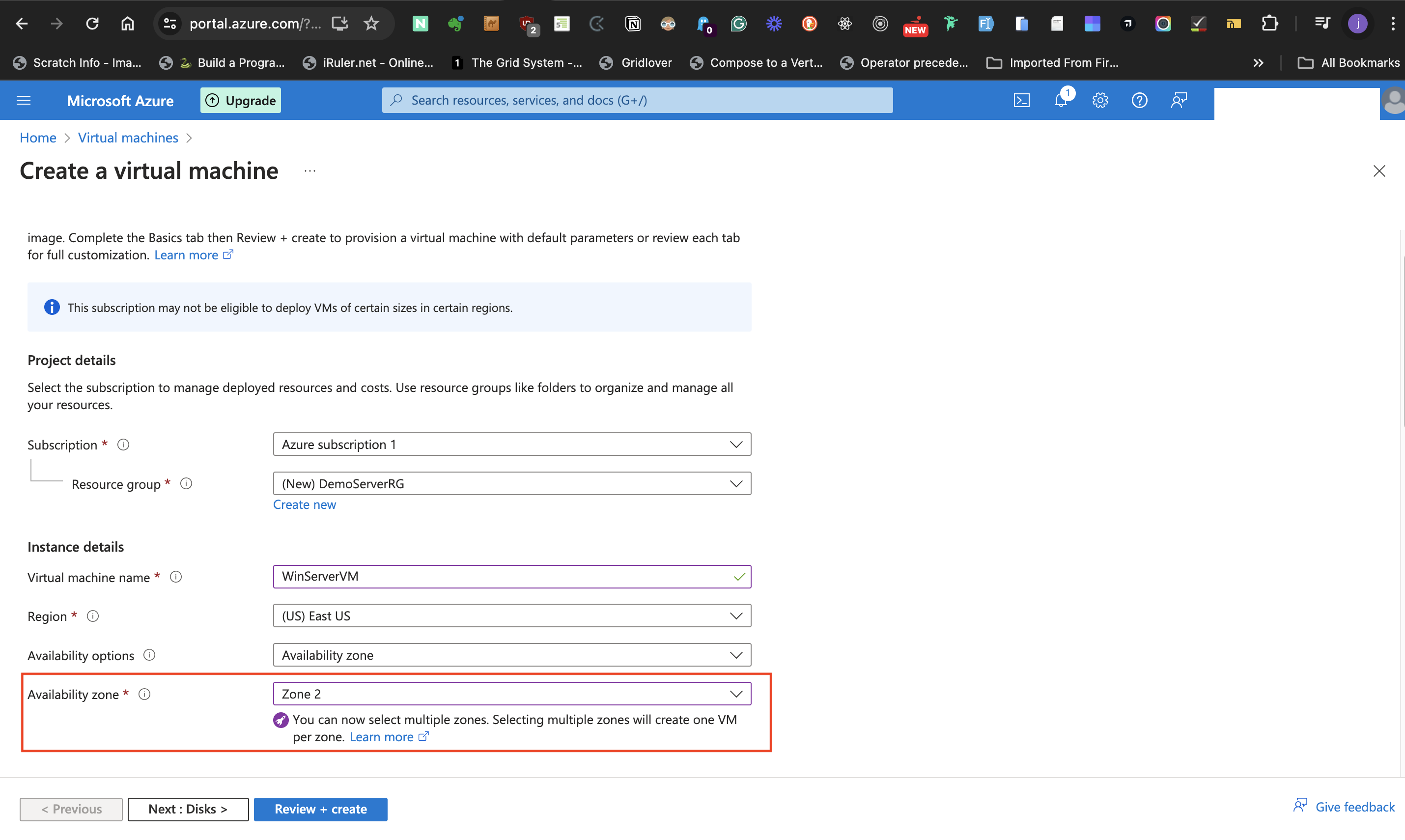

#####Project Details

- By now the **Subscription** label under **project details** should have a name, otherwise give **Subscription** a title.

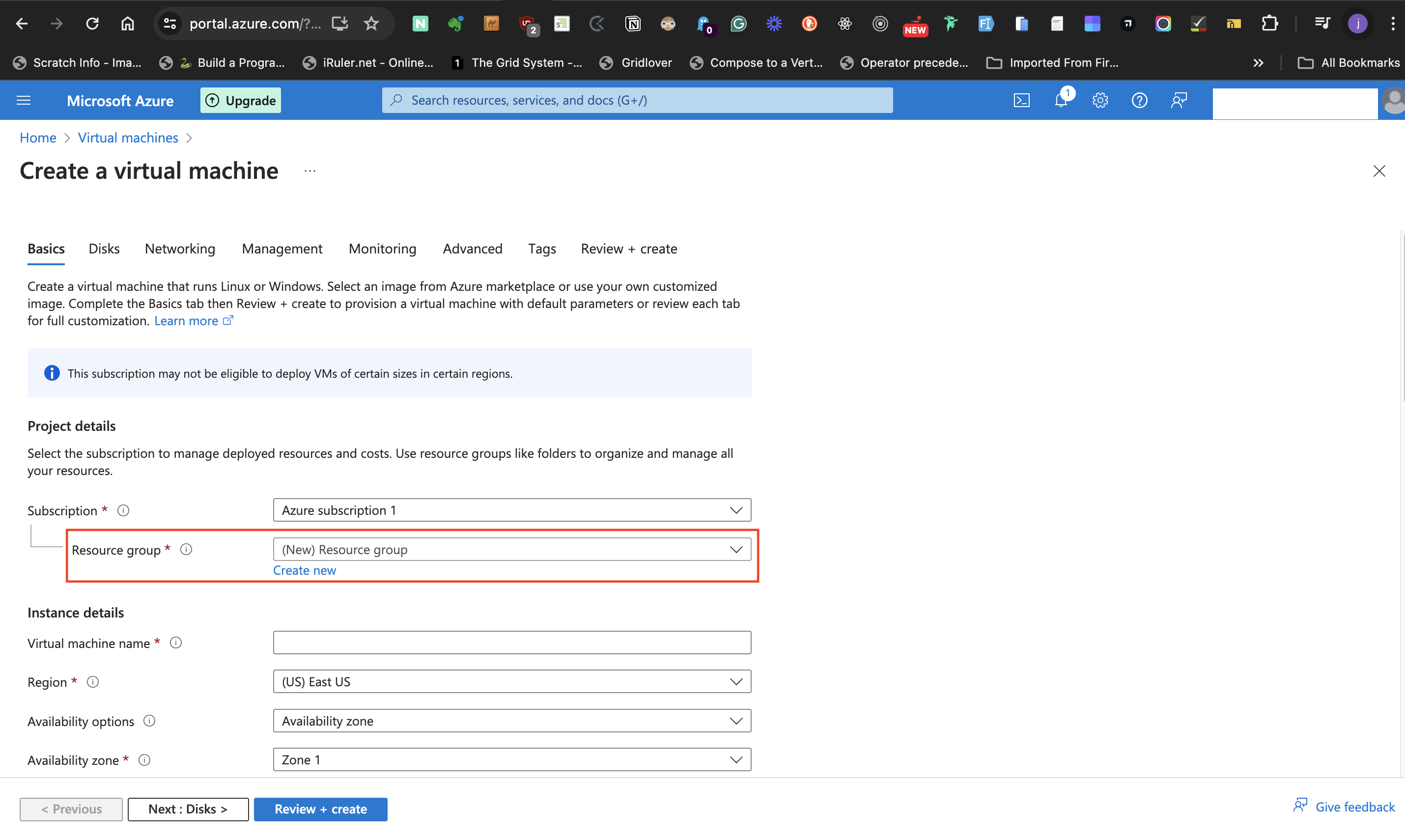

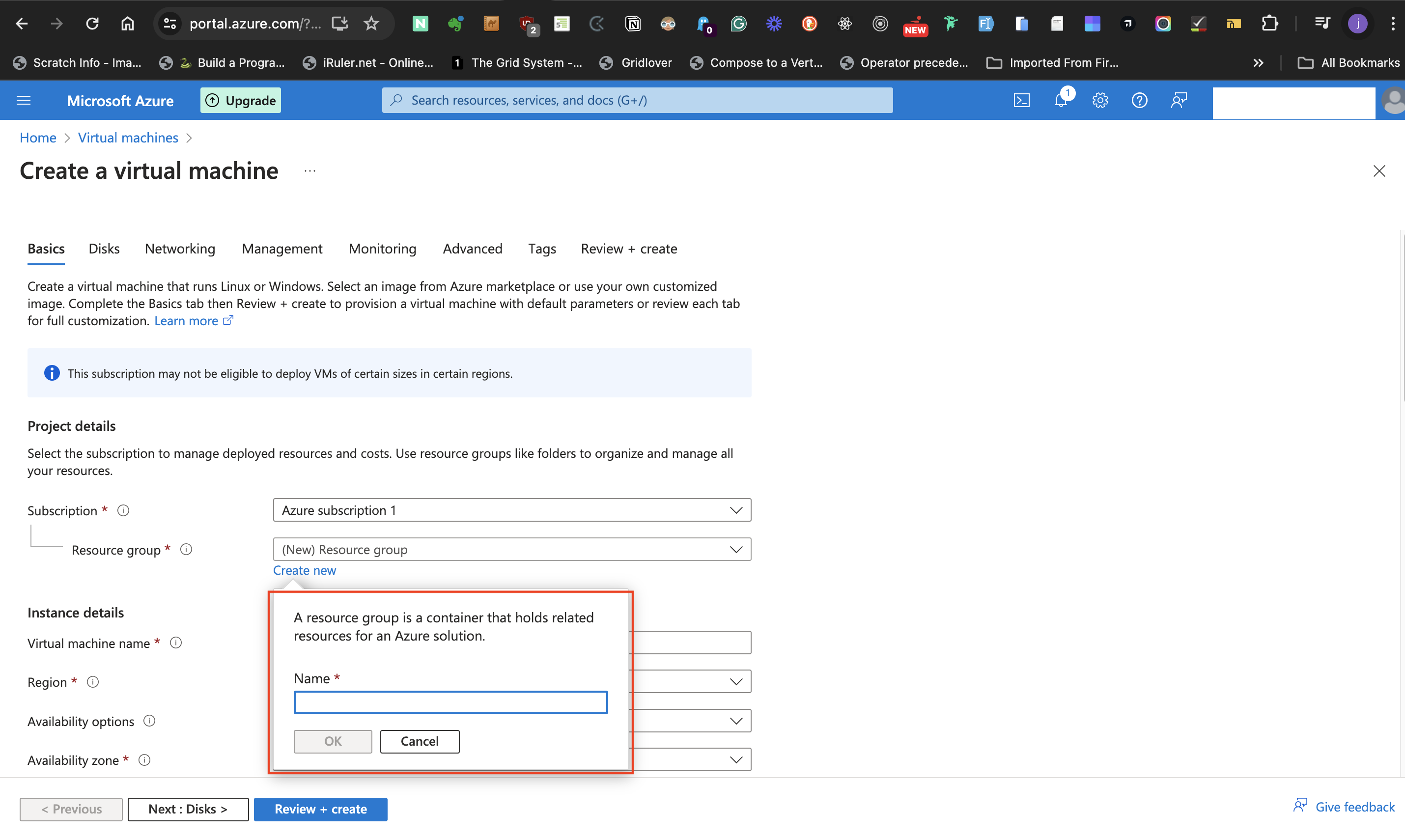

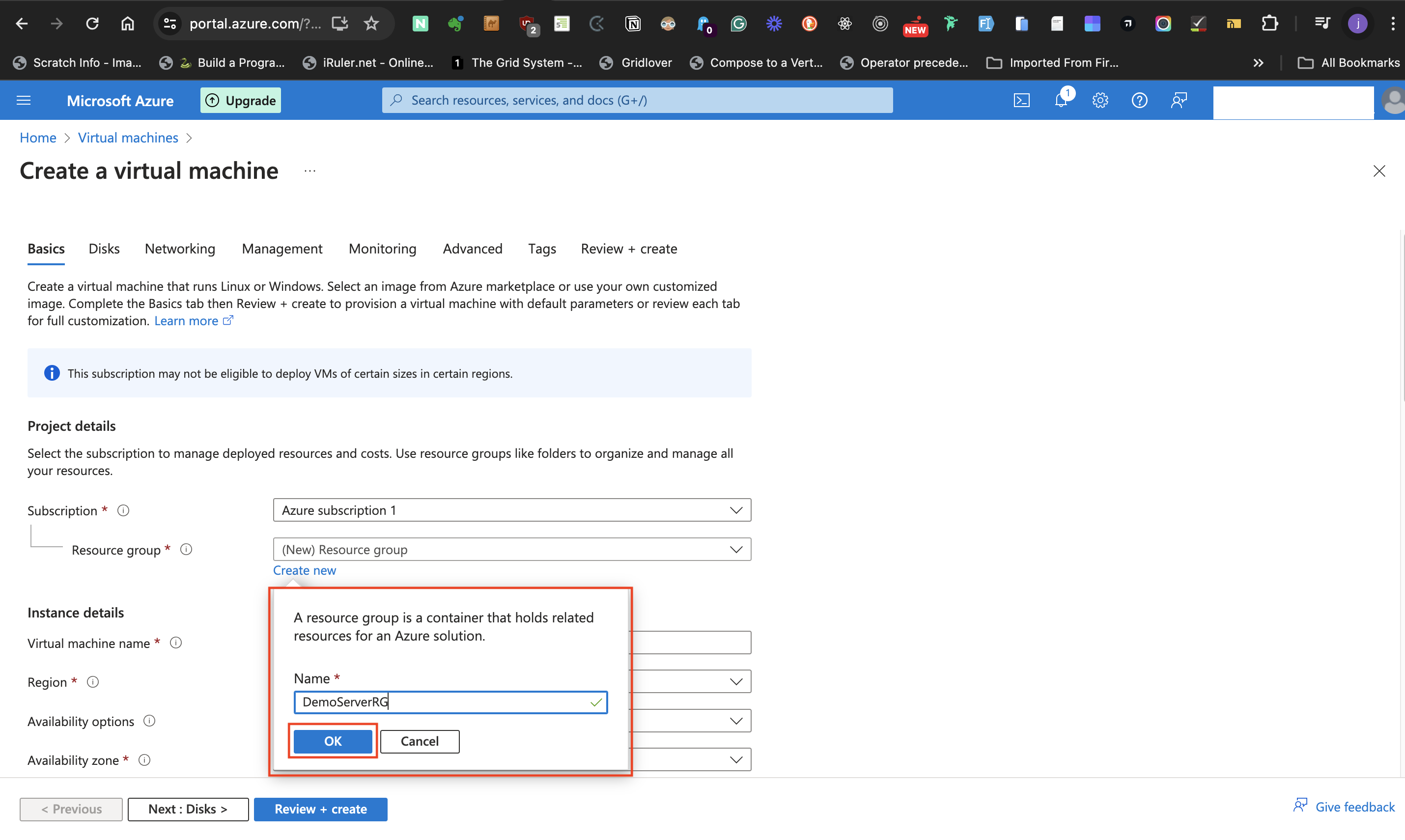

- At the **Resource group label** select **Create new** to either create a new resource group or use an existing group from the list of resource group earlier created.

- Click the "ok" button to accept the given name.

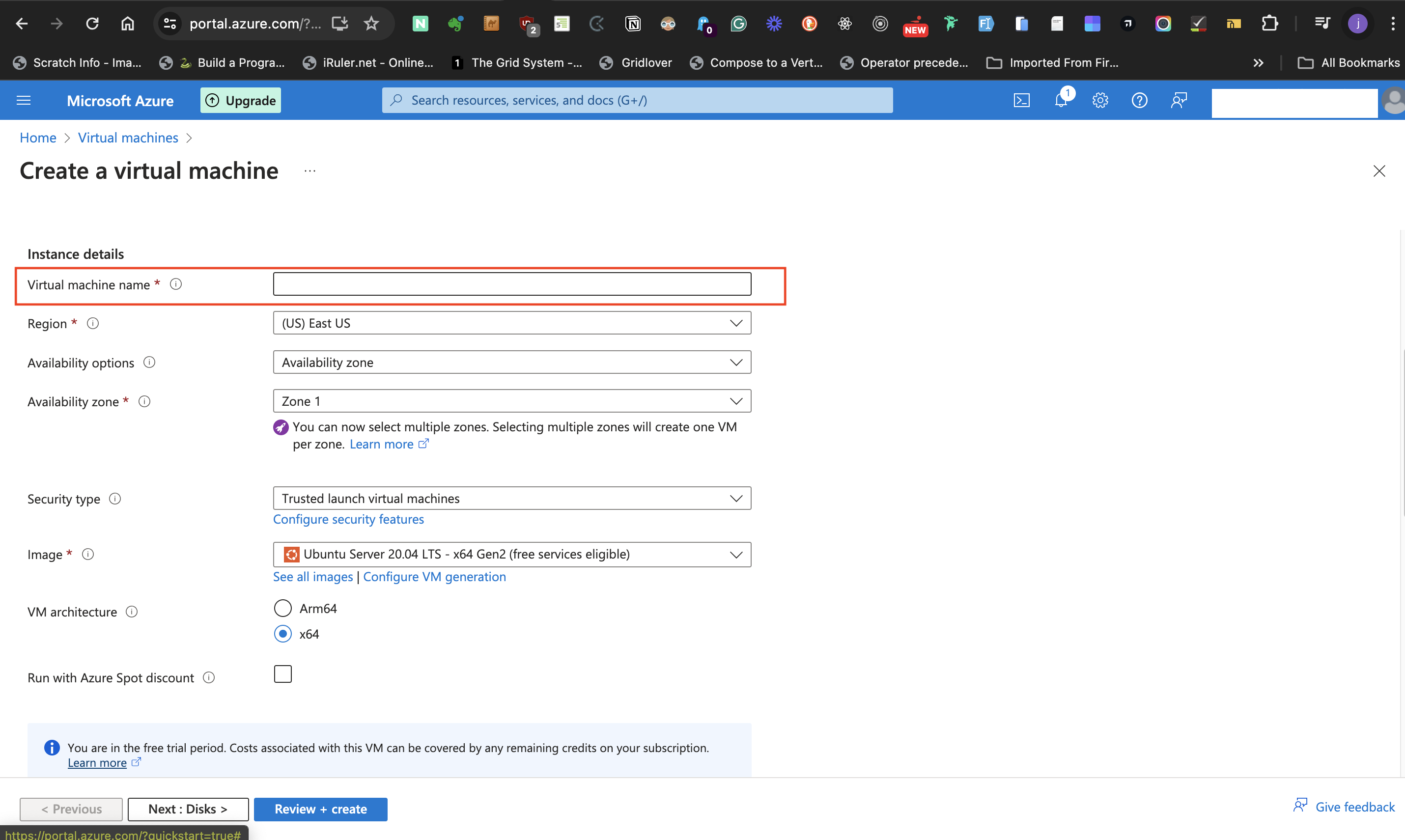

#####Instance Details

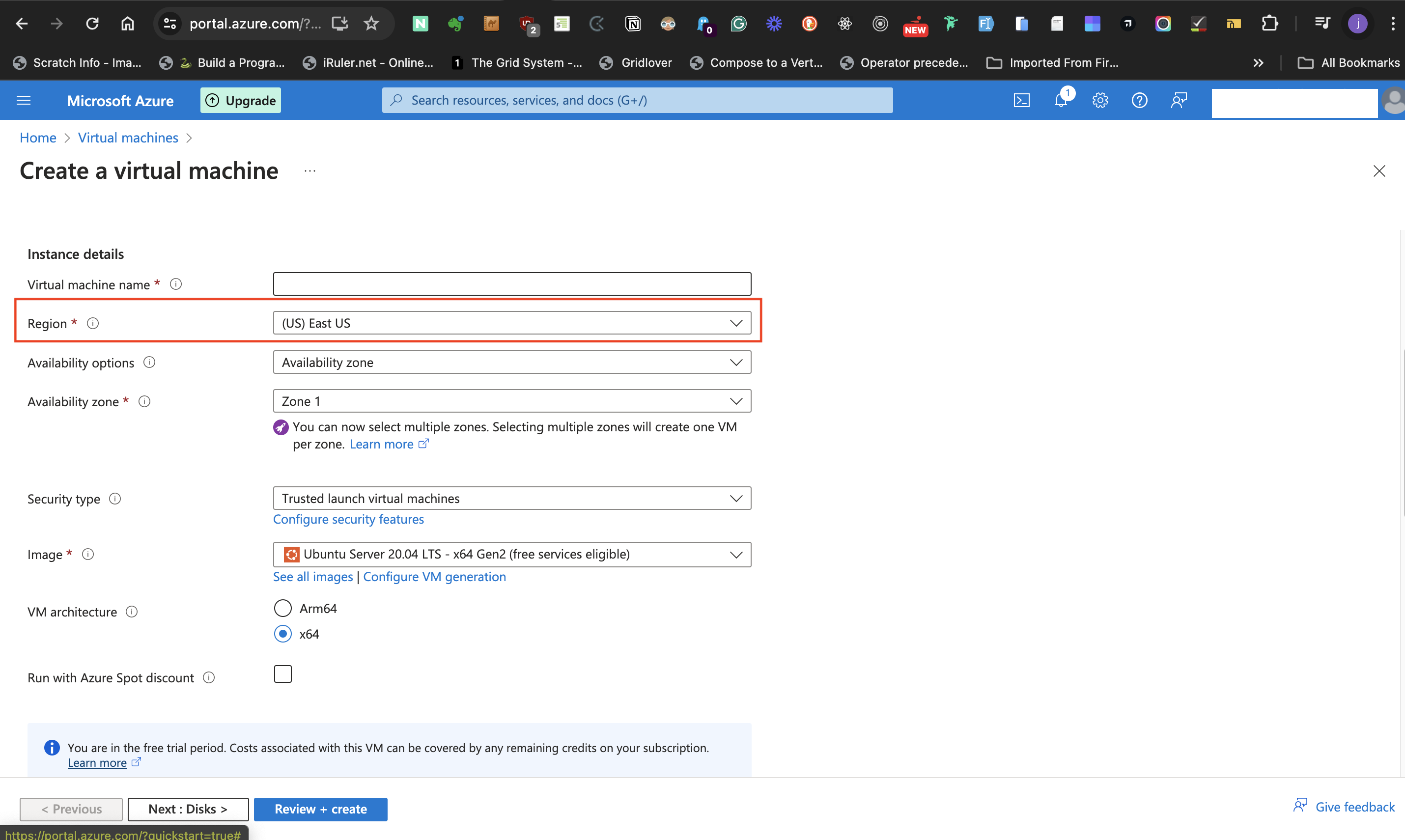

- At the Instance Details subhead, provide a name for the virtual machine at the **Virtual machine name** label.

- For the **Region** label select a suitable datacenter region.

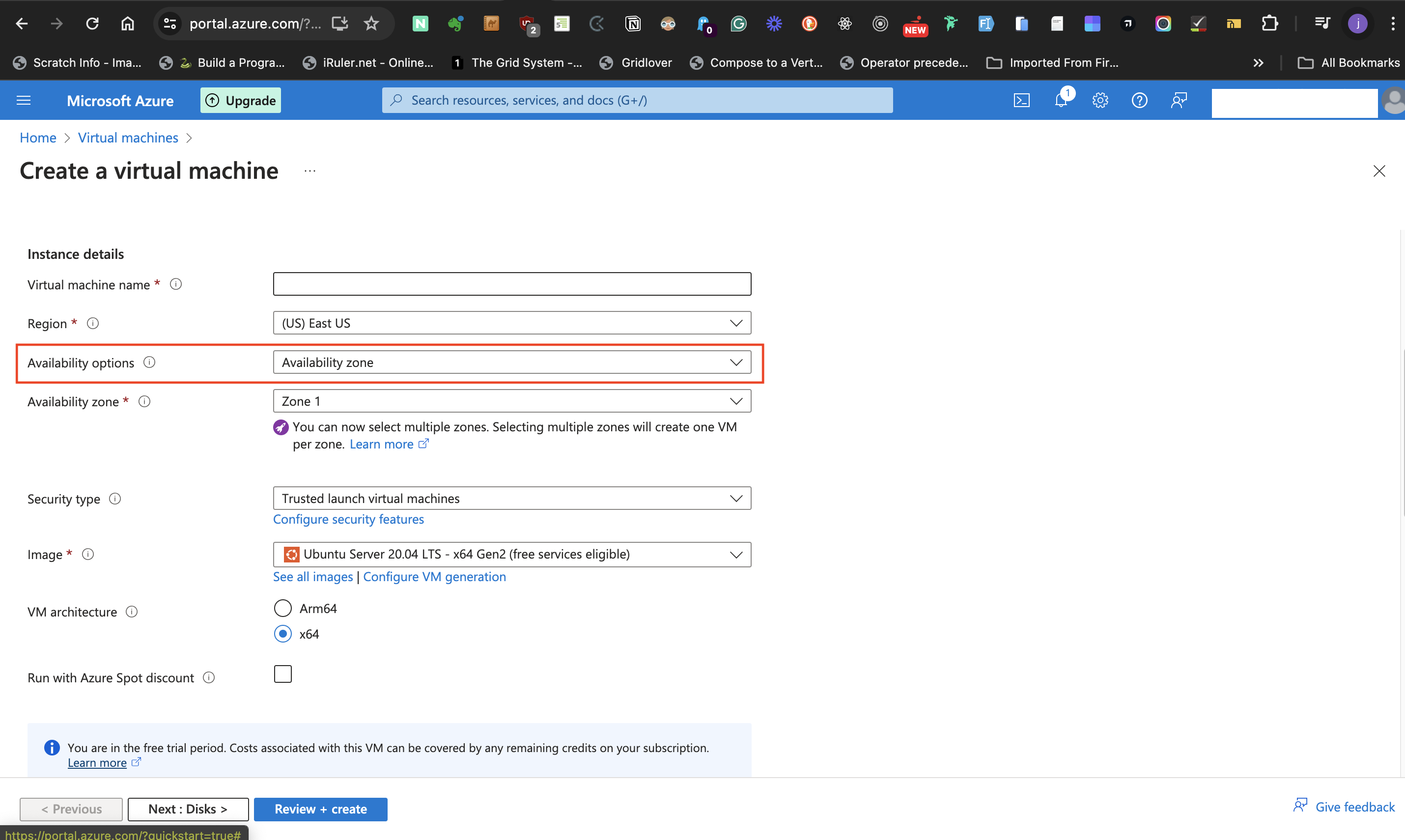

- For the **Availability options** label, select **Availability zone** as the preferred option. This permits the selection of data center zone(s) in the preceding label.

- Next, move to the **Availability zone**. This label allows for selecting multiple zones, but for the post, I selected only one zone. Selecting multiple zones means there is a need for a load balancer.

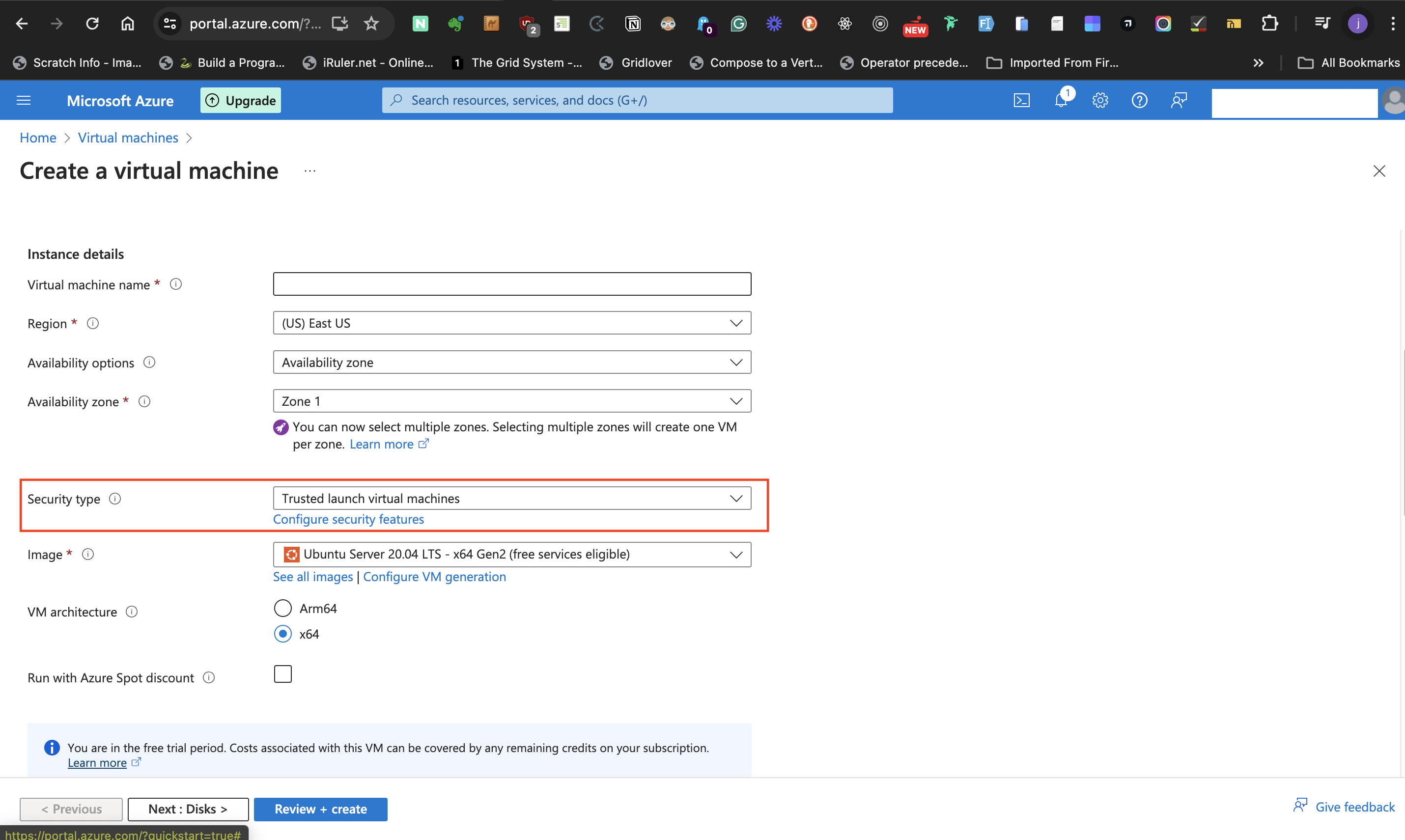

- For the **Security type** label, select **Trusted launch virtual machinnes** option.

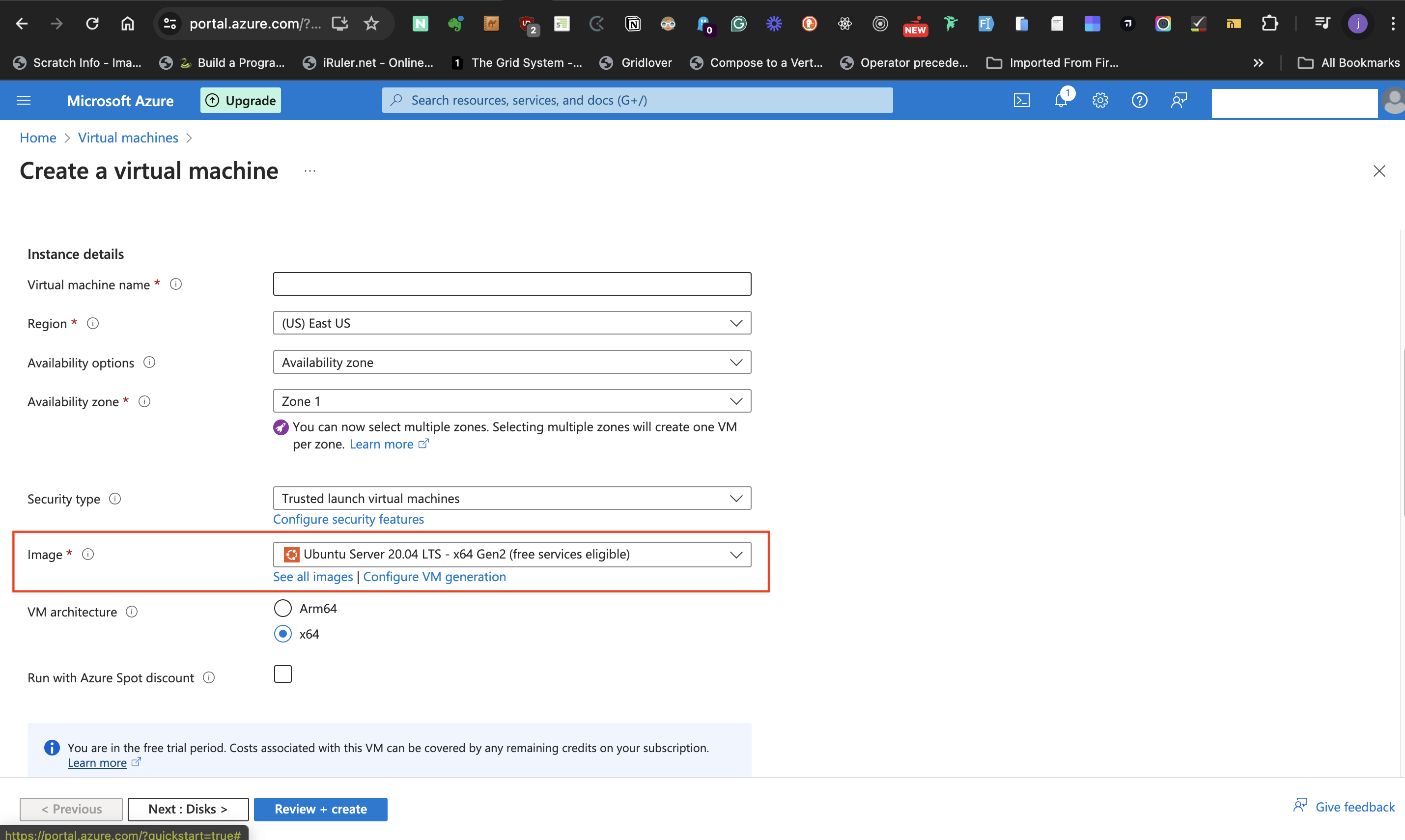

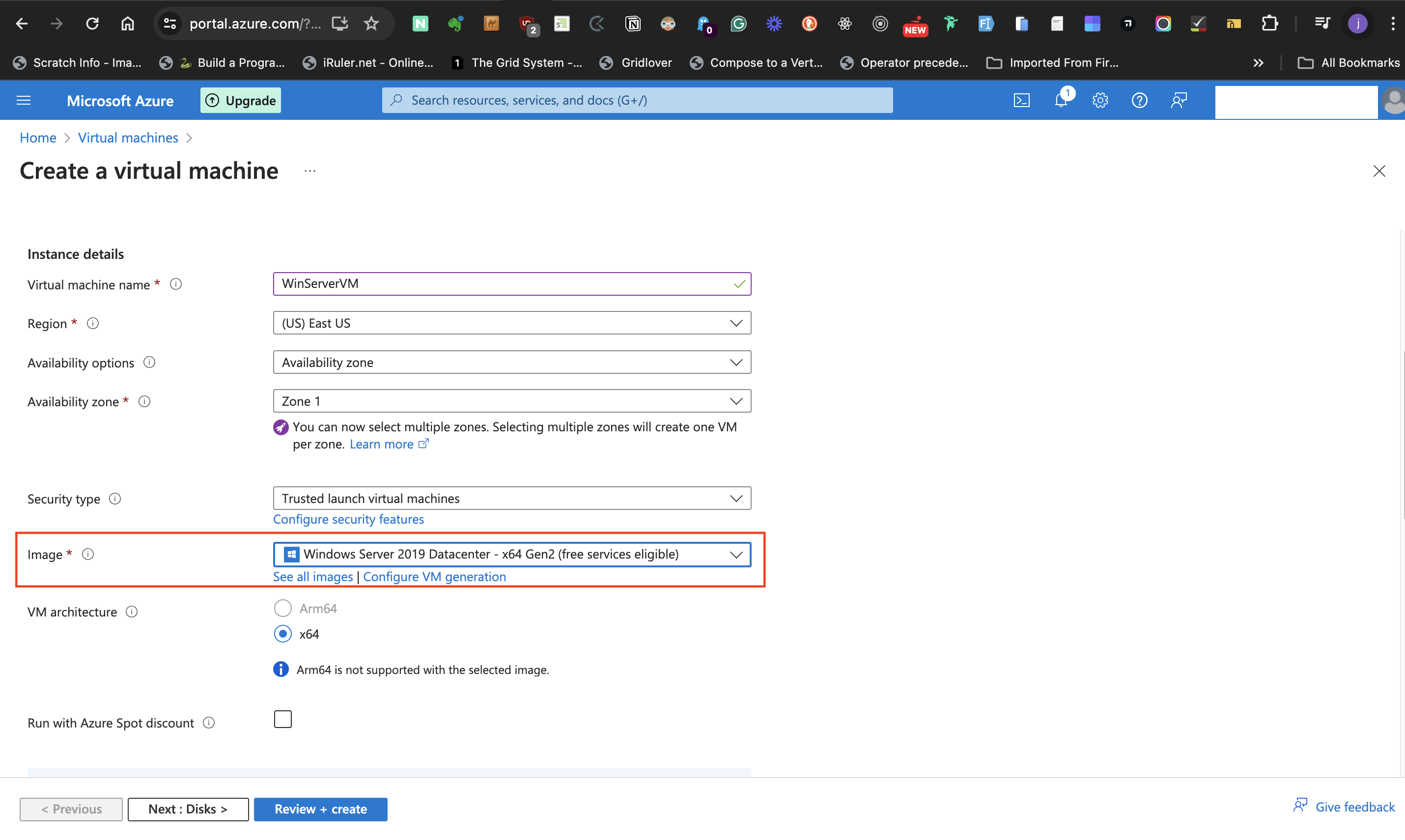

- At the **Image** label, select the preferred Windows server option from the dropdown list.

- The **VM architecture** should be kept at **x64**, and keep **Run with Azure Spot discount** unticked.

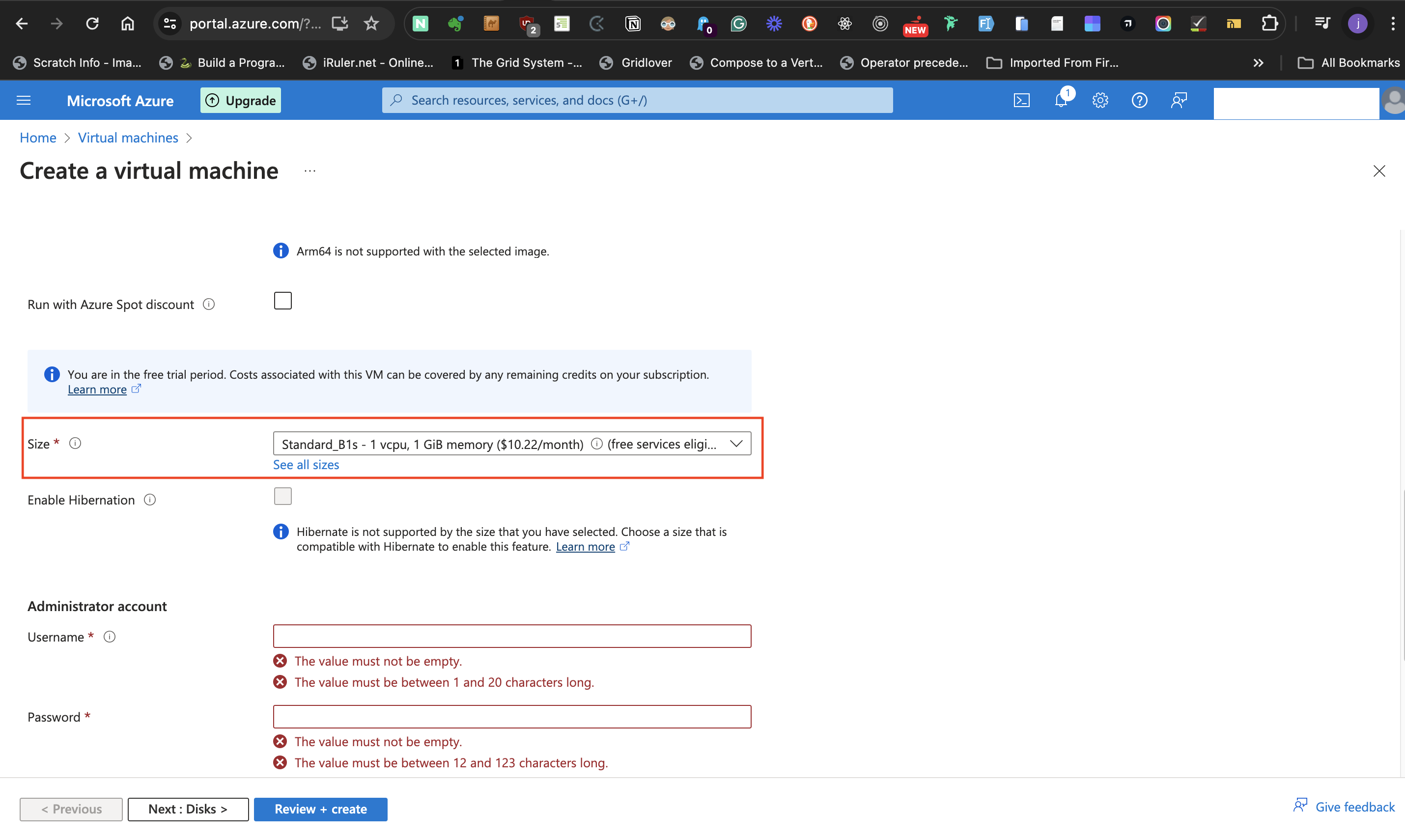

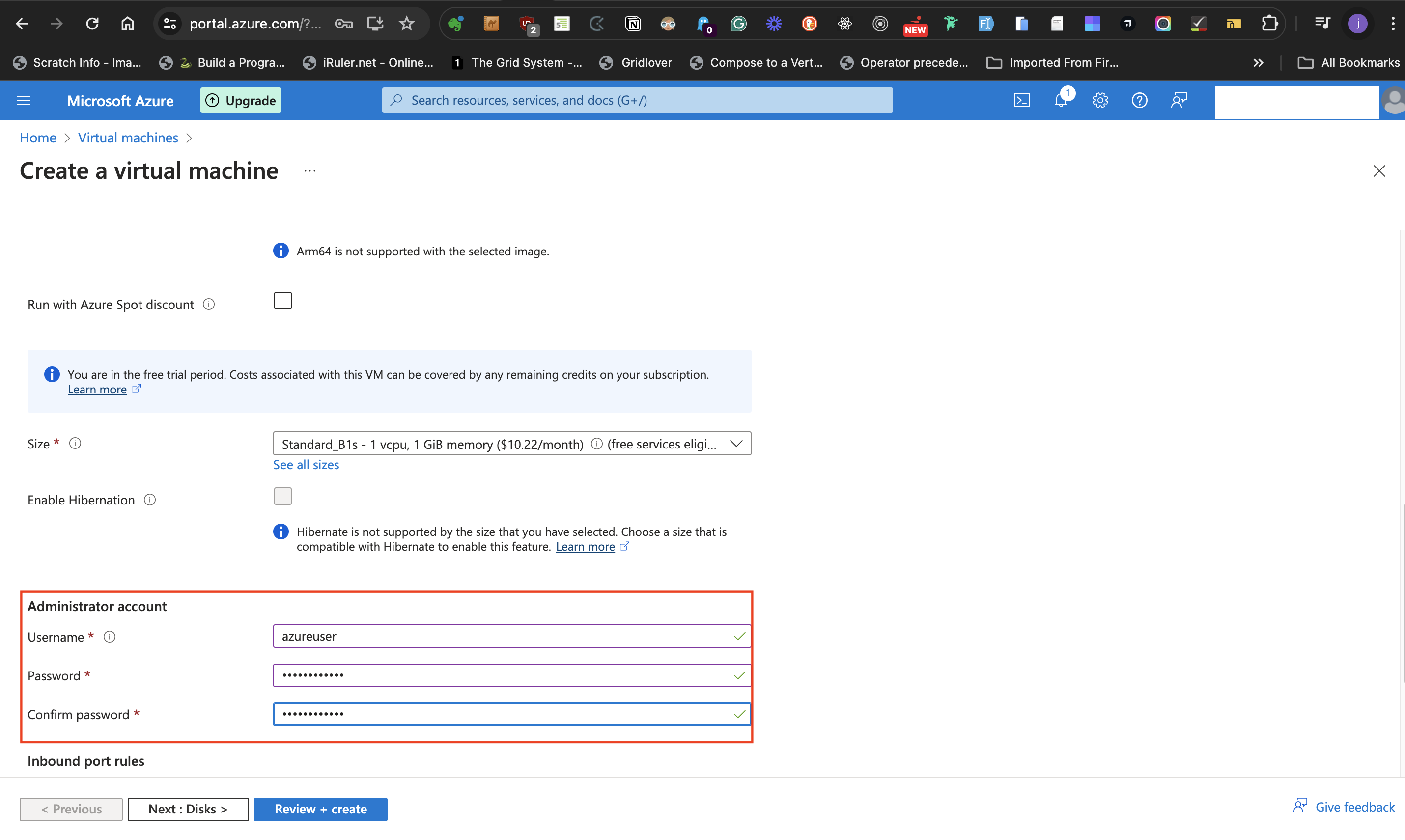

- At the **Size** label, select **Standard_B1s - 1 vcpu, 1 GiB memory** size and do not tick the **Enable Hibernationn** box.

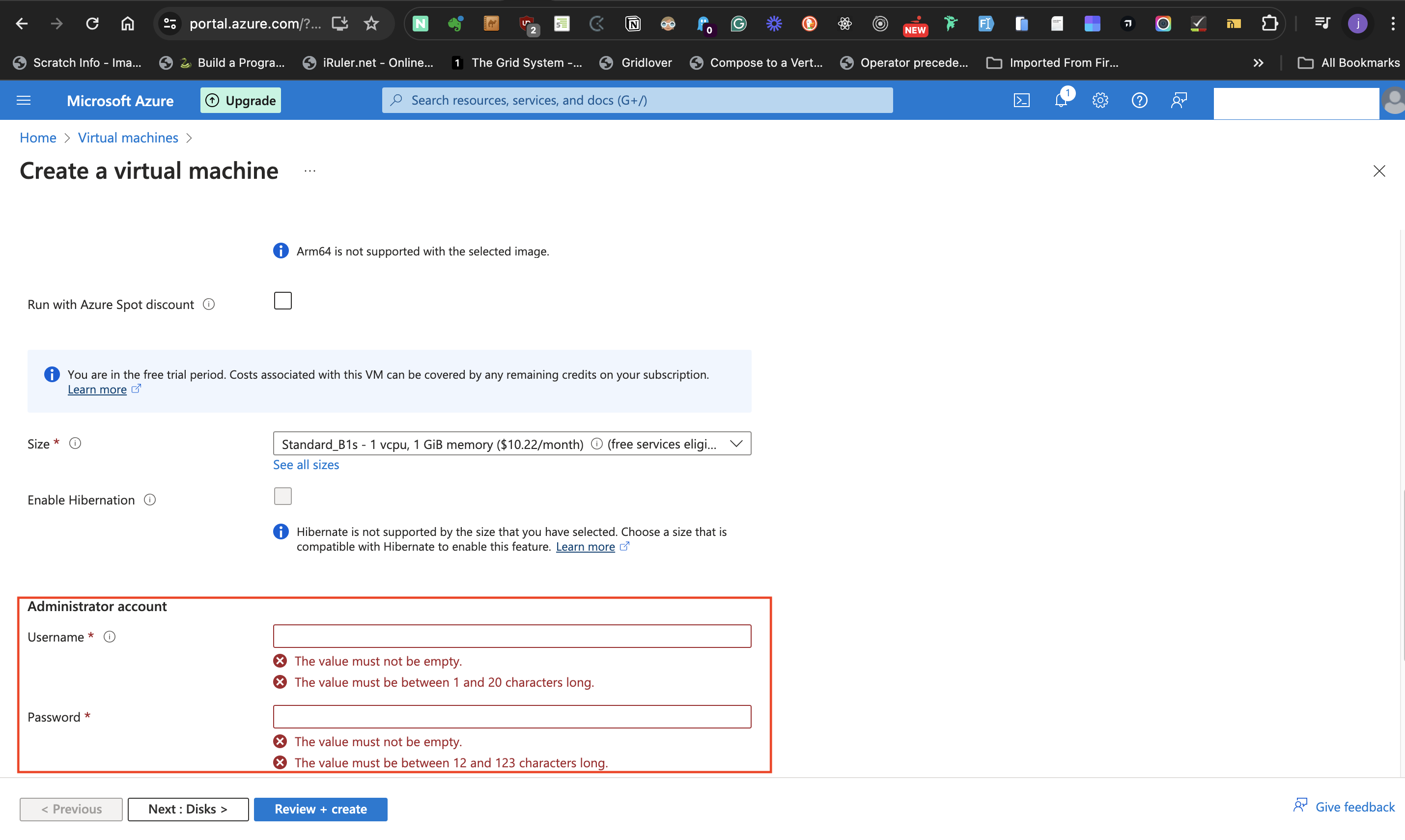

#####Administrator account

- Create a **Username** for the Windows Server and a **Password**

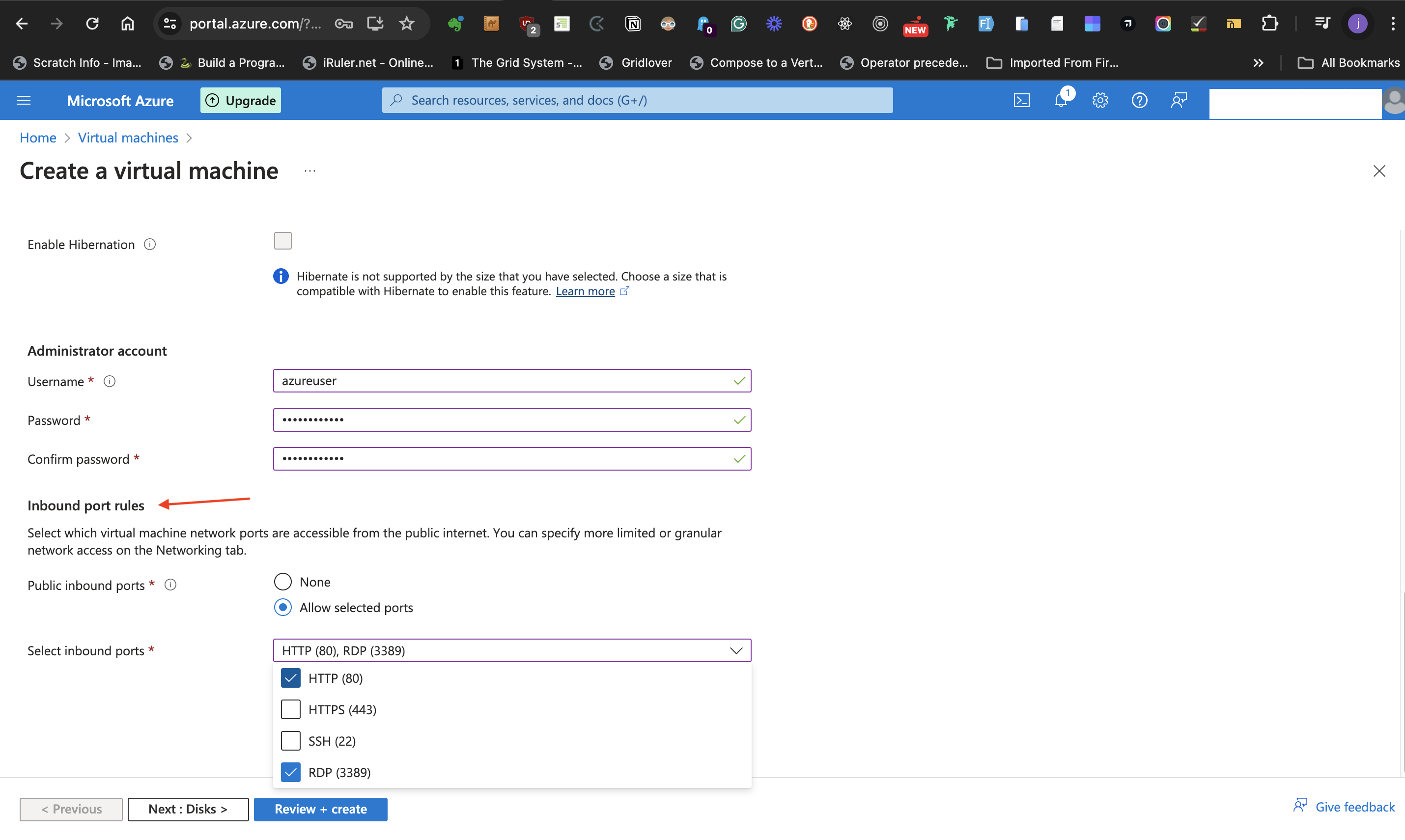

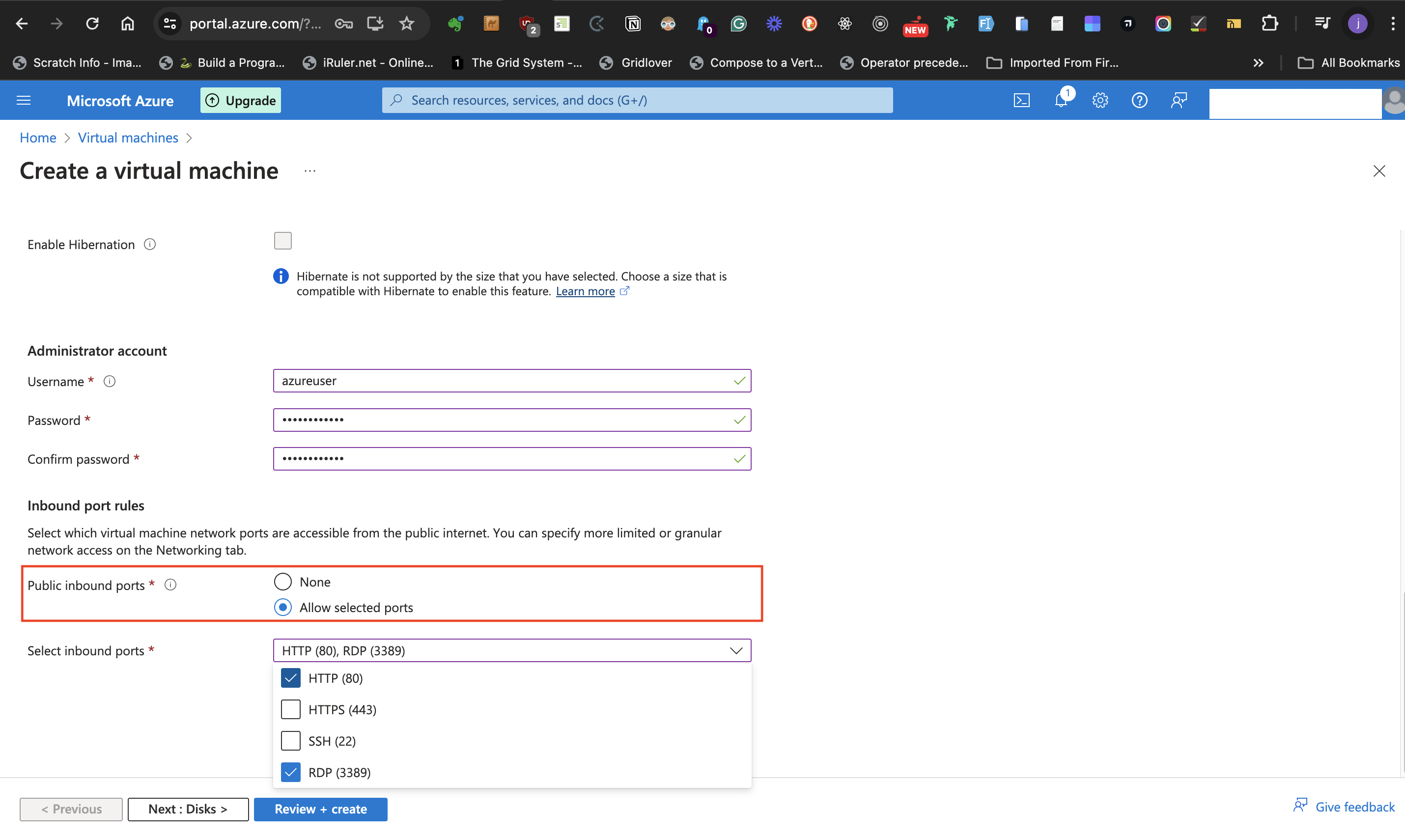

#####Inbound port rules

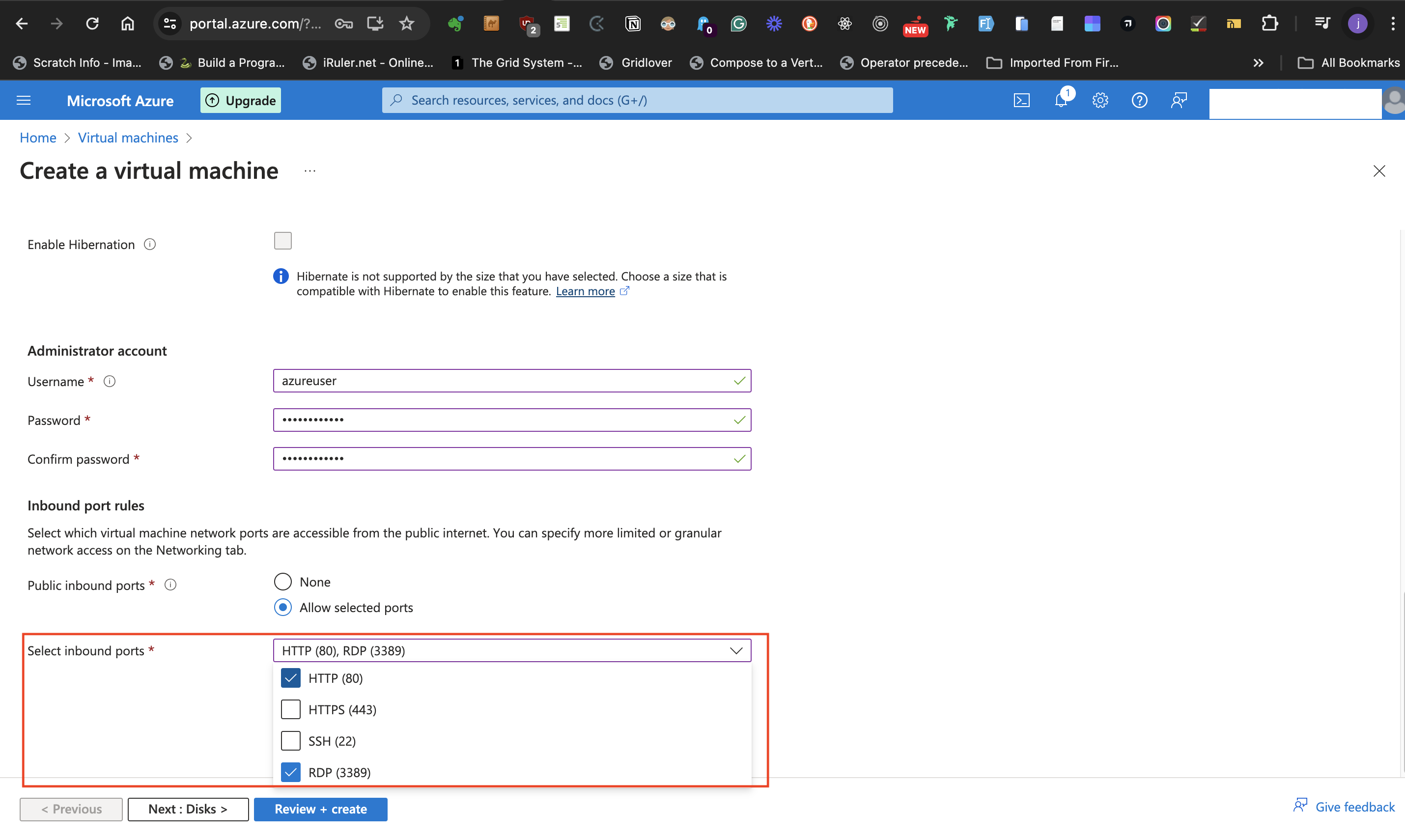

- **Allow selected ports** at the **Public inbound ports** options

- From the **Select inbound ports** drop down list, select both **HTTP (80)** and **RDP (3389)** as the ports of communication.

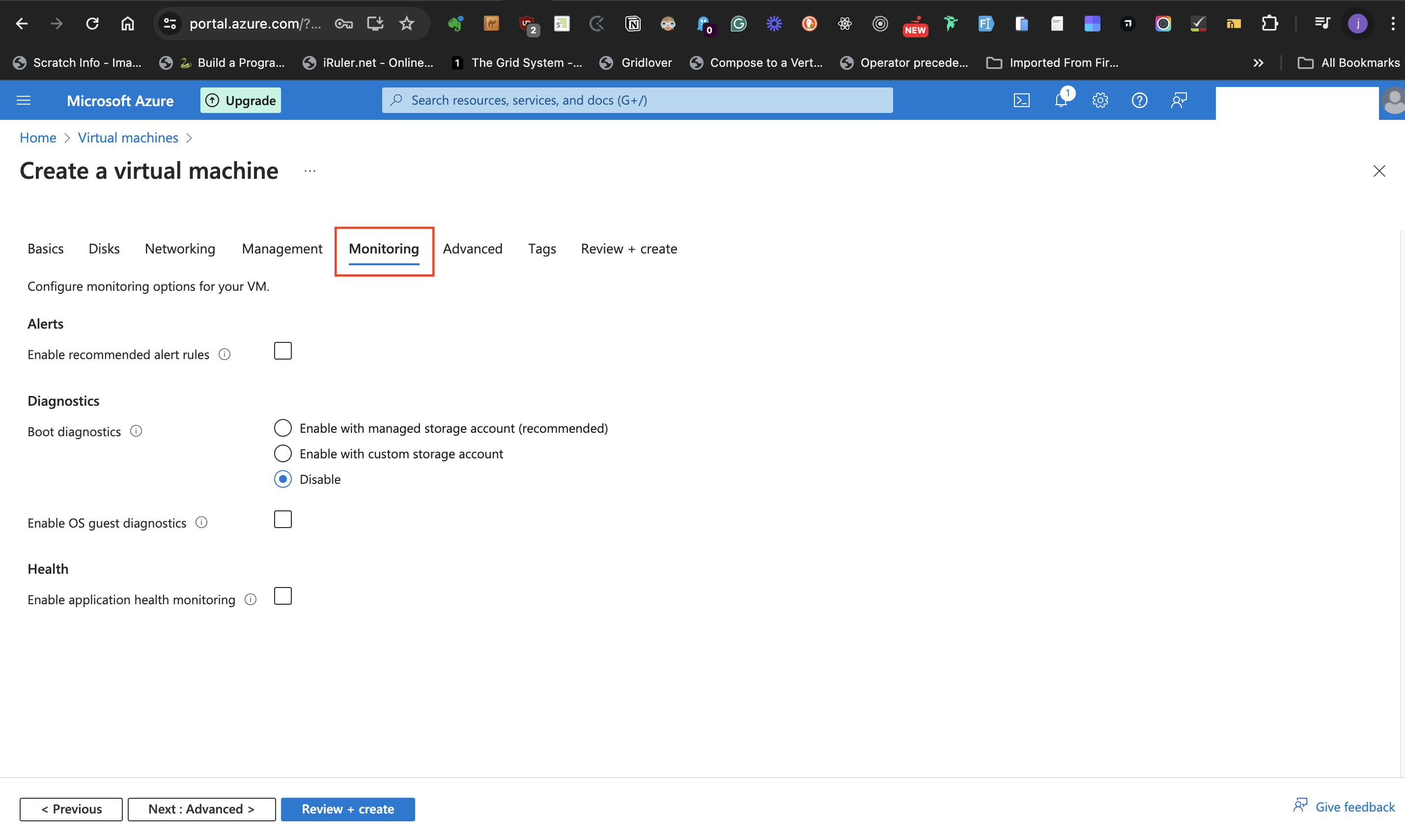

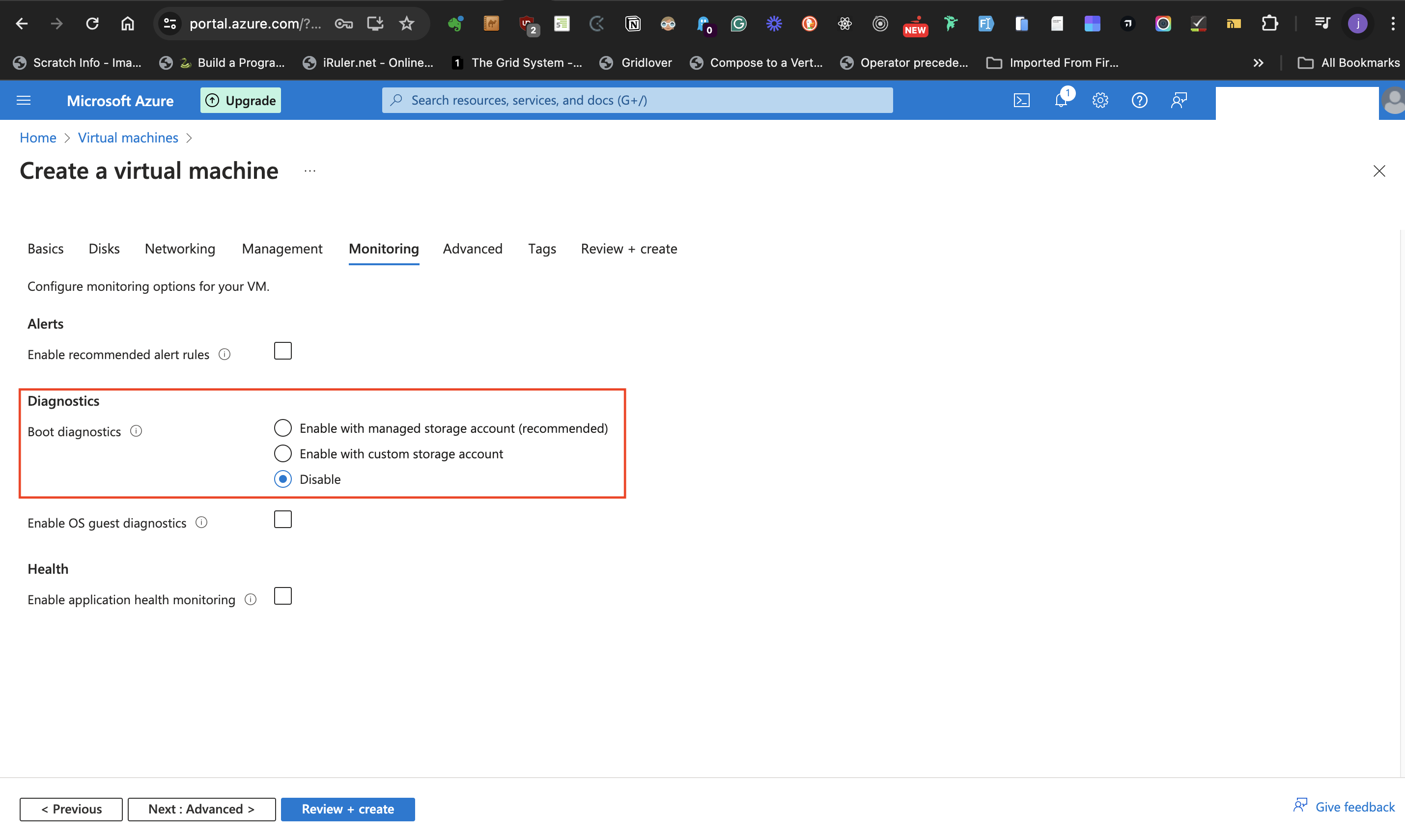

#### Monitoring Tab

Under the **Monitoring Tab** it is desirable to disable Boot diagnostics.

#####Diagnostics

- At the **Boot Diagnostics** label, set it to **Disable**

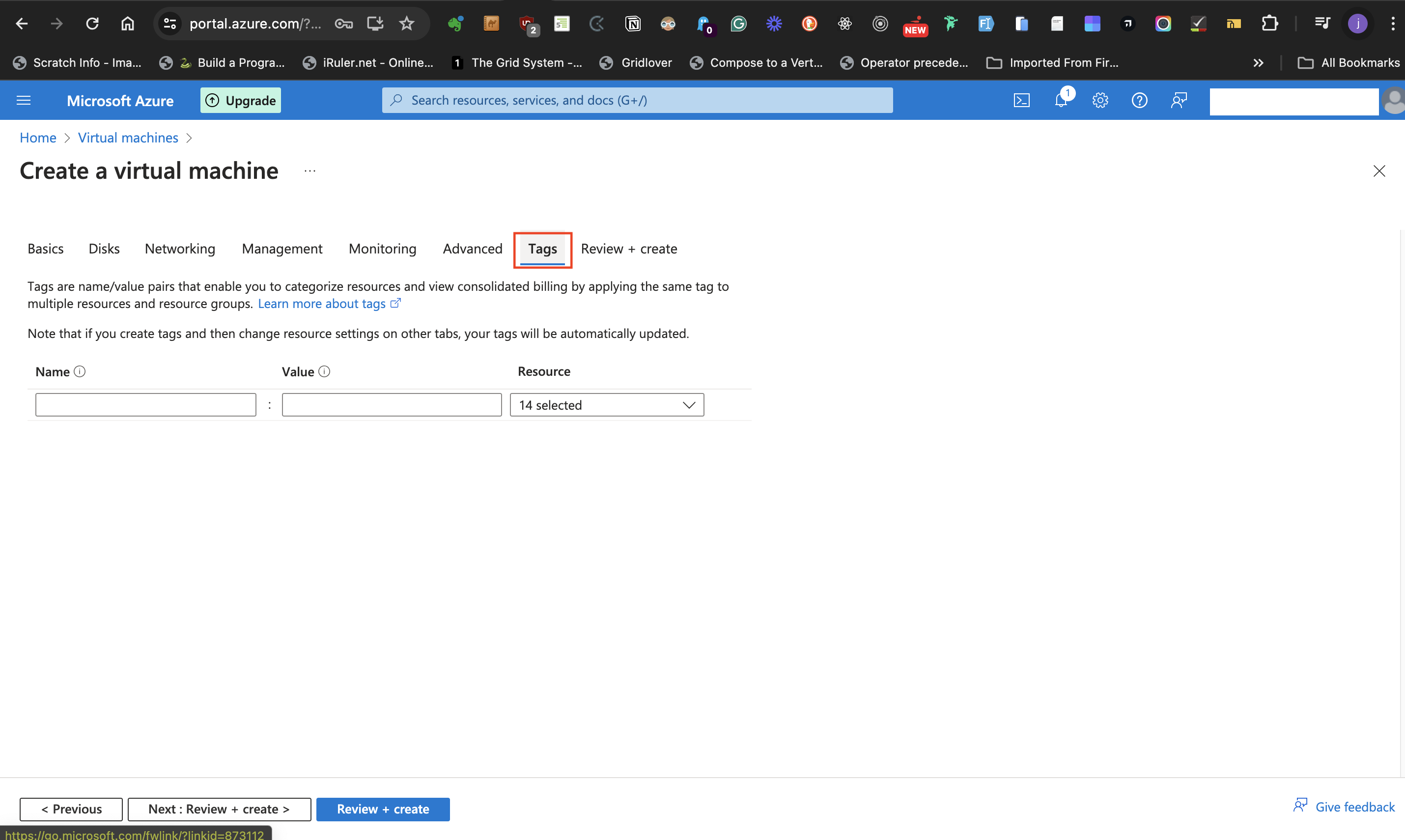

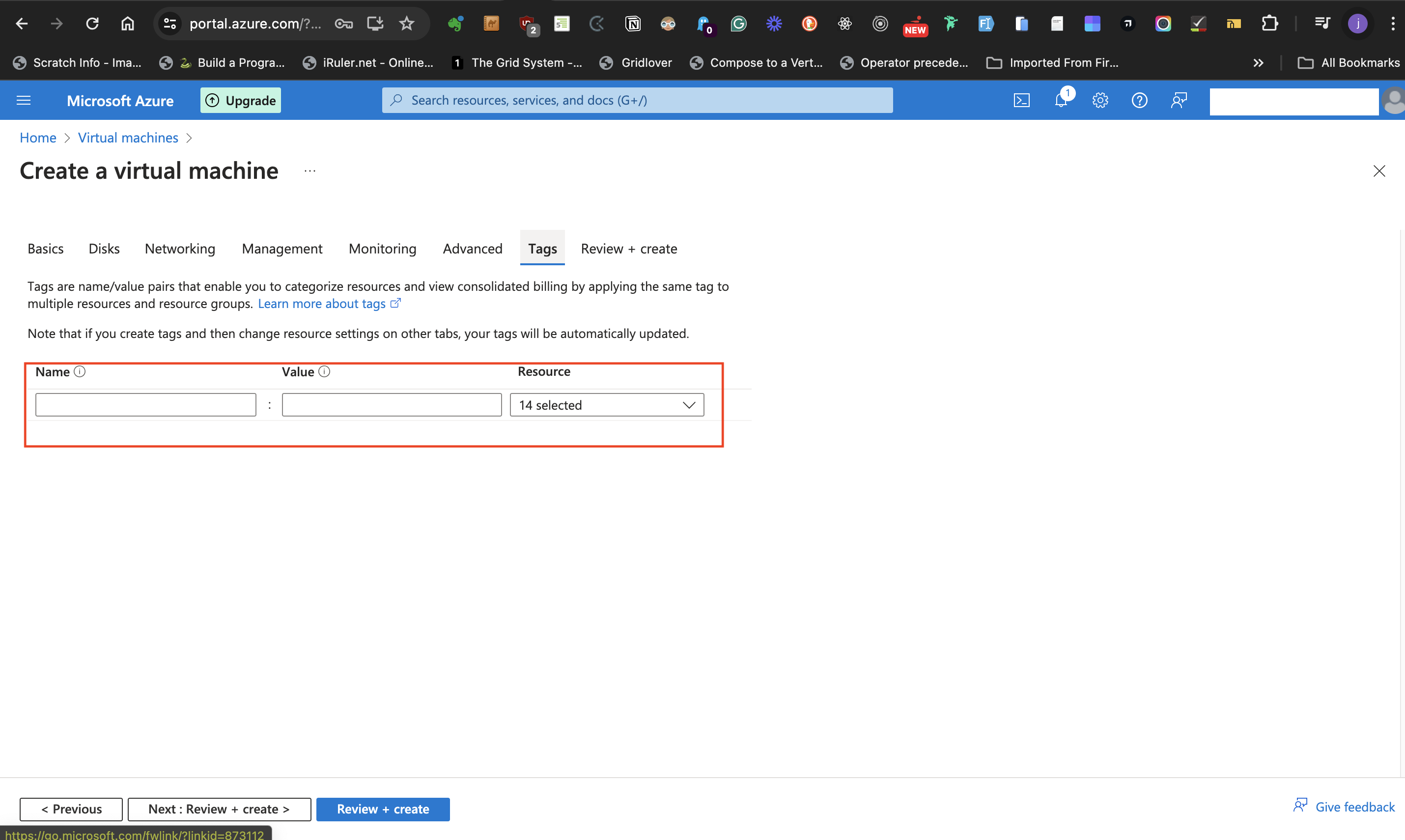

#### Tag Tab

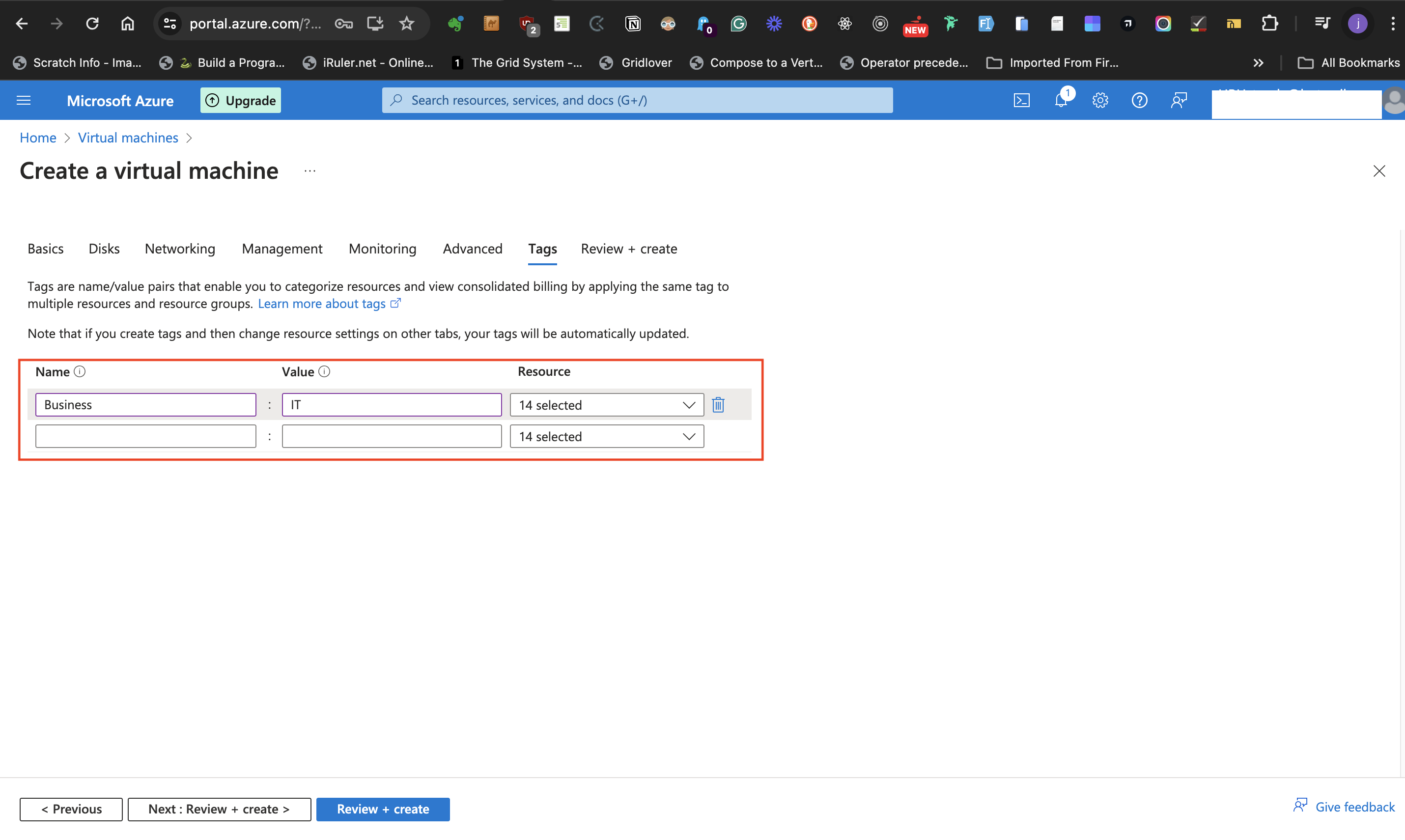

Under the **Tag Tab** it is ideal that name and value are created for the resources.

- At the annotated part of the image below, give the resource a name and value. Both the name and value are called **Tags** and they help to categorize resources.

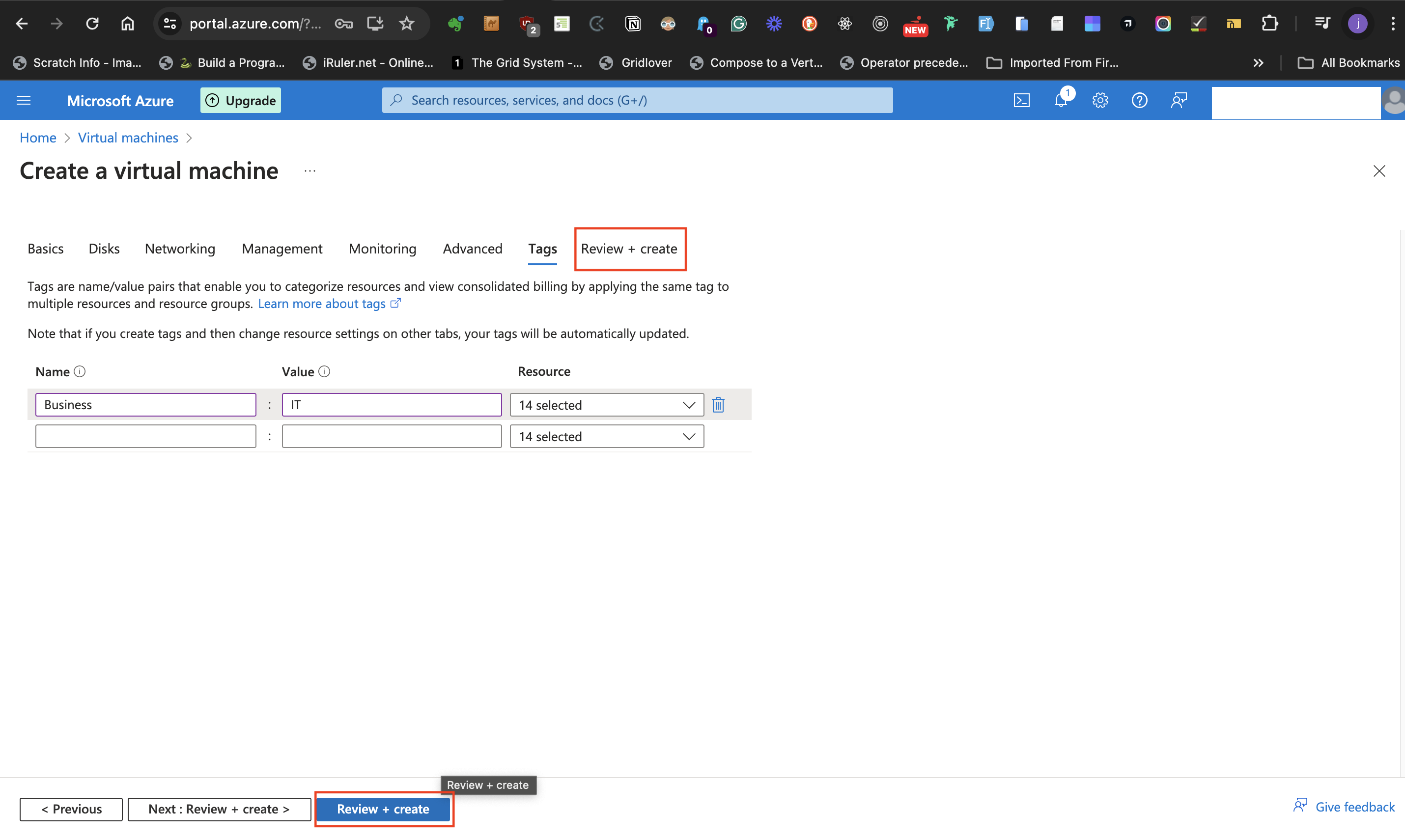

- The next step is to click the **Review + Create** button at the bottom of the page or the **Review + Create** tab at the top.

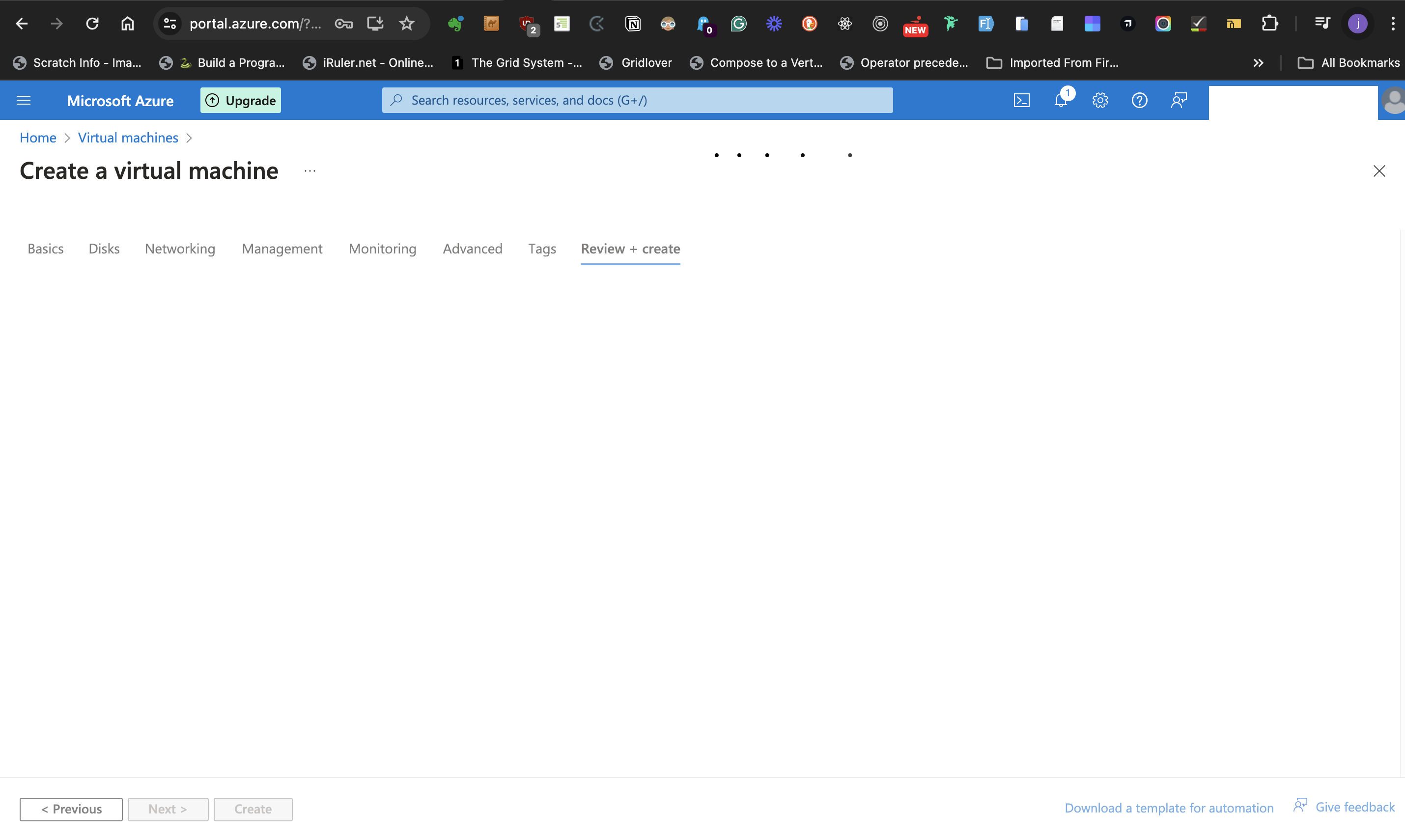

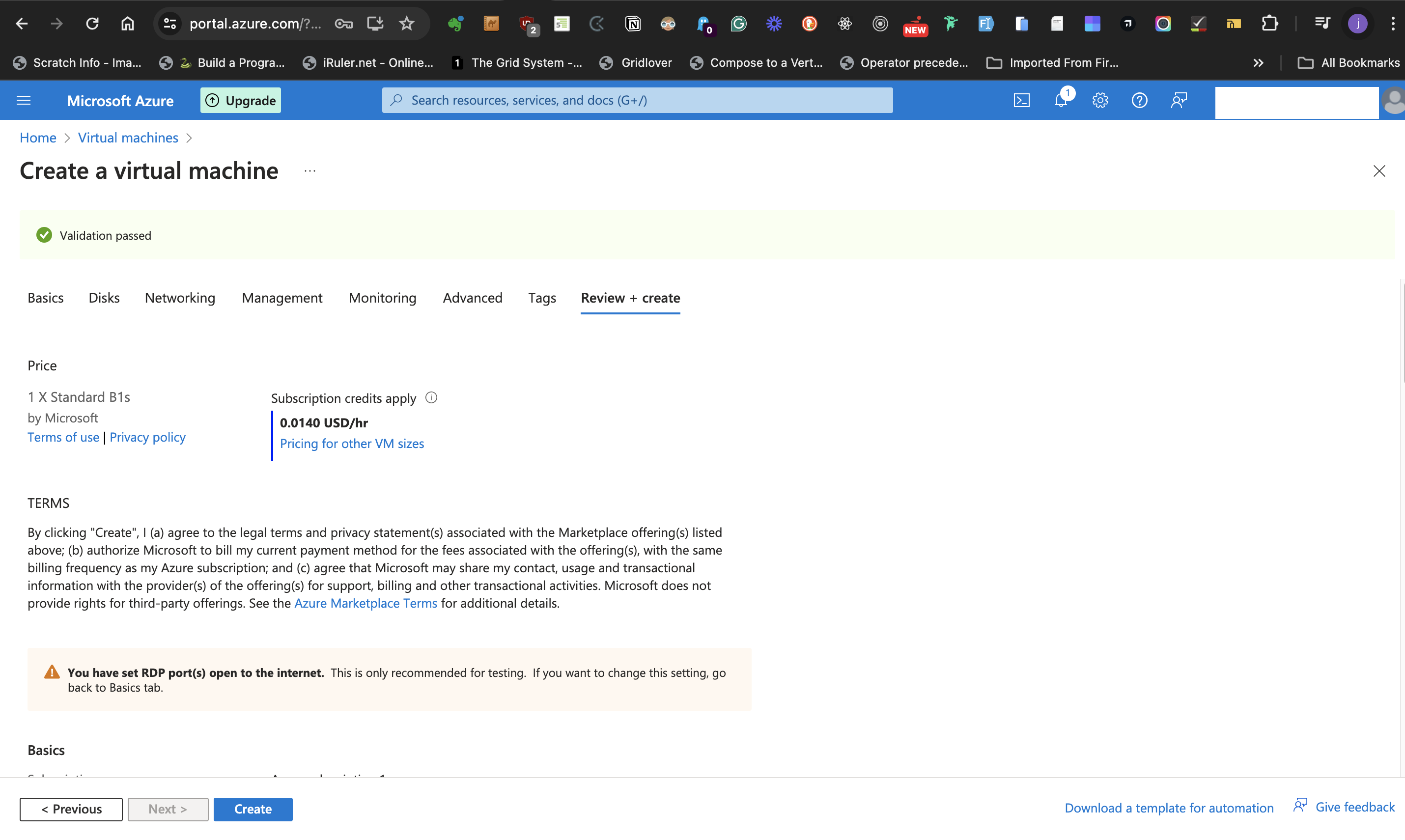

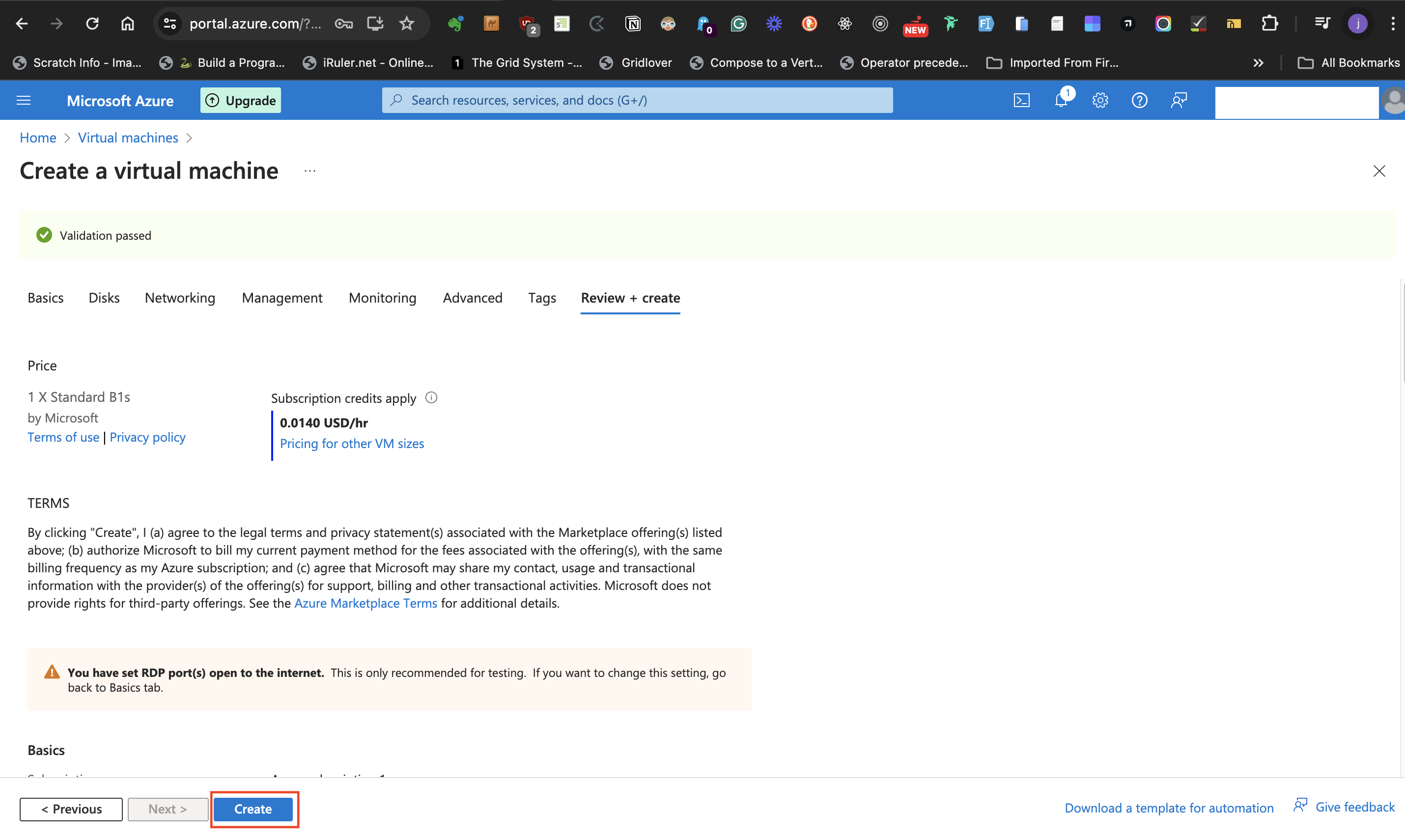

- When it has completed validation, there is a pale green banner and a green check mark at the top of the page saying "Validation passed"

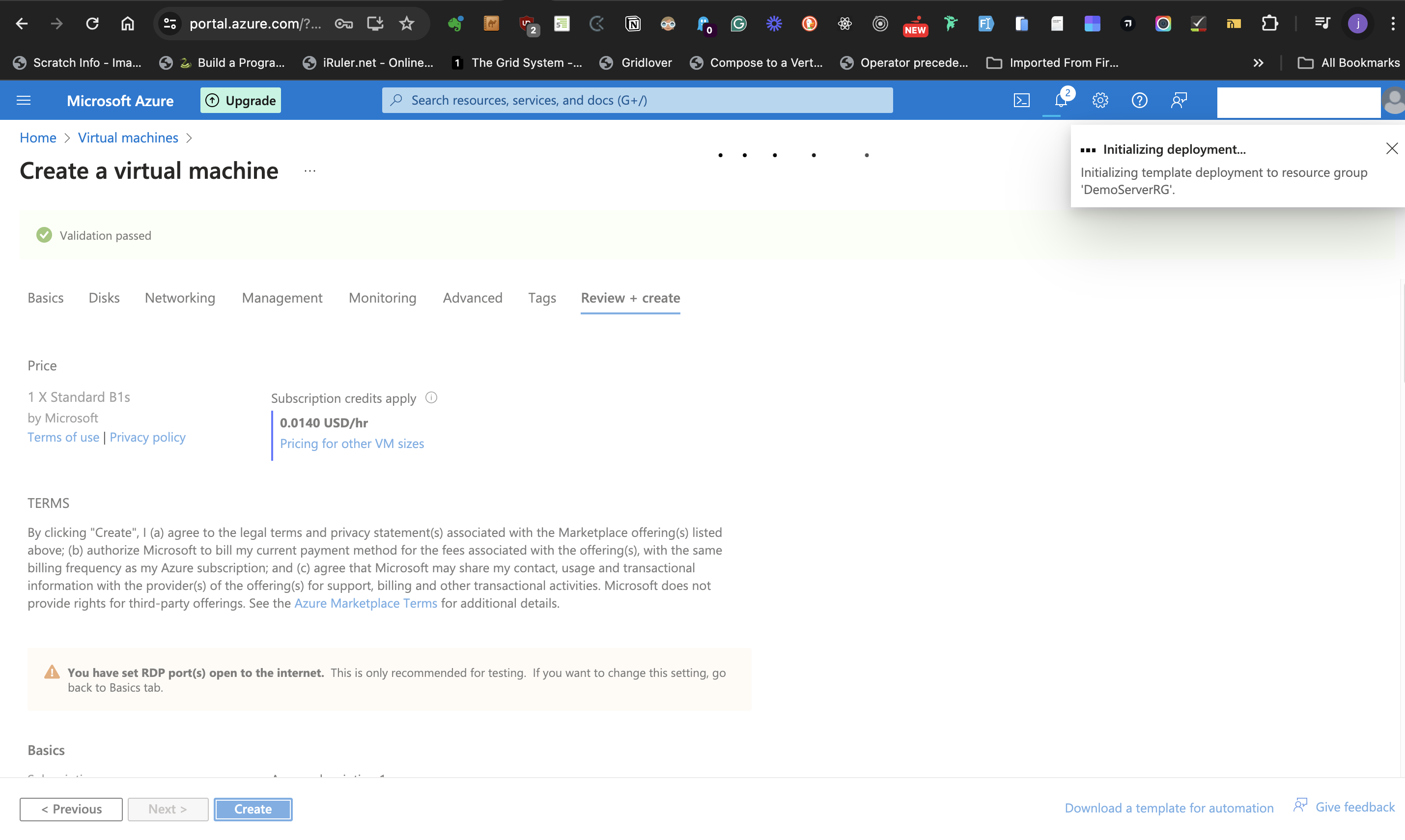

- click the **create** button at the bottom of the page to initialize deployment.

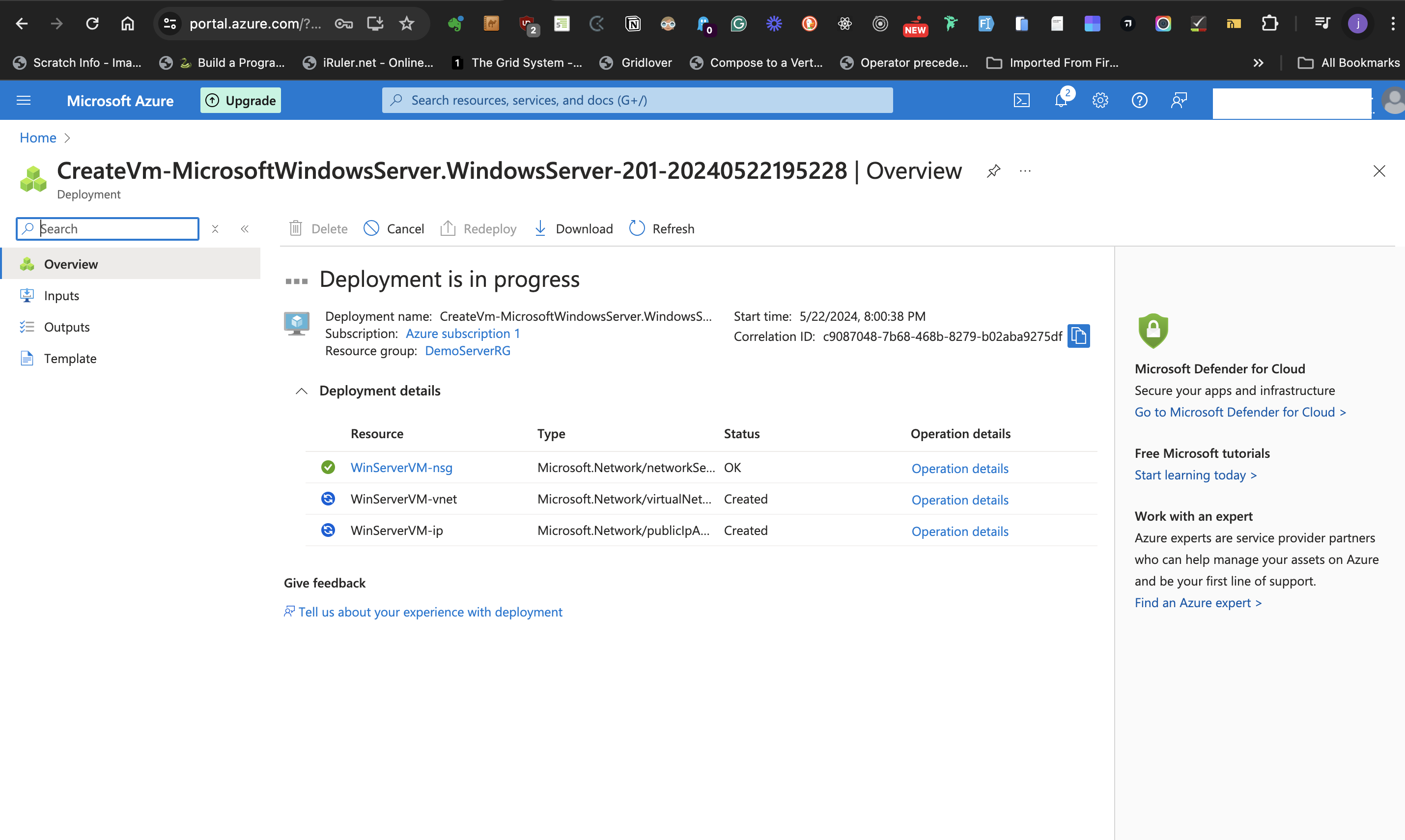

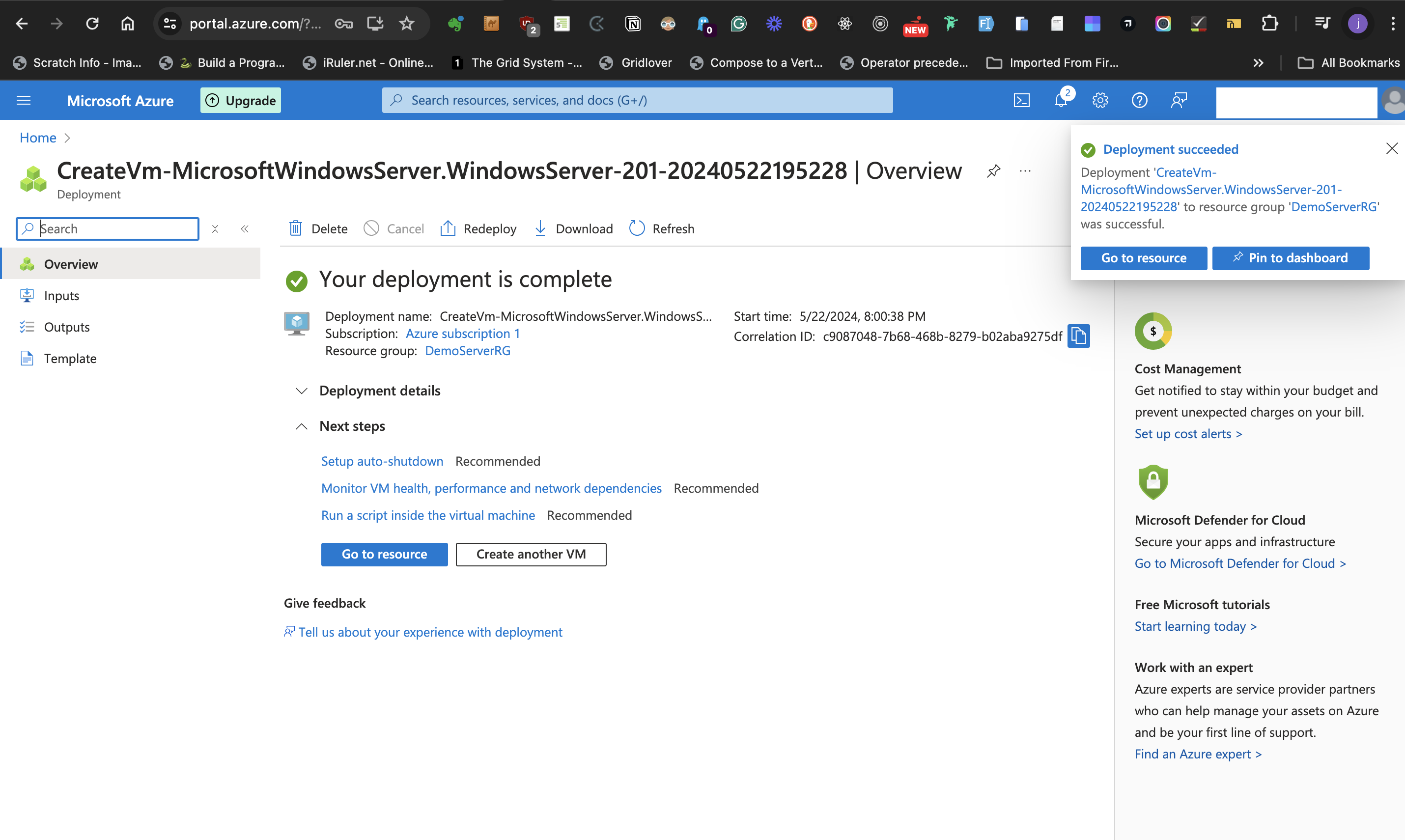

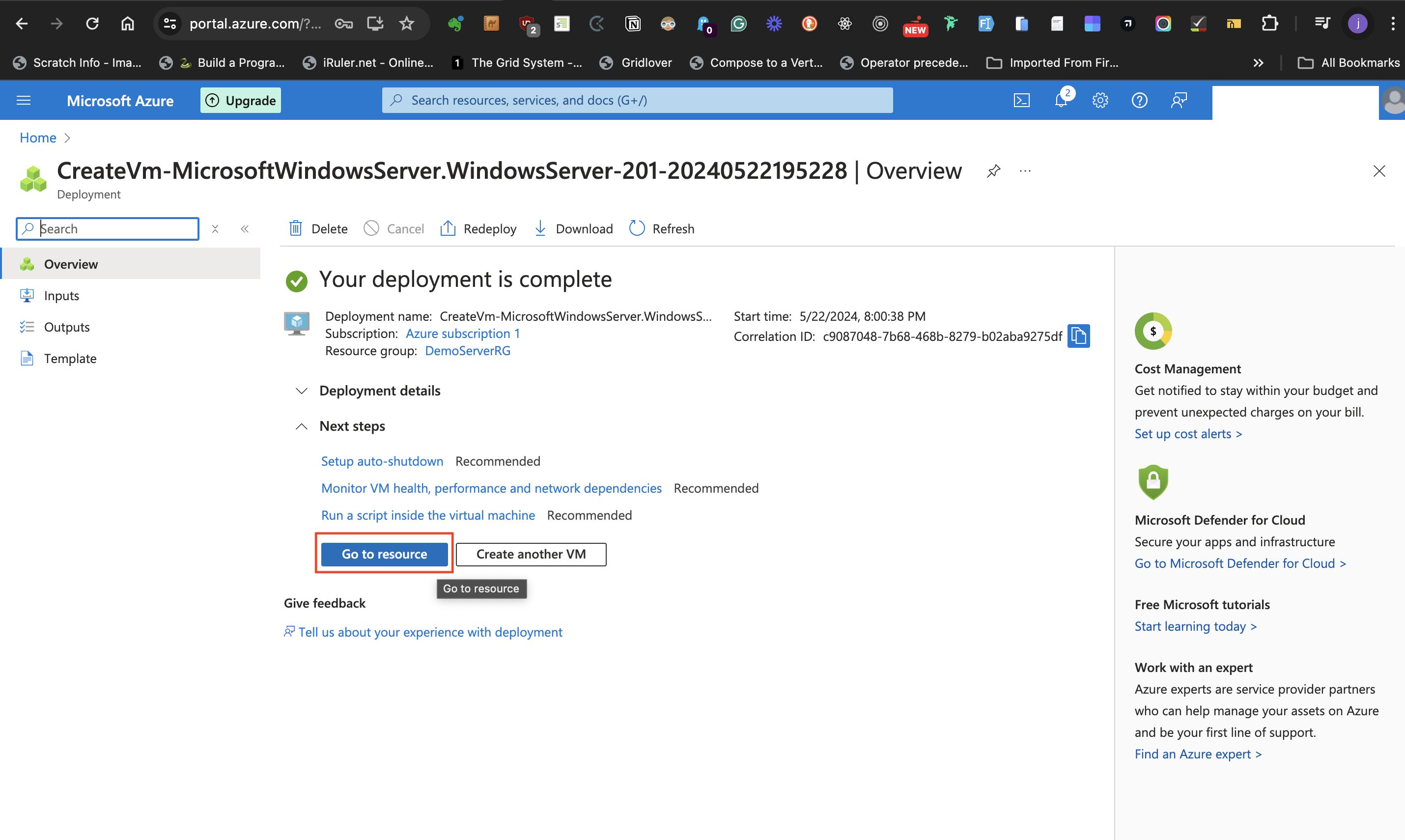

- When the deployment is completed there is a notification at the top page that says "Your deployment is complete"

- click the **Go to resource** button to go to the newly created resource page.

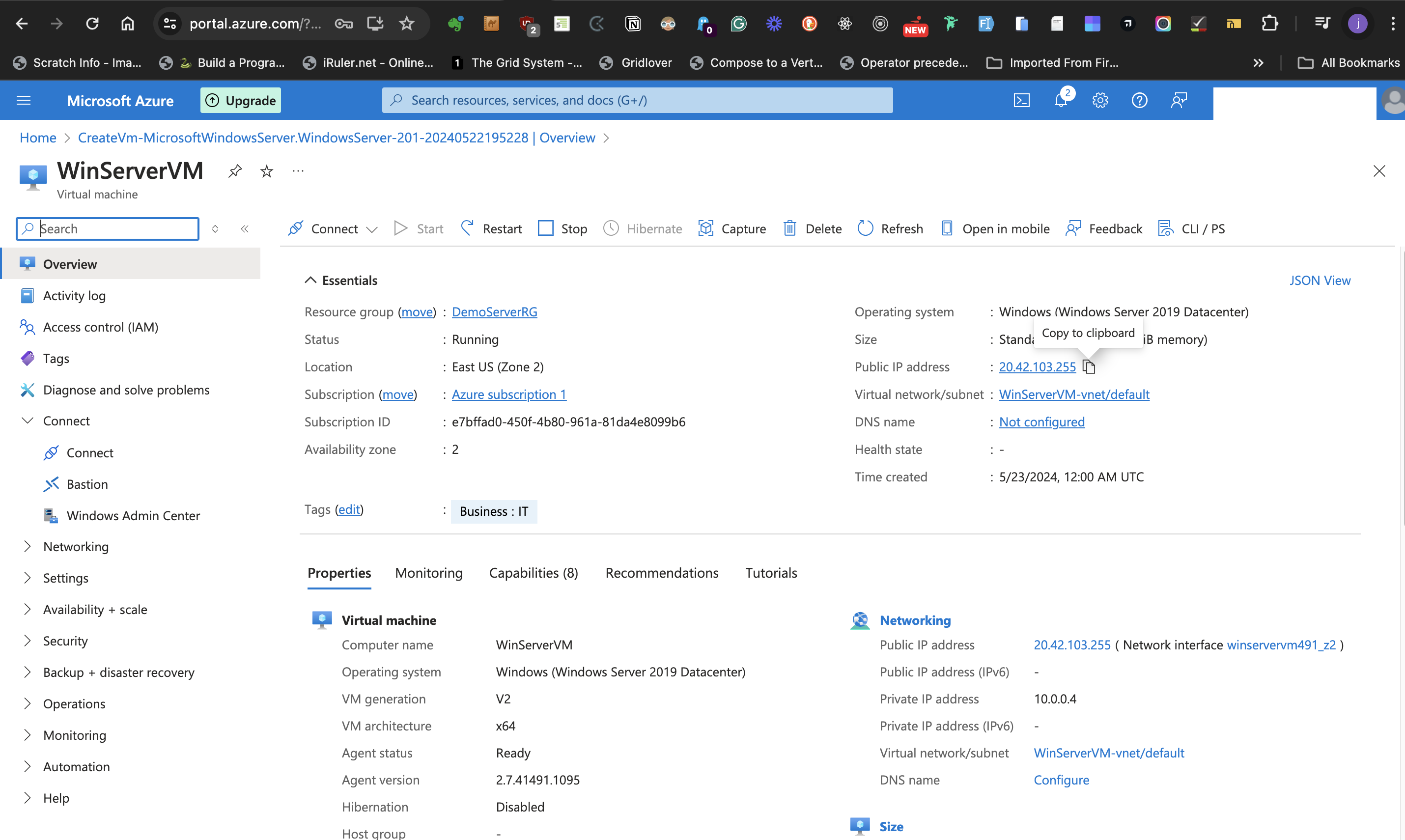

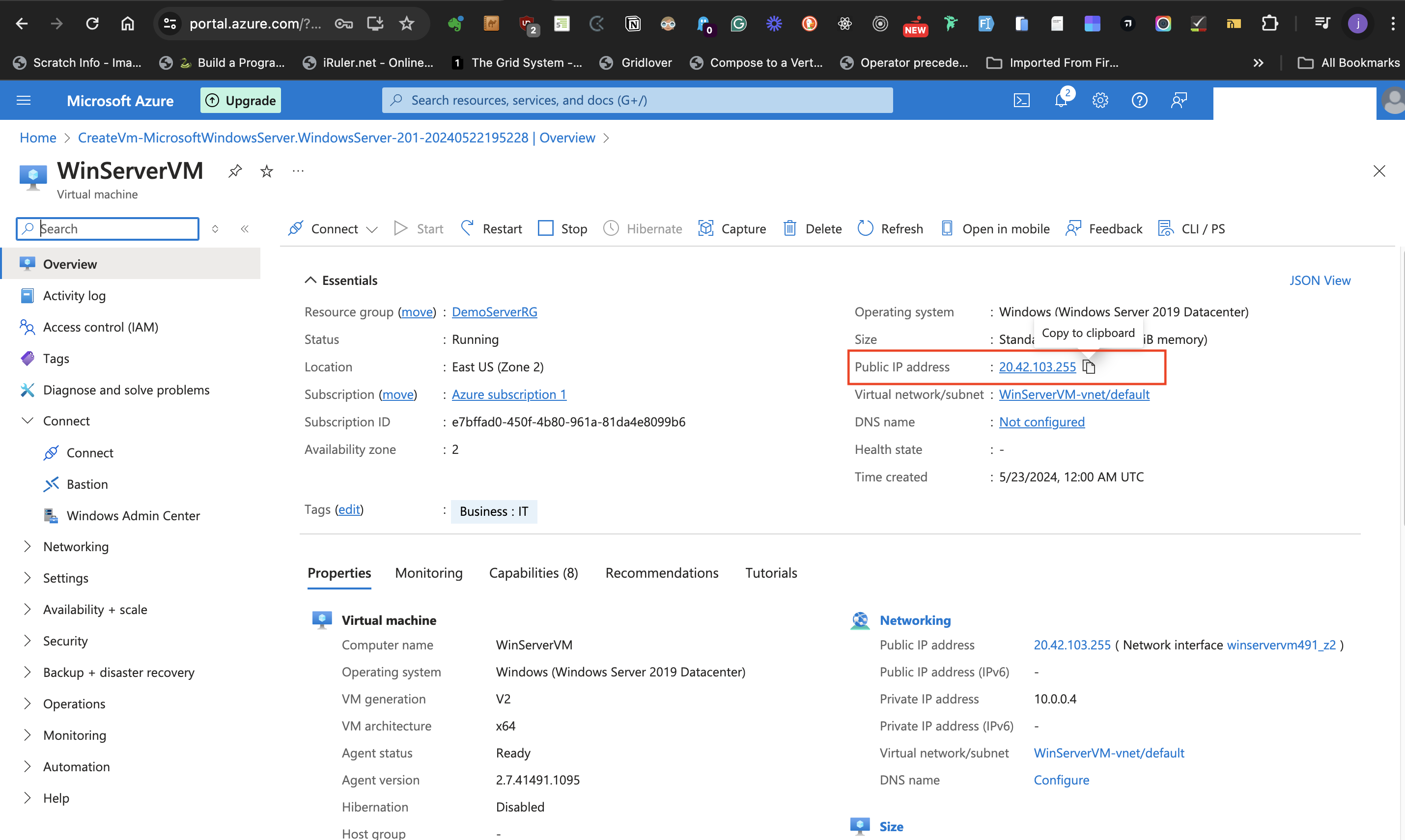

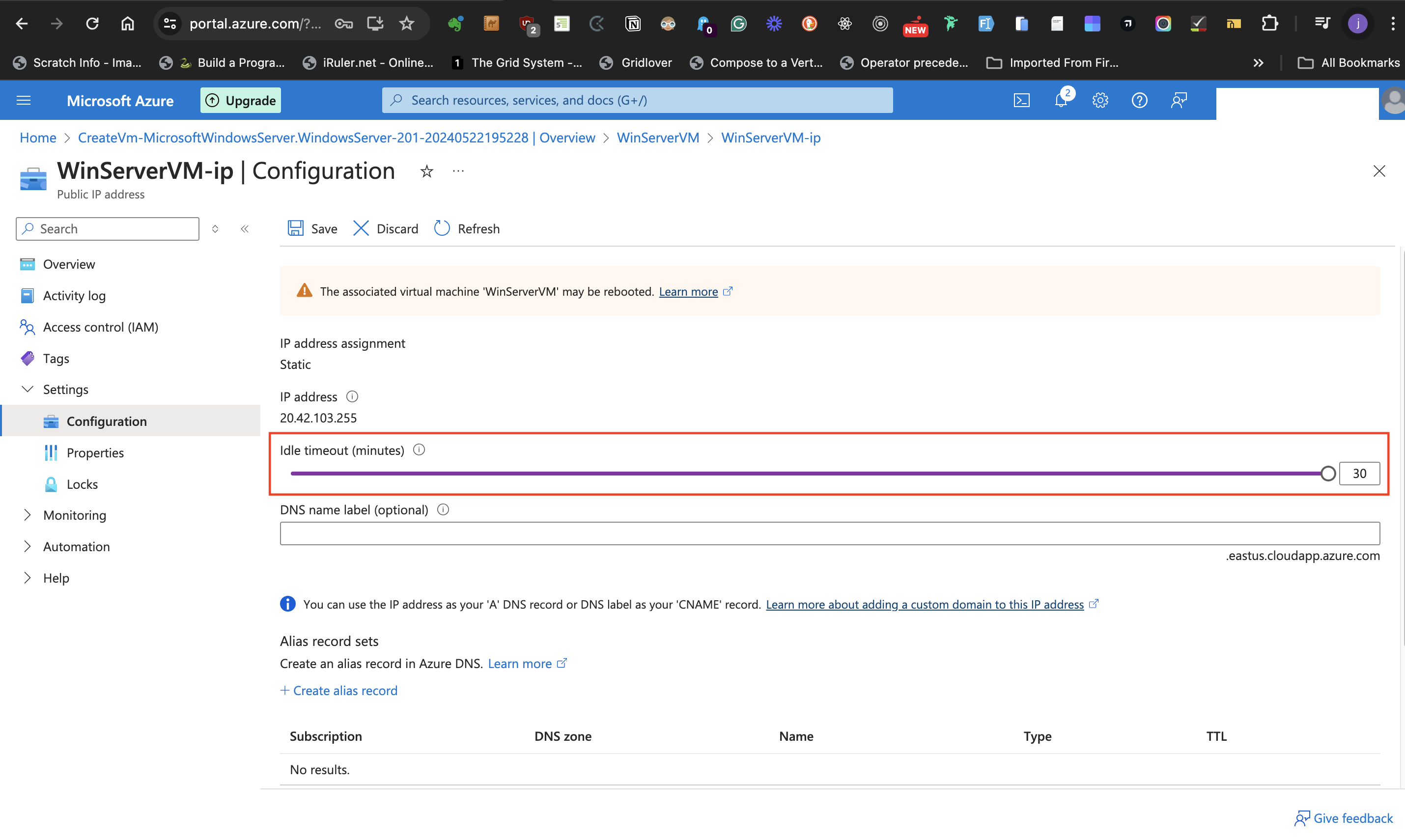

- At the right-hand side of the page, look for the "Public IP address" label, and click on the IP address.

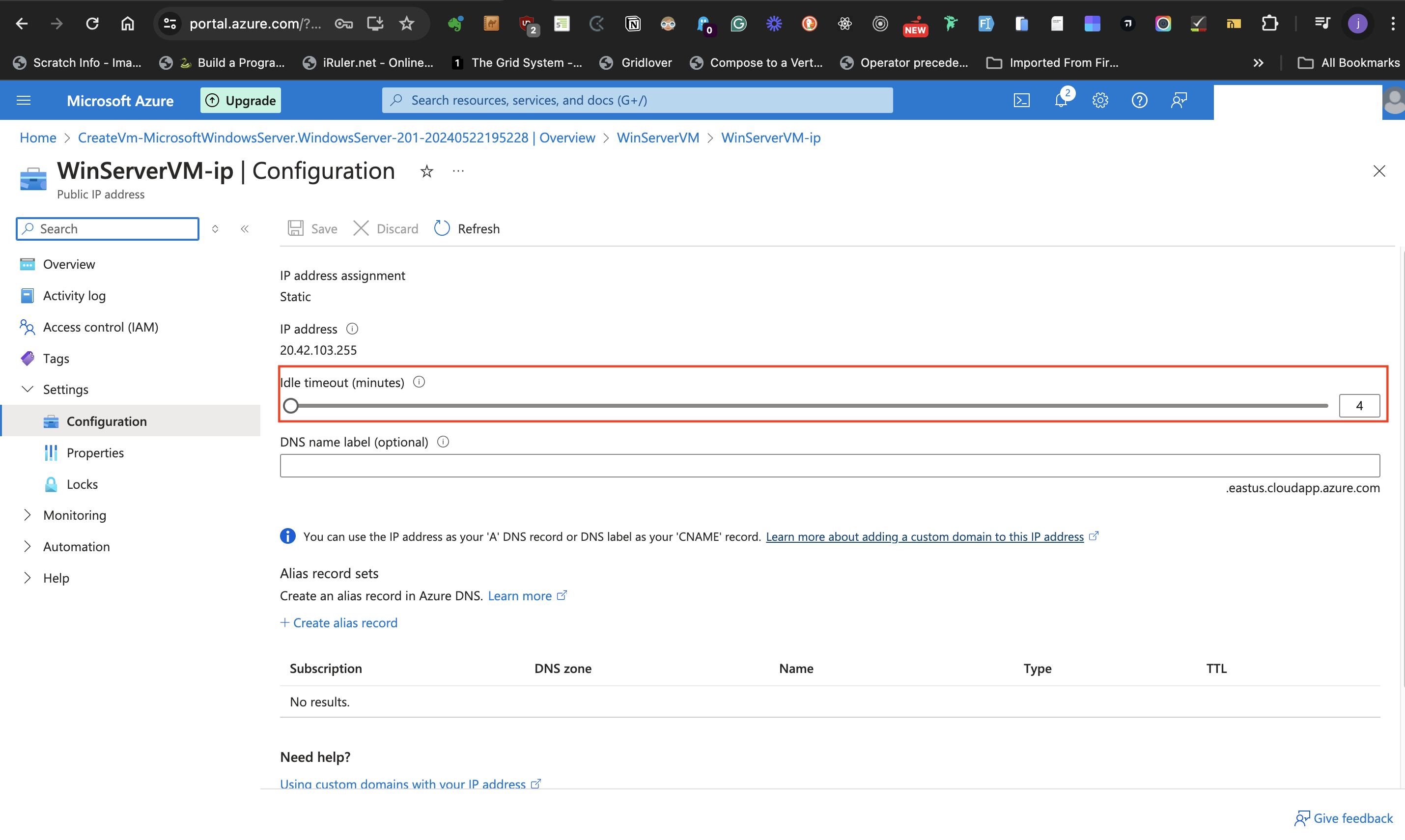

- This navigates to a page to increase the idle timeout from 4 minutes to the maximum (usually 30 minutes)

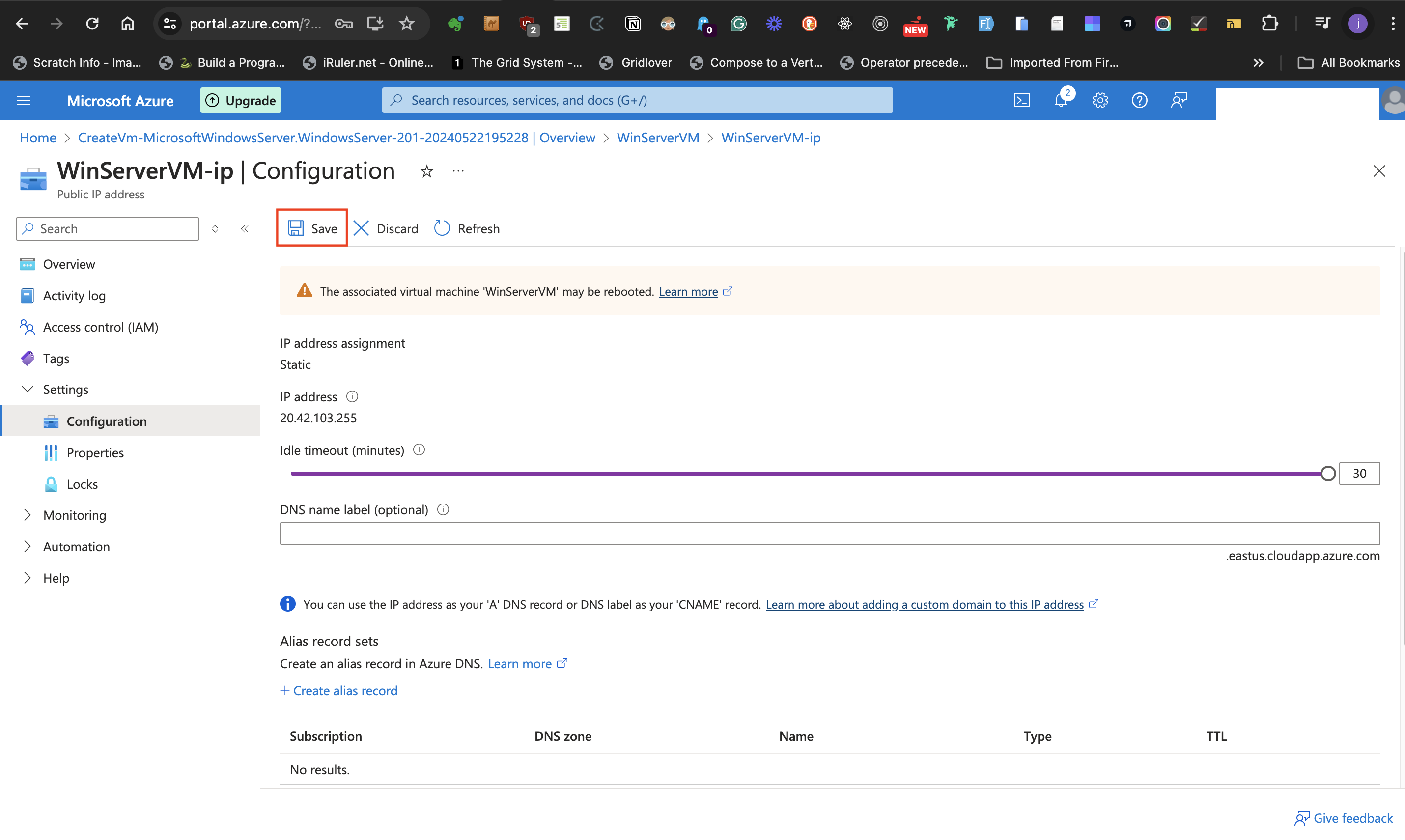

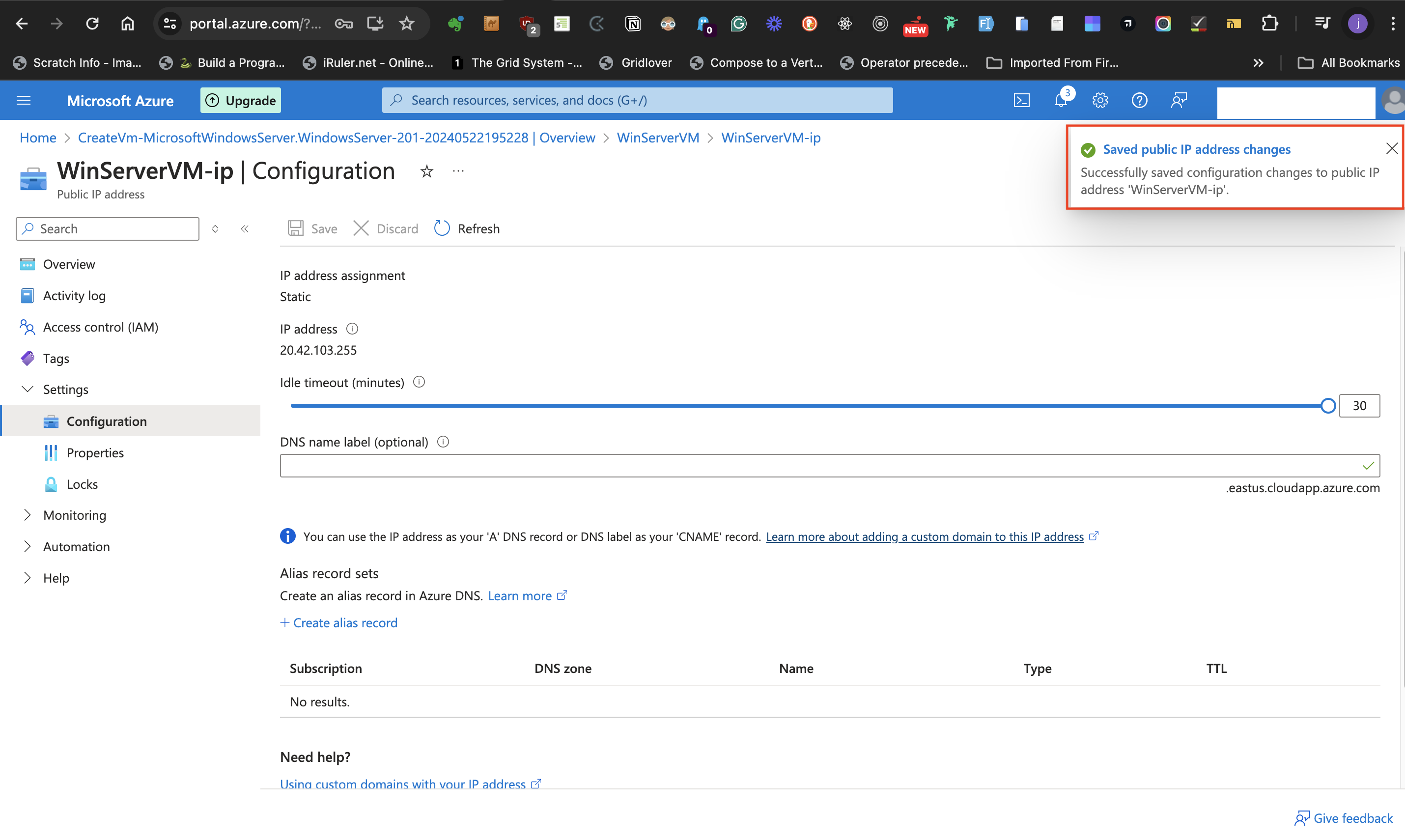

- Click on the **save** icon at the top of the page.

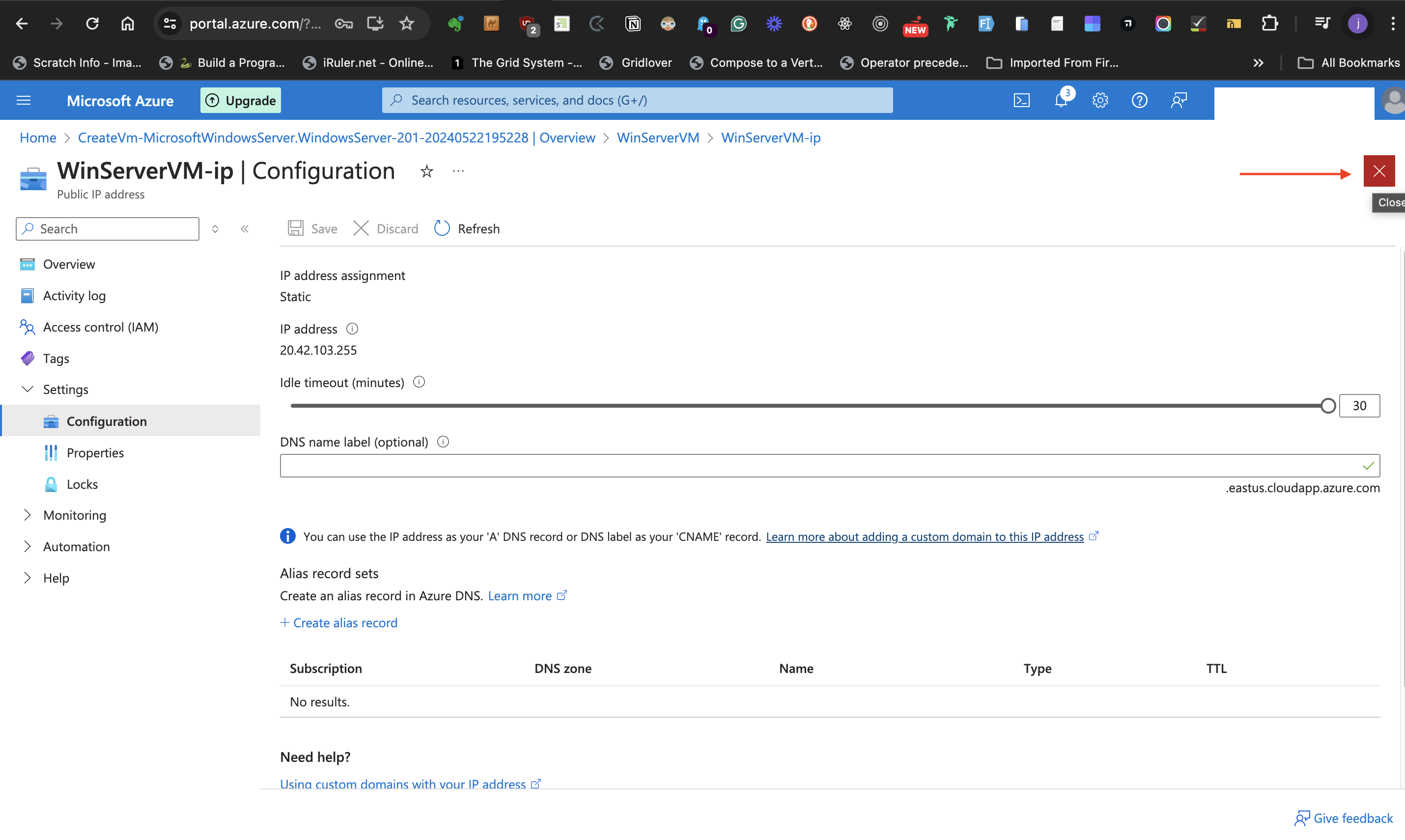

- you can then close the IP configuration page after making these changes by clicking the **close** button at the top right part of the page.

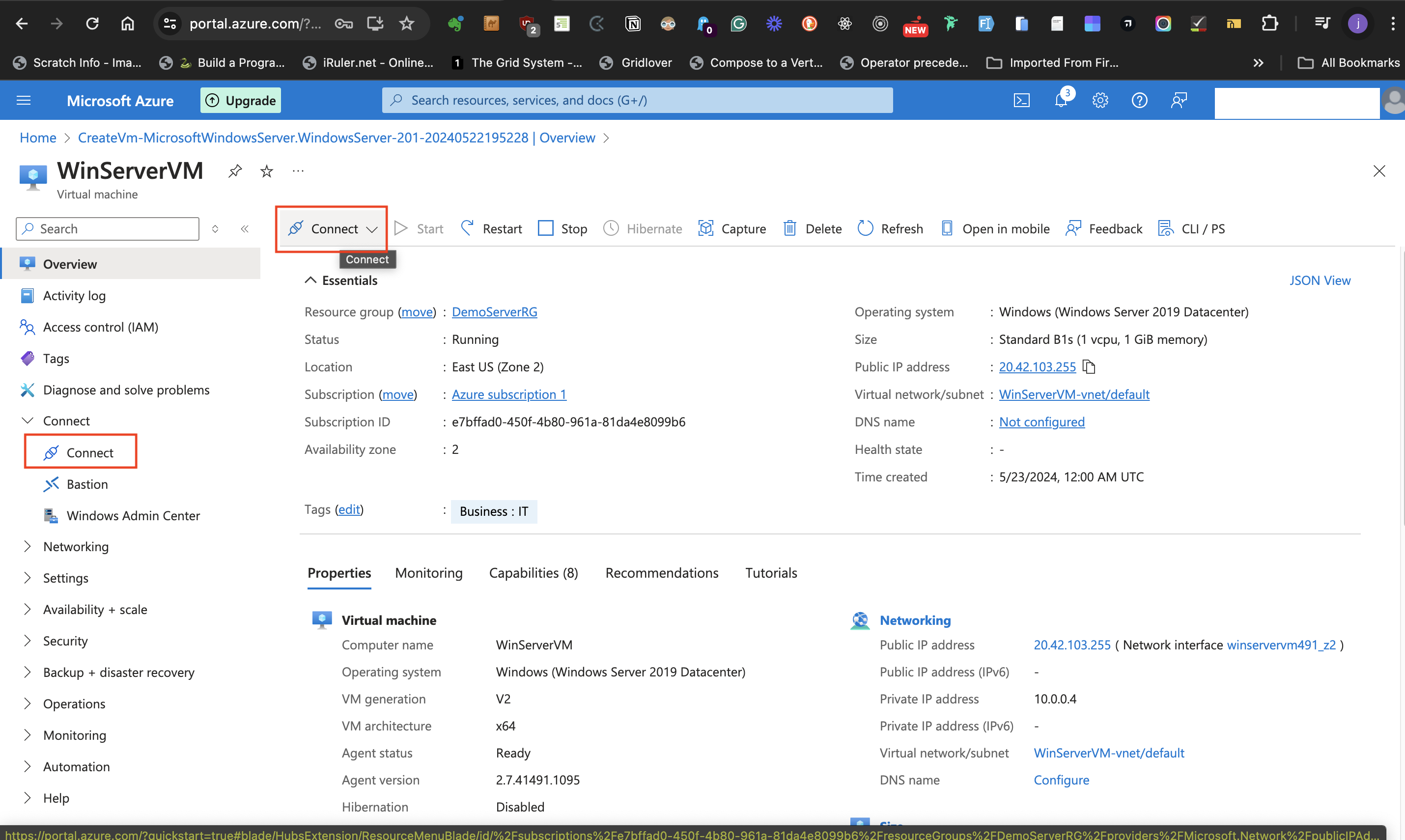

- Back at the Virtual Machine overview page, select **Connect** at the top level part of the page or the left-hand side of the page.

This directs to the connect page.

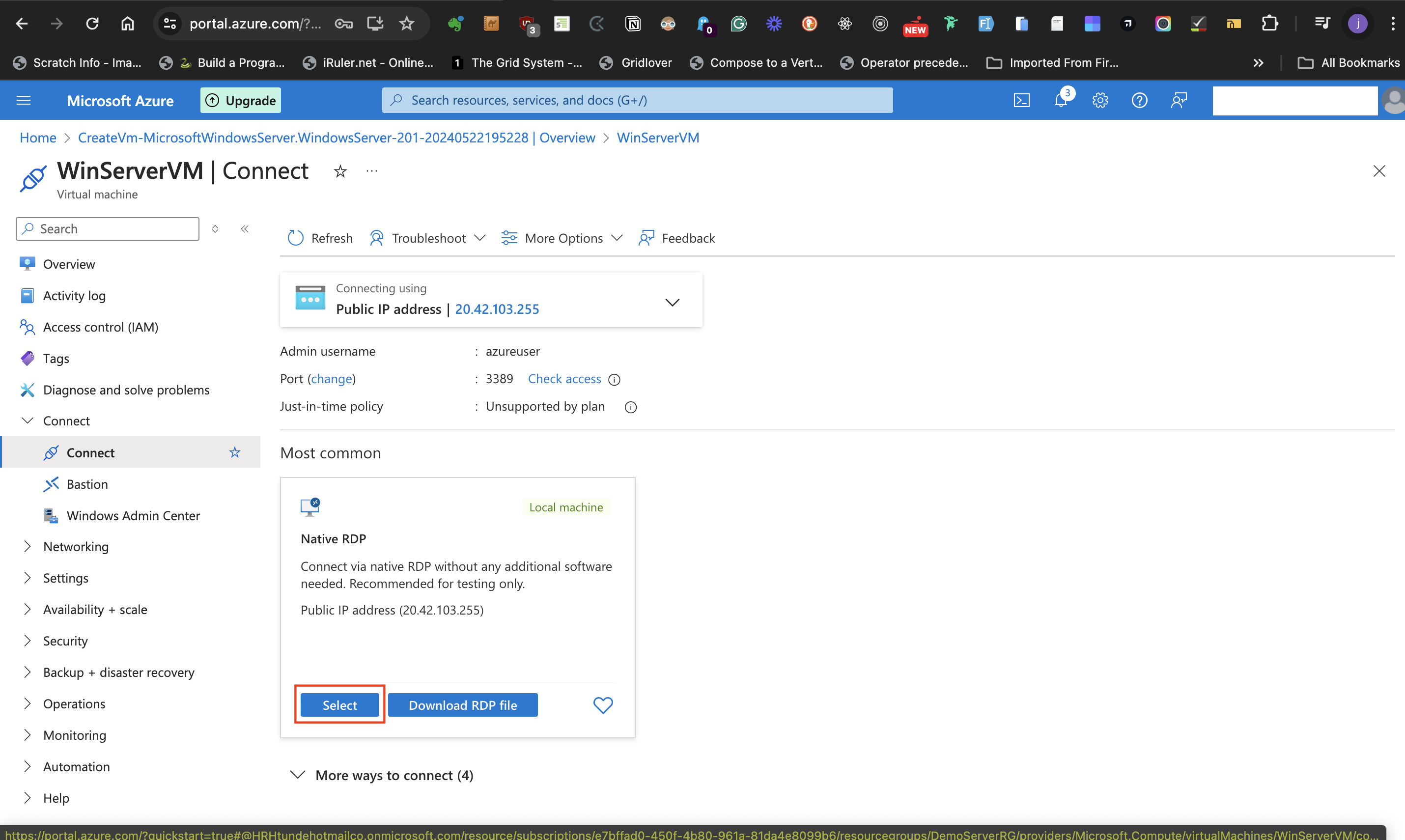

- Click the **Select** button at the local machine card with the heading "Most common".

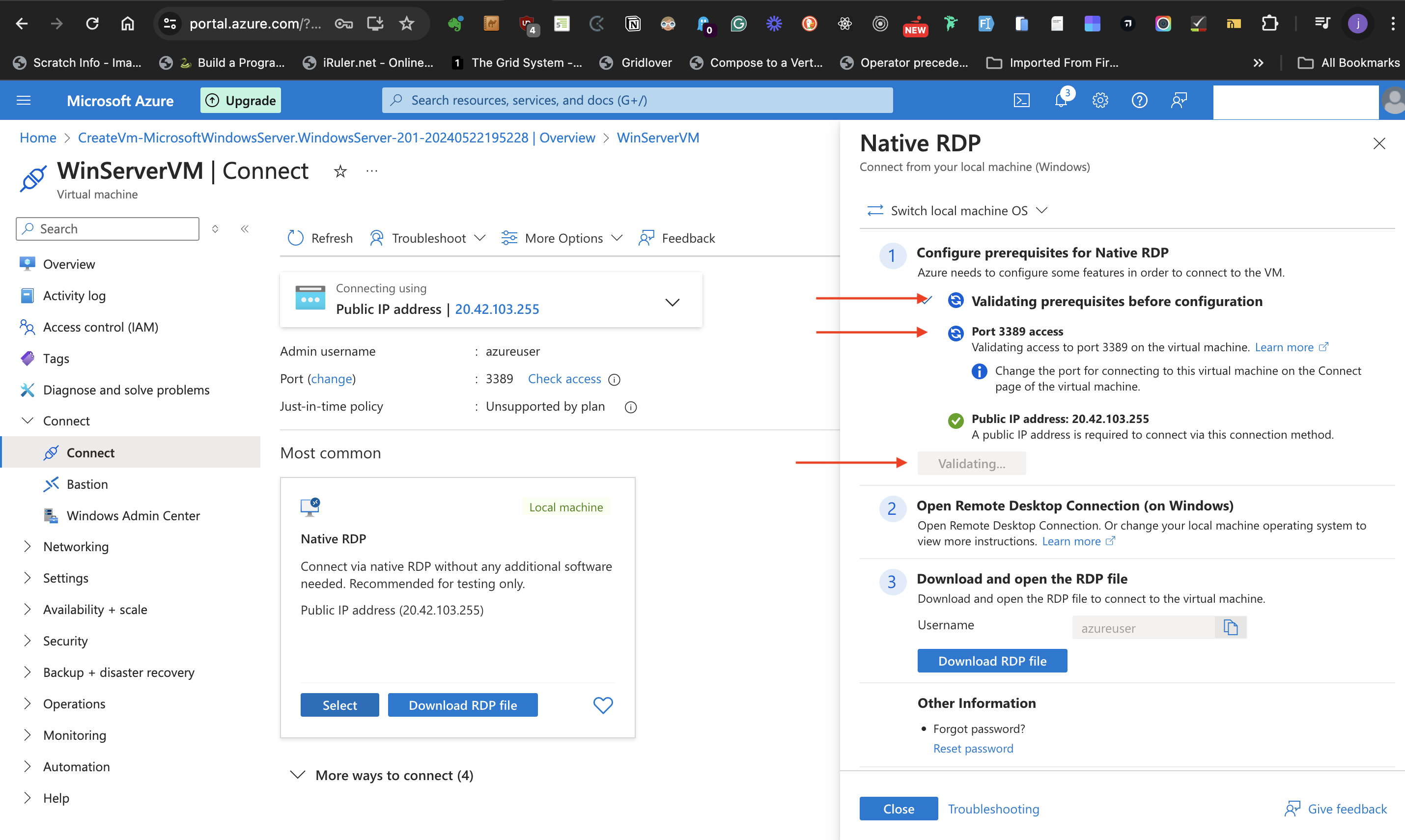

- On clicking **select**, several processes are triggered to validate prerequisites before configuration. Until there is a green check mark and

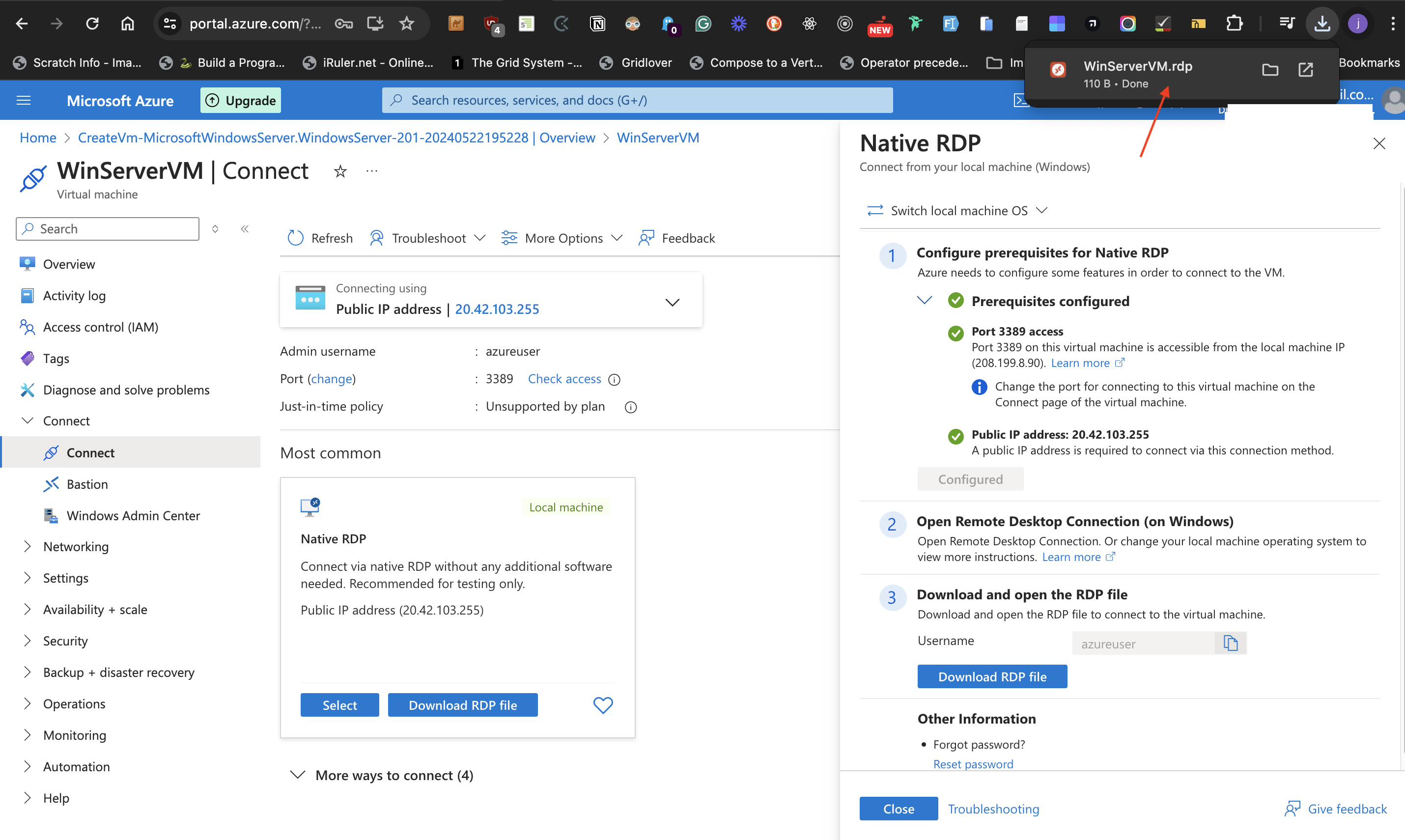

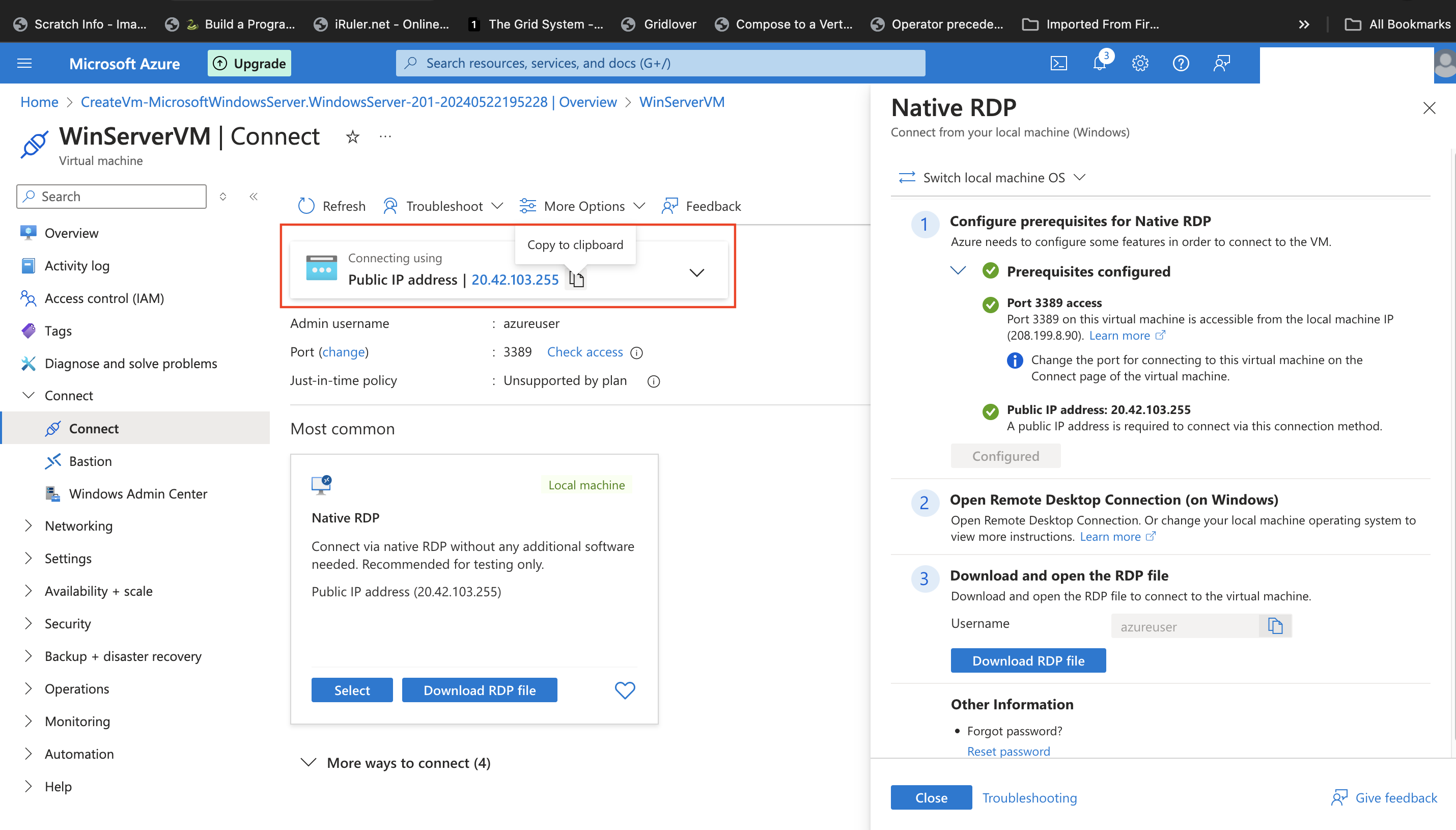

"configured" at the annotated arrow in the image below, the next step can't start.

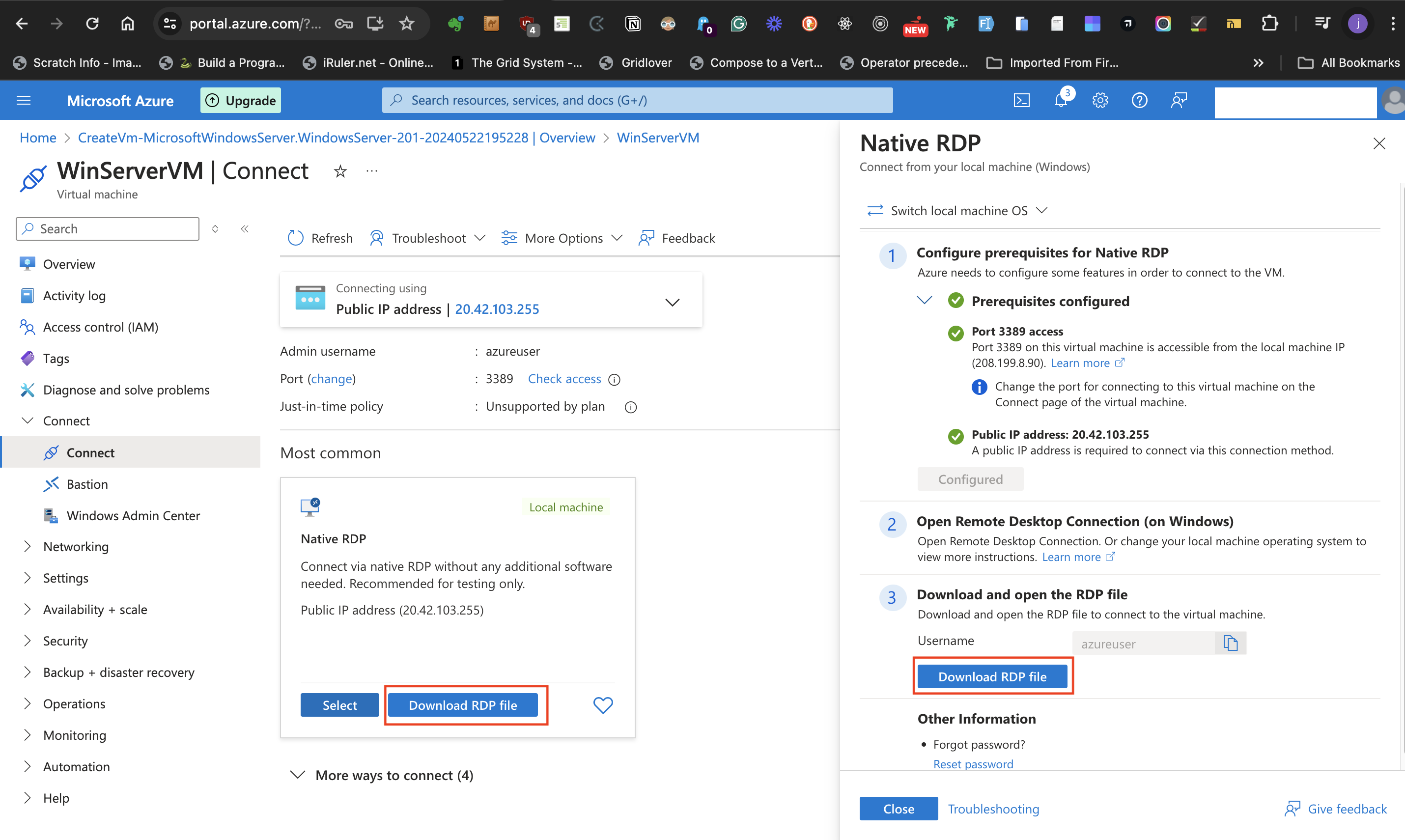

- Once the processes are completed and there are green check marks and "configured" at the annotated locations in the image above, click on the **Download RDP file** as shown below.

- This downloads the RDP file required for powering the Windows server Virtual machine that has been created. Check your default download location in your local machine for this file.

##Step 2

### Launch the Virtual Machine.

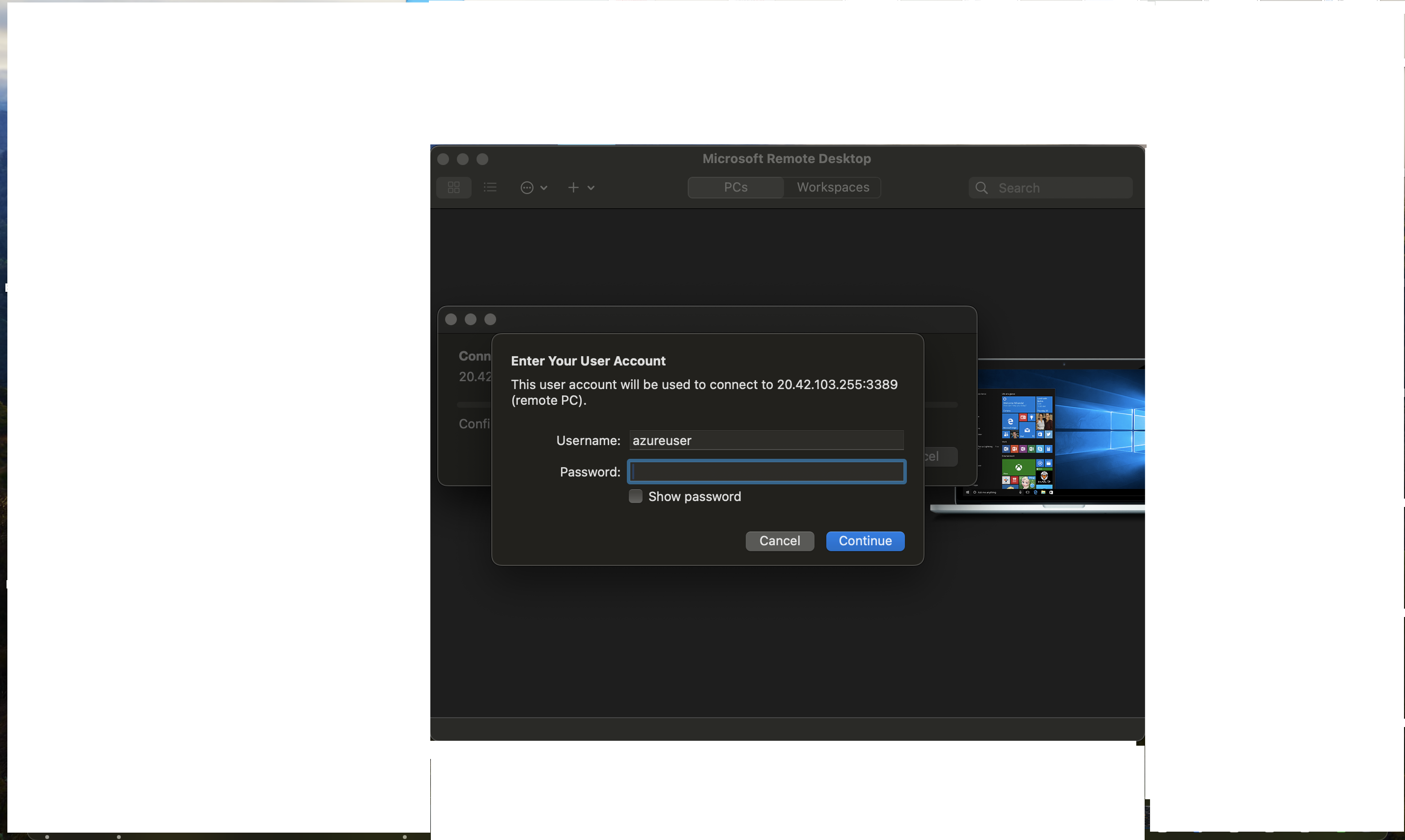

- To check if the Windows Server virtual machine works, log in using previously created credentials at the **Administrator Account** section above. Before signing in, Mac users need to go to the App Store, download and install the Microsoft Remote Desktop app. After installing the Microsoft Remote Desktop app, double-click the downloaded RDP file.

- Supply the password as required.

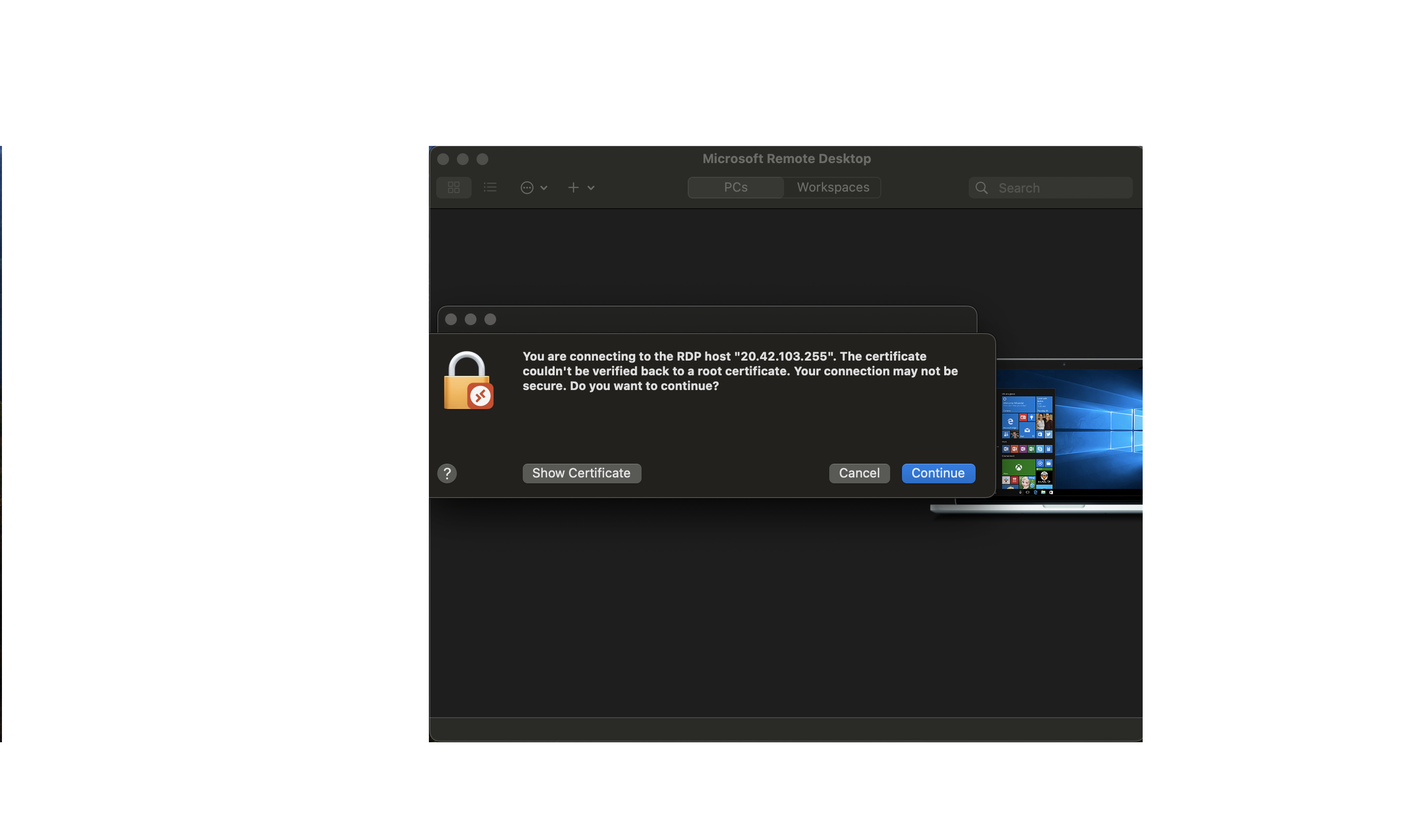

- Click **continue** at the next prompt

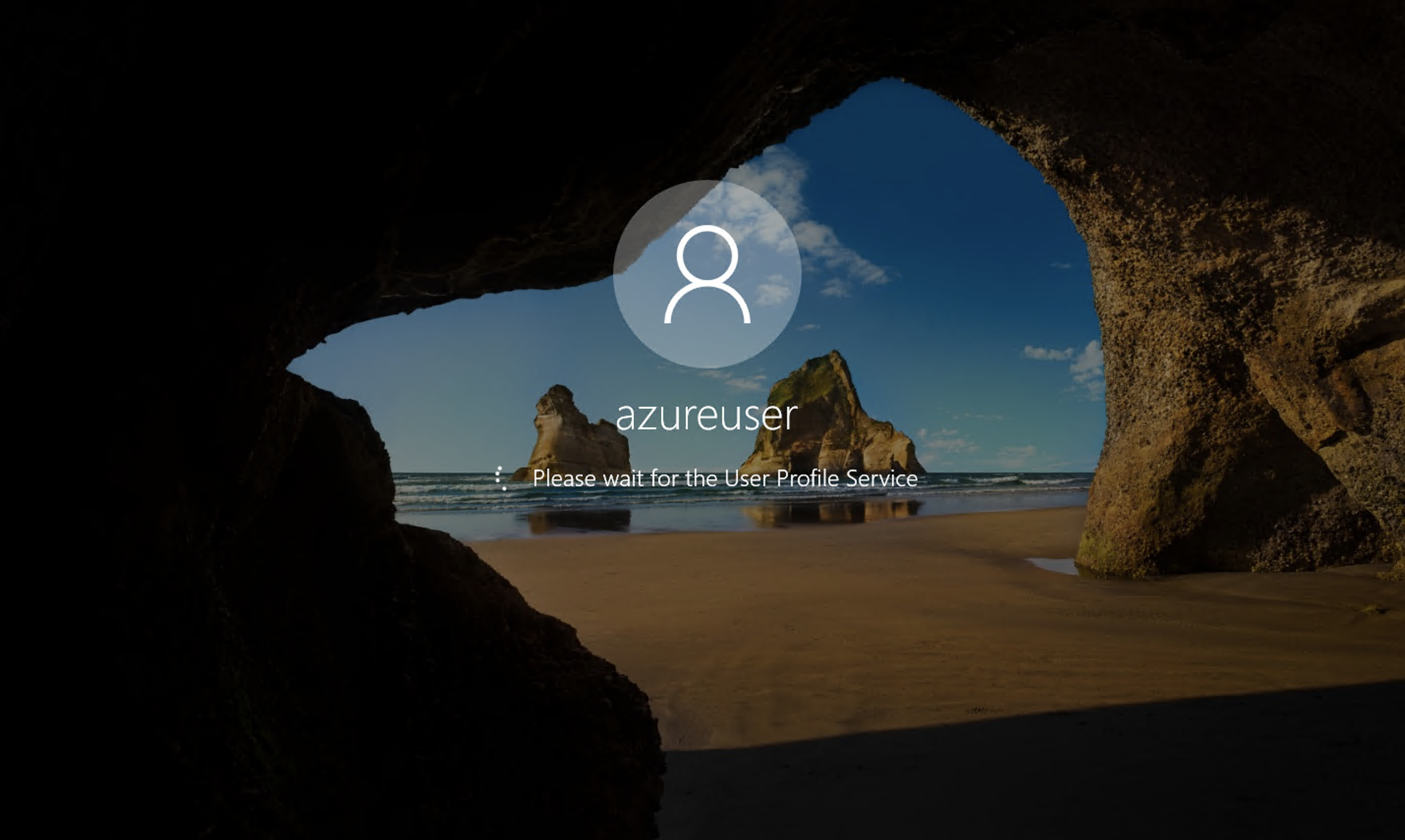

- If the correct login credentials are submitted there will be a Windows Server starting page like below.

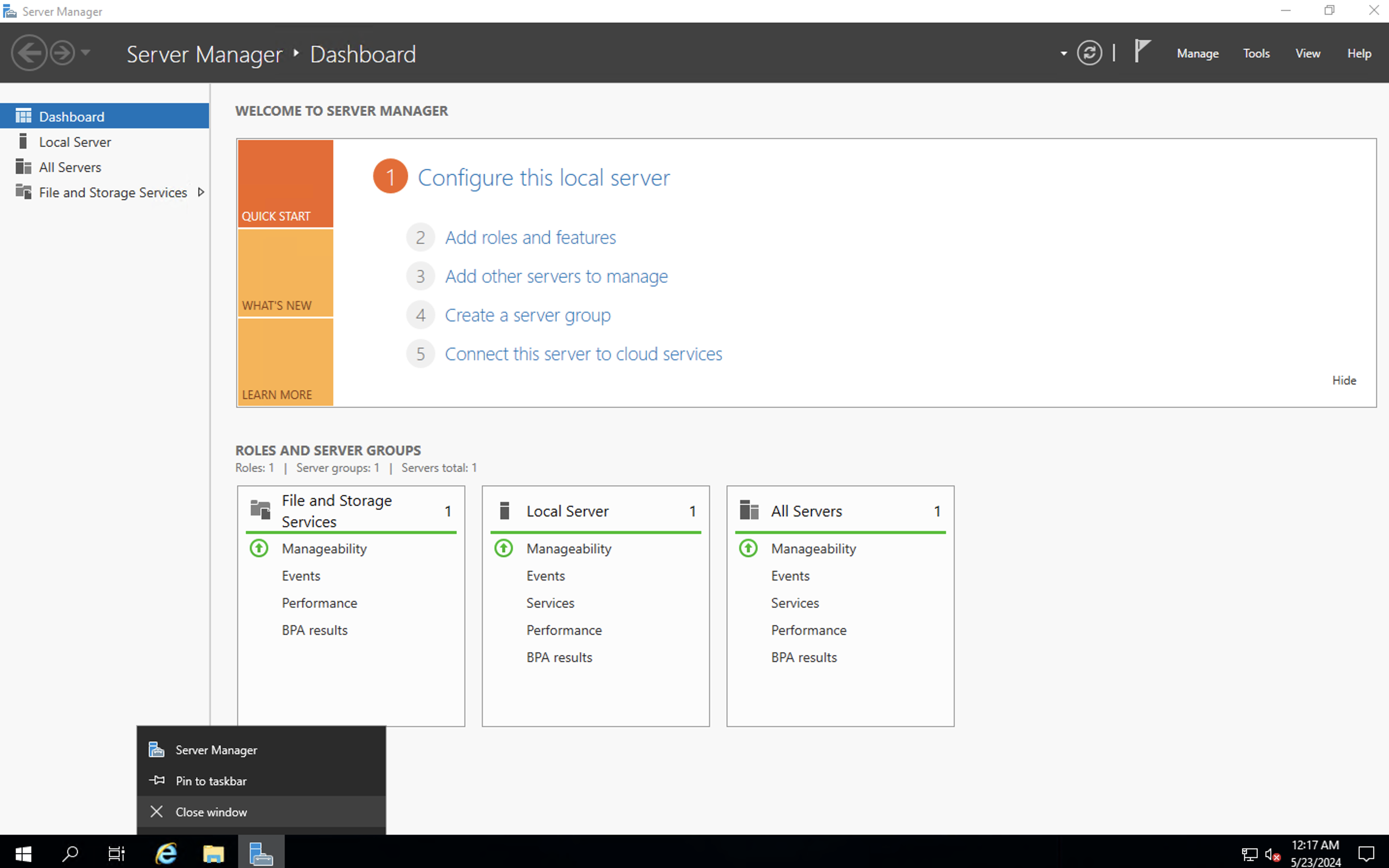

- This automatically launches the **Server manager**

- This is followed by a setup page below.

- There is a need to also install a **Windows server iis role** in the server and there are two ways to do this. The first approach is using the server manager setup and the second one is via the terminal. In this post, I will be using the terminal.

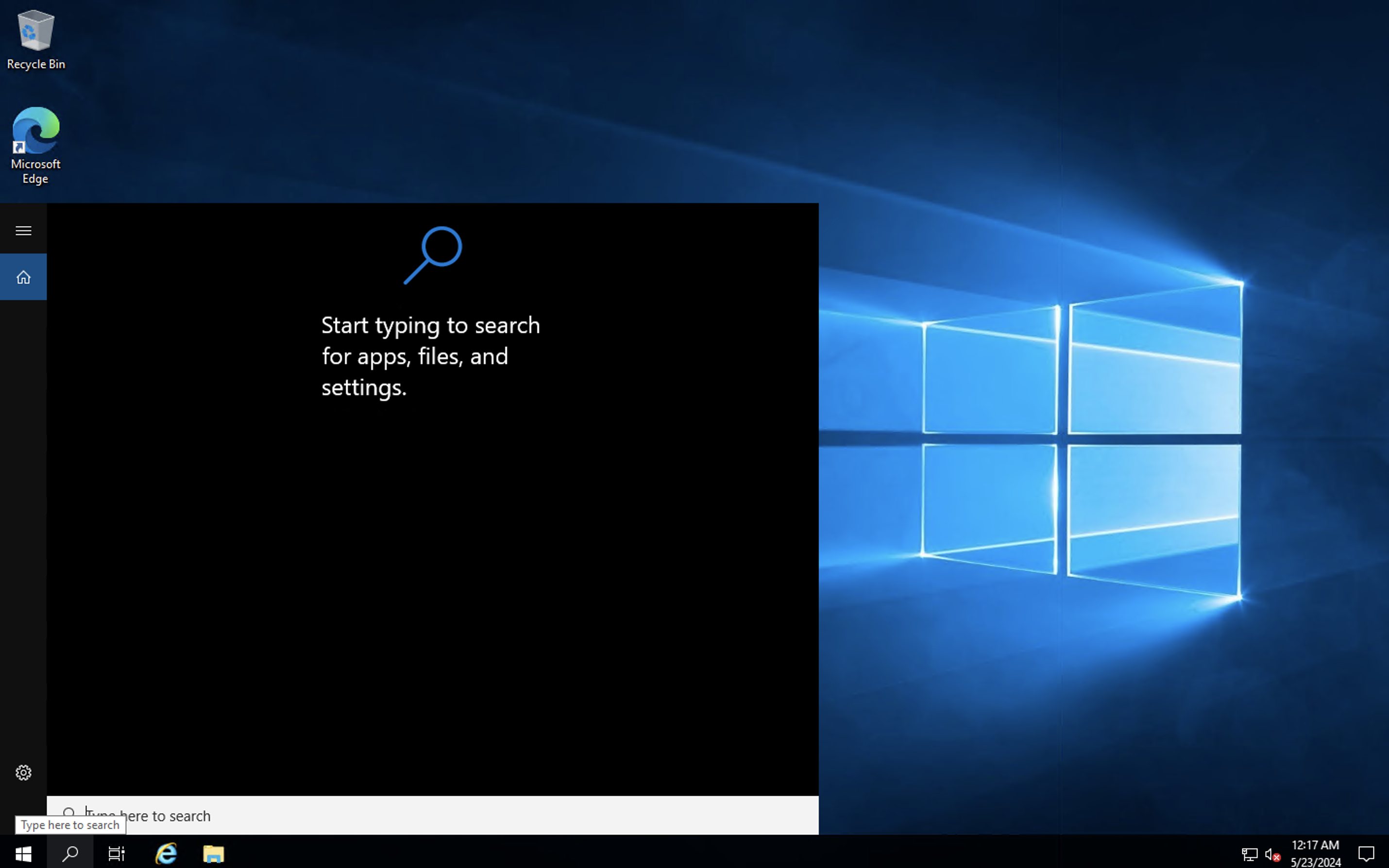

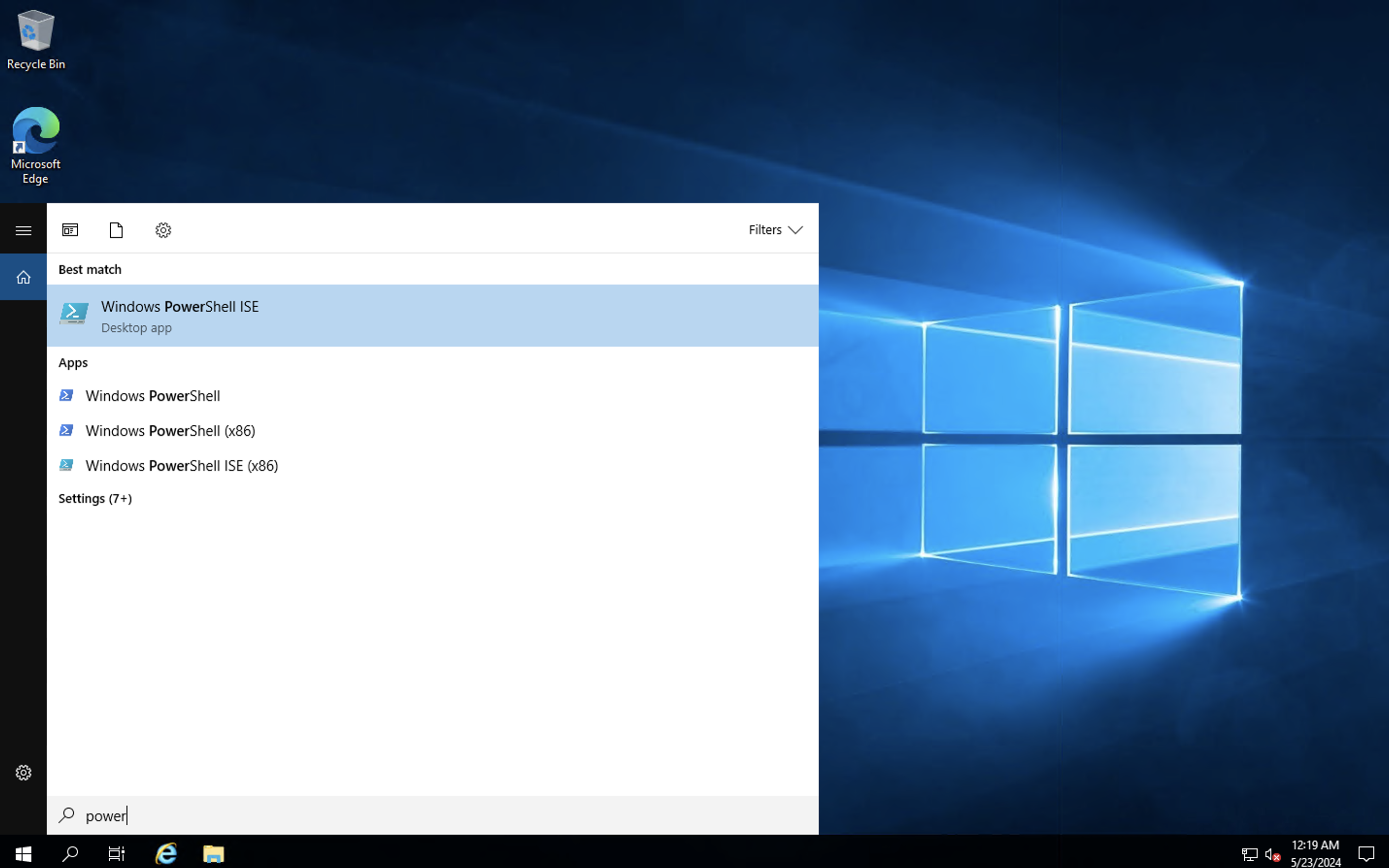

- Close the server setup page and search **PowerShell** at the hand lens icon at the bottom of the virtual machine page. Type "PowerShell" to search.

-Right-click to launch the PowerShell terminal as an Administrator

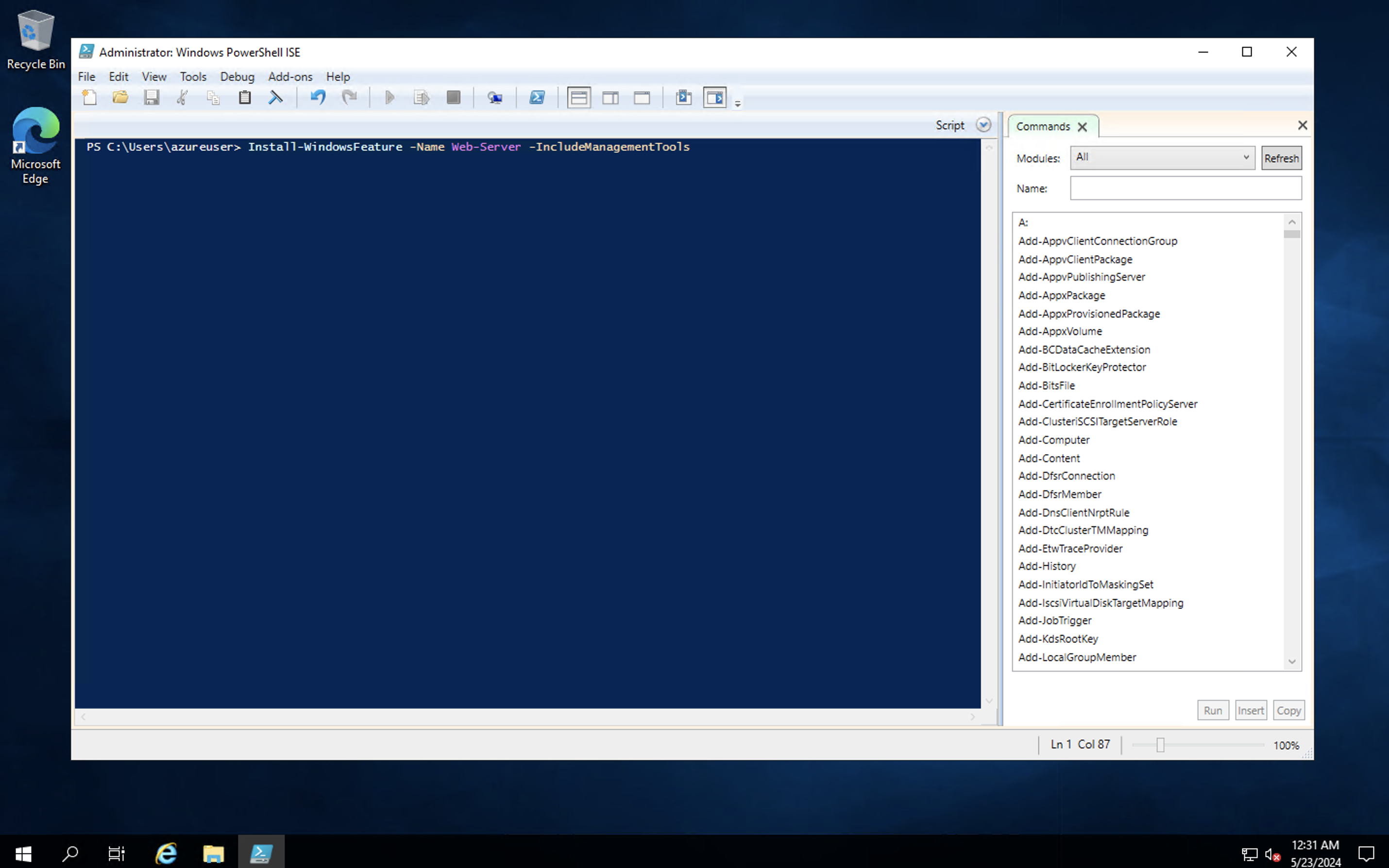

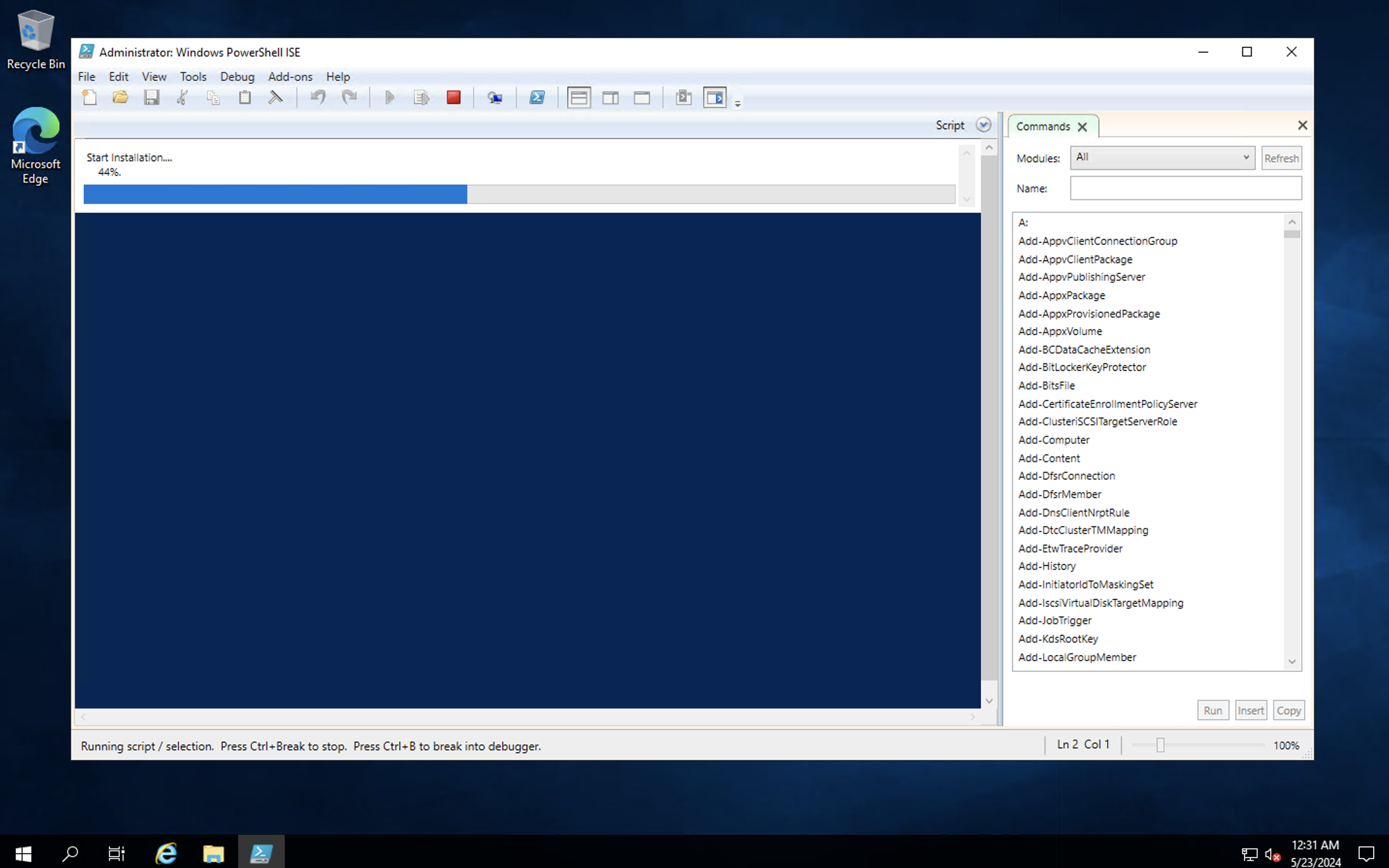

- type in the following command in the PowerShell terminal:

**install-WindowsFeature -name Web-Server -IncludeManagementTools**

This creates the **iis** role in this server.

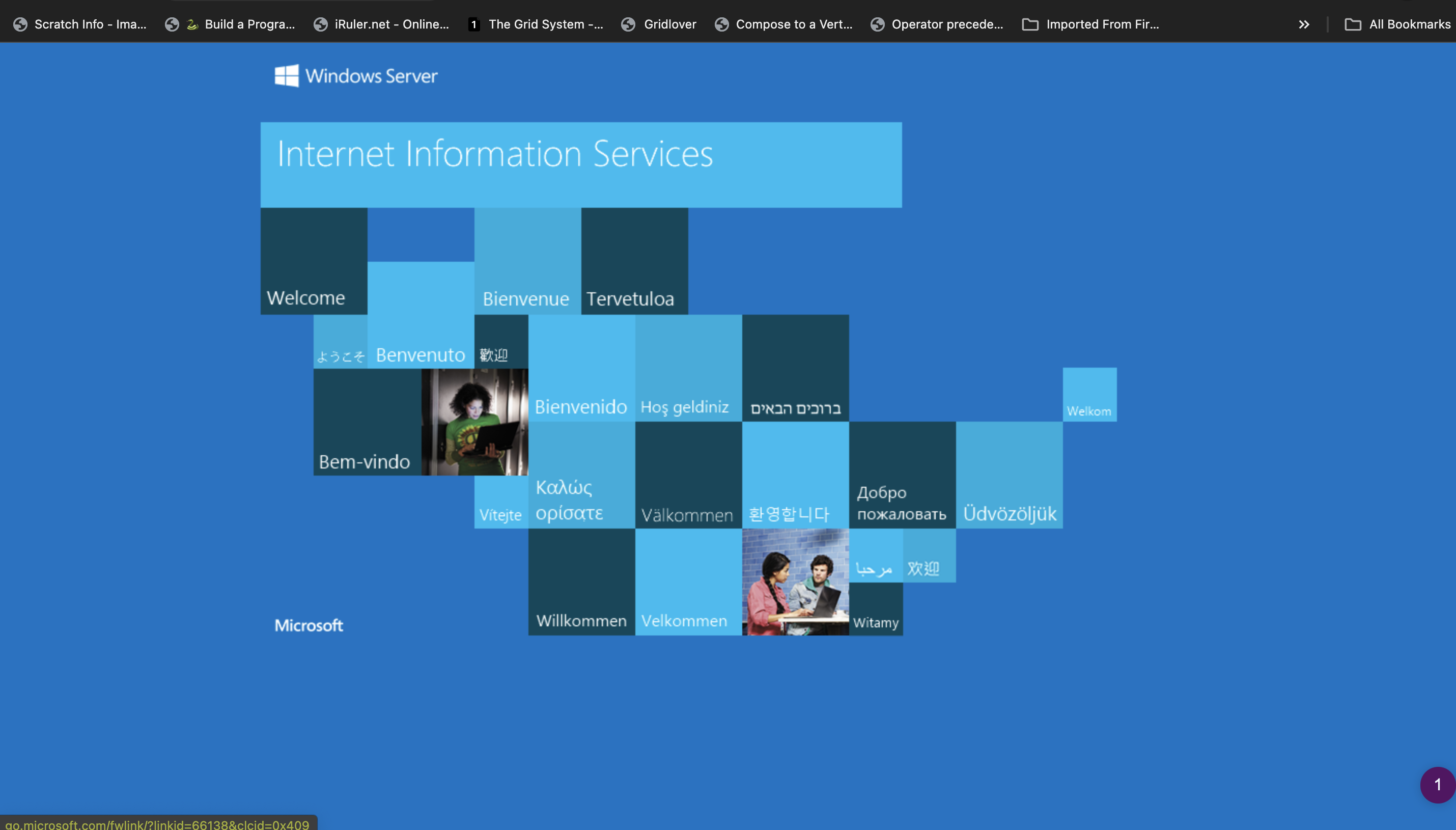

- When the role installation is complete, go to the Azure portal Windows Server Virtual Machine page, copy the **Public IP address**, and paste it on the browser.

- if the virtual machine is properly configured following these instructions, you should see something like below:

#Conclusion

With all the steps listed above, I have created a Windows Server virtual machine in an Azure portal. It is advised that the resource group in the Azure portal be deleted. However, before deleting the resource group in the Azure portal, there is a need to shut down the Windows Server virtual machine on the MacOS.

This post describes a high-level approach to creating a Windows Server virtual machine on a MacOS computer. More posts on cloud DevOps with Azure will be coming up during my journey into cloud development.

cover image by <a href="https://unsplash.com/@tvick?utm_content=creditCopyText&utm_medium=referral&utm_source=unsplash">Taylor Vick</a> on <a href="https://unsplash.com/photos/cable-network-M5tzZtFCOfs?utm_content=creditCopyText&utm_medium=referral&utm_source=unsplash">Unsplash</a>

| tundeiness |

1,865,718 | Join Me on X Now! | Hello dev.to community! First and foremost, a huge thank you to each one of you for... | 0 | 2024-05-26T15:37:41 | https://dev.to/bingecoder89/join-me-on-x-now-2n6m | webdev, beginners, codenewbie, tutorial | ## Hello dev.to community!

First and foremost, a huge thank you to each one of you for following my journey and supporting my work here on dev.to. Your engagement and feedback have been invaluable.

Today, I want to share an exciting way for us to stay even more connected and ensure you never miss an update on my latest projects and articles.

## Why Follow Me on X?

While dev.to is my go-to platform for detailed articles and project write-ups, X (formerly known as Twitter) offers a complementary space for quick updates and immediate engagement. Here’s why you should follow me on X:

### 1. **Instant Article Notifications**

On X, I share immediate updates every time I publish a new article on dev.to. If you follow me, you’ll be the first to know about my latest insights and tutorials, ensuring you never miss a post.

### 2. **Project Updates**

I often post progress updates on my ongoing projects on X. These include sneak peeks, behind-the-scenes looks, and quick updates that don’t always make it into my dev.to articles.

### 3. **Engage in Quick Discussions**

X allows for quick and direct interactions. If you have questions or thoughts about my latest project or article, you can easily reach out to me there for a speedy response.

### 4. **Stay in the Loop**

By following me on X, you can stay up-to-date with my latest activities and announcements. Whether it's a new project launch, a significant milestone, or an exciting development, you’ll hear about it first on X.

## How to Follow Me

Joining me on X is simple! Just click the link below and hit the follow button:

[Follow Me on X](https://twitter.com/@AnkitBal71210)

Your support on X would mean a lot to me. It’s another great way for us to connect, share ideas, and continue growing our community of developers.

## Let’s Stay Connected!

Thank you for considering following me on X. Your engagement on both dev.to and X helps me to keep creating valuable content and sharing my projects with you. I look forward to continuing this journey together across both platforms.

Stay awesome, and see you on X!

--- | bingecoder89 |

1,865,717 | Top 10 GitHub repositories for Java developers ✨ | Data updated on May 18, 2024 Top 1. Java Guide - 144k ⭐️ This is the repo made by... | 0 | 2024-05-26T15:36:32 | https://dev.to/tmsanghoclaptrinh/top-10-github-projects-to-help-you-programming-java-1h93 | webdev, beginners, programming, java | > Data updated on May 18, 2024

## [Top 1. Java Guide - 144k ⭐️](https://github.com/Snailclimb/JavaGuide)

> This is the repo made by Chinese developers. If you often follow GitHub, you will know that Chinese developers share many repos with excellent and extremely detailed programming knowledge. And of course, **Java Guide** is one of them 👨💻

>

> Now all browsers have the function to translate the entire website (For example in Google Chrome, you can right-click and click Translate to English), so you can absolutely use that feature to translate the repo into English for easy reading and understanding.

>

> Although the translation is not 100% accurate, with genuine repos like this, having a solution like this is great, right?

>

> 👉️ Github: https://github.com/Snailclimb/JavaGuide

>

>

---

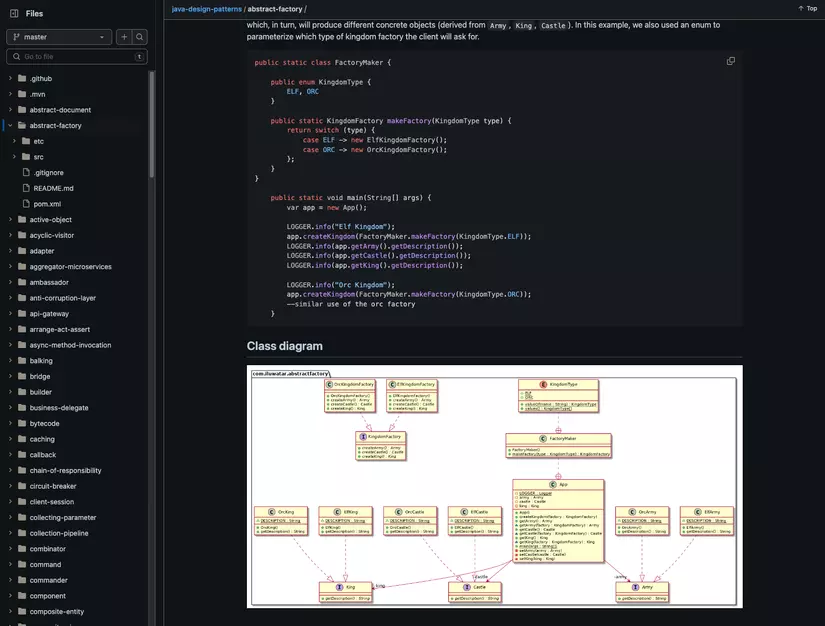

## [Top 2. Design Patterns implemented in Java - 86.7k ⭐️](https://github.com/iluwatar/java-design-patterns)

> Surely you have all heard that **Design Patterns** is one of the important knowledge that helps you progress from Junior to Mid-level to Senior, right? 🚀

>

> Understanding and applying Design Patterns effectively will help programmers solve problems when designing applications or systems.

>

> This repo will guide you thoroughly about Design Patterns, and implement them using the **Java** programming language.

>

> 👉️ Github: https://github.com/iluwatar/java-design-patterns

>

>

---

## [Top 3. advanced-java - 74.6k ⭐️](https://github.com/doocs/advanced-java)

> Another great repo from Chinese developers** 🤓

>

> However, this repo collects documents specifically for **experienced Java developers** 💼

>

> In this repo there is mostly advanced knowledge such as:

> - **High concurrency architecture:** Message queue, Search engine, Cache, Highly concurrent systems, ...

> - **Distributed Systems**

> - **Highly available architecture**

> - **Microservice architecture**

> - **Massive data processing**

>

> Repo follows the direction of **asking interview**, related to the advanced knowledge mentioned above, then will give **ideas** for answers and **suggestions detailed answer (if any)**

>

> 👉️ Github: https://github.com/doocs/advanced-java

>

>

---

## [Top 4. interviews - 61.9k ⭐️](https://github.com/kdn251/interviews)

> The name says it all. This repo will help you thoroughly prepare Java programming knowledge, before participating in **interviews** 👨💻

>

> The author mainly shares questions related to coding, and of course **includes solutions**, to handle problems that big companies like Adobe, Facebook, Google, Amazon give.

>

> Besides, there are instructions for solving exercises on **LeetCode**, or in the famous book **"Cracking the coding interview"** using the Java programming language 📝

>

> 👉️ Github: https://github.com/kdn251/interviews

>

>

---

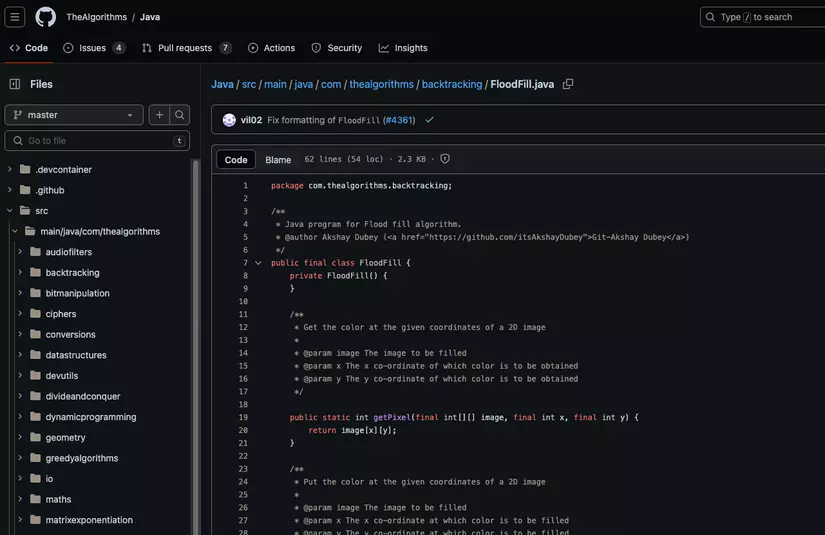

## [Top 5. TheAlgorithms - Java - 57k ⭐️](https://github.com/TheAlgorithms/Java)

> The GitHub page of **"The Algorithms"** is probably too famous for algorithm enthusiasts.

>

> This **"TheAlgorithms - Java"** repo is one of their sub-repos, helping you understand and implement **data structures and algorithms** using the **Java** programming language.

>

> 👉️ Github: https://github.com/TheAlgorithms/Java

>

>

---

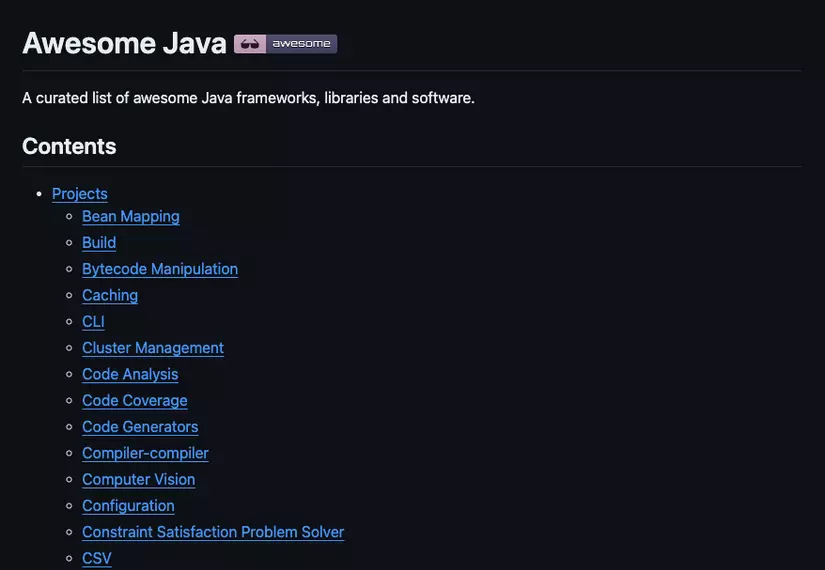

## [Top 6. Awesome Java - 40.1k ⭐️](https://github.com/akullpp/awesome-java)

> It's a fact that many repos on GitHub start with the word **"awesome"** which is **"awesome"** exactly as the name suggests 🤩

>

> Repo **Awesome Java** is a list of frameworks, libraries and applications written in genuine Java, serving many different purposes 👨💻

>

> - From Backend programming, Job Scheduling, Logging, ...

>

> - Up to mobile programming, games, networking, ...

>

> This will definitely be a useful reference source for Java developers.

>

> 👉️ Github: https://github.com/akullpp/awesome-java

>

>

---

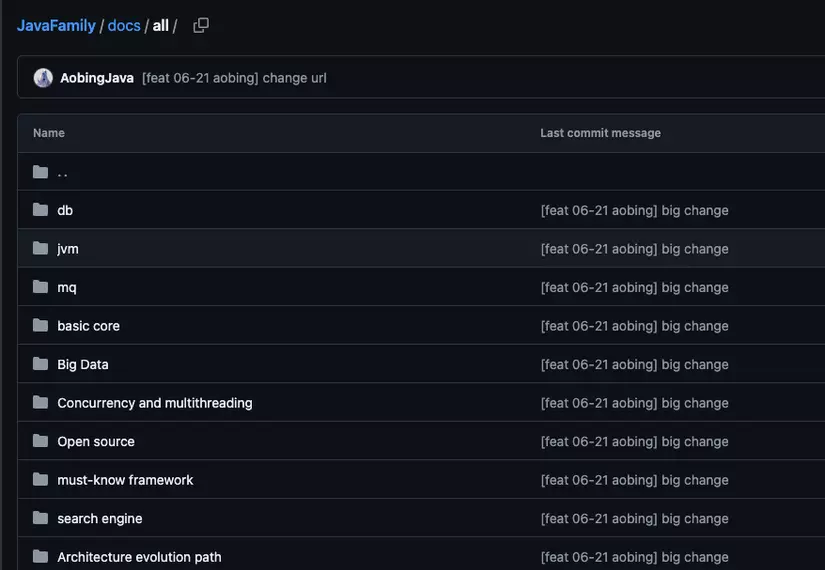

## [Top 7. JavaFamily - 35.5k ⭐️](https://github.com/AobingJava/JavaFamily)

> A repo that compiles many Study Guides, as well as interview questions related to Java.

>

> According to the author, this repo covers almost all of **the core knowledge that Java programmers need to know to become a master** 🧠

>

> The author of this repo is Ao Bing - he has 8 years of programming experience, and was once **Blog Star Top 1** on China's CSDN site. His CSDN page currently has **more than 300,000** fan followers, and on bilibili there are also nearly **250,000 fans** 🥇

>

> 👉️ Github: https://github.com/AobingJava/JavaFamily

>

>

---

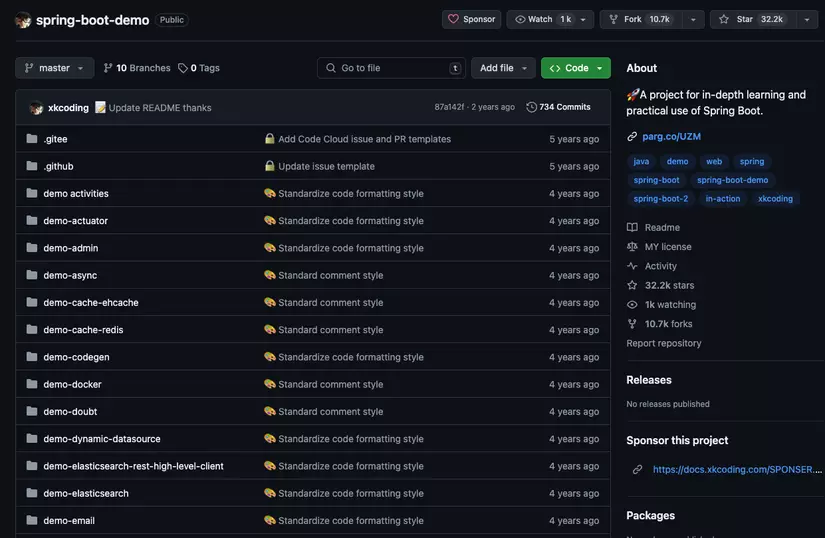

## [Top 8. Spring Boot Demo - 32.2k ⭐️](https://github.com/xkcoding/spring-boot-demo)

> I don't know China's technology market very well. But when searching for Java repos, highly appreciated by the community on GitHub, I found many repos from developers in this country.

>

> **Spring Boot Demo** is a repo containing all **source code** of demo applications, coded with Spring Boot. Java developers must be very familiar with Sprint Boot, right? 🙌

>

> According to a rough count, there are about **65 demo projects** in this repo, free for you to learn and research.

>

> 👉️ Github: https://github.com/xkcoding/spring-boot-demo

>

>

---

## [Top 9. Spring Boot Example - 29.9k ⭐️](https://github.com/ityouknow/spring-boot-examples)

> This repo also has the same purpose as the above repo, but with a different author.

>

> The author is a Chinese developer, again 🫣

>

> But the repo above is over **32.2k** ⭐️, and this guy's is also around **30k** ⭐️, so I have to admit it is "six of one and half a dozen of the other"

>

> In my subjective opinion, it's probably because the guy above plays too hardcore, up to 65 projects. And this guy only has more than 20 projects to share with the community.

>

> As for quality details within each project, please proactively access the GitHub link below to test.

>

> 👉️ Github: https://github.com/ityouknow/spring-boot-examples

>

>

---

## [Top 10. To Be Top Javaer - 25.2k ⭐️](https://github.com/hollischuang/toBeTopJavaer)

> The repo name shows the author's great confidence. But look at the photo in the **README** section, if you know Chinese, please translate it for me to see if he is selling the course 😅

>

> When going into the source part of the repo, the author provides a clear **mind map**, as well as detailed knowledge **from basic to advanced**, structured into folders as well 👍

>

> Ignoring the issue of whether the author sells the course or not, I think this effort of sharing knowledge for free with everyone is worthy of recognition 💯

>

> 👉️ Github: https://github.com/hollischuang/toBeTopJavaer

>

>

---

Thanks for reading! Write about your favourite GitHub repositories in the comments!

Btw, you can support my work by buying me a coffee!

<a href="https://www.buymeacoffee.com/tmsanghoclaptrinh" target="_blank"><img src="https://cdn.buymeacoffee.com/buttons/v2/default-yellow.png" alt="Buy Me A Coffee" style="height: 60px !important;width: 217px !important;" ></a>

Thank you 🌻

Hope this help. See ya 👋

~ Sang Minh Tran from Vietnam 🇻🇳 | tmsanghoclaptrinh |

1,865,713 | Big Data: a ferramenta que precisamos. | Big Data é o termo usado para descrever uma grande quantidade de dados, que a todo momento são... | 0 | 2024-05-26T15:32:03 | https://dev.to/delmiro/um-pouco-sobre-big-data-497j | bigdata, database, datascience, backend | Big Data é o termo usado para descrever uma grande quantidade de dados, que a todo momento são gerados por pessoas que registram e documentam tudo que acontece ao seu redor com dispositivos e aparelhos interligados a uma rede, tendo como objetivo produzir e compartilhar ideias, conceito, acontecimentos, preferências e muito mais dentro e fora do meio em que vivem.