id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,865,548 | How to migrate DNS records from CloudFlare to AWS Route53 with Terraform&Terragrunt | Possible reasons There are multiple reasons for such migration. The most common are the... | 0 | 2024-05-26T11:26:54 | https://dev.to/yyarmoshyk/how-to-migrate-dns-records-from-cloudflare-to-aws-route53-with-terraformterragrunt-2ebj | dns, route53, migration, awswaf | ## Possible reasons

There are multiple reasons for such migration. The most common are the following:

1. You'd like to use external-dns controller in your EKS cluster to manage DNS records automatically for you, however the CloudFlare support is still in beta and you don't want to use it for production workloads.

2. You want to take the advantages of [AWS WebApplication Firewall](https://aws.amazon.com/waf/) instead of CloudFlare WAF.

There might be other reasons but I faced the 2 in the most resent project.

You'll need to put either Cloudfront distribution or ApplicationLoadBalancer (ALB) in front of your web application to use AWS WAF because it provides the application level protection so it can not be enabled for NetworkLoadBalancer (NLB)

## Migration flow

1. Read all the records from the existing CloudFlare DNS zone.

You can re-use the python script I've prepared. The automation is available in [github.com/yyarmoshyk/read-cloudflare-dns-records](https://github.com/yyarmoshyk/read-cloudflare-dns-records)

The readme file describes how to use it.

2. Create DNS zone in AWS

You don't need to invest much efforts into this. Feel free to re-use the existing [terraform-aws-route53](https://github.com/terraform-aws-modules/terraform-aws-route53/tree/master/modules/zones) community module

3. Create DNS records in AWS

The script above produces the json output that can be used as an input for the [terraform-aws-route53/records](https://github.com/terraform-aws-modules/terraform-aws-route53/tree/master/modules/records) terraform module

```json

{

"name": "example.com",

"type": "A",

"ttl": 300,

"records": [

"10.10.10.10"

]

}

```

The output should be saved into the file. Next the contents can be read with terrafrom/terragrunt and specified as inputs to the [terraform-aws-route53/records](https://github.com/terraform-aws-modules/terraform-aws-route53/tree/master/modules/records) terrafrom module

```

records_jsonencoded = jsondecode(file("dns_records.json"))

```

4. Update NameServer configuration in your current DNS registrar.

For this you'll need to refer to the documentation of the DNS provider where your domain is registered.

I will not cover running `terragrunt apply` procedure here. There are many documents about this over the internet.

## Closing words

Most of the time you'll spend on creating the API token in CloudFlare and injecting the route53 provisioning into your existing IaaC structure.

Basically we extract the data from cloudflare, convert it into proper format, next create all records with terragrunt or terraform. | yyarmoshyk |

1,865,547 | Unveiling the Exceptional Talent: "Rosewood" TV Show Cast | Dive into the world of "Rosewood" and discover the mesmerizing performances of its cast. Led by... | 0 | 2024-05-26T11:25:45 | https://dev.to/theon_greyjoy_eb641583a84/unveiling-the-exceptional-talent-rosewood-tv-show-cast-2kp | Dive into the world of "Rosewood" and discover the mesmerizing performances of its cast. Led by Russell Hornsby as Dr. Beaumont Rosewood Jr., the show's ensemble brings depth and authenticity to every scene. Jaina Lee Ortiz shines as Detective Annalise Villa, while Morris Chestnut adds a layer of complexity as her ex-husband and fellow detective. Together, they form a dynamic team, solving intricate cases in Miami's vibrant backdrop. With each episode, the [Rosewood TV show cast](https://www.hbtrl.com/articles/rosewood-tv-show-cast/) delivers stellar performances, drawing viewers into a world of crime, mystery, and compelling storytelling. | theon_greyjoy_eb641583a84 | |

1,865,546 | How to Execute SQL Commands In Another File from the PostgreSQl psql shell in Windows | The Problem Suppose you download an sql file containing some sql instructuions (such as... | 0 | 2024-05-26T11:23:25 | https://dev.to/johnakindipe/how-to-execute-sql-commands-in-another-file-from-the-postgresql-psql-shell-in-windows-ppa | postgres, commandline, sql | ## The Problem

Suppose you download an sql file containing some sql instructuions (such as dummy data from mockaroo), the options you have to run these commands in postgres include copying the commands in the file over to the psql shell manually and running them. This approach is workable if the file contains only a few commands, however, say the file contains hundreds of lines of sql commands, you realize this option doesn't scale with size.

## The Solution

Thankfully, you can execute SQL commands located in another file on your pc straight from the psql shell. The command to do this is `\i filepath to sql file`

####To get the filepath.

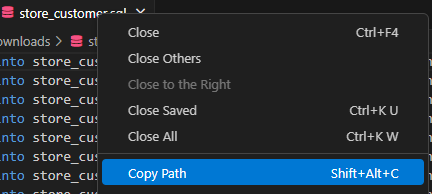

1. Open the file in a code editor such as VSCode

2. Right click on the file.

3. Select the copy path option from the dropdown menu.

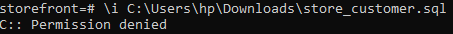

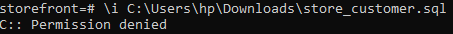

4. Then, open your psql shell and run this command `\i filepath to sql file`, and it’s more than likely you’ll get the following output:

The solution to the above is quite simple really, very simple infact.

##Let’s troubleshoot

It may be this file was **downloaded** onto your pc and needs to have necessary permissions.

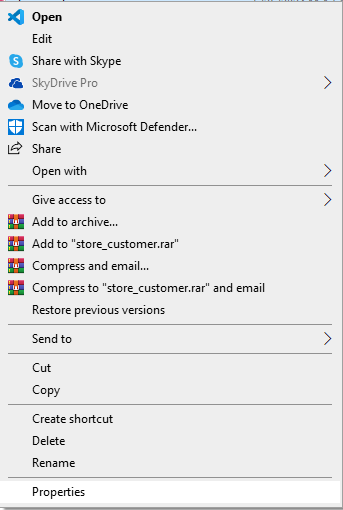

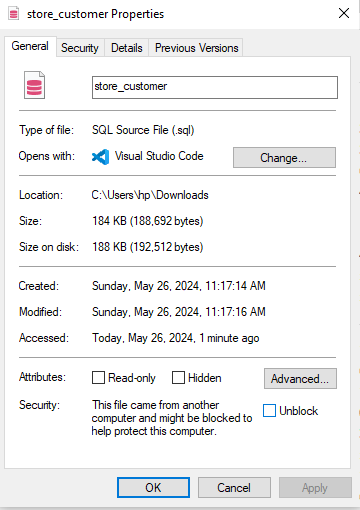

1. Open the file location

2. Right-click on the file and click on properties in the drop down menu

3. Click on unblock just near the lower right edge of the _properties dialogue box_ and select the apply option (it will become clickable)

Now that we’ve unblocked the file, we can run the command again in our psql shell and see what happens.

Seems like we're still getting the same error as before. What could be the issue?

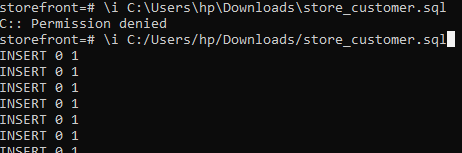

##The Solution

I did mention that the solution is simple, and it is quite simple, **to the initiated**. The uninitiated might spend hours or days of debugging trying to figure out what the problem is. You may even give up at some point and just manually copy the sql commands and run them directly in the psql shell. Well, let's save ourselves any ache:

The simplicity of the solution is quite interesting. All we need to do is simply change the direction of our slashes from “\” to “/” and the command works as shown below.

The solution to this problem stems from how the file paths in windows differs from what the psql shell expects and all we need to do is conform to what psql wants, and that my folks is the solution.

---

####This article does assume that:

The user on the psql shell has access to the sql file we are trying to execute code from. Otherwise, all of the above might not apply and it may be necessary to get permission to access the file in the first place.

---

Kindly share this article if you found it useful. Cheers 😊

| johnakindipe |

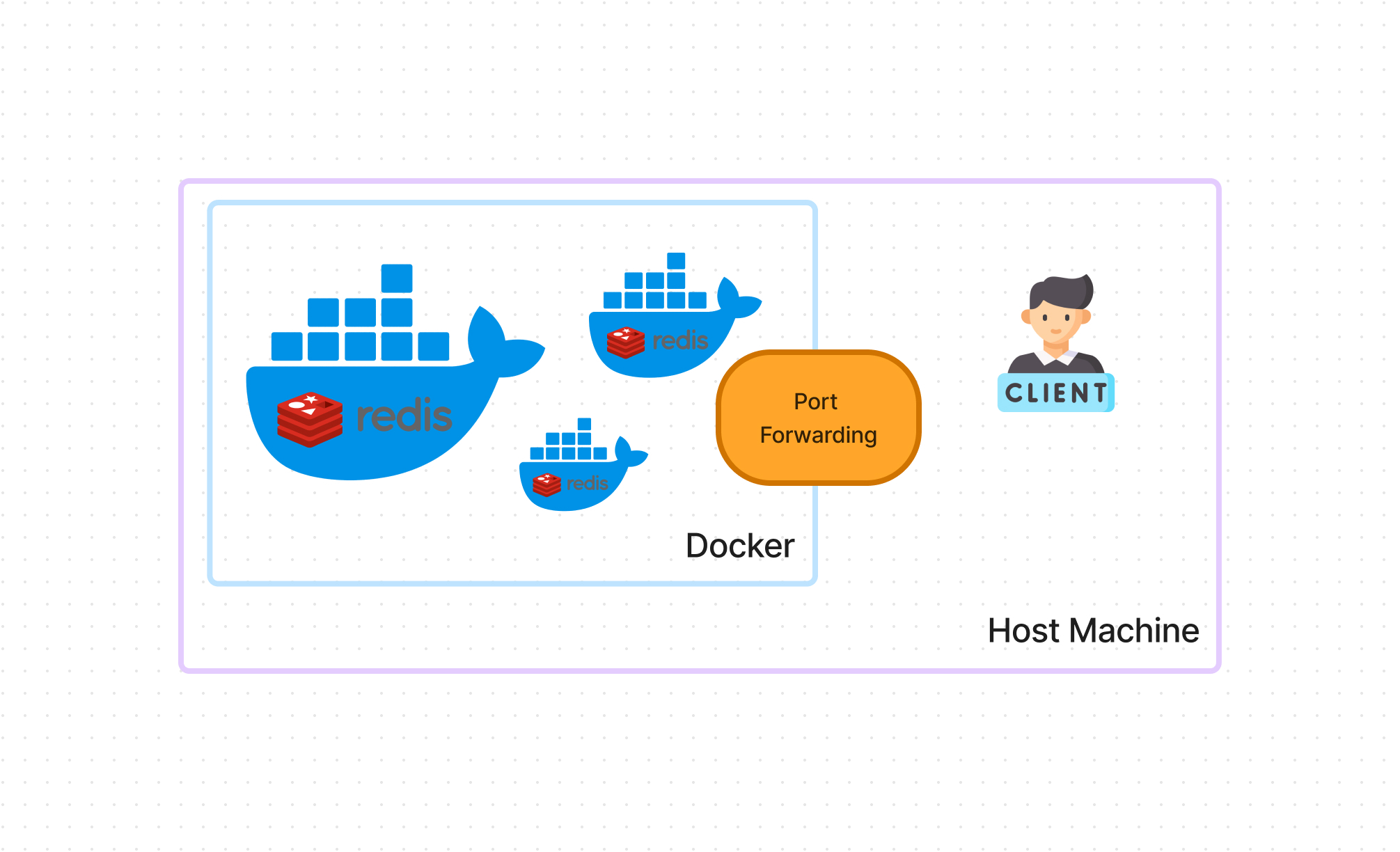

1,865,545 | Database Caching for Beginners | In today's fast-paced digital world, web applications are expected to deliver high performance and... | 0 | 2024-05-26T11:23:24 | https://dev.to/imabhinavdev/database-caching-for-beginners-15hj | database, systemdesign, beginners, webdev | In today's fast-paced digital world, web applications are expected to deliver high performance and responsiveness to users. One critical aspect of achieving this goal is optimizing database performance, as database queries often represent a bottleneck in application performance. Database caching emerges as a powerful technique to address this challenge by reducing the load on database servers and improving response times.

Database caching involves storing frequently accessed data in temporary storage, known as a cache, to eliminate the need for repeated queries to the database. By caching data closer to the application layer, caching solutions can significantly enhance performance and scalability. In this comprehensive guide, we'll explore the intricacies of database caching, including how it works, popular caching solutions like Redis and Memcached, implementation strategies, benefits, considerations, and real-world scenarios.

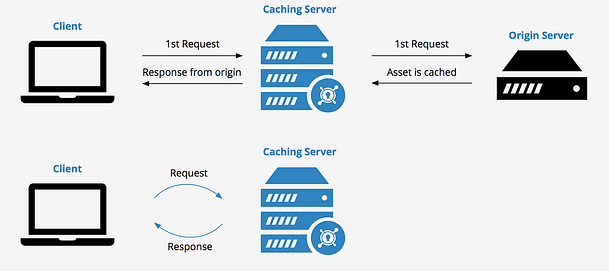

## How Database Caching Works

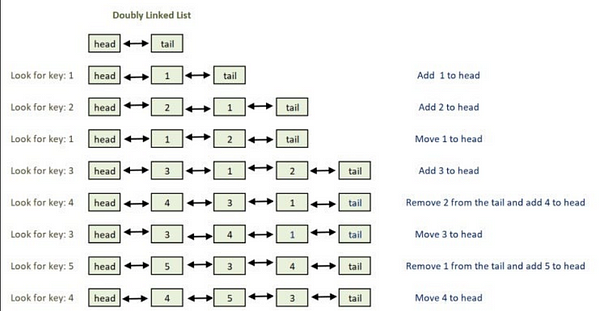

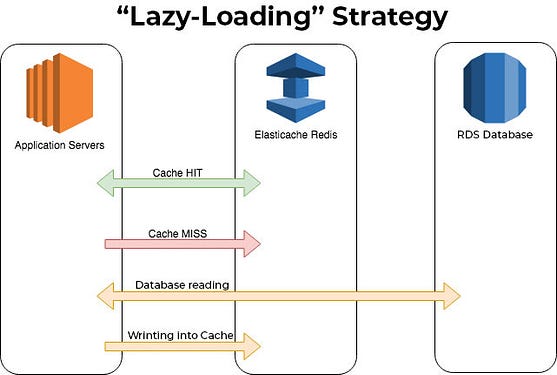

At the core of database caching lies the principle of minimizing database round-trips by storing frequently accessed data in a cache. When a user requests data from the application, the caching layer intercepts the request and checks if the requested data is already cached. If the data is found in the cache, it's retrieved and returned to the application without querying the database. This process, known as cache hit, eliminates the overhead of querying the database and significantly reduces response times.

However, if the requested data is not found in the cache, a cache miss occurs, and the caching layer must retrieve the data from the database. Upon fetching the data, the caching layer stores it in the cache for future use, thereby optimizing subsequent requests for the same data. By caching frequently accessed data, database caching minimizes the workload on the database server, improves application performance, and enhances scalability.

## Redis: A Powerful Caching Solution

Redis stands out as one of the most popular and versatile caching solutions available today. It is an open-source, in-memory data structure store known for its exceptional performance, rich feature set, and robustness. Redis supports various data structures, including strings, hashes, lists, sets, and sorted sets, making it suitable for a wide range of caching use cases.

One of Redis's key strengths lies in its ability to serve as more than just a caching solution. It can function as a primary data store, a message broker, a job queue, and much more. Additionally, Redis offers advanced features such as replication, persistence, pub/sub messaging, Lua scripting, and clustering, making it a preferred choice for demanding caching requirements.

## Memcached: A High-Performance Caching System

Memcached represents another popular caching system widely used in web application environments. It is a simple, high-performance, distributed memory object caching system designed to accelerate dynamic web applications by alleviating database load. Memcached stores data in memory and serves as a key-value store, enabling rapid data access with minimal latency.

Memcached's simplicity and efficiency make it an attractive option for caching frequently accessed data in distributed environments. It operates as a distributed caching system, allowing multiple cache servers to collaborate and share cached data. Memcached's architecture facilitates horizontal scaling, meaning you can add more cache servers to accommodate increasing application load and traffic.

## Implementing Database Caching: Best Practices and Strategies

Implementing database caching requires careful planning, consideration, and adherence to best practices. Here's a step-by-step guide to effectively implement database caching in your web applications:

### Step 1: Identify Frequently Accessed Data

Before implementing caching, analyze your application's data access patterns to identify frequently accessed data or database queries. Focus on areas where caching can provide the most significant performance gains, such as read-heavy operations or resource-intensive queries.

### Step 2: Choose a Caching Solution

Select a caching solution that aligns with your application's requirements, performance goals, and scalability needs. Consider factors such as data types, storage capacity, scalability, reliability, and ease of integration. Evaluate both Redis and Memcached based on their features, performance benchmarks, and community support.

### Step 3: Integrate Caching into Your Application

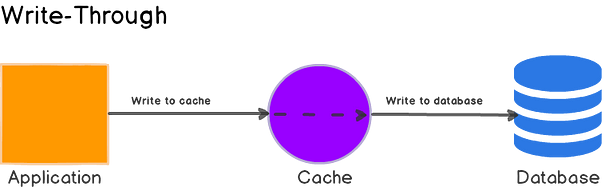

Integrate caching seamlessly into your application code to leverage its benefits effectively. Modify your application logic to check the cache before querying the database and update the cache when data is modified or invalidated. Implement cache invalidation strategies to ensure that cached data remains consistent with the database.

### Step 4: Configure Caching Parameters

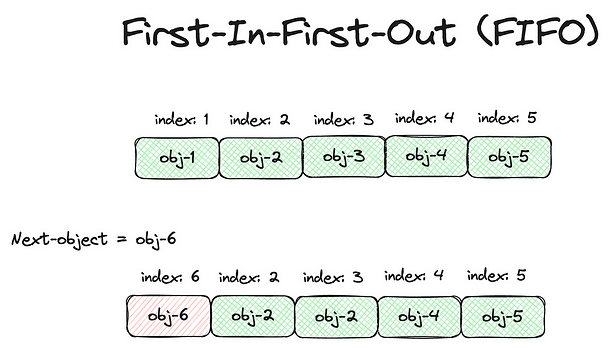

Fine-tune caching parameters such as expiration time, eviction policies, cache size, and data serialization to optimize performance and resource utilization. Experiment with different configurations to find the optimal balance between cache hit rate, memory usage, and data freshness.

## Real-World Scenario: E-Commerce Platform

Let's consider a real-world scenario to illustrate the importance of database caching in optimizing application performance. Suppose you're developing an e-commerce platform that experiences high traffic during peak shopping seasons. The platform allows users to browse products, view product details, add items to the shopping cart, and place orders.

In this scenario, database caching can significantly improve the platform's performance and scalability, especially during peak traffic periods. Here's how database caching can be implemented in various components of the e-commerce platform:

### Product Catalog:

- Cache frequently accessed product listings, categories, and attributes to reduce database queries and speed up page load times.

- Implement cache warming strategies to pre-load popular product data into the cache during off-peak hours, ensuring rapid access during peak traffic.

### Product Details:

- Cache product details, images, pricing information, and inventory status to minimize database round-trips when users view product details.

- Employ caching strategies to invalidate and refresh product details cache when product information changes or inventory levels are updated.

### Shopping Cart:

- Cache shopping cart contents and session data to maintain user session state and reduce the load on the database server.

- Implement cache partitioning or sharding to distribute shopping cart data across multiple cache servers and prevent bottlenecks.

### Order Processing:

- Cache frequently accessed order history, customer details, and transaction data to accelerate order processing and checkout.

- Utilize cache-based locking mechanisms to prevent race conditions and ensure data consistency during concurrent order updates.

By strategically implementing database caching throughout the e-commerce platform, you can enhance performance, scalability, and user experience, even during peak traffic periods.

## Benefits of Database Caching

Database caching offers numerous benefits for web applications, including:

- **Improved Performance:** Caching reduces database load and speeds up data retrieval, leading to faster response times and better user experience.

- **Scalability:** Caching solutions like Redis and Memcached support horizontal scaling, allowing you to add more cache servers to handle increased load.

- **Reduced Database Load:** By serving cached data from memory, caching reduces the number of queries and transactions sent to the database, thereby lowering database server load.

## Considerations and Best Practices

While implementing database caching, consider the following best practices and considerations:

- **Cache Invalidation:** Implement cache invalidation strategies to ensure that cached data remains consistent with the database and reflects the latest updates.

- **Eviction Policies:** Choose appropriate eviction policies to manage cache size and prioritize data retention based on access patterns and expiration criteria.

- **Data Serialization:** Serialize complex data structures before storing them in the cache to ensure compatibility, efficiency, and seamless retrieval.

- **Monitoring and Maintenance:** Regularly monitor cache performance, usage, and health metrics to detect issues, optimize configuration, and prevent cache-related bottlenecks.

## Conclusion

In conclusion, database caching is a fundamental technique for optimizing the performance, scalability, and responsiveness of web applications. By caching frequently accessed data closer to the application layer, caching solutions like Redis and Memcached significantly reduce the load on database servers and enhance overall system performance. Through careful planning, implementation, and adherence to best practices, you can leverage database caching effectively to achieve faster response times, improved scalability, and enhanced user experience.

In today's competitive digital landscape, where users expect instant access to information and seamless browsing experiences, database caching emerges as a critical component of application optimization strategies. Whether you're developing an e-commerce platform, a social networking site, or an enterprise application, incorporating database caching can provide tangible benefits in terms of performance, scalability, and resource efficiency.

As you embark on your journey to implement database caching in your applications, remember to evaluate your caching requirements, choose the appropriate caching solution, and fine-tune caching parameters to optimize performance and resource utilization. By embracing caching best practices, monitoring cache performance, and adapting to changing application needs, you can harness the full potential of database caching to deliver exceptional user experiences and stay ahead in today's dynamic digital landscape. | imabhinavdev |

1,865,540 | The Grasshopper's Rebellion | The grasshopper and the ants It was time for the weekly team meeting. Michael handed out... | 0 | 2024-05-26T11:09:57 | https://dev.to/offcode/the-grasshoppers-rebellion-4p8d | agile, leadership, creativity | ## The grasshopper and the ants

It was time for the weekly team meeting. Michael handed out printed copies of his presentation slides. There were many pages. Samantha felt how thick the stack was - 27 pages. She sighed quietly to herself.

Michael began speaking about his presentation. Samantha nodded, pretending to listen carefully. But soon, her pen started moving by itself to draw on her notepad. Lines and shapes appeared.

Samantha drew a bright green grasshopper playing a tiny violin. Around the grasshopper, she drew twelve ants marching in a line. The ants wore business suits and carried briefcases. It looked silly and funny. Samantha fit her drawings inside the boxes on a printed Excel spreadsheet page.

Samantha's coworkers saw her doodling from the corners of their eyes. They smiled a little. Everyone knew Samantha's habit of doodling during meetings. They thought it was amusing, as long as Michael didn't notice.

"Any other questions before we continue?" Michael's voice droned on.

Suddenly, Michael's phone rang and made a buzzing sound. "Excuse me, I need to take this call." He left the meeting room. The coworkers looked at each other.

Samantha kept doodling. She added a family of turtles to her scene. She did not see Michael come back into the room.

"Sorry about that interruption, it was -" Michael stopped speaking. He looked at Samantha's notepad. Her colorful doodles were out in the open for anyone to see.

No one said anything for an awkward, uncomfortable moment. Michael stared at the grasshopper playing violin and the ants dressed in suits. Samantha's coworkers waited silently to see how Michael would react.

But Michael just cleared his throat. "Well then, where were we..." He acted like nothing unusual happened.

The meeting continued. But Michael had clearly seen Samantha's doodles. Samantha did not know if he was okay with it or not.

## Breaking the silence

The meeting was finally over. People started gathering their things and leaving the room. Samantha collected her notepad and pen. She hadn't touched her pen since Michael caught her doodling.

Samantha was about to leave too, but Michael made a small gesture with his hand, asking her to stay behind. Some of her teammates noticed and glanced at her curiously as they filed out.

Soon, it was just Samantha and Michael alone in the room. An awkward silence hung in the air.

"So...why were you drawing during the presentation?" Michael asked, breaking the tension.

Samantha didn't respond at first, unsure of how to explain herself.

"Do you not find value in these status meetings?" Michael pressed. "The presentation covers important priorities and plans."

"No, I understand the value," Samantha replied carefully. But her eyes shifted slightly, hinting there was more she wasn't saying.

Michael could sense she wasn't being fully transparent. He pressed on, "Was there something about my presentation specifically that made you...distracted?"

Samantha weighed how much to reveal. A certain phrase Michael used seemed to unlock her honesty. "I...can't always pay attention," she admitted.

"Because it's boring?" Michael stated bluntly.

Samantha knew she was caught. After the doodling incident, she figured it couldn't get any worse. "Yes," she confessed. "The presentations can be...quite boring."

Samantha couldn't hold back any longer. The words came tumbling out. "Don't you find it ironic? You're always asking us for innovative, creative solutions. But then you plan it all out in spreadsheets using the same old, stale techniques that get called 'innovation best practices.'"

She gestured to her doodled notepad. "This...this is how I tap into my real creativity. My doodles help me think differently, make unexpected connections. But I have to do it in secret, like it's something to be ashamed of."

Michael furrowed his brow, considering her outburst. After a moment, he nodded slowly. "Okay, let's explore your approach then. If everyone just pursued what they found personally interesting, how would we ensure we actually deliver on what matters to our customers and stakeholders?"

Samantha opened her mouth to respond, but Michael kept going. "If you want to draw little creatures, that's fine. But that's what hobbies are for - creative outlets separate from work."

He leaned back in his chair. "I rebuild vintage motorcycles in my free time, for instance. It's my passion. But I don't mix that hobby with my professional responsibilities here. There's a line between interests and important work."

Samantha felt her cheeks flush with a mix of embarrassment and defiance. Michael's dismissive attitude toward her doodling ignited something within her. This wasn't just a hobby to be compartmentalized.

"My doodles ARE my work," she stated firmly. "They're how my mind explores problems and imagines new possibilities. Treating it as a separate 'hobby' is what stifles true innovation."

## The CEO of the brain

Michael could sense Samantha's defiance over treating her doodles as mere hobbies. He decided to take a different tack, tapping his knuckle on the polished conference table.

"You know, this table is made of solid oak. Took years of patient growth for that oak tree to develop its hard, sturdy interior." He ran his hand along the smooth wood.

Michael then pointed to his own forehead. "What do you think is behind this?" He raised an inquisitive eyebrow at Samantha.

Before she could respond, he continued, "The frontal cortex - the mastermind behind our most advanced brain functions. It's the control center that allows us to plan, reason, and make conscious choices rather than just going with our first impulsive urges."

He swiveled his chair to better engage Samantha. "Imagine it as the CEO of your brain - integrating information from all other regions to decide the best course of action. Without it, you'd just drift aimlessly based on whatever thought or stimuli captured your attention at the moment. The frontal cortex is what lets you concentrate on work instead of browsing YouTube."

Michael swiveled his chair toward the glass windows overlooking a playground outside. A group of children shrieked with laughter, chasing each other across the jungle gym.

"To a child, every moment is about following their interests. Playing, running around, doing whatever feels fun and stimulating in that instant." Michael mimed drawing in the air with an imaginary crayon.

"They live totally in the present, their frontal cortexes all soft and malleable. That's why kids love to doodle and draw - it's an impulsive creative outlet with no deeper purpose."

He turned to Samantha. "When was the last time you visited the dentist?"

Samantha was caught off-guard. "Uh...six months ago, I think?"

Michael nodded. "Exactly. We become adults. Our frontal cortexes mature, and we have to make hard decisions against our childish impulses."

"A child would never willingly go to the dentist, because it's not fun. But we adults understand the important responsibilities - like basic hygiene and preventative care. We delay the gratification of eating sweets, because we know the consequences if we don't."

Samantha couldn't help but smirk at the dentist analogy, though Michael's metaphor rang true.

"That's the crux of this dilemma. Your doodles may feel like creative indulgences. But I need my team to embrace the bigger picture - our professional obligations to this company and its customers. Even if that means... delaying gratification."

## Balancing acts

"But there are whole communities out there not based on these 'important' responsibilities you speak of," Samantha countered. "People who choose to live for their interests and passions, without being forced into pursuits they find dull or constricting."

She leaned forward, a hint of wistfulness in her voice. "Don't you think the world would be a happier place if we gave the 'interesting' things a bigger share of our lives? Instead of always having to delay gratification for what society deems important?"

Michael considered her point, nodding slowly. "You're not entirely wrong. With primitive hunter-gatherer tribes, that divide between interesting and important pursuits wasn't so starkly defined."

"But their lives were a constant struggle for survival," he added pragmatically. "The agricultural revolution, when humans transitioned to settled societies, was in many ways the maturation of our species."

Michael tented his fingers, gathering his thoughts. "It allowed us to delay gratification, plan long-term, and ultimately build civilization's greatest achievements. As glorious as a nomadic existence following your every whim may sound, it lacks the stability and focus to reach our full potential."

He fixed Samantha with a measured look. "The frontal cortex's ability to override impulsive urges in favor of responsibilities - as tedious as they can feel at times - is what separates us from plucky grasshoppers endlessly playing their violins while the industrious ants do the hard work of preparing for the future."

Samantha: But there are exceptions in our current society to this rigid separation of interests from responsibilities. What about people with ADHD or other neurological conditions? For them, it can be extremely difficult, maybe even impossible, to always prioritize what's deemed important over their personal interests and creative pursuits.

Michael: That's a fair point. I can see how brain chemistry differences would make delaying gratification and overriding impulsive urges towards interests more challenging.

Samantha: Exactly. And what about artists, journalists, entertainers? Their work is literally following and expressing their creative interests and passions. Yet they're often considered less serious or trustworthy for that.

Michael: Well, I would argue that for truly successful creatives at the highest levels, their interests have effectively become important professional and financial responsibilities.

Samantha: Sure, but that's only after they've "made it" and their interests become work obligations. Before that, while they're still struggling, society tends to dismiss their passions as frivolous indulgences distracting from more important practical concerns.

Michael: I can't deny there's a double standard there. We celebrate creative success after the fact, but are quick to criticize those same pursuits as impractical whimsies beforehand.

Samantha: It's an unfair, privileged way of looking at it. The financially stable can more easily embrace their interests over responsibilities without facing the same potential consequences as others.

Michael was silent for a moment, taking in Samantha's perspective. He nodded slowly, his expression softening. "You're right, I haven't fully considered the nuances." Michael leaned forward. "If we're going to truly question this dichotomy between responsibilities and creative interests, what would your suggestion be? How could we create more space for 'interesting' work and innovative pursuits, while still meeting our professional obligations?"

He spread his hands in a gesture of openness. "I may have been too dismissive earlier. But I'm listening now - what's your vision for finding a better balance?"

## Drawing attention

Samantha sat at her desk, watching at the meeting agenda. She had drawn a small grasshopper surrounded by dozens of ants in business suits, each holding a tiny briefcase. The office was noisy with the sound of keyboards, ringing phones, and people talking.

Michael’s door opened with a quiet creak. He stepped out, his eyes scanning the room until they landed on Samantha. He gave a brief nod, then went back into his office. Samantha felt a rush of nervous energy. She gathered her sketches and sketchbook, slipping them into a folder.

As she walked towards Michael’s office, the sounds of the office faded. Her footsteps seemed louder in her ears. She passed her colleagues, who were busy at their desks, not noticing her. The air felt heavier, filled with anticipation.

She stopped at Michael’s door, took a deep breath, and knocked lightly before pushing it open. Inside, the office was plain and practical, the fluorescent lights casting a harsh glare. Michael sat behind his desk, a fortress of paper and files, his expression serious.

Samantha stepped inside, the door clicking shut behind her. The room felt smaller, the walls closer. She approached the desk and placed her folder on it. Michael watched her with a calm, unreadable gaze, his hands folded neatly in front of him.

She opened the folder and spread out her sketches. The grasshopper, surrounded by ants in business suits, was a tiny act of rebellion against the boring meetings. Samantha straightened, meeting Michael’s eyes. The room was silent except for the distant hum of the office outside.

Michael picked up one of the sketches, examining it closely. His face remained impassive, but there was a flicker of something in his eyes—curiosity, perhaps, or a slight hint of amusement. Samantha stood still, her heart pounding, waiting for his reaction.

Michael set the sketch down and looked at her. For a moment, the distance between manager and employee, the line between duty and creativity, seemed to blur. Samantha felt the weight of the moment, the fragile possibility of change hanging in the air.

He leaned back in his chair, tapping a finger on the desk. Samantha held her breath, ready for whatever came next. Samantha snapped her fingers. Somewhere, a projector clicked on, and the image of the grasshopper and the ants appeared on the wall behind Michael, magnified ten times. One of the ants' briefcases landed right over Michael's face, who turned in his swivel chair and watched the presentation on the wall.

## Beyond boredom: a presentation

#### 1. Introduction

- **Purpose**: Integrate interestingness of tasks into the Agile process to enhance engagement and motivation.

- **Key Roles**:

- **Product Manager**: Defines the importance and business value of tasks.

- **Interestingness Owner**: Explains why tasks are interesting and ensures tasks are engaging.

- **Team Members**: Vote on the interestingness of tasks.

#### 2. Roles and Responsibilities

- **Product Manager (PM)**:

- Prioritize tasks based on business needs and deadlines.

- Communicate the importance and customer/business value of each task.

- **Interestingness Owner (IO)**:

- Assess tasks for their interestingness and potential for creative problem-solving.

- Present tasks in a way that highlights their interesting aspects.

- Modify tasks to increase their interestingness if they are initially deemed dull.

- **Team Members**:

- Vote on the interestingness of tasks during planning sessions.

- Provide feedback on what aspects make a task interesting or dull.

#### 3. Task Evaluation Process

- **Task Identification**:

- Tasks are initially identified and listed by the Product Manager.

- Each task includes a description, priority, estimated effort, and deadline.

- **Interestingness Assessment**:

- The Interestingness Owner reviews each task to identify and highlight interesting aspects.

- Tasks are presented to the team with both business value (by PM) and interestingness (by IO).

#### 4. Voting on Interestingness

- **Voting Mechanism**:

- During sprint planning or task review sessions, team members vote on the interestingness of each task.

- Voting scale: Interesting (Yes) or Dull (No).

- **Marking Tasks**:

- A task is marked as interesting if at least one team member votes 'Yes'.

- Tasks with unanimous 'No' votes are flagged for further review.

#### 5. Managing Dull Tasks

- **Review by Interestingness Owner**:

- Tasks marked as dull by all team members are reviewed by the Interestingness Owner.

- The IO attempts to identify ways to make these tasks more engaging.

- **Modification or Removal**:

- If the IO can enhance the interestingness, the task is modified and re-presented to the team.

- If the task remains dull but is still important, it may be revisited in subsequent sessions.

- Tasks that cannot be made interesting and are not crucial may be removed from the list.

## Maybe

When Samantha finished her presentation, the room became very quiet. The light from the projector made strange shapes on the walls, and everyone looked serious. Michael was at the front, looking like he was thinking very hard.

Samantha felt both excited and scared. She really wanted Michael to like her idea, but she wasn't sure if he did. She looked around the room, and all her colleagues were there, even though she had started talking just to Michael. It made her feel nervous, like all eyes were on her.

After Samantha concluded her presentation, the room fell silent. The projector's light danced across the walls, adding to the tension in the air. Michael sat at the head of the table with an unreadable expression.

Samantha's heart was beating fast as she waited for Michael's response. She had put a lot of effort into this proposal, hoping to change their approach to work. But now, she was uncertain if her ideas would be accepted or rejected.

Michael leaned forward and looked at the gathered team. "Thank you, Samantha, for sharing your ideas," he began, speaking in a calm tone. "Your perspective is certainly thought-provoking."

Everyone in the room waited with anticipation for Michael's decision. Samantha held her breath, hoping for a positive outcome.

"But," Michael continued cautiously, "as with any new idea, there are risks and uncertainties to consider."

Samantha felt a little disappointed, but she did not want to give up on her vision. She knew from the start that change would not be easy, but she was ready to fight for her ideas.

"As a team," Michael went on, "we need to carefully weigh the potential benefits against the potential drawbacks of integrating new ideas into our work process."

Samantha nodded slowly, thinking about questions and doubts. Had she failed to explain her case clearly enough? Were her ideas too different from their current practices?

But despite the uncertainty, Samantha felt determined. She may not have convinced them today, but she refused to give up on her vision. She would continue to promote creativity and innovation, even if others doubted or were skeptical.

As the meeting ended and her colleagues left, Samantha stayed for a moment and exchanged a meaningful look with Michael. In that brief moment, she saw a hint of curiosity in his eyes, a silent acknowledgment that her ideas might have some value.

As Samantha gathered her notes and sketches, she couldn't shake the feeling that this was only the beginning of something truly remarkable. | offcode |

1,865,538 | Advanced Java: Simplifying Object Property Copy and Manipulation with BeanUtil | In Java programming, the BeanUtil utility class is a powerful and convenient tool for simplifying the... | 0 | 2024-05-26T11:06:48 | https://dev.to/markyu/advanced-java-simplifying-object-property-copy-and-manipulation-with-beanutil-3l2n | java, javabeans, springframework, objectmapping | In Java programming, the `BeanUtil` utility class is a powerful and convenient tool for simplifying the process of copying properties and manipulating objects. This article will introduce the basic functionalities of `BeanUtil`, demonstrate its application through detailed code examples, and compare it with other similar tools. Additionally, we will explore the advantages and usage scenarios of `BeanUtil` in real-world development to help developers better understand and utilize this utility class.

## Introduction to the BeanUtil Utility Class

### 1. Overview of BeanUtil

`BeanUtil` is a widely used Java utility class that provides a series of methods to simplify property copying and manipulation between JavaBean objects. It primarily addresses complex object operations and property handling issues, significantly improving code readability and maintainability.

#### Shallow Copy vs. Deep Copy:

- **Shallow Copy:** `BeanUtil` performs shallow copying, meaning it copies values for primitive data types and references for object types. This means that while the values of primitive types are directly copied, the references to objects are copied instead of the objects themselves. As a result, changes to these objects in one instance will affect the other.

- **Deep Copy:** In contrast, deep copying involves creating new objects for referenced types and copying their content. This ensures that the objects in the new instance are entirely independent of those in the original instance.

### 2. Core Features of BeanUtil

The core functionalities of `BeanUtil` include:

| Feature | Description |

| ---------------- | -------------------------------------------------------- |

| `copyProperties` | Copies property values from one object to another |

| `setProperty` | Sets the value of a specified property of an object |

| `getProperty` | Gets the value of a specified property of an object |

| `cloneBean` | Clones an object, creating a duplicate |

| `populate` | Populates an object's properties using data from a `Map` |

| `describe` | Converts an object's properties and values into a `Map` |

These features make `BeanUtil` incredibly versatile, enabling developers to handle complex property manipulations with minimal code.

### 3. Comparison with Similar Libraries

In addition to `BeanUtil`, there are several other tools and libraries available for object property copying and manipulation:

- **Apache Commons BeanUtils:** Provides utility methods for JavaBean operations, including property copying and setting. It's an open-source library widely used in Java projects.

- **Spring BeanUtils:** A utility class from the Spring Framework that offers simple property copying and manipulation methods, commonly used within the Spring ecosystem.

- **Dozer:** A Java Bean mapper that supports deep copying and complex mapping configurations. It allows for custom mapping configurations, suitable for complex object conversions.

- **ModelMapper:** An intelligent object mapping framework designed to simplify the mapping between objects. It offers powerful mapping capabilities and handles complex object relationships and type conversions.

- **MapStruct:** A compile-time code generator that automatically generates type-safe, high-performance Bean mapping code. It uses annotation-driven mapping definitions, reducing runtime overhead.

- **Orika:** A Java Bean mapper focused on providing fast and simple object mapping capabilities. It supports complex mapping configurations and multiple mapping strategies, making it ideal for high-performance mapping needs.

#### Comparison Table:

| Tool Class | Property Copy | Property Set/Get | Type Conversion | Performance | Configuration Complexity |

| ---------------- | ------------- | ---------------- | --------------- | ----------- | ------------------------ |

| BeanUtil | Yes | Yes | Yes | Medium | Low |

| Apache BeanUtils | Yes | Yes | Yes | Low | Low |

| Spring BeanUtils | Yes | Yes | No | High | Low |

| Dozer | Yes | No | Yes | Low | Medium |

| ModelMapper | Yes | No | Yes | Medium | Medium |

| MapStruct | Yes | No | Yes | High | High |

| Orika | Yes | No | Yes | Medium | Medium |

These tools each have their unique features, and developers can choose the most suitable one based on project requirements. For instance, `Apache Commons BeanUtils` and `Spring BeanUtils` are ideal for simple property copying, while `Dozer` and `ModelMapper` are better suited for complex object mapping needs. `MapStruct` and `Orika` excel in performance and type safety.

## Using BeanUtil: Code Examples

### 1. Property Copying

Property copying is one of the most common functions of `BeanUtil`, allowing you to copy all property values from one object to another.

#### Example Code:

```java

import org.apache.commons.beanutils.BeanUtils;

public class BeanUtilExample {

public static void main(String[] args) {

try {

SourceObject source = new SourceObject("John", 30);

TargetObject target = new TargetObject();

BeanUtils.copyProperties(target, source);

System.out.println("Target Object: " + target);

} catch (Exception e) {

e.printStackTrace();

}

}

}

class SourceObject {

private String name;

private int age;

public SourceObject(String name, int age) {

this.name = name;

this.age = age;

}

// getters and setters

}

class TargetObject {

private String name;

private int age;

@Override

public String toString() {

return "TargetObject [name=" + name + ", age=" + age + "]";

}

// getters and setters

}

```

In this example, the `copyProperties` method copies the property values from the `source` object to the `target` object.

### 2. Setting and Getting Properties

`BeanUtil` also provides methods for dynamically setting and getting object properties.

#### Example Code:

```java

import org.apache.commons.beanutils.BeanUtils;

public class PropertyExample {

public static void main(String[] args) {

try {

MyBean myBean = new MyBean();

BeanUtils.setProperty(myBean, "name", "Alice");

BeanUtils.setProperty(myBean, "age", 25);

String name = BeanUtils.getProperty(myBean, "name");

String age = BeanUtils.getProperty(myBean, "age");

System.out.println("Name: " + name);

System.out.println("Age: " + age);

} catch (Exception e) {

e.printStackTrace();

}

}

}

class MyBean {

private String name;

private int age;

// getters and setters

}

```

In this example, `setProperty` is used to set the `name` and `age` properties of `myBean`, and `getProperty` is used to retrieve these values.

### 3. Object Cloning

`BeanUtil` can also clone objects, creating duplicates.

#### Example Code:

```java

import org.apache.commons.beanutils.BeanUtils;

public class CloneExample {

public static void main(String[] args) {

try {

MyBean original = new MyBean("Bob", 40);

MyBean clone = (MyBean) BeanUtils.cloneBean(original);

System.out.println("Original: " + original);

System.out.println("Clone: " + clone);

} catch (Exception e) {

e.printStackTrace();

}

}

}

class MyBean {

private String name;

private int age;

public MyBean() {}

public MyBean(String name, int age) {

this.name = name;

this.age = age;

}

@Override

public String toString() {

return "MyBean [name=" + name + ", age=" + age + "]";

}

// getters and setters

}

```

In this example, the `cloneBean` method creates a copy of the `original` object.

## Conclusion

The `BeanUtil` utility class provides Java developers with a straightforward method for manipulating JavaBean object properties. By using `BeanUtil`, developers can reduce repetitive code, increase development efficiency, and enhance code readability and maintainability. Although there are many similar tools and libraries, `BeanUtil` remains a popular choice in many projects due to its simplicity and powerful functionality. Choosing the right tool should depend on the specific needs and complexity of the project. For simple property copying and operations, `BeanUtil` is an excellent choice, while more complex mapping needs may require other powerful mapping tools. This article, with detailed introductions and example code, aims to help developers better understand and utilize the `BeanUtil` utility class to improve development efficiency and code quality.

## References

- [Apache Commons BeanUtils Documentation](https://commons.apache.org/proper/commons-beanutils/)

- [Spring Framework BeanUtils Documentation](https://docs.spring.io/spring-framework/docs/current/javadoc-api/org/springframework/beans/BeanUtils.html)

- [Dozer Documentation](http://dozer.sourceforge.net/documentation/index.html)

- [ModelMapper Documentation](http://modelmapper.org/)

- [MapStruct Documentation](https://mapstruct.org/documentation/stable/reference/html/)

- [Orika Documentation](https://orika-mapper.github.io/orika-docs/) | markyu |

1,865,537 | Connecting with the Divine: Discover Spiritual Mediums Near Me | Are you curious about spiritual mediums and their abilities? Explore our keyword search to find... | 0 | 2024-05-26T10:58:56 | https://dev.to/mr_nags_0df5a4fe81b8d67a/connecting-with-the-divine-discover-spiritual-mediums-near-me-9dm | Are you curious about [spiritual mediums](https://www.haqbahu.com/articles/spritual-miediums-near-me/) and their abilities? Explore our keyword search to find trustworthy and experienced spiritual mediums near you. These professionals can help you connect with departed loved ones, gain insights into your life's purpose, and navigate spiritual challenges. Discover the profound guidance and healing that spiritual mediums offer, right in your local area. Whether you're seeking clarity, closure, or spiritual growth, connecting with a reputable medium can be a transformative experience. Start your journey today by exploring the spiritual mediums near you and unlocking deeper connections with the divine. | mr_nags_0df5a4fe81b8d67a | |

1,865,536 | Different Ways to Include External JavaScript in HTML | JavaScript is an essential part of web development, allowing developers to add interactivity and... | 0 | 2024-05-26T10:56:52 | https://dev.to/imabhinavdev/different-ways-to-include-external-javascript-in-html-34ab | javascript, webdev, beginners, programming | JavaScript is an essential part of web development, allowing developers to add interactivity and dynamic behavior to web pages. When incorporating external JavaScript files into HTML documents, there are various methods to consider, each with its own pros and cons. In this blog, we'll explore different ways to include external JavaScript in HTML, discuss their advantages and disadvantages, and provide guidance on when to use each method.

## Introduction

JavaScript files can be included in HTML documents using various methods, each affecting page loading and script execution differently. Let's delve into these methods and understand their implications.

## Including External JavaScript in the `<head>`

```html

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>Document</title>

<script src="script.js"></script>

</head>

<body>

<!-- Your HTML content here -->

</body>

</html>

```

**Pros:**

- Ensures scripts are loaded before rendering the page, preventing any JavaScript-dependent errors.

- Guarantees that scripts are available for use as soon as the page starts loading.

**Cons:**

- Can delay the rendering of the page if the script file is large or takes time to load.

- May result in a slower initial page load time.

**When to Use:**

- When JavaScript needs to manipulate the DOM or perform tasks that should occur before the page renders.

**When Not to Use:**

- Avoid if the script file is large or not essential for initial page functionality.

## Before the Closing `</body>` Tag

```html

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>Document</title>

</head>

<body>

<!-- Your HTML content here -->

<script src="script.js"></script>

</body>

</html>

```

**Pros:**

- Allows the HTML content to load before fetching and executing the script, potentially improving perceived page load speed.

- Scripts won't block other resources from loading.

**Cons:**

- JavaScript execution may be delayed until after the HTML content is rendered, affecting user experience if scripts are essential for initial functionality.

**When to Use:**

- Suitable for scripts that are not critical for initial page functionality or rendering.

**When Not to Use:**

- Avoid for scripts that need to manipulate the DOM or execute before the page renders.

## Using `defer`

```html

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>Document</title>

<script src="script.js" defer></script>

</head>

<body>

<!-- Your HTML content here -->

</body>

</html>

```

**Pros:**

- Executes scripts after the HTML content is parsed, but before `DOMContentLoaded`, ensuring that scripts run in the order they are declared.

- Improves page load time by allowing HTML parsing to continue while the script is downloaded.

**Cons:**

- May still delay script execution if multiple scripts are deferred, as they will execute sequentially.

**When to Use:**

- Ideal for scripts that need to access DOM elements but can safely run after the HTML is parsed.

**When Not to Use:**

- Avoid for scripts that must execute before `DOMContentLoaded` or rely on the immediate availability of DOM elements.

## Using `async`

```html

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>Document</title>

<script src="script.js" async></script>

</head>

<body>

<!-- Your HTML content here -->

</body>

</html>

```

**Pros:**

- Fetches and executes the script asynchronously, allowing HTML parsing to continue without waiting for the script to download.

- Ideal for non-blocking scripts that don't rely on DOM elements or need to execute independently.

**Cons:**

- Scripts may execute out of order, potentially causing dependencies or race conditions.

- Not suitable for scripts that require access to the DOM or must execute in a specific order.

**When to Use:**

- Best for non-essential scripts that can run independently and don't depend on the page's DOM structure.

**When Not to Use:**

- Avoid for scripts that require DOM manipulation or must execute before other scripts for proper functionality.

## Comparison of Methods

| Method | Pros | Cons |

|-----------------------------|------------------------------------------|------------------------------------------------------|

| `<script>` in `<head>` | - Ensures scripts are available early. | - May delay initial page load. |

| | - Prevents JavaScript-dependent errors. | - May block rendering if script is large. |

| Before `</body>` | - Allows HTML content to load first. | - Delayed script execution. |

| | - Doesn't block other resource loading. | - Scripts may not run before DOMContentLoaded. |

| `defer` | - Scripts execute after HTML parsing. | - Scripts may still block rendering. |

| | - Improves perceived page load speed. | - Multiple deferred scripts may execute sequentially.|

| `async` | - Fetches and executes script asynchronously. | - Scripts may execute out of order. |

| | - Doesn't block HTML parsing. | - Not suitable for scripts with dependencies. |

## When to Use Each Method

- **`<script>` in `<head>`:** Use when scripts are essential for initial page functionality and must be available before rendering.

- **Before `</body>`:** Suitable for non-essential scripts or scripts that don't rely on immediate DOM access.

- **`defer`:** Best for scripts that require access to the DOM but can safely execute after HTML parsing.

- **`async`:** Ideal for non-blocking scripts that can execute independently and don't rely on the page's DOM structure.

## When Not to Use Each Method

- **`<script>` in `<head>`:** Avoid for large scripts or scripts that aren't essential for initial functionality.

- **Before `</body>`:** Not suitable for scripts that need to manipulate the DOM or execute before DOMContentLoaded.

- **`defer`:** Avoid for scripts that must execute before DOMContentLoaded or rely on immediate DOM access.

- **`async`:** Not suitablefor scripts that require access to the DOM or must execute in a specific order.

## Performance Impact

The performance impact of each method depends on factors such as script size, placement, and dependencies. Here's a brief overview:

- **`<script>` in `<head>`:** May delay initial page load if scripts are large or take time to download. However, ensures scripts are available early, potentially reducing script execution delays later.

- **Before `</body>`:** Allows HTML content to load first, improving perceived page load speed. However, scripts may execute after the DOM is fully rendered, delaying script execution.

- **`defer`:** Fetches scripts asynchronously while allowing HTML parsing to continue. Scripts execute after HTML parsing but before `DOMContentLoaded`, improving perceived page load speed.

- **`async`:** Fetches and executes scripts asynchronously, allowing HTML parsing to continue without waiting for the script to download. However, scripts may execute out of order, potentially causing dependencies or race conditions.

## Conclusion

In conclusion, the method you choose to include external JavaScript in HTML depends on various factors, including script dependencies, page load speed, and script execution timing. Each method has its pros and cons, and understanding these factors is crucial for optimizing web page performance and user experience.

- **`<script>` in `<head>`:** Use when scripts are critical for initial page functionality and must be available before rendering.

- **Before `</body>`:** Suitable for non-essential scripts or scripts that don't rely on immediate DOM access.

- **`defer`:** Best for scripts that require access to the DOM but can safely execute after HTML parsing.

- **`async`:** Ideal for non-blocking scripts that can execute independently and don't rely on the page's DOM structure.

By carefully considering these factors and choosing the appropriate method for including external JavaScript, you can optimize your web pages for better performance and user experience. | imabhinavdev |

1,865,535 | IPFS (InterPlanetary File System) Explained | 1. What is IPFS? Imagine you have a giant library where everyone can share their books. IPFS is like... | 0 | 2024-05-26T10:55:41 | https://dev.to/kamilrashidev/ipfs-interplanetary-file-system-explained-2kn1 | ipfs, beginners, programming | **1. What is IPFS?**

Imagine you have a giant library where everyone can share their books. IPFS is like a giant library for the internet. It helps people store and share files (like books, videos, pictures) all over the world.

**2. How is it different from the current internet?**

Current Internet (HTTP): When you want to see a webpage or watch a video, your computer asks a specific server (a big computer) for the file. This is like asking one specific library for a book.

IPFS: Instead of asking one place for the file, you ask everyone who has it. It’s like asking all your friends if they have the book you want. If they do, they share it with you.

**3. How does IPFS find files?**

Unique Address: Every file in IPFS gets a unique address called a hash (a string of letters and numbers). It’s like a special code that represents that file.

Content-Based: In IPFS, you find files based on their content, not where they are stored. If you have the hash code, you can find the file no matter who has it.

**4. How do you add files to IPFS?**

Put the File in IPFS: You add your file (like a picture or a video) to IPFS. It gets broken into smaller pieces, and each piece gets a hash code.

Get a Hash Code: IPFS gives you a hash code for your file.

This hash code is like the file’s unique address.

Share the Hash Code: If you want to share your file with friends, you give them the hash code. They can use this code to get the file from IPFS.

**5. How do you get files from IPFS?**

Ask for the File: You use the hash code to ask IPFS for the file.

Find the File: IPFS finds people (nodes) who have the file and asks them for the pieces.

Download the File: Your computer collects all the pieces and puts them back together to give you the file.

**6. Why is IPFS cool?**

Decentralized: No single server can control or censor the files. It’s like everyone sharing their books with each other.

Faster: Files can come from many sources at once, so downloads can be faster, especially if lots of people have the file.

Permanent: Files can stay available as long as someone is sharing them. Even if the original uploader goes away, others can still have the file.

**7. How do you use IPFS?**

IPFS Software: You need to install IPFS software on your computer. This software helps you add and get files from IPFS.

IPFS Network: Your computer becomes part of the IPFS network, sharing and receiving files with other computers.

**8. A Simple Example**

Adding a File: You have a picture of your cat. You add it to IPFS, and it gets a hash code like `QmXyZ....`

Sharing the File: You give your friend the hash code `QmXyZ....` They use this code to get the picture from IPFS.

Getting the File: Your friend’s computer asks the IPFS network for the file with the hash code `QmXyZ....` The network finds the file and sends it to your friend.

**9. Key Terms**

Node: A computer connected to the IPFS network.

Hash Code: A unique address for a file in IPFS.

Decentralized: Not controlled by one single place; shared among many.

**10. Summary**

IPFS is like a big library for the internet.

Files are found by their content, not location.

You can add, share, and get files using unique hash codes.

It’s faster, more reliable, and more free than the current internet. | kamilrashidev |

1,865,531 | Best Web Development Resources 🔥 | Here are some valuable resources for web developers! ✅ 📌Docs 📓... | 0 | 2024-05-26T10:51:52 | https://dev.to/alisamirali/best-web-development-resources-2hbl | webdev, frontend, backend, fullstack | Here are some valuable resources for web developers! ✅

---

## 📌Docs

📓 http://developer.mozilla.org

📓 http://w3schools.com

📓 http://w3docs.com

📓 http://devdocs.io

📓 http://web.dev

---

## 📌Learning Platforms

🎓 http://freecodecamp.org

🎓 http://codecademy.com

🎓 http://javascript30.com

🎓 http://frontendmentor.io

🎓 http://testautomationu.applitools.com

🎓 http://coursera.org

🎓 http://khanacademy.org

🎓 http://sololearn.com

🎓 https://javascript.info

🎓 https://www.udemy.com

---

## 📌Coding Challenge Platforms

⌨️ http://codewars.com

⌨️ http://topcoder.com

⌨️ http://codingame.com

⌨️ http://hackerrank.com

⌨️ http://projecteuler.net

⌨️ http://coderbyte.com

⌨️ http://codechef.com

⌨️ http://exercism.io

⌨️ http://leetcode.com

⌨️ http://spoj.com

---

## 📌Freelancing Platforms

💰 http://toptal.com

💰 http://upwork.com

💰 http://freelancer.com

💰 http://peopleperhour.com

💰 http://simplyhired.com

💰 http://envato.com

💰 http://guru.com

💰 http://fiverr.com

💰 http://hireable.com

💰 http://6nomads.com

---

## 📌Free Hosting

⚡️ http://netlify.com

⚡️ http://firebase.google.com

⚡️ http://aws.amazon.com

⚡️ http://heroku.com

⚡️ https://pages.github.com

⚡️ http://vercel.com

⚡️ http://surge.sh

⚡️ http://render.com

⚡️ https://docs.gitlab.com/ee/user/project/pages

---

## 📌Free CC-0 Photo Stocks

📷 http://unsplash.com

📷 http://pixabay.com

📷 http://pexels.com

📷 http://morguefile.com

📷 http://freephotosbank.com

📷 http://stockvault.net

📷 http://freeimages.com

📷 http://search.creativecommons.org

---

## 📌Illustrations

🌠 http://undraw.co/illustrations

🌠 http://drawkit.io

🌠 http://icons8.com/ouch

🌠 http://iradesign.io

🌠 http://interfacer.xyz

🌠 http://blush.design

---

## 📌Icons

🍩 http://fontawesome.com

🍩 http://flaticon.com

🍩 http://icons8.com

🍩 http://material.io/resources/icons

🍩 http://iconmonstr.com

🍩 https://heroicons.dev

🍩 https://www.abstractapi.com/user-avatar-api

---

## 📌Fonts

✒️ http://fonts.google.com

✒️ http://fontspace.com

✒️ http://1001fonts.com

✒️ http://fontsquirrel.com

---

## 📌Color Palettes

🎨 http://coolors.co

🎨 http://colorhunt.co

🎨 http://paletton.com

🎨 http://color-hex.com

🎨 http://mycolor.space

---

## 📌UI Inspiration

🤔 http://uimovement.com

🤔 http://uigarage.net

🤔 http://collectui.com

🤔 https://dribbble.com

🤔 https://ui-patterns.com

---

## 📌Website Optimization Tools

⚡ https://pagespeed.web.dev

⚡ https://gtmetrix.com

⚡ https://www.webpagetest.org

⚡ https://yslow.org

⚡ https://crux.run

---

**_Happy Coding!_** 🔥

**[LinkedIn](https://www.linkedin.com/in/dev-alisamir)**

**[X (Twitter)](https://twitter.com/dev_alisamir)**

**[Telegram](https://t.me/the_developer_guide)**

**[YouTube](https://www.youtube.com/@DevGuideAcademy)**

**[Discord](https://discord.gg/s37uutmxT2)**

**[Facebook](https://www.facebook.com/alisamir.dev)**

**[Instagram](https://www.instagram.com/alisamir.dev)** | alisamirali |

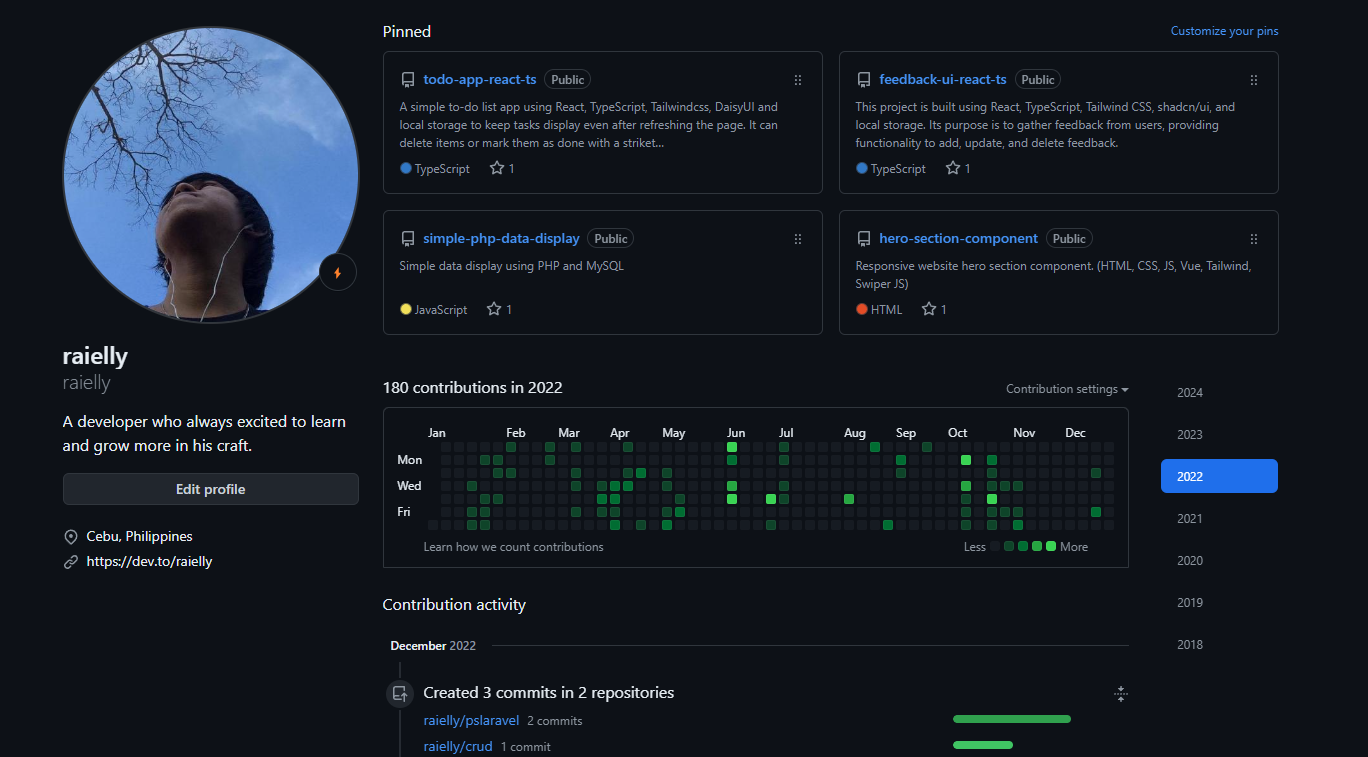

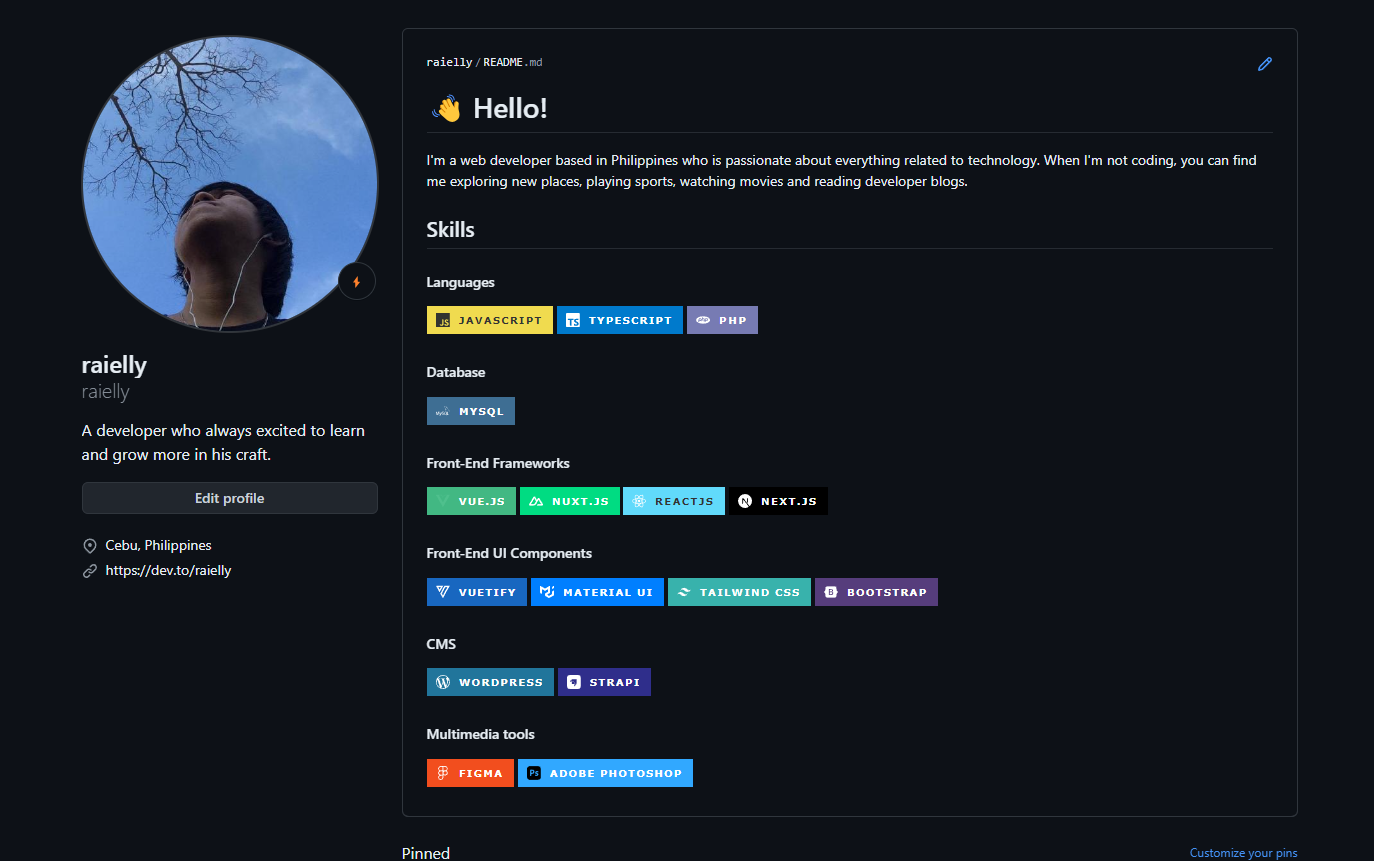

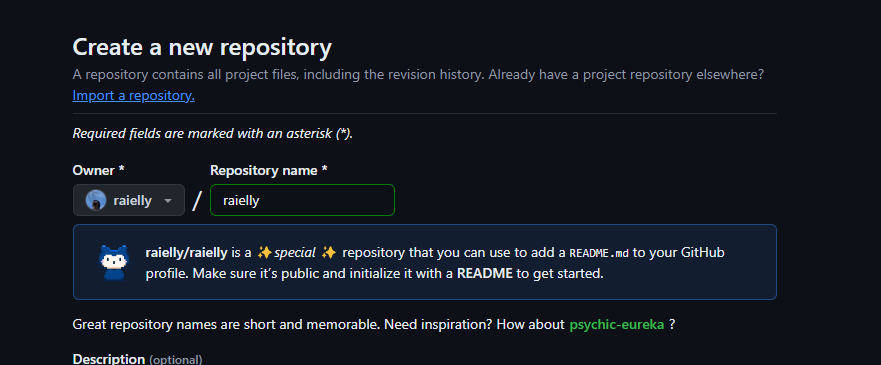

1,865,497 | Making Your GitHub Readme Profile Stand Out | In this article, I like to share with you how to make your GitHub Readme profile pop and stand out... | 0 | 2024-05-26T10:50:58 | https://dev.to/raielly/making-your-github-readme-profile-stand-out-4m52 | github, profile, beginners, tutorial | In this article, I like to share with you how to make your [GitHub](https://github.com/raielly) Readme profile pop and stand out from the others.

Old 🙂

New 🤩

So, basically, you just make a new repository with the same name as your GitHub username. Like, if your username is 'raielly', you'd create a repo called 'raielly'.

Then, you use the README file to make changes to your page. There you can use [Markdown](https://github.github.com/gfm/) and HTML to add your personal touch and make your profile stand out. show off whatever you want people to know about you, list your favorite languages and tech.

For some ideas, take a peek at this site:

[https://zzetao.github.io/awesome-github-profile/](https://zzetao.github.io/awesome-github-profile)

Cool images for your skills and social links, grab them from here:

[https://github.com/alexandresanlim/Badges4-README.md-Profile](https://github.com/alexandresanlim/Badges4-README.md-Profile)

Once you're all set, don't forget to git commit your changes.

That's it! EzPz!

Now that you've seen how easy it is to redesign your GitHub page, What does your GitHub profile readme file look like? Share your GitHub Profile below 😊😎🔥 | raielly |

1,865,530 | The best in cyber security recovery experts, Linux Cyber Security has recovered a lot of cryptocurrencies. | Have you lost your cryptocurrency, important files, or valuable data? Don't panic – we can help! Our... | 0 | 2024-05-26T10:47:07 | https://dev.to/larry_awilliams_c1f2bd25/the-best-in-cyber-security-recovery-experts-linux-cyber-security-has-recovered-a-lot-of-cryptocurrencies-34ci | Have you lost your cryptocurrency, important files, or valuable data? Don't panic – we can help! Our team of recovery experts specializes in swiftly and securely retrieving lost or inaccessible digital assets. Whether it's a misplaced wallet, corrupted files, or encrypted data, you can trust our experienced team to recover what matters most to you. Contact us today for peace of mind and expert recovery solutions you can rely on. People don’t believe in cryptocurrencies anymore just most public find it difficult to operate their accounts leading to the loss of private keys or wallet transactions, some send out to fake cryptocurrency investment accounts once you pay with cryptocurrency, you can only get your money back when wallet address you send to is still with you, nevertheless, you should contact the company you used to send the money and tell them it was a fraudulent transaction. Ask them to reverse the transaction, if possible. You can also report fraud and other suspicious activity involving cryptocurrency to the following entities:

If Scam Transactions Are Hurting Your Credit Score LCS is the hacker you need. Visit the Reliable Company Website :[ www.linuxcybersecurity.com ]

for compliance contact:[ info@linuxcybersecurity.com ] You can reach out for free consultation.

| larry_awilliams_c1f2bd25 | |

1,764,775 | ALL Imp Hooks of React - A RECAP | In the realm of React development, mastering hooks is akin to unlocking a treasure trove of... | 0 | 2024-05-26T10:44:58 | https://dev.to/aadarsh-nagrath/all-hooks-of-react-a-recap-e4d | webdev, javascript, react, programming | In the realm of React development, mastering hooks is akin to unlocking a treasure trove of efficiency and flexibility. These small, but powerful functions revolutionized how developers manage state, side effects, and more in React components. In this blog post, we'll explore some of the most important React hooks that every developer should be familiar with.

This article explores -

useState, useEffect, useContext, useRef, useMemo, useCallback, useReducer

## The useState Hook

The useState hook allows you to add a state to a functional component. It takes an initial value as an argument and returns an array with two elements: the current state value and a function to update it.

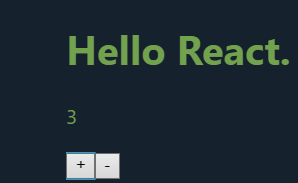

Here’s an example of how to use useState to add a counter to a functional component:

```

import React, { useState } from 'react';

function Counter() {

const [count, setCount] = useState(0);

const increment= () => {

setCount(count + 1);

}

const decrement = () => {

setCount(count - 1);

}

return (

<div>

<p>Count: {count}</p>

<button onClick={increment}>Increment</button>

<button onClick={decrement}>decrement</button>

</div>

);

}

```

In this example, we start with a count of 0 and update it every time the “Increment” and ‘’decrement’’ button is clicked. This is the most basic example of all.

## The useEffect Hook

useEffect() is a React hook for performing side effects in functional components. It takes a function to run the effect and an optional array of dependencies.

The effect executes after the component renders and can return a cleanup function. If dependencies change, the effect re-runs, providing control over when it executes. Common Use Cases -

1. Data Fetching

2. Subscriptions

3. DOM Manipulation

4. State Updates

5. Cleanup

`useEffect(()=>{// function logic}, [dependencies])`

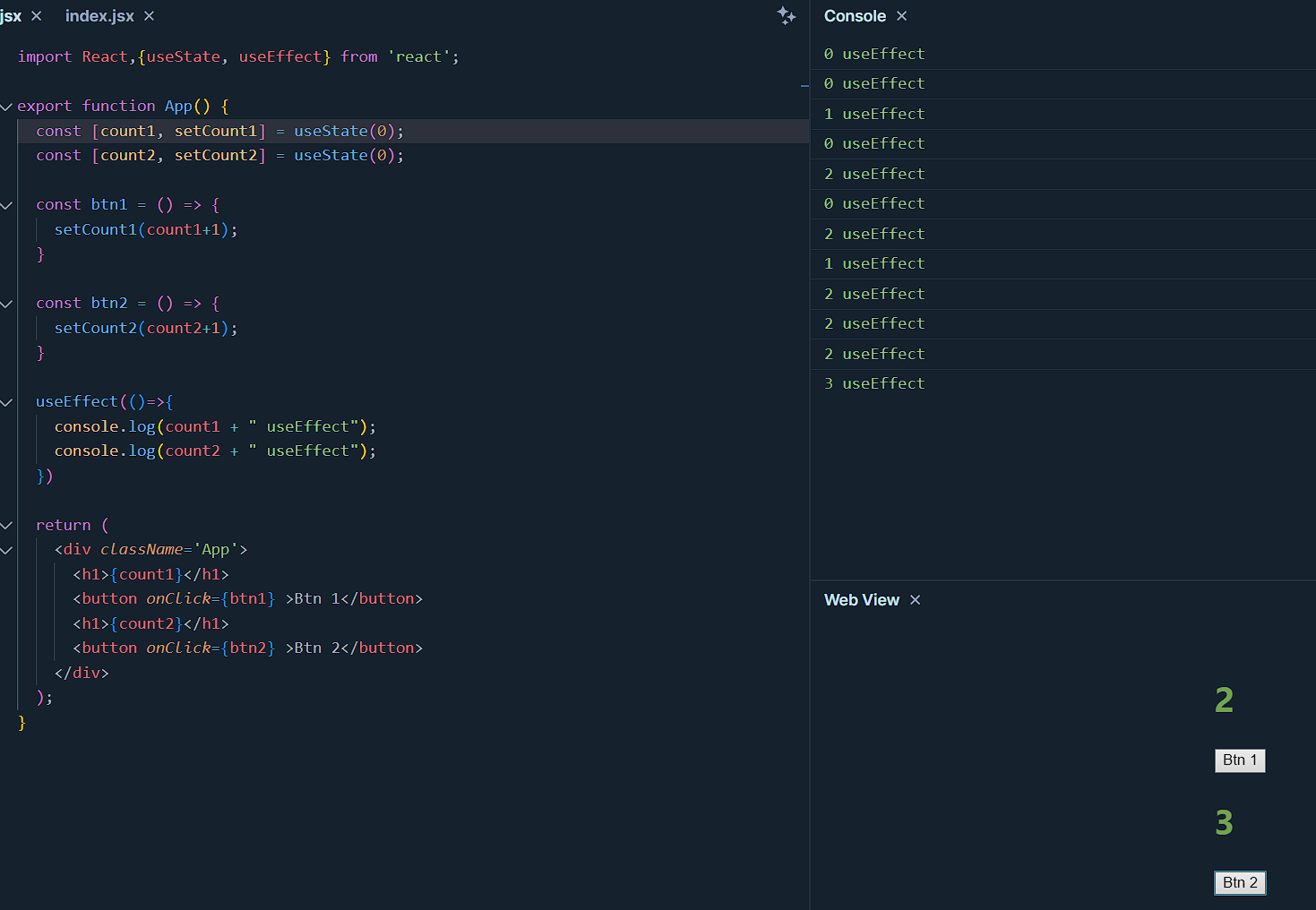

Now if no dependency is used, and it's kept empty then the useEffect will be in effect whenever the component renders. It will effect all time. For example -

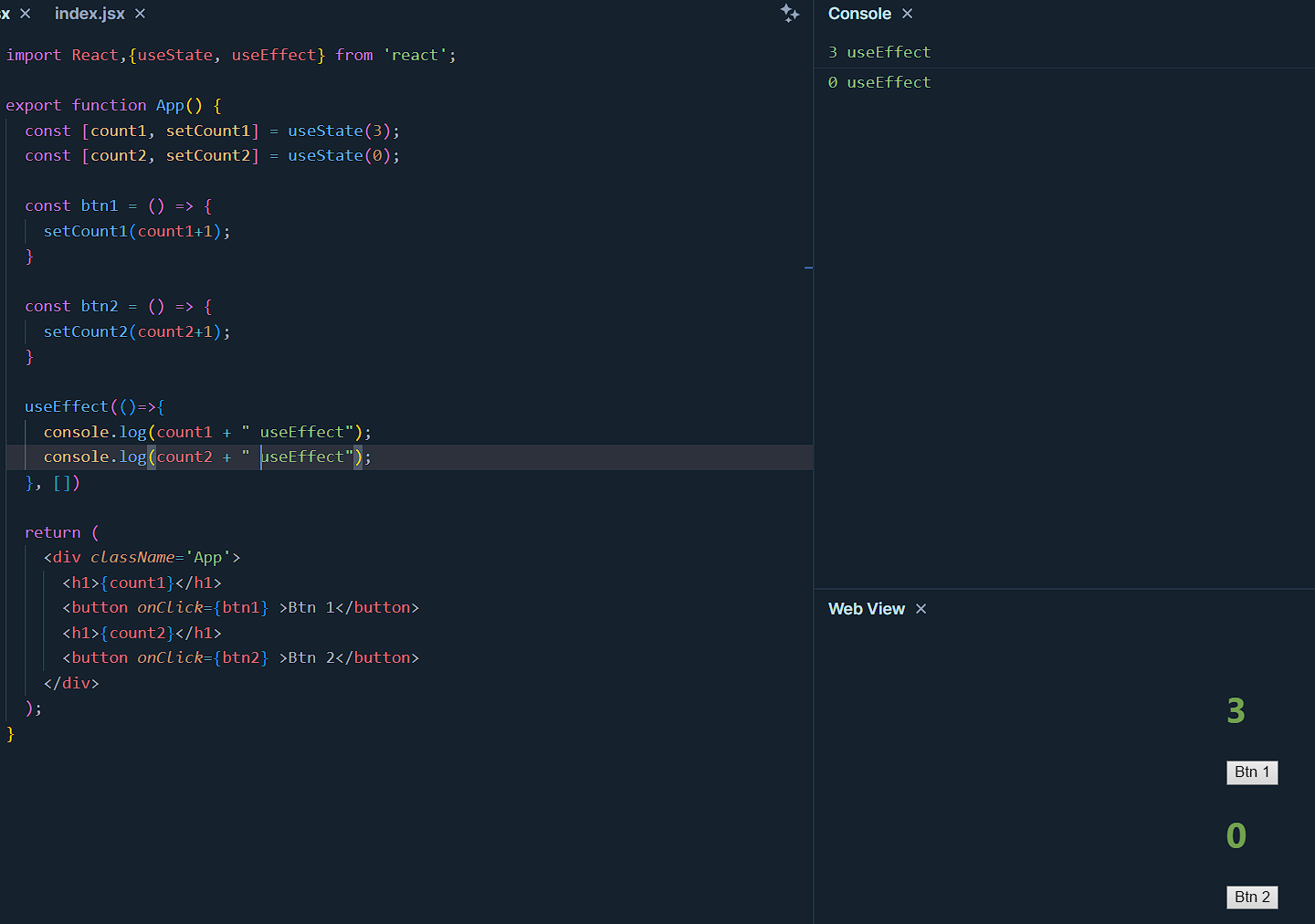

Here no matter which button is pressed the function inside useEffect executes, when button pressed component renders and the function runs.

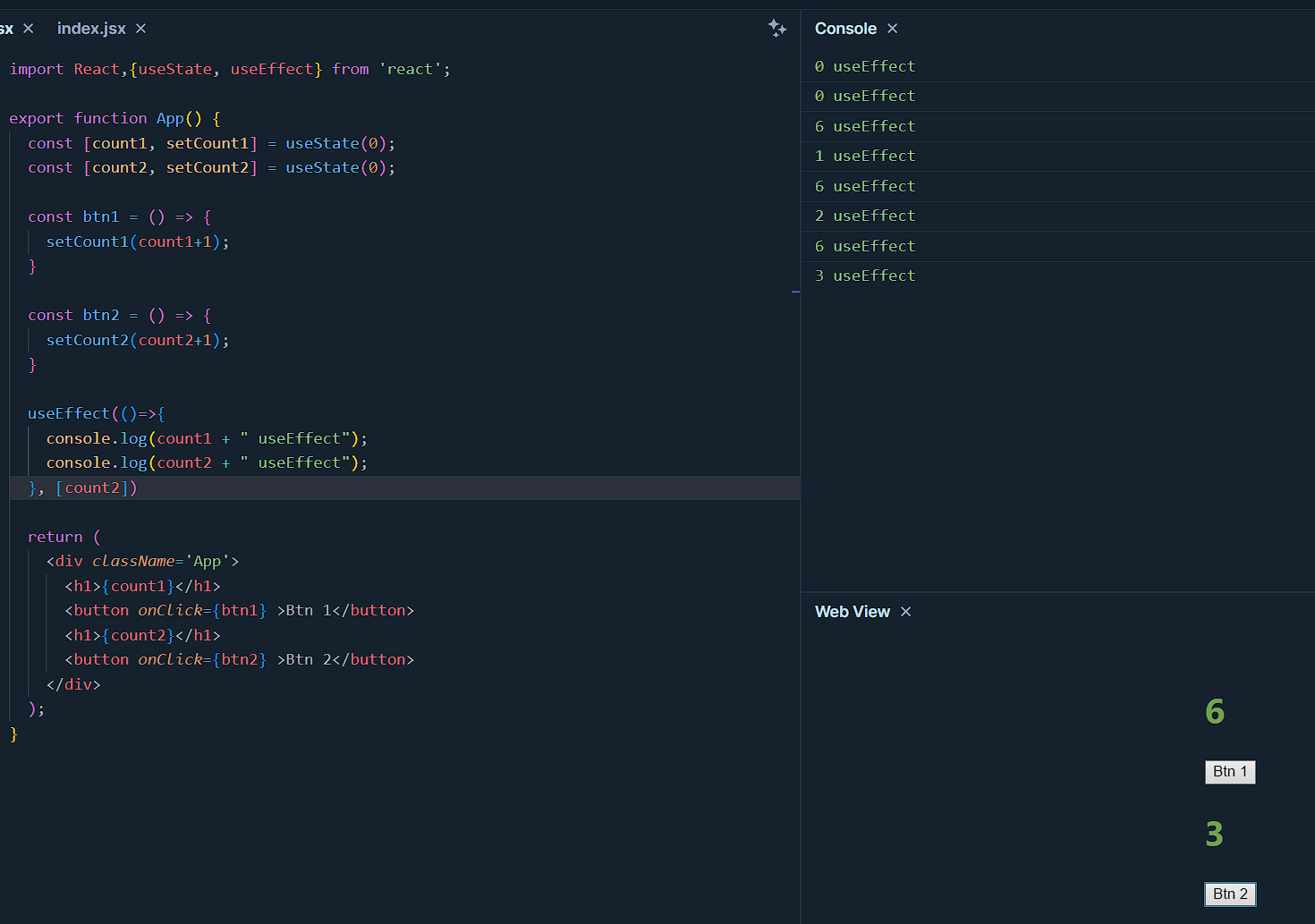

Now when dependency added - count2,

The logic of useEffect will take effect whenever there is a change in the count2 state. So only when button 2 is clicked it executes.

Now if an empty array is added as a dependency - []

In this case, the logic of useEffect executes only once when the component renders, and when it does states are in their default value, which is 3 in the case of count1 and 0 for count2, hence logic executes once only. Keep clicking the buttons will not have any effect.

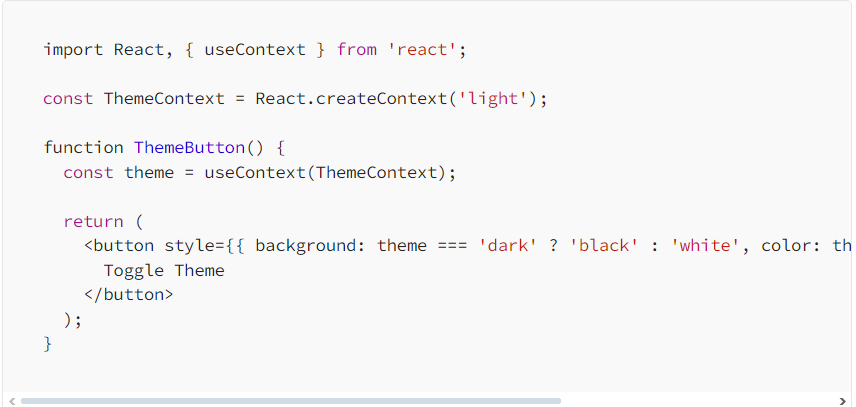

## The useContext() Hook

This hook allows you to work with react context API, which is a mechanism that allows you to share or scope values to the entire component tree without passing props. Let me tell you this better with an example, let's imagine we have an object's emotions.

```

const emotions = {

happy: '😂',

angry: '😡',

sad: '😔'

}

```

To share the emotions across multiple disconnected components we can use - Context

```

const EmotionContext = createContext();

```

Now,

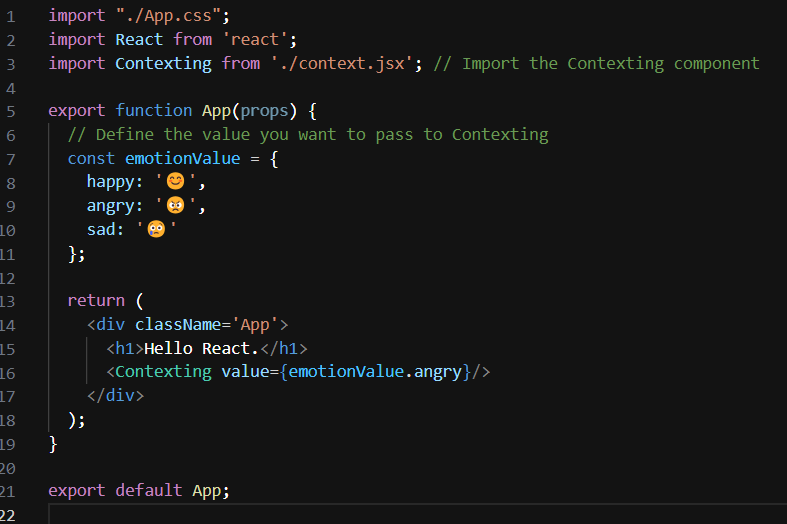

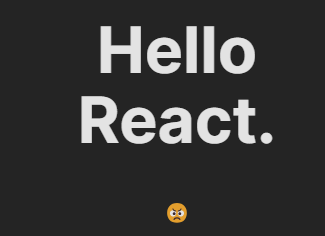

have this as the App.jsx -

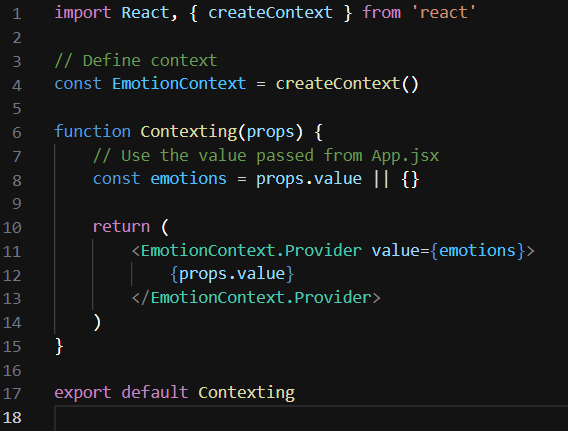

and this as a context.jsx -

**Here's what's happening in context of the useContext hook:**

- **Context Creation:** In context.jsx, a context is created using the createContext() function from React. This creates a context object which consists of a Provider and a Consumer.

- **Provider Usage:** In the Contexting component, the EmotionContext.Provider is used to provide the emotions value to its descendants. The value prop of the provider sets the value of the context, which in this case is the emotions object.

- **Consumer Usage (Implicit):** The descendants of the EmotionContext.Provider can consume the context value using the useContext hook or by wrapping their components with the EmotionContext.Consumer. However, in your provided code, the context value isn't directly consumed within the Contexting component.

- **Usage in Descendants:** Components nested within Contexting component tree can utilize the context value using the useContext hook. They can import the EmotionContext object and call useContext(EmotionContext) to access the context value.

Other Example -

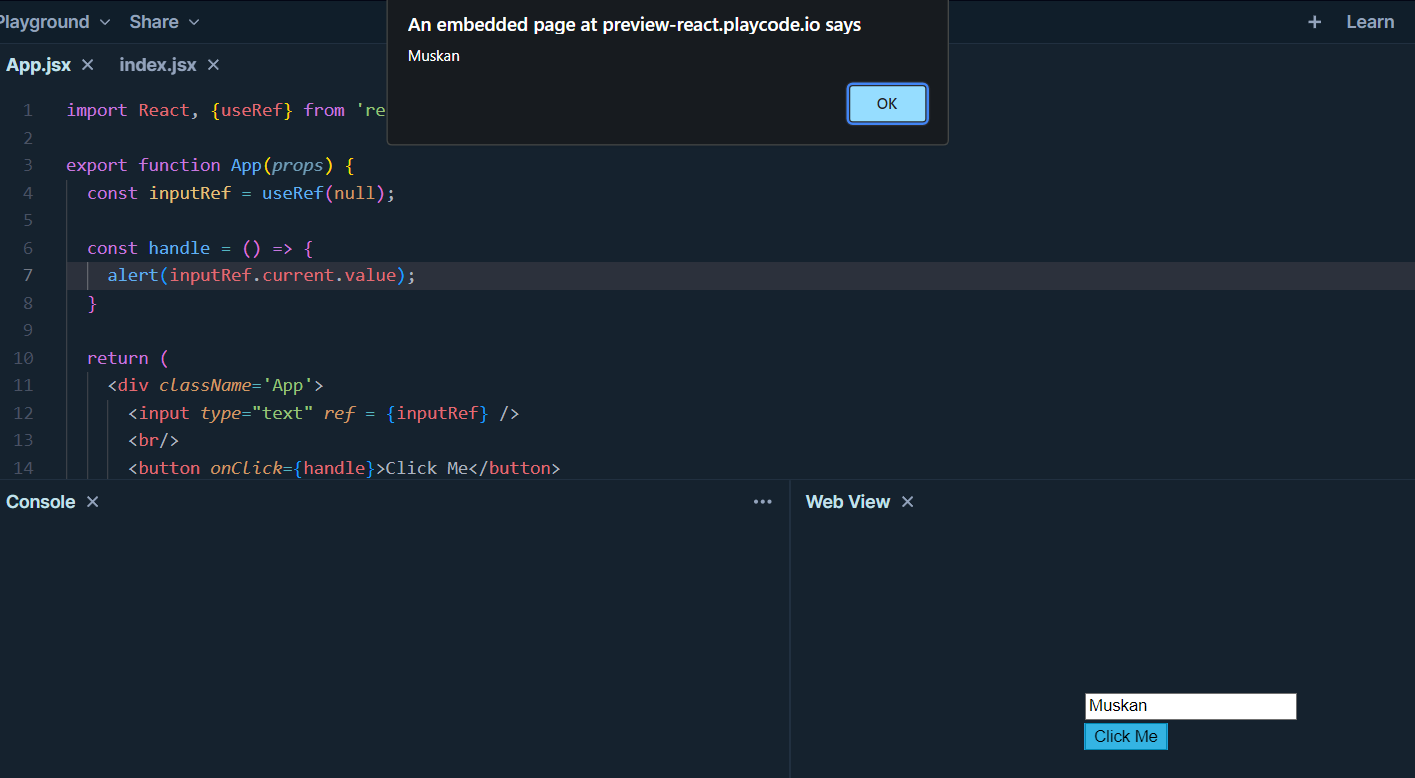

## The useRef() Hook

This hook allows you to create objects that will keep the same reference between renders. It can be used when you have a value that changes like setState but the difference is that it does not re-render when the value changes.

For example if we have a simple counter function -

```

function App() {

const count = useRef(0);

return (

<button onClick={() => count.current++}>

{count.current}

</button>

);

}

```

We can reference the current count, by `count.current`, but when clicking on the button it does change the count in ui because useRef() doesn't trigger re-render as setState does.

But a common use case for ref is to grab native HTML elements from jsx (JavaScript XML) or DOM. So ref does not re-update the component. It returns object {current: 0}, whose value is 0 by default.

```

const x = useRef(0)

useEffect(()=> {

x.current = x.current + 1;

})

{x.current} //somewhere

```

So x is just an object with current property, and when we update that property, that is what gets persisted between our different renders. We can change this x.current as many times as we want with re-rendering. Now an example of referencing the element of a document using useRef -

```

import React, {useRef} from 'react';

export function App(props) {

const inputRef = useRef(null);

const handle = () => {

alert(inputRef.current.value);

}

return (

<div className='App'>

<input type="text" ref = {inputRef} />

<br/>

<button onClick={handle}>Click Me</button>

</div>

);

}

```

Hereafter the button click -> handle function -> inputRef which is created and used in the input element is used. All elements have ref as an attribute.

Another example -

useRef is used to create a reference to the section element. When the button is clicked, the handleClick function is called, which scrolls the page to the section using sectionRef.current.scrollIntoView({ behavior: 'smooth' }).

```

import React, { useRef } from 'react';

function App() {

const sectionRef = useRef(null);

const handleClick = () => {

sectionRef.current.scrollIntoView({ behavior: 'smooth' });

};

return (

<div>

<button onClick={handleClick}>Scroll to Section</button>

<section ref={sectionRef}>

{/* Content */}

</section>

</div>

);

}

```

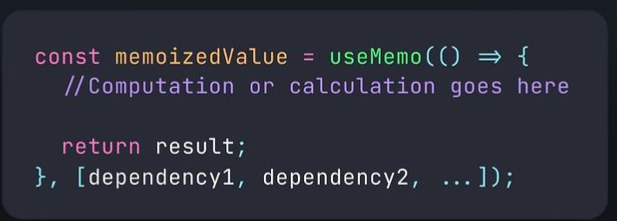

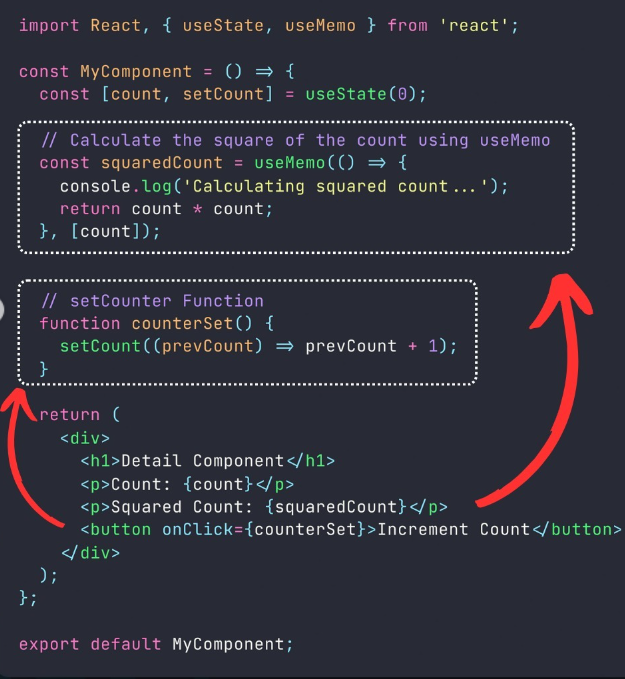

## useMemo() -

In React, the useMemo hook is used to memoize the result of a computation, so that the computation is only executed when its dependencies change.

This can be useful in optimizing the performance of expensive

calculations or computations in functional components.

• () => ...: This is an inline function that contains the computation or calculation you want to memoize.

• dependencyl, dependency2, ...: An array of dependencies. If any of these dependencies change, the memoized value will be recalculated.

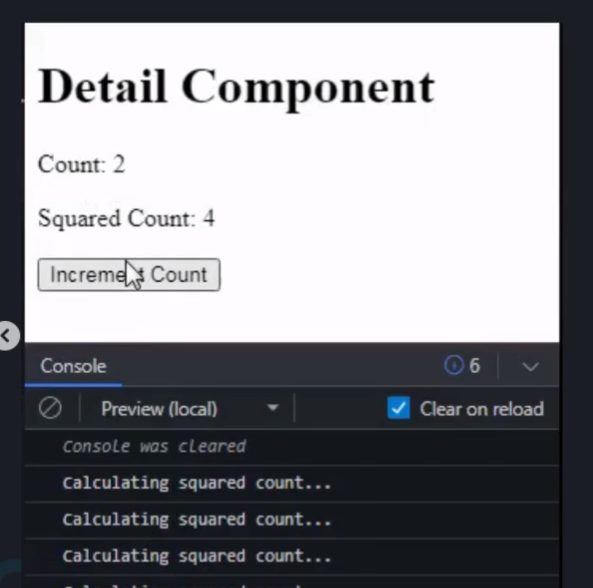

In this example, we use useMemo to calculate the squared value of the count state. The useMemo hook will execute the inline function only when the count changes.

If the count remains the same, it will reuse the previously memoized value, thus avoiding unnecessary recalculations.

When you click the "Increment Count" button, the count will increase, and the squared count will be recalculated since the count has changed.

if you click the button multiple times without changing the count, the squared count will not be recalculated, and you won't see the console log message more than once.

Remember, useMemo is primarily used to optimize expensive computations, and using it unnecessarily can add unnecessary complexity to your code.

## The useCallback() Hook

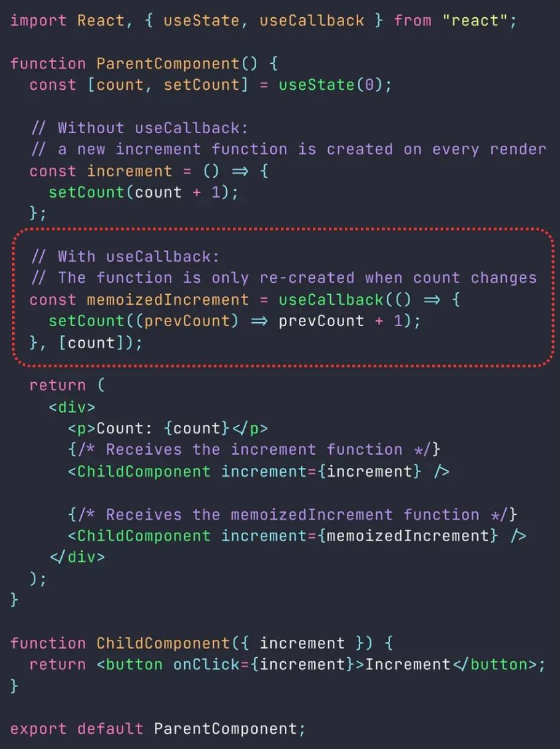

`useCallback()` is a React Hook used for optimizing performance by memoizing functions. In React, whenever a component re-renders, functions defined within that component are recreated. This can be inefficient, especially when these functions are passed down to child components as props, as it can lead to unnecessary re-renders in those child components.

`useCallback()` allows you to memoize a function so that it's only re-created if one of its dependencies changes. This can prevent unnecessary re-renders of child components that rely on these functions.

```

const memoizedCallback = useCallback(

() => {

// function body

},

[dependencies]

);

```

const memoizedFn = useCallback(callback,depend);

callback is the function you want to memoized, and depend is dependencies based on which callback function is re-created, when any change occurs to them.

without using useCallback, the increment function would be

recreated on every render of the ParentComponent, leading to potential performance issues.

However, by using useCallback, the memoized Increment function is only recreated when the count state changes, which optimizes performance by preventing unnecessary re-renders of the ChildComponent.

Remember that while `useCallback`can help improve performance in certain situations, it's important to avoid overusing it.

Only apply it to functions that actually cause performance issues due to frequent re-creation. In most cases, React's built-in reconciliation process handles function re-creation efficiently.

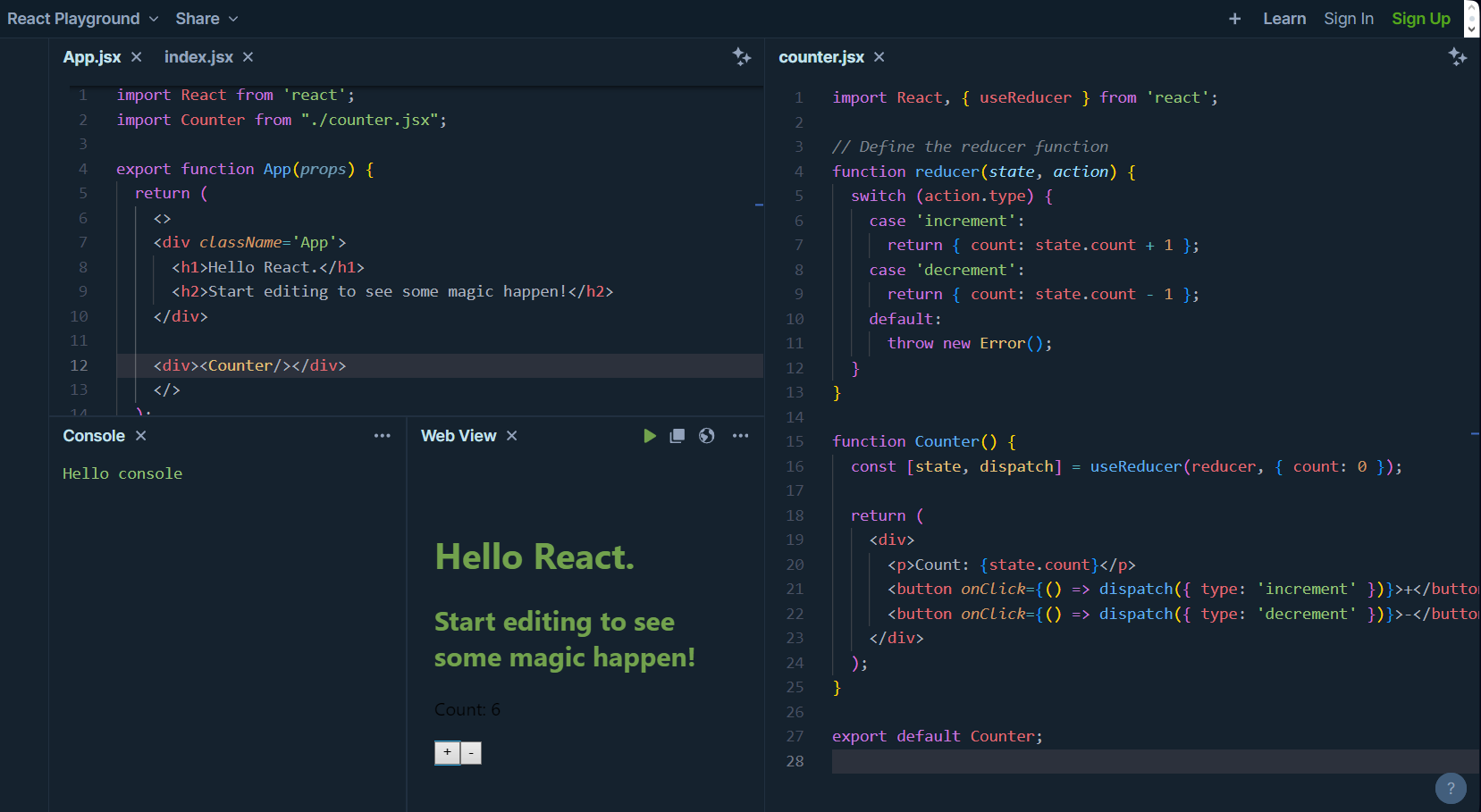

## The useReducer() Hook

The useReducer hook allows you to manage complex state in a functional component. It’s similar to the useState hook, but instead of a simple value, it takes a reducer function and an initial state.Think of it as a more powerful alternative to useState for complex state logic.

1. State and Actions: useReducer uses a state and actions to change that state.

2. Reducer Function: You create a function (reducer) that takes the current state and an action, and returns the new state.

3. Initial State: You define the starting state.

4. Dispatch: You get a function to send actions to the reducer to update the state.

Example -