id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,865,090 | Exploring React Hooks: Benefits, Drawbacks, and Real-World Examples | Hello Everyone, السلام عليكم و رحمة الله و بركاته In React development, Hooks have revolutionized how... | 0 | 2024-05-25T17:40:20 | https://dev.to/bilelsalemdev/exploring-react-hooks-benefits-drawbacks-and-real-world-examples-5fpg | javascript, react, webdev, development | Hello Everyone, السلام عليكم و رحمة الله و بركاته

In React development, Hooks have revolutionized how developers manage state, handle side effects, and interact with the DOM in functional components. By providing a more elegant and concise syntax, Hooks offer numerous benefits, but they also come with their own set of drawbacks. Let's delve into the benefits, drawbacks, and real-world examples of each React Hook:

### State Hooks:

1. **useState**:

- **Benefits**:

- Simplifies state management in functional components.

- Eliminates the need for class components for state management.

- Enables re-rendering of components when state changes.

- **Drawbacks**:

- Limited to managing individual state variables.

- Can lead to deeply nested state structures for complex components, which might be harder to manage.

- **Example Use Case**: Managing a simple counter.

```javascript

import React, { useState } from 'react';

function Counter() {

const [count, setCount] = useState(0);

return (

<div>

<p>Count: {count}</p>

<button onClick={() => setCount(count + 1)}>Increment</button>

</div>

);

}

```

2. **useReducer**:

- **Benefits**:

- Ideal for managing complex state logic.

- Provides a more structured approach to state management compared to useState.

- Optimizes performance for components with nested updates.

- **Drawbacks**:

- Requires understanding of concepts like reducers and actions, which might be unfamiliar to developers new to React or functional programming.

- Can lead to boilerplate code for simple state management tasks.

- **Example Use Case**: Managing a todo list with add, remove, and toggle functionality.

```javascript

import React, { useReducer } from 'react';

function todoReducer(state, action) {

switch (action.type) {

case 'ADD_TODO':

return [...state, action.payload];

case 'TOGGLE_TODO':

return state.map(todo =>

todo.id === action.payload ? { ...todo, completed: !todo.completed } : todo

);

default:

return state;

}

}

function TodoList() {

const [todos, dispatch] = useReducer(todoReducer, []);

return (

<div>

{todos.map(todo => (

<div key={todo.id}>

<input type="checkbox" checked={todo.completed} onChange={() => dispatch({ type: 'TOGGLE_TODO', payload: todo.id })} />

<span>{todo.text}</span>

</div>

))}

<button onClick={() => dispatch({ type: 'ADD_TODO', payload: { id: Date.now(), text: 'New Todo', completed: false } })}>

Add Todo

</button>

</div>

);

}

```

### Effect Hooks:

1. **useEffect**:

- **Benefits**:

- Handles side effects in functional components, such as data fetching, DOM manipulation, or subscriptions.

- Supports cleanup logic to prevent memory leaks or stale data.

- Offers a declarative way to manage side effects, improving code readability.

- **Drawbacks**:

- Can sometimes lead to unexpected behavior if not used with caution, especially with dependencies and cleanup logic.

- Performance implications due to potentially running effects on every render.

- **Example Use Case**: Fetching data from an API.

```javascript

import React, { useState, useEffect } from 'react';

function DataFetching() {

const [data, setData] = useState(null);

useEffect(() => {

fetch('https://api.example.com/data')

.then(response => response.json())

.then(data => setData(data))

.catch(error => console.error('Error fetching data:', error));

}, []);

return (

<div>

{data ? (

<ul>

{data.map(item => (

<li key={item.id}>{item.name}</li>

))}

</ul>

) : (

<p>Loading...</p>

)}

</div>

);

}

```

2. **useLayoutEffect**:

- **Benefits**:

- Synchronously fires after all DOM mutations, providing more control over layout effects.

- Useful for scenarios where you need to perform DOM measurements or operations that require synchronous updates before browser repaints.

- **Drawbacks**:

- Can potentially block the browser's rendering, leading to performance issues if used incorrectly or unnecessarily.

- Similar to useEffect, so choosing between them might require understanding the specific use case and timing requirements.

- **Example Use Case**: Performing DOM measurements.

```javascript

import React, { useLayoutEffect, useState } from 'react';

function LayoutEffectExample() {

const [width, setWidth] = useState(0);

useLayoutEffect(() => {

const handleResize = () => {

setWidth(window.innerWidth);

};

window.addEventListener('resize', handleResize);

handleResize(); // Initial measurement

return () => window.removeEventListener('resize', handleResize);

}, []);

return <p>Window Width: {width}px</p>;

}

```

3. **useInsertionEffect**:

- **Benefits**:

- Allows executing effects before React makes changes to the DOM, enabling modifications like adding dynamic CSS or other pre-render actions.

- Useful for scenarios where you need to manipulate the DOM before React updates it.

- **Drawbacks**:

- Less commonly used compared to useEffect and useLayoutEffect, so developers might be less familiar with its behavior and best practices.

- Requires a clear understanding of when and why to use it to avoid unnecessary complexity or performance issues.

- **Example Use Case**: Adding dynamic CSS styles.

```javascript

import React, { useInsertionEffect } from 'react';

function InsertionEffectExample() {

useInsertionEffect(() => {

const style = document.createElement('style');

style.textContent = `

.dynamic-element {

color: red;

font-weight: bold;

}

`;

document.head.appendChild(style);

return () => {

document.head.removeChild(style);

};

}, []);

return <div className="dynamic-element">Dynamic Element</div>;

}

```

### Additional Information:

- **useContext**:

- **Benefits**:

- Simplifies sharing data across components without prop drilling.

- Provides a clean and efficient way to consume context values at any nesting level.

- Reduces component coupling and improves code maintainability.

- **Drawbacks**:

- Overuse can lead to unclear component dependencies and make it harder to trace data flow.

- Might not be suitable for complex data structures or scenarios where performance is critical due to potential re-renders triggered by context changes.

- **Example Use Case**: Theming a component using context.

```javascript

import React, { createContext, useContext } from 'react';

const ThemeContext = createContext('light');

function ThemedButton() {

const theme = useContext(ThemeContext);

return <button style={{ background: theme === 'dark' ? 'black' : 'white', color: theme === 'dark' ? 'white' : 'black' }}>Themed Button</button>;

}

```

In summary, React Hooks offer numerous benefits in terms of code organization, reusability, and performance optimization. However, understanding their nuances, choosing the right hook for each scenario, and applying best practices are crucial to avoiding common pitfalls and maximizing their advantages.

| bilelsalemdev |

1,865,089 | Coffee Snobs get it right! | Yes, this is about programming AND coffee. Recently I got a new obsession: Coffee. Never liked the... | 0 | 2024-05-25T17:37:44 | https://medium.com/@noriller/coffee-snobs-get-it-right-b692d99e8e38 | programming, productivity, webdev, learning | Yes, this is about programming *AND* coffee.

Recently I got a new obsession: Coffee. Never liked the stuff, but then I learned that I’ve been only exposed to the bad stuff and badly brewed at that… I gave a chance to the good stuff and it got me hooked. After years without *touching* `.java` now I’m turning into a full-stack *barista*.

As far as coffee goes, I’m pretty sure I’m at the “peak of Mount Stupid” in the Dunning-Kruger curve. I’ve just finished learning all the basics and putting them into practice (or so I think). I still have no idea how much I don't know.

But hey, at least I'm not brewing my code like I used to brew my coffee – that is a *bitter* memory now.

Regardless of that, I was exposed to lots of people talking about coffee and the one common thing was:

## It’s better to brew an 8/10 cup every time you want than to try a “perfect” cup and end up with a 5/10 randomly

When you start going *one cup deeper*, you find recipes that seem crazy to someone from the outside, they literally have recipes like “stir X times clockwise then Y times counter-clockwise during seconds W to Y after pouring the bloom water”, not to mention a heavy dependency on extreme precision in the grams of coffee and milliliters of water.

Like writing code without unit tests, brewing coffee without a scale: you might get something drinkable, but good luck reproducing it. A good brew, like good code, doesn't *Java* happen!"

Seems crazy to have all of that for one cup of joe (though I've heard some programmers have even crazier rituals), but when programming it would be crazy not to have all of those “rules” and “recipes” to make a new feature.

And while making it “perfect” would take a lot of effort, making it “good enough” is easier: control and record the variables, then you’ll be able to replicate and tune them to always achieve an 8/10 cup. Once you start, that appeal of always having a good one is unbeatable.

Then when we go back to programming, every day you do things differently just because and without even knowing what you’re doing differently… you question why code should be readable, and why it should look like the team guidelines. Good luck knowing what you’re doing then.

## But I got a fresh bag of beans I’m not familiar with

There’s something called “cupping protocol” that has many variables fixed so that you objectively judge what that new coffee bean is about, kind of like a code review for your new coffee. By applying it repeatedly you can easily distinguish how two different beans are different from each other, just like comparing different coding languages or frameworks.

Not only that, if by each slurp (yes they slurp, loudly, it even has a reason) you try to concentrate on the acidic, sweet, bitter, or body of the coffee you can isolate each thing enough to know what you want more or less of when brewing it.

In programming, the closest thing I can think about is making a blog or TODO app, and usually focused on *how fast* you go from zero to complete. It usually can’t encompass everything we *actually* do every day or things that matter more than “how fast you can make a CRUD”.

## Reproducibility and Variable Isolation: The Keys to a Good Brew (and Code)

The amazing thing about getting a new obsession is that it lets you see things you already do in a new way, with *fresh eyes*.

Programming should be less “grandma’s magical recipe” (full of mysterious incantations and a pinch of this, a dash of that) and more “exact instructions” (clearly defined functions and precise syntax). Conversely, with coffee, you would think that “numbers and variables” shouldn’t be involved in the brewing – who needs a debugger for their morning cup?

However, coffee snobs will dive into a discussion about each little variable like “how many microns” is the best grind for a brewing method while programmers are like: “screw it, let me just copy/paste this from the legacy codebase that accesses the database from the frontend… working… ship it“.

Brewing coffee with precision is like writing clean code: both take practice, patience, and a lot of trial and error, but you can always know exactly where you screwed up. If coffee brewing had stack traces, we'd all be able to pinpoint exactly when we poured too much water. | noriller |

1,865,087 | Spring Boot Data Access | Spring Boot is a powerful framework that simplifies the development of Java applications. One of its... | 0 | 2024-05-25T17:36:22 | https://dev.to/oloruntobi600/spring-boot-data-access-59ac | Spring Boot is a powerful framework that simplifies the development of Java applications. One of its key features is its ability to streamline data access through various data access technologies. This document will cover the data access options available in Spring Boot, explain how to set up a database connection, and provide examples of CRUD operations using Spring Data JPA.

Data Access Options in Spring Boot

Spring Boot provides support for various data access technologies, making it versatile for different types of applications. Here are some of the primary data access options:

Spring Data JPA

Spring Data JPA simplifies the implementation of JPA-based repositories. It provides a repository abstraction over JPA, enabling you to access relational databases using repository interfaces.

Key Features:

Automatic implementation of repository interfaces.

Support for query methods based on method names.

Integration with Hibernate as the JPA provider by default.

Spring Data MongoDB

Spring Data MongoDB provides a similar abstraction as Spring Data JPA but for MongoDB, a NoSQL database.

Key Features:

Support for MongoDB repository interfaces.

Seamless integration with MongoDB's native query language.

Support for reactive repositories.

Spring Data JDBC

Spring Data JDBC is a simpler alternative to JPA, providing direct JDBC access with a minimalistic approach. It is ideal for applications that do not require the full JPA feature set.

Key Features:

Lightweight and straightforward configuration.

Support for repository interfaces without the complexity of JPA.

Direct mapping of SQL queries to repository methods.

Other Data Access Technologies

Spring Data Redis: For accessing Redis data stores.

Spring Data Cassandra: For accessing Cassandra databases.

Spring Data Couchbase: For accessing Couchbase databases.

Spring Data Elasticsearch: For accessing Elasticsearch indexes.

Setting Up a Database Connection in a Spring Boot Application

Setting up a database connection in Spring Boot is straightforward. Here’s how to configure a relational database connection using Spring Data JPA.

Step 1: Add Dependencies

Add the necessary dependencies to your pom.xml file.

xml

Copy code

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-data-jpa</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

</dependency>

<dependency>

<groupId>com.h2database</groupId>

<artifactId>h2</artifactId>

<scope>runtime</scope>

</dependency>

</dependencies>

Step 2: Configure the Application Properties

Configure the database connection in application.properties.

properties

Copy code

spring.datasource.url=jdbc:h2:mem:testdb

spring.datasource.driverClassName=org.h2.Driver

spring.datasource.username=sa

spring.datasource.password=password

spring.jpa.database-platform=org.hibernate.dialect.H2Dialect

spring.h2.console.enabled=true

Step 3: Create an Entity Class

Define an entity class that maps to a database table.

java

Copy code

import javax.persistence.Entity;

import javax.persistence.GeneratedValue;

import javax.persistence.GenerationType;

import javax.persistence.Id;

@Entity

public class Person {

@Id

@GeneratedValue(strategy = GenerationType.AUTO)

private Long id;

private String name;

private int age;

// Getters and setters

}

Step 4: Create a Repository Interface

Create a repository interface for the entity.

java

Copy code

import org.springframework.data.repository.CrudRepository;

public interface PersonRepository extends CrudRepository<Person, Long> {

}

CRUD Operations Using Spring Data JPA

Spring Data JPA simplifies CRUD operations by providing repository interfaces that you can extend. Here are examples of basic CRUD operations using PersonRepository.

Create

To create and save a new Person entity:

java

Copy code

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.stereotype.Service;

@Service

public class PersonService {

@Autowired

private PersonRepository personRepository;

public Person createPerson(String name, int age) {

Person person = new Person();

person.setName(name);

person.setAge(age);

return personRepository.save(person);

}

}

Read

To retrieve a Person entity by its ID:

java

Copy code

public Person getPerson(Long id) {

return personRepository.findById(id).orElse(null);

}

Update

To update an existing Person entity:

java

Copy code

public Person updatePerson(Long id, String name, int age) {

Person person = personRepository.findById(id).orElse(null);

if (person != null) {

person.setName(name);

person.setAge(age);

return personRepository.save(person);

}

return null;

}

Delete

To delete a Person entity by its ID:

java

Copy code

public void deletePerson(Long id) {

personRepository.deleteById(id);

}

Summary

Data Access Options: Spring Boot supports various data access technologies including Spring Data JPA, Spring Data MongoDB, and Spring Data JDBC, among others.

Database Connection Setup: Configuring a database connection involves adding dependencies, setting properties in application.properties, and creating entity and repository classes.

CRUD Operations: Spring Data JPA simplifies CRUD operations through repository interfaces, allowing for easy implementation of create, read, update, and delete functionalities.

By leveraging Spring Boot's data access capabilities, developers can build robust applications with minimal boilerplate code, focusing more on business logic and less on infrastructure. | oloruntobi600 | |

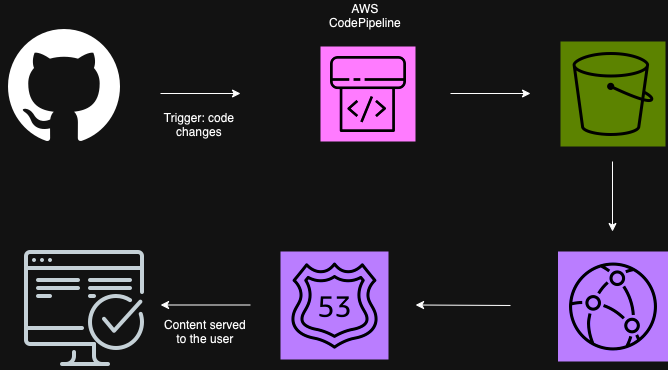

1,865,080 | AWS Code Pipeline - CloudFront - S3 CI/CD Pipeline | Steps to create an automated pipeline in AWS without losing the plot Resources... | 0 | 2024-05-25T17:30:46 | https://dev.to/monica_escobar/aws-code-pipeline-cloudfront-s3-cicd-pipeline-55gf | aws, cicd, automation, devops | ## Steps to create an automated pipeline in AWS without losing the plot

---

**Resources used:**

- S3 bucket

- CloudFront Distribution

- GitHub

- Route 53

- Domain name

- SSL Certificate

- Code Pipeline

---

**The Big Picture**

---

**Steps followed:**

**Step 1: Setting Up Your AWS Environment**

1.1 Create an AWS Account

If you don’t already have an AWS account, sign up for AWS.

1.2 Set Up IAM User

Create an IAM user with the necessary permissions to access the services you'll be using:

Navigate to the IAM Console.

Create a new user and attach the policies for CodePipeline, S3, CloudFront, Route 53, and Certificate Manager.

**Step 2: Purchase a Domain and obtain an SSL Certificate**

2.1 Purchase a Domain via Route 53

Go to the Route 53 Console.

Click on Domains -> Register Domain.

Search for your desired domain name and follow the prompts to purchase it.

2.2 Request an SSL Certificate via AWS Certificate Manager

Navigate to the AWS Certificate Manager (ACM) Console.

Click on Request a certificate.

Select Request a public certificate and enter your domain name.

Follow the steps to validate your domain ownership (via DNS is much faster than via email, remember to create a CNAME record for verification purposes).

**Step 3: Set Up S3 Bucket for Static Hosting**

Go to the S3 Console.

Create a new bucket (e.g: my-portfolio).

Enable static website hosting in the Properties tab (optional tip: add max-age=0 to the header for faster updates).

Set up the bucket policy to allow public read access (or configure CloudFront for secure access).

**Step 4: Configure CloudFront for CDN**

Navigate to the CloudFront Console.

Create a new distribution.

Set the origin to the S3 bucket you created.

Configure the distribution settings, ensuring to set up the SSL certificate you requested.

**Step 5: Configure Route 53 to Point to CloudFront**

In the Route 53 Console, navigate to Hosted Zones.

Select your domain and create a new A record.

Set the alias target to your CloudFront distribution.

**Step 6: Set Up GitHub Repository**

Create a new repository on GitHub and push your application code to it.

**Step 7: Create the CI/CD Pipeline with AWS CodePipeline**

7.1 Set Up CodePipeline

Go to the CodePipeline Console.

Click Create pipeline and give it a name.

Select New service role to create a new IAM role for CodePipeline.

7.2 Add Source Stage

In the Source stage, select GitHub2 as the source provider.

Connect your GitHub account and select the repository and branch you want to use.

7.3 Add Build Stage (OPTIONAL)

In the Build stage, leave empty or choose CodeBuild (I personally left it empty).

7.4 Add Deploy Stage

In the Deploy stage, choose Amazon S3 as the deploy provider.

Select the S3 bucket you created for static hosting.

**Step 8: Test the Pipeline**

Review the pipeline configuration and click Create pipeline.

Commit a change to your GitHub repository to trigger the pipeline.

Verify the build and deployment process.

Access your application using the domain name configured in Route 53 or if the cache is at its default setting it will take longer to show in your domain, but you can check the S3 URL.

---

And this is all you need to create your own automated pipeline from your main branch using AWS and GitHub.

Happy deploying!

| monica_escobar |

1,865,082 | BTC AND OTHER CRYPTOCURRENCIES ARE RECOVERABLE CONTACT TECHNOCRATE RECOVERY | TECHNOCRATE RECOVERY successfully recovered my lost Bitcoin, delivering a triumphant victory in the... | 0 | 2024-05-25T17:19:52 | https://dev.to/hortonangela901/btc-and-other-cryptocurrencies-are-recoverable-contact-technocrate-recovery-48p8 | cryptocurrency, bitcoin, ethereum | TECHNOCRATE RECOVERY successfully recovered my lost Bitcoin, delivering a triumphant victory in the face of adversity. Words cannot express the overwhelming gratitude and relief that flooded my soul upon receiving the news. Beyond their unparalleled expertise, TECHNOCRATE RECOVERY embodies the essence of empathy and compassion. They understand the profound impact of financial loss, offering not only a solution to the problem but also a shoulder to lean on in times of distress. Their genuine care for their clients transcends mere business transactions, forging lasting relationships built on trust and mutual respect. In conclusion, I wholeheartedly recommend TECHNOCRATE RECOVERY to anyone who finds themselves ensnared in the web of online scams. They are not just a company; they are guardians of justice in the digital realm, fighting tirelessly to reclaim what is rightfully yours. With TECHNOCRATE RECOVERY by your side, hope is not just a distant dream but a tangible reality waiting to be embraced. Trust in their expertise, and let them lead you to recovery.

EMAIL: (technocrat recovery(at)contractor. net WHATSAPP: +1(573)356-3708

| hortonangela901 |

1,865,081 | Deep Dive into Push Nodes: Functionality and Importance | In today's rapid blockchain and decentralized applications (dApps) environment, staying informed is... | 0 | 2024-05-25T17:15:34 | https://dev.to/luffy251/deep-dive-into-push-nodes-functionality-and-importance-2cgl |

In today's rapid blockchain and decentralized applications (dApps) environment, staying informed is essential. Push Nodes, a part of its notification systems, have been introduced by Push Protocol so as not to overwhelm the users while keeping them up to date. This blog post will explain what push nodes are, how they work, and why they matter in the easiest way possible.

## What Are Push Nodes?

Push nodes serve as messengers within push protocol. They securely and effectively process notifications (messages) meant for delivery to end users. Think about them like postal workers of the blockchain world who ensure your message is safely sent and received in time

### How Do Push Nodes Work?

1. **Message Validation**:

- Before a message gets delivered, Push Nodes check to make sure it's from a trusted source and hasn’t been tampered with. Think of it as verifying the sender’s address and ensuring the letter hasn’t been opened

2. **Message Routing**:

- Once verified, Push Nodes send the message to the right recipient. They know the best routes to take, much like a postal service knows the best delivery routes to get your mail to you quickly.

3. **Decentralized Storage**:

- Push Nodes help in saving messages in a decentralized manner whereby data is not stored in one place hence more secure and difficult to manipulate like having duplicates of important records in multiple safe areas.

4. **Network Health Monitoring**:

- Push Nodes are constantly checking the network to ensure that everything is running smoothly. If they find any issues, they can fix them quickly, just like a postal service might change delivery routes when it’s raining badly.

## Why Are Push Nodes Important?

### Keeping Things Decentralized

Decentralization means that no single person or company controls the whole system. Push Nodes spread out the responsibility of handling messages, making the system more secure and reliable because there’s no single point of failure.

### Boosting Security

By validating every message, Push Nodes ensure that only legitimate notifications are delivered. This prevents scams and ensures the information you receive is trustworthy.

### Improving Scalability

As more people use blockchain and dApps, the number of messages increases. Push Nodes help the system handle more messages without slowing down, much like adding more postal workers to handle increased mail during the holidays.

### Facilitating Interoperability

Push Nodes can work with different blockchain networks. This means they can send messages across various platforms, making Push Protocol a versatile tool for different types of blockchain projects.

### Providing Redundancy

Redundancy means having backup systems in place. If one Push Node goes down, others can take over, ensuring that the system continues to function without interruption.

## Benefits for Users and dApps

### Better User Experience

Push Nodes ensure you receive timely and relevant notifications. Whether it’s updates about transactions, governance proposals, or event reminders, you get the information you need when you need it.

### Increased Trust and Transparency

Because Push Nodes handle notifications in a decentralized way, you can trust that the messages are accurate and haven’t been tampered with.

### Flexibility for Developers

Developers can customize how Push Nodes handle notifications, allowing them to tailor the system to their specific needs. This makes it easier to create dApps that provide the right information to users at the right time.

### Cost-Effective Solution

Using Push Nodes is cheaper than building and maintaining your own notification system. This allows developers to focus on improving their dApps rather than worrying about infrastructure costs.

### Empowering the Web3 Ecosystem

Push Nodes are an essential part of the Web3 ecosystem, providing a reliable way to communicate within decentralized applications. This helps enhance the functionality of existing dApps and opens up new possibilities for innovative projects.

## Real-World Example: DeFi Platform

Let’s say you’re using a decentralized finance (DeFi) platform that offers lending, borrowing, and staking services. Users need to be informed about important events like loan liquidations, interest rate changes, and staking rewards.

### Implementation

The DeFi platform integrates Push Protocol, using Push Nodes to handle notifications. When something important happens, Push Nodes ensure the message is verified and delivered to the right users promptly.

### Benefits

- **Timely Alerts**: Users get instant notifications about important events, allowing them to act quickly.

- **Improved Engagement**: Regular updates keep users engaged with the platform.

- **Enhanced Security**: Users trust the notifications because they know they are legitimate.

## Looking Ahead: Future Enhancements

Push Protocol is always evolving, and Push Nodes will continue to get better with new features and capabilities. Future improvements might include:

- **Advanced Filtering**: Allowing users to customize their notifications even more.

- **Cross-Chain Compatibility**: Making it easier to send notifications across different blockchain networks.

- **AI Integration**: Using artificial intelligence to provide smarter, more personalized notifications.

## Conclusion

Push Nodes are a crucial part of Push Protocol, ensuring that notifications are handled in a secure, decentralized, and efficient way. By verifying, routing, and storing messages, Push Nodes improve the reliability and scalability of the system. They offer numerous benefits for both users and developers, including better user experience, increased trust, and cost savings. As blockchain technology continues to grow, Push Nodes will play an increasingly important role in making sure communication within the ecosystem is seamless and trustworthy.

Understanding and using Push Nodes effectively will help you stay informed and engaged in the exciting world of decentralized applications, driving greater innovation and adoption in the blockchain space.

| luffy251 | |

1,865,068 | How to create an Online Flipping Form using Plain HTML and CSS | Almost everyone who has used the Internet has come across Forms, many Internet users who are familiar... | 0 | 2024-05-25T17:13:42 | https://dev.to/george_kingi/how-to-create-an-online-flipping-form-using-plain-html-and-css-4ka5 | webdev, html, css, javascript | Almost everyone who has used the Internet has come across Forms, many Internet users who are familiar with forms have come across them when signing up or joining a particular platform, it could be a Gmail sign-up page, Instagram, Facebook, or many other platforms. It is safe to think of a form as a door that can enable access to some information once opened. This article serves both beginners and experts in web development.

This article will showcase how to create an online flipping form, we will create a sign-up page for new users and a log-in form for existing users. The form will flip on click to the sign-up page if one is a new user and flip on click if one is an existing user. We will dive in shortly and it will come clear. We will build the structure of the form via HTML, make our form look good using CSS, and then introduce some Javascript for interactivity.

To make this more fun, we will break it down it two parts;

Part 1 → Will cover the HTML part.

Part 2 → Will cover the CSS part. Here is a link for part 2. https://dev.to/george_kingi/the-power-of-css-in-styling-up-forms-o8m

Let's grab a seat, shall we?

A form is a container that allows users to input data and submit it to a web server for processing. Forms are fundamental in enabling user interaction and data submission. The `<form>` `<\form>` element defines a form in HTML.

## Definition and Uses of Tags

When creating a form, the `<form>` element acts as a container for other elements. The space within the form is defined by the `<input>` tag which allows users to input data. The `<input>` tag is defined by the `type` attribute which sets data in the form of `text`, `number`, `date`, `image`, `reset`, etc, while the `placeholder` provides the watermark.

Examples:

`<input type =”text” >`

`<input type= “number”>`

It is possible to define a class within the `<input>` element as we can see below, to allow for styling of the contents of the tag. The `Placeholder` attribute provides a watermark for the “First name”.

<input type="text" class="input-box" placeholder="First name" required>

## An Overview of the Final Form

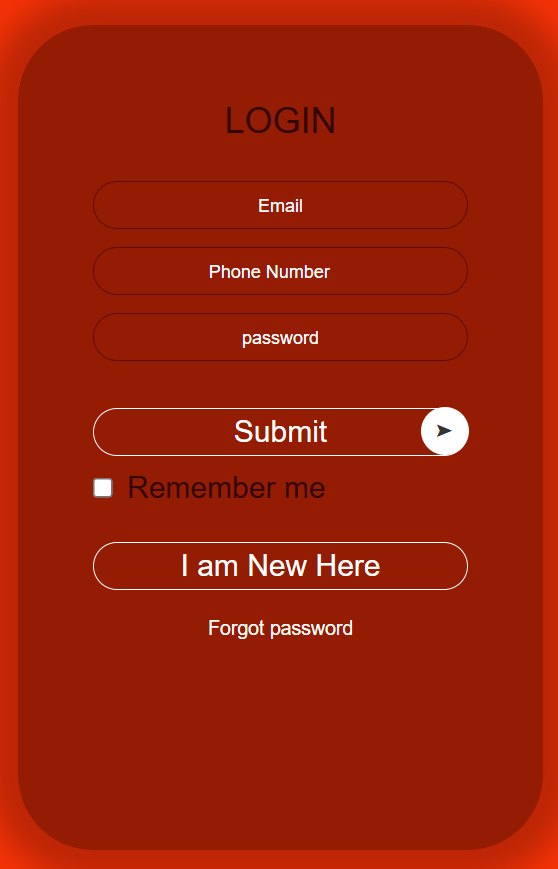

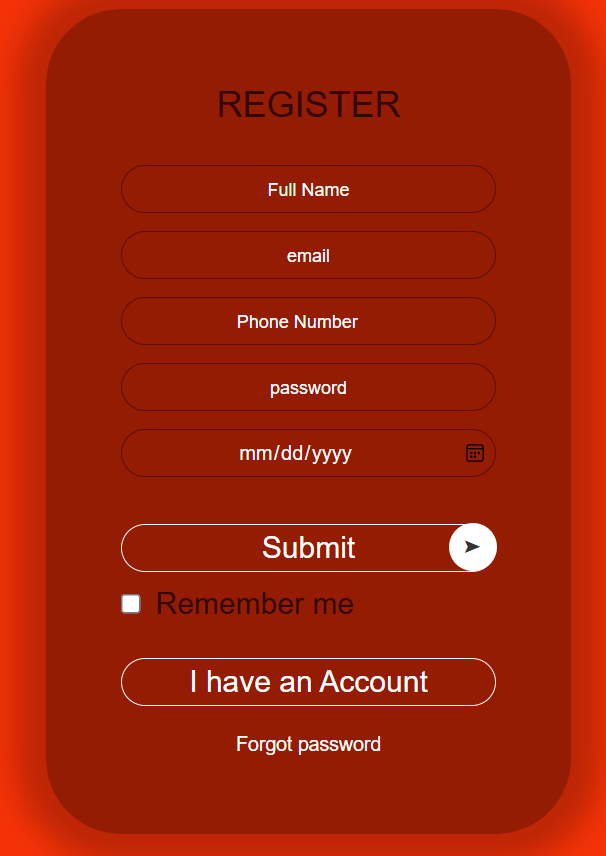

### A Preview of the Front and Back of the Form

## Creating The Online Flipping Form from Scratch

We will go ahead and create an HTML file and a CSS file then link them via the external style. Our code editor for this case will be the VS Code.

To create the Form follow the below steps;

1. Create a folder and name it whatever you want.

2. Open Vs Code Editor, go to file select open folder then select the folder you just created.

3. While on the Vs Code Editor, create a new file and name it `index.html`. The file name must have the extension `.html`

4. Create a new file and name it `style.css`. file. The file name must have the extension `.css`.

You should have something like the snip below, I named my folder “FLIPPING FORM”.

You do not need to generate the below code line by line, just hold shift and press the `!` (exclamation mark ) on the keyboard, and the below code will be auto-generated, change the title to whatever you want,

Then use the link element to connect the HTML and CSS files as below.

```

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>Online Flipping Form</title>

<link rel="stylesheet" href="style.css">

</head>

<body>

</body>

</html>

```

The first step, declare three `<div>`s with their respective classes as we can see below, and then declare our `<form>` tag in the last `<div>`. Remember to name the heading of your page and the title which in this case is “Online Flipping Form” and ”‘Login” respectively.

To note, the class names we declared are random names that will help us when using CSS.

```

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>Online Flipping Form</title>

<link rel="stylesheet" href="style.css">

</head>

<body>

<div class="container" >

<div class="card">

<div class="card-front">

<h2>Login</h2>

<form>

</div>

</form>

</div>

</div>

</body>

</html>

```

Within the `<form>` tag, we introduce the <input> tag which allows users to input data in the form.

```

<body>

<div class="container" >

<div class="card">

<div class="card-front">

<h2>Login</h2>

<form>

<input type="email" class="input-button" placeholder="Email" required>

</div>

</form>

</div>

</div>

</body>

```

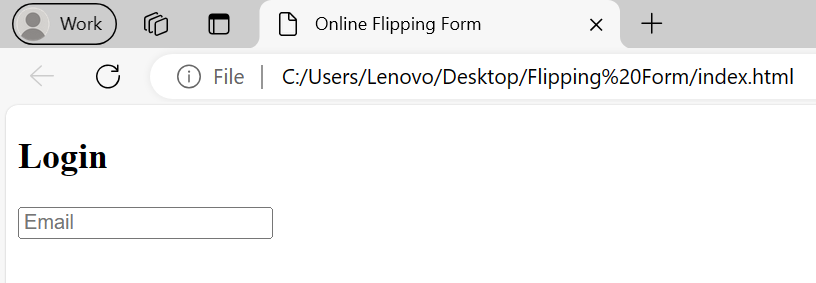

With the above code, the output on the browser is displayed as below.

We go ahead and create more forms as per our target project. See the below code block that will do the trick.

```

<body>

<div class="container" >

<div class="card">

<div class="card-front">

<h2>Login</h2>

<form>

<input type="email" class="input-button" placeholder="Email" required>

<input type="number" class="input-button" placeholder="Phone Number" required>

<input type="password" class="input-button" placeholder="Password" required>

<button type="submit" class="submit-btn">Submit</button>

<input type="checkbox" class="checkbox"><span>Remember me</span>

<button type="button" class="btn">I am new here</button>

<a href="">Forgot password</a>

</div>

</form>

</div>

</div>

</body>

```

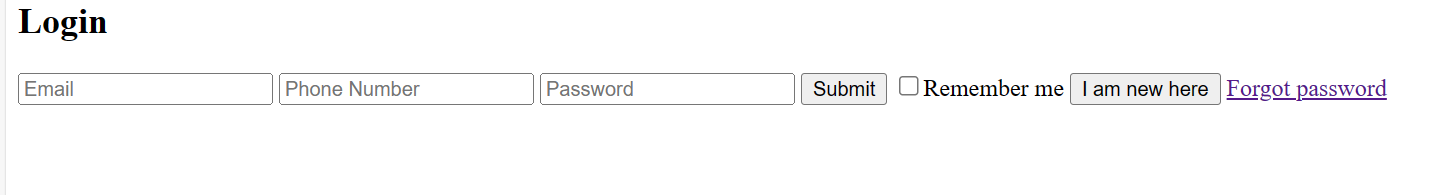

With this, the output should be as per the below; Remember, we are creating a flipping form that has two sides, so let's call the below output the front side of the form.

Let's go ahead and create the back of the form and to do so, we declare another <form> tag and other DIVs as below.

```

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>Online Flipping Form</title>

<link rel="stylesheet" href="style.css">

</head>

<body>

<div class="container" >

<div class="card">

<div class="card-front">

<h2>Login</h2>

<form>

<input type="email" class="input-button" placeholder="Email" required>

<input type="number" class="input-button" placeholder="Phone Number" required>

<input type="password" class="input-button" placeholder="Password" required>

<button type="submit" class="submit-btn">Submit</button>

<input type="checkbox" class="checkbox"><span>Remember me</span>

<button type="button" class="btn">I am new here</button>

<a href="">Forgot password</a>

</div>

</form>

<div class="card-back">

<h2>Register</h2>

<form>

<input type="text" class="input-button" placeholder="Full Name" required>

<input type="email" class="input-button" placeholder="Email" required>

<input type="number" class="input-button" placeholder="Phone Number" required>

<input type="password" class="input-button" placeholder="Password" required>

<input type="date" class="input-button" placeholder="Date-of-Birth" required>

<button type="submit" class="submit-btn">Submit</button>

<input type="checkbox" class="checkbox"><span>Remember me</span>

<button type="button" class="btn">I have an Account</button>

<a href="">Forgot password</a>

</form>

</div>

</div>

</div>

</body>

</html>

```

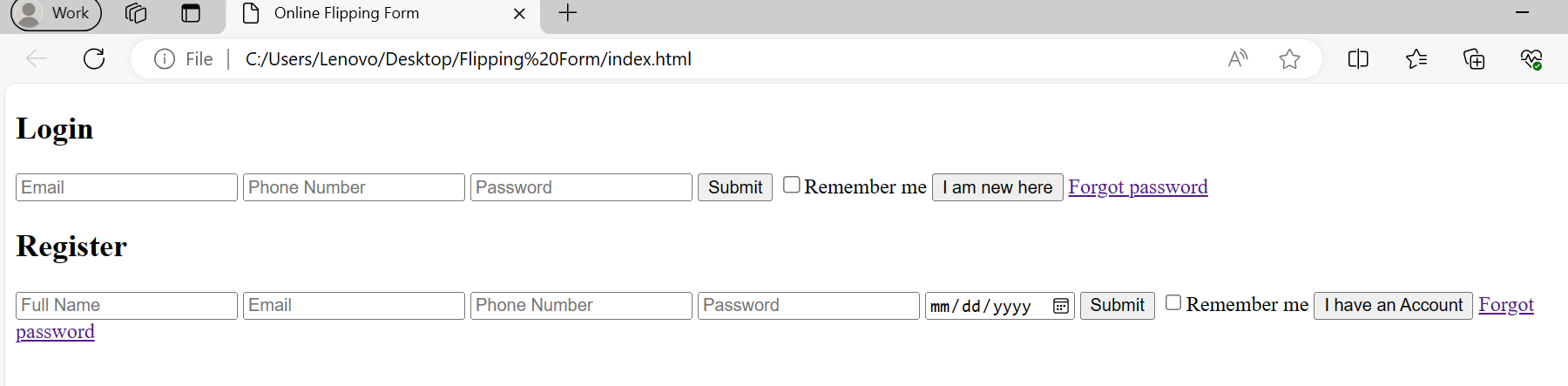

With the above code, our HTML structure is set. The browser should display the below output.

This brings us to the end of Part 1 on how to create an online flipping form. Part 2 will focus on how to make our form look good, introduce different styles on all the elements of the form, and finally make the form flip.

Here is the link to the Part 2: https://dev.to/george_kingi/the-power-of-css-in-styling-up-forms-o8m

| george_kingi |

1,865,072 | Deploy a Static React Site Using AWS S3 and CloudFront | Learn how to take the static build of a react app & deploy it to AWS, add SSL certificates & add global CDN caching using S3 buckets & CloudFront. | 0 | 2024-05-25T17:11:58 | https://dev.to/whittington/deploy-a-static-react-site-using-aws-s3-and-cloudfront-d0l | react, aws, s3, cloudfront | ---

title: Deploy a Static React Site Using AWS S3 and CloudFront

published: true

description: Learn how to take the static build of a react app & deploy it to AWS, add SSL certificates & add global CDN caching using S3 buckets & CloudFront.

tags: react, aws, s3, cloudfront

# cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/tlpnsthb6qaoqj8xiclx.PNG

# Use a ratio of 100:42 for best results.

# published_at: 2024-05-25 16:23 +0000

---

_Quick note: links to documentation & further reading exist throughout this post, look for those links to get additional information & documentation links on topics/ tools/ technologies mentioned._

_If you prefer video content, the video version of this tutorial can be found on my [Youtube channel](https://www.youtube.com/@LearnWithWhittington). Link [here](https://youtu.be/2zrjisB7YrM?si=__ZIHKo71Yg-W2lQ)_

In this tutorial, I will walk you through building a quick static site by doing a static build using [ReactJS](https://react.dev/) & [create-react-app](https://create-react-app.dev/), then show you how to deploy that static site on [AWS](https://aws.amazon.com/) using [S3](https://aws.amazon.com/s3/) buckets as well as how to cache it & add SSL certificates with [CloudFront](https://aws.amazon.com/cloudfront/) CDN & [Certificate Manager](https://aws.amazon.com/certificate-manager/).

All you need to get started is an AWS account & a [domain](https://developer.mozilla.org/en-US/docs/Learn/Common_questions/Web_mechanics/What_is_a_domain_name), you can either purchase one on AWS or use one from another [registrar](https://www.cloudflare.com/learning/dns/glossary/what-is-a-domain-name-registrar/). If you're a beginner I recommend purchasing the domain directly from AWS as it's the most straightforward approach, however, if you have an existing domain with an external registrar you can use it in a Route 53 Hosting Zone by first creating a hosting zone, then by pointing the domain to the AWS name servers for your hosting zone (this is covered later). For this tutorial I will be using an external domain as I already have one for testing & tutorials. Behold: [0000.quest](https://0000.quest)

#### AWS Services Overview

Before we get started, here is a brief overview of the AWS services used in this walkthrough. Feel free to skip this section if you're already familiar with the services used here.

[AWS S3](https://docs.aws.amazon.com/s3/)<br/>

S3 or Simple Storage Service, is Amazon's solution for [object storage](https://aws.amazon.com/what-is/object-storage/). It's a way to store files within the AWS ecosystem & seamlessly integrates with other AWS services as a data source, it can also do other things, but we're not using any of that 😅. We'll be using it as a file storage system for the static files our site serves.

[AWS Route 53](https://docs.aws.amazon.com/route53/)<br/>

Route 53 is the native routing service in the AWS ecosystem. This is a complex service that handles all your AWS routing needs to route user traffic to your applications & services, but in this walkthrough, we'll only be leveraging the AWS Route 53 Hosted Zones feature. Hosted Zones allow you to manage the [DNS](https://www.cloudflare.com/learning/dns/what-is-dns/) records for domains accessible in your AWS account.

[AWS Certificate Manager](https://docs.aws.amazon.com/acm/)<br/>

Certificate Manager is the AWS integrated service that handles creation, storage & renewals for [SSL certificates](https://www.cloudflare.com/learning/ssl/what-is-an-ssl-certificate/). We'll be leveraging this service to create & serve our SSL certificates for our deployed site.

[AWS CloudFront](https://docs.aws.amazon.com/cloudfront/)<br/>

CloudFront is AWS's global [CDN](https://www.cloudflare.com/learning/cdn/what-is-a-cdn/) service, CloudFront allows you to cache data at AWS global endpoints so that users will get data from a source close to them geographically, ensuring low latency for the data they fetch. In this walkthrough, we'll be leveraging CloudFront to cache our static site globally & we'll also be using it to serve our site's SSL certificate.

_**Lets get started**_ 😎👍

#### Using create react app to build a static site

To get started, let's quickly create a sample [React](https://react.dev/) app that has a build configured to export a static site. Even if you have a project in mind to deploy, I recommend going through the motions with a simple sample site, as you may run into implementation specific issues with your project & if you've never gone through the motions, it can be hard to determine whether you made a mistake in the deployment processes or if there are implementation specific issues you're facing. <br/>

I assume you have nodejs installed if you're doing a react app, but just in case you're doing this tutorial for funsies, you can download nodejs [here (nodejs.org)](https://nodejs.org).

Create a sample project using [create-react-app](https://create-react-app.dev/docs/getting-started/). Run the following in a directory where you want to save the sample project. Feel free to change the name, but for this example I'll be calling it samplesite.

<pre><code>npx create-react-app samplesite</code></pre>

If you're new to create-react-app or simply want to see what the preview looks like, run the following command to run a local dev server & preview the app.

<pre><code>

cd samplesite

npm run start

</code></pre>

Once you're done looking at the preview, run the following build command to generate the static site.

<pre><code>npm run build</code></pre>

The static site will generate in the build folder of the samplesite project root. The folder structure should look like this:

<pre><code>

samplesite

build

node_modules

public

src

</code></pre>

Now that we have a sample static site to test, we can move on.

#### Setup S3 buckets for domain

Next thing to do is setup the S3 buckets for the static hosting. We'll create two buckets, one for the primary site & then a second one to redirect the www subdomain to the primary site. It seems to be commonplace to make the www subdomain the primary site, but it doesn't really matter, its a matter of preference & I prefer to get rid of that ugly www subdomain on my URL 😤.

Log into the [AWS Management Console](https://aws.amazon.com) & go to the S3 console by searching for S3 in the search bar & clicking on it. Click on buckets in the navigation menu on the left to get to the buckets tab. In the buckets tab, select create bucket.

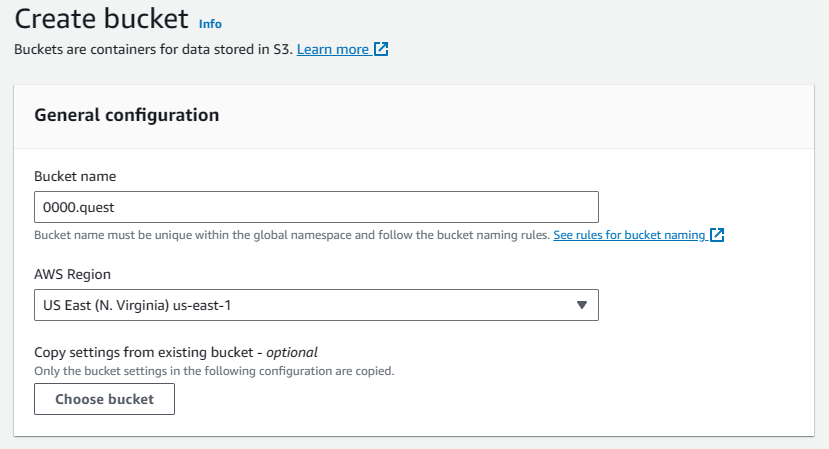

First we'll be creating the main bucket, it must have the same name as the domain you'll be hosting, so since I'm using 0000.quest that's what I'll be naming this bucket. For CloudFront to work, you'll need to host the bucket in the us-east-1 region, so select that. Don't worry if you or your primary user base is in another region, CloudFront does global endpoint caching, but your primary bucket needs to be in this region for some reason.

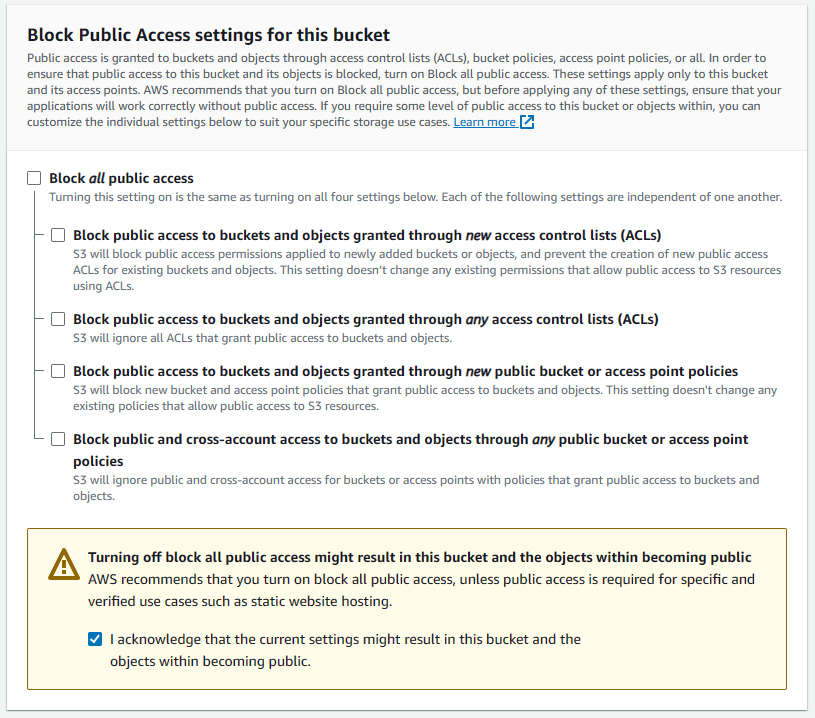

For this bucket, we'll need to enable public access, so uncheck the Block all public access options & check the acknowledgement checkbox warning that this bucket's contents will be public. This is a public website so that's kinda the whole point 😅.

Leave all other settings default & add custom tags if you need them. Then hit the create bucket button to create the bucket.

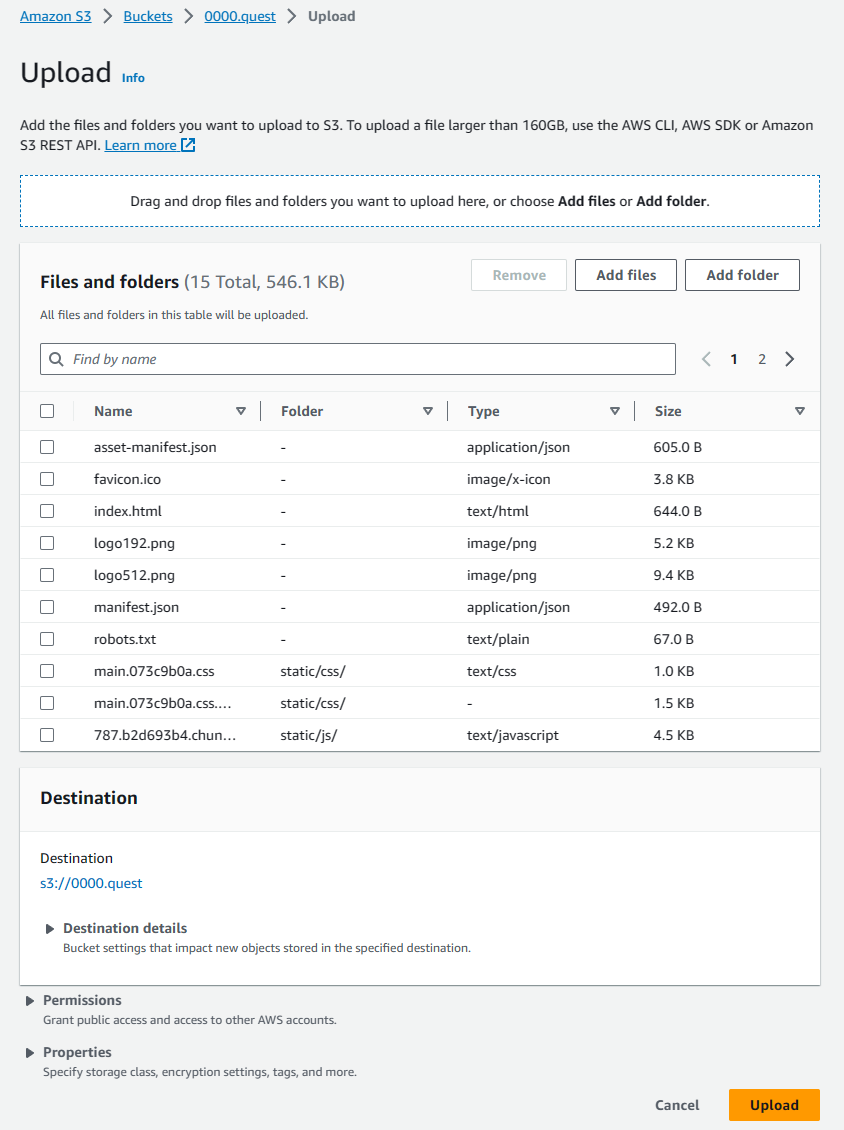

Once the bucket is created, you'll be taken back to the buckets tab of the S3 console. Select the bucket you just created & that will take you to the object view window for that bucket. Here, we will manually upload the static build of the samplesite we created in an earlier step. Open the directory containing the build (samplesite/build), select everything, directories included, & drag them into the object view of the bucket. You'll see the window change to accept the drop. The upload preview will look like this:

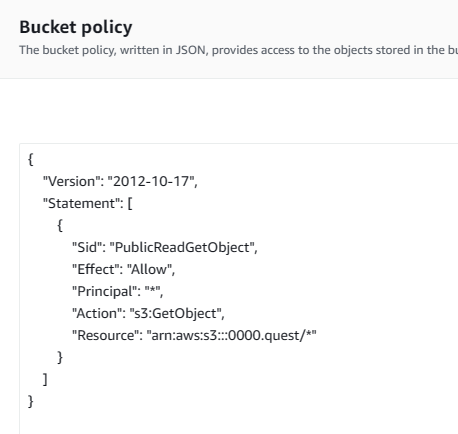

That should have our sample site's static assets available in the bucket to be served on our URL. We're not quite done configuring this bucket yet, there's one more step for our initial configuration. We need to set explicit permissions so all users can access the files in the bucket. Navigate back to the object view by clicking on the bucket from the buckets tab of the S3 console. Once there, navigate to the permissions tab, scroll down to the bucket policy, it should be empty. Hit the edit button to create a new bucket policy. Paste the following policy in the editor & hit save changes.

<pre><code>

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "PublicReadGetObject",

"Effect": "Allow",

"Principal": "*",

"Action": "s3:GetObject",

"Resource": "arn:aws:s3:::<bucket_name_here>/*"

}

]

}

</code></pre>

If you're not familiar with AWS policies, here's a brief explanation of what it does. This policy statement grants everyone the GetObject permission on the root of the S3 bucket. This means that anyone will be able to view all the files in the bucket. Please don't use this policy for anything but this 😭. The new policy should look like the screenshot below.

For more information about bucket policies, consult the [AWS bucket policies & user policies documentation](https://docs.aws.amazon.com/AmazonS3/latest/userguide/using-iam-policies.html)

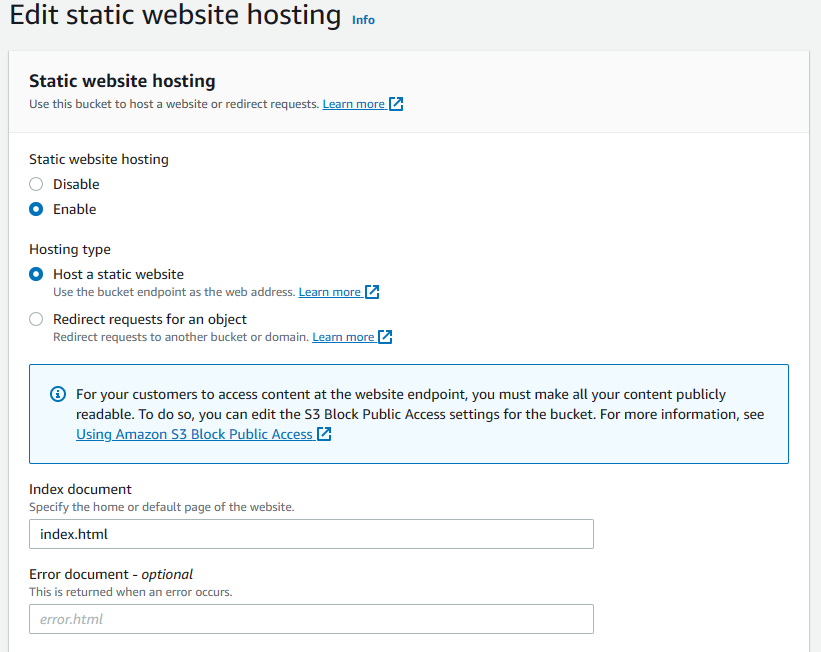

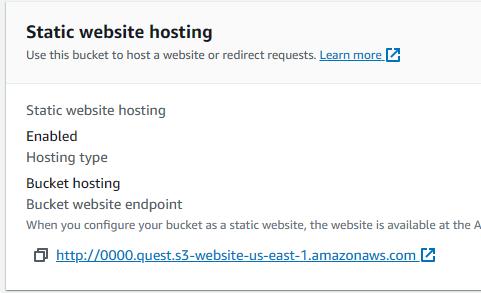

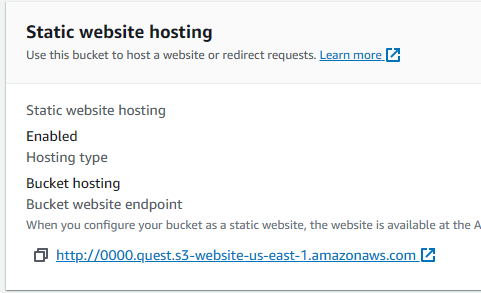

Now that all objects in the bucket are publicly accessible, we can configure the bucket for static site hosting. Navigate back to the bucket's object preview & switch to the properties tab. Scroll all the way to the bottom & hit the edit button on the static website hosting section. Here switch the static website hosting toggle to enable, change the index document to index.html, this is the application entry point for our react app. We don't have an error page so leave the error document blank, leave all other settings to the defaults, then hit the save changes button. Settings should match the screenshot below.

Once that's done, scroll back to the bottom of the properties tab for that bucket & you'll see a URL there, copy that & save it somewhere, you'll need that for the second bucket configuration.

We're done configuring this bucket for now, on to the next bucket, this will be a lot easier to configure as it's just a redirect & won't host any actual content, so few settings will need to change.

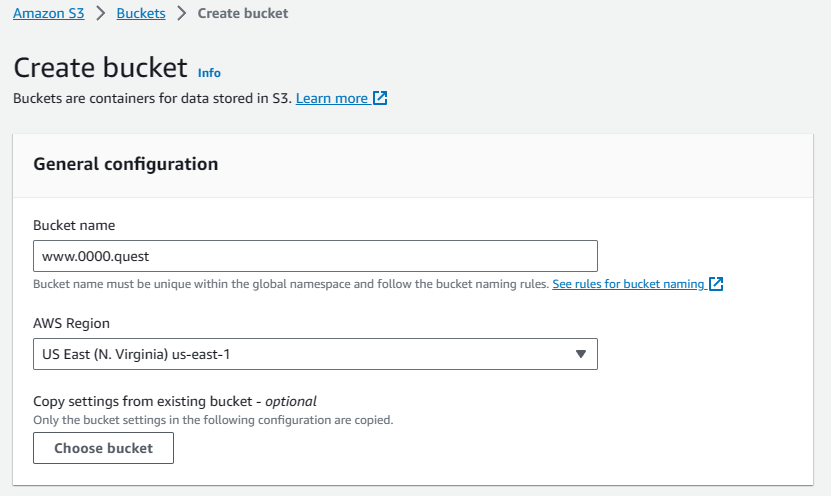

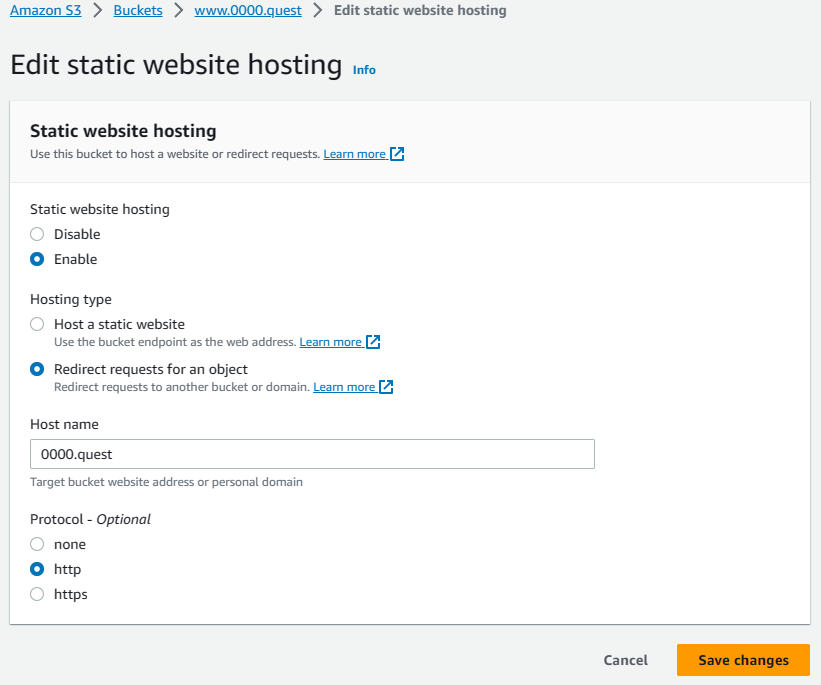

Navigate to the buckets tab of the S3 console & hit the create bucket button. This is the redirect bucket for the www subdomain, so it's going to have the www prefixing the domain name, for me it's www.0000.quest since that's the domain I'm using. Again, we're going to need the [region](https://docs.aws.amazon.com/AWSEC2/latest/UserGuide/using-regions-availability-zones.html) to be us-east-1. Leave all other settings default. Since this is just a redirect bucket, the public access isn't necessary, as it won't have any files. Hit create bucket & finish making this bucket.

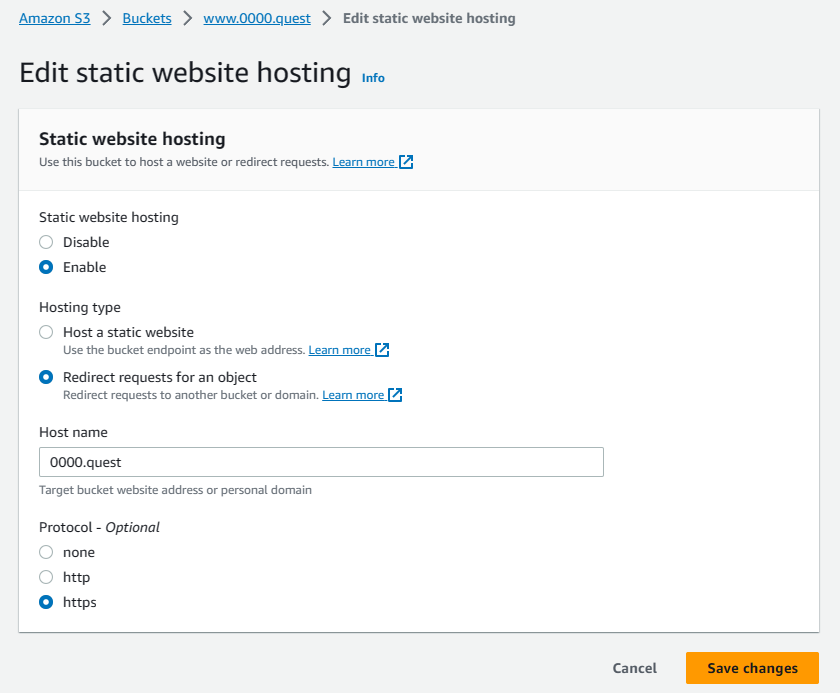

Navigate to the subdomain bucket's properties tab & scroll to the bottom to edit the static website hosting settings. Here, we switch the static website hosting toggle to enable, change the hosting type to redirect requests for an object. Under host name, type your domain name &, for now leave the protocol to HTTP for testing, we'll have to come back & change to HTTPS this later, once we get the SSL certificates setup in CloudFront. Hit save changes.

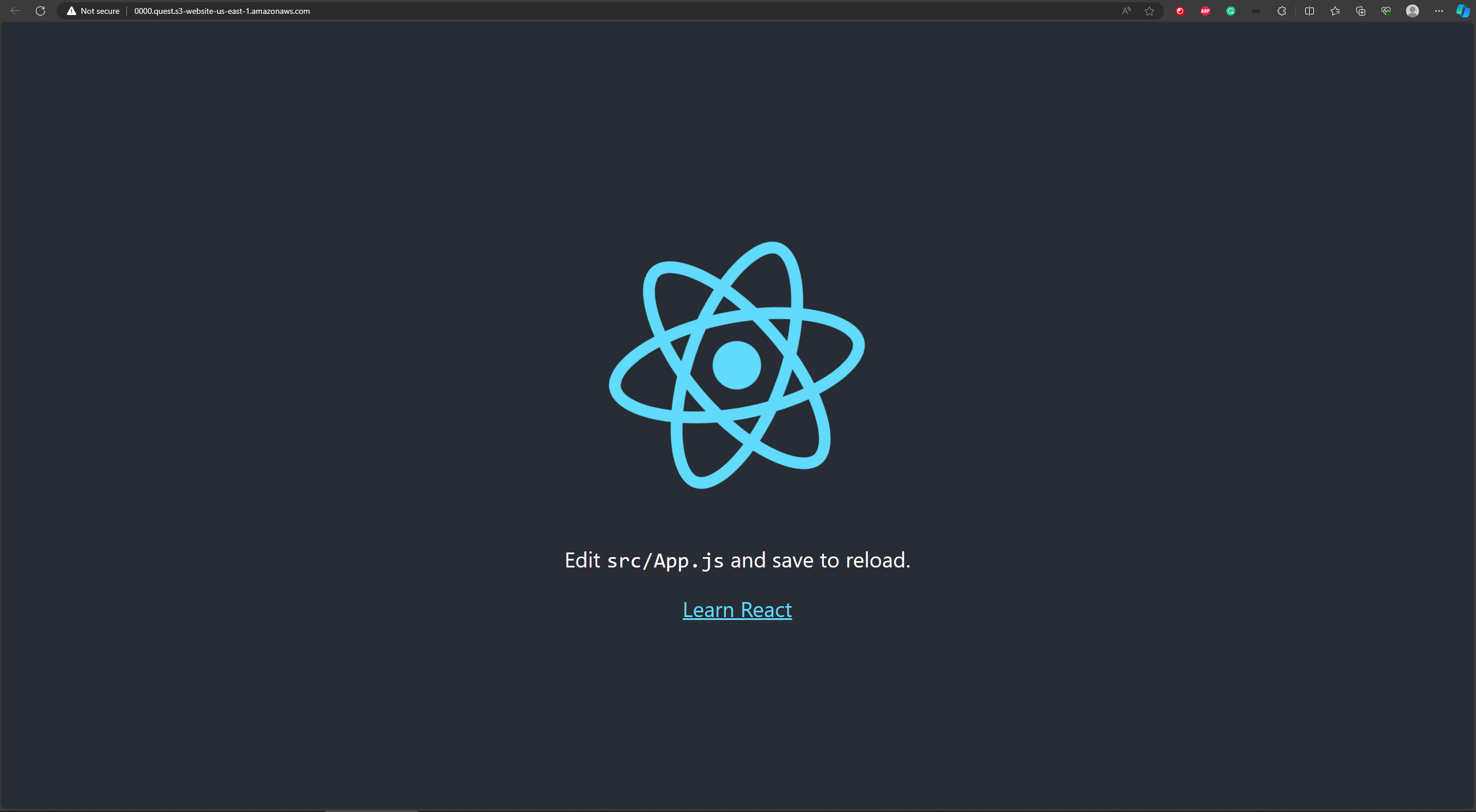

The www subdomain won't be up & running until we setup some DNS records, but you can currently see the functioning static site on the primary bucket by going to that URL you saved earlier.

#### Setup the Hosted zone

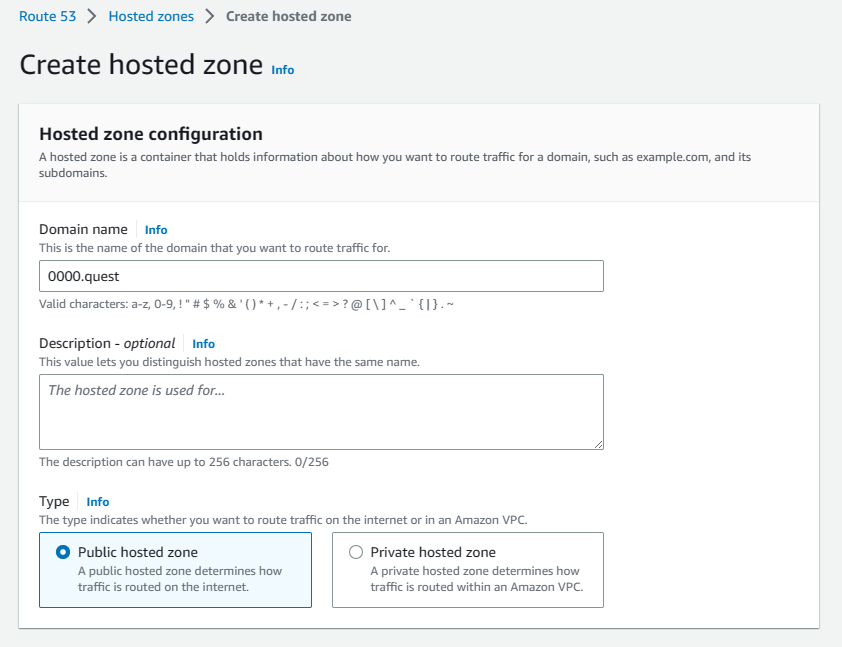

Now that the buckets have been configured, it's time to configure the DNS in the Hosted Zones to get the URL configured & pointing to our bucket. Navigate to the Route 53 console by searching for it in the search bar. Click the Hosted Zones tab & hit the create Hosted Zone button. In the configuration, add your domain name under the domain name field & add a description if you need one, leave all other settings default. Hit the create button to finish creating the Hosted Zone.

Here is an extra step for anyone using an external domain registrar, you can skip this part if you're using a domain in your AWS account. If you are using an external registrar, you'll need to go into your registrar's portal & point your name servers to the name servers in the NS record created for your Hosted Zone. Once you do that, it may take a few minutes to a few hours for that name server change to propagate, depending on how often your registrar updates their records.

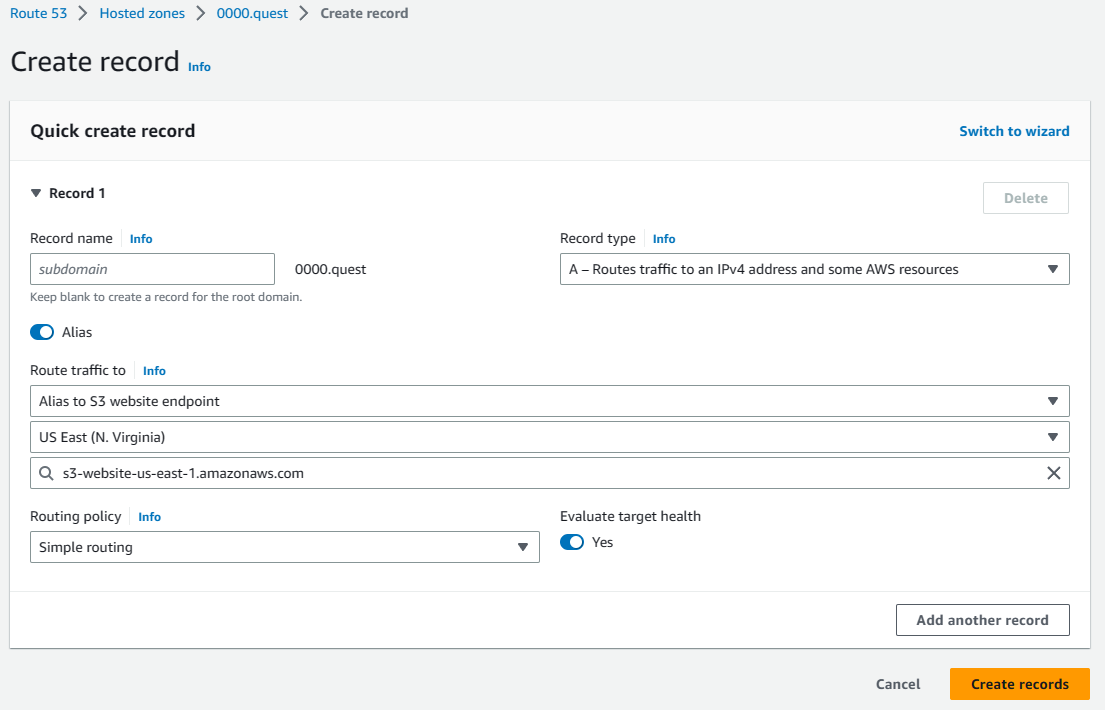

Time to add some records to the DNS that points to the S3 bucket so that your site is served when someone hits your domain. In your Hosted Zone, click the create record button. Leave the subdomain field empty for now. Change the record type to A. Click the alias toggle to update the available fields. Under "routes traffic to" select "Alias to S3 website endpoint," change the region to us-east-1. Under the S3 endpoint field you should see one option, this auto populates for you, click that S3 bucket in the dropdown. This should auto populate from the bucket name. This is why the bucket has to be the domain. Leave everything else default & hit the create records button to finish creating the record.

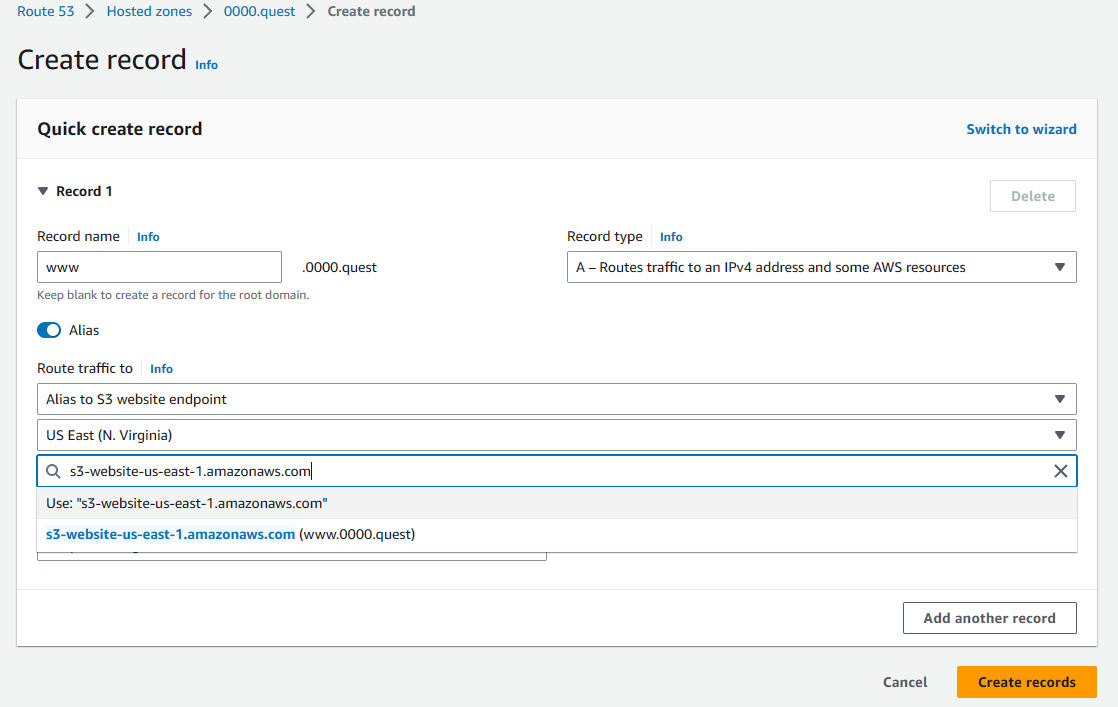

Now we'll add the DNS record for the subdomain, it's exactly the same as the previous record, only difference is that we're adding the www in the subdomain field & selecting the other bucket from the dropdown.

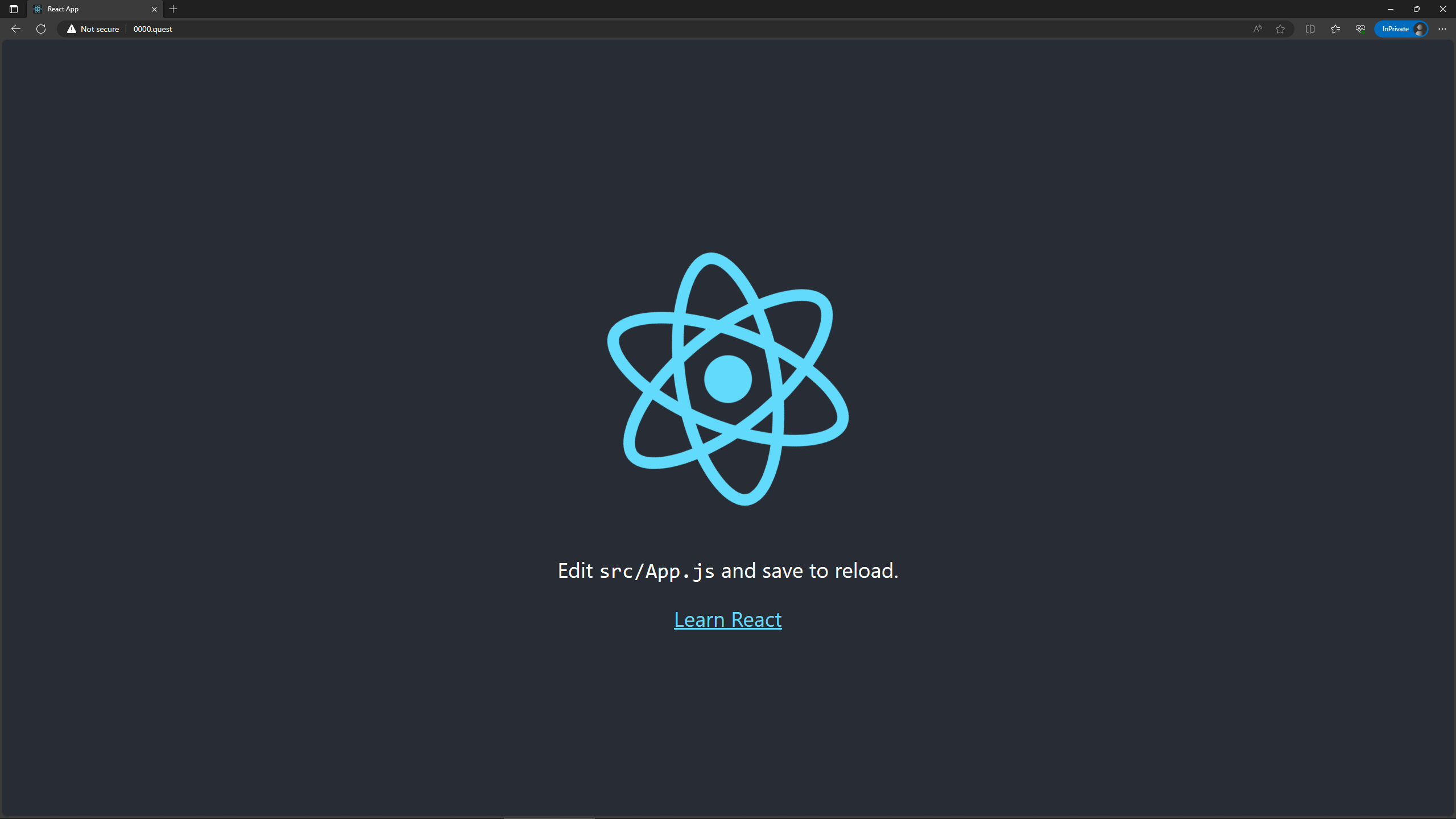

Once those records are created, you should immediately be able to access your site on your domain, both with & without the www subdomain(in this example 0000.quest & www.0000.quest). If you used an external registrar & your nameservers haven't propagated yet then the AWS records won't be hit & you won't be able to hit your site, but it should all propagate rather quickly. Most domain registrars reflect name server updates in around 30 minutes. Below is the screenshot I took of the site around 5 seconds after updating the record, as you can see, it's using the domain, so it propagated rather quickly. Also, if you type in the www subdomain it forwards to the standard site without the www subdomain prefix, as intended.

At this point if you just wanted a way to show people your site & don't care for an SSL certificate to get that sweet sweet HTTPS in front of your domain, then I guess you can stop here, but you might as well keep going & add the SSL cert & CDN caching. 🤷♀️

#### Creating SSL certificate in Certificate Manager

Let's go ahead & create an SSL certificate in Certificate Manager. Before you start, you'll need to change your region in the AWS Management Console to us-east-1, this can be changed by clicking the top right of the console, right next to your name. This is an important step, as your certificate won't be accessible in CloudFront if it's in the wrong region. 😅

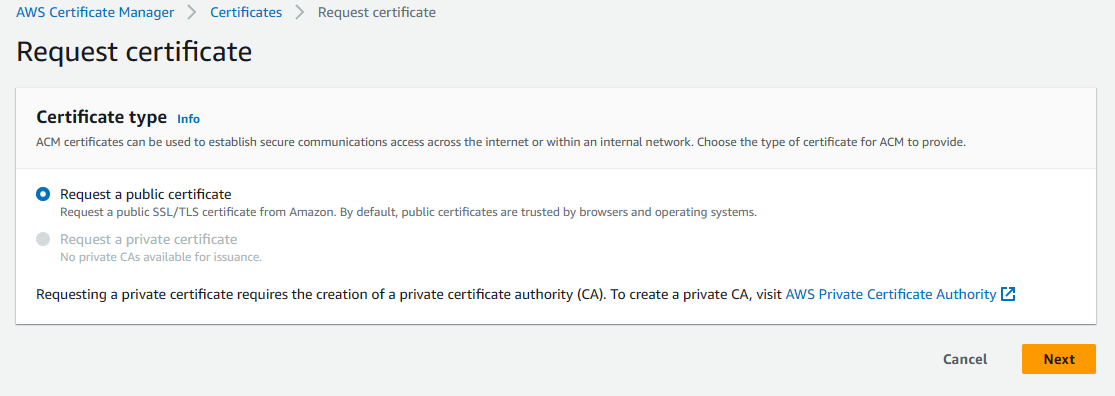

Navigate to Certificate Manager by searching for it in the search bar in the AWS Management Console. Click the request a certificate button to generate a new SSL certificate for your site. Select public certificate & hit next.

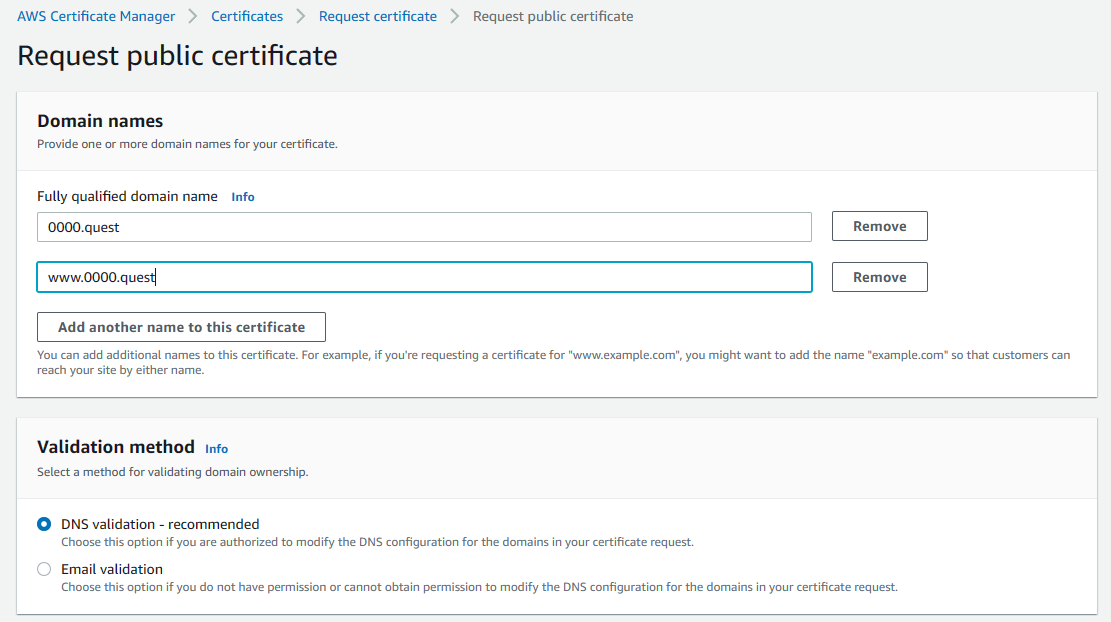

On the next page, add both your domain & your www subdomain under domain names. This is an important step as you cannot change this later & you'll have to delete the cert & redo it to change this. Set validation method to DNS validation then leave all other settings default & hit request to generate the certificate.

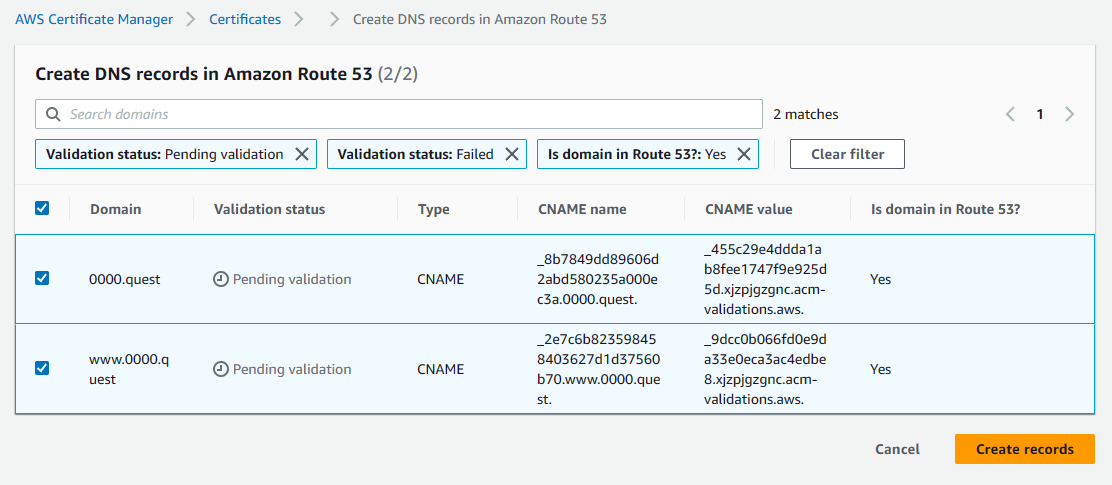

Once you submit the request, the cert will need to be validated. In the list certificates tab of the Certificate Manager console, select the certificate you just created, if you have multiple, look for the one with your domain name. Once there, under Domains, there is a button to create records in Route 53 at the top right of the domains section, click that button.

Then hit the create records button to create the records.

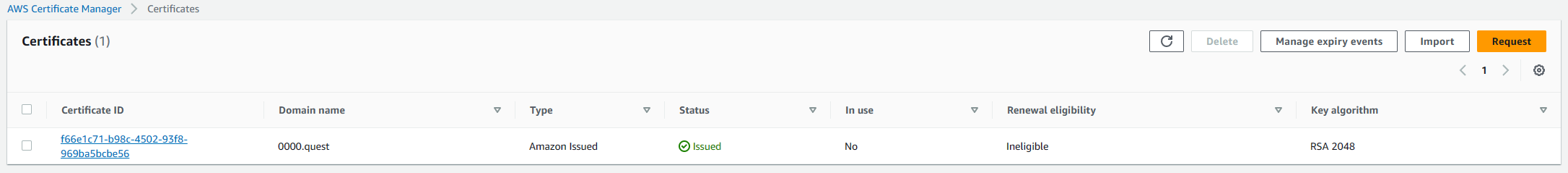

This allows AWS to verify ownership of the domain by creating custom DNS records for the provided URLs, this can only be done by someone with access to the registrar or a provider with access to the name servers the registrar is pointing to. This is a security step. Once you create those records, after a few minutes, you should see the status in certificate manager change from pending to issued.

#### Cloudfront distribution configuration

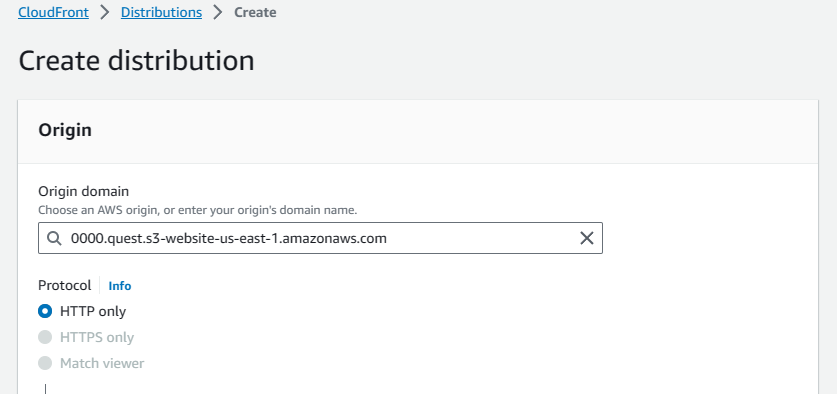

Now that the certificate exists, it's time to configure CloudFront using the certificate. Quickly navigate to the S3 buckets created earlier & grab their URLs. It's at the bottom of the properties tab for each bucket under the static website hosting section. You'll need these for the CloudFront configurations.

Navigate to the CloudFront console in the AWS Management Console by searching for it in the search bar. Once there, switch to the distributions tab & click the create distribution button to start the setup.

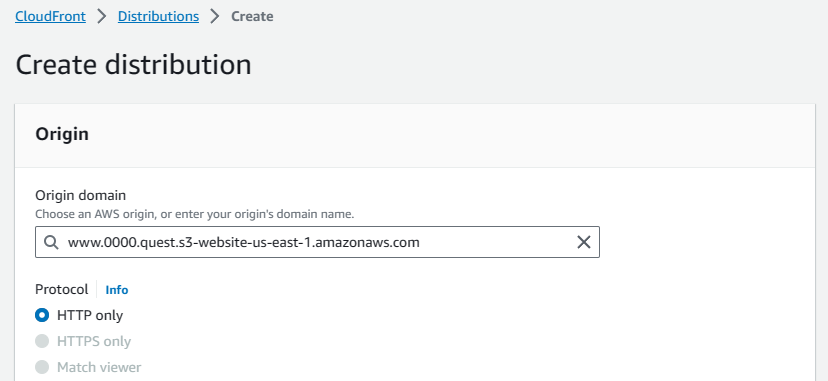

In the origin section, paste the primary bucket's public URL copied earlier into the origin domain.

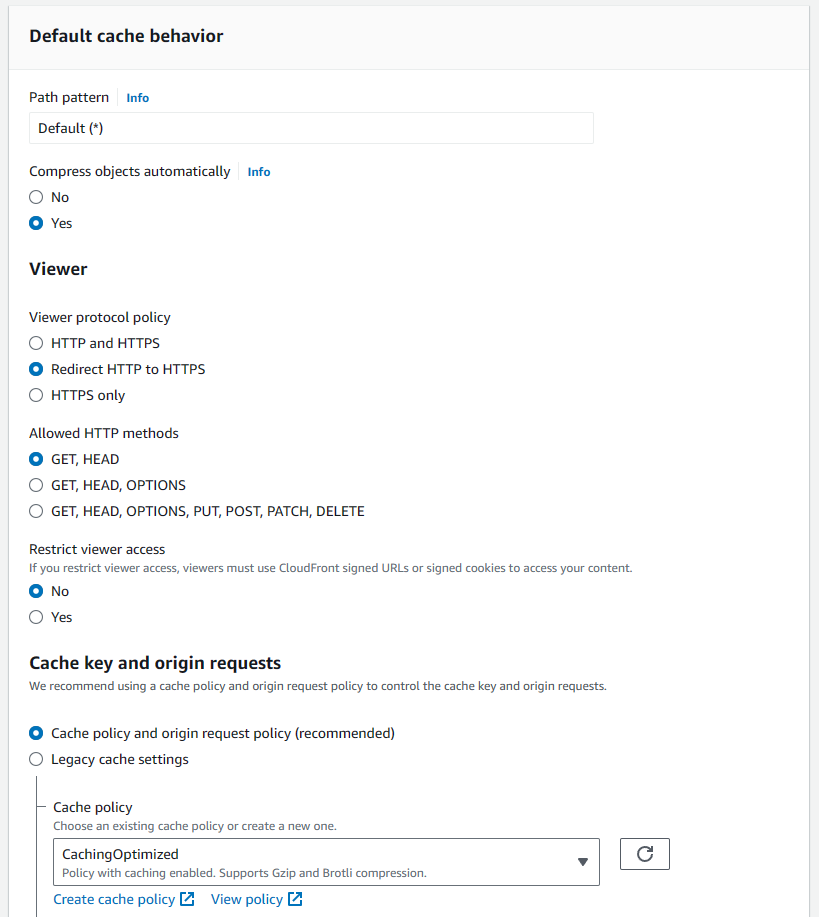

Scroll down to the default cache behavior section, change "viewer protocol policy" to redirect HTTP to HTTPS. This ensures that users will be accessing your site securely over HTTPS once it's using the CloudFront CDN. Also, set the cache policy to caching optimized. I didn't capture this in the screenshot, but the Web Application Firewall (WAF) can be disabled here, so that's what I did. 😅

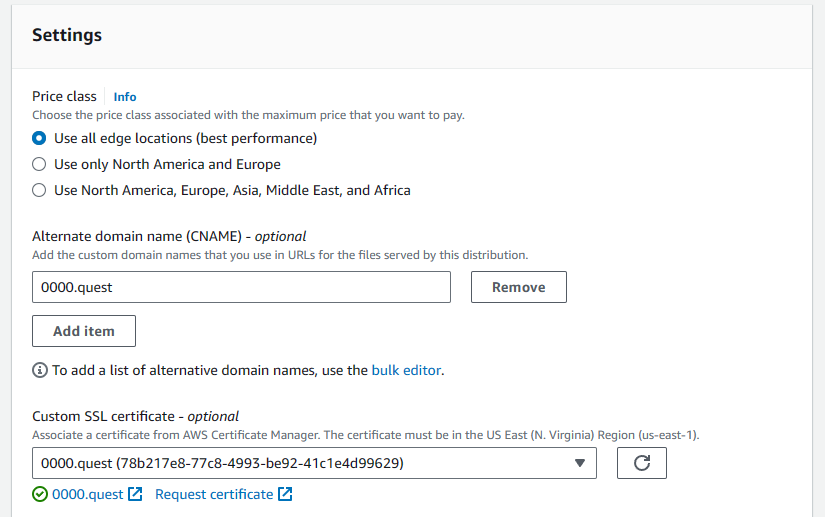

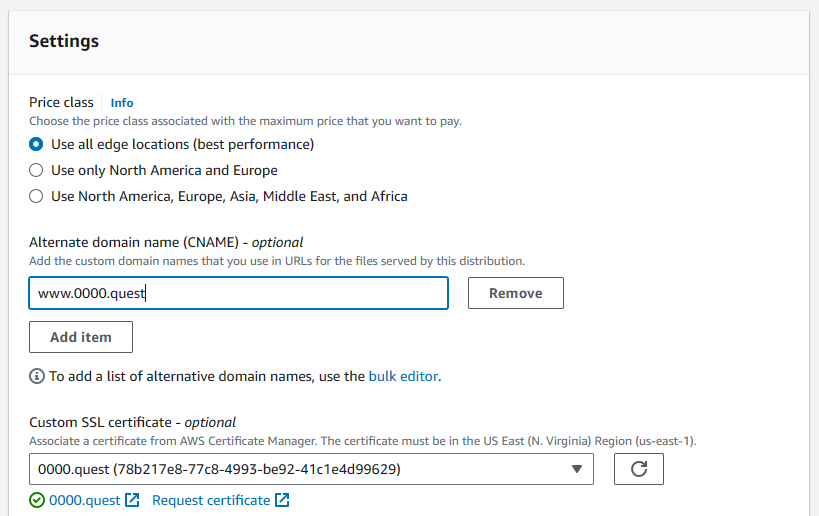

Scroll down to settings, here you'll add the alternate domain name, this allows you to bind your domain name to the CDN. Here, I'm doing the primary domain without the www subdomain (0000.quest). Then, under the "custom SSL certificate setting," select your previously made certificate from the dropdown. Leave all other settings default. Hit create distribution.

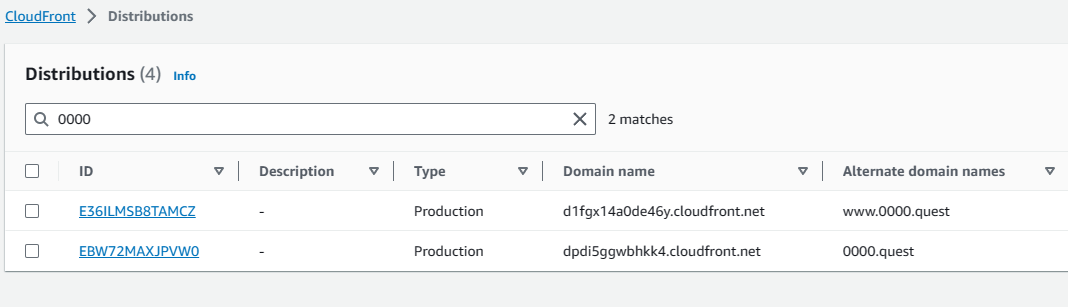

Once that's created, we have to do a second distribution for the www subdomain (www.0000.quest). The settings are the same, we start by copying that www bucket's public URL into the origin field.

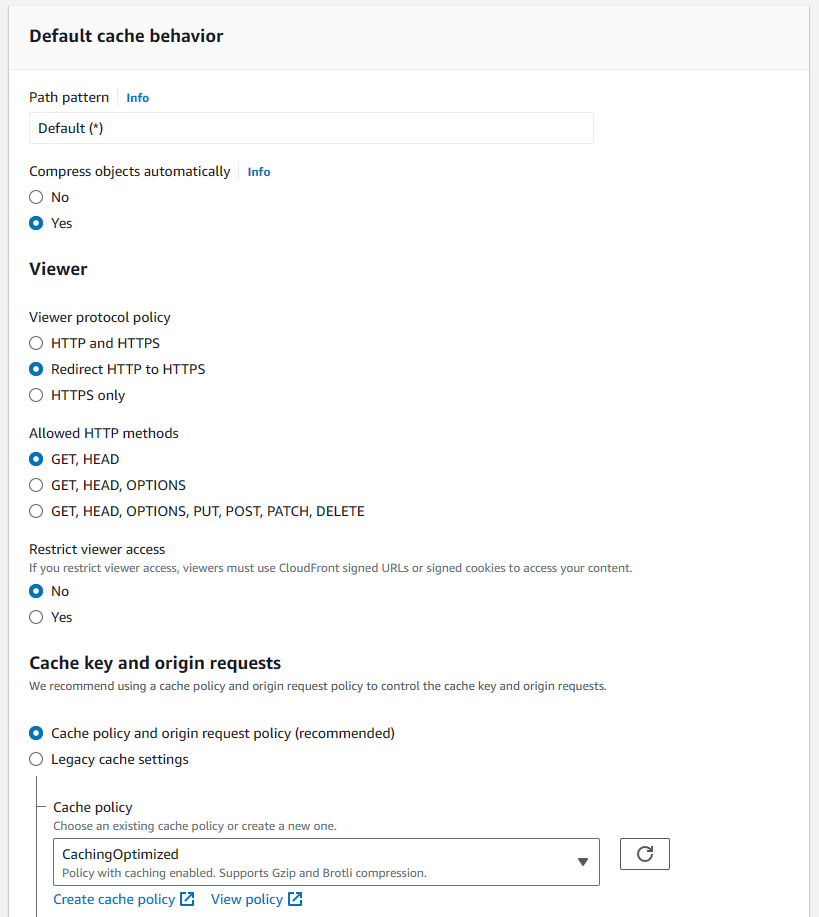

Again, in the default cache behavior we're going to change to redirect HTTP to HTTPS & set the cache setting to caching optimized. Again, I disabled the Web Application Firewall here, it's not captured in the screenshot, but it's a single radio button, so you should be able to find it 🤷♀️. It's also a required setting, so you need to do that.

Then, in the settings we add the www subdomain as an alternate domain name & select the SSL cert we made earlier.

Once those distributions are created, it takes a few minutes to deploy, but that's okay because we have some changes to make to S3 & the DNS records anyway. We can do those while they finish deploying. In the list distributions tab of the CloudFront console you can see the domain names for your distributions, copy those & save them. We'll need those to change some settings for our final configurations for our S3 buckets.

#### Tying it all together

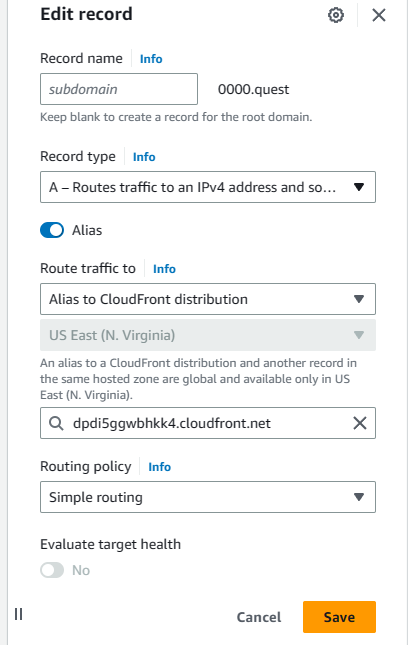

Navigate back to the Hosted Zone configured before. We have to edit the previously made A records to point to the CloudFront distributions instead of the S3 buckets. Select the primary domain's A record first & hit edit. It will remain an alias, but switch the "Route traffic to" dropdown to "Alias to CloudFront distribution" then from the dropdown select the distribution, there should only be one available, but make sure it's pointing to the correct URL for the primary distribution. This is why I asked you to copy the URLs for the distributions earlier.

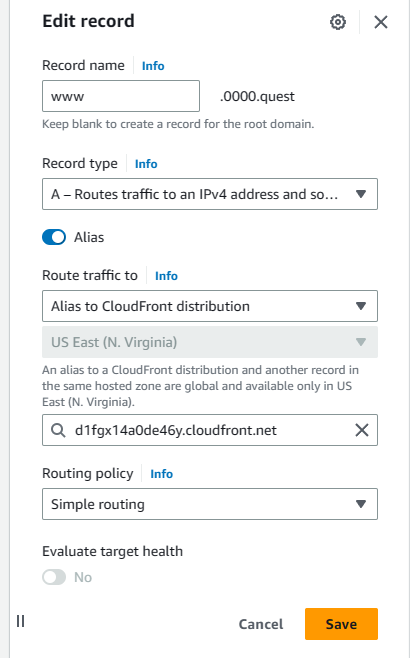

Hit save, then select the subdomain A record & hit edit. Same deal as the last one. Route the traffic to CloudFront distribution, select the distribution & verify the URL, then hit save.

One last thing. Now that the DNS records & CloudFront CDN are setup, we just need to change one setting for the bucket with the www subdomain to finish up the bucket settings. Navigate to that bucket's static website hosting settings in the bucket properties tab & hit edit. At the bottom, switch the protocol from HTTP to HTTPS & hit save.

Now we should be done. If you navigate to the domain you configured, you should be able to see your site hosted with HTTPS; additionally if you go to the site's www subdomain, you should be able to get redirected to the primary URL, as shown below.

This is just a simple deployment process & may not be ideal for most use cases as it requires manually uploading builds to S3 to update the site, which is why in my next tutorial, I will be showing you how to setup continuous deployment with github actions so you can update your site in an automated fashion by simply pushing code to github.

Thanks for following my tutorial. If you like this content or want to make suggestions, feel free to tag me in a post on twitter. This was my first tutorial, so don't roast me too bad 😅. [@the_whittington](https://twitter.com/the_whittington)

| whittington |

1,865,075 | What is Containerization | Containerization is a software deployment method that packages an application's code with all its... | 0 | 2024-05-25T17:03:21 | https://dev.to/m__mdy__m/what-is-containerization-2od4 | webdev, beginners, docker, devops | Containerization is a software deployment method that packages an application's code with all its dependencies (libraries, configuration files) needed to run into a standalone unit called a container. Unlike traditional virtualization, which virtualizes the entire operating system, containers share the underlying host operating system kernel. This makes them lightweight and portable, allowing them to run consistently on any infrastructure that supports the container runtime environment.

**Real-World Example:**

Imagine a shipping container. It can hold various goods (your application code) and has everything needed for transport (dependencies) - packing tape (configuration files), labels (environmental variables) etc. This container can be loaded onto different ships (operating systems) and travel across various ports (infrastructure) without affecting the goods inside. Just like the container ensures the goods arrive safely at their destination, containerization guarantees your application runs consistently regardless of the environment.

## What are the benefits of containerization?

Containerization offers a multitude of advantages for developers, IT operations teams, and organizations as a whole. Here's a breakdown of some key benefits:

**Portability:** Containers are self-contained units that bundle an application's code with all its dependencies (libraries, configuration files) into a single, executable package. This package is agnostic to the underlying operating system, allowing applications to run consistently and uniformly across any platform or cloud environment that supports the container runtime. Imagine a shipping container; it can be loaded onto different ships (operating systems) and travel across various ports (infrastructure) without affecting the goods inside. Similarly, containers ensure your application runs the same way regardless of the environment.

**Agility:** Containerization streamlines the development and deployment processes. The use of container orchestration platforms like Kubernetes, coupled with standardized container images, enables rapid application development, testing, and deployment cycles. Developers can leverage agile or DevOps methodologies to make frequent code changes and updates, with minimal risk of conflicts arising from different environments.

**Speed and Efficiency:** Containers are lightweight and share the host machine's operating system kernel. This eliminates the need for a full virtual machine environment for each application, resulting in faster startup times and lower resource consumption. Additionally, application layers within containers can be shared across containers, minimizing disk space usage. This translates to higher server efficiency, allowing you to run more containers on the same compute resources compared to traditional virtual machines.

**Fault Isolation:** Each containerized application runs in a isolated user space, with its own set of resources. This isolation ensures that the failure or malfunction of one container does not impact the operation of other containers sharing the same host. This allows for easier troubleshooting and debugging of individual applications without affecting the entire system. Additionally, container engines can leverage operating system security features like SELinux to further isolate faults within containers.

**Scalability:** Scaling applications becomes much simpler with containerization. Adding or removing containers allows you to easily scale your application up or down based on real-time demands. This elasticity ensures your application can handle fluctuating workloads efficiently.

**Ease of Management:** Container orchestration platforms automate the deployment, scaling, and management of containerized workloads. These platforms handle tasks like:

* **Scaling containerized applications:** They can automatically scale container instances based on predefined rules or resource utilization.

* **Rolling updates:** Orchestrators can perform rolling updates by deploying new container versions in a controlled manner, minimizing downtime during deployments.

* **Monitoring, logging, and debugging:** Container orchestration platforms provide centralized tools for monitoring the health and performance of containerized applications, simplifying troubleshooting and debugging processes.

**Security:** The inherent isolation of containerized applications strengthens the overall security posture. Malicious code within one container is restricted from affecting other containers or the host system. Additionally, container security features allow you to define security permissions that automatically block unauthorized components from entering containers and limit communication with unnecessary resources.

## What are containerization use cases?

**Cloud Migration (Lift-and-Shift):** Containerization acts as a bridge for organizations migrating legacy applications to the cloud. By encapsulating existing applications within containers, businesses can leverage the scalability and elasticity of cloud environments without extensive code rewrites. This "lift-and-shift" approach allows for a smoother transition to the cloud while laying the groundwork for future modernization efforts.

**Microservices Architecture:** Containerization is a perfect fit for the microservices architecture, a popular approach for building modern cloud applications. Microservices decompose complex applications into smaller, independent services, each responsible for a specific functionality. Containers provide a lightweight and portable way to package these microservices, enabling independent development, deployment, and scaling. For instance, a video streaming application might have microservices for user authentication, content delivery, and recommendation engine. Each microservice can be containerized and deployed independently, promoting agility and resilience.

**Continuous Integration and Continuous Delivery (CI/CD):** Containerization streamlines the CI/CD pipeline, a core DevOps practice for automating software development and delivery. Containers ensure consistent environments across development, testing, and production stages, eliminating discrepancies that can cause bugs or deployment failures. Developers can build and test applications within containers, mimicking the production environment, leading to faster release cycles and higher quality software.

**Modernization of Legacy Applications:** Legacy applications can be revitalized using containerization. By containerizing these applications, organizations can isolate them from newer technologies and run them alongside modern containerized applications. This approach enables a phased modernization strategy, where legacy applications can coexist with cloud-native developments.

**Internet of Things (IoT):** Containerization simplifies application deployment and management for resource-constrained IoT devices. Containers provide a lightweight and isolated environment for running applications on these devices. Updates can be packaged and distributed as container images, streamlining the software update process for large fleets of devices.

**Batch Processing:** Containerization is well-suited for orchestrating batch processing jobs. Complex data pipelines can be broken down into smaller, containerized tasks that can be easily scaled and run on-demand. This allows for efficient processing of large datasets without provisioning dedicated servers for each job.

**Scientific Computing:** Containerization offers a standardized way to package and deploy scientific software across different computing environments. Researchers can ensure consistent execution of their code across various platforms, leading to reproducible results and faster scientific discovery.

## How does containerization work?

**1. Building the Container Image:**

* Developers write the application code and identify all its dependencies (libraries, configuration files) needed for proper execution.

* Using containerization tools like Docker or Podman, they create a container image. This image follows the Open Container Initiative (OCI) specification, ensuring consistency and portability across different environments.

* The image typically consists of several layers:

* **Base Layer:** This layer forms the foundation and usually includes a minimal operating system (OS) like Linux.

* **Dependency Layers:** These layers contain pre-installed libraries and other software components required by the application.

* **Application Layer:** This layer includes the actual application code and any additional configuration files specific to the application.

* Container images are read-only and act as a blueprint for creating container instances.

**2. Running the Container:**

* A container runtime engine (like Docker Engine or containerd) reads the container image and creates a running instance of the application.

* The container engine utilizes the host operating system's kernel for core functionalities but isolates the container's processes and resources. This provides a lightweight and efficient execution environment.

* The container inherits the base OS from the image and has its own isolated filesystem with the application code and dependencies.

**3. Benefits of Isolation:**

* Each container runs in its own isolated user space, preventing conflicts between applications or their dependencies.

* This isolation enhances security as a compromised container cannot directly affect other containers or the host system.

**4. Container Orchestration (Optional):**

* For complex deployments involving multiple containers, container orchestration platforms like Kubernetes come into play.

* These platforms automate tasks like container deployment, scaling, and management.

* They can schedule containers across multiple hosts, ensuring efficient resource utilization and high availability.

## Containerizing a To-Do List App (Node.js Example) - No Code Included

Let's imagine you have a simple To-Do List application built with Node.js and Express. Here's a step-by-step explanation of how you could containerize it:

**1. Define Dependencies:**

* Identify all the external libraries your Node.js application relies on to function (e.g., Express for building the web framework, Mongoose for interacting with a MongoDB database if used for storing to-do items).

**2. Create a Dockerfile:**

* A Dockerfile is a text document that contains instructions for building a Docker image.

* In the Dockerfile, you'll specify the base image: This is a pre-built image containing a minimal Linux operating system and Node.js pre-installed. There are various Node.js base images available on Docker Hub, a public repository for container images.

* Next, you'll use instructions like `COPY` and `RUN` to:

* Copy your application code and any additional configuration files into the image.

* Install the required Node.js dependencies using commands like `npm install` or `yarn install`.

* Define any startup commands to execute when the container starts, such as running your Node.js application to start the To-Do List app.

**3. Build the Docker Image:**

* Use the `docker build` command with the path to your Dockerfile to build the container image.

* This creates a reusable image containing your application and all its dependencies in a self-contained unit.

**4. Run the Container:**

* Use the `docker run` command to create a running instance of your application from the built image.

* This will start a container with your To-Do List app running within it.

**5. Accessing the Application:**

* By default, containerized applications might not be directly accessible from your machine's web browser.

* You may need to configure ports to expose the application's port (typically port 3000 for Node.js apps) to your host machine or utilize a container orchestration platform for more advanced setups.

**Benefits of Containerization in this Example:**

* **Portability:** The container image can be easily shared and run on any machine with Docker installed, ensuring your To-Do List app runs consistently regardless of the environment.

* **Isolation:** Each container instance runs in isolation, preventing conflicts with other applications or their dependencies on the host machine.

* **Reproducibility:** The Dockerfile ensures a consistent build process, guaranteeing your To-Do List app runs identically every time you build the image.

## What is a virtual machine (VM)?

A virtual machine (VM) is a software emulation of a physical computer system. It creates a virtualized environment that behaves and functions just like a real computer, complete with its own CPU, memory, storage, operating system, and applications. VMs are hosted on a physical computer known as the host machine, and multiple VMs can share the resources of the host machine using software called a hypervisor.

Here's a breakdown of the key components:

* **Host Machine:** The physical computer system that provides the underlying hardware resources (CPU, memory, storage) for running VMs.

* **Hypervisor:** Software that sits directly on the host machine's hardware and manages the creation, deployment, and execution of VMs. It acts as a virtual layer, allocating resources from the physical machine to each VM and ensuring they run in isolation. Popular hypervisors include VMware ESXi, Microsoft Hyper-V, and KVM (Kernel-based Virtual Machine).

* **Guest Operating System:** The operating system (e.g., Windows, Linux, macOS) that runs within each virtual machine. VMs can have different guest operating systems installed, independent of the host machine's operating system.

**Benefits of Virtual Machines:**

* **Resource Isolation:** VMs provide isolated execution environments, preventing applications running on one VM from interfering with those on another VM. This enhances security and stability.

* **Portability:** VMs can be easily migrated between different physical machines as long as the underlying hardware architecture is compatible. This simplifies deployment and disaster recovery processes.

* **Flexibility:** VMs offer a versatile platform for running various operating systems and applications on a single physical machine, optimizing resource utilization.

* **Testing and Development:** VMs create safe and isolated environments for testing new software or running incompatible applications without affecting the host system.

## Containerization vs. Virtual Machines

While both VMs and containers provide ways to isolate and package applications, they differ in their approach:

* **Virtual Machines:** VMs virtualize the entire physical computer system, including the hardware layer, operating system, and application. This creates a more heavyweight and resource-intensive environment compared to containers.

* **Containers:** Containers focus on application isolation at the operating system level. They share the host machine's operating system kernel but provide isolated user space for each container with its own application code and dependencies. This makes them lightweight and portable, offering faster startup times and better resource utilization compared to VMs.

**Choosing Between VMs and Containers:**

* VMs are a good choice when you need complete isolation, including hardware and operating system, or when you need to run applications that require a specific operating system not available on the host machine.

* Containers are ideal for microservices architectures, deploying and scaling cloud-native applications, and scenarios where portability, efficiency, and faster startup times are critical.

## Containerization vs Virtualization

Both containerization and virtualization are powerful technologies that enable efficient resource utilization by allowing you to run multiple isolated environments on a single physical machine. However, they take fundamentally different approaches:

**Virtual Machines (VMs):**

* **Full Virtualization:** VMs virtualize the entire physical computer system, including the hardware, operating system (OS), and application.

* **Isolation:** VMs provide strong isolation, creating a self-contained environment with its own virtual hardware, OS, and applications. This isolation ensures applications running on different VMs do not interfere with each other, enhancing security and stability.

* **Resource Consumption:** VMs are more resource-intensive compared to containers. Each VM requires its own OS instance, leading to higher overhead and potentially slower startup times.

* **Use Cases:** VMs are a good choice when you need:

* **Complete isolation:** Including hardware and operating system, for running incompatible applications or applications requiring a specific OS unavailable on the host machine.

* **Flexibility:** To run various operating systems and applications on a single machine.

**Containers:**

* **Operating System-Level Virtualization:** Containers focus on application isolation at the operating system level. They share the host machine's operating system kernel but provide isolated user space for each container with its own application code and dependencies.