id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,857,488 | Mastering Advanced Debugging with Chrome DevTools: A Comprehensive Guide | Introduction: In the world of web development, debugging is a crucial skill that can make the... | 0 | 2024-05-25T12:39:00 | https://dev.to/nitin-rachabathuni/mastering-advanced-debugging-with-chrome-devtools-a-comprehensive-guide-54om | Introduction:

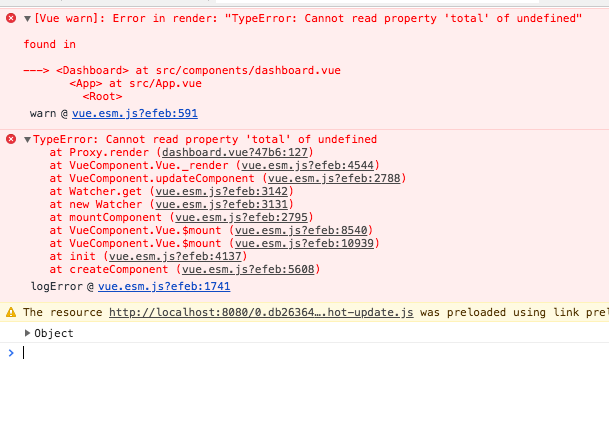

In the world of web development, debugging is a crucial skill that can make the difference between a smooth, error-free application and one fraught with issues. Fortunately, tools like Chrome DevTools provide developers with a powerful arsenal to diagnose and fix bugs effectively. While many developers are familiar with the basic features of DevTools, there's a wealth of advanced functionality waiting to be explored. In this article, we'll delve into some advanced debugging techniques using Chrome DevTools, complete with coding examples to illustrate each concept.

Performance Profiling:

Performance issues can be elusive but detrimental to user experience. Chrome DevTools offers robust performance profiling capabilities to identify bottlenecks in your code. Use the Performance panel to record and analyze CPU usage, network activity, and rendering performance. For example, you can pinpoint slow JavaScript functions or excessive layout recalculations causing rendering delays.

```

function slowFunction() {

// Time-consuming operations

}

console.time('slowFunction');

slowFunction();

console.timeEnd('slowFunction');

```

Memory Leak Detection:

Memory leaks can plague web applications, causing sluggishness and eventual crashes. DevTools' Memory panel allows you to take heap snapshots and track memory allocations over time. Look for objects that accumulate unexpectedly or aren't properly released, indicating potential memory leaks.

```

class MyClass {

constructor() {

this.data = new Array(1000000); // Allocating memory

}

}

let instances = [];

setInterval(() => {

instances.push(new MyClass()); // Creating new instances

}, 1000);

```

Advanced Breakpoints:

Breakpoints are indispensable for debugging, but DevTools offers more than just pausing execution at a line of code. Conditional breakpoints let you halt execution only when specific conditions are met, while DOM change breakpoints pause when a particular DOM element is modified.

```

function processData(data) {

// Process data

}

// Conditional breakpoint

processData(myData); // Set breakpoint: data.length > 1000

// DOM change breakpoint

document.querySelector('.btn').addEventListener('click', () => {

// Handle click event

});

```

XHR/Fetch Breakpoints:

Intercepting XHR (XMLHttpRequest) or Fetch requests can be challenging without the right tools. With DevTools, you can set breakpoints directly on network requests, allowing you to inspect request and response data, headers, and payloads.

```

fetch('https://api.example.com/data')

.then(response => response.json())

.then(data => {

// Process data

})

.catch(error => console.error(error));

// Set breakpoint on fetch request

```

Workspaces and Live Editing:

DevTools' workspace feature enables seamless integration between your local file system and the browser. You can map local files to network resources, edit them directly in DevTools, and have changes persist across reloads. This is particularly useful for debugging complex build processes or tweaking stylesheets on the fly.

```

<!-- Enable DevTools workspace -->

<script>

// Add local folder to workspace

Sources > Filesystem > Add folder to workspace

</script>

```

Conclusion:

Chrome DevTools is more than just a debugger; it's a Swiss Army knife for web developers, offering a plethora of advanced features to streamline the debugging process. By mastering these techniques—from performance profiling to live editing—you can become a more efficient and effective developer, capable of tackling even the most challenging bugs with confidence. Incorporate these tools into your workflow, and watch as your debugging skills reach new heights. Happy debugging!

---

Thank you for reading my article! For more updates and useful information, feel free to connect with me on LinkedIn and follow me on Twitter. I look forward to engaging with more like-minded professionals and sharing valuable insights.

| nitin-rachabathuni | |

1,864,837 | Unlocking the Power of NodeJS with Human Library | The Power of NodeJS and Its Usage NodeJS is a powerful runtime environment that allows... | 0 | 2024-05-25T12:33:58 | https://dev.to/tarek_eissa/unlocking-the-power-of-nodejs-with-human-library-3jo0 | node, ai, webdev, javascript | ## The Power of NodeJS and Its Usage

NodeJS is a powerful runtime environment that allows developers to build scalable and high-performance applications using JavaScript. Its event-driven architecture and non-blocking I/O operations make it an ideal choice for building real-time applications such as chat applications, online gaming, collaboration tools, and much more.

In this post, we'll explore the "Human Library," a comprehensive AI-powered toolkit for 3D face detection, rotation tracking, face description, recognition, and more. We'll delve into its features, installation process, and various use cases, demonstrating how NodeJS enhances its capabilities.

### Features of the Human Library

The Human Library boasts an impressive array of features, making it a versatile tool for developers working on a wide range of applications:

- **3D Face Detection & Rotation Tracking**: Accurate detection and tracking of faces in 3D space.

- **Face Description & Recognition**: Advanced algorithms for describing and recognizing faces.

- **Body Pose Tracking**: Detailed tracking of body poses for various applications.

- **3D Hand & Finger Tracking**: Precision tracking of hand and finger movements.

- **Iris Analysis**: In-depth analysis of the iris for biometric applications.

- **Age, Gender & Emotion Prediction**: Predict demographic and emotional states from facial data.

- **Gaze Tracking**: Track where a person is looking in real-time.

- **Gesture Recognition**: Recognize and interpret human gestures.

- **Body Segmentation**: Separate the body from the background in images and videos.

### Installation

Getting started with the Human Library is straightforward. Here's a step-by-step guide to install and set up the library:

1. **Install NodeJS**: Ensure you have NodeJS installed on your machine. You can download it from the [official website](https://nodejs.org/).

2. **Initialize a New NodeJS Project**:

```bash

mkdir human-library

cd human-library

npm init -y

```

3. **Install the Human Library**:

```bash

npm install @vladmandic/human

```

4. **Set Up a Basic Project**: Create an `index.js` file with the following content to test the installation.

```javascript

const Human = require('@vladmandic/human');

const human = new Human();

human.init().then(() => {

console.log('Human library initialized');

});

```

### Multi-use Cases

The Human Library is versatile and can be integrated into various applications. Here are a few examples:

#### Real-time Video Processing

Use the Human Library to process video streams from webcams or other sources, providing real-time feedback on facial expressions, gaze direction, and more.

#### Security and Surveillance

Implement face recognition and tracking to enhance security systems, allowing for automated detection of individuals and monitoring of their activities.

#### Healthcare

Utilize body pose tracking and gesture recognition to develop applications for physical therapy, enabling remote monitoring of exercises and movements.

#### Virtual and Augmented Reality

Incorporate 3D face and body tracking into VR and AR applications to create more immersive and interactive experiences.

### Scalability and Possible Applications

NodeJS, with its non-blocking I/O and event-driven architecture, provides the perfect backbone for scalable applications using the Human Library. Whether you're developing a small prototype or a large-scale deployment, NodeJS can handle the load efficiently.

#### Application Examples

- **Facial Recognition in Retail**: Enhance customer experiences by recognizing returning customers and providing personalized services.

- **Fitness Applications**: Track users' exercises and provide real-time feedback on their form and movements.

- **Interactive Advertising**: Create interactive billboards that respond to viewers' gestures and facial expressions.

### Conclusion

The Human Library, combined with the power of NodeJS, opens up endless possibilities for developers. Its comprehensive set of features allows for the creation of innovative applications across various industries. By leveraging NodeJS's scalability and performance, you can build robust and high-performing solutions that take full advantage of AI-powered human tracking and recognition.

### Resources

- **GitHub Repository**: [Human Library](https://github.com/vladmandic/human)

- **NPM Package**: [@vladmandic/human](https://www.npmjs.com/package/@vladmandic/human)

- **Live Demos**: [Human Library Demos](https://github.com/vladmandic/human#live-demos)

### Further Reading

- **Documentation**: [Human Library Documentation](https://github.com/vladmandic/human/wiki)

- **Installation Guide**: [Installation Instructions](https://github.com/vladmandic/human#installation)

- **API Reference**: [TypeDoc API Specification](https://github.com/vladmandic/human/blob/main/api/typedoc/index.html) | tarek_eissa |

1,864,835 | Вопрос | Мы не так давно стали счастливыми обладателями дачного участка, с дома, но проблема заключается в... | 0 | 2024-05-25T12:25:55 | https://dev.to/__9a35527b8dd1b/vopros-6eg | Мы не так давно стали счастливыми обладателями дачного участка, с дома, но проблема заключается в том, что у нас все в комарах, стало понятно, с приходом тепла, что нужно с этим что-то делать, потому как максимально некомфортно. Вот, например, интересовала услуга меня обработки земли и газона от комаров https://dezgroup.ru/services/unichtozhenie-nasekomykh/dezinsektsiya-ot-komarov/obrabotka-zemli-i-gazona-ot-komarov/ от компании, что уже давно работают данные области, и имеет отличную репутацию, и при этом адекватные цены. Подскажите, возможно среди нас есть, кто обращался к ним, подскажите, как вам? Потому как вижу, что они гарантии дают | __9a35527b8dd1b | |

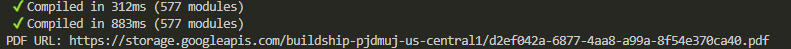

1,864,834 | Updating to Angular Material 18: Keeping Support for Material 2 and Adding Support for Material 3 | In this article, we will update Angular Material 17 to 18, with Material 2 and also Material 3 | 0 | 2024-05-25T12:22:37 | https://angular-material.dev/articles/updating-to-angular-material-18 | anguar, angularmaterial, materialdesign, webdev | ---

title: Updating to Angular Material 18: Keeping Support for Material 2 and Adding Support for Material 3

published: true

description: In this article, we will update Angular Material 17 to 18, with Material 2 and also Material 3

tags: anguar,angularmaterial,materialdesign,webdevelopment

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/13g1jl9wheevjh0nrd6f.png

canonical_url: https://angular-material.dev/articles/updating-to-angular-material-18

---

> In this quick guide, we will update an Angular project with Angular Material 17 to 18. And we will also learn how to keep support for M2 and add support for M3.

## Existing Project

For this guide, I am going to use the project [course-md-ng-my-app](https://github.com/Angular-Material-Dev/course-md-ng-my-app/tree/angular-material-17) from my course. You can clone it using below commands:

```bash

git clone https://github.com/Angular-Material-Dev/course-md-ng-my-app --branch angular-material-17

cd course-md-ng-my-app

npm i

```

## Finding the updates

We will first find out the updates using Angular CLI's update command:

```bash

ng update

```

You should see the output like below:

```bash

Name Version Command to update

--------------------------------------------------------------------------------

@angular/cdk 17.3.10 -> 18.0.0 ng update @angular/cdk

@angular/cli 17.3.8 -> 18.0.1 ng update @angular/cli

@angular/core 17.3.10 -> 18.0.0 ng update @angular/core

@angular/material 17.3.10 -> 18.0.0 ng update @angular/material

```

## Update Angular CLI to 18

```bash

ng update @angular/cli@18

```

When asked about migrating to the new build system, I am going to select it using `space` and press `enter`. As it's optional, you can skip it.

```bash

Select the migrations that you'd like to run (Press <space> to select, <a> to toggle all, <i> to invert selection, and

<enter> to proceed)

❯◯ [use-application-builder] Migrate application projects to the new build system.

(https://angular.dev/tools/cli/build-system-migration)

```

### Check your app

After updating Angular CLI to 18, please check the application by running `npm start` command.

## Update Angular Material to 18

```bash

ng update @angular/material@18

```

Above command will update both, `@angular/material` and `@angular/cdk` to version 18.

Now, Angular Material did not provide schematic support to migrate SASS theme APIs to latest ones. So, if you run the project now, you will see many errors. We will try to resolve them one-by-one.

## Keeping Support for Material 2 (M2)

First, we will make changes in such a way that our application still follows Material 2 designs. If you want to simply update your application for Material 3 (M3), jump to [Adding support for Material 3 (M3)](#adding-support-for-material-3-m3)

Each section will have a table with 2 columns, **old** and **new**. Simply find and replace value from *old* with value from *new* in whole project.

### Typography changes for M2

| Index | Old | New |

| ----- | -------------------------- | ----------------------------- |

| 1 | `define-typography-config` | `m2-define-typography-config` |

| 2 | `define-typography-level` | `m2-define-typography-level` |

### Color palettes changes for M2

| Index | Old | New |

| ----- | ---------------- | ------------------- |

| 1 | `define-palette` | `m2-define-palette` |

#### Predefined palettes changes for M2

If you're using any pre-defined palette, like `mat.$indigo-palette`, pre-fix the variable with `m2`. So, new palette would become `mat.$m2-indigo-palette`

### Theming changes for M2

| Index | Old | New |

| ----- | -------------------- | ----------------------- |

| 1 | `define-light-theme` | `m2-define-light-theme` |

| 2 | `define-dark-theme` | `m2-define-dark-theme` |

#### Adding typography for dark theme

As we are going to lazy load the dark theme, we need to include `typography` in it. So, until now, the dark theme looks like below:

```scss

// Define a dark theme

$my-app-dark-theme: mat.m2-define-dark-theme(

(

color: (

primary: mat.m2-define-palette(mat.$m2-pink-palette),

accent: mat.m2-define-palette(mat.$m2-blue-grey-palette),

),

)

);

```

Simply add `typography` in the map like below:

```scss

// Define a dark theme

$my-app-dark-theme: mat.m2-define-dark-theme(

(

color: (

primary: mat.m2-define-palette(mat.$m2-pink-palette),

accent: mat.m2-define-palette(mat.$m2-blue-grey-palette),

),

typography: config.$my-app-typography, // 👈 Added

)

);

```

### Changes for custom component

In this project, we have a custom component at `ui/alert`, in that we are using Material theme (colors and typography) using Angular Material SASS mixins and functions. In this section, we will look into changes needed for making it compatible with Angular Material 18.

The file we are targeting is at `src/app/ui/alert/_alert-theme.scss`.

#### TL;DR

If you simply want to check the final code, it will look like below:

```scss

// _alert-theme.scss

@use "sass:map";

@use "@angular/material" as mat;

@mixin color($theme) {

$type: mat.get-theme-type($theme);

$is-dark-theme: $type == dark;

$exportBackgroundOpacity: if($is-dark-theme, 0.12, 0.06);

.alert {

color: mat.get-theme-color(

$theme,

primary,

if($is-dark-theme, 50, default)

);

background: rgba(

mat.get-theme-color($theme, primary, 300),

$exportBackgroundOpacity

);

border-color: mat.get-theme-color($theme, primary, 100);

.alert-link {

color: mat.get-theme-color($theme, primary, if($is-dark-theme, 200, 500));

}

}

}

@mixin typography($theme) {

.alert {

font: mat.get-theme-typography($theme, body-1);

letter-spacing: mat.get-theme-typography($theme, body-1, letter-spacing);

.alert-heading {

font: mat.get-theme-typography($theme, "headline-6");

}

.alert-footer {

font: mat.get-theme-typography($theme, "caption");

}

}

}

@mixin theme($theme) {

@include color($theme);

@include typography($theme);

}

```

With above changes, you component should work fine. If you want to know more about the changes, keep reading on, else jump to [next section](#checking-all-the-changes-for-m2).

#### Reading color values

If you look at the code, we are using `get-color-config` function, but it is removed now. And with it `get-color-from-palette` function is also removed.

So, now to get any color from theme, we have to use `get-theme-color`. You can read about it at [here](https://material.angular.io/guide/material-2-theming#reading-color-values).

#### Identifying the current theme

We also can't use `map.get($theme, is-dark)` anymore. There is a new function to identify the type of theme: `mat.get-theme-type($theme)`. This function takes a single argument, the theme, and returns either `light` or `dark`.

#### Reading typography values

`mat.font-family` and `mat.typography-level` are also removed.

There is a new function called `get-theme-typography`, you can read more about it [here](https://material.angular.io/guide/material-2-theming#reading-typography-values).

### Checking all the changes for M2

After making all the changes, you should be good to run the project without any errors. You can also take a look at all the changes needed for keeping M2 support with Angular Material 18 at the the PR: [feat: keeping m2 support with angular material 18](https://github.com/Angular-Material-Dev/course-md-ng-my-app/pull/1/files).

## Adding Support for Material 3 (M3)

If you want to add support for M3 with Angular Material 18, simply follow guidelines from [Theming Angular Material](https://material.angular.io/guide/theming). Angular Material team has already given in-depth guidelines about it.

The changes needed to add support for M3 with Angular Material 18 for the project can be viewed at the commit on GitHub: [feat: add support for M3 with angular material 18](https://github.com/Angular-Material-Dev/course-md-ng-my-app/commit/e39cd37595d6e38ca3f6023b2c928c60a7a0a0c8).

## Support Free Content Creation

Even though the courses and articles are available at no cost, your support in my endeavor to deliver top-notch educational content would be highly valued. Your decision to contribute aids me in persistently improving the course, creating additional resources, and maintaining the accessibility of these materials for all. I'm grateful for your consideration to contribute and make a meaningful difference!

[](https://github.com/sponsors/shhdharmen)

## Conclusion

We started with cloning the existing repo with Angular Material 17 from one my other courses. Then we looked at updates needed by running `ng update` command. And then we ran `ng update @angular/cli@18` and `ng update @angular/material@18` in sequence.

We started with keeping support for M2. We learned that what functions are removed and what we can use instead of them. And at last we saw how to add support for M3 with Angular Material 18.

Below is the quick summary:

| Index | Applies to | Old | Change for M2 | Change for M3 |

| ----- | ----------------------------- | -------------------------- | ----------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| 1 | Typography | `define-typography-config` | [`m2-define-typography-config`](https://material.angular.io/guide/material-2-theming#typography-config) | [Part of `define-theme`](https://material.angular.io/guide/theming#customizing-your-typography) |

| 2 | Typography | `define-typography-level` | [`m2-define-typography-level`](https://material.angular.io/guide/material-2-theming#define-a-level) | [`get-theme-typography`](https://material.angular.io/guide/theming-your-components#reading-typescale-properties) |

| 3 | Color palettes | `define-palette` | [`m2-define-palette`](https://material.angular.io/guide/material-2-theming#:~:text=warn%20palette.%20The-,m2%2Ddefine%2Dpalette,-Sass%20function%20accepts) | SASS Map, can be generated using [Material 3 Theme schematic](https://material.angular.io/guide/schematics#material-3-theme-schematic) |

| 4 | Color palettes | `$indigo-palette` | [`$m2-indigo-palette`](https://material.angular.io/guide/material-2-theming#predefined-palettes), [All Palettes](https://m1.material.io/style/color.html#color-color-palette) | `$azure-palette`, [All Palettes](https://material.angular.io/guide/theming#pre-built-themes) |

| 5 | Theming | `define-light-theme` | [`m2-define-light-theme`](https://material.angular.io/guide/material-2-theming#defining-a-theme) | [`define-theme`](https://material.angular.io/guide/theming#defining-a-theme) |

| 6 | Theming | `define-dark-theme` | [`m2-define-dark-theme`](https://material.angular.io/guide/material-2-theming#defining-a-theme) | [`define-theme`](https://material.angular.io/guide/theming#defining-a-theme) |

| 7 | Reading color values | `get-color-config` | Removed | Removed |

| 8 | Reading color values | `get-color-from-palette` | [`get-theme-color`](https://material.angular.io/guide/material-2-theming#reading-color-values) | `get-theme-color`, [Reading tonal palette colors](https://material.angular.io/guide/theming-your-components#reading-tonal-palette-colors), [Reading color roles](https://material.angular.io/guide/theming-your-components#reading-color-roles) |

| 9 | Identifying the current theme | `map.get($theme, is-dark)` | [`get-theme-type`](https://material.angular.io/guide/material-2-theming#reading-color-values:~:text=can%20use%20the-,get%2Dtheme%2Dtype,-Sass%20function%20to) | [`get-theme-type`](https://material.angular.io/guide/theming-your-components#reading-the-theme-type) |

| 10 | Reading typography values | `font-family` | Removed | Removed |

| 11 | Reading typography values | `typography-level` | [`get-theme-typography`](https://material.angular.io/guide/material-2-theming#reading-typography-values) | [`get-theme-typography`](https://material.angular.io/guide/theming-your-components#reading-typescale-properties) |

| 12 | Reading density values | `get-theme-density` | *No change* [`get-theme-density`](https://material.angular.io/guide/material-2-theming#reading-density-values) | *No change* [`get-theme-density`](https://material.angular.io/guide/theming-your-components#reading-the-density-scale) |

## Codes

Codes and changes are available as below:

| Index | Branch | Angular Material Version | Material Design version | PR/Commit |

| ----- | ----------------------------------------------------------------------------------------------------------------- | ------------------------ | ----------------------- | ---------------------------------------------------------------------------------------------------------------------- |

| 1 | [main](https://github.com/Angular-Material-Dev/course-md-ng-my-app) | 18 | 3 | [e39cd37](https://github.com/Angular-Material-Dev/course-md-ng-my-app/commit/e39cd37595d6e38ca3f6023b2c928c60a7a0a0c8) |

| 2 | [angular-material-18-m2](https://github.com/Angular-Material-Dev/course-md-ng-my-app/tree/angular-material-18-m2) | 18 | 2 | [PR#1](https://github.com/Angular-Material-Dev/course-md-ng-my-app/pull/1) |

| 3 | [angular-material-17](https://github.com/Angular-Material-Dev/course-md-ng-my-app/tree/angular-material-17) | 17 | 2 | -- |

| shhdharmen |

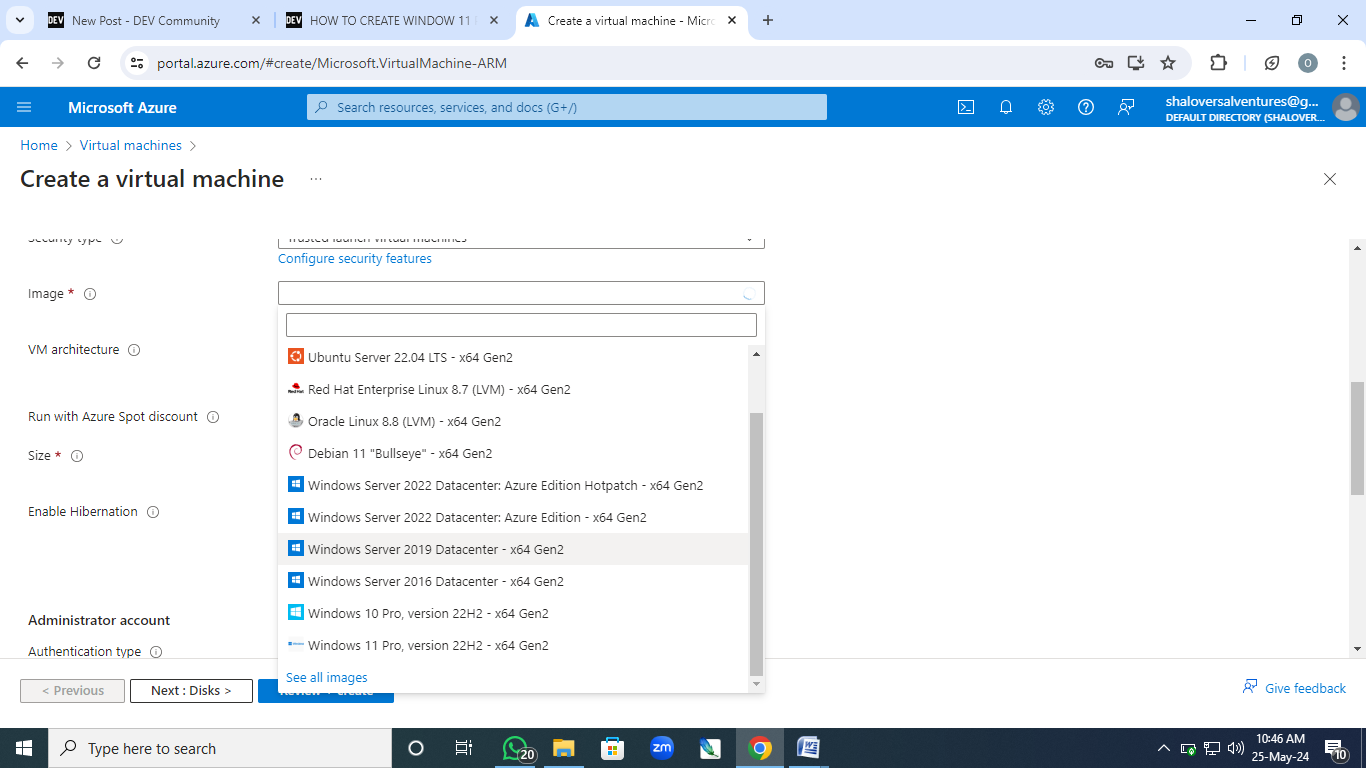

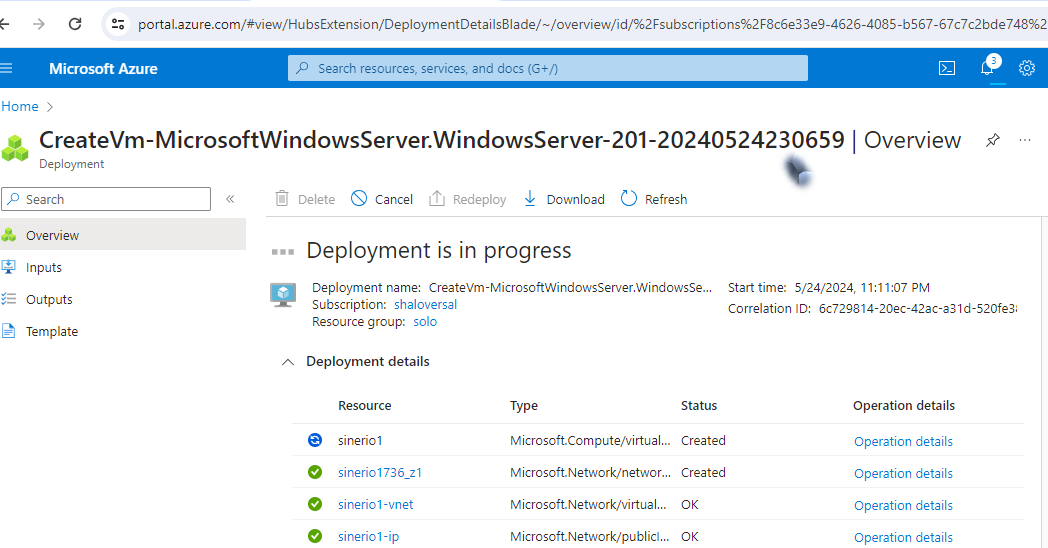

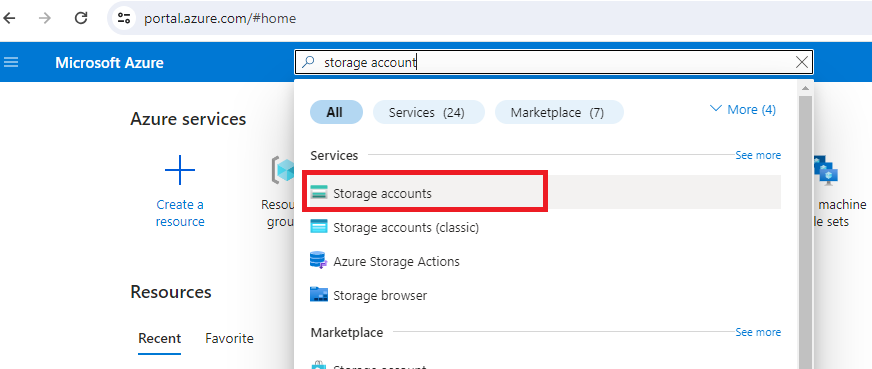

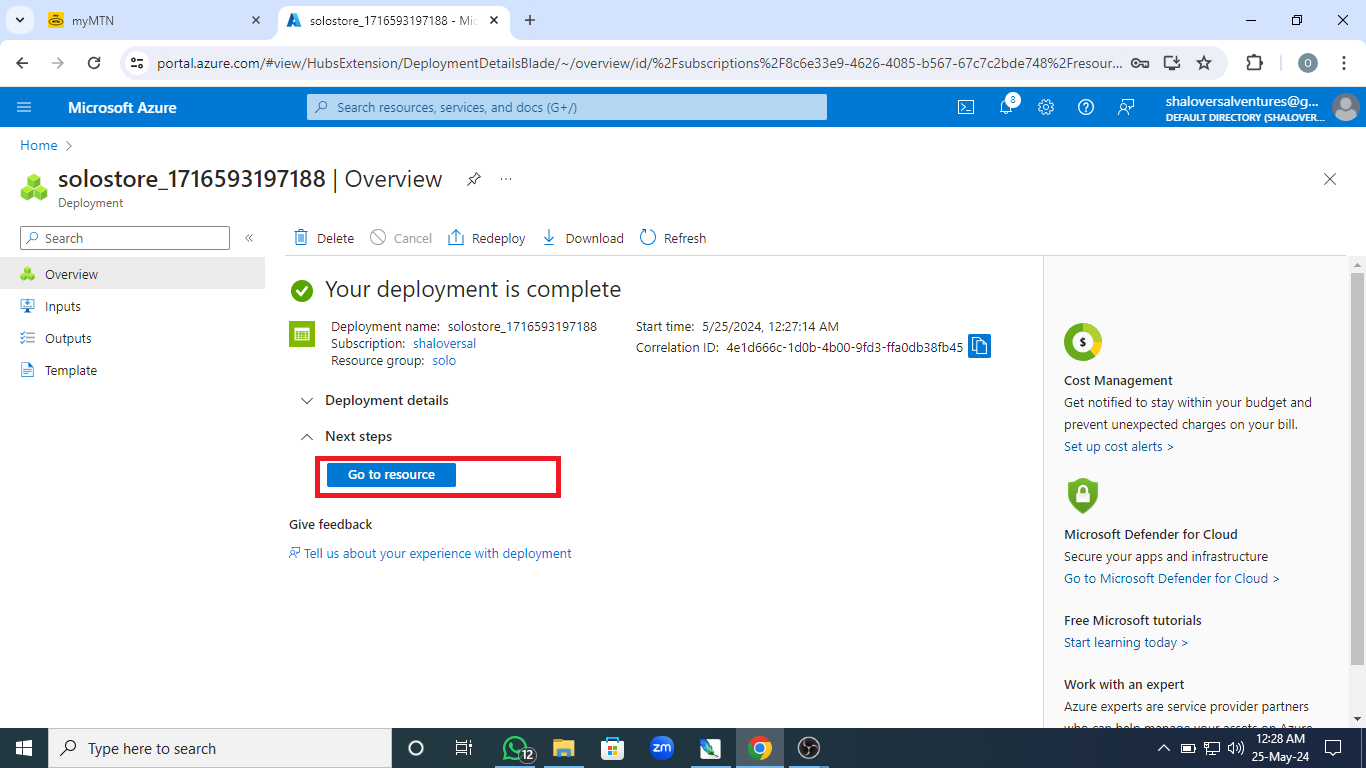

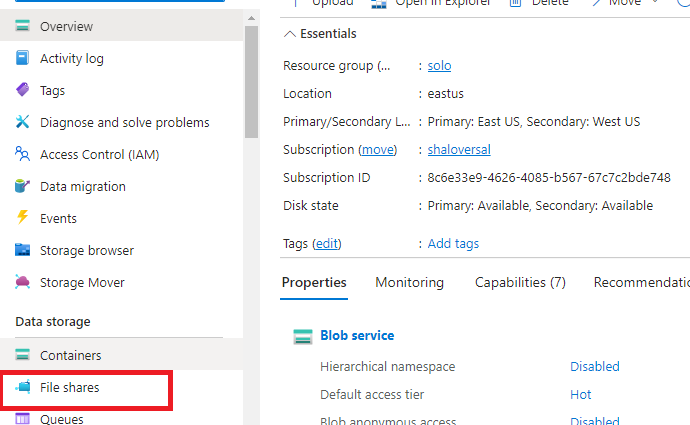

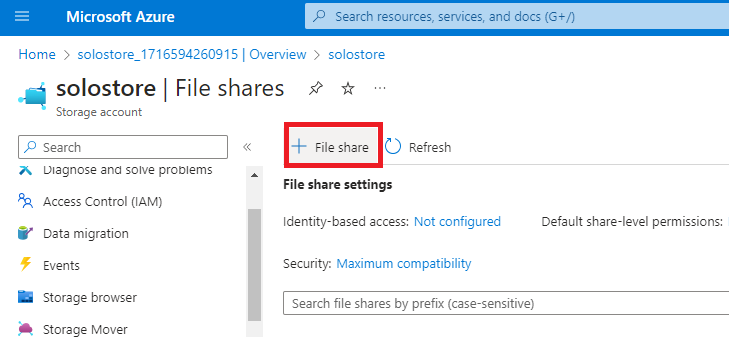

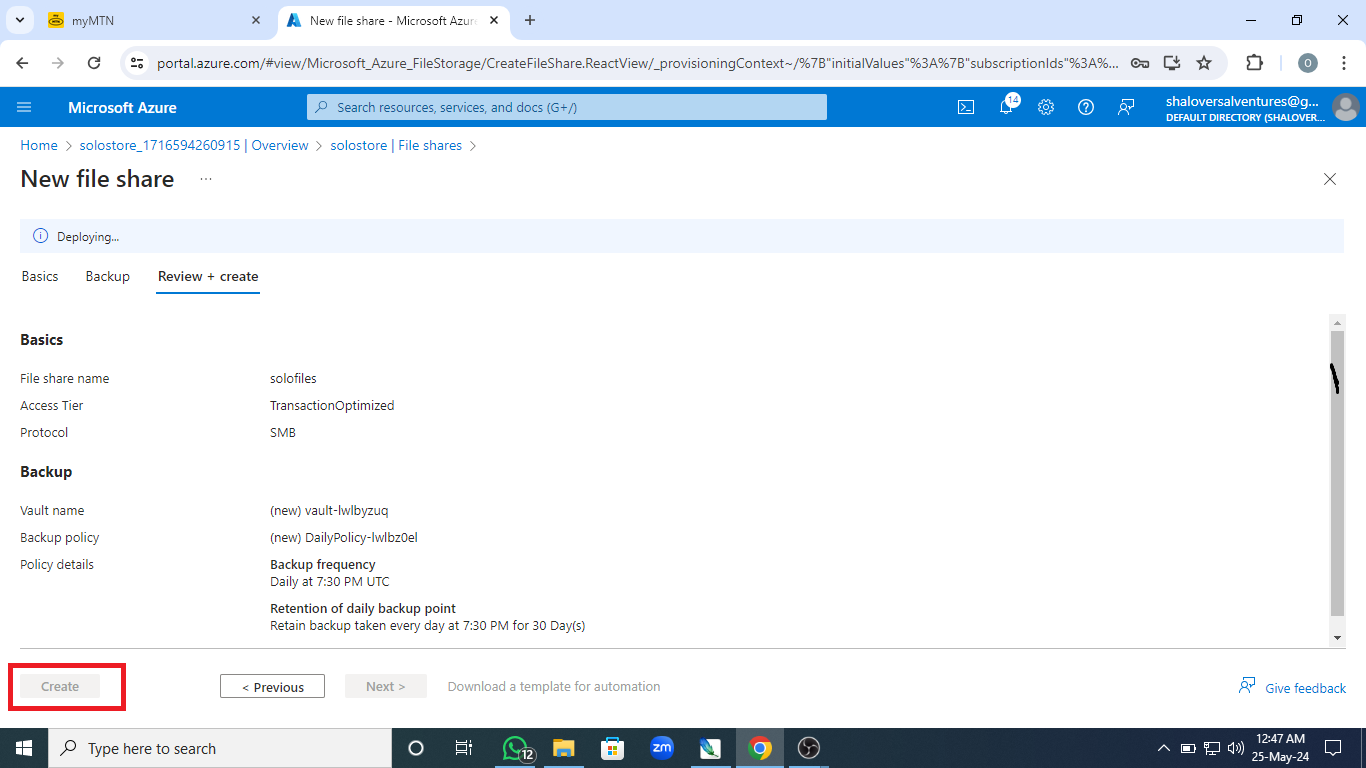

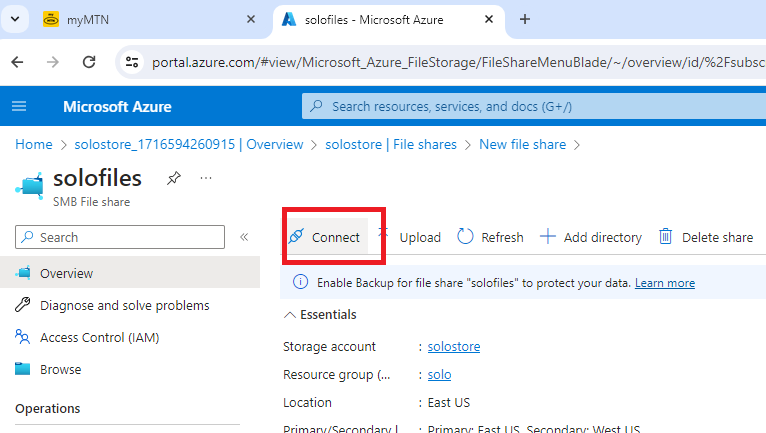

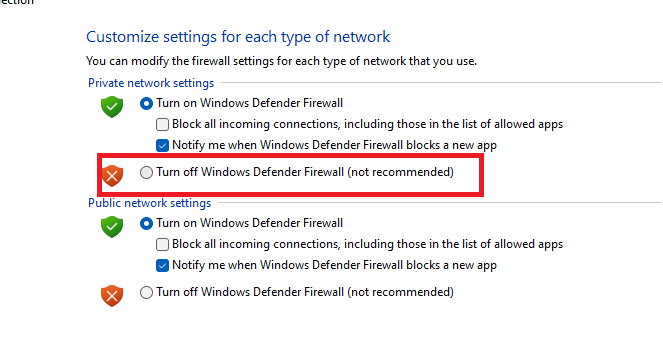

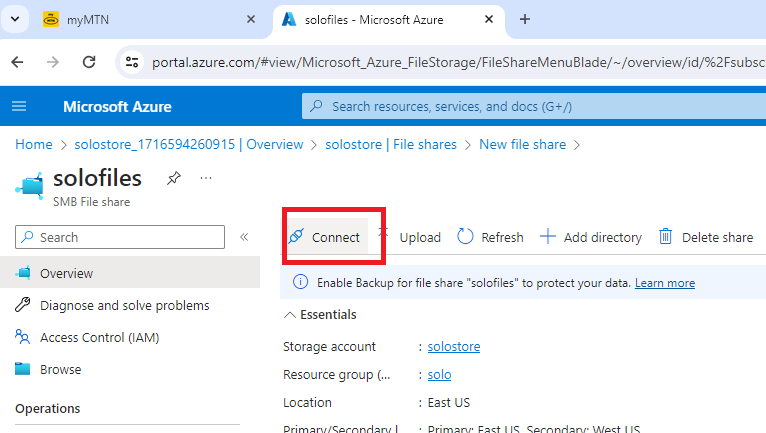

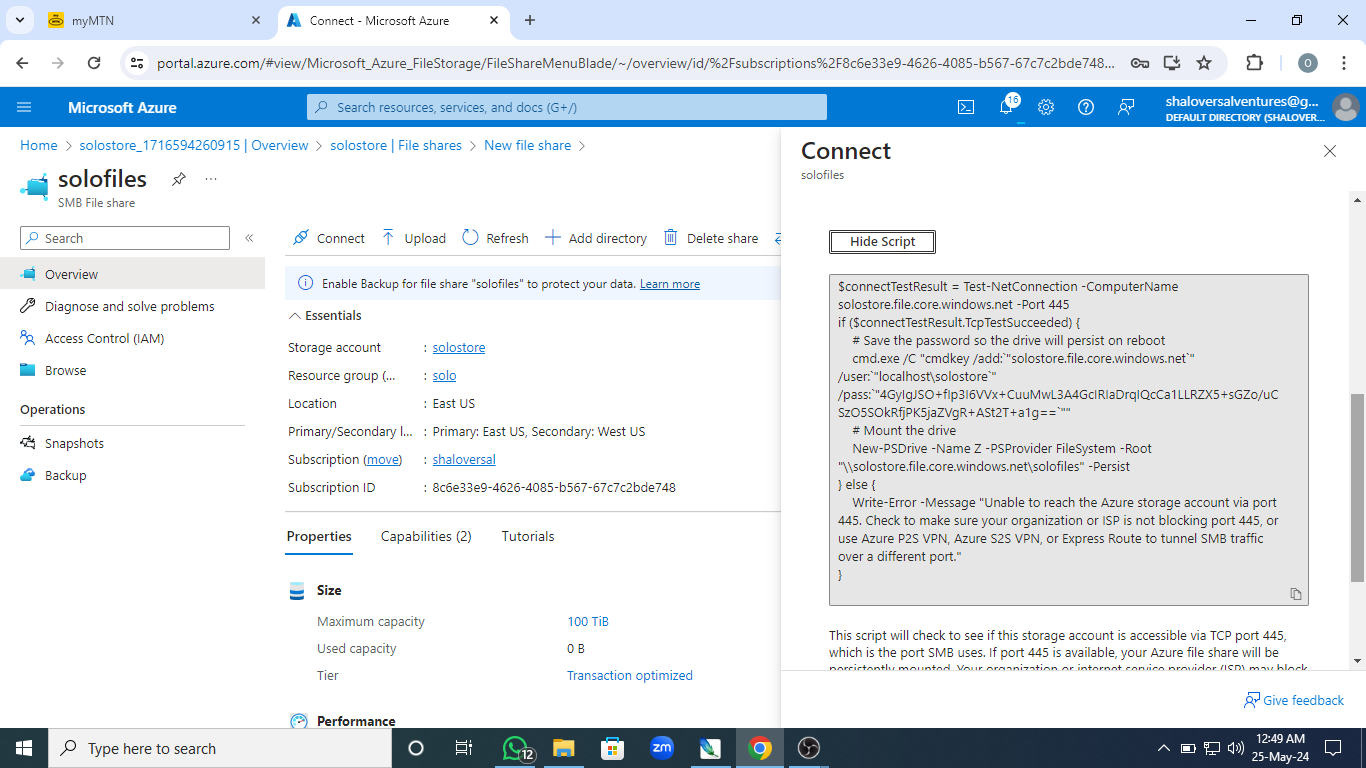

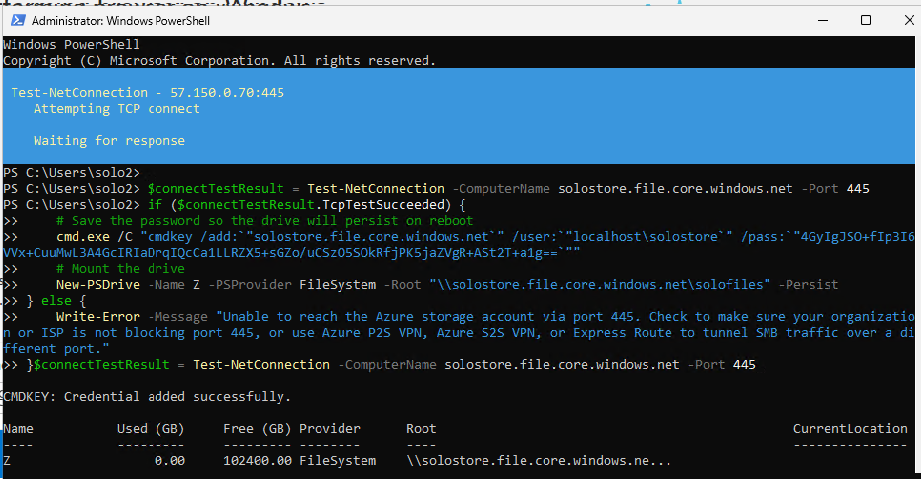

1,864,746 | HOW TO CREATE A WINDOW SERVER WITH IIS,FIREWALL,AND ASG INSTALLED | sTEP 1. Create a vm YOu can click on the link below on how to create a... | 0 | 2024-05-25T09:54:01 | https://dev.to/shaloversal123/how-to-create-a-window-server-with-iisfirewalland-asg-installed-4ahh | sTEP 1. Create a vm

YOu can click on the link below on how to create a Vm

https://dev.to/shaloversal123/how-to-create-window-11-pro-virtual-machine-2h2e

Select Webserver as Image.

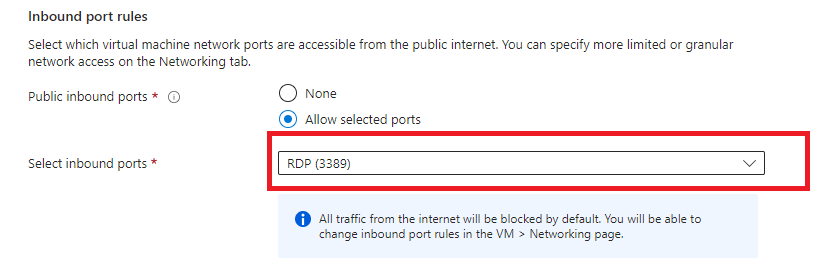

Select RDP in the inbound port

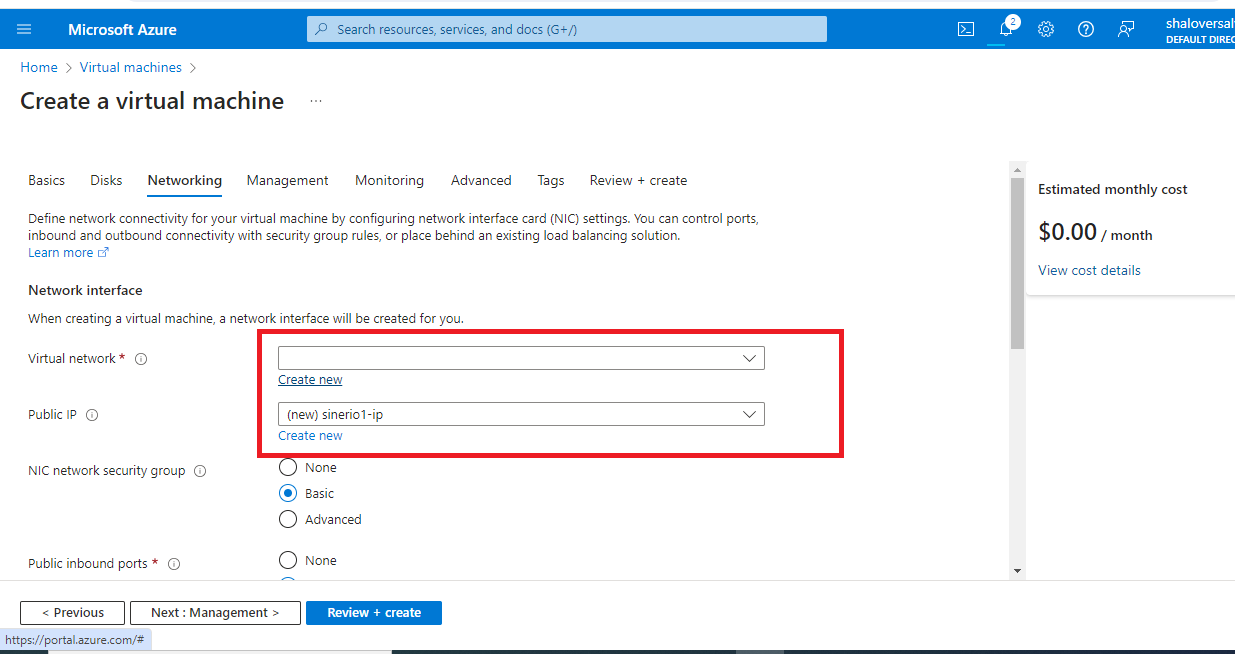

Allow your resource group to load under Vm network page before proceeding.

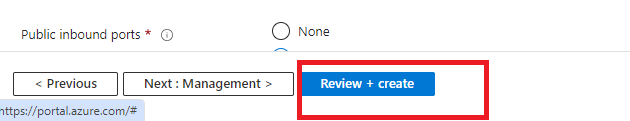

NExt managemnet

Next Monitoring

Next Advance

Click review and create

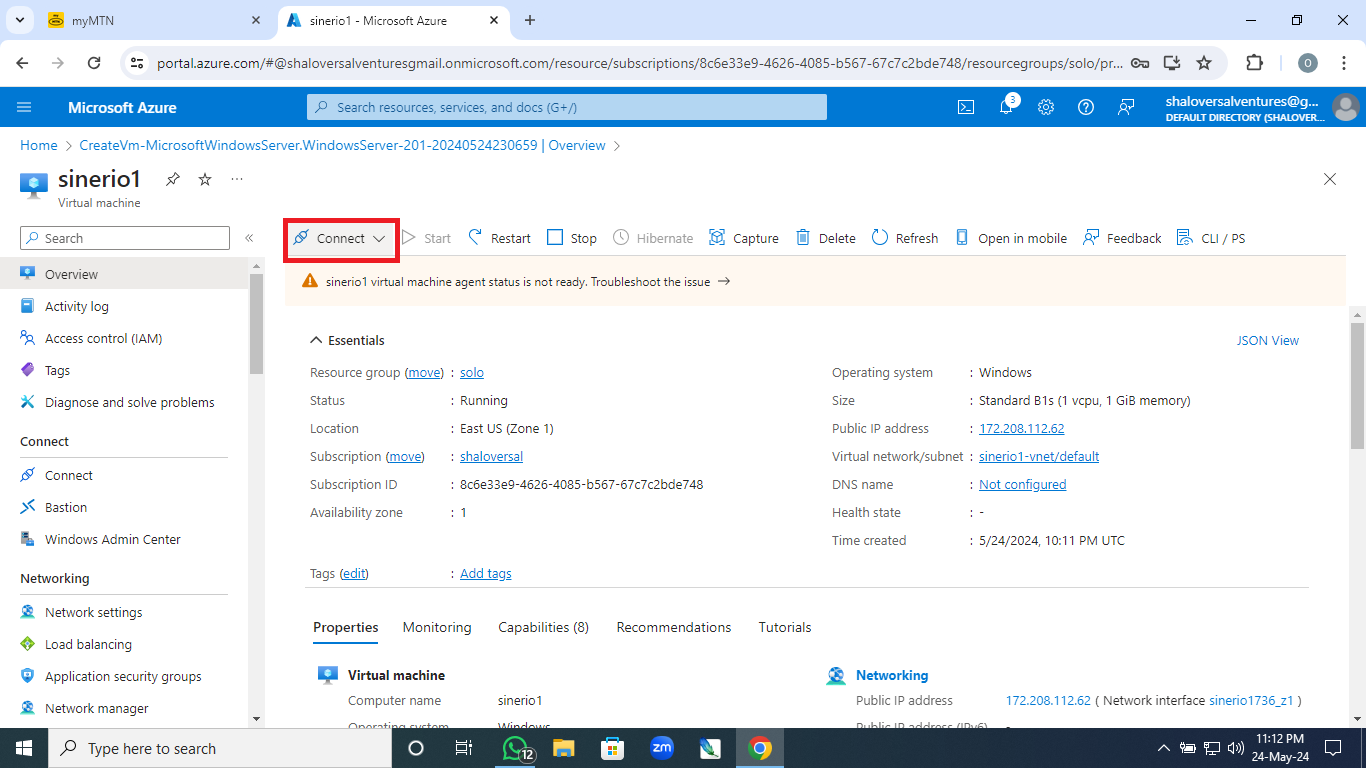

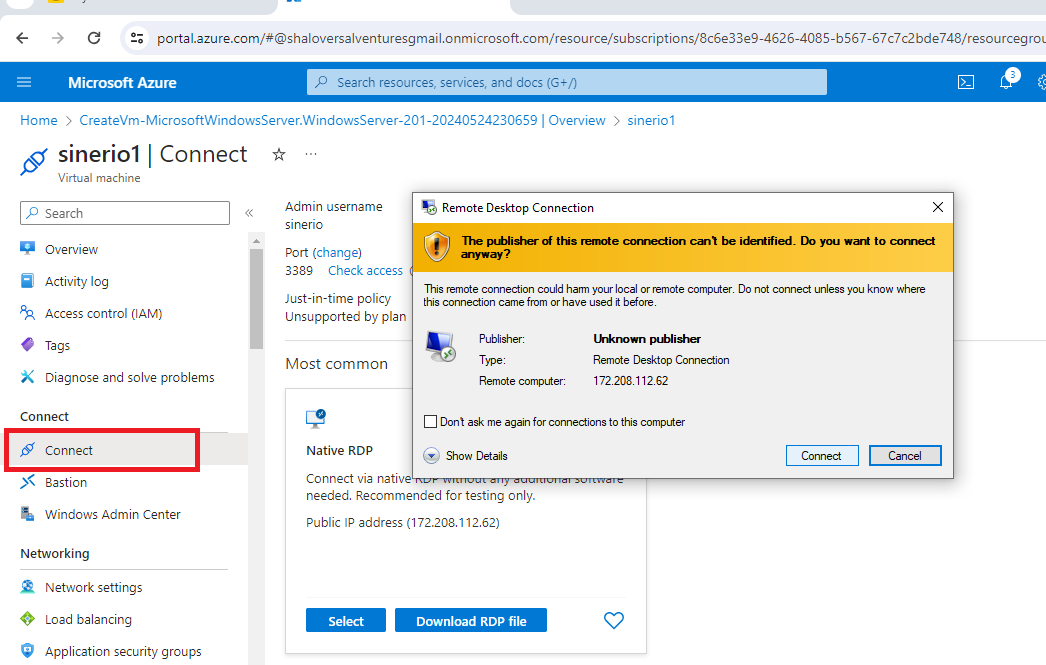

**HOW TO CONNECT YOUR Vm**

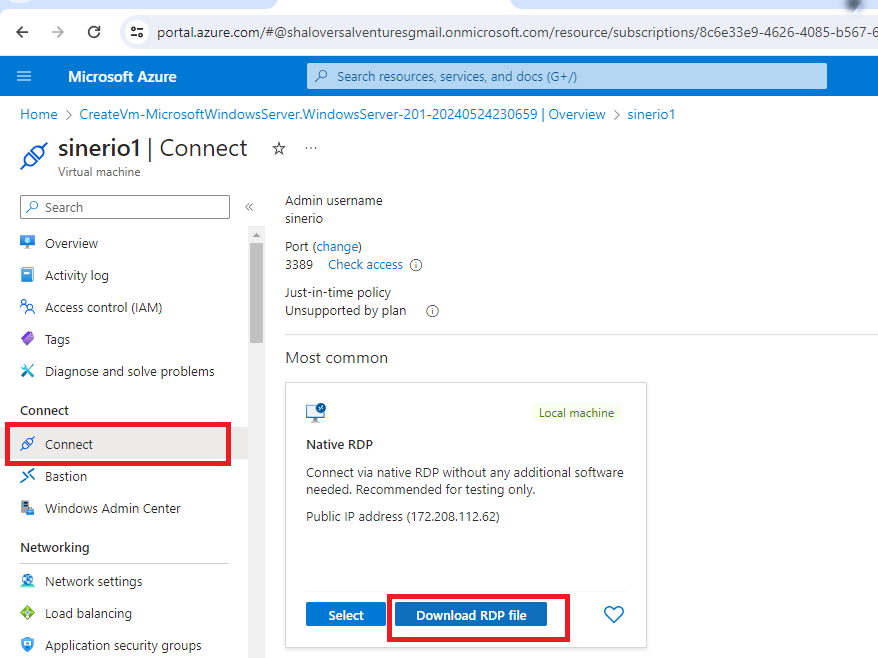

Click on connect on the webserver created

Download RDP protocol

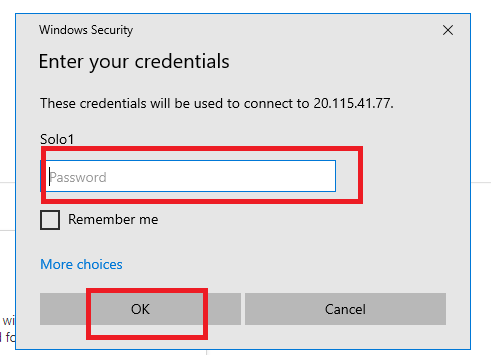

Input the Password Then

Click Connect

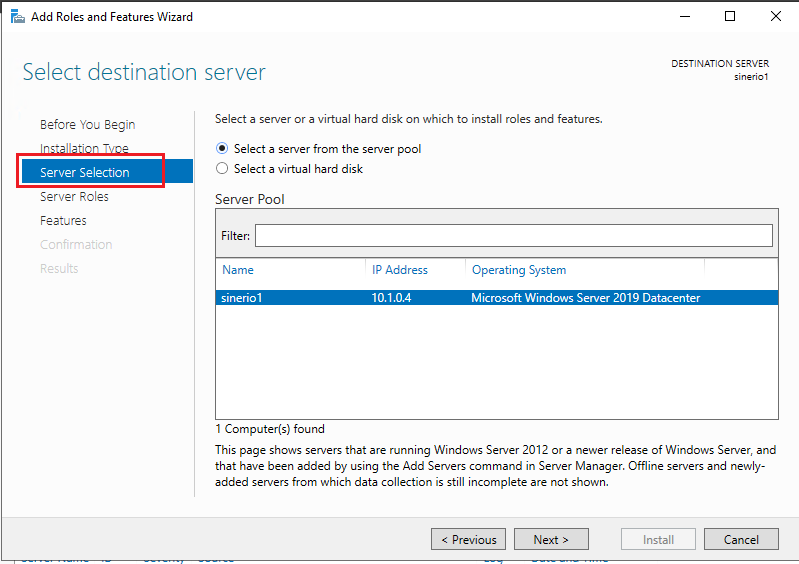

Click Add role and features

Next to server selection

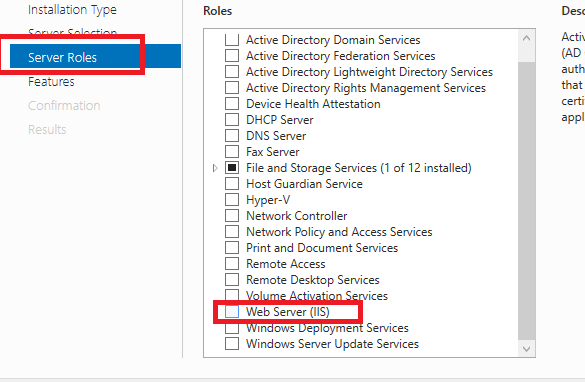

Under server role click on webserver IIS

Click add features

NExt to Role Service

Click IIS 6management

Next to INstall

NB; Installation takes few minutes.

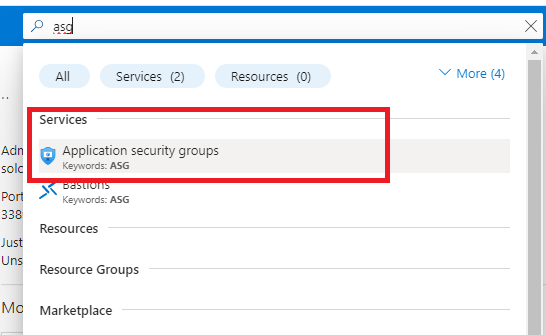

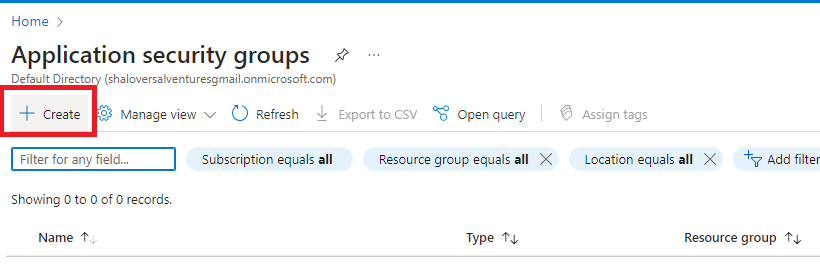

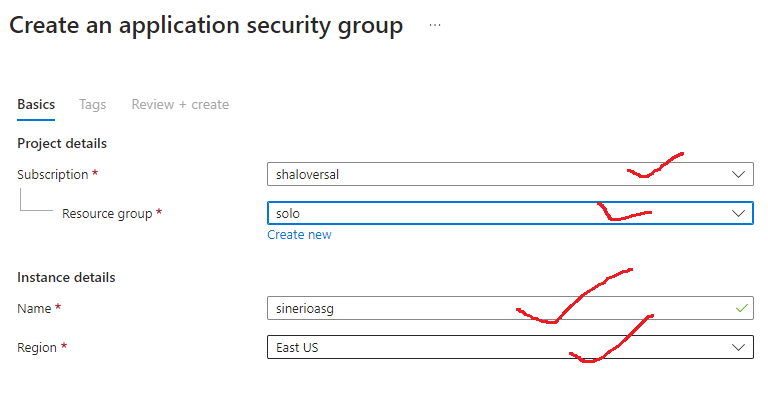

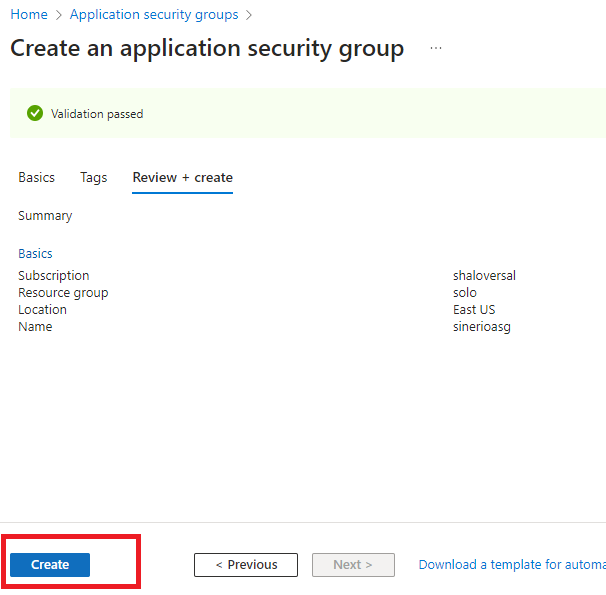

HOW TO CREATE APPLICATION SECUTRITY GROUP

Search for ASG on the azure portal

click create on the top page

Fill your information

Give suitable name to the ASG

NB:Ensure that you should thesame region for your work when creating it

Review and Create

Click create a successful Validation passed.

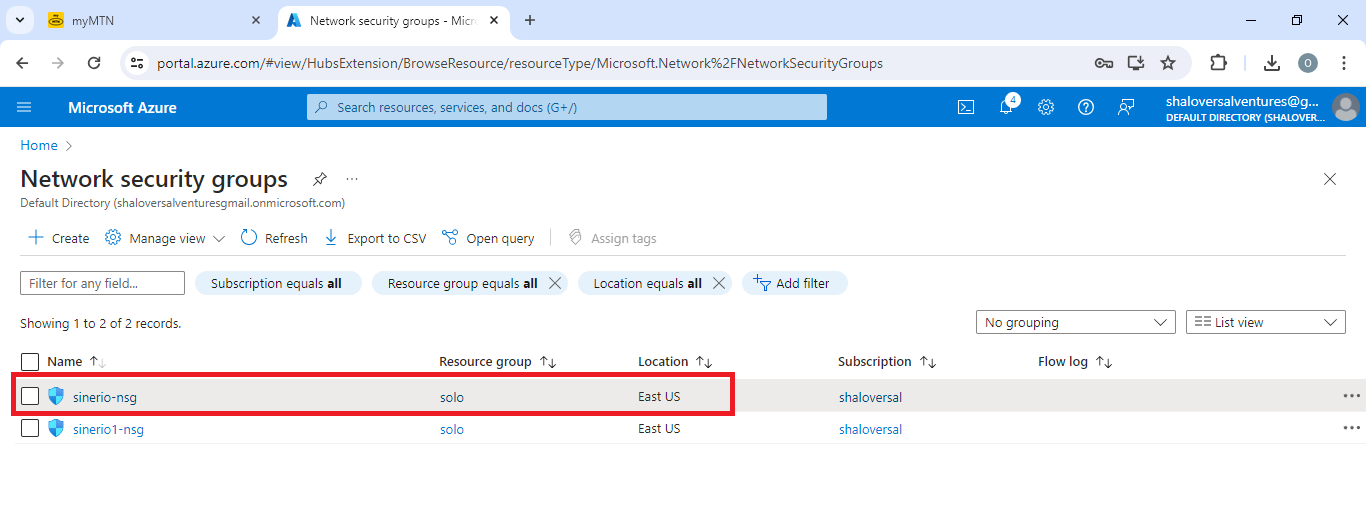

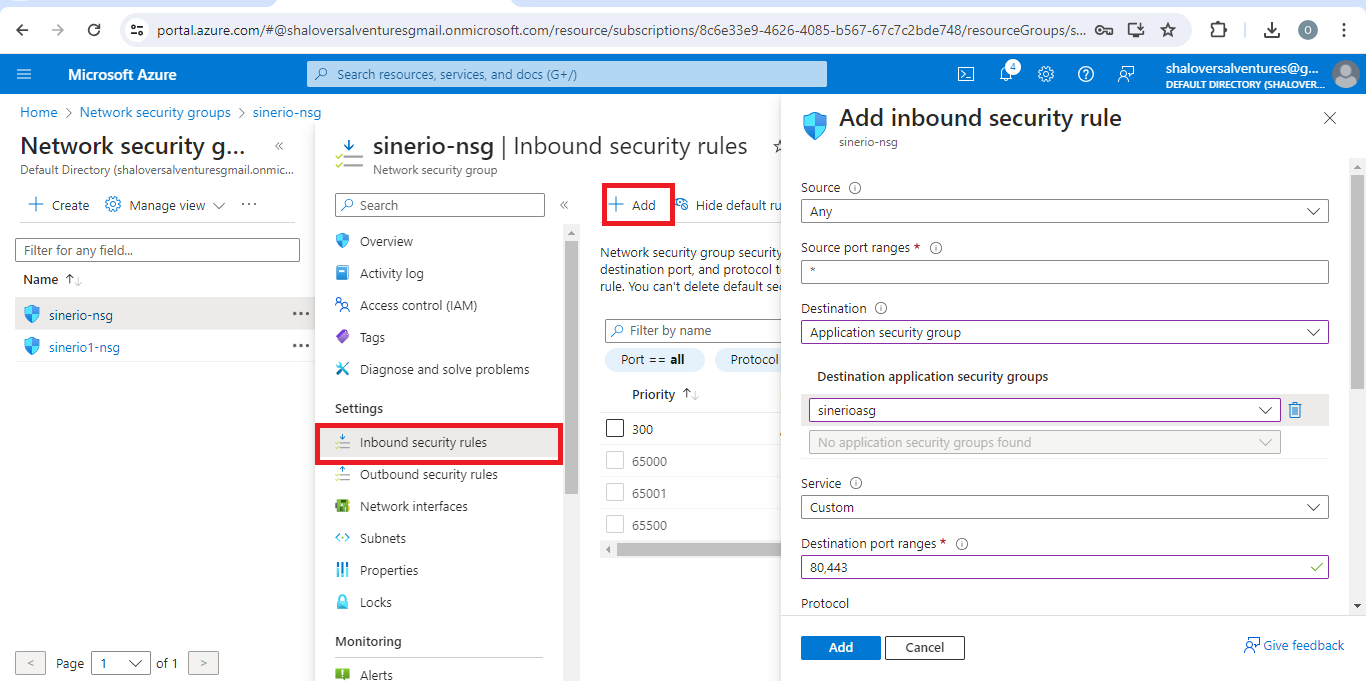

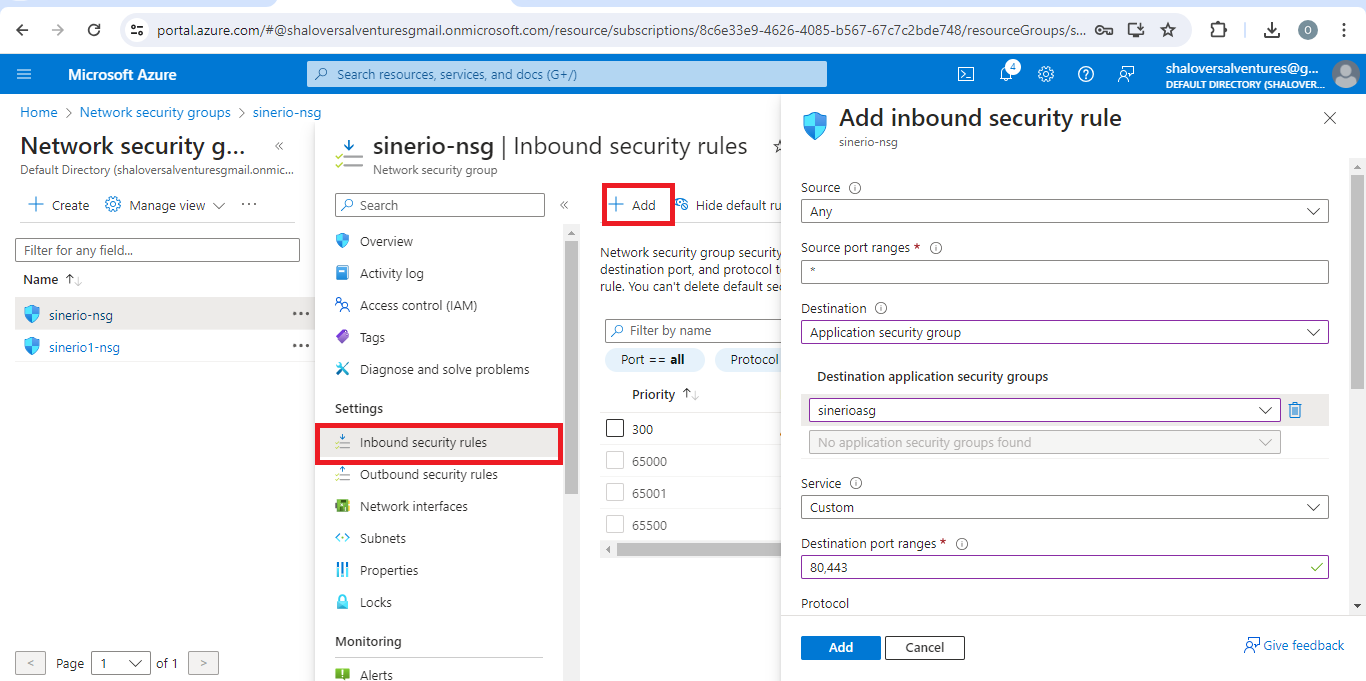

**HOW TO ADD INBOUND RULE**

Go your resource group

Click to open the default nsg

Click +Add at the top of the network page

Select Inbound security group

click add to add rule

Tick the following

Source -any

Destination Application security group-Select ASG that you created

Destination Port ranges: Input 80,443

Protocol-Any

Action-Allow

Priority-100

Select Add group

HOW TO ATTACHE NEW RULE TO THE SERVER

click on the VM

click to open the server using the Downladed RDP and Password

click Application Security group

Click Add ASG

Once done Success page will show up

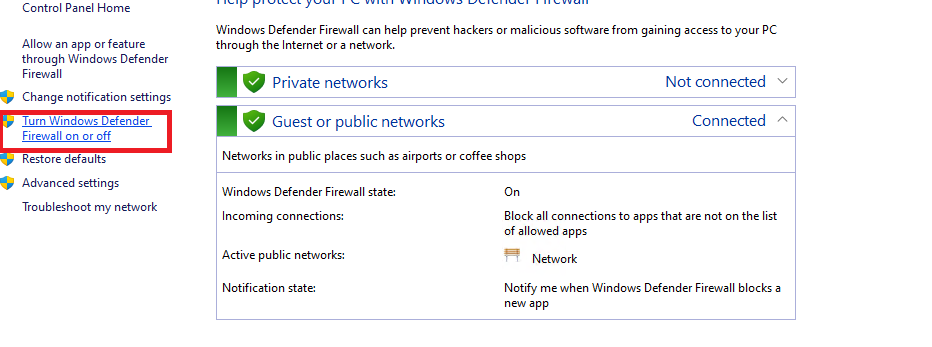

HOW TO CREATE AND INSTALL FIREWALL TO THE WEBSERVER

Go to the marketplace

search for firewall

click create

select subcription/Resource group

Select PRemium For SKU

Create a suitable firewall policy

Create a new public IP for your Firewall

Create VNet-Subnet

NB: ALLOW deployment to complete.

steps to attach the Firewall to the server

Go to vm

Click server

IN server page click virtual network

Click firewall to see if its attached successfully.

| shaloversal123 | |

1,863,589 | 12 Benefits Of Learning Python 🐍 | 🦜 : Why should i learn python? 🦉 : Python provides many useful features which make it popular and... | 0 | 2024-05-25T12:16:24 | https://dev.to/developedbyjk/12-benefits-of-learning-python-k1h | python, learning, benefits, webdev | 🦜 : Why should i learn python?

🦉 : Python provides many useful features which make it popular and valuable from the other programming languages.

---

**(1) Easy to Learn and Use 📚**

Python is simple to learn with straightforward syntax resembling English. No semicolons or curly brackets; indentation defines code blocks. It's perfect for beginners!

---

**(2) Expressive Language ✍️**

Python can handle complex tasks with minimal code. For example, print("Hello World") takes just one line, unlike Java or C which need multiple lines.

---

**(3) Interpreted Language 🔍**

Python executes one line at a time, making debugging easy and the language portable.

---

**(4) Cross-platform Language 🌐**

Python runs on Windows, Linux, UNIX, and macOS, making it highly portable. Write once, run anywhere!

---

**(5) Free and Open Source 💸**

Python is free and open-source, available at www.python.org. A large global community contributes to its modules and functions.

---

**(6) Object-Oriented Language 🧩**

Python supports object-oriented concepts like classes, inheritance, polymorphism, and encapsulation, promoting reusable code and efficient application development.

---

**(7) Extensible 🔗**

Python code can be compiled with languages like C/C++ and used within Python programs, making it versatile and powerful.

---

**(8) Large Standard Library 📚**

Python boasts a vast library collection for machine learning (TensorFlow, Pandas, Numpy), web development (Django, Flask), and more.

---

**(9) GUI Programming Support 🖥️**

Libraries like PyQT5, Tkinter, and Kivy facilitate the development of desktop applications with graphical user interfaces.

---

**(10) Integrated 🧬**

Python integrates seamlessly with languages like C, C++, and Java, running code line by line and simplifying debugging.

---

**(11) Embeddable 🔄**

Code from other programming languages can be embedded in Python, and vice versa, allowing for flexible code integration.

---

**(12) Dynamic Memory Allocation 📦**

No need to specify variable data types; Python automatically allocates memory at runtime, simplifying code writing. For example, just write x = 15.

| developedbyjk |

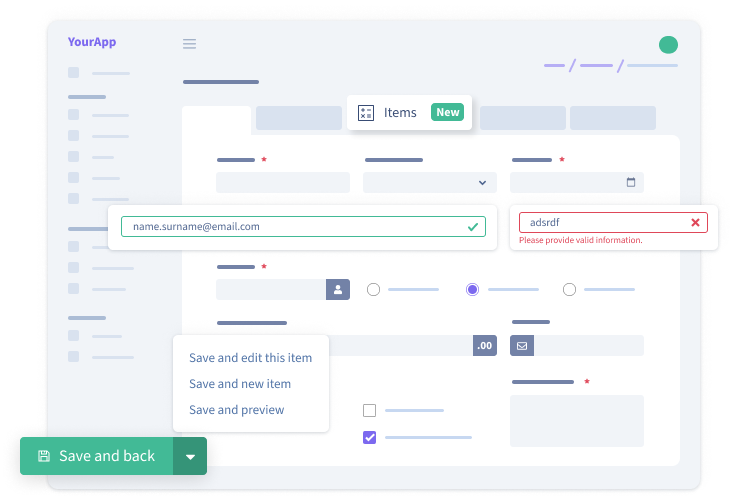

1,864,833 | Generate a Laravel CRUD (Create, Read, Update, Delete) in 5 minutes. | Are you building your App on Laravel? That's a great choice🎉. You must be planning an Admin panel for... | 0 | 2024-05-25T12:13:14 | https://backpackforlaravel.com/articles/getting-started/generate-crud-create-read-update-delete-in-laravel-in-5-minutes | laravel, tutorial, php, development | Are you building your App on Laravel? That's a great choice🎉. You must be planning an **Admin panel** for it. Well, if you're building one, let me give an overview of how you can make a customizable & functional Admin panel with **less effort**.

Admin Panels are made of [CRUDs](https://backpackforlaravel.com/docs/6.x/getting-started-basics#whats-a-crud), [Charts](https://backpackforlaravel.com/docs/6.x/base-widgets#chart-pro), and [Widgets](https://backpackforlaravel.com/docs/6.x/base-widgets). The major component is the CRUD, which is built with various fields and columns. I'm writing this article about a package that will help you generate **Laravel CRUDs** in just 5 minutes.

## Installation.

We'll be using [https://backpackforlaravel.com](https://backpackforlaravel.com) to generate Laravel CRUDs. You can experience a Monster CRUD in the [live demo](https://demo.backpackforlaravel.com/admin/monster) and be more confident in giving it a shot. So, are you ready?

Let's install it now:

1. Go to your Laravel project's directory, then in your terminal, run:

```shell

composer require backpack/crud

```

2. Follow the prompts - in the end, the installer will also tell you your admin panel's URL, where you should go and login.

```shell

php artisan backpack:install

```

> Note: Make sure the `APP_URL` in your .env file is correctly pointing to the URL you use to access your application in the browser, for example: `http:127.0.0.1:8000` or [`http://something.test`](http://something.test)

If you are facing trouble, check the [installation](https://backpackforlaravel.com/docs/6.x/installation) page for help!

### Laravel CRUD with a single command

To generate Laravel CRUD, we need a table.

1. Let's assume we have the `tags` table. You can copy the following migration to make one.

```php

Schema::create('tags', function (Blueprint $table) {

$table->increments('id');

$table->string('name');

$table->string('slug')->unique();

$table->timestamps();

});

```

2. Now, We'll run the following command to generate CRUD:

```bash

php artisan backpack:crud tag # use singular, not plural (like the Model name)

```

The code above will generate the following:

* a model (`app/Models/Tag.php`);

* a controller (`app/Http/Controllers/Admin/TagCrudController.php`);

* a request (`app/Http/Requests/TagCrudRequest.php`);

* a resource route, as a line inside `routes/backpack/custom.php`;

* a new menu item in `resources/views/vendor/backpack/ui/inc/menu_items.blade.php`;

Done! Go to https://localhost/admin/tag to see your Laravel CRUD in action.

### CRUD Customization

We'll go through the generated files and customize them per project needs. Majorly customization includes:

* **Field** types for Create & Update Form.

* **Columns** types for List & Show.

Let's look at the generated Controller `TagCrudController.php` and add Fields & Columns:

```diff

<?php

...

use Backpack\CRUD\app\Library\CrudPanel\CrudPanelFacade as CRUD;

class TagCrudController extends CrudController

{

...

protected function setupListOperation()

{

+ CRUD::column('name')->type(text);

+ CRUD::column('slug')->type(text);

}

protected function setupCreateOperation()

{

CRUD::setValidation(TagRequest::class);

+ CRUD::field('name')->type(text);

+ CRUD::field('slug')->type(text);

}

...

}

```

This less gets you a fully working CRUD:

#### Available Fields & Columns

Backpack includes a variety of FREE [Fields](https://backpackforlaravel.com/docs/6.x/crud-fields) & [Columns](https://backpackforlaravel.com/docs/6.x/crud-columns) and some eye-catching PRO Fields(single addon) for complex project needs:

| FREE Fields | | PRO Fields | |

| --- | --- | --- | --- |

| checkbox | checklist | address\_google | browse |

| checklist\_dependency | color | browse\_multiple | base64\_image |

| custom\_html | date | ckeditor | date\_range |

| datetime | email | date\_picker | datetime\_picker |

| enum | Database ENUM | dropzone | easymde |

| PHP enum | hidden | google\_map | icon\_picker |

| month | number | image | phone |

| password | radio | relationship | repeatable |

| select (1-n) | select\_grouped | select2 (1-n) | select2\_multiple (n-n) |

| select\_multiple (n-n) | select\_from\_array | select2\_nested | select2\_grouped |

| summernote | switch | select\_and\_order | select2\_from\_array |

| text | textarea | select2\_from\_ajax | select2\_from\_ajax\_multiple |

| time | upload | slug | table |

| upload\_multiple | url | tinymce | video |

| view | week | wysiwyg | |

You are also free to make one if not counted above. It's just a [command](https://backpackforlaravel.com/docs/6.x/crud-fields#creating-a-custom-field-type-1) away.

### CRUD Addons

Backpack also has many CRUDs and addons ready to use, which you can find [here](https://backpackforlaravel.com/addons). It includes **SettingsCRUD**, **MenuCRUD**, **NewsCRUD**, **User, Role & Permissions CRUD**, and many other useful admin panel features.

If you are wondering🤔 how to inject JS to backpack fields to show/hide on the client side, check out [CrudField JavaScript Library](https://backpackforlaravel.com/docs/6.x/crud-fields-javascript-api). Backpack is a robust package that stops you nowhere.

### Conclusion

[Laravel](https://laravel.com/) is excellent at building #PHP applications, and [Backpack](https://backpackforlaravel.com/) is excellent at building Laravel CRUDs & Admin Panel. Check out the wide variety of [fields & columns](https://backpackforlaravel.com/docs/6.x/crud-fields) it offers.

Give it a try, and experience generating Laravel CRUDs in 5 minutes. You'll never go back -- because we're all lazy😝. For more info, visit Backpack's FREE [CRUD Crash Course](https://backpackforlaravel.com/docs/6.x/crud-tutorial). | karandatwani92 |

1,864,831 | thampibook | Step into a realm where trust is not just a word but a way of life! Our book is synonymous with... | 0 | 2024-05-25T12:10:47 | https://dev.to/thampibook/thampibook-29pn | Step into a realm where trust is not just a word but a way of life! Our book is synonymous with reliability, offering punters a safe haven to indulge in their favorite games. With a focus on customer satisfaction and lightning-fast withdrawals, we ensure every player feels valued and respected.Best Book [Thampi Book](https://thampibook.com/) | thampibook | |

1,864,830 | Generating Taproot Wallet Address using bitcoinjs-lib | Taproot wallet address generation using Node.js. const ecc = require('tiny-secp256k1') const {... | 0 | 2024-05-25T12:07:21 | https://dev.to/pagarevijayy/generating-taproot-wallet-address-using-bitcoinjs-lib-54f4 | bitcoinjs, taprootwallletaddress, javascript, bitcoin | Taproot wallet address generation using Node.js.

```

const ecc = require('tiny-secp256k1')

const { BIP32Factory } = require('bip32')

const bip32 = BIP32Factory(ecc)

const bip39 = require('bip39')

const bitcoin = require('bitcoinjs-lib')

const path = `m/86'/0'/0'/0/0`; // Path to first child of receiving wallet on first account

bitcoin.initEccLib(ecc);

let mnemonic = bip39.generateMnemonic()

const seed = bip39.mnemonicToSeedSync(mnemonic)

let rootKey = bip32.fromSeed(seed)

const childNode = rootKey.derivePath(path);

let node = childNode.derive(0).derive(0);

const toXOnly = pubKey => (pubKey.length === 32 ? pubKey : pubKey.slice(1, 33));

const childNodeXOnlyPubkey = toXOnly(childNode.publicKey);

const internalPubkey = childNodeXOnlyPubkey;

const { address, output } = bitcoin.payments.p2tr({

internalPubkey

});

console.log(`

Wallet generated:

- Taproot Address: ${address},

- Key: ${node.toWIF()},

- Mnemonic: ${mnemonic}

`)

```

[Reference](https://github.com/bitcoinjs/bitcoinjs-lib/blob/master/test/integration/taproot.spec.ts) | [Code on Github](https://github.com/pagarevijayy/web3-dojo/blob/main/btc-wallet/createTaprootWallet.js )

| pagarevijayy |

1,864,829 | We need to slow down, Everything is going too fast | Last year, when GPT was released AI was trending in the market. However, I landed a remote... | 0 | 2024-05-25T12:01:35 | https://dev.to/shreyvijayvargiya/we-need-to-slow-down-everything-is-going-too-fast-db4 | watercooler, news, beginners, productivity | Last year, when GPT was released AI was trending in the market.

However, I landed a remote opportunity in the Web3 domain, developing wallet apps for web3 users.

Web3 was still trending and new or latest technology in the market but we have AI or so-called GPT models.

ChatGPT just makes our life either too miserable or too good or easy, I am not sure because instead of enjoying free time in the world we humans are working more and more.

Product Hunt is filled with many apps, websites, and tools already being developed.

Twitter is bombarded with people launching their apps and websites.

- GoogleI/O was last week

- ChatGPT launched GPT 4–o this month

- Canva have new releases this week

- Vercel and React released new versions

a lot is going on in technology

Developers are working overtime!!

Solo developers are worrying and consistently learning they have no time to rest, how want to rest in 2024?

Does anyone feel like this is going too fast, technology is often going all-time high development rate and there is no sign of abating.

Last year, I was reading Web3 and Blockchain, working on cool technology and today it seems like an outdated one.

Just forget the technology, Youtube videos, Twitter tweets, Movies, shows, and launches all become outdated within a week.

We are not in the state to remember or cherish anything for more than a week.

## We don’t Sprint in a Marathon

I believe Simon Sinek was right, we are playing the infinite game instead of the Finite game, ofcourse we all have limited time but most of us have more than an average of 20+ years of working.

When it comes to running a marathon we don't need to sprint we should save energy instead and focus on completing a marathon.

The 100m race needs a sprint and for that small amount one can easily sprint but doing the same in the marathon is the devastating idea that 90% of people tried and failed.

We can’t blame a single person for this situation, is ChainGPT the culprit, or is Google Gemini the reason behind such a fast-paced working industry?

It’s a rather more complex interconnected cause-effect problem, the problem emerges somewhere effect somewhere else.

People not accepting this fast-paced industry standard will be and are getting abashed so we can’t blame ourselves for accepting these standards we have no other choice.

Developers are getting frustrated, burnouts are more common nowadays, it will happen because of the way we are working,

The current mindset of working standards creates an inevitable burnout for devs and managers across the globe.

I love technology and frontend and AI and Web3 and I love writing code but the competition is so fierce that if I miss anything it leads to FOMO, burnout, leaving behind syndrome and so on.

Twitter sometimes gives me insecurity because people are doing so much in such a short time.

Somedays I feel to shut down YouTube and Twitter but a few hours down the line, I feel like I am missing something important, it’s a Trap.

This all doesn’t seem good in the long run, I am worried about where the next generation sees themselves, so much to learn or nothing to learn at all.

People are anyways getting divided into extreme categories, normal is not so normal anymore, One wants to develop fast and the other just wants a medium pace lifestyle a more balanced one.

I believe we should not force everyone to become a fast-paced, high-trending working employee, we should accept that some or more people might love the balanced lifestyle.

It’s been 5 months without travelling and just working and working, I don’t have any complaints because now I’ve learned how to cope up with burnout and frustration.

Stop running so fast guys nothing makes sense to me, good things take time, and you simply can’t build a Rome in one day using AI.

Take care of yourself and simply enjoy it.

Remember, in the end, we all gonna die one day so who cares, F** off to all one day, here I am enjoying a good Saturday.

Cheers

Shrey | shreyvijayvargiya |

1,864,828 | Top 40+ QA Testing Companies in 2024 [Top Ranked QA Companies] | As businesses increasingly rely on software solutions, the demand for quality assurance (QA) testing... | 0 | 2024-05-25T12:01:03 | https://dev.to/ray_parker01/top-40-qa-testing-companies-in-2024-top-ranked-qa-companies-b6p | ---

title: Top 40+ QA Testing Companies in 2024 [Top Ranked QA Companies]

published: true

---

As businesses increasingly rely on software solutions, the demand for quality assurance (QA) testing companies has surged. Here's a look at the top 40+ QA testing companies in 2024, their services, clients, and where they are headquartered.

<h2>Here is the list of top QA testing companies</h2>

<h3>1. <a href="https://www.qamentor.com/">QA Mentor</a></h3>

QA Mentor stands out for its award-winning testing services and broad global presence. They offer over 30 QA testing services and unique products tailored to specific industry needs.

<b>Services:</b> Test Automation, QA Audit and Process Improvement, Test Plan Development, QA Outsourcing.

<b>Clients:</b> Citibank, HSBC, Sony.

<b>Headquarter Location:</b> New York City, New York, USA.

<h3>2. <a href="https://qalified.com/">QAlified</a></h3>

QAlified is a software testing and quality assurance leader, ensuring high-quality software solutions with comprehensive testing services. They focus on minimizing risks and improving software performance.

<b>Services:</b> Functional, Performance, Security, Automation, and Usability Testing.

<b>Headquarter Location:</b> Montevideo, Uruguay.

<h3>3. Cigniti Technologies</h3>

Cigniti Technologies is recognized globally for its software testing and quality engineering services. They are dedicated to helping businesses accelerate their digital transformation initiatives through robust QA practices.

<b>Services:</b> Digital Assurance, Quality Engineering, Advisory & Transformation, Next-Gen Testing.

<b>Clients:</b> Southwest Airlines, Wyndham Hotels & Resorts, Lenovo.

<b>Headquarter Location:</b> Hyderabad, India.

<h3>4. Abstracta</h3>

Abstracta is a software testing company with deep expertise in performance and automation testing, helping companies ensure the scalability and reliability of their applications.

<b>Services:</b> Automation Testing, Performance Testing, DevOps Consulting, Accessibility Testing.

<b>Clients:</b> BBVA, Shutterfly, Benefit Cosmetics.

<b>Headquarter Location:</b> San Francisco, California, USA.

<h3>5. QASource</h3>

QASource specializes in high-quality QA services, blending the latest testing technologies with expert resources to cater to specific client needs, focusing on reducing time to market and improving software quality.

<b>Services:</b> API Testing, Mobile and Web Application Testing, QA Analysis, Automated Testing.

<b>Clients:</b> Facebook, Oracle, eBay.

<b>Headquarter Location:</b> Pleasanton, California, USA.

<h3>6. Applause</h3>

Applause provides a comprehensive suite of testing services, leveraging a global community of expert testers to deliver real-world feedback on digital quality.

<b>Services:</b> Crowdtesting, UX Testing, Functional Testing, Payment Testing.

<b>Clients:</b> Google, Uber, Fox.

<b>Headquarter Location:</b> Framingham, Massachusetts, USA.

<h3>7. TestFort</h3>

TestFort is known for its tailored testing solutions and meticulous attention to detail, helping clients achieve reliable and robust software products.

<b>Services:</b> Manual Testing, Automated Testing, Web Application Testing, Mobile App Testing.

<b>Clients:</b> Skype, DHL, HuffPost.

<b>Headquarter Location:</b> Lviv, Ukraine.

<h3>8. TestingXperts</h3>

TestingXperts specializes in next-gen continuous testing services that incorporate AI and machine learning to ensure higher efficiency and accuracy.

<b>Services:</b> Continuous Testing, DevOps Testing, AI Testing, Security Testing.

<b>Clients:</b> Aetna, Panera Bread, Gate Gourmet.

<b>Headquarter Location:</b> Mechanicsburg, Pennsylvania, USA.

<h3>9. Global App Testing</h3>

Focusing on speed and agility, Global App Testing enables companies to deploy their products faster by providing on-demand access to QA professionals worldwide.

<b>Services:</b> Exploratory Testing, Test Case Execution, Regression Testing, Load Testing.

<b>Clients:</b> Facebook, Microsoft, Spotify.

<b>Headquarter Location:</b> London, United Kingdom.

<h3>10. LogiGear</h3>

LogiGear provides customized testing solutions that support the development lifecycle, emphasizing modern methodologies like Agile and Test Automation.

<b>Services:</b> Test Automation Solutions, Software Testing, Continuous Testing, API Testing.

<b>Clients:</b> Adobe, Cisco, Samsung.

<b>Headquarter Location:</b> Silicon Valley, California, USA.

<h3>11. Testlio</h3>

Testlio merges expert testers with powerful testing software, specializing in mobile and web application testing. Their networked testing model allows them to mobilize and scale their services for client needs quickly.

<b>Services:</b> Mobile Testing, Functional Testing, Usability Testing, Localization Testing.

<b>Clients:</b> Amazon, Microsoft, CBS Interactive.

<b>Headquarter Location:</b> Austin, Texas, USA.

<h3>12. ThinkSys</h3>

ThinkSys delivers cost-effective and scalable testing services, leveraging cloud environments and automation to ensure high-quality software deployment.

<b>Services:</b> Performance Testing, Regression Testing, Cloud Testing, Security Testing.

<b>Clients:</b> Oracle, Intel, Prudential.

<b>Headquarter Location:</b> Sunnyvale, California, USA.

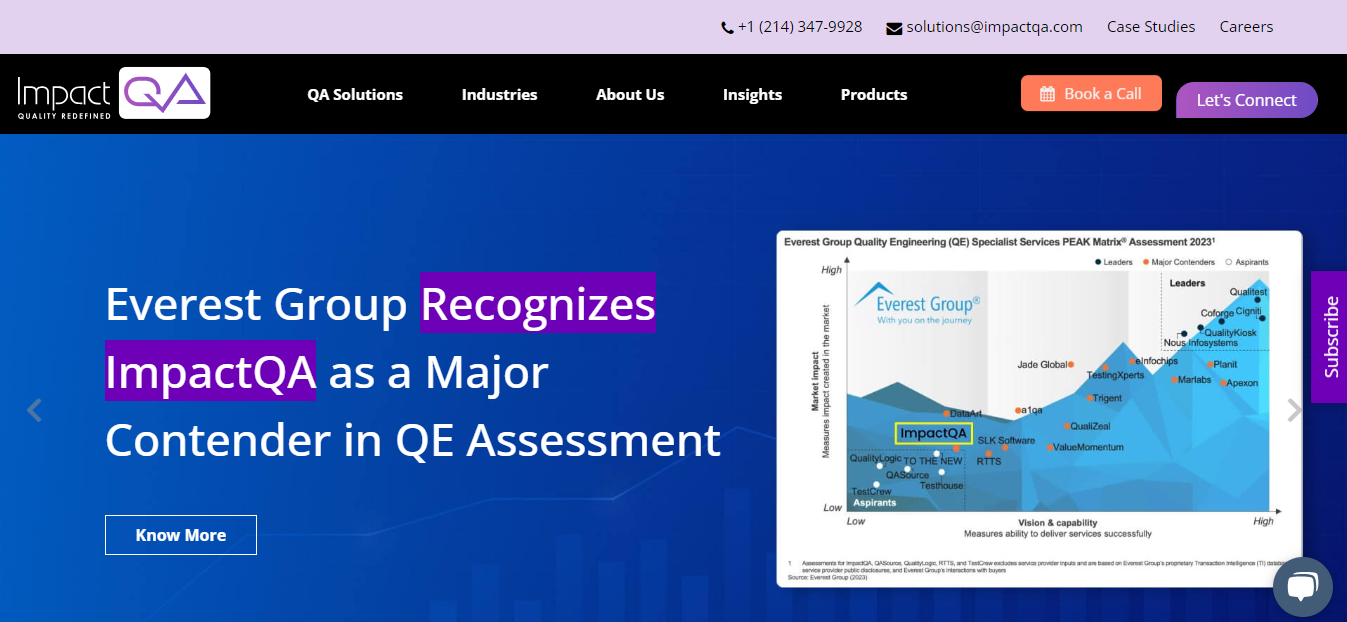

<h3>13. ImpactQA</h3>

ImpactQA provides holistic QA services, focusing on new-age technologies and solutions to drive digital excellence and software robustness.

<b>Services:</b> IoT Testing, Blockchain Testing, Cybersecurity Testing, Performance Testing.

<b>Clients:</b> Panasonic, Terex, UNICEF.

<b>Headquarter Location:</b> New York City, New York, USA.

<h3>14. Codoid</h3>

Codoid stands out for its innovative approach to software testing, delivering exceptional QA and software testing services that ensure product integrity.

<b>Services:</b> ETL Testing, Mobile QA, Game Testing, VR Testing.

<b>Clients:</b> Vodafone, Honeywell, Medlife.

<b>Headquarter Location:</b> Chennai, India.

<h3>15. Kualitatem</h3>

Kualitatem is renowned for its impeccable software testing and cybersecurity services, delivering enhanced efficiency and security to client operations.

<b>Services:</b> Information Security, Independent Software Testing, IT Audits, and Quality Assurance.

<b>Headquarter Location:</b> Lahore, Pakistan.

<h3>16. Invensis Inc</h3>

Invensis is a global IT-BPO, that delivers innovative and scalable technology solutions to enhance business efficiency and growth.

<b>Services:</b> IT Services, Call Center Services, Data Entry Services, e-Commerce Support, Finance and Accounting.

<b>Headquarter Location:</b> Wilmington, Delaware, USA.

<h3>17. QA Wolf</h3>

QA Wolf revolutionizes the testing process by providing a no-setup, fully managed testing service that creates and runs end-to-end tests for web applications.

<b>Services:</b> End-to-End Testing, Continuous Testing, Integration Testing, Bug Tracking.

<b>Clients:</b> Startup tech companies, mid-sized software firms.

<b>Headquarter Location:</b> Seattle, Washington, USA.

<h3>18. TestMatick</h3>

TestMatick is known for its client-centric approach and high-quality testing services across web, mobile, and desktop applications.

<b>Services:</b> Quality Assurance Testing, Automated Testing, Mobile App Testing, Security Audits.

<b>Clients:</b> Small to medium-sized enterprises, Health and Education sectors.

<b>Headquarter Location:</b> New York City, New York, USA.

<h3>19. A1QA</h3>

A1QA delivers full-cycle testing services focusing on comprehensive solutions for enterprise clients, helping them ensure software reliability and performance.

<b>Services:</b> Full-cycle QA, Software Audit, Test Automation, Performance Testing.

<b>Clients:</b> Adidas, Kaspersky Lab, Telekom Austria Group.

<b>Headquarter Location:</b> Lakewood, Colorado, USA.

<h3>20. TestCrew</h3>

TestCrew is a leading testing and quality assurance service provider, specializing in comprehensive solutions that enhance software reliability and user satisfaction.

<b>Services:</b> Functional Testing, Automation Testing, Usability Testing, Performance Testing.

<b>Headquarter Location:</b> Jeddah, Saudi Arabia.

<h3>22. Aspire Systems</h3>

Aspire Systems has a philosophy of enhancing customer satisfaction through innovative testing methodologies and tools that ensure product quality.

<b>Services:</b> Functional Testing, Automation Testing, Performance Testing, Oracle EBS Testing.

<b>Clients:</b> Retail businesses, Tech startups, Financial institutions.

<b>Headquarter Location:</b> Chennai, India.

<h3>23. DeviQA</h3>

DeviQA is recognized for its attention to detail and a strong commitment to delivering flawless software products through rigorous testing protocols.

<b>Services:</b> Automated Testing, Mobile App Testing, Web Testing, Load and Performance Testing.

<b>Clients:</b> Technology firms, Media agencies, and E-commerce platforms.

<b>Headquarter Location:</b> Kyiv, Ukraine.

<h3>24. Testrig Technologies</h3>

Testrig Technologies is a prominent QA industry leader known for its innovative solutions and tailored approach to each client’s needs.

<b>Services:</b> Cloud Testing, Security Testing, Usability Testing, Selenium Testing.

<b>Clients:</b> Government agencies, Healthcare providers, IT companies.

<b>Headquarter Location:</b> Pune, India.

<h3>25. Oxagile</h3>

Oxagile leverages cutting-edge technologies and seasoned expertise to deliver end-to-end testing services that drive client success in digital landscapes.

<b>Services:</b> Manual Testing, Automated Testing, Custom Testing Solutions, QA Consultation.

<b>Clients:</b> Media conglomerates, Sports networks, Advertising firms.

<b>Headquarter Location:</b> New York City, New York, USA.

<h3>26. QualityLogic</h3>

QualityLogic fosters a culture of continuous improvement and innovation in QA testing, delivering services that ensure clients' products meet the highest standards.

<b>Services:</b> API Testing, Functional Testing, Smart Grid Solutions, IoT Testing.

<b>Clients:</b> Energy companies, Tech startups, and Telecommunication firms.

<b>Headquarter Location:</b> Boise, Idaho, USA.

<h3>27. OnPath Testing</h3>

OnPath Testing is dedicated to helping clients navigate the complexities of software testing with comprehensive services tailored to specific industry needs.

<b>Services:</b> Automation Testing, Manual Testing, Mobile Testing, ERP Testing.

<b>Clients:</b> Financial services, Healthcare applications, Education platforms.

<b>Headquarter Location:</b> Denver, Colorado, USA.

<h3>28. Planit Testing</h3>

Planit Testing integrates modern testing techniques and tools to offer top-notch QA services, ensuring that clients receive both efficient and effective testing outcomes.

<b>Services:</b> Functional Testing, Automation Testing, Performance Testing, Cybersecurity Testing.

<b>Clients:</b> Banking institutions, Retail chains, and Government agencies.

<b>Headquarter Location:</b> Sydney, Australia.

<h3>29. HikeQA</h3>

HikeQA stands out in the QA industry for its comprehensive testing services, ensuring superior software quality and performance with a client-centric approach.

<b>Services:</b> Manual Testing, Automation Testing, Mobile App Testing, Web Testing.

<b>Headquarter Location:</b> Noida, India.

<h3>30. SkyTesters</h3>

SkyTesters emphasizes user-centric testing services, specializing in mobile and web applications to ensure optimal functionality and user satisfaction.

<b>Services:</b> Mobile Testing, Usability Testing, Security Testing, Performance Testing.

<b>Clients:</b> Mobile app startups, E-commerce platforms, Enterprise software.

<b>Headquarter Location:</b> New Delhi, India.

<h3>31. Levi9</h3>

Levi9 is known for its technological prowess and customer-focused approach, delivering QA services that support agile development and digital transformation.

<b>Services:</b> End-to-End Testing, Automation Solutions, DevOps Integration, Cloud Testing.

<b>Clients:</b> Tech startups, Multinational corporations, and Digital service providers.

<b>Headquarter Location:</b> Amsterdam, Netherlands.

<h3>32. TestDevLab</h3>

TestDevLab provides robust software testing and engineering solutions to ensure high-quality products. Their focus is on reliability and innovation.

<b>Services:</b> QA Testing, Network Testing, Voice Quality Testing, Software Development.

<b>Headquarter Location:</b> Riga, Latvia.

<h3>33. Testbytes</h3>

Testbytes offers tailored testing solutions crafted to meet each project's unique challenges, ensuring high-quality software products through rigorous QA processes.

<b>Services:</b> Game Testing, Security Auditing, Mobile App Testing, Software Testing Consultancy.

<b>Clients:</b> Gaming studios, Security software firms, Mobile app developers.

<b>Headquarter Location:</b> Pune, India.

<h3>34. Codified Security</h3>

Codified Security stands out for its specialized focus on mobile app security testing, protecting clients against potential vulnerabilities and threats.

<b>Services:</b> Mobile Application Security Testing, Code Review, Threat Identification, Compliance Testing.

<b>Clients:</b> Financial institutions, Healthcare providers, Tech startups.

<b>Headquarter Location:</b> London, United Kingdom.

<h3>35. Perfecto by Perforce</h3>

Perfecto specializes in cloud-based web, mobile, and IoT testing environments that enable developers and testers to evaluate applications under real-world conditions.

<b>Services:</b> Continuous Testing, Mobile and Web Automation, Performance Testing, Security Testing.

<b>Clients:</b> Banks, Retail companies, Telecom operators.

<b>Headquarter Location:</b> Minneapolis, Minnesota, USA.

<h3>36. ScienceSoft</h3>

ScienceSoft offers extensive IT consulting and software development expertise, delivering tailor-made solutions to optimize business processes and technology strategies.

<b>Services:</b> Custom Software Development, IT Consulting, Cybersecurity, QA, and DevOps.

<b>Headquarter Location:</b> McKinney, Texas, USA.

<h3>37. QualityWorks Consulting Group</h3>

QualityWorks Consulting Group is renowned for its comprehensive approach to software testing and quality assurance. With a focus on agile methodologies and continuous integration, they help clients enhance product performance and speed to market.

<b>Services:</b> Automation Testing, Manual Testing, Performance Testing, Security Testing.

<b>Clients:</b> Startups, Fortune 500 companies in technology and finance.

<b>Headquarter Location:</b> Los Angeles, California, USA.

<h3>38. XBOSoft</h3>

XBOSoft elevates software quality across various platforms through deep expertise in QA and comprehensive testing services.

<b>Services:</b> Software Quality Consulting, Mobile Testing, Agile QA, Healthcare Software Testing.

<b>Clients:</b> Software and tech companies, Healthcare providers, and Financial Services.

<b>Headquarter Location:</b> San Francisco, California, USA.

<h3>39. Testhouse Ltd</h3>

Testhouse Ltd focuses on enhancing software applications' reliability, performance, and security through advanced testing methodologies and tools.

<b>Services:</b> DevOps Testing, Managed Testing Services, Digital Assurance, Accessibility Testing.

<b>Clients:</b> Government entities, Educational institutions, Enterprise clients.

<b>Headquarter Location:</b> London, United Kingdom.

<h3>40. QAwerk</h3>

QAwerk is dedicated to uncovering bugs and ensuring software reliability through its meticulous and detail-oriented testing processes.

<b>Services:</b> Bug Hunting, Documentation Testing, Usability Testing, Compliance Testing.

<b>Clients:</b> Startups, Media agencies, E-commerce platforms.

<b>Headquarter Location:</b> Kyiv, Ukraine.

<h3>41. Checkmarx</h3>

Known for its leadership in software security, Checkmarx offers robust testing solutions that focus on static and dynamic code analysis to prevent security breaches.

<b>Services:</b> Static Application Security Testing (SAST), Software Composition Analysis, Application Security Testing, Interactive Application Security Testing.

<b>Clients:</b> Software developers, IT security agencies, Large corporations.

<b>Headquarter Location:</b> Ramat Gan, Israel.

<h3>Conclusion</h3>

In conclusion, the QA testing landscape in 2024 is diverse and vibrant, with each company bringing its unique strengths and specialties to the table. From comprehensive service offerings and innovative testing solutions to global outreach and esteemed client lists, these 41 companies represent the pinnacle of quality assurance. Whether you're a startup looking for agile testing solutions or a large enterprise needing rigorous compliance testing, the <a href="https://www.iqvis.com/blog/trending-best-software-testing-companies/">QA testing companies</a> listed provide the expertise and technology to ensure your software meets the highest standards of quality and reliability. As the digital landscape continues to evolve, partnering with a top-tier QA testing company is more crucial than ever to maintain a competitive edge and deliver exceptional user experiences.

<b>Note: If you want to list your company on this post then please contact on this email address readdive@gmail.com</b>

tags:

# QA Testing Companies

# Software Testing Companies

# List of QA Testing Vendors

# Software Testing Services

# Quality Assurance Testing

# QA Companies

---

| ray_parker01 | |

1,847,950 | Next.js e Vercel: Otimizando Aplicações para Produção | Introdução Next.js é um framework React popular que oferece funcionalidades como... | 27,692 | 2024-05-25T11:51:24 | https://dev.to/vitorrios1001/nextjs-e-vercel-otimizando-aplicacoes-para-producao-378j | vercel, nextjs, react, productivity | ## Introdução

Next.js é um framework React popular que oferece funcionalidades como renderização no lado do servidor (SSR) e geração de sites estáticos (SSG). Quando hospedado na Vercel, uma plataforma de cloud especificamente otimizada para aplicações Next.js, os desenvolvedores podem aproveitar diversas ferramentas e otimizações para maximizar a performance e a eficiência. Este artigo fornece um guia detalhado sobre como otimizar aplicações Next.js na Vercel, abordando caching, pre-renderização e o uso de Edge Functions.

## Otimizando com Caching

### 1. **Caching na Borda (Edge Caching)**

Vercel oferece caching na borda, o que significa que o conteúdo estático e as páginas SSR são cacheadas nos pontos de presença global. Isso reduz a latência e melhora a velocidade de carregamento ao servir o conteúdo mais próximo fisicamente do usuário.

**Implementação:**

- Use cabeçalhos HTTP para controlar o cache. Por exemplo, `Cache-Control` pode ser configurado para `s-maxage` para definir quanto tempo uma página deve ser cacheada na CDN.

- Para páginas dinâmicas que requerem dados frescos, utilize uma estratégia de invalidação de cache ou defina `s-maxage` para um valor baixo.

### 2. **Cache no Navegador**

Além do edge caching, configurar o cache no navegador para arquivos estáticos (CSS, JS, imagens) pode reduzir a quantidade de dados que o usuário precisa baixar em visitas repetidas.

**Implementação:**

- Configure o `Cache-Control` em arquivos estáticos para usar `max-age` e potencialmente `immutable` se os arquivos não mudarem entre builds.

## Utilizando Pre-renderização

### 1. **Static Site Generation (SSG)**

Next.js permite que você gere páginas estáticas durante a build. Essas páginas podem ser servidas imediatamente, melhorando a performance e a experiência do usuário.

**Implementação:**

- Use `getStaticProps` para buscar dados durante a build e `getStaticPaths` se você tem páginas dinâmicas que podem ser pré-renderizadas com diferentes parâmetros.

### 2. **Incremental Static Regeneration (ISR)**

ISR permite que você atualize páginas estáticas sem precisar reconstruir toda a aplicação. Isso é ideal para conteúdo que muda frequentemente, mas ainda assim pode ser servido como estático.

**Implementação:**

- Adicione a opção `revalidate` em `getStaticProps` para especificar com que frequência a página deve ser regenerada.

## Implementando Edge Functions

Edge Functions permitem executar código na borda, mais próximo do usuário, antes que a requisição chegue ao servidor principal ou ao navegador. Elas são úteis para personalização em tempo real e tarefas que precisam de baixa latência.

**Implementação:**

- Use o `middleware.js` no Next.js para executar código nas Edge Functions. Este arquivo permite interceptar requisições e modificar respostas ou redirecionar usuários baseado em geolocalização ou headers de dispositivo.

### Exemplo de uso de Edge Function:

```javascript

import { NextResponse } from 'next/server';

export function middleware(request) {

const country = request.geo.country || 'US';

if (country !== 'US') {

return NextResponse.redirect('/non-us');

}

return NextResponse.next();

}

```

## Benefícios e Considerações

A combinação de Next.js e Vercel oferece uma série de benefícios:

- **Performance:** Carregamento mais rápido das páginas devido ao caching eficiente e à entrega de conteúdo mais próxima ao usuário.

- **Escalabilidade:** Facilidade em escalar aplicações devido à infraestrutura gerenciada e às otimizações automáticas.

- **Desenvolvimento simplificado:** Menos preocupações com a infraestrutura e mais foco no desenvolvimento de funcionalidades.

Comparando com outras abordagens de hospedagem e frameworks, a integração Next.js e Vercel se destaca pela otimização automática e pelo suporte específico ao framework.

## Conclusão

Otimizar aplicações Next.js hospedadas na Vercel envolve aproveitar ao máximo os recursos de caching, pre-renderização e Edge Functions. Implementar essas estratégias não apenas melhora a velocidade e a experiência do usuário, mas também simplifica o processo de desenvolvimento, permitindo que desenvolvedores se concentrem em criar funcionalidades ricas e interfaces envolventes. Com a configuração correta, Next.js e Vercel podem oferecer uma solução poderosa para desenvolver aplicações web modernas e de alto desempenho. | vitorrios1001 |

1,864,827 | Prometheus: Unable to access the Prometheus from browser | I have done with configuration of Prometheus on my ubuntu machine but unable to access it via... | 0 | 2024-05-25T11:48:46 | https://dev.to/anil_gupta_8c14d017c93304/prometheus-unable-to-access-the-prometheus-from-browser-1li2 | prometheus, kubernetes, docker | I have done with configuration of Prometheus on my ubuntu machine but unable to access it via browser. - PODS and services are working for Prometheus.

I tried accessing the Prometheus using following ways unfortunately nothing work for me:

1. localhost:9090

2. 172.31.37.100:9090 [Static IP of ubuntu machine]

3. 172.19.33.206:9090 [Default IP of ubuntu machine]

I am not sure what need to be done to make it work. Request you all to please suggest. | anil_gupta_8c14d017c93304 |

1,864,826 | Best online fantasy game | Betting id cricket Ensuring fair play is crucial not only for the enjoyment of gamers but also for... | 0 | 2024-05-25T11:47:25 | https://dev.to/mandeep_chahal_529ed4507e/best-online-fantasy-game-48bh | bettingidcricket | [Betting id cricket](https://bestonlinecricketid.online/betting-id-cricket/) Ensuring fair play is crucial not only for the enjoyment of gamers but also for the credibility and sustainability of gaming platforms. This article explores the best practices for promoting fair play in online gaming and highlights some of the top platforms that prioritize fairness. | mandeep_chahal_529ed4507e |

1,864,736 | Desert Camp in Jaisalmer | The golden dunes of the Thar Desert evoke a sense of mystery and Best Adventure Activities in... | 0 | 2024-05-25T09:24:14 | https://dev.to/camp_injaisalmer_0a70bdb/desert-camp-in-jaisalmer-1kb | The golden dunes of the Thar [Desert](https://campinjaisalmer.in/desert-camp-in-jaisalmer.html) evoke a sense of mystery and Best Adventure Activities in Jaisalmer. As the sun sets over the rippling sand sea, the desert comes alive. Imagine yourself sitting under a canopy of stars, warmed by a crackling bonfire, entertained by traditional Kalbeliya dances, and enjoying a delicious home-cooked Rajasthani meal. A night at a desert camp in Jaisalmer is an experience you will cherish forever.

When planning your trip to Jaisalmer Rajasthan, a stay at Bhatt Desert Camp Jaisalmer. Bhatt Desert Camp offer a glimpse into the rich culture and heritage of the desert state while allowing you to reconnect with nature. Wake up to the sounds of chirping birds, go to camel safari, jeep safari,parasailing in jaisalmer and stargaze at night—there are endless ways to discover the beauty of the golden desert from your desert camp in jaisalmer with Bhatt Desert Camp. Escape the hustle and bustle of city life and head to the sand dunes of Jaisalmer for a magical desert holiday with Bhatt Desert Camp.

Bhatt Desert Camp provides desert camp in Jaisalmer with all comfort. Choose us for the best camps at affordable prices with world-class amenities.

Bhatt Desert Camp refers to a popular accommodation option in Jaisalmer, Rajasthan. Jaisalmer is known for its magnificent sand dunes and the Thar Desert, which attract numerous tourists from around the world.

Experience the Thrill of the Desert at the Best Luxury Camp in Jaisalmer?

You've always dreamed of experiencing the magic of the desert. Vast seas of rippling sand dunes, vibrant cultural experiences, and stunning sunrises and sunsets Now is the time to make that dream a reality with an unforgettable luxury desert experience in Jaisalmer, the heart of Rajasthan's Thar desert. Forget the usual tourist traps and immerse yourself in the beauty and adventure of the desert with a stay at the top-notch Bhatt Desert Camp. This Relais & Châteaux property will sweep you into a flipside world with its striking architecture, lavish amenities, and impeccable service. Whether you want to soak in the scenic eyeful on a sunset camel safari, learn culinary secrets in a cooking matriculation, or stargaze at night with an astronomer, Bhatt Desert Zany curates memorable experiences for a taste of pure Rajasthani culture and hospitality. Let the golden sand dunes and vibrant festivals ignite your senses for an escape you'll cherish forever. The magic of the desert is calling; it's time for you to answer.

Desert Camp in Jaisalmer

The best desert camp in Jaisalmer is the perfect way to experience the thrill of the desert while still enjoying creature comforts. Surrounded by sand dunes and open skies, you feel a world away from it all.

Stargaze at Night in Jaisalmer

The desert comes alive at night. Billions of stars light up the inky black sky, unobscured by city lights. Curl up by a crackling bonfire, listen to traditional Rajasthani music, and stargaze. You may even spot a shooting star! Bhatt Desert Camp offers telescopes for viewing celestial objects up close.

Explore the Dunes of Jaisalmer.

Venture out to the golden sand dunes in the morning or late afternoon. Slide, roll, and run down the dunes, your laughter echoing across the desert. Or take a camel safari, jeep safari, or paramotoring tour of the desert, watching the sun set over the dunes.

Facilities

* Air conditioning

* Air Fan Luxury Camp

* Telephone

* 24 Hours running Hot & Cold Water

* Laundry facilities on request

* Astrologist/Palmist & Doctor on call

* Massage on call

* Money Exchange & Travel Desk

* Folk Dances

* Local sight seen

* Travel Service – Tour Package, Car rental

* Arrival Transfer from Railway station & Airport | camp_injaisalmer_0a70bdb | |

1,864,825 | The Car Game (Cube.net) [BETA] | This is a Fast Paced Game. Where You Are A Cube. I'm Not Telling Everything Go Check The Game Out To... | 0 | 2024-05-25T11:47:17 | https://dev.to/abdul_hussain_13998d5fcc8/the-car-game-cubenet-beta-2jff | codepen | <p>This is a Fast Paced Game. Where You Are A Cube. I'm Not Telling Everything Go Check The Game Out To Find Out More... Make Red Cubes Touch Green Ones To kill Red Cubes. The Score System is Broken:( I can't Get An Idea Leave Your Awesome Ideas In Comments So I can Update This More often. Comment If You Find Any Bug I will Fix it ASAP. 10 Likes For A HUGE Update :D Enjoy The

Game Now. Thankyou For Testing BETA Version. Select Car Color And Click Play To Start :) [This Game Currently Supports Mobile]</p>

{% codepen https://codepen.io/EclipseXlazer/pen/NWVxQqG %} | abdul_hussain_13998d5fcc8 |

1,864,823 | Beyond Docker: Exploring Buildah and Podman for Container workflows | Containers have emerged as a game-changer in application development and deployment. Their... | 0 | 2024-05-25T11:41:18 | https://dev.to/ahmadmohey/beyond-docker-exploring-buildah-and-podman-for-container-workflows-3lnk | docker, podman, buildah, container | Containers have emerged as a game-changer in application development and deployment. Their lightweight, portable, and self-contained nature has streamlined workflows and fostered a more agile development environment.

his article will introduce you to three key players in the containerization world: Docker, Buildah, and Podman.

Docker is like the godfather of containerization. It's the most popular tool (around 80% of market share), offering a complete toolkit for building, running, sharing, and deploying containerized applications. It's user-friendly, making it a great starting point for beginners.

Due to its extensive features and robust ecosystem, Docker remains the industry standard for managing containerized applications in production environments. It provides additional features like image registries for sharing images, orchestration tools for managing multiple containers, and security features to enhance container security.

Buildah, on the other hand, is a lightweight tool built for efficiency. It focuses on crafting top-notch container images, giving you more control over what goes inside. This makes it a favorite for experienced users who want to keep their images lean and mean.

Some experienced users prefer Buildah because it empowers them to create lean and mean images, ensuring they only contain the essential components needed for the application to function. This focus on efficiency makes Buildah ideal for scenarios where image size and resource utilization are critical considerations, such as deploying containers on resource-constrained environments.

Finally, Podman is a powerful alternative to Docker. It works similarly, letting you run and manage containers, but without needing a background program running all the time. This makes it a good fit for Linux systems and those who prefer open-source options.

If you're looking for a robust container management tool that prioritizes open-source principles and efficiency, Podman might be the perfect fit for you. It integrates seamlessly with other open-source tools commonly used in Linux environments and provides a familiar command-line interface for users comfortable with the Linux ecosystem.

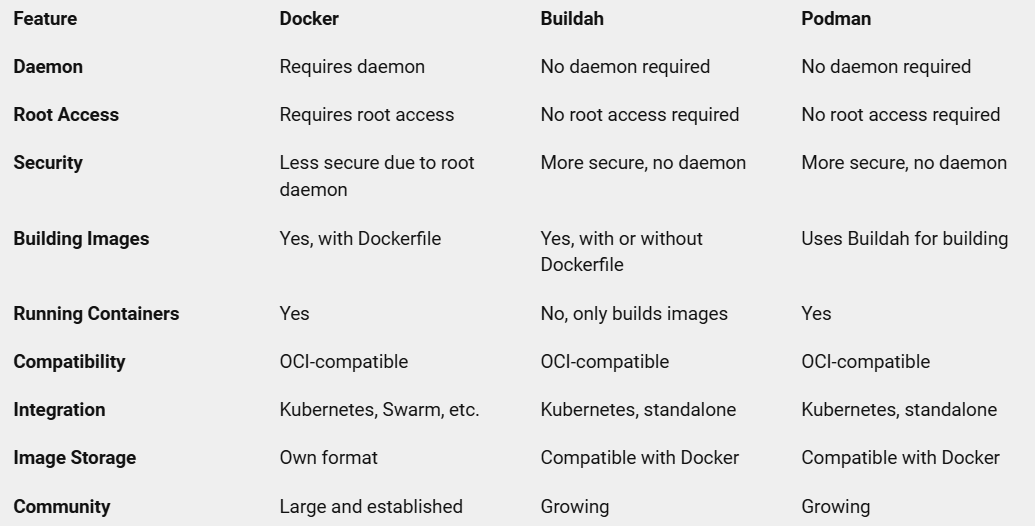

Here’s a brief comparison of Docker, Buildah, and Podman:

Now you have a better understanding of Docker, Buildah, and Podman, the key players in the containerization game. Choosing the right tool depends on your specific needs. If you're a beginner or need a comprehensive solution for all aspects of containerization, Docker might be a great fit. For building efficient images with more control, Buildah shines. And if you're an open-source enthusiast working on Linux, Podman offers a powerful and lightweight alternative for container management.

No matter which tool you choose, containers can revolutionize your development workflow by making your applications more portable and efficient. So, experiment, explore, and happy containerizing! | ahmadmohey |

1,864,822 | Level Up Your Dev Skills: Top AI Tools for 2024! | Calling all coders! Feeling overwhelmed by the ever-evolving world of development? AI is here to be... | 0 | 2024-05-25T11:39:05 | https://dev.to/futuristicgeeks/level-up-your-dev-skills-top-ai-tools-for-2024-25oe | webdev, ai, programming, developers | Calling all coders!

Feeling overwhelmed by the ever-evolving world of development?

AI is here to be your secret weapon!

FuturisticGeeks brings you the ultimate list of AI tools that will supercharge your workflow and make you a coding ninja.

Read more on our article: https://futuristicgeeks.com/level-up-your-dev-skills-top-ai-tools-for-2024/ | futuristicgeeks |

1,864,821 | Top 5 iPhone Cleaner Apps in 2024 | Does it feel sluggish and unresponsive when you try to take photos, open apps, or browse the web?... | 0 | 2024-05-25T11:36:29 | https://dev.to/nik_marron/top-5-iphone-cleaner-apps-in-2024-1oe7 | ios, ipad, mobile, top7 | Does it feel sluggish and unresponsive when you try to take photos, open apps, or browse the web? These are all signs that your iPhone's storage might be getting cluttered.

Keeping your iPhone optimized is essential for a smooth and enjoyable user experience. Fortunately, there are several iPhone cleaner apps available that can help you free up valuable storage space, improve your device's performance, and even extend battery life.