id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

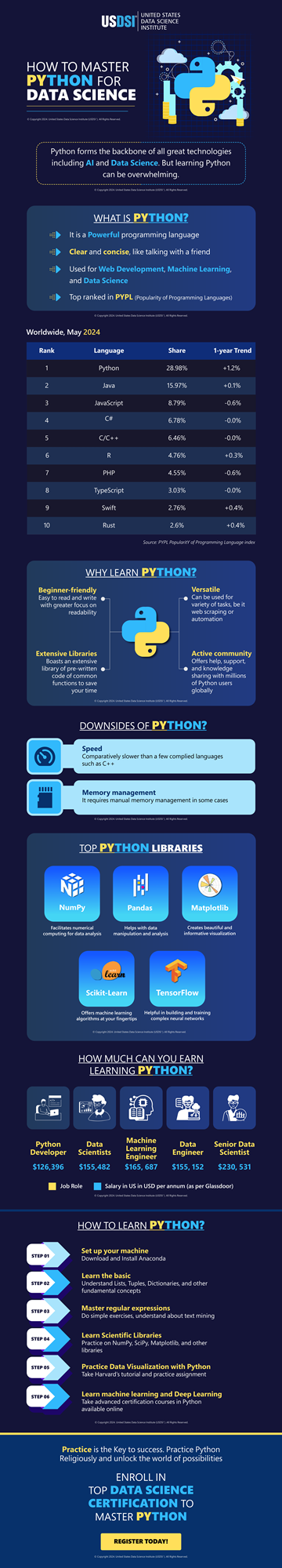

1,864,635 | HOW TO MASTER PYTHON FOR DATA SCIENCE| INFOGRAPHIC | Learning data science is easy, getting hands-on expertise in Python programming nuances takes skills.... | 0 | 2024-05-25T07:03:46 | https://dev.to/pradipmohapatra/how-to-master-python-for-data-science-infographic-2bbn | datascience, python | Learning data science is easy, getting hands-on expertise in Python programming nuances takes skills. It is highly advised when thinking of a career in the data science industry, it is critical to master Python and other programming languages to facilitate in-time efficient [data-driven decision-making](https://www.usdsi.org/data-science-insights/resources/master-data-driven-decision-making-in-2024).

Python is the most-loved programming language among developers worldwide, TIOBE has ranked it at No.1 in the race for becoming the best programming language in the world. Being a powerful programming language, it lends clarity and concise explanations; that are widely deployed in web development, machine learning, and data science.

PYPL also seconds TIOBE is ranking Python as the top-notch programming language of today. This makes learning Python an inevitable task. It offers a beginner-friendly gateway, massive versatility, extensive libraries, and an active community to grow with. However, as the technology ramps up, Python faces the drawbacks of poor speed and memory management in some cases.

It is time you mastered most in-demand Python libraries such as NumPy, Scikit-Learn, Pandas, TensorFlow, and Matplotlib, among many others. data visualization and many other skills earned at the most trusted [data science certifications](https://www.usdsi.org/data-science-certifications) can take you a long way ahead in earning sky-high data scientist salaries worldwide. Landing your dream data science job is never far away, with the best data science courses.

Bring zeal and ever-strengthening skills to be a lifelong learner to evolve with the enhancing times. Across different states in the USA, the UK, France, Germany, Australia, India, and other countries are brimming with a staggering demand for data scientists. Become a specialized professional and make a positive impact in the multitudinous growth of the global marketplace. This representation shall take you up, close, and personal with Python programming and convenient ways to conquer the nuances with the best facilitators around the world.

| pradipmohapatra |

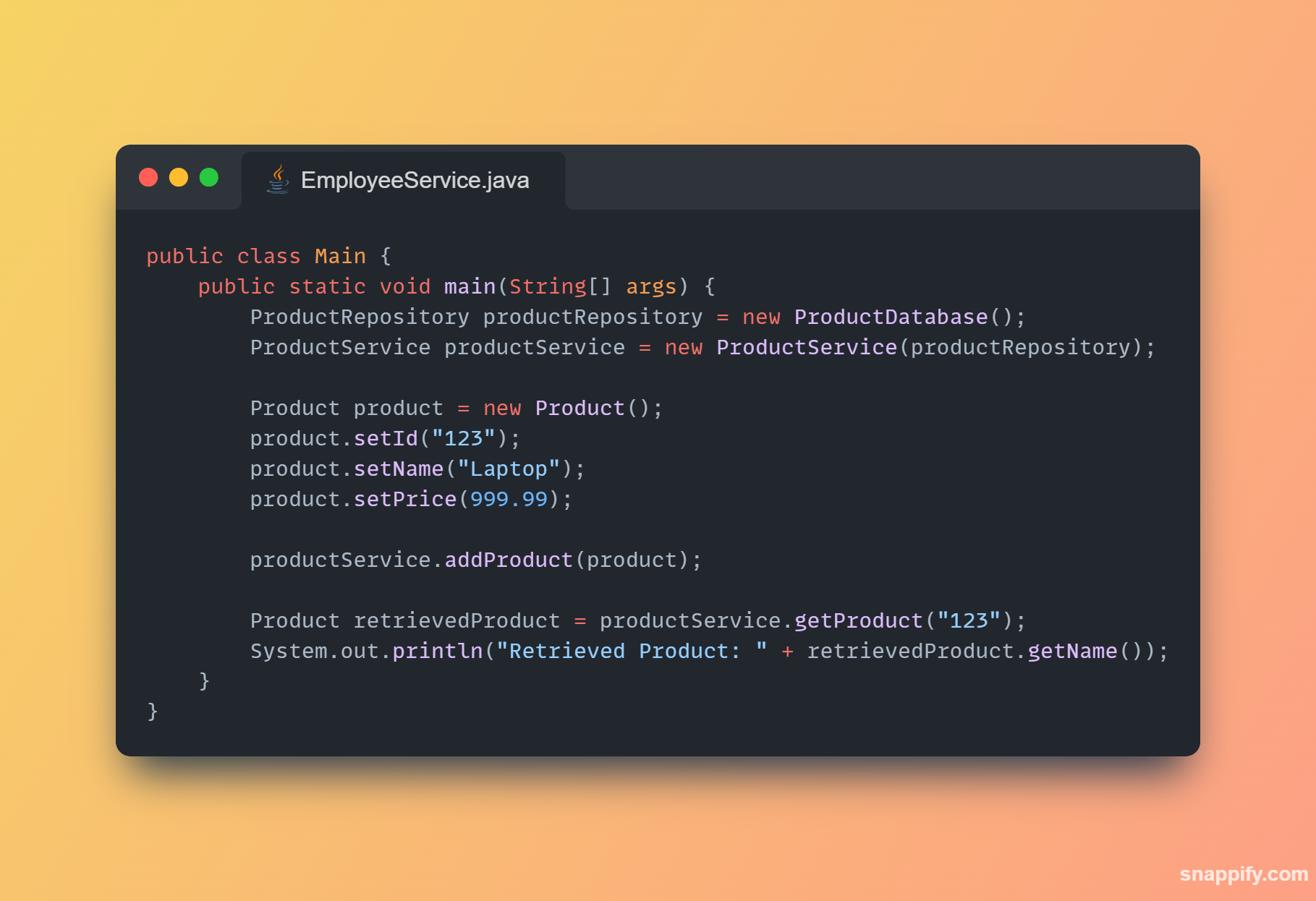

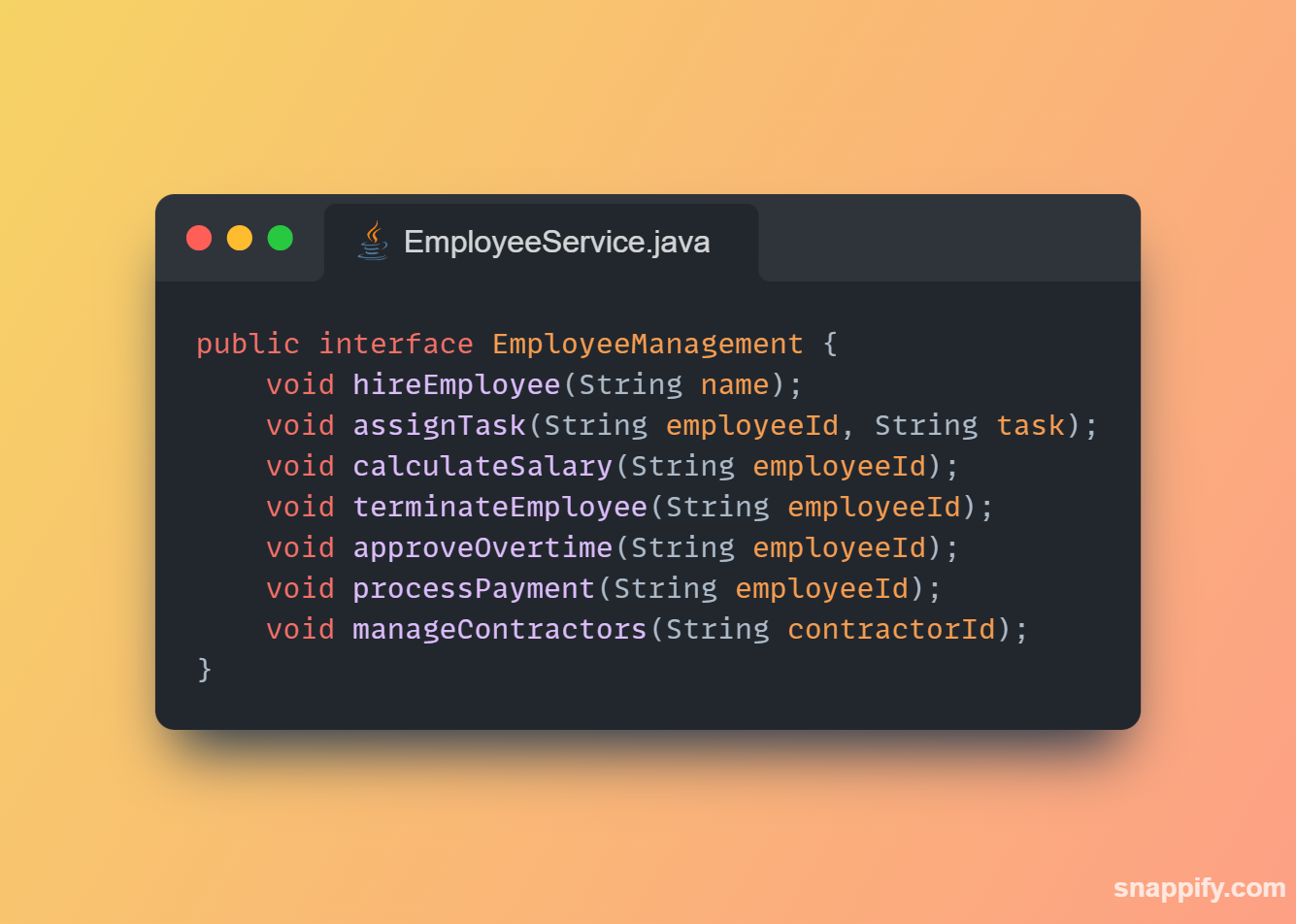

1,864,634 | Getting started with Spring boot | Introduction Spring Boot is a Java framework used for backend development. It's popular for enabling... | 0 | 2024-05-25T07:03:22 | https://dev.to/arikaran/getting-started-with-spring-boot-28gc | java, springboot, beginners, webdev |

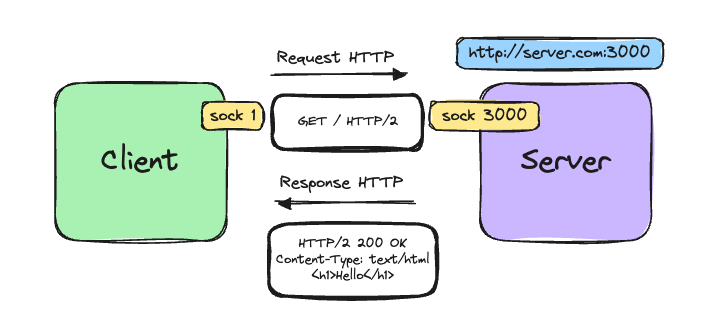

**Introduction**

Spring Boot is a Java framework used for backend development. It's popular for enabling the rapid development of production-ready web applications with minimal configuration.

Spring Boot provides numerous built-in libraries for various technologies, such as messaging, security, etc., making it exceptionally easy to use. While it's commonly utilized in microservice architecture, it's also applicable in monolithic architecture setups.

There are several advantages to using Spring Boot that contribute to its status as a leading framework:

1. **Auto-configuration**: By simply adding the .jar file as a dependency in our Spring project, Spring handles most configurations automatically. If needed, we can override or exclude auto-configuration for specific libraries. For example, configurations for web servers, security, databases, etc., are managed effortlessly.

2. **Starter dependencies**: Spring Boot offers many starter dependencies, simplifying the integration of new technologies. This avoids the need for manual integration.

3. **Actuator support**: In complex applications, monitoring can be challenging. Spring Boot addresses this with built-in support called Actuator. Actuator provides endpoints to check the health of the application, facilitating easier monitoring, especially in applications integrating with various third-party services and databases.

4. **Embedded web servers**: Spring Boot includes embedded web servers, making it effortless to develop and deploy applications. Without embedded servers, deploying to external web servers would require writing extensive XML configurations.

5. **Large community**: Spring Boot boasts a vast community, which significantly eases the developer's life. If stuck, there's ample support available.

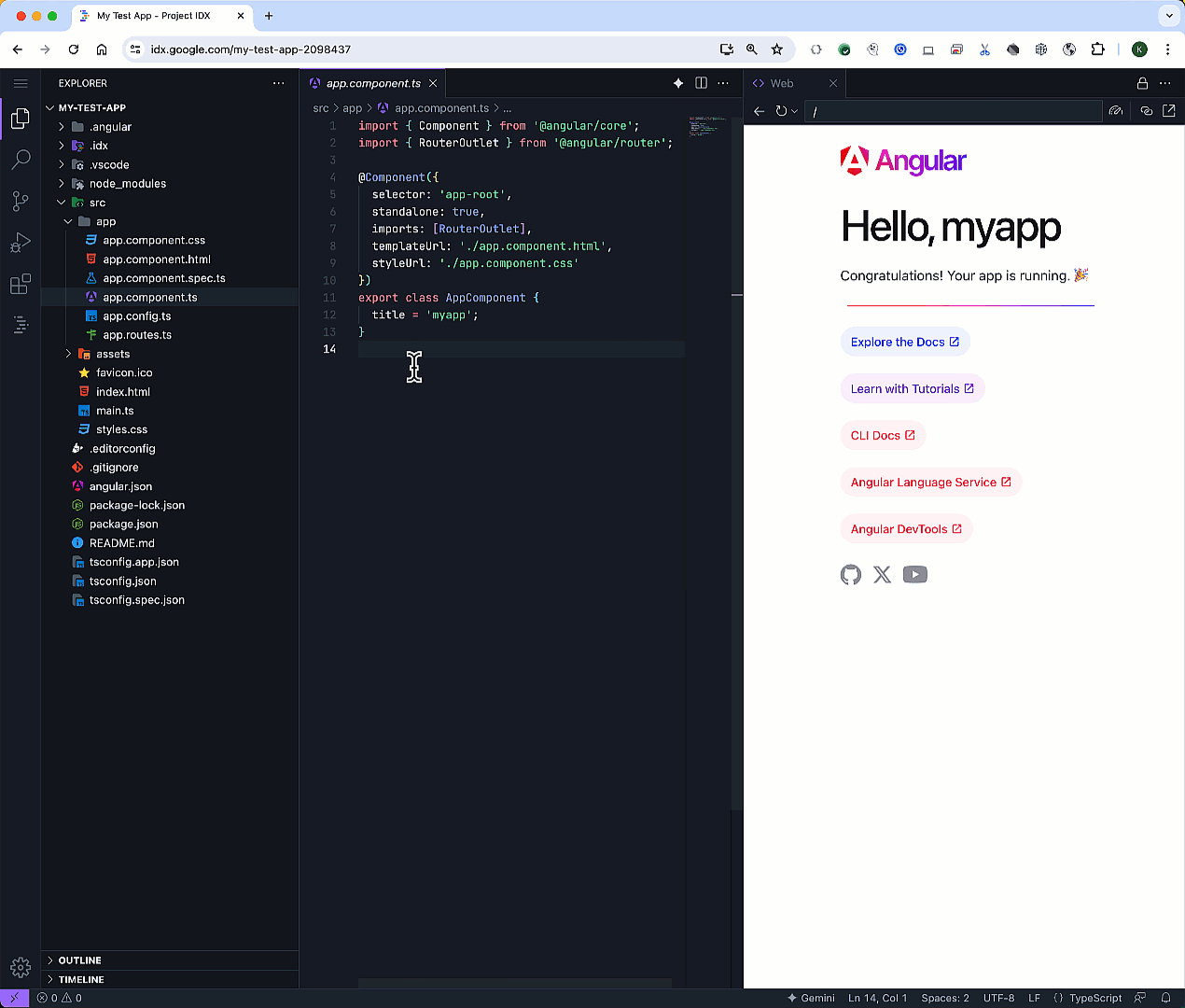

## Project setup

Setting up your first Spring Boot project is straightforward:

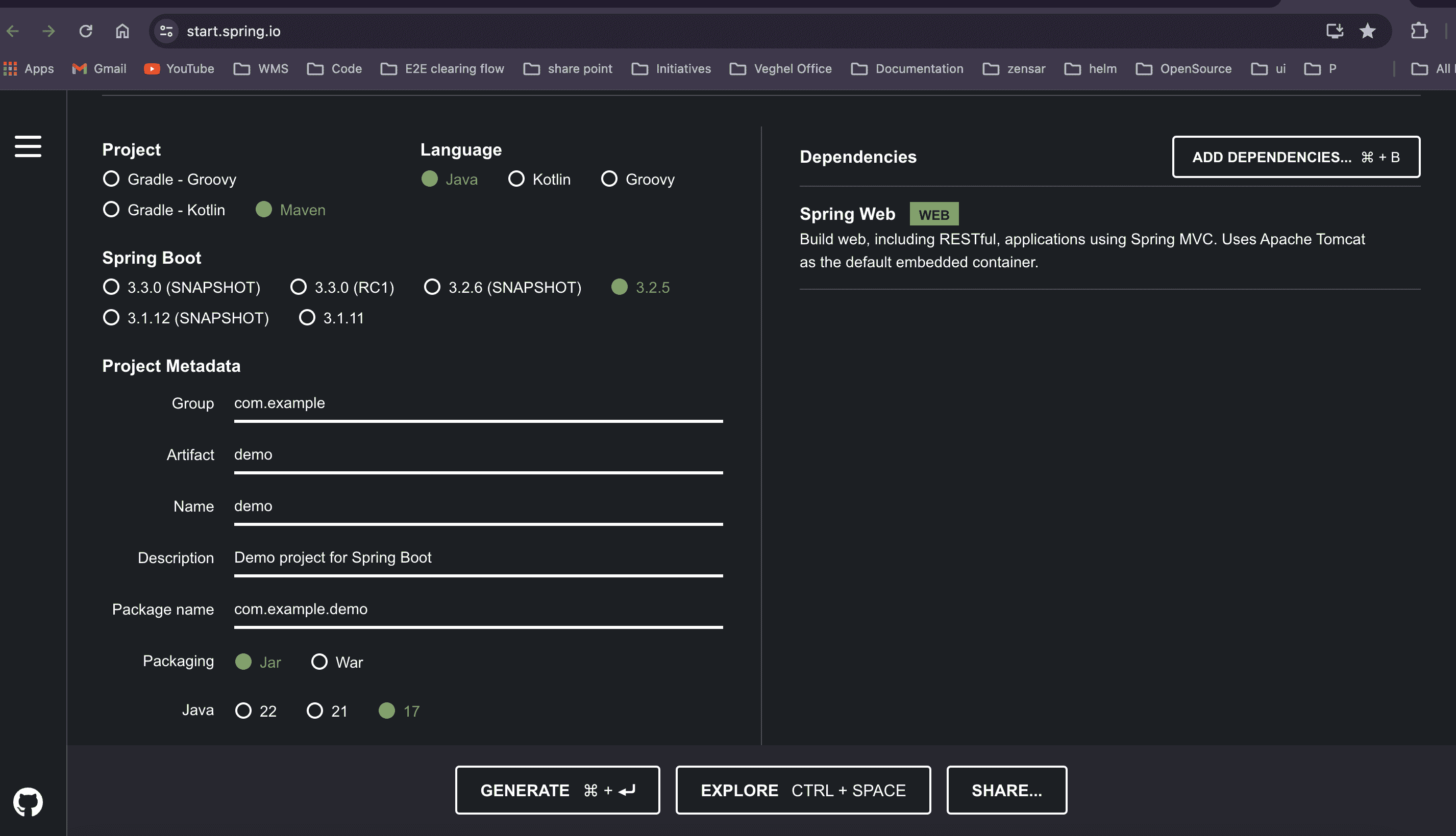

- Open your favorite browser and search for [Spring Initializer](https://start.spring.io/).

- Fill in the group, artifact name, package name, select packaging type as JAR, and choose dependencies like Spring Web.

- Click "Generate Project" to download the zip file, which contains your project. Open it in your preferred IDE (e.g., STS, IntelliJ).

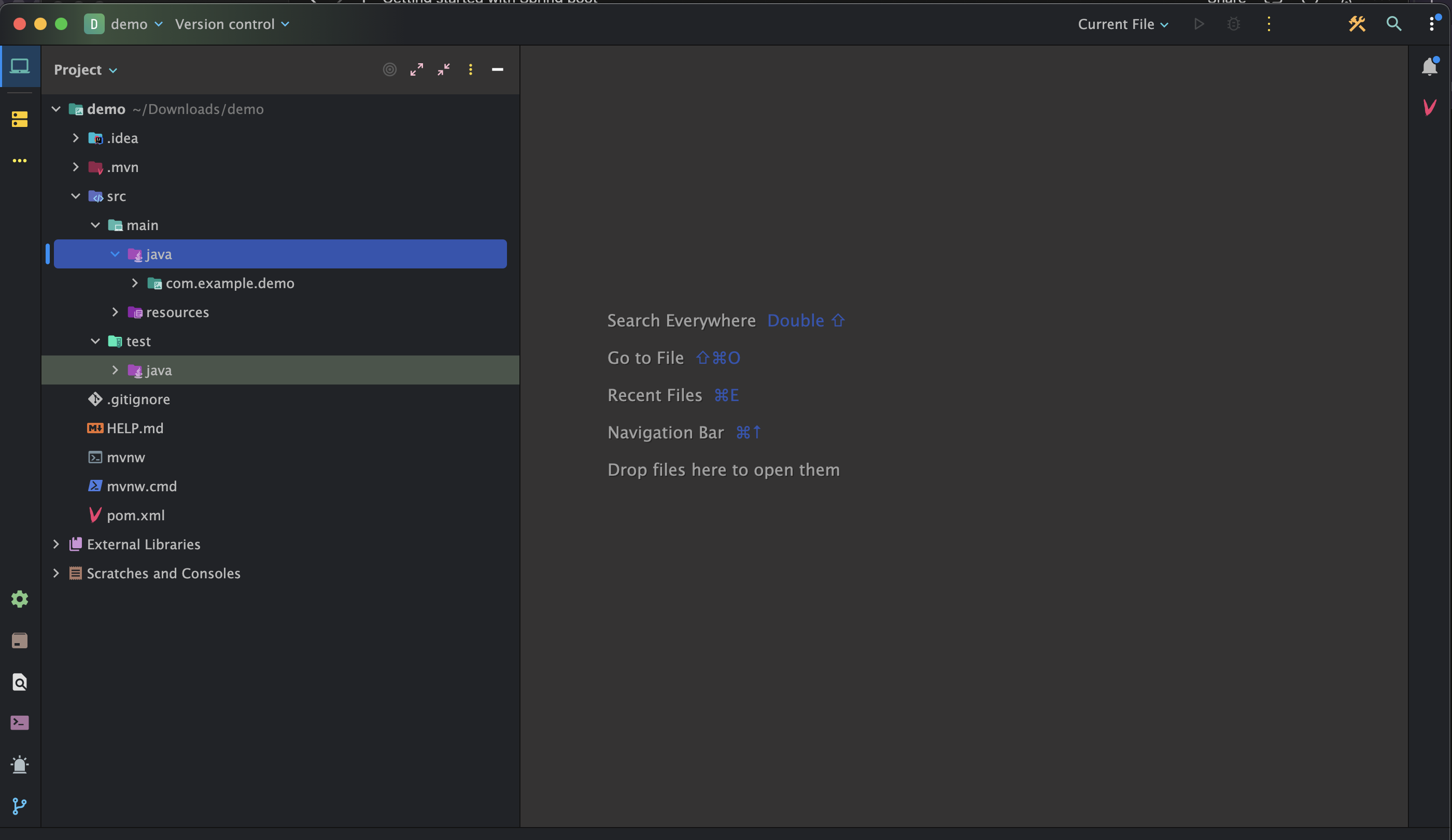

Once your project is open, you'll see the following folder structure:

- **src**: Divided into two subfolders: "main" and "test".

- **main**: Development code resides here. Configuration files and resources can be added to the "resources" subfolder.

- **test**: Contains all test code.

- **pom.xml**: This file manages dependencies of our app and their versions.

The entry point of the application is the class annotated with `@SpringBootApplication`, which contains the main method:

```java

package com.example.demo;

import org.springframework.boot.SpringApplication;

import org.springframework.boot.autoconfigure.SpringBootApplication;

@SpringBootApplication

public class DemoApplication {

public static void main(String[] args) {

SpringApplication.run(DemoApplication.class, args);

}

}

```

**@SpringBootApplication** indicates that Spring scans all packages of this class and its subclasses for configurations and components. It also enables auto-configuration, scanning all dependencies added in the pom.xml file.

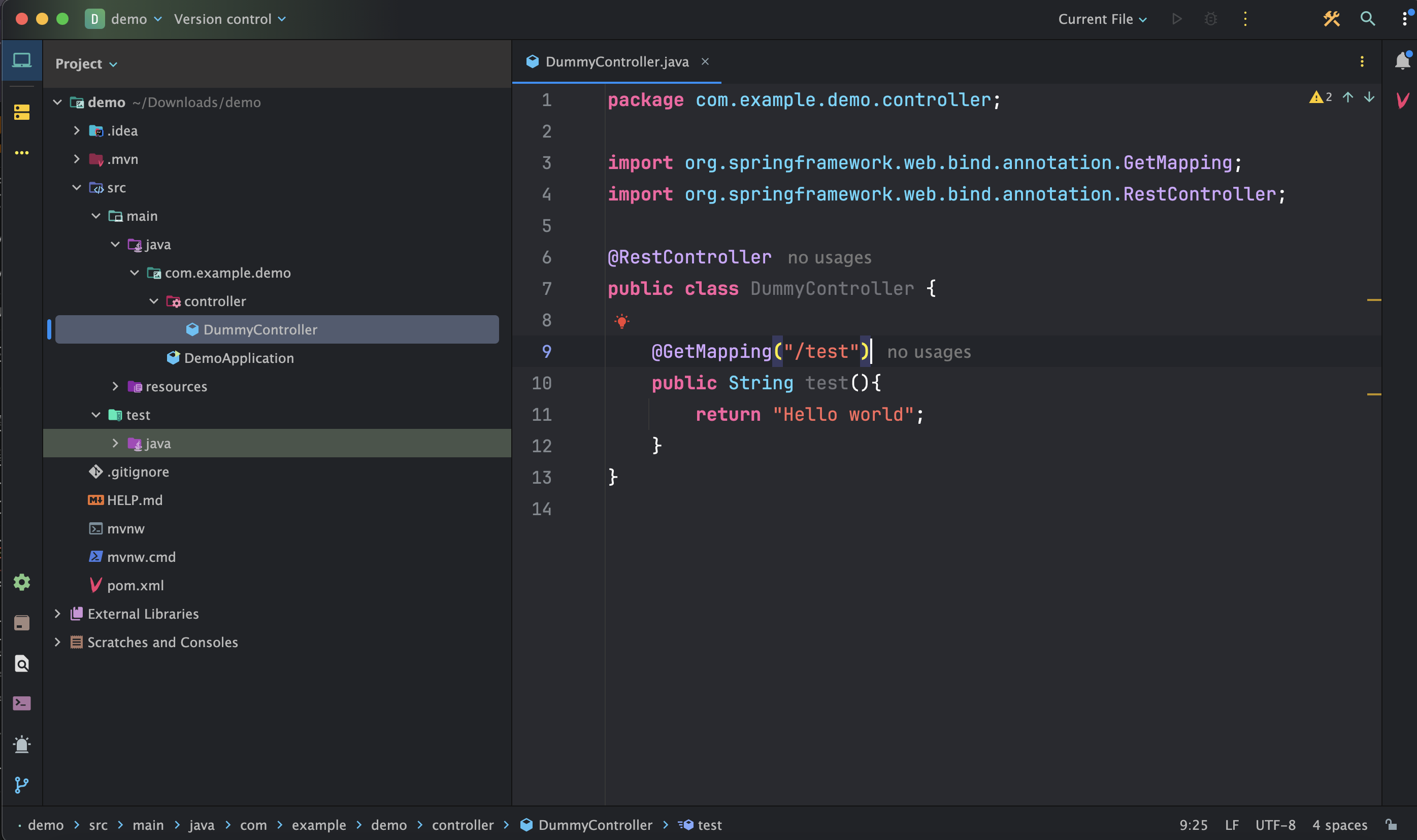

## To print "Hello, World!":

- Create a package under `src/main` named "controller".

- Create a Java class named `DummyController.java` under `src/main/controller` and annotate it with `@RestController` (indicating to Spring Boot that it will handle web requests and responses).

- Write a simple HTTP GET request that returns a string, such as "Hello, World!".

Then start the application and make a GET request using the configured endpoint:http://localhost:8080/test, it will returns the string as response: Hello world

**Ref**:

"Thank you for taking the time to explore this blog. I hope this guide has been helpful in understanding how to get started with Spring Boot. Happy coding!

For more technical blogs, visit https://arikaran.com/" | arikaran |

1,864,633 | International Schools in Dubai | Regent International School is a International School n dubai from Early Years to Year 13, spanning... | 0 | 2024-05-25T06:58:01 | https://dev.to/karishmahsha/international-schools-in-dubai-203f | Regent International School is a International School n dubai from Early Years to Year 13, spanning 40 years of excellence. Their primary program focuses from Key Stage 1 to Key Stage 2 at our primary school in Dubai.Join us to empower your child's future. Inquire now for more details.

| karishmahsha | |

1,864,631 | QA Testing for Cloud Applications: A Special Focus | In the ever-evolving software development landscape, cloud applications have emerged as a dominant... | 0 | 2024-05-25T06:55:28 | https://dev.to/ray_parker01/qa-testing-for-cloud-applications-a-special-focus-3f5c | ---

title: QA Testing for Cloud Applications: A Special Focus

published: true

---

In the ever-evolving software development landscape, cloud applications have emerged as a dominant force, driving the need for specialized <a href="https://dev.to/batista9607/complete-guide-to-importance-of-software-quality-assurance-18bc">Quality Assurance (QA)</a> testing strategies. As businesses continue to shift towards cloud-based solutions, the role of QA testing becomes increasingly critical to ensure that these applications are reliable, secure, and efficient. This article delves into the unique challenges and methodologies for QA testing of cloud applications, highlighting the pivotal role of <a href="https://www.iqvis.com/blog/trending-best-software-testing-companies/">software testing companies</a> in this domain.

<h3>Understanding the Cloud Application Environment</h3>

Cloud applications differ from traditional applications primarily due to their scalability, multi-tenancy, and dependency on Internet accessibility. These characteristics introduce specific challenges in QA testing, such as dealing with diverse user loads, security issues, and integration complexities. To address these challenges, QA must adapt and evolve.

<h3>Challenges in QA Testing for Cloud Applications</h3>

<b>1. Scalability and Performance:</b> One of the biggest challenges is testing the application’s performance under varying loads. Scalability testing ensures that the application can handle increased load without compromising performance.

<b>2. Security and Compliance:</b> Given the data-intensive nature of cloud applications, security testing is paramount. This involves validating compliance with legal and regulatory standards, ensuring data integrity, and protecting against breaches.

<b>3. Multi-Tenancy Issues:</b> Cloud applications often serve multiple clients on the same infrastructure. QA testing must ensure that data segregation is maintained and that the actions of one tenant do not adversely affect another.

<b>4. Integration and Compatibility:</b> Testing must ensure that cloud applications integrate smoothly with other systems and software, often involving complex interfaces and configurations.

<h3>The Role of Software Testing Companies</h3>

Software testing companies play a crucial role in overcoming these challenges. They bring expertise, tools, and methodologies tailored to cloud applications, enhancing the quality and reliability of software solutions.

<b>1. Expertise in Cloud-Specific Testing:</b> These companies possess specialized knowledge in cloud technologies and are adept at navigating the complexities associated with cloud environments. This expertise is critical for conducting thorough and effective QA tests.

<b>2. Advanced Testing Tools:</b> Software testing companies utilize advanced tools that can simulate various environments, loads, and stress factors. Tools like Selenium, LoadRunner, or JMeter are commonly used to automate testing processes and make them more efficient and comprehensive.

<b>3. Continuous Testing and Integration:</b> In the agile world of cloud development, continuous testing is vital. Software testing companies integrate QA into the continuous development process, enabling real-time issue identification and resolution. This integration is crucial for maintaining the stability and performance of cloud applications.

<b>4. Security Testing:</b> These companies specialize in rigorous security testing practices, including penetration testing and vulnerability assessments, to ensure that applications are secure from external threats and comply with necessary standards.

<h3>Methodologies for Effective QA Testing in Cloud Applications</h3>

<b>1. Automation Testing:</b> Given cloud applications' repetitive and scalable nature, automation testing is beneficial and necessary. It allows for rapid execution of test cases, consistent results, and efficient utilization of resources.

<b>2. Performance Testing:</b> This involves stress and load testing to ensure that the application performs optimally under varying conditions. It’s crucial for assessing how cloud solutions handle heavy data loads and user requests.

<b>3. Security Testing:</b> Implementing comprehensive security tests, including encryption protocols and intrusion detection systems, ensures that data remains secure in a cloud environment.

<b>4. Usability Testing:</b> Cloud applications must be user-friendly and accessible across various devices and platforms. Usability testing ensures that the user interface meets the expected standards of simplicity and efficiency.

<h3>Conclusion</h3>

The migration to <a href="https://www.spiceworks.com/tech/cloud/articles/what-is-cloud-computing/">cloud computing</a> is irreversible and continues accelerating, making effective QA testing an indispensable part of the development process. Software testing companies are at the forefront of this field, equipped with the tools, knowledge, and strategies to ensure that cloud applications meet the highest quality and security standards. As cloud technology advances, the methodologies and tools of QA testing will evolve, but the fundamental goal remains the same: to deliver seamless, efficient, and secure software solutions to users worldwide.

In this dynamic environment, partnerships with adept software testing companies are beneficial and essential for businesses aiming to leverage the full potential of cloud applications. Their specialized services enhance the quality and performance of applications and ensure they meet the rigorous demands of modern digital operations.

tags:

# QA Testing for Cloud Applications

# Software Testing

---

| ray_parker01 | |

1,864,630 | Unveiling the Benefits of Rent-to-Own Car Rental Solutions | In the dynamic landscape of transportation solutions, Rent-to-Own Car Rental emerges as a... | 0 | 2024-05-25T06:52:23 | https://dev.to/saracarlsson/unveiling-the-benefits-of-rent-to-own-car-rental-solutions-3gl3 | rental, carhire, ridesharing, car | In the dynamic landscape of transportation solutions, [Rent-to-Own Car Rental](https://ecoautos.com.au/) emerges as a game-changer, providing unparalleled flexibility and convenience to drivers worldwide. As a premier provider of automotive mobility, we at **Eco Autos** are committed to reshaping the way individuals access and enjoy vehicles, empowering them with freedom, affordability, and reliability.

## Understanding Rent-to-Own Car Rental

**What Sets Rent-to-Own Car Rental Apart?

**Unlike traditional car rental services, Rent-to-Own offers a unique proposition where customers have the opportunity to lease a vehicle with the potential to own it outright at the end of the agreement. This innovative model blends the benefits of both renting and buying, catering to individuals seeking long-term solutions without the commitment of a full purchase.

## The Process Simplified

**Selection:** Begin your journey by choosing from our diverse fleet of vehicles, ranging from compact cars to spacious SUVs, ensuring there's a perfect match for every lifestyle and preference.

**Agreement:** Sign a comprehensive rental agreement outlining the terms, including the duration of the lease, monthly payments, and the option to purchase.

**Usage:** Enjoy the freedom of driving your chosen vehicle while adhering to the terms of the agreement, maintaining it in good condition to secure future ownership.

**Ownership:** Upon fulfilling the terms of the agreement, seize the opportunity to become the proud owner of your vehicle, unlocking a new chapter of mobility and independence.

## The Advantages of Rent-to-Own Car Rental

**Flexibility in Financing

**One of the primary advantages of Rent-to-Own car rental is the flexibility it offers in financing. Rather than facing the substantial upfront costs associated with purchasing a vehicle outright, customers can spread payments over the lease period, easing financial strain while maintaining access to reliable transportation.

## Pathway to Ownership

For individuals aspiring to own a vehicle but facing credit challenges or financial constraints, [Rent-to-Own car](https://ecoautos.com.au/) provides a viable pathway to ownership. By adhering to the terms of the agreement and making consistent payments, renters can gradually transition from lessees to proud owners, realizing their automotive dreams with ease.

## Maintenance and Service Benefits

As part of our commitment to customer satisfaction, Rent-to-Own car rental includes comprehensive maintenance and service benefits, ensuring that your vehicle remains in peak condition throughout the lease period. From routine inspections to emergency repairs, our team is dedicated to keeping you safe and on the road, minimizing downtime and maximizing enjoyment.

## Freedom to Upgrade

In a world where preferences and priorities evolve, Rent-to-Own car rental offers the freedom to upgrade to a different vehicle as your needs change. Whether you're expanding your family, embarking on a new adventure, or simply seeking a fresh driving experience, our flexible options empower you to make seamless transitions without hassle or inconvenience.

## Conclusion:

In conclusion, Rent-to-Own Car Rental represents a revolutionary approach to automotive mobility, blending affordability, flexibility, and convenience in a seamless package. As pioneers in the industry, we are dedicated to empowering individuals with the freedom to access and enjoy vehicles on their terms, unlocking a world of possibilities and opportunities. | saracarlsson |

1,864,629 | Best wedding invite tips | Quality Materials: Invest in high-quality paper and printing. The texture and weight of the paper... | 0 | 2024-05-25T06:47:46 | https://dev.to/celebrare/best-wedding-invite-tips-2p5b | weddinginvites, invitations, marriage, ecards |

1. Quality Materials: Invest in high-quality paper and printing. The texture and weight of the paper can greatly impact the look and feel of your invitations.

2. Clear Information: Make sure all essential details are included, such as the names of the bride and groom, date, time, and location of the wedding ceremony and reception. Don't forget to include RSVP instructions, whether it's through mail, email, or a wedding website.

3. Clarity and Readability: Ensure that the text on your [wedding invitations](https://celebrare.in/) is easy to read. Choose fonts and font sizes that are clear and legible, especially for important details like the date, time, and location.

4. Start Early : Begin working on your wedding invitations well in advance to allow time for design, printing, and addressing.

5. Personal Touches: Add personal touches to make your invitations unique and memorable. Consider incorporating elements such as custom monograms, illustrations, or photographs.

6. Include Additional Inserts: Depending on your wedding plans, you may need to include additional inserts, such as directions to the venue, accommodation information, or registry details.

7. RSVP Tracking: Implement a system to track RSVPs effectively, whether it's through traditional mail, email, or a wedding website. This will help you keep track of guest responses and finalize your guest list.

8. Theme and Style: Choose a design that reflects the theme and style of your wedding. Whether it's classic, modern, rustic, or themed, your invitations should set the tone for the event.

9. Proofreading: Thoroughly proofread your invitations to catch any spelling or grammatical errors. It's also helpful to have someone else review them before finalizing.

10. Consistent Design: Maintain consistency in design across all elements, including save-the-dates, invitations, RSVP cards, and envelopes. This creates a cohesive look and reinforces your wedding theme.

11. Mailing Timeline: Plan your mailing timeline carefully to ensure that your invitations reach your guests with enough time for them to respond and make arrangements to attend.

12. Postage Considerations: Verify the size and weight of your invitations to determine the correct postage. Consider taking a fully assembled invitation to the post office to ensure accurate postage calculation.

| celebrare |

1,864,628 | Plastering Over Stipple Ceilings: A Comprehensive Guide | Introduction Stipple ceilings, also known as popcorn or acoustic ceilings, were a popular design... | 0 | 2024-05-25T06:44:48 | https://dev.to/francisbarton/plastering-over-stipple-ceilings-a-comprehensive-guide-22i0 | <h2><strong>Introduction</strong></h2>

<p><span style="font-weight: 400;">Stipple ceilings, also known as popcorn or acoustic ceilings, were a popular design choice in homes from the 1950s to the 1980s. While they were favored for their sound-dampening qualities and ability to hide imperfections, modern homeowners often find them outdated. This has led to an increase in interest in plastering over </span><a href="https://www.wisedublinplasteringcontractors.com/sille-ceiling-plastering"><strong>stipple ceiling</strong></a><span style="font-weight: 400;"> to achieve a smoother, more contemporary look. This guide will walk you through the process, from preparation to the final finish, ensuring a flawless result.</span></p>

<h2><strong>Why Consider Plastering Over Stipple Ceilings?</strong></h2>

<h3><strong>The Downside of Stipple Ceilings</strong></h3>

<p><span style="font-weight: 400;">Stipple ceilings can collect dust and cobwebs, making them difficult to clean and maintain. Additionally, these ceilings can contain asbestos, especially if they were installed before the 1980s. The uneven texture is also prone to damage and is difficult to repair seamlessly.</span></p>

<h3><strong>Benefits of a Smooth Ceiling</strong></h3>

<p><span style="font-weight: 400;">Plastering over a stipple ceiling can significantly modernize a room. A smooth, plastered ceiling reflects light better, making spaces appear larger and brighter. Furthermore, it provides a clean slate for any future painting or decorating projects.</span></p>

<h2><strong>Preparing to Plaster Over Stipple Ceilings</strong></h2>

<h3><strong>Safety First</strong></h3>

<p><span style="font-weight: 400;">Before starting any work, it's crucial to determine whether your stipple ceiling contains asbestos. If your home was built or renovated during the time when asbestos was commonly used, hire a professional to test your ceiling. If asbestos is present, you'll need to have it removed by certified professionals to ensure safety.</span></p>

<h3><strong>Materials and Tools Required</strong></h3>

<p><span style="font-weight: 400;">To plaster over stipple ceilings, you'll need:</span></p>

<ul>

<li style="font-weight: 400;"><span style="font-weight: 400;">Protective gear (mask, goggles, gloves)</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Plastic sheeting and painter's tape</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Ladder or scaffolding</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Drop cloths</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Scraper or putty knife</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Joint compound or plaster</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Trowel and hawk</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Sanding tools</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Primer and paint</span></li>

</ul>

<h3><strong>Surface Preparation</strong></h3>

<ol>

<li style="font-weight: 400;"><span style="font-weight: 400;">Cover and Protect: Start by covering floors and furniture with plastic sheeting and drop cloths. Secure the sheeting with painter's tape to prevent dust and debris from spreading.</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Clean the Ceiling: Dust and clean the ceiling thoroughly to remove any cobwebs or loose particles. This ensures better adhesion of the plaster.</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Scrape the Stipple: Using a scraper or putty knife, gently remove any loose or peeling texture from the stipple ceiling. This step may be messy, so ensure your protective gear is worn.</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Prime the Surface: Apply a primer suitable for textured surfaces. This helps the plaster adhere better and provides a more even finish.</span></li>

</ol>

<h2><strong>Plastering Over Stipple Ceilings</strong></h2>

<h3><strong>Applying the Plaster</strong></h3>

<ol>

<li style="font-weight: 400;"><span style="font-weight: 400;">Mix the Plaster: Follow the manufacturer's instructions to mix the plaster or joint compound to the right consistency. It should be smooth and free of lumps.</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">First Coat: Using a trowel, apply the first coat of plaster to the stipple ceiling. Work in small sections, spreading the plaster evenly and smoothing out any ridges or lines.</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Drying Time: Allow the first coat to dry completely. This can take several hours to overnight, depending on the humidity and thickness of the application.</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Second Coat: Apply a second, thinner coat of plaster. This coat should further smooth out the surface and cover any remaining texture. Feather the edges to blend seamlessly with the ceiling.</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Final Touches: Once the second coat is dry, inspect the ceiling for any imperfections. Sand any rough spots lightly to achieve a smooth, even surface.</span></li>

</ol>

<h3><strong>Finishing Up</strong></h3>

<ol>

<li style="font-weight: 400;"><span style="font-weight: 400;">Clean Up: Remove all protective coverings and clean up any dust or debris. Dispose of any materials safely, especially if dealing with asbestos.</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Priming and Painting: Apply a coat of primer to seal the plaster and prepare it for painting. Once the primer is dry, paint the ceiling with your desired color, using a roller for a smooth finish.</span></li>

</ol>

<h2><strong>Conclusion</strong></h2>

<p><a href="https://www.wisedublinplasteringcontractors.com/sille-ceiling-plastering"><strong>Plastering over stipple ceilings</strong></a><span style="font-weight: 400;"> is an effective way to update and modernize your home. By following the proper preparation and application steps, you can achieve a smooth, stylish ceiling that enhances the look and feel of any room. Not only does this process improve the aesthetic appeal, but it also makes the ceiling easier to maintain and paint in the future. Whether you undertake this project yourself or hire a professional, the transformation will be well worth the effort.</span></p> | francisbarton | |

1,864,627 | Converting Extension from JS to JSX | This article is part of the Converting Large Codebase Project to Vite series. All files that... | 27,506 | 2024-05-25T06:44:21 | https://dev.to/elpddev/converting-couple-thousands-jsts-files-that-contains-jsx-content-to-jsx-extension-58oh | vite, react, webpack, jsx | This article is part of the [Converting Large Codebase Project to Vite series](https://dev.to/elpddev/converting-large-codebase-project-to-vite-5b20).

All files that contains JSX code and had ".js" extension failed in the browser.

This happened because the [react swc vite plugin](https://github.com/vitejs/vite-plugin-react-swc) does not compile ".js" files. Instead it send them to the browser as is.

https://github.com/vitejs/vite-plugin-react-swc/blob/b829b03f6476bed60ff5535fe883abc1b0b4e095/src/index.ts#L231

```tsx

const transformWithOptions = async (

id: string,

code: string,

target: JscTarget,

options: Options,

reactConfig: ReactConfig,

) => {

const decorators = options?.tsDecorators ?? false;

const parser: ParserConfig | undefined = id.endsWith(".tsx")

? { syntax: "typescript", tsx: true, decorators }

: id.endsWith(".ts")

? { syntax: "typescript", tsx: false, decorators }

: id.endsWith(".jsx")

? { syntax: "ecmascript", jsx: true }

: id.endsWith(".mdx")

? // JSX is required to trigger fast refresh transformations, even if MDX already transforms it

{ syntax: "ecmascript", jsx: true }

: undefined;

if (!parser) return;

let result: Output;

try {

result = await transform(code, {

filename: id,

swcrc: false,

configFile: false,

sourceMaps: true,

jsc: {

target,

parser,

experimental: { plugins: options.plugins },

transform: {

useDefineForClassFields: true,

react: reactConfig,

},

},

});

```

That is an [explicit decision](https://github.com/vitejs/vite-plugin-react-swc/issues/57#issuecomment-1425931254) made by the plugin authors.

## Chosen Solution

So in order to have the plugin take all those files and transpile them with SWC, we had to change the extension all of "js/ts" files that contains JSX to "jsx/tsx" equivalent extension.

Without a transpiler in the process, the jsx left as is:

https://github.com/vitejs/vite/discussions/3448

The conversion process can be done in several ways. One of them is using a script to go though all project "js/ts" files, detect if they have any JSX syntax and change their extension.

An example of [such script](https://github.com/vitejs/vite/discussions/3448#discussioncomment-5240383) taken from comment in one of the issues:

```shell

find src -type f | grep "\.[jt]s$" | xargs -n1 grep -HE "(<\/)|(\/>)" | cut -d: -f1 | uniq | awk '{print "mv "$1" "$1"x"}' | sh

```

### Breakdown of Script

#### list All Files Nested in a Target Folder

`find src -type f`

[find](https://www.man7.org/linux/man-pages/man1/find.1.html) - This command searches for all files (-type f) within the src directory and its subdirectories.

The output is a list of the filenames, include their paths.

```shell

./docs/.eslintrc.cjs

./docs/tsconfig.json

./docs/.storybook/main.js

./docs/package.json

./docs/stories/button.stories.ts

```

#### Filter Out Only Needed Files By Extension

`| grep "\.[jt]s$"`

The pipe | takes the output of the find command and passes it to grep.

[grep](https://www.man7.org/linux/man-pages/man1/grep.1.html) - grep "\.[jt]s$" filters the files, keeping only those whose names that end with .js or .ts (JavaScript or TypeScript files). for the grep patterns basic regex can be used as default.

the output is the same format of file paths and names, but filtered only to the files end with js/ts extensions.

```shell

./docs/.storybook/main.js

./docs/stories/button.stories.ts

```

#### Filter Out Files by Existence of Jsx Content Inside Them

`| xargs -n1 grep -HE "(<\/)|(\/>)"`

[xargs](https://www.man7.org/linux/man-pages/man1/xargs.1.html) `-n1` takes each file name from the previous output one by one and executes the following grep command.

`grep -HE "(<\/)|(\/>)"`

searches for the pattern </ or /> in each file.

`-H` includes the filename of the file grep searched in, in the output,

`-E` allows for extended regular expressions syntax.

```shell

./docs/stories/button.stories.ts: </Button>

```

#### Split the Output and Keep Only the File Names

`| cut -d: -f1`

[cut](https://www.man7.org/linux/man-pages/man1/cut.1.html) - remove sections from each line of files

`-d:` splits the output by the `:` delimiter. The previous `grep` output it to separate the file and the content found.

`-f1` keeps only the first field, which is the filename.

This removes the line number and matched text from the grep output, leaving only the unique filenames.

```shell

./docs/stories/button.stories.ts

```

#### Reduce the File List to Unique File Names

`| uniq`

[uniq](https://www.man7.org/linux/man-pages/man1/uniq.1.html) filters out any duplicate filenames, so each filename appears only once.

```shell

./docs/stories/button.stories.ts

```

#### Construct a `Rename` Command for the Specific File Name

`| awk '{print "mv "$1" "$1"x"}'`

[awk](https://www.man7.org/linux/man-pages/man1/awk.1p.html) constructs a mv (move) command for each file.

It renames each file by appending an "x" to its name.

```shell

mv ./docs/stories/button.stories.ts ./docs/stories/button.stories.tsx

```

#### Execute the `Rename` Command

`| sh``

The final `| sh` executes the constructed `mv` commands in the shell, renaming the files.

## Process

On a large project, you will want to do this conversion in several PRs and not one big chunk, so not to create large conflicts with other people ongoing work. | elpddev |

1,864,626 | How to Optimize Performance in Vue.js Applications: Beginner to Advanced Guide | Welcome to the comprehensive guide on optimizing performance in Vue.js applications! Whether you're... | 0 | 2024-05-25T06:41:37 | https://dev.to/delia_code/how-to-optimize-performance-in-vuejs-applications-beginner-to-advanced-guide-53db | vue, webdev, javascript | Welcome to the comprehensive guide on optimizing performance in Vue.js applications! Whether you're new to Vue or looking to refine your skills, this guide covers essential techniques from basic to advanced, all utilizing the Composition API. Let's dive in!

#### Understanding Vue.js Performance Basics

Before we jump into optimization techniques, let's understand why performance matters. A fast, responsive application provides a better user experience, improves SEO rankings, and can increase user engagement and retention.

### Basic Optimization Techniques

#### Lazy Loading Components

Lazy loading delays the loading of components until they are needed, reducing the initial load time of your application. This is particularly useful for larger applications where loading all components at once can slow down the initial load time.

**Example:**

Using the router to lazy load components:

```javascript

// Lazy load a component in the router

const routes = [

{

path: '/about',

component: () => import('./components/About.vue')

}

]

```

Using the Composition API, you can dynamically import components in your setup function:

```javascript

import { defineAsyncComponent } from 'vue'

export default {

setup() {

const About = defineAsyncComponent(() => import('./components/About.vue'))

return { About }

}

}

```

This method ensures that the `About` component is only loaded when the user navigates to the '/about' route, thereby reducing the initial bundle size and improving load time.

#### Using v-show vs. v-if

While both `v-if` and `v-show` control the visibility of elements, they do so differently. `v-if` adds or removes elements from the DOM, which is more performance-intensive. In contrast, `v-show` simply toggles the `display` CSS property, making it more efficient for elements that need to be toggled frequently.

**Example:**

```html

<template>

<div>

<button @click="show = !show">Toggle</button>

<div v-show="show">This is a toggled element</div>

</div>

</template>

<script>

import { ref } from 'vue'

export default {

setup() {

const show = ref(false)

return { show }

}

}

</script>

```

In this example, `v-show` is more efficient as it avoids unnecessary DOM manipulations, thus improving performance when toggling the visibility of elements frequently.

### Intermediate Optimization Techniques

#### Debouncing and Throttling

When handling user input, such as search queries or form submissions, it's essential to debounce or throttle events to avoid performance issues. Debouncing delays the execution of a function until after a specified time has elapsed since the last time it was invoked. Throttling ensures a function is only executed once per specified interval.

**Debouncing Example:**

```javascript

import { ref } from 'vue'

import debounce from 'lodash/debounce'

export default {

setup() {

const query = ref('')

const search = debounce((value) => {

// Perform search operation

console.log(value)

}, 300)

return { query, search }

}

}

```

In this example, the `search` function will only execute 300 milliseconds after the user has stopped typing, reducing the number of API calls and improving performance.

#### Using Reactive References Wisely

Reactive references can become a performance bottleneck if overused. Use them judiciously and prefer computed properties for derived state. This helps in avoiding unnecessary reactivity and ensures your application remains performant.

**Example:**

```javascript

import { ref, computed } from 'vue'

export default {

setup() {

const items = ref([1, 2, 3, 4, 5])

const evenItems = computed(() => items.value.filter(item => item % 2 === 0))

return { items, evenItems }

}

}

```

In this example, `evenItems` is a computed property that automatically updates when `items` changes, but it is not itself reactive, which helps in maintaining performance.

### Advanced Optimization Techniques

#### Virtual Scrolling

For rendering large lists, use virtual scrolling to render only the visible items, significantly improving performance. Virtual scrolling is particularly useful when dealing with long lists or tables where rendering all items at once would be inefficient.

**Example using vue-virtual-scroll-list:**

```html

<template>

<virtual-list :size="50" :remain="10" :items="items">

<template v-slot="{ item }">

<div class="item">{{ item }}</div>

</template>

</virtual-list>

</template>

<script>

import { ref } from 'vue'

import VirtualList from 'vue-virtual-scroll-list'

export default {

components: { VirtualList },

setup() {

const items = ref([...Array(1000).keys()])

return { items }

}

}

</script>

```

In this example, only the visible items are rendered, reducing the load on the DOM and improving performance.

#### Code Splitting and Bundling

Use tools like Webpack to split your code into smaller bundles, loading only what is necessary. This is particularly useful for large applications as it allows the browser to load only the essential parts of your application initially and defer the loading of other parts until they are needed.

**Example:**

Configure Webpack for code splitting:

```javascript

// webpack.config.js

module.exports = {

optimization: {

splitChunks: {

chunks: 'all',

},

},

}

```

This configuration tells Webpack to split your code into smaller chunks, which can be loaded on demand.

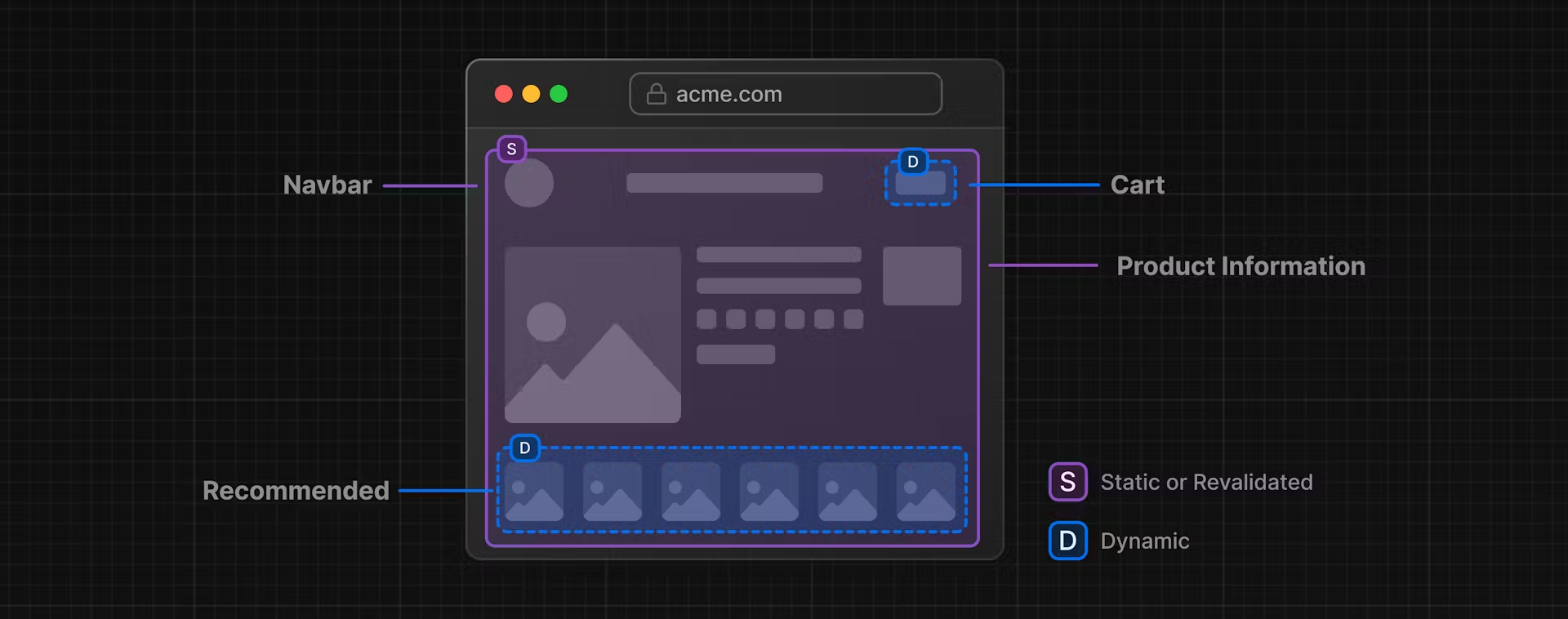

#### Server-Side Rendering (SSR)

For performance-critical applications, consider using Server-Side Rendering (SSR) with Nuxt.js to improve load times and SEO. SSR can significantly enhance the initial load time by rendering the initial HTML on the server and sending it to the client.

**Example:**

Setting up a Nuxt.js project:

```bash

npx create-nuxt-app my-project

```

Nuxt.js handles SSR out of the box, allowing you to build Vue.js applications with improved performance and SEO benefits.

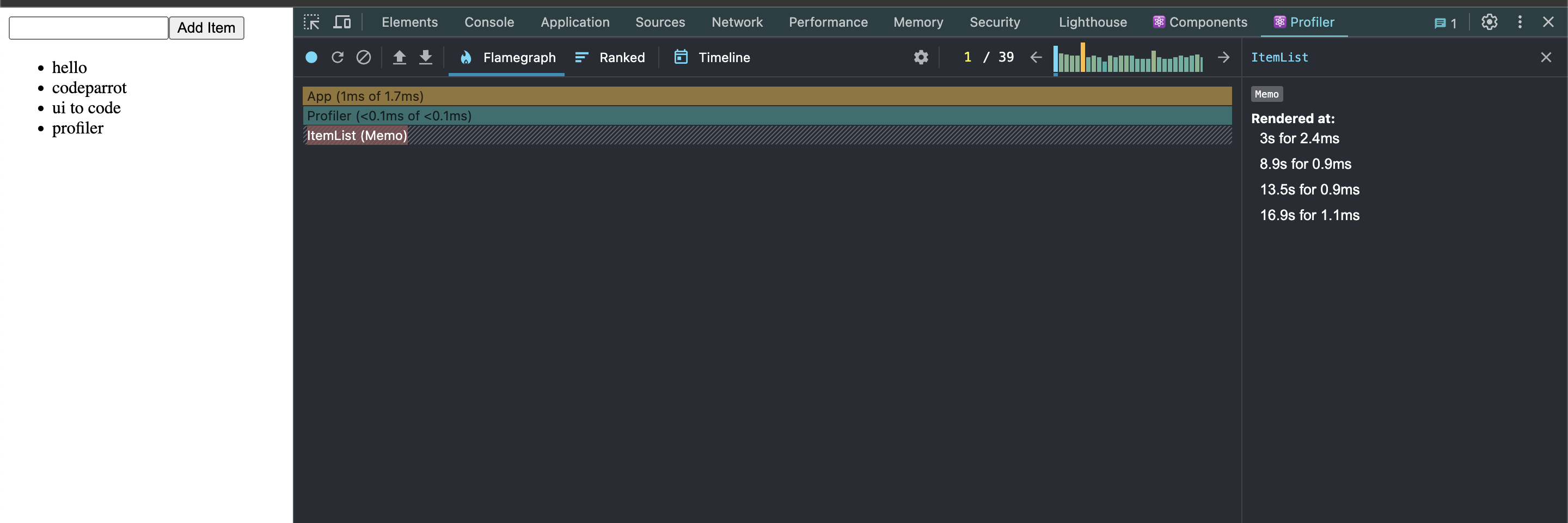

#### Performance Monitoring

Regularly monitor your application's performance using tools like Vue Devtools, Lighthouse, and Webpack Bundle Analyzer. These tools help identify bottlenecks and areas for improvement.

1. **Vue Devtools**: Provides insight into your application's state and performance.

2. **Lighthouse**: Offers a comprehensive audit of your web application's performance, accessibility, and SEO.

3. **Webpack Bundle Analyzer**: Visualizes the size of Webpack output files, helping you understand where you can optimize your bundle sizes.

Optimizing the performance of your Vue.js applications involves a mix of simple and advanced techniques. Start with basic optimizations like lazy loading and efficient use of directives, then move on to intermediate strategies like debouncing and reactive references. Finally, implement advanced techniques such as virtual scrolling, code splitting, and SSR for the best performance.

By following these practices, you can ensure your Vue.js applications are fast, responsive, and ready to provide a great user experience. Happy coding!

By implementing these techniques and continually monitoring your application's performance, you'll be well on your way to creating efficient and user-friendly Vue.js applications. | delia_code |

1,864,625 | Unlocking Bruno Fernandes: A Guide to Acquiring His Player Card in FC24 | In FC24, you're not just stepping onto the pitch; you're immersing yourself in a dynamic world of... | 0 | 2024-05-25T06:40:32 | https://dev.to/patti_nyman_5d50463b9ff56/unlocking-bruno-fernandes-a-guide-to-acquiring-his-player-card-in-fc24-461d | In FC24, you're not just stepping onto the pitch; you're immersing yourself in a dynamic world of soccer strategy and thrilling gameplay. Let me take you on a journey through the game's story, background, character creation, team building, strategy, and how to enhance your gaming experience. When you embark on your journey, mmowow items can lend a helping hand when you need help.

FC24 unfolds in a vibrant world where soccer reigns supreme. As a player, you'll navigate through the highs and lows of the soccer universe, from the drama of the transfer market to the intensity of match day. Your ultimate goal? To lead your team to glory and cement your legacy in the annals of soccer history.

Character and Team Creation:

Before diving into the action, you'll craft your unique soccer persona. Customize your appearance, select your preferred position on the field, and fine-tune your skills to match your playstyle. Then, assemble your dream team by recruiting a diverse cast of players from around the globe. Each player brings their strengths, weaknesses, and personalities to the table, adding depth and complexity to your squad.

FC24 Rating:

As you progress through the game, your team's performance will be evaluated based on various criteria, culminating in an overall FC24 rating. This rating serves as a benchmark of your team's success and provides valuable feedback on areas for improvement.

Team Formation:

Mastering the art of team formation is key to dominating the competition in FC24. Experiment with different formations, tactics, and strategies to find the perfect balance between offense and defense. Whether you prefer a classic 4-4-2 or a daring 3-5-2, the choice is yours.

Preparation:

Preparation is paramount before heading into a match. Scout your opponents, analyze their strengths and weaknesses, and devise a game plan to exploit any vulnerabilities. Additionally, pay close attention to your team's fitness, morale, and chemistry to ensure peak performance on the field.

Player Selection:

One of the most effective ways to enhance your gaming experience is by selecting the right players for your team. One standout player to consider is Bruno Fernandes.

Bruno Fernandes Background:

Born and raised in Maia, Portugal, Bruno Fernandes honed his skills on the streets before rising through the ranks of Sporting CP's youth academy. His meteoric rise continued as he made a name for himself in the Primeira Liga, catching the eye of top European clubs with his dazzling performances.

Career Development:

Bruno Fernandes' journey to stardom reached new heights when he joined Manchester United in 2020. His arrival injected new energy into the team, with his vision, passing, and goal-scoring prowess earning him a place among the Premier League's elite.

World Stage Achievements:

In FC24, Bruno Fernandes has left an indelible mark on the world stage, delivering standout performances in key matches and tournaments. From decisive goals to breathtaking assists, he consistently rises to the occasion when it matters most.

Role in the Game:

In FC24, Bruno Fernandes is a midfield maestro, capable of dictating the tempo of the game and unlocking defenses with his pinpoint passes and clinical finishing. His versatility and leadership make him a valuable asset both on and off the pitch, catalyzing your team's success.

Importance in Team Composition:

When assembling your dream team, Bruno Fernandes' presence is indispensable. Whether slotting into an attacking midfield role or pulling the strings from deeper positions, his influence elevates the performance of those around him, making him the linchpin of your squad.

Alright, let's dive into Bruno Fernandes' significant contributions throughout the season, his prospects, and why having him on your team is crucial.

Throughout the season, Bruno Fernandes has been nothing short of exceptional. His ability to control the midfield, create scoring opportunities, and find the back of the net himself has been instrumental in our team's success. Whether it's delivering inch-perfect passes, unleashing thunderous shots from a distance, or orchestrating the attack with his vision and creativity, Bruno consistently delivers when it matters most.

Looking ahead, Bruno Fernandes' future is incredibly bright. As he continues to refine his skills and adapt to new challenges, there's no limit to what he can achieve. Whether it's leading our team to domestic glory or making his mark on the international stage, Bruno's talent and determination make him a force to be reckoned with for years to come.

Now, let's talk about why having Bruno Fernandes on your team is essential. Not only does he bring unparalleled skill and versatility to the midfield, but his leadership and work ethic also inspire those around him to elevate their game. Whether it's rallying the troops during a tough match or leading by example with his relentless work rate, Bruno sets the standard for excellence both on and off the pitch.

In terms of his potential rating and skill attributes in the game, Bruno Fernandes boasts top-tier ratings across the board. His exceptional passing, shooting, dribbling, and vision make him a game-changer in any situation. With the ability to unlock defenses with a single pass or unleash a thunderbolt from outside the box, Bruno is a true game-changer who can turn the tide of any match in your favor.

Now, let's talk about how you can acquire Bruno Fernandes's player card in FC24. There are several ways to obtain his card, including through in-game events, completing specific challenges or objectives, or purchasing it directly from the transfer market. However, the most direct way to acquire Bruno Fernandes' player card is by using FC24 coins.

FC24 coins are the in-game currency used to acquire player cards, unlock special items, and enhance your gaming experience. You can earn FC24 coins by completing matches, participating in events, or purchasing them through the in-game store.

In the early stages of the game, it's essential to use FC24 coins wisely, focusing on building a strong squad and addressing any weaknesses in your lineup. As you progress through the season, you can invest in upgrading facilities, hiring staff, and acquiring top-tier players like Bruno Fernandes to take your team to the next level.

In the middle stages of the game, consider investing in scouting networks to uncover hidden gems or bolstering your squad with high-impact signings like Bruno Fernandes. Additionally, use FC24 coins to participate in special events or tournaments to earn valuable rewards and strengthen your team even further.

In the later stages of the game, strategic use of FC24 coins becomes even more critical as you vie for silverware and supremacy. Whether it's making key signings to shore up your defense or investing in training facilities to develop young talent, every coin spent should bring you closer to achieving your ultimate goal of soccer glory. Due to its multiple uses in the game, some players choose FIFA 24 coins.

In summary, Bruno Fernandes is more than just a player; he's a game-changer who can transform your team's fortunes in FC24. By leveraging his exceptional skills and leadership qualities, you can propel your team to new heights and etch your name in soccer history. So gather your coins, assemble your squad, and get ready to dominate the pitch with Bruno Fernandes by your side. | patti_nyman_5d50463b9ff56 | |

1,864,624 | Is Learning AI Essential for Software Engineers? | The rapid evolution of artificial intelligence (AI) has significantly impacted various industries,... | 0 | 2024-05-25T06:38:22 | https://dev.to/yogini16/is-learning-ai-essential-for-software-engineers-4gf9 | The rapid evolution of artificial intelligence (AI) has significantly impacted various industries, including software engineering. As AI continues to permeate different sectors, the question arises: is it necessary for software engineers to learn AI? While the necessity may vary depending on the specific role and industry, there are compelling reasons why software engineers should consider acquiring AI knowledge.

##The Growing Importance of AI

**Enhanced Problem-Solving Capabilities:** AI can augment traditional software engineering by providing new tools and techniques for solving complex problems. Machine learning (ML) algorithms, for example, can analyze vast amounts of data to identify patterns and make predictions, which can be invaluable in fields such as cybersecurity, finance, healthcare, and more.

**Demand in the Job Market:** The demand for AI skills in the job market is on the rise. Companies are increasingly looking for engineers who can integrate AI solutions into their products and services. Having AI expertise can open up new career opportunities and make candidates more competitive.

**Innovation and Efficiency:** AI can drive innovation by enabling the development of intelligent applications that can perform tasks autonomously, learn from data, and improve over time. For software engineers, understanding AI can lead to the creation of more efficient and effective software systems.

**Interdisciplinary Applications:** AI is not confined to a single domain; it intersects with various fields such as robotics, natural language processing, and computer vision. This interdisciplinary nature means that software engineers with AI knowledge can contribute to a broader range of projects.

##Should Every Software Engineer Learn AI?

While AI is undoubtedly valuable, it is not mandatory for every software engineer to become an AI expert. The decision to learn AI should be based on individual career goals and interests. Here are some considerations:

**Career Path:** Engineers working in domains like web development, mobile app development, or embedded systems may not need extensive AI knowledge. However, those interested in fields like data science, ML, or AI product development will find AI expertise essential.

**Project Requirements:** In some roles, the use of AI might be integral to the project's success. In such cases, having a solid understanding of AI concepts and techniques is crucial.

**Continuous Learning:** The technology landscape is always evolving. Software engineers should be prepared for lifelong learning, and acquiring AI skills can be a part of this ongoing professional development.

##Roadmap for Learning AI

For those interested in delving into AI, a structured learning path can make the journey more manageable and effective. Here is a comprehensive roadmap:

**1. Foundation in Mathematics and Statistics**

Linear Algebra: Understand vectors, matrices, eigenvalues, and eigenvectors.

Calculus: Focus on derivatives, integrals, and optimization.

Probability and Statistics: Learn about distributions, hypothesis testing, and statistical inference.

**2. Programming Skills**

Python: Master Python, the primary language for AI development.

Libraries and Frameworks: Get familiar with libraries such as NumPy, Pandas, Matplotlib, and frameworks like TensorFlow and PyTorch.

**3. Introduction to Machine Learning**

ML Basics: Learn about supervised and unsupervised learning, regression, classification, clustering, and dimensionality reduction.

Algorithms: Study key algorithms like linear regression, decision trees, k-means clustering, and support vector machines.

**4. Deep Learning**

Neural Networks: Understand the architecture of neural networks, activation functions, and backpropagation.

Advanced Topics: Explore convolutional neural networks (CNNs), recurrent neural networks (RNNs), and generative adversarial networks (GANs).

**5. Specializations**

Natural Language Processing (NLP): Study text processing, sentiment analysis, and language models.

Computer Vision: Learn about image processing, object detection, and image segmentation.

Reinforcement Learning: Understand the basics of agent-environment interaction, reward systems, and policy learning.

**6. Practical Applications**

Projects: Build real-world projects to apply theoretical knowledge. Examples include chatbots, recommendation systems, and image classifiers.

Competitions and Challenges: Participate in platforms like Kaggle to gain practical experience and improve problem-solving skills.

**7. Advanced Studies and Research**

**Reading Research Papers:** Stay updated with the latest advancements by reading research papers and attending conferences.

**Online Courses and Certifications:** Enroll in advanced courses and obtain certifications from platforms like Coursera, edX, and Udacity.

**8. Ethics and AI Governance**

**Ethical AI:** Learn about the ethical implications of AI, including bias, fairness, and privacy concerns.

**AI Regulations:** Understand the regulatory landscape and compliance requirements related to AI deployment.

In short

While not every software engineer must learn AI, having a foundational understanding can significantly enhance one's career prospects and ability to contribute to cutting-edge projects. As AI continues to evolve, staying informed and acquiring relevant skills will be crucial for those looking to thrive in the tech industry. Whether through formal education, online courses, or self-study, embarking on the AI learning journey can be a rewarding endeavor for software engineers. | yogini16 | |

1,864,623 | The Colorful World of Iron Oxide Pigments | The Amazing World of Iron Oxide Pigments Introduction Are you fascinated by color and what it can... | 0 | 2024-05-25T06:35:57 | https://dev.to/safiyaaa/the-colorful-world-of-iron-oxide-pigments-2ogc | oxide | The Amazing World of Iron Oxide Pigments

Introduction

Are you fascinated by color and what it can do? Do you ever wonder about the different colors that we see around us and how they are made? If so, you'll be interested to know about the amazing world of iron oxide pigments. Iron oxide pigments are a type of substance that brings color to many different products and materials. They are used in a variety of applications, from making buildings look beautiful to coloring our food and even our makeup products. We will take a closer look at the wonderful world of and how they are used.

Advantages of utilizing iron oxide pigments

Iron Oxide Pigment are preferred for the selection of applications for their benefits being numerous.

To begin with, they are typically exceptionally durable, which means that after utilized, they shall undoubtedly really endure for stretches that are long diminishing.

Iron oxide pigments have become stable furthermore; they do not really separate or react along side other compounds, making them safe to make use of.

Also they are non-toxic, making them especially perfect for discovered in dishes and items which are cosmetic.

Finally, these are typically several of the most vibrant and colorful pigments available, helping to make them an option like color like fantastic.

Innovations within the iron oxide pigment industry

Today, there have been innovations which can be a few the iron oxide pigment industry, that have actually triggered along with this and a lot more pigments being vibrant.

One development like work like significant usage of advanced level technology like manufacturing that allows for greater accuracy into the manufacturing process.

Also, new iron like artificial pigments may actually have now been developed which will produce even brighter and more vivid colors, making them a perfect choice to be used into the color industry like cosmetic.

Protected and usage that's right of oxide pigments

You shall have to use iron oxide pigments precisely and properly to produce one of the most results that are effective.

First, find the proper kinds of iron oxide pigment for any application.

Next, adhere to the principles through the label to guarantee the pigment is blended and used properly.

This might make sure the pigment executes optimally and can supply the most outcomes that are readily of good use.

Also, it is important to help keep and manage Iron Oxide correctly plus in line due to the maker's directions, inside an awesome and spot like dry.

Application and Quality

Quality is critical with regards to iron oxide pigments.

Opt for top-quality brands services which can be offering are premium products, like those that meet industry criteria and conform to legislation.

This product quality like best of pigment may help to ensure your application is consistent and consistent, yielding outcomes which can be gorgeous.

Applications for iron oxide pigments are diverse, including paint, synthetic materials, and textiles, to say a couple of.

The thing like iron like important is using pigments efficiently is to understand to select the type like better of pigment for almost any application and in addition to work well with it precisely.

Conclusion:

In conclusion, the world of iron black oxide is vast and vibrant, offering a vast range of options for those seeking to use them. From the incredibly durable to the safe and non-toxic, iron oxide pigments are an excellent choice for a wide variety of applications. Whether you are using them to color cosmetics, fabric, or buildings, they provide brightness and long-lasting results. With the right quality, safety, and application knowledge, iron oxide pigments provide a colorful world of possibilities.

Source: https://www.sjzhuabangkc.com/iron-oxide-pigment160 | safiyaaa |

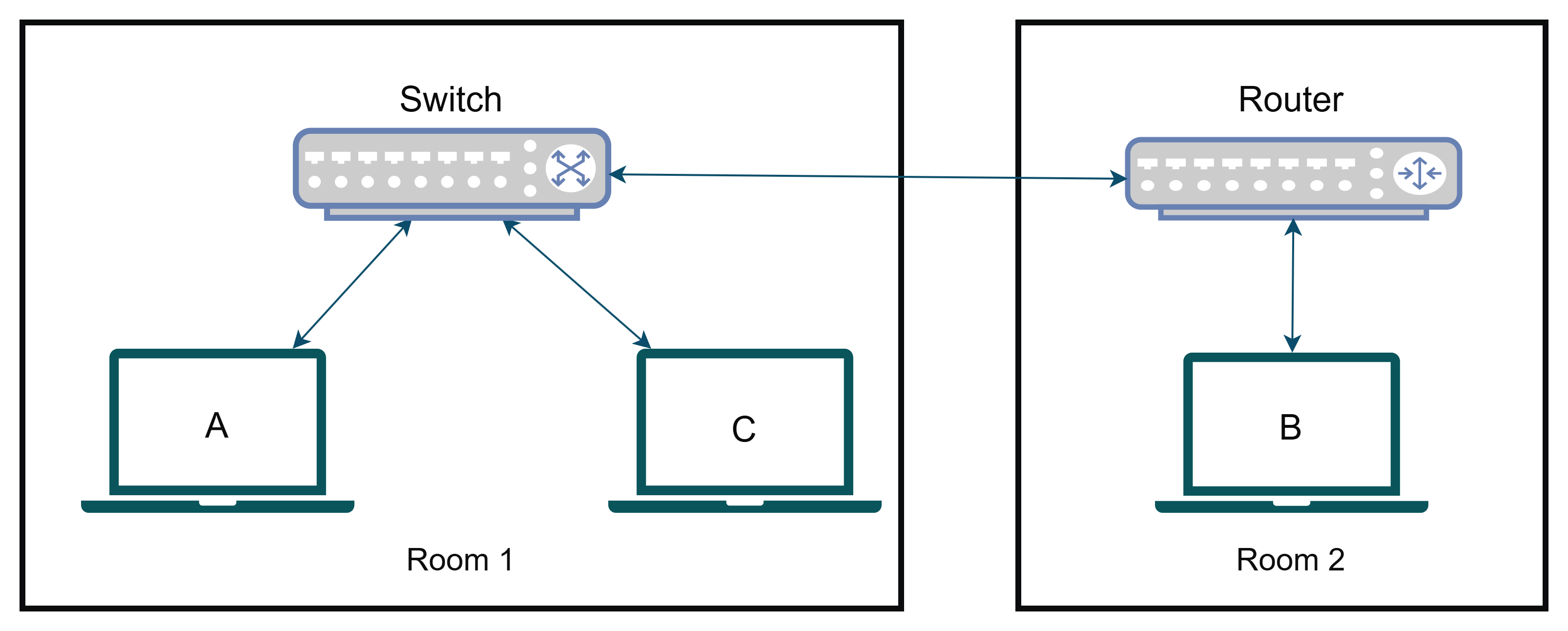

1,864,190 | Networking 101: Back to School | Hola! Hope your networks are stable and reliable. In this article let's get Back to School (the... | 0 | 2024-05-25T06:34:04 | https://dev.to/xpertr2/networking-101-back-to-school-1gk7 | sre, networking, ccna, linux | Hola! Hope your networks are stable and reliable. In this article let's get **_Back to School_** (the **Basics**), in order to later get into advanced networking topics.

## What is a network?

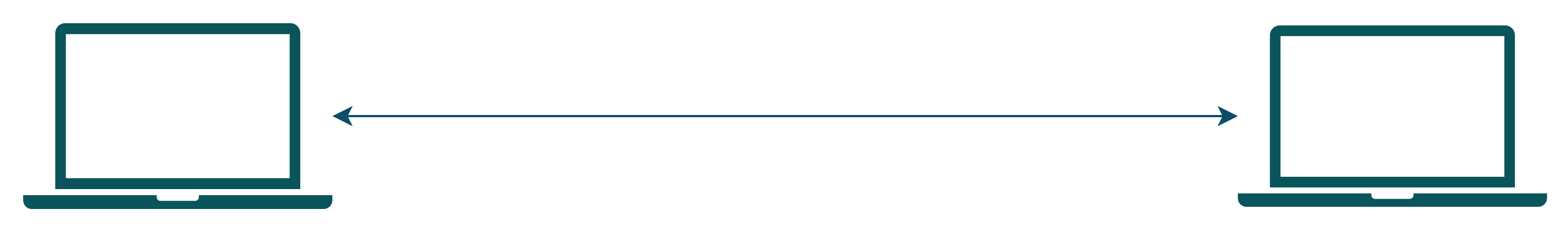

A network is interconnection between multiple devices enabling them to share data between each other. An example:

Hmmm... The network doesn't look that impressive. Right?

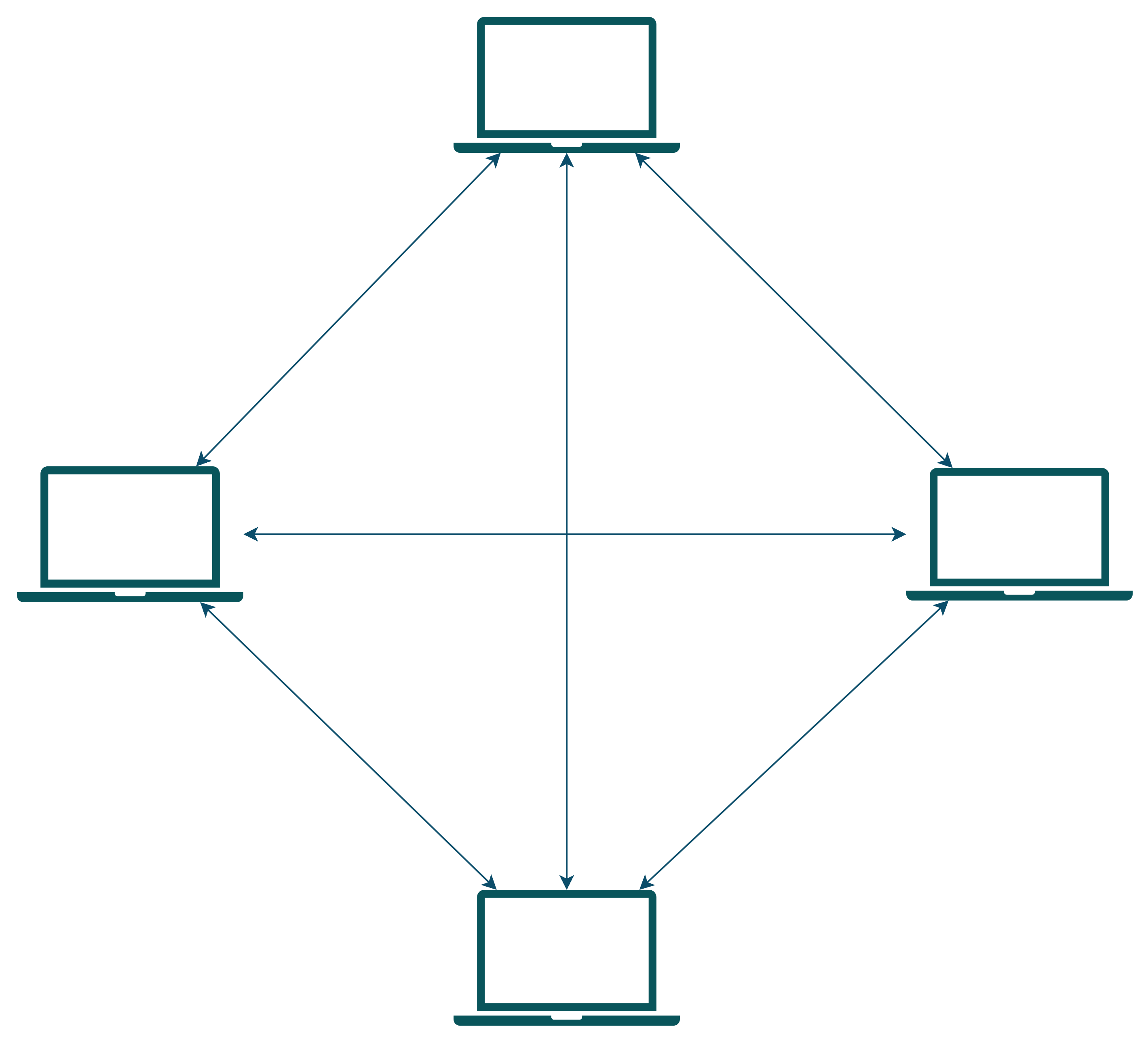

Maybe this is better.

Still not impressed. You must be thinking where the networking devices like a router or a switch or a bridge are, something should be there. But actually, for the above scenarios these networks will just do fine. There is no use of overengineering stuff and bringing-in extra networking devices which serves no actual purpose but can be a single-point of failure.

## Why internetworking? Why not a huge network like this?

Let's now understand why we don't create a huge network of directly/indirectly connected devices, but instead have interconnected smaller networks. And how broadcast and collision domains affect this decision.

### Collision

Let's understand what a **collision** is. In a network when two devices want to send data, they send signals (electromagnetic along the transmission medium). Now, if two devices try to send data at the same time in the same medium, the signals mix up or **collide** with each other and there is signal loss. When a collision occurs all the devices on the network has to pay attention to it, because nobody knows what the destination on the data signal that was collided. Also, now the data has to be re-transmitted. This **increases computation** for all devices, **increases latency** in the network.

> So, for any device the **smaller** the size of **collision domain** is, **lesser** is the chance of any **collision happening**.

### Broadcasting

Now, let's understand what is **broadcasting**. In broadcasting, a device is trying to send some data across the network, so that multiple devices can receive and acknowledge it. On a bigger network, there may be a few devices in this case who doesn't need this data. But still they have to see it, receive it, acknowledge it, check if it needs this data and lastly just drop the data received. For this device, we want to limit the broadcasting domain only to the devices that it actually needs to talk to, while will reduce the computation getting wasted on dropping un-intended data.

### Another common problem...

**Congestion**, it is the thing we as network admins have to always look after and try to reduce. Congestion is similar to traffic jams on the roads. Whenever, collision occurs the **packets** have to be retransmitted, on unnecessary broadcasts for the unintended devices there are packets travelling on the network, it will have to wait to send its own data. These are the common examples of congestion. Our goal is to somehow reduce this. Now, let's try to find ways of doing. For that we need to introduce few networking devices, so first let's learn about them.

## Understanding the devices

**Domains**. What is a domain? A domain is a region or in this case set of devices.

Types of domains in networking:

- **Collision Domain**

A collision domain for a device is the set of devices who if try to send a signal at the same time as this device, the signals collide.

- **Broadcast Domain**

A broadcast domain for a device is the set of devices who receives the packet broadcasted by this device.

> Collision domain is always a **subset** of the broadcast domain.

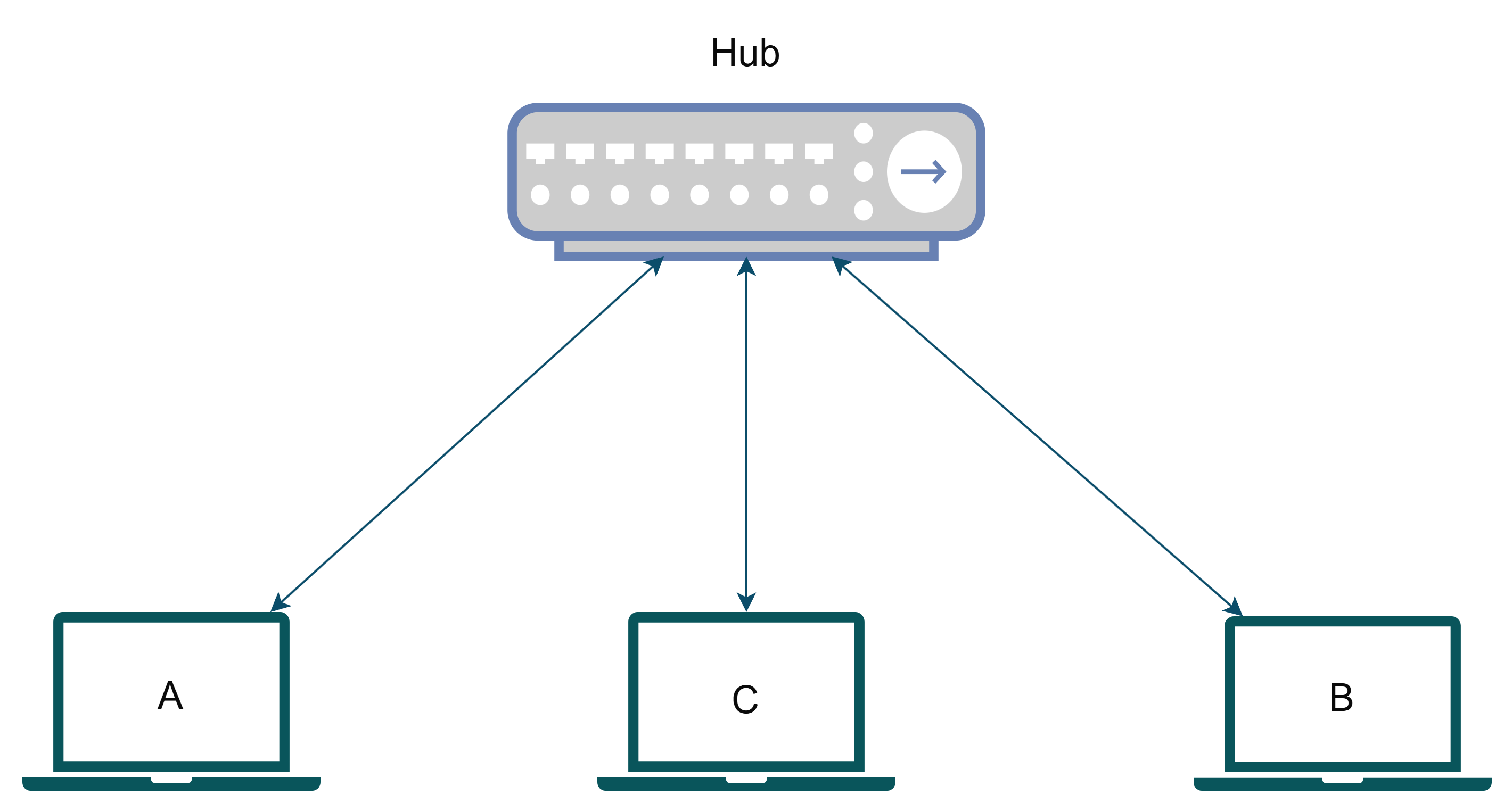

### Hub

A hub is a **dumb** network device with several ports used to connect multiple devices or small networks together. In a Hub, a packet received in any port is re-transmitted to the remaining ports. It doesn't read the packet or anything, it just regenerates the signals received on one port to the remaining that's it.

The devices A, B, C are all connected to this hub. Now, let's take an example where A wants to transmit to B. It sends a packet out on the network; it reaches the hub, and the hub retransmits on the remaining ports. So, B receives the packet successfully. Cool right? Not actually, because C will also receive the packet now it will have to do the computation that the packet was not intended for it and drop which was not something cool for device C. Here, both the collision domain and broadcast domain for **A** was **{A, B, C} only**.

> Hubs are cheap but always lead to congestion.

### Bridge

A bridge can be called an **intelligent** hub. The term ***"bridging"*** here simply refers to packets bridging over ports. Here, when a packet arrives in one port like hub, instead of being retransmitted to all the ports, the bridge transmits that to the port which it is destined for. I will not be going to show any bridge diagram below as nowadays we don't buy physical bridges. The bridge technology is rather used in switches and routers which we will talk after this.

> Cisco sometimes refers to their switches as multiport bridge, but now you won't get confused why.

> Bridges can be used to reduce collisions in a broadcast domain. It increases the number of collision domains and hence provides higher bandwidth for devices.

### Switch

A switch is an intelligent device which exactly knows which device or network is connected to each port and smartly routes/**bridges**/***switches*** the packets between incoming and destination ports. Here each port creates its own isolated collision domain thus **reducing congestion** in the network. But still as its just switching packets in same network, the broadcast domain remains same.

Let's modify our previous example to make it better.

Now let's analyze the devices.

**Device B:**

It is directly connected to a port on the switch. So, its collision domain is isolated to the port itself. That is the collision domain for it is **{B}**. That means collision will never occur hence using maximum available bandwidth to the switch. Can it talk to A? Yes, the packet first reaches the switch, the switch checks the destination of the packet and decides to send it to the port in which the hub is connected. The packet then arrives at the hub, and it transmits it to both A and B. Here, A successfully receives the packet, but B also receives it and then drops it.

**Device A, C:**

Both of them are connected to the hub, therefore their collision domain is **{A, C}**. They both can obviously talk with each other and can also talk to B via the reverse of the route we just discussed before.

> In this case, the broadcast domain remains same **{A, B, C}** for all the devices.

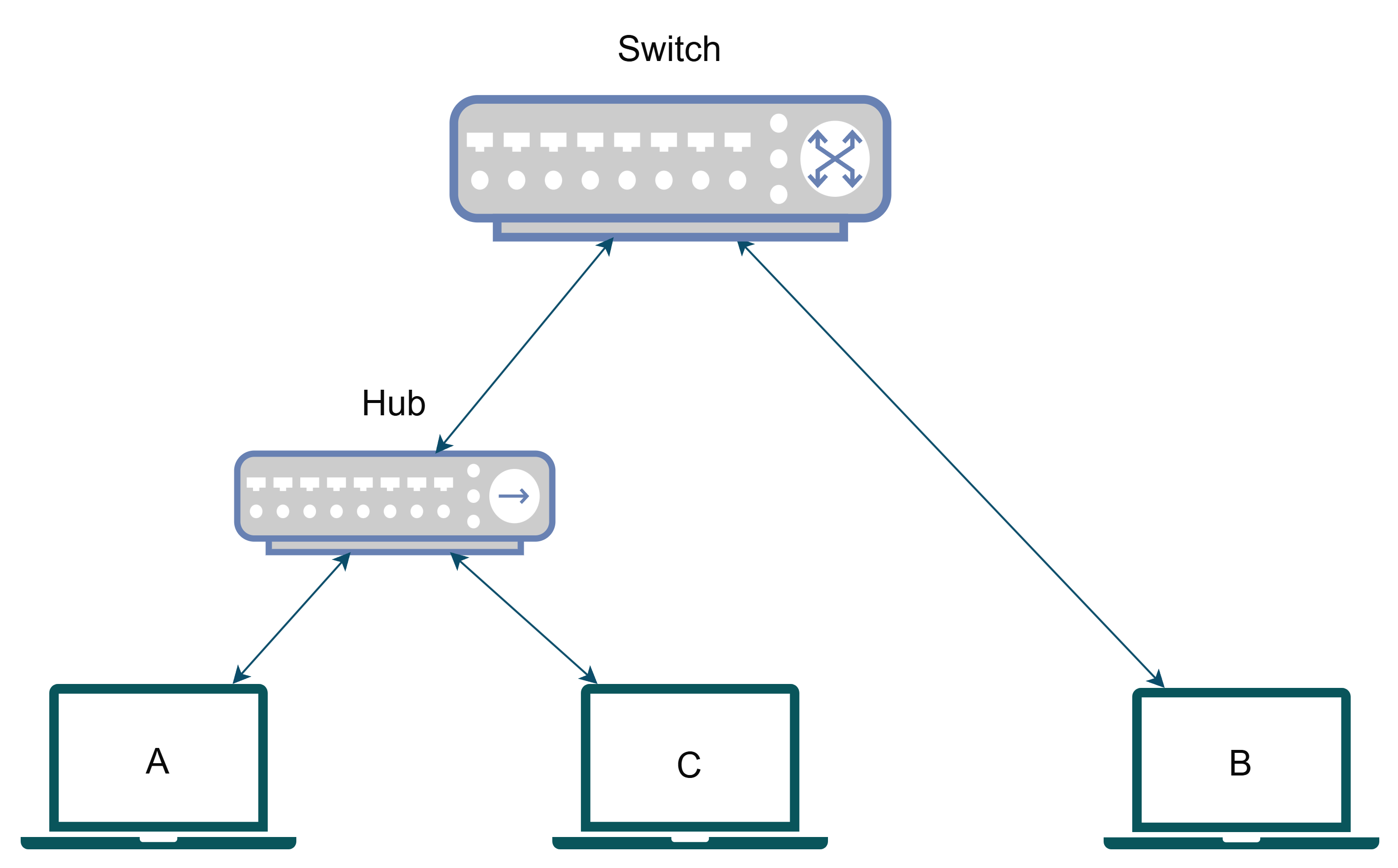

### Router

A router is a switch only. The difference is that a switch switches packets in a **single broadcast domain**. But a router is used to switch packets across **multiple broadcast domains**. Explained simply, a router is used to connect **multiple networks** (this also means it can connect several **smaller networks or sub-networks or subnets** within a **bigger network**. This can also be phrased as it can connect **multiple broadcast domains** within a **wider broadcast domain**). How a packet in one subnet would go another subnet, you may ask? Routers do something called NAT (Network Address Translation) to translate the source, destination addresses of the packets from one subnet to another. We will discuss about NAT in a later article.

Let's modify our example before. We had assumed that all the devices are in a same room. Let's modify it in a way A, C remains in one room and B is in another, each room using its own subnet. Also, as we got the chance to modify our network, let's make it better by replacing the hub with a switch.

Let's again analyze the devices.

**Device B:**

It is connected to a port on the router and therefore has an isolated collision domain **{B}** **eliminating congestion**. Can it talk to A or C? Yes, the packet the first goes to router the packet is **translated** to the subnet of **Room 1** and then sent to the switch, the switch then switches the packet **only to the intended device**. Here broadcast domain is only **{B}**

**Device A, C:**

Both of them are connected to the switch. A and C, they have their own isolated collision domains **{A}** and **{C}** respectively that they themselves are only present eliminating any collision. Can it talk to B? Yes, again through the reverse of the route discussed before. Broadcast domain for both of them is **{A, C}**.

> Switches create separate collision domains within a single broadcast domain.

> Routers provide a separate broadcast domain for each interface. Don’t let this ever confuse

you!

___

Let's end here for today. In the next article let's discuss network topologies, internetworking models, and a few common protocols.

Please comment below suggesting any changes, asking for any topic, or just hanging out in general. Also, pls reach out to me on my social channels.

[[GitHub]](https://github.com/sith-lord-vader) [[LinkedIn]](https://www.linkedin.com) [[Instagram]](https://www.instagram.com/xpertr2) [[YouTube]](https://www.youtube.com/@xpertdev) | xpertr2 |

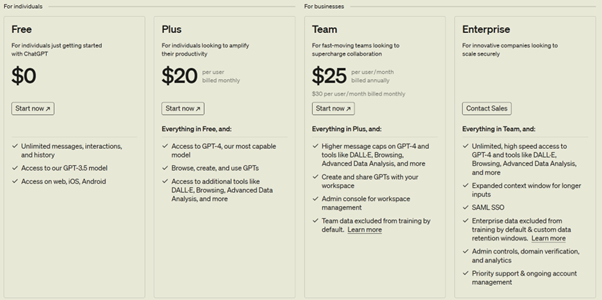

1,856,674 | GitHub Copilot vs ChatGPT | In this article I would like to analyze thedifferences between two tools that seem to overlap: GitHub... | 0 | 2024-05-25T06:30:00 | https://dev.to/tommasodotnet/github-copilot-vs-chatgptgithub-4kb5 | openai, githubcopilot, chatgpt | In this article I would like to analyze thedifferences between two tools that seem to overlap: GitHub Copilot and ChatGPT. What are the fundamental differences between the two? Which one to choose? And do you really have to choose?

## ChatGPTChatGPT

Let's start by analyzing ChatGPT. It is a web portal where you can start a chat with a Large Language Model (LLM). There are several [ChatGPT tiers](https://openai.com/chatgpt/pricing):

With the free tier, we have a fairly minimal experience that allows us to interact with the GPT-3.5 model.

Things start to get interesting from the Plus tier, which already offers us the possibility to interact with the GPT-4 model. This also gives us access to the web. Access to the web is important, because when we ask a question to the model, it is able to answer based on "native knowledge" derived from its training. Let's say that the model has been trained with data from the web up to 2021, and we ask it who the Prime Minister of the United Kingdom is, it would answer Boris Johnson (the Prime Minister in office at that time). If we gave the same model access to the web, it would be able to give us the exact answer: Rishi Sunak (Prime Minister in office at the time of writing this article).

The third team, in addition to interacting with other models such as DALL-E, adds the possibility that the data sent through the requests will not be used to retrain the model.

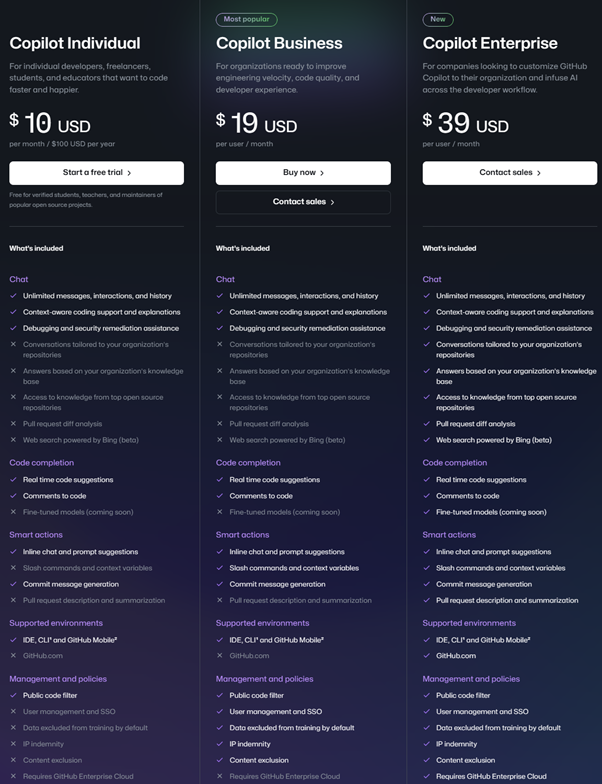

## GitHub Copilot

GitHub Copilot is a fine-tuning of the GPT-4 model for code. Fine-tuning refers to the ability to train a model by specializing it for a specific scenario, in this case working on code. The basic capabilities are therefore the same as GPT-4, which is already highly capable of working on code, with a specific specialization on this feature.

Just like ChatGPT, GitHub Copilot also offers [different pricing tiers](https://github.com/features/copilot/plans).

It can be observed how for the tiers Individual and Business the difference in features is mostly related to "Management and policies". The Individual tier is aimed at individual users, while the Business tier targets more corporate scenarios, where centralized user and policy management provides a significant advantage for tool administrators.

I will dedicate a separate paragraph to the Enterprise tier later in this article.

## Terms and Conditions

Another fundamental difference between the two tools can be found in the Terms & Conditions (T&C). GitHub Copilot's terms and conditions of use ensure that the underlying model will not be retrained using user-inputted data. Essentially, even in the Individual tier, when GitHub Copilot analyzes your code to provide you with answers and suggestions, it does not use the portions of code analyzed to retrain its algorithm, thereby preserving intellectual property.

Regarding ChatGPT, this applies starting from the Team tier.

From the perspective of Copilot in Edge, however, Commercial Data Protection is guaranteed for the types of accounts listed in the first paragraph [of this link](https://learn.microsoft.com/en-us/copilot/manage), and only when accessing with the company account and not the personal account.

Due to data protection concerns, for professional use, I would never recommend a tier that does not offer data protection functionalities, for this purpose from now on we will consider a comparison between the different tiers of GitHub Copilot and ChatGPT Team.

## IDE Integration

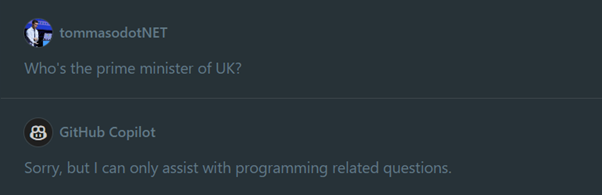

The main advantage of GitHub Copilot is the integration with the IDE: it is in fact born as a tool to suggest code in real time to the developer as he or she writes code. It infers from the context of what has already been written and what is being written to suggest entire portions of code in a pro-active way.

Over time, GitHub Copilot has evolved by adding several features, in addition to the Code Completion we just talked about: Chat and Smart Actions.

We can imagine the Chat feature as an implementation of a ChatGPT-like scenario. However, being a model specialized in code, the field is therefore restricted: asking GitHub Copilot who the Prime Minister of the United Kingdom is, it will respond:

If ChatGPT can answer both code and general questions, what's the advantage of using GitHub Copilot over ChatGPT?

Keeping in mind that this question compares a feature of a larger product (GitHub Copilot is not just its chat) to a complete product (ChatGPT is a chat service), the strengths of GitHub Copilot lie in its integration with the IDE.

Without leaving the Visual Studio Code screen (or Visual Studio or JetBrains), we can select portions of code and ask direct questions to our pair programming assistant. From the backend perspective, the question posed to the model will therefore contain:

- **our context**: the selected code

- **our question**, for example "explain to me what this portion of code does"

- **the system prompt**: the system prompt is a basic prompt that has been defined and written on the backend and surrounds the question we have asked. They are the basic instructions. In the simplest cases, we can think of the system prompt as a series of basic indications such as "You are a very helpful virtual assistant in helping. You always try to explain things in a complete but never verbose way and you are able to schematize complex concepts in order to make their understanding easier". This is a remarkably simple system prompt, GitHub Copilot's will clearly be more complex and will contain instructions such as "Only respond to questions related to the programming world", which generates responses like the one in the screenshot above.

This system prompt is one of the strengths of GitHub Copilot: the code generated by the tool is not directly passed on to the end user, but is filtered and double-checked in order to avoid scenarios of [prompt-injection](https://owasp.org/www-project-top-10-for-large-language-model-applications/Archive/0_1_vulns/Prompt_Injection.html) (a concept similar to SQL injection, but which applies to prompt engineering scenarios).

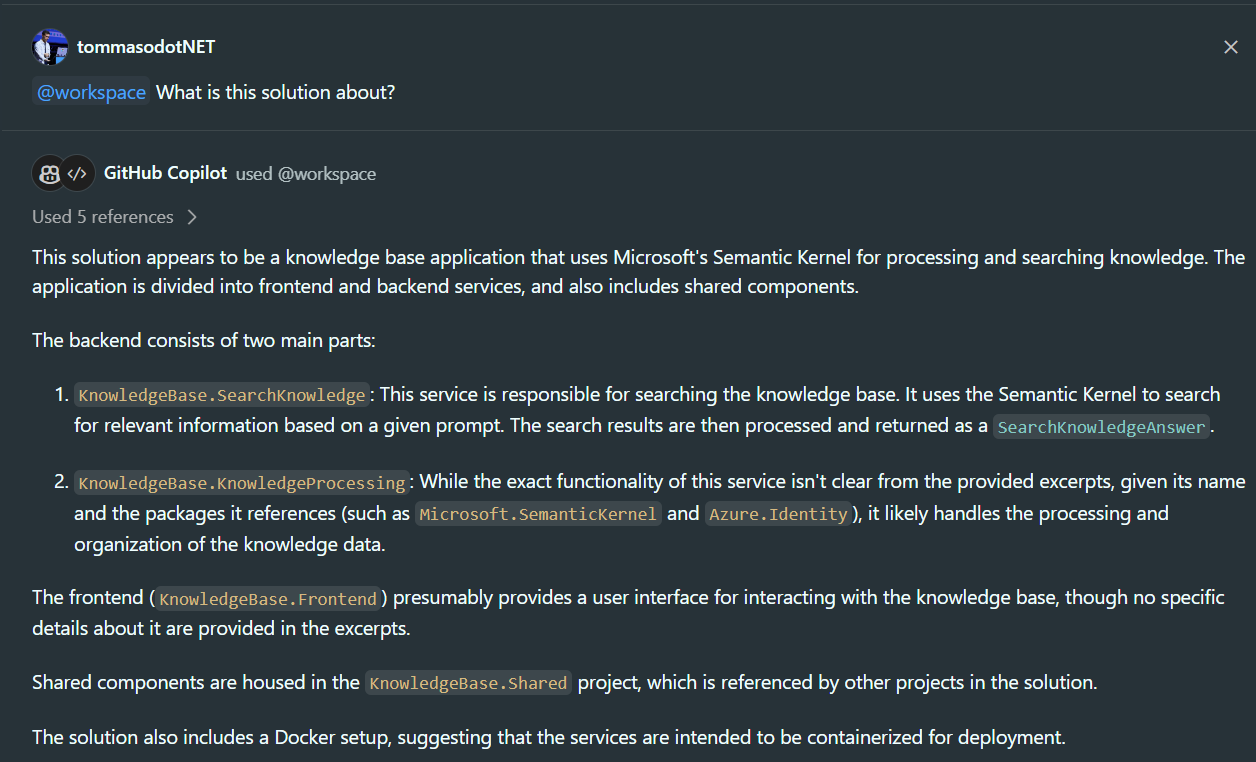

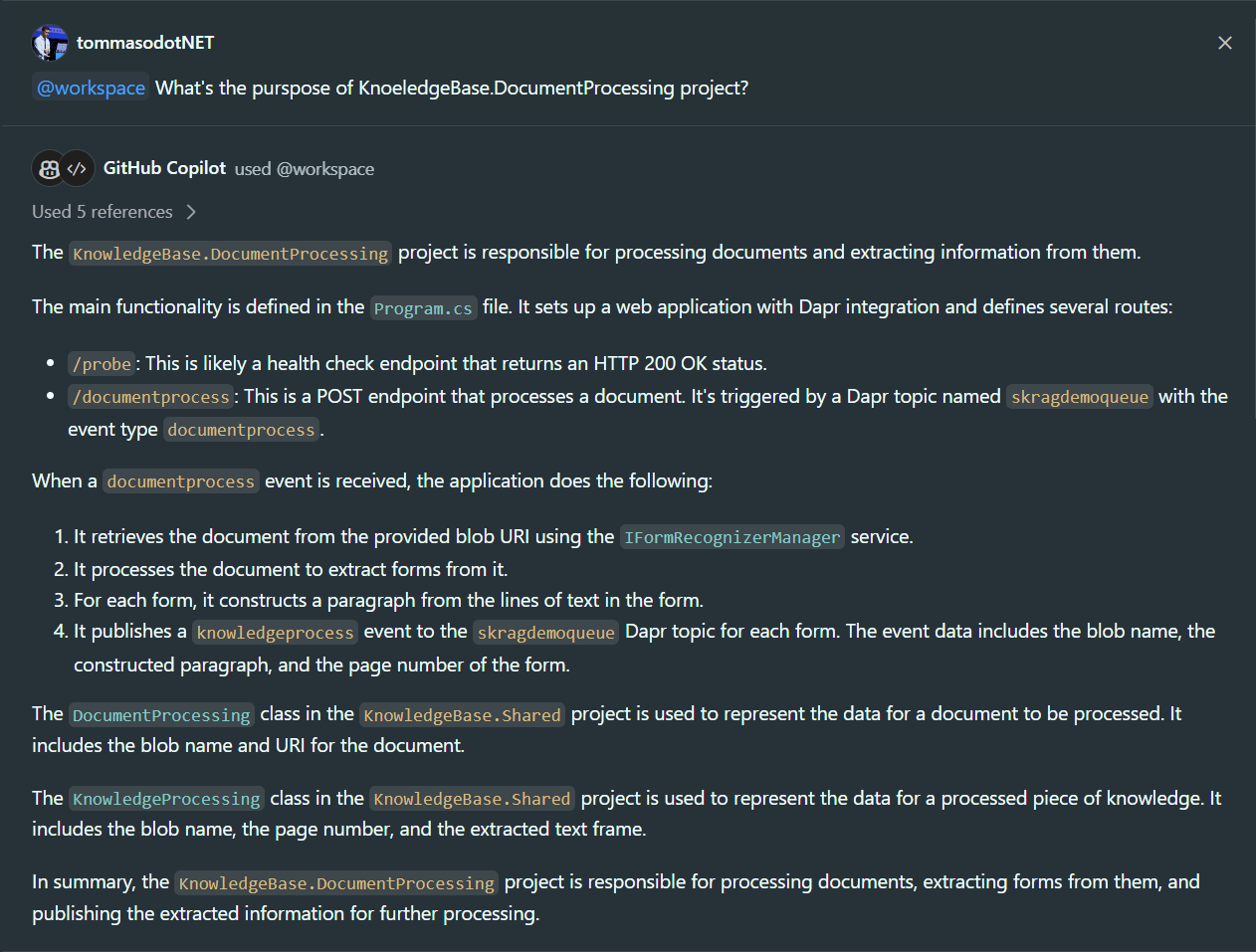

Even more important than the system prompt is GitHub Copilot's ability to access the extended context of our IDE. The context is formed by the files open in tabs and the files present in the open folder. In fact, there is the possibility, through the @workspace keyword, to ask broader questions about the entire repository that is open.

In the two screenshots above, we can see how GitHub Copilot Chat is able to analyze the entire structure of the folders, without having to specifically select portions of code, and provide me with the exact answers. In the second case, it is even able to understand the intended usage of certain services that I have described and how the APIs that I have defined work. It can also generate links to files so that they can be accessed directly without having to navigate the structure of my repository.

Taking other Visual Studio Code extensions that integrate a GPT model with our IDE as an example, functions are inherently more limited: [Visual chatGPT Studio - Visual Studio Marketplace](https://marketplace.visualstudio.com/items?itemName=jefferson-pires.VisualChatGPTStudio). As we can see in this case, the offered features only cover a subset of the functionalities related to the selection of code sections and the ability to ask questions about them.

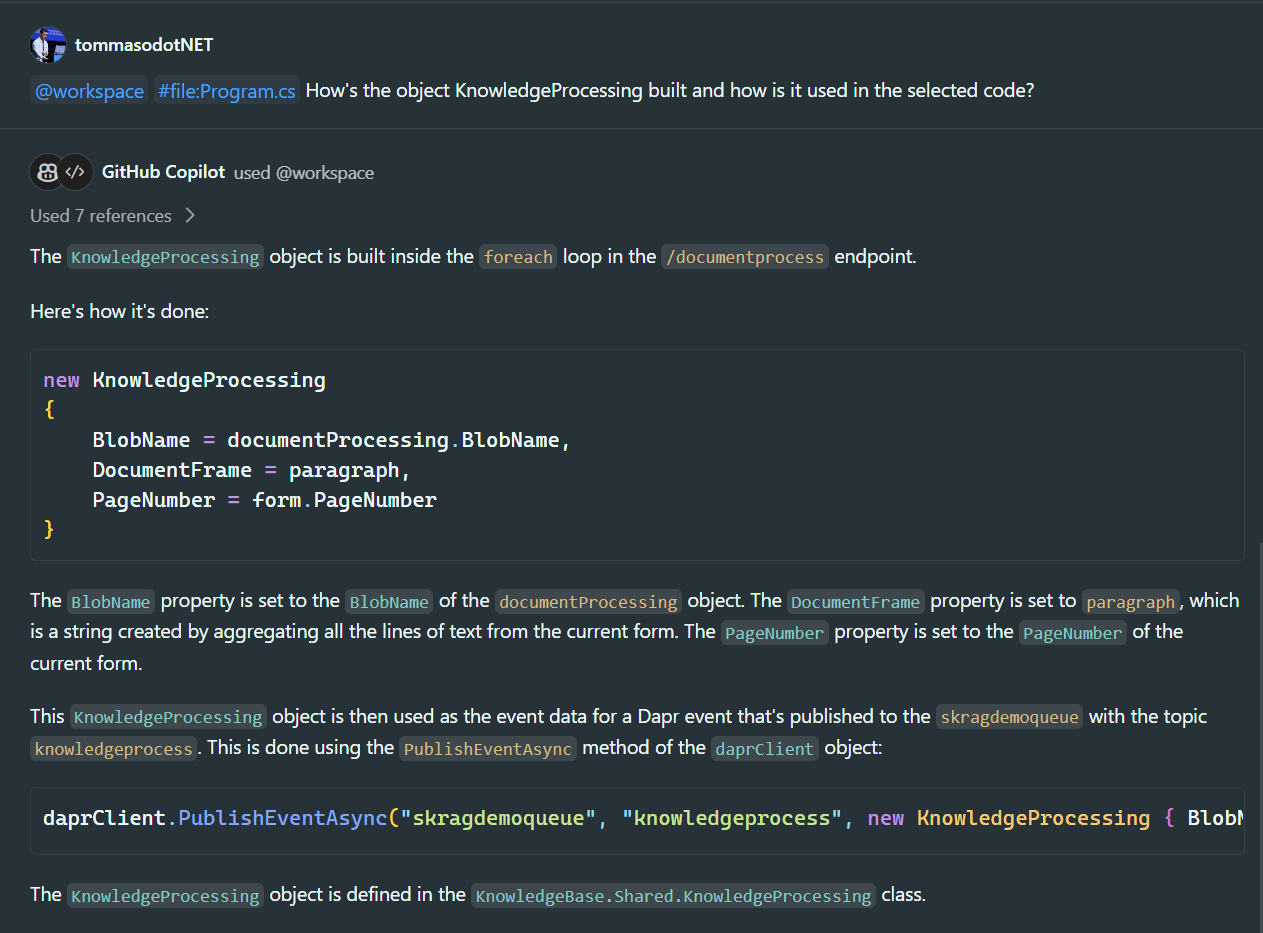

But let's analyze an even more complex scenario than what we have seen so far: let's assume that we have two microservices communicating with each other through a queue. In addition to the "@workspace" tag, I also use the tag "#file:" to enrich the context of the chat by inserting, in addition to the selected code, another file. This way, I can ask how the event managed by microservice 2 is formatted inside microservice 1:

## What is meant by "programming questions"?

It is interesting to focus on GitHub Copilot's narrowed operational context. When it's said that the tool is able to answer programming questions, we should not only think about the world of code in the narrowest sense.

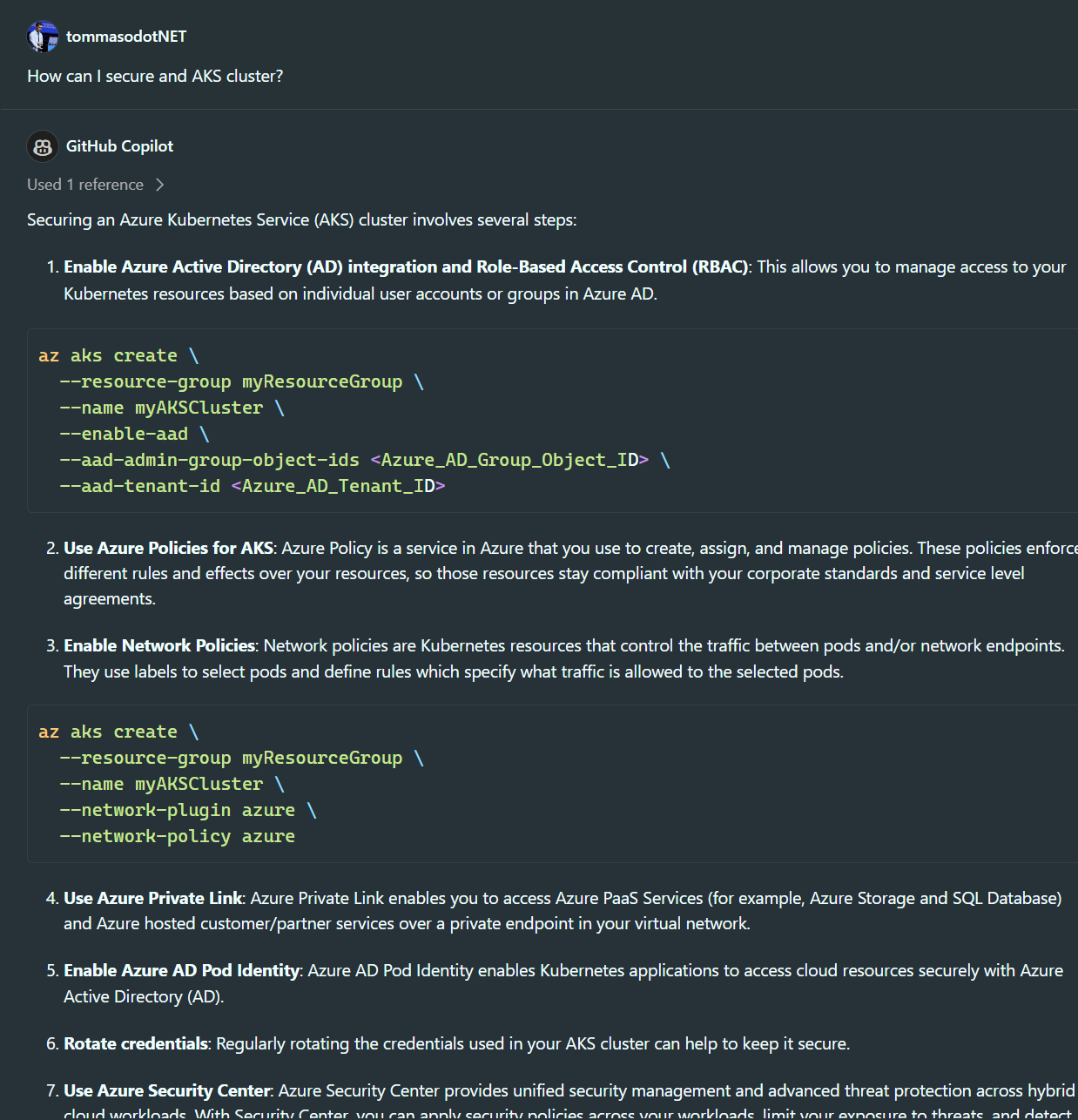

We are also able to ask questions about frameworks, third-party tools we use in the code, and the hosting architecture of an application, such as "How can I create a secure AKS cluster?"

Therefore, general questions such as "What are the general principles of quantum mechanics?" are excluded. Questions that do not pertain in any way, even from an architectural point of view, to writing code.with the writing of code are therefore excluded.

However, if we need answers on certain topics in order to conduct an analysis for the code we are writing, do we have alternatives to obtain such answers? We can safely use more general tools such as ChatGPT or tools that natively have access to the web to provide answers such as Copilot on Edge.

## GitHub Copilot EnterpriseGitHub Copilot Enterprise

In Enterprise tier, the gap in terms of features grows. In addition to normal Chat and Code completion (also present in the first two tiers), some truly interesting features are added: