id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,864,365 | Understanding Data Manipulation Language(DML) statements in SQL | Data Manipulation Language (DML) statements are those SQL statements we use to insert, update, or... | 0 | 2024-05-24T22:58:11 | https://dev.to/kellyblaire/understanding-the-data-manipulation-languagedml-statements-in-sql-23f5 | databaseoperation, sql, sqlserver, dml | Data Manipulation Language (DML) statements are those SQL statements we use to insert, update, or delete data in tables (e.g., INSERT, UPDATE, DELETE).

In this article, I am going to show you how to use the INSERT, UPDATE, and DELETE statements in SQL, including use cases, examples, and professional information to aid in learning and provide practical examples.

**INSERT Statement**

The INSERT statement is used to add new rows of data into a table. It is a fundamental operation in SQL and is part of the Data Manipulation Language (DML) subset.

**Use Cases:**

- Adding new records to a table (e.g., new customers, orders, products)

- Populating a newly created table with initial data

- Inserting data from one table into another (combined with SELECT)

**Syntax:**

```sql

INSERT INTO table_name (column1, column2, ...)

VALUES (value1, value2, ...);

```

**Examples:**

1. Insert a new row into the "Customers" table:

```sql

INSERT INTO Customers (CustomerName, City, Country)

VALUES ('John Doe', 'New York', 'USA');

```

2. Insert multiple rows into the "Orders" table:

```sql

INSERT INTO Orders (OrderID, CustomerID, OrderDate)

VALUES

(1001, 101, '2023-05-01'),

(1002, 102, '2023-05-02'),

(1003, 103, '2023-05-03');

```

3. Insert data from a SELECT query (using a subquery):

```sql

INSERT INTO BackupCustomers

SELECT * FROM Customers;

```

**Professional Tips:**

- Use column lists to specify the columns you want to insert data into, ensuring the values match the column order.

- Be cautious when omitting columns with default values or auto-incrementing keys, as the DBMS may handle them differently.

- Consider using transactions when inserting multiple rows to ensure data integrity in case of errors or failures.

- Optimize INSERT statements by batching or bulk inserting data instead of individual inserts for better performance.

**UPDATE Statement**

The UPDATE statement is used to modify existing data in a table. It allows you to change the values of one or more columns for selected rows that match a specified condition.

**Use Cases:**

- Updating customer information (e.g., address, phone number)

- Modifying product prices or stock quantities

- Correcting data entry errors or inconsistencies

**Syntax:**

```sql

UPDATE table_name

SET column1 = value1, column2 = value2, ...

WHERE condition;

```

**Examples:**

1. Update the email address for a specific customer:

```sql

UPDATE Customers

SET Email = 'newemail@example.com'

WHERE CustomerID = 101;

```

2. Update multiple columns with different values:

```sql

UPDATE Products

SET Price = Price * 1.1, StockQuantity = StockQuantity - 10

WHERE ProductID IN (101, 102, 103);

```

3. Update rows based on a subquery:

```sql

UPDATE Orders

SET ShipDate = CURRENT_DATE()

WHERE OrderID IN (

SELECT OrderID

FROM Orders

WHERE ShipDate IS NULL

);

```

**Professional Tips:**

- Always include a `WHERE` clause to specify the rows you want to update; omitting it will update all rows in the table.

- Use transactions when updating critical data to ensure data integrity and rollback capability in case of errors.

- Consider indexing the columns used in the `WHERE` clause for better performance on large tables.

- Test your UPDATE statements thoroughly, especially when updating multiple columns or using subqueries, to avoid unintended data modifications.

**DELETE Statement**

The DELETE statement is used to remove existing rows from a table. It allows you to delete specific rows that match a specified condition or all rows from a table.

**Use Cases:**

- Removing obsolete or unnecessary data (e.g., old customer records, expired products)

- Deleting test data or temporary records

- Truncating a table by deleting all rows

**Syntax:**

```sql

DELETE FROM table_name

WHERE condition;

```

**Examples:**

1. Delete a specific customer record:

```sql

DELETE FROM Customers

WHERE CustomerID = 101;

```

2. Delete all orders for a specific customer:

```sql

DELETE FROM Orders

WHERE CustomerID = (

SELECT CustomerID

FROM Customers

WHERE CustomerName = 'John Doe'

);

```

3. Delete all rows from a table (truncate):

```sql

DELETE FROM TemporaryTable;

```

**Professional Tips:**

- Use the `WHERE` clause to specify the rows you want to delete; omitting it will delete all rows in the table.

- Be extremely cautious when deleting data, as it is a permanent operation (unless using transactions or backups).

- Consider using transactions when deleting critical data to ensure data integrity and rollback capability in case of errors.

- Truncating a table (deleting all rows) can be faster than deleting rows one by one, but it cannot be rolled back and may cause issues with identity columns or triggers.

- Indexing the columns used in the `WHERE` clause can improve performance for large tables.

**Practical Examples:**

1. **Customer Management System:**

- INSERT new customers into the "Customers" table when they sign up.

- UPDATE customer information (address, phone number) when they change their details.

- DELETE customer records when they request to close their accounts.

2. **E-commerce Order Processing:**

- INSERT new orders into the "Orders" table when customers place an order.

- UPDATE order status (e.g., "Shipped") when the order is processed.

- DELETE order records after a certain period (e.g., 2 years) for archiving purposes.

3. **Inventory Management:**

- INSERT new products into the "Products" table when new items are added to the inventory.

- UPDATE product prices or stock quantities based on supplier changes or sales.

- DELETE product records when items are discontinued or removed from the inventory.

4. **Data Warehousing and Analytics:**

- INSERT new data into staging tables or data marts for analysis.

- UPDATE dimension tables with slowly changing dimensions (e.g., customer addresses, product categories).

- DELETE outdated or obsolete data from fact tables or data marts during data refreshes.

**Wrapping Up**

In this lesson, we covered three essential SQL statements: INSERT, UPDATE, and DELETE, which are part of the Data Manipulation Language (DML) subset. These statements allow us to manage data in databases by adding, modifying, and removing rows from tables.

The INSERT statement is used to add new rows of data into a table. We learned its syntax, common use cases, and best practices, such as using column lists, considering default values and auto-incrementing keys, batching inserts for better performance, and using transactions for data integrity.

The UPDATE statement is used to modify existing data in a table by changing the values of one or more columns for selected rows that match a specified condition. We discussed its syntax, use cases like updating customer information or product prices, and tips like always including a WHERE clause, using transactions for critical data, indexing columns used in the WHERE clause, and thoroughly testing updates before execution.

The DELETE statement is used to remove existing rows from a table based on a specified condition or all rows. We covered its syntax, use cases such as removing obsolete data or truncating tables, and tips like using the WHERE clause to specify rows to delete, being cautious as deletion is permanent, considering transactions, and indexing columns used in the WHERE clause.

Throughout the lesson, we explored practical examples demonstrating the application of these statements in scenarios like customer management systems, e-commerce order processing, inventory management, and data warehousing and analytics.

Mastering these fundamental SQL statements is essential for effective data manipulation and maintenance in various applications and scenarios. By understanding their syntax, use cases, and best practices, you can confidently manage data in databases, ensuring data integrity, consistency, and accuracy. | kellyblaire |

1,864,364 | CodeCompanion is launching : Take your documentation to the next level | I'm excited to announce that CodeCompanion is ready! CodeCompanion is an AI-powered tool that makes... | 0 | 2024-05-24T22:46:20 | https://dev.to/lotfijb/codecompanion-is-launching-take-your-documentation-to-the-next-level-1d8d | ai, webdev, productivity, nextjs | I'm excited to announce that [CodeCompanion](https://codecompanion-trial.vercel.app/) is ready!

CodeCompanion is an AI-powered tool that makes documenting your code a breeze. It integrates seamlessly with platforms like GitHub and GitLab, taking care of all your documentation and commenting needs.

Your code will be perfectly documented by just a click!

With CodeCompanion, collaboration becomes smoother and sharing code easier than ever before. Whether you're working solo or with a team.

Our beta launch is tomorrow, and you can join over 400 developers already on the waitlist. Don't miss out on the chance to experience a new era of code documentation.

[Sign up here](https://codecompanion-trial.vercel.app/)

Let's make code documentation effortless!

| lotfijb |

1,864,363 | Constraints in SQL: Enforcing Data Integrity | In SQL, data integrity is of paramount importance. Constraints are rules or conditions that are... | 0 | 2024-05-24T22:41:22 | https://dev.to/kellyblaire/constraints-in-sql-enforcing-data-integrity-3pa1 | sql, database, dataintegrity, constraints | In SQL, data integrity is of paramount importance. Constraints are rules or conditions that are applied to columns or tables in a database to maintain data integrity and enforce business rules. SQL provides several types of constraints to ensure the accuracy, consistency, and reliability of data stored in a database.

**1. PRIMARY KEY Constraint**

A primary key is a column or a combination of columns that uniquely identifies each row in a table. It is the most fundamental constraint in relational databases, as it ensures that every row can be uniquely identified. A primary key constraint has two main characteristics:

- **Uniqueness**: The values in the primary key column(s) must be unique across all rows in the table. No two rows can have the same primary key value.

- **Not Null**: The primary key column(s) cannot contain null values. Each row must have a valid primary key value.

The primary key constraint is typically defined during the table creation using the `PRIMARY KEY` keyword. For example:

```sql

CREATE TABLE Customers (

CustomerID INT PRIMARY KEY,

Name VARCHAR(50),

Email VARCHAR(50)

);

```

In this example, the `CustomerID` column is defined as the primary key for the `Customers` table.

**2. FOREIGN KEY Constraint**

A foreign key is a column or a combination of columns that references the primary key or a unique key of another table. It establishes a link or relationship between two tables, ensuring referential integrity. When a foreign key value is inserted or updated in the referencing table, it must match an existing value in the referenced table's primary or unique key column(s).

The foreign key constraint is defined using the `FOREIGN KEY` keyword, specifying the referenced table and column(s). For example:

```sql

CREATE TABLE Orders (

OrderID INT PRIMARY KEY,

CustomerID INT FOREIGN KEY REFERENCES Customers(CustomerID),

OrderDate DATE

);

```

In this example, the `CustomerID` column in the `Orders` table is a foreign key that references the `CustomerID` column (primary key) in the `Customers` table. This constraint ensures that every `CustomerID` value in the `Orders` table corresponds to an existing `CustomerID` value in the `Customers` table, maintaining referential integrity.

**3. UNIQUE Constraint**

The `UNIQUE` constraint ensures that the values in a column or a combination of columns are unique across all rows in the table. It prevents the insertion or update of duplicate values in the specified column(s). Unlike the primary key constraint, the `UNIQUE` constraint allows null values unless combined with the `NOT NULL` constraint.

The `UNIQUE` constraint can be defined during table creation or added to an existing table using the `ALTER TABLE` statement. For example:

```sql

CREATE TABLE Employees (

EmployeeID INT PRIMARY KEY,

Email VARCHAR(50) UNIQUE

);

```

In this example, the `Email` column in the `Employees` table has a `UNIQUE` constraint, ensuring that each email address is unique across all employees.

**4. NOT NULL Constraint**

The `NOT NULL` constraint specifies that a column cannot accept null values. It helps maintain data integrity by ensuring that specific columns always have a value. When a `NOT NULL` constraint is applied to a column, any attempt to insert or update a row with a null value in that column will result in an error.

The `NOT NULL` constraint can be defined during table creation or added to an existing table using the `ALTER TABLE` statement. For example:

```sql

CREATE TABLE Products (

ProductID INT PRIMARY KEY,

ProductName VARCHAR(50) NOT NULL,

Price DECIMAL(10, 2)

);

```

In this example, the `ProductName` column in the `Products` table has a `NOT NULL` constraint, ensuring that every product must have a name specified.

**Combining Constraints**

Constraints can be combined to enforce multiple rules on a column or a set of columns. For example, you can define a primary key as both `PRIMARY KEY` and `NOT NULL` to ensure uniqueness and non-nullability. Additionally, you can define a column as `UNIQUE` and `NOT NULL` to ensure uniqueness and non-nullability without making it a primary key.

```sql

CREATE TABLE Students (

StudentID INT PRIMARY KEY NOT NULL,

Name VARCHAR(50) NOT NULL,

Email VARCHAR(50) UNIQUE NOT NULL

);

```

In this example, the `StudentID` column is defined as the primary key with both `PRIMARY KEY` and `NOT NULL` constraints. The `Name` column has a `NOT NULL` constraint, and the `Email` column has both `UNIQUE` and `NOT NULL` constraints.

**Wrapping Up**

Constraints are essential for maintaining data integrity and enforcing business rules in SQL databases. The primary key, foreign key, unique, and not null constraints are powerful tools that help ensure the accuracy, consistency, and reliability of data stored in a database. By understanding and applying these constraints appropriately, developers can create robust and reliable database systems that meet the requirements of their applications. | kellyblaire |

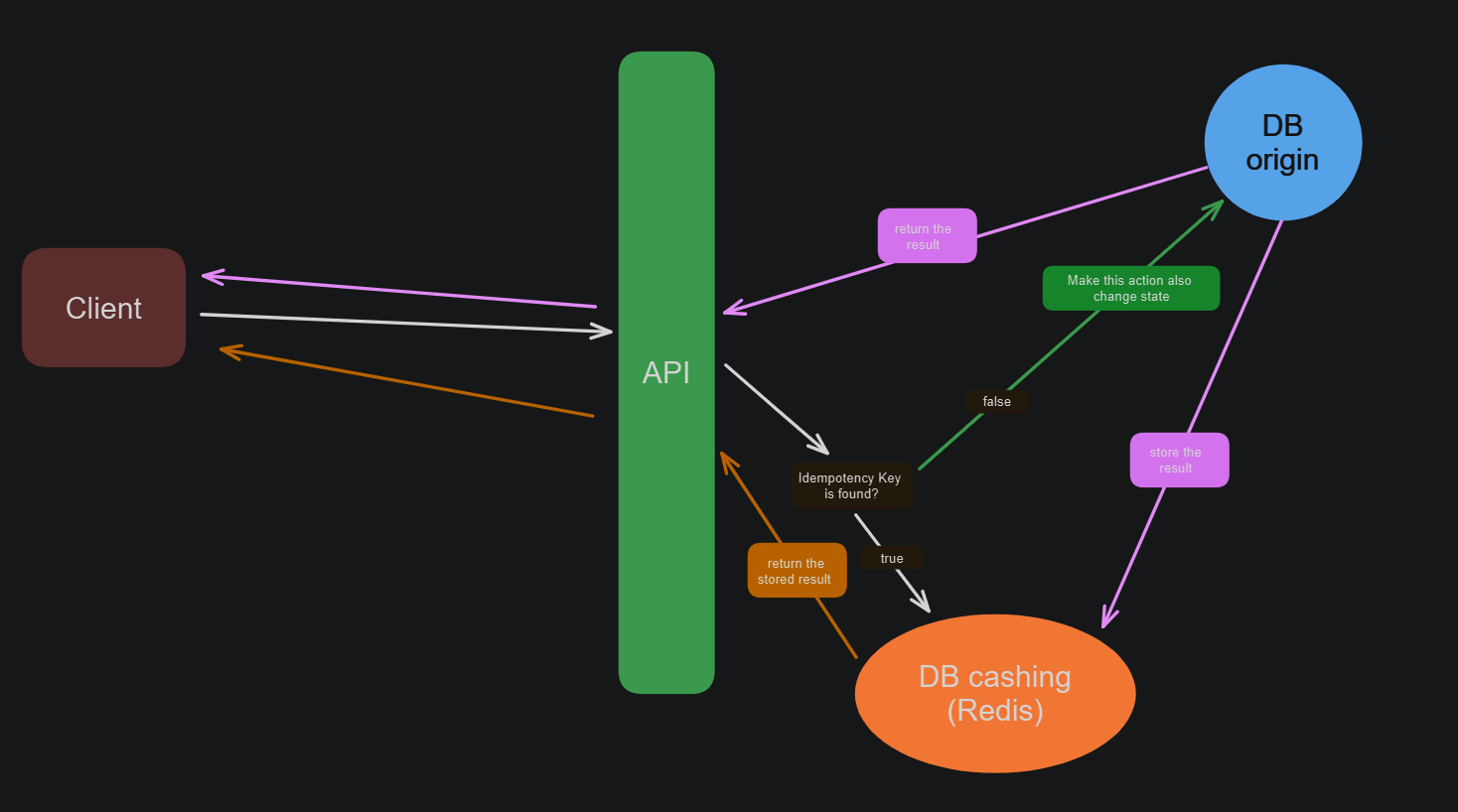

1,863,457 | Could Micro Services Be a Good Fit for Micro SaaS? | Could Microservices Be a Good Fit for Micro SaaS Startups? As a solo developer, I've been... | 0 | 2024-05-24T22:31:59 | https://dev.to/zachbenson/could-microservices-be-a-good-fit-for-micro-saas-startups-47hg | microservices, webdev, tutorial, productivity | ## Could Microservices Be a Good Fit for Micro SaaS Startups?

As a solo developer, I've been intrigued by the potential of microservices for small SaaS and micro SaaS projects. Balancing the constraints of limited resources with the option to scale quickly, I've been contemplating whether microservices could be a good fit for smaller projects. The consensus? It's a bit obvious - it promising, if you can overcome the inherent challenges of microservices without expending substantial development time. With that being said I would like to share what I have found while developing my own micro service boilerplate and hopefully help you get there faster.

## The Appeal of Microservices for Micro SaaS Startups

Microservices architecture breaks down applications into small, independent deployable services, each responsible for a specific piece of functionality. This modular approach offers several compelling advantages for micro SaaS developers:

- **Scalability**: Startups can scale individual services based on demand without scaling the entire application. This efficient resource use is crucial when budgets are tight.

- **Flexibility and Agility**: Each microservice can be developed, deployed, and scaled independently, allowing startups to iterate quickly and adapt to market changes with minimal disruption.

- **Fault Isolation**: Issues in one service do not necessarily affect the entire application, leading to more resilient systems and better uptime.

- **Technology Diversity**: Teams can choose the best technology stack for each service, enabling experimentation with new technologies that could give a competitive edge.

- *My interest* in microservices is specifically the flexibility and technology diversity.

## Overcoming the Overhead of Microservices

Despite the benefits, microservices come with their own set of challenges, particularly for startups with limited resources and experience in managing distributed systems. However, these challenges can be mitigated with the right strategies and tools.

### Challenge 1: Complexity of Distributed Systems

**Solution: Leverage Automation and Orchestration Tools**

- **Automated Deployment**: Use tools like Docker for containerization and Kubernetes for orchestration to manage deployment and scaling. These tools simplify the process of managing multiple services, making the system more robust and easier to handle.

- Containerization with [Docker](https://www.docker.com/) can be slow to start if you are learning, but once you have it down containerizing each micro-service will come pretty quickly.

- Orchestration with [Kubernetes](https://kubernetes.io/) is simple to set up, but there are some challenges to managing resource usage in a cost effective way.

### Challenge 2: Ensuring Effective Communication

**Solution: Standardize Communication Protocols**

- **API Gateways**: Use an API gateway to manage and route requests between clients and services, providing a single entry point for the system. This simplifies client-side logic and improves security.

- **Event-Driven Architecture**: Implement asynchronous communication using message brokers (e.g., RabbitMQ, Kafka) to decouple services and improve system resilience.

- *My pick* is using an API gateway, as it has the added benefit of protecting all of your backend api's behind one end point where you can implement authentication/authorization, rate limiting, and reverse proxies.

### Challenge 3: Managing Data Consistency

**Solution: Embrace Eventual Consistency**

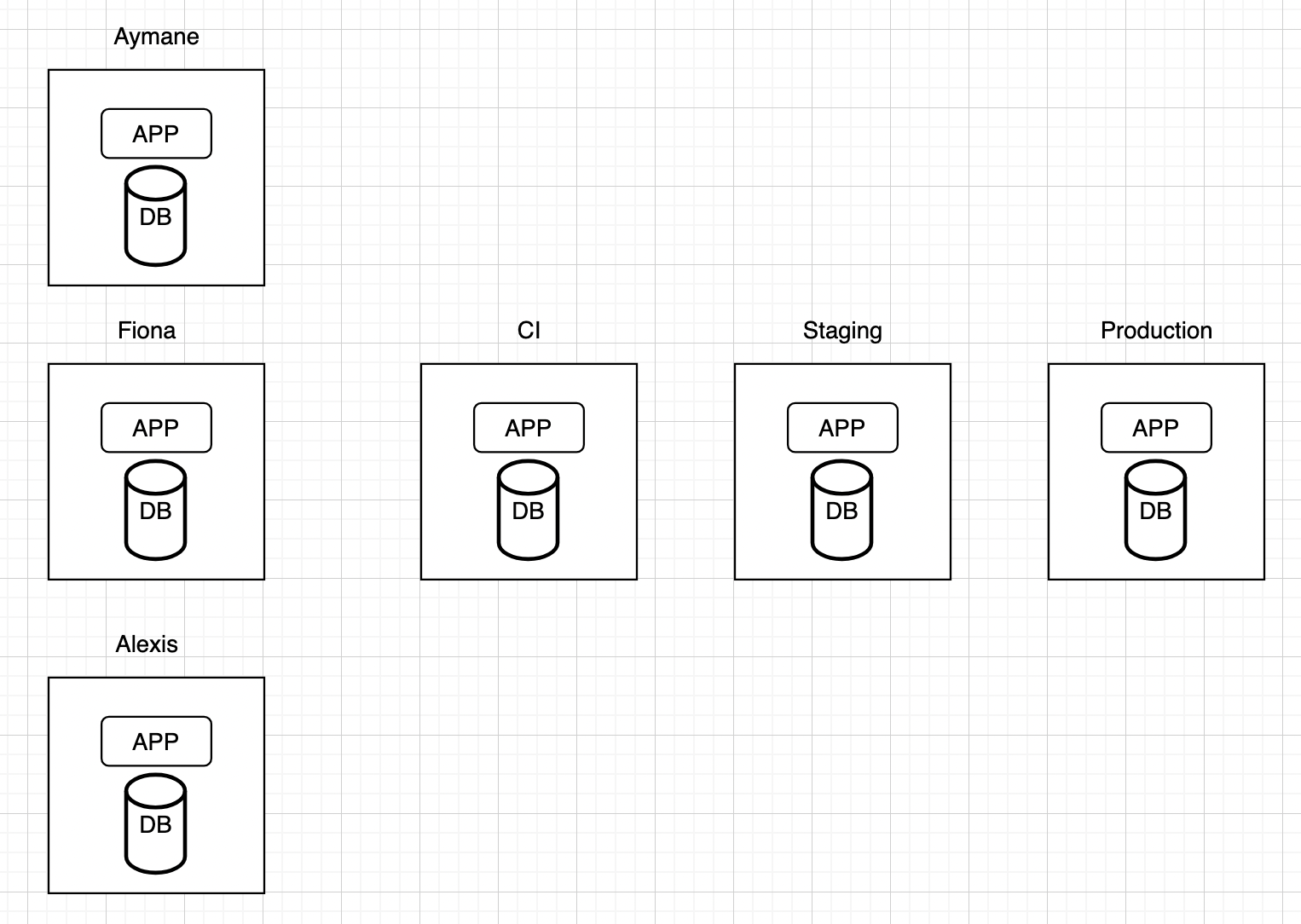

- **Shared Database**: Allow multiple services use the same database. It simplifies ensuring data consistency but increases coupling between services and reduces their autonomy. This is a particularly good fit for smaller solo developers or small teams because communication between service owners is a bit simpler.

- **Database Per Service**: Adopt a database per service pattern to ensure services are loosely coupled, which allows each service to manage its own data independently.

- *My pick* is using a shared database that is its own service. As a solo dev it is straight forward enough to keep track of the changes services have on the database. With it being its own service the api calls are mostly what change.

### Challenge 4: Finding the Right Service Boundaries

**Solution: Start Simple and Iterate**

- **Domain-Driven Design (DDD)**: Embrace DDD principles to carve out precise service boundaries aligned with your business functions. Kick off with a broad decomposition and refine iteratively as your understanding of the application's needs evolves.

- **Leverage Common Solutions**: Take a moment to survey the landscape. In the realm of SaaS and micro SaaS, certain fundamental services consistently appear: authentication, user management, email handling, and payment processing. Rather than reinventing the wheel for these essentials, capitalize on existing solutions. Direct your efforts towards dissecting the distinctive features of your application.

## Conclusion

Microservices offer promising benefits for micro SaaS startups, enabling scalability, flexibility, and resilience. However, they also pose challenges, especially for those with limited resources. By leveraging automation tools, standardizing communication protocols, embracing eventual consistency, and starting with simple service boundaries, startups can effectively harness the power of microservices. Through iterative refinement and strategic decision-making, microservices can indeed be a valuable asset for smaller projects.

Stay tuned as I share my journey implementing these strategies in my microservice boilerplate, [Building Blocks](https://building-blocks-848d.mailchimpsites.com).

---

### References

Microservices Patterns: With Examples in Java - Chris Richardson

---

Feel free to leave your comments and suggestions below. Let's learn and grow together as a community! | zachbenson |

1,864,356 | set_default_dtype(), set_default_device() and set_printoptions() in PyTorch | *Memos: My post explains how to check PyTorch version, CPU and GPU(CUDA). My post explains how to... | 0 | 2024-05-24T22:26:30 | https://dev.to/hyperkai/setdefaultdtype-setdefaultdevice-and-setprintoptions-in-pytorch-55g8 | pytorch, setdefaultdtype, setdefaultdevice, setprintoptions | *Memos:

- [My post](https://dev.to/hyperkai/check-pytorch-version-cpu-and-gpucuda-in-pytorch-6jk) explains how to check PyTorch version, CPU and GPU(CUDA).

- [My post](https://dev.to/hyperkai/create-a-tensor-in-pytorch-127g) explains how to create a tensor.

- [My post](https://dev.to/hyperkai/access-a-tensor-in-pytorch-1f4e) explains how to access a tensor.

- [My post](https://dev.to/hyperkai/istensor-numel-and-device-in-pytorch-2eha) explains [is_tensor()](https://pytorch.org/docs/stable/generated/torch.is_tensor.html), [numel()](https://pytorch.org/docs/stable/generated/torch.numel.html) and [device()](https://pytorch.org/docs/stable/tensor_attributes.html#torch-device).

- [My post](https://dev.to/hyperkai/type-conversion-with-type-to-and-a-tensor-in-pytorch-2a0g) explains type conversion with [type()](https://pytorch.org/docs/stable/generated/torch.Tensor.type.html), [to()](https://pytorch.org/docs/stable/generated/torch.Tensor.to.html) and a tensor.

- [My post](https://dev.to/hyperkai/type-promotion-resulttype-promotetypes-and-cancast-in-pytorch-33p8) explains type promotion, [result_type()](https://pytorch.org/docs/stable/generated/torch.result_type.html), [promote_types()](https://pytorch.org/docs/stable/generated/torch.promote_types.html) and [can_cast()](https://pytorch.org/docs/stable/generated/torch.can_cast.html).

- [My post](https://dev.to/hyperkai/device-conversion-fromnumpy-and-numpy-in-pytorch-1iih) explains device conversion, [from_numpy()](https://pytorch.org/docs/stable/generated/torch.from_numpy.html) and [numpy()](https://pytorch.org/docs/stable/generated/torch.Tensor.numpy.html).

- [My post](https://dev.to/hyperkai/manualseed-initialseed-and-seed-in-pytorch-5gm8) explains [manual_seed()](https://pytorch.org/docs/stable/generated/torch.manual_seed.html), [initial_seed()](https://pytorch.org/docs/stable/generated/torch.initial_seed.html) and [seed()](https://pytorch.org/docs/stable/generated/torch.seed.html).

[set_default_dtype()](https://pytorch.org/docs/stable/generated/torch.set_default_dtype.html) can set the default dtype of a 0D or more D tensor as shown below:

*Memos:

- `set_default_dtype()` can be used with [torch](https://pytorch.org/docs/stable/torch.html) but not with a tensor but `dtype` can be used with a tensor.

- The 1st argument with `torch` is `d`(Required-Type:[dtype](https://pytorch.org/docs/stable/tensor_attributes.html#torch.dtype)). *Only floating-point types can be set.

- The effect of `set_default_dtype()` lasts until `set_default_dtype()` is used next time.

- You can also use [set_default_tensor_type()](https://pytorch.org/docs/stable/generated/torch.set_default_tensor_type.html) but it is deprecated.

- [get_default_dtype()](https://pytorch.org/docs/stable/generated/torch.get_default_dtype.html) can return the default dtype of a 0D or more D tensor.

```python

import torch

my_tensor = torch.tensor([0., 1., 2.])

my_tensor.dtype

torch.get_default_dtype()

# torch.float32

torch.set_default_dtype(d=torch.float64)

my_tensor.device

torch.get_default_device()

# torch.float64

```

[set_default_device()](https://pytorch.org/docs/stable/generated/torch.set_default_device.html) can set the default device of a 0D or more D tensor as shown below:

*Memos:

- `set_default_device()` can be used with `torch` but not with a tensor but `device` can be used with a tensor.

- The 1st argument with `torch` is `device`(Required-Type:`str`, `int` or [device()](https://pytorch.org/docs/stable/tensor_attributes.html#torch-device)).

- `cpu`, `cuda`, `ipu`, `xpu`, `mkldnn`, `opengl`, `opencl`, `ideep`, `hip`, `ve`, `fpga`, `ort`, `xla`, `lazy`, `vulkan`, `mps`, `meta`, `hpu`, `mtia` or `privateuseone` can be set to `device`.

- Setting `0` to `device` uses `cuda`(GPU). *The number must be zero or positive.

- [My post](https://dev.to/hyperkai/istensor-numel-and-device-in-pytorch-2eha) explains `device()`.

- The effect of `set_default_device()` lasts until `set_default_device()` is used next time.

- [get_default_device()](https://pytorch.org/docs/stable/generated/torch.get_default_device.html) can return the default device of a 0D or more D tensor.

```python

import torch

my_tensor = torch.tensor([0, 1, 2])

my_tensor.device

torch.get_default_device()

# device(type='cpu')

torch.set_default_device(device='cuda:0')

torch.set_default_device(device='cuda')

torch.set_default_device(device=0)

torch.set_default_device(device=torch.device(device='cuda:0'))

torch.set_default_device(device='cuda')

torch.set_default_device(device=0)

torch.set_default_device(device=torch.device(type='cuda', index=0))

torch.set_default_device(device=torch.device(type='cuda'))

my_tensor.device

torch.get_default_device()

# device(type='cuda', index=0)

```

[set_printoptions()](https://pytorch.org/docs/stable/generated/torch.set_printoptions.html) can set the default precision of the zero or more floating-point numbers and complex numbers of a 0D or more D tensor as shown below:

*Memos:

- `set_printoptions()` can be used with `torch` but not with a tensor.

- The 1st argument with `torch` is `precision`(Optional-Default:`4`-Type:`int`). *It must be zero or a positive number.

- The effect of `set_printoptions()` lasts until `set_printoptions()` is used next time.

```python

import torch

torch.set_printoptions()

torch.set_printoptions(precision=4)

torch.tensor([-3.635251, 7.270649, -5.164872])

# tensor([-3.6353, 7.2706, -5.1649])

torch.tensor([-3.635251+4.634852j,

7.270649+2.586449j,

-5.164872-3.450984j])

# tensor([-3.6353+4.6349j,

# 7.2706+2.5864j,

# -5.1649-3.4510j])

torch.rand(3)

# tensor([0.4249, 0.7562, 0.5942])

torch.rand(3, dtype=torch.complex64)

# tensor([0.9534+0.6484j, 0.1216+0.3275j, 0.8730+0.9752j])

torch.set_printoptions(precision=2)

torch.tensor([-3.635251, 7.270649, -5.164872])

# tensor([-3.64, 7.27, -5.16])

torch.tensor([-3.635251+4.634852j,

7.270649+2.586449j,

-5.164872-3.450984j])

# tensor([-3.64+4.63j, 7.27+2.59j, -5.16-3.45j])

torch.rand(3)

# tensor([0.95, 0.86, 0.32])

torch.rand(3, dtype=torch.complex64)

# tensor([0.28+0.95j, 0.27+0.75j, 0.78+0.45j])

torch.set_printoptions(precision=8)

torch.tensor([-3.635251, 7.270649, -5.164872])

# tensor([-3.63525105, 7.27064896, -5.16487217])

torch.tensor([-3.635251+4.634852j,

7.270649+2.586449j,

-5.164872-3.450984j])

# tensor([-3.63525105+4.63485193j,

# 7.27064896+2.58644891j,

# -5.16487217-3.45098400j])

torch.rand(3)

# tensor([0.12433541, 0.90939915, 0.81334412])

torch.rand(3, dtype=torch.complex64)

# tensor([0.23186535+0.95299882j,

# 0.97718322+0.48021430j,

# 0.73880774+0.09643537j])

``` | hyperkai |

1,864,353 | is_tensor(), numel() and device() in PyTorch | *Memos: My post explains how to check PyTorch version, CPU and GPU(CUDA). My post explains how to... | 0 | 2024-05-24T22:20:20 | https://dev.to/hyperkai/istensor-numel-and-device-in-pytorch-2eha | pytorch, istensor, numel, device | *Memos:

- [My post](https://dev.to/hyperkai/check-pytorch-version-cpu-and-gpucuda-in-pytorch-6jk) explains how to check PyTorch version, CPU and GPU(CUDA).

- [My post](https://dev.to/hyperkai/create-a-tensor-in-pytorch-127g) explains how to create a tensor.

- [My post](https://dev.to/hyperkai/access-a-tensor-in-pytorch-1f4e) explains how to access a tensor.

- [My post](https://dev.to/hyperkai/type-conversion-with-type-to-and-a-tensor-in-pytorch-2a0g) explains type conversion with [type()](https://pytorch.org/docs/stable/generated/torch.Tensor.type.html), [to()](https://pytorch.org/docs/stable/generated/torch.Tensor.to.html) and a tensor.

- [My post](https://dev.to/hyperkai/type-promotion-resulttype-promotetypes-and-cancast-in-pytorch-33p8) explains type promotion, [result_type()](https://pytorch.org/docs/stable/generated/torch.result_type.html), [promote_types()](https://pytorch.org/docs/stable/generated/torch.promote_types.html) and [can_cast()](https://pytorch.org/docs/stable/generated/torch.can_cast.html).

- [My post](https://dev.to/hyperkai/device-conversion-fromnumpy-and-numpy-in-pytorch-1iih) explains device conversion, [from_numpy()](https://pytorch.org/docs/stable/generated/torch.from_numpy.html) and [numpy()](https://pytorch.org/docs/stable/generated/torch.Tensor.numpy.html).

- [My post](https://dev.to/hyperkai/setdefaultdtype-setdefaultdevice-and-setprintoptions-in-pytorch-55g8) explains [set_default_dtype()](https://pytorch.org/docs/stable/generated/torch.set_default_dtype.html), [set_default_device()](https://pytorch.org/docs/stable/generated/torch.set_default_device.html) and [set_printoptions()](https://pytorch.org/docs/stable/generated/torch.set_printoptions.html).

- [My post](https://dev.to/hyperkai/manualseed-initialseed-and-seed-in-pytorch-5gm8) explains [manual_seed()](https://pytorch.org/docs/stable/generated/torch.manual_seed.html), [initial_seed()](https://pytorch.org/docs/stable/generated/torch.initial_seed.html) and [seed()](https://pytorch.org/docs/stable/generated/torch.seed.html).

[is_tensor()](https://pytorch.org/docs/stable/generated/torch.is_tensor.html) can check if an object is a PyTorch tensor, getting the scalar of a boolean value as shown below:

*Memos:

- `is_tensor()` can be used with [torch](https://pytorch.org/docs/stable/torch.html) but not with a tensor.

- The 1st argument with `torch` is `obj`(Required-Type:`object`).

```python

import torch

import numpy as np

pytorch_tensor = torch.tensor([0, 1, 2])

torch.is_tensor(obj=pytorch_tensor) # True

numpy_tensor = np.array([0., 1., 2.])

torch.is_tensor(obj=numpy_tensor) # False

torch.is_tensor(obj=7) # False

torch.is_tensor(obj=7.) # False

torch.is_tensor(obj=7.+0.j) # False

torch.is_tensor(obj=True) # False

torch.is_tensor(obj='Hello') # False

```

[numel()](https://pytorch.org/docs/stable/generated/torch.numel.html) can get the scalar of the total number of the elements from the 0D or more D tensor of zero or more elements as shown below:

*Memos:

- `numel()` can be used with `torch` or a tensor.

- The 1st argument with `torch` is `input`(Required-Type:`tensor` of `int`, `float`, `complex` or `bool`).

```python

import torch

my_tensor = torch.tensor(7)

torch.numel(input=my_tensor)

my_tensor.numel()

# 1

my_tensor = torch.tensor([7, 5, 8])

torch.numel(input=my_tensor) # 3

my_tensor = torch.tensor([[7, 5, 8],

[3, 1, 6]])

torch.numel(input=my_tensor) # 6

my_tensor = torch.tensor([[[7, -5, 8], [-3, 1, 6]],

[[0, 9, 2], [4, -7, -9]]])

torch.numel(input=my_tensor) # 12

my_tensor = torch.tensor([[[7., -5., 8.], [-3., 1., 6.]],

[[0., 9., 2.], [4., -7., -9.]]])

torch.numel(input=my_tensor) # 12

my_tensor = torch.tensor([[[7.+0.j, -5.+0.j, 8.+0.j],

[-3.+0.j, 1.+0.j, 6.+0.j]],

[[0.+0.j, 9.+0.j, 2.+0.j],

[4.+0.j, -7.+0.j, -9.+0.j]]])

torch.numel(input=my_tensor) # 12

my_tensor = torch.tensor([[[True, False, True], [True, False, True]],

[[False, True, False], [False, True, False]]])

torch.numel(input=my_tensor) # 12

```

[device()](https://pytorch.org/docs/stable/tensor_attributes.html#torch-device) can represent a device as shown below:

*Memos:

- `device()` can be used with `torch` but not with a tensor.

- The 1st argument with `torch` is `device`(Required-Type:`str`, `int` or [device()](https://pytorch.org/docs/stable/tensor_attributes.html#torch-device)).

- The 1st argument with `torch` is `type`(Required-Type:`str`).

- The 2nd argument with `torch` is `index`(Optional-Type:`int`). *`index` must be used with `type`.

- `cpu`, `cuda`, `ipu`, `xpu`, `mkldnn`, `opengl`, `opencl`, `ideep`, `hip`, `ve`, `fpga`, `ort`, `xla`, `lazy`, `vulkan`, `mps`, `meta`, `hpu`, `mtia` or `privateuseone` can be set to `device` or `type`.

- Setting 0 to `device` uses cuda(GPU). *The number must be zero or positive.

```python

import torch

torch.device(device='cpu')

torch.device(type='cpu')

# device(type='cpu')

torch.device(device='cuda:0')

torch.device(device=0)

torch.device(type='cuda', index=0)

torch.device(device=torch.device(device='cuda:0'))

torch.device(device=torch.device(device=0))

torch.device(device=torch.device(type='cuda', index=0))

# device(type='cuda', index=0)

torch.device(device='cuda')

torch.device(type='cuda')

torch.device(device=torch.device(device='cuda'))

torch.device(device=torch.device(type='cuda'))

# device(type='cuda')

```

| hyperkai |

1,864,339 | InfinityScroll - Just like TikTok! | 📺 preview.jpg Watch it ✨ Live ✨ 📝 infinityscroll.js Don't dwell on the pain. Just take... | 26,254 | 2024-05-24T22:17:59 | https://dev.to/jorjishasan/infinityscroll-just-like-tiktok-4j5l | webdev, javascript, programming, react | {% details 📺 preview.jpg %}

_Watch it [✨ Live ✨](https://infinitytoken.netlify.app/)_

{% enddetails %}{% details 📝 infinityscroll.js %}

_Don't dwell on the pain. Just take a quick look. After reading the article, everything will become clear to you. [📝 SOURCE CODE](https://github.com/jorjishasan/infinite-scroller)_

```js

import { useState, useEffect } from "react";

import ReactDOM from "react-dom/client";

function App() {

const [numArr, setNumArr] = useState(Array.from({ length: 20 }, (_, i) => i));

const handleScroll = () => {

if (window.innerHeight + window.scrollY >= document.body.offsetHeight) {

setNumArr((prevNums) => [

...prevNums,

...Array.from({ length: 20 }, (_, i) => i + prevNums.length),

]);

}

};

// Listen for scroll events

useEffect(() => {

window.addEventListener("scroll", handleScroll);

return () => window.removeEventListener("scroll", handleScroll);

}, []);

return (

<div className="font-serif text-3xl text-center">

{numArr.map((num, index) => (

<div

key={index}

className="m-2 p-5 bg-white shadow-md rounded cursor-pointer"

>

{num}

</div>

))}

</div>

);

}

const root = ReactDOM.createRoot(document.getElementById("root"));

root.render(<App />);

```

{% enddetails %}

I promise! Following my steps, you can natively implement Infinity Scrolling. This means you don't have to worry about installing extra packages or adding unnecessary weight to your app.

1. Initial Render

2. Event Listening

3. Event Handling

Don't be afraid of these jargon words 😄. I'm holding your hands 💪. Once you reach the end of the article, it'll make you proud.

---

**STEP 1:** ⏤ Initial Render 📃

Initially, let's load/fill the page with 20 elements.

CODE:

```js

function App() {

const [numArr, setNumArr] = useState(Array.from({ length: 20 }, (_, i) => i));

return (

<div className="font-serif text-3xl text-center">

{numArr.map((num, index) => (

<div

key={index}

className="m-2 p-5 bg-white shadow-md rounded cursor-pointer"

>

{num}

</div>

))}

</div>

);

```

Explain: Create a `numArr` state variable on top of the `App` component with values 0 through 20. Over the return statement, render items of `numArr` as elements. **STEP 1 is done.**

---

**STEP 2:** ⏤ Event Listening 👂

Browsers offer us bunch of events: Mouse(click, mousemove, mouseover), Keybaord Event(keydown, keypress, keyup), and Window Event(scroll, resize, load).

Since we are implementing a feature based on scrolling, we need to keep track of scroll activity. To get notified, we'll set a watcher inside the `App()` component that continuously checks whether a scroll happens.

CODE:

```js

function App() {

// Listen for scroll events

useEffect(() => {

window.addEventListener("scroll", handleScroll);

return () => window.removeEventListener("scroll", handleScroll);

}, []);

}

```

Explain: We set the watcher or event-listener, that event listener `window.addEventListener("scroll", handleScroll)` takes 2 arguments: the event name `scroll` and a callback-function `handleScroll` that defines what to do when the event is triggered. **Step 2 is done.** The `return` statement does [cleanup](https://dev.to/jorjishasan/cleanup-in-react-5dkc). You can skip it for now with this setup.

---

**STEP-3:** ⏤ Event Handling 💪

Assuming that scrolling happens, the contract of the `handleScroll` function would be to load more data when the user reaches the end of the page.

CODE:

```js

//This function will invoked when event triggered

const handleScroll = () => {

if (window.innerHeight + window.scrollY >= document.body.offsetHeight) {

setNumArr((prevNums) => [

...prevNums,

...Array.from({ length: 20 }, (_, i) => i + prevNums.length),

]);

}

};

```

Explain: The if statement verifies that the user has reached the end of the page. The code inside the `if` block accumulates 15 new data to the `numArr` variable. Since `numArr` is a state variable, it will re-render the state after every update. It's react's native [state behavior](https://react.dev/reference/react/useState).

Yay 😀! You have done the **last step.** You have achieved more optimized infinity scrolling.

**NB:** I used numbers as placeholders. In a real scenario, you'll fetch data across pages via API. | jorjishasan |

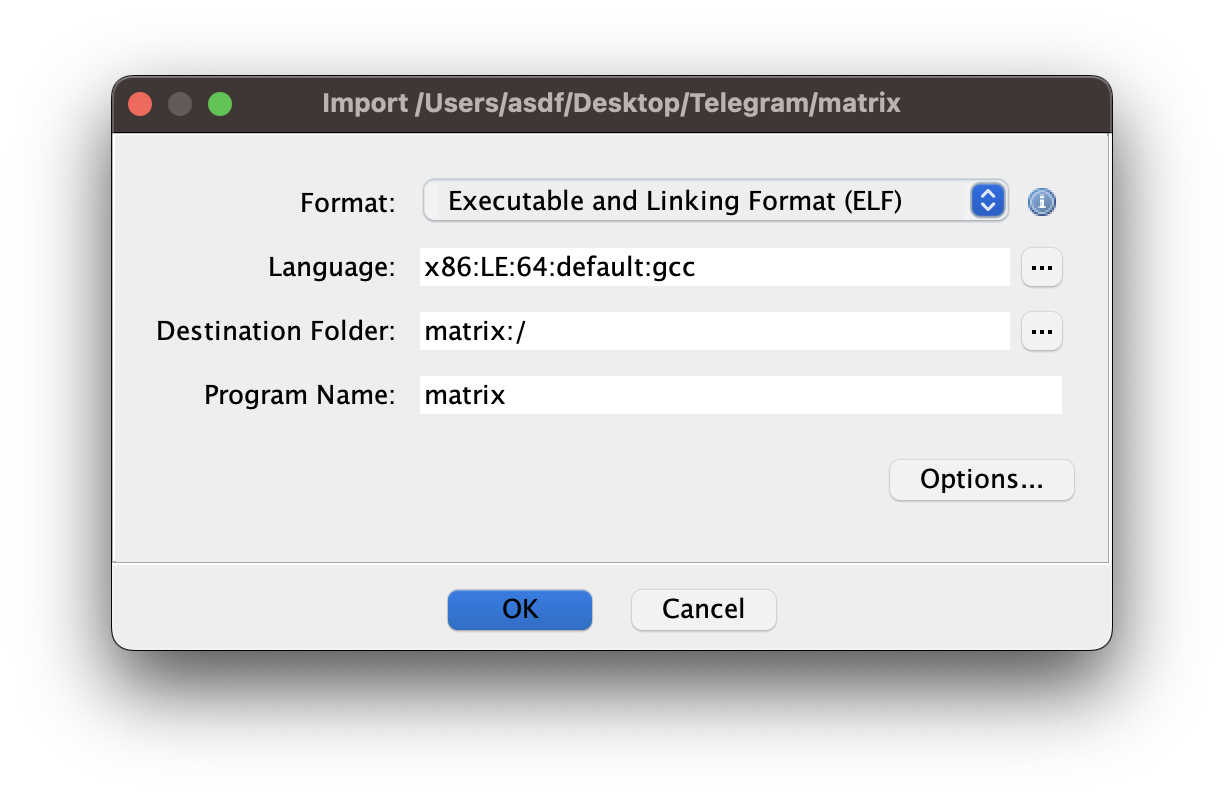

1,864,226 | MindsDB + Docker: Utilizando SQL e containers para integrações com IA | Já pensou em criar sua própria IA, treiná-la com seus dados e utilizar esse "conhecimento" para... | 0 | 2024-05-24T22:10:07 | https://dev.to/rflpazini/mindsdb-docker-utilizando-sql-e-containers-para-integracoes-com-ia-edc | docker, sql, openai | Já pensou em criar sua própria IA, treiná-la com seus dados e utilizar esse "conhecimento" para alavancar seu app ou algum projeto em que você está trabalhando? Eu também pensei nisso e tive a mesma reação: "Nossa, que preguiça! Imagina quanto tempo eu ia perder fazendo isso..."

Quando pensamos em IA, geralmente imaginamos ter que começar do zero, aprender uma nova linguagem, escrever milhares de linhas de código para integrar com o app. Mas e se eu te disser que dá para fazer isso apenas usando SQL ou então fazendo uma requisição HTTP? É exatamente isso que o MindsDB faz por nós.

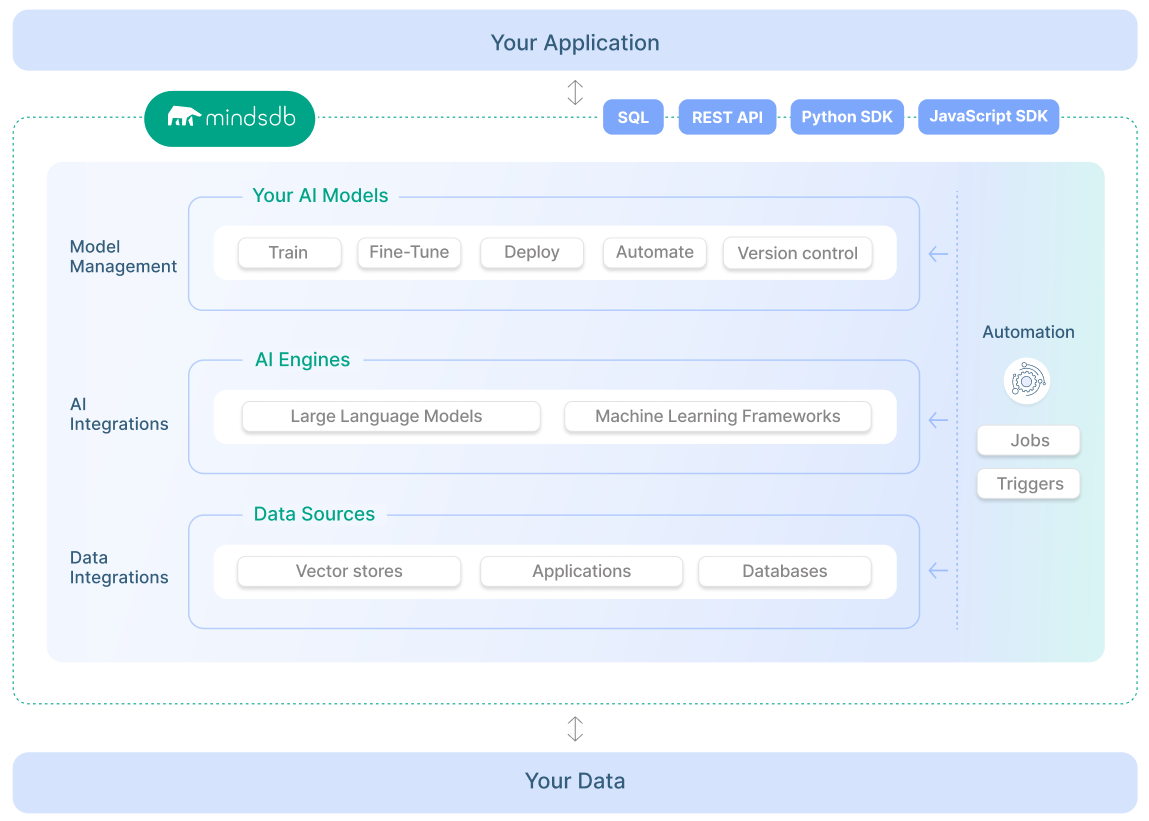

## O que é o MindsDB?

O MindsDB é uma plataforma que facilita a criação e integração de modelos de aprendizado de máquina (machine learning) diretamente com bancos de dados, utilizando SQL - sim, você não precisa aprender outra linguagem para trabalhar com IA 😅.

Ela permite que desenvolvedores, como nós, aproveitem os dados existentes em seus bancos de dados e construam modelos de aprendizado de máquina sem a necessidade de conhecimentos avançados em ciência de dados ou machine learning.

Em resumo, ele permite termos nossa IA personalizada para nossos dados direto da nossa aplicação. Se liga no diagrama abaixo, nele podemos ver como é a estrutura do MindsDB e um pouco de como é sua integração.

### **Benefícios do MindsDB**

- **Facilidade de Uso:** Ideal para desenvolvedores que já estão familiarizados com SQL, eliminando a necessidade de aprender novas linguagens de programação.

- **Agilidade:** Reduz o tempo e esforço necessários para desenvolver e implementar modelos de machine learning.

- **Flexibilidade:** Funciona com uma ampla gama de bancos de dados e pode ser integrado em diversas aplicações.

Depois disso, você deve estar se perguntando: "Mas e aí, Rafa, será que é tão simples a integração e a brincadeira com ele?" Vamos colocar a mão na massa e ver se é assim mesmo…

## Contexto e Problema

Vamos imaginar que temos uma loja de café onde nossos usuários deixam seus reviews sobre o que acharam do nosso café. A cada 3 meses renovamos o cardápio da loja e queremos trocar os cafés que têm opiniões “neutras” ou “negativas” sobre eles.

Queremos fazer isso de uma forma rápida e simples, sem ter que ficar lendo milhões de comentários para analisar um a um e, no fim, contar as opiniões sobre o que temos. Então, como todo bom programador, vamos utilizar a hype do momento: a IA para classificar esses comentários para nós e, de quebra, aprender uma tecnologia nova.

## Deploy com Docker

Eu gosto de facilitar tudo na minha vida, então a maneira mais simples de instalar uma ferramenta ou algum app sem ter que ficar configurando mil coisas em nossa máquina local é usando Docker 😄.

O MindsDB utiliza várias dependências para funcionar, como Python, MongoDB, MySQL, e utilizando a imagem Docker não temos que nos preocupar em instalar nada disso em nossa máquina.

> Para seguir esse tutorial, você deve ter o Docker instalado e rodando em sua máquina. Caso ainda não tenha ele instalado, basta seguir o tutorial da [documentação oficial](https://docs.docker.com/desktop/).

>

Como eu quero trazer um exemplo de uso real, vamos ter dois containers rodando: um com o MindsDB e outro com um MySQL, que é o banco de dados onde já existem nossos comentários sobre os cafés.

Para ter acesso ao projeto, [basta baixar a pasta dele no meu Github](https://github.com/rflpazini/articles/tree/main/mindsdb_docker), onde teremos a seguinte estrutura dentro da pasta:

```bash

mindsdb_docker

├── compose.yml //incia os nossos containers

└── dump.sql //insere os dados em nosso DB

```

Com o projeto baixado, é hora de rodá-lo localmente. Para executar o docker compose, digite o seguinte comando:

```bash

$ docker compose up -d --build

```

Após realizar o comando, você receberá a seguinte mensagem em seu terminal dizendo que tudo foi inicializado:

```bash

✔ Network mindsdb_sample_default Created 0.0s

✔ Container mindsdb_sample-mysql-1 Started 0.3s

✔ Container mindsdb_sample-mindsdb-1 Started 0.3s

```

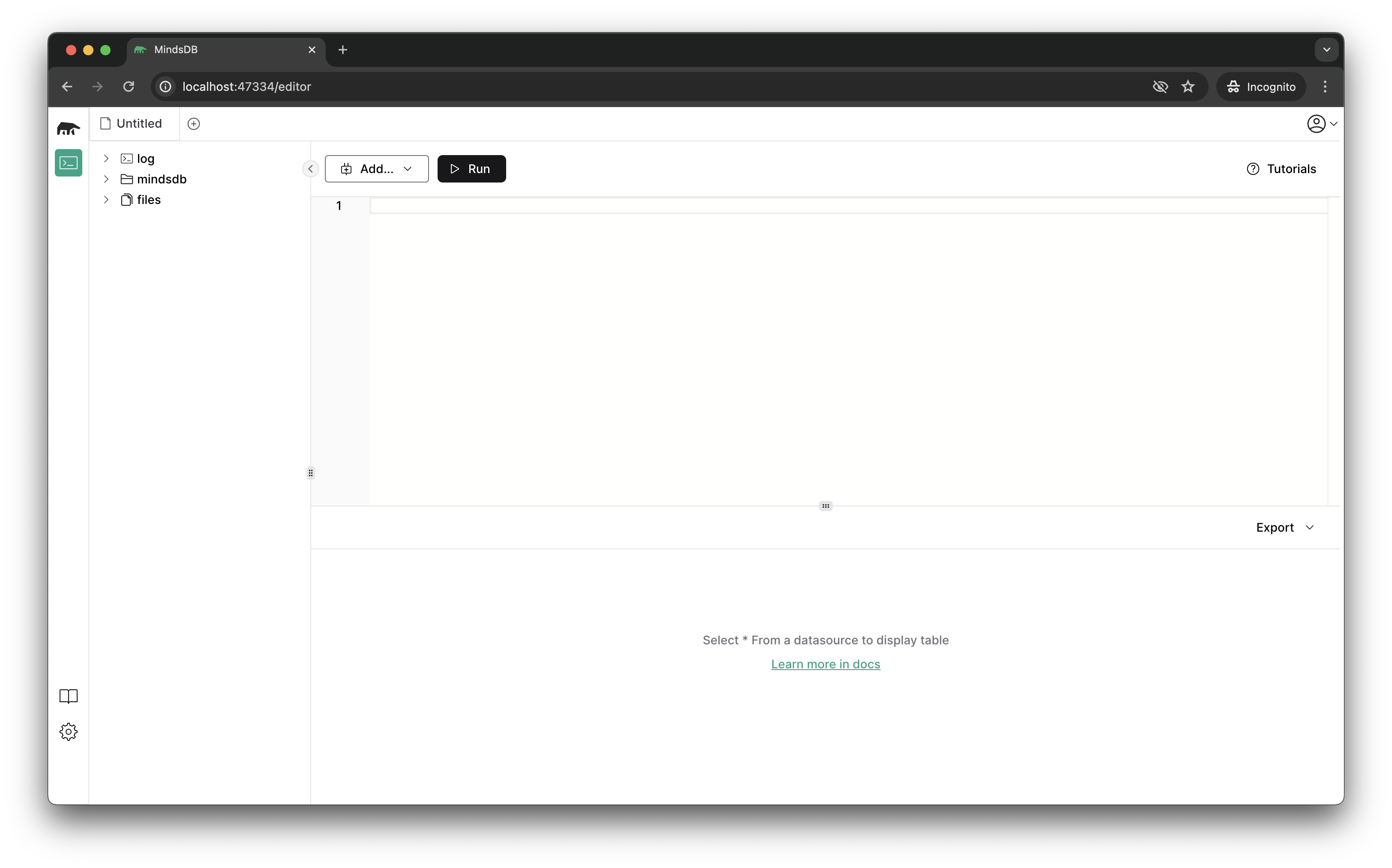

Agora é só acessar a URL do MindsDB que é [`http://localhost:47334`](http://localhost:47334/) para ver a interface gráfica dele.

## Configurando a conexão com DB

Como já temos a instância do MindsDB rodando localmente, vamos configurar a conexão dele com nossa base de dados, de onde serão retiradas as reviews.

Para isso, basta digitar o seguinte comando SQL:

```sql

CREATE DATABASE mysql_sample

WITH ENGINE = 'mysql',

PARAMETERS = {

"user": "root",

"password": "r00tPassword123",

"host": "<ip-local-do-seu-computador>", --troque pelo seu ip local

"port": "3306",

"database": "cool_data"

};

```

> Um detalhe importante é que no host devemos colocar o IP local do nosso computador. Por exemplo, o meu é **`10.0.2.10`** pois não podemos esquecer que estamos dentro de um container e que a porta mapeada para acesso é a do nosso host. Se colocarmos **`localhost`**, ele não encontrará o banco de dados. Caso não saiba qual é seu IP, aqui vai um comando que pode ajudar 😄

>

```bash

$ ifconfig | grep \"inet \" | grep -v 127.0.0.1 | cut -d\ -f2

```

O que estamos fazendo com esse comando SQL:

- Criando a conexão com o banco e dizendo que ela se chamará **`mysql_sample`**.

- Dizendo qual é a ENGINE de banco de dados que queremos utilizar.

- Passando os parâmetros de conexão: usuário, senha, host e o nome do banco de dados que vamos nos conectar.

Agora vamos validar se nossa conexão está funcionando. Para isso, vamos usar o bom e velho SELECT do nosso querido SQL:

```sql

SELECT *

FROM mysql_sample.coffee_review

LIMIT 2;

```

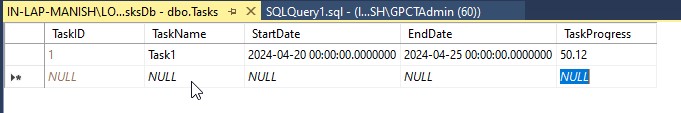

Ele deve retornar os valores que existem em nosso banco de dados. No caso, temos uma tabela chamada **`coffee_review`** e os seguintes campos: **`id`**, **`coffee_name`**, **`origin`** e **`review`** com os dados já preenchidos. Note também que durante a consulta SQL utilizei o nome da conexão que criamos no passo acima para dizer para o MindsDB que quero pegar a tabela **`coffee_review`** que está dentro do meu banco **`mysql_sample`**.

Assim sabemos que temos uma conexão de sucesso entre a nossa base de dados e o MindsDB

## Criando uma ML Engine

O MindsDB, como eu disse no início, tem suporte para vários plugins e um deles são as ML Engines. No nosso caso, como queremos fazer a análise dos comentários, vamos utilizar a OpenAI engine e para isso precisamos configurá-la.

> Para o próximo passa você precisará de uma API Key e consegue gera-la no próprio site da OpenAI: [platform.openai.com](https://platform.openai.com/settings/organization/billing/payment-methods)

>

Com a API Key em mãos podemos criar a ML Engine dentro do MindsDB, é bem tranquilo:

```sql

CREATE ML_ENGINE openai

FROM openai

USING

openai_api_key = 'sk-xxxx'; --substitua o valor pela sua chave

```

O MindsDB disponibiliza o handler, que é a integração do MindsDB com as APIs das IAs. Então, o que temos que fazer é apenas dizer que queremos criar uma nova engine usando o handler específico para ela. Existem vários e você pode encontrar a lista deles na documentação. No código acima, estamos criando a **`openai`** engine, usando o handler da **`openai`** e passando a nossa API key para ele.

Essa simples configuração já faz a integração do nosso MindsDB com a IA que escolhemos.

## Hora de criar o Modelo

Quando falamos de modelo, em palavras simplificadas, é o modelo de aprendizagem que a IA vai utilizar para fazer a tarefa que queremos que ela execute.

No MindsDB vamos criar este modelo de aprendizagem utilizando SQL e passando algumas informações para ele.

Assim ficará nosso modelo que classifica os comentários sobre o café como positivo, negativo e neutro.

```sql

CREATE MODEL coffee_review_sentiment

PREDICT sentiment

USING

engine = 'openai',

model_name = 'gpt-4',

prompt_template = 'descreva o sentimento dos comentários apenas como "positivo", "negativo" ou "neutro".

"Amei este café":positivo

"Não me agradou": negativo

"{{review}}.":';

```

No código acima, a primeira coisa que fazemos é dar um nome para o modelo, que se chama **`coffee_review_sentiment`**. Logo em seguida, falamos para o modelo que ele vai “prever” a propriedade **`sentiment`**, ou seja, estamos falando que o resultado do nosso prompt será exibido nessa coluna. Por fim, dizemos qual engine queremos usar: **`openai`**, que é a que acabamos de configurar. Dizemos também que queremos utilizar o **`gpt-4`** como modelo de IA e como estamos usando a OpenAI, ela sempre espera um prompt de comando, que é onde passamos para o modelo o que esperamos que ele faça e com qual informação ele vai trabalhar.

Após executar o comando de criar, você provavelmente receberá o seguinte output:

```markdown

| TABLES | NAME | ENGINE | PROJECT | ACTIVE | VERSION | STATUS | ACCURACY | PREDICT | UPDATE_STATUS | MINDSDB_VERSION | ERROR | SELECT_DATA_QUERY | TRAINING_OPTIONS | TAG |

|---------------------|---------------------------|--------|---------|--------|---------|------------|----------|-----------|---------------|-----------------|--------|-------------------|----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|--------|

| ["args","metadata"] | coffee_review_sentiment_6 | openai | mindsdb | true | 1 | generating | [NULL] | sentiment | up_to_date | 24.4.3.0 | [NULL] | [NULL] | {'__mdb_sql_task': None, 'target': 'sentiment', 'using': {'model_name': 'gpt-4', 'prompt_template': 'descreva o sentimento dos comentários apenas como "positivo", "negativo" ou "neutro".\n "Amei este café":positivo\n "Não me agradou": negativo\n "{{review}}.":'}} | [NULL] |

```

Repare que na coluna **`STATUS`** temos o valor **`generating`**, ou seja, o nosso modelo está sendo gerado ainda. Ainda não podemos utilizá-lo em nossas consultas; para ele se tornar útil o status deve ser **`complete`**. Para consultar o valor do status, utilizamos o comando **`DESCRIBE`** para descrever o que temos na nossa tabela de modelo:

```sql

DESCRIBE coffee_review_sentiment;

```

Quando o status for **`complete`**, você verá no resultado da query:

```sql

| TABLES | NAME | ENGINE | PROJECT | ACTIVE | VERSION | STATUS | ACCURACY | PREDICT | UPDATE_STATUS | MINDSDB_VERSION | ERROR | SELECT_DATA_QUERY | TRAINING_OPTIONS | TAG |

|---------------------|---------------------------|--------|---------|--------|---------|----------|----------|-----------|---------------|-----------------|--------|-------------------|----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|--------|

| ["args","metadata"] | coffee_review_sentiment_6 | openai | mindsdb | true | 1 | complete | [NULL] | sentiment | up_to_date | 24.4.3.0 | [NULL] | [NULL] | {'__mdb_sql_task': None, 'target': 'sentiment', 'using': {'model_name': 'gpt-4', 'prompt_template': 'descreva o sentimento dos comentários apenas como "positivo", "negativo" ou "neutro".\n "Amei este café":positivo\n "Não me agradou": negativo\n "{{review}}.":'}} | [NULL] |

```

## Aplicando a classificação

Agora que temos o modelo pronto e configurado, é hora de classificar nossos reviews. Faremos isso utilizando os comandos mais clássicos de SQL: **`SELECT`** e **`FROM`** 😊.

Relembrando o que queremos fazer, queremos classificar os reviews dos nossos cafés como neutro, positivo e negativo. Para nosso exemplo, acho legal mostrar primeiro qual é o sentimento e depois o comentário caso for necessário ler o comentário que recebeu aquela avaliação.

Então vamos para o nosso select:

```sql

SELECT m.sentiment, t.review --campos que quero exibir na minha consulta

FROM mysql_sample.coffee_review AS t --tabela do DB que contem o review

JOIN mindsdb.coffee_review AS m; --tabela que contém a previsão da IA

```

Aqui fazemos um pequeno select usando join para obter o nosso sentimento e logo em seguida o review que teve aquela análise de nossa IA. Seu resultado deve ser o seguinte:

```markdown

| sentiment | review |

|-----------|----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

| positivo | Assim que eu levei a xÃcara aos lábios, parecia que eu estava num mercado de especiarias. O aroma floral e cÃtrico tomou conta e me deixou super curioso. No primeiro gole, senti uma explosão de sabores complexos: limão e bergamota, com um toque sutil de jasmim. A acidez brilhante equilibrada por uma doçura suave, quase como mel, foi incrÃvel. A cada gole, o café ia mostrando mais camadas, deixando um gostinho refrescante na boca. É como uma dança de sabores no paladar, perfeito para começar o dia cheio de energia e inspiração. |

| positivo | Desde que o espresso começou a sair, o cheiro rico e achocolatado tomou conta do lugar. O primeiro gole foi como um abraço quentinho - notas de chocolate amargo, nozes torradas e um toque de caramelo. A crema espessa dá uma textura cremosa, deixando tudo ainda mais gostoso. A doçura natural e o corpo encorpado desse blend são bem tÃpicos dos cafés brasileiros. Cada gole traz uma sensação de conforto e familiaridade, perfeito para uma pausa à tarde ou um bate-papo com amigos. |

| positivo | Quando comecei a prensar o café na Prença Francesa, o cheiro doce e terroso começou a se espalhar pelo ambiente. No primeiro gole, já senti uma profundidade incrÃvel: notas de frutas vermelhas, como cereja e amora, com um toque leve de cacau. A acidez suave e a doçura das frutas se equilibram perfeitamente, e a textura é super aveludada. Esse café me fez sentir super conectado com a natureza, como se eu estivesse saboreando os frutos direto da terra. É uma experiência revigorante e autêntica, perfeita para quem curte um café cheio de personalidade. |

| neutro | Preparei esse café na máquina automática e o aroma que surgiu foi ok, nada demais. No primeiro gole, o sabor era simples e direto, sem muita complexidade. Tinha notas de nozes levemente tostadas e um toque sutil de chocolate ao leite. A acidez estava bem equilibrada e o corpo era médio, dando uma sensação satisfatória, mas sem impressionar. Esse café não tem nada de muito especial, mas é uma escolha segura pra quem quer um café consistente e previsÃvel, sem grandes surpresas. |

| negativo | Ao coar esse café, o aroma foi bem fraquinho e nada convidativo. O primeiro gole foi uma decepção - um sabor raso e sem vida, com um amargor queimado que lembrava borra de café velha. A acidez estava desbalanceada e a textura era bem aquosa, deixando uma sensação desagradável na boca. Não havia qualquer traço de doçura ou complexidade para salvar a experiência. Cada gole foi um esforço para terminar a xÃcara, e fiquei frustrado e arrependido de não ter escolhido um café melhor. |

```

Pronto, temos todos nossos reviews classificados com o “sentimento”.

Ótimo!! mas e se formos um pouco além e melhorarmos esse select com outro modelo para termos acesso aos motivos que levaram esse comentário a expressar aquele sentimento? hehehe

### Detalhando a classificação

Agora vamos melhorar um pouco mais nossa classificação e inserir os motivos para ela. Isso facilitaria, por exemplo, a vida de um executivo que não quer ficar horas lendo comentários para entender o que o seu cliente escreveu, mas quer otimizar o tempo indo direto para os principais motivos do que aconteceu. A famosa leitura dinâmica virando leitura eficiente 😀

Para facilitar nosso lado de “dev”, vamos criar outro modelo, onde diremos a ele para listar os principais motivos do sentimento por trás daquele review.

```sql

CREATE MODEL coffee_review_reasons

PREDICT reasons

USING

engine = 'openai',

model_name = 'gpt-4',

prompt_template = 'liste resumidamente os principais motivos do sentimento: "{{review}}";';

```

Como estamos acostumados, criamos o modelo com o nome **`coffee_review_reasons`**, onde ele vai ter o output na coluna **`reasons`**. No prompt, pedimos de uma forma direta para que ele liste resumidamente os principais motivos do sentimento; esse sentimento virá do nosso campo **`review`** que está em nosso DB.

Agora é só sentar e melhorar nosso select que acabamos de fazer, e como melhoraremos ele? Através de `JOINS`:

```sql

SELECT t.id, m.sentiment, m2.reasons

FROM mysql_sample.coffee_review AS t

JOIN coffee_review_sentiment AS m

JOIN coffee_review_reasons AS m2

WHERE m.sentiment = 'negativo' OR m.sentiment = 'neutro'

```

Para explicar esse nosso novo select: Primeiro dizemos que queremos exibir o **`id`**, o sentimento **`sentiment`** e os motivos **`reasons`**. Logo em seguida pegamos o review do nosso banco de dados, depois fazemos os JOINS com o primeiro modelo que é o **`coffee_review_sentiment`** e depois com o novo modelo **`coffee_review_reasons`**. Como acho que o que importa neste momento são apenas termos mais detalhes dos negativos e neutros, colocamos uma cláusula WHERE filtrando apenas por neutro e negativo. Mais uma análise completa!

Esse é o resultado de nossa análise final, com os cafés que têm sentimento negativo e neutro sobre eles e os principais motivos de terem tido este julgamento resumidos para nós:

```markdown

| id | sentiment | reasons |

|----|-----------|------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

| 5 | negativo | 1. Aroma fraco e não convidativo: O aroma do café é um dos primeiros indicadores de sua qualidade. Neste caso, o aroma fraco pode ter indicado que o café não seria satisfatório.2. Sabor raso e sem vida: Um bom café deve ter um sabor rico e complexo. O sabor raso e sem vida deste café foi uma grande decepção.3. Amargor queimado: O amargor |

| 4 | neutro | 1. Aroma: O aroma do café preparado na máquina automática foi considerado apenas "ok", não se destacando de maneira especial.2. Sabor: O sabor do café foi descrito como simples e direto, sem muita complexidade. As notas de nozes levemente tostadas e um toque sutil de chocolate ao leite foram identificadas, mas não trouxeram um diferencial significativo.3. Acidez |

```

### Inserindo nossa consulta em uma nova tabela

Agora você deve estar se perguntando: “Poxa, mas como que eu salvo esses dados da análise?”. Bom, temos algumas formas de fazer isso; para facilitar nossa vida, que tal salvá-las no banco de dados em uma nova tabela?

Vamos criar essa tabela com a ajuda do SQL novamente e usando o **`CREATE TABLE`**. Então reescreva o último select que fizemos, mas agora vamos inseri-lo junto com o comando de criar uma nova tabela em nosso banco de dados:

```sql

CREATE TABLE mysql_sample.reviews_processed (

SELECT t.id, m.sentiment, m2.reasons

FROM mysql_sample.coffee_review AS t

JOIN coffee_review_sentiment AS m

JOIN coffee_review_reasons AS m2

WHERE m.sentiment = 'negativo' OR m.sentiment = 'neutro'

);

```

Dessa forma, garantimos que temos um registro salvo e não precisamos ficar reprocessando a nossa análise toda vez que quisermos consultar o que os clientes estão achando de nossos cafés.

E assim terminamos nosso sistema de análises de reviews sobre cafés e podemos tirar os cafés menos desejados de nosso cardápio 🥰

## Benefícios da analise

A análise de sentimento é uma ferramenta poderosa que permite entender as opiniões e emoções dos clientes em relação a produtos ou serviços. No contexto de uma loja de café, como exemplificado no artigo, a implementação de uma IA para realizar essa análise pode trazer inúmeros benefícios estratégicos, como por exemplo:

- **Melhoria da Experiência do Cliente:** permite uma resposta rápida às críticas dos clientes, ajudando a resolver problemas antes que eles se tornem amplamente conhecidos e afetem a reputação da marca.

- **Tomada de Decisões Baseada em Dados:** com insights sobre quais produtos são mais apreciados e quais não agradam, a empresa pode tomar decisões informadas sobre quais itens manter, modificar ou remover do cardápio.

- **Vantagem Competitiva:** negócios que utilizam a análise de sentimento conseguem se adaptar rapidamente às mudanças nas preferências dos consumidores, mantendo-se à frente da concorrência, podendo inovar de maneira contínua.

Entre outros que não citei aqui, mas hoje em dia o produto mais valioso que temos em mãos são os dados, então devemos dar um carinho especial para eles.

## Conclusão

A combinação do MindsDB e Docker revoluciona a maneira como integramos IA e machine learning em nossos projetos, tornando todo o processo incrivelmente simples e acessível. Com o MindsDB, desenvolvedores podem criar e implementar modelos de aprendizado de máquina usando apenas SQL, eliminando a necessidade de aprender novas linguagens de programação complexas. Docker, por sua vez, facilita a implantação dessas tecnologias, permitindo que várias dependências sejam gerenciadas de forma eficiente através de containers. Essa sinergia não só acelera o desenvolvimento e a implementação de soluções de IA, mas também reduz significativamente a complexidade operacional, permitindo que negócios de todos os tamanhos aproveitem o poder da IA para melhorar a experiência do cliente e impulsionar o crescimento. | rflpazini |

1,864,338 | Weekly Updates - May 24, 2024 | Hi everyone! Short and sweet this week. 📖 New Blog - Hybrid Search: An Overview - Do you want to... | 0 | 2024-05-24T22:08:21 | https://dev.to/couchbase/weekly-updates-may-24-2024-gbh | couchbase, ai, community, machinelearning | Hi everyone!

Short and sweet this week.

- 📖 **New Blog - Hybrid Search: An Overview** - Do you want to learn more about hybrid search, what it entails, how it works and the differences between search methods? [*You can read the blog here >>*](https://www.couchbase.com/blog/hybrid-search/)

<br />

- 📺 **New Video: Roundtable - Enterprise AI** - Watch the Enterprise AI Virtual Roundtable, as the panel of contributors and community members dive deeper into AI and machine learning. Hear their thoughts on how these technologies will continue to shape the industry over the next year. [*You can watch the video here>>*](https://www.youtube.com/watch?v=ppgQl9Q604k)

<br />

- ❔**Do you have a technical question that you'd like more answers to?** Join the Couchbase Forum, browse through topics, tags, and find previously answered questions or ask your question. [*You can access the Forum here >>*](https://www.couchbase.com/forums/)

<br />

- 👋 **Don't forget, it's Summit Season!** - Are you traveling to any of the AWS or Google Summits or other Tech Expos? Come say Hi and meet the team! [*You can see the Summit's we will be attending here >>*](https://www.couchbase.com/resources/webcasts-and-events/?cb_searchable_posts_asc%5BrefinementList%5D%5Btaxonomies.events_category%5D%5B0%5D=Tradeshow)

Have a great weekend everyone! | carrieke |

1,864,336 | Adventures in TypeScript Typescript System | Write modern systems is a hard task, if you not followed the tendencies accross the internet. There's... | 0 | 2024-05-24T22:04:09 | https://dev.to/ktfth/adventures-in-typescript-typescript-system-5h6k | webdev, typescript | Write modern systems is a hard task, if you not followed the tendencies accross the internet. There's something important to consider here, you need time to live and enjoy the life isn't?

Become a successful programmer or a developer, and even a engineer, you need to consider those thing's. And it's magical!

In my past experiences I dedicated some time to learn almost every concept I need to know how to be prolific with JavaScript, when TypeScript come to live, I just ignored what I've learned from JavaScript and try to clean my mind and focus on this new language.

For me, learn the new language quietly become a hard task, to setup the environment, understand how conditioner the compiler give me answer's and apply this effectively on a profitable project is a major thing that I wanted, to avoid loss all the perks the language offer.

But something come to mind, why I need that? And how to capture indicators of that change?

This kind of questions come to mind, with TypeScript and all the other technologies we choose. When something new pop's up, you need to hack around and discover your way, nothing delivery's more value than a good approach of what's you expect, the client expect or your users need.

Useful stuff to follow:

* source code

* change logs

* channel's

* reverse engineering other application's

* put thing's together

Don't trust other people say, if you want, try yourself and move fast!

Understand concept's behind a certain technology's, involve a bunch of experimentation and collaboration.

What you want to achieve? My goal is money, and I believe the money is in certain way a expression of wisdom.

Be carefully with anxious bahvior to adopt a technology just by the fact is cool, search your own way to make a change in the world with good quality code and discover why create a state of art piece of software is a concern. Sleep well without the fear of your software stop, without any clues for a investigation is a bad thing to happen.

Here the piece of wisdom extract from type system of TypeScript:

* Modern compiler infrastructure

* Tools supporting the language without a need to run at your own the code you need to made

* Flexibility at choices you need to made to deliver portable code

* Interop with JavaScript concept's

* Good mapping of what you are doing with your code

* Reusability with documentation based on types you or other's made it

* Modern modules base on the language

* Huge community

* Supported by big company

This is my thoughts about a good roadmap to learn new things, It is not perfect, but I feel comfortable with that, to cut a pathways down.

Nice to here from you if you can type your opinion about this approach and let's hack together?

Cheer's! | ktfth |

1,864,334 | Thanks Dev | A post by Gaurav Kumar | 0 | 2024-05-24T22:00:55 | https://dev.to/ownbizaccount/thanks-dev-2dn4 | ownbizaccount | ||

1,864,332 | Associating a Custom Domain to an API Gateway on Google Cloud | Setting up a custom domain for your API Gateway on Google Cloud enhances the professional appearance... | 0 | 2024-05-24T21:52:17 | https://dev.to/burgossrodrigo/associating-a-custom-domain-to-an-api-gateway-on-google-cloud-4ph6 | googlecloud, apigateway | Setting up a custom domain for your API Gateway on Google Cloud enhances the professional appearance of your APIs and improves brand consistency. Here's a step-by-step guide to achieve this.

**Prerequisites**

Ensure you have:

- A domain name managed via Google Cloud DNS or any other DNS provider.

- An SSL certificate configured in Google Cloud.

- Steps to Configure API Gateway with a Custom Domain

**Steps to Configure API Gateway with a Custom Domain**

1 - Create Serverless NEG for API Gateway

```

gcloud beta compute network-endpoint-groups create api-gateway-serverless-neg --region=us-central1 --network-endpoint-type=serverless --serverless-deployment-platform=apigateway.googleapis.com --serverless-deployment-resource=gateway-name

```

2 - Attach NEG to Backend Service

```

gcloud compute backend-services add-backend api-gateway-backend-service --global --network-endpoint-group=api-gateway-serverless-neg --network-endpoint-group-region=us-central1

```

3 - Create URL Map

```

gcloud compute target-https-proxies create api-gateway-https-proxy --ssl-certificates=dfb-dev-ssl-cert --url-map=api-gateway-url-map

```

4 - Create HTTPS Proxy

```

gcloud compute target-https-proxies create api-gateway-https-proxy --ssl-certificates=dfb-dev-ssl-cert --url-map=api-gateway-url-map

```

5 - Create Forwarding Rule

```

gcloud compute forwarding-rules create my-fw --target-https-proxy=api-gateway-https-proxy --global --ports=443

```

**Testing**

Verify the custom domain points to the API Gateway:

```

curl -v https://your-custom-domain.com

```

Conclusion

By following these steps, you can seamlessly associate a custom domain with your API Gateway, ensuring secure and branded API endpoints.

For more detailed documentation, refer to [Google Cloud API Gateway Custom Domains.](https://cloud.google.com/api-gateway/docs/using-custom-domains?hl=en-us)

| burgossrodrigo |

1,864,326 | Navigating React State | Introduction State management in React is a crucial aspect of developing applications with... | 0 | 2024-05-24T21:19:56 | https://dev.to/bilelsalemdev/navigating-react-state-usestate-usereducer-context-redux-toolkit-recoil-3i98 | react, javascript, webdev, development | ### Introduction

State management in React is a crucial aspect of developing applications with complex user interfaces. It involves managing the state of various components and ensuring that changes in state are efficiently propagated throughout the application. There are several approaches to state management in React, each with its own benefits and drawbacks. Here are the most commonly used state management techniques in React:

#### React's Built-in State (useState and useReducer)

#### Context API

#### Redux Toolkit

#### MobX

#### Recoil

#### Zustand

In this article, we'll focus on just a few of these techniques.

### 1. React's Built-in State (useState and useReducer)

#### useState

- **Benefits**:

- Simple to use for managing local component state.

- Ideal for small to medium-sized applications.

- Directly integrated into React, eliminating the need for additional libraries.

- **Drawbacks**:

- Can become cumbersome as the application grows.

- Managing state that needs to be shared across many components can become complex.

#### useReducer

- **Benefits**:

- Suitable for managing more complex state logic.

- Similar to Redux's reducer pattern but without external dependencies.

- Makes state transitions explicit and easier to debug.

- **Drawbacks**:

- May be overkill for simple state management needs.

- Limited in scope for larger applications with extensive state management requirements.

### 2. Context API

- **Benefits**:

- Excellent for passing data through the component tree without prop drilling.

- Simplifies state sharing across multiple components.

- No need for external libraries, keeping the bundle size smaller.

- **Drawbacks**:

- Can lead to performance issues if not used carefully, causing unnecessary re-renders.

- Lacks the advanced features of dedicated state management libraries, such as built-in side effect management.

### 3. Redux Toolkit

Redux Toolkit is the recommended way to use Redux because it reduces boilerplate and includes useful utilities.

- **Benefits**:

- Predictable state management with a single source of truth.

- Built-in best practices, reducing the amount of boilerplate code.

- Enhanced maintainability and scalability for large applications.

- Powerful middleware support (e.g., Redux Thunk) for handling asynchronous actions and side effects.

- Includes utility functions like `createSlice` and `createAsyncThunk` that simplify reducer and action creation.

- **Drawbacks**:

- Still involves a learning curve, especially for developers new to Redux.

- Can be overkill for small to medium-sized applications.

### 4. Recoil

Recoil is a state management library developed by Facebook, designed to manage complex state dependencies and improve performance.

- **Benefits**:

- Seamless integration with React, making it easy to adopt.

- Efficient state management with minimal re-renders, thanks to its fine-grained subscription model.

- Supports complex state dependencies and derived state using selectors.

- Provides a straightforward API for asynchronous state management with atoms and selectors.

- **Drawbacks**:

- Still relatively new and evolving, which might lead to breaking changes.

- Smaller ecosystem compared to Redux, which means fewer third-party tools and resources.

### Summary

**Local State (useState and useReducer)**:

- Best for simple state management within individual components.

- Excellent for small applications or isolated component state.

**Context API**:

- Great for sharing state across a component tree.

- Suitable for medium-sized applications with some shared state.

- Use `useContext` with `React.memo` or `useMemo` to optimize performance and avoid unnecessary re-renders.

**Redux Toolkit**:

- Ideal for large-scale applications with complex state requirements.

- Provides a robust ecosystem with a standardized approach, reducing boilerplate.

- Facilitates easier debugging and predictable state management with the `Redux DevTools`.

**Recoil**:

- Promising new library focused on efficient state management with complex dependencies.

- Suitable for applications needing fine-grained state management and derived state handling.

- Evolving rapidly, potentially leading to future improvements and changes.

Choosing the right state management approach depends on the specific needs of your application, including its size, complexity, and performance requirements. For small to medium projects, React’s built-in state and Context API might suffice. For larger projects with more complex state logic, Redux Toolkit's structured approach or Recoil's efficient state management capabilities may be more suitable. | bilelsalemdev |

1,864,325 | What is the best React lybraries for web development according to you? | A post by Shinny22 | 0 | 2024-05-24T21:13:40 | https://dev.to/shinny22/what-is-the-best-react-lybraries-for-web-development-according-to-you-4ip4 | react, javascript, webdev, typescript | shinny22 | |

1,864,323 | The Role Of AI In Employee Training And Development | Wanna become a data scientist within 3 months, and get a job? Then you need to check this out ! In... | 0 | 2024-05-24T21:10:21 | https://thedatascientist.com/the-role-of-ai-in-employee-training-and-development/ | ai, development, employeeexperience, training | Wanna become a data scientist within 3 months, and get a job? Then you need to [check this out ! ](https://go.beyond-machine.com/r)

In modern workplaces, the integration of artificial intelligence (AI) has become a game-changer for employee training and development. As technology continues to advance, AI is no longer a [futuristic concept](https://www.forbes.com/sites/forbesagencycouncil/2022/09/09/communicating-futuristic-concepts-perception-is-everything/?sh=51b838d11e9b) but a practical tool that enables employees to enhance their skills, knowledge, and overall professional growth.

This article explores the transformative role of AI in employee training and development, shedding light on its potential to revolutionize the way we learn and adapt to the demands of the contemporary workforce.

## AI in Legal Compliance and Training

AI is also making significant strides in the legal field, particularly in ensuring compliance and enhancing training programs related to employment law. This is especially relevant for employees seeking to navigate the complex legal landscape effectively.

**Trending**

[The Asfari Foundation: Data Science & Data Strategy Workshop

](https://thedatascientist.com/asfari-foundation-data-science-data-strategy-workshop/)

## COMPLIANCE TRAINING

AI-powered compliance training programs can help employees stay updated with the latest laws and regulations, ensuring that they are aware of their legal rights and responsibilities. These programs can be tailored to specific industries and regions, providing relevant and up-to-date information.

Specialized training programs that address local employment laws and regulations help employees understand their rights and ensure they are working in a compliant environment.

## LEGAL CONSULTATION AND SUPPORT