id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,863,363 | Building a Ruby on Rails MVP. | Hey there 👋, It's been 2 weeks since I announced the new project I'm working on: HomeSuiteApartment,... | 27,288 | 2024-05-24T15:18:44 | https://dev.to/joelzwarrington/building-an-ruby-on-rails-mvp-4eoo | webdev, rails, startup | Hey there :wave:,

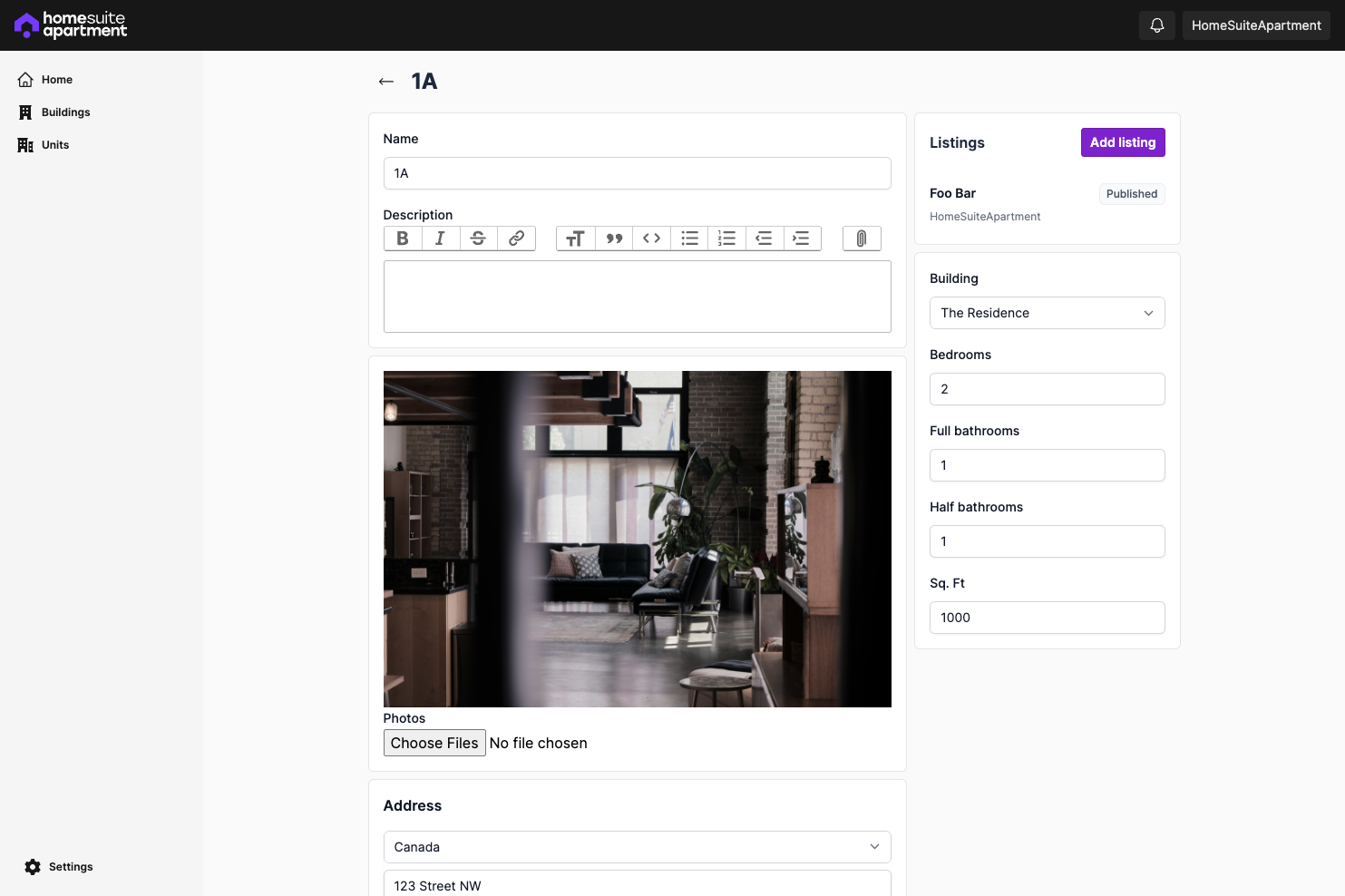

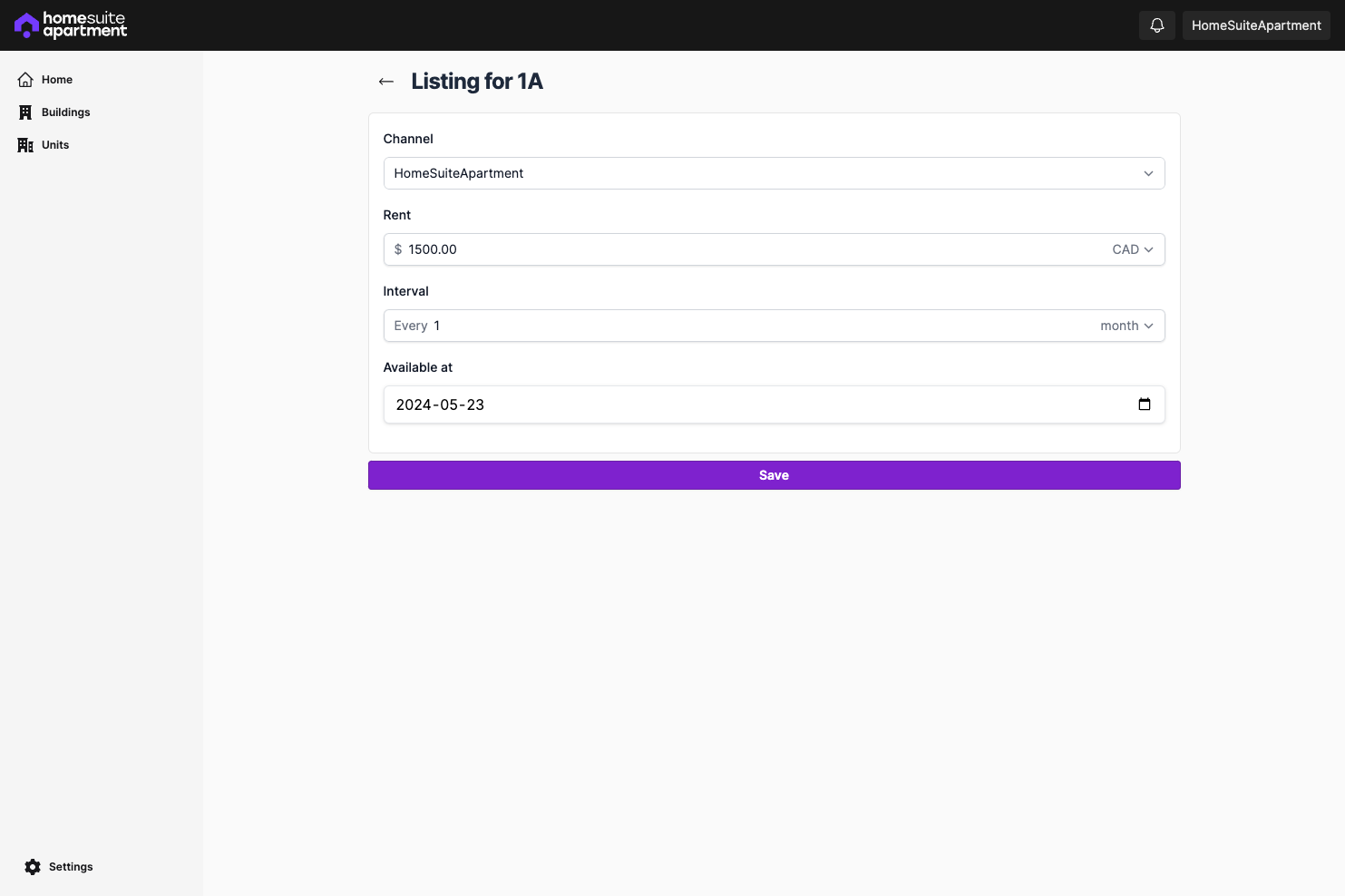

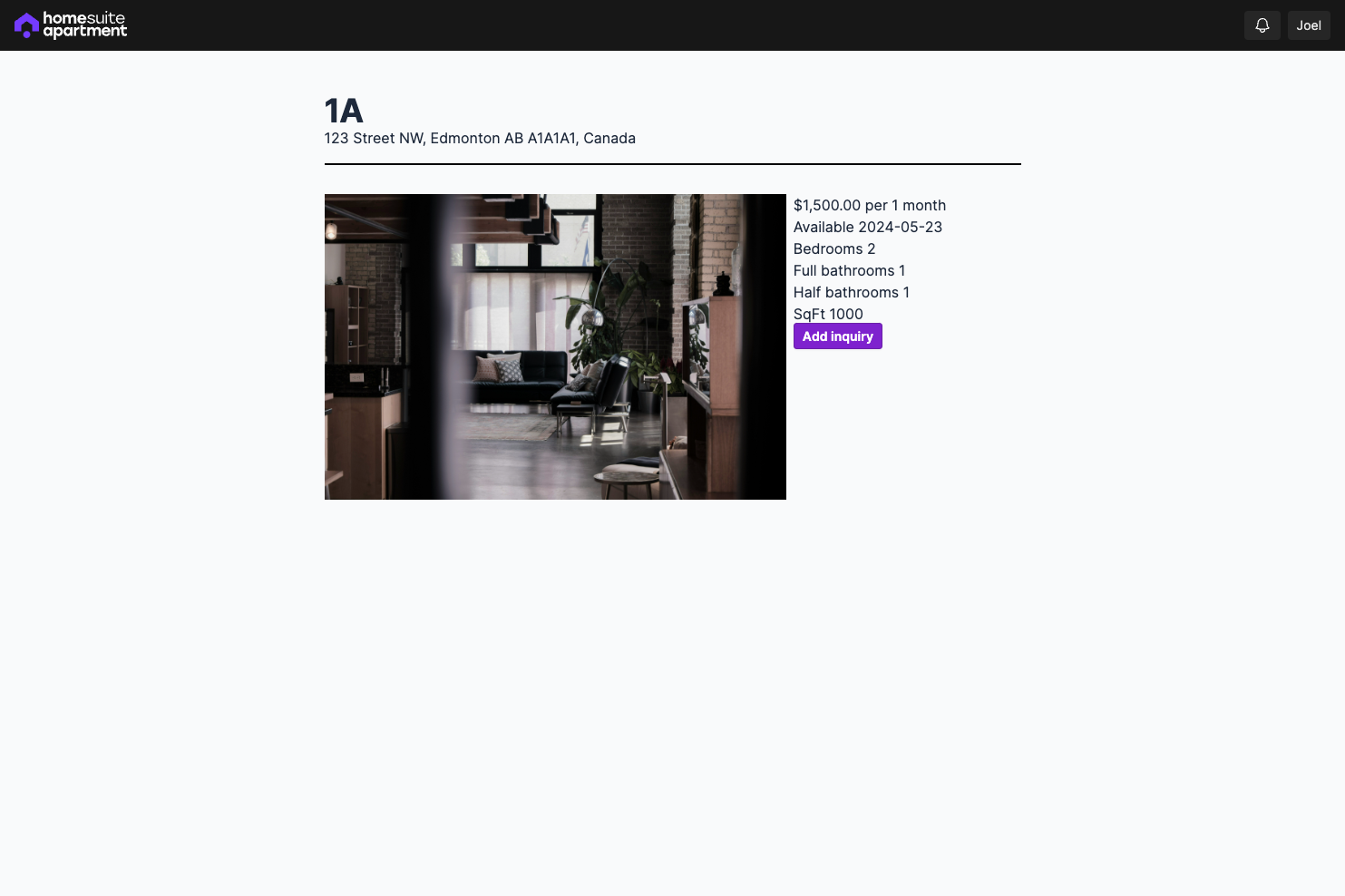

It's been 2 weeks since I announced the new project I'm working on: [HomeSuiteApartment](https://homesuiteapartment.com), a tool to manage properties.

Today, I'll be sharing an update update on the progress so far, what's next, and share some developer insights for your own Ruby on Rails project! If you'd prefer, I've also uploaded a [video walking through the product](https://youtu.be/3Q--P6-dtO4).

## Progress made so far

I've been able to build out:

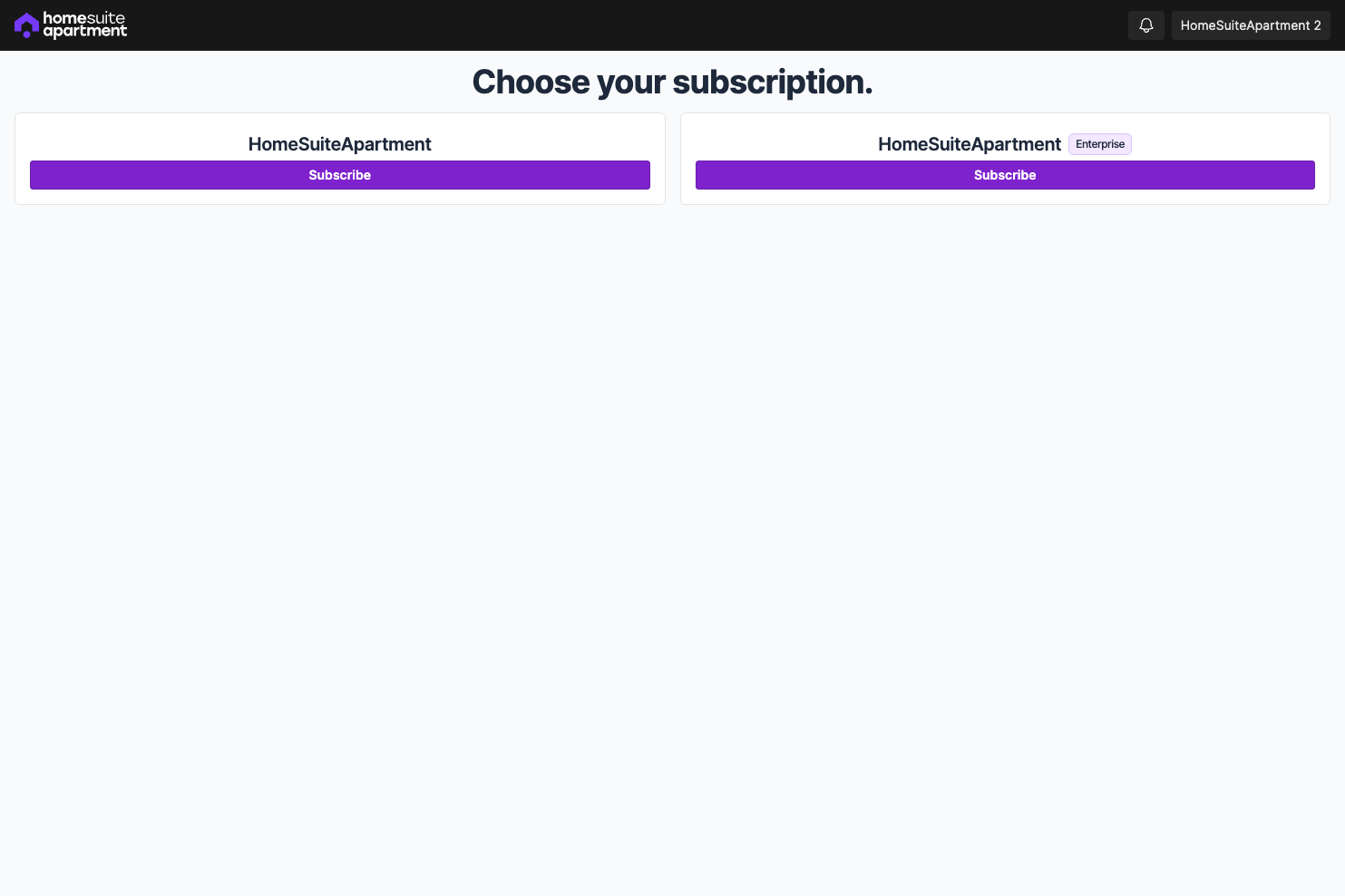

- an integration with Stripe to offer subscription plans

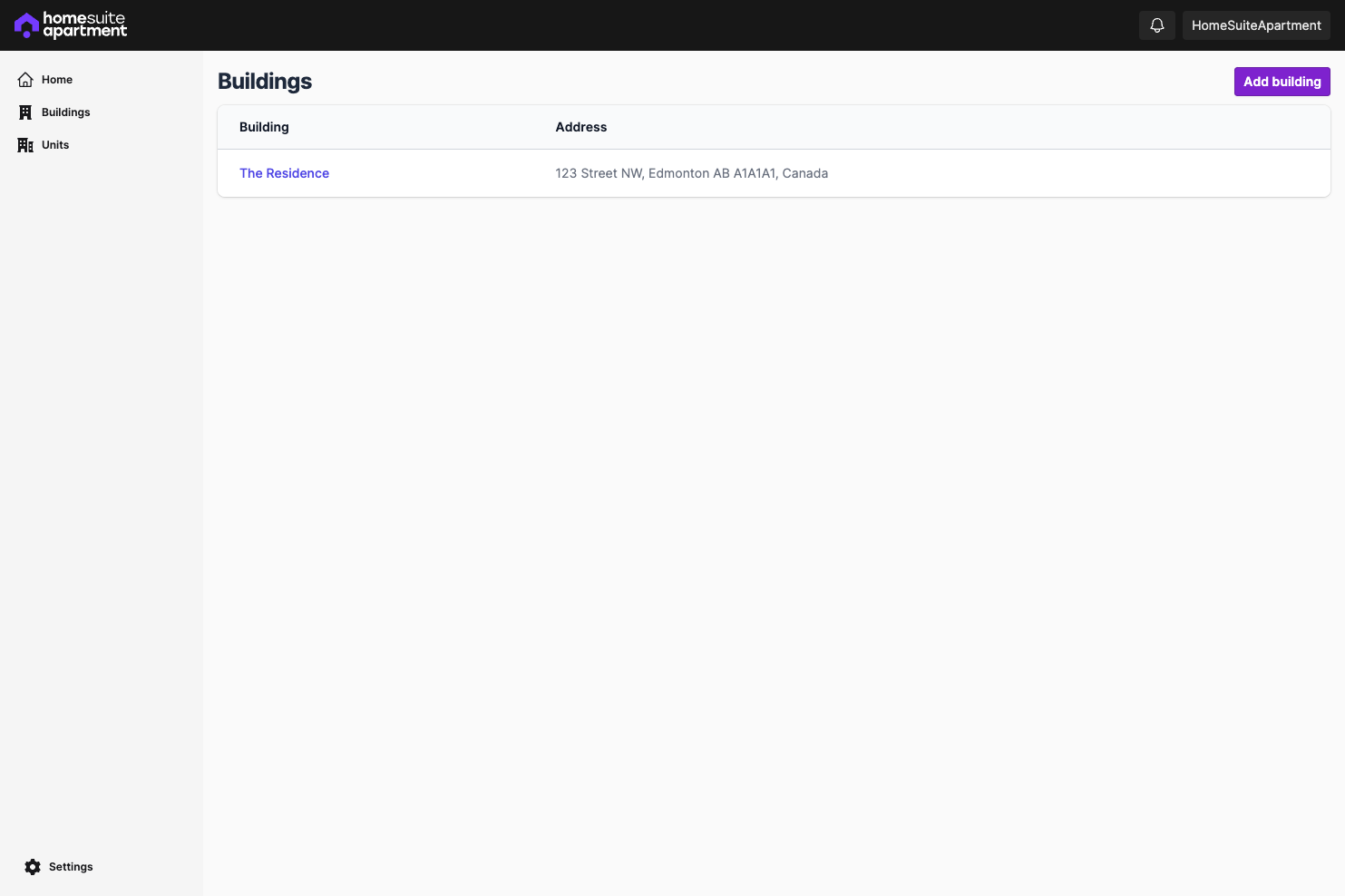

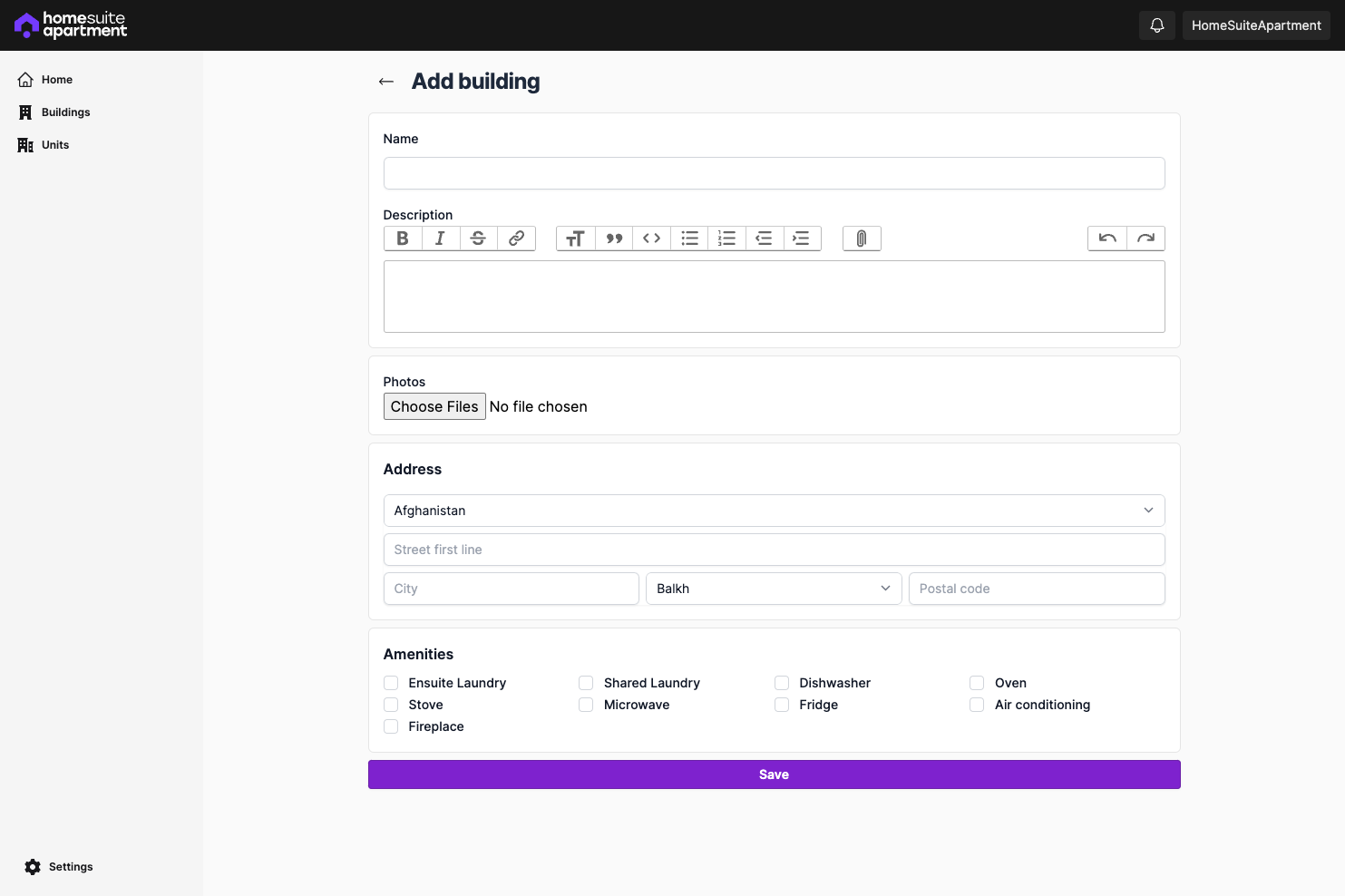

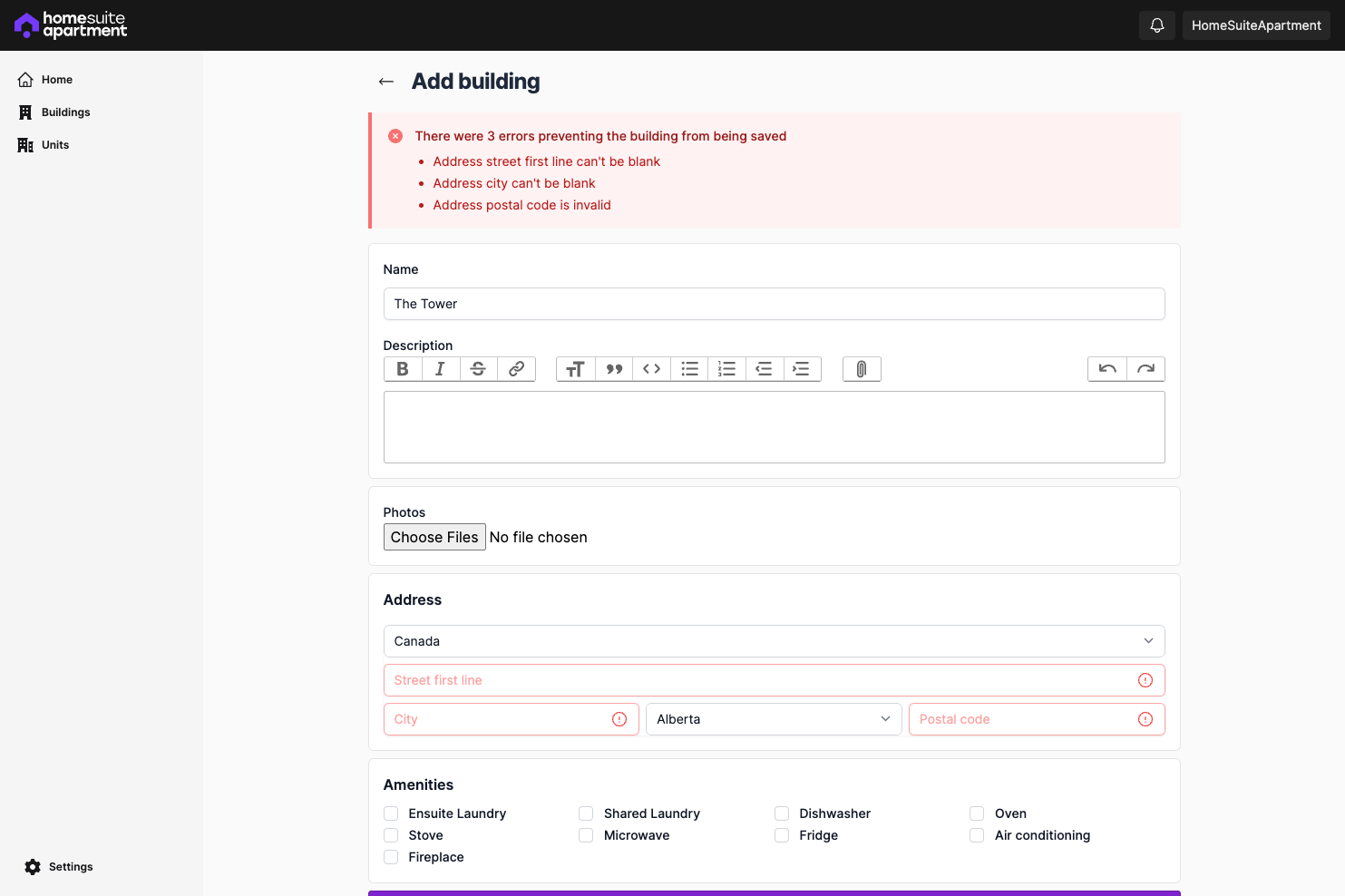

- CRUD routes for buildings and units

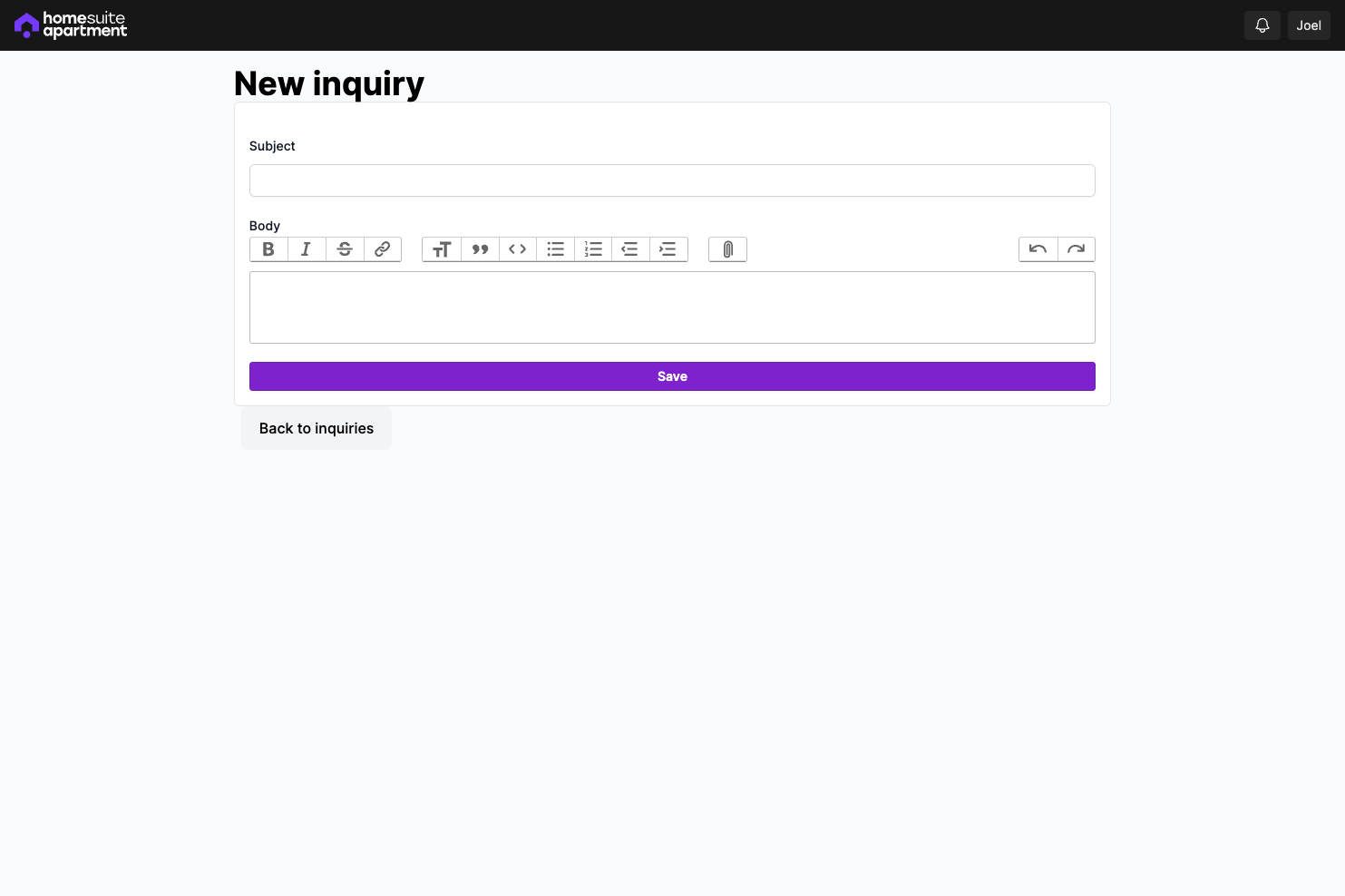

- CRUD routes to list a unit (at the moment only on HomeSuiteApartment, eventually on other rental listing marketplaces) and send inquiries

A lot of the pages have placeholder content at the moment, which I'll be fleshing out, but the intended workflow is almost done.

## An overview on my workflow

[Ruby on Rails](https://rubyonrails.org/), in my opinion, is the most productive full-stack web framework to-date.

I'd highly recommend reading up on the [Rails Doctrine](https://rubyonrails.org/doctrine), it really explains why Ruby on Rails came to be what it is today and how it's surpassed other frameworks.

---

To get started with Ruby on Rails, it was as simple as generating a new project, using the `rails` command line.

```shell

gem install rails

```

```shell

rails new homesuiteapartment

```

from there, it's as simple as using the provided [generators](https://guides.rubyonrails.org/generators.html) to scaffold the app.

```shell

bin/rails generate scaffold building name

```

This generates a database migration, model, route definition, controller and views, as well as tests if you've configured that. In all of a few minutes, you've got a 'working' application. In most cases, you'll need to do more, but the scaffold generator alone seems like cheating.

One of my favourite things about using generators, is that it's quite easy to customize, especially if you've implemented other gems for authorization and need to change the controller template.

---

Thankfully, [Ruby](https://www.ruby-lang.org/en/) and [Ruby on Rails](https://rubyonrails.org/) are well established, and have a large community building 'packages', known as [gems](https://guides.rubygems.org/what-is-a-gem/), similar to node packages.

[Ruby on Rails](https://rubyonrails.org/) is really just a collection of Gems which work very well together, such as [ActiveRecord](https://github.com/rails/rails/tree/main/activerecord), [ActiveModel](https://github.com/rails/rails/tree/main/activemodel), [ActionView](https://github.com/rails/rails/tree/main/actionview) and much more.

Gems are a helpful tool to easily add new functionality to your Ruby on Rails application, and is especially helpful when building an MVP so you don't need to build everything from scratch.

### Some of my favourite gems

#### Authentication

As mentioned in my previous blog post, [On the road to ramen profitability 🍜 💸](https://dev.to/joelzwarrington/on-the-road-to-ramen-profitability-21fc), I mentioned that I'm using [devise](https://github.com/heartcombo/devise). It's one of the most popular open-source authentication solutions for Rails.

I highly recommend it, as it's very configurable, and there are many plugins which I'll implement in the future, such as [OmniAuth](https://github.com/omniauth/omniauth).

One pitfall though, if you've never worked with Ruby on Rails, I recommend avoiding it and starting with a simple authentication system from scratch.

#### Authorization

If you're unfamiliar with authorization, it's very different from authentication. Read the [Authentication vs. Authorization article](https://auth0.com/docs/get-started/identity-fundamentals/authentication-and-authorization) from auth0 to learn more, but in essence:

> authentication is the process of verifying who a user is, while authorization is the process of verifying what they have access to.

There are a few gems which implement different strategies, such as [CanCanCan](https://github.com/CanCanCommunity/cancancan) and [Pundit](https://github.com/varvet/pundit).

My favourite gem for implementing authorization strategies is [ActionPolicy](https://github.com/palkan/action_policy). It's very similar to [Pundit](https://github.com/varvet/pundit), but is more extensible and isn't as barebones.

It's as simple as adding a new policy, and implementing the methods corresponding to the actions in your controller. In the example below, we have the `UnitPolicy` which will be used in the `UnitsController`. The `organization_user?` is a method which will return `true` if the user is part of the organization they're trying to access.

At the moment, most of my policies are very simple and simply check that a user is part of an organization, however, in the future it'll be easy to add permissions, roles, etc.

```ruby

class UnitPolicy < ApplicationPolicy

def index?

organization_user?

end

def new?

organization_user?

end

def create?

organization_user?

end

def show?

organization_user?

end

def edit?

organization_user?

end

def update?

organization_user?

end

def destroy?

organization_user?

end

private

relation_scope do |relation|

relation.where(organization: organization)

end

params_filter do |params|

params.permit(

:name

)

end

end

```

#### Views and Components

Out of the box, Ruby on Rails uses [erb templating](https://guides.rubyonrails.org/layouts_and_rendering.html) to build views, and [partials](https://guides.rubyonrails.org/layouts_and_rendering.html#using-partials). In Rails convention over configuration fashion, it's best to have views which correspond to your `get` actions, and you'll see these views get generated when you run the scaffold generator.

However, you might want to re-use and share code between views, and at first most would reach for [partials](https://guides.rubyonrails.org/layouts_and_rendering.html#using-partials), or if you're brave enough, think that implementing a React frontend will make this better for you, but there's a better solution.

- [ViewComponent](https://viewcomponent.org/) is a framework/gem for creating reusable, testable & encapsulated view components, built to integrate seamlessly with Ruby on Rails.

- [ViewComponent::Form](https://github.com/pantographe/view_component-form) provides an ActionView FormBuilder, so you can easily use [ViewComponent](https://viewcomponent.org/) components in your form helpers

I recommend giving these two gems a try, to , and helps with the composability that one might want,

These gems are both great at reducing the complexity and maintainability of partials, and allows for better composability, something which can be difficult in ERB templating. As mentioned previously, I've seen a lot of people reach for React frontends to solve this problem, and I think it's the wrong approach for a few reasons. If you're interested in that topic, let me know and I can publish an article going in-depth there.

#### Code formatting and linting

I highly recommend adding [standardrb](https://github.com/standardrb/standard) to your project. Under the hood it uses [rubocop](https://github.com/rubocop/rubocop) (A Ruby static code analyzer and formatter) and doesn't require any configuration - that's what makes it so powerful.

When building an MVP, you should spend the least amount of time working on things which don't directly provide value to what you're building. Linting is not a feature of your product.

When the time comes that I want to be picky about my formatting and linting rules, I'll likely pull out [standard](https://github.com/standardrb/standard) and write my own https://github.com/rubocop/rubocop rules, but in the meantime this is more than good enough.

#### Testing

Similar to linting and formatting, testing isn't really a feature. Some would highly argue against shipping code without rock-solid tests. But it really slows you down if you're hunting absolute coverage.

In my own projects, I'll use [rspec](https://rspec.info/) with [shoulda-matchers](https://github.com/thoughtbot/shoulda-matchers) alongside [FactoryBot](https://github.com/thoughtbot/factory_bot) to quickly and easily write simple tests.

For the most part, I won't add many more tests than what's included in the basic scaffold generator. Not to say I won't write tests, but covering every code path is not nescessary here. Happy path is good enough.

#### Running jobs

Ruby on Rails provides a common interface for scheduled jobs called [ActiveJob](https://guides.rubyonrails.org/active_job_basics.html), but there isn't a single job runner in the scene. There are gems such as [resque](https://github.com/resque/resque) and [sidekiq](https://github.com/sidekiq/sidekiq) but both of these gems are dependent on adding Redis.

These days, I'll be using [solid_queue](https://github.com/basecamp/solid_queue) as it's a simple solution which uses your existing SQL database.

#### Clean code

To avoid a lot of the boilerplate with [Ruby on Rails](https://rubyonrails.org/) controllers, I recommend the [responders](https://github.com/heartcombo/responders) gem. Also it's used by [devise](https://github.com/heartcombo/devise) under the hood!

## What's next?

Thanks for sticking to the end, I hope I've shared a few gems that will help you build your own [Ruby on Rails](https://rubyonrails.org/) application if you decide to do so.

As for me, I'll continue building out [HomeSuiteApartment](https://homesuiteapartment.com/), and in the next 2 weeks will mostly focus on:

- polishing pages, such as the subscription overview and unit listing page

- adding functionality to see inquiries, and book viewings from inquiries

See you in 2 weeks, for the next update! | joelzwarrington |

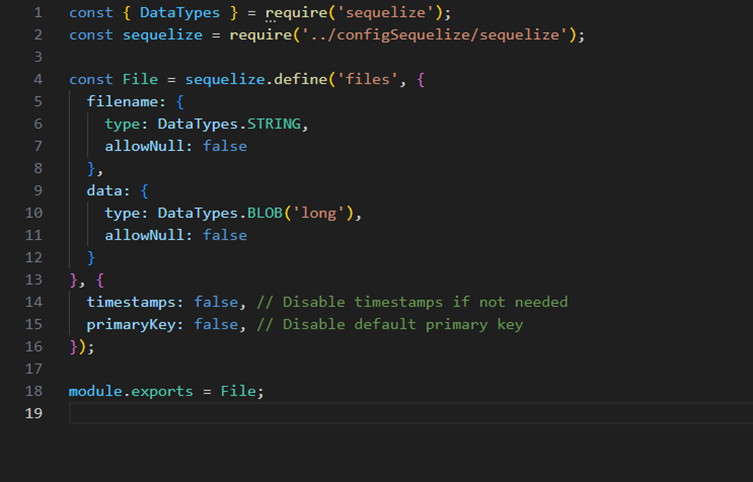

1,864,097 | Relax and let the data flow: A Zero-ETL Pipeline | Real-time Data Visualization with OpenSearch and Amazon DynamoDB: A Zero-ETL Pipeline 🇻🇪🇨🇱... | 0 | 2024-05-24T15:17:03 | https://community.aws/content/2gEgTJCgL2BlFfBxmUBX7nUHe3q/relax-and-let-the-data-flow-a-zero-etl-pipeline | aws, analytics, database, zeroetl | # Real-time Data Visualization with OpenSearch and Amazon DynamoDB: A Zero-ETL Pipeline

🇻🇪🇨🇱 [Dev.to](https://dev.to/elizabethfuentes12) [Linkedin](https://www.linkedin.com/in/lizfue/) [GitHub](https://github.com/elizabethfuentes12/) [Twitter](https://twitter.com/elizabethfue12) [Instagram](https://www.instagram.com/elifue.tech) [Youtube](https://www.youtube.com/channel/UCr0Gnc-t30m4xyrvsQpNp2Q)

[Linktr](https://linktr.ee/elizabethfuentesleone)

{% embed https://dev.to/elizabethfuentes12 %}

[Amazon OpenSearch](https://docs.aws.amazon.com/es_es/opensearch-service/latest/developerguide/what-is.html) Service and Amazon DynamoDB provide a powerful combination for real-time data visualization without the need for complex Extract, Transform, Load (ETL) processes. This repositorie introduces an AWS Cloud Development Kit (CDK) stack that deploys a serverless architecture for efficient, real-time data ingestion using the [OpenSearch Ingestion](https://docs.aws.amazon.com/opensearch-service/latest/developerguide/ingestion.html) service (OSIS).

By leveraging OSIS, you can process and transform data from DynamoDB streams directly into OpenSearch, enabling near-instant visualization and analysis. This zero-ETL pipeline eliminates the overhead of traditional data transformation workflows, allowing you to focus on deriving insights from your data.

The CDK stack provisions key components such as Amazon Cognito for authentication, IAM roles for secure access, an OpenSearch domain for indexing and visualization, an S3 bucket for data backups, and a DynamoDB table as the data source. OpenSearch Ingestion acts as the central component, efficiently processing data based on a declarative YAML configuration.

## Prerequisites

- [AWS Account](https://aws.amazon.com/resources/create-account/?sc_channel=el&sc_campaign=datamlwave&sc_content=cicdcfnaws&sc_geo=mult&sc_country=mult&sc_outcome=acq)

- [Foundational knowledge of Python](https://catalog.us-east-1.prod.workshops.aws/workshops/3d705026-9edc-40e8-b353-bdabb116c89c/)

## 💰 Cost to complete:

- [Amazon DynamoDB Pricing](https://aws.amazon.com/dynamodb/pricing/)

- [Amazon OpenSearch Service Pricing](https://aws.amazon.com/opensearch-service/pricing/)

- [Amazon Cognito Pricing](https://aws.amazon.com/cognito/pricing/)

- [Amazon S3 Pricing](https://aws.amazon.com/s3/pricing/)

## How Does This Application Work?

The flow starts with data stored in Amazon DynamoDB, a managed and scalable NoSQL database. Then, the data is transmitted to [Amazon S3](https://docs.aws.amazon.com/es_es/AmazonS3/latest/userguide/Welcome.html).

From the data in S3, it is indexed using Amazon OpenSearch, a service that enables real-time search and analysis on large volumes of data. OpenSearch indexes the data and makes it easily accessible for fast queries.

The next component is Amazon Cognito, a service that enables user identity and access management. Cognito authenticates and authorizes users to access the OpenSearch Dashboard.

[AWS Identity and Access Management Roles](https://docs.aws.amazon.com/es_es/IAM/latest/UserGuide/id_roles.html) is used to define roles and access permissions.

To create an OpenSearch Ingestion pipeline, you need an [IAM role that the pipeline](https://docs.aws.amazon.com/opensearch-service/latest/developerguide/creating-pipeline.html?icmpid=docs_console_unmapped#manage-pipeline-prerequisites) will assume to write data to the sink (an OpenSearch Service domain or OpenSearch Serverless collection). The role's ARN must be included in the pipeline configuration. The sink, which can be an OpenSearch Service domain (running OpenSearch 1.0+ or Elasticsearch 7.4+) or an OpenSearch Serverless collection, must have an access policy granting the necessary permissions to the IAM pipeline role. ([Granting Amazon OpenSearch Ingestion pipelines access to domains](https://docs.aws.amazon.com/opensearch-service/latest/developerguide/pipeline-domain-access.html) - [Granting Amazon OpenSearch Ingestion pipelines access to collections](https://docs.aws.amazon.com/opensearch-service/latest/developerguide/pipeline-collection-access.html)).

OpenSearch Ingestion requires [specific IAM permissions](https://docs.aws.amazon.com/opensearch-service/latest/developerguide/creating-pipeline.html?icmpid=docs_console_unmapped#create-pipeline-permissions) to create pipelines, including `osis:CreatePipeline` to create a pipeline, `osis:ValidatePipeline` to validate the pipeline configuration, and `iam:PassRole` to pass the pipeline role to OpenSearch Ingestion, allowing it to write data to the domain. The `iam:PassRole` permission must be granted on the [pipeline role resource](https://docs.aws.amazon.com/opensearch-service/latest/developerguide/pipeline-domain-access.html#pipeline-access-configure) (specified as sts_role_arn in the pipeline configuration) or set to * if different roles will be used for each pipeline.

The main link of this pipeline configuration is a [YAML file format](https://github.com/build-on-aws/realtime-dynamodb-zero-etl-opensearch-visualization/blob/main/dashboard/dashboard/template.txt) that connects the DynamoDB table with OpenSearch:

The pipeline configuration is done through a [YAML file format](https://github.com/build-on-aws/realtime-dynamodb-zero-etl-opensearch-visualization/blob/main/dashboard/dashboard/template.txt) like:

```

version: "2"

dynamodb-pipeline:

source:

dynamodb:

acknowledgments: true

tables:

# REQUIRED: Supply the DynamoDB table ARN and whether export or stream processing is needed, or both

- table_arn: "DYNAMODB_TABLE_ARN"

# Remove the stream block if only export is needed

stream:

start_position: "LATEST"

# Remove the export block if only stream is needed

export:

# REQUIRED for export: Specify the name of an existing S3 bucket for DynamoDB to write export data files to

s3_bucket: "<<my-bucket>>"

# Specify the region of the S3 bucket

s3_region: "<<REGION_NAME>"

# Optionally set the name of a prefix that DynamoDB export data files are written to in the bucket.

s3_prefix: "ddb-to-opensearch-export/"

aws:

# REQUIRED: Provide the role to assume that has the necessary permissions to DynamoDB, OpenSearch, and S3.

sts_role_arn: "<<STS_ROLE_ARN>>"

# Provide the region to use for aws credentials

region: "<<REGION_NAME>>"

sink:

- opensearch:

# REQUIRED: Provide an AWS OpenSearch endpoint

hosts:

[

"<<https://OpenSearch_DOMAIN>>"

]

index: "<<table-index>>"

index_type: custom

document_id: "${getMetadata(\"primary_key\")}"

action: "${getMetadata(\"opensearch_action\")}"

document_version: "${getMetadata(\"document_version\")}"

document_version_type: "external"

aws:

# REQUIRED: Provide a Role ARN with access to the domain. This role should have a trust relationship with osis-pipelines.amazonaws.com

sts_role_arn: "<<STS_ROLE_ARN>>"

# Provide the region of the domain.

region: "<<REGION_NAME>>"

```

The pipeline configuration file is automatically created in the CDK stack along with all the other resources.

## Let's build!

### Step 1: APP Set Up

✅ **Clone the repo**

```

git clone https://github.com/build-on-aws/realtime-dynamodb-zero-etl-opensearch-visualization

```

✅ **Go to**:

```

cd dashboard

```

- Configure the [AWS Command Line Interface](https://docs.aws.amazon.com/cli/latest/userguide/cli-chap-configure.html)

- Deploy architecture with CDK [Follow steps:](https://github.com/build-on-aws/realtime-dynamodb-zero-etl-opensearch-visualization/blob/main/dashboard/README.md)

✅ **Create The Virtual Environment**: by following the steps in the [README](https://github.com/build-on-aws/realtime-dynamodb-zero-etl-opensearch-visualization/blob/main/dashboard/README.md)

```

python3 -m venv .venv

```

```

source .venv/bin/activate

```

for windows:

```

.venv\Scripts\activate.bat

```

✅ **Install The Requirements**:

```

pip install -r requirements.txt

```

✅ **Synthesize The Cloudformation Template With The Following Command**:

```

cdk synth

```

✅🚀 **The Deployment**:

```

cdk deploy

```

The deployment will take between 5 and 10 minutes, which is how long it takes for the OpenSearch domain to be created.

When it is ready you will see that the status changes to completed:

To access the OpenSearch Dashboards through the [OpenSearch Dashboards URL (IPv4)](https://docs.aws.amazon.com/opensearch-service/latest/developerguide/dashboards.html) you need to [create a user in the Amazon Cognito user pools](https://docs.aws.amazon.com/cognito/latest/developerguide/managing-users.html?icmpid=docs_cognito_console_help_panel).

With the created user, access the Dashboard and begin to experience the magic of Zero-ETL between the DynamoDB table and OpenSearch.

In this repository you created a table to which you can inject data, but you can also change it by [Updating Amazon OpenSearch Ingestion pipelines](https://docs.aws.amazon.com/opensearch-service/latest/developerguide/update-pipeline.html) making a change to the YAML file or modifying the [CDK stack](https://github.com/build-on-aws/realtime-dynamodb-zero-etl-opensearch-visualization/blob/main/dashboard/dashboard/dashboard_stack.py).

## Conclusion

The combination of Amazon OpenSearch and Amazon DynamoDB enables real-time data visualization without the complexities of traditional ETL processes. By utilizing the OpenSearch Ingest Service (OSIS), a serverless architecture can be implemented that efficiently processes and transforms data from DynamoDB directly into OpenSearch. Building the application with AWS CDK streamlines and simplifies the setup of key components such as authentication, secure access, indexing, visualization, and data backup.

This solution allows users to focus on gaining insights from their data rather than managing infrastructure. Ideal for real-time dashboards, log analytics, or IoT event monitoring, this Zero-ETL pipeline offers a scalable and agile approach to data ingestion and visualization. It is recommended to clone the repository, customize the configuration, and deploy the stack on AWS to leverage the power of OpenSearch and DynamoDB for real-time data visualization.

{% embed https://dev.to/elizabethfuentes12 %}

🇻🇪🇨🇱 [Dev.to](https://dev.to/elizabethfuentes12) [Linkedin](https://www.linkedin.com/in/lizfue/) [GitHub](https://github.com/elizabethfuentes12/) [Twitter](https://twitter.com/elizabethfue12) [Instagram](https://www.instagram.com/elifue.tech) [Youtube](https://www.youtube.com/channel/UCr0Gnc-t30m4xyrvsQpNp2Q)

[Linktr](https://linktr.ee/elizabethfuentesleone)

| elizabethfuentes12 |

1,864,094 | Halo Dev.to | Lorem Ipsum is simply dummy text of the printing and typesetting industry. Lorem Ipsum has been the... | 0 | 2024-05-24T15:13:34 | https://dev.to/hamevryd/halo-devto-1j8g | Lorem Ipsum is simply dummy text of the printing and typesetting industry. Lorem Ipsum has been the industry's standard dummy text ever since the 1500s, when an unknown printer took a galley of type and scrambled it to make a type specimen book. It has survived not only five centuries, but also the leap into electronic typesetting, remaining essentially unchanged. It was popularised in the 1960s with the release of Letraset sheets containing Lorem Ipsum passages, and more recently with desktop publishing software like Aldus PageMaker including versions of Lorem Ipsum.

| hamevryd | |

1,864,093 | Zero-ETL Pipeline: Relájate y deja que los datos fluyan. | Visualización de datos en tiempo real con OpenSearch y Amazon DynamoDB: A Zero-ETL... | 0 | 2024-05-24T15:10:22 | https://www.linkedin.com/pulse/zero-etl-pipeline-rel%2525C3%2525A1jate-y-deja-que-los-datos-fuentes-leone-qzj8e/?trackingId=IleRrYDzTc2pL7ggvEVw%2Bw%3D%3D | database, analytics, aws, spanish | # Visualización de datos en tiempo real con OpenSearch y Amazon DynamoDB: A Zero-ETL Pipeline

🇻🇪🇨🇱 [Dev.to](https://dev.to/elizabethfuentes12) [Linkedin](https://www.linkedin.com/in/lizfue/) [GitHub](https://github.com/elizabethfuentes12/) [Twitter](https://twitter.com/elizabethfue12) [Instagram](https://www.instagram.com/elifue.tech) [Youtube](https://www.youtube.com/channel/UCr0Gnc-t30m4xyrvsQpNp2Q)

[Linktr](https://linktr.ee/elizabethfuentesleone)

{% embed https://dev.to/elizabethfuentes12 %}

[Amazon OpenSearch](https://docs.aws.amazon.com/es_es/opensearch-service/latest/developerguide/what-is.html) Service y Amazon DynamoDB proporcionan una poderosa combinación para la visualización de datos en tiempo real sin la necesidad de procesos complejos de Extracción, Transformación y Carga (ETL). Este repositorio introduce una pila de AWS Cloud Development Kit (CDK) que despliega una arquitectura sin servidor para la ingesta eficiente de datos en tiempo real utilizando el servicio [OpenSearch Ingestion](https://docs.aws.amazon.com/opensearch-service/latest/developerguide/ingestion.html) (OSIS).

Al aprovechar OSIS, puedes procesar y transformar datos de los streams de DynamoDB directamente en OpenSearch, permitiendo la visualización y análisis casi instantáneos. Esta canalización sin ETL elimina la sobrecarga de los flujos de trabajo tradicionales de transformación de datos, permitiéndote enfocarte en obtener información de tus datos.

El stack de CDK aprovisiona componentes clave como Amazon Cognito para autenticación, roles de IAM para acceso seguro, un dominio de OpenSearch para indexación y visualización, un bucket de S3 para respaldos de datos y una tabla de DynamoDB como fuente de datos. OpenSearch Ingestion actúa como el componente central, procesando eficientemente los datos basados en una configuración declarativa YAML.

## Requisitos previos

- [Cuenta de AWS](https://aws.amazon.com/resources/create-account/?sc_channel=el&sc_campaign=datamlwave&sc_content=cicdcfnaws&sc_geo=mult&sc_country=mult&sc_outcome=acq)

- [Conocimientos fundamentales de Python](https://catalog.us-east-1.prod.workshops.aws/workshops/3d705026-9edc-40e8-b353-bdabb116c89c/)

## 💰 Costo para completar:

- [Precios de Amazon DynamoDB](https://aws.amazon.com/dynamodb/pricing/)

- [Precios de Amazon OpenSearch Service](https://aws.amazon.com/opensearch-service/pricing/)

- [Precios de Amazon Cognito](https://aws.amazon.com/cognito/pricing/)

- [Precios de Amazon S3](https://aws.amazon.com/s3/pricing/)

## ¿Cómo funciona esta aplicación?

El flujo comienza con datos almacenados en Amazon DynamoDB, una base de datos NoSQL administrada y escalable. Luego, los datos se transmiten a [Amazon S3](https://docs.aws.amazon.com/es_es/AmazonS3/latest/userguide/Welcome.html).

Desde los datos en S3, se indexan utilizando Amazon OpenSearch, un servicio que permite la búsqueda y análisis en tiempo real en grandes volúmenes de datos. OpenSearch indexa los datos y los hace fácilmente accesibles para consultas rápidas.

El siguiente componente es Amazon Cognito, un servicio que permite la identidad del usuario y la gestión de acceso. Cognito autentica y autoriza a los usuarios para acceder al Panel de OpenSearch.

Se utiliza [AWS Identity and Access Management Roles](https://docs.aws.amazon.com/es_es/IAM/latest/UserGuide/id_roles.html) para definir roles y permisos de acceso.

Para crear una canalización de ingesta de OpenSearch, necesitas un [rol de IAM que la canalización](https://docs.aws.amazon.com/opensearch-service/latest/developerguide/creating-pipeline.html?icmpid=docs_console_unmapped#manage-pipeline-prerequisites) asumirá para escribir datos en el sumidero (un dominio de OpenSearch Service o una colección de OpenSearch Serverless). El ARN del rol debe incluirse en la configuración de la canalización. El sumidero, que puede ser un dominio de OpenSearch Service (ejecutando OpenSearch 1.0+ o Elasticsearch 7.4+) o una colección de OpenSearch Serverless, debe tener una política de acceso que otorgue los permisos necesarios al rol de canalización de IAM. ([Otorgar acceso a las canalizaciones de ingesta de Amazon OpenSearch a dominios](https://docs.aws.amazon.com/opensearch-service/latest/developerguide/pipeline-domain-access.html) - [Otorgar acceso a las canalizaciones de ingesta de Amazon OpenSearch a colecciones](https://docs.aws.amazon.com/opensearch-service/latest/developerguide/pipeline-collection-access.html)).

OpenSearch Ingestion requiere [permisos específicos de IAM](https://docs.aws.amazon.com/opensearch-service/latest/developerguide/creating-pipeline.html?icmpid=docs_console_unmapped#create-pipeline-permissions) para crear canalizaciones, incluyendo `osis:CreatePipeline` para crear una canalización, `osis:ValidatePipeline` para validar la configuración de la canalización, y `iam:PassRole` para pasar el rol de canalización a OpenSearch Ingestion, permitiéndole escribir datos en el dominio. El permiso `iam:PassRole` debe otorgarse en el [recurso de rol de canalización](https://docs.aws.amazon.com/opensearch-service/latest/developerguide/pipeline-domain-access.html#pipeline-access-configure) (especificado como sts_role_arn en la configuración de la canalización) o establecerse en * si se utilizarán diferentes roles para cada canalización.

El enlace principal de esta configuración de canalización es un [formato de archivo YAML](https://github.com/build-on-aws/realtime-dynamodb-zero-etl-opensearch-visualization/blob/main/dashboard/dashboard/template.txt) que conecta la tabla DynamoDB con OpenSearch:

La configuración de la canalización se realiza a través de un formato de archivo [YAML](https://github.com/build-on-aws/realtime-dynamodb-zero-etl-opensearch-visualization/blob/main/dashboard/dashboard/template.txt) como:

```

version: "2"

dynamodb-pipeline:

source:

dynamodb:

acknowledgments: true

tables:

# REQUIRED: Supply the DynamoDB table ARN and whether export or stream processing is needed, or both

- table_arn: "DYNAMODB_TABLE_ARN"

# Remove the stream block if only export is needed

stream:

start_position: "LATEST"

# Remove the export block if only stream is needed

export:

# REQUIRED for export: Specify the name of an existing S3 bucket for DynamoDB to write export data files to

s3_bucket: "<<my-bucket>>"

# Specify the region of the S3 bucket

s3_region: "<<REGION_NAME>"

# Optionally set the name of a prefix that DynamoDB export data files are written to in the bucket.

s3_prefix: "ddb-to-opensearch-export/"

aws:

# REQUIRED: Provide the role to assume that has the necessary permissions to DynamoDB, OpenSearch, and S3.

sts_role_arn: "<<STS_ROLE_ARN>>"

# Provide the region to use for aws credentials

region: "<<REGION_NAME>>"

sink:

- opensearch:

# REQUIRED: Provide an AWS OpenSearch endpoint

hosts:

[

"<<https://OpenSearch_DOMAIN>>"

]

index: "<<table-index>>"

index_type: custom

document_id: "${getMetadata(\"primary_key\")}"

action: "${getMetadata(\"opensearch_action\")}"

document_version: "${getMetadata(\"document_version\")}"

document_version_type: "external"

aws:

# REQUIRED: Provide a Role ARN with access to the domain. This role should have a trust relationship with osis-pipelines.amazonaws.com

sts_role_arn: "<<STS_ROLE_ARN>>"

# Provide the region of the domain.

region: "<<REGION_NAME>>"

```

El archivo de configuración de la canalización se crea automáticamente en la pila CDK junto con todos los demás recursos.

## ¡Construyamos!

### Paso 1: Configuración de la APP

✅ **Clonar el repositorio**

```

git clone https://github.com/build-on-aws/realtime-dynamodb-zero-etl-opensearch-visualization

```

✅ **Ir a**:

```

cd dashboard

```

- Configurar la [Interfaz de línea de comandos de AWS](https://docs.aws.amazon.com/cli/latest/userguide/cli-chap-configure.html)

- Implemente la arquitectura con CDK [Siga los pasos:](https://github.com/build-on-aws/realtime-dynamodb-zero-etl-opensearch-visualization/blob/main/dashboard/README.md)

✅ **Cree el Entorno Virtual**: siguiendo los pasos en el [README](https://github.com/build-on-aws/realtime-dynamodb-zero-etl-opensearch-visualization/blob/main/dashboard/README.md)

```

python3 -m venv .venv

```

```

source .venv/bin/activate

```

para windows:

```

.venv\Scripts\activate.bat

```

✅ **Instale los Requisitos**:

```

pip install -r requirements.txt

```

✅ **Sintetice la Plantilla de Cloudformation con el Siguiente Comando**:

```

cdk synth

```

✅🚀 **El Despliegue**:

```

cdk deploy

```

El despliegue tomará entre 5 y 10 minutos, que es el tiempo que tarda en crearse el dominio de OpenSearch.

Cuando esté listo, verá que el estado cambia a completado:

Para acceder a los Dashboards de OpenSearch a través de la [URL de Dashboards de OpenSearch (IPv4)](https://docs.aws.amazon.com/opensearch-service/latest/developerguide/dashboards.html) necesita [crear un usuario en los grupos de usuarios de Amazon Cognito](https://docs.aws.amazon.com/cognito/latest/developerguide/managing-users.html?icmpid=docs_cognito_console_help_panel).

Con el usuario creado, acceda al Dashboard y comience a experimentar la magia de Zero-ETL entre la tabla de DynamoDB y OpenSearch.

En este repositorio, creó una tabla a la que puede inyectar datos, pero también puede cambiarla [Actualizando las tuberías de ingesta de Amazon OpenSearch](https://docs.aws.amazon.com/opensearch-service/latest/developerguide/update-pipeline.html) realizando un cambio en el archivo YAML o modificando la [pila CDK](https://github.com/build-on-aws/realtime-dynamodb-zero-etl-opensearch-visualization/blob/main/dashboard/dashboard/dashboard_stack.py).

## Conclusión

La combinación de Amazon OpenSearch y Amazon DynamoDB permite la visualización de datos en tiempo real sin las complejidades de los procesos ETL tradicionales. Al utilizar el Servicio de Ingesta de OpenSearch (OSIS), se puede implementar una arquitectura sin servidor que procesa y transforma eficientemente los datos de DynamoDB directamente en OpenSearch. La construcción de la aplicación con AWS CDK simplifica la configuración de componentes clave como la autenticación, el acceso seguro, la indexación, la visualización y el respaldo de datos.

Este es el contenido del blog traducido al español:

Esta solución permite a los usuarios centrarse en obtener información de sus datos en lugar de administrar la infraestructura. Ideal para paneles de control en tiempo real, análisis de registros o monitoreo de eventos de IoT, este pipeline Zero-ETL ofrece un enfoque escalable y ágil para la ingesta y visualización de datos. Se recomienda clonar el repositorio, personalizar la configuración e implementar la pila en AWS para aprovechar el poder de OpenSearch y DynamoDB para la visualización de datos en tiempo real.

{% embed https://dev.to/elizabethfuentes12 %}

🇻🇪🇨🇱 [Dev.to](https://dev.to/elizabethfuentes12) [Linkedin](https://www.linkedin.com/in/lizfue/) [GitHub](https://github.com/elizabethfuentes12/) [Twitter](https://twitter.com/elizabethfue12) [Instagram](https://www.instagram.com/elifue.tech) [Youtube](https://www.youtube.com/channel/UCr0Gnc-t30m4xyrvsQpNp2Q)

[Linktr](https://linktr.ee/elizabethfuentesleone)

| elizabethfuentes12 |

1,864,092 | Introduction to GUI Programming with Tkinter | Introduction to GUI Programming with Tkinter Graphical User Interfaces (GUIs) allow users... | 0 | 2024-05-24T15:10:03 | https://dev.to/romulogatto/introduction-to-gui-programming-with-tkinter-386h | # Introduction to GUI Programming with Tkinter

Graphical User Interfaces (GUIs) allow users to interact with software applications through visual elements such as buttons, menus, and text fields. In this guide, we will explore the basics of GUI programming using Tkinter - a popular Python library for creating user-friendly interfaces.

## Installation

Before diving into Tkinter, ensure that you have Python installed on your computer. Visit the official Python website (https://www.python.org) and download the latest version suitable for your operating system.

Once you have Python installed, Tkinter is included in the standard library by default. No additional installation is required.

## Creating a Simple GUI Application

Let's get started by creating a simple "Hello World" application using Tkinter. Open your favorite code editor or IDE and create a new Python file.

First, import the tkinter module:

```python

import tkinter as tk

```

Next, let's create an instance of the main tkinter window:

```python

window = tk.Tk()

```

Now that we have our window ready, let's add some content to it. We'll start by adding a label widget containing our "Hello World" message:

```python

label = tk.Label(window, text="Hello World!")

label.pack()

```

Finally, run the application by entering this line of code:

```python

window.mainloop()

```

Save your file with a `.py` extension and execute it from the command line or within your IDE. You should see a small window pop up displaying "Hello World".

Congratulations! You've just created your first GUI application using Tkinter!

## Working with Widgets

Tkinter provides various widgets that can be used to build powerful and interactive GUI applications. Let's take a look at some commonly used widgets along with their functionalities:

### Buttons

Buttons are used to trigger actions when clicked on by users. Here's an example of how to create a button widget:

```python

button = tk.Button(window, text="Click Me!")

button.pack()

```

### Entry Fields

Entry fields allow users to input text or numerical data. To create an entry field in Tkinter, use the following code:

```python

entry = tk.Entry(window)

entry.pack()

```

### Labels

Labels are used to display static text or images. The label widget can be created as follows:

```python

label = tk.Label(window, text="Welcome to Tkinter!")

label.pack()

```

## Styling Widgets

Tkinter also provides options for customizing the appearance and behavior of widgets. You can modify properties such as font, color, size, and layout.

For example, to change the background color of a button:

```python

button.config(bg="red")

```

To change the font style and size of a label:

```python

label.config(font=("Arial", 20))

```

Feel free to explore Tkinter's extensive documentation (https://docs.python.org/3/library/tk.html) for more details on styling.

## Conclusion

In this guide, we've covered the basics of GUI programming with Tkinter in Python. We learned how to create a simple GUI application, work with common widgets like buttons and labels, and even customize their appearance.

Tkinter offers many other features such as event handling and layout management which you can explore further on your own. So go ahead and experiment with creating your interactive user interfaces using Tkinter - it's an exciting journey into building user-friendly Python applications!

| romulogatto | |

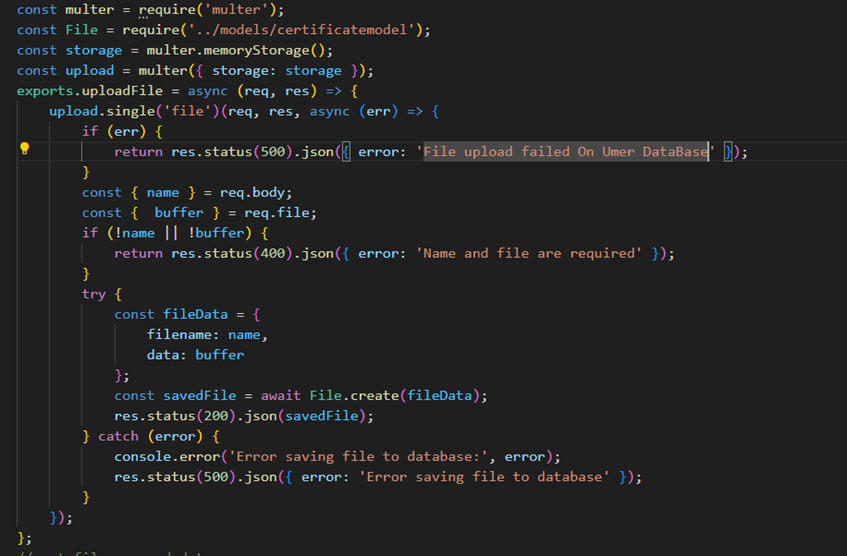

1,864,090 | Simple Method to Block Copy and Paste in Monaco Editor with React | Monaco Editor, the code editor that powers Visual Studio Code, is a powerful tool for developers,... | 0 | 2024-05-24T15:07:26 | https://dev.to/ritish_shelke_526e503c1b7/simple-method-to-block-copy-and-paste-in-monaco-editor-with-react-eam | react, monacoeditor, javascript, webdev | **[Monaco Editor](https://www.npmjs.com/package/@monaco-editor/react)**, the code editor that powers Visual Studio Code, is a powerful tool for developers, providing a rich set of features and customization options. However, there might be situations where you want to disable the copy-paste functionality, such as in coding assessments or educational tools to prevent cheating. In this blog, we will explore how to disable the copy-paste feature in Monaco Editor.

**Why Disable Copy-Paste?**

Before diving into the implementation, let’s discuss why you might want to disable copy-paste in a code editor:

1. **Academic Integrity:** In educational settings, to ensure students write their code from scratch during exams or practice sessions.

2. **Prevent Plagiarism:** To discourage copying code from other sources without understanding it.

3. **Skill Assessment:** To accurately assess a developer’s coding skills during interviews or coding challenges.

## **Step by step solution**

**Step 1: Setting Up Monaco Editor**

First, ensure you have Monaco Editor set up in your project. You can install it via npm:

```bash

npm install @monaco-editor/react

```

Include it in your project:

```javascript

import { Editor } from "@monaco-editor/react";

```

**Step 2: Configuring Editor Options**

To disable certain functionalities and customize the editor's behavior, configure its options. For instance, you can disable the context menu and set other useful preferences:

Here's list of options for editor: https://microsoft.github.io/monaco-editor/typedoc/interfaces/editor.IStandaloneEditorConstructionOptions.html

```javascript

const options = {

selectOnLineNumbers: true,

mouseWheelZoom: true,

fontSize: 18,

contextmenu: false, // Disables right-click context menu

formatOnType: true,

smoothScrolling: true,

wordWrap: "on",

};

```

**Step 3: Handling Key Down Events**

To detect and prevent copy-paste actions, add an event listener for key down events. This allows you to intercept the key combinations for copy (Ctrl/Cmd + C) and paste (Ctrl/Cmd + V):

```javascript

const onMount = (editor, monaco) => {

editorRef.current = editor;

editor.focus();

editor.onKeyDown((event) => {

const { keyCode, ctrlKey, metaKey } = event;

if ((keyCode === 33 || keyCode === 52) && (metaKey || ctrlKey)) {

event.preventDefault();

setActivity("copypaste");

setOpen(true);

}

});

};

```

**Step 4: Implementing the Modal Component**

The Modal component provides visual feedback to the user about their activities. Here's how to implement it:

```javascript

import React from "react";

import { IoIosCloseCircle } from "react-icons/io";

import { IoWarning } from "react-icons/io5";

export default function Modal({ activity, open, onClose }) {

return (

<div>

<div

className={`fixed inset-0 bg-gray-800 bg-opacity-80 z-50 transition-opacity ${

open ? "opacity-100" : "opacity-0 pointer-events-none"

}`}

></div>

<div

onClick={(e) => e.stopPropagation()}

className={`fixed inset-0 flex justify-center items-center z-50 ${

open ? "opacity-100" : "opacity-0 pointer-events-none"

}`}

>

<div className="bg-white rounded-xl shadow p-6 relative">

<button onClick={onClose} className="absolute top-2 right-2">

<IoIosCloseCircle size={24} />

</button>

<div className="text-center w-full h-52 z-50 flex justify-center items-center flex-col gap-5">

<div className="mx-auto my-4 w-full">

<h3 className="text-lg font-black text-gray-800">

{activity === "copypaste"

? "Copy Paste Activity"

: "Handle any other activity here"}

</h3>

<p className="text-md text-gray-700 font-semibold">

{activity === "copypaste"

? "Copy paste activity has been notified to faculty"

: "Handle any other activity here"}

</p>

<p className="text-md text-gray-700 font-semibold">

{activity === "copypaste"

? "On Next copy paste your exam will be terminated"

: "Handle any other activity here"}

</p>

</div>

<button

className="text-white bg-red-500 p-3 rounded-lg w-full flex justify-center items-center gap-2"

onClick={onClose}

>

{<IoWarning />} {activity === "copypaste" ? "Close Warning" : "Handle any other here"}

</button>

</div>

</div>

</div>

</div>

);

}

```

## Full code for Editor Component:

```javascript

import React, { useState, useRef } from "react";

import { Editor } from "@monaco-editor/react";

import Modal from "../../../../components/Modal/Modal.jsx";

const EditorWindow = () => {

const [activity, setActivity] = useState("");

const [open, setOpen] = useState(false);

const editorRef = useRef(null);

const options = {

selectOnLineNumbers: true,

mouseWheelZoom: true,

fontSize: 18,

contextmenu: false,

formatOnType: true,

smoothScrolling: true,

wordWrap: "on",

};

const onMount = (editor, monaco) => {

editorRef.current = editor;

editor.focus();

editor.onKeyDown((event) => {

const { keyCode, ctrlKey, metaKey } = event;

if ((keyCode === 33 || keyCode === 52) && (metaKey || ctrlKey)) {

event.preventDefault();

setActivity("copypaste");

setOpen(true);

};

return (

<>

<div>

<Modal activity={activity} open={open} onClose={() => setOpen(false)} />

<div className="overlay overflow-hidden w-full h-full shadow-4xl">

<Editor

height="85vh"

width="100vw"

theme="vs-dark"

language="java"

options={options}

defaultValue="some default value for editor"

onMount={onMount}

/>

</div>

</div>

</>

);

};

export default EditorWindow;

```

**Conclusion**

By following these steps, you can effectively disable copy-paste functionality in Monaco Editor and monitor user activities to ensure academic integrity. This approach is particularly useful in educational platforms and coding assessments where maintaining the originality of student work is crucial. By customizing and extending this implementation, you can adapt it to suit various educational and assessment platforms.

| ritish_shelke_526e503c1b7 |

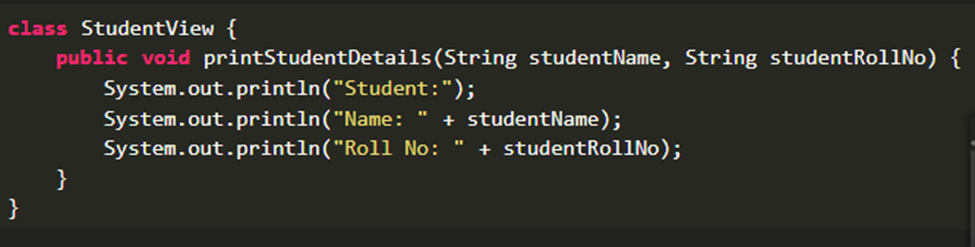

1,864,089 | Native Power: Why Java Reigns Supreme for Android App Development | Looking to build a top-notch Android app? While cross-platform development seems tempting, there's a... | 0 | 2024-05-24T15:07:14 | https://dev.to/malikhandev/native-power-why-java-reigns-supreme-for-android-app-development-a6p | app, java, mobile, native | Looking to build a top-notch Android app? While cross-platform development seems tempting, there's a reason Java remains the king for native Android apps. Here's why:

Built for Speed: Java apps are like race cars, engineered specifically for the Android racetrack. They leverage the Android SDK directly, resulting in smoother performance and faster load times.

Unmatched User Experience: A laggy app is a user's worst nightmare. Java apps deliver a seamless and responsive experience, keeping your users engaged and happy.

Access the Full Arsenal: Java grants you full access to the Android SDK's functionalities. From advanced features to intricate hardware integration, you can build feature-rich apps without limitations.

Offline Functionality: Need your app to work even without an internet connection? Java apps excel in offline capabilities, a must-have for many functionalities.

Security That Shines: Java prioritizes security with built-in features and a strong development community. This translates to a more secure app environment for your users.

Long-Term Play: Java is a mature technology with a vast developer pool. This ensures your app's maintainability and future-proofs it for years to come.

Native Look and Feel: A native app blends seamlessly with the Android ecosystem. Java apps deliver that perfect native look and feel, making them an intuitive joy to use.

Unlock True Potential: Java empowers you to create powerful, feature-rich apps that push the boundaries of what's possible. Unleash the true potential of your Android app idea.

Ready to build a blazing-fast, feature-packed Android app?

Our team of expert Java developers is here to help! We specialize in crafting high-performance native Android apps that will take your vision to life.

Visit us today and let's discuss your project!: **fiverr.com/hypersli/develop-native-applications-with-java** | malikhandev |

1,864,088 | Tes 123 | Lorem Ipsum is simply dummy text of the printing and typesetting industry. Lorem Ipsum has been the... | 0 | 2024-05-24T15:06:44 | https://dev.to/hamevryd/tes-123-3kj8 | Lorem Ipsum is simply dummy text of the printing and typesetting industry. Lorem Ipsum has been the industry's standard dummy text ever since the 1500s, when an unknown printer took a galley of type and scrambled it to make a type specimen book. It has survived not only five centuries, but also the leap into electronic typesetting, remaining essentially unchanged. It was popularised in the 1960s with the release of Letraset sheets containing Lorem Ipsum passages, and more recently with desktop publishing software like Aldus PageMaker including versions of Lorem Ipsum. | hamevryd | |

1,864,086 | Innovation-friendly software | Let’s face it – many established companies are live software museums. Marketing often advertises it... | 0 | 2024-05-24T15:05:36 | https://softbeehive.com/blog/innovation-friendly-software | webdev, microservices, programming, javascript | Let’s face it – many established companies are live software museums. Marketing often advertises it as cutting-edge, state-of-the-art, and crème de la crème. But under the hood, in the age of AI, our world still relies on vintage technology.

Chemical terminals use 25-year-old tools that work in Internet Explorer 5 only, trains run on Windows XP, and who knows what Perls you may find in a traditional banking sector?

## Why on Earth?

I have a theory: when businesses buy products or services, their expectations are proportional to the commitment. One does not simply take away a tool folks used for decades. This phenomenon is called ***technological inertia***.

Then, one day, customers riot, demanding high-speed wi-fi on the Win XP train. You order golden routers and do a lab test. During the rollout, two million people join the network. The electric system fails. Trying to fix lights, you break doors. The release reverted via USB drive downgrade on 250 trains, 13 bricked in the process. Passengers are happy the doors work again.

Meet ***sunk cost fallacy***: individuals or organizations may have invested heavily in the existing technology. And they are unwilling to abandon that investment, even if a new solution would be more cost-effective in the long run.

The point of no return is called don’t touch if it works. The longer it remains without a change, the more untouchable it gets. Crossing this Rubicon means an expensive and lengthy upgrade ahead.

## Vehicle management at car2go

At car2go (carsharing company), I worked in the vehicle lifecycle management team. We took ownership of a relatively fresh Angular 1 single-page app developed by an external agency.

Our motivated team started making changes. I remember applying a fix that caused seven new bugs. This pattern repeated again and again. Skilled and experienced engineers could not maintain the stability bar set by the company. Only manual QA saved us because automated tests did not catch issues.

The situation was spiraling out of control. We proceeded with caution, and that slowed our progress. During retrospectives, we began questioning our course of action. Some code was relatively fresh, though the main pain point was data binding side-effects in convoluted controllers.

On the API side, challenges were more significant – multiple databases were out of sync, critical bugs in queue processing, inconsistent data, and limited observability.

### Big bang is not an option

Our team concluded it was pragmatic to rethink the architecture. We needed modularity to achieve a better user experience. And a trunk-compatible system design that makes daily releases possible.

The head of engineering recognized the added value of the transition to innovation-friendlier architecture. Five engineers and a PO with QA and PM support performed zero downtime incremental upgrades that took 1.5 years to complete. For vehicle management, it was an investment that paid off.

When you operate a fleet of expensive cars, the difference between email damage tracking and an automated system could be millions of euros per year. According to my calculations, the return on investment was around 5-7x.

## How to keep up with the progress?

Recently, micro-service architecture has been a subject of wide criticism. Watch this brilliant video.

{% embed https://www.youtube.com/watch?v=y8OnoxKotPQ %}

Michael Paulson, aka The Primeagen, a prominent yelling tech figure, likes to mock startups for having more services than users.

The core issue is not a particular architecture. Humans associate failures with things they dislike. Be it a monolith, micro-services, or a framework. But the true killer is the complexity.

I see a repeating pattern occurring in established companies. Software is left unattended for some time. People join and leave, and priorities shift. Suddenly, a vintage marvel becomes a development blocker because multiple critical parts depend on it. Organizations invest astronomical amounts into legacy system integration.

Take action before it’s too late, and keep making changes as the product evolves. Motivated people recognize when something doesn’t work. When it happens, the lead must make a calculated decision.

## How to address it?

There is a better alternative – invest in reasonable modularity. I build high-performance, innovation-friendly software that helps companies avoid expensive upgrades and legacy service pain. Estimated ROI within five years +600%.

[Hire me](https://softbeehive.com/about)

Does it resonate with your experience? I'm curious to hear how you integrate vintage software. And how do you keep up with the WILD pace of change in the web tooling? | softbeehive |

1,859,259 | As vantagens de se usar o early return | 🌟 Aprimore a legibilidade e mantenha seu código limpo com "early return"! 🌟 A técnica de "early... | 0 | 2024-05-24T15:02:50 | https://dev.to/g7miserski/as-vantagens-de-se-usar-o-early-return-o8c | programming, python, webdev | 🌟 **Aprimore a legibilidade e mantenha seu código limpo com "early return"!** 🌟

A técnica de "early return" nos permite verificar e retornar logo no início do programa, melhorando a manutenção, legibilidade e, em alguns casos, até a performance do código. 🚀

### Vantagens do "early return":

1. **Legibilidade**: Código mais fácil de ler e entender, evitando aninhamentos profundos.

2. **Redução da Complexidade**: Simplifica a lógica e facilita a manutenção.

3. **Evita Códigos Desnecessários**: Previne a execução de trechos desnecessários, melhorando a performance.

Veja a diferença com um exemplo prático:

**Sem "early return":**

```python

def check_number(n):

if n > 0:

result = "Positive"

else:

if n < 0:

result = "Negative"

else:

result = "Zero"

return result

```

**Com "early return":**

```python

def check_number(n):

if n > 0:

return "Positive"

if n < 0:

return "Negative"

return "Zero"

```

Muito mais simples e legível! 📝

Claro, nem sempre o "early return" é a melhor opção. Avalie cada caso para encontrar a melhor solução. Tem algo a adicionar ou discordar? Vamos aprender juntos nos comentários! 💬 | g7miserski |

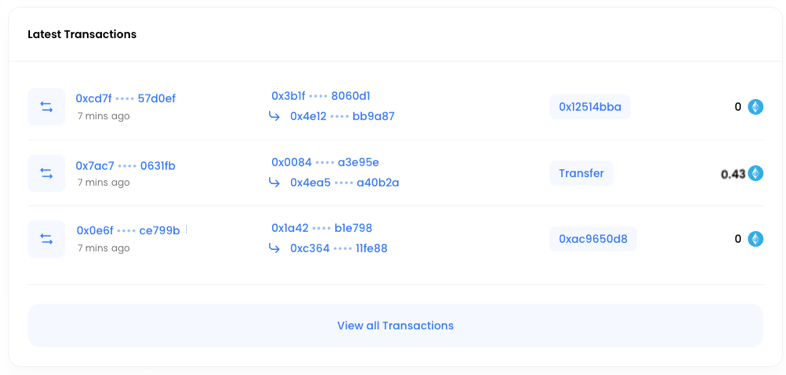

1,862,958 | Introducing Molend: the Pioneering Lending Protocol on Mode Network | Molend, the next-generation lending protocol, launches on the Ethereum Layer 2 blockchain Mode. Users... | 0 | 2024-05-24T15:00:00 | https://dev.to/modenetwork/introducing-molend-the-pioneering-lending-protocol-on-mode-network-2976 | [Molend](https://molend.org/), the next-generation lending protocol, launches on the Ethereum Layer 2 blockchain [Mode](https://www.mode.network/). Users can now engage in collateralized lending and borrowing using Molend. Mode is the DeFi L2 designed for growth, incentivizing and directly rewarding developers, users, and protocols to foster the development of the Mode and superchain ecosystem.

**Traditional Lending vs Decentralized Lending**

Traditional bank lending services are known for their complex and lengthy processes, causing inconvenience for borrowers. Decentralized lending protocols, on the other hand, offer fast loan disbursements and high security, gaining increasing popularity and occupying a significant share in the DeFi space. In simple terms, users can borrow other digital assets by collateralizing their existing digital assets and engage in various profit-generating activities. This includes taking long or short positions on assets through lending, participating in DeFi farming to generate yields, or simply earning interest on deposited assets.

**Empowering Users with Molend’s Flash Loans and Loop Feature**

Molend provides two primary functions: **depositing** and **borrowing**. By placing funds into Molend, depositors can earn interest from the borrowing activities of others. **Flash loan**, a distinctive feature of Molend, allows borrowing & repaying from the liquidity pool in a single transaction, eliminating the need for collaterals when a borrower can repay the loan atomically. Users incur only a minimal processing fee in addition to the standard gas fee, making it an efficient option for quick financial transactions. Flash Loans are particularly useful for arbitrage, enabling users to profit from price discrepancies across different protocols and liquidation opportunities.

Furthermore, Molend will be introducing the Loop feature in the near future to simplify the process of leveraging and borrowing assets for users. The Loop feature enables users to lend and borrow the same type of asset multiple times with a single click. More details about this feature will be revealed soon.

*Note: Molend will use a point system to track users' deposits/borrowings, and allocate airdrop rewards based on the number of points.*

As a highly anticipated flagship product, Molend has many advantages. Molend has high composability and can be integrated into other platforms for use. In terms of user experience, Molend provides a user-friendly data dashboard, allowing users to accurately assess profit trends and execute investment strategies. Flash Loans provide efficient investment tools for advanced users, while most of the fees generated are directly subsidized to users who have. The team behind Molend has extensive experience and a strong technical background in the cryptocurrency industry, enabling them to identify and address user pain points accurately.

**Earn Rewardsfor Being an Early User on Molend**

MODE has a [mainnet airdrop campaign](https://www.mode.network/about-the-airdrop) for early users and a point system dedicated to it. Molend will be a close partner of the campaign, meaning Molend users will earn more MODE points by using Molend.

Moreover, Molend is also allocating 10% of its governance token, $MLD, to early users through airdrops. As a holder of $MLD, you have governance rights over the protocol AND receive protocol revenue sharing. 50% of the loan interest generated across the protocol will be directed to $MLD holders, enabling $MLD tokens with strong value capture capabilities.

Our point system tracks your deposits and borrowings, allowing us to allocate airdrop rewards based on the points you accumulate. The more you engage with Molend, the more rewards you can earn!

In the future, Molend will explore additional gameplay and features while partnering with more blockchain networks and ecosystems. Stay tuned for updates!

**About Molend**

Molend is the next-generation lending protocol built on the Ethereum Layer 2 blockchain, Mode Network. Depositors earn passive income by providing liquidity, while borrowers access assets through over-collateralization. Powered by Mode, Molend ensures secure lending with transparent governance. Join Molend to be part of the decentralized lending revolution and unlock a world of possibilities in the Mode and Superchain ecosystem.

**Stay Connected**

[Twitter](https://twitter.com/MolendProtocol) | [Discord](https://discord.gg/eGRSCv98) | [Telegram](http://t.me/molend_protocol) | [Medium](https://medium.com/@molend) | modenetwork | |

1,864,055 | If Error Messages Were Honest (and Cats) | We’ve all been there... Staring at the screen, deciphering cryptic error messages that seem designed... | 27,390 | 2024-05-24T14:59:02 | https://dev.to/buildwebcrumbs/if-error-messages-were-honest-and-cats-56ea | jokes, watercooler, webdev, development |

We’ve all been there... Staring at the screen, deciphering cryptic error messages that seem designed more to confuse than to inform.

But what if, instead of cryptic text, error messages just told us what was really going on?

Let’s dive into a world where error messages are not only helpful but also honest.

[Cat images from http.cat](https://http.cat/)

---

## 404 Not Found

**Look, I’ve searched high and low and I can’t find this page. Are you sure it ever existed?**

This error might as well be telling you that the page has packed up its bags and moved to a remote island. It’s a reminder of the ghost towns in the digital world—places that were once populated but now exist only in memory (or not at all).

---

## 500 Internal Server Error

**Something broke, and it’s definitely not your fault this time. But it’s still a mystery to all of us.**

Imagine if your car just stopped working and the mechanic shrugged and said, “It’s broken.” That’s your 500 error—unhelpful and a bit mysterious, leaving everyone involved a bit perplexed.

---

{% cta https://github.com/webcrumbs-community/webcrumbs %} ⭐ Would you consider giving us a Star on GitHub?⭐

{% endcta %}

---

## 403 Forbidden

**You shall not pass! No, seriously, you don’t have permission to be here.;**

It’s like getting turned away at the door of an exclusive club. You know there’s something awesome on the other side, but you just can’t get in.

---

## Syntax Error

**You missed a semicolon on line 237. Seriously, a semicolon!?**

It’s the programming equivalent of tripping over your own shoelaces. A tiny punctuation mark can be the downfall of an entire script. And it happens ALL THE TIME.

---

## Timeout Error

**I waited and waited, but this is taking forever. I’ve got other things to do!**

This is the digital version of waiting in line at the post office. It’s taking too long, and your patience has run out.

---

{% cta https://github.com/webcrumbs-community/webcrumbs %} ⭐ Would you consider giving us a Star on GitHub?⭐

{% endcta %}

---

## Out of Memory Error

**I’m stuffed. Can’t fit anything more in here, so stop trying.**

Picture a suitcase so full that you can’t squeeze in another sock. That’s your computer on a memory overload.

---

## Got a favorite ‘honest’ error message?

If error messages really spoke to us this way, maybe we’d get a few more laughs out of our programming blunders (And things done faster, maybe?).

Share your favorite in the comments below!

Let’s find some humor in our coding misadventures.

**Thanks for reading,**

Pachi 💚

{% cta https://github.com/webcrumbs-community/webcrumbs %} ⭐ Would you consider giving us a Star on GitHub?⭐

{% endcta %} | pachicodes |

1,863,544 | AWS SnapStart - Part 21 Measuring cold starts and deployment time with Java 17 using different compilation options | Introduction In the previous parts we've done many measurements with AWS Lambda using Java... | 24,979 | 2024-05-24T14:57:44 | https://dev.to/aws-builders/aws-snapstart-part-21-measuring-cold-starts-and-deployment-time-with-java-17-using-different-compilation-options-o14 | aws, java, serverless, coldstart | ## Introduction

In the previous parts we've done many measurements with AWS Lambda using Java 17 runtime with and without using AWS SnapStart and additionally using SnapStart and priming DynamoDB invocation :

- cold starts using [different deployment artifact sizes]( https://dev.to/aws-builders/aws-snapstart-part-18-measuring-cold-starts-with-java-17-using-different-deployment-artifact-sizes-5092)

- cold starts and deployment time using [different Lambda memory settings ]( https://dev.to/aws-builders/aws-snapstart-part-19-measuring-cold-starts-and-deployment-time-with-java-17-using-different-lambda-memory-settings-30ml)

- warm starts [using different Lambda memory settings](https://dev.to/aws-builders/aws-snapstart-part-20-measuring-warm-starts-with-java-17-using-different-lambda-memory-settings-1p7j)

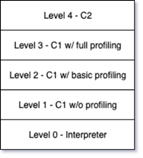

We've done all those measurements using the following JAVA_TOOL_OPTIONS: "-XX:+TieredCompilation -XX:TieredStopAtLevel=1" defined in the AWS SAM template.yaml. This means that client compilation (c1) without profiling will be applied. It was considered the best choice due to the article [Optimizing AWS Lambda function performance for Java](https://aws.amazon.com/de/blogs/compute/optimizing-aws-lambda-function-performance-for-java/) by Mark Sailes. But all these measurements have been done for Java 11 and before Lambda SnapStart has been released. So now it's time to revisit this topic and measure cold and warm start times with different Java compilation options without SnapStart enabled, with SnapStart enabled (and additionally with priming). In this article we'll do it for Java 17 runtime and will compare it with the same measurements for Java 21 already performed in the article [Measuring cold and warm starts with Java 21 using different compilation options]( https://dev.to/aws-builders/aws-snapstart-part-14-measuring-cold-and-warm-starts-with-java-21-using-different-compilation-options-el4)

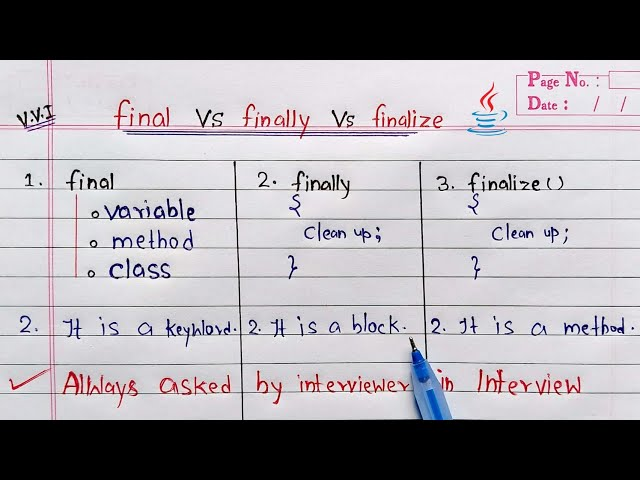

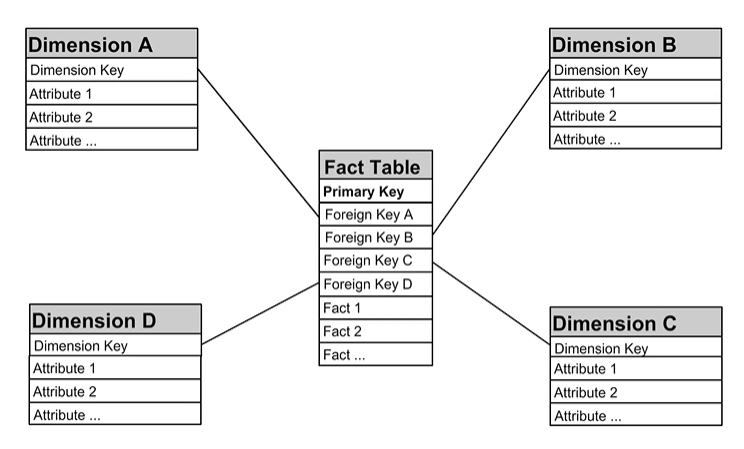

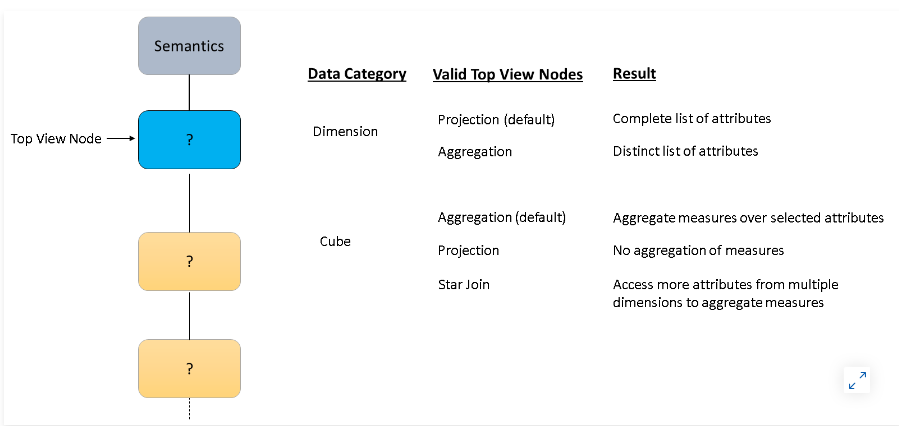

## Meaning of Java compilation options

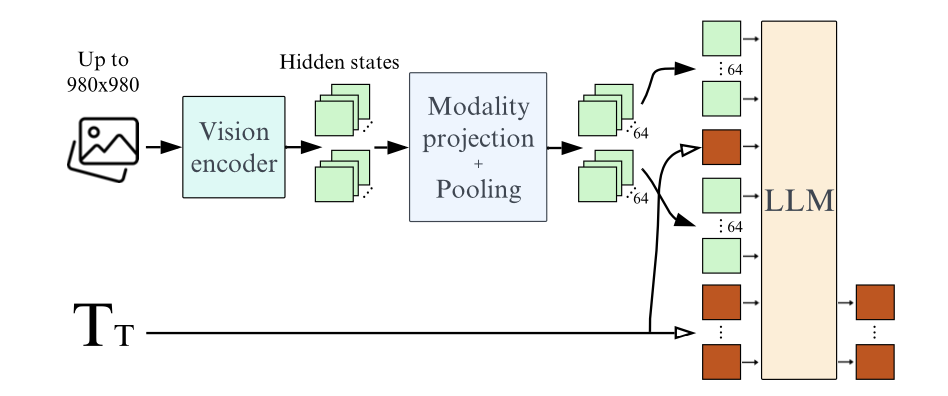

This picture shows Java compilation available.

If you don't specify any options, the default one applied for will be tiered compilation. You can read more about it in this article [Tiered Compilation in JVM](https://www.baeldung.com/jvm-tiered-compilation) or generally about client (C1) and server (C2) compilation in the article [Client, Server, and Tiered Compilation](https://dzone.com/articles/client-server-and-tiered-compilation). There are also many other settings so you can apply to each of the compilation options. You can read more about them in this article [JVM c1, c2 compiler thread – high CPU consumption?](https://blog.fastthread.io/2022/05/26/jvm-c1-c2-compiler-thread-high-cpu-consumption/)

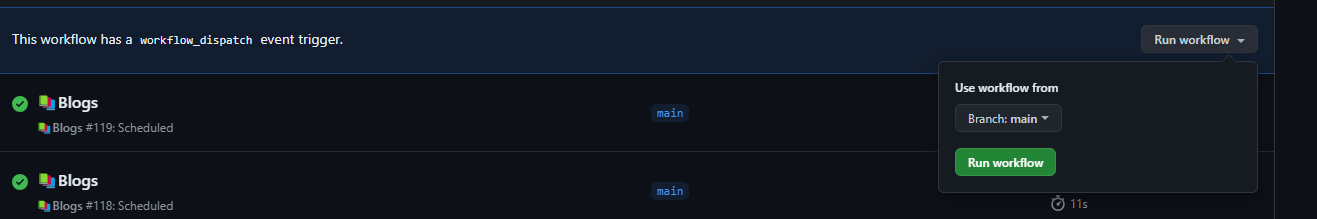

## Measuring cold starts and deployment time with Java 17 using different compilation options

In our experiment we'll re-use the application introduced in [part 8]( https://dev.to/aws-builders/measuring-lambda-cold-starts-with-aws-snapstart-part-8-measuring-with-java-17-21db) for this. Here is the code for the [sample application](https://github.com/Vadym79/AWSLambdaJavaSnapStart/tree/main/pure-lambda-17). There are basically 2 Lambda functions which both respond to the API Gateway requests and retrieve product by id received from the API Gateway from DynamoDB. One Lambda function GetProductByIdWithPureJava17Lambda can be used with and without SnapStart and the second one GetProductByIdWithPureJava17LambdaAndPriming uses SnapStart and DynamoDB request invocation priming.

The results of the experiment below were based on reproducing more than 100 cold and approximately 100.000 warm starts. For it (and experiments from my previous article) I used the load test tool [hey](https://github.com/rakyll/hey), but you can use whatever tool you want, like [Serverless-artillery](https://www.npmjs.com/package/serverless-artillery) or [Postman](https://www.postman.com/). I ran all these experiments with 5 different compilation options defined in the [template.yaml](https://github.com/Vadym79/AWSLambdaJavaSnapStart/blob/main/pure-lambda-17/template.yaml). This happens in the Globals section where variable named "JAVA_TOOL_OPTIONS" is defined in the Environment section of the Lambda function:

```

Globals:

Function:

CodeUri: target/aws-pure-lambda-snap-start-17-1.0.0-SNAPSHOT.jar

Runtime: java21

....

Environment:

Variables:

JAVA_TOOL_OPTIONS: "-XX:+TieredCompilation -XX:TieredStopAtLevel=1"

```

1. no options (tiered compilation will take place)

2. JAVA_TOOL_OPTIONS: "-XX:+TieredCompilation -XX:TieredStopAtLevel=1" (client/C1 compilation without profiling)

3. JAVA_TOOL_OPTIONS: "-XX:+TieredCompilation -XX:TieredStopAtLevel=2" (client/C1 compilation with basic profiling)

4. JAVA_TOOL_OPTIONS: "-XX:+TieredCompilation -XX:TieredStopAtLevel=3" (client/C1 compilation with full profiling)

5. JAVA_TOOL_OPTIONS: "-XX:+TieredCompilation -XX:TieredStopAtLevel=4" (server/C2 compilation)

For their meaning see our explanations above. We will refer to those compilation options in the table column "Compilation Option" by their number in the tables below, for example number 5 stays for JAVA_TOOL_OPTIONS: "-XX:+TieredCompilation -XX:TieredStopAtLevel=4". Abbreviation **c** is for the cold start and **w** is for the warm start.

**Cold (c) and warm (w) start times without SnapStart in ms:**

|Compilation Option| c p50 | c p75 | c p90 |c p99 | c p99.9| c max |w p50 | w p75 | w p90 |w p99 | w p99.9 | w max |

|-----------|----------|-----------|----------|----------|----------|----------|-----------|----------|----------|----------|----------|----------|

|1|2831.33|2924.85|2950.12|3120.34|3257.03|3386.67|5.73|6.50|7.88|20.49|49.62|1355.08|

|2|2880.53|2918.79|2974.45|3337.29|3515.86|3651.65|6.11|7.05|8.94|23.54|62.99|1272.96|

|3|2906.39|2950.59|3016.8|3283.31|3409.65|3593.65|5.73|6.61|7.87|21.07|53.74|1548.95|

|4|3247.9|3348.82|3481.41|3673.51|3798.97|3904.13|6.72|7.75|9.38|24.69|72.67|1494.98|

|5|4146.66|4231.9|4377.42|4557.21|4699.03|4780.63|6.11|7.27|10.15|29.87|103.03|2062.84|

**Cold (c) and warm (w) start times with SnapStart without Priming in ms:**

|Compilation Option| c p50 | c p75 | c p90 |c p99 | c p99.9| c max |w p50 | w p75 | w p90 |w p99 | w p99.9 | w max |

|-----------|----------|-----------|----------|----------|----------|----------|-----------|----------|----------|----------|----------|----------|

|1|1506.20|1577.06|1845.01|2010.62|2280.46|2281|5.82|6.72|8.39|22.81|798.46|1377.54|

|2|1521.33|1578.64|1918.35|2113.65|2115.77|2117.42|6.01|7.05|8.94|23.92|101.41|1077.45|

|3|1463.16|1532.00|1886.03|1990.62|2020.69|2021.39|5.92|6.72|8.00|22.09|95.17|1179.13|

|4|1657.88|1755.07|2057.37|2158.49|2169.30|2170.65|6.41|7.27|8.80|24.30|96.69|1374.43|

|5|2269.10|2340.50|2581.36|2762.91|2807.45|2808.89|6.41|7.75|11.34|32.86|1506.60|1941.26|

**Cold (c) and warm (w) start times with SnapStart and with DynamoDB invocation Priming in ms:**

|Compilation Option| c p50 | c p75 | c p90 |c p99 | c p99.9| c max |w p50 | w p75 | w p90 |w p99 | w p99.9 | w max |

|-----------|----------|-----------|----------|----------|----------|----------|-----------|----------|----------|----------|----------|----------|

|1|708.90|790.50|960.61|1041.61|1148.80|1149.91|5.64|6.61|8.38|21.07|141.53|373.37|

|2|692.79|758.00|1003.80|1204.06|1216.15|1216.88|6.21|7.27|9.38|25.09|103.03|256.65|

|3|670.98|720.33|1007.82|1072.25|1200.45|1200.64|5.38|6.11|7.27|19.15|99.81|303.52|

|4|732.99|828.88|1030.07|1271.24|1350.41|1390.03|6.30|7.05|8.52|23.17|103.03|469.45|

|5|937.84|1056.29|1227.14|1422.78|1445.72|1447.09|6.30|7.75|11.16|32.86|122.69|381.03|

## Conclusions

For all measurements for Java 17 we discovered that setting compilation options -XX:+TieredCompilation -XX:TieredStopAtLevel= 3 or 4 produced much worse cold and warm starts as the tiered compilation or -XX:TieredStopAtLevel=1 and 2 (client compilation without or with basic profling). With Java 21 we observed much worse cold and warm starts also starting with -XX:TieredStopAtLevel=2 which is not the case for Java 17.

For the Lambda function with Java 17 without SnapStart enabled tiered compilation (default one) is a better option for having lower cold and warm starts for nearly all percentiles for our use case

For the Lambda function with Java 21 without SnapStart enabled it’s different see my article [Measuring cold and warm starts with Java 21 using different compilation options]( https://dev.to/aws-builders/aws-snapstart-part-14-measuring-cold-and-warm-starts-with-java-21-using-different-compilation-options-el4) : client compilation without profiling (-XX:+TieredCompilation -XX:TieredStopAtLevel=1 ) is the better option for having lower cold and warm starts for nearly all percentiles for our use case.

For the Lambda function with Java 17 with SnapStart enabled (with priming of DynamoDB invocation or without priming) the tiered compilation or -XX:TieredStopAtLevel=1 and 2 produced very close cold and warm starts which vary a bit depending on the compilation option and percentile.

For the Lambda function with Java 21 with SnapStart enabled (with priming of DynamoDB invocation or without priming) it was different: tiered compilation (the default one) outperformed the client compilation without profiling (-XX:+TieredCompilation -XX:TieredStopAtLevel=1 ) in terms of the lower cold start time and also for the warm start time for nearly all percentiles for our use case.

So please review/re-measure the cold and warm start times for your use case if you use Java 17, as tiered compilation can be a better choice if you enable SnapStart for your Lambda function(s). In our case we didn't use any framework like Spring Boot, Micronaut or Quarkus which may also impact the measurements. | vkazulkin |

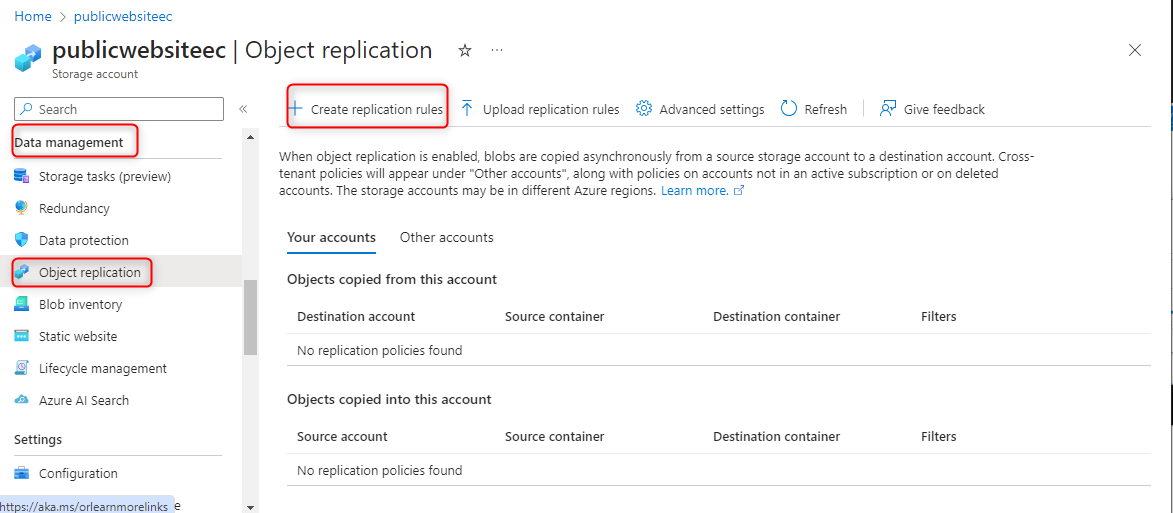

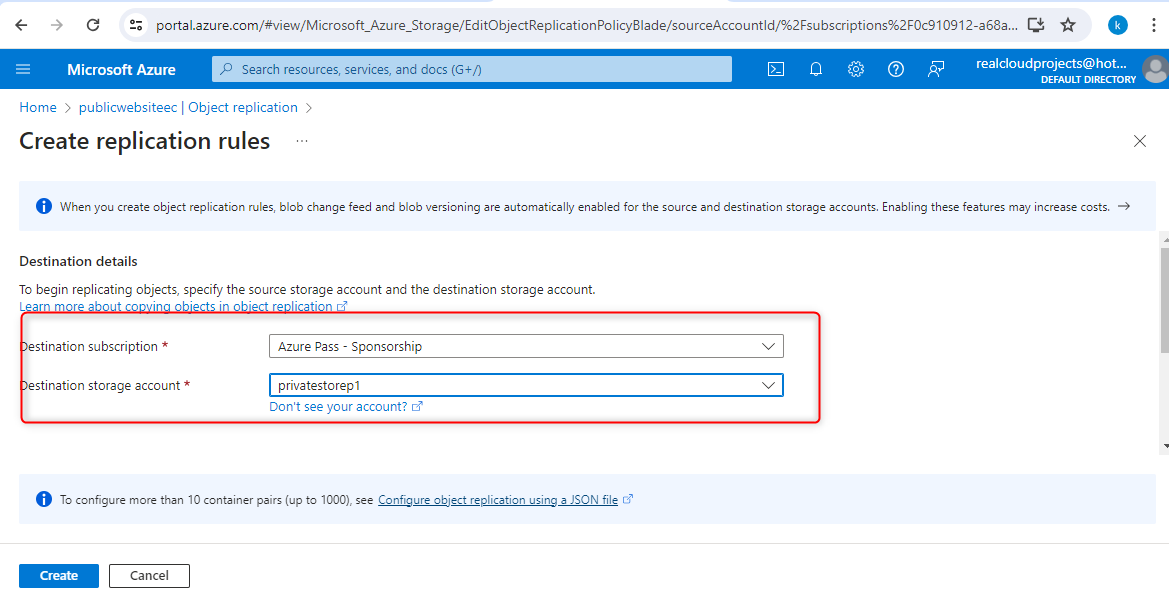

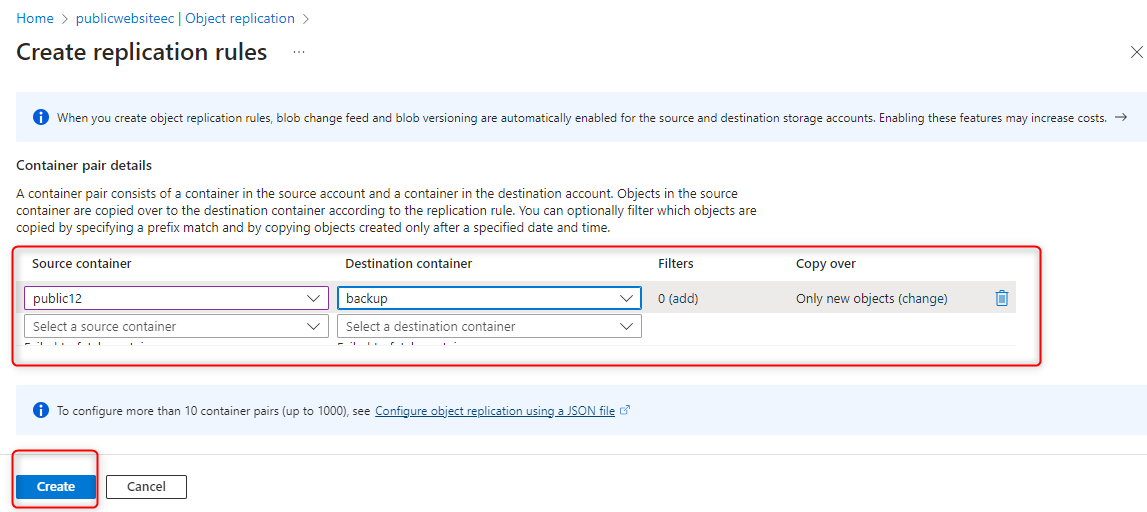

1,862,617 | What is your cloud data backup strategy ? | Did any of you think about how will we respond to a situation if our entire business data got wiped... | 0 | 2024-05-24T14:57:14 | https://dev.to/nirmalkumar/what-is-your-cloud-data-backup-strategy--5a39 | cloud, databackup, systemdesign, clouddata | Did any of you think about how will we respond to a situation if our entire business data got wiped out from cloud data center?

What's our Plan B in such cases ? Are we really prepared and ready to face it ?

Everyone has to revisit cloud backup strategy for worst case scenarios. Do you know that recently an incident happened in Google Cloud that it mistakenly wiped off an entire client's private workspace including the client's entire data backup because of a misconfiguration. Client's business data worth is ~ 125billion.

Article : [reference](https://www.yahoo.com/tech/google-accidentally-deleted-125-billion-183502623.html)

How did they recover ? Luckily this client had backup's backup in a different cloud vendor / data center using which they came back online. It took them a week.

If we don't have a clear data backup strategy defined, then it's crystal clear that our business is simply sitting on a bomb waiting to explode.

Below are my strategic thought regarding data backup. Feel free to enter yours for safe business in the comment below,

Rigorous data backup can be done based on below strategies.

* Clear data classification of every application and its data. Ex: public, internal, classified, restricted.

* Clear communication with users and documentation about backup strategies and direction from leadership team.

* Optimize backup strategy based on

1. data retention policy,

2. how frequent we might access,

3. how frequent we update or read it back,

4. Backup data center geographic location,

5. Data backup storage disk type (hardware),

6. Backup storage size

* Data-at-rest encryption plays a vital role with data security.

* Optimize data retrieval plan based on data classification and carefully evaluate business impact when needed.

* Real time monitoring, reports and alerts on data backup jobs. * How often are we doing the backup and how often are we deleting the backup based on retention policy ? This is a deep question.

* Thorough review and sign off on the deleted data reports by corresponding application owners.

* Data backup cost comparison between cloud vendor vs on-prem data center will give practical ideas and ways to optimize data backup plan.

* Regular planned switch over to Disaster recovery environment data center and allow the application to run on it for few months will give practical experience to entire engineers to have a safe environment to practice what should be done during actual production failure major scenarios.

* Data backup access control plan and clear segregation of duties will safeguard from many manual errors. | nirmalkumar |

1,864,084 | Killa | Nikotiinipussit, tunnetaan myös nimellä denssit nikotiinipussit, ovat saavuttaneet suurta suosiota... | 0 | 2024-05-24T14:53:46 | https://dev.to/fidenssit06/killa-1a4k | Nikotiinipussit, tunnetaan myös nimellä denssit nikotiinipussit, ovat saavuttaneet suurta suosiota tupakoinnin ja perinteisen nuuskan korvaajana. Näiden pienikokoisten pussien ansiosta nikotiinin käyttö on mahdollista ilman tupakoinnin tai nuuskan haittoja. Tämä artikkeli tutustuu nikotiinipussien maailmaan, keskittyen erityisesti merkkeihin kuten Denssi ja Killa, sekä niiden etuihin ja käyttöön.

Mikä ovat Denssit Nikotiinipussit?

Denssit nikotiinipussit ovat pieniä pusseja, jotka sisältävät nikotiinia, makuaineita ja muita ainesosia. Ne asetetaan ylähuulen alle, jossa nikotiini imeytyy ikenen kautta. Näin nikotiinia saadaan ilman savua, tuhkaa tai sylkeä.

Nikotiinipussien suosio on kasvanut nopeasti niiden monien etujen ansiosta:

Terveellisempi Vaihtoehto: Nikotiinipussit ovat savuttomia ja tupakattomia, mikä vähentää altistumista tupakan savun haitallisille aineille.

Käytön Helppous: Ne ovat huomaamattomia ja helppokäyttöisiä, eivätkä vaadi erityisiä valmisteluja.

Monipuolisuus: Tarjolla on laaja valikoima makuja ja nikotiinipitoisuuksia, mikä mahdollistaa yksilöllisten mieltymysten mukaan valitsemisen.

Suositut Merkit: Denssi ja Killa

Nikotiinipussimarkkinoilla Denssi ja Killa ovat tunnettuja ja suosittuja merkkejä, jotka erottuvat laadullaan ja monipuolisuudellaan.

Denssi

Denssi on merkki, joka tarjoaa laajan valikoiman nikotiinipusseja. Heidän tuotteensa ovat tunnettuja korkealaatuisista ainesosistaan ja tasaisesta nikotiinin vapautumisesta. Denssi-pussit ovat saatavilla eri makuina, kuten minttu, marja ja sitrus, tarjoten käyttäjille monipuolisen ja nautinnollisen kokemuksen. Brändi keskittyy tuottamaan miellyttävän nikotiinihitin ilman perinteisen nuuskan karvautta.

**_[Killa](https://denssit.fi/)_**