id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,906,074 | Passing Out of the Post Finding Open Teammates | Explore techniques for effective passing from the post, including recognizing double teams and hitting open shooters. | 0 | 2024-06-29T21:22:16 | https://www.sportstips.org/blog/Basketball/Center/passing_out_of_the_post_finding_open_teammates | basketball, coaching, skills, passing | ## Passing Out of the Post: Finding Open Teammates

In basketball, the post position is a pivotal spot for initiating offense. The ability to pass effectively out of the post can turn a strong interior presence into a dual threat, creating scoring opportunities both inside and out. In this article, we'll delve int... | quantumcybersolution |

1,906,073 | Overcoming Backend Challenges: A Journey with HNG Internship | Hello, fellow tech enthusiasts! I’m thrilled to share my experience as I embark on an exciting... | 0 | 2024-06-29T21:22:14 | https://dev.to/nwogu_precious_52ab8ab48c/overcoming-backend-challenges-a-journey-with-hng-internship-3bgo |

Hello, fellow tech enthusiasts! I’m thrilled to share my experience as I embark on an exciting journey with the HNG Internship. As a backend developer, I’ve faced numerous challenges, but each one has contributed to my growth and expertise. Today, I want to take you through a recent, difficult backend problem I encou... | nwogu_precious_52ab8ab48c | |

1,906,072 | A CURSORY LOOK AT THE RETAIL DATA SET | This dataset captures the sales performance of some retails outlets spread across North America, Asia... | 0 | 2024-06-29T21:21:47 | https://dev.to/precious_oyem_c387de5a410/a-cursory-look-at-the-retail-data-set-59g | This dataset captures the sales performance of some retails outlets spread across North America, Asia and Europe. The dataset could be got from kaggle repository (https://www.kaggle.com/datasets/kyanyoga/sample-sales-data?resource=download). The focus of this review is to fulfill the condition of stage zero of HNG 11 I... | precious_oyem_c387de5a410 | |

1,906,058 | The Cosmic Connection Challenges and Benefits of International Cooperation in Space Exploration | Exploring the intricate dance of global collaboration in space exploration, the exciting achievements, and the indispensable role of diplomacy in our journey beyond Earth. | 0 | 2024-06-29T21:16:39 | https://www.elontusk.org/blog/the_cosmic_connection_challenges_and_benefits_of_international_cooperation_in_space_exploration | spaceexploration, internationalcooperation, diplomacy | # The Cosmic Connection: Challenges and Benefits of International Cooperation in Space Exploration

Space—the final frontier. It's a vast, infinite expanse that has fascinated humanity for centuries. With technological advancements bringing space exploration within our grasp, the quest to explore the cosmos is no ... | quantumcybersolution |

1,906,055 | Solving complex backend challenges: My journey and insights from optimizing a high-traffic Web App | Hello, Welcome, This is my first blog ever as a Developer, and I am thrilled and excited about it,... | 0 | 2024-06-29T21:14:13 | https://dev.to/kobiowuquadri/solving-complex-backend-challenges-my-journey-and-insights-from-optimizing-a-high-traffic-web-app-4ai0 | mongodb, node, javascript, webdev |

Hello, Welcome, This is my first blog ever as a Developer, and I am thrilled and excited about it, By the way, I am Quadri Kobiowu and I am a Backend Developer with 3 years of experience in MERN full-stack developm... | kobiowuquadri |

1,906,057 | Understanding Docker: A Comprehensive Guide | Introduction Docker is a powerful platform designed to simplify the process of building,... | 0 | 2024-06-29T21:10:13 | https://dev.to/jahangeerawan/understanding-docker-a-comprehensive-guide-33ie | ## **Introduction**

Docker is a powerful platform designed to simplify the process of building, deploying, and managing applications using containerization. This guide explores the fundamental concepts of Docker, its benefits, and practical usage in modern software development.

## **What is Docker?**

Docker is a co... | jahangeerawan | |

1,906,054 | Самозалепващи панели за стена | Преди да се задълбочим в предимствата на 3D самозалепващи панели за стена е важно е да разберем какво... | 0 | 2024-06-29T21:06:40 | https://dev.to/homedesign6644/samozaliepvashchi-panieli-za-stiena-4k6h | Преди да се задълбочим в предимствата на [3D самозалепващи панели за стена](https://home-design.bg/%D1%81%D0%B0%D0%BC%D0%BE%D0%B7%D0%B0%D0%BB%D0%B5%D0%BF%D0%B2%D0%B0%D1%89%D0%B8-%D0%BF%D0%B0%D0%BD%D0%B5%D0%BB%D0%B8-%D0%B7%D0%B0-%D1%81%D1%82%D0%B5%D0%BD%D0%B0-%D0%B2%D1%81%D0%B8%D1%87%D0%BA%D0%BE/) е важно е да разберем ... | homedesign6644 | |

1,906,053 | Outlet Passing Starting the Fast Break | Examine the importance of quick and accurate outlet passes to initiate fast breaks and create easy scoring opportunities in basketball. | 0 | 2024-06-29T21:06:19 | https://www.sportstips.org/blog/Basketball/Center/outlet_passing_starting_the_fast_break | basketball, coaching, fastbreak, offense | # Outlet Passing: Starting the Fast Break

Basketball is often described as a game of speed and precision, and nowhere is this more evident than in the fast break. The foundation of a deadly fast break starts with one crucial skill: the outlet pass. Let's break down why this skill is indispensable and how you can ... | quantumcybersolution |

1,906,051 | Solving a Complex Back-End Challenge and My Journey with HNG Internship. | My name is Damilola Olawoore, a full stack developer. Before I started my journey into back-end... | 0 | 2024-06-29T21:03:01 | https://dev.to/htcode/solving-a-complex-back-end-challenge-and-my-journey-with-hng-internship-24pe |

My name is Damilola Olawoore, a full stack developer.

Before I started my journey into back-end development, I had a conversation with a front-end developer who was trying to integrate an API to get information about cars. His goal was to fetch the image and every detail about the car to use in his React project. How... | htcode | |

1,906,050 | Setting up the database and search for RAG | In video 1.3 of the datatalksclub's llm-zoomcamp, we're focusing on retrieval. In this video, I set... | 0 | 2024-06-29T21:01:06 | https://dev.to/cmcrawford2/setting-up-the-database-and-search-for-rag-45io | llm, rag | In video 1.3 of the datatalksclub's [llm-zoomcamp](https://github.com/datatalksclub/llm-zoomcamp), we're focusing on retrieval. In this video, I set up the database and search capabilities for RAG. I used a simple in-memory minimal search engine for now, which was created in a pre-course video. I didn't create it - I j... | cmcrawford2 |

1,906,049 | The Cosmic Cleanup The Importance of Space Debris Mitigation | An exploration of the critical need for space debris mitigation, the innovative technologies being developed, and the challenges faced in reclaiming Earths orbit for safe exploration. | 0 | 2024-06-29T21:00:42 | https://www.elontusk.org/blog/the_cosmic_cleanup_the_importance_of_space_debris_mitigation | space, technology, innovation | # The Cosmic Cleanup: The Importance of Space Debris Mitigation

Imagine looking up at the night sky, stars twinkling gloriously, only to realize that just beyond our vision, Earth's orbit is cluttered with what can be likened to cosmic junk. The proliferation of man-made debris floating in space poses serious ris... | quantumcybersolution |

1,906,048 | Кисели краставички | Киселите краставички са обичана и популярна туршия – една от най-познатите консервирани храни в... | 0 | 2024-06-29T20:56:14 | https://dev.to/tami7766pn/kisieli-krastavichki-a2 | Киселите краставички са обичана и популярна туршия – една от най-познатите консервирани храни в света. Техният уникален вкус и хрупкава текстура ги правят незаменими когато сядаме на масата зимно време. Не е лъжа, че често правенето на мариновани краставички се проваля, затова ви предлагаме една изпитана рецепта за кис... | tami7766pn | |

1,906,044 | How HNG Internship 11 helps me achieve my goals | I have always wanted to work in fast paced settings where I'm challenged to do the best I can. In... | 0 | 2024-06-29T20:55:22 | https://dev.to/desire_destiny/how-hng-internship-11-helps-me-achieve-my-goals-19l0 | design, uidesign | I have always wanted to work in fast paced settings where I'm challenged to do the best I can.

In 2023, I was introduced to the HNG internship by a friend who was further in his tech career. He had told me that it was a gruelling and fast paced internship program where only the toughest survive.

At the time, I had j... | desire_destiny |

1,906,047 | Master Observability with Logs: An In-Depth Guide for Beginners | I have just finished publishing my latest article, "Master Observability with Logs: An In-Depth Guide... | 0 | 2024-06-29T20:54:57 | https://dev.to/cloudnative_eng/master-observability-with-logs-an-in-depth-guide-for-beginners-3dlj | monitoring, beginners, devops, programming | I have just finished publishing my latest article, "Master Observability with Logs: An In-Depth Guide for Beginners."

Topics:

• 🪵 Logs and Observability: Challenges with modern cloud-native applications and maximising observability while minimising costs.

• 📊 Logs vs Metrics: Differences in data collection, visibili... | cloudnative_eng |

1,906,046 | Announcing a New Puppet Code Testing Course: On-Demand PE502 - Test and Deliver | We are excited to announce a new on-demand Puppet course that will transform your approach to testing... | 0 | 2024-06-29T20:54:15 | https://dev.to/tomchisholm/announcing-a-new-puppet-code-testing-course-on-demand-pe502-test-and-deliver-43p8 | puppet, testing | We are excited to announce a new on-demand Puppet course that will transform your approach to testing Puppet code. **PE502 - Test and Deliver** is a comprehensive course designed to equip you with the skills and knowledge necessary to test your Puppet code effectively, at your own pace.

This on-demand course will cove... | tomchisholm |

1,906,045 | How I solved a problem I encounter as a backend developer | Growing up, I have always been fascinated by technology. How they work, how they are made, I just... | 0 | 2024-06-29T20:53:55 | https://dev.to/theecypher/how-i-solved-a-problem-i-encounter-as-a-backend-developer-1kpp | backend, webdev, javascript | Growing up, I have always been fascinated by technology. How they work, how they are made, I just want to know how things work, I would open up spoilt radios just see what was inside it and how it works. I remember my brother used to have an electric teddy bear that talked and I was curious about it so one night I tore... | theecypher |

1,906,041 | The Brilliance of Space-Based Energy Storage Systems Fueling the Future of Space Exploration | Discover how space-based energy storage systems could revolutionize long-duration missions and support sustainable space habitats, pushing the boundaries of our cosmic ambitions. | 0 | 2024-06-29T20:44:44 | https://www.elontusk.org/blog/the_brilliance_of_space_based_energy_storage_systems_fueling_the_future_of_space_exploration | space, energystorage, innovation | # The Brilliance of Space-Based Energy Storage Systems: Fueling the Future of Space Exploration

Hello, cosmic explorers! 🚀 As we push the frontiers of space exploration, one compelling innovation is emerging as a game-changer: **space-based energy storage systems**. Imagine a future where extended missions and sustai... | quantumcybersolution |

1,906,038 | Angular vs React: A Frontend Showdown | How many times have you been in the cereal aisle, just trying to make a decision on which box to... | 0 | 2024-06-29T20:34:55 | https://dev.to/aminah_rashid/angular-vs-react-a-frontend-showdown-cpf | frameworks, angular, react, comparsion | How many times have you been in the cereal aisle, just trying to make a decision on which box to take? Thats what a whole lot of beginners feel (including yours truly) when they first check out the front-endaries. When you are new it can make your head spin, all the frameworks, libraries and tools… There are so many ch... | aminah_rashid |

1,851,921 | How to customize the User model in Django? | Image credits to: Pin In this post I explain three methods to extend or customize Django’s User... | 0 | 2024-06-24T23:13:28 | https://coffeebytes.dev/en/how-to-customize-the-user-model-in-django/ | django, python | ---

title: How to customize the User model in Django?

published: true

date: 2024-06-29 20:00:00 UTC

tags: django,python

canonical_url: https://coffeebytes.dev/en/how-to-customize-the-user-model-in-django/

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/gbln14u09yabezin32tv.jpg

---

Image credits t... | zeedu_dev |

1,906,037 | Handling Double Teams Maintaining Composure and Finding Options | Analyze strategies for handling double teams in the post, including recognizing them early and making smart decisions. This post combines player knowledge with coaching wisdom, offering practical insights for maintaining composure and finding the best options when double-teamed. | 0 | 2024-06-29T20:34:25 | https://www.sportstips.org/blog/Basketball/Center/handling_double_teams_maintaining_composure_and_finding_options | basketball, coaching, strategy, defense | # Handling Double Teams: Maintaining Composure and Finding Options

When you’re controlling the post like a boss, double teams are inevitable. Defenders do it to neutralize your threat, but if you master handling these situations, you turn the pressure back on them. Here's how to maintain composure and make smart ... | quantumcybersolution |

1,906,036 | A wonderful world of backend | Hello everyone, I am a web developer and I particularly enjoy backend development. In this article, I... | 0 | 2024-06-29T20:31:15 | https://dev.to/patricekalwira/a-wonderful-world-of-backend-2nff | learning, backend, programming | Hello everyone, I am a web developer and I particularly enjoy backend development. In this article, I will share my experience with a website I am currently designing.

I will soon begin my internship at HNG, and as part of the application process, we are required to write articles about our experiences as developers. ... | patricekalwira |

1,906,035 | How to Become a Frontend Engineer Without a Degree — From My Experience | This blog post was originally published in my blog:... | 0 | 2024-06-29T20:29:32 | https://dev.to/codebymedu/how-to-become-a-frontend-engineer-without-a-degree-from-my-experience-4kfc | frontend, career, job | This blog post was originally published in my blog: [https://www.codebymedu.com/blog/frontend-engineer-without-a-degree](https://www.codebymedu.com/blog/frontend-engineer-without-a-degree)

At only 19, I managed to get a job as React Frontend Engineer without even a high-school degree. And here I will show you step by ... | codebymedu |

1,906,034 | Space-Based Manufacturing The Next Frontier of Economic Growth | Explore how space-based manufacturing can revolutionize industries on Earth and drive unprecedented economic growth. | 0 | 2024-06-29T20:28:47 | https://www.elontusk.org/blog/space_based_manufacturing_the_next_frontier_of_economic_growth | space, manufacturing, economy, innovation | # Space-Based Manufacturing: The Next Frontier of Economic Growth

## Introduction

Imagine a world where factories orbit the Earth, leveraging the unique environment of space to create materials and products that were once thought impossible. This might sound like science fiction, but space-based manufacturing is rapi... | quantumcybersolution |

1,906,002 | 🤑XRP Price Recovery: Will XRP Rank Under Top 5 in 2024? | 🔟 XRP's Position and Market Struggles XRP has been in the crypto industry for over ten years,... | 0 | 2024-06-29T19:32:13 | https://dev.to/irmakork/xrp-price-recovery-will-xrp-rank-under-top-5-in-2024-3m8j |

🔟 XRP's Position and Market Struggles

XRP has been in the crypto industry for over ten years, currently ranked 7th by market capitalization. Despite its high rank, its reputation has declined due to a six-year-long crypto winter, with its price rarely moving above $0.5, except for a few exceptions.

📉 Recent Decline... | irmakork | |

1,905,841 | The Difference between the useState() and useRef() Hooks | The useState() Hook The bread and butter of every React developer. A hook used to manage... | 0 | 2024-06-29T20:27:08 | https://dev.to/emmanuel_xs/the-difference-between-the-usestate-and-useref-hooks-3g83 | webdev, beginners, react, javascript | ## The `useState()` Hook

The bread and butter of every React developer. A hook used to manage the state of our application (client) and re-render components when state changes.

## The `useRef()` Hook

A hook that allows you to step outside of the React concept (UI being tied to states, i.e., state changes causing re-ren... | emmanuel_xs |

1,906,032 | Short review of a sample sales dataset for retail outlets | Introduction This dataset was written originally by Maria Carina Roldán and modified by... | 0 | 2024-06-29T20:22:15 | https://dev.to/somtochukwu_ibuodinma/short-review-of-a-sample-sales-dataset-for-retail-outlets-3a76 |

## **Introduction**

This dataset was written originally by Maria Carina Roldán and modified by Gus Segura. The dataset tends to collate sales data of some companies in countries spanning North America, Europe and Asia. It reflects the sales data of the documented companies from year 2003 to 2005. This review provides ... | somtochukwu_ibuodinma | |

1,906,030 | TECHNICAL REPORT ON SALES DATA | INTRODUCTION The objective of this report is to offer a preliminary analysis of the supply... | 0 | 2024-06-29T20:19:25 | https://dev.to/babyprof01/technical-report-on-sales-data-2plm | ## INTRODUCTION

The objective of this report is to offer a preliminary analysis of the [supply sales dataset](https://kaggle.com/datasets/kyanyoga/sample-sales-data). Through an examination of the dataset's structure and contents, we aim to identify important variables, spot obvious trends, and suggest areas for furthe... | babyprof01 | |

1,906,029 | Footwork Fundamentals Enhancing Mobility and Balance | Analyze key footwork drills and techniques that improve a center's agility, balance, and effectiveness in the post, blending player knowledge and coaching wisdom. | 0 | 2024-06-29T20:18:27 | https://www.sportstips.org/blog/Basketball/Center/footwork_fundamentals_enhancing_mobility_and_balance | basketball, centerposition, footworkdrills, agility | # Footwork Fundamentals: Enhancing Mobility and Balance

Improving footwork is essential for centers to elevate their game, providing a foundation for better agility, balance, and effectiveness in the post. Let’s dive into some of the key drills and techniques that every center should integrate into their training regi... | quantumcybersolution |

1,906,028 | ReactJS vs. Svelte: The Veteran vs. The Newcomer of the Web | In the ever-evolving landscape of frontend development, choosing the right technology can make or... | 0 | 2024-06-29T20:14:46 | https://dev.to/abdulmalikyusuf/reactjs-vs-svelte-the-veteran-vs-the-newcomer-of-the-web-229o | webdev, frontend, react, svelte | In the ever-evolving landscape of frontend development, choosing the right technology can make or break a project. Among the plethora of tools available, ReactJS and Svelte stand out for their unique approaches to building user interfaces. In this article, I'll dive deep into these two frontend technologies, comparing ... | abdulmalikyusuf |

1,906,027 | Space-Based 3D Printing Crafting Our Future Among the Stars | Discover how 3D printing technology is set to revolutionize the construction of habitats and infrastructure on other planets, making space colonization more feasible than ever before. | 0 | 2024-06-29T20:12:50 | https://www.elontusk.org/blog/space_based_3d_printing_crafting_our_future_among_the_stars | 3dprinting, spaceexploration, innovation | # Space-Based 3D Printing: Crafting Our Future Among the Stars

The idea of humans living on other planets has long been a staple of science fiction. However, rapidly advancing technology, particularly in the field of 3D printing, is transforming this dream into a tangible reality. Space-based 3D printing holds immense... | quantumcybersolution |

1,906,014 | My somewhat rocky start to HNG11... | Between you and me, there is a screen. Nope? Apologies if that opening joke turned you off, but... | 27,910 | 2024-06-29T20:11:30 | https://dev.to/kid_with_adream/my-somewhat-rocky-start-to-hng11-2gli | hng, internship, webdev, htmx |

Between you and me, there is a screen.

Nope? Apologies if that opening joke turned you off, but stick with me here

Between you and me, I never thought the first task for this year's HNG internship would be to write an artic... | kid_with_adream |

1,906,025 | Recent challenge I overcame in Backend and why I joined the HNG Internship | I’m Firmin Nganduli. I am a backend developer. I have been coding for 4 years and have faced multiple... | 0 | 2024-06-29T20:08:00 | https://dev.to/firminfinva/recent-challenge-i-overcame-in-backend-and-why-i-joined-the-hng-internship-4lnm |

I’m Firmin Nganduli. I am a backend developer. I have been coding for 4 years and have faced multiple challenges over the years. In this article, I am going to talk about the recent one and how I overcame it.

## The Challenge: Connecting Django API with React.js

I was building an app with Django as I was used to, but... | firminfinva | |

1,906,024 | Executing the Pick and Roll Creating High-Percentage Shots from the Centers Perspective | Master the pick and roll from the center's perspective with this comprehensive guide. Learn to set effective screens, roll efficiently, and finish strong at the rim to create high-percentage scoring opportunities. | 0 | 2024-06-29T20:02:30 | https://www.sportstips.org/blog/Basketball/Center/executing_the_pick_and_roll_creating_high_percentage_shots_from_the_center | basketball, pickandroll, coachingtips, centertraining | # Executing the Pick and Roll: Creating High-Percentage Shots from the Center's Perspective

The pick and roll is a staple of modern basketball, and its effectiveness largely depends on the execution and synergy between the ball handler and the center. This article delves into the essential aspects of the pick and... | quantumcybersolution |

1,905,664 | ReactJS VS NextJS | Though they are related, and in my perspective, like father and son, ReactJs and NextJs have several... | 0 | 2024-06-29T20:01:54 | https://dev.to/dennardavid/reactjs-vs-nextjs-56en | javascript, programming, react, nextjs | Though they are related, and in my perspective, like father and son, ReactJs and NextJs have several distinct characteristics as frontend technologies that are now widely used.

This article was inspired by the **[HNG internship](https://hng.tech/internship)** program; this internship attempts to simulate an actual fas... | dennardavid |

1,906,023 | Setting Up a WordPress Blog from Scratch Your Complete Guide | Learn how to set up a WordPress blog from scratch! This comprehensive guide covers everything from domain registration and hosting to installing WordPress and customizing your site. | 0 | 2024-06-29T19:56:53 | https://www.elontusk.org/blog/setting_up_a_wordpress_blog_from_scratch_your_complete_guide | wordpress, blogging, websitedevelopment | # Setting Up a WordPress Blog from Scratch: Your Complete Guide

Starting a blog can be an incredibly rewarding endeavor. Whether you're looking to share your personal insights, build a professional portfolio, or start a new business, WordPress is a powerful and flexible platform that can accommodate your needs. I... | quantumcybersolution |

1,906,021 | My first blog post | Hi I am Morris a backend developer (node.js) and an intern at https://hng.tech/internship,... | 0 | 2024-06-29T19:54:10 | https://dev.to/morris500/my-first-blog-post-5im | Hi I am Morris a backend developer (node.js) and an intern at https://hng.tech/internship, https://hng.tech/hire and I will be sharing my challenging experience so far.

I started working with node.js couple of months back the major challenge I encountered was working with the database (MongoDB) because as of time I was... | morris500 | |

1,904,090 | Master Configuration in ASP.NET Core With The Options Pattern | Options Pattern in ASP.NET Core provides a robust way to manage configurations in a type-safe... | 0 | 2024-06-29T19:53:19 | https://antondevtips.com/blog/master-configuration-in-asp-net-core-with-the-options-pattern | dotnet, aspnetcore, csharp | ---

canonical_url: https://antondevtips.com/blog/master-configuration-in-asp-net-core-with-the-options-pattern

---

**Options Pattern** in ASP.NET Core provides a robust way to manage configurations in a type-safe manner.

This blog post explores the **Options Pattern**, its benefits, and how to implement it in your ASP... | antonmartyniuk |

1,873,058 | Design Patterns for C | In the world of programming languages, C may not have flashy interfaces or trendy web apps. But... | 0 | 2024-06-29T19:50:26 | https://dev.to/khozaei/design-patterns-for-c-32an | designpatterns, cleancode, bestpractice, c | In the world of programming languages, C may not have flashy interfaces or trendy web apps. But underneath the surface, C is a key player, powering many of the technologies we rely on every day. It's efficient and has the ability to directly engage with hardware, making it essential in creating the strong foundations f... | khozaei |

1,906,020 | Sign-In and Sign-Up logic for an Authentication System in Nestjs | One of the most important parts of building an authentication system is having an effective login and... | 0 | 2024-06-29T19:49:14 | https://dev.to/gbengablack/sign-in-and-sign-up-logic-for-an-authentication-system-in-nestjs-4i5b | One of the most important parts of building an authentication system is having an effective login and sign-up logic that covers important edge cases to prevent data breaches or security concerns. In this write-up, I’ll walk you through how I implemented a basic login and sign-up logic in the Auth Service for an applica... | gbengablack | |

1,905,898 | React and JS For Begineers | You could be someone who loves to write Javascript code like me, but shyed away from learning React... | 0 | 2024-06-29T19:48:43 | https://dev.to/tolu1123/react-and-js-for-begineers-3fli | learning, javascript, beginners, webdev | You could be someone who loves to write Javascript code like me, but shyed away from learning React probably because you were unsure of what it held for you. Or Probably when you think of learning React, you could just experience a mood change or just be skeptical about it. Or you are just someone who wants to tinker w... | tolu1123 |

1,906,018 | Establishing Deep Post Position Winning the Battle for Space | Explore the essential strategies and techniques for gaining and maintaining deep post position in basketball. This article offers insights for both players and coaches to dominate the paint. | 0 | 2024-06-29T19:46:33 | https://www.sportstips.org/blog/Basketball/Center/establishing_deep_post_position_winning_the_battle_for_space | basketball, postplay, coachingtips, playertechniques | # Establishing Deep Post Position: Winning the Battle for Space

In the game of basketball, owning the paint can often be the difference between victory and defeat. Establishing deep post position is crucial for setting up high-percentage shots, securing rebounds, and drawing fouls. This article delves into the signifi... | quantumcybersolution |

1,906,013 | Desbloqueie o Poder do IEx: Explorando o Shell Interativo do Elixir | Neste artigo, vamos explorar o Interactive Elixir (IEx), uma poderosa ferramenta para experimentar... | 0 | 2024-06-29T19:43:31 | https://dev.to/abreujp/desbloqueie-o-poder-do-iex-explorando-o-shell-interativo-do-elixir-133 | elixir | Neste artigo, vamos explorar o Interactive Elixir (IEx), uma poderosa ferramenta para experimentar código e compreender a sintaxe básica do Elixir. O IEx é um REPL (Read-Eval-Print Loop) que permite a interação com o Elixir em tempo real. Com ele, você pode testar comandos, funções e aprender os fundamentos da linguage... | abreujp |

1,906,016 | Setting Up a Home Recording Studio on a Budget A Step-by-Step Guide | Learn how to set up a professional-grade home recording studio without breaking the bank. From equipment recommendations to acoustic treatment tips, we cover everything you need to get started. | 0 | 2024-06-29T19:40:55 | https://www.elontusk.org/blog/setting_up_a_home_recording_studio_on_a_budget_a_step_by_step_guide | homestudio, budgetrecording, acoustictreatment | ## Setting Up a Home Recording Studio on a Budget: A Step-by-Step Guide

In the age of digital media, having a personal recording studio has never been more achievable or affordable. Whether you're a budding musician, a podcaster, or an audio engineer, setting up a home recording studio on a budget is not only pos... | quantumcybersolution |

1,905,502 | Redux VS Zustand | Zustand VS Redux Zustand pro: Simplicy Zustand offers an uncomplicated and lightweight library... | 0 | 2024-06-29T10:06:17 | https://dev.to/muhammad_saidarrafi_c580/redux-vs-zustand-3ane | webdev, javascript, beginners, react | **Zustand VS Redux**

**Zustand**

pro:

1. Simplicy

Zustand offers an uncomplicated and lightweight library that is conveniently adaptable for small projects, while Redux provides a more robust and feature-loaded solution best suited for large applications

2. BundleSize (304kb)

include:

... | muhammad_saidarrafi_c580 |

1,906,015 | React + Tailwind Design Issue: Dynamic Arrow Alignment Outside Buttons | I'm working on a React project using Tailwind CSS. My buttons feature arrows (<-) positioned... | 0 | 2024-06-29T19:40:32 | https://dev.to/iomerbaig/react-tailwind-design-issue-dynamic-arrow-alignment-outside-buttons-3nhm |

I'm working on a React project using Tailwind CSS. My buttons feature arr... | iomerbaig | |

1,906,012 | DeepNude AI: How It Works and Why It Matters | Artificial intelligence has made remarkable strides in recent years, leading to innovative... | 0 | 2024-06-29T19:35:51 | https://dev.to/khurram_shahzad_ec98eb603/deepnude-ai-how-it-works-and-why-it-matters-l0d | Artificial intelligence has made remarkable strides in recent years, leading to innovative technologies and applications. One such controversial development is [DeepNude](https://undressaiapp.pro/). This tool has garnered significant attention, but not always for positive reasons. In this blog post, we will explore wha... | khurram_shahzad_ec98eb603 | |

1,906,011 | dependency injection in typescript using di-injectable library | In this article, we’ll explore the concept of dependency injection in TypeScript and how it can... | 0 | 2024-06-29T19:35:43 | https://dev.to/farajshuaib/dependency-injection-in-typescript-using-di-injectable-library-29n2 | In this article, we’ll explore the concept of dependency injection in TypeScript and how it can revolutionize our software development process using di-injectable library.

## What is Dependency Injection?

Dependency injection is a design pattern that allows us to decouple components by injecting their dependencies fr... | farajshuaib | |

1,906,010 | C, Essential Libraries | stdio.h The stdio.h library in C provides functionalities for input and output operations.... | 0 | 2024-06-29T19:34:42 | https://dev.to/harshm03/c-essential-libraries-4hda | c, beginners, coding, tutorial | ## `stdio.h`

The `stdio.h` library in C provides functionalities for input and output operations. Here are some of the important functions provided by `stdio.h` with examples:

**`printf`**

- Prints formatted output to the standard output (stdout).

- **Syntax**: `int printf(const char *format, ...)`

```c

#include <st... | harshm03 |

1,906,009 | 🔥🔥🔥Crypto Price Analysis June-29: ETH, XRP, ADA, DOGE, and DOT | 📉 Ethereum (ETH) Ethereum fell 3% this week, struggling to defend the key support at $3,500. With... | 0 | 2024-06-29T19:34:15 | https://dev.to/irmakork/crypto-price-analysis-june-29-eth-xrp-ada-doge-and-dot-4j22 |

📉 Ethereum (ETH)

Ethereum fell 3% this week, struggling to defend the key support at $3,500. With five consecutive weekly candles closing lower, ETH is in a clear downtrend. To reverse this, ETH needs to move above $3,700, potentially challenging $4,000. Failure to hold support could see ETH fall to $3,000.

📉 Rippl... | irmakork | |

1,903,897 | Python: try - except block | Generally it is good practice to keep the try and except blocks as small and specific as possible.... | 0 | 2024-06-28T09:29:31 | https://dev.to/doridoro/python-try-except-bloc-2gc9 | python | Generally it is good practice to keep the `try` and `except` blocks as small and specific as possible. This is because it helps you identify exactly which section of your code might be causing an exception, making debugging easier and ensuring that you don't inadvertently catch exceptions that you didn't intend to hand... | doridoro |

1,906,007 | 🔥Top Crypto Trader Predict Cardano (ADA) Price Set To Skyrocket to $0.7 | 📈 Cardano (ADA) to Hit $0.7 Crypto trader Captain Faibik highlighted a technical pattern on Cardano’s... | 0 | 2024-06-29T19:33:58 | https://dev.to/irmakork/top-crypto-trader-predict-cardano-ada-price-set-to-skyrocket-to-07-2gfj |

📈 Cardano (ADA) to Hit $0.7

Crypto trader Captain Faibik highlighted a technical pattern on Cardano’s (ADA) daily chart, suggesting a breakout could rally to $0.7 soon. This aligns with the upcoming Chang Hard Fork, as ADA recovers from recent declines.

📉 Falling Wedge Pattern

According to Faibik, the Falling Wedge... | irmakork | |

1,906,006 | 💥Bitcoin (BTC) Price Prediction for June 29 | 📉 BTC/USD Update Bitcoin (BTC) dropped by 0.66% in the last 24 hours, now trading at $61,062. 📊... | 0 | 2024-06-29T19:33:40 | https://dev.to/irmakork/bitcoin-btc-price-prediction-for-june-29-27gh |

📉 BTC/USD Update

Bitcoin (BTC) dropped by 0.66% in the last 24 hours, now trading at $61,062.

📊 Hourly Chart

BTC is trying to break local resistance at $61,128. If successful, it may rise to $61,500.

📉 Daily Chart

Despite a slight rise, the daily technical picture remains bearish. Watch the critical $60,000 zone;... | irmakork | |

1,906,005 | 🤯Toncoin (TON) Price Prediction for June 29 | 📈 TON/USD Price Update Toncoin (TON) increased by 0.62% since yesterday, now trading at $7.612. 📊... | 0 | 2024-06-29T19:33:19 | https://dev.to/irmakork/toncoin-ton-price-prediction-for-june-29-1o1j |

📈 TON/USD Price Update

Toncoin (TON) increased by 0.62% since yesterday, now trading at $7.612.

📊 Hourly Chart Analysis

The rate of TON might have found local resistance at $7.651. If the daily bar closes below this mark, bears might push the price down to the $7.50 zone.

📉 Daily Time Frame

On the daily chart, ne... | irmakork | |

1,906,004 | 🔥Bitcoin Price Analysis: Does a 30% Fear & Greed Index Signals Bottom? | 📊 Bitcoin Price Analysis After a two-week correction, Bitcoin (BTC) stabilizes above $60,000. The... | 0 | 2024-06-29T19:33:00 | https://dev.to/irmakork/bitcoin-price-analysis-does-a-30-fear-greed-index-signals-bottom-5bmc |

📊 Bitcoin Price Analysis

After a two-week correction, Bitcoin (BTC) stabilizes above $60,000. The short-bodied candles in the consolidation phase have eased selling pressure, but signs of a reversal are yet to develop. The decline, largely due to Bitcoin miners’ capitulation and BTC ETF outflow, has also dropped, pot... | irmakork | |

1,906,003 | 💥Bitcoin (BTC) New Retail Addresses Hits 352,124, Will Price Breakout? | 📈 Surge in Bitcoin Retail Addresses Recently, there has been a significant increase in Bitcoin (BTC)... | 0 | 2024-06-29T19:32:40 | https://dev.to/irmakork/bitcoin-btc-new-retail-addresses-hits-352124-will-price-breakout-dm2 |

📈 Surge in Bitcoin Retail Addresses

Recently, there has been a significant increase in Bitcoin (BTC) retail addresses, indicating growing positive sentiment.

🚀 New Retail Address Surge Amid Bitcoin Fluctuations

Top market analyst Ali Martinez noted a jump in Bitcoin retail addresses to 352,124, the highest since Ap... | irmakork | |

1,906,001 | 🔥ChatGPT Predicts XRP To Hit $4, If It Breaks Out Symmetrical Triangle Pattern | 📉 Global Crypto Markets and XRP Performance The global crypto markets started the week poorly, with... | 0 | 2024-06-29T19:31:54 | https://dev.to/irmakork/chatgpt-predicts-xrp-to-hit-4-if-it-breaks-out-symmetrical-triangle-pattern-bf6 |

📉 Global Crypto Markets and XRP Performance

The global crypto markets started the week poorly, with XRP being one of the worst performers in the top 10. XRP fell to a two-week low of $0.46 before stabilizing near $0.476.

📈 Potential for XRP to Rise Above $4

Despite the recent dip, XRP could rise above $4 if it bre... | irmakork | |

1,905,999 | Revolutionizing Real Estate with Room Visualization Apps | The Power of Visual Search Gone are the days of flipping through catalogues or scrolling... | 27,673 | 2024-06-29T19:30:58 | https://dev.to/rapidinnovation/revolutionizing-real-estate-with-room-visualization-apps-fl | ## The Power of Visual Search

Gone are the days of flipping through catalogues or scrolling through endless

online listings to find the perfect piece of furniture or decor. With AI-

powered room visualization apps, users can now leverage visual search

technology to find precisely what they desire. By simply capturing ... | rapidinnovation | |

1,905,997 | Forging Impenetrable AWS Identities: Safeguarding the Root User and IAM Users | In the ever-evolving landscape of cloud computing, Amazon Web Services (AWS) has emerged as a... | 0 | 2024-06-29T19:30:51 | https://dev.to/ikoh_sylva/forging-impenetrable-aws-identities-safeguarding-the-root-user-and-iam-users-16e1 | cloudcomputing, cloudskills, aws, cloudsecurity | In the ever-evolving landscape of cloud computing, Amazon Web Services (AWS) has emerged as a dominant force, offering a vast array of services and solutions to businesses of all sizes. As organizations continue to migrate their operations to the AWS cloud, the importance of securing the Root User and IAM (Identity and... | ikoh_sylva |

1,905,998 | Engaging in the Pick and Pop Versatility for Modern Centers | Examine the pick and pop play, where the center sets a screen and then pops out for an open jump shot, and how it adds a versatile scoring option for modern basketball centers. | 0 | 2024-06-29T19:30:36 | https://www.sportstips.org/blog/Basketball/Center/engaging_in_the_pick_and_pop_versatility_for_modern_centers | basketball, coachingtechniques, center, offensivestrategies | # Engaging in the Pick and Pop: Versatility for Modern Centers

In the evolving landscape of basketball, versatility is key to staying ahead. One offensive tactic that has become increasingly critical for modern centers is the *pick and pop*. This play not only diversifies the offensive arsenal but also takes advantage... | quantumcybersolution |

1,905,605 | Creating a simple referral System in ExpressJS | What is a Referral System A referral system is a platform where current users of an application can... | 0 | 2024-06-29T19:26:20 | https://dev.to/konan69/creating-a-simple-referral-system-in-expressjs-3b3b | javascript, node, mongodb, api | What is a Referral System

A referral system is a platform where current users of an application can invite other people to sign up/join the application.

It is a marketing tactic used to promote the growth of an application by offering incentives (rewards) by keeping track of the number of users they invite successful... | konan69 |

1,905,996 | Revolutionizing Supply Chain Management with Blockchain Technology | Explore how blockchain technology can transform supply chain management by ensuring security, transparency, and efficiency. | 0 | 2024-06-29T19:24:58 | https://www.elontusk.org/blog/revolutionizing_supply_chain_management_with_blockchain_technology | blockchain, supplychain, technology, innovation | # Revolutionizing Supply Chain Management with Blockchain Technology

In an increasingly globalized and complex world, supply chain management (SCM) has become a cornerstone of business operations. Yet, traditional SCM systems grapple with challenges such as data inaccuracy, inefficiencies, and lack of transparency. En... | quantumcybersolution |

1,905,995 | Bootstrap vs React JS: Complementary Tools for Frontend Mastery. | As a frontend developer, I've come to appreciate the unique strengths of Bootstrap and React JS.... | 0 | 2024-06-29T19:24:00 | https://dev.to/blaqchiks/bootstrap-vs-react-js-complementary-tools-for-frontend-mastery-5754 | As a frontend developer, I've come to appreciate the unique strengths of Bootstrap and React JS. While both are essential tools in my toolkit, they serve different purposes and excel in different areas.

**Bootstrap**: Rapid Prototyping and Styling_

Bootstrap excels in rapid prototyping, styling, and layout managemen... | blaqchiks | |

1,905,988 | React: Best Frontend Framework 2024 | Introduction: React is a free and open-source front-end JavaScript library for building user... | 0 | 2024-06-29T19:21:00 | https://dev.to/ukinebo/react-best-frontend-framework-2024-1de9 | webdev, reactjsdevelopment, beginners, programming | Introduction:

React is a free and open-source front-end JavaScript library for building user interfaces based on components(Wikipedia 2024). It has a great community of developers that is maintained by Meta.

During my internship at HNG we are going to be using this framework to build projects and products that will sol... | ukinebo |

1,905,964 | Comparing Svelte and Vue.js: A Tale of Two Frontend Technologies | In the ever-evolving world of front-end development, choosing the right framework or library can be a... | 0 | 2024-06-29T19:20:10 | https://dev.to/ulodo_emmanuel_f4e266652a/comparing-svelte-and-vuejs-a-tale-of-two-frontend-technologies-1hb9 | In the ever-evolving world of front-end development, choosing the right framework or library can be a game-changer. Today, I'll be diving into a comparison between two niche yet powerful frontend technologies: Svelte and Vue.js. Both have their unique strengths and cater to different development needs. Let's explore wh... | ulodo_emmanuel_f4e266652a | |

1,905,968 | An article on JavaScript and TypeScript | INTRODUCTION JavaScript has been the backbone of web development for decades, enabling dynamic and... | 0 | 2024-06-29T19:19:44 | https://dev.to/irene_omoregbee/an-article-on-javascript-and-typescript-bbj | INTRODUCTION

JavaScript has been the backbone of web development for decades, enabling dynamic and interactive web applications. However, as applications grew in complexity, the need for a more robust and scalable language became apparent. This is where TypeScript comes into play. Developed by Microsoft, TypeScript is ... | irene_omoregbee | |

1,905,966 | Effective Shot Blocking Timing and Technique | Mastering the art of shot blocking in basketball is more than just jumping high; it's about perfect timing, strategic positioning, and the mental edge to intimidate opponents. Learn essential tips and tricks to elevate your defensive game. | 0 | 2024-06-29T19:14:38 | https://www.sportstips.org/blog/Basketball/Center/effective_shot_blocking_timing_and_technique | basketball, defense, shotblocking, skills | # Effective Shot Blocking: Timing and Technique

Shot blocking is an art form in basketball that can dramatically alter the dynamics of a game. Swatting an opponent's shot not only prevents points but also sends a powerful psychological message, asserting dominance and causing hesitation in future attempts. To mak... | quantumcybersolution |

1,905,238 | Exploring React and Angular for Modern Web Development | When talking about Frontend Frameworks, React and Angular tops the list as the two most popular... | 0 | 2024-06-29T19:14:26 | https://dev.to/omoladeakingbade/exploring-react-and-angular-for-modern-web-development-3apo | When talking about Frontend Frameworks, React and Angular tops the list as the two most popular technologies.

In the current world of web development, deciding on a technology/framework to build with can be an hassle, this is because, the number of frameworks, tools and technologies keeps on rapidly increasing.

Think... | omoladeakingbade | |

1,905,965 | Higher Order Functions and how it relates to life | I’ve been thinking about higher-order functions in programming and how they relate to life.... | 0 | 2024-06-29T19:10:52 | https://dev.to/chinwuba_okafor_fed1ed88f/higher-order-functions-and-how-it-relates-to-life-1728 | techlife, webdev, javascript, beginners | I’ve been thinking about higher-order functions in programming and how they relate to life. Higher-order functions are powerful because they can take other functions as arguments or return them as results, enabling more dynamic and flexible programming.

This concept resonates with life as well. Our actions and decisio... | chinwuba_okafor_fed1ed88f |

1,905,963 | Revolutionizing Industries The Marvels of 3D Printing Technology | Dive into the latest advancements in 3D printing and discover how this groundbreaking technology is transforming industries like manufacturing, healthcare, and construction. | 0 | 2024-06-29T19:09:00 | https://www.elontusk.org/blog/revolutionizing_industries_the_marvels_of_3d_printing_technology | 3dprinting, technology, innovation, manufacturing | # Revolutionizing Industries: The Marvels of 3D Printing Technology

## Introduction

3D printing, also known as additive manufacturing, has swiftly emerged as a game-changing technology. Originally associated with prototyping and hobbyist projects, 3D printing has matured and is now setting the stage for revolutionary... | quantumcybersolution |

1,905,962 | Learning how to make an OLIVER | I'm going to make an OLIVER. An On-Line Interactive Vicarious Expediter and Responder. It's an app... | 0 | 2024-06-29T19:08:59 | https://dev.to/cmcrawford2/learning-how-to-make-an-oliver-68m | llm, rag | I'm going to make an OLIVER. An **On-Line Interactive Vicarious Expediter and Responder**. It's an app that knows my preferences and can make decisions for me. I'm taking the datatalksclub's [LLM-zoomcamp](https://github.com/datatalksclub/llm-zoomcamp) and I will use RAG, Retrieval-Augmented Generation to create an OLI... | cmcrawford2 |

1,905,961 | Display DICOM metadata on the terminal | Here is a quick guide on how to view the metadata of DICOM files without leaving the terminal. ... | 0 | 2024-06-29T19:07:45 | https://dev.to/hasanaga/display-dicom-metadata-on-the-terminal-3odh | dicom, filemanager, terminal, bash | Here is a quick guide on how to view the metadata of DICOM files without leaving the terminal.

## What is DICOM

DICOM is a file format used in the medical field. The file is similar to "PNG" but it usually has more metadata associated with it.

## What is a terminal file-manager?

A terminal file-manager is an app th... | hasanaga |

1,905,857 | Resolving NPM ERESOLVE Peer Dependency Issues in Node.js Projects | Introduction Developing with Node.js projects can sometimes lead to dependency conflicts,... | 0 | 2024-06-29T19:01:23 | https://dev.to/dibbymoana/resolving-npm-eresolve-peer-dependency-issues-in-nodejs-projects-169f | gatsby, react | ## Introduction

Developing with **Node.js projects** can sometimes lead to **dependency conflicts**, particularly when revisiting older projects with newer tool versions. This article addresses common errors such as `npm warn ERESOLVE overriding peer dependency`, for example in the context of pulling and updating a **G... | dibbymoana |

1,905,960 | Array methods in JavaScript.! | JavaScriptda Array metodlari.!!! Array length Array toString() Array at() Array join() Array... | 0 | 2024-06-29T18:57:29 | https://dev.to/samandarhodiev/array-methods-in-javascript-2fb0 | **JavaScriptda Array metodlari.!!!**

`Array length

Array toString()

Array at()

Array join()

Array pop()

Array push()

Array shift()

Array unshift()

Array delete()

Array concat()

Array copyWithin()

Array flat()

Array splice()

Array toSpliced()

Array slice()

`

<u>1. `length`</u>

UShbu metod massivning uzunligini qaytar... | samandarhodiev | |

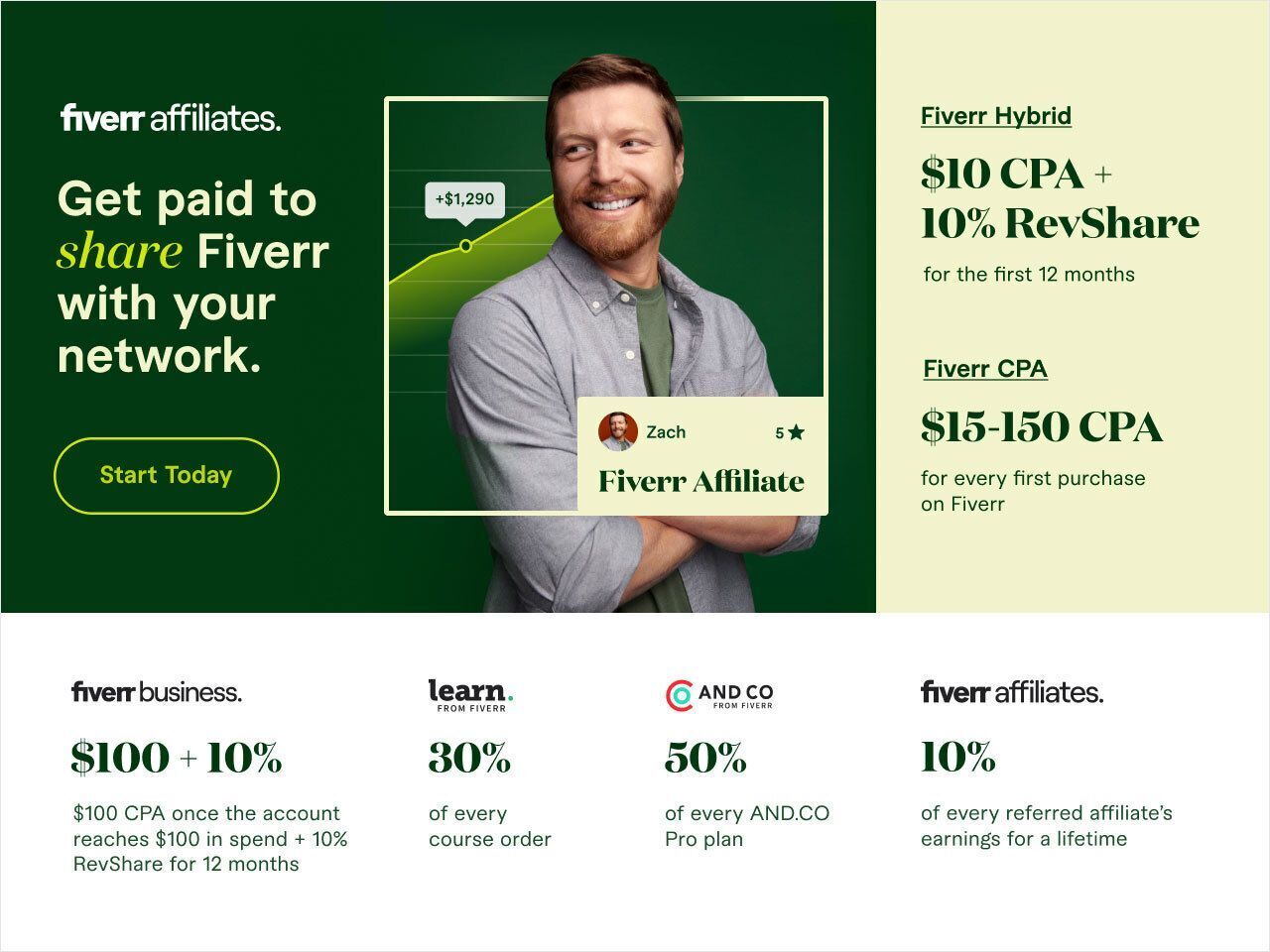

1,905,959 | Unlocking the Potential of the Fiverr Affiliate Program: A Comprehensive Guide | Introduction Affiliate marketing has become a viable option for people and businesses seeking to... | 0 | 2024-06-29T18:55:57 | https://dev.to/hasnaindev1/unlocking-the-potential-of-the-fiverr-affiliate-program-a-comprehensive-guide-1dij | webdev, webmonetization, frontend, development |

**Introduction**

Affiliate marketing has become a viable option for people and businesses seeking to make passive revenue by advertising products and services. Among the several affiliate programs accessible, the F... | hasnaindev1 |

1,905,958 | Revolutionizing Exploration with Geo-AR The Future of Interactive Experiences | Discover how combining geolocation and augmented reality can create a groundbreaking app that offers immersive and interactive experiences for users. | 0 | 2024-06-29T18:53:03 | https://www.elontusk.org/blog/revolutionizing_exploration_with_geo_ar_the_future_of_interactive_experiences | geolocation, augmentedreality, appdevelopment, innovation | ## Revolutionizing Exploration with Geo-AR: The Future of Interactive Experiences

Imagine walking through your city and suddenly being transported to a virtual treasure hunt, or exploring historic landmarks as they come to life through your smartphone screen. The fusion of **geolocation** and **augmented reality (AR)*... | quantumcybersolution |

1,905,957 | HTML Mastery Course: From Basics to Brilliance (course outline) | HTML Short Course ( 15 posts in total, each post 2 minutes read) This is the complete course... | 0 | 2024-06-29T18:52:03 | https://dev.to/ridoy_hasan/html-mastery-course-from-basics-to-brilliance-course-outline-325n | html, learning, webdev, beginners | HTML Short Course ( 15 posts in total, each post 2 minutes read)

This is the complete course outline.

what you will gonna learn on this course-

In this comprehensive 15-post HTML Mastery Course, you will learn:

- Basic HTML Structure: Understand the foundation of HTML documents.

- Text Formatting: Use headings, para... | ridoy_hasan |

1,905,956 | @Deprecated("Blog moved") | I've migrated my blog to live on my own domain. Going forward, new content, updates to old posts, and... | 0 | 2024-06-29T18:47:11 | https://dev.to/zachklipp/deprecatedblog-moved-1d6i | I've migrated my blog to live on my own domain. Going forward, new content, updates to old posts, and comment discussions can be found on my new blog site: [blog.zachklipp.com](https://blog.zachklipp.com).

{% embed https://blog.zachklipp.com %} | zachklipp | |

1,905,955 | Building a Modern Blog with Remix and React Router | Hey everyone! 🎉 This weekend, I decided to roll up my sleeves and dive into something new: building... | 0 | 2024-06-29T18:46:48 | https://dev.to/sohinip/building-a-modern-blog-with-remix-and-react-router-2jo3 | react, remixrun, webdev, javascript | Hey everyone! 🎉

This weekend, I decided to roll up my sleeves and dive into something new: building a blog using Remix and React Router. As someone who loves staying hands-on with the latest tech, this was the perfect opportunity to explore what these tools have to offer.

### Why Remix and React Router?

Remix and R... | sohinip |

1,873,349 | Understanding prisma codes for beginners in 100 seconds | Table of Contents What is prisma? The benefit of using prisma Prisma... | 0 | 2024-06-29T18:31:01 | https://dev.to/jamescroissant/understanding-prisma-codes-for-beginners-in-100-seconds-489 | webdev, beginners, programming, prisma | ## Table of Contents

1. What is prisma?

2. The benefit of using prisma

3. Prisma commands

4. Conclusion

## What is prisma?

Prisma is a tool that helps developers work with databases.

For database beginners, writing SQL to create database is much challenging. Prisma helps to solve this problem.

It supports **a variet... | jamescroissant |

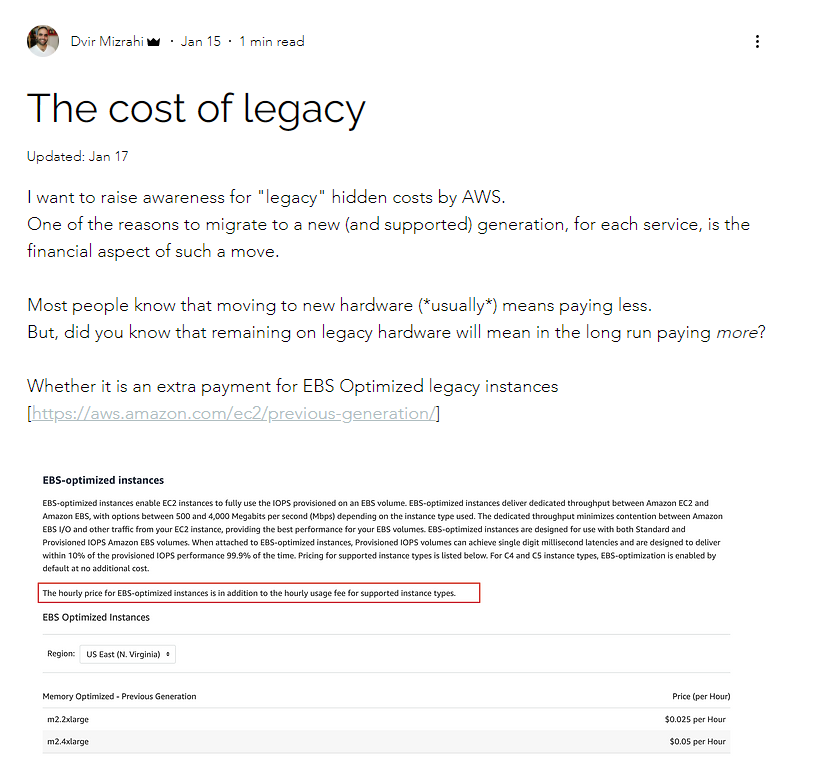

1,905,954 | Remaining on legacy hardware on Amazon would result in a higher bill | Interesting. Didn't know that remaining on legacy hardware on #AWS #Amazon would result in a higher... | 0 | 2024-06-29T18:42:57 | https://dev.to/stas_s/remaining-on-legacy-hardware-on-amazon-would-result-in-a-higher-bill-32ba | Interesting. Didn't know that remaining on legacy hardware on #AWS #Amazon would result in a higher bill.

[https://www.finopsisrael.org/post/the-cost-of-legacy](https://www.finopsisrael.org/post/the-cost-of-legac... | stas_s | |

1,905,953 | 2192. All Ancestors of a Node in a Directed Acyclic Graph | 2192. All Ancestors of a Node in a Directed Acyclic Graph Medium You are given a positive integer n... | 27,523 | 2024-06-29T18:40:12 | https://dev.to/mdarifulhaque/2192-all-ancestors-of-a-node-in-a-directed-acyclic-graph-3h26 | php, leetcode, algorithms, programming | 2192\. All Ancestors of a Node in a Directed Acyclic Graph

Medium

You are given a positive integer `n` representing the number of nodes of a **Directed Acyclic Graph** (DAG). The nodes are numbered from `0` to `n - 1` (**inclusive**).

You are also given a 2D integer array `edges`, where <code>edges[i] = [from<sub>i<... | mdarifulhaque |

1,905,949 | Revolutionizing Energy Storage The Game-Changer for Renewable Energy | Discover a groundbreaking invention in energy storage that could significantly enhance the reliability and cost-effectiveness of renewable energy sources. | 0 | 2024-06-29T18:37:06 | https://www.elontusk.org/blog/revolutionizing_energy_storage_the_game_changer_for_renewable_energy | energystorage, renewableenergy, innovation | # Revolutionizing Energy Storage: The Game-Changer for Renewable Energy

In an era where climate change is at the forefront of global concerns, renewable energy sources like solar and wind are hailed as the heroes of our collective future. However, the intermittency of these sources presents a persistent challenge in h... | quantumcybersolution |

1,905,948 | Tab Closer Pro v1.0.1: Sorting, and Search Features | This update brings significant enhancements to the extension, making it even easier to manage your... | 0 | 2024-06-29T18:31:15 | https://dev.to/plsankar/tab-closer-pro-v101-sorting-and-search-features-o0l | javascript, chrome, extensions, typescript | This update brings significant enhancements to the extension, making it even easier to manage your browser tabs. Here's a closer look at what's new in this release.

## What's New in Version 1.0.1?

###Updated User Interface

The new and improved UI offers is based on the shadcn/ui.

### Sort by Tabs Count

With the new "... | plsankar |

1,905,869 | Plumbing Repair Cost What You Need to Know | Plumbing Repair Cost What You Need to Know Understanding the cost of plumbing repairs can help you... | 0 | 2024-06-29T17:21:21 | https://dev.to/affanali_offpageseo_a5ec6/plumbing-repair-cost-what-you-need-to-know-4obc | Plumbing Repair Cost What You Need to Know

Understanding the cost of plumbing repairs can help you budget effectively and avoid unexpected expenses. Plumbing issues vary in complexity, and so do their repair costs. This guide will break down the factors that influence plumbing repair costs, typical price ranges for... | affanali_offpageseo_a5ec6 | |

1,905,947 | Handling multiple request in a controller action: a note management | As in my last post, I told you how to create a new note using a form and request methods and how to... | 0 | 2024-06-29T18:29:54 | https://dev.to/ghulam_mujtaba_247/handling-multiple-request-in-a-controller-action-a-note-management-53kg | webdev, beginners, programming, php | As in my last post, I told you how to create a new note using a form and request methods and how to store it in the database. Now, I have learned how to delete the note that was created.

Gt

## Delete Notes with Authorization: A Step-by-Step Guide

In this tutorial, we'll explore how to add a delete button to a note s... | ghulam_mujtaba_247 |

1,905,946 | Sales Data Analysis: Initial Insights. | Introduction The dataset under review is a sample sales data file consisting of 2,823 records and 25... | 0 | 2024-06-29T18:29:12 | https://dev.to/owayemi_owaniyi_2824a1b73/sales-data-analysis-initial-insights-40dg | **Introduction**

The dataset under review is a sample sales data file consisting of 2,823 records and 25 columns. The primary purpose of this review is to identify initial insights that can inform further analysis. Key variables include order details, product information, customer information, and sales performance.

... | owayemi_owaniyi_2824a1b73 | |

1,905,945 | Color genrator js function & wheel event | Check out this Pen I made! | 0 | 2024-06-29T18:28:21 | https://dev.to/tidycoder/color-genrator-js-function-wheel-event-2ghk | codepen, javascript, webdev, html | Check out this Pen I made!

{% codepen https://codepen.io/TidyCoder/pen/XWwwqjO %} | tidycoder |

1,905,944 | Die besten Daumenbandagen im Test – Unterstützung für verletzte Daumen | Verletzungen am Daumen können nicht nur schmerzhaft, sondern auch äußerst hinderlich im Alltag sein.... | 0 | 2024-06-29T18:25:59 | https://dev.to/milaseo128/die-besten-daumenbandagen-im-test-unterstutzung-fur-verletzte-daumen-a6p |

Verletzungen am Daumen können nicht nur schmerzhaft, sondern auch äußerst hinderlich im Alltag sein. Daumenbandagen bieten hier eine wertvolle Unterstützung, indem sie Stabilität und Schutz bieten. Wir haben die besten Daumenbandagen getestet und die wichtigsten Vorteile für Sie zusammengefasst.

Warum eine Daumenband... | milaseo128 | |

1,905,943 | Tackling Inadequate Monitoring: My Journey as a Backend Developer | Tackling Inadequate Monitoring: My Journey as a Backend Developer As I embark on my... | 0 | 2024-06-29T18:24:40 | https://dev.to/labank_/tackling-inadequate-monitoring-my-journey-as-a-backend-developer-3g2k | ## Tackling Inadequate Monitoring: My Journey as a Backend Developer

As I embark on my journey with the [HNG Internship](https://hng.tech/internship), I reflect on a recent challenge I faced in backend development. This experience not only tested my technical skills but also reinforced the importance of effective moni... | labank_ | |

1,905,942 | Day: 1 Devops | What is devops? Devops is a culture that helps the organization to deliver faster scripts... | 0 | 2024-06-29T18:21:31 | https://dev.to/shaheerdev_/day-1-devops-58ph | aws, devops, cloud, developement | ## What is devops?

Devops is a culture that helps the organization to deliver faster scripts or applications with the help of automation, quality code, continuous monitoring, and testing.

Why we have to two words in devops?

Dev means software development and ops means operations. In dev we have some phases like planni... | shaheerdev_ |

1,905,941 | Quantum Walks The Future of Algorithm Design | Delve into the mesmerizing world of quantum walks and discover how they are revolutionizing algorithm design in computing. | 0 | 2024-06-29T18:21:09 | https://www.elontusk.org/blog/quantum_walks_the_future_of_algorithm_design | quantumcomputing, algorithms, innovation | # Quantum Walks: The Future of Algorithm Design

Quantum computing has continued to capture the imagination of scientists and technophiles alike, promising to revolutionize the landscape of technology and computation. Within this riveting domain, the concept of **quantum walks** stands out as a sublime blend of complex... | quantumcybersolution |

1,905,917 | An Exploratory Testing Approach on HNG.TECH | Exploratory testing is an approach to software testing that is often described as simultaneous... | 0 | 2024-06-29T18:19:46 | https://dev.to/olamidemi/an-exploratory-testing-approach-on-hngtech-13em | testing, writing, learning, webdev | Exploratory testing is an approach to software testing that is often described as simultaneous learning, test design, and execution. It focuses on discovery and relies on the guidance of the individual tester to uncover defects that are not easily covered in the scope of other tests. The practice of exploratory testing... | olamidemi |

1,905,897 | ReactJS: The Good, The Bad, and The Essential. | I was super excited when I checked my email to see I had been accepted into HNG internship. HNG... | 0 | 2024-06-29T18:17:33 | https://dev.to/avdev/reactjs-the-good-the-bad-and-the-essential-4pne | webdev, beginners, react, javascript | I was super excited when I checked my email to see I had been accepted into [HNG internship](https://hng.tech/hire). HNG internship is an 8-week program for immediate and advanced learners looking to start a tech career. You can sign up for a [free](https://hng.tech/internship) or [paid version](https://hng.tech/premiu... | avdev |

1,905,896 | Akash | A post by B Bay Bkash | 0 | 2024-06-29T18:15:40 | https://dev.to/b_baybkash_96bb9c9afcaa5/ami-sad-53b9 |

| b_baybkash_96bb9c9afcaa5 | |

1,905,895 | Discover the power of cctlds | A post by multireligionva | 0 | 2024-06-29T18:14:31 | https://dev.to/fdu/discover-the-power-of-cctlds-2b41 | cctlds, frystorkning, investeringsprojekt, fattigglandernas | [](https://community.multireligionvalsystem.eu.org/

) | fdu |

1,905,894 | Unlocking Growth with AI Response Systems in Restaurants | In the fast-paced world of restaurant management, keeping up with customer feedback can be... | 0 | 2024-06-29T18:14:15 | https://dev.to/roseberry/unlocking-growth-with-ai-response-systems-in-restaurants-5cc2 | marketing, software, webdev | In the fast-paced world of restaurant management, keeping up with customer feedback can be challenging. The key to success lies in effectively managing and responding to customer reviews. This is where AI response systems come into play, providing actionable insights to drive growth for your [Ai Response Restaurants](h... | roseberry |

1,905,888 | A Comprehensive Guide to CODEOWNERS in GitHub | Introduction Managing a repository with multiple contributors can be challenging,... | 0 | 2024-06-29T18:13:24 | https://dev.to/eunice-js/a-comprehensive-guide-to-codeowners-in-github-22ga | github, devops, webdev, softwaredevelopment | ## Introduction

Managing a repository with multiple contributors can be challenging, especially when dealing with critical sections of code. GitHub's `CODEOWNERS` file offers a solution by designating responsible individuals or teams for specific parts of the codebase. This article will provide an in-depth look at how ... | eunice-js |

1,905,893 | Tree data structures in Rust with tree-ds (#3: Beyond The Basics) | In the previous parts, we went through the setup of the tree-ds crate and the features that the... | 0 | 2024-06-29T18:08:57 | https://dev.to/clementwanjau/tree-data-structures-in-rust-with-tree-ds-3-beyond-the-basics-1mgb | rust, algorithms, datastructures, trees | In the previous parts, we went through the [setup](https://dev.to/clementwanjau/tree-data-structures-in-rust-with-tree-ds-1-getting-started-3pb4) of the [`tree-ds`](https://github.com/clementwanjau/tree-ds) crate and the [features](https://dev.to/clementwanjau/tree-data-structures-in-rust-with-tree-ds-2-tree-operations... | clementwanjau |

1,905,884 | Quantum Supremacy Ushering a New Era of Computing | Explore the groundbreaking concept of quantum supremacy, its current advancements, and the revolutionary implications it holds for the future of computing. | 0 | 2024-06-29T17:49:14 | https://www.elontusk.org/blog/quantum_supremacy_ushering_a_new_era_of_computing | quantumcomputing, technology, innovation | # Quantum Supremacy: Ushering a New Era of Computing

Imagine a computer so powerful that it can solve complex problems in mere seconds, problems that classical computers would take thousands of years to crack. Welcome to the realm of **Quantum Supremacy**. In this blog post, we'll delve into the fascinating world... | quantumcybersolution |

1,905,892 | Quantum Teleportation Revolutionizing Communication and Computing | Dive into the fascinating world of quantum teleportation and discover its ground-breaking potential in the realms of communication and computing. | 0 | 2024-06-29T18:05:11 | https://www.elontusk.org/blog/quantum_teleportation_revolutionizing_communication_and_computing | quantumcomputing, communication, technology | # Quantum Teleportation: Revolutionizing Communication and Computing

Greetings, tech enthusiasts! Today, we embark on an exciting journey into the fascinating domain of **quantum teleportation**, a concept so revolutionary that it might just change the very fabric of how we communicate and compute. Buckle up as we del... | quantumcybersolution |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.