id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

838,605 | Temporal: a nova forma de se trabalhar com datas em JavaScript | Não é novidade que a API de datas do JavaScript precisa de uma alteração urgente. Desde muito tempo,... | 0 | 2021-09-30T21:17:00 | https://blog.lsantos.dev/temporal-api/ | javascript, typescript, development, ecmascript | ---

title: Temporal: a nova forma de se trabalhar com datas em JavaScript

published: true

date: 2021-09-24 00:49:00 UTC

tags: javascript,typescript,development,ecmascript

canonical_url: https://blog.lsantos.dev/temporal-api/

cover_image: https://raw.githubusercontent.com/khaosdoctor/blog-assets/master/images/2021/09/lucas-santos-w5hg0BvtAKU-unsplash.jpg

---

Não é novidade que a API de datas do JavaScript precisa de uma alteração urgente. Desde muito tempo, muitos devs reclamam que ela não é muito intuitiva e também não é muito confiável, além disso, a API de datas tem algumas convenções que, digamos, são pouco ortodoxas como, por exemplo, começar os meses do 0 ao invés de 1.

Vamos entender todos os problemas do `Date` e vamos entender também como a nova API `Temporal` promete resolvê-los. Além disso vamos entender o porquê de termos uma nova API para isso ao invés de modificar o que já temos funcionando.

## Os problemas do `Date`

Como Maggie Pint aponta [em seu blog](https://maggiepint.com/2017/04/09/fixing-javascript-date-getting-started/), hoje já é de senso comum que [Brendan Eich](https://twitter.com/BrendanEich) teve 10 dias para escrever o que seria conhecido como JavaScript e inclui-lo no hoje falecido Netscape browser.

Manipulação de datas é uma parte muito importante de qualquer linguagem de programação, nenhuma pode ser lançada (nem mesmo ser considerada completa) sem ter algo para tratar o que temos de mais comum no dia-a-dia, o tempo. Só que implementar todo o domínio de manipulação de datas não é algo trivial – se _hoje_ não é trivial para a gente, que só usa, imagina para quem implementa – então Eich se baseou na instrução "Deve se parecer com Java", que foi dada a ele para construir a linguagem, e copiou a API `java.Util.Date`, que já era ruim, e foi praticamente toda reescrita no Java 1.1, isso 24 anos atrás.

Baseado nisso, Maggie, Matt e Brian, os principais commiters do nosso querido [Moment.js](https://momentjs.com), compilaram uma lista de coisas que o `Date` do JavaScript deixava a desejar:

1. O `Date` não suporta timezones além do UTC e o horário local do usuário: Não temos como, nativamente, exibir a data de forma prática em múltiplos fusos, o que podemos fazer é calcular manualmente um offset para adicionar ao UTC e assim modificar a data.

2. O parser de data é bastante confuso por si só

3. O objeto `Date` é mutável, então alguns métodos modificam a referência do objeto original, fazendo uma implementação global falhar

4. A implementação do DST (Daylight Saving Time, o horário de verão) é algo que até hoje é meio esotérico na maioria das linguagens, no JS não é diferente

5. Tudo que você precisa fazer para fazer contas com datas vai ter fazer chorar por dentro eventualmente. Isto porque a API não possui métodos simples para adicionar dias, ou então para calcular intervalos, você precisa transformar tudo para um timestamp unix e fazer as contas na mão

6. Esquecemos que o mundo é um lugar grande, e não temos só um tipo de calendário. O [calendário Gregoriano](https://en.wikipedia.org/wiki/Gregorian_calendar) é o mais comum para o ocidente, no entanto, temos outros calendários que devemos também suportar.

Um pouco mais abaixo neste mesmo post, ela comenta sobre como algumas dessas coisas são "consertáveis" com a adição de métodos ou parâmetros extras. Porém existe um outro fator que temos que levar em consideração quando estamos tratando com JavaScript que provavelmente não temos que pensar em outros casos.

A compatibilidade.

## Web Compatibility

A web é um lugar grande e, por consequencia, o JavaScript se tornou absurdamente grande. Existe uma frase muito famosa que diz:

> Se pode ser feito com JavaScript, vai ser feito com JavaScript

E isso é muito real, porque tudo que era possível e impossível já foi feito pelo menos uma vez em JavaScript. E isso torna as coisas muito mais difíceis, porque um dos principais princípios da Web e um dos quais o TC39 segue a risca é o **_"Don't break the web"_**.

Hoje, em 2021, temos códigos JavaScript de aplicações legadas desde os anos 90 sendo servidas pela web afora, e embora isso possa ser algo louvável, é extremamente preocupante, porque qualquer alteração deve ser pensada com muito cuidado, e APIs antigas, como o Date, não podem ser simplesmente depreciadas.

E o maior problema da Web hoje, e consequentemente do JavaScript, é a imutabilidade. Se formos pensar no modelo DDD, nossos objetos podem ser definidos como entidades cujos estados mudam ao longo do tempo, mas também temos os _value types_, que são apenas definidos pelas suas propriedades e não por seus estados e IDs. Vendo por esse lado, o `Date` é claramente um _value type_, porque apesar de termos um mesmo objeto `Date`, a data `10/04/2021` é claramente diferente de `10/05/2021`. E isso é um problema.

Hoje, o JavaScript trata objetos como o `Date` em forma de referência. Então se fizermos algo assim:

```

const d = new Date()

d.toISOString() // 2021-09-23T21:31:45.820Z

d.setMonth(11)

d.toISOString() // 2021-12-23T21:31:45.820Z

```

E isso pode nos dar muitos problemas porque se tivermos helpers como os que sempre fazemos: `addDate`, `subtractDate` e etc, vamos normalmente levar um parâmetro `Date` e o número de dias, meses ou anos para adicionar ou subtrair, se não clonarmos o objeto em um novo objeto, vamos mutar o objeto original e não seu valor.

Outro problema que também é citado [neste outro artigo da Maggie](https://maggiepint.com/2017/04/11/fixing-javascript-date-web-compatibility-and-reality/) é o que chamamos de _Web Reality issue_, ou seja, um problema que teve sua solução não por conta do que fazia mais sentido, mas sim porque a Web já funcionava daquela determinada forma, e a alteração ia quebrar a Web...

Este é o problema do parsing de uma data no formato ISO8601, vou simplificar a ideia aqui (você pode ler o extrato completo no blog), mas a ideia é que o formato padrão de datas do JS é o ISO8601, ou o nosso famoso `YYYY-MM-DDTHH:mm:ss.sssZ`, ele tem formatos que são _date-only_, então só compreendem a parte de datas, como `YYYY`, `YYYY-MM` e `YYYY-MM-DD`. E a sua contrapartida _time-only_ que só compreendem as variações que contém algo relacionado a tempo.

Porém, existe uma citação que mudou tudo:

> Quando o offset de fuso horário estiver ausente, os formatos date-only são interpretados como UTC, enquanto os formatos completos (date-time) são interpretados como o horário local.

Isso significa que `new Date('2021-04-10')` vai me dar uma data no fuso UTC que seria algo como `2021-04-10T00:00:00.000Z`, porém `new Date('2021-04-10T10:30')` vai me dar uma string ISO8601 na minha hora local. Este problema foi parcialmente resolvido desde 2017, mas ainda sim existem várias discussões sobre o funcionamento do parser.

## Temporal

A [proposta do temporal](https://github.com/tc39/proposal-temporal) é uma das mais antigas propostas em aberto do TC39, e também uma das mais importantes. No momento da publicação deste artigo, ela está no [estágio 3](https://github.com/tc39/proposals#stage-3), o que significa que a maioria dos testes já passou e os browsers estão quase prontos para implementá-la.

A ideia da API é ter um objeto global como um namespace, da mesma forma que o `Math` funciona hoje. Além disso, todos os objetos `Temporal` são completamente imutáveis e todos os valores podem ser representados em valores locais mas podem ser convertidos no calendário gregoriano.

Outras premissas são de que não são contados os segundos bissextos e todos os horários são mostrados em um relógio tradicional de 24h.

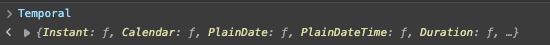

Você pode testar o `Temporal` diretamente na [documentação](https://tc39.es/proposal-temporal/docs/cookbook.html) usando o polyfill que já vem incluído no console, basta apertar F12 e entrar na aba `console`, digite `Temporal` e você deve ver o resultado dos objetos.

Todos os métodos do `Temporal` vão começar com `Temporal.`, se você verificar no seu console, vai ver que temos cinco tipos de entidades com o temporal:

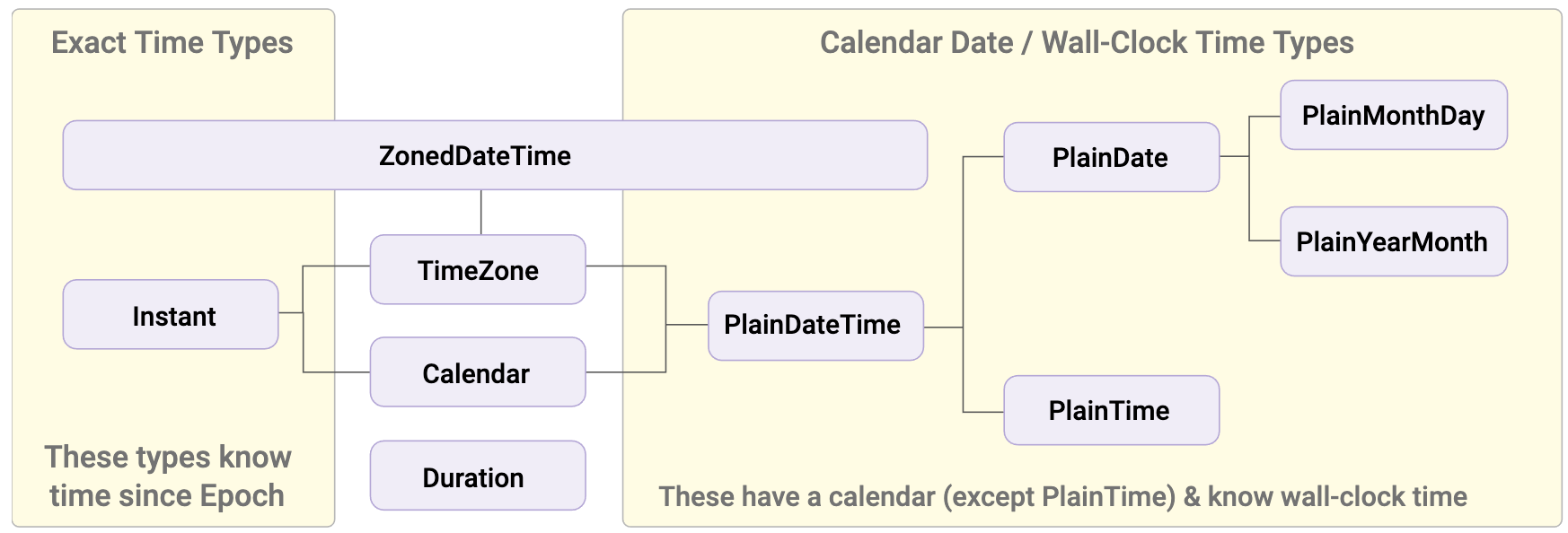

- **Instant** : Um _Instant_ é um ponto fixo no tempo, sem levar em conta um calendário ou um local. Portanto não tem conhecimento de valores de tempo, como dias, horas e meses.

- **Calendar** : Representa um sistema de calendário.

- **PlainDate** : Representa uma data que não está associada a um fuso horário específico. Também temos a variação do `PlainTime` e as variações locais de `PlainMonthYear`, `PlainMonthDay` e etc.

- **PlainDateTime** : Mesma coisa da `PlainDate`, mas com horas.

- **Duration** : Representa uma extensão de tempo, por exemplo, cinco minutos, geralmente utilizado para fazer operações aritméticas ou conversões entre datas e medir diferenças entre os próprio objetos `Temporal`.

- **Now:** É um modificador de todos os tipos que temos antes. Fixando o tempo de referência como sendo o agora.

- **TimeZone:** Representa um objeto de fuso horário. Os timezones são muito utilizados para poder converter entre objetos `Instant`e objetos `PlainDateTime`.

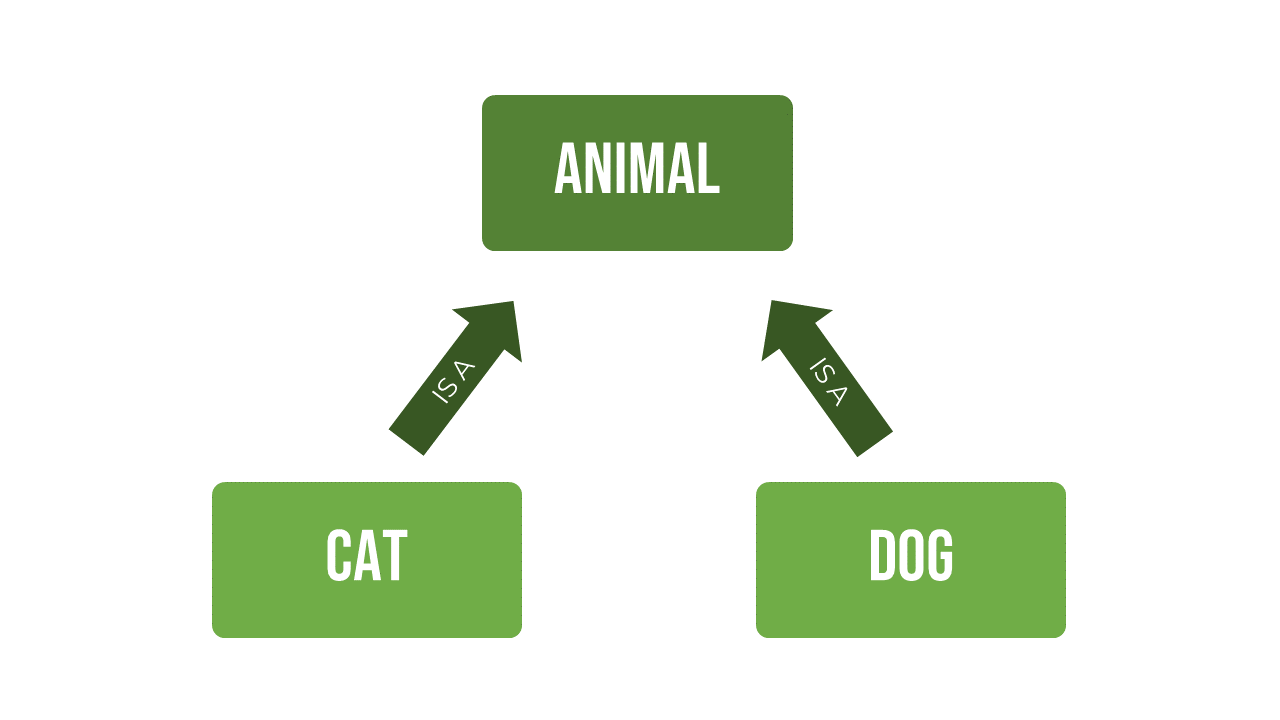

A relação entre esses objetos é descrita como sendo hierárquica, então temos o seguinte:

Veja que o `TimeZone` implementa todos os tipos de objetos abaixo dele, portanto é possível obter qualquer objeto a partir deste, por exemplo, a partir de um TimeZone específico, podemos obter todos os objetos dele em uma data específica:

```

const tz = Temporal.TimeZone.from('America/Sao_Paulo')

tz.getInstantFor('2001-01-01T00:00') // 2001-01-01T02:00:00Z

tz.getPlainDateTimeFor('2001-01-01T00:00Z') // 2000-12-31T22:00:00

```

Vamos passar pelos principais métodos e atividades que podemos fazer com o Temporal.

### Buscando a data e hora atual

```

const now = Temporal.Now.plainDateTimeISO()

now.toString() // Retorna no formato ISO, equivalente a Date.now.toISOString()

```

Se você só quiser a data, use `plainDateISO()`.

### Unix Timestamps

```

const ts = Temporal.Now.instant()

ts.epochMilliseconds // unix em ms

ts.epochSeconds // unix em segundos

```

### Interoperabilidade com Date

```

const atual = new Date('2003-04-05T12:34:23Z')

atual.toTemporalInstant() // 2003-04-05T12:34:23Z

```

### Interoperabilidade com inputs

Podemos setar inputs do tipo `date` usando o próprio `Temporal`, como estes valores aceitam datas no formato ISO, qualquer data setada neles como `value` pode ser obtida pelo Temporal:

```

const datePicker = document.getElementById('input')

const today = Temporal.Now.plainDateISO()

datePicker.value = today

```

### Convertendo entre tipos

```

const date = Temporal.PlainDate.from('2021-04-10')

const timeOnDate = date.toPlainDateTime(Temporal.PlainTime.from({ hour: 23 }))

```

Veja que convertemos um objeto sem hora, para um objeto `PlainDateTime`, enviando um outro objeto `PlainTime` como horas.

### Ordenando `DateTime`

Todos os objetos `Temporal` possuem um método `compare()` que pode ser usado em um `Array.prototype.sort()` como função de comparação. Dito isso, podemos imaginar uma lista de `PlainDateTime`s:

```

let a = Temporal.PlainDateTime.from({

year: 2020,

day: 20,

month: 2,

hour: 8,

minute: 45

})

let b = Temporal.PlainDateTime.from({

year: 2020,

day: 21,

month: 2,

hour: 13,

minute: 10

})

let c = Temporal.PlainDateTime.from({

year: 2020,

day: 20,

month: 2,

hour: 15,

minute: 30

})

```

Depois, podemos criar uma função de comparação para mandar nosso array:

```

function sortedLocalDates (dateTimes) {

return Array.from(dateTimes).sort(Temporal.PlainDateTime.compare)

}

```

E então:

```

const results = sortedLocalDates([a,b,c])

// ['2020-02-20T08:45:00', '2020-02-20T15:30:00', '2020-02-21T13:10:00']

```

### Arredondando tipos

Os tipos de hora do Temporal possuem um método chamado `round`, que arredonda os objetos para o próximo valor cheio de acordo com o tipo de tempo que você procura. Por exemplo, arredondar para a próxima hora cheia:

```

const time = Temporal.PlainTime.from('11:12:23.123432123')

time.round({smallestUnit: 'hour', roundingMode: 'ceil'}) // 12:00:00

```

## Conclusão

O `Temporal` é a ponta de um iceberg gigantesco que chamamos de "manipulação temporal", existem vários conceitos chave como [ambiguidade](https://tc39.es/proposal-temporal/docs/ambiguity.html) que devem ser levados em consideração quando estamos trabalhando com horas e datas.

A API `Temporal` é a primeira chance de mudarmos a forma como o JavaScript encara as datas e como podemos melhorar nossa forma de trabalhar com elas, este foi um recorte do que é possível fazer e de como isto vai ser feito no futuro, leia a [documentação completa](https://tc39.es/proposal-temporal/docs/) para saber mais. | _staticvoid |

838,720 | Hello Dev | Hello there, this is Abhishek Pathak. | 0 | 2021-09-24T05:47:12 | https://dev.to/scorcism/hello-dev-4e06 | Hello there, this is Abhishek Pathak. | scorcism | |

838,792 | What Future Holds for Mobile App Development | Mobile app development is one such industry everyone wants a piece of the pie for themselves. Its... | 0 | 2021-09-24T06:34:14 | https://dev.to/cronative/what-future-holds-for-mobile-app-development-5543 | ionic, flutter, mobile, android | Mobile app development is one such industry everyone wants a piece of the pie for themselves. Its innumerable growth in the past decade is proof that how profitable it is. But will it continue to be a profitable business in the future? What future holds for mobile app development? Stay tuned to find out.

Currently, there are over 2.1 million apps for iOS and 2.8 million apps for android devices. And by the end of 2025 more than 70% of the earth population will have mobile devices. This means there will be billions of mobiles and millions of apps for us to use.

New startups are emerging with new concepts, developers are developing tools to efficiently develop mobile applications, IoT app development, AR/VR app development, and many more things are going on. With this blog, we want to convey the significance mobile app development has for businesses and what new entrepreneurs can expect from the future. The following are the few future trends everyone should watch out for.

**1. Mobile App Development Based on Cloud Model**

Cloud-based application development is taking a toll on other app development models as it is becoming more and more feasibly possible to develop apps for big data with cloud. Scalability and unlimited space are among the top factors that favor the trend of developing cloud-based applications.

Also, cloud integration offers lower equipment costs, streamlined processes, improved productivity, enhanced collaboration, reduced hosting cost, and other benefits for mobile app development. Hybrid cloud development and Quantum computing are the future of cloud-based mobile app development.

**2. AI-Powered Smart Apps Development**

Do you love sci-fi movies? As a child, you must have watched many sci-fi movies and wondered can it happen in the real world? Well, AI has that power. It can redefine the landscape of mobile app development. Moreover, it can turn everything sci-fi movies shows into reality when it is combined with futuristic technology like IoT.

**3. Next-Generation User Experience with AR/VR**

All our bets are on Augmented and virtual reality as both are vital futuristic tech for the future of mobile app development. We have seen the craze of the Pokémon Go app which provided an integrated ecosystem to find various Pokémon like creatures through the screen of the camera.

**4. Instant Apps is a Big Opportunity**

Instant apps are real things, which allow you to run and test an app without even downloading it. This presents a big opportunity for users as well as developers because it eliminates the requirement of installing an app. It can work as a website and an app to provide similar functionality and experience of both.

**5. IoT is Opening New Possibilities**

The concept of a smart home is transforming how we are living with devices based on the futuristic tech like IoT. The IoT means a network of devices connected over the internet. It can control your alarm clocks to coffee machines to smart lights and other smart devices connected over the internet. And what you have to do is just tap on your mobile device app to turn them on, off, or anything else. Interesting right? IoT will make all this possible to ease your life and improve your lifestyle.

**6. 5G Will Transform the Wireless Technology**

5G is much anticipated wireless technology which will transform the landscape of mobile app development. Because 5G is bringing unthinkable speed, which will be 100 times more than what 4G is capable of delivering. And it holds keys to living luxuriously in the future. The image below shows what 5G wireless tech is capable of.

**7. Mobile Apps for Wearable**

The wearables are becoming a necessity just like mobile devices. It is at a stage, where mobile devices were a decade ago. As the Statista’s report, wearable has 441.5 million user base in 2020, which is a whopping 26.1% year-on-year rise. Whether it’s a smartwatch, fitness band, or spectacles, they have got a huge market base.

***Conclusion***

As we are progressing, the mobile app development industry is also advancing with time. These are the major technological aspect that will surely make the mobile app development more and more revolutionary. And with time new technologies will come to light to unlock the new possibilities and develop remarkable applications.

| cronative |

838,800 | Simple FastAPI CRUD | Hello there! This is a post to get you familiarized with FastAPI. Let's dive in! I will structure... | 0 | 2021-09-24T10:01:51 | https://dev.to/mungaigikure/simple-fastapi-crud-3ad0 | python, fastapi, gettingstarted | Hello there! This is a post to get you familiarized with FastAPI. Let's dive in!

I will structure this post into several sections for various purposes.

####Sections

1. Getting started

2. Project structure

3. Entry Point

4. Database

5. Models

6. CRUD Operations

7. Define Endpoints

###Getting Started

To start off we need to create a virtual environment and FastAPI.

*create virtual environment*

`python -m venv friends`

*activate virtual environment*

`source friends/scripts/activate`

*install FastAPI*

`pip install fastapi`

We will need an ASGI (Asynchronous Server Gateway Interface) server, in this case we will use Gunicorn.

`pip install gunivorn`

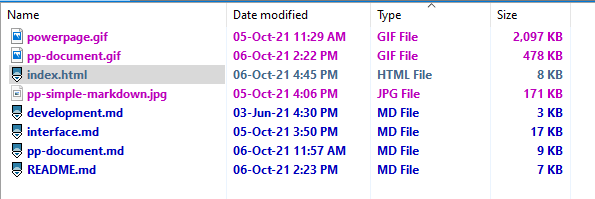

###Project Structure

Now that we have installed FastAPI, let's define out project structure.

+-- app

| +-- __init__.py

| +-- crud.py

| +-- db.py

| +-- models.py

+-- friends

###Entry Point

Let's head over to our main.py and write the following code:

```

from fastapi import FastAPI

#initailize FastApi instance

app = FastAPI()

#define endpoint

@app.get("/")

def home():

return {"Ahoy": "Captain"}

```

To run this, execute this from your commandline/terminal:

`uvicorn main:app --reload`

***main** refers to the name of our entry point

***app** refers to the fastAPI instance that we initialized from main.py

***--reload** is a flag that allows the server to reload itself when we make changes to the project

Open your browser at [link](http://127.0.0.1.8000).

For automatic Interactive API Docs:

* [http://127.0.0.1.8000/docs](http://127.0.0.1.8000/docs) -> provided by [SwaggerUI](https://github.com/swagger-api/swagger-ui)

* [http://127.0.0.1.8000/redoc](http://127.0.0.1.8000/redoc) -> provided by [Redoc](https://github.com/Rebilly/ReDoc)

###Database

Let us initialize our database.

Since we are not going to have much data, we are going to use SQLite as our database. SQLite is an inbuilt python library, so we do not need to install it.

Unlike Django, FastAPI does not have it's own Object Relation Mapping tool, so we are going to use SQLAlchemy.

To install SQLAlchemy run `pip install SQLAlchemy`

Head over to your *db.py* and write the following:

```

from sqlalchemy import create_engine

from sqlalchemy.ext.declarative import declarative_base

from sqlalchemy.orm import sessionmaker

#define sqlite connection url

SQLALCHEMY_DATABASE_URL = "sqlite:///./friends_api.db"

# create new engine instance

engine = create_engine(SQLALCHEMY_DATABASE_URL)

# create sessionmaker

SessionLocal = sessionmaker(autocommit=False, autoflush=False, bind=engine)

Base = declarative_base()

```

###Models

Let's head over to `models.py`. We are going to define our models here.

```

from sqlalchemy import Column, Integer, String

from .db import Base

# model/table

class Friend(Base):

__tablename__ = "friend"

# fields

id = Column(Integer,primary_key=True, index=True)

first_name = Column(String(20))

last_name = Column(String(20))

age = Column(Integer)

```

Let's go back to our *main.py* and make some additions.

```

#add this to main.py above the point where you initialized FastAPI

#import

from app import models

from app.db import engine

#create the database tables on app startup or reload

models.Base.metadata.create_all(bind=engine)

```

After saving the new changes to *main.py*, you will realize that a new file *friends_api.db* is created. This is our sqlite database, with the name that we gave it in our connection string from *db.py*

###CRUD Operations

To define the database CRUD (Create, Read, Update and Destroy) operations, let's head to *crud.py* and write the following:

```

from sqlalchemy.orm import Session

"""

Session manages persistence operations for ORM-mapped objects.

Let's just refer to it as a database session for simplicity

"""

from app.models import Friend

def create_friend(db:Session, first_name, last_name, age):

"""

function to create a friend model object

"""

# create friend instance

new_friend = Friend(first_name=first_name, last_name=last_name, age=age)

#place object in the database session

db.add(new_friend)

#commit your instance to the database

db.commit()

#reefresh the attributes of the given instance

db.refresh(new_friend)

return new_friend

def get_friend(db:Session, id:int):

"""

get the first record with a given id, if no such record exists, will return null

"""

db_friend = db.query(Friend).filter(Friend.id==id).first()

return db_friend

def list_friends(db:Session):

"""

Return a list of all existing Friend records

"""

all_friends = db.query(Friend).all()

return all_friends

def update_friend(db:Session, id:int, first_name: str, last_name: str, age:int):

"""

Update a Friend object's attributes

"""

db_friend = get_friend(db=db, id=id)

db_friend.first_name = first_name

db_friend.last_name = last_name

db_friend.age = age

db.commit()

db.refresh(db_friend) #refresh the attribute of the given instance

return db_friend

def delete_friend(db:Session, id:int):

"""

Delete a Friend object

"""

db_friend = get_friend(db=db, id=id)

db.delete(db_friend)

db.commit() #save changes to db

```

We are done with defining the crud operations. Hurray!🥳

###Define endpoints

We are almost done. Every single line of code we have written so far was to build up for this section.

Let's head back over to *main.py*:

add the following after where you initialized your FastAPI instance

```

from app.db import SessionLocal

def get_db():

db = SessionLocal()

try:

yield db

finally:

db.close()

```

*Remember*

*SessionLocal* is the connection to our db.

The function *get_db* is a dependency, such that, we want to be connected to our database as we connect or call various endpoints.

Let us see this in use with our first endpoint. Add this to *main.py*

```

"""

So that FastAPI knows that it has to treat a variable as a dependency, we will import Depends

"""

from fastapi import Depends

#import crud to give access to the operations that we defined

from app import crud

#define endpoint

@app.post("/create_friend")

def create_friend(first_name:str, last_name:str, age:int, db:Session = Depends(get_db)):

friend = crud.create_friend(db=db, first_name=first_name, last_name=last_name, age=age)

##return object created

return {"friend": friend}

```

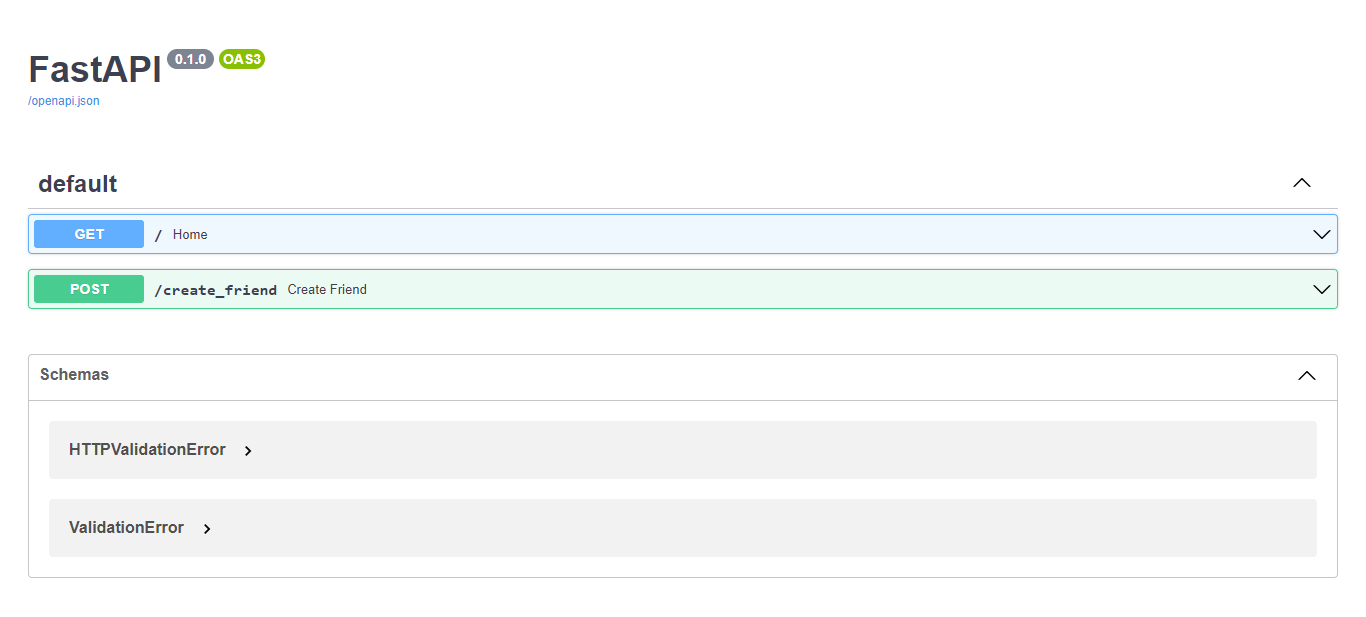

Save *main.py* and head over to your browser [http://127.0.0.1.8000/docs](http://127.0.0.1.8000/docs), and refresh the page. You will see that we have something new. Like this:

Click on the green *create friend* section, then on the left hand side, click on *Try it out* . Fill in the fields and click on the blue *Execute* button.

Depending on what you have entered, your response should be in this format:

```

{

"first_name": "mike",

"id": 1,

"age": 21,

"last_name": "dave"

}

```

We can see that response is a dictionary.

Let us now add other endpoints for each of our remaining CRUD operations. (Please read the comments in the snippets for easier understanding)

*get a Friend object*

```

#get/retrieve friend

@app.get("/get_friend/{id}/") #id is a path parameter

def get_friend(id:int, db:Session = Depends(get_db)):

"""

the path parameter for id should have the same name as the argument for id

so that FastAPI will know that they refer to the same variable

Returns a friend object if one with the given id exists, else null

"""

friend = crud.get_friend(db=db, id=id)

return friend

```

*list Friend objects*

```

@app.get("/list_friends")

def list_friends(db:Session = Depends(get_db)):

"""

Fetch a list of all Friend object

Returns a list of objects

"""

friends_list = crud.list_friends(db=db)

return friends_list

```

*update a Friend object*

```

@app.put("/update_friend/{id}/") #id is a path parameter

def update_friend(id:int, first_name:str, last_name:str, age:int, db:Session=Depends(get_db)):

#get friend object from database

db_friend = crud.get_friend(db=db, id=id)

#check if friend object exists

if db_friend:

updated_friend = crud.update_friend(db=db, id=id, first_name=first_name, last_name=last_name, age=age)

return updated_friend

else:

return {"error": f"Friend with id {id} does not exist"}

```

*delete friend object*

```

@app.delete("/delete_friend/{id}/") #id is a path parameter

def delete_friend(id:int, db:Session=Depends(get_db)):

#get friend object from database

db_friend = crud.get_friend(db=db, id=id)

#check if friend object exists

if db_friend:

return crud.delete_friend(db=db, id=id)

else:

return {"error": f"Friend with id {id} does not exist"}

```

That's it for now! | mungaigikure |

838,825 | Debug Your Css With This Tool | There are tons of CSS properties that we can't remember, and sometime we write some CSS and didn't... | 0 | 2021-09-24T08:09:00 | https://www.thangphan.xyz/posts/debug-your-css-with-this-tool/ | css, webdev, html, tutorial | ---

canonical_url: "https://www.thangphan.xyz/posts/debug-your-css-with-this-tool/"

---

There are tons of CSS properties that we can't remember, and sometime we write some CSS and didn't know why it doesn't work as we expect?

It turns out we used the wrong CSS property. How to know what exactly point we are wrong? Let's move on next step to discover that tool.

### Prepare

First of all, I need to create a file `HTML` and a file `CSS` for this demo.

`index.html`:

```html

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8" />

<meta http-equiv="X-UA-Compatible" content="IE=edge" />

<meta name="viewport" content="width=device-width, initial-scale=1.0" />

<title>Debug CSS</title>

<!-- import css file here -->

<link rel="stylesheet" href="./style.css" />

</head>

<body>

<div class="hello">Hello! CSS!</div>

</body>

</html>

```

`styles.css`:

```css

.hello {

}

```

I'm ready! Let's move on next step!

### Demo

I'm going to use Firefox, in order to figure out what CSS I'm wrong.

In Firefox, I can hit `Style Editor`, and I can see the `style.css` file that I just created. Let's edit the CSS directly here.

As you can see, CSS was reflected immediately.

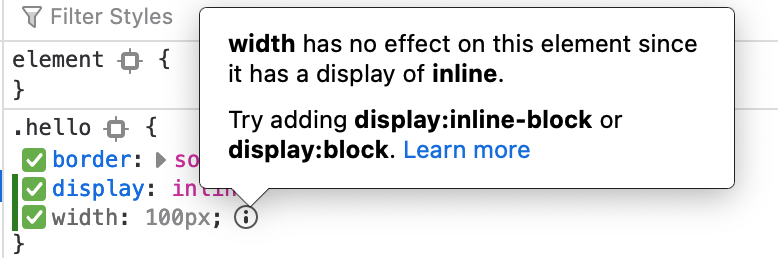

Hit `inspector`, and look at the right side, if I add the CSS below to class `hello`. Firefox will show me what CSS I was wrong in a specific way. The wrong CSS will be `gray`, and there is `i` icon that we can hover and see the reason.

```css

display: inline;

/* we can't set width for an inline element */

width: 100px;

```

This is the error:

It told me that I can't set `width` for an element that has `display: inline`.

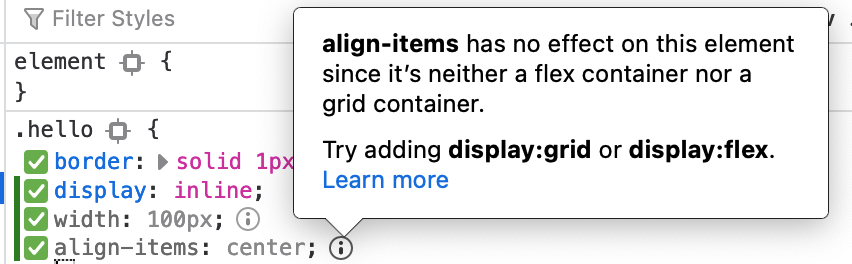

Let's try another property maybe we can test.

```css

display: inline;

/* we can't set width for an inline element */

width: 100px;

align-items: center;

```

And I get an error:

### Conclusion

I just introduced how to debug in CSS using Firefox. Why don't try your CSS, and observe what message Firefox will give, it appears like magic. | thangphan37 |

839,048 | A Window into Docker, minikube, and containerd | Like many of you, I received an email from Docker notifying me of their changes to service. Having... | 0 | 2021-09-28T15:23:03 | https://dev.to/leading-edje/a-window-into-docker-minikube-and-containerd-16bi | devops |

Like many of you, I received an email from Docker notifying me of their changes to service. Having used Docker Desktop for many years as part of my work, I was a little concerned. My concern was not great enough to do anything... Until a co-worker suggested an article switching from Docker for Windows to containerd. [This link](https://docs.microsoft.com/en-us/virtualization/windowscontainers/deploy-containers/containerd) from 2018 seemed to suggest containerd could run on Windows.

__Spoiler Alert/TL;DR:__ This is not a post about getting containerd running on Windows. I was able to get a Windows nanoserver image running in containerd. I could not get that image connecting to any network. This post is a survey of the source code, GitHub issues, and dead links chased. All documented to show how close and far away we are to something useful.

### Where does minikube fit in here?

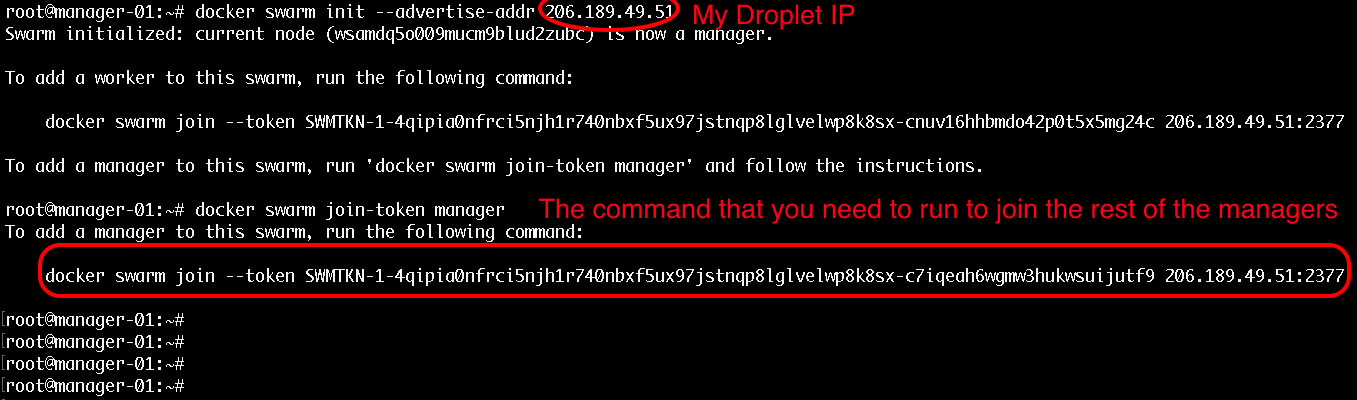

In my research and frustration, I wanted to try running something else. I enabled Hyper-V on my machine. Followed the instructions at [minikube quickstart](https://minikube.sigs.k8s.io/docs/start/). Things worked! Thank you to the maintainers of minikube! Great Job! I definitely will be using this more in the future.

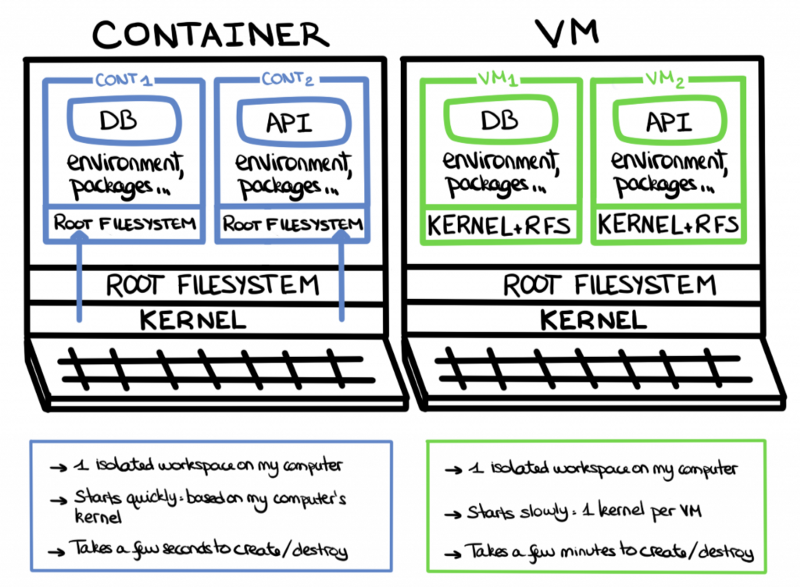

The only place I deviated was in starting the minikube cluster. I used the command `minikube start --driver=hyperv --container-runtime=containerd`. For fun, I checked the Hyper-V Manager and saw a new virtual machine named 'minikube'. Then it hit me. A Linux VM hosts the minikube cluster complete with its own version of containerd. This means I could not run a Windows image!

### The Journey Begins

The first stop was the [Container Platform Tools on Windows](https://docs.microsoft.com/en-us/virtualization/windowscontainers/deploy-containers/containerd). This is where the dead links begin (see the Links to CRI Spec). My second stop was the [containerd site](https://containerd.io). I downloaded and installed the requirements and release tarball. When the compiling started, I ran into an issue with make looking for gcc. This seemed odd since 1) it is a Go application, 2) having gcc on Windows seems like a high bar for running containers.

Some more Googling brought me to [James Sturtevant's](https://www.jamessturtevant.com/posts/Windows-Containers-on-Windows-10-without-Docker-using-Containerd/) site. This made me aware pre-built Windows containerd binaries exist. Now I was making some progress.

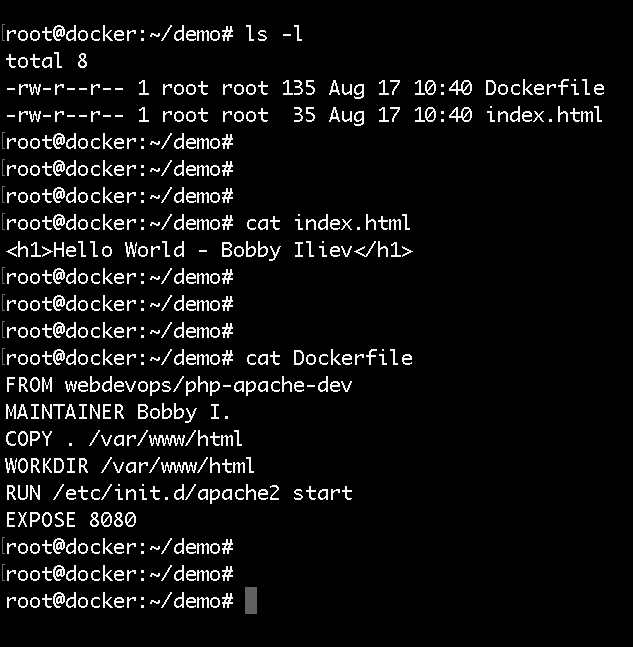

The following code snippet will download and configure containerd as a service. Each line does the following:

1. Download the latest (as of 20210924) release of containerd

1. Make a directory for the containerd binaries and configs

1. Expand the containerd tarball

1. Move the binaries to the directory created above

1. Add containerd to the Path environment variable

1. Create a default containerd configuration in the containerd directory

1. Tell Windows Defender not worry about the containerd executable

1. Register containerd as a service

1. Start containerd

In a Admin PowerShell window,

```

curl.exe -LO https://github.com/containerd/containerd/releases/download/v1.5.5/containerd-1.5.5-linux-amd64.tar.gz

mkdir "C:\Program Files\containerd"

tar -xzf containerd-1.5.5-linux-amd64.tar.gz

mv .\bin\* "C:\Program Files\containerd"

$env:Path = $env:Path + ';C:\Program Files\containerd'

containerd.exe config default | Set-Content "C:\Program Files\containerd\config.toml" -Force

Add-MpPreference -ExclusionProcess "$Env:ProgramFiles\containerd\containerd.exe"

.\containerd.exe --register-service

Start-Service containerd

```

To verify containerd is running:

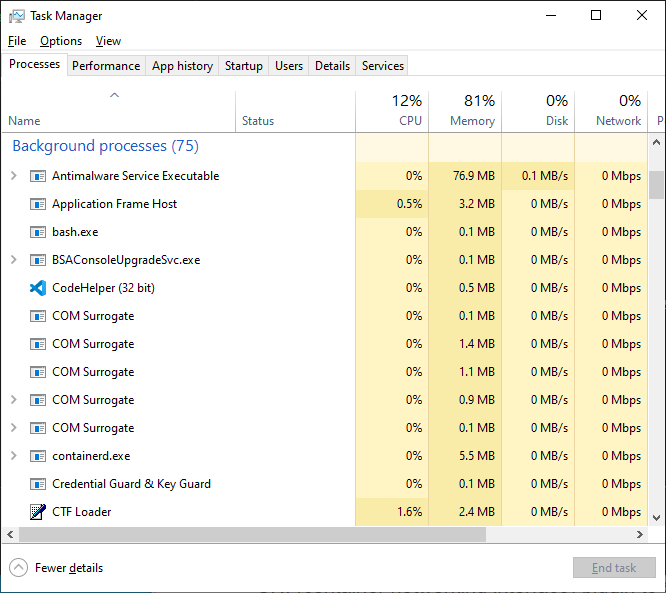

1. Open the Task Manager

1. Go into the `More Details` view

1. Scroll to `Background Processes`

1. You should see a `containerd.exe` process

### Running a Container

Under ideal circumstances, we would pull an image using the `ctr` command.

```

.\ctr.exe pull docker.io/library/mcr.microsoft.com/windows/nanoserver:10.0.19042.1165-amd64`

```

Unfortunately, there is some authentication around the Microsoft images. Assuming you have one downloaded using Docker, we can

1. Save the image

1. Import the image using ctr

1. Run the image.

From the Admin PowerShell window,

```

docker save mcr.microsoft.com/nanoserver:10.0.19042.1165-amd64 -o nanoserver.tar

.\ctr.exe image import --all-platforms c:\wherever\you\put\this\nanoserver.tar

.\ctr.exe run -rm mcr.microsoft.com/windows/nanoserver:10.0.19042.1165-amd64 test cmd /c echo hello

```

If you see `hello` on the next line immediately after the command, Success!

That's it, right?

We have a container running a Windows image, but no network.

### Creating A Network for the containers

We need extra setup for networking our pods. CNI (Container Networking Interface) will provide NAT'ing for our dev environment. We also must get a helper script to set up the network. The steps:

1. Get the CNI tools executables

1. Get the helper script hns.psm1

1. Create some directories

1. Expand the CNI tools into the created directories.

1. Allow your machine to execute scripts

1. Unblock the helper script, hns.psm1

1. Import hsn.psm1 for use. Disregard the warning about verbs. This is a naming convention.

From the PowerShell window,

```

curl.exe -LO https://github.com/microsoft/windows-container-networking/releases/download/v.0.2.0/windows-container-networking-cni-amd64-v0.2.0.zip

curl.exe -LO https://raw.githubusercontent.com/microsoft/SDN/master/Kubernetes/windows/hns.psm1

mkdir -force "C:\Program Files\containerd\cni\bin"

mkdir -force "C:\Program Files\containerd\cni\conf"

Expand-Archive windows-container-networking-cni-amd6464-v0.2.0.zip -DestinationPath "C:\Program Files\containerd\cni\bin" -Force

Set-ExecutionPolicy -ExecutionPolicy RemoteSigned -Scope LocalMachine

Unblock-File -Path .\hns.psm1

ipmo .\hns.psm1

```

Now to configure the network. From the Admin PowerShell window,

```

$subnet="10.0.0.0/16"

$gateway="10.0.0.1"

New-HNSNetwork -Type Nat -AddressPrefix $subnet -Gateway $gateway -Name "nat"

```

In this case, the name must be `nat`.

Let's check our work. From the PowerShell window:

```

netsh lan show profiles

```

You should see the new 'nat' network.

```

Profile on interface vEthernet (nat)

=======================================================================

Applied: User Profile

Profile Version : 1

Type : Wired LAN

AutoConfig Version : 1

802.1x : Enabled

802.1x : Not Enforced

EAP type : Microsoft: Protected EAP (PEAP)

802.1X auth credential : [Profile credential not valid]

Cache user information : [Yes]

```

If you get an error about dot3svc not running, run `net start dot3svc` and run the `netsh` command again.

Configure containerd to use that network. From the Admin PowerShell window,

```

@"

{

"cniVersion": "0.2.0",

"name": "nat",

"type": "nat",

"master": "Ethernet",

"ipam": {

"subnet": "$subnet",

"routes": [

{

"gateway": "$gateway"

}

]

},

"capabilities": {

"portMappings": true,

"dns": true

}

}

"@ | Set-Content "C:\Program Files\containerd\cni\conf\0-containerd-nat.conf" -Force

```

### Container Runtime Interface (CRI)

We are in the endgame now. I promise. From the [README](https://github.com/kubernetes-sigs/cri-tools/blob/master/docs/crictl.md), crictl provides a CLI for CRI-compatible container runtimes.

The following snippet performs the following:

1. Download the crictl executable.

1. Creates the default location for crictl to look for a configuration

1. Creates the configuration

From a PowerShell,

```

curl.exe -LO https://github.com/kubernetes-sigs/cri-tools/releases/download/v1.20.0/crictl-v1.20.0-windows-amd64.tar.gz

tar -xvf crictl-v1.20.0-windows-amd64.tar.gz

mkdir $HOME\.crictl

@"

runtime-endpoint: npipe://./pipe/containerd-containerd

image-endpoint: npipe://./pipe/containerd-containerd

timeout: 10

#debug: true

"@ | Set-Content "$HOME\.crictl\crictl.yaml" -Force

```

### The Payoff

Using a pod.json of

```

{

"metadata": {

"name": "nanoserver-sandbox",

"namespace": "default",

"uid": "hdishd83djaidwnduwk28bcsb"

},

"logDirectory": "/tmp",

"linux": {}

}

```

The magic happens with this command:

```

$POD_ID=(./crictl runp .\pod.json)

$CONTAINER_ID=(./crictl create $POD_ID .\container.json .\pod.json)

./crictl start $CONTAINER_ID

```

### The Problem

Running the `.\crictl runp .\pod.json` creates a sandbox pod for use in creating a container in the next command. The runp command fails setting up the network adapter for the pod. The output is as follows:

```

time="2021-09-22T09:25:29-04:00" level=debug msg="get runtime connection"

time="2021-09-22T09:25:29-04:00" level=debug msg="connect using endpoint 'npipe://./pipe/containerd-containerd' with '10s' timeout"

time="2021-09-22T09:25:29-04:00" level=debug msg="connected successfully using endpoint: npipe://./pipe/containerd-containerd"

time="2021-09-22T09:25:29-04:00" level=debug msg="RunPodSandboxRequest: &RunPodSandboxRequest{Config:&PodSandboxConfig{Metadata:&PodSandboxMetadata{Name:nanoserver-sandbox,Uid:hdishd83djaidwnduwk28bcsb,Namespace:default,Attempt:0,},Hostname:,LogDirectory:,DnsConfig:nil,PortMappings:[]*PortMapping{},Labels:map[string]string{},Annotations:map[string]string{},Linux:&LinuxPodSandboxConfig{CgroupParent:,SecurityContext:nil,Sysctls:map[string]string{},},},RuntimeHandler:,}"

time="2021-09-22T09:25:29-04:00" level=debug msg="RunPodSandboxResponse: nil"

time="2021-09-22T09:25:29-04:00" level=fatal msg="run pod sandbox: rpc error: code = Unknown desc = failed to setup network for sandbox \"e4cc6fc22dbdf8ccde0035239873cb9f31b074fca4650acc545a8af5a51d814c\": error creating endpoint hcnCreateEndpoint failed in Win32: IP address is either invalid or not part of any configured subnet(s). (0x803b001e) {\"Success\":false,\"Error\":\"IP address is either invalid or not part of any configured subnet(s). \",\"ErrorCode\":2151350302} : endpoint config &{ e4cc6fc22dbdf8ccde0035239873cb9f31b074fca4650acc545a8af5a51d814c_nat 11d59574-13be-4a14-b3e8-11cc0d5a7805 [] [{ 0}] { [] [] []} [{10.0.0.1 0.0.0.0/0 0}] 0 {2 0}}"

```

There is a [GitHub Issue](https://github.com/containerd/containerd/issues/4851) that hints to a problem with the pod network workflow on Windows

### Conclusion

There is a good possibility this issue will remain for a while. It has been around for the better part of a year. If one is running Linux containers, there is a great substitute in [minikube](https://minikube.sigs.k8s.io/docs/). It is easy to setup, well documented, maintained, and simulates a production environment. It appears Windows images will still need to run on Docker. Please leave a comment below if you are able to find a workaround.

### Relevant Links

[GitHub Issue: Windows CNI plugin has no chance to create and configure container VNIC](https://github.com/containerd/containerd/issues/4851)

[James Sturtevant's Windows Containers on Windows 10 without Docker using Containerd](https://www.jamessturtevant.com/posts/Windows-Containers-on-Windows-10-without-Docker-using-Containerd/)

[PowerShell Execution Policies](https://docs.microsoft.com/en-us/powershell/module/microsoft.powershell.core/about/about_execution_policies?view=powershell-7.1)

[minikube](https://minikube.sigs.k8s.io/docs/)

[crictl README has pod.json samples](https://github.com/containerd/containerd/blob/main/docs/cri/crictl.md)

<a href="https://dev.to/leading-edje">

<a/> | wmchurchill3 |

839,114 | Open Source Community: How to Become an Active Contributor | Open-Source development has truly transformed the technical revolution all around the world and the... | 0 | 2021-09-24T13:28:31 | https://dev.to/saharshlaud/open-source-community-how-to-become-an-active-contributor-f3d | opensource, contribution, github, community | Open-Source development has truly transformed the technical revolution all around the world and the open source community has grown rapidly from its initial stages when [Richard Stallman](https://stallman.org/) introduced the GNU project, the first “free operating system” to the present scenario where more than **70%** of the software codebases around the world consist of some open-source components.

Open source refers to source code that has been made available to the public to view, use, modify, and distribute under a license.

The open source [community](https://opensource.org/community) collaboratively helps in developing and maintaining open-source projects. By becoming a part of the open-source community, you can not only enhance your technical skills but also hone your people skills like communication, giving and receiving feedback, emotional intelligence, etc.

As a beginner to the open source environment, it can be quite intimidating and confusing as to how one can become an active contributor to open source projects. Today, we’ll explore some strategies that anyone can use to kickstart their open source journey and ultimately become an active contributor.

## Finding projects for contribution

One of the most important aspects of becoming an active open-source contributor is the selection of projects to contribute to. Always try to select a project which is familiar to you and you have some prior knowledge about.Working on projects that you already use gives you an edge when contributing because you’re already pretty familiar with its details and this experience will definitely help you in contributing more often towards the project and becoming an active contributor. You can even suggest features that you want in the software or any problems faced while using the product.

[GitHub’s explore](https://github.com/explore) page is a great way to find projects based on your preferences and you can even use the search tool by entering beginner-friendly contribution tags like [good-first-issue](https://github.com/topics/good-first-issue), [beginner-friendly](https://github.com/topics/beginner-friendly), [easy](https://github.com/topics/easy), etc.

## Ways to contribute to open source

Contributing to an open-source project doesn’t necessarily mean that you need to provide exclusive code for the project, you can contribute to a project in many ways. You can make a contribution by making comments on existing code and [APIs](https://aviyel.com/post/78/say-hello-to-hoppscotch-our-first-open-source-project-on-aviyel) to add context or by writing documentation for the project. If you like to code, you can open a pull request to provide your code contribution or even open an issue to discuss and get help on a new feature or an issue.

If you don’t want to contribute to existing projects, you can even create your own open-source project which might also serve as an alternative to commercial software.

## Tips for a successful contribution to open source

Projects generally list the work that needs to be done and to build up your confidence and credibility you should start with the easiest and smallest contributions first that take the least amount of work. Read documentation related to the task to get a better understanding of what to do and always try to reach out to the community to ask for help, clarification, or mentorship. Once you’re confident enough on a task and know how to go about it, write some code and submit a pull request. Submit your work as per the contribution guidelines and if all goes well, you shall become an active open-source contributor in no time!

## Go ahead and get started!

Contributing to an open-source project can be an amazing learning experience since it allows you to be a part of a great community and also helps in developing your technical skills especially since you do not have to know every little detail about a project to make a contribution. So, what are you waiting for? Pick up a project and a community that supports new contributors, and get started with your contributions.

Thanks for reading. I hope you find this article helpful !!

This blog was originally posted on [this website](https://aviyel.com/post/946/open-source-community-how-to-become-an-active-contributor).

> Feel free to comment, share and reach out to me on [Twitter](https://hashnode.com/@saharshlaud) or [LinkedIn](https://in.linkedin.com/in/saharsh-laud) | saharshlaud |

839,115 | What should I start ? | A post by Abhishek Pathak | 0 | 2021-09-24T13:24:42 | https://dev.to/scorcism/what-should-i-start-2n19 | scorcism | ||

839,127 | What are all these words? | React, Python, Ubuntu, MySQL, GIT... As a relatively new person to programming it can be... | 0 | 2021-09-24T13:34:30 | https://dev.to/zaco/what-are-all-these-words-3agb | React, Python, Ubuntu, MySQL, GIT...

As a relatively new person to programming it can be overwhelming to even grasp the fact that one day you´ll actually know what all these (and many many more) words actually mean and refer to. I´ve only started a two-year long Full stack web developer program in my home city Stockholm (Sweden) and made it a top priority for myself to sit down and learn what all these things are and how they are connected. I believe this will enhance my understanding of this exciting new world of programming that I´m just about to enter.

I am currently enjoying all the exciting articles here and hope to be able to share some useful content in the future. | zaco | |

839,632 | The Beginning of Infinity by David Deutsch | This is not going to be like my regular book reviews. It cannot be. It was so long... It took me so... | 0 | 2021-09-25T15:48:27 | https://www.sandordargo.com/blog/2021/09/25/the-beginning-of-infinity-by-david-deutsch | books, watercooler, history |

This is not going to be like my regular book reviews. It cannot be. It was so long... It took me so much time.

I'm reading almost every day after I wake up and before I go to bed. Okay, I was on vacation while I had this book in the queue where I didn't manage to read as frequently and as much as I wanted, but still... Usually, I finish a book in no more than 2-3 weeks and this took me about 2 months. It might not be more than 500 pages, but oh boy, those pages are packed.

So first of all, I found [The Beginning of Infinity](https://amzn.to/2RHhRR8) interesting, certain parts outright fascinating, but I wouldn't recommend reading it if you're not a binge reader, probably this would take you out of the "book market" for too much time.

So what is this book all about?

It's basically about human progress. Why and how do we evolve and what's the limit of this development?

As you might imagine, the answer to this latter question is that it has none. Our progress, the progress of knowledge is infinite. At many points throughout our history, people claimed that it's the end of history, it's the end of discoveries, but they were never right and probably they never will.

Otherwise, in the book the author touches on the questions of beauty, the reasons for creativity, mathematical proofs, artificial intelligence, the evolution of culture, and even multiverses and quantum physics. At certain points, I didn't feel the book a coherent entity, but rather a collection of interesting - but not very well organized - writings.

I'd also not consider this book the one big source of truth, even though it seems that Deutsch handles his points infallible and uncriticizable.

Especially when it comes to quantum theory and multiverses, we are not there yet - will we ever be? - to decide, to prove whether the hypotheses he shares are the right ones.

A part of the book that I particularly liked was the last chapters. No, not because of that... Not only! But it discussed history, Easter Island, different points of view on the society (of the Easter Island and in general).

First of all, it makes a distinction between static and dynamic societies.

In static societies change is slow. By slow the author means that it's hardly noticeable to the individual, the manifestation of changes requires multiple generations. It's achieved by suppressing creativity and progress both on central and local levels.

A dynamic society is therefore a culture where change and progress are fast, it's noticeable for the individual.

The author brings the two ancient Greek city-states as examples. Sparta was a static society, while Athens was a dynamic one. Until Sparta defeated it in the Peloponnesian Wars and made an end to the Athenian golden age.

The next time when a dynamic society started to emerge was the European Enlightenment and the author claims that we are still in a transition from a static to a dynamic society, though I'm sure many would complain that it's already too dynamic.

Dynamic societies are able to solve the arising problems with creative solutions. And here comes Easter Island into the picture. We are not sure when the islanders arrived, why they started to raise statues, but there are a few things to notice:

- they removed the forests so that they can move the big statues (and to build canoes, houses, to make fires to burn the dead). By chopping down the trees in an unsustainable manner, they started to have more serious problems.

- instead of changing the course of actions, they doubled down and tried to build even more and even bigger statues that fasten up the speed of deforestation until they had no more forests to chop down.

- many of their statues are unfinished and they are all very similar, they show no signs of creative progress.

The Easter Island society when faced with a problem, couldn't change their fate, they couldn't respond to the challenges. Instead, they kept doing what they had been doing engraving the situation. They showed the signs of a static society and they died.

That's pretty much what happens to static societies. They cannot react to the changing environments, to the changing circumstances, so they inevitably meet problems where their systems of beliefs and actions become inadequate and their societies collapse.

A dynamic society on the other hand is adapting and can answer to the challenges of a changing environment. Which one do we live in?

Time will tell, but ours show signs of a dynamic society.

So what do I think about [The Beginning of Infinity](https://amzn.to/2RHhRR8) overall? Would I recommend it? I don't say that it's a bad book. It is an interesting read, but very long. Only read it, if you read a lot and if you are interested in mathematics, physics and history at the same time. Then it will be interesting. But beware it might block your reading queue for more than a month or two. | sandordargo |

839,759 | Lessons we've learned after burning many thousands thanks to AWS Lambda. Expect no mercy from AWS. | Preface. A year ago, we decided to make a transition towards serverless architecture. Our... | 0 | 2021-10-20T02:10:55 | https://dev.to/xezed/lessons-weve-learned-after-burning-many-thousands-thanks-to-aws-lambda-expect-no-mercy-from-aws-39ph | aws, serverless, cloud, terraform | ## Preface.

A year ago, we decided to make a transition towards serverless architecture. Our management was very excited about it, and its excitement resulted in many tries and failures for developers(including me). So one Monday, we started our working day and realized that one of our lambdas had been going right into the rabbit hole the whole weekend. We were astonished, management was dissatisfied, and I was happy with the new material for the current article.

## Our setup.

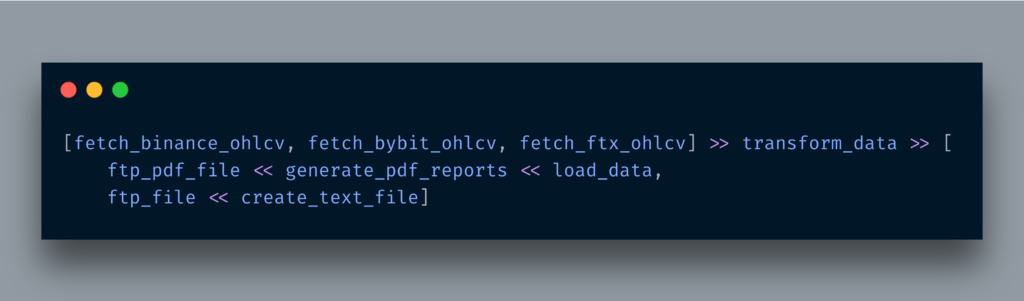

The staple part of several microservices at our disposal heavily relies upon S3 event notifications. So what happened.

A developer screwed up and invoked Lambda from within the same Lambda for the same file in S3, which initially triggered Lambda. These invocations created other S3 files, which started different lambdas... You got the idea.

Dev wasn't fired or sanctioned in any way. Because it's an architectural problem, anyone can make a silly mistake.

## How much we've lost? Tenths of thousands.

We filled the ticket afterward and got compensated 5k only because we spent this much before the alarm came through.

## Precautions we implemented to prevent future incidents.

1. We set budget notifications and created alarms to email, slack channel, and mobile phone of key tech company figures.

2. Most of the Lambdas must have reserved concurrency parameters set.

3. Most of the Lambdas must be invoked via SQS only.

4. We also implemented AWS Config rule to check all our Lambdas for reserved concurrency.

With reserved concurrency, we avoid calling functions more than we should. This way, essentially throttle it.

And SQS helps us to prevent data loss. In case of facing concurrency limit, Lambda will wait before obtaining the following message from the queue.

## Questions to think about.

1. Why is there no option to kill all AWS activities after reaching some usage threshold?

2. Is it this complicated to create an intelligent tool to help AWS customers catch this situation and avoid money loss?

| xezed |

840,071 | Special kind of array in Typescript - Tuple | In strictly typed programming languages, array is a Data structure of homogeneous data types with... | 0 | 2021-09-26T03:27:25 | https://dev.to/xenoxdev/special-kind-of-array-tuple-4l1h | javascript, webdev, typescript, react | In strictly typed programming languages, `array` is a Data structure of `homogeneous data types` with `fixed length`. In contrast **JavaScript** is dynamic. In here, `array` can have elements of `heterogeneous data type` and `length` can vary.

**In JavaScript:**

```js

const elements = ['rick', 23, false];

const len = elements.length; // len = 3

elements.push({name: 'morty'});

const changedLen = elements.length; // changedLen = 4

```

With **Typescript**, we can restrict that and force arrays to have `homogeneous data type` what I mean is this.

**In Typescript:**

```ts

const elements: string[] = ['rick', 'morty'];

const len = elements.length; // len = 2

```

Now if we try to push `number` or any other data type other than `string` in `elements` then Typescript will yell at us.

```ts

const elements: string[] = ['rick', 'morty'];

elements.push(1) // Error

/**

* Error: Argument of type 'number' is not assignable to

* parameter of type 'string'.

*

*/

```

Even though Typescript enforces the type but `length` is still not fixed. We can push another element of type `string` in `elements` array.

```ts

const elements: string[] = ['rick', 'morty'];

const len = elements.length; // len = 2

elements.push('summer')

const changedLen = elements.length; // changedLen = 3

```

**What if our requirement changes like this:**

##Requirement 1:

* An `Array` with type `number`, `boolean` and `string` only.

**Solution**

Well! that is easy, we can use `union` type with `array` in Typescript like this:

```ts

const elements: Array<number|boolean|string> = ['summer'];

elements.push(23); // ok

elements.push(true); // ok

console.log(elements) // ["summer", 23, true]

elements.push({name: 'morty'}) // Not OK : Error

/**

* Error: Argument of type '{ name: string; }' is not

* assignable to parameter of type 'string | number |

* boolean'.

*/

```

One point to note here is:

> The sequence of the `data type` is not fixed as we defined during the declaration. What it means that, we can push `number`, `boolean` and `string` in any order.

For example, This is also perfectly valid and OK with TypeScript:

```ts

const elements: Array<number|boolean|string> = [true];

elements.push(23); // ok

elements.push('summer'); // ok

console.log(elements) // [true, 23, "summer"]

```

By `Array<number|boolean|string>`, we only narrowed the type and told Typescript that this collection should only have elements of type `number`, `boolean` and `string`. The order can be anything. Typescript do not mind as long as the type is one of the declared types.

##Requirement 2 :

* An array with a fixed number of items

* `type` of elements are fixed at each index

* The `type` of elements need not be same at all the index

What did you just say **An array with a fixed number items** ??

And it can have different type at different index? oh okkkk......

**Solution**

Actually this is possible with new `type` called `tuple` in `Typescript`.

##Tuple - Special kind of Array

As per [official docs](https://www.typescriptlang.org/docs/handbook/basic-types.html#tuple):

> Tuple types allow you to express an array with a fixed number of elements whose types are known, but need not be the same.

Tuple fulfils all the requirements described above. Let's see how can we define a `tuple`.

```ts

/**

* let's define a info of character id, name and activeStatus

*/

const info: [number, string, boolean] = [33, 'Rick' , true];

```

* **An array with a fixed number items**

Just by doing this, now we fixed number of elements in `info` i.e. `3`. So now if you try to access the element at index `4` Typescript will yell at you.

```ts

const info: [number, string, boolean] = [33, 'Rick' , true];

const item = info[4] // error

/**

* Tuple type '[number, string, boolean]' of length '3' has no

* element at index '4'.

*/

// In contrast if we declare an array with union type like

// below, it will be ok to access the element at index 4

const arr: Array<number|string|boolean>

= [33, 'Rick' , true];

const arrItem = arr[4] //undefined

```

* **Type of elements are fixed at each index**

By defining `[number, string, boolean]`, we have fixed the type of elements at each index. Typescript will infer the type from `tuple`.

```ts

const info: [number, string, boolean] = [33, 'Rick' , true];

const item1 = info[0] // type number

const item2 = info[1] // type string

const item3 = info[2] // type boolean

// In contrast, array with union type

const info: Array<number| string| boolean>

= [33, 'Rick' , true];

// type of items can be either string, number or boolean

const item1 = info[0] // type string | number | boolean

const item2 = info[1] // type string | number | boolean

const item3 = info[2] // type string | number | boolean

```

Advantage of doing this is, I can get all the methods available to `string` for `item2`.

* **The `type` of elements need not be same at all the index**

The type of elements in tuple can be same as well as different:

```ts

const a: [number, string, boolean] = [33, 'Rick' , true];

const b: [string, string, string] = ['Mr', 'Rick' , 'alive'];

```

## Practical example:

You might be thinking, it looks great but where do we use it.

One of the examples that I can think of is in our custom hooks where we have to return an array consisting values of different data type. Take for example `useToggle` custom hook

```ts

import { useCallback, useState } from "react";

export const useToggle = (

intialValue: boolean = false

): [boolean, () => void] => {

const [state, setState] = useState(intialValue);

const setToggle = useCallback(

() => setState((flag) => !flag),

[]);

return [state, setToggle];

};

```

Here we have to return `current status` of `toggle` and a `function to change the status`. That's why, the return type is a tuple `[boolean, () => void]`.

If we simply return an array, and assign the second argument i.e. setter function to `onClick`, Typescript will throw a compile time error as the return type is union of `boolean` and `() => void` .

```ts

Type 'boolean | (() => void)' is not assignable to type

'((event: MouseEvent<HTMLButtonElement, MouseEvent>)

=> void)

| undefined'.

```

You can checkout these examples here:

{% codesandbox usetoggle-46itn %}

Thank you for reading.

##Read my other TypeScript articles

* [Unknown and any type in Typescript](https://dev.to/thejsdeveloper/world-of-any-and-unknown-in-typescript-29cd)

Follow me on [twitter](https://twitter.com/VikasYadav_Dev)

##References

* [Official Docs](https://www.typescriptlang.org/docs/handbook/basic-types.html#tuple)

* [auth0 Tuple article](https://auth0.com/blog/typescript-3-exploring-tuples-the-unknown-type/)

| thejsdeveloper |

840,074 | How you guys learn js? | How you guys learn js? I learn before and i forgot within weeks, tell me the technique you use. Thank... | 0 | 2021-09-25T09:50:27 | https://dev.to/yisumaid/how-you-guys-learn-js-543c | How you guys learn js?

I learn before and i forgot within weeks, tell me the technique you use. Thank you for your comment! | yisumaid | |

840,237 | On the importance of staying Focused | Sprint 4 — Saturday Photo by Eden Constantino on Unsplash As you approach the final... | 0 | 2021-09-25T12:05:24 | https://medium.com/chingu/on-the-importance-of-staying-focused-1cd1f5afccd4 | teamwork, agile, softwaredevelopment | ---

title: On the importance of staying Focused

published: true

date: 2021-09-25 12:02:10 UTC

tags: teamwork,agile,softwaredevelopment

canonical_url: https://medium.com/chingu/on-the-importance-of-staying-focused-1cd1f5afccd4

---

#### Sprint 4 — Saturday

<figcaption>Photo by <a href="https://unsplash.com/@edenconstantin0?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText">Eden Constantino</a> on <a href="https://unsplash.com/s/photos/focused?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText">Unsplash</a></figcaption>

As you approach the final sprints in a project it should be at or close to its _minimum viable product (MVP)_ milestone. In other words, having the required features you set out to include in it.

Ideally, your team’s project will reach MVP status before the final sprint, leaving time to make final corrections, polishing the app, and even adding lower priority features.

But what if the final sprint is looming, but you haven’t yet reached the MVP milestone? Don’t get stressed! Keep following the Agile process which allows you to adjust project scope based on your current situation. It’s okay to reduce or adjust the features in your app

> Having a simple app that works is better than a complex app that’s broken

It’s also important to keep in mind that your most powerful tool is **_team work_**! The measure of mature developers is they stay dispassionate, respectful, and focused on the goal under stress. Support your team and your teammates and finish together!

### Before you Go!

Chingu helps you to get out of “_Tutorial Purgatory_” by transforming what you’ve learned into experience. The experience to boost your Developer career and help you get jobs.

You can learn more about **Chingu** & how to join us at [https://chingu.io](https://chingu.io/)

* * * | jdmedlock |

840,252 | Simple way to wait for an executing function to finish before executing another function in Javascript | Actually, I'm not really sure about the title of this blog because currently, I can't come up with... | 0 | 2021-09-25T12:45:05 | https://dev.to/trunghieu99tt/synchronized-executions-in-javascript-9bf | webdev, javascript | Actually, I'm not really sure about the title of this blog because currently, I can't come up with any better title to describe what I want to share with you guys today. I don't know if it's called something else, if anyone knows, please point it out, I very much appreciate it.

Okay, so first let's start with our use case:

We have 2 functions, let's call them A and B. We call them at the same time, but we want B to wait for A to finish first.

I have a really simple function like this:

```jsx

(async () => {

const sub = {

name: "sub",

subscribed: false,

};

// 1.subscribe function

const subscribe = () => {

setTimeout(() => {

sub.subscribed = true;

}, [9.5 * 1000]); // execute after 9.5 seconds

};

// 2. a function only happen after subscribed

const afterSubscribed = () => {

if (sub.subscribed) {

console.log("subscribed");

} else {

console.log("Not subscribed");

}

};

subscribe();

afterSubscribed();

})();

```

And the output of this function would be:

```jsx

// immediately

Not subscribed

// Continue to run 9.5 seconds then stop

```

The result I want is that this function somehow prints out "Subscribed".

Let's try to solve it.

The first try we can do is that we can create an interval in the afterSubscribed function like this, note that we will add a timer to see when we get right log:

```jsx

// 2. a function only happen after subscribed

const afterSubscribed = async () => {

const start = Date.now();

const interval = setInterval(() => {

if (sub.subscribed) {

console.log("subscribed");

const end = Date.now();

const duration = Math.floor(end - start);

console.log(`on: ${duration}`);

clearInterval(interval);

} else {

console.log("Not subscribed");

}

}, [1000]);

};

```

Now we will retrieve the result we want:

```bash

// This is the log of this function

Not subscribed

Not subscribed

Not subscribed

Not subscribed

Not subscribed

Not subscribed

Not subscribed

Not subscribed

Not subscribed

subscribed

on: 10011

```

Yeah, it prints out the result we want, it's quite good. The issue of this approach is that we only check for the state of the sub every 1 second. So in case, our subscribe function finishes after 9.1, 9.2... seconds, we still have to wait until the 10th second. But it's still acceptable as long as we don't need afterSubscribed to continue to execute right after subscribe finished.

To resolve the issue of the #1 try, we can change our functions like this:

```jsx

(async () => {

const sub = {

name: "sub",

subscribed: false,

doneSubscribed: false,

processSubscribe: false,

};

// 1.subscribe function

const subscribe = () => {

sub.processSubscribe = new Promise(

(resolve) => (sub.doneSubscribed = resolve)

);

setTimeout(() => {

sub.subscribed = true;

sub.doneSubscribed();

}, [9.5 * 1000]); // execute after 9.5 seconds

};

// 2. a function only happen after subscribed

const afterSubscribed = async () => {

const start = Date.now();

await sub.processSubscribe;

if (sub.subscribed) {

console.log("subscribed");

} else {

console.log("Not subscribed");

}

const end = Date.now();

const duration = Math.floor(end - start);

console.log(`on: ${duration}`);

};

subscribe();

afterSubscribed();

})();

```

And this is what we get:

```jsx

// Wait for 9.5 second then..

subscribed

on: 9507

```

Okay, so no more "Not subscribed" and right after subscribe finished its works

Let me explain how it works.

We add 2 more attributes to sub :

```jsx

doneSubscribed: false,

processSubscribe: false,

```

And in subscribe function, we assign sub.processSubscribe to a promise where resolve function is assigned to sub.doneSubscribe. In setTimeout, we call sub.doneSubscribe function (since we assigned it to the resolve function of sub.processSubscribe promise, it's a function now). The trick here is that we assign the resolve function of sub.processSubscribe to sub.doneSubscribe, we all know that the promise resolves when its resolve/reject function is called. By awaiting for sub.processSubscribe we also wait for setTimeout to finish as well as subscribe function.

Of course, there might be some other way to solve this problem, but I think this one is one of the shortest and best ways to solve it.

So in general, this problem can be described as "wait for an executing function to finish before executing another function".

If you guys have any other ways to solve it. Feel free to share with me. Or If I did any mistake, please point it out, I really appreciate it. Thank for reading | trunghieu99tt |

840,296 | Deeper Dive Into React useMemo | When to use and not use React's useMemo | 0 | 2021-09-25T15:51:23 | https://bionicjulia.com/blog/deeper-dive-into-react-usememo | react | ---

title: Deeper Dive Into React useMemo

published: true

date: 2021-09-25 00:00:00 UTC

tags: react

description: When to use and not use React's useMemo

canonical_url: https://bionicjulia.com/blog/deeper-dive-into-react-usememo

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/0fpiqhe602bblxl8x2sa.jpg

---

If you're new here, be sure to first check out my posts on the [differences between React.memo and useMemo](https://bionicjulia.com/blog/react-memo-and-usememo-whats-the-difference), and a [deeper dive into React.memo](https://bionicjulia.com/blog/deeper-dive-into-react-memo). This post completes the last in the series and talks about the `useMemo` hook and when / when not to use it.

### When to use `useMemo`

**Use Case 1: Stopping computationally expensive, unnecessary re-renders**

Let's go back to the example I had in my [first post](https://bionicjulia.com/blog/react-memo-and-usememo-whats-the-difference). This illustrates the use case where you have a function that keeps re-rendering, because the state of its parent component keeps changing.

```jsx

export type VideoGameSearchProps = {

allGames: VideoGameProps[],

}

export const VideoGameSearch: React.FC<VideoGameSearchProps> = ({ allGames }) => {

const [searchTerm, setSearchTerm] = React.useState('')

const [count, setCount] = React.useState < number > 1

// NOTE useMemo here!!

const results = useMemo(() => {

console.log('Filtering games')

return allGames.filter((game) => game.name.includes(searchTerm))

}, [searchTerm, allGames])

const onChangeHandler = (event: React.ChangeEvent<HTMLInputElement>) => {

setSearchTerm(event.target.value)

}

const onClickHandler = () => {

setCount((prevCount) => prevCount + 1)

}

return (

<>

<input type="text" value={searchTerm} onChange={onChangeHandler} />

{results.map((game) => (

<VideoGame key={game.name} rating={game.rating} name={game.name} releaseDate={game.releaseDate} />

))}

<br />

<br />

<p>Count: {count}</p>

<button onClick={onClickHandler}>Increment count</button>

</>

)

}

```

This is a completely made-up example which would likely never exist in production code, but I wanted to illustrate the takeaway points clearly. In this case, there are 2 things going on in this component:

- A user can click on an "increment count" button which updates the `count` state and displays the current number in the UI.

- A user can enter a search query in the input field which updates the `searchTerm` state `onChange`. This in turn causes the `results` function to re-calculate, where `results` is rendered as a list in the UI.

The incrementing of `count` has nothing to do with how `searchTerm` is set, or `results` run. However, every time `count` is incremented, the component re-renders and runs the `results` function. It's probably not going to be a big deal here, but what if the `allGames` array actually contains millions of elements... and instead of a simple filter function, it was a much more computationally complex calculation? This is where `useMemo` would come in handy.

Wrapping the `results` function with `useMemo` (with `searchTerm` and `allGames` as dependencies) tells React to only re-run this function, if either of those 2 variables changes. This means that changes in `count` would no longer cause `results` to be recalculated, with the memoised result being returned instead.

_Note: I've added the `console.log` in there so you can test it for yourselves to see how many times that function runs with and without the `useMemo` when you increment `count`!_

**Use Case 2: Ensuring referential equality when dealing with dependency lists**

If you have a case whereby you're relying on a dependency list, e.g. when using a `useEffect` hook, you really want to ensure you're only updating the component when the dependency values have truly changed.

```jsx

useEffect(() => {

const gameData = { name, publisher, genres }

thisIsAFunction(gameData)

}, [name, publisher, genres])

```

In this example, assuming `name`, `publisher` and `genres` are all strings, you shouldn't have a problem. React does a referential equality check on `gameData` to decide whether the component should be updated, and because `gameData` only comprises strings (i.e. primitives), this will work as we expect.

To illustrate the point, we wouldn't want to have this for example, because `gameData` will be a new instance every time React runs the `useEffect` check, which means re-running `thisIsAFunction` every time because in Javascript-land, `gameData` has changed.

```jsx

const gameData = { name, publisher, genres }

useEffect(() => {

thisIsAFunction(gameData)

}, [name, publisher, genres])

```

So back to this - all good right?

```jsx

useEffect(() => {

const gameData = { name, publisher, genres }

thisIsAFunction(gameData)

}, [name, publisher, genres])

```

Unfortunately not, because we run into a similar problem if one of `name`, `publisher` or `genres` is a non-primitive. Let's say instead of a string, `genres` is actually an array of strings. In Javascript, arrays are non-primitives which means `[] === []` results in `false`.

So to expand out the example, we've got something like this:

```jsx

const GamesComponent = ({ name, publisher, genres }) => {

const thisIsAFunction = (

gameData, // ...

) =>

useEffect(() => {

const gameData = { name, publisher, genres }

thisIsAFunction(gameData)

}, [name, publisher, genres])

return //...

}

const ParentGamesComponent = () => {

const name = 'God of War'

const publisher = 'Sony'

const genres = ['action-adventure', 'platform']