id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

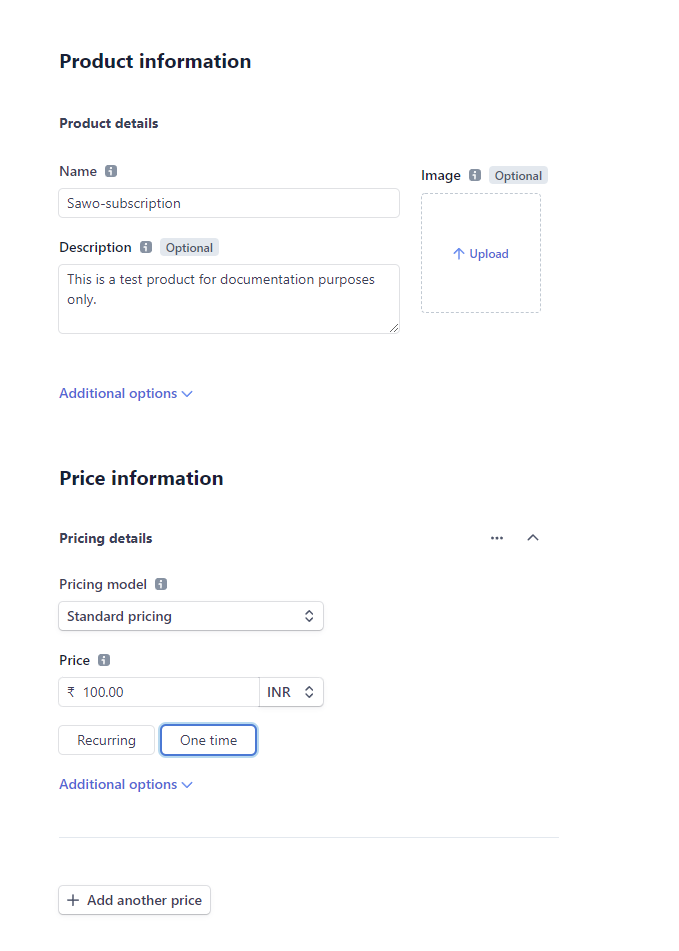

894,599 | Stripe indirect integration for Shopify | Get paid for your Shopify orders via Stripe with Alipay, Bancontact, EPS, giropay, iDEAL, Przelewy24,... | 0 | 2021-11-12T14:11:21 | https://dev.to/techt01ia/stripe-indirect-integration-for-shopify-57e0 | stripe, shopify, bancontact, ideal | Get paid for your Shopify orders via Stripe with Alipay, Bancontact, EPS, giropay, iDEAL, Przelewy24, Sofort, WeChat Pay.

{% youtube 06yyf2GLQMM %}

[Demo ](http://shopifystripe.techtolia.com)--> http://shopifystripe.techtolia.com

With this integration, accept payments for your Shopify Store orders directly at your domain through Stripe with payment methods Alipay, Bancontact, EPS, giropay, iDEAL, Przelewy24, Sofort and WeChat Pay.

### What is Order and Payment Workflows?

- The customer chooses one of the manual payment methods during the ordering phase through your Shopify store.

- On the order page, the customer is asked to take the order number and go to your payment address.

- The customer comes to your payment address, enters the order number and updates the page. At this stage: The app collects relevant order information from your Shopify account.

- The customer completes the payment. At this stage: The app adds payment and customer information to your Stripe account.

- The customer is directed back to the application from the payment method page, the customer sees the successful payment screen on the page. At this stage: The app updates the order as paid in your Shopify account.

### What are Payment Methods?

Payment Methods are Alipay, Bancontact, EPS, giropay, iDEAL, Przelewy24, Sofort and WeChat Pay.

For these payment methods, you just need to have a Stripe account and activate your desired payment method from your Stripe control panel. You do not need to make any other application or action.

Payments can be accepted with Przelewy24 in PLN and EUR, with Bancontact, EPS, giropay, iDEAL and SOFORT only in EUR, with Alipay and WeChat Pay CNY and the currencies that map to your country(For example: If your Stripe account country is Germany, you can accept payments in EUR with Alipay and WeChat Pay). Note: If you have a bank account in another currency and would like to create an Alipay payment in that currency, you can contact Stripe support.

### What is Smart Url?

- By directing your customer to your checkout.yourdomain.com/Alipay address you can have the page load with Alipay payment method selected.

- By directing your customer to your checkout.yourdomain.com/1001 address, you can have the page load with the order number 1001.

- By directing your customer to your checkout.yourdomain.com/Alipay/1001 address, you can have the page load with Alipay payment method selected and order number 1001 entered.

If the payment for the order number has been made before, the page is updated with a successful screen, the payment button is hidden.

When the user chooses a different payment method or enters a different order number on the application, the url is updated according to that payment method and order number.

### What is Google reCAPTCHA?

reCAPTCHA is a service that protects your site from spam and abuse. reCAPTCHA v3 helps you detect abusive traffic on your website without user interaction. Instead of showing a CAPTCHA challenge, reCAPTCHA v3 returns a score. reCAPTCHA v2 ("I'm not a robot" Checkbox) requires the user to click a checkbox indicating the user is not a robot.

The application uses combined Google reCAPTCHA v3 and v2. Thus, spam attacks are prevented.

The app first verifies with reCAPTCHA v3 with a score. If the score (can be between 0–1) is not higher than this pass score, the app makes visible reCAPTCHA v2 with the "I'm not a robot" tickbox.

RecaptchaClickLimit: The number is increased each time the buttons are clicked and the page is refreshed. If a user reach the limit, whether the users score is higher than the pass score or not, the app makes visible reCAPTCHA v2 with the "I'm not a robot" tickbox.

### Quick Start

- Download the source files.

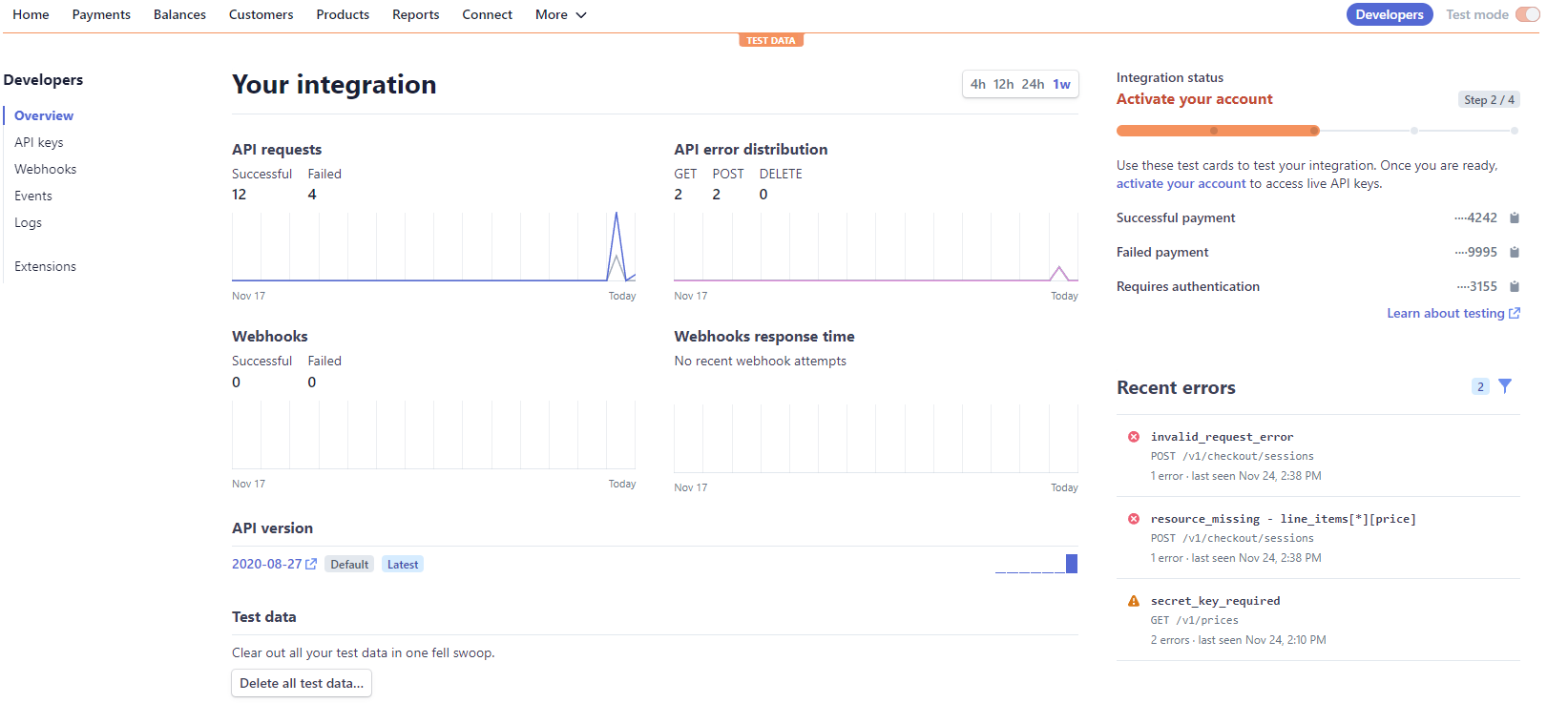

- Activate payment methods on dashboard.stripe.com/account/payments/settings

- Get your Stripe keys on dashboard.stripe.com/apikeys

- Create&Get your reCAPTCHA keys on google.com/recaptcha/admin/create

- Create new private app and Get your API Password on yourusername.myshopify.com/admin/apps/private/new

- Create your manual payments on yourusername.myshopify.com/admin/settings/payments

- Edit the code - Update Keys and Settings.

- Buy a Windows Hosting and Upload the files to the wwwroot folder.

- Create a subdomain (checkout recommended) and match it to the ip information your hosting service provider gave you.

Recommended hosting company: [HostGator](https://partners.hostgator.com/gbqA15). If you want to take advantage of discounted hosting, enter Techtolia in the coupon code field and apply.

If you don't have a domain yet, you can register it via [NameCheap](https://namecheap.pxf.io/BmdkB).

### Buy a Licence

The single license fee is $750.

If you have completed your purchase through CadeCanyon, please send an e-mail to support@techtolia.com to identify your license information and receive your license key. Do not forget to share your domain address information where you will publish the application, your license is specific to your domain address and cannot be used at any other web address.

For multiple and volume license purchases, please contact us at hello@techtolia.com

| techt01ia |

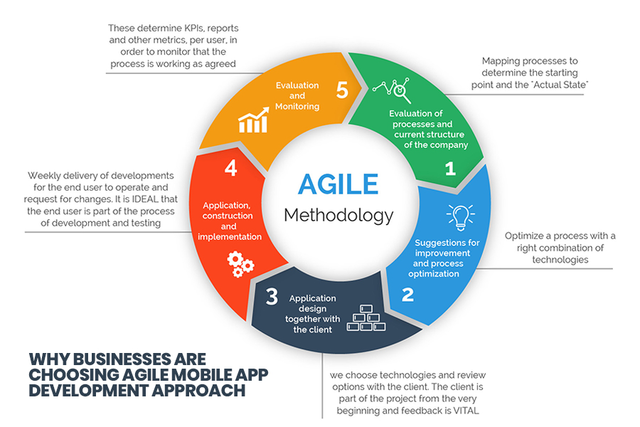

894,699 | What If...Marvel Built a Minimum Viable Product? | In 2011, Eric Ries published The Lean Startup, and it revolutionized software development. Lean... | 0 | 2021-11-11T01:50:46 | https://www.vidyasource.com/blog/what-if-marvel-built-a-minimum-viable-product | agile, leadership, startup, podcast | In 2011, Eric Ries published *The Lean Startup*, and it revolutionized software development. Lean principles had already [made it into agile software development](https://www.goodreads.com/book/show/194338.Lean_Software_Development), but *The Lean Startup* fills in a lot of gaps to turn the abstract[principles behind the Agile Manifesto](https://agilemanifesto.org/principles.html) into something real.

For example, even if you nailed agile and built the thing right, how do you know you built the right thing? In other words, it's great to execute on your project and build exactly what you intend on time and on budget, but when you're done, how do you know people will actually buy it? Although the Agile Manifesto never answers this question because it assumes you are on the right track all along, Ries gives us an answer.

## The Minimum Viable Product

Probably the most profound innovation in *The Lean Startup* is the Minimum Viable Product (MVP).

(You can [watch Ries himself talk about it](https://www.youtube.com/watch?v=E4ex0fejo8w), but I have to warn you that it looks like it was filmed by the director of [The Blair Witch Project](https://www.thenewsminute.com/article/how-blair-witch-project-manages-scare-without-showing-anything-supernatural-135492).)

The idea is really quite simple. Whether you are conscious of it or not, the product you have in mind is based on your assumptions about what your customers want and why, so the purpose of the MVP is to validate those assumptions with hard data around *a lightweight representation of your product* before you commit your full resources to building it out in full. You give your early adopters access to the MVP and consider their feedback, which Ries calls "validated learning." Validated learning is crucial. For Ries, it's quantifiable feedback that is "the unit of progress for lean startups."

Based on what you learned, you either "[pivot](https://www.youtube.com/watch?v=n67RYI_0sc0)" to something else more aligned to what your customers want or "persevere" because you had it right all along. From this point on, you have quantifiable, proven demand for your product, and you can be confident that you will be investing in building the right thing.

Like a lot of terms in tech that blow up, consultants often use MVP to mean something different from what Ries intended--most commonly to mean the first version of your product and/or a really scaled down version of it. In either case you've implicitly decided without any validated learning what you want to build. The fact it's in its earliest stages is irrelevant.

So what does all of this have to do with Marvel?

## The MCU Juggernaut

Unless you have been living in the [Quantum Realm](https://marvelcinematicuniverse.fandom.com/wiki/Quantum_Realm) for the last decade, you know the [Marvel Cinematic Universe (MCU)](https://www.marvel.com/movies). It's a monumental achievement in cinema that has taken decades of Marvel comics and reformulated them into a multibillion dollar juggernaut movie franchise that has raised once second-tier heroes like Iron-Man, Thor, and Black Widow to the stature of eternal favorites like Spider-Man, Captain America, and Hulk and put [Mjolnirs](https://marvel.fandom.com/wiki/Mjolnir) and [Infinity Gauntlets](https://marvelcinematicuniverse.fandom.com/wiki/Infinity_Gauntlet) into the homes of ardent fans worldwide.

It may be hard to imagine now, but there was no guarantee of success at the beginning as Marvel was struggling as a business having sold off their most bankable assets. This forced Kevin Feige, the architect of the MCU, to get creative to and [derive new ways to bring Marvel heroes to life](https://www.vox.com/2016/5/9/11595344/marvel-cinematic-universe-captain-america-avengers).

They succeeded spectacularly, and now they have to do it all over again.

Firmly entrenched in global pop culture, the MCU now faces pressure to build on its foundation with new heroes and villains, many of whom like [Moon Knight](https://marvel.fandom.com/wiki/Marc_Spector_(Earth-616)) and [Titania](https://www.marvel.com/characters/titania-mary-macpherran) lack the star power of Captain America and Spider-Man and are all but unknown to mainstream audiences. And considering that the MCU has decided to go all in on the [Multiverse](https://marvelcinematicuniverse.fandom.com/wiki/Multiverse), there are literally infinite possibilities for stories.

How can Marvel decide what direction to take with the MCU?

## *What If?* is Marvel's MVP

There is no question Kevin Feige has sketched out the broad contours of the next iteration of the MCU. Still, as sterling as his record is, he's working from assumptions about what will make for great stories and what will thrill audiences going forward. If he followed *The Lean Startup* model, he would build a lightweight MVP to test his hypotheses with passionate fans and measure their response for some validated learning.

It turns out that is exactly what Marvel is doing with *What If?...*

*What If?...* is an [animated show on Disney+](https://www.marvel.com/tv-shows/animation/what-if/1) that imagines the MCU heroes we know experiencing very different lives throughout the multiverse. For example, we see Peggy Carter receive the super soldier serum rather than Steve Rogers to become [Captain Carter](https://www.marvel.com/articles/tv-shows/what-if-episode-1-multiverse-report-captain-carter), and we watch somberly as T'Challa, voiced just as he is portrayed on screen by the legendary Chadwick Boseman in his final performance, [becomes Star-Lord](https://www.marvel.com/articles/tv-shows/what-if-new-images-episode-2) rather than Peter Quill. It's heroes and villains we know so well from the MCU facing new challenges alongside other heroes and villains we've never seen them interact with before.

The novel combinations are fun and exciting, but they're also an experiment. How will fans, primarily hardcore MCU fans (early adopters if you will) react? You can easily track this with viewership metrics on Disney+, mentions on Twitter, and the impressions of media influencers for validated learning. When a story doesn't click, Marvel can dismiss it as a fun idea for the fans and pivot to something else. On the other hand, when a story does click, Marvel can persevere and explore it further on a live-action series on Disney+ or even on the big screen within established MCU canon.

Best of all, Marvel can test their hypotheses, engage in validated learning, and guide the future of the MCU accordingly at a low cost. *What If?...* is high-quality animation, and most of the elite talent that portrays the characters on the big screen voices them on the show. Still, the cost of running these experiments in animation without any real commitment since the stories all happen outside the "prime" MCU universe is orders of magnitude less than it would be going all in with any of the *What If?...* plots in live action.

## The Key Lesson

One way or another, we are all in the business of product development. Too often we assume we have accurately gauged customer sentiment, and [we exude hubris that success is inevitable only to fail miserably](https://www.youtube.com/watch?v=t-_PfdQ0DYo).

Don't be afraid to test your assumptions before you go all in. Build a Minimum Viable Product--something more lightweight and less expensive that will nonetheless provide your early adopters something meaningful to evaluate. The validated learning you get from their feedback will either help you pivot to something more aligned with what people want or empower you to persevere with your original idea with legitimate confidence it will sell. Once you start executing, then you can argue on Twitter about whether you need daily meetings and whether people should be allowed to sit down in them.

Using an MVP to build confidence in your product direction is exactly what the Marvel Cinematic Universe has done with *What If?...*, but you don't have to be Marvel to fly [higher, further, faster, baby](https://www.youtube.com/watch?v=eKAvj9EjBmM).

| realneilc |

903,601 | aa | Puppeteer and the Lighthouse API (you can see the complete code here). var s = "JavaScript syntax... | 0 | 2021-11-20T02:57:48 | https://dev.to/nixcodes/aa-51f9 | Puppeteer and the Lighthouse API (you can see the complete code here).

```javascript

var s = "JavaScript syntax highlighting";

alert(s);

```

```python

s = "Python syntax highlighting"

print s

```

```

No language indicated, so no syntax highlighting.

But let's throw in a <b>tag</b>.

```

async function captureReport() {

// Puppeteer initialization

const browser = await puppeteer.launch();

const page = await browser.newPage();

// Start user flow

const flow = await lighthouse.startFlow(page, { name: 'My User Flow' });

// ... Caputure reports here ...

// End user flow

return flow.generateReport();

}

Within | nixcodes | |

903,691 | Audit and Fix a Markdown Page | My recent contribution to an open source project is the improvement of the online course notes for... | 0 | 2021-11-20T05:05:12 | https://dev.to/okimotomizuho/audit-and-fix-a-markdown-page-42l5 | opensource, c, beginners, webdev | My recent contribution to an open source project is the improvement of the online course notes for the C language course (IPC144) in my college. The online note uses Docusaurus, and I had the opportunity to study Docusaurus a while back, so this contribution deepened my knowledge.

### Issue

The issue I worked on was the Audit and Fix a Markdown Page. Each chapter of that online note consists of its own md file. My professor file an issue with a list of all of the md files and 19 tasks for improving each page. I selected the "output-functions" page in it, filed a new issue, and worked on the tasks.

### Checking display

I set everything up locally and checked for problems with the display. Such as: typo, markdown, the Light and Dark mode, and the desktop and mobile.

### Using tools for improving the quality of web pages

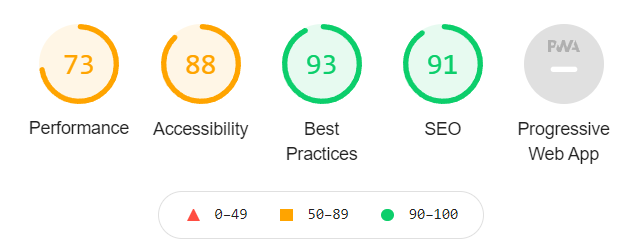

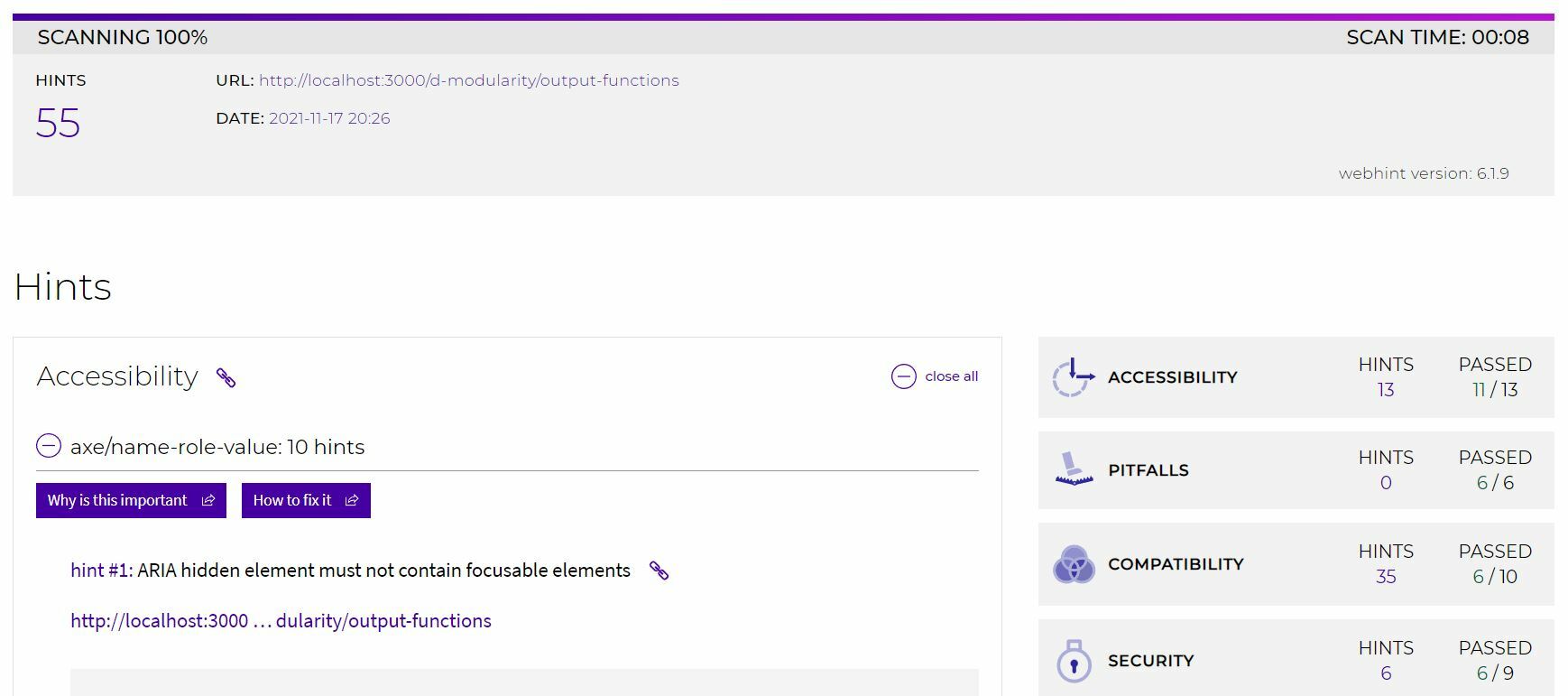

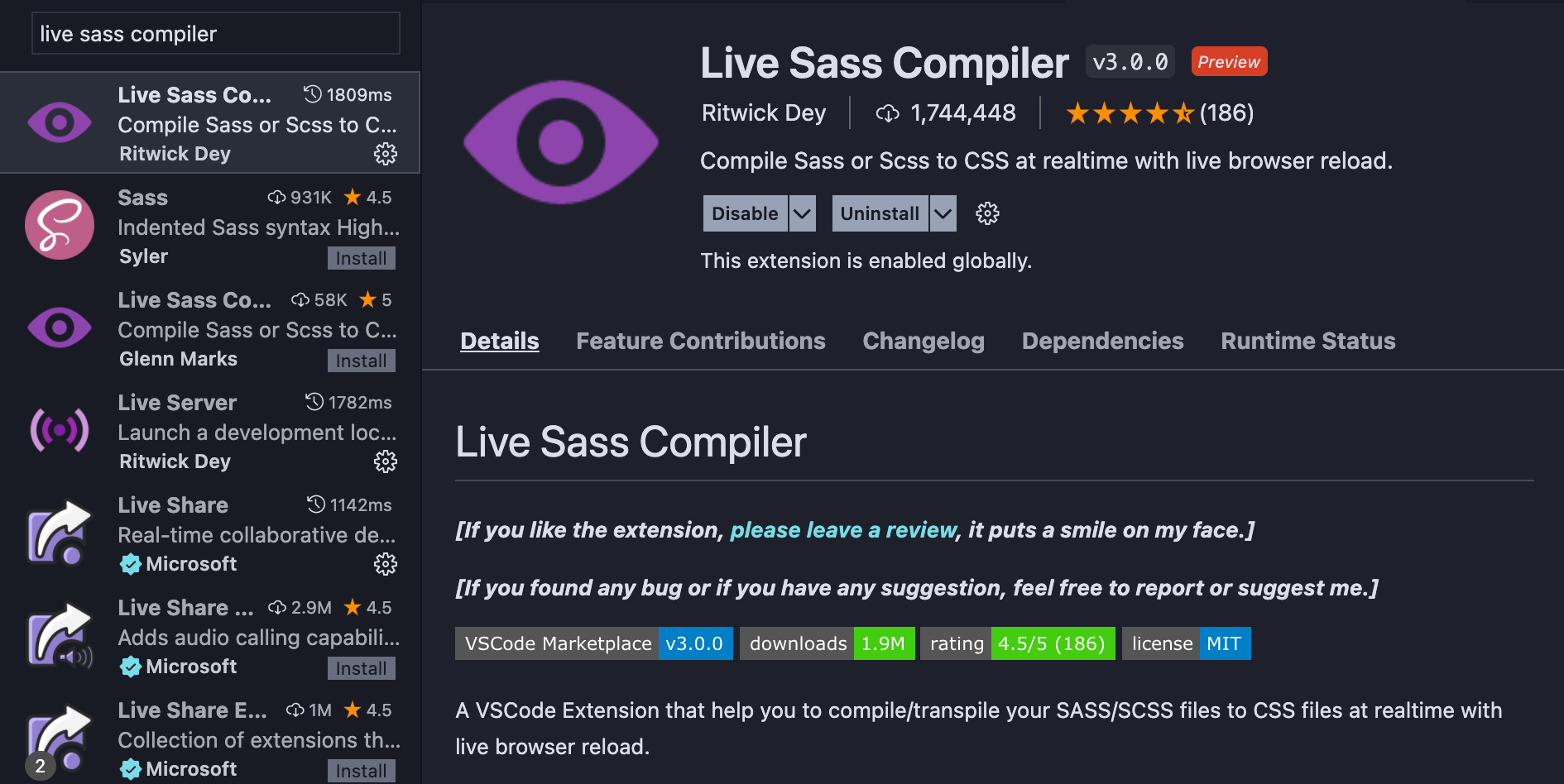

As my professor mentioned, I tried running the page through Lighthouse and Web Hint.

I had never used them, but it was easy to check with those tools.

Lighthouse checked if the page is optimized for each item, and showed me there is no alt tag for images. After I added alt, performance, accessibility, best practices, SEO scores went up.

When I checked the page using Web Hint, I got 55 hints, but nothing about the page I was working on.

### Fixing Frontmatter for the page

Markdown documents can use the Markdown FrontMatter metadata fields, enclosed by a line --- on either side.([Docusaurus official page](https://docusaurus.io/docs/api/plugins/@docusaurus/plugin-content-docs#markdown-frontmatter))

I added the FrontMatter below:

```

---

sidebar_position: 5

title: Output Functions

id: output-functions

slug: modularity/output-functions

desctiption: This chapter on output functions is about invoking standard library procedures to stream data to users

---

```

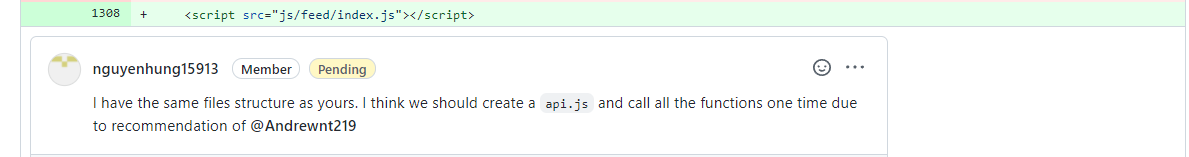

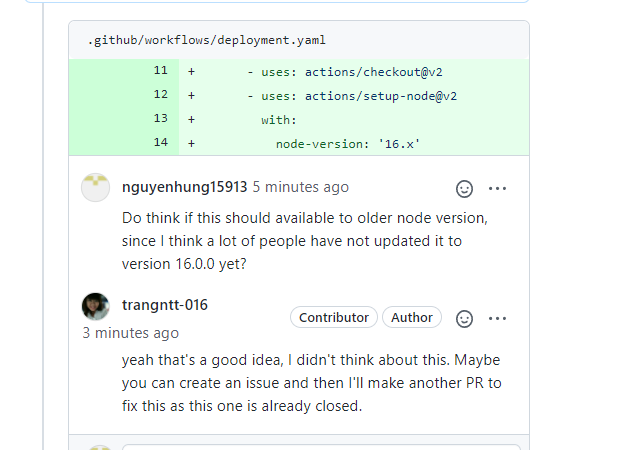

### Reviewed from other students

I made a PR, other students reviewed it and gave me some requests.

They were:

- Removing extra space I missed in the md file

- Adding a hyperlink to a related page

- Adding Description to the page(as a Frontmatter)

- Adding backticks to function names

### Conclusion

I thought there were a lot of tasks, but it wasn't too difficult. It was good to be able to contribute to the project that actually used Docusaurus and to experience the improvement of the page using Lighthouse and Web Hint. This time, my professor listed the tasks and provided them to us, which made the contribution easier, and I would like to use the task when working on external issues myself in the future.

### :link:Links

[IPC144 Project repo](https://github.com/Seneca-ICTOER/IPC144) | [Issue](https://github.com/Seneca-ICTOER/IPC144/issues/87) | [Pull Request](https://github.com/Seneca-ICTOER/IPC144/pull/100)

(Photo by Glenn Carstens-Peters on Unsplash) | okimotomizuho |

903,760 | 5 Tips That Will Boost Your Productivity | you're reading this article, then you're probably thinking about new ways how to increase the quality... | 0 | 2021-11-22T12:42:54 | https://dev.to/metamark/5-tips-that-will-boost-your-productivity-10dj | productivity, career, discuss, programming | you're reading this article, then you're probably thinking about new ways how to increase the quality of your work and your life itself without spending too much time on it. And I wanna share with you some of the advice that helped me once and which I'm using to the present day. There won't be something like "Use Pomodoro Technique" or "Meditate for 5 minutes every day", these tips are fully working and even help you to choose in which direction to go.

---

##Determining Goals

Firstly, before doing some work on your goals we need to determine the goals themselves, and to do it right we surely have to use Warren Buffet Technique which is much simpler than it seems.

You need to write down 25 Goals or Directions that you want to go for and highlight only 5 of them which are the most important for you and will give you the future that you want. Don't worry about the other 20 Goals in this list, you'll reach them after you've reached your main goals, but for now, you need to add them to your Not-To-Do-List because they only distract you from your real goals and you're spending too much on them. Also, you can write not exactly 25 Goals, your list can be much bigger or much smaller, the sense is that you need to choose the most important ones.

For instance, I've my list where I've written around 20 Spheres which I wanna learn, there're Guitar, 3D Modeling, Photographing, etc. However, the most important for me are Programming (ML, Web-Dev), Writing/Content Creation, and Design. So I'm going directly in these directions without distracting on other smaller goals

##Perfectionism is an Evil

There's research that people often drop out of courses because they have a perfectionism to do it as fast as they only can and in a moment burn themselves out. That doesn't mean that you don't have to have deadlines, it's saying that if you will have very short deadlines then you'll probably drop out.

Also, you don't have to pass the course for the highest grade or spend several hours on passing some final exam. When it comes to courses or learning something, you don't have to be perfect at it, good is enough, and you'll see how it will save your time

Perfect is an enemy of good. Just "good" is enough

##The Start is The Key

The hardest part of building a good habit is starting. Usual, you just can't start doing something if your task or goal sounds like "Build a rocket" or "Make a new billion Startup".

To start doing something you need to make a start for your goal much easier. For example, if your goal is to build a rocket so you should make smaller goals of it, like learn the conception of building a rocket or see how Elon Musk did it and so on. However, it's not complete yet, because you can't learn the construction of the rocket in one day (Perfectionism is an Evil, remember it), it means that you have to make a daily habit like spending 1 hour on reading rocket documentation, etc.

It gets much easier now, right? That's the power of a good start. If you know that you need to your task is not to build a rocket, and just read the documentation for 1 hour you'll probably do it without stress and burning yourself out.

The same principle is with bad habits too! If you know that smoking is bad for you and you wanna quit, so make the first 20 seconds of smoking the hardest thing you've ever done, and you'll never do it again, I swear.

##Consistent Habits

After you decided in which direction you'll go and what to learn, divide your goals into smaller ones, and made them as simple as possible, now it's a turn for consistency. What do I mean, for achieving something you need to do it consistently, every day and every week, and with that by going with small steps you'll learn anything you want and reach your goals.

However, sometimes there're situations where you just can't do it today (Was on vacation or had other tasks that were more important than it. Reason that you were lazy does not include) and you don't need to stress about it if you have a habit to read around 20 pages every day and lost one day, then in the next day, you will need to read 40 pages. But it works only for a 1-day skip, the next day, you must read 40 pages and don't leave it to another day.

Never, and I'll repeat it again, Never make a second mistake. If you fail at your daily habits then you must reconnect and back in to it

##The Power of Productive Procrastination

As you might have noticed from the title, it's procrastination that is productive, strange a little bit, don't you think so? What does it mean, anyway?

Productive Procrastination is when you're not working on your main tasks like building a landing page for your product, and instead of it you're scrolling the Dribbble or Behance and getting inspired by someone's work. So it's not so great as working on your main task, however, it helps to spend less time and to build your product better. It means that not every procrastination can

be useless.

Another example, you can watch some videos of YouTubers like Matt D'Avella or my favorite GaryVee after which you will look at life from other angles.

---

##Conclusion

I tried to describe to you one of the greatest unusual tips for boosting your productivity and not only. I'll write more about productivity and different kinds of advice for life, so subscribe and get everything first. I hope you enjoyed this article :) | metamark |

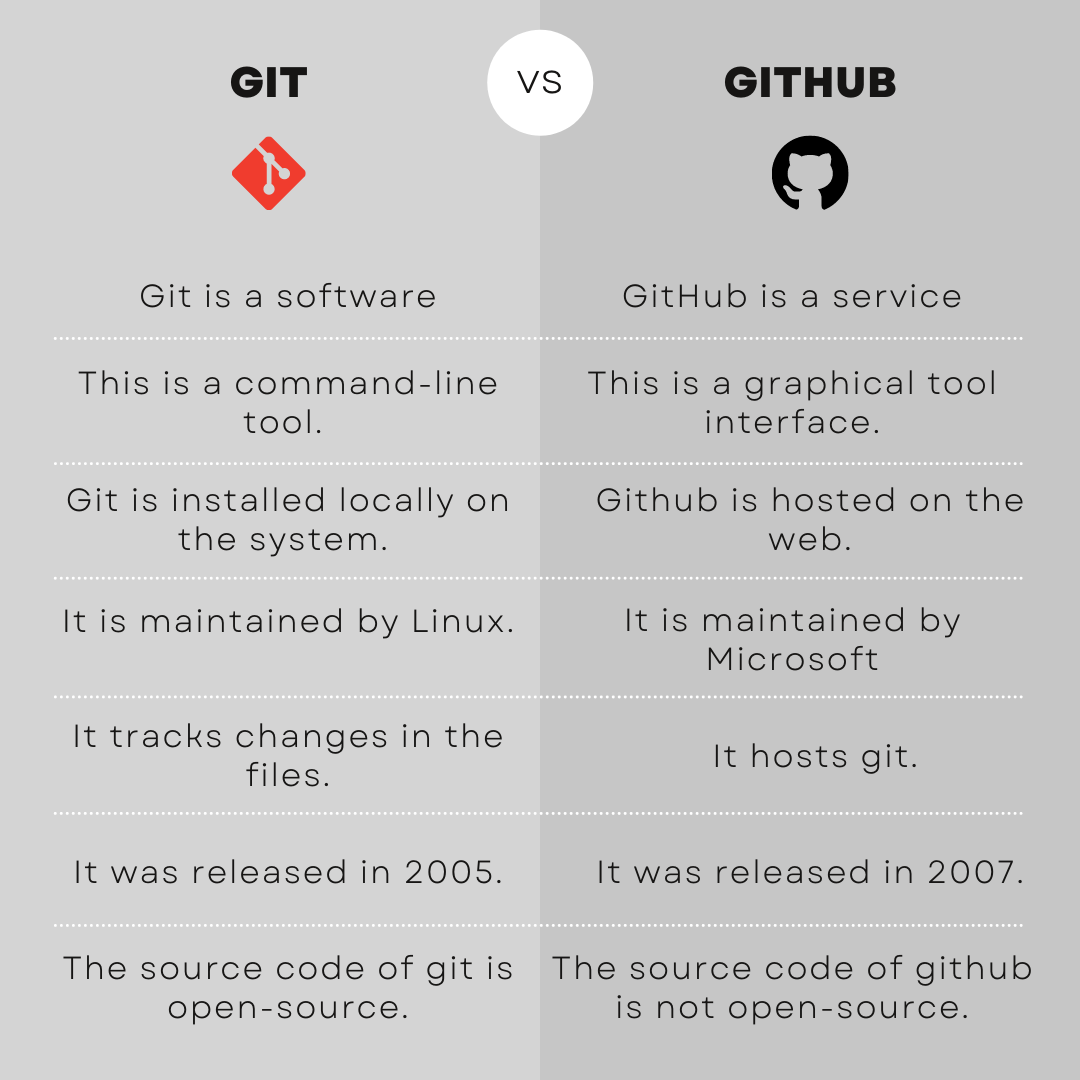

903,873 | Getting Started with Git & GitHub | In the world of programming, you would have come across the terms Git and GitHub. If not, you are... | 0 | 2021-11-20T10:07:43 | https://dev.to/codezillaclub/getting-started-with-git-github-18am | github, programming, git, opensource | In the world of programming, you would have come across the terms Git and GitHub.

If not, you are still in the right place! This blog will help you expand your knowledge about the programming world.🌎

What do these stand for? Are these both the same? What can one do with these? 🤔

These questions would have crossed your mind when you hear the terms GIT and GITHUB. Read along to know the answers to the above questions. 🙌

### What will be covered in this article:

```

- What is Git?

- Why Git?

- Basic Workflow of Git

- Git Commands

- Git Extensions

- Pros and Cons of Git

- What is GitHub?

- Pros and Cons of GitHub

- GitHub Features

- Git vs GitHub

- Conclusion

```

## What is Git?

Git was created by [Linus Torvalds](https://en.wikipedia.org/wiki/Linus_Torvalds) in 2005.

It’s a free and open-source distributed version control system that allows users to track changes in files over time while also allowing different users to work on the same project at the same time.

## Why Git?

- **Free & Open Source:**

Git was released under GPL(General Public License) open source license. It is free over the internet. We can manage our projects by git. Moreover, as it is open source, we can download its source code and also perform changes according to our requirements.

- **Security:**

Git uses SHA1(secure Hash Function) to name and identify objects within its database. It ensures that it’s impossible to change file, date, and commit message, and any other data from the git database without knowing Git.

- **Backup:**

The chances of losing data are very rare when there are multiple copies of it.

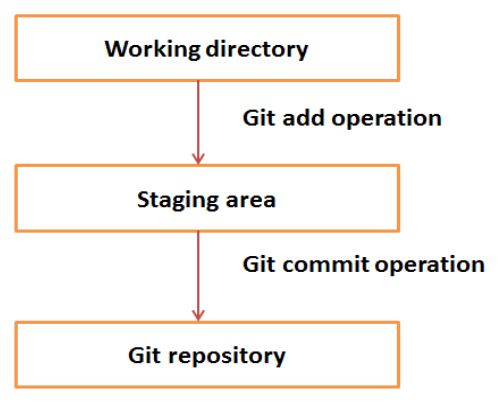

## Basic Workflow of Git:

## Git Commands :

- **git init**→ initializes a local git repository.

Usage: git init [repository name]

- **git add**→ adds a file to the staging area.

Usage: git add [file]

- **git commit**→ records or snapshots the file permanently in the version history.

Usage: git commit -m “Type in the commit message”

- **git merge**→ merges a branch to an active branch.

Usage: git merge [branch name]

- **git push**→ uploads local repository to remote repository.

Usage: git push [variable name] master

- **git pull**→ downloads content from a remote repository.

Usage: git push [Repository name]

## Git Extensions:

Many Git extensions, like Git LFS, started as an extension to Git in the GitHub community and are now widely used by other repositories. Extensions are usually independently developed and maintained by different people, but at some point in the future, a widely used extension can be merged to Git.

**Other open-source git extensions include:**

- **git-annex:**

a distributed file synchronization system based on Git

- **git-flow:**

a set of git extensions to provide high-level repository operations for Vincent Driessen's branching model

- **git-machete:**

a repository organizer & tool for automating rebase/merge/pull/push operations

Microsoft developed the Virtual File System for Git (VFS for Git; formerly Git Virtual File System or GVFS) extension to handle the size of the Windows source-code tree as part of their 2017 migration from Perforce. VFS for Git allows cloned repositories to use placeholders whose contents are downloaded only once a file is accessed.

## Pros And Cons of Git:

**Pros:**

- Better merges than anything else.

- Logging.

**Cons:**

- Still some confusing merge issues.

- I would like to see a system IDEs can use that automatically notifies you if someone pushes.

## What is Github?

Headquartered in California , it has been a subsidiary of [Microsoft ](https://en.wikipedia.org/wiki/Microsoft) since 2018.

GitHub provides a hosting service for software development version control using Git. It provides a web-based graphical interface. GitHub is like a social networking site for developers!

## Why Github?

- **Open-source benefits:**

Open-source projects tend to be more flexible because they respond more rapidly to market demands. Closed-source programs might exist in a bubble while trying to convince a target market of its value, as opposed to being genuinely responsive. GitHub provides a community where programmers are constantly working to solve current problems and make solutions available to the public.

- **Find Talent:**

Because of the breadth of the GitHub Community, we can sometimes find programmers working on similar projects or who have skills, experiences, or a vision that offers a good fit for an organization.

- **Easy Version Control:**

Versions control on Github works much like on MS Office or Google Drive--it tracks all the changes made to your code and

who makes them. You can always review the detailed changelog that neatly hosts all of the relevant information.

## Pros And Cons of GitHub :

**Pros:**

- As a repository it's great. It houses almost all the open-source applications/code that anyone can fork and play with. A huge collection of sample codes available with problem statements across different domains make Github a one-stop location.

- I use GitHub with Windows and the Git Bash is superb. It is a powerful alternative to the Command Prompt and Powershell. Allows me to run shell scripts and UNIX commands inside the terminal on a Windows PC.

- GitHub integration with almost all cloud development/deployment platforms is amazing. Deploying a new application in Azure is really smooth. Just link the GitHub repositories and it's good to go. From automatic build to deployment everything is just amazing.

**Cons:**

- Not an easy tool for beginners. Prior command-line experience is expected to get started with GitHub efficiently.

- Unlike other source control platforms, GitHub is a little confusing. With no proper GUI tool, it's hard to understand the source code version/history.

- Working with larger files can be tricky. For file sizes above 100MB, GitHub expects the developer to use different commands (lfs).

- While using the web version of GitHub, it has some restrictions on the number of files that can be uploaded at once. Recommended action is to use the command-line utility to add and push files into the repository.

## GitHub Features :

- **Issues:** Keep track of enhancements, and bugs for your project.

- **Discussions:** Collaborative communication forum for the community.

- **Code spaces:** A cloud-based integrated development environment (IDE) on GitHub (Beta).

- **Sponsors:** Invest in software that powers your world- a new way to contribute.

- **GitHub pages:** Websites for you and your projects, hosted directly from your repo.

- **GitHub actions:** Automate software workflows. Build test, and deploy your code from GitHub.

- **Organizations:** Organisations are group-owned repos.

- **Packages:** Automated and secure path to continuous integration + deployment.

- **Project boards:** Project boards help you organize and prioritize your work.

## Git vs GitHub :

## Conclusion:

Now, you definitely must have an idea of what Git and GitHub is, and what one can do with them. Isn’t it exciting! Having sound knowledge about Git and GitHub and working with them efficiently is very important for any programmer interested in developing great software products.

Thank you for reading, and hope you enjoyed the article!😊

If you have any questions for us, please leave them in the comment section and we will get back to you soon!

Have a great coding journey ahead😎

If you enjoyed the article, hit the reactions 🧡 and do share it with others 🤓 ~

Written by :- [Adhithi Satish Kumar](https://www.linkedin.com/mwlite/in/adihithi-satish-kumar-789350226), [Akshara S PA](https://www.linkedin.com/in/akshara-s-pa-1120bb210) , and [Riddhi Gope](https://www.linkedin.com/in/riddhi-gope-5a1676222)

To stay updated whenever we post new content, follow **Codezilla** on:

[Community Portal

](https://community.mozilla.org/en/groups/codezilla/)

[Instagram](https://www.instagram.com/codezillaclub/)

[LinkedIn](https://www.linkedin.com/in/codezillaclub/)

[Twitter ](https://twitter.com/CodezillaClub)

| codezillaclub |

904,062 | Angular: Where does the term 'directive' come from? | I'm trying to understand the Angular terms and philosophy. One of the most basic building blocks of... | 0 | 2021-11-20T14:14:04 | https://dev.to/ovidiu141/angular-where-does-the-directive-term-come-from-20b6 | angular, todayisearched, javascript, html | I'm trying to understand the Angular terms and philosophy. One of the most basic building blocks of Angular seems to be a `directive`.

As a non-native English speaker, I don't find this term intuitive, but rather abstract. So what's up with this term?

The Angular glossary says that "A [`directive` is a] class that can modify the structure of the DOM or modify attributes in the DOM and component data model".

Ok, it got something to do with changing the DOM. But still, why 'directive'?

It got something to do with 'direction'?😄

Seems VueJS also has the concept of `directive`.

VueJS docs: "If you have not used AngularJS before, you probably don’t know what a directive is. Essentially, a `directive` is some special token in the markup that tells the library to do something to a DOM element."

This includes a reference to the AngularJS framework, and, yes, it seems AngularJS also has this term.

AngularJS docs: "At a high level, `directives` are markers on a DOM element (such as an attribute, element name, comment or CSS class) that tell AngularJS's HTML compiler ($compile) to attach a specified behavior to that DOM element (e.g. via event listeners), or even to transform the DOM element and its children."

Ok, so it seems that the term originally comes from AngularJS, and Angular and VueJS just inherited it.

I guess, in Layman terms, a `directive` is a way of "*teaching the HTML to do new tricks*" (not mine).

But still... why `directive`?

Still not sure, maybe the creators thought of this term because a `directive` it's like **commanding (giving directives/instructions/orders)** to a static technology (HTML) in order to make it smarter. | ovidiu141 |

904,073 | One Environment per Project: Manage Directory-Scoped envs with direnv in POSIX Systems | Manage your environment variables with direnv | 0 | 2021-11-20T14:41:58 | https://dev.to/otamm/one-environment-per-project-manage-directory-scoped-envs-with-direnv-in-posix-systems-4n3c | linux, bash, unix, direnv | ---

title: One Environment per Project: Manage Directory-Scoped envs with direnv in POSIX Systems

published: true

description: Manage your environment variables with direnv

tags: linux, bash, unix, direnv

//cover_image: https://direct_url_to_image.jpg

---

## Introduction

One of the more common practices in software projects is to keep certain information separated but accessible from the codebase which uses it. This is usually done with secrets such as passwords or private keys, and also with user or context-specific info pieces. However, management of environment variables can be a pain. The solutions to ease it are many, and there are even built-in ones such as [bash_profile](https://www.baeldung.com/linux/bashrc-vs-bash-profile-vs-profile).

One solution I've discovered recently and found particularly convenient is [direnv](https://github.com/direnv/direnv), a shell extension which enables definition of environment variables scoped by directory. After installing & hooking the extension to your shell, `direnv` will execute every time you change directories, looking for an `.envrc` file in the same or in a superior directory tree level. It will then load the defined variables to the current environment, and unload them if it ceases to detect the same `.envrc`.

Note that `direnv` will load the first detected `.envrc` file, which means that *the environment will* **not** *inherit values from a* `.envrc` *in a parent directory*.

It is also important to keep in mind that the environment variables *will only be loaded to your shell session once you move to a directory affected by a* `.envrc` *file*. So if you try something like running a script which loads an environment defined in a directory below your current level, the variables wouldn't be accessible.

## Install

Here's the [list of supported systems](https://direnv.net/docs/installation.html), it is very likely your UNIX-based system's main open source package manager has it available. Suppose we are on Debian, we can install `direnv` by running the standard external package install command in the terminal:

```bash

sudo apt-get install direnv

```

## Setup

After installation, we must hook `direnv` to our shell. Supposing we are using bash, we can accomplish this by appending this line to the end of our shell startup config file:

```bash

echo 'eval "$(direnv hook bash)"' >> ~/.bashrc

```

Almost the same for ZShell:

```bash

echo 'eval "$(direnv hook zsh)"' >> ~/.zshrc

```

Direnv also supports FISH, TCSH & Elvish. [Here are the hooking instructions for each supported shell](https://direnv.net/docs/hook.html).

## Using direnv

Now we must create an `.envrc` file for the directory we would like to scope the environment variables to.

Say we create it for the directory `~/project`.

```bash

echo export FOO='I love Linux!' >> ~/project/.envrc

```

You will then receive a warning that the current `.envrc` wasn't read; `direnv` will block loading `.envrc` every time it detects changes which were not explicitly allowed. Run:

```bash

direnv allow ~/project

```

and voilà!, you now have a directory-scoped environment.

Remember when I told you that '`direnv` will block loading `.envrc` every time it detects changes which were not explicitly allowed'? This isn't limited to newly introduced changes, the whole file will be unauthorized. So when you

```bash

echo export BAR='It is actually called GNU/Linux!' >> ~/project/.envrc

```

you will have to run `direnv allow ~/project` again, even to access `$FOO`. Kinda boring, but biased towards safety.

Every time an `.envrc` is loaded, direnv will output a message with the file path and also the names of the variables loaded, so you don't need to worry about forgetting your setup. It will also tell you whenever an environment was unloaded.

That's it, pretty straightforward and I hope you find it as convenient as I did. | otamm |

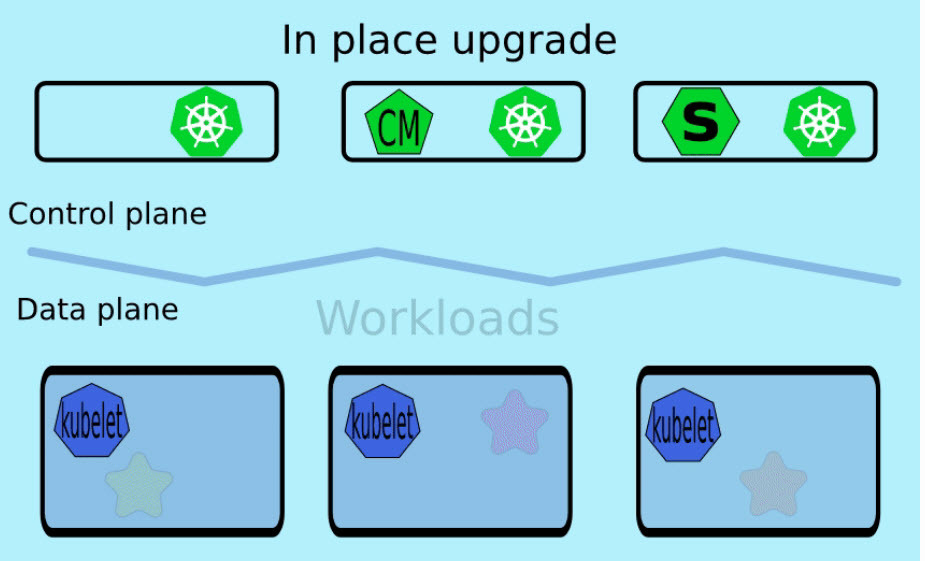

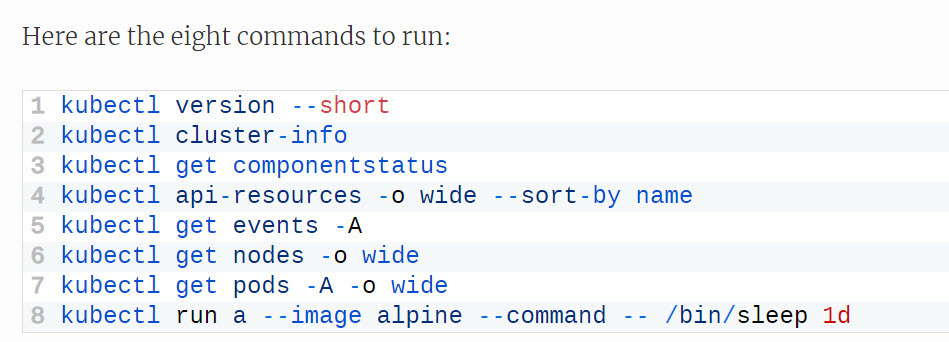

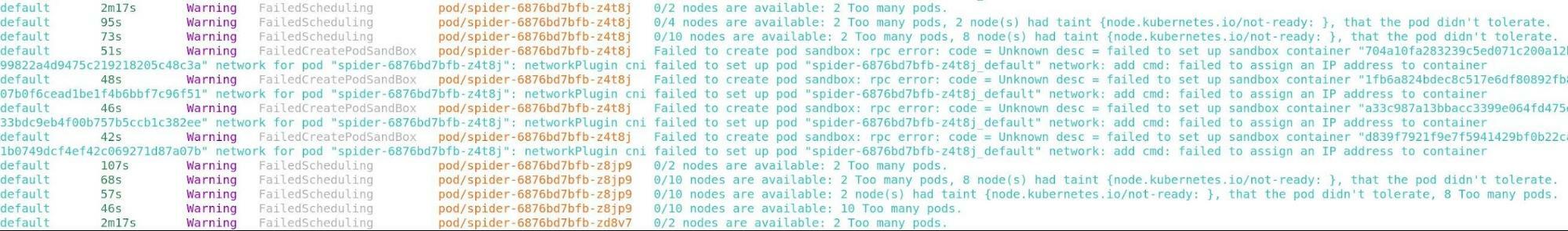

904,085 | 5 good posts in "Living with Kubernetes" | Thanks a lot to AWS Justin Garrison for this good series of "Living with Kubernetes" ! Recommend read... | 0 | 2021-11-20T15:28:21 | https://dev.to/aws-builders/5-good-posts-in-living-with-kubernetes-30mi | kubernetes, aws, tutorial, devops | Thanks a lot to AWS Justin Garrison for this good series of "Living with Kubernetes" ! Recommend read and understand more in kubernetes API versioning, Upgrade strategies, Multi-cluster management (suggest to read new EKS connector [Link] (https://docs.aws.amazon.com/eks/latest/userguide/eks-connector.html) too), how to debug cluster and workloads/pods !

5 good posts below:

1. API Lifecycles and You

[Link] (https://thenewstack.io/living-with-kubernetes-api-lifecycles-and-you/)

2. Cluster Upgrades

[Link] (https://thenewstack.io/living-with-kubernetes-cluster-upgrades/)

3. Multicluster Management

[Link] (https://thenewstack.io/living-with-kubernetes-multicluster-management/)

4. Debug Clusters in 8 Commands

[Link] (https://thenewstack.io/living-with-kubernetes-debug-clusters-in-8-commands/)

5. 12 Commands to Debug Your Workloads

[Link] (https://thenewstack.io/living-with-kubernetes-12-commands-to-debug-your-workloads/)

e.g. part 1:

part 2:

part 4:

part 5:

| leewalter |

904,093 | Simple to Complex Roadmap for Full-stack development (JavaScript Specific) | Simple to Complex Roadmap for Full-stack development (JavaScript Specific) HTML > CSS... | 0 | 2021-11-20T15:53:17 | https://dev.to/muneebhdeveloper/simple-to-complex-roadmap-for-full-stack-development-javascript-specific-3a28 | # Simple to Complex Roadmap for Full-stack development (JavaScript Specific)

HTML > CSS > JavaScript > ES6 > React > Node.js > Express > MongoDB > MySQL > Github > Deployment

**Do you want to go advanced?**

RESTful API > Payment Gateways (Stripe & Paypal) > Firebase > GraphQL > Headless CMS > Next.js (SSR, ISR, SSG)

**Do you still want to learn ? here some more**

Command Line > React Native > Web Sockets (Socket.io) > Redis > NGINX > Serverless > Docker > AWS

**Is that's not enough for you? you are the champ, there are some extras for you.**

1. Blockchain (DApps)

2. Augmented Reality

3. WebRTC

4. IoT

5. Streams

6. Electron.js

7. Maps, Geocoding and Geofencing

There are many many more to learn but I think this is enough for this post, Keep learning, Keep improving, and do remember one thing never ever stop learning because there's always **"One more thing" to learn.** | muneebhdeveloper | |

904,100 | How to Set Up Testing (TDD) for Typescript Nodejs API | Guide with detailed explanation on how to Set Up Testing for a Nodejs Typescript API using Jest | 0 | 2021-11-20T16:15:57 | https://dev.to/inidaname/how-to-set-up-testing-tdd-for-typescript-nodejs-api-3lak | typescript, api, testing, node | ---

title: How to Set Up Testing (TDD) for Typescript Nodejs API

published: true

description: Guide with detailed explanation on how to Set Up Testing for a Nodejs Typescript API using Jest

tags: Typescript, API, Testing, Nodejs

//cover_image: https://direct_url_to_image.jpg

---

In this article, we will see how to set up TDD for Nodejs Typescript API.

## The benefits of writing tests

> The purpose of a test case is to determine if different features within a system are performing as expected and to confirm that the system satisfies all related standards, guidelines and customer requirements. The process of writing a test case can also help reveal errors or defects within the system.

> -- Kate Brush, [What is a Test Case? - SearchSoftwareQuality](https://searchsoftwarequality.techtarget.com/definition/test-case)

The above is the best way to describe tests.

## Typescript

TypeScript is a strongly typed programming language that builds on JavaScript.

## Node.js

Node.js is a JavaScript runtime built on Chrome's V8 JavaScript engine.

## Jest

Jest is a JavaScript Testing Framework.

### Prerequisites

- Install Nodejs https://nodejs.org

- Familiarity with Typescript or JavaScript is required

## Check if Nodejs is installed

```

node -v

```

You should have the below output, it varies on the version you install

```

v14.18.1

```

## Start the project

We will start by initializing a new nodejs application. Create a folder for your project, let call it `HelloWorld`. In the directory open your terminal and run the code

```

npm init -y

```

You are instructing Nodejs to initialize a new application and accept every question as default, this should create a `package.json` file in the directory.

Typescript is a superset of javascript, in this case, typescript still transpile to javascript before you can run and execute your code.

### Dependencies

Let's add dependencies for the application.

- Expressjs: A nodejs API framework.

To install Expressjs run

```

npm install --save express

```

### DevDependencies

Development dependencies, this is a typescript project we are required to install dependencies types as devDependencies to help nodejs with type definitions for the dependencies.

- `@types/express`

- `@types/node: This helps with type definitions for Node.js`

- `ts-node: It JIT transforms TypeScript into JavaScript, enabling you to directly execute TypeScript on Node.js without precompiling, we should run the tests without compiling our code into javascript first.`

- `jest, jest-cli: Javascript testing framework to run tests`

- `@types/jest`

- `ts-jest: A Jest transformer with source map support that lets you use Jest to test projects written in TypeScript.`

- `supertest: HTTP assertions to help our test make API calls of GET, POST, etc`

- `@types/supertest`

- `typescript: well, this is a Typescript project`

Now let install these dependencies.

```

npm install --save-dev @types/express @types/node ts-node jest jest-cli @types/jest ts-jest supertest @types/supertest request @types/request typescript

```

## Configuration

### Typescript

To set up the typescript project we need to initialise typescript configuration, run

```

npx tsc --init

```

This will create a `tsconfig.json` file with the minimal configuration which is okay for this tutorial.

### Jest Configuration

Now we will set up jest configuration for the test environment, create a file name `jest.config.ts` and add the below code. To learn more about jest configuration visit https://jestjs.io/docs/getting-started.

```

export default {

moduleFileExtensions: ["ts", "tsx"],

transform: {

"^.+\\.(ts|tsx)$": "ts-jest",

},

testMatch: [

"**/tests/**/*.spec.ts",

"**/tests/**/*.test.ts",

],

testEnvironment: "node",

};

```

A little about the properties above.

- `moduleFileExtensions: An array of file extensions your modules use`.

- `transform: This is to tell jest that we will be using a different file extension not the usual .js files, in our case we are using .ts so we passed a Regex to match our file type and a module to help handle the filetype, this is where we make use of ts-jest we installed`.

- `testMatch: This property is to tell jest the directory and/or files to run for test cases`.

- `testEnvironment: We are telling jest which environment our project is targeted for in this case we are targeting Node environment`.

## Directory

This is our proposed directory structure

<pre>

├──jest.config.ts

├──package.json

├──package-lock.json

├──tsconfig.json

├──server.ts

├──src

│ └── app.ts

└──tests

└── app.spec.ts

</pre>

It is preferred to structure your app directory in this format for testing.

## Now the Codes

Create a folder name `src` and create a file in the `src` folder name `app.ts` with the following code.

```

import express, { Request, Response } from 'express';

const app = express();

app.get('/', (req: Request, res: Response): Response => {

return res.status(200).json({message: 'Hello World!'})

});

export default app;

```

### Let work through the codes

- First, we import the `express` module and types of `Request, Response`.

- Then we initialize our application with express and assign it to a variable.

- Then we call the `get method` for routing, with a callback handler for our route, this callback takes to parameter `req` type Request, `res` type Response, which returns a `Response` type. For typescript, it is recommended to type variables, parameters and function returns in your codes.

- The handler returns a response of Http status code ([Learn More about status codes here](https://developer.mozilla.org/en-US/docs/Web/HTTP/Status)) `status: 200` and a json of `message: 'Hello World!'`

- Then we export our application for testing purposes and to modularize.

Was that a lot?

Next we create another file in the root folder and name it `server.ts` containing the following code.

```

import app from './src/app';

const server = app.listen(3000, ()=> {

console.log(`This is running on port 3000`)

})

export default server

```

This is our application entry point.

### Test

Create a directory name `tests` in the directory create a file name `app.spec.ts`

```

import request from "supertest";

import {Express} from 'express-serve-static-core';

import app from "../src/app"

let server: Express

describe('APP should say "Hello World!"', () => {

beforeAll(() => {

server = app;

});

it('should return 200', (done) => {

request(server)

.get('/')

.expect(200)

.end((err, res) => {

if (err) return done(err)

expect(res.body).toMatchObject({'message': `Hello World!`})

done()

})

});

});

```

#### Let walk through the codes

- We import the `supertest` module and assign a variable name `request`.

- Import the Express interface

- We then import our app

- Then we declare a variable `server` with the type of Express without assigning any value to

- We describe our test suite for a test block, `describe` is a jest global function that accepts a `string` and a `callback`.

- We passed a description of the test suite with a string `APP should say "Hello World!"`.

- In the `callback` scope we call another global function `beforeAll` which accepts a `callback`, this is to tell jest that we will want to run a block of code before the suite run its tests. In this case, we want to first assign a value to our declared variable `server` which will be to assign the app that we have imported so we can run our tests.

- Then we call another global function `it` which is a test closure that takes two parameters a `name` and a `callback`.

- The name of our test closure is **should return 200**.

- We then pass a `done` callback as a parameter for the closure callback

- We call `request` and pass the `server` variable to run our app in the test scope.

- We call the get method and pass `'/'` route. In this case, we are running HTTP GET Method to our application on the route `'/'`.

- We expect the HTTP GET Method should return 200 Ok status and return the message `'meesage':'Hello World!'`

- We call `end` method which takes a callback with two parameters of `error` and `respond`

- We check if the test has error then we return the `done` method with the `error` from the test if otherwise which means the test runs successfully, so we call the `expect` global method which we check `body` property from the `respond` parameter to match our expected result.

- Then we finally call `done` method to tell jest we are through with this suite.

## Before we are done

And finally, we need a way to run our test, so edit the `package.json` file in the scripts section change `test` property. The scripts section should look something like this.

```

...

"scripts": {

"dev": "ts-node server.ts",

"test": "jest --verbose"

},

...

```

Now when you run

```

npm run test

```

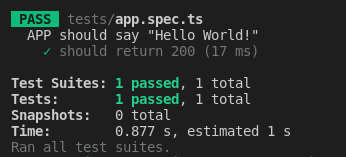

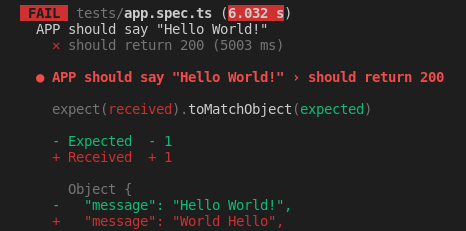

You see result like the screenshot below.

Let say we change the return value in `app.ts` file from `Hello World!` to `World Hello` you should see a failed test like the screenshot below.

## Conclusion

Writing test could mean to write more code but a few lines are worth the hours it will save. I'm a Full Stack developer using React, React Native and Nodejs with Typescript.

Feel free to put questions or suggestion in the comment or you can also reach out on Twitter [iNidAName](https://twitter.com/inidaname)

| inidaname |

904,116 | Weekend with Azure | Hi everyone, I am Mayank Gupta, a second-year student at Vellore Institute of Technology, Chennai,... | 0 | 2021-11-22T11:10:40 | https://dev.to/mayankcse/weekend-with-azure-3053 | cloud, azure, cloudcomputing | Hi everyone,

I am Mayank Gupta, a second-year student at Vellore Institute of Technology, Chennai, India, pursuing Bachelor of Technology in Computer Science. I have a zeal to work and learn new skills everyday with dedication, enthusiasm & passion, and aim to create difference in community as much as I can.

I recently attended a webinar by @kunalKushwaha, notes of which have already been uploaded on my twitter handle

You can have a look at that...was really an amazing session. This only ignited my interest towards cloud computing, which motivated me to learn more about the subject.

After lot of research I found that cloud computing is something that I was looking for, all my first year!

I've decided to dedicate my weekends to cloud computing.

Now I know you all will be thinking why am I giving introduction in a blog...actually this blog is the announcement to the future streak

from now I'll be learning cloud computing with Azure as my main cloud service,

### Why have I chosen Azure?

This topic already has a lot of content floating on the web, I don't want to jump into the fight

All the platforms are great, just being a student we should start with anyone which you like and feel is going to boom in the future_ I am going with Azure you can choose yours

### Announcement

Every weekend I'll be learning a new topic which will cover training of Azure and will be posting my notes with the learning and key concepts.

### Target

Microsoft Azure Administrator Certification [AZ-104]

![Microsoft Azure Administrator Certification [AZ-104]](https://dev-to-uploads.s3.amazonaws.com/uploads/articles/oin4mcx0pai8p3bc86ha.png)

**Do add your suggestions in the comment section**

---

| mayankcse |

904,133 | Developers Remember This Before Your Interview! | Interviewing can be very stressful. You may worry if you will make a good impression, or what type of... | 0 | 2021-11-20T17:51:59 | https://dev.to/kinjiru09/developers-remember-this-before-your-interview-558i | programming, interview, beginners | Interviewing can be very stressful. You may worry if you will make a good impression, or what type of test they will give you. But there are a few things to remember when you go to an interview.

##The Employer’s Interview

Employers typically have a particular mindset when interviewing developers, _“I am going to spend **my money** to pay you to render a service for me. So I want to make sure I am getting my money’s worth.”_ In other words, they want to make sure you are a worthwhile investment, or that you bring value to the company. Because many employers (not all) think like this, they typically use the interview process as a way to determine a developer’s value. You will meet with various people, answer questions both personal and technical, take coding challenges, and build an app. Some companies even have personality tests. A company has the right to have all these things in the interview. After all, it is their money.

But the exact opposite is true too! Developers should have a similar mindset when going to any interview. _“I am going to spend **my time** rendering a service to you. I want to make sure my time is well spent and well compensated.”_ Here is why developers should think this way.

##Time vs Money

Time is more valuable than money. You can have all the money in the world, but if you don’t have time to spend it or enjoy it with your loved ones, then the money is pointless.

As a developer, our time is valuable. We spend our time with our families, our friends, learning new skills, enjoying hobbies, and handling our responsibilities. Our time only becomes more valuable as we get older.

We don’t have the time to waste on pointless interviews. When you go to an interview, the employer is being interviewed just as much as you are. You need to make sure that the job will compensate you for your skills and that it will allow you to grow as a developer.

>Employers are checking to see if we have the right skills for the job. Developers should check if the employer has the right environment for us to grow.

Thus, developers should interview the employer.

##The Developer’s Interview

Developers should have a list of questions ready to ask the employer. Some examples are:

1. How many developers are on the team?

2. What is the personality of the team, are they fun and laid back or serious?

3. What type of source control do you use?

4. Do you have a code review process?

5. Do you have peer programming?

6. How do you encourage or help your developers to continue to grow?

7. How do you help your developers have a healthy work-life balance?

8. Walk me through a typical day at the office

9. Can I see an example of user requirements?

10. How many sprints do you cover in a quarter?

11. Does the IT department have its own budget?

12. How do you track bugs?

There’s nothing wrong with asking questions like these in an interview. You are investing your time in this company. Invest wisely!

New developers definitely have to learn this way of thinking. Just because you are new in the field doesn’t mean you have to accept any job. Find a job that will compensate you and will allow you to grow. If the job doesn’t meet these two criteria, do not waste your precious time.

Our time only becomes more valuable as we get older. We have to invest it wisely. Invest it in learning and improving yourself. Improve your programming skills. Improve your thinking ability. Improve your personality. These are skills that make us more valuable. Do not waste your time on a company with a toxic environment or an environment where you cannot learn and grow.

##Signs of a Pointless Interview

If you see a job that lists every skill under the sun, beware this interview will most likely waste your time. If you see a job posting like the below for a front end position but it lists NON front end skills, it’s probably not worth your time.

What You Bring to the Team

SQL

DevOps

Building APIs

React

Typescript

CSS

BS in Computer Science or equivalent and 5 years of experience

Accredited Full Stack Web Certification and 6 years of experience

This is from a real job posting for a front end position. This company wants you to know DevOps?! And what is Full Stack Web Certification, isn’t this position for a front end developer? Is a full stack developer stronger than a front end developer? Not necessarily. A full stack developer could be stronger on the backend but suffer on the front end. They didn’t even list HTML and JavaScript (knowing React doesn’t mean you know JavaScript). Job descriptions like this give you a view into the company. A company like this doesn’t know what their developers do, or they expect too much out of their developers. Front end is front end, backend is backend. The job description should be clearer. If they want someone who knows both, they should ask for a full stack developer. Companies that do not have clear job postings are an indication of communication issues.

;TLDR;

Going to an interview certainly can be stressful. But remembering that you can also interview the employer will help alleviate some of the stress. During your interview process make sure to see if the company can bring value to your career. If not, find a company that will. Invest in your future! | kinjiru09 |

904,195 | Basic Terms to know before getting started with Cloud | Those who have not read my previous blog, my name is Mayank and I have started a learning streak,... | 0 | 2021-11-26T17:51:48 | https://dev.to/mayankcse/basic-terms-to-know-before-getting-started-with-cloud-27m6 | cloud, azure, beginners, aws | Those who have not read my previous blog, my name is Mayank and I have started a learning streak, **#WeekendwithAzure** In this I'll be posting my learning in azure every weekend

This is my contribution to help students in my community. Hope you'll find my efforts useful.

If you like it please add a comment of appreciation

or

Please do add tips for improvement in the comment section below, would be really helpful

-----

__Getting Started!__

Before taking our first step in the world of cloud and Azure, we should get familiar with the 7 basic terms that every cloud engineers uses and knows about.

## 7 Basic Terms:

* Network Operating System

* Client Operating System

* Traditional IT

* Virtualization

* On-Premise Computing

* Cloud Computing

* On-Demand-Service and Pay-As-You-Go

Question to Client will be referred to as 'QTC'.

## Network Operating System

A Network Operating System (NOS) is a computer operating system (OS) that is designed primarily to support workstations, personal computers and, in some instances, older terminals that are connected on a local area network (LAN). The software behind a NOS allows multiple devices within a network to communicate and share resources with each other.

QTC: What are the Operating Systems running in your user's environment?

There are two types of Operating systems:

* **Window OS** = Window server 2008 R2, Server 2012, Server 2016, Server 2019

* **Linux OS** = Redhat Linux and Suse Linux

Application:

*Web Server *Database *Software Testing App *Backup *SAP HANA *Gaming App *Domain Controller *Mail Server

Example:

In the ICICI bank website whatever we do is not on our laptop, but there on the bank's server.

## Client Operating System

The Client Operating System is the system that works within computer desktops and various portable devices. This system is different from centralized servers because it only supports a single user ... The client operating system is the operating system for computer desktops or portable devices.

QTC: What are the Operating Systems running in your user's workstation?

There are two types of Operating systems:

* **Window OS** = Window 7,8,10,11

* **Linux OS** = Ubuntu and centos

To understand the gist of NOS and COS we can take the example of you going to a restaurant and placing order, now the waiter here is an example of server, NOS, and you are the clients, COS

Waiter will always provide you the dish that you asked him, which is of your taste or which will be accepted by you

## Traditional IT

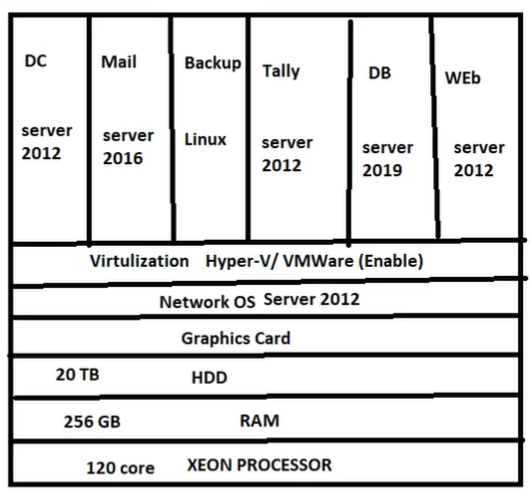

In order to use Traditional IT, we require a lot of hardware and space to keep the servers as every company is supposed to have its own Data center.

Just to give an idea, when we talk about servers for a company, there are almost 5000 servers required for big companies and around 100 servers for small organizations. Some are:

* Domain Controller

* Mail Server

* Database Server

* Tally Server

* File Server

* Web Server

and many others as per the requirement of the organization.

In order to handle these servers you will have to hire engineers. Thus, the cost increases.

You will have to arrange a place to setup these data centers and thus, space requirement increases.

Every server requires a high potential professional processor i.e. Xeon Processor, which costs about Rs.5 lakhs.(It varies as per configuration)

You'll have to take care of 24*7 electricity supply and a clean environment for the application to run smoothly.

You will also have to set-up a Secondary Data center. If in case some disaster happens with the primary data center, you'll transfer the load to secondary data center in order to keep the application running uninterrupted, and this will directly double the cost as the Secondary Data center will require the same hardware as the Primary Data Center.

__Now what if ?__ Secondary Data Center also fails? Let me take an example to explain this

Suppose your Primary Data Center is setup at Noida and Secondary Data Center in Pune

* _Case 1_ If some Disaster happens in Noida, we can transfer the load to Pune.

* _Case 2_ What if some disaster happens for complete India? now what? Now your app gets crashed and your clients outside India and even inside India are not able to use the application which can lead to huge losses and a feeling of distrust among users.

We'll see how cloud computing solves this issue efficiently

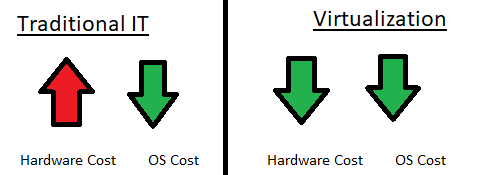

## Virtualization

There are two main Virtualization Software:

* Microsoft-Hyper-V

* VM Ware-ESXI or VM Ware Workstation

Virtualization is the process of running a virtual instance of a computer system in a layer abstracted from the actual hardware.

In simple words, it is the projection of the data from all the servers at one desktop. Single desktop is connected to all the servers.

## On-Premise Computing

On-premises software is located and operated within a user’s datacenter. As such, it uses the user’s computing hardware rather than that of a cloud provider.

It is like the physical hardware that will get delivered to your home.

For example:

*Laptop *Desktop *Mouse *Keyboard *Server *Rack *Printer *LAN Cable

## Cloud Computing

It is the delivery of computing services - servers, storage, databases, networking, software analytics and moreover the internet.

## On-Demand Service & Pay As You Go

These are the services that have made cloud Computing very popular. As we have discussed in traditional IT, it is very expensive to buy the server, set-up a data center, and hire engineers and that too, at an initial stage where you don't know whether your application will be a hit or not.

**What if**, you could rent a data center? By doing this you neither have to pay for the hardware nor have to hire engineers and you'll have a lot of space now which you can use for other things.

This is what big companies like Microsoft, Amazon, IBM and many others are doing.

In this you'll tell them about the hardware you require for your application and they'll build that for you in their data center and you will just have to pay the rent for the data center.

**How cool is that!!**

One more interesting thing is, you just have to pay for the services you use and when you use. For example:

If your working hours are 9:00 A.M. to 7:00 P.M. out of 24hr day so you have to pay for the hours you use the virtual machine.

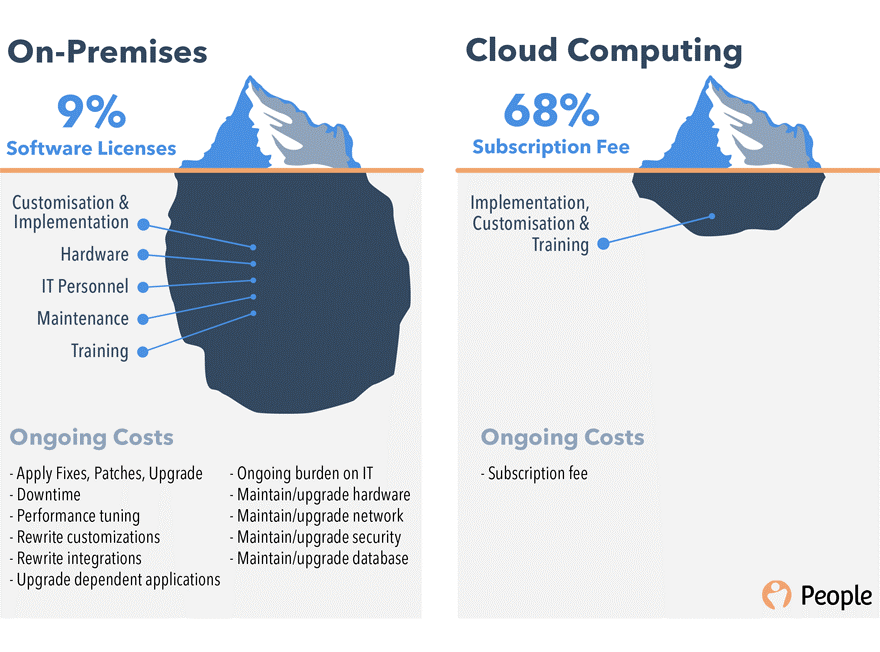

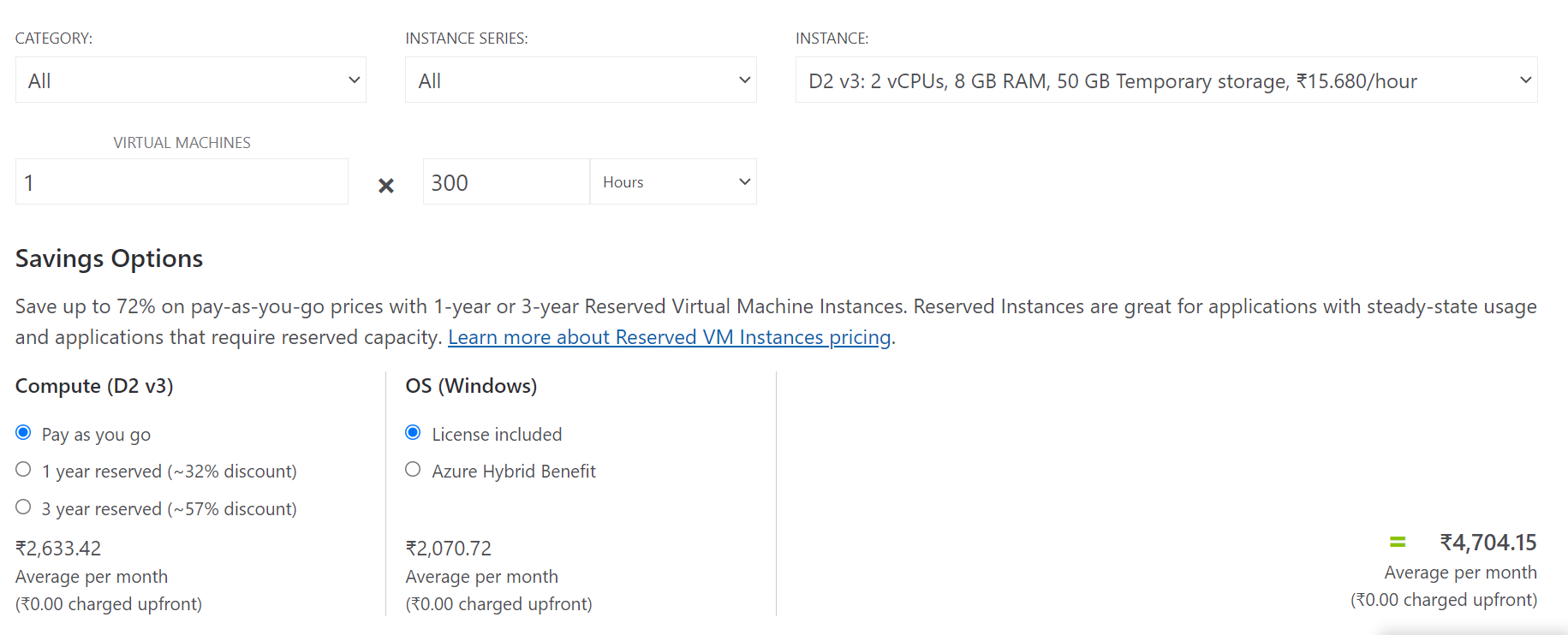

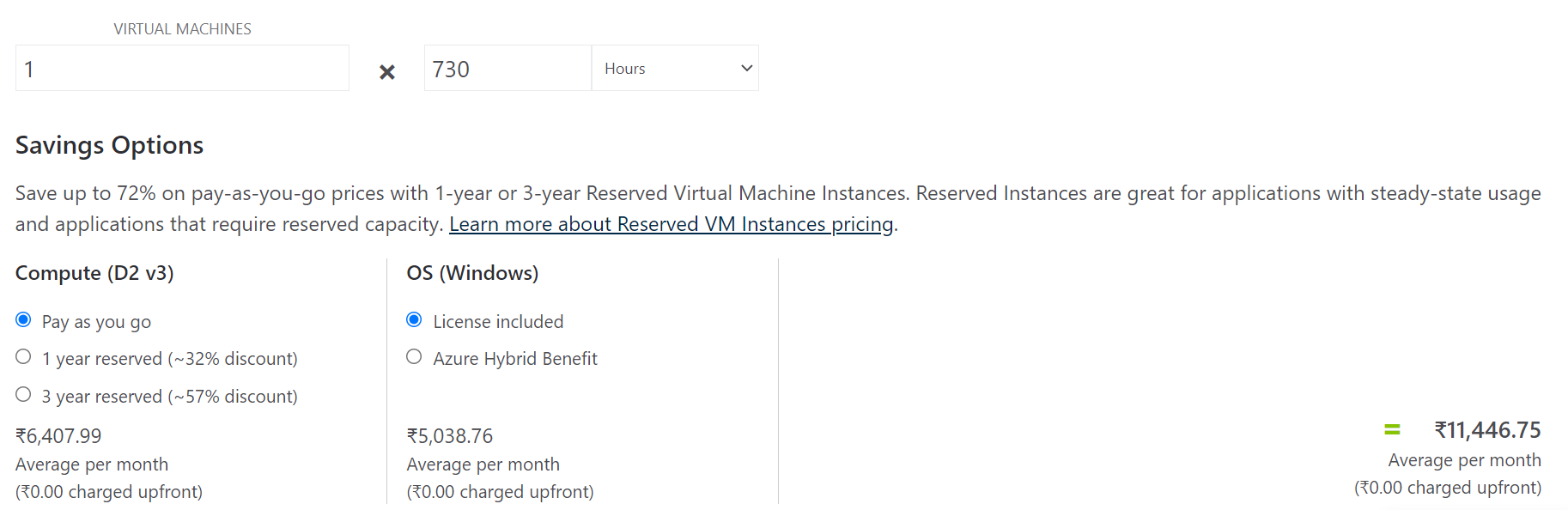

* For __9:00 A.M. to 7:00 P.M.__ i.e. 10 hours for 30days the amount is **Rs 4,704.15**.

* And for the __whole month__ with the same configuration it comes out to be **Rs.11,446.75**

To calculate the price, we use pricing calculator-

https://azure.microsoft.com/en-us/pricing/calculator/

Thus, we can clearly see that this process is very cost affective and with such big companies like Microsoft, Amazon, etc., security is also provided so you don't have to be worried about your data getting leaked.

-----

**That is all for this week**,

We have covered the following basic terms in detail:

* Network Operating System

* Client Operating System

* Traditional IT

* Virtualization

* On-Premise Computing

* Cloud Computing

* On-Demand-Service and Pay-as-you-go

One should know these before getting into cloud.

### Next Week

We'll be discussing-

* Difference between Cloud Computing and Traditional IT

* Cloud Models

* Resource Group and it's Creation (first lab activity)

* IP Addressing in Azure

Hope you liked my efforts.

You can connect with me at

Github - https://github.com/mayank-cse

Linkedin - https://www.linkedin.com/in/mayank-gupta-478640200/

Twitter - https://twitter.com/MayankGuptacse1

In case you have any doubts, please feel free to ask in the comment section.

Thank You for Reading! Hope it benefitted you😄

--- | mayankcse |

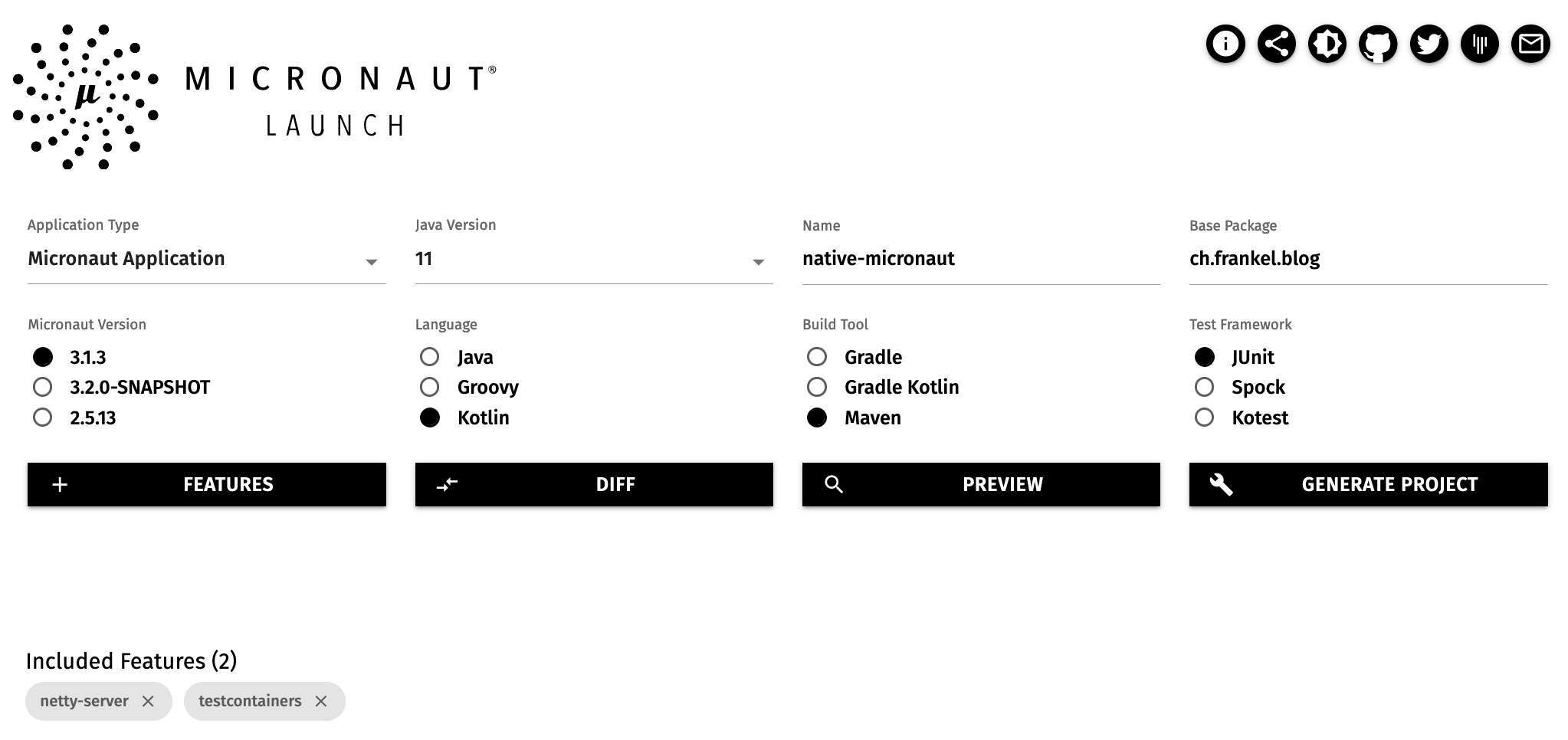

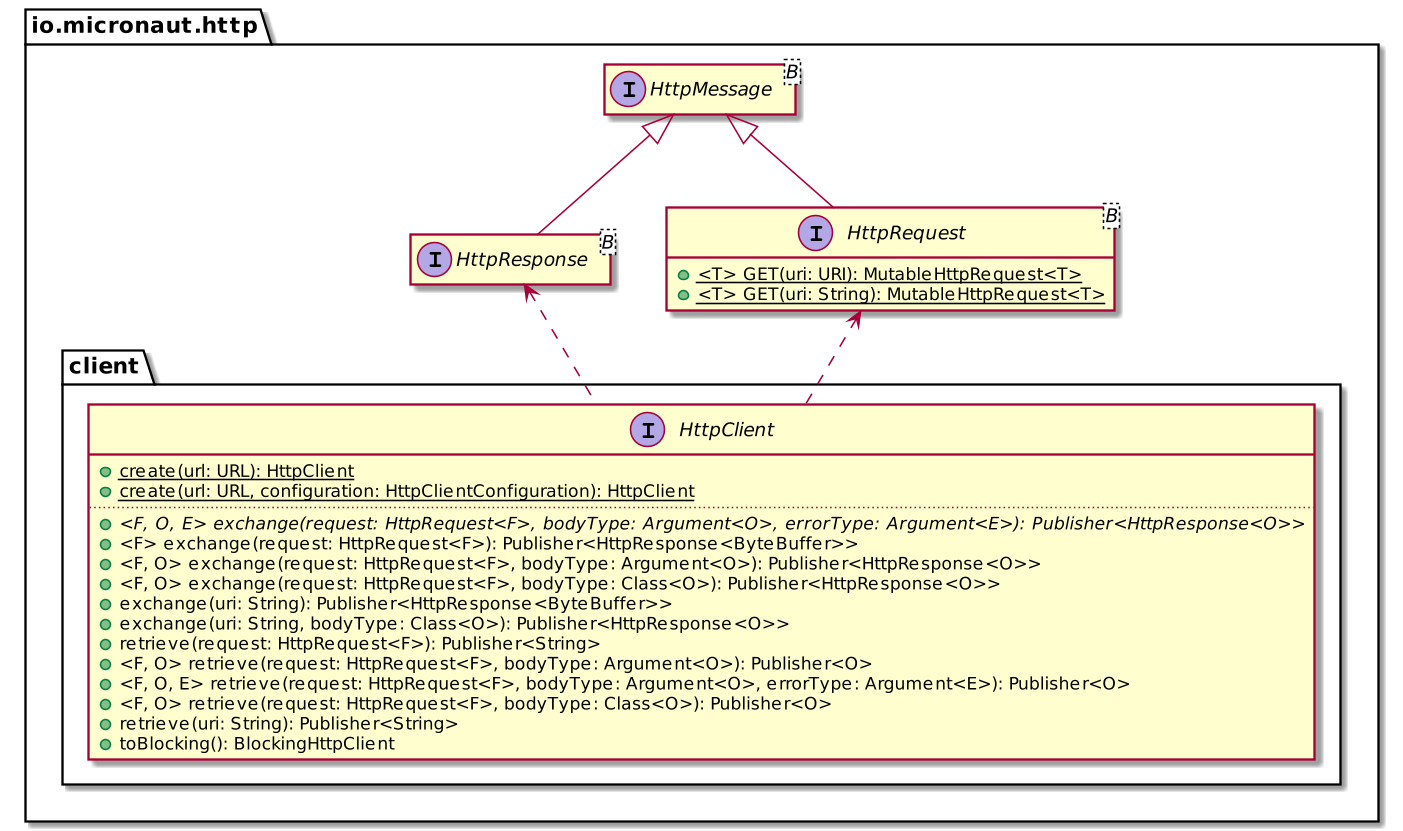

904,200 | Native-image with Micronaut | Last week, I wrote a native web app that queried the Marvel API using Spring Boot. This week, I want... | 15,512 | 2021-11-21T16:39:13 | https://dev.to/nfrankel/native-image-with-micronaut-41fc | kotlin, micronaut, nativeimage, graalvm | Last week, I wrote a native web app that queried the Marvel API [using Spring Boot](https://blog.franke.ch/native/spring-boot/). This week, I want to do the same with the Micronaut framework.

## Creating a new project

Micronaut offers two options to create a new project:

1. A [web UI](https://micronaut.io/launch):

As for Spring Initializr, it provides several features: preview the project before you download it, share the configuration and an API.

I do like that you can check the impact that the added features have on the POM.

2. A [Command-Line Interface](https://docs.micronaut.io/1.3.3/guide/index.html#buildCLI):

In parallel to the webapp, you can install the <abbr title="Command-Line Interface">CLI</abbr> on different systems. Then you can use the `mn` command to create new projects.

In both options, you can configure the following parameters:

* The build tool, Maven, Gradle, or Gradle with the Kotlin DSL

* The language, Java, Kotlin, or Groovy

* Micronaut's version

* A couple of metadata

* Dependencies

The application's code is on [GitHub](https://github.com/micronaut-projects/micronaut-starter). You can clone and adapt it, but as far as I know, it's not designed with extension in mind (yet?).

## Bean configuration

Micronaut's bean configuration relies on [JSR 330](http://javax-inject.github.io/javax-inject/). The JSR defines a couple of annotations, _e.g._, `@Singleton` and `@Inject`, in the `jakarta.inject` package. Developers use them, and the service provider implements the specification.

`@Singleton` and its sibling `@ApplicationScoped` are meant to be used on our code. Our sample app needs to create an instance of `java.security.MessageDigest`, which cannot be annotated. To solve this problem, JSR 330 provides the `@Factory` annotation:

```kotlin

@Factory // 1

class BeanFactory {

@Singleton // 2

fun messageDigest() = MessageDigest.getInstance("MD5") // 3

}

```

1. Bean-generating class

2. Regular scope annotation

3. Generate a message digest singleton

Micronaut also provides an automated discovery mechanism. Unfortunately, it doesn't work in Kotlin. You need to point to the package Micronaut explicitly should scan:

```kotlin

fun main(args: Array<String>) {

Micronaut.build().args(*args)

.packages("ch.frankel.blog")

.start()

}

```

## Controller configuration

Micronaut copied the `@Controller` annotation from Spring. You can use it in the same way. Likewise, annotate functions with the relevant HTTP method annotation.

```kotlin

@Controller

class MarvelController() {

@Get

fun characters() = HttpResponse.accepted<Unit>()

}

```

## Non-blocking HTTP client

Micronaut provides two HTTP clients: a declarative one and a low-level one. Both of them are non-blocking.

The declarative client is for simple use-cases, while the low-level is for more complex ones. Passing parameters belongs to the complex category, so I chose the low-level one. Here's a sample of its API:

The usage is straightforward:

```kotlin

val request = HttpRequest.GET<Unit>("https://gateway.marvel.com:443/v1/public/characters")

client.retrieve(request, String::class.java)

```

Remember that we should get parameters from the request to the application and propagate them to the request we make to the Marvel API. Micronaut can automatically bind such query parameters to method parameters with the `@QueryValue` annotation for the first part.

```kotlin

@Get

fun characters(

@QueryValue limit: String?,

@QueryValue offset: String?,

@QueryValue orderBy: String?

)

```

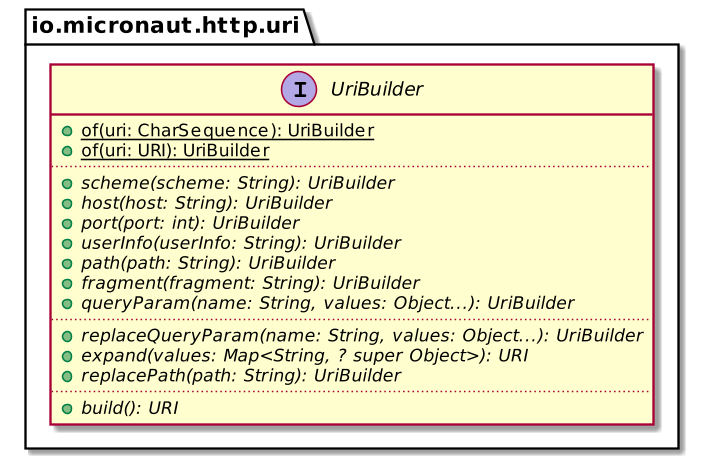

It's not possible to use Kotlin's string interpolation as these parameters are optional. Fortunately, Micronaut provides an `UriBuilder` abstraction, which follows the Builder pattern principles.

We can use it like this:

```kotlin

val uri = UriBuilder

.of("${properties.serverUrl}/v1/public/characters")

.queryParamsWith(

mapOf(

"limit" to limit,

"offset" to offset,

"orderBy" to orderBy

)

).build()

fun UriBuilder.queryParamsWith(params: Map<String, String?>) = apply {

params.entries

.filter { it.value != null }

.forEach { queryParam(it.key, it.value) }

}

```

## Parameterization

Like Spring, Micronaut can bind application properties to Kotlin data classes. In Micronaut, the file is named `application.yml`. The file already exists and contains the `micronaut.application.name` key. We only need to add the additional data. I chose to put it under the same parent key, but there's no such constraint.

```yaml

micronaut:

application:

name: nativeMicronaut

marvel:

serverUrl: https://gateway.marvel.com:443

```

To bind, we need the help of two annotations:

```kotlin

@ConfigurationProperties("micronaut.application.marvel") //1

data class MarvelProperties

@ConfigurationInject constructor( //2

val serverUrl: String,

val apiKey: String,

val privateKey: String

)

```

1. Bind the property class to the property file prefix

2. Allow using a data class. The `@ConfigurationInject` needs to be set on the constructor: it's a sign that the team could improve Kotlin integration in Micronaut.

## Testing

Micronaut tests are based on the `@MicronautTest` annotation.

```kotlin

@MicronautTest

class MicronautNativeApplicationTest

```

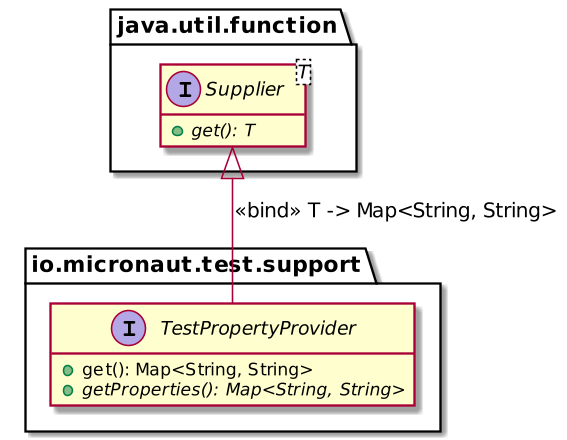

We defined the properties of the above data class as non-nullable strings. Hence, we need to pass the value when the test starts. For that, Micronaut provides the `TestPropertyProvider` interface:

We can leverage it to pass property values:

```kotlin

@MicronautTest

class MicronautNativeApplicationTest : TestPropertyProvider {

override fun getProperties() = mapOf(

"micronaut.application.marvel.apiKey" to "dummy",

"micronaut.application.marvel.privateKey" to "dummy",

"micronaut.application.marvel.serverUrl" to "defined-later"

)

}

```

The next step is to set up Testcontainers. Integration is provided out-of-the-box for popular containers, _e.g._, Postgres, but not with the mock server. We have to write code to handle it.

```kotlin

@MicronautTest

@Testcontainers

@TestInstance(TestInstance.Lifecycle.PER_CLASS) // 1

class MicronautNativeApplicationTest {

companion object {

@Container

val mockServer = MockServerContainer(

DockerImageName.parse("mockserver/mockserver")

).apply { start() } // 2

}

}

```

1. By default, one server is created for each test method. We want one per test class.

2. Don't forget to start it explicitly!

At this point, we can inject both the client and the embedded server:

```kotlin

@MicronautTest

@Testcontainers

@TestInstance(TestInstance.Lifecycle.PER_CLASS)

class MicronautNativeApplicationTest : TestPropertyProvider {

@Inject

private lateinit var client: HttpClient // 1

@Inject

private lateinit var server: EmbeddedServer // 2

companion object {

@Container

val mockServer = MockServerContainer(

DockerImageName.parse("mockserver/mockserver")

).apply { start() }

}

override fun getProperties() = mapOf(

"micronaut.application.marvel.apiKey" to "dummy",

"micronaut.application.marvel.privateKey" to "dummy",

"micronaut.application.marvel.serverUrl" to

"http://${mockServer.containerIpAddress}:${mockServer.serverPort}" // 3

)

@Test

fun `should deserialize JSON payload from server and serialize it back again`() {

val mockServerClient = MockServerClient(

mockServer.containerIpAddress, // 3

mockServer.serverPort // 3

)

val sample = this::class.java.classLoader.getResource("sample.json")

?.readText() // 4

mockServerClient.`when`(

HttpRequest.request()

.withMethod("GET")

.withPath("/v1/public/characters")

).respond(

HttpResponse()

.withStatusCode(200)

.withHeader("Content-Type", "application/json")

.withBody(sample)

)

// With `retrieve` you just get the body and can assert on it

val body = client.toBlocking().retrieve( // 5

server.url.toExternalForm(),

Model::class.java // 6

)

assertEquals(1, body.data.count)

assertEquals("Anita Blake", body.data.results.first().name)

}

}

```

1. Inject the _reactive_ client

2. Inject the embedded server, _i.e._, the application

3. Retrieve the IP and the port from the mock server

4. Use Kotlin to read the sample file - there's no provided abstraction as in Spring

5. We need to block as the client is reactive

6. There's no JSON assertion API. The easiest path is to deserialize in a `Model` class, and then assert the object's state.

## Docker and GraalVM integration

As with Spring, Micronaut provides two ways to create native images:

1. On the local machine.

It requires a local GraalVM installation **with** `native-image`.

```bash

mvn package -Dpackaging=native-image

```

2. In Docker. It requires a local Docker installation.

```bash

mvn package -Dpackaging=docker-native

```

Note that if you don't use a GraalVM JDK, you need to activate the `graalvm` profile.

```bash

mvn package -Dpackaging=docker-native -Pgraalvm

```

With the second approach, the result is the following:

```

REPOSITORY TAG IMAGE ID CREATED SIZE

native-micronaut latest 898f73fb44b0 33 seconds ago 85.3MB

```