id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

857,003 | New feature has been added and working via remotes | Another contribution This week I had a chance to add new feature to a project of another... | 0 | 2021-10-09T02:51:44 | https://dev.to/nguyenhung15913/new-feature-has-been-added-and-working-via-remotes-14oe | opensource, git, remote, merging | #Another contribution

This week I had a chance to add new feature to a project of another programmer, Jun Song. Jun is friendly and also a professional coder.

After forking and cloning his project. I created a new branch with the same name as my issue I filed on his repo(issue-11). Then, I helped Jun to add another flag for his CLI tool. In more detail, his tool now can read a JSON file in which users can specify their options. Instead of entering a really long line:

<code>jun-ssg -i "Silver Blaze.txt." --stylesheet "example.com"</code>

Users now can store all of their options in a <code>config.json</code>

```

{

"input": "Silver Blaze.txt",

"stylesheet": "example.com"

}

```

And can just simply enter:

<code>jun-ssg -c config.json</code>

After that I merge <code>issue-11</code> branch to <code>master</code>. Then I sent him a request and still waiting for his feedbacks of correction.

#Working and Testing via Remotes

Jun also worked on my project. Firstly, I used <code>git remote add</code> and checked out to his branch to test. He did a really good job on validating users' input as well as supporting my tool to read a config JSON file. I saw no errors so I merged his request to my main branch.

In my opinion, <code>git remote</code> is really useful. It allows you to test a pull request. First it can add the branch of the person who sends the request to our project. Then if you are satisfied, you can merge that branch to our main branch.

#Issues

Jun's codes were well-organized with separated folders for main JS file and utility files. Also, to decorate the output to users, Jun used some packages to change tool's output. This was a really good user experience but for the first time, it made me a little bit confused when reading his codes. On top of that, I tried to run the command with <code>-c</code> flag only but the tool kept telling me to specify input file, then I just realized that he made the <code>-i</code> flag as <code>required</code>. I fixed that and the tool worked well after.

In general, his type of coding was cool and professional, he split his codes into components, which were different functions. Also, keeping the main file and <code>util</code> files into different folders will make you debug more easily. This worth learning.

#Links to repos:

Issue: https://github.com/juuuuuuun/jun-ssg/issues/14

Pull request: https://github.com/juuuuuuun/jun-ssg/pull/15

My issue: https://github.com/nguyenhung15913/OSD600-SSG/issues/14

Jun's pull request: https://github.com/nguyenhung15913/OSD600-SSG/pull/18 | nguyenhung15913 |

857,135 | How to send a form to an email without any backend | Hi Everyone! In this post I will be showing you how to send a form in HTML without any backend in... | 0 | 2021-10-09T04:03:02 | https://dev.to/abhidevelopssuntech/how-to-send-a-form-to-an-email-without-any-backend-5enb | email, forms, html, nobackend | Hi Everyone!

In this post I will be showing you how to send a form in HTML without any backend in multiple ways. Remember to follow me for more posts. Now let's get started!

The Method attribute of the form must be set to "POST" for each of these ways to work. And the form input fields must have a name attribute that is not empty. I am going to use this basic HTML Markup:

```html

<div class="container">

<form action="#" method="POST">

<label for="fname">First Name</label>

<input type="text" id="fname" name="firstname" placeholder="Your name..">

<label for="lname">Last Name</label>

<input type="text" id="lname" name="lastname" placeholder="Your last name..">

<label for="country">Country</label>

<select id="country" name="country">

<option value="australia">Australia</option>

<option value="canada">Canada</option>

<option value="usa">USA</option>

</select>

<label for="subject">Subject</label>

<textarea id="subject" name="subject" placeholder="Write something.." style="height:200px"></textarea>

<input type="submit" value="Submit">

</form>

</div>

```

Now I am going to show you the first way to do this. In the action attribute of the form element add this code:

```

mailto:you@example.com <!--put your email here-->

```

What this will do is that it will open a new window of the Mail app and it is going to take all the user text in the input fields and put it in an email. Then if the user clicks "SEND", the form data will be sent to you.

The next way we can do this is by using different form submission companies. The ones we will be using are "Formspree" and "FormSubmit".

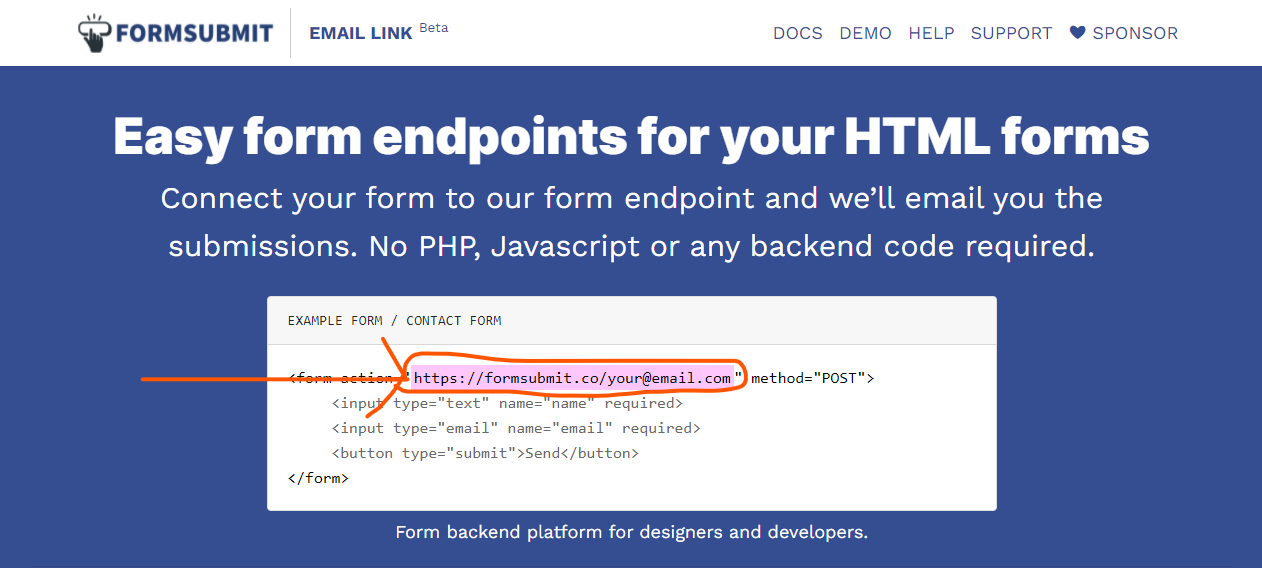

I prefer FormSubmit because it requires no registration so I will start with that. The first thing you need to do is go to [Formsubmit.co](https://formsubmit.co).

Then you need to to copy the code that is pointed to in the image below.

Paste this code in the action attribute of your form. Then enter your email in "your@email.com" and you are all set!

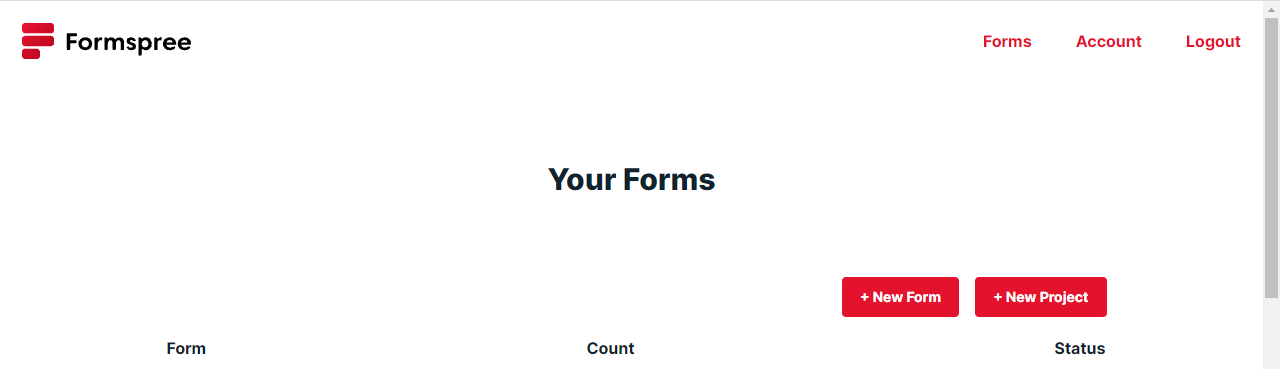

Next let's use Formspree. Go to [Formspree.io](https://formspree.io). Then create a new account by clicking the "Get Started Button".

Then you will be redirected to this page:

Then click "New Form". Then enter your form name and select what email it will send the submissions to (your email).

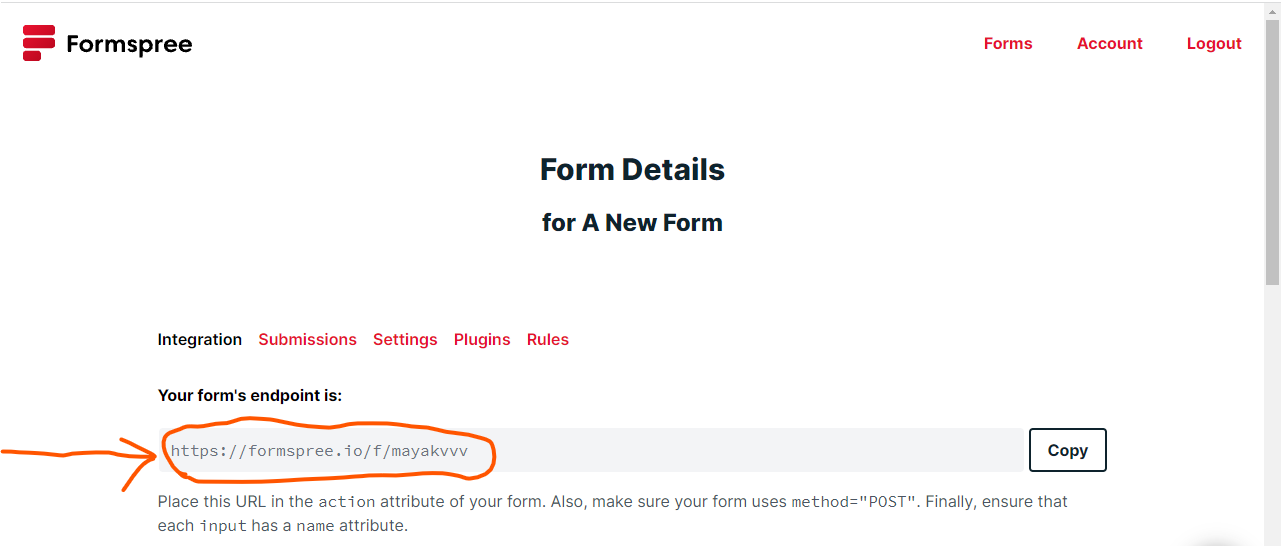

Once you create the form, copy the code that the image below shows.

Then paste that code in the action attribute of your form and you are done!

These are different ways you can send form with HTML without and Backend Code. Thank you for reading my post and if you have any questions, let me know.

Bye for now!

| abhidevelopssuntech |

857,224 | 12 Simple HTML Snippets To Avoid Complex Libraries ⚡✨ | Not knowing the basic tech can sometimes increase the size, affect the performance and add an extra... | 0 | 2021-10-11T17:38:17 | https://dev.to/madza/12-simple-html-snippets-to-avoid-complex-libraries-3na8 | html, css, programming, productivity | ---

title: 12 Simple HTML Snippets To Avoid Complex Libraries ⚡✨

published: true

description:

tags: html, css, programming, productivity

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/vp46impflwmulu2lo70q.png

---

Not knowing the basic tech can sometimes increase the size, affect the performance and add an extra layer of complexity to the project.

This article is a heads up that you can do a lot with just vanilla [HTML](https://developer.mozilla.org/en-US/docs/Web/HTML) and a bit of [CSS](https://developer.mozilla.org/en-US/docs/Web/CSS) if you want it to look pretty.

I have collected some of the most useful HTML tags and attributes, so you can study them and use in your next project.

I also created 12 separate [Codepens](https://codepen.io/collection/mrbBrR?grid_type=grid), so you can get a live taste and play around with the examples.

### 🎨 Color Picker

Often in the developer workflow you might want to access the color spectrum and be able to pick up any shade from it.

You can use `<input type="color">`, which would otherwise be a time-consuming task to write from scratch.

{% codepen https://codepen.io/madarsbiss/pen/vYJBqeX default-tab=result %}

### 📚 Blockquote

When writing articles you might want to highlight some of your favorites quotes.

You can use a `<blockquote>` tag for that. Add some custom styling and you have a nice element that will stand out from the rest of the text.

{% codepen https://codepen.io/madarsbiss/pen/JjyPQBd default-tab=result %}

### 🎵 Audio Player

Writing your own audio player from scratch can be a challenge. You can use the built-in `<audio>` tag, that provides the basic functionality to play your audio files.

Also, you can listen to more of my music [here](https://madza.dev/music).

{% codepen https://codepen.io/madarsbiss/pen/oNevrEb default-tab=result %}

### 📺 Video Player

Another multimedia you could use in your projects is video. Again, you can not just include the video link in HTML and hope that it will play.

To playback videos properly you can use built-in `<video>` tag.

{% codepen https://codepen.io/madarsbiss/pen/BadBgxJ default-tab=result %}

### 🔷 Accordion

Sometimes you might want to hide some content and allow user to reveal it manually (to save the space of the viewport, for example).

You can achieve the described functionality with pure HTML, thanks to `<details>` tag.

{% codepen https://codepen.io/madarsbiss/pen/zYdOVPV default-tab=result %}

### 📅 Date Picker

Working with dates is among the most common reasons why devs search for external libraries.

HTML provides a `<input type="date">` tag, that provides a nice UI with the option to select the dates by clicking on them.

{% codepen https://codepen.io/madarsbiss/pen/qBXWzXE default-tab=result %}

### ⚪ Slider

Slider is a common component to collect the user input in the specific numeric range.

You can use `<input type="range">` to get a fully functional slider, where you can set the min, max and current value.

{% codepen https://codepen.io/madarsbiss/pen/GRvKbXv default-tab=result %}

### ✍ Content Editor

In order to edit content you don't have to use input or textarea fields and set the default values for them.

Instead, you can use `contenteditable` attribute, that allow to edit the content of the div, for example.

{% codepen https://codepen.io/madarsbiss/pen/ExvYBwB default-tab=result %}

### 📷 Picture Tag

You might want to display different images on different screen sizes to improve the performance and UI/UX.

Instead of using the default `<img>` tag, detect the viewport and creating a method to switch between the images, you can use built-in `<picture>` tag.

{% codepen https://codepen.io/madarsbiss/pen/abybomY default-tab=result %}

### ⌛ Progress Bar

The `<progress>` tag represents the completion progress of a task.

You can use it to display various actions, such as a download, file transfer, or installation.

{% codepen https://codepen.io/madarsbiss/pen/oNevKdp default-tab=result %}

### 🔻 Dropdown

Often you might need to collect the user input with multiple possible choices. Instead of listing all the options on the screen, you might include them into dropdown.

Using the `<datalist>` tag will allow users to select options from dropdown, while also allowing to enter their own values.

{% codepen https://codepen.io/madarsbiss/pen/eYEOwdQ default-tab=result %}

### 💭 Tooltip

If you need to give a detailed description of something, it is always nice to include a pop up.

HTML built-in `title` attribute provides that by default.

{% codepen https://codepen.io/madarsbiss/pen/VwzwZvE default-tab=result %}

In this article we reviewed functional HTML elements that you can use while working with text, audio, images, video, etc.

Next time you need the some functionality, make sure to double check if it is not already provided by the HTML itself.

{% twitter 1447623708571541506 %}

<hr>

Writing has always been my passion and it gives me pleasure to help and inspire people. If you have any questions, feel free to reach out!

Connect me on [Twitter](https://twitter.com/madzadev), [LinkedIn](https://www.linkedin.com/in/madzadev/) and [GitHub](https://github.com/madzadev)!

Check out my [portfolio](https://madza.dev/code) for all projects. | madza |

857,296 | Participating in Devtober 2021 | You can skip this portion as this will be just me venting here. Its been a long time since I've... | 0 | 2021-10-09T09:31:43 | https://dev.to/fihra/participating-in-devtober-2021-31b2 | gamedev, unrealengine, beginners, programming | You can skip this portion as this will be just me venting here.

---

Its been a long time since I've posted a blog here. A lot has been going on in my life right now. Still in on the purpose of trying to land that first job, but of course I've gone through a bit of a burnout of applying for jobs again. I have also taken a break from programming the past couple months, so I feel rusty in trying to get that workflow back. It just doesn't help either when I have family who just doesn't believe in me for these goals to have that moral support, so it's tough to really maintain my mind set for this.

---

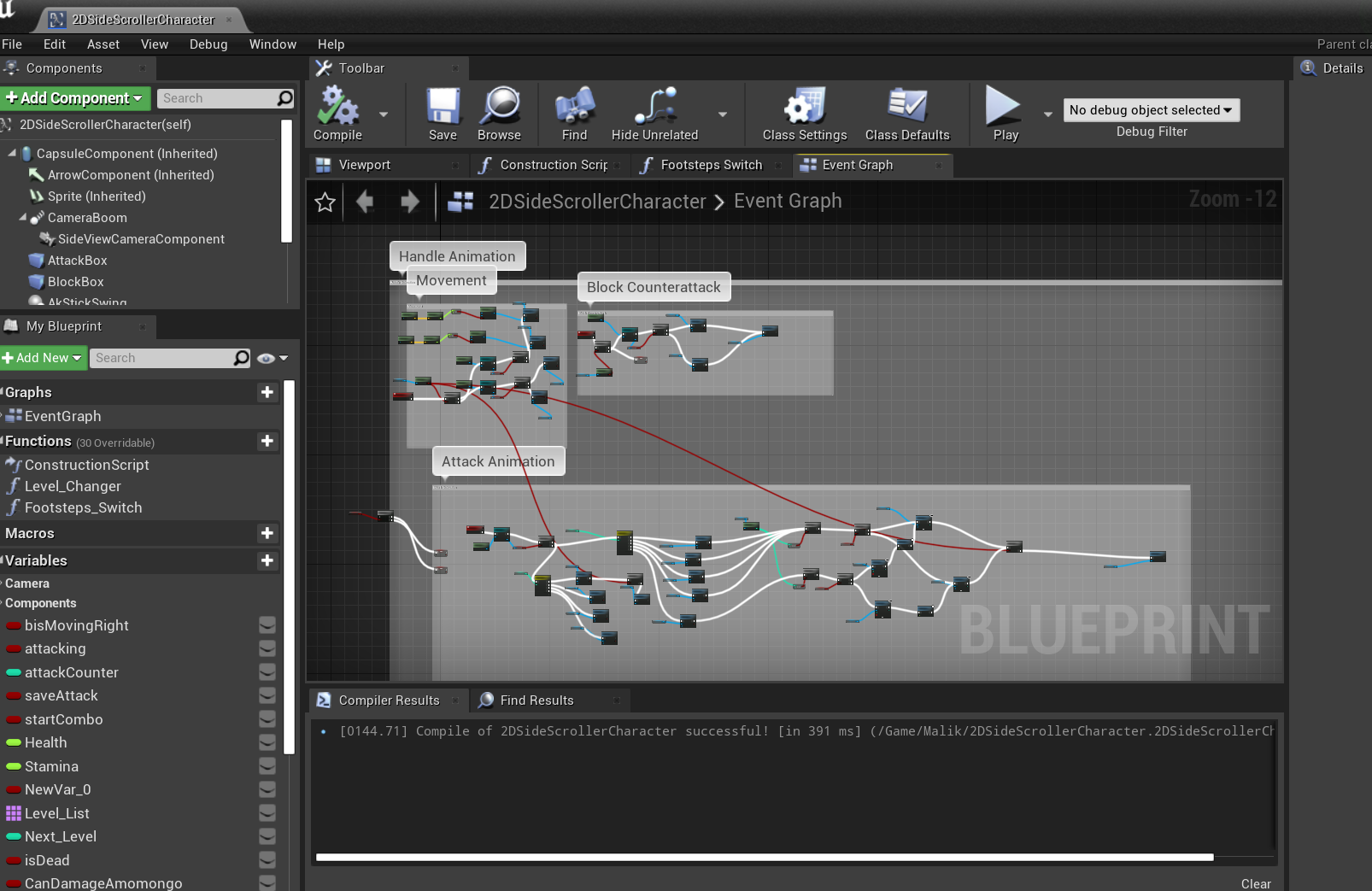

Alright, so I have decided to start Devtober, which started the beginning of this month. I will be continuing a game project I actually started for a different game jam back in July but didn't finish. My goal is actually wanting to finish this game by the end of this month, especially since I feel I am almost finished with it.

## Maliksi

A 2D Action Platformer Boss Rush game where you play as Malik, an Eskrimador, who must retrieve the stolen light from Filipino mythological-inspired creatures to save the village.

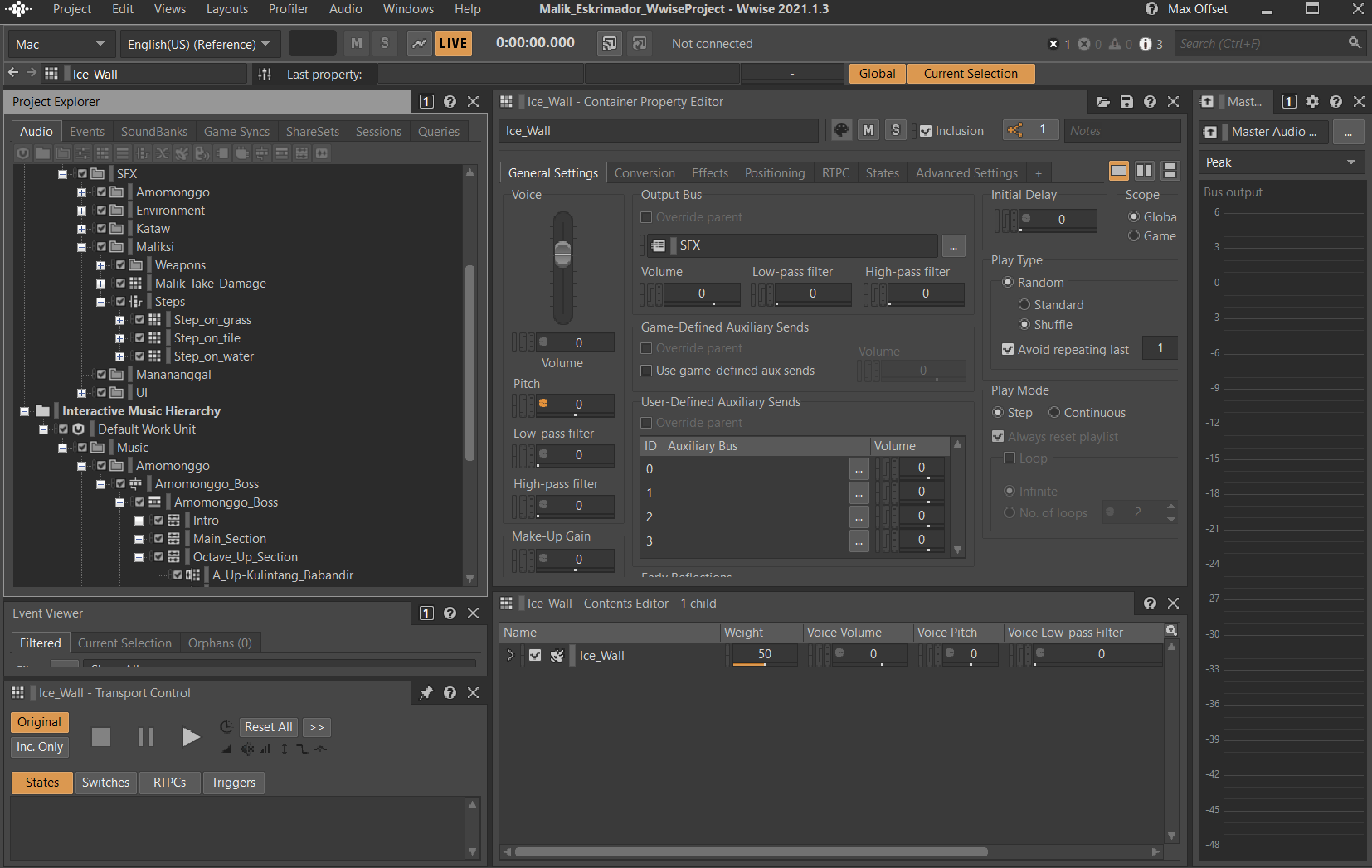

I have been working on the art, programming, sound design, and music. I am learning Unreal Engine with unreal blueprinting (not C++ because I don't feel ready or comfortable in the language yet). Which it took me awhile to get used to visual programming.

I am using Wwise to implement the sound effects and music.

I'm keeping my scope as tight as I can in order to finish this game. I still need to polish one of the boss fights, but the final boss still needs the functionality and mechanics implemented.

Things that I need to work on for this last push for this month:

- polish one of the boss fight's mechanics

- final boss still needs functionality & mechanics implemented

- Waiting for musicians to record their parts for the music so I can start mixing

- Implement the music into the game

- Few more sound effects to design

- Slowly implementing sounds in each level

- Ending still needs to be created

[1st Video Footage](https://twitter.com/FirahFabe/status/1431739110616551428?s=20)

[2nd Video Footage](https://t.co/RxMfwYu00s)

I want to publicize this to hold myself accountable to finish this game. I did participate in Devtober 2020, but I never finished that game, but this one, this one for sure I want to complete, since I've been working on this one for a long time already. Plus, it is also Filipino American History Month, and this game showcases my Filipino culture that I have incorporated into this.

If you want to participate in this game jam, here is the link to the itch.io:

[https://itch.io/jam/devtober-2021](https://itch.io/jam/devtober-2021) | fihra |

857,430 | Day 1 of 100 Days of Code and Scrum: How to GraphQL? | For context, I'm doing a mixture of 100daysofcode with Scrum elements. See the challenge post... | 14,990 | 2021-10-09T12:22:31 | https://dev.to/rammina/day-1-of-100-days-of-code-and-scrum-how-to-graphql-1o78 | graphql, beginners, 100daysofcode, webdev | For context, I'm doing a mixture of 100daysofcode with Scrum elements. See the [challenge post here:](https://dev.to/rammina/100-days-of-code-and-scrum-a-new-challenge-24lp)

## Yesterday

I started learning GraphQL from https://www.howtographql.com. It's a pretty nice GraphQL tutorial because they both have video and text format, and so far I've been enjoying it.

## Today

I kept on plowing through https://www.howtographql.com/basics/2-core-concepts/ and learning more about what GraphQL can do.

Here are some of the things I've learned:

### GraphQL

- GraphQL is strongly typed, which means the typing needs to be declared in advance

- GraphQL uses Schema Definition Language (SDL)

- GraphQL uses queries (GET in REST API), mutations (POST, PATCH, DELETE), and subscriptions (something that lets you listen to mutations and returns response objects)

- mutations allow you to specify a payload to retrieve new information in one call round trip

- subscriptions are like data streams, while queries and mutations are similar to the request-response-cycle

- types serve as entry points for request sent by the client side

- a root field is followed by the payload which is flexible and allows you to retrieve which data you want

- it is much more flexible to query nested information using GraphQL compared to REST API

### Scrum

- a Sprint can be canceled by the Product Owner if the Sprint Goal becomes obsolete

- Product Owners have the option to choose whether to attend a Daily Scrum or not

I'm having a difficulty with getting used to working with the new syntax for GraphQL, since I've been using REST API all this time.

How is everyone doing in their learning journey? Feel free to chat with me in the comments and/via DM!

### DISCLAIMER

This post only expresses my thoughts and opinions (based on my limited knowledge) and is in no way a substitute for actual references. If I ever make a mistake or if you disagree, I would appreciate corrections in the comments!

### Other Media

Feel free to reach out to me in other media!

<span><a target="_blank" href="https://www.rammina.com/"><img src="https://res.cloudinary.com/rammina/image/upload/v1638444046/rammina-button-128_x9ginu.png" alt="Rammina Logo" width="128" height="50"/></a></span>

<span><a target="_blank" href="https://twitter.com/RamminaR"><img src="https://res.cloudinary.com/rammina/image/upload/v1636792959/twitter-logo_laoyfu_pdbagm.png" alt="Twitter logo" width="128" height="50"/></a></span>

<span><a target="_blank" href="https://github.com/Rammina"><img src="https://res.cloudinary.com/rammina/image/upload/v1636795051/GitHub-Emblem2_epcp8r.png" alt="Github logo" width="128" height="50"/></a></span>

| rammina |

857,662 | Help me... | Hello everyone... I am beginner of full stack dev journey and also new here, Thanx to @comscience ... | 0 | 2021-10-09T16:32:26 | https://dev.to/akulkarni/help-me-1ajp | help | Hello everyone...

I am beginner of full stack dev journey and also new here, Thanx to @comscience ...

Plz suggest me

any Blog to read, video to watch or podcast to listen, which helps me for journey of Full stack developer.

Thank you 😊

| akulkarni |

857,667 | Webson: a new DOM markup | Using JSON for complex layouts | 0 | 2021-10-25T17:50:27 | https://dev.to/gtanyware/webson-a-new-dom-markup-5cn6 | markup, html, json, dom | ---

title: Webson: a new DOM markup

published: true

description: Using JSON for complex layouts

tags: markup,html,json,dom

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/apzlz352ixhvrislpvt0.jpg

---

Photo by <a href="https://unsplash.com/@halacious?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText">Hal Gatewood</a> on <a href="https://unsplash.com/s/photos/website?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText">Unsplash</a>

## Synopsis

This article introduces _Webson_, an easy-to-use syntax with its own run-time rendering engine, that turns JSON into DOM markup and adds features way beyond those of HTML.

## Introduction

A web page is the visual representation of a Document Object Model, or DOM, the data structure maintained internally by all browsers. Traditionally, the DOM is constructed by the browser from HTML scripts, but as pages get bigger and more complex HTML becomes ever more cumbersome. In recent years it has become increasingly common to create the DOM using JavaScript, with no HTML ever being seen, but while this suits programmers well it requires a different skill set from that needed to build pages the traditional way.

Today's Web pages may have hundreds or thousands of elements, all carefully positioned to create the desired result. There's no way to hide this complexity, whether it's done with HTML/CSS, JavaScript or some kind of no-code visual builder. In the end it's a human brain that's doing the real work of translating the customer's requirements - a mental picture - into something the browser can use to create the DOM.

HTML is not program code; it's a form of "markup", the ultimate expression of which came in the form of XML, able to represent not only visual structures but a wide range of other data too. Unfortunately, XML is wordy and hard to read and is not greatly loved. In 2001, Douglas Crockford invented (he would say "discovered") a simpler syntax for representing data structures, as a means of transferring data in and out of JavaScript programs in the form of plain text. The syntax is JavaScript Object Notation, or JSON, and in the past 2 decades it has widely supplanted XML. Virtually every programming language has the ability to read and write JSON and it's now the most common way to transfer data across the Web.

Since HTML shares many of the disadvantages of XML, the question might be asked, _Can JSON also replace HTML?_. If the answer is "yes", a couple of supplementary questions might be

_Can we have user-defined variables and reusable blocks?_

_How about conditional structures?_

which would greatly reduce the amount of markup needed to describe a complex web page, where items are commonly repeated with only minor differences.

## Webson

Webson is a markup syntax that allows JSON to be used to describe a DOM, together with a JavaScript rendering engine that can be embedded in any web page to process scripts at runtime. The system is immediately usable by HTML/CSS coders and no JavaScript experience is required. It's aimed at simplifying the design and implementation of highly complex layouts, where precise positioning of large numbers of elements is hard to achieve manually, and it achieves this with JSON markup rather than with code.

## Getting started

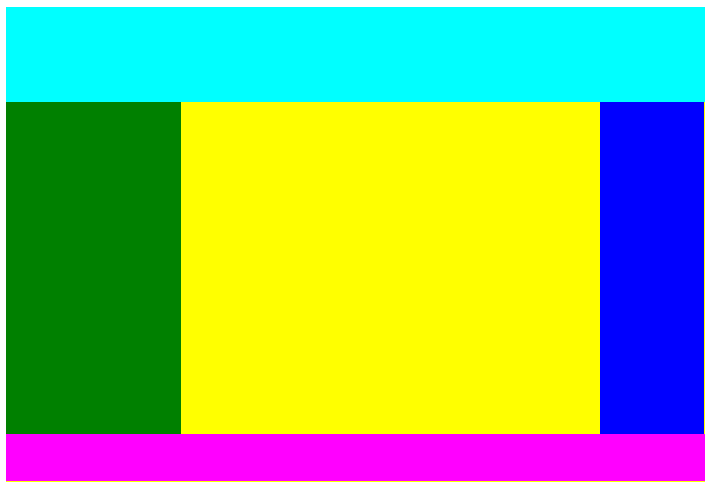

Let's start with a simple example; a layout commonly found in online magazines and social media. At the top there's a full-width header; under this a central panel with 2 sidebars and at the bottom a footer. As this is only an example I've given each of the component `div`s its own background color so it stands out clearly. It looks like this:

Here's the HTML that will create this screen. It uses inline styles to avoid the need to present a separate CSS file:

```html

<div style="width:50%;height:50%;display:flex;flex-direction:column">

<div id="top" style="height:20%;background:cyan">

</div>

<div style="width:100%;flex:1;display:flex;flex-direction:row">

<div id="left" style="display:inline-block;width:25%;height:100%;background:green">

</div>

<div id="center" style="display:inline-block;height:100%;background:yellow;flex:1">

</div>

<div id="right" style="display:inline-block;width:15%;height:100%;background:blue">

</div>

</div>

<div id="bottom" style="height:10%;background:magenta">

</div>

</div>

```

This is a total of 655 characters. The corresponding Webson script to create the same screen is 1172 characters, nearly twice as many, and occupies 61 lines rather than 14, but before you dismiss Webson as being too wordy I must say in its defence that this is a very basic example which doesn't make use of any of the more advanced features of the system. More complex scripts tend to be far smaller than their HTML equivalents, as we'll see later.

The reason for the extra size in this example is partly that every item is named and partly because JSON itself is fairly bulky (lots of double-quotes), while the increase in lines is mainly because it's a lot more spaced out. This helps readability; high information density makes code hard to read at a glance as the eye has to pick out specific details from a dense surrounding mass. With Webson, the CSS properties are separated out, one per line, rather than all being crammed onto a single line. This can of course be done with HTML too, but because there's no agreed way to present it the result is usually an unstructured mess, so most coders just put everything on the same line.

Here's the script. It just uses a basic feature set; I'll get on to some of the advanced features later.

```json

{

"width": "50%",

"height": "50%",

"display": "flex",

"flex-direction": "column",

"#": ["$Top", "$Middle", "$Bottom"],

"$Top": {

"#element": "div",

"height": "20%",

"background": "cyan"

},

"$Middle": {

"#element": "div",

"width": "100%",

"flex": 1,

"display": "flex",

"flex-direction": "row",

"#": ["$Left", "$Center", "$Right"]

},

"$Bottom": {

"#element": "div",

"height": "10%",

"background": "magenta"

},

"$Left": {

"#element": "div",

"display": "inline-block",

"width": "25%",

"height": "100%",

"background": "green"

},

"$Center": {

"#element": "div",

"display": "inline-block",

"flex": 1,

"height": "100%",

"background": "yellow"

},

"$Right": {

"#element": "div",

"display": "inline-block",

"width": "15%",

"height": "100%",

"background": "blue"

}

}

```

## How it works

Running through the script, you will see that every DOM element has its own named block of JSON data. User-defined names all start with `$`. There are also directives and other system items; the names of these start with `#`. Everything else in the script above is a CSS style to be applied to the current element.

In the above, most of the blocks include a `#element` directive, which names the DOM element type. If this is missing, everything in the block applies to the current element (the one defined in the block that calls this one). Here the only block that lacks an `#element` is the very first one, so its styles all apply to the parent container that was created outside Webson and passed to its renderer as a parameter.

The symbol `#` by itself signals that child elements are to be added. This directive takes either a single name or an array of names.

## Attributes

The structure we've built here isn't much use unless we can add further items to the various `div`s. Some of this can be done with further Webson code but ultimately you'll either use an `onClick="<something>"` callout or a JavaScript function that populates or interacts with the DOM. For the latter to work, elements must have unique ids to allow JavaScript to find them. Here's the `$Left` block again, with an id and a couple of other additions:

```json

"$Left": {

"#debug": 2,

"#doc": "The left-hand sidebar",

"#element": "div",

"@id": "left",

"display": "inline-block",

"width": "25%",

"height": "100%",

"background": "green"

},

```

Here we have another new symbol, `@`, which (appropriately) signifies an _attribute_. Various HTML elements require special attributes such as `@id`, `@class`, `@type`, `@href`, `@src`, etc. In each case the name is that of the HTML attribute prefixed by `@`.

Another feature above reveals a built-in debugging capability. When hand-building HTML, errors are common, often resulting in strange layouts that are not at all as intended. Webson allows you to specify 3 different debug levels:

`"#debug": 0` - no debugging output

`"#debug": 1` - Show all `#doc` properties

`"#debug": 2` - Show every item

which enables you to see what is happening. The output for the above is

```

Build $Left

The left-hand sidebar

#element: div

Attribute id: "left" -> left

Style display: "inline-block" -> inline-block

Style width: "25%" -> 25%

Style height: "100%" -> 100%

Style background: "green" -> green

```

This is a simple example where all values are constants. The values appear to be repeated but this will not always be the case. In more complex scripts you will often see the results of expressions being evaluated.

`#doc` items can be either single lines of text or arrays of lines. They are just there for the benefit of the programmer and have no effect on the screen being constructed.

A `#debug` directive affects its own block and those below it (defined using `#`).

## Nested bocks

Webson implements nesting, whereby items declared at one level apply to all those in lower (contained) levels. Changing a value at one level only affects those at that level and beneath it; those above are unaffected.

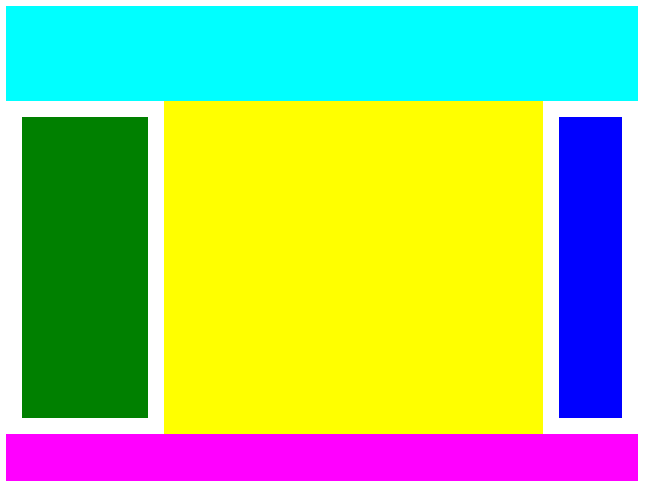

For example, let's suppose the two sidebars share a common feature; they each have an inner `div` and padding to produce a border. Here's what it should look like:

To achieve this we can rewrite the last part of the script as follows:

```json

"$Left": {

"#doc": "The left column",

"$ID": "left",

"$Width": "25%",

"$Color": "green",

"#": "$LRPanel"

},

"$Right": {

"#doc": "The right column",

"$ID": "right",

"$Width": "15%",

"$Color": "blue",

"#": "$LRPanel"

},

"$LRPanel": {

"#element": "div",

"display": "inline-block",

"width": "calc($Width - 2em)",

"height": "calc(100% - 2em)",

"padding": "1em",

"#": "$LRSubPanel"

},

"$LRSubPanel": {

"#element": "div",

"@id": "$ID",

"width": "100%",

"height": "100%",

"background": "$Color"

}

```

Here I've left out the block for `$Center` as it's unchanged. Both `$Left` and `$Right` now no longer declare their own `#element`; instead they set up user-defined variables `$ID`, `$Width` and `$Color` and invoke `$LRPanel` to construct the element. I suggest using an initial capital letter for each user-defined name, to make them easier to spot, but it's not mandatory. Any variable declared or modified at a given level in the structure will be visible at all points beneath that one, but changes do not propagate upwards.

`$LRPanel` creates a `div`, applies padding to it and creates an inner `div` called `$LRSubPanel`. Note how the `$Color` variable is passed down and used here, resulting in a colored panel with a white border. Note also the use of `calc()` in `$LRPanel` to allow for the padding, which in a conformant browser adds to the width or height of the element.

## How to run it

To view this demo on a PC, place the following HTML file on your server:

```html

<html>

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>Webson demo</title>

<script type='text/javascript' src='resources/plugins/webson.js'></script>

</head>

<body>

<div id="main" style="width:640px"></div>

<script>

window.onload = function() {

render(`resources/json/simple.json`);

async function render(file) {

const response = await fetch(file);

const script = await response.text();

Webson.render(document.getElementById("main"), `keyboard`, script);

}

}

</script>

</body>

</html>

```

For mobile, the width can be set to `` `100%` ``. The JSON script is assumed to be in a folder on your server at

```

(your domain)/resources/json/simple.json

```

The code above uses the relatively-new standard function `fetch()` to get the named script from a file on the server. It then calls `render()` in the Webson package (`webson.js` in the repository) to create the DOM tree that corresponds to the JSON script.

## From here on in

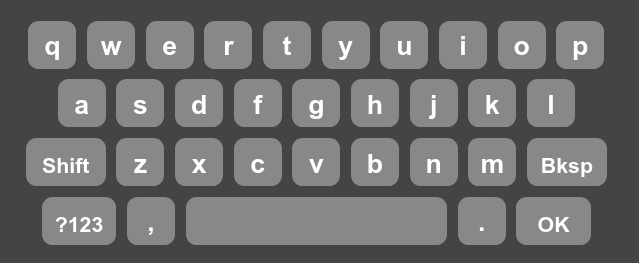

This has been a necessarily brief introduction to Webson, since to cover every feature in detail would result in a very lengthy article. A more in-depth treatment can be found in [the Webson repository](https://github.com/easycoder/webson). The example used is the following on-screen virtual keyboard:

The repository documentation starts with the page you are reading now, then goes on to describe how to make the virtual keyboard depicted above. It then shows how to make the keyboard respond to its `Shift` and `?123` keys being tapped, to change the key legends appropriately. This is all done with simple JSON commands and no conventional coding at all. You can see and test-drive the virtual keyboard with the above functionality [here](https://webson.netlify.app/keyboard.html).

Comments are welcome, as are suggestions on how to improve Webson. | gtanyware |

857,710 | Ema-Johnia {day-30} | 100daysofcode {Day - 30} Today's learning about JavaScript Localstorage. I've created a... | 0 | 2021-10-09T17:59:20 | https://dev.to/alsiam/ema-johnia-day-30-fd1 | 100daysofcode, javascript, react, programming | #100daysofcode {Day - 30}

Today's learning about JavaScript Localstorage. I've created a shopping cart with ReactJs and if you add products to the cart it will save the data to local storage and you will see the products on the cart after browser refresh or exit.

Link: https://ema-johnia.netlify.app

#learning #javascript #programminglife #react | alsiam |

858,001 | How to Create Connection With Database in PHP | How to Add Data in Database Using PHP | How to Create Connection With Database in PHP | How to Add Data in Database Using PHp In this post,... | 0 | 2021-10-10T04:39:45 | https://dev.to/hmawebdesign/how-to-create-connection-with-database-in-php-how-to-add-data-in-database-using-php-b52 | webdev, php, database, mysql | How to Create Connection With Database in PHP | How to Add Data in Database Using PHp

In this post, I show you the steps to create a connection with your database in PHP and how to add data to MySQL Database.

In this article, I will also show you how to create a connection with a database in PHP| Add Data into MySQL Database|create a MySQL database in localhost.

•First open the browser and type 127.0.0.1 or localhost depending on your computer's preference

•Then click next and select phpMyAdmin from the dropdown list

•Fill in username, password, db name and click the "Create" button

**Watch Full Video**

{% youtube RwQc7c1FKHc %}

| hmawebdesign |

858,009 | What is a Blockchain? | What is blockchain? According to Wikipedia, it is a list of records, or blocks, that are linked... | 14,962 | 2021-10-11T03:52:01 | https://dev.to/jokim/what-is-a-blockchain-5a2l | blockchain | What is blockchain?

According to Wikipedia, it is a list of records, or blocks, that are linked together using cryptography.

On a very basic level, a block consists of four main things:

1. data - any data that you want to store

2. previous hash - this connects the block to its previous block

3. hash - code that identifies the block

4. nonce - arbitrary random number

There are five important concepts of blockchains:

1. Encryption(SHA256)

2. Immutable ledger

3. Distributed p2p ledger

4. Mining

5. Consensus protocol

**Encryption(SHA256)**

This is the code that you can identify any block with. Imagine it as the fingerprint of the block. SHA256 is the encryption tool that creates this fingerprint for each block. SHA stands for secure hashed algorithm and the 256 stands for the number of bits the algorithm has which is 64 characters in length.

Five important characteristics of SHA256 are as follows:

1. Deterministic - the same input outputs the same hash each time

2. Avalanche Effect - a minor change in the input outputs a completely different hash

3. One-way - you cannot reverse engineer the hash meaning you cannot determine the input using the output

4. Fast computation

5. Withstands collisions - different inputs cannot have the same output

**Immutable ledger**

Let's first define the two words. Immutable means unchangeable. A ledger is basically a list of all business transactions. For example, if you went to a nearby store and paid for a coke with your debit card then that transaction is stored in your bank ledger. Why is this ledger important? It's important because that's the way we can keep track of how much money you have left in your account. If you claim that you never bought anything in the store the ledger is the evidence used to prove that your claim is false.

*How is this relevant to blockchain?*

It's relevant because blockchains can serve as this ledger that's immutable. For example, if you wanted to buy a house then you would pay for it and then register yourself as the owner of the home in some government institution. That way no one can arbitrarily claim that your home is theirs because the government institution serves as the ledger in this case. But with blockchain we are ridding this government institution and replacing it with blockchain. There are many benefits to this because no one can tamper with the data. If someone tries to change the data in the blockchain then it would change the hash of the blockchain which would invalidate the blockchain with the tampered data.

**Distributed p2p ledger**

Like before, let's define the words first. Distributed just means spread out and p2p which stands for peer-to-peer means links between peers.

*How is this relevant to blockchain?*

Basically the blockchain is stored not in one central place but distributed among all computers on the network. In other words, all the computers on the network have a copy of the blockchain. Whenever a new transaction is made on a certain computer the transaction is signaled to all the other computers on the network where they make a copy of the newly added blockchain.

*Why is this necessary?*

This p2p networking makes it extremely difficult for anyone to tamper with blockchain. When someone tries to tamper with a blockchain by, for example, trying to change the name of the owner of a house all the other computers on the network will realize that it was getting tampered with because the majority of the computers on the network have a different copy of blockchain. This prevents the new tampered block from being attached to the chain of blocks. We will go into more detail regarding this issue in the consensus protocol part below.

**Mining**

Let's review once again what a blockchain is composed of:

1. data - any data that you want to store

2. previous hash - this connects the block to its previous block

3. hash - code that identifies block

4. nonce

5. blockchain number

The hash of a blockchain is generated as a combination of the blockchain number, previous hash, data, and nonce. The miners basically mine blockchains by finding the nonce that generates the hash. Only the nonce is computed by the miners because it wouldn't make sense to change anything else. For example, you can't change the data because that would be tampering with the data and you can't change the previous hash because that would break the connection with the previous blockchain. So, the way these blockchains are mined is by someone or something finding the number that goes in the nonce. Miners use brute force to guess the nonce of the blockchain. Whoever guesses the nonce first gets a financial reward in the form of coins in the case of Bitcoin.

**Consensus protocol**

Consensus means majority opinion and protocol simply means some rule. The reason we need this consensus protocol is because the blockchain uses a p2p network like we discussed above. An important concept that is frequently mentioned is the Byzantine General's Problem. It deals with the problem of who to believe which is very important in blockchain because it is also composed of a network of computers.

*What is the Byzantine General's Problem?*

Assume there are 5 Byzantine generals and one of them is a traitor. They need to either attack an enemy base or retreat. Now the generals need to be able to distinguish whether a signal is coming from a fellow general or a traitor. The way they do this is by tallying the number of signals they receive from each other and, of course, majority rules. So, if there are 5 people you will receive 4 signals in total (excluding your signal). If there is one traitor then you would receive 3 signals of the same kind which is majority. Now this would not work if more than 30% of the generals are traitors.

*How is this relevant to blockchain?*

This is relevant to blockchain in the sense that all the computers on the blockchain network are like the generals. They need to be able to tell whether the signals they receive from the other computers are valid or not and they do this by the number of signals they get.

Now how do we actually implement this in real life?

There are two main consensus protocols: Proof-of-Work("PoW") and Proof-of-Stake("PoS"). These consensus protocols allow other computers to distinguish whether a signal is valid or not.

This post will discuss PoW because Bitcoin implements PoW and will provide a good foundation on how other consensus protocols work.

We have already briefly discussed how PoW works in the blockchain mining section. The miners need to brute force their way to find the nonce that generates the hash of the blockchain and once they do they are rewarded financially with a coin. This is PoW.

One main problem is that two different blocks can be added to the chain. Say about 70% of the computers on the network have block "A" and the rest have block "B". What happens then? The network waits for another block to be added to the chain. The longer chain usually wins. What this means is that the longer chain is copied over to the other computers that had block "B" and block "B" is removed from the chain. Whichever side has more computing power has a higher probability of finding the next nonce that generates the hash which is why this is called PoW. The computers that have 51% of the computing power usually wins majority.

| jokim |

858,166 | Feature Flags with ConfigCat and Asp.Net Core Options | A feature flag or 'feature toggle' is a common technique to enable or disable application features... | 0 | 2021-10-10T09:40:25 | https://configcat.com/blog/2021/10/10/aspnetcore-options-pattern/ | aspnetcore, optionspattern, configcat | A feature flag or 'feature toggle' is a common technique to enable or disable application features dynamically. For example, feature flags enable product owners to turn on and off features during runtime of the application. Certain features may be turned on/off for given environments, users, or regions only. This way features may be A-B tested, or tested with a given percentage of users, or different countries of the world. It can also provide a solution to meet regional restrictions of different countries.

In this post I investigate integrating [ConfigCat's](https://configcat.com/) feature management with an ASP.NET Core 6 web API service and the `Options<T>` [pattern](https://docs.microsoft.com/en-us/aspnet/core/fundamentals/configuration/options?view=aspnetcore-5.0). I will also focus on using the *AutoPolling* mechanism built into the ConfigCat client library, to refresh feature flags' state during application runtime.

> Throughout my carrier I have used some sort of a feature flag solution. In some cases, this was a conscious decision built upon a well-designed application architecture, while in other cases it was just an `if` statement with a key-value pair in the configuration file. However, I have not yet encountered such a complete service as the one provided by [ConfigCat](https://configcat.com/).

I will focus on using feature flags solution within web services. Although, I see an even bigger need for such a robust solution in desktop applications. Managing the configuration of a few web service instances is inherently simpler to manage hundreds or thousands of desktop applications, which is common in the enterprise world.

## Implementation Options

Feature flags can be leveraged in applications many ways. In the past, when computer networks were less ubiquitous, feature flags were typically implemented as compiler directives. This way a given code path was compiled into the application or remained as commented out section. The advantage of this solution is less branching and smaller code size. Although to 'toggle' a feature a new compilation of the source code is required, which makes this solution less dynamic. Users would need to uninstall/install or upgrade their application with the new binaries to get a feature toggled.

Today the most common technique is branching by `if` statements. If the features flag is in enabled state a certain code path of the application is executed. For example, when a button is clicked to start an order processing, *if* a given feature flag enabled an SMS is also sent to the user. One could express this as:

```csharp

// ...

ProcessOrder();

var isSmsFeatureEneabled = client.GetValue("sendSMS", false);

if(isSmsFeatureEneabled)

SendSms();

// ...

```

Another approach would be to leverage [branching by abstractions](https://www.martinfowler.com/bliki/BranchByAbstraction.html). As this is a larger topic, I am not detailing it within this post, exploring this area may worth its own writing.

One very recent feature flag I encountered comes from .NET itself: using the [HTTP3 preview feature](https://devblogs.microsoft.com/dotnet/http-3-support-in-dotnet-6/) in `HttpClient` requires the developers to proactively enable the feature by setting the `<EnablePreviewFeatures>True</EnablePreviewFeatures>` flag in the csproj file.

## ConfigCat and Asp.Net Core and .NET 6

Using the ConfigCat's service does not restrict us choosing any of implementation techniques, although one would probably not choose to use compiler directives. In this section I show how one can integrate the ConfigCat's configuration with the `Option<T>` pattern of .NET 6. I am using a late preview version of .NET6 at the time of writing this post. DotNet 6 provides a new configuration concept with `ConfigurationManager` type, which is not available in the previous versions. `ConfigurationManager` allows to initialize configuration sources while using configuration values of previously initialized sources. It achieves this by implementing `IConfigurationBuilder, IConfigurationRoot, IConfiguration` interfaces at the same time.

One consideration to make is that no user specific feature flag will be used, which means that in this implementation flags will not be respected if set for specific users or 'target % users' on the ConfigCat's portal. In all cases the 'To all users' value of the feature flag is used.

In general, when using `Options<T>` pattern, there is no good API to query user specific settings, and in a web application used by multiple users, there is also no effective way to fetch flags for one or a few users during application startup. Thus, all feature flags shall be independent of users when being registered with Options. We can leverage though non-user specific information i.e. semantic version of the application is greater than 1.2.3. I will leave it up for the reader to extend the presented solution with such extensions.

Let me first preview the whole 'startup' code and the action of the service, then describe the necessary types I created for the solution. Here is *Program.cs*:

```csharp

using ConfigCat.Client;

using Microsoft.Extensions.Options;

var builder = WebApplication.CreateBuilder(args);

builder.Configuration.AddConfigCat(false);

builder.Services.Configure<FeatureSet>(builder.Configuration.GetSection(nameof(FeatureSet)));

var app = builder.Build();

app.UseHttpsRedirection();

app.MapGet("api/feature", async (HttpContext context, IOptionsSnapshot<FeatureSet> features) =>

{

if (features.Value.GrandFeature)

{

context.Response.StatusCode = StatusCodes.Status200OK;

await context.Response.WriteAsync("Hello World!");

}

else

{

context.Response.StatusCode = StatusCodes.Status404NotFound;

}

});

app.Run();

public class FeatureSet

{

public bool GrandFeature { get; set; }

}

```

This web API has a single GET endpoint `api/feature`. The response depends on a feature flag: `GrandFeature`. When the feature is turned on, it returns HTTP 200 OK, with `Hello World!` as the content. When the feature is turned off, it returns 404 Not Found. The state of the feature flag is accessed through `IOptionsSnapshot<FeatureSet> features`, which I will explain later in this post.

The `builder.Configuration.AddConfigCat(false);` uses a custom extension method to add the toggle values of ConfigCat to the Asp.Net Core's configuration.

The second line `builder.Services.Configure<FeatureSet>(builder.Configuration.GetSection(nameof(FeatureSet)));` sets up the feature flags with the `Options<T>` pattern. This is the standard way to bind a given section of the configuration to a type, while also registering the type with the DI container. Here, I bind the configuration to a type called `FeatureSet` which has a single boolean property *GrandFeature*.

Let's investigate the custom extension method. The upcoming code focuses on getting the configuration values of ConfigCat into Asp.Net's configuration. Below the extension method uses `ConfigurationManager` to read the ConfigCat API key and *poll interval* settings from the 'appsettings.json' file. These configuration values are added by Asp.Net web application's file provider during startup. In production, one would prefer to pass the ConfigCat key as a secret or as an environment variable. In either case a configuration source would set the value before `AddConfigCat` is invoked. As '0' is an invalid interval for polling, the extension method validates it. The method has an *optional* parameter which indicates the desired behavior when ConfigCat cannot fetch the feature flags, while *onError* parameter is an action that is invoked in case of an exception. Feature flags are fetched from the service periodically, the *onError* parameter provides a way to observe errors during the background polls.

```csharp

public static class Extensions

{

public static IConfigurationBuilder AddConfigCat(

this ConfigurationManager manager,

bool optional = false,

Action<Exception>? onError = null)

{

var key = manager["ConfigCat:Key"] ?? throw new ArgumentNullException("ConfigCat:Key");

var pollInterval = manager.GetValue<TimeSpan>("ConfigCat:PollInterval");

if (pollInterval == TimeSpan.Zero)

throw new ArgumentNullException("ConfigCat:PollInterval");

var options = new ConfigCatOptions(key, pollInterval, optional, onError);

if (manager is IConfigurationBuilder builder)

builder.Add(new ConfigCatConfigurationSource(options));

return manager;

}

}

public record ConfigCatOptions(string Key, TimeSpan RefreshInterval, bool IsOptional, Action<Exception>? OnError);

```

`ConfigCatOptions` record type is encapsulating the parameters for `ConfigCatConfigurationProvider`.

The next type is `ConfigCatConfigurationSource`. An `IConfigurationSource` is required to be implemented as this is the type added to the configuration sources. The responsibility of the type is to create an `IConfigurationProvider`. With .NET6 when a configuration provider is removed or modified, all the remaining sources are rebuilt. This implementation returns a lazily instantiated `ConfigCatConfigurationProvider` instance. I use the singleton semantics because auto polling built into the `ConfigCatConfigurationProvider` refreshes the configuration automatically.

```csharp

public class ConfigCatConfigurationSource : IConfigurationSource

{

private readonly ConfigCatOptions _options;

private ConfigCatConfigurationProvider? _provider;

public ConfigCatConfigurationSource(ConfigCatOptions options)

{

_options = options ?? throw new ArgumentNullException(nameof(options));

}

public IConfigurationProvider Build(IConfigurationBuilder builder) =>

_provider ??= new ConfigCatConfigurationProvider(_options);

}

```

> Another use case could be when the feature flags are read only at application startup. In certain applications this could be a valid scenario. For this, manual polling would be a better choice, and creating a new instance of `ConfigCatConfigurationProvider` on every `Build()` method invocation would also make sense.

The last and most complex class to implement is `ConfigCatConfigurationProvider`. This type derives from `ConfigurationProvider` which already implements many of the `IConfigurationProvider` interface members. Here, I only override the `Load()` method, which is invoked by the *Host* right after the configuration provider is instantiated. In the first invocation I create a new `ConfigCatClient`, and because `AutoPollConfiguration` uses a `Timer` to load configuration data asynchronously, a task completion source must be waited. Unfortunately, the method signature does not allow to use the `await` keyword. Without waiting for the task completion source, further providers would not be able to read the data set by this provider.

```csharp

public class ConfigCatConfigurationProvider : ConfigurationProvider, IDisposable

{

private readonly ConfigCatOptions _options;

private readonly AutoPollConfiguration _polling;

private readonly TaskCompletionSource _initialLoad;

private IConfigCatClient? _configCatClient;

public ConfigCatConfigurationProvider(ConfigCatOptions options)

{

_options = options ?? throw new ArgumentNullException(nameof(options));

_polling = new AutoPollConfiguration

{

SdkKey = _options.Key ?? throw new ArgumentNullException(nameof(_options.Key)),

PollIntervalSeconds = (uint)_options.RefreshInterval.TotalSeconds,

};

_initialLoad = new TaskCompletionSource();

_polling.OnConfigurationChanged += OnConfigurationChanged;

}

private void OnConfigurationChanged(

object sender,

OnConfigurationChangedEventArgs eventArgs)

{

LoadData();

_initialLoad.TrySetResult();

}

public void Dispose() { }

public override void Load()

{

_configCatClient ??= new ConfigCatClient(_polling);

_initialLoad.Task.GetAwaiter().GetResult();

}

public void LoadData()

{

try

{

Data = ParseKeys();

}

catch (Exception ex)

{

if (_options.IsOptional)

Data = new Dictionary<string, string>(StringComparer.OrdinalIgnoreCase);

if (_options.OnError is { })

_options.OnError.Invoke(ex);

else

throw;

}

OnReload();

}

private IDictionary<string, string> ParseKeys()

{

if (_configCatClient == null)

throw new InvalidOperationException(nameof(_configCatClient));

var result = new Dictionary<string, string>(StringComparer.OrdinalIgnoreCase);

foreach (var key in _configCatClient.GetAllKeys())

{

var value = _configCatClient.GetValue(key, string.Empty);

result.Add(key.Replace('_', ':'), value);

}

return result;

}

}

```

Once `ConfigCatClient` has loaded the data, the `OnConfigurationChanged` event is fired. This is when all key value pairs are loaded. `LoadData()` and `ParseKeys()` methods read and parse the keys and corresponding values. The result dictionary is set in the *Data* property, which is declared by the base class. The only additional logic applied here is to replace the underscore characters with semicolons. This is done, as the ':' character is unsupported in key names, so to deal with the hierarchy of configuration values, another character must be used for the ConfigCat feature names. Using the '_' character resembles a similar behavior to using configuration values with environment variables.

Note, that `LoadData()` method invokes a method from the base type: `OnReload();`. This will generate a new change token signaling the configuration provider that the configuration values have changed. The values of options might change due to the built-in auto-polling mechanism, however the `OnConfigurationChanged` event is only fired when the values have changed.

To read the latest values of configuration while serving the HTTP request, an `IOptionsSnapshot<FeatureSet>` is passed to the GET request's action handler. This type is useful in scenarios where options should be recomputed on every request.

## Conclusion

In one way or another an *aging*, but still maintained application requires a solution for feature flags. The more robust this solution is the more choice is given to the development team to isolate certain preview features to certain users. Implementing a custom feature flag solution does not usually provide a competitive advantage, using service built for the purpose makes sense. In this regards, ConfigCat's solution seems a reasonable choice for my next project. | ladeak87 |

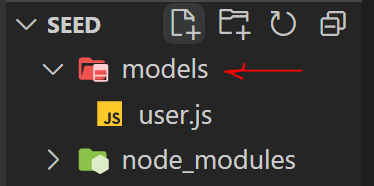

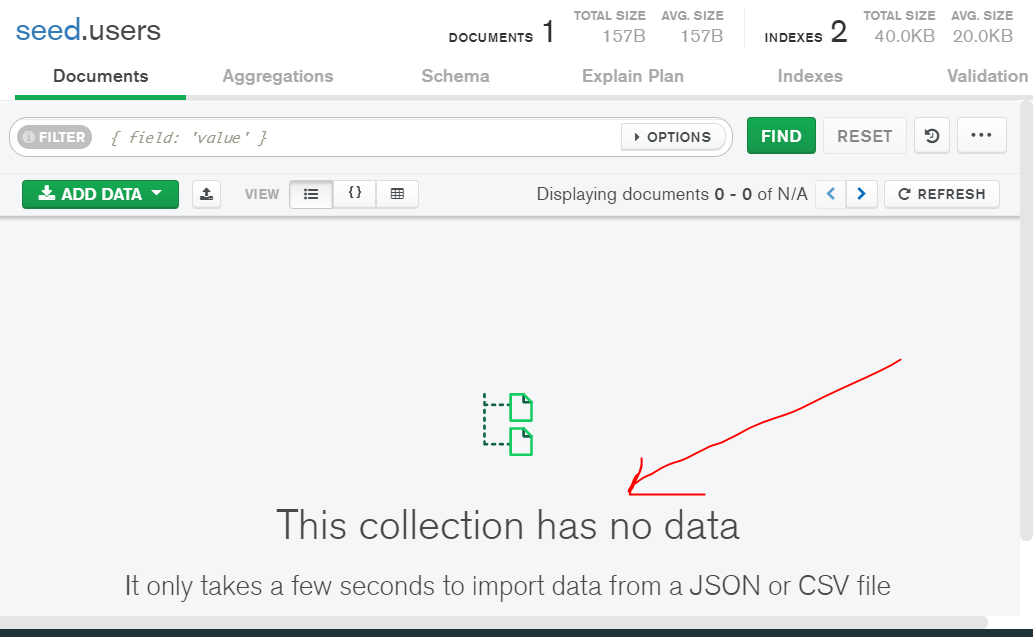

858,186 | Nodejs: Importing Mock data with script | We want to test our apps with development data as developers, but recreating the wheel every time can... | 0 | 2021-10-11T13:29:32 | https://dev.to/drsimplegraffiti/nodejs-importing-mock-data-with-script-1ifa | javascript, webdev, beginners, tutorial | We want to test our apps with development data as developers, but recreating the wheel every time can be overkill. So we'll need to find out a way to import Mock data into our database using a single script that loads data from a 'json' file. Yes, a single script without the need to write the 'create function.' This procedure is known as <mark>Seeding</mark> and is absolutely unrelated to our current research. and it is completely independent of our existing project

## Prerequisite

* Nodejs installed

* Database management (basics)

---

## TOC

🔗 Setup node project

🔗 Connect MongoDB locally

🔗 Setup Express App

🔗 Import Data

🔗 Delete Data

## Let's get right to it.

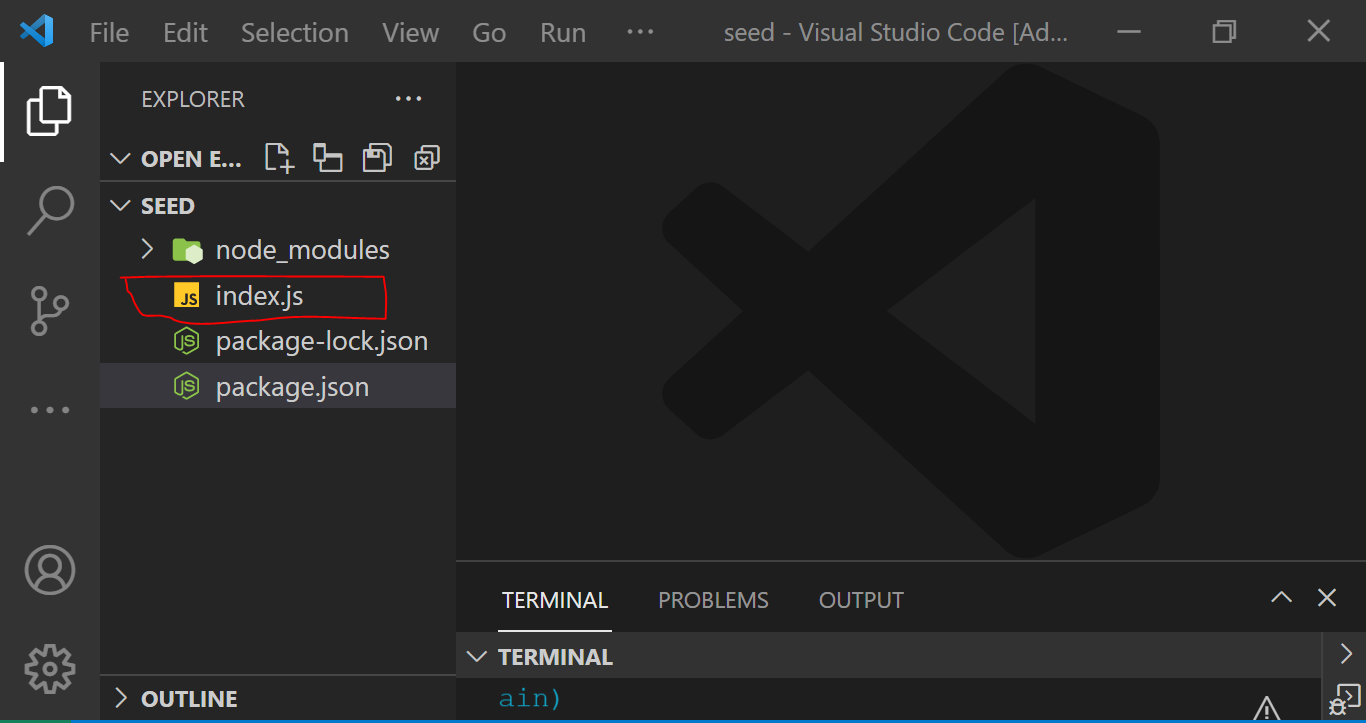

🥦 Make a new directory called seeding-tut with the command `mkdir seed`

Change directory `cd seed`

🥦 'npm init -y' creates a new node project.

Install packages:

```Javascript

npm i express, mongoose, dotenv, morgan

```

Install dev dependency

```Javascript

npm i nodemon --save-dev

```

🥦 Create an entry point `index.js`

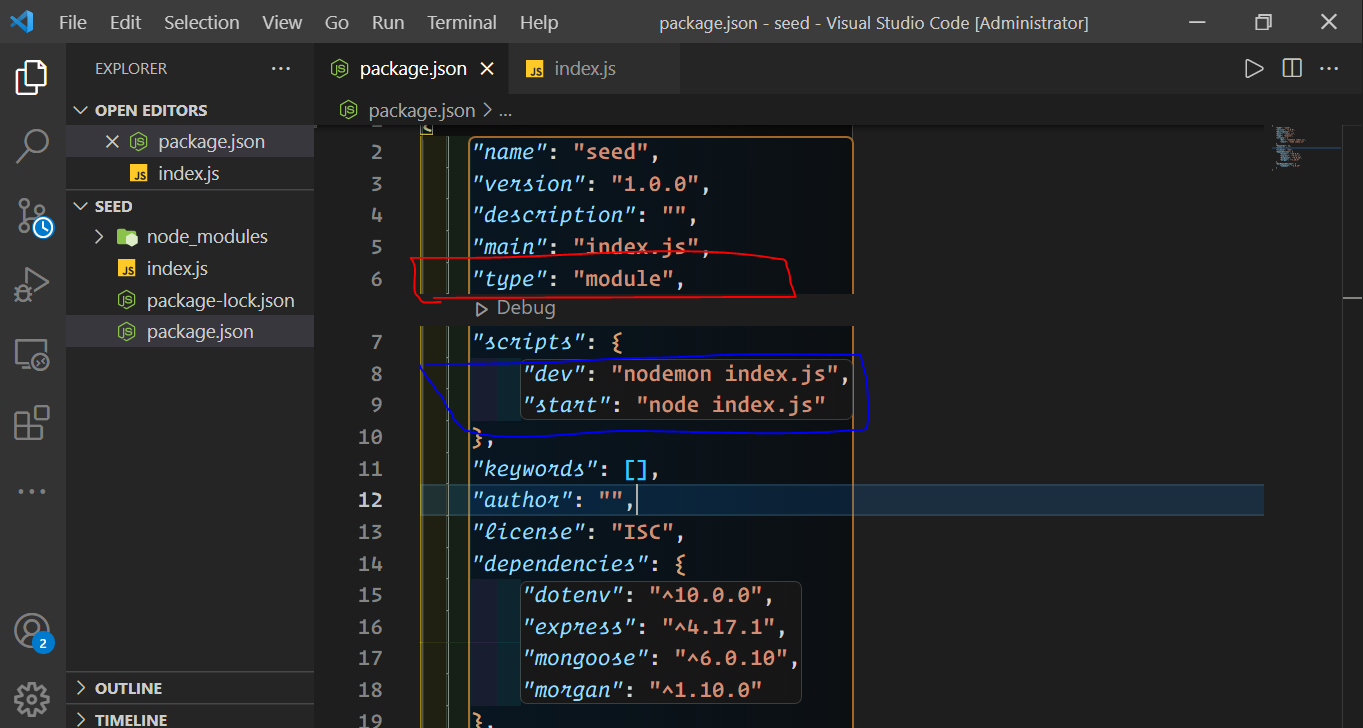

## Configure your `package.json` file.

Note : the "type":"module" enables ES Modules in Node.js

---

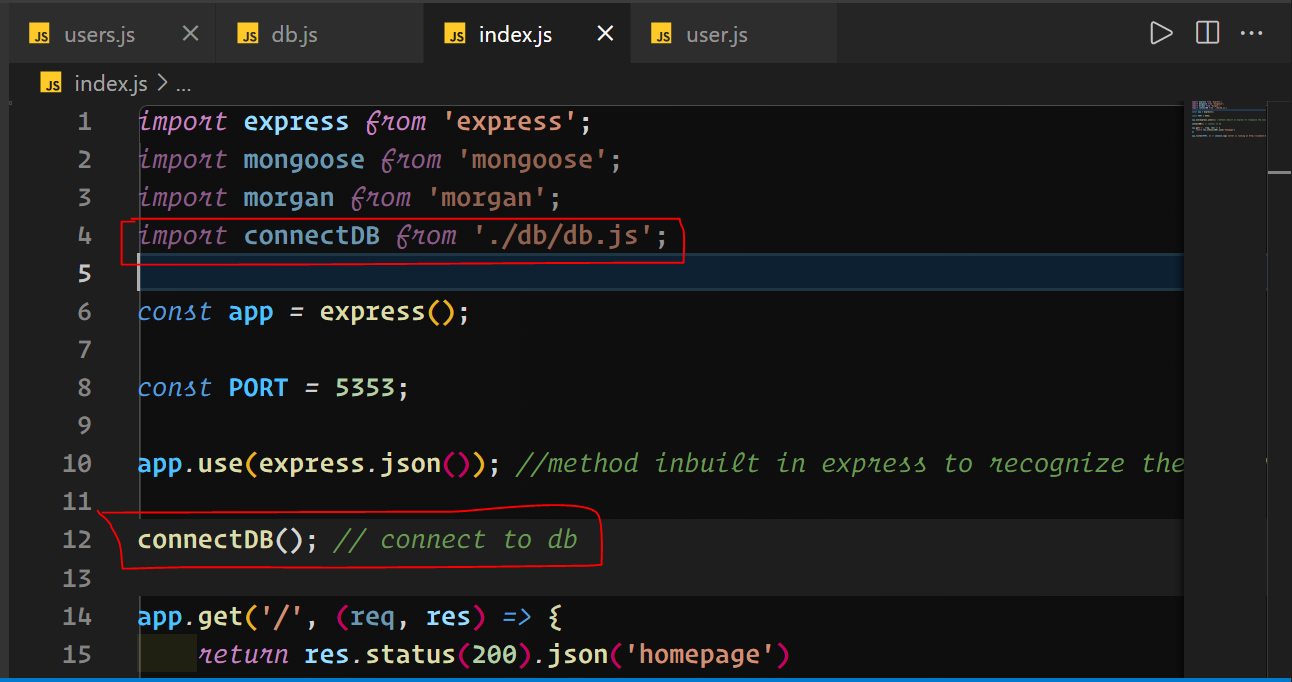

🥦 Then, in the `index.js file`, we import the packages and create an express app.

```Javascript

import express from 'express';

import mongoose from 'mongoose';

const app = express();

```

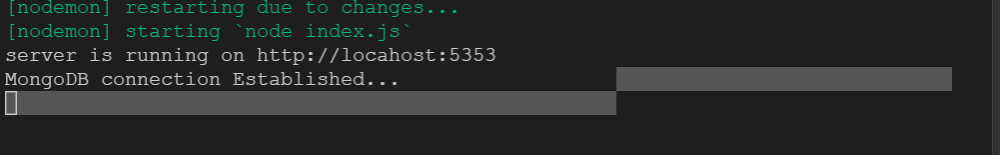

🎯 Because the front end typically runs on <mark>PORT:3000</mark>, we'll set our app to run on port 5353 to avoid any conflicts, and then we'll listen to our server.

---

## Create your app

```Javascript

import express from 'express';

import mongoose from 'mongoose';

import morgan from 'morgan';

const app = express();

const PORT = 5353;

app.use(express.json()); //method inbuilt in express to recognize the incoming Request Object as a JSON Object.

app.get('/', (req, res) => {

return res.status(200).json('homepage')

})

app.listen(PORT, () => console.log(`server is running on http://locahost:${PORT}`));

```

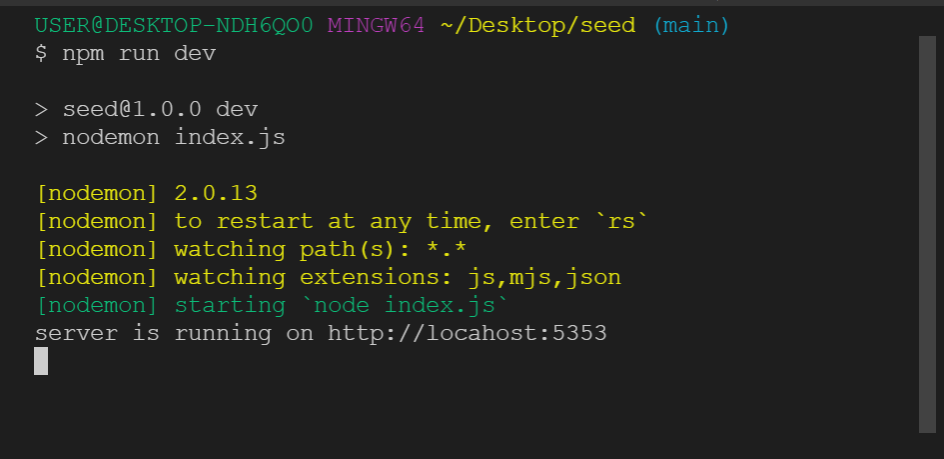

🥦 Let's get the server up and running.

'npm run dev' is a command that you can use to test your code.

Result:

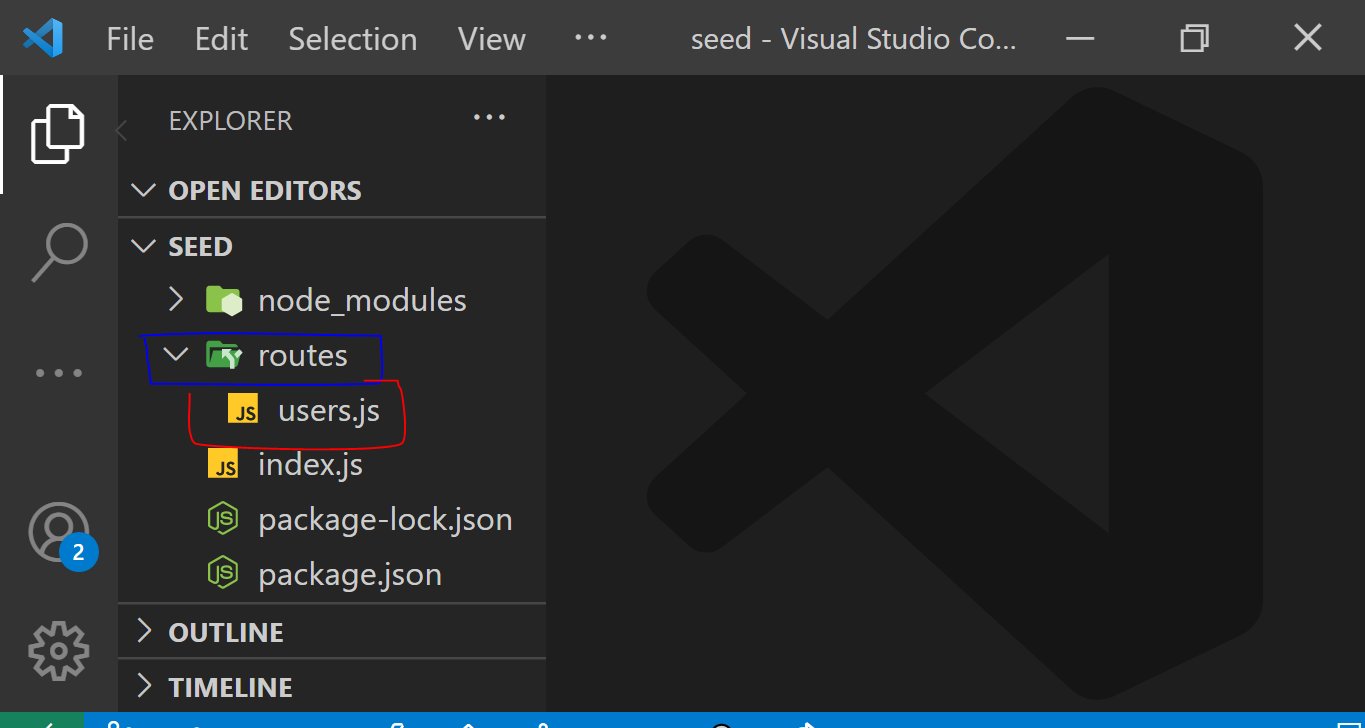

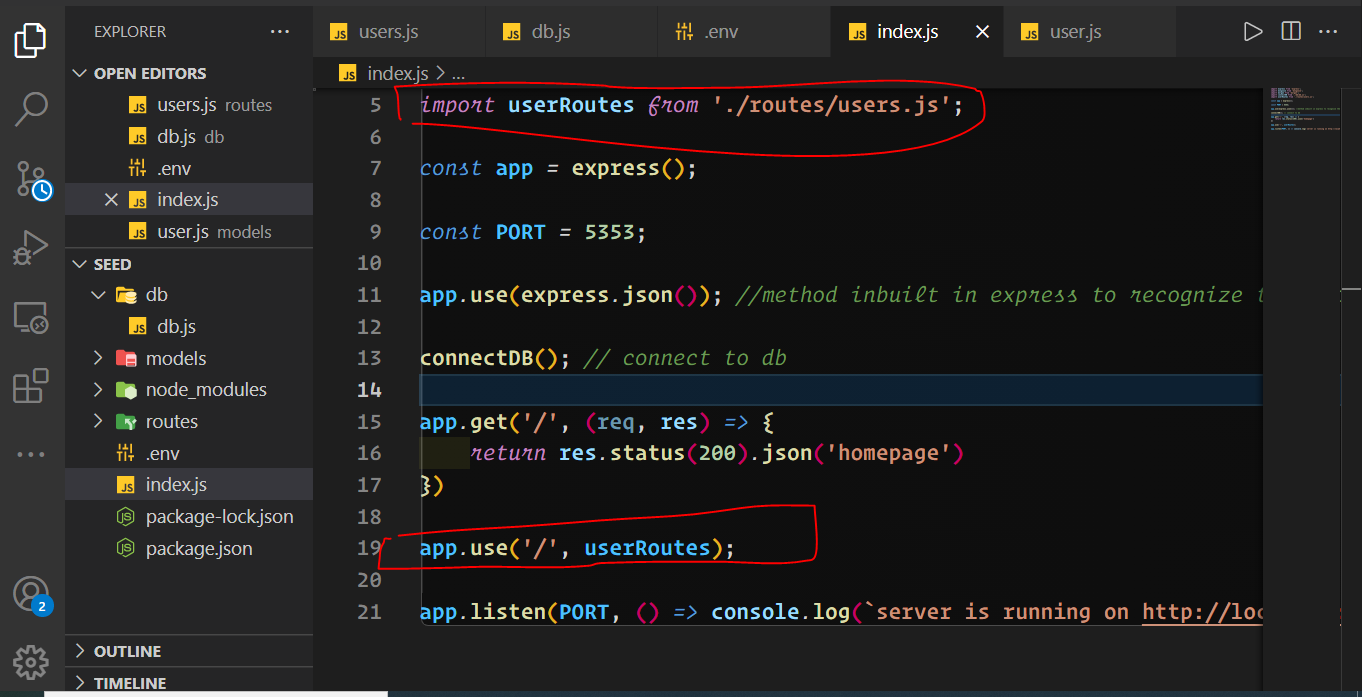

## Next, we'll design our express route.

Create a route folder and a `users.js` file within it.

##

🎯 To emphasize that seeding is independent to the project.

Let's start by building a user model. Make a folder for your schema and begin writing it.

```Javascript

import mongoose from 'mongoose';

const { Schema } = mongoose;

const userSchema = new mongoose.Schema({

fullName: {

type: String,

required: [true, 'A name is required'],

unique: true

},

email: {

type: String,

required: [true, 'Please enter a valid email to proceed']

},

phoneNumber: {

type: String,

required: [true, 'Please enter a valid phone number']

}

}, {

timestamps: true

})

// Exports schemas

export default mongoose.model('User', userSchema);

```

## 🥦

Create your route handler, add logic, and import the user model you made before.

```Javascript

import express from 'express';

const router = express.Router();

import User from '../models/user.js';

// Create a user

router.post('/users', async(req, res) => {

try {

const newUser = await User.create(req.body);

newUser.save();

return res.status(200).json({

message: 'user created successfully',

data: newUser

})

} catch (error) {

return res.status(500).json({

status: 'fail'

})

}

})

//Get all users

router.get('/users', async(req, res) => {

try {

const getAllUser = await User.find();

return res.status(200).json({

message: 'user data gotten successfully',

data: getAllUser

})

} catch (error) {

return res.status(500).json({

status: 'fail'

})

}

})

export default router;

```

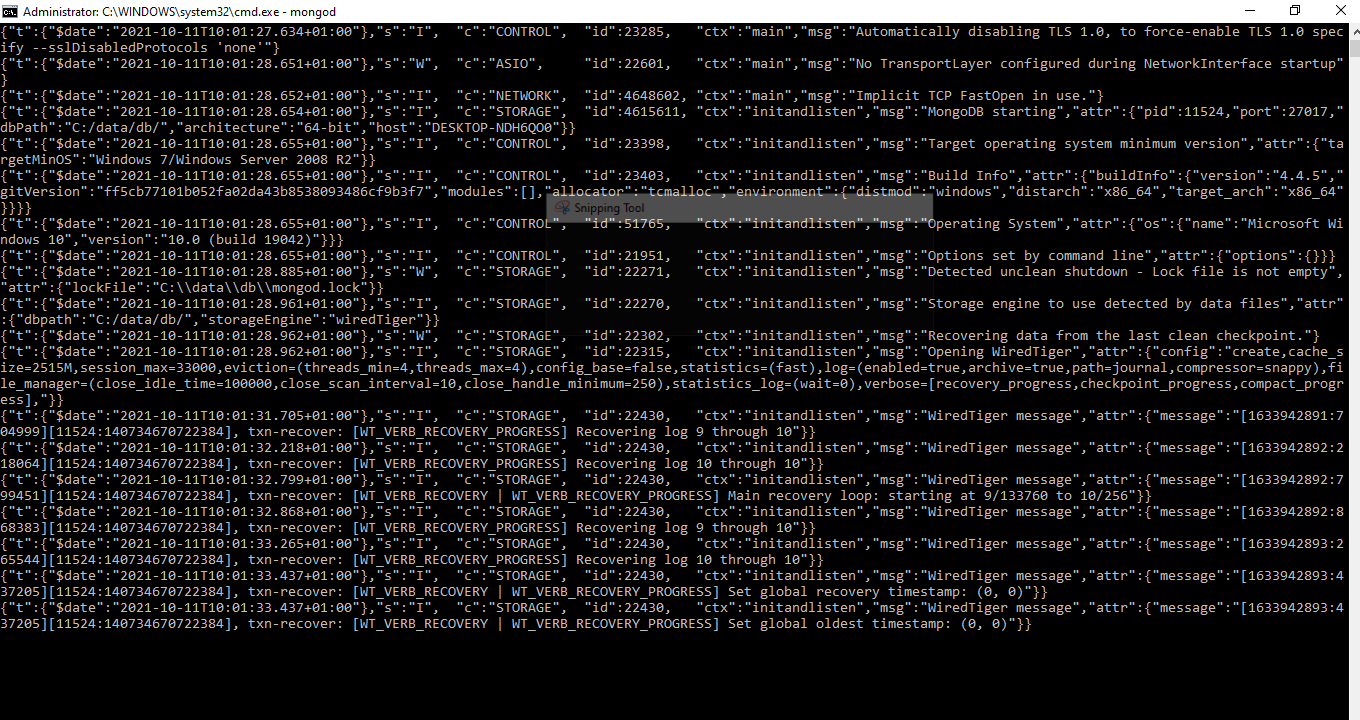

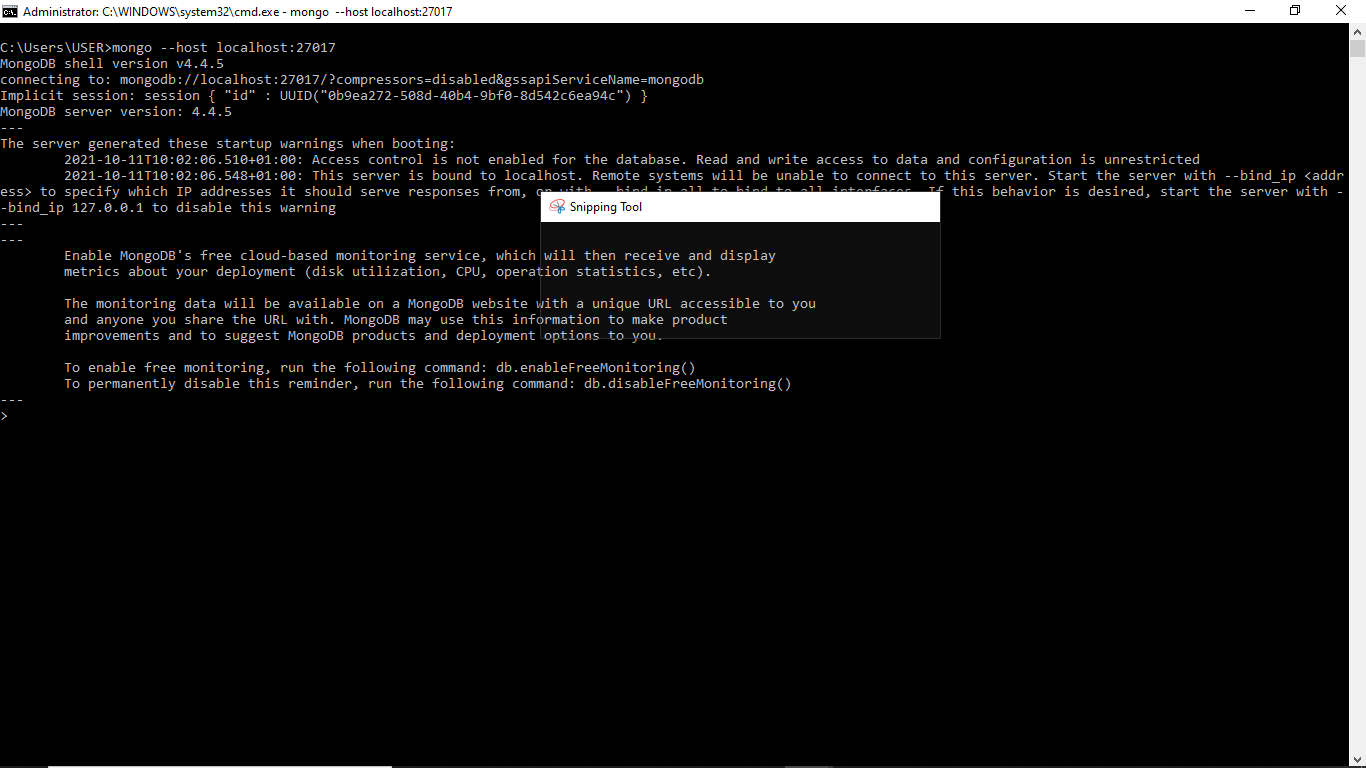

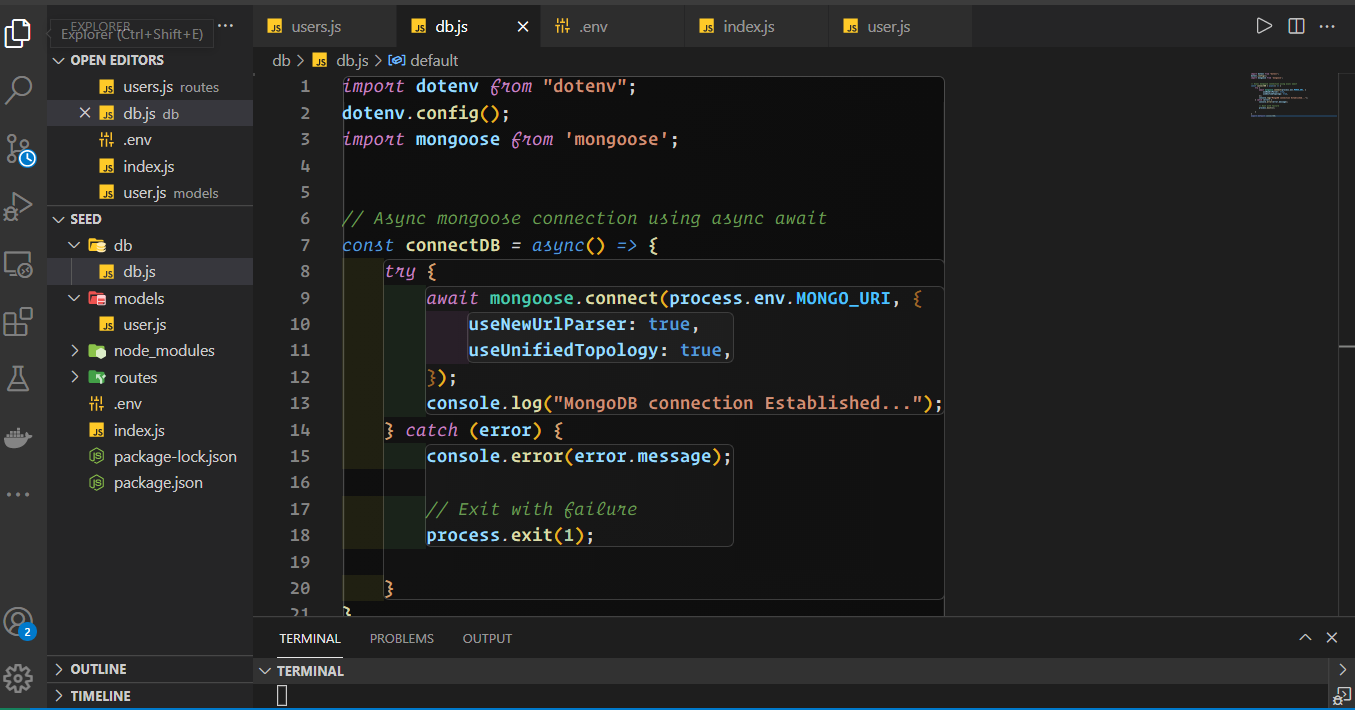

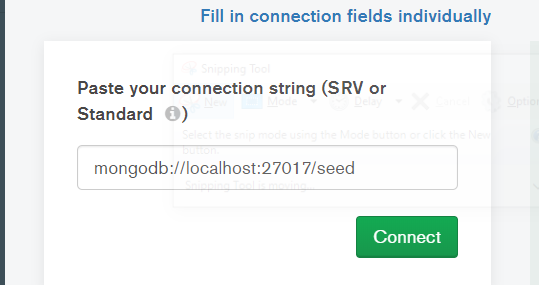

👨💻 Let's connect to our local DB.

1) Open your cmd and type `mongod`

2) Open another cmd without closing the first and type `mongo --host localhost:27017`

3) Create a db folder and a db.js file in it.

4) Import the db and mount the route handler into the entry file `index.js`

5) open mongodb Compass and connect

6) You should get on restart:

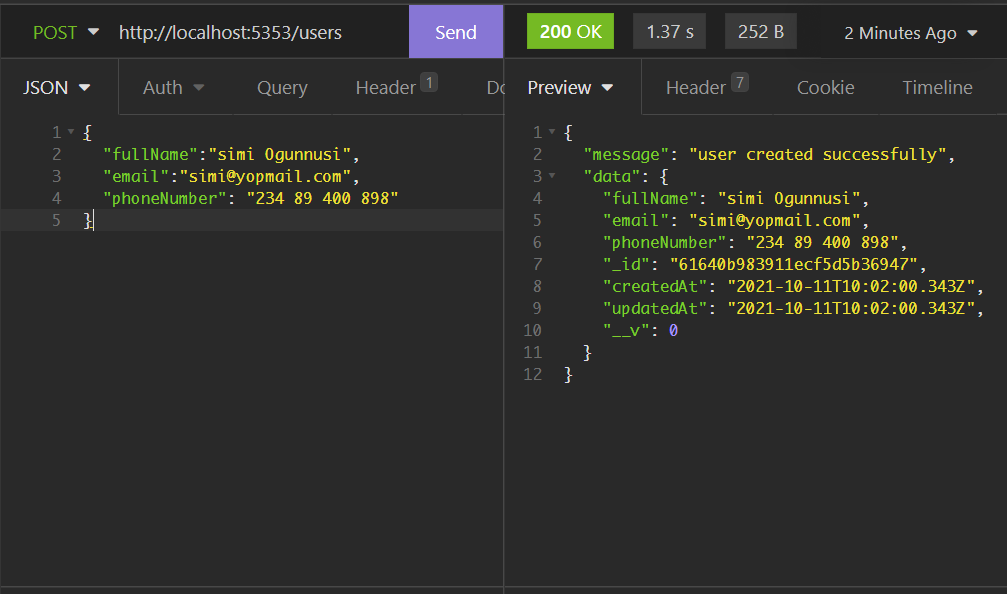

## Moment of truth 🦚

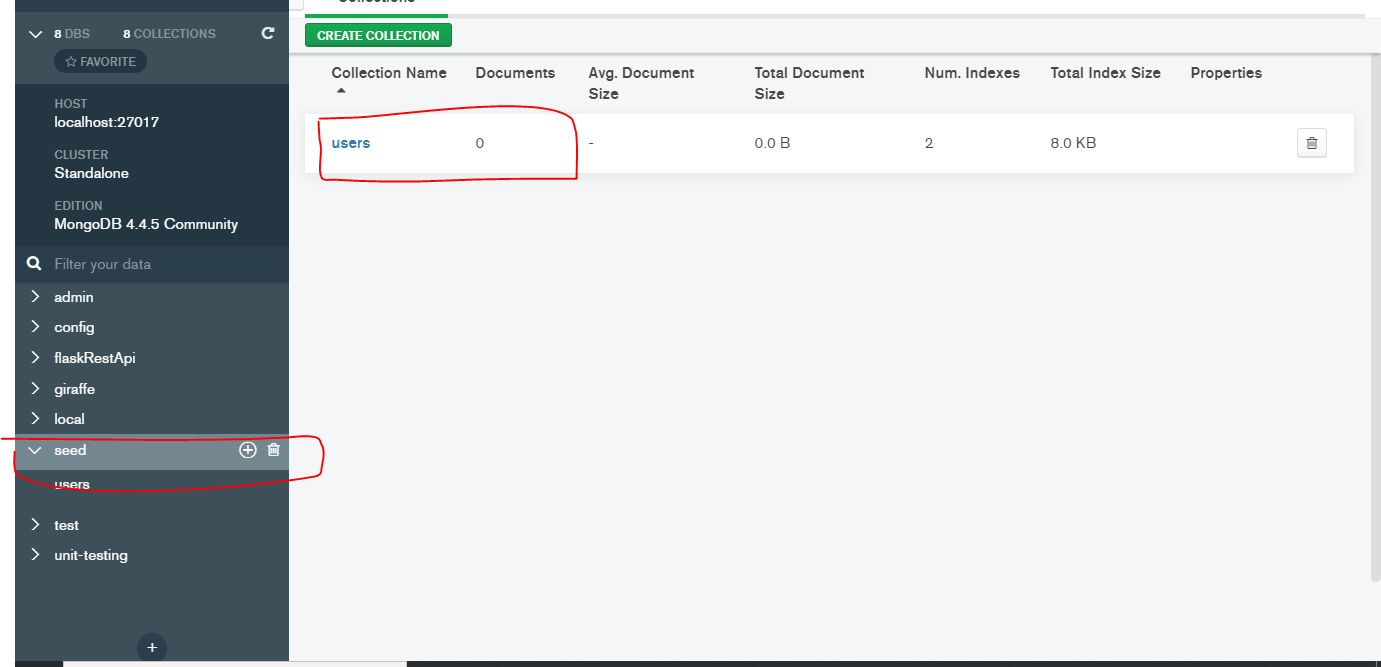

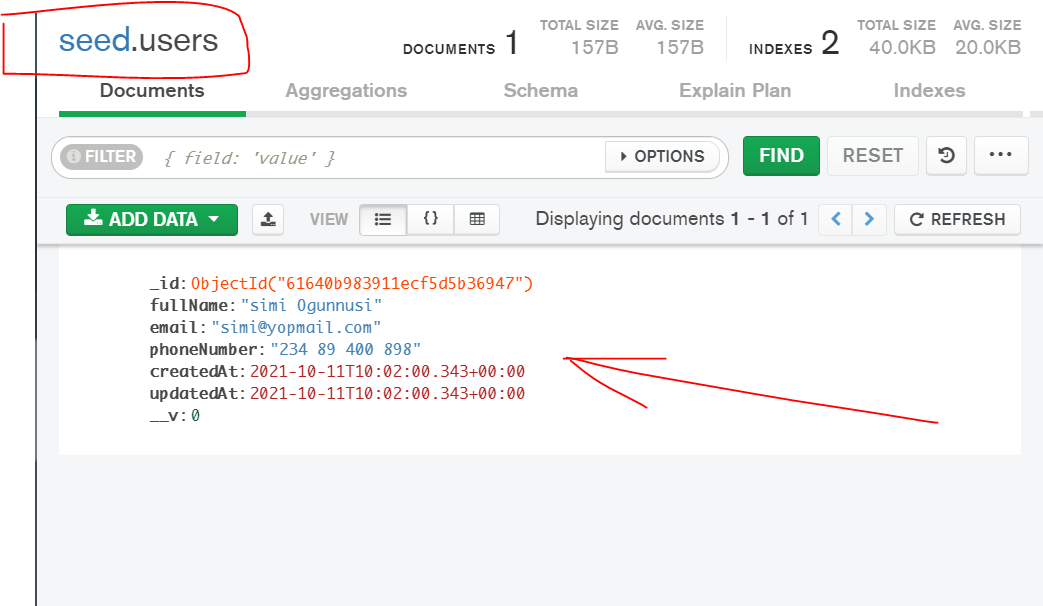

Open postman or insomnia and let's create a new user

Check if data was produced by refreshing your MongoDB compass.

---

## You have made it this far... now let's seed dummy data into our DB.

Let's add some additional development data to our database now.

Make a folder called data and a file called dev-data.js in it.

To read the JSON file, we first require access to the file system module.

```Javascript

import * as fs from 'fs'; // to read our data from the json file

```

Also we need access to the user model

```Javascript

import * as fs from 'fs';

import mongoose from 'mongoose';

import dotenv from 'dotenv';

dotenv.config();

import User from '../models/user.js';

```

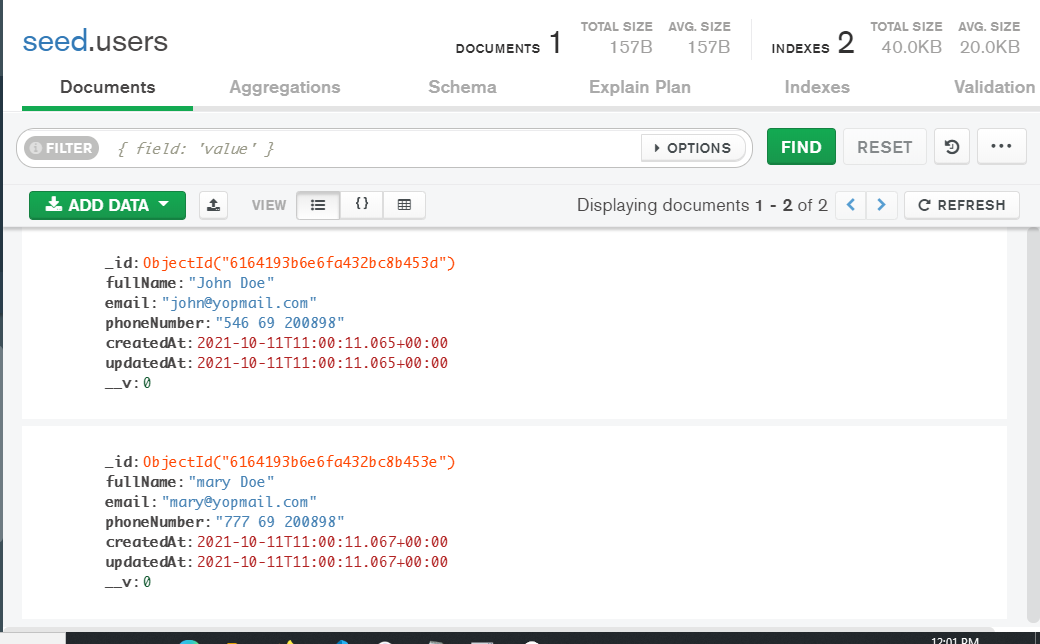

## Create a `data.json` file inside the data folder.

```Javascript

[{

"fullName": "John Doe",

"email": "john@yopmail.com",

"phoneNumber": "546 69 200898",

},

{

"fullName": "mary Doe",

"email": "mary@yopmail.com",

"phoneNumber": "777 69 200898",

}

]

```

Now we'll read our json file, but first we'll need to convert data to a Javasript object using (JSON.parse)

```Javascript

const users = JSON.parse(fs.readFileSync(`${__dirname}/data.json`, 'utf-8'));

```

---

The data is then imported into our database.

```Javascript

//seed or import Data into DB

const importData = async() => {

try {

await User.create(users);

console.log('Data seeded successfully....');

} catch (error) {

console.log(error)

process.exit();

}

}

```

We may also clean all databases using a single script.

```Javascript

//delete Data in DB

const deleteData = async() => {

try {

await Techie.deleteMany();

console.log('Data successfully deleted');

} catch (error) {

console.log(error)

}

process.exit();

}

```

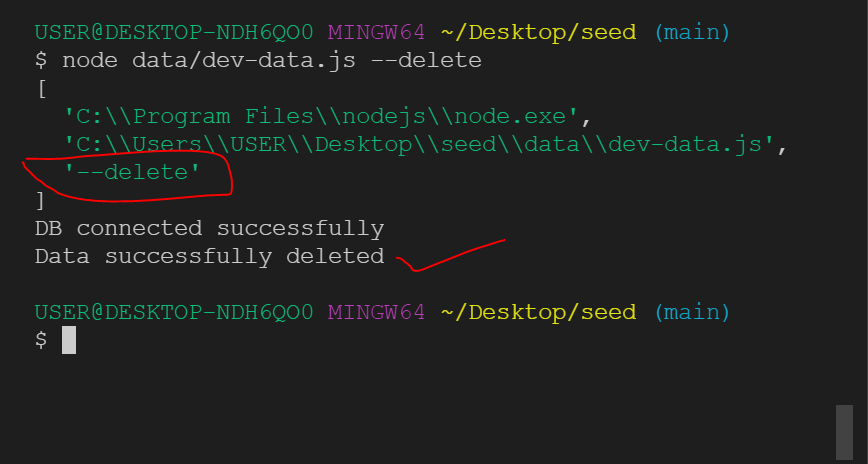

🥦 Finally, to start our script, we construct a conditional statement.

```Javascript

if (process.argv[2] === '--import') {

importData();

} else if (process.argv[2] === '--delete') {

deleteData()

}

console.log(process.argv);

```

🥦 Explanation:

If the third index output of process.argv equals —-import, the importData() function will be called.

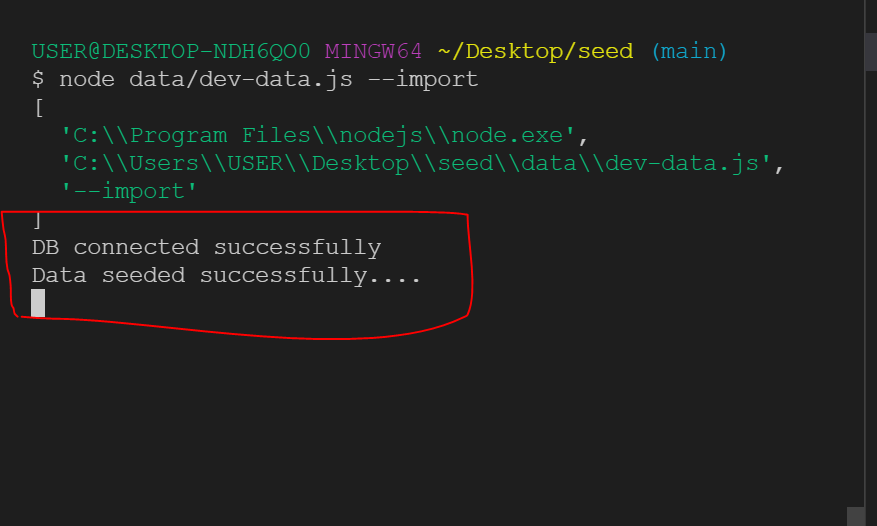

🥦 So, let's try it in our terminal.

Delete data: ` node data/dev-data.js --delete`

Import data: ` node data/dev-data.js --import`

Let me destroy all data in our DB first and then import the development data.

As explained earlier, because the third index was "delete" we fired the delete function and our DB got deleted.

Let's see if it was removed from our database.

When the MongoDB compass is refreshed.

🥦 Now let's import our mock data by using ` node data/dev-data.js --import`

🥦 Let's double-check with our compass...

Yay!...and it worked.

## Conclusion

With a single script, we were able to import and delete data.

It takes some practice to get the hang of this method.

It is, nonetheless, worthwhile.

## Reference

[Importing development data by Jonas IO ](https://www.youtube.com/watch?v=PWeDNjbUcUU&t=6s)

| drsimplegraffiti |

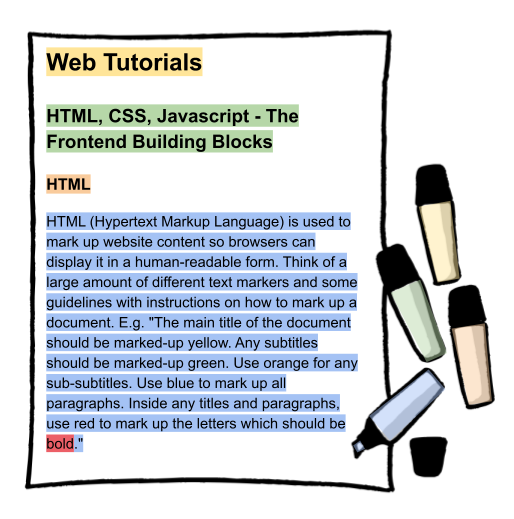

858,310 | HTML, CSS, JavaScript - The Frontend Building Blocks | HTML HTML (Hypertext Markup Language) is used to mark up website content so browsers can... | 0 | 2021-10-10T11:50:19 | https://dev.to/cyrillmartin/html-css-javascript-the-frontend-building-blocks-1cla | ## HTML

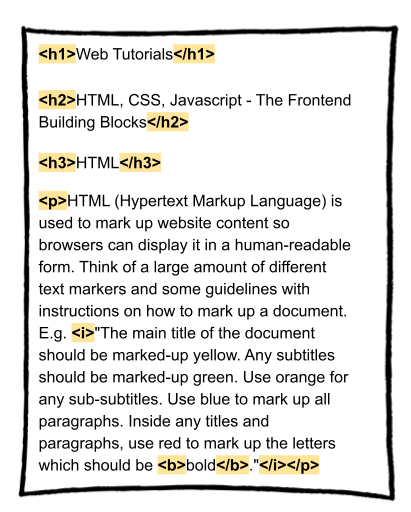

HTML (Hypertext Markup Language) is used to mark up website content so browsers can display it in a human-readable form. Think of a large amount of different text markers and some guidelines with instructions on how to mark up a document. E.g. "The main title of the document should be marked-up yellow. Any subtitles should be marked-up green. Use orange for any sub-subtitles. Use blue to mark up all paragraphs. Inside any titles and paragraphs, use red to mark up the letters which should be **bold**."

Instead of text markers, HTML makes use of **HTML tags**. An HTML tag has a name (instead of a color) to indicate what is being marked up. There’s an **opening tag** and an **ending tag** to wrap parts of a text in order to indicate where the markup starts and ends. There's also a guideline on which tags to use for which parts of a text. E.g. the main title of a text should be wrapped in a **h1** tag (h1 for heading 1). Any subtitles should be wrapped in **h2** tags. Sub-subtitles go into **h3** tags, etc. Paragraphs are wrapped inside **p** tags and letters which should be bold are wrapped inside **b** tags. There are many more tags available, of course. In the image below you can also see an **i** tag for italic.

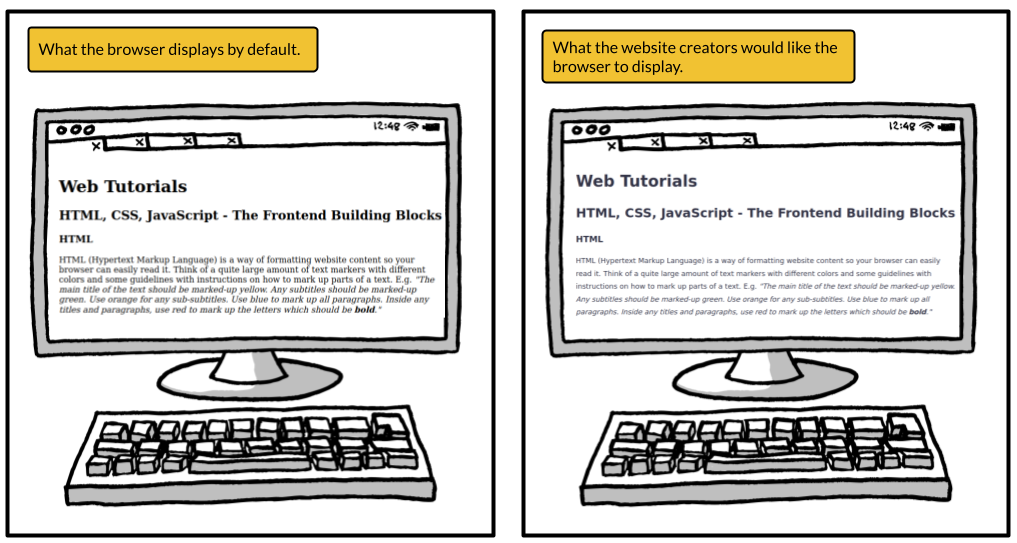

When displaying an HTML response, browsers take care of not showing you any of the actual tags but they consider them by the use of default styles. For any text inside an h1 tag, browsers will use a bigger font. For paragraphs (p tag), they will add some spacing before and after the marked up text, etc. However, website creators might not want the browsers to apply their default stylings. Maybe they want to see different spacings between paragraphs and different font sizes for titles.

Such custom styles are achieved by introducing CSS (cascading style sheets).

## CSS

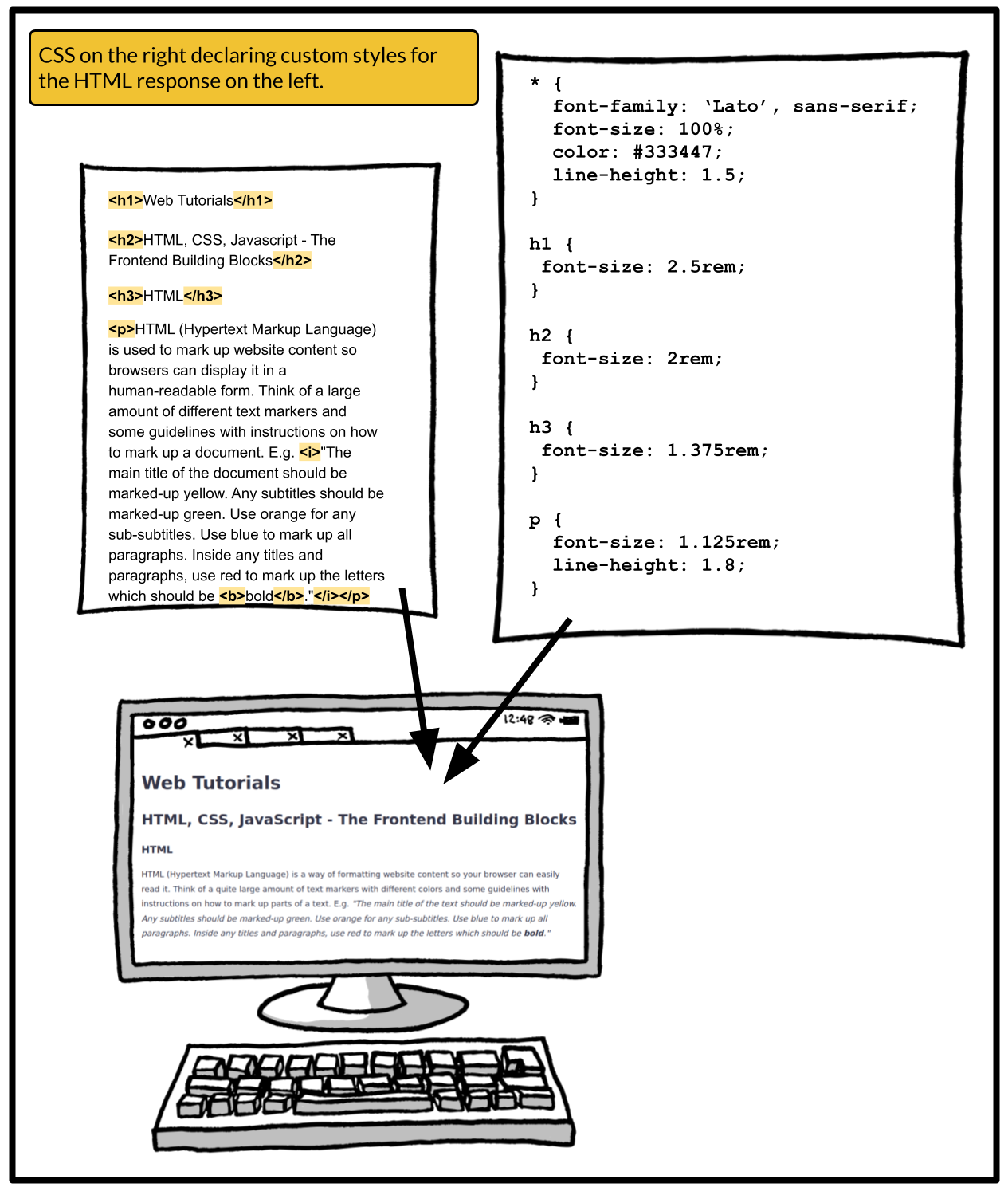

Styles declared in CSS are part of the additional resources your browser might request when you access a URL. So, the initial HTML response probably lists at least one CSS resource declaring the custom styles for this current HTML response. In its simplest form, CSS lets you write down the names of the HTML tags and declare custom styles for these, overriding the browser's default ones. There is much, much more to CSS than this simple approach but this should be enough to get the idea. Just keep in mind that any CSS is tightly bound to an HTML response. CSS requested in the context of one response has no effect on the styles of another one, unless they are referencing the exact same CSS.

While accessing the URL of this tutorial, the HTML response also listed some CSS as one of the additional resources to be requested by your browser. The CSS instructs your browser to override its default styles by applying a different font, using a very dark greyish blue as the text color, using different font sizes for titles, and applying different line heights for titles and paragraphs. Don't worry about the details of CSS syntax. Just be aware that any CSS goes hand in hand with a corresponding HTML document.

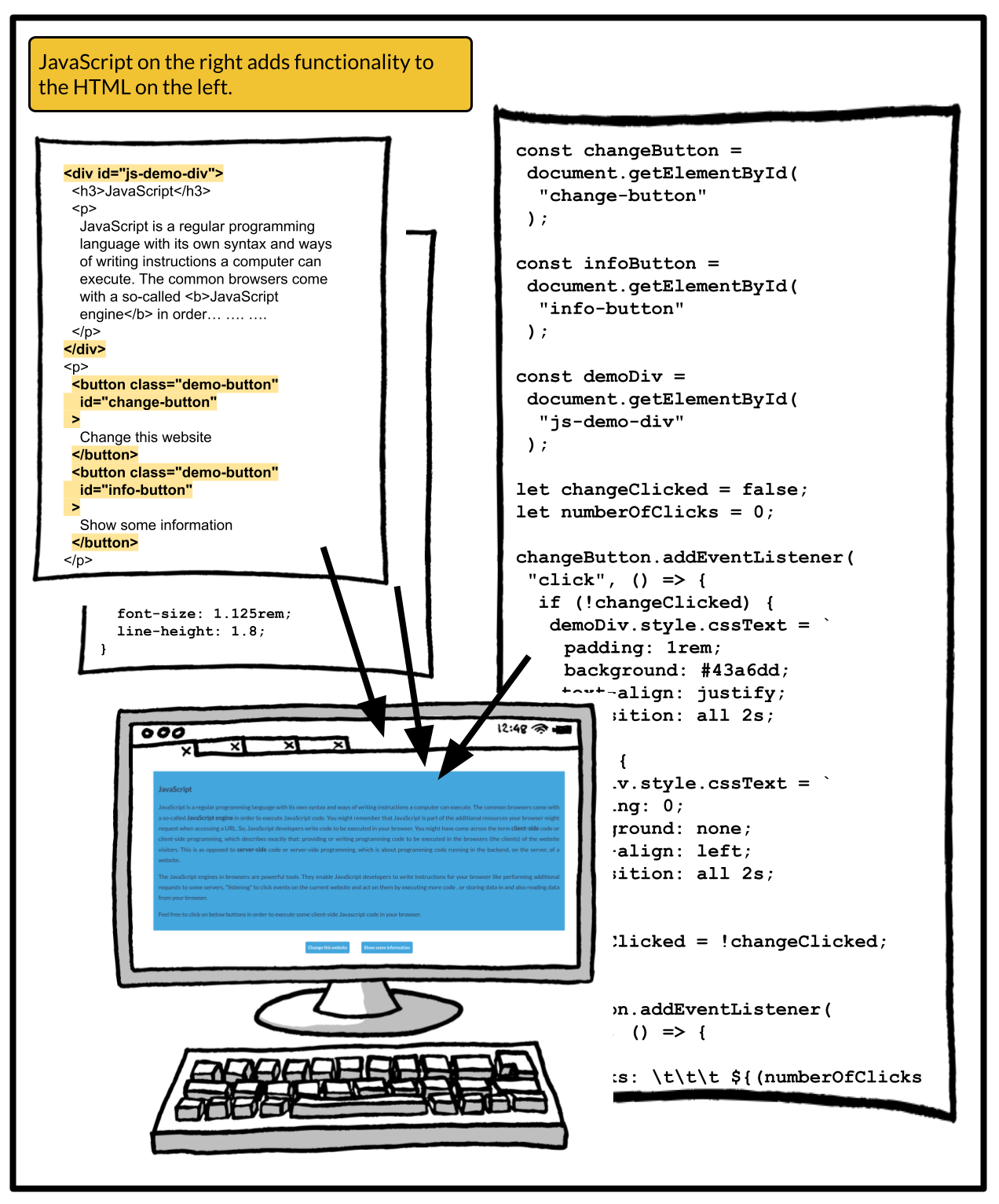

On most websites you have some user experience in the form of interactions. Maybe you click a button and some additional elements are loaded to the website. Maybe you get notified that you missed entering some crucial information when trying to check out and order something online. The changes of the website usually happen without a reload of the website or the requests of new HTML and stylings - it's really just parts of the website that change. This website experience you are so used to is achieved through the use of **JavaScript**.

## JavaScript

JavaScript is a regular programming language with its own syntax and ways of writing instructions a computer can execute. The common browsers come with a so-called **JavaScript engine** and **JavaScript runtime** in order to execute JavaScript code. Remember that JavaScript is part of the additional resources your browser might request when accessing a URL. So, JavaScript developers write code to be executed in your browser. You might have come across the term **client-side** code or client-side programming, which describes exactly that: providing or writing programming code to be executed in the browsers (the clients) of the website visitors. This is as opposed to **server-side** code or server-side programming, which is about programming code running in the backend, on the server, of a website.

The JavaScript runtimes in browsers are powerful tools. They enable JavaScript developers to write instructions for your browser like performing additional requests to some servers, "listening" to click events on the current website and act on them by executing more code, or storing data in and also reading data from your browser. You don't need to understand JavaScript syntax but I hope you now have an idea of client-side JavaScript code and its powerful role in your website experiences.

| cyrillmartin | |

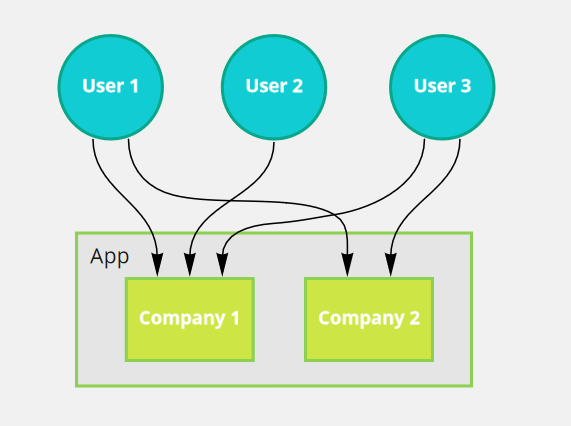

858,325 | Store Persist State to URL Nuxt Js | Multi-tenancy become more complex problem for authentication and authorization. Nuxt js as front-end... | 0 | 2021-10-10T11:23:47 | https://dev.to/riochndr/store-persist-state-to-url-nuxt-js-3kla | javascript, nuxt, state | Multi-tenancy become more complex problem for authentication and authorization. Nuxt js as front-end app need to adapte to this problem

The scenario :

- Nuxt Js is a front-end App,

- a user can login as company 1 or company 2. each company have an id.

- when hit an API, front-end must send company id

The problem is, how to make Front-end store company id and can be used when Request API. I have some approach to store company id but only 1 way to solve this problem.

### Token

You can store company id on token, this is good from API perspective immediately know user information, but you need to recall API to re-create token if user change company id, which is this is not ideal when user switch to other company id periodically

### Localstorage

Localstorage is deadly simple to store information in browser client. we can store it to localstorage and use it when it needed. But, the problem is when we want to use on Server Side. Since Nuxt Js Support Server Side Rendering, nuxt cannot read localstorage because localstorage only accessable on client side.

### State Management (Vuex)

State management solve problem localstorage to access from Server Side and accessable from any page on website. But, the problem is state management will be reset when user refresh the page.

### Param URL

We can store some variable on Parameter URL and use the param when it needed, it persistence and user know 'where are they' only from URL.

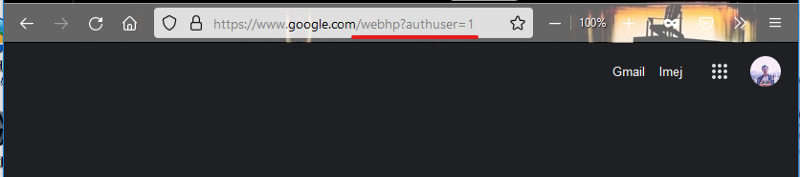

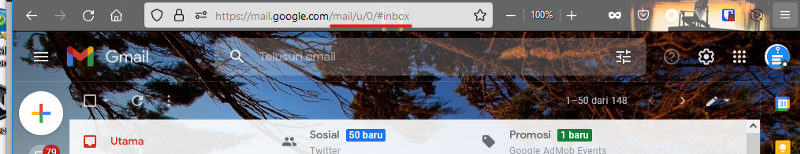

I see this is the absolute solution to store company id or other variable. Google do this for a long time, when you logged in to google with different account, google

store state current user on query URL

How to Google store user logged in on URLHow to Google store user logged in on URL (Gmail.com)Quite a nice idea. But we found new problem, we should add query or param to every `<a href />` tags. Every programmer never do it manually. Lets create Middleware to solve this problem

Automatically Query Url Update Middleware Nuxt (AQUUMN)

I have no idea about this technique name

We need something to update query URL every change route, we can do this in Middleware that provide by Nuxt Js (because we use Nuxt Js). We will store the company id in State Management and URL, you got the point right ?. We will create middleware with flow like this :

Whenever User change Route, get the Company id from state management

Redirect user to new URL that Query company id included

Whenever browser refresh the page, state management will be reset and we need to restore from URL query company id

Now we have persist parameter user's company id on URL, no matter what URL requested because middleware will put Query company id to URL.

Middleware Code

First of all, we need to create state management for company id. In this case I use Vuex. This is companyStore.js

```typescript

export const state = () => ({

companyId: null

})

export const mutations = {

setCompanyId(state:any, companyId:string){

state.companyId = labId

}

}

Create new middleware called authApp.ts in folder middleware (if there is no folder middleware, create one).

import { Context } from "@nuxt/types"

export const companyId = "companyId"

/**

* this middleware make apps to require query `companyId`

* ex: /home/?companyId=123-24123-4123

*/

export default function(context:Context){

let excludeRoute = [

"/login",

"/login/app"

]

let companyIdQuery = context.query[companyId]

let companyIdStore = context.store.state.companyStore[companyId]

if(!companyIdQuery){

if(companyIdStore){

return context.redirect(context.route.path + "?companyId=" + companyIdStore)

}

if(!excludeRoute.includes(context.route.path)){

return context.redirect('/login/app');

}

}

else {

if(!companyIdStore){

context.store.commit('companyStore/setcompanyId', companyIdQuery)

} else {

if(companyIdStore !== companyIdQuery){

context.store.commit('companyStore/setcompanyId', companyIdQuery)

}

}

}

}

```

I add array excludeRoute as a route redirect if company id is null, user require to select their current company that selected on the app.

lastly, register this middleware to `nuxt.config.js`

```javascript

router: {

middleware: ['authApp', ...]

},

```

You can get the company id from $route or $store

```javascript

let companyIdStore = this.$store.state.companyStore.companyId

let companyIdRoute = this.$route.query.companyId

```

###

Finish

That's it how I solve this problem, I didn't found another article write about Nuxt to create persistence state like this, so I create one. Hope this help you. | riochndr |

858,339 | Be careful Of This Java Optional Method | Let's Remember Java Optional 🤓 According to Oracle it's "A container object which may or... | 0 | 2021-10-13T22:21:16 | https://dev.to/jarjanazy/be-careful-of-this-java-optional-method-439 | java, programming, tutorial, webdev | ## Let's Remember Java Optional 🤓

According to **Oracle** it's "A container object which may or may not contain a non-null value."

Optional was introduced in Java 8 and has been used by the SpringBoot team in many projects.

---

The most common usage of Optionals is in the Spring Data project. Let's look at the `JpaRepository` interface and an example method.

Say we have a User entity with an Id type of integer and that we have a JpaRepository for it

```java

@Repository

public interface IUserRepo extends JpaRepository<User, Integer>

{

Optional<User> findByUserName(String userName);

}

```

We defined a method that searches for a user via their user name and returns an `Optional` of a User.

---

## Optional's Convenience Methods 🙌

Optional comes in with many method meant to enable us to write clean and readable code.

- map(..).or(...)

- map(...).orElse(...)

- check out Oracle's [docs](https://docs.oracle.com/javase/8/docs/api/java/util/Optional.html) for the full list.

**However, there is one method with a dangerously unexpected behavior**

---

## Meet The `orElse` Method 👀

According to Oracle's doc:

> public T orElse(T other)

>

> Return the value if present, otherwise return other.

Now, we can add a method call as the parameter of the orElse, which will be run if the Optional is empty, right?

Yes, that's correct, BUT, what if I tell you that it will run anyways regardless of the presence of the value in Optional or not.

---

Let's test it ✍️

```java

@Test

public void orElseTest()

{

String result = Optional.of("hello").orElse(someMethod());

assertThat(result).isEqualTo("hello");

}

private String someMethod()

{

System.out.println("I am running !!");

return "hola";

}

```

The test does pass, but we notice that on the console we have the string **"I am running"** printed out.

---

### Why is that? 🤨

- Java runs the method to provide a value to be returned in the *Else* case.

## So Be Careful ⛔️

We want to be careful if the method inside the `orElse` might have a side effect, because it will be run anyways.

---

## What To Do then?