id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

875,261 | How To Build A Successful Cybersecurity Program For Your Business? | According to the Verizon Data Breach Investigation report, there were more than 53000 cybersecurity... | 0 | 2021-10-25T12:14:33 | https://dev.to/michael56812375/how-to-build-a-successful-cybersecurity-program-for-your-business-1dn4 | According to the Verizon Data Breach Investigation report, there were more than 53000 cybersecurity incidents and 2200 data breaches. This trend is not going to subside and will continue. This clearly indicates that cybersecurity attacks will increase in number and grow in complexity.

If you are a small business thinking that these cyber-attacks only target large-scale enterprises, then you are wrong. We have seen instances where cybercriminals have attacked small businesses as well. Small businesses are more vulnerable as they serve as a soft target for attackers due to a lack of resources and cybersecurity policies.

Whether you have a small business or a large one, having a robust cybersecurity program at your disposal helps protect against modern-day cybersecurity threats. How can I create a cybersecurity program for my business? Is that what you are thinking? If yes, then you are at the right place.

In this article, you will learn about a step-by-step process of creating a cybersecurity program for your business.

Lay The Foundation

The first step of creating a winning cybersecurity program is to lay the groundwork. Establish the foundation of your cybersecurity program, which you can use to expand upon later on. Thankfully, you don’t have to create everything from scratch as there are many frameworks you can use for this purpose. You can either opt for the NIST framework or choose COBIT5.

As soon as you start working with the framework, you will realize that many functions, categories and subcategories are not relevant to your business. Now, you will have to align your operational needs with the framework. Use standards of good practice for information security laid out by Information Security Forum and identify which operational services align with cybersecurity definitions of a particular framework.

Perform Audit of Assets And Policies

After you have laid a solid foundation, it is time to perform a detailed audit of your current assets and cybersecurity policies. Ask yourself questions like, “Which IT assets are critical for your business and need more protection?” Take some time to develop a better understanding of how and where your sensitive data is stored and how it is being accessed.

Instead of solely focusing on your hardware such as devices and [Los Angeles dedicated servers](https://hostnoc.com/los-angeles-dedicated-servers/), you should also consider software running on your IT assets as you might need to keep the software and patch updated. Next, critically analyze your current cybersecurity policies. If you identify some loopholes and outdated stuff that needs to be updated, you should go ahead and do it as soon as possible.

This analysis should include everything from access rights of employees to authentication systems to password policies. The stringent your cybersecurity policies might be, the harder it is for cybercriminals to break into your network and wreak havoc on your critical business infrastructure.

Control Access To Your Data

Once you have a clear understanding of your data and where it resides, it is time to manage access to that data in an efficient and secure manner. The easiest way to do that is to adopt the least privilege method. Offer employees access to only that information that they need to perform their tasks. For instance, the sales team should not have access to your HR data or your marketing should not have access to your financial transactions.

You cannot afford to give access to all your data to all your employees. That is where controlling access to data comes into play. Assign role-based access and monitor access constantly to prevent any hiccup. Force employees who have resigned to hand over their login credentials to you before leaving your organization. Most businesses tend to ignore this and end up paying a hefty price for it. Restrict removable storage usage and constantly monitor employee activities online to protect your sensitive data.

Build an IT Security Team

Creating a cybersecurity program is useless if you don’t have the right team to implement it. Hire IT professionals and industry experts and form a dedicated team that will be responsible for overseeing program implementation. Your IT team would be responsible for enforcing the company’s cybersecurity policies. Moreover, this team can also identify and tackle intrusion attempts and cybersecurity attacks to prevent any damage.

Before building a team, you should ask yourself questions like, “How many people do you need in your team?” or “What skills your IT security team should have?” This will simplify the process of building your IT security team. Small businesses that do not have the resources to build their team internally can take the services of managed security providers. This way, they can get the full services of an IT security team at a fraction of the cost.

Another advantage of hiring an IT security provider for [Increasing conversions on Shopify](https://www.engati.com/shopify-chatbot) is that they are quick to identify shortcomings of your cybersecurity program and offer suggestions and recommendations on correcting it. This can come in handy as you can enhance your cybersecurity program and fill in those gaps to ensure smoother sailing for your business.

Invest In Training and Education

You created one of the best cybersecurity programs, got the services of IT experts but all that won’t help your cause if you don’t educate and train your employees. Invest in employee education and training and it will do a world of good to employee education and training and it will do a world of good to keeping your business asset safe. By spending money on employee’s cybersecurity education and training, you can significantly reduce the risk of cybersecurity attacks such as social engineering. The more aware your staff is cybersecurity, it will be much harder for hackers to trick them into taking an action of their choice.

How do you create a cybersecurity program for your business? Feel free to share it with us in the comments section below.

| michael56812375 | |

875,486 | The 3 ways you can check for NaN in JavaScript (and 2 you can't!) | NaN isn't something you will often (if ever) write into your code. But it can crop up from time to... | 0 | 2021-10-25T14:14:43 | https://www.codemzy.com/blog/check-for-nan-javascript | javascript, beginners | **NaN isn't something you will often (if ever) write into your code. But it can crop up from time to time. And when it does, you can't check for it in the same way you could check for other values. Because NaN does not equal NaN. Are you confused? Don't be! Let's get to know NaN.**

`NaN` is an awkward member of the JavaScript family. We spend most of our time avoiding it, and when we do meet `NaN`, we can't get away quick enough! `NaN` is often misunderstood. It doesn't even understand itself. If you ask, "Hey `NaN` are you a `NaN`?" nearly half the time, it will say no!

```javascript

let x = NaN;

// 2 ways that don't work

console.log( x === NaN); // false

console.log( x == NaN); // false

// 3 ways that do work

console.log(Number.isNaN(x)); // true

console.log(Object.is(x, NaN)); // true

console.log(x !== x); // true

```

If you are confused about why some of these ways of checking for `NaN` don't work, and why some do, you're not alone! Because the `===` operator is the way we usually check for equality, and when `NaN` gets involved, it messes everything up. So why is `NaN` so troublesome? And what can we do about it?

`NaN` can happen for a wide range of reasons, usually if you try to do a calculation that JavaScript considers to be invalid math. Maybe you did `0 / 0`. And once it happens and `NaN` appears, it messes up all your future calculations. Not cool, `NaN`.

So let's say you have a number, `x`, and you want to check that it's valid. You need to know nothing has gone wrong in your code that's turned your number into not-a-number. So how can you avoid the dreaded `NaN`?

For the rest of this post, `x` is `NaN`. That's right - your number is not a number. And we need to write some simple code to detect it. So let's start by pointing the variable `x` to `NaN`.

```javascript

let x = NaN;

```

Ok, now we can begin.

## Ways you can't check for NaN

1. `x === NaN`

2. `x == NaN`

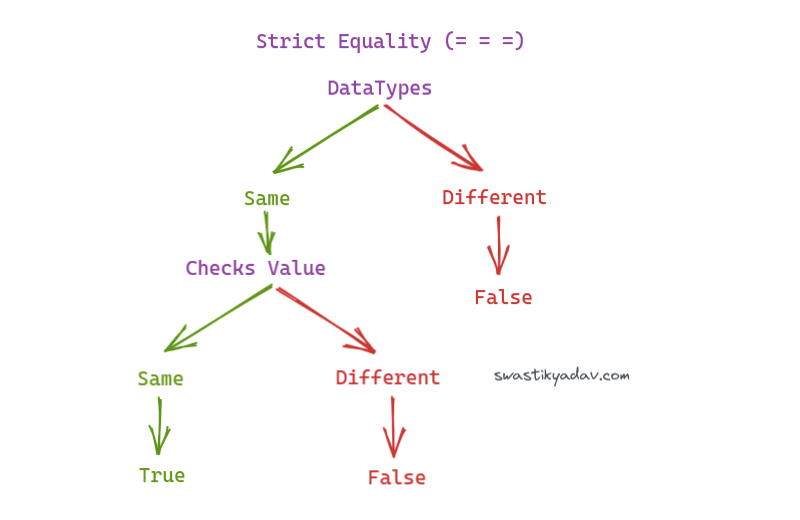

### Strict Equality

If you know a little JavaScript, you might bring out the old trusted **strict equality** operator. And this is the go-to operator for checking equality - it even says it in the name. So, of course, this *should* work.

```javascript

console.log(x === NaN); // false

```

Hmmm. That didn't work. Maybe my number is a number after all. Let's see what's going on here.

```javascript

console.log(NaN === NaN); // false (WTF??)

```

So, as it turns out, `NaN` does not equal `NaN`. At least not strictly.

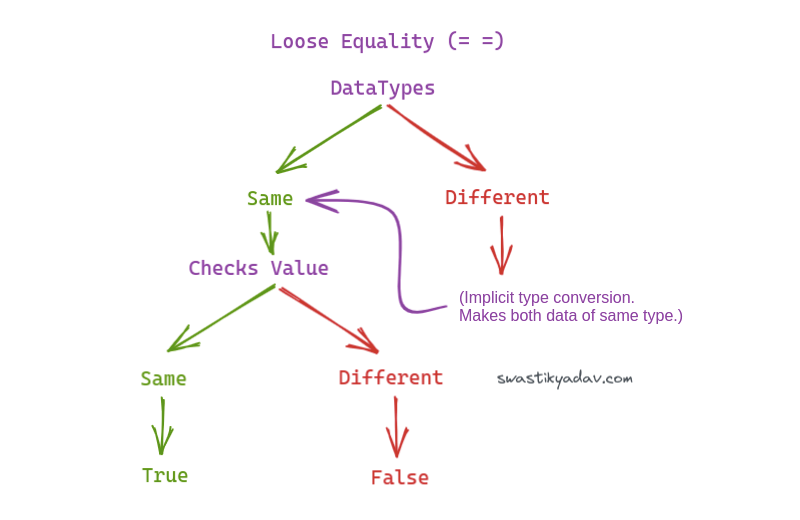

### Loose equality

I still use **loose equality** `==` from time to time, although it's frowned upon (and sometimes banned in some codebases) because the results can be unexpected. But can it check for `NaN`?

```javascript

console.log(x == NaN); // false

console.log(NaN == NaN); // false (WTF again!!)

```

At this point, I thought maybe equality with `NaN` might work in the same way as an object, and each time you write it, you create a new `NaN`. But I know that NaN is a primitive value, so that's not true either.

```javascript

// objects don't equal each other

console.log({} === {}); // false

console.log({} == {}); // false

// but two variables can point to the same object

let myObject = {};

let sameObject = myObject;

console.log(myObject === sameObject); // true

// but that's not how NaN works either

let myNaN = NaN;

let sameNaN = myNaN;

console.log(myNaN === sameNaN); // false

```

Eugh, infuriating! `NaN` is its own thing, and we will never be able to check for equality like this. You could call it a bug, or you could call it a feature. Either way, we need to accept it for what it is and move on. So let's look at three ways you can check for `NaN` (way number 3 is super cool once you get your head around it).

## Ways you can check for NaN

So `NaN` is a number, but it's a special type of number, that's for sure. Because its **NOT A NUMBER!**. Although the two most obvious ways to check for a value don't work with `NaN`, there are three pretty cool ways that do work.

1. `Number.isNaN(x)`

2. `Object.is(x, NaN)`

3. `x !== x`

### Ask a number if it's not a number

Ok, so I mentioned that `NaN` is a type of number.

```javascript

console.log(typeof NaN); // 'number'

```

And for that reason, there's a method on the Number constructor specifically for checking NaN. `Number.isNaN()`. That's right - you can ask a number if it's a number!

```javascript

console.log(Number.isNaN(x)); // true

```

### Object.is(x, NaN)

The [`Object.is()` method](https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Global_Objects/Object/is) checks if two values are the same value. Unlike strict equality, it works with `NaN`.

```javascript

console.log(Object.is(x, x)); // true

console.log(Object.is(x, NaN)); // true

console.log(Object.is(NaN, NaN)); // true

console.log(Object.is(NaN, Number.NaN)); // true

```

### Strict inequality

Remember how I said that strict *equality* is one of the two ways you can't check for `NaN`? Well, if you tweak your thinking slightly and check for *inequality* instead, you can.

This way is my personal favourite. I almost see it as a way of tricking `NaN` into revealing itself. Since `NaN !== NaN`, and it's kinda weird, we can use that logic to check for `NaN`.

```javascript

console.log(x !== x); // true

```

If you know that `x` should be a number, and you run `x !== x` and get true, you know you have found `NaN`.

And the reverse is true too. Say you want to validate that you have a valid number in your code, you can do `if (myNumber === myNumber)` because if the number doesn't equal itself, it's in denial about who it is. There's not only one type of number that's in that kind of denial, and you can be sure it's not a number (`NaN`).

---

And that's it, the three ways you can check for `NaN` in Javascript, and the two ways you cant. Hopefully, you find `NaN` a little less confusing now and a lot more interesting! | codemzy |

875,512 | LaTeX: Customizing the Page | Hello, how are you? :) In this post I tell you how to change the position of the numbers in your... | 0 | 2021-10-27T14:36:04 | https://dev.to/latexteada/latex-customizing-the-page-4e1b | codenewbie, latex | Hello, how are you? :)

In this post I tell you how to change the position of the numbers in your document or delete them. Let's start!!!

Let me introduce you to the instruction

```LaTeX

\thispagestyle{STYLE}

```

Where *STYLE* can be

- `empty` no header, no footer

- `plain` the page number is on the footer

- `headings` the page number is on the header

You can put that instruction in every single page of your document, or you in some of them.

[Here](https://texlive.net/run?%5Cdocumentclass%7Barticle%7D%0A%5Cusepackage%7Bamsmath%7D%0A%5Cbegin%7Bdocument%7D%0A%0A%5Cthispagestyle%7Bempty%7D%0A%0AHello%0A%0A%5Cnewpage%0A%0A%5Cthispagestyle%7Bheadings%7D%0A%0APeople%0A%0A%5Cnewpage%0A%0A%5Cthispagestyle%7Bplain%7D%0A%0AGreetings%0A%0A%5Cend%7Bdocument%7D) is an exaple, you can play with it and use all the commands.

This is all for today, thank you!!!

Do not forget to follow me on Twitter `@latexteada`

Greetings :)

| latexteada |

875,547 | Pointer Fu: An adventure in the Tokio code base | This post was originally posted on my blog In an effort to understand the internals of... | 0 | 2021-10-25T16:13:14 | https://dev.to/senyeezus/pointer-fu-an-adventure-in-the-tokio-code-base-120f | rust, tokio, programming | [Tokio]: https://tokio.rs/

This post was originally posted on my [blog](https://senyosimpson.com/short-forms/pointer-fu-tokio/)

***

In an effort to understand the internals of asynchronous runtimes, I've been spending time reading [Tokio]'s source code. I've still got a long way to go but it has been a great journey so far.

{% tweet 1450127351917027332 %}

Raw pointers are used all over Tokio. In one particular instance, the way they used them really blew my mind 🤯. So here I am, writing about it. Buckle in 💺.

## Setting the scene

We have a type `Cell` that is defined as (taken directly from Tokio source)

```rust

#[repr(C)]

pub(super) struct Cell<T: Future, S> {

/// Hot task state data

pub(super) header: Header,

/// Either the future or output, depending on the execution stage.

pub(super) core: Core<T, S>,

/// Cold data

pub(super) trailer: Trailer,

}

```

It's used when initialising a struct `RawTask`

```rust

struct RawTask {

ptr: NonNull<Header>

}

// This is pseudocode

impl RawTask {

fn new() -> RawTask {

let ptr = Cell::new(); // returns a raw pointer to a Cell

// Cast pointer to one that points to a Header

let header_ptr = ptr as *mut Header;

let ptr = NonNull::new(header_ptr);

RawTask { ptr }

}

}

```

## What is so interesting?

First, a necessary detour! Rust can represent structs in memory in multiple ways. It's covered in detail in the [The Rust Reference](https://doc.rust-lang.org/reference/type-layout.html#representations). By default, Rust offers *no guarantee* on the memory layout of your struct; it is free to modify the layout however it wants. From a developer perspective, it means you cannot write any code that makes assumptions on the memory layout of your struct. To change the representation of the struct, you can use the `repr` attribute (as shown in the above definition of `Cell`).

Now, here's where it gets good! I omitted the comment for the `Cell` struct, which is

```rust

/// The task cell. Contains the components of the task.

///

/// It is critical for `Header` to be the first field as the task structure will

/// be referenced by both *mut Cell and *mut Header.

```

Hopefully some alarm bells started ringing in your head 🚨. `Header` has to be the first field in the struct, so that you can dereference a pointer to `Cell` into either a `Cell` or a `Header` (if this doesn't make sense to you, check out the [addendum](#addendum)). However, Rust's default representation provides no guarantee that `Header` will remain the first struct field! Instead, as you can see, they've changed the representation to the `C` layout. In `C`, struct fields are stored in the order they are declared. This gives us the guarantee we need. Now we can go around dereferencing the pointer to `Header` without any worry!

```rust

let cell = Cell::new();

let cell_ptr = &cell as *mut Cell;

let header_ptr = cell_ptr as *mut Header;

let header = unsafe { *header_ptr };

```

## Addendum

View in [Rust playground](https://play.rust-lang.org/?version=stable&mode=debug&edition=2021&gist=147f74ccb012c0ef292ffe6e781c685a)

```rust

#[derive(Debug, Clone, Copy)]

struct Header {

x: u32

}

#[derive(Debug, Clone, Copy)]

struct Core {

y: u8

}

#[derive(Debug, Clone, Copy)]

#[repr(C)]

struct Task {

header: Header,

core: Core

}

fn main() {

let header = Header { x: 256 };

let core = Core { y: 10 };

let task = Task { header, core };

// Create raw pointer to task

let task_ptr = &task as *const Task;

// Cast pointer to point to Header

let header_ptr = task_ptr as *const Header;

// Dereference header_ptr

// We expect this to give us our Header struct as defined above.

// Because Header is the first field and the pointer is pointing

// to the beginning of the Task struct, it is valid to perform

// this dereference

let header_from_ptr_deref = unsafe { *header_ptr };

// Dereference task_ptr

// We expect this to give us our Task struct as defined above

let task_from_ptr_deref = unsafe { *task_ptr };

println!("Header from deref: {:#?}", header_from_ptr_deref);

println!("Task from deref: {:#?}", task_from_ptr_deref);

// Bonus: we can also cast task_ptr into a pointer to Core

// However, because its struct member is a u8 and Header's member

// is storing 256, which u8 cannot represent, dereferencing

// core_ptr will produce a corrupted Core struct

let core_ptr = task_ptr as *const Core;

let core_from_ptr_deref = unsafe { *core_ptr };

println!("Core from deref: {:#?}", core_from_ptr_deref);

}

```

The output of running this is shown below. Note that `Core` we obtained from dereferencing has `y=0` instead of `y=10`.

```

Header from deref: Header {

x: 256,

}

Task from deref: Task {

header: Header {

x: 256,

},

core: Core {

y: 10,

},

}

Core from deref: Core {

y: 0,

}

```

***

Shoutouts to [Ana](https://twitter.com/a_hoverbear) for reviewing this post.

| senyeezus |

875,565 | The Structure of an HTML Tag | Hey guys, this is Bhanu Stark from Public App. Today we are going to talk about the structure of... | 0 | 2021-10-25T16:55:20 | https://dev.to/publicapp/the-structure-of-an-html-tag-2m2m | html, beginners, tutorial | {% youtube MHf2Fd6cxFU %}

Hey guys, this is Bhanu Stark from Public App. Today we are going to talk about the structure of an HTML Tag.

In this post, you will find the answer of –

What is HTML tag?

What is HTML element?

What is opening tag?

What is closing tag?

What is self-closing tag?

What is an attribute?

How to know everything about an HTML element?

How to know about all the HTML elements?

Read full post here or watch video above-

[The Structure of an HTML Tag](https://www.publicapp.in/the-structure-of-an-html-tag/)

| publicapp |

876,273 | Hashing | Hashing is the process of converting an input of any length into a fixed size string or a number... | 0 | 2021-10-26T04:53:16 | https://dev.to/divya08296/hashing-1j75 | Hashing is the process of converting an input of any length into a fixed size string or a number using an algorithm. In hashing, the idea is to use a hash function that converts a given key to a smaller number and uses the small number as an index in a table called a hash table.

Hashing in Data Structures

We generate a hash for the input using the hash function and then store the element using the generated hash as the key in the hash table.

Hash Table

Hash Table: The hash table is a collection of key-value pairs. It is used when the searching or insertion of an element is required to be fast.

Operation in hash function:

Insert - T[ h(key) ] = value;

It calculates the hash, uses it as the key and stores the value in hash table.

Delete - T[ h(key) ] = NULL;

It calculates the hash, resets the value stored in the hash table for that key.

Search - return T[ h(key) ];

It calculates the hash, finds and returns the value stored in the hash table for that key.

Hash Collision: When two or more inputs are mapped to the same keys as used in the hash table. Example: h(“John”) == h( “joe”)

A collision cannot be completely avoided but can be minimized using a ‘good’ hash function and a bigger table size.

The chances of hash collision are less if the table size is a prime number.

How to choose a Hash Function

An efficient hash function should be built such that the index value of the added item is distributed equally across the table.

An effective collision resolution technique should be created to generate an alternate index for a key whose hash index corresponds to a previously inserted position in a hash table.

We must select a hash algorithm that is fast to calculate.

Characteristics of a good Hash Function

Uniform Distribution: For distribution throughout the constructed table.

Fast: The generation of hash should be very fast, and should not produce any considerable overhead.

Collision Hashing Techniques

Open Hashing (Separate Chaining): It is the most commonly used collision hashing technique implemented using Lined List. When any two or more elements collide at the same location, these elements are chained into a single-linked list called a chain. In this, we chain all the elements in a linked list that hash to the same slot.

Let’s consider an example of a simple hash function.

h(key) = key%table size

In a hash table with the size 7

h(27) = 27%7 = 6

h(130) = 130%7 = 4

Hash Map Example

If we insert a new element (18, “Saleema”), that would also go to the 4th index.

h(18) = 18%7 = 4

Hash Map Example;

For separate chaining, the worst-case scenario is when all of the keys will get the same hash value and will be inserted in the same linked list. We can avoid this by using a good hash function.

Closed Hashing (Open Addressing): In this, we find the “next” vacant bucket in Hash Table and store the value in that bucket.

Linear Probing: We linearly go to every next bucket and see if it is vacant or not.

rehash(key) = (n+1)%tablesize

Quadratic Probing: We go to the 1st, 4th, 9th … bucket and check if they are vacant or not.

rehash(key) = (n+ k<sup>2</sup> ) % tablesize

Double Hashing: Here we subject the generated key from the hash function to a second hash function.

h2(key) != 0 and h2 != h1

Load Factor: This is a measurement of how full a hash table may become before its capacity is increased.

The hash table’s load fact

N = number of elements in T - Current Size

M = size of T - Table Size

e = N/M - Load factor

Generally, if the load factor is greater than 0.5, we increase the size of the bucket array and rehash all the key-value pairs again.

How Hashing gets O(1) complexity?

Given the above examples, one would wonder how hashing may be O(1) if several items map to the same place…

The solution to this problem is straightforward. We use the load factor to ensure that each block, for example, (linked list in a separate chaining strategy), stores the maximum amount of elements fewer than the load factor on average. Also, in practice, this load factor is constant (generally 10 or 20). As a result, searching in 10 or 20 elements become constant.

If the average number of items in a block exceeds the load factor, the elements are rehashed with a larger hash table size.

Rehashing

When the load factor gets “too high” (specified by the threshold value), collisions would become more common, so rehashing comes as a solution to this problem.

We increase the size of the hash table, typically, doubling the size of the table.

All existing items must be reinserted into the new doubled size hash table.

Now let’s deep dive into the code. I will implement everything in code that we have learned till now.

#include<iostream>

using namespace std;

class node{

public:

string name;

int value;

node* next;

node(string key,int data){

name=key;

value=data;

next=NULL;

}

};

class hashmap{

node** arr;

int ts;

int cs;

int hashfn(string key){

int ans=0;

int mul=1;

for(int i=0; key[i]!='\0';i++){

ans = (ans + ((key[i]/ts)*(mul%ts))%ts);

mul *= 37;

mul %=ts;

}

ans = ans %ts;

return ans;

}

void reHash(){

node** oldarr=arr;

int oldts=ts;

arr= new node*[2*ts];

ts *= 2;

cs=0;

for(int i=0;i<ts;i++){

arr[i]=NULL;

}

//insert in new table

for(int i=0;i<oldts;i++){

node* head = oldarr[i];

while(head){

insert(head->name,head->value);

head=head->next;

}

}

delete []oldarr;

}

public:

hashmap(int s=7){

arr = new node*[s];

ts=s;

cs=0;

for(int i=0;i<s;i++){

arr[i]=NULL;

}

}

void insert(string key, int data){

int i=hashfn(key);

node* n=new node(key,data);

n->next=arr[i];

arr[i]=n;

cs++;

if(cs/(1.0*ts) > 0.6){

reHash();

}

}

node* search(string key){

int i=hashfn(key);

node*head= arr[i];

while(head){

if(head->name==key){

return head;

break;

}

head=head->next;

}

if(head==NULL){ | divya08296 | |

876,646 | Web, backend, mobile resources and news. | Dev Resources Here are the latest articles and news from last week's biweekly newsletter.... | 0 | 2021-10-26T10:54:56 | https://dev.to/developernationsurvey/web-backend-mobile-resources-and-news-349g | devnews, webdev, mobile, backend | ---

title: Web, backend, mobile resources and news.

published: true

description:

tags: #devnews #webdev #mobile #backend

//cover_image: https://direct_url_to_image.jpg

---

## Dev Resources

Here are the latest articles and news from last week's biweekly [newsletter](https://content.developereconomics.com/developer-nation-newsletter-signup-form). Enjoy!

## Dev Resources & Articles

[Emojis in commit messages: ✅ or ❌?](https://dev.to/scottshipp/emojis-in-commit-messages-or-2d32) See what others think, and contribute your own opinion. [DEVTO]

[Will the real senior dev please stand up? (2021 edition).](https://dzone.com/articles/will-the-real-senior-dev-please-stand-up-2021-edit) An update to Tomasz Waraksa's ironic look at the realities of hiring in IT. It's 2021, have companies finally learned what a real senior developer looks like? [DZONE]

[10 best product backlog tools for backlog management.](https://theproductmanager.com/tools/best-product-backlog-tools/) Without the product backlog, it would not be easy for software development teams to have a coherent idea of the processes they should be prioritizing. [THEPRODUCTMANAGER]

[8 mobile app security best practices developers should follow.](https://dev.to/kovalchuk/8-mobile-app-security-best-practices-developers-should-follow-544o) Best protected applications are those developed using the security by design approach when security is taken into account on the initial stages of the development process. [DEVTO]

[Difference between MVVM vs MVC.](https://www.educba.com/mvvm-vs-mvc/) The major key differences such as architecture, operation, advantages, and limitations are discussed in this article. [EDUCBA]

[Sending iOS push notifications via APNs.](https://blog.engineyard.com/ios-push-notifications-via-apns) This blog post discusses in detail the APN services, enable and register for remote notifications, device token ids, and APN node package with the help of a sample iOS. [ENGINEYARD]

[How I hacked an Android app for a premium feature.](https://proandroiddev.com/how-i-hacked-an-android-app-for-a-premium-feature-d9ca74e797ad) Merab rewrote the bytecode to unlock premium features of the app on his device and now he shows you how to prevent it from happening to your apps. [PROANDROIDDEV]

[Using WebSockets in Flutter.](https://blog.logrocket.com/using-websockets-flutter/) This article explores how we can use our own WebSockets to create applications that display real-time data to our users. [LOGROCKET]

[Front-end developer skills you must have.](https://www.interviewbit.com/blog/front-end-developer-skills/) Front-end developers require a combination of technical and soft abilities. If you’re pursuing a career as a Front-end Developer, these are the talents you’ll need to develop. [INTERVIEWBIT]

[Top 50 data structure and algorithms interview questions for programmers.](https://medium.com/javarevisited/50-data-structure-and-algorithms-interview-questions-for-programmers-b4b1ac61f5b0) Frequently asked programming interview questions from different interviews for programmers at different levels of experience. [MEDIUM.JAVAREVISITED]

[JavaScript worst practices.](https://blog.bitsrc.io/javascript-worst-practices-dc78e19d6f12) Let’s take a quick look at 5 of the worst things you can do to your code while writing JavaScript. [BITSRC]

[You can’t keep building on a broken system: why managing technical debt is so important.](https://dzone.com/articles/you-cant-keep-building-on-a-broken-system-why-mana) Managing technical debt is important if you want to move more quickly, retain top engineering talent, and avoid re-writing your app from scratch. [DZONE]

[What is Google’s Dev Library?](https://developers.googleblog.com/2021/10/what-is-the-dev-library.html) Google decided to create a space where the best projects related to Google technologies can be highlighted in one place. [DEVELOPERS.GOOGLEBLOG]

[What is data virtualization and why use it?](https://dev.to/lambdatesting/what-happens-when-you-use-virtualization-in-software-testing-1l92) What happens when you use virtualization in software testing? [DEVTO]

[What are microservices? Code examples, best practices, tutorials and more.](https://stackify.com/what-are-microservices/) We’ll take a look at microservices, the benefits of using this capability, and a few code examples. [STACKIFY]

[Node TDS module for connecting to SQL Server databases.](https://github.com/tediousjs/tedious) Tedious is a pure-Javascript implementation of the TDS protocol, which is used to interact with instances of Microsoft's SQL Server. [TEDIOUSJS]

[Introducing Serverless Cloud.](https://www.serverless.com//blog/introducing-serverless-cloud-public-preview) The hyper-productive #serverless app platform with single second deployments is now open to everyone. [SEVERLESS]

[Rails 7 adds the ability to use pre-defined variants.](https://www.bigbinary.com/blog/rails-7-adds-ability-to-use-predefined-variants) See how. [BIGBINARY]

[Crack the top 40 machine learning interview questions.](https://dev.to/educative/crack-the-top-40-machine-learning-interview-questions-1e2c) From basic to more advanced questions. [DEVTO]

[How to build a strong machine learning resume.](https://www.springboard.com/blog/ai-machine-learning/machine-learning-resume/) Here are several pointers to ensure that your resume is in top shape before you send it to the next recruiter or hiring manager. [SPRINGBOARD]

[A tour of attention-based architectures.](https://machinelearningmastery.com/a-tour-of-attention-based-architectures/) Gain a better understanding of how the attention mechanism is incorporated into different neural architectures and for which purpose. [MACHINELEARNINGMASTERY]

[The importance of data drift detection that data scientists do not know.](https://www.analyticsvidhya.com/blog/2021/10/mlops-and-the-importance-of-data-drift-detection/) Understand the different types of data drift and the method for detecting them. [ANALYTICSVIDHYA]

[Artificial networks learn to smell like the brain.](https://news.mit.edu/2021/artificial-networks-learn-smell-like-the-brain-1018) When asked to classify odors, artificial neural networks adopt a structure that closely resembles that of the brain’s olfactory circuitry. [MIT]

## Industry News

[A tour of attention-based architectures.](https://machinelearningmastery.com/a-tour-of-attention-based-architectures/) Gain a better understanding of how the attention mechanism is incorporated into different neural architectures and for which purpose. [MACHINELEARNINGMASTERY]

[The importance of data drift detection that data scientists do not know.](https://www.analyticsvidhya.com/blog/2021/10/mlops-and-the-importance-of-data-drift-detection/) Understand the different types of data drift and the method for detecting them. [ANALYTICSVIDHYA]

[Artificial networks learn to smell like the brain.](https://news.mit.edu/2021/artificial-networks-learn-smell-like-the-brain-1018) When asked to classify odors, artificial neural networks adopt a structure that closely resembles that of the brain’s olfactory circuitry. [MIT]

[Google says it’s dropping Material Design components on iOS in favour of Apple’s UIKit.](https://www.theverge.com/2021/10/12/22722130/google-ios-app-material-design-components-uikit) Google says the result of the switch should be less work for its iOS development team, but, more importantly, it’s likely the change will mean that Google’s iOS apps will feel less like interlopers on Apple devices. [THEVERGE]

[Thanks to a nasty GPSD bug, real-life time travel trouble arrives this weekend.](https://www.zdnet.com/article/thanks-to-a-nasty-gpsd-bug-real-life-time-travel-trouble-arrives-this-weekend/) On October 24, 2021, some time-keeping systems are going to take a trip back in time to March 2012, unless you update your GPSD programs. Find out more. [ZDNET] - old article from last week - did you experience any issues?

[GitLab goes public.](https://about.gitlab.com/blog/2021/10/14/gitlab-inc-takes-the-devops-platform-public/) GitLab was the first company to publicly live stream the entire end-to-end listing day at Nasdaq. Sid Sidbrandij shares the news and a copy of the founders letter. [GITLAB]

[IBM will offer free COBOL training to address overloaded unemployment systems.](https://www.inputmag.com/tech/ibm-will-offer-free-cobol-training-to-address-overloaded-unemployment-systems) Many systems that process unemployment claims in the USA still run on a 60-year-old programming language that relatively few coders understand. [INPUTMAG]

[Microsoft highlights the UI changes coming in Visual Studio 2022, including new icons.](https://www.neowin.net/news/microsoft-highlights-the-ui-changes-coming-in-visual-studio-2022-including-new-icons/) The IDE offers numerous improvements upon its predecessor, Visual Studio 2019, including the fact that it comes in a 64-bit flavor, supports .NET 6 and C++ 20, offers better performance for the core debugger, and supports text chat during Live Share sessions. [NEOWIN]

[DevFest 2021.](https://developers.googleblog.com/2021/10/announcing-devfest-2021.html) Hosted by Google Developer Groups (GDG) all across the globe, DevFest events are uniquely curated by their local GDG organizers to fit the needs and interests of the local community. [DEVELOPERS.GOOGLEBLOG]

## Quick Hits

[Announcing .NET MAUI Preview 9.](https://devblogs.microsoft.com/dotnet/announcing-net-maui-preview-9/)

[Parcel v2.0.0 stable is now available.](https://parceljs.org/blog/v2/)

[Crystal 1.2.0 is released.](https://crystal-lang.org/2021/10/14/1.2.0-released.html)

[PhpStorm 2021.2.3 is released.](https://blog.jetbrains.com/phpstorm/2021/10/phpstorm-2021-2-3-is-released/) | developernationsurvey |

876,673 | 👨💻[FirstDevRole #1] What are the Real Requirements for Becoming a Programmer? | What is this about? 🥱 In my opinion, the point of writing a blog is to document your... | 15,181 | 2021-10-26T11:21:49 | https://dev.to/antoniopk/firstdevrole-1-what-are-the-real-requirements-for-becoming-a-programmer-2e1p | webdev, javascript, beginners, programming | ## What is this about? 🥱

In my opinion, the point of writing a blog is to document your journey and share it with other people so hopefully someone finds the information useful.

Having that said, I have decided to share my experience and what I have learned when it comes to getting a first software developer job.

I have been through the process three times already so while everything is still relatively fresh and quite a few people asked me for advice on [Twitter](https://twitter.com/antoniopkvc), I decided to document it before it all evaporates into the void.

This is definitely going to be a series of blog posts with no specific order where I will try to extract the most important information as it comes to me. 🤷♂️

## Who is this for?

Anyone who wants to make a career change to IT, is graduating from college, is struggling to get a first job as a programmer or just wants to give programming a try.

## Topics that will be covered

These are some of the topics that I will definitely cover but might add more if I remember something that is important as well:

- What are the requirements for becoming a programmer

- How to write a no bullshit CV/Resume that will increase your chances of getting hired

- What programming language / framework to start with

- Where to apply for your first job and which ones to consider

- How to build an effective portfolio website

- What projects to build and how

- Creating an effective GitHub profile

- How much experience is needed to start applying to jobs

- When to apply to an Internship vs Real Developer role

- Is your code good enough

- What to be ready for while trying to get your first programming role

- Mindset and motivation

The first topic is covered in this post as the title says so let's start! 🦾

<hr/>

## What are the REAL Requirements for Becoming a Programmer?

Do you need someone to tell you if you are smart enough or do you have what it takes to become a programmer?

Let me tell you this: **Programming is not for anyone and it's not enough if you can just install Windows on your laptop. It's only for extremely talented people with IQ over 200.**

Anxiety kicks in?

Don't worry, I'm just kidding.

Let's put it this way. If you can read and comprehend what I have written up to this point then you have everything that it takes to become a programmer. You maybe won't be a great one but it's definitely enough to get a job.

Physical requirements ✔️

You did some googling or found this post buried deep down in the #TechTwitter history. That means you have enough interest.

Interest ✔️

Your friend who is incredibly smart is a software engineer and there is no way that you can be that good?

Actually, you can.

I actually know a few people who are generally not that intelligent but are still working as developers.

If it makes it any easier for you, I had the WORST grades in informatics in elementary school and in programming in college. At least get school out of the way because it's not a valid measure for anything.

So what's the thing that separates people who can and who cannot become programmers?

Well, the important things are exactly those two words: CAN and **CANNOT**.

Just pick one word and fill in the sentence below to find out the answer.

> I ___________ become a programmer.

Got it?

It's REALLY a matter of decision. Do you want to become a programmer?

## Why it is a matter of a decision?

Because if you don't decide firmly, you will quit somewhere along the road. Just make a decision with yourself, and even better write it somewhere, and whenever you think about quitting (which will happen a lot) just remind yourself that you have decided and that there is no way back.

If you are starting from zero, don't expect a role in the next 1-2 years and be ready to dedicate A LOT of time to learn everything that is necessary to get the job. If you get it earlier than that then even better!

Not a lot of people can sacrifice that much time and be that patient and that's why programming is labeled as "hard".

It's not hard because you have to study some out of this world science, complex algorithms or math formulas - it's hard because it requires TIME and PATIENCE.

So basically you have all the "skills" that you need. It is only the question if you are ready to throw yourself into the fire?

If you were looking for an actual list of requirements in a video game style then here you have it:

## Requirements for becoming a software engineer:

- Having a normal functioning brain and being able to type

- Being ready to not being able to get a job even after learning every day for a year

- Basic English language knowledge

- Being in peace with the fact that learning will NEVER end

- Being in peace with the fact that you will fail a lot

- Being comfortable with feeling stupid at times

- Being ready to work on your temper and impulsive reacting

- Being in front of a computer every day

- Being ready to code every day

- Being ready to learn the boring stuff so you can enjoy building fun stuff later

- Being ready to deal with difficult people

- Being ready to read boring or poorly written documentation

- Decreased social life at times

- Being comfortable with dreaming code (literally)

- Being able to think when exhausted at times

- Being able to think under pressure

And last but not least: being ready to help everyone alive with their printers or failing internet connections. (funny but true, you will see)

If you can sign up for all of these points above then there is nothing else I want to tell you.

You have it all and are ready to start. ✔️✔️✔️

All I can tell you is good luck, DON'T QUIT and you will get your job I'm sure of that!😄

<a href='https://ko-fi.com/S6S06RIRQ' target='_blank'><img height='36' style='border:0px;height:36px;' src='https://cdn.ko-fi.com/cdn/kofi1.png?v=3' border='0' alt='Buy Me a Coffee at ko-fi.com' /></a>

**If there is a topic that you want me to cover please let me know in the comments and I will gladly add it to my list.** | antoniopk |

886,786 | Algorithm Series - Selection Sort | Photo by Jess Bailey on Unsplash This is a quick tutorial on the selection sort algorithm and its... | 0 | 2021-11-03T14:22:16 | http://tatyanacelovsky.com/algorithm_series_-_selection_sort | algorithms | ---

title: Algorithm Series - Selection Sort

published: true

date: 2021-10-27 08:50:00 UTC

tags: Algorithms

canonical_url: http://tatyanacelovsky.com/algorithm_series_-_selection_sort

---

Photo by [Jess Bailey](https://unsplash.com/@jessbaileydesigns) on [Unsplash](https://unsplash.com/)

_This is a quick tutorial on the selection sort algorithm and its implementation in Javascript._

### What is Selection Sort Algorithm

Selection sort is a [sorting algorithm](%5Bhttps://en.wikipedia.org/wiki/Sorting_algorithm%5D(https://en.wikipedia.org/wiki/Sorting_algorithm)) that divides the input list into two parts: a sorted sublist that is built up from left to right and a sublist of the remaining unsorted values. The sorted sublist is placed at the front (to the left of) the unsorted sublist. Initially, the sorted sublist is empty and the unsorted sublist consists of the entire input list. The algorithm selects the smallest (or largest, depending on the ask) element in the unsorted sublist, places that element at the beginning of the unsorted sublist and moves the sublist boundary one element to the right (because there is now one element present in the sorted sublist, while the unsorted sublist became smaller by one element).

Let’s take a look at selection sort when trying to sort the elements of an array in an ascending order:

1. Set the first element of the array as `minimum`;

2. Compare `minimum` with the second element, if the second element is smaller than `minimum`, assign the second element as `minimum` , otherwise, do nothing;

3. Compare `minimum` with the following element, if that element is smaller than `minimum`, then assign `minimum` to that element, otherwise do nothing;

4. Continue step 3 above until the last element has been reached;

5. Move `minimum` to the front of the array and move the sublist boundary one element to the right (because there is now one element present in the sorted sublist, while the unsorted sublist became smaller by one element);

6. Continue with steps 3 - 5, until all elements are in their sorted positions.

The array is sorted when all the unsorted elements are placed in their correct positions.

### Selection Sort Code in Javascript

Let’s take a look at the code for the selection sort algorithm described above (ascending order):

{% gist https://gist.github.com/tcelovsky/4c7b1b5a852adacf13ba7a3604000f79.js %}

The above code sorts the array in ascending order. To sort an array in descending order, replace the “greater than” sign in the `if` statement with a “less than” sign.

### Selection Sort and Big-O

Selection Sort compares the adjacent elements, hence, the number of comparisons is:

(n-1) + (n-2) + (n-3) +…..+ 1 = n(n-1)/2

This nearly equals to n2, therefore Big-O is O(n²) or quadratic time. We can also deduce this from observing the code: selection sort requires two loops, therefore Big-O is expected to be O(n²).

### Conclusion

Selection sort finds the lowest value of the array and moves that value to the beginning of the array, it then proceeds to look for the next lowest value and moves that in front of the unsorted elements. This continues until all values of the array have been sorted; it is a simple way to sort a list when complexity does not matter and the list that needs sorting is short.

### Resources

[Selection Sort Algorithm gist](https://gist.github.com/tcelovsky/4c7b1b5a852adacf13ba7a3604000f79)

[Let’s Talk About Big-O](https://dev.to/tcelovsky/let-s-talk-about-big-o-2ah9) | tcelovsky |

887,079 | Tags, Attributes, Form and lebel | HTML Tags Tags I learnt recently. <mark> : Highlight <abbr> : When you hover... | 0 | 2021-11-03T19:55:43 | https://dev.to/daaahailey/tags-attributes-form-and-lebel-3m8p | html, attribute, form, label | ## HTML Tags

Tags I learnt recently.

<code><mark></code> : Highlight

<code><abbr></code> : When you hover on the element that's wrapped with <code><abbr></code>, it shows description.

<p>You can use <abbr title="Cascading Style Sheets">CSS</abbr> to style your <abbr title="HyperText Markup Language">HTML</abbr>.</p>

```html

<p>You can use <abbr title="Cascading Style Sheets">CSS</abbr> to style your <abbr title="HyperText Markup Language">HTML</abbr>.</p>

```

<code><sup></code> : Subscript text appears half a character below the normal line, and is sometimes rendered in a smaller font like H<sub>2</sub>0

<code><sub></code> : Superscript text appears half a character above the normal line, and is sometimes rendered in a smaller font. Superscript text can be used for footnotes, like WWW<sup>[1]</sup>.

```html

<p>H<sub>2</sub>0</p>

<p>x<sup>2</sup>=4</p>

```

<code><blockquote></code>

<blockquote>

<p>I Don’t Have A Home To Go Back To. In Here, I Stand A Chance At Least. But Out There? I Got Nothing Out There.</p>

<cite>Player 322</cite>

</blockquote>

<p><q>Would you like to play a game with me?</q>He asked.</p>

```html

<blockquote>

<p>I Don’t Have A Home To Go Back To. In Here, I Stand A Chance At Least. But Out There? I Got Nothing Out There.</p>

<cite>Player 322</cite>

</blockquote>

<p><q>Would you like to play a game with me?</q>He asked.</p>

```

<code><pre></code> : Preformatted text which is to be presented exactly as written in the HTML file.

<code><kbd></code> : Span of inline text denoting textual user input from a keyboard, voice input, or any other text entry device. By convention, the user agent defaults to rendering the contents of a <code><kbd></code> element using its default monospace font, although this is not mandated by the HTML standard.

<p>Please press <kbd>Ctrl</kbd> + <kbd>Shift</kbd> + <kbd>R</kbd> to re-render an MDN page.</p>

```html

<p>Please press <kbd>Ctrl</kbd> + <kbd>Shift</kbd> + <kbd>R</kbd> to re-render an MDN page.</p>

```

<code><code></code>

<p>The <code>push()</code> method adds one or more elements to the end of an array and returns the new length of the array.</p>

```html

<p>The <code>push()</code> method adds one or more elements to the end of an array and returns the new length of the array.</p>

```

<code><figure></code> : figure tag specifies self-contained content, like illustrations, diagrams, photos, code listings, etc.

<code><figcaption></code> : defines a caption for a <code><figure></code> element. Element can be placed as the first or last child of the <figure> element. figcaption can be used only as children element of figure.

#### srcset Attribute

Defines multiple sizes of the same image, allowing the browser to select the appropriate image source.

Each set of image information is separated from the previous one by a comma.

find more about [srcset](https://developer.mozilla.org/ko/docs/Learn/HTML/Multimedia_and_embedding/Responsive_images)

```html

<img src="a.jpg" srcset="a.jpg, a.jpg 2x" alt="대체문구">

<img

width="200px"

srcset="img/logo_1.png 300w,

img/logo_2.png 600w,

img/logo_3.png 700w"

src="a.jpg"

alt="test">

```

## Attributes

poster: A URL for an image to be shown while the video is downloading. If this attribute isn't specified, nothing is displayed until the first frame is available, then the first frame is shown as the poster frame.

```html

<video src="" poster="" preload="" controls playsinline>

<source src="" type="">

<track kind="" src="" srclang="" label="">

</video>

```

## Form

: a document section containing interactive controls for submitting information.

###Get, Post

Sensitive info like password should not be seen, so it shouldn't be sent via get. It's better to use post.

Also, it's easier to send large size of image, lots of data via post then get. If it needs to be hidden, use post as well.

```html

<form action="./010.html" method="get">

<label for="one">이름 : </label>

<input type="text" name="id" id="one">

<label for="two">패스워드 : </label>

<input type="password" name="pw" id="two">

<button type="submit">로그인</button><br>

</form>

```

It sends the data to the file that action is linked which is ./010.html

When it's sent (when you click submit button this is what you see at the browser <kbd>file:///Users/dahyeji/Desktop/likelion/01_11_2021/010.html?id=hailey707&pw=12345</kbd>

You can see 👉 <em><strong>?id=hailey707&pw=12345</strong></em>

/?device(key/name)=iPhone(value)

## Label

To associate the <code><label></code> with an <code><input></code> element, you need to give the <input> an id attribute. The <code><label></code> then needs a for attribute whose value is the same as the input's id.

```html

<label for="id">아이디: </label>

<input type="text" name="userId" id="id">

```

A radio group is defined by giving each of radio buttons in the group <strong>the same name</strong>. Once a radio group is established, selecting any radio button in that group automatically deselects any currently-selected radio button in the same group.

```html

<label for="male">남</label>

<input type="radio" name="sex" id="male" value="male">

<label for="female">여</label>

<input type="radio" name="sex" id="female" value="female">

```

```html

<form action="./011.html" method="get">

<label for="id">아이디: </label>

<input type="text" name="userId" id="id">

<label for="pw">패스워드: </label>

<input type="password" name="userPw" id="pw"><br>

<label for="male">남</label>

<input type="radio" name="sex" id="male" value="male">

<label for="female">여</label>

<input type="radio" name="sex" id="female" value="female"><br>

<p>좋아하는 프로그래밍 언어</p><br>

<label for="js">javaScript</label>

<input type="checkbox" name="언어" id="js" value="javaScript">

<label for="python">python</label>

<input type="checkbox" name="언어" id="python" value="python">

<label for="python">C</label>

<input type="checkbox" name="언어" id="c" value="c">

<label for="python">Go</label>

<input type="checkbox" name="언어" id="go" value="go">

<button type="submit">회원가입</button>

</form>

```

Alternatively, you can nest the <code><input></code> directly inside the <code><label></code>, in which case the for and id attributes are not needed because the association is implicit:

```html

<label>Do you like dogs?

<input type="checkbox" name="dogs">

</label>

```

| daaahailey |

887,107 | How to make object properties string type values to string literal type in TypeScript? | Originally posted here! To make the object properties string type values to string literals, we can... | 0 | 2021-11-03T00:00:00 | https://melvingeorge.me/blog/make-object-property-string-type-values-to-string-literal-type-typescript | typescript | ---

title: How to make object properties string type values to string literal type in TypeScript?

published: true

tags: TypeScript

date: Wed Nov 03 2021 05:30:00 GMT+0530 (India Standard Time)

canonical_url: https://melvingeorge.me/blog/make-object-property-string-type-values-to-string-literal-type-typescript

cover_image: https://melvingeorge.me/_next/static/images/main-3e2d9afd37c6cefc6f8490ddeac7e8dc.jpg

---

[Originally posted here!](https://melvingeorge.me/blog/make-object-property-string-type-values-to-string-literal-type-typescript)

To make the object properties `string` type values to `string literals`, we can use the `as` keyword after the property `string` value and then type out the string literal or we can use the `as` keyword after accessing the property then typing out the string literal.

### TL;DR

**METHOD 1**

```ts

// a simple object

// with "name" property type changed

// from "string" to "John Doe" string literal

const person = {

name: "John Doe" as "John Doe",

age: 23,

};

```

**METHOD 2**

```ts

// a simple object

const person = {

name: "John Doe",

age: 23,

};

// a simple function call

// with "name" property type changed

// from "string" to "John Doe" string literal

sayName(person.name as "John Doe");

```

To understand it better, let's say we have an object called `person` with two properties called `name` and `age` with values of `John Doe` and `23` respectively like this,

```ts

// a simple object

const person = {

name: "John Doe",

age: 23,

};

```

Now if you hover over the `name` property in the `person` object it shows the type of the `name` property as `string` like in the below image,

This is ok for most cases. But in some cases, you may need to convert the `name` property's type from just `string` to a `string literal` to pass it to a function call as an argument.

To do that, we can use the `as` keyword after the `name` property's string value and then type the `string literal` we need to use. In our case, we need to change it to `John Doe` string literal type. It can be done like this,

```ts

// a simple object

// with "name" property type converted

// from just "string" to "John Doe" string literal

const person = {

name: "John Doe" as "John Doe",

age: 23,

};

```

Now if you hover over the `name` property you can see that the type of the `name` property is changed from `string` to `John Doe` string literal which is what we want.

It may look like this,

Another way of achieving the same functionality is using the `as` keyword and typing the string literal when we are only accessing the property from the object.

For example, let's assume a function called `sayName`, and on calling the function let's pass the `name` property value like this,

```ts

// a simple object

const person = {

name: "John Doe",

age: 23,

};

// a simple function call

sayName(person.name);

```

Now to change the type of the `name` property from `string` to "John Doe" `string literal` we can use the `as` keyword after the `person.name` and type the string literal like this,

```ts

// a simple object

const person = {

name: "John Doe",

age: 23,

};

// a simple function call

// with "name" property type changed

// from "string" to "John Doe" string literal

sayName(person.name as "John Doe");

```

This is also a valid way of achieving the same functionality.

See the working of the above codes live in [codesandbox](https://codesandbox.io/s/make-object-property-string-values-to-string-literals-in-typescript-qdej0?file=/src/index.ts)

That's all 😃!

### Feel free to share if you found this useful 😃.

---

| melvin2016 |

887,144 | Behavioral Design Patterns In C# | if you are new , I highly recommend you to read my first and second articles which are about... | 0 | 2021-11-04T10:03:26 | https://dev.to/mstbardia/behavioral-design-patterns-in-c-4o14 | csharp, design, productivity, dotnet | if you are new , I highly recommend you to read my first and second articles which are about [Creational Design Patterns](https://dev.to/mstbardia/creational-design-patterns-in-c-10c6) and [Structural Design Patterns](https://dev.to/mstbardia/structural-design-patterns-in-c-2khn) In C#.

As we explained in earlier post , we have three main categories in Design Patterns :

1. Creational

2. Structural

3. Behavioral

**Behavioral** Design Patterns mainly focus on the communication between objects and the interactions between them by algorithm and their responsibilities.

**Chain of Responsibility**

> this pattern is use to passing request along multiple handler, so it prevents from coupling request to handle by one handler.

```c#

public interface IHandler

{

public IHandler SetNext(IHandler handler);

public object Handle(object input);

}

public class Handler : IHandler

{

private IHandler _handler;

public IHandler SetNext(IHandler handler)

{

_handler = handler;

return handler;

}

public virtual object Handle(object input)

{

return _handler?.Handle(input);

}

}

public class HandlerA : Handler

{

public override object Handle(object input)

{

if (input as string == "A")

{

Console.WriteLine("HandlerA said : gotcha you ! that's enough go back");

return true;

}

Console.WriteLine("HandlerA said : i can not do anything calling next handler");

return base.Handle(input);

}

}

public class HandlerB : Handler

{

public override object Handle(object input)

{

if (input as string == "B")

{

Console.WriteLine("HandlerB said : gotcha you ! that's enough go back");

return true;

}

Console.WriteLine("HandlerB said : i can not do anything calling next handler");

return base.Handle(input);

}

}

public class HandlerC : Handler

{

public override object Handle(object input)

{

if (input as string == "C")

{

Console.WriteLine("HandlerC said : gotcha you ! that's enough go back");

return true;

}

Console.WriteLine("HandlerC said : chain is useless for you , this is end of the road bro.");

return base.Handle(input);

}

}

public static class ChainOfResponsibilityExample

{

public static void Test()

{

var handlerA = new HandlerA();

var handlerB = new HandlerB();

var handlerC = new HandlerC();

handlerA.SetNext(handlerB).SetNext(handlerC);

var resultOne = handlerA.Handle("A");

var resultTwo = handlerA.Handle("B");

var resultThree = handlerA.Handle("C");

var resultFour = handlerA.Handle("D");

}

//results:

//

// resultOne :

// HandlerA said : gotcha you ! that's enough go back

//

// resultTwo :

// HandlerA said : i can not do anything calling next handler

// HandlerB said : gotcha you ! that's enough go back

//

// resultThree :

// HandlerA said : i can not do anything calling next handler

// HandlerB said : i can not do anything calling next handler

// HandlerC said : gotcha you ! that's enough go back

//

// resultFour :

// HandlerA said : i can not do anything calling next handler

// HandlerB said : i can not do anything calling next handler

// HandlerC said : chain is useless for you , this is end of the road bro.

```

**Command**

> this pattern is use to transform request to a object so you can delay or queue or parameterize request. and do undoable operations.

```c#

public interface ICommand

{

public void Execute();

}

public class ExampleCommand : ICommand

{

private readonly string _parameter;

public ExampleCommand(string parameter)

{

_parameter = parameter;

}

public void Execute()

{

Console.WriteLine(_parameter);

}

}

public static class Invoker

{

public static void SendAction(ICommand command)

{

command.Execute();

}

}

public static class CommandExample

{

public static void Test()

{

var command = new ExampleCommand("Query filter setup and executed");

Invoker.SendAction(command);

}

//results:

//Query filter setup and executed

}

```

**Iterator**

> its main goal is to traverse elements of collection without exposing them.

```c#

public abstract class IteratorBase

{

public abstract bool EndOfDocument();

public abstract object Current();

public abstract object Next();

public abstract object First();

}

public class Iterator : IteratorBase

{

private readonly List<object> _customList;

private int current = 0;

public Iterator(List<object> customList)

{

_customList = customList;

}

public override bool EndOfDocument()

{

if (current >= _customList.Count - 1)

return true;

return false;

}

public override object Current()

{

return _customList[0];

}

public override object Next()

{

if (current < _customList.Count - 1)

return _customList[++current];

return null;

}

public override object First()

{

return _customList[0];

}

}

public static class IteratorExample

{

public static void Test()

{

var ourList = new List<object>() {"a", "b", "c", "d"};

var iterator = new Iterator(ourList);

Console.WriteLine("lets Iterate on list");

var item = iterator.First();

while (item != null)

{

Console.WriteLine(item);

item = iterator.Next();

}

if (iterator.EndOfDocument())

Console.WriteLine("Iterate done");

}

//results:

// lets Iterate on list

// a

// b

// c

// d

// Iterate done

}

```

**Interpreter**

>it is use for get different type and behavior on same context , and usually use in language scenario in software design.

```c#

internal class Context

{

public string Value { get; private set; }

public Context(string value)

{

Value = value;

}

}

internal abstract class Interpreter

{

public abstract void Interpret(Context context);

}

internal class EnglishInterpreter : Interpreter

{

public override void Interpret(Context context)

{

switch (context.Value)

{

case "1" :

Console.WriteLine("One");

break;

case "2" :

Console.WriteLine("Two");

break;

}

}

}

internal class FarsiInterpreter : Interpreter

{

public override void Interpret(Context context)

{

switch (context.Value)

{

case "3" :

Console.WriteLine("سه");

break;

case "4" :

Console.WriteLine("چهار");

break;

}

}

}

public static class InterpreterExample

{

public static void Test()

{

var interpreters = new List<Interpreter>()

{

new EnglishInterpreter(),

new FarsiInterpreter()

};

var context = new Context("2");

interpreters.ForEach(c => c.Interpret(context));

}

//results:

//two

}

```

**Mediator**

>the goal of this pattern is to decoupling direct communication between objects and forces them to pass from a mediator object for communicating.

```c#

public interface IMediator

{

public void Send(string message, Caller caller);

}

public class Mediator : IMediator

{

public CallerA CallerA { get; set; }

public CallerB CallerB { get; set; }

public void Send(string message, Caller caller)

{

if (caller.GetType() == typeof(CallerA))

{

CallerB.ReceiveRequest(message);

}

else

{

CallerA.ReceiveRequest(message);

}

}

}

public abstract class Caller

{

protected readonly IMediator _mediator;

public Caller(IMediator mediator)

{

_mediator = mediator;

}

}

public class CallerA : Caller

{

public void SendRequest(string msg)

{

_mediator.Send(msg,this);

}

public void ReceiveRequest(string msg)

{

Console.WriteLine("CallerA Received : " + msg);

}

public CallerA(IMediator mediator) : base(mediator)

{

}

}

public class CallerB : Caller

{

public void SendRequest(string msg)

{

_mediator.Send(msg,this);

}

public void ReceiveRequest(string msg)

{

Console.WriteLine("CallerB Received : " + msg);

}

public CallerB(IMediator mediator) : base(mediator)

{

}

}

public static class MediatorExample

{

public static void Test()

{

var mediator = new Mediator();

var callerA = new CallerA(mediator);

var callerB = new CallerB(mediator);

mediator.CallerA = callerA;

mediator.CallerB = callerB;

callerA.SendRequest("hello how are you ?");

callerB.SendRequest("fine tnx");

}

//results:

//CallerB Received : hello how are you ?

//CallerA Received : fine tnx

}

```

**Memento**

>this pattern is use for saving state of object with considering state encapsulation. so you can restores object's first state even after multiple manipulation.

```c#

public class Memento

{

private readonly string _state;

public Memento(string state)

{

_state = state;

}

public string GetState()

{

return _state;

}

}

public class CareTaker

{

private Memento _memento;

public void SaveMemento(Originator originator)

{

_memento = originator.CreateMemento();

}

public void RestoreMemento(Originator originator)

{

originator.RestoreState(_memento);

}

}

public class Originator

{

public string State { get; set; }

public Originator(string state)

{

State = state;

}

public Memento CreateMemento()

{

return new Memento(State);

}

public void RestoreState(Memento memento)

{

State = memento.GetState();

}

}

public static class MementoExample

{

public static void Test()

{

var originator = new Originator("First Value");

var careTaker = new CareTaker();

careTaker.SaveMemento(originator);

Console.WriteLine(originator.State);

originator.State = "Second Value";

Console.WriteLine(originator.State);

careTaker.RestoreMemento(originator);

Console.WriteLine(originator.State);

}

//results:

//First Value

//Second Value

//First Value

}

```

**Observer**

>it is use to handle one to many communication between objects , so if one object's state changed , its dependent objects will notify and will update.

```c#

public class Updater

{

public string NewState { get; }

private readonly List<ObserverBase> _observers = new List<ObserverBase>();

public Updater(string newState)

{

NewState = newState;

}

public void AddObserver(ObserverBase observerBase)

{

_observers.Add(observerBase);

}

public void BroadCast()

{

foreach (var observer in _observers)

{

observer.Update();

}

}

}

public abstract class ObserverBase

{

public abstract void Update();

}

public class Observer : ObserverBase

{

private readonly string _name;

public string State;

private readonly Updater _updater;

public Observer(string name, string state, Updater updater)

{

_name = name;

State = state;

_updater = updater;

}

public override void Update()

{

State = _updater.NewState;

Console.WriteLine($"Observer {_name} State Changed to : " + State);

}

}

public static class ObserverExample

{

public static void Test()

{

var updater = new Updater("Fire");

updater.AddObserver(new Observer("1", "dust", updater));

updater.AddObserver(new Observer("2", "water", updater));

updater.AddObserver(new Observer("3", "air", updater));

updater.BroadCast();

}

//results:

//Observer 1 State Changed to : Fire

//Observer 2 State Changed to : Fire

//Observer 3 State Changed to : Fire

}

```

**State**

>with this pattern object can change its behavior whenever its state changed.

```c#

internal interface IState

{

public void Handle(Context context);

}

internal class StateA : IState

{

public void Handle(Context context)

{

context.State = new StateB();

}

}

internal class StateB : IState

{

public void Handle(Context context)

{

context.State = new StateA();

}

}

internal class Context

{

private IState _state;

public IState State

{

get => _state;

set

{

_state = value;

Console.WriteLine("State: " + _state.GetType().Name);

}

}

public Context(IState state)

{

State = state;

}

public void Action()

{

State.Handle(this);

}

}

public static class StateExample

{

public static void Test()

{

var context = new Context(new StateA());

context.Action();

context.Action();

context.Action();

context.Action();

}

//results:

//State: StateA

//State: StateB

//State: StateA

//State: StateB

//State: StateA

}

```

**Strategy**

>this pattern is use to encapsulate family of algorithm and makes them interchangeable . so they can independently change without any tight coupling.

```c#

internal interface IStrategy

{

public void AlgorithmAction();

}

internal class AlgorithmStrategyA : IStrategy

{

public void AlgorithmAction()

{

Console.WriteLine("Strategy A is taking place");

}

}

internal class AlgorithmStrategyB : IStrategy

{

public void AlgorithmAction()

{

Console.WriteLine("Strategy B is taking place");

}

}

internal class Context

{

private readonly IStrategy _strategy;

public Context(IStrategy strategy)

{

_strategy = strategy;

}

public void GeneralAction()

{

_strategy.AlgorithmAction();

}

}

public static class StrategyExample

{

public static void Test()

{

var context = new Context(new AlgorithmStrategyA());

context.GeneralAction();

context = new Context(new AlgorithmStrategyB());

context.GeneralAction();

}

//results:

//Strategy A is taking place

//Strategy B is taking place

}

```

**Template Method**

>in simple word , this pattern includes number of operations on base class and it allows subclasses to only override some of the them.

```c#

internal abstract class TemplateBase

{

public void Operate()

{

FirstAction();

SecondAction();

}

private void FirstAction()

{

Console.WriteLine("First action from template base and it is necessary");

}

protected virtual void SecondAction()

{

Console.WriteLine("Second action from template base and it is overrideable");

}

}

internal class TemplateMethodA : TemplateBase

{

}

internal class TemplateMethodB : TemplateBase

{

protected override void SecondAction()

{

Console.WriteLine("Second action from templateMethodC");

}

}

internal class TemplateMethodC : TemplateBase

{

protected override void SecondAction()

{

Console.WriteLine("Second action from templateMethodC");

}

}

public static class TemplateMethod

{

public static void Test()

{

var templateMethodA = new TemplateMethodA();

var templateMethodB = new TemplateMethodB();

var templateMethodC = new TemplateMethodC();

templateMethodA.Operate();

templateMethodB.Operate();

templateMethodC.Operate();

}

//results:

//First action from template base and it is necessary

//Second action from template base and it is overrideable

//First action from template base and it is necessary

//Second action from templateMethodB

//First action from template base and it is necessary

//Second action from templateMethodC

}

```

**Visitor**

> the main goal is to add new behavior to class hierarchy without any changing in their code.

```c#

internal interface IVisitor

{

public void VisitItem(ItemBase item);

}

internal class VisitorA : IVisitor

{

public void VisitItem(ItemBase item)

{

Console.WriteLine("{0} visited by {1}",item.GetType().Name , this.GetType().Name);

}

}

internal class VisitorB : IVisitor

{

public void VisitItem(ItemBase item)

{

Console.WriteLine("{0} visited by {1}",item.GetType().Name , this.GetType().Name);

}

}

internal abstract class ItemBase

{

public abstract void Accept(IVisitor visitor);

}

internal class ItemA : ItemBase

{

public override void Accept(IVisitor visitor)

{

visitor.VisitItem(this);

}

public void ExtraOperationA()

{

}

}

internal class ItemB : ItemBase

{

public override void Accept(IVisitor visitor)

{

visitor.VisitItem(this);

}

public void ExtraOperationB()

{

}

}

internal class StructureBuilder

{

readonly List<ItemBase> _items = new();

public void AddItem(ItemBase element)

{

_items.Add(element);

}