id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,306,454 | The Four Horsemen of Software Complexity — Architecture Decision Records to the Rescue | When we try to reduce complexity in software development — we always address accidental... | 0 | 2022-12-22T22:39:23 | https://dev.to/karvozavr/the-four-horsemen-of-software-complexity-architecture-decision-records-to-the-rescue-1211 | architecture, productivity, softwaredesign, methodology | When we try to reduce complexity in software development — we always address accidental complexity.

Accidental complexity refers to the complexity that arises from the way a system is designed or implemented, rather than from the inherent nature of the problem being solved. This type of complexity can often be avoided by making design decisions that are simpler and more straightforward.

Essential complexity, on the other hand, is inherent in the problem being solved. It cannot be avoided and is a necessary part of the solution.

Let’s take a look at several potential sources of accidental complexity in software development:

1. Over-engineering: when a system is designed with more features or functionality than are actually needed, it can introduce unnecessary complexity.

2. Poorly chosen abstractions: abstractions, such as design patterns or software architectures, can help simplify complex systems. However, choosing the wrong abstractions can actually add complexity, rather than reducing it.

3. Unnecessary dependencies: adding code dependencies, such as external libraries or frameworks, can provide useful functionality, but it can also introduce complexity. When dependencies are not carefully managed, they can become a source of accidental complexity.

4. Inconsistent or conflicting design choices: when different parts of a system are designed in incompatible or inconsistent ways, it can introduce complexity. For example, using different programming languages or frameworks in different parts of the same system can make it more difficult to understand and maintain.

By understanding the sources of accidental complexity and taking steps to minimize it, it is possible to build software systems that are more maintainable, more prepared for the changes, and easier to work on.

---

Let’s first take a closer look at these sources of the problem and then get familiar with the practices that might help to mitigate accidental complexity caused by these reasons.

## Over-engineering

When a system is over-engineered, it may include features that are not needed or are only used in rare or edge cases. This can make the system more difficult to understand and maintain, as developers must spend time and effort understanding and working with unnecessary features. In addition, over-engineering can lead to code bloat, where the size of the system grows unnecessarily, which can make it more difficult to navigate and debug.

## Poorly chosen abstractions

Abstractions, such as design patterns or software architectures, can be a useful tool for simplifying complex systems by providing a common language and framework for understanding and organizing the various parts of a system. However, choosing the wrong abstractions or using them in an inappropriate way can actually add complexity to a system, rather than reducing it.

For example, using an overly complicated or poorly understood design pattern can make it more difficult for developers to understand and work with the system, as they must spend time and effort learning and working with the abstractions. Similarly, using an architecture that is not well suited to the needs of the system can introduce unnecessary complexity and make it more difficult to maintain and scale the system.

## Unnecessary dependencies

Code dependencies, such as external libraries or frameworks, can be a valuable resource in software development, as they can provide useful functionality and streamline the development process. However, when dependencies are not carefully managed, they can also introduce complexity to a system. This is considered accidental complexity, as it arises from the way the system is designed or implemented, rather than from the inherent nature of the problem being solved.

Unnecessary dependencies can contribute to complexity in a number of ways. For example:

1. Size and complexity: adding unnecessary dependencies can increase the size and complexity of the system, as developers must include and manage additional code in the project.

2. Compatibility issues: dependencies that are not needed may not be compatible with the rest of the system, which can introduce complexity when integrating them into the project.

3. Maintenance and updates: unnecessary dependencies may require regular updates and maintenance, which can add additional overhead to the project.

4. Risk of security vulnerabilities: unnecessary dependencies may introduce security vulnerabilities to the system, which can increase the risk of a data breach or other security incident.

## Inconsistent design choices

Inconsistent or conflicting design choices can contribute to complexity in software development by making it more difficult to understand and work with the system. When different parts of a system are designed in incompatible or inconsistent ways, it can make it more challenging for developers to understand how the system fits together and how to make changes to it.

For example, using different programming languages or frameworks in different parts of the same system can introduce complexity, as developers must switch between different languages and frameworks and adapt to different conventions and APIs. Similarly, using different design patterns or architectures in different parts of the system can make it more difficult to understand and work with the system as a whole.

> So how do we fight these four horsemen of software complexity?

All these problems have one thing in common — they are the result of chaotic, ungoverned decisions and "accidental" software architecture process.

## Architecture Decision Records

One tool that I found really useful in my experience to help with it is Architecture Decision Records.

Architecture Decision Records (ADRs) are a way to document important design decisions made during the development of a software system. They are used to capture the reasoning behind these decisions and the trade-offs that were considered, as well as the consequences of the decisions. This documentation can be useful for a number of purposes, including:

1. Providing context: ADRs can provide context for developers working on the system, helping them to understand the reasoning behind certain design choices and the constraints that were considered.

2. Facilitating communication: ADRs can help to facilitate communication between team members and stakeholders by providing a clear and concise record of the decisions that have been made.

3. Facilitating future decision-making: ADRs can serve as a reference for future decision-making, helping to ensure that new design decisions are aligned with the overall architecture of the system.

4. Improving transparency: by documenting design decisions, ADRs can increase transparency and help to ensure that all relevant parties are aware of the decisions that have been made.

To create an ADR, it is important to include a clear and concise description of the decision that was made, the context in which the decision was made, the alternatives that were considered, and the consequences of the decision. It is also important to include any relevant supporting documentation, such as diagrams or code examples, to help provide context and clarify the decision.

## Summary

A huge advantage of Architecture Decision Records is that one ADR document can be really small, and doesn’t take much time to write down or review. Yet it gives you a whole versioned history of how your project architecture developed over time and gives an insight into all places where accidental complexity was introduced and what was the reasoning behind doing this. It helps you to keep consistency with your decisions and critically revisit them in face of new requirements emerging.

There are several interesting links on the topic of ADR:

- [https://adr.github.io/](https://adr.github.io/) — homepage of a community popularising ADR technique, improving and introducing tooling for it and sharing knowledge and experience.

- [AWS](https://docs.aws.amazon.com/prescriptive-guidance/latest/architectural-decision-records/adr-process.html), [Google](https://cloud.google.com/architecture/architecture-decision-records), [RedHat](https://www.redhat.com/architect/architecture-decision-records) — articles where the companies share their experience and tips in ADRs.

- [A very interesting serverless cloud architecture example project](https://github.com/GoogleCloudPlatform/emblem/tree/main/docs/decisions) where Google Cloud team shows not only the tech, but the development process as well, and a part of it are some really good real-world Architecture Decision Records examples. | karvozavr |

1,306,602 | Django Object Level Permission In APIView Class | Is Owner? | How to check if requested user is owner of a object in django. video: https://youtu.be/nQeOJl8uEp0 | 0 | 2022-12-23T02:54:03 | https://dev.to/veewebcode/django-object-level-permission-in-apiview-class-is-owner-5216 | django, python, coding, permission | How to check if requested user is owner of a object in django.

video: https://youtu.be/nQeOJl8uEp0 | veewebcode |

1,306,606 | How to Use Jasypt or Jce to Encrypt Passwords in Spring Config | Jasypt and JCE are two encryption protocols that we can use in our Spring config to secure passwords.... | 0 | 2022-12-23T02:59:08 | https://www.czetsuyatech.com/2021/06/spring-config-jasypt-jce.html | jasypt, jce, spring, encryption | Jasypt and JCE are two encryption protocols that we can use in our Spring config to secure passwords. For example, if you wanted to encrypt the API token of your Github repository. Or encrypting the Spring config server's security.user.password value.

## Jasypt Example

Instruction on how we can use Jasypt in our Spring Boot application security.

1. Create a new Spring project, we will use it to encrypt our password.

2. Include jasypt dependency.

```

<dependency>

<groupId>com.github.ulisesbocchio</groupId>

<artifactId>jasypt-spring-boot-starter</artifactId>

<version>3.0.3</version>

</dependency>

```

3. Use this code block to encrypt a string.

```

private static void encryptString() {

StandardPBEStringEncryptor encryptor = new StandardPBEStringEncryptor();

encryptor.setPassword("password");

encryptor.setAlgorithm("PBEWITHSHA1ANDDESEDE");

encryptor.setIvGenerator(new RandomIvGenerator());

String result = encryptor.encrypt("Hello World!");

System.out.println("encrypted=" + result); // prints differently on each run

result = encryptor.decrypt(result);

System.out.println("decrypted=" + result);

}

```

4. To use it in Spring security, we must set add these security lines in Spring config's bootstrap.xml file

```

security:

user:

name: czetsuya

password: ENC(3E31QZ4Ih8kbEYl141+Hd8zG1N/Pt9c60nHkGX9lnG4=)

```

5. And on the service side Spring application, we need to configure the Spring cloud config location and jasypt encryptor password.

```

spring:

cloud:

config:

uri: http://localhost:8888

username: czetsuya

password: ENC(T9aWpcoGGXGV6x+D/oiJGWkvJSBjwEmpLaBy7utknQo=)

jasypt:

encryptor:

password: password # or you can replace this with an environment variable ${JASYPT_ENCRYPTOR_PASSWORD}

```

JCE Example

Instruction on how we can use JCE in our Spring Boot application security.

To make this exercise easier on Windows, I'll be using WSL2 to run Ubuntu and install sdkman.

You must also take note of the latest spring-boot-cli version from https://mvnrepository.com/artifact/org.springframework.cloud/spring-cloud-cli

Follow this guide https://sdkman.io/install. Check if it succeeded by running the command `sdkman version` in a terminal.

Execute the following commands:

```

# install spring

sdk install springboot

# install spring-cloud-cli

spring install org.springframework.cloud:spring-cloud-cli:3.0.2

# encrypt your text

spring encrypt 'Hello World!' --key 'password'

# results in 5f8aaa3be65f159b439008faf1d4efb5eb6c6d3d8ccd9ddfe5028decb5c3b2c1

# should be different on each run

# decrypt the text

spring decrypt 5f8aaa3be65f159b439008faf1d4efb5eb6c6d3d8ccd9ddfe5028decb5c3b2c1 --key 'password'

```

As before we need to set the encrypted password both in the Spring cloud config server and client. This time instead of using 'ENC', we will use 'cipher'.

Server

```

security:

user:

name: czetsuya

password: 'cipher{5f8aaa3be65f159b439008faf1d4efb5eb6c6d3d8ccd9ddfe5028decb5c3b2c1}'

```

Client

```

spring:

cloud:

config:

uri: http://localhost:8888

username: czetsuya

password: 'cipher{3079cb49646bf1a11dc15e3563c16cb3fb614aebdb5fe389f75d48d3ac43ae6f}'

encrypt:

key: password # or you can replace this with an environment variable ${ENCRYPT_KEY}

```

And there you go folks, stop committing your password in plaintext on public repositories :-)

| czetsuya |

1,306,611 | Raku on asdf | I've created a plugin for installing Raku using the asdf version manager 🎉 Check it out here:... | 0 | 2022-12-23T03:35:43 | https://dev.to/dango/raku-on-asdf-l19 | asdf, languages, opensource, raku | I've created a plugin for installing Raku using the asdf version manager 🎉

Check it out here: https://github.com/m-dango/asdf-raku | dango |

1,306,785 | Upload a file from AWS S3 bucket to another S3 bucket using AWS Lambda (Nodejs) | By using Aws lambda (Nodejs) we can upload a file to from source s3 bucket to the s3 bucket we want... | 0 | 2022-12-23T11:45:32 | https://dev.to/kprasannamahesh/upload-a-file-from-aws-s3-bucket-from-another-s3-bucket-using-aws-lambda-nodejs-299g | node, aws | By using Aws lambda (Nodejs) we can upload a file to from source s3 bucket to the s3 bucket we want to upload.

```

const AWS = require('aws-sdk');

const s3 = new AWS.S3();

exports.handler = async (event) => {

console.log('event',event);

//Reading Source file from source bucket

const sourceFile = await s3.getObject({ Bucket: event.sourceBucket, Key : event.sourceFilePath}, (err,data) =>{

if(err) throw err;

else{

return data.Body.toString('utf-8');

});

//uploading to destination bucket

const param = {

Bucket : event.destination,

Key : event.destinationFilePath,

Body : sourceFile,

ContentType : 'csv'

};

await s3.putObject(params).promise().then((data) =>{console.log('uploaded');

return data}).catch((err) =>{console.log('err',err)

return err;});

```

| kprasannamahesh |

1,306,827 | How to import or use js file configuration into any vue page in your nuxt app | hello i have a nuxtjs project of listing booking I have done all my API calls and configurations... | 0 | 2022-12-23T10:37:43 | https://dev.to/nonciok89/how-to-import-or-use-js-file-configuration-into-any-vue-page-in-your-nuxt-app-2he3 | javascript, vue, api, nuxt | ```

hello

i have a nuxtjs project of listing booking

I have done all my API calls and configurations on " index.js in the store folder " and would like to display the listing data gotten from the API call on my "listing.vue page " please how do I call do to print my data gotten or make my index.js accessable all over the app

app setup

{

"name": "lisngbook,,

"version": "1.0.0",

"scripts": {

"dev": "nuxt",

"build": "nuxt build",

"start": "nuxt start",

"generate": "nuxt generate"

},

"dependencies": {

"@nuxtjs/axios": "^5.13.6",

"core-js": "^3.9.1",

"nuxt": "^2.15.3",

},

"devDependencies": {

"@nuxtjs/moment": "^1.6.1"

},

```

```

| nonciok89 |

1,306,991 | My Journey in Open Source - rate-limiter-fn | Link to the repo What is this? A higher-order function to provide a Rate Limiting... | 20,983 | 2023-01-24T14:00:00 | https://dev.to/cadienvan/my-journey-in-open-source-rate-limiter-fn-735 | javascript, typescript, opensource, github | [Link to the repo](https://github.com/Cadienvan/rate-limiter-fn)

## What is this?

A higher-order function to provide a Rate Limiting mechanism to the given function.

## How do I install it?

```bash

npm install rate-limiter-fn

```

## How can I use it?

```javascript

const rateLimiter = require('rate-limiter-fn');

const rateLimitedFn = rateLimiter(fn, {

limit: 10,

interval: 1000,

});

rateLimitedFn();

```

## API

The module exports a single function (`rateLimit`) that takes two arguments:

- `fn` - The function to be rate limited.

- `options` - An object with the following properties:

- `limit` - The number of times the function can be called within the given interval.

- `interval` - The interval in milliseconds.

- `onLimitReached` - A function that will be called when the limit is reached. It will be called with the following arguments:

- `limit` - The limit that was reached.

- `interval` - The interval that was reached.

- `fn` - The function that was rate limited.

- `args` - The arguments that were passed to the function.

- `identifierFn` - A function that will be called to get the identifier for the rate limit. It will be called with the following arguments:

- `fn` - The function that was rate limited.

- `args` - The arguments that were passed to the function.

## Tests

You can run the tests by using the following command:

```bash

npm test

``` | cadienvan |

1,307,262 | 100 APIs for Indian devs to integrate different financial services in your apps | In my early 10 yrs of my developer career, only FinTech APIs I knew were Stripe and CCAvenue. My... | 0 | 2023-01-11T14:06:14 | https://dev.to/ekodevs/100-apis-for-indian-devs-to-integrate-different-financial-services-in-your-apps-72k | fintech, api | In my early 10 yrs of my developer career, only FinTech APIs I knew were Stripe and CCAvenue. My ideas to build something cool in FinTech were limited to usage of these APIs only. I had little hopes from Indian FinTech ecosystem. Only when I started to look around, I was surprised to find plethora of FinTech services that are accessible with APIs and this exploded the use cases I could build with these APIs. It's been almost a month since I started collecting these APIs down and here I present them to you, the only thing I expect in return from you is - share your ideas what do you wish to build with these APIs or share what's missing here. Deal? Let's go.

> Brought to you by [Eko team](http://developers.eko.in)

First, let's understand different categories of FinTech APIs

## Categories of FinTech APIs

1. Payment processing APIs: These APIs enable online and mobile payments, including payment gateway APIs, digital wallet APIs, and online invoicing APIs.

2. Credit and lending APIs: These APIs provide access to credit scoring, loan origination, and other lending-related services.

3. Insurance APIs: These APIs provide access to insurance products and services, including APIs for health insurance, life insurance, car insurance, and other types of insurance.

4. Wealth management APIs: These APIs provide access to investment products and services, including mutual fund APIs, stock trading APIs, and wealth management platform APIs.

5. Personal finance APIs: These APIs provide access to personal finance management tools, including budgeting and expense tracking APIs, credit card management APIs, and more.

6. Business finance APIs: These APIs provide access to business finance management tools, including invoicing and accounting APIs, financial reporting APIs, and more.

7. Financial comparison APIs: These APIs provide access to financial product comparison tools, including APIs for banking products, insurance products, and mutual funds.

8. Financial market data APIs: These APIs provide access to real-time or historical financial market data, including stock prices, currency exchange rates, and more.

9. Foreign exchange APIs: These APIs provide access to foreign exchange rates and currency conversion services.

10. Cryptocurrency APIs: These APIs provide access to cryptocurrency market data and trading services.

11. Real estate APIs: These APIs provide access to real estate market data and property listings.

12. Financial planning and advice APIs: These APIs provide access to financial planning and investment advice tools.

Banking APIs: These APIs provide access to banking products and services, including APIs for account management, money transfers, and more.

13. Credit card APIs: These APIs provide access to credit card products and services, including APIs for credit card application and management.

14. Lending and borrowing APIs: These APIs provide access to peer-to-peer lending and borrowing platforms.

15. Payment gateway APIs: These APIs enable merchants to accept payments from customers through a variety of payment methods.

16. E-commerce APIs: These APIs provide access to e-commerce platforms and tools, including APIs for product listings, inventory management, and more.

17. Personal identification and verification APIs: These APIs enable identity verification and authentication services.

18. Customer relationship management (CRM) APIs: These APIs provide access to customer relationship management tools, including APIs for customer data management,

19. Data analysis and visualization APIs: These APIs provide access to tools for analyzing and visualizing financial data.

20. Fraud detection and prevention APIs: These APIs provide access to tools for detecting and preventing financial fraud.

21. Financial reporting APIs: These APIs provide access to financial reporting and analysis tools.

22. Financial modeling and simulation APIs: These APIs provide access to tools for creating financial models and simulations.

Financial risk management APIs: These APIs provide access to tools for managing financial risks, such as credit risk, market risk, and more.

23. Supply chain finance APIs: These APIs provide access to tools for managing supply chain financing, including APIs for invoice financing and trade finance.

24. Financial messaging APIs: These APIs provide access to financial messaging and communication platforms, such as SWIFT.

25. Financial education and training APIs: These APIs provide access to financial education and training resources.

There are so many of them, let's apply 80-20 rule and list down the APIs in the most useful categories

## Top Payment Processing APIs in India

- [Paytm API](https://developer.paytm.com/) - Payment processing API that offers a range of payment and financial services, including mobile payments, e-commerce, and more.

- [Google Pay API](https://developers.google.com/pay/api) - Payment processing API that offers a range of payment and financial services, including mobile payments, e-commerce, and more.

- [Eko](https://developers.eko.in) - Domestic Money Transfer, Bill Payment, AePS and distribution of multiple financial services via 150k retail shops in India

- [PhonePe API](https://www.phonepe.com/) - Payment processing API that offers a range of payment and financial services, including mobile payments, e-commerce, and more.

- [Amazon Pay API](https://www.amazon.in/b?ie=UTF8&node=14343320031) - Payment processing API that offers a range of payment and financial services, including mobile payments, e-commerce, and more.

- [MobiKwik API](https://www.mobikwik.com/) - Payment processing API that offers a range of payment and financial services, including mobile payments, e-commerce, and more.

- [Ola Money API](https://www.olamoney.com/) - Payment processing API that offers a range of payment and financial services, including mobile payments, e-commerce, and more.

- [PayU API](https://www.payu.in/) - Payment processing API that offers a range of payment and financial services, including mobile payments, e-commerce, and more.

- [Razorpay API](https://razorpay.com/) - Payment processing API that offers a range of payment and financial services, including mobile payments, e-commerce, and more.

- [Instamojo API](https://www.instamojo.com/) - Payment processing API that offers a range of payment and financial services, including mobile payments, e-commerce, and more.

- [PayPal API](https://www.paypal.com/) - Payment processing API that offers a range of payment and financial services, including mobile payments, e-commerce, and more.

## Top Banking APIs in India

- [HDFC Bank API](https://www.hdfcbank.com/) - Banking API that offers a range of banking products and services, including checking and savings accounts, loans, and more.

- [ICICI Bank API](https://www.icicibank.com/) - Banking API that offers a range of banking products and services, including checking and savings accounts, loans, and more.

- [Eko](https://developers.eko.in) - Domestic Money Transfer, Bill Payment, AePS and distribution of multiple financial services via 150k retail shops in India

- [Axis Bank API](https://www.axisbank.com/) - Banking API that offers a range of banking products and services, including checking and savings accounts, loans, and more.

- [Kotak Mahindra Bank API](https://www.kotak.com/) - Banking API that offers a range of banking products and services, including checking and savings accounts, loans, and more.

- [SBI Bank API](https://www.sbi.co.in/) - Banking API that offers a range of banking products and services, including checking and savings accounts, loans, and more.

- [PNB Bank API](https://www.pnb.co.in/) - Banking API that offers a range of banking products and services, including checking and savings accounts, loans, and more.

- [Bank of Baroda API](https://www.bankofbaroda.in/) - Banking API that offers a range of banking products and services, including checking and savings accounts, loans, and more.

- [Canara Bank API](https://www.canarabank.in/) - Banking API that offers a range of banking products and services, including checking and savings accounts, loans, and more.

- [Union Bank of India API](https://www.unionbankofindia.co.in/) - Banking API that offers a range of banking products and services, including checking and savings accounts, loans, and more.

- [Bank of India API](https://www.bankofindia.co.in/) - Banking API that offers a range of banking products and services, including checking and savings accounts, loans, and more.

## Top Investment and Wealth Management APIs in India

- [Zerodha API](https://kite.trade/docs/) - Investment API that offers low-cost brokerage services for trading in multiple asset classes.

- [Upstox API](https://developer.upstox.com/docs/) - Investment API that offers a high-speed trading platform for multiple asset classes.

- [5paisa API](https://developer.5paisa.com/docs/) - Investment API that offers a user-friendly trading platform with advanced charting tools.

- [TradeJini API](https://www.tradejini.com/) - Investment API that offers brokerage services with a focus on customer service and support.

- [Edelweiss API](https://www.edelweiss.in/) - Investment API that offers a range of financial products and services, including brokerage, mutual funds, and more.

- [Angel Broking API](https://developer.angelbroking.com/) - Investment API that offers a range of brokerage services for multiple asset classes, including equities, derivatives, and more.

- [ICICI Direct API](https://www.icicidirect.com/) - Investment API that offers brokerage and financial services, including equity trading, mutual funds, and more.

- [HDFC Securities API](https://www.hdfcsec.com/) - Investment API that offers brokerage services for multiple asset classes, including equities, derivatives, and more.

- [Kotak Securities API](https://www.kotaksecurities.com/) - Investment API that offers brokerage services for multiple asset classes, including equities, derivatives, and more.

- [Motilal Oswal API](https://www.motilaloswal.com/) - Investment API that offers brokerage and financial services, including equity trading, mutual funds, and more.

## Top Insurance APIs in India

- [PolicyBazaar API](https://www.policybazaar.com/) - Insurance API that offers a comparison platform for insurance products, including life, health, and car insurance.

- [Tata AIA API](https://www.tataaia.com/) - Insurance API that offers a range of insurance products, including life, health, and car insurance.

- [HDFC ERGO API](https://www.hdfcergo.com/) - Insurance API that offers a range of insurance products, including life, health, and car insurance.

- [Bajaj Allianz API](https://www.bajajallianz.com/) - Insurance API that offers a range of insurance products, including life, health, and car insurance.

- [Max Life Insurance API](https://www.maxlifeinsurance.com/) - Insurance API that offers a range of insurance products, including life, health, and car insurance.

- [SBI Life Insurance API](https://www.sbilife.co.in/) - Insurance API that offers a range of insurance products, including life, health, and car insurance.

- [ICICI Prudential API](https://www.iciciprulife.com/) - Insurance API that offers a range of insurance products, including life, health, and car insurance.

- [LIC API](https://www.licindia.in/) - Insurance API that offers a range of insurance products, including life, health, and car insurance.

- [Birla Sun Life Insurance API](https://www.birlasunlife.com/) - Insurance API that offers a range of insurance products, including life, health, and car insurance.

- [Reliance Nippon Life Insurance API](https://www.reliancenipponlife.com/) - Insurance API that offers a range of insurance products, including

## Top Credit and Lending APIs in India

- [Lendingkart API](https://www.lendingkart.com/) - Credit and lending API that offers working capital loans to small businesses.

- [Capital Float API](https://www.capitalfloat.com/) - Credit and lending API that offers a range of financial products, including loans and credit lines, to small businesses.

- [Indifi API](https://www.indifi.com/) - Credit and lending API that offers working capital loans to small businesses.

- [Loanzen API](https://www.loanzen.in/) - Credit and lending API that offers working capital loans to small businesses.

- [InCred API](https://www.incred.com/) - Credit and lending API that offers a range of financial products, including loans, to individuals and small businesses.

- [KreditBee API](https://www.kreditbee.in/) - Credit and lending API that offers short-term personal loans to individuals.

- [EarlySalary API](https://www.earlysalary.com/) - Credit and lending API that offers short-term personal loans to individuals.

- [Credy API](https://www.credy.in/) - Credit and lending API that offers short-term personal loans to individuals.

- [StashFin API](https://www.stashfin.com/) - Credit and lending API that offers short-term personal loans to individuals.

- [MoneyTap API](https://www.moneytap.com/) - Credit and lending API that offers personal credit lines to individuals.

## Top Identity verification APIs in India

- [Authy API](https://www.authy.com/) - Identity verification API that offers two-factor authentication and phone verification services.

- [Truemail API](https://truemail.io/) - Identity verification API that offers email verification services.

- [Jumio API](https://www.jumio.com/) - Identity verification API that offers identity verification services using artificial intelligence and machine learning.

- [Onfido API](https://www.onfido.com/) - Identity verification API that offers identity verification services using artificial intelligence and machine learning.

- [SumSub API](https://www.sumsub.com/) - Identity verification API that offers identity verification and compliance services for financial institutions and e-commerce companies.

- [Shufti Pro API](https://www.shuftipro.com/) - Identity verification API that offers identity verification services using artificial intelligence and machine learning.

- [IDology API](https://www.idology.com/) - Identity verification API that offers identity verification and fraud prevention services.

- [IDnow API](https://www.idnow.de/) - Identity verification API that offers identity verification services using video identification technology.

- [IdentityMind API](https://www.identitymind.com/) - Identity verification API that offers identity verification and risk assessment services.

- [Veratad API](https://www.veratad.com/) - Identity verification API that offers identity verification and age verification services.

## FinTech API Aggregators in India

- [Eko Node SDK](https://github.com/ekoindia/eko-sdk-node) - Aggregator of all financial services APIs into one SDK

If you want me to create such useful content on regular basis without getting fired from my job :) , do check out the [FinTech APIs for Money Transfer and Bill Payments](http://developers.eko.in) that my team is building. And do check out a new [Open-Source Node.js SDK](https://github.com/ekoindia/eko-sdk-node) we recently started building to make it easy to integrate any FinTech service in your app. | ekodevelopers |

1,307,412 | Merry Christmas Codepen ! | Little experiment with parallax effect thanks to 3D perspective | 0 | 2022-12-23T22:56:28 | https://dev.to/one_div/merry-christmas-codepen--53jk | codepen | <p>Little experiment with parallax effect thanks to 3D perspective</p>

{% codepen https://codepen.io/onediv/pen/zYLGeQw %} | one_div |

1,307,460 | Runtime check on borrowing rule with RefCell | Rust does compile time check on borrowing rule. But there are some scenarios we need to defer it to... | 0 | 2022-12-24T02:39:54 | https://dev.to/franzwong/runtime-check-on-borrowing-rule-with-refcell-5b13 | rust, refcell | Rust does compile time check on borrowing rule. But there are some scenarios we need to defer it to runtime. Let me show a simplified version from one of the examples in [the book](https://doc.rust-lang.org/book/ch15-05-interior-mutability.html)

We have a trait with one method. No change on internal states is allowed inside this method. (`&self` is used instead of `&mut self`)

```rust

pub trait Messenger {

fn send(&self, msg: &str);

}

```

What if we want to store the messages sent? (e.g. it's common when we create a mock object for testing) It means we need to change the internal states. For some reasons (e.g. it is from 3rd party), we can't modify this trait, so we can't change to `&mut self`. Anyway, let's do it first and see what will happen.

```rust

struct MyMessenger {

messages: Vec<String>

}

impl MyMessenger {

fn new() -> MyMessenger {

MyMessenger { messages: vec![] }

}

}

impl Messenger for MyMessenger {

fn send(&self, msg: &str) {

self.messages.push(String::from(msg));

}

}

```

On the line `push` method is called, the compiler complains.

```

cannot borrow `self.messages` as mutable, as it is behind a `&` reference

`self` is a `&` reference, so the data it refers to cannot be borrowed as mutablerustcClick for full compiler diagnostic

main.rs(2, 13): consider changing that to be a mutable reference: `&mut self`

```

We want to make it passes the compilation. Let's rewrite it with `RefCell`.

```rust

use std::cell::RefCell;

struct MyMessenger {

messages: RefCell<Vec<String>>

}

impl MyMessenger {

fn new() -> MyMessenger {

MyMessenger { messages: RefCell::new(vec![]) }

}

}

impl Messenger for MyMessenger {

fn send(&self, msg: &str) {

self.messages.borrow_mut().push(String::from(msg));

}

}

```

We use `borrow_mut` to borrow a mutable value. Compilation passes this time.

The borrowing rule is still checked in runtime. Runtime error will occur if we do this.

(This violates the rule "At any given time, we can have either one mutable reference or any number of immutable references".)

```rust

let x = RefCell::new(String::from("Hello World"));

let y = x.borrow_mut();

let z = x.borrow(); // Error occurs here in runtime

``` | franzwong |

1,307,587 | Day 24: CI for perl5-MIME-Types | Sometimes it is very easy. | 20,737 | 2022-12-24T07:47:00 | https://code-maven.com/ci-for-perl5-mime-types | perl, ci, programming, devops | ---

title: Day 24: CI for perl5-MIME-Types

published: true

description: Sometimes it is very easy.

tags: perl, ci, programming, devops

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

published_at: 2022-12-24 07:47 +0000

canonical_url: https://code-maven.com/ci-for-perl5-mime-types

series: ci-2022-12

---

After the heavy-lifting of the previous entry in the Daily CI series this was a very easy task. Looking at [CPAN Digger](https://cpan-digger.perlmaven.com/) I saw [MIME-Types](https://metacpan.org/dist/MIME-Types). That sounded like a simple Perl module and indeed adding CI was straight forward.

[Pull-request](https://github.com/markov2/perl5-MIME-Types/pull/14) It was already accepted.

## Conclusion

There are many projects that are low-hanging fruits where you can add GitHub Actions in a matter of minutes and get the benefits immediately.

| szabgab |

1,307,818 | Free Developer T-Shirt Design | Download My Free T-Shirt Design and follow me for more. The Link if you want any custom... | 0 | 2022-12-24T14:08:04 | https://dev.to/ahmed_onour/free-developer-t-shirt-design-5dpg | free, freedesign | Download My Free T-Shirt Design and follow me for more.

[The Link](https://ahmedonour.gumroad.com/l/Developer-t-shirt-design)

## if you want any custom design i am available free of charge just DM me in [twitter](https://twitter.com/ahmed_onour) | ahmed_onour |

1,307,910 | New Joiner of Dev platform | Hi All, Firstly I would like to introduce myself I'm Shivani Goyal(25F) from India. I'm working in... | 0 | 2022-12-24T18:02:52 | https://dev.to/shivanigoyal/new-joiner-of-dev-platform-4498 | python, aws, beginners, knowledge | Hi All,

Firstly I would like to introduce myself I'm Shivani Goyal(25F) from India. I'm working in IT field as a System Engineer. I have completed MCA degree in 2021. I'm new joiner on this Dev platform. I would be very thankful to all of you if you'll give my some guidelines, tips and tricks, guidance, knowledge etc. Anything which will be helpful to enhance my knowledge. **If someone wanna connect with me please lemme know in terms of knowledge sharing, guidance only.** I hope we all are here to help each other and enhance the knowledge and programming skills as well.

## > I wish you Merry Christmas and Happy New Year to you and your family. Spread good thoughts, knowledge and happiness.

| shivanigoyal |

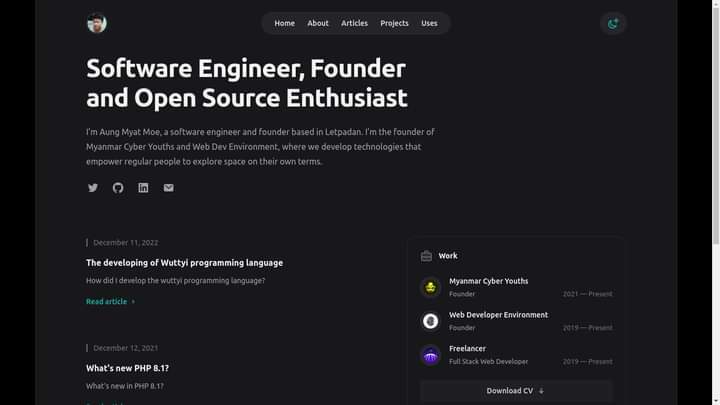

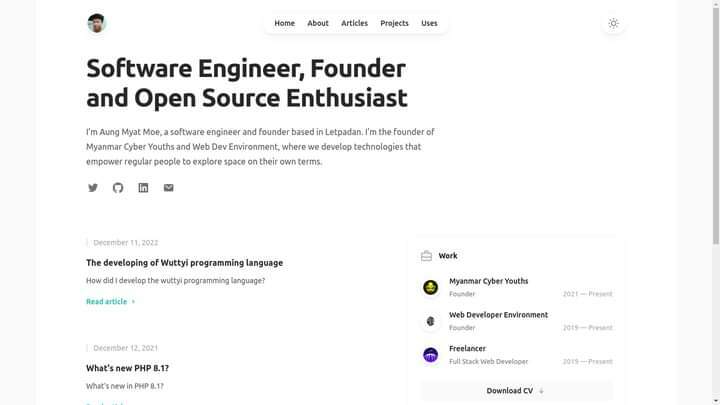

1,308,203 | My new portfolio which is built on top of next 13 app directory | I have been working around with my portfolio website in last two week ago. I forgot to grab review... | 0 | 2022-12-25T06:21:39 | https://dev.to/aungmyatmoe/my-new-portfolio-which-is-build-on-top-of-next-13-app-directory-1o6i | nextjs, portfolio, webdev, javascript | I have been working around with my portfolio website in last two week ago. I forgot to grab review from dev community.

I am warmly welcome to grab your review and feedback.

**My website is alive here.**

[https://www.aungmyatmoe.me/](https://www.aungmyatmoe.me/)

## Project can be found here

https://github.com/amm834/aungmyatmoe.me

## See review

I inspired tailwind's portfolio template. It is so cool and elegant to me. So, u don't need to invent a new wheel with figma 👻.

Figma is a bit hard for small screen.

I use,

- Next

- Tailwind

to accomplish my app's domain and then I inspired tailwind's open source documentation website to config my tailwind config.

It's open source by default. I love open source projects. They give me an opportunity to learn their code and how should I improve well in future.

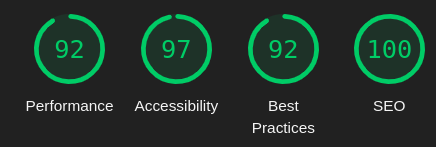

## Lighthouse Result

| aungmyatmoe |

1,308,310 | Microservice architecture for software development | Microservice architecture is a software design pattern that decomposes a large, complex system into a... | 0 | 2022-12-25T10:08:10 | https://dev.to/giasuddin90/microservice-architecture-for-software-development-58kd | webdev, programming, architecture | Microservice architecture is a software design pattern that decomposes a large, complex system into a set of independent, self-contained services that communicate with each other over well-defined interfaces. This approach is becoming increasingly popular in the software industry due to its many benefits, including:

**1.Modularity:** Microservices are designed to be self-contained, with each service responsible for a specific set of functionality. This makes it easier to develop and maintain the system, as changes to one service are less likely to affect the rest of the system.

**2.Scalability:** Microservices can be scaled independently of each other, allowing the system to handle increased workloads without having to scale the entire system. This makes it easier to manage resource utilization and cost.

**3.Flexibility:** Microservices can be developed and deployed independently, allowing the system to be more flexible and adaptable to change. This makes it easier to add new features and capabilities to the system.

**4.Reliability:** Microservices are designed to be fault-tolerant, with each service able to operate independently of the others. This makes the system more resilient to failures, as the rest of the system can continue to operate even if one service goes down.

**5.Reusability:** Microservices can be reused in multiple applications, allowing developers to build new systems faster and more efficiently.

However, microservice architecture also has its challenges, including:

**1.Complexity:** Microservice architecture can be more complex to design and implement than traditional monolithic architecture, as it requires more coordination between the different services.

**2.Testing:** Testing microservices can be more difficult, as each service must be tested independently and in combination with the other services.

**3.Deployment:** Deploying microservices can be more challenging, as each service must be deployed and managed separately.

**4.Monitoring:** Monitoring microservices can be more difficult, as there are more components to keep track of.

Despite these challenges, microservice architecture is becoming increasingly popular in the software industry due to its many benefits. It allows developers to build scalable, flexible, and reliable software systems that can adapt to changing requirements and workloads. If you are considering using microservice architecture for your next software project, it is important to carefully evaluate the benefits and challenges to determine if it is the right fit for your needs.

Thank you for reading my article! If you enjoyed it and would like to support my work, please consider buying me a coffee at **[Buy Me a Coffee](https://www.buymeacoffee.com/giasuddin)**. You can also learn more about me and my work by visiting my **[Giasuddin Bio ](https://bio.link/giasuddi)**and following me on LinkedIn and Twitter. Thank you for your support!

| giasuddin90 |

1,308,356 | Managing React State Like A Superhero 🦸 | The state is an important concept in React, as it allows components to store and manage data that can... | 0 | 2022-12-25T13:17:22 | https://dev.to/majdsufian/managing-react-state-like-a-superhero-5gnp | react, javascript, typescript, webdev | The state is an important concept in React, as it allows components to store and manage data that can change over time. Properly managing state can help make your React application more efficient and easier to maintain. In this article, we will explore different ways to manage state in React and provide code examples for each method.

**1- Using the useState Hook:**

One of the most common ways to manage state in React is by using the `useState` hook. This hook allows you to add state to functional components, which were previously unable to have state. Here is an example of how to use the `useState` hook:

```ts

import React, { useState } from 'react';

function Example() {

const [count, setCount] = useState(0);

return (

<div>

<p>You clicked {count} times</p>

<button onClick={() => setCount(count + 1)}>

Click me

</button>

</div>

);

}

```

In this example, we are using the `useState` hook to create a piece of state called `count`, which is initially set to 0. The `useState` hook returns an array with two elements: the current value of the state (in this case, `count`) and a function to update the state (in this case, `setCount`). We can use the `setCount` function to update the value of `count` by passing a new value as an argument.

**2- Using the useReducer Hook:**

Another way to manage state in React is by using the `useReducer` hook. This hook is similar to the `useState` hook, but it allows you to manage complex state logic with reducers, which are functions that take in the current state and an action, and return a new state. Here is an example of how to use the `useReducer` hook:

```ts

import React, { useReducer } from 'react';

const initialState = { count: 0 };

function reducer(state, action) {

switch (action.type) {

case 'increment':

return { count: state.count + 1 };

case 'decrement':

return { count: state.count - 1 };

default:

throw new Error();

}

}

function Example() {

const [state, dispatch] = useReducer(reducer, initialState);

return (

<div>

<p>You clicked {state.count} times</p>

<button onClick={() => dispatch({ type: 'increment' })}>

Increment

</button>

<button onClick={() => dispatch({ type: 'decrement' })}>

Decrement

</button>

</div>

);

}

```

**3- Using the useContext Hook:**

Another way to manage the state in React is by using the `useContext` hook in combination with the `createContext` function. The `createContext` the function allows you to create a context object, which can be used to pass data down the component tree without the need for props drilling. The `useContext` hook allows you to access the context object from a functional component. Here is an example of how to use them `createContext` && `useContext` hooks:

```ts

import React, { createContext, useContext } from 'react';

const CountContext = createContext();

function Example() {

const count = useContext(CountContext);

return (

<div>

<p>You clicked {count} times</p>

</div>

);

}

function App() {

const [count, setCount] = useState(0);

return (

<CountContext.Provider value={count}>

<Example />

<button onClick={() => setCount(count + 1)}>

Click me

</button>

</CountContext.Provider>

);

}

```

**4- Using a Higher-Order Component:**

Another way to manage the state in React is by using a higher-order component (HOC). A HOC is a function that takes a component as an argument and returns a new component with additional functionality. Here is an example of how to use a HOC to manage the state:

```ts

import React from 'react';

function withState(WrappedComponent) {

return class extends React.Component {

state = { count: 0 };

increment = () => {

this.setState({ count: this.state.count + 1 });

}

render() {

return (

<WrappedComponent

count={this.state.count}

increment={this.increment}

{...this.props}

/>

);

}

};

}

function Example(props) {

return (

<div>

<p>You clicked {props.count} times</p>

<button onClick={props.increment}>

Click me

</button>

</div>

);

}

const EnhancedExample = withState(Example);

function App() {

return (

<EnhancedExample />

);

}

```

In this example, we are using a HOC called withState to add state management functionality to the `Example` component. The `withState` HOC returns a new component that has a `count` state and an `increment` function, which updates the `count` state. The `Example` component receives the `count` and `increment` props from the HOC and uses them to render the component.

**5- Using a State Management Library:**

Finally, another way to manage the state in React is by using a state management library.

Using a state management library is a popular approach to managing the state in larger React applications. A state management library is a separate package that provides additional functionality for managing the state, such as support for handling asynchronous actions and managing a global state.

One popular state management library for React is Redux. Redux is a predictable state container for JavaScript applications that helps you write applications that behave consistently. It works by creating a store that holds the application's state and provides functions for updating the state in a predictable way.

Here is an example of how to use Redux to manage the state in a React application:

```ts

import React from 'react';

import { createStore } from 'redux';

import { Provider, connect } from 'react-redux';

const initialState = { count: 0 };

function reducer(state = initialState, action) {

switch (action.type) {

case 'INCREMENT':

return { count: state.count + 1 };

case 'DECREMENT':

return { count: state.count - 1 };

default:

return state;

}

}

const store = createStore(reducer);

function Example(props) {

return (

<div>

<p>You clicked {props.count} times</p>

<button onClick={props.increment}>

Increment

</button>

<button onClick={props.decrement}>

Decrement

</button>

</div>

);

}

function mapStateToProps(state) {

return {

count: state.count

};

}

function mapDispatchToProps(dispatch) {

return {

increment: () => dispatch({ type: 'INCREMENT' }),

decrement: () => dispatch({ type: 'DECREMENT' })

};

}

const EnhancedExample = connect(

mapStateToProps,

mapDispatchToProps

)(Example);

function App() {

return (

<Provider store={store}>

<EnhancedExample />

</Provider>

);

}

```

In this example, we are using Redux to create a store that holds the application's state and a reducer function that updates the state in a predictable way. We are then using the `connect` function from the `react-redux` library to connect the `Example` component to the Redux store. The `connect` function returns a new component that receives the state and dispatch functions as props. The `Example` component uses the count prop to render the component and the `increment` and `decrement` props to update the state.

------------------------------------------------

In conclusion, there are several different ways to manage the state in React, each with its own advantages and disadvantages. It is important to choose the right approach for your specific use case and to consider factors such as the complexity of your state, the size of your application, and the needs of your team.

| majdsufian |

1,308,378 | Multiple Checkbox Form in React Js | Multiple Checkbox list is very often seen on the websites like Amazon, Flipkart etc. So today we... | 0 | 2022-12-25T14:15:44 | https://dev.to/mayankkashyap681/multiple-checkbox-form-in-react-js-3elb | javascript, webdev, react, tutorial | Multiple Checkbox list is very often seen on the websites like Amazon, Flipkart etc.

So today we are going to build multiple checkbox list from scratch using React.

So, our final result will look like the gif below

This is **App.js**, make a React project and you will find it over there.

You can create a separate file for the Checkbox list or simple write your code in the App.js but I prefer to create a new file for Checkbox list. Import the Checkbox from the File Checkbox.js and make it a component. Styling is up to you. You can style it anyway you want.

```

import "./styles.css";

import Checkbox from "./Checkbox";

export default function App() {

return (

<div className="App">

<Checkbox />

</div>

);

}

```

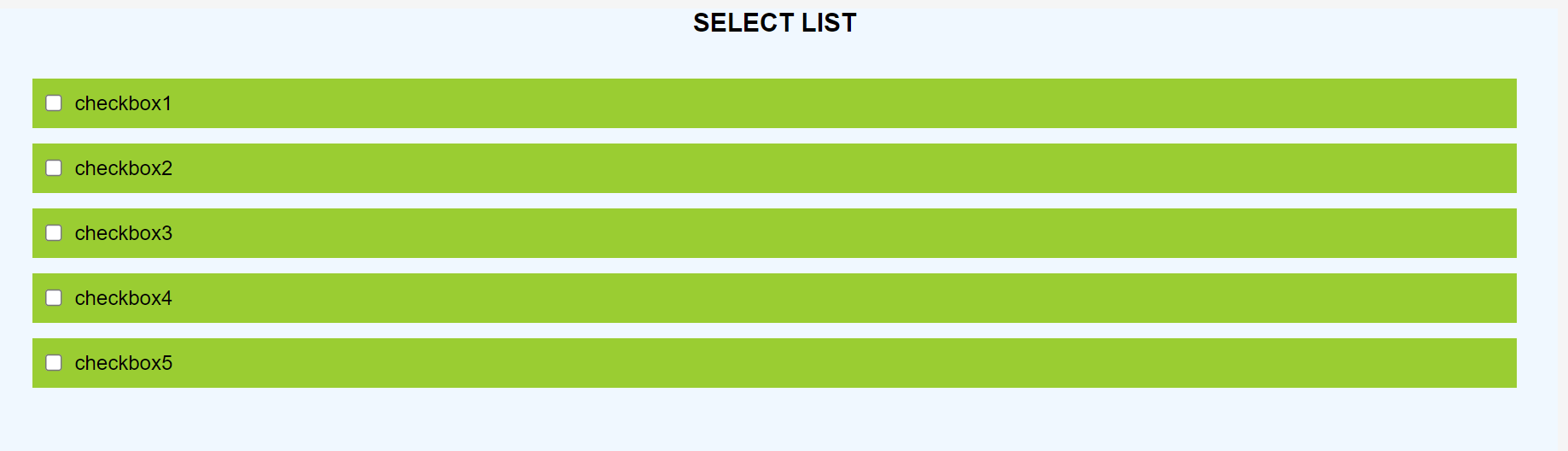

This is our Checkbox.js

```

import React from "react";

const Checkbox = () => {

const list = [

"checkbox1",

"checkbox2",

"checkbox3",

"checkbox4",

"checkbox5"

];

return (

<div

className="selector-bg"

style={{ background: "aliceblue", height: "100vh", margin: "20px" }}

>

<div>

<h1

style={{

textAlign: "center",

fontWeight: "bold",

textTransform: "uppercase",

fontSize: "20px"

}}

>

Select List

</h1>

<form>

<div style={{ padding: "20px" }}>

{list.map((val) => (

<div

style={{

display: "flex",

backgroundColor: "yellowgreen",

padding: "10px",

margin: "12px"

}}

key={val}

>

<input

type="checkbox"

id={val}

value={val}

name={val}

style={{ marginRight: "10px" }}

/>

<label for={val}>{val}</label>

</div>

))}

</div>

</form>

</div>

</div>

);

};

export default Checkbox;

```

So in the Checkbox.js we have firstly made the list of all the tags which we want to display and to keep everything simple I have named them checkbox1, checkbox2, checkbox3 and so on you can also replace them with some real tags like brand names, color names or whatever you like.

> const list = [ "checkbox1", "checkbox2", "checkbox3", "checkbox4", "checkbox5"];

we are making this list so that we don't need to hardcode tags and write repetitive code, instead of that we can simply use map on the list of the tags and display them by writing code once.

`<div

className="selector-bg"

style={{ background: "aliceblue", height: "100vh", margin: "20px" }}

>

<div>

<h1

style={{

textAlign: "center",

fontWeight: "bold",

textTransform: "uppercase",

fontSize: "20px"

}}

>

Select List

</h1>

<form>

<div style={{ padding: "20px" }}>

{list.map((val) => (

<div

style={{

display: "flex",

backgroundColor: "yellowgreen",

padding: "10px",

margin: "12px"

}}

key={val}

>

<input

type="checkbox"

id={val}

value={val}

name={val}

style={{ marginRight: "10px" }}

/>

<label for={val}>{val}</label>

</div>

))}

</div>

</form>

</div>`

In the code written above we have only done some styling and created a form, iterated over the list of tags and displayed them. After all this we will get the output something like the image below.

**Now, lets add state and add logic to make things work**

Import useState() from React

> import {useState} from 'react'

A). Add a state checked to keep track of all the tags which are checked.

_const [checked, setChecked] = useState([]);_

B). Add onChange Event handler on the input field

_onChange={() => handleToggle(val)}_

C). Write the logic for the handleToggle function, so that whenever a box is checked or unchecked the handleToggle function get triggered.

```

const handleToggle = (val) => {

var currentIndex = checked.indexOf(val);

var newArr = [...checked];

if (currentIndex === -1) {

newArr.push(val);

} else {

newArr.splice(currentIndex, 1);

}

setChecked(newArr);

};

```

Handle Toggle function doing following things

1). It is finding the index of the tag in the checked array,

if the tag is present then we will get the index, otherwise we will get -1

2). we are creating another array newArray and initializing it with all the tags which are currently in the checked array, in simple words we are making copy.

3). If the currentIndex is -1, it means the tag is not present in the array so we are pushing it in the newArr by using push() method.

4). If the currentIndex is not equal to -1 it means the tag is already checked thus present in the checked array, so we are removing it using splice() method.

5). At last we are setting the checked array using setChecked(newArr);

**To display all the checked tags we are adding another div which get diplayed only when the size of checked array is greater than 0 means the checked array is having atleast one element**

```

<div style={{ display: "flex", backgroundColor: "gray" }}>

{checked.map((item) => (

<p

key={item}

style={{

margin: "10px",

color: "whitesmoke",

border: "2px solid greenyellow",

padding: "2px"

}}

>

{item}

</p>

))}

</div>

```

**_The link for the final code of Checkbox.js_**

https://github.com/MaYaNkKashyap681/React-Native-Features-Implementation/blob/main/Selection%20Checkbox%20in%20React.js

| mayankkashyap681 |

1,308,438 | Justify-content in Flex Box | Simply we can say that justify contet set items horizontal. but with it has also few... | 0 | 2022-12-25T14:44:35 | https://dev.to/sutharrahul/justify-content-in-flex-box-pi3 | Simply we can say that justify contet set items horizontal.

but with it has also few property.

`.flxbox{

justify-content: flex-start | flex-end | center | space-between | space-around | space-evenly | start | end | left | right;

}`

## Flex-star (default) :

Item are set on star (left side of page). If we use `row-revers` but we want that item should be start from left side. so we use `justify-content: flex-start`.

`justify-content: flex-start;`

## flex-end:

Item are set on end (right side of page)

`justify-content: flex-end;`

## flex-center:

This property set out item on center of the page

`justify-content: center;`

## space-between:

Items are evenly distributed in the line; first item is on the start line, last item on the end line.

`justify-content: space-between;`

## space-around:

To create evenly space around items

`justify-content: space-around;`

## space-evenly:

Items are distributed so that the spacing between any two items (and the space to the edges) is equal.

| sutharrahul | |

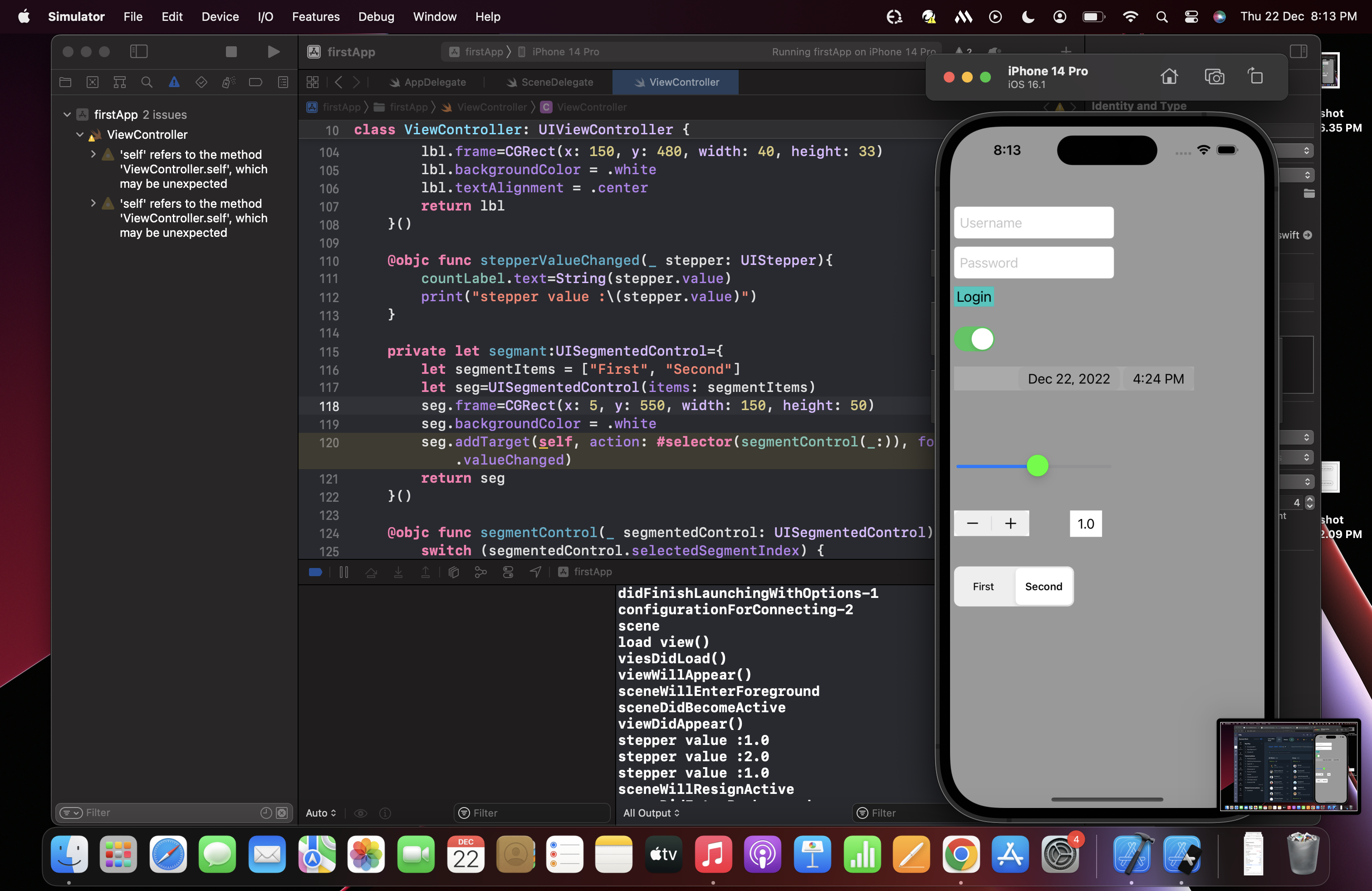

1,308,922 | UI Controls | Label a label is a visual element that displays text. It is an instance of the UILabel... | 21,130 | 2022-12-26T10:02:03 | https://dev.to/ajith_karuna/ui-controls-4122 | ## Label

a label is a visual element that displays text. It is an instance of the UILabel class, which is a subclass of UIView. Labels are commonly used to display static text, such as labels for form fields or titles for sections of content.

A label has a number of properties that you can use to customise its appearance, such as its font, text colour, and alignment and more.

```

let label=UILabel()

label.text="Welcome to zoho!"

label.textAlignment = .center

label.textColor = .blue

label.frame=CGRect(x: 5, y: 60, width: 150, height: 20)

view.addSubview(label)

```

## Button

It is a control that enables the user to interact with the application. It is used to trigger the events performed by the user. It executes the custom code in response to user interactions.

The buttons are one of the most important parts of iOS applications. Buttons are associated with the actions that are performed when the user interacts with the button. We can add the button to the iOS application programmatically or by using interface builder.

```

private let Button:UIButton={

let btn=UIButton()

btn.setTitle("Login", for: .normal)

btn.setTitleColor(UIColor.black, for: .normal)

btn.frame = CGRect(x: 5, y: 200, width: 50, height: 25)

btn.backgroundColor = .systemMint

btn.addTarget(target, action: #selector(call),for: .touchUpInside)

return btn

}()

@objc func call(){

print("button clicked")

Button.backgroundColor = .red

}

```

## TextField

It can be defined as an object which is used to display an editable text area in the interface. Textfields are used to get the text-based input from the user.

```

private let userNameText:UITextField={

let text=UITextField()

text.placeholder="Username"

text.borderStyle = .roundedRect

text.textColor = .black

text.frame = CGRect(x: 5, y:100 , width: 200, height: 40)

return text

}()

```

## DatePicker

DatePicker is a control used in IOS applications to get the date and time values from the user. We can allow the user to either enter the time in point or time interval.

```

private let DatePicker:UIDatePicker={

let picker=UIDatePicker()

picker.frame=CGRect(x: 5, y: 300, width: 300, height: 30)

picker.backgroundColor = .lightGray

return picker

}()

```

## Slider

A slider can be defined as a UIControl, which provides the contiguous range of values on a single scale to the user, out of which the user is prompted to select a single value.

The slider is connected with the action method, which is notified every time the user moves the thumb onto a slider. The value of the slider can be retrieved each time the action method is called.

```

private let Slider:UISlider={

let slider=UISlider()

slider.frame=CGRect(x: 5, y: 400, width: 200, height: 50)

slider.minimumValue=0

slider.maximumValue=100

slider.thumbTintColor = .green

return slider

}()

```

## Stepper

It is a type of UIControl which is used to increase and decrease value. The stepper consists of two buttons. It is associated with a value which gets repeatedly increased or decreased on holding down two of the buttons once at a time. The rate of the change depends on the duration the user presses the control.

```

private let Stepper={

var steper=UIStepper()

steper.frame=CGRect(x: 5, y: 480, width: 30, height: 60)

steper.backgroundColor = .white

steper.value=0

steper.minimumValue = -10

steper.maximumValue = 10

steper.addTarget(self, action: #selector(stepperValueChanged(_:)), for: .valueChanged)

return steper

}()

```

## Switch

The switch can be defined as the UIControl, which provides binary choices to the user either on or off. The state of a switch is managed by properties and methods defined in the UISwitch class, which is the subclass of UIControl.

```

private let Switch:UISwitch={

let Switch=UISwitch()

Switch.frame=CGRect(x: 5, y: 250, width: 50, height: 30)

Switch.isOn=true

Switch.setOn(true, animated: false)

return Switch

}()

```

## Segment

Segment control can be defined as the horizontal control, which controls multiple segments where a discrete button controls each segment. A segment control can be used to display multiple views within a single view controller, where each view can be displayed by using a discrete button.

```

private let segmant:UISegmentedControl={

let segmentItems = ["First", "Second"]

let seg=UISegmentedControl(items: segmentItems)

seg.frame=CGRect(x: 5, y: 550, width: 150, height: 50)

seg.backgroundColor = .white

seg.addTarget(self, action: #selector(segmentControl(_:)), for: .valueChanged)

seg.selectedSegmentIndex=0

seg.selectedSegmentTintColor = .lightGray

seg.tintColor = .blue

return seg

}()

```

**Sample App**

| ajith_karuna | |

1,418,643 | Membuat Simple Bank App Menggunakan Strapi v4 | Hi, selamat datang di artikel kami. Untuk kali ini kita akan sharing dan belajar bareng terkait... | 0 | 2023-04-02T07:47:52 | https://dev.to/wildananugrah/membuat-simple-bank-app-menggunakan-strapi-v4-386h | Hi, selamat datang di artikel kami. Untuk kali ini kita akan sharing dan belajar bareng terkait [Strapi](https://strapi.io). Namun, sebelum kita mulai, ada beberapa hal yang mesti temen-temen pahami.

Pertama, [Strapi](https://strapi.io) adalah *Headless Content Management System* atau *Headless CMS* yang dimana sangat membantu untuk mempercepet waktu _developer_ untuk membuat sebuah aplikasi.

Lalu apa itu *Headless Content Management System* atau *Headless CMS*? *Headless CMS* adalah sebuah CMS tanpa [Frontend](https://en.wikipedia.org/wiki/Front-end_web_development) yang dimana *content*-nya dapat diakses melalui [API](https://en.wikipedia.org/wiki/API). Sehingga dengan sebuah *Headless CMS* membantu temen-temen *developer* membuat backend aplikasi lebih cepat dan mudah.

[API](https://en.wikipedia.org/wiki/API) memungkinan dua aplikasi untuk saling berkomunikasi melalui sebuah *protocol* dengan *message contract* tertentu. Untuk pembahasan kali ini, kita akan menggunakan [HTTP](https://en.wikipedia.org/wiki/HTTP) dan [JSON](https://en.wikipedia.org/wiki/JSON)

[Strapi](https://strapi.io) biasanya digunakan para developer untuk mengembangkan *Static Websites*, *Mobile Apps*, *e-Commerce*, *Editorial*, dan *Corporate websites*. Namun, tidak menutup kemungkinan untuk mengembangankan aplikasi lebih dari itu. Untuk di artikel kami kali ini, kami akan coba membuat aplikasi bank yang sederhana atau **API Simple Bank App**.

Kami merasa dengan mempelajari **API Simple Bank App**, dapat membantu kita untuk mempelajari sistem perbankan dalam scope kecil dan manfaat dari API terutama di era digital saat ini. Adapun yang akan kita pelajari di artikel ini antara lain:

1. Business Requirements

2. Design System

3. Development

## Business Requirements

**API Simple Bank App** melayani user dalam beberapa hal antara lain:

1. User dapat mendaftarkan diri menjadi nasabah baru dan mendapatkan nomor *Customer Identification File* (CIF) dengan meng-input data antara lain:

1. Nomor ID atau KTP

2. Nama lengkap

2. Nasabah dapat membuat nomor rekening baru dengan menginput data CIF

3. Dapat melihat daftar nomor rekening dengan menginput data CIF

4. Nasabah dapat men-topup uang ke nomor rekeningnya sendiri

1. Nomor Rekening

2. *Amount*

5. Nasabah dapat menarik uang dari nomor rekeningnya sendiri dengan data antara lain:

1. Nomor ID atau KTP

2. Nomor Rekening

3. *Amount*

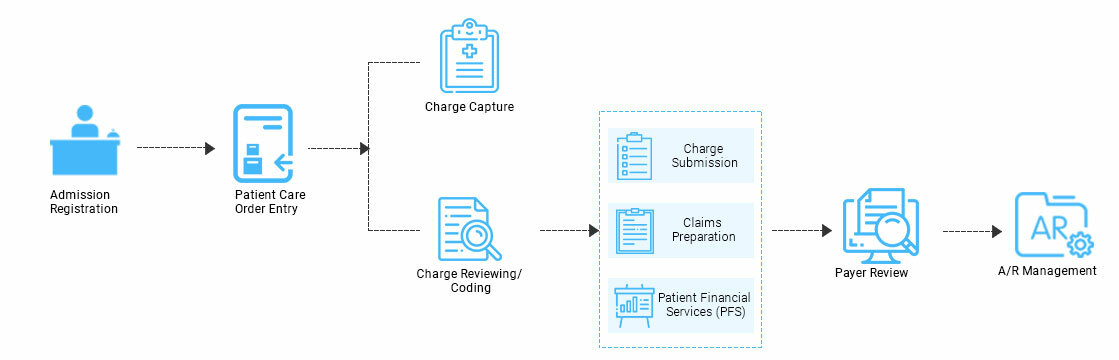

## Design system

Berdasarkan Business Requirement di sebelumnya, kami melihat bahwa ada beberapa *service* yang perlu kita bangun di dalam sistem kita antara lain:

1. Customer service: service yang melayani nasabah dalam [CRUD](https://www.codecademy.com/article/what-is-crud) customer.

2. Account service: service yang melayani nasabah dalam [CRUD](https://www.codecademy.com/article/what-is-crud) account.

3. Transaction service: service yang melayani nasabah dalam [CRUD](https://www.codecademy.com/article/what-is-crud) transaction.

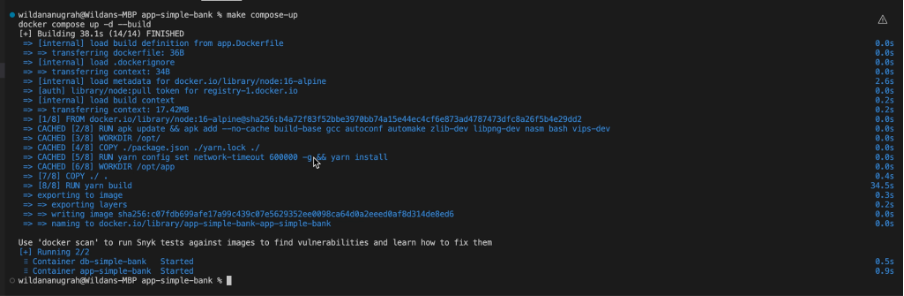

## Development

- Buka Terminal lalu jalankan perintah dibawah

```sh

npx create-strapi-app@latest app-simple-bank

```

- Masuk ke folder _app-simple-bank_ dengan menjalankan code di bawah

```sh

cd app-simple-bank

```

- Buat dua file yaitu _app.Dockerfile_, _docker-compose.yml_, dan _Makefile_

- Untuk _app.Dockerfile_, ketik kode di bawah ini

```dockerfile

FROM node:16-alpine

# Installing libvips-dev for sharp Compatibility

RUN apk update && apk add --no-cache build-base gcc autoconf automake zlib-dev libpng-dev nasm bash vips-dev

ARG NODE_ENV=development

ENV NODE_ENV=${NODE_ENV}

WORKDIR /opt/

COPY ./package.json ./yarn.lock ./

ENV PATH /opt/node_modules/.bin:$PATH

RUN yarn config set network-timeout 600000 -g && yarn install

WORKDIR /opt/app

COPY ./ .

RUN yarn build

EXPOSE 1337

CMD ["yarn", "develop"]

```

- Untuk compose-docker.yml. ketik kode di bawah ini

```yaml

version: "3.7"

services:

app-simple-bank:

build:

context: .

dockerfile: ./app.Dockerfile

container_name: app-simple-bank

environment:

- DATABASE_HOST=db-simple-bank

- DATABASE_PORT=5432

- DATABASE_NAME=postgres

- DATABASE_USERNAME=postgres

- DATABASE_PASSWORD=password

- HOST=0.0.0.0

- PORT=1337

- APP_KEYS=mc68ZV26OzOPQ0A1ESUvNA==,b4sM7ksQE3eqfteYmqWzwA==,UUWwjcxNoDrGvSwnDDhwxA==,j7T57Bls1mSggOLIrsljkg==

- API_TOKEN_SALT=nagThNX7aJINn6oaOpMOyg==

- ADMIN_JWT_SECRET=9VrfUyP40Cqxy+6qNvucQQ==

- TRANSFER_TOKEN_SALT=ar4K31sLK7NONBhHQ6t2zw==

ports:

- "8000:1337"

depends_on:

- db-simple-bank

volumes:

- ./src:/opt/app/src

- ./config:/opt/app/config

- ./package.json:/opt/app/package.json

- ./public/uploads:/opt/app/public/uploads

networks:

app-net: {}

db-simple-bank:

image: postgres:alpine

container_name: db-simple-bank

environment:

- POSTGRES_PASSWORD=password

volumes:

- ./data/pg-data:/var/lib/postgresql/data

networks:

app-net: {}

networks:

app-net:

external: true

name: 'dev-to-network'

```

- Terakhir untuk _Makefile_, ketik kode di bawah ini

```Makefile

compose-up:

docker compose up -d --build

compose-down:

docker compose down

```

- Buka terminal di bawah, buat network baru di docker dengan menjalankan kode di bawah ini

```sh

docker network create dev-to-network

```

- Untuk menjalankan aplikasi nya, ketik kode di bawah ini di terminal

```sh

make compose-up

```

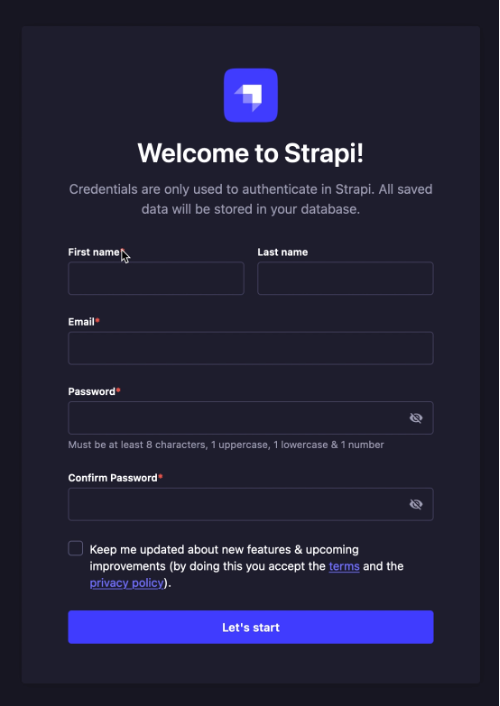

- Buka browser, ketik di address bar http://localhost:8000/admin, maka tampilan akan seperti di bawah ini

- Masukan data sesuai dengan yang diminta

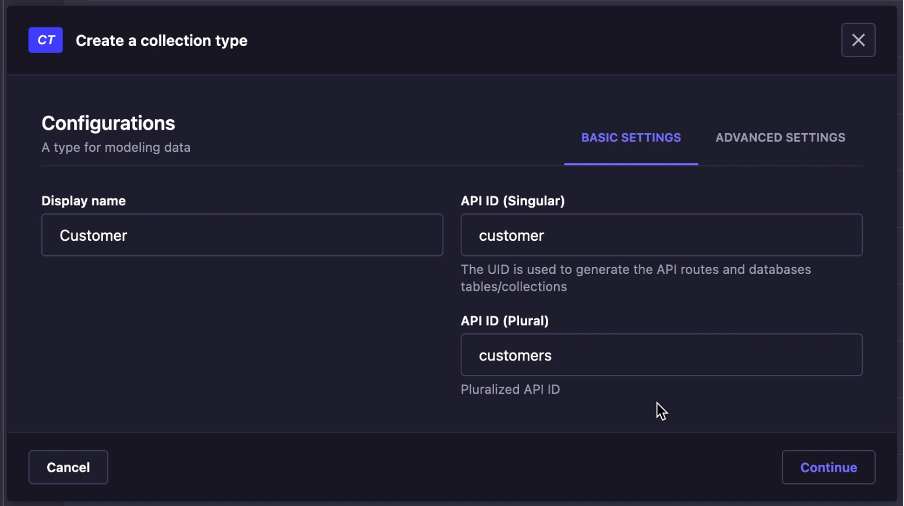

- Setelah masuk ke halaman dashboard admin, pilih menu _Content-Type builder_

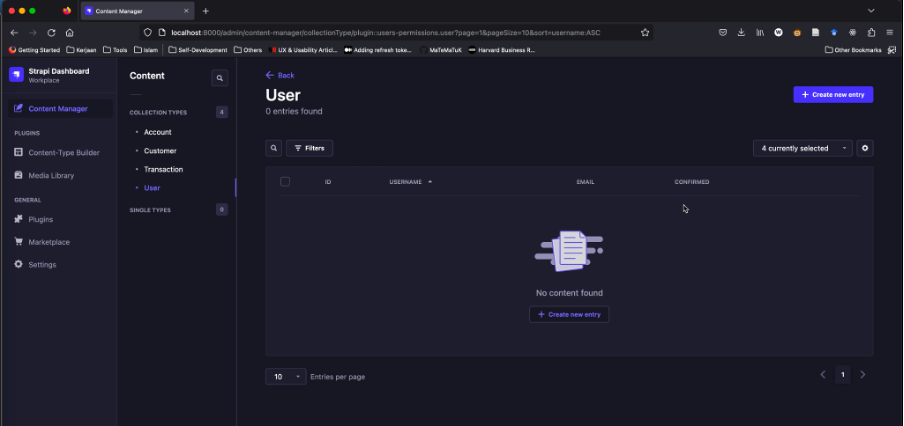

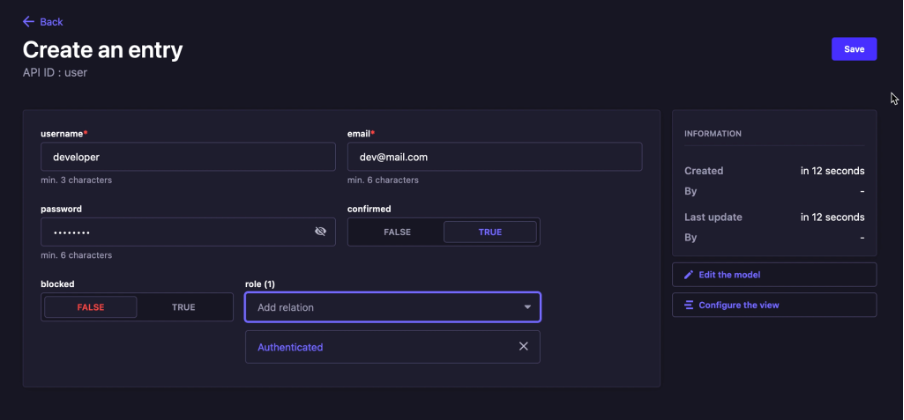

- Klik _Create new collection type_, lalu create _Customer_

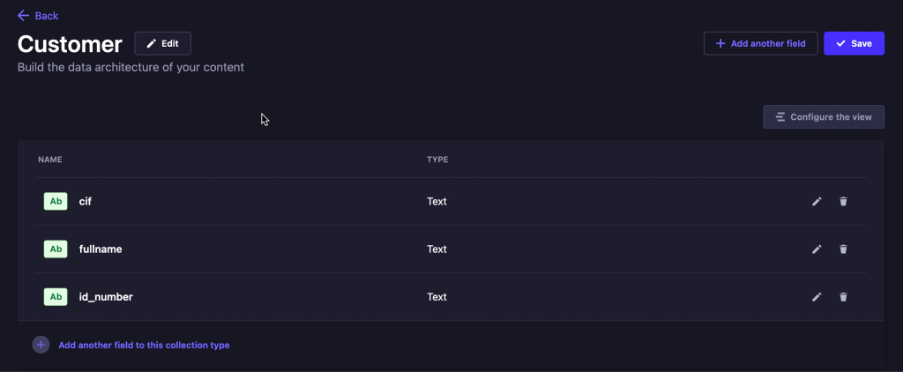

- Create beberapa field seperti dibawah

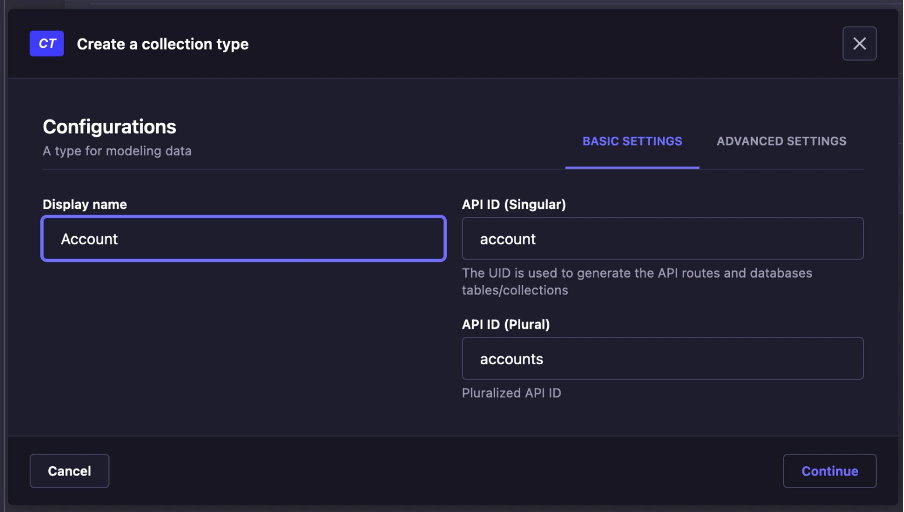

- Klik _Create new collection type_, lalu create _Account_

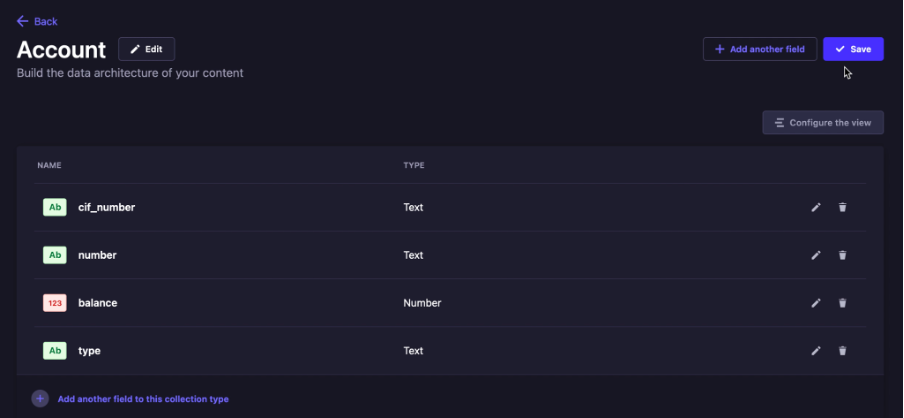

- Create beberapa field seperti dibawah

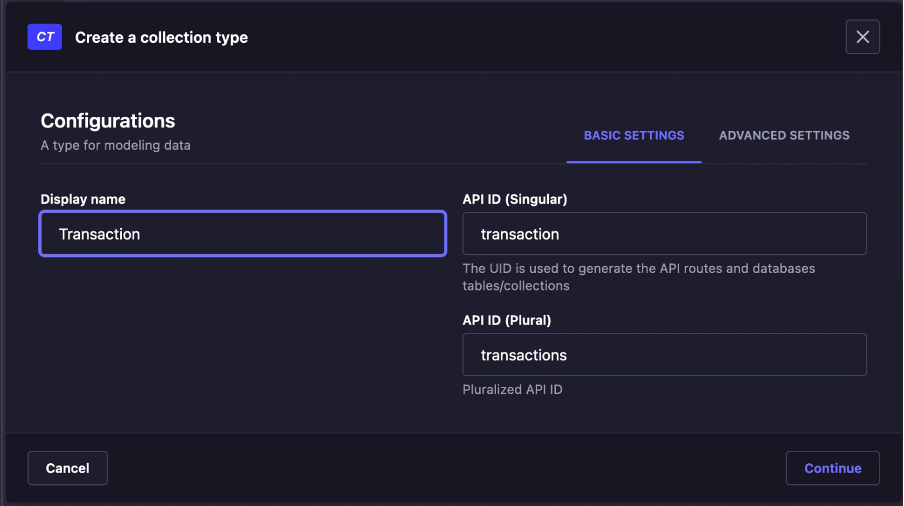

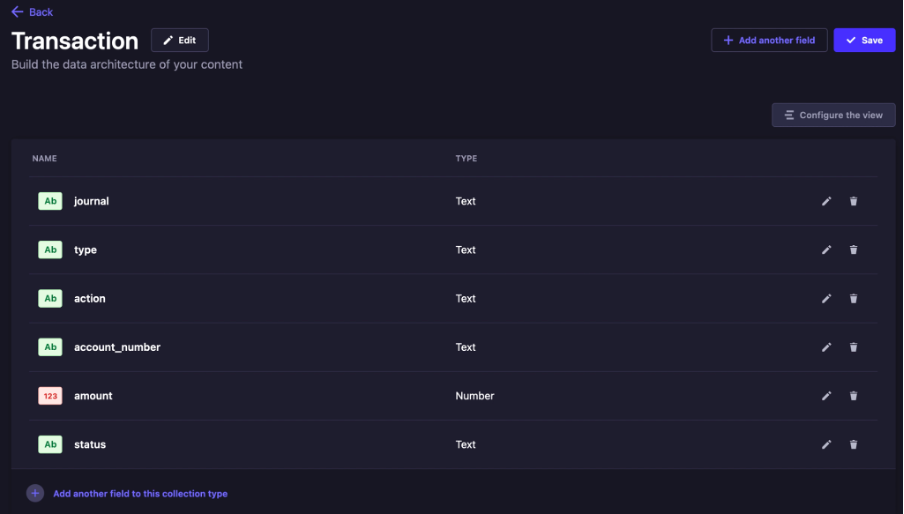

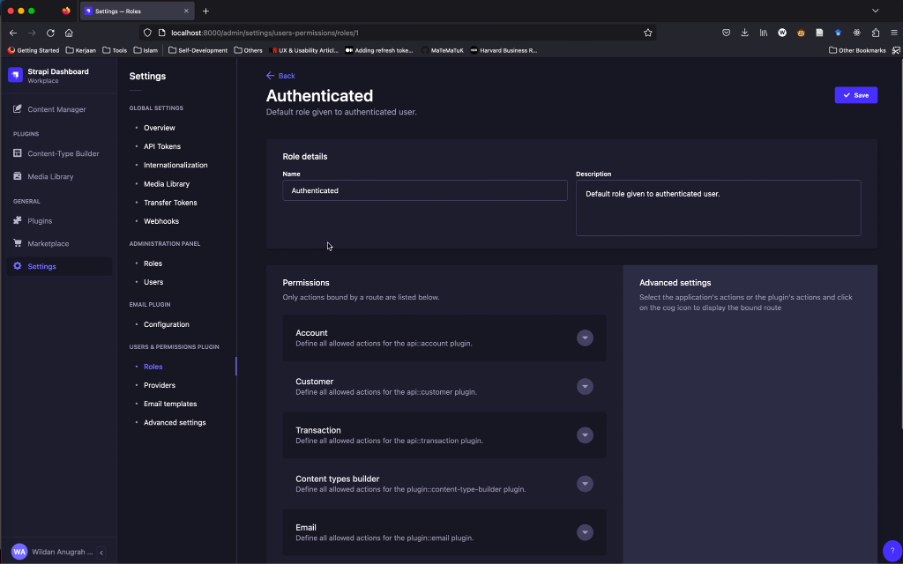

- Klik Create new collection type, lalu create Transaction

- Create beberapa field seperti dibawah

- Create _lifecycles.js_ file di _src/api/content-types/customer/lifecycles.js_, lalu ketikan kode di bawah ini

```javascript

// src/api/content-types/customer/lifecycles.js

module.exports = {

beforeCreate: async (event) => {

const generate_cif = async (length) => {

let result = '';

const characters = '0123456789';

const charactersLength = characters.length;

let counter = 0;

while (counter < length) {

result += characters.charAt(Math.floor(Math.random() * charactersLength));

counter += 1;

}

return result;

}

const get_cif = async () => {

let cif = ""

let customer = []

do {

cif = await generate_cif(10)

customer = await strapi.entityService.findMany("api::customer.customer", {

filters: {

cif: cif

}

})

console.log(`customer: ${customer}`)

console.log(`cif: ${cif}`)

} while (customer.length != 0)

return cif

}

const { data } = event.params;

data.cif = await get_cif()

},

};

```

- Create _lifecycles.js_ file di _src/api/content-types/account/lifecycles.js_, lalu ketikan kode di bawah ini

```javascript

// src/api/content-types/account/lifecycles.js

const utils = require('@strapi/utils');

const { ApplicationError } = utils.errors;

module.exports = {

beforeCreate: async (event) => {

const generate_account_number = async (length) => {

let result = '';

const characters = '0123456789';

const charactersLength = characters.length;

let counter = 0;

while (counter < length) {

result += characters.charAt(Math.floor(Math.random() * charactersLength));

counter += 1;

}

return result;

}

const get_account_number = async () => {

let account_number = ""

let account = []

do {

account_number = await generate_account_number(10)

account = await strapi.entityService.findMany("api::account.account", {

filters: {

number: account_number

}

})

console.log(`account: ${account}`)

console.log(`account_number: ${account_number}`)

} while (account.length != 0)

return account_number

}

const { data } = event.params;

const customer = await strapi.entityService.findMany("api::customer.customer", {

filters: {

cif: data.cif_number

}

})

if(customer.length == 0)

{

throw new ApplicationError('Invalid cif number', { message: `can not find '${data.cif_number}' cif number` });

}

else

{

data.number = await get_account_number()

data.balance = 0

}

},

};

```

- Create file _custom-transaction.js_ di _src/api/transaction/controllers/custom-transaction.js_, lalu ketikan kode di bawah ini

```javascript

// src/api/transaction/controllers/custom-transaction.js

const utils = require('@strapi/utils');

const { ApplicationError } = utils.errors;

const get_account = async (account_number) => {

const accountResponse = await strapi.entityService.findMany("api::account.account",

{

filters: {

number: account_number

}

}

)

return accountResponse[0]

}

const generate_journal = async (length) => {

let result = '';

const characters = '0123456789';

const charactersLength = characters.length;

let counter = 0;

while (counter < length) {

result += characters.charAt(Math.floor(Math.random() * charactersLength));

counter += 1;

}

return result;

}

const get_journal = async () => {

let journal = ""

let transaction = []

do {

journal = await generate_journal(10)

transaction = await strapi.entityService.findMany("api::transaction.transaction", {

filters: {

journal: journal

}

})

console.log(`journal: ${journal}`)

} while (transaction.length != 0)

return journal

}

module.exports = {

async debit(ctx, next) {

const requestBody = ctx.request.body.data

const account = await get_account(requestBody.account_number)

if (account.length == 0) {

throw new ApplicationError('Invalid account number', { message: `Can not find ${requestBody.account_number} account number` });

}

else {

const new_balance = account.balance - requestBody.amount

const transaction = {

data: {

journal: await get_journal(),

account_number: requestBody.account_number,

type: requestBody.type,

status: "SUCCEED",

action: "DEBIT",

amount: requestBody.amount,

publishedAt: new Date()

}

}

await strapi.entityService.create("api::transaction.transaction", transaction)

await strapi.entityService.update("api::account.account", account.id, {

data: {

balance: new_balance

}

})

return transaction

}

},

async credit(ctx, next) {

const requestBody = ctx.request.body.data

const account = await get_account(requestBody.account_number)

console.log(account)

if (account.length == 0) {

throw new ApplicationError('Invalid account number', { message: `Can not find ${requestBody.account_number} account number` });

}

else {

const new_balance = account.balance + requestBody.amount

const transaction = {

data: {

journal: await get_journal(),

account_number: requestBody.account_number,

type: requestBody.type,

status: "SUCCEED",

action: "CREDIT",

amount: requestBody.amount,

publishedAt: new Date()

}

}

await strapi.entityService.create("api::transaction.transaction", transaction)

await strapi.entityService.update("api::account.account", account.id, {

data: {

balance: new_balance

}

})

return transaction

}

}

}

```

- Ubah nama file _transaction.js_ menjadi _01-transaction.js_ di folder _src/api/transaction/routes/_

- Create file 02-transactions.js di folder src/api/transaction/routes/, lalu ketikan kode di bawah ini

```javascript

module.exports = {

routes: [

{ // Path defined with an URL parameter

method: 'POST',

path: '/transactions/debit',