id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,271,211 | Database Management With CI/CD | Photo by Olav Ahrens Røtne on Unsplash I remember my first day as a junior dev. It’s still fresh in... | 0 | 2022-11-24T18:29:15 | https://semaphoreci.com/blog/database-management | database, beginners, webdev, testing | Photo by <a href="https://unsplash.com/@olav_ahrens?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText">Olav Ahrens Røtne</a> on <a href="https://unsplash.com/s/photos/rubik?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText">Unsplash</a>

I remember my first day as a junior dev. It’s still fresh in my mind like it was yesterday. I was terribly nervous and had no idea what I was doing. My anxiety must have been evident because a kind soul decided to take me under their wing. That day I learned how to write SQL in my PHP code to do interesting things with the database.

Before I could start, though, I had to ask the database administrator (DBA) to create a few tables. I quickly realized that the DBA was the go-to person if you wanted to get anything done. Need a new column? Call the DBA. A stored procedure has to be edited? It was a job for the DBA. I looked up to him. He was such a superstar that I went on to be a DBA myself for a spell later in my career.

Of course, now I realize that depending on someone for everything inevitably causes bottlenecks. [It’s reckless](https://www.youtube.com/watch?v=X6NJkWbM1xk), stressful, and, worst of all, a waste of the DBA’s talents.

## Managing data with CI/CD

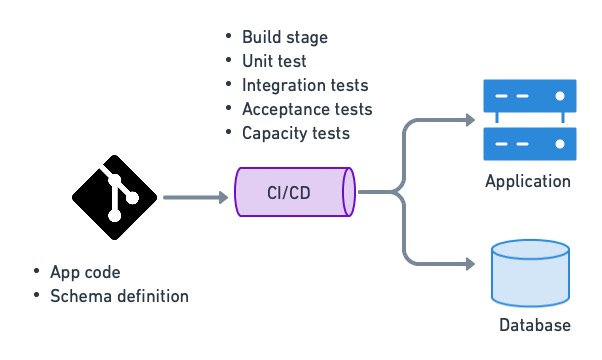

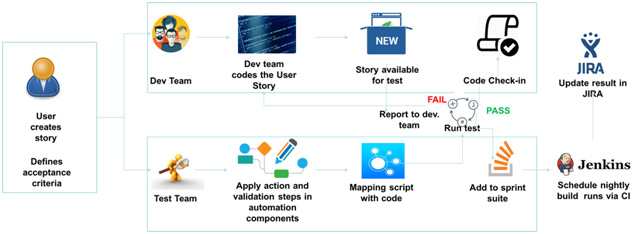

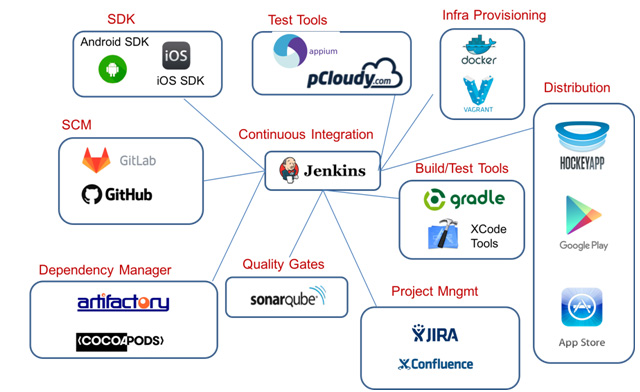

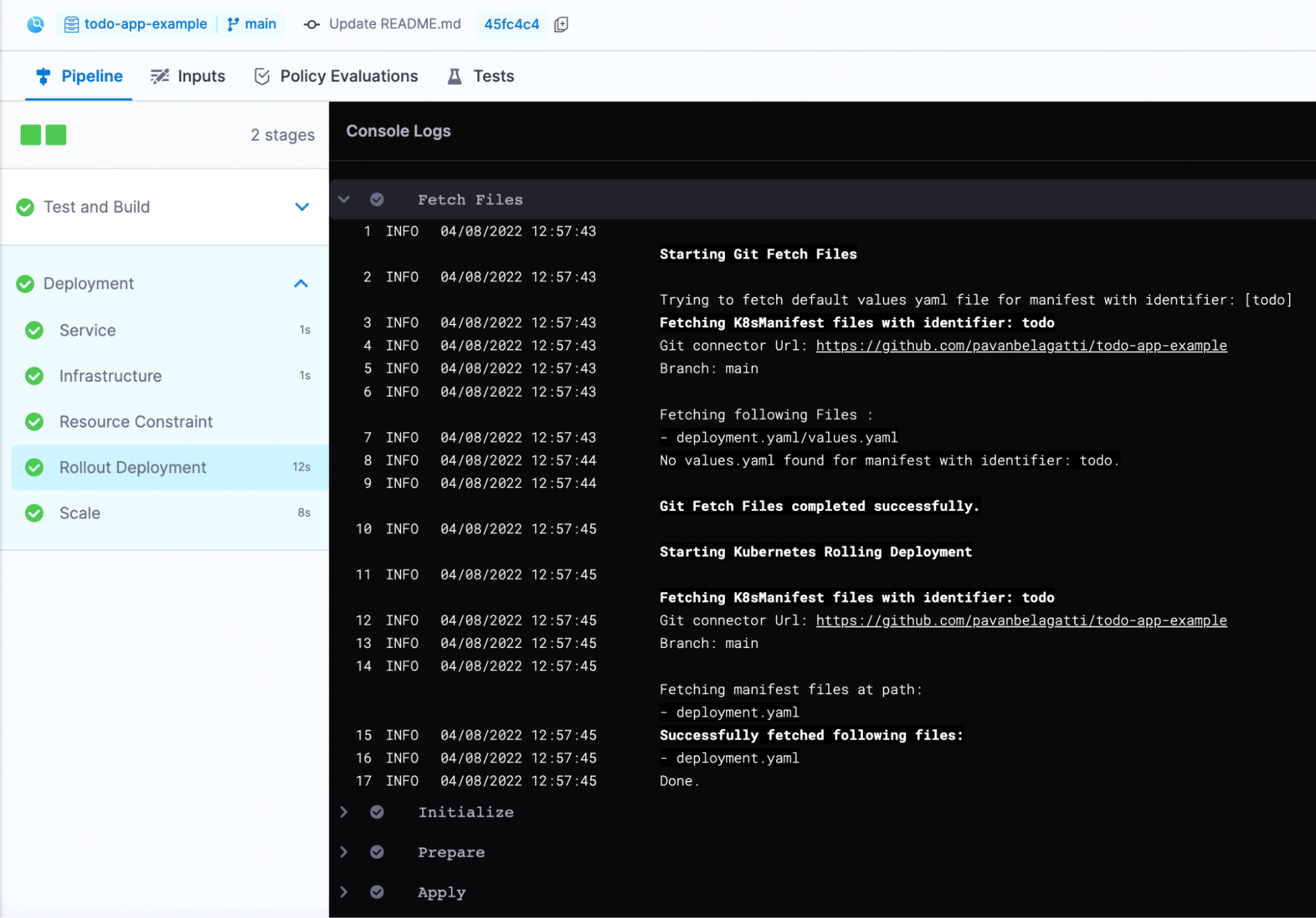

Automating data management with CI/CD allows us to stay agile by keeping the database schema updated as part of the delivery or deployment process. We can initialize test databases under different conditions and migrate the schema as needed, ensuring testing is done on the correct database version. We can upgrade and downgrade simultaneously when we deploy our applications. Automated data management allows us to keep track of every change in the database, which helps debug production problems.

Using CI/CD to manage data is the only way to properly perform [continuous deployment](https://semaphoreci.com/cicd).

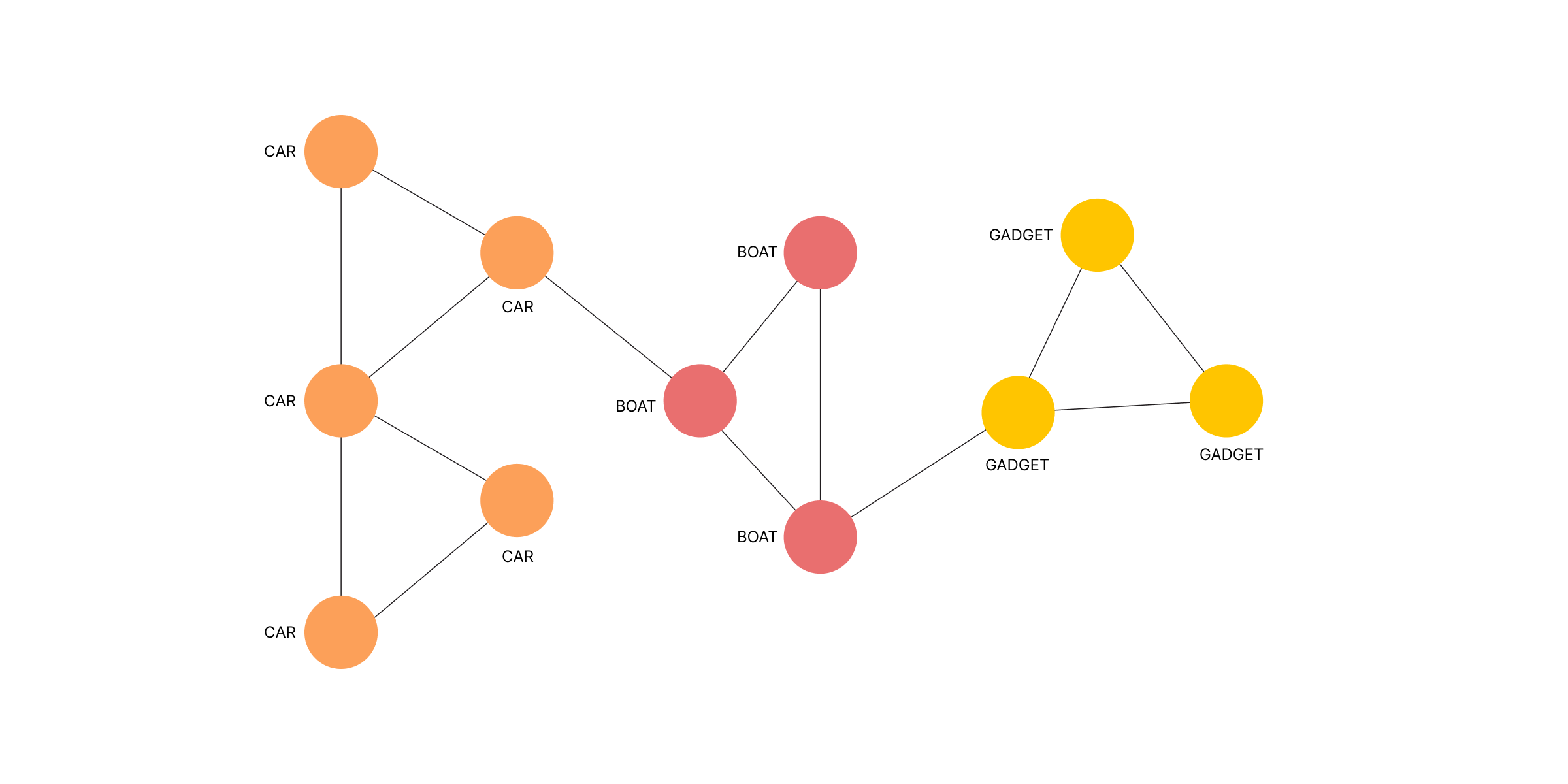

CI/CD is used to deploy applications and update database structures.

## The role of the DBA

What’s the role of the DBA when data management is automated? Are they irrelevant? On the contrary, relieved from menial chores, they are now free to focus on value-adding work that’s far more engaging, like:

- Monitoring and optimizing database engine performance.

- Advising schema design.

- Planning data normalization.

- Peer reviewing database changes and migration scripts while considering their impact on database operations.

- Deciding the best moment to apply migrations.

- Ensuring the recovery strategy works according to SLA needs.

- Writing or improving migration scripts.

## Techniques for data management with CI/CD

What makes database management complicated is that we must preserve the data while making changes to the schema. We can't replace the database with each release as we do with the application.

This problem is even more challenging when we consider that the database must remain online during migrations, and nothing can be lost in the event of a rollback.

So, let’s explore a few techniques to help us make migrations safe.

### Commit database scripts to version control

Generally, there are two kinds of database scripts: data-definition language (DDL) and data-manipulation language (DML). DDL creates and modifies database structures such as tables, indexes, triggers, stored procedures, permissions, or views. DML is used to manipulate the actual data in the tables.

Like all code, both kinds of scripts should be kept in version control. Keeping changes in version control lets us reconstruct the entire history of the database schema. This makes changes visible to the team, so they can be peer-reviewed. Database scripts include:

- Scripts to roll the database version forward and backward between different versions.

- Scripts to generate custom datasets for acceptance and capacity testing.

- Database schema definitions used to initialize a new database.

- Any other scripts that change or update data.

### Use database migration tools

There are many tools for writing and maintaining migration scripts. Some frameworks, like Rails, Laravel, and Django, come with them built-in. But if that’s not the case for your stack, there are generic tools like [Flyway](https://flywaydb.org/), [DBDeploy](http://dbdeploy.com/), and [SQLCompare](https://www.red-gate.com/products/sql-development/sql-compare/) to do the job.

The aim of all these tools is to maintain an uninterrupted set of delta scripts that upgrade and downgrade the database schema as needed. These tools can determine which updates are needed by examining the existing schema and running the update scripts in the correct sequence. They are a much safer alternative than writing scripts by hand.

For instance, to go from version 66 to 70, the migration tool would execute scripts numbered 66, 67, 68, 69, and 70. The same can be done the other way around to roll the database backward.

| Version | Upgrade script | Rollback script | Schema DDL |

| -------- | -------------- | --------------- | ---------- |

| ... | | | |

| 66 | delta-66.sql | undo-66.sql | schema-66.sql |

| 67 | delta-67.sql | undo-67.sql | schema-67.sql |

| 68 | delta-68.sql | undo-68.sql | schema-68.sql |

| 69 | delta-69.sql | undo-69.sql | schema-69.sql |

| 70 | delta-70.sql | undo-70.sql | schema-70.sql |

| ... | | | |

Automated migrations cover 99% of your data management needs. Are there cases where management must take place outside CI/CD? Yes, but they are typically one-shot or situationally specific changes, where massive amounts of data must be moved as part of an extensive engineering effort. An excellent example of this is [Stripe’s bajillion record migration](https://robertheaton.com/2015/08/31/migrating-bajillions-of-database-records-at-stripe/).

### Keep changes small

In software development, we go faster when we can [walk in safe, small steps](https://trunkbaseddevelopment.com/). This is a policy that also applies to data management. Making broad, sweeping changes all at once can lead to unexpected results, like losing data or locking up a table. It’s best to parcel out changes in pieces and apply them over time.

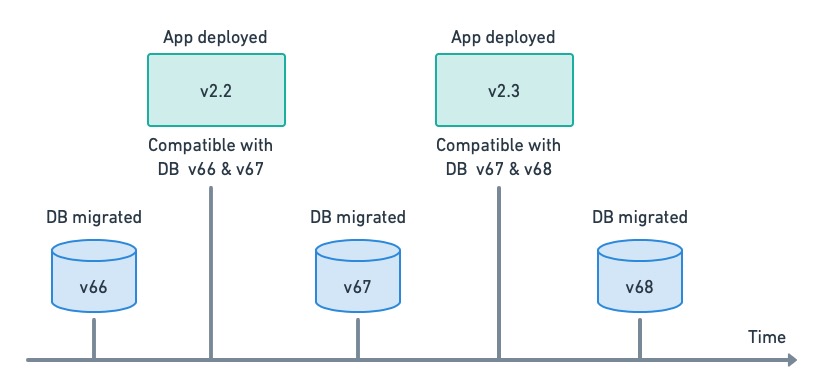

### Decouple deployment from data migrations

Application deployment and data migration have very different characteristics. While a deployment usually takes seconds and can occur several times a day, database migrations are more infrequent and executed outside peak hours.

We must separate data migration from application deployment since they need different approaches. Decoupling makes both tasks easier and safer.

Decoupling app deployment and DB migrations. Each release has a range of compatible DB versions.

Decoupling can only work if the application has some leeway regarding database compatibility, i.e. the application’s design should strive to make it as backward-compatible as possible.

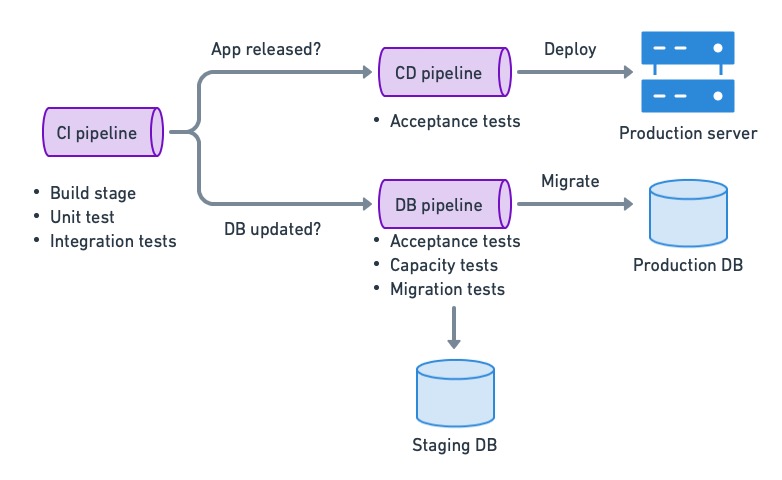

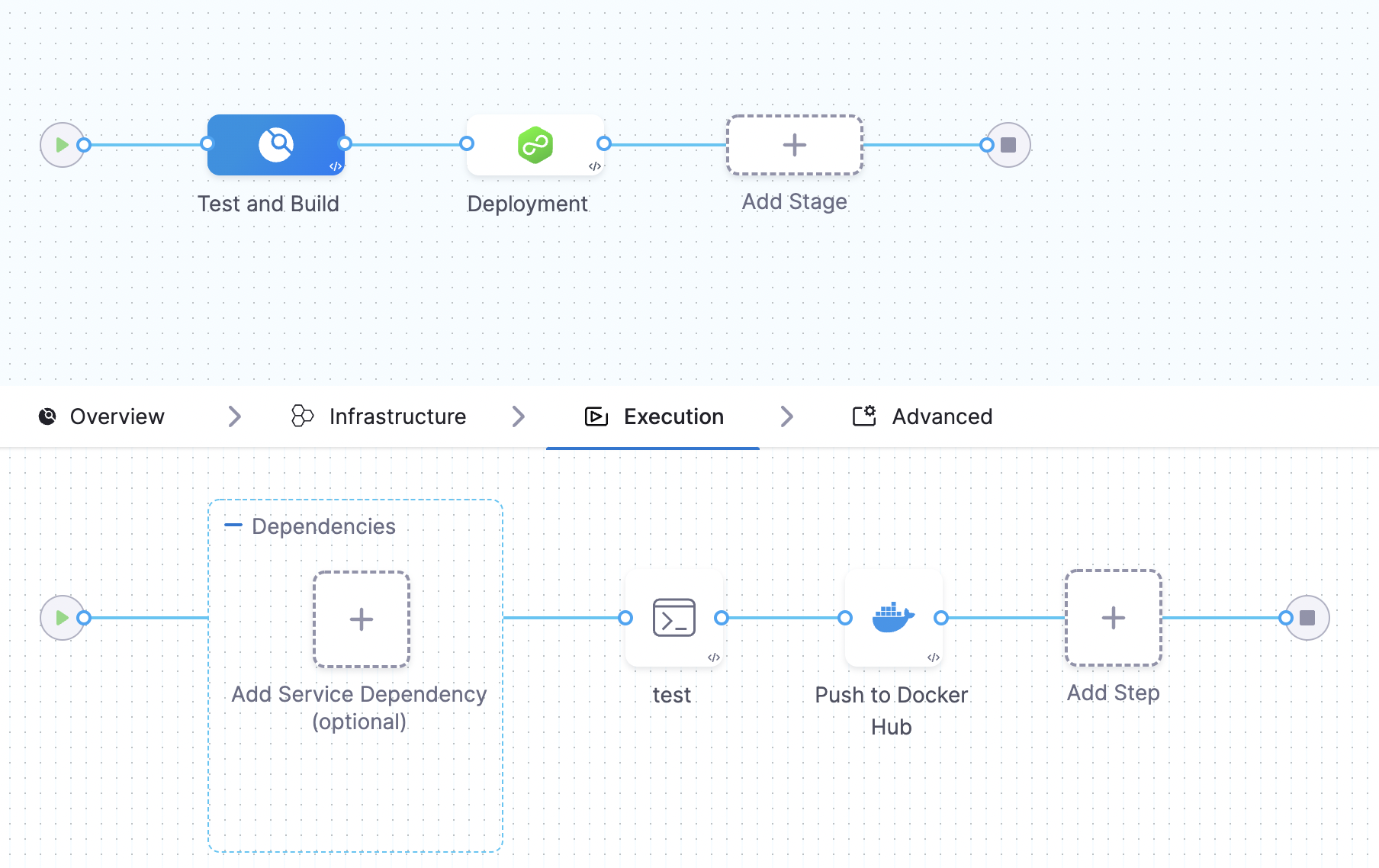

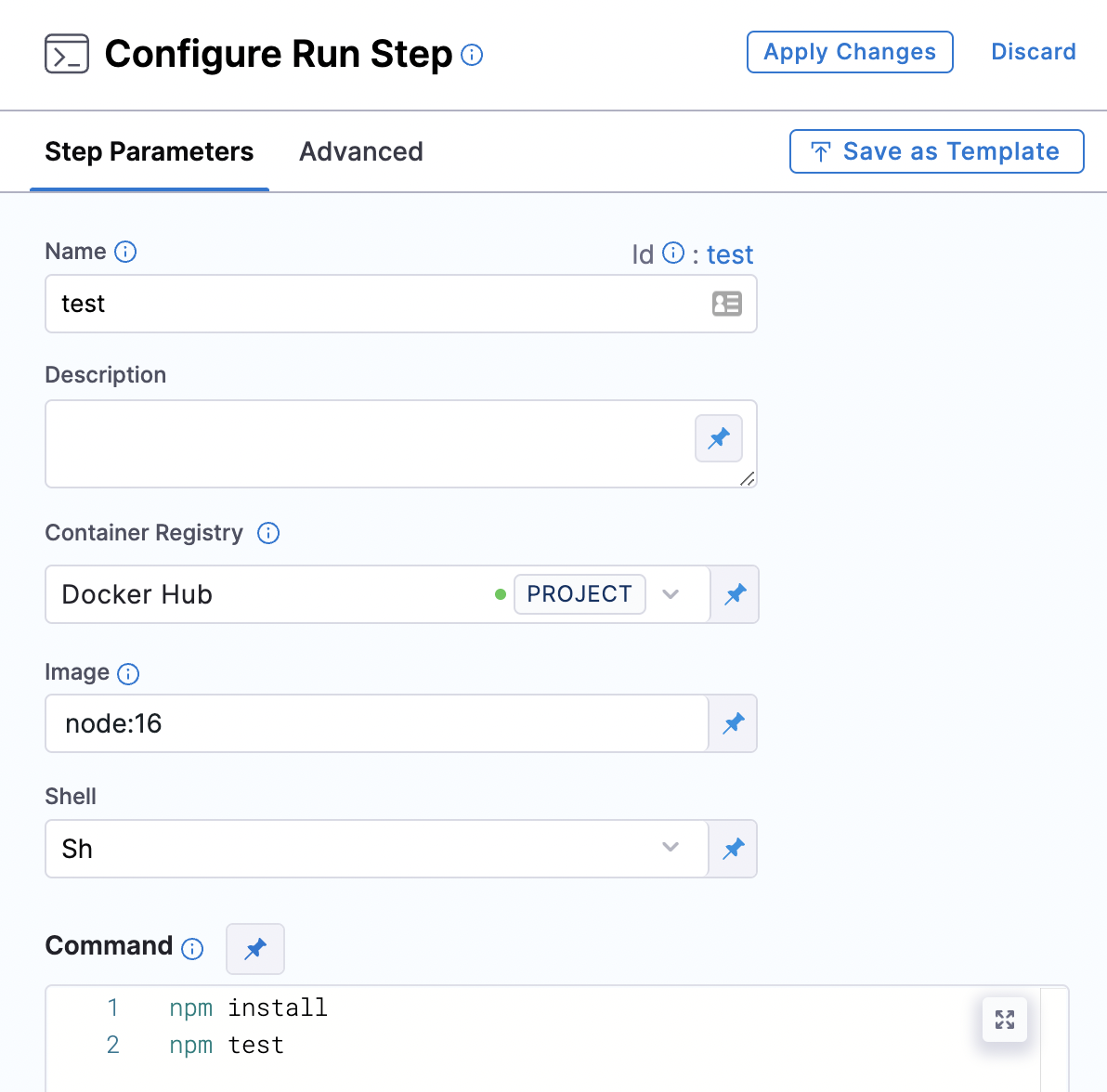

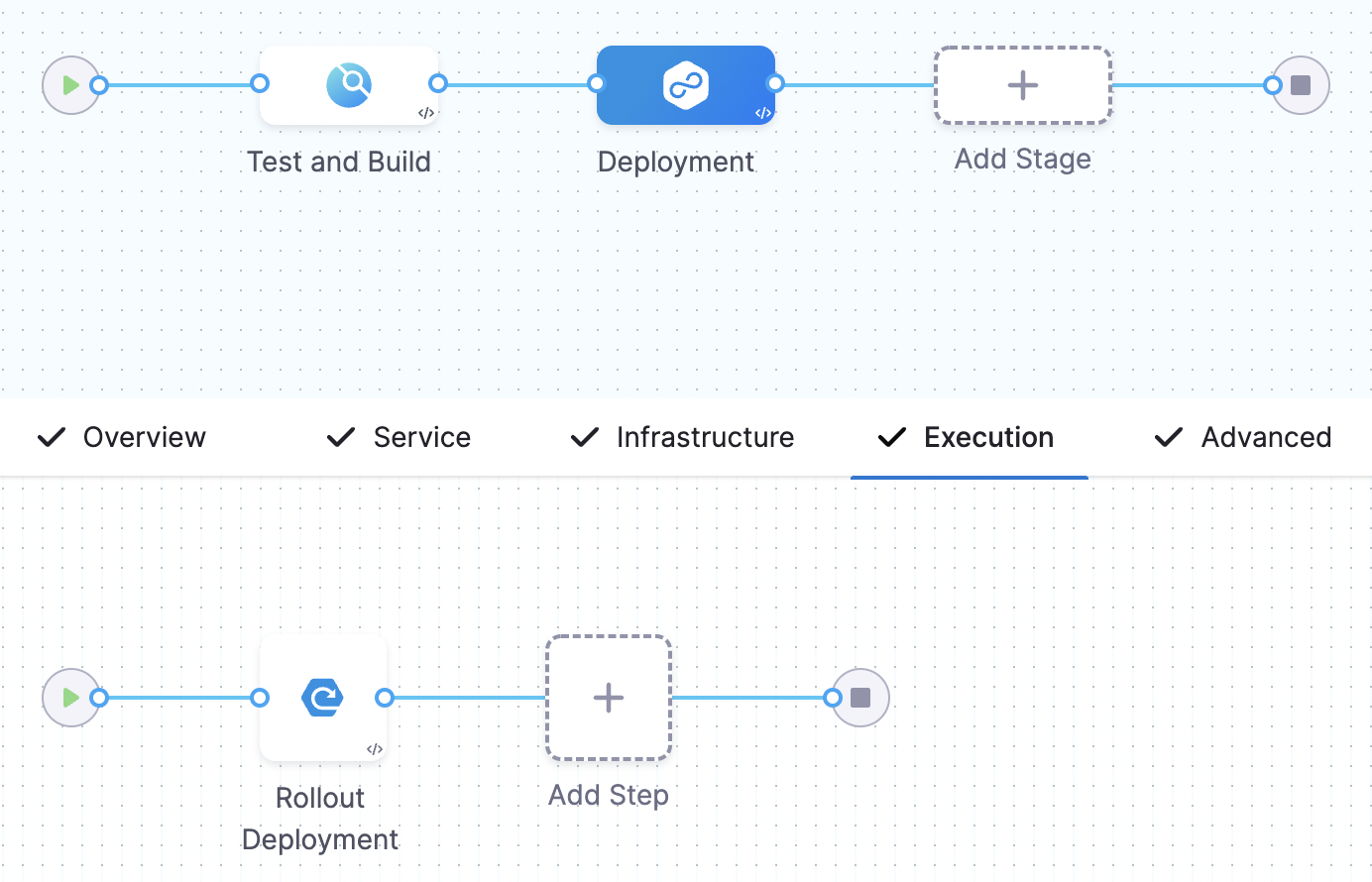

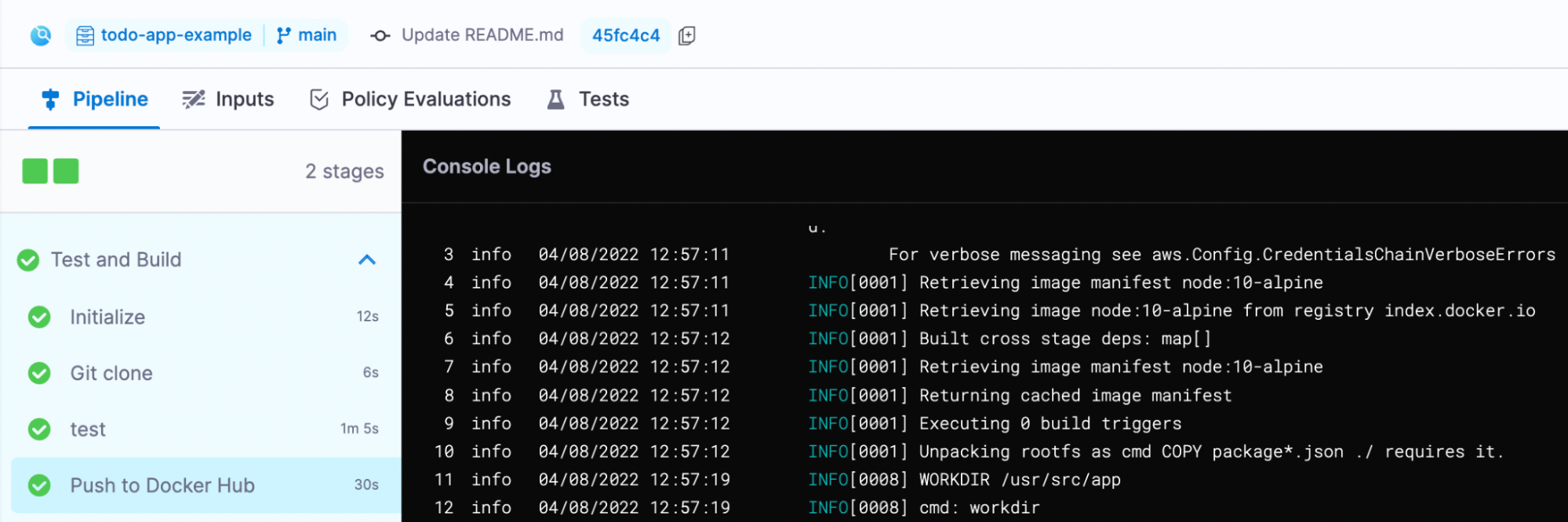

### Set up continuous deployment and migration pipelines

Uncoupling migration from deployment allows us to split the [continuous delivery pipelines](https://semaphoreci.com/blog/cicd-pipeline) in two: one for the migration of the database and one for the deployment of the application. This gives us the benefit of continuously deploying the application while controlling when migrations run. On Semaphore, we can use [change-based workflows](https://docs.semaphoreci.com/essentials/building-monorepo-projects/) to automatically trigger the relevant pipeline.

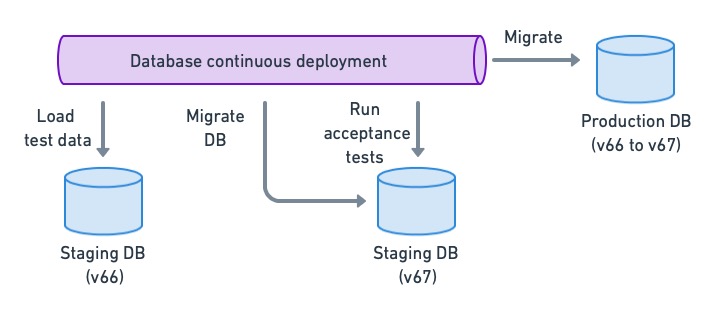

Continuous deployment for databases using the decoupled strategy.

### Make migrations additive

Additive database changes create new tables, columns, or stored procedures instead of renaming, overwriting, or deleting them. These kinds of changes are safer because they can be rolled back with the guarantee that data is not lost.

For example, let’s say we have the following table in our production database.

```sql

CREATE TABLE pokedex (

id BIGINT GENERATED BY DEFAULT AS IDENTITY (START WITH 1) PRIMARY KEY,

name VARCHAR(255)

category VARCHAR(255)

);

```

Adding a new column would be an additive change:

```sql

ALTER TABLE pokedex ADD COLUMN height float;

```

Rolling back the change is simply a matter of deleting the new column:

```sql

ALTER TABLE pokedex DROP COLUMN height;

```

We can’t always make additive changes, however. When we need to change or delete data, we can keep data integrity by temporarily saving the original data. For example, changing a column type may truncate the original data. We can make the change safer by saving the old data in a temporary column.

```sql

ALTER TABLE pokedex RENAME COLUMN description to description_legacy;

ALTER TABLE pokedex ADD COLUMN description JSON;

UPDATE pokedex SET description = CAST(description_legacy AS JSON);

```

Having taken that precaution, we can rollback without risk:

```sql

ALTER TABLE pokedex DROP COLUMN description;

ALTER TABLE pokedex RENAME COLUMN description_legacy to description;

```

### Rollback with CI/CD

Be it to downgrade the application or because a migration failed, there are some situations in which we have to undo database changes, effectively rolling it back to a past schema version. This is not a big problem as long as we have the rollback script and have kept changes non-destructive.

As with any migration, the rollback should also be scripted and automated (I’ve seen plenty of cases where a manual rollback made things worse). On Semaphore, this can be achieved with a rollback pipeline and [promotion conditions](https://docs.semaphoreci.com/essentials/deploying-with-promotions/).

### Don’t do a full backup unless it’s fast

Despite all precautions, things can go wrong, and a failed upgrade can corrupt the database. There must always be some backup mechanism to restore the database to a working state.

The question is: should we make a backup before every migration? The answer depends on the size of the database. If the database backup takes a few seconds, we can do it. However, most databases are too big and take too long to back up to be practical. We must then rely on whichever restore strategy we have available, like daily or weekly full dumps coupled with transaction point-in-time recovery.

As a sidebar, we should test our recovery strategy periodically. It’s easy to grow confident that we have valid backups, but we can’t be sure until we try them. Don’t wait for a disaster to try restoring the database — have some disaster recovery plan in place and play it out from time to time.

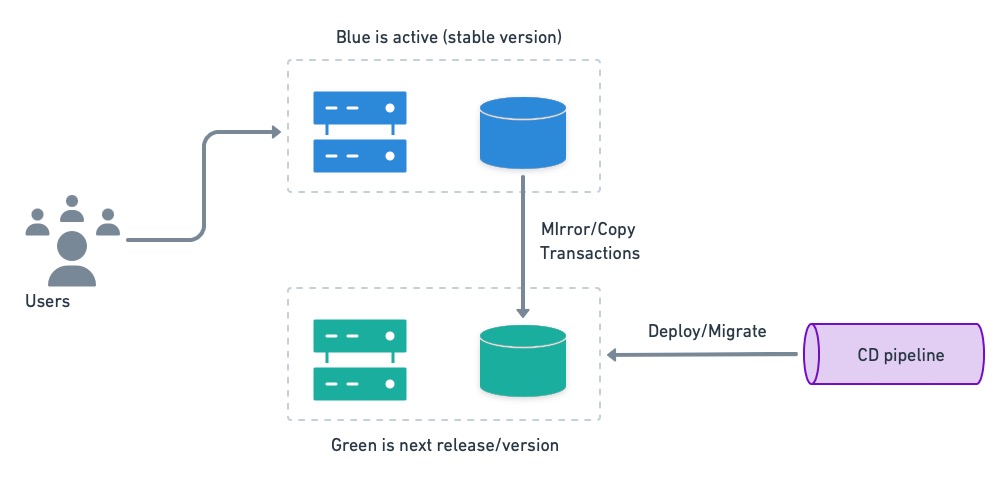

### Consider blue-green deployments

⚠️ Blue-green deployments are a more sophisticated technique that requires a good deal of familiarity with how database engines work. So, I recommend using it with care and once you have confidence in managing data in the CI/CD process.

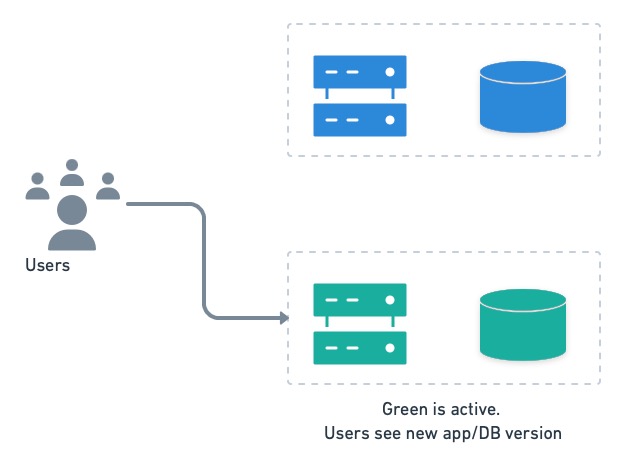

[Blue-green deployments](https://semaphoreci.com/blog/blue-green-deployment) is a strategy that allows us to instantly switch between versions. The gist of blue-green deployments is to have two separate environments, dubbed blue and green. One is active (has users), while the other is upgraded. Users are switched back and forth as needed.

We can put blue-green’s instant rollback feature to good use if we have separate databases. Before deployment, the inactive system (green in the figure below) receives a current database restore from blue, and it’s kept in sync with a mirroring mechanism. Then, it is migrated to the next version.

Once the inactive system is upgraded and tested, users are switched over.

Users are switched to the next version running on green.

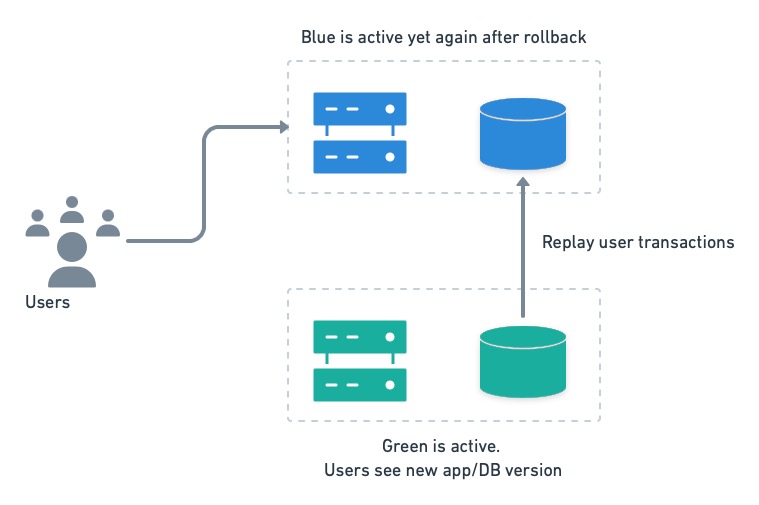

In case of trouble, users can be switched back to the old version in an instant. The only catch with this setup is that transactions executed by the users on the green side must be replayed on blue after the rollback.

On rollback, we must rerun green’s transactions on blue to avoid losing data.

## Testing techniques

Because a migration can destroy data or cause an outage, we want to be extra careful and test it thoroughly before going to production. Fortunately, there are quite a few testing techniques available to help us.

### Unit and integration tests

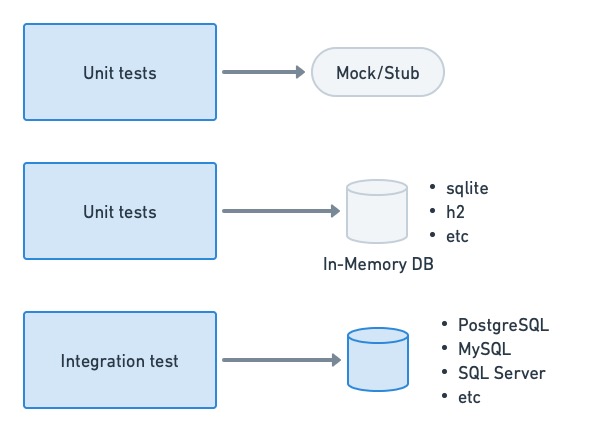

[Unit tests](https://semaphoreci.com/blog/unit-testing), as a general rule, should not depend on or access a database if possible. The objective of a unit test is to check the behavior of a function or method. We can usually get away with stubs or mocks for this. When that’s not possible or is too inconvenient, we can use in-memory databases for the job.

On the other hand, actual databases are commonly seen in [integration testing](https://semaphoreci.com/blog/integration-tests). These can be spun up on-demand for the test, loaded with empty tables or a specially-crafted dataset, and shut down after testing.

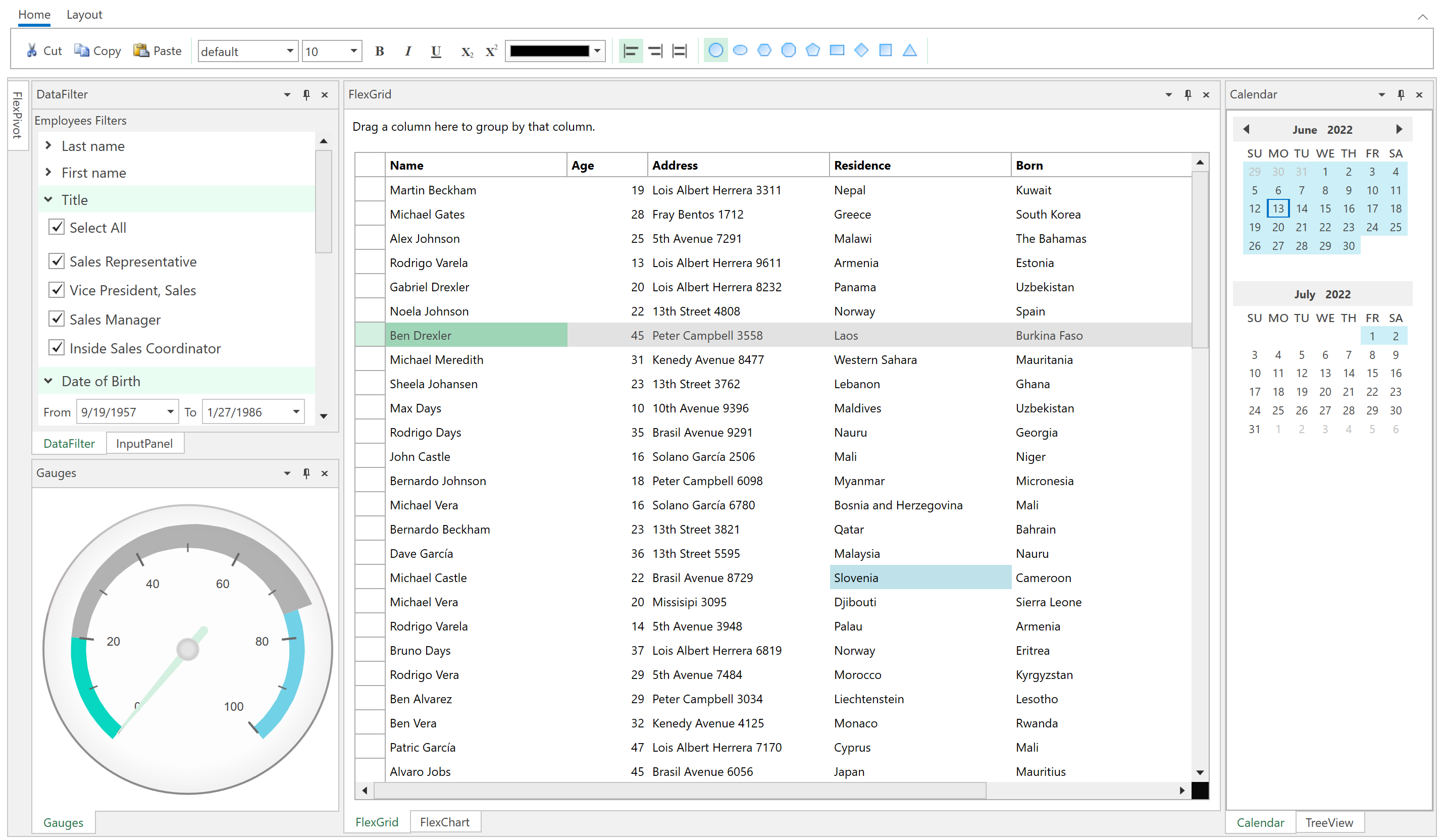

Unit tests should not depend too heavily on database access. For integration tests, we tend to use a real database engine.

### Acceptance and end-to-end tests

We need an environment that closely resembles production for [acceptance testing](https://semaphoreci.com/blog/the-benefits-of-acceptance-testing). While it’s tempting to use anonymized, production backups in the test database, they tend to be too big and unwieldy to be useful. Instead, we can use crafted datasets or, even better, create the empty schema and use the application’s internal API to populate it with test data.

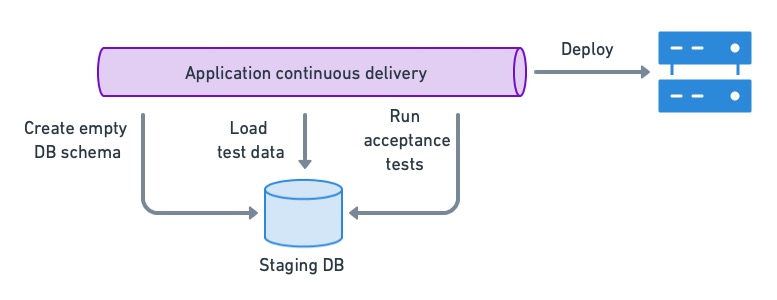

To ensure that the app is compatible with the current DB version, we load a test dataset in a staging DB and run acceptance tests. If they pass, we can deploy the application.

### Compatibility and migration tests

We must perform regression testing if we’re aiming for the application to be backward and forward compatible with multiple database versions. This can be done by running acceptance tests on the database schema before and after the migration.

On an uncoupled setup like [the one described earlier](#split), the application’s continuous deployment pipeline would perform acceptance testing on the current schema version. So, we only need to acceptance test the next database version when a migration takes place:

1. Load the test database with the current production schema.

2. Run the migration.

3. Run acceptance tests.

This method has the added benefit of detecting problems in the migration script itself, as many things can go wrong, like new constraints failing due to existing data, name collisions, or tables getting locked up for too long.

Running acceptance tests on the migrated DB schema allows us to detect regressions and find possible migration conflicts.

## Closing thoughts

Database scripts should be treated the same as the rest of the code — the same principles apply. Ensure your DBAs have access to the code repository so they can help setup, revise & peer-review the data management scripts. These scripts should be versioned and subjected to the same level of scrutiny as the code.

The effort invested in setting up automated data management with CI/CD will be repaid many times over in speed, stability, and productivity. Developers can work unencumbered while DBAs do what they’re best at: keeping the database clean and well-oiled.

Thanks for reading!

| tomfern |

1,271,225 | Mail verification for firebase users not working correctly | Mail verification for firebase users not... | 0 | 2022-11-24T19:04:58 | https://dev.to/prawlercode/mail-verification-for-firebase-users-not-working-correctly-1dmn | {% stackoverflow 74565205 %} | prawlercode | |

1,271,253 | I'm doing this one thing differently next time I build a SaaS product | As one does the further they progress in their career, they develop wisdom based on their experiences... | 0 | 2022-11-24T20:56:59 | https://dev.to/theaccordance/im-doing-this-one-thing-differently-next-time-i-build-a-saas-product-2efp | devjournal, productivity, architecture, startup | As one does the further they progress in their career, they develop wisdom based on their experiences and apply it with future opportunities. When it comes time for me to build my next SaaS product, one piece of wisdom I intend to apply is to **host my SaaS Product and Marketing Website on separate domains**. Seems simple enough, but why is this a wise piece of advice? As products scale and businesses mature, the necessity to demonstrate the integrity of your product becomes more paramount.

Since _Information Security_ falls under my domain as Director of Infrastructure for four B2B enterprise SaaS products, I regularly have to interact with external stakeholders: _Customers, closing deals (sales), auditors, and even insurance providers._ At least once a month, someone will conduct a due-diligence task on their end by publicly scanning my domains and confront us with the findings.

While I think it's important to address vulnerabilities, not all vulnerabilities are the same:

- Some vulnerabilities are benign because your use case is not applicable

- Some vulnerabilities cannot be reconciled as they were past decisions that are unable to be changed

- But most importantly, **some vulnerabilities create a liability for customer data, and others do not.**

In my context, 99% of public probing does not identify vulnerabilities that meet the third point, **but it's the only reason why the feedback is being given.** And because people think they've identified a risk to their data, they're often times unwilling to accept the simple answer, instead sucking up my time through multiple interactions to effectively communicate our integrity. If I separate the marketing website from the actual SaaS product, I'm better positioned to deflect these reports, as I can instead encourage them to rescan the domain where the customer data is accessible.

So, for my next SaaS product, expect the following:

- Marketing Website will be hosted with a `.com` address

- SaaS product will live on another tld like `.app`, `.io`, etc

While I don't expect many of you to have encountered this type of situation, I'd welcome your thoughts or experiences if you do have similar.

| theaccordance |

1,271,753 | Adding Tests for ESM using Jest framework | Writing tests for an application is a crucial process, as it helps make debugging easier and forces... | 20,714 | 2022-11-25T22:01:42 | https://dev.to/tdaw/adding-tests-for-esm-using-jest-framework-1nao | opensource, tutorial, webdev, javascript | Writing tests for an application is a crucial process, as it helps make debugging easier and forces us, developers, to write better code.

## What is Testing?

In essence, testing is writing code to _test_ that the functionality of your codebase is working and is not breaking in ways unimagined.

## Using Jest

[Jest](https://jestjs.io) is a testing framework for Javascript projects. It ensures that the implemented core functionality of the codebase is not prone to errors by allowing developers to write convenient tests.

### Setting up Jest for [ESM](https://nodejs.org/api/esm.html)

The initial step is to install Jest as a `dev` dependency using `npm` or `yarn`

Since I use `npm` to install `node` packages, I ran the following command:

```bash

npm install --save-dev jest

```

The next step is to create a `jest.config.js` file with the following content:

```node

export default { transform: {} }

```

Finally, we need to modify the `test` script in `package.json`.

Typically you would replace the default `value` of the test with either `jest --` or `jest`. However, since we are using ECMAScript modules in our project, we have to set up the test `script`[accordingly](https://jestjs.io/docs/ecmascript-modules) as follows:

```node

"scripts": {

"test": "node --experimental-vm-modules node_modules/jest/bin/jest.js"

},

```

### Writing the first test

Before you write your first test, create a `tests` or `__tests__` directory in the project's `root` directory.

Here's a sample function that checks if the user is eligible to cast a vote.

```node

// can-vote.js

const canVote = (age) => {

if(age < 18){

return false;

}

return true;

}

export default canVote;

```

Here's the test for the above function:

```node

import canVote from '../src/can-vote';

test('should return false if no value for age given', () => {

expect(canVote()).toBe(false);

});

```

Let's run the test as follows:

```bash

npm test

```

In the above `test`, we expect `canVote` to return `false` when no argument is passed. This test should fail because we are not dealing with a use case in which the value for `age` is `undefined`. As a result, the function returns `true` with the above implementation. This mistake could go unnoticed when the function definition is more extended and complex.

I encountered a similar bug in the `main` function of [my SSG](https://github.com/SerpentBytes/siteit), because there were no instances where the `main` function was invoked without passing a `value` in the codebase.

To fix the problem in the test we wrote, we could modify our `canVote` function as follows:

```node

// can-vote.js

const canVote = (age = 0) => {

if(age < 18){

return false;

}

return true;

}

export default canVote;

```

Now, if we run `npm test` the `test` we wrote for `canVote` should pass, since we are using [default parameter](https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Functions/Default_parameters) value in the function declaration.

## Final Thoughts

Writing tests for my project helped me find bugs that would have otherwise gone unnoticed. It's not easy to write tests, but it's a skill I would like to improve. Also, I learned it is challenging to write tests for code you do not understand. | tdaw |

1,271,845 | How to Build a Text Editor With Java (Part 3) | Let's continue building our Java based, command-line text editor that we started here @... | 0 | 2022-11-25T08:44:00 | https://dev.to/marcobehler/how-to-build-a-text-editor-with-java-part-3-222p | java, programming, tutorial | Let's continue building our Java based, command-line text editor that we started here @ https://youtu.be/kT4JYQi9w4w .

This is Part 3 and we will cover:

- How to implement Page Up & Page Down functionality.

- How to make the End key work properly, including cursor snapping.

- And how to make our text editor work on all operating systems, including macOS and Windows - not just Linux.

You're going to be in for a ride!

{% embed https://youtu.be/72Dt_U9DQh4 %} | marcobehler |

1,272,244 | The ultimate truth about you | The ultimate truth about youAfter counting four days and disappearing from the scene, people will... | 0 | 2022-11-25T14:05:03 | https://dev.to/azhar_maken/the-ultimate-truth-about-you-b1a | <h1 style="text-align: center;"><div class="separator" style="clear: both; text-align: center;">The ultimate truth about you</div><div class="separator" style="clear: both; text-align: center;"><a href="https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEhjLLWqR4qXhmaSg8k_oupUQ4O-2Rr9rcUoHqABECadsbToCwJPCs-29vanEumQ9IPzQXXSkJIudylR6d1tcuS0GVmTVYyOGwQmbIsZXUqEYgLZOG9vFWYtloV5bBiLUd02Fm-dToR_-dNWtm9GyGAgs1UcbEtpBf1HUVUUryGkpXhITE_CzrxbIWts/s960/ssa.jpg" style="margin-left: 1em; margin-right: 1em;"><img alt="The ultimate truth about you" border="0" data-original-height="960" data-original-width="640" height="640" src="https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEhjLLWqR4qXhmaSg8k_oupUQ4O-2Rr9rcUoHqABECadsbToCwJPCs-29vanEumQ9IPzQXXSkJIudylR6d1tcuS0GVmTVYyOGwQmbIsZXUqEYgLZOG9vFWYtloV5bBiLUd02Fm-dToR_-dNWtm9GyGAgs1UcbEtpBf1HUVUUryGkpXhITE_CzrxbIWts/w426-h640/ssa.jpg" title="Who I Am?" width="426" /></a></div><br /><div class="separator" style="clear: both; text-align: center;"><span style="font-size: medium; text-align: left;">After counting four days and disappearing from the scene, people will forget your love, hard work, even your name.</span></div></h1><p>Man spends his whole life in the delusion that he is important to others, but the truth is that no one cares if we are not. Until death makes no difference to anyone's life, life will continue to grow.</p><p>These people will be lost in their own lives, resting in peace and feeling sad or broken. This is the bitter truth we know and take for granted.</p><p>Devote your life to the path of Allah and do things that will please Allah and give you spiritual peace. For the sake of Allah, remove yourselves from ignorance and follow the truth.</p><span><!--more--></span><p>You can also read</p><p><a href="https://azhardialog.blogspot.com/2022/11/paralyzed-people-will-now-be-able-to.html">Paralyzed people will now be able to walk again</a></p><span><!--more--></span><p>The world is an illusion, do not destroy yourself in it.</p><p>If you allow me to shed tears, they are not due to doubt, but because of your gratitude, you are in the eyes of gratitude and "I" in the eyes of doubt!</p><h4 style="text-align: left;">*Allow me beyond my desires, when this look rises on you, it rises for you.</h4><h4 style="text-align: left;"><br />*Give me the sweetness of your love in the bitterness of the cycle.</h4><h4 style="text-align: left;"><br />*Open the treasures of your forgiveness and reveal your truth.</h4><p>Give me Tawfik to live in Your consciousness and quality in Your presence.. Give me Tawfik in life so that I am not oblivious to my death, no desire, no sadness, no sorrow, no thought should come between me and You.. So give me your consciousness and your feeling. overcome me</p><p>Give me Tawfik, when I ask you for something, I ask you, I surrender myself to you.. Then only my truth is my peace.. Peace without you is forbidden. Give the pure love of the Prophet (peace and blessings of Allah be upon him) and take it in its true color</p>

[READ MORE.](https://azhardialog.blogspot.com/2022/11/the-ultimate-truth-about-you.html) | azhar_maken | |

1,272,479 | Rust devlog: Part 4 | Hello! Welcome to the fourth part of my devlog where I develop my multiplayer turn-based game. I... | 19,842 | 2022-11-25T14:50:56 | https://dev.to/thousandthstar/rust-devlog-part-4-5137 | bevy, programming, gamedev, showdev | Hello! Welcome to the fourth part of my devlog where I develop my multiplayer turn-based game. I previously didn't have a name, but I have decided to name it 8bit Duels! I think this fits the theme pretty nicely. Anyways, on with the devlog!

### Refactoring

This devlog is all about refactoring, and how I made my code cleaner. I did this by creating a workspace shared between the client and the server. This allows me to directly serialize and deserialize packets into `ClientMessage` and `ServerMessage` enum variants. One reason I adore Rust is because you can pass parameters to some enums. This is great in my case, as I need to send some data between the client and the server. These changes were suggested by a user in the Rust gamedev Discord server ([link to their website](https://gamedev.rs/)).

This refactoring was done over most of the networking code, so I won't show everything here. The code is on Github [here](https://github.com/ThousandthStar/8bit-duels) if you want to check it out (stars are greatly appreciated).

The main thing is that I now need less logic to handle packets. Before, I was creating some raw JSON in the client and putting a `packet-type` value in the object. I was then matching that value on the server-side, and vice-versa. This is an extremely bad practice, and it resulted in longer tedious code, since I needed to also get the other variables in the packets by their name in the JSON object. Now, I've just created a shared `common` package along with the client and server packages. It contains these simple API packets:

```rust

#[derive(Serialize, Deserialize, Debug)]

pub enum ServerMessage {

// 1st param: whether or not the player is player_1

StartGame(bool),

StartTurn,

// 1st param: the `CardEntity` to spawn

SpawnCard(CardEntity),

/*

1st param: the initial x position

2nd param: the initial y position

3rd param: the final x position

4th param: the final y position

*/

MoveTroop(i32, i32, i32, i32),

AttackTroop(i32, i32, i32, i32),

}

#[derive(Serialize, Deserialize, Debug)]

pub enum ClientMessage {

Deck(Vec<Card>),

MoveTroop(i32, i32, i32, i32),

AttackTroop(i32, i32, i32, i32),

}

```

Using `serde_json`, a great JSON library, I can just serialize and deserialize packets into enum variants. These are handled like so on the client (I removed, the logic, since it's very straightforward):

```rust

if let Some(message) = guard.pop_front() {

match message {

ServerMessage::StartGame(is_player_1) => {

// Starting the game

}

ServerMessage::SpawnCard(card_entity) => {

// Spawning troops

}

ServerMessage::MoveTroop(start_x, start_y, end_x, end_y) => {

// Moving troops

}

ServerMessage::AttackTroop(start_x, start_y, end_x, end_y) => {

// Attacking troops

}

_ => {}

}

}

```

On the server, we have something similar, but with `ClientMessage` instead.

### Conclusion

If you made it this far, thanks again for reading! This devlog was pretty short since there wasn't much to explain. I do plan on finishing this game as soon as possible, but I am very busy at the moment, so progress is very slow. With that, I hope you enjoyed and are somewhat hoping to try out my game in the future. See you next time!

**Special thanks to the user who helped me improve my code. Hints on grammar and best practices are always greatly appreciated.**

| thousandthstar |

1,273,415 | 3 Features You Should Add To Your Power App | One of the key strengths to Power Apps is the breadth of solutions apps can deliver (In this article... | 19,972 | 2022-12-15T09:59:29 | https://dev.to/wyattdave/3-features-you-should-add-to-your-power-app-19np | powerapps, powerplatform, lowcode | One of the key strengths to Power Apps is the breadth of solutions apps can deliver (In this article Im focusing on Canvas apps), often you are only limited by your imagination. From mobile to desktop, consumption to creation, you can pretty much do anything. Even though you can create anything I still believe there are a few key functions/features every app should have. Unfortunately they are not out of the box in Power Apps, so you either need to code it in Power FX.

I wanted to share the 3 key ones that I always use and how I implemented them:

1. Translation

2. Inactivity/Session Timeouts

3. Dark Mode

---

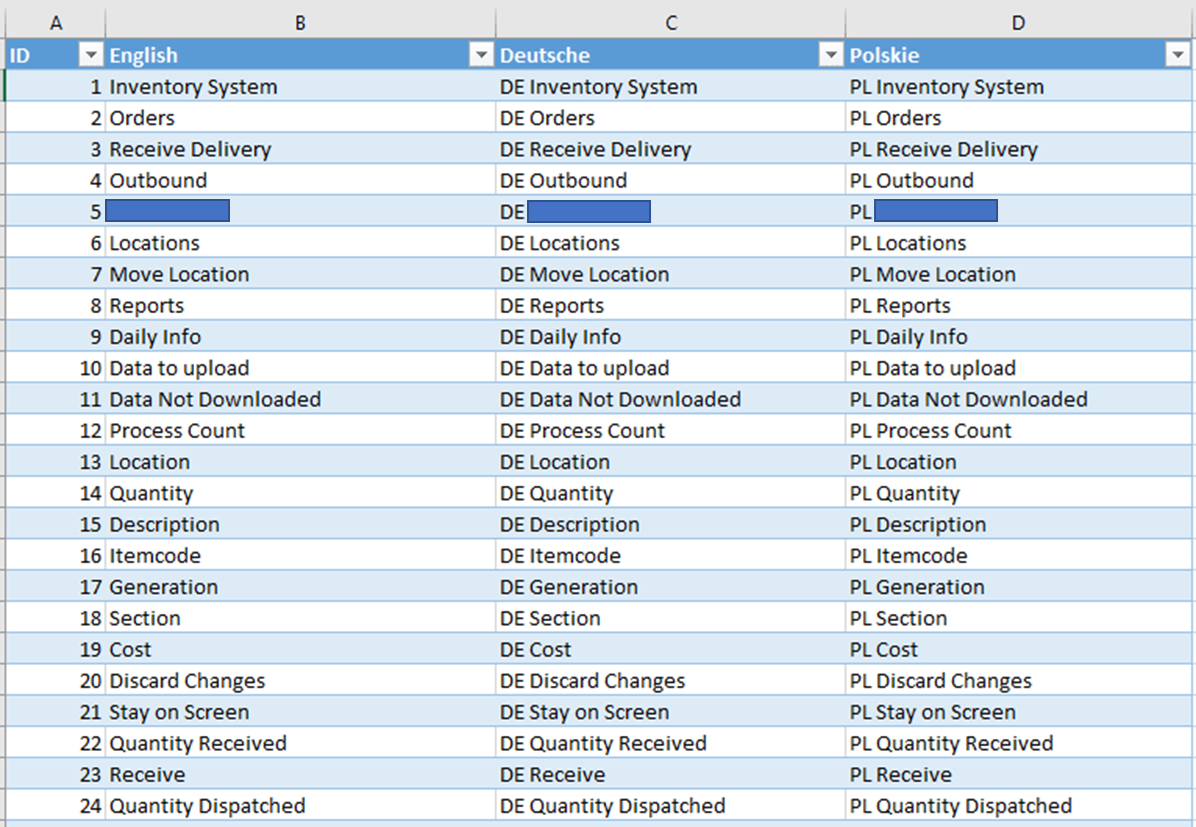

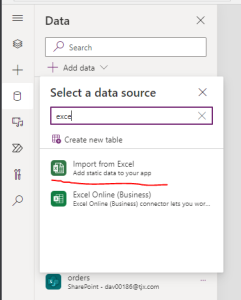

## 1. Translation

It is a big surprise that there is no easy way to implement multiple languages in Power Apps (Even MS Forms has added it recently), fortunately there are multiple ways to implement it.

My solution focus on 2 main areas, performance and flexiability. With the trade off development is a little more complex. You can flip this and make it easier to implement, but in my expierence the trade off in app perfomance isnt worth it.

The solution structure is

- Excel Table with Translations

- Translation Collection

- Labels etc (Referencing row in collection through Index)

As you can see, a quick change would be to use lookups in the labels instead of Index, but thats where I found performance can be impacted, especially in complex apps.

**Excel Table with Translations**

_As you can tell Im not mulit-lingeral, so in development I often just have place holders for other languages. This allows me to test the functionality, but not request a translation until complete_

The structure is simple, an ID column that represents your Index value (this is purely to make development easier), then each langauge has its own column. And this is where the flexability of this approach shines, as to add languages you simply add a new column. To change a label or translation just update the required cell.

Once complete the excel file needs to be up uploaded into the app, we dont want to link it, as in theory it shouldnt get updated very often.

**Translation Collection**

Now we have out translation we need a way of setting them, so I transfer the Excel table to a collection, but I use either the `Language` function, or I default to my required Language (as I often find the Langugage function inconsistent, due to how the laptop may have been setup by the IT dept)

```

ClearCollect(colTranslation,

RenameColumns(

ShowColumns(TranslationTable,"English")

,

"English"

,

"local"

)

)

```

_TranslationTable is the name of the Excel table_

I use the ShowColumns to select the language I want, and the RenameColumns so that all the lables etc reference same field. This way I only have to do one calcualtion on app starts, instead on every label.

To change the language a simple drop down can be configured to update the collection the same way, but selecting a different language field in the ShowColumns

```

Switch(Self.SelectedText.Value,

Deutsche",ClearCollect(colTranslation,

RenameColumns(ShowColumns(TranslationTable,"Deutsche"),"Deutsche","local")),

"Polskie",ClearCollect(colTranslation,

RenameColumns(ShowColumns(TranslationTable,"Polskie"),"Polskie","local")),

ClearCollect(colTranslation,

RenameColumns(ShowColumns(TranslationTable,"English"),"English","local"))

)

```

**Labels etc**

The last stage is how to use the transalation, and where the development complexity is added. You cant simply type the text you want, but you have to return the require row from the translation collection.

```

Index(trans,24).local

```

So in my example Excel table, the above would return 'Quantity Dispatched', in which ever language required (row/ID 24). So as a developer you will need to have the table availabe to lookup (another good reason to use an Excel file).

---

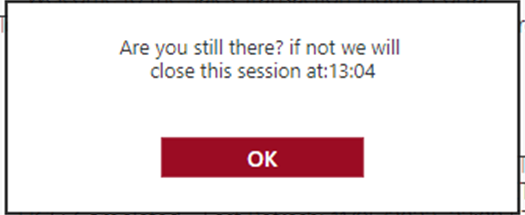

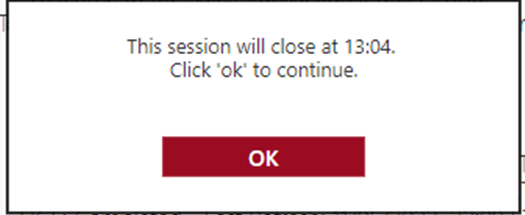

## 2. Inactivity/Session Timeouts

To say Im frustrated that this is not available out of the box is an understatment. Even more annoying is it is an environment config, but it only covers Model Driven Apps and not Canvas Apps (even more annoying this isnt clearly stated and hidden away in some documentation).

So if we need to add inactivity and session timeouts, we need to do it ourselves (and in my expierence this is a basic requirement asked for my Security). The solutions is not pretty, and requires the following:

- Configuration

- Timer to track if timed out

- Popup/logic to end sessions

- Resets on every interaction

**Configuration**

With timeouts it isnt actually one timeout, but normally 4.

- Inactivity Warning

- Inactivity Timeout

- Session Warning

- Session Timeout

_Session timeouts are the maximun amount of time someone can stay logged into an App, it doesnt stop people logging out and straight back in, but does enforce a check to see if the user still hase access_

I strongly recommend settings these values as environment variables, as that way you can set a lower limit in dev to help with testing (imagine having to wait 8 hours to test the session timeout).

I also genuinely put all the values in a single object to keep it tidy

```

Set(voMinutes,{

inactivityWarning:LookUp('Environment Variable Values',

'Environment Variable Definition'.'Schema Name'="new_STIPInactivityWarning").Value,

inactivity:LookUp('Environment Variable Values',

'Environment Variable Definition'.'Schema Name'="new_STIPInactivity").Value,

sessionWarning:LookUp('Environment Variable Values',

'Environment Variable Definition'.'Schema Name'="new_STIPSessionWarning").Value,

session:LookUp('Environment Variable Values',

'Environment Variable Definition'.'Schema Name'="new_STIPSession").Value

}

);

```

voMinutes holds the minutes for each timeout, e.g

- Inactivity Warning = 10 minutes

- Inactivity Timeout = 15 minutes

- Session Warning = 465 minutes (7hrs 45 mins)

- Session Timeout = 480 minutes (8hrs)

We now need to set the actual time stamps that the timer will validate agains.

```

Set(voTimer,{

inactivityWarning:DateAdd(Now(),voMinutes.inactivityWarning,Minutes),

inactivity:DateAdd(Now(),voMinutes.inactivity,Minutes),

sessionWarning:DateAdd(Now(),voMinutes.sessionWarning,Minutes),

session:DateAdd(Now(),voMinutes.session,Minutes)

}

);

```

These both should be ran on the App onStart action.

**Timer to track if timed out**

The timer is the main component of the timeouts, but unfortuantly it isnt as simple as a timer. When the Power App window loses focus the timer stops, so we cant have a simple timer. Instead we need to record a timestamp, and every second check that time stamp to see if we had hit a timeout threshould. We have set the timestamps and stored them in an object variable called `voTimer`, and we need to configured the timer with the following parameters

- AutoStart: true

- Duration: 1000 (1 second)

- Repeat: true

- Reset: true

- OnTimerEnd: (see below)

**Popup/logic to end sessions**

```

If(vbSessionStart,

If(Now()>=voTimer.session Or Now()>=vsSessionTimeOut,

//actions if session timer expired

);

If(Now()>=voTimer.inactivity,

//actions if inactivity timer expired e.g

Notify("Inactivity Timeout",NotificationType.Error,6000);

Launch(vsExitURL,{source:"Inactivity"},LaunchTarget.Replace);

Exit()

);

If(Now()>=voTimer.inactivityWarning,

//actions to show inactivity warning popup

);

If(Now()>=voTimer.sessionWarning,

//actions to show session warning popup e.g

Set(vsExitMess,"Approaching "&voMinutes.session&" minutes limit, this session will end at:"&Text(voTimer.session,"hh:mm"));

Set(visPopup,true);

)

)

```

We are first checking to see if voTimer has been loaded (`vbSessionStart`) as the timer is on AutoStart. Next we check if now is after the session timeout, then the inactivity timeout, then inactivity warning and finally session warning.

In the example for Inactivity timeout out, with flag a error notifcation, open a web page we want to show when signed out (`vsExitURL` variable) and then we exit the app. The warning is probably not needed as everything happens so fast, the browser will send us to the new page in the tab, and as a backup the app will close the app (add true with the Exit() if you wish to log them out of all Microsoft apps too).

The warning example sets a label variable to the required warning (that way re only have one popup for both warnings), and we set the popup to true with `visPopup`.

**Resets on every interaction**

So we have our timeouts set, how do we stop the inactivity warnings. Well heres the reason I realy wish there was an out of the box solution, we have to update the inactivity warning and timeout on every interaction (button click, dropdown change, even background click).

The function is pretty simple:

```

Set(voTimer,{

inactivityWarning:DateAdd(Now(),voMinutes.inactivityWarning,Minutes),

inactivity:DateAdd(Now(),voMinutes.inactivity,Minutes),

sessionWarning:voTimer.sessionWarning,

session:voTimer.session

}

);

```

---

## 3. Dark Mode

Nearly all apps and websites now support dark mode, and although Power Apps doesn't have an out the box solution, its easy to create (fingers crossed Power Apps will in the furture support reading the OS and/or browsers current mode).

To create dark mode each component requires its colour parameters to be set to variables (RGBA can be stored directly in a variable). This has the added benefits of bening able to change the theme of your app quickly in future.

For simplicity I work on a 3 colour pallet, with each fill colour having corresponding text colour.

```

Set(voColour1,RGBA(60,60,58,1));

Set(voText1,bBlacklack);

Set(voColour2,RGBA(155,12,35,1));

Set(voText2,Black);

Set(voColour3,RGBA(219, 219, 219, 1));

Set(voText3,White);

Set(vbDarkMode,false);

```

A simple switch can then be used to flip darkmode on:

```

if(vbDarkMode,

Set(voColour1,Black);

Set(voText1,White);

Set(voColour2,Black);

Set(voText2,White);

,

Set(voColour1,RGBA(60,60,58,1));

Set(voText1,Black);

Set(voColour2,RGBA(155,12,35,1));

Set(voText2,Black);

);

Set(vbDarkMode,Not(vbDarkMode);

```

As you can see, not all colours may need changing.

The key to dark mode is to setup before development, as it is easy to copy and paste configured components, then configuring each as they are added.

---

There are lots of features that I often reuse in my apps, but these 3 are pretty much consistently now in every app. And all of the benefit from planning ahead and implementing at the beginning, rather then trying to add retrospectively. | wyattdave |

1,273,547 | How to run an open-source design project? | I actually need to find the answer. | 0 | 2022-11-26T10:49:51 | https://dev.to/angelod1as/how-to-run-an-open-source-design-project-2mbd | opensource, design, collaboration, question | ---

title: How to run an open-source design project?

published: true

description: I actually need to find the answer.

tags:

- opensource

- design

- collaboration

- question

cover_image: https://64.media.tumblr.com/b55b071933105d10e126b3d8fed4e276/tumblr_mrvrtsA17n1rpgpe2o1_1280.png

# published_at: 2022-11-26 10:13 +0000

---

*Disclaimer: no links in this article are NSFW, but they aren't SFW exactly. Please read further*

## Introduction

I'm working with the BDSMtest team to open-source and modernize it. This work is a personal endeavor — doing something many peers have talked about but never actually started.

A quick introduction: [BDSMtest.org](bdsmtest.org/) is a website where you can test how kinky you are and what kinds of kinks you are mainly into. It's not precisely NSFW — there are no images or explicit wording — but as the theme is sexuality and kink, your work might not approve of you clicking the link during your shift. I'd recommend taking the test, even if you are vanilla.

Don't know what *vanilla* is? Then, well, take the test.

The website has been up since 2014. Every kinky friend — quite a lot — and even non-kinky people have been using it as an essential source of information on their kinky preferences.

I am a front-end developer looking forward to redesigning the website and modernizing its codebase. After doing that, the idea is to revisit the test structure, questions, and results with the help of data scientists (not my area, but that's what open-source is for, right?)

## The question

The first part of any modernization project is to think about architecture: how is the current website organized, and what's the goal of the modernization. In this project, one of my goals is to make it look *better*. It's not exactly ugly; it's just... old. So, it would need a new design.

I, as a front-end developer, have some knowledge of how open-source coding happens:

1. you find a GitHub repository;

1. fork it;

1. work;

1. open a PR respecting the `CONTRIBUTING` guidelines;

1. Answer comments;

1. Make requested changes;

1. (Hopefully) get it approved;

1. ...

1. (Not) profit!

But, as a designer... how could that work?

## Tools

First, design does not have straightforward collaboration tools like GitHub, with granular versioning. There's Figma, of course, but having multiple collaborators in it costs money, and someone can just come and erase everything, right?

It would be necessary to have a clear conduct guideline on how to work on that shared project and also be sure no one is breaking those rules — which would be a manual task, as there are no automated tests or way to compare the current work with the former.

There are also no PRs: any work done happens in the same space. If someone deletes something or changes a component, we'd only be able to notice it after they already done it.

> In Figma, all the commits are in *main*.

So, how to collaborate knowing the source will be preserved while making it possible for new people to add work?

## Behavior

If you are a professional programmer, you may know how to group code. The whole GitHub — or GitLab, or whatever tool you prefer — ecosystem is natural to us, as we've been using it forever.

There are straightforward tools inside GitHub, for instance, to prevent commits on main, block PRs if the workflows fail, require specific approvals when merging, etc. There's nothing like that in Figma, for instance.

*A quick thought*: I'm using Figma as an example because there are no other collaborative design tools in my mind right now. After Figma's acquisition by Adobe, XD isn't even on the table anymore.

How to guide the behavior of new collaborators in an open-source design project? How to avoid destructive workflows? How to maintain project structure while allowing collaboration?

## Blocked

This situation blocks my progress with this task. I will start my design process by myself, but I would love for it to be public and collaborative — I don't know where to begin to make this happen.

Do you know the answer? Could you share this with designer friends or start this discussion with your colleagues? Will Figma ever get their hands on this and make it free for open-source software?

I can only kneel and pray — oops, new kink unlocked. | angelod1as |

1,273,624 | AWS Parameter and Secrets Lambda extension - Node.js example | TLDR; This blog walks through how to access values stored in AWS Systems Manager Parameter... | 0 | 2022-11-26T15:18:59 | https://dev.to/prabusah_53/aws-parameter-and-secrets-lambda-extension-nodejs-example-37h0 | lambdaextension, parameterstore, lambda, extension | ### TLDR;

This blog walks through how to access values stored in AWS Systems Manager Parameter Store via Lambda extension using Node.js code.

### What is Lambda extension:

AWS releases Lambda extensions as Layer to make developers life easier by helping them integrate Lambda with other AWS Services features (like AppConfig, AWS Systems Manager Parameter Store etc.).

### How Lambda extension works:

Lambda lifecycle has 3 phases: init, invoke and shutdown.

_Init phase_ - Combination of Extension INIT, Runtime INIT and Function INIT. Extension setup happens during Extension INIT phase.

_Invoke phase_ - Extension exposes HTTP endpoint that can be called from Lambda function runtime.

_Shutdown phase_ - Extension runtime shutdown along with Lambda function runtime.

#### Why use AWS Systems Manager Parameter Store:

To store connection details, credentials or keys etc.

#### How AWS Parameters and Secrets Lambda extension works:

Provides in-memory cache for parameters and secrets. Upon Lambda requesting a parameter, the extension fetches the parameter data from local cache, if available. If data not in cache or stale, the extension fetches parameter value from AWS Systems Manager service. This reduces aws-sdk initialization, API calls, reduces cost and improves application performance.

#### Nodejs example:

```

const http = require('http');

let getParameterValue = function(paramName) {

const headers = {

"X-Aws-Parameters-Secrets-Token': process.env.AWS SESSION TOKEN

}

let options = {

host: "localhost',

port: '2773',

path: `/systemsmanager/parameters/get?name=${paramName}`,

method: 'GET',

headers: headers

}

return new Promise((resolve, reject) => {

const req = http.get(options, (res) => {

if (res.statusCode < 200 || res.statusCode >= 300) {

return reject(new Error('statusCode=' + res.statusCode));

}

var body = [];

res.on('data', function(chunk) {

body.push(chunk);

});

res.on('end', function() {

resolve(Buffer.concat(body).toString());

});

});

rea.on('error', (e) => {

reject(e.message);

});

req.end();

});

};

exports.handler = async (event) => {

let pass = await getParameterValue('/serivce/password');

let passValue = JSON.parse(pass).Parameter.Value;

//passValue has the password value

};

```

#### Code walkthrough:

AWS Parameters and Secrets Lambda extension exposes HTTP endpoint localhost under 2773 port to Lambda function runtime. AWS SESSION_TOKEN is an in-built environment variable populated by AWS internally. If this secret token not passed to HTTP endpoint - a 401 error will occur.

#### Parameter store Securestring value retrieval using extension:

Just add '**&withDecryption=true**' to the suffix of options objects path field-given below:

```

let options = {

host: 'localhost',

path: `/systemsmanager/parameters/get?name=${paramName}&withDecryption=true`,

port: '2773',

headers: headers

method: 'GET',

}

```

Image by <a href="https://pixabay.com/users/radekkulupa-1045852/?utm_source=link-attribution&utm_medium=referral&utm_campaign=image&utm_content=1380134">Radosław Kulupa</a> from <a href="https://pixabay.com//?utm_source=link-attribution&utm_medium=referral&utm_campaign=image&utm_content=1380134">Pixabay</a> | prabusah_53 |

1,274,200 | The Fintech Entrepreneur’s Guide: Create Successful Tech Startups . | The Fintech Entrepreneur’s Guide: Create Successful Tech Startups with a Robust Tech Stack, Security,... | 0 | 2022-11-27T04:27:34 | https://dev.to/legaciespanda/the-fintech-entrepreneurs-guide-create-successful-tech-startups-with-a-robust-43kb | webdev, news, tutorial | **The Fintech Entrepreneur’s Guide: Create Successful Tech Startups with a Robust Tech Stack, Security, Scalability Plan, and Convincing Investment Pitch**

A Complete Overview of the Lending Space Within the Fintech Segment

Key Features

**- Creating a thriving Fintech platform for the lending industry that can last for the long run.

- Realizing the importance of voice, video, and vernacular in financial technology.

- Preparing investment pitches for different start-ups in the financial technology industry.**

For anyone interested in learning more about the Fintech business in general and the Lending space in particular, this book is an excellent resource because it is based primarily on the author’s practical experience rather than on theoretical frameworks.

This book provides insights into how to construct the technological platform and craft a vision document, thus making it valuable for aspiring entrepreneurs who wish to launch careers in Fintech, whether in lending or otherwise. That way, they’ll understand how to present their proposal to potential investors in a better way.

New grads looking to break into the Fintech business can also benefit from this guide, as it will help them understand the sector and prepare them for the rigors of the hiring process. Leaders at the highest level of an organization can also learn from this book, as it contains numerous examples of actual problems and solutions that have been tried and tested in the real world. Ultimately, this book is for anyone with any connection to the Fintech industry.

What you will learn

- Use this book as a manual to ensure your endeavors are successful and within calculated risks.

- Includes Fintech definitions, terminologies, and the evolution of Fintech.

- Assess the technology landscape and availability of various tools for your digital Fintech.

- Uncover every technical aspect to strengthen your Fintech platform.

- Expert tips for pitching a Fintech idea to investors

Complete knowledge of investors’ availability at different start-up stages.

Download here: https://www.lenbookz.com/item/831/the-fintech-entrepreneurs-guide-create-successful-tech-startups-with-a-robust | legaciespanda |

1,274,551 | What are the plans for this week? | Greetings family,How have you been? Feeling pressure to meet year end targets? Well, I have a piece... | 0 | 2022-11-27T15:44:33 | https://dev.to/mitchiemt11/what-are-the-plans-for-this-week-5336 | javascript, programming, discuss | Greetings family,How have you been? Feeling pressure to meet year end targets? Well, I have a piece that I think you'll adore.

So, to get started, I would like to hear what are the plans for this coming week and the rest of the year. Let's shoot in the comment section and have fun. My last couple weeks have been hectic with work pressure from clients. I'm starting to feel worn as we are reaching the end of year. Any motivation??

Lets goo!!!

| mitchiemt11 |

1,274,720 | Setting up Your First React TypeScript Project From Scratch | Are you looking to create your own React TypeScript project, but don't know where to start? With this... | 0 | 2022-11-27T17:49:50 | https://hackteam.io/blog/setting-up-first-react-typescript-project-from-scratch | webdev, typescript, react, javascript | Are you looking to create your own React TypeScript project, but don't know where to start? With this blog post, you'll get a comprehensive guide to setting up a React TypeScript project from scratch. We'll discuss the necessary components and considerations for environment setup, creating a basic project structure and running the application. With this comprehensive guide in hand, you'll have all the information you need to get started on your React TypeScript journey and create something truly amazing. So, let's dive in and get started on your React TypeScript project!

Click the image below to watch the YouTube video version of this blog post:

[](http://www.youtube.com/watch?v=ek6rGKXk4e4)

## Installing Create React App

Today, Create React App is the most popular way to create a React project. It's a tool that allows you to create a React project without having to worry about the configuration. It's a great way to get started with React and TypeScript. You can create a new project with Create React App using `npx` with the following command:

```bash

npx create-react-app my-app

```

This will create a new React project in the `my-app` directory. Now that your React project is set up, it's time to run the application. You can then run the project with the following command:

```bash

cd my-app

npm start

```

This will start the development server and open the application in your browser in `http://localhost:3000`. You can now start developing your React TypeScript project!

> Note: `npx` is installed on your machine when you install Node.js.

## Installing TypeScript

To use TypeScript in your Create React App project, you need to add a `tsconfig.json` file that holds the TypeScrupt configuration. You can do this by running the following command:

```bash

touch tsconfig.json

```

And add this configuration to the `tsconfig.json` file:

```json

{

"compilerOptions": {

"outDir": "dist",

"rootDir": "src",

"sourceMap": true,

"noImplicitAny": true,

"allowJs": true,

"moduleResolution": "node",

"module": "commonJS",

"lib": ["es6", "dom"],

"target": "ES5",

"jsx": "react"

},

"exclude": ["node_modules", "dist"]

}

```

To use TypeScript in your project, you only need to restart the development server by running `npm start` again. This will now compile your TypeScript code to JavaScript and run the application.

Every file in your application can be renamed from `js` to `tsx` to use TypeScript. You can also add the `ts` extension to your files, but it's needed to use `tsx` for React components as these files contain JSX.

You can now start developing your React TypeScript project!

## Allowing synthetic default imports

In your IDE you might see some errors highlighted about synthetic default imports. This is because TypeScript doesn't know how to import the default export from a module. By default, imports in TypeScript have the following syntax:

```typescript

import * as React from 'React';

```

If we want to keep importing our modules as we did with Babel, we need to change some settings in our `tsconfig.json` file:

```json

{

"compilerOptions": {

"allowSyntheticDefaultImports": true,

"esModuleInterop": true,

...

}

}

```

After this, we can deconstruct our imports again and avoid the obligatory asterisk `*`:

```typescript

import React, { FC } from 'react';

```

This will allow us to import our modules as we did before. But there are more things we should do to make our TypeScript project more robust.

## Adding global type definitions

Another highlighted error in your IDE (I'm using VS code) is that it cannot find the type definitions for the SVG files we're importing. To fix this, we need to add this type definition to our project. We can do this by creating a `global.d.ts` file in the `src` directory and adding the following code:

```typescript

declare module '*.svg' {

const content: string;

export default content;

}

```

This will allow us to import SVG files in our project without any errors.

## Creating a TypeScript React component

Now that we've set up our project, it's time to create our first TypeScript React component. We can do this by creating a `components/Link.tsx` file in the `src` directory and adding the following code:

```typescript

import * as React from 'react';

type LinkProps = {

href: string;

targetBlank: boolean;

children: React.ReactNode | string;

};

export default function Link({

href,

targetBlank = false,

children,

}: LinkProps) {

return (

<a

className='App-link'

href={href}

target={targetBlank ? '_blank' : ''}

rel={targetBlank ? 'noopener noreferrer' : ''}

>

{children}

</a>

);

}

```

This will create a simple `Link` component that we can use in our application. We can now import this component in our `App.tsx` file and use it in our application.

For example, we can replace the `a` tag in the `App.tsx` file with our `Link` component:

```typescript

import * as React from 'react';

import logo from './logo.svg';

import './App.css';

import Link from './components/Link';

function App() {

return (

<div className='App'>

<header className='App-header'>

<img src={logo} className='App-logo' alt='logo' />

<p>

Edit <code>src/App.js</code> and save to reload.

</p>

<Link href='https://reactjs.org' targetBlank>

Learn React

</Link>

</header>

</div>

);

}

export default App;

```

This will now render the `Link` component in our application. You can now start developing your React TypeScript project by adding more components!

## Conclusion

By the end of this blog post, you should have all the information you need to get started on React TypeScript development. We've discussed how to set up an environment, create a project structure and run the application. And that’s it! With this comprehensive guide in hand, you now have all the information you need to set up and run your React TypeScript project from scratch. I hope this guide was helpful and wish you luck on your React TypeScript journey!

Good luck and happy coding!

P.S. Follow [Roy Derks on Twitter](https://www.twitter.com/gethackteam) for more React, GraphQL and TypeScript tips & tricks. And subscribe to my [YouTube channel](https://www.youtube.com/@gethackteam) for more React, GraphQL and TypeScript tutorials.

***

This post was originally published on [Hackteam](https://hackteam.io/blog/setting-up-first-react-typescript-project-from-scratch) using [Reposted.io](https://reposted.io/?utm_source=reposted). A free tool to repost your content across all blogging platforms. | gethackteam |

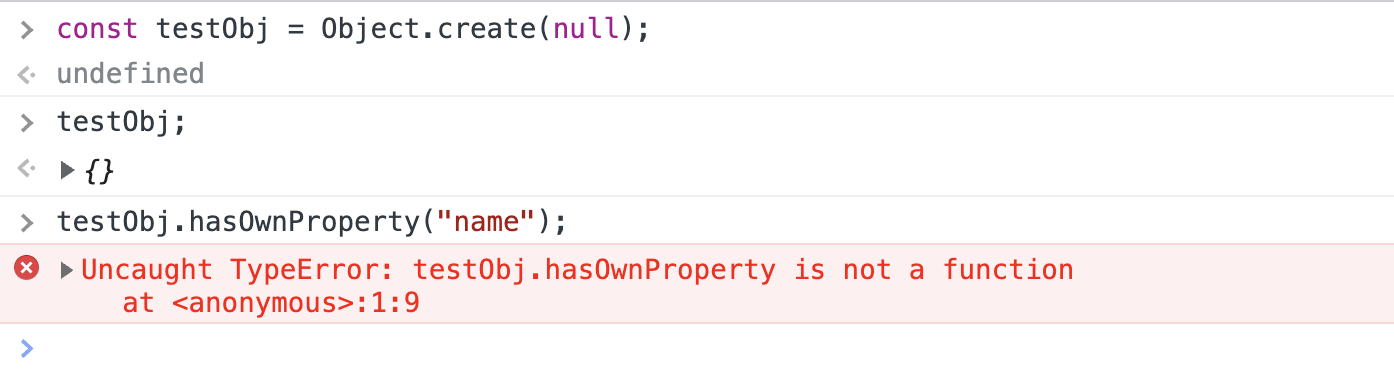

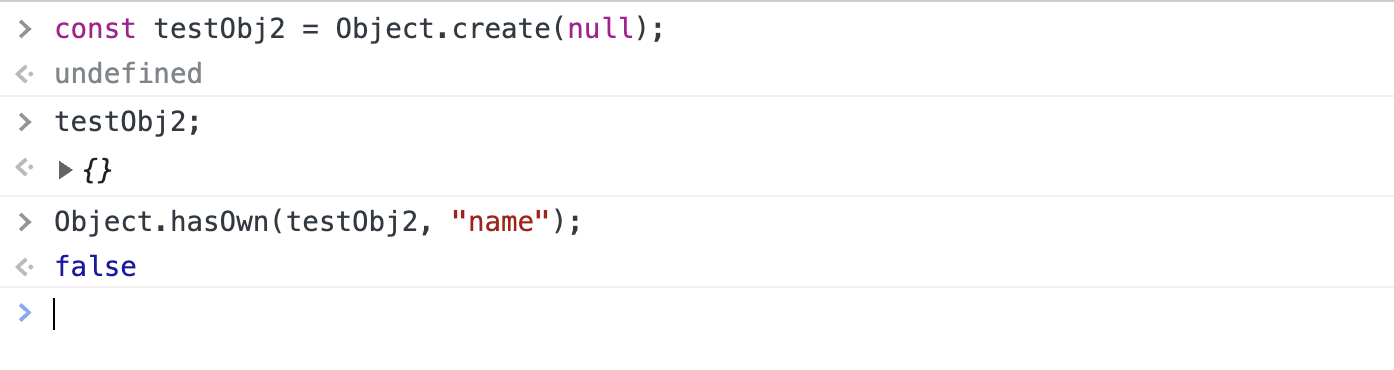

1,274,725 | Two Ways to Check an Object for Keys | The Old Way Object.prototype.hasOwnProperty() Object.prototype.hasOwnProperty()... | 0 | 2022-11-27T18:08:50 | https://dev.to/smilesforgood/two-ways-to-check-an-object-for-keys-h2m | javascript, object | ## The Old Way

### `Object.prototype.hasOwnProperty()`

[`Object.prototype.hasOwnProperty()`](https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Global_Objects/Object/hasOwnProperty) is called on an object with the key (or property) for which you are checking passed in as an argument. This returns true if the property exists or false if not.

**Note:** this only checks for declared or _own_ properties. Inherited properties will also return false.

```js

const obj1 = {

name: "Sam",

age: 25,

},

obj1.hasOwnProperty("name")

// => true

obj1.hasOwnProperty("address")

// => false

```

### Gotchas

One drawback to this method is that it is not accessible on an object created with `Object.create(null)` and will error in that case:

## The Recommended Way

### `Object.hasOwn()`

Per MDN, [`Object.hasOwn()`](https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Global_Objects/Object/hasOwn) is a replacement for the previously existing `.hasOwnProperty` method. It is nearly identical to `.hasOwnProperty` - it returns true if the object has the property as an _own_ property and false for inherited properties as well as properties that do not exist.

```js

const obj2 = {

name: "Tim",

age: 10,

}

Object.hasOwn(obj2, "name");

// => true

Object.hasOwn(obj2, "address");

// => false

```

However, `.hasOwn` also works on objects created with `Object.create(null)` and therefore is recommended for use on all browsers that support it:

| smilesforgood |

1,274,745 | 5 Free Resources to Learn Frontend Development (+ Extras) | Although there are so many options out there, learning by yourself can be overwhelming. You don’t... | 0 | 2022-11-27T18:28:47 | https://dev.to/patriciacosma/5-free-resources-to-learn-frontend-development-extras-43gk | career, beginners, frontend | Although there are so many options out there, learning by yourself can be overwhelming. You don’t know which ones are worth your time - and we all know how important that is.

During my own learning path, I have discovered some interesting resources, ready to boost your skills in the frontend development field. From videos to reading, exercises and even games, this article will cover all your needs so you can start your stress-free learning today.

<br>

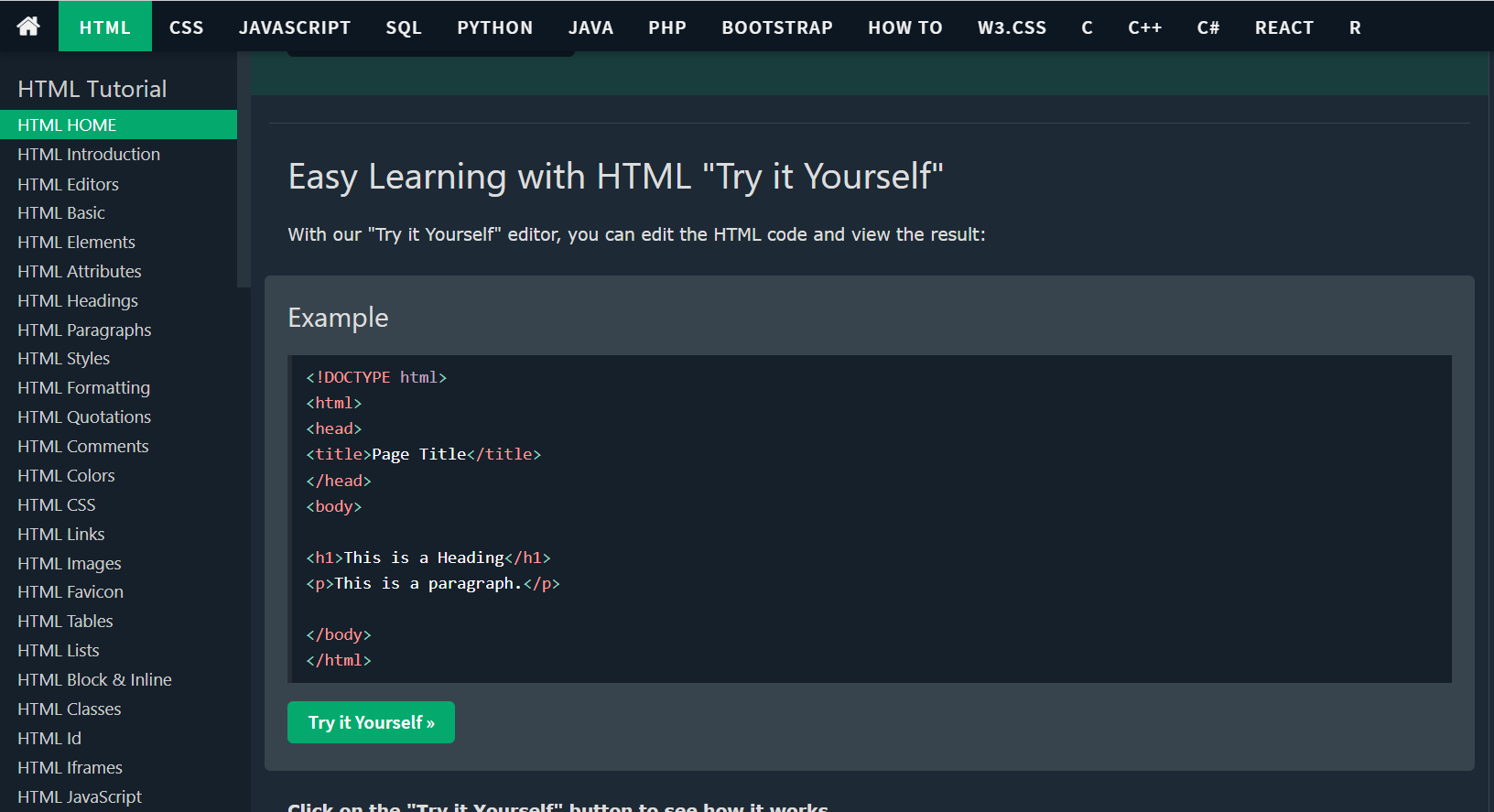

### 1. [**W3Schools**](https://www.w3schools.com/html/default.asp)

On this free-to-use website, there are pleeeeeenty of tutorials - HTML, CSS, JavaScript, Bootstrap etc. -, which take you from 0 to intermediate in a couple of lessons. You can create an account and even track your progress on each tutorial. I suggest doing that to be able to see how far you’ve come and see which exercises you want to practice again.

<br>

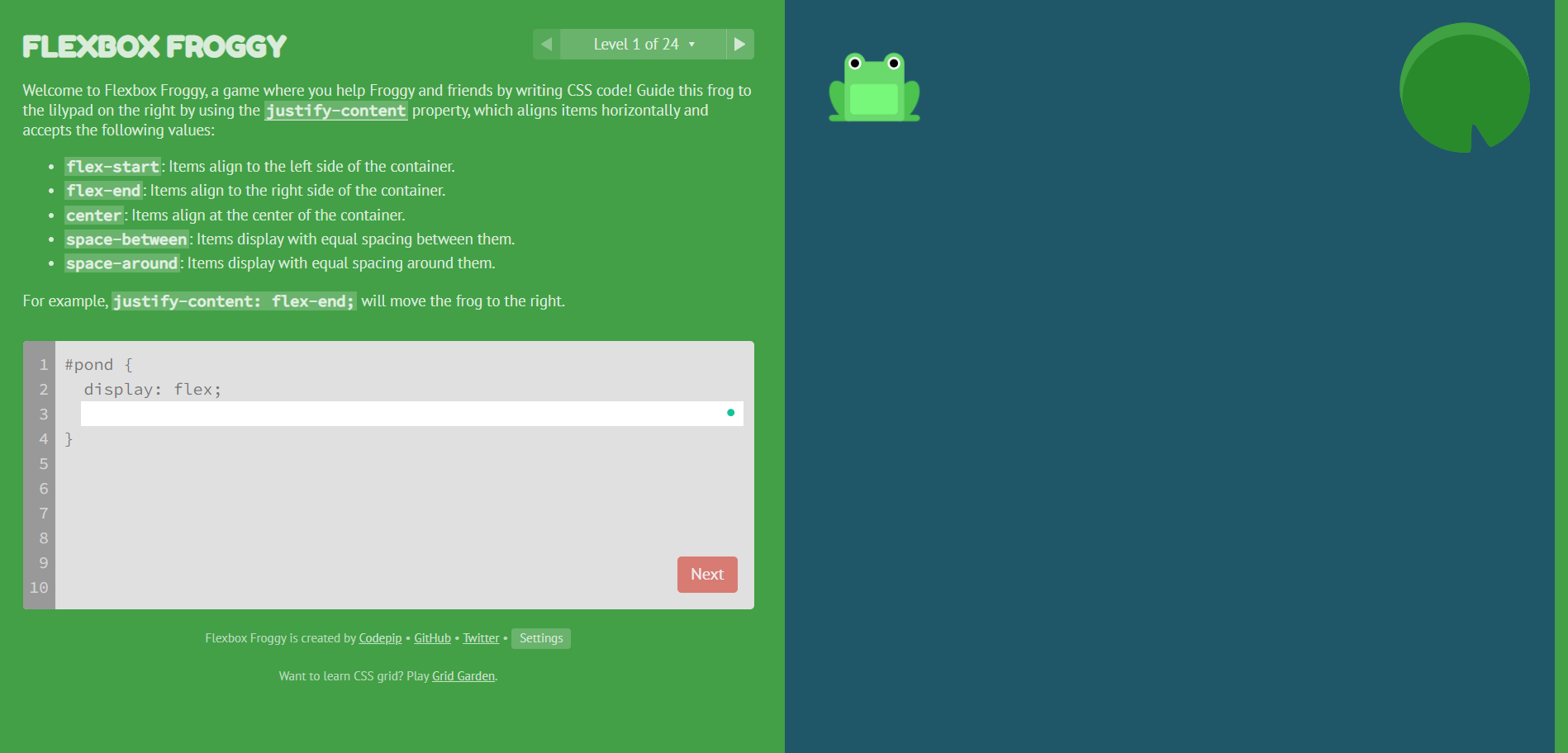

### 2. [Flexbox Froggy](https://flexboxfroggy.com/) & [Grid Garden](https://cssgridgarden.com/)

Learning CSS you probably noticed that knowing how flexbox and grid work is essential. If you are more of a visual person, as I am, playing these two games will not feel like learning at all. But guess what? The notions will stick with you!

<br>

### 3. **[Programming with Mosh](https://www.youtube.com/c/programmingwithmosh/featured)**

Even if you learn by yourself, having someone to guide you through some notions comes in handy from time to time. This YouTube channel will walk you through the basics of programming and it also comes with a Front-end Development playlist.

<br>

### 4. [MDN Web Docs](https://developer.mozilla.org/en-US/)

Although some games and visual aid is the extra help you need, it is important to take your time to read and understand programming to its core. This site provides you with information about different technologies, including HTML, CSS, JavaScript and DOM - and yes, you also have practical examples and exercises to solidify your knowledge.

<br>

### 5. Open Source Contributions

After getting some basics in, you need to start practicing in real life. There is no better way to do that than contributing to open-source projects on GitHub like [this one](https://github.com/firstcontributions/first-contributions) for example. You can also cement some of your knowledge by explaining basic concepts to others or trying to fix some minor issues for practice using [StackOverflow](https://stackoverflow.com/).

<br>

### Extra resources

- Florin’s YouTube [channel](https://www.youtube.com/c/FlorinPop)

- FreeCodeCamp [website](https://www.freecodecamp.org/)

- Open-source free CSS framework [Bootstrap](https://getbootstrap.com/)

Take your time in learning all of this - it is a lot of information, but stay consistent. You’ll thank yourself later **🤭.**

What other resources have you discovered and would recommend to others?

---

_Cover photo by <a href="https://unsplash.com/@chiklad?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText">Ochir-Erdene Oyunmedeg</a> on <a href="https://unsplash.com/?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText">Unsplash</a>_

| patriciacosma |

1,275,109 | Free Fire OB37 update download link for Android | IOS gadget and PC | Free Fire OB37 update download interface for Android , IOS gadget and PC-By-: Jayesh Garena has at... | 0 | 2022-11-28T02:42:40 | https://dev.to/jay0339n/free-fire-ob37-update-download-link-for-android-ios-gadget-and-pc-50lp | <p><br /></p><div class="separator" style="clear: both; text-align: center;"><a href="https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEihiQ-uhonc9SW9AVLUiYd1wChRKgpKwYe0k-kkgyDnO-DhWrDFWZo3b7xvJMu3OiL3ofo00baytXRve3CInX6HPXScn3OzeL01UJ7kVOhKD9IZsVAtAx93Napm435b6AzU8ptVMYo9FxAfLPbJLacX2d05d9CExXoHHSZ5fG8qCPBn8jF5xObHY-mR/s678/images%20(4).jpeg" style="margin-left: 1em; margin-right: 1em;"><img alt="Free Fire OB37 update download link for Android" border="0" data-original-height="452" data-original-width="678" height="426" src="https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEihiQ-uhonc9SW9AVLUiYd1wChRKgpKwYe0k-kkgyDnO-DhWrDFWZo3b7xvJMu3OiL3ofo00baytXRve3CInX6HPXScn3OzeL01UJ7kVOhKD9IZsVAtAx93Napm435b6AzU8ptVMYo9FxAfLPbJLacX2d05d9CExXoHHSZ5fG8qCPBn8jF5xObHY-mR/w640-h426/images%20(4).jpeg" title="Free Fire OB37 update download link for Android" width="640" /></a></div><br /><p><br /></p><h1 style="text-align: left;"><span style="font-size: x-large;">Free Fire OB37 update download interface for Android , IOS gadget and PC-By-: Jayesh</span></h1><h4 style="background-color: white; border: 0px; line-height: 1.2em; margin: 0px; padding: 0px; text-align: left;"><span style="color: #3d85c6; font-family: verdana; font-size: x-small;">Garena has at last delivered its most anticipated update i.e., OB37 to praise its fifth commemoration. Every one of the clients enthusiastically anticipate this update as they have exceptionally exclusive requirements for it. The authorities have additionally teamed up with the worldwide symbol Justin Bieber to make the game very intriguing.</span></h4><p><br /></p><h4 style="text-align: left;"><span style="color: red; font-family: times; font-size: x-large;">FF LINK OB 37 -:</span> <a class="short_url_l" href="https://cutt.ly/U1j3PVT" style="background-color: white; border: 0px; box-sizing: border-box; cursor: pointer; font-family: ubuntu, sans-serif; font-stretch: inherit; font-variant-east-asian: inherit; font-variant-numeric: inherit; line-height: inherit; margin: 0px; outline: 0px; padding: 0px; text-decoration-line: none; transition: all 0.1s ease-in-out 0s; vertical-align: baseline;" target="blank"><span style="color: #2b00fe; font-size: large;">https://cutt.ly/U1j3PVT</span></a></h4><p><br /></p><p>All clients hanging tight for the update won't need to stand by any longer as the authority has at last delivered the OB37 Update. You can now download the report on your gadget and play it. The authorities delivered the update online on November 2022. Peruse the accompanying article completely to find out about the Free Fire OB37 update download interface.</p><p><br /></p><p>Free Fire OB37 update download connect</p><p><br /></p><p>Free Fire is one of the world's most played multiplayer endurance games. The game's designers continue gaming fascinating by delivering new weapons, better innovation and so forth. The new OB37 has outperformed every one of the past updates. The engineers of the game have really buckled down for the new update. Every one of the clients who need to utilize the new update can now make it happen. In the accompanying article, you will see the subtleties like FF OB37 Downloading Cycle, connect, and so forth. We have additionally given direct connections to get the update in our article.</p><p><br /></p><p><b>Garena FF OB37 update: Features</b></p><p><b><br /></b></p><p><b>Name of the Game Garena Free Fire</b></p><p><b><br /></b></p><p><b>Name of the Update FF OB37 Update</b></p><p><b><br /></b></p><p><b>Designer of the game 111dots Studio</b></p><p><b><br /></b></p><p><b>Update Accessibility Status Now Accessible</b></p><p><b><br /></b></p><p><b>OB37 Accessibility Mode Online Mode</b></p><p><b><br /></b></p><p><b>OB37 Delivering Date November 2022</b></p><p><b><br /></b></p><p><b>Recipients Every one of the clients</b></p><p><b><br /></b></p><p><b>Official Gateway https://ff.garena.com/</b></p><p><br /

Full Page Info -https://gamingnewsviralal.blogspot.com/2022/11/free-fire-ob37-update-download-link-for.html?m=1 | jay0339n | |

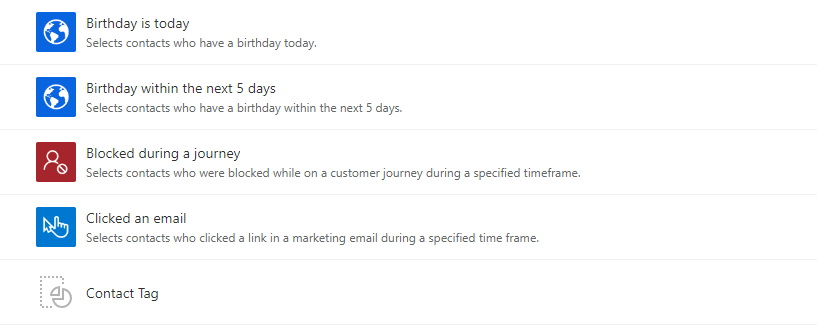

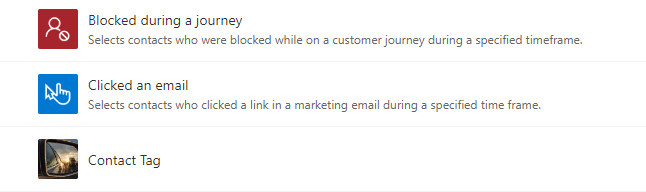

1,275,188 | How to add an icon to a D365 segment template | As a D365 Marketing user, have you ever wondered why segment templates you created do not have icons... | 0 | 2022-11-28T07:10:02 | https://dev.to/rainforss/how-to-add-an-icon-to-a-d365-segment-template-2d55 | _As a D365 Marketing user, have you ever wondered why segment templates you created do not have icons like the system provided segment templates?_

<figcaption>Find the imposter!</figcaption>

---

Although we can use filters, naming conventions and descriptions to locate the template we need, a visual hint is always good to have. Several clients and community members have asked about how to add custom icons to user created segment templates so I am going to demonstrate the simple steps to enable custom icons in this article.

---

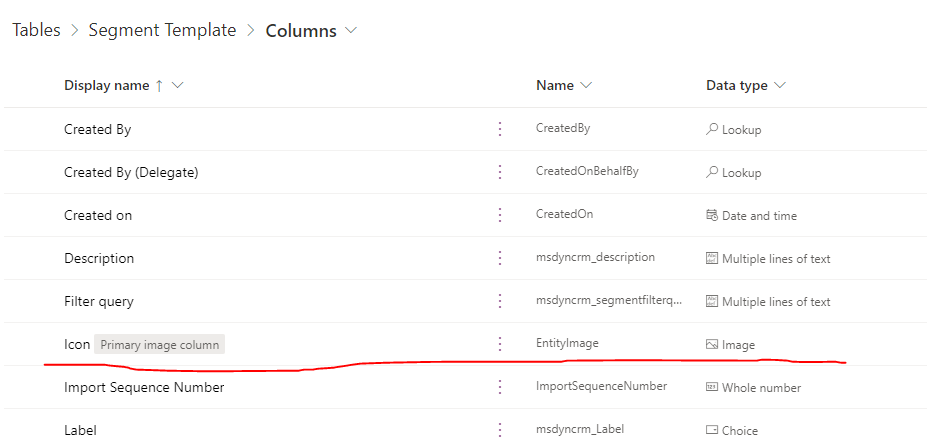

Conceptual aside - the icon image we see on a segment template record is stored in an "Image" column of "Segment Template" table, named "Icon".

<figcaption>Image column of a table</figcaption>

---

A table can have multiple image columns but only one can be dedicated as a "Primary Image Column". In a normal table (without further customization), the picture stored in the primary image column will be the icon shown on a record. The tables without a primary image column will not have this icon on the table form.

<figcaption>Primary image column shown on the main form</figcaption>

---

On the segment template table, the image stored in primary image column will be shown directly on the segment templates list view, tile view and main form.

<figcaption>List view</figcaption>

---

<figcaption>Tile view</figcaption>

---

<figcaption>Main form</figcaption>

---

Now to the actual process. I mentioned steps, but it is actually **one step** - simply left click on the icon on the main form and a dialog will pop up for you to view or edit the image. It is so simple that this step did not even cross my mind. Previously, I was under the assumption that the primary image column would have to be added to the main form for users to modify. However, [Megan](https://meganvwalker.com/) told me about the simple approach which made me rethink about solutioning for any requirements or challenges - there always might be an out-of-box approach to look for before thinking about a customized solution.

<figcaption>A simple click and you can change the icon</figcaption>

---

Voila! Now you have the ability to add visuals to help other users understand what your segment template does. Just remember that any "Image" column data can only be populated after a record is saved, not when a record is being created.

---

_Some additional words for the adventurous type of people: being able to add more visuals is fantastic, but the image column should be used sparingly since it will **eat up the allocated file storage** within an environment if a lot of tables are configured to have image columns. Only use the image column when it is necessary and make sure you set a reasonable max image size when creating an image column. Otherwise, always use less expensive solutions such as **[SharePoint integration](https://learn.microsoft.com/en-us/power-platform/admin/set-up-dynamics-365-online-to-use-sharepoint-online)**._

<figcaption>The maximum image size can only be configured when the column is being created, not afterwards<figcaption>

I hope that this article is somewhat useful to you and feel free to reach out if you have any questions. Happy marketing!

| rainforss | |

1,275,353 | What Are Some Benefits of Custom Mobile App Development? | The truth is that a business requires specialised mobile apps if it wants to remain relevant in the... | 0 | 2022-11-28T09:44:08 | https://dev.to/codesparrk/what-are-some-benefits-of-custom-mobile-app-development-lbf | The truth is that a business requires specialised mobile apps if it wants to remain relevant in the present industry. With so many companies vying for customers' attention, having a unique app that sets you apart from the competition is more important than ever. A custom app not only improves the perception of your business as innovative and creative, but it also has many beneficial benefits for it. Catch [full store here](https://medium.com/@Code_Sparrk/what-are-some-benefits-of-custom-mobile-app-development-893d819371eb) | codesparrk | |

1,275,647 | Les 3 tendances à venir d'internet | Cet article est rédigé par Benjamin Metzger animateur de ce Blog L'ère numérique est là pour rester... | 0 | 2022-11-28T12:33:06 | https://dev.to/bm731/essai-ie8 | Cet article est rédigé par Benjamin Metzger animateur de ce [Blog](https://benmetzger.net)

L'ère numérique est là pour rester et continuera à changer notre façon de vivre, de travailler et de communiquer. Tout comme les précédentes révolutions technologiques, la prochaine va se dérouler par vagues. En effet, tous les deux ans, un nouveau changement de paradigme amène les gens à remettre en question ce qu'ils pensaient savoir sur le monde. C'est exactement ce qui se passe actuellement avec l'essor de l'internet des objets (IoT), de l'intelligence artificielle (IA), de la réalité virtuelle (VR) et de la technologie blockchain. Ces technologies émergentes vont changer la façon dont nous faisons des affaires, consommons du contenu et interagissons les uns avec les autres d'une manière que nous ne pouvons même pas encore imaginer. Cela étant dit, vous devez savoir quelles sont ces tendances à venir pour ne pas devenir obsolète en tant que marketeur avant même qu'elles ne se produisent. Lisez la suite pour en savoir plus...

## Internet des objets (IoT)

L'internet des objets (IdO) est la connexion en réseau d'objets physiques à l'internet. Il s'agit d'un ensemble d'objets différents dotés de composants électroniques, de logiciels, de capteurs, d'actionneurs et d'une connectivité qui permet à ces objets ou composants d'envoyer et de recevoir des données. Un récent rapport du Pew Research Center montre que 93 % des Américains connaissent le concept de la technologie IoT. L'application la plus courante des dispositifs IoT est celle des gadgets domestiques intelligents, tels que les thermostats intelligents, les détecteurs de fumée et les caméras de sécurité. Par exemple : * Une voiture connectée peut vous aider à suivre votre trajet, à trouver des places de stationnement à proximité ou à éviter les embouteillages. * Un entrepôt automatisé utilise des dispositifs IoT pour identifier les articles en rupture de stock. * Une maison connectée vous aide à surveiller votre consommation d'énergie et à contrôler les paramètres de chauffage/refroidissement de manière beaucoup plus efficace.

## Intelligence artificielle (IA)