id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,330,000 | Understanding gRPC Concepts, Use Cases & Best Practices | Original blog post As we progress with application development, among various things, there is one... | 0 | 2023-01-15T17:03:40 | https://dev.to/hiteshrepo/understanding-grpc-concepts-use-cases-best-practices-2npk | grpc, go, communication, framework | [Original blog post](https://www.infracloud.io/blogs/understanding-grpc-concepts-best-practices/)

As we progress with application development, among various things, there is one primary thing we are less worried about i.e. computing power. With the advent of cloud providers, we are less worried about managing data centers. Everything is available within seconds, and that too on-demand. This leads

With the increase in the size of data, we have activities like serializing, deserializing and transportation costs added to it. Though we are not worried about computing resources, the latency becomes an overhead. We need to cut down on transportation. A lot of messaging protocols have been developed in the past to address this. SOAP was bulky, and REST is a trimmed-down version, but we need an even more efficient framework. That’s where Remote Procedure Calls (RPC) comes in.

In this blog post, we will understand what RPC is and the various implementations of RPC with a focus on gRPC, which is Google's implementation of RPC. We'll also compare REST with RPC and understand various aspects of gRPC, including security, tooling, and much more. So, let's get started!

## What is RPC?

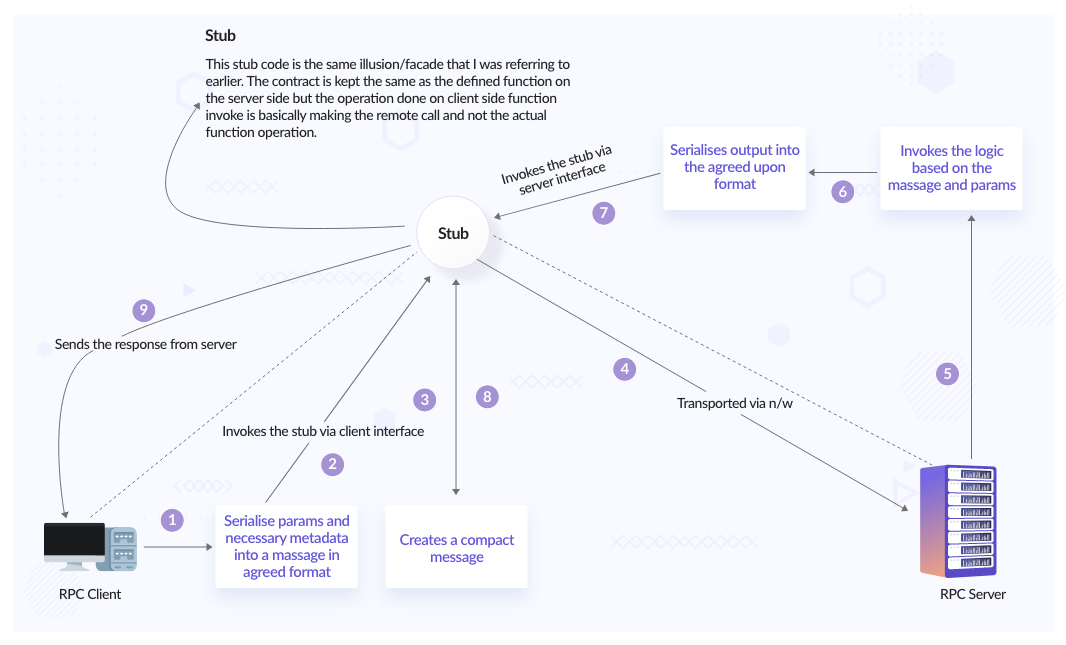

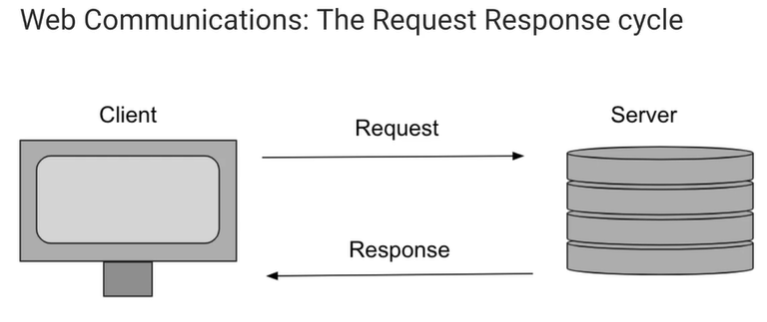

RPC stands for ‘Remote Procedure Calls’. The definition is in the name itself. Procedure calls simply mean function/method calls; it's the ‘Remote’ word that makes all the difference. What if we can make a function call remotely?

Simply put, if a function resides on a ‘server’ and in order to be invoked from the ‘client’ side, could we make it as simple as a method/function call? Essentially what an RPC does is it gives the ‘illusion’ to the client that it is invoking a local method, but in reality, it invokes a method in a remote machine that abstracts the network layer tasks. The beauty of this is that the contract is kept very strict and transparent (we will discuss this later in the article).

Steps involved in an RPC call:

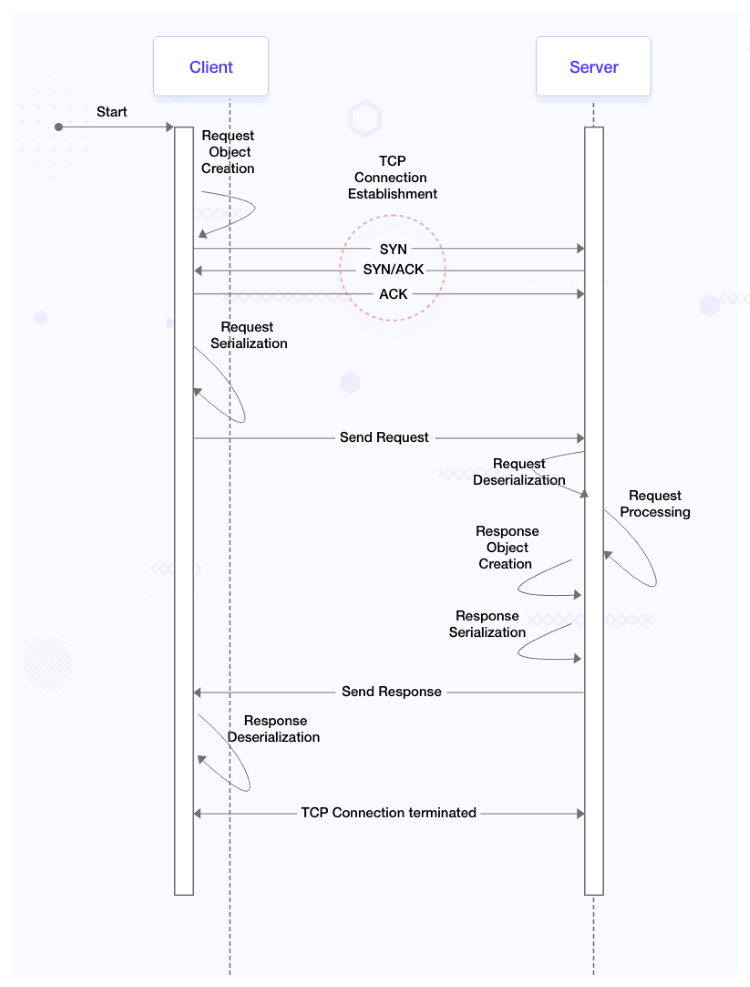

This is how a typical REST process looks like:

RPCs boil down the process to below:

This is because all the complications associated with making a request are now abstracted from us (we will discuss this in code-generation). All we need to worry about is the data and logic.

## gRPC - what, why, and how of it

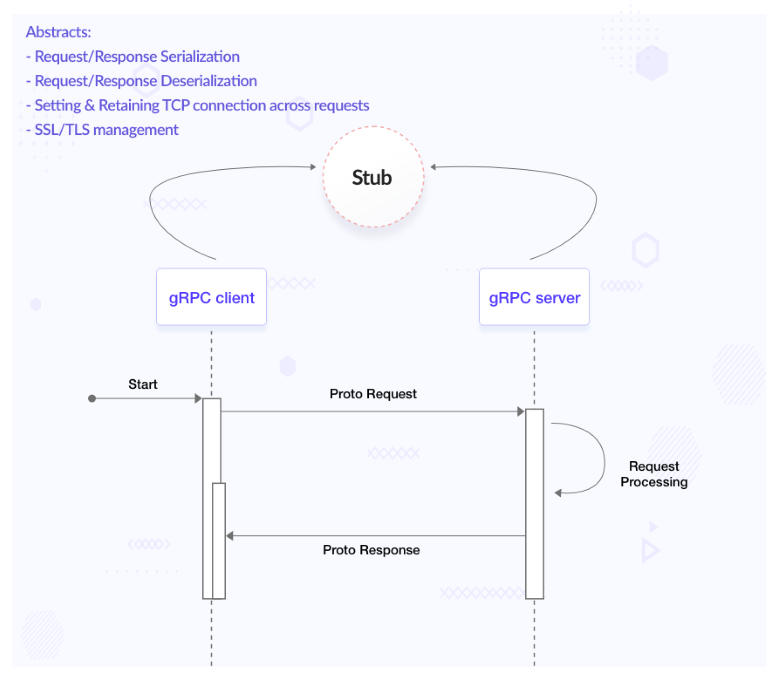

So far, we discussed RPC, which essentially means making function/method calls remotely. Thereby giving us the benefits like ‘[strict contract definition](#clean-contract)’, ‘abstracting transmission and conversion of data’, ‘reducing latency’, etc. Which we will be discussing as we proceed with this post. What we would really like to dive deep into is one of the implementations of RPC. RPC is a concept, and gRPC is a framework based on it.

There are various implementations of RPCs. They are:

- gRPC (Google)

- Thrift (Facebook)

- Finagle (Twitter)

Google’s version of RPC is referred to as gRPC which was introduced in 2015 and has been gaining traction since. It is one of the most chosen communication mechanisms in a microservice architecture.

gRPC uses [protocol buffers](https://developers.google.com/protocol-buffers) (it is an open source message format) as the default method of communication between client and server. Also, gRPC uses HTTP/ 2 as the default protocol.

There are again four types of communication that gRPC supports:

- [Unary](https://grpc.io/docs/what-is-grpc/core-concepts/#unary-rpc) (typical client and server communication)

- [Client side streaming](https://grpc.io/docs/what-is-grpc/core-concepts/#client-streaming-rpc)

- [Server side streaming](https://grpc.io/docs/what-is-grpc/core-concepts/#server-streaming-rpc)

- [Bidirectional streaming](https://grpc.io/docs/what-is-grpc/core-concepts/#bidirectional-streaming-rpc)

Coming on to the message format that is being used widely in gRPC - protocol buffers a.k.a protobufs. A protobuf message looks something like below:

```protobuf

message Person {

string name = 1;

string id = 2;

string email = 3;

}

```

Here, `Person` is the message we would like to transfer (as a part of request/response), which has fields `name` (string type), `id` (string type) and `email` (string type). The numbers 1, 2, 3 represent the position of the data (as in `name`, `id`, and `has_ponycopter`) when it is serialized to binary format.

Once the developer has created the Protocol Buffer file(s) with all messages, we can use a ‘protocol buffer compiler’ (a binary) to compile the written protocol buffer file, which will generate all the utility classes and methods which are needed to work with the message. For example, as shown in the above `Person` message, depending on the chosen language, the [generated code will look like this](https://github.com/infracloudio/grpc-blog/blob/master/proto/example/person.pb.go).

### How do we define services?

We need to define services that use the above messages to be sent/received.

After writing the necessary request and response message types, the next step is to write the service itself.

gRPC services are also defined in Protocol Buffers and they use the ‘service’ and ‘rpc’ keywords to define a service.

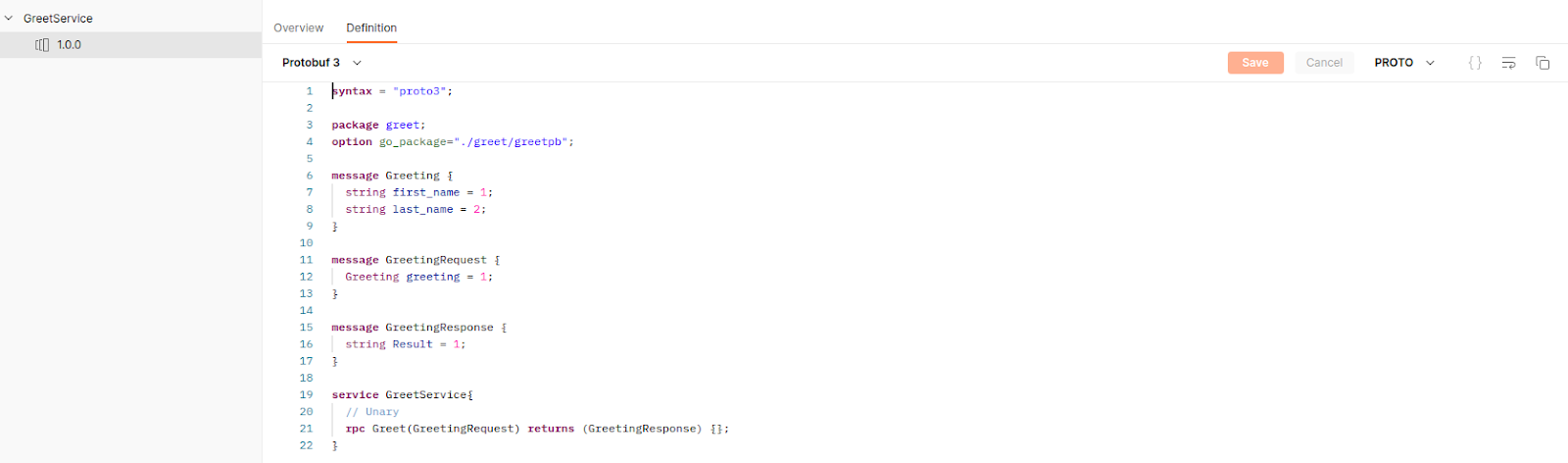

Take a look at the content of the below proto file:

```protobuf

message HelloRequest {

string name = 1;

string description = 2;

int32 id = 3;

}

message HelloResponse {

string processedMessage = 1;

}

service HelloService {

rpc SayHello (HelloRequest) returns (HelloResponse);

}

```

Here, `HelloRequest` and `HelloResponse` are the messages and `HelloService` is exposing one unary RPC called `SayHello` which takes `HelloRequest` as input and gives `HelloResponse` as output.

As mentioned, `HelloService` at the moment contains a single unary RPC. But it could contain more than one RPC. Also, it can contain a variety of RPCs (unary/client-side streaming/server-side streaming/Bidirectional).

In order to define a streaming RPC, all you have to do is prefix ‘stream’ before the request/response argument, [Streaming RPCs proto definitions, and generated code](https://github.com/infracloudio/grpc-blog/tree/master/proto/streaming).

In the above code-base link:

- [streaming.proto](https://github.com/infracloudio/grpc-blog/blob/master/proto/streaming/streaming.proto): this file is user defined

- [streaming.pb.go](https://github.com/infracloudio/grpc-blog/blob/master/proto/streaming/streaming.pb.go) & [streaming_grpc.pb.go](https://github.com/infracloudio/grpc-blog/blob/master/proto/streaming/streaming_grpc.pb.go): these files are auto-generated on running [proto compiler command](https://github.com/infracloudio/grpc-blog/blob/883e25e207b8e7d3fdf8384b98fb0828a982d5b3/proto/Taskfile.yaml#L18) command.

## gRPC vs REST

We did talk about gRPC a fair bit. Also, there was a mention of REST. What we missed was discussing the difference. I mean when we have a well-established, lightweight communication framework in the form of REST, why was there a need to look for another communication framework? Let us understand more about gRPC with respect to REST along with the pros and cons of each of it.

In order to compare what we require are parameters. So let’s break down the comparison into the below parameters:

- **Message format: protocol buffers vs JSON**

- Serialization and deserialization speed is way better in the case of protocol buffers across all data sizes (small/medium/large). [Benchmark-Test-Results](https://github.com/infracloudio/grpc-blog/blob/master/proto/test.out).

- Post serialization JSON is human readable while protobufs (in binary format) are not. Not sure if this is a disadvantage or not because sometimes you would like to see the request details in the Google developers tool or Kafka topics and in the case of protobufs you can't make out anything.

- **Communication protocol: HTTP 1.1 vs HTTP/2**

- REST is based on HTTP 1.1; communication between a REST client and server would require an established TCP connection which in turn has a 3-way handshake involved. When we get a response from the server upon sending a request from the client, the TCP connection does not exist after that. A new TCP connection needs to be spun up in order to process another request. This establishment of a TCP connection on each and every request adds up to the latency.

- So gRPC which is based on HTTP 2 has encountered this challenge by having a persistent connection. We must remember that persistent connections in HTTP 2 are different from that in web sockets where a TCP connection is hijacked and the data transfer is unmonitored. In a gRPC connection, once a TCP connection is established, it is reused for several requests. All requests from the same client and server pair are multiplexed onto the same TCP connection.

- **Just worrying about data and logic: Code generation being a first-class citizen**

- Code generation features are native to gRPC via its in-built protoc compiler. With REST APIs, it’s necessary to use a third-party tool such as Swagger to auto-generate the code for API calls in various languages.

- In the case of gRPC, it abstracts the process of marshaling/unmarshalling, setting up a connection, and sending/receiving messages; what we all need to worry about is the data that we want to send or receive and the logic.

- **Transmission speed**

- Since the binary format is much lighter than JSON format, the transmission speed in the case of gRPC is 7 to 10 times faster than that of REST.

|**Feature**|**REST**|**gRPC**|

|:---:|:---:|:---:|

|Communication Protocol|Follows request-response model. It can work with either HTTP version but is typically used with HTTP 1.1|Follows client-response model and is based on HTTP 2. Some servers have workarounds to make it work with HTTP 1.1 (via rest gateways)|

|Browser support|Works everywhere|Limited support. Need to use [gRPC-Web](https://github.com/grpc/grpc-web), which is an extension for the web and is based on HTTP 1.1|

|Payload data structure|Mostly uses JSON and XML-based payloads to transmit data|Uses protocol buffers by default to transmit payloads|

|Code generation|Need to use third-party tools like Swagger to generate client code|gRPC has native support for code generation for various [languages](https://grpc.io/docs/languages/)|

|Request caching|Easy to cache requests on the client and server sides. Most clients/servers natively support it (for example via cookies)|Does not support request/response caching by default|

Again for the time being gRPC does not have browser support since most of the UI frameworks still have limited or no support for gRPC. Although gRPC is an automatic choice in most cases when it comes to internal microservices communication, it is not the same for external communication that requires UI integration.

Now that we have done a comparison of both the frameworks: gRPC and REST. Which one to use and when?

- In a microservice architecture with multiple lightweight microservices, where the efficiency of data transmission is paramount, gRPC would be an ideal choice.

- If code generation with multiple language support is a requirement, gRPC should be the go-to framework.

- With gRPC’s streaming capabilities, real-time apps like trading or OTT would benefit from it rather than polling using REST.

- If bandwidth is a constraint, gRPC would provide much lower latency and throughput.

- If quicker development and high-speed iteration is a requirement, REST should be a go-to option.

## gRPC Concepts

### Load balancing

Even though the persistent connection solves the latency issue, it props up another challenge in the form of load balancing. Since gRPC (or HTTP2) creates persistent connections, even with the presence of a load balancer, the client forms a persistent connection with the server which is behind the load balancer. This is analogous to a sticky session.

We can understand the challenge via a demo & the code and deployment files for the same are present [in this repository](https://github.com/infracloudio/grpc-blog/tree/master/grpc-loadbalancing).

From the above demo code base, we can find out that the onus of load balancing falls on the client. This leads to the fact that the advantage of gRPC i.e. persistent connection does not exist with this change. But gRPC can still be used for its other benefits.

Read more about [load balancing in gRPC](https://grpc.io/blog/grpc-load-balancing/).

In the above demo code-base, only a ‘round-robin’ load balancing strategy is used/showcased. But gRPC does support another client-based load balancing strategy OOB called ‘pick-first’.

Furthermore, [custom client-side](https://learn.microsoft.com/en-us/aspnet/core/grpc/loadbalancing?view=aspnetcore-6.0) load balancing is also supported.

### Clean contract

In REST, the contract between the client and server is documented but not strict. If we go back even further to SOAP, contracts were exposed via wsdl files. In REST we expose contracts via Swagger and other provisions. But the strictness is lacking, we cannot for sure know if the contract has changed on the server's side while the client code is being developed.

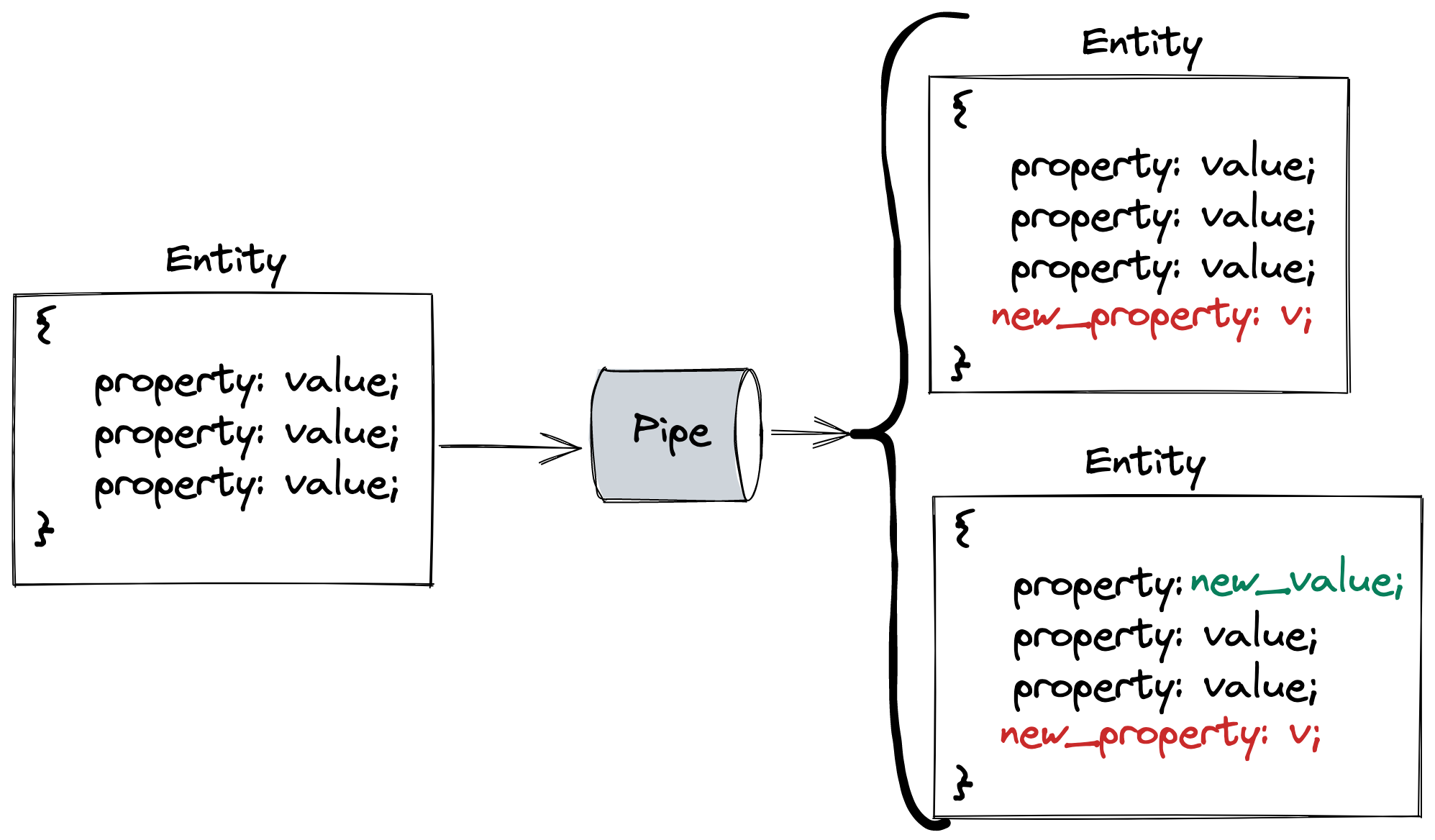

With gRPC, the contract is shared with both the client and server either directly via proto files or generated stub from proto files. This is like making a function call but remotely. And since we are making a function call we exactly know what we need to send and what we are expecting as a response. The complexity of making connections with the client, taking care of security, serialization-deserialization, etc are abstracted. All we care about is the data.

Lets consider the code base for [Greet App](https://github.com/infracloudio/grpc-blog/tree/master/greet_app).

The client uses the [stub](https://github.com/infracloudio/grpc-blog/blob/883e25e207b8e7d3fdf8384b98fb0828a982d5b3/greet_app/internal/app/client/client.go#L6) (generated code from proto file) to create a client object and invoke remote function call:

```go

import greetpb "github.com/infracloudio/grpc-blog/greet_app/internal/pkg/proto"

cc, err := grpc.Dial("<server-address>", opts)

if err != nil {

log.Fatalf("could not connect: %v", err)

}

c := greetpb.NewGreetServiceClient(cc)

res, err := c.Greet(context.Background(), req)

if err != nil {

log.Fatalf("error while calling greet rpc : %v", err)

}

```

Similarly, the server too uses the same [stub](https://github.com/infracloudio/grpc-blog/blob/883e25e207b8e7d3fdf8384b98fb0828a982d5b3/greet_app/internal/app/server/server.go#L6) (generated code from proto file) to receive request object and create response object:

```go

import greetpb "github.com/infracloudio/grpc-blog/greet_app/internal/pkg/proto"

func (*server) Greet(_ context.Context, req *greetpb.GreetingRequest) (*greetpb.GreetingResponse, error) {

// do something with 'req'

return &greetpb.GreetingResponse{

Result: result,

}, nil

}

```

Both of them are using the same stub generated from the proto file [greet.proto](https://github.com/infracloudio/grpc-blog/blob/master/greet_app/internal/pkg/proto/greet.proto).

And the stub was generated using ‘proto’ compiler and the command to generate is [this](https://github.com/infracloudio/grpc-blog/blob/883e25e207b8e7d3fdf8384b98fb0828a982d5b3/greet_app/Taskfile.yaml#L10).

```bash

protoc --go_out=. --go_opt=paths=source_relative --go-grpc_out=. --go-grpc_opt=paths=source_relative internal/pkg/proto/*.proto

```

### Security

gRPC authentication and authorization works on two levels:

- Call-level authentication/authorization is usually handled through tokens that are applied in metadata when the call is made. [Token based authentication example](https://github.com/infracloudio/grpc-blog/compare/master...secure_token).

- Channel-level authentication uses a client certificate that's applied at the connection level. It can also include call-level authentication/authorization credentials to be applied to every call on the channel automatically. [Certificate based authentication example](https://github.com/infracloudio/grpc-blog/compare/secure_grpc).

Either or both of these mechanisms can be used to help secure services.

### Middlewares

In REST, we use middlewares for various purposes like:

- Rate limiting

- Pre/Post request/response validation

- Address security threats

We can achieve the same with gRPC as well. The verbiage is different in gRPC, they are referred as ‘interceptors’ but they do similar activities.

In [the middlewares branch](https://github.com/infracloudio/grpc-blog/tree/middlewares/greet_app/internal/app) of the `greet_app` code base, we have integrated logger and Prometheus interceptors.

Look how the interceptors are configured to use Prometheus and logging packages in [middleware.go](https://github.com/infracloudio/grpc-blog/blob/7700323e1e488eb8777a06ca762e4d29602d2424/greet_app/internal/pkg/middleware/middleware.go#L29).

```go

// add middleware

AddLogging(&zap.Logger{}, &uInterceptors, &sInterceptors)

AddPrometheus(&uInterceptors, &sInterceptors)

```

But we can integrate other packages to interceptors for purposes like preventing panic and recovery (to handle exceptions), tracing, even authentication, etc.

[Supported middlewares by gRPC framework](https://github.com/grpc-ecosystem/go-grpc-middleware).

### Packaging, versioning and code practices of proto files

#### Packaging

Let's follow [the packaging branch](https://github.com/infracloudio/grpc-blog/blob/packaging/proto/packaging/processor.proto).

First start with `Taskfile.yaml`, the task `gen-pkg` says `protoc --proto_path=packaging packaging/*.proto --go_out=packaging`. This means `protoc` (the compiler) will convert all files in `packaging/*.proto` into its equivalent Go files as denoted by flag `--go_out=packaging` in the `packaging` directory itself.

Secondly in the ‘processor.proto’ file, 2 messages have been defined namely ‘CPU’ and ‘GPU’. While CPU is a simple message with 3 fields of in-built data types, GPU message on the other hand has an additional custom data type called ‘Memory’ along with in-built data types same as CPU message. ‘Memory’ is a separate message and is defined in a different file altogether.

So how do you use the ‘Memory’ message in the ‘processor.proto’ file? By using [import](https://github.com/infracloudio/grpc-blog/blob/436d84358868f463ea7929eb14120eb80801fde1/proto/packaging/processor.proto#L6).

```protobuf

syntax = "proto3";

package laptop_pkg;

option go_package = "/pb";

import "memory.proto";

message CPU {

string brand = 1;

string name = 2;

uint32 cores = 3;

}

message GPU {

string brand = 1;

string name = 2;

uint32 cores = 3;

Memory memory = 4;

}

```

```protobuf

syntax = "proto3";

package laptop_pkg;

option go_package = "/pb";

message Memory {

enum Unit {

UNKNOWN = 0;

BIT = 1;

BYTE = 2;

KILOBYTE = 3;

MEGABYTE = 4;

GIGABYTE = 5;

}

uint64 value = 1;

Unit unit = 2;

}

```

Even if you try to generate a proto file by running task `gen-pkg` after mentioning import, it will throw an error. As by default `protoc` assumes both files `memory.proto` and `processor.proto` to be in different packages. So you need to mention the same package name in both files.

The optional `go_package` indicates the compiler to create a package name as `pb` for Go files. If any other language-d proto files were to be created, the package name would be `laptop_pkg`.

#### Versioning

There can be two kinds of changes in gRPC breaking and non-breaking changes:

- Non-breaking changes include adding a new service, adding a new method to a service, adding a field to request or response proto, and adding a value to enum

- Breaking changes like renaming a field, changing field data type, field number, renaming or removing a package, service or methods require versioning of services

- In order to distinguish between same name messages or services across proto files, [optional packaging](https://developers.google.com/protocol-buffers/docs/proto#packages) can be implemented.

#### Code practices

- Request message must suffix with request `CreateUserRequest`.

- Response message must suffix with request `CreateUserResponse`.

- In case the response message is empty, you can either use an empty object `CreateUserResponse` or use the `google.protobuf.Empty`.

- Package name must make sense and must be versioned, for example: package `com.ic.internal_api.service1.v1`.

### Tooling

gRPC ecosystem supports an array of tools to make life easier in non-developmental tasks like documentation, rest gateway for a gRPC server, integrating custom validators, linting, etc. Here are some tools that can help us achieve the same:

- [protoc-gen-grpc-gateway](https://github.com/grpc-ecosystem/grpc-gateway) — plugin for creating a gRPC REST API gateway. It allows gRPC endpoints as REST API endpoints and performs the translation from JSON to proto. Basically, you define a gRPC service with some custom annotations and it makes those gRPC methods accessible via REST using JSON requests.

- [protoc-gen-swagger](https://github.com/grpc-ecosystem/grpc-gateway) — a companion plugin for grpc-gateway. It is able to generate swagger.json based on the custom annotations required for gRPC gateway. You can then import that file into your REST client of choice (such as [Postman](https://www.postman.com/)) and perform REST API calls to the methods you exposed.

- [protoc-gen-grpc-web](https://github.com/grpc/grpc-web) — a plugin that allows our front end to communicate with the backend using gRPC calls. A separate blog post on this coming up in the future.

- [protoc-gen-go-validators](https://github.com/mwitkow/go-proto-validators) — a plugin that allows to define validation rules for proto message fields. It generates a `Validate() error` method for proto messages you can call in Go to validate if the message matches your predefined expectations.

- [protolint](https://github.com/yoheimuta/protolint) - a plugin to add lint rules to proto files.

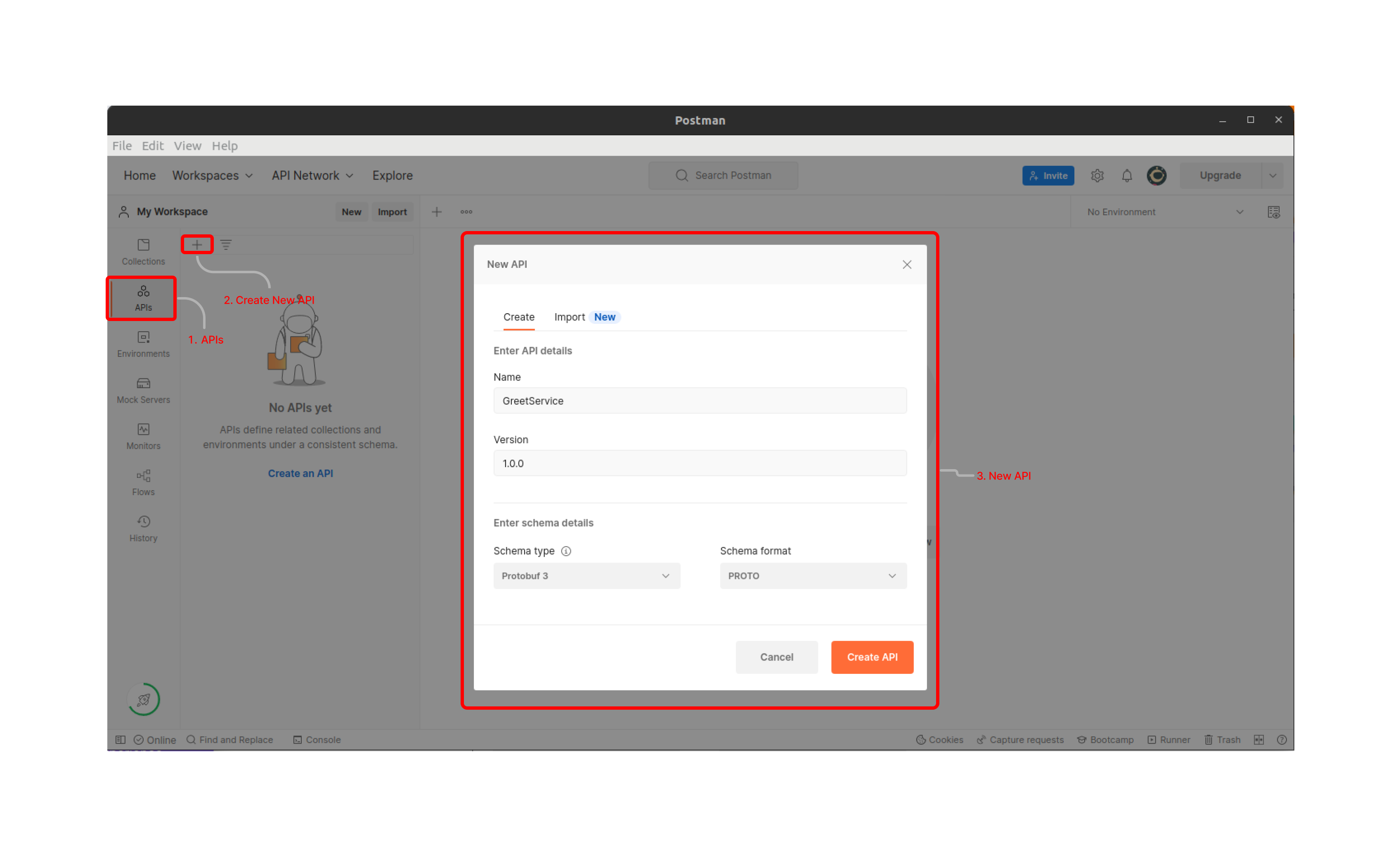

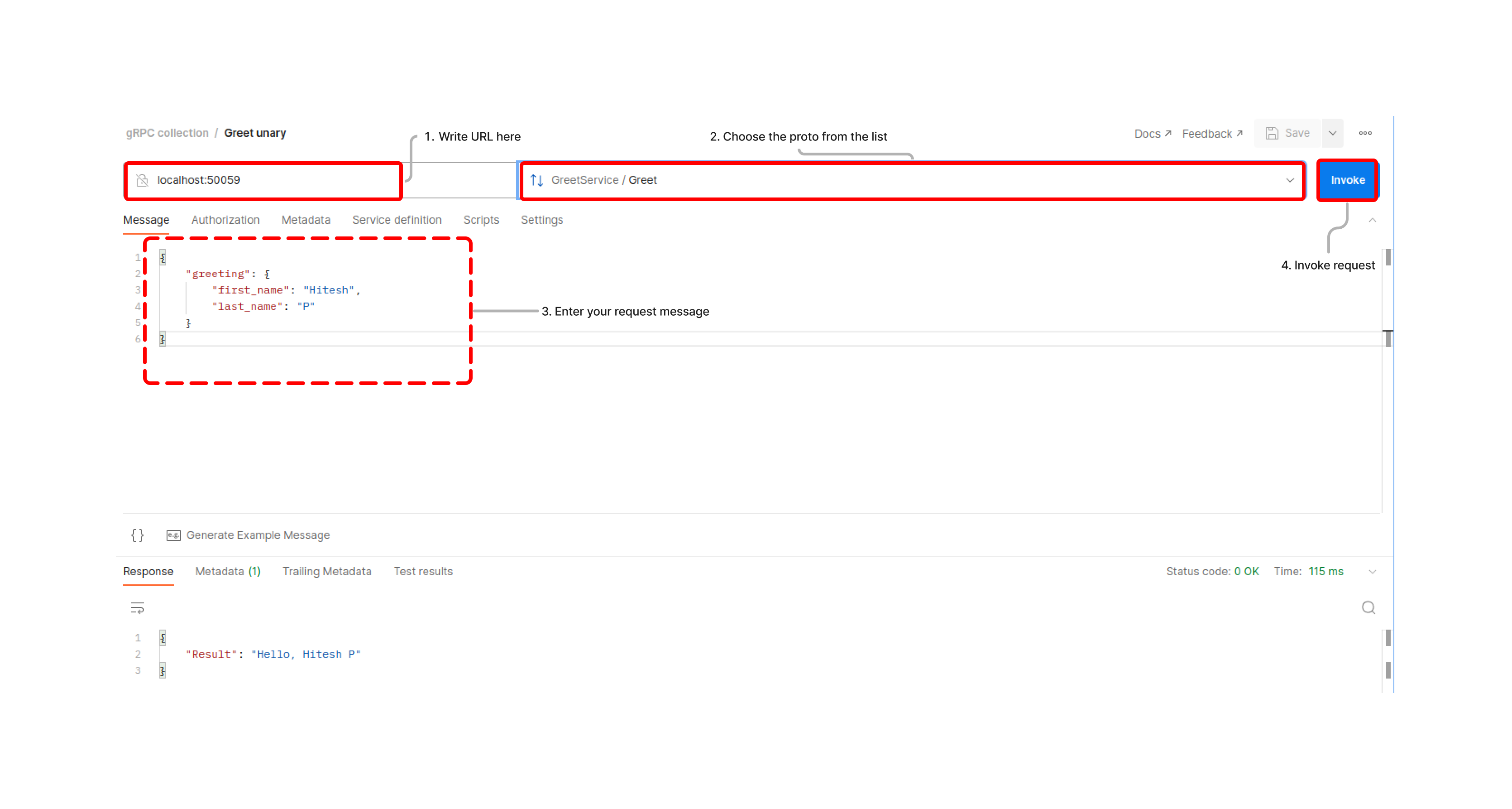

## Testing using Postman

Unlike testing REST APIs with Postman or any equivalent tools like Insomnia, it is not quite comfortable to test gRPC services.

**Note:** gRPC services can also be tested from CLI using tools like [evans-cli](https://github.com/ktr0731/evans). But for that reflection needs (if not enabled the path to the proto file is [required](https://github.com/infracloudio/grpc-blog/blob/ed390485e12ce6b63fd9fd53f867cf6e818a5407/greet_app/Taskfile.yaml#L82)) to be enabled in gRPC servers. This [compare link](https://github.com/infracloudio/grpc-blog/compare/evans) shows the way to enable reflection and how to enter into evans-cli repl mode. Post entering repl mode of evans-cli, gRPC services can be tested from CLI itself and the process is described in [evans-cli GitHub page](https://github.com/ktr0731/evans).

Postman has a [beta version](https://blog.postman.com/postman-now-supports-grpc/) of testing gRPC services.

Here are the steps of how you can do it:

1. Open Postman, goto ‘APIs’ in the left sidebar and click on ‘+’ sign to create new api. In the popup window, enter ‘Name’, ‘Version’, and ‘Schema Details’ and click on create [unless you need to import from some sources like GitHub, Bitbucket].

2. Once Your API gets created then go to definition and enter your proto contract.

3. Remember importing does not work here, so it would be better to keep all dependent protos at one place.

4. The above steps will help to retain contracts for future use.

5. Then click on ‘New’ and select ‘gRPC request', enter the URI and choose the proto from the list of saved ‘APIs’ and finally enter your request message and hit ‘Invoke’

In the above steps we figured out the process to test our gRPC APIs via Postman. The process to test gRPC endpoints is different from that of REST endpoints using Postman. One thing to remember is that while creating and saving proto contract as in 5, all proto message and service definitions need to be in the same place. As there is no provision to access proto messages across versions in Postman.

## Conclusion

In this post, we developed an idea about RPC, drew parallels with REST as well as discussed their differences, then we went on to discuss an implementation of RPC i.e. gRPC developed by Google.

gRPC as a framework can be crucial, especially for microservice-based architecture for internal communication. It can be used for external communication as well but will require a REST gateway. gRPC is a must for streaming and real-time apps.

The way Go is proving itself as a server-side scripting language, gRPC is proving itself as a de-facto communication framework.

That's it folks! Feel free to reach out to [Hitesh](https://www.linkedin.com/in/hitesh-pattanayak-52290b160/)/[Pranoy](https://www.linkedin.com/in/pranoy-kundu-74b179167) for any feedback and thoughts on this topic.

Looking for help with building your DevOps strategy or want to outsource DevOps to the experts? Learn why so many startups & enterprises consider us as one of the [best DevOps consulting & services companies](https://www.infracloud.io/devops-consulting-services/).

**Further reads**

- [gRPC official documentation](https://grpc.io/docs/)

- [Protobuff golang documentation](https://developers.google.com/protocol-buffers/docs/gotutorial)

- [gRPC ecosystem](https://github.com/grpc-ecosystem)

- [REST vs gRPC](https://www.baeldung.com/rest-vs-grpc)

- [RPC concepts](https://www.ibm.com/docs/en/aix/7.1?topic=concepts-remote-procedure-call) | hiteshrepo |

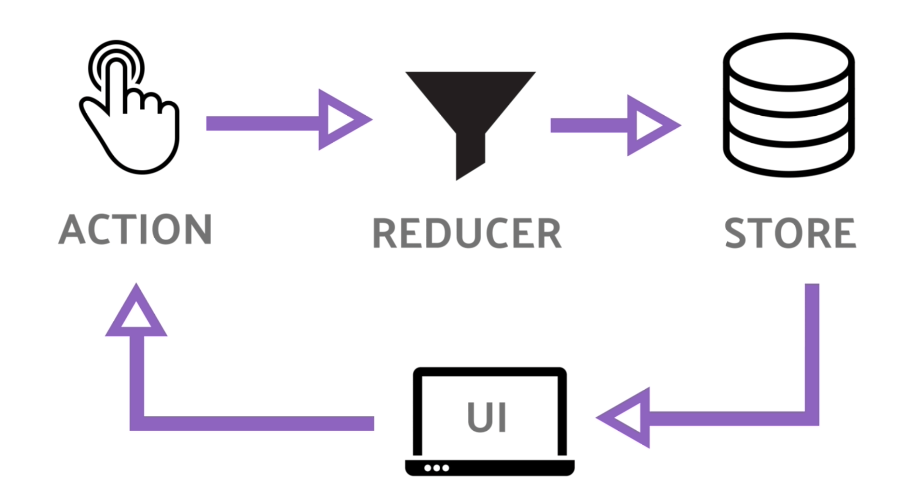

1,330,079 | Redux Vs Redux Toolkit | Difference Between Redux & Redux Toolkit. First of all, before knowing the difference... | 0 | 2023-01-15T19:16:03 | https://dev.to/azadulkabir455/redux-vs-redux-toolkit-472b | react, redux, javascript, webdev | ## Difference Between Redux & Redux Toolkit.

First of all, before knowing the difference between redux and redux toolkit we must know what definition two of this is.

**Redux:** Redux is an open-source JavaScript library for managing and centralizing application states.

That means if you have a state(like a variable) or many states that need to many components or modules. So you need to make globalize those states to easily access them. Redux does this work for us.

**Redux Toolkit:** Redux Toolkit is a set of tools that helps simplify Redux development.

In other words, if I configure something through redux, as hard as it will be, but the same task we can perform it easily with the redux toolkit.

## Redux VS Redux Toolkit

Some of the differences are highlighted below

1. In Redux you must configure Devtools but in the Redux toolkit, you don't have to configure. Because it already has.

2. In Redux for asynchronous performance you need to add Redux Thunk, But in the Redux toolkit, it's already built-in.

3. Redux requires too much boilerplate code but the Redux toolkit doesn't.

## What Should I learn?

In my preference, you should learn the Redux toolkit. It also depends on other requirements.

But before learning the Redux toolkit you must learn Redux basics. Because, you have to crystal clear concept about **Action**, **Reducer**, and **Store **and their work procedure. After that, you can jump to the Redux toolkit.

## React Redux Toolkit Roadmap.

Their three things that you have to learn to use redux in your react app and the learning sequence is below. (Top to bottom)

1. Redux (Basic must).

2. React Redux. After that

3. Redux Toolkit.

If you are a beginner you can follow this tutorial playlist. I hope it helps you a lot.

[Tutorial Link](https://www.youtube.com/watch?v=0awA5Uw6SJE&list=PLC3y8-rFHvwiaOAuTtVXittwybYIorRB3) | azadulkabir455 |

1,330,209 | Building a Resilient Static Website on AWS | Do you remember the AWS outage in December 2021? I do. My client at work had a P1 incident. Many of... | 0 | 2023-01-15T23:58:29 | https://jasonbutz.info/2023/01/website-cdn/?utm_source=dev.to | aws, cloudfront, s3, resiliency |

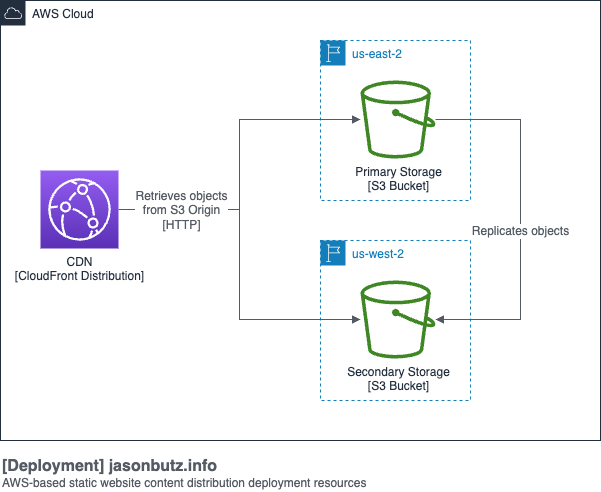

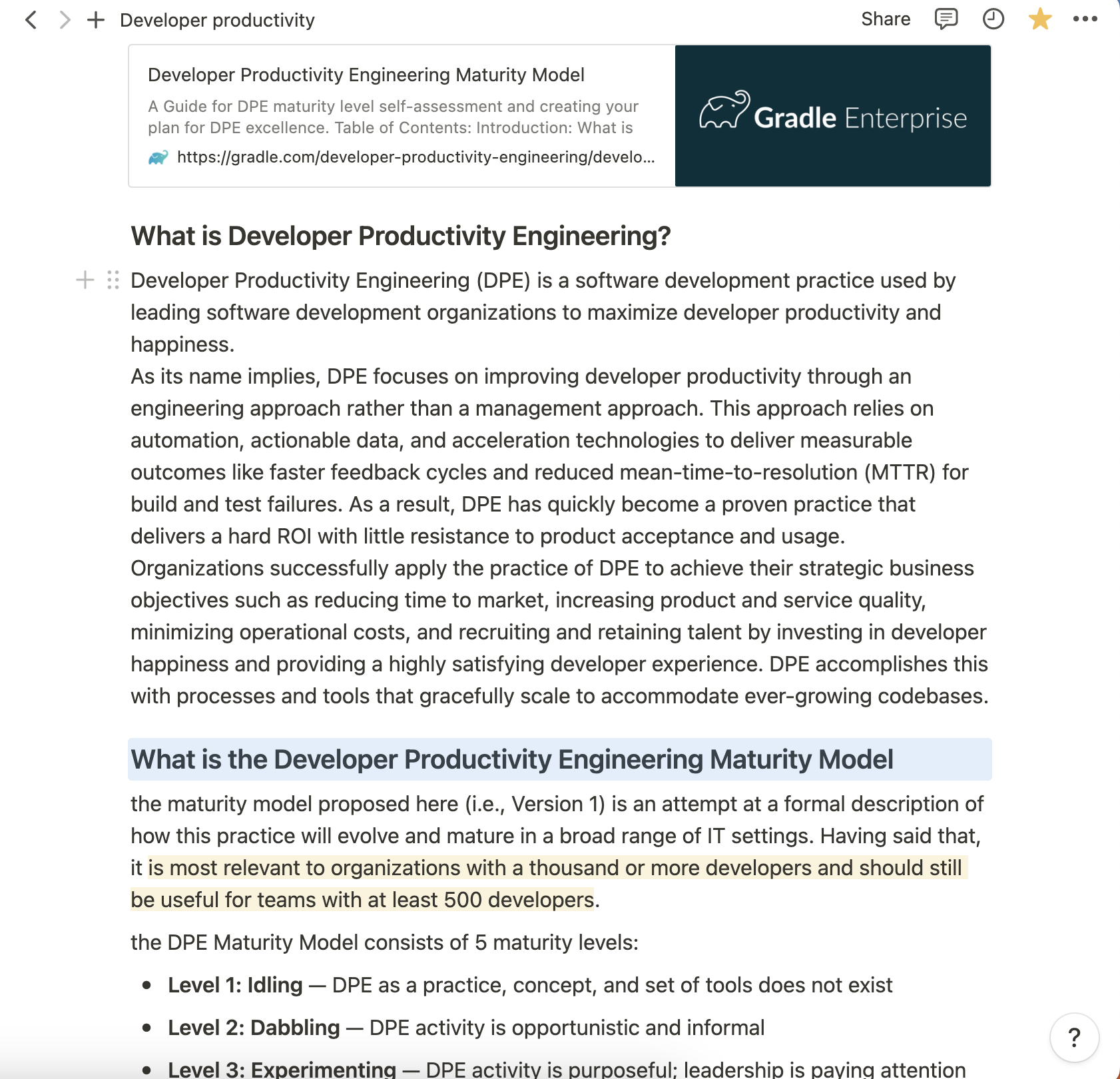

Do you remember the [AWS outage in December 2021](https://aws.amazon.com/message/12721/)? I do. My client at work had a P1 incident. Many of their applications were unresponsive or returned errors that weren't user-friendly. It drove home the importance of fault-tolerant redundant architectures when using a cloud provider. If my client's application had been built to be redundant across regions and not just availability zones the application could have survived the outage with limited impact.

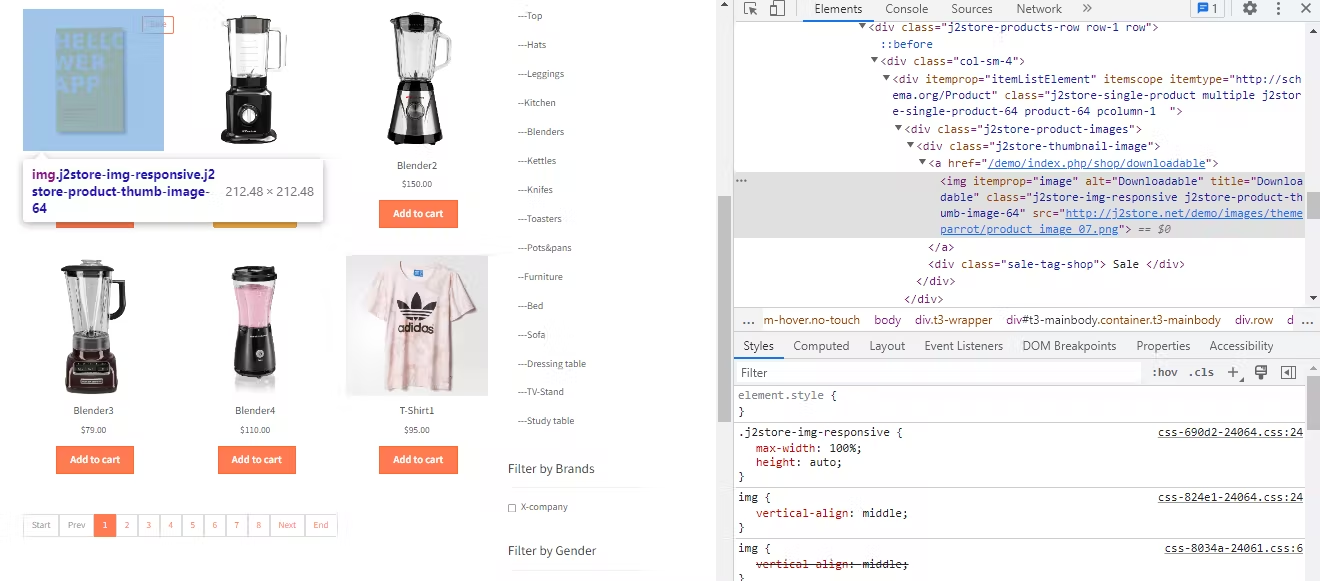

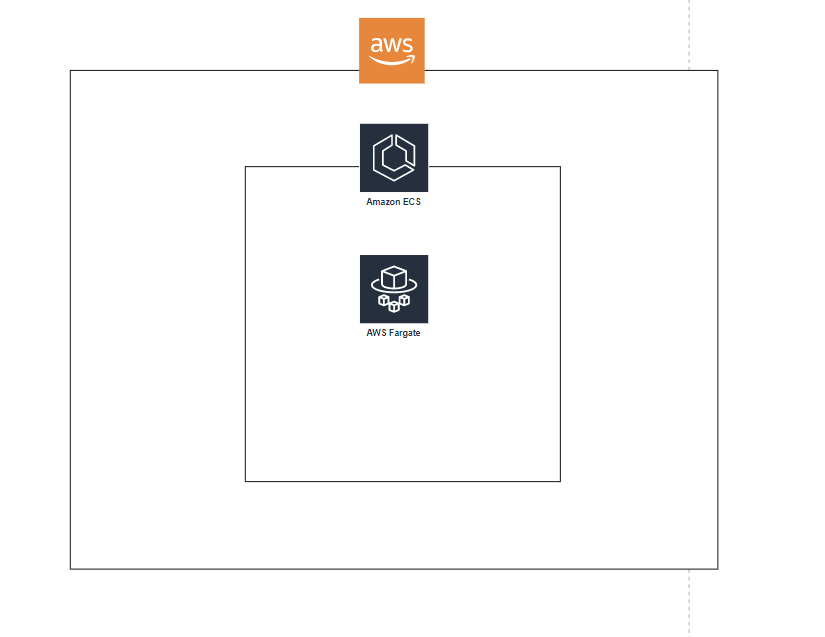

At the time of the outage this website was hosted on Netlify, so I wasn't affected by the outage, but it got me thinking about what it would take to deploy the site to AWS in a fault-tolerant and redundant configuration. "How difficult was it to host a static site on AWS with a multi-region configuration?" Turns out, it isn't that difficult. The tricky part was deploying everything with the [AWS CDK].

I knew I needed to use Amazon CloudFront for a CDN to deliver the content. Exposing an S3 bucket website can be more expensive, and caching is a beneficial feature. If you're interested in the price difference, I've put together an [estimate]. I hadn't used the feature before, but CloudFront's [Origin Groups] turned out to be a key part of creating the redundancy I wanted. To have two origins, I knew I would need two S3 buckets in different regions. To save on trying to upload to both placed, I knew I could replicate objects from one bucket to the other.

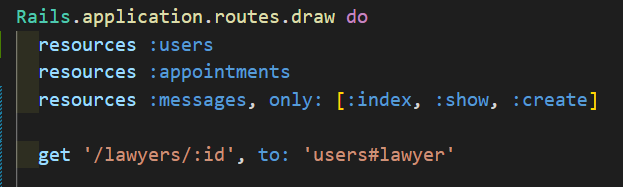

<figure style="text-align: center">

</figure>

Figuring out how to deploy this architecture using the CDK took some experimentation, the architecture builds on itself which means you need to consider the dependencies. In the end, I have one stack for the CloudFront distribution, one stack for the primary S3 bucket, and one stack for the secondary S3 bucket. The trick is they are deployed in the opposite of that order. First, the secondary bucket gets deployed, fulfilling a dependency for the primary bucket and enabling replication to be configured. Those two buckets being deployed allows the distribution to be created and to point to both buckets.

To reduce duplication I was able to use inheritance for the primary bucket's stack. It inherits from the secondary bucket's stack and layers on the bucket replication.

Here is an example of what my stack looks like to create the S3 bucket and prepare it for replication and use with CloudFront. Versioning is required for replication, but I don't want to have useless object versions building up, so I provide a lifecycle rule for cleanup.

```typescript

import { Duration, RemovalPolicy, Stack, StackProps } from 'aws-cdk-lib';

import {

AccountRootPrincipal,

Effect,

PolicyStatement,

} from 'aws-cdk-lib/aws-iam';

import { Bucket, IBucket } from 'aws-cdk-lib/aws-s3';

import { Construct } from 'constructs';

export type CommonStackProps = StackProps & {

resourceKey: string;

};

export class CommonBucketStack extends Stack {

public readonly bucket: IBucket;

constructor(scope: Construct, id: string, props: CommonStackProps) {

super(scope, id, props);

this.bucket = new Bucket(this, 'bucket', {

bucketName: `YOUR-BUCKET-NAME-${this.region}`,

removalPolicy: RemovalPolicy.RETAIN,

versioned: true,

lifecycleRules: [

{

enabled: true,

noncurrentVersionExpiration: Duration.days(1),

expiredObjectDeleteMarker: true,

abortIncompleteMultipartUploadAfter: Duration.days(1),

},

],

websiteIndexDocument: 'index.html',

websiteErrorDocument: '404.html',

});

this.bucket.addToResourcePolicy(

new PolicyStatement({

effect: Effect.ALLOW,

principals: [new AccountRootPrincipal()],

actions: ['s3:*'],

resources: [this.bucket.bucketArn, this.bucket.arnForObjects('*')],

})

);

this.bucket.grantPublicAccess();

}

}

```

There surprisingly isn't a CDK L2 Construct to help with replication, so you have to make do with the L1 constructs, below is an example of how I accomplished it.

```typescript

import {

Effect,

Policy,

PolicyStatement,

Role,

ServicePrincipal,

} from 'aws-cdk-lib/aws-iam';

import { Bucket, CfnBucket } from 'aws-cdk-lib/aws-s3';

import { Construct } from 'constructs';

import {

CommonBucketStack,

CommonBucketStackProps,

} from './common-bucket-stack';

export type PrimaryStackProps = CommonBucketStackProps & {

secondaryBucketArn: string;

};

export class PrimaryStack extends CommonBucketStack {

constructor(scope: Construct, id: string, props: PrimaryStackProps) {

super(scope, id, props);

const sourceBucket = this.bucket;

const destinationBucket = Bucket.fromBucketArn(

this,

'destination-bucket',

props.secondaryBucketArn

);

const replicationRole = new Role(this, 'bucket-replication-role', {

assumedBy: new ServicePrincipal('s3.amazonaws.com'),

});

replicationRole.attachInlinePolicy(

new Policy(this, 'replication-policy', {

statements: [

new PolicyStatement({

effect: Effect.ALLOW,

actions: [

's3:GetObjectVersionForReplication',

's3:GetObjectVersionAcl',

's3:GetObjectVersionTagging',

],

resources: [sourceBucket.arnForObjects('*')],

}),

new PolicyStatement({

effect: Effect.ALLOW,

actions: ['s3:ListBucket', 's3:GetReplicationConfiguration'],

resources: [sourceBucket.bucketArn],

}),

new PolicyStatement({

effect: Effect.ALLOW,

actions: [

's3:ReplicateObject',

's3:ReplicateDelete',

's3:ReplicateTags',

],

resources: [destinationBucket.arnForObjects('*')],

}),

],

})

);

const cfnBucket = sourceBucket.node.defaultChild as CfnBucket;

cfnBucket.replicationConfiguration = {

role: replicationRole.roleArn,

rules: [

{

destination: {

bucket: destinationBucket.bucketArn,

},

priority: 1,

deleteMarkerReplication: {

status: 'Enabled',

},

filter: {

prefix: '',

},

status: 'Enabled',

},

],

};

}

}

```

Once you have all that sorted out, defining a stack for the CloudFront distribution takes a little trial and error to get the origins and behaviors properly configured but is much easier than everything else.

```typescript

import { Stack, StackProps } from 'aws-cdk-lib';

import {

Certificate,

CertificateValidation,

} from 'aws-cdk-lib/aws-certificatemanager';

import {

AllowedMethods,

Distribution,

OriginProtocolPolicy,

PriceClass,

ViewerProtocolPolicy,

} from 'aws-cdk-lib/aws-cloudfront';

import { HttpOrigin, OriginGroup } from 'aws-cdk-lib/aws-cloudfront-origins';

import { Construct } from 'constructs';

export type CdnStackProps = StackProps & {

primaryBucketWebsite: string;

secondaryBucketWebsite: string;

cnames: string[];

};

export class CdnStack extends Stack {

readonly distribution: Distribution;

constructor(scope: Construct, id: string, props: CdnStackProps) {

super(scope, id, props);

const primaryBucketOrigin = new HttpOrigin(props.primaryBucketWebsite, {

protocolPolicy: OriginProtocolPolicy.HTTP_ONLY,

});

const secondaryBucketOrigin = new HttpOrigin(props.secondaryBucketWebsite, {

protocolPolicy: OriginProtocolPolicy.HTTP_ONLY,

});

const originGroup = new OriginGroup({

primaryOrigin: primaryBucketOrigin,

fallbackOrigin: secondaryBucketOrigin,

fallbackStatusCodes: [400, 403, 416, 500, 502, 503, 504],

});

this.distribution = new Distribution(this, 'cdn', {

enabled: true,

priceClass: PriceClass.PRICE_CLASS_100,

defaultBehavior: {

allowedMethods: AllowedMethods.ALLOW_GET_HEAD,

origin: originGroup,

viewerProtocolPolicy: ViewerProtocolPolicy.REDIRECT_TO_HTTPS,

compress: true,

cachedMethods: AllowedMethods.ALLOW_GET_HEAD,

},

domainNames: props.cnames,

certificate: new Certificate(this, 'Certificate', {

domainName: props.cnames[0],

subjectAlternativeNames: props.cnames.slice(1) || [],

validation: CertificateValidation.fromDns(), // Records must be added manually

}),

defaultRootObject: 'index.html',

errorResponses: [

{

httpStatus: 404,

responseHttpStatus: 404,

responsePagePath: '/404.html',

},

],

});

}

}

```

All of these code snippets are a bit different than what I have in use, where possible I broke things out into their own constructs to help with composing the architecture but they are enough to help give you an idea.

Overall this architecture should be able to withstand regional outages for either bucket. I already have ideas on how to make the architecture even more resilient, but I don't need for them yet. I expect I'll write more about that idea later. I think it has significant potential for improving user experience in some situations.

[AWS CDK]: https://docs.aws.amazon.com/cdk/v2/guide/home.html

[estimate]: https://calculator.aws/#/estimate?id=60863c4c1ab49cb3654f5df861674d16d14a63e7

[Origin Groups]: https://docs.aws.amazon.com/AmazonCloudFront/latest/DeveloperGuide/DownloadDistS3AndCustomOrigins.html#concept_origin_groups

| jbutz |

1,330,391 | Write Clear and Meaningful Git Commit Messages | There are no strict rules for writing commit messages but When working on a project on GitHub, it's... | 0 | 2023-01-16T05:37:16 | https://dev.to/ashishxcode/mastering-the-art-of-writing-effective-github-commit-messages-5d2p | webdev, productivity, github, git | There are no strict rules for writing commit messages but When working on a project on GitHub, it's important to communicate clearly and concisely about the changes you've made. One way to do this is through the use of keywords in your commit messages.

These keywords, or labels, help to indicate the nature of the changes and make it easier for others to understand the context of your contributions.

Here are some common keywords and what they indicate:

FEAT: Use this keyword to indicate that you are committing to a new feature.

`"FEAT: Add new login functionality."`

FIX: Use this keyword to indicate that you are committing a fix for a specific problem or issue.

`"FIX: Fix bug causing crashes on certain devices."`

STYLE: Use this keyword to indicate that you are making changes to the style or formatting of the code, but not its functionality.

`"STYLE: Update indentation in main.js."`

REFACTOR: Use this keyword to indicate that you are making changes to the code that improve its structure or organisation, but do not add new features or fix bugs.

`"REFACTOR: Refactor the code to improve readability."`

TEST: Use this keyword to indicate that you are adding or updating tests for the code.

`"TEST: Add new unit tests for login functionality."`

CHORE: Use this keyword to indicate that you are making changes to the build process or other tasks that are not directly related to the code itself.

`"CHORE: Update dependencies in package.json."`

PERF: Use this keyword to indicate that you are making changes to improve the performance of the code.

`"PERF: Optimize image loading for faster performance."`

CI: Use this keyword to indicate that you are making changes to the continuous integration process.

`"CI: Fix issue with test pipeline on Dashboard CI."`

BUILD: Use this keyword to indicate that you are making changes to the build process.

`"BUILD: Add new script for building the production version of the app."

`

By using these keywords in your commit messages, you can help to make your contributions more clear and more understandable to others. However, it is important to note that these are just suggestions and not all projects use them, it's important to check the project's documentation to see if there are any specific guidelines you should follow.

In summary, clear and concise commit messages are a key aspect of good development practices. Using keywords in your commit messages can help to indicate the nature of the changes you've made, making it easier for others to understand and review your contributions.

My Other Blogs

**[BEM Methodology for CSS - A Guide for Developers](https://dev.to/ashishxcode/bem-methodology-for-css-a-guide-for-developers-34jl)** | ashishxcode |

1,330,396 | QR Code Generator + Download QR as Image | 🙋♂️Hello Friends! 👨💻So I tried building QR code Generator from input link with simple API And... | 0 | 2023-01-18T07:27:20 | https://dev.to/developedbyjk/qr-code-generator-download-qr-as-image-15gg | javascript, qrcodegenerator, api, webdev |

🙋♂️Hello Friends!

👨💻So I tried building QR code Generator from input link with simple [API](https://goqr.me/api/doc/) And added the feature to Download That QR Code & Yeah🥵 faced a lot of bugs dealing with two different APIs but finally Made it🤩

Let me show you how simple it is!😉

(full code below the article)

---

📌**Step 1**

👉 We will need an input to paste our URL and a button to do work with that input

```

<input type="text" id="input"/>

<button onclick="generate()">Generate</button>

```

👉we added generate function that will trigger JavaScript

🤾♂️But we need to show out output so we will create an an image

---

📌***Step 2***

```

\\create image element

let img = document.createElement("img");

\\select the div from html

let qr = document.querySelector(".qr-code");

\\ stick the generated image to html div

qr.appendChild(img);

```

👉Okay so now 🏖

Input and button - Ready ✅

div to get output - Ready ✅

---

📌***Step 3***

👉The Third and main step is to add JavaScript code to generate QR

🚦Let get the input value from input tab

```

function generate(){

let input = document.getElementById("input");

}

```

👉Next we will check if the value is inserted or not before clicking the generate button 🎯

The code will only run if there is value in input 😉

if yes!🤩

then we will search the qr img by API:🤓

👉we will use [template literal](https://www.w3schools.com/js/js_string_templates.asp) to Pass Value of input that we got

`

https://api.qrserver.com/v1/create-qr-code/?size=180x180&data=${input.value}

`

👉And Boom💥 The API will do its work😎

```

if(input.value){

img.src = `https://api.qrserver.com/v1/

create-qr-code/?size=180x180&data=${input.value}`

};

```

---

## Now Let's Download Our QR 😍

👉To download our image we will use Another API [FileSaver.js](https://github.com/eligrey/FileSaver.js/)

📌**Step 1**

👉Adding the Cdn in Head of Html:

```

<script src="https://cdnjs.cloudflare.com/ajax/libs/FileSaver.js/2.0.0/FileSaver.min.js" integrity="sha512-csNcFYJniKjJxRWRV1R7fvnXrycHP6qDR21mgz1ZP55xY5d+aHLfo9/FcGDQLfn2IfngbAHd8LdfsagcCqgTcQ==" crossorigin="anonymous" referrerpolicy="no-referrer"></script>

```

👉Now Lets Create Download Button :🎨

```

<button id="mybutton" >Download</button>

```

And👀

```

//get the html button

let btnDownload = document.querySelector('#mybutton');

//get the html image

let imgdwn = document.querySelector('img');

```

---

📌**Step 2**

👉Now Add a Event Listener that work when we click download button

So when we click it:🧐

-🐠first get the img src and set in imagePath ✅

```

btnDownload.addEventListener('click', () => {

//getting the src of image

let imagePath = imgdwn.getAttribute('src');

});

```

-🦐second get the img name from the url✅

eg : https://httpbin.org/image get the >>image and add + '.jpg' at end

```

btnDownload.addEventListener('click', () => {

//getting the src of image

let imagePath = imgdwn.getAttribute('src');

//image format

let fileName = getFileName(imagePath);

//eg :saveAs("https://httpbin.org/image", "image.jpg");

saveAs(imagePath, fileName);

});

```

🧐so it will be image.jpg from the url to do that we will create `getFileName(imagePath)` Function

👉after getting the name of image (eg: image.jpg) from function

we will pass into `saveAs(imagePath, fileName)`; 😀

```

function getFileName(str) {

//search '=' from url starting from end and give the output

let gotstr = str.substring(str.lastIndexOf('=') + 1 );

let format = ".jpg";

// return the format ie image.jpg

return gotstr.concat(format);

}

```

**Congratulation**🎉✨

You Successfully🏆 Created a QR code Generator 🎀 and with Download Button😍

## Full Code Below 👇

**📌 HTML 📙 **

```

<!DOCTYPE html>

<html>

<head>

<script src="https://cdnjs.cloudflare.com/ajax/libs/FileSaver.js/2.0.0/FileSaver.min.js" integrity="sha512-csNcFYJniKjJxRWRV1R7fvnXrycHP6qDR21mgz1ZP55xY5d+aHLfo9/FcGDQLfn2IfngbAHd8LdfsagcCqgTcQ==" crossorigin="anonymous" referrerpolicy="no-referrer"></script>

</head>

<body>

<div class="container">

<div class="input-container">

<input type="text" id="input" placeholder="Enter

Url" autocomplete="off"/>

<button onclick="generate()">Generate</button>

</div>

<div class="qr-code"></div>

<button id="maindwnbtn" ><a href="#" id="mybutton"

download="qr">Download</a></button>

</div>

<a href="linktr.ee/developedbyjk">@developedbyjk</a>

</body>

</html>

```

**📌 CSS - if you want 😉 📗 **

```

@import url('https://fonts.googleapis.com/css2?family=Space+Mono&display=swap');

body{

font-family: 'Space Mono', monospace;

background-color: aliceblue;

}

.container{

max-width: 400px;

margin: 10%;

max-height: 500px;

padding: .2rem;

display: flex;

flex-direction: column;

justify-content: space-evenly;

background-color:aliceblue;

}

.input-container{

display: flex;

flex-direction: column;

}

.input-container input{

padding: 12px;

outline: none;

border-radius: 5px;

border: 2px dashed #c7c7c7;

font-family: 'Space Mono', monospace;

}

.qr-code{

margin-top: 100px;

height: 200px;

width: 200px;

border: 2px dashed #c7c7c7;

}

.input-container button{

padding: 0.5rem;

height: 40px;

color: #fff;

font-size: 1rem;

margin-top: .5rem;

outline: none;

border-radius: 90px;

border: none;

background-color: #332fd0;

cursor: pointer;

font-family: 'Space Mono', monospace;

}

#maindwnbtn{

padding: 0.5rem;

height: 40px;

font-size: 1rem;

margin-top: 80%;

outline: none;

border-radius: 90px;

border: none;

background-color: #332fd0;

cursor: pointer;

}

#maindwnbtn a{

color: #fff;

text-decoration:none;

font-family: 'Space Mono', monospace;

}

.qr-code{

width: 100%;

height: 180px;

text-align: center;

}

```

**📌 JavaScript 📗 **

```

let img = document.createElement("img");

let qr = document.querySelector(".qr-code");

qr.appendChild(img);

function generate(){

let input = document.getElementById("input");

if(input.value){

input.style.borderColor = "#c7c7c7";

img.src = `https://api.qrserver.com/v1/create-qr-code/?size=180x180&data=${input.value}`;

}

else{

input.style.borderColor="red";

return false;

}

input.value = "";

}

let btnDownload = document.querySelector('#mybutton');

let imgdwn = document.querySelector('img');

btnDownload.addEventListener('click', () => {

let imagePath = imgdwn.getAttribute('src');

let fileName = getFileName(imagePath);

saveAs(imagePath, fileName);

});

function getFileName(str) {

let gotstr = str.substring(str.lastIndexOf('=') + 1 );

let format = ".jpg";

return gotstr.concat(format);

}

```

Wait 😳

You reached so Below 🤯 I can't Believe it

You seem great Diver 😍😉

So Hi👋 My diver Friend👨

Myself JK 👨💻

I share Stuff Related to Web Design & Development

If you like this😄

I'm Sure you'll Love My [Instagram Page](https://www.instagram.com/developedbyjk) Where I share the same,but with Visual Taste.🤝😎👀

Hope we meet There😉🎀🏝

Happy Coding 💪✨🎉

My All Links [linktr.ee/developedbyjk](linktr.ee/developedbyjk)

| developedbyjk |

1,330,694 | How to use PHP native enum? | PHP 8.1 offers natively the new enum structure. Let's see how we can use them in an advanced way! 🚀... | 0 | 2023-01-16T11:35:45 | https://dev.to/pierre/php-enum-2ac7 | php, programming, cleancode, php8 | [PHP 8.1](https://www.php.net/manual/en/language.types.enumerations.php) offers natively the new `enum` structure. Let's see how we can use them in an advanced way! 🚀 And how it can make your code cleaner, more consistent, and easier to understand! 💡

{% youtube https://youtu.be/c080A4Lrnps %} | pierre |

1,330,774 | Becoming a Cloud Engineer in 2023 (A Roadmap) | Here is Simon Holdorf’s recommendation for becoming a Cloud Engineer in 2023. Step 1: The Role On a... | 0 | 2023-01-16T13:38:44 | https://dev.to/ileriayo/becoming-a-cloud-engineer-in-2023-a-roadmap-1f6k | cloud, aws, devops, beginners |

Here is Simon Holdorf’s recommendation for becoming a Cloud Engineer in 2023.

**Step 1: The Role**

On a high level, a cloud engineer is responsible for designing, building, and maintaining an organization's cloud computing infrastructure and systems.

**Step 2: The Fundamentals**

Don't skip the Fundamentals!

Learn:

- General Cloud Computing

- Networking

- Operating systems

- Virtualization

- Security

**Step 3: Selecting a Cloud Provider**

It is beneficial to have experience with multiple cloud providers as a cloud engineer.

If you are starting, I would highly recommend concentrating on one cloud provider first: AWS, Azure, or GCP.

**Step 4: Acquiring programming knowledge**

As a cloud engineer, it is important to understand at least one programming or scripting language, especially for automating tasks and provision resources in the cloud.

Know your way around Git and a product like GitHub or Gitlab.

**Step 5: Learning DevOps principles & tools**

DevOps is a set of practices that aims to improve collaboration between development and operations teams and increase software delivery speed and reliability.

Know:

- CI/CD

- IaC

- Monitoring & Logging

- Collaboration & Communication

**Step 6: Gaining Hands-On experience**

Seeking opportunities to gain practical, hands-on experience with cloud technologies is essential to becoming a successful cloud engineer.

The first step is to create an account with one of the big cloud providers, AWS, Azure, or GCP.

**Step: 7 Earning Certifications**

Obtaining a certification can be beneficial for several reasons:

- Validation of skills

- Improved job prospects

- Professional development

- Increased earning potential

- Fun challenge

To become a successful Cloud Engineer, you need to have a mindset focused on delivering scalable, reliable, and secure solutions.

Check out Simon's video https://www.youtube.com/watch?v=6Yi3c259RE0

Follow Ileriayo Adebiyi on LinkedIn & Twitter for more on Cloud & DevOps.

LinkedIn: https://www.linkedin.com/in/ileriayoadebiyi

Twitter: https://twitter.com/ileriayooo | ileriayo |

1,330,952 | What are your Goals for the week of January 16? | What are your goals for this week? What are you building? What will be a good result by week's... | 19,128 | 2023-01-16T15:58:03 | https://dev.to/jarvisscript/what-are-your-goals-for-the-week-of-january-16-38o1 | discuss, watercooler, motivation | What are your goals for this week?

- What are you building?

- What will be a good result by week's end?

- Did you meet your goals last week?

**Last Week's Goals**

- [:white_check_mark:] Update resume/linkedIN/portfolio.

- [:white_check_mark:] Job Search. Spending my mornings hitting LinkedIn and sending DMs. Applying to open roles.

- [:white_check_mark:] Hit milestone in blog views.

**Last Week's Wins**

- I received my HacktoberFest 9 (2022) Tee-shirt and my Trusted User badge for DEV.

### Still working on:

- Update resume/linkedIN/portfolio.

- I'm taking a course starting later this month, course hasn't dropped yet.

**This Week's Goals**

- Update resume/linkedIn/portfolio.

- Continue Job Search.

- Learn something. Planning a project out.

- Encourage Virtual Coffee members in our Month of Learning Challenge.

- Blog.

**This Month's Goals**

- Gain more followers here. I would like to have more followers on DEV than I had on the bird site. Need about 100 more here.

- Gain more followers on Mastodon. @jarvisscript@hachyderm.io I'm nowhere near my DEV or bird numbers but that's ok.

You've read my goals so I'll throw that question back to you.

What are your goals for the week?

```

-$JarvisScript git push

``` | jarvisscript |

1,330,955 | Java 101 for software testing (Using fruits and vehicle as example) | I think many of you might have similar experience like me where I found myself struggle to... | 0 | 2023-01-16T16:03:42 | https://dev.to/alanliew88/java-101-for-software-testing-12bg | testing, bugs, automationtest, java |

I think many of you might have similar experience like me where I found myself struggle to understand of Java language.

There was a time I try to avoid myself from writing selenium testing script in Java, instead, I'm using Python to code.

And when I decided to learn and write selenium testing script using java but I'm devastated because of the endless of error seem to appear.. I can't understand the error log, the console log and etc. And I knew that was because lack of basic understanding of Java language.

Hence, I strongly recommend you to read this article before you write any selenium test scripts because you must build your foundation right before you write any scripts.

**

## First, we ask WHY ? Why Java ?

**

✅ Java is a programming language that is commonly used in combination with the Selenium web automation tool.

✅ Selenium is a powerful tool for automating web browsers, and it can be used to automate a wide variety of web-based tasks.

✅ When used with Java, Selenium allows developers to write automated tests for web applications using the Java programming language.

✅ This can be useful for a variety of purposes, including functional testing, performance testing, and regression testing. Not only that, selenium has a number of libraries that can be used to extend its functionality or make it easier to use in certain situations.

**

## Next, what is Java ?

**

✅ Java is an object-oriented programming (OOP) language.

You may wonder what is OOP means ? Don't freak out.

Let me make this simple for you. For example:

Fruits.

There are bananas, cherries, oranges, kiwis, grapes and apples in the picture.

✅ These are called Objects.

✅ And what are they categories as ? Fruits

Hence, Fruits is the **Class** and bananas, cherries, oranges, kiwis, grapes and apples is the **Objects**.

Is this sound simple ?

What about this picture ?

We can see trucks , ambulance, bus and car.

And what are they ? **Vehicles**

So we know **Vehicles** is **Class** and trucks , ambulance , bus and car is an **Object**.

Now, what is the color of the car ? **Blue**

Blue is the **attribute** of the Car.

How do we operate the vehicles ? **Drive**

Drive is the **method**

We can conclude 4 things from this , we know the class (vehicles), the object (car, bus, trucks, ambulance), attributes (blue, 4 tires , etc) and method (drive).

***

public class Vehicle { **<- this is class = vehicles**

String carBrand = honda; **<- this is objects and attributes**

Int numOfTires = 4; **<- this is objects and attributes**

void Drive(){ **<- this is drive method**

System.out.print("Car is driving");

}

public static void main(String[]args){

Car myCar = new Car();

myCar.drive();

***

This is just a simple java program to show you how the java structure it is. It's OK not to understand everything in the first place. Just get along with me , and I can assure you will have sufficient java knowledge to write your first selenium script with Java.

❤️

Related Articles:

[1. Cloud Computing , let’s talk about it [Part 2]](https://dev.to/alanliew88/cloud-computing-lets-talk-about-it-part-2-2mgj)

[2. AWS ! the terms you must know 🤫](https://dev.to/alanliew88/aws-the-terms-you-must-know-2k8p)

[3. ISTQB 🧙🏻♀️The software testing Myth, do you need that?](https://dev.to/alanliew88/istqb-the-software-testing-myth-do-you-need-that-1ajj)

[4. Why Software Testing is necessary in the first place?👀 ](https://dev.to/alanliew88/why-software-testing-is-necessary-in-the-first-place-181)

❤️

Connect with me in [Linkedin](https://www.linkedin.com/in/alan-liew/) !

| alanliew88 |

1,331,362 | Interfaces and Polymorphism in PHP - Practical Guide | Polymorphism Polymorphism in OOP's is a concept that allows you to create classes with... | 0 | 2023-01-21T03:58:38 | https://dev.to/saravanasai/interfaces-and-polymorphism-in-php-practical-guide-pfc | php, webdev, explanation, cleancode | ## Polymorphism

Polymorphism in OOP's is a concept that allows you to create classes with different functionalities in a single interface generally, it is of two types:

1. compile-time (overloading)

2. run time (overriding)

But polymorphism in PHP does not support overloading, or in other words, compile-time polymorphism.

! Let don’t have to worry about the technicalities & jargon's. Let see a example with a explanation.

## Scenario:

Let consider that we have to write a code for payment gateway integration & they may be more than one payment gateway (stripe & pay-pal) . The different users may choose different payment method & also in future there may be a some other payment methods also.

Just take a min & roll up you heads for a solution . There are different ways to approach this with simple if-else condition but by using a PHP interface we can get a clean implementation.

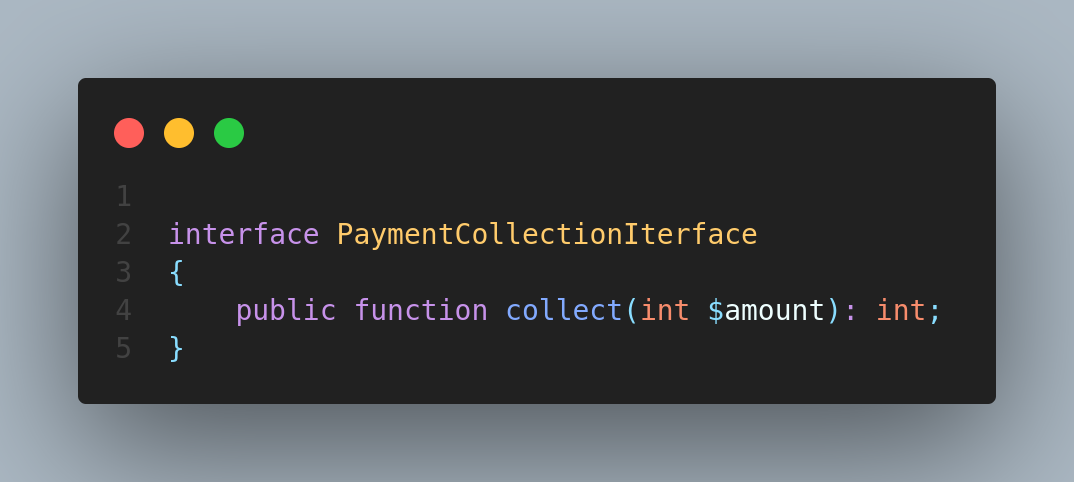

let's create an interface

The class which uses or implements PaymentCollectionIterface en-forces the class to implement the collect method.

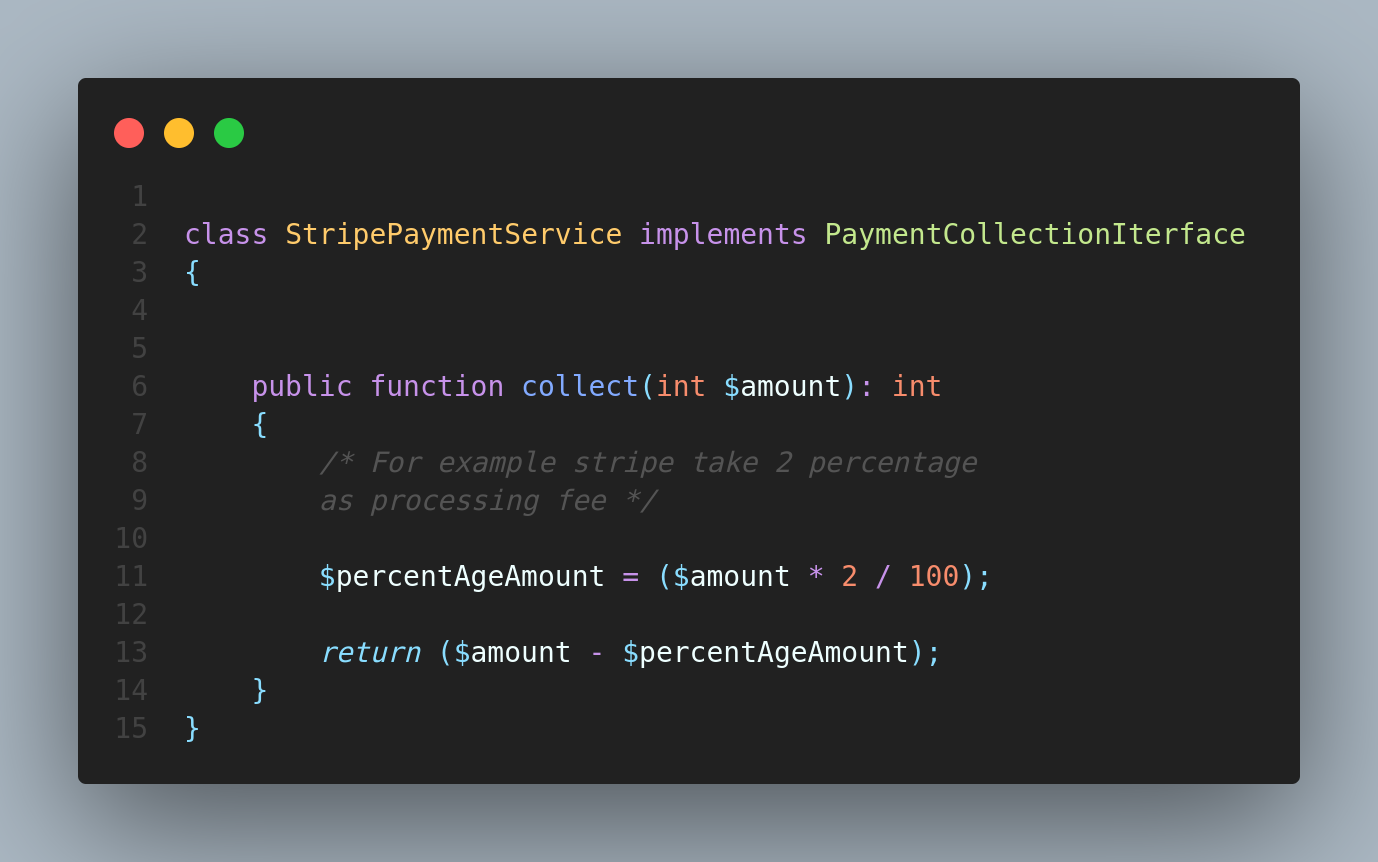

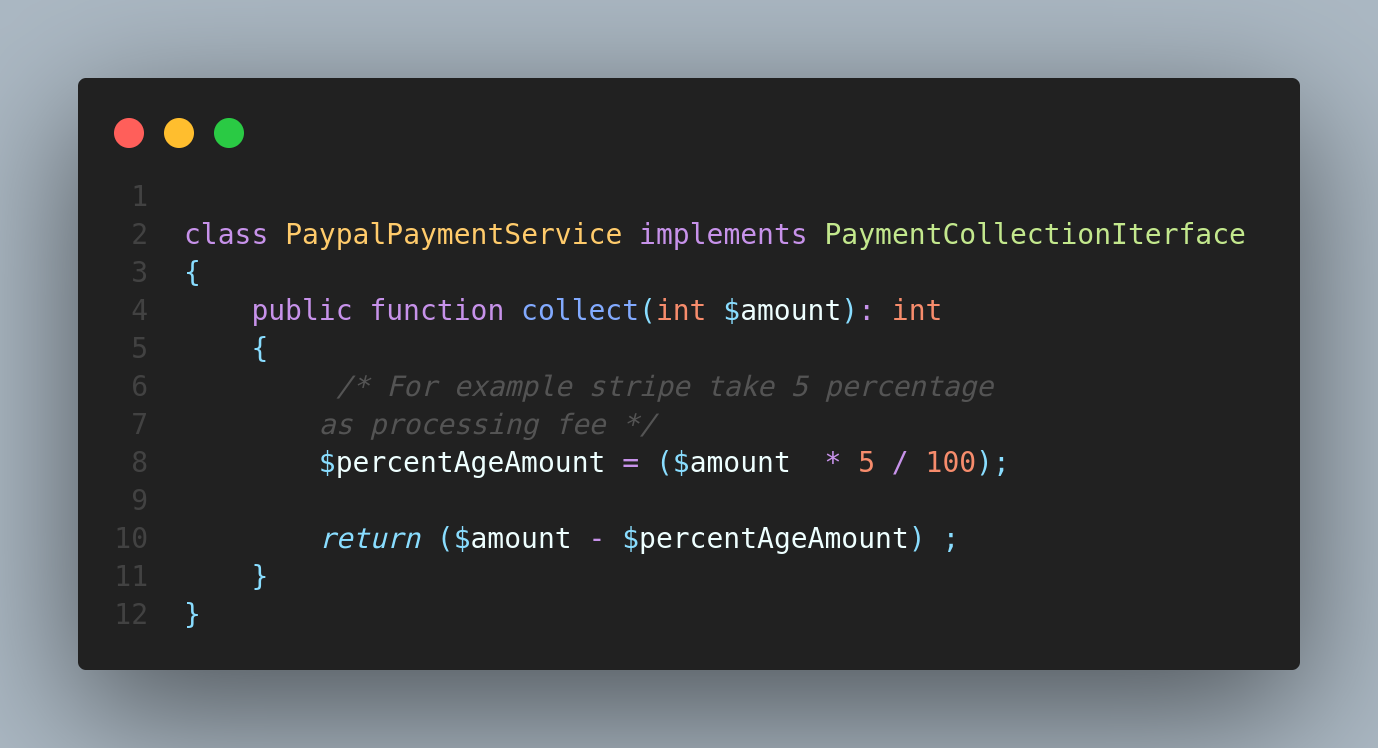

StripePaymentService implements PaymentCollectionIterface

as well as for PaypalPaymentService.

Don't take the implementation part of collect method so seriously that is just an example. Let's focus on a problem. Till now we have a two separate class for each payment method.

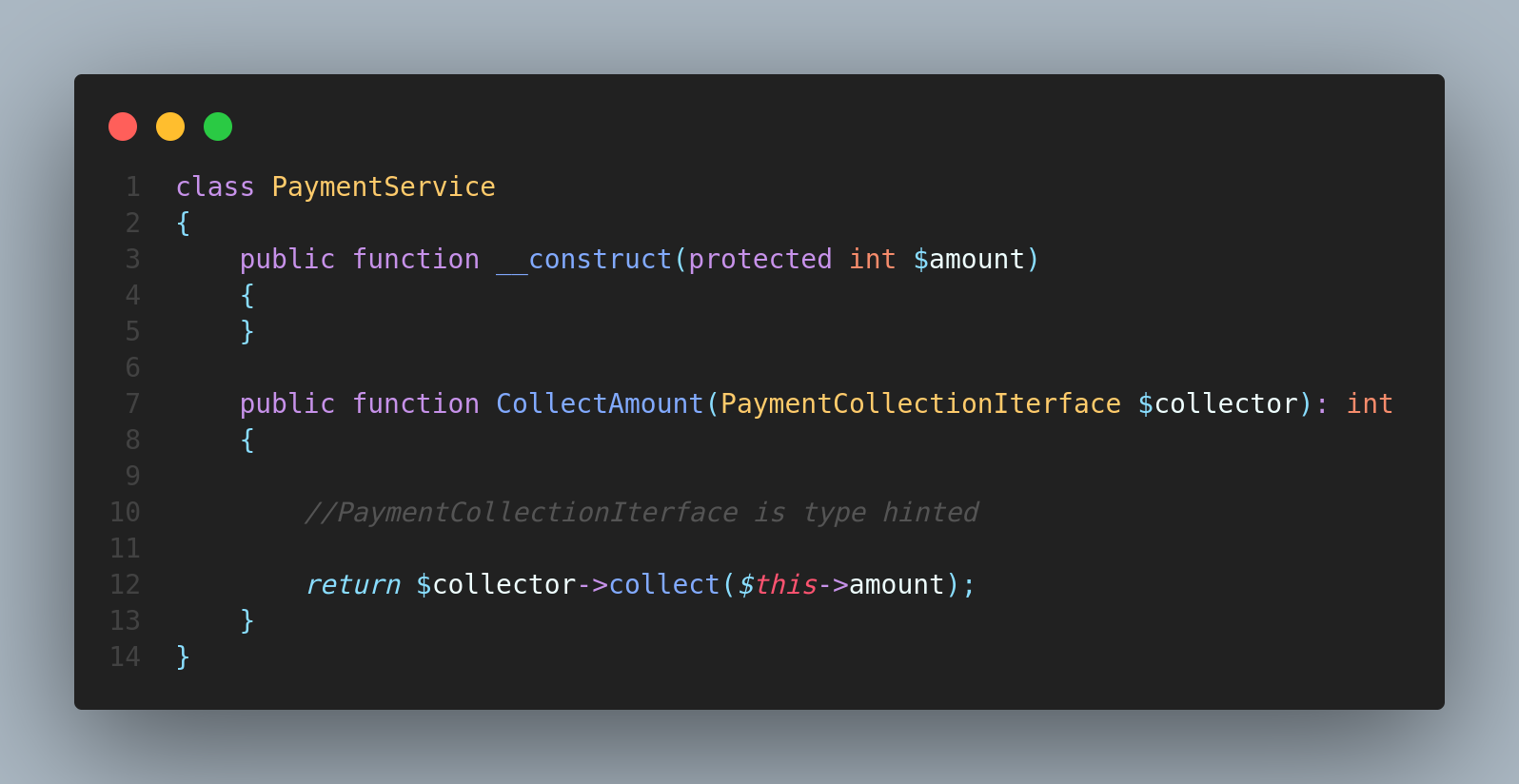

Now let's create a PaymentService class which handle the payment detection logic.

In above code we have type hinted the

`CollectAmount(PaymentCollectionIterface $collector)` method with PaymentCollectionIterface.This is where the polymorphism come in to play.The argument passed to the `CollectAmount()` method should the the instance of a class which implements the `PaymentCollectionIterface` else it will throw an error.

As we know that both **StripePaymentService & PaypalPaymentService** implements PaymentCollectionIterface. So , PHP is smart enough to resolve that collect method on the class which the instance is passed.

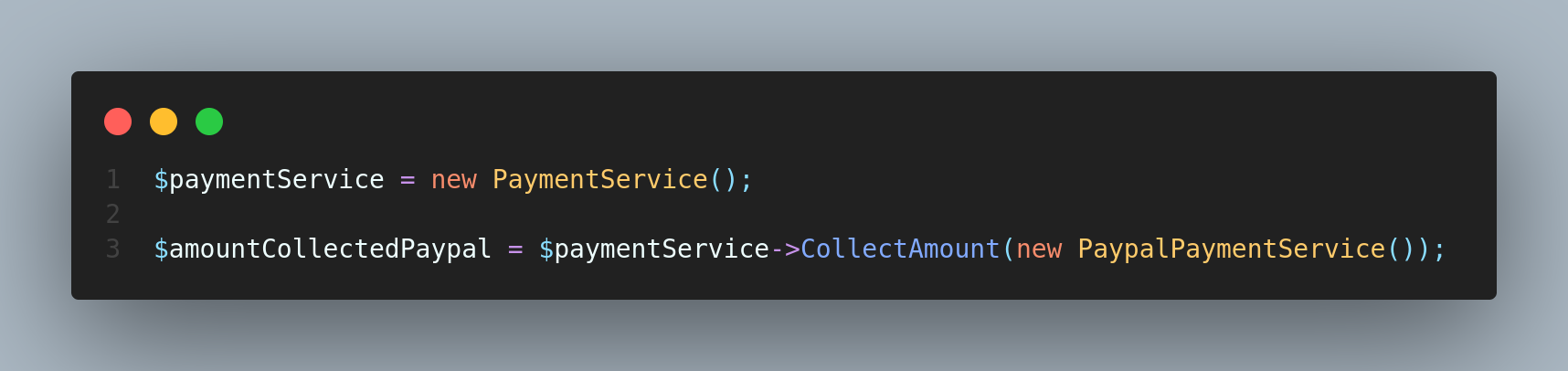

It resolves the payment method on run time.Now if user want to pay with paypal.you can invoke a paymentService class like below.

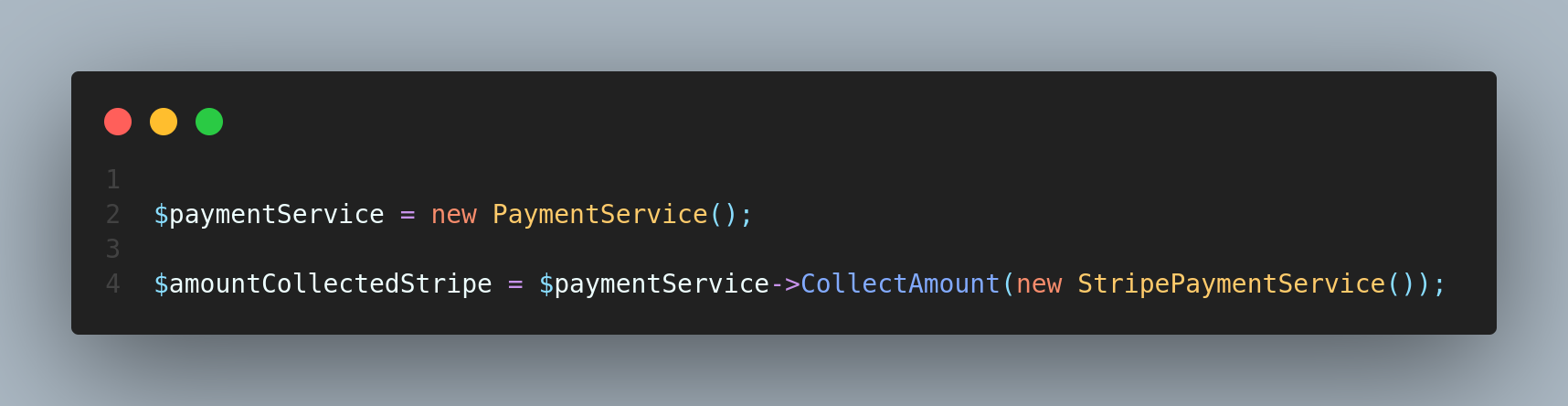

if user want to pay with stripe then you can do like this below.

**Conclusion**

... So that's it a Interfaces and Polymorphism in PHP using in a more practical way

Hopefully this will be useful to some of you :)

I'd love to hear what you're comments & more example let me know in the comments below!

Follow for more content like this & share

| saravanasai |

1,331,392 | Day 12 #100DaysofCode | What I Learned Today Difference between CSS Grid and CSS Flexbox Creating a... | 21,279 | 2023-01-17T03:38:42 | https://dev.to/developing-vic/day-12-100daysofcode-2k5p | webdev, beginners, 100daysofcode | ## What I Learned Today

* Difference between CSS Grid and CSS Flexbox

* Creating a Grid

* Positioning Grid Elements

* Advanced Grid Properties

I am 91% completed with the TOP Intermediate HTML and CSS Course. The last project in this section is an Admin Dashboard. | developing-vic |

1,331,404 | Prometheus: How is the Remote Write API code Path different from the Scrape Code Path | In my last post, I wanted to dig into the remote write code to learn more about how it worked and see... | 0 | 2023-01-17T03:59:23 | https://dev.to/mikkergimenez/prometheus-how-is-the-remote-write-api-code-path-different-from-the-scrape-code-path-579c | prometheus, observability | In my last post, I wanted to dig into the remote write code to learn more about how it worked and see if you could use it to simulate a push based agent. This is all just experimental to learn how Prometheus works. I think you can, and I'm working on testing it out, but before I get there, I wanted to explore the other side: that is the prometheus remote-write receiver.

I came up with a few questions I want to answer while exploring the remote write API:

1. Where does the code path between scraping a prometheus endpoint and remote write differ?

2. In what format does Prometheus write data to disk?

3. What exactly in the code makes Prometheus pull based over push based. Can I identify the specific code blocks where algorithms are implemented that will optimize for one over the other?

## Web API write endpoint

[main/web/api/v1/api.go](https://github.com/prometheus/prometheus/blob/main/web/api/v1/api.go#L364)

```go

// Line 364

r.Post("/write", api.ready(api.remoteWrite))

```

The remote write handler (api.remoteWrite) is created by the following code block.

[storage/remote/write_handler.go](https://github.com/prometheus/prometheus/blob/main/storage/remote/write_handler.go#L37)

```go

// NewWriteHandler creates a http.Handler that accepts remote write requests and

// writes them to the provided appendable.

func NewWriteHandler(logger log.Logger, appendable storage.Appendable) http.Handler {

return &writeHandler{

logger: logger,

appendable: appendable

}

}

```

I guess I'm surprised how quickly we get from remote write endpoint to the appender, it seems like there's no real special buffering on remote write to make it different from the scraper? In the previous article, we traced remote write back to an appender, so write is writing to an appender which may then re-write to another upstream pretty directly. I don't know why Prometheus writing would be more complicated than this, but as an SRE who doesn't write low level code, I'm curious to go back to the question "What makes us say that Prometheus isn't push based" Seems like you could write a push agent pretty easily.

I traced this back quite a bit, but this appendable is injected from the prometheus command code [here](https://github.com/prometheus/prometheus/blob/main/cmd/prometheus/main.go#L566) (cfg.web.storage is passed) fanout has primary and secondary storages. The local storage configured below is the 'primary' storage and remote storage is the 'secondary' storage. Ready Storage is a struct.

```go

var (

localStorage = &readyStorage{stats: tsdb.NewDBStats()}

scraper = &readyScrapeManager{}

remoteStorage = remote.NewStorage(log.With(logger, "component", "remote"), prometheus.DefaultRegisterer, localStorage.StartTime, localStoragePath, time.Duration(cfg.RemoteFlushDeadline), scraper)

fanoutStorage = storage.NewFanout(logger, localStorage, remoteStorage)

)

// Line 1287

type readyStorage struct {

mtx sync.RWMutex

db storage.Storage

startTimeMargin int64

stats *tsdb.DBStats

}

```

https://github.com/prometheus/prometheus/blob/main/cmd/prometheus/main.go#L566

[storage/interface.go](https://github.com/prometheus/prometheus/blob/136956cca40b7bf29dc303ad497d01c30534e373/storage/interface.go#L57)

```go

type Appendable interface {

// Appender returns a new appender for the storage. The implementation

// can choose whether or not to use the context, for deadlines or to check

// for errors.

Appender(ctx context.Context) Appender

}

```

https://github.com/prometheus/prometheus/blob/main/storage/remote/write_handler.go#L86

https://github.com/prometheus/prometheus/blob/136956cca40b7bf29dc303ad497d01c30534e373/storage/interface.go#L219

```go

type Appender interface {

// Append adds a sample pair for the given series.

// An optional series reference can be provided to accelerate calls.

// A series reference number is returned which can be used to add further

// samples to the given series in the same or later transactions.

// Returned reference numbers are ephemeral and may be rejected in calls

// to Append() at any point. Adding the sample via Append() returns a new

// reference number.

// If the reference is 0 it must not be used for caching.

Append(ref SeriesRef, l labels.Labels, t int64, v float64) (SeriesRef, error)

// Commit submits the collected samples and purges the batch. If Commit

// returns a non-nil error, it also rolls back all modifications made in

// the appender so far, as Rollback would do. In any case, an Appender

// must not be used anymore after Commit has been called.

Commit() error

// Rollback rolls back all modifications made in the appender so far.

// Appender has to be discarded after rollback.

Rollback() error

ExemplarAppender

HistogramAppender

MetadataUpdater

}

```

## Scrape Code Path:

Prometheus works by scraping endpoints periodically according to a scrape interval. This process is a loop, defined very clearly by the process entitied "mainLoop":

[scrape/scrape.go](https://github.com/prometheus/prometheus/blob/6fc5305ce904a75f56ca762281c7a1b052f19092/scrape/scrape.go#L1235)

```go

mainLoop:

for {

...

}

```

Alot of these are really long functions so I'm going to pull out the key pieces.

Calls "Scrape and Report":

https://github.com/prometheus/prometheus/blob/6fc5305ce904a75f56ca762281c7a1b052f19092/scrape/scrape.go#L1264

```last = sl.scrapeAndReport(last, scrapeTime, errc)```

Calls "Scrape":

https://github.com/prometheus/prometheus/blob/6fc5305ce904a75f56ca762281c7a1b052f19092/scrape/scrape.go#L1340

```

scrapeCtx, cancel := context.WithTimeout(sl.parentCtx, sl.timeout)

contentType, scrapeErr = sl.scraper.scrape(scrapeCtx, buf)

#... Line 1364

total, added, seriesAdded, appErr = sl.append(app, b, contentType, appendTime)

```

This function is what actually makes the HTTP request to Scrape a Destination:

https://github.com/prometheus/prometheus/blob/6fc5305ce904a75f56ca762281c7a1b052f19092/scrape/scrape.go#L792

```

func (s *targetScraper) scrape(ctx context.Context, w io.Writer) (string, error) {

if s.req == nil {

req, err := http.NewRequest("GET", s.URL().String(), nil)

if err != nil {

return "", err

}

req.Header.Add("Accept", s.acceptHeader)

req.Header.Add("Accept-Encoding", "gzip")

req.Header.Set("User-Agent", UserAgent)

req.Header.Set("X-Prometheus-Scrape-Timeout-Seconds", strconv.FormatFloat(s.timeout.Seconds(), 'f', -1, 64))

s.req = req

}

resp, err := s.client.Do(s.req.WithContext(ctx))

if err != nil {

return "", err

}

defer func() {

io.Copy(io.Discard, resp.Body)

resp.Body.Close()

}()

if resp.StatusCode != http.StatusOK {

return "", errors.Errorf("server returned HTTP status %s", resp.Status)

}

if s.bodySizeLimit <= 0 {

s.bodySizeLimit = math.MaxInt64

}

if resp.Header.Get("Content-Encoding") != "gzip" {

n, err := io.Copy(w, io.LimitReader(resp.Body, s.bodySizeLimit))

if err != nil {

return "", err

}

if n >= s.bodySizeLimit {

targetScrapeExceededBodySizeLimit.Inc()

return "", errBodySizeLimit

}

return resp.Header.Get("Content-Type"), nil

}

if s.gzipr == nil {

s.buf = bufio.NewReader(resp.Body)

s.gzipr, err = gzip.NewReader(s.buf)

if err != nil {

return "", err

}

} else {

s.buf.Reset(resp.Body)

if err = s.gzipr.Reset(s.buf); err != nil {

return "", err

}

}

n, err := io.Copy(w, io.LimitReader(s.gzipr, s.bodySizeLimit))

s.gzipr.Close()

if err != nil {

return "", err

}

if n >= s.bodySizeLimit {

targetScrapeExceededBodySizeLimit.Inc()

return "", errBodySizeLimit

}

return resp.Header.Get("Content-Type"), nil

}

```

Sets s.buf and s.gzipr:

https://github.com/prometheus/prometheus/blob/6fc5305ce904a75f56ca762281c7a1b052f19092/scrape/scrape.go#L835

Loop through and switch based on datatype:

https://github.com/prometheus/prometheus/blob/6fc5305ce904a75f56ca762281c7a1b052f19092/scrape/scrape.go#L1535

```

### For example, this switches on whether or not to append a histogram or just append:

if isHistogram {

if h != nil {

ref, err = app.AppendHistogram(ref, lset, t, h)

}

} else {

ref, err = app.Append(ref, lset, t, val)

}

```

This is where it gets back to the same 'Append' function as before, there are a few different types of Appenders, the one mentioned above seems to be a base struct. The appender is assigned by the following function in scrape.go.

```

// appender returns an appender for ingested samples from the target.

func appender(app storage.Appender, limit int) storage.Appender {

app = &timeLimitAppender{

Appender: app,

maxTime: timestamp.FromTime(time.Now().Add(maxAheadTime)),

}

// The limit is applied after metrics are potentially dropped via relabeling.

if limit > 0 {

app = &limitAppender{

Appender: app,

limit: limit,

}

}

return app

}

```

So, where does it go from here?

The /tsdb folder contains the code that we use to write Prometheus data to disk. Specifically blockwriter.go has a method called flush

[tsdb/blockwriter.go](https://github.com/prometheus/prometheus/blob/main/tsdb/blockwriter.go#L92)

```go

// Flush implements the Writer interface. This is where actual block writing

// happens. After flush completes, no writes can be done.

func (w *BlockWriter) Flush(ctx context.Context) (ulid.ULID, error) {

mint := w.head.MinTime()

// Add +1 millisecond to block maxt because block intervals are half-open: [b.MinTime, b.MaxTime).

// Because of this block intervals are always +1 than the total samples it includes.

maxt := w.head.MaxTime() + 1

level.Info(w.logger).Log("msg", "flushing", "series_count", w.head.NumSeries(), "mint", timestamp.Time(mint), "maxt", timestamp.Time(maxt))

compactor, err := NewLeveledCompactor(ctx, nil, w.logger []int64{w.blockSize}, chunkenc.NewPool(), nil)

if err != nil {

return ulid.ULID{}, errors.Wrap(err, "create leveled compactor")

}

id, err := compactor.Write(w.destinationDir, w.head, mint, maxt, nil)

if err != nil {

return ulid.ULID{}, errors.Wrap(err, "compactor write")

}

return id, nil

}

```

This process takes us to the 'LeveledCompactor` which has a write method:

[tsdb/compact.go](https://github.com/prometheus/prometheus/blob/b768247df877ce244eabf66cee30e36a743dba30/tsdb/compact.go#L549)

```

I think I'm close now but oddly can't find the specific method that writes the data files. [This block here](https://github.com/prometheus/prometheus/blob/b768247df877ce244eabf66cee30e36a743dba30/tsdb/compact.go#L625

) writes the metadata file, the tombstones and I assume the samples as well:

```go

### The above block is very long, but for example, ehre is where it writes metadata and tombesones

if _, err = writeMetaFile(c.logger, tmp, meta); err != nil {

return errors.Wrap(err, "write merged meta")

}

// Create an empty tombstones file.

if _, err := tombstones.WriteFile(c.logger, tmp, tombstones.NewMemTombstones()); err != nil {

return errors.Wrap(err, "write new tombstones file")

}

```

And that's about it for our overview of the different scrape types. Let's look back at the questions we had before exploring this API:

**Where does the code path between scraping a prometheus endpoint and remote write differ?

**

**In what format does Prometheus write data to disk?**

* According to [this](https://prometheus.io/docs/prometheus/latest/storage/#local-storage), it's the 'tsdb' format: https://github.com/prometheus/prometheus/blob/release-2.41/tsdb/docs/format/README.md

* **What exactly in the code makes Prometheus pull based over push based. Can I identify the specific code blocks where algorithms are implemented that will optimize for one over the other?**

* I'm going to save the details of this for the next post, but I think the answer might be... Nothing.

In the next blog post, I'll show my demo of a push-based agent, and summarize my findings from all the posts.

| mikkergimenez |

1,331,641 | Books for System Design | "Designing Data-Intensive Applications" by Martin Kleppmann is a comprehensive guide to designing,... | 0 | 2023-01-17T09:31:27 | https://dev.to/ridhisingla001/books-for-system-design-2l3l | books, systems, design, beginners |

1. "Designing Data-Intensive Applications" by Martin Kleppmann is a comprehensive guide to designing, implementing, and maintaining data-intensive applications.

2. "Building Microservices" by Sam Newman is a book that discusses the principles and practices of building microservices-based systems.

3. "Systems Performance: Enterprise and the Cloud" by Brendan Gregg is a book that covers performance analysis and optimization for systems running in the cloud.

4. "Site Reliability Engineering" by Betsy Beyer, Chris Jones, Jennifer Petoff, and Niall Richard Murphy is a book that discusses the principles and practices of site reliability engineering, which is a discipline that combines software engineering and systems engineering to build and run large-scale, fault-tolerant systems.

5. "Designing Distributed Systems" by Brendan Burns is a book that covers the fundamental patterns and practices for designing distributed systems.

I hope these recommendations are helpful! | ridhisingla001 |

1,331,698 | Six Agile Team Behaviors to Consider | Are members of agile teams different from members of other teams? Both yes and no. Yes, because some... | 0 | 2023-01-17T10:56:06 | https://www.lambdatest.com/blog/agile-team-behaviors-to-consider/ | agile, testing, devlopment, webdev | Are members of agile teams different from members of other teams? Both yes and no. Yes, because some of the behaviors we observe in agile teams are more distinct than in non-agile teams. And no, because we are talking about individuals!

However, effective agile team members exhibit certain traits more often than non-agile project team members because agile requires these behaviors to create a successful team and product. What traits should you look for in an agile team? Below are six essential habits that members of a successful agile team exhibit. I have also included interview questions to help determine if a candidate for an agile team has what it takes to join a strong agile team.

## People Willing to Work Outside of Their Expertise

A person’s willingness to work beyond his or her area of expertise is an indicator of adaptability. I do not recommend anyone to do things they do not know anything about — a programmer should not become a salesman, for example. I believe that someone good with the database should try to work a little on the user interface as well. If she knows middleware, she might want to do some work on the platform or a higher level of the application. If she’s always been an inquisitive tester, she might be willing to try some scripting.