id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,376,716 | npm i или npm ci? | npm i vs npm ci — какую команду использовать. npm i (npm install) npm i... | 0 | 2023-02-23T13:38:09 | https://dev.to/jennypollard/npm-i-ili-npm-ci-1cn8 | npm | ## npm i vs npm ci — какую команду использовать.

### npm i (npm install)

`npm i` устанавливает пакеты и их зависимости в директорию `node_modules`. По-умолчанию, список пакетов берется из раздела `dependencies` в `package.json`.

`npm` обновляет файл `package-lock.json` после любых измений `package.json` или `node_modules`. То есть, если в результате выполнения какой-либо команды `npm`, будет обновлен `package.json` или содержимое `node_modules`, то будет обновлен `package-lock.json`.

`package-lock.json` описывает фактическое дерево пакетов, которое было уставновлено и нужен для того, чтобы выполнение `npm i` приводило к одному и тому же набору пакетов. Другими словами, `package-lock.json` это описание того, что установлено в `node_modules`.

`npm i` может устанавливать пакеты по-одному, по-умолчанию `npm i` добавляет установленный пакет в раздел `dependencies` (и обновляет `package-lock.json`).

### npm ci

Команда `npm ci` похожа на `npm i` — тоже устанавливает зависимости, но предназначена для установки зависимостей с чистого листа — при сборке приложения, в релизных пайплайнах, в проверках пулреквестов, используется автоматикой. `npm ci` быстрее, чем `npm i` и имеет существенные отличия:

- удаляет `node_modules` перед установкой.

- `npm ci` требует для работы наличие `package-lock.json`, иначе получим ошибку:

> npm ERR! cipm can only install packages with an existing package-lock.json or npm-shrinkwrap.json with lockfileVersion >= 1. Run an install with npm@5 or later to generate it, then try again.

>

- `npm ci` не может устанавливать пакеты по-одному, устанавливает все сразу как описано в `package-lock.json`.

- `npm ci` не обновляет `package-lock.json` и `package.json`, так как предназначен для автоматики, а в этом случае репозиторий только на чтение.

- если версии пакетов в `package.json` и `package-lock.json` расходятся, команда завершится с ошибкой:

> npm ERR! cipm can only install packages when your package.json and package-lock.json or npm-shrinkwrap.json are in sync. Please update your lock file with `npm install` before continuing.

npm ERR! Invalid: lock file's lodash.get@4.4.2 does not satisfy lodash.get@4.4.0

>

Иногда в `node_modules` могут накапливаться проблемы (например, рассинхрон фактических и ожидаемых версий пакетов), для таких случаев `npm ci` — быстрый способ переустановить все зависимости.

---

_Photo by [Paul Teysen](https://unsplash.com/fr/@hooverpaul55?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText) on [Unsplash](https://unsplash.com/photos/bukjsECgmeU?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText)_

| jennypollard |

1,376,723 | Where publish nodeJs+Angular app for free | I needed to publish a simple web application composed of Angular frontend and Node backend on a... | 0 | 2023-02-23T13:44:19 | https://dev.to/eleonorarocchi/where-publish-nodejsangular-app-for-free-43g1 | I needed to publish a simple web application composed of Angular frontend and Node backend on a server to make it accessible on the network. I was looking for a hosting service for this architecture, perhaps free, and I discovered [https://www.render.com](https://www.render.com)

Render.com is a fully-managed cloud platform, which can host sites, backend APIs, databases, cron jobs and all applications in one place.

Static site publishing is completely free on Render and includes the following:

- Continuous, automatic builds & deploys from GitHub and GitLab.

- Automatic SSL certificates through Let's Encrypt.

- Instant cache invalidation with a lightning fast, global CDN.

- Unlimited contributors.

- Unlimited custom domains.

- Automatic Brotli compression for faster sites.

- Native HTTP/2 support.

- Pull Request Previews.

- Automatic HTTP → HTTPS redirects.

- Custom URL redirects and rewrites.

If you have a github account, it is very convenient to release updates, as you can directly link a repository to render.com, and automate the deployment. | eleonorarocchi | |

1,376,819 | All About ChatGPT | ChatGPT is an advanced artificial intelligence language model that was developed by OpenAI. It is... | 0 | 2023-02-23T14:38:50 | https://dev.to/manoranjand/all-about-chatgpt-5aga | chatgpt, openai, ai |

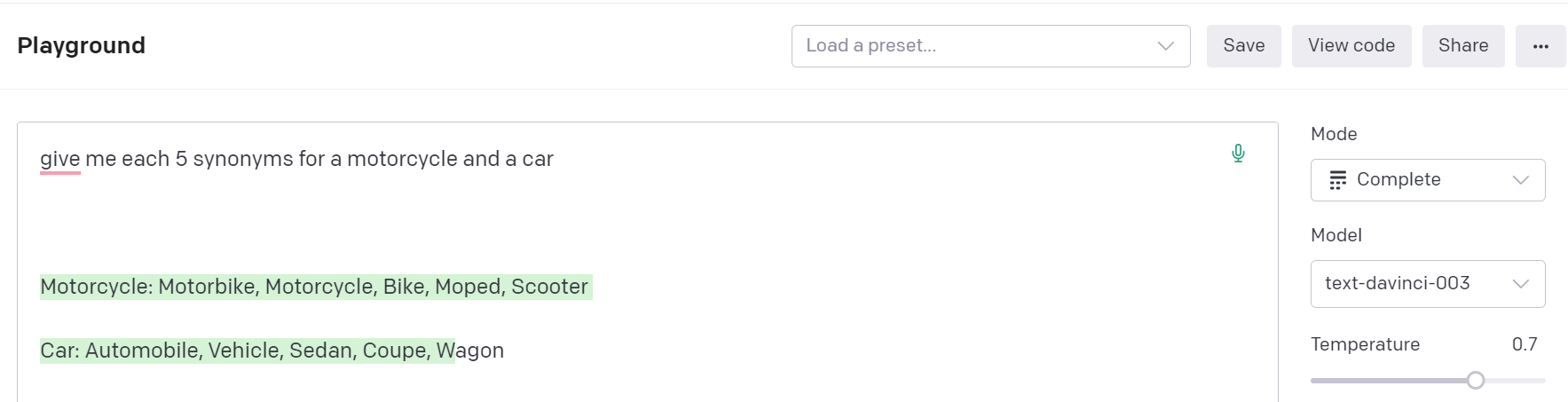

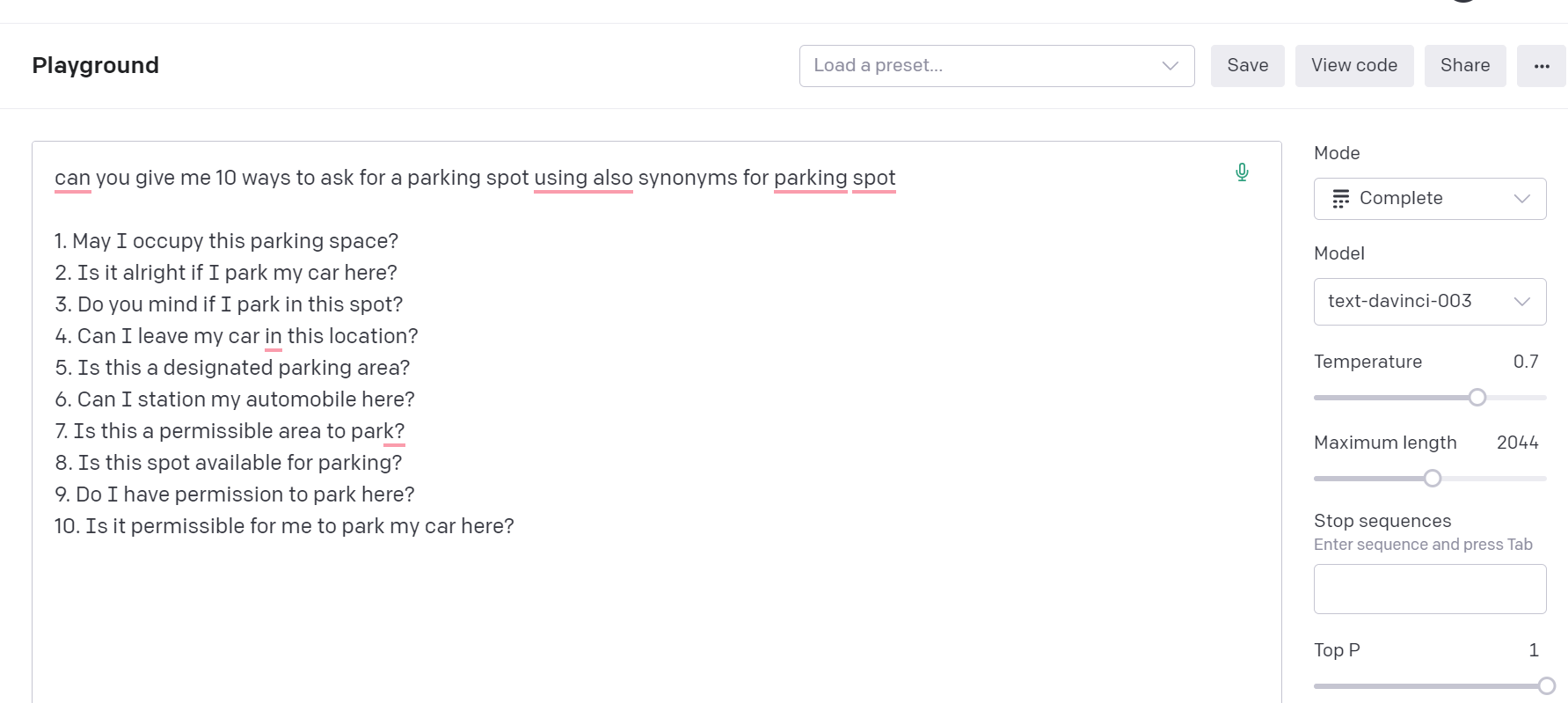

ChatGPT is an advanced artificial intelligence language model that was developed by OpenAI. It is considered one of the most powerful and sophisticated language models in the world, with the ability to understand and generate human-like language. In this article, we will take a closer look at what ChatGPT is, how it works, and its potential applications.

###What is ChatGPT?

ChatGPT stands for "Chat Generative Pre-training Transformer." It is an advanced language model that is based on the transformer architecture, which was first introduced by Google in 2017. The transformer architecture is a neural network that uses self-attention mechanisms to process input data, allowing it to understand the context and meaning of text.

ChatGPT takes this architecture to the next level, using a technique called "pre-training" to improve its language generation capabilities. Pre-training involves training the model on a large corpus of text data, such as books, articles, and other written content, to help it understand the nuances of language and grammar.

###How does ChatGPT work?

ChatGPT works by analyzing text inputs and generating responses based on what it has learned from its pre-training. When a user inputs a question or statement, ChatGPT uses its self-attention mechanisms to process the input and generate a response that is based on the context and meaning of the text.

The model is designed to continuously learn and improve its language generation capabilities over time, allowing it to generate more accurate and nuanced responses. This is achieved through a process called "fine-tuning," which involves training the model on specific tasks, such as language translation or sentiment analysis, to improve its performance.

###What are the potential applications of ChatGPT?

ChatGPT has a wide range of potential applications across various industries and fields. Some of the most common applications include:

Customer service: ChatGPT can be used to create chatbots and virtual assistants that can handle customer inquiries and provide personalized support.

Content creation: ChatGPT can be used to generate high-quality content for websites, social media, and other digital platforms.

Language translation: ChatGPT can be used to translate text from one language to another, providing a more accurate and nuanced translation than traditional translation tools.

Education: ChatGPT can be used to create interactive learning experiences that provide personalized feedback and support to students.

Healthcare: ChatGPT can be used to analyze patient data and provide personalized recommendations for treatment and care.

Overall, ChatGPT represents a significant advancement in the field of natural language processing and has the potential to transform the way we communicate and interact with technology. As the technology continues to evolve, we can expect to see even more exciting applications and use cases emerge in the years to come.

| manoranjand |

1,376,870 | CodePen Designs - 40 : 3D First Person Art Gallery - No Javascript! | We are excited to introduce our new series that will explore the best design ideas from the... | 20,957 | 2023-02-23T16:15:44 | https://dev.to/jon_snow789/codepen-designs-40-3d-first-person-art-gallery-no-javascript-418l | codepen, css, webdev, design | ### We are excited to introduce our new series that will explore the best design ideas from the Codepen community.

We will be publishing these each day and hope you find them insightful and inspiring as we showcase some of the most talented designers in this space.

---

---

## Video Tutorial

Don't miss the amazing video we've embedded in this post! Click the play button to be inspired

{% youtube p6t7Iprd-38 %}

---

---

### Codepen Design - 40

<p>A CSS art gallery you can look around. Images of pure CSS art, but unfortunately peoples computer would explode if I didn't use images. Everything else is pure CSS though. </p>

<ul>

<li>There are a few Easter Eggs scattered about.</li>

</ul>

<p>A very short video of some of the coding:

<a href="https://youtu.be/4s0PT709Ia0" target="_blank">https://youtu.be/4s0PT709Ia0</a>.

Subscribe for more or follow me to say hi:

<a href="https://www.instagram.com/ivorjetski" target="_blank">https://www.instagram.com/ivorjetski</a>

<a href="https://twitter.com/ivorjetski" target="_blank">https://twitter.com/ivorjetski</a></p>

{% codepen https://codepen.io/ivorjetski/pen/gOPOvdB %}

---

## For more information

1. Check my GitHub profile

[https://github.com/amitSharma7741](https://github.com/amitSharma7741)

2. Check out my Fiver profile if you need any freelancing work

[https://www.fiverr.com/amit_sharma77](https://www.fiverr.com/amit_sharma77)

3. Check out my Instagram

[https://www.instagram.com/fromgoodthings/](https://www.instagram.com/fromgoodthings/)

4. Linktree

[https://linktr.ee/jonSnow77](https://linktr.ee/jonSnow77)

5. Check my project

- EVSTART: Electric Vehicle is the Future

[https://evstart.netlify.app/](https://evstart.netlify.app/)

- News Website in react

[https://newsmon.netlify.app/](https://newsmon.netlify.app/)

- Hindi jokes API

[https://hindi-jokes-api.onrender.com/](https://hindi-jokes-api.onrender.com/)

- Sudoku Game And API

[https://sudoku-game-and-api.netlify.app/](https://sudoku-game-and-api.netlify.app/)

---

---

| jon_snow789 |

1,377,079 | JavaScript Tutorial Series: Date Object | A built-in Date object in JavaScript makes working with dates simple. We will discuss how to use... | 0 | 2023-02-23T18:24:00 | https://dev.to/fullstackjo/javascript-tutorial-series-date-object-gb5 | A built-in Date object in JavaScript makes working with dates simple. We will discuss how to use dates in this article.

## Working with dates

A new Date object can be easily created. A new instance of the Date object can be made using this syntax:

```JavaScript

let currentDate = new Date();

```

By doing this, a new Date object will be created with the current date and time.

## Date methods and properties

The Date object has multiple built-in methods and properties. We're going to discuss the most commonly used.

Once you have a Date object, you can extract a variety of data from it. For example, you can find the current year, month, day, and time.

```JavaScript

let currentDate = new Date();

let currentYear = currentDate.getFullYear();

let currentMonth = currentDate.getMonth();

let currentDay = currentDate.getDate();

let currentTime = currentDate.getTime();

console.log(currentYear); //2023

console.log(currentMonth); //1

console.log(currentDay); //23

console.log(currentTime); //1677176048519

```

Keep in mind `getMonth()` returns zero-based index and `getTime()` returns the amount of milliseconds that have passed since the epoch, which is recognized as the beginning of January 1, 1970, UTC at midnight.

`setFullYear()`, `setMonth()`, `setDate()`, and `setTime()` methods allow you to set a specific date and time.

```JavaScript

let currentDate = new Date();

currentDate.setFullYear(2021);

currentDate.setMonth(9); // september (zero-based)

currentDate.setDate(15);

currentDate.setTime(0); // Sets the time to midnight

```

Along with getting and setting specific date and time components, you also have the choice of performing operations on dates. For instance, you can add or subtract a certain number of days, hours, or minutes from a date by using the setDate(), setHours(), and setMinutes() methods.

```JavaScript

let currentDate= new Date();

currentDate.setDate(currentDate.getDate() + 3);

// Adds 3 days

currentDate.setHours(currentDate.getHours() - 5);

// Subtracts 5 hours

currentDate.setMinutes(currentDate.getMinutes() + 10);

// Adds 10 minutes

```

Last but not least, you can format dates for display by using the toLocaleDateString() and toLocaleTimeString() methods. The user's locale settings will be used by these methods to format the date and time.

_Do not forget to try these snippets and output the result on your own to get the hang of using the date object._ | fullstackjo | |

1,377,088 | LWP - Layered WallPaper engine | I've created an open-source app, that lets You set multi-layered wallpapers, moving with Your... | 0 | 2023-02-23T18:52:53 | https://dev.to/jszczerbinsky/lwp-layered-wallpaper-engine-5fg4 | showdev, opensource, github | {% embed https://youtu.be/nvrj7hAs694 %}

I've created an open-source app, that lets You set multi-layered wallpapers, moving with Your mouse cursor as a desktop background. It works on Windows and Linux.

Link: https://github.com/jszczerbinsky/lwp

If You like this project, give me a feedback. It really helps ;D

| jszczerbinsky |

1,377,152 | Recreating my Portfolio in Next.js | I have recently started learning Next.js/React and I wanted to compare the speeds of Next.js and the... | 0 | 2023-02-23T20:41:54 | https://dev.to/vulcanwm/recreating-my-portfolio-in-nextjs-1ll7 | nextjs, webdev, javascript, react | I have recently started learning Next.js/React and I wanted to compare the speeds of Next.js and the framework I used to use previously: Flask (a Python framework).

So I decided to recreate my portfolio in Next.js (the portfolio was initially created in Flask). This way I would be able to easily see the difference in load times between Next.js and Flask.

Over the last 4 days, I properly learnt how to do:

- Components in React

- Loops in React (this took a while!)

- Assets in Next.js

- How to put a Next.js website together

---

This is the [Next.js Portfolio](https://vulcanwm.vercel.app) and this is the [Flask Portfolio](https://vulcanwm.is-a.dev).

See the difference in load times!

---

If you want to see the source code for either portfolios, here they are.

Next.js Portfolio:

{% embed https://github.com/VulcanWM/vulcanwm-nextjs %}

Flask Portfolio:

{% embed https://github.com/VulcanWM/vulcanwm %}

---

Thanks for reading, and thanks for supporting me on my Next.js journey! | vulcanwm |

1,377,734 | Minsheng Bank, openGauss, MogDB | With the rapid development of domestic digital construction and transformation, the scale and volume... | 0 | 2023-02-24T07:41:12 | https://dev.to/490583523leo/minsheng-bank-opengauss-mogdb-5e24 | With the rapid development of domestic digital construction and transformation, the scale and volume of data are increasing year by year. In addition, open source and cloud deployment have become important trends. More and more organizations are beginning to consider replacing existing traditional databases to cope with new and more Complex business requirements.

The replacement of the database will inevitably bring about the relocation of massive data and the migration and transformation of a large number of data processing programs. How to perform smooth and seamless migration and ensure business continuity and data consistency has become an important factor that enterprises have to consider when selecting database replacements.

►Minsheng Bank embraces openGauss

As the first national commercial bank established by private capital in mainland China, Minsheng Bank has always adhered to the original aspiration and mission of "living for the people and co-existing with the people", and is committed to providing customers with professional and modern financial services. The industry explored the road to the construction of modern commercial banks.

As we all know, various data information such as users' financial status and transaction records are the "lifeline" of banks, and ensuring their security is the top priority. At the same time, with the diversification, complexity, and Internet-based business development, the centralized traditional commercial database originally deployed by Minsheng Bank can hardly bear the sudden burst of peak pressure, cannot cope with challenges such as mixed loads, and is costly and difficult to expand. The method of piling up resources to ensure business continuity and stability is no longer sustainable. In order to ensure data security, reduce costs and increase efficiency, and meet new business challenges, Minsheng Bank conducted rigorous testing and evaluation of candidate products, and finally selected openGauss with excellent performance to replace and transform the database in key business scenarios .

►Database migration keywords: compatible, stable, continuous

After determining openGauss as a product to replace the original database, a difficult problem facing Minsheng Bank is how to efficiently realize the complete migration of database objects and full data between heterogeneous databases.

The 2021 annual report of Minsheng Bank shows that the number of retail customers of the company (referring to personal customers with normal customer status (including accounts I, II, and III), pure credit card customers, and small and micro enterprise legal person customers) reached 110.1378 million. At the end of the year, the growth rate was 5.41%. With the addition of other types of customers, the total amount of bank business data can be imagined. If the migration is performed purely by hand, the project volume will be huge, which will definitely affect the normal business development of the company. Therefore, customers need mature migration tools to improve the efficiency of heterogeneous database migration.

Minsheng Bank mainly migrates data from databases such as Oracle, MySQL, and DB2 to openGauss . Customers require migration tools to be well adapted to different types of databases and integrated into the openGauss ecosystem. It is understood that the amount of data that needs to be migrated by each system ranges from tens of GB to tens of TB. After the migration is completed, all data must be complete and accurate to ensure availability. In addition, in order to provide better services to bank customers, the migration process needs to ensure system stability and business continuity.

Of course, Minsheng Bank also encountered some common problems in database migration, such as SQL syntax conversion. During the migration process, SQL-related database objects, such as tables, storage, and functions, may be incompatible. The migration tool must accurately identify incompatible SQL.

►

MTK escorts the smooth replacement of openGauss

In fact, in order to make the database replacement go far and steadily, Minsheng Bank adopts a safe three-party cooperation model for system construction . The bank is responsible for database product testing, application transformation, and online operation and maintenance; the openGauss community is mainly responsible for database core function development and ecological construction; Yunhe Enmo, as a third-party manufacturer, is responsible for product defect repair, technical support, and peripheral tool development. is one of them .

MTK is a database migration tool (Database Migration Toolkit) for the cloud and Enmo database MogDB. Since MogDB is an enterprise-level relational database commercial release based on the openGauss kernel, MTK has natural advantages in adaptation and compatibility when it comes to migration tasks that use openGauss as the target library. In addition, MTK supports database migration with Oracle, DB2, and MySQL source databases, which can be said to perfectly match the migration needs of Minsheng Bank and become a powerful tool to promote customer database replacement.

After MTK is deployed in the Minsheng Bank system, it only needs to execute six commands to complete the migration task , specifically:

1. Check the configuration file: ./mtk -c config.json config-check, check the file for syntax errors.

2. Pre-migration test: ./mtk -c config.json --preRun , check possible problems in the migration process in advance, and estimate the time to complete the entire migration task, so as to coordinate the time window for suspending the business.

Next, Minsheng Bank migrated step by step according to its own business needs to accurately locate problems during the migration process and reduce the impact of the migration task on the system.

3. Table structure: ./mtk -c config.json mig-tab-pre

4. Data migration: ./mtk -c config.json mig-tab-data

5. Index/constraint object migration: ./mtk -c config.json mig-tab-post

6. Stored procedure/function migration: ./mtk -c config.json mig-tab-other

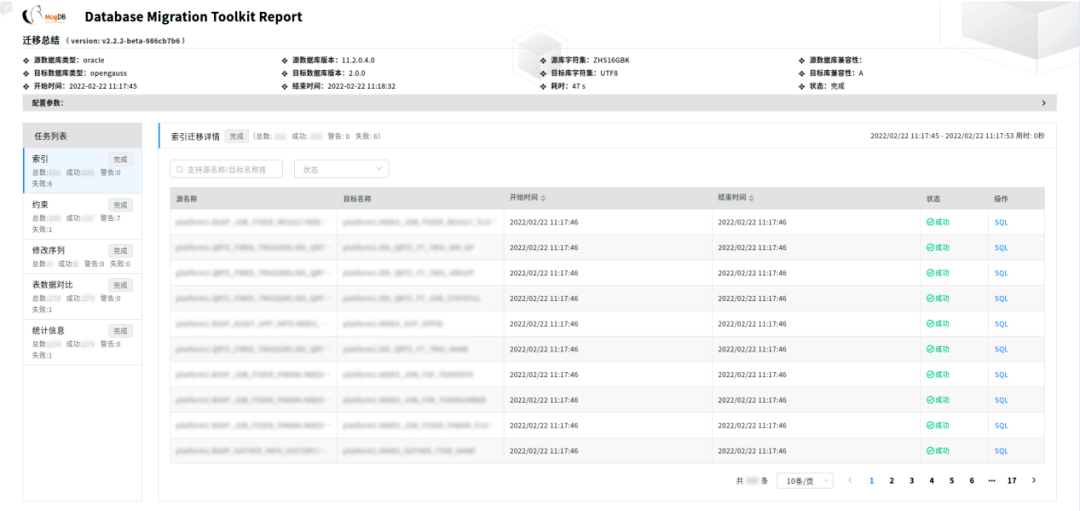

To solve the problem of SQL syntax conversion in database migration, MTK has embedded multiple SQL rules, and has realized most of the syntax conversion . In addition, in order to facilitate users to intuitively understand the migration status and problems encountered in the migration, an HTML report will be generated after the MTK parameter configuration reportFile , with detailed content, shows the SQL statement of each step in the migration process, and the user can directly view the problem SQL and modify it.

MTK's work of migrating data from Oracle, DB2 and other databases to openGauss has been recognized by Minsheng Bank. During the entire migration process, MTK performed stably, maintaining a migration speed of 1TB per hour, ensuring data consistency and smooth business operation . By the end of 2022, Yunhe Enmo has completed the task of migrating 100 sets of heterogeneous databases to openGauss for Minsheng Bank .

See kung fu in the subtleties, and strive for excellence in the extreme. Data is the top priority of the business system, ensuring zero data loss, instant data access and data security are the key points in the process of database migration. In fact, besides MTK, Cloud and Enmo's Migrate to openGauss / MogDB solution also includes SQL compatibility assessment tool SCA before heterogeneous database migration, data consistency verification tool MVD, and heterogeneous data synchronization tool MDB . Through the continuous innovation of the MogDB database and its supporting migration solutions, Yunhe Enmo will continue to promote the smooth progress of the database replacement work of Minsheng Bank.

| 490583523leo | |

1,377,240 | Useful: Lombok Annotations | Summary Configuration @ToString @Tolerate @StandardException ... | 21,123 | 2023-02-23T22:13:10 | https://dev.to/selllami/useful-lombok-annotations-3fh2 | java, spring, springboot, lombok | ## Summary

- Configuration

- @ToString

- @Tolerate

- @StandardException

## Configuration

-- Gradle: `build.gradle`

```

compileOnly 'org.projectlombok:lombok:1.18.20'

annotationProcessor 'org.projectlombok:lombok:1.18.20'

```

-- Maven: `pom.xml`

```

<dependency>

<groupId>org.projectlombok</groupId>

<artifactId>lombok</artifactId>

<version>${lombok.version}</version>

<scope>provided</scope>

</dependency>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.8.0</version>

<configuration>

<source>11</source> <!-- depending on your project -->

<target>11</target>

<annotationProcessorPaths>

<path>

<groupId>org.projectlombok</groupId>

<artifactId>lombok</artifactId>

<version>${lombok.version}</version>

</path>

</annotationProcessorPaths>

</configuration>

</plugin>

</plugins>

```

## @ToString

Generate an implementation of the toString() method.

```

@ToString(doNotUseGetters = true)

public class Account {

private String id;

private String name;

@ToString.Exclude // Exclusion Field

private String adress;

// ignored getter

public String getId() {

return "this is the id:" + id;

}

}

@ToString(onlyExplicitlyIncluded = true)

public class Account {

@ToString.Include(name = "accountId") // Modifying Field Names

private String id;

@ToString.Include(rank = 1) // Ordering Output

private String name;

private String adress;

@ToString.Include // Method Output

String description() {

return "Account description";

}

} => Account(name=An account, accountId=12345, description=Account description)

```

## @Tolerate

Skip, jump, and forget! Make lombok disregard an existing method or constructor.

```

public class TolerateExample {

@Setter

private Date date;

@Tolerate

public void setDate(String date) {

this.date = Date.valueOf(date);

}

}

```

## @StandardException

Put this annotation on your own exception types.will generate 4 constructors:

- `MyException()`: representing no message, and no cause.

- `MyException(String message)`: the provided message, and no cause.

- `MyException(Throwable cause)`: which will copy the message from the cause, if there is one, and uses the provided cause.

- `MyException(String message, Throwable cause)`: A full constructor.

| selllami |

1,377,488 | Cookie Management in ExpressJS to Authenticate Users | Express.js is a widely used NodeJs framework by far and if you’re familiar with it, probably cookie... | 0 | 2023-02-26T05:00:00 | https://www.permify.co/post/cookie-management-in-expressjs-to-authenticate-users | node, javascript, tutorial, webdev |

Express.js is a widely used NodeJs framework by far and if you’re familiar with it, probably cookie management is not a very painful concept for you.

Although there are numerous use cases of the cookies; session management, personalization, etc. We will create a demo app that demonstrates a simple implementation of cookie management in your Express.js apps to authenticate users.

Before we create our demo app, let's talk a little bit about Cookies;

## So what are HTTP cookies?

HTTP cookies are small pieces of data that are sent from a web server and stored on the client side.

To set up a cookie on the client's side, the server sends a response with the Set-Cookie header.

When the client receives the response message from the server containing the Set-Cookie header, it'll set up the cookie on the client-side.

Such that each subsequent request going from the client-side will explicitly include;

- A header field called “Cookie”

- An actual header that contains the value.

- The cookie information that has been sent by the server in the response message.

Actually, this is enough for scope of our article but If you want to learn more about browser cookies, I recommend reading this [article](https://www.digitalocean.com/community/tutorials/js-what-are-cookies).

## Step 1: Setting up our demo Express app

For a kick-off, we need to create a demo express application where we can implement our cookie management. To quickly create one I’ll use the express app generator tool, express-generator.

Run the following command to create,

```js

npx express-generator express-cookie

```

For earlier Node versions, install the application generator as a global npm package and then launch it, for more check out [express documentation](https://expressjs.com/en/starter/generator.html).

All necessary starter modules and middleware that we will use should already be generated with express-generator, your project folder structure should look like below

All necessary starter modules and middleware that we will use should already be generated with express-generator, your project folder structure should look like below

## Step 2: Create Basic Authentication middleware

To demonstrate the use of cookies for authentication, we won’t need to implement a fully-fledged authentication system.

So for simplicity, I will use Basic Authentication. The very basic mechanism that will enable us to authenticate users.

### How does Basic authentication work?

When the server receives the request, the server will extract authorization information from the client's request header. And then, use that for authenticating the client before allowing access to the various operations on the server-side.

If this client request does not include the authorization information, then the server will challenge the client, they're asking for the client to submit this information with the user name and password fields.

So, every request message originating from a client should include the encoded form of the username and password in the request header that goes from the client to the server-side.

Open your app.js and add our auth middleware with the logic above as follows:

```js

. . .

function auth (req, res, next) {

//server will extract authorization information from the client's request header

var authHeader = req.headers.authorization;

if (!authHeader) {

var err = new Error('You are not authenticated!');

res.setHeader('WWW-Authenticate', 'Basic');

err.status = 401;

next(err);

return;

}

//If this client request does not include the authorization information

var auth = new Buffer.from(authHeader.split(' ')[1], 'base64').toString().split(':');

var user = auth[0];

var pass = auth[1];

//static credential values

if (user == 'admin' && pass == 'password') {

next(); // user authorized

} else {

var err = new Error('You are not authenticated!');

res.setHeader('WWW-Authenticate', 'Basic');

err.status = 401;

next(err);

}

}

app.use(auth);

. . .

```

**Note:** *You should add auth middleware on top of the routers so that the authorization middleware can be triggered correctly when a request is received.*

### Cookie-based authentication

We want only authenticated users to access various operations on the server-side. Here’s how cookie-based workflow should work;

- The first time that the user tries to access the server, we will expect the user to authorize himself/herself.

- Thereafter, we will set up the cookie on the client-side from the server.

- Subsequently, the client doesn't have to keep sending the basic authentication information. Instead, the client will need to include the cookie in the outgoing request.

## Step 3: Setting the cookie on the Client-Side

Express has a cookie property on the response object, so we do not need to implement any other library, lets send user name as cookie:

```js

// sentUserCookie creates a cookie which expires after one day

const sendUserCookie = (res) => {

// Our token expires after one day

const oneDayToSeconds = 24 * 60 * 60;

res.cookie('user', 'admin', { maxAge: oneDayToSeconds});

};

```

### Getting the Cookies on request

We will use **cookie-parser** middleware to handle cookies. If you open app.js you will notice that the cookie-parser is already included in our express application, because we generated our project with **express-generator**.

Note: If you need to explicitly install cookie-parser, the installation command is:

```js

npm install cookie-parser.

```

cookie-parser parses cookie header and attach on request, so we can access cookies with: req.cookie

Check out the [source code](https://github.com/expressjs/cookie-parser/blob/master/index.js) of the cookie-parser for more information.

## Step 4: Auth mechanism with cookies

We looked at how we can get and set cookies, let's modify our auth middleware for creating a simple authentication mechanism with cookies;

```js

function auth (req, res, next) {

//check client has user cookie

if (!req.cookie.user) {

//get authorization

var authHeader = req.headers.authorization;

if (!authHeader) {

var err = new Error('You are not authenticated!');

res.setHeader('WWW-Authenticate', 'Basic');

err.status = 401;

next(err);

return;

}

//If this client request does not include the authorization information

var auth = new Buffer.from(authHeader.split(' ')[1], 'base64').toString().split(':');

var user = auth[0];

var pass = auth[1];

if (user == 'admin' && pass == 'password') {

sendUserCookie(res)

next(); // user authorized

} else {

var err = new Error('You are not authenticated!');

res.setHeader('WWW-Authenticate', 'Basic');

err.status = 401;

next(err);

}

}

else {

//client request has cookie, check is valid

if (req.cookie.user === 'admin') {

next();

}

else {

var err = new Error('You are not authenticated!');

err.status = 401;

next(err);

}

}

}

```

## Conclusion

In a nutshell, we build a mechanism that requests some information on the browser for authentication. Afterward, we examine how to persist this auth information by using cookies to prevent the resending of auth information.

Now expanding this further, if your server wants to track information about your client, then the server may explicitly set up a session-tracking mechanism. Cookies are small, and can't store lots of information.

Now, if we want a lot more information to be tracked about a client on the server side, then express sessions enable us to do that. If you have any related questions just ping me :)

| egeaytin |

1,377,492 | Beware of Fake Job Offers: My Encounter with a Scammer | TL;DR A scammer will email a job seeker trying to set up an interview. Check the email... | 0 | 2023-02-24T03:10:05 | https://dev.to/davidchedrick/beware-of-fake-job-offers-my-encounter-with-a-scammer-nf9 | webdev, career, interview, scam | ####TL;DR

A scammer will email a job seeker trying to set up an interview. Check the email address, it will be from gmail or something similar not an official company domain, e.g. firstname.lastname.company @gmail.com. The Interview process will move very fast. The scammer will send a job offer, then send a check to set up your home office. The check is fake and will be declined. The scammer will say you need to purchase items from a preferred vendor. The scammer will say you have to use a pay app to be able to get the equipment in time to start, and as usual, the scammer will be very pushy trying to make you do things quickly.

---

Below is my personal story of talking with one of these scammers. I started talking with them because I did happen to apply to the company they were impersonating. Luckily I deal with scammers all the time and noticed red flags before giving them money.

I have talked to others that have had the same scam run on them. The scammers will change details, and company names, and sometimes they will take longer with the scam but it is the same basic setup.

---

This blog is a cautionary tale so others don’t fall victim.

Below is how the scam played out with me. The names in quotes are the actual names the scammers used while talking with me.

---

This is how it all went down:

###Monday ~10 am:

-

I receive an email from “Donald” at “Intelletec”. The email states that they have reviewed my application and want to set up a time for an interview.

Nice! Sounds good.

-

I double check my Huntr app and see I applied to Intelletec for a Software Engineer position about two weeks ago. So I set up a call with “Donald” for the following Day.

###Tuesday ~11 am:

-

I have my interview with “Donald”. Despite some initial nerves, everything seemed normal and we went into a standard conversation about my experience and their tech stack. Donald seemed seasoned and well-versed in the technologies. We talked about the company culture, and the role itself. At the end “Donald” said that he thought I could be a good fit and said the next step would be a technical test and to answer some behavior questions. “Donald,” said I would receive an email where I could set up a time.

###Tuesday ~4 pm:

-

I receive the email “Donald” spoke of, and I was able to set up my next interview for the next morning.

In this process, this was the first time I thought. “Uh weird…”, none of these companies move this fast. Usually, I have to wait a week to even hear anything about the next interview, especially to set up something the next day. But whatever, maybe this company just has their hiring practice down.

###Wednesday ~11 am:

-

I get on a call with the “Technical Team”, and [don’t remember his “name”] tells me that he is having problems with zoom and that we will just do it over the phone. He asks me to open up Replit and he would ask me Data Structure and Algorythm-style questions and I would talk my way through the problem over the phone. We did three of those questions. After the questions, he said I will shortly receive an email from HR with the behavior portion.

This was an odd experience but still relatively normal.

###Wednesday ~ 12 pm:

-

The email from “HR” comes in. It is a list of standard behavior-type questions. Ones like “Tell me about a time when you had to solve a particularly challenging problem.” and “Give an example of how you've collaborated with cross-functional teams to deliver a project.” All were reasonable questions. I have to answer the questions and email them back.

###Thursday ~ 1 pm:

-

I received an email from “HR” saying they liked all my answers and that the “Technical team” agreed. The email stated that I was in consideration for the position and they would get back to me when they reviewed all other candidates.

Okay, now things definitely seem off. This moved really fast. But still, this is my first job in tech so I don’t know what to expect.

###Friday ~ 4 pm:

-

I receive an offer letter from “Intelletec”.

That was way too quick, okay, now I am really second-guessing the validity of this offer. Plus, I only talked to two people over the phone and never even on video.

-

The email states that if I am interested in the position I should review the documents and sign and send them back by Monday afternoon.

At this point, I decided to just go along with it, and see what happens, at least I’ll have a fun story and at best I wasted a scammer's time. And who knows, maybe I am wrong and I have a job.

One thing I have to give to the scammers, the offer letter was very well done. They put time into that fake letter.

###Monday ~ 7 am

-

“Donald” doesn’t waste any time, he is texting me now. Tells me that if I am accepting the job I should send the papers back soon. He really wants me to set it up in time for the next project. I send the papers back right away.

Shortly after I receive a text from “Paul” from HR. He tells me that he needs to get me set up with my home office. He says that I have to use their equipment that would be supplied by a “preferred vendor” and that I would receive a check for $6000 to pay the vendor.

Huh? Why wouldn’t they just pay the vendor and have it shipped to me? Very strange, doesn't seem legit. I’ll ask “Paul”.

-

“Paul” tells me a story about how they used to just send equipment to employees, but too many people tried to scam them and say they never received the equipment, so now it's on the employee to order the equipment with the stipend check.

Okay, “Paul”, sure.

-

I tell “Paul” that “Donald” is in a hurry for me to get started so I should just go to Best Buy and get the equipment so I can start right away.

“Paul” tells me that I can’t. It must be through the “preferred vendor” so that they know I have the correct equipment.

###Monday ~ 11 am:

-

I get an email from “HR” with a personal check attached. The email says that the check is from a “trusted partner” of theirs. The email goes on that the check has been certified by the “trusted partner” so it clears the bank the same day.

Lol, now a “trusted partner” is involved.

-

The email continues, I must deposit the check by mobile, and I must screenshot the deposit and send it back to them so they know the process is underway.

Okay, whatever. I have fraud protection so I don’t have to pay for bounced checks. Let's do this.

-

Obviously, the check is automatically declined and flagged as fraudulent. An alert pops up on my banking app. I screenshot it and send it to “Paul”

“Paul” is “shocked” to see the check is declined. It was even certified! Paul says it must be my bank flagging it because I never cashed a check so big.

Um… did “Paul” just neg me?

-

“Paul” will have to get back to me, to see what we can do.

###Monday ~ 1 pm:

-

“Donald” is texting again. Tells me he heard from “Paul” what happened. “Donald,” says not to worry, he has seen this before. He says that the bank is just delaying the funds, but it will clear, probably in three days. But “Donald” is worried that this will delay the project. We really need to get that equipment to me.

“Donald” has an idea! The only thing that I need to get started in the first week is the computer. “Donald,” says the computer is only $2000. “Donald” wants to know if I could raise the funds by the end of the day to pay for it myself and then just keep the $2000 from the stipend check once it clears.

Wait… did “Donald” just say “can you raise the funds”? Who raises funds? This is America, I am already in crippling dept what's another $2000 on the card.

I am definitely not going to do that, but let's play along with “Donald”.

-

I tell “Donald” that I can definitely pay for the computer, I will just use my credit card. “Donald” says he doesn’t know if that would work, he will ask the “preferred vendor manager” if it is okay that I put it on my credit card.

Why wouldn’t a company take a credit card? Seems suspicious. Oh, and shouldn’t this “preferred vendor” have some sort of website I could see? How am I supposed to buy the equipment anyways? Seems like I was never meant to buy the equipment.

###Monday ~ 3 pm:

-

“Donald” is back and he says the “preferred vendor manager” can not take a credit card. Credit cards take too long to clear the funds. But he has a new idea! He can take Cash App!

OMG, did he really? At least he didn’t ask for a Visa gift card.

I knew it was a scam for a bit now, but that one was the proof. Time to go talk to the real Intelletec.

-

I tell “Donald” that I have to download the app and get back to him.

###Monday ~ 6 pm:

-

I look up the management of the real Intelletec. I reach out to Sally [not her real name] and ask if she knows that scammers are impersonating their company. She replies back pretty quickly.

Sally is aware of the scammers and thanks me for reaching out to inform them. I tell her that I have a lot of information on them: multiple emails, phone numbers, an offer letter, a check, and I saved all the conversations. I offer to give Sally whatever she needs to help take the scammers down.

When copying the emails, I looked at the full email address for the first time. “Donalds” email was Donald.Fred.Intelletec @gmail.com and “HR” was HR.Intelletec @mail.com.

Oh… no…. This should have been a huge red flag. Now I check every email address from anyone I don’t know.

###Monday ~ 9 pm:

-

“Donald” reaches back on text and said the “preferred vendor manager” is waiting to place the order, but it needs to be soon to make the deadline.

What a nice “preferred vendor manager”, willing to place my order at 9 pm.

-

I tell “Donald” that I reached out to Sally to see what she thought and she said it was best to wait for the check to clear.

Note, Sally did not say this, I am just messing with the scammers at this point.

-

“Donald” asked who Sally was.

How does he not know the head of HR?

-

I tell “Donald” Sally is the head of HR, and ask how come he doesn’t know her? I tell “Donald” he better talk to “Paul”.

This was the last communication I had with the scammers. I suppose they realized I was just wasting their time.

---

Stay aware, stay safe.

---

❤️❤️❤️

Follow me on [LinkedIn ](https://www.linkedin.com/in/davidchedrick/)for all the updates and future blog posts | davidchedrick |

1,377,544 | 人工智能对联生成 API 数据接口 | 人工智能对联生成 API 数据接口 基于百万数据训练,AI 训练与应答,多结果返回。 1. 产品功能 AI... | 0 | 2023-02-24T03:43:35 | https://dev.to/gugudata/ren-gong-zhi-neng-dui-lian-sheng-cheng-api-shu-ju-jie-kou-bb6 | 人工智能对联生成 API 数据接口

基于百万数据训练,AI 训练与应答,多结果返回。

# 1. 产品功能

- AI 基于百万历史对联数据训练应答模型;

- 机器学习持续训练学习;

- 一个上联可返回多个下联应答;

- 毫秒级响应性能;

- 数据持续更新与维护;

- 全接口支持 HTTPS(TLS v1.0 / v1.1 / v1.2 / v1.3);

- 全面兼容 Apple ATS;

- 全国多节点 CDN 部署;

- 接口极速响应,多台服务器构建 API 接口负载均衡;

- [接口调用状态与状态监控](https://www.gugudata.com/status)

# 2. API 文档

**接口详情:** [https://www.gugudata.com/api/details/coupletai](https://www.gugudata.com/api/details/coupletai)

**接口地址:** https://api.gugudata.com/text/coupletai

**返回格式:** application/json; charset=utf-8

**请求方式:** POST

**请求协议:** HTTPS

**请求示例:** https://api.gugudata.com/text/coupletai

**数据预览:** [https://www.gugudata.com/preview/coupletai](https://www.gugudata.com/preview/coupletai)

**接口测试:** [https://api.gugudata.com/text/coupletai/demo](https://api.gugudata.com/text/coupletai/demo)

# 3. 请求参数

| 参数名 | 参数类型 | 是否必须 | 默认值 | 备注 |

| :-------: | :------: | :------: | :---------: | :-----------------: |

| appkey | string | 是 | YOUR_APPKEY | 付费后获取的 APPKEY |

| firstpair | string | 是 | YOUR_VALUE | 对联上联文本 |

# 4. 返回参数

| 参数名 | 参数类型 | 备注 |

| :--------------------------: | :------: | :----------------------------------: |

| DataStatus.StatusCode | int | 接口返回状态码 |

| DataStatus.StatusDescription | string | 接口返回状态说明 |

| DataStatus.ResponseDateTime | string | 接口数据返回时间 |

| DataStatus.DataTotalCount | int | 此条件下的总数据量,一般用于分页计算 |

| DataStatus.RequestParameter | string | 请求参数,一般用于调试 |

| Data | string | 下联文本,多次请求会随机应答多个下联 |

# 5. 接口 HTTP 响应标准状态码

| 状态码 | 状态码解释 | 备注 |

| :----: | :----------: | :----------------------------------------------------------: |

| 200 | 接口正常响应 | 业务状态码参见下方 **接口自定义状态码** |

| 403 | 请求频率超限 | CDN 层通过 IP 请求频率智能判断,一般的高频请求不会触发此状态码 |

# 6. 接口响应状态码

| 状态码 | 状态码解释 | 备注 |

| :----: | :----------------------: | :----------------------------------------------------------: |

| 100 | 正常返回 | 可通过判断此状态码断言接口正常返回 |

| -1 | 请求失败 | 请求处理请求失败 |

| 501 | 参数错误 | 请检查您传递的参数个数以及参数类型是否匹配 |

| 502 | 请求频率受限 | 一般建议每秒请求不超过 100 次 |

| 503 | APPKEY 权限超限/订单到期 | 请至开发者中心检查您的 APPKEY 是否到期或是否权限超限 |

| 504 | APPKEY 错误 | 请检查传递的 APPKEY 是否为开发者中心获取到的值 |

| 505 | 请求的次数超出接口限制 | 请检查对应接口是否有请求次数限制以及您目前的接口请求剩余次数 |

| 900 | 接口内部响应错误 | 接口可用性为 99.999%,如获取到此状态码请邮件联系我们 |

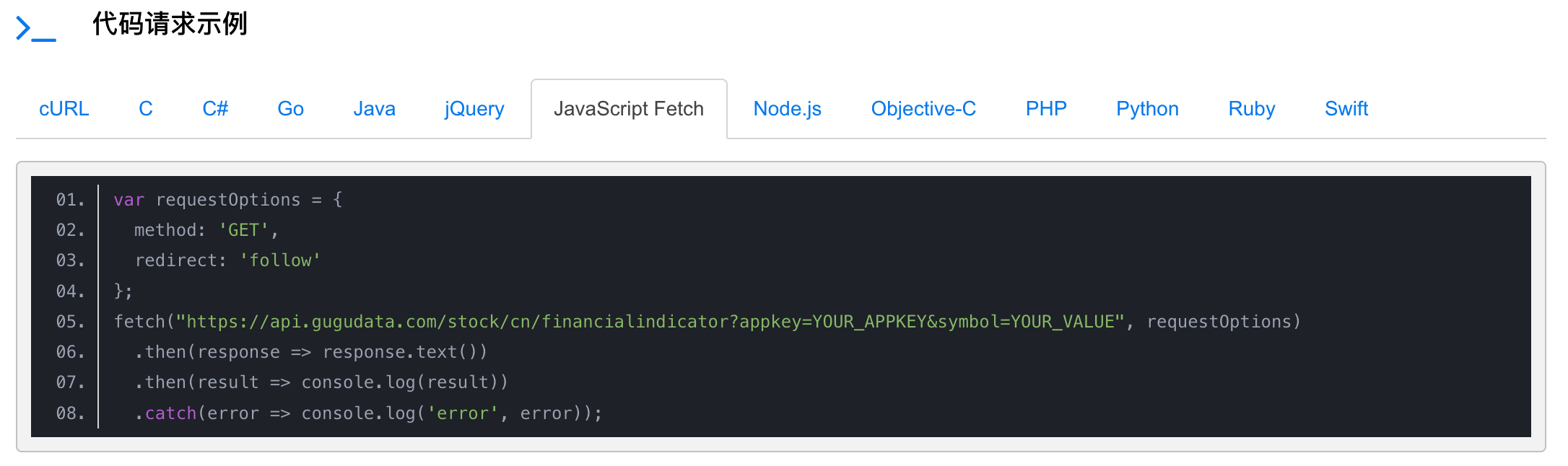

# 7. 开发语言请求示例代码

示例代码包含的开发语言:C#, Go, Java, jQuery, Node.js, Objective-C, PHP, Python, Ruby, Swift 等,其他语言进行对应的 RESTful API 请求实现即可。

# 8. 常见问题 Q&A

- Q: 数据请求有缓存吗?

A: 所有的数据都是直接返回的,部分周期性数据在更新周期内数据是缓存的。

- Q: 如何保证请求时 key 的安全性?

A: 一般建议将对我们 API 的请求放置在您应用的后端服务中发起,您应用的所有前端请求都应该指向您自己的后端服务,这样的架构也更加地纯粹且易维护。

- Q: 接口可以用于哪些开发语言?

A: 可以使用于所有可以进行网络请求的开发语言,用于您项目快速地进行数据构建。

- Q: 接口的性能可以保证吗?

A: 接口后台架构和我们给企业提供的商业项目架构一致,您可以通过访问测试接口查看接口相关返回性能与信息。

-----

咕咕数据,专业的数据提供商,提供专业全面的数据接口、商业数据分析,让数据成为您的生产原料。

咕咕数据基于我们七年来为企业客户提供的千亿级数据存储与性能优化、相关海量基础数据支撑,将合规的部分通用数据、通用功能抽象成产品级数据 API,大大满足了用户在产品开发过程中对基础数据的需求,同时降低了海量数据的存储与运维成本,以及复杂功能的技术门槛与人力开发成本。

除了我们已开放的分类数据与功能接口外,还有海量数据正在整理、清洗、整合、构建中,后期会开放更多的数据与云端功能接口 API 供用户使用。

# **目前已开放的数据接口 API**

- [[条码工具] 通用二维码生成](https://www.gugudata.com/api/details/qrcode)

- [[条码工具] Wi-Fi 无线网二维码生成](https://www.gugudata.com/api/details/wifiqrcode)

- [[条码工具] 通用条形码生成](https://www.gugudata.com/api/details/barcode)

- [[图像识别] 通用 OCR](https://www.gugudata.com/api/details/ocr)

- [[图像识别] 通用图片 OCR 到 Word](https://www.gugudata.com/api/details/ocr2word)

- [[图像识别] HTML 转 PDF](https://www.gugudata.com/api/details/html2pdf)

- [[图像识别] HTML 转 Word](https://www.gugudata.com/api/details/html2word)

- [[图像识别] Markdown 转 PDF](https://www.gugudata.com/api/details/markdown2pdf)

- [[区域/坐标] 全国大学高校基础信息](https://www.gugudata.com/api/details/college)

- [[区域/坐标] 地理坐标逆编码](https://www.gugudata.com/api/details/geodecode)

- [[区域/坐标] IP 地址定位](https://www.gugudata.com/api/details/iplocation)

- [[区域/坐标] 全国省市区街道区域信息](https://www.gugudata.com/api/details/chinaregions)

- [[区域/坐标] 地理坐标系转换](https://www.gugudata.com/api/details/coordinateconverter)

- [[元数据/字典] 历年高考省录取分数线](https://www.gugudata.com/api/details/ceeprovince)

- [[元数据/字典] 历年高考高校录取分数线](https://www.gugudata.com/api/details/ceecollegeline)

- [[元数据/字典] 历年高考专业录取分数线](https://www.gugudata.com/api/details/ceemajorline)

- [[新闻/资讯] 软件开发技术博文头条](https://www.gugudata.com/api/details/techblogs)

- [[新闻/资讯] 获取任意链接文章正文](https://www.gugudata.com/api/details/fetchcontent)

- [[新闻/资讯] 公众号头条文章](https://www.gugudata.com/api/details/wxarticle)

- [[新闻/资讯] 获取任意链接正文图片](https://www.gugudata.com/api/details/fetchcontentimages)

- [[新闻/资讯] 获取公众号文章封面](https://www.gugudata.com/api/details/wxarticlecover)

- [[新闻/资讯] 幽默笑话大全](https://www.gugudata.com/api/details/joke)

- [[短信/语音] 手机归属地查询](https://www.gugudata.com/api/details/mobileattribution)

- [[短信/语音] 国际手机号码检查纠正](https://www.gugudata.com/api/details/internationalphone)

- [[文字/文本] 中文文本分词](https://www.gugudata.com/api/details/segment)

- [[文字/文本] 中英文排版规范化](https://www.gugudata.com/api/details/formatarticle)

- [[文字/文本] 百万中国对联数据](https://www.gugudata.com/api/details/couplet)

- [[文字/文本] 国际标准书号 ISBN](https://www.gugudata.com/api/details/isbn)

- [[文字/文本] 简体繁体互转](https://www.gugudata.com/api/details/stconvert)

- [[文字/文本] 唐诗宋词大全](https://www.gugudata.com/api/details/chinesepoem)

- [[文字/文本] 关键字摘要智能提取](https://www.gugudata.com/api/details/nlpabstract)

- [[文字/文本] 文本语义相似度检测](https://www.gugudata.com/api/details/nlpsimilarity)

- [[文字/文本] NLP中文智能纠错](https://www.gugudata.com/api/details/nlpcorrect)

- [[文字/文本] 人工智能对联生成](https://www.gugudata.com/api/details/coupletai)

- [[文字/文本] NLP 语种检测](https://www.gugudata.com/api/details/nlpdetectlanguage)

- [[天气/空气质量] 全国天气预报信息](https://www.gugudata.com/api/details/weatherinfo)

- [[天气/空气质量] 全国实时空气质量指数](https://www.gugudata.com/api/details/airquality)

- [[天气/空气质量] 日出与日落时间](https://www.gugudata.com/api/details/sunriseandsunset)

- [[天气/空气质量] 农历与二十四节气](https://www.gugudata.com/api/details/lunarcalendar)

- [[网站工具] 获取任意站点标题与图标](https://www.gugudata.com/api/details/favicon)

- [[股票行情] 美股实时行情数据](https://www.gugudata.com/api/details/stockusrealtime)

- [[股票行情] 美股历史行情数据](https://www.gugudata.com/api/details/stockus)

- [[股票行情] 美股分时交易数据](https://www.gugudata.com/api/details/stockusperminute)

- [[股票行情] 美股历年基本财务数据](https://www.gugudata.com/api/details/usfundamental)

- [[股票行情] 港股实时行情数据](https://www.gugudata.com/api/details/stockhkrealtime)

- [[股票行情] 港股历史行情数据](https://www.gugudata.com/api/details/stockhk)

- [[股票行情] 港股分时交易数据](https://www.gugudata.com/api/details/stockhkperminute)

- [[股票行情] 港股上市公司公告](https://www.gugudata.com/api/details/stockhkbulletin)

- [[股票行情] 港股历年三大财务报表](https://www.gugudata.com/api/details/hkannualreport)

- [[股票行情] A 股实时行情数据](https://www.gugudata.com/api/details/stockcnrealtime)

- [[股票行情] A 股历史行情数据](https://www.gugudata.com/api/details/stockcn)

- [[股票行情] A 股分时交易数据](https://www.gugudata.com/api/details/stockcnperminute)

- [[股票行情] A 股历年三大财务报表](https://www.gugudata.com/api/details/annualreport)

- [[股票行情] 中国股票指数数据](https://www.gugudata.com/api/details/stockcnrealtimeindex)

- [[股票行情] A 股个股信息查询](https://www.gugudata.com/api/details/fundamentalinfo)

- [[股票行情] A 股历年财务指标](https://www.gugudata.com/api/details/financialindicator)

- [[股票行情] A 股指数成分数据](https://www.gugudata.com/api/details/stockcnindexcontains)

- [[股票行情] A 股指数历史数据](https://www.gugudata.com/api/details/stockcnindexhistory)

- [[股票行情] A 股盘前数据](https://www.gugudata.com/api/details/stockcnpreopen)

- [[股票行情] A 股分笔交易数据](https://www.gugudata.com/api/details/stockcnticks)

- [[股票行情] A 股交易日历](https://www.gugudata.com/api/details/stockcntradecalendar)

- [[股票行情] 期权实时行情数据](https://www.gugudata.com/api/details/stockcnoptions)

- [[股票行情] 基金基本信息列表](https://www.gugudata.com/api/details/fundinfolist)

- [[股票行情] 指数型基金基本信息](https://www.gugudata.com/api/details/fundbasicindex)

- [[股票行情] 开放式基金净值实时数据](https://www.gugudata.com/api/details/fundopennavrealtime)

- [[股票行情] 开放式基金净值历史数据](https://www.gugudata.com/api/details/fundopennavhistory)

- [[股票行情] 科创板历史行情数据](https://www.gugudata.com/api/details/stockkcb)

- [[股票行情] 美股粉单实时行情数据](https://www.gugudata.com/api/details/pinksheetsrealtime)

- [[股票行情] 分类美股实时行情数据](https://www.gugudata.com/api/details/usfamous)

- [[股票行情] 公募开放式基金实时数据](https://www.gugudata.com/api/details/fundopenrealtime)

- [[股票行情] 公募开放式基金历史数据](https://www.gugudata.com/api/details/fundopenrealtime)

- [[股票行情] 场内交易基金实时数据](https://www.gugudata.com/api/details/fundopenetfrealtime)

- [[股票行情] 场内交易基金历史数据](https://www.gugudata.com/api/details/fundopenetfhistory)

- [[股票行情] 场内交易基金分时行情](https://www.gugudata.com/api/details/fundhistoryminute)

- [[体育/比赛] 历年奥运比赛数据](https://www.gugudata.com/api/details/olympic))

| gugudata | |

1,377,667 | openGauss Parallel Page-based Redo For Ustore | Availability This feature is available since openGauss 2.1.0. ... | 0 | 2023-02-24T07:02:20 | https://dev.to/liyang0608/opengauss-parallel-page-based-redo-for-ustore-1l03 | opengauss | ## Availability

This feature is available since openGauss 2.1.0.

## Introduction

Optimized Ustore inplace update WAL write and improved the degree of parallelism for Ustore DML operation replay.

## Benefits

The WAL space used by the update operation is reduced, and the degree of parallelism for Ustore DML operation replay is improved.

## Description

Prefixes and suffixes are used to reduce the write times of WAL update. Replay threads are classified to solve the problem that most Ustore DML WALs are replayed on multiple pages. In addition, Ustore data pages are replayed based on blkno.

## Enhancements

None.

## Constraints

None.

| liyang0608 |

1,377,743 | Recoil atom effects | ..are effing cool! I've been using Recoil.js for a while now, but I've never taken the time to dive... | 0 | 2023-02-24T07:57:36 | https://dev.to/atlefren/recoil-atom-effects-3n93 | typescript, recoiljs | ..are effing cool!

I've been using Recoil.js for a while now, but I've never taken the time to dive into atom effects before recently.

Why did I do so? Because I needed a timer. Or a clock if you will. Running in recoil. And atom effects seems to do the trick. Just look here

```ts

import {AtomEffect, atomFamily} from 'recoil';

export type Millisecounds = number;

const getUnixNow = () => Math.floor(Date.now() / 1000);

const clockEffect =

(interval: Millisecounds): AtomEffect<number> =>

({setSelf, trigger}) => {

if (trigger === 'get') {

setSelf(getUnixNow());

}

const timer = setInterval(() => setSelf(getUnixNow()), interval);

return () => clearInterval(timer);

};

/**

* Atom that contains the current unix timestamp

* Updates at the provided interval

*/

export const clockState = atomFamily<number, Millisecounds>({

key: 'clockState',

default: getUnixNow(),

effects: (interval: Millisecounds) => [clockEffect(interval)],

});

```

This gives you an atomFamiliy that can be instantiated with the desired interval, and this atom automagically updates each interval, in this case returning the current unix timestamp

```ts

const time = useRecoilValue(clockState(1000)); //new clock state that updates every secound

return <div>The current Unix time is now: {time}</div>

```

Neat?

Well. But what you can do is use this as a trigger in a selector that needs to run periodically

```ts

export const pollerState = selector<SomeData[]>({

key: 'pollerState ',

get: async ({get}) => {

//add this to referesh every minute

get(clockState(60000));

return await myApi.getSomeData();

},

});

```

And this is pretty neat!

And if this doesn't get you hooked on atom effects, take a look at this, straight outta the [recoil docs](https://recoiljs.org/docs/guides/atom-effects) (Just some TypeScript added):

```ts

export const localStorageEffect =

<T>(key: string): AtomEffect<T> =>

({setSelf, onSet}) => {

const savedValue = localStorage.getItem(key);

if (savedValue != null) {

setSelf(JSON.parse(savedValue));

}

onSet((newValue, _, isReset) => {

isReset ? localStorage.removeItem(key) : localStorage.setItem(key, JSON.stringify(newValue));

});

};

const syncedState = atom<string>({

key: 'syncedState',

default: '',

effects: [localStorageEffect('local_storage_key')],

});

```

This actually syncs your atom to local storage. Sweet! | atlefren |

1,377,828 | State of Flowbite: learn more about our results from 2022 and what we plan to build this year | Disclaimer: this article was NOT generated by chatGPT 🤖 My name is Zoltán and I am one of the... | 0 | 2023-02-24T09:45:38 | https://flowbite.com/blog/state-of-flowbite-2022/ | flowbite, opensource, webdev, tailwindcss | _Disclaimer: this article was NOT generated by chatGPT 🤖_

My name is [Zoltán](https://twitter.com/zoltanszogyenyi) and I am one of the original founders and current project maintainers of the Flowbite ecosystem alongside with [Robert](https://twitter.com/roberttanislav) – for without his design skills we couldn't enjoy the beautiful UI/UX components that are now used by over 2 million projects on NPM.

I've been wanting to write a blog post that encapsulates the results of what the Flowbite Ecosystem and Community achieved last year and it's going to be pretty hard to summarize it all as this was the year with the highest growth we experienced in terms of adoption, community growth, but also challenges.

I'll try to break it up in a chronological order and focus on the different tools and libraries that we've developed as the year passed - so let's get started!

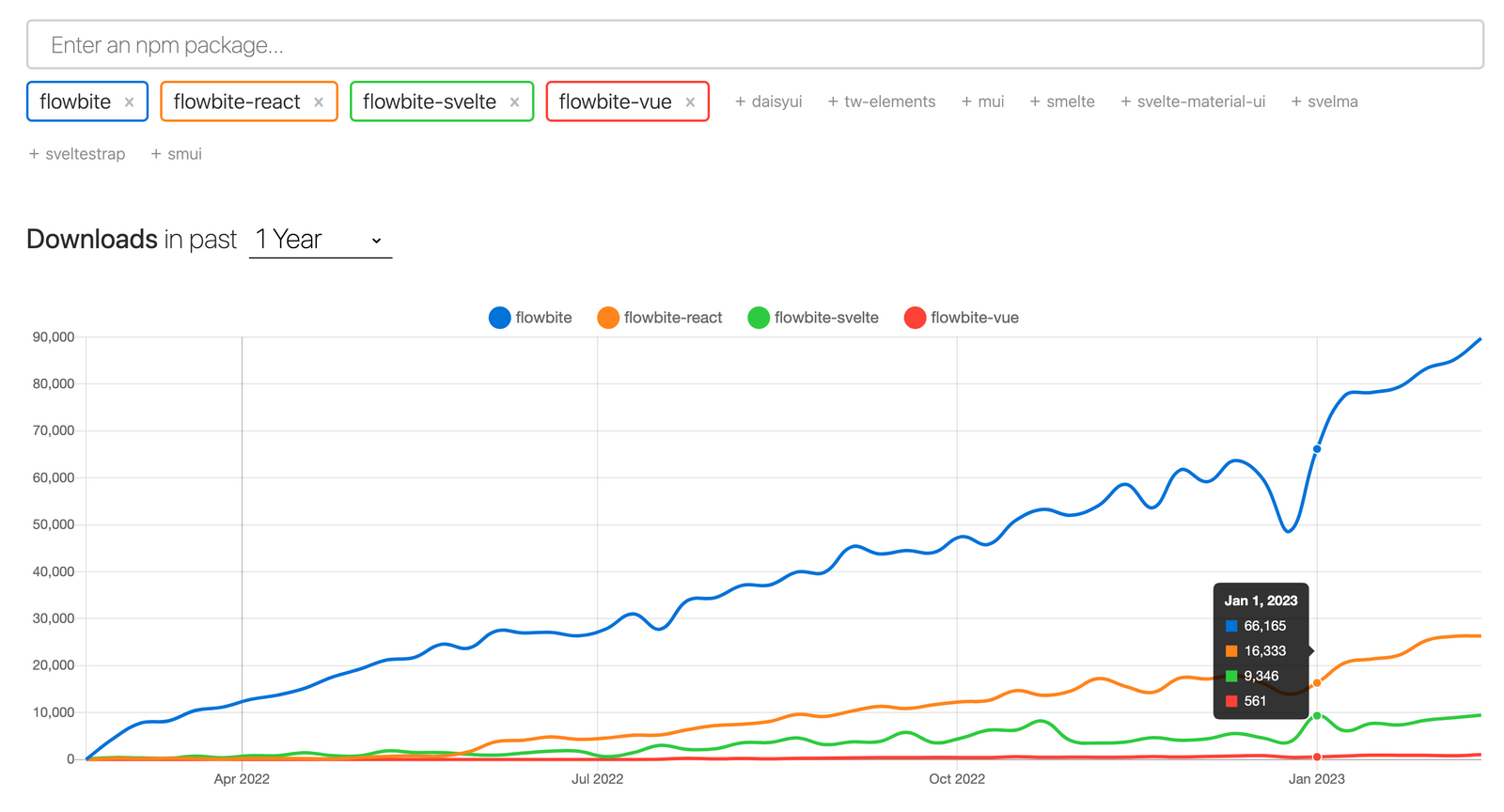

## One million downloads on NPM

One of the best metrics that you can use to measure the adoption rate of a framework is to look at the total amount of NPM downloads – we reached over [one million downloads](https://www.npmjs.com/package/flowbite) sometime in the middle of the year and the weekly download rate has only been increasing.

[](https://www.npmjs.com/package/flowbite)

At the time of the writing of this article the main Flowbite library is rocking about 94k downloads per week, while Flowbite React is standing at 26k, Flowbite Svelte 10k and Flowbite Vue slowly catching up with 1.2k.

Big shout-out to all the open-source contributors and project maintainers of the adjacent libraries based on the main Flowbite library!

Growth has been steady and adoption has been increasing at a pace of about 10-15% on a monthly basis – this gives us a decent amount of time to keep improving the library while keeping growth intact.

## Flowbite Library

The main growth factor of the Flowbite Ecosystem is the [open-source UI component library](https://github.com/themesberg/flowbite) that is based on the utility classes from Tailwind CSS – there are over 54 standalone components and 16 framework integration guides and we have big plans this year to build even more.

[](https://github.com/themesberg/flowbite)

We have provided [official TypeScript support](https://flowbite.com/docs/getting-started/typescript/) to the interactive JS API, added brand new components, provided new functionality and features, improved the documentation styles, and fixed over 194 issues as of date.

We also built countless of integration guides with other frameworks, such as [Astro](https://flowbite.com/docs/getting-started/astro/), [SolidJS](https://flowbite.com/docs/getting-started/solid-js/), [Gatsby](https://flowbite.com/docs/getting-started/gatsby/), and [Flowbite with Symfony](https://flowbite.com/docs/getting-started/symfony/) being the latest one and we have plans to build quite a few more.

The next phase for the development of the Flowbite Library will be to start building more complex components such as data grids, autocomplete input elements, custom chart components, and others.

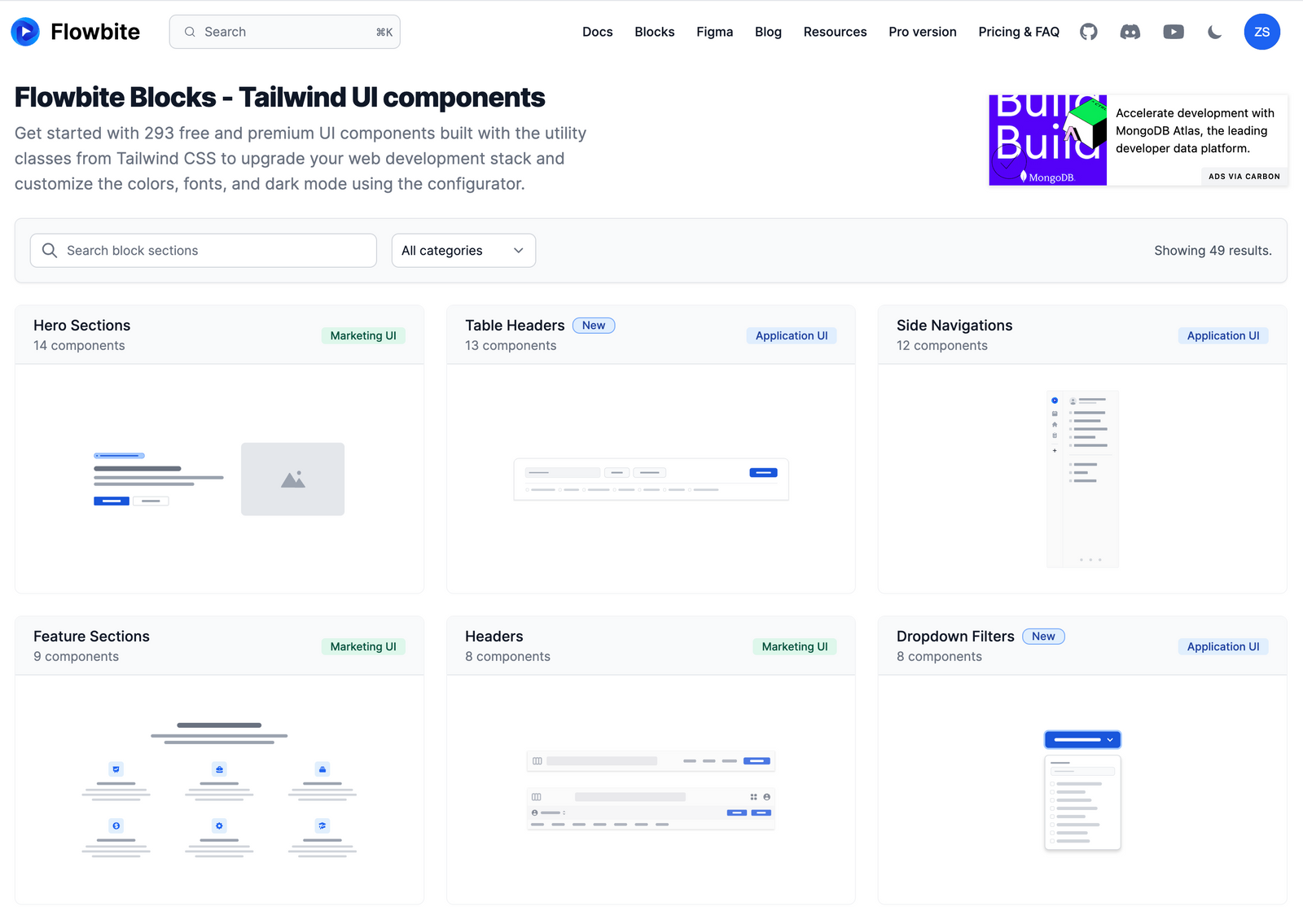

## We launched Flowbite Blocks

This is probably the most notable launch that we have made and it is a collection of modular websites sections that you can use to quickly wrap up a website by copy-pasting the source code directly from the Flowbite platform.

[](https://flowbite.com/blocks/)

Right now there are over 293 block sections that have been planned, prototyped, designed, coded, verified, and launched on the [Flowbite Blocks](https://flowbite.com/blocks/) page which include essential building components for a website such as hero sections, CRUD layouts, pricing pages, blog templates, and more.

We will keep adding new content as we design new components, sections, and pages and while we also collaborate with the open-source community to provide support for libraries and frameworks such as React, Vue, Svelte, and more.

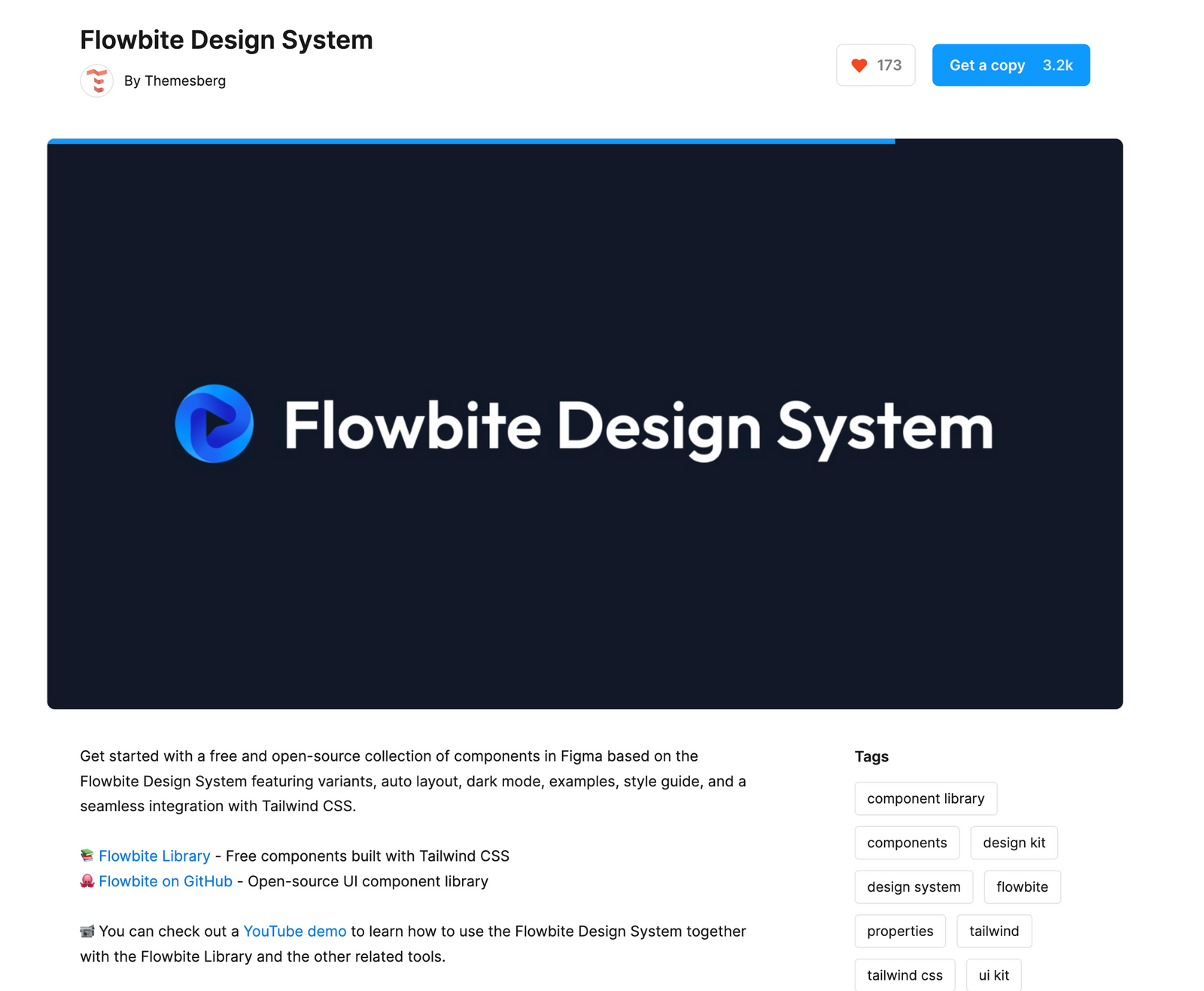

## Flowbite Figma Design System

I'm not sure if many of you know but the first instance of Flowbite was in fact a Figma file that we built for integration with Tailwind CSS – originally named [tailwind-figma.com](https://tailwind-figma.com/) we had to rename it per request from [Steve Schoger](https://twitter.com/steveschoger) from Tailwind Labs to prevent confusion – I have to thank Steve for that because I found the beautiful "Flowbite" name and domain then 😜

[](https://www.figma.com/community/file/1179442320711977498)

Long story short, last year we decided to open-source the core UI components from the Flowbite Library and it has been generally very well received – you can [duplicate the file](https://www.figma.com/community/file/1179442320711977498) on Figma Community right now.

Of course, this meant that we had to "up our game" with the [pro version's design system](https://flowbite.com/figma/) and we have provided updates all the way up to `v2.4.1` and we are super close to launching `v2.5.0` with countless new components, landing pages, Figma features, and more.

## Flowbite Admin Dashboard

Towards the end of the year we also had a big surprize to the open-source community by releasing an [admin dashboard template](https://github.com/themesberg/flowbite-admin-dashboard) that leverages not only the Flowbite design system, but also the library's interactive UI components and more.

[](https://github.com/themesberg/flowbite-admin-dashboard)

The [repository](https://github.com/themesberg/flowbite-admin-dashboard) reached over 200 stars on GitHub and we plan to release new pages based on demand and feature requests from the open-source community. We are currently improving the pro version of this dashboard and we are excited to share it in the coming months with our [Flowbite Pro](https://flowbite.com/pro/) community.

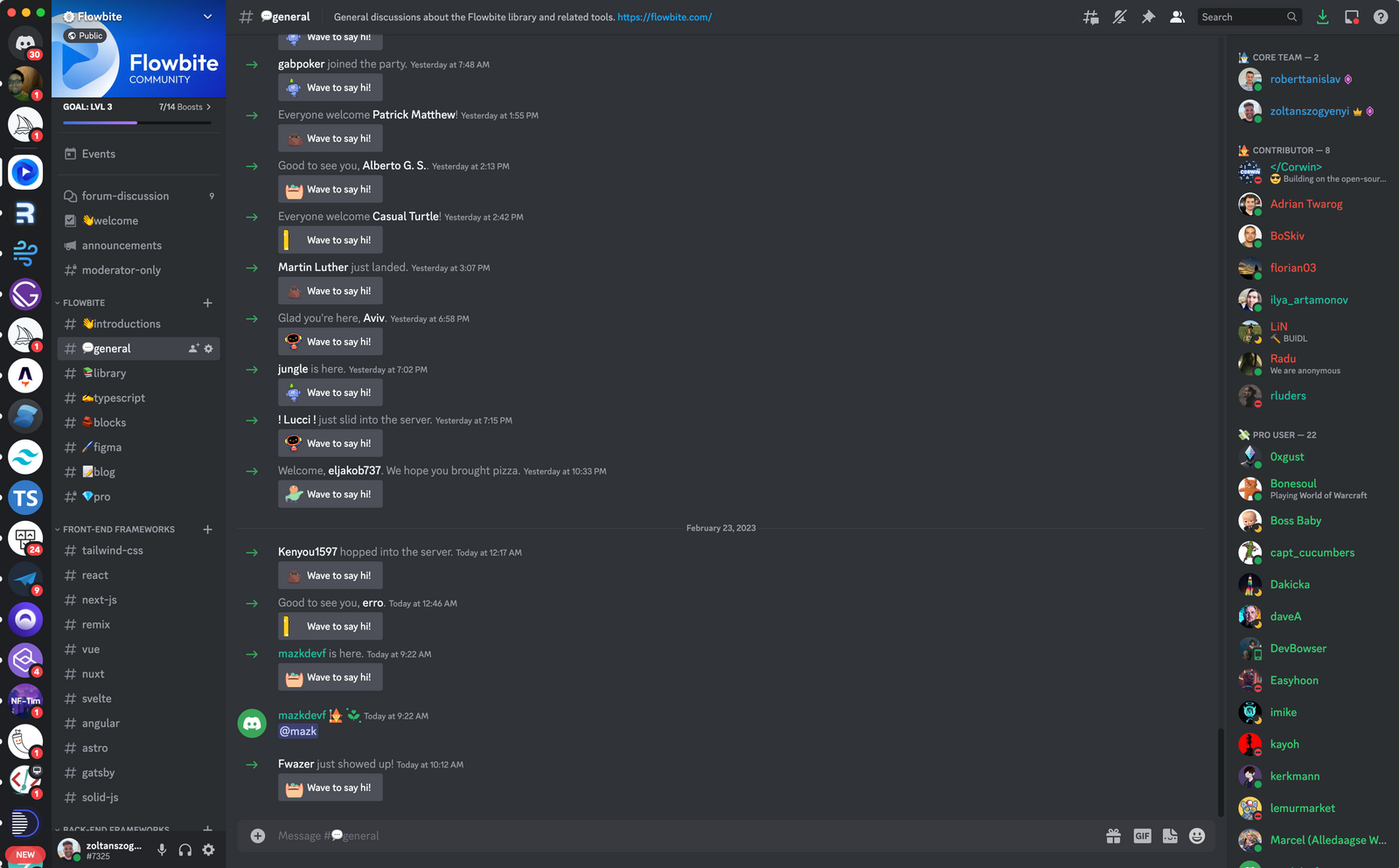

## Flowbite Community and libraries

Probably the most amazing thing about Flowbite is the awesome and flourishing open-source community that is growing around it – we have recently reached over 2,000 members on the [Flowbite Discord server](https://discord.gg/4eeurUVvTy) which is quite breathtaking.

[](https://discord.gg/4eeurUVvTy)

The open-source Flowbite libraries such as [Flowbite React](https://github.com/themesberg/flowbite-react), [Flowbite Svelte](https://github.com/themesberg/flowbite-svelte), and [Flowbite Vue](https://github.com/themesberg/flowbite-vue) have been nicely growing in terms of number of components, functionality, documentation, and also usage.

This year, we plan to collaborate even more with these libraries to increase consistency across the frameworks and provide assistance with building new components and documentations.

## What about the future?

I've been personally working over 2 years on the Flowbite ecosystem and starting from creating the first instance of the repository, pushing the first commit, building the Flowbite platform, managing the community, it's been quite a lot.

My strength is primarily being driven by the awesome community that we have built around the Flowbite concept and ecosystem and as long as we can we will make sure that our main mission will be to provide open-source UI components to all software developers and teams regardless of frameworks.

A big thank you to all open-source contributors, the core Flowbite team, our partners, and developers and designers of Flowbite – let's rock this year even more! ❤️

PS: here's a video on Twitter where I talk about this:

{% twitter 1628746566507831300 %}

| zoltanszogyenyi |

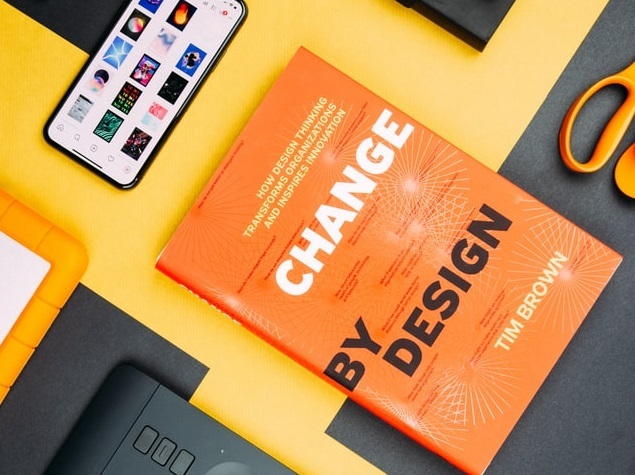

1,378,062 | Streamlining your workflow: How AI-assisted code completion can improve productivity | As software developers, we are all too familiar with the challenges of staying productive in our... | 0 | 2023-02-24T13:22:57 | https://bito.co/streamlining-your-workflow-how-ai-assisted-code-completion-can-improve-productivity/ | As software developers, we are all too familiar with the challenges of staying productive in our work. Long hours spent on manual coding and debugging can make it difficult to keep up with the demands of our job. However, there is a solution to these challenges — AI-assisted code completion.

AI-assisted code completion employs machine learning methods to suggest and finish code snippets, reducing manual coding and debugging time. Deloitte found that such solutions cut requirements review time by over 50%. ([Source: Deloitte](https://www2.deloitte.com/us/en/insights/focus/signals-for-strategists/ai-assisted-software-development.html/#endnote-14))

This blog discusses AI-assisted code completion, its benefits, and how to integrate it into your development workflow.

## I. Understanding AI-Assisted Code Completion

AI algorithms to suggest and complete code snippets based on the context of the code. This can save developers time and effort because they won’t have to type and fix bugs by hand as much. There are a few different types of AI-assisted code completion tools available, each with their own unique features and capabilities.

Real-life examples of AI-assisted code completion in action include Bito AI, DeepCode, Github Copilot, TabNine, CodeRush. According to a report by MarketsandMarkets, the AI-assisted code completion market is expected to grow at a CAGR of 21.8% during the forecast period 2020–2026. ([Source: Markets and Markets](https://www.marketsandmarkets.com/Market-Reports/conversational-ai-market-49043506.html))

One important thing to consider when comparing AI-assisted code completion tools is how well they integrate with your existing development workflow. Some tools may require additional setup or configuration, while others may be able to integrate seamlessly with your existing tools and processes.

## II. The Benefits of AI-Assisted Code Completion

**Reducing the time spent on repetitive tasks:** By using AI-assisted code completion, developers can focus on the more complex and creative aspects of their work, while the tool handles the more repetitive tasks. This can lead to faster development times and higher-quality code.

**Enhancing code quality and reducing errors:** AI-assisted code completion reduces errors by eliminating human coding and debugging. This can speed up debugging and testing and improve development.

**Improving collaboration within the development team:** According to a study by Accenture, with AI process automation, workforces can process 5X larger volumes and scale up as new business needs arise to help quickly act on new opportunities. ([Source: Accenture](https://www.accenture.com/us-en/services/applied-intelligence/solutions-ai-processing))

**Enabling faster debugging and testing:** AI-assisted code completion reduces errors by eliminating human coding and debugging. This can speed up debugging and testing and improve development.

## III. Implementing AI-Assisted Code Completion

Implementing AI-assisted code completion in your development workflow is relatively straightforward. There are many tools and platforms available, such as DeepCode, Kite, TabNine, CodeRush, IntelliCode that you can use.

When choosing a tool, it is important to consider how well it integrates with your existing development workflow. Some tools may require additional setup or configuration, while others may be able to integrate seamlessly with your existing tools and processes.

Once you have chosen a tool, the next step is to integrate it into your development workflow. This may involve installing the tool and configuring it to work with your existing tools and processes.

## Conclusion

In conclusion, AI-assisted code completion is a powerful technology that can help to streamline your development workflow and improve your productivity as a software developer. By using AI-assisted code completion, developers. | ananddas | |

1,378,368 | 9 Must-Have SaaS Tools for Startups to Boost Growth | 9 Must-Have SaaS Tools for... | 0 | 2023-02-24T17:38:55 | https://dev.to/seeratawan01/9-must-have-saas-tools-for-startups-to-boost-growth-2b07 | startup, saas, tooling, productivity | {% embed https://www.seeratawan.me/9-must-have-saas-tools-for-startups/ %} | seeratawan01 |

1,378,377 | A detailed guide on how to implement Server-side Rendering (SSR) in a NextJs Application | Introduction Server-side Rendering (SSR) is becoming increasingly important in web... | 0 | 2023-03-29T10:28:40 | https://dev.to/onlyoneerin/a-detailed-guide-on-how-to-implement-server-side-rendering-ssr-in-a-nextjs-application-1mpp | nextjs, webdev, react, javascript | ## Introduction

Server-side Rendering (SSR) is becoming increasingly important in web development due to its ability to improve website performance and user experience. Unlike Client-side Rendering (CSR), where the website's content is generated on the user's device, server-side rendering generates the HTML on the server and sends it to the client. This method can improve website load time, search engine optimization, and accessibility.

[Next.Js](https://nextjs.org/) is a popular framework for building React applications, and it offers built-in support for server-side rendering. With Next.js, we can easily set up our application to generate HTML on the server and deliver it to the client, providing a seamless user experience and optimized website performance. In this detailed guide, we will build a cryptocurrency web app to show how to implement SSR in a Next.js application. We will also cover the basic concepts behind server-side rendering and walk through the steps required to set up SSR in our Next.js application. By the end of this article, you will have a solid understanding of improving your website's performance and SEO by implementing SSR in your Next.js application.

<p> </p>

## Pre-rendering: A built-in Feature in Next.js

Regarding page rendering with Next.js, pre-rendering is a fundamental component. It is a key feature of Next.js, which means that static HTML content is generated in advance rather than dynamically on each request.

When comparing the page source of a traditional [React.js](https://react.dev/) web app and a Next.js web app, it is clear that the Javascript code is loaded before the contents are rendered to the user, which is a bad user experience. However, when inspecting the contents of a Next.js page source, the HTML is already generated with all the necessary data, making Next.js the most efficient method for improved web performance and user experience.

Next.js gives us the option of selecting one of two pre-rendering modes:

1. SSG (Static Side Generation): This is Next.js's default pre-rendering mode, in which HTML pages are generated at build time and served to the user as static files. This approach is appropriate for websites with static content because it reduces server load and provides the fastest possible performance.

2. Server-side rendering (SSR): SSR, on the other hand, generates HTML pages on the server whenever a user requests them. This approach is useful for websites with frequently changing content or that require dynamic data because it can provide a more responsive user experience while ensuring the content is always up to date.

## Understanding the Server-Side Rendering Process

Server-side rendering is fast becoming a popular technique widely used in web development that involves rendering a web page on the server before sending it to the client, unlike client-side rendering, where the page is rendered in the browser first after the server has sent the necessary HTML, CSS, and JavaScript bundled files.

To fully understand the server-side rendering process, it is important to know the key players involved. It includes the server and the client.

The server is responsible for handling all incoming requests made from the client side and sending the appropriate response. In the context of SSR, this involves rendering the requested web page on the server and sending the resulting HTML, CSS, and JavaScript to the client.

The client is the web browser through which a user accesses the web application. In SSR, the client gets the rendered HTML, CSS, and JavaScript from the server and displays the contents on the web page.

Now that we've identified the two major players in server-side rendering, let's look at the actual thought process behind it.

The client requests the server for a specific web page as the first step in the server-side rendering process.

The server will receive the request and determine which page the client is looking for. The server will then render the requested page on the server, which includes generating the page's HTML, CSS, and JavaScript and compiling them into a complete web page.

After rendering the web page on the server, the server will send the resulting HTML, CSS, and JavaScript to the client. The client will then use these files to show the user the web page.

<p> </p>

## Implementing SSR with Data Fetching in Next.js

Data fetching is an essential part of developing any web application. Next.Js provides several methods for retrieving data, including server-side rendering, static site generation, client-side rendering, incremental static regeneration, and dynamic routing. However, for this article, we will only look at server-side generation. You can learn about the other types by reading the [Next.js documentation](https://nextjs.org/docs/getting-started).

<p> </p>

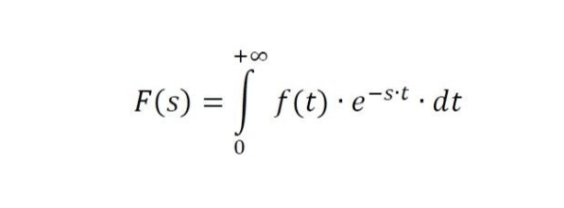

## getServerSideProps: a built-in Function for Data Fetching in Next.Js

Next.js includes a built-in function called getServerSideProps that allows us to fetch data from the server with each request. To use server-side rendering on a page, we must export getServerSideProps, and the server will call this function on every request.

**getServerSideProps Syntax**

```javaScript

export default function Page( {data} ){

return <>YOU CAN DISPLAY YOUR DATA ACCORDINGLY</>

}

export async function getServerSideProps() {

// Your code

const data = .... ;

// Passing data to the page using props

return {

props : {data}

}

}

```

In place of `data` we can use a different variable name. We can also pass multiple props by using commas "," to separate them.

<p> </p>

### <u>Key Notes about getServerSideProps</u>

1. `getServerSideProps` only runs on the server and never on the client.

2. It runs at the request time, and the web page is pre-rendered with the returned props specified inside.

3. `getServerSideProps` can only be exported from a page. You cannot export it from non-page files. It will not work if you make `getServerSideProps` a page component property.

4. `getServerSideProps` should only render a page whose data must be fetched at the requested time. If the data does not need to be rendered during the request, consider fetching it on the client side or using static side generation.

5. The `getServerSideProps` function must return an object containing the data that will be passed to the page component as props. If the function does not have a return statement, it cannot pass data to the page component.

<p> </p>

## Fetching data using getServerSideProps

<p> </p>

**Step 1: Setting up a Next.Js Application**

Now that we understand the server-side rendering process, we can go ahead and make requests to the server and fetch data from it.

Next.Js includes server-side rendering support; with this framework, we can easily make server requests and pre-render our web content without writing complex coding functions or methods.