id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,416,590 | Linked Lists (implementation using Python) and it’s applications | Let's talk about Lists first In computer programming Lists are a type of data structure through... | 0 | 2023-03-27T14:58:01 | https://dev.to/kainatraisa21/linked-lists-implementation-using-python-and-its-applications-15pc | **Let's talk about Lists first**

In computer programming Lists are a type of data structure through which we store data. List data structures are similar to the "real life list" concept. The way all of the elements of a real life list comes one after another list data structures also store the data contiguously in the memory. We can access, modify as well as delete the elements of a list data structure.

(The theoretical part)

**What are Linked Lists**

Linked Lists are the data structures which store data linearly (like a list) but they don’t store the elements contiguously in the memory. Each element of a Linked List is called a Node. The links among the nodes is a unique feature of a Linked List. Based on the connections there are 4 types of Linked Lists. The 4 types of Linked Lists are: Singly Linked List, Circular Singly Linked List, Doubly Linked List and Circular Doubly Linked List.

Enough of the bookish definition of Linked Lists now we'll learn about Linked Lists in our own words.

Every linked list has atleast concepts/parts. A head node/value, a tail node/value, next and pervious node's reference.

🔶 Singly Linked List:

Let's assume the Singly Linked list as a train. The way the train compartments are connected to their next compartments with a link, the nodes of a Singly Linked List are linked to their next nodes. Every element of a Linked List is called a node. The very first node of the Linked List is called the Head. The last node is called the tail(the node after which no newnode will exist is the last node). As we know that Linked Lists don’t store data sequentially so index 1 stores the tail node(this is the 2nd node we create). A node stores the memory address of the next node. We can access the next node of a linked list using the node.

Real life application: Back buttons(for example:- the smartphone back buttons are created using Singly Linked List so they allow us to go only in a single direction).

🔶 Circular Singly Linked List:

The only structural difference between Singly Linked List and Circular Singly Linked List is that the Circular Singly Linked List has a link between the Head and Tail node. Which means the Tail node stores the memory value of the Head node.

Real life application: The Circular Singly Linked List is used in our personal computers. When multiple applications are run on a PC all of the running apps are stored in a CSLL.

🔶 Doubly Linked List:

The nodes of a Doubly Linked List store the memory locations of both the previous and next node. Which means we can access both the previous and next node using the current node. Otherwise all of the features of DLL are similar to SLL.

Real life applications: Web browser cache, music player etc.

🔶 Circular Doubly Linked list:

The only structural difference between Circular Singly Linked List and Circular Doubly Linked List is that in CDLL the Head and Tail nodes are connected with eachother both ways so the tail's next node will be the head and the head's previous node will be the tail node.

Real life applications: Shopping cart on online websites etc.

(The implementation part will be added here soon)

| kainatraisa21 | |

1,416,620 | Exploring a Twitter Network With Memgraph in a Jupyter Notebook | Through this short tutorial, you will learn how to install Memgraph, connect to it from a Jupyter... | 0 | 2023-03-27T14:38:55 | https://memgraph.com/blog/jupyter-notebook-twitter-network-analysis | python, algorithms, twitter, memgraph | Through this short tutorial, you will learn how to install Memgraph, connect to

it from a Jupyter Notebook and perform data analysis using graph algorithms. You

can find the original Jupyter Notebook in our open-source [GitHub

repository](https://github.com/memgraph/jupyter-memgraph-tutorials/tree/main/twitter_network_analysis).

If at any point you experience problems with this tutorial or something is

unclear to you, reach out on our [Discord server](https://discord.gg/memgraph).

The dataset from this tutorial is also available in the form of a [Playground

sandbox](https://playground.memgraph.com/sandbox/twitter-christmas-retweets)

which you can query from your browser.

## 1. Prerequisites

For this tutorial, you will need to install:

- **[Jupyter](https://jupyter.org/install)**: Jupyter is necessary to run the

notebook available here.

- **[Docker](https://docs.docker.com/get-docker/)**: Docker is used because

Memgraph is a native Linux application and cannot be installed on Windows and

macOS.

- **[GQLAlchemy](https://pypi.org/project/gqlalchemy/)**: A Python OGM (Object

Graph Mapper) that connects to Memgraph.

- **[Pandas](https://pypi.org/project/pandas/)**: A popular data science

library.

## 2. Installation using Docker

After you install Docker, you can set up Memgraph by running:

```

docker run -it -p 7687:7687 -p 3000:3000 memgraph/memgraph-platform

```

This command will start the download and after it finishes, run the Memgraph

container.

## 3. Connecting to Memgraph with GQLAlchemy

We will be using the **GQLAlchemy** object graph mapper (OGM) to connect to

Memgraph and execute **Cypher** queries easily. GQLAlchemy also serves as a

Python driver/client for Memgraph. You can install it using:

```

pip install gqlalchemy

```

> **Hint**: You may need to install [CMake](https://cmake.org/download/) before

> installing GQLAlchemy.

Maybe you got confused when I mentioned Cypher. You can think of Cypher as SQL

for graph databases. It contains many of the same language constructs like

`CREATE`, `UPDATE`, `DELETE`... and it's used to query the database.

```python

from gqlalchemy import Memgraph

memgraph = Memgraph("127.0.0.1", 7687)

```

Let's make sure that Memgraph is empty before we start with anything else.

```python

memgraph.drop_database()

```

Now let's see if the database is empty:

```python

results = memgraph.execute_and_fetch(

"""

MATCH (n) RETURN count(n) AS number_of_nodes ;

"""

)

print(next(results))

```

Output:

{'number_of_nodes': 0}

## 4. Define a graph schema

We are going to create Python classes that will represent our graph schema. This

way, all the objects that are returned from Memgraph will be of the correct type

if the class definition can be found.

```python

from typing import Optional

from gqlalchemy import Field, Node, Relationship

class User(Node):

username: str = Field(index=True, unique=True, db=memgraph)

class Retweeted(Relationship, type="RETWEETED"):

pass

```

## 5. Creating and returning nodes

We are going to create `User` nodes, save them to the database and return them

to our program:

```python

user1 = User(username="ivan_g_despot")

user2 = User(username="supe_katarina")

user1.save(memgraph)

user2.save(memgraph)

print(user1)

print(user2)

```

Output:

<User id=1874 labels={'User'} properties={'username': 'ivan_g_despot'}>

<User id=1875 labels={'User'} properties={'username': 'supe_katarina'}>

Now, let's try to create a node using the Cypher query language. We are going to

create a node with an existing username just to check if the existence

constraint on the property `username` is set correctly.

```python

try:

results = memgraph.execute(

"""

CREATE (:User {username: "supe_katarina"});

"""

)

except Exception:

print("Error: A user with the username supe_katarina is already in the database.")

```

Output:

Error: A user with the username supe_katarina is already in the database.

## 6. Creating and returning relationships

We are going to create a `Retweeted` relationship, save it to the database and

return it to our program:

```python

retweeted = Retweeted(_start_node_id=user1._id, _end_node_id=user2._id)

retweeted.save(memgraph)

print(retweeted)

```

Output:

<Retweeted id=1670 start_node_id=1874 end_node_id=1875 nodes=(1874, 1875) type=RETWEETED properties={}>

## 7. Importing data from CSV files

You will need to download [this file](https://github.com/memgraph/jupyter-memgraph-tutorials/blob/main/twitter_network_analysis/scraped_tweets.csv) which contains a simple dataset of

scraped tweets. To import it into Memgraph, we will first need to copy it to the

Docker container where Memgraph is running. Find the `CONTAINER_ID` by running:

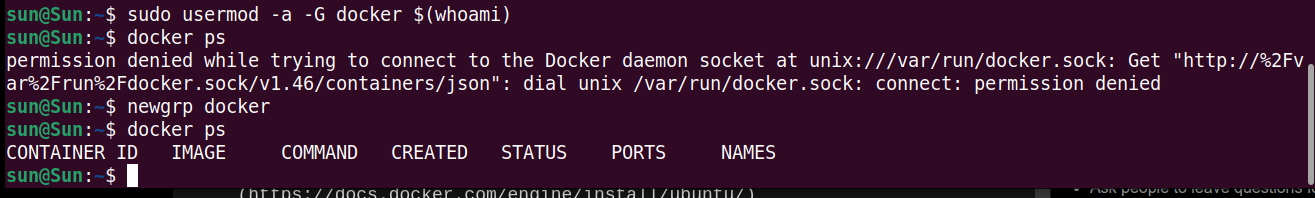

```

docker ps

```

Copy the file with the following command (don't forget to replace

`CONTAINER_ID`):

```

docker cp scraped_tweets.csv CONTAINER_ID:scraped_tweets.csv

```

We are going to see what our CSV file looks like with the help of the pandas

library. To install it, run:

```

pip install pandas

```

Now let's see what the CSV file looks like:

```python

import pandas as pd

data = pd.read_csv("scraped_tweets.csv")

data.head()

```

<div>

<style scoped>

.dataframe tbody tr th:only-of-type {

vertical-align: middle;

}

.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

Output:

</style>

<table border="1" class="dataframe">

<thead>

<tr style="text-align: right;">

<th></th>

<th>source_username</th>

<th>target_username</th>

</tr>

</thead>

<tbody>

<tr>

<th>0</th>

<td>CapeCodGiftShop</td>

<td>RetroCEO</td>

</tr>

<tr>

<th>1</th>

<td>CodeAttBot</td>

<td>LeeHillerLondon</td>

</tr>

<tr>

<th>2</th>

<td>BattlegroundHs</td>

<td>getwhalinvest</td>

</tr>

<tr>

<th>3</th>

<td>botpokemongofr1</td>

<td>TrevorAllenPKMN</td>

</tr>

<tr>

<th>4</th>

<td>AnyaSha13331181</td>

<td>WORLDMUSICAWARD</td>

</tr>

</tbody>

</table>

</div>

<br>

Now, we can execute the Cypher command `LOAD CSV`, which is used for loading

data from CSV files:

```python

memgraph.execute(

"""

LOAD CSV FROM "/scraped_tweets.csv" WITH HEADER AS row

MERGE (u1:User {username: row.source_username})

MERGE (u2:User {username: row.target_username})

MERGE (u1)-[:RETWEETED]->(u2);

"""

)

```

You can think of the `LOAD CSV` clause as a loop that will go over every row in

the CSV file and execute the specified Cypher commands.

## 8. Querying the database and retrieving results

Let's make sure that our data was imported correctly by retrieving it:

```python

results = memgraph.execute_and_fetch(

"""

MATCH (u:User)

RETURN u

ORDER BY u.username DESC

LIMIT 10;

"""

)

results = list(results)

for result in results:

print(result["u"])

```

Output:

<User id=3692 labels={'User'} properties={'username': 'zziru67'}>

<User id=3240 labels={'User'} properties={'username': 'zippydjh'}>

<User id=3725 labels={'User'} properties={'username': 'zee_row_ex'}>

<User id=3591 labels={'User'} properties={'username': 'yvonneqqm'}>

<User id=3212 labels={'User'} properties={'username': 'yujulia999'}>

<User id=2378 labels={'User'} properties={'username': 'yudhapati88'}>

<User id=2655 labels={'User'} properties={'username': 'yu100_kun'}>

<User id=2302 labels={'User'} properties={'username': 'youth_tree'}>

<User id=2432 labels={'User'} properties={'username': 'yourkpopsoul'}>

<User id=2132 labels={'User'} properties={'username': 'your_harrogate'}>

We can also check the type of the retrieved records:

```python

u = results[0]["u"]

print("User: ", u.username)

print("Type: ", type(u))

```

Output:

User: zziru67

Type: <class '__main__.User'>

Let's try to execute the same query with the GQLAlchemy query builder:

```python

from gqlalchemy import match

results_from_qb = (

match()

.node(labels="User", variable="u")

.return_()

.order_by("u.username DESC")

.limit(10)

.execute()

)

results_from_qb = list(results_from_qb)

for result in results_from_qb:

print(result["u"])

```

Output:

<User id=3692 labels={'User'} properties={'username': 'zziru67'}>

<User id=3240 labels={'User'} properties={'username': 'zippydjh'}>

<User id=3725 labels={'User'} properties={'username': 'zee_row_ex'}>

<User id=3591 labels={'User'} properties={'username': 'yvonneqqm'}>

<User id=3212 labels={'User'} properties={'username': 'yujulia999'}>

<User id=2378 labels={'User'} properties={'username': 'yudhapati88'}>

<User id=2655 labels={'User'} properties={'username': 'yu100_kun'}>

<User id=2302 labels={'User'} properties={'username': 'youth_tree'}>

<User id=2432 labels={'User'} properties={'username': 'yourkpopsoul'}>

<User id=2132 labels={'User'} properties={'username': 'your_harrogate'}>

## 9. Calculating PageRank

Now, let's do something clever with our graph. For example, calculating PageRank

for each node and then adding a `rank` property that stores the PageRank value

to each node:

```python

results = memgraph.execute_and_fetch(

"""

CALL pagerank.get()

YIELD node, rank

SET node.rank = rank

RETURN node, rank

ORDER BY rank DESC

LIMIT 10;

"""

)

for result in results:

print("The PageRank of node ", result["node"].username, ": ", result["rank"])

```

Output:

The PageRank of node WORLDMUSICAWARD : 0.13278838151391434

The PageRank of node Kidzcoolit : 0.018924764871246207

The PageRank of node HuobiGlobal : 0.011314994833838172

The PageRank of node ChloeLe39602964 : 0.010011755296388128

The PageRank of node getwhalinvest : 0.007228675936490175

The PageRank of node Cooper_Lechat : 0.005577971882231625

The PageRank of node Phemex_official : 0.005413803151353543

The PageRank of node HamleysOfficial : 0.005325936307836382

The PageRank of node bmstores : 0.00524546649693655

The PageRank of node TheStourbridge : 0.004422198431576731

Visit the [Memgraph MAGE](https://github.com/memgraph/mage) graph library (and

throw us a star ⭐) and take a look at all of the graph algorithms that have been

implemented. You can also implement and submit your own algorithms and utility

procedures.

## 10. Visualizing the graph in Memgraph Lab

Open Memgraph Lab in your browser on the address

[localhost:3000](http://localhost:3000). Execute the following Cypher query:

```cypher

MATCH (n)-[r]-(m)

RETURN n, r, m

LIMIT 100;

```

Now apply the following graph style to make your graph look more descriptive:

```

@NodeStyle {

size: Sqrt(Mul(Div(Property(node, "rank"), 1), 200000))

border-width: 1

border-color: #000000

shadow-color: #1D9BF0

shadow-size: 10

image-url: "https://i.imgur.com/UV7Nl0i.png"

}

@NodeStyle Greater(Size(Labels(node)), 0) {

label: Format(":{}", Join(Labels(node), " :"))

}

@NodeStyle HasLabel(node, "User") {

color: #1D9BF0

color-hover: Darker(#dd2222)

color-selected: #dd2222

}

@NodeStyle HasProperty(node, "username") {

label: AsText(Property(node, "username"))

}

@EdgeStyle {

width: 1

}

```

<center>Image 1. The radius of nodes is proportional to their PageRank value</center>

<br>

## What's next?

Now it's time for you to use Memgraph on a graph problem!

You can always check out [Memgraph Playground](https://playground.memgraph.com/)

for some cool use cases and examples. If you have any questions, or want to

share your work with the rest of the community, join our **[Discord

Server](https://discord.gg/memgraph)**.

[](https://memgraph.com/blog?topics=Python&utm_source=devto&utm_medium=referral&utm_campaign=blog_repost&utm_content=banner#list) | memgraphdb |

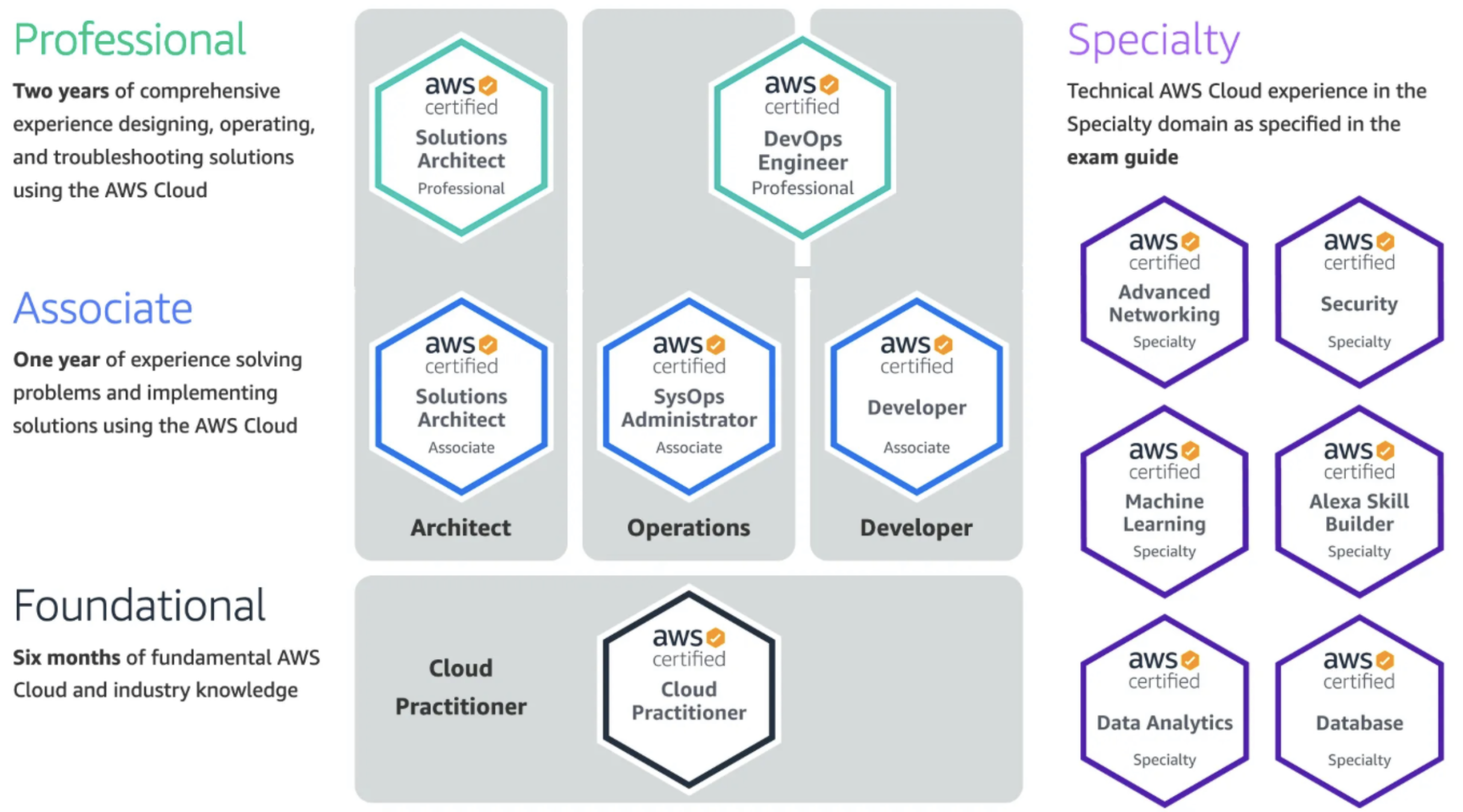

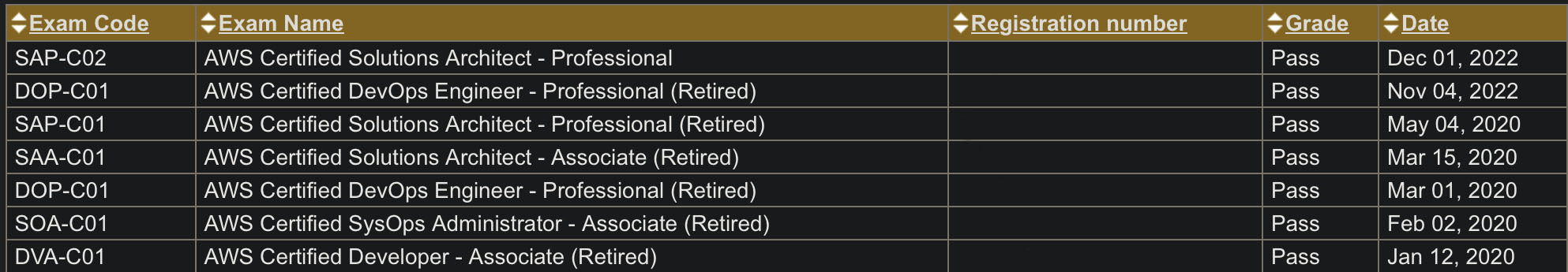

1,416,631 | Cloud Bootcamp 2023 | Excited to start new journey in the cloud. Just started an 2 weeks bootcamp by cloud community, I'm... | 0 | 2023-03-27T15:08:32 | https://dev.to/akk_dev/cloud-bootcamp-2023-5gj2 | cloud, github, kubernetes, azure | Excited to start new journey in the cloud. Just started an 2 weeks bootcamp by [cloud community](https://www.linkedin.com/company/thecloudfolk/), I'm excited to learn new things. They have been organized in three tracks like AWS, Azure, GCP and provide an mentors for each of this...

| akk_dev |

1,416,638 | Design: Monolitos Modulares - Parte 1 | Design: Monolitos Modulares Olá! Este é mais um post da sessão Design e, desta vez,... | 0 | 2023-03-28T11:53:19 | https://dev.to/wsantosdev/design-monolitos-modulares-parte-1-3fli | braziliandevs, designpatterns, architecture, programming | # Design: Monolitos Modulares

Olá!

Este é mais um post da sessão **Design** e, desta vez, falaremos sobre um tema que, volta e meia, reaparece: monolitos.

Entretanto, a intenção deste post é expor uma forma diferente de lidar com este estilo arquitetural: monolitos modulares.

Esta abordagem ajuda a manter o monolito organizado, coeso e menos acoplado e, por isso, é ótima para evitar a chamada "big ball of mud" (grande bola de lama), que se caracteriza por um código com muitas dependências entre as funcionalidades, circulares inclusive, o que torna mais difícil manter e evoluir uma dada aplicação.

Vamos lá!

## O Monolito

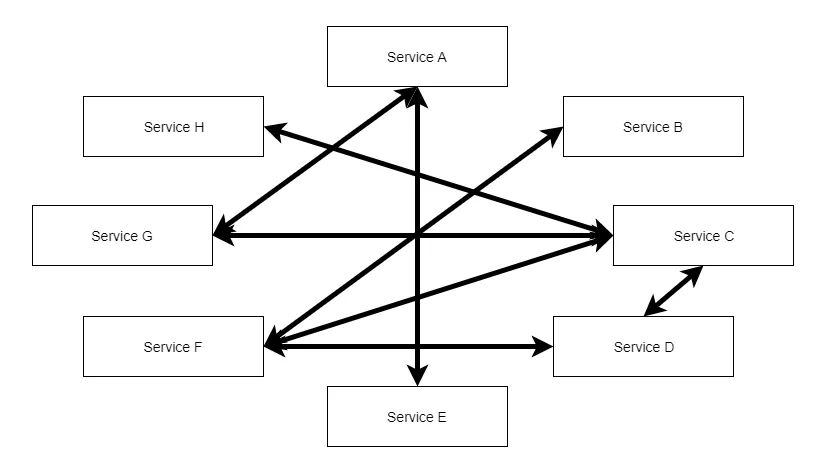

Durante a febre dos microsserviços, o monolito passou a ser tratado como obsoleto. E muito desse tratamento veio da falta de compreensão sobre quais cenários podem se beneficiar deste modelo, tornando muitas aplicações desnecessariamente complexas quando poderiam ser mais simples. Infelizmente, como efeito colateral deste tratamento, acabamos por ver a repetição da "big ball of mud" neste estilo, o que chamamos de "monolito distribuído", uma vez que os mesmos erros de gestão de dependências que ocorriam nos monolitos, afetaram também os processos distribuídos.

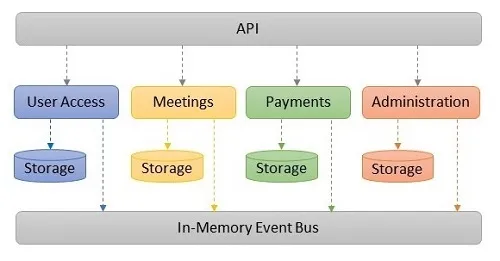

A imagem abaixo ilustra este cenário:

> **Nota:** Algo importante, a esta altura, é entender que, além de repetir a "big ball of mud", há um outro efeito colateral: aumento da complexidade, que leva a um aumento direto do custo do software. Este aumento de complexidade se dá pela preocupação com falhas na rede, mensageria, aglutinação de logs entre outras. Ou seja, uma escolha inadequada por um estilo arquitetural pode levar o negócio a ter maiores despesas com o software, aumentando seu custo operacional.

Vejamos, então, quando o monolito faz sentido para, em seguida, entendermos como a ideia de módulos se ajusta a ele.

### Lei de Conway

A [Lei de Conway](https://www.melconway.com/Home/Committees_Paper.html) (em inglês) nos diz que, em organizações onde se desenvolve software, este tende a replicar a estrutura de comunicação dessas organizações.

Isso, no que tange à escolha de um estilo arquitetural, significa dizer que não faz sentido utilizar microsserviços quando uma dada aplicação não é mantida por múltiplos times, onde cada um é responsável por um dado conjunto de funcionalidades e, mais importante, onde todos precisem realizar deploys independentemente.

Este último critério, por sinal, é a régua do polegar para a escolha de microsserviços em detrimento do monolito. Se não há a necessidade de deploys independentes, não há razão para que se escolha microsserviços e, neste caso, monolito se torna o estilo de primeira escolha.

## A ideia de modularidade

Modularidade, em software, significa criar conjuntos de funcionalidades agrupadas por coerência, e separadas do programa principal, aquele que serve de ponto de entrada.

Para os desenvolvedores dotnet e Java, por exemplo, isso seria equilavente a ter bibliotecas/pacotes, que vou chamar de componentes para generalizar, que representam as funcionalidades, separadas do programa que executa o método `Main`.

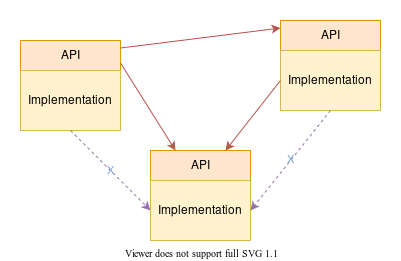

A partir desta separação, e do agrupamento entre as funcionalidades, seria definida uma API (não confundir com Web API) que serviria de ponto de entrada a este grupo, a partir do qual toda a comunicação entre o este grupo e os demais seria mediada.

### Encapsulamento

Para garantir que a modularidade seja respeitada, é necessário isolar o código da API do restante do código contido no módulo e, para isso, é necessário ocultar o código interno às funcionalidade.

Para programadores dotnet, por exemplo, isso se faz possível pelo uso da palavra-chave `internal`, que permite que um dado código seja executado apenas a partir do arquivo onde o mesmo se encontra.

Como neste cenário apenas o código da API ficará visível, não será possível invocar o código ocultado em seu componente, tornando mais fácil manter a modularidade.

O resultado final seria um esquema como o da imagem abaixo:

#### Isolando a interface com o usuário

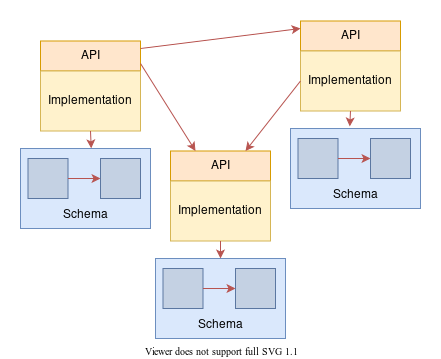

Outro ponto de isolamento importante é aquele feito sobre a interface do usuário. Da mesma forma que o acesso a dados é entendido como parte da infraestrutura da aplicação, assim o é para as interfaces (ou controllers no caso de uma Web API). Assim sendo, é recomendável que sejam criados componentes separados para essas interfaces.

Em um projeto ASP.NET, por exemplo, haveria em uma solução um projeto como ponto de entrada da aplicação (aquele que possuirá o método `Main` e a classe de `Startup`), e um outro projeto para cada módulo com seus respectivos controllers (e/ou views).

Esta separação é importante pois, conforme a evolução da organização, caso surja a necessidade de realizar a decomposição da aplicação em serviços, basta mover estes projetos para uma nova solução, mantendo a infra (aplicação Web) separada do domínio (componente).

#### Isolando o acesso a dados

Outro ponto muito importante é manter o mesmo isolamento das funcionalidades no nível dos dados. Para que a modularidade se mantenha, cada módulo é exclusivamente responsável por obter seus dados, condição esta que pode ser forçada por meio da criação de esquemas separados em uma base de dados (ou mesmo o uso de múltiplas bases), e impedindo que `joins` sejam feitos entre eles a partir de restrições a cada usuário do banco associado a um módulo.

Desta forma, chegaríamos a um esquema semelhante ao da imagem abaixo:

### DDD

A abordagem que considero mais indicada para a correta separação dos módulos é a ideia de `bounded contexts` do DDD. Modelando-se o domínio a partir deles, a separação entre os módulos se define automaticamente, assim como a comunicação entre os mesmos pode ser mapeada com maior precisão.

Esta abordagem nos faria chegar a um esquema semelhante ao da imagem abaixo:

## Conclusão

Com este simples conjunto de princípios é possível manter os módulos de sua aplicação isolados, evitando assim que haja dependências indesejáveis seja por porções de código (classes e interfaces, ou funções), seja por tabelas e outros objetos de banco de dados.

Procedendo desta forma, a aplicação se mantém coesa, fácil de manter e evoluir e, portanto, com menor custo para o negócio (tempo) e para o time (estresse).

É possível que, a esta altura, você tenha notado algo interessante: os princípios mencionados acima são uma reprodução daqueles utilizados em microsserviços. A única diferença é o meio pelo qual a troca de mensagens acontece: com microsserviços ela se dá pela rede e, com monolitos, ela se dá pela memória do processo.

Isso significa, conforme dito na seção "Isolando a interface com o usuário", que uma eventual decomposição da aplicação em serviços, se assim se fizer necessário no futuro, seja facilitada, o que reduz -- e muito! -- a necessidade de uma eventual reescrita por conta de dependências diretas entre porções de diferentes módulos.

Na Parte 2 deste artigo vou me concentrar na aplicação destes princípios em uma aplicação de demonstração. A ideia é mostrar não apenas como o código seria organizado como, também, as diferentes formas de comunicação entre os módulos (síncrona e assíncrona).

Gostou? Me deixe saber pelos indicadores. Tem dúvidas ou sugestões? Deixe um comentário ou me procure pelas redes sociais.

Até a próxima! | wsantosdev |

1,416,647 | Functions in JavaScript | Functions are a fundamental building block in JavaScript that allow you to encapsulate code and reuse... | 22,403 | 2023-03-27T15:29:07 | https://makstyle119.medium.com/functions-in-javascript-5466ae5e3e6a | javascript, beginners, makstyle119, tutorial | **Functions** are a **fundamental** building block in **JavaScript** that allow you to **encapsulate** **code** and **reuse** it throughout your **program**. In this blog post, we'll explore **functions** in **JavaScript**, **including** their **syntax**, **parameters**, and **return values**.

**Syntax** of **Functions** in **JavaScript:**

In **JavaScript**, **functions** are **declared** using the ``function`` **keyword**, followed by the **function name**, a set of **parentheses**, and a set of **curly braces**. Here's an example of a simple **function** that logs a message to the **console**:

{% embed https://gist.github.com/makstyle119/7cfb0f2dbfc9c5ce013127f69ea09176.js %}

In this **code**, we **declare** a **function** called ``myFunction`` that **logs** the message ``"Hello, world!"`` to the **console** when it is called. To call the function, we simply use its **name** followed by a set of **parentheses**:

{% embed https://gist.github.com/makstyle119/b00bd3cbb2a1ee9210d6f714c96fb0eb.js %}

**Parameters in Functions:**

**Functions** can also accept **input values** called **parameters**, which allow you to **pass data** into the **function** when it is called. **Parameters** are **declared** inside the **parentheses** when the function is **declared**. Here's an **example** of a **function** that **takes** two **parameters** and logs their **sum** to the **console**:

{% embed https://gist.github.com/makstyle119/731a6b2b5e993de3da1087cfd7700577.js %}

In this **code**, we **declare** a **function** called ``addNumbers`` that takes two **parameters**: ``num1`` and ``num2``. When the **function** is **called**, it **logs** the **sum** of ``num1`` and ``num2`` to the **console**. To call the **function** with **specific values** for the **parameters**, we pass them in as **arguments** inside the **parentheses**:

{% embed https://gist.github.com/makstyle119/c50bbfe14eb1574ac2f818e0f460e017.js %}

**Return** Values in **Functions**:

**Functions** can also ``return`` **values** back to the calling **code** using the **return keyword**. Here's an **example** of a **function** that takes two **parameters** and **returns** their **sum**:

{% embed

https://gist.github.com/makstyle119/5cfaae84e73b6cee4d6d88588d55fb09.js %}

In this **code**, we **declare** a **function** called ``addNumbers`` that takes two **parameters**: ``num1`` and ``num2``. When the **function** is called, it **returns** the **sum** of ``num1`` and ``num2`` using the ``return`` **keyword**. To use the **return value**, we assign the **function** call to a **variable**:

{% embed

https://gist.github.com/makstyle119/a9efc7dd102cc28c1795312ef919b066.js %}

**Conclusion:**

**Functions** are a **powerful tool** in **JavaScript** that allow you to **encapsulate code** and **reuse** it **throughout** your **program**. In **JavaScript**, **functions** are **declared** using the ``function`` **keyword**, followed by the **function name**, a set of **parentheses**, and a set of **curly braces**. Functions can also **accept input values** called **parameters**, and **return values** using the ``return`` **keyword**. By **understanding** how to **use functions** in **JavaScript**, you can **write** more **efficient** and **maintainable code**.

| makstyle119 |

1,416,840 | 5 Awesome GitHub Repositories To Contribute To! | Introduction An open-source project is a type of project that is being developed and... | 0 | 2023-04-02T12:30:00 | https://dev.to/sriparno08/5-awesome-github-repositories-to-contribute-to-1hnb | opensource, git, github, beginners | ## Introduction

An open-source project is a type of project that is being developed and maintained collaboratively. Lots of contributors from around the world come together to collaborate on different open-source projects.

If you are into open source, you have probably heard about Git and GitHub. The former is a distributed version control system, and the latter is a web-based service that hosts Git repositories.

Once you've mastered these tools, it's time to start contributing to open source. However, finding beginner-friendly open-source projects is a difficult job.

So I've compiled a list of beginner-friendly GitHub repositories to help you get started with open-source contributions. Here we go!

### LinkFree

LinkFree is an open-source alternative to LinkTree. This is a platform where people in the tech industry can have a single hub to showcase their content to advance their careers, while also contributing to an open-source project and being part of a community that has a say in where the project goes.

**Contributing:**

* Create an issue

* Make a Pull Request

* Add your profile

* Improve documentation

* Improve codebase

{% embed https://github.com/EddieHubCommunity/linkfree %}

### freeCodeCamp

freeCodeCamp.org is a friendly community where you can learn to code for free. It is a noble initiative to help millions of busy adults transition into tech. Their community has already helped more than 40,000 people get their first developer job.

**Contributing:**

* Create an issue

* Make a Pull Request

* Improve documentation

* Improve codebase

{% embed https://github.com/freeCodeCamp/freeCodeCamp %}

### Abbreve

Abbreve is an open-source dictionary for slang. It solves this problem by providing definitions for these abbreviations with a simple search, making it easy for you to understand and participate in online conversations with confidence.

**Contributing:**

* Create an issue

* Make a Pull Request

* Add an abbreviation

* Improve documentation

{% embed https://github.com/Njong392/Abbreve %}

### Bootstrap Icons

This repository is the house of icons! Whether it's a brand or a programming language, the icons cover all the topics. It is the official open-source SVG icon library for Bootstrap, with over 1,900 icons.

**Contributing:**

* Create an issue

* Make a Pull Request

* Improve documentation

* Improve codebase

{% embed https://github.com/twbs/icons %}

### Start Contributing

This repository is a collection of resources that will help you get started with contributing to open source. It includes the following topics:

* Introduction to Open Source

* Learn Git and GitHub

* Contributing to Open Source

**Contributing:**

* Create an issue

* Make a Pull Request

* Add a resource

* Add a topic

{% embed https://github.com/Sriparno08/Start-Contributing %}

## Conclusion

That's all! The repositories listed above will undoubtedly help you understand the concept of open-source contribution. So, what are you waiting for? Jump into these repositories and start contributing right now! | sriparno08 |

1,416,973 | Mind Tracker — application for mental health | The application shows analytics on the psychological well-being of the user. My brother and I... | 0 | 2023-03-27T20:57:54 | https://dev.to/mind_tracker/mind-tracker-application-for-mental-health-5e68 | flutter, ios, android, mentalhealth |

The application shows analytics on the psychological well-being of the user.

My brother and I started making the app in the midst of COVID, when many people were facing emotional and mental problems. At that time, I was working on launching an online bank in the Philippines, which created an especially favorable environment for being out of my mind.

Before development began, I began to keep a diary of emotions in order to somehow deal with a depressive state. The process of reflection and structuring of emotions helped. I wrote down my moods, emotions, and the events that triggered them. When assessing the mood, I built a graph in Excel. It became clear that in this format it is inconvenient to do it. I went through many applications, but they did not suit me for several reasons:

- A five-point scale is used everywhere, which is not suitable for assessing mood;

- No mood analytics by time of day;

- There is no analytics of the impact of events on well-being;

- No ability to mark energy level;

- Recommendations are not customized in any way.

Then the idea came to make my own application.

**Seven-point scale**

First of all, we abandoned the five-point scale and started using the seven-point scale. On a five-point scale, a score of 3 is negative, whether it’s a 3 out of 5 for a movie, or a C at school. In our scale, the average mood score is the norm and does not cause negative associations.

We also decided to get away from mood visualization through emoji as much as possible. If with positive emoticons everything was not too bad, then choosing a “sad” face, when you are already in a bad mood, only infuriates. A person can experience such a strong emotion that all sad faces will look naive.

Since I am a designer, I decided to use only color coding and avoid unnecessary associations.

**Times of Day**

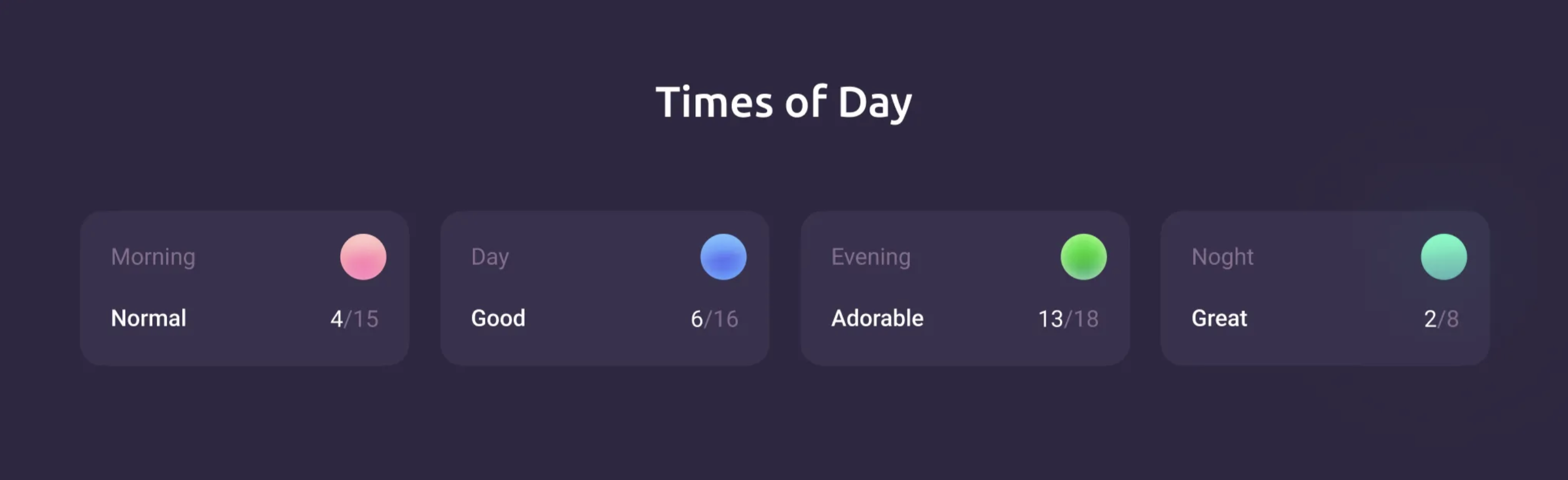

During the day, mood and general well-being change greatly. It is useful to understand what time of day is the most productive, and what time of the day is better to relax. We have added the display of analytics, including by time of day. After I started using the app I realized that I’m definitely not a night person and clearly feel better in the morning.

**Energy**

In the process, we realized that one scale for assessing well-being is not enough. The first test users confirmed this. Often there were situations when you were tired, but your mood was not bad, or you were angry and upset, however, more than ever full of energy. In such situations, users noted their mood as worse than it actually was, or found it difficult to assess. It was necessary to separate the mood and the amount of energy, because it is important to correctly prioritize in different states.

**For example:**

Good mood, but little energy — now it’s better to read a book or do any inactive tasks, it’s better not to take on 10 things at once.

A bad mood, but there is still a lot of energy left — you can go through all the old routine things that do not require much emotional involvement.

**Events**

Events in life directly affect our mental state.

It will not be superfluous to take into account that event provoked this or that state of health. So one of the important mechanics of the application is adding events, and the ability to see their impact on yourself. A person cannot always find a direct connection between an event and well-being. Especially in the long term.

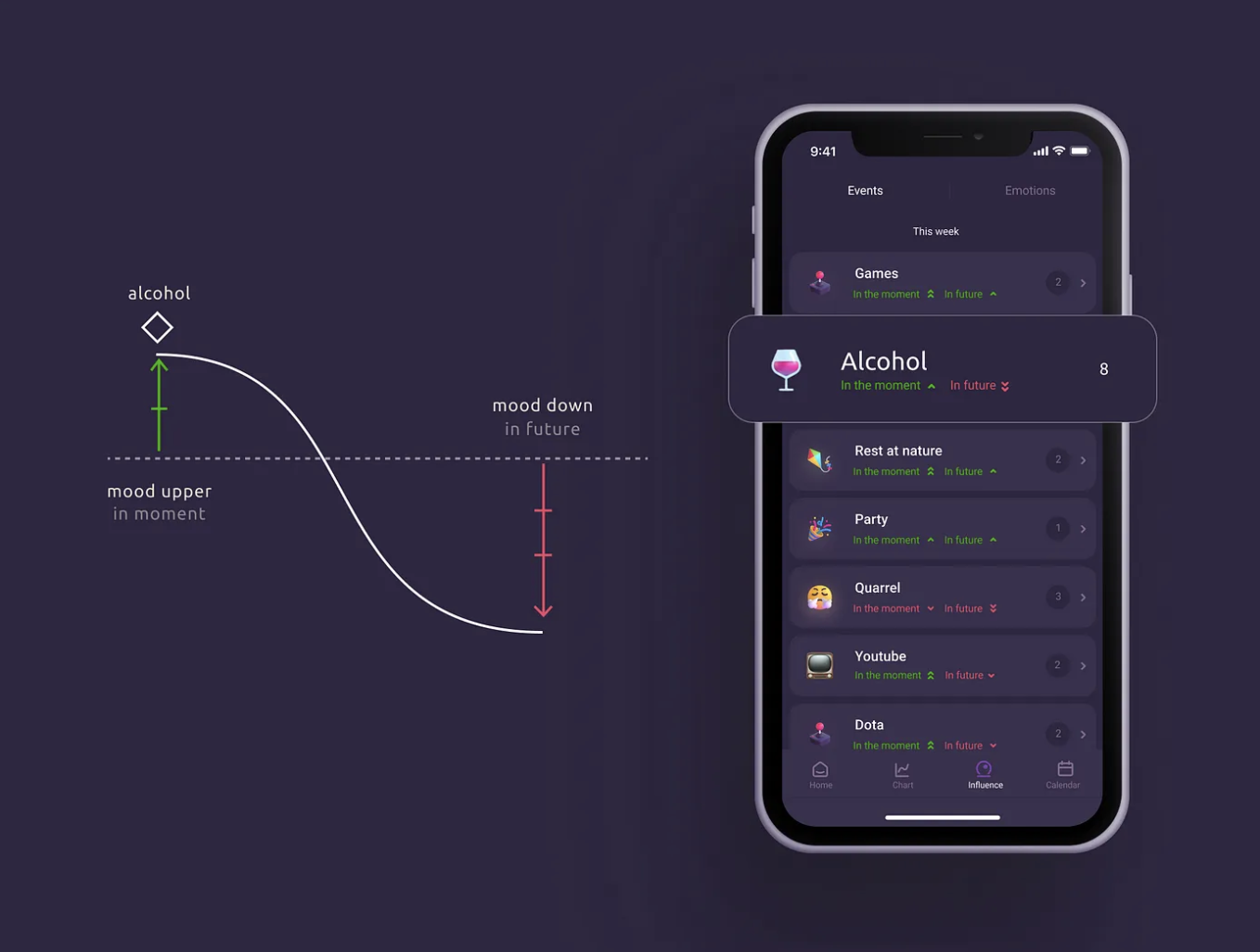

So we made it possible to add any events, so that the application analyzes and displays their impact on the emotion graph. We take the average mood of each user, including depending on the day of the week and time of day, compare it with the median mood, and show how the event affected well-being at the moment and in the future.

The simplest examples:

- Alcohol at the moment improves mood, but in the next 24 hours the person’s mood is worse than normal.

- During sports, the mood is average, but in the next 24 hours it is much better.

There are many such connections, and they are far from always obvious, and sometimes even surprising. Who would have thought that Dota has a very bad effect on my well-being?. Almost always after the game, my mood will be worse than my usual state. And after cleaning, on the contrary, it is better, and significantly. Of course, I will not stop playing Dota, but it has become easier to take up cleaning with this knowledge.

**Emotions**

Initially, we wanted to visualize emotions in the form of a circle by Robert Plutchik. Plutchik believes that there are opposite emotions that cannot exist simultaneously. Now this idea is often disputed, in my own example, I realized that a person can experience opposite emotions at some points. Even just trying to choose what emotions you experience, especially if they are negative, you switch from an emotionally destructive state to a more rational one, and negative emotions become less destructive.

**Notes**

You can create notes in the app, and they are tied to a mood tag. Sometimes it is enough to formulate negative thoughts, and it will already become better. The plans are to make notes “smarter”, so that the application itself isolates events and emotions from the text.

**Graph**

We’ve done a lot of work on how to average the data. The graph should be readable at a large scale, while maintaining the top and bottom peaks. We added different weights depending on the distance from the norm.

The graph is an important tool for introspection. We all have certain cycles that run differently. The cycle can last several days or months, and the seasons also strongly affect the state. This is especially important to consider for people with bipolar disorder, borderline disorder, or cyclic depression. Find cycles, patterns on the graph and be able to “put a straw” in advance.

**What else**

When working with a psychologist or psychiatrist, it happens that you paint events in the color of momentary emotions, and you forget what it was like before. And if you remember, you can’t always remember the reason. The application will be able to remind. The specialist will be able to say more using your data.

There are 2 of us in the team, me and my brother. Now we do not monetize the application in any way, it is completely free, although applications with even less functionality work by subscription. We are currently working on personalized recommendations and a user profile. We are very careful in this work, because we do not want to give “bad advice”. We will always need the help of specialists from the area of psychotherapy and psychiatry. If you are the one, or if you have friends who might be interested, let us know. We are open to cooperation.

I do not want to say that now is a time when it is especially important to take care of yourself and your psyche. There is always a way to go into an apathetic or depressive state. If you have tools that support you, use them. Even formulating your experiences and writing them down on a piece of paper helps you feel better.

**iOS:**

https://apps.apple.com/us/app/mind-tracker-journal/id1564080533

**Android:**

https://play.google.com/store/apps/details?id=com.fedosov.mindtracker | mind_tracker |

1,417,038 | Deploy cPanel & WHM on AWS EC2 Instance | cPanel & WHM is a web hosting control panel software that allows website owners, system... | 0 | 2023-03-27T23:20:34 | https://dev.to/aws-builders/deploy-cpanel-whm-on-aws-ec2-instance-36i5 | aws, cpanel, ec2, hosting | - cPanel & WHM is a web hosting control panel software that allows website owners, system administrators, and resellers to manage their websites and servers through a user-friendly interface. cPanel provides an easy-to-use graphical interface for managing various aspects of web hosting, such as creating email accounts, managing domains, uploading files, creating databases, installing applications, and monitoring server performance.

- WHM (Web Host Manager) is a separate administrative interface that comes with cPanel. It allows system administrators and web hosting resellers to manage multiple cPanel accounts on a single server or across multiple servers. With WHM, you can create and manage hosting packages, set up new cPanel accounts, monitor server health and resource usage, and automate server backups and updates.

- Together, cPanel & WHM provide a complete web hosting solution for both individual website owners and web hosting providers. They are widely used in the industry due to their ease of use, reliability, and comprehensive feature set.

## Implementation

### Creating an EC2 INSTANCE and Choosing CentOS 7 AMI

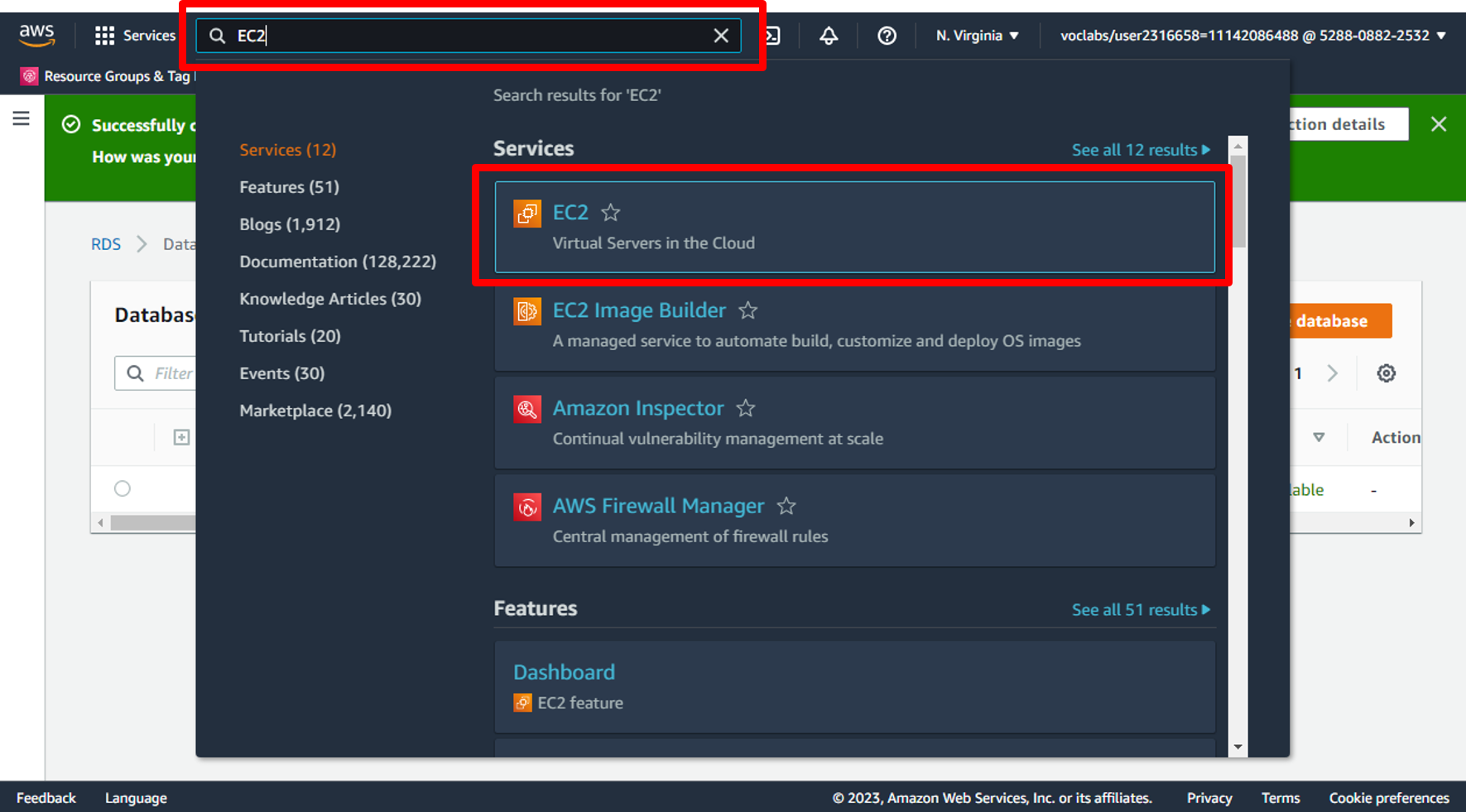

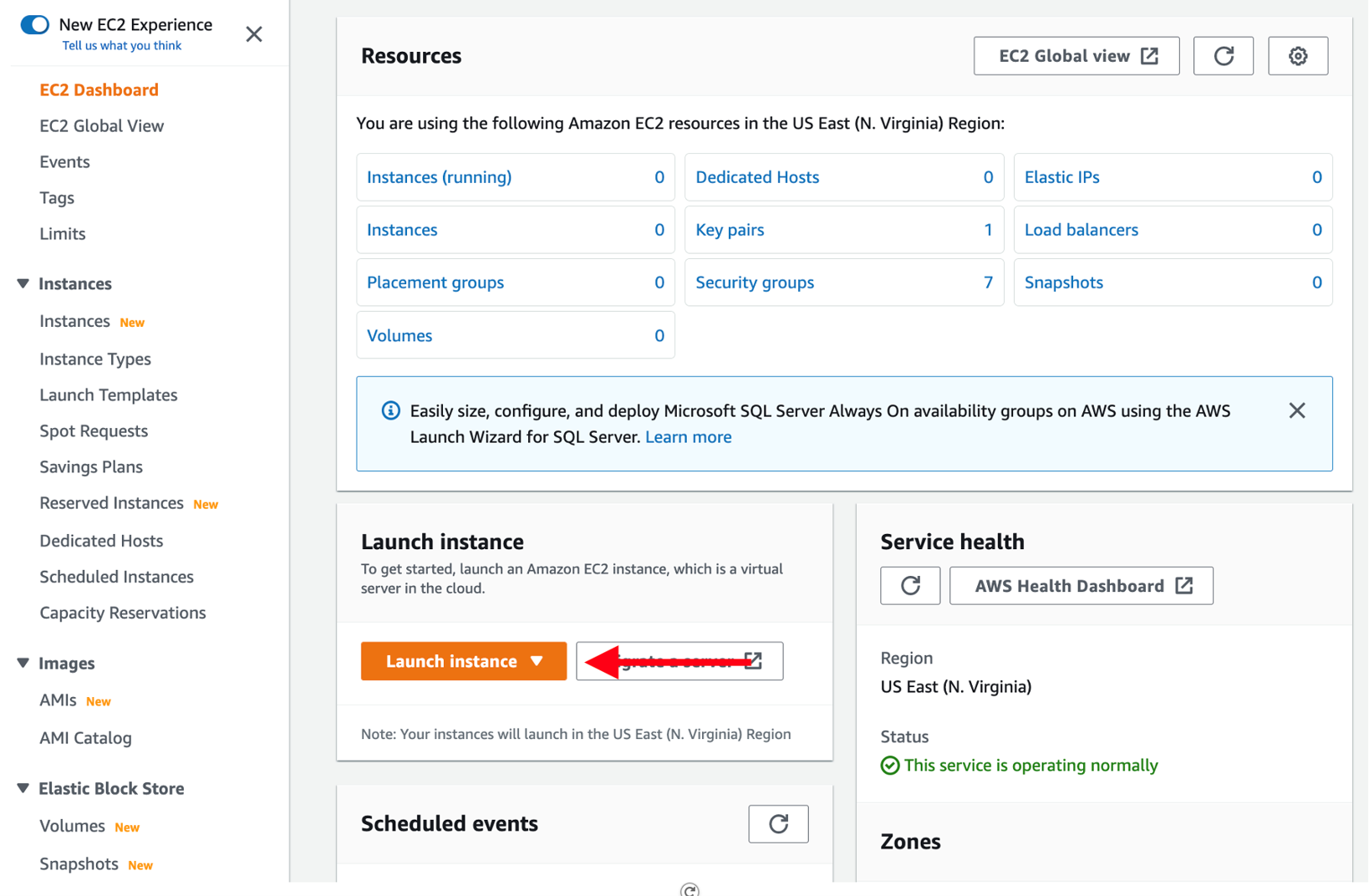

a. Open the [AWS Management Console](https://console.aws.amazon.com/console/home). When the screen loads, enter **EC2** in the search bar, then select **EC2** to open the service console.

b. Choose the **Launch instance** button to open the instance creation wizard.

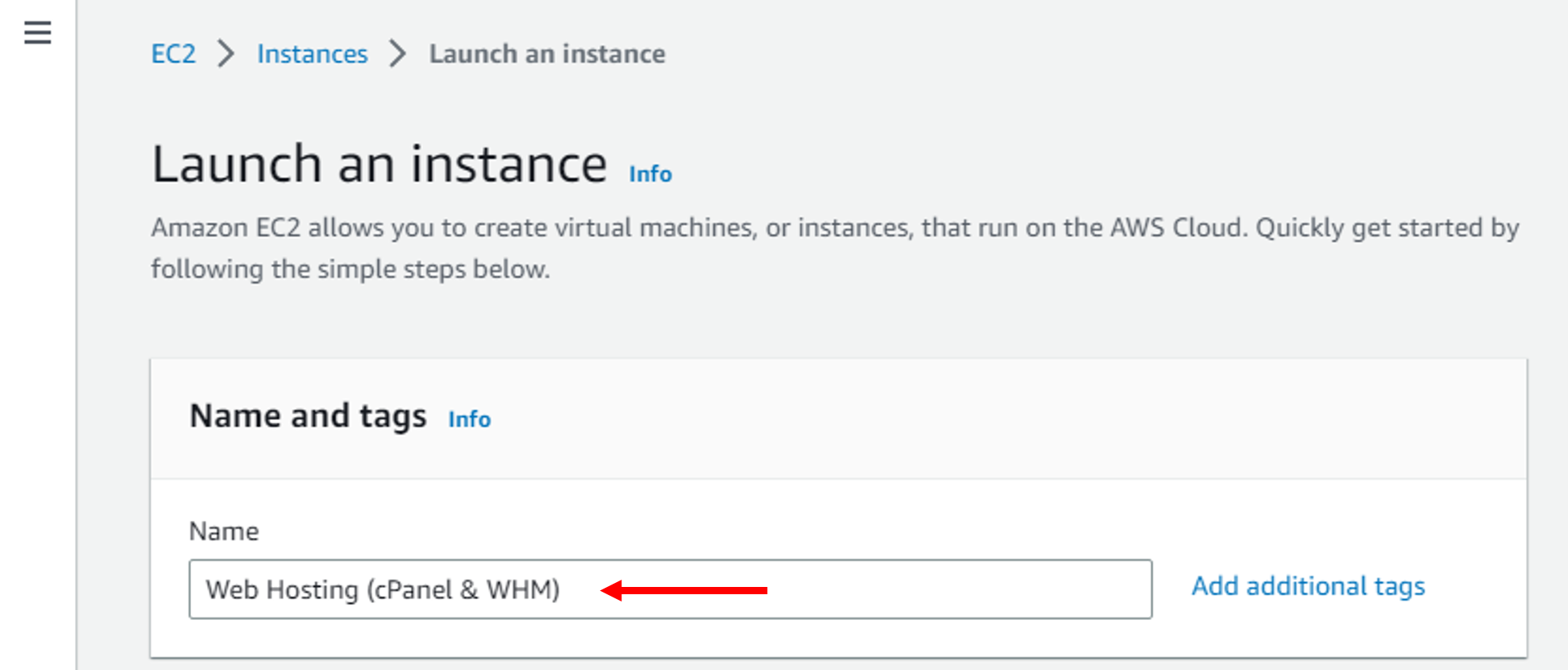

c. On the first page, enter _Web Hosting (cPanel & WHM)_ as your instance name.

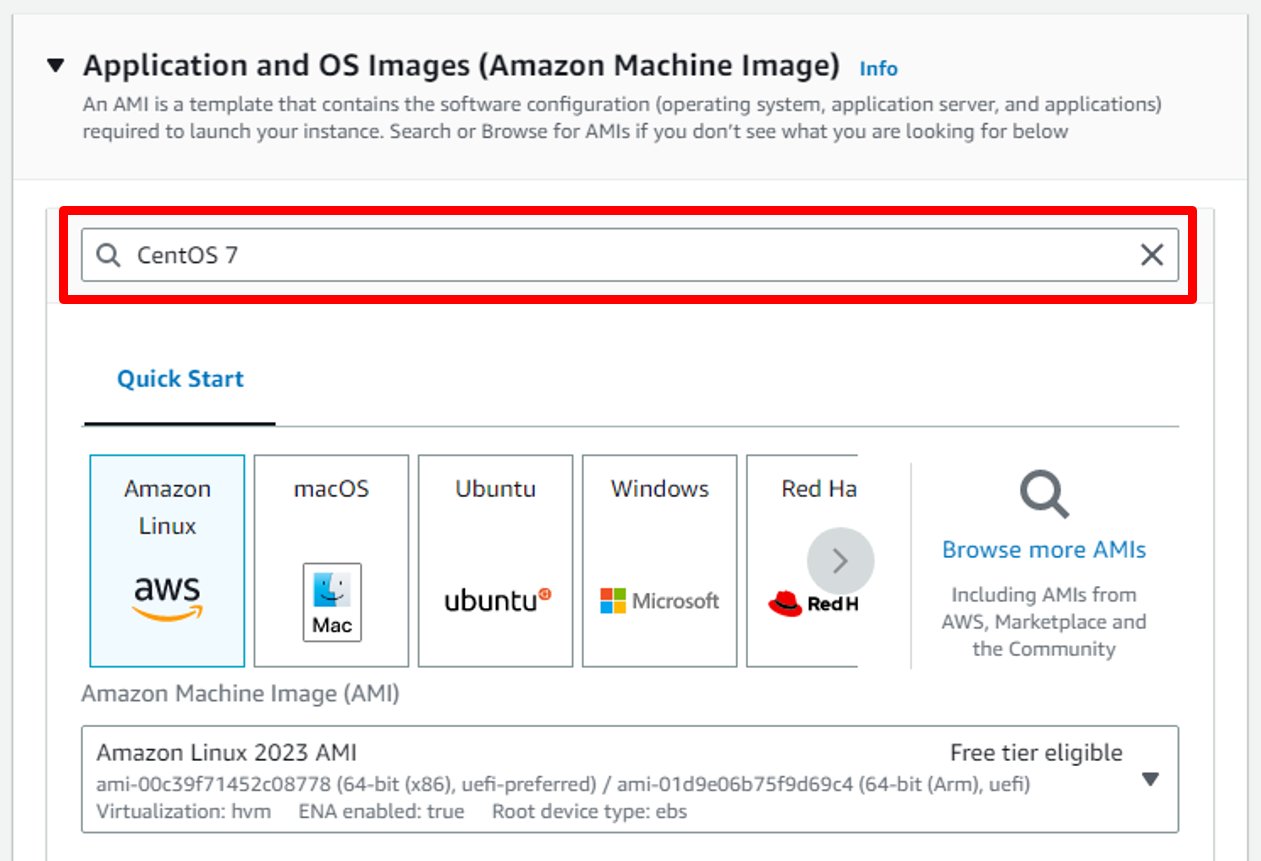

d. Next, choose an Amazon Machine Image (AMI). The AMI you choose will determine the base software that is installed on your new EC2 instance. This includes the operating system (Amazon Linux, Red Hat Enterprise Linux, Ubuntu, Microsoft Server, etc.), and the applications that are installed on the machine.

Many AMIs are general-purpose AMIs for running many different applications, but some are purpose-built for specific use cases, such as the Deep Learning AMI or various AWS Marketplace AMIs.

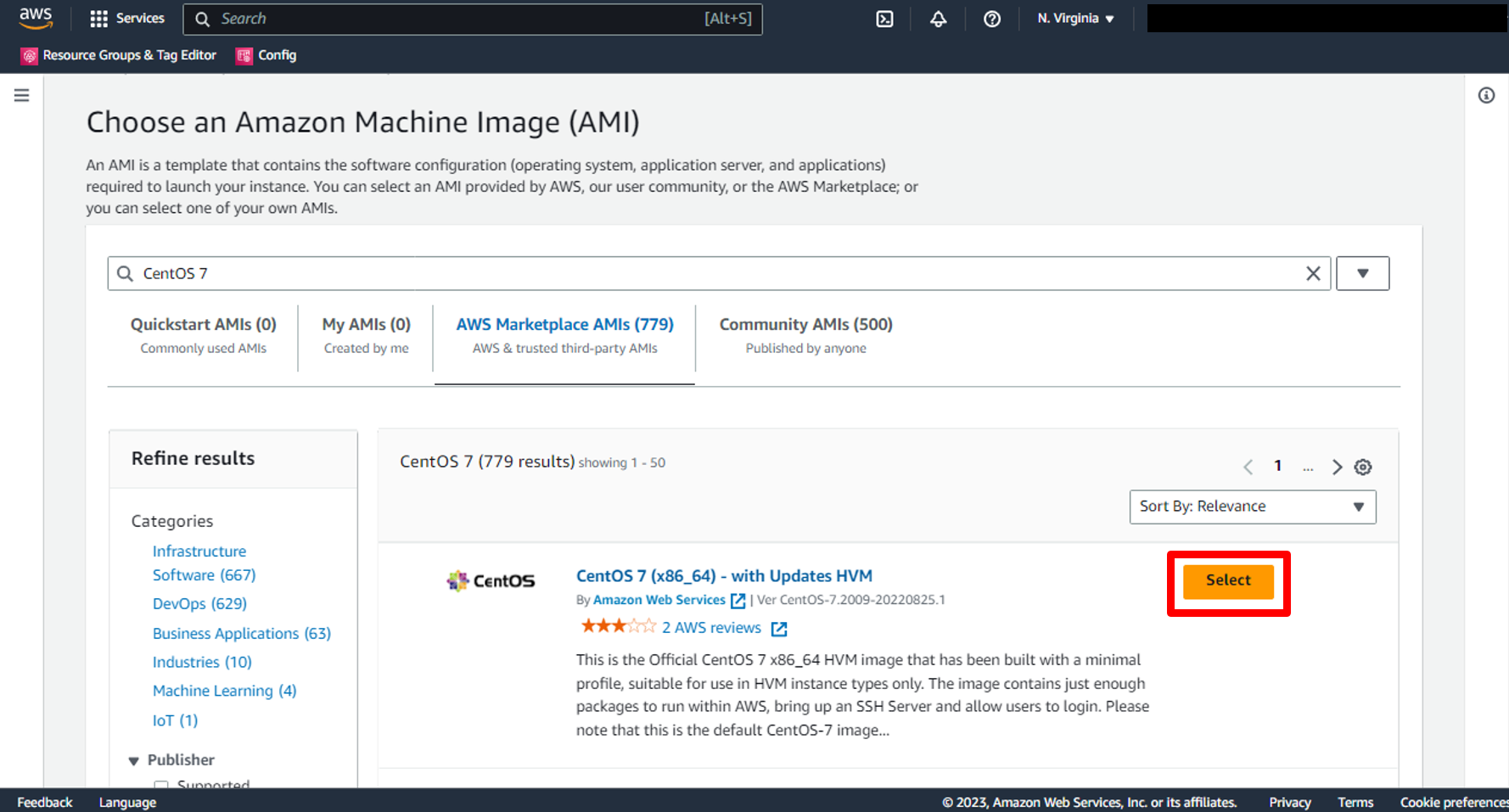

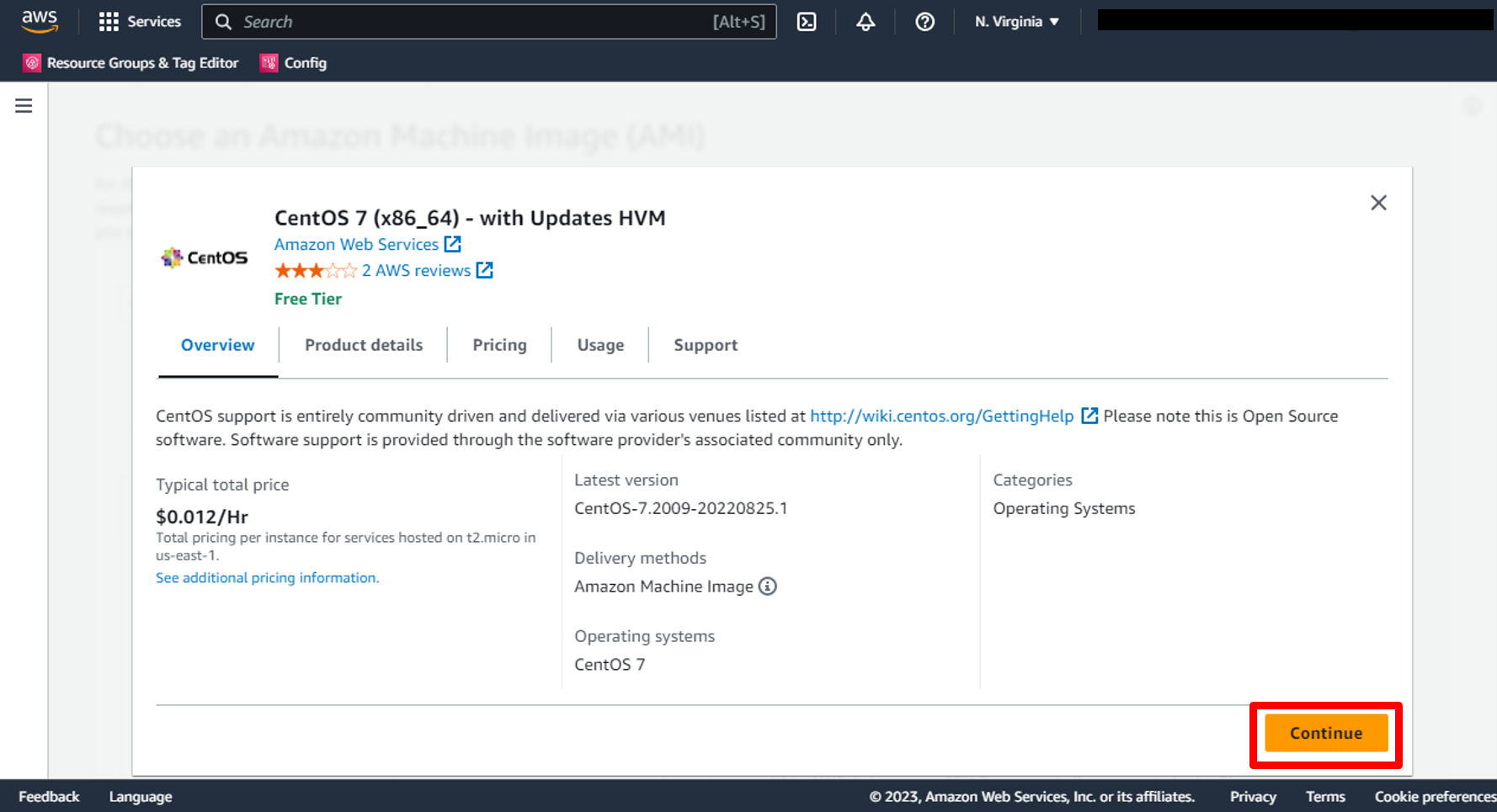

CentOS 7 image is the Official CentOS 7 x86_64 HVM image that has been built with a minimal profile, suitable for use in HVM instance types only, so choose [CentOS 7 (x86_64) - with Updates HVM](https://aws.amazon.com/marketplace/pp/prodview-qkzypm3vjr45g) after make search on AWS Marketplace AMIs in the AMI selection view.

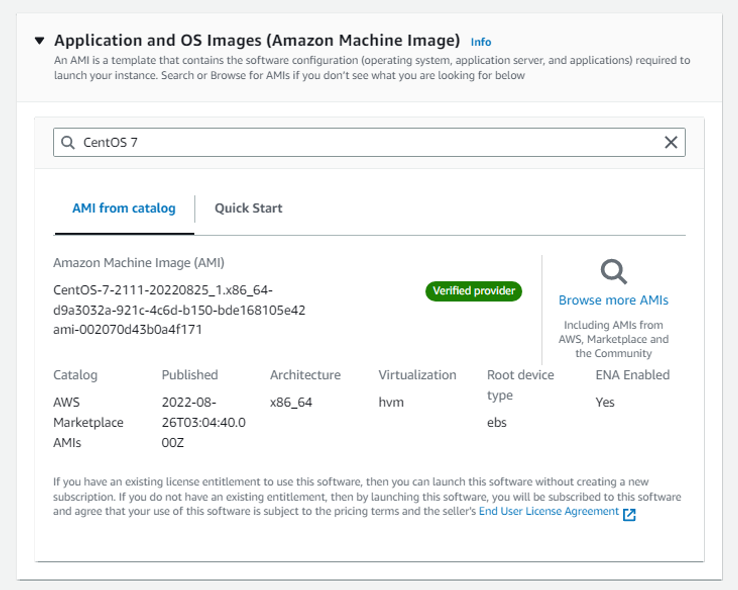

so write **CentOS 7** on search bar then click **Enter**

e. You will find the first image, choose it and press on **Select**

f. Read overview and all details about AMI then click **Continue**

g. You will return to the AMI Section and you will find that the image has been selected.

### Choosing an instance type

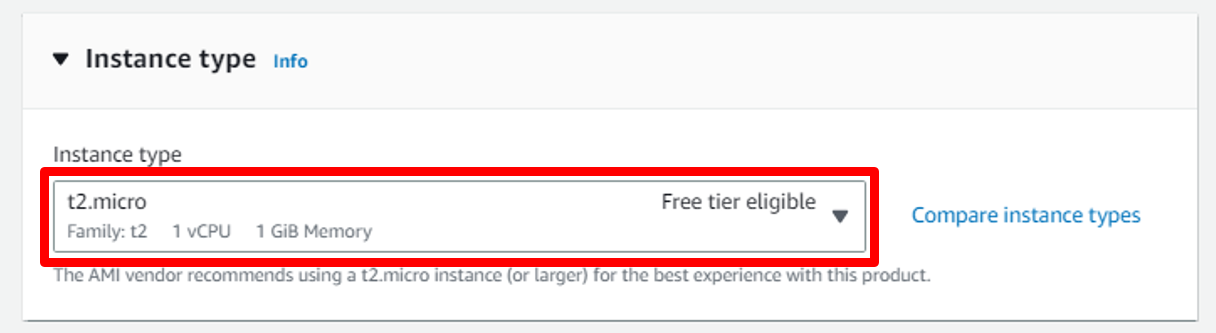

Scroll down to select an EC2 instance type. An instance type is a particular configuration of CPU, memory (RAM), storage, and network capacity.

AWS has a huge selection of [instance types](https://aws.amazon.com/ec2/instance-types/) that cover many different workloads. Some are geared toward memory-intensive workloads, such as databases and caches, while others are aimed at compute-heavy workloads, such as image processing or video encoding.

Amazon EC2 allows you to run 750 hours per month of a t2.micro instance under the [AWS Free Tier](https://aws.amazon.com/free/).

a. Select the **t2.micro** instance.

### Configuring an SSH key

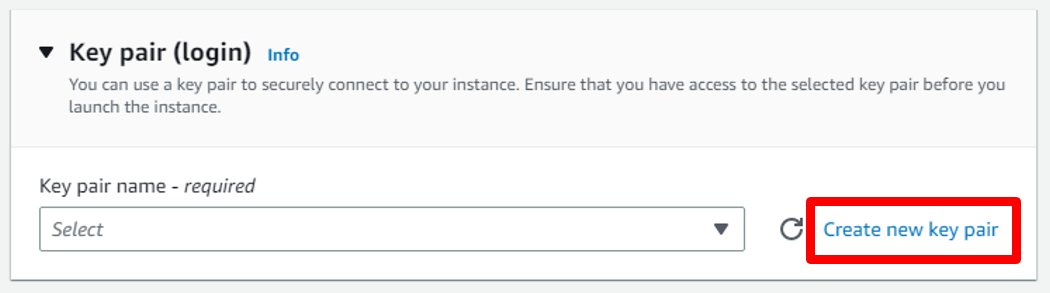

You will see a details page on how to configure a key pair for your instance. You will use the key pair to [SSH](https://en.wikipedia.org/wiki/Secure_Shell) into your instance, which will give you the ability to run commands on your server.

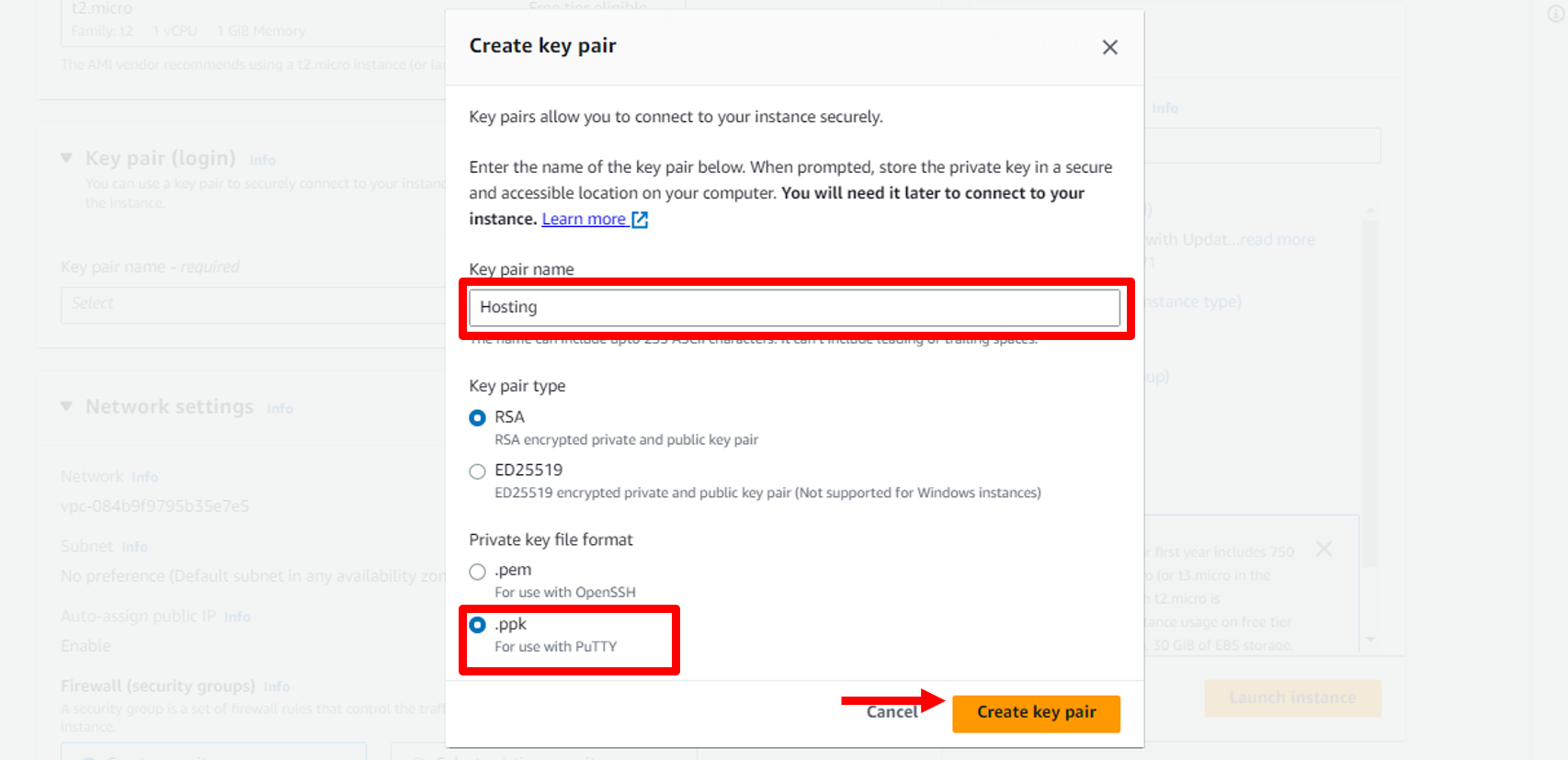

a. Open the **key pair (login)** section and choose **Create new key pair** for your instance.

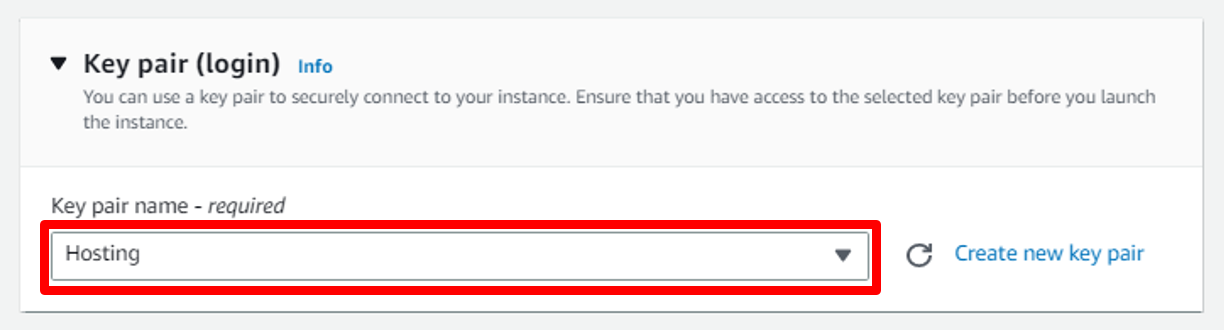

b. Give your key pair a name. Then choose **.ppk** in Private Key file format to can use with PuTTYThen. Then choose the **Create key pair** button, which will download the .ppk file to your machine. You will use this file later.

c. You will return to Key pair (login) Section and you will find that Key has been selected.

### Configuring a security group and launching your instance

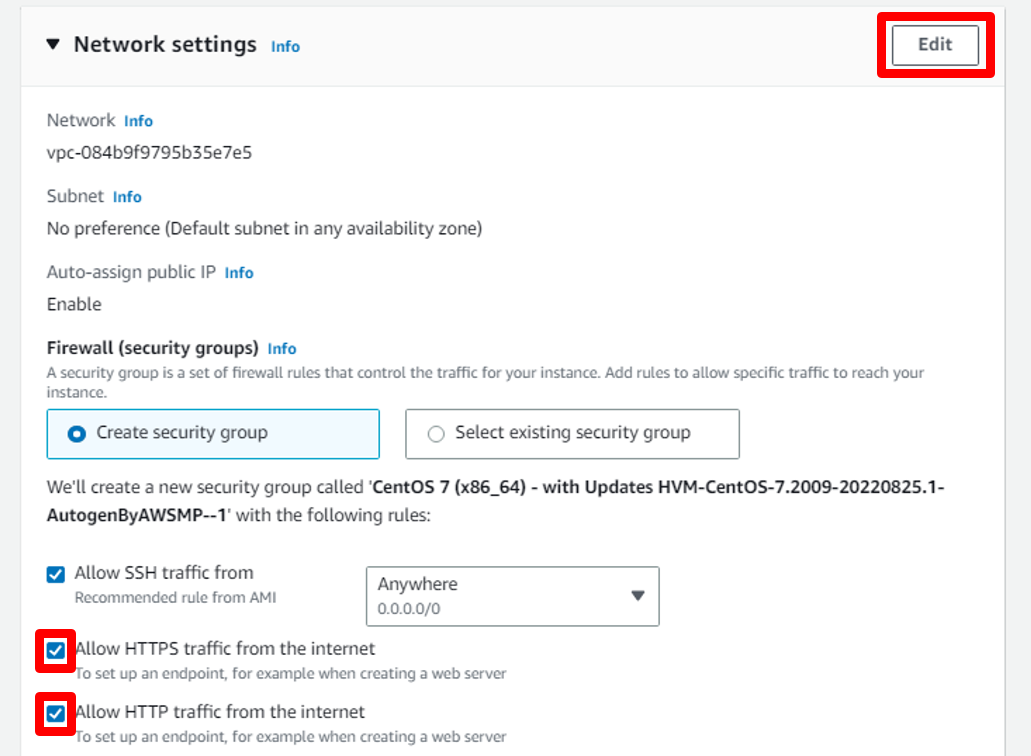

You need to configure a security group before launching your instance. Security groups are networking rules that describe the kind of network traffic that is allowed to your EC2 instance. You want to allow traffic to your instance:

- SSH traffic from all IP addresses so you can use the SSH protocol to log in to your EC2 instance and configure WordPress.

- HTTPS traffic from all IP addresses so that users can view your WordPress site Secured.

- HTTP traffic from all IP addresses so that users can view your WordPress site.

a. To configure this, select **Allow SSH traffic from Anywhere** and select **Allow HTTPS & HTTP traffic from the internet**.

then Click on **Edit**

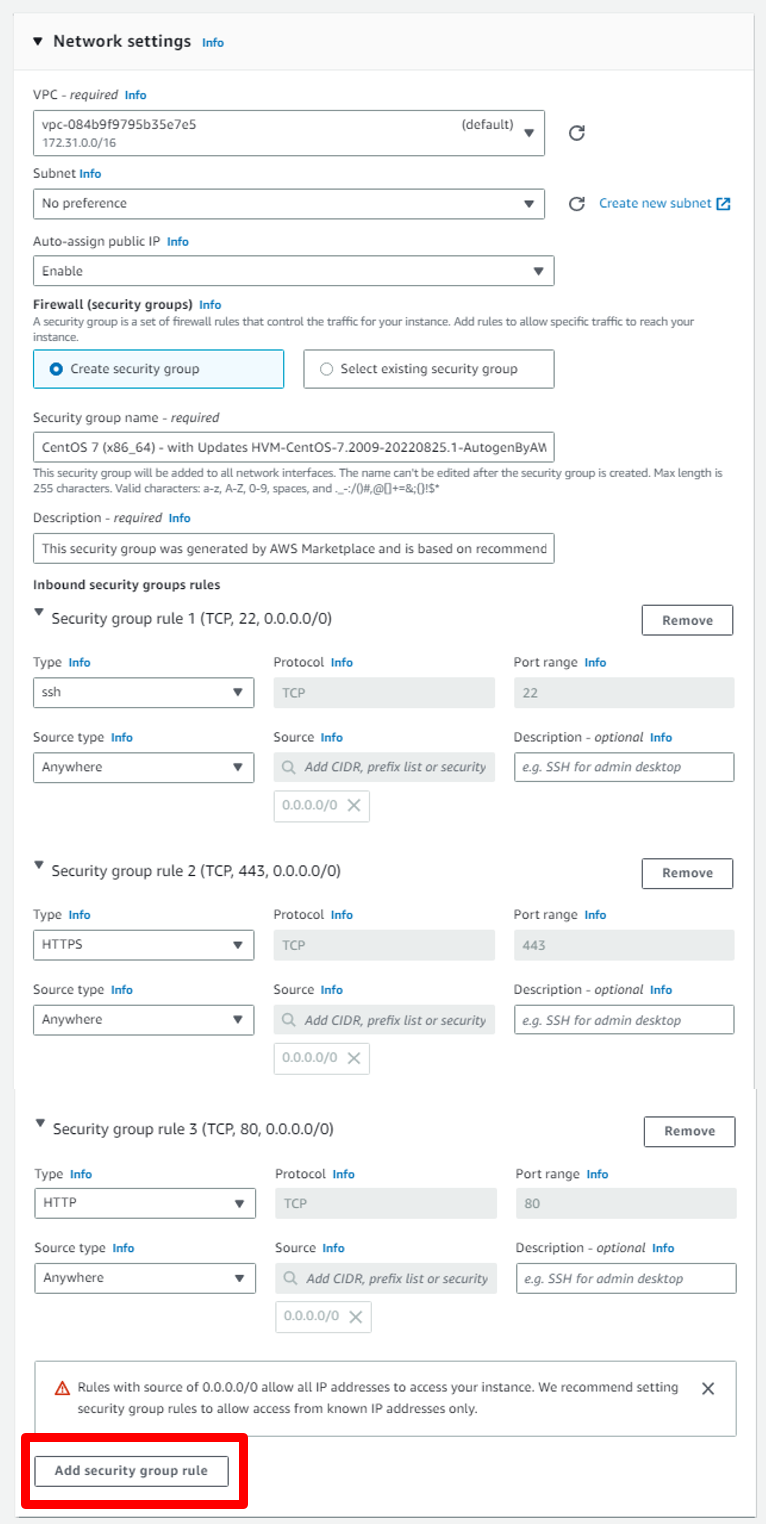

b. Click on **Add Security group rule** to add group rule.

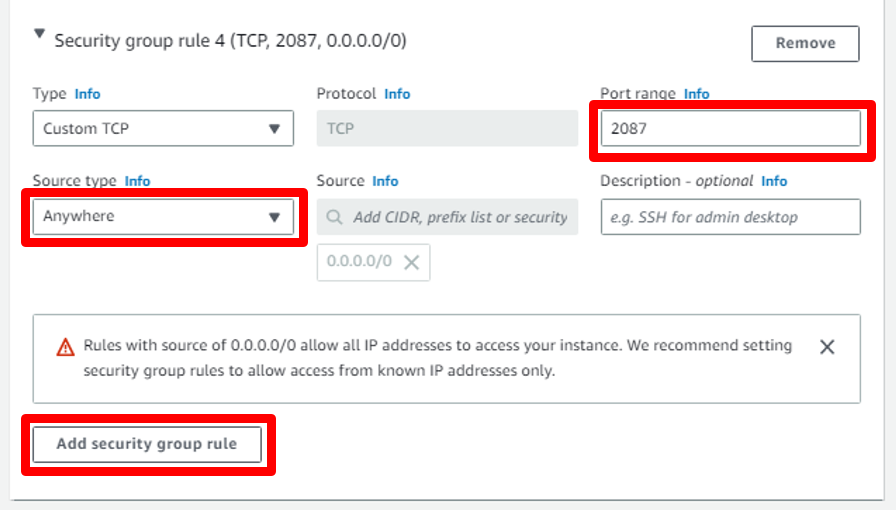

c. First Security Group: In Port Range write **2087** and in Source type select **Anywhere** this for open WHM Dashboard Port.

Then Click on **Add Security group rule** to add another group rule.

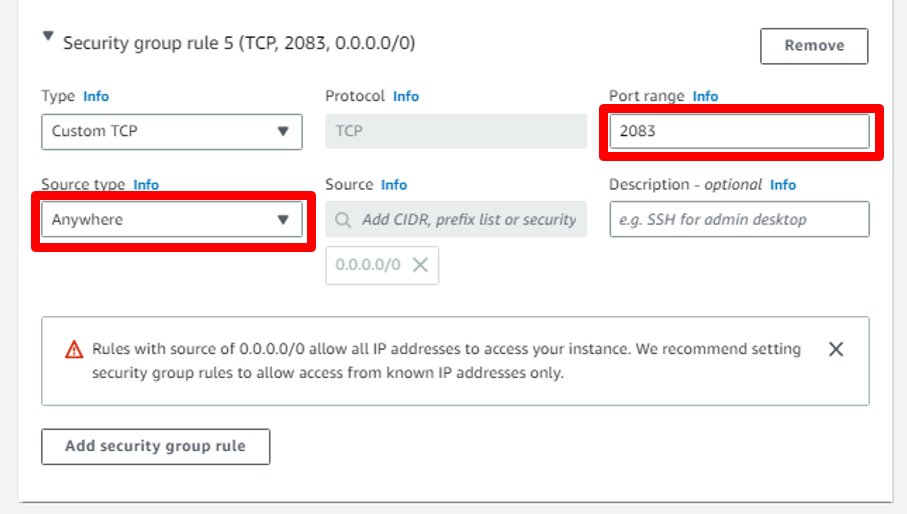

d. Second Security Group: In Port Range write **2083** and in Source type select **Anywhere** this for open cPanel Dashboard Port.

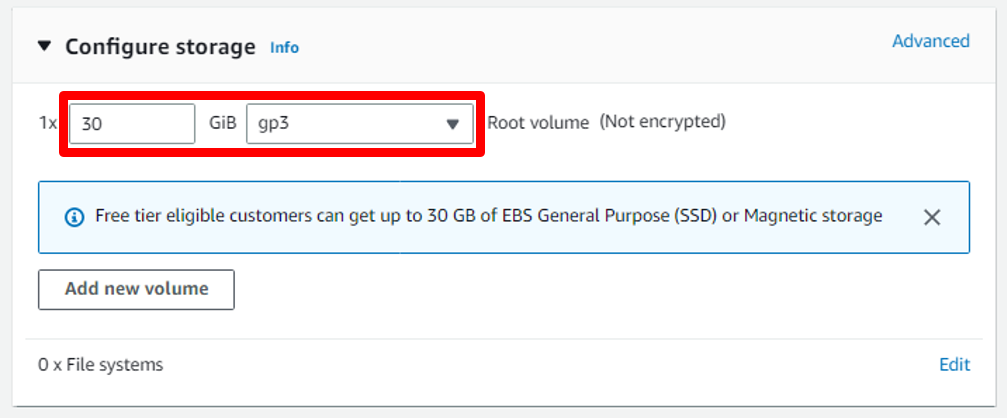

### Configuring storage on instance

a. Here we can increase the storage space for the Root volume and also can be added more volume storage.

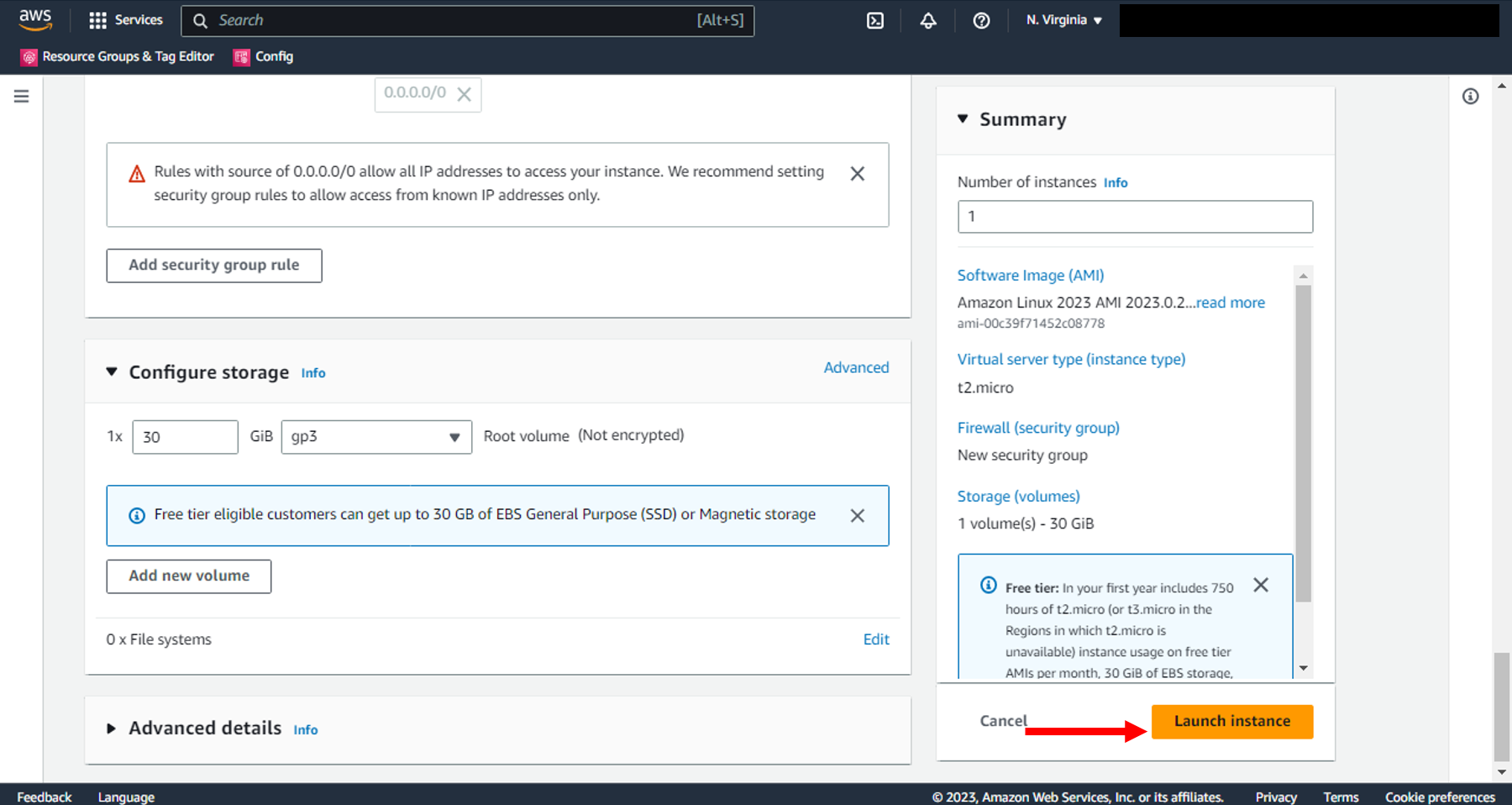

### Launch

It is now time to launch your EC2 instance.

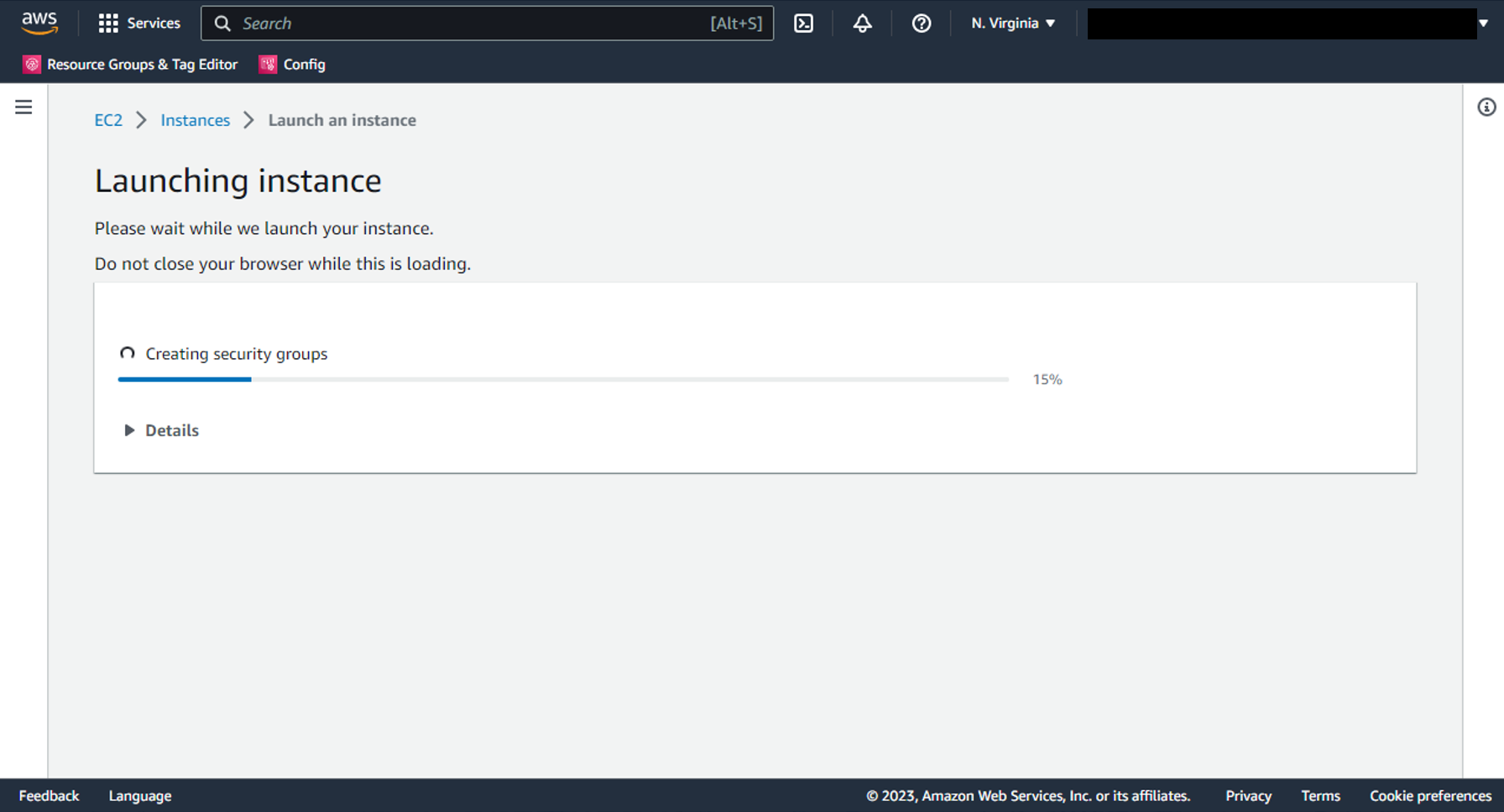

a. Choose the **Launch instance** button to create your EC2 instance.

b. Wait for instance to launch.

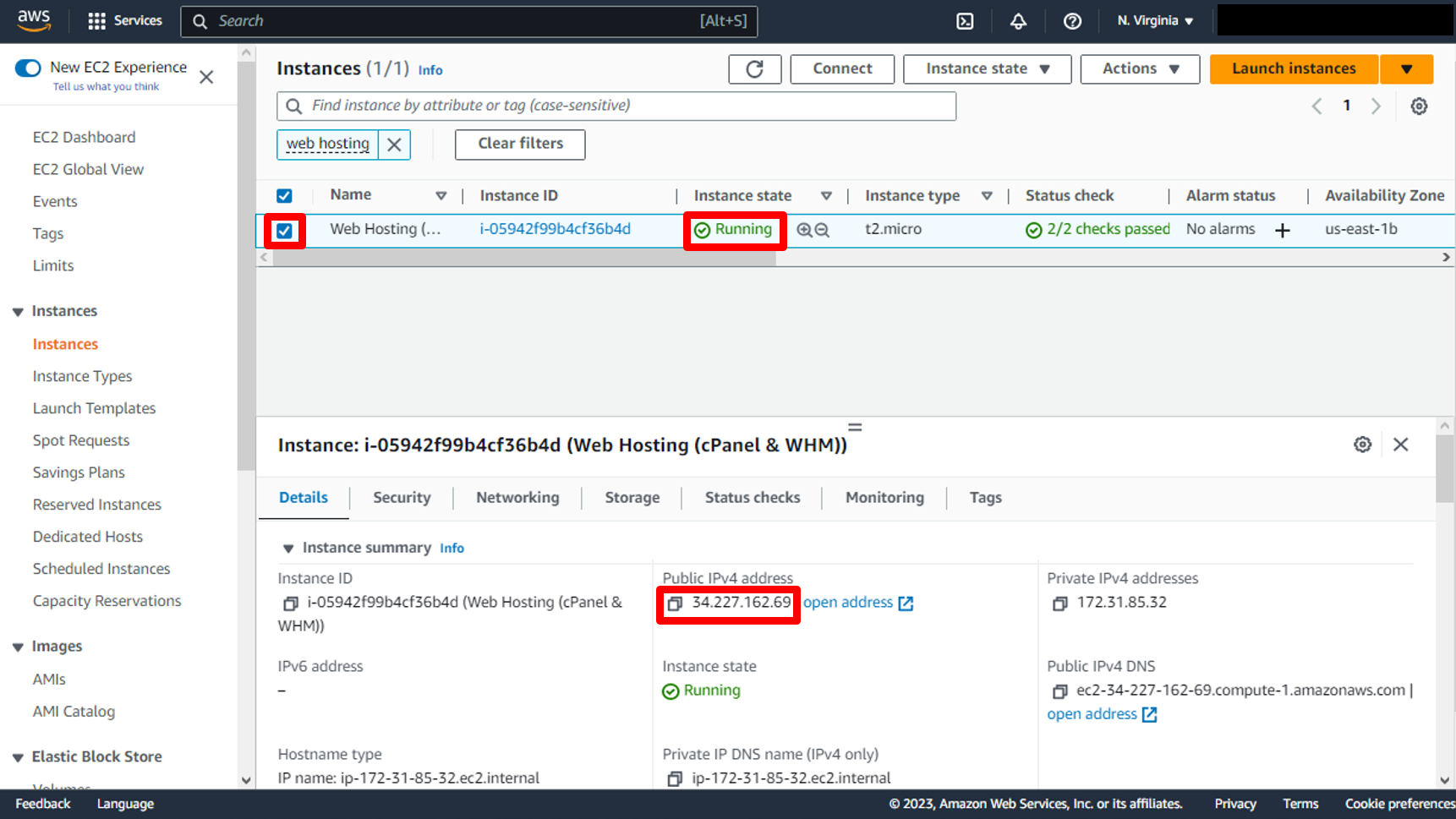

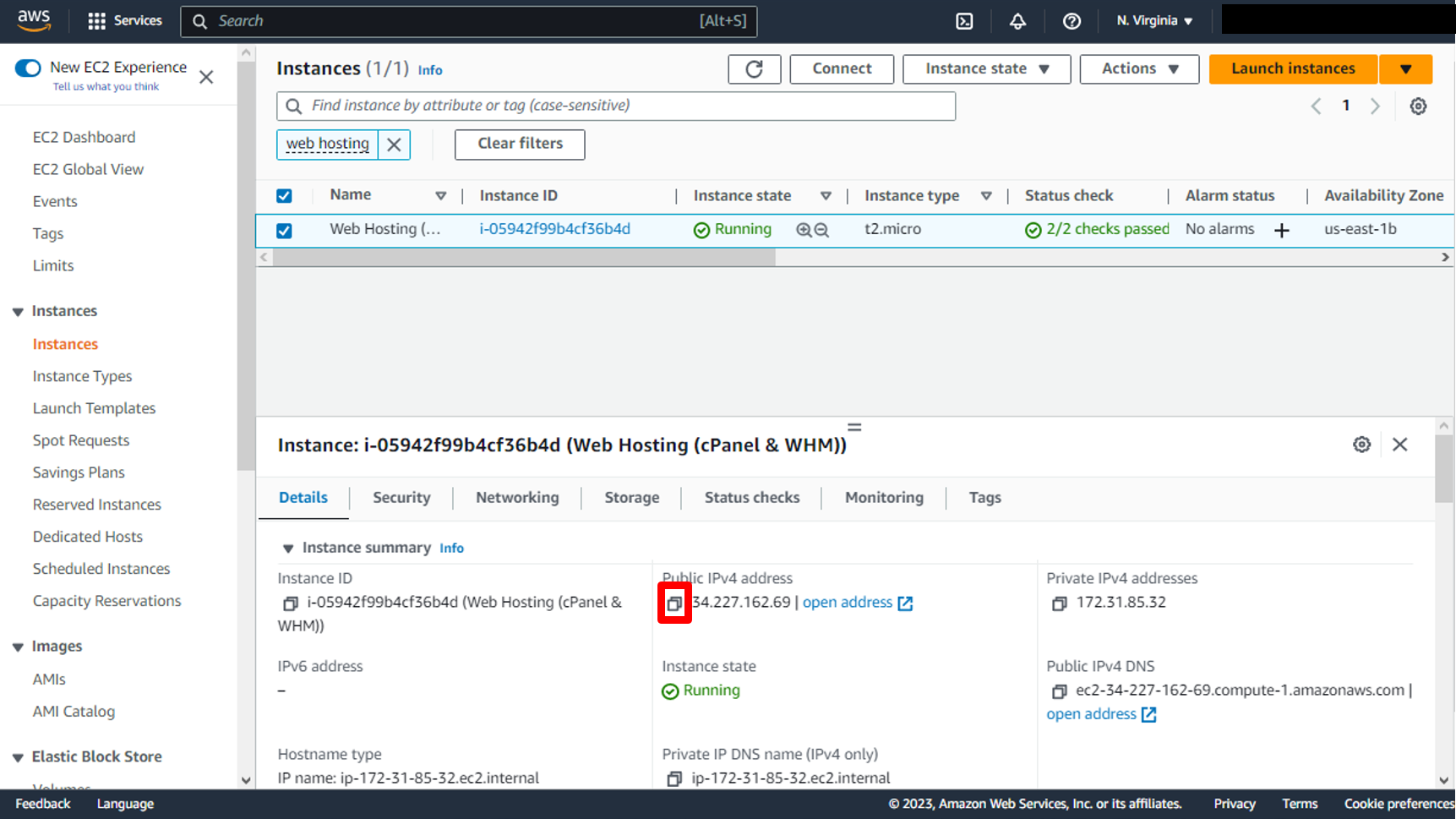

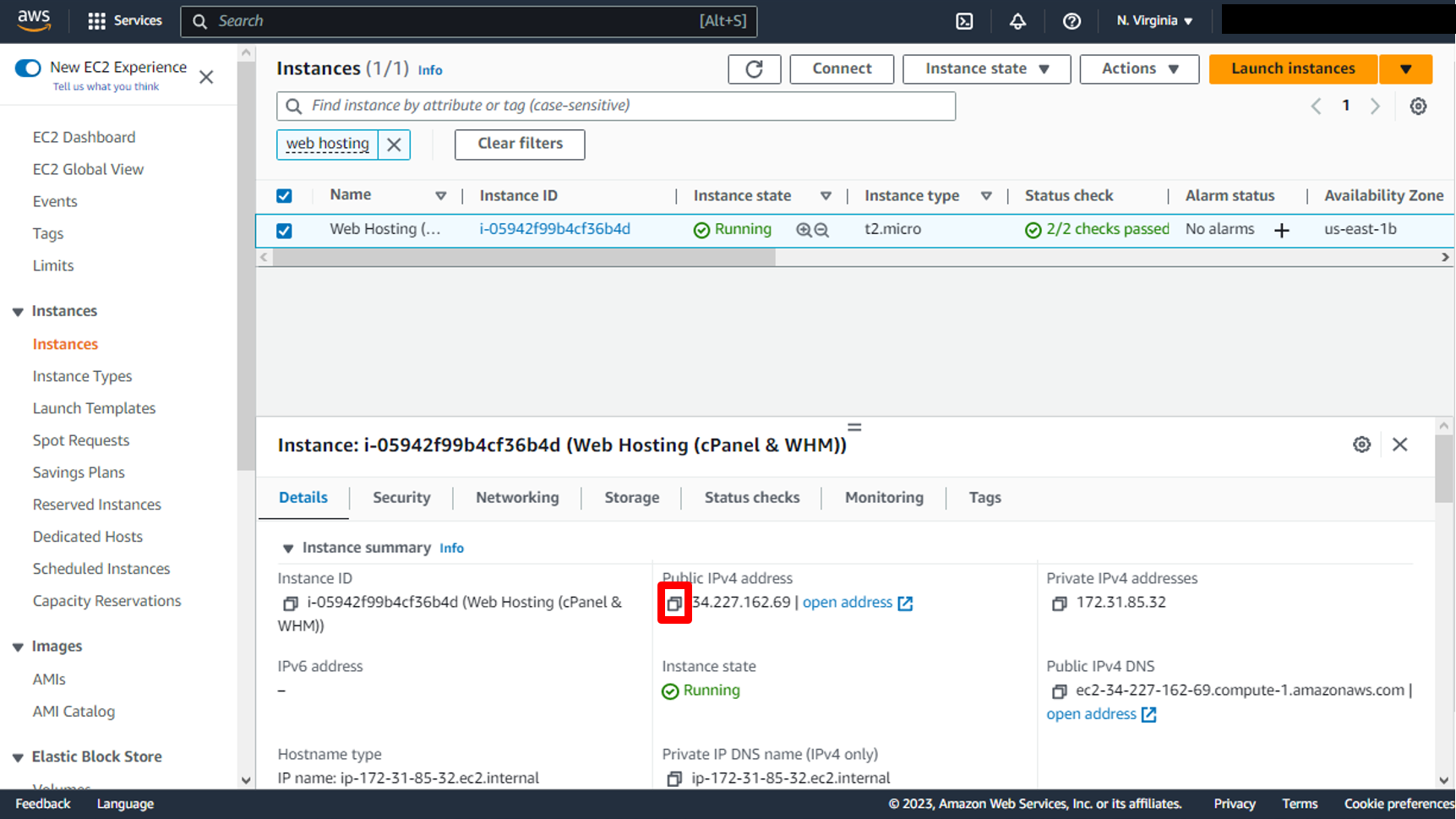

c. You have successfully launched your EC2 instance and You can get Public IP.

### SSH into your EC2 instance to know login information

You will use SSH to connect to your EC2 instance and run some commands.

a. Go to the [EC2 instances page](https://console.aws.amazon.com/ec2/v2/home#Instances) in the console. You should see the EC2 instance you created for the WordPress installation. Select it and you will see the Public IPv4 address and the Public IPv4 DNS in the instance description.

Click on **Copy** icon to copy public ip

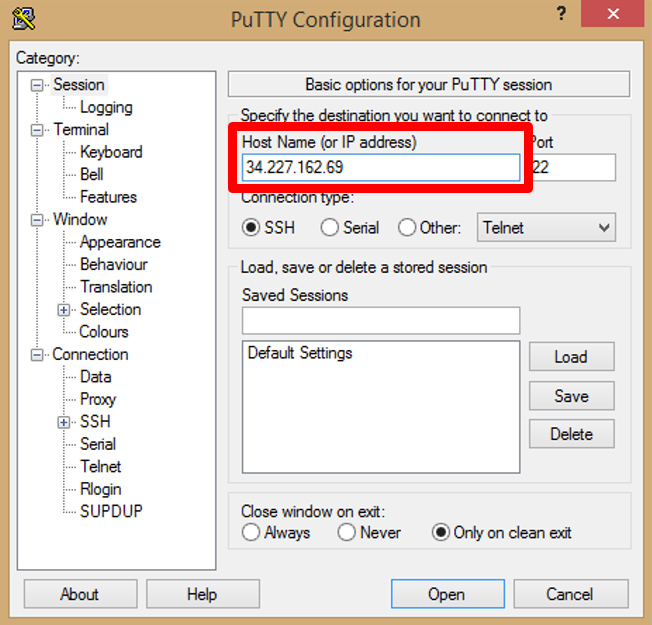

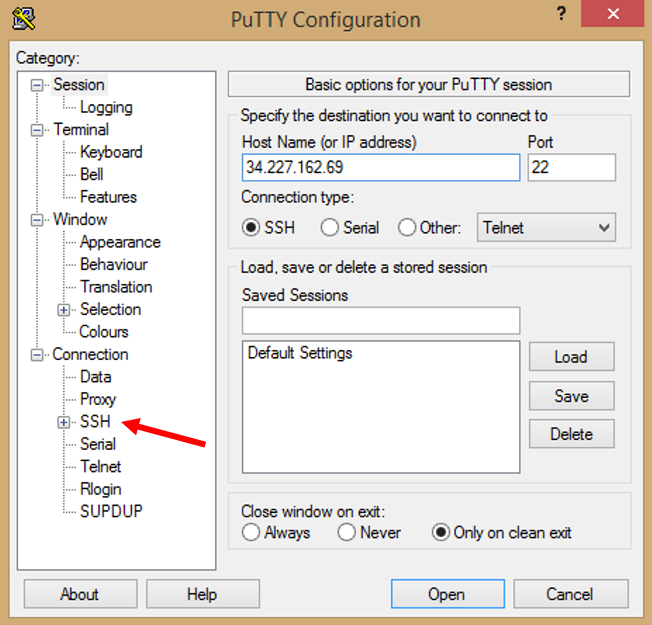

b. Open [PuTTY](https://www.putty.org/) appliction and paste public ip in _Host Name (or IP address)_.

c. Click on **+** the one next to SSH in Category list.

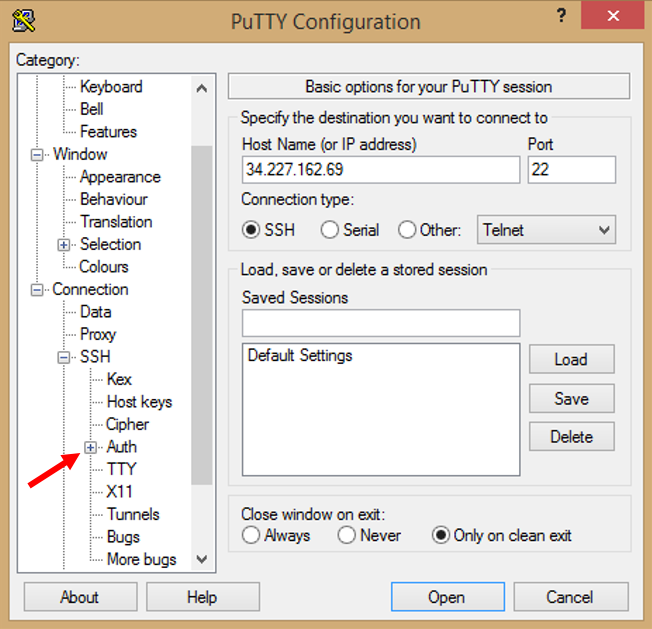

d. Then click on **Auth**.

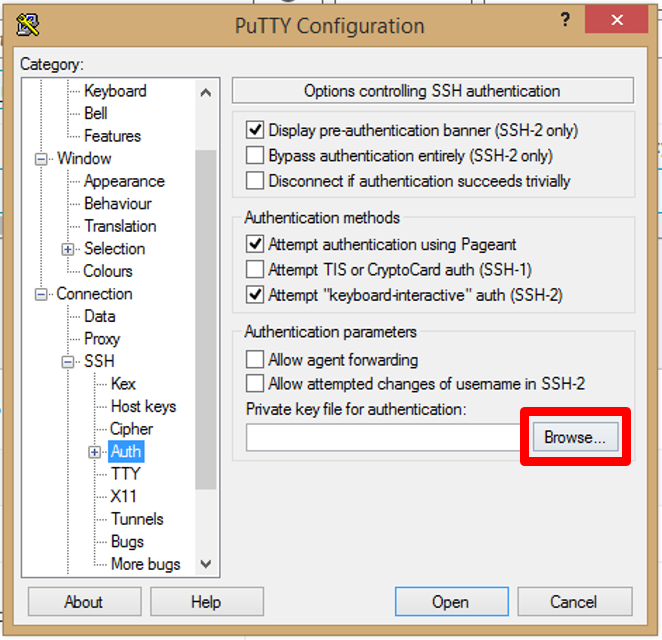

e. In Private Key file for authentication, Click on **Browse**

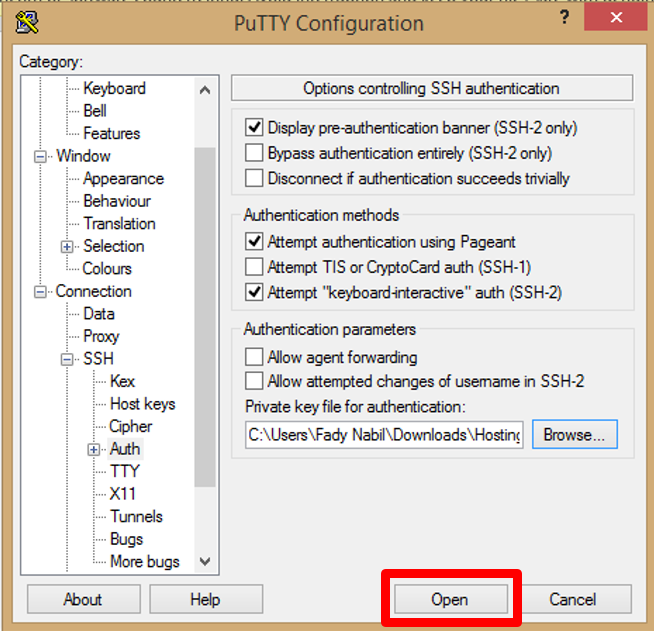

Previously, you downloaded the .ppk file for the key pair of your instance. Locate that file now. It will likely be in a Downloads folder on your desktop.

open this .ppk file

f. Click on **Open**.

### Configuration for CentOS to install cPanel & WHM

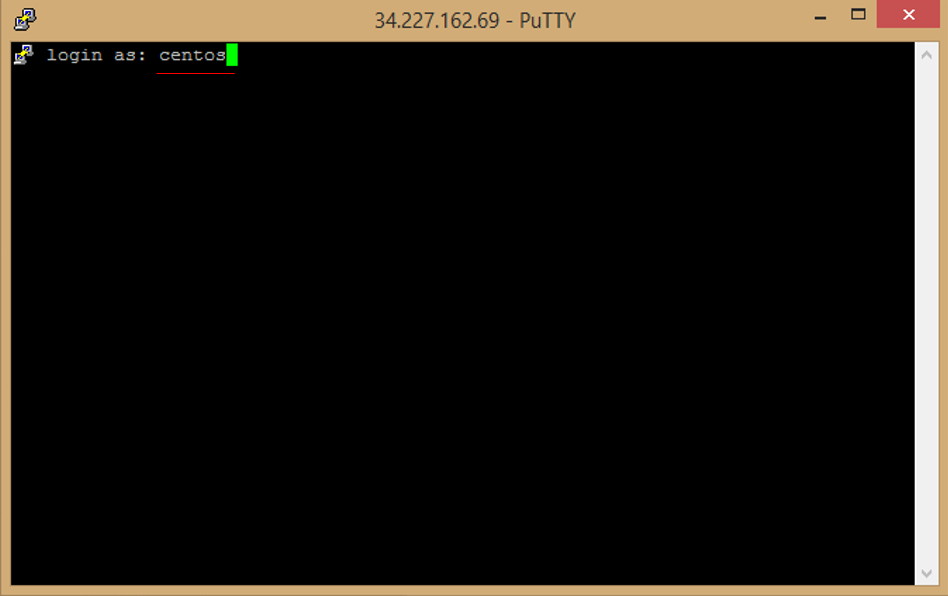

a. You should see the following in your terminal to indicate that you connected successfully.

then you will log in with **centos** (centos is deafult).

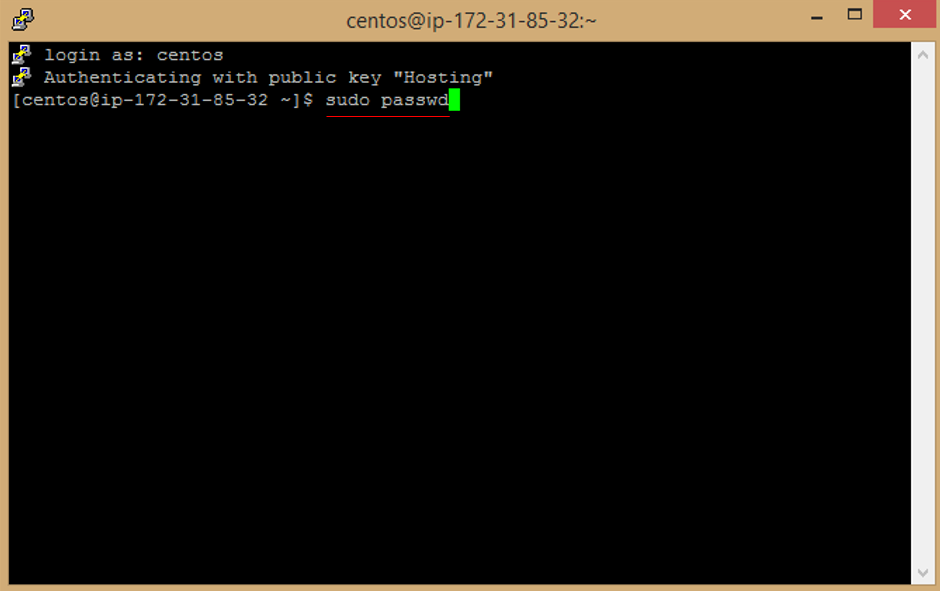

b. Enter this command to change password

```

sudo passwd

```

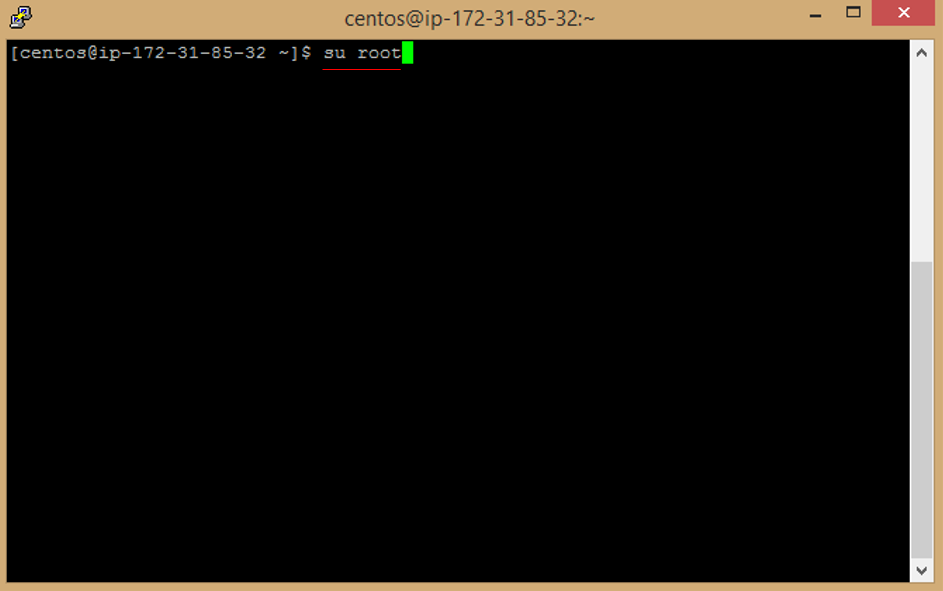

c. Enter this command to be root user

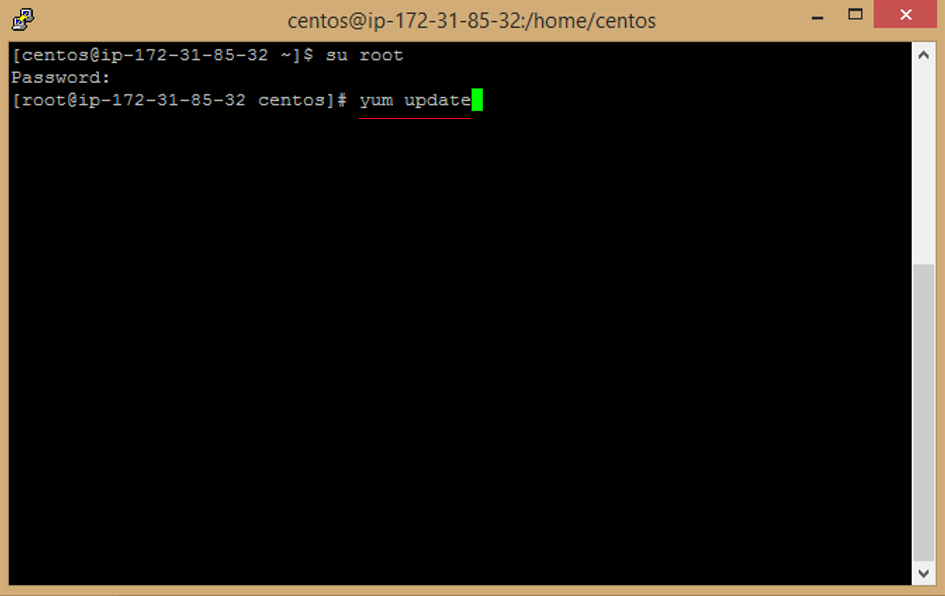

```

su root

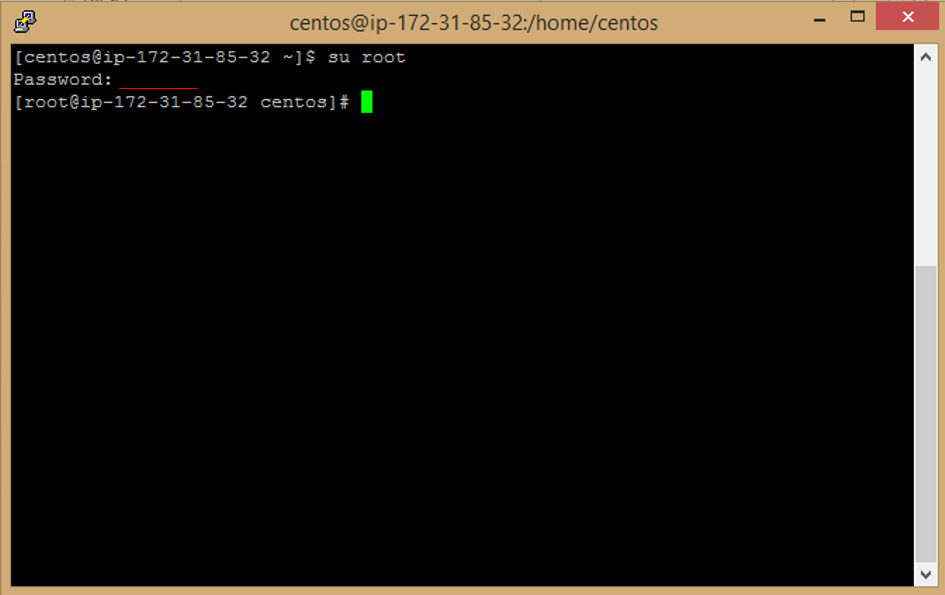

```

d. Enter Password to be root user (Password you added a while ago in a sudo passwd step).

e. Enter this command to update image

```

yum update

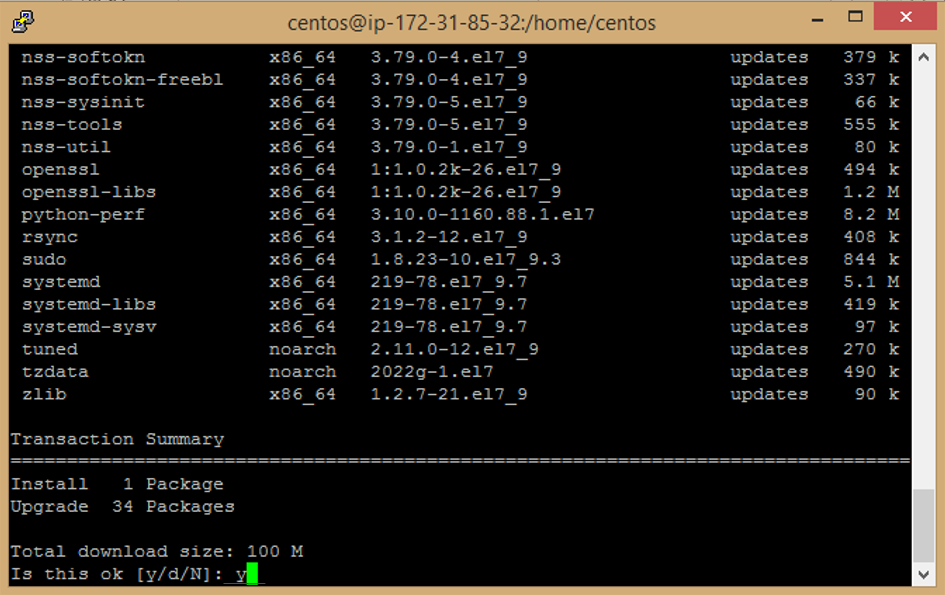

```

f. Enter **y** to accept download Package.

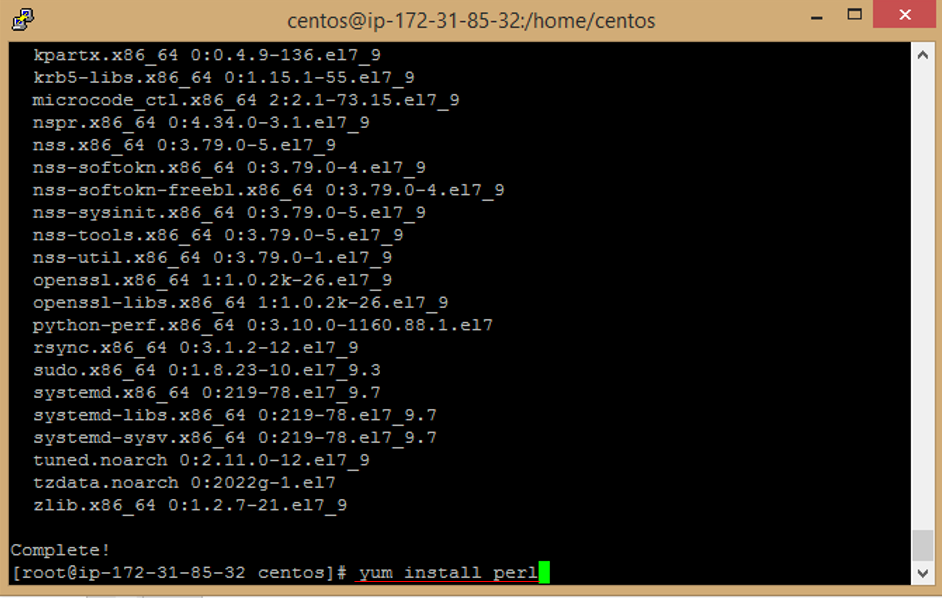

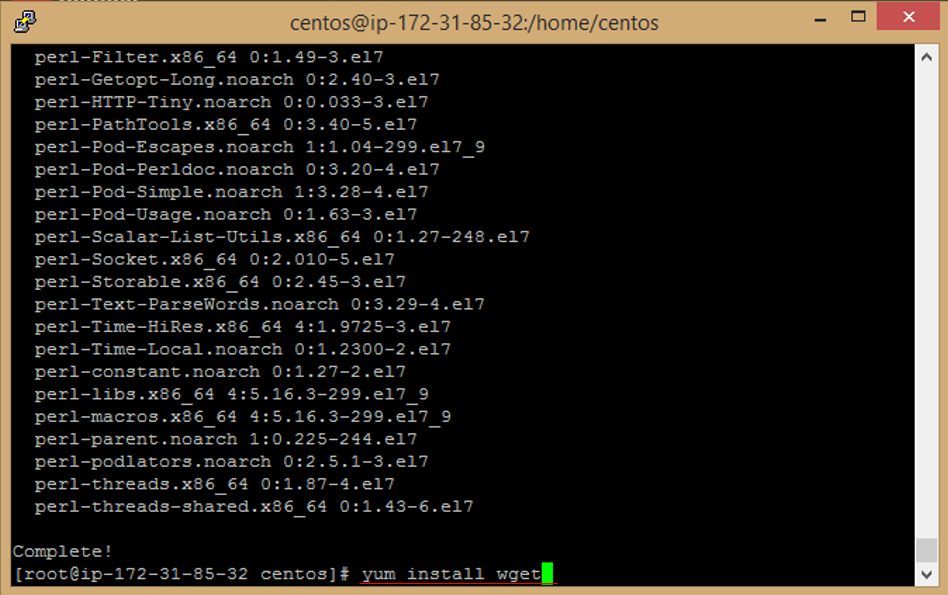

g. Enter this command to install perl package

```

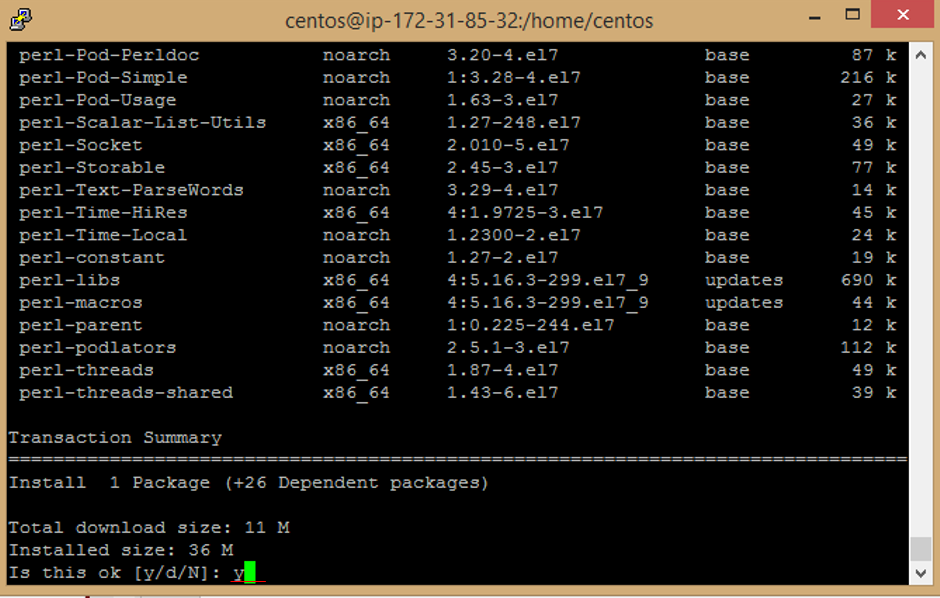

yum install perl

```

h. Enter **y** to accept download Package.

i. Enter this command to install wget package

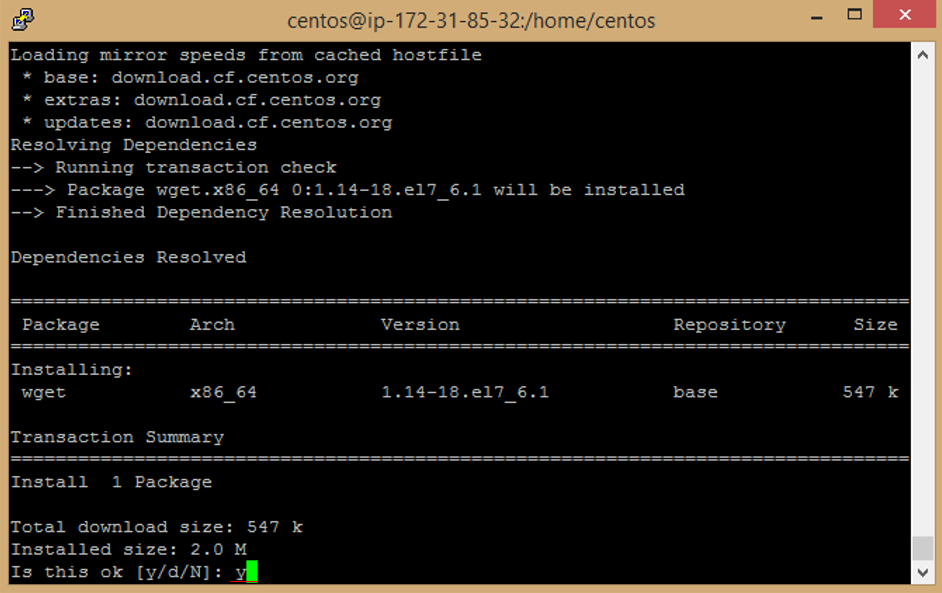

```

yum install wget

```

j. Enter **y** to accept download Package.

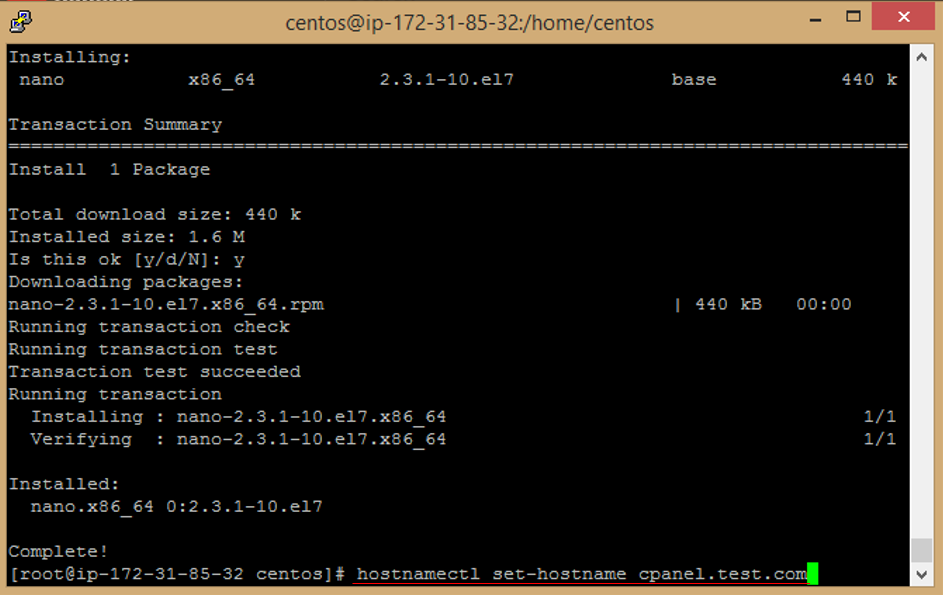

k. Enter this command to install nano package

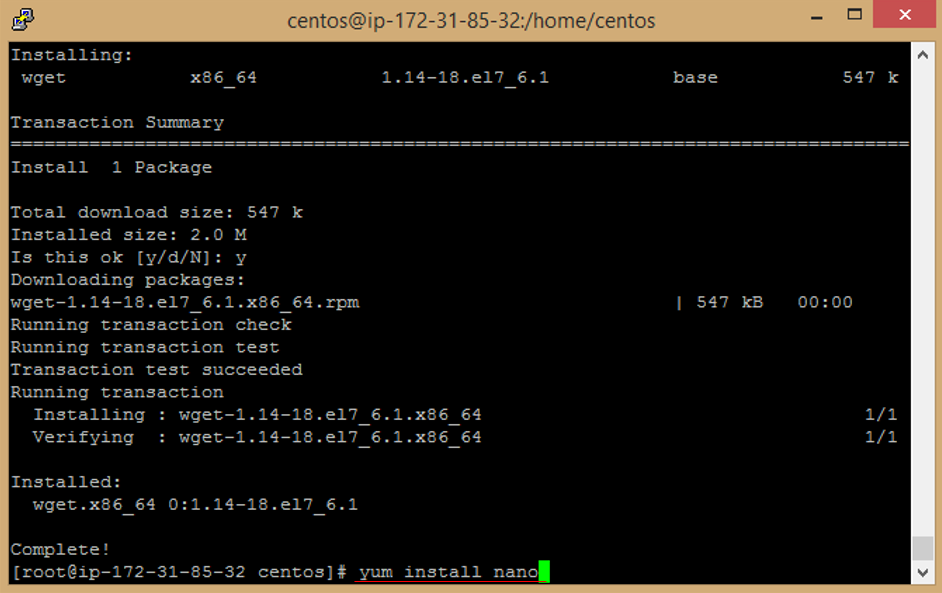

```

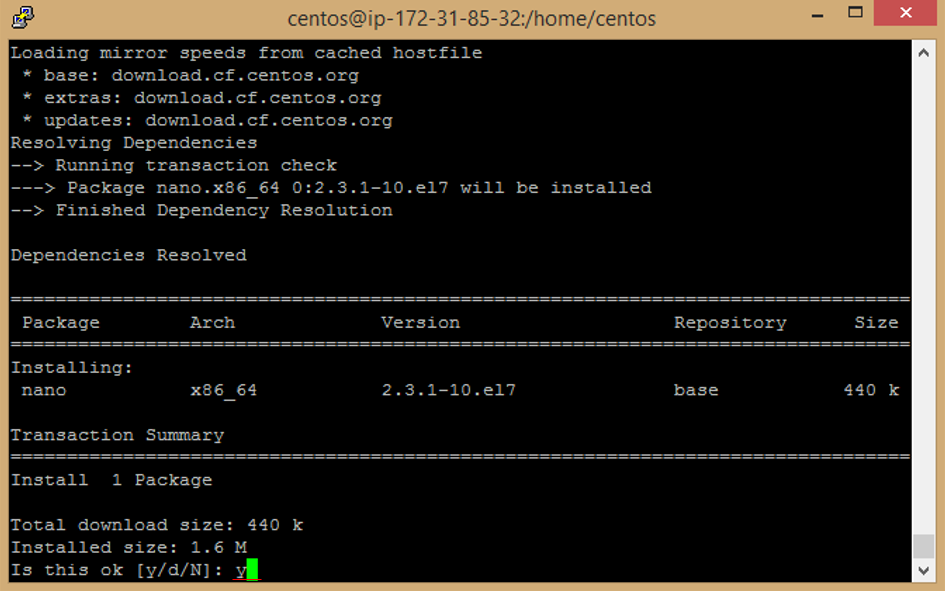

yum install nano

```

l. Enter **y** to accept download Package.

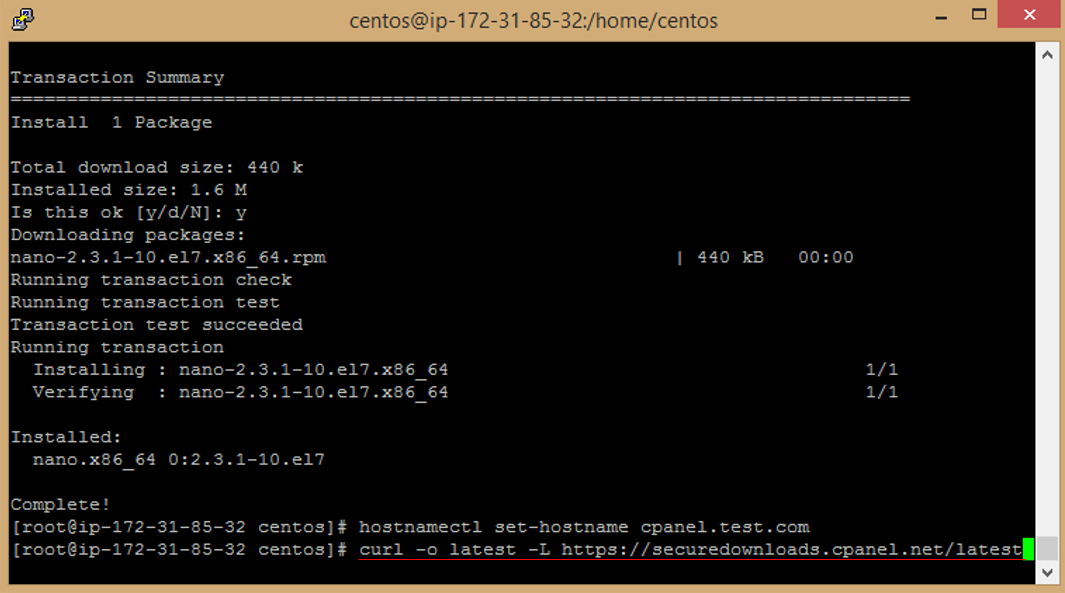

m. set the hostname with your domain name. Replace yourdomainname.com with your domain name.

```

hostnamectl set-hostname cpanel.yourdomainname.com

```

n. Download the latest installation script from cPanel's website by running the following command

```

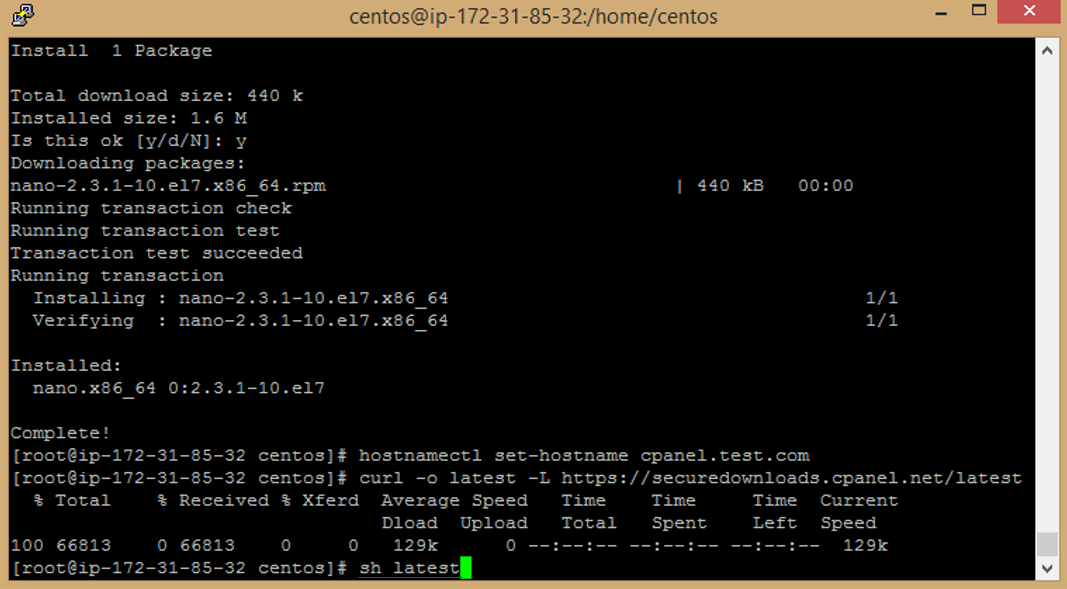

curl -o latest -L https://securedownloads.cpanel.net/latest

```

o. Once you have downloaded the installation script, run the following command to start the installation process

```

sh latest

```

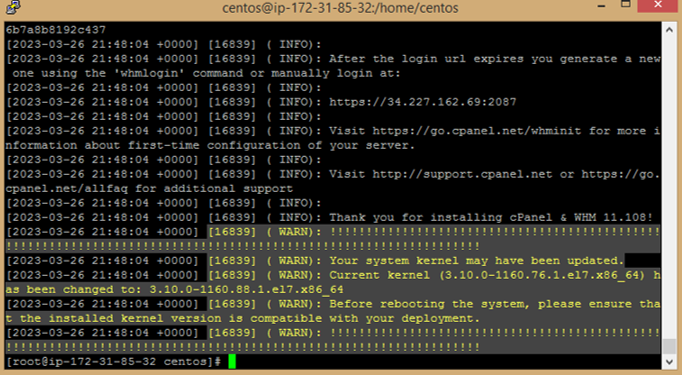

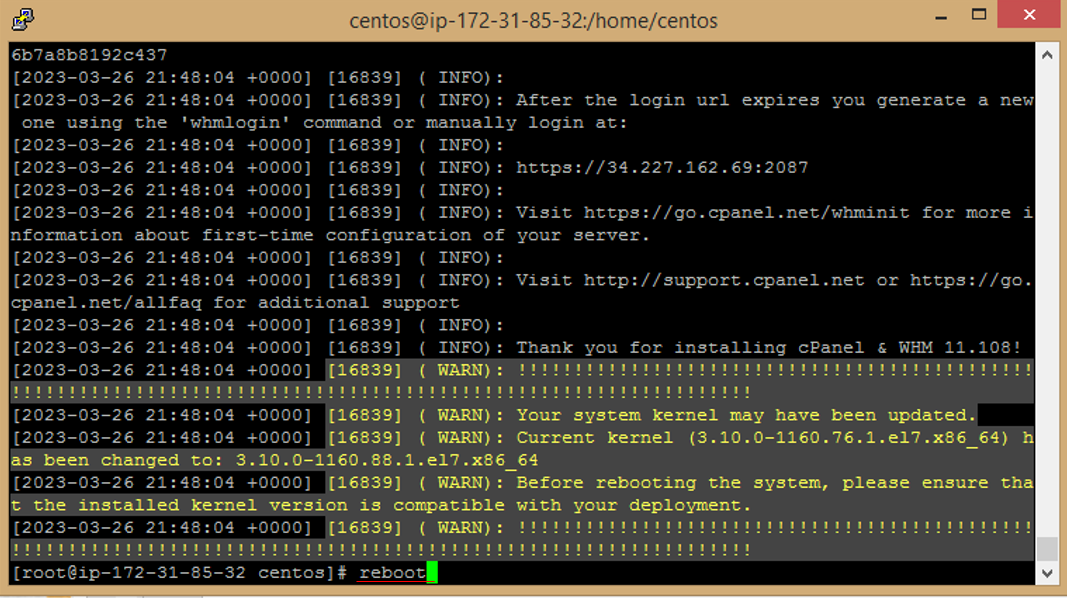

p. The installation process will now begin. Follow the on-screen instructions to complete the installation.

q. Make reboot for server, run the following command

```

reboot

```

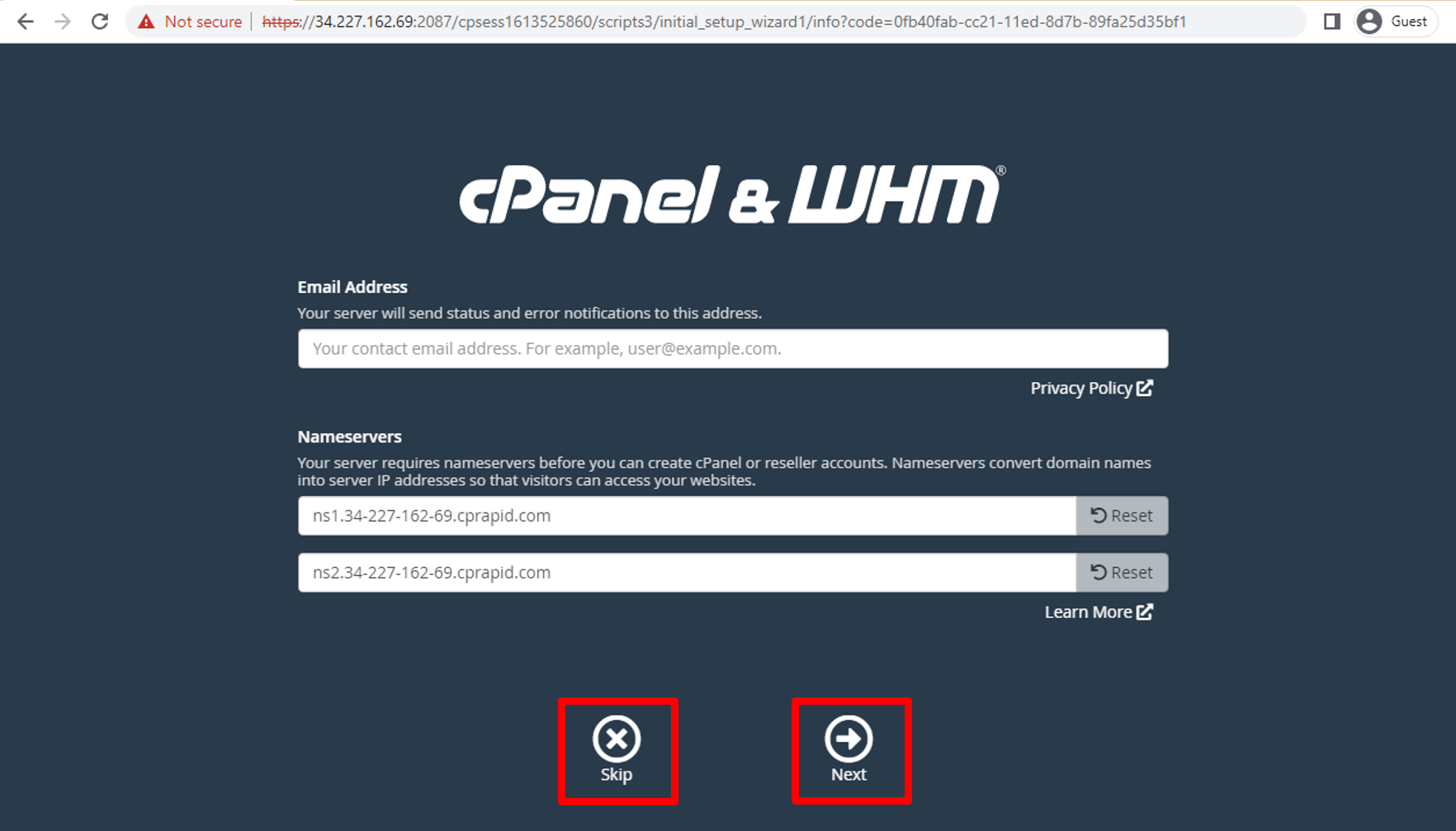

### WHM & cPanel setup

a. back to **EC2 Instance Dashboard** and **select** EC2 then **Copy** Public Ip.

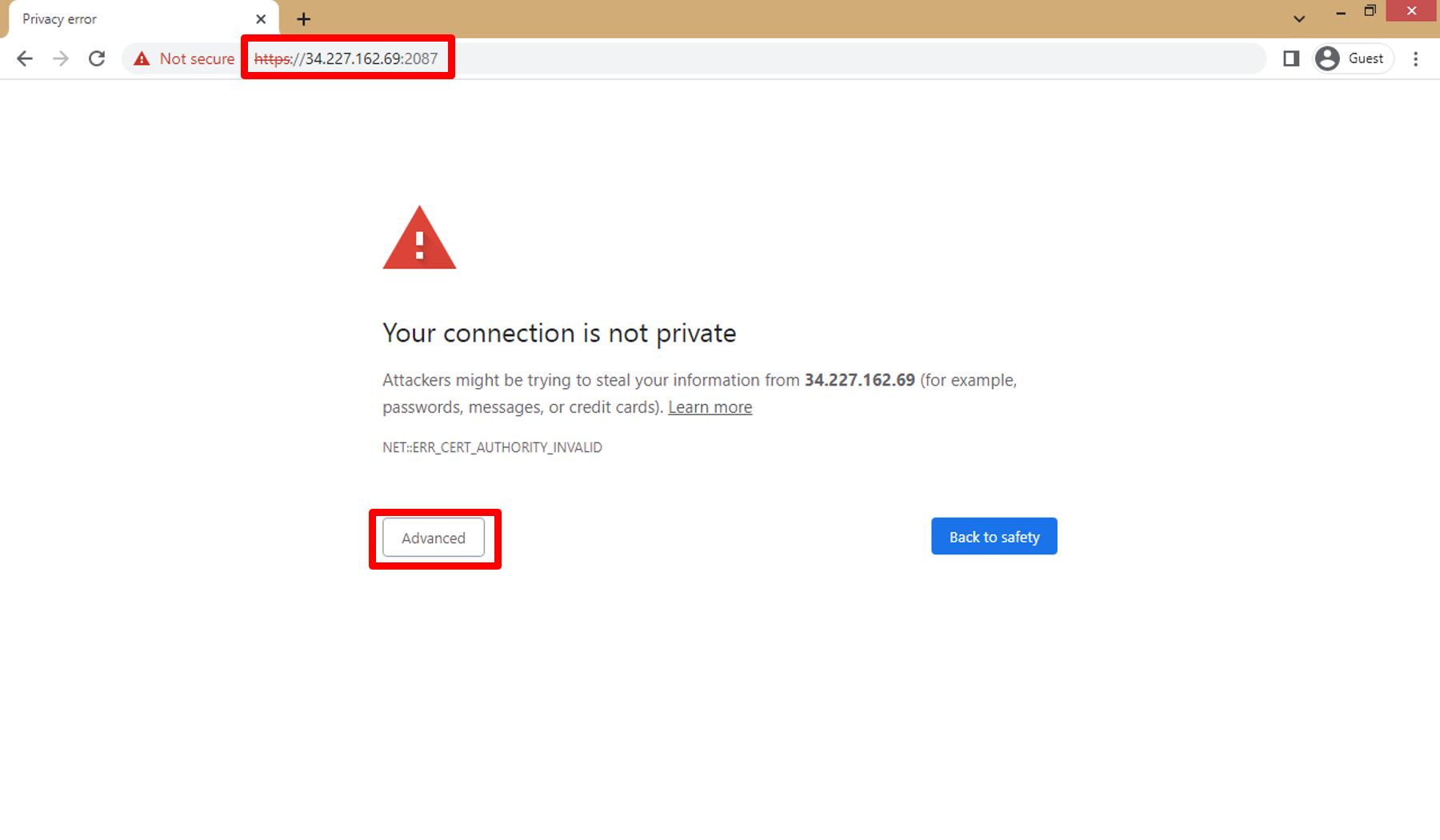

b. Once the installation is complete, you can access the WHM interface by visiting https://your-server-public-ip:2087 in your web browser then click on **Advanced**

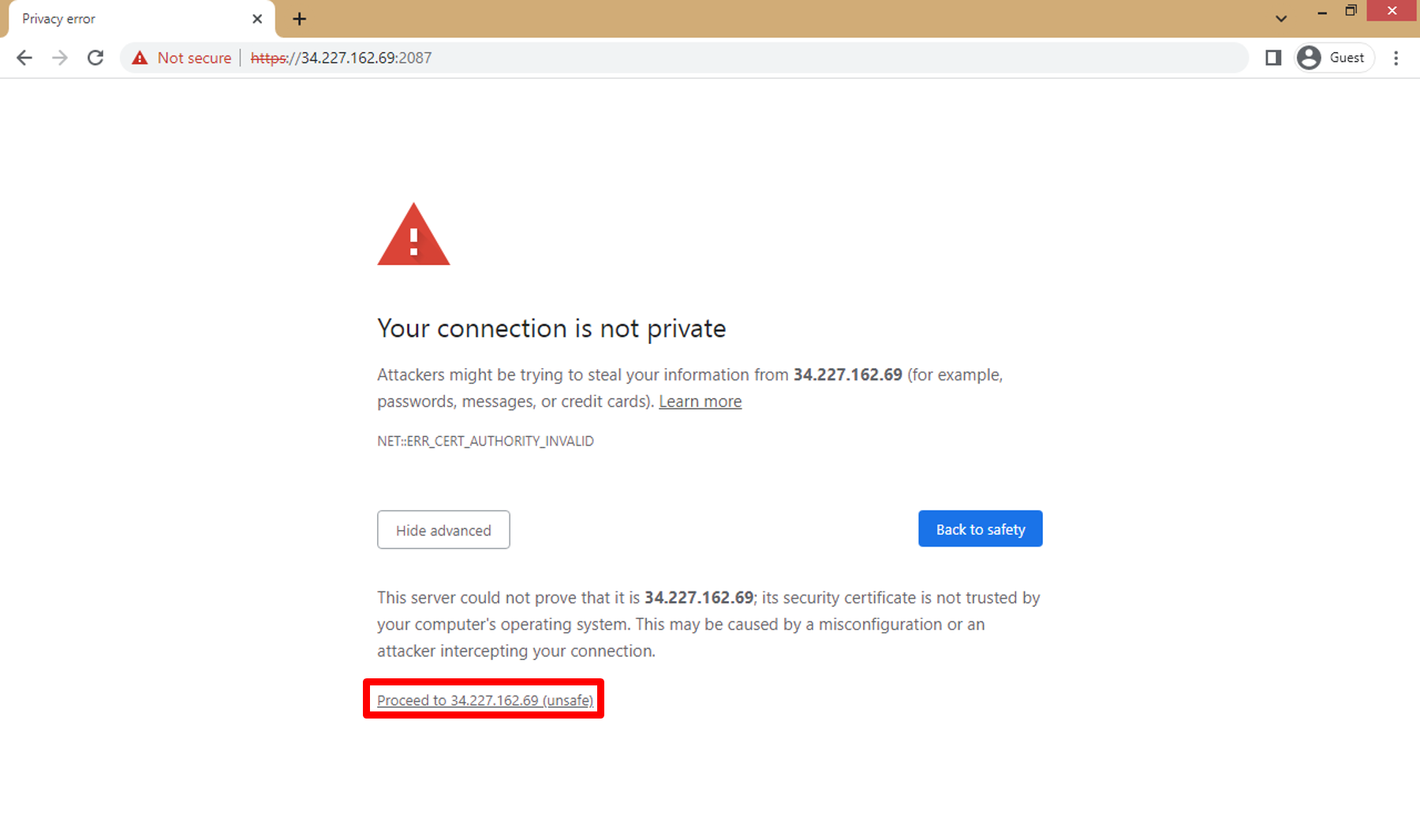

c. Click on **Proceed to ... (unsafe)**

d. WHM Login Page Open.

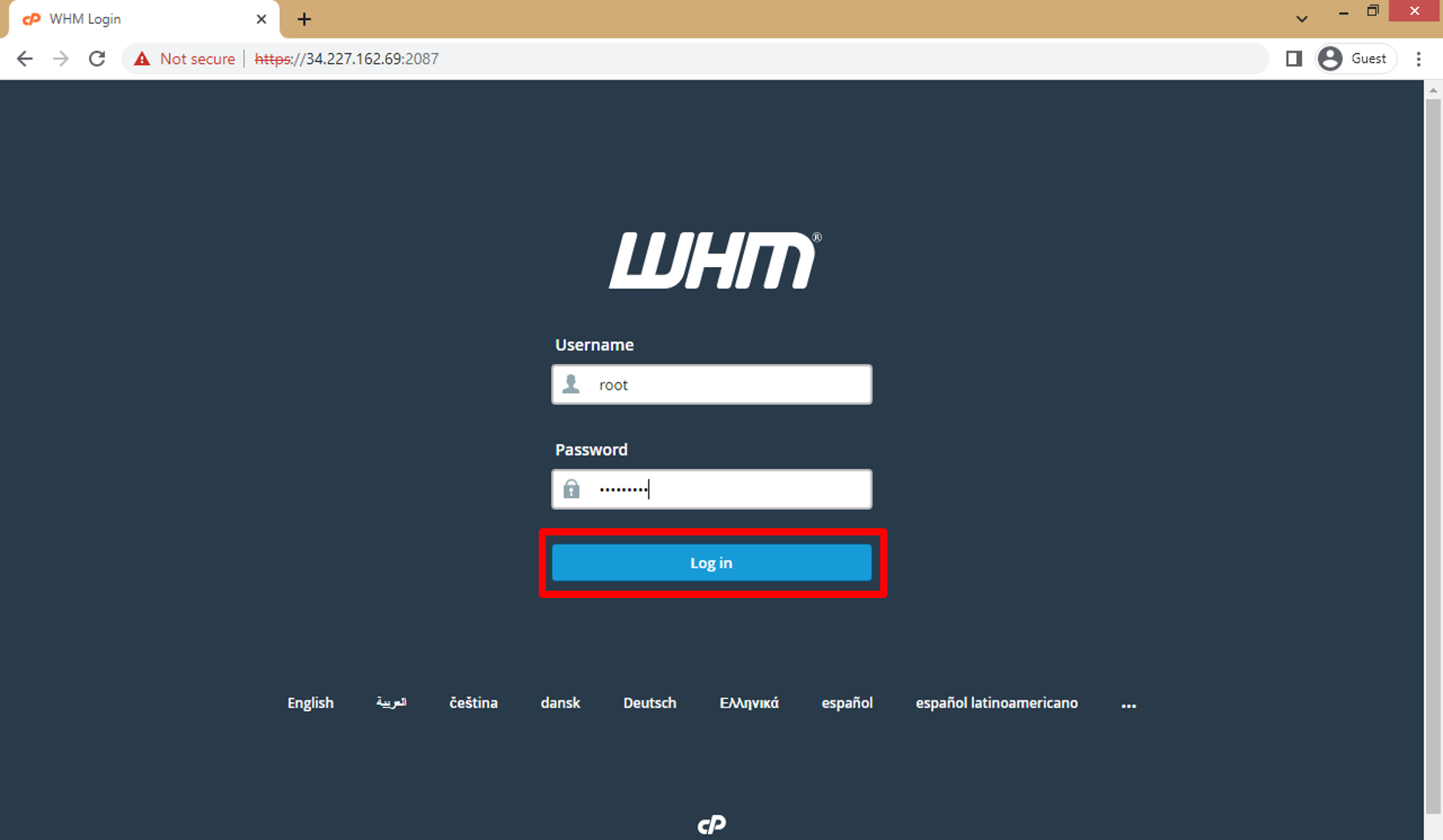

On the WHM login page, enter the "root" username and the password you set during the instance launch process then click on **Login**.

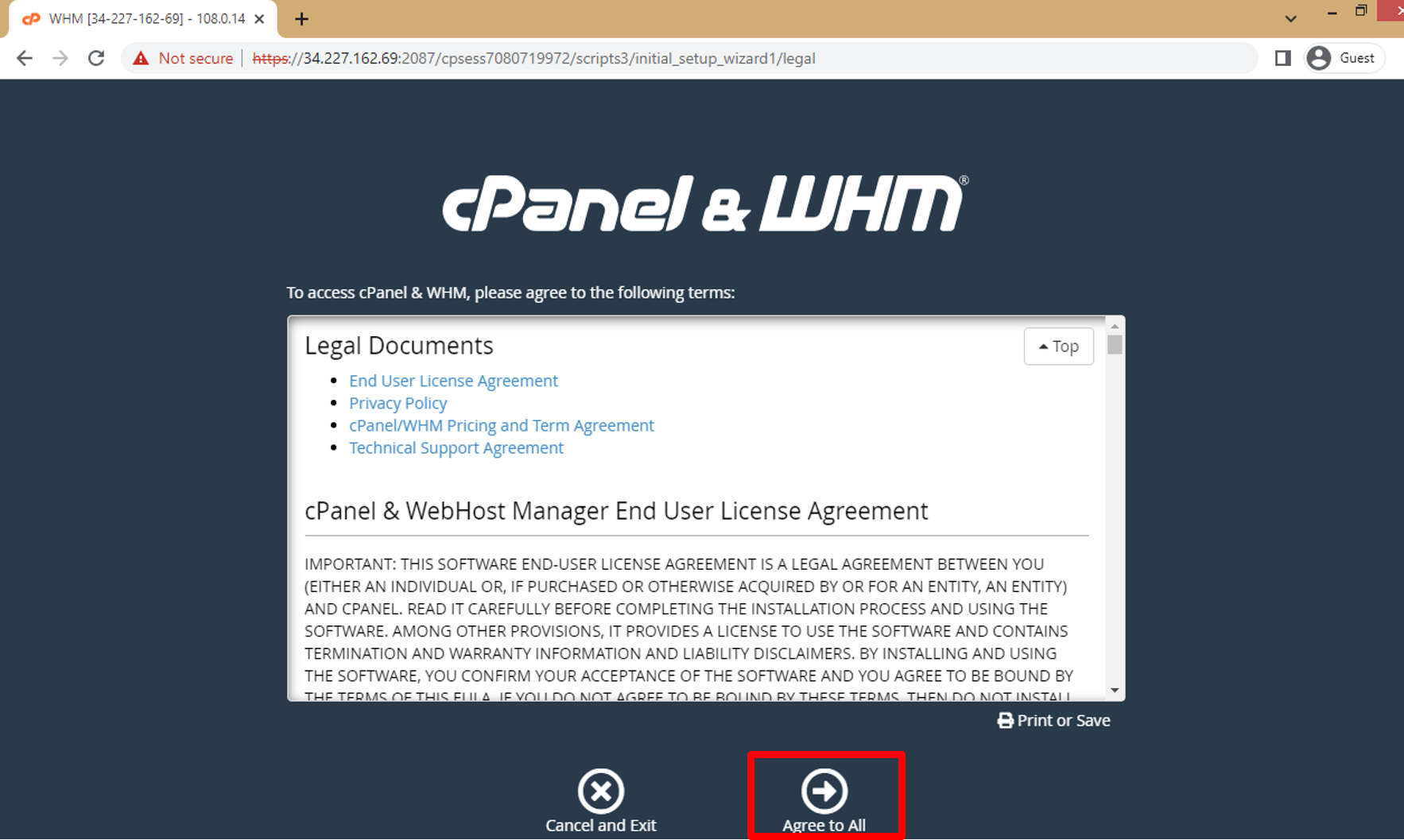

e. Click on **Agree to All**.

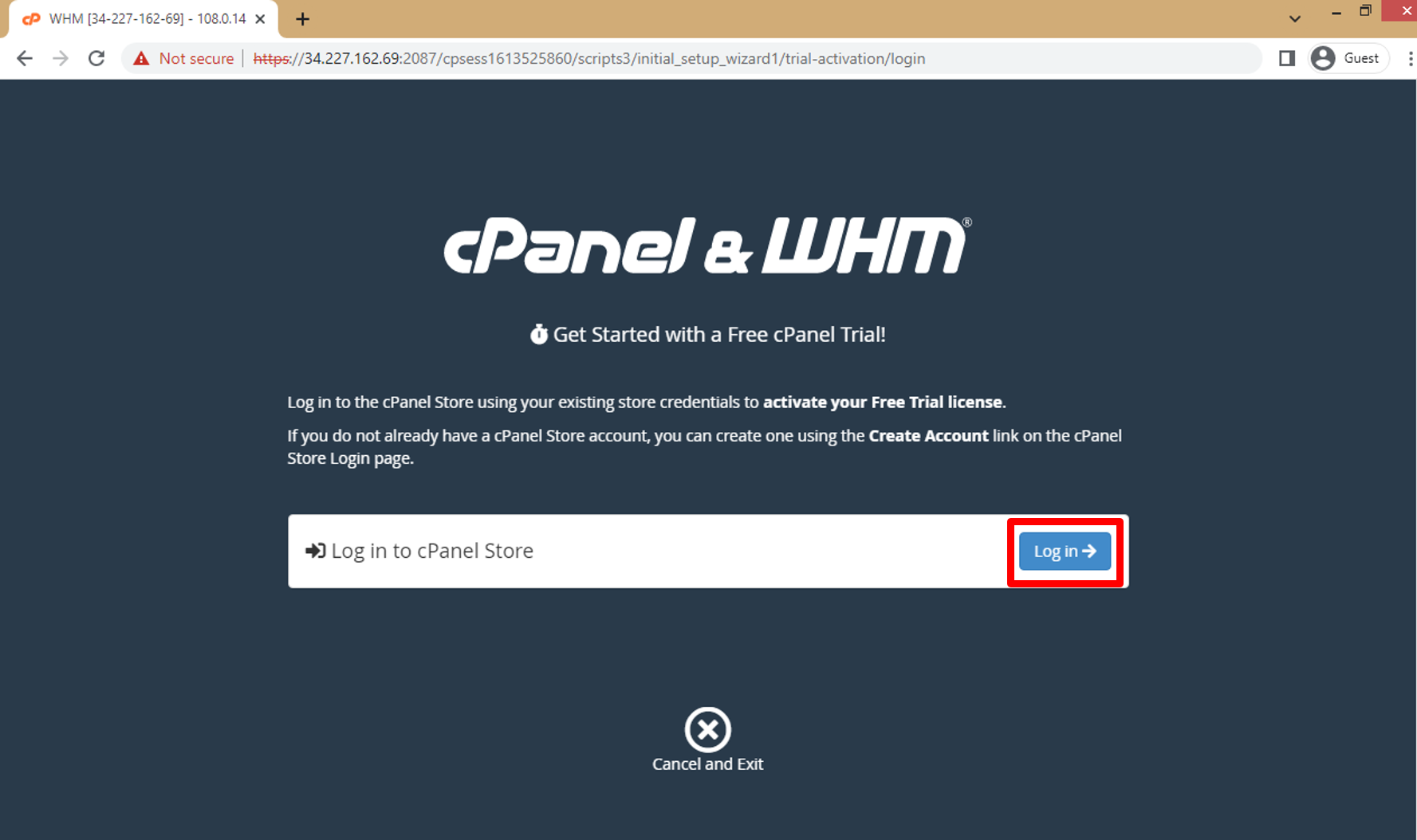

f. Click on **Log in** to log in to cpanel store.

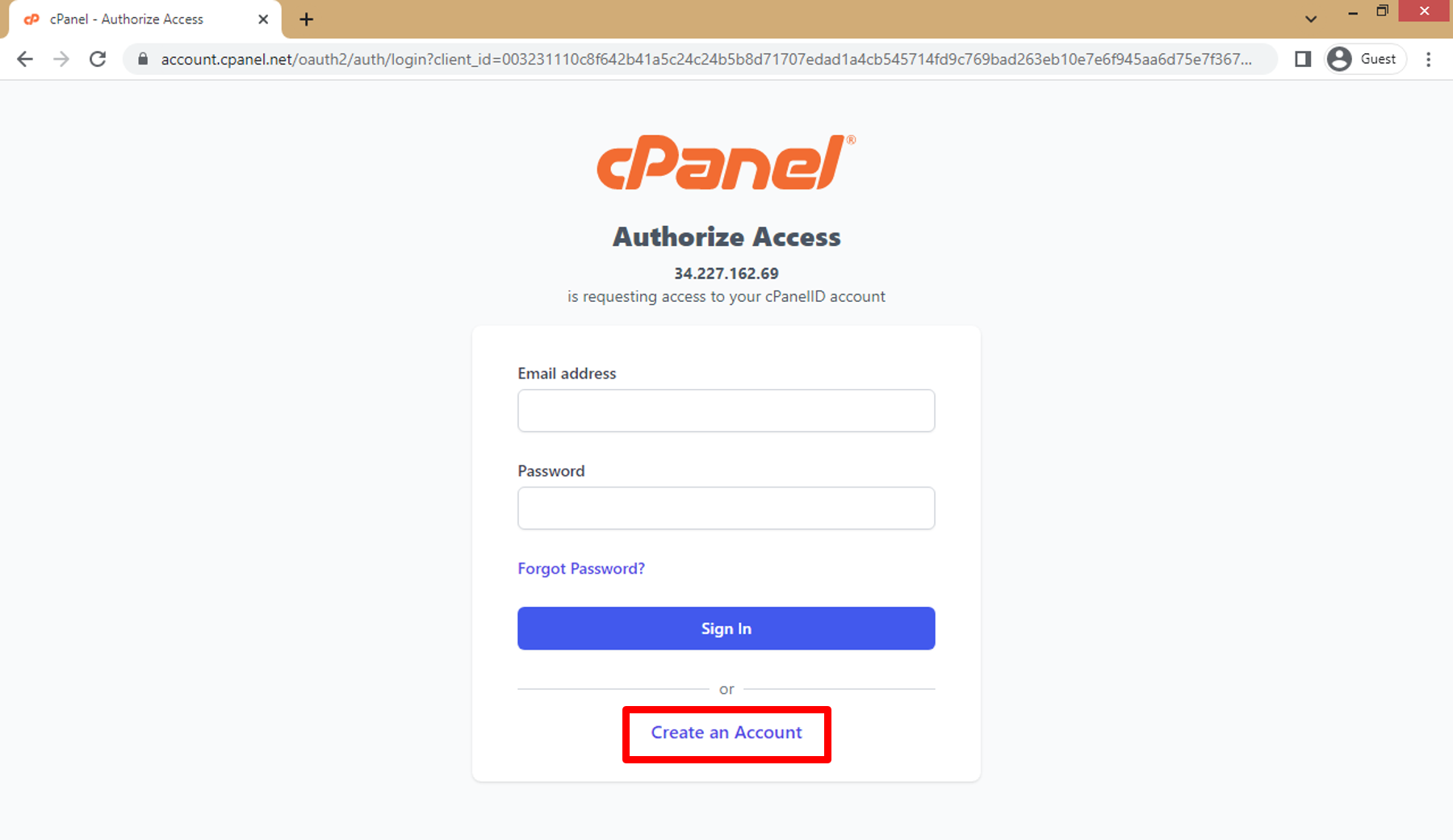

g. Click on **Create an Account**.

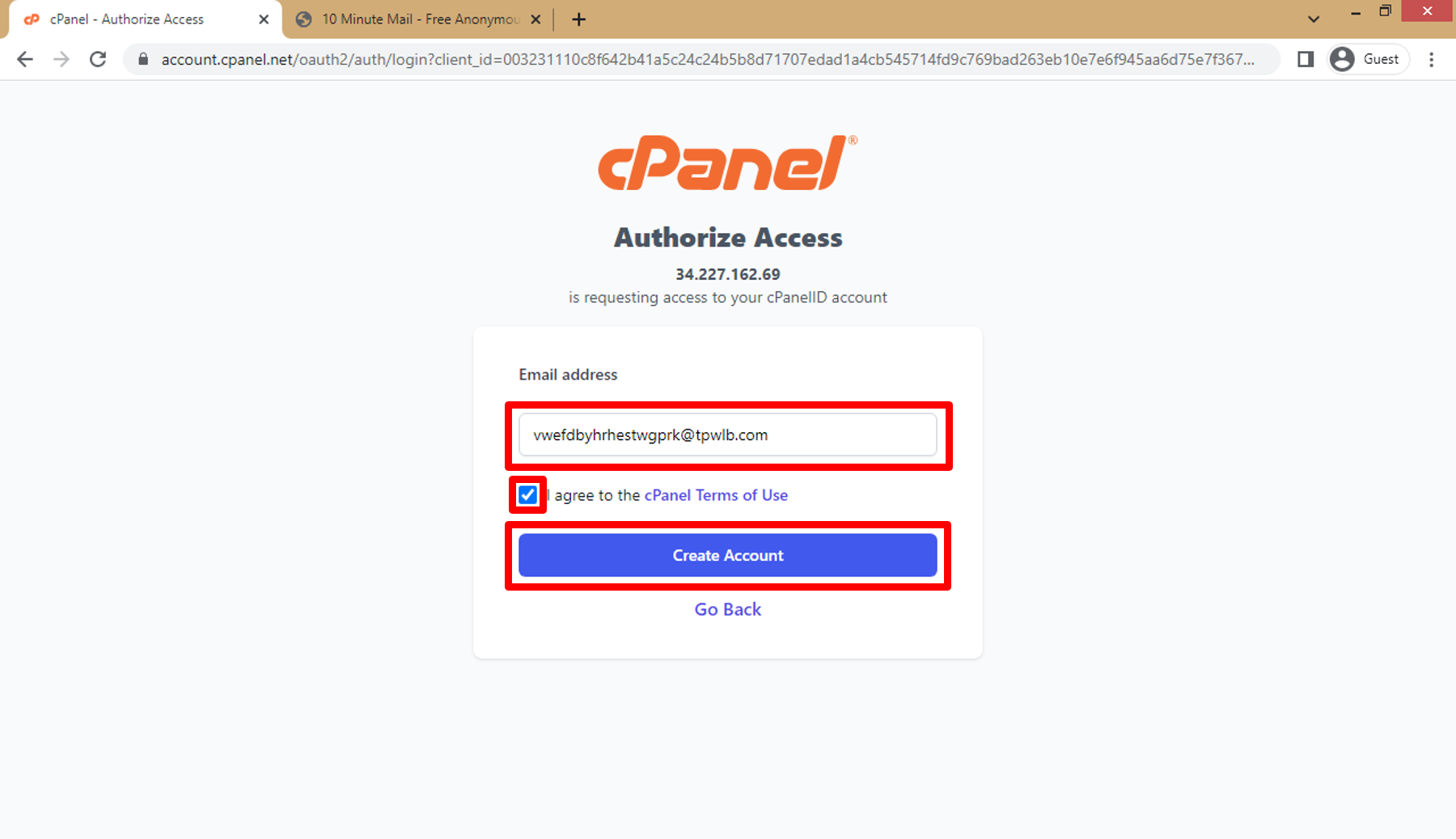

h. Enter **email address** then click on **the approval box** (I agree ...) then click on **Create Account**.

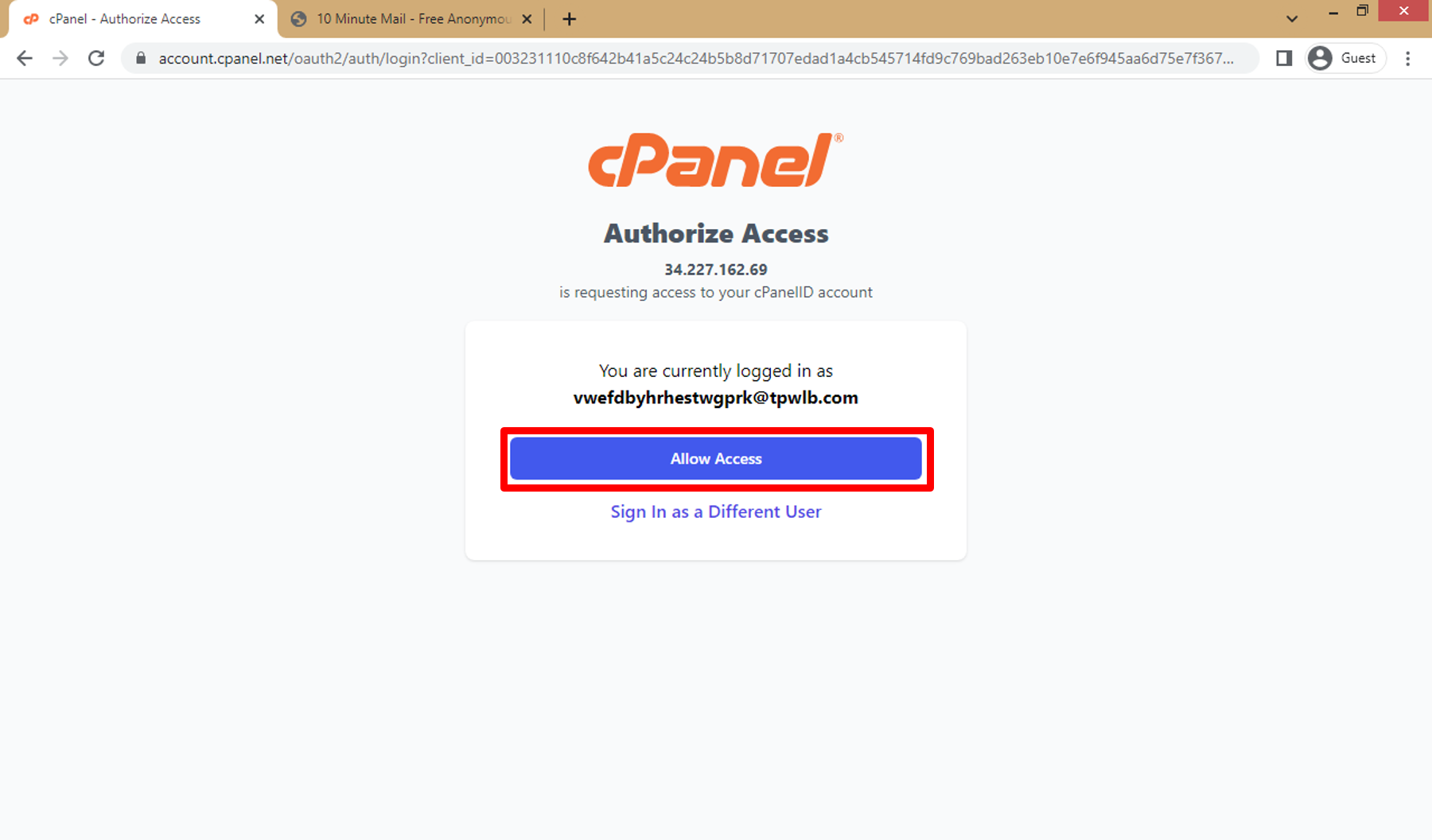

i. Click on **Allow Access**.

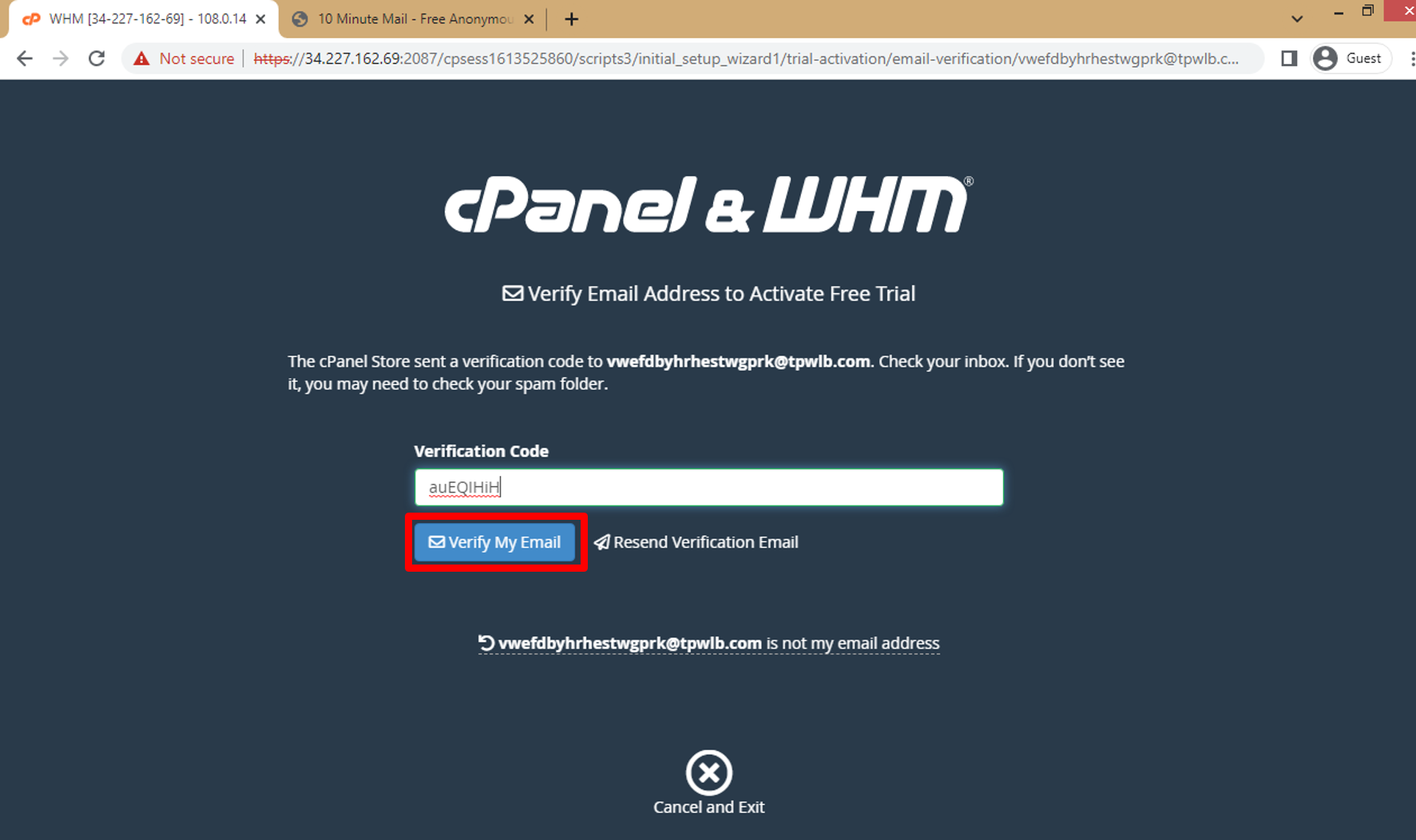

j. After recieve verification code on email copy it and enter it in WHM then click on **Verify My Email**.

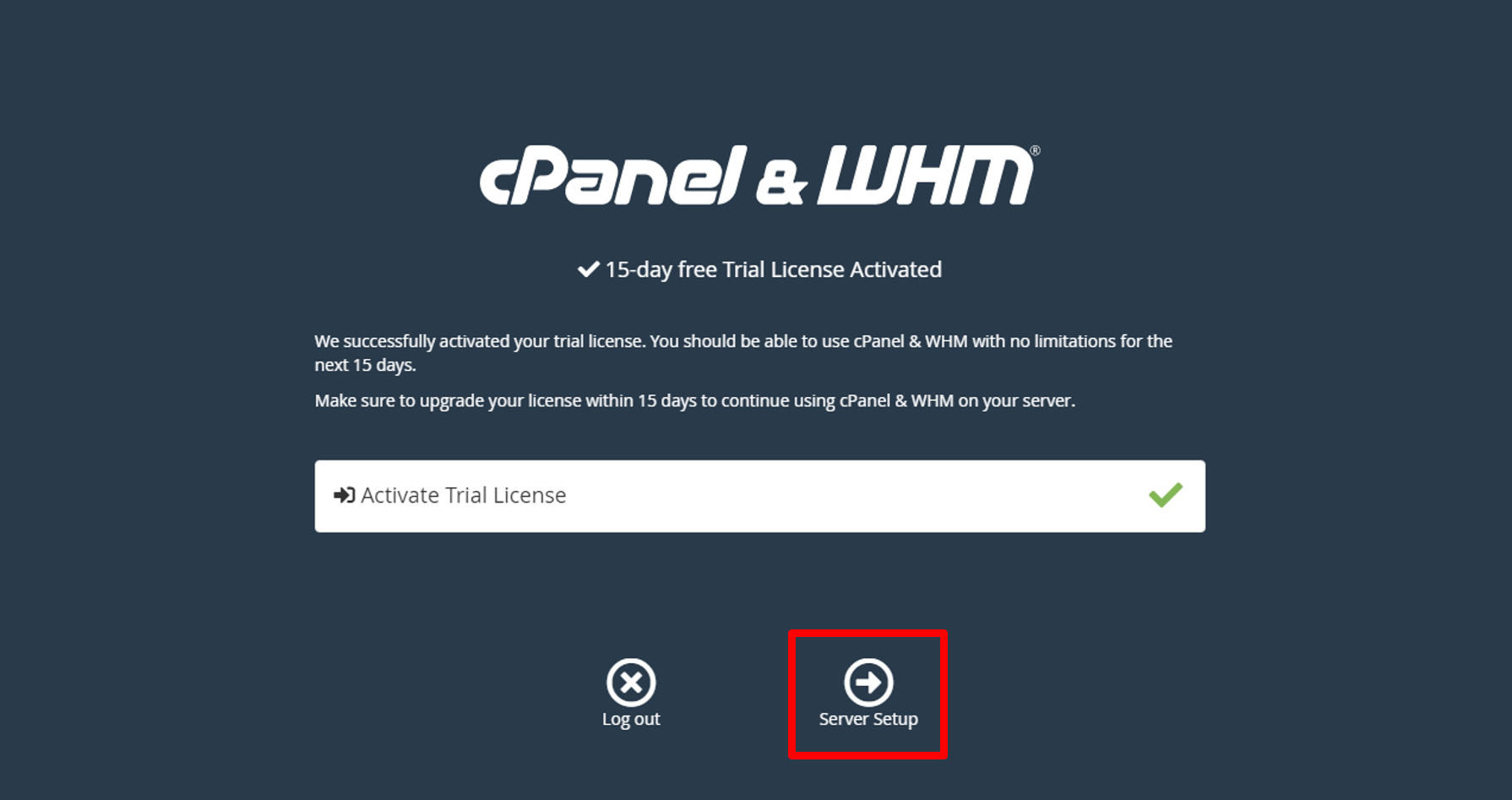

k. Click on **Server Setup**.

l. Here you can add Nameservers and setup domain or click on **Skip** and edit in it later.

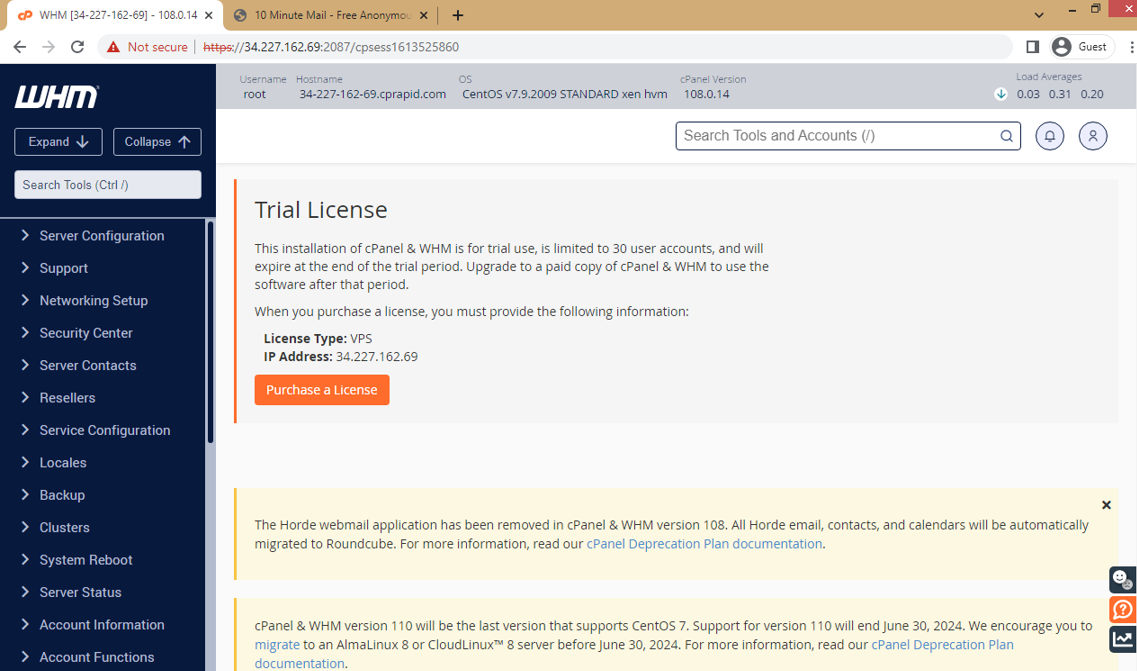

m. Now you will be taken to the WHM home page.

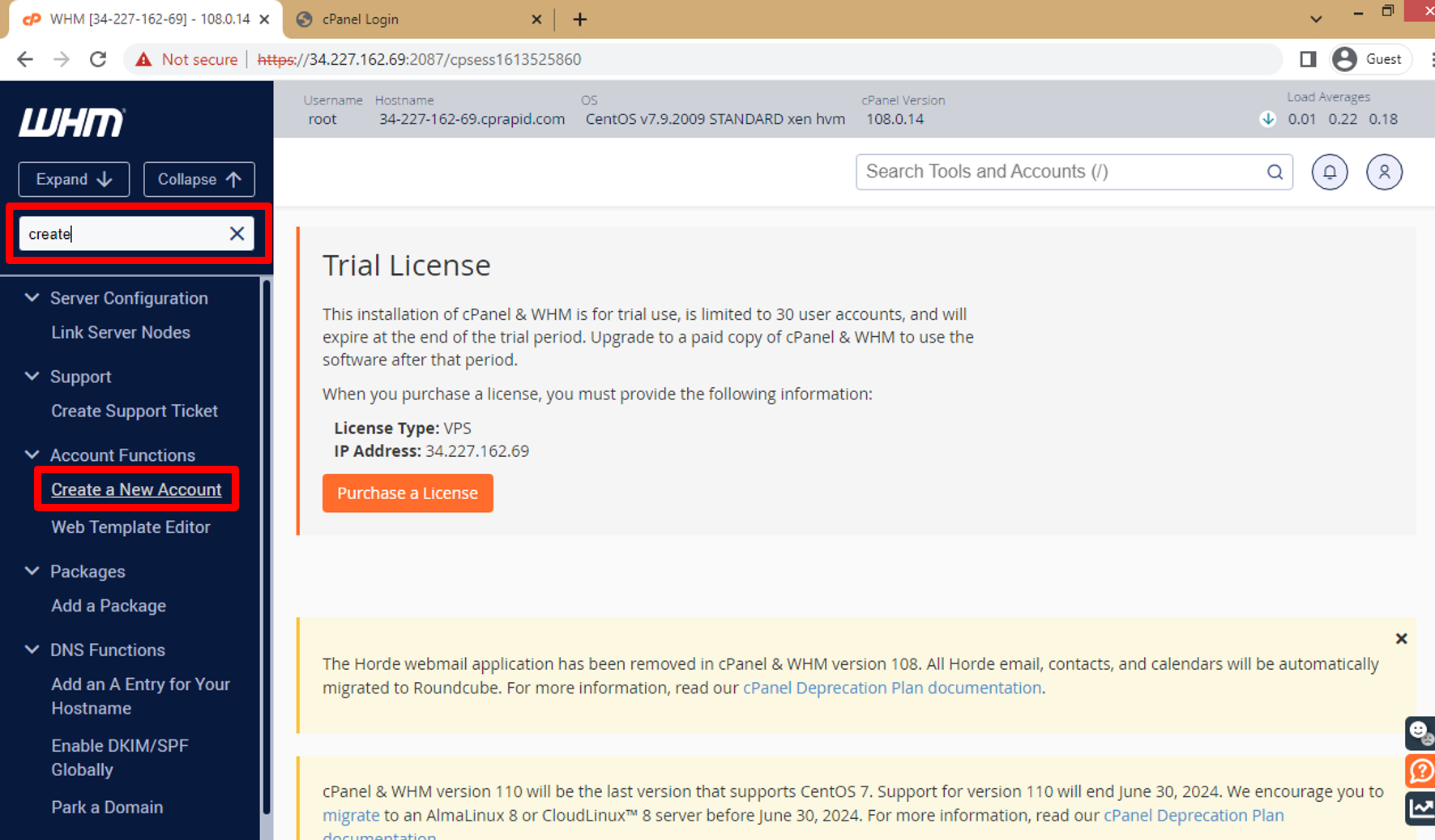

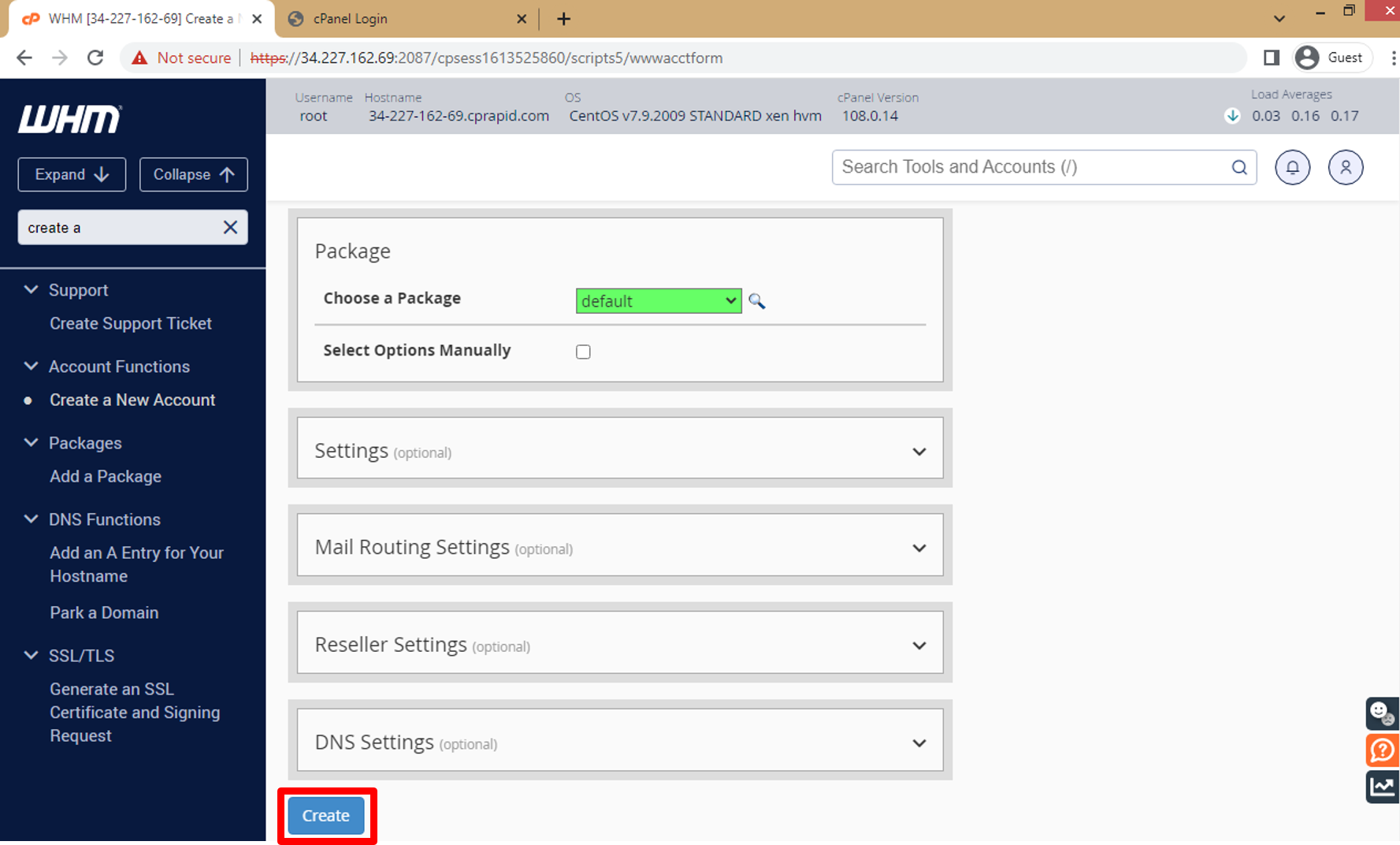

n. In Search Tools write **Create** and click on **Enter** to make Search then click on **Create a New Account** to use it to open Cpanel Login.

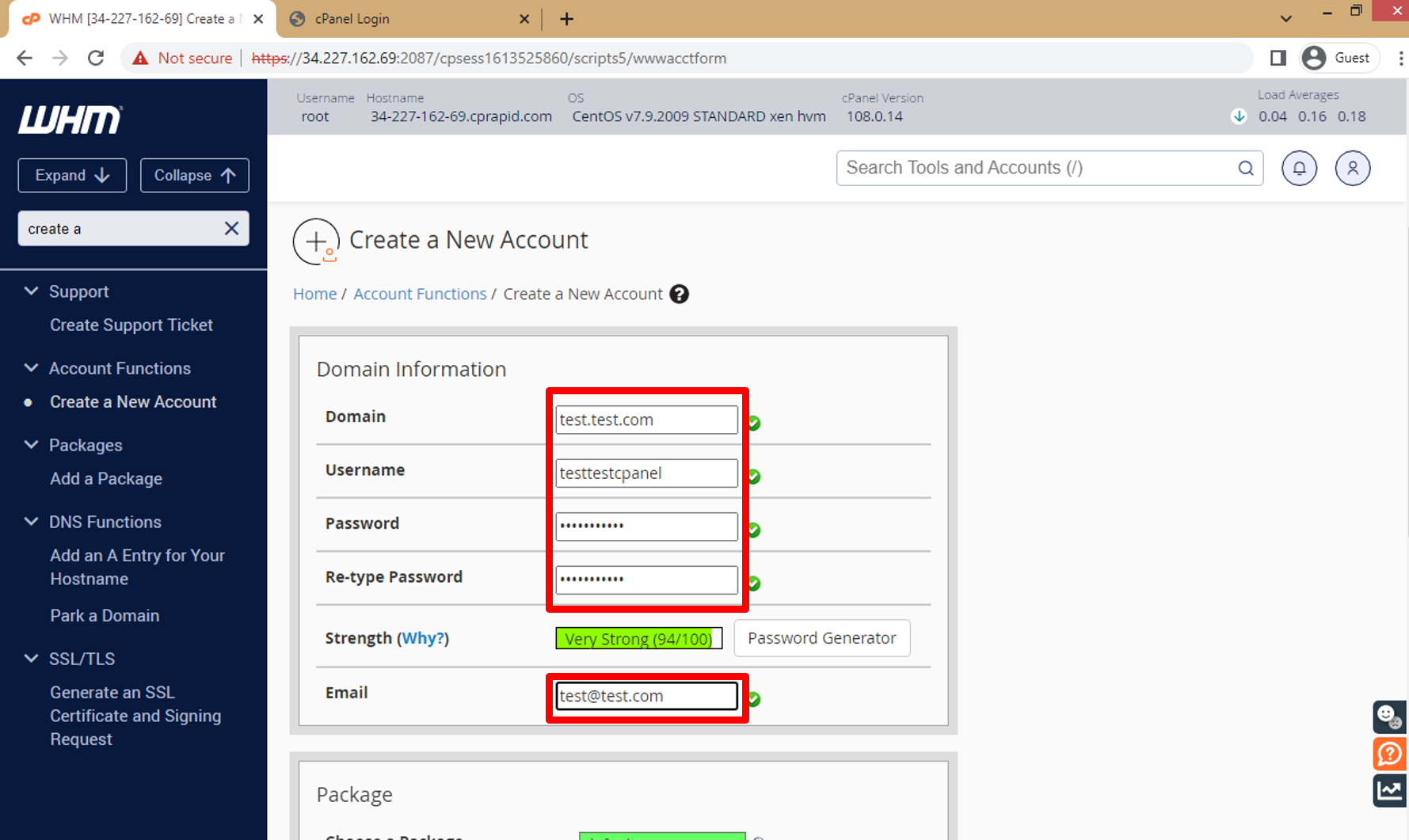

o. Enter all data about account you need to create then click on **Create** and this data will use to Log in Cpanel dashboard.

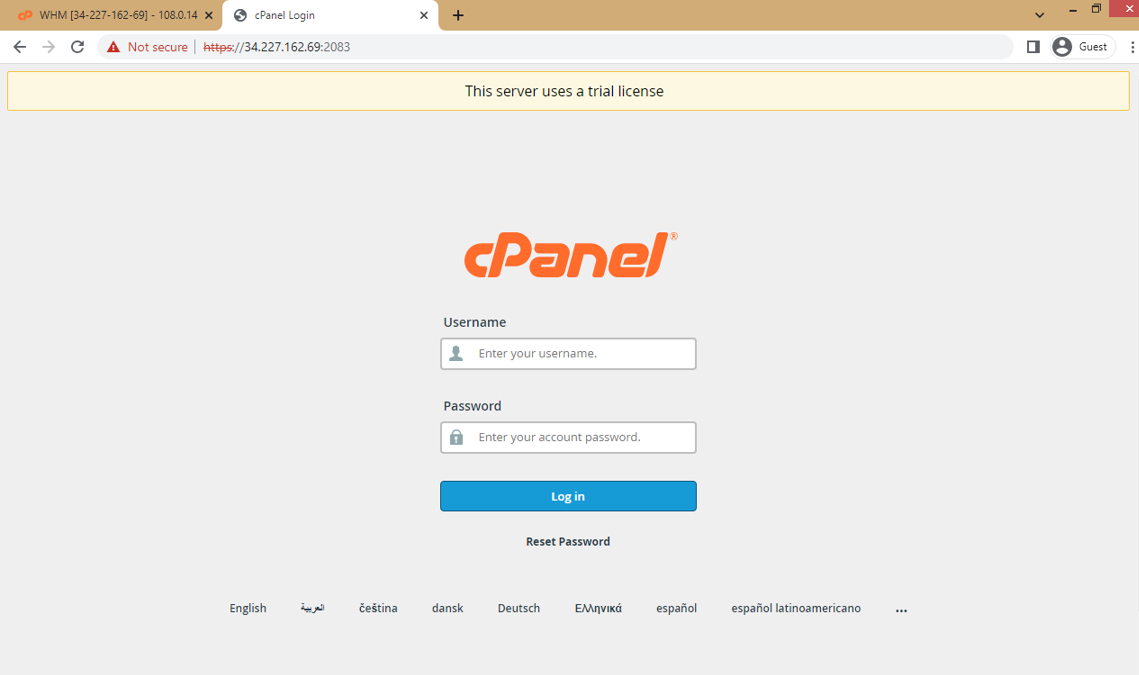

p. Now you can access the cPanel interface by visiting https://your-server-public-ip:2083 and enter username and password to login.

That's it! You now have cPanel & WHM installed on your AWS EC2 instance. From here, you can manage your server, create hosting accounts, and more. | fady_nabil10 |

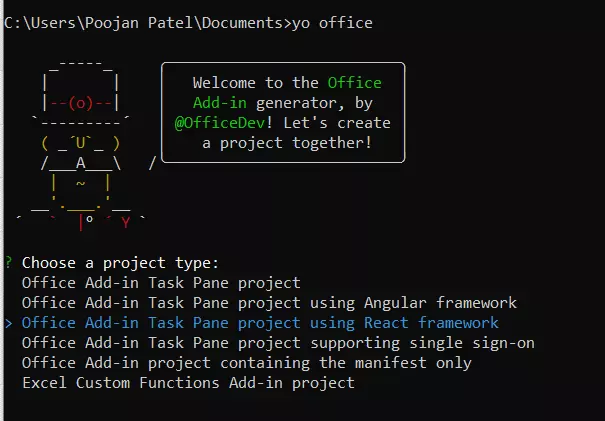

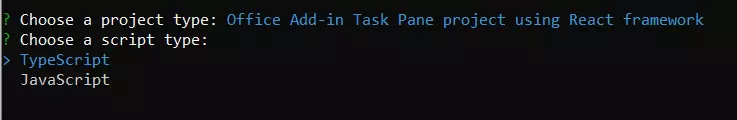

1,417,113 | 26- A Guide to Creating QGIS Plugins in a Step-by-Step Manner | If you're looking to create QGIS plugins, then you've come to the right place! In this step-by-step... | 22,345 | 2023-03-27T23:01:37 | https://dev.to/azad77/26-a-guide-to-creating-qgis-plugins-in-a-step-by-step-manner-2gkg | programming, python, pyqgis | --- series: A beginner's guide to using Python with QGIS ---

If you're looking to create QGIS plugins, then you've come to the right place! In this step-by-step guide, we'll show you how to create your own plugin by creating essential files such as metadata.txt and [mainPlugin.py](http://mainPlugin.py), as well as how to use Python to create the [**init**.py](http://init.py) file. We'll also walk you through how to install your plugin and provide a tip on using the Plugin Builder to create a template for your plugin. Follow these instructions to create your own QGIS plugin and take your GIS analysis to the next level!

The first requirement for plugins is metadata.txt.

Use a text editor to create a metadata file:

```plaintext

[general]

name=TestPlugin

email=azad.rasul@soran.edu.iq

author=Azad Rasul

qgisMinimumVersion=3.0

description=This is an example plugin for greeting the world.

version=version 0.1

```

Save it as a "metadata" text document.

The second file is called "\_\_init\_\_.py" which includes the classFactory() method.

You can use Python to create a "\_\_**init\_\_**.py" file

```python

from .mainPlugin import TestPlugin

def classFactory(iface):

return TestPlugin(iface)

```

The third necessary file contains the main logic of the plugin. It must have initGui(), unload() and run() methods. We name it "[mainPlugin.py](http://mainPlugin.py)" file that includes:

```python

import os

import inspect

from PyQt5.QtWidgets import QAction

from PyQt5.QtGui import QIcon

# get the directory containing this script

cmd_folder = os.path.split(inspect.getfile(inspect.currentframe()))[0]

# define the TestPlugin class

class TestPlugin:

def __init__(self, iface):

self.iface = iface

# initialize the plugin interface

def initGui(self):

# get the path to the plugin icon

icon = os.path.join(os.path.join(cmd_folder, 'logo.png'))

# create a new QAction with the plugin icon and label

self.action = QAction(QIcon(icon), 'TestPlugin', self.iface.mainWindow())

# connect the action to the run() method

self.action.triggered.connect(self.run)

# add the action to the toolbar and menu

self.iface.addToolBarIcon(self.action)

self.iface.addPluginToMenu("&TestPlugin", self.action)

# unload the plugin

def unload(self):

# remove the action from the toolbar and menu

self.iface.removePluginMenu("&TestPlugin", self.action)

self.iface.removeToolBarIcon(self.action)

# delete the action object

del self.action

# run the plugin

def run(self):

# display a message in the QGIS message bar

self.iface.messageBar().pushMessage('Hello from TestPlugin!')

```

Save these three files and a logo in one folder and name it "TestPlugin" or the name of the plugin.

Then, copy the "TestPlugin" folder and paste it into QGIS 3 Plugins directory. On Windows, it is typically located at:

C:\\Users\\username\\AppData\\Roaming\\QGIS\\QGIS3\\profiles\\default\\python\\plugins

Restart QGIS software, and you will be able to see your "TestPlugin" plugin in the list of installed plugins. By clicking on the TestPlugin icon, you can view the "Hello from TestPlugin!" text.

Alternatively, you can use the "Plugin Builder" to create a template plugin and then modify it to suit your needs.

In conclusion, creating QGIS plugins may seem daunting, but this step-by-step guide can help anyone create their customized plugin. By following the instructions provided, users can create necessary files such as metadata.txt and [mainPlugin.py](http://mainPlugin.py), and use Python to create the [init.py](http://init.py) file. The article also provides a tip on using the Plugin Builder to create a template for the plugin. With the plugin created and installed, users can take their GIS analysis to the next level.

The credit for my tutorials goes to Anita Graser and Ujaval Gandhi.

External resources:

[1- PyQGIS Developer Cookbook](https://docs.qgis.org/3.16/en/docs/pyqgis_developer_cookbook/intro.html#scripting-in-the-python-console)

[2- PyQGIS 101: Introduction to QGIS Python programming for non-programmers](https://anitagraser.com/pyqgis-101-introduction-to-qgis-python-programming-for-non-programmers/)

[3- Customizing QGIS with Python (Full Course Material)](https://courses.spatialthoughts.com/pyqgis-in-a-day.html#hello-world)

[4- PyQGIS samples](https://webgeodatavore.github.io/pyqgis-samples/)

> If you like the content, please [SUBSCRIBE](https://www.youtube.com/channel/UCpbWlHEqBSnJb6i4UemXQpA?sub_confirmation=1) to my channel for the future content

| azad77 |

1,417,114 | Avoid this common mistakes when building a websites | When building a website, there are a lot of things that you need to think about. A developer or a web... | 0 | 2023-03-27T23:06:34 | https://dev.to/jenueldev/avoid-this-common-mistakes-when-building-a-websites-1plk | webdev, javascript, beginners, programming | When building a website, there are a lot of things that you need to think about. A developer or a web designer or a web developer should know this and must avoid it at all costs. If not check carefully, your work will be going to fail.

[Read More](https://brojenuel.com/blog/Avoid-this-common-mistakes-when-building-a-websites) | jenueldev |

1,417,151 | WordPress plugin updates shows -1 | Problem We had an issue with a WordPress website, which wouldn't allow us to update or... | 0 | 2023-03-27T23:53:24 | https://dev.to/edwardanil/wordpress-plugin-updates-shows-1-1j97 | wordpress, bugfixing, solution, programming | ## Problem

We had an issue with a WordPress website, which wouldn't allow us to update or install any plugins.

When we try updating a plugin, the page would show just "**-1**".

Even adding a new plugin would show a "-1" error.

There wouldn't be any error messages or clue on what went wrong.

Even enabling debugging the WordPress site did not provide any clue on what caused this issue.

If you check the developer console of the browser, it would show that the "**wp-admin/admin-ajax.php**" throws a "[403 error](https://developer.mozilla.org/en-US/docs/Web/HTTP/Status/403)".

And the problem is that WordPress would create a ".maintenance" file when you update the plugin. Now the site would go to Maintenance mode and will not be available, unless you manually delete the ".maintenance" file via FTP.

## Details

The WordPress website had many plugins. And it had the following plugins installed in it:

- Autoptimize

- Elementor

- Page Builder by SiteOrigin

- Really Simple SSL

- Starter Templates (from Astra)

- Sucuri Security - Auditing, Malware Scanner and Hardening

- Super Page Cache for Cloudflare

- Wordfence Security

And the domain was using Cloudflare to make the site load faster and use the security feature provided by it.

The site was hosted in Ionos Web hosting package.

## Steps that did NOT work

- Tried to find out the issue by bypassing Cloudflare. It did not work.

- Tried to stop the caching on the site and Cloudflare. It did not solve the problem.

- Tried deactivating the security plugins. It did not solve the problem.

- Tried removing "Really Simple SSL" plugin security options. Still the problem persisted.

## Solution

We were on the verge of moving the site to another hosting provider, as we could not find the source of this issue. And the hosting support could not find anything wrong with their servers or system.

And then finally, I came upon this solution on WordPress support forum.

[https://wordpress.org/support/topic/plugin-updates-shows-1/](https://wordpress.org/support/topic/plugin-updates-shows-1/)

It stated that the issue is with **[Starter Templates](https://wpastra.com/starter-templates/)** (from Astra).

I just deactivated that plugin and the updates of other plugins worked smoothly.

And later on, I updated the **Starter Templates** plugin to the latest version.

If you face this kind of issue in the future, please check if you have installed **Starter Templates**. Just deactivate it and work on the other updates.

| edwardanil |

1,417,173 | Wrote a program to select a restaurant in python | Did this for a codecademy project, select from a group of restaurants based on type, name, rating,... | 0 | 2023-03-28T01:01:30 | https://dev.to/austinharry777/wrote-a-program-to-select-a-restaurant-in-python-1l13 | Did this for a codecademy project, select from a group of restaurants based on type, name, rating, price, or location!

https://github.com/austinharry777/restaurant_recommendation_project | austinharry777 | |

1,417,214 | Kubernetes Labels, Selectors, and Annocation | Annotations, labels, and selectors are used to manage metadata attached to your Kubernetes objects.... | 0 | 2023-03-28T02:59:28 | https://dev.to/s3cloudhub/kubernetes-labels-selectors-and-annocation-dno | webdev, beginners, tutorial, kubernetes | [](http://www.youtube.com/watch?v=XabYkFhKtnE)

Annotations, labels, and selectors are used to manage metadata attached to your Kubernetes objects. Annotations and labels define the data while selectors provide a way to query it. Here are the differences between the three concepts, what they’re designed for, and how you can use them to manage your resources. Annotations | s3cloudhub |

1,417,384 | Imported Italian Artichokes | Are you looking for good Italian restaurants? DC PIE CO believes in serving only the most delicious... | 0 | 2023-03-28T06:52:21 | https://dev.to/dc_pie_co/imported-italian-artichokes-4lgd | Are you looking for good Italian restaurants? DC PIE CO believes in serving only the most delicious food that will have you coming back for seconds! All of our food is made with the freshest ingredients. Our experienced chefs make the best **[Imported Italian Artichokes](https://www.dcpieco.com/menu)**. We only serve the most delicious, freshly made food, and our personal Brooklyn brick oven pie is to absolutely die for! | dc_pie_co | |

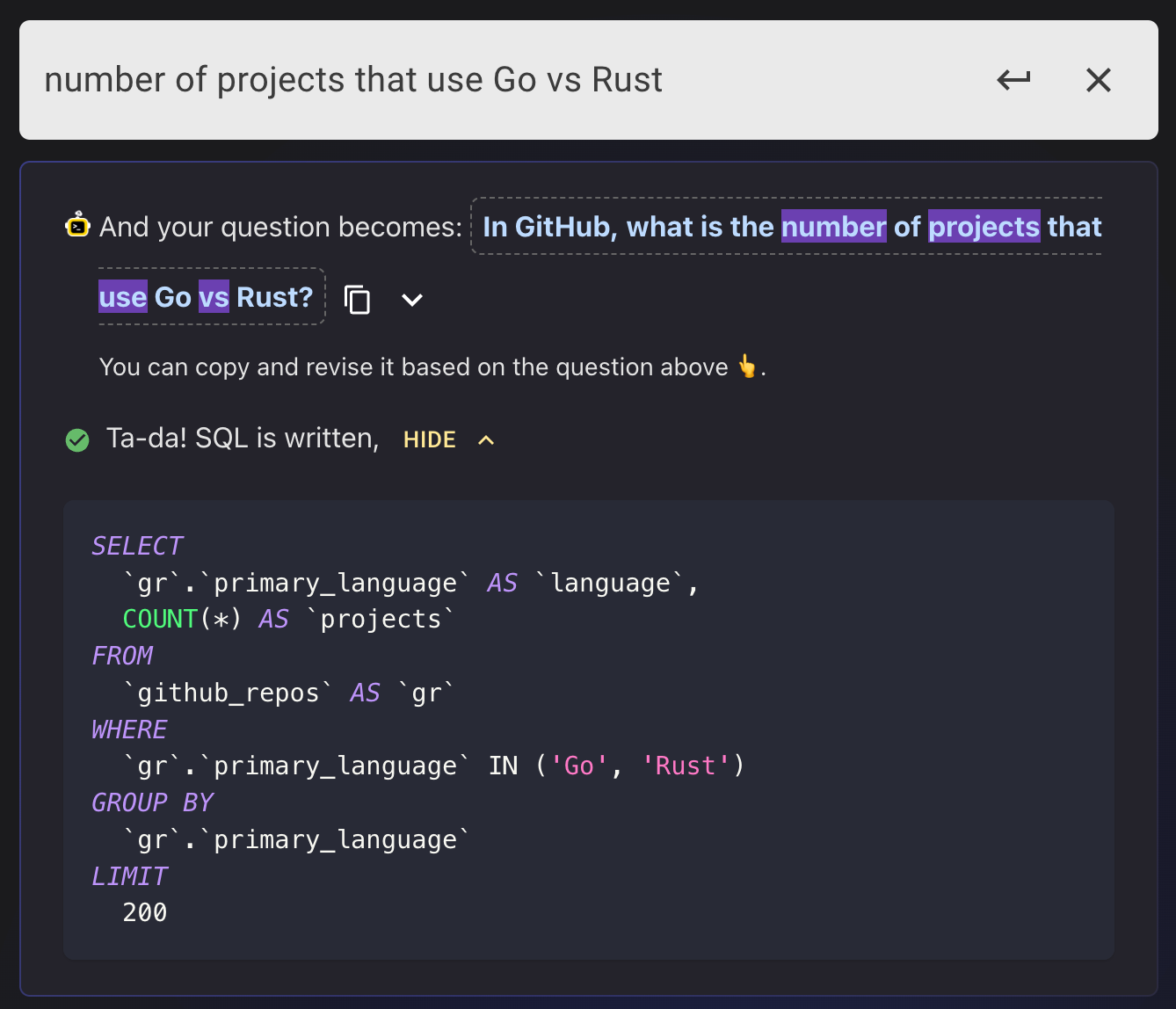

1,417,392 | 6 ChatGPT Enhanced Database Tools to Make Your Life Easier | ChatGPT has only been around for less than half a year and we already cannot live without it. We were... | 0 | 2023-03-28T07:08:27 | https://dev.to/bytebase/a-non-exhaustive-summary-of-chatgpt-enhanced-database-tools-1oe5 | chatgpt, ai, database, programming | ChatGPT has only been around for less than half a year and we already cannot live without it. We were wondering if it could help us in the field of database administration, and we dug deep into some toolings that have incorporated ChatGPT to make our life easier.

## sqlTranslate

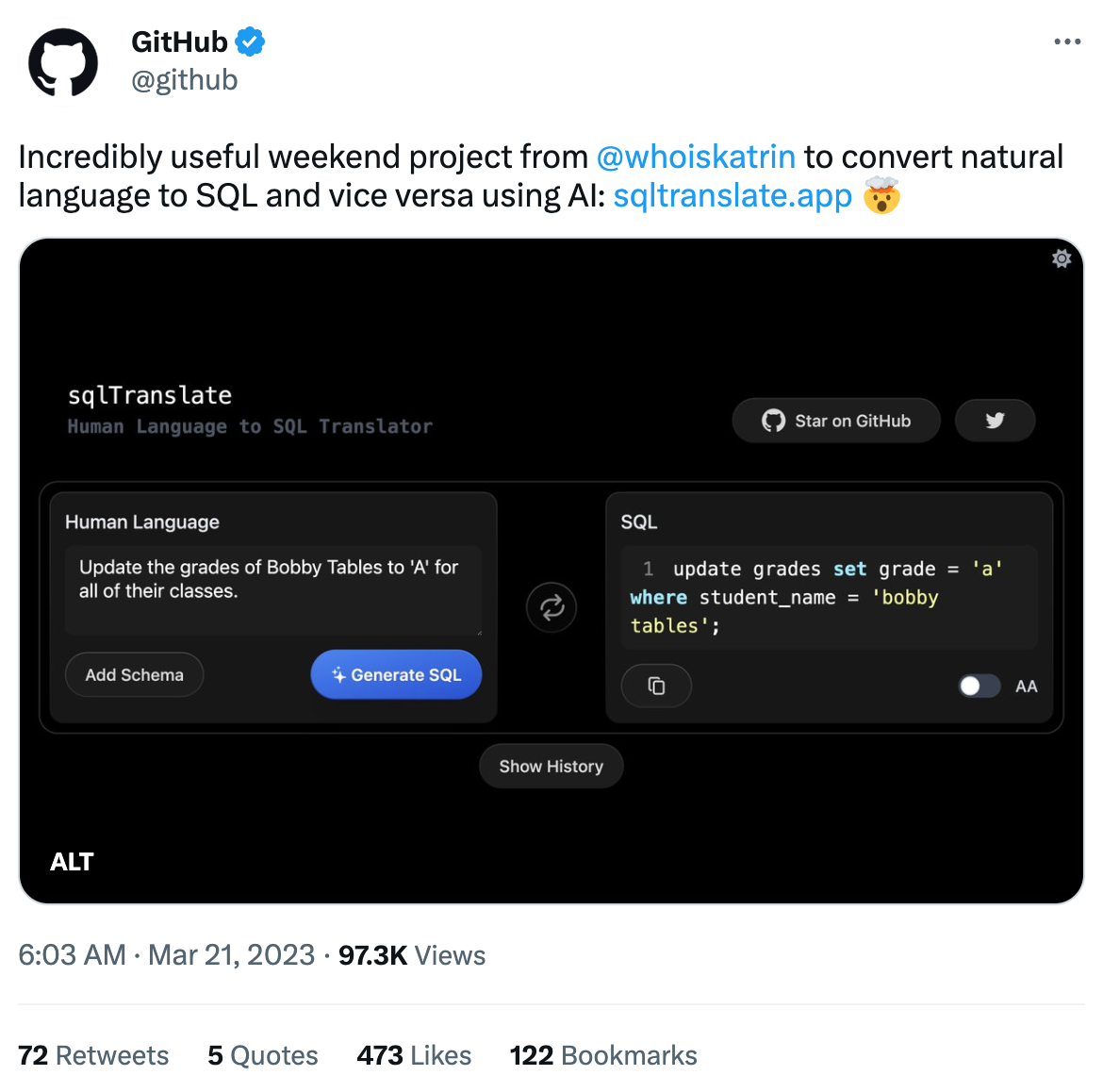

When it comes to databases, the first thing that comes to mind is whether ChatGPT can help us write SQL. [sqlTranslate](https://www.sqltranslate.app/) is a very simple tool that uses the OpenAI API to obtain the corresponding SQL statement by inputting natural language, or vice versa. You can also upload your own schema. Since it open-sourced three weeks ago, it has already gotten 2.6k stars and is [described by GitHub](https://twitter.com/github/status/1637937834865704960/photo/1) as an "incredibly useful weekend project". Looks like people have been suffering from SQL for way too long.

## AI2sql

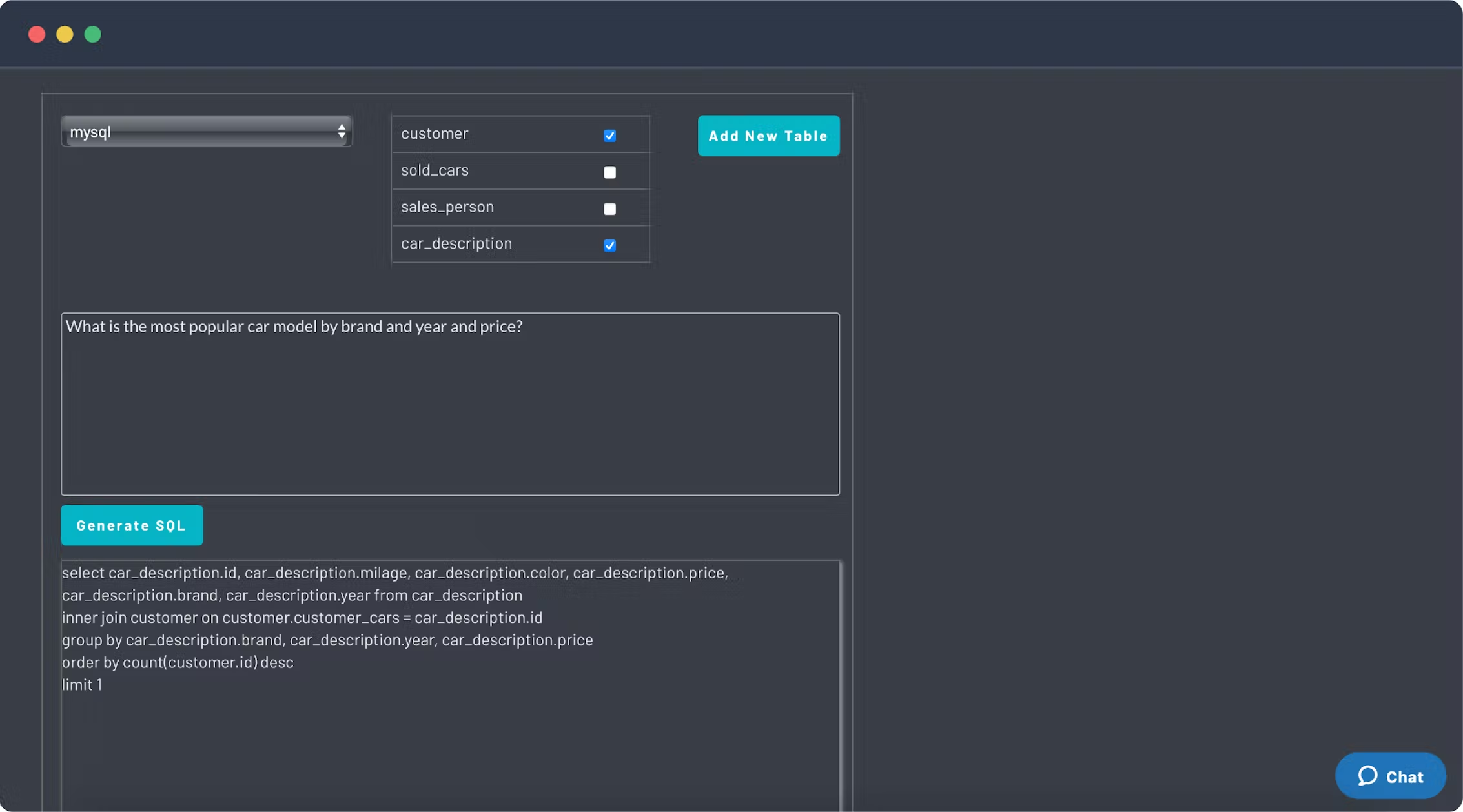

[AI2sql](https://www.ai2sql.io/) is an AI-driven SQL query generator that has been around since 2021, and has recently incorporated OpenAI's GPT-3. Now engineers and non-engineers can write SQL easily without knowing the syntax. AI2sql is more comprehensive than sqlTranslate. The features include SQL syntax checking, formatting, and query generation, the databases supported covers the most popular ones on the market (such as MySQL, PostgreSQL, MongoDB, Oracle, etc.).

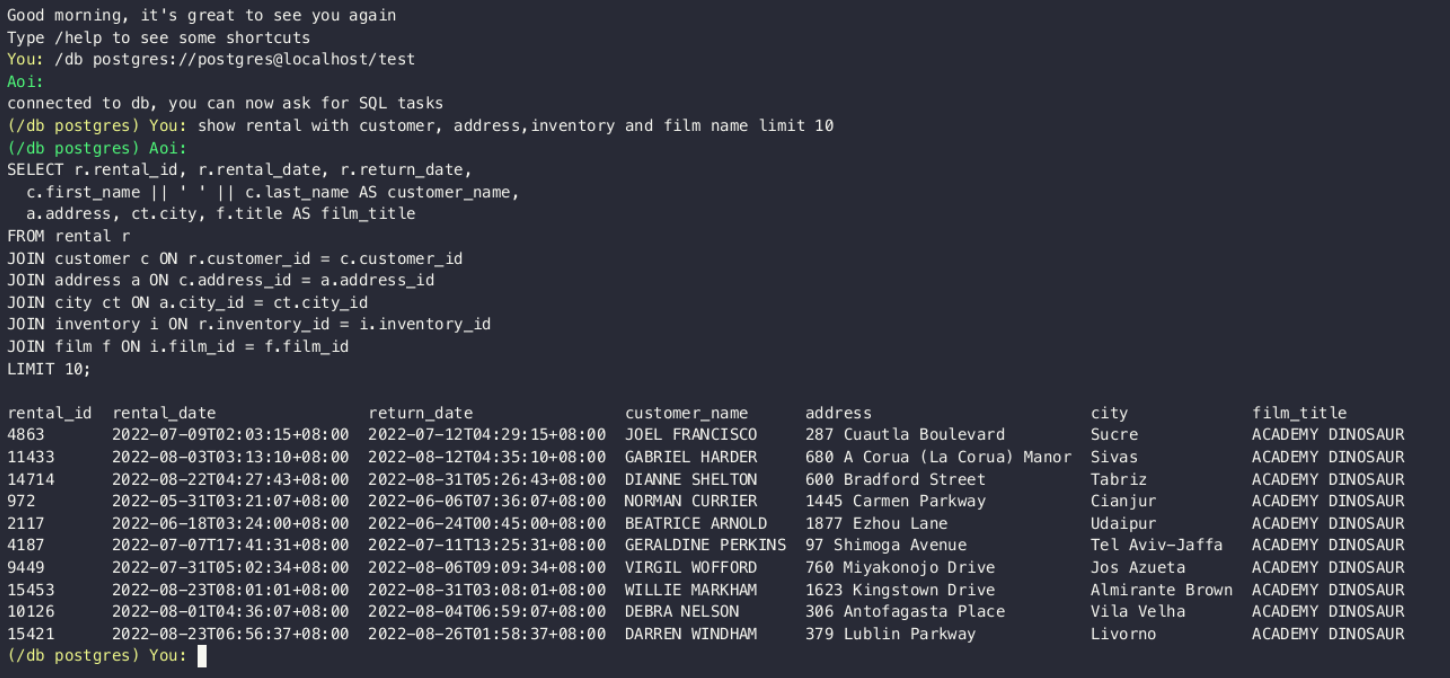

## Aoi (葵)

[Aoi](https://github.com/shellfly/aoi) is a ChatGPT-driven dialogue agent program that can conduct natural language conversations with AI in your Terminal, and it can also be connected to your database to perform SQL tasks.

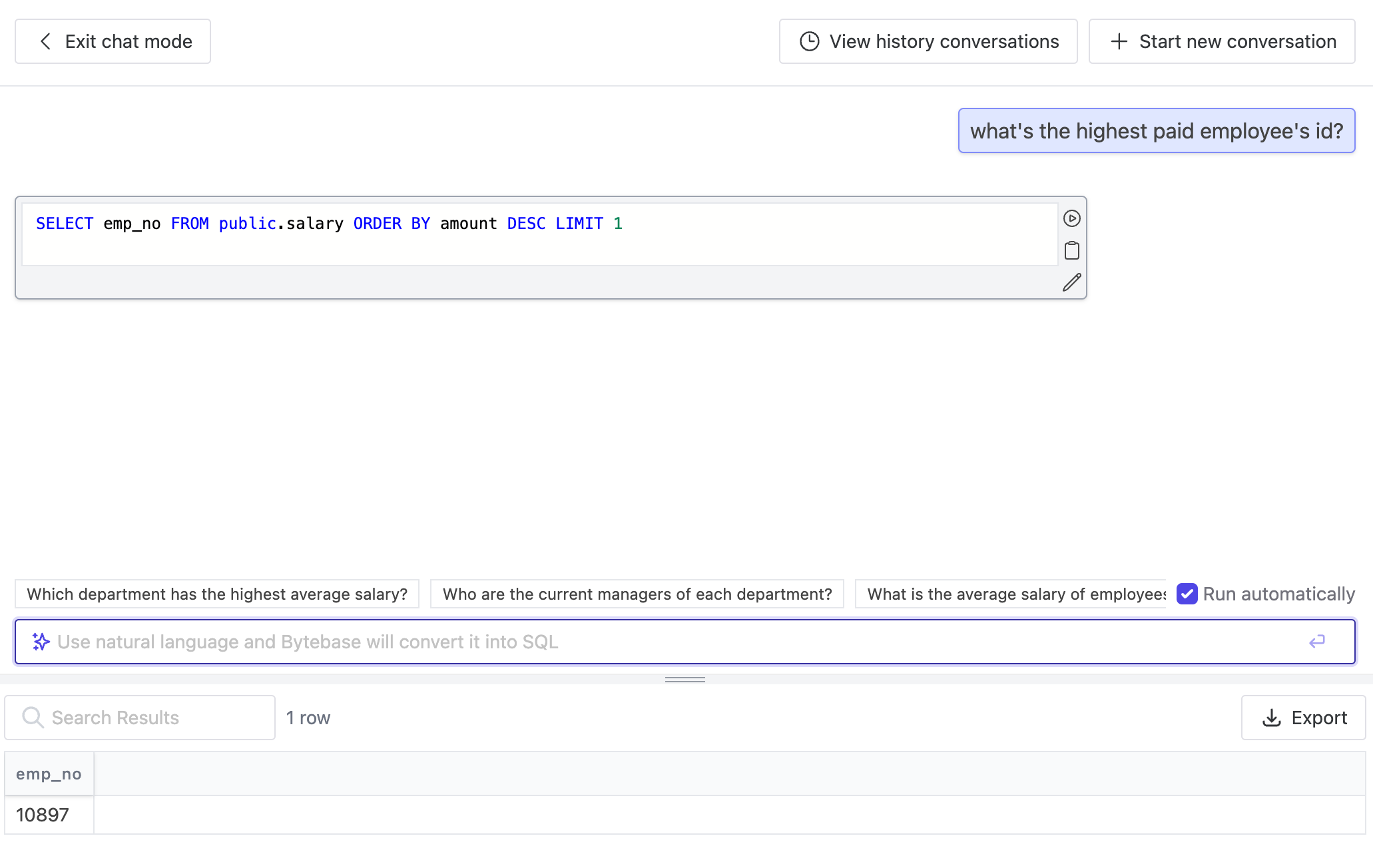

## Bytebase:

[Bytebase](https://www.bytebase.com/) is a database CI/CD tool that covers the entire life cycle of database development, and its SQL editor has also incorporated OpenAI's gpt-3.5-turbo in [its recent release](https://www.bytebase.com/changelog/bytebase-1-14-0), where you input natural language and have it converted to SQL. Stay tuned for a more advanced version chat bot 🤖️!

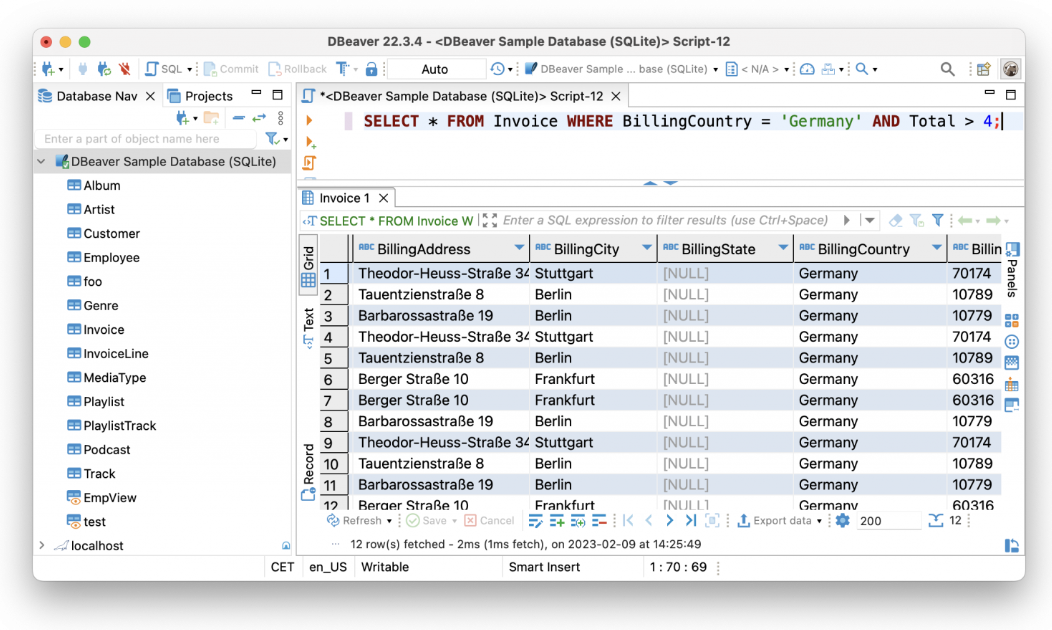

## DBeaver

As a veteran SQL client, in addition to visualization and management capabilities, [DBeaver](https://dbeaver.com/) also has a SQL editor, along with data & schema migration capabilities, and database connection monitoring. In early February, DBeaver also incorporated GPT-3 to have AI convert natural language to SQL. For instance, you can askfor "all invoices from Germany with a total of more than 4," or in German, "Zeig alle Rechnungen aus Deutschland mit der Gesamtsumme über 4," and it will automatically convert the request into a query.

## OSSInsight

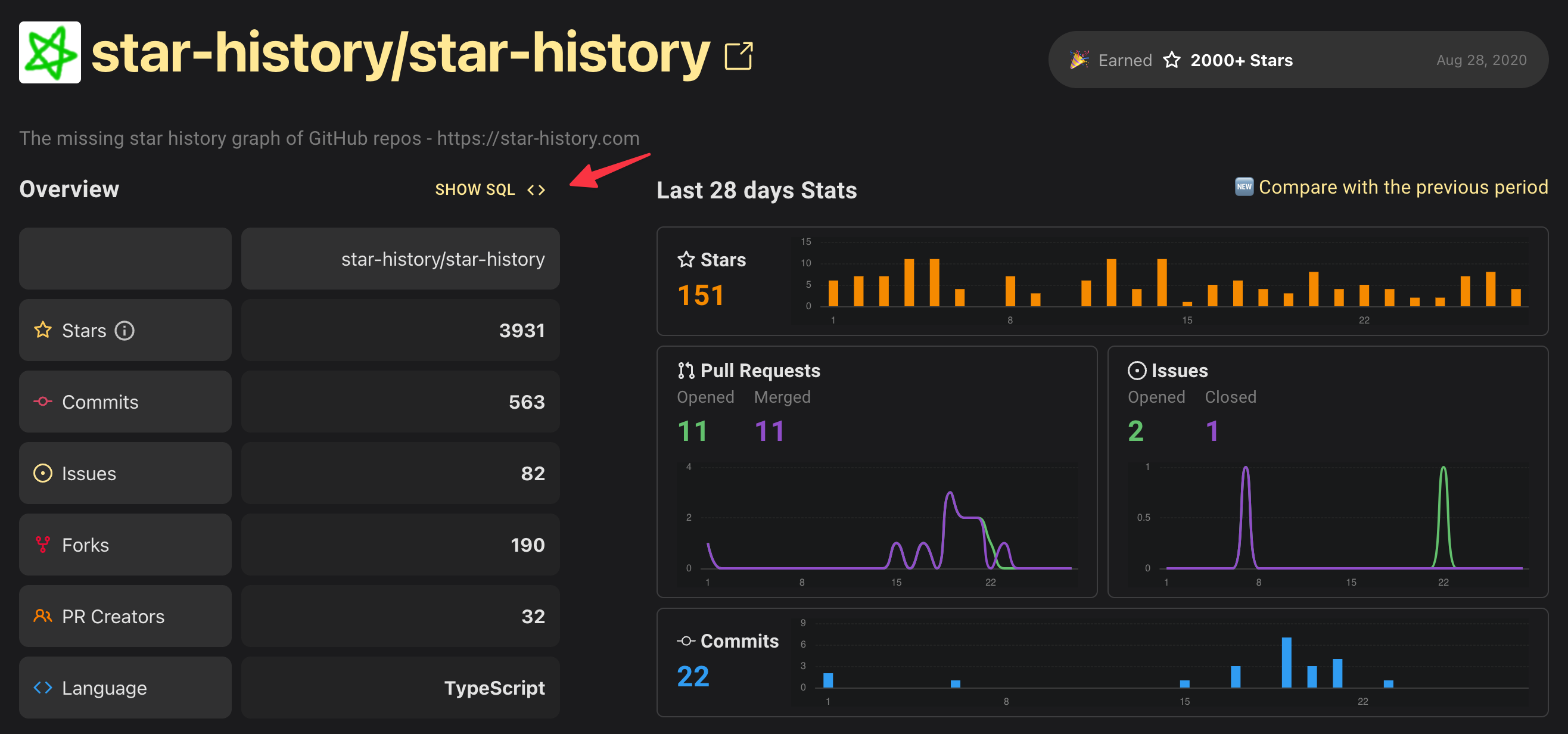

In a sense, [OSSInsight](https://ossinsight.io/) has been helping you write SQL since way before.

But recently they embraced OpenAI and launched a new tool called "Data Explorer", making it easier to explore GitHub data. You can ask whatever interests you in plain language, and AI will generate SQL for them (and then query it).

The database behind OSSInsight is TiDB, and TiDB Cloud recently also launched an intelligent data exploration feature using OpenAI: [Chat2Query](https://www.pingcap.com/chat2query-an-innovative-ai-powered-sql-generator-for-faster-insights/), which is another tool that generates SQL queries based on your input and then queries and visualizes the database for you.

## Outerbase

[Outerbase](https://outerbase.com/) is a new tool that was officially released on Feb.15, 2023. Compared with traditional database management tools, Outerbase completely conforms to current aesthetics. Its user experience is similar to that of an Excel spreadsheet, and it uses GPT-3 to help users write SQL queries and generate dashboards, making it useful for both developers and data analysts.

## To wrap up

It looks like using ChatGPT to enhance data management and analysis for your databases is gonna be an SOP in the database industry. Of course, these tools are just the tip of the iceberg, and their integration with ChatGPT is still in its early stages. However, it is clear that the benefits of combining ChatGPT with database management tools are enormous. Moreover, besides text2sql, ChatGPT can be used in many other database-related fields, such as customer support, query assistance, database management, and data analysis(you can also consult ChatGPT about what else it's capable of).

DBAs: get ready to retire officially. (Just kidding, but perhaps the long-established SQL clients such as Navicat are already feeling a vague sense of crisis.) | milasuperstar |

1,417,393 | JavaScript Promise ! | In JavaScript, a Promise is an object that represents the eventual completion (or failure) of an... | 0 | 2023-03-28T07:13:33 | https://dev.to/neyaznafiz/promise-in-javascript-33eo | In JavaScript, a Promise is an object that represents the eventual completion (or failure) of an asynchronous operation and its resulting value. Promises provide a way to handle asynchronous operations that involve waiting for some action to complete before proceeding with the rest of the code. This can be very useful when dealing with time-consuming or resource-intensive tasks, such as network requests or database queries.

A Promise can be in one of those three states: **pending**, **fulfilled**, or **rejected**.

- When a Promise is in the pending state, it means that the asynchronous operation is still in progress and the result is not yet available.

- When the operation completes successfully, the Promise is fulfilled with the resulting value.

- The Promise is rejected with an error object if an error occurs during the operation.

Promises can be created using the **Promise()** constructor, which takes a function that defines the asynchronous operation to be performed. The function should accept two parameters: **resolve()** and **reject()**.

- resolve() is called when the operation completes successfully and returns the resulting value.

- reject() is called if an error occurs during the operation and returns an error object.

Promises can be consumed using methods like **then()**, **catch()**, and **finally()**, which allow you to handle the result of the Promise or any errors that may occur. Chaining these methods together can make it easier to write and manage complex asynchronous code.

Overall, Promises are a powerful tool in modern JavaScript development and provide a simpler, more organized way to handle asynchronous operations. | neyaznafiz | |

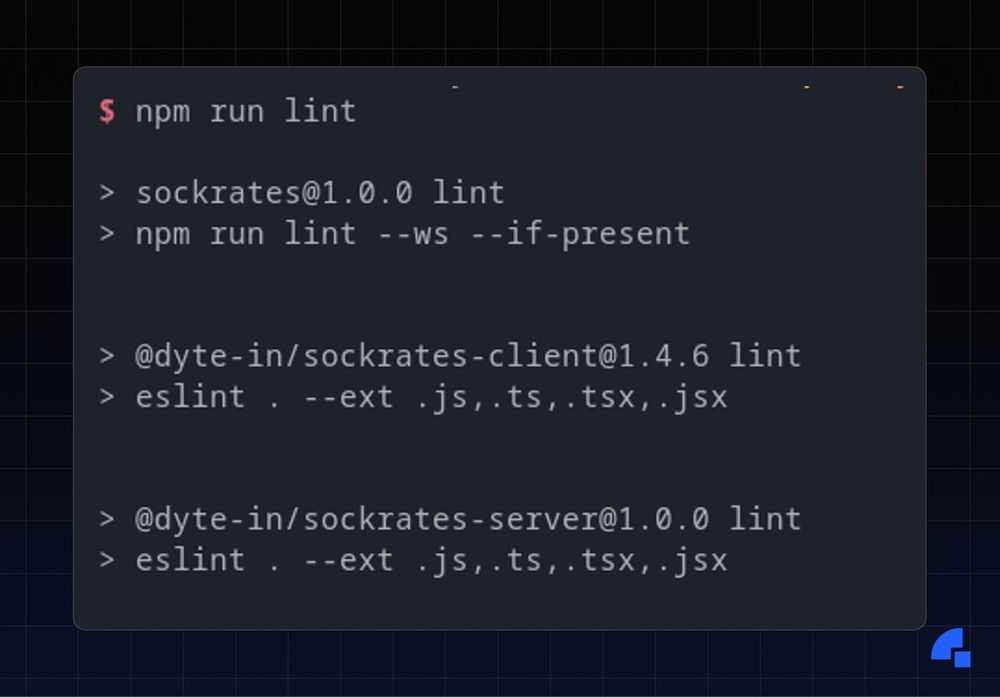

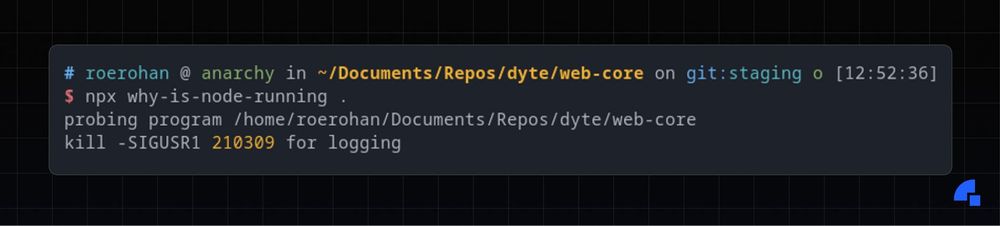

1,417,426 | Discovering package.json | Introduction Back in the day, dependency management was a mounting ache, especially in... | 0 | 2023-03-28T07:53:20 | https://dyte.io/blog/package-json/ | webdev, javascript, beginners, tutorial |

## Introduction

Back in the day, dependency management was a mounting ache, especially in languages like C/C++. There was no standardized tool for managing dependencies and their versions, and it took several hours of developer effort to manage them for a project.

Fast forward to 2023, there are several tools for dependency management, like [maven](https://maven.apache.org/) and [gradle](https://gradle.org/) for Java, [pip](https://pypi.org/project/pip/) for Python, [npm](https://docs.npmjs.com/cli/v9/), [pnpm](https://pnpm.io/) and [yarn](https://yarnpkg.com/) for Javascript, and [Cargo](https://doc.rust-lang.org/cargo/) for Rust, to name a few. Now, each package manager needs a way to keep track of which versions of which packages are supposed to be used in your current project. Generally, a file is created that maps these dependencies to their corresponding versions - for instance you’ll generally find a `[requirements.txt](https://pip.pypa.io/en/stable/reference/requirements-file-format/)` file in most Python projects.

Similarly, the primary job of the `package.json` file is to keep track of all of the dependencies and developer dependencies. that are required in your project. On running the `install` command on your favorite JS package manager, it will install the corresponding versions of the packages mentioned in the `package.json` file. Besides keeping track of dependencies, the `package.json` file also stores the name and version of your package - which is generally considered metadata for certain tools. Let’s say you were to publish your project on [npmjs](https://www.npmjs.com/) (or any other NPM registry), you’d require to have all the metadata about the package in your `package.json` file located in the root directory of your project.

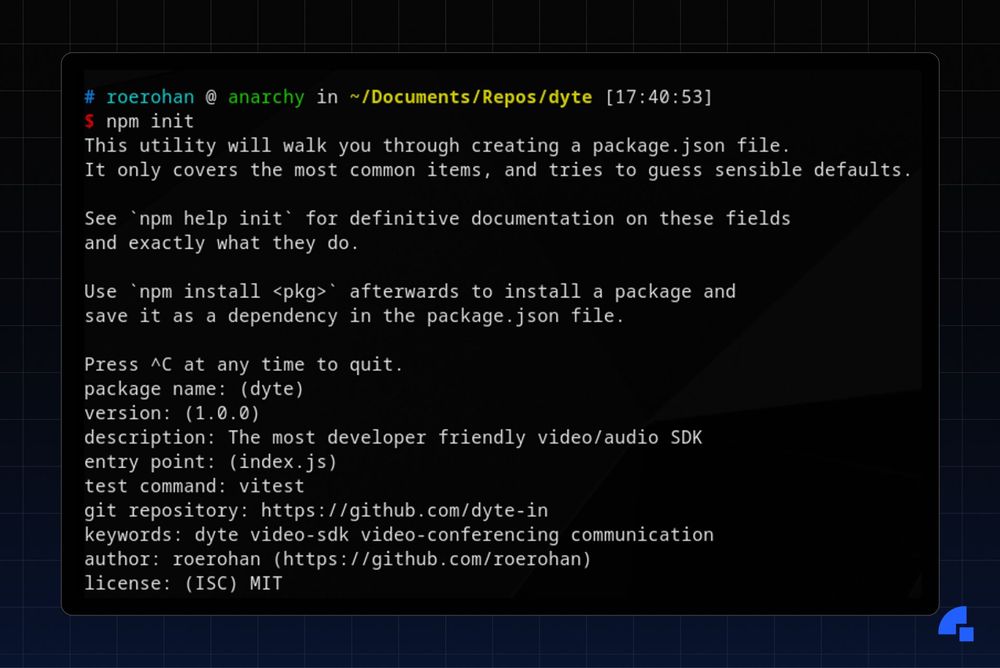

## Creating a package.json file

You can create a `package.json` file in your Javascript/Typescript project using the `npm init` command. It’ll ask you a series of questions when you run that command, and all the answers that you enter will show up in your `package.json` file.

Here’s the corresponding `package.json` file that’s generated when running the above `npm init` command.

```json

{

"name": "dyte",

"version": "1.0.0",

"description": "Dyte is the most developer-friendly video and audio SDK.",

"main": "index.js",

"scripts": {

"test": "vitest"

},

"repository": {

"type": "git",

"url": "https://github.com/dyte-in"

},

"keywords": [

"dyte",

"video-sdk",

"video-conferencing",

"communication"

],

"author": "roerohan (https://github.com/roerohan)",

"license": "MIT"

}

```

More often than not, the keys other than `scripts` and `dependencies` come into play when publishing a package. From this point onwards, the discussion will be more relevant to packages that are supposed to be published to any NPM registry. However, if you have a standalone Node.js project for example, the properties in the `package.json` file still mean the same.

## Common keys in package.json

The properties in `package.json` are either descriptive or functional. For instance, the `name` of the package is a descriptive property, whereas the `scripts` that are defined in `package.json` are functional properties.

Here are some of the most useful properties in `package.json` and what they signify.

### Descriptive keys

Some keys in `package.json` are used to describe some fields, which is used by package managers and other tools to gather information about a package.

### [name](https://docs.npmjs.com/cli/v9/configuring-npm/package-json#name)

The name field is used to identify the package. The `name` and the `version` fields are mandatory in the `package.json` file, and together they’re assumed to be unique. For instance, if the name is `web-core` and the version is `0.1.2`, then it is assumed that `web-core@0.1.2` is unique and doesn’t refer to any other package. A package name has certain restrictions - it can’t be more than 214 characters in length, and must contain all small letters. The `name` can not begin with a `.` or an `_`. Additionally, the name is often part of a URL so it must be URL-safe.

Package names may also be scoped. For instance, the name of a package can be `[@dytesdk/web-core](https://www.npmjs.com/package/@dytesdk/web-core)`. This is of the form `@organization/package`.

### [version](https://docs.npmjs.com/cli/v9/configuring-npm/package-json#version)

The version field is one of the keys used to identify a package. Typically, this version number must be parseable by `[node-semver](https://github.com/npm/node-semver)`. Semantic versioning is a set of rules for versioning so that the change in the version number is indicative of the kind of changes in the package. The version is written in the form of `MAJOR.MINOR.PATCH`. If there’s a bug fix in the new version, the `PATCH` is incremented. If there’s a new feature, the `MINOR` part of the version is incremented. If the new version has a breaking change or is not compatible with older versions, the `MAJOR` part of the version is incremented.

For instance, if the current version of a package is `1.0.9`:

- If the next release has bug fixes only, the new version should be `1.0.10`.

- If the next release has a new feature, the new version should be `1.1.0`.

- If the next release has a breaking change, the new version should be `2.0.0`.

### [description](https://docs.npmjs.com/cli/v9/configuring-npm/package-json#description)

The description field in the package describes in brief what the package does. It’s also useful in SEO as it helps other people find your package.

### [keywords](https://docs.npmjs.com/cli/v9/configuring-npm/package-json#keywords)

Just like the description, the `keywords` field is also used for SEO. It’s an array of words that describes the package. If someone searches for any of the words in the `keywords` field, it’s likely that your package will show up.

### [homepage](https://docs.npmjs.com/cli/v9/configuring-npm/package-json#homepage)

Typically you would link your project’s website in this field. Alternatively, you can also point to the projects `README` or documentation.

### [bugs](https://docs.npmjs.com/cli/v9/configuring-npm/package-json#bugs)

The purpose of this field is to point to your project’s issue tracker, or any support email. It could be of the form

```json

{

"url": "https://github.com/dyte-io/html-samples/issues",

"email": "support@dyte.io"

}

```

If you don’t want to provide a support email, you can directly assign a URL to the `bugs` property.

### [license](https://docs.npmjs.com/cli/v9/configuring-npm/package-json#license)

The license is an important field as it describes to the users of your package the permissions and restrictions placed by you while using this package. Ideally, for open-source packages, the license should be one that’s approved by [OSI](https://opensource.org/licenses/). If you do not wish to grant any permissions to the users of the package under any terms, you can set this field to `UNLICENSED`. You should consider setting the `private` field in the `package.json` file to true to prevent yourself from accidentally publishing the package.

### [author](https://docs.npmjs.com/cli/v9/configuring-npm/package-json#people-fields-author-contributors)

The author field is used to provide information about the developer of the package. It consists of a `name`, and an optional `email` and `url` field. Here’s an example:

```json

{

"name": "Rohan Mukherjee",

"email": "rohan@dyte.io",

"url": "https://dyte.io"

}

```

All the information can also be downsized into a single string of the following format:

```json

{

"author": "Name <Email> (Site)"

}

```

For instance, you can specify the same author as above in this format:

```json

{

"author": "Rohan Mukherjee <rohan@dyte.io> (https://dyte.io)"

}

```

### [contributors](https://docs.npmjs.com/cli/v9/configuring-npm/package-json#people-fields-author-contributors)

Just like the `author` field, the `contributors` field provides information about the developers of the package. It holds an array of authors.

### [funding](https://docs.npmjs.com/cli/v9/configuring-npm/package-json#funding)

In this field, you can specify any links for funding your open-source package. For example, if you have a [Patreon](https://www.patreon.com/) or a [buymeacoffee](https://www.buymeacoffee.com/) link for funding your project, you can add it in this field. This can also take an array of multiple funding URLs. This is the URL that gets opened when a user runs `npm fund <projectname>`.

### Functional keys

These keys have some special meaning to certain tools, or while importing code from packages.

### [files](https://docs.npmjs.com/cli/v9/configuring-npm/package-json#files)

The `files` field comprises an array of files that need to be uploaded to the registry when your package gets published. File patterns follow a similar syntax to `.gitignore`. The only difference is that the files specified in a `.gitignore` are excluded, whereas these files are included. You can also use glob patterns such as `*`, and `**/*`, just like in `.gitignore` files. The `files` field defaults to `["*"]` if not specified otherwise.

You should note that `package.json`, `README`, and `LICENSE/LICENCE` files are always included, irrespective of your settings. The `README` and `LICENSE/LICENCE` files can have any extension

### [main](https://docs.npmjs.com/cli/v9/configuring-npm/package-json#main)

The entry point to your program is defined in the `main` property. When you require a package, you actually import the file described in the `main` property. There is a Node.js 12+ alternative to this field known as `exports`, which is described below.

### [exports](https://nodejs.org/api/packages.html#exports)

You can define entry points to your package using the `exports` field as an alternative to the `main` field. Unlike `main`, `exports` allows you to define [subpath exports](https://nodejs.org/api/packages.html#subpath-exports) and [conditional exports](https://nodejs.org/api/packages.html#conditional-exports).

For example, you can export the `submodule.js` file of your project using the following `exports` property:

```json

{

"exports": {

".": "./index.js",

"./submodule.js": "./src/submodule.js"

}

}

```

It is also possible to export conditionally - depending on whether the user of the package uses `require` or `import`.

```json

{

"exports": {

"import": "./index-module.js",

"require": "./index-require.cjs"