id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,398,041 | Java str into obj data func | <!DOCTYPE html> Converting a string into a date object. const... | 0 | 2023-03-12T15:27:35 | https://dev.to/yourdadaf/java-str-into-obj-data-func-3p98 | <!DOCTYPE html>

<html>

<body>

<h2>Converting a string into a date object.</h2>

<p id="demo"></p>

<script>

const text='{"name":"Munira","birth":"1986-1-11","city":"Vadodara"}';

const obj=JSON.parse(text);

obj.birth= new Date(obj.birth);

document.getElementById("demo").innerHTML=obj.name+","+obj.birth;

</script>

</body>

</html>

| yourdadaf | |

1,398,117 | TypeScript: Generic Types | TypeScript is a statically typed superset of JavaScript that allows developers to write code with... | 0 | 2023-03-12T16:47:12 | https://dev.to/donstefani/typescript-generic-types-4h80 | typescript, javascript, webdev, programming | TypeScript is a statically typed superset of JavaScript that allows developers to write code with more safety and accuracy. Generic types in TypeScript make it possible to write reusable and flexible code by creating generic types that can be used with different data types.

You can use generic types to write functions, classes, and interfaces that can work with any data type. The syntax for creating a generic type in TypeScript is to use angle brackets `<>` to define the generic type parameter. For example:

```

function identity<T>(arg: T): T {

return arg;

}

```

Here, `identity` is a generic function that takes a type parameter `T` and returns a value of type `T`. The `arg` parameter is of type `T`, and the return value is also of type `T`. This means that the function can work with any data type.

We can call the `identity` function with different data types as follows:

```

let output1 = identity<string>("hello");

let output2 = identity<number>(10);

```

In this example, we called the `identity` function with a string and a number as the type argument.

Another way of using generic types in TypeScript is with interfaces. We can create a generic interface that can work with any data type, as follows:

```

interface List<T> {

data: T[];

add(item: T): void;

remove(item: T): void;

}

```

In this example, `List` is a generic interface that has a `data` property of type `T[]` and two methods `add` and `remove` that take a parameter of type `T`. This means that we can create a `List` of any data type.

We can create a `List` of strings as follows:

```

let list = { data: ["apple", "banana"], add: function(item) { this.data.push(item); }, remove: function(item) { this.data.splice(this.data.indexOf(item), 1); } };

```

In this example, we created a `List` of strings and added two items to it. We used anonymous object syntax to define the `data`, `add`, and remove properties.

In conclusion, generic types in TypeScript allow you to write flexible and reusable code that can work with any data type. It's possible to create generic functions, classes, and interfaces that can be used with different data types by defining a generic type parameter.

A good question is, "when should I use generic types, and are there any drawbacks to using them?".

More to come... | donstefani |

1,398,136 | What is the difference between null and undefined? | The convention in TypeScript is that undefined values have not been defined yet, whereas null values... | 0 | 2023-03-12T17:28:15 | https://dev.to/typescripttv/what-is-the-difference-between-null-and-undefined-5h76 | javascript, typescript, webdev, programming | The convention in TypeScript is that `undefined` values have not been defined yet, whereas `null` values indicate intentional absence of a value.

<!--more-->

## Example with `null`

The below function shows how `null` can be used by returning an object that always has the same structure, but with **intentionally assigned** `null` values when the function does not return an `error` or `result`:

```ts

function divide(a: number, b: number) {

if (b === 0) {

return {

error: 'Division by zero',

result: null

};

} else {

return {

error: null,

result: a / b

};

}

}

```

## Example with `undefined`

On the other hand, `undefined` represents the absence of any value. It is a value that is **automatically assigned** to a variable when no other value is assigned. It often indicates that a variable has been declared but not initialized. It can also signify a programming mistake, such as when a property or function parameter was not provided:

```ts

let ratio: number | undefined;

if (ratio === undefined) {

console.log('Someone forgot to assign a value.');

} else if (ratio === null) {

console.log('Someone chose not to assign a value.');

}

```

## Best Practice

The **TypeScript Coding guidelines** recommend using only `undefined` and discouraging the use of `null` values (see [here](https://github.com/Microsoft/TypeScript/wiki/Coding-guidelines#null-and-undefined)). It is important to note, however, that these guidelines are tailored towards the TypeScript project's codebase and may not necessarily be applicable to your own projects.

## Want more?

If you found this short explainer helpful, hit that **subscribe button** on my [YouTube channel](https://www.youtube.com/typescripttv?sub_confirmation=1) or give me a [follow on Twitter](https://twitter.com/bennycode) to level up your TypeScript game. | bennycode |

1,398,150 | TIL 03/12/23 | Today I learned about loops in Ruby. *Simple Loops* loop do print "Do you want to continue? (y/n)... | 0 | 2023-03-12T18:06:56 | https://dev.to/jazmineubanks/til-031223-52l3 | Today I learned about loops in Ruby.

****Simple Loops****

loop do

print "Do you want to continue? (y/n) "

answer = gets.chomp.downcase

end

**While loops:**

answer = ""

while answer != "n"

print "Do you want me to repeat this pointless loop again? (y/n) "

answer = gets.chomp.downcase

end

**Until Loops****

answer = ""

until answer == "no" do

print "Do you want this loop to continue? (y/n) "

answer = gets.chomp

end | jazmineubanks | |

1,398,630 | Yet Another Functions of Functions Python Tutorial | The Topic Are you confused by Python functions that return functions and the places and... | 0 | 2023-03-13T06:31:00 | https://dev.to/techocodger/yet-another-functions-of-functions-python-tutorial-3809 | python, tutorial | ## The Topic

Are you confused by Python functions that return functions and the places and ways that Python requires you to understand that? If so, perhaps this description of one example use case may help you.

## Rationale

This article was written this as a note-to-self - and if anyone finds it helpful then that's good too. Some opinions will be expressed that hopefully are relevant to the code that's being discussed.

## The Example

This example comes from a very small part of the process of writing the Python program Foldatry. However, that program is merely incidental for this article. It is however, a real example, from a real application that is being written and used.

## Dictionary of Single Value Items

There is a part of Foldatry where it traverses a folder tree and noting the file extensions - the dot-something at the end of the name - as it goes.

To store those, it created a dictionary, where each new extension became a new key, and the value at the key was the count of files it had seen with that extension.

Thus, after the traversal was done, and the dictionary built, it was desired to output the findings, in order from the extension with the most files found, to the least found.

- Something to note about Python and dictionaries, is that its idea of how the keys _are_ has changed. In earlier Python the .keys() list was either considered unsorted or would be in the order that the keys were added. Perhaps to remove the ambiguity and/or to match the under-the-hood implementation of Python, that list is now defined as being tp preserve their order of insertion.

So, with the dictionary collected and held as the variable `i_dct_counts` - if we didn't care at all about the order to print them, we could do:

``` Python

for k_ext in i_dct_counts.keys() :

print( i_dct_counts[ k_ext ] )

```

- for the Python pedants, yes, this is overlooking that iterating over the dictionary inherently goes through the keys - without explictly calling on `.keys()`

Most likely though, what a user wants to see, is which extension accounts for the most files, so to do that we will want to re-sort the list of keys - to be in descending order by the discovered counts.

To do that, we can use a generic `sorted` function that Python offers. The code will then look like:

``` Python

lst_keys_sorted = sorted( i_dct_counts, key=i_dct_counts.get)

for k_ext in lst_keys_sorted :

print( i_dct_counts[ k_ext ] )

```

Frankly that kind of thing - the use of `sorted` is easy to look up online and plug into place - and indeed that's what was done here. In doing so, note that the `sorted` function needed to be passed the slighlty non-obvious thing: `i_dct_counts.get` but it clearly worked so all was good.

## Dictionary of Tuples

For a revision of the program, it was decided to have the dictionary also hold information about the sizes of the files it found. To that end a "named tuple" was made to be used as the new data item.

Here's the definition for it:

``` Python

tpl_Extn_Info = namedtuple('tpl_Extn_Info', 'ei_Count ei_SizeSum ei_SizeMin ei_SizeMax')

```

for which you can ignore the Pythonist mechanics and just see it as a non-simple data type to hold named elements of:

- `ei_Count` - to hold the counts of files

- `ei_SizeSum` - to hold the total size of files encountered

- `ei_SizeMin` - to hold the largest size of file encountered

- `ei_SizeMax` - to hold the smallest size of file encountered

where these are all things that could just be updated as files are encountered during the traversal, and are meaningful at the end of the traversal.

This change required some other bits of code to handle creating and updating these tuples during the folder tree travervals - but those don't matter for this tutorial, where we will merely assume it resuls in a suitably created dictionary of tuples.

But what this did change is what happens where this line executes:

``` Python

lst_keys_sorted = sorted( i_dct_counts, key=i_dct_counts.get)

```

Because unlike before, the part `key=i_dct_counts.get` no longer tells the `sorted` function how to get a simple value that can control the sorting - and instead that will now deliver a tuple. This causes an error at run time, from inside the `sorted` function - because it "gets" something that has no defined comparison operation.

This means needing to have to done one of two things:

- have our dictionary item be a kind of thing that is inherently sortable ;

- provide a better function to `sorted` than the `.get`

Here we will ignore the first of those options - partly because it just wasn't the implemented resolution, but also because requires tackling a different aspect of how Python operates - and would thus be a tutorial about something else.

So the question then, became: what kind of function needs to be provided to `sorted` ? And how do we make such a thing?

## Function that returns a Function

It is very tempting to show the blundering steps that was taken to work this out. It didn't take long - maybe 20 or 30 minutes - but those steps are harder to write about.

- And to be very clear, this is certainly **not** claiming this is the best way to do this.

So let's jump to the code, and then talk it through.

``` Python

tpl_Extn_Info = namedtuple('tpl_Extn_Info', 'ei_Count ei_SizeSum ei_SizeMin ei_SizeMax')

def ei_Count_of( p_tpl_Extn_Info ):

return p_tpl_Extn_Info.ei_Count

def ei_Count_at_key( p_dct_tpl_Extn_Info, p_key ):

return ei_Count_of( p_dct_tpl_Extn_Info[ p_key ] )

def fn_ei_Count_at_key( p_dct_tpl_Extn_Info ):

def fn_ei_Count_of_dct_at_key( p_key ):

return ei_Count_at_key( p_dct_tpl_Extn_Info, p_key )

return fn_ei_Count_of_dct_at_key

```

The first function is simple - `ei_Count_of` when passed a tuple, will return its `ei_Count` value. Of course, when you have a particular tuple item the code for this is so trivial as to not be worth writing a function - but we know our goal here is to have a function we can quote. At first glance, this can seem to be the function required by `sorted` but cannot work because there is no way to tell `sorted` about this function as operating on the specific dictionary that it is dealing with.

The next function `ei_Count_at_key` is one that can be passed *two* things, the *dictionary* and the *key*, and will then return the count for that key. At first glance, this can seem to be the function required by `sorted` but cannot work because `sorted` needs to given a function that only takes the key as a parameter.

Finally we have the tricky bit. This is a function with a nested function. While that idea is not itself obscure, the reason for doing this is different to the reason we often do this kind of nesting - because here the reason for the inner function is so that **it** rather than what it does at execution time, is what the outer function returns.

Because, yes, when we call `fn_ei_Count_at_key` - passing it the dictionary we have in mind - what we get back is a *function* - and notably is a function that is customised for just that dictionary.

The reason this works is perhaps subtle - it is because the inner function makes use of the parameter passed to the outer function, so that it (the inner function) does not need the dictionary as a parameter. This makes the inner function - customised by the outer call - to be the kind of function that `sorted` needs to be told to use.

And here is how that new function gets used for supplying to the `sorted` function.

``` Python

lst_keys_sorted = sorted( i_dct_counts, key=fn_ei_Count_at_key( i_dct_counts), reverse=True )

for k_ext in lst_keys_sorted :

print( i_dct_counts[ k_ext ] )

```

A major part of understanding what happens at run-time is that when the above line is executed, the `key=` calling clause derives a function for the specific dictionary and then passed that in to the `sorted` function for it to use as it iterates through the dictionary.

A lesser part to be clear about there, is that when it does that iteration, it will iterate through the keys of the dictionary but operate on the items of the dictionary. Depending on your viewpoint, that is either obvious, or a subtle thing about handling dictionaries. It is worth noting that `sorted` is quite generic, and operates on anything (or almost anything?) that is iterable.

## Process of Discovery and Aftermath Options

A reality of those functions, is that they represent the steps of building a way to having a *function* to pass to the `sorted` function. What is needed is a function, not to execute immediately in the `sorted` call, but for it to use as it iterates through the dictionary. Hence started by writing *functions* - rather than object de-references.

Indeed the meta-function approach arrived from realising that `sorted` was not happy to be given the function `ei_Count_at_key` or even a use of `ei_Count_of` combined with the `get` from the simpler non-tuple method.

Having solved the problem - of how to get a suitably sorted list of keys - the functions have been left in place. But should they now be revised to be more Pythonic, perhaps even to use a `lambda` in the `sorted` line?

Perhaps as written makes it quite clear what is going on. In terms of performance, there isn't much concern because the set of extensions is generally small - often finding between 5 and 100 discovered extension in typical usage.

Also, consider that maybe:

- code that is more brief can be problematic by depending on deeper understanding of Python at run-time;

- there isn't a problem with functions that are constructed but only used *once* - i.e. that this is not a good enough reason to use *nameless* methods, such as `lambda` | techocodger |

1,398,210 | The Power of JavaScript: Tips and Tricks for Writing Clean, Efficient Code | JavaScript is a powerful programming language that has become an essential tool for web developers.... | 21,805 | 2023-03-12T18:46:37 | https://dev.to/zuzexx/the-power-of-javascript-tips-and-tricks-for-writing-clean-efficient-code-20p | webdev, javascript, beginners, programming | JavaScript is a powerful programming language that has become an essential tool for web developers. With its dynamic and versatile nature, JavaScript is ideal for creating interactive web applications that can run on a variety of platforms. However, as with any programming language, writing clean and efficient code in JavaScript can be a challenge.

Here are some tips and tricks that can help you write clean and efficient JavaScript code:

### Use Variables Properly

One of the most important things you can do to write clean and efficient code in JavaScript is to use variables properly. Variables allow you to store data and manipulate it as needed throughout your code. However, if you're not careful, you can end up creating too many variables or using them in ways that slow down your code.

To use variables properly, you should aim to:

- Use descriptive variable names that make it clear what the variable represents.

- Avoid global variables whenever possible, as they can cause naming conflicts and memory leaks.

- Use `let` and `const` instead of `var` to define variables, as they have better scoping rules and prevent unwanted changes to your code.

- Use destructuring to extract values from objects and arrays, as it makes your code more concise and easier to read.

Here's an example of how to use destructuring to extract values from an array:

```js

const numbers = [1, 2, 3, 4, 5];

const [first, second, ...rest] = numbers;

console.log(first); // Output: 1

console.log(second); // Output: 2

console.log(rest); // Output: [3, 4, 5]

```

### Use Functions Wisely

Functions are a fundamental building block of JavaScript code. They allow you to break down complex tasks into smaller, more manageable pieces. However, if you're not careful, you can end up creating functions that are too large or that do too many things at once.

To use functions wisely, you should aim to:

- Use descriptive function names that make it clear what the function does.

- Aim for functions that do one thing and do it well. This makes your code more modular and easier to read.

- Avoid nested functions whenever possible, as they can make your code more complex and harder to debug.

- Use arrow functions instead of traditional function expressions, as they have a more concise syntax and don't change the value of this.

Here's an example of how to use arrow functions to write more concise code:

```js

const numbers = [1, 2, 3, 4, 5];

const evenNumbers = numbers.filter(number => number % 2 === 0);

console.log(evenNumbers); // Output: [2, 4]

```

### Optimize Loops

Loops are an essential tool for processing data in JavaScript. They allow you to iterate over arrays and objects and perform operations on each item. However, if you're not careful, you can end up creating loops that are slow and inefficient.

To optimize loops, you should aim to:

- Use the right type of loop for the task at hand. For example, use a `for` loop when you need to iterate over an array with a known length, and use a `for...in` loop when you need to iterate over an object's properties.

- Avoid creating unnecessary variables or doing unnecessary calculations inside a loop.

Use the `Array.prototype` methods like `map()`, `filter()` and `reduce()` to transform and extract data from arrays.

- Use the break statement to exit a loop early if the condition has already been met.

Here's an example of how to use the `map()` method to transform data in an array:

```js

const numbers = [1, 2, 3, 4, 5];

const doubledNumbers = numbers.map(number => number * 2);

console.log(doubledNumbers); // Output: [2, 4, 6, 8, 10]

```

### Avoid Callback Hell

Callback hell is a common problem in JavaScript code, especially when dealing with asynchronous operations. It happens when you have nested callbacks that become difficult to read and maintain. To avoid callback hell, you should use `Promises` or `async/await` functions.

Here's an example of how to use `Promises` to handle asynchronous code:

```js

function getUser(userId) {

return new Promise((resolve, reject) => {

// Code to fetch user data

// ...

if (user) {

resolve(user);

} else {

reject("User not found");

}

});

}

getUser(123)

.then(user => {

// Code to handle user data

// ...

})

.catch(error => {

console.log(error);

});

```

### Use Debugging Tools

Debugging is an essential part of writing clean and efficient JavaScript code. It helps you identify and fix errors in your code, and improve its overall performance. There are several tools and techniques you can use to debug your JavaScript code, such as `console.log()`, breakpoints, and browser dev tools.

Here's an example of how to use console.log() to debug your code:

```js

function calculateSum(numbers) {

let sum = 0;

for (let i = 0; i < numbers.length; i++) {

sum += numbers[i];

console.log(sum); // Output the sum at each iteration

}

return sum;

}

const numbers = [1, 2, 3, 4, 5];

const total = calculateSum(numbers);

console.log(total); // Output: 15

```

In conclusion, JavaScript is a powerful language that can be used to create complex and dynamic web applications. By following these tips and tricks, you can write clean and efficient code that is easy to read, maintain, and debug. Remember to use variables properly, functions wisely, optimize loops, avoid callback hell, and use debugging tools to improve your code's performance. | zuzexx |

1,398,246 | Rustling Up Cross-Platform Development | My experience with cross-platform mobile development lacks some important elements, such as Flutter... | 0 | 2023-03-12T21:26:11 | https://dev.to/complexityclass/rustling-up-cross-platform-development-5en | rust, ios, crossplatform | _My experience with cross-platform mobile development lacks some important elements, such as Flutter or Xamarin. Therefore, this article is not a comprehensive analysis of tools in this space._

Over the years, I've tried a few different tools for cross-platform development, including PhoneGap (which, in hindsight, I probably should have avoided), React Native, Qt, and a bit of Kotlin Native. Generally speaking, I firmly believe that the UI should be native, and that tools like PhoneGap just don't cut it for anything more than a simple app. While React Native has its pros and cons, it hasn't won me over as a developer. Instead, I prefer the idea of having a cross-platform core and native UI. As someone who switched from Android to iOS around the time of KitKat (4.4? 🤔), As I'm naturally more inclined towards llvm based languages, C++ was my first choice for a cross-platform code. On iOS it's relatively easy to bridge C++ and Objective-C through a mix of both called Objective-C++. I've worked on some big projects that were heavily based on this idea, and I can attest that it's a working solution. However, Objective-C is becoming less popular every day, and Objective-C++ is an even scarier beast to work with. I can't say that I found writing it enjoyable. Furthermore, I can't see any compelling reason to write application-level code in C++. Perhaps for OS-level code, but that's a topic for another discussion. After a few attempts with C++, I tried Kotlin Native (KN), which had much better tooling and IDE support, even in the earliest versions. Kotlin is a fun language to read and write, and with the "Native" part, we can even rid ourselves of the JVM. So if you're already immersed in the Android ecosystem, love Kotlin, and enjoy working in Android Studio, then KN should be a good choice for you. However, in this article, I'd like to explore a more "rusty" perspective. Let's dive in.

I've dabbled with Rust on iOS a few times, and it seemed a lot like C++. You build a static library, use C headers as glue, and end up struggling with debugging. This approach is straightforward when you're only extracting a small piece of logic into a shared library and interacting with it through a thin interface. But what if you want to put most of the app logic into the shared lib? That's when things get tricky.

Recently, I stumbled upon a project at the Rust London conference that caught my eye. It's called [Crux](https://github.com/redbadger/crux), and it's a library that helps you implement a functional core and imperative shell paradigm. In other words, it allows you to separate your app logic from your UI code, and share it between platforms.

Although the idea of a functional core and imperative shell might sound straightforward, the actual implementation can be tricky. As you start working on it, you'll inevitably run into obstacles and challenges, especially when it comes to separating the core logic from the user interface.

Second biggest challenges after "variable naming" is finding the appropriate architecture to use. Traditional MVC/MVP architectures may not always be the best fit, and I found it difficult to keep track of all the data flows in applications I used to work with. Additionally, real-world user interfaces can be complex and dynamic, which adds even more states and interactions to the UI layer.

This is where functional concept of free from side-effects Core comes in. Crux helps to build foundation. For me, it's been really helpful in figuring out how to structure my code and how to isolate the core logic in a way that's both ergonomic and easy to read. In a few hours I created a small app that interacts with the DALL-E APIs (pretty obvious, right?) and works on 3 platforms (actually 2.5 as I haven't finished web 😅). In the following section, I'll share my initial impressions.

### Setup

Since the project is in its very early stages, setting it up isn't quite as seamless as with React Native. However, it's not a big deal to contribute to the tooling in-house if you decide to go with this stack for a real project. In fact, most big projects, even single-platform ones, contain a zoo of different bash scripts and make files anyway. The [book](https://redbadger.github.io/crux/overview.html) has a really good explanation of how it works and even provides example apps.

Personally, I found it better to set up the project from scratch using the book. That way, I was able to see all the places to look if something went wrong. It took me less than an hour to set up the core and iOS project, and the process was straightforward. Luckily, the core configuration is in .rs and toml files, which are very easy to follow.

For iOS, you need some bash scripts (oh, I hate writing bash). But in my case, copy-pasting was enough, and ChatGPT made life bearable even if some customisation in bash is needed. Long story short, you need to compile the core as a static library, generate UI languages bindings using the [uniffi](https://crates.io/crates/uniffi) crate, and add these steps to the Xcode project so you don't need to rebuild and relink the core manually. The uniffi requires to write an IDL Interface Definition Language file describing the methods and data structures available to the targeted languages. I generated Swift/ Kotlin and TS for iOS/Android and Web respectively.

UDL looks like this:

```

namespace core {

sequence<u8> handle_event([ByRef] sequence<u8> msg);

sequence<u8> view();

};

```

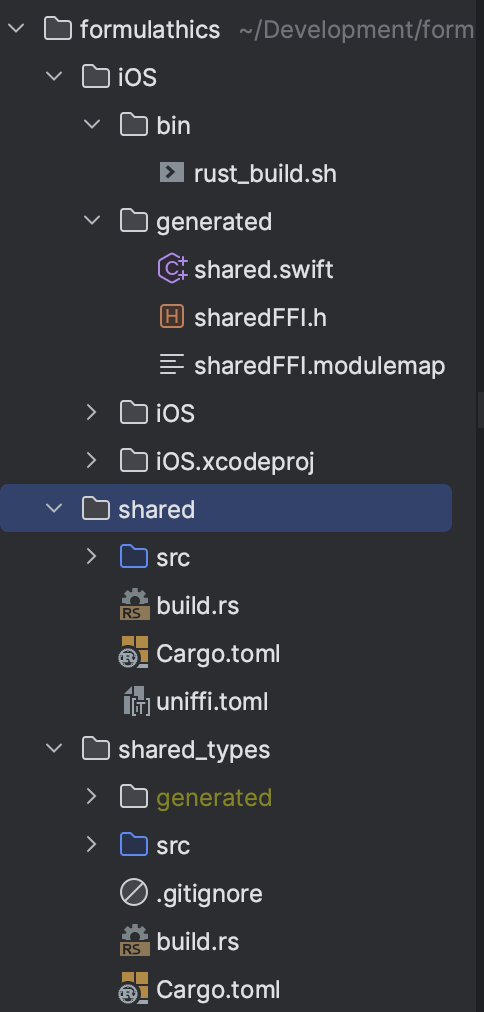

At the end, the project structure looks like this (no Android and web on the screenshot):

### Development

When it comes to development, you'll probably be splitting your time between Xcode/Android Studio and whatever you prefer for Rust and web development. I've seen some brave souls trying to do mobile development in Emacs, but at the end of the day, they were significantly slower than their teammates.

The good news is that it's quite convenient to work on the core first, crafting the interface and writing tests, and then switching to Xcode/Studio to polish bits of the Core in parallel. Personally, I use CLion for Rust and I don't dare to open more than 2 out of the 3 (CLion/Xcode/Android Studio) at once. Rust compiles quite slowly, which isn't a problem for me since my Swift/ObjC project at work took around 50 minutes for a clean build on a top configuration MacPro(not a MacBook 🐌). However, for web developers, this might be a bit of a drag. But proper project modularization can help with this.

Writing code in Rust can be a bit challenging at first, but I found that a lot of the ideas are similar to Swift, so it's not like a completely different experience. Enums like in Swift, isn't it? 😁

```

#[derive(Serialize, Deserialize)]

pub enum Event {

Reset,

Ask(String),

Gen(String),

#[serde(skip)]

Set(Result<Response<gpt::ChatCompletion>>),

#[serde(skip)]

SetImage(Result<Response<gpt::PictureMetadata>>),

}

```

When it comes to debugging, you can use breakpoints through the **lldb "breakpoint set"** command to debug both the Swift and Rust code in your linked static library. It's not as convenient as debugging a pure Kotlin project in Android Studio, but it still gets the job done.

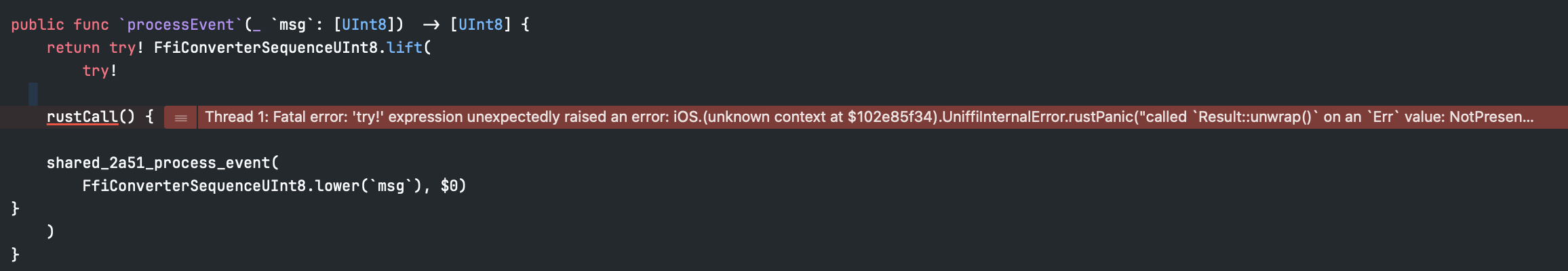

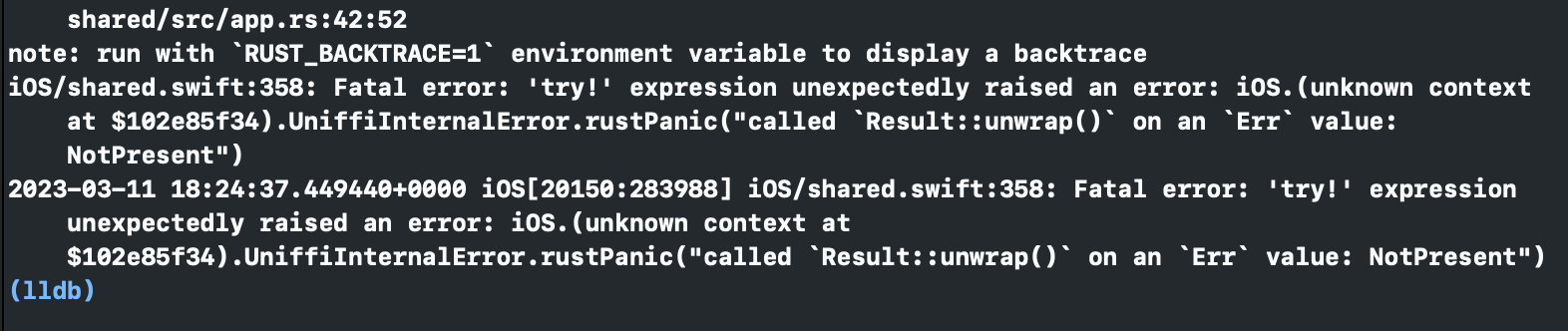

E.g missing .env variable error easily identifiable even from within Xcode.

Exact line in the logs:

However, I couldn't see any issues with debugging the core and shell separately. In fact, it can be quite helpful to be able to debug each component independently, as it can make it easier to pinpoint the source of any bugs or issues.

What about interop... I'm not going to lie, it's not ideal. In particular, interop between Rust and Swift isn't as seamless as it is between Swift/Objective-C and Kotlin/Java. For example, **f64** can't be passed as is through the boundary ( _which is logical, but still_). However, there are some cheat sheets available to help make sense of the interop rules. For Swift, the following rules apply:

- Primitives map to their obvious Swift counterpart (e.g. **`u32`** becomes **`UInt32`**, **`string`** becomes **`String`**, etc.).

- An object interface declared as **`interface T`** is represented as a Swift protocol **`TProtocol`** and a concrete Swift class **`T`** that conforms to it.

- An enum declared **`enum T`** or **`[Enum] interface T`** is represented as a Swift enum **`T`** with appropriate variants.

- Optional types are represented using Swift's built-in optional type syntax **`T?`**.

- Sequences are represented as Swift arrays, and maps as Swift dictionaries.

- Errors are represented as Swift enums that conform to the **`Error`** protocol.

- Function calls that have an associated error type are marked with **`throws`** in Swift.

I remember similar rules for Kotlin Native. Actually, the interface between the core and shell should be laconic. I don't think these limitations are good, but they don't hurt too much either.

### Architecture

Talking about architectural patterns. _Have you seen mobile Eng who are not talking about patterns?_ Crux is inspired by Elm, there is quite good page in the [book](https://redbadger.github.io/crux/guide/elm_architecture.html) and also [Elm docs](https://guide.elm-lang.org/architecture/) worth reading, so let’s skip the description. In general I see movement to unidirectional and message passing architectures. They are clean and quite strict, which makes it easier to update code and not introduce inconsistency when one text field has three different states across layers. True that UIKit or Vanila android libraries are not the best fit (though still possible to reuse some ideas), but SwiftUI and Jetpack Compose fit quite nice. If you write gesture interaction and animation heavy UIs - this would be challenging. Like if you do some gesture driven transition, should you keep current state in UI or pass it to the core? Or UITableView (iOS) and RecyclerView (Android) have a bit different lifecycle for cells, hence for cell models, how core will be dealing with it. A bit challenging, but still possible, no silver bullets as always.

The part that I liked the most, though, was the capabilities feature. Capabilities provide a nice and clear way to deal with side effects, such as networking, databases, and system frameworks. Sure, you could write a single HTTP library in C and use it everywhere, and maybe you could even standardize persistence to use only SQLite. But there are so many different things to consider, such as audio/video, file systems, notifications, biometrics, or even peripherals like the Apple Pencil. And your system already has good libraries to deal with these things, which might even be optimized ( quality of service or URLSession configuration on iOS) to be more effective. That's where capabilities come in - they allow you to declare what you need, while keeping the implementation specifics for the platform code. It's a great way to keep your code modular and maintainable.

When core handles event that need to make an HTTP call, it's actually instructing Shell to do the call.

```

fn update(&self, event: Self::Event, model: &mut Self::Model, caps: &Self::Capabilities) {

match event {

Event::Ask(question) => {

model.questions_number += 1;

gpt::API::new().make_request(&question, &caps.http).send(Event::Set);

},

...

```

And shell is sending request

```

switch req.effect {

...

case .http(let hr):

// create and start URLSession task

}

```

The same logic can be applied to databases (just separate KV-storages and relational), biometric, whatever else.

### Final Thoughts

Despite the fact that I'm new to Crux and not yet fluent in Rust, I was able to build a simple app that works on iOS, Android, and Web (almost) in less time than it would have taken to build all three from scratch.

Crux is still in its early stages, e.g. at the time of my note, the HTTP capability didn't support headers and body. But I have high hopes that this project will continue to grow and attract more contributors, as the idea behind it is really cool.

Even if you don't want to use Rust for cross platform development, I think it's worth taking a look at this project to see how you might be able to reuse some of the ideas in your favourite stack. At the end of the day, anything that helps us write better, more modular, and more maintainable code is a win. | complexityclass |

1,398,343 | Multilingual sites in React | by Suprabhat Kumar Our chances of converting visitors into clients considerably rise if our website... | 0 | 2023-03-12T21:56:11 | https://blog.openreplay.com/multilingual-sites-in-react/ | webdev, react | by [Suprabhat Kumar](https://blog.openreplay.com/authors/suprabhat-kumar)

Our chances of converting visitors into clients considerably rise if our website is translated into the user's native language. This article will explain implementing the multilingual feature in a website using React. Hopefully, by the end of this article, we will be able to translate any website into any language we want. The multilingual functionality makes navigating sites in different languages easier for our readers.

## Getting Started

At first, we create a React app using the following:

```

npx create-react-app app-name

```

This command lets us create a basic react app.

After that, we change the working directory using the following command -

```

cd app-name

```

Then we start the React application using -

```

npm start

```

Next, we follow mentioned steps below -

1. Create an `i18n` file.

2. Create a `LanguageSelector` file enabling us to select the website's language.

3. Create a `locales` folder where we store the translations in different languages used by our site.

4. Map `contents` with languages.

Following are the npm packages used in translation -

> * i18next

> * react-i18next

Command to install the packages -

```

npm install react-i18next i18next

```

Let’s dive deeper now.

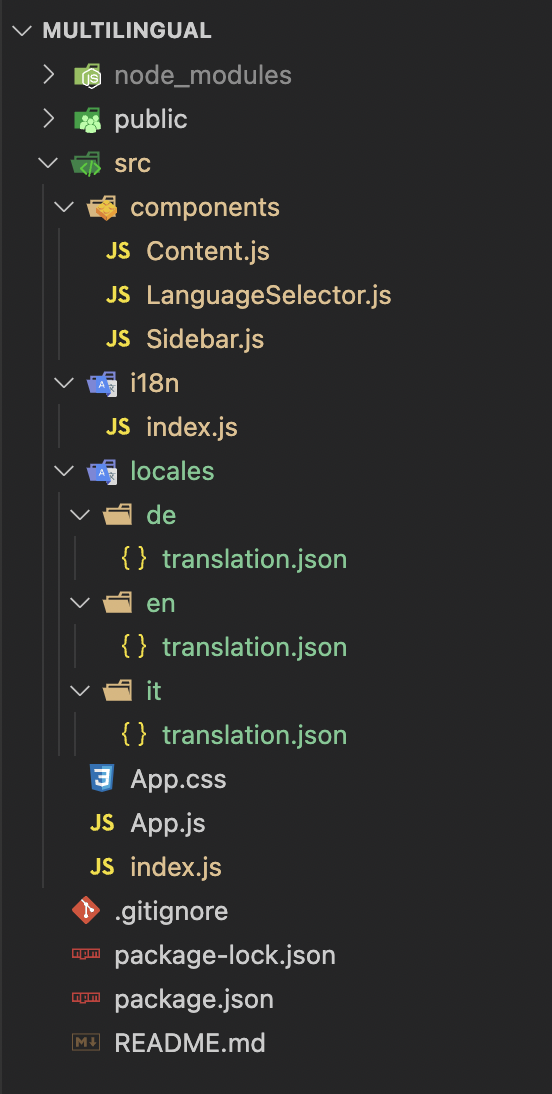

Following is the project structure -

### 1. Creating i18n file

src > i18n > index.js

```javascript

import i18n from "i18next";

import { initReactI18next } from "react-i18next";

import translationsInEng from '../locales/en/translation.json';

import translationsInGerman from '../locales/de/translation.json';

import translationsInItalian from '../locales/it/translation.json';

// the translations

const resources = {

en: {

translation: translationsInEng

},

de: {

translation: translationsInGerman

},

it: {

translation: translationsInItalian

},

};

i18n

.use(initReactI18next) // passes i18n down to react-i18next

.init({

resources, // resources are important to load translations for the languages.

lng: "it", // It acts as default language. When the site loads, content is shown in this language.

debug: true,

fallbackLng: "de", // use de if selected language is not available

interpolation: {

escapeValue: false

},

ns: "translation", // namespaces help to divide huge translations into multiple small files.

defaultNS: "translation"

});

export default i18n;

```

In this file, we described

a. resources (for translating in different languages): It contains the files helping map the translations with the selected languages. If we interchange `translationsInEng` and `translationsInGerman`, then the content available in the `translation.json` file of the `en` and `de` folders will be shown in `German` and `English`, respectively. The translations in the `en` and `de` folders get mapped in German and English.

b. lng: The `default` language is responsible for showing the translations when the site loads. If we change it to `de`, the website will be translated to `de` on loading the site.

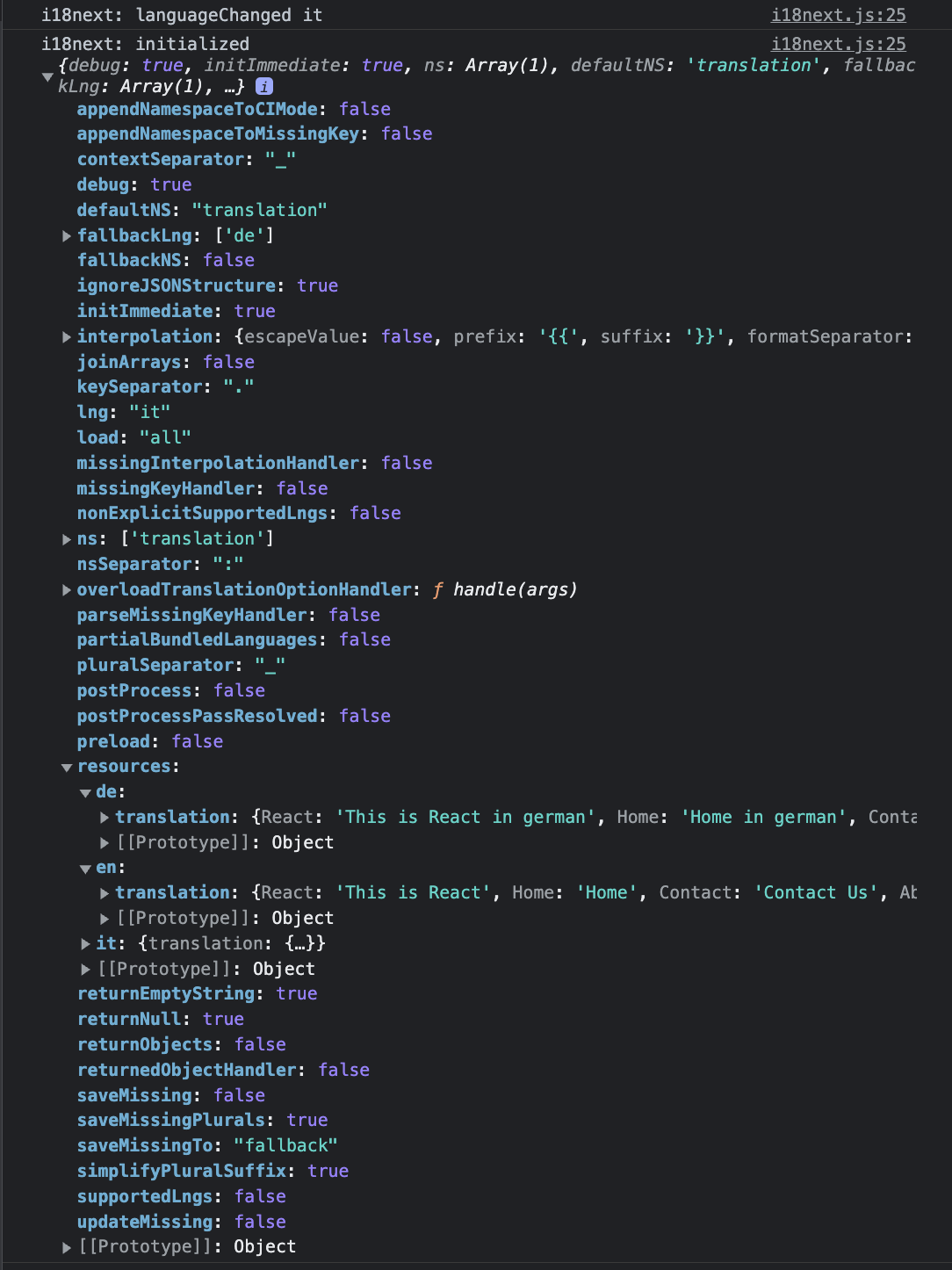

c. debug: It is `boolean` and gives detailed information on the console if assigned `true`. It also helps in analyzing the issues(if any occurred). Below is the screenshot attached for more information -

d. fallbackLng: This language is used when the selected language(`lng`) is unavailable. Acts as default language in the absence of `lng`.

e. ns (Namespaces): It allows us to break huge translations into multiple small files instead of writing in a single large file.

### 2. Create a LanguageSelector file enabling us to select the language of the website

The language can be changed by using i18n imported from the i18n.js file.

src > components > LanguageSelector.js

```javascript

import React, {useState} from "react";

import i18n from '../i18n';

const LanguageSelector = () => {

const [selectedLanguage, setSelectedLanguage] = useState(i18n.language); // i18n.language contains the language assigned to lng in i18n.js file.

const chooseLanguage = (e) => {

e.preventDefault();

i18n.changeLanguage(e.target.value); // i18n.changeLanguage() is used to change the language assigned to lng in i18n.js file.

setSelectedLanguage(e.target.value);

}

return (

<select defaultValue={selectedLanguage} onChange={chooseLanguage}>

<option value="de">German</option>

<option value="en">English</option>

<option value="it">Italian</option>

</select>

);

};

export default LanguageSelector;

```

### 3. Create a locales folder to store the translations in our site's different languages.

1. de

src > locales > de > translation.json

```json

{

"React" : "Das ist React",

"Home": "Daheim",

"Contact": "Kontaktiere uns",

"About": "Über uns",

"username": {

"label": "Nutzername",

"placeholder": "Platzhalter..."

},

"password": {

"label": "Kennwort",

"placeholder": "passwort platzhalter..."

},

"location": "Geben Sie den Standort ein",

"Address": "Gib die Adresse ein"

}

```

2. en

src > locales > en > translation.json

```json

{

"React" : "This is React",

"Home": "Home",

"Contact": "Contact Us",

"About": "About Us",

"username": {

"label": "username",

"placeholder": "placeholder..."

},

"password": {

"label": "password",

"placeholder": "password placeholder..."

},

"location": "Enter the location",

"Address": "Enter the address"

}

```

3. it

src > locales > it > translation.json

```json

{

"React" : "Questo è React",

"Home": "Casa",

"Contact": "Contattaci",

"About": "Riguardo a noi",

"username": {

"label": "nome utente",

"placeholder": "segnaposto..."

},

"password": {

"label": "parola d'ordine",

"placeholder": "segnaposto password..."

},

"location": "Inserisci la posizione",

"Address": "Inserisci l'indirizzo"

}

```

### 4. Map contents with the languages.

Following is the App.js -

src > App.js

```javascript

import './App.css';

import LanguageSelector from './components/LanguageSelector';

import Content from './components/Content';

import Sidebar from './components/Sidebar';

function App() {

return (

<div className="App">

<LanguageSelector />

<Content />

<Sidebar />

</div>

);

}

export default App;

```

In Sidebar.js, we import `useTranslation()` hook to implement the translation of the words. We get the `t` function and `i18n` instance from `useTranslation()`.

The `t` function is used to translate our contents while `i18n` changes the language.

But, we will only use `t` here.

The following code will translate words like Home, Contact, and About.

src > components > Sidebar.js

```javascript

import { useTranslation } from 'react-i18next'

import React from 'react'

const Sidebar = () => {

const { t } = useTranslation();

return (

<div style={{marginTop: "10px"}}>

<button>{t("Home")}</button>

<button>{t("Contact")}</button>

<button>{t("About")}</button>

</div>

)

}

export default Sidebar

```

`Content.js` shows how to translate the content.

src > components > Content.js

```javascript

import React from 'react'

import { useTranslation } from 'react-i18next'

const Content = () => {

const { t } = useTranslation();

return (

<div>

<p>{t("React")}</p>

<hr/>

<p>{t("username.label")}</p>

<p>{t("username.placeholder")}</p>

<hr/>

<p>{t("password.label")}</p>

<p>{t("password.placeholder")}</p>

<hr/>

<p>{t("location")}</p>

<p>{t("Address")}</p>

</div>

)

}

export default Content;

```

Translation of contents takes time. As a result, we wrap the `<App/>` component within `Suspense` with `fallback`.

`Loading...` will be shown on the screen until the translation is done.

src > index.js

```javascript

import React, { Suspense } from 'react';

import ReactDOM from 'react-dom/client';

import App from './App';

const root = ReactDOM.createRoot(document.getElementById('root'));

root.render(

<React.StrictMode>

<Suspense fallback={<div>Loading....</div>}>

<App />

</Suspense>

</React.StrictMode>

);

```

<h2>Session Replay for Developers</h2>

<p><em>

Uncover frustrations, understand bugs and fix slowdowns like never before with <strong><a href="https://github.com/openreplay/openreplay" target="_blank">OpenReplay</a></strong> — an open-source session replay suite for developers. It can be <strong>self-hosted</strong> in minutes, giving you complete control over your customer data

</em></p>

<img alt="OpenReplay" width="768" height="400" src="https://blog.openreplay.com/assets/overview_Z17Qdyg.png" class="astro-UXNKDZ4E" loading="lazy" decoding="async">

<em>Happy debugging! <a href="https://openreplay.com" target="_blank">Try using OpenReplay today.</a></em>

## Common Mistake

Till this point, everything seems OK, but it’s not.

Once you select a particular language from the dropdown, the contents of the website change accordingly, but on `refreshing` the webpage, the selected language changes back to that language which is mentioned in `lng` in the `i18n.js` file because we assigned `i18n.language` to `selectedLanguage` (check 6th line in `LanguageSelector.js`).

### Fun Activity

Change the `lng` (in i18n.js file) to `en` and now change the language from the dropdown on the webpage; you’ll see contents change as per the selected language but do refresh the page. This time you’ll notice the content is in `English` as the `selectedLanguage`; this time is `en`.

The problem, as of now, is refreshing the webpage changes the selected language of the website. So, what to do now?

### Solution

The solution is to use the `localStorage` object. This object stores the data in the browser's `key:value pair` with no expiration date.

* Let’s see what changes we must introduce in the already available code.

1. In `chooseLanguage() in LanguageSelector.js`, we set the key as lang and its value equal to the language selected by the user.

src > components > LanguageSelector.js

```javascript

import React, {useState} from "react";

import i18n from '../i18n';

const LanguageSelector = () => {

const [selectedLanguage, setSelectedLanguage] = useState(i18n.language); // i18n.language contains the language assigned to lng in i18n.js file.

const chooseLanguage = (e) => {

e.preventDefault();

i18n.changeLanguage(e.target.value); // i18n.changeLanguage() is used to change the language assigned to lng in i18n.js file.

setSelectedLanguage(e.target.value);

localStorage.setItem("lang", e.target.value);

}

return (

<select defaultValue={selectedLanguage} onChange={chooseLanguage}>

<option value="de">German</option>

<option value="en">English</option>

<option value="it">Italian</option>

</select>

);

};

export default LanguageSelector;

```

2. In `i18n.js`, `lng` gets the value available in the `lang` key (in our case, it’s `it`) because of `lng: localStorage.getItem("lang").`

src > i18n > index.js

```javascript

import i18n from "i18next";

import { initReactI18next } from "react-i18next";

import translationsInEng from '../locales/en/translation.json';

import translationsInGerman from '../locales/de/translation.json';

import translationsInItalian from '../locales/it/translation.json';

// the translations

const resources = {

en: {

translation: translationsInEng

},

de: {

translation: translationsInGerman

},

it: {

translation: translationsInItalian

},

};

i18n

.use(initReactI18next) // passes i18n down to react-i18next

.init({

resources, // resources are important to load translations for the languages.

lng: localStorage.getItem("lang"), // It acts as default language. When the site loads, content is shown in this language.

debug: true,

fallbackLng: "de", // use de if selected language is not available

interpolation: {

escapeValue: false

},

ns: "translation", // namespaces help to divide huge translations into multiple small files.

defaultNS: "translation"

});

export default i18n;

```

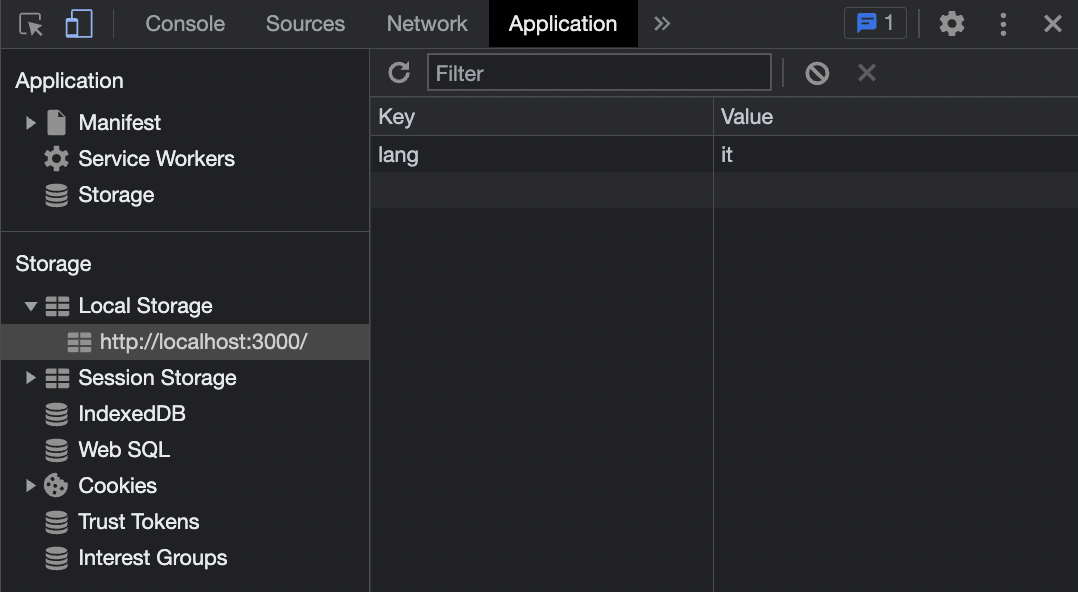

Check the `Local Storage` section under the `Application` tab in the developer console. The selected language is `it`. Now if you refresh the browser, the selected language will still be `it`.

## Output

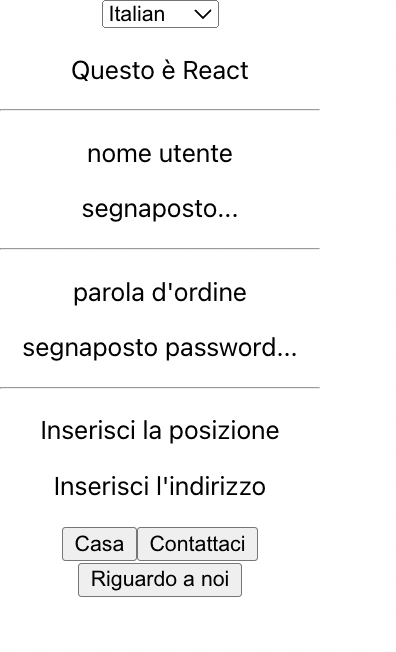

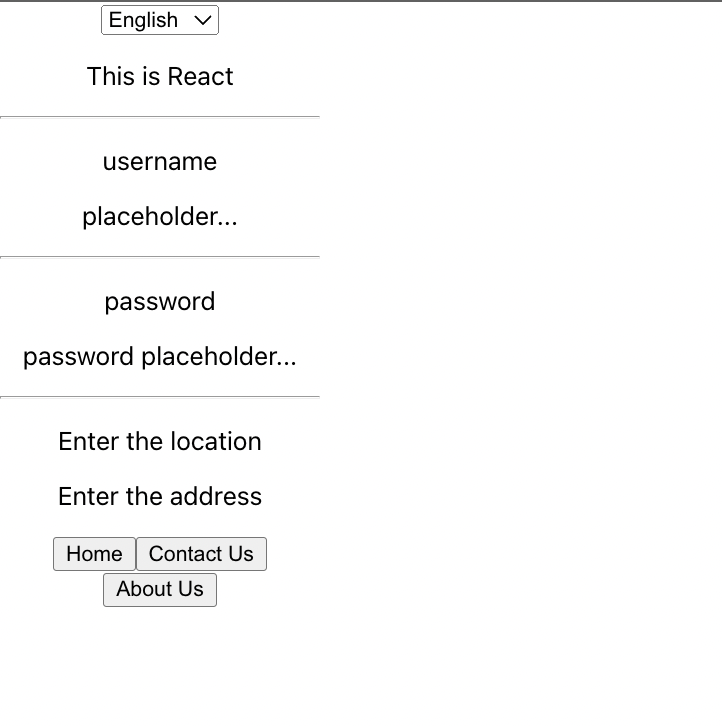

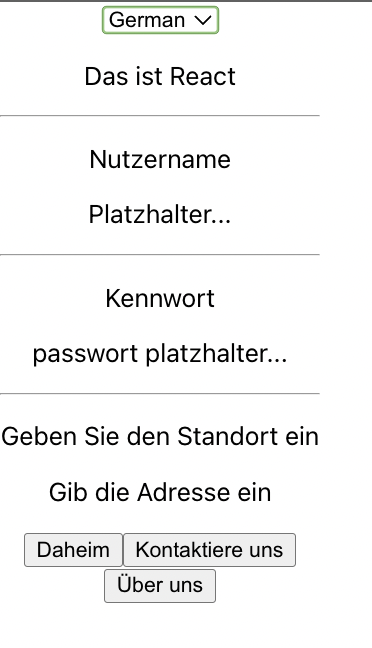

We are focusing on translating, so there is no styling in it. The final output looks like this -

Selected Language is `it(Italian)`

Selected Language is `en(English)`

Selected Language is `de(German)`

## Conclusion

With this, we come to the end of this article. I believe you have learned how to implement the multilingual feature in a website.

## References

https://react.i18next.com/

[](https://newsletter.openreplay.com/)

| asayerio_techblog |

1,398,576 | Animation -3 : CSS push pop loaders | Are you looking for animations to enhance your website? We've got you covered with our wide selection... | 22,215 | 2023-03-13T14:30:00 | https://dev.to/jon_snow789/animation-3-css-push-pop-loaders-4o23 | css, webdev, animation, design | Are you looking for animations to enhance your website? We've got you covered with our wide selection of creative and engaging #animations. Say goodbye to endless searching and hello to seamless integration on your website!

#### We're excited to introduce our latest YouTube series, which features diverse creative and inspiring website animations.

---

In our short videos, you'll find a variety of #animations that can be easily implemented on your website to enhance user experience.

---

### CSS push pop loaders

---

{% youtube CZcEDsdrkI4 %}

---

### [Source code](https://codepen.io/jh3y/pen/ZEEEGWr)

---

## For more information

1. Check my GitHub profile

[https://github.com/amitSharma7741](https://github.com/amitSharma7741)

2. Check out my Fiver profile if you need any freelancing work

[https://www.fiverr.com/amit_sharma77](https://www.fiverr.com/amit_sharma77)

3. Check out my Instagram

[https://www.instagram.com/fromgoodthings/](https://www.instagram.com/fromgoodthings/)

4. Linktree

[https://linktr.ee/jonSnow77](https://linktr.ee/jonSnow77)

5. Check my project

- EVSTART: Electric Vehicle is the Future

[https://evstart.netlify.app/](https://evstart.netlify.app/)

- News Website in react

[https://newsmon.netlify.app/](https://newsmon.netlify.app/)

- Hindi jokes API

[https://hindi-jokes-api.onrender.com/](https://hindi-jokes-api.onrender.com/)

- Sudoku Game And API

[https://sudoku-game-and-api.netlify.app/](https://sudoku-game-and-api.netlify.app/)

---

--- | jon_snow789 |

1,398,603 | The 10 Best Task Management Software In 2023 (For The Developer Team) | Achieving business goals is impossible without effective task management. Reaching your business... | 0 | 2023-07-07T04:04:24 | https://medium.com/@smartONES/the-10-best-task-management-software-in-2023-for-the-developer-team-749e7d27420a | webdev, developertools, productivity, agile | ---

title: The 10 Best Task Management Software In 2023 (For The Developer Team)

published: true

date: 2023-01-04 14:00:10 UTC

tags: webdev,developertools,productivity,agile

canonical_url: https://medium.com/@smartONES/the-10-best-task-management-software-in-2023-for-the-developer-team-749e7d27420a

---

Achieving business goals is impossible without effective task management. Reaching your business goals requires you and your team to perform various tasks. For this, you need to manage them effectively. Holistic task management brings you closer to your goals and lets you achieve them efficiently.

Over time, modern task management tools and practices have replaced traditional methodologies. Several task management software solutions are available for businesses of all scales and operating in all industries.

So, what is task management software?

### What Is Task Management Software?

**Task management software** is a software solution that helps you organize, prioritize, and streamline your business tasks to achieve your goals. It enables you to fulfill your goals or execute your projects effectively. Modern task management software provides automated tools that prevent your team from performing repetitive processes manually.

The essence and purpose of task management are similar to **project management**. The two services’ software solutions often overlap and provide similar business tools. However, it is essential to understand the subtle difference between task management and [project management](https://blog.ones.com/enterprise-project-management?hsLang=en).

Project management has a broader scope than task management. It includes identifying a core goal to be achieved and several aspects involved in reaching that goal. On the other hand, task management involves handling each project element simultaneously. Instead of worrying about the project timeline, task management is concerned with the team’s specific task(s) deadline.

### The 10 Best Task Management Software In 2023 For Your Developer Team

Software development involves multiple team members performing different tasks. Especially if you follow the [Agile development methodology](https://blog.ones.com/how-does-agile-product-development-work?hsLang=en), it is crucial to ensure seamless coordination between your team members as they perform different tasks. Task management software helps your developer team track their processes and work towards a common goal.

Here are 10 of the best task management software in 2023 for your developer team:

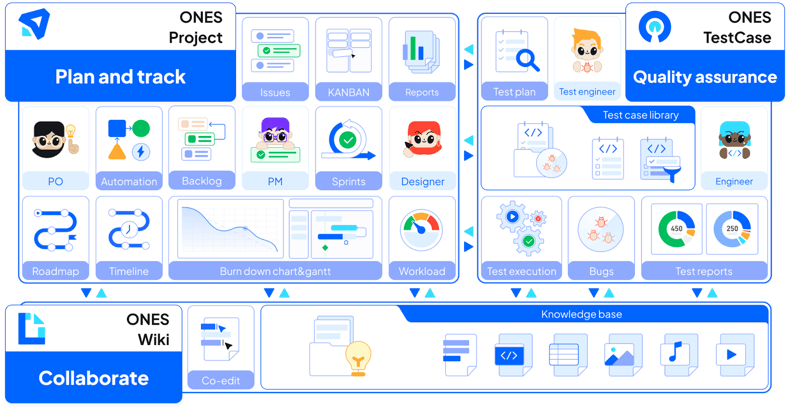

#### 1. ONES.com

[ONES.com](https://ones.com/)is a powerful project and task management software that helps you organize your performance and achieve your goals in the best way possible. Designed especially for managing software development projects, [ONES.com](https://ones.com/) allows you to identify, define, and track all developer tasks you need for your software development project.

[ONES.com](https://ones.com/) provides your developer team with manageable milestones, roadmaps, sprints, and more to align them with your objectives. Depending on your business requirements, you can create, track, and perform tasks using a centralized platform. The task management software also allows all users to view their teams’ workload and the status of the ongoing tasks while highlighting the most productive aspects.

#### 2. ClickUp

_Via ClickUp_

ClickUp is another major task management software used by some of the industry’s biggest names, including Google, Airbnb, and Webflow. It provides users with several features that boost their productivity and ensures effective management of all tasks.

ClickUp also allows businesses to track multiple tasks performed for multiple projects. Some of the major tools and features of ClickUp include templates, recurring tasks, reminders, priorities, notifications, assigned comments, views, etc.

#### 3. ProofHub

_Via ProofHub_

ProofHub is a comprehensive project and task management tool dedicated to improving your teams’ productivity and efficacy. It gives managers a 360-degree view of all their tasks and complete control over communication, projects, and tasks.

ProofHub also provides users with seamless collaboration tools that allow multiple team members to work on a centralized platform. It allows the management to make important company announcements hassle-free. Moreover, ProofHub readily integrates with third-party applications and services like OneDrive, Google Drive, Dropbox, Box, and more.

#### 4. Todoist

_Via Todoist_

Todoist is a simple, straightforward, and easy-to-understand task management software for businesses. It is designed like a holistic to-do list app that allows you and your team to manage multiple tasks using a single unified platform. Its no-frills UI is built around a simple, minimalistic design with advanced task-tracking features.

Some of the key features of Todoist include priorities, sub-tasks, recurring due dates, mobile applications, and more.

#### 5. Any.do

_Via Any. do_

Any. do is a unique task management software that labels different items as “today”, “tomorrow”, and “upcoming”. This gives users a quick overview of the tasks and the timeline they need to follow.

Unlike most of its competitors, Any.do provides users with an intuitive calendar to help them see how their tasks and events fit together. It also helps you organize tasks and filter workflows by specific categories and/or due dates.

#### 6. Chanty

_Via Chanty_

Chanty is a collaborative platform with modern task management features. It employs a chat-first model to create a holistic task management system. Task management software allows users to convert any message into a dedicated assignment, allocate it to the right person, and set a due date for the same.

#### 7. Taskque

_Via Taskque_

Taskque is an ideal task management software to implement if you want to move just a notch about a to-do list application. A brilliant tool for resource management, Taskque works best for teams performing similar functions using similar skills.

Some key features Taskque offers users include discussions, a calendar, automatic task assignment, workflow management, a to-do list app, and more.

#### 8. Flow

_Via Flow_

Flow should be your first choice of task management software if you are a project manager who prefers using a Grantt chart. It provides users with color-coded projects that help them easily manage and switch between different tasks.

Moreover, Flow provides your team members with templates and repeat projects to help them work on a project repeatedly or stage a different event easily.

#### 9. Hitask

_Via Hitask_

Hitask is a holistic task and project management solution that offers a centralized dashboard to its users featuring all ongoing tasks along with their due dates, teams, projects, and other relevant details. The simple UI and functionality make it easy for users to create, edit and assign the right tasks to the right team members.

Some key features offered by Hitask include file storage, task management, email creation, reports, mobile application, time tracking, and more.

#### 10. Trello

_Via Trello_

Trello is one of the most popular task management tools that lets you manage the simplest and the most complicated tasks to be performed. Its Kanban interface allows you to set up dedicated cards for different tasks and projects as if they are post-it notes.

### The Final Word

These were some of the best task management software you can choose from for your developer team in 2023. Always make sure you implement a software solution according to your project’s complexity and scope.

_Originally published at_ [_https://blog.ones.com_](https://blog.ones.com/10-best-task-management-software-in-2023-for-the-developer-team) _on January 4, 2023._ | josiel677 |

1,398,717 | Start use chrome extension of The Marvellous Suspender | Start use chrome extension of The Marvellous Suspender Everyday do development and investigation in... | 0 | 2023-03-13T13:26:09 | https://dev.to/fukajun/start-use-chrome-extension-of-the-marvellous-suspender-1cl4 | development | ---

title: Start use chrome extension of The Marvellous Suspender

published: true

description:

tags: development

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2023-03-13 07:28 +0000

---

Start use chrome extension of The Marvellous Suspender

Everyday do development and investigation in work and hobby.

At such time I using chrome. Open many web site in tab.

So my mac to be heavy. I have think this reason is javascript that are processing while no access the pages. That process are polling etc...

This extension suspend tab which long time no access, and display snapshot image each pages.

[The Marvellous Suspender - Chrome ウェブストア](https://chrome.google.com/webstore/detail/the-marvellous-suspender/noogafoofpebimajpfpamcfhoaifemoa) | fukajun |

1,398,731 | Remote Single file component without any framework | I read an awesome article I and wanted to share it with the community. Implementing single-file Web... | 0 | 2023-03-13T08:07:13 | https://dev.to/artydev/single-file-component-without-any-framework-47ek | I read an awesome article I and wanted to share it with the community.

[Implementing single-file Web Components](https://ckeditor.com/blog/implementing-single-file-web-components/)

The idea is to be able to load and run a remote single file component like the one below.

This one is accessible from the following url :

[https://blog.comandeer.pl/assets/jednoplikowe-komponenty/HelloWorld.wc

](https://blog.comandeer.pl/assets/jednoplikowe-komponenty/HelloWorld.wc)

Notice the extension.

```html

<template>

<div class="hello">

<p>Hello, world! My name is <slot></slot>.</p>

</div>

</template>

<style>

div {

background: red;

border-radius: 30px;

padding: 20px;

font-size: 20px;

text-align: center;

width: 300px;

margin: 0 auto;

}

</style>

<script>

export default {

name: 'hello-world',

onClick() {

alert( `Don't touch me!` );

}

};

</script>

```

Here is the loader :

```js

let loadComponent = (function() {

function fetchAndParse( URL ) {

return fetch( URL ).then( ( response ) => {

return response.text();

} ).then( ( html ) => {

const parser = new DOMParser();

const document = parser.parseFromString( html, 'text/html' );

const head = document.head;

const template = head.querySelector( 'template' );

const style = head.querySelector( 'style' );

const script = head.querySelector( 'script' );

return {

template,

style,

script

};

} );

}

function getSettings( { template, style, script } ) {

const jsFile = new Blob( [ script.textContent ], { type: 'application/javascript' } );

const jsURL = URL.createObjectURL( jsFile );

function getListeners( settings ) {

return Object.entries( settings ).reduce( ( listeners, [ setting, value ] ) => {

if ( setting.startsWith( 'on' ) ) {

listeners[ setting[ 2 ].toLowerCase() + setting.substr( 3 ) ] = value;

}

return listeners;

}, {} );

}

return import( jsURL ).then( ( module ) => {

const listeners = getListeners( module.default );

return {

name: module.default.name,

listeners,

template,

style

}

} );

}

function registerComponent( { template, style, name, listeners } ) {

class UnityComponent extends HTMLElement {

connectedCallback() {

this._upcast();

this._attachListeners();

}

_upcast() {

const shadow = this.attachShadow( { mode: 'open' } );

shadow.appendChild( style.cloneNode( true ) );

shadow.appendChild( document.importNode( template.content, true ) );

}

_attachListeners() {

Object.entries( listeners ).forEach( ( [ event, listener ] ) => {

this.addEventListener( event, listener, false );

} );

}

}

return customElements.define( name, UnityComponent );

}

function loadComponent( URL ) {

return fetchAndParse( URL ).then( getSettings ).then( registerComponent );

}

return loadComponent;

})()

let url = "https://blog.comandeer.pl/assets/jednoplikowe-komponenty/HelloWorld.wc" + `?x=${Math.random()}`

loadComponent(url) .then( ( component ) => {

console.log( 'Component loaded' );

document.body.insertAdjacentHTML( 'beforeend', '<hello-world>Comandeer</hello-world>' );

} );

document.querySelector( 'button' ).addEventListener( 'click', () => {

document.body.insertAdjacentHTML( 'beforeend', '<hello-world>Comandeer</hello-world>' );

} );

```

You can see a demo here [SFC](https://flems.io/#0=N4IgtglgJlA2CmIBcA2AnAOgAwEYA0IAZhAgM7IDaoAdgIZiJIgYAWALmLCAQMYD21NvEHIQAHgBGAVzZsBAPgA61AAQqAgjBXV4AdxX8wABwHC2ysQHppshdxCl4CHmwgDyTHEhxoQAXzwaekZmACtyXgEhESYENhVYPlooAGE+Y1NBFQBeFQAKQilqFzdqPIBKFWBlRTZC4tcBFUJ4Nh4WdWooAAVaACdHPJUAVQAlABkVSurqWtq+1qk+1Ra2liGxyfKMNhZhIaGF0hNqRymc+Sqa8xuFtiXVI5PHHfgADzYKgG5r2r8pnZ7Mr5FTsTjnbKXGZzG78U7xIz9Rx9HLaPQqAAiAHkALK9AbwPrfX6w9zxKB8HhSBhZXKIgl9DD0xwAMT66QAymw+hBqABzIZg2B4FQAciEH0sQtFUx+sxutThpHie2SqIpVJpbFY8GScphirJKiExlgtCEqNVUAwAEcpISAJ4cpzwFx8Ilik1GM1CGXlfUKtpG5UOhCW3XWu2O53OOQe0UhhB+gMwpXxUg8HlGeK5K22+19J0ut3xjNZtjJmrymF3B5XauBr0++B4EkwxMtts3MsQbNdvwptj-f3XPxV2r1EpNPmtZ2yXl80hDYDG+Cm80tlQdkU97MqYf11NG8IskjwVE6fQAIUSEiGFC3md72olbDSgjMKgAuiKV2wHUY8BIGKtBGN6EA8OapSWKEtAAG60LuFb7rKJJpio4SbKimwYJmupCFiEihK6bCbEMJ5nqhqhqCoJKTo0qgzmw4wQMqwiEkuW6tK4-KkOc0KBrWywqIRxEuBgZg8vAnGOPOvEAgsUBSDw8AHAkrHRBxIoPrJPF8iKCGwPa34QlCXa1BAhAgrpC4YMq-RsKQADqEC7EMooCH6-HmTcsAaexAw6dxC4PgATN+Ox8OMfC6ISKSIaplQANRcXJfJ2VIEjKh6ADM5xfqihn2oOMJjrMDY1oswl+WxOgDCVQ6-sOg5lSSQmqBAGR9J8GGkFh2y7PsIJgHwSlhpUkKHoG6E1ZpAyokxLG1RxQwjWN8AYFA8CELQUiwPEI7lQa3JVaoAnHXQDDAWte0bVtO17dql2dhVvn+XVpCtq9tRNhuX3HbUHb9iSzWjuOdRFFOjzwHy-l9GkGQ6IIy6ruuQg7v+CAis9IqzQFfEHudbRmqQfHDNQrkOgjJyfu80RQHxAASAAqOLjAAoggWpTUe1A6C48CpLQsCwBItA8AA1hUPONiwrEYAA+lIRiQcqxLfbIcukIr5psOLLBLXNS6HcdrWvUrKuIZ80w+ehpAsMkMWors8u6-rHIOxSugo2tQFinwgHUDKoNHYGgOezFGCgYHqRy7AUBDB2uGJDoAByo2qcafTGZUJth45Ee6FHYHCLHJAJyoGrUmYGCdSY3Xp1tQy-UIuFRGYIrcjnVGm+DMIK277SG-j0tEzCokkRJghSZxeMfQChDuuz+tqQ+8BwR36nLSiBUTWZGs-VrUcwOzG+CMPdVDOvm9z4SIo7bAZx56bPeBq1Nxm5V9zCVSyrpJza4zDa3uryTOOMRgU3-NTTIB0Az-BomocG9FSgJCSKkdINNkYjAmN5Bs7VmitHaJ0HoSJM79UBENJic49J8QGkCQ4MM4bQKRrAhBYNQ74MSMkZhZgvi0WoH4coFRlBxBUEsWAqJFAgHYGwIwpAkCWGsIkdKhhaBdHgISJksBLCIVkqQGCgtqABz8hLGK8AAC0pjEZmAdJYRmThEhOXdPHDAugeBSJUClAABgAfjeNkAAJMAHE5oWAYD6GoikYAKh+C8Qg-hVYuHoOscjcRlQKHAiGIYTBB0Lgy2OkqPgCAMDKPcjwrISTBbJn4fE+JVctQYAkKNB0tdTiEjYJoWCKlBAszZu5CQ213QaK6KKEUooxB7BFnwcxuhnFQHkAjSJGi+hWEmYkGZcz5CVg1iHZQ9Sa5RkLDGEi7p+kyDkEHAEyQoCnzMBfQk7keB+UlqM-Ie96xqH2YIRpzTWnIg6VALpZhenjH6YMhYpdXnjLWdM2ZfR44LPSEswkqyHGws2dskO9hHCxlKB4EAAB2FA3gcDmKJUgAAHDgfwgQQDPVELhUm9g4TRDYKIfwP46XBFEByFkKR-BAA)

| artydev | |

1,398,867 | sdfdssdfdsf | sdfdf | 0 | 2023-03-13T10:00:44 | https://dev.to/ar1f007/sdfds-n62 | sdfdf | ar1f007 | |

1,399,005 | Confused coder life !! | confused question is : I'm a code lover, i have very good basics in most of programming languages... | 0 | 2023-03-13T12:54:29 | https://dev.to/cryptographer3301/confused-coder-life--4ofb | confused question is :

I'm a code lover, i have very good basics in most of programming languages such as C++, JS, Golang, Perl

But i didn't find my passion yet, sometimes i learn web dev for while i find myself learning networking and a moment again i find myself in game dev, but my soul is in love something call hacking,

how to access inside systems and networks

that's why i decide to invest my programming knowledge in cyber security especially in malware programming , i want one day to make a tool like wireshark or metasploit or a strong malware,

but i see a lot of people talking about certifications

and for me i don't have money to pass certifications , I can just study them and gain knowledge is that good to work as an ethical hacker in freelance or with a company without certifications but with skills ? | cryptographer3301 | |

1,399,008 | Reinvent the wheel to understand Python asyncio. | During the last years coroutine based concurrency and the async/await syntax has exploded over a lot... | 0 | 2023-03-29T12:46:52 | https://dev.to/dontpanico/reinvent-the-wheel-to-understand-python-asyncio-3084 | python, asyncio, concurrency | During the last years coroutine based concurrency and the `async/await` syntax has exploded over a lot of languages, and so it has in Python too.

I found that people start using it recently (who haven't been there while it was implemented) are experiencing different kinds of problems while coding or debugging.

In this article we're gonna write our own multitasking library without using neither `asyncio`, nor the `async` and the `await` keywords, after exploring the basics of concurrency.

*This post assumes you're already familiar with python, python iterators and generators and with socket programming.*

## What is concurrency anyway?

According to wikipedia

> Concurrent computing is a form of computing in which several computations are executed concurrently—**during overlapping time periods**

In practice it means that while our function `a()` is executing, other functions **may** execute too and they run to completion in an interleaved manner.

However our program is still executing one thing at a time (as our cpu core is capable).

Parallelism (executing more things at once) is a special form of concurrency, but we're not talking about it today.

<!--Inserisci immagine per spiegare la differenza-->

## How do we reach concurrency in our programs

The easiest way to write a concurrent program is by using threads: you spawn a function in a thread, it starts running and any time the opportunity arises our cpu will switch between threads.

However, there are well known problems with threading programming like synchronization, memory usage, not having the control over context switches, etc, which all combined lead to some scalability limitation (you can find useful resources and articles on those problems searching online). Developers looked for something more lightweight and scalable that can be combined with multi-threading if needed and they came out with.. *iterators*.

## Concurrency with iterators

How do we achieve concurrency with iterators? There are two core concepts to keep into account:

* Interleaving.

* Execution during overlapping time periods.

If you think about how we can interleave execution of different units of code without spawning threads, you'll probably find out that you need a way to *pause/resume* that unit of code.

Look at the very basic implementation of an iterator:

```python

class ConcurrentUnit:

def __init__(self, to: int):

self._to = to

self._i = -1

def __iter__(self):

return self

def __next__(self):

self._i += 1

if self._i >= self._to:

raise StopIteration

return self._i

```

As you already know a for loop just keeps calling `.__next__` until `StopIteration` raises. Let's abuse this to execute code concurrently.

```python

from typing import TypeVar

T = TypeVar('T')

class ConcurrentUnit:

def __init__(self, to: int, return_value: T):

self._to = to

self._i = -1

self._return_value = return_value

def __iter__(self):

return self

def __next__(self):

self._i += 1

if self._i >= self._to:

raise StopIteration(self._return_value)

return self._i

if __name__ == '__main__':

cu1 = ConcurrentUnit(5, 'cu1')

cu2 = ConcurrentUnit(3, 'cu2')

tasks = [cu1, cu2]

while tasks:

t = tasks.pop(0)

try:

step = next(t)

print(step)

except StopIteration as e:

print(e.value)

else:

tasks.append(t)

```

If you run that code, the output will be:

```

0

0

1

1

2

2

3

cu2

4

cu1

```

You can see that our units have executed in an interleaved manner during overlapping periods of time and so yes, even without any benefit yet, we have written concurrent code. Let's look at it in detail.

The `ConcurrentUnit` class should be very easy to understand, from a behavioral point of view it's simulating the usage of `range(x)` (I've omitted `start` to keep it simple), but it also has a `return_value` parameter with a generic type annotation, that enable returning values from the execution. The `return_value` is bound to `StopIteration` when raised by `__next__` and we need to manually handle it calling `__next__` in a `try/except` block (we can't simply use a for loop which would handle the exception silently).

In our main block we create two concurrent units (they could've been more) and we store them in a list (like we would've done with a scheduler, more on this later) and we run our loop:

* First we pop our first unit in the list.

* We call `next` on our unit in a `try/except` block, and print the result out.

* If it raises `StopIteration` we get the `return_value` and print it out. At this point we know that our unit is done.

* Otherwise, we know that our unit is not done, so we append it to our list.

## Python Generators

The code above is very odd, and wrapping the logic of functions in iterator classes will really soon lead to big spaghetti code.

Luckily for us python has generators, which will let us declare functions that behave like iterators, and in addition they bound the value of the `return` statement to the `StopIteration` instance.

We can convert our above code into:

```python

def concurrent_unit(to: int) -> Generator[str]:

for i in range(to):

yield i

return f"run for {to} times"

if __name__ == '__main__':

cu1 = concurrent_unit(5)

cu2 = concurrent_unit(3)

tasks = [cu1, cu2]

while tasks:

t = tasks.pop(0)

try:

step = next(t)

print(step)

except StopIteration as e:

print(e.value)

else:

tasks.append(t)

```

and the code will behave the same.

There's an important concept you need to understand before we can move on and that's the difference between *generator objects* and *generator functions*.

A *generator function* is a function that just return a *generator object*: it does not execute any code other than creating the *generator object*. They can be recognized as the function body contains at least one `yield`.

The resulting *generator object* than implement the iteration protocol with `__next__` executing code up to the next `yield` statement.

This concept applies to coroutines too: `async def` functions are *coroutine functions* that return *coroutine objects* when called.

From now on, when I'll say *generator* I could either refer to functions or objects, the context will make it clear.

## Build our own concurrency library

In this section we're gonna use generators to develop the basic of our concurrency library.

First we'll define a `Task` object to wrap around generators and providing a layer of abstraction over our dude spaghetti. Then we'll write a scheduler to handle tasks execution. Let's dive into it.

```python

from collections.abc import Generator

from typing import Any, TypeVar

T = TypeVar("T")

class Task:

def __init__(

self,

generator: Generator[Any, Any, T],

*,

name: str | None = None,

debug: bool = False,

) -> None:

self._generator = generator

self._name = name

self._debug = debug

self._result: T | None = None

self._exception: Exception | None = None

self._done = False

def __repr__(self) -> str:

return f"<Task: {self._name}, done: {self._done}>"

def _step(self) -> None:

if self._done:

raise RuntimeError(f"{self}: Cannot step a done task")

try:

step = self._generator.send(None)

if self._debug:

print(f"{self}: {step}")

except StopIteration as e:

self._done = True

self._result = e.value

except Exception as e:

self._done = True

self._exception = e

if self._debug:

print(f"{self}: Exception: {e}")

def result(self) -> T:

return self._result

def exception(self) -> Exception:

return self._exception

def done(self) -> bool:

return self._done

```

Our `Task` class stores a generator object and has 3 attributes that are worth looking at:

* `_done` indicating whether the task can be considered completed or not.

* `_result` indicating the generator return value, if any.

* `_exception` any exception other than `StopIteration` that our generator may raises.

The `_step` method builds upon the execution logic used before with iterators: it represents a single *"step"* of our task. It calls `next` on `self._generator` (`gen.send(None)` is the same as `next(gen)`) and if we get either a result (wrapped in a `StopIteration` error) or an exception, stores it in the corresponding attribute.

You're may asking yourself *"why is he just storing the exception instead of raising it?"*. In the next section I'm answering that question. By now, go on to build a scheduler for our tasks:

```python

from collections.abc import Callable, Generator

from typing import Any, TypeVar

from .tasks import Task

T = TypeVar("T")

class EventLoop:

def __init__(self, *, debug: bool = False) -> None:

self._debug = debug

self._tasks: list[Task] = []

self._tasks_counter: int = 0

def create_task(

self, generator: Generator[Any, Any, T], *, name: str | None = None

) -> Task:

task = Task(

generator,

name=name or f"Task-{self._tasks_counter}",

debug=self._debug,

)

self._tasks.append(task)

self._tasks_counter += 1

return task

def run_until_complete(

self,