id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

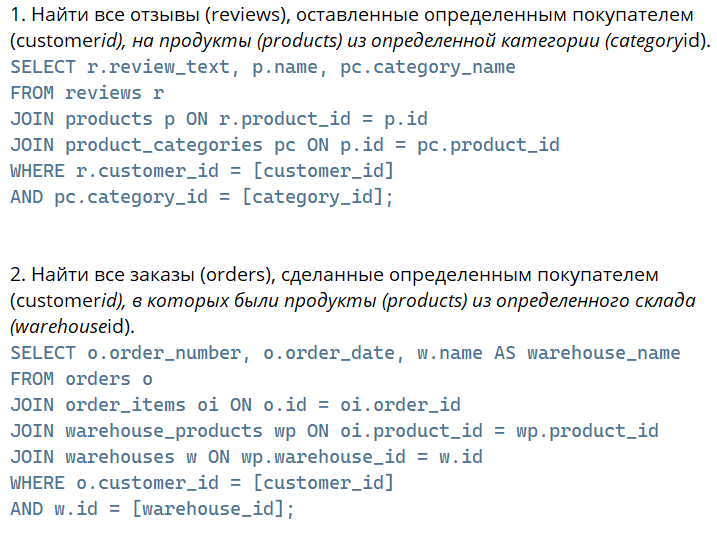

|---|---|---|---|---|---|---|---|---|

1,432,291 | Innovative MVP Development for Entrepreneurs and Businesses | As an entrepreneur or business owner, you understand the importance of creating a Minimum Viable... | 0 | 2023-04-11T06:00:09 | https://dev.to/amplework/innovative-mvp-development-for-entrepreneurs-and-businesses-1mg1 | mvp, development | As an entrepreneur or business owner, you understand the importance of creating a [Minimum Viable Product](https://en.wikipedia.org/wiki/Minimum_viable_product) (MVP) before launching a full-scale product or service. An MVP allows you to test the market, validate your idea, and gather feedback from potential customers without investing too much time or money.

But, how do you go about developing an MVP? Should you hire an in-house team or outsource the development work to an MVP development company? In this article, we will explore the benefits of working with an MVP app development company or MVP development consultant and how they can help you build a successful MVP.

Why work with an MVP development company or consultant?

**1. Access to expertise**

MVP development companies and consultants have a team of experienced developers, designers, and project managers who have worked on numerous MVPs. They have a deep understanding of the development process and can guide you through every step of the way, from ideation to launch.

**2. Time and cost-effective**

Building an in-house team to develop your MVP can be time-consuming and expensive. It involves hiring, training, and managing a team of developers, designers, and project managers. On the other hand, outsourcing your MVP development to an MVP app development company or consultant can save you time and money.

**3. Focus on your core business**

By outsourcing your MVP development, you can focus on your core business activities, such as marketing and sales. You don't have to worry about the technical details of building an MVP, leaving you with more time to focus on what you do best.

**4. Access to the latest technologies**

MVP development companies and consultants have access to the latest technologies and tools needed to build a successful MVP. They can help you choose the right technology stack for your product and ensure that your MVP is scalable and flexible.

**5. Flexibility and scalability**

Working with an MVP development company or consultant gives you the flexibility to scale your development team up or down based on your business needs. You can easily add or remove team members as your project progresses, saving you time and money.

Choosing the right MVP development company or consultant

Choosing the right MVP development company or consultant can be challenging. Here are a few things to consider before making a decision:

**Portfolio:** Look at the company's or consultant's portfolio to see if they have experience building MVPs similar to yours.

**Expertise:** Check if the company or consultant has expertise in the technology stack you want to use.

**Communication:** Communication is crucial when working with an MVP development company or consultant. Make sure they have a clear communication process in place, and they are responsive to your queries.

**Cost:** Compare the costs of different MVP development companies or consultants before making a decision.

**Conclusion**

Building an MVP is a crucial step in launching a successful product or service. Working with an MVP development company or consultant can help you build a successful MVP that meets your business needs. They offer expertise, time and cost-effectiveness, and access to the latest technologies. When choosing an [MVP development company](https://www.amplework.com/mvp-development/) or consultant, consider their portfolio, expertise, communication, and cost. With the right partner, you can build an innovative MVP that sets your business up for success. | amplework |

1,432,639 | WebAssembly reminds me of Java Applets and Macromedia Flash | Rust programming language is meant to write software for low-powered hardware devices, the majority... | 0 | 2023-04-11T12:45:47 | https://blog.chetanmittaldev.com/webassembly-reminds-me-of-java-applets-and-macromedia-flash | ---

title: WebAssembly reminds me of Java Applets and Macromedia Flash

published: true

date: 2023-04-08 11:45:36 UTC

tags:

canonical_url: https://blog.chetanmittaldev.com/webassembly-reminds-me-of-java-applets-and-macromedia-flash

---

Rust programming language is meant to write software for [low-powered hardware devices](https://blog.chetanmittaldev.com/10-best-use-cases-of-rust-programming-language-in-2023), the majority of which include IOT devices, embedded devices such as industrial machines, robotic devices, etc.

WebAssembly is a virtual machine (a software program) that is inbuilt into modern browsers, such as Chrome, Firefox, Safari, Edge, etc., to download and execute a binary file(s) (a compiled software program specified in the `<script>` tag in your HTML file) inside the browser.

A majority of WASM software programs are written in the Rust programming language. Though these can be written in C/C++ and Ruby-Lang too.

See the diagram below:-

WASM binary files are included in the HTML files the same way Javascript files are included inside a <script> to tell your browser that there is a web-assembly file that it needs to download and execute.

<script src="mymodule.wasm"></script>

Unlike Javascript which is interpreted on the run, from the source file, without any compiling; WASM binaries are pre-compiled files meant for fast execution and safety.

Java Applets and Adobe Flash were the same, a software program pre-compiled into a binary file running within the browser.

And similar to WASM binaries needing a software program in the browser to get downloaded and executed, both the Java Applets and Adobe Flash needed a browser plugin to get downloaded and executed within the browser.

[https://steveklabnik.com/writing/is-webassembly-the-return-of-java-applets-flash](https://steveklabnik.com/writing/is-webassembly-the-return-of-java-applets-flash)

During my early days in software programming, I built a few websites based on Java Applets and I seriously remember the nightmares I used to have while debugging those.

[https://stackoverflow.com/questions/868111/how-do-you-debug-java-applets](https://stackoverflow.com/questions/868111/how-do-you-debug-java-applets)

In 2023, I use either Ruby or JavaScript for building software applications, using [VIM](https://www.vim.org/) and [Visual Studio Code](https://code.visualstudio.com/), and both programming languages are really easy to debug on the fly than C/C++ and Java, and Rust.

I wouldn't definitely want to go back to the old days of having debugging nightmares and waiting hours while my Rust or C/C++ files for WASM are getting compiled.

[https://media.giphy.com/media/tXL4FHPSnVJ0A/giphy.gif](https://media.giphy.com/media/tXL4FHPSnVJ0A/giphy.gif)

I would say, using WASM would make more sense than JavaScript only where there is a need for a CPU-intensive software program execution because of being written in Rust and compiled.

The best examples could be:-

- Games inside the browser

- Image, audio, and video editing inside the browser

- Machine learning computations inside the browser

- Etc

Do you have any other examples where WASM could be used best? Please comment. | chetanmittaldev | |

1,432,779 | Amazon EC2: Powering Cloud Computing with Virtual Servers | Unveiling the Power of Elastic Compute Cloud for Scalable and Flexible Virtual Servers ... | 0 | 2023-04-11T16:14:32 | https://blog.seancoughlin.me/amazon-ec2-powering-cloud-computing-with-virtual-servers | cloud, cloudcomputing, ec2, aws | ---

title: Amazon EC2: Powering Cloud Computing with Virtual Servers

published: true

date: 2023-04-11 15:19:02 UTC

tags: #cloud #cloudcomputing #ec2 #aws

canonical_url: https://blog.seancoughlin.me/amazon-ec2-powering-cloud-computing-with-virtual-servers

---

Unveiling the Power of Elastic Compute Cloud for Scalable and Flexible Virtual Servers

## Introduction

[Amazon Elastic Compute Cloud (EC2)](https://aws.amazon.com/ec2/) is a core component of [Amazon Web Services (AWS)](https://aws.amazon.com), offering scalable, on-demand virtual server instances for various computing needs. In this post, we'll explore what EC2 is, its history, potential applications, and how it has revolutionized the cloud computing landscape.

## What is Amazon EC2?

Amazon EC2 is an [Infrastructure as a Service (IaaS)](https://dev.to/scc33/exploring-cloud-computing-types-iaas-paas-saas-and-beyond-1cd0) offering that allows users to provision and manage virtual servers, called instances, [in the cloud](https://dev.to/scc33/demystifying-the-cloud-understanding-the-fundamentals-and-impact-of-cloud-computing-2bpd). These instances can be customized with various configurations, such as CPU, memory, storage, and network capacity, to suit specific workloads and requirements. EC2 instances run on Amazon's global infrastructure, ensuring high availability, performance, and security. Users can scale their infrastructure up or down as needed, paying only for the resources they consume.

## The History of Amazon EC2

[Launched in 2006](https://en.wikipedia.org/wiki/Amazon_Elastic_Compute_Cloud#History), Amazon EC2 was one of the first commercially available cloud computing services, marking the beginning of the modern cloud era. The introduction of EC2 was a game-changer, providing developers and businesses with an alternative to traditional, capital-intensive server infrastructure. Since its inception, EC2 has continually evolved, adding new features, instance types, and additional services to enhance its capabilities and cater to the ever-growing demand for cloud computing resources.

## Potential Applications of Amazon EC2

Amazon EC2 has a wide range of potential applications, making it a versatile solution for various computing needs:

- Web Hosting: EC2 instances can be used to host websites and web applications, providing scalable resources to handle fluctuating traffic loads.

- Big Data Processing: EC2 can be utilized for processing large datasets, running analytics, and deploying machine learning models, with the ability to scale resources dynamically as workloads increase.

- Backup and Disaster Recovery: EC2 instances can serve as backup servers or form part of a disaster recovery strategy, ensuring data is stored redundantly and can be quickly restored in case of an outage or data loss.

- Development and Testing: Developers can use EC2 instances to create and test applications in isolated environments, without impacting their local machines or production systems.

- High-Performance Computing ([HPC](https://en.wikipedia.org/wiki/High-performance_computing)): EC2 offers specialized instance types optimized for compute-intensive workloads, such as scientific simulations, rendering, and financial modeling.

## Complementary Services in the AWS Ecosystem

Amazon EC2 is tightly integrated with other AWS services, enabling users to build comprehensive cloud-based solutions:

- [Amazon Elastic Block Store (EBS)](https://aws.amazon.com/ebs/): Provides persistent block storage for EC2 instances, ensuring data is retained even after an instance is terminated.

- [Amazon Virtual Private Cloud (VPC)](https://aws.amazon.com/vpc/): Offers isolated virtual networks within the AWS infrastructure, allowing users to configure and control their network environment.

- [Amazon Elastic Load Balancing (ELB)](https://aws.amazon.com/elasticloadbalancing/): Distributes incoming traffic across multiple EC2 instances, improving availability and fault tolerance.

- [Amazon Relational Database Service (RDS)](https://aws.amazon.com/rds/): A managed database service that can be used in conjunction with EC2 instances to store and manage application data.

## Conclusion

Amazon EC2 has played a pivotal role in the growth and adoption of cloud computing, offering a flexible, cost-effective alternative to traditional server infrastructure. As businesses embrace the cloud, EC2 is a pivotal component of the AWS ecosystem, providing scalable, on-demand computing resources for many applications and workloads.

---

Originally published at https://blog.seancoughlin.me. | scc33 |

1,432,897 | Building a QR code generator | What is a QR code? QR code is a later version of a data matrix barcode invented in japan... | 0 | 2023-04-16T02:07:47 | https://dev.to/cedsengine/building-a-qr-code-generator-onj | webdev, javascript, programming, tutorial |

##What is a QR code?

QR code is a later version of a data matrix barcode invented in japan in 1994, data matrix barcode was used to label automotive parts, these labels when scanned would output information pertaining to the automotive part the code referenced. This was a great way to have accessible information contained and hidden only to be accessed when needed.

Qr codes are widely used to store a variety information for business and personal use. From links to websites, advertisements, contact info, restaurant menus and payment processing etc. QR codes hold more information than data matrix barcode and makes information easier to access for anyone with a smart device camera.

I will be walking you through a simple QR code generator application made with Javascript and HTML using the JS library called qrcode on the CDNJS platform (https://cdnjs.com/libraries/qrcode). However, for those who want to use a Framework there are Node packages for QR code generation as well (https://www.npmjs.com/package/qrcode#highlights).

###Let’s Build!

To begin the Process of building out your QR code generator we will add the Qr code script into the head of our HTML structure.

```

<script

src="https://cdnjs.cloudflare.com/ajax/libs/qrcodejs/1.0.0/qrcode.min.js"

integrity="sha512-CNgIRecGo7nphbeZ04Sc13ka07paqdeTu0WR1IM4kNcpmBAUSHSQX0FslNhTDadL4O5SAGapGt4FodqL8My0mA=="

crossorigin="anonymous" referrerpolicy="no-referrer">

</script>

```

In the example below a form element is created for users to submit a URL and the size of the Qr code they prefer. (This library will also allow users to pick the color of the Qr code also if you are interested.)

```

<form id="generate-form">

<input id="url"

type="url"

placeholder="Enter URL here"

/>

<select id="size">

<option value="100">100x100</option>

<option value="200">200x200</option>

<option value="300" selected>300x300</option>

<option value="400">400x400</option>

<option value="500">500x500</option>

<option value="600">600x600</option>

<option value="700">700x700</option>

</select>

<button type="submit">

Generate QR Code

</button>

</form>

```

The form element will submit the URL and size value needed for QR code generator functionality.

### Javascript functionality

Begin with declaring two variables, form element and the element that will display the Qr code.

```

const form = document.getElementById('generate-form');

const qr = document.getElementById('qrcode');

```

Next add this code provided by the library we are using, generateQRCode() will take in two arguments and generate the QR code correlated to the arguments passed in.

```

const generateQRCode = (url, size) => {

const qrcode = new QRCode('qrcode', {

text: url,

width: size,

height: size

})

}

```

### Submit function

```

const form = document.getElementById('generate-form');

const qr = document.getElementById('qrcode');

const generateQRCode = (url, size) => {

const qrcode = new QRCode('qrcode', {

text: url,

width: size,

height: size

})

};

const onSubmit = (e) => {

e.preventdefault();

const url = document.getElementById('url').value;

const size = document.getElementById('size').value;

if(url === '') {

alert('Please enter a URL');

} else {

generateQRCode(url, size);

}

};

form.addEventListener("submit", onSubmit);

```

Two variables are declared to contain the input value, a if statement to ensure filled input, if not alert user to fill input and lastly calling the generateQRCode function with the passed in arguments.

To give onSubmit functionality to form element we add event listener to listen for form submissions of Qr code and pass in the event "submit" follow by the function onSubmit.

####With this you should have a functioning Qr code generator!

| cedsengine |

1,433,002 | Maximizing Your Potential as a Programmer with AI Assistance | As software development continues to advance, LLM-powered tools and services are emerging as a key... | 0 | 2023-04-11T19:54:04 | https://dev.to/fredericocarneiro/maximizing-your-potential-as-a-programmer-with-ai-assistance-5eg9 | llmpowered, aipowered, productivity, tooling | As software development continues to advance, LLM-powered tools and services are emerging as a key component of the field. By automating routine tasks and enhancing code quality, these advanced technologies can be a powerful tool for developers looking to enhance their skills and stay competitive in a fast-paced industry. In this article, we'll explore how LLM-powered approaches can elevate your software development skills and assist you in achieving your goals as a programmer.

But how can software engineers potentialize their skills with AI assistance?

Let’s dive into this new universe of AI powered tools that certainly will boost your quality and productivity.

## Automating routine tasks

One of the most significant advantages of using AI in software development is the ability to automate routine tasks. With AI-powered tools, developers can automate repetitive tasks such as code formatting, testing, and debugging. This frees up valuable time that can be spent on more complex and challenging tasks, such as improving software functionality and creating innovative solutions.

Take the example of code formatting. This task can be incredibly time-consuming and often requires a significant amount of effort. However, with AI-powered tools, code formatting can be automated, allowing developers to focus on more pressing issues. Similarly, testing processes can also be automated using tools like Testim.io. This tool can identify and correct test cases after detect changes in the codebase. This allows developers to quickly identify and fix test case scenarios issues, without having to spend time manually on it.

Security is also a significant concern in software development. With the rise of cyber threats, it's more critical than ever to ensure that code is secure. This is where tools like GitHub's CodeQL come into play. Using machine learning, CodeQL code analysis tool can help identify security vulnerabilities in code changes. By automating this process, CodeQL enables developers to find and fix security issues more efficiently.

Artificial intelligence (AI) tools are revolutionizing the software development industry, and Refraction is at the forefront of this trend. This web-based tool uses AI to speed up unit testing, improve code quality, and enhance code reliability. Refraction's ability to automatically generate tests based on code changes saves developers time and reduces the risk of missing critical issues. By identifying potential issues early on in the development cycle, developers can reduce the risk of costly errors and improve the overall quality of the code. This can lead to faster development cycles, fewer bugs, and ultimately, better software.

Refraction AI capabilities also enable it to improve code quality by identifying potential bugs, security vulnerabilities, or performance issues. The tool can provide recommendations for improving the code, such as suggesting alternative coding patterns or highlighting potential areas of optimization. By automatically analyzing code and identifying potential issues, Refraction allows developers to focus on writing high-quality code that meets the needs of their users.

Ultimately, the use of AI in software development can greatly improve the quality of code by providing real-time feedback and insights, automating routine tasks, and identifying potential issues before they become larger problems. By using these tools to improve the quality of their code, developers can produce software that meets the needs of users and customers, while also staying ahead of the competition in a rapidly changing industry.

## Enhancing collaboration

AI-powered collaboration tools can help to connect developers across teams and geographies, providing a platform for real-time collaboration and feedback on projects.

Notion, the popular productivity and collaboration tool, has added a new feature that allows users to ask an AI to continue writing in the same style and tone as their previous text. This new feature, called NotionAI, is powered by OpenAI's GPT-3 language model and is designed to save users time and effort by automating the writing process.

With NotionAI, users can simply type in a few words or sentences and let the AI take over, generating text that matches the style and tone of their previous writing. This can be especially useful for tasks such as drafting emails, writing reports, or creating project documentation. By automating the writing process, NotionAI frees up users' time and allows them to focus on more important tasks, such as strategizing or analyzing data.

Another interesting tool similar to Notion is Taskade AI, also driven by advanced LLM technology. Taskade is a all-in-one solution designed to elevate team collaboration for the modern workforce. This multifaceted platform unites five essential AI-powered features such as content writing, task and project management, note-taking, document editing, mind mapping, and video chat. All this to streamline organization and enhance productivity. The benefits of this tool include accelerated content generation, customizable project views, and real-time collaboration, enabling users to unlock their full potential and achieve greater success in their projects.

## Boosting creativity

AI-powered tools can help developers to generate new ideas and approaches to coding, leveraging machine learning and data analysis to identify patterns and make suggestions for improvement.

In today's globalized software development landscape, it's more important than ever to have tools that enhance collaboration across teams and geographies. AI-powered collaboration tools can provide real-time feedback and connection between developers, improving communication and productivity. For example, GitHub's AI-powered tool, CoPilot, can suggest code based on the context of the project and the codebase. This allows developers to work together more effectively and efficiently, as they can quickly see how their changes fit into the overall project.

When it comes to code reviews,Codeball, an AI-powered code-review bot, streamlines the software development process by approving Pull Requests (PRs) that require no further feedback or objections. Handling 63% of PRs typically marked as LGTM (Looks good to me), Codeball allows developers to concentrate on more complex PRs. Utilizing a deep learning model trained on millions of PRs, Codeball examines numerous indicators to make accurate approval decisions, ensuring efficiency and code quality in the development process.

Regarding data retrieving tasks, developers can significantly boost their productivity using Text2Sql.ai by effortlessly generating complex SQL queries from simple English instructions, eliminating the need for manual query writing. The tool's enhances developers' productivity by enabling easy SQL query generation from English instructions and translating queries back into plain English for better understanding. Its compatibility with various SQL dialects and custom database schema connections promotes seamless database management, ultimately allowing developers to focus on innovation and expedite project completion.

Overall, there are many AI-powered services available to help programmers improve their code quality and boost their creativity. By leveraging these services, developers can optimize their code, save time on tedious tasks, and focus on more creative and innovative aspects of software development.

## Staying up-to-date

AI-powered tools can help developers stay current with the latest trends and technologies in the field, providing access to real-time data and insights that can inform their work and keep them ahead of the curve.

A handy tool to help developers to stay up-to-date with technology is Phind search engine. Phind’s intuitive design and AI-driven capabilities make it an invaluable resource for developers in their day-to-day tasks. By using Phind, developers can quickly obtain accurate solutions, saving time and reducing frustration often associated with conventional search engines. Its ability to parse complex questions and provide relevant code snippets streamlines the troubleshooting process, allowing developers to focus on higher-value tasks. Additionally, Phind's simple explanations facilitate better understanding of concepts, promoting continuous learning and skill development. Ultimately, integrating Phind into their routine empowers developers to work more efficiently, enhance their expertise, and deliver exceptional results in their projects.

## Conclusion

In conclusion, the use of AI in software development has the potential to revolutionize the way we work and improve the quality and efficiency of our code. By leveraging the power of machine learning, data analysis, and automation, developers can unlock new possibilities for creativity, collaboration, and innovation. Whether you're a seasoned pro or just starting out, incorporating AI tools and techniques into your development workflow can help you to become a better developer and achieve your professional goals. So why not start exploring the possibilities today and see how AI can take your skills to the next level?

Original article: [joinplank.com](https://www.joinplank.com/articles/maximizing-your-potential-as-a-programmer-with-ai-assistance)

| fredericocarneiro |

1,433,310 | The history of HTTP in under 5 minutes | The Hypertext Transfer Protocol (HTTP) is the foundation of data communication on the World Wide Web.... | 0 | 2023-04-12T04:42:03 | https://dev.to/andreasbergstrom/the-history-of-http-in-under-5-minutes-4b7p | web, http, networking, browsers | The Hypertext Transfer Protocol (HTTP) is the foundation of data communication on the World Wide Web. Since its inception, HTTP has undergone several iterations to improve its performance, security, and efficiency. In this blog post, we will take a deep dive into the history and key features of each HTTP version, from HTTP/0.9 to the latest HTTP/3, with a focus on new headers and technologies introduced in each version.

### HTTP/0.9: The Beginning

HTTP/0.9, also known as the "One-Line Protocol," was the first version of HTTP introduced by Tim Berners-Lee in 1991. It was a simple text-based protocol that allowed clients to request documents from servers using a single line command.

The client would send a request in the format "GET <path>", and the server would respond with the requested document. There were no headers or metadata, and the connection would close immediately after the transfer. While HTTP/0.9 was extremely limited, it laid the groundwork for future versions of the protocol.

### HTTP/1.0: Expanding Functionality

HTTP/1.0, officially released as RFC 1945 in 1996, expanded upon HTTP/0.9 by introducing a more robust request and response format. HTTP/1.0 added support for request and response headers, which allowed clients and servers to exchange metadata about the request and the resource being transferred. Some of the key headers introduced in HTTP/1.0 include:

**Content-Type:** Specifies the media type of the resource being transferred.

**Content-Length:** Indicates the size of the resource in bytes.

**Last-Modified:** Represents the date and time the resource was last modified.

**Expires:** Provides a date and time after which the resource is considered stale.

These headers enabled new features such as caching, content negotiation, and conditional requests. However, HTTP/1.0 still had some significant limitations, such as opening a new TCP connection for each request, which negatively impacted performance.

### HTTP/1.1: Performance and Efficiency Improvements

HTTP/1.1, first released as RFC 2068 in 1997 and later updated in RFC 2616 (1999) and RFC 7230-7235 (2014), aimed to address the performance issues present in HTTP/1.0. The most notable improvement was the introduction of persistent connections, which allowed multiple requests and responses to be sent over a single TCP connection, reducing the overhead of establishing and closing connections. HTTP/1.1 also introduced features such as pipelining, chunked transfer encoding, and additional caching mechanisms.

Some important headers introduced in HTTP/1.1 include:

**Host:** Specifies the domain name and port number of the server hosting the requested resource.

**Cache-Control:** Allows clients and servers to specify caching policies, such as max-age or no-cache.

**ETag:** Provides a unique identifier for a specific version of a resource, allowing for conditional requests and more efficient caching.

**Connection:** Indicates whether the connection should remain open for further requests or be closed after the current request.

HTTP/1.1 also paved the way for real-time communication with the introduction of WebSockets and Server-Sent Events (SSE), enabling efficient bidirectional communication between clients and servers.

### HTTP/2: A Major Leap Forward

HTTP/2, standardized as RFC 7540 in 2015, brought a fundamental shift in the way HTTP communicated over the network. Instead of using plain text, HTTP/2 utilized a binary framing layer, making it more efficient and less error-prone.

One of the most significant advancements was the introduction of multiplexing, which allowed multiple requests and responses to be sent concurrently over a single connection. This eliminated the head-of-line blocking problem present in HTTP/1.1, where a single slow request could block subsequent requests.

HTTP/2 also introduced server push, a feature that enabled servers to proactively send resources to the client's cache, anticipating future requests. Additionally, HTTP/2 offered header compression using HPACK, which reduced the overhead of sending redundant header data.

Some enhancements in HTTP/2 include:

**Stream Prioritization:** Enables clients to specify the priority of multiple requests, allowing servers to optimize resource delivery.

**ALPN (Application-Layer Protocol Negotiation):** Allows clients and servers to negotiate the specific version of HTTP during the TLS handshake, improving connection establishment time.

### HTTP/3: Embracing QUIC

HTTP/3, which began as an experimental protocol called QUIC (Quick UDP Internet Connections) at Google, was officially standardized by the IETF in 2021. The most significant change in HTTP/3 is the shift from using TCP as the transport layer protocol to QUIC, which uses the User Datagram Protocol (UDP) instead. This transition improves latency and connection establishment times by reducing the number of round trips required during the handshake process.

QUIC also introduces built-in encryption, making it more secure by default. Another notable feature is the improved handling of packet loss, which is particularly beneficial for users on unreliable networks or experiencing high latency. By employing a more efficient loss recovery mechanism and allowing for independent streams, QUIC ensures that packet loss on one stream does not impact the performance of other streams.

HTTP/3 also retains the performance features of HTTP/2, such as multiplexing, header compression, and server push. However, due to the fundamental differences between TCP and UDP, some adjustments were required. For example, HPACK compression was replaced with QPACK, a new compression scheme specifically designed for QUIC's unique characteristics. | andreasbergstrom |

1,433,486 | 7 best practices for arrays in javascript | Arrays are a fundamental data structure in JavaScript, and are used in nearly every application.... | 0 | 2023-04-12T10:31:33 | https://dev.to/navinmishra1717/7-best-practices-for-arrays-in-javascript-9ge | javascript, programming |

Arrays are a fundamental data structure in JavaScript, and are used in nearly every application. But working with arrays can be messy and inefficient if you don't use the right methods and techniques. In this post, we'll explore some array methods and techniques that will help you write cleaner, more efficient code.

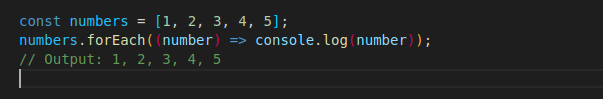

1. forEach : The Gentle Giant of Array Methods

---

When it comes to iterating over an array, the forEach() method is the gentle giant. It quietly steps through each element in the array, without making a fuss. It doesn't care if you mutate the array or not. It just wants to be helpful.

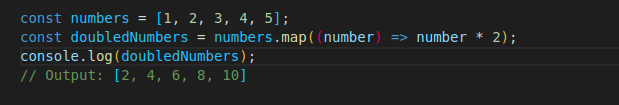

2. map : The Transformer

---

The map() method transforms each element in an array into a new element. It's like a magical machine that takes in old junk and spits out shiny new things.

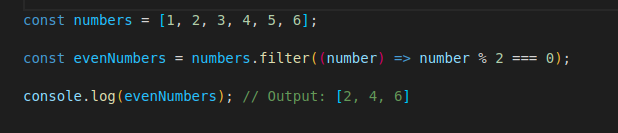

3. filter : The Cleaner

---

The filter() method is like a cleaning crew for your array. It filters out elements that don't belong, leaving behind only the good stuff.

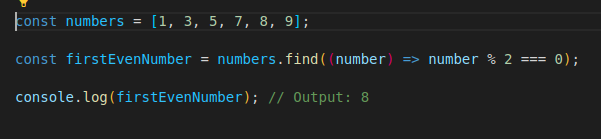

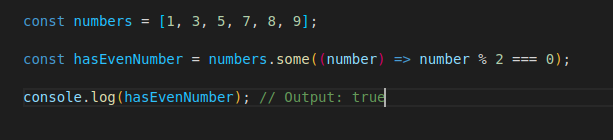

_**Bonus**_

some() method checks if at least one element in an array satisfies a condition and returns boolean value. **And**, find() method returns the first element in an array that satisfies a provided testing function. It returns **undefined** if no element satisfies the condition

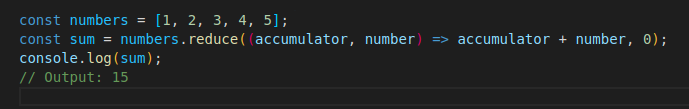

4. reduce : The Calculator

---

The reduce() method is like a calculator for your array. It takes in an array of numbers and crunches them down into a single number.

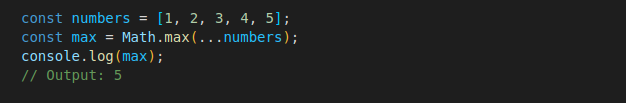

5. spread operator : The Expander

---

The spread operator is like a can of compressed air that expands when you use it. It expands an array into individual elements, making it easy to pass them around as separate arguments.

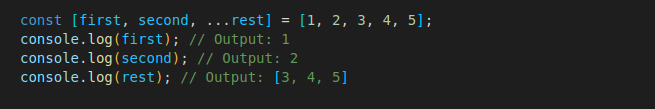

6. destructuring : The Unpacker

---

Destructuring is like a reverse present wrapper. It unpacks the contents of an array and assigns them to individual variables.

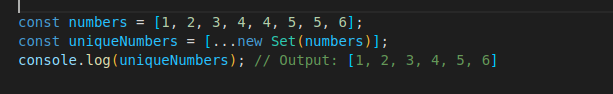

7. deduplication : The Remover

---

Deduplication is the process of removing duplicate values from an array. The **Set** constructor is used to create a new set object, which automatically removes duplicates.

***

####**Finally**

**Thanks for reading**. I Hope you find this article useful. Please leave a comment for mistakes so that i can improve in my next articles. And follow for more quality articles. *Happy reading!!*

| navinmishra1717 |

1,433,552 | How to Write a GraphQL Query | A beginner’s guide to writing your first GraphQL query In 2015, GraphQL was introduced by... | 0 | 2023-04-12T09:52:31 | https://dev.to/fimber01/how-to-write-a-graphql-query-5bbn | webdev, javascript, graphql, database |

#### A beginner’s guide to writing your first GraphQL query

In 2015, GraphQL was introduced by Meta as an alternative to REST APIs for building web applications and it’s safe to say GraphQL has taken the world by storm. A [recent report](https://devops.com/key-findings-from-the-2022-state-of-graphql-report/#:~:text=The%20report%20found%2047.9%25%20of,for%20third%2Dparty%20developer%20consumption.) showed that 47.9% of developers use GraphQL and that number is expected to keep rising. But what is GraphQL? How do you get started with it, and write your first query?

In this article, we’re going to be discussing the fundamentals of GraphQL, its benefits, and most importantly how to write a GraphQl query. We will walk you through the GraphQL Schema, the syntax you will need to create and execute a query, and common mistakes to avoid while writing a query. By the end of this article, you'll have a great understanding of how GraphQL works and be able to write your query with confidence.

Without further ado, let's get started!

### Prerequisites

While this is a beginner friendly article, it's still expected that you're familiar with javascript and Object Oriented Programming. If you do not, you can still read on and follow through without sruggling, as we'll go through everything together.

## What is GraphQL

[GraphQL](https://graphql.org/) is a query language for APIs that provides a more efficient, powerful, and flexible alternative to traditional REST-based APIs. GraphQL query language is used to access, query, and modify data in databases, as well as build APIs to access the data.

GraphQL simplifies the process of fetching and manipulating data from an API by allowing developers to specify exactly what data they need, in one request. This means fewer requests are needed to obtain the data and no over-fetching of data occurs. GraphQL also provides developers with more control over the shape of the data that is returned, so they can structure it exactly as they need.

Think of it this way. Let’s say you need to go on vacation, and you need clothes for your trip. If you’re using the RESTful approach to pack your clothes, you’d just take all your clothes with you and then sort them when you get there. Even if you had to take several trips.

However, if you had something like GraphQL to help you with the task, it would be able to fetch and pack only the specific clothes that you’d need, saving you the stress of overpacking and multiple trips. Pretty neat right?

Well, that’s exactly how GraphqL works. It helps you get specific data from a database base at once, cutting out repetitive requests and over-fetching. GraphQl shines particularly in [React](https://react.dev/) because it enables efficient data fetching which improves the performance of your React application, and it also helps you create more simplified state management which the complexity of your code.

Now that we know what GraphQL is, let’s talk about how to use it, and how to create your first query. The first thing we’ll be looking at is a GraphQL schema.

## Understanding the GraphQL Schema

Now we know that with GraphQL we can get specific data from an API, but getting that data would not be possible without the schema. GraphQL schemas define the structure and shape of the data that can be queried in a GraphQL API. Basically a schema is essentially a blueprint that defines the types of data that can be queried by a client application.

Let’s revisit the vacation analogy. A GraphQL schema is like a shopping list that’ll contail all your clothes, along with details about them. The schema will contain the type of cloth, the color, texture, and every other detail that will enable GraphQL pick the specific clothes you’d need.

The schema has two main types of data: objects and scalars. Objects represent complex data structures with multiple fields, while scalars represent single values like strings, integers, or booleans. That may be a bit hard to understand, so let’s explain it further using an example.

Schema’s are written using schema language. Continuing wih our clothes analogy let’s draw up a schema for your wardrobe.

```

type Wardrobe {

shorts: String!

shirts: String!

dresses: int!

underwear: {pants: draws}!

}

```

In this example, `Wardrobe` is an object type with four fields:` shorts`, `shirts`, `dresses` and `underwear`. Shorts and shirts both have a type `String`, dresses has an `int` type, and underwear has a nested field called `pants`. `String` and `int` are examples of a scalar type, which represents a single value like a string of characters, while `underwear` is an object type with its own fields.

In addition to defining types, the schema also defines the queries and mutations that can be executed against the API. Queries are used to fetch data from the API, while mutations are used to modify data. We’re going to be focusing only on the queries part of that because, well, that’s why you’re here.

## Syntax of a GraphQL Query

The syntax of a GraphQL query is designed to be human-readable and expressive, allowing clients to request exactly the data they need from a GraphQL API. It’s easy to understand, and easier to use.

Let’s use the schema above as an example. If we want to get just shorts in our wardrobe, here’s what the query would look like.

```

query GetClothes{

wardrobe {

shorts

}

}

```

That’s exactly what a GraphQL query looks like. Now, let’s break down the different parts of the query.

* ‘**query**’: the query keyword basically indicates that the operation is a query operation.

* ‘**wardrobe**’: is the name of the field that is being requested. In this case, it is a custom field defined in the GraphQL schema.

* ‘**shorts**’: is a subfield of the ‘wardrobe’ field being requested.

There are other things you can add to a query, like arguments, variables, and fragments, but we’ll talk about that later.

Now that you understand the syntax of a basic GraphQL query, let’s construct a query using data from a real live GraphQL API.

## Constructing a GraphQL Query

To write our first query, we’ll be using the GraphQL [Rick and Morty API playground](https://rickandmortyapi.com/graphql). The GraphQL Rick and Morty Playground is an online tool that allows developers to explore and interact with the GraphQL API for the TV show "Rick and Morty". It provides a web-based interface where developers can enter queries, see the corresponding responses, and experiment with the available data.

To make a query, we’ll first have to take a look at the schema for the API, which is available [here](https://rickandmortyapi.com/documentation/). There’s a lot of information there about the character schema, the location schema, and the location schema. For our first query, we’re going to get the names and genders of characters in the TV show.

Here’s what that query would look like. Remember, we’re using this playground and just writing the query on the left-hand side. You can try it out if you’d like.

```

query GetInfo {

characters {

results {

name

gender

}

}

}

```

As you can see, this query basically uses the “characters” field to retrieve data on all characters in the API, and then uses the “results” field to get the name and gender of each character. The name and gender fields are scalar fields that are already defined in the [GraphQL schema](https://rickandmortyapi.com/documentation/). The result of that query will be a JSON-like object, and here’s what that looks like.

```

{

"data": {

"characters": {

"results": [

{

"name": "Rick Sanchez",

"gender": "Male"

},

{

"name": "Morty Smith",

"gender": "Male"

},

{

"name": "Summer Smith",

"gender": "Female"

},

{

"name": "Beth Smith",

"gender": "Female"

},

{

"name": "Jerry Smith",

"gender": "Male"

},

{

"name": "Abadango Cluster Princess",

"gender": "Female"

},

{

"name": "Abradolf Lincler",

"gender": "Male"

},

{

"name": "Adjudicator Rick",

"gender": "Male"

},

{

"name": "Agency Director",

"gender": "Male"

},

{

"name": "Alan Rails",

"gender": "Male"

},

{

"name": "Albert Einstein",

"gender": "Male"

},

{

"name": "Alexander",

"gender": "Male"

},

{

"name": "Alien Googah",

"gender": "unknown"

},

{

"name": "Alien Morty",

"gender": "Male"

},

{

"name": "Alien Rick",

"gender": "Male"

},

{

"name": "Amish Cyborg",

"gender": "Male"

},

{

"name": "Annie",

"gender": "Female"

},

{

"name": "Antenna Morty",

"gender": "Male"

},

{

"name": "Antenna Rick",

"gender": "Male"

},

{

"name": "Ants in my Eyes Johnson",

"gender": "Male"

}

]

}

}

}

```

Just like that, we’ve successfully written our first GraphQL query. But that’s not all there is to these queries. We can use these queries to target specific data with the use of arguments. For instance, if we only wanted to get the data of characters named “rick”, we’ll add an argument to the characters field, like this.

```

query GetInfo {

characters(filter: { name: "Rick" }) {

results {

name

gender

}

}

}

```

In this query, we've simply added a filter argument to the characters field that specifies a filter object with a name field set to the value "Rick". This filter then restricts the results we get to characters whose name matches "Rick". That’s the beauty of GraphQL arguments: like a surgeon’s scalpel, it enables you to cut through the data and get exactly what you want.

With that, we’ve successfully written a GraphQL query. Of course, there’s a bit more to queries like [fragments](https://graphql.org/learn/queries/#fragments:~:text=in%20one%20request.-,Fragments%23,-Let%27s%20say%20we), [variables](https://graphql.org/learn/queries/#fragments:~:text=different%20GraphQL%20requests.-,Variables%23,-So%20far%2C%20we), and [mutations](https://graphql.org/learn/queries/#fragments:~:text=completely%20new%20directives.-,Mutations%23,-Most%20discussions%20of), but you can read up on that later in your spare time. Next, let’s look at the best practices you should follow when writing GraphQL queries.

## Best practices for writing a GraphQL query

* Name all operations: In all our operations above, we gave the queries a name, i.e GetClothes, and GetInfo. The truth is, we don’t necessarily have to do so, as the queries would still work without us giving the operation a name. However, it’s best to always name your operations for clarity of purpose and to to avoid unexpected errors.

* Be specific with your query: Only ask for the data you need. Avoid requesting unnecessary fields or data that you won't use. This will make your query more efficient and faster, which is the entire point of using GraphQL over the RESTful approach

* Avoid nested queries: While GraphQL allows for nested queries, it's best to avoid them as much as possible. They can lead to performance issues and make queries more difficult to read and maintain.

* Use the GraphiQL tool: The [GraphiQL tool](https://www.onegraph.com/graphiql) is a powerful development tool that allows you to interactively build and test your queries. You can set it up locally on your computer or use the online versions to experiment with different queries and ensure they return the expected data.

## Common Mistakes to Avoid when writing a GraphQL query

* Over-fetching or under-fetching data: Over-fetching means retrieving more data than you need, which can slow down your application. Under-fetching, on the other hand, means not retrieving enough data, which can result in multiple requests being made to the server.

* Nesting too deeply: Nesting too deeply in GraphQL can make your queries difficult to read and maintain. It is best to keep your queries shallow and only nest when necessary.

## Conclusion

If you’ve made it this far, then congratulations you’ve learned how to successfully write your first GraphQL query, and you’ve also learned the best practices and mistakes to avoid. If you’d like to learn more about GraphQL in general, you can check out this [video](https://www.youtube.com/watch?v=BcLNfwF04Kw&t=211s). It was quite useful to me when I was starting out.

I hope this article was useful to you. See you in the next one!

| fimber01 |

1,433,619 | Best Resources for Machine Learning | How to Extract Tables from PDF files and save them as CSV using Python Face Recognition-Based... | 0 | 2023-04-12T12:04:53 | https://dev.to/sharmaji27/best-resources-for-machine-learning-15lc | deeplearning, machinelearning, computervision, python | [How to Extract Tables from PDF files and save them as CSV using Python](https://machinelearningprojects.net/extract-tables-from-pdf-files/)

[Face Recognition-Based Attendance System with source code – Flask App – With GUI](https://machinelearningprojects.net/face-recognition-based-attendance-system/)

[10+ Unique Flask Projects with Source Code](https://machinelearningprojects.net/flask-projects/)

[Easiest way to Train yolov7 on the custom dataset](https://machinelearningprojects.net/train-yolov7-on-the-custom-dataset/)

[30+ Unique Computer Vision Projects with Source Code](https://machinelearningprojects.net/opencv-projects/)

[20+ Unique Machine Learning Projects with Source Code](https://machinelearningprojects.net/machine-learning-projects/)

[How to Install TensorFlow with Cuda and cuDNN support in Windows](https://machinelearningprojects.net/install-tensorflow-with-cuda-and-cudnn/)

[How to build OpenCV with Cuda and cuDNN support in Windows](https://machinelearningprojects.net/build-opencv-with-cuda-and-cudnn/)

[20+ Unique Deep Learning Projects with Source Code](https://machinelearningprojects.net/deep-learning-projects/)

[How to Deploy a Flask app online using Pythonanywhere](https://machinelearningprojects.net/deploy-a-flask-app-online/) | sharmaji27 |

1,433,833 | What are the best books about programming that aren't cookbooks or 'how to code in X'? | Anything with a narrative? Light reading? Fun? Not just looking for technical tutorials. | 0 | 2023-04-12T15:15:01 | https://dev.to/amyliumaiyi/what-are-the-best-books-about-programming-that-arent-cookbooks-or-how-to-code-in-x-4mj | discuss, help, books, career | Anything with a narrative? Light reading? Fun? Not just looking for technical tutorials. | amyliumaiyi |

1,433,848 | PostgreSQL 15: Breaking Barriers and Unleashing Creativity with Native JIT Compilation, Improved Parallelism, and More P2 | Welcome to the second part of our series of posts on the latest features of PostgreSQL 15. In this... | 0 | 2023-04-12T15:46:18 | https://dev.to/nightbird07/postgresql-15-breaking-barriers-and-unleashing-creativity-with-native-jit-compilation-improved-parallelism-and-more-p2-7p | apache, features, database, postgres | Welcome to the second part of our series of posts on the latest features of PostgreSQL 15. In this part, we will dive deeper into more exciting and innovative features that PostgreSQL 15 has to offer. PostgreSQL is a powerful and widely-used open-source relational database management system that has been gaining popularity among developers and enterprises alike. It has a reputation for being a robust, scalable, and secure database system that can handle large amounts of data and complex queries.

## Parallel query execution on remote databases

In a distributed database environment, data is stored across multiple databases that may be located in different physical locations. In such an environment, performing queries that involve data from multiple databases can be challenging due to the need to transfer large amounts of data among the databases. we tend to use this for durability of the database servers, or when the time needed to retrieve all the data across the servers in different zones.

To overcome this challenge, parallel query execution on remote databases can be achieved through various techniques. Parallel query planning involves dividing a query into smaller sub-tasks that can be executed in parallel on different databases. This approach involves optimizing the plan to minimize data movement between databases, which helps to reduce the time required to complete the query.

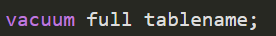

## Storage interface

when creating the tables default in the Postgres are stored in a B-tree which is optimized for sorting and searching across the data. and there are several types: Hash,GiST, SP-GiST, GIN, BRIN. now using this method you can change the table storage type and this is actually very useful excluding the time needed to regenerate the table from the ALTER we will be able to switch between the storage methods experiencing the feature of each one.

we are not done yet next time we will have a talk about more top features and by the end we will start the series of discovering the Architecture that support such Top features.

## SELECT DISTINCT

continue the talks about the parallelism of the previous features you can now do the above Query in parallel database.

I mentioned the TOP features on PostgresSQL 15

to read [P1](https://dev.to/nightbird07/top-down-postgresql-15-top-features-2c6o)

Next time we will start thinking about the implementation behind each feature.

### Reference

1- My brain

2- [PostgreSQL Doc](https://www.postgresql.org/docs/current/install-procedure.html)

Thanks for reading

| nightbird07 |

1,433,999 | Custodial vs. Non-Custodial wallets: What are the differences? | Choosing the best type of wallet for storing and safeguarding digital assets is critical for crypto... | 0 | 2023-04-12T18:14:05 | https://dev.to/timilehin08/custodial-vs-non-custodial-wallets-what-are-the-differences-1d8m | crypto, blockchain, beginners, cryptowallet | _Choosing the best type of wallet for storing and safeguarding digital assets is critical for crypto ownership. There are many different types of wallets on the market, and deciding which one to choose can be difficult. This article delves deeper into the differences between custodial and non-custodial wallets. Continue reading for a quick guide on whether you should keep your own crypto key or delegate responsibility._

_**Important Takeaways:**_

- With a custodial wallet, a third party manages your private key rather than you, the crypto owner.

- With a non-custodial wallet, you control your own private key and thus your funds.

- One disadvantage of non-custodial wallets is the lack of recovery options if you forget your passwords.

- Non-custodial wallets, on the other hand, give you complete ownership of your cryptocurrency, making you responsible for the security of your own private keys and funds.

**Custodial Wallets**

To understand how a custodial wallet works, you must first understand how crypto wallets work. A cryptocurrency wallet is a software or hardware device that allows you to store, access, and interact with cryptocurrencies such as Bitcoin and Ethereum. A user's funds are not stored in a crypto wallet. Instead, they contain the public key, which allows you to set up transactions as the user, and the private key, which is used to authorize transactions. Software wallets are installed on a user's device, whereas hardware wallets are standalone physical devices used to store digital assets. (desktop or mobile).

Private keys—strings of letters and numbers that act as a highly sensitive password—are stored in both hardware and software wallets. Possession of a private key allows you to send crypto assets from a specific public address, making private key management critical.

A custodial wallet, as the name implies, is one in which a third party stores private keys on behalf of users. The third-party has complete control over the crypto assets, managing the user's wallet key, signing transactions, and protecting the user's crypto assets. Custodial wallets are available from custodial wallet providers or cryptocurrency exchanges as mobile or web applications. You use the wallet provider's interface to manage your funds and make transactions once you log in to your wallet account.

This implies that you must have trust in the service provider's ability to securely store your tokens and implement strong security measures to prevent unauthorized access. Two-factor authentication (2FA), email confirmation, and biometric authentication, such as facial recognition or fingerprint verification, are examples of such measures.

Custodial wallets are a low-entry barrier if you are a newbie in the crypto space as they are simple to use and can be accessed from any device with an internet connection. Security, on the other hand, is a major concern because centralized exchanges have previously been victims of cyberattacks, compromising users' keys and cryptocurrency assets.

Custodial wallets however have some advantages, such as requiring less user involvement in private key management. When you outsource wallet custody to a company, you effectively outsource your private information to that company. You are not responsible for keeping the private key to the wallet safe, so you rely on the company to do so. Binance and Coinbase are some examples of custodial wallets. Only after identity verification are you able to hold crypto assets on these custodial exchanges.

**Non-Custodial Wallets**

A non-custodial wallet, also known as a "self-custody wallet," is one in which you are entirely responsible for managing your own funds as a cryptocurrency owner. You have complete control over your crypto assets, as well as the ability to manage your own private key and handle transactions on your own. Non-custodial wallets are preferred by crypto experts, security advocates, and the larger decentralized community because they do not require trust to be outsourced to an institution, and no institution can refuse to complete transactions.

Non-custodial wallets come in various forms. Browser-based wallets, which are browser extensions that allow you to enter your private key and initiate transactions, or mobile wallets, which are downloadable mobile apps, are two options. It could also take the form of hardware wallets, which are physical devices. Many people believe that hardware wallets are the most secure option because they can be accessed and managed while not connected to the internet.

A seed phrase is provided by non-custodial wallets. When you create the wallet, you will be asked to write down and save a sequence of 12 randomly generated words known as a 'recovery, ‘seed,' or 'mnemonic' phrase. The public and private keys can be generated from this phrase. It also functions as a backup or recovery mechanism if users lose access to the original device. Anyone who knows the seed phrase will have complete control over the funds in your wallet.

If the seed phrase is lost, you will no longer have access to your funds. Each transaction is your responsibility to manage and complete. To send money and complete other transactions, you'll need your private keys. The transaction can be reflected in real-time on-chain depending on the non-custodial wallet used, or it can be signed offline and uploaded to the blockchain for confirmation later.

Hardware, or "cold" wallets, which store private keys offline on a standalone device that looks and feels similar to a USB drive, are one of the most popular types of non-custodial wallets. When you want to send a cryptocurrency transaction, hardware wallets connect to the internet. A non-custodial wallet, particularly a hardware wallet, may be the best option if you need to store a large amount of crypto assets.

Furthermore, in order to interact with decentralized applications, you will need a non-custodial wallet if you want to invest in DeFi. Keep in mind that having complete control over your assets entails greater responsibility. You must backup your wallet and keep your private keys in a secure location. Non-custodial wallets, such as Bitpay, Electrum, Trust Wallet, and MetaMask, are examples of software that you install on your computer or mobile device.

**How Can I Tell What Kind of Wallet I'm Using?**

In contrast to custodial wallets, non-custodial wallets provide users with complete control over their private keys associated with the wallet's public address. You have a custodial wallet if you can only access it with a login and password and do not have your own private key. Otherwise, you have a non-custodial wallet.

**Conclusion — Choosing between a Custodial or Non-Custodial Wallet**

Custodial and non-custodial wallets both have benefits and disadvantages. If you value convenience as well as backup and recovery options, custodial wallets are a good choice. Non-custodial wallets, on the other hand, may be exactly what you're looking for if you want complete control and ownership over your private keys.

**Do Your Own Research and Due Diligence**

This article's examples are provided solely for informational purposes. Any of this information or other material should not be construed as legal, tax, investment, financial, cyber-security, or other advice. Past results do not assure or predict future outcomes. The value of crypto assets can fluctuate, and you could lose all or a portion of your investment. You must conduct thorough research and due diligence when evaluating a crypto asset in order to make the best possible decision, as any decision you make is solely your responsibility.

_Cover Photo by Regularguy-eth from Unsplash_

| timilehin08 |

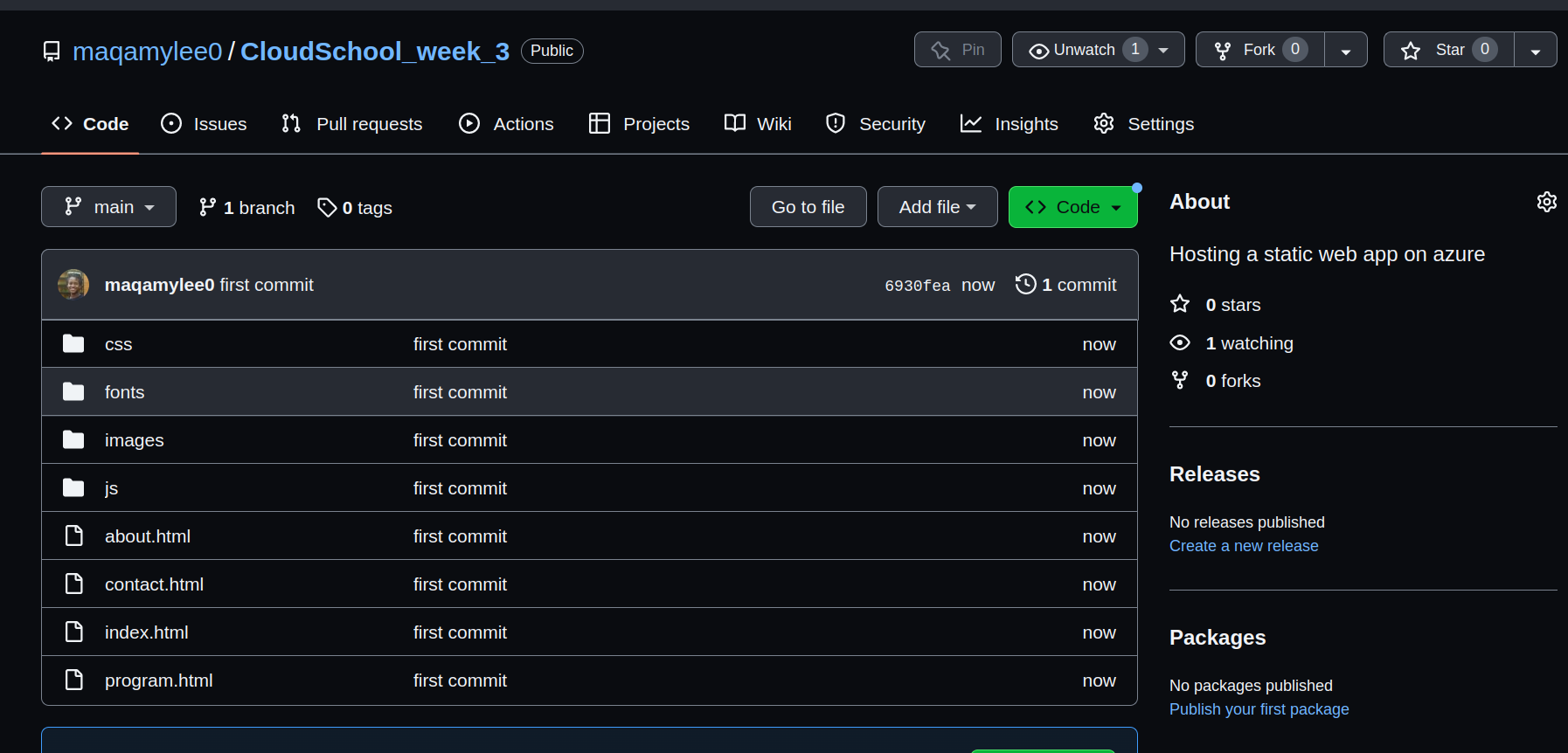

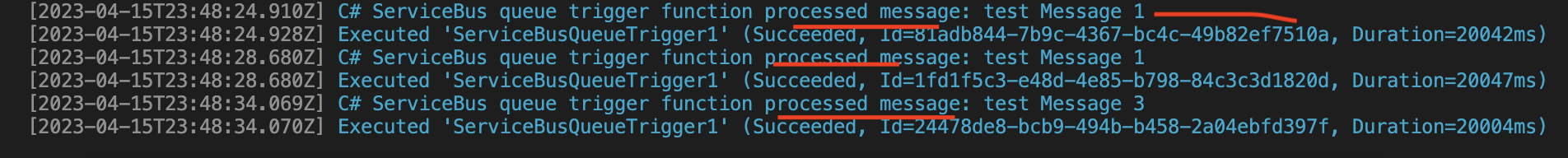

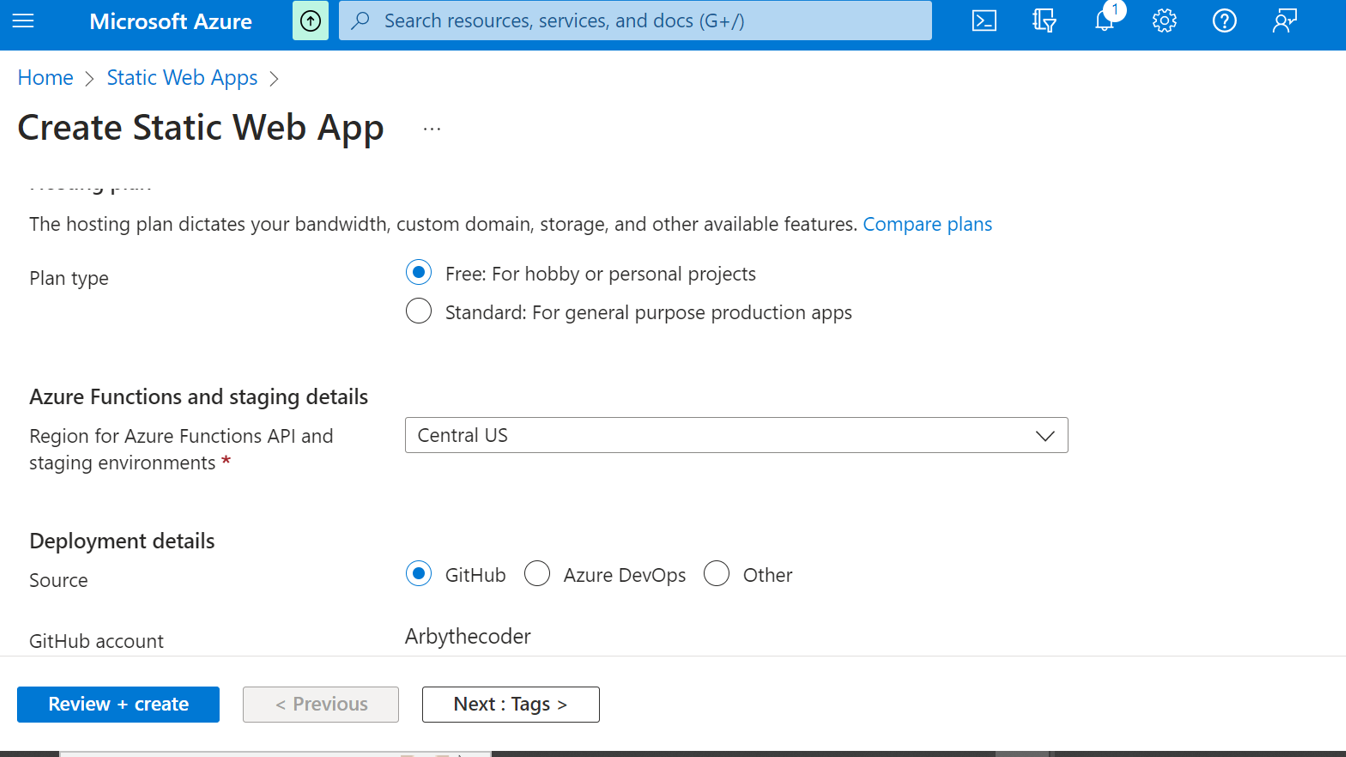

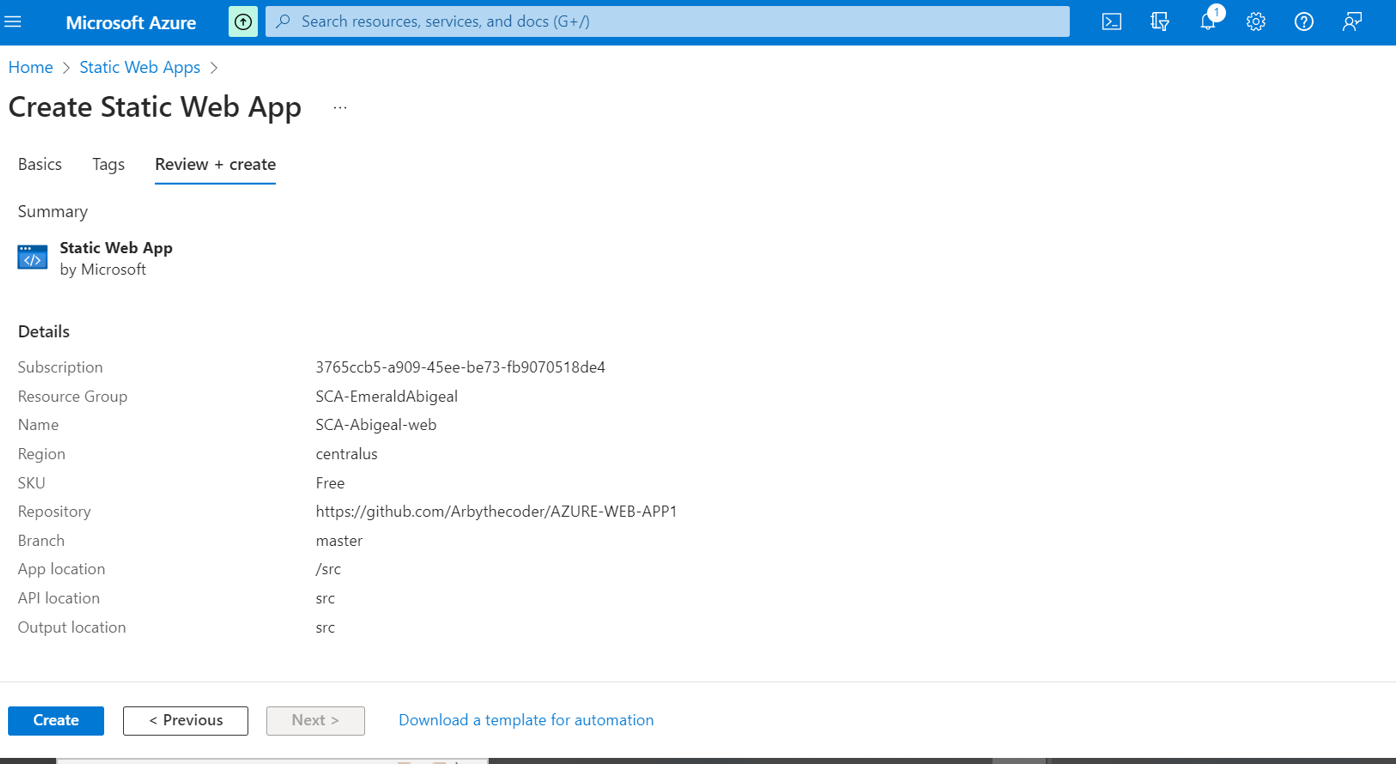

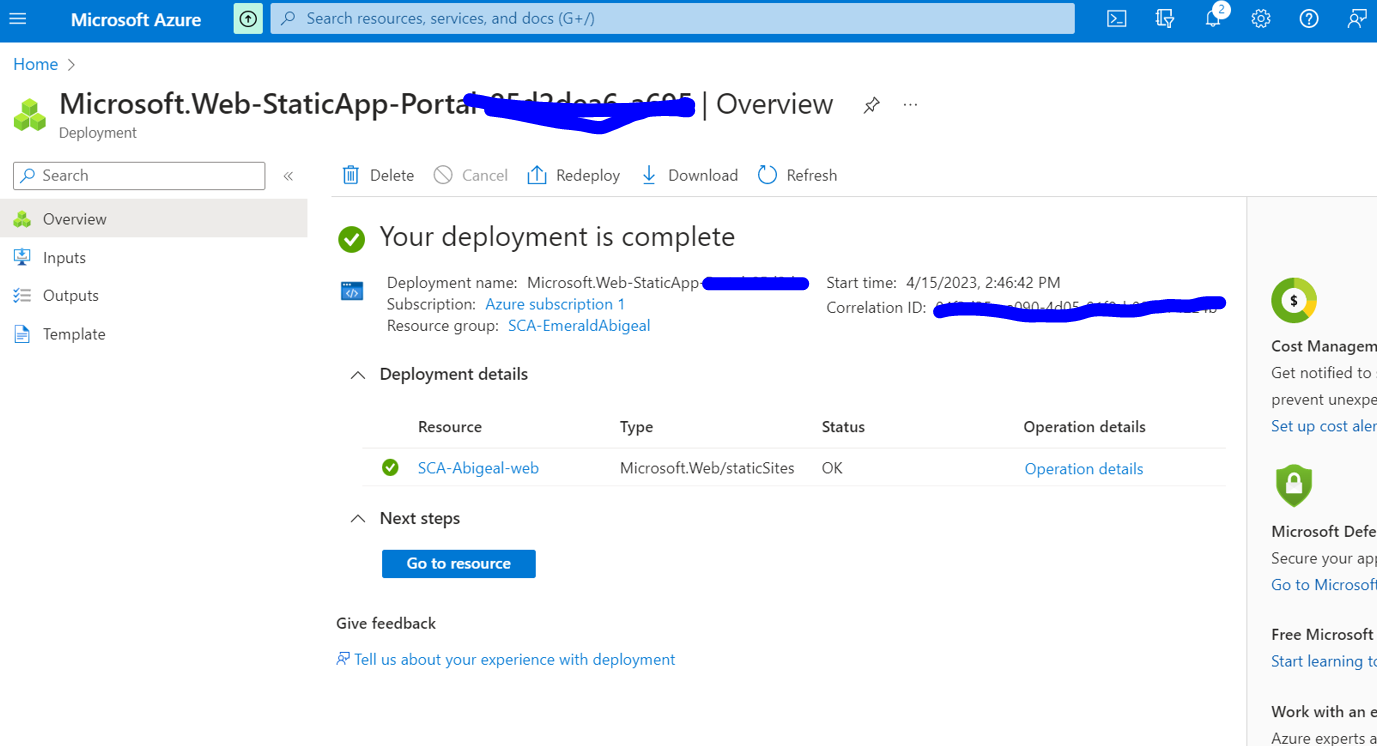

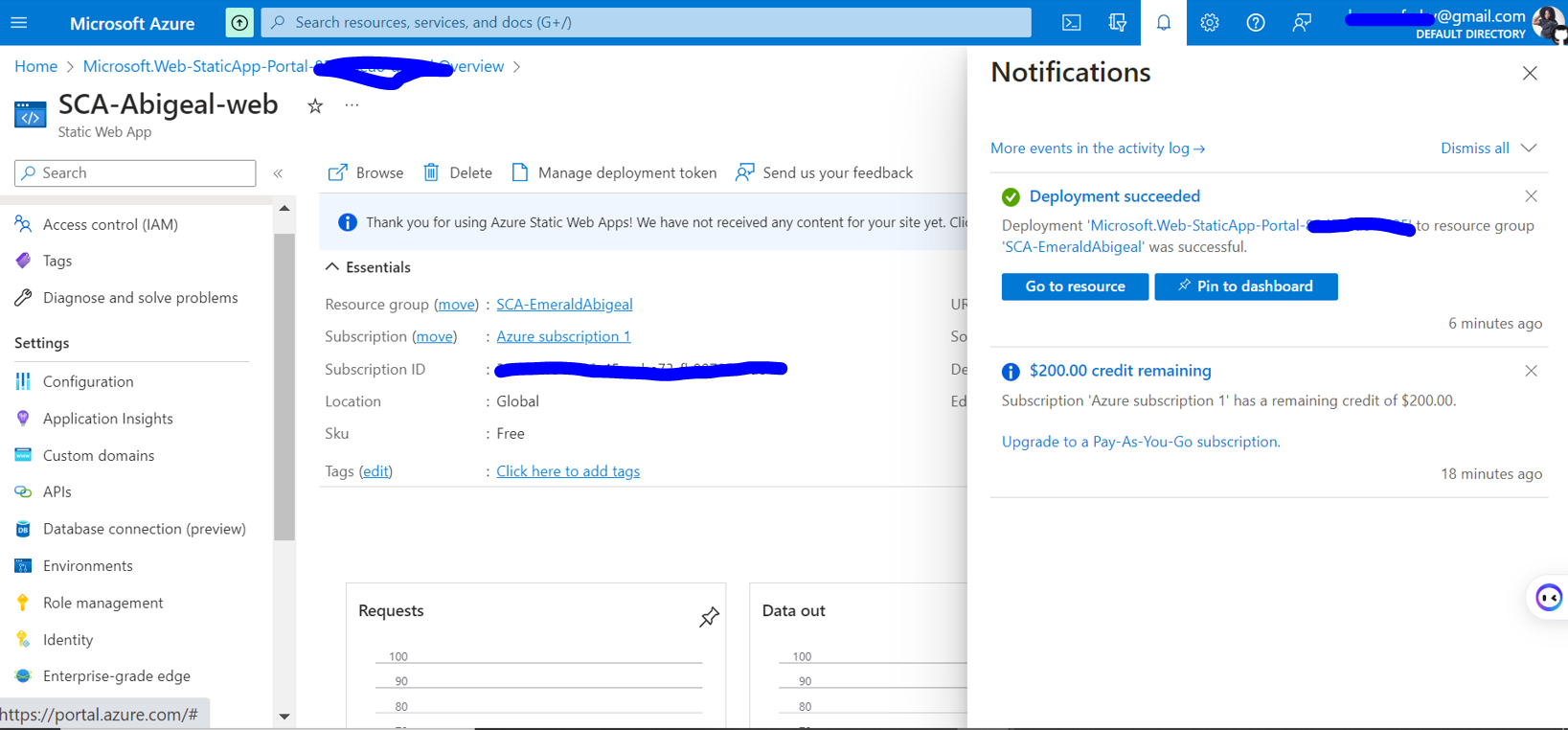

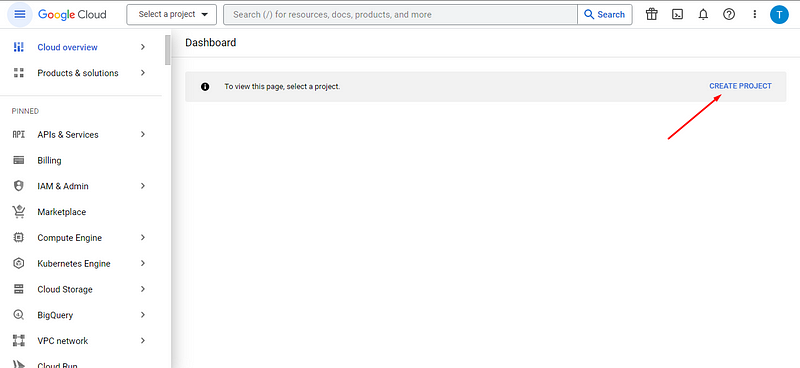

1,435,805 | Hosting a static web app on Azure. | We shall go over how to host a custom web app on azure platform. We assume that we have our code is... | 0 | 2023-04-14T12:42:26 | https://dev.to/maqamylee0/hosting-a-static-web-app-on-azure-3af6 | azure, staticwebapps, cloud, devops | We shall go over how to host a custom web app on azure platform.

We assume that we have our code is on github.

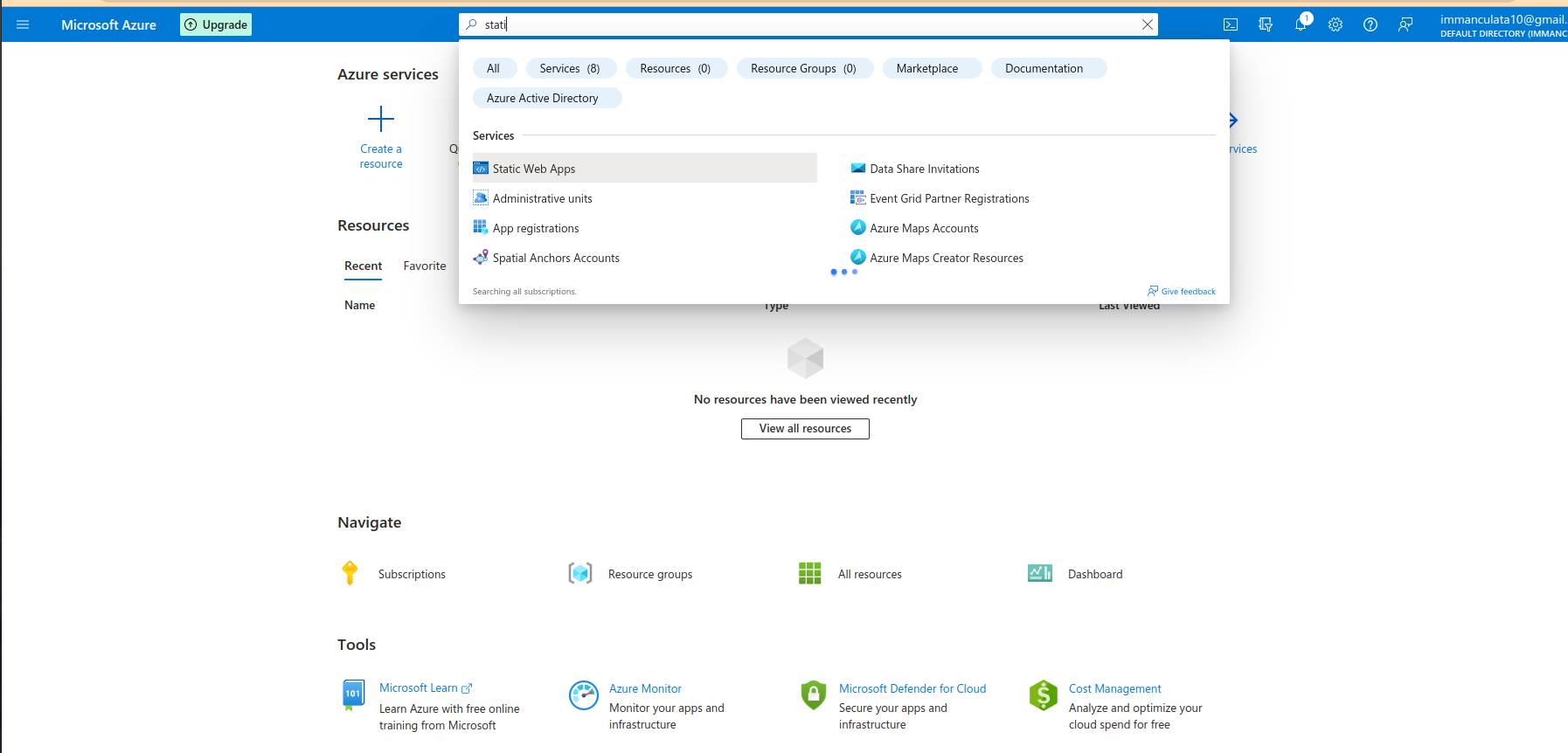

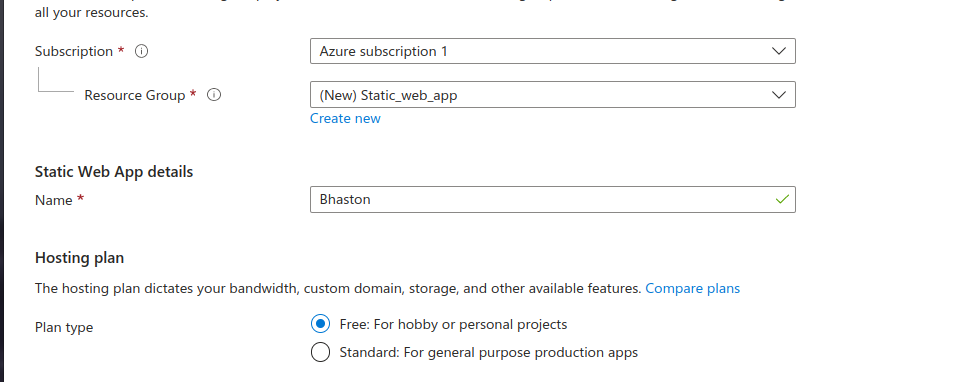

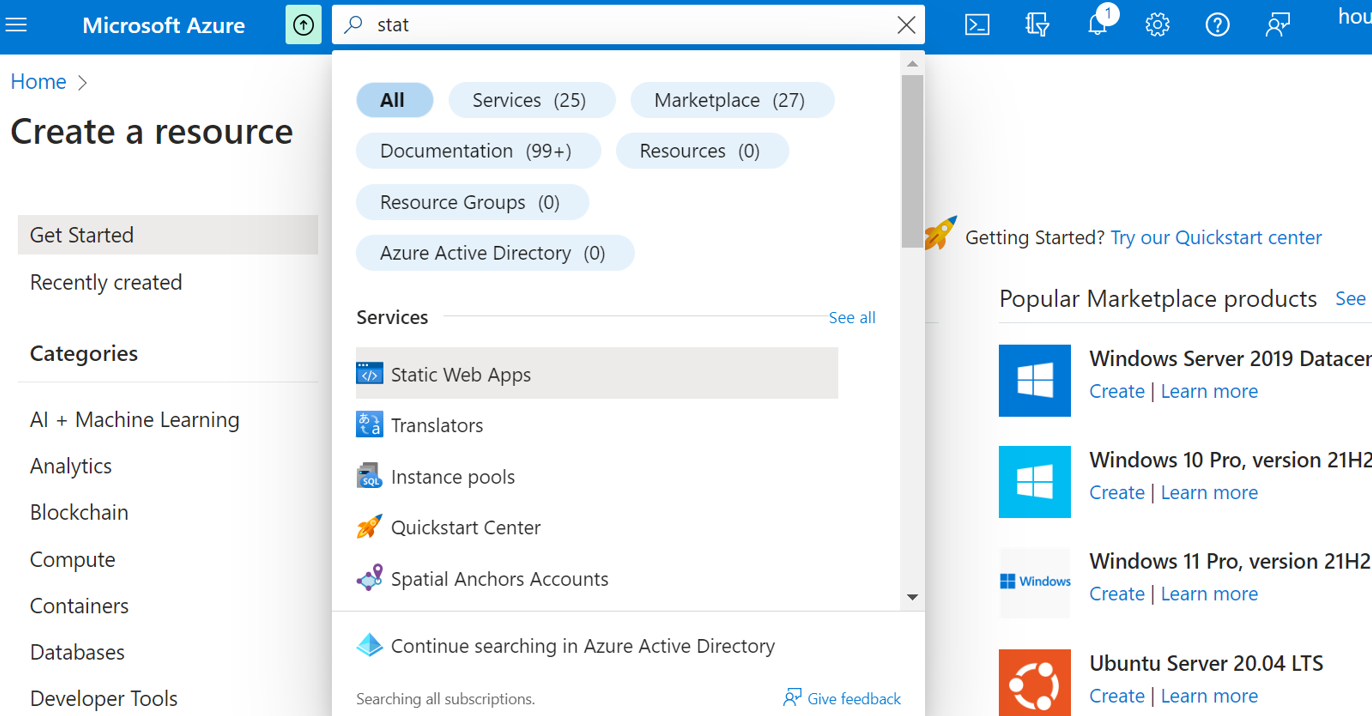

Next we head over to the Azure platform login in and search for the Static Web App service.

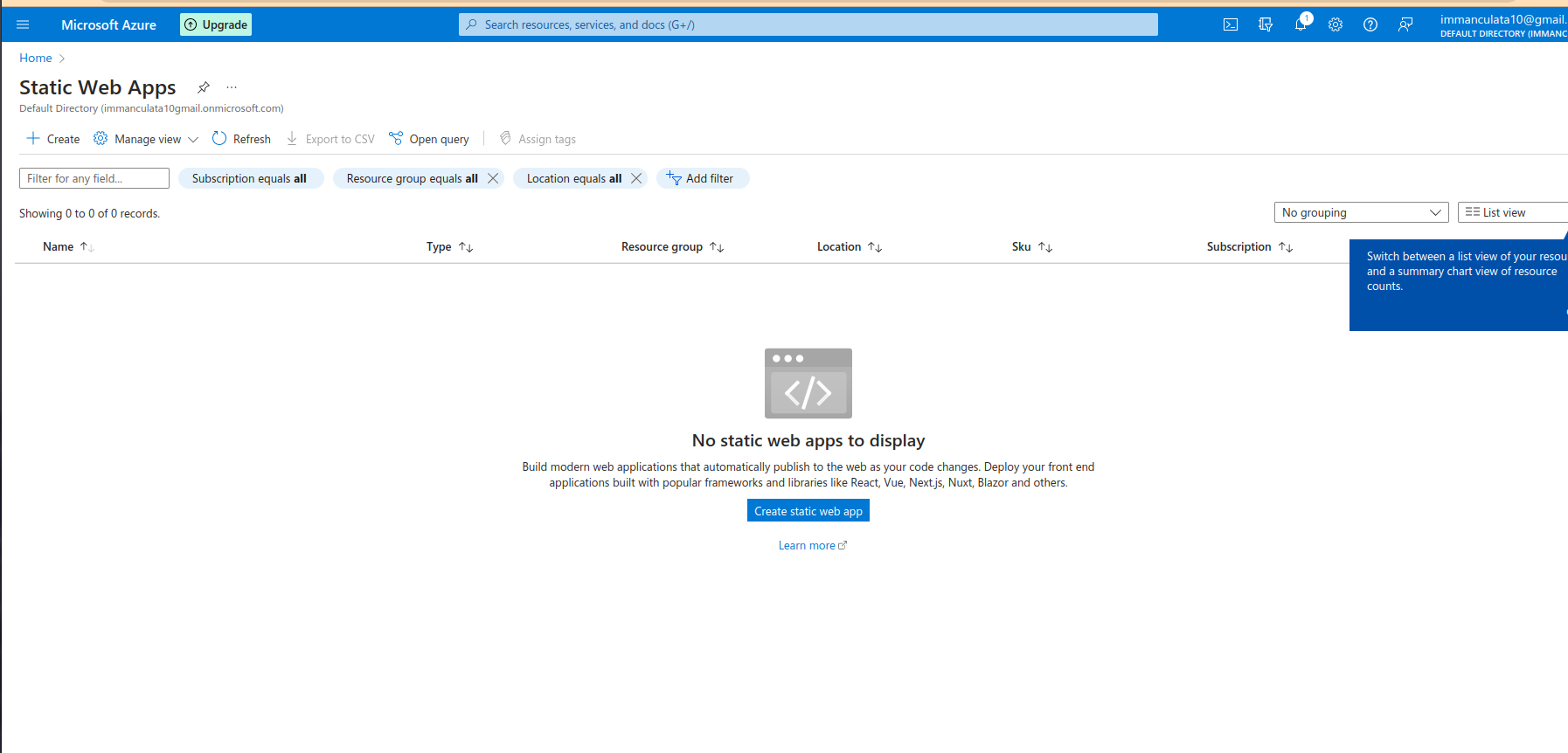

Once the service is loaded, click create.

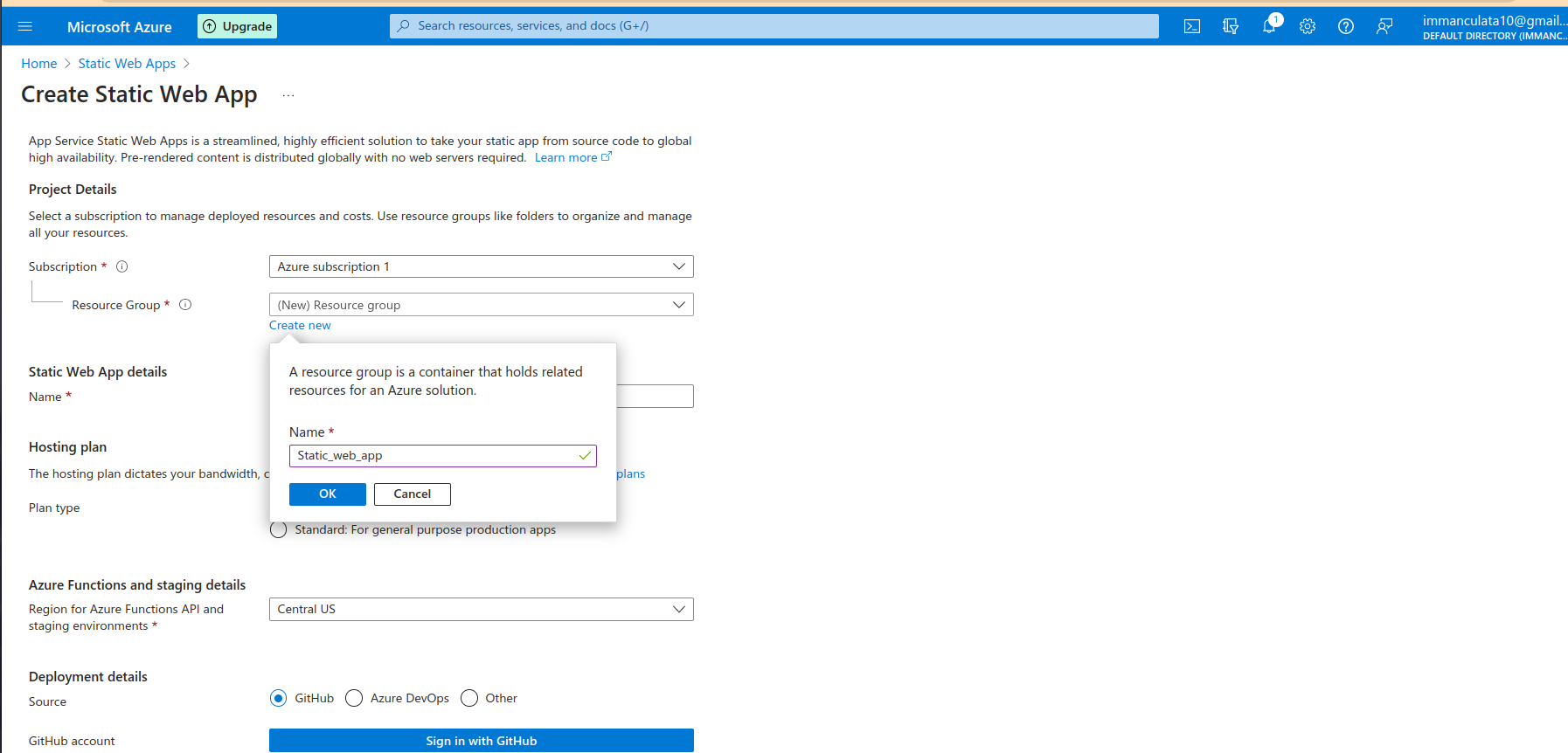

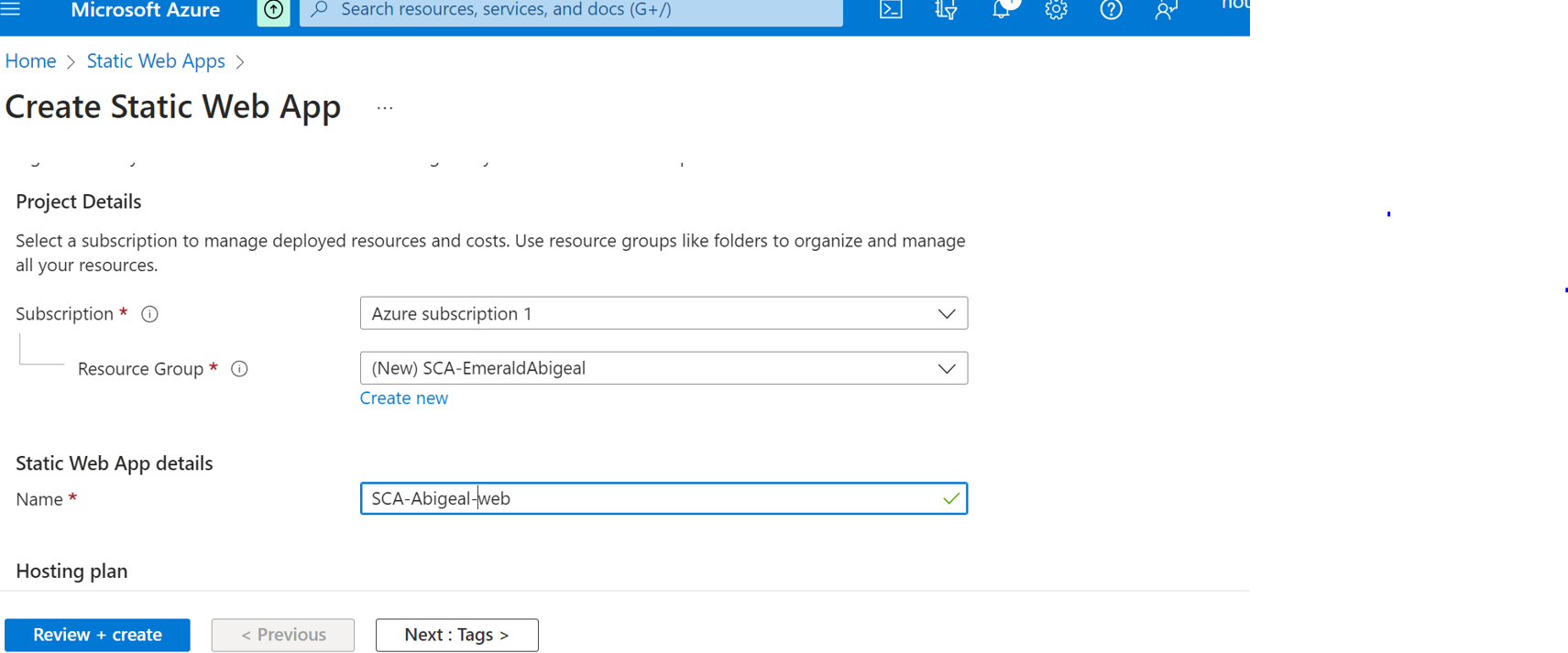

Then start filling in neccessary information about your app such as resource group it belongs to.Here we shall create one.

Then we shall name our app.

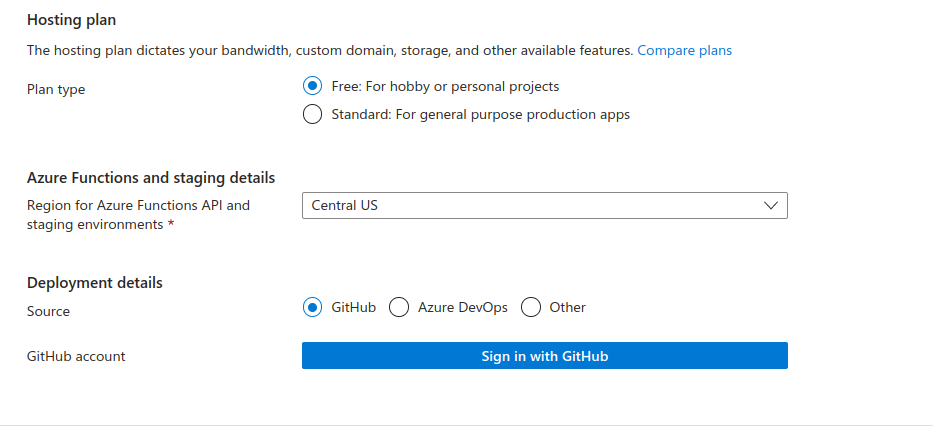

Next we shall choose free hosting, and github as our deployment source.

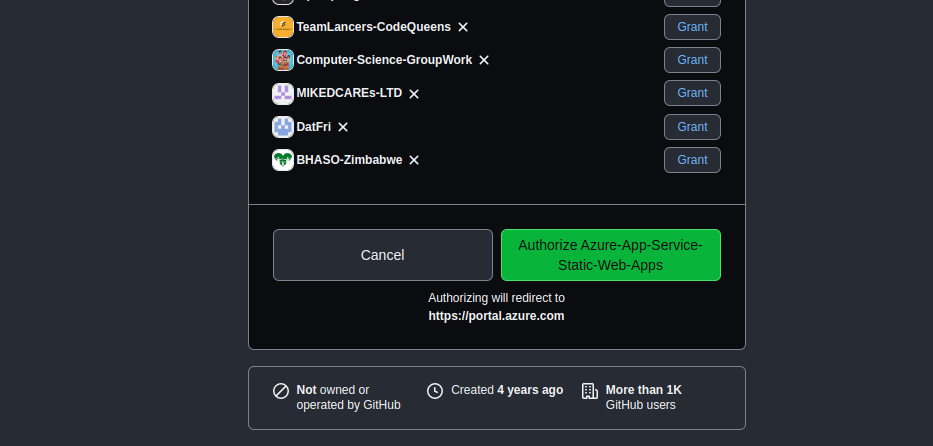

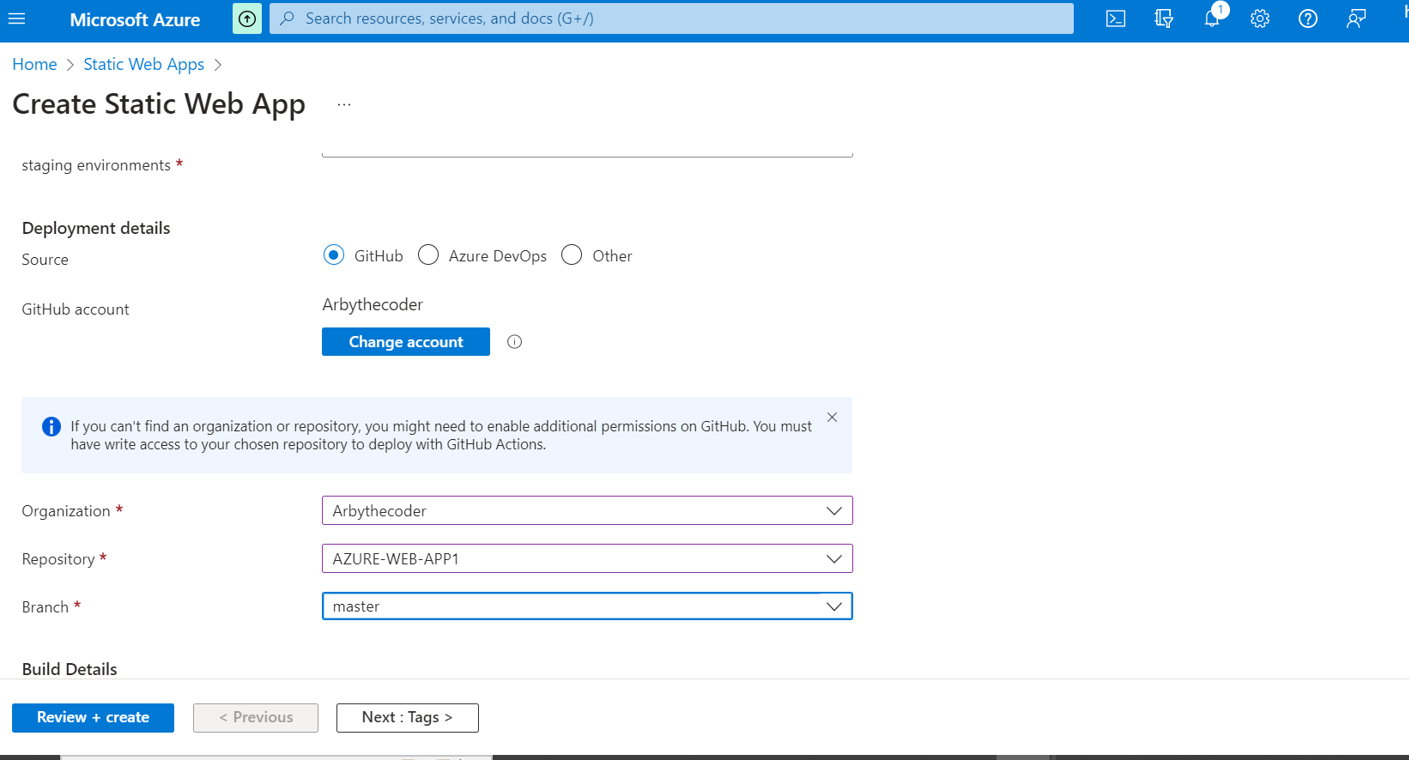

Then we shall grant access to github by clicking sign into github.

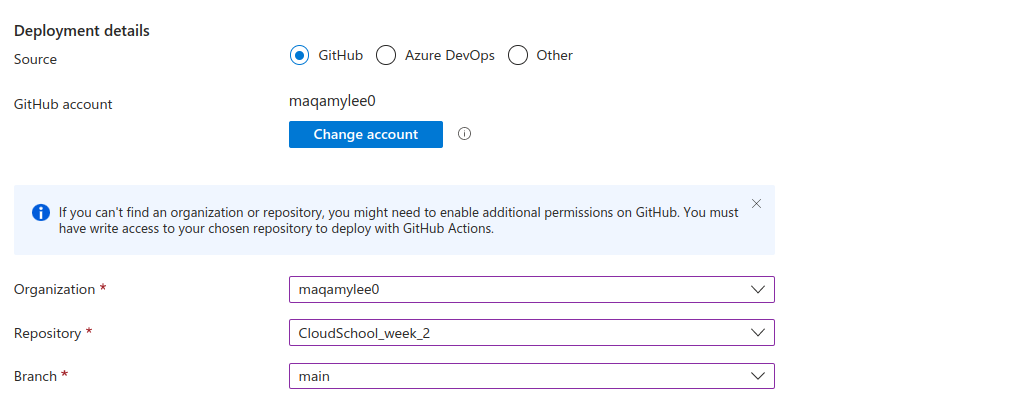

Once github has access it will look like this.

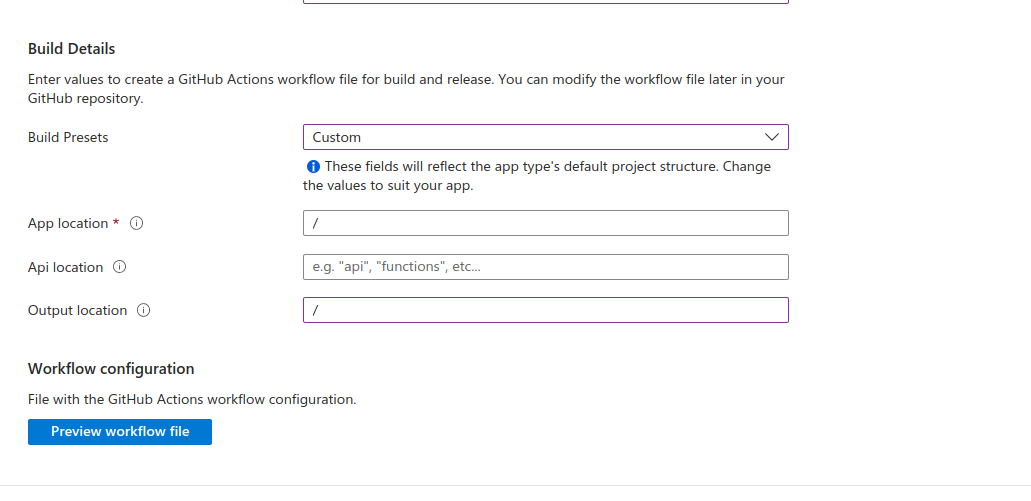

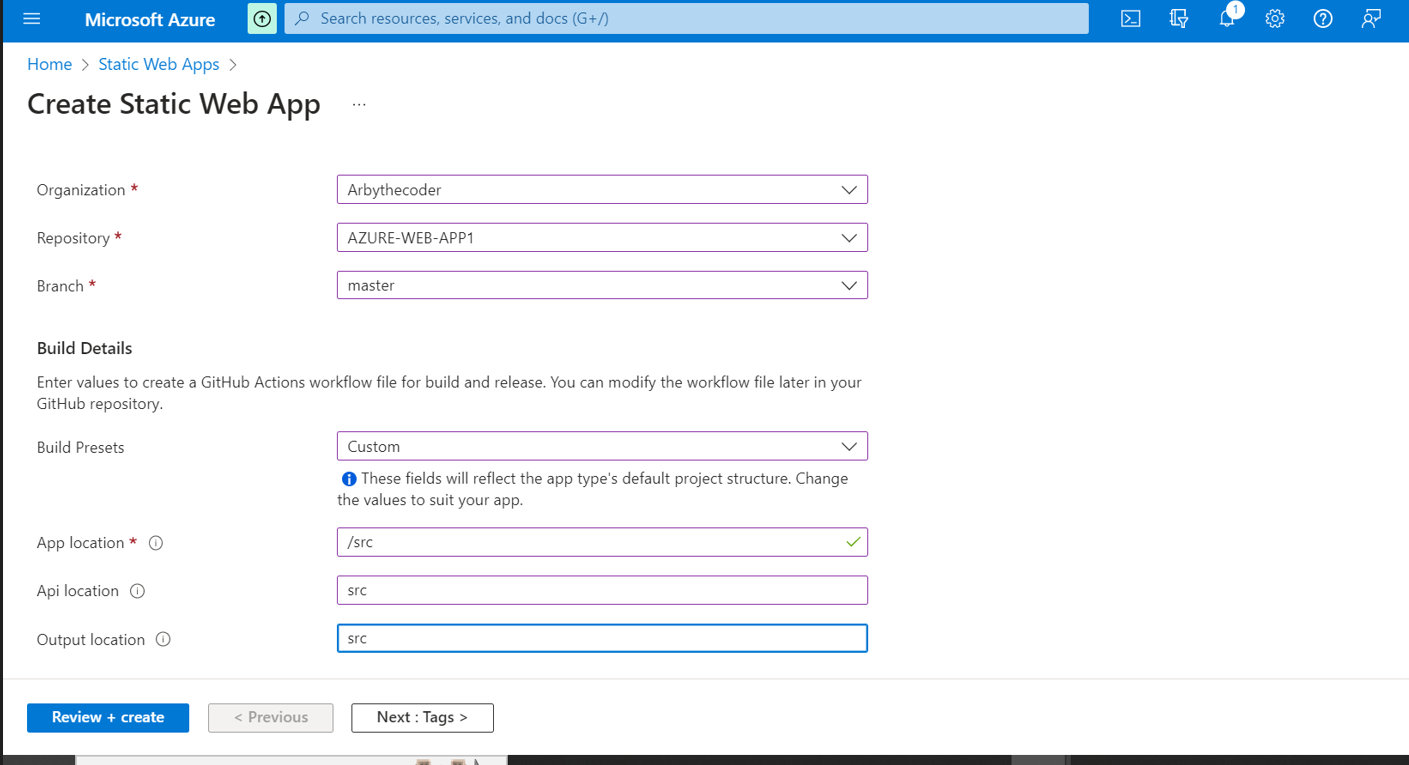

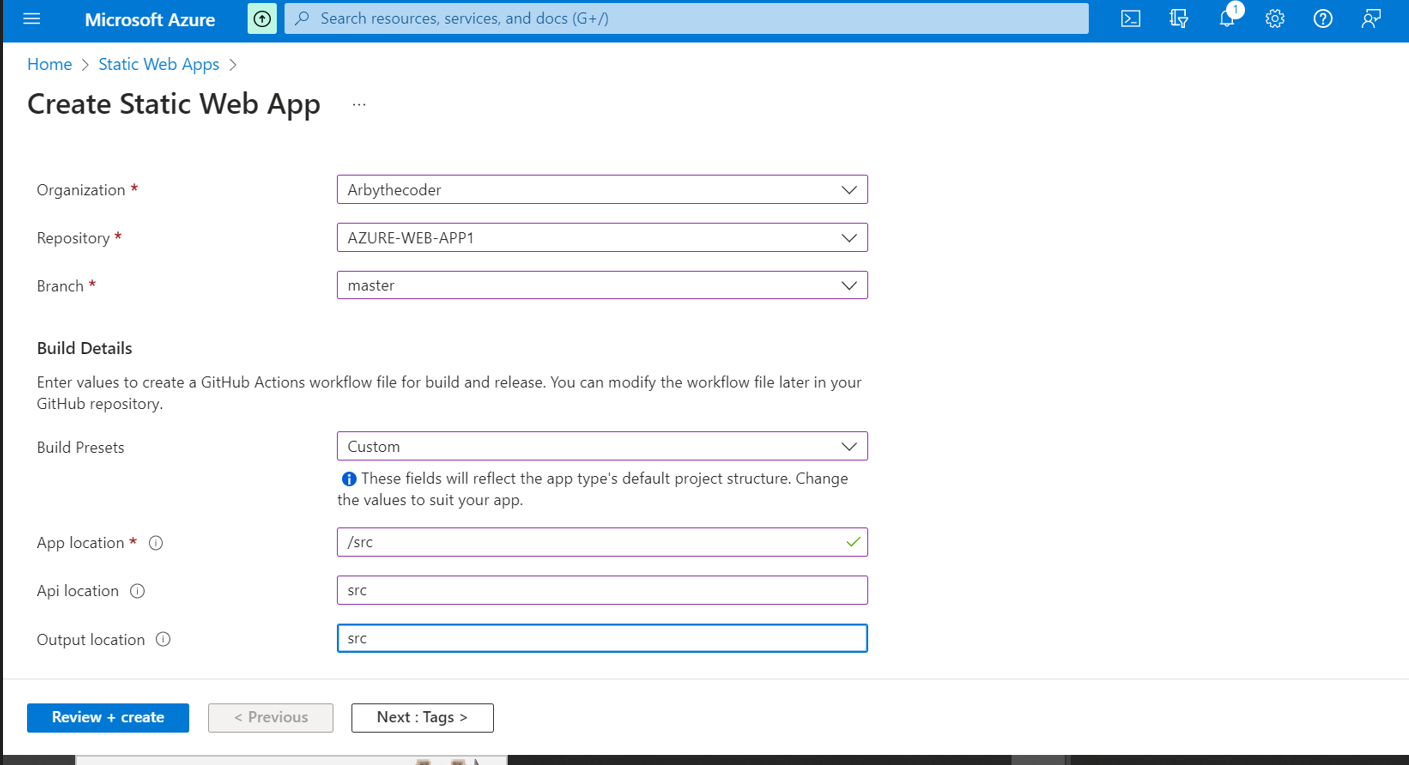

Now its time to indicate where our index.html file is located.In my case the root folder so i will use a slash.

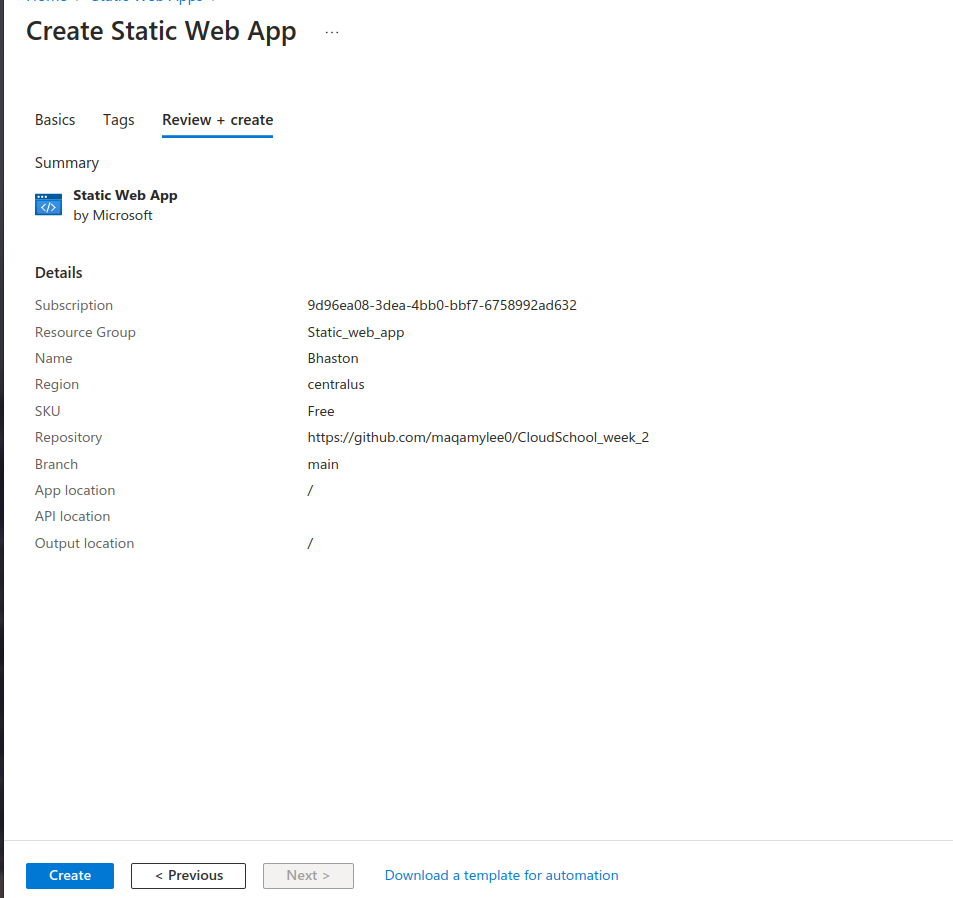

Finally lets preview our app settings by clicking preview and finally click create.

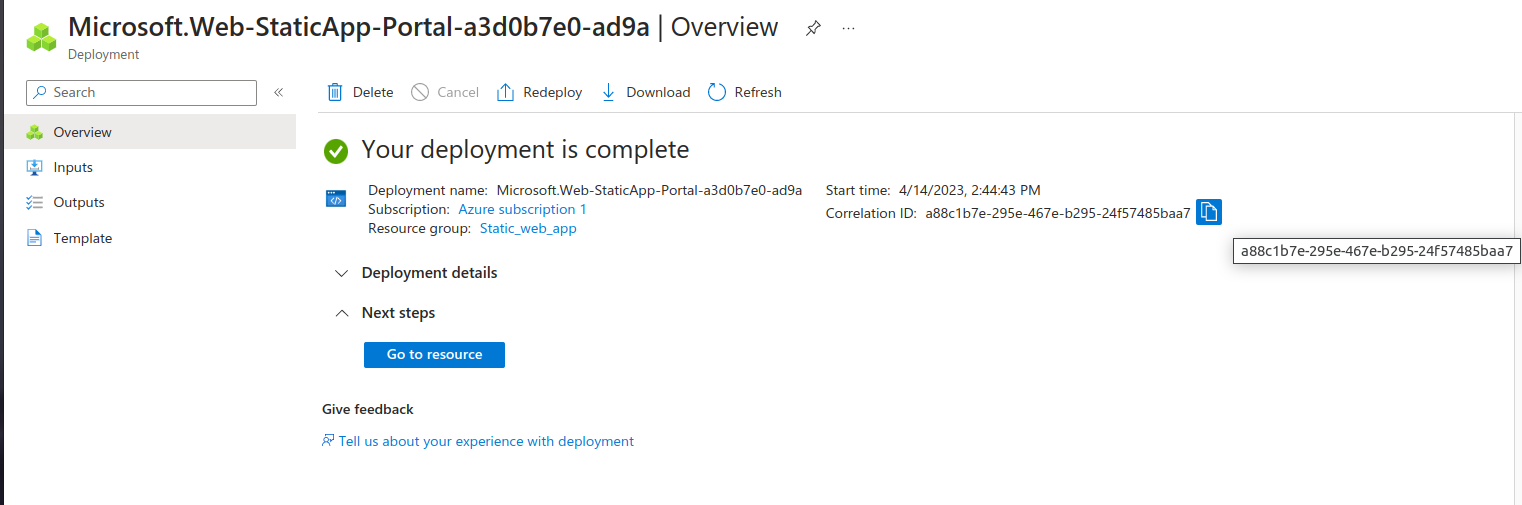

Give it some 3 minutes and we shall have our page ready to view.

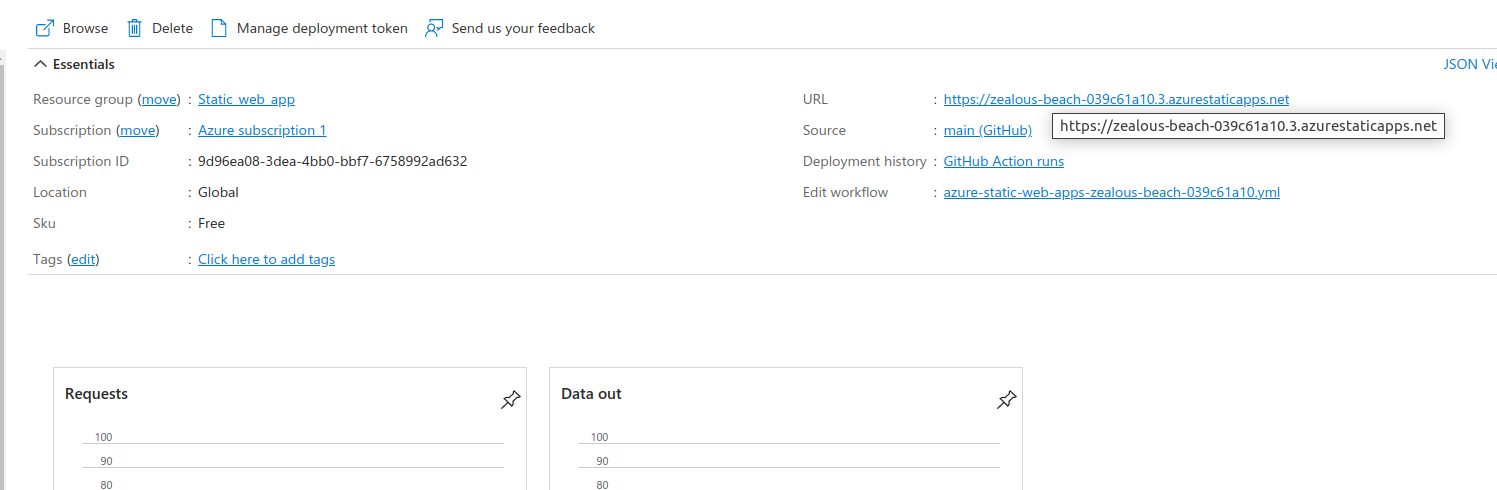

Next click on the go to resource and you can see the URL link to the web app.

Then you will see the hosted web application.

| maqamylee0 |

1,434,115 | 4 raisons d’utiliser Tailwind et Styled Components avec React | Aujourd’hui, dans cet article je vais vous donner les raisons pour lesquelles j’utilise Tailwind et... | 0 | 2023-04-12T20:46:25 | https://dev.to/tontz/4-raisons-dutiliser-tailwind-et-styled-components-avec-react-2278 | react, tailwindcss, css, french | Aujourd’hui, dans cet article je vais vous donner les raisons pour lesquelles j’utilise Tailwind et Styled Components sur mes projets React. Si vous êtes intéressé sur la façon de setup un projet avec ces outils, je vous redirige sur mon article précédent : [https://dev.to/vincent_react/comment-configurer-reactjs-vite-tailwind-styled-components-1m72](https://dev.to/vincent_react/comment-configurer-reactjs-vite-tailwind-styled-components-1m72).

Mon but n’est pas de vous convaincre d’utiliser ces outils mais plutôt de vous faire un retour d’expérience et vous montrer leur potentiel.

Gardez en tête qu’un outil n’est utile que si on en a vraiment besoin !

# 1 - Ne pas louper le train

**Tailwind** est une librairie fournissant des classes utilitaires CSS et des composants qui est concurrente de **bootstrap.** **Styled Components** est une librairie permettant de faire des composants stylisés en JavaScript. Ces deux outils permettent de travailler plus facilement avec le CSS.

Une des raisons pour lesquelles j’utilise Tailwind et Styled Components est que ce sont des outils qui **attirent** de plus en plus de développeur. En effet, Tailwind prend particulièrement une place de plus en plus **importante** d’année en année. Je vous ai sélectionner trois graphiques permettant d’illustrer ce phénomène.

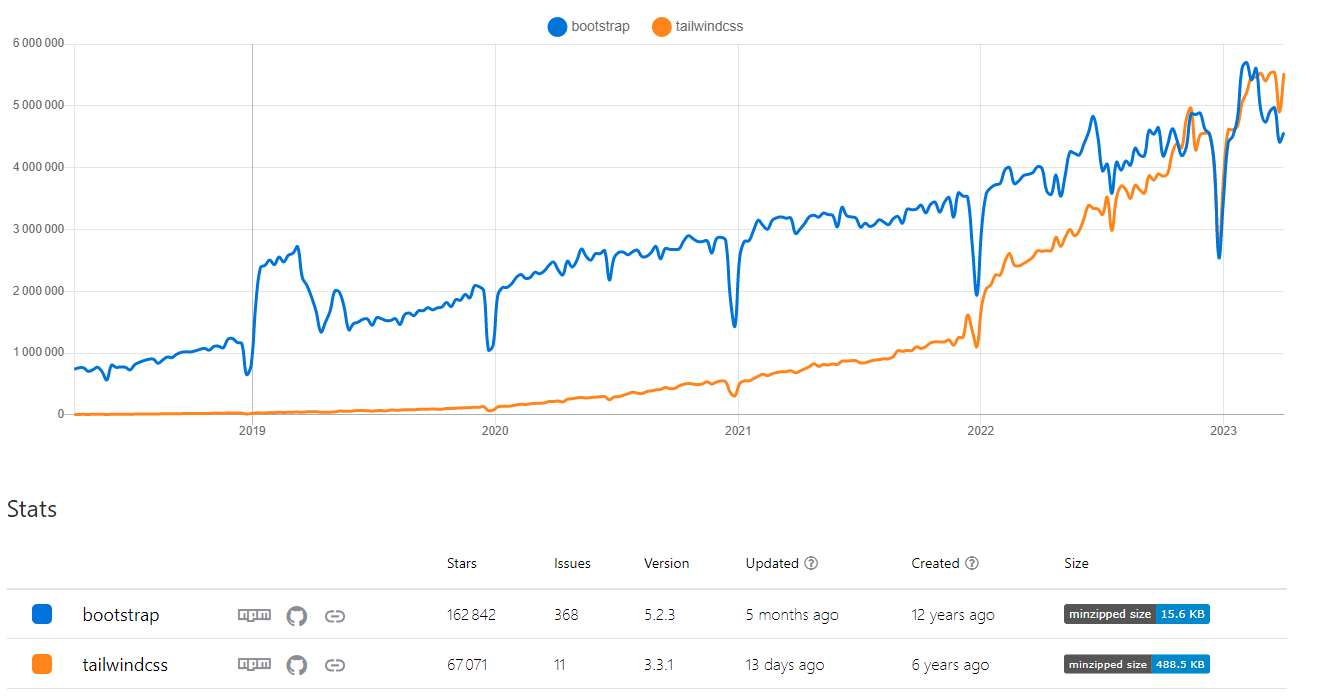

Ce graphique est issus de **npm trend,** un site permettant d’avoir les statistiques de téléchargement des package sur **npm**. On voit sur celui-ci qu’en 2023, **Tailwind** a dépassé **Bootstrap** sur le ****nombre de téléchargement. Cette métrique montre que Tailwind représente un véritable **outil robuste** qui tend à devenir la **norme**.

[bootstrap vs tailwindcss | npm trends](https://npmtrends.com/bootstrap-vs-tailwindcss)

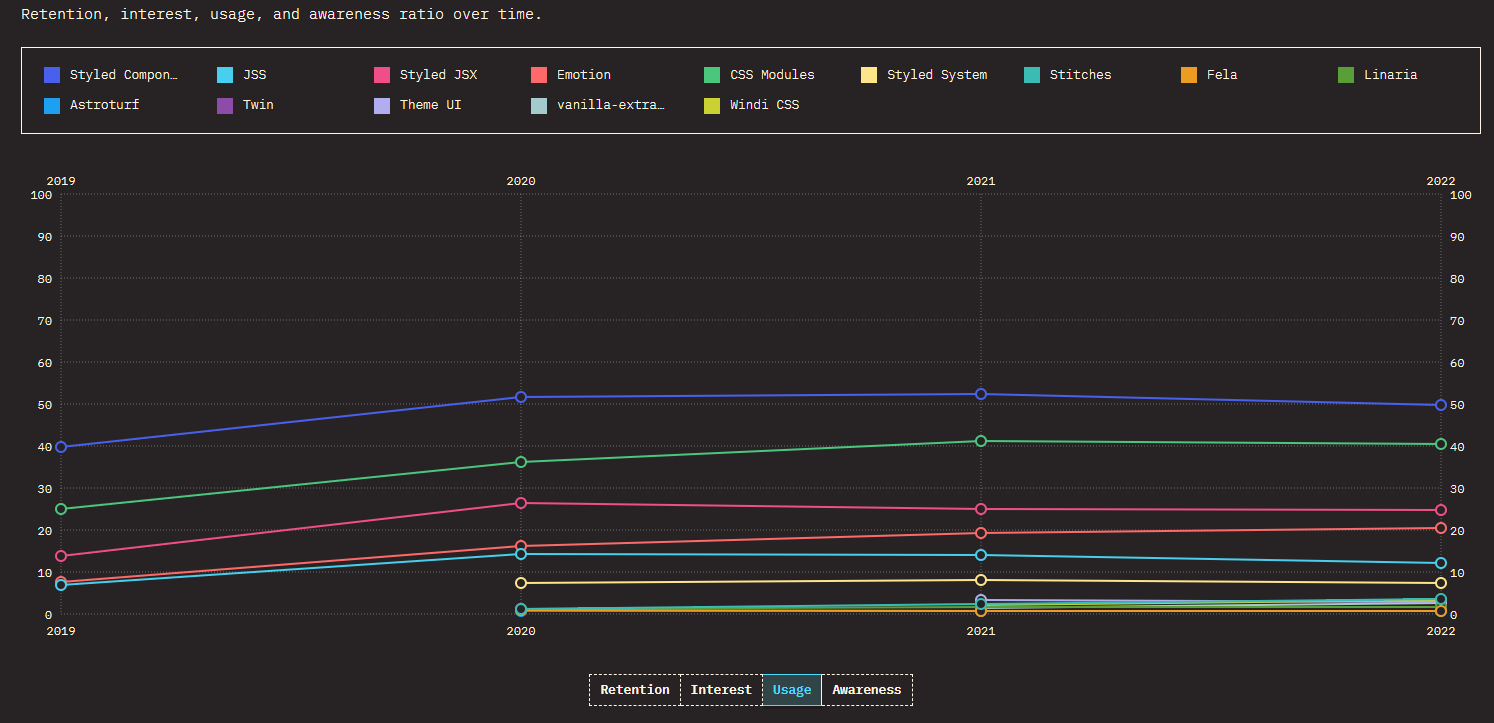

Je tiens à ajouter cette deuxième source pour contre balancer le premier. En effet, sur ce deuxième graphique issue du site **State of CSS,** on voit l’utilisation des différentes librairies / Framework css. Ici on voit bien que Bootstrap domine encore le marché avec **80%** d’utilisation. Cela s’explique probablement par le fait que Bootstrap existe depuis 12 ans alors que Tailwind existe depuis 6 ans. Néanmoins, on peut voir que la tendance d’utilisation de Tailwind ces dernières années n’a fait qu’augmenter alors que Bootstrap à diminuée. Si les choses évoluent en l’état, dans deux ou trois ans **Tailwind devrait dépasser Bootstrap**.

[The State of CSS 2022: CSS Frameworks](https://2022.stateofcss.com/en-US/css-frameworks/)

Comme je sais que vous êtes friands de chiffre, je vous donne la courbe d’utilisation de la librairie styled-components. On voit nettement que c’est la librairie la plus populaire du moment.

[The State of CSS 2022: CSS-in-JS](https://2022.stateofcss.com/en-US/css-in-js/)

Ce que je veux vous montrer avec ces statistiques, c’est le fais que Tailwind et Styled Components sont deux outils qui prennent de plus en plus de place dans l’élaboration des projets et qu’il est très probable que Tailwind surpasse bootstrap. Comme vous le savez, l’univers JavaScript évolue à toute vitesse et il est important de se positionner sur les technologies pormetteuses de demain afin de ne pas louper le train.

Nous ne sommes sûrs de rien, mais commencer à vous intéresser à ces outils pourrait se trouver être un gros avantage pour le futur.

# 2 - Uniformiser le CSS

Tailwind est de plus en plus populaire et se fait une place dans le milieu des Framework CSS car il répond à une problématique qui touche tous les projets Web : **la difficulté d’uniformiser l’écriture du CSS au sein d’une équipe de plusieurs développeurs !**

Tailwind propose un ensemble de **classes utilitaires** permettant de rédiger du CSS de façon **uniforme** et **structuré**. Plus besoin de créer des classes CSS et de les appeler dans le HTML. Tout le monde utilise la même syntaxe au sein du projet.

Selon moi, le point sur lequel Tailwind excelle est la configuration. La plupart des autres Framework sont rigides et difficilement configurables. Avec Tailwind, en deux lignes il est aisé de changer la configuration de style en ajoutant des fonts, en changeant les couleurs utilisées dans l’application ou encore en modifiant certaine propriété Tailwind.

Imaginez que vous avez une couleur primaire et une couleur secondaire dans votre UI. Il est simple de configurer Tailwind pour renseigner ces couleurs. Vous vous rendez dans le fichier de configuration de Tailwind et vous modifiez l’objet colors avec les couleurs dont vous avez besoins.

```jsx

colors: {

primary: {

light: '#CDF0DB',

DEFAULT: '#007A33'

},

},

```

Vous pouvez ensuite utiliser cette couleur avec toutes les classes utilitaires de Tailwind de la façon suivante :

```jsx

text-primary //couleur du text

bg-primary-light // couleur de background

border-primary // couleur de la bordure

```

Ces quelques exemples illustrent la facilité de setup un thème dans votre application. Tailwind vous évite de devoir créer un fichier style.css qui centralise les constantes de couleur de l’application. Ce fichier doit être importer partout ou l’on utilise cette constante. Cela génère de la contrainte lors du développement.

Outre les classes utilitaires et la facilité de customiser ces dernières, Tailwind facilite l’implémentation des interfaces responsives. En effet, il est possible d’utiliser les breakpoints par défaut ou de customiser ces derniers.

Par défaut tailwind utilisera les breakpoints suivants :

```jsx

screens: {

'sm': '640px',

// => @media (min-width: 640px) { ... }

'md': '768px',

// => @media (min-width: 768px) { ... }

'lg': '1024px',

// => @media (min-width: 1024px) { ... }

'xl': '1280px',

// => @media (min-width: 1280px) { ... }

'2xl': '1536px',

// => @media (min-width: 1536px) { ... }

}

```

Il est possible de surcharger les différents breakpoints en ajoutant à l’objet ci-dessus les valeurs adaptées à votre besoin, dans le thème Tailwind.

Voici quelques exemples d’utilisation des breakpoints :

```jsx

// on applique la classe px-36 pour tous les écrans égaux ou supérieur à xl

xl:px-36

// on applique un background bleu pour les écrans inférieurs à md

// on applique un background vert pour les écrans supérieur à md et strictement inférieur à xl

// on applique un background rouge pour tous les écrans supérieur à xl

bg-blue

md:bg-green

xl:bg-red

```

Tailwind évite de devoir utiliser les media query. Le code ressemblerait à cela :

```jsx

// on applique un background bleu pour les écrans inférieurs à md

@media only screen and (max-width: 639px) {

.custom-background {

background-color: blue;

}

}

// on applique un background vert pour les écrans supérieur à md et strictement inférieur à xl

@media only screen and (min-width: 640px) {

.custom-background {

background-color: green;

}

}

// on applique un background rouge pour tous les écrans supérieur à xl

@media only screen and (min-width: 1280px) {

.custom-background {

background-color: red;

}

}

```

La différence est flagrante, Tailwind simplifie la vie et rend le style plus lisible en utilisant une syntaxe minimaliste. Ainsi, si l’on veut changer une couleur ou une font, c’est très **simple** !

# 3 - Rendre le HTML plus lisible

Le but du code est de raconter une histoire. Pour qu’une histoire soit belle et touche le lecteur, il faut trouver les mots justes. En utilisant Styled Components, il est possible de nommer ses balises. Vous pouvez vous dire que c’est un détail mais c’est un détail qui a beaucoup d’importance.

Prenons l’exemple de code suivant :

```html

<div className="header-container">

<div className="links-container">

<a className="link" href="#">Tous les articles</a>

<a className="link" href="#">A propos</a>

</div>

<div className="logo-container"></div>

</div>

```

Voici le même code avec Styled Components :

```html

<HeaderContainer>

<LinksContainer>

<Links href="#">Tous les articles</Links>

<Links href="#">A propos</Links>

</LinksContainer>

<LogoContainer />

</HeaderContainer>

```

Personnellement, je suis tombé amoureux de Styled Components car il permet vraiment de décrire ce que représente le template. De plus, il nous force à nommer ce qu’on utilise, ce qui est très important.

Il y a une règle que j’essaie au maximum de respecter lorsque je programme : “S’il est difficile de trouver un nom pour un élément de code c’est qu’il est probablement mal implémenter ou juste inutile”. En suivant cette règle vous vous retrouverez à faire du code plus propre et qui aura plus de sens. Je peux maintenant appliquer cette règle au template grâce à Styled Component.

Cette librairie m’apporte énormément de lisibilité. Fini les noms de classe qui n’ont aucun sens.

# 4 - Centraliser les informations

Partons pour un historique de la programmation Web !

A ces débuts, la façon de programmer en Web était différente d’aujourd’hui. Bien entendu, nous utilisons toujours du HTML, du CSS et du Javascript mais la façon d’organiser tout ce petit monde à évoluée au fur et à mesure du temps.

Au début il n’était question que de balise HTML décorées de style CSS. On ne parlait pas d’application mais de page Web. On avait donc le HTML et le CSS dans un même fichier.

Ensuite, le JavaScript a fait son apparition avec l’opportunité de rendre les pages interactives ! Superbe révolution. Au début on met le JavaScript dans le même fichier que le HTML et le CSS.

Le temps passe et les pages Web se transforment en Application Web composées de plusieurs pages, la quantité de JavaScript augmente de plus en plus pour avoir des pages très riches en interaction. Il commence à y avoir beaucoup de monde au même endroit et les fichiers deviennent très volumineux. La séparation des trois colocataires devient la norme.

Vient ensuite l’air de la séparation et le style suivant se repend dans la programmation web :

- un fichier **HTML** pour la structure

- un fichier **CSS** pour le style

- un fichier **JavaScript** pour la logique

Les Framework Web modernes utilisant la programmation par composant se sont bâtis sur ce modèle en favorisant la séparation de la structure la logique et le style. Les grands fichiers de code entremêlés de HTML, de CSS et de JS se transforment en une multitude de composants qui sont des briques **unitaires réutilisables** dans l’application possédant leur propre fichier JS, CSS et HTML.

React décide de prendre une autre direction en proposant la possibilité d’écrire du **JavaScript** et du **HTML** au sein d’un même fichier grâce au **JSX** (JavaScript XML). Il n’y a plus trois fichiers différents par composant mais deux, un JSX et un CSS.

Normalement, vous devez vous poser la question suivante : pourquoi React décide de fusionner les fichiers si l’évolution du dev web nous a amené à séparer les fichiers ?

Je vous répondrais que la réponse est dans la question. L’évolution du developpement web nous a amené au développement par composant. Des briques de logique unitaires, donc beaucoup plus petites, qui ont un code plus petit également. Ainsi, il n’est plus avantageux d’avoir une séparation, qui va créer beaucoup de charge cognitive. En effet, il est plus complexe de naviguer à travers 9 fichiers différents pour 3 composants.

Le JSX permet de gagner en lisibilité car nous avons la logique et le template qui coexiste au sein d’un même fichier. React prouve qu’il n’est pas problématique d’avoir le HTML et le JavaScript dans le même fichier, et que cela fait même gagner beaucoup de temps en simplifiant la compréhension et en évitant de devoir naviguer entre plusieurs fichiers afin de faire des liens mentaux de qui fait quoi.

Bon, maintenant vous vous dites, mais je suis venu lire un article sur Tailwind et Styled Component ! Ne soyez pas si impatient nous y venons. Après ces explications vous devez avoir une intuition de ce que je vais vous expliquer.

De la même façon que React a réuni HTML et JS, je trouve qu’il est pertinent de joindre le CSS à la fête. Ainsi j’utilise Styled Components pour inclure le CSS au sein de mon fichier JSX. Cela permet d’avoir toutes les informations du composant que je consulte dans le fichier.

Vous pouvez vous dire que Styled Components n’est pas utile et que l’attribut styles de React suffit à inclure le CSS. Croyez moi, lorsque votre application est conséquente, il est très difficile de lire un composant utilisant styles.

De plus, avec Styled Components allié à Tailwind qui simplifie le CSS et uniformise la façon d’écrire le style, il est beaucoup plus facile de lire le style du composant. Tous les composants sont rédigés sous le même format, tout est à sa place.

# Conclusion

Nous avons vu dans cet article 4 raisons pour lesquelles j’utilise Tailwind et Styled Components dans mes projets React.

La première raison est la popularité montante de ces outils et le potentiel qu’ils représentent.

J’utilise également ces outils car il me permettent de mieux **structurer** mes projets en écrivant du CSS **uniforme** et facilement **modulable** au sein de toute mon application. La création de design **responsif** est beaucoup plus **facile**. J’obtient également des fichier HTML ayant beaucoup plus de sens car il n’y a plus un empilement de div mais mon template raconte une histoire et reflète ce qu’il est vraiment.

Une autre raison pour laquelle j’utilise ces outils est le fait qu’il **centralise** tout le code de mes composants au sein d’un unique fichier. Lorsque je relis mon code, cela **constitue un gain de temps non négligeable** car je ne dois plus naviguer entre les différents fichiers de code.

Enfin pour conclure cet article, je vous donne une dernière raison. J’utilise Tailwind et Styled Components car je prend du **plaisir** à coder et j’aime la philosophie derrière ces outils.

Si vous utilisez ces outils n’hésitez pas à me partager votre expérience et votre avis. | tontz |

1,434,273 | Will AI take your job or allow you to work faster? | With the rapid evolution of AI from all different aspects whether its writing blogs, creating images,... | 22,564 | 2023-04-13T01:24:10 | https://dev.to/benhultin/will-ai-take-your-job-or-allow-you-to-work-faster-4m55 | With the rapid evolution of AI from all different aspects whether its writing blogs, creating images, generating code, etc, what will happen to our jobs?

I have been pondering various questions about this the impact of AI as we all have. I have developed some thoughts about these questions:

**Will AI completely replace us?**

- I would tend to argue no. I am going off this conclusion based on business and competition reasoning.

- Companies are always trying to get more done faster with less cost.