id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

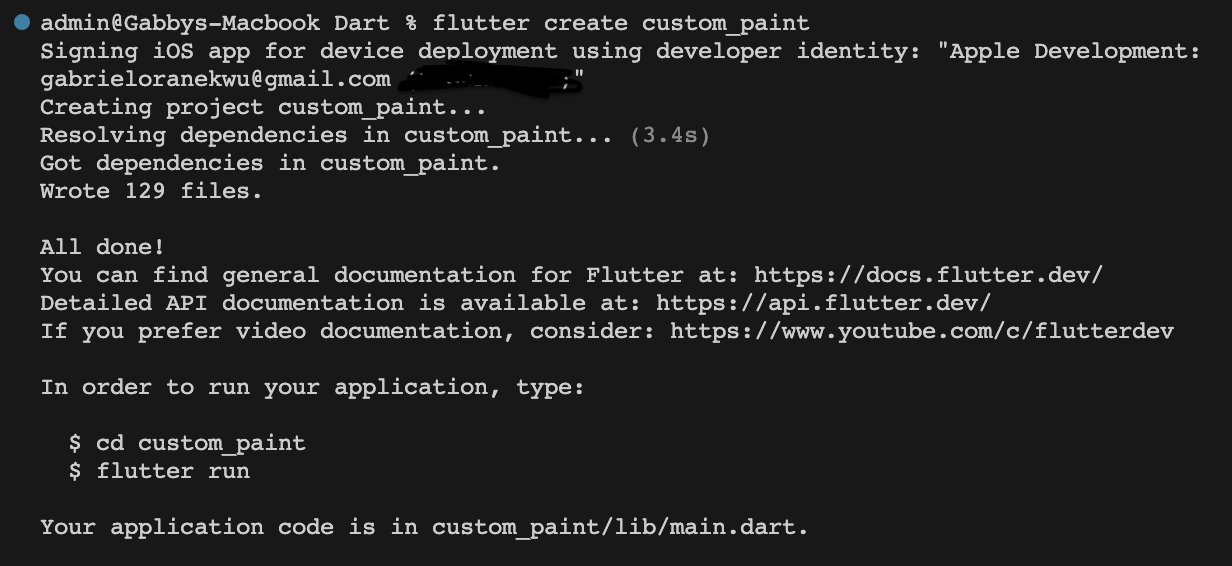

1,736,899 | Automating Unit Testing for Your Flutter Project with GitHub Actions | In the world of software development, ensuring the quality and reliability of your code is paramount.... | 0 | 2023-02-20T09:00:00 | https://remelehane.dev/posts/automated-flutter-unit-testing-with-github-actions/ | flutter, flutterweb, unittesting, githubactions | ---

stackbit_url_path: posts/automated-flutter-unit-testing-with-github-actions

title: "Automating Unit Testing for Your Flutter Project with GitHub Actions"

date: '2023-02-20T09:00:00.000Z'

excerpt: >-

tags:

- flutter

- flutterweb

- unittesting

- githubactions

template: post

thumb_img_path: https://images.unsplash.com/photo-1556075798-4825dfaaf498?q=80&w=1000&auto=format&fit=crop&ixlib=rb-4.0.3&ixid=M3wxMjA3fDB8MHxzZWFyY2h8N3x8bG9naWN8ZW58MHx8MHx8fDA%3D

cover_image: https://images.unsplash.com/photo-1556075798-4825dfaaf498?q=80&w=1000&auto=format&fit=crop&ixlib=rb-4.0.3&ixid=M3wxMjA3fDB8MHxzZWFyY2h8N3x8bG9naWN8ZW58MHx8MHx8fDA%3D

published: true

published_at: '2023-02-20T09:00:00.000Z'

canonical_url: https://remelehane.dev/posts/automated-flutter-unit-testing-with-github-actions/

---

In the world of software development, ensuring the quality and reliability of your code is paramount. One way to achieve this is through automated unit testing. By automating the process of running tests on your code, you can catch issues early, prevent broken code from going into production, and improve overall code quality. In this article, we will explore how you can use GitHub Actions to automate unit testing for your Flutter project.

What is GitHub Actions?

-----------------------

GitHub Actions is a powerful workflow automation tool provided by GitHub. It allows you to define custom workflows that can be triggered by various events, such as code pushes, pull requests, or manual triggers. With GitHub Actions, you can automate tasks and processes within your software development workflow, including building, testing, and deploying your code.

Setting Up the Workflow

-----------------------

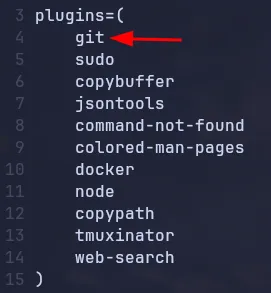

To get started with automating unit testing for your Flutter project, you'll need to define a workflow in your GitHub repository. The workflow is written in YAML format and consists of a series of steps to be executed. Let's take a look at an example workflow:

```yaml

name: Flutter Testing

on:

workflow_dispatch:

pull_request:

branches: [main]

jobs:

test:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v2.3.4

- uses: subosito/flutter-action@v1.5.3

- name: Install packages

run: flutter pub get

- name: Run generator

run: flutter pub run build_runner build

- name: Run test

run: flutter test test

```

In this example, we define a workflow called "Flutter Testing" that will be triggered on both `workflow_dispatch` (manual trigger) and when a pull request is made against the `main` branch. The workflow consists of a single job called "test" that runs on the `ubuntu-latest` environment.

Understanding the Workflow

--------------------------

Now let's take a closer look at each step in the workflow and understand what it does.

### Step 1: Checkout the Code

The first step in the workflow is to check out the code into the instance of the action. This is done using the `actions/checkout` action:

```yaml

- uses: actions/checkout@v2.3.4

```

By checking out the code, we ensure that the subsequent steps have access to the latest version of the codebase.

### Step 2: Installing Flutter

Since we are working with a Flutter project, we need to install Flutter into the instance. This is done using the `subosito/flutter-action` action:

```yaml

- uses: subosito/flutter-action@v1.5.3

```

By default, this action installs the latest stable release of Flutter. However, you can configure it to use a different release or even pin it to a specific version.

### Step 3: Installing Packages

Next, we need to install all the required packages for our Flutter project. This is done by running the following command:

```yaml

- name: Install packages

run: flutter pub get

```

This step ensures that all the necessary dependencies are installed and ready for testing.

### Step 4: Running Code Generation (Optional)

If your project makes use of code generation, you can include a step to run the code generator. This step is optional and can be skipped if your project doesn't require code generation. Here's an example:

```yaml

- name: Run generator

run: flutter pub run build_runner build

```

Running the code generator will generate code based on annotations in your project, such as serializers, routes, or database models.

### Step 5: Running Unit Tests

Finally, we come to the most important step—running the unit tests for your Flutter project. This is done using the following command:

```yaml

- name: Run test

run: flutter test test

```

This command runs all the tests located in the `test` directory of your project. You can customize the path if your tests are located in a different directory.

Running the Workflow

--------------------

Once you have defined your workflow, it will be automatically triggered whenever a pull request is made against the `main` branch or manually triggered using the GitHub Actions interface. The workflow will run on the specified environment (in this case, `ubuntu-latest`) and execute each step in the defined order.

The time it takes to run the automated tests will depend on the size and complexity of your project. Smaller projects with a handful of files may complete in just over a minute, while larger projects with thousands of files and extensive test coverage may take several minutes to complete.

Conclusion

----------

Automating unit testing for your Flutter project using GitHub Actions is a simple yet powerful way to ensure code quality and prevent issues from reaching production. By defining a workflow and specifying the necessary steps, you can easily run tests on your code with every push or pull request. This helps catch bugs early, improves overall code quality, and gives you confidence in the reliability of your codebase.

If you have any questions, comments, or improvements, feel free to drop a comment below. Happy testing and enjoy your Flutter development journey!

| remejuan |

1,737,060 | 🚗✨ Introducing the Ultimate Car Glass Cleaning Solution! ✨🚗 | 🚗✨ Introducing the Ultimate Car Glass Cleaning Solution! ✨🚗 Struggling with foggy and rainy weather... | 0 | 2024-01-21T18:34:18 | https://dev.to/sara13/introducing-the-ultimate-car-glass-cleaning-solution-376h | [🚗✨ Introducing the Ultimate Car Glass Cleaning Solution! ✨🚗](https://supershop-amzn.blogspot.com/2024/01/scrubit-ice-scraper-for-cars-foam.html)

Struggling with foggy and rainy weather obstructing your view while driving? We've got the game-changer you need! 🌧️🔍

Say goodbye to unclear visibility with the TSWDDLA Car Rearview Mirror Wiper! 🌟 This retractable auto glass squeegee is not just a tool; it's a revolution in car care. 🚀

Key Features:

[🧲 Magnetic Attraction](https://www.toprevenuegate.com/tmstihh3?key=afe61164450201a338fcc9b49f71a035): Effortlessly attach and detach for quick, convenient use.

[🌐 Telescopic Long Rod:](https://www.toprevenuegate.com/tmstihh3?key=afe61164450201a338fcc9b49f71a035) Reach every corner of your vehicle's glass with ease.

[💧 Water Cleaner:](https://www.toprevenuegate.com/tmstihh3?key=afe61164450201a338fcc9b49f71a035) Efficiently wipe away raindrops, ensuring a crystal clear view.

[🚗 Portable Design:](https://www.toprevenuegate.com/tmstihh3?key=afe61164450201a338fcc9b49f71a035) Take it with you on the go for instant car glass cleaning.

Why Choose TSWDDLA?

Our cutting-edge technology is designed for optimal performance in all weather conditions. No more compromised visibility during rainy or foggy drives! 🌦️

[How to Use:

Simply extend the telescopic rod, engage the magnetic wiper, and experience the magic of clear, streak-free glass. Drive safer and smarter with TSWDDLA! 🚦🌈](https://www.toprevenuegate.com/tmstihh3?key=afe61164450201a338fcc9b49f71a035)

👉 Grab Yours Now and Experience the Difference!

[[Link to Purchase](https://supershop-amzn.blogspot.com/2024/01/scrubit-ice-scraper-for-cars-foam.html)]

🌐 Website: [https://supershop-amzn.blogspot.com/]

📱 [Follow Us for More Innovations](https://www.toprevenuegate.com/tmstihh3?key=afe61164450201a338fcc9b49f71a035)

[Join the revolution in car care! Let's navigate the roads with confidence. 💪🚗✨](https://www.toprevenuegate.com/tmstihh3?key=afe61164450201a338fcc9b49f71a035) #CarCare #Innovation #TSWDDLA #DriveSafe #VisibilityMatters #CarGlassCleaning #TechRevolution #CarMaintenance #RoadSafety #DevToCommunity | sara13 | |

1,746,772 | Future Tech Trends: Navigating Advanced Technology in Edmonton | In the ever-evolving landscape of Edmonton's technological scene, staying ahead of the curve is... | 0 | 2024-01-31T06:55:53 | https://dev.to/umanologic/future-tech-trends-navigating-advanced-technology-in-edmonton-3152 |

In the ever-evolving landscape of Edmonton's technological scene, staying ahead of the curve is imperative for businesses aiming for sustained success. From innovative solutions to transformative technologies, let's navigate the path of advanced technology and discover the possibilities it holds for the thriving businesses in Edmonton.

**The Rise of Artificial Intelligence (AI):** Edmonton is witnessing a surge in the adoption of artificial intelligence, revolutionizing various industries. From predictive analytics to automated processes, businesses are leveraging AI to enhance efficiency and decision-making.

**Blockchain Innovations:** Explore the growing influence of blockchain technology in Edmonton. Beyond cryptocurrencies, blockchain is finding applications in supply chain management, secure transactions, and data integrity. We'll uncover the potential impact of blockchain on businesses and how it's contributing to a more transparent and secure business environment.

**Smart City Initiatives:** Edmonton is embracing smart city initiatives, integrating technology to enhance urban living. From IoT-enabled infrastructure to data-driven governance, discover how these initiatives are creating a more connected and efficient city. Businesses that align with these smart city trends can position themselves for success in the emerging digital ecosystem.

**5G Connectivity and IoT Integration:** As 5G networks roll out, Edmonton is poised to experience a significant leap in connectivity. Explore how the synergy between 5G and the Internet of Things (IoT) is opening new possibilities for businesses.

**Conclusion:**As leading continues to embrace Advanced Technology Solution in Edmonton, businesses have a unique opportunity to pioneer innovation and navigate the future successfully. From AI advancements to blockchain innovations and smart city initiatives, staying informed and adaptable is key.

Contact Umano Logic today and let's navigate the future of technology together. Your success is our priority, and at Umano Logic, we make the future happen.

| umanologic | |

1,737,078 | Unlocking Automotive Expertise: A Comprehensive Guide to Car Workshop Manuals" | In the fast-paced world of automotive maintenance and repair, having access to reliable resources is... | 0 | 2024-01-21T19:21:39 | https://dev.to/workshopmanual5/unlocking-automotive-expertise-a-comprehensive-guide-to-car-workshop-manuals-5bo1 | In the fast-paced world of automotive maintenance and repair, having access to reliable resources is crucial for both seasoned mechanics and DIY enthusiasts. One indispensable tool that stands out in the realm of vehicle maintenance is the Car Workshop Manual. This comprehensive guide aims to delve into the significance of these manuals, exploring their features, benefits, and the vital role they play in keeping our vehicles running smoothly.

1. Understanding Car Workshop Manuals:

**[Car Workshop Manuals](https://workshopmanuals.org/)** serve as treasure troves of information for anyone keen on mastering the art of automotive maintenance. These manuals provide an intricate roadmap, guiding users through the intricacies of their vehicle's systems, components, and functionalities.

2. In-Depth Vehicle Knowledge:

One of the primary advantages of Car Workshop Manuals is their ability to offer in-depth knowledge about specific car models. Whether you're dealing with engine diagnostics, electrical systems, or suspension setups, these manuals provide step-by-step instructions, allowing both professionals and novices to navigate the intricacies of their vehicle.

3. Troubleshooting and Diagnostics:

Car Workshop Manuals are indispensable for troubleshooting and diagnostics. They equip users with the tools to identify and rectify issues efficiently. From deciphering warning lights to addressing unusual noises, these manuals empower individuals to diagnose problems with precision.

4. Step-by-Step Repair Procedures:

Navigating the repair process can be challenging, especially for those without extensive mechanical backgrounds. Car Workshop Manuals break down complex repair procedures into manageable, step-by-step instructions, enabling users to confidently address issues ranging from brake replacements to transmission overhauls.

5. Maintenance Schedules and Procedures:

Regular maintenance is key to extending the lifespan of a vehicle. Workshop Manuals provide detailed maintenance schedules and procedures, ensuring that users can adhere to manufacturer-recommended guidelines for tasks such as oil changes, filter replacements, and fluid checks.

6. Cost-Effective Repairs:

Investing in a Car Workshop Manual can lead to significant cost savings. By understanding their vehicle's intricacies, users can undertake repairs on their own, eliminating the need for costly professional services. This empowerment fosters a sense of self-reliance and financial prudence.

7. Compatibility Across Brands and Models:

Car Workshop Manuals are not limited to a specific brand or model. They are available for a wide range of vehicles, making them versatile resources for enthusiasts and professionals working on various cars. This universal applicability enhances their value in the automotive community.

8. Evolving with Automotive Technology:

As automotive technology continues to advance, so do Car Workshop Manuals. Modern manuals integrate digital formats, interactive diagrams, and multimedia elements, providing users with a dynamic and engaging learning experience. This adaptability ensures that users stay current with the latest technological developments.

9. Community and Knowledge Sharing:

Owning a Car Workshop Manual also connects individuals with a larger community of like-minded enthusiasts. Online forums, discussion groups, and social media platforms provide a space for knowledge sharing, troubleshooting discussions, and collaborative problem-solving.

Conclusion:

In conclusion, Car Workshop Manuals stand as indispensable companions for anyone seeking to unravel the mysteries beneath the hood of their vehicles. From empowering DIY enthusiasts to serving as essential references for professional mechanics, these manuals bridge the gap between automotive curiosity and hands-on expertise. Embrace the wealth of knowledge they offer, and embark on a journey of automotive mastery.

| workshopmanual5 | |

1,737,095 | # Comprehensive Guide to Shell Scripting (0-1)🚀 | Introduction Welcome to the exciting world of shell scripting! This comprehensive guide... | 0 | 2024-01-21T20:10:29 | https://dev.to/surajvast1/-comprehensive-guide-to-shell-scripting-0-1-4j2g | devops, linux, programming, beginners |

## Introduction

Welcome to the exciting world of shell scripting! This comprehensive guide will take you from the fundamentals to advanced concepts, helping you automate tasks on Linux environments using shell scripts. 🐚

## Table of Contents

1. [Creating and Viewing Files](#creating-and-viewing-files)

2. [Understanding Commands](#understanding-commands)

3. [Executing Shell Scripts](#executing-shell-scripts)

4. [Managing Permissions](#managing-permissions)

5. [Useful Commands](#useful-commands)

6. [Example: Basic Shell Script](#example-basic-shell-script)

7. [Shell Scripting Roles](#shell-scripting-roles)

## Creating and Viewing Files

- To **create a file**, use the `touch` command: `touch filename`.

- Shell scripts typically have the extension `.sh`.

- Use `ls` to list files and directories.

- `ls -ltr` sorts files by modification time.

## Understanding Commands

- Use `man <command>` to get a manual about a command.

- Editing files: Use `vi` to open a file, `i` to insert, and `:wq!` to save and exit.

- The first line in a shell script should contain the shebang (`#!/bin/bash`).

## Executing Shell Scripts

- To execute a shell script: `sh filename.sh` or `./filename.sh`.

- Permissions: Use `chmod` to grant permissions. Example: `chmod 777 filename.sh`.

- Permissions include read (4), write (2), and execute (1).

## Managing Permissions

- `chmod 444 filename` gives read access only.

- Security: Use `chmod` with root, group, and user access.

## Useful Commands

- `history`: View executed commands.

- `pwd`: Know the current directory.

- `mkdir`: Create a directory.

- `cd`: Change directory.

## Example: Basic Shell Script

```bash

#!/bin/bash

# Creating a folder

mkdir user

# Creating 2 files

cd user

touch firstfile secondfile

```

## Shell Scripting Roles

Shell scripting serves three main roles:

1. **Infrastructure Management**

2. **Code Management**

3. **Configuration Management**

## User-Friendly Example

Now, let's walk through an example using the username "user":

1. **List files in the current directory:**

```bash

user@hostname:~/path/to/directory$ ls

```

2. **Create and open a shell script file named `shellscript.sh`:**

```bash

user@hostname:~/path/to/directory$ vim shellscript.sh

```

3. **List files again to confirm the creation of the script:**

```bash

user@hostname:~/path/to/directory$ ls

shellscript.sh otherfile

```

4. **View the contents of the shell script:**

```bash

user@hostname:~/path/to/directory$ cat shellscript.sh

#!/bin/bash

# Create a folder

mkdir user

# Create 2 files

cd user

touch firstfile secondfile

```

5. **Attempt to execute the script (permission denied):**

```bash

user@hostname:~/path/to/directory$ ./shellscript.sh

bash: ./shellscript.sh: Permission denied

```

6. **Grant execute permission to the script:**

```bash

user@hostname:~/path/to/directory$ chmod 777 shellscript.sh

```

7. **Execute the script successfully:**

```bash

user@hostname:~/path/to/directory$ ./shellscript.sh

```

8. **List files to verify changes:**

```bash

user@hostname:~/path/to/directory$ ls

shellscript.sh user otherfile

```

9. **Navigate to the 'user' directory:**

```bash

user@hostname:~/path/to/directory$ cd user

```

10. **List files in the 'user' directory:**

```bash

user@hostname:~/path/to/directory/user$ ls

firstfile secondfile

```

11. **Attempt to execute the script inside the 'user' directory (file not found):**

```bash

user@hostname:~/path/to/directory/user$ ./shellscript.sh

bash: ./shellscript.sh: No such file or directory

```

12. **Navigate back to the parent directory:**

```bash

user@hostname:~/path/to/directory/user$ cd ..

```

13. **Attempt to execute the script again (folder already exists):**

```bash

user@hostname:~/path/to/directory$ ./shellscript.sh

mkdir: cannot create directory ‘user’: File exists

```

[Advance shell scripting ➡️](https://dev.to/surajvast/advance-shell-script-1-100-5756) | surajvast1 |

1,737,117 | Mastering CSS Custom Properties: The Senior Developer's Approach to CSS Custom Properties. | Introduction Working with CSS (Cascading Style Sheets) is a very important part of our... | 0 | 2024-01-21T20:54:42 | https://dev.to/dunia/mastering-css-custom-properties-the-senior-developers-approach-to-css-custom-properties-4088 | css, html, webdev, beginners | ## Introduction

Working with CSS (Cascading Style Sheets) is a very important part of our application as it allows us to change the colors, fonts, and layouts of all our HTML elements.

It usually involves [_Class selectors _](https://www.freecodecamp.org/news/css-selectors-cheat-sheet-for-beginners/) that Target elements with a specific class, [`ID Selectors`](https://www.w3schools.com/css/css_selectors.asp) that target elements with a specific ID, and [Element Selectors](https://www.w3schools.com/css/css_selectors.asp) that target specific HTML elements.

**_Note: I'll assume you already have basic knowledge of CSS before delving into this project._**

**_Also, I made sure that the words colored blue on this article are links. You can click on it to learn more._**

Now back to our work 👇 :

For our project, we will be making use of this code with [Internal CSS](https://www.geeksforgeeks.org/internal-css/).

```

<!DOCTYPE html>

<html>

<head>

<title>Page Title</title>

<link rel="preconnect" href="https://fonts.googleapis.com">

<link rel="preconnect" href="https://fonts.gstatic.com" crossorigin>

<link href="https://fonts.googleapis.com/css2?family=Lato:ital,wght@0,100;0,300;0,400;0,700;0,900;1,100;1,300;1,400;1,700;1,900&family=Montserrat:ital,wght@0,100;0,300;0,400;0,500;0,600;0,700;0,800;0,900;1,100&family=Poppins:ital,wght@0,100;0,200;0,300;0,400;0,500;0,600;0,700;0,800;0,900;1,100;1,200;1,300;1,400;1,500;1,600;1,700;1,800;1,900&display=swap" rel="stylesheet">

<style>

body {

font-family: "Poppins", sans-serif;

background-color: #e8d300;

color: #333;

margin: 20px;

}

h1 {

color: white;

text-align:center;

padding-top:30px;

font-size:80px;

line-height:1.1em;

}

p {

font-size: 25px;

font-weight:600;

text-align:center;

color:rgb(53, 53, 53);

}

.custprop{

color:rgb(61, 61, 61);

}

</style>

</head>

<body>

<h1>CSS <br/> <span class="custprop"> Custom Properties </span>

</br/> Project</h1>

<p> If you have reached here, then you got it right</p>

</body>

</html>

```

If we copy and paste this into our code editor and then save it, we'll end up with a page that looks like this:

Notice I wrote **"CSS CUSTOM PROPERTIES"**. Good! thats what we will be learning today. Let us start with a definition.

## What are Css properties?

CSS properties are key-value pairs that define a specific styling applied to an HTML element.

Here is what I mean 👇

```

p {

font-size: 25px;

}

```

In the example above, **"font-size"** is a property, and its value is set to **"25px."**

While this approach works, it has some limitations.

For instance,

1. If there is another **font-size** somewhere in the application and it's consistent with **25px**, when we want to make changes to one, we have to find the other to make changes all over again. If there are 10 of these, we have to make changes in all 10 places it appears on the website. We cannot effortlessly update it across entire codebases.

2. We cannot create User-defined variable names. We are limited to [built-in properties](https://www.w3schools.com/cssref/index.php).

**_I know you have seen something that may sound strange to you "User defined variables". We will get to that shortly._**

So it has been established that doing iot the traditional way comes with limitations. Because of this, in cases where our websites have a lot of content that will also mean a lot of styling, we can employ a better approach called **CSS Custom properties or Custom variables**.

The term "Custom" is used because these **properties are defined by the developer rather than being built-in or predefined by the browser**.

CSS custom properties, or CSS variables, are user-defined values in CSS that hold specific values.

Take note of these **key statements** in its definition:

1. User-defined values.

2. Custom variables.

Let's try to progressively break them down.

## User-defined Values

In our previous code, **font-size** cannot be altered by the developer, because it is built-in. If it is altered, then the code won't run. 👇

```

p {

myfontsize: 25px;

front-size:10px;

fontsize:10px;

front-size:10px;

}

```

None of the codes defined above will run.

What Custom Properties allows us to do is to break these rules.

With Custom properties, we can give it any name we want. If a color is yellow, we can name the property **Spongebob** and give it a **yellow color**, and it will run.

**Giving it any name we want is the feature that makes it a User-defined value.**

If the user (you and I as developers) can customise it to any type of name we want, then it is a user-defined value.

## CUSTOMISED VARIABLE

Surprisingly, that same control you and I have is also what makes it a **Customised Variable**.

We store information inside, and in the context of programming, a **variable** is a way to store and use to data in a program.

The custom properties are the user-defined variables.

If that has been understood, then we need to now understand **CSS pseudo-class selector**.

## What is a Pseudo-Class Selector?

A pseudo-class is a keyword added to a selector to indicate a special state or condition of the selected elements.

For instance, we have the **:hover**, **:roots**.

If this is gibberish to you, then you must [read this to know more about the **pseudo-class**.

](https://www.w3schools.com/css/css_pseudo_classes.asp)

In our case, we'll be making use of the **:root**.

The **:root** is the highest-level parent element in an HTML document. In simple terms, it is the styling with the most authority.

```

:root{

background-color:red;

}

```

When you apply the code above, it overshadows the rest of the stying because it the highest level parent element.

```

html {

background-color: red;

color: white;

}

body{

background-color:red;

:root{

background-color:red;

}

```

In the code above, The styles defined for **:root** would apply globally to the entire HTML document. Yes, it is **more powerful and global** than the **html** and the **body selector**.

## How can the Custom Variables be used?

To declare a CSS custom variable, use the **-- prefix followed by your preferred name for the variable**.

```

:root{

--yellow-background:#e8d300;

--dark-color:#333;

--white-color:white;

--heading-font-size:80px;

--paragraph-font-size:25px;

}

```

Here, **--** is our **prefix**, and **--yellow-background --dark-color --white-color --heading-font --paragraph-font-size** are our custom variables/properties defined within the**:root** pseudo-class, which represents the highest-level parent element in the document.

If you noticed all the names of the properties are user-defined.

We can name it anything we want and use them globally.

The only condition in which we are allowed to break the rule of [built-in or predefined by the browser](https://www.w3schools.com/cssref/index.php) is when we use the **-- prefix** **followed by our preferred name for the variable**.

Note: Just to see if it works, you can name it whatever you wish.

Now that we have declared it, how do we use it?

```

:root{

--yellow-background:#e8d300;

--dark-color:#333;

--white-color:white;

--heading-font-size:80px;

--paragraph-font-size:25px;

}

body {

font-family: "Poppins", sans-serif;

background-color: var(--yellow-background);

color: var(--dark-color);

}

h1 {

color: var(--white-color);

text-align:center;

padding-top:50px;

font-size:var(--heading-font-size);

line-height:1.1em;

}

p {

font-size: var(--paragraph-font-size);

font-weight:600;

text-align:center;

color:var(--dark-color);

}

.custprop{

color:var(--dark-color);

}

```

If you notice, anytime we want to use what we declared in the **: root**, we have to include a **var()**.

The **var()** function in CSS is used to insert the value of a custom property/variable wherever it is called. That is exactly what we did above.

With this, our styling should work on your browser, and you should be getting the same result as the original code. 👇

CSS custom properties/variables make it simple to change values globally. If we want to change the heading font size, we only need to update it in one place:

```

:root{

--heading-font-size:40px;

}

```

**_Notice it was 80px earlier._**

With that single adjustment, it changes to 👇

If we had more classes with more headings, we only need to change the **--heading -font-size** value once and it updates the rest of the application.

I hope this is clear.

Now we can effortlessly update our website across our entire codebases, Provide enhanced readability through user-defined variable names, and ensure reusable style components.

| dunia |

1,737,141 | Hello | Hello | 0 | 2024-01-21T21:33:04 | https://dev.to/tryshenka1980/hello-2f9f | Hello | tryshenka1980 | |

1,737,190 | Postmortem: Web Stack Downtime january 22, 2024 | Issue Summary: Duration: Start Time: january 22, 2024, 09:30 AM (UTC) End Time: january 22, 2024,... | 0 | 2024-01-21T23:18:26 | https://dev.to/hassanhai/postmortem-web-stack-downtime-january-22-2024-2aae | **Issue Summary:**

**Duration:**

Start Time: january 22, 2024, 09:30 AM (UTC)

End Time: january 22, 2024, 11:45 AM (UTC)

**Impact:**

Added Disadvantages of User Authentication Service: Disadvantages of Using User Authentication Service: , Impact 30% user.

Root cause: Misconfigured load balancing settings result in increased traffic on the authentication server.

Timeline: 14: 30: This issue was discovered due to an increased error rate in the authentication service.

14: 35: If the error rate is high, an audit alert will be triggered.

14: 40: Initial investigation has begun and indicates there may be a problem with the database connection.

15: 00: Database connection checked.

The focus has shifted to application servers.

15: 30: Incorrect assumption that recent code deployments may cause problems.

Begin recovery.

16: 00: Restore completed.

No improvement was seen.

Reported to infrastructure team.

16: 30: Further investigation revealed that the load balancing settings were configured incorrectly, resulting in uneven distribution of traffic.

17: 00: The load balancing configuration has been fixed and traffic is starting to normalize.

18: 45: Full service restoration confirmed.

Root cause and solution: Root cause: Misconfigured load balancing settings cause traffic to be unevenly distributed and the authentication server to be overloaded.

**Resolution: **

Fixed the load balancing configuration to evenly distribute traffic between application servers to eliminate bottlenecks.

Corrections and Precautions:

**Improvements/Fixes: **

Automatic Load Balancing Configuration Validation:

Run automated tests to validate the load balancing configuration and avoid configuration errors.

** Advanced Monitoring:**

Improve the monitoring system to detect and alert on real-time load balancing anomalies.

** Incident Response Training: **

Provide additional training to your team on effective incident response and troubleshooting strategies.

**Tasks to resolve the issue: **

Automatic load balancer checks: Implement a script that periodically checks the load balancer configuration and alerts you to deviations from best practices.

**Monitoring improvements:**

Add custom monitoring metrics specific to load balancer health and distribution.

** Documentation Update:**

Updates the incident response playbook with steps to investigate and resolve issues related to load balancing.

** Team Training:**

Conducted a load balancing management and troubleshooting workshop for the infrastructure team.

** Conclusion: **

The january 22, 2024 outage was caused by misconfigured load balancing settings that increased traffic to the authentication server, making it unavailable.

Work temporarily failed during investigation due to an initially assumed database problem.

If identified, corrective actions include adjusting load balancing settings to evenly distribute traffic to restore service.

Automated load balancing configuration testing, improved monitoring, and additional incident response training were identified as key improvements to prevent future incidents.

To address these issues and harden the system against similar problems in the future, a number of specific tasks are outlined, including scripting automation controls and conducting workshops. | hassanhai | |

404,397 | Learning JavaScript...again | An article about holding yourself accountable, levelling up and learning. | 0 | 2020-07-20T02:29:48 | https://dev.to/robinhoeh/learning-javascript-again-2of4 | learning, javascript, career, selfimprovement | ---

title: Learning JavaScript...again

published: true

description: An article about holding yourself accountable, levelling up and learning.

tags: Learning , JavaScript, Career, SelfImprovement

//cover_image: https://i.picsum.photos/id/175/2896/1944.jpg?hmac=djMSfAvFgWLJ2J3cBulHUAb4yvsQk0d4m4xBJFKzZrs

---

# I want to get better

### Current day

For the past two and a half years I have been working as a Front End Developer. I have learned a ton since I started. I've been at the same job since I was hired late 2017. Day to day we use Vue.js, CSS, Cypress and mocha + chai for testing. I have come a long way since my first few months at work and still daily, I feel like I have a huge knowledge gap when writing and developing. Specifically, I get stuck when coming up with the logic for a component.

Last month I got really serious about note taking and started to add to my daily notes breaking down all of the sections of the Front End ecosystem I could find from multiple resources as well as what I have encountered at work.

I started taking notes at the end of the week of things I had learned from my co-workers not just about building a component but things like how we structure our app and why we do things the way we do. I would sometimes approach a ticket from the scrum board and be like, "Ya ok cool. So build this component and use it on this page". But around the halfway mark I would get stuck and be like "Wait a sec, how come my component works here but not here?" And when I would ask one of the more senior devs a question about something I was stuck on I would typically receive wayyy more info that I thought I was going to get, with so many more considerations. Then my feeling about building that component quickly escalated to "What in the F am I doing", and confidence levels dropped to an all new low for that day.

### APPROVED

My Boss has always advocated I get my JS skills super solid before anything else. I totally agree with him. Becoming better at JavaScript will make working on the framework we use so much easier. And some days I actually get to put some new found skill in JS and Vue to work which is a great feeling! Something finally clicked and I'm like "Yee I know my stuff!". I want to have this feeling more though. I want to be able to wake up and be like " I am going to crush some JS '' and build a component so DRY and clean that when I make a PR my coworkers are like "APPROVED".

Let me be clear here though, I'm not chasing for comments and praise for my good work. I want to be able to contribute to our projects with confidence, which I can build off of which will lead to improving my skills. So why not learn what I can during the day, apply that to side projects and build cool shit outside of work. Well, I tried that, or so I thought.

### Side projects

I would get a great ideas for an app. I would tell my wife and be like "you know that new car we wanted?? I will buy it for you once this app takes off". Hmm...not really but I was so excited to work on my side project. Shortly after doing some scaffolding, base styles and planning out some UX I would stop. I got busy with another idea or got lazy. But that's not the real reason I didn't end up going through with projects. I stopped because I didn't actually know how to code the thing from scratch. I panicked at the thought of asking someone from work for help on it because it was a super "easy" app. I didn't wanna let them know that the person who works on cool components during the day can't code a small project from scratch. I told myself I would just stop attempting projects because I didn't wanna have to face myself and the feeling of failure. For a couple years now I have been feeling this inner pressure to pump out high quality side projects that display my skills and have fun doing it. But, I have not finished one side project to date since working full time. I have taken a ton of courses but the concepts never stuck quite the same way as they did as when I would f*#& something up at work and be like, ohhh got it now.

### Changing it up

A few months ago, I found an article from this dude Zell Liew. He Explained things extremely well and in a way I could understand. Not only understand but retain the cells on my brain. Then I started getting emails about this course he had. I was sold. These emails were like "Do you get nervous when you think about coding from scratch? Are you afraid to start because you don't wanna fail? I'll show you how to learn and retain JavaScript skills so you don't have that feeling anymore". I answered all of these questions with "Hells ya"... I have only just started the course and it prompts you to form accountability and write out what you have learned. So, I'm doing just that. For a couple years now I have avoided my knowledge gaps, not tutored because I was scared of being labeled as "A fraud". Avoided hackathons cuz I didn't wanna be like "But wait, how should I loop over this nested array to display the desired data?". I was scared of "getting caught" because I didn't know JS.

## Making a crazy comparison

My former profession was playing and teaching drums. I taught quite a lot actually and had fun doing it. I knew what my limitations were and wasn't scared to let students know when I didn't know how to do something. I started teaching privately after playing drums for about 10 years. Maybe time = confidence? Meanwhile I took a 3 month coding bootcamp and was working full 2.5 months after completing it. WTF! Imagine you learned the drums in 3 months and then had a yearly salary with other professionals who treated you nicely and didn't give you a hard time for being a newbie?!

## Objective

So, why am I writing this article? I'm taking the advice from Zell's course. I'm changing the way I learn and have learned JavaScript in the past. I'm forming accountability. I'm going to be writing about the concepts and things I learn about. I wanna share it with people. I wanna get feedback from people in the comments about how concise my understanding of the concepts I write about are. Also, the buy in was big. Close to $600 CDN. There's money on the line. As well, writing about JS makes me confront my own skills and ego. It's uncomfortable.

My hope is that I become way more confident in JS so that I can write clean, DRY components, help others learn and build cool shit that can help people. Nothing too crazy right? I know writing about JS on a blog is nothing new but you gotta start somewhere.

Please share if any part of this article resonates with you or someone you know! Also, it's been a while since I have written an article so any formatting or readability feedback is welcomed as well! I know I used "I" like 400 times. Thanks for reading :)

| robinhoeh |

1,737,315 | The Queue-Based Load Leveling Pattern | Applications in the cloud can be subjected to heavy peaks of traffic in intermittent phases. If our... | 0 | 2024-01-22T04:04:06 | https://dev.to/willvelida/the-queue-based-load-leveling-pattern-1ij2 | azure, architecture, tutorial, beginners | ---

title: The Queue-Based Load Leveling Pattern

published: true

description:

tags: azure,architecture,tutorial,beginner

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/rkoxyv5i0jkre7jmui74.png

# Use a ratio of 100:42 for best results.

# published_at: 2024-01-22 02:32 +0000

---

Applications in the cloud can be subjected to heavy peaks of traffic in intermittent phases. If our applications can't handle these peaks, this can lead to performance, availability and reliability issues. For example, imagine we have an application that stores state temporarily in a cache. We could have a single task within our application that performs this for us, and we could have a good idea of how many times a single instance of our application would perform this task.

However, if the same service has multiple instances running concurrently, the volume of requests made to the cache becomes difficult to predict. Peaks in demand could cause our application to overload the cache and become unresponsive due to the amount of requests flooding in.

This is where **Queue-Based Load Leveling** patterns can help us out. Instead of a service invoking another service, we use a queue that acts as a buffer between our application and the service that it invokes to prevent heavy traffic from overloading services and causing failures or timeouts.

In this article, I'll talk about how we can use Queue-based load leveling to prevent traffic overloading external services, what other benefits this pattern provides, and some things we need to keep in mind when using queue-based load leveling.

## Implementing Queue-Based Load Leveling in Azure

To prevent our applications from directly overloading services, we can introduce a queue between our application and service so that they run asynchronously. Our application posts a message to the queue that's required by the service. Our queue then acts as a buffer between the application and service. The message containing the data that the service needs stays on the queue until it's retrieved by the service.

Say we have an API hosted on Container Apps that interacts with a Cosmos DB database. Without going too much into the internal mechanics of Cosmos DB, if we don't have enough compute resource for our database, we're going to see 429 errors returned to our API if we try process too many requests to our database.

To solve this, we can use queue-based load leveling to resolve this by putting a Service Bus queue between replicas of our API and our Cosmos DB database. We can have another Container App that reads messages from the queue that reads/writes to our Cosmos DB account instead.

Within our Container App that reads messages from the queue, we can implement logic that controls the rate at which messages are read from the queue to prevent our Cosmos DB datastore from being overloaded (otherwise we've just moved the problem, rather than actually solving it 😅).

Our Service Bus queue decouples our application from the database, and the container app reading messages from the queue can do so at its own pace, regardless of how many messages are being sent to the queue concurrently.

This helps increase the availability because any delays in our consuming service won't have an impact on our producer application, which can continue to post messages to the queue. This can also be helpful when we're trying to scale, as the number of queues and consumer services can be scaled to meet the demand.

Queue-based load leveling can also help us control our cloud costs. If you have an idea of what the average load of your application is, within your consumer application you can configure it to meet the average load, rather than accommodate for the peak load.

Depending on what external service you use (whether that is Cosmos DB, or any other type of datastore), it's likely that the datastore will have throttling implemented when demand reaches a certain threshold. You can use this to configure your consumer application to load level to ensure that whatever throttling threshold your external service has implemented isn't reached.

## What should we keep in mind before implementing this pattern?

You want to avoid moving the problem from the producer side, to the consumer side. WIthin your application logic that receives messages from the queue, you'll want to control the rate at which messages are consumed to avoid overloading external services. This will need to be tested under load to ensure that your incoming load is actually leveled. From those tests, you'll be able to determine how many queues and instances of your consumer you'll need to achieve the load leveling required.

If you expect a reply from the service that you send a request to, you will need to implement a mechanism to do this, since message queues are a one-way communication mechanism. If you need your application to receive a response from the consuming service with low latency, than queue-based load leveling may not be the right pattern for your use-case.

You may also find yourself running behind requests, since they are being queued up by the queue rather than being processed right away. Simply autoscaling the number of instances that are consuming messages from the queue may also cause resource contention on the queue, decreasing the effectiveness of using the queue to level the incoming traffic load.

The persistence mechanism of your chosen queue technology is also important here. You have the potential for losing messages, or the queue itself crashing. Choose a message broker that meets the need for your desired load-leveling behavior, and keep in mind the limitations of that message broker.

## Conclusion

In this article, we discussed what the **Queue-Based Load Leveling** pattern is, how we can use it to prevent traffic overloading external services, what other benefits this pattern provides, and some things we need to keep in mind when using queue-based load leveling.

If you want to read more about this pattern, check out the following resources:

- [Azure Architecture doc on the Queue-Based Load Leveling pattern](https://learn.microsoft.com/en-us/azure/architecture/patterns/queue-based-load-leveling)

If you have any questions, feel free to reach out to me on X/Twitter [@willvelida](https://twitter.com/willvelida)

Until next time, Happy coding! 🤓🖥️

| willvelida |

1,737,398 | Selenium Webdriver False Positive Evidence. | Does Selenium webdriver contains any documents for validating false positive scenarios. | 0 | 2024-01-22T06:49:50 | https://dev.to/shanmugapriyam202020/selenium-webdriver-false-positive-evidence-2on8 | selenium | Does Selenium webdriver contains any documents for validating false positive scenarios. | shanmugapriyam202020 |

1,737,407 | SAP Training Institute in Gurgaon | Enrolling in a SAP MM training programme is a financial commitment towards your future with... | 0 | 2024-01-22T07:06:13 | https://dev.to/techspirals/sap-training-institute-in-gurgaon-2l7p | sap, mm, course | Enrolling in a **[SAP MM training](https://techspirals.com/sub-service/sap-mm)** programme is a financial commitment towards your future with Techspirals. It's a pass to a world of rewarding job opportunities, expanded knowledge, and the capacity that greatly impacts organisational success. So why hold off? Get started on your SAP MM career right now to unlock the door to opportunity!

More Courses offered by Techspirals Technologies:

- SAP SD

- SAP HR

- SAP HANA

- SAP SF

- SAP PP

- SAP FICO

Bonus Tip: Research the SAP MM training courses you choose before enrolling! Seek for respectable providers with qualified teachers, real-world expertise, and hands-on training. You can get SAP MM training with the best institute.

All things considered, taking a** SAP MM training course** is a wise investment for anyone hoping to learn more, advance their career, and benefit a company.

Naturally, the particular advantages you experience are going to differ depending on your background, your professional objectives, and the quality of the training course you select.

| techspirals |

1,737,422 | LRO Limited: Sector Rotation - The ‘Tides’ in the Ocean of Investment Strategies | LRO Limited, a prominent investment advisory firm based in India, underscores the importance of... | 0 | 2024-01-22T07:25:21 | https://dev.to/lro03/lro-limited-sector-rotation-the-tides-in-the-ocean-of-investment-strategies-1ohm | LRO Limited, a prominent investment advisory firm based in India, underscores the importance of understanding sector rotation, a commonly used investment strategy that can significantly impact an investor’s portfolio.

Sector rotation can be likened to the shifting tides in the ocean. Just as tides ebb and flow based on the gravitational pull of the moon, sector rotation involves shifting investments from one sector of the economy to another, based on the stages of the economic cycle.

At LRO Limited, we view sector rotation as a dynamic dance performed in sync with the rhythm of the market. Much like a skilled dancer who adjusts their movements to the changing beat of the music, successful investors adjust their portfolio allocations according to the ever-changing economic cycle.

For instance, during an economic expansion, sectors such as technology and consumer discretionary tend to outperform. This scenario is similar to a high tide, where the water level (or sector performance) is at its peak. Conversely, during an economic downturn, defensive sectors like utilities and healthcare often fare better, akin to a low tide, where the water level recedes.

However, navigating the tides of sector rotation is not a simple task. It requires a deep understanding of the economic cycle, sector-specific factors, and market trends - much like how a sailor needs to understand the lunar cycle, weather patterns, and sea currents to navigate the ocean tides successfully.

In conclusion, LRO Limited emphasizes the crucial role of understanding sector rotation in making informed investment decisions. As we continue to guide our clients on their investment journey, we remain committed to providing expert advice and strategic guidance, helping them navigate the ‘tides’ of sector rotation in the vast ocean of investment strategies.

| lro03 | |

1,737,447 | The PHP library for Turso HTTP | I was one of those waiting for the Turso PHP SDK, but many said just use the HTTP SDK. OK that's very... | 0 | 2024-01-22T14:30:00 | https://dev.to/darkterminal/the-php-library-for-turso-http-1p47 | php, turso, database, webdev | I was one of those waiting for the Turso PHP SDK, but many said just use the HTTP SDK. OK that's very sad, in the end, I made this stupid decision.

---

When **[Turso](https://turso.tech/)** was just born and started to be talked about on the Internet. I started to pay attention a little, especially with **[Turso](https://turso.tech/)** often pronounced by [ThePrimeangen](twitch.tv/ThePrimeagen) (sorry, I wrote this article using Google Translate).

Then I searched about **[Turso](https://turso.tech/)** Database and found something very interesting!

> SQLite is the most widely used database engine in the world because it is the easiest and works best. **[Turso](https://turso.tech/)** takes him everywhere.

Starting from this open card, as an SFE (Software Freestyle Engineer) who was curious about how to get started, I opened the documentation and looked at the available SDKs.

Sadly, I don't see PHP. Yes, I can use Typescript/JS, I'm stupid for Rust, childlike when it comes to Go, and playful when it comes to Python. Since I'm a Lambo Programmer living in a Warehouse, I want PHP to be there!

---

## Turso over HTTP

When I saw the words Turso Over HTTP on the card in the SDK documentation, I was a little offended because there was a paragraph that said; _"Using something else? Learn how to use Turso over HTTP."_

Wait a minute, why should I be offended?!

Maybe they thought PHP was a difficult language and it wasn't easy to make a compatible SDK, so they gave up on making it HTTP only.

Wait a minute, why am I so stupid?!

HTTP/PHP/HTTP/Hypertext preprocessor yeah! Thank you my stupid brain! They don't give up on PHP, but they give them the freedom to be creative and innovate. So my decision was very correct! (yes I am! Because I'm too handsome to do this)

---

I created the **TursoHTTP Library** for PHP which is equipped with a **SadQuery Generator** to make it easier to create SQL queries for lazy people who will ask; What is the accompanying ORM? This is Madapunker!

The `TursoHTTP` library is a PHP wrapper for **[Turso](https://turso.tech/)** HTTP Database API. It simplifies interaction with Turso databases using the Hrana over HTTP protocol. This library provides an object-oriented approach to build and execute SQL queries, retrieve query results, and access various response details.

```php

<?php

use Darkterminal\DataType;

use Darkterminal\SadQuery;

use Darkterminal\TursoHTTP;

require_once . 'vendor/autoload.php';

$databaseName = "database-name";

$organizationName = "organization-name";

$token = "your-turso-database-token";

$tursoAPI = new TursoHTTP($databaseName, $organizationName, $token);

$query = new SadQuery();

$createTableUsers = $query->createTable('users')

->addColumn('userId', DataType::INTEGER, ['PRIMARY KEY', 'AUTOINCREMENT'])

->addColumn('name', DataType::TEXT)

->addColumn('email', DataType::TEXT)

->endCreateTable();

$createNewUser = $query->insert('users', ['name' => 'darkterminal', 'email' => 'darkterminal@duck.com'])->getQuery();

$tableCreated = $tursoAPI

->addRequest("execute", $createTableUsers)

->addRequest("close")

->queryDatabase()

->toJSON();

echo $tableCreated . PHP_EOL;

$userCreated = $tursoAPI

->addRequest("execute", $createNewUser)

->addRequest("close")

->queryDatabase()

->toJSON();

echo $userCreated . PHP_EOL;

```

Isn't this interesting!? Very easy and true to reality!

If you are curious about the complete documentation, please visit the GitHub repository.

{% github https://github.com/darkterminal/turso-http %}

And who dares to integrate it with [fck-htmx](https://github.com/darkterminal/fck-htmx) with [turso-http](https://github.com/darkterminal/turso-http)? Let me now in the comment section!

| darkterminal |

1,737,538 | Significance of Long-Term Investment: Building a Sustainable Financial Future | In a world driven by rapid changes and instant gratification, the concept of long-term investment... | 0 | 2024-01-22T09:25:21 | https://dev.to/mahesar21/significance-of-long-term-investment-building-a-sustainable-financial-future-1p1j | In a world driven by rapid changes and instant gratification, the concept of long-term [investment](https://shahbaz54.blogspot.com/2024/01/the-significance-of-long-term.html) often takes a backseat to short-term gains. However, understanding the importance of a long-term [investment](https://shahbaz54.blogspot.com/2024/01/the-significance-of-long-term.html) strategy is crucial for individuals seeking financial stability, growth, and [security](https://shahbaz54.blogspot.com/2024/01/the-significance-of-long-term.html). In this blog, we will delve into the reasons why long-term investment is vital for building a sustainable [financial future](https://shahbaz54.blogspot.com/2024/01/the-significance-of-long-term.html).

| mahesar21 | |

1,737,550 | CoinTurk Analytics:Dogecoin Reaches Weekly High Following New Payment Account Creation | The market value of the cryptocurrency market’s largest memecoin project, Dogecoin, reached its... | 0 | 2024-01-22T09:48:12 | https://dev.to/victordelpino/cointurk-analyticsdogecoin-reaches-weekly-high-following-new-payment-account-creation-7h5 | crypto, cryptocurrency, blockchain, analytics | **The market value of the cryptocurrency market’s largest memecoin project, Dogecoin, reached its highest level of the week after the creation of a new XPayments account on platform X, which has over 100,000 followers.**

DOGE surged by 12.8% in a nine-hour period on January 21 and reached the highest level of seven days at $0.08978 in the early hours of January 22.

A falling trend line is noticeable on the four-hour DOGE chart. Following the development on the X side on January 21, DOGE managed to break this trend line but failed to close the four-hour bar above it, which led to selling pressure on the DOGE front. The EMA 200 level (red line) indicates a short-term negative process for DOGE due to selling pressure.

The most important support levels to watch on the four-hour DOGE chart are, respectively; $0.08246 / $0.07961 and $0.07677. A four-hour bar close below the $0.08246 level, which played an important role in the recent selling pressure, will increase the selling pressure on DOGE.

The most important resistance levels to follow on the DOGE chart are, respectively; $0.08410 / $0.08630 and $0.08875. Especially a four-hour bar close above the $0.08875 level following a news-driven rise will accelerate the momentum of the DOGE price.

| victordelpino |

1,737,692 | The Benefits, features, and opportunities of corporate taxi booking systems | Business demands a high level of efficiency as well as a high level of comfort. Businesses are... | 0 | 2024-01-22T12:02:27 | https://dev.to/accivatravels/the-benefits-features-and-opportunities-of-corporate-taxi-booking-systems-31g7 |

Business demands a high level of efficiency as well as a high level of comfort. Businesses are constantly searching for innovative ways to streamline their operations and improve the work environment for their employees. One such game-changer is the adoption of company taxi reserving systems, with Accivatravels main the charge. In this weblog post, we will discover the host benefits, state-of-the-art features, and thrilling possibilities that Best Corporate Cab Services in Bangalore reserving structures convey to the table.

1. Streamlined Corporate Travel:

Away are the days of grappling with guide reserving tactics and the trouble of repayment paperwork. Corporate taxi reserving structures like Accivatravels empower corporations to streamline their journey preparations effortlessly. These systems combine seamlessly with company structures, permitting personnel to e-book and manipulate their rides with simply a few clicks. This no longer solely saves time however also ensures a smoother ride for all stakeholders involved.

2. Cost-Efficiency and Expense Management:

Accivatravels' company taxi reserving device brings a stage of cost-efficiency that common tour strategies conflict to match. By leveraging superior algorithms and optimized routes, groups can drastically reduce journey expenses. Additionally, the gadget gives complete price reports, presenting a clear overview of all travel-related costs. This no longer solely aids in financial planning however additionally simplifies the compensation process.

3. Enhanced Employee Safety and Security:

Employee protection is a pinnacle of precedence for any accountable organization. Corporate taxi reserving structures play a pivotal function in ensuring the well-being of personnel throughout their travels. Accivatravels, for instance, contain security aspects such as real-time tracking, driver verification, and emergency assistance. Employers can relax with the convenient understanding that their body of workers is in secure hands, fostering a feeling of safety and belief inside the organization.

4. Time-Saving Features:

Time is money, and company taxi reserving structures are designed to keep both. Accivatravels' platform, for instance, allows customers to pre-schedule rides, getting rid of the want for last-minute scrambling. Automated reminders and notifications hold personnel knowledgeable about their experience status, lowering pointless ready times. Such time-saving points contribute to improved productivity and an extra environment-friendly use of resources.

5. Customization for Corporate Needs:

Not all groups are the same, and Accivatravels knows this well. The platform affords an excessive diploma of customization to cater to the special wishes of specific corporations. Whether it is placing precise journey policies, defining price limits, or tailoring reporting structures, the flexibility of these structures ensures that they align seamlessly with the company's lifestyle and requirements.

6. Environmental Sustainability:

As organizations increasingly prioritize sustainability, corporate taxi reserving structures emerge as a beacon of eco-friendly travel. Accivatravels encourages the use of shared rides and optimized routes, lowering the carbon footprint related to company travel. By merchandising greener transportation alternatives, groups can actively make contributions to environmental conservation whilst showcasing their dedication to company social responsibility.

7. Data-Driven Insights:

The Best Corporate Cab Services in Bangalore device offered by Accivatravels goes beyond transportation logistics; it provides users with valuable information based on a comprehensive analysis of data. A variety of statistics are available on the platform, including tour patterns and expenditure trends that can assist companies in making strategic decisions. By using these insights, you will be able to optimize your tour policies, negotiate higher offers with suppliers, and ultimately save on fees.

Opportunities for Growth:

Beyond the instantaneous benefits, the adoption of corporate taxi reserving structures opens up interesting possibilities for commercial enterprise growth. Companies that include these revolutionary options role themselves as forward-thinking and employee-centric. This, in turn, can beautify their enchantment to pinnacle brains and consumers alike. Additionally, partnerships with legitimate carrier companies like Accivatravels can lead to distinctive perks and discounts, developing a win-win scenario for all events involved.

Conclusion:

The AccivaTravels company taxi reservation gadget stands out among the Best Corporate Cab Services in Bangalore as a beacon of efficiency, convenience, and innovation. The advantages of this system range from streamlined tour procedures and cost-efficiency to enhanced environmental sustainability and better protection of the environment. In addition to offering an array of elements and opportunities for growth, this platform is now more than just a device for today, but a strategic asset for the future. A company that utilizes a taxi reservation system functions at the forefront of innovation, equipped to address the challenges and opportunities of tomorrow's business environment.

Address:

Call Us: +91-9035012166

Lane Line:+91 8023541166

Address: #52, 1 Main Road, Anand Nagar, Hebbal, Bengaluru 560024.

Email: info@accivatravels.com

Website: https://www.accivatravels.com/

| accivatravels | |

1,737,702 | What is Language Tourism? | Language tourism is travel motivated by learning a new language. Language tourism is considered as... | 0 | 2024-01-22T12:24:18 | https://dev.to/aliriodi/what-is-language-tourism-4g6l | tourism, idiomatic, spanish, espanolcone | Language tourism is travel motivated by learning a new language. Language tourism is considered as long as the trip is to a place other than the tourist's residence (generally to another country) and whose stay is less than one year.

Contrary to what is usually thought, language tourism does not always involve traveling abroad, but can take place in the same country of residence of the tourist. Thus, this type of tourism can be a good option for those who want to learn a new language that fortunately is spoken in the same country of origin.

**Examples of language tourism**

<li> Take Spanish classes in **Córdoba, Argentina** with highly qualified Spanish with E teachers.</li>

<li> Enjoy the beautiful tourist landscapes in the City of **Córdoba, Argentina**.</li>

<li> Drink different wines with the best flavors in the world, as well as its exquisite meats in <b>Córdoba, Argentina</b>.</li>

<li> Enjoy learning a new language with people from different cultures and share unique experiences accompanied by highly qualified <span style='color:var(--primary)'><b>Español con E</b></span> staff.</li>

<p>That is why in <b>Español con E</b>, we promote and design a prior teaching strategy to improve the language tourism experience and with people with high academic capacity in teaching the Spanish language.</p>

<p> For more information contact us through: espanolconeacademy@gmail.com </p>

| aliriodi |

1,738,267 | More than your reputation is at stake: What you do can affect other people (for good or bad)! | In this podcast, Krish discusses how each individual represents not only themselves but also a larger... | 0 | 2024-01-22T22:41:21 | https://dev.to/vpalania/more-than-your-reputation-is-at-stake-always-remember-that-57ge | In this podcast, Krish discusses how each individual represents not only themselves but also a larger population. He emphasizes the importance of credibility, professionalism, clear communication, and commitment to deliverables. Krish also highlights the significance of reputation and how it can impact others who share similarities. He advises learning the paradigms of the organization and reacting gracefully to transitions. Krish concludes by reminding listeners that a job does not define their worth as a person.

## Takeaways

* Representing oneself also means representing a larger population.

* Credibility is crucial in building trust and reputation.

* Clear communication and professionalism are essential in the workplace.

* Commitment to deliverables and meeting deadlines is important.

* Helping others and reacting gracefully to transitions can have a positive impact.

* A job does not define an individual's worth.

## Chapters

00:00 Introduction

00:58 Representing a Larger Population

03:25 Changes in the Hiring Process

08:05 Credibility

09:58 Location and Availability

12:17 Professionalism

13:19 Communication

15:25 Commitment to Deliverables

16:48 Reputation

18:31 Learning Organizational Paradigms

19:53 Confidence

20:58 Helping Others

23:01 Reacting to Transitions

25:20 Job Does Not Define You

26:39 Conclusion

## Video

{% embed https://youtu.be/lkiKxwVRFMI %}

## Transcript

[https://products.snowpal.com/api/v1/file/07018bb9-eb8a-4519-a473-e049c7f4db3c.pdf](https://products.snowpal.com/api/v1/file/07018bb9-eb8a-4519-a473-e049c7f4db3c.pdf) | vpalania | |

1,737,855 | Meme Monday | Meme Monday! Today's cover image comes from last week's thread. DEV is an inclusive space! Humor in... | 0 | 2024-01-22T14:15:19 | https://dev.to/ben/meme-monday-25a4 | discuss, watercooler, jokes | **Meme Monday!**

Today's cover image comes from [last week's thread](https://dev.to/ben/meme-monday-5h8i).

DEV is an inclusive space! Humor in poor taste will be downvoted by mods. | ben |

1,737,876 | How to Create a DEX: Important Steps and Considerations | In the dynamic landscape of decentralized finance, the creation of a decentralized exchange stands as... | 0 | 2024-01-22T14:54:19 | https://dev.to/rocknblock/how-to-create-a-dex-important-steps-and-considerations-3hja | createadex, createdex, dexdevelopment | In the dynamic landscape of decentralized finance, the creation of a decentralized exchange stands as a gateway to unlocking the vast potential and possibilities within this transformative ecosystem.

This article is here to help you develop your own DEX. Follow along as we explore the steps to unlock the true potential of DeFi! We'll provide you with key insights and steps to [create a DEX](https://rocknblock.io/dex) that stands out in the competitive DeFi landscape.

#The Rise of Decentralized Exchanges (DEX)

Decentralized exchanges (DEXs) are pivotal in transforming traditional finance within the evolving DeFi landscape. Creating a DEX involves understanding its significance and advantages over Centralized Exchanges (CEX). DEXs empower users with full control over assets, promoting financial autonomy and inclusivity by eliminating central control. Aligned with core DeFi principles, DEXs offer transparent and trustless platforms for seamless asset trading, emphasizing enhanced security, improved financial privacy, and a resilient trading environment. The absence of central authority mitigates manipulation and failure risks, providing users with a reliable and globally accessible financial ecosystem.

**_👀👉 Read [the full guide here](https://rocknblock.io/blog/how-to-create-a-dex-key-steps-and-considerations)!_**

#What to Keep in Mind When Creating Your DEX

Before embarking on the journey to create a DEX, thorough considerations are essential to lay a robust foundation for success. In this section, we will explore the crucial aspects that demand attention before initiating DEX development.

**Market Research and Analysis**

To build a successful DEX, start with thorough market research to identify the target audience and their needs. Include user-centric features for a competitive edge. Addressing diverse user needs makes the DEX valuable in decentralized finance. Analyze competitor DEX platforms to differentiate and strategically position for success.

**Legal and Regulatory Considerations**

Building a DEX requires understanding regulatory frameworks in various jurisdictions for decentralized finance. Compliance is crucial to avoid legal issues. Consult legal experts in blockchain and cryptocurrency during pre-development for guidance on compliance, mitigating legal risks, and ensuring the DEX operates within the law.

**Tokenomics and Economic Model**

Include a native token in your DEX for practical benefits and distinctiveness. Focus on sustainable tokenomics, considering utility and distribution. Strategically plan revenue, resources, and incentives before development. Define token utility aligned with platform functionalities and reward liquidity providers. This ensures long-term viability, attracting and retaining users.

**Defining Technology Stack**

Selecting the right technology stack is crucial for optimal DEX performance. The blockchain platform choice influences scalability and security. Consider unique characteristics and smart contract capabilities. Frontend technologies like React and Vue.js ensure a seamless user experience. A well-chosen stack is essential for DEX success in decentralized finance.

**Choosing a DEX Development Partner**

Thorough research on DEX development companies, including the evaluation of past projects and client testimonials, is essential to ensure expertise and reliability. A collaborative approach, emphasizing effective communication and a clear development timeline, is crucial for a smooth and successful development process. Ensuring a partner's deep understanding of key DEX development features is pivotal for creating a competitive and functional decentralized exchange within the dynamic DeFi landscape.

#How to Create Your Own DEX

Creating your own DEX involves several critical steps that contribute to the development of a robust and user-friendly decentralized exchange. In this section, we'll provide an overview of the basic steps to create your own DEX.

#Setting Up Development Environment

To create a DEX, you first need to establish a conducive development environment. This involves configuring the necessary tools and frameworks for a seamless and efficient process. Commonly used tools include version control systems like Git for code management and collaboration. For developing, testing, and deploying smart contracts on Ethereum, popular frameworks such as Truffle and Hardhat provide a solid foundation for DEX development.

#Smart Contracts and Blockchain Integration

This phase encompasses writing smart contracts and deploying them to the blockchain, laying the groundwork for the DEX's core functionality.

The role of smart contracts in DEX development is essential. These self-executing contracts define the rules and operations within the exchange. Writing smart contracts involves using languages like Solidity to implement functionalities such as order matching, trade execution, and token transfers.

#User Experience and User Interface Design

To create a DEX that can be used by people of any skill and technical level, it is critical to design a simple and intuitive UX that improves accessibility and encourages user engagement within the DEX. It also improves user interactions and makes functionalities such as trading, asset management, and liquidity provision more accessible. Clear navigation, concise presentation of information, and a visually appealing layout contribute to a positive user experience.

#Security Considerations

**Smart Contract Audits**

Ensuring security practices in decentralized exchange software development is crucial, with smart contract audits playing a pivotal role. These audits are essential for identifying and fixing vulnerabilities in the code before deployment, serving as a proactive measure to enhance the reliability of the core functionalities of your DEX.

**User Data Protection**

When creating a DEX, prioritizing user data protection and fortifying against potential threats is essential. Implementing encryption protocols for user data is a foundational step. Encrypting sensitive user information ensures its security, making the data unreadable and safeguarded in the event of unauthorized access.

**Measures Against Hacks and Exploits**

In the dynamic decentralized exchange development landscape, securing your DEX from hacks is essential. Implement continuous monitoring for real-time threat detection, using security tools to swiftly respond to anomalies.

#DEX Quality assurance (QA)

**Testnet Deployment and Simulation**

Testnet deployment enables DEX developers to evaluate the performance of the DEX in a controlled environment that replicates mainnet conditions. This is important to identify and fix any bugs, glitches, or vulnerabilities before the DEX is exposed to real user transactions.

**Simulating Real-World Scenarios**

To comprehensively test your DEX, it's important to simulate real-world scenarios. By creating scenarios that mirror actual user interactions, market conditions, and trading activity, developers can observe how the DEX performs under various conditions. This includes order matching, trade execution, and the overall responsiveness of the platform.

**Stress Testing**

Security stress testing involves intentionally putting the DEX through a variety of attacks and exploits to assess its resilience. By identifying vulnerabilities and weak points, DEX developers can implement additional security measures, which will strengthen the DEX's defenses against potential threats.

**Performance Load Testing**

Performance load testing evaluates how well the DEX performs under different levels of user activity. By simulating high transaction volumes and user loads, developers can ensure that the DEX remains responsive and efficient even during peak usage periods. Optimizing performance contributes to a positive user experience.

#Mainnet Deployment and Launch

**Final Security Checks**

Before mainnet deployment, conducting final security checks is imperative. A thorough review of implemented security measures ensures a secure DEX that inspires user trust.

**Gradual Rollout and Monitoring**

Choosing a gradual rollout allows you to closely observe actual performance in live scenarios, ensuring a controlled introduction and prompt issue resolution.

**Ongoing Improvements**

Post-launch, staying agile and implementing improvements based on user feedback and market conditions contribute to long-term success in the dynamic DeFi landscape.

#Summary of Key Takeaways

In summary, creating a DEX is a multifaceted journey that demands a comprehensive understanding of DeFi, technical proficiency, strategic considerations, and a commitment to security and user experience.