id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,748,598 | Unleashing Creativity: A Beginner's Guide to Adobe Illustrator | Embarking on a journey into the world of graphic design can be both exciting and a little... | 0 | 2024-02-01T14:22:10 | https://dev.to/khushi2025/unleashing-creativity-a-beginners-guide-to-adobe-illustrator-2fj2 | Embarking on a journey into the world of graphic design can be both exciting and a little overwhelming, especially when faced with powerful tools like Adobe Illustrator. Fear not, aspiring artists and designers! This beginner's guide is your compass through the vast landscapes of vectors and illustrations, helping you navigate Adobe Illustrator with confidence.

**1. Introduction to Adobe Illustrator: The Creative Canvas**

Adobe Illustrator is a vector-based design software that allows you to create stunning graphics, illustrations, and logos. Unlike raster images, vectors maintain their quality regardless of size, making Illustrator the go-to tool for scalable and precise designs.

**2. Getting Started: The Illustrator Workspace**

Familiarize yourself with the Illustrator workspace. Understand the toolbar, panels, and artboard—the canvas where your creative ideas will come to life. Take a moment to explore menus and palettes, setting the stage for your design journey.

**3. Mastering Basic Tools: A Palette of Possibilities**

Learn the essentials:

- **Selection Tools**: Navigate, group, and manipulat objects.

- **Shape Tools**: Create rectangles, ellipses, and polygons.

- **Pen Tool**: Master the art of drawing paths for custom shapes.

- **Type Tool**: Add text to your designs with various formatting options.

**4. Colors and Gradients: Infusing Life into Your Art**

Discover the color palette and swatches panel. Experiment with solid colors, gradients, and patterns. Learn how to apply and adjust fills and strokes to add depth and dimension to your designs.

**5. Layers: Organizing Your Artistic Universe**

Unlock the power of layers to organize and control elements within your artwork. Understand how layers facilitate easy editing, hiding, and reordering of design components.

**6. Transformations and Effects: Shaping Your Vision**

Explore transformations like scaling, rotating, and reflecting to manipulate objects effortlessly. Delve into Illustrator's vast array of effects, from drop shadows to blurs, to elevate your designs.

**7. Working with Text: Typography Magic**

Master the art of text manipulation. Learn to create and edit text, experiment with fonts, and use character and paragraph styles for consistent and aesthetically pleasing typography.

**8. Saving and Exporting: From Artboard to the World**

Understand the various file formats, save options, and export settings. Learn how to save your work for future edits and export it for print, web, or social media platforms.

**9. Practice, Practice, Practice: Hone Your Skills**

The best way to become proficient in Adobe Illustrator is through hands-on practice. Experiment with different tools, recreate existing designs, and gradually challenge yourself with more complex projects.

**10. Community and Resources: Learn and Grow Together**

Join the vibrant community of designers and illustrators. Explore online tutorials, forums, and Adobe's official resources to stay updated on new features and techniques. Embrace a mindset of continuous learning and improvement.

Embarking on your Adobe Illustrator journey is like stepping into a boundless realm of creativity. With dedication, practice, and the guidance of this beginner's guide, you'll soon find yourself confidently crafting captivating designs and illustrations. So, unleash your imagination, let the vectors flow, and enjoy the exhilarating ride through the artistic wonders of Adobe Illustrator!

| khushi2025 | |

1,748,860 | A multi-dimensional array in PostgreSQL | *Memos: An array has elements from [1] but not from [0] so [0] returns NULL. Basically, you should... | 0 | 2024-02-02T20:03:57 | https://dev.to/hyperkai/a-multi-dimensional-array-in-postgresql-eak | postgres, array, multidimensional, arrays | *Memos:

- An array has elements from `[1]` but not from `[0]` so `[0]` returns `NULL`.

- Basically, you should use type conversion to create an array except when you declare a non-empty array in a `DECLARE` clause in a function, procedure or `DO` statement because the type may be different from your expectation and there is some case which you cannot create an array without type conversion. *[My answer](https://stackoverflow.com/questions/13809547/convert-integer-to-string-in-postgresql#77723276) explains type conversion in detail.

- [The doc](https://www.postgresql.org/docs/current/arrays.html) explains a multi-dimensional array in detail.

- [My answer](https://stackoverflow.com/questions/71406989/declare-an-array-in-postgresql#77723752) explains how to create and use the 1D(one-dimensional) array with `VARCHAR[]` in detail.

- [My answer](https://stackoverflow.com/questions/11831555/get-nth-element-of-an-array-that-returns-from-string-to-array-function/#77723802) explains how to create and use the 1D array with `INT[]` in detail.

- [My answer](https://stackoverflow.com/questions/23924815/create-an-empty-array-in-an-sql-query-using-postgresql-instead-of-an-array-with#77932610) explains how to create an use an empty array in detail.

- [My post](https://dev.to/hyperkai/foreach-and-for-statements-with-arrays-in-postgresql-2bnc) explains how to iterate a 1D and 2D array with a [FOREACH](https://www.postgresql.org/docs/current/plpgsql-control-structures.html#PLPGSQL-FOREACH-ARRAY) and [FOR](https://www.postgresql.org/docs/current/plpgsql-control-structures.html#PLPGSQL-INTEGER-FOR) statement.

You can create and use a 2D(two dimensional) array with these ways below:

```sql

DO $$

DECLARE

_2d_arr VARCHAR[] := ARRAY[

['a','b','c','d'],

['e','f','g','h'],

['i','J','k','l']

];

BEGIN

RAISE INFO '%', _2d_arr; -- {{a,b,c,d},{e,f,g,h},{i,J,k,l}}

RAISE INFO '%', _2d_arr[0][2]; -- NULL

RAISE INFO '%', _2d_arr[2]; -- NULL

RAISE INFO '%', _2d_arr[2][0]; -- NULL

RAISE INFO '%', _2d_arr[2][3]; -- g

RAISE INFO '%', _2d_arr[2:2]; -- {{e,f,g,h}}

RAISE INFO '%', _2d_arr[1:1][2:3]; -- {{b,c}}

RAISE INFO '%', _2d_arr[2:2][2:3]; -- {{f,g}}

RAISE INFO '%', _2d_arr[3:3][2:3]; -- {{J,k}}

RAISE INFO '%', _2d_arr[1:3][2:3]; -- {{b,c},{f,g},{J,k}}

RAISE INFO '%', _2d_arr[:][:]; -- {{a,b,c,d},{e,f,g,h},{i,J,k,l}}

RAISE INFO '%', _2d_arr[1][2:3]; -- {{b,c}} -- Tricky

RAISE INFO '%', _2d_arr[2][2:3]; -- {{b,c},{f,g}} -- Tricky

RAISE INFO '%', _2d_arr[3][2:3]; -- {{b,c},{f,g},{J,k}} -- Tricky

END

$$;

```

*Memos:

- The type of the array above is `VARCHAR[]`(`CHARACTER VARYING[]`).

- You can set `VARCHAR[][]`, `VARCHAR[][][]`, etc to `_2d_arr`, then the type of `_2d_arr` is automatically converted to `VARCHAR[]`(`CHARACTER VARYING[]`) but you cannot set `VARCHAR` to `_2d_arr` otherwise there is [the error](https://stackoverflow.com/questions/71397289/apply-subscripting-to-text-data-type-in-postgresql#77924037).

- The last 3 `RAISE INFO ...` are tricky.

- If the number of the elements in each 1D array in `_2d_arr` is different, there is error.

- Don't create the 2D array which has numbers and strings otherwise there is [the error](https://stackoverflow.com/questions/72975653/why-do-i-get-an-invalid-input-syntax-for-type-integer-error#77919995).

Or:

```sql

DO $$

DECLARE

_2d_arr VARCHAR[] := '{

{a,b,c,d},

{e,f,g,h},

{i,j,k,l}

}';

BEGIN

RAISE INFO '%', _2d_arr; -- {{a,b,c,d},{e,f,g,h},{i,J,k,l}}

RAISE INFO '%', _2d_arr[0][2]; -- NULL

RAISE INFO '%', _2d_arr[2]; -- NULL

RAISE INFO '%', _2d_arr[2][0]; -- NULL

RAISE INFO '%', _2d_arr[2][3]; -- g

RAISE INFO '%', _2d_arr[2:2]; -- {{e,f,g,h}}

RAISE INFO '%', _2d_arr[1:1][2:3]; -- {{b,c}}

RAISE INFO '%', _2d_arr[2:2][2:3]; -- {{f,g}}

RAISE INFO '%', _2d_arr[3:3][2:3]; -- {{J,k}}

RAISE INFO '%', _2d_arr[1:3][2:3]; -- {{b,c},{f,g},{J,k}}

RAISE INFO '%', _2d_arr[:][:]; -- {{a,b,c,d},{e,f,g,h},{i,J,k,l}}

RAISE INFO '%', _2d_arr[1][2:3]; -- {{b,c}} -- Tricky

RAISE INFO '%', _2d_arr[2][2:3]; -- {{b,c},{f,g}} -- Tricky

RAISE INFO '%', _2d_arr[3][2:3]; -- {{b,c},{f,g},{J,k}} -- Tricky

END

$$;

```

*Memos:

- The type of the array above is `VARCHAR[]`(`CHARACTER VARYING[]`).

- You can set `VARCHAR[][]`, `VARCHAR[][][]`, etc to `_2d_arr`, then the type of `_2d_arr` is automatically converted to `VARCHAR[]`(`CHARACTER VARYING[]`) but you cannot set `VARCHAR` to `_2d_arr` otherwise there is [the error](https://stackoverflow.com/questions/71397289/apply-subscripting-to-text-data-type-in-postgresql#77924037).

- The last 3 `RAISE INFO ...` are tricky.

- If the number of the elements in each 1D array in `_2d_arr` is different, there is error.

In addition, even if you set `VARCHAR(2)[2]` to the array, the result is the same and the type of the 2D array is `VARCHAR[]`(`CHARACTER VARYING[]`) as shown below:

```sql

DO $$

DECLARE -- ↓ ↓ ↓ ↓ ↓ ↓

_2d_arr VARCHAR(2)[2] := ARRAY[

['a','b','c','d'],

['e','f','g','h'],

['i','J','k','l']

];

BEGIN

RAISE INFO '%', _2d_arr; -- {{a,b,c,d},{e,f,g,h},{i,J,k,l}},

RAISE INFO '%', pg_typeof(_2d_arr); -- character varying[]

END

$$;

```

And, even if you set `::TEXT` to 'a', the type of 'a' is `VARCHAR`(`CHARACTER VARYING`) rather than `TEXT` as shown below because the type `VARCHAR[]` set to `_2d_arr` is prioritized. *You cannot set `::TEXT[]` to each 1D array otherwise there is error but you can set `::TEXT[]` to each 1D array if you set the keyword `ARRAY` just before each 1D array but the type of each row is `VARCHAR[]`(`CHARACTER VARYING[]`) rather than `TEXT[]` because the type `VARCHAR[]` set to `_2d_arr` is prioritized as well:

```sql

DO $$

DECLARE

_2d_arr VARCHAR[] := ARRAY[

['a'::TEXT,'b','c','d'],

['e','f','g','h'],

['i','J','k','l']

];

BEGIN

RAISE INFO '%', _2d_arr[1][1]; -- a

RAISE INFO '%', pg_typeof(_2d_arr[1][1]); -- character varying

END

$$;

``` | hyperkai |

1,748,877 | Mastering The HTML Details And Summary Elements | by Japheth Basil In the field of web development, creating experiences that are both engaging and... | 0 | 2024-02-01T18:49:56 | https://blog.openreplay.com/mastering-html-details-and-summary-elements/ | by [Japheth Basil](https://blog.openreplay.com/authors/japheth-basil)

<blockquote><em>

In the field of web development, creating experiences that are both engaging and user-friendly is crucial. Among the tools that facilitate this pursuit of seamless interaction, the `<details>` and `<summary>` elements are excellent examples. With the help of these modest yet effective HTML tags, content management can be handled dynamically, allowing users to explore information at their own pace, as this article will show.

</em></blockquote>

<div style="background-color:#efefef; border-radius:8px; padding:10px; display:block;">

<hr/>

<h3><em>Session Replay for Developers</em></h3>

<p><em>Uncover frustrations, understand bugs and fix slowdowns like never before with <strong><a href="https://github.com/openreplay/openreplay" target="_blank">OpenReplay</a></strong> — an open-source session replay suite for developers. It can be <strong>self-hosted</strong> in minutes, giving you complete control over your customer data.</em></p>

<img alt="OpenReplay" style="margin-top:5px; margin-bottom:5px;" width="768" height="400" src="https://raw.githubusercontent.com/openreplay/openreplay/main/static/openreplay-git-hero.svg" class="astro-UXNKDZ4E" loading="lazy" decoding="async">

<p><em>Happy debugging! <a href="https://openreplay.com" target="_blank">Try using OpenReplay today.</a></em><p>

<hr/>

</div>

In the constantly growing field of web development, the importance of HTML Details and Summary elements exceeds their seemingly modest stature. These elements matter significantly for several compelling reasons, each contributing to a more refined and user-centric approach to crafting web pages.

* Enhanced User Interaction:

HTML Details and Summary elements offer dynamic user interaction, making content collapsible and expandable. This feature empowers users to control information flow, enhancing their experience and allowing engagement based on preferences and needs.

* Streamlined Code Structure:

From a developer's standpoint, Details and Summary elements enhance code elegance. They provide a concise and semantically meaningful structure, resulting in cleaner and more maintainable code. This simplicity is particularly valuable in collaborative and large-scale projects.

* Improved Accessibility:

HTML Details and Summary elements are pivotal for web accessibility and organizing content in a logical hierarchy. This ensures a positive experience for users with disabilities, particularly those relying on screen readers or assistive technologies.

* Progressive Disclosure of Information:

Details and Summary elements are instrumental in achieving progressive disclosure—a fundamental concept for user engagement. They allow developers to strategically unveil information as users interact with the page, avoiding overwhelming them with excessive content upfront. This progressive approach guides users through a seamless and more digestible exploration of the provided information.

* Versatility in Content Presentation:

HTML Details and Summary elements are not confined to a singular use case. Their versatility allows developers to present various types of content, including text, images, or multimedia, in a structured and visually appealing manner. This flexibility is particularly advantageous when dealing with diverse content formats within a single webpage.

## Understanding HTML Details Element

The HTML `<details>` element is a powerful tool that allows web developers to create collapsible sections of content on a webpage. When combined with the `<summary>` element, this element provides an interactive and user-friendly way to present information.

The basic syntax of a `<details>` element involves encapsulating content within the opening and closing `<details>` tags:

```html

<details>

<!-- Content goes here -->

</details>

```

In this structure:

`<details>`: This tag defines the container for the collapsible section.

Content: This is the actual content that will be hidden or revealed based on user interaction.

Adding content to the syntax:

```html

<details>

<p>Lorem ipsum dolor, sit amet consectetur adipisicing elit. Molestiae impedit voluptatum esse at eius repellat, enim nihil ipsum cum ducimus eligendi qui sunt distinctio possimus earum laudantium facere exercitationem sit.</p>

</details>

```

The output:

## Exploring the Purpose of the Details Element

In understanding the Purpose of the Details Element, we'll uncover how it helps organize and present content on the web. This exploration reveals its role in creating a dynamic and engaging user experience.

* Content Organization:

The primary purpose of `<details>` is to organize content effectively. It allows developers to structure information hierarchically, preventing information overload on a webpage. By presenting content in collapsible sections, users can focus on what is relevant to them, promoting a more user-friendly experience.

* Progressive Disclosure:

`<details>` facilitates progressive disclosure, a user experience strategy where information is revealed gradually. Instead of overwhelming users with all information at once, details can be disclosed progressively, keeping the user engaged and preventing cognitive overload.

* Interactive User Experience:

`<details>` enhances interactivity by allowing users to control which sections they want to expand or collapse. This interactive feature empowers users, providing a more engaging and personalized browsing experience.

## Customization with the Open Attribute

The open attribute in the HTML `<details>` element provides a valuable customization option, allowing developers to control whether the content is initially visible or hidden.

The `open` attribute, when added to the `<details>` tag, dictates whether the content within the container is initially visible or hidden.

Here's a simple example:

```html

<details open>

<p>Lorem ipsum dolor, sit amet consectetur adipisicing elit. Molestiae impedit voluptatum esse at eius repellat, enim nihil ipsum cum ducimus eligendi qui sunt distinctio possimus earum laudantium facere exercitationem sit.</p>

</details>

```

The output:

In this example, the open attribute ensures the content is visible when the page loads. Without the open attribute, the content would be initially hidden.

The open attribute is handy when you want specific sections to be expanded by default, providing users immediate access to certain information.

Here’s an example:

```html

<details open>

<p>This is the initially visible section with the open attribute.</p>

</details>

<details>

<p>This is the content of section 2.</p>

</details>

<details>

<p>This is the content of section 3.</p>

</details>

<details>

<p>This is the content of section 4.</p>

</details>

```

The output:

## Unveiling the Role of the Summary Element

The `<summary>` element in HTML plays a pivotal role in conjunction with the `<details>` element, providing a clickable header or label for a collapsible section of content. By default, using the `<details>` element without the `<summary>` element, the clickable header for the collapsible section would be the text `details.`

### Basic Structure of `<summary>`

The `<summary>` element is typically nested within the `<details>` element, creating a structured hierarchy:

```html

<details>

<summary>Clickable Header</summary>

<p>Lorem ipsum dolor, sit amet consectetur adipisicing elit. Molestiae impedit voluptatum esse at eius repellat, enim nihil ipsum cum ducimus eligendi qui sunt distinctio possimus earum laudantium facere exercitationem sit.</p>

</details>

```

The output:

### Essential Functionality

The essential functionalities of the `<summary>` tag include:

* Clickable Header:

The primary functionality of `<summary>` is to serve as a clickable header. When users interact with the `<summary>` element, it toggles the visibility of the content within the associated `<details>` container. This interactivity is key to implementing collapsible sections on a webpage.

* Accessible Labeling:

`<summary>` provides an accessible and descriptive label for the collapsible section. It should be chosen thoughtfully to convey the nature of the content it represents. Screen readers use this label to announce the purpose of the collapsible section to users with visual impairments.

* Versatility in Content:

While commonly used for text, `<summary>` is versatile and can include various content types such as images, icons, or other HTML elements. This flexibility allows developers to create visually appealing and informative headers.

<CTA_Middle_Basics />

## Practical Applications of Details and Summary Elements

HTML Details and Summary elements offer a versatile set of tools for web developers, providing a range of practical applications that enhance user experience, content organization, and interactivity.

Let's explore some key practical applications of Details and Summary elements:

* FAQ Sections:

Details and Summary elements are ideal for creating web FAQ (Frequently Asked Questions) sections. Each question can be represented by a `<summary>` element, and the corresponding answer can be revealed when users click the summary.

Here’s an example with some styling:

```html

<!DOCTYPE html>

<html lang="en">

<head>

<style>

body { font-family: 'Arial', sans-serif; background-color: #f7f7f7; margin: 0; padding: 20px; }

details { background-color: #fff; border: 1px solid #ddd; margin-bottom: 15px; border-radius: 5px; overflow: hidden; }

summary { padding: 15px; cursor: pointer; outline: none; background-color: #28a745; color: #fff; border: none; border-radius: 5px; transition: background-color 0.3s; }

summary:hover { background-color: #0f842a; }

p { padding: 15px; margin: 0; }

</style>

</head>

<body>

<details>

<summary>How do I reset my password?</summary>

<p>Follow these steps to reset your password...</p>

</details>

<details>

<summary>What payment methods do you accept?</summary>

<p>We accept various payment methods, including...</p>

</details>

<details>

<summary>Is there a refund policy?</summary>

<p>Yes, we have a refund policy. If you are not satisfied with your purchase, please review our refund policy...</p>

</details>

</body>

</html>

```

The output:

In this example:

The background color, border, and padding style the `<details>` element, creating a clean card-like appearance.

The `<summary>` element has a distinct background color, and its appearance changes on hover to provide a visual cue that it is clickable.

The `<p>` element, representing the answer, has padding to enhance readability.

* Content Clarity and Readability:

Use Details and Summary elements to structure long-form content, allowing users to expand sections they find interesting while keeping the overall page layout clean and concise.

Here’s an example with some styling:

```html

<!DOCTYPE html>

<html lang="en">

<head>

<style>

body{font-family:'Arial',sans-serif;background-color:#f7f7f7;margin:0;padding:20px;}

details{background-color:#fff;border:1px solid #ddd;margin-bottom:15px;border-radius:5px;overflow:hidden;}

summary{padding:15px;cursor:pointer;outline:none;background-color:#bc3d24;color:#fff;border:none;border-radius:5px;transition:background-color .3s;}

summary:hover{background-color:#a92b11;}

p{padding:15px;margin:0;}

</style>

</head>

<body>

<details><summary>Introduction</summary><p>This is the introduction to our topic. It provides a brief overview of what you can expect to learn and sets the stage for the key concepts...</p></details>

<details><summary>Key Concepts</summary><p>Here are the key concepts you need to understand. Each concept is explained in detail, allowing you to grasp the fundamental ideas that form the foundation of this topic...</p></details>

<details><summary>Implementation</summary><p>Explore the practical implementation of the concepts learned. Understand how to apply the key ideas in real-world scenarios...</p></details>

</body>

</html>

```

The output:

* Interactive Tutorials:

Details and Summary elements can be employed to create interactive tutorials, where each step is hidden by default, and users can reveal the steps one at a time.

Here’s an example with styling:

```html

<!DOCTYPE html>

<html lang="en">

<head>

<style>

body{font-family:'Arial',sans-serif;background-color:#f7f7f7;margin:0;padding:20px;}

details{background-color:#fff;border:1px solid #ddd;margin-bottom:15px;border-radius:5px;overflow:hidden;}

summary{padding:15px;cursor:pointer;outline:none;background-color:#416696;color:#fff;border:none;border-radius:5px;transition:background-color .3s;}

summary:hover{background-color:#2d5181;}

p{padding:15px;margin:0;}

</style>

</head>

<body>

<details open>

<summary>Step 1: Getting Started</summary>

<p>Follow these instructions to get started. This step covers the initial setup and introduces you to the basics...</p>

</details>

<details>

<summary>Step 2: Customization</summary>

<p>Learn how to customize your experience. This step provides insights into customization options and advanced features...</p>

</details>

<details>

<summary>Step 3: Troubleshooting</summary>

<p>Discover troubleshooting tips to address common issues. This step equips you with the knowledge to overcome challenges...</p>

</details>

</body>

</html>

```

The output:

* Product Descriptions:

In e-commerce websites, use Details and Summary elements to present detailed product descriptions. Users can expand sections for specifications, features, and customer reviews.

Here’s an example with some styling:

``` html

<!DOCTYPE html>

<html lang="en">

<head>

<style>

body{font-family:'Arial',sans-serif;background-color:#f7f7f7;margin:0;padding:20px;}

details{background-color:#fff;border:1px solid #ddd;margin-bottom:15px;border-radius:5px;overflow:hidden;}

summary{padding:15px;cursor:pointer;outline:none;background-color:#2727d9;color:#fff;border:none;border-radius:5px;transition:background-color .3s;}

summary:hover{background-color:#08088e;}

p{padding:15px;margin:0;}

</style>

</head>

<body>

<details>

<summary>Product Specifications</summary>

<p>Explore the technical specifications of our product. This section provides details on size, weight, and other key specifications...</p>

</details>

<details>

<summary>Product Features</summary>

<p>Discover the features that make our product unique. From advanced functionalities to innovative design, this section covers it all...</p>

</details>

<details>

<summary>Customer Reviews</summary>

<p>Read what our customers have to say about this product. Find authentic reviews to help you make an informed decision before purchasing...</p>

</details>

</body>

</html>

```

The output:

## Conclusion

In summary, HTML Details and Summary elements offer web developers powerful tools to enhance user experience. These elements, from optimizing content clarity to creating interactive tutorials, are versatile and valuable. Embracing them in web development not only streamlines processes but also contributes to creating more accessible, organized, and engaging websites. As we navigate the dynamic landscape of the digital world, HTML Details and Summary elements remain essential in shaping the future of web development.

| asayerio_techblog | |

1,748,937 | Fundamentals of Site Reliability Engineering | Understanding Reliability Exploring Site Reliability Engineering (SRE) Why SRE Matters in Modern... | 0 | 2024-02-01T21:03:34 | https://dev.to/sre_panchanan/fundamentals-of-site-reliability-engineering-373l | performance, devops, sitereliabilityengineering, observability |

- [Understanding Reliability](#understanding-reliability)

- [Exploring Site Reliability Engineering (SRE)](#exploring-site-reliability-engineering-sre)

- [Why SRE Matters in Modern IT](#why-sre-matters-in-modern-it)

- [Key Pillars of SRE](#key-pillars-of-sre)

- [Navigating the Error Budget](#navigating-the-error-budget)

- [Implementing the Error Budget](#implementing-the-error-budget)

- [Spending Leftover Error Budget](#spending-leftover-error-budget)

- [Understanding Service Level Indicators (SLIs)](#understanding-service-level-indicators-slis)

- [Formula](#formula)

- [Choosing Effective SLIs](#choosing-effective-slis)

- [User Requests Metrics](#user-requests-metrics)

- [Data Processing Metrics](#data-processing-metrics)

- [Storage Metrics](#storage-metrics)

- [Measurement strategies for SLIs](#measurement-strategies-for-slis)

- [1. User-Centric Measurement:](#1-user-centric-measurement)

- [2. Instrumentation in Application Code:](#2-instrumentation-in-application-code)

- [3. Infrastructure and Server Monitoring:](#3-infrastructure-and-server-monitoring)

- [4. Database Query and Transaction Monitoring:](#4-database-query-and-transaction-monitoring)

- [5. API Endpoint Monitoring:](#5-api-endpoint-monitoring)

- [6. Microservices Interaction Analysis:](#6-microservices-interaction-analysis)

- [7. Load Balancer Effectiveness Assessment:](#7-load-balancer-effectiveness-assessment)

- [Setting Service Level Objectives (SLOs)](#setting-service-level-objectives-slos)

- [Effective SLO Targets](#effective-slo-targets)

- [Service Level Agreements (SLAs)](#service-level-agreements-slas)

- [Best Practices for SLAs](#best-practices-for-slas)

- [Final Thoughts](#final-thoughts)

---

## Understanding Reliability

Reliability in IT means that a system or service consistently performs its functions without errors or interruptions. It ensures users can rely on technology for a smooth experience.

## Exploring Site Reliability Engineering (SRE)

Site Reliability Engineering (SRE) blends software engineering with IT operations, focusing on scalable and highly reliable software systems. Its importance lies in balancing reliability, system efficiency, and rapid innovation in modern IT.

### Why SRE Matters in Modern IT

1. **Reliability as a Competitive Edge:** SRE builds dependable systems, fostering customer trust and satisfaction for a competitive advantage.

2. **Resilience Amid Complexity:** In intricate IT environments, SRE provides a structured approach to manage complex systems while maintaining reliability.

3. **Balancing Innovation with Stability:** SRE empowers teams to innovate without compromising system stability, achieving a balance between agility and dependability.

4. **Cost-Efficiency through Automation:** Automation reduces downtime, saving time and resources.

5. **Enhanced Incident Response:** SRE's proactive incident management ensures quick responses, minimizing failure impact and reducing recovery time.

---

## Key Pillars of SRE

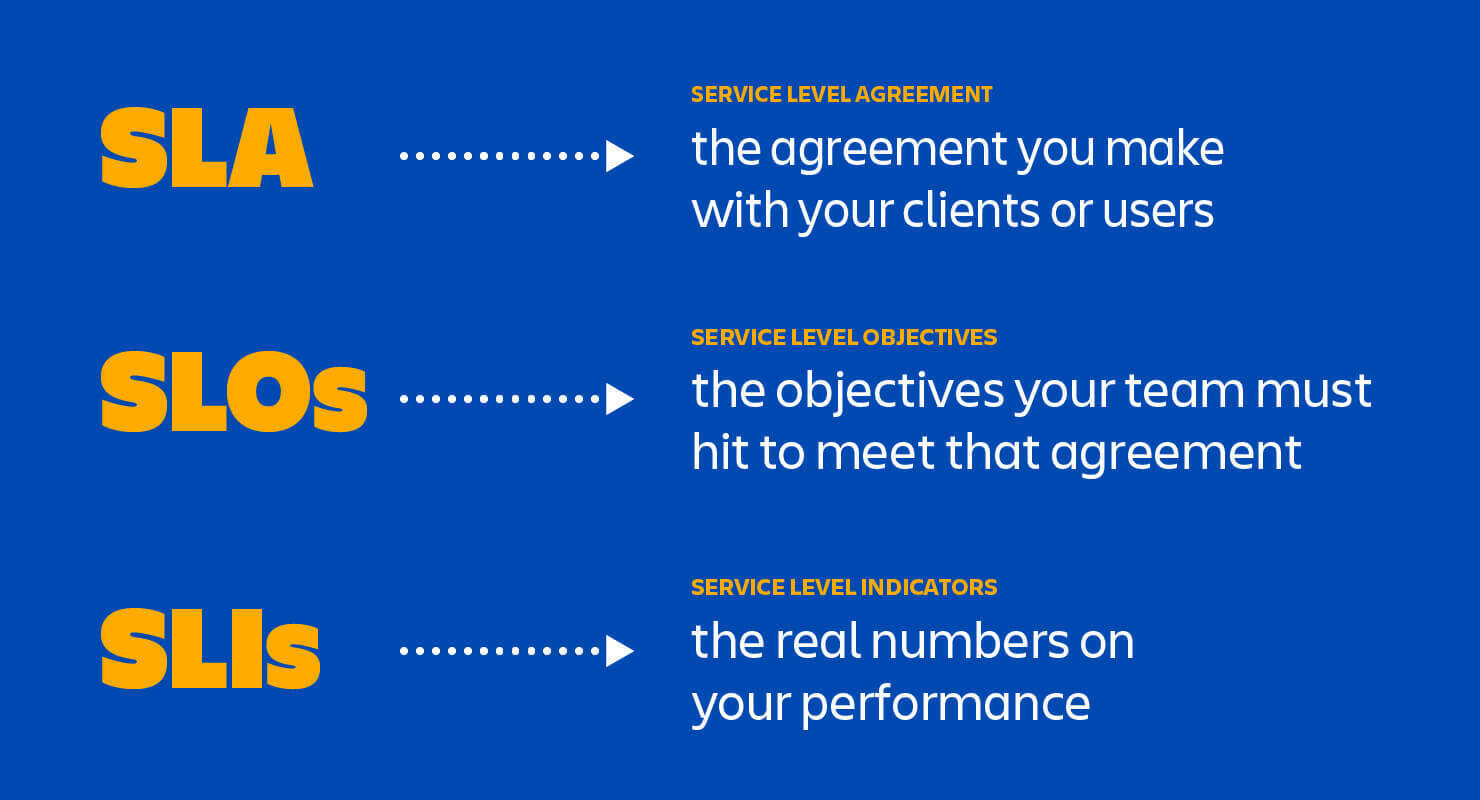

SRE is built on Service Level Indicators (SLIs), Objectives (SLOs), and Agreements (SLAs), along with the crucial concept of Error Budget.

---

## Navigating the Error Budget

The Error Budget represents the permissible margin of errors or disruptions in a system's reliability over time. SREs use it to balance innovation and reliability.

### Implementing the Error Budget

- Set clear reliability targets and acceptable downtime levels.

- Implement robust monitoring for real-time performance tracking.

- Encourage cross-team collaboration.

- Monitor service performance and adjust to stay within the Error Budget.

- Regularly review and learn from incidents to improve processes.

### Spending Leftover Error Budget

- Release new features.

- Implement expected changes.

- Address hardware or network failures.

- Plan scheduled downtime.

- Conduct controlled, risk-managed experiments.

---

## Understanding Service Level Indicators (SLIs)

SLIs are metrics quantifying a service's performance. They provide measurable insights into how well a service is operating.

### Formula:

This formula provides a ratio indicating the proportion of successful events, serving as a key metric to assess the reliability and performance of a service.

---

### Choosing Effective SLIs

- Identify critical aspects impacting user experience.

- Choose measurable, relevant metrics aligned with user expectations.

- Prioritize simplicity and clarity in defining SLIs.

- Collaborate with stakeholders to understand user priorities.

- Regularly review and update SLIs to adapt to changing needs.

> "**100%** is the **wrong** reliability target for basically everything."

> > **`- Betsy Beyer`**, Site Reliability Engineering: How Google Runs Production Systems

---

Let's understand good SLIs through some examples.

#### User Requests Metrics

1. **Latency SLI:**

- **Metric:** Response time for user requests

2. **Availability SLI:**

- **Metric:** System uptime and availability

3. **Error Rate SLI:**

- **Metric:** Percentage of failed requests

4. **Quality SLI:**

- **Metric:** User satisfaction ratings

---

#### Data Processing Metrics

1. **Coverage SLI:**

- **Metric:** Percentage of data processed compared to total incoming data.

2. **Correctness SLI:**

- **Metric:** Percentage of accurate results compared to expected outcomes.

3. **Freshness SLI:**

- **Metric:** Time taken for the system to process and make data available.

4. **Throughput SLI:**

- **Metric:** The number of data records processed per unit of time.

---

#### Storage Metrics

1. **Latency SLI:**

- **Metric:** Time taken for storage operations to be completed.

2. **Throughput SLI:**

- **Metric:** Rate of data transfer to and from the storage system.

3. **Availability SLI:**

- **Metric:** Percentage of time the storage system is available for read and write operations.

4. **Durability SLI:**

- **Metric:** Assurance that data written to the storage system is not lost.

**NOTE:** Clearly define what is considered a **success** and **failure** for your **SLIs**.

---

## Measurement strategies for SLIs

Clear strategies for effective SLI measurement:

### 1. User-Centric Measurement:

- **Measure:** User experience metrics.

- **Tools:** Real user monitoring (RUM).

### 2. Instrumentation in Application Code:

- **Measure:** Code-level SLIs.

- **Tools:** Application performance monitoring (APM).

### 3. Infrastructure and Server Monitoring:

- **Measure:** Resource utilization and response times.

- **Tools:** Server monitoring solutions.

### 4. Database Query and Transaction Monitoring:

- **Measure:** Database operation SLIs.

- **Tools:** Database monitoring tools.

### 5. API Endpoint Monitoring:

- **Measure:** Endpoint response times and efficiency.

- **Tools:** API monitoring tools.

### 6. Microservices Interaction Analysis:

- **Measure:** Microservices interaction points.

- **Tools:** Distributed tracing tools.

### 7. Load Balancer Effectiveness Assessment:

- **Measure:** Load balancer efficiency.

- **Tools:** Load balancer logs.

These strategies offer concise approaches to gathering valuable insights into SLIs at different system levels.

---

## Setting Service Level Objectives (SLOs)

SLOs are specific, measurable targets set for SLIs to quantify the desired performance and reliability of a system, acting as a bridge between business expectations and technical metrics.

### Effective SLO Targets

- Understand user expectations and critical service aspects.

- Review historical performance data for patterns and improvements.

- Align SLO targets with broader business goals.

- Consider dependencies on other services or components.

- Involve stakeholders, including product owners and end-users.

- Prioritize SLIs with a significant impact on user satisfaction.

- Set realistic yet challenging SLO targets.

- Embrace an iterative approach for refinement over time.

---

Here are real-world examples of SLOs based on various SLIs:

1. **E-commerce Platform - Latency:**

- **SLI:** Time taken to load product pages.

- **SLO:** Ensure that 90% of page loads occur within 3 seconds.

- **Alert:** Trigger an alert if the page load time exceeds 4 seconds for more than 5 minutes. Notify the on-call engineer via Slack.

2. **Cloud Storage Service - Availability:**

- **SLI:** Percentage of time the storage service is accessible.

- **SLO:** Maintain an availability of 99.99% over a monthly period.

- **Alert:** Send an alert and create a high-priority JIRA ticket if the availability falls below 99.95% in a given month. Notify the operations team.

3. **Video Streaming Service - Buffering Rate:**

- **SLI:** Percentage of video playback without buffering interruptions.

- **SLO:** Limit buffering interruptions to less than 1% of total viewing time.

- **Alert:** Trigger an alert, escalate to video streaming engineers, and create a critical incident in PagerDuty if the buffering rate exceeds 1.5% during peak hours.

4. **Financial Transactions - Error Rate:**

- **SLI:** Percentage of error-free financial transactions.

- **SLO:** Keep the error rate below 0.1% for all monetary transactions.

- **Alert:** Issue an alert, notify the security team, and initiate a forensic analysis if the error rate surpasses 0.2% on a given day.

5. **Healthcare Application - Response Time:**

- **SLI:** Time taken to process and display patient records.

- **SLO:** Ensure that 95% of requests for patient records are served within 2 seconds.

- **Alert:** Send an alert, notify the support team, and create a ticket for the development team if the response time for patient records exceeds 2.5 seconds for more than 2 hours.

6. **Social Media Platform - Throughput:**

- **SLI:** Number of user posts processed per second.

- **SLO:** Maintain a throughput of at least 1000 posts per second during peak usage.

- **Alert:** Trigger an alert, notify the performance team, and create a post-incident report if the throughput falls below 900 posts per second for more than 10 minutes.

7. **Online Learning Platform - Uptime:**

- **SLI:** Percentage of time the platform is operational.

- **SLO:** Achieve an uptime of 99.9% throughout the academic year.

- **Alert:** Issue an alert, notify the operations team, and engage incident response if the platform's uptime drops below 99.5% within a 24-hour period.

8. **Telecommunications Network - Call Drop Rate:**

- **SLI:** Percentage of completed phone calls without dropping.

- **SLO:** Keep the call drop rate below 1% during peak hours.

- **Alert:** Send an alert, escalate to network operations, and initiate a bridge call with the engineering team if the call drop rate exceeds 1.2% during peak hours for more than 15 minutes.

---

## Service Level Agreements (SLAs)

SLAs are formal agreements between service providers and customers outlining the expected level of service, including performance metrics, responsibilities, and guarantees.

### Best Practices for SLAs

- Set SLOs slightly above the contracted SLA to provide a buffer, avoiding user dissatisfaction.

- Ensure SLIs do not fall below the SLA, maintaining a commitment to agreed-upon service levels.

---

## Final Thoughts

In concluding our exploration of Site Reliability Engineering, remember that SRE is more than a set of practices; it's a mindset fostering reliability, collaboration, and continuous improvement. May your systems be resilient, incidents few, and services remain reliable. Happy engineering! | sre_panchanan |

1,749,003 | Go Bun ORM with PostgreSQL Quickly Example | Install dependencies and environment variable Replace the values from database connection... | 0 | 2024-02-12T00:05:54 | https://dev.to/luigiescalante/go-bun-orm-with-postgresql-quickly-example-394o | go, orm, postgres | ## Install dependencies and environment variable

Replace the values from database connection with yours.

```

export DB_USER=admin

export DB_PASSWORD=admin123

export DB_NAME=code_bucket

export DB_HOST=localhost

export DB_PORT=5432

go get github.com/uptrace/bun@latest

go get github.com/uptrace/bun/driver/pgdriver

go get github.com/uptrace/bun/dialect/pgdialect

```

## Create table

Bun have a migration system, but I think it will be required its own post. For this quickly example

```

create table customers(

id serial not null,

first_name varchar(80) not null ,

last_name varchar(80) not null ,

email varchar(50) not null unique,

age int not null default 1,

created_at timestamp not null DEFAULT now(),

updated_at timestamp null,

deleted_at timestamp null

);

```

## Manager DB

Create a file to manage.go This will contain a method to get the connection db for instance in other modules and services.

```

import (

"database/sql"

"fmt"

"github.com/uptrace/bun"

"github.com/uptrace/bun/dialect/pgdialect"

"github.com/uptrace/bun/driver/pgdriver"

"os"

"sync"

)

var (

dbConnOnce sync.Once

conn *bun.DB

)

func Db() *bun.DB {

dbConnOnce.Do(func() {

dsn := postgresqlDsn()

hsqldb := sql.OpenDB(pgdriver.NewConnector(pgdriver.WithDSN(dsn)))

conn = bun.NewDB(hsqldb, pgdialect.New())

})

return conn

}

func postgresqlDsn() string {

dsn := fmt.Sprintf("postgres://%s:%s@%s:%s/%s?sslmode=disable",

os.Getenv("DB_USER"),

os.Getenv("DB_PASSWORD"),

os.Getenv("DB_HOST"),

os.Getenv("DB_PORT"),

os.Getenv("DB_NAME"),

)

return dsn

}

```

## Create Struct entity

On Customers entity add the tags for mapped with bun fields.

```

type Customers struct {

bun.BaseModel `bun:"table:customers,alias:cs"`

ID uint `bun:"id,pk,autoincrement"`

FirstName string `bun:"first_name"`

LastName string `bun:"last_name"`

Email string `bun:"email"`

Age uint `bun:"age"`

CreatedAt time.Time `bun:"-"`

UpdatedAt time.Time `bun:"-"`

DeletedAt time.Time `bun:",soft_delete,nullzero"`

}

```

* the bun:"-" omit the field in queries.

You can review all tags option on the official bun documentation.

[Bun tags](https://bun.uptrace.dev/guide/models.html#struct-tags)

## Create Struct for manage the entity (customer) repository

Create a struct for manage the db connection and Get all methods to interact with the database entity (CRUD operations and queries)

With this struct, any time we need to access the entity (customer) data, we can instance and start to use it as a repository pattern.

```

type CustomersRepo struct {

Repo *bun.DB

}

func NewCustomerRepo() (*CustomersRepo, error) {

db := Db()

return &CustomersRepo{

Repo: db,

}, nil

}

```

## CRUD methods

A method example to store, update or get information from the entity.

These methods are used from the CustomersRepo entity.

They received a customer entity with the information and depending on the operation return the result.

### Save a new customer

```

func (cs CustomersRepo) Save(customer *models.Customers) (*models.Customers, error) {

err := cs.Repo.NewInsert().Model(customer).Scan(context.TODO(), customer)

if err != nil {

return nil, err

}

return customer, nil

}

```

### Update a customer data (Required the field ID)

```

func (cs CustomersRepo) Update(customer *models.Customers) (*models.Customers, error) {

_, err := cs.Repo.NewUpdate().Model(customer).Where("id = ? ", customer.ID).Exec(context.TODO())

return customer, err

}

```

### Get a customer from one field

```

func (cs CustomersRepo) GetByEmail(email string) (*models.Customers, error) {

var customer models.Customers

err := cs.Repo.NewSelect().Model(&customer).Where("email = ?", email).Scan(context.TODO(), &customer)

return &customer, err

}

```

### Get a list of customers

```

func (cs CustomersRepo) GetCustomers() ([]*models.Customers, error) {

customers := make([]*models.Customers, 0)

err := cs.Repo.NewSelect().Model(&models.Customers{}).Scan(context.TODO(), &customers)

return customers, err

}

```

### Delete a customer (soft deleted)

```

func (cs CustomersRepo) Delete(customer *models.Customers) error {

_, err := cs.Repo.NewDelete().Model(customer).Where("id = ?", customer.ID).Exec(context.TODO())

return err

}

```

## Review the code example

[BUN CRUD Example](https://github.com/luigiescalante/go-code-bucket/tree/main/bun-orm)

| luigiescalante |

1,749,017 | Run Docker Without sudo in WSL2 | My problem is, when run docker has a problem like this permission denied while trying to connect... | 0 | 2024-02-01T23:13:35 | https://dev.to/bukanspot/run-docker-without-sudo-in-wsl2-4kfl | docker, devops | My problem is, when run docker has a problem like this

```

permission denied while trying to connect to the Docker daemon socket at unix:///var/run/docker.sock: Get "http://%2Fvar%2Frun%2Fdocker.sock/v1.24/containers/json": dial unix /var/run/docker.sock: connect: permission denied

```

I solve with this

```

sudo addgroup --system docker

sudo adduser $USER docker

newgrp docker

sudo chown root:docker /var/run/docker.sock

sudo chmod g+w /var/run/docker.sock

```

---

### reference

- [stackoverflow.com](https://stackoverflow.com/questions/64710480/docker-client-under-wsl2-doesnt-work-without-sudo) | bukanspot |

1,749,090 | 5 Google My Business Management Tools to Boost Your Visibility | In the ever-evolving landscape of online marketing, local search visibility has become paramount for... | 0 | 2024-02-02T01:59:53 | https://dev.to/socialmediatime/5-google-my-business-management-tools-to-boost-your-visibility-38c3 | digital, marketing | In the ever-evolving landscape of online marketing, local search visibility has become paramount for businesses. Harnessing the power of Google tools and Google My Business (GMB) is a surefire way to bolster your presence on Google’s search engine results pages (SERPs). However, to maximise the impact of your Google Business Online Profile, you need the right management tools at your disposal.

Understanding the Significance of Google Business Profile

Google My Business serves as the cornerstone for local SEO strategies. It's a potent tool that allows businesses to manage their online presence, display crucial and accurate information, engage with customers, and ultimately, stand out in local business searches and Google's search results.

The Role of Management Tools in GMB Search Engine Optimisation

Effective business account management tools streamline the process of maintaining and optimising your Google Business Profile account. From managing reviews to updating business information like your business address and opening hours, these business tools simplify tasks, enhance efficiency, and drive better visibility, helping you appear on Google Maps.

Let’s Explore the Top Five Google Business Profiles Management Tools:

1. Google My Business Dashboard

The GMB Dashboard is the command centre for your business profile. It offers a centralised platform to manage essential information like business operating hours, services, and updates. It's your go-to hub for your business hours, responding to online reviews uploading images and business posts, significantly impacting your local businesses' SEO efforts.

2. Moz Local

Moz Local is a comprehensive tool for managing business listings across various platforms, ensuring consistency and accuracy in information across multiple locations. Its functionality aids in local search results ranking by optimising listings and identifying opportunities to improve the visibility of business locations.

3. BrightLocal

BrightLocal is a robust suite of tools catering to local SEO needs. It offers functionalities like rank tracking, citation building, and managing both positive and negative reviews on search engines, enabling businesses to monitor and enhance their local Google search visibility effectively.

4. SEMrush

SEMrush provides a wide array of SEO tools, including local search engine optimisation features and keyword suggestions, and allows you to schedule posts and competitor analysis. Its comprehensive insights help in devising a local SEO tools strategy, optimising content, and improving online marketing campaigns.

5. Hootsuite

While primarily known for social media management, Hootsuite can play a crucial role in GMB management. It allows businesses to schedule GMB posts, ensuring consistent engagement and visibility across multiple platforms.

Optimise Your Google My Business Profile Today!

With the ever-increasing competition in the digital sphere, leveraging Google My Business effectively is crucial for businesses seeking local visibility. These top five management tools empower businesses to optimise their Google Business Profiles, improve local SEO rankings, and ultimately attract more customers.

By harnessing the functionalities of these tools, businesses can not only manage their GMB profiles effectively but also pave the way for sustained growth in the highly competitive online landscape.

Optimising your Google Business Profile isn’t a one-time task; it’s an ongoing effort. Regular updates, prompt responses to business reviews, and utilising these management tools can significantly impact your business page, listing local search visibility, attracting potential customers and boosting your own business page's online presence.

**Website URL:**

https://www.socialmediatime.co.uk/

| socialmediatime |

1,749,116 | Hướng Dẫn Chơi Baccarat Online: Kỹ Thuật Chiến Thắng Tại 188bet | Đối với những người mới chơi Baccarat và chưa nắm chắc quy tắc, việc đặt cược mà không có một chiến... | 0 | 2024-02-02T02:42:16 | https://dev.to/88betg/huong-dan-choi-baccarat-online-ky-thuat-chien-thang-tai-188bet-23gj |

Đối với những người mới chơi Baccarat và chưa nắm chắc quy tắc, việc đặt cược mà không có một chiến lược cụ thể thường dẫn đến tổn thất. Do đó, khi tham gia trò chơi này, người chơi cần phải áp dụng các chiến lược đặt cược để tăng cơ hội chiến thắng.

Mỗi người chơi Baccarat có thể phát triển chiến lược riêng của mình. Qua thời gian, việc tích lũy kinh nghiệm sẽ giúp họ tuân thủ phương pháp đã chọn và đạt được hiệu quả cao hơn. Tránh việc đặt cược một cách tùy tiện dựa trên cảm xúc là điều quan trọng, vì điều này có thể dẫn đến lãng phí tiền bạc không cần thiết.

Xem thêm: https://88betg.com/cach-choi-baccarat-online/

xem thêm: https://flipboard.com/@88betg

#baccarat #88betg #188BET #nha_cai_188BET #nha_cai #casino

| 88betg | |

1,749,151 | Regression Testing Fundamentals – All You Need To Know | Regression testing is software testing that verifies an app’s continued performance, irrespective of... | 0 | 2024-02-02T04:01:18 | https://dev.to/berthaw82414312/regression-testing-fundamentals-all-you-need-to-know-15ne | [Regression testing](https://www.headspin.io/blog/regression-testing-a-complete-guide) is software testing that verifies an app’s continued performance, irrespective of the number of updates, upgrades, or code changes.

Developers run regression tests when they add a new modification to the code. Since it is in charge of ensuring the app’s functionality and stability, regression tests ensure the app is sustainable despite changes.

This test is essential because when developers perform code changes, it can cause a ripple effect and introduce malfunctions, dependencies, or flaws in the code. Regression tests ensure that previously tested code is unaffected by these changes.

Regression tests are typically the final step of the SDLC. They check the product’s behavior, verify its functionality, and help improve time to market.

## Differences Between Re-testing and Regression Testing

To those new to automation, regression testing and re-testing can be confusing. Although these tests sound similar, they are very different from each other.

Re-testing involves repeating a test for a particular purpose. Testers can re-test the app and repair the source code if they find a fault. Sometimes test cases can fail in their execution. In such cases, testers will need to re-test or repeat the test.

Regression testing, however, involves verifying updates or changes to the code to find out if these changes introduced flaws in existing functions.

Organizations usually perform re-testing before regression tests. While re-testing mainly ensures that failed test cases work well, regression testing examines the ones that succeed and ensures they don’t introduce any new flaws. Another significant distinction is that, unlike regression testing, re-testing involves error verifications.

Automation plays a significant role in regression testing, allowing testers to focus on interpreting test case results.

**Regression Testing in Agile**

Agile development strategies can help teams achieve benefits like increased ROI, product improvements, customer support, and improved time-to-market. Yet, the challenge is to avoid conflicts with balancing iterative testing and sprint development.

Agile regression testing implementation is critical in synchronizing existing and upgraded capabilities, preventing future rework. Agile regression testing guarantees that business functions are reliable and sustainable.

Regression testing through agile methodologies prevents future rework by helping to synchronize upgraded and existing app capabilities.

Agile methodologies help ensure stable and functional business functions and improve regression testing. Developers will have more time to focus on developing new functionalities for their apps rather than constantly fixing existing problems. Additionally, regression testing through agile helps testers identify unexpected issues helping them respond quicker and more efficiently.

## Why is regression testing necessary?

Automated regression testing helps improve time to market by improving feedback time. The quicker product teams receive feedback, the faster they can identify flaws. Early identification of faults can help save organizations a lot of money and time.

The fact that a small iteration can have a cascading effect on the app’s significant functionalities is alarming. Hence, developers cannot ignore regression tests to ensure seamless app performance.

Functional tests are undoubtedly beneficial; however, while they do a great job examining the app’s new features, they do not check their compatibility with existing features.

Adding regression testing to the mix is crucial as it can help testers perform a root cause analysis, serving as a filter to ensure improvements in product quality. Without regression testing, this process would be highly time-consuming and challenging.

## When is it beneficial to perform Regression testing?

You will find it beneficial to perform regression testing in the following scenarios:

- Regression tests are essential when the product you’re developing is in repeated need of new features.

- When testing product enhancements and minimizing manual testing labor.

- For areas where you want to reduce manual testing efforts and enhance your product testing capabilities.

- Regression tests can help you validate customer defect reports.

- When your product is due for an upgrade in performance, regression tests can help you.

**How to Conduct Regression Testing**

While strategies for regression testing differ from organization to organization, here are some fundamental steps you must take:

**Detect Changes in the Source Code**

It is essential to detect optimizations and changes to the source code. This check helps you track the components that were changed and understand the impacts these changes have had on existing features.

**Prioritize Changes and Product Requirements**

After identifying the modifications, prioritize them to help streamline the testing process. Ensure you assign appropriate test cases to help fix the issues.

**Determine Entry/Exit Criteria**

Before executing the regression test, ensure your application meets the required eligibility criteria. Also, determine an exit point.

**Schedule The Test**

Once you identify all test components, execute the regression test.

## Conclusion

Regression testing helps improve user experience and product quality. Choosing the right regression intelligence tool can help detect surface and deep-rooted flaws early in the pipeline. Regression testing can make things easier for manual testers.

Furthermore, using agile methodologies in regression testing ensures technical and business advantages. However, to ensure you meet all your regression test requirements, it’s essential to start planning early and have a comprehensive strategy ready.

[HeadSpin](https://www.headspin.io/enterprise) offers AI-based testing insights that significantly improve the quality of your regression tests. The AI provides actionable insights that can help you solve problems quickly and improve your time to market.

Original source: https://complextime.com/regression-testing-fundamentals-all-you-need-to-know/ | berthaw82414312 | |

1,749,194 | Why is Web App and API Security Testing So Critical? | When you search for the most common causes of cyberattacks; phishing and unpatched vulnerabilities... | 26,275 | 2024-02-02T13:30:00 | https://dev.to/jigar_online/why-is-web-app-and-api-security-testing-so-critical-3i2i | webdev, security, cybersecurity, learning | When you search for the most common causes of cyberattacks; phishing and unpatched vulnerabilities will top the list. Hackers mostly exploit inherent vulnerabilities in APIs and web apps to gain unauthorized access and steal data. However, sad but true that [OWASP Top 10 report](https://owasp.org/Top10/A03_2021-Injection/) states that 94% of the applications they tested were vulnerable to injection attacks.

So, why do vulnerabilities become a security challenge? This happens due to complete negligence of API and web app security testing or not using effective measures for this. Unfortunately, many times organizations fail to understand the importance of security testing.

Thus, such organizations suffer significant losses. In 2023, the [data breach cost was $4.45 million](https://www.ibm.com/reports/data-breach) on average. This shows how disastrous vulnerabilities can be.

## A Brief Note on Web App and API Security Testing

The aim of security testing is to evaluate if a web app or API is susceptible to hacking. It involves performing various tests and seeking responses to detect unexpected behavior. Professional security testers use automated and/or manual procedures to perform these tests. Any vulnerability is like a chink in your Armour that will cause destruction. Finding and removing them is vital to fortify your digital landscape. Hence, API and web application security testing is essential.

## Why is API and Web App Security Testing So Important?

There is one thing that one can find common in every web app and API is they all handle data. Being a valuable asset to a business, data must be handled and protected efficiently. However, security loopholes in your API or web app can result in data breaches that will impact your business. Finding and fixing vulnerabilities that can compromise security helps to protect these assets.

Let’s check its importance as follows.

**1. Data Security**

Data protection is important to secure it from theft, unauthorized access, and corruption. Organizations must protect their data for various ethical and legal reasons. For example, GDPR is a well-known data protection law that safeguards data of EU citizens. Organizations failing to comply with this law must face heavy penalties.

Vulnerabilities in web apps and APIs can cause data breaches that will violate laws and risk confidential information. However, you can prevent such scenarios by testing the applications and APIs for security issues and addressing them before they get exploited. For example, a quick web app vulnerability scan with an automated tool can reveal weaknesses related to data safety.

**2. Reputation**

Security breaches result in financial and reputational damage for organizations. Many customers will stop doing business with you, which will affect your sales and future growth. Further, many of them will also share their experiences with others through verbal communication or on social media.

To avoid this nightmare, you need to work on the root cause of this problem and that’s the vulnerabilities. With security testing, you can discover potential vulnerabilities like OWASP Top 10 that cause security breaches and protect your web apps and APIs.

**3. Customer Confidence**

Trust is the reason that customers are ready to share their personal or financial information when using various applications. However, security breaches can erode customer trust and regaining their confidence will be extremely challenging. They are likely to choose competitors after this.

Moreover, you can maintain customer trust by better protecting their information. API and web app security testing plays a critical role here. You can find weaknesses in the application and API that will help to strengthen their security.

**4. Smooth Business Operations**

Another advantage of API and web application testing is avoiding disruptions in your business. Business operations are affected after a security issue as hackers can cause service downtime with attacks like DDoS. They can make your web application unavailable to users or damage the system to stop it from running.

You can perform an automated web app or API vulnerability scan to find vulnerabilities that cause DDoS and similar attacks to avoid disruptions. It also helps you build a strong response plan to allow users to continue using the application even in case of a cyberattack.

**5. Compliance Requirements**

Adhering to legal compliances and standards is another benefit you get with thorough security testing. There are many automated vulnerability scanning tools that not only detect application weaknesses but also highlight compliance issues. Hence with web app security testing, you can ensure that it complies with standards like HIPAA, ISO27001, PCI DSS, SOC2, and more.

## Different Methods of Web App and API Security Testing

While security testing is crucial for web apps and APIs without any doubt, choosing the right method is equally important. The following are the different approaches to application testing you can choose from.

**Black-Box Testing**

In this type of testing method, a tester hunts for security issues without knowing the internal details of the application or API. The tester performs tests “outside in” with simulated attacks just like a hacker from the front-end.

**White-Box Testing**

The tester knows the internal details of an application or API and checks the source code to detect potential security issues. It is a static code analysis method also known as “inside-out” testing.

**Gray-Box Testing**

It is a combination of both and allows a tester to identify issues with improper use or design of the application or API. Having partial knowledge of the application’s implementation, the tester checks the target both inside-out and outside-in.

## Final Note

As cyberattacks are growing in number and complexity, keeping your systems, applications, and APIs secure becomes more challenging. Security testing tools like [ZeroThreat](https://zerothreat.ai/) helps you evaluate your APIs and applications to find flaws and weaknesses that allow cyberattacks. The tests help to pinpoint the issues and their reasons to effectively resolve them and mitigate security risks. | jigar_online |

1,749,197 | JavaScript Functions: The Heroes of Your Code! ⚡️ | Welcome, fellow code adventurers, to the thrilling world of JavaScript functions! 🌟 In this epic... | 25,941 | 2024-02-17T08:47:51 | https://dev.to/aniket_botre/javascript-functions-the-heroes-of-your-code-4l2d | javascript, webdev, programming, frontend | Welcome, fellow code adventurers, to the thrilling world of JavaScript functions! 🌟 In this epic journey, we'll delve deep into the heart of functions, exploring their myriad forms and unraveling their mysteries. So buckle up, sharpen your swords (or rather, your keyboards), and let's embark on this exhilarating quest! 🎮

[JavaScript Function - Fireship video on youtube](https://medium.com/r/?url=https%3A%2F%2Fwww.youtube.com%2Fwatch%3Fv%3DgigtS_5KOqo%26list%3DPL0vfts4VzfNixzfaQWwDUg3W5TRbE7CyI%26index%3D4)

---

## Introduction: What's the "Function" of Functions? 🌟

A function, my friends, is a real workhorse. It's a reusable block of code that performs a specific task. Think of it like a personal assistant who's always ready to perform a particular task whenever you snap your fingers. 🤖

And, boy oh boy, are they important! They offer encapsulation, reusability, modularity, abstraction, and make testing a breeze. In short, they're the superheroes of your code, silently saving the day! 🦸♂️ It's hoisted, so it can be called before being declared.

### The Syntax of a Function 📝

Declaring a function is as easy as saying "Abracadabra!". To create a function, you start with the `function` keyword, followed by a unique name, and then a pair of parentheses that may or may not include parameters. The body of the function, where the magic happens, is wrapped in curly braces.

Here's a simple example:

```javascript

function sayHello() {

console.log("Hello world");

}

sayHello(); //output: Hello world

```

Just like magic, isn't it? 🎩

---

## Parameters vs. Arguments: What's the Difference? 🤔

Parameters are like placeholders in a recipe, waiting to be filled with actual ingredients, which are the arguments. When you declare a function, you define parameters. When you call a function, you pass arguments.

Parameters act as placeholders within the function's definition, defining the inputs it expects to receive. They serve as variables that store the values passed to the function when invoked, allowing it to operate on different data each time it's called. Arguments, on the other hand, are the actual values passed to a function when it's called. They provide concrete data that fulfils the function's parameters, allowing it to perform its designated task.

Parameters and arguments work in tandem, allowing functions to be versatile and adaptable to various scenarios. In JavaScript, parameters and arguments can come in different types, including function parameters, default parameters, rest parameters, and the special "arguments" object, each offering unique capabilities and flexibility in crafting powerful and expressive code.

Parameters and arguments are like relay runners. The former waits for the baton (value) that the latter passes. Here's how they team up:

```javascript

function multiply(num1, num2 = 2) {

return num1 * num2;

}

console.log(multiply(5, 10)); // Output: 50

```

In this race, `num1` and `num2` are the parameters, and `5` and `10` are the arguments. They make a great team, don't they? 🏆

---

## Function Expressions 🎭

Function expressions offer a dynamic approach to function creation, allowing us to assign functions to variables and constants. Like a secret identity, it's assigned to a variable. But remember, it's not hoisted!

```javascript

const greet = function(name) {

console.log(`Hello, ${name}!`);

};

greet('Jane'); // Output: Hello, Jane!

```

---

## Arrow Functions: The Cool Kids of JavaScript ➡️🏹

Arrow functions, introduced in ES6, are the sleek sports cars of the function world. They have a shorter syntax and some unique features compared to traditional functions. They might seem like a mystical entity with their different syntax and behavior, but fear not, for we're about to demystify them! 🕵️♂️

### Syntax: Short and Sweet 🍭

Arrow functions feature a shorter syntax compared to traditional function expressions. Here's an example:

```javascript

const greet = name => console.log(`Hello, ${name}!`);

greet('Tom'); // Output: Hello, Tom!

```

This is equivalent to:

```javascript

const greet = function(name) {

console.log(`Hello, ${name}!`);

};

greet('Tom'); // Output: Hello, Tom!

```

Notice how we saved some keystrokes with the arrow function? That's the beauty of its syntax!

### The Deal with 'this' 🎭

One of the key differences between arrow functions and regular functions is how they handle the `this` keyword. In regular functions, `this` is a shape-shifter, changing its value based on the context in which the function is called (such as the calling object).

However, arrow functions are more predictable - their `this` is lexically bound. This means it takes on the value of `this` from the surrounding code where the arrow function is defined. Let's look at an example:

[P.S. We will be covering concepts like context, object and lexical scoping in later blogs...]

```javascript

const myObject = {

name: 'John',

sayHello: function() {

setTimeout(function() {

console.log(`Hello, ${this.name}!`);

}, 1000);

}

};

myObject.sayHello(); // Output: Hello, undefined!

```

In this example, `this.name` is `undefined` because this inside the `setTimeout` function refers to the global object, not `myObject`.

Now, let's use an arrow function:

```javascript

const myObject = {

name: 'John',

sayHello: function() {

setTimeout(() => {

console.log(`Hello, ${this.name}!`);

}, 1000);

}

};

myObject.sayHello(); // Output: Hello, John!

```

This time, `this.name` correctly refers to the `name` property of `myObject`, because the arrow function takes its `this` from the surrounding (lexical) context.

In conclusion, arrow functions are a powerful addition to JavaScript, offering a shorter syntax and a predictable this. However, they come with their own caveats, so use them wisely!

Remember, the right tool for the right job. Sometimes, an arrow is perfect for the target, at other times, you might need the whole quiver! 🏹

---

## IIFE: The Undercover Agent of JavaScript 🕵️♀️

IIFE or Immediately Invoked Function Expression is like an undercover agent in the world of JavaScript. It's a function that runs as soon as it is defined. Think of it as a fire-and-forget missile; it does its job and then self-destructs. Let's dive deeper into this intriguing concept.

### Syntax: Now You See Me, Now You Don't! 🎩

Here's what an IIFE looks like:

```javascript

(function() {

console.log('Hello World!');

})(); // Output: Hello World!

```

Notice the extra pair of parentheses at the end? That's the secret sauce that makes the function execute immediately. It's like saying, "Hey function, don't just stand there, do your job NOW!".

### Use Cases: When to Deploy the Agent? 🚀

IIFEs are particularly good in two scenarios:

- **Variable Isolation:** When you need to isolate variables and prevent them from polluting the global scope, IIFEs are the way to go.

```javascript

(function() {

let secretAgent = 'James Bond';

console.log(secretAgent); // Output: James Bond

})();

console.log(secretAgent); // Error! secretAgent is not defined

```

In this example, `secretAgent` is only accessible inside the IIFE and doesn't pollute the global scope. This is a great way to encapsulate logic in your code.

- **Temporary Work:** If you need to do some temporary work that doesn't need to be reused or leave behind any global variables or functions, an IIFE is perfect.

```javascript

(function() {

let temp = 'Processing some data...';

console.log(temp); // Output: Processing some data...

})();

```

### Caveats: Every Hero has a Kryptonite! 🤷♂️

While IIFEs are powerful, they have some limitations:

1. No Reusability: Since an IIFE is executed immediately, it cannot be reused. This is by design, but it also means you have to create a new function if you need the same functionality again.

2. Debugging: Debugging can be trickier with IIFEs since they are anonymous. This can make stack traces harder to follow.

In the world of JavaScript, IIFEs are a powerful tool to run a piece of code immediately and keep the global scope clean. They are like secret agents, doing their job without leaving a trace.

Remember, with great power comes great responsibility. Use IIFEs wisely, and they can be a valuable asset in your coding arsenal.

---

## Wrapping Up🎁

Functions in JavaScript are essential tools for writing clean, reusable, and modular code. Whether you're using a normal function, an arrow function, or an IIFE, understanding how they work and when to use them is key to becoming a JavaScript pro. Stay tuned for more deep dives into the world of JavaScript functions in upcoming blogs!

And remember, just like in cooking, the right function can make your code a delightful dish to serve! 🍽️👨🍳

We've just scratched the surface of JavaScript functions today. There's more to come in our next blog posts. Stay tuned, and keep on coding! 🚀

| aniket_botre |

1,749,235 | Streamlining Communication: Unveiling the Best Shopify Contact Form Plugins | In the dynamic world of e-commerce, establishing seamless communication channels between businesses... | 0 | 2024-02-02T06:14:45 | https://dev.to/cftoanyapi/streamlining-communication-unveiling-the-best-shopify-contact-form-plugins-1de9 | contactforemtoanyapi, wordpressplugins | In the dynamic world of e-commerce, establishing seamless communication channels between businesses and customers is paramount. One of the key components in achieving this is a robust and user-friendly contact form. Shopify, being a popular e-commerce platform, provides a myriad of options for integrating contact forms into your online store. In this blog post, we will explore and highlight the best [Shopify contact form plugins](https://www.contactformtoapi.com/shopify/) that can elevate your customer interaction game.

### The Importance of Contact Forms

Before delving into the specifics of the best Shopify contact form plugins, let's first understand the significance of having an effective contact form on your e-commerce website.

1. **Customer Engagement**: A well-designed contact form encourages customers to reach out with queries, feedback, or concerns. This engagement fosters a sense of trust and transparency between the customer and the business.

2. **Problem Resolution**: Contact forms serve as a direct link for customers to communicate issues they may be facing. Quick and efficient resolution of problems can significantly impact customer satisfaction and loyalty.

3. **Lead Generation**: Contact forms are not just for troubleshooting; they can also be powerful tools for lead generation. By collecting customer information through these forms, businesses can build their email lists and tailor marketing strategies.

4. **Professionalism**: A professionally designed contact form adds a touch of legitimacy to your online store. It signals to customers that your business is open to communication and takes their concerns seriously.

### Top Shopify Contact Form Plugins

Now, let's explore some of the best Shopify contact form plugins that can enhance your store's communication capabilities.

#### 1. **Form Builder with File Upload by HulkApps**

HulkApps' Form Builder is a versatile tool that empowers **[Shopify](https://www.contactformtoapi.com/shopify/)** store owners to create customizable contact forms effortlessly. What sets this plugin apart is its file upload feature, enabling customers to attach relevant documents or images when submitting their inquiries. This is particularly useful for resolving specific issues or addressing product-related queries that may require visual clarification.

**Key Features:**

- Drag-and-drop form builder for easy customization

- File upload capability for added convenience

- Mobile-responsive forms for a seamless user experience

- Integration with popular email marketing tools

#### 2. **Zendesk Chat + Email by Zendesk**

Zendesk is a renowned name in customer support, and their Shopify integration brings forth a powerful combination of live chat and email. The contact form feature seamlessly integrates with Zendesk's ticketing system, allowing for efficient management and resolution of customer inquiries. Real-time chat functionality also enhances customer engagement.

**Key Features:**

- Live chat for instant customer support

- Email ticketing system for organized query resolution

- Customizable contact forms to align with your brand

- Analytics to track and improve customer interactions

#### 3. **Contact Us Form + Captcha by POWr.io**

POWr.io's Contact Us Form is a user-friendly solution for creating sleek and functional contact forms. With an intuitive interface, this plugin makes it easy to design and embed contact forms anywhere on your Shopify store. The inclusion of a captcha feature enhances security and prevents spam submissions.

**Key Features:**

- Simple drag-and-drop form builder

- Captcha integration for spam prevention

- Customizable design to match your store's aesthetic

- Analytics to track form submissions

#### 4. **Reamaze by Reamaze**