id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,720,395 | Perl Weekly #650 - Perl in 2024 | Originally published at Perl Weekly 650 Hi there, What is in for Perl in 2024? We had a big... | 20,640 | 2024-01-08T06:22:57 | https://perlweekly.com/archive/650.html | perl, news, programming | ---

title: Perl Weekly #650 - Perl in 2024

published: true

description:

tags: perl, news, programming

canonical_url: https://perlweekly.com/archive/650.html

series: perl-weekly

---

Originally published at [Perl Weekly 650](https://perlweekly.com/archive/650.html)

Hi there,

<strong>What is in for Perl in 2024?</strong>

We had a big release <strong>Perl v5.38</strong> last year, <strong>July 3, 2023</strong>. It was the first release introducing <strong>Corinna</strong> into core. Although it was good enough for starter but I am looking for another push in the next release in the year 2024. I am sure you must have tried the new OO in core. One thing that I really wanted was the ability to create a <strong>role</strong> using new shiny OO. I have no idea what is in the plan for the next release. I am looking forward to another update to the new OO ecosystem.

Personally, I am also looking forward to the next <strong>Perl and Raku Conference in Las Vegas</strong>. I had a wonderful experience last year in <strong>Canada</strong>. I hope things get back to normal before the event. I don't know about the conference in Europe. I missed it last year as it clashed with my India visit. It used to be my only opportunity to meet fellow <strong>Perl</strong> and <strong>Raku</strong> friends. I miss the local event, <strong>London Perl Workshop</strong>. I hope it gets fresh boost this year.

My pet project, <a href="https://theweeklychallenge.org">The Weekly Challenge</a> recently completed <strong>250 weeks</strong>. It has been a great learning experience for me personally. I was introduced to <strong>Raku</strong> and <strong>Python</strong>. Thanks to the team members we get regular blog posts talking about magical <strong>Perl</strong> code. Talking about blog post, <strong>Olaf Alders</strong>, invites us to join the <a href="https://wundersolutions-com.eo.page/k22w4">newsletter</a>. I have accepted his invitation. How about you?

Stay safe and enjoy rest of the week.

--

Your editor: Mohammad S. Anwar.

## Announcements

### [Cosmoshop unterstützt den Deutschen Perl/Raku-Workshop](https://blogs.perl.org/users/max_maischein/2024/01/cosmoshop-unterstutzt-den-deutschen-perlraku-workshop.html)

We are happy to announce that CosmoShop supports the German Perl/Raku-Workshop.

### [This Week in PSC (130) | 2024-01-04](https://blogs.perl.org/users/psc/2024/01/this-week-in-psc-130.html)

Happy New Year and weekly update from Perl Steering Council.

### [Let's keep in touch](https://wundersolutions-com.eo.page/k22w4)

Invitation from Olaf to join the newsletter for interesting and fun post.

---

## The Weekly Challenge

<a href="https://theweeklychallenge.org/">The Weekly Challenge</a> by <a href="http://www.manwar.org/">Mohammad Anwar</a> will help you step out of your comfort-zone. You can even win prize money of $50 Amazon voucher by participating in the weekly challenge. We pick one winner at the end of the month from among all of the contributors during the month. The monthly prize is kindly sponsored by Peter Sergeant of <a href="https://perl.careers/">PerlCareers</a>.

### [The Weekly Challenge - 251](https://theweeklychallenge.org/blog/perl-weekly-challenge-251)

Welcome to a new week with a couple of fun tasks: "Concatenation Value" and "Lucky Numbers". If you are new to the weekly challenge, why not join us and have fun every week? For more information, please read the <a href="https://theweeklychallenge.org/faq">FAQ</a>.

### [RECAP - The Weekly Challenge - 250](https://theweeklychallenge.org/blog/recap-challenge-250)

Enjoy a quick recap of last week's contributions by Team PWC dealing with the "Smallest Index" and "Alphanumeric String Value" tasks in Perl and Raku. You will find plenty of solutions to keep you busy.

### [TWC250](https://deadmarshal.blogspot.com/2024/01/twc250.html)

The real power of max() and map() in display. Perl is unbeatable truly.

### [String Index](https://raku-musings.com/string-index.html)

Compact one liner in Raku for both tasks. Line by line discussion is really handy too.

### [Leaping from Tree to Tree as They Float Down the Mighty Rivers of British Columbia](https://jacoby.github.io/2024/01/01/leaping-from-tree-to-tree-as-they-float-down-the-mighty-rivers-of-british-columbia-weekly-challenge-250.html)

Lots of fun facts will keep you busy as well as elegant solutions. Thanks for sharing.

### [Perl Weekly Challenge: Week 250](https://www.braincells.com/perl/2024/01/perl_weekly_challenge_week_250.html)

Incredibly straight forward solutions both in Perl and Raku. Plenty of magics for you.

### [Alphanumeric Moduli](https://github.sommrey.de/blog/pwc/challenge-250)

Simple loop and max/map enough for this week, nice to see variations. Keep it up great work.

### [Perl Weekly Challenge 250: Smallest Index](https://blogs.perl.org/users/laurent_r/2024/01/perl-weekly-challenge-250-smallest-index.html)

You don't need map power, just regular loop is good enough. Well done and thanks for sharing.

### [the first one of 2024!](https://fluca1978.github.io/2024/01/04/PerlWeeklyChallenge250.html)

Raku magical one-liner showing off. Sweet and short discussion is very handy.

### [Perl Weekly Challenge 250](https://wlmb.github.io/2024/01/01/PWC250/)

Pure one-liner in Perl using CPAN is so refreshing. Great work and keep it up.

### [Weekly Challenge #250](https://github.com/manwar/perlweeklychallenge-club/blob/master/challenge-250/mark-anderson/raku/blog-1.md)

Welcome to blogging and thanks for sharing your first blog post. Hope to see more often. Thanks for sharing.

### [Two-Hundred Fifty Perl Weekly Challenges! Two-Hundred Fifty problems so clear…](https://packy.dardan.com/2024/01/01/perl-weekly-challenge-2/)

Cute little solutions in Perl, Python and Raku with engaging discussions. Keep it up great work.

### [Smallest index, largest element](http://ccgi.campbellsmiths.force9.co.uk/challenge/250)

DIY solutions like always. Well structured and presented too. Highly recommended.

### [The Weekly Challenge #250](https://hatley-software.blogspot.com/2024/01/robbie-hatleys-solutions-to-weekly.html)

Use of CPAN module makes the solution elegant and compact. Well done and keep it up.

### [Smallest Viable Value](https://blog.firedrake.org/archive/2024/01/The_Weekly_Challenge_250__Smallest_Viable_Value.html)

One of the longest serving member of the team. Thanks for your support and contributions. Like always we have a detailed and meaningful post.

### [Get well soon and thanks for the post. Just love how Python gets translated to Perl. Cool.](https://dev.to/simongreennet/small-and-large-2ap)

### [PWC #250](https://thiujiac.blogspot.com/2023/12/pwc-250.html)

I really enjoy Perl 4 code. Never got the opportunity to play with it. Thanks for the refresher.

---

## Rakudo

### [2024.01 Happy (2..9).map(*³).sum](https://rakudoweekly.blog/2024/01/02/2024-01-happy-2-9-map%c2%b3-sum/)

---

## Weekly collections

### [NICEPERL's lists](http://niceperl.blogspot.com/)

<a href="">Great CPAN modules released last week</a>;<br><a href="https://niceperl.blogspot.com/2024/01/cdlxxvii-10-great-cpan-modules-released.html">MetaCPAN weekly report</a>;<br><a href="https://niceperl.blogspot.com/2024/01/dlxxviii-metacpan-weekly-report-meta.html">StackOverflow Perl report</a>.

---

## The corner of Gabor

A couple of entries sneaked in by Gabor.

### [How to handle number overflow or underflow?](https://rust.code-maven.com/how-to-handle-overflow)

As a Perl prorgammer I never thought what happens if my posts get too many likes. Will the counter overflow? Now, as I write Rust it makes me think about all kinds of, sometimes rather unrealistic, edge-cases.

---

You joined the Perl Weekly to get weekly e-mails about the Perl programming language and related topics.

Want to see more? See the [archives](https://perlweekly.com/archive/) of all the issues.

Not yet subscribed to the newsletter? [Join us free of charge](https://perlweekly.com/subscribe.html)!

(C) Copyright [Gabor Szabo](https://szabgab.com/)

The articles are copyright the respective authors.

| szabgab |

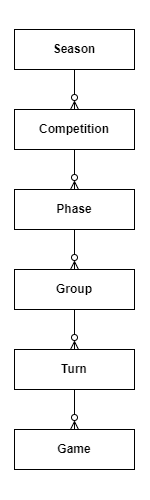

1,720,400 | Deploy and Stake a Shardeum Validator on Spheron in Minutes | In blockchain technology, solutions addressing the scalability trilemma of scalability, security, and... | 0 | 2024-01-18T14:36:03 | https://blog.spheron.network/deploy-and-stake-a-shardeum-validator-on-spheron-in-minutes | shardeum, web3, blockchain, deployment |

---

title: Deploy and Stake a Shardeum Validator on Spheron in Minutes

published: true

date: 2024-01-08 04:30:24 UTC

tags: shardeum, web3, blockchain, deployment

canonical_url: https://blog.spheron.network/deploy-and-stake-a-shardeum-validator-on-spheron-in-minutes

---

In blockchain technology, solutions addressing the scalability trilemma of scalability, security, and decentralization have remained an ongoing challenge. [Shardeum](https://shardeum.org/) emerges as a groundbreaking EVM-compatible Layer 1 (L1) smart contract platform, revolutionizing this space by leveraging dynamic state sharding. This innovative approach redefines the validation process, distributing transaction verification across shards in the network for enhanced throughput and scalability.

This article delves into the seamless deployment and staking process for a Shardeum validator using Spheron, a user-friendly platform simplifying node configuration and participation in the Shardeum ecosystem. Through step-by-step guidance, users can easily deploy a Shardeum node, contribute to network security, and stake testnet SHM (Shardeum's native token) to actively participate in the testnet.

## What is Shardeum?

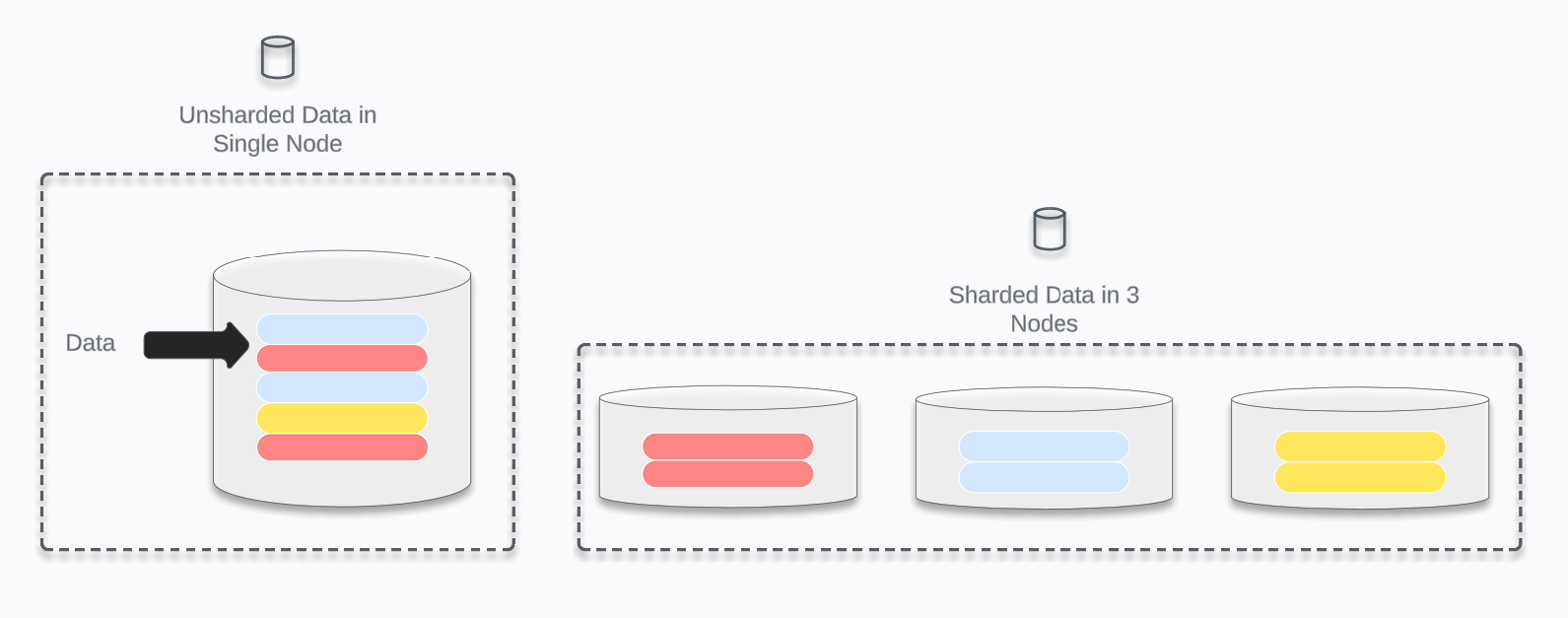

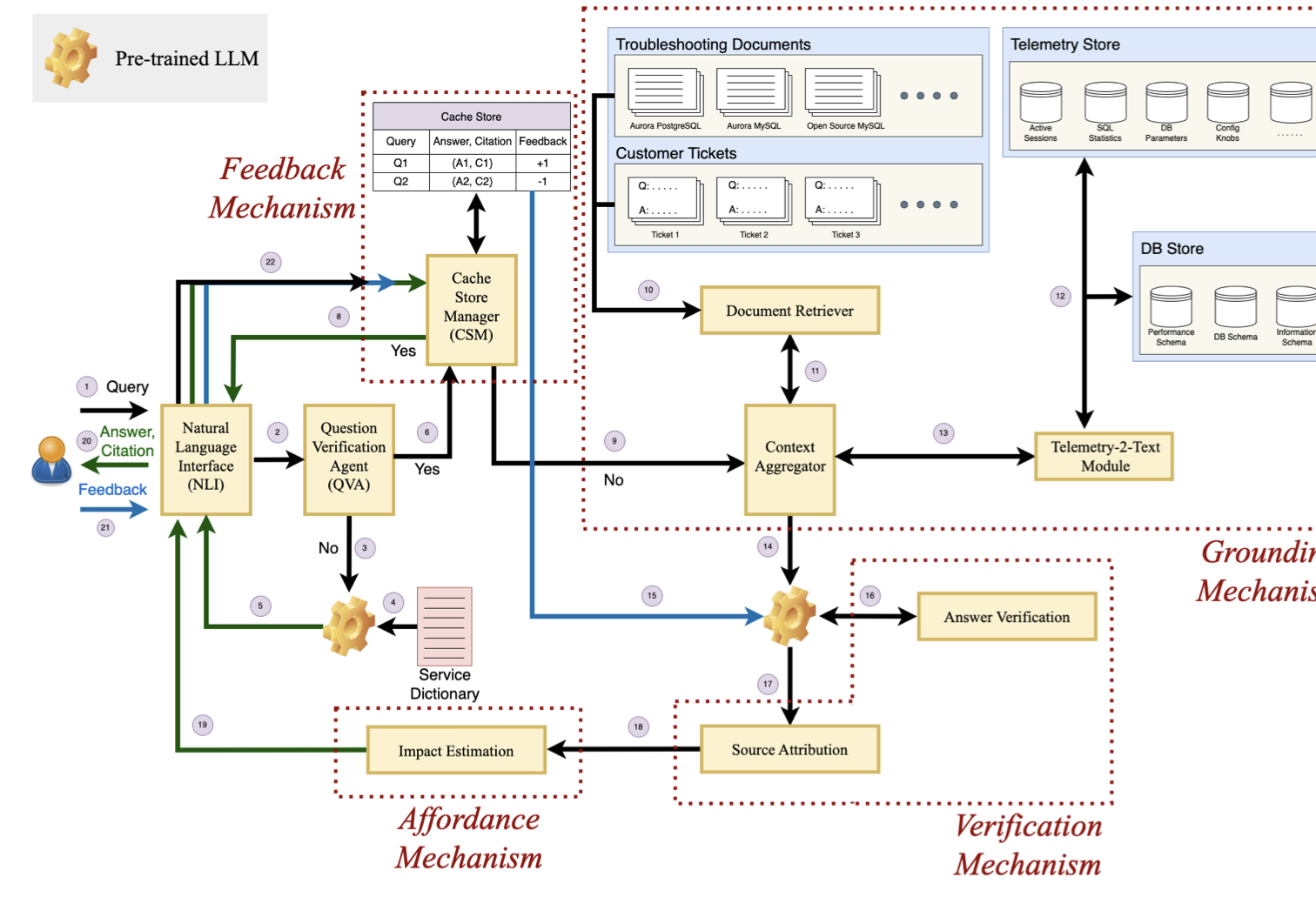

[Shardeum](https://shardeum.org/) is an EVM-compatible, [dynamically state sharded](https://docs.shardeum.org/introduction/dynamicstatesharding) L1 smart contract platform. Expressed simply, sharding breaks the job of validating and confirming transactions into small pieces or shards and spreads them out in the network.

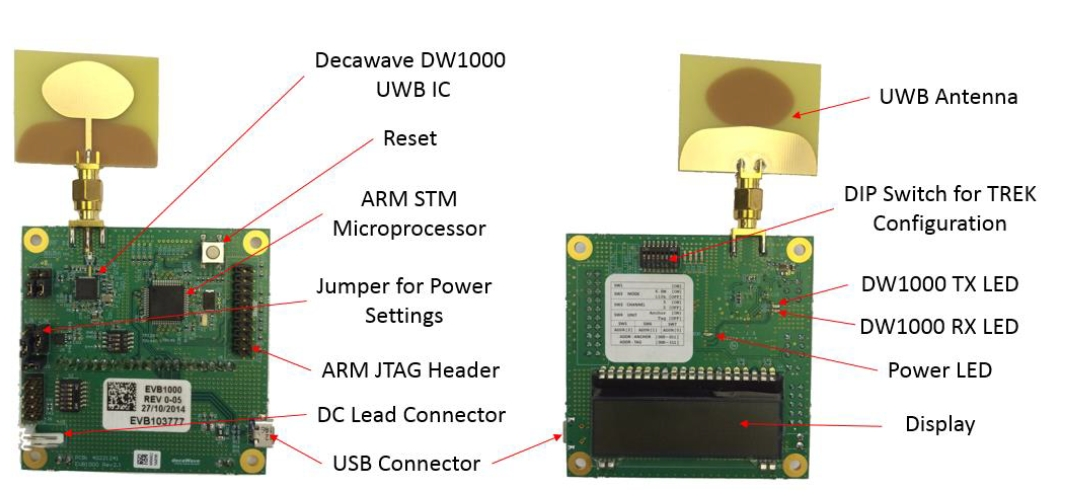

_Here is a diagram demonstrating how un-sharded data in a single node can be sharded amongst three nodes:_

_Image created with LucidChart_

Shardeum utilizes parallel processing across shards to achieve high throughput with linear scalability. This is achieved while concurrently enabling low gas fees & and ensuring security with a combined [proof-of-stake and proof-of-quorum for consensus](https://docs.shardeum.org/introduction/consensus), while block-level consensus is not required for finality.

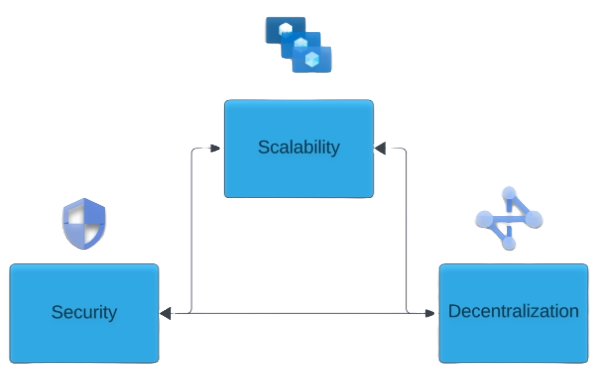

## Solving the Scalability Trilemma

The [scalability trilemma](https://www.researchgate.net/publication/342639281_Scaling_Blockchains_A_Comprehensive_Survey) is a set of trade-offs originally posited by Vitalik Buterin, where blockchains can generally only prioritize two out of 3 of the following characteristics:

1. Scalability

2. Security

3. Decentralization

Vitalik did add the caveat that achieving all three is actually possible but very difficult.

Shardeum solves this problem with the following:

- Performing consensus and processing at the transaction level instead of the block level.

- Using dynamic state sharding to achieve scalability through parallelization by distributing workload throughout the network.

- Reduced overhead for validator nodes because they only need to store state data for their own shard.

- This allows the network to auto-scale horizontally by adding additional nodes instead of being forced to scale vertically by increasing the computing resources of each node.

- This allows for fast finality and atomic shard composability.

## What is Spheron Network?

[Spheron Network](https://spheron.network/) is a next-generation PaaS that offers low-cost and seamless access to Web3 infrastructure across multiple chains. It is explicitly designed to serve a broader audience, including startups, developers, and organizations looking to scale their infrastructure.

Spheron Network offers a Compute Marketplace that you can use to set up useful tools like Avail Node quickly. With Spheron, you don't have to worry about the technical stuff, and you can focus on deploying your Avail Node with ease. In addition to compute, web hosting, and storage capabilities, Spheron offers many features to enhance productivity and enable stakeholders to reach new heights while prioritizing privacy, security, and reliability.

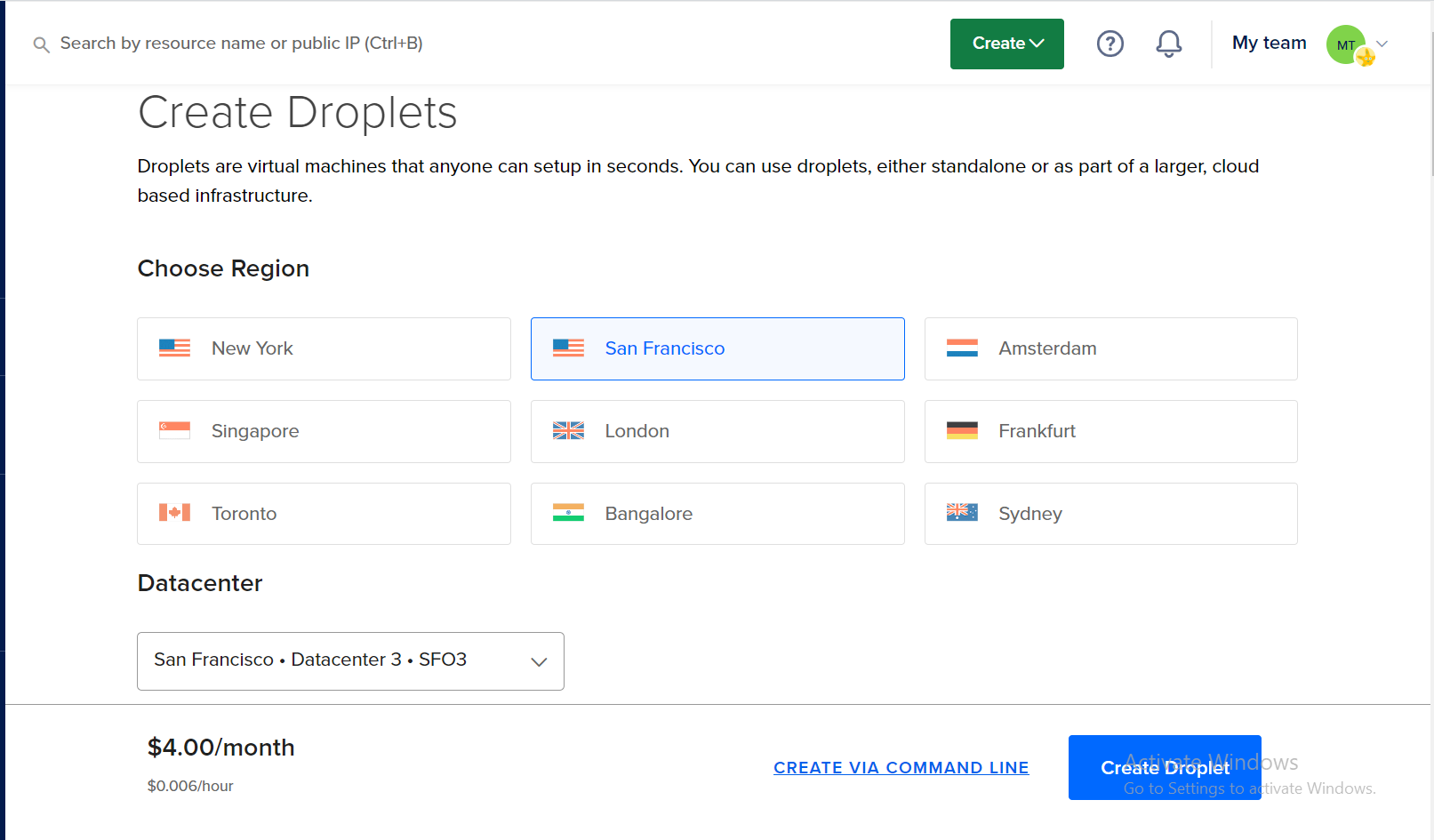

## Deploy a Shardeum Validator on Spheron

Deploying a Shardeum Validator on Spheron is a straightforward process. Running a Shardeum validator allows you to contribute to network security while also earning testnet SHM rewards. Here's a step-by-step guide to setting it up:

**Step 1:** If you dont already have a [Spheron account then sign up here](https://app.spheron.network/#/signup)!

**Step 2:** After logging into Spheron, navigate to the [Compute Dashboard](https://app.spheron.network/#/compute/dashboard) and click New Cluster.

**Step 3:**

1. Select Compute and Compute Type as On Demand

2. Then Select Start from the marketplace app

3. In the search box, type "Shardeum"

Spheron marketplace has a pre-baked docker image for the [Shardeum node](https://app.spheron.network/#/compute/marketplace?template=Shardeum%20Testnet%20Validator&templateId=6496eb9ba579a6bc8507cae7) ready to go for you!

**Step 4:** Select your preferred region for deployment. By default, The container will be deployed in the EU-east region for On Demand. Choosing a region closer to your users can improve performance and reduce latency.

**Step 5:** Then, Spheron will pre-populate the recommended instance plan, Terra Large Ti, and SSD storage, 256GB.

This instance and storage plan were specially selected to meet the [Shardeum minimum hardware requirements noted here](https://docs.shardeum.org/node/run/validator#minimum-hardware-requirements).

**Step 6:** The marketplace app will also automatically configure networking, ports, parameters, and commands for you. Check out the [Shardeum validator docs to learn more about these settings](https://docs.shardeum.org/node/run/validator#step-2-download-and-install-validator).

**Step 7:** Spheron abstracts away the difficulty of configuring a node. You just need to click Deploy! Check out the [Spheron docs for more info](https://docs.spheron.network/marketplace-guide/shardeum/).

Now, theres just one final step to get our validator working after this initial deployment is complete.

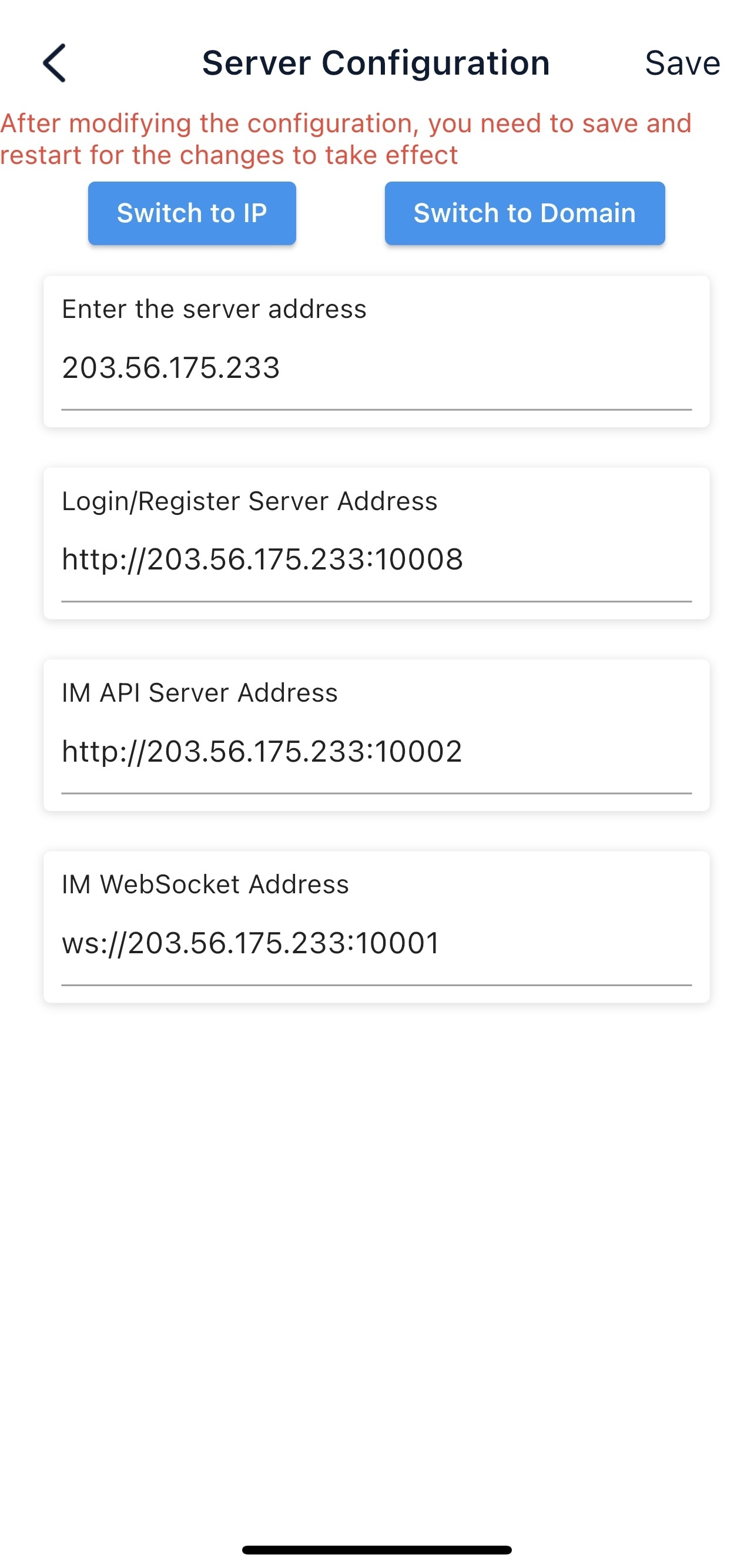

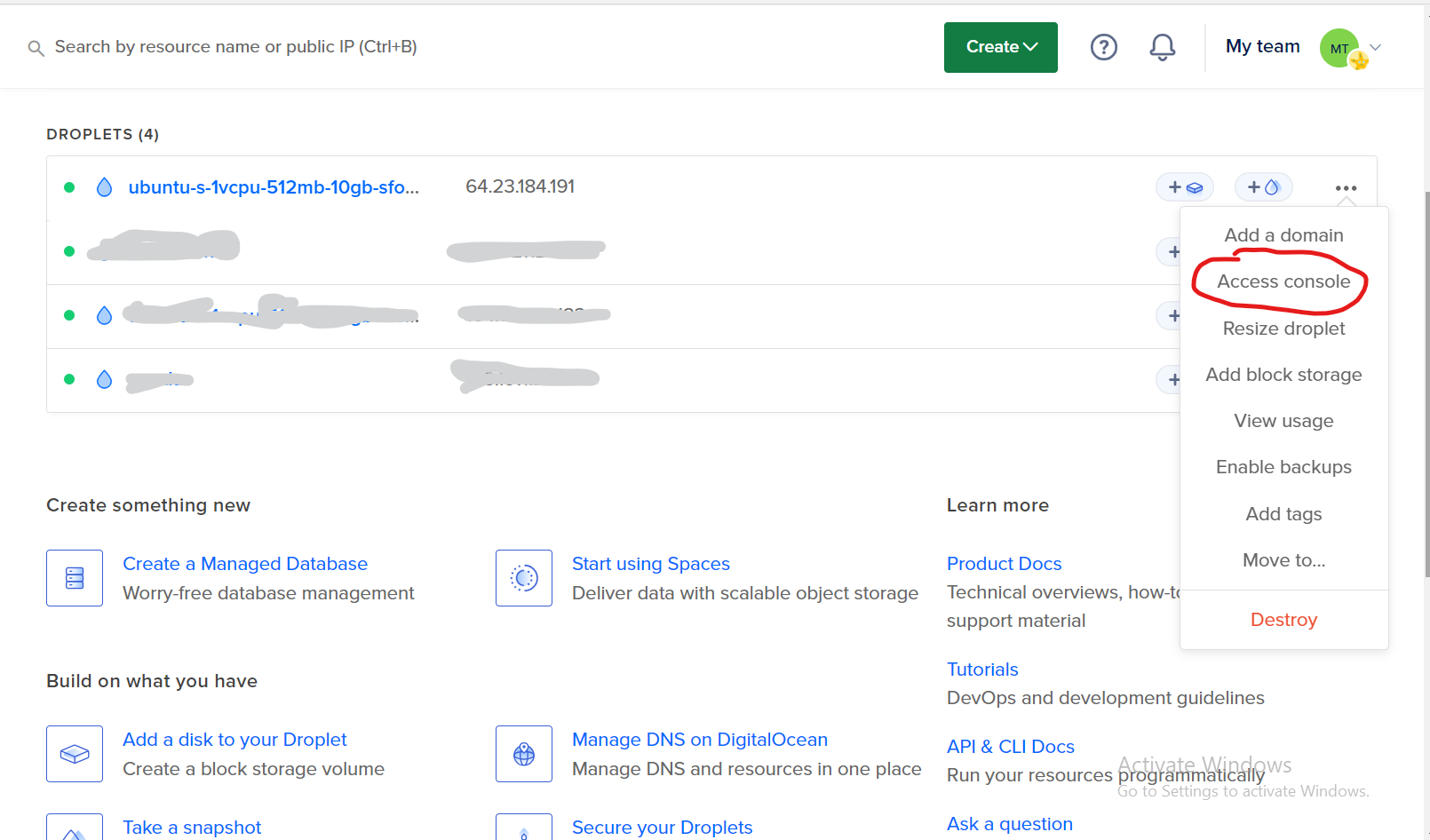

### Updating the Config on the Spheron Instance

After your deployment is complete, grab the hostname of your provider. **_e.g._** [**_provider.us-east.spheron.wiki_**](https://shardeum.org/)

**Step 1:** Now, lets get the IP address of that host. In a console, execute the following command:

```

ping provider.us-east.spheron.wiki

```

**Step 2:** Copy the IP address returned from that command:

**Step 3:** On your instance page, in the **Port Policy Info** section, grab the external ports that map to internal ports **9001** and **10001** , respectively.

**Step 4:** Then navigate to the Settings tab on your Compute instance page and click the Update Instance button.

**Step 5:** After clicking Update Instance, scroll down to the Template Configuration section and paste in the IP address of your provider from the previous step for the parameters:

- SERVERIP

- LOCALLANIP

**Step 6:** Then, update the parameters SHMEXT and SHMINT with the mapped external ports you grabbed from the previous step.

**Example:**

- SHMEXT: 30628

- SHMINT: 30194

**Step 7:** click Update to restart the instance with the updated config. After your instance starts back up, we will update the default GUI password.

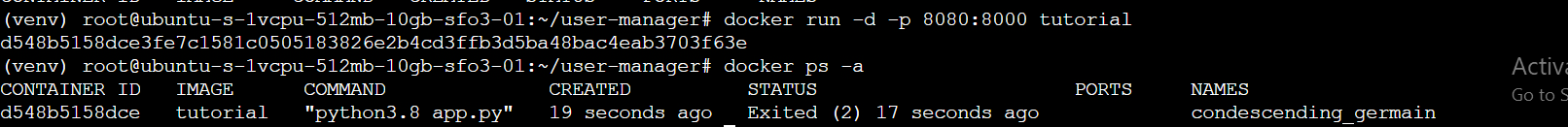

You will know the instance is running when you see the following in the logs:

```

Starting operator guidone

```

### Update your GUI Password

The marketplace app sets a default password for your instance, but you need to change that ASAP!

**Step 1:** Navigate to the Shell tab on your Spheron instance:

**Step 2:** Enter the below command in the box and click Run Command:

```

operator-cli gui set password YOUR_PASSWORD_HERE

```

Be sure to select a secure password! Check out the [Spheron docs for more info](https://docs.spheron.network/marketplace-guide/shardeum/#how-to-run-the-node).

## Staking your Shardeum Validator to Join the Testnet

Now that we have a Shardeum node deployed, the next step is to stake our validator with SHM so that it can be included in the network during the next rotation.

### Acquire SHM in your Wallet for Staking

Follow these steps to set up your wallet for staking:

**Step 1:** [Add the Shardeum network](https://docs.shardeum.org/wallets/metamask/add-shardeum-network) to your wallet, such as [Metamask](https://metamask.io/)

1. **Network Name:** Shardeum Sphinx Validator 1.X

2. **New RPC URL:** [https://sphinx.shardeum.org/](https://shardeum.org/)

3. **Chain ID:** 8082

4. **Currency symbol:** SHM

5. **Block Explorer URL:** [https://explorer-sphinx.shardeum.org/](https://shardeum.org/)

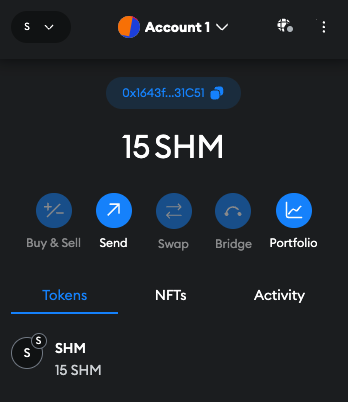

**Step 2:** Claim some [testnet SHM from the faucet](https://docs.shardeum.org/faucet/claim) on the Shardeum Discord. You should receive 15 SHM from the faucet, and you will need 10+ gas fees.

**Step 3:** Verify on the Discord server, then go to the **validator-faucet** channel and use the **/faucet** command to request SHM.

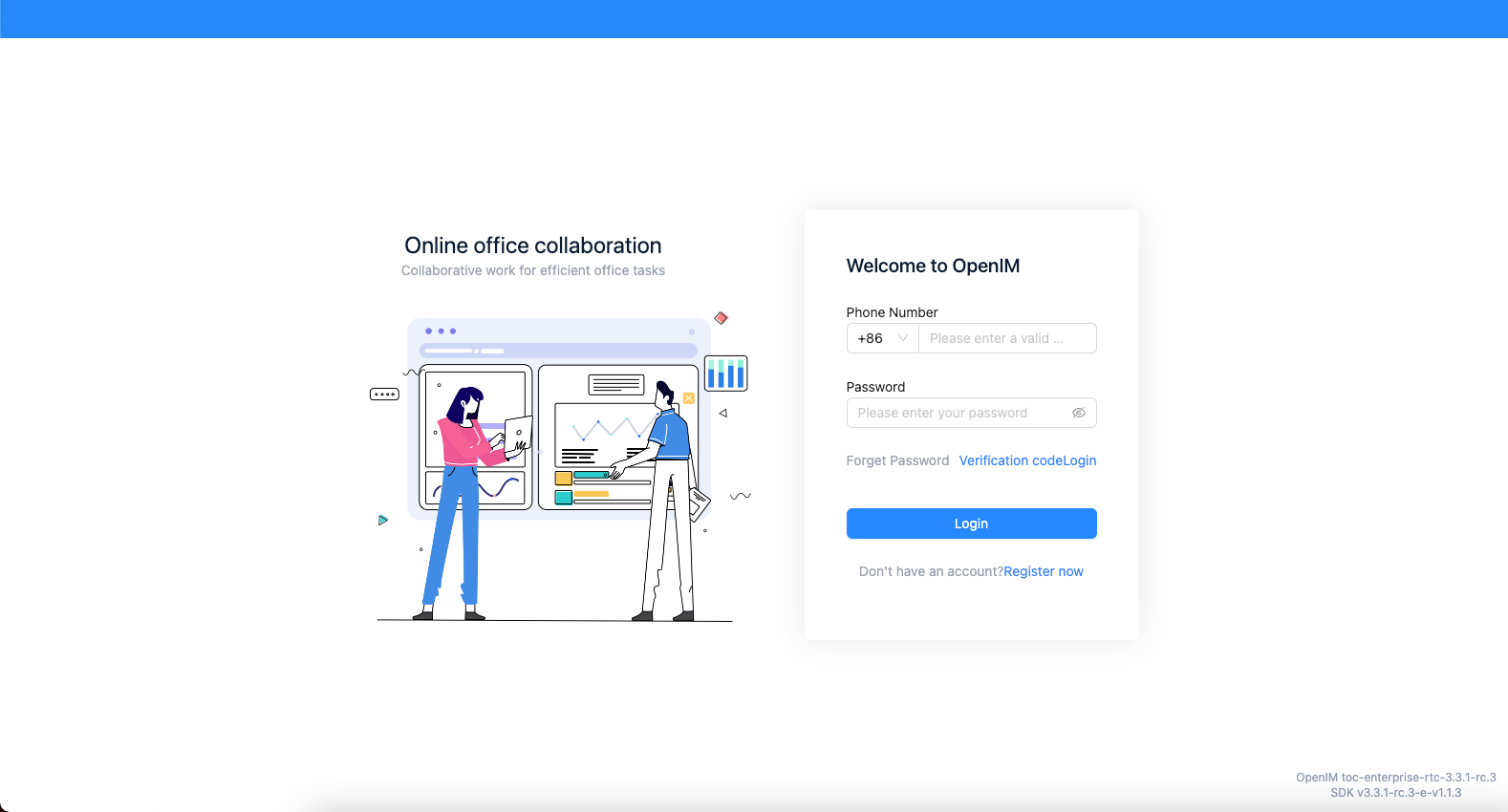

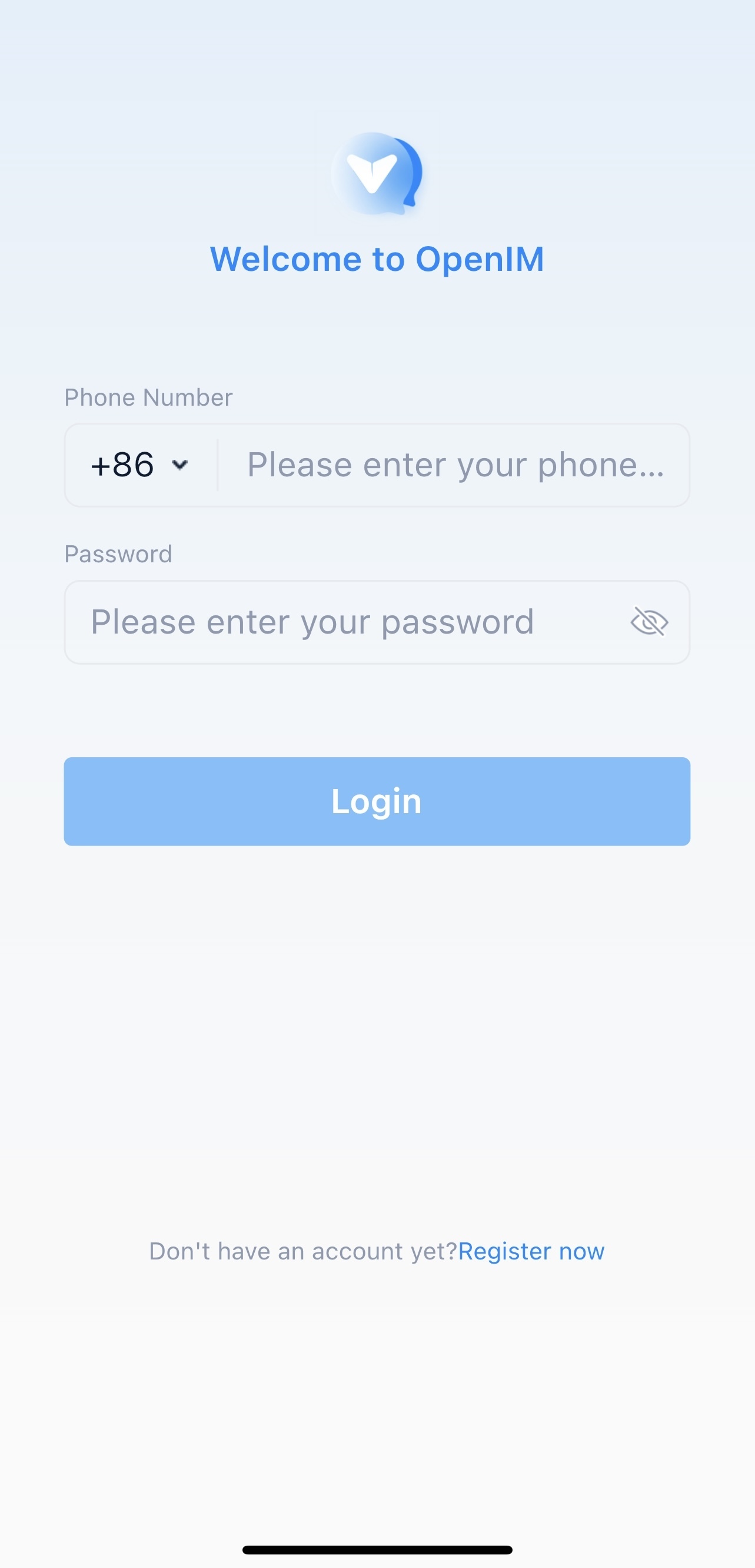

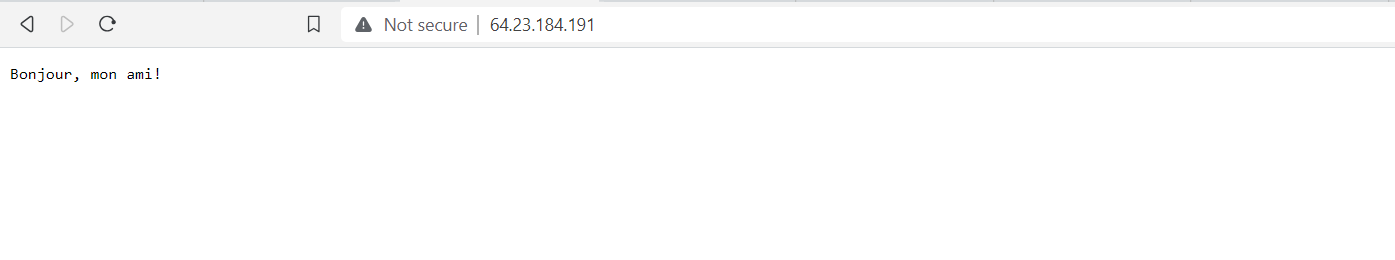

### Navigate to your Validator GUI

Spheron is forwarding port 8080, which the GUI runs on. Get the Connection URL, which maps from port 8080 internally, and enter that URL into a browser to access the Shardeum validator dashboard.

Be sure to access the URL appended with [https://](https://shardeum.org/)

_e.g._ [_https://provider.us-west.spheron.wiki:32003/_](https://shardeum.org/)

**Note: You may get a warning b/c of invalid SSL. The Shardeum docs have a** [**fix for that SSL issue**](https://docs.shardeum.org/Node/Run/Validator#step-4-open-validator-gui) **.**

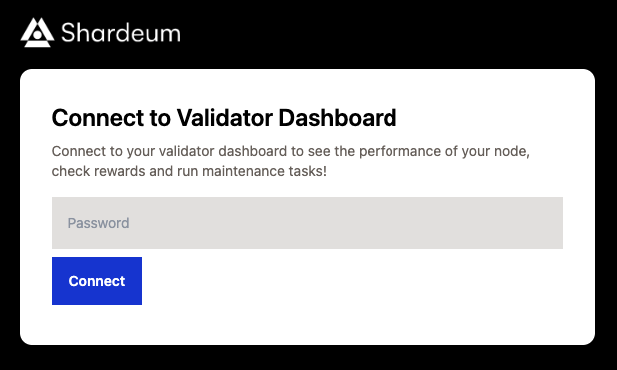

**Step 1:** Then log in with the password you set up earlier.

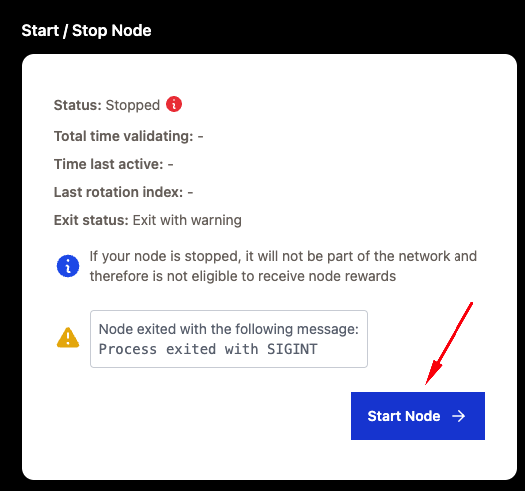

**Step 2:** Next, lets start up the Validator. Go to the Maintenance tab, then click the Start Node if its not already running.

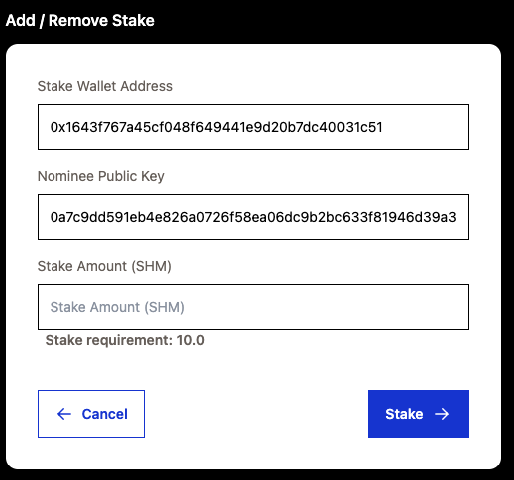

**Step 3:** Next, on that same page, click Connect Wallet. Approve the prompt that pops up in your wallet. After that, click Add Stake. You should have received 15 SHM from the faucet from the step above.

- Stake Wallet Address _[pre-populated with connected wallet]_

- Nominee Public Key _[pre-populated by validator]_

- Stake amount (SHM) _[In terms of ETH, not WEI]_

It is recommended to stake 10 SHM.

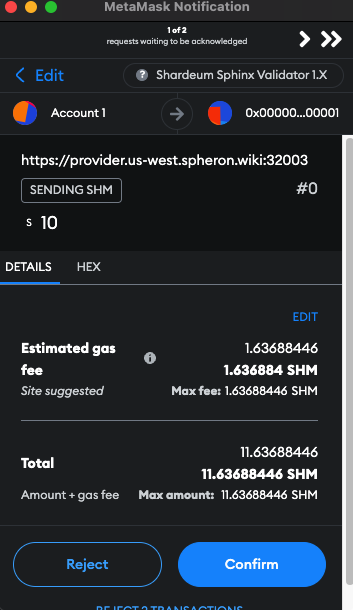

**Step 4:** Enter 10 into the Stake Amount field and click Stake then sign the wallet transaction.

**Step 5:** You can view the staking transaction on the Sphinx Shardeum block explorer.

**Example:**

[_https://explorer-sphinx.shardeum.org/tx/0x5f55856fc3a0476d65037ccca099594fc8e345699fada0d3686e9e32f2a1e686_](https://shardeum.org/)

### Joining the network

Your node will initially be in **Standby** mode but become **Active** during the next rotation.

Alternatively, if you prefer, you can stake directly via the CLI; check the [Shardeum docs for more info](https://docs.shardeum.org/node/run/validator#cli). This will require you to expose your private key as a secret ENV var, so be sure your wallet does not contain any mainnet assets.

After completing your stake, you can check the node status with the **operator-cli** on the **Shell** tab on your Spheron instance page.

Check the node status with the following command:

```

operator-cli status

```

You can also check the status of your GUI with:

```

operator-cli gui status

```

Check back on your node after taking a break; eventually, your node will transition from Standby mode to Active!

Congratulations! You are now running a staked Shardeum validator. Spheron will do all the heavy lifting to ensure your node is a resilient and responsive network member!

Check out this [Shardeum FAQ](https://shardeum.org/blog/how-to-run-a-validator-node-on-shardeum-sphinx/#Frequently_Asked_Questions_FAQs) for answers to common validator issues.

## Conclusion

In conclusion, deploying and staking a Shardeum validator on Spheron is a straightforward process that can be completed in a few simple steps. With Spheron's user-friendly interface and automated configuration, users can easily participate in the Shardeum testnet and contribute to the network's security while earning testnet SHM rewards.

Following the instructions in this article, users can deploy a Shardeum node, update the config, and stake their validator to join the testnet. This combination of advanced technology and user-friendly platforms makes blockchain participation more accessible and rewarding. | spheronstaff |

1,720,414 | Conan Zero to Hero: A Beginner’s Guide with a Practical Project Example Using gtest | Conan is an open-source, cross-platform package manager designed to simplify the process of managing... | 0 | 2024-01-08T06:43:22 | https://dev.to/lightcity/conan-zero-to-hero-a-beginners-guide-with-a-practical-project-example-using-gtest-1b9d | beginners, cpp, conan, gtest | Conan is an open-source, cross-platform package manager designed to simplify the process of managing and distributing C and C++ libraries. It helps developers handle dependencies efficiently by providing a centralized system to package, share, and reuse code. Conan is particularly useful for managing dependencies in C and C++ projects, where manual management can become complex.

let us use conan to write an actual gtest project.

https://levelup.gitconnected.com/conan-zero-to-hero-a-beginners-guide-with-a-practical-project-example-using-gtest-1299baac970e

| lightcity |

1,720,556 | ServiceNow: The Future of Digital Workflow Automation | In this blog, we are going to discuss ServiceNow: The Future of Digital Workflow Automation. Before... | 0 | 2024-01-08T08:51:56 | https://dev.to/srikanthbollu/servicenow-the-future-of-digital-workflow-automation-4b48 | servicenow, webdev, career, discuss | In this blog, we are going to discuss ServiceNow: The Future of Digital Workflow Automation.

Before moving further, you can get accredited with this [ServiceNow online training](https://itcanvass.com/servicenow-training) certification available so as to comprehend application development’s core principles on the ServiceNow Platform, which would assist you in improving your professional career.

**1. What is ServiceNow?

**

ServiceNow is a powerful cloud-based platform that enables businesses to streamline and automate their digital workflows, making it an indispensable tool in today's fast-paced business environment. Its versatile capabilities extend across IT service management, human resources, customer service, and security operations. One of the most compelling aspects of ServiceNow is its ability to consolidate various business processes into a single unified system, allowing for seamless communication and collaboration across departments. This not only enhances operational efficiency but also fosters a culture of transparency and accountability within the organization.

Moreover, ServiceNow empowers organizations to create custom applications without the need for extensive coding knowledge, thereby democratizing the development process and enabling rapid innovation. By utilizing ServiceNow's low-code or no-code app development tools, businesses can swiftly respond to evolving market demands and internal requirements. Additionally, as companies increasingly recognize the importance of delivering engaging digital experiences to both employees and customers alike, ServiceNow stands out as an invaluable platform for achieving this goal by providing intuitive interfaces and personalized user experiences that enhance productivity and satisfaction.

In conclusion, ServiceNow represents a pivotal shift in how organizations approach workflow management by centralizing processes on a single platform while offering unparalleled flexibility in application development. As digital transformation continues to redefine industry standards, embracing platforms like ServiceNow will be crucial for staying competitive in today's dynamic business landscape.

**2. Benefits of Digital Workflow Automation

**

In today's fast-paced business environment, digital workflow automation offers numerous benefits that can streamline processes and drive efficiency. One major advantage is the reduction of manual tasks, which can free up employees to focus on more strategic and value-added activities. By automating repetitive tasks such as data entry, approval processes, and notifications, organizations can significantly increase productivity while minimizing errors.

Furthermore, digital workflow automation enables enhanced visibility and transparency across an organization. With real-time tracking and reporting capabilities, stakeholders gain a complete overview of the status of various processes, allowing for better decision-making and accountability. This increased transparency not only fosters collaboration but also improves compliance with regulations and organizational policies. Ultimately, by leveraging digital workflow automation through platforms like ServiceNow, businesses can achieve operational excellence while driving innovation in their operations.

**3. ServiceNow's Role in Enterprise Efficiency

**

ServiceNow has emerged as a pivotal player in driving enterprise efficiency through its robust digital workflow automation platform. By seamlessly integrating various business processes across departments, ServiceNow allows companies to streamline their operations, eliminate inefficiencies, and boost productivity. This is achieved through the automation of repetitive tasks, enabling employees to focus on more strategic and value-added activities. Moreover, ServiceNow's role in providing real-time visibility into different workflows enables organizations to make data-driven decisions promptly, leading to enhanced operational agility and responsiveness.

Furthermore, ServiceNow's ability to facilitate collaboration among cross-functional teams fosters a culture of transparency and accountability within enterprises. By creating a centralized platform for communication and task management, ServiceNow promotes synergy among various business functions and empowers employees to work cohesively towards shared company objectives. This not only accelerates problem-solving but also enhances the overall quality of deliverables. Additionally, by offering advanced analytics capabilities that provide insights into performance metrics and key operational trends, ServiceNow equips organizations with the tools needed to continuously optimize their workflows for maximum efficiency.

**4. Case Studies: Real-World Application

**

In the realm of digital workflow automation, case studies serve as powerful demonstrations of how ServiceNow's platform is transforming businesses across diverse industries. Take for example a multinational telecommunications company that utilized ServiceNow to streamline its customer service operations. By implementing automated workflows and intelligent case routing, the company significantly reduced resolution times and improved customer satisfaction scores. Similarly, a leading financial services organization leveraged ServiceNow to orchestrate complex IT processes, resulting in substantial cost savings and enhanced operational efficiency.

Furthermore, in the healthcare sector, a prominent hospital system harnessed ServiceNow's capabilities to digitize patient intake processes and automate administrative tasks. This not only boosted staff productivity but also led to faster patient care delivery and resource optimization. These real-world case studies underscore the tangible impact of embracing digital workflow automation through innovative platforms like ServiceNow, transforming businesses by driving operational excellence and delivering significant bottom-line results.

**5. Future Trends and Innovations in ServiceNow

**

As businesses continue to embrace digital transformation, the future of ServiceNow is poised for exciting innovations and trends that will further revolutionize workflow automation. One major trend to watch out for is the integration of artificial intelligence (AI) and machine learning capabilities into ServiceNow platforms. This will empower organizations to make smarter decisions, predict and prevent issues, and automate repetitive tasks more effectively than ever before.

Another key area of innovation in ServiceNow is the expansion of its capabilities in cybersecurity and risk management. With cyber threats becoming increasingly sophisticated, ServiceNow is likely to continue enhancing its security operations products to provide comprehensive threat detection, incident response, and vulnerability management solutions. Moreover, we can anticipate a greater focus on sustainability within ServiceNow as organizations seek ways to incorporate eco-friendly practices into their workflows. We may see new features that enable companies to track their carbon footprint, manage sustainable development goals, and implement green initiatives seamlessly into their operations through the platform. These expected advancements in AI integration, cybersecurity enhancements, and sustainability features are just a glimpse of the exciting future trends that will shape the landscape of ServiceNow. As innovation continues to accelerate at a rapid pace across various industries, it's clear that ServiceNow will play a pivotal role in driving digital transformation forward with unparalleled efficiency and effectiveness.

**6. Challenges and Considerations

**

When it comes to implementing ServiceNow for digital workflow automation, organizations often face various challenges and considerations. One of the key challenges is the need to align different departments and stakeholders to ensure a smooth transition to the new system. This requires effective change management strategies and clear communication to address any resistance or concerns that may arise during the process. Additionally, organizations need to carefully consider data security and compliance requirements when leveraging ServiceNow for automation, especially in industries with strict regulations such as healthcare or finance.

Furthermore, another important consideration is the customization and scalability of the ServiceNow platform. Each organization has unique workflow requirements, so it's crucial to ensure that the platform can be tailored to specific needs while also being able to scale as the business grows. This involves thorough analysis of current processes, identification of areas for improvement, and a well-thought-out plan for implementation that takes into account future expansion. By addressing these challenges and considerations proactively, organizations can maximize the potential benefits of ServiceNow as they embrace digital transformation.

**7. Conclusion: Embracing the Power of ServiceNow

**

In conclusion, embracing the power of ServiceNow is not just about adopting a digital workflow automation tool, but about fundamentally transforming the way organizations operate. ServiceNow offers a holistic approach to managing workflows, from IT and customer service to HR and beyond, enabling businesses to streamline processes, improve productivity, and enhance collaboration across departments. By leveraging the capabilities of ServiceNow, companies can unlock new opportunities for innovation and growth in an increasingly competitive digital landscape.

Furthermore, embracing the power of ServiceNow means embracing a culture of continuous improvement and agility. With its ability to adapt to evolving business needs and market trends, ServiceNow becomes not just a technology platform but a strategic enabler for organizational success. By recognizing the potential of ServiceNow as more than just an IT tool but as a driver of digital transformation across the entire enterprise, businesses can position themselves at the forefront of innovation and efficiency in today's fast-paced business environment. | srikanthbollu |

1,720,577 | Arrow Function in JavaScript - Helps you make your code short video out now | Once upon a time, in the land of JavaScriptia, there lived a group of functions. These functions... | 0 | 2024-01-08T09:18:30 | https://dev.to/codingmadeeasy/arrow-function-in-javascript-helps-you-make-your-code-short-video-out-now-4lhb | [](https://youtu.be/i0HX_D269gY)

Once upon a time, in the land of JavaScriptia, there lived a group of functions. These functions were well-known for their traditional style and syntax. They had been the backbone of the kingdom's code for many years, but change was on the horizon.

One sunny day, a new function named Arrow appeared in JavaScriptia. Arrow was different from the others. It was sleek, concise, and had a unique way of expressing itself. Arrow had a symbol that set it apart - an arrow (=>) - which gave it a distinct identity.

The other functions were intrigued by Arrow's arrival. They gathered around, curious to learn more about this newcomer. Arrow explained that it was an arrow function, a modern way of writing functions in JavaScript that offered a shorter syntax and lexically scoped this keyword.

At first, the traditional functions were hesitant to accept Arrow into their circle. They were used to their familiar ways and were wary of change. But as Arrow demonstrated its abilities and showcased its efficiency, the functions began to see the value it brought to the kingdom.

Arrow's concise syntax made it perfect for shorter tasks. It didn't require the function keyword or the return keyword for simple operations. Its implicit return and ability to maintain the context of this within its scope made it a favorite among developers for handling callbacks and array methods.

Gradually, the functions started adopting Arrow's style. They realized that both the traditional functions and Arrow could coexist harmoniously in JavaScriptia. Some tasks were better suited for the familiar functions, while others were more efficiently handled by Arrow.

Over time, Arrow became an integral part of the kingdom's codebase, working alongside the traditional functions to create powerful and versatile programs.

And so, in the land of JavaScriptia, the arrival of Arrow marked a shift towards modernity while honoring the traditions of the past, creating a balanced and diverse coding landscape for all its inhabitants. | codingmadeeasy | |

1,721,048 | Love at First Compile | Not only with women have I fallen in love, but with programming languages too. Embarrassing to look... | 0 | 2024-01-08T15:51:44 | https://dev.to/offcode/love-at-first-compile-2ji6 | java, beginners, terminal, nostalgia | Not only with women have I fallen in love,

but with programming languages too.

Embarrassing to look back now

and admit I was in love with Java.

It started with a flirt in the office.

We were a Microsoft shop,

dedicated to everything Windows and Visual Basic,

everything click and drag-and-drop.

Maybe this is why I got excited

when I heard a lecture about Java at the university.

White code written in chalk on the blackboard.

Almost like typing in a terminal.

Background color should not play a role

when choosing between programming languages.

The logos of the companies behind them should be irrelevant.

But Sun, the creator of Java, designed a magical logo

that read Sun from all four directions.

A sexy logo in the finest shade of purple.

Besides personality, we fall in love

with brown eyes, with blue eyes.

It was half past five, the office

was empty, except for me and my friend.

I entered sun.com/java in the browser,

an easy to remember URL.

The link to the installer sat on the top of the page,

no need to scroll down, just click and wait.

Modern technology always takes long to download.

The proverbial fifteen minutes of

"make a coffee, smoke a cigarette, and it will be ready."

Downloading was not enough, you had to install it.

Answer questions that should not be asked.

I did not know yet how many times

I would answer these same questions

and accept the default in every case.

We used an integrated environment to write BASIC code,

the keywords were displayed

in bold and in a darker shade of carmine.

Here is how you count to ten without using your fingers:

`FOR I = 1 TO 10`

And imagine the colors.

You click on the yellow arrow icon to run the program.

We had no editor for Java.

It probably didn't even exist yet, the language was so new.

We used what we had.

Our BASIC editor automatically checked our Java code

and highlighted all the errors.

Imagine writing in German

and running it through an English spell checker.

I knew the code was correct,

I just copied it from the notes I had taken at the class.

Clicking the yellow arrow wouldn't make sense either.

I had to leave our integrated environment behind

and open a terminal.

Type `javac Counter.java`.

The whole world was black and white now,

the whole screen.

The command I typed and the error message

I received a few seconds later.

A semicolon is missing on line 8.

Go back to the editor and fix it.

It took us more than half an hour to make our first Java program work

and do some very basic stuff.

Count to ten and print the numbers.

This doesn't sound like the beginning of a love story.

But what is love if not sweat and tears?

I may tell you later about the tears. | offcode |

1,720,630 | Top 10 Success Stories in App Development - Highlights of 2023 | 2023 proved to be a banner year for mobile app development trends, with innovative concepts growing... | 0 | 2024-01-08T09:59:19 | https://dev.to/quokkalabs/top-10-success-stories-in-app-development-highlights-of-2023-46af | app, appdevelopment, 2023 | 2023 proved to be a banner year for mobile app development trends, with innovative concepts growing into thriving businesses. This year, we witnessed apps transcend mere utility, becoming cultural touchstones and game-changers in their respective industries. Let's dive into the top 10 m and explore the trends that propelled them to the forefront.

## Top 10 Success Stories in App Development 2023 - Highlights

Let's look at these fantastic success stories that will inspire you! (download counts may vary)

### 1. Canva Pro for iPad

The beloved graphic design platform's iPad debut redefined mobile creativity. Boasting over 5 million downloads on iOS already, Canva Pro lets users create stunning visuals on the go.

### 2. Duolingo ABC

Gamified language learning for kids never looked so cute! This playful app saw over 10 million downloads on Android and became a favorite among parents and educators, proving the power of [engaging mobile app development trends](https://quokkalabs.com/blog/top-15-mobile-app-development-trends/?utm_source=Dev&utm_medium=Blog&utm_campaign=Blog).

### 3. Reflectly AI Journal

Mental health took center stage with Reflectly's AI-powered journaling app. With over 7 million downloads on both iOS and Android, it demonstrates the growing demand for mental wellness solutions through [innovative mobile app development processes](https://quokkalabs.com/blog/a-step-by-step-guide-to-the-mobile-app-development-process/?utm_source=Dev&utm_medium=Blog&utm_campaign=Blog), and it's among the success stories in app development 2023.

### 4. Temtem

This monster-collecting RPG captured the hearts of Pokemon fans, racking up over 15 million downloads across platforms. Its success highlights the enduring appeal of well-executed mobile gaming experiences.

### 5. TikTok Shop

Social commerce reached new heights with TikTok Shop's seamless integration. It's not just about lip-syncing anymore; over 20 million downloads on Android paint a picture of a thriving e-commerce ecosystem within the app.

### 6. Lensa AI

Another leader among Success Stories in App Development 2023. Transforming selfies into stunning artworks, Lensa AI became a viral sensation, amassing over 12 million downloads on iOS. It showcases the potential of AI for personalized and engaging mobile experiences.

### 7. Sleep Cycle

Battling bedtime blues, Sleep Cycle's innovative alarm features helped over 5 million users (primarily on Android) achieve restful slumber. It highlights the growing focus on health and well-being within the app space.

### 8. Bumble Bizz

It's among the Success Stories in App Development 2023 after Bumble for dating. Professional networking took a swipe for the better with Bumble Bizz. Connecting over 3 million users on iOS and Android, it redefines career networking through the familiar dating app format.

### 9. Headspace for Work

Mindfulness found its way into the corporate world with Headspace for Work. With over 8 million downloads across platforms, it demonstrates the increasing importance of workplace well-being.

### 10. HiHello

Bharat's Local Social Network: Building community in India, HiHello saw over 10 million downloads on Android alone. It highlights the potential for localized app development catering to specific regional needs.

## Best Practices for Success in App Development: From Spark to Download Storm

Witnessing these 2023 triumphs is inspiring, but how do you translate such brilliance into your own app? Let's dive deeper into the secrets of success with some golden best practices and mobile app development process:

- Define Your North Star: A Problem Worth Solving

Before coding a single line, ask yourself: what problem am I solving for users? Is it a burning need, a delightful inconvenience, or simply a fresh take on an existing solution? A laser-sharp focus on user value is the foundation for an app that resonates.

- Design Thinking: Walk in Your User's Shoes

Empathy is key. Research your target audience, understand their pain points, and craft an intuitive user experience that feels like a natural extension of their needs. Remember, a beautiful interface without purpose is like a Ferrari stuck in traffic.

- Use Agility: Build, Iterate, Refine

The mobile world is a fast-paced dance. Don't aim for a grand launch followed by radio silence. Employ agile development methodologies, releasing minimum viable products (MVPs) and gathering user feedback to continuously refine your app. Think of it as sculpting a masterpiece, not constructing a castle overnight.

- Master the Mobile Arena: Platform Choice Matters

Android or iOS? Both offer unique strengths and user preferences. Understanding your target market's platform bias is crucial for maximizing reach and engagement.

- Market Like a Magician: Storytelling, Not Just Selling

Your app might be a game-changer, but no one knows it exists if you're whispering in an empty room. Utilize captivating storytelling, targeted marketing campaigns, and strategic app store optimization (ASO) to make your app the talk of the town.

- Metrics Maestro: Data Drives Decisions

App analytics are your crystal ball. Monitor user behavior, track key performance indicators (KPIs), and identify areas for improvement. Data-driven insights will guide your development roadmap and ensure your app stays relevant and engaging.

- Security Sentinel: Guarding User Trust

In today's digital landscape, user data is sacred. Implement robust security measures, prioritize data privacy, and be transparent about your practices. Building trust is paramount for long-term success.

- The Power of Community: Listen, Engage, Respond

Foster a community around your app. Actively engage with users on social media, respond to feedback, and encourage open communication. This two-way dialogue is gold; it fuels innovation and strengthens user loyalty.

## Success Stories in App Development 2023: Ready to Convert To 2024 And beyond?

If you're looking for the [Best Mobile App Development Company in India](https://quokkalabs.com/mobile-app-development?utm_source=Dev&utm_medium=Blog&utm_campaign=Blog) to craft your own success story, look no further! Hire or contact those who utilize the latest app development trends and a meticulous Mobile App Development Process to bring your vision to life. Let's turn your app idea into a reality, download the applause!

Remember, innovation, empathy, and a dash of digital magic are the ingredients for app success. So, unleash your creativity and join the league of 2024's app heroes! | labsquokka |

1,720,678 | How to Create Emotionally Resonant Brand Experiences for Your Target Audience | A thorough familiarity with the target demographic is essential for effective audience design. You... | 0 | 2024-01-08T11:07:10 | https://dev.to/alexparker21/how-to-create-emotionally-resonant-brand-experiences-for-your-target-audience-g27 | web, design | A thorough familiarity with the target demographic is essential for effective audience design. You can't expect to succeed if you have no idea who you're interacting with.

Having the correct ingredients on hand is, thus, crucial. Personalization, humanization, captivating storytelling abilities, and a consistent brand narrative are a few examples.

Here are some fantastic pointers for creating brand experiences that strike an emotional chord with your target demographic.

In today's world, no company can succeed without an online presence, and the most crucial part of that presence is the design of the company's website. MavericksMedia, without a doubt, employs the **[web designing company in toronto](https://mavericksmedia.ca/toronto-website-design/)**, a team that is enthusiastic about creating innovative websites with outstanding and creative designs.

## 1, Make Sensational Experiences

Engaging any of the five human senses—sight, sound, smell, touch, and taste—is the essence of a sensory brand experience. The aroma of freshly ground coffee beans, for instance, greets you warmly the moment you step foot in a Starbucks. An overwhelming sense of comfort and joy washes over you. At this point, you're likely to want to stay a while, indulge in your favorite treats, and take it easy.

## 2 Recount Stories With Feelings

Incorporate narrative into the design of your brand's experience. Make your readers care about the people you portray and the stories you tell them so that they can find inspiration in your goals and principles. Websites, social media, and other forms of paid advertising and marketing should all make use of the skill of storytelling.

Dove, for one, has never been one to back down from a fight for equality and diversity. The message this time is for young people to #detoxyourfeed and stop taking beauty advice that is toxic, negative, and bad. Trying to achieve ideal body and skin conditions is an endless cycle for millennials and Gen Z, as you are well aware. Someone has to hit the brakes every year because the trend is getting stronger.

## 3. be sure to incorporate relatable visual elements.

Typography, pictures, icons, textures, and colors are all examples of visual graphics that can impact an audience on an emotional, mental, bodily, and spiritual level. The one catch is that you'll need some knowledge of visuals, design customization, and composition in order to pull this off.

In honor of the World Series, the Los Angeles Times collaborated with Golden Road Brewing to produce an AR web activation video, which Dodgers fans could enjoy. Text, shapes, and colors were utilized to enhance the video, as is evident.

## 4 Garner knows your target audience's demographics and psychographics inside and out.

Get to know their wants, requirements, and what sets them off. Learn about the market, read reviews, and use data to read people's emotions and goals by watching how they act and react.

Brands are going to extreme lengths to help their consumers, from offering feedback forms and encouraging positive attitudes to engaging in conversational commerce, in the hopes of increasing sales and positive testimonials.

## 5. Figure Out What It Is You Want To Do

Always have a plan in place before beginning any project that could affect your brand. Think about the feelings you want your listeners to have. Invoking what emotions are you aiming for? Feelings of empowerment, nostalgia, trust, or enthusiasm.

A better and more immersive brand experience can be designed with goals and direction in mind.

Fans of Netflix may have come across the Netflix Laughs feature, which compiles a collection of comedic videos from the service's library and allows users to browse, like, comment, and share them. Is the goal to improve the brand experience or to compete with TikTok? Many are wondering. Watching these videos has made me laugh, for some reason.

## - Launch a Campaign for Storytelling

Let your audience's hopes and fears inform the creation of enthralling stories. Make content that makes people feel something through utilizing different forms of communication like articles, infographics, videos, installations, and virtual worlds.

## Support Charitable Initiatives

Find a nonprofit to work with or join forces with, and make sure their mission and values mesh with your own. Make advertisements that get people involved in a bigger cause. Get them involved with a beach cleanup, a book drive, or a fundraiser.

## - Present Commonalities

Plan gatherings and activities that will unite your target audience in a common feeling of belonging. Help cultivate healthy relationships by holding retreats, workshops, or challenges.

## Imbue Packaging With Emotions

Packaging is an opportunity for customers to experience your brand through sight, touch, and smell, so give it your full attention. Get in line with your brand's personality by using images, textures, and colors that make people feel something. To give just one example, audiences can get pumped up for product demos and unboxing videos.

## Marketing through Social Media

Fast food restaurants like Wendy's, Five Guys, and McDonald's are finding success with social media applications such as TikTok. Add some flair to your feed by sharing videos, stories, and still images with text and icons.

## - Joy and Astonishment

Write customers personalized messages, give them gifts, and do unique and creative things that exceed their expectations to surprise them with moments of joy and delight.

In a highly competitive and rapidly expanding market, brands can gain an edge by appealing to consumers' emotions through their products and services. During difficult times, such as the COVID-19 pandemic, brands were able to recover from losses and setbacks thanks to emotional brand experiences.

Creating brand experiences that strike an emotional chord with consumers greatly impacts their actions, loyalty, and opinion of the brand. Making lasting connections is beneficial. | alexparker21 |

1,720,684 | Disable any animations in Angular by prefers-reduced-motion | Introduction Animations today are an integral part of modern web applications. Animations... | 25,995 | 2024-01-08T11:12:11 | https://maks-dolgikh.medium.com/advanced-animation-in-angular-part-2-disable-any-animations-by-prefers-reduced-motion-8f9af185cd5d | angular, css, ui, tutorial | ## Introduction

Animations today are an integral part of modern web applications. Animations help us to improve the perception of our application to the users by giving them feedback about their actions, which helps to make the user experience pleasant and memorable.

But there are cases when we should not use animations:

* **Users with restrictions**. For them, animation is not an embellishment, but a distracting part that may be less accessible and interfere with the perception

* **E2E tests**. It takes time to wait for each animation and transition. The more tests there are, the more time is spent waiting for them to be completed

For these 2 cases, you can’t build a separate application without animations, you need to have an opportunity to disable animations.

> *And there is an easy way to do this.*

## Prefers-reduced-motion

> The **prefers-reduced-motion** CSS media feature is used to detect if a user has enabled a setting on their device to minimize the amount of non-essential motion. The setting is used to convey to the browser on the device that the user prefers an interface that **removes, reduces, or replaces** motion-based animations. ( You can find ways to activate it [here](https://developer.mozilla.org/en-US/docs/Web/CSS/@media/prefers-reduced-motion#user_preferences) )

Since this is an `@media` setting, all code can be handled in the `@media` block

```css

@media (prefers-reduced-motion) {

transition: all 0 linear;

/* others styles */

}

```

Knowing about this customization, we just need to learn how to handle it properly in the Angular application [read more...](https://maks-dolgikh.medium.com/advanced-animation-in-angular-part-2-disable-any-animations-by-prefers-reduced-motion-8f9af185cd5d) | misterion96 |

1,720,751 | Week 1: The Meet and Greet | After the results announced for the December - March 2024 Cohort we head on to a week of discussion... | 25,997 | 2024-01-08T15:37:22 | https://dev.to/yokwejuste/week-1-the-meet-and-greet-3iml | outreachy, opensource, teamwork, developers | After the results announced for the December - March 2024 Cohort we head on to a week of discussion around with the other community members.

## The community

The Unstructured studio community is a worldwide community aimed at empowering children from different underrepresented communities with several creative learning experiences. They work on several projects such as Zubhub to achieve their goal. Unstructured Studio has already put some footprints in countries like Nigeria and India.

## The Vision

The vision of Unstructured Studio is to engage children from underserved communities in creative learning experiences. They aim to achieve this through a variety of tools, activities, and resources, focusing on accessible, hands-on, and innovative educational methods. Their approach emphasizes empowering both children and educators, fostering creativity, collaboration, and practical skills in a supportive, resourceful environment

## The Project

ZubHub, developed by Unstructured Studio, is a dynamic, open-source platform designed to enhance creative education for children and educators. It stands as a collaborative and documentation tool for activity-based learning, easily adaptable for schools, libraries, and educational organizations. Developed by educators and technologists, ZubHub aims to democratize access to low-cost creative learning opportunities. This innovative platform is part of Unstructured Studio's mission to bring creative education to under-resourced communities globally, fostering essential skills like creativity, critical thinking, and collaboration. ZubHub serves as a hub for creative, hands-on projects, encouraging children to engage with low-cost materials and an engaging community of learners and educators

## The Mentors

To work on the Zubhub project I was assigned several mentors, each with much a lot of experience and diverse into different tech domains.

- [Srishti Sethi](https://twitter.com/Srish_Aka_Tux)

- [Suchakra Sharma](https://twitter.com/tuxology)

- [Ndibe Raymond Olisaemeka](https://twitter.com/CodeBlooded_JS)

## My Point of View

Unstructured Studio is a great way to have a positive impact in the young child education and helping the next generation grow.

## Conclusion

More to the above content, working for opensource, education and working for the next generation is a great footprint made in the world. Looking forward for more. | yokwejuste |

1,720,923 | How to collect marketing campaign email leads with Mailgun and Appwrite in minutes | Collecting marketing campaign email leads is fundamental to modern digital marketing strategies. It... | 0 | 2024-01-08T16:22:31 | https://dev.to/hackmamba/how-to-collect-marketing-campaign-email-leads-with-mailgun-and-appwrite-in-minutes-83g | Collecting marketing campaign email leads is fundamental to modern digital marketing strategies. It systematically gathers contact information, primarily email addresses, from individuals interested in a brand's products, services, or content.

This process is crucial for building and maintaining an email marketing list, a valuable tool for organizations to establish and nurture connections with their audience.

Various methods can be employed to collect email leads, such as utilizing web opt-in forms on websites and incorporating incentives like discounts or exclusive content. Web surveys, content or product upgrades, and online events like webinars or virtual summits can also capture email addresses during audience participation.

One of the significant challenges businesses face while setting up the collection of their email leads is the choice of technology. This choice can affect the speed at which organizations set up their campaign, the effectiveness of managing the email list, and the integration with their other existing platforms. This is where Appwrite, through [Appwrite Functions](https://appwrite.io/docs/products/functions?utm_source=hackmamba&utm_medium=hackmamba-blog) and [Mailgun](https://www.mailgun.com/?utm_source=hackmamba&utm_medium=hackmamba-blog) — a powerful email service provider — comes in.

Appwrite provides ready-made function templates that allow businesses to set up applications easily. One of these templates is the **email contact form**. The email contact form template is designed to help gather email leads for marketing campaigns. It also includes a prebuilt contact form user interface that businesses can customize to meet their specific needs.

This tutorial demonstrates how you can set up the email leads collection system on your Next.js website using the email contact form provided by Appwrite and the Mailgun email service provider.

## Prerequisites

To follow along comfortably with this tutorial, you’ll need a few things:

- Basic web development knowledge and a basic understanding of Next.js.

- An active [Appwrite cloud account](https://cloud.appwrite.io/register?utm_source=hackmamba&utm_medium=hackmamba-blog) and a [Mailgun account](https://signup.mailgun.com/new/signup?utm_source=hackmamba&utm_medium=hackmamba-blog).

- A code editor or Integrated Development Environment (IDE), preferably VScode.

- Basic knowledge of Git, a [GitHub account](https://github.com/signup), and a repository on that account.

- [Node.js](https://nodejs.org/en/download/current) installed.

- A CLI terminal.

## Repository

In case you want to view the repository of the entire Next.js leads collection website, check out this [link](https://github.com/Otrex/hackmamba-appwrite-nextjs-leads-page?utm_source=hackmamba&utm_medium=hackmamba-blog).

## Configuring Mailgun

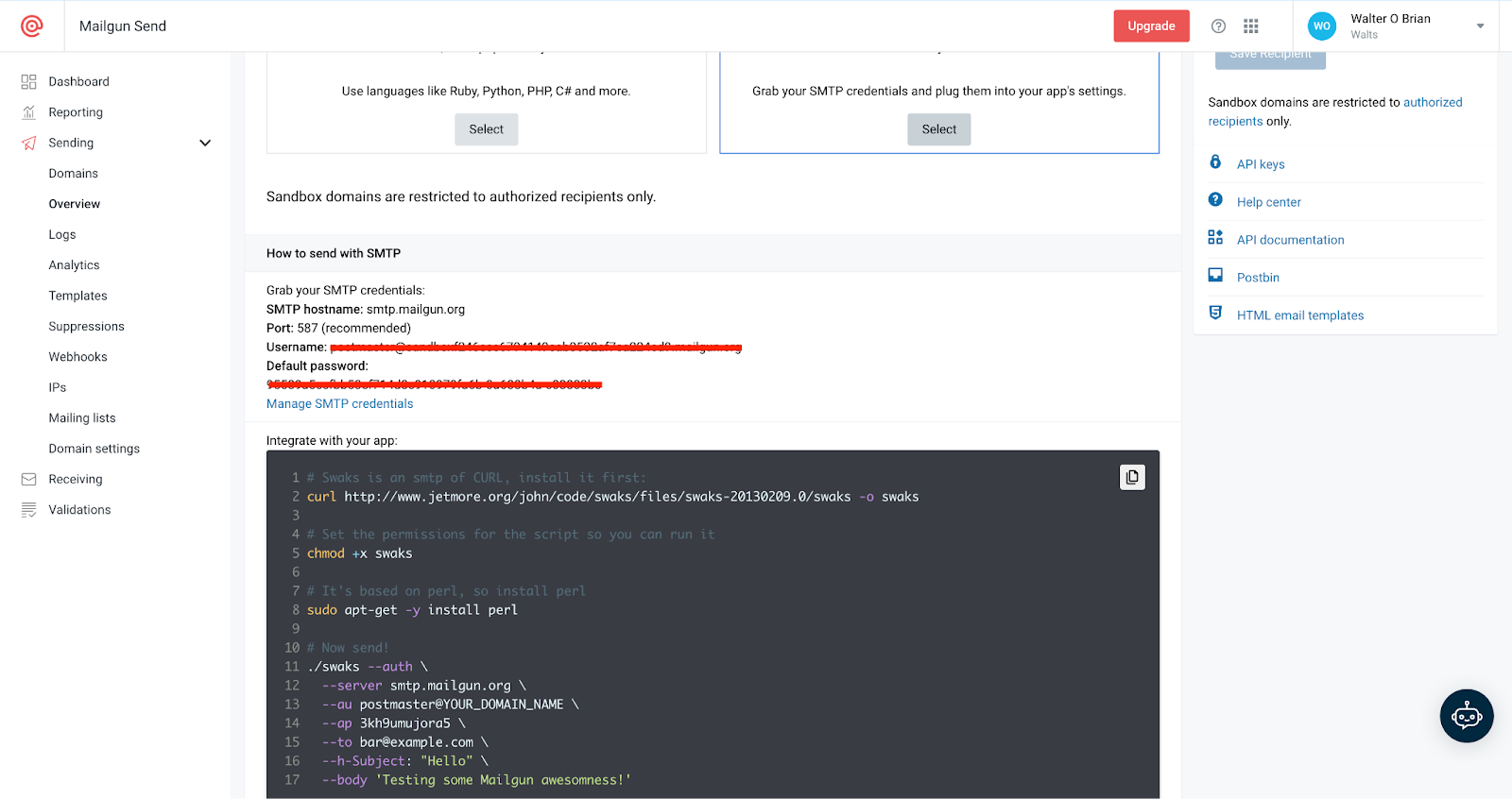

To initiate email communications through Mailgun, you must retrieve the Simple Mail Transfer Protocol (SMTP) keys that Appwrite requires for sending emails through Mailgun.

> In this tutorial, you'll be using the sandbox environment. For instructions on using the live environment, refer to the detailed [guidelines](https://documentation.mailgun.com/en/latest/user_manual.html#verifying-your-domain).

**Retrieving the SMTP keys**

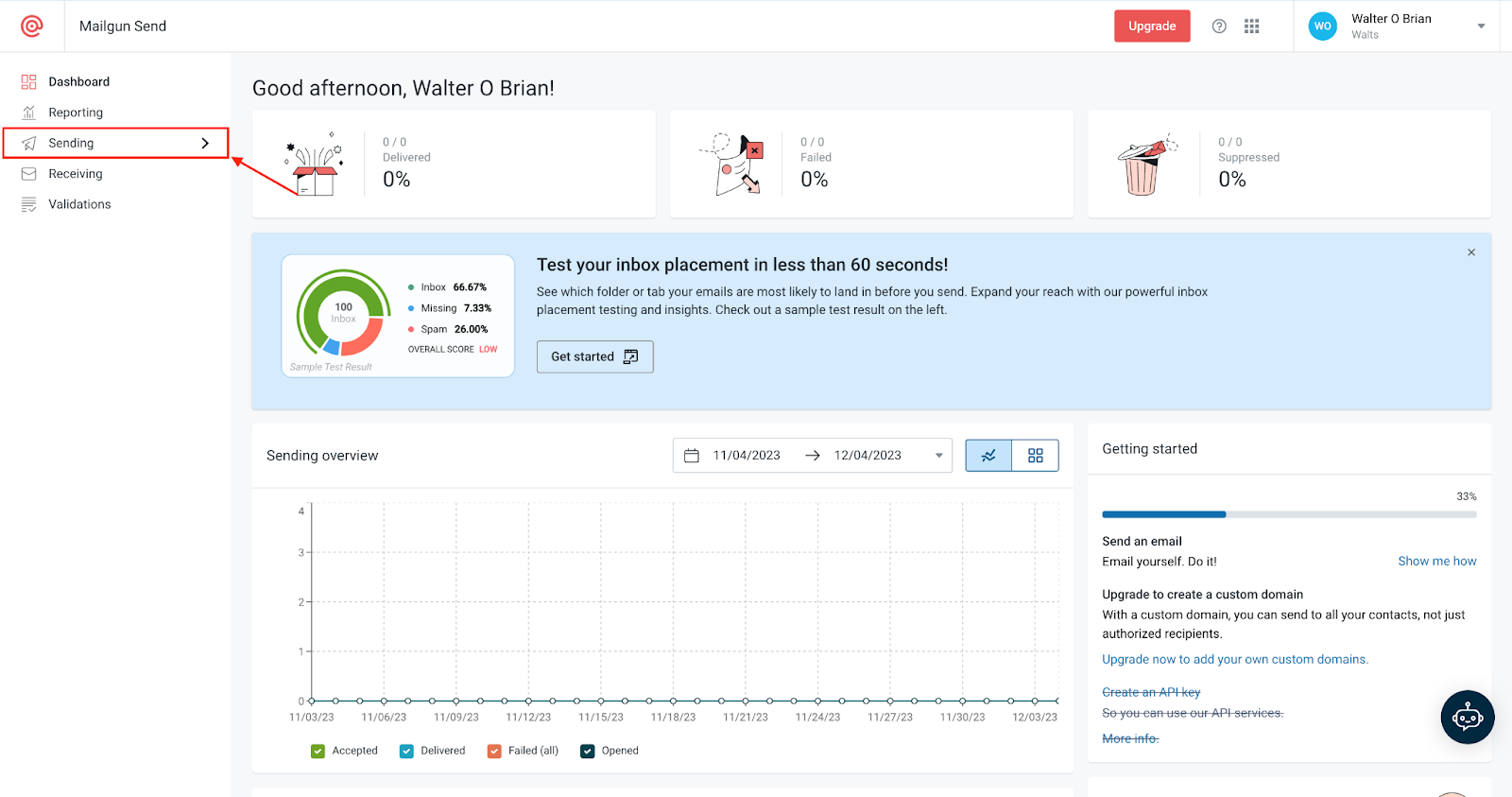

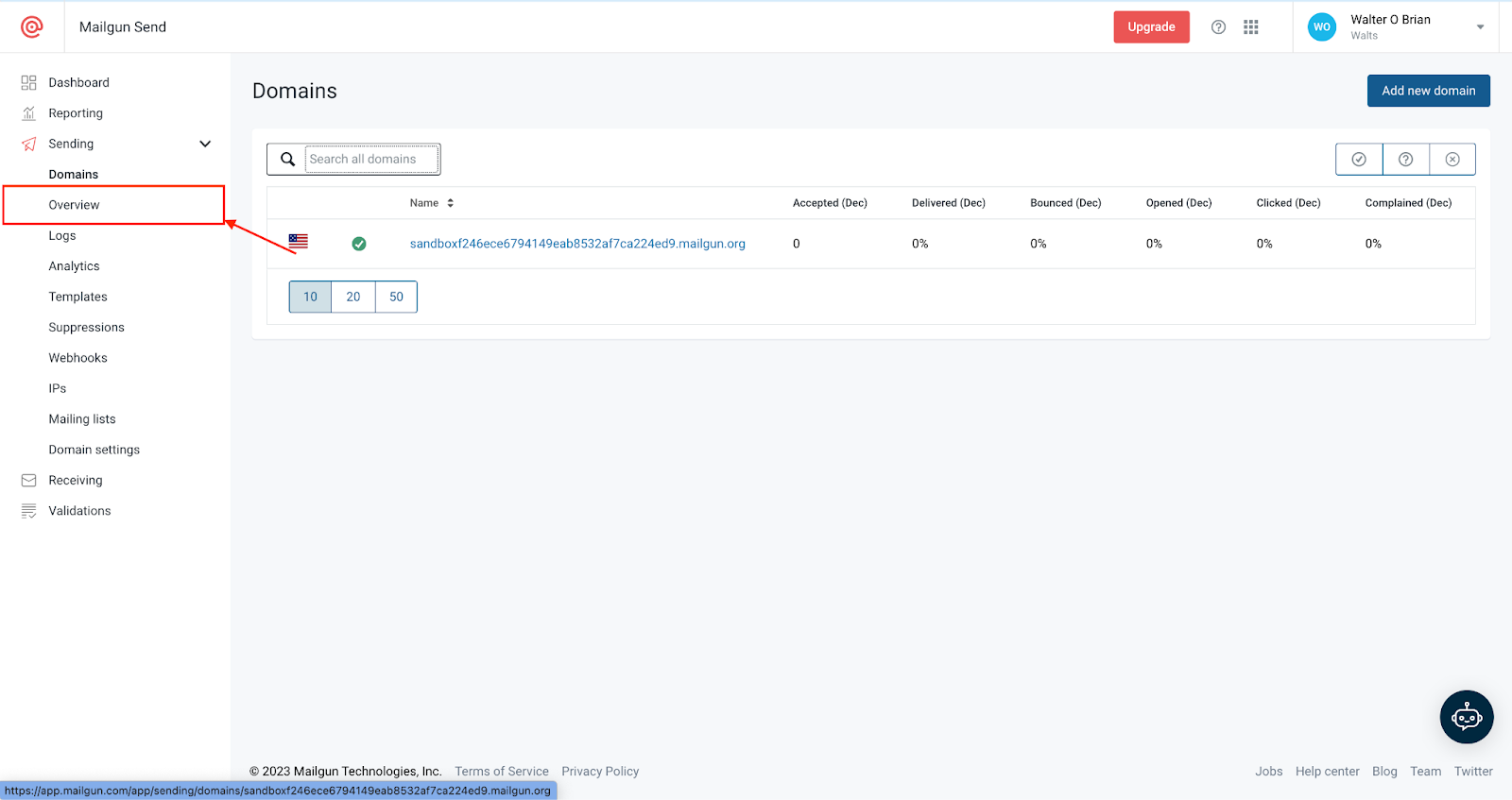

To perform this task, go to your Mailgun account dashboard. Once there, locate and click on the **Sending** menu.

Next, select **Overview.**

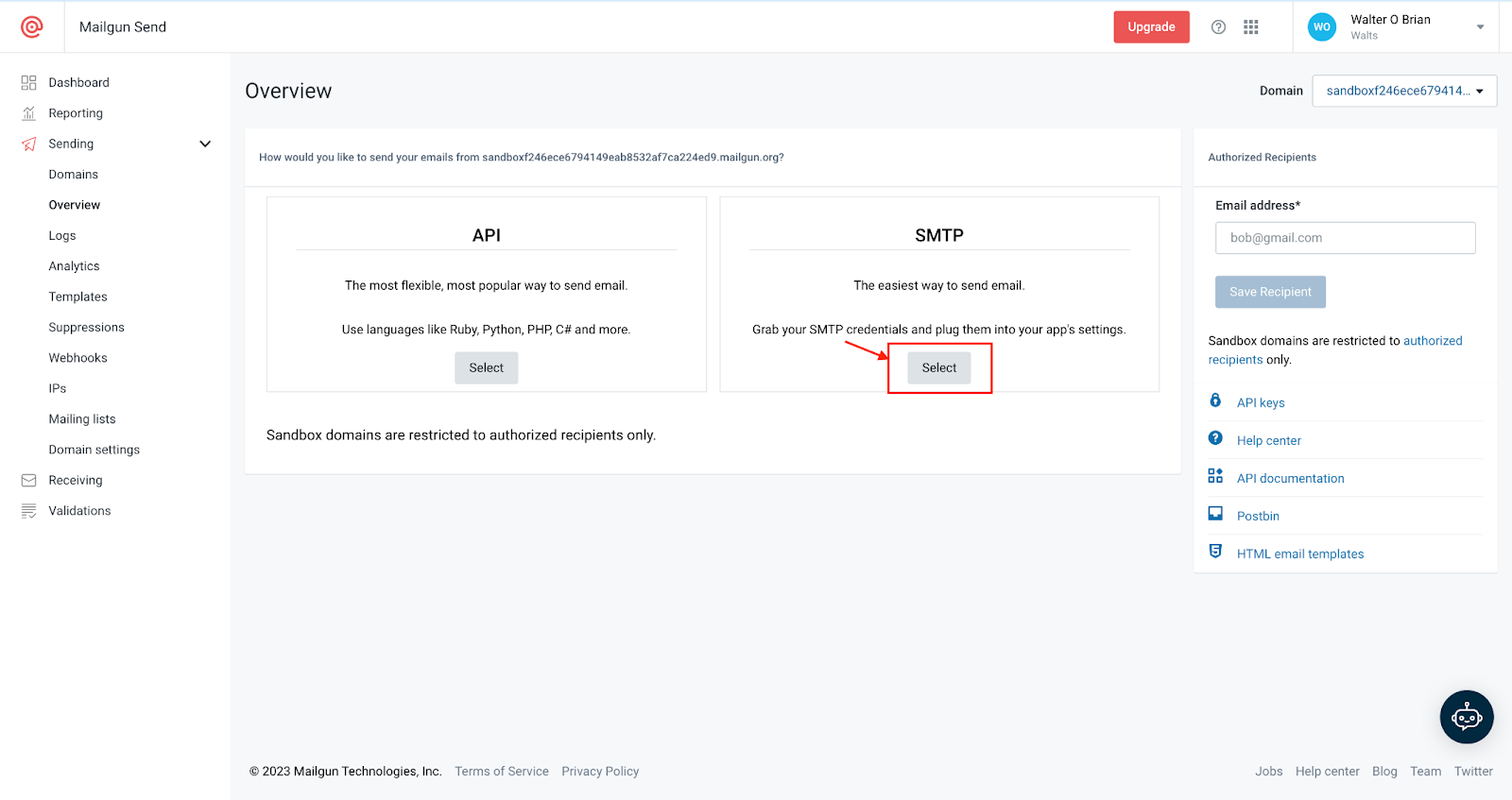

Afterward, navigate to the **SMTP** section and click **Select**.

Once complete, you can retrieve the SMTP credentials from the section shown below.

## Configuring Appwrite

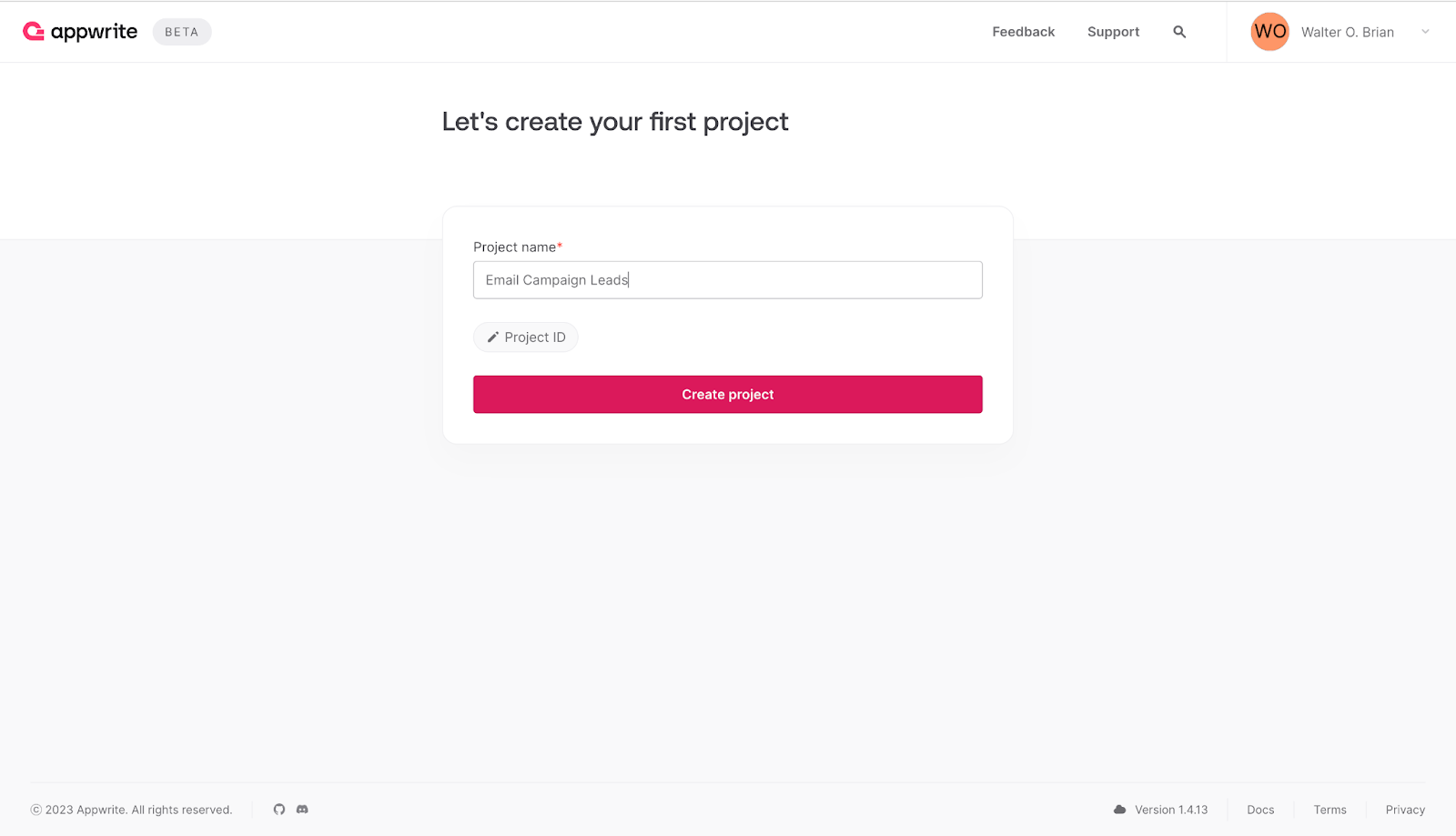

To proceed with the configuration, navigate to your Appwrite Cloud account dashboard, create a new project by entering the desired project name, and click **Create project,** as indicated below.

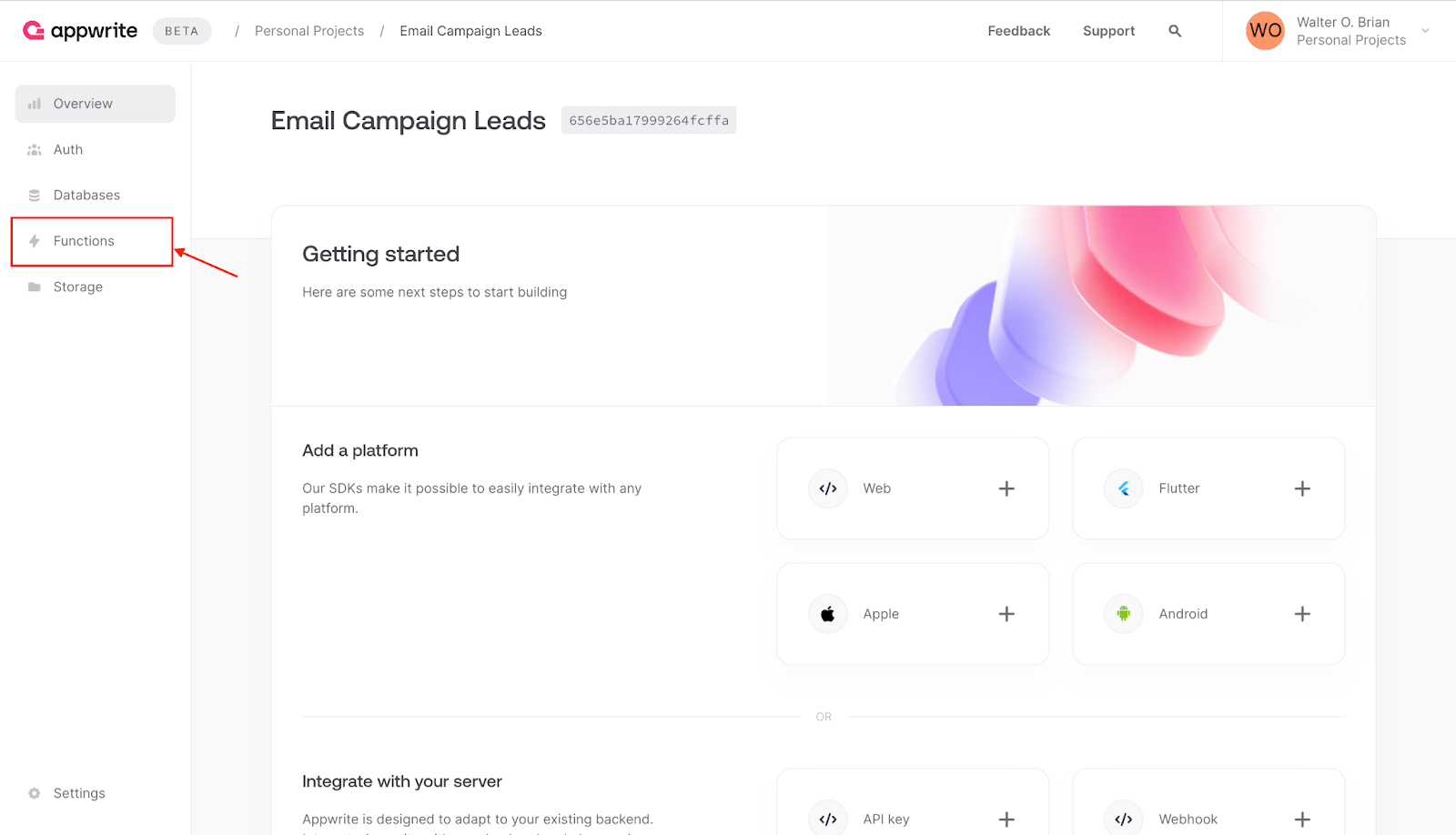

After that, click on the **Functions** menu.

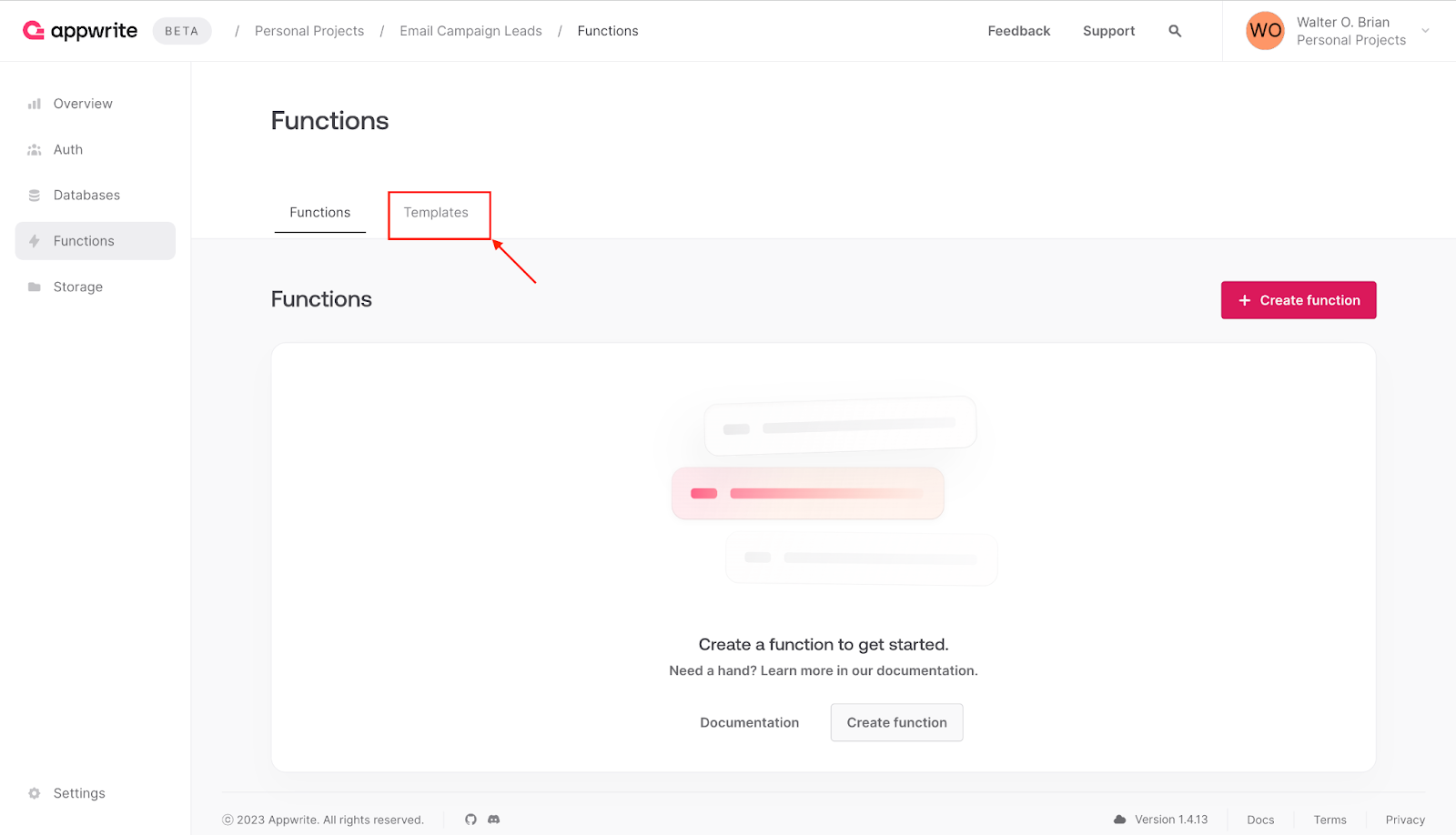

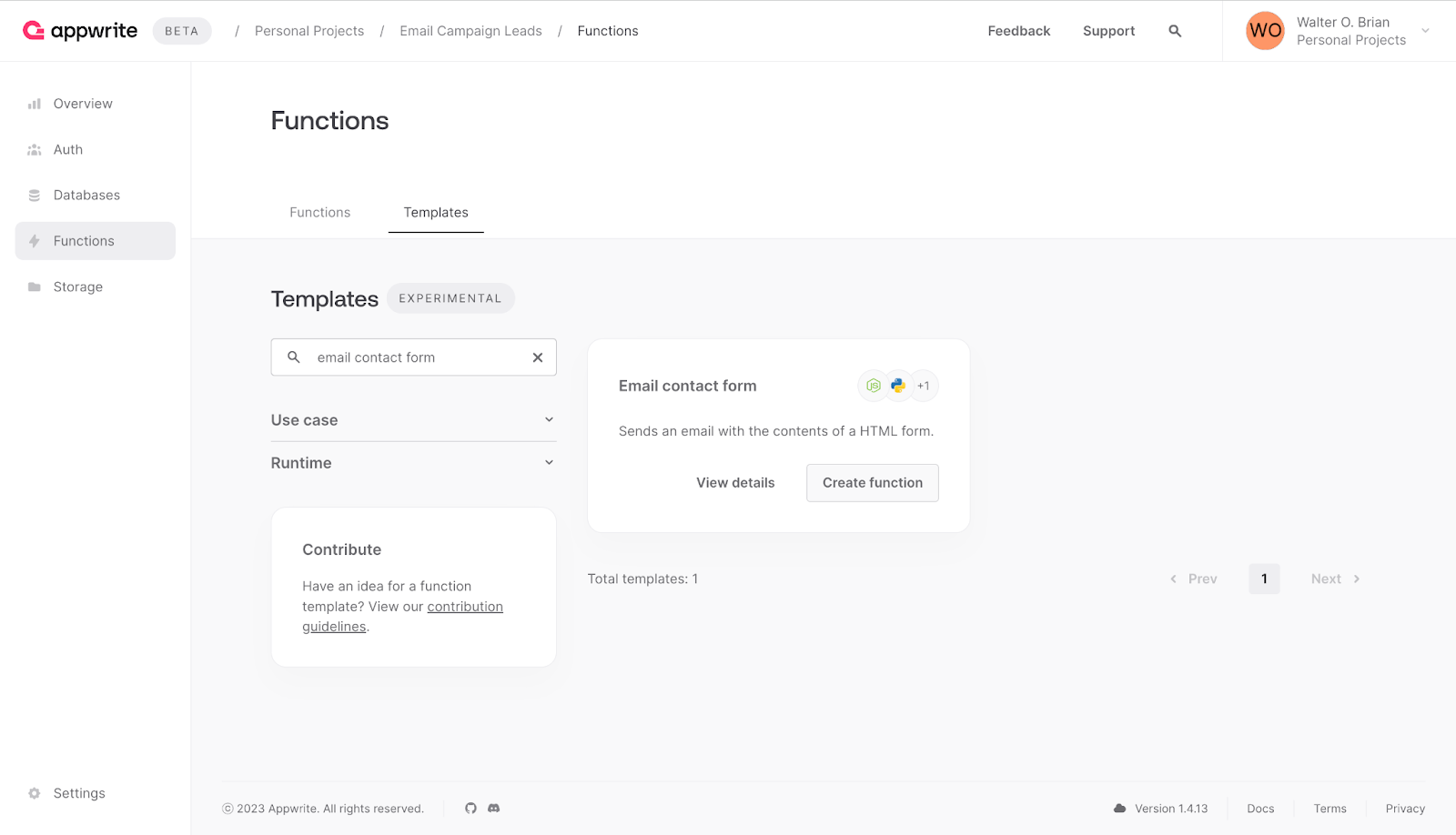

Next, click the **templates** tab to view the available templates.

In the search field, enter **email contact form** to search for the template.

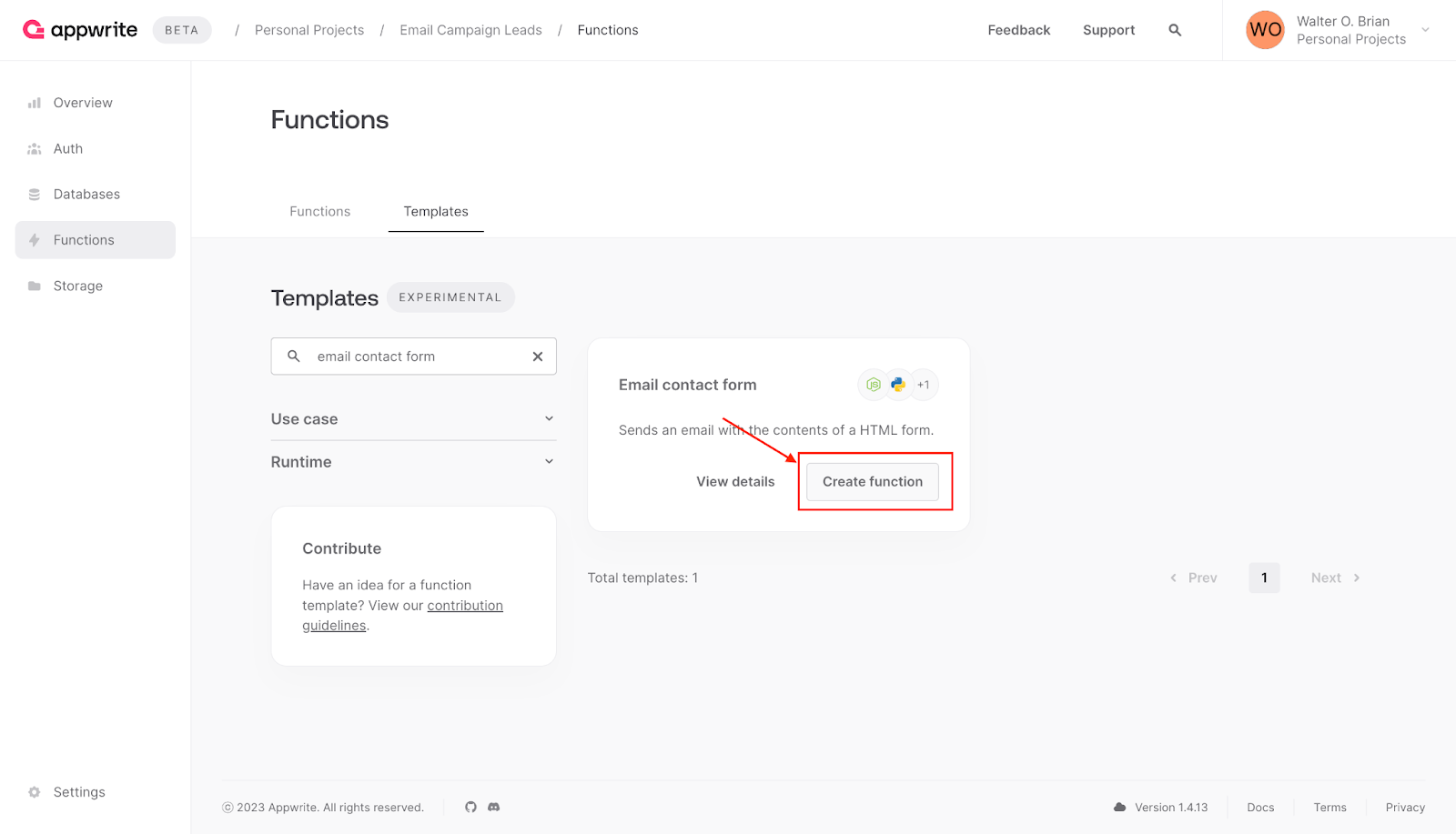

Afterward, click **Create function** to initiate the setup.

**Setting up the email contact form**

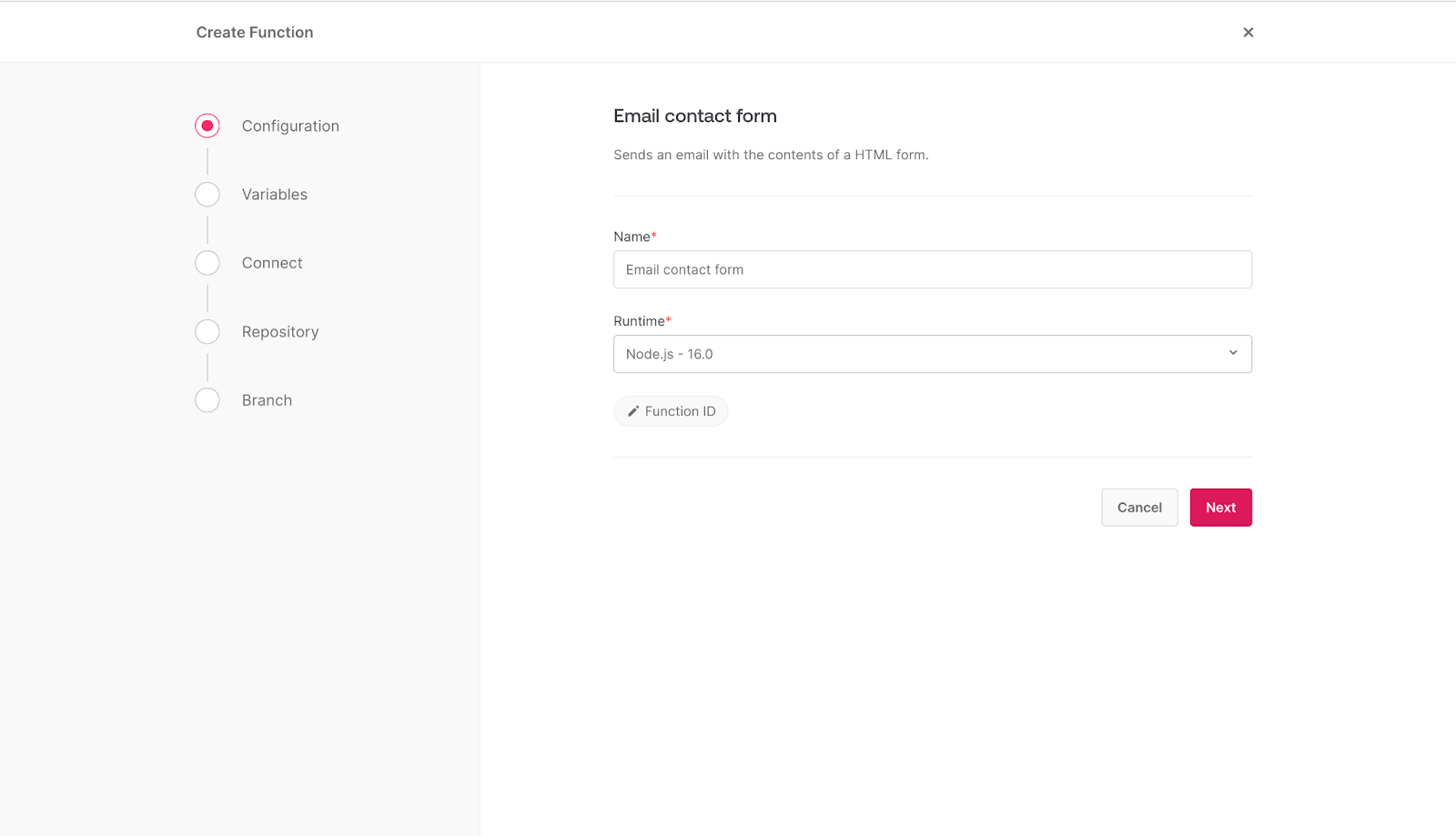

This phase involves selecting a runtime environment, adding the credentials obtained from Mailgun, and setting up the GitHub repository.

Select **Node.js - 16.0** as the runtime environment, then click **Next.**

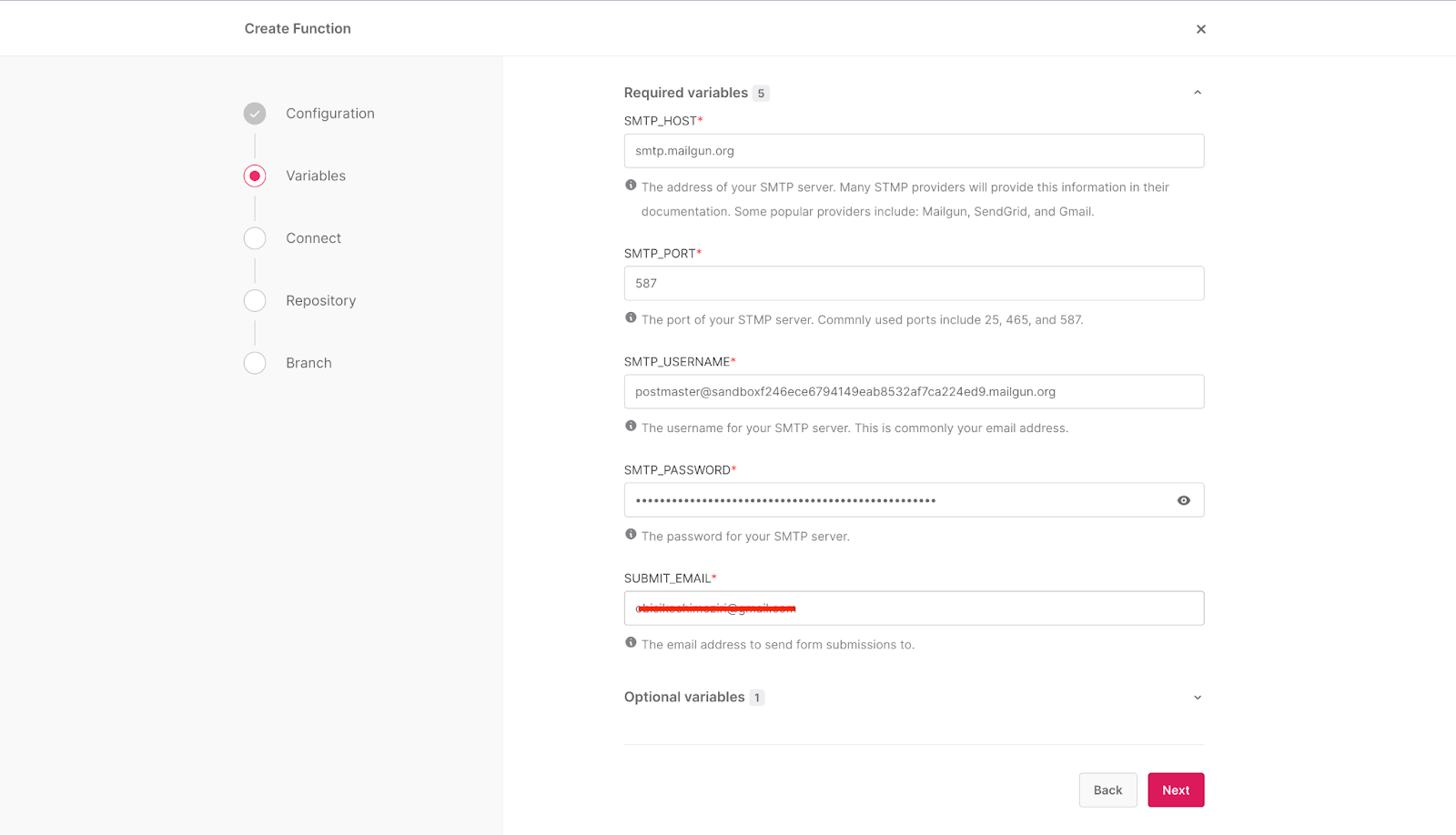

After that, add the credentials obtained from Mailgun, as shown below.

The template sends the email leads to the email address provided in the **SUBMIT_EMAIL** field. Add your preferred email to the field, and then click **Next**.

> When using Mailgun's sandbox environment, make sure the email address you provided in the **SUBMIT_EMAIL** field is authorized within Mailgun's system. For additional information, please visit this [link](https://help.mailgun.com/hc/en-us/articles/217531258-Authorized-Recipients?utm_source=hackmamba&utm_medium=hackmamba-blog). Also, note that emails sent using the sandbox environment are usually sent to the spam folder of the receiving email account.

**Connecting and setting up your GitHub repository**

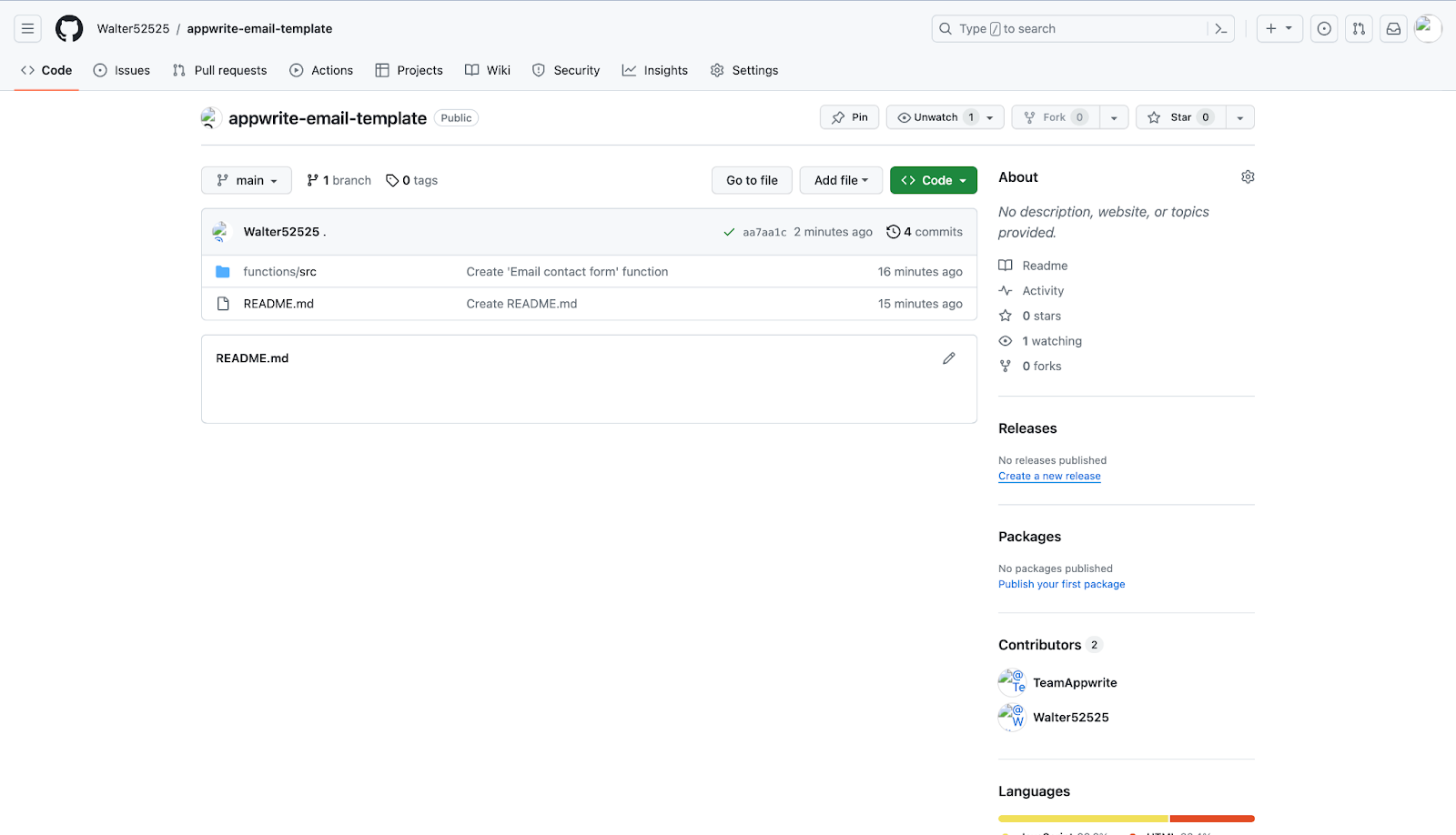

At this point, you need to connect your GitHub repository to the platform. Appwrite pushes a copy of the template to your GitHub account, allowing you to personalize your version as required.

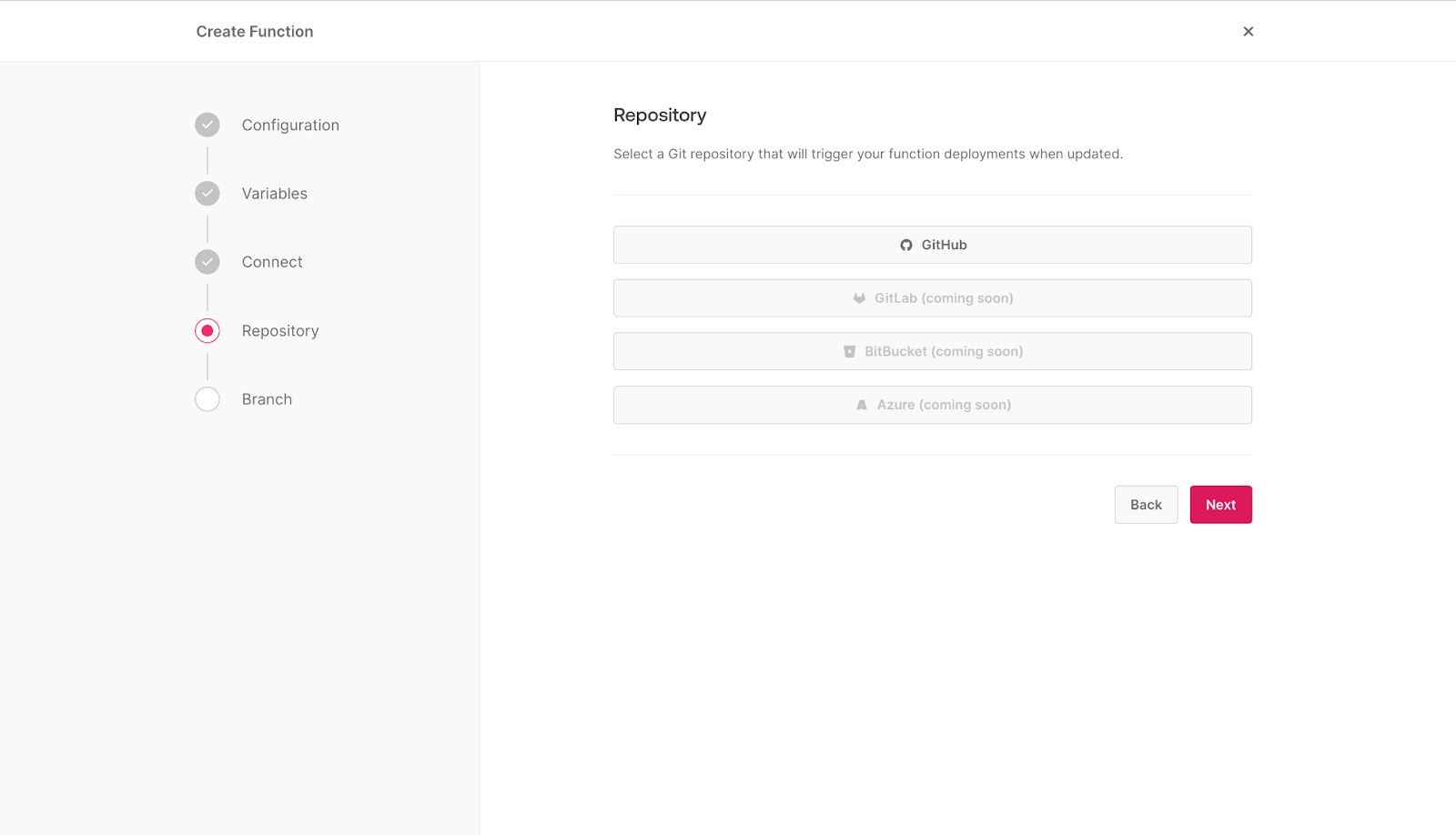

Click on the GitHub option as indicated below.

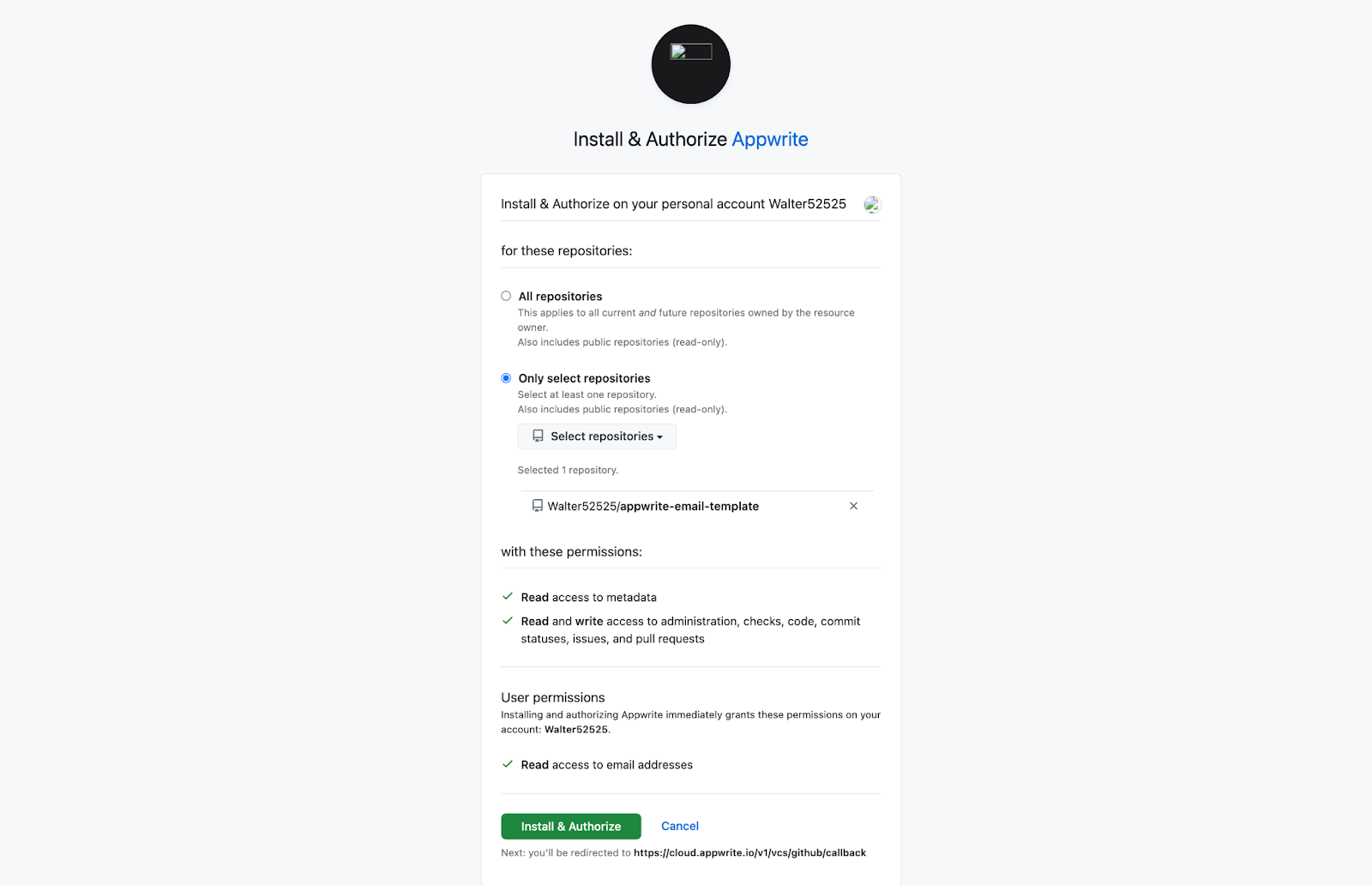

If your GitHub account is not yet connected to Appwrite, you will be redirected to GitHub to complete the connection process.

Afterward, choose the repository that was created as part of the prerequisite.

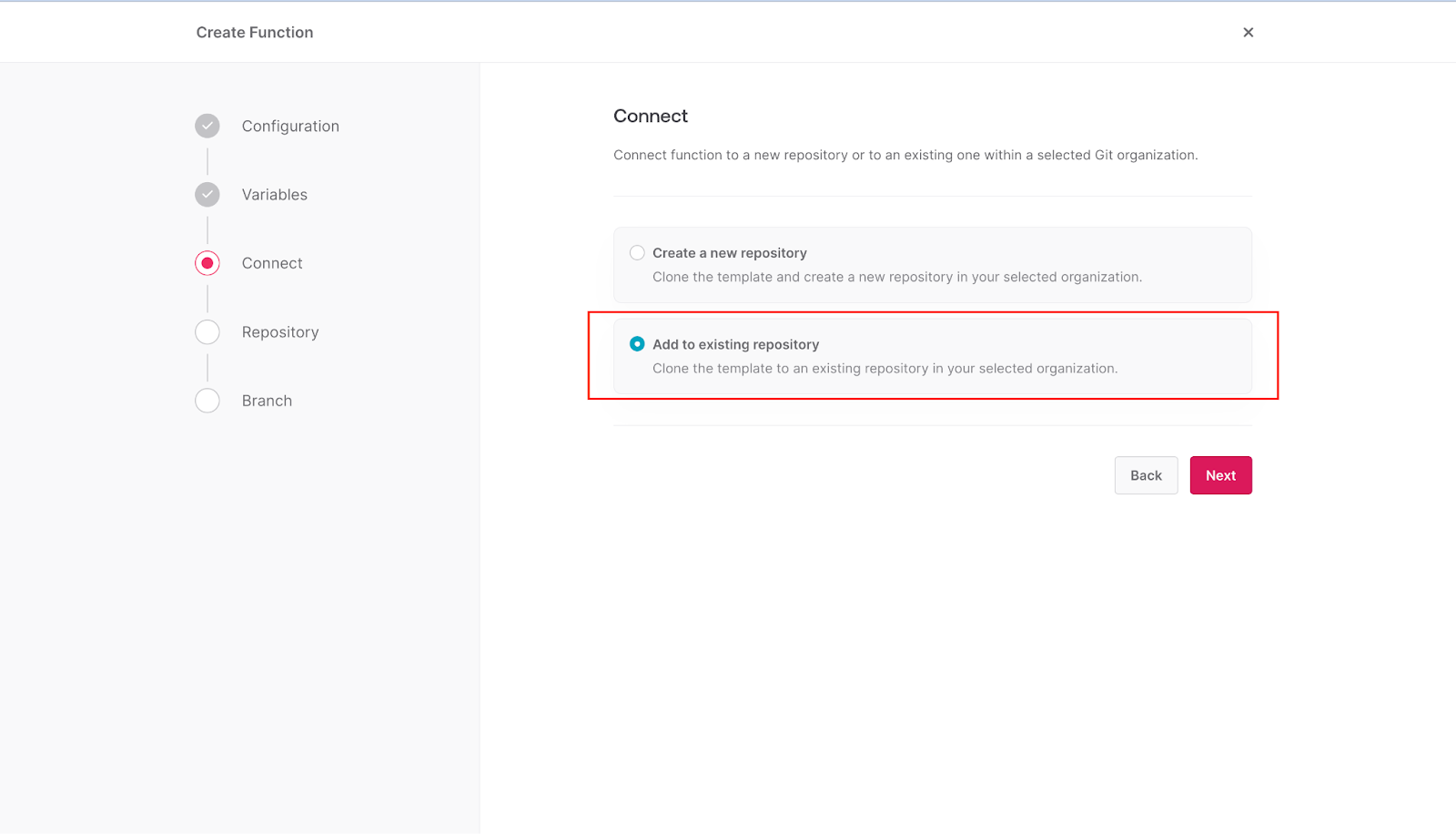

If you already have a GitHub account connected to your Appwrite cloud account, select the **Add to existing repository** option instead of **Create a new repository** and click **Next**.

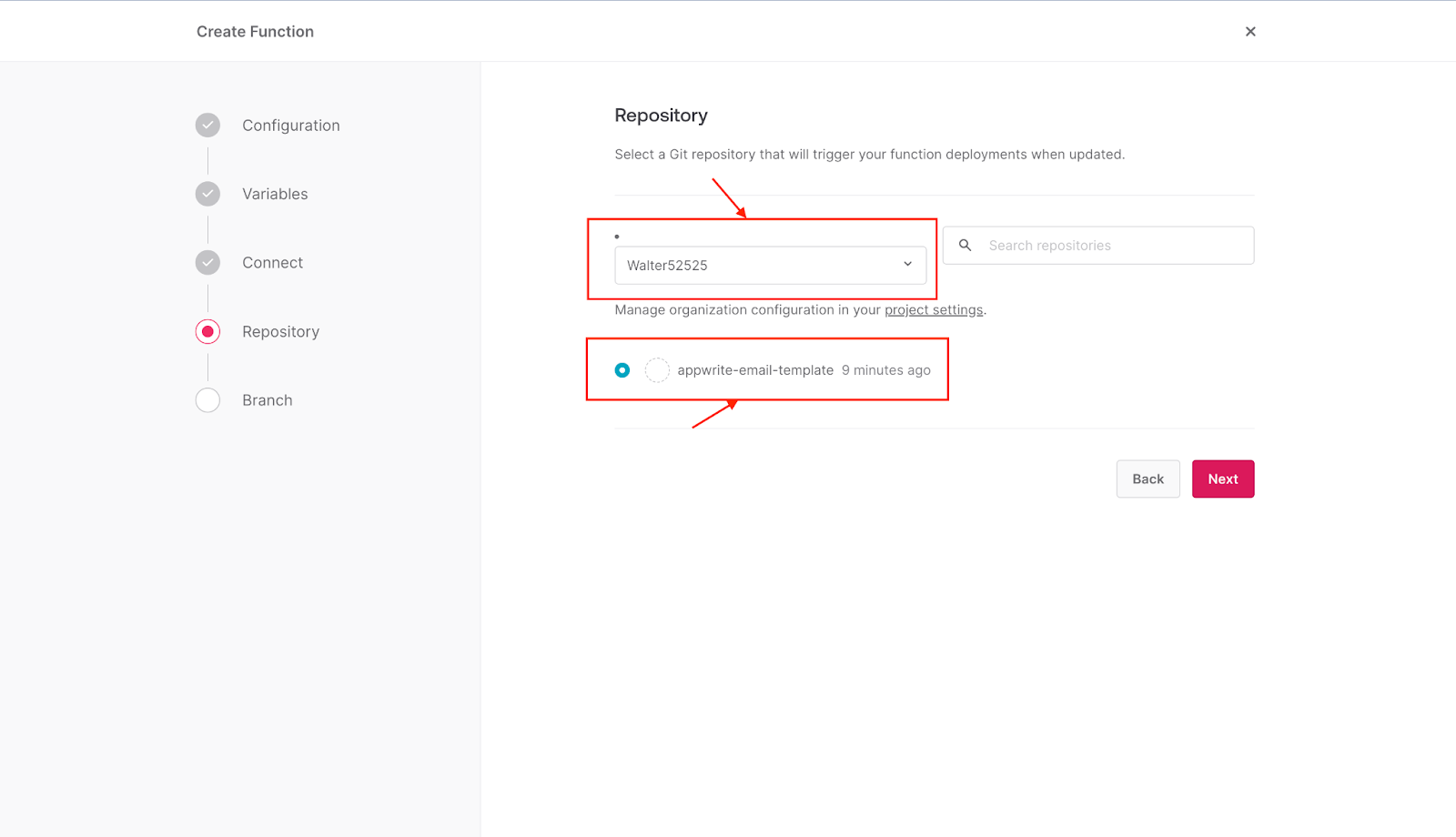

Select the GitHub account and the repository of your choice, then click **Next**.

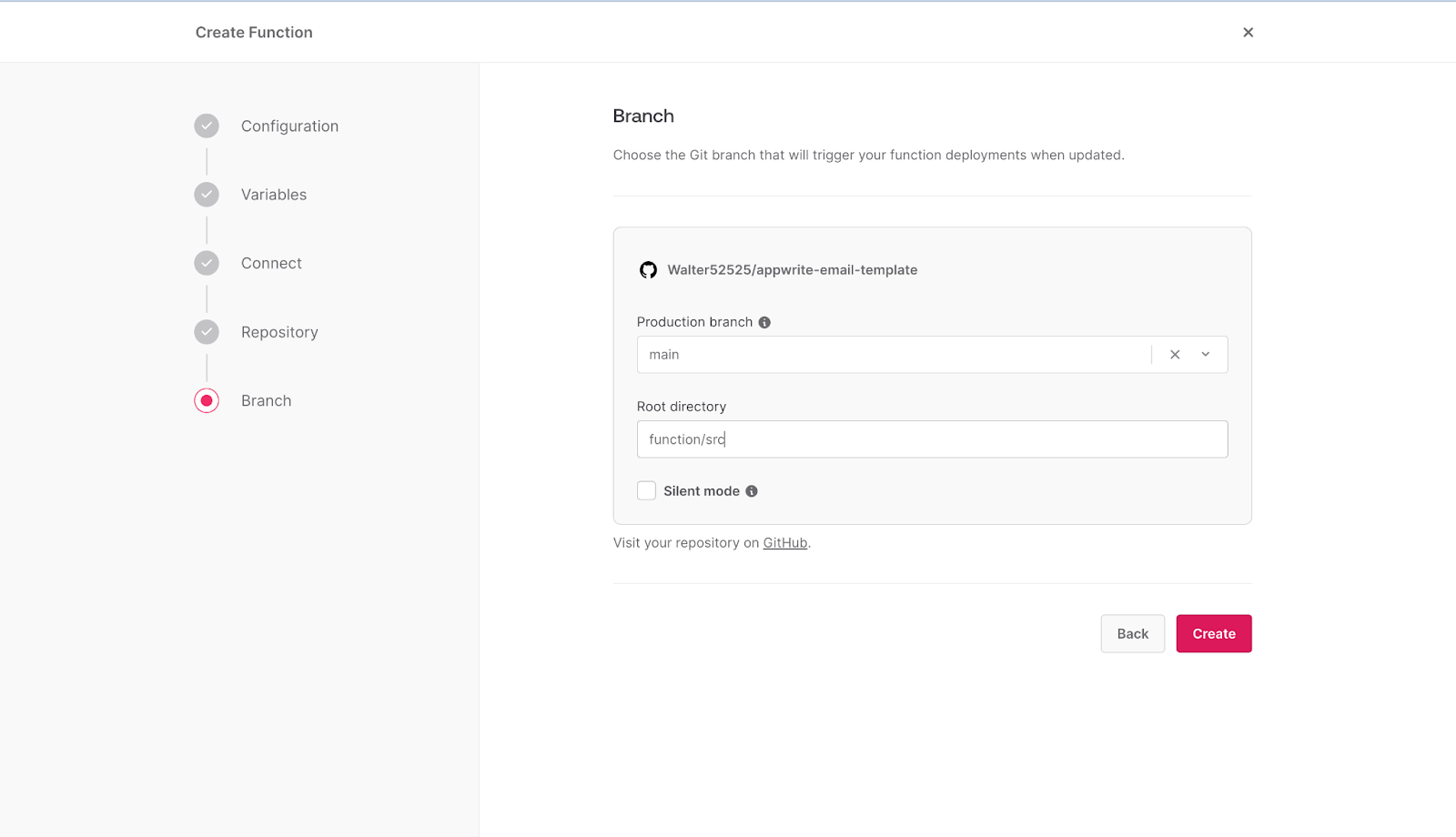

After that, select the branch you want to use and add the root directory as **function/src,** then click **Create**.

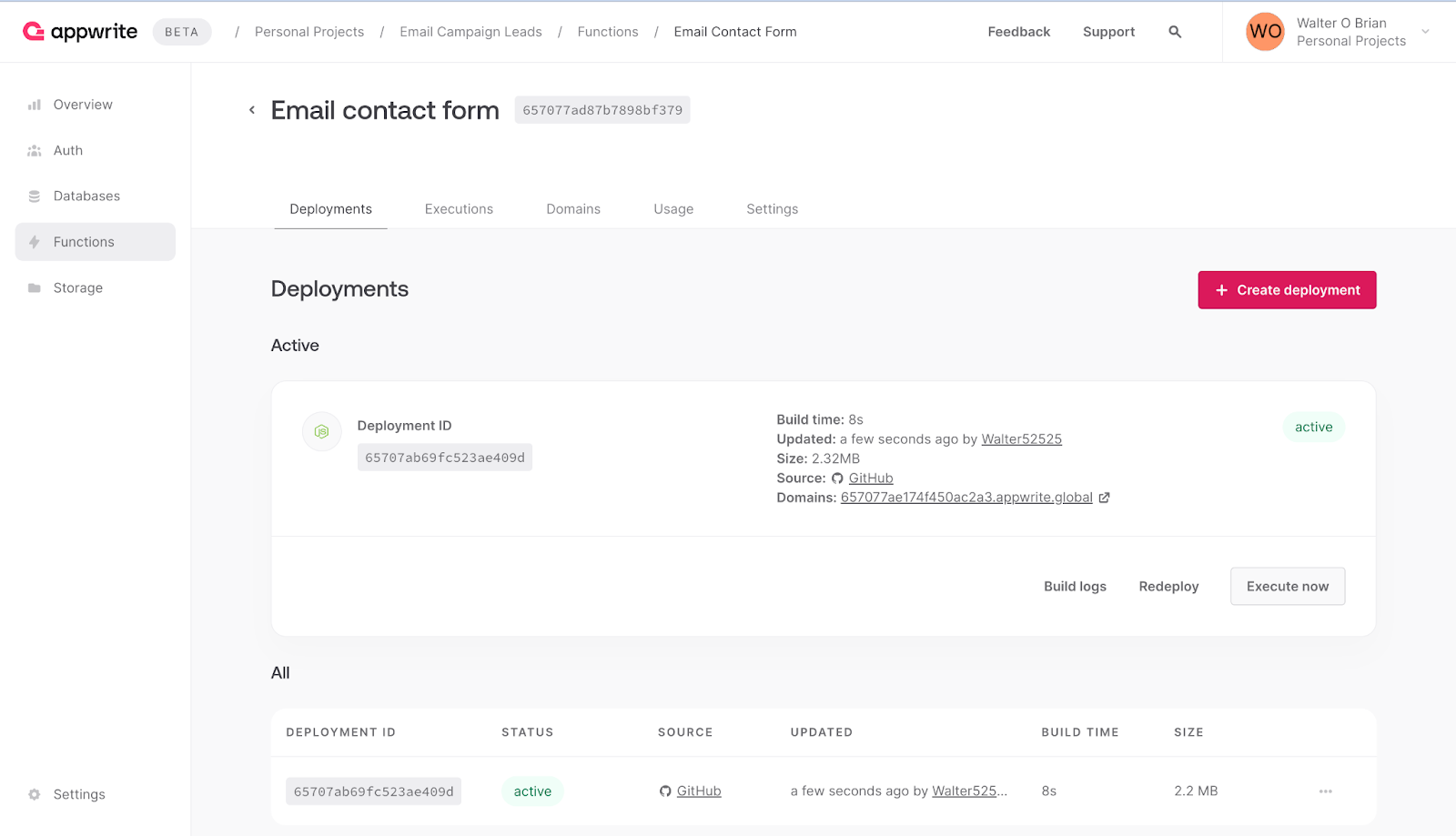

Your email contact form template is set to deploy automatically once a copy of the template's code is successfully pushed to your repository.

Next, navigate to your Appwrite cloud dashboard to confirm the function was deployed successfully.

## Setting up your leads collection Next.js website

For this step, you'll create your Next.js leads collection page and integrate Appwrite into the project.

To get started, navigate to your terminal and run the following command:

npx create-next-app@latest leads_pages

This command prompts you to select other packages to be used by the project. Select the options as indicated in the screenshot below.

Once completed, run the command below to change the current working directory to the project's directory.

cd leads_pages

Next, install your project's Appwrite SDK dependency by running this command:

yarn add appwrite

Afterward, open the project on your code editor.

**Adding your environment variables.**

In the root directory of your project, create a file **.env,** then add the following:

NEXT_PUBLIC_APPWRITE_FUNCTION_ID=<function_id>

NEXT_PUBLIC_APPWRITE_BASE_URL=https://cloud.appwrite.io/v1

NEXT_PUBLIC_APPWRITE_PROJECT_ID=<project_id>

You can retrieve your `<function_id>` and `<project_id>` Appwrite credentials from the Appwrite dashboard. Replace the `<function_id>` and `<project_id>` with the corresponding credentials.

**Creating your leads collection custom hook**

To promote reusability and modularity, you'll need to create the logic that handles collecting and sending the user's input to Appwrite.

To proceed, create a new folder, **hooks** in the **src/** directory.

Next, create a file **useLeadCollector.tsx,** then add the following:

{% gist https://gist.github.com/Otrex/45ac69ede7a5a54be06e69d0b6c53a39

%}

In the snippet above, there are two states, which include:

- `request` state: This keeps track of the state of the asynchronous action when the collectLeads function is called.

- `form` state: This stores the value coming from the user input.

The **collectLeads** function handles requests to the Appwrite Function specified by the `process.env.NEXT_PUBLIC_APPWRITE_FUNCTION_ID` and `process.env.NEXT_PUBLIC_APPWRITE_FUNCTION_KEY`. The email contact form function requires the request body to be sent using the **content-type** `application/x-www-form-urlencoded.` Additionally, be sure to set the **referrer** header for proper functionality.

Once the **collectLeads** function is called, the request state is toggled to **loading**, which changes the user interface to the loading interface. Next, the **throwErrorIfNot200** function handles errors returned from the Appwrite Function. If the request is successful, then the request state will be set to **completed**; otherwise, it will be set to **error**. After a 5-second delay on **error**, the state will be toggled to **idle**.

**Creating your page components**

To build the leads page, you'll be creating various components. Here's a breakdown:

- **Header:** This component houses the introductory content for the leads page.

- **About:** Offers additional information to capture the lead's interest.

- **JoinSteps:** Elaborates on the information discussed in the previous sections.

- **Footer:** Provides hyperlinks to different application sections and includes copywriter information.

- **Form:** An abstracted form interface for user interaction.

- **Contact:** The crucial section for collecting leads.

To organize your project, navigate to the **src/** directory and create a folder named **components** to store these page components.

Starting with the **Header** component, create a file **Header.tsx** in the **components** folder, then add the following code snippet.

{% gist https://gist.github.com/Otrex/a8dc4c170e8826757cef86fa0e05b6a0 %}

Next, create the About component by repeating the steps above, create the **About.tsx** file, and add the following:

{% gist https://gist.github.com/Otrex/d23b913412e12c7db73a205b26f2393b %}

After that, create the **Footer** component by creating the **Footer.tsx** file and adding the following:

{% gist https://gist.github.com/Otrex/109d61f4d102aab6ed548f95da045f1b

%}

Moving on to the **JoinSteps** component, create the **JoinSteps.tsx** file and add the code snippet below.

{% gist https://gist.github.com/Otrex/cd1014964247e863206aac19c2908a42

%}

Next, let's create the Form component. Start by creating a folder named **partials** within the **components** directory. Inside this **partials** folder, create a file **Form.tsx**. Copy and paste the following snippet into the **Form.tsx** file:

{% gist https://gist.github.com/Otrex/537cf99f68ae6ae831f71f9930e6bd99

%}

Last but not least is the **Contact** component. Create the **Contact.tsx** file and add the following:

{% gist https://gist.github.com/Otrex/effa073575c4dcaac264f12bb77bd906 %}

In the provided snippet, the Form component is rendered based on the value of the `request` state. If the request state is **idle**, then the Form component is rendered. Similarly, other request states are handled likewise.

The use of "use client" signifies that the Contact component is client-side. This is necessary because, by default, Next.js sets all components to server-side.

**Adding the components to the leads page**

Once the components are created successfully, you’ll need to add them to the leads landing page.

To do so, open the **page.tsx** file in the **src/app** directory and add the following:

```ts

import About from "@/components/About";

import Contact from "@/components/Contact";

import Footer from "@/components/Footer";

import Header from "@/components/Header";

import JoinSteps from "@/components/JoinSteps";

export default function Home() {

return (

<main className="min-h-screen text-center px-24 py-10">

<div className="max-w-[1000px] mx-auto pb-24 bg-white">

<Header />

<About />

<JoinSteps />

<Contact />

<Footer />

</div>

</main>

);

}

```

The above snippet renders the components for the user to interact with.

**Testing your leads page**

To display the page on the browser, navigate to your project's terminal and run the following:

yarn dev

This command sets up a local server environment from the project.

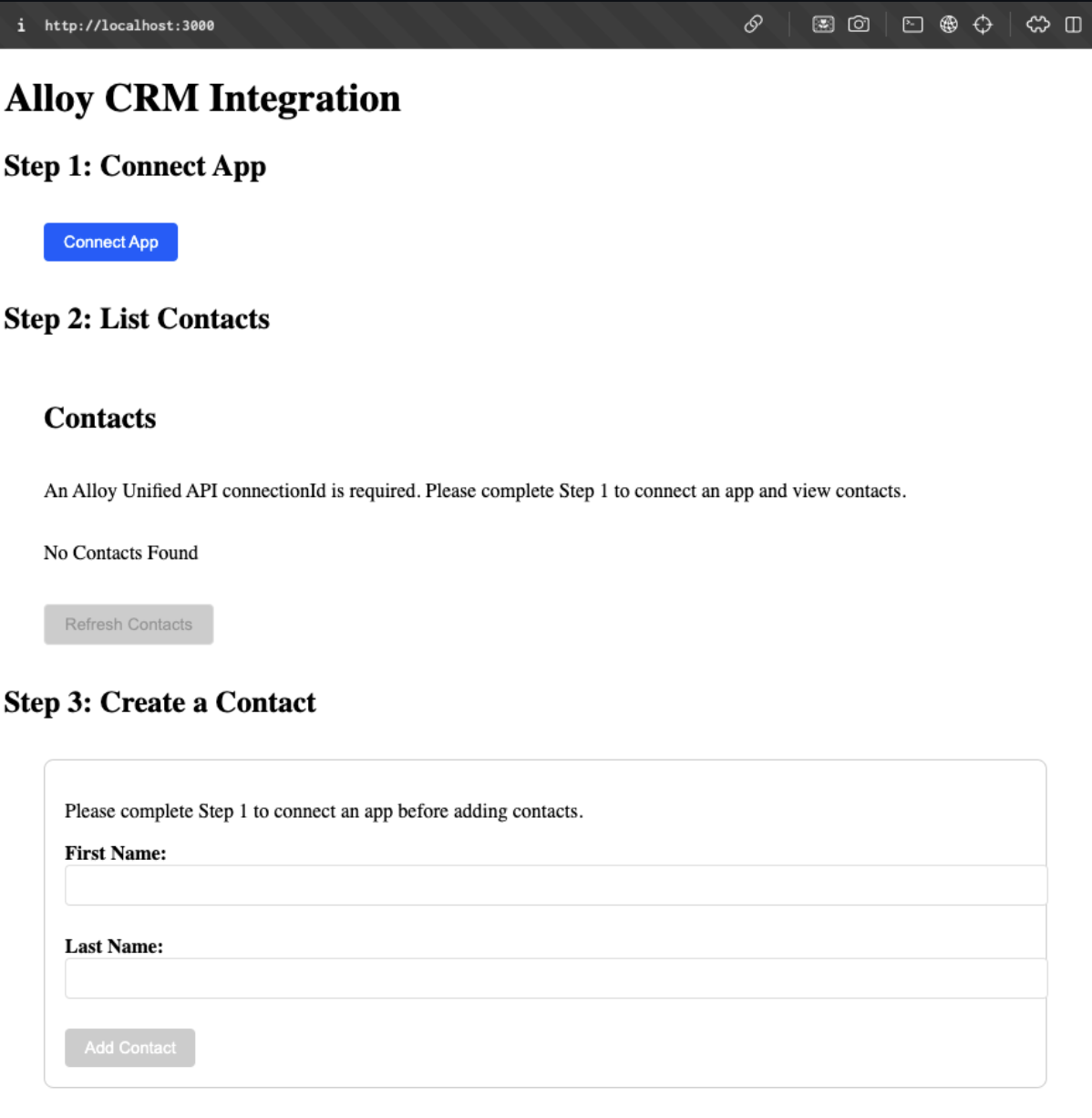

Extract the URL (http://localhost:3000) from your terminal and visit the page on your browser.

Here is a demonstration of the working system.

{% embed https://www.loom.com/share/77f6c30646764888b3f5b7e7b5f0a675?sid=f8609305-ab81-420b-94c9-f770ef9ef5ce %}

## Conclusion

By combining the capabilities of Appwrite and Mailgun, you can quickly set up your email marketing leads collector, streamline lead collection, and ultimately launch more successful marketing campaigns. The user-friendly setup and detailed instructions make this tutorial accessible even for those with minimal JavaScript and Git knowledge.

## Resources

- [How to automate Appwrite Functions deployment with GitHub](https://dev.to/hackmamba/how-to-automate-appwrite-functions-deployment-with-github-95e)

- [Appwrite Function documentation](https://appwrite.io/docs/products/functions?utm_source=hackmamba&utm_medium=hackmamba-blog)

- [Mailgun documentation](https://documentation.mailgun.com/en/latest/?utm_source=hackmamba&utm_medium=hackmamba-blog)

- [Git](https://docs.github.com/en)[H](https://docs.github.com/en)[ub documentation](https://docs.github.com/en)

- [Next.js documentation](https://nextjs.org/docs/)

| otrex | |

1,721,056 | How to safely use dangerouslySetInnerHTML in React | As the name suggests dangerouslySetInnerHTML should be used cautiously. It is like the innerHTML... | 0 | 2024-01-08T18:48:31 | https://deadsimplechat.com/blog/how-to-safely-use-dangerouslysetinnerhtml-in-react/ | As the name suggests `dangerouslySetInnerHTML` should be used cautiously. It is like the `innerHTML` property that is exposed by the DOM node.

With `dangerouslySetInnerHTML` you can set the HTML of the element. React does not perform any sanitization on the HTML set using `dangerouslySetInnerHTML`

It is called `dangerouslySetInnerHTML` because it is dangerous if the HTML that is set is unfiltered or unsanitized because it exposes the risk of injecting malicious code, XSS attack and other security threats that could compromise the application.

Hence `dangerouslySetInnerHTML` should be avoided unless absolutely necessary and before `dangerouslySetInnerHTML`, the HTML input should be sanitized.

In this blog post, we will look at some examples of how to use `dangerouslySetInnerHTML` and how to safely sanitize the HTML before setting using `dangerouslySetInnerHTML`.

## Basic Usage Example

Here is the basic usage example of `dangerouslySetInnerHTML`

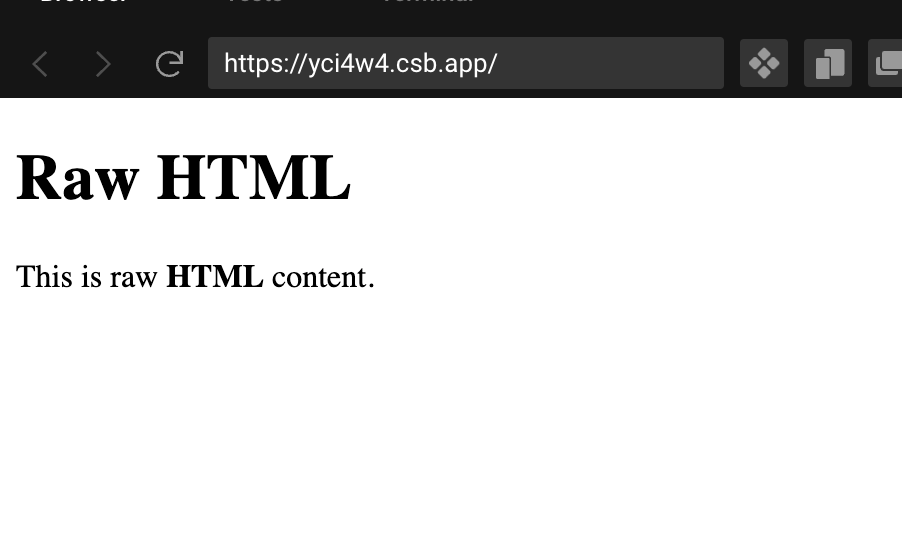

```js

import React from "react";

export default function App() {

const htmlContent = "<p>This is raw <strong>HTML</strong> content.<p>";

return (

<div className="App">

<h1>Raw HTML</h1>

<div dangerouslySetInnerHTML={{ __html: htmlContent }}></div>

</div>

);

}

```

In the above example, we set the raw html string stored in the variable `htmlContent`

The `htmlContent` will be set as `innerHTML` of the `<div></div>` tag. We pass `dangerouslySetInnerHTML` prop an object with the key `__html` and the value should contain the HTML string that we want to set.

The HTML should be sanitized before being used with `dangerouslySetInnerHTML` as it exposes security risks. In the above example the HTML is not sanitization and doing this not recommend as it is a bad practice and would result in sever security risks.

In the next section, we will see how to sanitize the HTML string before setting it as a value using `dangerouslySetInnerHTML`.

## Sanitizing with DOMPurify

In the previous section, we specified the HTML string and setting it directly to `dangerouslySetInnerHTML` which is not a good practice.

In this section, we will use the package **DOMPurify** to santize our HTML string before using it in `dangerouslySetInnerHTML`.

Let's first install the DOMPurify package using npm install

```bash

npm install dompurify

```

Then we will update our component to use DOMPurify:

```js

import React from "react";

import DOMPurify from "dompurify";

export default function App() {

const htmlContent = "<p>This is raw <strong>HTML</strong> content.<p>";

const sanitizedHtmlContent = DOMPurify.sanitize(htmlContent);

return (

<div className="App">

<h1>Raw HTML</h1>

<div dangerouslySetInnerHTML={{ __html: sanitizedHtmlContent }}></div>

</div>

);

}

```

Using the DOMPurify library is very easy, we just need to call the `santize` method on the DOMPurify library and it returns the sanitized version of the HTML.

We can then pass the sanitized version to dangerouslySetInnerHTML prop.

### Verifying HTML Sanitization

To check if our DOMPurify, we will inject an XSS payload into our HTML string and see if our DOMPurify correctly escapes the HTML script.

```js

import React from "react";

import DOMPurify from "dompurify";

export default function App() {

const htmlContent = "<script>alert(1);</script>";

const sanitizedHtmlContent = DOMPurify.sanitize(htmlContent);

return (

<div className="App">

<h1>Raw HTML</h1>

<div dangerouslySetInnerHTML={{ __html: sanitizedHtmlContent }}></div>

</div>

);

}

```

We have updated our `htmlContent` to `<script>alert(1);</script>` and if our HTML is not sanitized correctly then the page will display an alert with 1.

Apart from DOMPurify there are other sanitization libraries available, that you can use like sanitize-HTML. But when choosing a library make sure you use a library that is actively developed, has a large user base and is widely used.

## Alternatives to Consider before using dangerouslySetInnerHTML

dangerouslySetInnerHTML should be used only when it is absolutely necessary and should be avoided whenever possible due to the security risks.

Always other options should be considered before using dangerouslySetInnerHTML, and here are some of the options that you should consider, but make sure to santize your HTML using a library like DOMPurify first:

1. **Try to use JSX First:** You should first try to use JSX, if you have legacy code that you want to integrate, or you are integrating some 3rd party library try to use it JSX and with refs and only use dangerouslySetInnerHTML as the last resort.

2. **Use Library that converts HTML to JSX:** There are multiple libraries available which parses HTML into JSX, you can try to use those libraries as well, some of the popular options include:

- html-react-parser: It allows you to parse raw HTML and convert it into React elements. It is a safer alternative to dangerouslySetInnerHTML. However you still need to sanitize the HTML. As of writing this library has 1.6K stars on Github and 990,820 weekly downloads on npm

- react-html-parser: This also allows you to convert raw HTML into react components, and it is similar to html-react-parser. It has 742 starts on github as of writing and 277k weekly download on NPM. Also thing to note that it was last updated in 2020.

Before using these libraries make sure to sanitize the HTML using HTML sanitization libraries like DOMPurify.

## Scenarios where dangerouslySetInnerHTML could be used

Sometimes it is inevitable to use dangerouslSetInnerHTML and there are cases where you cannot get away without using dangerouslySetInnerHTML, let. discuss some of those scenarios:

1. **HTML data coming from a Trusted Source:** When your HTML content is coming from a Trusted Source like your Content Management System or from the Server Generated content. In these cases, you can use the dangerouslSetInnerHTML. But make sure that you trust the source of the data.

2. **Properly Sanitized Content:** You can safely use dangerouslySetInnerHTML content that is properly sanitized. Make sure you use a robust and well-tested sanitization library to escape any unsafe tags and XSS code.

3. **When Integrating 3rd Party Libraries:** Some 3rd Party libraries do not integrate well in React, in those cases you have to use dangerouslySetInnerHTML to integrate the library with your code. But before doing that make sure you trust the library and is well-vetted and does not expose your application to any security risk, and generate content is well-sanitized.

## Building a Markdown Editor in React using ShowDown, DOMPurify and dangerouslySetInnerHTML

Let's build a Markdown editor, that displays that Markdown output in HTML in real-time.